A Beginner's Guide to Machine Learning in Drug Discovery: Foundations, Applications, and Future Trends

This guide provides researchers, scientists, and drug development professionals with a comprehensive introduction to the application of machine learning (ML) in modern drug discovery.

A Beginner's Guide to Machine Learning in Drug Discovery: Foundations, Applications, and Future Trends

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive introduction to the application of machine learning (ML) in modern drug discovery. It covers foundational ML concepts, explores specific methodologies and their applications across the drug development pipeline—from target identification to clinical trials—addresses common challenges and optimization strategies, and examines real-world validation and the evolving competitive landscape. By synthesizing current trends and case studies, this article serves as a primer for understanding how ML is reshaping pharmaceutical R&D to improve efficiency, reduce costs, and accelerate the delivery of new therapies.

Machine Learning Fundamentals: Why AI is Reshaping Pharmaceutical R&D

Defining Machine Learning and its Role in Drug Discovery

Machine Learning (ML), a subset of Artificial Intelligence (AI), refers to a set of techniques that train algorithms to improve performance on a task based on data [1]. In the context of drug discovery, ML provides computational methods to learn from complex pharmaceutical data, identify patterns, and make predictions, thereby accelerating the research process and reducing the risk and cost associated with clinical trials [2] [3]. The traditional drug development process is notoriously lengthy, often exceeding 10 years, and costly, with an average expenditure of approximately $2.558 billion USD for bringing a novel drug to market [2] [3]. Machine intelligence is now being customized to perform activities that mimic human brain function in interpreting and attaining knowledge from this data, fundamentally transforming the pharmaceutical industry [2].

ML's ability to analyze "big data" within short periods positions it as a transformative technology across the entire drug development pipeline [3]. This capability is crucial given the expansion of chemical space and the increasing complexity of biological data. From a practical perspective, ML approaches have evolved from theoretical curiosities to tangible forces, with AI-designed therapeutics now advancing into human trials across diverse therapeutic areas [4]. The field has progressed remarkably, with over 75 AI-derived molecules reaching clinical stages by the end of 2024, a significant leap from just a few years prior when essentially no AI-designed drugs had entered human testing [4].

Core Machine Learning Approaches and Techniques

Multiple ML algorithms have gained importance in drug discovery, each with distinct strengths for handling different types of pharmaceutical data. The most prominent algorithms include Support Vector Machines (SVM), Random Forest (RF), Naive Bayes (NB), and various types of Artificial Neural Networks (ANN), including Deep Learning (DL) models [2] [5]. These techniques enable fundamental ML activities such as classification, regression, predictions, and optimization across complex biological and chemical datasets [2].

Deep Learning, a specialized subset of ML algorithms, has demonstrated particular success in public challenges and is increasingly becoming a framework of choice within biomedical machine learning [2] [6]. DL models, including Convolutional Neural Networks (CNN) and Recurrent Neural Networks (RNN), develop sophisticated models capable of learning hierarchical representations from raw data, eliminating the need for manual feature engineering in many applications [2].

Graph Machine Learning (GML) represents another emerging framework, especially well-suited for biomedical data due to its inherent ability to model interconnected structures [6]. GML methods learn effective feature representations of nodes, edges, or entire graphs, with Graph Neural Networks (GNNs) attracting growing interest for their ability to propagate information through graph structures [6]. This approach is particularly valuable for representing biomolecular structures, functional relationships between biological entities, and integrating multi-omic datasets [6].

Table 1: Key Machine Learning Algorithms in Drug Discovery

| Algorithm | Primary Applications | Key Advantages |

|---|---|---|

| Random Forest (RF) | QSAR analysis, virtual screening, biomarker discovery | Handles high-dimensional data, provides feature importance metrics, robust to outliers |

| Support Vector Machines (SVM) | Compound classification, toxicity prediction | Effective in high-dimensional spaces, memory efficient, versatile with different kernel functions |

| Naive Bayes (NB) | Target prediction, adverse drug reaction monitoring | Simple implementation, works well with small datasets, computationally efficient |

| Artificial Neural Networks (ANN) / Deep Learning | Molecular modeling, de novo drug design, image analysis (digital pathology) | Learns complex non-linear relationships, automatic feature extraction, handles unstructured data |

| Graph Neural Networks (GNN) | Molecular property prediction, drug-target interaction, protein-protein interaction | Naturally handles graph-structured data, incorporates relational inductive biases |

The selection of appropriate ML techniques depends heavily on the specific problem domain, data characteristics, and desired outcomes. For instance, quantitative structure-activity relationship (QSAR) analysis frequently employs RF and SVM models, while molecular design and protein structure prediction increasingly utilize DL and GNN architectures [2] [6] [5].

Key Applications in the Drug Discovery Pipeline

ML technologies are being deployed across the entire drug development lifecycle, from initial target identification to clinical trials and post-marketing surveillance. Their implementation is delivering tangible benefits in accelerating timelines, reducing costs, and improving prediction accuracy [3].

Target Identification and Validation

ML approaches are revolutionizing target identification by analyzing complex biological networks and multi-omic data to identify novel therapeutic targets [2] [3]. Knowledge graphs that capture specific types of relationships between biomolecular species provide powerful frameworks for representing the complex interactions between drugs, targets, side effects, and disease mechanisms [6]. Companies like BenevolentAI have successfully utilized AI for target discovery, exemplified by their identification of Baricitinib as a repurposing candidate for COVID-19 treatment, which subsequently received emergency use authorization [3].

Graph machine learning approaches have set the state of the art for mining graph-structured data including drug-target-indication interaction and relationship prediction through knowledge graph embedding [6]. These methods can identify novel biological targets by propagating information across heterogeneous biological networks, significantly accelerating the initial stages of drug discovery.

Compound Design and Screening

ML has dramatically transformed compound design and screening through virtual screening, de novo molecular design, and property prediction [2] [5]. Traditional high-throughput screening (HTS) approaches are expensive and time-consuming, whereas AI-enabled virtual screening can analyze properties of millions of molecular compounds more rapidly and cost-effectively [3].

Generative models, particularly Generative Adversarial Networks (GANs) and variational autoencoders (VAEs), are being used to design novel chemical entities with specific biological properties [3]. These approaches can explore chemical space more efficiently than traditional methods, generating compounds optimized for specific target profiles. For instance, Insilico Medicine demonstrated the power of this approach by designing a novel drug candidate for idiopathic pulmonary fibrosis in just 18 months, substantially faster than traditional timelines [4] [3].

Table 2: ML Applications Across the Drug Discovery Pipeline

| Drug Discovery Stage | ML Applications | Notable Examples |

|---|---|---|

| Target Identification | Biological network analysis, knowledge graph mining, multi-omic data integration | BenevolentAI's identification of Baricitinib for COVID-19 [3] |

| Compound Screening | Virtual screening, binding affinity prediction, QSAR modeling | Atomwise's CNN platforms predicting molecular interactions for Ebola and multiple sclerosis [3] |

| Compound Design | Generative chemistry, de novo molecular design, lead optimization | Insilico Medicine's generative AI-designed IPF drug [4]; Exscientia's AI-designed clinical compounds [4] |

| Preclinical Development | Toxicity prediction, ADME profiling, biomarker identification | GML for predicting ADME profiles [6]; Digital pathology and prognostic biomarkers [2] |

| Clinical Trials | Patient recruitment, trial design optimization, outcome prediction | AI analysis of EHRs for patient stratification [3] |

Preclinical Development and Optimization

In preclinical development, ML models are utilized to predict critical properties such as absorption, distribution, metabolism, excretion, and toxicity (ADMET) profiles, thereby reducing reliance on animal models and accelerating safety assessment [2] [3]. ML approaches can analyze biological data to simulate drug behavior in the human body, potentially identifying critical safety issues earlier in the development process [3].

Graph ML methods have shown particular promise for molecular property prediction, including the prediction of ADME profiles [6]. For example, directed message passing GNNs operating on molecular structures have been used to propose repurposing candidates for antibiotic development, with validation of these predictions in vivo demonstrating the capability to identify suitable repurposing candidates structurally distinct from known antibiotics [6].

Experimental Protocols and Methodologies

Implementing ML in drug discovery requires rigorous experimental protocols to ensure robust and reproducible results. Below are detailed methodologies for key experiments commonly cited in ML-driven drug discovery research.

Quantitative Structure-Activity Relationship (QSAR) Modeling

QSAR modeling represents a fundamental application of ML in drug discovery, aiming to establish relationships between chemical structures and biological activities.

Protocol:

- Data Collection and Curation: Compile a dataset of chemical structures with associated biological activity measurements. Sources include ChEMBL, PubChem, or proprietary corporate databases.

- Molecular Featurization: Represent chemical structures using numerical descriptors or fingerprints. Common approaches include:

- Molecular Descriptors: Calculate physicochemical properties (e.g., molecular weight, logP, polar surface area).

- Fingerprints: Generate binary vectors representing molecular substructures (e.g., ECFP, MACCS keys) [5].

- Data Splitting: Divide the dataset into training, validation, and test sets using techniques such as random splitting or time-based splitting to assess model generalizability.

- Model Training: Train ML algorithms (e.g., Random Forest, Support Vector Machines, or Neural Networks) on the training set to learn the relationship between features and activity.

- Model Validation: Evaluate model performance on the validation set using metrics such as AUC-ROC, precision-recall curves, or RMSE. Employ cross-validation to ensure robustness.

- External Validation: Test the final model on a held-out test set to estimate real-world performance.

QSAR Modeling Workflow

Graph Neural Networks for Molecular Property Prediction

GNNs have emerged as powerful tools for predicting molecular properties by directly learning from graph representations of molecules.

Protocol:

- Graph Representation: Represent molecules as graphs where atoms correspond to nodes and bonds to edges. Initialize node features using atom properties (e.g., element type, charge) and edge features using bond characteristics (e.g., bond type, conjugation).

- Graph Neural Network Architecture:

- Message Passing: Implement multiple message passing layers where nodes aggregate information from their neighbors. Each layer updates node representations by combining a node's current state with aggregated messages from adjacent nodes.

- Readout Phase: After several message passing layers, generate a graph-level representation by aggregating all node embeddings using methods such as global mean pooling or attention-based pooling.

- Property Prediction: Feed the graph-level representation into a fully connected neural network to predict target properties (e.g., solubility, toxicity, binding affinity).

- Training: Train the model using appropriate loss functions (e.g., mean squared error for regression, cross-entropy for classification) and optimization algorithms (e.g., Adam).

- Interpretation: Utilize explainability techniques (e.g., attention mechanisms, saliency maps) to identify molecular substructures contributing to predictions.

GNN Molecular Property Prediction

Virtual Screening with Deep Learning

Virtual screening uses DL models to rapidly evaluate large chemical libraries for potential activity against a biological target.

Protocol:

- Benchmark Dataset Preparation: Curate a dataset of known actives and inactives/decoys for a specific target. Apply careful curation to address biases and ensure data quality.

- Model Selection and Training:

- Structure-Based: If 3D target structure is available, use docking-based DL approaches or 3D convolutional neural networks.

- Ligand-Based: If only ligand information is available, employ fingerprint-based DNNs, SMILES-based RNNs, or graph neural networks.

- Library Screening: Apply the trained model to screen large virtual compound libraries (e.g., ZINC, Enamine). Utilize GPU acceleration for computationally intensive evaluations.

- Hit Selection and Analysis: Rank compounds based on predicted activity scores and select top candidates for experimental testing. Apply diversity analysis and chemical clustering to ensure structural variety in selected compounds.

- Experimental Validation: Subject computational hits to experimental validation through biochemical or cellular assays to confirm activity.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of ML in drug discovery requires both computational tools and experimental resources. The following table details key research reagent solutions and their functions in ML-driven drug discovery workflows.

Table 3: Essential Research Reagent Solutions for ML-Driven Drug Discovery

| Category | Specific Tools/Reagents | Function in ML Workflow |

|---|---|---|

| Chemical Libraries | Enamine REAL Space, ZINC Database, MCULE | Provide large-scale compound datasets for virtual screening and training generative models [3] |

| Bioactivity Databases | ChEMBL, PubChem BioAssay, BindingDB | Supply curated structure-activity relationship data for model training and validation [5] |

| Protein Structure Resources | AlphaFold Protein Structure Database, PDB | Offer protein structural data for structure-based drug design and target validation [3] |

| Omics Data Resources | GEO, TCGA, KEGG, Gene Ontology | Provide transcriptomic, genomic, and proteomic data for target identification and biomarker discovery [6] [5] |

| ML Software Frameworks | TensorFlow, PyTorch, DeepGraph, RDKit | Enable implementation, training, and deployment of ML models for drug discovery applications [6] [5] |

| ADME-Tox Prediction Tools | GastroPlus, Simcyp, ADMET Predictor | Generate pharmacokinetic and toxicity data for model training and compound prioritization [2] [3] |

Current Landscape and Future Directions

The landscape of ML in drug discovery has evolved rapidly from experimental curiosity to clinical utility. As of 2025, multiple AI-driven drug candidates have reached Phase I trials in a fraction of the typical 5+ years traditionally needed for discovery and preclinical work [4]. Leading AI-driven discovery platforms have emerged, specializing in various approaches including generative chemistry, phenomics-first systems, integrated target-to-design pipelines, knowledge-graph repurposing, and physics-enabled ML design [4].

Companies such as Exscientia, Insilico Medicine, and Schrödinger have demonstrated the practical impact of AI-driven approaches. Exscientia reported in silico design cycles approximately 70% faster and requiring 10 times fewer synthesized compounds than industry norms [4]. Similarly, the advancement of the Nimbus-originated TYK2 inhibitor, zasocitinib (TAK-279), into Phase III clinical trials exemplifies physics-enabled ML design strategies reaching late-stage clinical testing [4].

Regulatory agencies are also adapting to this changing landscape. The U.S. Food and Drug Administration (FDA) has recognized the increased use of AI throughout the drug product life cycle and has seen a significant increase in drug application submissions using AI components in recent years [1]. The FDA has published draft guidance providing recommendations on the use of AI to support regulatory decision-making for drugs, indicating the maturation of this field from research concept to regulatory consideration [1].

Despite these advances, challenges remain in the widespread adoption of ML in drug discovery. Issues of model interpretability, data quality and standardization, and the need for methodological validation continue to be active areas of research and development [2] [3]. Furthermore, as noted in recent analyses, while AI has accelerated progress into clinical stages, the fundamental question remains whether AI is truly delivering better success rates or simply faster failures [4]. Continued advancements in explainable AI, robust validation frameworks, and high-quality data generation will be essential to fully realize ML's potential in transforming drug discovery.

Machine Learning has fundamentally redefined the approach to drug discovery, providing powerful computational methods to navigate the complexity of biological systems and chemical space. From target identification to clinical trial optimization, ML approaches are delivering tangible benefits in accelerating timelines, reducing costs, and improving prediction accuracy. While challenges remain in model interpretability, data quality, and validation, the continued advancement of ML technologies, coupled with growing regulatory frameworks, promises to further integrate computational intelligence into the pharmaceutical research paradigm. As the field evolves from experimental applications to clinically validated outcomes, ML is poised to become an indispensable component of drug discovery, potentially transforming how therapeutics are developed and delivering more effective treatments to patients faster than ever before.

The Staggering Cost and High Attrition of Drug Development

The traditional drug development process is characterized by immense costs, protracted timelines, and a high probability of failure. Understanding these bottlenecks is crucial for appreciating the transformative value of artificial intelligence (AI) and machine learning (ML).

On average, it takes 10 to 15 years and costs over $2.5 billion to bring a new drug from initial discovery to market approval [7] [8]. This exorbitant cost is largely driven by a failure rate that exceeds 90%; for every 10,000 compounds initially tested, only a handful ever reach clinical trials, and just a fraction of those are approved [7].

The table below quantifies the primary challenges that contribute to these inefficiencies.

Table 1: Key Bottlenecks in Traditional Drug Development

| Bottleneck | Impact & Statistics |

|---|---|

| High Failure Rate | Approximately 90% of drug candidates entering clinical trials fail to receive approval [7] [9] [8]. |

| Time-Intensive Process | The preclinical phase alone can take 6.5 years, with the total process averaging 12 years [9]. |

| Astronomical Costs | The $2.6 billion average cost per approved drug is compounded by sunk costs from failed candidates [7] [8]. |

| Inefficient Clinical Trials | Nearly 80% of trials fail to meet enrollment timelines, and about 50% of research sites enroll one or no patients [7]. |

| Target Selection Uncertainty | Many promising biological targets fail in later stages due to unforeseen complications or side effects [9]. |

A core concept that encapsulates the industry's productivity crisis is Eroom's Law (Moore's Law spelled backward). This principle observes that the number of new drugs approved per billion US dollars spent on R&D has halved roughly every nine years, indicating that drug development becomes slower and more expensive over time despite technological advances [8].

The following diagram maps the high-attrition pathway of a traditional drug development pipeline, illustrating the stage-by-stage probability of success.

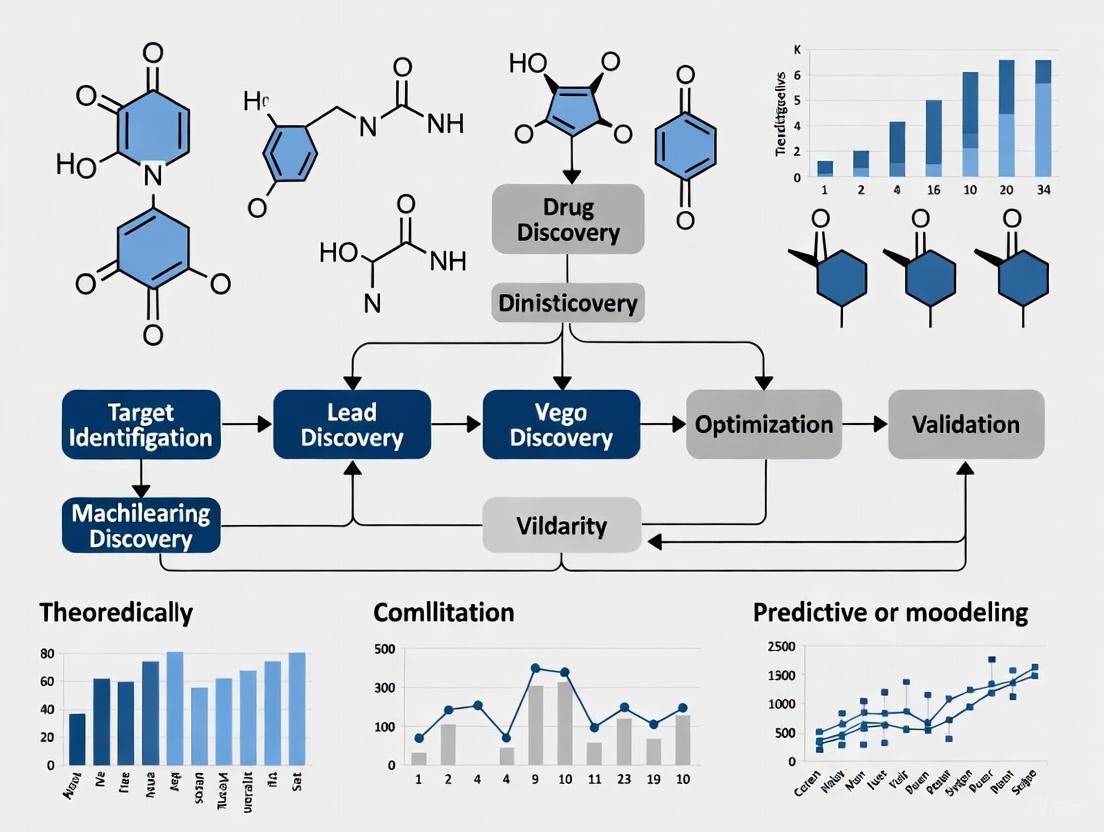

Figure 1: The Traditional Drug Development Pipeline with High Attrition Rates. This sequential, siloed process results in significant time and resource loss at each stage, with the highest failure occurring in Phase II clinical trials [7] [8].

How AI and Machine Learning Are Transforming the Pipeline

AI and ML are not merely automating single tasks; they are fundamentally reshaping the entire drug development lifecycle by enabling data-driven decision-making, predicting failures earlier, and uncovering novel insights from complex biological data.

AI Applications Across the Drug Development Workflow

The integration of AI creates a more integrated, intelligent system with feedback loops, contrasting sharply with the traditional linear pipeline.

Table 2: AI/ML Applications Addressing Key Drug Development Challenges

| Development Stage | AI/ML Application | Impact |

|---|---|---|

| Target Identification | Analyzing genomic, proteomic, and scientific literature to identify novel disease-associated targets and biomarkers [7] [9]. | Reduces initial target identification from 2-3 years to months or weeks, with one analysis showing AI helped avoid dead-end experiments in 22% of projects [10]. |

| Compound Screening & Design | Virtual screening of millions of compounds; generative AI designs novel molecules with desired properties from scratch [7] [8]. | Cuts discovery phase by 1-2 years. For example, generative AI designed novel fibrosis drug candidates in 46 days, a process that traditionally takes 2-4 years [7] [10]. |

| Preclinical Testing | Predicting drug toxicity, absorption, distribution, metabolism, and excretion (ADMET) using in-silico models [7] [3]. | Flags safety issues earlier, reduces reliance on animal studies, and accelerates the preclinical stage [3]. |

| Clinical Trials | Optimizing patient recruitment via analysis of electronic health records (EHRs); enabling adaptive trial designs [7] [3] [10]. | Addresses a major bottleneck, as 86% of trials miss enrollment timelines. AI can also create synthetic control arms, reducing needed participants [10]. |

Emerging evidence suggests that AI-discovered molecules are showing promising clinical success. An analysis of AI-native biotech companies found that AI-discovered molecules have an 80-90% success rate in Phase I trials, substantially higher than historical industry averages. This indicates AI's high capability in generating molecules with drug-like properties [11].

The following workflow illustrates how an AI-powered, end-to-end drug discovery system operates, highlighting the continuous feedback loops that enable learning and optimization across stages.

Figure 2: AI-Powered End-to-End Drug Discovery System. This integrated approach uses a central AI/ML engine that learns from all stages of development, creating continuous feedback loops to optimize the entire pipeline, unlike traditional siloed stages [8].

Experimental Protocol: Predicting Aqueous Solubility with Machine Learning

A critical step in early drug discovery is predicting a compound's aqueous solubility (LogS), a key physicochemical property influencing bioavailability. The following section provides a detailed protocol for building a simple ML model to predict LogS, based on the ESOL (Estimating Aqueous Solubility Directly from Molecular Structure) method [12].

Research Reagent and Computational Toolkit

Table 3: Essential Materials and Tools for the ML Solubility Protocol

| Item / Tool | Function & Description |

|---|---|

| Delaney Solubility Dataset | A curated dataset of 1,144 molecules with experimental LogS values, used for training and validating the model [12]. |

| RDKit (Python Cheminformatics Library) | An open-source toolkit used to handle chemical structures (e.g., convert SMILES strings to molecular objects) and calculate molecular descriptors [12]. |

| Python Programming Environment | (e.g., Jupyter Notebook, Google Colab). The core programming environment for implementing the machine learning workflow. |

| Scikit-learn (sklearn) Library | A core ML library in Python used for data splitting, model training (e.g., Linear Regression), and performance evaluation. |

| Molecular Descriptors | Quantitative features of molecules calculated by RDKit. For this protocol: • cLogP: Octanol-water partition coefficient (measure of lipophilicity). • MW: Molecular weight. • RB: Number of rotatable bonds (measure of molecular flexibility). • AP: Aromatic proportion (ratio of aromatic atoms to heavy atoms) [12]. |

Step-by-Step Methodology

- Computing Environment Setup: Begin by setting up a Python environment, ideally using Jupyter Notebooks either locally or on the cloud (e.g., Google Colab). Install the necessary libraries, primarily

rdkitandscikit-learn[12]. - Dataset Acquisition: Download the Delaney solubility dataset (

delaney.csv). This file contains the chemical structures in SMILES notation and their corresponding experimental LogS values [12]. - Data Preprocessing and Descriptor Calculation:

- SMILES to Molecule Conversion: Use RDKit's

MolFromSmiles()function to convert each SMILES string in the dataset into a molecular object [12]. - Descriptor Calculation: Write a function to calculate the four key molecular descriptors for each molecule:

Descriptors.MolLogP(mol)for cLogP.Descriptors.MolWt(mol)for Molecular Weight.Descriptors.NumRotatableBonds(mol)for Rotatable Bonds.- Calculate Aromatic Proportion (AP) as

(number of aromatic atoms) / (number of heavy atoms)[12]. This step results in a feature matrix (X) where each row is a molecule and each column is one of the four descriptors.

- SMILES to Molecule Conversion: Use RDKit's

- Data Splitting: The target variable (Y) is the experimental LogS values from the dataset. Split the data into training and testing sets (e.g., 80/20 split) using

train_test_splitfromsklearnto enable unbiased model evaluation [12]. - Model Training and Validation:

- Training: Train a Linear Regression model from the

sklearnlibrary on the training set (X_train,y_train). - Validation: Use the trained model to predict LogS values for both the training (

X_train) and testing (X_test) sets. - Performance Assessment: Evaluate model performance by calculating metrics like the coefficient of determination (R²) and root mean square error (RMSE) for both sets, allowing you to assess accuracy and check for overfitting [12].

- Training: Train a Linear Regression model from the

This practical protocol demonstrates how ML can rapidly predict a crucial drug property computationally, reducing the need for resource-intensive lab experiments early in the discovery process.

Regulatory Landscape and Future Outlook

Regulatory agencies are actively adapting to the increasing use of AI in drug development. The U.S. Food and Drug Administration (FDA) has issued draft guidance titled "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products," providing recommendations for using AI-generated data in regulatory submissions [7]. Similarly, the European Medicines Agency has published a reflection paper on the use of AI in the medicinal product lifecycle [7]. These frameworks emphasize assessing AI credibility based on risk and meeting established standards for safety, quality, and compliance.

Looking forward, the convergence of AI with other transformative technologies like quantum computing promises to tackle problems currently beyond the reach of classical computers. Hybrid AI-quantum systems are projected to enable real-time simulation of molecular interactions at an unprecedented scale, potentially reducing development timelines by up to 60% and opening up new frontiers in the treatment of complex diseases [13].

While the definition of a fully "AI-developed" drug is still evolving and no drug has yet been fully discovered, developed, and approved purely by AI, the technology is undeniably making the entire process faster, less expensive, and more likely to succeed. The first fully AI-designed drug approved for patients appears to be on the near horizon [10].

The process of discovering and developing new drugs is notoriously time-consuming and expensive, often taking over 12 years and costing more than $2.8 billion with a success rate of only 1 in 5,000 compounds [14]. In recent years, machine learning (ML) has emerged as a transformative force in pharmaceutical research, offering the potential to accelerate this process, reduce costs, and increase the probability of success. Machine learning, a subset of artificial intelligence (AI), enables systems to learn from data, identify patterns, and make decisions with minimal human intervention [15]. For researchers, scientists, and drug development professionals, understanding the core types of machine learning—supervised, unsupervised, and reinforcement learning—is no longer a specialized skill but an essential competency for modern drug discovery.

The application of AI in drug discovery spans multiple stages, from initial drug design to clinical trial optimization [14]. These technologies can predict molecular properties, design novel compounds, identify drug-target interactions, and even forecast adverse drug effects. As noted in a recent review, "AI is expected to significantly contribute to the development of new medications and therapies in the next few years" [16]. This guide provides a comprehensive technical overview of the three primary ML paradigms, framed specifically for their applications in drug discovery research.

Supervised Learning

Core Concepts and Definition

Supervised learning operates similarly to learning with a teacher, where the model is trained on a labeled dataset containing input-output pairs [15]. In this paradigm, each training example includes input data along with its corresponding correct output or label. The algorithm learns a mapping function from the inputs to the outputs, which can then be used to predict outcomes for new, unseen data. This approach requires a substantial amount of labeled data for training, which can be a limitation in domains where labeled data is scarce or expensive to obtain [17].

In the context of drug discovery, supervised learning has become the most widely used category of ML, helping organizations solve several real-world problems in pharmaceutical development [18]. The availability of large, well-curated chemical databases such as ChEMBL, PubChem, and ZINC has facilitated the application of supervised learning across multiple stages of the drug development pipeline [19] [20].

Key Algorithms and Methodologies

Supervised learning algorithms can be broadly categorized based on the type of problem they solve:

Classification Algorithms: Used when the output variable is categorical. Common algorithms include Logistic Regression, Support Vector Machines (SVM), Decision Trees, Random Forests, and Neural Networks [15] [18]. These are typically used for tasks like spam detection in scientific communications or classifying compounds as active/inactive against a biological target.

Regression Algorithms: Employed when predicting a continuous value. Key algorithms include Linear Regression, Bayesian Linear Regression, and Non-linear Regression methods [17]. These are commonly applied to predict continuous molecular properties such as solubility, lipophilicity, or binding affinity.

The experimental protocol for implementing supervised learning typically involves: (1) data collection and curation, (2) feature selection and engineering, (3) model selection and training, (4) model validation using techniques like k-fold cross-validation, and (5) model deployment and monitoring [18]. For drug discovery applications, particular attention must be paid to data quality and potential biases in historical compound data [19].

Drug Discovery Applications

Supervised learning has found extensive applications across the drug development pipeline:

Molecular Property Prediction: Models are trained to predict key molecular properties such as solubility, permeability, and toxicity from chemical structure data [14]. For instance, supervised learning can predict the efficacy and toxicity of potential drug compounds with high accuracy, enabling more informed decisions in early discovery stages [16].

Drug-Target Interaction Prediction: By training on known drug-target pairs, supervised models can predict novel interactions, facilitating drug repurposing and identifying potential off-target effects [14]. Deep learning algorithms have been successfully used to predict protein-ligand binding affinities, significantly accelerating virtual screening processes [16].

Clinical Trial Recruitment: Supervised models can identify qualified patients and suitable investigators for clinical trials by analyzing electronic health records and other healthcare data [14]. This application helps reduce recruitment times and improve trial success rates.

QSAR Modeling: Quantitative Structure-Activity Relationship (QSAR) models represent a classic application of supervised learning in drug discovery, where regression or classification models predict biological activity from chemical descriptors [20].

Experimental Protocol: Building a QSAR Model

A typical protocol for building a QSAR model using supervised learning involves:

Data Curation: Collect and curate a dataset of compounds with measured biological activity against the target of interest. Public databases like ChEMBL and PubChem are common sources [20].

Molecular Featurization: Convert chemical structures into numerical descriptors using methods like molecular fingerprints, topological indices, or physicochemical properties [19].

Model Training: Split data into training and test sets (typically 80:20). Train multiple algorithms (e.g., Random Forest, SVM, Neural Networks) on the training set using cross-validation to optimize hyperparameters [20].

Model Validation: Evaluate model performance on the held-out test set using metrics appropriate for the problem (e.g., ROC-AUC for classification, R² for regression). Apply additional validation through external test sets or temporal validation to assess generalizability [18].

Model Interpretation: Use feature importance analysis or model-specific interpretation methods to identify structural features driving activity, providing insights for medicinal chemistry optimization [18].

Unsupervised Learning

Core Concepts and Definition

Unsupervised learning operates without labeled outputs, instead identifying inherent patterns, structures, and relationships within the input data alone [15] [21]. This approach is particularly valuable in drug discovery when the underlying data relationships are not explicitly known or when researchers are exploring data without predefined hypotheses about what they might find [17]. Unlike supervised learning that predicts known outcomes, unsupervised learning discovers the unknown organization of data, making it an essential tool for knowledge discovery in complex biological and chemical datasets.

The fundamental principle behind unsupervised learning is that data possesses an inherent structure that can be revealed through mathematical techniques. As noted in recent literature, "Unsupervised learning is a category of machine learning where the algorithm is tasked with discovering patterns, structures, or relationships within a dataset without the guidance of labeled or predefined outputs" [21]. This capability is especially valuable in early drug discovery when exploring new target spaces or compound collections where limited prior knowledge exists.

Key Algorithms and Methodologies

Unsupervised learning techniques primarily fall into two categories:

Clustering Algorithms: Group similar data points together based on their inherent properties. Key algorithms include K-means Clustering, Hierarchical Clustering, and Self-Organizing Maps (SOM) [15] [19] [21]. These methods identify natural clusters or segments within data without predefined categories.

Dimensionality Reduction Methods: Reduce the number of random variables under consideration while preserving essential information. Principal Component Analysis (PCA), t-Distributed Stochastic Neighbor Embedding (t-SNE), and Autoencoders are commonly used techniques [15] [21]. These methods are particularly valuable for visualizing and understanding high-dimensional chemical and biological data.

Other important unsupervised approaches include association rule learning for identifying frequently co-occurring itemsets (valuable for market basket analysis in pharmaceutical sales data) and hidden Markov models for analyzing sequential data like protein sequences [21].

Drug Discovery Applications

Unsupervised learning enables multiple critical applications in drug discovery:

Compound Clustering and Scaffold Analysis: K-means and similar algorithms group compounds based on structural similarity, enabling researchers to select diverse compound subsets for screening, identify novel chemotypes, and analyze structure-activity relationships [21]. This approach helps in "mapping molecular representations from the 1990s to the current deep chemistry" [19].

Patient Stratification: By clustering patient omics data (genomics, proteomics, transcriptomics), researchers can identify distinct disease subtypes that may respond differently to treatments, enabling precision medicine approaches [15] [21].

Target Discovery and Validation: Unsupervised analysis of gene expression data can reveal novel disease-associated pathways and targets. Hidden Markov Models (HMMs) are particularly valuable for protein homology detection and family classification, helping identify new drug targets [21].

Chemical Space Visualization: t-SNE and PCA enable visualization of high-dimensional chemical descriptor spaces in two or three dimensions, allowing researchers to explore the distribution of compound libraries and identify underrepresented regions [21].

Experimental Protocol: Compound Clustering with K-means

A standard protocol for compound clustering using K-means includes:

Molecular Representation: Calculate molecular descriptors or fingerprints for all compounds in the dataset. Common representations include Morgan fingerprints, physicochemical properties, or molecular graph embeddings [21].

Similarity Calculation: Compute pairwise similarity or distance matrices using appropriate metrics (e.g., Tanimoto similarity for fingerprints, Euclidean distance for continuous descriptors).

Dimensionality Reduction (Optional): Apply PCA or t-SNE to reduce dimensionality before clustering, particularly for visual exploration [21].

Cluster Number Determination: Use the elbow method, silhouette analysis, or gap statistics to determine the optimal number of clusters (k) [21].

Model Application: Apply K-means clustering with the selected k value. Multiple random initializations are recommended to avoid local optima.

Cluster Validation and Interpretation: Analyze cluster characteristics using descriptive statistics, visualize clusters in chemical space, and identify representative compounds from each cluster for further analysis [21].

Reinforcement Learning

Core Concepts and Definition

Reinforcement Learning (RL) represents a fundamentally different approach from both supervised and unsupervised learning. In RL, an agent learns to make decisions by performing actions in an environment and receiving rewards or penalties based on the consequences of those actions [15]. Rather than learning from a static dataset, the agent learns through trial-and-error interactions with a dynamic environment, aiming to maximize cumulative long-term rewards [22]. This learning paradigm is particularly well-suited for sequential decision-making problems where the optimal strategy must be discovered through experience.

The core components of an RL system include: (1) an agent that makes decisions, (2) an environment with which the agent interacts, (3) actions that the agent can perform, (4) states that describe the current situation, and (5) rewards that provide feedback on the quality of actions [20] [22]. In drug discovery, RL has shown remarkable potential for molecular design and optimization, where the agent learns to generate compounds with desired properties through iterative refinement.

Key Algorithms and Methodologies

Reinforcement learning encompasses several algorithmic families:

Value-Based Methods: These algorithms, including Q-learning and SARSA, learn the value of being in a given state and taking specific actions [15]. The agent selects actions that maximize the expected cumulative reward.

Policy-Based Methods: Algorithms like REINFORCE directly learn the optimal policy (action selection strategy) without explicitly estimating value functions [20] [22]. These methods are particularly effective for high-dimensional or continuous action spaces.

Actor-Critic Methods: Hybrid approaches that combine value-based and policy-based methods, using both a value function (critic) and a policy function (actor) [22]. Deep Q-Networks (DQN) and their variants fall into this category.

Model-Based RL: These methods learn a model of the environment's dynamics and use it to plan optimal actions. While potentially more sample-efficient, they require accurate environment models [22].

In recent years, deep reinforcement learning—combining RL with deep neural networks—has achieved remarkable success in complex domains including molecular design [22].

Drug Discovery Applications

Reinforcement learning has enabled several advanced applications in drug discovery:

De Novo Molecular Design: RL agents can learn to generate novel molecular structures with optimized properties. Approaches like ReLeaSE (Reinforcement Learning for Structural Evolution) integrate generative and predictive models to design compounds with specific physical, chemical, or biological properties [22]. These systems can explore the vast chemical space (estimated at 10^30 to 10^60 compounds) more efficiently than traditional methods [22].

Molecular Optimization: RL can optimize lead compounds by sequentially modifying their structures to improve multiple properties simultaneously, such as potency, selectivity, and metabolic stability [20]. Techniques like REINVENT and RationaleRL have demonstrated successful optimization of compounds for specific targets [20].

Reaction Optimization: In synthetic chemistry, RL can optimize reaction conditions (catalysts, solvents, temperature) to maximize yield or minimize impurities [14].

Clinical Trial Design: RL can adapt trial parameters based on accumulating results, potentially reducing trial duration and improving success rates [14].

Experimental Protocol: De Novo Molecular Design with REINVENT

The REINVENT approach for de novo molecular design using RL involves:

Initialization: Pre-train a generative model (typically a Recurrent Neural Network) on a large dataset of drug-like molecules (e.g., from ChEMBL) to learn the syntax of valid molecular representations (SMILES strings) and the distribution of chemical space [20].

Predictor Model Training: Train a predictive model to estimate the properties of interest (e.g., bioactivity, ADMET properties) from molecular structure [20] [22].

RL Environment Setup: Define the reward function that combines multiple objectives (e.g., activity, synthesizability, novelty) and the episode termination conditions [20].

Policy Optimization: Use policy gradient methods to fine-tune the generative model to maximize the expected reward. Techniques like experience replay and reward shaping help address the sparse reward problem common in molecular design [20].

Iterative Refinement: Generate molecules with the current policy, evaluate them with the predictor model, compute rewards, and update the policy. This cycle continues until performance converges [20] [22].

Validation: Synthesize and experimentally test selected generated compounds to validate predicted activities [20].

Comparative Analysis

Technical Comparison

The table below summarizes the key technical differences between the three machine learning approaches:

| Criteria | Supervised Learning | Unsupervised Learning | Reinforcement Learning |

|---|---|---|---|

| Definition | Learns from labeled data to predict outcomes [15] | Identifies patterns in unlabeled data [15] | Learns through interaction with environment [15] |

| Data Requirements | Labeled datasets with input-output pairs [17] | Unlabeled data only [17] | No predefined data; learns from environment [15] |

| Problem Types | Classification, Regression [15] [17] | Clustering, Association [15] | Sequential decision-making [15] |

| Supervision Level | High (requires full supervision) [15] | None (completely unsupervised) [15] | Partial (reward signals only) [15] |

| Common Algorithms | SVM, Decision Trees, Neural Networks, Linear Regression [15] | K-Means, PCA, Autoencoders [15] | Q-learning, DQN, SARSA [15] |

| Primary Goal | Predict outcomes accurately [15] | Discover hidden patterns [15] | Optimize actions for maximum rewards [15] |

| Drug Discovery Applications | Molecular property prediction, QSAR models, virtual screening [18] [14] | Compound clustering, patient stratification, target discovery [21] | De novo molecular design, reaction optimization [20] [22] |

Selection Guidelines for Drug Discovery Problems

Choosing the appropriate ML approach depends on the specific drug discovery problem:

Use Supervised Learning when you have high-quality labeled data and a clear predictive task, such as classifying compounds as active/inactive, predicting binding affinities, or forecasting clinical outcomes [15] [18]. This approach is most suitable when the relationship between inputs and outputs is consistent and representative examples are available.

Use Unsupervised Learning when exploring data without predefined labels or hypotheses, such as identifying novel disease subtypes from omics data, discovering natural clusters in compound libraries, or detecting anomalous biological responses [15] [21]. This approach is valuable for knowledge discovery in early research stages.

Use Reinforcement Learning for sequential decision-making problems or optimization tasks where an agent must learn a series of actions to achieve a goal, such as designing novel molecular structures, optimizing synthetic routes, or adapting clinical trial protocols [15] [20] [22].

In practice, hybrid approaches often yield the best results. For example, unsupervised learning can preprocess data or generate features for supervised models, while reinforcement learning can use supervised learning predictions as reward functions [19] [22].

Implementation Workflows

Supervised Learning Workflow

Supervised Learning Workflow for Drug Discovery

Unsupervised Learning Workflow

Unsupervised Learning Workflow for Drug Discovery

Reinforcement Learning Workflow

Reinforcement Learning Workflow for Drug Discovery

Key Computational Tools and Databases

Successful implementation of machine learning in drug discovery requires access to appropriate tools, datasets, and computational resources. The following table outlines essential components of the ML drug discovery toolkit:

| Resource Type | Examples | Key Functionalities |

|---|---|---|

| Chemical Databases | ChEMBL [20], PubChem [19], ZINC [19] | Provide curated chemical structures and associated bioactivity data for model training and validation |

| Descriptor Calculation | RDKit, PaDEL, Dragon | Generate molecular descriptors and fingerprints from chemical structures for featurization |

| Deep Learning Frameworks | TensorFlow, PyTorch, Keras | Implement and train neural network models for various drug discovery tasks |

| Specialized Drug Discovery Platforms | DeepChem [14], REINVENT [20], MolDesigner [14] | Provide end-to-end pipelines for specific drug discovery applications like molecular design |

| Visualization Tools | t-SNE [21], PCA, UMAP | Enable visualization and exploration of high-dimensional chemical and biological data |

| Validation Resources | Therapeutics Data Commons (TDC) [14], external test sets | Provide benchmark datasets and standardized evaluation protocols |

Implementation Considerations

When implementing ML approaches in drug discovery, several practical considerations emerge:

Data Quality and Curation: The success of any ML approach depends heavily on data quality. Pharmaceutical data often requires significant curation to address errors, inconsistencies, and biases [19]. As noted in recent literature, "protein X-ray data needs the so-called data curation before use" [19].

Feature Representation: The choice of molecular representation significantly impacts model performance. Representations should balance expressiveness, simplicity, invariance to molecular rotations, and interpretability [19].

Model Interpretability: Especially in regulated pharmaceutical environments, understanding model predictions is crucial. Techniques like SHAP, LIME, and attention mechanisms help interpret complex models and build trust among stakeholders [16].

Hardware Requirements: Deep learning and reinforcement learning approaches often require substantial computational resources, including GPUs for efficient training, particularly when working with large compound libraries or complex biological networks [22].

The integration of machine learning into drug discovery continues to evolve rapidly. Emerging trends include the development of more sophisticated generative models for molecular design, increased emphasis on explainable AI to build trust in model predictions, and greater integration of multimodal data (genomics, proteomics, clinical data) for more comprehensive biological modeling [23] [16]. Foundation models pre-trained on massive chemical and biological datasets are showing promise for transfer learning across multiple drug discovery tasks [23].

As the field progresses, the most successful implementations will likely combine multiple ML approaches—using unsupervised learning for initial data exploration and feature discovery, supervised learning for predictive modeling, and reinforcement learning for optimization—in integrated workflows that leverage the strengths of each paradigm [19] [22]. Furthermore, close collaboration between ML experts and domain specialists in medicinal chemistry and biology remains essential for translating computational predictions into tangible therapeutic advances [23].

For researchers and drug development professionals, developing literacy in these core ML approaches is no longer optional but essential for driving innovation in modern pharmaceutical research. By understanding the strengths, limitations, and appropriate applications of supervised, unsupervised, and reinforcement learning, scientists can more effectively leverage these powerful technologies to accelerate the delivery of new medicines to patients.

The traditional drug discovery pipeline, often described as a high-stakes gamble, is grappling with a systemic crisis known as “Eroom’s Law”—the counterintuitive trend of declining R&D efficiency despite monumental technological advances [24]. This model, characterized by a linear and sequential process from target identification to clinical trials, requires an average of 10 to 15 years and an investment exceeding $2.23 billion for a single new medicine [24]. The probability of success is vanishingly small, with only one compound emerging successfully from an initial pool of 20,000 to 30,000 candidates [24]. This unsustainable economic reality, with industry returns on investment having hit a record low, is the primary driver for a fundamental restructuring of the discovery process.

Artificial intelligence (AI), and particularly its subset machine learning (ML), promises to break the chains of Eroom's Law by orchestrating a paradigm shift from a process reliant on serendipity and brute-force screening to one that is data-driven, predictive, and intelligent [24]. This report will argue that this shift is not merely incremental but represents a fundamental rewiring of the R&D engine. At its core, this transformation is a move away from the costly and time-consuming "make-then-test" approach—where physical compounds are synthesized and then screened—toward a "predict-then-make" paradigm. In this new paradigm, hypotheses are generated, molecules are designed, and their properties are validated at a massive scale in silico (via computer simulation), reserving precious laboratory resources for confirming only the most promising, AI-vetted candidates [24]. This inversion of the workflow has the potential to slash years and billions of dollars from the development lifecycle, ultimately delivering more life-saving medicines to patients more quickly.

Deconstructing the Traditional "Make-then-Test" Paradigm

The conventional drug development pipeline is a linear marathon of rigorously defined stages, each acting as a gatekeeper to the next. While designed to ensure patient safety, this rigid framework is also the source of the industry's immense costs and protracted timelines [24]. The following diagram and table elucidate this traditional, sequential gauntlet.

Diagram 1: The Sequential "Make-then-Test" Drug Development Pipeline.

Quantitative Challenges of the Traditional Pipeline

Table 1: Key Challenges in the Traditional "Make-then-Test" Model

| Challenge | Quantitative Impact | Consequence |

|---|---|---|

| Attrition Rate | 1 successful drug per 20,000-30,000 compounds screened [24] | Colossal waste of resources and time in early stages |

| Cost | Average cost > $2.23 billion per approved drug [24] | Unsustainable R&D expenditure and high drug prices |

| Timeline | 10-15 years from discovery to market [24] | Slow delivery of new therapies to patients |

| Probability of Success | Overall success rate from Phase I to approval as low as 6.2% [25] | High financial risk and low return on investment |

| Late-Stage Failure | Failure in Phase III trials is most common and costly [24] | Maximizes the cost of failure after massive investment |

The fundamental architecture of this pipeline creates a system where the cost of failure is maximized at the latest stages. A drug failing in Phase III incurs nearly the full R&D cost without generating any return [24]. This linear structure also creates information silos, where insights from late-stage clinical trials cannot easily feed back to optimize the initial discovery process for the next drug candidate. The process is inherently low-probability and high-risk, making it vulnerable to the disruption that machine learning promises.

The Machine Learning Arsenal: Core Techniques for a New Paradigm

Machine learning provides the technical foundation for the "predict-then-make" paradigm. ML is the practice of using algorithms to parse data, learn from it, and then make determinations or predictions without being explicitly programmed for the task [24] [25]. The predictive power of any ML approach is dependent on the availability of high volumes of high-quality data [25]. The following section details the core ML techniques being deployed in the pharmaceutical arsenal.

A Primer on Core Machine Learning Techniques

Table 2: Core Machine Learning Techniques in Drug Discovery

| Technique | Purpose | Learning Approach | Drug Discovery Applications |

|---|---|---|---|

| Supervised Learning [24] [25] | Predict outcomes from labeled data | Learns from known input-output pairs to map new inputs to correct outputs. Used for classification and regression. | Classifying compound activity (active/inactive), predicting binding affinity values, toxicity prediction [24]. |

| Unsupervised Learning [24] [25] | Find hidden patterns in data without labels | Discovers intrinsic structures and clusters in unlabeled data for exploratory analysis. | Patient stratification for clinical trials, identifying novel disease subtypes from omics data [25]. |

| Reinforcement Learning [26] | Optimize decision-making over time | Learns optimal actions through trial and error, receiving feedback from a dynamic environment. | Optimizing multi-step chemical synthesis routes, molecular design through iterative reward signals [26]. |

| Deep Learning (DL) [25] | Learn from massive, complex datasets | Uses multi-layered (deep) neural networks to detect complex, hierarchical patterns from raw data. | Bioactivity prediction, de novo molecular design, analysis of biological images (e.g., histology) [25]. |

Key Deep Learning Architectures

Deep learning, a subset of ML using sophisticated, multi-level deep neural networks (DNNs), has been particularly impactful [25]. Several architectures are commonly used:

- Convolutional Neural Networks (CNNs): Excel at processing data with a grid-like topology, making them ideal for image analysis in digital pathology and for analyzing molecular structures [25].

- Recurrent Neural Networks (RNNs): Designed for sequential data, making them suitable for analyzing time-series data or biological sequences [25].

- Graph Convolutional Networks: A special type of CNN that operates directly on graph structures, making them perfectly suited for molecular graphs where atoms are nodes and bonds are edges [25].

- Generative Adversarial Networks (GANs): Consist of two competing networks: one generates new molecular structures (generator), and the other evaluates their authenticity (discriminator). This is a powerful technique for de novo molecular design [25].

Implementing "Predict-then-Make": Experimental Protocols and Workflows

The "predict-then-make" paradigm is operationalized through a continuous, iterative cycle that integrates AI-driven decision-making and feedback loops. This is often framed as the "Design-Decide-Make-Test-Learn" (D2MTL) framework [27]. The following workflow and detailed protocols illustrate this modern approach.

Diagram 2: The AI-Powered "Design-Decide-Make-Test-Learn" (D2MTL) Cycle.

Detailed Experimental Protocol for AI-Driven Molecule Optimization

This protocol outlines a specific application of the D2MTL cycle for optimizing lead compounds, a common task in drug discovery.

Objective: To iteratively design and prioritize novel small molecules with optimized potency and reduced toxicity for a specific protein target.

Materials & Computational Tools (The Scientist's Toolkit):

Table 3: Essential Research Reagents and Computational Tools

| Item / Tool | Function / Explanation |

|---|---|

| High-Quality Bioactivity Datasets (e.g., ChEMBL) | Curated, public repositories of chemical structures and their associated biological assay data. Used as the foundational training data for predictive models [25]. |

| Molecular Representation Software (e.g., RDKit) | An open-source toolkit for cheminformatics. Used to convert chemical structures into computer-readable formats (e.g., SMILES strings, molecular fingerprints, graphs) for ML model input. |

| Deep Learning Frameworks (e.g., TensorFlow, PyTorch) | Open-source libraries that provide the foundational building blocks for designing, training, and deploying deep neural networks [25]. |

| Generative Chemistry Software (e.g., using GANs or VAEs) | Specialized software or algorithms capable of generating novel, valid chemical structures that satisfy desired constraints learned from training data [25]. |

| ADMET Prediction Platforms (e.g., QSAR/QSPR models) | AI/ML models that predict Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties in silico, enabling early safety screening [27]. |

| Automated Synthesis & Screening Hardware | Closed-loop automation systems that physically synthesize the AI-prioritized compounds and run high-throughput assays to generate new experimental data for the "Learn" phase [28]. |

Step-by-Step Methodology:

Learn (Data Curation and Model Training):

- Data Collection: Gather a large, curated dataset of molecules with known binding affinities (IC50/Ki values) for the target of interest and ADMET properties from public and proprietary sources.

- Feature Engineering: Use tools like RDKit to convert the 2D chemical structures of these molecules into numerical representations (e.g., molecular fingerprints, graph representations).

- Model Training: Train multiple supervised learning models (e.g., Random Forest, Graph Neural Networks) to predict bioactivity and key ADMET endpoints. Validate model performance on a held-out test set using metrics like AUC-ROC and root mean square error (RMSE).

Design (Generative Molecular Design):

- Employ a generative model, such as a Generative Adversarial Network (GAN) or a Variational Autoencoder (VAE), which has been trained on the general chemical space (e.g., the ZINC database).

- Use techniques like Reinforcement Learning or Bayesian optimization to guide the generative model. The predictive models from Step 1 act as the "reward function," encouraging the generator to create molecules that maximize predicted bioactivity while minimizing predicted toxicity.

Decide (Virtual Screening and Prioritization):

- Generate a large virtual library (e.g., 1,000,000 compounds) using the guided generative model.

- Screen this entire library in silico using the trained predictive models from Step 1.

- Apply multi-parameter optimization to rank the virtual compounds based on a weighted sum of desired properties (e.g., high predicted activity, low predicted liver toxicity, good predicted solubility).

- Select a shortlist of 50-100 top-ranking compounds for synthesis. This step replaces the initial brute-force HTS of the traditional paradigm.

Make (Chemical Synthesis):

- Chemists synthesize the shortlist of AI-prioritized compounds. AI tools can also be used here to predict feasible synthetic routes [27].

Test (Experimental Validation):

- The synthesized compounds are tested in biochemical and cell-based assays to determine their actual potency, selectivity, and cytotoxicity.

- This step generates a new, high-quality dataset of real-world results.

Learn (Model Retraining and Feedback):

- The experimental results from Step 5 are fed back into the initial dataset.

- The predictive models are retrained on this new, larger dataset, which now includes the AI-designed compounds. This iterative feedback loop continuously improves the model's accuracy and domain-specific predictive power, closing the D2MTL cycle [28].

Real-World Impact and Regulatory Evolution

The "predict-then-make" paradigm is not theoretical; it is actively being implemented by pharmaceutical companies and biotechs, yielding measurable improvements in R&D efficiency.

Case Studies and Industry Adoption

- Bristol Myers Squibb's "Predict First" Strategy: The company has moved from predicting about 5% of molecules to applying a "predict-first" mindset across its entire small molecule portfolio. This has shifted their approach from a traditional funnel to a "tailored, dynamic screening strategy," resulting in a "measurable and meaningful impact to the rate of progression and the quality of progression" of their programs [28].

- Insilico Medicine and BenevolentAI: These AI-native companies have demonstrated the ability to rapidly identify and design novel therapeutic candidates. Insilico developed a candidate for idiopathic pulmonary fibrosis in a fraction of the traditional time, while BenevolentAI identified baricitinib as a potential treatment for COVID-19 [29].

- Cellarity: This company exemplifies a radical shift by moving the starting point of drug discovery away from a single molecular target to focusing on overall cellular dysfunction using single-cell omics and ML. They have developed predictive models for liabilities like drug-induced liver injury with greater power than existing models [30].

The Evolving Regulatory Landscape

The U.S. Food and Drug Administration (FDA) is actively adapting to this technological shift. Noting a surge in submissions referencing AI/ML (over 100 in 2021 alone), the FDA has issued a discussion paper to shape future regulatory guidance [31]. The agency is focusing on three key areas to ensure the safe and effective use of AI/ML in drug development:

- Human-led governance, accountability, and transparency.

- Quality, reliability, and representativeness of data.

- Model development, performance, monitoring, and validation [31].

Engaging with the FDA early in the process through programs like the ISTAND Pilot Program is recommended to address these considerations effectively [31].

The transition from the "make-then-test" to the "predict-then-make" paradigm represents a fundamental and necessary recalibration of pharmaceutical R&D. Driven by the unsustainable economics of Eroom's Law and enabled by advances in machine learning, this shift places computational prediction and data-driven intelligence at the center of the drug discovery process. By moving from a linear, high-attrition funnel to an iterative, AI-powered cycle, the industry can significantly increase the probability of technical and regulatory success, reduce development timelines and costs, and ultimately unlock novel treatments for patients with unmet medical needs. While challenges surrounding data quality, model interpretability, and regulatory alignment remain, the ongoing integration of human expertise with powerful ML tools—"collaborative hybrid intelligence"—is poised to recode the future of medicine [28].

The application of machine learning (ML) in drug discovery represents a paradigm shift from traditional, labor-intensive methods to data-driven approaches that can dramatically compress timelines and reduce costs [25] [4]. For ML models to generalize effectively and produce accurate predictions, they require large volumes of high-quality, well-structured training data [25]. The foundational premise is that the predictive power of any ML approach is directly dependent on the availability of such data, with data processing and cleaning often constituting up to 80% of the work in a typical ML project [25]. This guide provides a comprehensive overview of the key data sources—encompassing chemical, genomic, clinical, and high-throughput screening data—that form the essential infrastructure for modern, AI-powered drug discovery pipelines [32].

Chemical Structure Databases

Chemical structure data provides the fundamental representation of molecular entities, enabling ML models to learn structure-activity relationships (SAR) and predict the behavior of novel compounds.

Key Public Chemical Databases

Table 1: Major Public Chemical Databases for Drug Discovery

| Database Name | Primary Focus | Key Features | Common Use Cases in ML |

|---|---|---|---|

| ChEMBL [33] [34] | Bioactive molecules | Manually curated data on drug-like molecules, bioactivities, and ADMET properties [32]. | Supervised learning for bioactivity and toxicity prediction [25] [33]. |

| PubChem [32] | Chemical substances | Massive repository of chemical structures and their biological screening results [32]. | Large-scale virtual screening and chemical property prediction [33]. |

| DrugBank [33] | Drug and drug target data | Combines detailed drug data with comprehensive drug target information [33]. | Drug-target interaction prediction and drug repurposing studies [33]. |

| Protein Data Bank (PDB) [33] [35] | 3D macromolecular structures | Atomic-level structures of proteins, nucleic acids, and complexes [35]. | Structure-based drug design and binding site prediction [33]. |

Data Processing and Standardization

Raw chemical data is inherently messy and requires sophisticated processing to be useful for ML. Key challenges and solutions include:

- Tautomer Handling: Tautomers are structural isomers that readily interconvert. Database representations often treat them as distinct compounds, which can fragment data and mislead ML models. The solution is to establish a canonical tautomer form for each compound, using transformation rules like SMIRKS patterns to ensure consistency [36].

- Structure Representation: Chemical structures exist in multiple formats (e.g., SMILES, InChI, SDF, MOL), each with different dimensionality and information content. Standardizing to a single, stereo-aware format (e.g., V3000 connection tables with enhanced stereochemistry) is crucial for accurate model training [36].

- Assay Data Normalization: Bioactivity data from sources like ChEMBL and PubChem often contain inconsistent units (e.g., IC50, Ki, % inhibition). Normalizing these into a standard unit is a prerequisite for building robust predictive models [36].

Figure 1: Chemical Data Standardization Workflow for ML.

Genomic data enables a deeper understanding of disease mechanisms and facilitates the identification and validation of novel therapeutic targets.

Major Genomic Data Repositories

Table 2: Core Genomic Data Resources for Target Discovery

| Resource | Type of Data | Scale and Content | ML Application |

|---|---|---|---|

| GenBank / dbSNP [37] | Genetic sequences & variations | Stores genetic sequences from diverse organisms; catalogs single nucleotide polymorphisms (SNPs) [37]. | Feature identification for target-disease association models [25]. |

| GWAS Catalog [37] | Genome-wide association studies | Structured repository of summary statistics linking genetic markers to complex diseases and traits [37]. | Identification of genetically validated targets and patient stratification biomarkers [25] [37]. |

| The Cancer Genome Atlas (TCGA) [34] | Cancer genomics | Multi-dimensional maps of key genomic changes in over 30 types of cancer [34]. | Oncology target discovery and biomarker development for personalized medicine [25]. |

| 1000 Genomes Project [34] | Human genetic variation | Sequencing data from 2,500 individuals across 26 global populations [34]. | Understanding population-specific genetic diversity in drug response [37]. |

| UK Biobank [35] [37] | Integrated genetic & health data | Large-scale biomedical database containing genetic, clinical, and lifestyle data from ~500,000 participants [37]. | Training multi-modal models for disease progression and drug response prediction [37]. |

Functional Genomics and Emerging Technologies

Beyond static genomic sequences, functional genomics data reveals how genes and proteins operate within biological systems. Key technologies generating data for ML include:

- CRISPR-Cas9 Screening: This gene-editing technology enables the creation of libraries of CRISPR reagents to systematically knock out or activate every gene in the genome. The resulting high-content phenotypic data, when combined with ML, helps pinpoint genes critical for disease [37].

- Single-Cell Sequencing: This technology allows for the analysis of gene expression profiles in individual cells, revealing cellular heterogeneity in diseases like cancer. When combined with CRISPR (scCRISPR screening), it accelerates target validation and provides detailed mechanistic insights [37].

Clinical and Medical Record Data

Clinical data provides the critical link between molecular discoveries and patient outcomes, enabling the development of safer and more effective therapies.

Key Clinical Data Repositories

- Electronic Health Records (EHRs): These are comprehensive digital records of patient health information generated by healthcare providers. For research, de-identified or anonymized EHR datasets are essential.

- MIMIC-III: A prominent, freely accessible critical care database containing de-identified health-related data associated with over 40,000 intensive care unit (ICU) patients. It includes vital signs, medications, laboratory measurements, and more [34].

- Healthcare Cost and Utilization Project (HCUP): A family of healthcare databases and related software tools from the Agency for Healthcare Research and Quality (AHRQ). It is the largest collection of longitudinal hospital care data in the U.S., encompassing encounter-level information [34].

- Alzheimer's Disease Neuroimaging Initiative (ADNI): A longitudinal multicenter study designed to develop clinical, imaging, genetic, and biochemical biomarkers for the early detection and tracking of Alzheimer's disease. It includes MRI and PET images, genetic data, and cognitive tests [34].

Data Integration and Privacy

A major challenge with clinical data is its heterogeneity and the need to protect patient privacy. Successful ML initiatives often use trusted research environments where advanced AI pipelines can be applied to layered, multi-modal datasets (e.g., imaging, omics, clinical outcomes) without raw data leaving a secure platform [23]. This approach maintains privacy while enabling the discovery of links between molecular features and clinical endpoints.

Figure 2: Secure Clinical Data Integration and Analysis Pathway.

High-Throughput and High-Content Screening Data

High-throughput (HTS) and high-content screening (HCS) generate massive, information-rich datasets that are ideally suited for ML, particularly deep learning models.

- Corporate Proprietary Datasets: Large pharmaceutical companies and AI-focused biotechs (e.g., Recursion, Exscientia) maintain massive, fit-for-purpose screening datasets. Recursion's dataset, for example, is generated in an automated wet lab using robotics and microscopy, producing millions of standardized images of cells perturbed by CRISPR or compounds weekly [35] [4].

- Public HCS Datasets: While less common, high-quality public HCS datasets are emerging. RxRx3-core is a notable example—an 18GB dataset of 222,601 microscopy images spanning 736 CRISPR knockouts and 1,674 compounds at various concentrations. It is specifically designed for benchmarking ML models in drug-target interaction prediction [35].

- Microscopy Image Data: HCS produces high-dimensional image data from which ML models can extract features related to cell morphology, protein localization, and other phenotypic changes. This allows for the connection of genetic or compound-induced perturbations to complex cellular outcomes [25] [35].

Experimental Protocol: A Typical HCS Workflow for ML

A standardized HCS protocol is critical for generating reproducible, ML-ready data.

- Cell Culture and Seeding: Human-derived cells (e.g., HUVECs) are cultured and automatically seeded into multi-well plates using robotic liquid handlers to ensure consistency [35] [23].

- Perturbation Introduction: Cells are perturbed using:

- Staining and Fixation: At predetermined time points, cells are fixed and stained with fluorescent dyes or antibodies to mark specific cellular components (e.g., nuclei, cytoskeleton, organelles).

- Automated Imaging: High-content microscopes automatically acquire multi-channel images of the stained cells in each well [35].

- Image Processing and Feature Extraction: An automated analysis pipeline, often involving a deep learning foundation model (e.g., a convolutional neural network), processes the images. The model segments individual cells and computes numerical embeddings or "feature vectors" that quantitatively represent the cellular phenotype [35] [23].

- Data Structuring and Metadata Capture: The extracted features are linked to comprehensive metadata describing the experimental conditions (perturbation, concentration, time, etc.). This creates a structured, multi-dimensional dataset ready for downstream ML analysis [23].

Table 3: Essential Research Reagents and Tools for HCS

| Item / Solution | Function in HCS Workflow |

|---|---|

| CRISPR-Cas9 Reagents | Introduces targeted genetic perturbations to study gene function [35] [37]. |

| Compound Libraries | Collections of small molecules used to perturb cellular systems and identify bioactive compounds [35]. |

| Fluorescent Dyes & Antibodies | Label specific cellular structures or proteins for visualization and quantification via microscopy [35]. |

| Cell Culture Media & Supplements | Maintains cell health and supports specific experimental conditions during the assay. |

| Robotic Liquid Handlers (e.g., Tecan Veya) | Automates plate preparation, reagent dispensing, and cell seeding to ensure reproducibility and scale [23]. |

| High-Content Microscopes | Automated imaging systems that capture high-resolution, multi-channel images of stained cells in multi-well plates [35]. |

Integrated Data Strategy and Future Outlook