A Comprehensive Agent-Based Model Verification Workflow for Robust Biomedical Research and Drug Development

This article provides a detailed framework for the verification of Agent-Based Models (ABMs), with a specific focus on applications in drug development and biomedical research.

A Comprehensive Agent-Based Model Verification Workflow for Robust Biomedical Research and Drug Development

Abstract

This article provides a detailed framework for the verification of Agent-Based Models (ABMs), with a specific focus on applications in drug development and biomedical research. As regulatory authorities increasingly consider in silico trial evidence, establishing model credibility through rigorous verification, validation, and uncertainty quantification (VV&UQ) has become paramount. We outline a structured workflow encompassing foundational principles, practical methodological steps, troubleshooting and optimization techniques, and finally, robust validation and comparative strategies. This guide is designed to help researchers and scientists ensure their ABMs are robust, reliable, and suitable for supporting critical regulatory decisions.

Laying the Groundwork: Core Principles and Regulatory Importance of ABM Verification

Defining Verification and Validation (V&V) in the Context of Agent-Based Models

Troubleshooting Guide: Common V&V Challenges

Problem 1: Insufficient Empirical Grounding

- Symptoms: Model outcomes are based on assumptions that seem reasonable but lack connection to real-world data. The model is a "black box" where outputs cannot be traced to empirically justified inputs or processes [1].

- Solutions:

- Implement a multi-faceted validation strategy that includes input validation, process validation, descriptive output validation, and predictive output validation [2].

- Use participatory modeling, collaborating with domain experts and stakeholders in an iterative process to ground the model in real-world knowledge [2].

- Integrate process mining techniques using event data to discover, check, and enhance the processes represented in your model [3].

Problem 2: Inadequate Verification of Computational Implementation

- Symptoms: The simulation produces unexpected errors or outputs, suggesting that the code does not correctly implement the intended model design.

- Solutions:

- Apply a tailored subset of Verification and Validation (V&V) techniques throughout the entire software development lifecycle, as no single standard exists for ABMs [4].

- Follow established principles, such as those from Balci, which emphasize that V&V must be a continuous process and thoroughly documented [4].

Problem 3: Lack of Standardization Hindering Cumulative Science

- Symptoms: Difficulty comparing results across different ABM studies; each model is a unique, tailor-made construct that hinders the building of cumulative knowledge [1].

- Solutions:

- Adopt and report standardized validation practices to improve reproducibility and allow for meaningful comparisons [1].

- When using LLM-powered generative agents, be extra vigilant about their black-box nature, stochasticity, and cultural biases, which can further complicate validation. Relying solely on outcome measures or "face-validity" is insufficient [1].

Problem 4: Validating Models with Multiple Equivalent Formulations

- Symptoms: Different model formulations (e.g., a primal and its dual) yield different intermediate results or objective values but represent the same underlying problem, causing false positives or negatives in validation [5].

- Solutions:

- Move beyond simple syntactic equivalence or objective value comparison. Develop a problem-level testing interface that validates model outputs against the natural language specification of the problem itself [5].

- Employ mutation testing, a software testing technique that creates small faults (mutations) in the model to assess the fault-detection power of your test suite [5].

Frequently Asked Questions (FAQs)

What is the fundamental difference between Verification and Validation?

- Verification answers the question "Did we build the model right?" It is the process of ensuring that the computational model has been implemented correctly according to its design specifications—that the code is free of bugs and accurately represents the intended conceptual model [4].

- Validation answers the question "Did we build the right model?" It is the process of ensuring that the conceptual model is an accurate and useful representation of the real-world system being studied for the intended purpose [2] [4]. A famous quote from G.E.P. Box reminds us that "Essentially, all models are wrong, but some are useful," underscoring that validation is about usefulness for a specific purpose [2].

How can I validate the behavior of individual agents in my ABM?

This is a persistent challenge, especially with traditional, simple rule-following agents. The integration of Large Language Models (LLMs) as generative agents promises greater behavioral realism but introduces new validation challenges due to their black-box nature and potential biases [1]. Techniques include:

- Input Validation: Ensuring the initial state conditions and parameters for agents are empirically meaningful [2].

- Process Validation: Checking that the agents' decision-making and interaction rules reflect real-world behaviors, which can be informed by role-playing games or participatory exercises [2].

What are the key aspects of a comprehensive empirical validation strategy for an ABM?

A robust strategy should address multiple facets, as outlined by Tesfatsion [2]:

- Input Validation: Are the model's exogenous inputs (initial conditions, parameters) empirically appropriate?

- Process Validation: Do the modeled processes (social, physical, biological) realistically reflect their real-world counterparts?

- Descriptive Output Validation: How well does the model capture the key features of the sample data it was built on (in-sample fitting)?

- Predictive Output Validation: How well can the model forecast outcomes for new or withheld data (out-of-sample forecasting)?

My ABM involves LLM-powered agents. Does this change how I should approach V&V?

Yes, significantly. While LLMs can enhance agent realism, they also exacerbate validation challenges [1].

- Increased Complexity: The stochastic and opaque nature of LLMs makes it harder to understand why an agent produced a specific output.

- Need for Rigor: Studies show that validation in generative ABMs often relies on loose outcome measures. To be scientifically valuable, you must implement more rigorous, multi-faceted validation protocols that go beyond surface-level checks [1].

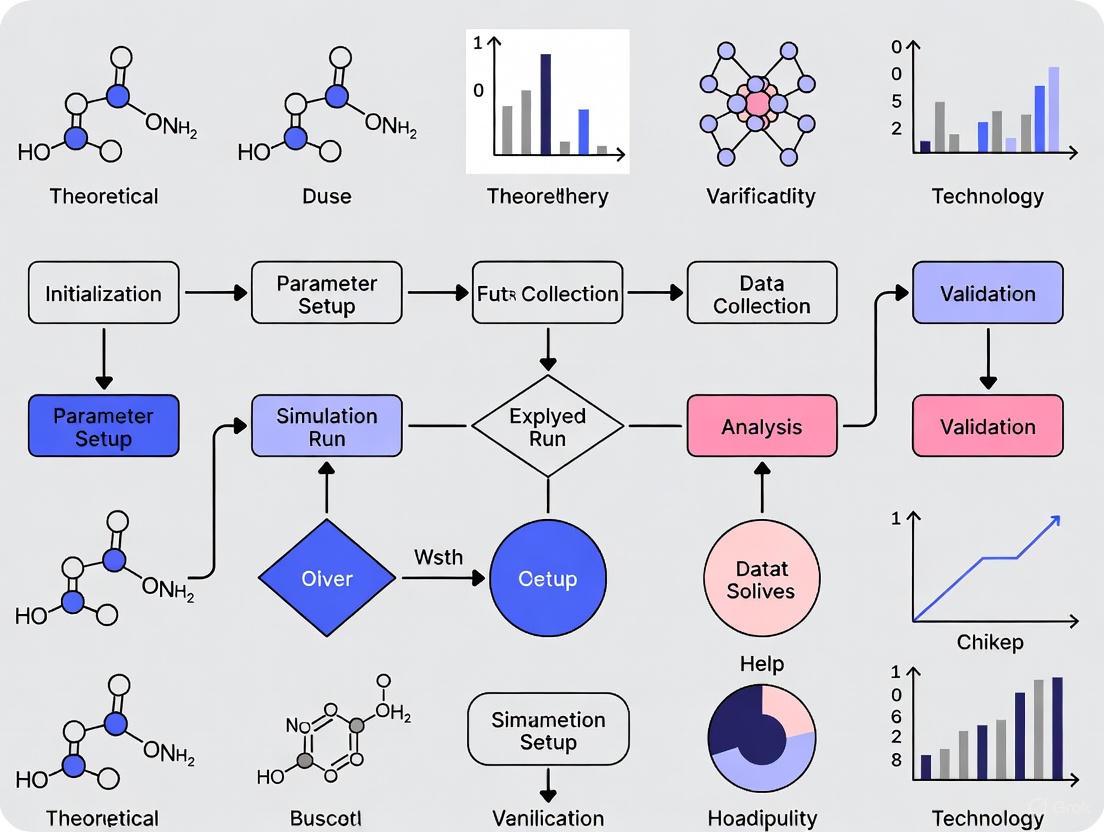

Experimental Protocol: An Agent-Based Validation Workflow

The following diagram outlines a rigorous, iterative workflow for verifying and validating an Agent-Based Model, integrating best practices from the literature.

ABM V&V Workflow

Detailed Methodology

- Define Model Purpose: Clearly articulate the intended use and the questions the ABM is designed to answer. This guides all subsequent V&V activities [2].

- Develop Conceptual Model & Specifications: Create a detailed design document describing the agents, their environment, interaction rules, and the overall processes. This document is the basis for verification.

- Implementation (Coding): Translate the conceptual model into executable code.

- Verification Phase: Ensure the code is bug-free and aligns with the specifications.

- Activities: Unit testing of agent behaviors, consistency checks, and debugging [4].

- Input Validation: Assess the empirical meaningfulness of the model's exogenous inputs.

- Activities: Calibrating initial state conditions, functional forms, and parameter values using historical data or established literature [2].

- Process Validation: Evaluate how well the model's internal mechanisms reflect reality.

- Output Validation: Compare model-generated outcomes with real-world data.

- Mutation Testing: Systematically assess the quality and power of your entire test suite.

- Activities: An automated framework introduces small, specific faults (mutations) into the model. A powerful test suite should be able to detect and "kill" these mutated models by failing the tests [5].

- Iterative Refinement: If validation is unsatisfactory at any stage, return to the implementation or even the conceptual model stage to refine the design. This loop continues until the model is sufficiently validated for its purpose.

The Scientist's Toolkit: Key Research Reagents & Solutions

The following table details essential "research reagents"—methodologies and tools—for conducting rigorous V&V in agent-based modeling.

| Reagent / Solution | Function / Purpose in V&V | Key Considerations |

|---|---|---|

| Multi-faceted Validation Framework [2] | Provides a comprehensive structure for validation, breaking it down into Input, Process, and Output (Descriptive & Predictive) components. | Ensures that the model is scrutinized from multiple angles, not just on its final output. |

| Mutation Testing [5] | A fault-based testing technique that assesses the quality of a test suite by measuring its ability to detect intentionally seeded faults (mutations). | A high "mutation score" indicates a powerful test suite, increasing confidence in the model's correctness. |

| Problem-Level Testing API [5] | Creates an interface to test the model's solutions directly against the original natural language problem description, independent of the specific formulation. | Crucial for avoiding false positives/negatives when multiple, mathematically different models can solve the same problem. |

| Process Mining Techniques [3] | Uses event data to discover, check conformance, and enhance process models within the ABM, providing data-driven insights into agent behaviors. | Helps bridge the gap between simulated processes and real-world workflow data. |

| Participatory Modeling (IPM) [2] | A collaborative approach where researchers and stakeholders jointly develop and validate the model through iterative loops of field study, role-playing, and computational experiments. | Grounds the model in practical expertise and increases its credibility and usefulness for stakeholders. |

| Generative Agent Validation Protocols [1] | Specialized procedures for validating ABMs that use LLMs to power agent reasoning and communication. | Addresses unique challenges like LLM stochasticity, cultural bias, and the "black-box" problem, moving beyond simple face-validity checks. |

The Critical Role of V&V in Regulatory Acceptance of In Silico Trials for Medicinal Products

FAQs: Core Concepts of V&V for In Silico Trials

FAQ 1: What is the fundamental difference between verification and validation (V&V) in the context of in silico trials?

Verification and validation are distinct but complementary processes. Verification answers the question "Are we building the model correctly?" It ensures that the computational model is implemented correctly and without errors, typically through code verification and numerical accuracy checks [6] [7]. Validation answers the question "Are we building the correct model?" It ensures the model accurately represents the real-world biological and physiological phenomena it intends to simulate, achieved by comparing model predictions with experimental or clinical data [6] [7].

FAQ 2: Why is V&V critically important for the regulatory acceptance of in silico trials?

Regulatory agencies like the FDA require that any method used in a regulatory submission, including computational models, must be "qualified" [7]. A comprehensive V&V process is the primary pathway to demonstrating the credibility of a model for a specific Context of Use [7]. This is formalized in frameworks like the ASME V&V 40 standard, which provides a structured approach for assessing model credibility based on the risk of the regulatory decision [6] [7]. Without rigorous V&V, in silico evidence will not be accepted for critical decisions regarding drug safety and efficacy.

FAQ 3: What is a 'Context of Use' and why is it the starting point for V&V?

The Context of Use (COU) is a precise definition of how the simulation will be used to inform a specific regulatory decision [7]. It defines the specific question the model aims to answer, the patient population, and the clinical endpoint. The COU is the foundation of the entire V&V strategy because it determines the required level of model credibility and the scope of the validation activities [7]. The risk associated with the regulatory decision directly influences the stringency of the V&V requirements.

FAQ 4: What are the key pillars of a credibility assessment for a computational model?

The credibility assessment is built upon several key pillars, which are evaluated relative to the model's Context of Use [6] [7]:

- Verification: Ensuring the computational model is solved correctly.

- Validation: Assessing the model's accuracy in representing reality.

- Uncertainty Quantification: Characterizing the uncertainty in model predictions, which includes managing model parameter uncertainty, model structure uncertainty, and numerical uncertainty [6].

- Related Activities: This includes documentation, transparency of assumptions, and the qualifications of the modelers.

Troubleshooting Guides: Common V&V Challenges and Solutions

Guide 1: Addressing Inadequate Model Validation

Problem: Model predictions do not sufficiently match real-world experimental or clinical data, raising doubts about its predictive power for the intended Context of Use.

Troubleshooting Steps:

- Review the Context of Use: Re-examine the COU to ensure the validation data is appropriate and relevant. The clinical endpoint being predicted by the model must align with the validation dataset [7].

- Assess Data Quality and Relevance: Evaluate whether the source data used for validation is of high quality, sufficiently large, and representative of the target patient population. A virtual cohort must be validated against real datasets to ensure its biological plausibility [8].

- Expand the Validation Scope: Move beyond simple descriptive output validation. Implement a comprehensive validation strategy that includes:

- Descriptive Output Validation: How well the model captures salient features of the sample data used for its identification (in-sample fitting) [2].

- Predictive Output Validation: How well the model can forecast outcomes for new data withheld from the model identification process (out-of-sample forecasting) [2].

- Process Validation: Ensure the internal mechanisms of the model reflect real-world biological and physical processes [2].

Guide 2: Managing Model Uncertainty and Variability

Problem: The model fails to adequately account for uncertainty, making its predictions unreliable for regulatory decision-making.

Troubleshooting Steps:

- Categorize Sources of Uncertainty: Systematically identify and document all sources of uncertainty. These typically include [6]:

- Model Parameter Uncertainty: Arising from variability in material properties or anatomical parameters.

- Model Structure Uncertainty: Stemming from limitations in the mathematical formulation.

- Numerical Uncertainty: Resulting from computational approximations.

- Implement Uncertainty Quantification (UQ): Use UQ methods to propagate these uncertainties through the model to quantify their impact on the final prediction. This provides a confidence interval for the model's output, which is critical for regulators to understand the model's limitations [7].

- Perform Sensitivity Analysis: Conduct a sensitivity analysis to identify which input parameters have the greatest influence on the model's output. This helps prioritize efforts for uncertainty reduction and model refinement [6].

Guide 3: Navigating Regulatory Hurdles for a Novel In Silico Model

Problem: Lack of clarity on the evidence package needed to secure regulatory qualification for a new in silico model.

Troubleshooting Steps:

- Adhere to Established Frameworks: Structure your V&V plan according to recognized regulatory frameworks, such as the FDA's Credibility Assessment Framework and the ASME V&V 40 standard [6] [7].

- Engage Regulators Early: Seek early feedback from regulatory agencies through pre-submission meetings (e.g., FDA's Q-Sub program). Present your proposed COU and V&V plan to establish the acceptability of your approach before investing significant resources [6].

- Build a Comprehensive Evidence Dossier: Your submission should be more than just model predictions. It must include [6] [7]:

- A clearly defined COU.

- A detailed V&V report, including all methods and results.

- A thorough uncertainty quantification.

- Transparent reporting of all model assumptions and limitations.

Experimental Protocols & Data

Detailed Methodology for Virtual Cohort Validation

The following protocol, derived from the EU-Horizon SIMCor project, outlines the steps for generating and validating a virtual cohort, a cornerstone of in silico trials [8].

Objective: To create a virtual patient cohort that is statistically indistinguishable from a real-world patient population for specific biomarkers and clinical parameters.

Workflow:

Procedure:

- Data Acquisition and Curation: Collect high-quality, real-world patient data from clinical trials, registries, or electronic health records. This dataset must be cleaned and curated.

- Define Target Distributions: From the real data, define the multivariate statistical distributions (e.g., joint distributions of age, weight, biomarkers) that the virtual cohort must replicate.

- Cohort Generation: Use statistical sampling methods (e.g., multivariate Gaussian sampling, copula-based methods) to generate a virtual cohort that reflects the target distributions.

- Initial Comparison: Perform initial visual checks (e.g., histograms, Q-Q plots) and basic statistical tests to compare the virtual and real cohorts.

- Formal Statistical Validation: Apply a suite of formal statistical tests to demonstrate equivalence. The table below summarizes key techniques as implemented in open-source tools like the SIMCor statistical web application [8].

Statistical Techniques for Virtual Cohort Validation

Table 1: Statistical Methods for Validating Virtual Cohorts against Real-World Data [8]

| Technique Category | Specific Method | Function in Validation |

|---|---|---|

| Descriptive Statistics | Summary Statistics (Mean, SD, Quantiles) | Initial comparison of central tendency and dispersion for key variables. |

| Goodness-of-Fit Tests | Kolmogorov-Smirnov Test, Anderson-Darling Test | Test whether a sample (virtual cohort) comes from a specified distribution (derived from real data). |

| Multivariate Comparison | Hotelling's T² Test, Mahalanobis Distance | Compare means of multiple variables simultaneously between the virtual and real cohorts. |

| Correlation Analysis | Pearson/Spearman Correlation | Compare the correlation structures of multiple parameters within the cohorts. |

Agent-Based Model V&V Protocol

For agent-based models (ABMs) used in in silico trials, a comprehensive empirical validation strategy is required.

Objective: To ensure the ABM is consistent with empirical data and fit for its intended purpose.

Workflow:

Procedure:

- Input Validation: Ensure all exogenous inputs to the model are empirically meaningful. This includes initial conditions, parameter values (e.g., from literature or estimated from data), and functional forms [2].

- Process Validation: Verify that the physical, biological, and behavioral rules governing the agents' actions are consistent with real-world knowledge and constraints [2]. This is crucial for building trust in the model's internal mechanisms.

- Descriptive Output Validation (In-Sample Fitting): Assess how well the model-generated outputs can capture the salient features of the sample data used for its identification and calibration [2].

- Predictive Output Validation (Out-of-Sample Forecasting): This is the strongest test of a model's predictive power. Validate the model's ability to forecast distributions or moments for new data that was withheld during the model development phase [2].

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Tools and Frameworks for In Silico Trial V&V

| Tool / Framework | Type | Function in V&V |

|---|---|---|

| ASME V&V 40 Standard | Regulatory Framework | Provides a structured methodology for assessing the credibility of computational models used in medical applications, based on model risk and Context of Use [6] [7]. |

| SIMCor R-Statistical Web App | Open-Source Software | An open-source menu-driven web application providing a statistical environment specifically for validating virtual cohorts and analyzing in-silico trials [8]. |

| Leadscope Hazard Assessment Platform | Commercial Software | An interactive platform for implementing integrated hazard assessment protocols (e.g., ICH M7), integrating both experimental and in silico results for a weight-of-evidence approach [9]. |

| FDA Credibility Assessment Framework | Regulatory Guidance | Outlines the FDA's approach for evaluating the credibility of computational models submitted in medical device applications, based on the ASME V&V 40 standard [6]. |

| Digital Twins | Computational Model | A virtual representation of a patient or population that integrates multi-omics and real-world data to simulate disease progression and treatment response; requires extensive V&V [10]. |

| In Silico Toxicology Protocols | Standardized Method | Published protocols (e.g., for genetic toxicology, skin sensitization) that define a battery of tests and rules for combining in silico and experimental data to ensure consistent, defendable assessments [9]. |

Technical Support Center: ABM Verification

Frequently Asked Questions (FAQs)

Q1: Why are my ABM simulation results not reproducible even with the same input parameters?

This is a fundamental issue in ABM verification often stemming from uncontrolled stochastic elements. Unlike deterministic models, ABMs use pseudo-random number generators (PRNGs) for initial agent distribution, environmental factors, and agent interactions. If these random seeds are not managed and recorded, results will vary.

- Solution: Implement a Random Seed Control Protocol. Systematically record the seed values for all stochastic variables used in a simulation run. For complete reproducibility, ensure your model uses separate PRNGs for different stochastic processes, as seen in the UISS-TB model which uses three different generators for initial distribution, environmental factors, and HLA types [11].

Q2: How can I determine if my ABM has converged to a solution, and how many simulation runs are needed?

ABMs require multiple runs to characterize the system's behavior due to their stochastic nature. The inability to establish this is a core epistemic challenge.

- Solution: Perform a Stochastic Output Analysis. Conduct a pilot study by running the model multiple times (e.g., 50-100 runs) for a fixed set of inputs. Calculate the mean and variance of your key output metrics. Continue increasing the number of runs until the confidence intervals for these outputs stabilize. This process, part of distributional equivalence, identifies the number of repetitions needed to establish the model's statistical properties [11].

Q3: My ABM code is bug-free, but the results still don't match expected trends. Is this a verification or validation problem?

This touches on the critical distinction between verification and validation. If the code correctly implements the intended rules but the outcomes are unexpected, it is likely a validation issue (checking if the model accurately represents the real world). Verification ensures you are "building the model right," while validation ensures you are "building the right model." [11]. Relational alignment, which involves comparing predictions with expected trends, is part of validation, not verification [11].

Q4: What is the difference between code verification and solution (model) verification for ABMs?

This is a crucial distinction in the verification workflow.

- Code Verification: Ensures the software is implemented correctly and is free of programming bugs. This involves standard software testing practices like unit and integration tests [11].

- Solution (Model) Verification: Aims to quantify the numerical approximation errors of the model itself. For ABMs, this involves specific studies to evaluate errors from sources like spatial discretization and stochasticity, which conventional equation-based methods cannot address [11].

Experimental Protocols for ABM Verification

Protocol 1: Deterministic Verification Test

Objective: To verify the deterministic logic of agent rules by removing stochastic influences.

- Isolate Stochastic Variables: Identify all points in the model where random number generators are used (e.g., agent movement, rule selection).

- Fix Random Seeds: Set all random seeds to a fixed, known value at the start of the simulation.

- Run Simulation: Execute the model with a specific set of initial conditions.

- Re-run and Compare: Execute the model again with the same fixed random seeds and initial conditions. The results must be bit-for-bit identical. Any divergence indicates a problem in the deterministic logic of the code or an uncontrolled external dependency [11].

Protocol 2: Grid Convergence Study for Spatial Discretization Error

Objective: To quantify the numerical error introduced by the spatial discretization (e.g., the cartesian lattice used in UISS-TB) [11].

- Define a Baseline: Select a spatial grid with a very fine resolution that can be considered a "reference" solution.

- Create Coarser Grids: Define a series of progressively coarser grids (e.g., 2x, 4x coarser than the baseline).

- Run Simulations: Execute the model on each grid level using the same fixed random seeds from Protocol 1.

- Quantify Error: For each key output metric, calculate the difference between the coarse-grid result and the baseline reference solution. This helps establish how sensitive your model is to the choice of spatial resolution [11].

ABM Verification Workflow

The following diagram illustrates the step-by-step procedure for verifying an Agent-Based Model, integrating both deterministic and stochastic studies.

The Scientist's Toolkit: Research Reagent Solutions for ABM Verification

The table below details key components required for a rigorous ABM verification process, as exemplified by the UISS-TB model [11].

Table: Essential Components for an ABM Verification Framework

| Component | Function in Verification | Example from UISS-TB Model |

|---|---|---|

| Pseudo-Random Number Generators (PRNGs) | Introduces controlled stochasticity for testing; different algorithms can be used for different processes. | MT19925, TAUS 2, and RANLUX algorithms for different random seeds [11]. |

| Fixed Random Seeds | Enables deterministic verification by ensuring the same "random" sequence is used across runs for reproducibility testing [11]. | Used to separate deterministic and stochastic aspects of the model for individual study [11]. |

| Vector of Input Features | Provides a standardized set of inputs with defined ranges to test model behavior across the operational domain. | 22 input parameters (e.g., Th1 cells, IL-2, patient age) with min/max values [11]. |

| Spatial Domain (Lattice) | The environment for agent interaction; its resolution must be tested for convergence as part of solution verification. | A bidimensional cartesian lattice structure [11]. |

| Agent Interaction Rules | The core logic of the ABM (e.g., bit-string matching); must be verified for correct implementation. | Receptor-ligand binding modeled with bit string matching rules based on Hamming distance [11]. |

Quantitative Data from Verified ABM Studies

The UISS-TB model, used as a case study for verification, relies on a specific set of quantitative inputs to simulate the immune response to tuberculosis [11].

Table: Example Input Parameters for the UISS-TB Agent-Based Model [11]

| Input Parameter | Description | Minimum Value | Maximum Value |

|---|---|---|---|

| Mtb_Sputum | Bacterial load in the sputum smear | 0 CFU/ml | 10,000 CFU/ml |

| Th1 | CD4 T cell type 1 | 0 cells/μl | 100 cells/μl |

| TC | CD8 T cell | 0 cells/μl | 1134 cells/μl |

| IL-2 | Interleukin 2 | 0 pg/ml | 894 pg/ml |

| IFN-g | Interferon gamma | 0 pg/ml | 432 pg/ml |

| Patient Age | Age of the virtual patient | 18 years | 65 years |

Troubleshooting Guides

Guide 1: Resolving Discrepancies Between Model Outputs and Expected Results

Problem: Your model produces unexpected outcomes or fails to replicate known behaviors, raising questions about its internal correctness.

Solution: This is often a verification issue. Follow this systematic procedure to diagnose and resolve the problem.

Step 1: Code Verification

- Action: Conduct unit testing on individual agent functions and interaction rules.

- Example: If your model has a

move_agent()function, verify with a simple test case that the agent's position updates correctly. Check that probabilistic rules (e.g., infection probability) produce outcomes consistent with their defined distributions over many runs. - Rationale: Ensures that the computational implementation is free of software bugs and correctly translates the model's design into code [11].

Step 2: Deterministic Model Verification

- Action: Run the model with fixed random seeds and simplified initial conditions.

- Example: Initialize all agents at the same location with identical properties and disable stochastic elements. Verify that the model produces identical, predictable results each time it is run. This checks the core logic without the confounding factor of randomness [11].

- Rationale: Isolates and tests the deterministic skeleton of your model, ensuring that state transitions and interactions function as designed when randomness is removed.

Step 3: Stochastic Model Verification

- Action: Assess the impact of numerical approximations and stochastic elements.

- Example: Run the model multiple times (hundreds or thousands) using different random seeds. Analyze the ensemble of outputs to ensure the distribution of results is plausible and that the model does not exhibit extreme, unexplainable variance.

- Rationale: Quantifies the numerical error associated with the model's stochasticity and ensures that random elements are integrated correctly [11].

Step 4: Solution Verification

- Action: Evaluate the numerical convergence of your model.

- Example: If your model uses a spatial or temporal grid, run simulations with progressively finer resolutions. If the results do not change significantly beyond a certain resolution, your model has likely converged numerically [11].

- Rationale: Confirms that the model's outcomes are not artifacts of discretization choices.

Guide 2: Addressing Failed Calibration with Observational Data

Problem: Your model has been verified but cannot be adequately calibrated to fit real-world observational data, even after adjusting parameters.

Solution: The issue may lie in the model structure, the calibration method, or the data itself.

Step 1: Perform a Stand-alone Calibration Verification

- Action: Use synthetic data generated by your own model for calibration.

- Example: Choose a known set of parameter values, run your model to generate "fake" observed data, and then attempt to recover the original parameters using your calibration method. This isolates the calibration process from model structure error [12].

- Rationale: If your calibration method cannot recover known parameters from synthetic data, the calibration process itself is flawed, and no amount of real-world data will fix it [12].

Step 2: Review Input Validation

- Action: Check the empirical meaningfulness of your model's inputs and parameters.

- Example: Ensure that initial state conditions, parameter ranges, and functional forms are biologically or socially plausible and appropriate for the intended purpose of the model [2].

- Rationale: A model cannot be credible if its foundational inputs are not empirically justified.

Step 3: Conduct Process Validation

- Action: Verify that the model's internal processes reflect real-world mechanisms.

- Example: In a disease model, check that the rules for agent interaction, transmission, and recovery are consistent with known epidemiological principles and data [2].

- Rationale: Ensures the model is not just fitting curves but is simulating processes in a way that mirrors reality.

Step 4: Evaluate Predictive Output Validation

- Action: Test the model's ability to forecast out-of-sample data.

- Example: Calibrate your model on the first 30 days of an outbreak and validate its predictions against the subsequent 15 days of data that were withheld from the calibration process [2].

- Rationale: A model that fits calibration data well but fails to predict new data may be overfitted and lacks true predictive power.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a deterministic and a stochastic model? A deterministic model lacks any randomness. Given a fixed set of inputs and initial conditions, it will always produce the exact same output. It establishes a transparent cause-and-effect relationship [13] [14]. In contrast, a stochastic model incorporates inherent randomness. Even with identical inputs and initial conditions, it will produce an ensemble of different outputs, which can be analyzed statistically [13] [15]. This makes stochastic models better suited for capturing the uncertainty and variability present in real-world biological systems.

Q2: When should I choose a stochastic modeling approach over a deterministic one for my agent-based model? You should prioritize a stochastic approach when your system involves inherent randomness or when component copy numbers are small [15]. This is critical in biological applications like intracellular signaling, gene regulation, and epidemic spread, where random molecular interactions or individual contact events can significantly influence macro-level outcomes [15]. Stochastic models prevent the oversimplification of these complex, noisy processes.

Q3: My stochastic model shows a bimodal distribution of outcomes, but my corresponding deterministic model has only one stable fixed point. Why does this discrepancy occur? This challenging scenario can arise in mesoscopic systems that are not close to the thermodynamic limit. Factors such as large stoichiometric coefficients and the presence of nonlinear reactions can synergistically promote large, asymmetric fluctuations [15]. As a result, a system that is monostable from a deterministic perspective can exhibit bimodality (two distinct outcome peaks) in its stochastic probability distribution. This highlights a key limitation of deterministic ODE modeling in systems with low copy numbers [15].

Q4: What is the difference between model verification and validation? Verification is the process of ensuring that the model is built and implemented correctly—that is, "Are we building the model right?" It involves checking the internal correctness of the code and the numerical solution, often through tests like deterministic and stochastic verification [11] [2]. Validation, on the other hand, is the process of ensuring that the right model has been built for its intended purpose—that is, "Are we building the right model?" It involves comparing model outputs with real-world data to assess the model's accuracy and usefulness [2] [16].

Q5: What is Simulation-Based Calibration (SBC) and why is it useful? Simulation-Based Calibration is a calibration verification method that uses synthetic data. The core process involves: 1) drawing parameters from a prior distribution, 2) generating synthetic data using these parameters in your model, 3) performing a full Bayesian inference to recover the posterior distribution of the parameters, and 4) analyzing the resulting posteriors to check for systematic biases [12]. SBC is useful because it isolates and tests the calibration procedure independently of model structure error and problems with real-world data quality. It can reveal calibration issues that might be hidden by standard validation techniques like posterior predictive checks [12].

Experimental Protocols & Data Presentation

Detailed Protocol: Simulation-Based Calibration for Verification

This protocol provides a methodology for verifying the calibration process of a stochastic agent-based model, as discussed in [12].

1. Objective: To verify that the chosen model calibration method (e.g., Bayesian inference) can accurately recover known model parameters from synthetic data, thereby isolating calibration errors from other model deficiencies.

2. Materials:

- A fully implemented stochastic agent-based model.

- A defined set of parameters to be calibrated and their prior distributions.

- Access to high-performance computing resources for thousands of model runs.

3. Procedure:

- Step 3.1: Define a prior distribution, P(θ), for the parameters of interest, θ.

- Step 3.2: For each calibration trial

i(whereiranges from 1 to N, e.g., N=1000):- a. Sample a parameter vector, θ*i, from the prior P(θ).

- b. Run the ABM using θi to generate a synthetic dataset, Di.

- c. Treat Di as observed data and perform a full Bayesian calibration to compute the posterior distribution, P(θ | Di).

- Step 3.3: For each parameter, analyze the collection of posteriors across all trials. In a well-calibrated method, the rank of the true parameter value θ*i within its posterior distribution should be uniformly distributed between 0 and 1.

4. Analysis:

- Deviations from a uniform distribution indicate a bias in the calibration process. For example, if the true parameter value consistently lies in the extreme tails of its posterior, the calibration method is failing to capture it accurately, suggesting issues with the likelihood function or sampling algorithm [12].

Quantitative Data: Model Comparison

Table 1: Comparative Analysis of Deterministic and Stochastic Modeling Approaches.

| Feature | Deterministic Model | Stochastic Model |

|---|---|---|

| Core Concept | Fixed inputs produce identical outputs; no randomness [13] [14]. | Incorporates randomness; produces a distribution of possible outputs [13] [15]. |

| Handling of Uncertainty | Does not account for uncertainty or randomness [14]. | Explicitly considers uncertainty and randomness, providing a range of outcomes [13] [14]. |

| Data Requirements | Lower; can be accurate with less data [14]. | Higher; requires extensive data to capture variability [14]. |

| Computational Cost | Generally lower and more computationally efficient [13] [14]. | Higher; requires many simulations (e.g., Monte Carlo) for statistical power [13] [14]. |

| Interpretability | High; clear cause-and-effect facilitates interpretation [14]. | Can be more complex to interpret due to probabilistic outputs [14]. |

| Ideal Application Context | Systems with well-defined inputs and outputs, high copy numbers, and negligible noise [14] [15]. | Systems with inherent randomness, small copy numbers, and unpredictable futures (e.g., finance, disease spread) [13] [14] [15]. |

Table 2: Key Aspects of a Comprehensive Model Credibility Framework [2].

| Validation Aspect | Description | Key Question |

|---|---|---|

| Input Validation | Assessing the empirical meaningfulness of exogenous model inputs. | Are the initial conditions, parameters, and functional forms appropriate and realistic? |

| Process Validation | Evaluating how well the model's internal mechanisms reflect reality. | Do the simulated physical, biological, and social processes match real-world counterparts? |

| Descriptive Output Validation | Measuring how well model outputs fit the sample data used for calibration. | How well does the model capture the features of the calibration data (in-sample fitting)? |

| Predictive Output Validation | Testing the model's ability to forecast new, out-of-sample data. | How well does the model predict data that was withheld from the calibration process? |

Model Verification Workflow Visualization

Verification Workflow Logic: This diagram outlines the sequential stages of a credibility framework for agent-based models, moving from internal verification tasks (yellow) to external validation against data (blue), with calibration verification (green) serving as a critical bridge.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Analytical Tools for ABM Verification.

| Item | Function / Description | Application in Verification |

|---|---|---|

| Pseudo-Random Number Generators (PRNGs) | Algorithms (e.g., MT19925, TAUS 2, RANLUX) that produce reproducible sequences of "random" numbers [11]. | Enables deterministic verification by using fixed seeds. Allows for stochastic verification by generating independent random streams for different model elements (e.g., initial agent distribution, environmental factors) [11]. |

| Sensitivity Analysis Tools | Software services (often agent-based themselves) that automate running large numbers of model simulations across parameter spaces [16]. | Used to test model robustness, identify critical parameters, and perform model calibration. Helps in understanding how variation in inputs affects outputs. |

| Bayesian Inference Engines | Computational tools for Markov Chain Monte Carlo (MCMC) sampling and Approximate Bayesian Computation (ABC) [12]. | The core engine for advanced calibration and calibration verification. Used to estimate parameter posterior distributions and perform Simulation-Based Calibration (SBC). |

| Ensemble Run Managers | Scripts or software that orchestrate and manage thousands of independent stochastic model simulations [11]. | Critical for stochastic verification and generating the data needed to analyze outcome distributions, variances, and other statistical properties. |

| Synthetic Data Generators | The model itself, configured to produce simulated datasets with known ground-truth parameters [12]. | The fundamental "reagent" for calibration verification. Used to test the accuracy and bias of parameter inference methods in a controlled setting. |

A Step-by-Step Guide to Implementing the ABM Verification Workflow

Conducting Existence and Uniqueness Analysis for Numerical Robustness

Frequently Asked Questions

Q1: What are the core aspects of empirical validation for an Agent-Based Model? A comprehensive empirical validation framework for ABMs should address four key aspects [2]:

- Input Validation: Ensuring exogenous model inputs (initial conditions, parameters, functional forms) are empirically meaningful and appropriate.

- Process Validation: Verifying that the modeled physical, biological, and social processes realistically reflect aspects critical to the research purpose and are consistent with scaffolding constraints like physical laws.

- Descriptive Output Validation: Assessing how well model-generated outputs capture salient features of the sample data used for model identification (in-sample fitting).

- Predictive Output Validation: Evaluating how well the model can forecast distributions or moments for withheld sample data or new data acquired later (out-of-sample forecasting).

Q2: Why is model verification distinct from validation, and how is it achieved? Verification ensures the computational model is implemented correctly and behaves as the modeler intends, essentially checking "Did we build the model right?" [2] This is a prerequisite for validation, which asks "Did we build the right model?" [2] Verification involves rigorous code testing, debugging, and ensuring that the agent behavior rules and model dynamics are correctly translated into code.

Q3: My ABM produces a wide distribution of outcomes. How should I report these results? The stochastic nature of ABMs means outcomes are often distributions rather than single points. Researchers should run the model numerous times to obtain a representative distribution of outcomes [17]. Results should be summarized across these multiple runs, and reports should accurately communicate this distribution of findings, for example, by using visualizations that show outcome ranges and probabilities [2].

Q4: How can I ensure the findings from my ABM are robust and not just overfitting? Robustness checks ensure model outcomes reflect persistent aspects of the real-world system, not just overfitting to temporary features. This can involve sensitivity analysis on key parameters, testing the model under different initial conditions, and using cross-validation techniques where the model is calibrated on one dataset and tested on another [2].

Q5: What is a common mistake when starting with ABM development? A common mistake is attempting to create an overly complex model that incorporates too many elements from a broad conceptual model at once [17]. Good models balance simplicity and adequate representation. It is recommended to start with a simple model incorporating the core elements and processes, then iteratively expand complexity [17].

Troubleshooting Guides

Problem: Unstable or Non-Convergent Model Behavior

| Potential Cause | Diagnostic Steps | Resolution |

|---|---|---|

| Faulty Agent Logic | Review agent decision rules and utility functions for logical errors; check for unintended circular dependencies. | Simplify agent behavior rules, incorporate bounded rationality with randomness [17], and verify the code implementation. |

| Unrealistic Parameterization | Conduct sensitivity analysis on key input parameters to identify which ones disproportionately drive instability. | Revisit empirical data or theoretical grounds for parameter estimation; ensure inputs are empirically meaningful [2]. |

| Missing Feedback Loops | Analyze model outputs for explosive growth or decay to extinction; map core system feedbacks. | Review the conceptual model to identify and incorporate essential balancing or reinforcing feedbacks [17]. |

Problem: Model Fails Empirical Validation

| Symptom | Investigation | Solution |

|---|---|---|

| Poor In-Sample Fit | Compare model outputs against the full calibration dataset; identify which specific empirical patterns are not captured. | Re-evaluate and refine the conceptual model, agent characteristics, and behavior rules that drive the mismatched patterns [2]. |

| Poor Out-of-Sample Forecasting | Withhold a portion of data during model calibration, then test the calibrated model on this withheld data. | Avoid overfitting by simplifying the model; ensure the model captures general underlying mechanisms rather than noise [2]. |

| Process Inconsistency | Check if model processes violate known physical laws, accounting identities, or institutional constraints. | Adjust the model to conform to all necessary scaffolding constraints as part of process validation [2]. |

Experimental Protocols for Robustness Analysis

Protocol 1: Establishing Solution Existence and Uniqueness

This methodology is adapted from analytical techniques used in fractional dynamics [18] for ABM contexts.

- Problem Formulation: Define the ABM's core dynamics as a system of equations or rules governing agent interactions and state changes.

- Fixed-Point Theorem Application: Frame the problem of finding a steady state or equilibrium within the model as a fixed-point problem (where T is a model operator), ( s = T(s) ).

- Mathematical Analysis: Apply appropriate fixed-point theorems (e.g., Banach, Brouwer). This often involves demonstrating that the model operator T is a contraction mapping or maps a convex set into itself.

- Conclusion: If the conditions of the theorem are satisfied, the existence (and potentially uniqueness) of a solution or equilibrium for the model is rigorously established [18].

Protocol 2: A-Posteriori Numerical Verification

This protocol uses model outputs to rigorously verify properties, based on methods from PDE analysis [19].

- Model Simulation: Run the ABM multiple times to generate a distribution of solution trajectories.

- Norm Tracking: From the simulation data, compute the time evolution of a key metric or norm ( ||s(t)|| ) that signifies the system's state.

- Differential Inequality Construction: Derive a scalar differential equation or inequality that describes the growth of this norm, ( \frac{d}{dt} ||s(t)|| \leq F(||s(t)||) ), where the function F may depend on empirical data from the simulations.

- Numerical Bound Propagation: Instead of solving the inequality analytically, use numerical methods to compute rigorous upper bounds for its solution. If the norm remains bounded, it verifies the stability and robustness of the model solution over time [19].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Analysis |

|---|---|

| Fixed-Point Theorems | Provide the mathematical foundation for rigorously proving that a solution or equilibrium to the model equations exists and is unique [18]. |

| Sensitivity Analysis | A computational technique to determine how variations in model input parameters affect the outputs, identifying critical parameters that influence robustness. |

| Ulam-Hyers Stability | A mathematical framework for assessing whether small perturbations in model inputs or rules lead to only small changes in outputs, indicating model stability [18]. |

| Adomian Decomposition Method (ADM) | An analytical approximation method useful for breaking down complex non-linear problems into simpler components, which can aid in analysis and verification [18]. |

| A-Posteriori Error Analysis | A verification method that uses numerical solutions (simulation data) to derive rigorous, computable bounds on the error of the solution [19]. |

Workflow Diagram

The diagram below outlines a structured workflow for integrating existence and uniqueness analysis into an ABM verification framework.

Performing Time Step Convergence Analysis to Minimize Discretization Error

How do I determine if my Agent-Based Model requires time step convergence analysis?

Any Agent-Based Model (ABM) used for mission-critical scenarios, such as predicting patient treatment responses or in silico drug trials, requires rigorous verification, including time step convergence analysis [11]. This process is a fundamental part of solution verification, which aims to identify, quantify, and reduce numerical errors associated with the model [11]. If your model involves simulating dynamic processes where agents interact and evolve over discrete time steps, the choice of time step can introduce discretization errors that affect the accuracy and reliability of your results. Conducting this analysis is essential before using your model for predictive purposes or to inform scientific conclusions [17].

What is a step-by-step protocol for performing time step convergence analysis?

The following methodology, adapted from general ABM verification frameworks, provides a detailed protocol for assessing time step convergence [11].

Step 1: Define a Key Model Output (QoI) Select one or more Quantities of Interest (QoI) that are critical to your model's purpose. These should be specific, measurable outputs like "total tumor cell count at 100 days" or "percentage of infected agents at equilibrium."

Step 2: Run Simulations with Progressively Smaller Time Steps (Δt) Execute your model multiple times, systematically reducing the time step (Δt) with each run. Ensure all other model parameters, including the random seed for stochastic components, remain constant to isolate the effect of the time step.

Step 3: Calculate the Relative Error For each time step (Δt), calculate the relative error of your QoI compared to a reference value. The reference value is typically the result from the simulation with the finest (smallest) time step. The relative error (E) for a given Δt is: E(Δt) = | (QoI(Δt) - QoI_ref) / QoI_ref |

Step 4: Plot Error vs. Time Step and Analyze Convergence Create a log-log plot of the relative error E(Δt) against the time step Δt. A converging model will show a clear trend of decreasing error as the time step decreases. The following diagram illustrates this workflow.

What key metrics should I track during convergence analysis?

Tracking the right quantitative data is crucial for a robust analysis. The table below summarizes the core metrics to monitor during a convergence study.

Table 1: Key Metrics for Time Step Convergence Analysis

| Metric | Description | Interpretation |

|---|---|---|

| Time Step (Δt) | The discrete interval used to advance the simulation. | The independent variable in the convergence study. |

| Quantity of Interest (QoI) | The specific model output being analyzed (e.g., final population size, average concentration). | The dependent variable whose accuracy is being assessed. |

| Relative Error (E) | The absolute difference between the QoI at a given Δt and the reference QoI, normalized by the reference QoI. | Measures the numerical error due to discretization. Should decrease as Δt decreases. |

| Observed Order of Convergence (p) | The rate at which the error decreases as the time step is refined. Calculated from the slope of the log(E) vs log(Δt) plot. | A higher value indicates faster convergence. A positive value confirms the model is converging. |

My model is stochastic. How does this affect convergence analysis?

Stochasticity is a fundamental feature of many ABMs, and it must be accounted for in verification [11] [17]. A single model run for a given time step is insufficient because random variation will obscure the underlying discretization error.

Enhanced Protocol for Stochastic ABMs:

- Repeat Runs: For each time step (Δt) in your analysis, run the model multiple times (e.g., 30-100 runs) using different random seeds [17].

- Compute Statistics: Calculate the average (or median) of your QoI across all runs for that specific Δt.

- Use Averages for Error Calculation: In the convergence analysis procedure (Step 3 above), use the average QoI value for each Δt instead of a single value. This ensures you are analyzing the convergence of the expected outcome, filtering out the random noise [11].

The error in my results is not decreasing with a smaller time step. What should I do?

If your analysis does not show a clear convergence trend, this indicates a potential issue with your model or implementation. Follow this troubleshooting guide.

Table 2: Troubleshooting Guide for Non-Converging Models

| Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| High Stochastic Variability | The randomness in the model is so large that it dominates the discretization error. | Increase the number of runs per time step to get a more reliable average QoI [17]. |

| Instability or Divergence | The model's rules or equations become unstable with smaller time steps. | Check for implementation errors (code verification). Review the logic of agent interaction rules and state transitions for potential oversimplifications or contradictions [11] [2]. |

| Insufficiently Small Reference Δt | Your finest time step is not small enough to serve as a true "reference solution." | Attempt to run with an even smaller time step, if computationally feasible. Alternatively, if available, compare against an analytical solution for a simplified version of your model. |

| Bug in the Model Code | A software defect is causing unexpected behavior. | Perform unit testing on individual agent functions and verify that the time-stepping mechanism is implemented correctly [11] [2]. |

What are the essential "research reagents" for a robust ABM verification workflow?

Just as a wet lab requires specific reagents, a computational modeling lab needs a toolkit for verification. The following table details essential components.

Table 3: Research Reagent Solutions for ABM Verification

| Item | Function in Verification |

|---|---|

| Version-Controlled Codebase | Tracks all changes to the model code, ensuring that verification tests are always run against a known, stable version of the model. |

| Automated Testing Framework | Automates the process of running the convergence analysis (and other tests) across multiple time steps and random seeds, ensuring consistency and saving time. |

| High-Performance Computing (HPC) Resources | Provides the computational power needed to execute the hundreds or thousands of simulation runs required for a thorough convergence analysis on stochastic models. |

| Reference Dataset / Analytical Solution | Serves as a benchmark to calculate the error. This could be high-fidelity simulation data, a known mathematical solution, or a simplified, stable version of your model. |

| Formal Model Charter | A documented definition of the model's scope, objectives, and stakeholders. This provides the context for deciding which QoIs are critical to verify [11]. |

Assessing Solution Smoothness to Identify Stiffness and Discontinuities

Troubleshooting Guides

FAQ: Stiffness and Discontinuities in Computational Models

1. What are stiff equations and why do they cause problems in numerical simulations?

Stiff equations are differential equations for which certain numerical methods become unstable unless the step size is taken to be extremely small. The primary issue is that these equations include terms that can lead to rapid variation in the solution [20]. During numerical integration, one would expect the step size to be relatively small in regions where the solution curve displays significant variation and relatively large where the solution curve straightens out. However, for stiff problems, this is not the case—the step size is required to be unacceptably small even in regions where the solution curve is very smooth [20]. This phenomenon is particularly problematic in agent-based modeling where computational efficiency is crucial.

2. How can I identify if my system of equations is stiff?

A linear constant coefficient system is often considered stiff if all its eigenvalues have negative real part and the stiffness ratio is large [20]. The stiffness ratio can be calculated as |Re(λ¯)|/|Re(λ)|, where λ¯ and λ are the eigenvalues with the largest and smallest absolute values of their real parts, respectively [20]. More qualitatively, stiffness occurs when some components of your solution decay much more rapidly than others [20], or when stability requirements, rather than accuracy requirements, constrain your step length [20].

3. What numerical methods are most affected by stiffness and discontinuities?

Methods with finite regions of absolute stability are particularly vulnerable to stiffness [20]. For example, Euler's method exhibits significant instability when applied to stiff equations unless the step size is drastically reduced [20]. The trapezoidal method (a two-stage Adams-Moulton method) generally performs better for stiff systems due to its improved stability properties [20]. Discontinuities pose particular challenges for machine learning approaches and root-finding algorithms that require differentiability, as derivatives may become unbounded near collision barriers or other discontinuous boundaries [21].

4. What specific issues do discontinuities create in optimization and machine learning applications?

Discontinuities create significant problems for approaches requiring differentiability, which are typical in machine learning, inverse problems, and control [21]. The derivative of collision time with respect to parameters becomes infinite as one approaches the barrier separating colliding from not colliding [21]. Standard backpropagation approaches often fail because they utilize standard rules of differentiation but ignore more advanced mathematical principles like L'Hopital's rule that are necessary near discontinuities [21].

5. How does stiffness affect agent-based models specifically?

In agent-based modeling, stiffness can significantly impact the temporal dynamics of your simulation. Since ABM often involves modeling heterogeneous agents with different time scales of behavior, stiffness can force you to use excessively small time steps to maintain stability, making long-term simulations computationally prohibitive [17]. The high heterogeneity in agent characteristics and interactions between agents and environments that ABM can accommodate [17] may inadvertently introduce stiffness if not carefully considered during model design.

Experimental Protocols for Stiffness and Discontinuity Assessment

Protocol 1: Eigenvalue Analysis for Linear Systems

- Objective: Quantitatively identify stiffness in linear constant coefficient systems.

- Methodology:

- For a system represented as y' = Ay + f(x), compute all eigenvalues λt of matrix A [20].

- Verify that Re(λt) < 0 for all eigenvalues to ensure system stability [20].

- Identify λ¯ and λ, the eigenvalues with the largest and smallest absolute values of their real parts [20].

- Calculate the stiffness ratio as |Re(λ¯)|/|Re(λ)| [20].

- Interpretation: A large stiffness ratio (often > 1000) indicates a stiff system that may require specialized numerical methods [20].

Protocol 2: Step Size Sensitivity Testing

- Objective: Empirically detect stiffness through numerical experimentation.

- Methodology:

- Apply a numerical method with a finite region of absolute stability (e.g., Euler's method) to your system [20].

- Systematically vary the step size while monitoring solution stability.

- Compare the required step size for stability with the smoothness of the exact solution in each interval.

- Interpretation: If the method is forced to use a step length that is excessively small relative to the smoothness of the exact solution in a given interval, the system is likely stiff in that interval [20].

Protocol 3: Discontinuity Localization in Physical Simulations

- Objective: Identify and characterize discontinuities that may cause numerical instability.

- Methodology:

- Focus on processes with inherent mathematical discontinuities, such as collisions between rigid or deformable bodies [21].

- Analyze the derivative of key parameters (e.g., collision time) with respect to system parameters.

- Monitor for unbounded derivatives as the system approaches decision boundaries (e.g., the barrier separating colliding from not colliding) [21].

- Interpretation: Unbounded derivatives near decision boundaries indicate the presence of significant discontinuities that may require specialized treatment such as complexification or mollification [21].

Diagnostic Workflows

Diagnostic Workflow for Numerical Stability

The Scientist's Toolkit: Research Reagent Solutions

Computational Methods for Stiffness and Discontinuity Handling

| Method/Technique | Function | Application Context |

|---|---|---|

| Stiffness Ratio Calculation | Quantitative measure of stiffness through eigenvalue analysis [20] | Linear constant coefficient ODE systems |

| Implicit Integration Methods | Maintain numerical stability with larger step sizes for stiff systems [20] | Differential equations with rapidly decaying transient solutions |

| Complexification | Lift solution space to complex numbers to handle discontinuous barriers [21] | Root-finding near collision boundaries in physical simulations |

| Mollification | Smooth sharp transitions to enable standard numerical approaches [21] | Discontinuous physical processes (e.g., collisions) |

| Agent-Based Model Verification | Framework for testing heterogeneous agent interactions and system dynamics [17] | Complex systems with multiple interacting components |

Experimental Workflow for ABM Verification

ABM Verification Workflow

Advanced Diagnostic Tables

Quantitative Metrics for Stiffness Assessment

| Metric | Calculation Method | Interpretation Threshold | ||||||

|---|---|---|---|---|---|---|---|---|

| Stiffness Ratio | Re(λ¯) | / | Re(λ_) | where λ¯ and λ_ are eigenvalues with largest and smallest | Re | [20] | > 10³ indicates significant stiffness [20] | |

| Step Size Sensitivity | Maximum stable step size / Solution smoothness scale | Ratio << 1 indicates stiffness constraints dominate [20] | ||||||

| Derivative Boundness | sup⎛∂t_collision/∂parameters⎞ near barriers [21] | Unbounded derivatives indicate significant discontinuities [21] |

Common Failure Modes in Discontinuous Systems

| Failure Mode | Symptoms | Remediation Approach |

|---|---|---|

| Unbounded Derivatives | Derivatives approach infinity near decision boundaries [21] | Complexification of solution space; Barrier mollification [21] |

| Collision Detection Errors | Incorrect collision resolution in rigid/deformable bodies [21] | Implicit differentiation of governing equations; Specialized root-finding [21] |

| Backpropagation Failures | Training instability in machine learning applications [21] | Address mathematical nature of problem; Apply L'Hopital's rule where appropriate [21] |

Executing Parameter Sweep and Sensitivity Analysis (e.g., LHS-PRCC, Sobol)

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between local and global sensitivity analysis, and why is the latter critical for Agent-Based Models (ABMs)?

Local sensitivity analysis assesses how small perturbations of model parameters around specific reference values influence the model output. However, it is unsuitable for most ABMs because it assumes model linearity, does not account for interactions between parameters, and only explores a limited portion of the input space. In contrast, global sensitivity analysis varies all uncertain factors across their entire feasible space, revealing the global effects of each parameter on the model output, including any interactive effects. For nonlinear models like ABMs, which typically exhibit complex, nonlinear dynamics and interactions, global sensitivity analysis is the preferred and necessary approach [22].

FAQ 2: When should I use LHS-PRCC versus Sobol' indices for my sensitivity analysis?

The choice depends on your analysis goals and the nature of your model's input-output relationships.

- LHS-PRCC (Latin Hypercube Sampling - Partial Rank Correlation Coefficient) is a robust technique for screening influential parameters. It is particularly effective for identifying monotonic (consistently increasing or decreasing) relationships between inputs and outputs. It is often computationally less expensive than Sobol' analysis and is well-suited for an initial factor fixing or prioritization step [23].

- Sobol' indices (a variance-based method) provide a more comprehensive decomposition of the output variance. This method can quantify not only the first-order (main) effect of each parameter but also higher-order interaction effects between parameters. This makes it ideal for a detailed factor prioritization, as it can reveal which parameters, and their interactions, contribute most to the output uncertainty [24] [23].

FAQ 3: How do I determine the number of model runs needed for a sensitivity analysis to be reliable?

The required number of model runs depends on the complexity of your model, the number of parameters, and the sensitivity analysis method.

- For LHS-PRCC, a general rule of thumb is to use at least N = (4/3)K samples, where K is the number of parameters, though larger samples improve reliability [23].

- For Sobol' analysis, the sampling requirement is more intensive. A common approach uses N(d+2) model evaluations, where d is the number of parameters and N is a base sample size (often in the thousands) needed to achieve stable estimates of the indices [24].

- For general stochastic models like ABMs, you must also account for inherent randomness. The model should be run multiple times (e.g., 10-30 runs) for each unique parameter set to obtain a representative distribution of outcomes. The number of runs can be determined by checking if summary statistics (e.g., the mean) of the output stabilize across runs [17].

FAQ 4: My model is computationally expensive. What is the most efficient sampling scheme to reduce the number of required evaluations?

For computationally expensive models, efficient sampling schemes are crucial. While random sampling is simple, it is inefficient. Sobol sequences, a type of low-discrepancy (quasi-random) sequence, provide superior uniformity and faster convergence to the true output distribution compared to random sampling and Latin Hypercube Sampling (LHS). This often allows for a smaller sample size to achieve the same accuracy. Furthermore, generating Sobol sequences is computationally less expensive than generating LHS samples [25].

FAQ 5: What are the specific verification steps for an Agent-Based Model before conducting a parameter sweep?

Before a full parameter sweep, a robust verification workflow should be followed to ensure the model is functioning as intended. Key deterministic verification steps include:

- Existence and Uniqueness: Verify that the model produces an output for all parameter sets in the relevant range and that identical inputs produce identical outputs (within numerical tolerance).

- Time Step Convergence Analysis: Run the model with progressively smaller time-steps to ensure the solution is not sensitive to the chosen discretization.

- Smoothness Analysis: Check output time series for unnatural stiffness, singularities, or discontinuities that may indicate numerical errors.

- Parameter Sweep Analysis: Perform an initial sweep to check for ill-conditioned behavior, where slight parameter variations lead to disproportionately large or invalid output changes [23].

Troubleshooting Guides

Issue 1: Sensitivity Analysis Results are Not Converging or are Erratic

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient sample size | Gradually increase the sample size (for Sobol/LHS) and the number of replications per parameter set (for ABMs). Plot sensitivity indices against sample size to see if they stabilize. | Increase the sample size until key sensitivity indices show less than a target variation (e.g., 5%) [24] [17]. |

| High inherent stochasticity | For a fixed parameter set, run the model many times and observe the distribution of outputs. A very wide distribution indicates high inherent variance. | Increase the number of replications per parameter set. Consider using more robust output metrics (e.g., median over mean) [17]. |

| Non-monotonic relationships | Plot scatterplots of input parameters against the output. | If relationships are non-monotonic, LHS-PRCC may be inappropriate. Switch to a variance-based method like Sobol' which can handle any type of relationship [24] [22]. |

| Faulty sampling strategy | Verify the coverage of your parameter space visually with 2D scatter plots for the first few parameters. | Use a more efficient sampling scheme like Sobol sequences instead of random sampling to ensure better space-filling properties [25]. |

Issue 2: Parameter Sweep Reveals Unexpected or Invalid Model Behavior

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Model ill-conditioning | Identify the specific parameter values that lead to invalid outcomes. Check if these parameters are causing numerical errors (e.g., division by zero). | Implement safeguards in the model code, such as parameter boundaries and exception handling, to prevent invalid operations [23]. |

| Overly broad parameter ranges | Check if the biologically/physically implausible parameter ranges are being sampled. | Refine the parameter space by narrowing the distributions for the sweep based on empirical data or literature [22]. |

| Errors in model logic | Isolate the problematic parameter sets and run the model in a debug mode to step through the agent behaviors and interactions. | This is a verification issue. Revisit the model's conceptual design and implementation to fix logical errors [2]. |

| Violation of model assumptions | Use factor mapping to trace which parameter combinations lead to the "invalid" region of output space. | Document the boundaries of model validity and refine the underlying assumptions to better reflect the system being modeled [22]. |

Issue 3: High Computational Cost is Prohibiting a Comprehensive Analysis

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Too many uncertain parameters | Perform a preliminary factor screening (e.g., using a smaller LHS-PRCC study) to identify and fix non-influential parameters. | Use a two-step approach: first, fix non-influential parameters to their nominal values, then perform a detailed analysis on the remaining influential subset [22]. |

| Inefficient sampling | Compare the convergence speed of different samplers (Random, LHS, Sobol) on a smaller, test version of your problem. | Adopt Sobol sequences for faster convergence and deterministic, reproducible samples, reducing the total number of required evaluations [25]. |

| Individual model run is too slow | Profile your model code to identify performance bottlenecks. | Optimize the model code. If possible, use techniques like parallel computing to distribute model evaluations across multiple processors or machines [25] [26]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Computational Tools for Sensitivity Analysis and Model Verification.

| Tool / Technique | Function | Key Properties & Use Cases |

|---|---|---|

| Sobol Sequences | A quasi-random number generator for creating efficient input samples. | Deterministic, fast convergence, low discrepancy. Ideal for variance-based sensitivity analysis and reducing the number of model evaluations [25]. |

| Latin Hypercube Sampling (LHS) | A statistical method for generating a near-random sample of parameter values from a multidimensional distribution. | Ensures full stratification over each parameter's range. Good for building response surfaces and for use with correlation-based methods like PRCC [27] [23]. |

| SALib (Sensitivity Analysis Library) | A Python library implementing global sensitivity analysis methods. | Provides implementations of Sobol' analysis, Morris method, and others. Works seamlessly with NumPy and SciPy [23]. |

| Model Verification Tools (MVT) | An open-source toolkit for the verification of discrete-time stochastic models, including ABMs. | Automates key verification steps: existence/uniqueness, time step convergence, smoothness analysis, and parameter sweep analysis [23]. |

| Partial Rank Correlation Coefficient (PRCC) | A statistical measure to determine the strength of monotonic relationships between inputs and output. | Robust to non-normality. Used with LHS to identify key influential parameters in complex, nonlinear models [23]. |

Table 2: Comparative Analysis of Sampling Schemes for Sensitivity Analysis [25].

| Sampling Scheme | Computational Cost (to Generate) | Reproducibility | Space-Filling Properties | Best Use Case in SA |

|---|---|---|---|---|

| Random Sampling | Lowest | Low (requires seed management) | Poor, can miss regions | Baseline comparison, simple models |

| Latin Hypercube Sampling (LHS) | Highest | Moderate (depends on implementation) | Good, ensures projection properties | LHS-PRCC for monotonic relationships |

| Sobol Sequences | Low (slightly higher than random) | High (deterministic by design) | Excellent, low discrepancy | Variance-based methods (Sobol' indices), computationally expensive models |

Experimental Protocols

Protocol 1: Executing a Sobol' Variance-Based Sensitivity Analysis

Purpose: To quantify the contribution of each input parameter and their interactions to the variance of the model output.

Methodology:

- Define Inputs and Outputs: Identify d uncertain parameters and their probability distributions. Select the model output Y of interest.

- Generate Sample Matrix: Use the Saltelli extension of the Sobol' sequence to generate an N × (2d + 2) sample matrix, where N is the base sample size. This creates three matrices: A, B, and a set of AB_i where all columns are from A except the i-th, which is from B [24].

- Run Model Evaluations: Execute the model for each row in the A, B, and all AB_i matrices. This requires N × (2d + 2) model runs. For stochastic ABMs, run multiple replications per parameter set and use the average output for each set.

- Calculate Indices: Use the model outputs f(A), f(B), and f(AB_i) to compute the first-order (S_i) and total-order (ST_i) Sobol' indices using established estimators [24]:

- First-order index ($Si$): Measures the main effect of Xi on the output variance.

- Total-order index ($STi$): Measures the total contribution of Xi, including all its interaction effects with other parameters.

Protocol 2: Performing an LHS-PRCC Analysis

Purpose: To identify and rank parameters that have a significant monotonic influence on the model output.

Methodology:

- Define Input Space: Specify the d parameters and their statistical distributions.