A Comprehensive Protocol for Validating Computational Target Prediction Methods in Drug Discovery

This article provides a comprehensive, step-by-step protocol for the rigorous validation of computational target prediction methods, which are essential tools in modern drug discovery and development.

A Comprehensive Protocol for Validating Computational Target Prediction Methods in Drug Discovery

Abstract

This article provides a comprehensive, step-by-step protocol for the rigorous validation of computational target prediction methods, which are essential tools in modern drug discovery and development. Aimed at researchers, scientists, and drug development professionals, it covers the foundational principles of why validation is critical, details methodological approaches for implementation, offers strategies for troubleshooting common pitfalls like data bias and overfitting, and establishes a framework for robust performance evaluation and comparison. By integrating guidelines from recent literature, the protocol emphasizes the importance of 'targeted validation'—ensuring models are evaluated in contexts that match their intended clinical use—to produce reliable, actionable predictions that can effectively guide experimental efforts and reduce research waste.

Understanding the Critical Role of Validation in Computational Target Prediction

The paradigm of small-molecule drug discovery has transitioned from traditional phenotypic screening to more precise target-based approaches, increasing the focus on understanding mechanisms of action (MoA) and target identification [1]. Computational target prediction has emerged as a crucial discipline that leverages artificial intelligence (AI), machine learning (ML), and structural bioinformatics to decipher drug-target interactions (DTIs) with the potential to significantly reduce both time and costs in pharmaceutical development [1] [2]. By revealing hidden polypharmacology—how a single drug can interact with multiple targets—these computational methods facilitate off-target drug repurposing and enhance our understanding of therapeutic efficacy and safety profiles [1] [3].

The identification of druggable binding sites on protein targets represents a pivotal stage in modern drug discovery, offering a strategic pathway for elucidating disease mechanisms [2]. While traditional experimental methods like X-ray crystallography provide high-resolution structural insights, they are often constrained by lengthy timelines, substantial costs, and limitations in capturing dynamic conformational states of proteins [2]. Computational methodologies provide powerful, efficient, and cost-effective alternatives for large-scale binding site prediction and druggability assessment, enabling researchers to explore chemical and biological spaces at unprecedented scales [4] [2].

Key Computational Methodologies

Computational target prediction methods can be broadly categorized into several complementary approaches, each with distinct strengths and applications in drug discovery pipelines.

Structure-Based Prediction Methods

Structure-based methods leverage the three-dimensional architecture of proteins to identify potential binding sites and predict interactions [2]. Geometric and energetic approaches, implemented in tools such as Fpocket and Q-SiteFinder, rapidly identify potential binding cavities by analyzing surface topography or interaction energy landscapes with molecular probes [2]. While computationally efficient, these methods often treat proteins as static entities, overlooking the critical role of conformational dynamics. To address this limitation, molecular dynamics (MD) simulation techniques have been increasingly integrated. Methods like Mixed-Solvent MD (MixMD) and Site-Identification by Ligand Competitive Saturation (SILCS) probe protein surfaces using organic solvent molecules, identifying binding hotspots that account for some degree of flexibility [2]. For more complex conformational transitions, advanced frameworks like Markov State Models (MSMs) and enhanced sampling algorithms (e.g., Gaussian accelerated MD) enable the exploration of long-timescale dynamics and the discovery of cryptic pockets absent in static structures [2].

Ligand-Based Prediction Methods

Ligand-centric methods focus on the similarity between a query molecule and a large set of known molecules annotated with their targets [1]. Their effectiveness depends on the knowledge of known ligands and established ligand-target relationships. These approaches include similarity searching techniques that use molecular fingerprints (e.g., Morgan fingerprints, MACCS keys) and similarity metrics (e.g., Tanimoto scores) to identify potential targets based on the principle that structurally similar molecules are likely to share biological targets [1]. With data on proven interactions, several small-molecule drugs have been successfully repurposed using these methods. For example, MolTarPred discovered hMAPK14 as a potent target of mebendazole, which was further validated through in vitro experiments [1].

Machine Learning and Deep Learning Approaches

The advent of machine learning, particularly deep learning, has ushered in a transformative era for computational target prediction [2] [5]. Traditional machine learning algorithms, including Support Vector Machines (SVMs), Random Forests (RF), and Gradient Boosting Decision Trees (GBDT), have been successfully deployed in tools like COACH, P2Rank, and various affinity prediction models [2]. These methods excel at integrating diverse feature sets—encompassing geometric, energetic, and evolutionary descriptors—to achieve robust predictions. Deep learning architectures have demonstrated superior capability in automatically learning discriminative features from raw data. Convolutional Neural Networks (CNNs) process 3D structural representations in tools like DeepSite and DeepSurf, while Graph Neural Networks (GNNs), as implemented in GraphSite, natively handle the non-Euclidean structure of biomolecules, modeling proteins as graphs of atoms or residues to effectively capture local chemical environments and spatial relationships [2]. Furthermore, Transformer models, inspired by natural language processing, are repurposed to interpret protein sequences as "biological language," learning contextualized representations that facilitate binding site prediction and even de novo ligand design [2].

Integrated and Hybrid Approaches

Recognizing that no single method is universally superior, integrated approaches have gained prominence [2]. Ensemble learning methods, such as the COACH server, combine predictions from multiple independent algorithms, often yielding superior accuracy and coverage by leveraging their complementary strengths [2]. Simultaneously, multimodal fusion techniques aim to create unified representations by jointly modeling heterogeneous data types, including protein sequences, 3D structures, and physicochemical properties [2]. Platforms like MultiSeq and MPRL exemplify this trend, seeking to provide a more holistic analysis of protein characteristics and binding behaviors.

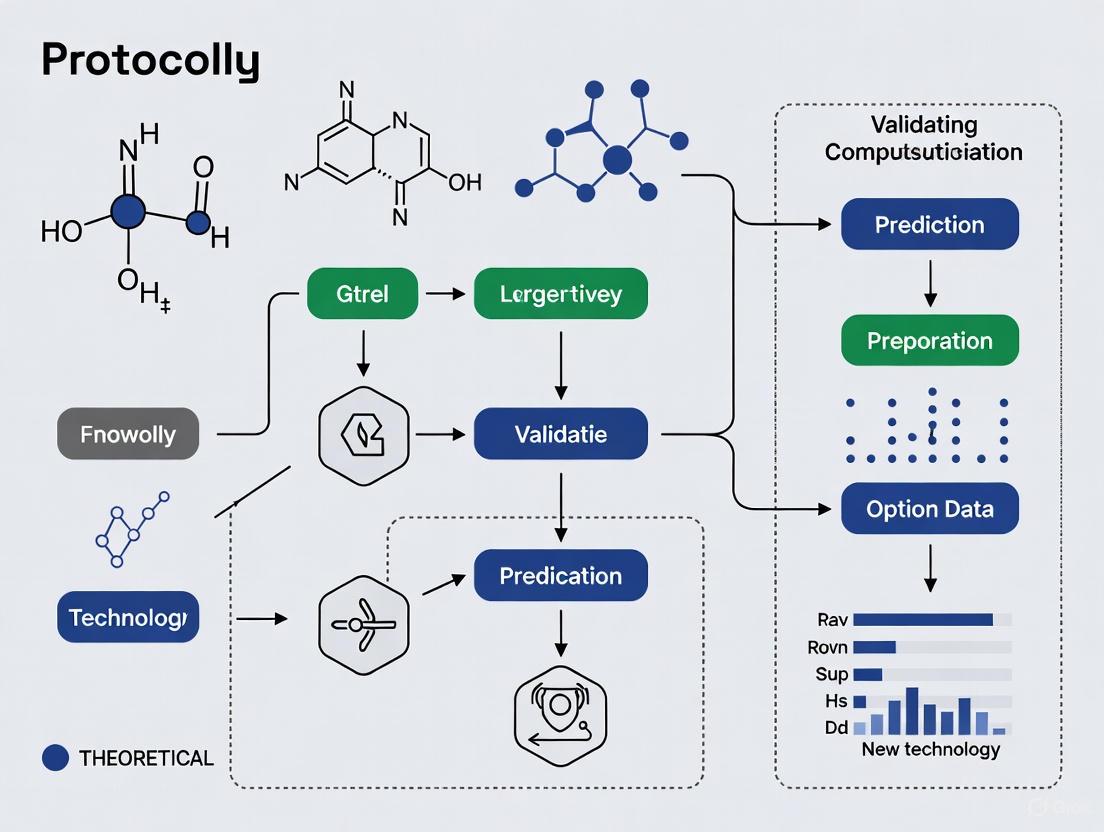

Figure 1: Computational Target Prediction Method Categories. This diagram illustrates the major categories of computational methods used for target prediction in drug discovery.

Comparative Analysis of Target Prediction Methods

Performance Benchmarking

A precise comparison of molecular target prediction methods conducted in 2025 systematically evaluated seven target prediction methods, including stand-alone codes and web servers (MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred) using a shared benchmark dataset of FDA-approved drugs [1]. This analysis revealed that MolTarPred was the most effective method among those tested [1]. The study also explored model optimization strategies, such as high-confidence filtering, which reduces recall, making it less ideal for drug repurposing where broader target identification is valuable [1]. Furthermore, for MolTarPred, Morgan fingerprints with Tanimoto scores outperformed MACCS fingerprints with Dice scores [1].

Table 1: Comparison of Seven Target Prediction Methods [1]

| Method | Type | Algorithm | Database | Fingerprints/Features |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity | ChEMBL 20 | MACCS |

| PPB2 | Ligand-centric | Nearest neighbor/Naïve Bayes/deep neural network | ChEMBL 22 | MQN, Xfp, ECFP4 |

| RF-QSAR | Target-centric | Random forest | ChEMBL 20&21 | ECFP4 |

| TargetNet | Target-centric | Naïve Bayes | BindingDB | FP2, Daylight-like, MACCS, E-state, ECFP2/4/6 |

| ChEMBL | Target-centric | Random forest | ChEMBL 24 | Morgan |

| CMTNN | Target-centric | ONNX runtime | ChEMBL 34 | Morgan |

| SuperPred | Ligand-centric | 2D/fragment/3D similarity | ChEMBL and BindingDB | ECFP4 |

Binding Affinity Prediction Methods

Beyond simple binary classification of drug-target interactions, predicting drug-target binding affinities (DTBA) is of great value as it reflects the strength of the interaction and potential efficacy of the drug [5]. Methods developed to predict DTBA provide more informative insights but are also more challenging. Most in silico DTBA prediction methods use 3D structural information in molecular docking analysis followed by applying search algorithms or scoring functions to assist with binding affinity predictions [5]. The concept of scoring function (SF) is frequently used in DTBA predictions, reflecting the strength of binding affinity between ligand and protein interaction [5]. Machine learning-based SFs are data-driven models that capture non-linearity relationships in data, making the SF more general and accurate, while deep learning-based SFs learn features to predict binding affinity without requiring extensive feature engineering [5].

Experimental Protocols and Applications

Database Preparation and Curation

For reliable computational target prediction, proper database preparation is essential. The following protocol outlines the steps for creating a benchmark dataset based on the ChEMBL database, which is widely used for its extensive and experimentally validated bioactivity data, including drug-target interactions, inhibitory concentrations, and binding affinities [1]:

- Database Selection: Select ChEMBL (version 34 or newer) for its extensive chemogenomic data, containing targets, compounds, and interactions [1].

- Data Retrieval: Host the PostgreSQL version of the ChEMBL database locally and retrieve data from the moleculedictionary and targetdictionary tables, including unique ChEMBL IDs for both compounds and targets, bioactivity interaction, and canonical SMILES strings, by connecting via pgAdmin4 software [1].

- Bioactivity Filtering: Retrieve bioactivity records with standard values for IC50, Ki, or EC50 below 10000 nM from the activities table [1].

- Data Cleaning: Filter out targets associated with non-specific or multi-protein targets by excluding targets whose names contain keywords such as "multiple" or "complex." Remove duplicate compound-target pairs, retaining only unique pairs [1].

- Confidence Scoring: Employ a filtered database containing only highly confident interactions with a minimum confidence score of 7, which ensures that only well-validated interactions are included in the analysis [1].

- Benchmark Preparation: Collect molecules with FDA approval years to prepare a benchmark dataset of FDA-approved drugs. To prevent bias or overestimated performance, ensure these molecules are excluded from the main database to prevent any overlap with known drugs during prediction. Randomly select samples (e.g., 100 drugs) from the FDA-approved drugs dataset for validation [1].

Figure 2: Database Preparation Workflow. This diagram outlines the sequential steps for preparing a validated database for computational target prediction.

Case Study: Fenofibric Acid Repurposing

A practical application of these methods was demonstrated in a case study on fenofibric acid, which showed its potential for drug repurposing as a THRB (thyroid hormone receptor beta) modulator for thyroid cancer treatment [1]. The protocol for such target repurposing studies involves:

- Query Molecule Preparation: Obtain the canonical SMILES string or structural representation of the drug candidate (e.g., fenofibric acid).

- Target Prediction: Submit the query molecule to one or more target prediction methods (e.g., MolTarPred, PPB2, RF-QSAR) to generate a list of potential protein targets.

- Consensus Prediction: Compare results across multiple methods to identify high-confidence targets.

- Binding Affinity Assessment: Use molecular docking or binding affinity prediction tools to estimate the strength of interaction between the drug and predicted targets.

- Mechanism of Action Hypothesis: Generate MoA hypotheses based on the biological functions of the predicted targets and their relevance to disease pathways.

- Experimental Validation: Design in vitro experiments to validate the top predictions, including binding assays and functional cellular assays.

AI-Driven Drug Discovery Platforms

Leading AI-driven drug discovery platforms have demonstrated remarkable progress in advancing candidates to clinical stages. By mid-2025, over 75 AI-derived molecules had reached clinical stages, representing exponential growth from the first examples appearing around 2018-2020 [4]. Notable platforms include:

- Exscientia: Uses deep learning models trained on vast chemical libraries and experimental data to propose new molecular structures that satisfy precise target product profiles, including potency, selectivity, and ADME properties [4]. The company reported in silico design cycles approximately 70% faster and requiring 10× fewer synthesized compounds than industry norms [4].

- Insilico Medicine: Developed a generative-AI-designed idiopathic pulmonary fibrosis drug that progressed from target discovery to Phase I in 18 months, significantly compressing traditional timelines [4].

- Schrödinger: Leverages physics-enabled design strategies, with its TYK2 inhibitor, zasocitinib (TAK-279), advancing into Phase III clinical trials [4].

- Recursion: Integrated phenomic screening with automated precision chemistry into a full end-to-end platform, later merging with Exscientia to create an integrated AI drug discovery platform [4].

Research Reagent Solutions

Table 2: Essential Research Resources for Computational Target Prediction

| Resource | Type | Function | Application |

|---|---|---|---|

| ChEMBL Database | Bioactivity Database | Provides curated bioactivity data, drug-target interactions, and compound information [1]. | Training and testing predictive models; benchmark creation. |

| MolTarPred | Target Prediction Tool | Ligand-centric method using 2D similarity searching with molecular fingerprints [1]. | Predicting potential targets for query molecules. |

| PPB2 (Polypharmacology Browser 2) | Web Server | Uses nearest neighbor, Naïve Bayes, or deep neural network algorithms for target prediction [1]. | Multi-target profiling and polypharmacology prediction. |

| RF-QSAR | Web Server | Target-centric method using random forest algorithm and ECFP4 fingerprints [1]. | Quantitative structure-activity relationship modeling. |

| Fpocket | Structure-Based Tool | Geometric approach for binding site detection based on protein 3D structure [2]. | Identifying potential binding pockets on protein surfaces. |

| COACH | Meta-Server | Combines multiple independent algorithms using ensemble learning [2]. | Consensus ligand-binding site prediction. |

| DeepSite | Deep Learning Tool | Uses 3D convolutional neural networks to process structural representations [2]. | Protein-binding site prediction with deep learning. |

Validation Framework and Future Perspectives

Validation Protocol for Computational Predictions

Establishing a robust validation framework is essential for assessing the reliability and translational potential of computational target predictions. The following protocol outlines a comprehensive approach:

Computational Validation:

- Perform k-fold cross-validation (e.g., 5-fold or 10-fold) on benchmark datasets to assess model performance.

- Use metrics including precision, recall, F1-score, area under the curve (AUC), and mean squared error (MSE) for binding affinity predictions.

- Compare performance against random predictors and existing state-of-the-art methods.

Experimental Validation:

- Select top predictions for experimental testing based on confidence scores and biological relevance.

- Perform binding assays (e.g., surface plasmon resonance, isothermal titration calorimetry) to confirm physical interactions.

- Conduct functional cellular assays to assess biological activity and therapeutic potential.

- Validate target engagement in relevant disease models.

Clinical Correlation:

- Analyze clinical data and patient-derived samples to assess translational relevance.

- Investigate correlation between predicted targets and clinical outcomes where available.

Figure 3: Multi-Level Validation Framework. This diagram illustrates the comprehensive approach to validating computational target predictions at computational, experimental, and clinical levels.

Current Challenges and Future Directions

Despite significant progress, the field of computational target prediction continues to face several challenges that define its future trajectory [2]:

- Enhancing Prediction Accuracy: Further refinement of algorithms, more effective ensemble and multimodal fusion techniques, and deeper integration of experimental data are needed to improve predictive accuracy [2].

- Accounting for Protein Dynamics: Accurately simulating full protein dynamics remains crucial, especially for capturing transient cryptic pockets; this requires continued development of advanced sampling methods and analysis frameworks [2].

- Integration of Multi-Source Data: The efficient integration of multi-source and multi-scale information—from genomic data to atomic-level interaction energies—poses a significant informatics challenge but is essential for precise target localization [2].

- Computational-Experimental Integration: Closing the loop between computation and experiment is vital. The establishment of robust, standardized computational-experimental validation pipelines and benchmark datasets will be critical for rigorously evaluating new methods and enhancing translational impact [2].

- Addressing Data Limitations: Methods still face limitations related to data availability and quality. Ligand-based approaches suffer from low performance when the number of known ligands of target proteins is small, while structure-based methods are limited by the availability of high-quality 3D protein structures [5].

As computational methods continue to evolve and integrate with experimental approaches, they hold the promise of fundamentally transforming drug discovery by enabling more precise target identification, rational drug design, and successful therapeutic repurposing, ultimately accelerating the delivery of effective treatments to patients.

The integration of artificial intelligence (AI) and computational methods into drug discovery has catalyzed a transformative shift from traditional phenotypic screening toward precise target-based approaches [6] [1]. These computational methodologies now routinely inform target prediction, compound prioritization, and virtual screening strategies, demonstrating potential to significantly compress traditional discovery timelines [6] [7]. However, as these in silico tools increasingly support critical decisions in therapeutic development, establishing rigorous validation frameworks transitions from an academic exercise to a fundamental requirement for clinical translation.

The core challenge lies in the translational gap between computational predictions and clinical applicability. Despite promising technical capabilities, many AI systems remain confined to retrospective validations and preclinical settings, seldom advancing to prospective evaluation in clinical workflows [8]. This limitation stems not only from technological immaturity but also from insufficient validation frameworks that adequately address the complexity of biological systems and regulatory requirements [9] [8]. As noted in recent oncology research, even algorithms demonstrating high accuracy in controlled evaluations rarely undergo assessment in routine clinical practice across diverse healthcare settings and patient populations [8].

Method validation provides the critical foundation for bridging this gap, serving as documented evidence that a computational procedure fulfills its intended purpose [10] [11]. In the context of computational target prediction, validation moves beyond mere algorithmic performance to encompass fitness-for-purpose, ensuring models generate reliable, interpretable, and actionable insights for downstream decision-making [10]. This comprehensive approach to validation is particularly crucial given the high-dimensional, stochastic, and nonlinear nature of biological systems, which often behave in ways that challenge human intuition and conventional statistical methods [9].

Comprehensive Validation Framework for Computational Target Prediction

Foundational Principles and Regulatory Context

Validation in computational sciences constitutes a multi-faceted process addressing distinct but complementary questions: verification ("Are we building the system right?") ensures components meet their specifications, while validation ("Are we building the right system?") confirms the system fulfills customer needs and intended uses [10]. For computational target prediction methods, this distinction proves critical—a model may be perfectly executed (verification) yet fail to address the appropriate biological context or clinical need (validation).

Regulatory agencies require documented evidence providing "a high degree of assurance that a planned process will uniformly deliver results conforming to expected specifications" [11]. This principle underpins regulatory frameworks including the FDA's guidelines for computer system validation [11] [12] and ISO standards for computational model validation [7]. Within these frameworks, validation encompasses the entire model lifecycle—from development and implementation to deployment and monitoring—ensuring continued reliability in real-world environments characterized by data heterogeneity and operational variability [8].

The risk-based approach to validation prioritizes resources toward systems with greatest impact on patient safety and product quality [11]. For target prediction methodologies, risk assessment should consider the consequence of false positives (pursuing irrelevant targets) and false negatives (overlooking promising targets), with more stringent validation required for models informing clinical decisions or regulatory submissions [8].

Hierarchical Validation Strategy

A comprehensive validation strategy for computational target prediction incorporates multiple evidence layers, progressing from technical performance to clinical relevance.

Technical Performance Validation

Technical validation establishes that the computational method executes its intended function reliably and reproducibly. This begins with standard performance metrics evaluated through appropriate statistical methods.

Table 1: Key Performance Metrics for Classification Models in Target Prediction

| Metric Category | Specific Metrics | Interpretation in Target Prediction Context |

|---|---|---|

| Overall Performance | Accuracy, Precision, Recall, F1-score | Balanced assessment of correct target identification [10] |

| Statistical Validation | k-fold cross-validation, Leave-one-out cross-validation | Reduces bias in model evaluation and mitigates overfitting [10] |

| Error Metrics | Mean Absolute Error (MAE), Root Mean Square Error (RMSE) | Quantifies closeness of predictions to actual outcomes [10] |

| Correlation Measures | Correlation coefficient (R) | Quantifies strength and direction of linear relationships [10] |

For models predicting continuous values (e.g., binding affinity), validation should include mean absolute error (MAE) and root mean square error (RMSE), which quantify the magnitude of prediction errors, with correlation coefficients assessing relationship strength between predicted and actual values [10]. In classification tasks (e.g., target vs. non-target), metrics including accuracy, precision, recall, and F1-score provide complementary insights, with preference for precision and recall in imbalanced datasets common to drug-target interactions [10].

The experimental setup must rigorously address potential data leakage, where information from the test set inadvertently influences model training, generating optimistically biased performance estimates [1]. Implementation of k-fold cross-validation or leave-one-out cross-validation provides more reliable performance estimates, particularly for smaller datasets [10].

Biological and Functional Validation

Technical excellence alone is insufficient; predictive models must demonstrate biological relevance and functional utility. Biological validation confirms that computational predictions align with established biological knowledge and experimental observations.

Experimental correlation represents the most direct approach, comparing computational predictions with wet-lab results. Recent advances in high-throughput experimental techniques, including Cellular Thermal Shift Assay (CETSA) for target engagement and high-content screening, enable medium-to-large scale experimental validation of computational predictions [6]. For example, Mazur et al. (2024) applied CETSA with high-resolution mass spectrometry to quantitatively validate drug-target engagement in complex biological systems, confirming dose-dependent stabilization ex vivo and in vivo [6].

Benchmarking against established methods provides relative performance assessment. A 2025 systematic comparison of seven target prediction methods using a shared benchmark dataset of FDA-approved drugs revealed significant performance variation, with MolTarPred demonstrating superior effectiveness, particularly when using Morgan fingerprints with Tanimoto scores [1]. Such comparative studies highlight the importance of methodological choices, including fingerprint selection and similarity metrics, in optimizing prediction accuracy.

Table 2: Comparative Performance of Target Prediction Methods (Adapted from He et al., 2025)

| Method | Type | Algorithm/Approach | Key Findings | Optimal Configuration |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity | Most effective method in benchmark study | Morgan fingerprints with Tanimoto scores [1] |

| RF-QSAR | Target-centric | Random Forest | Performance varies by target class | ECFP4 fingerprints [1] |

| TargetNet | Target-centric | Naïve Bayes | Competitive performance across diverse datasets | Multiple fingerprint types [1] |

| PPB2 | Ligand-centric | Nearest neighbor/Naïve Bayes/DNN | Comprehensive polypharmacology profiling | MQN, Xfp and ECFP4 fingerprints [1] |

| CMTNN | Target-centric | Multitask Neural Network | Local execution advantage | Morgan fingerprints [1] |

Clinical and Regulatory Validation

The ultimate validation test for computational target prediction lies in demonstrating clinical utility and regulatory compliance. Prospective validation represents the critical missing link for many AI tools in drug development, assessing how systems perform when making forward-looking predictions in real-world clinical environments rather than identifying patterns in historical data [8].

The randomized controlled trial (RCT) represents the gold standard for clinical validation, with evidence requirements correlating directly with the innovativeness of AI claims [8]. As with therapeutic interventions, AI systems promising clinical benefit must meet comparable evidence standards, including demonstration of statistically significant and clinically meaningful impact on patient outcomes [8]. Adaptive trial designs that accommodate continuous model updates while preserving statistical rigor offer promising approaches for evaluating rapidly evolving AI technologies [8].

Regulatory validation encompasses both the computational model itself and the computer system implementing it [11]. The FDA's framework for computer system validation emphasizes the "V-model" approach, incorporating Installation Qualification (IQ), Operational Qualification (OQ), and Performance Qualification (PQ) [11] [12]. This systematic methodology ensures computerized systems—including AI-driven prediction tools—are properly installed, function according to specifications, and consistently perform their intended functions in production environments [11].

Application Notes: Implementing Validation Protocols

Protocol 1: Benchmarking Target Prediction Methods

Experimental Workflow

Benchmarking workflow for target prediction methods

Detailed Methodology

Database Selection and Preparation

- Source Repositories: Utilize established bioactivity databases (ChEMBL, BindingDB, PubChem) with experimentally validated interactions [1]. ChEMBL version 34 provides 2,431,025 compounds, 15,598 targets, and 20,772,701 interactions, offering extensive coverage of drug-target interactions [1].

- Quality Filtering: Apply confidence scoring (e.g., ChEMBL's confidence score ≥7 indicating direct protein target assignment) to ensure high-quality interaction data [1]. Exclude entries associated with non-specific or multi-protein targets by filtering out targets containing keywords like "multiple" or "complex" [1].

- Redundancy Removal: Eliminate duplicate compound-target pairs, retaining only unique interactions. For FDA-approved drug benchmarking, ensure no overlap between benchmark molecules and training data to prevent overoptimistic performance estimates [1].

Experimental Design

- Dataset Partitioning: Implement stratified splitting to maintain target distribution across training, validation, and test sets (70%/15%/15% recommended). For temporal validation, use time-split partitioning where models trained on older data predict newer interactions.

- Method Selection: Include diverse algorithmic approaches (ligand-centric, target-centric, hybrid) representing current methodological spectrum [1]. In the 2025 benchmark, seven methods were evaluated: MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred [1].

- Performance Metrics: Employ comprehensive metric suites including area under precision-recall curve (AUPRC), receiver operating characteristic (AUC-ROC), precision, recall, F1-score, and enrichment factors [10] [1].

Protocol 2: Experimental Correlation Study

Experimental Workflow

Experimental correlation protocol workflow

Detailed Methodology

Computational Predictions

- Generate target predictions for query molecules using optimized methods (e.g., MolTarPred with Morgan fingerprints and Tanimoto similarity) [1].

- Apply appropriate confidence thresholds to identify high-priority targets for experimental validation. For novel target identification, prioritize predictions with both high confidence scores and biological plausibility within disease context.

Experimental Validation Techniques

- Binding Affinity Assays: Implement surface plasmon resonance (SPR) or thermal shift assays to quantify direct binding interactions. Cellular Thermal Shift Assay (CETSA) enables target engagement validation in physiologically relevant cellular environments [6].

- Functional Activity Assays: Employ cell-based reporter systems or enzymatic activity assays to confirm functional modulation of predicted targets.

- Selectivity Profiling: Utilize broad profiling panels (e.g., kinase panels, safety panels) to assess target specificity and identify potential off-target effects.

Success Criteria Definition Establish predefined validation criteria before experimental initiation:

- Statistical Significance: Binding affinity or functional activity with p-value <0.05.

- Potency Thresholds: Minimum binding affinity (e.g., KD <10μM) or functional potency (e.g., IC50 <10μM).

- Dose-Response Relationship: Confirmation of concentration-dependent effects.

- Reproducibility: Technical and biological replicates demonstrating consistent results.

Protocol 3: Clinical Translation Framework

Clinical Validation Workflow

Clinical translation validation workflow

Detailed Methodology

Regulatory Compliance Framework

- Computer System Validation (CSV): Implement IQ/OQ/PQ protocols for computational tools [11] [12]. Installation Qualification (IQ) verifies proper system installation; Operational Qualification (OQ) confirms operational ranges; Performance Qualification (PQ) demonstrates consistent performance in production environment [12].

- Documentation Requirements: Maintain comprehensive validation documentation including requirements specifications, design documents, test protocols, traceability matrices, and validation summary reports [11].

- Change Control Procedures: Establish formal change management processes to maintain validated state through system modifications and updates.

Prospective Clinical Validation

- Adaptive Trial Designs: Implement platform trials or master protocols that accommodate continuous model evaluation and refinement while maintaining statistical integrity [8].

- Clinical Utility Endpoints: Define endpoints measuring clinical impact beyond technical accuracy, including diagnostic efficiency, therapeutic decision modification, and patient outcomes [8].

- Real-World Performance Monitoring: Deploy continuous monitoring systems to assess model performance across diverse clinical settings and patient populations, detecting performance degradation or dataset shift [8].

Essential Research Reagent Solutions

Table 3: Research Reagent Solutions for Validation Studies

| Reagent Category | Specific Tools/Platforms | Function in Validation | Key Features |

|---|---|---|---|

| Bioactivity Databases | ChEMBL, BindingDB, PubChem | Provide annotated compound-target interactions for model training and benchmarking [1] | Experimentally validated interactions, confidence scoring, standardized data formats [1] |

| Target Prediction Methods | MolTarPred, RF-QSAR, TargetNet, CMTNN | Enable comparative performance assessment and method selection [1] | Ligand-centric and target-centric approaches; various fingerprinting and algorithm options [1] |

| Structure-Based Tools | AutoDock, SwissADME, Fpocket, DeepSite | Facilitate binding site prediction and druggability assessment [6] [2] | Molecular docking, binding cavity identification, machine learning-enhanced prediction [6] [2] |

| Experimental Validation Assays | CETSA, SPR, High-Content Screening | Confirm computational predictions through experimental measurement [6] | Cellular target engagement, binding affinity quantification, functional activity assessment [6] |

| Validation Metrics Platforms | Scikit-learn, DeepCheminet, Model-specific evaluation | Standardized performance assessment and statistical validation [10] [1] | Comprehensive metric suites, cross-validation implementations, statistical testing [10] |

Rigorous validation constitutes the critical pathway translating computational promise into clinical reality in target prediction. The framework presented—encompassing technical, biological, and clinical validation tiers—provides a structured approach for establishing model credibility, reliability, and ultimately, clinical utility. As computational methods continue evolving toward more complex AI and quantum computing approaches [7], validation frameworks must similarly advance, incorporating adaptive regulatory pathways [8] and robust performance monitoring systems.

The future of computational drug discovery hinges not merely on algorithmic sophistication but on demonstrable validation rigor—objectively confirming that these powerful tools consistently deliver actionable insights improving therapeutic development efficiency and patient outcomes. Through implementation of comprehensive validation protocols, researchers can bridge the current translational gap, transforming computational target prediction from promising technology to validated component of the drug discovery toolkit.

In the field of computational drug discovery, validation is the critical process that assesses how well a predictive model will perform in real-world scenarios. For computational target prediction methods, robust validation is the cornerstone of scientific credibility and practical utility, ensuring that predictions about drug-target interactions (DTIs) are reliable and can inform downstream experimental work. The core validation types—internal, external, and targeted—serve complementary purposes in establishing a model's predictive power and applicability. Internal validation provides an initial, optimistic estimate of performance on data similar to that used for training. External validation tests the model's ability to generalize to new, independent data sources. Targeted validation, a more nuanced concept, specifically assesses performance within a precisely defined intended-use population and setting, sharpening the focus on the model's practical application [13] [14]. The choice and execution of these validation strategies directly impact the trustworthiness of computational methods and their potential to accelerate drug discovery.

Defining the Core Validation Types

Internal Validation

Internal validation assesses the expected performance of a prediction method on data drawn from a population similar to the original training sample. Its primary purpose is to correct for in-sample optimism, the tendency of models to overfit the specific development data. This process does not involve truly external data; instead, it uses resampling techniques on the development dataset itself. Common methodologies include cross-validation and bootstrapping. For instance, in internal validation via bootstrapping, the model is developed on multiple bootstrap samples (samples drawn with replacement from the original data), and its performance is tested on the data not included in each sample. This process yields an optimism-adjusted estimate of performance, providing a more realistic view of how the model might perform on new subjects from the same underlying population [13] [15].

External Validation

External validation is an examination of model performance using entirely new participant-level data, external to the development dataset. It is often regarded as a gold standard for establishing model credibility, as it tests the model's generalizability. The key differentiator from internal validation is the use of a distinct dataset, which is critical because model performance is highly dependent on the population and setting [13] [14]. External validation studies can take several forms, including assessing reproducibility (in a similar population/setting), transportability (in a different population/setting, e.g., a model developed for adults tested in children), or generalisability (across multiple relevant populations and settings) [13]. A model that performs well in a broad external validation demonstrates stronger robustness.

Targeted Validation

Targeted validation is the process of estimating how well a model performs within its specific intended population and setting. This concept sharpens the focus on the model's intended use, which may increase applicability and avoid misleading conclusions. The central tenet of targeted validation is that a model should not be considered "validated" in a general sense, but only "valid for" the particular contexts in which its performance has been assessed. For example, a clinical prediction model developed for use in a specific hospital requires a targeted validation using data from that same hospital, not just a general external validation in arbitrary, conveniently available datasets [13]. This framework exposes that a robust internal validation may sometimes be sufficient if the development data is large and perfectly matches the intended-use population, and it highlights "validation gaps" where performance in the intended context remains unknown.

Table 1: Comparative Overview of Core Validation Types

| Validation Type | Core Purpose | Key Characteristics | Primary Data Source | Addresses Overfitting? |

|---|---|---|---|---|

| Internal Validation | Estimate performance on data from the same population as the training set; correct for over-optimism. | Uses resampling methods (e.g., cross-validation, bootstrapping). Does not use new subjects. | Original development dataset. | Yes, directly. |

| External Validation | Test model generalizability and transportability to new data sources. | Uses a completely independent dataset. Considered a stronger test of real-world performance. | A new dataset, external to the development data. | Indirectly, by testing on new data. |

| Targeted Validation | Estimate performance for a specific intended-use population and setting. | Defined by the specific context of intended use, not just data availability. Can be internal or external. | A dataset representative of the intended target population/setting. | Ensures relevance, not just generalizability. |

Experimental Protocols for Validation

Implementing a comprehensive validation strategy is a multi-stage process. The following protocols provide a structured approach for each validation type, which should be tailored to the specific computational method and application domain.

Protocol for Internal Validation via Cross-Validation

Objective: To obtain an optimism-adjusted estimate of model performance on data from a population similar to the development dataset and to prevent overfitting.

Materials:

- Development dataset (e.g., known drug-target pairs with features and labels).

- Computational resources for model training and evaluation.

Procedure:

- Data Preparation: Randomly shuffle the entire development dataset.

- Data Partitioning: Split the shuffled dataset into k equally sized folds (e.g., k=5 or k=10 is common).

- Iterative Training and Validation:

- For each unique fold i (where i ranges from 1 to k): a. Set Aside Test Fold: Designate fold i as the temporary validation set. b. Train Model: Use the remaining k-1 folds as the training set to develop (train) the model. c. Validate Model: Apply the trained model to the held-out fold i and calculate the chosen performance metric(s) (e.g., AUC, accuracy).

- Performance Aggregation: Calculate the final internal performance estimate by averaging the performance metrics obtained from all k iterations.

- Final Model Training: Train the final model on the entire development dataset for subsequent use or external validation.

This protocol provides a more robust performance estimate than a single train-test split, as every observation is used for both training and validation once [16].

Protocol for External Validation

Objective: To independently assess the model's performance and generalizability on a completely new dataset, providing a realistic evaluation of its real-world applicability.

Materials:

- The final model developed on the entire development dataset.

- An independent external validation dataset, collected from a different source, time period, or population.

Procedure:

- Dataset Curation: Secure a validation dataset that is entirely independent of the development data. This dataset should have the same input features and output labels.

- Model Application: Apply the pre-trained final model (from Step 5 of the internal validation protocol) to the external validation dataset to generate predictions.

- Performance Calculation: Calculate the model's performance metrics (e.g., AUC, precision, recall) based on the predictions and the true labels in the external dataset.

- Performance Comparison: Compare the performance on the external dataset to the internal validation estimates. A significant drop in performance may indicate overfitting or differences in the data distributions (e.g., case-mix variation).

- Model Analysis (Optional): If performance is poor, investigate reasons, which may include differences in baseline risk, predictor-outcome associations, or data quality between the development and validation populations [13]. Model updating (e.g., recalibration) may be considered.

Protocol for Targeted Validation

Objective: To validate the model within a specific, pre-defined population and setting that matches its intended clinical or practical use case.

Materials:

- The final model developed on the entire development dataset.

- A validation dataset that is representative of the intended target population and setting.

Procedure:

- Define Intended Use: Clearly articulate the intended population (e.g., patients with a specific disease subtype, a specific demographic) and setting (e.g., primary care, a particular hospital network) for the model.

- Identify Targeted Dataset: Procure or collect a validation dataset that closely matches the intended population and setting defined in Step 1. This dataset could be a subset of the development data (if it perfectly matches the target) or a new external dataset. The critical factor is representativeness, not merely convenience [13].

- Assess Dataset Relevance: Formally document how the chosen dataset aligns with the intended use, noting any potential gaps (e.g., differences in demographics, disease severity, or measurement protocols).

- Execute Validation: Apply the model to the targeted dataset and evaluate its performance using relevant metrics.

- Contextualize Findings: Report the validation results with explicit reference to the intended-use context. The conclusion should be framed as "the model is validated for use in [specific context]," not as a general statement of validity.

Diagram: A strategic workflow for selecting the appropriate validation type based on data availability and the model's intended use.

Successful validation of computational methods relies on both data and software resources. The following table details key components of a validation toolkit.

Table 2: Key Research Reagent Solutions for Validation Studies

| Resource Category | Example(s) | Function in Validation |

|---|---|---|

| Benchmark Datasets | Yamanishi_08's dataset, Hetionet | Provide standardized, curated data for the development and external validation of drug-target prediction models, enabling fair comparison between different methods [17]. |

| Structured Databases | MBGD (Microbial genome database), ModelArchive, CAZyme3D, ExoCarta, Papillomavirus Episteme (PaVE) | Offer organized, annotated biological data that can be used to construct validation datasets specific to certain targets or pathways [18]. |

| Software Tools & Web Servers | DINC-ensemble, GRAMMCell, Phyre2.2, AFflecto, AlphaFold Protein Structure Database, RNAproDB | Provide computational platforms for generating structural models, simulating interactions, or extracting features that can be used as inputs for model validation or as orthogonal validation methods [18]. |

| Analysis & Scripting Environments | R, Python, scHiCcompare R package, rcsb-api Python toolkit | Offer programming environments and specialized packages for implementing cross-validation, calculating performance metrics, and analyzing validation results [18]. |

| Performance Metrics | Area Under the Curve (AUC), C-index, Precision, Recall, Calibration Slopes | Quantitative measures used to assess model performance in discrimination, calibration, and overall accuracy during validation [13] [17]. |

Advanced Concepts and Future Directions

Simulation-Based Validation

Beyond traditional data-splitting, simulation-based validation is a powerful advanced technique. This involves generating synthetic data where the underlying "truth" is known, based on realistic assumptions and parameters. The model is then validated against this simulated data to assess its ability to recover known signals and its robustness to various biases. For example, a study validated a model for detecting changes in SARS-CoV-2 reinfection risk by simulating datasets that incorporated real-world biases like imperfect observation and mortality. This approach allowed the researchers to confirm the model could accurately detect true risk changes and not just artifacts of data limitations [19]. This method is particularly valuable when large, high-quality real-world validation datasets are scarce.

Addressing Cold-Start Scenarios and Model Generalization

A significant challenge in computational drug discovery is the cold-start problem, where predictions are needed for novel drugs or targets that have no known interactions in the training data. Validation protocols must specifically address this. This involves designing cold-start cross-validation settings where, for example, all drugs (or targets) in the validation fold are absent from the training fold [17]. The performance of advanced methods like DTIAM, which uses self-supervised pre-training on large amounts of unlabeled data to learn meaningful representations, demonstrates the field's move towards models that maintain robust performance even in these challenging scenarios [17]. Properly validating for cold-start conditions is essential for ensuring a model's practical utility in discovering truly novel interactions.

Diagram: A strategy to overcome the cold-start problem in drug-target prediction, using pre-training and targeted validation.

Computational prediction of drug-target interactions is a cornerstone of modern drug discovery, enabling the rapid identification and prioritization of candidate molecules. These methods are broadly categorized into three paradigms: ligand-based, structure-based, and machine learning (ML) approaches [20]. Ligand-based methods rely on the principle that structurally similar molecules are likely to exhibit similar biological activities, while structure-based methods leverage the three-dimensional structure of the target protein to predict ligand binding [1] [20]. Machine learning, a subset of artificial intelligence (AI), encompasses a range of algorithms that can learn complex patterns from data to make predictions, and it can be applied to both ligand- and structure-based paradigms [20]. The integration of these methods is transforming the field, offering powerful tools for hit identification, lead optimization, and drug repurposing [1] [20]. This document provides detailed application notes and protocols for these methods within the context of validating computational target prediction protocols.

Ligand-Based Prediction Methods

Ligand-based methods are employed when the three-dimensional structure of the biological target is unknown but there is information about known active ligands [20]. These methods are founded on the "similarity principle," which posits that molecules with similar structural features are likely to share similar biological properties and target interactions [21].

Key Algorithms and Protocols

The core of ligand-based screening involves molecular similarity calculations. The typical workflow involves representing molecules as numerical or binary fingerprints and then computing a similarity score between the query molecule and a database of known actives [1] [21].

- Molecular Fingerprints: These are vector representations of molecular structure. Common types include:

- MACCS Keys: A set of 166 predefined structural fragments (bits) that indicate the presence or absence of specific molecular features [1] [21].

- Extended Connectivity Fingerprints (ECFP): Circular fingerprints that capture atom environments within a specified radius, providing a more nuanced representation of molecular structure [1] [21].

- Morgan Fingerprints: Similar to ECFP, implemented within the RDKit cheminformatics toolkit, and have been shown to offer high performance in target prediction [1].

- Similarity Metrics: The choice of similarity measure is critical. The Tanimoto coefficient is the most widely used metric for comparing binary fingerprints, while the Dice score is another alternative [1] [21].

Protocol 1: Ligand-Based Virtual Screening using MolTarPred

MolTarPred is a ligand-centric method that has been demonstrated as one of the most effective for target prediction [1].

- Database Curation: Obtain a comprehensive database of ligand-target interactions, such as ChEMBL (version 34 or higher) [1]. Filter bioactivity records (e.g., IC50, Ki) to include only high-confidence interactions (e.g., confidence score ≥ 7) and remove duplicates and non-specific protein targets [1].

- Fingerprint Calculation: For the query molecule and all molecules in the database, compute molecular fingerprints. The literature suggests Morgan fingerprints (radius 2, 2048 bits) offer superior performance over MACCS keys for this application [1].

- Similarity Search: Calculate the similarity (e.g., Tanimoto coefficient) between the query molecule's fingerprint and every molecule in the curated database.

- Target Prediction: Rank the database molecules by their similarity to the query. The known targets of the top K most similar molecules (e.g., top 1, 5, 10, or 15) are retrieved as potential targets for the query [1].

- Validation: To prevent bias, ensure that the query molecule and its close analogs are excluded from the database during the benchmarking phase [1].

Ligand-based screening workflow.

Application Notes and Performance

Ligand-based methods are particularly valuable for target fishing or polypharmacology prediction, where the goal is to identify all potential targets for a small molecule [1]. A case study on fenofibric acid using MolTarPred successfully predicted its potential for repurposing as a THRB modulator for thyroid cancer treatment [1]. Performance is highly dependent on the similarity metric and fingerprint combination, and it is recommended to test multiple configurations for a given dataset [21].

Table 1: Common Ligand-Based Methods and Their Characteristics

| Method Name | Type | Key Algorithm | Fingerprint Used | Application |

|---|---|---|---|---|

| MolTarPred [1] | Stand-alone Code | 2D Similarity | MACCS, Morgan | General Target Prediction |

| SuperPred [1] | Web Server | 2D/Fragment/3D Similarity | ECFP4 | General Target Prediction |

| PPB2 [1] | Web Server | Nearest Neighbor/Naïve Bayes | MQN, ECFP4 | Polypharmacology Profiling |

| LiSiCA [21] | Stand-alone Code | 3D Pharmacophore & Shape | Molecular Graph & 3D Coordinates | Similarity based on 3D alignment |

Structure-Based Prediction Methods

Structure-based drug design (SBDD) relies on the three-dimensional structure of the target protein to identify and optimize potential drugs [20]. The core technique is molecular docking, which predicts the preferred orientation (pose) of a small molecule when bound to a target protein, and scores the strength of their interaction (scoring function) [22].

Key Algorithms and Protocols

The SBDD process involves several key steps, from obtaining a reliable protein structure to docking and scoring ligand poses.

- Receptor Modeling: A high-quality 3D structure of the target is essential. Sources include:

- Experimental Structures: The Protein Data Bank (PDB) is the primary repository for structures determined by X-ray crystallography, cryo-EM, or NMR [22].

- Computational Prediction: When experimental structures are unavailable, AI-based tools like AlphaFold2 and RoseTTAFold can generate highly accurate protein models from amino acid sequences [22]. For GPCRs and other dynamic targets, generating state-specific (e.g., active vs. inactive) models is crucial for success [22].

- Molecular Docking: This process involves sampling possible ligand conformations and orientations within the binding site and ranking them using a scoring function.

- Emerging Co-folding Methods: A recent breakthrough involves co-folding methods, such as NeuralPLexer, RoseTTAFold All-Atom, and Boltz-1/Boltz-1x, which predict the protein-ligand complex structure directly from the protein sequence and ligand information [23]. These deep learning approaches can model structural changes induced by ligand binding (induced fit) but are currently biased towards well-characterized orthosteric sites [23].

Protocol 2: Structure-Based Hit Identification using Molecular Docking

This protocol outlines a standard docking workflow for hit identification.

- Protein Preparation:

- Obtain the protein structure from the PDB or generate a model using AlphaFold2.

- Remove water molecules and co-crystallized ligands, unless they are critical for binding.

- Add hydrogen atoms and assign protonation states to residues (e.g., using H++ or PROPKA).

- Define the binding site, typically around the known active site or a predicted allosteric site.

- Ligand Preparation:

- Obtain the 3D structure of the query small molecule(s) in a suitable format (e.g., SDF, MOL2).

- Generate possible tautomers and protonation states at physiological pH.

- Perform energy minimization to ensure correct geometry.

- Docking Execution:

- Use docking software (e.g., AutoDock Vina, Glide, GOLD) to perform flexible ligand docking into the rigid protein binding site.

- Set the search space to encompass the entire binding site.

- Generate multiple poses (e.g., 10-20) per ligand.

- Pose Scoring and Analysis:

- Rank the generated poses based on the scoring function provided by the docking program.

- Visually inspect the top-ranked poses for sensible interactions (e.g., hydrogen bonds, hydrophobic contacts, pi-stacking).

- Select the most promising poses and compounds for further experimental validation.

Structure-based docking workflow.

Application Notes and Performance

Structure-based methods are indispensable when little is known about active ligands but the target structure is available [20]. They are particularly powerful for lead optimization, as the binding pose can guide medicinal chemistry efforts to improve potency and selectivity [22]. The success of docking is highly dependent on the accuracy of the protein structure and the quality of the scoring function. While AI-predicted structures have revolutionized the field, they may still contain inaccuracies in flexible loops and side-chain conformations in the binding site, which can impact docking accuracy [22]. Co-folding methods show great promise but currently struggle with predicting allosteric ligand binding, as their training data is dominated by orthosteric sites [23].

Table 2: Common Structure-Based Methods and Tools

| Method/Tool | Type | Key Principle | Application |

|---|---|---|---|

| Molecular Docking (e.g., AutoDock Vina) [20] | Stand-alone/Server | Sampling & Empirical Scoring | Hit Identification, Pose Prediction |

| AlphaFold2 [22] | Web Server/Code | Deep Learning (AI) | Protein Structure Prediction |

| NeuralPLexer [23] | Deep Learning Model | Co-folding from Sequence | Protein-Ligand Complex Prediction |

| Boltz-1/Boltz-1x [23] | Deep Learning Model | Co-folding from Sequence | High-Quality Pose Prediction (>90% pass quality checks) |

Machine Learning Approaches

Machine learning (ML) models can learn complex, non-linear relationships between molecular structures and their biological activities from large datasets, making them powerful tools for predictive modeling in drug discovery [20]. These models can be applied in both ligand- and structure-based contexts.

Key Algorithms and Protocols

ML algorithms can be categorized into traditional ML and deep learning (DL). The choice of algorithm depends on the problem type (classification vs. regression) and the size and nature of the available data [20] [24].

- Traditional Machine Learning: These models require pre-computed molecular features (descriptors or fingerprints).

- Random Forest (RF): An ensemble method that builds multiple decision trees and aggregates their results, often used for classification and regression tasks. It is robust against overfitting [1] [24].

- Naïve Bayes: A probabilistic classifier based on Bayes' theorem, suitable for high-dimensional data [1] [24].

- Support Vector Machine (SVM): Effective for binary classification by finding the optimal hyperplane that separates classes in a high-dimensional space [24].

- Deep Learning (DL): A subset of ML that uses neural networks with many layers to automatically learn relevant features from raw data (e.g., SMILES strings, graphs) [20].

Protocol 3: Building a ML-QSAR Model for Target Prediction

This protocol describes building a Quantitative Structure-Activity Relationship (QSAR) model using ML.

- Data Collection and Curation:

- Collect a dataset of molecules with known activity (e.g., IC50, Ki) against a specific target from a database like ChEMBL [1].

- Divide the data into active and inactive classes based on a predefined activity threshold.

- Split the data into training (~70%), validation (~15%), and test sets (~15%). Ensure that the test set is held back until the final model evaluation.

- Feature Calculation:

- Calculate molecular descriptors (e.g., molecular weight, logP) or fingerprints (e.g., ECFP4, Morgan) for all compounds [1].

- Model Training and Validation:

- Train a model (e.g., Random Forest) on the training set using the features as input and the activity class as the output.

- Use the validation set to tune hyperparameters (e.g., number of trees in RF) to prevent overfitting.

- Model Evaluation:

- Use the independent test set to evaluate the final model's performance. Common metrics include Accuracy, Precision, Recall, and the Area Under the ROC Curve (AUC-ROC) [25].

- Perform cross-validation to assess the model's robustness.

Application Notes and Performance

ML models are widely used for predicting drug-target interactions, virtual screening, and assessing pharmacokinetic properties [20]. A systematic comparison of target prediction methods found that MolTarPred (ligand-centric) and RF-QSAR (target-centric) were among the most effective [1]. Deep learning models excel with large datasets but require substantial computational resources and data, whereas traditional ML can be effective with smaller, well-curated datasets [20]. It is critical to avoid data leakage by ensuring that molecules very similar to the query are not present in the training data during benchmark validation [1].

Table 3: Common Machine Learning Algorithms and Their Uses in Drug Discovery

| Algorithm | Type | Key Characteristics | Common Drug Discovery Application |

|---|---|---|---|

| Random Forest (RF) [1] [24] | Ensemble (Traditional ML) | Robust, handles high-dim. data, reduces overfitting | QSAR, Classification (e.g., RF-QSAR) |

| Naïve Bayes [1] [24] | Probabilistic (Traditional ML) | Fast, works well with high-dim. data | Target Prediction, Document Classification |

| Support Vector Machine (SVM) [24] | Traditional ML | Effective for binary classification, finds complex boundaries | Compound Classification, Toxicity Prediction |

| Multitask Neural Networks [1] | Deep Learning (DL) | Learns multiple tasks simultaneously, can improve accuracy | Polypharmacology Prediction, Multi-target Activity |

| Graph Neural Networks [20] | Deep Learning (DL) | Learns directly from molecular graph structure | Molecular Property Prediction, de novo Design |

Experimental Validation & Reagent Solutions

Validation is a critical step to ensure the predictive power and real-world applicability of any computational method.

Model Evaluation Metrics

For classification models (e.g., active vs. inactive), standard evaluation metrics should be employed [25].

- Confusion Matrix: A table showing true positives, true negatives, false positives, and false negatives [25].

- Precision and Recall: Precision measures the correctness of positive predictions, while Recall measures the ability to find all positive instances [25].

- F1-Score: The harmonic mean of Precision and Recall, providing a single metric for model balance [25].

- AUC-ROC: The Area Under the Receiver Operating Characteristic curve measures the model's ability to distinguish between classes across all classification thresholds [25].

Table 4: Key Reagents and Databases for Computational Target Prediction

| Resource Name | Type | Function in Validation | Access |

|---|---|---|---|

| ChEMBL [1] | Bioactivity Database | Provides curated, experimentally validated ligand-target interactions for model training and benchmarking. | Web Server / Local PostgreSQL |

| PDB (Protein Data Bank) [22] | Protein Structure Database | Source of experimentally solved 3D protein structures for structure-based methods and model validation. | Web Server |

| BindingDB [1] | Bioactivity Database | Provides binding affinity data for drug targets, used for model training and testing. | Web Server |

| RDKit [21] | Cheminformatics Toolkit | Open-source software for calculating fingerprints, descriptors, and performing molecular operations. | Stand-alone Code |

| AlphaFold2 Protein Structure Database [22] | Protein Structure Database | Source of high-accuracy predicted protein structures for targets without experimental structures. | Web Server |

| MolTarPred [1] | Target Prediction Tool | A high-performing, ligand-based method for benchmarking against new models. | Stand-alone Code |

Integrated Workflow and Decision Framework

No single method is universally superior. The choice of method depends on the available data and the specific research question. A synergistic approach that integrates multiple methods often yields the most reliable results.

Integrated method selection workflow.

Decision Framework for Method Selection:

- If protein structure is available (experimental or high-confidence prediction like AlphaFold2): Prioritize structure-based methods like docking. For novel targets, also consider co-folding methods if the ligand is not expected to bind in a deeply buried orthosteric site [23] [22].

- If protein structure is unavailable, but known active ligands exist: Prioritize ligand-based methods like similarity searching or ML models trained on bioactivity data (e.g., from ChEMBL) [1] [21].

- If large, high-quality bioactivity datasets are available: Machine Learning (especially Random Forest or Multitask Neural Networks) is highly suitable for building robust predictive models [1] [20].

- For comprehensive validation: Always use an integrated approach. For example, use a fast ligand-based method to screen a large chemical library, then apply more computationally intensive structure-based methods to the top hits. Finally, use consensus scoring from multiple methods to generate high-confidence target hypotheses for experimental testing [1] [21].

Navigating Biases in Bioactivity and Structural Data and Their Impact on Validation

Computational target prediction is a cornerstone of modern drug discovery, but the validity of its predictions is heavily dependent on the quality of the underlying data. Biases in bioactivity and structural data can significantly skew model outputs, leading to failed validation and costly late-stage attrition. This application note provides a structured framework for identifying, quantifying, and mitigating these biases to strengthen the validation protocols for computational prediction methods. We detail specific experimental protocols and provide actionable checklists to help researchers navigate the complex landscape of data bias.

Quantifying Bias in Research Data

A comprehensive analysis of nonclinical research articles reveals significant gaps in the reporting of measures against bias, which directly impacts the reliability of data used for computational modeling [26]. The following table summarizes key reporting deficiencies across a sample of 860 life sciences articles published in 2020.

Table 1: Reporting Rates of Anti-Bias Measures in Nonclinical Research (2020)

| Measure Against Bias | Reporting Rate in In Vivo Articles (n=320) | Reporting Rate in In Vitro Articles (n=187) | Reporting Rate in Combined In Vivo/In Vitro Articles (n=353) |

|---|---|---|---|

| Randomization | 0% - 63% (varies by journal) | 0% - 4% (varies by journal) | Not separately reported |

| Blinded Conduct of Experiments | 11% - 71% (varies by journal) | 0% - 86% (varies by journal) | Not separately reported |

| A Priori Sample Size Calculation | Low (specific rates not reported) | Low (specific rates not reported) | Not separately reported |

This systemic under-reporting of critical methodological details introduces selection bias and measurement bias into public datasets, which are then propagated through computational models [26]. Furthermore, studies have confirmed the presence of technical bias in widely used repositories like The Cancer Genome Atlas (TCGA), where models can achieve nearly 70% accuracy in predicting a sample's data source center—a clear indicator of learned site-specific technical artifacts rather than biological signals [27].

A Protocol for Bias Detection and Mitigation in Computational Workflows

The following integrated protocol provides a step-by-step guide for detecting and mitigating bias throughout the computational target prediction pipeline, from data curation to model validation.

Experimental Protocol: Bias-Aware Model Validation

Purpose: To validate a computational target prediction model while accounting for and mitigating biases in the training and test data.

Workflow Overview:

Procedure:

Data Collection and Preprocessing

- Data Source Identification: Gather data from diverse, well-documented public repositories (e.g., TCGA, ChEMBL, FooDB) and proprietary sources [28] [29].

- Data Curation: Implement stringent preprocessing to handle inconsistencies, standardize nomenclature, and normalize measurement units (e.g., converting all bioactivity concentrations to nM, all compound amounts to mg/100g) [28].

- Metadata Annotation: Preserve and curate all available metadata, including demographic information (if any), experimental conditions, instrumentation, and data source center.

Bias Auditing

- Performance Disparity Testing: Train a classifier to predict protected attributes (e.g., data source center, demographic group) from your model's primary features. High accuracy (>70%) indicates strong technical or sampling bias [27].

- Cross-Group Performance Analysis: Calculate key performance metrics (e.g., AUC, accuracy) separately for different subgroups defined by data source, experimental batch, or demographic variables. Disparities in error rates indicate potential bias [30] [31].

- Reference Dataset Benchmarking: Use balanced benchmarking datasets, whether simulated (with known ground truth) or real-world (with carefully documented limitations), to evaluate model performance across different conditions [32].

Bias Mitigation

- Pre-processing: Apply re-sampling or re-weighting techniques to balance underrepresented groups in the training data [30].

- In-processing: Use adversarial debiasing during model training, where a secondary network attempts to predict the protected attribute (e.g., data source) from the main model's features, forcing the main model to learn features invariant to that attribute [30].

- Post-processing: Adjust decision thresholds for different subgroups to equalize specified fairness metrics, such as equalized odds [30].

Model Validation and Reporting

- External Validation: Always validate the final model on a completely external dataset, preferably one from a different source institution or acquired with different protocols, to test generalizability [27] [31].

- Comprehensive Reporting: Document all bias auditing results, mitigation strategies employed, and subgroup performance analyses. Transparency is critical for assessing validation robustness [26].

Diagram: Bias Mitigation Pathways in AI Model Lifecycle

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Bias-Aware Computational Research

| Resource Name | Type | Primary Function in Bias Mitigation |

|---|---|---|

| BASIL DB [28] | Knowledge Graph Database | Provides semantically integrated bioactivity data from multiple sources (FooDB, ChEMBL, PubMed), using NLP to standardize information and link compounds to health outcomes. |

| TCGA (The Cancer Genome Atlas) [27] | Biomedical Dataset | Serves as a primary source for histopathology and genomic data. Note: Requires rigorous bias auditing for site-specific effects. |

| ARRIVE 2.0 Guidelines [26] | Reporting Guideline | Provides a checklist to improve the design, analysis, and reporting of in vivo research, enhancing data quality and reproducibility for model training. |

| PROBAST [31] | Risk of Bias Assessment Tool | A structured tool to assess the risk of bias and applicability of prediction model studies. |

| Adversarial Debiasing [30] | Algorithmic Technique | An in-processing mitigation technique that uses an adversary network to remove dependence on protected attributes in the model's latent features. |

Robust validation of computational target prediction methods requires a fundamental shift from simply evaluating performance to actively interrogating and mitigating data bias. By integrating the outlined protocols for bias auditing, mitigation, and transparent reporting into their workflows, researchers can build more reliable, generalizable, and equitable models. This proactive approach is no longer optional but is essential for reducing attrition rates in drug discovery and ensuring that computational predictions translate into tangible clinical benefits.

A Step-by-Step Guide to Implementing Robust Validation Strategies

Within the protocol for validating computational target prediction methods, the selection of an appropriate validation strategy is a critical determinant of the reliability and interpretability of research outcomes. This document provides detailed application notes and protocols for two fundamental validation methods: the hold-out test and k-fold cross-validation. The guidance is structured to enable researchers, scientists, and drug development professionals to make informed, context-driven choices to robustly evaluate their predictive models.

Core Concepts and Comparative Analysis

Hold-Out Validation

The hold-out method, also known as the train-test split, involves partitioning the available dataset into two distinct subsets: a training set and a test set. The model is trained exclusively on the training set, and its performance is evaluated once on the held-out test set, which provides an estimate of its performance on unseen data [33] [34]. A common partition is to use 80% of the data for training and the remaining 20% for testing [33].

k-Fold Cross-Validation

k-fold cross-validation is a resampling technique that uses the available data more comprehensively. The dataset is randomly split into k approximately equal-sized subsets, or folds [35]. The model is trained and evaluated k times; in each iteration, k-1 folds are used for training, and the remaining single fold is used as the test set. Each fold serves as the test set exactly once [35] [36]. The final performance metric is the average of the k individual performance estimates [37]. A value of k=5 or k=10 is typically suggested [35].

Strategic Comparison for Research Decisions

The choice between these methods is not one-size-fits-all and must be guided by the specific context of the research, particularly in computational target prediction where data characteristics can vary significantly.

Table 1: Comparative Analysis of Hold-Out and k-Fold Cross-Validation Methods

| Feature | Hold-Out Validation | k-Fold Cross-Validation |

|---|---|---|

| Core Principle | Single train-test split [33] | k iterative train-test splits; each data point is tested once [35] |

| Computational Cost | Lower; model is trained and evaluated once [33] | Higher; model is trained and evaluated k times [35] [37] |

| Variance of Estimate | Higher; dependent on a single, potentially unlucky, data split [33] [38] | Lower; averaging over k results provides a more stable estimate [38] [36] |

| Data Utilization | Less efficient; a portion of data (the test set) is never used for training [34] | More efficient; all data is used for both training and testing [35] [37] |

| Ideal Use Context | Very large datasets, initial model prototyping, or when computational time is a constraint [33] [39] | Small to medium-sized datasets, final model evaluation, and when a reliable performance estimate is paramount [35] [40] |

| Risk of Overfitting | Assessed once, but knowledge can leak from the test set if used repeatedly for hyperparameter tuning [34] | Reduced through averaging, though a separate test set is still recommended for final model assessment [34] |

For research requiring high reliability of performance estimates, such as in peer-reviewed publications or before initiating costly in vitro experiments, k-fold cross-validation is generally preferred [40]. Its averaging process provides a more robust and trustworthy measure of a model's generalizability [38] [36].

Experimental Protocols

Protocol 1: Implementing the Hold-Out Method

This protocol is suitable for rapid model assessment during initial development phases or when working with very large datasets.

Step-by-Step Procedure: