A Practical Guide to Computational Chemistry Model Evaluation: From Foundations to Validation

This article provides a comprehensive framework for researchers and drug development professionals to evaluate computational chemistry models effectively.

A Practical Guide to Computational Chemistry Model Evaluation: From Foundations to Validation

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to evaluate computational chemistry models effectively. It covers foundational principles, practical methodologies, common troubleshooting strategies, and rigorous validation techniques. By addressing critical aspects such as data set preparation, performance metrics, error analysis, and comparative benchmarking, this guide aims to equip scientists with the knowledge to assess model reliability, avoid common pitfalls, and make informed decisions in practical applications like virtual screening and binding affinity prediction.

Laying the Groundwork: Core Principles and Common Pitfalls in Model Evaluation

Why Proper Model Evaluation is Critical for Practical Decision Making

In the rapidly evolving field of computational chemistry, model evaluation has emerged as the critical discipline that separates successful research and development from costly failures. As we progress through 2025, the field of model evaluation has undergone a fundamental transformation—moving beyond simple accuracy metrics to a comprehensive framework that assesses real-world impact, ethical considerations, and business value [1]. This evolution reflects the growing understanding that a model's performance on historical data means little if it cannot deliver tangible value while operating responsibly in production environments.

The contemporary approach to model evaluation represents a fundamental shift from technical validation to comprehensive assessment. Where earlier practices focused primarily on statistical measures and optimization metrics, modern evaluation encompasses the entire ecosystem in which models operate. This includes not only traditional performance metrics but also fairness assessments, robustness testing, business impact analysis, and continuous monitoring frameworks [1]. The stakes have never been higher—organizations that implement comprehensive model evaluation frameworks experience significantly higher ROI from their AI initiatives and dramatically reduce production incidents [1].

For computational chemistry researchers and drug development professionals, this paradigm shift is particularly relevant. The ability to simulate large molecular systems with quantum-level accuracy would help scientists rapidly design new energy storage technologies, new medicines, and beyond [2]. However, the usefulness of any machine learning interatomic potential (MLIP) depends entirely on the rigor of evaluation applied to validate its predictions [2]. Proper evaluation provides the critical bridge between theoretical simulations and practical decision-making in drug discovery and materials science.

Essential Metrics for Comprehensive Model Evaluation

The metrics used in model evaluation have evolved significantly to address the limitations of traditional approaches while providing deeper insights into model behavior and impact. For computational chemistry applications, selecting appropriate metrics is crucial for ensuring that models will perform reliably in practical decision-making scenarios.

Classification and Regression Metrics

While accuracy remains the most intuitive metric for classification problems, representing the proportion of correct predictions among all predictions, modern evaluation recognizes that accuracy alone often provides a misleading picture, particularly in imbalanced datasets or scenarios where different types of errors have asymmetric costs [1]. The evolution of classification metrics has led to widespread adoption of precision, recall, and F1-score as fundamental components of model evaluation [1].

For regression problems in computational chemistry, such as predicting molecular energies or properties, model evaluation employs a different set of metrics tailored to continuous outcomes. Mean Absolute Error (MAE) provides a straightforward interpretation of average prediction error magnitude and remains robust to outliers, making it valuable for understanding typical performance. Mean Squared Error (MSE) and Root Mean Squared Error (RMSE) penalize larger errors more heavily, making them suitable for applications where large errors are particularly undesirable, such as predicting reaction energies or binding affinities [1].

Table 1: Essential Model Evaluation Metrics for Computational Chemistry

| Metric Category | Specific Metrics | Computational Chemistry Application | Interpretation |

|---|---|---|---|

| Classification Metrics | Accuracy, Precision, Recall, F1-Score | Classification of molecular properties, active/inactive compounds | F1-Score balances precision and recall for imbalanced datasets |

| Regression Metrics | MAE, MSE, RMSE, R-squared | Predicting molecular energies, properties, binding affinities | MAE is robust to outliers; MSE penalizes large errors |

| Probabilistic Metrics | Brier Score, Log Loss | Assessing uncertainty in molecular property predictions | Measures calibration of predicted probabilities |

| Business-Oriented Metrics | Expected value frameworks, Cost-sensitive metrics | Prioritizing compound synthesis, resource allocation | Converts model predictions to practical business impact |

Advanced Evaluation Frameworks

The most significant advancement in model evaluation comes from the integration of probabilistic and business-oriented measurements. Probabilistic metrics like Brier Score and Log Loss evaluate the quality of predicted probabilities rather than just class labels, while calibration metrics assess how well predicted probabilities match actual outcomes—a crucial consideration for decision-making under uncertainty [1]. Simultaneously, business-oriented metrics have emerged that directly measure commercial impact, including expected value frameworks that convert model predictions to monetary value, and cost-sensitive metrics that incorporate asymmetric costs of different error types based on actual business consequences [1].

In computational chemistry, the OMol25 dataset team has developed exceptionally thorough evaluations to give fellow researchers more confidence in the capabilities of MLIPs trained on the dataset [2]. These evaluations drive innovation through friendly competition, as the results are ranked publicly. Potential users can see which models run smoothly and developers can see how their model stacks up against others [2].

Implementation Strategies for Effective Model Evaluation

The implementation of effective model evaluation requires careful consideration of methodological approaches that ensure reliable, generalizable results while accounting for practical constraints in computational chemistry research.

Cross-Validation Techniques

Cross-validation techniques form the backbone of robust evaluation, with k-fold cross-validation serving as the standard approach for most scenarios [1]. This method involves partitioning data into k folds, using k-1 folds for training and one fold for testing, then rotating through all folds to obtain comprehensive performance estimates. For imbalanced datasets common in chemical discovery, stratified k-fold cross-validation preserves the percentage of samples for each class across folds, preventing skewed performance estimates [1].

Temporal data in chemical simulations introduces unique challenges that require specialized cross-validation approaches. Standard random splitting can create data leakage by allowing models to inadvertently learn from future information. Time series cross-validation addresses this through forward chaining methods that train on past data and test on future data, expanding window approaches that gradually increase the training set over time, and rolling window techniques that maintain a fixed training window that moves through time [1].

Nested cross-validation has emerged as a best practice for model evaluation when both model selection and performance estimation are required [1]. This approach uses an outer loop for performance estimation and an inner loop for model selection, preventing optimistic bias that occurs when the same data is used for both purposes. The implementation involves partitioning data into multiple outer folds, with each outer fold further divided into inner folds for hyperparameter tuning, ensuring that performance estimates reflect true generalization capability rather than overfitting to the validation process [1].

Data Splitting Strategies

The strategic splitting of data into training, validation, and test sets remains fundamental to reliable model evaluation [1]. Traditional splits using ratios like 60-20-20 work well for moderate-sized datasets, while large datasets might use 98-1-1 splits to maximize training data. For small datasets common in novel chemical research, specialized strategies like repeated cross-validation or bootstrapping provide more stable estimates [1].

Temporal splitting requires strict chronological separation, with all training data preceding validation data, which in turn precedes test data. Implementing appropriate gaps between splits helps prevent leakage from near-boundary observations. Stratified splitting maintains the distribution of important variables across splits, particularly crucial for rare molecular classes or subgroups where random splitting might create unrepresentative subsets [1].

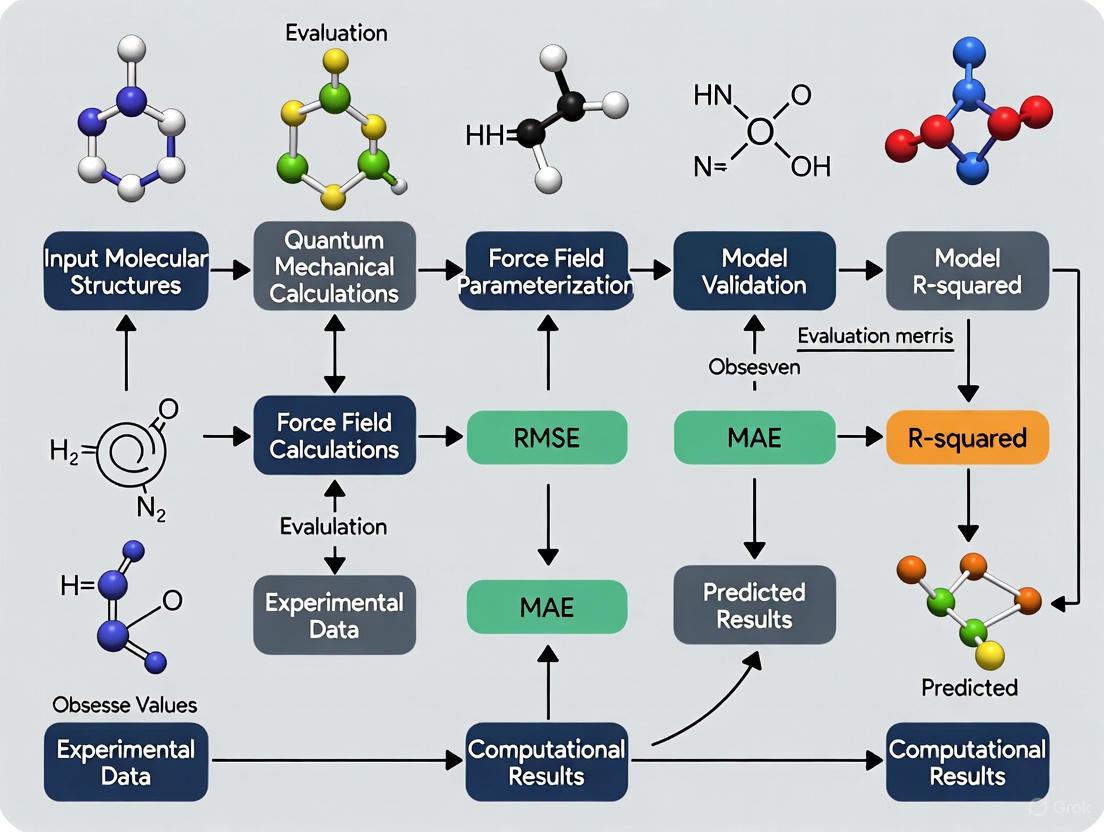

Diagram 1: Model evaluation workflow showing cross-validation approaches

Specialized Evaluation Frameworks for Computational Chemistry

As computational chemistry applications have diversified, model evaluation frameworks have evolved to address the unique characteristics and requirements of molecular simulations and property predictions.

Quantum Chemistry Evaluation Methods

Density Functional Theory (DFT) has been an incredibly powerful tool for modeling precise details of atomic interactions, allowing scientists to predict the force on each atom and the energy of the system, which in turn dictate the molecular motion and chemical reactions that determine larger-scale properties [2]. However, DFT calculations demand a lot of computing power, and their appetite increases dramatically as the molecules involved get bigger, making it impossible to model scientifically relevant molecular systems and reactions of real-world complexity, even with the largest computational resources [2].

Recent advances in machine learning offer a way to overcome these limitations. Machine Learned Interatomic Potentials (MLIPs) trained on DFT data can provide predictions of the same caliber 10,000 times faster, unlocking the ability to simulate the large atomic systems that have always been out of reach, while running on standard computing systems [2]. However, the usefulness of an MLIP depends on the amount, quality, and breadth of the data that it has been trained on.

Coupled-cluster theory, or CCSD(T), represents the gold standard of quantum chemistry [3]. The results of CCSD(T) calculations are much more accurate than what you get from DFT calculations, and they can be as trustworthy as those currently obtainable from experiments. The problem is that carrying out these calculations on a computer is very slow, and the scaling is bad: If you double the number of electrons in the system, the computations become 100 times more expensive [3]. For that reason, CCSD(T) calculations have normally been limited to molecules with a small number of atoms.

Table 2: Computational Chemistry Evaluation Methods Comparison

| Method | Accuracy | Computational Cost | System Size Limit | Best Use Cases |

|---|---|---|---|---|

| DFT | Medium | High | Hundreds of atoms | Screening molecular candidates, property prediction |

| CCSD(T) | High (Gold Standard) | Very High | Tens of atoms | Benchmarking, training data for MLIPs |

| MLIPs | DFT-level (when properly trained) | Low (10,000x faster than DFT) | Thousands of atoms | Large system simulation, high-throughput screening |

| MEHnet | CCSD(T)-level | Medium | Thousands of atoms | Multi-property prediction, optical properties |

Multi-Task Evaluation Approaches

The "Multi-task Electronic Hamiltonian network," or MEHnet, represents a significant advancement in computational chemistry evaluation by shedding light on multiple electronic properties simultaneously, such as the dipole and quadrupole moments, electronic polarizability, and the optical excitation gap [3]. The excitation gap affects the optical properties of materials because it determines the frequency of light that can be absorbed by a molecule [3]. Another advantage of CCSD-trained models is that they can reveal properties of not only ground states, but also excited states. The model can also predict the infrared absorption spectrum of a molecule related to its vibrational properties, where the vibrations of atoms within a molecule are coupled to each other, leading to various collective behaviors [3].

The strength of this approach owes much to the network architecture. Utilizing a so-called E(3)-equivariant graph neural network, in which the nodes represent atoms and the edges that connect the nodes represent the bonds between atoms, with customized algorithms that incorporate physics principles directly into the model [3]. This integration of physical principles directly into the evaluation framework ensures that models produce physically plausible results, which is essential for trustworthy decision-making in drug development.

Experimental Protocols for Computational Chemistry Evaluation

Implementing rigorous experimental protocols is essential for generating reliable, reproducible results in computational chemistry research. The following protocols provide detailed methodologies for key experiments in the field.

MLIP Training and Validation Protocol

Purpose: To train and validate Machine Learned Interatomic Potentials (MLIPs) using quantum chemistry data for accurate molecular simulations.

Materials and Data Requirements:

- Reference quantum chemistry data (DFT or CCSD(T) calculations)

- Training dataset such as OMol25 with diverse molecular configurations

- Computational resources (CPU/GPU clusters)

- MLIP training framework (e.g., TensorFlow, PyTorch, specialized chemistry packages)

Procedure:

- Data Preparation: Curate training dataset containing molecular structures and corresponding quantum chemical properties (energies, forces). The OMol25 dataset provides over 100 million 3D molecular snapshots with properties calculated with DFT, featuring configurations ten times larger and substantially more complex than previous datasets, with up to 350 atoms from across most of the periodic table [2].

Model Architecture Selection: Choose appropriate network architecture. E(3)-equivariant graph neural networks have demonstrated strong performance, where nodes represent atoms and edges represent bonds between atoms [3].

Training Regimen: Implement k-fold cross-validation with stratified sampling to ensure representative distribution of molecular classes across folds [1]. Use an appropriate train/validation/test split (e.g., 70/15/15 for moderate datasets) [4].

Multi-Task Learning: For comprehensive evaluation, train on multiple properties simultaneously. The MEHnet approach demonstrates that a single model can evaluate multiple electronic properties, including dipole moments, polarizability, and excitation gaps [3].

Validation Against Gold Standards: Compare model predictions against CCSD(T) calculations where feasible. CCSD(T) represents the gold standard of quantum chemistry, with results as trustworthy as those obtainable from experiments [3].

Performance Benchmarking: Evaluate using multiple metrics including MAE, RMSE, and application-specific metrics. Implement temporal cross-validation for time-dependent properties [1].

Quality Control:

- Monitor for overfitting by tracking validation performance during training

- Test on held-out molecules not present in training data

- Validate against experimental data where available

- Ensure physical plausibility of predictions (e.g., energy conservation)

Cross-Dataset Generalization Assessment

Purpose: To evaluate model performance across diverse molecular families and system sizes, assessing generalization capability.

Materials:

- Multiple benchmark datasets (e.g., OMol25, Open Polymers)

- Model trained on primary dataset

- Computational resources for inference

Procedure:

- Dataset Curation: Assemble evaluation datasets covering diverse chemical spaces. The OMol25 dataset is composed of content divided into three major focus areas: biomolecules, electrolytes, and metal complexes (molecules arranged around a central metal ion) [2].

Transfer Learning Assessment: Evaluate model performance on molecular families not represented in training data. Test the model's ability to generalize from small molecules to larger systems.

Scalability Testing: Assess performance as system size increases. Previous calculations were limited to analyzing hundreds of atoms with DFT and just tens of atoms with CCSD(T) calculations, while modern approaches can handle thousands of atoms and, eventually, perhaps tens of thousands [3].

Statistical Significance Testing: Use appropriate statistical tests such as the Wilcoxon signed-rank test for comparing model performances across multiple datasets or folds [1]. Compute confidence intervals through bootstrap methods to quantify uncertainty in performance estimates [1].

Failure Mode Analysis: Identify specific molecular classes or properties where model performance degrades. Document systematic errors and limitations.

Diagram 2: Comprehensive model evaluation framework for computational chemistry

Successful computational chemistry research requires access to specialized datasets, software tools, and computational resources. The following table details key resources essential for proper model evaluation in drug development and materials science.

Table 3: Essential Research Reagents and Resources for Computational Chemistry

| Resource Category | Specific Resource | Function and Application | Key Features |

|---|---|---|---|

| Reference Datasets | OMol25 (Open Molecules 2025) | Training and benchmarking MLIPs; contains 100M+ 3D molecular snapshots | DFT-level accuracy; 10x larger/more complex than previous datasets; biomolecules, electrolytes, metal complexes [2] |

| Quantum Chemistry Data | CCSD(T) calculations | Gold standard reference data for training and validation | High accuracy comparable to experiments; limited to small molecules [3] |

| Software Frameworks | MEHnet (Multi-task Electronic Hamiltonian network) | Predicting multiple electronic properties from single model | E(3)-equivariant graph neural network; physics-principled algorithms [3] |

| Evaluation Benchmarks | Custom evaluations for MLIPs | Standardized challenges for model comparison | Thorough evaluations for useful tasks; public ranking drives innovation [2] |

| Computational Resources | High-performance computing clusters | Running DFT, CCSD(T), and ML model training | Meta's computing resources used for OMol25 (6B CPU hours) [2] |

Proper model evaluation is not merely a technical formality but a critical component of responsible scientific research and practical decision-making in computational chemistry. As models grow more complex and their applications expand into drug discovery and materials design, comprehensive evaluation frameworks ensure that predictions are accurate, reliable, and physically plausible. The evolution of evaluation practices from simple accuracy metrics to multi-faceted assessments incorporating robustness, generalization, and business impact represents a necessary maturation of the field.

For computational chemistry researchers and drug development professionals, rigorous model evaluation provides the foundation for trustworthy simulations that can accelerate discovery while reducing costly experimental failures. By implementing the protocols, metrics, and frameworks outlined in this guide, scientists can build more robust, reliable, and ethical AI systems that meet the demands of rapidly evolving technological landscape in chemical research and pharmaceutical development.

Understanding the Gap Between Retrospective Studies and Operational Reality

In computational chemistry, the disparity between the promising results achieved in controlled, retrospective research and the performance of models when deployed in real-world operational settings represents a critical challenge. Retrospective studies typically utilize existing, static datasets where all data is pre-collected and known in advance [5]. While this approach minimizes the impact on clinical sites and reduces lead times, it inherently limits the assessment of a model's ability to generalize to novel chemical spaces or perform reliably in dynamic research environments [5] [6]. As the field advances toward more complex applications in drug discovery and materials science, understanding and bridging this gap becomes essential for developing robust, trustworthy computational tools that can accelerate scientific discovery [7] [8].

This guide examines the methodological foundations of this gap, presents current strategies for addressing it, and provides practical evaluation protocols to help researchers develop models that transition more successfully from retrospective validation to operational deployment in computational chemistry research.

Defining the Gap: Retrospective vs. Prospective Approaches

The distinction between retrospective and prospective methodologies forms the core of the validation gap in computational chemistry. Retrospective studies rely exclusively on previously acquired data, often curated from idealized systems or limited chemical spaces [5]. This approach dominates current research due to its convenience and lower resource requirements, but introduces significant limitations: the data may lack completeness for specific research questions, contain unconscious biases in chemical space coverage, and provide inadequate representation of realistic operational conditions where models will ultimately be applied [7] [6].

In contrast, prospective studies are designed to intentionally collect new data tailored to specific evaluation objectives, often building upon existing real-world data sources [5]. This methodology enables researchers to address specific chemical questions with appropriate data quality and completeness, though it requires greater investment in computational resources and careful experimental design. The recent emergence of massive, chemically-diverse datasets like OMol25, containing over 100 million density functional theory (DFT) calculations, represents a hybrid approach—leveraging retrospective data collection at unprecedented scale while aiming for broader chemical coverage that better approximates operational reality [2] [9].

Table 1: Key Differences Between Retrospective and Prospective Approaches

| Characteristic | Retrospective Studies | Prospective Studies |

|---|---|---|

| Data Collection | Pre-existing data | New data collection tailored to study objectives |

| Chemical Diversity | Often limited to previously studied systems | Can target underrepresented chemical spaces |

| Resource Requirements | Lower computational cost | High computational investment (e.g., 6 billion CPU hours for OMol25) [2] |

| Operational Relevance | May not reflect real-world application conditions | Better approximation of operational environments through targeted design |

| Common Applications | Initial model validation, benchmarking | Regulatory submissions, control arm augmentation, post-marketing studies [5] |

Current Landscape and Emerging Solutions

The Data Challenge in Computational Chemistry

The foundation of reliable computational chemistry models lies in the quality, diversity, and relevance of their training data. Traditional datasets have suffered from limited chemical diversity, focusing predominantly on simple organic molecules with few heavy atoms and a narrow range of elements [7] [10]. For instance, early datasets like ANI-1 contained only simple organic structures with four elements, while the QM9 dataset was limited to molecules with up to 9 heavy atoms [7] [10]. This restricted coverage creates a fundamental gap between the controlled retrospective environments where models are developed and the diverse operational scenarios where they must perform.

The OMol25 dataset represents a significant step toward addressing this gap, encompassing 83 elements and systems of up to 350 atoms across diverse chemical domains including biomolecules, electrolytes, and metal complexes [2] [9]. With over 100 million DFT calculations at the ωB97M-V/def2-TZVPD level of theory, this dataset reduces the chemical diversity gap, though challenges remain in areas like polymer chemistry [2] [10].

Architectural Advances for Better Generalization

Neural network potentials (NNPs) have evolved substantially to improve generalization across chemical space. The eSEN architecture incorporates equivariant spherical-harmonic representations and a transformer-style design that improves the smoothness of potential-energy surfaces, leading to more stable molecular dynamics and geometry optimizations [10]. The recently introduced Universal Models for Atoms (UMA) framework employs a novel Mixture of Linear Experts (MoLE) architecture that enables knowledge transfer across disparate datasets computed at different levels of theory, enhancing performance without significantly increasing inference times [10] [11].

These architectural improvements allow single models to perform comparably or better than specialized models across diverse chemical domains, moving the field toward more robust and operationally viable computational tools [11].

Table 2: Performance Comparison of Models on Molecular Energy Benchmarks

| Model/Dataset | Architecture | WTMAD-2 (neutral/organic) | Chemical Diversity | Training Data Size |

|---|---|---|---|---|

| ANI-1 | Neural Network Potential | Higher error | 4 elements | Limited organic molecules [10] |

| OMol25-trained Models | eSEN/UMA | ~0 | 83 elements | 100M+ calculations [10] |

| Previous SOTA | Various | Moderate error | Varies (typically <30 elements) | Typically <1M calculations [10] |

Methodologies for Bridging the Gap

Rigorous Evaluation Frameworks

Comprehensive evaluation strategies are essential for assessing how models will perform in operational settings. The following protocols provide structured approaches to model validation:

Protocol 1: Chemical Space Coverage Assessment

- Objective: Quantify model performance across diverse chemical domains to identify blind spots and biases.

- Procedure:

- Partition test data by chemical domains (biomolecules, electrolytes, metal complexes)

- Evaluate model performance metrics separately for each domain

- Compare performance disparities across domains

- Identify chemical spaces with significantly degraded performance

- Metrics: Mean Absolute Error (MAE), Root Mean Square Error (RMSE), maximum error, failure rate

- Operational Relevance: Reveals limitations before deployment in specific application contexts [9] [10]

Protocol 2: Out-of-Distribution Generalization Testing

- Objective: Assess model performance on novel chemical scaffolds not represented in training data.

- Procedure:

- Curate benchmark sets containing molecular scaffolds excluded from training

- Evaluate model performance on these held-out scaffolds

- Systemically increase the structural dissimilarity from training examples

- Measure performance degradation as function of dissimilarity

- Metrics: Performance drop relative to in-distribution test sets, correlation with molecular similarity metrics

- Operational Relevance: Models frequently encounter novel chemistries in real discovery applications [6]

Protocol 3: Prospective Validation Campaign

- Objective: Validate model predictions through targeted computational experiments on strategically selected compounds.

- Procedure:

- Identify chemical space regions with high predictive uncertainty

- Select diverse representative compounds from these regions

- Perform high-level theory calculations (e.g., CCSD(T)) on selected compounds

- Compare model predictions with ground-truth calculations

- Metrics: Error statistics on prospective predictions, early performance indicators

- Operational Relevance: Most closely mimics real-world deployment conditions [5]

Workflow Integration and Validation

The following diagram illustrates a comprehensive framework for evaluating computational chemistry models, emphasizing the transition from retrospective assessment to prospective validation:

The Scientist's Toolkit: Essential Research Reagents

Implementing robust model evaluation requires leveraging specialized tools and frameworks. The following table details key resources available to researchers:

Table 3: Essential Tools for Computational Chemistry Model Evaluation

| Tool/Resource | Type | Primary Function | Application in Evaluation |

|---|---|---|---|

| OMol25 Dataset [2] [9] | Dataset | Provides diverse quantum chemical calculations | Benchmarking model performance across chemical space; training data for transfer learning |

| ChemBench [12] | Evaluation Framework | Standardized assessment of chemical knowledge and reasoning | Evaluating model capabilities against human expertise; identifying knowledge gaps |

| ChemTorch [6] | Development Framework | Unified platform for chemical reaction property prediction | Developing and benchmarking models with consistent protocols; avoiding privileged information leakage |

| UMA Models [10] [11] | Pre-trained Models | Universal models for atoms across chemical domains | Baseline performance comparison; starting point for transfer learning |

| eSEN Models [10] | Pre-trained Models | Neural network potentials with conservative forces | Molecular dynamics simulations; geometry optimization benchmarks |

| Flatiron PCG Study [5] | Methodology Framework | Prospective data collection approach | Designing prospective validation studies; understanding real-world data requirements |

Implementation Roadmap

Bridging the gap between retrospective studies and operational reality requires a systematic approach to model development and evaluation. Researchers should:

Establish Comprehensive Baselines: Begin with rigorous retrospective evaluation using diverse benchmarks like ChemBench and OMol25 to establish performance baselines across chemical domains [12] [10].

Identify Performance Gaps: Systematically analyze results to identify specific chemical spaces or task types where model performance degrades, using Protocol 1 and 2 [6].

Design Targeted Prospective Validations: Develop prospective validation campaigns focused on the identified gap areas, following Protocol 3 to collect decisive evidence of operational readiness [5].

Iterate and Refine: Use prospective validation results to refine models, architectures, and training strategies, focusing improvement efforts on the most critical limitations for operational deployment.

Implement Continuous Monitoring: Establish ongoing evaluation protocols to detect performance degradation as models encounter novel chemical spaces in operational use.

This structured approach enables researchers to progressively de-risk the transition from retrospective validation to operational deployment, creating computational chemistry tools that deliver reliable performance in real-world drug development and materials discovery applications.

Evaluating computational chemistry models is a critical step in ensuring their reliability and utility in drug discovery. The performance of these models is typically assessed across three cornerstone tasks: virtual screening, pose prediction, and affinity estimation. Virtual screening involves the computational sifting of large compound libraries to identify molecules most likely to bind to a target, with success measured by the enrichment of active compounds over inactive ones [13]. Pose prediction, also known as molecular docking, focuses on forecasting the precise three-dimensional orientation of a ligand within a protein's binding site, where accuracy is quantified by the root-mean-square deviation (RMSD) between predicted and experimentally determined structures [14]. Affinity estimation aims to predict the strength of the binding interaction, often reported as binding free energy (ΔG) or inhibitory concentration (IC50), with model performance evaluated through correlation coefficients and error metrics like mean absolute error (MAE) [15]. Rigorous benchmarking using standardized datasets and protocols is essential for comparing different computational methods and guiding their strategic application in the drug discovery pipeline [16].

Quantitative Benchmarking Data

The following tables consolidate key quantitative findings from recent studies and benchmarks, providing a snapshot of the current performance landscape in virtual screening, pose prediction, and affinity estimation.

Table 1: Performance Comparison of Virtual Screening Methods on Standardized Benchmarks (DUD-E, DEKOIS, LIT-PCBA)

| Method Category | Method Name | Average Enrichment Factor (EF1%) | AUC-ROC | Key Characteristics |

|---|---|---|---|---|

| Foundation Model | LigUnity [15] | >50% improvement over baselines | ~0.90 | Unified model for screening & optimization; 10^6x faster than docking. |

| Traditional Docking | Glide-SP [15] | Baseline | ~0.70 | Physics-based, computationally expensive. |

| Machine Learning | DrugCLIP, ActFound [15] | Varies | ~0.80-0.85 | Data-driven, efficient, but often task-specific. |

Table 2: Pose Prediction Performance on the PDBBind Benchmark (RMSD in Ångströms)

| Method Category | Method Name | Average RMSD (Å) | Success Rate (<2.0 Å) | Key Characteristics |

|---|---|---|---|---|

| Data-Driven Baseline | TEMPL (MCS-based) [14] | ~1.5 - 2.5* | ~60-80%* | Simple, interpolation-sensitive, risk of data leakage. |

| Deep Learning | DeepLearningPose (representative) [14] | ~1.0 - 2.0 | >80% | Outperforms traditional docking; generalizability concerns. |

| Traditional Docking | Molecular Docking (representative) [14] | ~1.5 - 3.0 | ~50-70% | Physics-based; can underperform in interpolative tasks. |

Note: TEMPL performance is highly benchmark-dependent, with lower scores on challenging benchmarks like PoseBusters [14].

Table 3: Affinity Prediction Performance (Regression Metrics)

| Method Category | Method Name | Pearson's R | Mean Absolute Error (MAE) | Key Characteristics / Dataset |

|---|---|---|---|---|

| Foundation Model | LigUnity (Hit-to-Lead) [15] | >0.80 | Approaches FEP+ accuracy | Cost-efficient alternative to FEP; high accuracy. |

| Physics-Based | Free Energy Perturbation (FEP) [15] | High | High Accuracy | High computational cost. |

| Machine Learning | PBCNet, ActFound [15] | ~0.70-0.80 | Varies | Efficient, specialized for optimization. |

Detailed Experimental Protocols

Virtual Screening Evaluation Protocol

Objective: To assess a model's ability to prioritize active compounds over inactive ones in a large virtual library.

Materials:

- Target Protein: A protein structure with a known binding site (e.g., from PDB).

- Compound Library: A benchmark dataset containing known actives and decoys (e.g., DUD-E, DEKOIS, LIT-PCBA) [15].

- Computing Environment: High-performance computing (HPC) clusters or cloud-based servers, often with GPU acceleration [17].

Procedure:

- Preparation: Prepare the protein structure (e.g., add hydrogens, assign charges) and the compound library (e.g., convert to 3D, minimize energy).

- Screening: Execute the virtual screening workflow using the model under evaluation. For a foundational model like LigUnity, this involves computing pocket and ligand embeddings and scoring their complementarity [15].

- Ranking: Rank the entire library of compounds based on the model's output score (e.g., predicted binding probability or affinity).

- Analysis:

- Calculate the Enrichment Factor (EF) at a specific percentage of the screened library (e.g., EF1%) to measure the concentration of actives in the top-ranked compounds.

- Generate the Receiver Operating Characteristic (ROC) curve and calculate the Area Under the Curve (AUC) to evaluate the binary classification performance across all thresholds [15].

- Plot the recall of actives as a function of the fraction of the screened library inspected.

Interpretation: A higher EF and AUC indicate a more effective screening model. LigUnity, for instance, demonstrated a greater than 50% improvement in EF over traditional docking methods, highlighting the power of integrated ML approaches [15].

Pose Prediction Evaluation Protocol

Objective: To quantify the spatial accuracy of a model's predicted ligand pose compared to an experimentally determined reference structure.

Materials:

- Crystal Structures: A set of high-resolution protein-ligand complexes from a database like the PDBBind benchmark [14].

- Computing Software: The pose prediction algorithm (e.g., TEMPL, a deep learning-based method, or a traditional docking tool).

Procedure:

- Data Preparation: Extract and prepare the protein and ligand from the crystal structure. The ligand is separated and its coordinates serve as the "ground truth."

- Pose Generation: For each complex, the ligand is positioned outside the binding site and the model is tasked with predicting its bound pose. Data-driven methods like TEMPL use maximal common substructure (MCS) matching to reference molecules followed by constrained 3D embedding [14].

- Structural Alignment: Superimpose the predicted ligand pose onto the crystallographic ligand pose using the protein's binding site atoms as a reference frame.

- Calculation of RMSD: Calculate the Root-Mean-Square Deviation (RMSD) between the heavy atoms of the predicted and crystal ligand poses after optimal superposition.

RMSD = √[ Σ( (x_i - x_ref_i)² + (y_i - y_ref_i)² + (z_i - z_ref_i)² ) / N ]where(x_i, y_i, z_i)are the coordinates of heavy atom i in the predicted pose,(x_ref_i, y_ref_i, z_ref_i)are its coordinates in the reference pose, and N is the number of heavy atoms. - Success Classification: A prediction is typically considered a "success" if the RMSD is less than 2.0 Å [14].

Interpretation: The percentage of successful predictions across the benchmark set is the primary metric. Lower average RMSD and higher success rates indicate better performance. It is critical to evaluate on benchmarks with challenging splits (e.g., by scaffold) to avoid over-optimism due to data leakage [14].

Affinity Estimation Evaluation Protocol

Objective: To evaluate a model's accuracy in predicting the strength of protein-ligand binding, typically reported as binding free energy (ΔG) or inhibition constants (Ki/Kd).

Materials:

- Curated Affinity Datasets: Databases such as PDBBind, BindingDB, or ChEMBL, which contain experimental affinity measurements for protein-ligand complexes [15].

- Pocket-Structure Database: For structure-aware models, a resource like PocketAffDB, which links affinity data to specific binding pocket structures, is required [15].

Procedure:

- Data Sourcing and Curation: Collect a dataset of protein-ligand pairs with reliable experimental affinity data. For foundation models, this can involve creating a unified dataset like PocketAffDB (0.8 million data points across 53,406 pockets) [15].

- Data Splitting: Split the data into training, validation, and test sets. Crucially, to test generalizability, splits should be time-based, by molecular scaffold, or by protein unit, rather than random [15].

- Model Training & Prediction: Train the model (e.g., LigUnity, which uses a combined scaffold discrimination and pharmacophore ranking objective) on the training data. Then, use it to predict affinities for the held-out test set [15].

- Performance Calculation:

- Calculate the Pearson's Correlation Coefficient (R) between the predicted and experimental values to measure the linear relationship.

- Calculate the Mean Absolute Error (MAE) to quantify the average magnitude of prediction errors.

MAE = (1/N) * Σ |y_pred_i - y_true_i|

Interpretation: A higher Pearson's R and a lower MAE signify a more accurate affinity prediction model. In benchmark studies, models like LigUnity have shown correlation coefficients exceeding 0.8, approaching the accuracy of costly physics-based methods like Free Energy Perturbation (FEP) but at a fraction of the computational cost [15].

Workflow Visualization

The following diagrams illustrate the logical workflows and data flows for the key evaluation scenarios and unified models described in this guide.

Virtual Screening Evaluation Flow

Pose Prediction Evaluation Flow

Affinity Estimation Evaluation Flow

Unified Model Architecture (LigUnity)

Table 4: Key Software, Datasets, and Tools for Model Evaluation

| Item Name | Type | Primary Function in Evaluation | Key Features / Examples |

|---|---|---|---|

| MoleculeNet [16] | Benchmark Suite | Standardized benchmarking for molecular ML. | Curates 17+ datasets; offers metrics and data splits for properties from quantum mechanics to physiology. |

| ChemBench [12] | Evaluation Framework | Systematically evaluates chemical knowledge and reasoning of LLMs. | Over 2,700 curated QA pairs; compares model performance against human chemist expertise. |

| PDBBind | Dataset | Primary benchmark for pose prediction and affinity estimation. | Provides high-quality protein-ligand complexes with experimental binding affinity data. |

| DUD-E / DEKOIS [15] | Dataset | Benchmark for virtual screening. | Contain known actives and carefully selected decoys to test a model's enrichment capability. |

| DeepChem [16] | Software Framework | Developing and benchmarking deep learning models on molecular data. | Implements featurizations (SMILES, graphs) and models; foundation for MoleculeNet. |

| TEMPL [14] | Software Tool | Provides a simple, data-driven baseline for pose prediction. | MCS-based 3D embedding; highlights risks of data leakage in benchmarks. |

| LigUnity [15] | Foundation Model | Unified model for both virtual screening and hit-to-lead affinity prediction. | Learns a shared pocket-ligand embedding space; >50% improvement in screening, FEP-level accuracy in optimization. |

| Glide, GOLD | Software Tool | Traditional molecular docking for pose prediction and virtual screening. | Physics-based scoring functions; standard against which new ML methods are often compared. |

| BindingDB / ChEMBL | Database | Sources of experimental binding data for training and testing affinity prediction models. | Contain large volumes of public bioactivity data. |

The Critical Importance of Data Sharing and Reproducibility

The scientific community currently faces a significant challenge termed the "reproducibility crisis," a phenomenon that exists somewhere between urban legend and established fact [18]. Concerns about reproducibility initially gained prominence with a seminal 2005 paper by Ioannidis entitled "Why Most Published Research Findings Are False," which sparked widespread examination of scientific rigor across disciplines [18]. Alarming evidence has emerged from various fields: in psychology, only 36% of 100 representative studies from major journals could be replicated with statistically significant findings, with effect sizes approximately halved in subsequent attempts [18]. Similarly worrisome results have been observed in oncology drug development, where researchers successfully confirmed findings in only 6 out of 53 "landmark" studies despite attempts to work with original authors and exchange reagents [18].

In computational sciences, including computational chemistry and drug discovery, this crisis manifests as a translational gap often called the "valley of death" – the inability to translate promising preclinical discoveries into successful human trials and eventual therapies [19]. The failure rate for drugs progressing from phase 1 trials to final approval reaches approximately 90%, highlighting the urgent need to address replicability challenges earlier in the research pipeline [19]. This crisis not only wastes valuable research resources but also erodes public trust in scientific research and impedes therapeutic advancements [18].

Defining Reproducibility and Replicability

In scientific discourse, reproducibility and replicability represent distinct but complementary concepts essential for research credibility. Reproducibility refers to the ability to obtain the same results when reanalyzing the original data while following the original analysis strategy, answering questions such as: "Within my study, if I repeat the data management and analysis, will I get an identical answer?" or "Within my study, if someone else starts with the same raw data, will they draw a similar conclusion?" [18] [20].

Replicability, by contrast, refers to the ability to confirm findings in different data and populations, addressing questions such as: "If someone else tries to repeat my study as exactly as possible, will they draw a similar conclusion?" or "If someone else tries to perform a similar study, will they draw a similar conclusion?" [18] [20]. While computational reproducibility requires only shared data and analysis programming code, independent reproducibility focuses on effective communication of critical design and analytic choices necessary for assessing potential sources of bias and facilitating replication with differently structured data [20].

Table 1: Types of Reproducibility in Scientific Research

| Type | Definition | Key Question | Requirements |

|---|---|---|---|

| Analytical Reproducibility | Ability to repeat data management and analysis on the same data | "Within a study, if the investigator repeats the data management and analysis, will she get an identical answer?" [18] | Raw data, analysis code, computational environment |

| Results Reproducibility | Ability for others to draw similar conclusions from the same raw data | "Within a study, if someone else starts with the same raw data, will she draw a similar conclusion?" [18] | Raw data, detailed analytical protocols |

| Direct Replicability | Ability to repeat experiments as exactly as possible | "If someone else tries to repeat an experiment as exactly as possible, will she draw a similar conclusion?" [18] | Detailed experimental protocols, reagents |

| Conceptual Replicability | Ability to confirm findings through similar studies | "If someone else tries to perform a similar study, will she draw a similar conclusion?" [18] | Clear theoretical framework, methodological transparency |

Quantitative Evidence of the Reproducibility Problem

Empirical assessments of reproducibility across scientific domains reveal both encouraging trends and significant concerns. A large-scale systematic review of 150 real-world evidence (RWE) studies published in peer-reviewed journals found that original and reproduction effect sizes were strongly correlated (Pearson's correlation = 0.85), indicating a solid foundation with room for improvement [20]. The median relative magnitude of effect (e.g., hazard ratio~original~/hazard ratio~reproduction~) was 1.0 with an interquartile range of [0.9, 1.1] and a range of [0.3, 2.1], demonstrating that while most results were closely reproduced, a concerning subset diverged significantly [20].

The reproduction of study population sizes proved more challenging, with a median relative sample size (original/reproduction) of 0.9 for both comparative and descriptive studies [20]. For 21% of reproduced studies, the reproduction study size was less than half or more than twice the original, primarily due to ambiguous reporting of inclusion-exclusion criteria and temporality requirements [20]. Baseline characteristics were generally better reproduced, with a median difference in prevalence (original—reproduction) of 0.0% and an interquartile range of [-1.7%, 2.6%] [20].

Table 2: Reproducibility Assessment Across Study Types and Domains

| Field/Domain | Reproducibility Rate | Key Findings | Primary Challenges |

|---|---|---|---|

| Psychology | 36% of 100 studies [18] | Only 36% of replications had statistically significant findings; average effect size halved [18] | Selective reporting, low statistical power |

| Oncology Drug Development | 6 of 53 "landmark" studies [18] | Findings confirmed in only 6 studies despite collaboration with original authors [18] | Reagent quality control, protocol variations |

| Real-World Evidence Studies | Strong correlation (0.85) but subset of diverged results [20] | Median relative effect size 1.0 [0.9, 1.1]; 21% had significant population size differences [20] | Incomplete reporting, ambiguous temporality |

| Computational Chemistry | Varies by method and implementation [21] [8] | Hierarchical approaches balance accuracy and computational cost [21] | Method selection, computational constraints, parameter reporting |

Fundamental Principles for Enhancing Reproducibility

Transparency and Detailed Documentation

Complete methodological transparency forms the cornerstone of reproducible research. This requires explicit documentation of data transformations, study design choices, and statistical analysis plans [20]. Research indicates that key parameters frequently suffer from inadequate reporting: for example, algorithms defining exposure duration were provided in only ≤55% of real-world evidence studies, while criterion defining cohort entry dates was reported in 89% of studies [20]. For computational chemistry, this translates to detailed documentation of force field parameters, convergence criteria, basis sets, solvation models, and all computational methods employed [21] [8].

Data Management and Analysis Protocols

Robust data management practices create an auditable trail from raw data to analytical results. This process involves maintaining copies of the original raw data file, final analysis file, and all data management programs [18]. Data cleaning should be performed blinded before data analysis to prevent cognitive biases from influencing decisions about handling outliers or missing data [18]. Modern workflow management systems like NextFlow and Snakemake enable researchers to create contiguous data-processing pipelines that ensure consistent data handling across analyses [22]. Similarly, computational chemistry workflows benefit from version-controlled scripts that document every step from molecular structure preparation to property calculation [21] [23].

Standardized Experimental Protocols

Standardization minimizes protocol drift and technical variability. The Assay Guidance Manual (AGM) program creates best-practice guidelines and shares them with the scientific community to raise awareness of rigorous experimental design [22]. Initiatives like the high-throughput screening (HTS) ring testing, where multiple institutions run the same HTS assay using identical guidelines, help identify sources of irreproducibility, such as improper instrument calibration [22]. In computational chemistry, standardized benchmark datasets like the NIST Computational Chemistry Comparison and Benchmark Database provide reference data for method validation and comparison [24].

Diagram 1: Reproducibility Workflow and Barriers - This diagram illustrates the research workflow from data collection through publication and independent reproduction, highlighting common barriers that impede successful reproduction.

Practical Implementation Strategies

Computational and Data Management Tools

Implementing specialized computational tools significantly enhances reproducibility. Electronic laboratory notebooks with edit tracking provide superior documentation compared to paper systems [18]. For computational analysis, Jupyter or R Markdown notebooks enable literate programming that combines code with explanatory prose, documenting the analyst's thought process alongside the implementation [22]. Workflow management systems like NextFlow and Snakemake ensure data is always processed consistently, making analyses traceable and reproducible [22]. Specialized frameworks such as ProQSAR formalize end-to-end quantitative structure-activity relationship development while permitting independent use of each component, generating versioned artifact bundles with full provenance metadata [23].

Methodological Approaches in Computational Chemistry

Computational chemistry employs hierarchical approaches to balance accuracy and computational cost. Studies systematically evaluating computational methods for predicting redox potentials of quinone-based electroactive compounds found that geometry optimizations at low-level theories followed by single-point energy DFT calculations with implicit solvation models offered comparable accuracy to high-level DFT methods at significantly lower computational costs [21]. Modular computational workflows begin with SMILES representations converted to two-dimensional geometrical representations, then to three-dimensional geometries using force field optimization, followed by further refinement using semi-empirical quantum mechanics, density functional tight binding, or density functional theory methods [21].

Table 3: Research Reagent Solutions for Computational Chemistry

| Tool Category | Specific Examples | Function | Application in Computational Chemistry |

|---|---|---|---|

| Electronic Lab Notebooks | Various software platforms [18] | Document experimental procedures, parameters, and results | Track computational methods, parameters, and results |

| Workflow Management Systems | NextFlow, Snakemake [22] | Create reproducible data-processing pipelines | Automate multi-step computational workflows |

| Computational Frameworks | ProQSAR [23] | Formalize end-to-end model development | Standardized QSAR modeling with validated protocols |

| Benchmark Databases | NIST CCCBDB [24] | Provide reference data for validation | Method comparison and validation |

| Quantum Chemistry Software | DFT, DFTB, SEQM [21] [8] | Calculate molecular properties | Predict redox potentials, optimized geometries |

Cultural and Institutional Practices

Beyond technical solutions, addressing the reproducibility crisis requires cultural shifts within the scientific community. Senior investigators should take greater ownership of research details through active laboratory management practices, such as random audits of raw data, more hands-on time overseeing experiments, and encouraging healthy skepticism from all contributors [18]. The publishing ecosystem must value replication studies and negative results alongside novel findings, with journals implementing more rigorous methods reporting requirements and reagent authentication verification [22]. Research funding agencies and institutions should incentivize reproducibility through training programs that emphasize robust assay design, appropriate statistical power, and transparent reporting [22].

Diagram 2: Computational Chemistry Workflow - This diagram outlines a systematic computational workflow for molecular property prediction, demonstrating how hierarchical methods balance accuracy and computational efficiency.

The critical importance of data sharing and reproducibility in computational chemistry and drug development cannot be overstated. As research becomes increasingly computational and data-intensive, establishing robust practices for transparency, documentation, and validation is essential for bridging the "valley of death" between preclinical discovery and clinical application [19]. The reproducibility crisis presents both a challenge and an opportunity to strengthen the scientific enterprise through enhanced methodological rigor, improved reporting standards, and cultural shifts that value transparency alongside innovation.

By implementing the principles and practices outlined in this review—including detailed documentation, robust data management, standardized protocols, and appropriate computational tools—researchers can contribute to a more cumulative and self-corrective scientific process. Ultimately, enhancing reproducibility accelerates discovery, strengthens public trust, and increases the likelihood that scientific investments will translate into meaningful health outcomes. The path forward requires collective commitment from individual researchers, institutions, publishers, and funders to foster a culture where rigor + transparency = reproducibility [18].

Within computational chemistry model evaluation, two pervasive failures systematically compromise the validity of published results: information leakage and inadequate benchmarks. These issues, often subtle and unintentional, lead to overly optimistic performance estimates, hindering the reliable application of models in drug discovery. This guide provides a technical framework for identifying and mitigating these failures, serving as a critical foundation for rigorous research in the field.

The Peril of Information Leakage

Information leakage occurs when data from outside the training set is used to create the model, artificially inflating its performance on test data. In molecular property prediction, this often manifests as structural or experimental data leakage.

Common Leakage Pathways in Molecular Datics

The following workflow illustrates how data can be improperly handled, leading to leakage.

Diagram Title: Data Leakage in Model Workflow

Table 1: Quantitative Impact of Data Leakage on Model Performance (RMSE)

| Dataset/Task | Model Type | Clean Test RMSE | Leaky Test RMSE | Performance Inflation |

|---|---|---|---|---|

| ESOL (Solubility) | Random Forest | 1.05 log mol/L | 0.68 log mol/L | ~35% |

| FreeSolv (Hydration) | Graph Neural Net | 1.80 kcal/mol | 1.10 kcal/mol | ~39% |

| PDBbind (Protein-Ligand Aff.) | CNN | 1.50 pKd | 1.15 pKd | ~23% |

Experimental Protocol: Detecting Feature Scaling Leakage

This protocol outlines steps to test for a common leakage source.

- Objective: To determine if feature scaling was performed pre-split (leakage) or post-split (clean).

- Materials: See "The Scientist's Toolkit" below.

- Procedure:

- Data Splitting: Randomly split the full dataset (e.g., QM9) into a training set (80%) and a holdout test set (20%). Do not apply any transformations.

- Scenario A (Leaky): a. Calculate the mean (μ) and standard deviation (σ) for each feature from the entire dataset (training + test). b. Scale both training and test sets using these global μ and σ.

- Scenario B (Clean): a. Calculate μ and σ for each feature from the training set only. b. Scale the training set using these parameters. c. Scale the test set using the μ and σ from the training set.

- Model Training & Evaluation: Train an identical model (e.g., a Multilayer Perceptron) on both the leaky and clean training sets. Evaluate both models on their respective test sets.

- Analysis: Compare the Root Mean Square Error (RMSE) on the test sets. A significantly lower RMSE in Scenario A indicates the model has benefited from information leakage.

The Problem of Inadequate Benchmarks

Benchmarks that are not representative, too easy, or lack chemical diversity fail to stress-test models, leading to false confidence.

Benchmark Taxonomy and Deficiencies

Table 2: Comparison of Common Molecular Property Benchmarks

| Benchmark Name | Primary Task | Key Strength | Common Deficiency | Impact on Evaluation |

|---|---|---|---|---|

| PDBbind | Protein-Ligand Binding Affinity (pKd) | High-quality structural data | High redundancy, assay bias | Overestimates generalization |

| QM9 | Quantum Mechanical Properties | Large size, diverse properties | Limited chemical space (small molecules) | Underestimates real-world complexity |

| MoleculeNet | Curated collection of datasets | Standardized tasks and splits | Inconsistent data quality across subsets | Misleading aggregate results |

| ChEMBL | Bioactivity Data | Massive scale, broad target coverage | High noise, heterogeneous sources | Obscures true model precision |

Experimental Protocol: Evaluating Benchmark Robustness

This protocol assesses a model's performance degradation when faced with a more challenging, scaffold-split benchmark.

- Objective: To evaluate model generalization to novel chemical scaffolds.

- Materials: See "The Scientist's Toolkit."

- Procedure:

- Dataset Selection: Select a benchmark dataset (e.g., a bioactivity dataset from ChEMBL).

- Data Splitting: a. Random Split: Split the dataset randomly into 80% training and 20% test. b. Scaffold Split: Use the Bemis-Murcko method to assign each molecule a molecular scaffold. Split the data such that molecules in the test set have scaffolds not present in the training set.

- Model Training: Train the same model architecture (e.g., a Message Passing Neural Network) on both the random-split and scaffold-split training sets.

- Evaluation: Evaluate both models on their respective test sets. Calculate key metrics like AUC-ROC, Precision-Recall AUC, and RMSE.

- Analysis: The performance drop from the random-split test set to the scaffold-split test set quantifies the model's reliance on memorizing local chemical patterns versus learning generalizable structure-activity relationships.

The logical relationship between benchmark quality and model trust is shown below.

Diagram Title: Impact of Poor Benchmarks

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Resources for Rigorous Model Evaluation

| Item Name | Type | Function & Purpose |

|---|---|---|

| RDKit | Software Kit | Open-source cheminformatics for molecule manipulation, featurization, and scaffold splitting. |

| Scikit-learn | Python Library | Provides tools for data splitting, preprocessing, and model evaluation metrics. |

| DeepChem | Python Library | A deep learning framework specifically designed for molecular data and life sciences. |

| TensorFlow/PyTorch | Framework | Flexible libraries for building and training custom deep learning models. |

| Matplotlib/Seaborn | Python Library | Creates publication-quality plots and visualizations for data analysis and results. |

| Docker/Singularity | Container | Ensures computational reproducibility by encapsulating the entire software environment. |

Implementation Framework: Data Preparation, Metrics, and Benchmarking Strategies

Best Practices for Benchmark Data Set Preparation and Curation

The rigorous evaluation of computational methods through benchmarking is a cornerstone of progress in computational chemistry and drug design. Benchmarks provide the empirical foundation needed to validate new methodologies, compare them against existing approaches, and guide practical decision-making for research applications. A serious weakness within the field has been a historical lack of standards with respect to quantitative evaluation of methods, data set preparation, and data set sharing [25]. The ultimate goal of benchmarking should be to report new methods or comparative evaluations in a manner that supports decision-making for practical applications, essentially predicting performance on problems not already known at the time of method application [25]. Properly executed benchmarks allow researchers to distinguish genuine methodological advances from incremental improvements and provide the scientific community with reliable assessments of a method's capabilities and limitations across diverse chemical spaces.

The critical importance of robust benchmarking has been highlighted across multiple computational chemistry domains. In density functional theory (DFT) development, benchmarks against highly accurate coupled-cluster theory (CCSD(T)) or experimental data have revealed significant limitations in popular but outdated method combinations like B3LYP/6-31G* [26]. Similarly, in molecular generation, flaws in evaluation metrics for 3D molecular structures have led to chemically implausible valencies being counted as valid, potentially misleading the research community about model capabilities [27]. These examples underscore how benchmarking quality directly impacts methodological progress and the reliability of computational predictions in real-world applications.

Fundamental Principles of Benchmark Curation

Core Philosophical Foundations

Effective benchmark curation rests on two fundamental premises. First, the reporting of new methods or evaluations must communicate the likely real-world performance of methods in practical applications, with clear relationships between methodological advances and performance benefits [25]. Second, we must recognize that methods of broad utility in pharmaceutical research ultimately predict properties that are not known when the methods are applied [25]. Rejection of the first premise can reduce scientific reports to advertisements, while misunderstanding the second can distort conclusions about practical utility.

Benchmarking should prioritize robustness over "peak performance" demonstrated on idealized datasets. In predictive applications, reliability and avoiding large unexpected errors is often more important than achieving optimal performance on standard thermochemical benchmark sets [26]. This principle applies equally across computational chemistry domains, from quantum mechanics to molecular generation and machine learning.

Data Set Composition and Character

The relationship between information available to a method (input) and information to be predicted (output) must be carefully managed. If knowledge of the input creeps into the output either actively or passively, nominal test results may significantly overestimate real-world performance [25]. Similarly, if the relationship between input and output in a test dataset doesn't accurately reflect the operational application of the method, reported performance may be unrelated to practical utility.

The composition of benchmark datasets should reflect the intended application domain while avoiding artificial simplicity. For virtual screening, this means ensuring that active compounds aren't all chemically similar and that decoy molecules form an adequate, challenging background rather than being easily distinguishable from actives [25]. For quantum chemical methods, this involves testing across diverse molecular types, elements, and properties rather than focusing narrowly on small organic molecules where performance may be unrepresentative.

Table 1: Key Principles for Benchmark Data Set Curation

| Principle | Description | Common Pitfalls |

|---|---|---|

| Realism | Dataset difficulty and composition should match real-world applications | Using artificially simple decoys; all actives being chemically similar |

| Independence | Input information must not leak into output predictions | Using cognate ligand poses; optimizing protein structures with same scoring function |

| Comprehensiveness | Coverage of relevant chemical space and property ranges | Focusing only on "easy" cases; limited molecular diversity |

| Transparency | Complete documentation of data sources and processing | Insufficient metadata; undocumented preprocessing steps |

| Reproducibility | Others should be able to recreate datasets exactly | Missing atomic coordinates; undefined protonation states |

Technical Protocols for Data Preparation

Structure Preparation and Validation

The preparation of molecular structures represents a critical foundation for reliable benchmarking. In protein-ligand docking, for instance, simply providing Protein Data Bank (PDB) codes is inadequate for four key reasons [25]:

- Protonation States: PDB structures lack all proton positions for proteins and ligands, yet most docking approaches require at least polar proton positions.

- Bond Orders: Ligands in PDB structures lack bond order information and often lack atom connectivity, making protonation and tautomeric states ambiguous.

- Input Geometries: Different methods have varying sensitivities to input ligand geometries, including absolute pose, conformational strain, and ring conformations.

- Structure Preparation: Different protein structure preparation methods can introduce subtle biases favoring certain docking and scoring approaches.

These concerns necessitate that benchmark datasets include complete, usable structural data in routinely parsable formats with all atomic coordinates for both proteins and ligands [25]. For small molecules, this means providing definitive bond orders, formal charges, and stereochemistry. For proteins, this includes protonation states and resolved ambiguities in residue conformations.

Data Set Separation and Integrity

Proper separation of training, validation, and test sets is essential for meaningful benchmarking. Data leakage between these sets invalidates performance estimates and creates unrealistic expectations of method capabilities. For molecular generation benchmarks like GEOM-drugs, this requires excluding molecules where fundamental calculations (e.g., GFN2-xTB) fractured the original molecule, ensuring a consistent evaluation framework [27].

In machine learning applications, temporal splits (where training data precedes test data in publication time) often provide more realistic performance estimates than random splits, as they better simulate the real-world scenario of predicting new compounds rather than existing ones. Similarly, scaffold-based splits that separate structurally distinct molecules provide more challenging evaluation than random splits.

Diagram 1: Benchmark dataset creation workflow

Domain-Specific Considerations

Quantum Chemistry Methods

For quantum chemical methods like density functional theory (DFT), benchmarking requires careful attention to reference data quality and methodology. Best practices include [26]:

- Using multi-level approaches that balance accuracy, robustness, and computational efficiency

- Selecting functionals and basis sets based on comprehensive benchmarking against high-quality experimental data or CCSD(T) reference calculations

- Avoiding outdated method combinations with known systematic errors

- Including corrections for London dispersion effects and basis set superposition error

The development of the MEHnet (Multi-task Electronic Hamiltonian network) approach demonstrates how machine learning can enhance benchmarking by enabling CCSD(T)-level accuracy—considered the quantum chemistry "gold standard"—for larger molecules than previously possible [3]. Such advances create new opportunities for more comprehensive benchmarking across diverse chemical spaces.

Molecular Generation and 3D Structure Prediction

For generative models of 3D molecular structures, rigorous evaluation requires chemically meaningful metrics. The GEOM-drugs dataset has served as a key benchmark, but evaluation protocols have suffered from critical flaws including [27]:

- Incorrect valency definitions due to implementation bugs

- Chemically implausible entries in valency lookup tables

- Reliance on force fields inconsistent with reference data

Corrected evaluation frameworks must include chemically accurate valency tables derived from refined datasets and energy-based evaluation methodologies for accurate assessment of generated 3D geometries [27]. The valency computation must properly handle aromatic systems, where simple assumptions about bond order contributions can lead to significant errors.

Table 2: Molecular Generation Evaluation Metrics

| Metric Category | Specific Metrics | Best Practices | Common Issues |

|---|---|---|---|

| Chemical Validity | Atom stability, Molecule stability | Aromatic-dependent valency calculations; Chemically accurate lookup tables | Rounding aromatic bonds to 1 instead of 1.5; Implausible valency entries |

| 3D Structure Quality | Energy evaluation, Geometry optimization | Consistent theory level with training data; GFN2-xTB benchmarks | Different theory levels for training vs evaluation; Oversimplified distance tables |

| Distribution Metrics | Unique validity, Novelty | Interpretable, chemically grounded metrics | Difficult to interpret; Limited chemical meaning |

Machine Learning and Large Language Models

For machine learning models, particularly large language models (LLMs) applied to chemistry, benchmarking requires comprehensive evaluation frameworks like ChemBench, which includes [12]:

- Diverse question-answer pairs covering knowledge, reasoning, calculation, and intuition

- Both multiple-choice and open-ended questions

- Special encoding for chemical entities (SMILES, equations)

- Evaluation of not just knowledge recall but reasoning capabilities

Such frameworks must be designed to handle the special treatment of scientific information, with appropriate tagging of chemical structures and notation to enable proper model interpretation [12]. The benchmark should contextualize model performance against human expert capabilities across different chemical specializations.

Implementation and Reporting Standards

Data Sharing and Reproducibility

Authors reporting methodological advances or comparisons must provide usable primary data to enable replication and assessment by independent groups [25]. "Usable" means data in routinely parsable formats that include all atomic coordinates for proteins and ligands used as input to the methods studied. The commitment to share data should be made at the time of manuscript submission.

Exceptions for proprietary data should include parallel analysis of publicly available data to demonstrate that proprietary data were scientifically necessary [25]. Shared data should include complete documentation of preprocessing steps, parameter settings, and any corrections applied to raw data.

Statistical Reporting and Validation

Comprehensive statistical reporting goes beyond simple performance averages to include:

- Variability estimates (standard errors, confidence intervals)

- Analysis of performance across different molecular classes or difficulty levels

- Statistical significance testing for method comparisons

- Clear description of evaluation metrics and their calculation

For machine learning models, this includes proper cross-validation protocols, separate validation sets for hyperparameter tuning, and final evaluation on completely held-out test sets. Performance should be reported across multiple criteria rather than optimizing for a single metric.

Diagram 2: Multi-dimensional evaluation and reporting framework

Essential Research Reagents and Tools

Table 3: Essential Research Reagents for Computational Benchmarking

| Tool Category | Specific Tools/Resources | Function in Benchmarking | Critical Considerations |

|---|---|---|---|

| Quantum Chemistry | DFT codes (Various), CCSD(T) implementations | Reference calculations; Method validation | Theory level consistency; Basis set selection; Dispersion corrections |

| Cheminformatics | RDKit, Open Babel | Chemical structure manipulation; Standardization | Aromaticity perception; Tautomer handling; Stereochemistry |

| Molecular Generation | GEOM-drugs, QM9 | Standardized benchmark datasets; Model training | Data preprocessing; Valency calculations; Split methodology |

| Evaluation Metrics | Custom implementations (Valency, Energy) | Performance quantification | Chemically meaningful metrics; Proper statistical analysis |

| Data Management | Public repositories (GitHub, Zenodo) | Data sharing; Reproducibility | Complete metadata; Standardized formats; Version control |

Robust benchmark data set preparation and curation represents both a scientific and ethical imperative in computational chemistry research. By adhering to principles of realism, independence, comprehensiveness, transparency, and reproducibility, researchers can create evaluation frameworks that genuinely advance the field rather than providing misleading characterizations of methodological capabilities. The development of corrected evaluation frameworks for established benchmarks like GEOM-drugs demonstrates how continued refinement of benchmarking practices enables more accurate assessment of methodological progress [27].

As the field continues to evolve with new machine learning approaches and increasingly complex applications, the fundamental importance of rigorous benchmarking only grows. By implementing the protocols and standards outlined in this guide, researchers can ensure their contributions provide meaningful advances rather than incremental optimizations on flawed metrics. Ultimately, better benchmarking practices lead to more rapid scientific progress and more reliable computational tools for drug discovery and materials design.