A Practical Guide to Data Augmentation for Molecular Property Prediction: Strategies to Overcome Data Scarcity

This guide provides a comprehensive framework for researchers, scientists, and drug development professionals to implement effective data augmentation strategies for molecular property prediction.

A Practical Guide to Data Augmentation for Molecular Property Prediction: Strategies to Overcome Data Scarcity

Abstract

This guide provides a comprehensive framework for researchers, scientists, and drug development professionals to implement effective data augmentation strategies for molecular property prediction. It addresses the critical challenge of scarce and noisy experimental data, which often limits the performance of AI/ML models in early-stage drug discovery. The article systematically explores the foundational principles of data augmentation in cheminformatics, details practical methodologies from multi-task learning to SMILES enumeration, outlines solutions to common implementation challenges, and establishes rigorous validation protocols. By synthesizing the latest research, this guide offers actionable recommendations to enhance predictive accuracy, improve model generalizability, and ultimately accelerate the drug discovery pipeline.

Why Data Augmentation is Crucial for Molecular Property Prediction

Molecular property prediction is a cornerstone of modern drug discovery and materials science. However, the field is fundamentally constrained by the dual challenges of data scarcity and data noise. The process of generating high-quality experimental biological and physicochemical data is often costly, time-consuming, and subject to experimental variability, leading to sparse, heterogeneous, and sometimes inconsistent datasets [1] [2] [3]. This reality severely limits the performance of data-hungry deep learning models and poses a significant risk of overfitting and poor generalization to novel molecular structures or properties [2]. This Application Note addresses these core challenges by presenting a structured framework and practical protocols for data augmentation and consistency assessment to empower more robust and reliable predictive modeling.

Quantifying the Challenge: Data Landscape

The scale and nature of these challenges are revealed through systematic analysis of public data. The following table summarizes common issues in molecular datasets that hinder model development.

Table 1: Common Challenges in Molecular Property Datasets

| Challenge Category | Specific Issue | Impact on Model Performance |

|---|---|---|

| Data Scarcity | Limited labeled data for specific properties (e.g., ADME) [1] [3] | Inability to train complex models; high risk of overfitting [2] |

| Annotation Noise | Inconsistent property annotations between gold-standard and benchmark sources [1] | Introduction of erroneous signals; degradation of predictive accuracy [1] |

| Distributional Shifts | Significant misalignments in data distributions across different sources [1] | Poor generalization and transfer learning across datasets [1] [2] |

| Data Heterogeneity | Variability in experimental protocols and conditions [1] | Obscured biological signals; increased model complexity required [1] |

Practical Solutions: Augmentation and Assessment Frameworks

To combat data scarcity and noise, researchers can employ a multi-faceted strategy. The solutions can be broadly categorized into data-level and model-level approaches, each with distinct mechanisms and benefits.

Table 2: Frameworks for Addressing Data Scarcity and Noise

| Method Category | Core Principle | Key Techniques | Applicable Scenarios |

|---|---|---|---|

| Data-Level Augmentation | Artificially expand the training set by creating modified versions of existing data. | SMILES Enumeration [4]; Noise Injection (e.g., Gaussian, token masking, swapping) [5] [6] | Low-data regimes for specific properties; need for robust feature learning. |

| Model-Level Learning | Leverage model architecture and training strategies to learn from limited or heterogeneous data. | Multi-Task Learning (MTL) [7] [3]; Transfer Learning (TL) [3]; Few-Shot Learning [2] | Availability of auxiliary (even weakly related) tasks; pre-trained models exist. |

| Data Consistency Assessment | Systematically identify and address data quality issues before modeling. | Distribution analysis; outlier detection; identification of annotation conflicts [1] | Integration of multiple data sources; quality control for critical predictions. |

Data Augmentation via SMILES Enumeration and Perturbation

One potent data-level strategy exploits the fact that a single molecular structure can be represented by multiple valid SMILES strings. This protocol outlines the steps for implementing this augmentation.

Protocol 1: SMILES-Based Data Augmentation

- Objective: To increase the size and diversity of molecular sequence data for training deep learning models.

- Principle: A single molecule can be represented by numerous equivalent SMILES strings due to different atom traversal orders. Treating these as distinct training samples improves model robustness [4].

- Materials/Reagents:

- Methodology:

- Data Preparation: Start with a cleaned set of canonical SMILES.

- Augmentation Execution:

- Strategy 1 (SMILES Enumeration): For each molecule, generate a predefined number of unique, randomized SMILES representations [4].

- Strategy 2 (Noise Injection): For each SMILES string, apply perturbations with a specified probability. Common operations include:

- Training: Use the original and augmented SMILES as independent data points during model training. For noise-injection methods, contrastive learning can be used to ensure the model learns representations that are consistent between the original and perturbed versions [6].

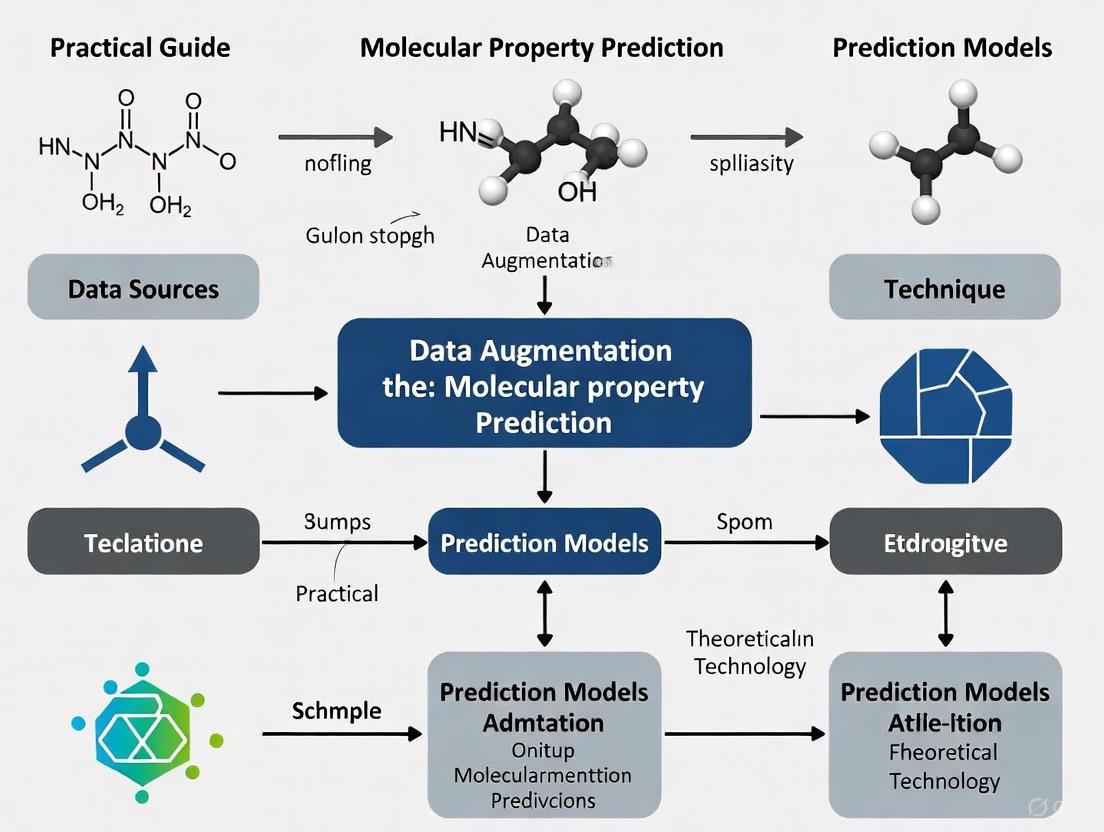

The following workflow diagram illustrates the two main augmentation paths and their integration into a model training pipeline.

Data Consistency Assessment with AssayInspector

Before integrating multiple datasets, a rigorous consistency check is crucial. Naive aggregation of disparate sources can introduce more noise than signal [1].

Protocol 2: Pre-Modeling Data Consistency Assessment

- Objective: To systematically identify distributional misalignments, annotation conflicts, and outliers across molecular datasets prior to integration and model training.

- Principle: Diagnose inconsistencies arising from differences in experimental conditions, measurement protocols, or chemical space coverage [1].

- Materials/Reagents:

- Methodology:

- Data Loading: Load all datasets to be compared into the AssayInspector tool.

- Descriptive Statistics Generation: Run the tool to generate a summary report containing key parameters (e.g., sample size, mean, standard deviation, quartiles) for each dataset.

- Distribution Comparison: Use the tool's statistical testing (e.g., two-sample Kolmogorov–Smirnov test for regression tasks) and visualization features to compare the endpoint distributions across sources [1].

- Chemical Space Analysis: Perform a UMAP projection based on molecular fingerprints to visually assess the overlap and coverage of the chemical space for each dataset [1].

- Conflict Identification: For molecules present in multiple datasets (overlap), directly compare their property annotations to flag significant inconsistencies [1].

- Insight Report Review: Generate and review the tool's automated insight report, which provides alerts on divergent datasets, conflicting annotations, and outliers.

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and resources essential for implementing the protocols described in this note.

Table 3: Key Research Reagent Solutions for Data Augmentation and Assessment

| Tool/Resource Name | Type | Primary Function | Access/Reference |

|---|---|---|---|

| AssayInspector | Software Package | Data consistency assessment (DCA) via statistics, visualization, and diagnostic summaries [1]. | GitHub Repository [1] |

| RDKit | Cheminformatics Library | Calculation of molecular descriptors and fingerprints; SMILES manipulation and canonicalization [1]. | https://www.rdkit.org [1] |

| maxsmi | Code Library | Provides strategies for SMILES augmentation and model training with confidence estimation [4]. | GitHub Repository [4] |

| INTransformer | Deep Learning Model | Transformer-based property prediction using noise injection and contrastive learning for data augmentation [6]. | Methodology described in Jiang et al. [6] |

| Therapeutic Data Commons (TDC) | Data Repository | Provides standardized benchmarks, including ADME datasets, for molecular property prediction [1]. | https://tdc.broadinstitute.org [1] |

Validation and Performance Metrics

Implementing the aforementioned strategies has demonstrated significant benefits in real-world scenarios. The following diagram and table summarize the validation workflow and expected outcomes.

Table 4: Key Performance Indicators for Validation

| Validation Aspect | Metric | Interpretation of Improvement |

|---|---|---|

| Predictive Accuracy | Mean Absolute Error (MAE), Root Mean Square Error (RMSE), Area Under the Curve (AUC) | Lower MAE/RMSE or higher AUC indicates better predictive performance. |

| Generalization | Performance on held-out test sets and external validation sets | Smaller performance drop between training and test sets indicates better generalization and reduced overfitting. |

| Data Efficiency | Model performance as a function of training set size | Achieving comparable accuracy with fewer original data points demonstrates effective augmentation [5]. |

| Robustness | Performance variance across different data splits or noise levels | Lower variance indicates a more stable and reliable model. |

Scarcity and noise in molecular data are not merely inconveniences but fundamental challenges that must be proactively managed. By adopting a systematic approach that combines rigorous data consistency assessment with modern data augmentation techniques and model-level strategies like multi-task learning, researchers can significantly enhance the accuracy, robustness, and generalizability of molecular property prediction models. The protocols and tools outlined in this Application Note provide a practical pathway to build more trustworthy AI systems, ultimately accelerating drug discovery and materials design.

Understanding the Few-Shot Learning Paradigm in Cheminformatics

Few-shot learning (FSL) represents a machine learning paradigm where models learn to make accurate predictions given only a very small number of labeled examples per class [8]. This approach stands in stark contrast to traditional supervised learning, which requires hundreds or thousands of labeled examples to achieve reliable performance [9]. In cheminformatics and drug discovery, FSL has emerged as a powerful solution to address the fundamental challenge of data scarcity, where generating labeled biological activity data through wet lab experiments is both time-consuming and costly—often taking 12 years and costing 1.8 billion dollars to bring a new drug to market [10].

The core value of few-shot learning in cheminformatics lies in its ability to leverage prior knowledge acquired from related tasks to enable rapid learning in new contexts with limited data [11]. This capability is particularly valuable for predicting molecular properties, screening compound libraries, and repurposing existing drugs, where comprehensive experimental data for every target of interest is simply unavailable [12] [13]. By mimicking the human ability to learn from just a few examples, FSL approaches accelerate the drug discovery pipeline and reduce associated costs [9] [14].

Theoretical Foundations of Few-Shot Learning

Problem Formulation and Key Terminology

Few-shot learning problems are typically framed as N-way-K-shot classification tasks [9] [8]. In this formulation:

- N-way refers to the number of classes (e.g., active vs. inactive compounds) the model must discriminate between

- K-shot indicates the number of labeled examples available per class for learning

The learning process relies on two fundamental concepts [14]:

- Support Set: The few labeled samples from novel categories used to adapt a pre-trained model (typically K examples for each of N classes)

- Query Set: The unlabeled samples from the same categories on which the model must make predictions after learning from the support set

This framework encompasses specialized cases including one-shot learning (K=1) and zero-shot learning (K=0), though the latter requires different techniques as it must recognize new classes without any direct examples [9] [8].

Meta-Learning: The "Learning to Learn" Paradigm

Meta-learning represents the dominant approach for few-shot learning, where models are trained across numerous related tasks so they can quickly adapt to new tasks with minimal examples [9]. In the context of cheminformatics, this involves:

- Meta-Training Phase: The model is exposed to a variety of different predefined contexts (e.g., different biological targets or assay systems), each represented by numerous training samples [11]

- Meta-Testing Phase: The model is presented with a new context not seen previously, and further learning occurs on a small number of new samples [9]

The key insight is that by learning across multiple related tasks during meta-training, the model acquires prior knowledge that can be efficiently transferred to solve new problems in the low-data regime [11].

Key Methodological Approaches in Cheminformatics

Metric Learning and Prototypical Networks

Metric learning approaches aim to learn an embedding space where samples from the same class are close together while those from different classes are far apart [9] [8]. Prototypical networks operate on the principle that there exists an embedding where several points cluster around a single prototype representation for each class [9]. These networks:

- Compute M-dimensional prototype representations for each class as the mean vector of embedded support points belonging to that class

- Classify query samples based on their distance to these prototypes in the learned embedding space

- Have demonstrated particular effectiveness in molecular few-shot learning benchmarks [10]

Model-Agnostic Meta-Learning (MAML)

The MAML algorithm provides a general framework for meta-learning by finding optimal initial parameters that can rapidly adapt to new tasks with few gradient steps [9]. For molecular applications:

- The algorithm performs an inner loop update where it adapts parameters using one or multiple gradient steps on the support set

- An outer loop update then optimizes the initial parameters based on performance across multiple tasks

- While powerful, MAML can be challenging to train due to computational requirements and hyperparameter sensitivity [9]

Fine-Tuning Approaches

Recent research has demonstrated that straightforward fine-tuning approaches can achieve highly competitive performance compared to more complex meta-learning strategies [13] [10]. These methods:

- Utilize models pre-trained in standard supervised settings on base datasets

- Employ specialized fine-tuning techniques such as regularized quadratic-probe loss based on Mahalanobis distance

- Offer advantages in black-box settings where model weights cannot be accessed directly [10]

- Have shown particular robustness to domain shifts in molecular applications [10]

Data-Level Approaches and Augmentation

Data-level approaches address few-shot learning by augmenting limited datasets through various techniques [9]. In cheminformatics, this includes:

- Molecular graph augmentation that modifies molecular topology while preserving key physicochemical properties [15]

- Molecular connectivity index-based augmentation which ensures generated molecules retain the same topological indices as original data [15]

- Generative models such as GANs that can produce additional synthetic examples for training [9]

Table 1: Comparison of Major Few-Shot Learning Approaches in Cheminformatics

| Approach | Key Mechanism | Advantages | Limitations |

|---|---|---|---|

| Metric Learning | Learns similarity space where similar molecules cluster | Intuitive; strong performance on standard benchmarks | May struggle with highly diverse molecular classes |

| MAML | Finds optimal parameter initialization for fast adaptation | Model-agnostic; theoretically grounded | Computationally intensive; training instability |

| Fine-Tuning | Adapts pre-trained models to new tasks with limited data | Simple; works with standard models; black-box compatible | Requires relevant pre-training data |

| Data Augmentation | Generates additional synthetic training examples | Directly addresses data scarcity | Risk of generating unrealistic molecules |

Applications in Drug Discovery and Development

Predicting Drug Response and Biomarker Identification

Few-shot learning has demonstrated remarkable success in predicting drug response across biological contexts. The Translation of Cellular Response Prediction (TCRP) model exemplifies this application, showing exceptional capability in:

- Transferring predictive models of drug response learned in cell lines to patient-derived tumor cells (PDTCs) and patient-derived xenografts (PDXs) [11]

- Rapidly adapting to new tissue types with minimal samples, achieving performance gains of up to 829% after examining only 5 additional samples [11]

- Identifying key molecular features important for drug response, highlighting critical roles for RB1 and SMAD4 in response to CDK inhibition and RNF8 and CHD4 in response to ATM inhibition [11]

This approach creates a vital bridge from the numerous samples surveyed in high-throughput screens (n-of-many) to the distinctive contexts of individual patients (n-of-one) [11].

Molecular Property Prediction

FSL enables accurate prediction of molecular properties with limited labeled data, addressing a fundamental challenge in cheminformatics:

- Blood-brain barrier penetration prediction using SMILES strings as textual input for large language models [16]

- hERG liability assessment to identify compounds with cardiac toxicity risks [16]

- BACE-1 inhibition prediction for Alzheimer's disease drug discovery [16]

- General molecular property prediction using topological indices and molecular fingerprints [15] [13]

Pharmaceutical Repurposing and CNS Drug Discovery

Integration of few-shot meta-learning with brain activity mapping (BAMing) has created powerful platforms for central nervous system (CNS) therapeutic discovery [12]. This approach:

- Utilizes patterns from previously validated CNS drugs to rapidly identify potential drug candidates from limited datasets

- Demonstrates enhanced stability and improved prediction accuracy over traditional machine-learning methods through Meta-CNN models

- Facilitates classification of CNS drugs and aids in pharmaceutical repurposing and repositioning strategies [12]

Experimental Protocols and Implementation

Protocol: Implementing Few-Shot Learning for Molecular Property Prediction

Objective: Predict binary molecular properties (e.g., active/inactive) using limited labeled data.

Materials and Datasets:

- Base dataset (e.g., ChEMBL, PubChem) with multiple related tasks for meta-training

- Target task with limited labeled examples (typically 5-20 per class)

- Molecular representation (e.g., fingerprints, graph representations, SMILES strings)

- Computational environment with GPU acceleration

Procedure:

Data Preparation and Splitting

- Split base dataset into multiple tasks, ensuring no overlap between meta-training and target tasks

- For each task in meta-training, further split into support and query sets to simulate few-shot conditions

- For target task, reserve a small portion as support set and the remainder as query set

Model Selection and Configuration

- Choose appropriate architecture (e.g., Graph Neural Network, Transformer)

- Select learning approach (metric learning, meta-learning, or fine-tuning)

- Configure hyperparameters (learning rate, embedding dimension, etc.)

Meta-Training Phase

- For each training episode, sample a batch of tasks from the base dataset

- For each task, extract support set and compute loss on query set

- Update model parameters to minimize loss across tasks

- Repeat for predetermined number of episodes or until convergence

Few-Shot Adaptation

- For target task, use small support set to adapt model (via fine-tuning or similarity computation)

- Evaluate performance on query set using appropriate metrics (AUC-ROC, accuracy, etc.)

Validation and Interpretation

- Assess model calibration and confidence estimates

- Interpret important molecular features contributing to predictions

- Perform ablation studies to validate design choices

Protocol: Molecular Connectivity Index-Based Data Augmentation

Objective: Generate augmented molecular data while preserving topology-based physicochemical properties.

Procedure:

Calculate Molecular Connectivity Indices

- Compute topological indices (χ) for each molecule in the original dataset

- These indices reflect molecular branching, size, and flexibility

Graph Modification

- Apply structure-preserving transformations to molecular graphs

- Ensure modified molecules maintain identical connectivity indices to originals

- Validate that augmented structures chemically feasible

Model Training with Augmented Data

- Combine original and augmented molecules in training set

- Proceed with standard few-shot learning pipeline

- Evaluate impact on prediction accuracy and generalization [15]

Table 2: Research Reagent Solutions for Molecular Few-Shot Learning

| Resource | Type | Function | Example Sources |

|---|---|---|---|

| FS-mol Benchmark | Dataset | Standardized evaluation of FSL methods | Stanley et al. [10] |

| Molecular Fingerprints | Representation | Encodes molecular structure as fixed-length vectors | ECFP, Morgan fingerprints |

| Graph Neural Networks | Model | Learns directly from molecular graph structure | GNN, MPNN [10] |

| Molecular Connectivity Indices | Descriptor | Captures topology-based physicochemical properties | RDKit [15] |

| Pre-trained Language Models | Model | Processes SMILES strings as textual data | ChemBERTa, SMILES Transformer [16] |

| TCRP Framework | Methodology | Transfers predictions across biological contexts | Civeni et al. [11] |

Performance Comparison and Benchmarking

Table 3: Quantitative Performance of Few-Shot Learning Methods on Molecular Tasks

| Method | Benchmark | Performance (AUC-ROC) | Data Efficiency | Domain Shift Robustness |

|---|---|---|---|---|

| Prototypical Networks | FS-mol | 0.71 ± 0.02 | Moderate | Low to Moderate |

| MAML | FS-mol | 0.69 ± 0.03 | Low | Moderate |

| Fine-tuning + Quadratic Probe | FS-mol | 0.73 ± 0.02 | High | High |

| TCRP (Drug Response) | GDSC1000 to PDTC | 0.35 (at 10 samples) | Very High | High [11] |

| Connectivity Index Augmentation | Molecular Properties | +5-8% improvement | High | Moderate [15] |

Visualization of Workflows

Few-Shot Learning Workflow for Molecular Data

Data Augmentation Approach for Molecular Graphs

Few-shot learning represents a transformative paradigm in cheminformatics, directly addressing the field's fundamental challenge of data scarcity. By leveraging meta-learning, metric learning, and sophisticated fine-tuning approaches, FSL enables accurate prediction of molecular properties, drug responses, and biological activities with minimal labeled examples. The integration of molecular-specific strategies—such as connectivity index-preserving data augmentation and graph-based representations—further enhances the capability of these models to generalize from limited data.

As drug discovery increasingly focuses on personalized medicine and rare targets, the ability to extract meaningful insights from small datasets becomes increasingly valuable. Few-shot learning provides the methodological foundation to bridge the gap between data-rich preliminary screening and data-poor clinical contexts, ultimately accelerating the development of novel therapeutics and expanding the scope of computational approaches in molecular design and optimization.

Molecular Property Prediction (MPP) is a critical task in drug discovery and materials science, where the goal is to build models that can accurately predict properties for new molecules and for new property types. The core challenges that hinder this are cross-property generalization and cross-molecule generalization [17]. Cross-property generalization refers to the difficulty a model faces when it must transfer knowledge learned from predicting one set of properties to a different, potentially weakly related, property. This is complicated by the fact that each property may follow a different data distribution. Cross-molecule generalization arises from the immense structural diversity of molecules; a model trained on one set of chemical scaffolds may perform poorly on molecules with novel, unseen structures [17]. These challenges are exacerbated in real-world research by the scarcity of labeled experimental data for many properties and compounds. This application note outlines practical data augmentation strategies and detailed experimental protocols to overcome these barriers, providing a toolkit for researchers to build more robust and generalizable MPP models.

Core Concepts and Problem Definitions

Defining the Generalization Problems

- Cross-Property Generalization: This challenge occurs when the statistical relationship between molecular structure and property value shifts across different prediction tasks. For instance, a model trained to predict metabolic stability may fail to generalize to predicting solubility because the underlying structural features that determine each property differ. The problem is acute in few-shot learning scenarios where a new property has only a handful of labeled examples [17].

- Cross-Molecule Generalization: This challenge concerns a model's ability to make accurate predictions for molecules that are structurally dissimilar to those in its training set. This is critical for exploring new chemical spaces, such as in scaffold hopping—the discovery of new core structures that retain a desired biological activity [18]. Models often overfit to specific functional groups or scaffolds seen during training and fail when encountering novel ones [17].

The following table summarizes the primary data augmentation strategies discussed in this note, their core principles, and their primary application.

Table 1: Data Augmentation Strategies for Molecular Property Prediction

| Strategy | Core Principle | Target Generalization Challenge | Key Advantage |

|---|---|---|---|

| Multi-task Learning [7] | Jointly train a single model on multiple property prediction tasks. | Cross-Property | Leverages auxiliary data, even if sparse or weakly related, to learn a more robust shared representation. |

| Virtual Data Augmentation [19] | Generate new training examples by replacing functional groups with chemically similar alternatives (e.g., Cl with Br, I). | Cross-Molecule | Systematically expands chemical space coverage without altering reaction sites or atom valences. |

| LLM-Based Knowledge Augmentation [20] | Extract prior knowledge and molecular vectorization rules from Large Language Models (e.g., GPT-4o, DeepSeek). | Cross-Property | Injects human-like reasoning and feature design for properties with limited labeled data. |

| Multi-modal & Self-Supervised Learning [21] | Fuse different molecular representations (graph, SMILES, 3D geometry) and use pretext tasks on unlabeled data. | Cross-Molecule & Cross-Property | Creates rich, transferable representations that are not over-reliant on a single data type or labeled examples. |

Detailed Experimental Protocols

This section provides step-by-step protocols for implementing key data augmentation strategies.

Protocol 1: Multi-task Learning with Graph Neural Networks

Objective: To improve model performance on a primary, data-scarce molecular property task by jointly training on one or more auxiliary property tasks [7].

Materials:

- Primary dataset (e.g., small set of fuel ignition properties)

- One or more auxiliary datasets (e.g., subsets from QM9)

- Graph Neural Network architecture (e.g., MPNN, GIN)

Procedure:

- Data Preparation: a. Standardize all molecular structures across datasets (e.g., convert to canonical SMILES). b. Handle missing values in auxiliary tasks; techniques like mask-based learning can be employed where labels are unavailable for some tasks for given molecules [7]. c. Split each dataset (primary and auxiliary) into training, validation, and test sets, ensuring no data leakage.

Model Architecture Setup: a. Design a GNN with a shared backbone for feature extraction from the molecular graph. b. Attach separate task-specific prediction heads (typically a linear layer) for each property to be predicted. c. The loss function ( \mathcal{L} ) is a weighted sum of the losses for each task: ( \mathcal{L} = \sum{i=1}^{T} \lambdai \mathcal{L}i ), where ( T ) is the number of tasks, ( \mathcal{L}i ) is the loss for task ( i ), and ( \lambda_i ) is a weighting hyperparameter [7].

Model Training and Validation: a. Train the model on the combined training data from all tasks. b. Use the validation set to monitor performance on the primary task and to tune hyperparameters, including the task weights ( \lambda_i ). c. Apply early stopping based on the primary task's validation performance.

Model Evaluation: a. Evaluate the final model on the held-out test set for the primary task. b. Compare its performance against a single-task model trained only on the primary dataset.

Protocol 2: Virtual Data Augmentation for Reaction Prediction

Objective: To augment a small reaction dataset by creating "fake" data through the substitution of functionally similar groups, thereby improving model generalization to novel reactants [19].

Materials:

- Small, targeted reaction dataset (e.g., Suzuki, Buchwald-Hartwig from Reaxys)

- Cheminformatics toolkit (e.g., RDKit in Python)

Procedure:

- Data Curation: a. Export and preprocess reaction SMILES from a database like Reaxys, removing duplicates and irrelevant information (yield, temperature) [19]. b. Canonicalize all SMILES strings.

Virtual Augmentation: a. Identify Replaceable Groups: For a given reaction type, identify functional groups that can be substituted without altering the reaction's core mechanism (e.g., halogens: Cl, Br, I; boron groups). b. Generate Fake Data: i. Single Augmentation: Replace the identified group in one reactant with a similar group [19]. ii. Simultaneous Augmentation: Replace groups in multiple reactants simultaneously (e.g., in a Suzuki reaction, augment both the halogen and boron reactants) [19]. c. Validation: Ensure the generated fake SMILES are chemically valid and that the replacements do not change the atom valences or reaction sites.

Dataset Construction: a. Combine the original raw data with the newly generated fake data, removing any duplicates. b. Split the augmented dataset into training, validation, and test sets. Crucially, apply augmentation only to the training set to avoid evaluation bias [19].

Model Training and Evaluation: a. Train a reaction prediction model (e.g., a Molecular Transformer) on the augmented training set. b. Evaluate the model on the pristine, non-augmented test set. c. Compare the accuracy against a baseline model trained only on the raw data.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for MPP Data Augmentation

| Item | Function / Application | Example Tools / Libraries |

|---|---|---|

| Graph Neural Network Library | Provides the core architecture for multi-task and representation learning. | PyTorch Geometric, Deep Graph Library (DGL) |

| Cheminformatics Toolkit | Handles molecule standardization, SMILES manipulation, and fingerprint generation; essential for virtual data augmentation. | RDKit |

| Large Language Model API | Source for extracting prior knowledge and generating molecular features for knowledge augmentation. | GPT-4o/4.1, DeepSeek-R1 [20] |

| Pre-trained Molecular Model | Provides robust structural feature embeddings that can be fused with LLM-generated knowledge. | Models from frameworks like KPGT [21] or other self-supervised GNNs [20] |

| Molecular Database | Source of raw data for primary and auxiliary tasks, as well as for pre-training. | QM9 [7], USPTO [19], Reaxys [19] |

Experimental Workflow Visualization

The following diagram illustrates the integrated workflow for combining structural and knowledge-based features to tackle generalization challenges.

The Impact of Data Heterogeneity and Distributional Shifts

Data heterogeneity and distributional misalignments represent critical challenges for machine learning models in molecular property prediction, often compromising predictive accuracy and generalizability. These issues are particularly acute in preclinical safety modeling and early-stage drug discovery, where limited data availability and experimental constraints exacerbate integration difficulties [1]. The fundamental problem stems from aggregating data from multiple sources—such as various public databases, experimental protocols, and literature sources—which introduces inconsistencies in data distributions, chemical space coverage, and property annotations [1]. Analyzing public ADME (Absorption, Distribution, Metabolism, and Excretion) datasets has revealed significant misalignments and inconsistent property annotations between gold-standard and popular benchmark sources, including Therapeutic Data Commons (TDC) [1]. These discrepancies can arise from differences in experimental conditions, measurement techniques, and chemical space coverage, ultimately introducing noise that degrades model performance [1]. Even data standardization efforts, despite harmonizing discrepancies and increasing training set size, may not consistently improve predictive performance, highlighting the necessity for rigorous data consistency assessment prior to modeling [1].

The impact of these challenges extends across multiple facets of molecular property prediction. In few-shot learning scenarios, models must overcome both cross-property generalization under distribution shifts and cross-molecule generalization under structural heterogeneity [2]. For out-of-distribution (OOD) prediction, which is essential for discovering high-performance materials and molecules with property values outside known distributions, traditional models struggle with extrapolation to unseen property value ranges [22]. Furthermore, class imbalance problems in multitask classification scenarios necessitate specialized adversarial augmentation techniques to maintain model robustness [23]. Understanding and addressing these heterogeneity and distributional shift challenges is therefore paramount for developing reliable predictive models that can accelerate drug discovery and materials design.

Understanding Data Challenges in Molecular Property Prediction

Data heterogeneity in molecular property prediction manifests in several distinct forms, each presenting unique challenges for model development and deployment. Experimental heterogeneity arises from differences in measurement protocols, assay conditions, and laboratory-specific procedures across data sources [1]. For example, pharmacokinetic parameters obtained from high-throughput in vitro screenings may exhibit systematic differences from those curated from published literature or in vivo studies [1]. Representational heterogeneity occurs when molecular structures are encoded using different schemas, including Simplified Molecular Input Line Entry System (SMILES) strings, molecular graphs, fingerprints, or 3D conformations [2] [24]. Temporal heterogeneity emerges when data collected over extended time periods incorporates evolving experimental standards and technologies, creating distributional shifts that reflect methodological advances rather than biological truths [1].

The chemical space coverage variability across datasets represents another significant dimension of heterogeneity. Publicly available molecular datasets often exhibit substantial differences in the structural diversity and property ranges they encompass [1] [22]. For instance, analysis of half-life datasets from five different sources revealed notable disparities in molecular structural diversity and property value distributions, complicating direct integration efforts [1]. Similarly, clearance datasets gathered from seven distinct sources demonstrated misalignments that introduced noise and degraded model performance when aggregated without proper harmonization [1].

Consequences of Distributional Shifts

Distributional shifts in molecular data lead to several critical failure modes in predictive modeling. Covariate shift occurs when the distribution of input features (molecular structures or descriptors) differs between training and testing conditions, while the conditional distribution of properties given structures remains unchanged [22]. Concept shift arises when the fundamental relationship between molecular structures and their properties changes across different experimental contexts or biological systems [1] [2]. Label noise and annotation inconsistencies represent particularly pernicious problems, where the same molecular property may be annotated inconsistently between gold-standard and benchmark sources [1].

The practical consequences of these shifts include performance degradation on out-of-distribution compounds, overfitting to dataset-specific artifacts rather than generalizable structure-property relationships, and reduced reliability for decision-making in drug discovery pipelines [1] [22]. In extreme cases, models may learn to exploit confounding factors specific to individual datasets, completely failing to generalize to new chemical spaces or experimental settings [1].

Tools and Frameworks for Data Assessment

Specialized Tools for Consistency Evaluation

Table 1: Tools and Frameworks for Data Consistency Assessment

| Tool Name | Primary Function | Key Features | Compatibility |

|---|---|---|---|

| AssayInspector [1] | Data consistency assessment and visualization | Statistical comparisons, outlier detection, chemical space visualization, batch effect identification | Python, RDKit, Scipy |

| MMFRL [24] | Multimodal fusion with relational learning | Cross-modal knowledge transfer, relational metrics, explainable representations | Deep learning frameworks |

| MatEx [22] | Out-of-distribution property prediction | Bilinear transduction, extrapolation to high-value regions | Materials and molecules |

| AAIS [23] | Adversarial augmentation | Influence function-based sample selection, class imbalance handling | Graph Neural Networks |

AssayInspector represents a model-agnostic Python package specifically designed for systematic data consistency assessment prior to modeling pipelines [1]. Its functionality encompasses three primary components: (1) generation of comprehensive descriptive statistics including molecule counts, endpoint statistics (mean, standard deviation, quartiles), within- and between-source feature similarity values, and identification of outliers; (2) visualization plots for property distribution, chemical space coverage, dataset discrepancies, and molecular overlaps; and (3) automated insight reports with alerts and recommendations for data cleaning and preprocessing [1]. The tool incorporates built-in functionality to calculate traditional chemical descriptors, including ECFP4 fingerprints and 1D/2D descriptors using RDKit, and supports both regression and classification tasks [1].

MMFRL (Multimodal Fusion with Relational Learning) addresses heterogeneity challenges through a framework that leverages relational learning to enrich embedding initialization during multimodal pre-training [24]. This approach enables downstream models to benefit from auxiliary modalities even when these are absent during inference, effectively addressing the data availability and incompleteness issues common in molecular property prediction [24]. The system systematically investigates modality fusion at early, intermediate, and late stages, providing unique advantages for different data scenarios and task requirements [24].

Research Reagent Solutions

Table 2: Essential Research Reagents and Computational Tools

| Reagent/Tool | Type | Primary Function | Application Context |

|---|---|---|---|

| AssayInspector [1] | Software package | Data consistency assessment | Preprocessing of heterogeneous molecular datasets |

| RDKit [1] | Cheminformatics library | Molecular descriptor calculation | Feature generation from chemical structures |

| DGL/LifeSci [23] | Graph neural network library | Molecular graph representation | Graph-based property prediction |

| OGB [23] | Benchmarking suite | Model performance evaluation | Standardized assessment of prediction accuracy |

| GROMACS [25] | Molecular dynamics engine | MD simulation and property extraction | Calculation of dynamics-based descriptors |

| WebAIM Contrast Checker [26] | Accessibility tool | Color contrast verification | Compliance with visualization standards |

Experimental Protocols and Methodologies

Protocol 1: Data Consistency Assessment with AssayInspector

Objective: Systematically identify distributional misalignments, outliers, and batch effects across multiple molecular property datasets prior to model training.

Materials and Reagents:

- Molecular datasets from heterogeneous sources (e.g., ChEMBL, TDC, proprietary collections)

- AssayInspector Python package [1]

- RDKit cheminformatics library [1]

- Computational environment with Python 3.7+ and required dependencies (Scipy, Plotly, Matplotlib, Seaborn)

Procedure:

- Data Collection and Preparation: Gather molecular property datasets from diverse sources, ensuring consistent structural representation (SMILES or molecular graph format). Compile associated property annotations and experimental metadata.

Descriptive Statistics Generation: Execute AssayInspector's statistical analysis module to compute key parameters for each data source:

- Number of molecules and endpoint statistics (mean, standard deviation, quartiles) for regression tasks

- Class counts and ratios for classification tasks

- Statistical comparisons of endpoint distributions using two-sample Kolmogorov-Smirnov test (regression) or Chi-square test (classification)

- Within- and between-source feature similarity calculations using Tanimoto coefficient (ECFP4) or standardized Euclidean distance (RDKit descriptors)

Visualization and Exploratory Analysis: Generate comprehensive visualization plots:

- Property distribution plots with pairwise statistical testing

- Chemical space visualization using UMAP dimensionality reduction

- Dataset intersection analysis to identify molecular overlaps

- Feature similarity plots to detect representation discrepancies

Insight Report Generation: Review automated alerts and recommendations for:

- Dissimilar datasets based on descriptor profiles

- Conflicting datasets with differing annotations for shared molecules

- Divergent datasets with low molecular overlap

- Redundant datasets with high proportion of shared molecules

Data Preprocessing Decisions: Based on AssayInspector outputs, implement appropriate data cleaning strategies:

- Remove or correct conflicting annotations

- Apply distribution alignment techniques for misaligned datasets

- Exclude outlier molecules or datasets with extreme distributional differences

- Strategically aggregate datasets with complementary chemical coverage

Protocol 2: Adversarial Augmentation for Imbalanced Data

Objective: Enhance model robustness for molecular property prediction tasks with class imbalance using adversarial augmentation techniques.

Materials and Reagents:

- Imbalanced molecular property datasets

- AAIS (Adversarial Augmentation to Influential Sample) framework [23]

- Graph Neural Network architecture (e.g., DMPNN, GIN)

- OGB (Open Graph Benchmark) evaluation framework [23]

Procedure:

- Initial Model Training: Train baseline Graph Neural Network on available imbalanced molecular property data using standard cross-entropy or mean squared error loss.

Influential Sample Identification: Apply influence function analysis to identify data points that significantly impact model training:

- Compute one-step influence function to assess training data contributions

- Identify samples located near decision boundaries with high influence values

- Select candidates for adversarial augmentation based on influence rankings

Adversarial Augmentation Generation: Implement AAIS framework for distributionally robust optimization:

- Generate adversarial examples by perturbing influential molecular graphs

- Ensure augmented samples maintain biochemical validity through structure constraints

- Balance augmentation intensity to maximize diversity while preserving semantic meaning

Robust Model Training: Integrate original and augmented samples in training process:

- Employ balanced sampling strategies to address class imbalance

- Utilize adaptive weighting to prioritize challenging examples

- Implement consistency regularization between original and augmented views

Validation and Evaluation: Assess model performance using appropriate metrics:

- For classification: AUC, F1-score (with emphasis on minority classes)

- For regression: Mean Absolute Error, R-squared across value ranges

- Compare against non-augmented baselines and alternative augmentation strategies

Protocol 3: Out-of-Distribution Property Prediction

Objective: Enable extrapolative prediction of molecular properties beyond the training distribution range using transductive approaches.

Materials and Reagents:

- Molecular property datasets with defined value ranges

- MatEx (Materials Extrapolation) implementation [22]

- Composition-based or graph-based molecular representations

- Benchmark datasets (AFLOW, Matbench, Materials Project, MoleculeNet)

Procedure:

- Data Stratification and Splitting: Partition molecular property data into in-distribution (ID) and out-of-distribution (OOD) sets:

- Define OOD ranges based on property value thresholds (e.g., top 30% of values)

- Ensure chemical diversity within both ID and OOD splits

- Maintain representative molecular scaffolds across splits

Bilinear Transduction Model Setup: Implement MatEx framework for extrapolative prediction:

- Represent molecules using stoichiometry-based or graph-based features

- Configure bilinear transduction to learn property changes as functions of molecular differences

- Parameterize prediction based on training examples and representation space differences

Transductive Learning Optimization: Train model using analogical input-target relationships:

- Leverage training-test analogies rather than direct input-output mapping

- Optimize for relative property differences rather than absolute values

- Incorporate chemical similarity constraints to maintain biochemical plausibility

Extrapolative Performance Evaluation: Assess model capability to predict high-value properties:

- Compute Mean Absolute Error specifically for OOD samples

- Measure extrapolative precision (fraction of true top candidates correctly identified)

- Evaluate recall of high-performing candidates in OOD regions

- Compare against baseline methods (Ridge Regression, MODNet, CrabNet)

Applicability Domain Analysis: Characterize model confidence and reliability:

- Estimate prediction uncertainty for OOD compounds

- Identify chemical regions with reliable extrapolation performance

- Establish confidence thresholds for high-stakes predictions

Implementation Guidelines and Best Practices

Data Collection and Curation Strategies

Effective management of data heterogeneity begins with strategic data collection and curation. Proactive source evaluation should assess potential data sources for methodological consistency, chemical space coverage, and annotation reliability before integration [1]. Implementing standardized metadata capture ensures comprehensive documentation of experimental conditions, measurement protocols, and data processing steps, facilitating later consistency assessment [1]. Structured data provenance tracking enables retrospective analysis of performance variations attributable to specific data sources or processing decisions [1].

For molecular representation, multimodal approaches that integrate graph-based, descriptor-based, and potentially image-based representations can enhance robustness to representation-specific biases [24]. The MMFRL framework demonstrates that cross-modal knowledge transfer during pre-training enables models to benefit from auxiliary modalities even when unavailable during inference, effectively addressing modality-specific distributional shifts [24].

Model Selection and Training Considerations

Model architecture and training strategies should explicitly account for distributional shifts and heterogeneity. For few-shot learning scenarios with limited labeled data, approaches that leverage external chemical knowledge and structural constraints help address both cross-property generalization under distribution shifts and cross-molecule generalization under structural heterogeneity [2]. Adversarial augmentation techniques like AAIS significantly improve performance on imbalanced molecular property prediction tasks, with demonstrated improvements of 1%-15% in AUC and 1%-35% in F1-score [23].

When targeting out-of-distribution prediction, bilinear transduction methods have shown substantial improvements in extrapolative precision—1.8× for materials and 1.5× for molecules—with up to 3× boost in recall of high-performing candidates [22]. These approaches reparameterize the prediction problem to focus on how property values change as functions of molecular differences rather than predicting absolute values from new materials directly [22].

Validation and Performance Assessment

Robust validation strategies must explicitly address data heterogeneity challenges. Stratified evaluation that separately assesses performance across different data sources, chemical scaffolds, and property value ranges provides clearer insight into model limitations and failure modes [1]. Cross-dataset validation, where models trained on one dataset are evaluated on entirely separate datasets with the same property annotations, offers the most realistic assessment of real-world generalization capability [1].

For OOD scenarios, extrapolative precision metrics that measure the fraction of true top candidates correctly identified provide more actionable assessments than aggregate error metrics alone [22]. Similarly, in few-shot learning contexts, meta-validation approaches that simulate few-shot conditions during model development help optimize for target deployment scenarios [2].

The challenges posed by data heterogeneity and distributional shifts in molecular property prediction are significant but addressable through systematic assessment, appropriate methodological choices, and robust validation practices. Tools like AssayInspector enable researchers to identify and characterize data inconsistencies before model development, preventing the integration of misaligned datasets that degrade performance [1]. Advanced learning techniques including adversarial augmentation for imbalanced data [23], bilinear transduction for OOD prediction [22], and multimodal fusion with relational learning [24] provide powerful approaches for maintaining model robustness and generalization across diverse data conditions.

The implementation of these strategies within a comprehensive framework that spans data collection, model development, and validation represents a practical pathway toward more reliable molecular property prediction systems. By explicitly acknowledging and addressing data heterogeneity rather than assuming dataset homogeneity, researchers and drug development professionals can develop models that maintain predictive accuracy across diverse chemical spaces and experimental contexts, ultimately accelerating the discovery and optimization of novel therapeutic compounds.

Molecular representation is a cornerstone of computational chemistry and drug design, bridging the gap between chemical structures and their biological, chemical, or physical properties. It involves converting molecules into mathematical or computational formats that algorithms can process to model, analyze, and predict molecular behavior [18]. Effective molecular representation is essential for various drug discovery tasks, including virtual screening, activity prediction, and scaffold hopping, enabling efficient and precise navigation of chemical space [18].

The evolution from traditional rule-based representations to modern AI-driven approaches has significantly advanced molecular property prediction. These representations serve as the foundational input for machine learning (ML) and deep learning (DL) models, with the choice of representation profoundly impacting model performance, particularly in data-scarce scenarios common to molecular property prediction [18].

Foundational Representation Methods

Molecular Graph Representations

Molecules can naturally be viewed as graph structures, where atoms are considered as nodes and covalent bonds between atoms as edges [20]. This representation preserves the topological structure and connectivity of molecules, making it particularly valuable for capturing spatial relationships and functional groups.

With the advancement of graph neural networks (GNNs), many studies have shifted towards using GNNs for molecular property prediction tasks [20]. GNNs can be trained end-to-end directly on molecular graphs, enabling them to capture higher-order nonlinear relationships more effectively, eliminate human biases, and dynamically adapt to different tasks [20].

SMILES String Representations

The Simplified Molecular Input Line Entry System (SMILES) provides a compact and efficient way to encode chemical structures as strings [18]. Introduced in 1988 by Weininger et al., SMILES remains the mainstream molecular representation method due to its human-readability and compactness [18]. Despite its widespread use, SMILES has inherent limitations in capturing the full complexity of molecular interactions, particularly in reflecting intricate relationships between molecular structure and key drug-related characteristics [18].

Table 1: Comparison of Foundational Molecular Representation Methods

| Representation Type | Format | Key Features | Common Applications | Limitations |

|---|---|---|---|---|

| Molecular Graph | Graph (Nodes & Edges) | Preserves topological structure; Natural for GNN processing | Graph Neural Networks; Structure-activity relationship analysis | Computational complexity; Requires specialized architectures |

| SMILES | Line Notation/String | Human-readable; Compact format; Extensive tool support | Language model-based approaches; Sequence-based learning | Limited spatial awareness; Variability in canonical forms |

| Molecular Fingerprints | Binary Vectors/Bit Strings | Encodes substructural presence; Computational efficiency | Similarity search; Clustering; QSAR analyses | Predefined features limit novelty discovery |

| Molecular Descriptors | Quantitative Features | Physicochemical properties; Interpretable features | Traditional ML models; Property prediction | Dependent on expert knowledge; May miss complex patterns |

Data Augmentation Strategies for Molecular Property Prediction

Multi-Task Learning Approaches

Multi-task learning represents a promising approach to facilitate training ML models in low-data regimes by leveraging additional molecular data—even potentially sparse or weakly related—to enhance prediction quality [7]. Through controlled experiments, researchers have evaluated the conditions under which multi-task learning outperforms single-task models, offering recommendations for augmenting auxiliary data to improve predictive accuracy [7].

This approach is particularly valuable for few-shot molecular property prediction (FSMPP), which has emerged as an expressive paradigm that enables learning from only a few labeled examples [2]. The primary challenge of FSMPP lies in the risk of overfitting and memorization under limited molecular property annotations, which significantly hampers generalization ability to new rare chemical properties or novel molecular structures [2].

Integration of External Knowledge

Recent approaches have integrated knowledge extracted from large language models (LLMs) with structural features derived from pre-trained molecular models to enhance molecular property prediction [20]. These methods prompt LLMs to generate both domain-relevant knowledge and executable code for molecular vectorization, producing knowledge-based features that are subsequently fused with structural representations [20].

This integration addresses the long-tail distribution of molecular knowledge in LLMs, where well-studied molecular properties may have sufficient reference information, while less-explored areas may lack adequate reference rules [20]. By combining knowledge features with structural features, models can leverage both human expertise and direct mappings between structure and properties [20].

Experimental Protocols and Workflows

Protocol: Multi-Task Graph Neural Network for Property Prediction

Objective: Enhance molecular property prediction accuracy in low-data regimes using multi-task learning with graph neural networks.

Materials and Reagents:

- Molecular dataset (e.g., QM9 dataset or fuel ignition properties dataset)

- Graph neural network framework (PyTor Geometric or DGL)

- RDKit for molecular descriptor calculation

- Compute resources (GPU recommended for training)

Procedure:

Data Preparation:

- Collect molecular datasets with property annotations

- Convert molecules to graph representations (nodes=atoms, edges=bonds)

- Apply data standardization to address distributional misalignments between datasets [27]

- Split data into training, validation, and test sets

Model Architecture Setup:

- Implement graph neural network with shared encoder layers

- Add task-specific output heads for each property

- Configure loss function with weighted multi-task objective

- Set up optimization algorithm (Adam, learning rate 0.001)

Training Procedure:

- Initialize model parameters

- Train with mini-batch gradient descent

- Monitor validation loss for each task

- Apply early stopping based on combined validation metric

- Save best-performing model checkpoint

Evaluation:

- Calculate performance metrics on test set

- Compare against single-task baselines

- Analyze transfer learning benefits across tasks

Protocol: LLM Knowledge Integration for Enhanced Prediction

Objective: Leverage knowledge from large language models to augment molecular representations for improved property prediction.

Materials:

- Pre-trained LLMs (GPT-4o, GPT-4.1, or DeepSeek-R1)

- Molecular structure encoders (GNNs or Transformers)

- SMILES representations of molecules

- Feature fusion framework

Procedure:

Knowledge Extraction from LLMs:

- Prompt LLMs with molecular property-specific queries

- Generate both relevant domain knowledge and executable function code

- Extract knowledge-based molecular features through LLM vectorization

Structural Feature Extraction:

- Process molecular graphs through pre-trained GNNs

- Alternatively, use SMILES sequences with molecular language models

- Extract structural embeddings from final network layers

Feature Fusion:

- Combine knowledge features with structural features

- Implement attention mechanisms for weighted feature integration

- Apply dimensionality reduction if necessary

Prediction and Validation:

- Train predictive models on fused representations

- Validate against experimental data

- Compare performance against structure-only and knowledge-only baselines

Table 2: Research Reagent Solutions for Molecular Property Prediction

| Reagent/Category | Specific Examples | Function/Purpose | Application Context |

|---|---|---|---|

| Molecular Datasets | QM9 [7], ChEMBL [2], TDC ADME [27] | Provide experimental property annotations for training and evaluation | Benchmarking; Model training; Transfer learning |

| Graph Neural Networks | GNNs [7] [20], Multi-task GNNs [7] | Learn molecular representations directly from graph structure | End-to-end property prediction; Structure-property mapping |

| Large Language Models | GPT-4o, GPT-4.1, DeepSeek-R1 [20] | Extract human prior knowledge; Generate molecular features | Knowledge augmentation; Feature vectorization |

| Molecular Descriptors | ECFP4 fingerprints [27], RDKit descriptors [27] | Provide predefined chemical features for traditional ML | Feature-based models; Similarity analysis |

| Data Consistency Tools | AssayInspector [27] | Detect distributional misalignments and annotation discrepancies | Data quality assessment; Preprocessing |

| Visualization Software | PyMOL [28], ChimeraX [29] | Molecular structure visualization and analysis | Result interpretation; Publication graphics |

Critical Considerations and Best Practices

Addressing Data Heterogeneity and Distribution Shifts

Data heterogeneity and distributional misalignments pose critical challenges for machine learning models, often compromising predictive accuracy [27]. These challenges are particularly evident in preclinical safety modeling, where limited data and experimental constraints exacerbate integration issues [27]. When integrating public molecular datasets, researchers have uncovered significant misalignments as well as inconsistent property annotations between gold-standard and popular benchmark sources [27].

To address these challenges, rigorous data consistency assessment prior to modeling is essential. Tools like AssayInspector provide model-agnostic packages that leverage statistics, visualizations, and diagnostic summaries to identify outliers, batch effects, and discrepancies across diverse datasets [27]. This approach enables effective transfer learning across heterogeneous data sources and supports reliable integration across diverse scientific domains [27].

Overcoming Few-Shot Learning Challenges

Few-shot molecular property prediction faces two core challenges: cross-property generalization under distribution shifts and cross-molecule generalization under structural heterogeneity [2]. Cross-property generalization involves transferring knowledge across weakly correlated tasks with diverse labels and biochemical mechanisms, while cross-molecule generalization addresses the tendency to overfit limited molecular structures [2].

Successful approaches to these challenges include data-level, model-level, and learning paradigm-level interventions [2]. At the data level, techniques include molecular mining and augmentation strategies. At the model level, approaches focus on stages of representation learning and architecture design. For learning paradigms, methods include generalization-oriented optimization mechanisms that incorporate external chemical domain knowledge and structural constraints [2].

The evolution from basic molecular graphs to sophisticated SMILES representations has fundamentally transformed molecular property prediction research. These foundational representations, when combined with modern data augmentation strategies such as multi-task learning and LLM knowledge integration, provide powerful frameworks for addressing the data scarcity challenges pervasive in drug discovery. The experimental protocols and considerations outlined in this application note offer researchers practical guidance for implementing these approaches, ultimately contributing to more robust and generalizable molecular property prediction models that can accelerate early-stage drug discovery and materials design.

Practical Data Augmentation Techniques for Molecular Data

In molecular property prediction, a significant challenge is data scarcity, as obtaining high-fidelity, experimentally measured properties is often costly and time-consuming. Multi-task learning (MTL) addresses this by jointly learning multiple related tasks, allowing a model to leverage shared information and improve generalization on the primary task. This approach is particularly promising for drug discovery and materials informatics, where data can be sparse but the relationships between different molecular properties are rich. By sharing representations across tasks, MTL mitigates overfitting and enables knowledge transfer, especially in low-data regimes [7] [30].

Two dominant paradigms exist within this framework. Auxiliary Learning deliberately uses secondary tasks to improve the primary task's performance, often employing strategies to weight these tasks or align their learning signals. Classical MTL aims to achieve good performance across all tasks simultaneously. The core challenge, known as negative transfer, occurs when irrelevant or conflicting tasks impede learning. Success hinges on identifying related tasks and managing gradient conflicts during optimization [31] [32].

Key Multi-Task Learning Strategies and Performance

The effectiveness of an MTL strategy depends on the relatedness of the tasks and the specific approach used to combine them. The table below summarizes the core strategies identified in recent literature for molecular and polymer informatics.

Table 1: Multi-Task Learning Strategies for Molecular and Polymer Property Prediction

| Strategy | Core Methodology | Reported Performance Improvement | Application Context |

|---|---|---|---|

| Gradient Surgery (RCGrad) [31] | Aligns conflicting auxiliary task gradients through rotation during training. | Up to 7.7% improvement over vanilla fine-tuning on molecular property prediction [31]. | Adapting pretrained Graph Neural Networks (GNNs) with auxiliary self-supervised tasks. |

| Bi-Level Optimization (BLO+RCGrad) [31] | Learns optimal auxiliary task weights via bi-level optimization, often combined with gradient rotation. | Consistent improvements over fine-tuning, particularly in limited data scenarios [31]. | Molecular property prediction with multiple self-supervised auxiliary tasks. |

| Auxiliary Task Selection [32] | Uses statistical theory and maximum flow algorithms to select the most relevant auxiliary tasks for a given primary task. | Outperforms both single-task learning and standard multi-task learning methods [32]. | Predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties. |

| Supervised Auxiliary Training [30] | Augments a primary task with supervised auxiliary tasks (e.g., other polymer properties) during training. | Provides beneficial performance gains, mitigating data scarcity issues [30]. | Polymer property prediction with limited experimental data. |

| FetterGrad Algorithm [33] | Mitigates gradient conflicts by minimizing the Euclidean distance between task gradients. | Achieved CI: 0.897, MSE: 0.146 on KIBA dataset; outperformed state-of-the-art models [33]. | Unified framework for predicting drug-target affinity and generating novel drugs. |

Experimental Protocols for Multi-Task Learning

Protocol: Auxiliary Learning with Adaptive Gradient Alignment

This protocol is adapted from methods used to enhance pretrained Graph Neural Networks (GNNs) for molecular property prediction [31].

1. Problem Formulation and Model Setup

- Objective: Improve performance on a target molecular property prediction task (\mathop{\mathcal{T}_{t}}\limits).

- Model: Initialize with an off-the-shelf pretrained GNN with parameters (\Theta).

- Auxiliary Tasks: Select (k) self-supervised tasks (e.g., masked atom prediction, context prediction, graph infomax) designed to capture diverse chemical semantics.

2. Joint Optimization Setup

- The model is trained to minimize a combined loss function: (\min{ {\Theta ,\Psi ,\Phi }{i\in {1..k}} } {\mathcal{L}{t}} + \sum _{i=1}^k {{\textbf{w}}i} {\mathcal {L}{a,i}}), where (\mathop{\mathcal{L}{t}}\limits) is the target task loss, (\mathop{\mathcal{L}{a,i}}\limits) is the (i)-th auxiliary task loss, and (\textbf{w}i) is its weight.

- Parameters are updated as: (\Theta ^{(t+1)} := \Theta ^{(t)} - \alpha \left( \mathop{\textbf{g}{t}}\limits + \sum \nolimits _{i=1}^k \textbf{w} _i \mathop{\textbf{g}{a,i}}\limits \right)), where (\mathop{\textbf{g}{t}}\limits) and (\mathop{\textbf{g}{a,i}}\limits) are gradients from the target and auxiliary tasks.

3. Gradient Conflict Mitigation

- Implement the Rotation of Conflicting Gradients (RCGrad) algorithm.

- For each auxiliary gradient (\mathop{\textbf{g}{a,i}}\limits), compute its projection onto the target gradient (\mathop{\textbf{g}{t}}\limits).

- If the projection is negative (indicating conflict), rotate the auxiliary gradient to align with the target gradient's direction, preserving its magnitude.

- Alternatively, use Bi-Level Optimization (BLO+RCGrad) to learn the optimal weights (\textbf{w}_i) automatically, eliminating the need for manual tuning.

4. Evaluation and Validation

- Evaluate the final model on a held-out test set for the target task.

- Compare performance against a baseline model that uses only vanilla fine-tuning on the target task.

Protocol: Multi-Task Graph Learning for ADMET Prediction

This protocol outlines the "one primary, multiple auxiliaries" paradigm for predicting multiple ADMET properties [32].

1. Auxiliary Task Selection

- Objective: Predict a primary ADMET property (e.g., metabolic stability).

- Selection: Use statistical theory (status theory) combined with a maximum flow algorithm to automatically identify the most relevant set of auxiliary ADMET tasks from a pool of candidates. This step ensures task synergy and avoids negative transfer.

2. Model Architecture and Training

- Framework: Implement a Multi-Task Graph Learning framework for ADMET (MTGL-ADMET).

- Input: Represent drug-like small molecules as graphs.

- Architecture: A GNN encoder shared across all tasks extracts unified molecular representations.

- Task-Specific Heads: Each property (primary and auxiliary) has a dedicated prediction head.

- Training: Jointly train the shared encoder and all task-specific heads using a combined loss function. The framework incorporates interpretability modules to highlight molecular substructures crucial for the predictions.

3. Model Interpretation and Validation

- Interpretability: Use the model's built-in interpretability modules to visualize and identify the key molecular substructures (e.g., functional groups, rings) that the model associates with each ADMET endpoint.

- Validation: Compare the predictive performance of MTGL-ADMET against state-of-the-art single-task and multi-task learning baselines on benchmark ADMET datasets.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Multi-Task Learning in Molecular Property Prediction

| Resource Name | Type | Primary Function/Application |

|---|---|---|

| Graph Neural Networks (GNNs) [31] [7] | Model Architecture | Learns effective structural and relational representations of molecules represented as graphs. |

| Self-Supervised Learning (SSL) Tasks [31] | Auxiliary Tasks | Provides pre-training and auxiliary signals for GNNs; includes tasks like masked atom prediction and context property prediction. |

| QM9 Dataset [7] | Benchmark Data | A public dataset of quantum mechanical properties for ~133k small molecules; used for controlled MTL experiments. |

| KIBA, Davis, BindingDB [33] | Benchmark Data | Real-world datasets used for benchmarking Drug-Target Affinity (DTA) prediction models. |

| CoPolyGNN [30] | Software/Model | A multi-scale GNN model with an attention-based readout, designed for polymer property prediction using MTL. |

| RDKit [30] | Software | Open-source cheminformatics toolkit used for handling molecular data and calculating molecular descriptors. |

Workflow Visualization: Multi-Task Learning with Gradient Alignment

The following diagram illustrates the core workflow for adapting a pre-trained model using auxiliary learning with gradient alignment, as described in the first experimental protocol.

Workflow Visualization: Multi-Task Graph Learning for ADMET

This diagram outlines the "one primary, multiple auxiliaries" paradigm for predicting ADMET properties, which involves adaptive auxiliary task selection.

Simplified Molecular Input Line Entry System (SMILES) is a single-line text representation that encodes the two-dimensional structure of a molecule [34]. A fundamental characteristic of the SMILES notation is its non-univocal nature; the same molecule can be represented by multiple, equally valid SMILES strings [34] [35]. This variation arises from choices in the starting atom for the graph traversal and the direction in which the molecular graph is navigated [34].

SMILES enumeration (also referred to as SMILES randomization) is a data augmentation technique that leverages this non-univocality by generating multiple SMILES string representations for a single chemical structure [34] [36]. This process artificially inflates the size and diversity of molecular datasets, a crucial strategy for training "data-hungry" deep learning models, particularly in low-data scenarios common in molecular property prediction and de novo drug design [34] [37]. By exposing a model to different syntactic representations of the same underlying molecular structure, SMILES enumeration helps the model learn the inherent chemical rules rather than memorizing specific text patterns, ultimately improving model robustness and generalization performance [37] [38].

Experimental Protocols and Implementation

Core SMILES Enumeration Protocol

The following workflow details the steps for implementing SMILES enumeration for a molecular dataset.

Title: SMILES Enumeration Workflow

Procedure:

- Input Preparation: Begin with a dataset of molecules, typically in a structure data file (SDF) or a list of canonical SMILES. Pre-process the structures by removing salts, standardizing tautomers, and explicitly defining aromaticity if needed [39].

- SMILES Enumeration/Randomization: For each molecule in the dataset, generate multiple, non-canonical SMILES representations. This is achieved by:

- Randomizing the atom order (selecting a different starting atom for the traversal).

- Varying the direction (clockwise or counter-clockwise) of traversing the molecular graph [34] [36].

- Using a tool like the

SmilesEnumeratorclass, which relies on RDKit to ensure all generated SMILES are chemically valid and sanitizable [36].

- Vectorization: Convert the enumerated SMILES strings into a numerical format suitable for model input. This involves:

- Defining a character set (

charset) that includes all unique symbols present in the entire dataset of SMILES. - Determining a fixed sequence length (

pad) to which all SMILES will be standardized, typically by truncating longer strings or padding shorter ones with spaces [36]. - Transforming each character in the SMILES string into a one-hot encoded vector based on the defined character set [36] [39].

- Defining a character set (

- Model Training: Train the neural network model (e.g., LSTM, GRU) using the augmented and vectorized dataset. The model learns to predict the next token in the sequence given the previous tokens [34] [39].

- Prediction and Inference (for Predictive Tasks): For property prediction tasks, during the inference phase, generate multiple enumerated SMILES for the query molecule. Pass each enumerated SMILES through the trained model to obtain a prediction. The final, stabilized prediction for the molecule is the average of the predictions from all its enumerated SMILES representations [40] [37].

Advanced Augmentation Strategies

Recent research has introduced strategies that go beyond identity-preserving enumeration. The following protocols are designed for experimental use to potentially enhance model robustness and performance further [34] [35].

Protocol 1: Atom Masking

- Objective: To improve the model's ability to learn physicochemical properties, especially in very low-data regimes, by forcing it to reason about incomplete structural information [34] [35].

- Procedure:

- Variants: The masking can be performed completely randomly or can be targeted to atoms belonging to pre-defined, chemically relevant functional groups [34].

Protocol 2: Token Deletion