A Practical Guide to Molecular Property Prediction with Machine Learning for Drug Discovery

This article provides a comprehensive roadmap for researchers and drug development professionals to implement machine learning for molecular property prediction.

A Practical Guide to Molecular Property Prediction with Machine Learning for Drug Discovery

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals to implement machine learning for molecular property prediction. It covers the foundational concepts of molecular representations, explores predictive and generative modeling methodologies, addresses critical troubleshooting and optimization challenges like dataset bias and model uncertainty, and outlines rigorous validation and comparative analysis techniques. The guide synthesizes current best practices to help scientists navigate the complexities of AI-driven drug discovery, from initial setup to reliable deployment, with a focus on practical application and building confidence in predictive outcomes.

Laying the Groundwork: Core Concepts and Molecular Representations for ML

The Challenge of Navigating Vast Chemical Space

The chemical space of possible, stable organic molecules is estimated to encompass 10^60 to 10^100 distinct structures, a scale so vast that exhaustive experimental characterization is an impossibility. This presents a fundamental challenge for scientific research and industrial development in fields like drug discovery and materials science. The process of experimentally determining molecular properties is notoriously slow, expensive, and resource-intensive, often requiring sophisticated equipment and consuming significant time. For instance, the traditional drug discovery process can take over a decade and cost billions of dollars, with a success rate of only 1 in 5,000 compounds [1].

Machine learning (ML) has emerged as a transformative tool to navigate this expansive space. By learning from existing chemical data, ML models can predict the properties of new, unsynthesized molecules with high speed and accuracy, dramatically accelerating the search for new drugs, materials, and energy carriers. However, the effectiveness of these models is constrained by several significant challenges, including the scarcity of high-quality experimental data, the difficulty of generalizing predictions to new regions of chemical space, and the complexity of selecting the appropriate model architecture and featurization for a given task [2] [3] [4]. This guide provides a technical overview for researchers and scientists embarking on ML-driven molecular property prediction, with a focus on overcoming these central challenges.

Core Machine Learning Approaches

Molecular property prediction leverages several classes of ML algorithms, each with distinct strengths and methodological considerations. The selection of an approach often depends on the volume of available data and the specific prediction task.

Multi-Task Learning (MTL) and the Negative Transfer Problem

MTL is a powerful strategy designed to alleviate data scarcity by training a single model on multiple related property prediction tasks simultaneously. The core idea is that by learning these tasks jointly, the model can discover and exploit shared underlying structures and correlations, leading to improved generalization on all tasks [2].

- Architecture: A typical MTL model consists of a shared backbone (e.g., a Graph Neural Network) that learns a general-purpose molecular representation, and multiple task-specific heads (e.g., Multi-Layer Perceptrons) that map the shared representation to a specific property value [2].

- Methodology: The model is trained on a dataset where each molecule has labels for one or more properties. The total loss is often a weighted sum of the losses for each individual task.

- Challenge - Negative Transfer (NT): A major obstacle to effective MTL is negative transfer, which occurs when updates driven by one task are detrimental to the performance of another. This is often exacerbated by task imbalance, where certain tasks have far fewer labeled examples than others, limiting their influence on the shared model parameters [2].

- Advanced Protocol: Adaptive Checkpointing with Specialization (ACS)

- Purpose: To mitigate negative transfer while preserving the benefits of inductive transfer [2].

- Procedure:

- Train a single MTL model with a shared GNN backbone and task-specific MLP heads.

- Throughout training, continuously monitor the validation loss for every single task.

- Independently for each task, checkpoint and save the model parameters (both the shared backbone and the task-specific head) whenever that task's validation loss reaches a new minimum.

- Upon completion, each task has a specialized model that represents the optimal shared knowledge for that specific property.

- Validation: This method has been shown to consistently surpass or match the performance of recent supervised methods, particularly in ultra-low data regimes. In one real-world application, it enabled accurate predictions for sustainable aviation fuel properties with as few as 29 labeled samples [2].

Geometric Deep Learning

Geometric deep learning incorporates three-dimensional structural information into the model, which is crucial for accurately predicting properties that depend on molecular conformation and quantum-chemical interactions.

- Architecture: The Directed Message Passing Neural Network (D-MPNN) is a state-of-the-art architecture for molecular graphs [4]. Messages are passed along directed edges (bonds) to prevent redundant updates and noise. A Geometric D-MPNN extends this by incorporating 3D molecular coordinates and other quantum-chemical descriptors into the featurization of nodes (atoms) and edges [4].

- Featurization: Models can be featurized with 2D information (molecular graph) or 3D information (atomic coordinates from methods like Density Functional Theory - DFT). The necessity of 3D information varies with the property being predicted [4].

- Advanced Protocol: Achieving Chemical Accuracy with Δ-ML and Transfer Learning

- Chemical Accuracy: A key target, especially for thermochemistry, is an error of ~1 kcal mol⁻¹, which is required for constructing thermodynamically consistent models [4].

- Δ-ML Protocol:

- Obtain property calculations for a set of molecules at both a low (LL) and high (HL) level of theory.

- Train an ML model to predict the residual (difference) between the HL and LL values:

Δ = Property_HL - Property_LL. - The final prediction for a new molecule is the sum of the easily computed low-level value and the ML-predicted delta:

Property_HL_Predicted = Property_LL + Δ_Predicted[4].

- Transfer Learning Protocol:

- Pre-training: A model is first trained on a large, diverse database of molecules with properties calculated at a lower level of theory (e.g., via COSMO-RS). This teaches the model a robust general molecular representation.

- Fine-tuning: The pre-trained model is then further trained (fine-tuned) for a few epochs on a small, high-quality dataset (e.g., experimental data or high-level quantum chemical data) for the specific property of interest. This adapts the model to achieve high accuracy on the target domain [4].

Addressing the Out-of-Distribution (OOD) Generalization Challenge

A model's ability to make accurate predictions for molecules that are structurally different from those in its training set—known as OOD generalization—is critical for real-world discovery, which inherently involves exploring new chemical territories.

- The Problem: Standard ML models often suffer from significant performance degradation when applied to OOD molecules. The BOOM benchmark study found that even top-performing models exhibited an average OOD error three times larger than their in-distribution error [3].

- Benchmarking Insights: The BOOM benchmark evaluated over 140 model and task combinations, revealing that no single existing model achieves strong OOD generalization across all tasks. Key findings include [3]:

- Models with high inductive bias (e.g., strong architectural constraints suited to the problem) can perform well on OOD tasks with simple, specific properties.

- Current large-scale chemical foundation models, while promising for limited-data scenarios, do not yet show strong OOD extrapolation capabilities.

- Methodological Recommendations: To enhance OOD performance, the benchmark emphasizes careful attention to data generation procedures, model pre-training strategies, hyperparameter optimization, and molecular representation [3].

Experimental Protocols and Workflows

Implementing a robust ML pipeline for property prediction requires a structured workflow from data preparation to model deployment.

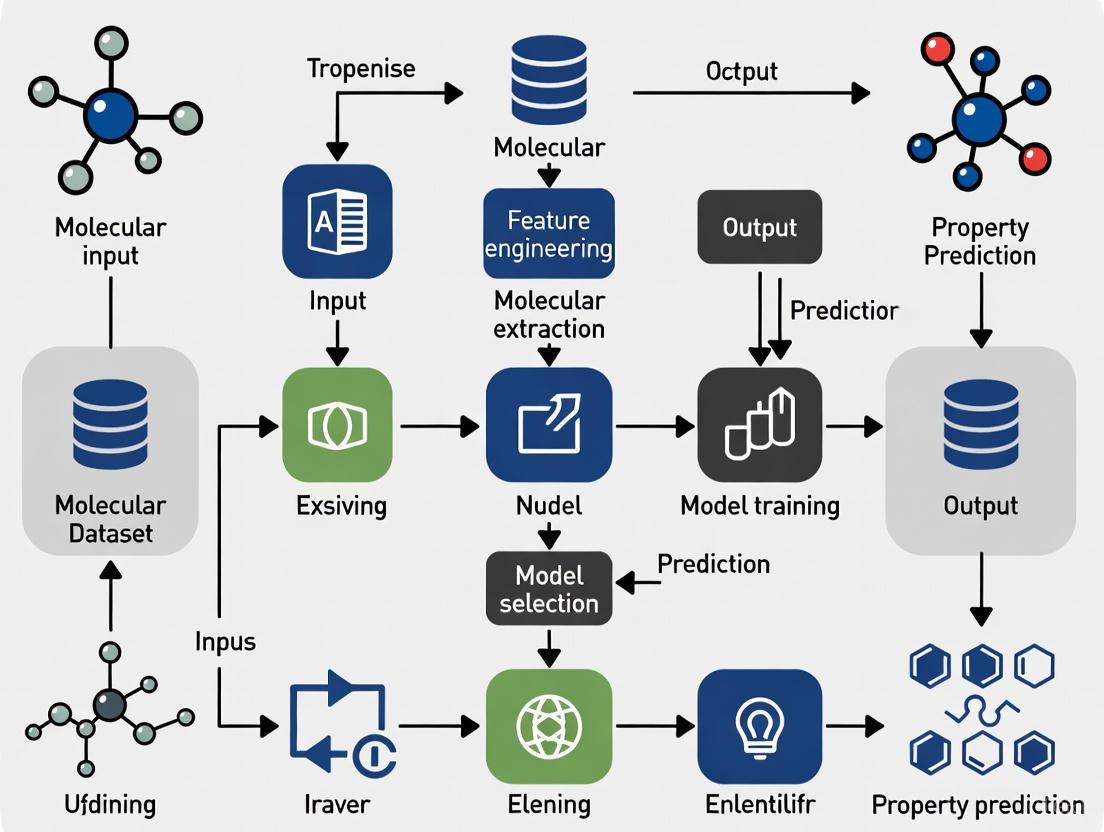

A Standard Workflow for Molecular Property Prediction

The following diagram illustrates a generalized experimental protocol that incorporates best practices for handling data splits and specialized training techniques.

Data Splitting Methodologies

The strategy for splitting data into training, validation, and test sets is critical for a realistic performance estimate.

- Random Splitting: Divides molecules randomly. This often leads to over-optimistic performance estimates because molecules in the test set can be highly structurally similar to those in the training set [2] [3].

- Scaffold Splitting (Murcko Scaffold): Partitions molecules based on their Bemis-Murcko scaffold (the core ring system with linkers). This ensures that molecules with different core structures are in different splits, providing a more challenging and realistic assessment of a model's ability to generalize to novel chemotypes [2].

- Temporal Splitting: Splits data based on the year of measurement or publication. This best simulates a real-world discovery scenario where models are used to predict properties for molecules synthesized in the future. Studies have shown that temporal splits can reveal inflated performance estimates from random/scaffold splits [2].

Successful ML projects rely on a suite of software tools, datasets, and computational resources. The table below summarizes key "research reagent solutions" for molecular property prediction.

Table 1: Essential Tools and Resources for Molecular Property Prediction

| Item Name | Type | Primary Function | Key Features / Considerations |

|---|---|---|---|

| Directed MPNN (D-MPNN) [2] [4] | Model Architecture | Graph-based property prediction | Reduces redundant messaging; supports 2D and 3D featurization. |

| ACS Training Scheme [2] | Training Algorithm | Mitigates negative transfer in MTL | Adaptive checkpointing for task specialization; ideal for imbalanced data. |

| Δ-ML & Transfer Learning [4] | Training Protocol | Achieves high accuracy with limited data | Δ-ML corrects low-level calculations; transfer learning adapts from large pretraining sets. |

| Therapeutics Data Commons (TDC) [1] | Data Resource | Benchmark datasets for drug discovery | Provides curated datasets for various drug development tasks. |

| BOOM Benchmark [3] | Benchmarking Tool | Evaluates out-of-distribution (OOD) generalization | Systematically tests model performance on OOD molecules. |

| ChemXploreML [5] | Software Application | User-friendly desktop app for predictions | No programming skills required; operates offline; uses molecular embedders. |

| RDKit [6] | Software Library | Open-source cheminformatics | Fundamental for molecule handling, fingerprint generation, and descriptor calculation. |

| Geometric Datasets (ThermoG3, DrugLib36) [4] | Data Resource | Large-scale quantum chemical data | Provides high-quality, industrially-relevant data for training and pretraining. |

Quantitative Performance Benchmarks

To guide model selection and expectation setting, it is essential to consider typical performance metrics across different model types and data regimes.

Table 2: Performance Benchmarks of ML Models on Molecular Property Prediction

| Model / Approach | Dataset / Context | Key Performance Metric | Result / Accuracy | Notes / Implication |

|---|---|---|---|---|

| ACS (MTL) [2] | ClinTox, SIDER, Tox21 | Average Improvement vs. Single-Task Learning | +8.3% improvement | Demonstrates effectiveness of transfer and NT mitigation. |

| Geometric D-MPNN [4] | Novel Thermochemistry Data | Mean Absolute Error (MAE) | Achieved <1 kcal mol⁻¹ ("Chemical Accuracy") | Crucial for reliable thermochemical kinetic models. |

| Schrödinger Formulation ML [7] | Polymer Glass Transition (Tg) | Coefficient of Determination (R²) | R² = 0.97 | High accuracy for complex mixture properties. |

| ChemXploreML App [5] | Critical Temperature Prediction | Accuracy Score | Up to 93% accuracy | Validates use of accessible, non-programmatic tools. |

| Top BOOM Benchmark Model [3] | Various OOD Tasks | OOD vs. In-Distribution Error | OOD error 3x larger than ID error | Highlights the pervasive challenge of OOD generalization. |

| ACS (MTL) [2] | Sustainable Aviation Fuels | Minimum Viable Dataset Size | Accurate models with 29 samples | Enables work in ultra-low data regimes. |

Machine learning provides a powerful and necessary set of tools for navigating the vastness of chemical space. The field has moved beyond simple models to sophisticated frameworks that can handle multi-task learning, 3D geometric information, and specialized training protocols like ACS and Δ-ML to achieve chemical accuracy. However, significant challenges remain. Out-of-distribution generalization is the next frontier, with current benchmarks showing that even the best models struggle to reliably extrapolate [3]. Future progress will likely come from models with stronger inductive biases, improved pre-training strategies on large, diverse datasets, and more rigorous evaluation protocols that prioritize real-world generalization over performance on narrow benchmarks. By leveraging the tools, protocols, and insights summarized in this guide, researchers can effectively harness ML to accelerate the discovery of new molecules with tailored properties.

Key Computational Representations of Molecules

The acceleration of materials discovery and drug development increasingly hinges on the ability of machine learning (ML) models to accurately predict molecular properties. The foundation of any successful ML model in this domain is its computational molecular representation, which translates chemical structures into a format that algorithms can process. The choice of representation fundamentally shapes the model's capacity to capture the physical and chemical principles that govern molecular behavior.

This guide provides a technical overview of the core representations used in modern ML-driven molecular property prediction, detailing their methodologies, comparative advantages, and protocols for implementation.

Molecular Graph Representations

Molecular graphs provide a natural and intuitive framework for representing molecules by treating atoms as nodes and chemical bonds as edges. This structure is inherently compatible with Graph Neural Networks (GNNs), which learn through a process of message passing [8] [9].

Core Methodology: Message Passing Neural Networks (MPNNs)

The message-passing framework allows atoms (nodes) to aggregate information from their local chemical environments. The process can be formalized in three key steps [8]:

- Message (M): For each node (atom)

v, a message is constructed from its neighboring nodesuand the connecting edges (bonds). This function,M, typically combines the current state of the neighborh_uand the features of the bonde_uv. - Aggregation (AGG): The messages from all neighbors are aggregated into a single vector. Common aggregation functions include sum, mean, or maximum.

- Update (U): The central node's state

h_vis updated based on its previous state and the aggregated message from its neighbors.

This cycle is repeated for multiple steps, allowing each atom to incorporate information from atoms increasingly farther away in the molecular structure, effectively capturing the topological environment [9].

Diagram 1: The Message Passing Neural Network (MPNN) workflow for learning molecular representations from graphs.

Experimental Protocol: Implementing a GNN for Property Prediction

A standard protocol for training a GNN on molecular property prediction involves the following steps [8]:

- Data Preparation: A dataset such as MoleculeNet is used. Molecules are converted into graphs, where nodes are featurized with atomic properties (e.g., atom type, degree, hybridization) and edges are featurized with bond properties (e.g., bond type, conjugation).

- Model Architecture: A GNN model like a Graph Convolutional Network (GCN) or Graph Attention Network (GAT) is constructed. The model consists of several message-passing layers followed by a readout function.

- Readout / Pooling: After the final message-passing layer, the updated node states are combined to form a single graph-level representation. This is often done via global mean pooling, sum pooling, or more advanced attention-based methods.

- Prediction Head: The graph-level representation is passed through a fully connected neural network to produce the final property prediction (e.g., solubility, toxicity).

- Training: The model is trained using a relevant loss function (e.g., Mean Squared Error for regression, Cross-Entropy for classification) and an optimizer like Adam.

Geometric and 3D Representations

While 2D graph representations capture topological connectivity, they ignore the crucial three-dimensional spatial arrangement of atoms. Geometric representations are essential for predicting properties that depend on molecular conformation, such as energy, vibrational spectra, and reactivity [10] [11].

Density Functional Theory (DFT) as a Data Source

Geometric ML models are typically trained on data generated from high-fidelity quantum mechanical calculations, primarily Dispersion-inclusive Density Functional Theory (DFT) [10] [11]. DFT provides the ground-truth labels for properties like system energy and atomic forces. Large-scale DFT datasets, such as the Open Molecules 2025 (OMol25) dataset, provide the foundational data for training these models [12] [11].

OMol25 is a collection of over 100 million DFT calculations on systems containing up to 350 atoms, spanning 83 elements and a wide range of chemical interactions [11]. This data is used to train Machine Learning Interatomic Potentials (MLIPs), which can predict DFT-level accuracy at a fraction of the computational cost, enabling simulations of large-scale systems that are otherwise prohibitive [12].

Advanced Architectural Innovation: Kolmogorov-Arnold GNNs (KA-GNNs)

Recent research has explored enhancing GNNs by integrating them with Kolmogorov-Arnold Networks (KANs). KA-GNNs replace the standard linear transformations and fixed activation functions in traditional GNNs with learnable univariate functions placed on the edges of the network [13].

The methodology involves integrating KAN modules into the three core components of a GNN [13]:

- Node Embedding: KANs are used to initialize node features from atomic and local environmental data.

- Message Passing: The aggregation and update functions within the GNN are handled by KAN layers, which use Fourier-series-based basis functions to capture complex patterns.

- Readout: The final pooling step to a graph-level representation is also performed by a KAN.

This integration has been shown to improve both prediction accuracy and computational efficiency while offering enhanced interpretability by highlighting chemically meaningful substructures [13].

Emerging and Multimodal Representations

Functional Group-Level Reasoning with LLMs

For large language models (LLMs), a key representation is the functional group-level annotation. Benchmarks like FGBench provide datasets where functional groups (e.g., hydroxyl, carbonyl) are precisely annotated and localized within the molecule [14]. This representation provides valuable prior knowledge that links molecular structures with textual descriptions, allowing LLMs to reason about the impact of specific chemical moieties on overall molecular properties [14].

Multimodal Representations: Integrating Text and Geometry

Another emerging approach is multimodal learning, which enriches geometric graph representations with textual descriptors from public databases like PubChem. These descriptors can include IUPAC names, molecular formulas, and physicochemical properties [15].

The experimental protocol for this approach involves [15]:

- Feature Extraction: Separate feature vectors are generated for the molecular graph (using a GNN) and for the textual descriptors (using an encoder network).

- Gated Fusion: A gated fusion mechanism dynamically balances and combines the geometric and textual feature vectors into a unified representation. This allows the model to leverage complementary information from both data types.

Comparative Analysis of Molecular Representations

The table below summarizes the key characteristics, strengths, and limitations of the primary computational representations.

Table 1: Comparative Analysis of Key Molecular Representations

| Representation | Core Idea | Best For | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Molecular Graph (GNN) [8] [9] | Atoms as nodes, bonds as edges. | Predicting properties dependent on 2D topology (e.g., toxicity, drug-likeness). | Natural representation; automatically learns features; invariant to atom indexing. | Neglects 3D spatial and electronic structure. |

| Geometric (3D) Representation [10] [11] | Includes 3D atomic coordinates. | Energetics, forces, spectroscopy, and conformation-dependent properties. | Captulates essential physics; enables accurate force fields (MLIPs). | Computationally intensive; requires high-quality 3D data. |

| KAN-enhanced Graph (KA-GNN) [13] | GNNs with learnable activation functions on edges. | General molecular property prediction with enhanced accuracy/interpretability. | Improved parameter efficiency and interpretability; strong theoretical foundation. | Emerging technology; less established than traditional GNNs. |

| Functional-Group (LLM) [14] | Textual annotation of chemical substructures. | Reasoning about structure-property relationships in language models. | Provides chemical prior knowledge; interpretable; leverages LLM capabilities. | Struggles with precise quantitative prediction; model may hallucinate. |

| Multimodal [15] | Combines graph and textual descriptors. | Leveraging existing chemical metadata to boost prediction on benchmarks. | Enriches representation with diverse data sources; can improve performance. | Gains can be task-dependent; adds model complexity. |

Table 2: Key datasets, models, and tools for molecular property prediction research.

| Resource Name | Type | Key Features | Primary Use Case |

|---|---|---|---|

| OMol25 Dataset [11] [16] | Dataset | >100M DFT calculations, 83 elements, up to 350 atoms, electronic densities/wavefunctions. | Training next-generation MLIPs and electronic property prediction models. |

| OMC25 Dataset [10] | Dataset | ~27M molecular crystal structures from DFT relaxation trajectories. | Developing models for crystalline materials and solid-state properties. |

| FGBench [14] | Dataset & Benchmark | 625K problems with functional-group annotations for reasoning. | Training and evaluating LLMs on fine-grained molecular reasoning tasks. |

| MoleculeNet [8] [2] | Benchmark Suite | Curated collection of datasets for molecular property prediction. | Standardized benchmarking of machine learning models. |

| KA-GNN [13] | Model Architecture | Integrates KANs into GNNs for node, edge, and graph-level processing. | Developing accurate and interpretable graph models with strong theoretical guarantees. |

| ACS Training Scheme [2] | Training Method | Adaptive checkpointing for multi-task GNNs to mitigate negative transfer. | Reliable model training in ultra-low-data regimes and with imbalanced tasks. |

The field of molecular representation is evolving toward richer, more physically grounded, and multi-faceted paradigms. The integration of geometric information, the application of novel mathematical frameworks like KANs, and the fusion of multiple data modalities are pushing the boundaries of what is possible in computational molecular science. Selecting the appropriate representation is the critical first step in building ML models that can reliably accelerate the discovery of new molecules and materials.

Molecular property prediction stands as a cornerstone of modern computational chemistry, enabling the accelerated discovery of novel pharmaceuticals, materials, and energy solutions. Machine learning (ML) models have emerged as powerful tools for predicting molecular properties, but their performance and generalizability are fundamentally constrained by the quality, breadth, and composition of the datasets on which they are trained. Understanding the landscape of available molecular property datasets—including their strengths, inherent biases, and methodological limitations—is therefore a critical prerequisite for effective research in this domain. This guide provides a comprehensive technical overview of popular molecular property datasets, systematically categorizes their inherent biases, and outlines experimental protocols to mitigate these challenges, framed within the broader context of initiating ML research for molecular property prediction.

The following table summarizes key characteristics of major molecular property datasets, highlighting their scope, common applications, and illustrative examples.

Table 1: Overview of Major Molecular Property Datasets

| Dataset Name | Primary Focus / Property Types | Approximate Size (Molecules) | Notable Features / Use Cases | Example Properties |

|---|---|---|---|---|

| OMol25 (Open Molecules 2025) [17] [12] | Quantum chemical calculations for neural network potentials | Over 100 million calculations | High-accuracy DFT (ωB97M-V/def2-TZVPD) on diverse structures; targets biomolecules, electrolytes, metal complexes [17]. | Potential energy surfaces, atomic forces, energies of large systems [17]. |

| MoleculeNet [18] [19] [2] | Curated benchmark for multiple property types | Varies by sub-dataset (e.g., 600-4200 in common sets) [18] | Aggregates multiple public sources; standardized benchmarks for ML model evaluation [19]. | Quantum mechanics, physical chemistry, biophysics (e.g., ESOL, FreeSolv, BACE) [18] [19]. |

| FGBench [19] | Functional group-level property reasoning | 625K question-answer pairs | Focuses on impact of single and multiple functional groups on properties; includes atom-level localization [19]. | Property changes from functional group additions/deletions [19]. |

| OMC25 (Open Molecular Crystals 2025) [10] | Molecular crystal structures and properties | Over 27 million structures | Dispersion-inclusive DFT relaxation trajectories of organic molecular crystals [10]. | Crystal structure properties, lattice energies [10]. |

| Therapeutic Data Commons (TDC) [20] | ADME (Absorption, Distribution, Metabolism, Excretion) and toxicity | Varies by sub-dataset | Focus on preclinical drug discovery safety and pharmacokinetic parameters [20]. | Half-life, clearance, toxicity endpoints [20]. |

Inherent Biases in Molecular Property Datasets

Despite their utility, all molecular property datasets contain inherent biases that can severely compromise model performance and generalizability if not properly addressed.

Chemical Space and Structural Biases

Datasets often suffer from limited diversity and uneven coverage of the chemical space. Early datasets were frequently restricted to simple organic molecules with only a handful of elements [17]. Although newer datasets like OMol25 have made significant strides by including biomolecules, electrolytes, and metal complexes, gaps remain in areas like polymer chemistry [12]. This structural bias means models may perform poorly on molecule classes underrepresented in training data. Furthermore, the source of molecular structures can introduce bias; for instance, datasets built primarily from commercial compound libraries may overrepresent certain structural motifs while underrepresenting natural products or novel scaffolds.

Experimental and Annotation Biases

Significant distributional misalignments often exist between different data sources for the same property, arising from variations in experimental protocols, measurement conditions, or biological assays [20]. Naive integration of such heterogeneous data can introduce noise and degrade model performance rather than improve it [20]. For ADME properties in particular, inconsistencies have been identified between gold-standard sources and commonly used benchmarks like TDC [20]. These annotation biases are especially problematic in low-data regimes where researchers must aggregate multiple sources to achieve sufficient training volume.

Property Value Distribution Biases

The distribution of property values within a dataset creates another critical bias. Most ML models excel at interpolation but struggle with extrapolation, making it difficult to identify molecular extremes—precisely the candidates often sought in materials and drug discovery [18]. When training data lacks representation of certain property value ranges (e.g., exceptionally high binding affinity or extreme stability), models cannot reliably predict these out-of-distribution (OOD) values [18]. This range limitation fundamentally constrains a model's utility in virtual screening for novel materials or drugs with exceptional characteristics.

Methodologies for Bias Assessment and Mitigation

Workflow for Dataset Evaluation and Bias Mitigation

Implementing a systematic workflow for dataset evaluation is crucial for robust molecular property prediction. The following diagram outlines key stages from initial dataset analysis to model training, highlighting steps to identify and address common biases.

Key Experimental Protocols

Data Consistency Assessment (DCA) Protocol

Purpose: To identify distributional misalignments, outliers, and annotation discrepancies across datasets before integration [20]. Tools: AssayInspector package or similar custom analysis [20]. Procedure:

- Input Preparation: Compile datasets from multiple sources for the target property. Include molecular structures (SMILES) and measured property values.

- Statistical Comparison: Calculate descriptive statistics (mean, standard deviation, quartiles) for each data source. Perform pairwise two-sample Kolmogorov-Smirnov tests to compare property value distributions across sources [20].

- Chemical Space Analysis: Generate molecular descriptors (e.g., ECFP4 fingerprints, RDKit 2D descriptors) and project into lower-dimensional space using UMAP. Visually inspect for clustering by data source rather than chemical similarity [20].

- Overlap Analysis: Identify molecules present in multiple sources and quantify annotation differences. Flag significant discrepancies for further investigation.

- Report Generation: Document all inconsistencies, including datasets with significantly different distributions, conflicting annotations, or divergent chemical spaces.

Protocol for Out-of-Distribution (OOD) Property Prediction

Purpose: To enhance model capability to extrapolate to property values outside the training distribution [18]. Method: Bilinear Transduction for zero-shot extrapolation [18]. Procedure:

- Data Partitioning: Split data into training and test sets, ensuring the test set contains property values outside the range represented in the training data.

- Model Training:

- Instead of learning to predict property values directly, train the model to learn how property values change as a function of differences in material representations [18].

- Reparameterize the prediction problem: during inference, predict property values based on a chosen training example and the representation-space difference between it and the new sample [18].

- Evaluation: Assess performance using OOD-specific metrics including extrapolative precision (fraction of true top candidates correctly identified) and recall of high-performing OOD candidates [18].

Protocol for Low-Data Regime Modeling

Purpose: To achieve accurate predictions when labeled data is extremely scarce [2]. Method: Adaptive Checkpointing with Specialization (ACS) for multi-task graph neural networks [2]. Procedure:

- Architecture Setup: Implement a shared graph neural network (GNN) backbone with task-specific multi-layer perceptron (MLP) heads.

- Training with Checkpointing:

- Train the model on all available tasks simultaneously.

- Monitor validation loss for each task independently.

- Checkpoint the best backbone-head pair for each task whenever its validation loss reaches a new minimum [2].

- Inference: Use the specialized backbone-head pair for each task during prediction.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Software Tools and Methodologies for Molecular Property Prediction

| Tool / Method Name | Type | Primary Function | Application Context |

|---|---|---|---|

| AssayInspector [20] | Software Package | Data consistency assessment; detects distributional misalignments, outliers, and batch effects across datasets. | Preprocessing before model training; identifying dataset discrepancies in ADME and physicochemical properties [20]. |

| Bilinear Transduction [18] | Machine Learning Method | Enables zero-shot extrapolation to out-of-distribution (OOD) property values. | Virtual screening for materials/molecules with property values beyond the training distribution [18]. |

| ACS (Adaptive Checkpointing with Specialization) [2] | Training Scheme | Mitigates negative transfer in multi-task learning under data imbalance. | Accurate modeling in ultra-low data regimes (e.g., <30 samples per task) [2]. |

| ChemXploreML [5] | Desktop Application | User-friendly ML for property prediction without programming; includes molecular embedders. | Rapid prototyping and prediction of physical chemical properties by experimental chemists [5]. |

| Functional Group Annotation (e.g., AccFG) [19] | Analysis Method | Precisely annotates and localizes functional groups within molecules. | Building interpretable, structure-aware models and analyzing structure-activity relationships [19]. |

The evolving landscape of molecular property datasets presents both unprecedented opportunities and significant challenges for ML researchers. While foundational datasets like MoleculeNet provide standardized benchmarks, and massive new resources like OMol25 offer unprecedented quantum chemical data, success in this field requires careful attention to the inherent biases within these datasets. Researchers must systematically address limitations in chemical space coverage, experimental inconsistencies, and property value distributions through rigorous assessment protocols and specialized methodological approaches. By implementing the data consistency checks, OOD prediction strategies, and low-data regime techniques outlined in this guide, researchers can build more robust, reliable, and generalizable molecular property prediction models that accelerate scientific discovery across chemistry, materials science, and drug development.

Understanding the Applicability Domain of a Model

In machine learning for molecular property prediction, the Applicability Domain (AD) refers to the region of chemical space where the model's predictions are expected to be reliable and accurate [21]. The concept is fundamental to ensuring trustworthy predictions in drug discovery and materials science, where decisions based on model outputs can significantly impact research outcomes and resource allocation. According to the OECD principles for model validation, defining the AD is a crucial requirement for quantitative structure-activity relationship (QSAR) models, emphasizing that reliable predictions are generally limited to chemicals structurally similar to those used in model training [22].

The core challenge stems from a fundamental machine learning limitation: models are typically trained on specific types or ranges of data, and their performance often degrades when applied to structurally dissimilar compounds [23] [24]. This is particularly problematic in molecular property prediction, where the vastness of synthesizable chemical space means that most potential compounds will be distant from previously characterized molecules [24]. Understanding and defining the AD allows researchers to identify the boundaries of their models, recognize potentially unreliable predictions, and make informed decisions about model application.

Core Methodologies for Defining Applicability Domains

Taxonomy of AD Methods

Various methodologies have been developed to define and characterize the applicability domain of predictive models. These approaches can be broadly classified into several categories based on their underlying principles and implementation strategies [22].

Table: Classification of Applicability Domain Methods

| Method Category | Core Principle | Representative Techniques | Key Advantages | Main Limitations |

|---|---|---|---|---|

| Range-Based | Defines boundaries based on descriptor ranges in training data | Bounding Box, PCA Bounding Box [22] | Simple to implement and interpret | Cannot identify empty regions or descriptor correlations |

| Geometric | Establishes geometric boundaries enclosing training data | Convex Hull [22] | Clear boundary definition | Computationally complex for high dimensions; ignores internal data distribution |

| Distance-Based | Measures similarity/distance to training set representatives | Leverage, k-NN, Mahalanobis Distance [22] [21] | Intuitive similarity measures | Threshold selection can be arbitrary; depends on distance metric choice |

| Density-Based | Estimates probability density of training data in feature space | Kernel Density Estimation (KDE) [23] | Naturally accounts for data sparsity; handles complex geometries | Bandwidth selection impacts results; computationally intensive for large datasets |

| Model-Specific | Leverages internal model characteristics for uncertainty | Bayesian Neural Networks, Ensemble Variance [21] | Directly measures prediction uncertainty | Tied to specific model architectures |

Figure 1: Taxonomy of Applicability Domain Methods

Detailed Technical Implementation

Kernel Density Estimation (KDE) Approach

Kernel Density Estimation has emerged as a powerful technique for AD determination due to its ability to naturally account for data sparsity and handle arbitrarily complex geometries of data distributions [23]. The fundamental principle involves estimating the probability density function of the training data in feature space, where regions with high density are considered in-domain, and regions with low density are considered out-of-domain.

The multivariate kernel density estimate for a query point ( x ) is given by:

[ \hat{f}h(x) = \frac{1}{n} \sum{i=1}^n Kh(x - xi) ]

where ( xi ) are the training samples, ( n ) is the number of training points, ( Kh ) is the kernel function with bandwidth ( h ). The Gaussian kernel is commonly used:

[ K_h(u) = \frac{1}{(2\pi)^{d/2}h^d} \exp\left(-\frac{\|u\|^2}{2h^2}\right) ]

where ( d ) is the dimensionality of the feature space. The bandwidth parameter ( h ) controls the smoothness of the density estimate and is typically optimized via cross-validation [23].

Distance-Based Methods

Distance-based methods are among the most widely used approaches for AD definition, particularly in QSAR modeling [22]. These methods calculate the distance of query compounds from a reference point in the training data descriptor space, with several distance metrics commonly employed:

Mahalanobis Distance: Accounts for descriptor correlations through the covariance matrix [ D_M(x) = \sqrt{(x - \mu)^T \Sigma^{-1} (x - \mu)} ] where ( \mu ) is the mean of training descriptors and ( \Sigma ) is the covariance matrix [22].

k-Nearest Neighbor (k-NN) Distance: Measures proximity to the closest training instances, with common variants including:

- ( \kappa ): Distance to the k-th nearest neighbor

- ( \gamma ): Mean distance to k-nearest neighbors

- ( \delta ): Length of the mean vector to k-nearest neighbors [21]

Leverage: Based on the hat matrix in regression analysis [ hi = xi^T (X^T X)^{-1} x_i ] where ( X ) is the model matrix of training data [21].

Bayesian and Ensemble Methods

Model-specific approaches leverage internal model characteristics to estimate prediction uncertainty. Bayesian Neural Networks provide a principled framework for uncertainty quantification by learning probability distributions over model weights rather than point estimates [21]. The predictive uncertainty can be captured through techniques such as Monte Carlo dropout or variational inference.

Similarly, ensemble methods generate uncertainty estimates by measuring the variance in predictions across multiple models [21]. The standard deviation of predictions from a heterogeneous or homogeneous ensemble serves as an indicator of model confidence for a given input.

Experimental Protocols and Validation Frameworks

Benchmarking AD Methods

Rigorous evaluation of applicability domain methods requires standardized protocols and metrics. Recent research has established comprehensive frameworks for comparing AD techniques across multiple models and datasets [21]. A typical validation workflow involves:

- Dataset Curation: Collecting diverse molecular datasets with experimentally validated properties

- Model Training: Developing QSAR models using various algorithms (random forests, neural networks, etc.)

- AD Method Application: Implementing multiple AD techniques on the trained models

- Performance Assessment: Evaluating how effectively each AD method identifies unreliable predictions

Table: Experimental Framework for AD Method Validation

| Validation Component | Implementation Details | Evaluation Metrics |

|---|---|---|

| Data Splitting | Scaffold-based splits to assess extrapolation capability | Coverage rate, domain size |

| Domain Definition | Four domain types: chemical, residual (point/group), uncertainty [23] | Precision, recall, F1-score |

| Threshold Selection | Percentile-based, error-based, or density-based cutoffs | ROC curves, precision-recall curves |

| Performance Correlation | Relationship between AD measures and prediction errors | Spearman correlation, monotonicity assessment |

| Comparative Analysis | Benchmark against multiple baseline methods | Relative improvement, statistical significance |

Case Study: CYP2B6 Inhibition Prediction

A practical implementation of AD analysis was demonstrated in a study aiming to expand the applicability domain for CYP2B6 inhibition prediction [25] [26]. The experimental protocol included:

Data Collection: CYP2B6 inhibition IC50 values were downloaded from ChEMBL and binarized (active: IC50 ≤ 10 μM; inactive: IC50 > 10 μM), resulting in 100 active and 401 inactive compounds [26].

Applicability Domain Definition: A distance-based approach defined the AD using Euclidean distance on molecular fingerprints (MACCS keys). Chemical diversity was visualized using t-distributed stochastic neighbor embedding (t-SNE) plots [25].

Domain Expansion Strategy: A drug repurposing library was screened to identify plates with the highest average minimum Euclidean distance from the training set. Selected compounds were tested experimentally for CYP2B6 inhibition [26].

Model Retraining and Evaluation: New experimental data was incorporated into the training set, and model performance was re-evaluated using one-class classification to assess domain expansion efficacy [26].

The results demonstrated that while intentional expansion of the applicability domain did not substantially increase model performance, it successfully identified new CYP2B6 inhibitors (vilanterol and allylestrenol) and increased training set diversity [25].

Protocol for KDE-Based AD Assessment

A general protocol for implementing KDE-based applicability domain assessment includes these critical steps [23]:

- Feature Selection: Choose relevant molecular descriptors (e.g., ECFP, physicochemical properties)

- KDE Training:

- Standardize features to zero mean and unit variance

- Optimize bandwidth parameter via cross-validation

- Fit KDE model to training data

- Threshold Determination:

- Calculate density values for training compounds

- Set density threshold based on desired coverage (e.g., 5th percentile of training densities)

- Domain Classification:

- For query molecules, compute density estimate using trained KDE

- Classify as in-domain if density exceeds threshold, out-of-domain otherwise

- Validation:

- Assess correlation between density values and prediction errors

- Verify that out-of-domain compounds show higher errors and chemical dissimilarity

Table: Key Research Reagents and Computational Tools

| Tool/Resource | Type | Function/Purpose | Example Applications |

|---|---|---|---|

| MACCS Keys | Molecular Descriptor | 166-bit structural keys encoding substructural features | Chemical space analysis, similarity assessment [26] |

| ECFP Fingerprints | Molecular Descriptor | Extended-Connectivity Fingerprints capturing circular substructures | Similarity searching, machine learning features [27] |

| t-SNE | Visualization Algorithm | Dimensionality reduction for chemical space visualization | Comparing training and test set distributions [25] |

| KDE Implementation | Statistical Tool | Non-parametric density estimation in high-dimensional spaces | Determining data-dense regions in feature space [23] |

| Bayesian Neural Networks | Modeling Framework | Provides uncertainty estimates alongside predictions | Confidence estimation for molecular property prediction [21] |

| One-Class Classification | Validation Method | Determines if new data belongs to training distribution | Evaluating applicability domain boundaries [26] |

| Federated Learning Platforms | Collaborative Framework | Enables multi-institutional model training without data sharing | Expanding chemical space coverage for ADMET models [28] |

Advanced Applications and Future Directions

AD for Generative Molecular Design

The concept of applicability domains is extending beyond predictive models to generative artificial intelligence for molecular design [27]. In this context, the AD defines the chemical space where generative models produce structures with acceptable drug-likeness and synthesizability. Research has explored various AD definitions for generative models, combining structural similarity to training compounds, physicochemical property similarity, unwanted substructure filters, and quantitative drug-likeness estimates (QED) [27].

Studies demonstrate that appropriate AD definitions strongly influence the drug-likeness of AI-generated molecules. Molecular Turing tests, where generated molecules are evaluated by medicinal chemists alongside human-designed compounds, provide validation of whether generative AD definitions successfully produce chemically plausible structures [27].

Federated Learning for Domain Expansion

Federated learning has emerged as a promising approach for expanding model applicability domains by enabling collaborative training across distributed datasets without centralizing sensitive data [28]. This technique is particularly valuable for ADMET prediction, where individual organizations typically have limited data coverage of relevant chemical space.

Key benefits of federated learning for AD expansion include:

- Altering the geometry of chemical space a model can learn from, improving coverage

- Systematic outperformance of local baselines, with improvements scaling with participant diversity

- Expanded applicability domains, with increased robustness across unseen scaffolds

- Persistent benefits across heterogeneous data sources and assay protocols [28]

Large-scale initiatives like the MELLODDY project have demonstrated that cross-pharma federated learning at unprecedented scale unlocks benefits in QSAR without compromising proprietary information [28].

Figure 2: Applicability Domain Assessment Workflow

Defining and understanding the applicability domain of molecular property prediction models is not merely an academic exercise but a practical necessity for reliable drug discovery and materials science research. As machine learning continues to transform molecular design, robust AD methods provide the guardrails that enable responsible application of these powerful technologies. The continuing evolution of AD methodologies—from simple distance-based approaches to sophisticated density estimation and Bayesian uncertainty quantification—reflects the growing recognition that knowing when not to trust a model is as important as knowing when to trust it. For researchers beginning their journey in molecular property prediction, establishing rigorous practices for applicability domain assessment should be considered an essential component of any modeling workflow.

Building Your Model: Predictive and Generative Modeling Approaches

The accurate prediction of molecular properties is a critical challenge in accelerating drug discovery and materials science. Traditional experimental methods are often associated with significant costs and time investments [5]. Machine learning (ML) has emerged as a powerful tool to mitigate these burdens; however, selecting the appropriate modeling approach is paramount for success. This guide provides a structured framework for researchers and drug development professionals to choose between two fundamental ML paradigms: deterministic and probabilistic modeling. The core distinction lies in their treatment of uncertainty. Deterministic models provide single, point estimates for a given molecule, while probabilistic models output a distribution of possible values, thereby quantifying the model's confidence in its own predictions [29] [30]. Within the context of molecular property prediction—a field often characterized by small, sparse, and noisy datasets—understanding and leveraging this distinction is key to building reliable and informative models [31] [32].

Core Conceptual Differences

At its heart, the choice between a deterministic and probabilistic model is a choice about how to handle uncertainty. This section breaks down the fundamental characteristics of each approach.

Deterministic Machine Learning

Deterministic models operate on predefined rules and logic. Given the same input (a molecular representation), a deterministic system will always produce the same output (a predicted property value) [33]. These models are trained to optimize a scalar-valued loss function, such as mean squared error or cross-entropy, and provide a single best estimate for each input [29].

Key Characteristics:

- Predictability: Outputs are entirely determined by the inputs and the programmed model weights.

- Transparency: The logic behind the system's decision-making is often more easily understandable and auditable.

- Limited Adaptability: Cannot inherently express confidence or learn from uncertainty without structural changes [30] [33].

Probabilistic Machine Learning

Probabilistic models, in contrast, incorporate uncertainty and express outcomes as likelihoods rather than certainties. They use statistical models to analyze data and provide a probabilistic characterization of their predictions [29] [34]. Instead of a single value, the output may be a distribution (e.g., a mean and variance for a continuous property) which allows the model to know what it does not know [29].

Key Characteristics:

- Statistical Reasoning: Uses probability theory to express confidence in outcomes.

- Adaptability: Can evolve as new data becomes available and is designed to work with ambiguous or incomplete information.

- Uncertainty Quantification (UQ): Provides a natural framework for quantifying predictive uncertainty, which is crucial for identifying unreliable predictions [30] [32].

The table below summarizes the core differences between these two approaches.

Table 1: Core Differences Between Deterministic and Probabilistic Models

| Factor | Deterministic Model | Probabilistic Model |

|---|---|---|

| Output Type | Single, point estimate (e.g., a property value) | Probability distribution (e.g., mean and variance) |

| Uncertainty Handling | Does not quantify its own uncertainty | Explicitly quantifies predictive uncertainty |

| Data Requirements | Requires complete, clean data to function optimally | Tolerates incomplete or noisy data better |

| Transparency | Easy to audit and explain due to fixed logic paths | Can be a "black box"; may require tools for explainability |

| Primary Strength | Precision and predictability in well-defined scenarios | Pattern recognition and decision-making under uncertainty |

Molecular Property Prediction Context

The theoretical differences between deterministic and probabilistic models have profound practical implications in molecular property prediction. Drug discovery pipelines are long and complex, with a low overall success rate, creating a strong business need for technologies that can lower attrition and costs [35]. ML models are applied across all stages, from identifying novel targets and optimizing small-molecule compounds to analyzing digital pathology data [35].

A significant challenge in this domain is that the effectiveness of ML is often limited by scarce and incomplete experimental datasets [31]. This "low-data regime" makes it difficult for models to generalize well. Furthermore, poor predictive accuracy is often related to two key issues:

- Regions of chemical space with steep structure-activity relationships (SAR), where small structural changes lead to large property differences.

- A lack of representation of test molecules in the training data [32].

Probabilistic models directly address these challenges by providing a measure of reliability for their predictions. This allows researchers to flag molecules where the model's prediction is likely to be inaccurate, either because the molecule is too different from the training set or because it lies in a structurally complex region of the chemical space [32]. As noted in recent research, "reliable methods to quantify the predictive uncertainty of machine learning models can significantly increase the impact of molecular property prediction" in applications like active learning and ML-guided property optimization [32].

Technical Comparison and Selection Framework

Choosing the right model is a contextual decision. The following table and workflow diagram provide a structured guide for researchers.

Table 2: Model Selection Guide for Molecular Property Prediction

| Decision Factor | Deterministic Model | Probabilistic Model |

|---|---|---|

| Data Quality & Availability | Large, high-quality datasets with precise labels and consistent identifiers. | Smaller, noisier, or fragmented datasets; data with inherent uncertainty. |

| Regulatory & Audit Needs | High-priority; decisions must be explainable and reproducible (e.g., for regulatory filings). | Less critical, or can be addressed with additional explainability tools (e.g., SHAP values). |

| Task Nature | Prediction of properties where a single, best answer is required and uncertainty is low. | Prediction in complex SAR regions or for applications like active learning, where understanding confidence is key. |

| Desired Output | A single property value for each molecule (e.g., predicted IC50). | A value with a confidence interval (e.g., IC50 ± SD) or a full probability distribution. |

| Example Applications | Initial high-throughput screening where speed and a clear cutoff are needed. | Prioritizing compounds for synthesis in a lead optimization campaign, where knowing the confidence can prevent wasted resources. |

Diagram 1: Model Selection Workflow

Experimental Protocols and Implementations

To ground the theoretical comparison, this section outlines detailed methodologies for implementing both model types in a molecular property prediction task.

A Deterministic CNN for Property Prediction

A deterministic approach can be implemented using a Convolutional Neural Network (CNN) that operates on molecular graph representations or other structured data [29] [35].

Architecture:

- Input Layer: Accepts a featurized representation of the molecule (e.g., a graph, fingerprint, or image).

- Convolutional Layer: Performs a convolutional operation with a set of filters and a ReLU activation function to extract local molecular features.

- Max-Pooling Layer: Reduces the spatial dimensionality of the feature maps, retaining the most salient information.

- Flatten Layer: Converts the pooled feature maps into a one-dimensional vector.

- Dense (Output) Layer: A fully-connected layer with a linear activation function (for regression) or softmax (for classification) to produce the final, single-value prediction [29].

Training Protocol:

- Loss Function: Mean Squared Error (MSE) for regression tasks; Sparse Categorical Crossentropy for classification.

- Optimizer: RMSprop or Adam.

- Training: The model is trained to minimize the difference between its scalar output and the experimental value for each molecule in the training set [29].

A Probabilistic Model with Uncertainty Quantification

A probabilistic model extends the deterministic architecture to output a distribution. One effective method is to model the output as a Gaussian distribution.

Architecture:

- Feature Extraction Layers: Identical to the deterministic CNN (Convolutional, Max-Pooling, Flatten).

- Dense Layer: A fully-connected layer that maps the flattened features to a higher-dimensional space.

- Probabilistic Output Layer: This layer is configured to output two parameters: the mean (μ) and the variance (σ²) of a Gaussian distribution. The loss function is then the negative log-likelihood of the training data under this predicted distribution [29] [34] [32].

Training Protocol:

- Loss Function: Negative Log-Likelihood (NLL). This function penalizes the model both for incorrect mean predictions and for high variance when it should be confident (and vice versa).

- Optimizer: RMSprop or Adam.

- Training: The model learns to predict a distribution for each molecule. The mean represents the most likely property value, and the variance represents the model's uncertainty in that prediction [29] [32].

Diagram 2: Deterministic vs. Probabilistic Model Architectures

Successfully implementing ML models for molecular property prediction requires a suite of computational tools and datasets.

Table 3: Essential Research Reagents for Molecular Property Prediction

| Tool / Resource | Type | Function in Research |

|---|---|---|

| TensorFlow with TensorFlow Probability | Programmatic Framework | Provides a flexible, open-source ecosystem for building and training both deterministic and probabilistic deep learning models [29] [35]. |

| PyTorch | Programmatic Framework | An alternative open-source ML library popular for research, offering dynamic computation graphs and robust support for deep learning [35]. |

| ChemXploreML | Desktop Application | A user-friendly, offline-capable app that allows chemists to make property predictions without deep programming expertise, automating molecular featurization [5]. |

| Graph Neural Networks (GNNs) | Model Architecture | A specialized neural network architecture that operates directly on graph-based molecular structures, often achieving state-of-the-art results [31] [36]. |

| Multi-task Learning | Training Methodology | A technique to improve model generalization by training a single model on multiple, related property prediction tasks simultaneously, which is especially useful with sparse data [31]. |

| QM9 Dataset | Benchmark Dataset | A public dataset containing quantum-mechanical properties for ~134,000 small organic molecules, commonly used for training and benchmarking models [31]. |

| Active Learning Loop | Experimental Design | A process that uses a model's uncertainty estimates (from a probabilistic model) to intelligently select which compounds to test next in an experiment, maximizing information gain [32]. |

The journey to selecting the right modeling approach for molecular property prediction is a strategic one. Deterministic models offer simplicity, speed, and transparency, making them suitable for well-defined problems with abundant, high-quality data. However, the inherent challenges of molecular data—its sparsity, noise, and complex structure-activity landscapes—often make probabilistic models the more robust and informative choice. By quantifying predictive uncertainty, probabilistic models empower researchers to make risk-aware decisions, prioritize experimental resources effectively, and ultimately increase the impact of machine learning in accelerating drug discovery and materials design. A modern research workflow may even leverage both, using deterministic models for initial screening and probabilistic models for finer, more critical optimization tasks. The key is to align the model's capabilities with the project's specific data context, regulatory requirements, and strategic goals.

Model Architectures for Different Representations

Molecular property prediction stands as a cornerstone in accelerated drug discovery and materials science. The paradigm has shifted from traditional descriptor-based machine learning to geometric deep learning, where models directly learn from molecular graph structures. This transition enables more accurate capture of intricate topological and chemical information, moving beyond the limitations of manual feature engineering [37]. The core challenge lies in selecting and implementing model architectures that align with specific molecular representations and property characteristics. This guide provides a comprehensive technical overview of contemporary architectures, their experimental protocols, and performance benchmarks to inform researchers' model selection and development.

Foundational Architectures and Their Representations

Core Architectural Paradigms

Different molecular properties stem from distinct structural and geometric characteristics, necessitating specialized model architectures for their prediction. The following table summarizes the primary architectural families, their core principles, and ideal application domains.

Table 1: Foundational Model Architectures for Molecular Property Prediction

| Architecture | Core Principle | Molecular Representation | Ideal for Property Types |

|---|---|---|---|

| Graph Isomorphism Network (GIN) [37] | Uses injective aggregation functions to capture local node neighborhoods and substructures, providing high expressiveness for graph isomorphism. | 2D topological graph (atoms=nodes, bonds=edges). | Properties determined by molecular topology/functional groups (e.g., carcinogenicity in MUTAG [37]). |

| Equivariant GNN (EGNN) [37] | Incorporates 3D atomic coordinates and preserves Euclidean symmetries (translation, rotation, reflection) in its operations. | 3D geometric graph (includes spatial atom coordinates). | Quantum chemical and spatially-sensitive properties (e.g., dipole moment, Air-Water Partition Coefficient - log Kaw [37]). |

| Graphormer [37] | Integrates graph topology with global attention mechanisms, allowing nodes to interact directly based on structural encoding. | 2D/3D graph enhanced with spatial encoding. | Complex properties requiring long-range dependency modeling (e.g., bioactivity on OGB-MolHIV [37]). |

| Kolmogorov-Arnold GNN (KA-GNN) [13] | Replaces standard MLPs with learnable, interpretable univariate functions (e.g., Fourier series) on graph edges within the GNN pipeline. | Standard 2D molecular graph. | General molecular prediction with enhanced interpretability and parameter efficiency [13]. |

| Multi-Task GNN with ACS [2] | Combines a shared GNN backbone with task-specific heads and adaptive checkpointing to mitigate negative transfer in multi-task learning. | 2D molecular graph. | Predicting multiple properties simultaneously, especially in ultra-low data regimes for specific tasks [2]. |

Architectural Selection Workflow

The following diagram outlines the logical decision process for selecting an appropriate model architecture based on the property of interest and available data.

Diagram 1: Model Selection Workflow

Advanced and Specialized Frameworks

Kolmogorov-Arnold Networks (KANs) for Graphs

KA-GNNs represent a recent innovation by integrating KAN modules into the three fundamental components of GNNs: node embedding, message passing, and readout. Unlike traditional multi-layer perceptrons (MLPs) that use fixed activation functions on nodes, KANs place learnable univariate functions (e.g., Fourier series, B-splines) on edges [13]. This design offers superior expressivity, parameter efficiency, and inherent interpretability. The Fourier-series-based functions in KA-GNNs are particularly effective for capturing both low-frequency and high-frequency structural patterns in molecular graphs, providing strong theoretical approximation guarantees grounded in Fourier analysis and Carleson's theorem [13].

Experimental Protocol for KA-GNNs:

- Architecture Variants: Two primary variants are developed: KA-Graph Convolutional Networks (KA-GCN) and KA-Graph Attention Networks (KA-GAT). In KA-GCN, initial node embeddings are created by passing concatenated atomic and neighboring bond features through a KAN layer. Message passing follows the GCN scheme, with node updates via residual KANs. KA-GAT further incorporates edge embeddings initialized with KAN layers [13].

- Training & Evaluation: Models are evaluated across seven diverse molecular benchmarks. Performance is measured by prediction accuracy (e.g., ROC-AUC, MAE) and computational efficiency. Interpretability is qualitatively assessed by the model's ability to highlight chemically meaningful substructures [13].

- Key Results: KA-GNNs consistently outperform conventional GNNs in accuracy and computational efficiency. The learned network mappings often align with known chemical principles, providing valuable insights for lead optimization [13].

Frameworks for Imperfect and Multi-Task Data

Real-world molecular datasets are often imperfectly annotated, meaning most properties are labeled for only a subset of molecules. The OmniMol framework addresses this by modeling the entire molecule-property universe as a hypergraph, where each property is a hyperedge connecting all molecules annotated with it [38]. This structure captures three key relationships: molecule-molecule, molecule-property, and property-property.

Experimental Protocol for OmniMol:

- Architecture: Built upon a Graphormer backbone, OmniMol integrates a task-routed Mixture of Experts (t-MoE) that uses task embeddings to dynamically activate specialized model pathways. This enables task-adaptive predictions with O(1) complexity, independent of the number of tasks. It also includes an SE(3)-equivariant encoder to incorporate 3D molecular conformation and enforce physical symmetries [38].

- Training: The model is trained end-to-end on all available molecule-property pairs. A scale-invariant message passing strategy and equilibrium conformation supervision are used to facilitate learning-based conformational relaxation [38].

- Key Results: OmniMol achieves state-of-the-art performance on 47 out of 52 ADMET-P (Absorption, Distribution, Metabolism, Excretion, Toxicity - Physicochemical) prediction tasks and demonstrates strong chirality awareness, which is critical for drug safety [38].

For multi-task learning, the Adaptive Checkpointing with Specialization (ACS) scheme effectively mitigates negative transfer. Negative transfer occurs when updates from one task degrade performance on another, a common issue in multi-task learning with imbalanced data [2].

Experimental Protocol for ACS:

- Architecture: A single, shared GNN backbone learns general-purpose molecular representations. These feed into separate, task-specific MLP heads.

- Training Scheme: Unlike standard MTL that saves one final model, ACS continuously monitors the validation loss for each task. It checkpoints the best backbone-head pair for a task whenever that task's validation loss hits a new minimum. This results in a specialized model for each task, protecting them from detrimental parameter updates later in training [2].

- Key Results: On benchmarks like ClinTox, SIDER, and Tox21, ACS matched or surpassed state-of-the-art supervised methods. It demonstrated particular efficacy in ultra-low data regimes, accurately predicting sustainable aviation fuel properties with as few as 29 labeled samples per task [2].

Benchmarking and Performance

Quantitative benchmarking is essential for evaluating model efficacy across diverse property types. The following table synthesizes key performance metrics from comparative analyses of major architectures.

Table 2: Architectural Benchmarking on Standardized Tasks

| Architecture | Dataset & Task | Key Metric | Reported Performance | Comparative Context |

|---|---|---|---|---|

| Graphormer [37] | OGB-MolHIV (Bioactivity) | ROC-AUC | 0.807 | Best performance on this bioactivity classification task. |

| Graphormer [37] | MoleculeNet (log Kow) | Mean Absolute Error (MAE) | 0.18 | Best performance for this partition coefficient. |

| EGNN [37] | MoleculeNet (log Kaw) | MAE | 0.25 | Best performance for this geometry-sensitive property. |

| EGNN [37] | MoleculeNet (log K_d) | MAE | 0.22 | Best performance for this soil-water partition coefficient. |

| GIN [37] | MUTAG (Carcinogenicity) | Accuracy | ~0.90 (inferred) | Not explicitly stated, but performs well on topology-based tasks. |

| ACS Scheme [2] | ClinTox (FDA approval/Toxicity) | Average Improvement | +15.3% vs. STL | Effective mitigation of negative transfer in multi-task setting. |

| KA-GNN [13] | Multiple Benchmarks (7 datasets) | Prediction Accuracy | Consistent outperformance vs. GNNs | Superior accuracy and computational efficiency. |

The Scientist's Toolkit

Implementing and evaluating these models requires a standardized set of software tools, datasets, and molecular featurization methods.

Table 3: Essential Research Reagents for Molecular Property Prediction

| Tool / Resource | Type | Primary Function | Example in Context |

|---|---|---|---|

| MoleculeNet [19] [37] | Benchmark Dataset Collection | Provides standardized datasets for fair model comparison across quantum mechanics, physical chemistry, and biophysics. | Used as the primary benchmark for models like GIN, EGNN, and Graphormer [37]. |

| OGB (Open Graph Benchmark) [37] | Benchmark Dataset Collection | Provides large-scale, realistic benchmark datasets for graph ML. | OGB-MolHIV is a key dataset for evaluating bioactivity prediction [37]. |

| Functional Group Annotations | Molecular Featurization | Provides fine-grained, chemically meaningful substructures that link structure to property. | The FGBench dataset provides 625K problems with FG annotations to enhance LLM and GNN reasoning [19]. |

| Graph Kernel Algorithms | Similarity Metric | Quantifies structural similarity between molecules from a global perspective. | Used in MSSM-GNN to build a similarity graph, enhancing molecular representation learning [39]. |

| Adaptive Checkpointing (ACS) [2] | Training Scheme | Mitigates negative transfer in multi-task learning with imbalanced data. | Enabled accurate prediction of fuel properties with only 29 labeled samples [2]. |

| SE(3)-Equivariant Encoder [38] | Model Component | Encodes 3D molecular conformation while respecting physical symmetries (rotation/translation). | A core component of OmniMol, enabling chirality-aware predictions without expert features [38]. |

The field of molecular property prediction has evolved beyond one-size-fits-all models towards specialized architectures tailored to the nature of the target property and data landscape. As evidenced, selecting the right model depends critically on whether the property is rooted in 2D topology, 3D geometry, or requires global attention. Furthermore, practical challenges like imperfect annotation and multi-task interference are now being addressed by innovative frameworks like OmniMol and ACS. Mastering this diverse toolkit of architectures and their respective strengths is fundamental for researchers aiming to deploy machine learning effectively in drug discovery and materials science. Future progress will likely involve a deeper fusion of these architectural paradigms and a stronger emphasis on data-efficient, explainable models that can reliably guide experimental efforts.

The fundamental goal of de novo molecular design is to identify novel compounds with desired properties from an virtually infinite chemical space, estimated to contain between 10^60 and 10^100 potential drug-like molecules [40]. This search task is computationally intractable using traditional methods, necessitating sophisticated computational approaches. Recent advances in artificial intelligence (AI) and machine learning (ML) have revolutionized this field, enabling researchers to generate novel molecular structures efficiently and predict their properties with increasing accuracy [41]. This technical guide explores the core paradigms, methodologies, and practical implementations of generative models for molecular design, framed within the broader context of initiating ML research for molecular property prediction—a critical prerequisite for effective generative design.

The integration of generative models into the drug discovery pipeline represents a paradigm shift from traditional screening-based approaches to automated design. However, the efficacy of these models relies heavily on accurate molecular property prediction, which faces significant challenges including data scarcity, imbalanced datasets, and the need for robust validation frameworks [2]. Understanding these challenges and the solutions being developed, such as multi-task learning and specialized architectures, provides the essential foundation for successful implementation of de novo molecular design systems.

Fundamentals of Molecular Representation

Before implementing generative models, molecules must be translated into numerical representations comprehensible to machine learning algorithms. This translation process is a critical first step in both property prediction and generative design.

Molecular Representations and Descriptors

- SMILES Strings: Simplified Molecular-Input Line-Entry System (SMILES) provides a linear notation representing molecular structure using ASCII strings, enabling the application of natural language processing techniques to chemical structures [40].

- Molecular Fingerprints: Binary vectors that encode the presence or absence of specific molecular substructures or patterns. RDKit provides multiple fingerprint types including Morgan fingerprints (equivalent to ECFP), RDKit fingerprints, and MACCS keys [42].

- Molecular Graph Representations: Graph-based representations treat atoms as nodes and bonds as edges, preserving the topological structure of molecules and enabling the application of graph neural networks (GNNs) [2].

- Molecular Descriptors: Computational chemistry features such as molecular weight, logP, topological polar surface area, and Lipinski rule counts, which can be calculated using toolkits like RDKit and used as input for predictive models [42].

Comparison of Molecular Representations

Table 1: Comparison of Molecular Representation Approaches for Machine Learning

| Representation Type | Format | Advantages | Limitations | Common Use Cases |

|---|---|---|---|---|

| SMILES Strings | Text/Sequence | Simple, compact, widely supported | May represent same molecule differently; validity challenges | Transformer-based generation, sequence models |

| Molecular Fingerprints | Fixed-length binary vectors | Fast similarity search, well-established | May miss structural nuances, fixed dimensionality | Similarity screening, QSAR models, classification |

| Molecular Graphs | Graph (nodes+edges) | Preserves topological structure, natural representation | Computationally intensive, complex model architectures | Graph Neural Networks, property prediction |

| 3D Coordinate Sets | Atomic coordinates & elements | Encodes spatial conformation, essential for some properties | Conformational flexibility, alignment sensitivity | Structure-based design, docking studies |

Core Paradigms in Generative Molecular Design

De novo molecular design strategies can be categorized according to the coarseness of their molecular representation, each with distinct advantages and implementation considerations [41].

Atom-Based Generation

Atom-based approaches generate molecules atom by atom, providing maximum flexibility but requiring careful constraint management to ensure chemical validity.

- Implementation: Typically uses recurrent neural networks (RNNs) or transformers that sequentially add atoms to a growing molecular structure, with rules to maintain chemical validity during the generation process.