Active Learning and Hyperparameter Tuning for Molecular Models: A Strategic Guide for Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on integrating active learning with hyperparameter optimization to enhance molecular model performance.

Active Learning and Hyperparameter Tuning for Molecular Models: A Strategic Guide for Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on integrating active learning with hyperparameter optimization to enhance molecular model performance. It covers the foundational synergy between these techniques, details methodological implementations in drug response and synergy prediction, addresses advanced troubleshooting for optimization challenges, and presents rigorous validation frameworks. By synthesizing current research and real-world applications, this guide aims to equip scientists with strategies to significantly reduce experimental costs, accelerate the identification of promising drug candidates and synergistic pairs, and build more robust and efficient predictive models in biomedical research.

The Synergy of Active Learning and Hyperparameter Tuning in Molecular Machine Learning

Frequently Asked Questions

What is the primary goal of Active Learning in molecular design? The primary goal is to find optimized molecules for a given design task, such as binding to a target protein, while intelligently selecting the most informative data points to label. This minimizes the use of expensive computational or experimental resources, closely mimicking the iterative design-make-test-analysis (DMTA) cycle of laboratory experiments [1] [2].

My AL model's performance has plateaued. What could be wrong? Performance plateaus are a common challenge. This can occur when the acquisition function no longer selects informative samples or when the surrogate model cannot generalize further with the current data. It may indicate that you have reached the limits of your initial chemical space exploration. Consider switching your query strategy, re-examining the diversity of your initial data pool, or incorporating a generative model to create novel, informative candidates instead of relying on a static library [3] [1] [2].

How do I choose the right query strategy for my regression task, like predicting binding affinity? For regression tasks, uncertainty-driven strategies are often effective. In benchmark studies, strategies like Least Confidence Margin (LCMD) and Tree-based Uncertainty (Tree-based-R) have been shown to outperform random sampling and geometry-based methods, especially in the early stages of an AL campaign when data is scarce [3]. As your labeled set grows, the differences between strategies may diminish.

What is the role of the 'oracle' in an Active Learning setup? The oracle is the source of ground-truth labels. In molecular design, this is typically a computationally expensive and high-fidelity method, such as Absolute Binding Free Energy (ABFE) calculations using molecular dynamics (e.g., ESMACS), or it could be actual experimental results [1]. The surrogate model is trained to approximate this oracle at a much lower computational cost.

What are common batch size considerations for GAL cycles? The choice of batch size involves a trade-off between exploration efficiency and computational load. In Generative Active Learning (GAL) protocols, using larger batch sizes (e.g., up to 1000 molecules per cycle) has been demonstrated to provide a more comprehensive picture of the chemical space and can lead to finding higher-scoring molecules [1]. However, the optimal value depends on your specific computational resources and the diversity of the generated molecules.

Troubleshooting Guides

Problem: The AL algorithm gets stuck in a local optimum, generating similar molecules.

This is a sign that the algorithm is over-exploiting a specific region of chemical space and lacks sufficient exploration.

- Potential Cause 1: The acquisition function over-emphasizes exploitation. An acquisition function based purely on the surrogate model's predicted score will continuously select molecules it already believes are good.

- Potential Cause 2: Lack of diversity in the generated batches. The generative model or the selection strategy is not incentivized to create or choose structurally diverse candidates.

- Potential Cause 3: The surrogate model's predictions are overconfident outside its domain of applicability.

| Solution | Methodology | Expected Outcome |

|---|---|---|

| Implement Hybrid Query Strategies | Combine an uncertainty-based acquisition function with a diversity-based one. For example, use a strategy like RD-GS, which balances model uncertainty with data diversity [3]. | Broader exploration of the chemical space, reducing the recurrence of structurally similar molecules. |

| Use a Generative Model with Diversity Penalties | In a GAL workflow, incorporate scoring components that penalize similarity to already-sampled compounds or reward novelty during the reinforcement learning phase [1]. | The generative AI creates a more diverse set of candidate molecules in each cycle. |

| Adjust Batch Size | Increase the batch size in each AL cycle. Studies on exascale computing platforms have shown that larger batch sizes (e.g., 1000) can improve the diversity of discovered ligands [1]. | A more comprehensive and representative sample of the chemical space is selected for oracle evaluation per cycle. |

Problem: The surrogate model's predictions are inaccurate and mislead the AL process.

A poor surrogate model causes the AL algorithm to select suboptimal or uninformative candidates.

- Potential Cause 1: The initial training set for the surrogate model is too small or non-representative.

- Potential Cause 2: Model drift has occurred because the underlying hypothesis space of the AutoML system has changed. The surrogate model is no longer a good fit for the data being evaluated [3].

- Potential Cause 3: The surrogate model is used outside its applicability domain.

| Solution | Methodology | Expected Outcome |

|---|---|---|

| Leverage Automated Machine Learning (AutoML) | Use an AutoML framework to automatically search and optimize between different model families (e.g., random forest, neural networks) and their hyperparameters. This ensures the surrogate model is robust and well-tuned for the specific dataset [3]. | A surrogate model with higher predictive accuracy and better generalization to new, unseen molecules. |

| Implement a Robust Model Update Protocol | In each AL cycle, retrain the surrogate model on the newly expanded labeled dataset. For neural network-based surrogates like ChemProp, this involves a defined hyperparameter optimization routine using cross-validation [1]. | The surrogate model adapts to new data and maintains its predictive power as the chemical space exploration evolves. |

| Apply Domain Awareness | Use tools like QSARtuna for automatic model selection or incorporate filters that detect when a generated molecule falls outside the structural space of the training data [1]. | Prevents the AL algorithm from being misled by highly uncertain predictions on molecules that are too dissimilar from the training set. |

Problem: The computational cost of the oracle is prohibitively high.

The whole premise of AL is to minimize oracle calls, but the process can still be expensive.

- Potential Cause 1: The acquisition function selects too many candidates that are ultimately uninformative.

- Potential Cause 2: The oracle used (e.g., detailed MD simulations) is inherently resource-intensive.

| Solution | Methodology | Expected Outcome |

|---|---|---|

| Adopt a Multi-Fidelity Modeling Approach | Use a cheaper, low-fidelity oracle (like a docking score) to pre-screen candidates. Only the most promising molecules from this pre-screening are then evaluated with the high-fidelity oracle (like ABFE calculations) [1]. | A significant reduction in the number of expensive oracle calls, streamlining the DMTA cycle. |

| Optimize Query Strategy for Informativeness | Shift from a pure expected-model-change strategy to an uncertainty-sampling strategy. This selects molecules the surrogate model is most uncertain about, maximizing the information gain per oracle query [4] [5]. | Fewer oracle evaluations are needed to achieve the same level of model performance or to find a high-affinity ligand. |

Experimental Protocols

Protocol 1: Generative Active Learning (GAL) for Molecular Optimization

This protocol combines generative AI with physics-based oracles for de novo molecular design [1].

- Initialization: Train an initial surrogate model on a small set of molecules with labels from the oracle (e.g., a docking score or a small set of binding affinities).

- Generation: Use a generative model (e.g., REINVENT) to propose a large pool of novel molecules. The generation is guided by a scoring function that heavily weights the prediction of the surrogate model.

- Acquisition: From the generated pool, select a batch of molecules for oracle evaluation. The selection can be based on the surrogate model's prediction (for exploitation) and its uncertainty (for exploration).

- Oracle Evaluation: Evaluate the selected batch of molecules using the high-fidelity oracle (e.g., run ESMACS molecular dynamics simulations to compute binding free energies).

- Model Update: Add the new molecule-oracle label pairs to the training set. Retrain and update the surrogate model with this expanded dataset.

- Iteration: Repeat steps 2-5 until a stopping criterion is met, such as a target molecule performance level or a maximum number of iterations.

Protocol 2: Pool-Based Active Learning for Virtual Screening

This protocol is used to efficiently screen large, static molecular libraries [3] [2].

- Pool Setup: Define a large pool of unlabeled molecules (e.g., a commercial compound library).

- Initial Labeling: Randomly select a small subset of molecules from the pool and label them using the oracle.

- Model Training: Train a surrogate model (which can be an AutoML-optimized model) on the initially labeled set.

- Query Strategy Application: Apply a query strategy (e.g., uncertainty sampling, query-by-committee) to the entire unlabeled pool to score and rank all molecules by their expected informativeness.

- Batch Selection: Select the top-ranked molecules (a batch) from the pool for oracle evaluation.

- Iteration and Update: The newly labeled data is added to the training set, the model is retrained, and the process repeats.

The Scientist's Toolkit: Key Research Reagents & Solutions

| Item | Function in Active Learning Experiments |

|---|---|

| REINVENT | A generative molecular AI model that uses reinforcement learning to generate novel compounds optimized for a specified scoring function, acting as the "design" engine in a GAL cycle [1]. |

| ChemProp | A directed message-passing neural network (D-MPNN) specifically designed for molecular property prediction. It commonly serves as the high-quality surrogate model in GAL workflows [1]. |

| ESMACS (Enhanced Sampling of MD with Approximation of Continuum Solvent) | A molecular dynamics simulation protocol used as a high-fidelity oracle to calculate absolute binding free energies (as scores) for protein-ligand complexes [1]. |

| QSARtuna | An automated QSAR modeling tool that performs automatic model selection from various classical machine learning algorithms, useful for bootstrapping initial surrogate models from small datasets [1]. |

| AutoML Frameworks | Automated machine learning systems that search for the best model family and hyperparameters, ensuring the surrogate model is robust and saving researchers from manual, repetitive tuning [3]. |

The following table summarizes findings from a large-scale benchmark study of 17 AL strategies within an AutoML framework for small-sample regression, a common scenario in materials and molecular science [3].

| AL Strategy Type | Example Strategies | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) |

|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms random sampling and geometry-based methods. | Differences narrow as all methods converge. |

| Diversity-Hybrid | RD-GS | Clearly outperforms random sampling by selecting informative and diverse samples. | Differences narrow as all methods converge. |

| Geometry-Only | GSx, EGAL | Less effective than uncertainty and hybrid methods initially. | Converges with other methods. |

| Baseline | Random-Sampling | The benchmark against which other strategies are compared. | The benchmark against which other strategies are compared. |

The Critical Role of Hyperparameters in Model Performance and Generalization

FAQs and Troubleshooting Guides

This technical support center provides solutions for researchers working at the intersection of active learning and hyperparameter tuning for molecular models. The following guides address common experimental issues, offering detailed methodologies and data to help you optimize your drug discovery pipelines.

FAQ 1: My Active Learning Model is Not Converging. How Can I Improve Sample Selection?

Issue: The active learning (AL) model shows poor performance or fails to identify high-value compounds after multiple iterations, often due to inefficient sampling from the unlabeled data pool.

Solution: Implement a strategic sampling method that goes beyond random selection. The choice of strategy is critical, especially in the early, data-scarce stages of your experiment [3].

Experimental Protocol: A comprehensive benchmark study evaluated 17 different AL strategies within an Automated Machine Learning (AutoML) framework for materials science regression tasks. The process is as follows [3]:

- Initialization: Start with a small set of labeled samples (L = {(xi, yi)}{i=1}^l) and a large pool of unlabeled data (U = {xi}_{i=l+1}^n).

- Iterative Sampling: For each AL iteration, the most informative sample (x^*) is selected from (U) based on a specific strategy.

- Annotation & Update: The selected sample is labeled (e.g., via costly computation or experiment) to get (y^), and the labeled set is updated: (L = L \cup {(x^, y^*)}).

- Model Retraining: The AutoML model is retrained on the expanded set (L), and performance is evaluated on a held-out test set.

- Stopping: The process repeats until a stopping criterion (e.g., performance plateau or budget exhaustion) is met. Model performance is tracked using metrics like Mean Absolute Error (MAE) and R².

Data Presentation: The benchmark tested various strategies against a random-sampling baseline. The table below summarizes the performance of key strategy types in the early data-scarce phase [3].

| Strategy Type | Key Principle | Early-Stage Performance (vs. Random Sampling) |

|---|---|---|

| Uncertainty-Driven | Selects samples where the model's prediction is most uncertain. | Clearly outperforms baseline |

| Diversity-Hybrid | Selects samples that are both informative and diverse in the feature space. | Clearly outperforms baseline |

| Geometry-Only | Selects samples based solely on data distribution geometry. | Underperforms compared to uncertainty and hybrid methods |

Key Takeaway: For optimal results in small-sample regimes, use uncertainty-driven (e.g., LCMD, Tree-based-R) or diversity-hybrid (e.g., RD-GS) strategies. As the labeled set grows, the performance gap between different strategies narrows [3].

FAQ 2: Which Hyperparameter Tuning Method Should I Use for My Molecular Property Prediction Model?

Issue: A Graph Neural Network (GNN) model for molecular property prediction is not achieving state-of-the-art performance, and manual hyperparameter tuning is proving inefficient and computationally prohibitive.

Solution: Automate the Hyperparameter Optimization (HPO) process using a systematic sampling algorithm. The choice of algorithm depends on your computational budget and search space [6].

Experimental Protocol: Azure Machine Learning's framework provides a robust methodology for HPO. The core steps are [6]:

- Define Search Space: Specify the range of values for each hyperparameter (e.g., learning rate, batch size, number of hidden layers). These can be discrete (

Choice) or continuous (Uniform,Normal). - Select Sampling Algorithm: Choose a method to explore the search space:

- Random Sampling: A good starting point to identify promising regions. Supports early termination of low-performing jobs.

- Bayesian Sampling: Recommended if you have a sufficient budget (≥ 20 times the number of hyperparameters). It selects new samples based on prior results to improve the primary metric efficiently.

- Grid Sampling: Only feasible for small, discrete search spaces, as it exhaustively tries every combination.

- Set Objective: Define the

primary_metric(e.g., accuracy, AUC-ROC) and thegoal(maximize or minimize) that the sweep job will optimize. - Configure Early Termination: Use a policy like

BanditPolicyto automatically terminate jobs that are performing poorly, freeing up computational resources.

Data Presentation: The table below compares the key hyperparameter sampling algorithms to guide your selection [6].

| Sampling Algorithm | Best For | Key Advantage | Key Limitation |

|---|---|---|---|

| Random | Initial exploration; diverse search spaces. | Efficiently finds promising regions; supports early termination. | May not find the absolute optimal point. |

| Bayesian | Maximizing performance with a sufficient budget. | Efficiently uses prior results to select new samples. | Requires a higher number of jobs; lower parallelism can be beneficial. |

| Grid | Small, discrete search spaces. | Exhaustively searches all combinations. | Computationally intractable for large spaces. |

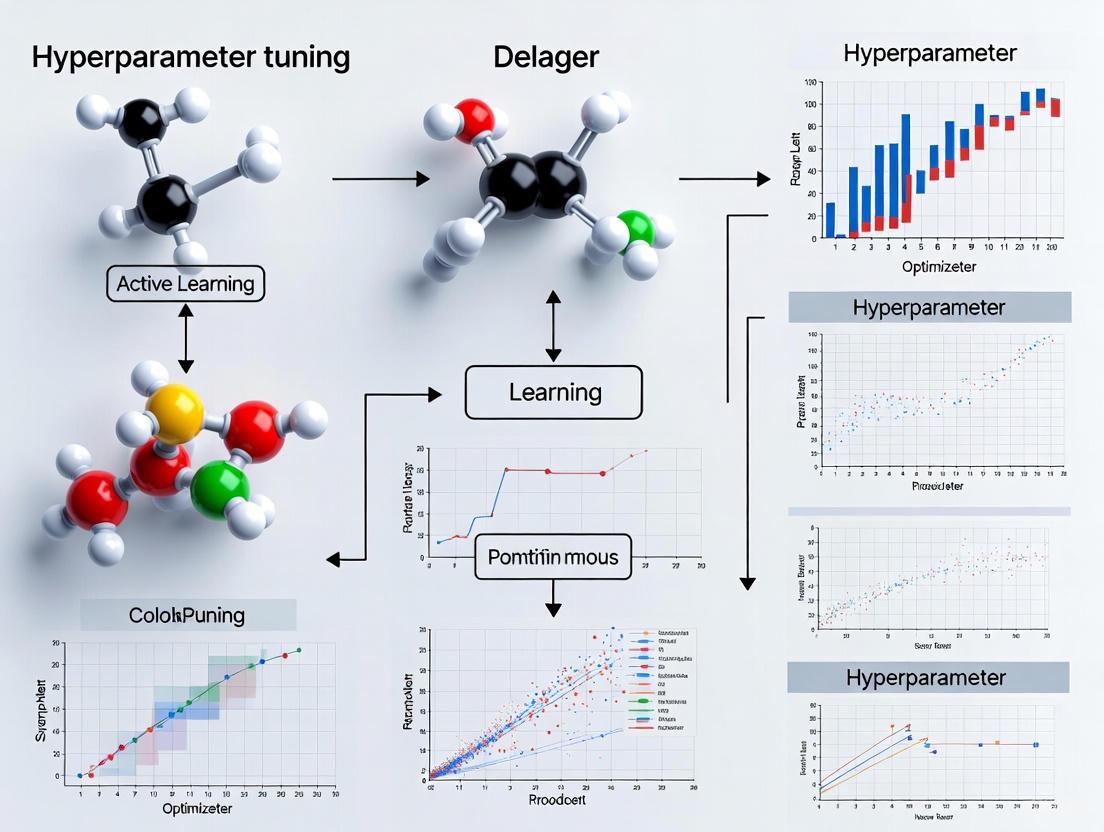

Visual Workflow: The following diagram illustrates the logical relationship between the tuning method and the model training process.

FAQ 3: How Can I Effectively Integrate Active Learning with an Automated Hyperparameter Tuning Pipeline?

Issue: The surrogate model in an active learning loop is underperforming, but its hyperparameters are fixed, leading to suboptimal sample selection and wasted computational resources.

Solution: Integrate Automated Machine Learning (AutoML) into your active learning cycle to dynamically optimize the surrogate model's architecture and hyperparameters at each iteration [3].

Experimental Protocol: This protocol combines the concepts from FAQ 1 and FAQ 2 into a robust, automated pipeline for molecular optimization [3]:

- Setup: Begin with an initial small labeled dataset (L) and a large pool of unlabeled molecular structures (U).

- Active Learning Loop: For a predetermined number of iterations: a. AutoML Optimization: Use an AutoML framework on (L) to automatically search for the best model family (e.g., linear models, tree-based ensembles, neural networks) and its hyperparameters. This creates an optimally-tuned surrogate model for the current iteration. b. Inference: Use the AutoML-tuned model to predict properties and, for some strategies, uncertainties for all molecules in (U). c. Informed Selection: Apply your chosen AL strategy (e.g., uncertainty sampling, greedy selection) to select the most informative batch of molecules (x^) from (U). d. Oracle Evaluation: Obtain the true labels for the selected molecules through your "oracle" (e.g., alchemical free energy calculations, experimental measurement) [7]. e. Data Update: Add the newly labeled pairs ({(x^, y^*)}) to (L) and remove them from (U).

- Output: The final model and the set of identified high-performance molecules.

Visual Workflow: The integrated pipeline for molecular optimization, combining AutoML and Active Learning, is illustrated below.

The Scientist's Toolkit: Essential Research Reagents for Computational Experiments

This table details key "reagents" used in building and tuning molecular models within active learning frameworks.

| Item / "Reagent" | Function & Explanation | Example from Literature |

|---|---|---|

| Alchemical Free Energy Calculations | Serves as a high-accuracy "oracle" to provide training data for the active learning model by calculating binding affinities [7]. | Used as the oracle to identify high-affinity phosphodiesterase 2 (PDE2) inhibitors; provided accurate labels for ML model training [7]. |

| Molecular Representations (Features) | Encodes a molecule's structure into a fixed-size vector for machine learning model consumption [7]. | Benchmarked representations include 2D/3D RDKit descriptors, PLEC fingerprints (protein-ligand interaction), and interaction energy matrices (MDenerg) [7]. |

| Ligand Selection Strategies | The algorithm that decides which molecules from the unlabeled pool should be evaluated next by the oracle [7]. | Strategies include "greedy" (top predicted binders), "uncertain" (largest prediction uncertainty), and "mixed" (balances both criteria) [7]. |

| Functional Group Masking (MLM-FG) | A pre-training task for molecular language models that masks chemically significant subsequences in SMILES strings, forcing the model to learn fundamental chemical concepts [8]. | Used in the MLM-FG model, which outperformed existing SMILES- and graph-based models on 9 out of 11 molecular property prediction tasks [8]. |

Frequently Asked Questions (FAQs)

Q1: What are the most common rookie mistakes in molecular modeling and how can I avoid them? Several common, yet easily avoidable, errors can compromise modeling results:

- Incorrect Ionization States: Amines should be protonated and carboxylic acids deprotonated at physiological pH. An incorrect state can drastically alter docking results and electrostatic interactions [9].

- Wrong Stereochemistry: Always verify the absolute stereochemistry (R/S) of stereocenters, especially after modifying a structure, as atom priority can change [9].

- Unrealistic Conformations: Converting 2D structures to 3D can introduce high-energy conformations like axial substituents on rings, syn-pentane interactions, or non-planar aromatic rings. Always inspect the final minimized structure [9].

- Incorrect Cis/Trans Bonds: The process of energy minimization can sometimes incorrectly flip amide bonds from trans to cis. Check double bonds in your final structure [9].

Q2: I'm getting a "Residue not found in topology database" error in GROMACS. What should I do?

This error in pdb2gmx means the force field you selected lacks parameters for a molecule or residue in your structure [10]. Your options are:

- Check Naming: Verify if the residue exists in the database under a different name and rename your structure accordingly [10].

- Find Topology Parameters: Search for a topology file (.itp) for the molecule from a reputable source and include it in your system's top file [10].

- Parameterize the Molecule: Parameterize the residue yourself (advanced, time-consuming) or find published parameters consistent with your force field [10].

- Use a Different Force Field: Switch to a force field that already includes parameters for your molecule [10].

Q3: My molecular dynamics job is failing with an "Out of memory" error. How can I fix this? This occurs when your system demands more memory than is available. You can:

- Reduce System Scope: Analyze a smaller number of atoms or a shorter trajectory [10].

- Check Unit Errors: A common cause is accidentally creating a simulation box that is 10³ times too large by confusing nanometers and Ångströms [10].

- Scale Up Hardware: Use a computer with more RAM or install more memory [10].

Q4: Why is my software reporting that it cannot find force fields? This typically indicates an issue with the software installation or environment paths. The program cannot locate its database of forcefield information. Re-installing the software or properly configuring your environment variables usually resolves this [10].

Q5: How can I find 3D structures that are geometrically similar to my protein of interest? The NCBI's VAST (Vector Alignment Search Tool) service can identify structurally similar proteins or 3D domains based purely on shape, which can find distant homologs missed by sequence comparison [11].

- If your structure is in the MMDB database, retrieve its summary page and use the "Molecules and interactions" table to find similar structures [11].

- You can also go directly to the VAST home page and enter your structure's PDB or MMDB ID [11].

Troubleshooting Guides

Issue 1: Handling Missing Atoms and Long Bonds in Structure Preparation

Problem: During topology generation (e.g., with pdb2gmx), the software reports long bonds and/or missing atoms, often halting the process [10].

Diagnosis and Solution:

- Check the Output Log: The screen output will specify which atom is missing. This is your starting point for investigation [10].

- Identify the Cause:

- Missing Hydrogens: Use the

-ignhflag to ignore all hydrogens in the input file and allow the software to add them correctly according to the force field's database [10]. - Incomplete Model: Check for

REMARK 465andREMARK 470entries in your PDB file, which indicate missing atoms. GROMACS has no built-in tool for this; you must use external software like WHAT IF to model in the missing atoms before proceeding [10]. - Terminal Residue Issues: For N-terminal residues, ensure you have properly specified the

-terflag and, when using AMBER force fields, that the residue name is correctly prefixed (e.g.,NALAfor an N-terminal alanine) [10].

- Missing Hydrogens: Use the

Issue 2: Navigating the Computational Cost of Ultralarge Virtual Screens

Problem: Screening multi-billion-compound libraries with traditional molecular docking is computationally prohibitive, requiring massive resources and time [12].

Solution: Implement a machine learning-guided docking workflow to reduce the number of compounds that require explicit docking by over 1,000-fold [12].

Protocol: Machine Learning-Accelerated Virtual Screening

- Objective: Rapidly identify top-scoring compounds from a library of billions.

- Workflow Overview: The following diagram illustrates the hybrid ML-docking pipeline that efficiently traverses vast chemical space:

- Step-by-Step Methodology [12]:

- Initial Docking & Training Set Creation: Perform molecular docking of a randomly selected subset of 1 million compounds from the vast library against your target protein.

- Classifier Training: Use the docking scores from step 1 to train a machine learning classifier (e.g., CatBoost with Morgan2 fingerprints) to distinguish between high-scoring ("active") and low-scoring compounds.

- Conformal Prediction: Apply the trained model to the entire multi-billion-compound library using the conformal prediction framework. This statistical method allows you to control the error rate and select a "virtual active" set of compounds likely to be top-binders.

- Focused Docking: Perform explicit molecular docking only on the greatly reduced "virtual active" set (e.g., ~10% of the original library).

- Validation: Send the final top-ranking compounds from the focused docking for experimental testing.

Issue 3: Hyperparameter Tuning for Active Learning in Yield Prediction

Problem: Building generalizable machine learning models for chemical reaction yield prediction requires efficient exploration of vast substrate spaces with limited data.

Solution: Use an active learning loop with uncertainty sampling to strategically select experiments for hyperparameter tuning and model improvement.

Protocol: Active Learning for Substrate Space Mapping

- Objective: Construct a predictive yield model for a virtual space of >22,000 compounds using less than 400 initial data points [13].

- Workflow Overview: The following diagram shows the iterative active learning cycle for model building:

- Step-by-Step Methodology [13]:

- Define Chemical Space: Create a virtual product space from commercially available building blocks (e.g., 8 aryl bromides x 2776 alkyl bromides).

- Featurization: Generate molecular features using Density Functional Theory (DFT) calculations and difference Morgan fingerprints.

- Initial Sampling: Use dimensionality reduction (UMAP) and hierarchical clustering on the features to select a diverse, representative initial set of substrates for high-throughput experimentation (HTE).

- Model Training & Uncertainty Querying:

- Train a Random Forest model on the acquired HTE yield data.

- Use the model to predict on the entire virtual space.

- Select the next substrates for experimentation based on the model's highest prediction uncertainty (active learning).

- Iterate: Incorporate new experimental results and retrain the model, repeating the uncertainty querying step until model performance plateaus or meets a predefined success criterion.

Molecular Modeling Software & Cost Analysis

The substantial cost of professional molecular modeling software is a key factor driving the need for efficient methods. The table below summarizes cost structures and considerations.

Table 1: 3D Molecular Modeling Software Cost & Licensing

| Software / Aspect | Cost Structure | Key Features & Considerations |

|---|---|---|

| Typical Commercial Software [14] | $50,000 - $1,000,000+ per year | Wide range; costs vary with capabilities, computational resources, support, and training. |

| BioPharmics Platform [14] | $100,000 - $250,000 per year (subscription) | All-inclusive, unlimited users/CPU. Includes Surflex-Dock, ForceGen, training, and support. |

| Critical Cost Factors [14] | - Per-token vs. site licenses- Computational resources- Support & training- Maintenance fees | Ease of integration, scalability, and required user training significantly impact total cost of ownership. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Computational Tools for Featured Experiments

| Item / Tool | Function / Role in the Experiment |

|---|---|

| Enamine REAL Space [12] | A "make-on-demand" chemical library containing billions of readily synthesizable compounds used for ultralarge virtual screening. |

| CatBoost Classifier [12] | A machine learning gradient boosting algorithm identified as optimal for balancing speed and accuracy in classifying docking scores. |

| Morgan Fingerprints (ECFP4) [12] | A circular fingerprint that encodes molecular structure and substructures, serving as a key feature for machine learning models. |

| Conformal Prediction (CP) Framework [12] | A statistical framework that provides valid prediction intervals, allowing control of error rates when selecting compounds from vast libraries. |

| AutoQchem Software [13] | An automated tool for generating Density Functional Theory (DFT) features (e.g., LUMO energy) for machine learning featurization. |

| ChEMBL Database [15] | A manually curated database of bioactive molecules with drug-like properties, used for model training and validation. |

A technical guide for streamlining computational drug discovery

This technical support center provides troubleshooting guides and FAQs for researchers using active learning and hyperparameter tuning for molecular models. These resources address common challenges in computational drug discovery, helping you optimize workflows and improve model performance.

Frequently Asked Questions

What are the primary methods for molecular optimization in AI-driven drug discovery? AI-aided molecular optimization methods primarily operate in two distinct spaces [16]:

- Discrete Chemical Space: Methods use direct structural modifications based on molecular representations like SMILES, SELFIES, or molecular graphs. They explore space through iterative generation and selection, including Genetic Algorithm (GA)-based and Reinforcement Learning (RL)-based methods.

- Continuous Latent Space: Methods use encoder-decoder frameworks to transform molecules into continuous vector representations, enabling optimization in a differentiable space. This includes deep learning models like Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs).

How can active learning specifically reduce my experimental burden? Active learning reduces experimental burden by iteratively selecting the most informative experiments to run, rather than relying on exhaustive screening [17] [18]. A well-designed active learning framework proactively tests unseen and informative working conditions to enrich training data, which significantly improves the generalization performance of data-driven models and can achieve learning objectives in approximately 300 experiments that would be impossible using traditional methods [17] [19].

My model is performing poorly. How do I systematically diagnose the issue? First, determine if your model is overfitting (high variance, low bias) or underfitting (high bias, low variance) the training data [20].

- For overfitting: Use more training data, reduce model complexity, apply regularization (e.g., Ridge, Lasso), add dropout layers (for neural networks), or employ early stopping.

- For underfitting: Increase model complexity (more parameters), add more input features, perform better feature engineering, or train for more epochs [20] [21].

What's a strategic approach to hyperparameter tuning? Adopt an incremental tuning strategy. For a given experimental goal, categorize your hyperparameters as follows [22]:

- Scientific Hyperparameters: The core parameters whose effect you are trying to measure (e.g., number of model layers, choice of optimizer).

- Nuisance Hyperparameters: Parameters that must be optimized to fairly compare different scientific hyperparameters (e.g., learning rate, which often interacts with model architecture).

- Fixed Hyperparameters: Parameters held constant for the current experiment to manage complexity.

This categorization allows you to design efficient experiments by focusing resources on tuning the most critical parameters [22].

Troubleshooting Guides

Issue: High Computational Cost of Active Learning

Problem: The active learning process is too slow or computationally expensive, especially with large datasets.

Solution: Implement a compute-efficient active learning framework. This involves strategically choosing and annotating data points to optimize the process [23].

Methodology:

- Initialization: Start with a small, randomly selected seed dataset and train an initial model.

- Iterative Loop:

- Uncertainty Sampling: Use the current model to predict on the unlabeled pool. Prioritize samples where the model's prediction confidence is lowest (e.g., highest entropy).

- Diversity Sampling: Incorporate a measure of diversity to ensure selected samples are not too similar to each other, improving the coverage of the chemical space. A distance-based cost metric can be useful here [19].

- Batch Selection: Select a batch of samples that balance uncertainty and diversity for expert labeling (or computational evaluation).

- Model Update: Retrain the model on the enlarged, annotated dataset.

- Stopping Criterion: Stop when a performance plateau is reached or a computational budget is exhausted.

Compute-Efficient Active Learning Workflow

Issue: Model Optimization with Multiple Conflicting Objectives

Problem: You need to optimize a molecule for multiple properties simultaneously (e.g., high bioactivity, good drug-likeness (QED), and synthetic accessibility), but improving one property often degrades another.

Solution: Utilize multi-objective optimization algorithms that can identify a set of optimal compromises (the Pareto front), rather than a single "best" solution [16] [24].

Methodology:

- Pareto-Based Genetic Algorithms (e.g., GB-GA-P): These methods maintain a population of candidate molecules and use evolutionary operations (crossover, mutation) to explore the chemical space. Selection is based on non-domination, meaning a solution is preferred if it is better in at least one objective without being worse in any other. This yields a diverse set of Pareto-optimal molecules [16].

- Reinforcement Learning with Multi-Objective Rewards (e.g., MolDQN, GCPN): An RL agent iteratively modifies a molecule. The reward function is a weighted sum or a more complex function that combines scores from all target properties, guiding the agent toward regions of chemical space that balance all objectives [16] [24].

- Property-Guided Generation in Latent Space: For deep learning models like VAEs, perform Bayesian optimization or other search strategies in the continuous latent space. The acquisition function for the search can be designed to handle multiple objectives, proposing latent vectors that decode into molecules with a better balance of properties [24].

Comparison of Multi-Objective Optimization Methods:

| Method | Type | Key Mechanism | Key Feature |

|---|---|---|---|

| GB-GA-P [16] | Genetic Algorithm | Pareto-based selection & evolutionary operations | Identifies a diverse set of Pareto-optimal molecules |

| MolDQN [16] | Reinforcement Learning | Multi-property reward function | Iteratively modifies molecules based on combined rewards |

| Latent Space BO [24] | Deep Learning/Bayesian | Multi-objective acquisition function | Efficiently searches continuous representations |

Issue: Poor Generalization of Data-Driven Models

Problem: Your model performs well on training data but poorly on new, unseen data (overfitting), or it fails to capture the underlying patterns altogether (underfitting).

Solution: A comprehensive approach involving data, features, and model tuning is required [20] [21].

Methodology:

- Data Diagnosis: Plot training and validation error curves. A growing gap indicates overfitting; consistently high errors indicate underfitting [20].

- Address Data Issues:

- Feature Engineering & Selection:

- Feature Creation: Derive new variables from existing ones (e.g., creating molecular descriptors from structural data) [21].

- Feature Transformation: Normalize data or remove skewness via log/square root transformations [21].

- Feature Selection: Use domain knowledge, statistical parameters (p-values), or PCA to select the most relevant features and reduce dimensionality [21].

- Model and Hyperparameter Tuning:

- Apply Regularization: Use L1 (Lasso) or L2 (Ridge) regularization to penalize model complexity [20] [21].

- Hyperparameter Optimization (HPO): Systematically tune hyperparameters. As per the incremental strategy [22]:

- Goal: "Determine the impact of model depth."

- Scientific HP: Number of hidden layers.

- Nuisance HP: Learning rate (must be tuned for each layer depth).

- Fixed HP: Activation function (if prior evidence shows it's insensitive to depth).

The Scientist's Toolkit

| Research Reagent / Solution | Function in the Context of Molecular Models |

|---|---|

| Genetic Algorithms (GAs) | Heuristic search methods that use crossover and mutation on a population of molecules to evolve towards optimal solutions [16]. |

| Reinforcement Learning (RL) | Trains an agent to take sequential actions (modifying molecules) within a chemical environment, guided by a reward function based on desired properties [16] [24]. |

| Bayesian Optimization (BO) | A sample-efficient strategy for optimizing expensive-to-evaluate functions (like molecular property prediction), often used in the latent space of generative models [24]. |

| Stacked Autoencoder (SAE) | A deep learning model used for unsupervised feature extraction and dimensionality reduction, learning hierarchical representations of molecular data [25]. |

| Particle Swarm Optimization (PSO) | An evolutionary optimization algorithm that optimizes model parameters by simulating the social behavior of a flock of birds or a school of fish [25]. |

| Active Learning Framework | A closed-loop system that integrates automated actuation, measurement, and a learning function to iteratively select the most informative experiments [17]. |

Implementing Active Learning Loops and Optimization Techniques for Drug Discovery

Frequently Asked Questions (FAQs)

Q1: What is the core purpose of an Active Learning loop in molecular design? Active Learning (AL) is a machine learning strategy designed to optimize the iterative Design-Make-Test-Analyze (DMTA) cycle. Its core purpose is to achieve high model performance or discover optimized molecules while minimizing the number of expensive and time-consuming laboratory or high-fidelity computational experiments (oracle calls). An AL algorithm intelligently selects the most informative data points to label, thereby accelerating the learning process and reducing resource consumption [1] [26].

Q2: In a generative molecular AI context, is data automatically used for retraining after human validation? No, the process is not automatic. In platforms like UiPath's Document Understanding, validated data from an Action Center does not automatically pass back into the model for retraining. A dedicated training module must be included in the workflow. After validation, the task should use the document and validated data to train the model, often involving a "Train Scope" activity. The retrained model must then be uploaded to the relevant system (e.g., an AI Center) to update the pipelines and skills [27]. Similarly, in generative molecular AI, a deliberate step to update the surrogate model with the new, validated data is required in each AL cycle [1].

Q3: What are the common types of Active Learning sampling strategies? There are three primary sampling strategies in pool-based Active Learning [28]:

- Random Sampling: Data is selected at random from the pool. This is a simple baseline method that avoids bias but may not be efficient.

- Uncertainty Sampling (Stream-Based Selective Sampling): The model evaluates unlabeled data points one-by-one and selects those where its prediction confidence is lowest for labeling.

- Pool-Based Sampling: The model assesses the entire pool of unlabeled data and selects a batch of samples that are most "informative," often based on criteria like uncertainty or diversity, to improve the model most effectively.

Q4: How do I know if my Active Learning loop is working effectively? You should track performance metrics across learning cycles. Effective AL shows a steeper increase in performance (e.g., hit discovery, model accuracy) versus the number of oracle calls compared to passive learning (e.g., random selection). The table below summarizes quantitative improvements observed in molecular design studies [29].

Table 1: Performance Metrics of Active Learning in Molecular Design

| Metric | Baseline (e.g., Random Screening, RL alone) | With Active Learning | Improvement Factor |

|---|---|---|---|

| Hit Discovery Efficiency | Low number of hits for a fixed oracle budget | 5x to 66x more hits for the same budget [29] | 5–66 fold increase |

| Computational Time | Longer time to find a specific number of hits | 4x to 64x reduction in time [29] | 4–64 fold reduction |

| Multi-parameter Optimization | Lower objective score enrichment | Substantial enrichment of the scoring objective [29] | Superior efficacy |

Q5: What is a common pitfall when combining Reinforcement Learning (RL) and Active Learning (AL)? A significant challenge in RL–AL is the feedback loop between the generative model and the surrogate model. The RL agent generates data that is used to train the surrogate, and the surrogate's predictions then guide the RL agent. This can lead to the agent "exploiting" the weaknesses of the surrogate model, potentially generating molecules that score highly on the surrogate but perform poorly with the true oracle. Careful design of the acquisition function and incorporating diversity metrics are crucial to mitigate this [29].

Troubleshooting Guides

Problem 1: The Model is Not Improving Across Active Learning Cycles

Description: After several iterations of the AL loop, the performance of the model (e.g., accuracy, hit rate) has plateaued or is improving very slowly.

Diagnosis and Solution:

- Check Data Diversity: The selected batches may lack diversity, causing the model to overfit to a specific region of the chemical space.

- Solution: Incorporate diversity-based acquisition functions. Move beyond simple uncertainty sampling and use methods that select a batch of data points that are both uncertain and diverse from each other. For example, methods that maximize the joint entropy or the determinant of the covariance matrix of the batch predictions can enforce diversity [30] [29].

- Assess Surrogate Model Quality: The surrogate model may be inaccurate or have a narrow applicability domain.

- Review Oracle Function: The computational oracle (e.g., a docking score) might be too noisy or not correlate well with the desired real-world property.

- Solution: Consider using a more accurate, albeit expensive, oracle (e.g., free energy perturbation calculations) for a subset of critical points to guide the learning process more reliably [29].

Problem 2: The Active Learning Loop Fails to Find Any Hits

Description: The AL process is running but is not discovering any molecules that meet the target criteria (e.g., binding affinity threshold).

Diagnosis and Solution:

- Initial Training Pool is Too Small or Non-Representative: The AL loop might not have enough starting information to explore the space effectively.

- Acquisition Function is Too Explorative: The algorithm may be prioritizing complete uncertainty and exploring regions of chemical space that are not fruitful.

- Solution: Adjust the acquisition function to balance exploration (trying new regions) with exploitation (refining known good regions). For multi-parameter optimization, develop acquisition strategies that are specifically designed for the MPO problem [29].

- Check the Reward Function in RL–AL: In a generative AL setup, the reward function used by the RL agent might be mis-specified.

- Solution: Decompose the reward function. Instead of relying solely on the expensive oracle, include secondary scoring components like Quantitative Estimate of Drug-likeness (QED) or synthetic accessibility filters to guide the generator towards more realistic and drug-like molecules [1].

Problem 3: Inefficient Retraining After Human-in-the-Loop Validation

Description: The workflow involves human validation (e.g., in Action Center), but the validated data is not efficiently used to update the model.

Diagnosis and Solution:

- Missing Automated Retraining Step: The workflow may lack an explicit step to pass the validated data back to the training module.

- Solution: Explicitly design the workflow to include a "Train Classifier" or "Train Extractor" activity after the validation task is completed. The resumed task should pass the validated data to this training scope [27].

- Disconnect Between Modern and Classic Approaches: In some platforms, modern project interfaces may not have built-in retraining activities, requiring the use of classic activities that integrate with an AI Center.

- Solution: You may need to import and use "Intelligent OCR" activities or similar packages that contain the necessary retraining components and connect to a dedicated AI model management system [27].

Experimental Protocols and Workflows

Protocol: Generative Active Learning (GAL) for Molecular Optimization

This protocol combines generative AI with active learning for de novo molecular design, as demonstrated in recent studies [1] [29].

Initialization:

- Define the Objective: Assemble a multi-parameter scoring function (e.g., combining predicted binding affinity, drug-likeness QED, and absence of structural alerts).

- Prepare the Oracle: Select the high-fidelity, expensive computational method that will act as the ground truth (e.g., ESMACS/MMPBSA for absolute binding free energy, FEP).

- Bootstrap the Surrogate Model: Train an initial surrogate model (e.g., a Directed-Message Passing Neural Network like ChemProp) on a pre-existing dataset or a set of molecules generated and scored with a cheaper method (e.g., docking).

Generative Active Learning Loop:

- Step 1 - Generate Candidates: Use a generative model (e.g., REINVENT) to propose a large pool of novel molecules. The model is guided by a reward function that heavily weights the prediction from the surrogate model.

- Step 2 - Select Batch: From the generated pool, use an acquisition function to select a batch of molecules that are most uncertain and diverse according to the surrogate model. A method like maximizing the determinant of the epistemic covariance matrix (COVDROP) is effective here [30].

- Step 3 - Oracle Evaluation: Evaluate the selected batch of molecules using the expensive oracle (e.g., run ESMACS simulations).

- Step 4 - Update Surrogate Model: Add the newly labeled data (molecules and their oracle scores) to the training set and retrain the surrogate model.

- Step 5 - Iterate: Repeat steps 1-4 for a fixed number of cycles or until a performance target is reached.

Generative Active Learning (GAL) Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Active Learning in Molecular Design

| Tool / Reagent | Function / Description | Application in Active Learning |

|---|---|---|

| REINVENT | A SMILES-based generative model using Reinforcement Learning (RL). | Serves as the agent that proposes novel molecular structures based on a reward function, enabling exploration of vast chemical space [1] [29]. |

| ChemProp | A directed message-passing neural network (D-MPNN) for molecular property prediction. | Acts as the surrogate model that predicts molecular properties (e.g., binding affinity) quickly, guiding the generative model between expensive oracle calls [1]. |

| ESMACS (MMPBSA) | A molecular dynamics-based method for estimating absolute binding free energies. | Functions as the high-fidelity, computationally expensive oracle that provides accurate ground-truth labels for selected molecules [1]. |

| AutoDock Vina | A widely used molecular docking program. | Can be used as a medium-cost oracle or for bootstrapping the initial surrogate model before moving to more expensive methods [29]. |

| ROCS | A tool for shape-based virtual screening and pharmacophore matching. | Used as a cheap oracle or a component in a multi-parameter objective to steer molecules towards desired shapes or pharmacophores [29]. |

| Active Learning Acquisition Functions (e.g., COVDROP) | Algorithms for batch selection (e.g., based on Monte Carlo Dropout). | The core logic that selects the most informative and diverse batch of molecules for evaluation by the oracle, maximizing learning efficiency [30]. |

Troubleshooting: Model Not Improving

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary sampling strategies in active learning for molecular selection, and when should I use each?

Active learning (AL) for molecular selection primarily employs three strategy types, each suited to different experimental goals. Uncertainty-based sampling selects molecules for which the current model's predictions are most uncertain, ideal for rapidly improving model accuracy for a specific property [31] [32]. Diversity-based sampling prioritizes molecules that are structurally dissimilar to those already in the training set, ensuring broad coverage of the chemical space and is best used during initial exploration [32]. Hybrid approaches combine these, often with physics-informed objectives, to balance exploration of new chemical areas with targeted optimization of desired properties, which is crucial for complex multi-objective tasks like photosensitizer design or scaffold hopping [33] [32].

FAQ 2: How can I address class imbalance in my molecular dataset during active learning?

Class imbalance, where inactive molecules vastly outnumber active ones, is a common challenge in toxicity prediction and drug discovery. To address this, you can integrate strategic data sampling within your AL framework. This involves modifying the training data distribution, for example, by dividing it into k-ratios to achieve a more balanced distribution between toxic and nontoxic compounds during the training of the ensemble model [34]. Another method is to enhance uncertainty sampling with category information. This uses pre-trained feature extractors and similarity metrics to explicitly ensure all molecular classes (e.g., different types of protein ligands) are represented in the selected batch, preventing the model from ignoring rare but important categories [31].

FAQ 3: My generative active learning model is converging on a limited chemical space. How can I improve diversity?

This is a typical sign of over-exploitation. To encourage greater diversity in your Generative Active Learning (GAL) outputs, you should adjust your acquisition function. Ensure it includes a term that explicitly rewards structural diversity, perhaps by quantifying dissimilarity to the existing training set [1] [32]. Furthermore, you can modify the reinforcement learning (RL) objective in generative models like REINVENT. Instead of relying solely on a property-prediction score, aggregate it with other scoring components like Quantitative Estimate of Drug-likeness (QED) and structural filters. Using a weighted geometric mean for aggregation helps maintain chemical reasonableness and diversity [1].

FAQ 4: How do I validate that my active learning model is performing efficiently and accurately?

Validation should assess both the model's predictive performance and the chemical quality of its selections. Key steps include:

- Track Performance Metrics: Monitor standard metrics like AUC-ROC (Area Under the Receiver Operating Characteristic Curve) and AUC-PR (Area Under the Precision-Recall Curve) on a held-out test set across AL cycles. A well-performing model will show rapid improvement in these metrics [34].

- Benchmark Against Baselines: Compare your AL strategy's performance against random sampling to quantify the efficiency gain [32] [35].

- Analyze Selected Compounds: Use tools like molecular docking or binding free energy calculations (e.g., ESMACS) to physically validate the predicted properties of top-ranked molecules [34] [1]. Also, check that the selected compounds occupy a diverse and synthetically accessible chemical space [1] [35].

Troubleshooting Guides

Problem: High Computational Cost of Oracle Evaluations Description: The computational expense of the oracle (e.g., molecular dynamics simulations, free energy calculations, or quantum chemical methods) severely limits the number of AL cycles you can perform.

Solution Checklist: Implement a Robust Surrogate Model: Train a fast, QSAR-like surrogate model (e.g., using a Directed Message Passing Neural Network (D-MPNN) or Graph Neural Network) to approximate the expensive oracle. This model is updated iteratively with new data from the oracle and handles the bulk of the molecular scoring [1] [32]. Use Multi-Fidelity Oracles: When possible, employ a hierarchy of oracles. Use a cheap, low-fidelity method (e.g., docking) for initial screening and reserve high-fidelity, expensive methods (e.g., absolute binding free energy calculations) only for the most promising candidates [1]. Optimize Batch Size: Experiment with the batch size (number of molecules sent to the oracle per cycle). A larger batch can improve parallel efficiency on HPC clusters but may reduce the informational value of each individual selection. Studies have shown that tuning this parameter is crucial for optimal performance on exascale computing platforms [1].

Problem: Model Instability and Poor Generalization Description: The model performs well on the training and validation sets but fails to generalize to new regions of chemical space or produces unstable molecular dynamics simulations.

Solution Checklist: Adversarial Active Learning with Calibration: Integrate algorithms like Calibrated Adversarial Geometry Optimization (CAGO). This technique intentionally generates molecular structures that challenge the model and optimizes them to a user-defined target error level. Adding these "adversarial" examples to the training set significantly improves model robustness and stability for simulating dynamical systems [36]. Leverage Ensemble Models: Use a committee of models for uncertainty estimation. The variance in the committee's predictions is a reliable indicator of the model's uncertainty on a given molecule. This uncertainty can then directly guide the acquisition function [32] [36]. Incorporate Physics-Based and Knowledge-Based Constraints: Guide the sampling process with domain knowledge. In drug design, this can include using protein-ligand interaction profiles (PLIP) from crystallographic fragments in the scoring function or applying filters for drug-likeness (QED) and structural alerts to avoid problematic groups [1] [35].

Problem: Inefficient Exploration-Exploitation Trade-off Description: The AL algorithm either gets stuck in a local optimum (over-exploitation) or wanders randomly without improving the target objective (over-exploration).

Solution Checklist: Apply a Hybrid Acquisition Strategy: Combine multiple acquisition functions. For example, a unified framework might use diversity-based sampling in the early AL cycles to map the chemical space broadly, then gradually shift towards uncertainty-based and property-based sampling to hone in on high-performance candidates [32]. Dynamic Strategy Scheduling: Program your AL framework to change strategies based on the cycle number or model confidence. Early stages should prioritize exploration (diversity), while later stages should prioritize exploitation (uncertainty or expected improvement) [32]. Seed with Purchasable Compounds: To ensure practical outcomes, seed the initial chemical space with molecules from on-demand chemical libraries (e.g., Enamine REAL). This grounds the exploration in synthetically tractable space from the beginning, making the exploitation phase more directly relevant to experimental efforts [35].

Experimental Protocols & Data

Table 1: Comparison of Core Acquisition Functions for Molecular Active Learning

| Acquisition Function | Key Principle | Best Use Case | Reported Performance |

|---|---|---|---|

| Uncertainty Sampling [31] [32] | Selects samples where model prediction confidence is lowest (e.g., based on entropy or committee variance). | Rapidly improving predictive accuracy for a specific molecular property. | Achieved competitive mAP scores in object detection and ~0.08 eV MAE for photosensitizer T1/S1 energy levels [32]. |

| Diversity Sampling [32] | Maximizes structural or feature-space diversity in the selected batch. | Initial exploration of a vast, unknown chemical space. | Enabled discovery of chemically diverse ligands, occupying a different space than a baseline model [1]. |

| Hybrid (Uncertainty + Diversity) [32] | Balances the selection of uncertain and diverse samples in a single acquisition function. | Maintaining diversity while optimizing for a property; preventing mode collapse. | Outperformed static baselines by 15-20% in test-set MAE for predicting photophysical properties [32]. |

| Knowledge-Enhanced [31] [35] | Integrates domain knowledge (e.g., category info, interaction profiles) into the sampling decision. | Multi-class problems with imbalance or when specific protein-ligand interactions are critical. | Mitigated the long-tail effect in sampled datasets and identified molecules with high similarity to known active inhibitors [31] [35]. |

Table 2: Key Software Tools for Implementing Molecular Active Learning

| Tool / Reagent | Type | Primary Function in Workflow |

|---|---|---|

| REINVENT [1] | Generative Model | Uses reinforcement learning (RL) to generate novel molecules optimized for a user-defined scoring function. |

| ChemProp [1] | Surrogate Model | A D-MPNN-based model that provides fast, QSAR-like property predictions for molecules. |

| FEgrow [35] | Structure-Based De Novo Design | Builds and scores congeneric series of ligands in a protein binding pocket by growing R-groups and linkers from a core. |

| gnina [35] | Scoring Function | A convolutional neural network used to predict the binding affinity of a ligand pose within a protein. |

| ESMACS [1] | Physics-Based Oracle | An enhanced sampling MD protocol that provides absolute binding free energy estimates, acting as a high-fidelity oracle. |

| ML-xTB [32] | Quantum Chemical Method | A machine-learning accelerated quantum chemistry method that provides accurate photophysical property labels at low cost. |

| Core Hunter / Core Finder [37] | Core Set Selection | Algorithms originally from genetics, adapted to select a maximally diverse core subset from a larger molecular library. |

Detailed Protocol: Generative Active Learning for Ligand Discovery

This protocol is adapted from studies targeting SARS-CoV-2 Mpro and TNKS2 proteins [1] [35].

1. Initialization:

- Define Objective: Specify the target property (e.g., binding affinity for a specific protein, optimal T1/S1 energy levels).

- Prepare Initial Data: Assemble a small, labeled dataset to train the initial surrogate model. This can come from known actives, a high-throughput virtual screen, or crystallographic fragment hits [1] [35].

- Choose Generative Model & Oracle: Select a generative model (e.g., REINVENT) and a high-fidelity, physics-based oracle (e.g., ESMACS for binding free energy, ML-xTB for photophysical properties).

2. Active Learning Cycle:

- Step A - Generate Candidates: Use the generative model to propose a large set of candidate molecules (e.g., 10,000-100,000).

- Step B - Surrogate Scoring & Selection: Score the entire candidate pool using the current surrogate model (e.g., ChemProp). Apply the chosen acquisition function (see Table 1) to select a batch of molecules (e.g., 50-1000) for oracle evaluation. A hybrid strategy might be used here [32].

- Step C - Oracle Evaluation: Send the selected batch to the expensive oracle (e.g., ESMACS, ML-xTB) to obtain high-fidelity property labels.

- Step D - Model Update: Add the newly labeled molecules to the training set and retrain/update the surrogate model.

- Iterate: Repeat steps A-D for a predetermined number of cycles or until performance converges.

3. Validation:

- Select the top-performing molecules from the final cycle based on the oracle's scores.

- Validate these compounds using independent methods, such as experimental assays or higher-level theoretical calculations [35].

Workflow Visualizations

Diagram 1: The iterative Generative Active Learning (GAL) cycle for molecular design.

Diagram 2: A hybrid acquisition function combining multiple sampling strategies.

Troubleshooting Guides

Why is my hyperparameter optimization taking too long and how can I speed it up?

Problem: The optimization process is computationally expensive and time-consuming, significantly slowing down research progress.

Solution: The choice of optimization method directly impacts computational efficiency.

- If you are using Grid Search (GS): GS exhaustively tests all combinations in a defined hyperparameter space [38] [39]. This "brute force" method is simple but becomes computationally prohibitive as the number of hyperparameters grows [38]. Switch to Randomized Search (RS) or Bayesian Optimization (BO). RS often finds good parameters much faster by evaluating a random subset of the space [39].

- Assess the necessity of each hyperparameter: Reduce the dimensionality of your search space by focusing on hyperparameters with the greatest impact on your model.

- Leverage parallel computing: Many BO frameworks, like

BayesianoptandGPyOpt, support parallel evaluation of multiple parameter sets, dramatically reducing wall-clock time [40]. - Use a cheaper surrogate objective: If your final evaluation is an expensive molecular dynamics simulation, use a faster proxy like a docking score during the optimization phase [1] [35].

How do I address overfitting in my optimized machine learning model for molecular property prediction?

Problem: The model performs well on training data but generalizes poorly to new, unseen molecular structures.

Solution: Overfitting often indicates that the hyperparameter optimization is overly tailored to the training set.

- Revisit your cross-validation strategy: Ensure that your optimization process uses robust cross-validation. A study on heart failure prediction found that while Support Vector Machine (SVM) models initially showed high accuracy, Random Forest (RF) models demonstrated superior robustness after 10-fold cross-validation [38].

- Incorporate regularization: Tune hyperparameters that control model complexity, such as regularization strength (

Cin SVM), weight decay in neural networks, or maximum depth in tree-based methods. Bayesian Optimization is particularly effective at navigating this trade-off [41]. - Validate on an external test set: Always hold out a completely separate validation set that is never used during the optimization process to get an unbiased estimate of real-world performance.

My Bayesian Optimization is converging to a poor local minimum. What should I do?

Problem: The BO algorithm seems to get stuck and fails to find a globally optimal set of hyperparameters.

Solution: This is often related to the balance between exploration and exploitation.

- Adjust the acquisition function: The acquisition function guides the search. If it's too greedy (over-exploitation), it can get stuck. Tune the parameter that controls the exploration-exploitation balance (often denoted as

λorξ) [40]. - Restart the optimization with different initial points: BO can be sensitive to the initial set of evaluated points. Run the optimization multiple times with different random seeds to probe different regions of the search space.

- Consider a different surrogate model: For rough, high-dimensional search spaces common in molecular design (e.g., with activity cliffs), standard Gaussian Process (GP) surrogates may struggle. Emerging methods like Rank-based Bayesian Optimization (RBO) use ranking models instead of regression, which can be more robust in these scenarios [42].

Which hyperparameter optimization method is best for my active learning workflow in drug discovery?

Problem: Selecting the most efficient and effective optimization technique for a resource-intensive active learning cycle.

Solution: The best method depends on your priorities: computational cost, sample efficiency, or handling complex spaces.

- For simplicity and small search spaces: Use Grid Search. It is straightforward to implement and parallelize but is rarely optimal for large-scale molecular problems [39].

- For a good balance of speed and performance in medium-complexity spaces: Use Randomized Search. It often finds good parameters much faster than Grid Search [38] [39].

- For maximum sample efficiency and high-dimensional spaces: Use Bayesian Optimization. BO is ideal when each function evaluation is expensive, such as running free energy calculations or synthesizing a compound [1]. It has been shown to consistently require less processing time than GS and RS while finding superior configurations [38] [41].

Frequently Asked Questions (FAQs)

What is the fundamental difference between Grid Search, Random Search, and Bayesian Optimization?

- Grid Search (GS) is an exhaustive search over a predefined set of hyperparameters. It is guaranteed to find the best combination within the grid but is computationally expensive and inefficient for high-dimensional spaces [38] [39].

- Randomized Search (RS) randomly samples hyperparameter combinations from a defined distribution. It is more efficient than GS for large spaces, as it has a high chance of finding good parameters without evaluating every possible combination [38] [39].

- Bayesian Optimization (BO) is a sequential, model-based approach. It builds a probabilistic surrogate model (e.g., a Gaussian Process) of the objective function and uses an acquisition function to decide which hyperparameters to evaluate next. This makes it highly sample-efficient, especially for expensive-to-evaluate functions [40] [38].

In which scenarios is Bayesian Optimization clearly superior?

Bayesian Optimization is clearly superior in scenarios where:

- Evaluations are extremely expensive: This includes guiding experiments in self-driving laboratories, running high-fidelity molecular simulations (e.g., ESMACS for binding affinity), or conducting wet-lab experiments [1] [35].

- The search space is high-dimensional and complex: BO efficiently navigates complex relationships between hyperparameters that GS and RS struggle with [40].

- Sample efficiency is critical: BO typically requires far fewer iterations to find a good optimum compared to GS and RS, as demonstrated in a study predicting biomass gasification gases where it optimized eight different machine learning models with high success [41].

How do I choose the right hyperparameter space to search?

- Start with literature and prior knowledge: Use recommended values from similar studies or model documentation to define a plausible initial range.

- Use log-scale for certain parameters: Parameters like learning rates or regularization strengths often benefit from a log-uniform distribution (e.g., from 1e-5 to 1e-1) rather than a linear one.

- Iteratively refine the space: If your optimization consistently suggests values at the boundary of your space, consider expanding the search in that direction in a subsequent, focused optimization run.

Can these methods be combined with Active Learning for molecular design?

Yes, they are often the core of Active Learning (AL) cycles. In molecular design, the workflow typically is:

- A generative model or compound library proposes candidate molecules.

- A surrogate model (e.g., a QSAR model) predicts their properties.

- Hyperparameter optimization is used to tune the surrogate model itself.

- An acquisition function, which can be part of a BO framework, selects the most informative or promising candidates for the next expensive evaluation (e.g., synthesis or simulation) [1] [35]. This creates a closed loop where hyperparameter optimization ensures the surrogate model is accurate, while the AL cycle efficiently explores the chemical space.

Comparative Performance Data

The following tables summarize key quantitative comparisons between the three optimization methods.

Table 1: Method Comparison and Characteristic Workflows

| Feature | Grid Search | Randomized Search | Bayesian Optimization |

|---|---|---|---|

| Core Principle | Exhaustive brute-force search [38] | Random sampling from distributions [38] | Sequential model-based optimization [40] |

| Search Strategy | Tests all combinations in a predefined grid [39] | Evaluates a fixed number of random combinations [39] | Uses an acquisition function to select the most promising next parameters [40] |

| Key Hyperparameter | The grid resolution itself | Number of iterations (n_iter) [39] |

Exploitation/exploration balance (λ) [40] |

| Best For | Small, low-dimensional search spaces [39] | Faster results on larger spaces [38] [39] | Expensive, high-dimensional black-box functions [40] [1] |

| Python Implementation | GridSearchCV from sklearn [39] |

RandomizedSearchCV from sklearn [39] |

Packages like Ax, BoTorch, BayesianOptimization [40] |

Table 2: Experimental Performance Comparison from Recent Studies

| Study Context | Grid Search Performance | Randomized Search Performance | Bayesian Optimization Performance | Key Metric |

|---|---|---|---|---|

| Heart Failure Prediction [38] | N/A | N/A | Consistently required less processing time than GS and RS | Computational Time |

| Biomass Gas Prediction [41] | N/A | N/A | Optimized XGBoost to R² values of 0.951 (CO) and 0.981 (H₂) | Model Accuracy (R²) |

| HVAC Performance Modeling [43] | 288 configurations tested systematically | Identified as a common comparative method | Identified as a common comparative method | Methodology |

| Molecular Design [1] [35] | Not typically used due to intractable search space | Not typically used due to intractable search space | Core component of active learning and generative AI workflows for drug discovery | Applicability & Integration |

Experimental Protocols

Protocol: Hyperparameter Tuning for a Predictive Model in Drug Discovery

This protocol outlines a methodology for optimizing a machine learning model used to predict compound activity, similar to those used in active learning pipelines [38] [1].

1. Define the Objective and Model

- Objective: Maximize the Area Under the Curve (AUC) for predicting protein-ligand binding affinity.

- Model: Select a model such as Random Forest (RF), eXtreme Gradient Boosting (XGBoost), or a Support Vector Machine (SVM).

2. Preprocess the Dataset

- Handle Missing Values: Apply imputation techniques such as Multivariable Imputation by Chained Equations (MICE), k-Nearest Neighbors (kNN), or Random Forest imputation [38].

- Encode Categorical Features: Use one-hot encoding to convert categorical variables into a binary matrix [38].

- Normalize Continuous Features: Apply z-score normalization to standardize continuous features to a mean of 0 and a standard deviation of 1 [38].

3. Establish the Hyperparameter Search Space Define the distributions for each hyperparameter. For example, for an XGBoost model:

learning_rate: A log-uniform distribution between 0.01 and 0.3.max_depth: A uniform integer distribution between 3 and 10.n_estimators: A uniform integer distribution between 100 and 500.subsample: A uniform distribution between 0.6 and 1.0.

4. Execute the Optimization Method

- Grid Search:

- Define a finite list of values for each hyperparameter.

- Use

GridSearchCVto exhaustively evaluate all combinations. - This is often used as a baseline for smaller spaces [39].

- Randomized Search:

- Use

RandomizedSearchCVfrom scikit-learn. - Set the number of iterations (

n_iter) based on your computational budget (e.g., 50-100). - The search will sample and evaluate

n_iterrandom combinations from the defined distributions [39].

- Use

- Bayesian Optimization:

- Use a library like

AxorBayesianOptimization. - Configure the Gaussian Process surrogate model and the acquisition function (e.g., Expected Improvement).

- Run the optimization for a set number of trials (e.g., 30-50). The algorithm will intelligently select the next hyperparameters to evaluate based on previous results [40] [41].

- Use a library like

5. Validate and Select the Best Model

- The best-performing set of hyperparameters is selected based on the highest average score from cross-validation [38].

- Final Evaluation: The model refit with the best hyperparameters is evaluated on a held-out test set that was not used during the optimization process to assess its generalization performance.

Workflow and Relationship Diagrams

Hyperparameter Optimization in an Active Learning Cycle

Method Decision Logic

Research Reagent Solutions

Table 3: Essential Software and Libraries for Hyperparameter Optimization

| Item/Reagent | Function/Application | Example Packages & Notes |

|---|---|---|

| General ML & Optimization | Core infrastructure for model training and standard hyperparameter tuning. | scikit-learn (GridSearchCV, RandomizedSearchCV) [39] |

| Bayesian Optimization Frameworks | Specialized libraries for implementing sample-efficient BO. | Ax [40], BoTorch [40], BayesianOptimization [40], GPyOpt [40] |

| Chemistry & Materials Science BO | Domain-specific packages tailored for chemical problems. | Gaussian Processes (GAUCHE) [42], Olympus [40], Phoenics [40] |

| Surrogate Model | The statistical model that approximates the objective function. | Gaussian Process (GP): Flexible, provides uncertainty [40] [42]. Random Forest: Handles high-dimensional spaces well [40]. Deep Ranking Models: Effective for rough landscapes with activity cliffs [42]. |

| Acquisition Function | The strategy for selecting the next hyperparameters to evaluate. | Expected Improvement (EI): Balances exploration and exploitation. Upper Confidence Bound (UCB): Explicitly tunable exploration. |

| Molecular Simulation Oracle | The high-fidelity, expensive evaluation that provides ground-truth data. | ESMACS: Absolute binding free energy calculations [1]. Docking Scores: Faster, approximate proxies for binding affinity [35]. |