Active Learning for Chemical Space Exploration: Advanced Data Sampling Techniques to Accelerate Drug Discovery

This article provides a comprehensive overview of active learning (AL) data sampling techniques for exploring the vast chemical space in drug discovery and materials science.

Active Learning for Chemical Space Exploration: Advanced Data Sampling Techniques to Accelerate Drug Discovery

Abstract

This article provides a comprehensive overview of active learning (AL) data sampling techniques for exploring the vast chemical space in drug discovery and materials science. It covers foundational principles, from the core challenge of data scarcity to the pool-based AL framework, and details a wide array of methodological strategies including uncertainty-based, diversity-driven, and hybrid sampling. The content further addresses practical troubleshooting for integration with Automated Machine Learning (AutoML) and handling model drift, and offers validation through rigorous benchmarking studies and real-world case studies in ADMET prediction and lead optimization. Designed for researchers and drug development professionals, this guide synthesizes current evidence to help efficiently navigate chemical space, significantly reduce experimental costs, and accelerate the development of new therapeutics and materials.

The 'Needle in a Haystack' Problem: Why Active Learning is Essential for Navigating Chemical Space

Defining the Vastness of Chemical Space and the High Cost of Data Acquisition

Frequently Asked Questions (FAQs)

What is chemical space? Chemical space is the universe of all possible chemical compounds, including both known and hypothetical molecules. It encompasses all conceivable combinations of atoms and bonds, forming a multi-dimensional space where each dimension can represent a different molecular property or structural feature [1]. The size of drug-like chemical space is estimated to be between 10²³ and 10¹⁸⁰ compounds, with a commonly cited middle-ground figure of 10⁶⁰ for synthetically accessible small organic molecules [2] [3]. This number is so vast that it dwarfs the number of stars in the observable universe [4].

Why is exploring chemical space so costly and challenging? The primary challenge is the sheer, almost infinite, size of chemical space. To date, fewer than one trillion compounds have ever been synthesized and experimentally characterized [4]. Traditional drug discovery methods, which rely on physically synthesizing and testing compounds, are slow and expensive, resulting in the synthesis of only about 1,000 compounds per year for analysis by a single organization [4]. This makes broad exploration cost-prohibitive, as high-throughput experimental investigation requires significant financial investment, specialized equipment, and time.

How can computational methods reduce the cost of exploration? Computational assays can evaluate billions of molecules in silico (via computer simulation) per week, drastically reducing the need for costly physical experiments in the early stages [4]. These methods use physics-based predictions and machine learning to triage down the vast chemical space to only the most promising candidates for synthesis and lab testing [1] [4]. This approach allows for a much wider and faster exploration than traditional lab-based methods.

What role does data quality play in this process? The underlying data defines the solution space for a model and the boundaries of what it can reliably predict [5]. The adage "garbage in, garbage out" is highly relevant. While perfect datasets are rare, using high-quality, relevant data is crucial for building accurate predictive models. A strategic focus on generating high-quality datasets has been shown to be a critical factor in achieving breakthroughs in AI for drug discovery [5].

What are the key computational tools for navigating chemical space? Several key tools and databases are essential for this work:

- ZINC Database: A free database of commercially available compounds for virtual screening [1].

- PubChem: A public repository of chemical molecules and their activities [1].

- RDKit: An open-source toolkit for cheminformatics used for analyzing chemical space [1].

- ChemGPS: A tool for navigating chemical space, providing a visual representation of chemical diversity [1].

- Deep Origin Balto: A platform to retrieve chemical information, calculate molecular properties, and perform ADMET profiling [1].

Troubleshooting Guides

Problem: Low Hit Rate in Virtual Screening

- Symptoms: Computational screening fails to identify compounds with desired biological activity during subsequent lab validation.

- Potential Causes & Solutions:

- Cause 1: Biased or Non-Diverse Training Library. The model was trained on a chemical library lacking diversity, causing it to perform poorly in unexplored regions of chemical space [2].

- Solution: Analyze your chemical library's coverage of chemical space using tools like ChemGPS or RDKit [1]. Intentionally expand the library to include more diverse molecular structures, such as those inspired by natural products, which are known to modulate biological processes [2].

- Cause 2: Inadequate Molecular Descriptors. The choice of descriptors used to represent molecules dramatically impacts their distribution in the defined chemical space [2].

- Solution: Experiment with different sets of molecular descriptors (e.g., 2D structural fingerprints, 3D coordinates, physicochemical properties) to find the optimal representation for your specific biological target [3].

Problem: Poor Synthesizability of AI-Generated Compounds

- Symptoms: Generative AI or de novo design suggests novel compounds that are challenging or impossible to synthesize in the lab [5].

- Potential Causes & Solutions:

- Cause: The AI model is not constrained by synthetic chemistry rules. The algorithm optimizes for biological activity without a sufficient penalty for synthetic complexity [5].

- Solution: Integrate reinforcement learning (RL) to fine-tune generated compounds towards user-defined targets, including synthesizability and drug-like properties [5]. Implement iterative cycles where a computational scientist's model suggests compounds and a medicinal chemist provides feedback on synthetic feasibility, creating a closed feedback loop [5].

Problem: Inaccurate Prediction of Physicochemical Properties

- Symptoms: Computationally predicted properties (e.g., solubility, lipophilicity) do not match experimental results.

- Potential Causes & Solutions:

- Cause: Model trained on small or low-quality datasets. Traditional QSAR models can struggle with predicting complex properties when trained on small, noisy datasets [3].

- Solution: Employ deep learning models, which have shown significant improvements over traditional machine learning for predicting ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties, even with large, complex datasets [3]. Use tools like the ADMET predictor to leverage these advanced algorithms [3].

Quantitative Data on Chemical Space and Screening

Table 1: Estimates of Chemical Space Size

| Type of Chemical Space | Estimated Size (Number of Compounds) | Key Constraints |

|---|---|---|

| Total Drug-Like Chemical Space [2] | 10²³ to 10¹⁸⁰ | Based on various calculation methods and molecular constraints. |

| Synthetically Accessible Space [1] [2] | 10⁶⁰ (often cited) | Limited by molecular size (e.g., ~30 atoms of C, N, O, S), stability, and lead-like properties. |

| Historically Explored Space [4] | < 10¹² (less than one trillion) | Limited by the cumulative history of synthesis and experimental characterization. |

Table 2: Comparison of Screening Methodologies

| Screening Method | Throughput (Compounds/Year) | Relative Cost | Key Tools & Techniques |

|---|---|---|---|

| Traditional Lab-Based [4] | ~1,000 | Very High | High-throughput experimental screening, robotic automation. |

| Computational (Virtual) [4] | Billions per week | Low (per compound) | Physics-based simulations, machine learning, virtual screening [1]. |

| Active Learning-Driven [6] | Highly focused subsets | Highly Efficient | Query by Committee (QBC), deep learning ensembles (e.g., ANI). |

Experimental Protocols for Active Learning in Chemical Space

Protocol: Active Learning for Sampling Chemical Space using Query by Committee (QBC)

1. Principle: This protocol uses an ensemble of machine learning potentials (the "committee"). The disagreement among committee members is used to identify regions of chemical space where the model's predictions are unreliable. These uncertain regions are then prioritized for targeted data acquisition, maximizing the information gain from each expensive simulation or experiment [6].

2. Materials/Software:

- Initial Training Set: A small, curated set of molecules with known properties (e.g., potential energy).

- Machine Learning Model Ensemble: Multiple instances of a model architecture (e.g., ANI deep learning potentials) [6].

- Unlabeled Molecular Pool: A large, diverse database of molecules representing the region of chemical space to be explored.

- Quantum Chemistry Software: For generating new reference data (e.g., Gaussian, ORCA, NWChem).

3. Step-by-Step Procedure:

- Step 1: Initialization. Train an ensemble of machine learning models on the initial, small training set.

- Step 2: Prediction and Disagreement Scoring. Use the trained ensemble to predict properties for all molecules in the unlabeled pool. Calculate the disagreement (e.g., standard deviation) between the predictions of each committee member for every molecule.

- Step 3: Querying. Rank the molecules in the unlabeled pool by their disagreement score. Select the top N molecules with the highest disagreement for which reference data will be acquired.

- Step 4: Expensive Calculation. Use high-fidelity, computationally expensive methods (e.g., Density Functional Theory) to calculate the accurate properties for the selected N molecules.

- Step 5: Dataset Augmentation. Add the newly calculated data points (molecules and their properties) to the training set.

- Step 6: Model Retraining. Retrain the machine learning ensemble on the newly augmented training set.

- Step 7: Iteration. Repeat steps 2-6 until the model achieves the desired level of accuracy across the chemical space of interest.

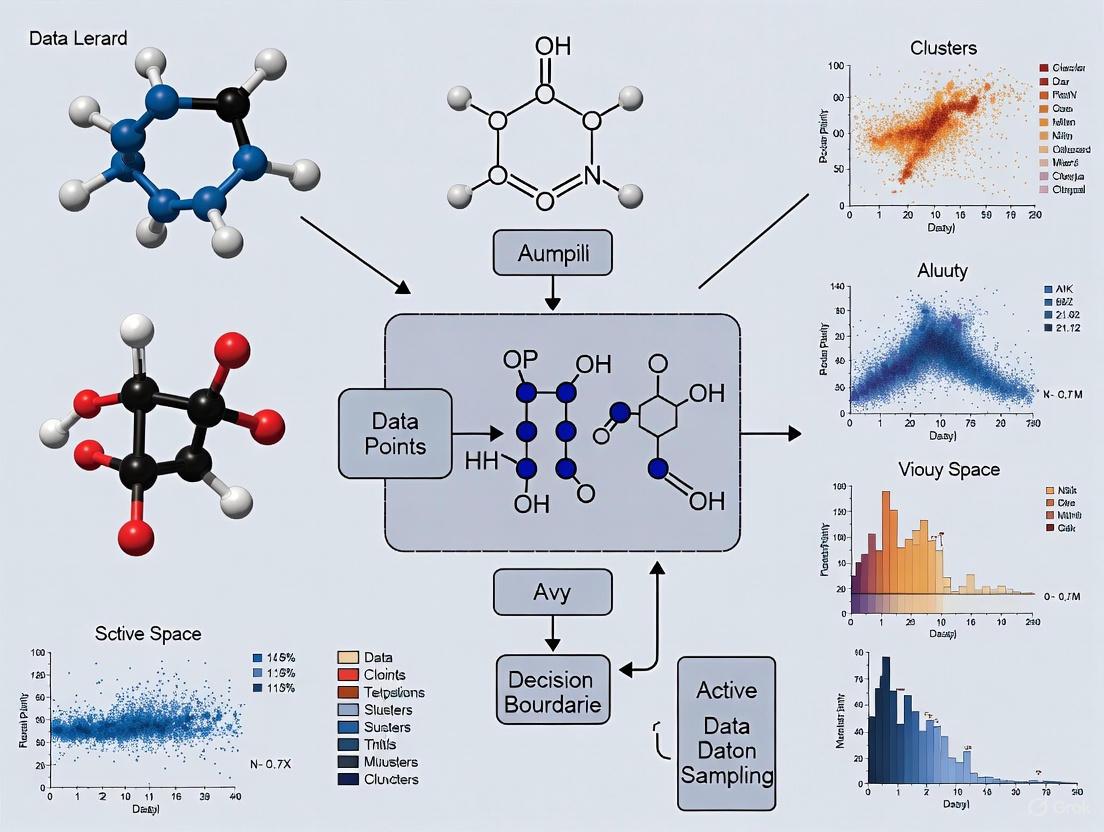

4. Visualization of Workflow: The following diagram illustrates the iterative active learning cycle.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Resources for Chemical Space Research

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| ZINC Database [1] | Database | Provides a large, freely available database of commercially available compounds for virtual screening. |

| PubChem [1] [3] | Database | A public repository of chemical molecules and their biological activities, useful for training models. |

| RDKit [1] | Software | An open-source cheminformatics toolkit used for calculating molecular properties, descriptor generation, and similarity mapping. |

| ChemGPS [1] | Software | Acts as a global positioning system for chemical space, enabling visualization and navigation of chemical diversity. |

| ANAKIN-ME (ANI) [6] | AI Model | A deep learning potential for molecular energetics that can be trained and sampled using active learning protocols. |

| DeepVS [3] | AI Tool | A deep learning-based virtual screening tool for docking ligands to receptors and identifying hit compounds. |

| ADMET Predictor [3] | AI Tool | Uses neural networks to predict critical pharmacokinetic and toxicity properties of molecules early in the discovery process. |

Active learning (AL) has emerged as a powerful machine learning paradigm to address the high costs of data acquisition in fields like drug discovery and materials science. By iteratively selecting the most informative data points for labeling, AL builds high-performance models and identifies promising candidates with far fewer experiments than traditional approaches. This technical guide addresses common challenges and provides proven protocols for implementing AL in chemical space research.

Core Concepts & Frequently Asked Questions

What is the fundamental workflow of an Active Learning cycle?

The AL process is an iterative feedback loop. It starts with an initial, often small, set of labeled data to train a model. This model then scores the vast pool of unlabeled data, and a query strategy selects the most valuable points for experimental labeling. These newly labeled points are added to the training set, and the model is retrained, creating a self-improving cycle [7]. The process stops when a performance goal is met or a resource budget is exhausted.

Why is the choice of query strategy critical, and what are the main types?

The query strategy is the decision engine of AL, directly controlling the balance between exploration (probing diverse regions of chemical space) and exploitation (refining predictions in promising areas). An ineffective strategy wastes resources. Common approaches include:

- Uncertainty Sampling: Selects points where the model's prediction is least confident. This is highly effective for improving the model's accuracy quickly [8] [9].

- Diversity Sampling: Selects a set of points that are maximally different from each other and the existing training data. This ensures broad coverage of the chemical space and helps prevent the model from overfitting to a specific region [8] [10].

- Greedy (or Exploitation) Sampling: Selects points predicted to have the best property (e.g., highest synergy, strongest binding). This directly optimizes for hit discovery [8] [11].

- Hybrid Strategies: Combine elements of the above. For example, a strategy might seek points that are both highly uncertain and have a high predicted performance, or that are uncertain while also being diverse [8] [9].

The following workflow diagram illustrates how these strategies are integrated into the AL cycle.

What are the proven performance benefits of Active Learning?

Extensive simulations in drug discovery show that AL delivers substantial efficiency gains. The table below summarizes key quantitative results from recent studies.

Table 1: Quantitative Benefits of Active Learning in Drug Discovery

| Application Area | Performance Metric | AL Result | Control (Random Screening) | Citation |

|---|---|---|---|---|

| Synergistic Drug Combination Screening | Proportion of synergistic pairs found | Found 60% of synergies | Required screening 82% of space to find the same number | [12] |

| Virtual Screening (Docking) | Identification of top-100 scoring ligands | Found 66.8% of top ligands after screening 6% of library | Found only 5.6% after screening 6% of library (11.9x enrichment) | [11] |

| Low-Data Drug Discovery | Hit discovery rate | Up to 6-fold improvement | Baseline performance of traditional screening | [13] |

| Anti-Cancer Drug Response | Hit identification | Significant improvement for most drugs | Lower hit identification rate | [8] |

Troubleshooting Common Experimental Issues

Problem: The AL model is not discovering new hits and gets stuck in a local optimum.

This is a classic sign of over-exploitation or a lack of diversity in the selected samples.

- Solution 1: Adjust the Query Strategy. Pure greedy sampling can quickly exhaust a local region of chemical space. Incorporate exploration-focused strategies:

- Switch to uncertainty sampling to improve the model in poorly understood regions [8].

- Use diversity sampling or a hybrid method to force exploration. For example, the Density-Aware Greedy Sampling (DAGS) method integrates data density with uncertainty for more effective exploration in regression tasks [10].

- Solution 2: Reduce the Batch Size. Selecting fewer compounds per AL iteration allows the model to adapt more frequently. Research has shown that smaller batch sizes yield a higher synergy discovery rate [12].

- Solution 3: Enforce Diversity Algorithmically. Use a method like Farthest Point Sampling (FPS) in the chemical feature space. FPS selects compounds that are maximally distant from each other, ensuring a diverse and representative training set and improving model generalization [14].

Problem: Model performance is poor when predicting for new cell lines or structurally novel compounds.

This indicates a failure to generalize, often because the initial training set or the AL-selected compounds do not adequately represent the wider chemical or biological space.

- Solution: Prioritize Informative Cellular Context Features.

- Use Gene Expression Profiles: Incorporating genomic features like gene expression profiles from databases like GDSC has been shown to significantly boost prediction quality and generalizability across cell lines [12].

- Feature Selection is Key: You don't need to profile all genes. Research indicates that a small set of informative genes (e.g., as few as 10) can be sufficient for accurate predictions, making the approach more efficient [12].

Problem: High computational cost of the AL iteration cycle.

Training complex models like message-passing neural networks on large libraries can be slow.

- Solution: Benchmark Model Architecture for Efficiency. The best model for AL is not always the largest. In a virtual screening study, a well-tuned feedforward neural network on molecular fingerprints performed comparably to a more complex graph-based model but trained faster [11]. Start with a simpler, more data-efficient architecture and only scale up if necessary.

Detailed Experimental Protocols

Protocol 1: Implementing an AL Cycle for Drug Synergy Screening

This protocol is based on the methodology that achieved 60% synergy discovery with only 10% of the experimental space [12].

Initialization:

- Data Pool: Compile your library of all possible drug pairs and target cell lines.

- Base Model: Pre-train a model on any existing public synergy data (e.g., O'Neil, ALMANAC). A Multi-Layer Perceptron (MLP) is a robust starting point.

- Features: Encode drugs using Morgan fingerprints and cell lines using GDSC gene expression profiles (10-908 key genes).

Iterative Active Learning Loop:

- Step 1: Predict. Use the current model to predict synergy scores for all untested drug pairs in the pool.

- Step 2: Query. Apply your chosen strategy. For a balanced approach, select a batch that includes:

- The top-k drug pairs with the highest predicted synergy (greedy).

- A subset of pairs where the model is most uncertain (uncertainty).

- Step 3: Experiment. Conduct the wet-lab synergy screening experiments for the selected batch.

- Step 4: Retrain. Add the new experimental results to the training data and update the model parameters.

- Step 5: Check Stopping Criterion. Repeat until the desired number of synergistic pairs is found or the experimental budget is spent.

Protocol 2: Expanding the Applicability Domain for Property Prediction

This protocol uses AL to improve prediction accuracy for a new class of compounds, as demonstrated for Ionization Efficiency (IE) [9].

- Define Spaces: Label your existing training data as the "Explored Space." The new chemical class (e.g., PFAS, natural products) is the "Unexplored Space."

- Train Initial Model: Train an ML model (e.g., XGBoost) on the Explored Space.

- Active Learning Iteration:

- Compute Distances: Calculate the Euclidean distance in the molecular descriptor space from each compound in the Unexplored Space to its nearest neighbors in the Explored Space.

- Query Strategy (Clustering-based): Cluster the Unexplored Space and select compounds from the most distant clusters, ensuring coverage of diverse structural types.

- Experiment and Update: Acquire experimental labels for the selected compounds, add them to the Explored Space, and retrain the model. This significantly reduces prediction RMSE for the new chemical class [9].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Resources for Active Learning Experiments in Drug Discovery

| Resource / Reagent | Function in Active Learning Workflow | Example Sources / Types |

|---|---|---|

| Chemical Libraries | The vast pool of unlabeled candidates for the AL algorithm to screen. | PubChem [14], ZINC [11], Enamine [11] |

| Cell Line Panels | Provides the biological context (cellular environment) for screening; genomic features are critical for model accuracy. | Cancer Cell Line Encyclopedia (CCLE) [8], NCI-60 |

| Genomic Feature Data | Numerical representation of the cellular context; significantly enhances model generalizability. | Gene Expression (e.g., from GDSC) [12], Mutation profiles |

| Molecular Descriptors | Numerical representation of chemical structures; the input features for the AI model. | Morgan Fingerprints [12], MAP4 [12], PaDEL Descriptors [9] |

| High-Throughput Screening Assays | The "oracle" that provides experimental labels (e.g., IC50, synergy score) for AL-selected compounds. | Automated viability assays, high-content imaging |

| Benchmarked AI Models | The core predictive algorithm that guides the selection of experiments. | MLP, Random Forest, XGBoost, D-MPNN [12] [8] [11] |

Troubleshooting Guide: Common Active Learning Challenges

1. Problem: Model Performance Has Plateaued

- Issue: The model shows little to no improvement despite several AL cycles.

- Solution: Re-evaluate your acquisition function. A strategy focused only on uncertainty might be stuck exploring a complex but uninformative region. Switch to a hybrid strategy that incorporates diversity sampling to select data points from broader regions of the chemical space, ensuring better generalization [15].

2. Problem: Sampling is Redundant and Non-Diverse

- Issue: Selected compounds are structurally too similar, leading to an unbalanced training set.

- Solution: Implement a diversity-hybrid strategy like RD-GS, which was shown to outperform geometry-only heuristics in early acquisition phases [16]. Ensure your molecular descriptors (e.g., ECFP, ChemDist embeddings) capture relevant structural features for a meaningful diversity calculation [17].

3. Problem: Poor Performance on Imbalanced Datasets

- Issue: The model fails to predict rare but critical active compounds (e.g., toxic chemicals).

- Solution: Integrate strategic data sampling (like k-ratio sampling) within the AL framework to balance the distribution of active and inactive compounds during training [18]. Combine this with an uncertainty-based AL strategy to focus the limited labeling resources on the most informative minority class examples [18].

4. Problem: High Computational Cost of Labeling

- Issue: The quantum chemical calculations (e.g., for T1/S1 energy levels) required to label selected molecules are prohibitively expensive.

- Solution: Use a hybrid quantum mechanics/machine learning pipeline like ML-xTB to generate accurate labels at a fraction of the cost (e.g., 1% of TD-DFT cost) [19]. This makes the iterative AL process computationally feasible.

5. Problem: Acquisition Strategy is Inefficient Under a Dynamic Model

- Issue: When using AutoML, the underlying surrogate model may change between AL iterations, making static acquisition strategies less effective.

- Solution: Early in the process, prioritize uncertainty-driven (LCMD, Tree-based-R) and diversity-hybrid (RD-GS) strategies, which have been benchmarked to perform robustly even when the model family evolves in an AutoML workflow [16].

Frequently Asked Questions (FAQs)

Q1: What is the most effective AL strategy for a brand-new project with very little labeled data? For a cold start, uncertainty-based sampling is highly effective. Strategies like Least Confidence Margin (LCMD) or Tree-based Uncertainty (Tree-based-R) quickly identify the most uncertain points, allowing the model to learn the most from each new label. As the labeled set grows, hybrid strategies that balance uncertainty with diversity often yield better performance [16].

Q2: How can I leverage a large pool of unlabeled chemical compounds? The Partially Labeled Noisy Student (PLANS) method is designed for this. It uses a self-training approach where a "teacher" model labels the unlabeled pool, and a "student" model is then trained on this larger, noisier dataset. This process can be iterated, significantly improving model generalizability by exploiting the vast unlabeled chemical space [20].

Q3: My dataset is severely imbalanced. Which AL strategy should I use? For imbalanced data, such as in toxicity prediction, an uncertainty-based method has been shown to provide superior stability. In a benchmark study on thyroid-disrupting chemicals, uncertainty sampling maintained strong performance (AUROC >0.82) even under severe class imbalance, achieving high performance with up to 73.3% less labeled data [18].

Q4: How do I visually validate and guide my AL exploration of chemical space? Dimensionality Reduction (DR) techniques like UMAP and t-SNE are crucial. They project high-dimensional chemical descriptor data into 2D or 3D maps. These "chemical space maps" allow you to visually track where your AL strategy is sampling, ensuring it explores diverse regions and validates the model's understanding of the chemical landscape [21] [17].

Q5: What is a unified AL framework for a complex task like photosensitizer design? A robust framework integrates multiple components: a generative model or large pool for candidate generation, a surrogate model (like a Graph Neural Network) for fast property prediction, and a hybrid acquisition strategy. This strategy should balance exploration (diversity), exploitation (property optimization), and model uncertainty, all while using a cost-effective labeling pipeline (like ML-xTB) [19].

Quantitative Benchmarks of Active Learning Strategies

The table below summarizes the performance of various AL strategies benchmarked in a regression task with an AutoML framework, measured by the Mean Absolute Error (MAE) at different stages of data acquisition [16].

Table 1: Benchmark of AL Strategies in an AutoML Workflow for Regression [16]

| Strategy Category | Example Strategy | Key Principle | Early-Stage Performance (MAE) | Late-Stage Performance (MAE) | Key Strength |

|---|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Selects points where model is most uncertain | Outperforms baseline & geometry methods | Converges with other methods | High impact with very little data |

| Diversity-Hybrid | RD-GS | Balances uncertainty with sample diversity | Outperforms baseline & geometry methods | Converges with other methods | Prevents redundant sampling |

| Geometry-Only | GSx, EGAL | Selects points based on feature space structure | Underperforms vs. top strategies | Converges with other methods | Simpler computation |

| Baseline | Random-Sampling | Selects data points at random | Lower performance | Converges with other strategies | Useful performance benchmark |

Detailed Experimental Protocol: A Unified AL Framework for Molecular Design

This protocol outlines the methodology for implementing a unified active learning framework to discover photosensitizers, as detailed by Chen et al. (2025) [19].

Objective: To efficiently discover high-performance photosensitizers by iteratively training a model to predict key photophysical properties (S1/T1 energy levels) with minimal costly quantum chemical calculations.

Workflow Overview:

Step-by-Step Methodology:

Preparation of Molecular Dataset and Chemical Space Definition

- Action: Compile a large, diverse pool of candidate molecules from public databases (e.g., ChEMBL). The study by Chen et al. started with a pool of 655,197 candidates [19].

- Action: Generate an initial small set of labeled data. This can be done via random sampling or by using available experimental data.

Training of the Surrogate Model

- Action: Train a Graph Neural Network (GNN) as a surrogate model on the current labeled dataset. GNNs are well-suited for representing molecular structures [19].

- Action: The model learns to predict target properties (e.g., S1/T1 energies) from the molecular structure.

Prediction and Molecule Selection via Hybrid Acquisition

- Action: Use the trained surrogate model to predict properties and uncertainties for all molecules in the unlabeled candidate pool.

- Action: Apply a hybrid acquisition strategy to select the most valuable molecules for labeling. The unified framework uses a combination of [19]:

- Uncertainty-based: Selects molecules where the model's prediction is most uncertain.

- Diversity-based: Ensures selected molecules are structurally diverse, covering the chemical space.

- Property-based: Focuses on molecules predicted to have desirable properties (exploitation).

- Pro Tip: The study found that a sequential strategy, which first prioritizes diversity for exploration before switching to a more objective-focused strategy, yielded superior results [19].

High-Fidelity Labeling and Model Update

- Action: Label the selected molecules using a high-accuracy but computationally expensive method. To reduce cost, the framework uses the ML-xTB pipeline, which provides DFT-level accuracy at ~1% of the computational cost [19].

- Action: Add the newly labeled molecules to the training set.

- Action: Retrain the surrogate model with the augmented dataset.

Iteration and Stopping

- Action: Repeat steps 2-4 until a stopping criterion is met (e.g., a performance target is achieved, a computational budget is exhausted, or consecutive iterations no longer improve the model).

- Output: A list of top candidate molecules with predicted high performance for experimental validation.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Tools for Active Learning in Chemical Research

| Item Name | Function in Active Learning | Example / Note |

|---|---|---|

| Molecular Descriptors & Fingerprints | Translate molecular structure into a numerical format for ML models. | Morgan Fingerprints (ECFP): Encode substructure information [17]. MACCS Keys: Predefined structural key fingerprints [17]. |

| Graph Neural Network (GNN) | Acts as the surrogate model to predict molecular properties directly from the graph structure of molecules. | Superior for capturing structural relationships compared to traditional fingerprints [19] [20]. |

| Dimensionality Reduction (DR) Algorithms | Create 2D/3D visualizations ("chemical space maps") of the high-dimensional molecular data for analysis and validation. | UMAP and t-SNE are high-performing non-linear methods for neighborhood preservation [17]. PCA is a common linear method [17]. |

| Automated Machine Learning (AutoML) | Automates the selection and optimization of machine learning models within the AL loop, reducing manual tuning. | Ensures the surrogate model is always near-optimal, making the AL process more robust and efficient [16]. |

| Cost-Effective Labeling Methods | Provide accurate ground-truth labels for selected molecules at a reduced computational cost. | The ML-xTB pipeline combines semi-empirical quantum calculations with machine learning to achieve high accuracy at 1% of the cost of TD-DFT [19]. |

| Acquisition Functions | The core algorithms that decide which unlabeled data points to select next. | Key types include Uncertainty Sampling (e.g., LCMD), Diversity Sampling, and Hybrid approaches (e.g., RD-GS) [16] [15]. |

Active Learning (AL) is a machine learning paradigm designed to maximize model performance while minimizing the costly process of data labeling, a bottleneck particularly acute in chemical space research where experiments can take "weeks, months to get data points" [22]. This is achieved through an iterative cycle where the model itself strategically selects the most informative data points from a vast pool of unlabeled candidates to be labeled next [23] [24]. For researchers and drug development professionals, this method provides a powerful framework to efficiently navigate massive chemical spaces, potentially reducing labeling efforts by 30% to 70% compared to traditional approaches [23]. The core of this methodology is the iterative loop of Query, Label, Retrain, and Repeat, which enables a targeted exploration of the chemical universe.

The Core Workflow Diagram

The following diagram illustrates the continuous, iterative cycle of the Active Learning workflow.

Detailed Breakdown of the Workflow Cycle

Query: Strategic Data Selection

The "Query" phase is the intelligence core of the AL cycle, where the model selects which unlabeled data points would be most valuable for its own improvement. The choice of strategy depends on the specific research goal.

- Uncertainty Sampling: Selects data points where the model's prediction is least confident (e.g., class probabilities closest to 0.5 in classification) [23] [24]. This is highly effective for refining decision boundaries.

- Diversity Sampling: Aims for broad coverage of the chemical space by selecting a diverse set of data points, often using clustering or distance metrics [23] [19]. This prevents the model from over-sampling a single, highly uncertain region.

- Query-by-Committee (QBC): Uses an ensemble of models. Data points with the highest disagreement among the committee members are selected for labeling [23] [25]. This approach helps reduce model-specific bias.

- Hybrid Strategies: Many advanced frameworks combine strategies. For instance, a unified AL framework for photosensitizer design used a hybrid acquisition function balancing ensemble-based uncertainty with a physics-informed objective and an early-cycle diversity schedule [19].

Label: The Experimental Bottleneck

In the context of chemical space research, the "Label" phase involves the actual physical experiment or high-fidelity calculation to obtain the property of interest for the selected compounds.

- High-Fidelity Calculation: This could involve quantum chemical calculations like TD-DFT or alchemical free energy calculations to label properties like binding affinity or electronic energy levels [26] [19]. One study used an ML-xTB pipeline to generate data at DFT-level accuracy for 655,197 photosensitizer candidates at a fraction of the cost [19].

- Wet-Lab Experiment: This is the ultimate validation, where a compound is synthesized and its properties (e.g., ionization efficiency, biological activity) are measured empirically [22] [9]. Research highlights that going "all the way to experiments as a final output" is crucial for real-world applicability [22].

Retrain: Integrating New Knowledge

Once the new data is labeled, it is added to the existing training set, and the model is retrained from scratch or fine-tuned. This step updates the model's parameters to internalize the new information, expanding its understanding of the chemical space and refining its predictive accuracy for subsequent cycles [23] [19].

Repeat: The Path to Convergence

The cycle repeats until a predefined stopping criterion is met. Key metrics to monitor for deciding when to stop include:

- Performance Plateau: The model's performance (e.g., RMSE, accuracy) on a held-out validation set no longer improves significantly with new data [23].

- Cost Exhaustion: The allocated budget for experimentation or computation is reached [23].

- Low Overall Uncertainty: The model's average uncertainty across the remaining unlabeled pool drops below a certain threshold [23].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for an Active Learning Pipeline in Chemical Research

| Item/Resource | Function in the AL Workflow |

|---|---|

| Initial Seed Data | A small, initially labeled dataset (e.g., 58-100 data points) to bootstrap the first iteration of the AL model [22] [9]. |

| Unlabeled Chemical Pool | A large, diverse library of candidate molecules (e.g., a virtual library of 1 million electrolytes [22] or 655,197 photosensitizers [19]) representing the target chemical space for exploration. |

| High-Fidelity Calculator/Lab | The "labeling oracle" that provides ground-truth data, such as a quantum chemistry computation pipeline (ML-xTB [19], alchemical free energy [26]) or an experimental laboratory for synthesis and testing [22]. |

| Machine Learning Model | The surrogate model that learns structure-property relationships. Common choices include Graph Neural Networks (GNNs) for molecules [19] or ensemble methods like XGBoost [9]. |

| Query Strategy Algorithm | The computational logic (e.g., uncertainty, diversity, QBC) that ranks and selects the most informative candidates from the unlabeled pool for the next labeling round [23] [19]. |

Performance Data from Research Studies

Table: Quantitative Outcomes of Active Learning in Chemical Research

| Study Focus | AL Strategy | Key Quantitative Result |

|---|---|---|

| Battery Electrolyte Screening [22] | Active Learning with experimental output | Identified 4 high-performing battery electrolytes from a search space of 1 million candidates, starting from only 58 initial data points. |

| Ionization Efficiency (IE) Prediction [9] | Uncertainty-based vs. Clustering-based | Uncertainty-based AL was most efficient for sampling ≤10 chemicals/iteration. AL reduced RMSE by up to 0.3 log units, improving quantification fold error from 4.13× to 2.94×. |

| Universal ML Potential (ANI-1x) [25] | Query-by-Committee (QBC) | The AL-based model outperformed a model trained on randomly sampled data using only 10% of the data, and achieved state-of-the-art accuracy with only 25% of the data. |

| Photosensitizer Design [19] | Hybrid (Uncertainty + Diversity) | The sequential AL strategy (explore then exploit) outperformed static baselines by 15-20% in test-set Mean Absolute Error (MAE) for predicting T1/S1 energy levels. |

Troubleshooting Guides and FAQs

FAQ 1: My model's performance is not improving significantly after several AL cycles. What could be wrong?

Answer: This performance plateau is a common issue. Several factors could be at play:

- Inadequate Query Strategy: Your sampling strategy might be stuck in a local region of the chemical space. Pure uncertainty sampling can sometimes overfit to noisy or outlier data points [23].

- Poor Quality or Noisy Labels: If the experimental or computational method used for "labeling" has high variability or systematic error, the model cannot learn a clean signal.

- Solution: Audit your labeling pipeline. Replicate a few key measurements to assess experimental noise and ensure your high-fidelity calculations are appropriately validated [9].

- Saturation: You may have simply exhausted the informative potential of your current chemical library.

- Solution: Consider expanding your unlabeled pool with new, structurally diverse compounds or employing a generative model to create novel candidates that fulfill the acquisition function's criteria [19].

FAQ 2: How do I choose the right query strategy for my specific problem?

Answer: The optimal strategy is dictated by your primary goal and the nature of your chemical space.

- For Rapidly Improving a Specific Predictive Task: Start with Uncertainty Sampling. It is simple to implement and highly effective for quickly refining a model around a decision boundary [23] [24].

- For Broad Exploration of an Unknown Chemical Space: Begin with Diversity or Clustering-based Sampling. This ensures your initial training set is representative of the entire space, which is crucial for building a generally applicable model [9] [19].

- For Complex, Multi-Objective Optimization (e.g., balancing potency and solubility): Use a Hybrid Strategy. Combine uncertainty with a custom, physics-informed or multi-objective acquisition function to guide the search toward practically useful regions [19].

- When Model Calibration is Poor: Query-by-Committee (QBC) is robust as it relies on model disagreement rather than calibrated probabilities, making it suitable when uncertainty estimates are not reliable [23] [25].

FAQ 3: The experimental labeling process is too slow for a rapid AL cycle. How can I mitigate this?

Answer: This is a fundamental challenge. The key is to create a tiered labeling system.

- Solution: Implement a multi-fidelity approach. Use a fast, inexpensive computational method (e.g., semi-empirical quantum mechanics, molecular docking) as a "cheap oracle" for the initial AL cycles to narrow down the candidate pool. Only the most promising finalists are then passed to the slow, high-fidelity experimental or computational method (e.g., synthesis & assay, TD-DFT) for final validation and model refinement [19]. This creates an efficient funnel that reserves your most expensive resources for the highest-value compounds.

FAQ 4: My AL model seems to be introducing bias towards certain chemical classes. How can I ensure diversity?

Answer: Bias occurs when the acquisition function oversamples from a specific region.

- Solution: Explicitly integrate diversity constraints into your query strategy. Instead of selecting only the top N most uncertain points, you can:

- Use Clustering: Cluster the unlabeled data in a descriptor space and select the most uncertain points from each cluster [23].

- Apply a Distance Penalty: Formulate an acquisition function that selects points that are both uncertain and far from the existing training set [19].

- Monitor Chemical Space Coverage: Use visualization techniques like UMAP or t-SNE to track how well your selected compounds cover the target chemical space over iterations [9]. Proactive diversity management is essential for building robust and generalizable models.

A Practical Toolkit: Key Active Learning Strategies for Chemical Data

Uncertainty-based sampling is a powerful active learning (AL) strategy that strategically selects unlabeled data points for annotation by identifying instances where the model exhibits low prediction confidence. In chemical space research, where experimental data is often scarce and costly to obtain, this approach prioritizes the most informative molecular data for labeling, significantly accelerating drug discovery pipelines like quantitative structure-activity relationship (QSAR) modeling and molecular property prediction [27] [28]. By focusing resources on ambiguous regions of the chemical space—often near decision boundaries—this methodology enables the construction of more accurate and robust predictive models with substantially less labeled data [29] [30].

# Comparison of Primary Uncertainty Metrics

The following table summarizes the key uncertainty quantification metrics used for query selection in classification tasks.

| Metric Name | Mathematical Formulation | Primary Use Case | Key Advantage |

|---|---|---|---|

| Least Confidence [27] [29] | ( 1 - P(\hat{y} \mid \mathbf{x}) ), where ( \hat{y} = \arg \max_y P(y \mid \mathbf{x}) ) | General classification tasks | Simple to implement and computationally efficient [30] |

| Margin Sampling [27] [29] | ( 1 - [P(\hat{y}1 \mid \mathbf{x}) - P(\hat{y}2 \mid \mathbf{x})] ), where ( \hat{y}1 ) and ( \hat{y}2 ) are the top two predicted classes | Distinguishing between top candidate classes | Conserts the gap between the two most likely classes [27] |

| Entropy Sampling [27] [29] | ( - \sum{i=1}^{C} P(yi \mid \mathbf{x}) \log P(y_i \mid \mathbf{x}) ) | Multi-class classification with complex decision boundaries | Captures overall predictive uncertainty across all classes [27] |

Figure 1: Generalized Active Learning Workflow with Uncertainty Sampling. This iterative process involves model training, uncertainty calculation on an unlabeled pool, expert labeling of the most uncertain samples, and model updating until a performance threshold is met.

# Core Experimental Protocol for Molecular Property Prediction

Implementing uncertainty-based sampling for molecular property prediction involves a standardized, iterative protocol.

- Initial Model Training: Begin by training an initial model on a small, randomly selected subset of labeled molecular data (e.g., 1-5% of the total data) [28] [31].

- Uncertainty Calculation & Query:

- Use the trained model to predict on a large pool of unlabeled molecules.

- Calculate an uncertainty score (e.g., entropy, least confidence) for each molecule in the pool [27] [29].

- Rank the molecules based on their uncertainty scores and select the top K most uncertain molecules (the "batch") for labeling [32].

- Oracle Labeling: Send the selected batch of molecules to an "oracle" (e.g., experimental assay or computational simulation, such as Density Functional Theory) to obtain the true property values [28] [31].

- Model Update & Iteration: Add the newly labeled molecules to the training set, retrain the model, and repeat steps 2-4 until a predefined performance target or labeling budget is reached [28] [12].

# Troubleshooting Common Experimental Issues

FAQ: My uncertainty sampling strategy is performing poorly on my high-dimensional molecular dataset, sometimes even worse than random sampling. What could be wrong?

This is a documented challenge, particularly when material or molecular descriptors are high-dimensional (e.g., 2048-bit Morgan fingerprints) and the data distribution is unbalanced [31]. The "curse of dimensionality" can make the data sparse, and uncertainty estimates can become unreliable.

- Solution 1: Incorporate Diversity. Use a hybrid strategy that combines uncertainty with diversity sampling. Instead of selecting only the most uncertain points, choose a batch that is both uncertain and diverse relative to each other. Methods like clustering or using the log-determinant of the covariance matrix (which maximizes joint entropy) can help ensure the batch covers a broader region of the chemical space [32] [31].

- Solution 2: Dimensionality Reduction. Apply techniques like Principal Component Analysis (PCA), UMAP, or feature selection to project the data into a lower-dimensional, more manageable space before applying the uncertainty sampling strategy [29] [31].

FAQ: My model's uncertainty scores are poorly calibrated, leading to the selection of uninformative samples. How can I improve calibration?

Poor calibration, where the model's predicted confidence does not match its actual accuracy, is a common pitfall. This can be caused by model overfitting or distribution shifts between training and unlabeled data [28].

- Solution 1: Use Model Ensembles. Instead of a single model, train an ensemble of models (e.g., with different initializations or architectures). The variance in the predictions across the ensemble provides a robust estimate of epistemic (model) uncertainty. Query the points where the ensemble disagrees the most [28] [33].

- Solution 2: Leverage Bayesian Methods. Techniques like Monte Carlo Dropout (MCDO) approximate Bayesian inference by performing multiple stochastic forward passes during prediction. The variance across these passes provides a useful uncertainty measure for query selection [28] [32].

- Solution 3: Utilize Censored Labels. In pharmaceutical settings, many experimental results are censored (e.g., "IC50 > 10 μM"). Adapt uncertainty quantification methods to learn from these censored labels using survival analysis techniques like the Tobit model, which can lead to more reliable uncertainty estimates [34].

FAQ: I am dealing with a highly imbalanced dataset in toxicity prediction. How can I prevent my uncertainty sampler from ignoring the rare, toxic class?

Standard uncertainty sampling can be biased toward the majority class in imbalanced scenarios.

- Solution: Strategic Sampling and Hybrid Approaches. Combine uncertainty sampling with strategic over-sampling of the minority class or use algorithm-level methods that adjust the learning process. One effective framework involves using a stacking ensemble trained with strategic k-sampling, which creates balanced data subsets during the active learning process. This has been shown to maintain stability and performance even under severe class imbalance [18].

FAQ: Why does my uncertainty sampling work well initially but show diminishing returns in later active learning cycles?

This can occur if the model becomes overconfident in its predictions or if the selected batches become redundant.

- Solution: Dynamic Exploration-Exploitation Tuning. Implement a strategy that dynamically balances exploring new regions of chemical space with exploiting known uncertain regions. For example, use Thompson Sampling or adjust the acquisition function to occasionally select samples with high predicted performance (high "exploitation") alongside highly uncertain ones (high "exploration") [12] [31]. Reducing the batch size in later stages can also help maintain efficiency [12].

# Research Reagent Solutions: Essential Components for Implementation

The following table lists key computational "reagents" and their functions for setting up an uncertainty-based sampling experiment in chemical research.

| Research Reagent | Function & Purpose | Example Tools / Methods |

|---|---|---|

| Molecular Features/Descriptors | Translates molecular structure into a numerical representation for model input. | Morgan Fingerprints, MAP4, Matminer Descriptors, Graph Neural Networks [28] [12] [31] |

| Base Predictive Model | The core machine learning model used for initial property prediction. | Fully-Connected Neural Networks, Graph Neural Networks (GNNs), Gaussian Process Regression (GPR), Gradient Boosting Machines (GBM) [28] [31] [33] |

| Uncertainty Quantifier | The algorithm responsible for calculating the uncertainty score from model predictions. | Model Ensemble Variance, Monte Carlo Dropout (MCDO), Laplace Approximation, Predictive Entropy [28] [32] [34] |

| Acquisition Function | Uses uncertainty scores to select the most valuable data points for labeling. | Uncertainty Sampling (US), Thompson Sampling (TS), Hybrid Diversity-Acquisition functions [27] [31] |

| Experimental Oracle | The source of ground-truth labels for selected molecules. | High-Throughput Screening Assays, DFT Calculations, Public Databases (e.g., PubChem, ChEMBL) [28] [12] |

Figure 2: Logical relationships between a base predictive model, various uncertainty quantification methods, and the resulting uncertainty metrics used for query selection.

Frequently Asked Questions

What is the fundamental principle behind Query by Committee (QBC)? QBC operates on the principle of measuring disagreement among an ensemble of models (the "committee") to identify data points for which the model predictions are most uncertain. These points of high disagreement are considered the most informative for improving the model if selected for labeling [35] [36].

My active learning process is slow because I retrain my model after every new data point. What can I do? You can implement Batch Mode Deep Active Learning (BMDAL). This approach selects multiple data points simultaneously in each iteration, which allows for parallel computation of expensive ab initio labels and reduces the frequency of model retraining, making the overall process much more efficient [35].

The batch of points selected by my QBC algorithm is not diverse and contains many similar structures. How can I improve this? A naive QBC that only considers informativeness can select similar points. To fix this, use advanced BMDAL algorithms that also enforce diversity and representativeness. This ensures the selected batch covers different regions of the chemical space and is representative of the entire data pool, avoiding redundancy [35].

What are some practical measures of "disagreement" or "uncertainty" in a neural network committee? For neural network-based potentials, common measures include the variance in the predicted energies or forces across the ensemble members [35]. Alternative methods use the gradient of the network's output with respect to its parameters to construct a kernel for uncertainty estimation [35].

Can QBC be applied to interatomic potentials for molecular systems? Yes, QBC and other active learning methods are successfully used to build machine-learned interatomic potentials (MLIPs). They help in selectively generating datasets for molecular and periodic bulk systems by identifying rare or under-sampled molecular configurations during simulations [35].

Troubleshooting Guides

Problem: The committee members agree too quickly, failing to find new informative points.

Potential Cause 1: Lack of Committee Diversity. The ensemble models are too similar, often because they are initialized with the same parameters or trained on an identical, small dataset.

- Solution: Ensure diversity by using different random initializations for each model in the committee. You can also vary the architecture or training hyperparameters for each member, or use bootstrap sampling (training each model on a different subset of the current training data) [36].

Potential Cause 2: Exploration-Exploitation Imbalance. The algorithm is stuck in a well-sampled region of the chemical space and is not exploring new areas.

- Solution: Incorporate diversity metrics into your selection algorithm. Instead of selecting only the top-K most uncertain points, use a method that balances uncertainty with the diversity of the selected batch, such as cluster-based selection or core-set selection [35].

Problem: The model performance plateaus or even deteriorates after adding new data from active learning.

Potential Cause 1: Noisy or Incorrect Labels. The ab initio calculations used to label the selected data points may have failures or inaccuracies, introducing noise into the training set.

- Solution: Implement a validation step to check the consistency of new ab initio calculations. Visually inspect the structures that receive the highest uncertainty scores to ensure they are physically reasonable and not artifacts.

Potential Cause 2: Inadequate Uncertainty Quantification. The committee's disagreement is not a good proxy for the true prediction error, leading to the selection of uninformative or outlier points.

- Solution: Experiment with different uncertainty metrics. If using neural networks, try approximating the neural tangent kernel (NTK) using gradient features or random projections, which can provide a more robust uncertainty estimate than simple committee variance [35].

Problem: The active learning process is computationally too expensive.

- Potential Cause: Inefficient Retraining and Data Generation. Frequently retraining a large model and running molecular dynamics (MD) simulations for data generation is inherently costly.

- Solution:

- Adopt a batch active learning strategy to reduce the number of retraining cycles [35].

- Use a cheaper, pre-trained potential or a force field to run the initial MD simulations and generate the pool of candidate structures. This defers the use of expensive ab initio methods until the final labeling step [35].

- For the neural network potential, consider using an architecture designed for fast training to lower the cost of each retraining iteration [35].

- Solution:

Experimental Protocols & Data

Protocol 1: Standard QBC Workflow for Sampling Chemical Space

This protocol is based on the fully automated approach described by Smith et al. for generating datasets to train universal machine learning potentials [36].

- Generate Candidate Pool: Use an atomistic simulation (e.g., Molecular Dynamics with a cheap Hamiltonian) to generate a large pool of unlabeled molecular structures.

- Initialize Committee: Train an ensemble of machine learning potentials (the "committee") on the initial, small training set. Ensure model diversity.

- Evaluate Disagreement: For each structure in the candidate pool, calculate the committee's disagreement. This is often the variance of the predicted total energies [36].

- Query Labels: Select the structure(s) with the highest disagreement.

- Obtain Labels: Use reference ab initio methods to calculate the accurate energy and forces for the selected structure(s).

- Update Training Set: Add the newly labeled data points to the training set.

- Retrain Committee: Retrain the ensemble of models on the updated training set.

- Repeat: Iterate steps 3-7 until a performance metric (e.g., error on a test set) meets the desired threshold.

Protocol 2: Batch Mode BMDAL with Diversity Enforcement

This protocol extends the standard QBC for batch selection, incorporating methods from Zaverkin et al. to ensure a diverse and representative batch [35].

- Steps 1 & 2: Same as Protocol 1.

- Define Informativeness: For each candidate structure, compute an informativeness score. This can be the committee's predictive variance or a kernel-based uncertainty measure derived from the neural network's gradient features [35].

- Enforce Diversity: Instead of simply selecting the top-K most informative points, use a selection algorithm that considers the similarity between candidate points. The maximization of the distance to already selected points is one such method [35].

- Ensure Representativeness: To make the batch representative of the entire data pool, a cluster-based approach can be used. Cluster all data (training and candidate) and select the most informative points from large, underrepresented clusters [35].

- Query, Label, and Update: Select the final batch, obtain labels for all points in the batch in parallel, and update the training set.

- Retrain and Repeat: Retrain the model and iterate. Batch mode significantly reduces the number of retraining cycles [35].

The table below summarizes the key differences between a naive high-uncertainty selection and a batch method with diversity.

| Criterion | Naive High-Uncertainty Selection | BMDAL with Diversity & Representativeness |

|---|---|---|

| Informativeness | Yes (selects highest uncertainty) | Yes (selects high uncertainty points) |

| Diversity | No (batch may contain similar points) | Yes (enforces dissimilarity in the batch) |

| Representativeness | No | Yes (ensures coverage of data distribution) |

Key Research Reagent Solutions

| Item / Concept | Function in QBC for Chemical Space |

|---|---|

| Ensemble of ML Potentials | The "committee" whose disagreement is used to quantify prediction uncertainty and identify informative points [36]. |

| Ab Initio Calculation Software | Provides the high-fidelity "ground truth" labels (energy, forces) for the selected data points [35]. |

| Molecular Dynamics (MD) Engine | Generates the pool of candidate molecular configurations using a cheap potential, from which the active learning algorithm selects [35]. |

| Gradient Feature Calculator | Calculates the gradient of the network's output wrt its parameters, used to build a kernel for advanced uncertainty estimation [35]. |

Standard QBC Active Learning Workflow

Batch Mode Selection for Efficient Learning

In the fields of drug discovery and materials science, the concept of "chemical space" represents the vast, multidimensional ensemble of all possible molecules and compounds. Navigating this space efficiently is a fundamental challenge. Diversity-driven sampling strategies are essential for ensuring broad coverage of this chemical landscape, enabling researchers to build robust models, discover novel materials, and identify potential drug candidates with limited experimental resources. These strategies are particularly powerful when integrated with active learning (AL) frameworks, where the sampling algorithm intelligently selects the most informative data points to query next, thereby maximizing the knowledge gained from each experiment. This technical support center provides troubleshooting guides and detailed FAQs to help researchers implement these sophisticated strategies effectively.

FAQs on Chemical Space and Sampling Strategies

What is chemical space and why is its representation important?

Chemical space is an intuitive concept that has become a cornerstone in many areas of chemistry. It can be roughly defined as the set of all possible chemical compounds or the descriptor space in which these compounds are represented [37]. The choice of how to define this space—what molecular descriptors to use—is critical, as it leads to the "chemical multiverse." The representation is vital because if the subset of chemical space you are working with is not representative of the broader space, it introduces a bias that propagates to all conclusions drawn from it, such as predictions of material properties or drug activity [38]. Unbiased exploration is key to discovering truly novel phenomena and building machine learning models with high transferability [38].

What is the difference between random sampling and active learning for sampling chemical space?

- Random Sampling: This is a passive approach where data points are selected randomly from the chemical space for experimentation or inclusion in a training set. A recent 2025 study on building machine learning potentials for quantum liquid water found that, contrary to common understanding, random sampling could sometimes lead to smaller test errors than active learning for a given dataset size, demonstrating a degree of robustness [39].

- Active Learning (AL): This is an iterative, intelligent process where a machine learning model selects the most informative data points to be labeled next by an "oracle" (e.g., a wet-lab experiment or a computational simulation). The core idea is that ML algorithms can achieve greater performance with fewer labeled data points by choosing the data they learn from [18]. AL is particularly useful when acquiring data is expensive or time-consuming. Its goal is to minimize the number of experiments required to train an accurate model by focusing on regions of chemical space where the model is most uncertain or where data can provide the most information gain [40].

How can I handle highly imbalanced datasets in toxicity prediction?

Imbalanced datasets, where one class (e.g., "toxic") is vastly outnumbered by another (e.g., "non-toxic"), are a major challenge in machine learning. Strategies to address this include:

- Strategic Data Sampling: This is a data-level method that involves modifying the training data. One effective approach is to divide the training data into k-ratios to achieve a more balanced distribution between the majority and minority classes before model training [18].

- Algorithm-Level Methods: Using advanced deep learning models like Convolutional Neural Networks (CNNs), Bidirectional Long Short-Term Memory (BiLSTM) networks, and attention mechanisms can help capture complex molecular features and relationships, improving the model's ability to learn from the minority class [18].

- Active Learning Frameworks: Integrating strategic sampling within an AL framework can significantly improve predictive performance on imbalanced datasets. For instance, an uncertainty-based AL method combined with stacking ensemble learning has demonstrated superior stability and required up to 73.3% less labeled data to achieve high performance in predicting thyroid-disrupting chemicals [18].

What are some specific active learning strategies for regression tasks in materials science?

Regression tasks, which involve predicting continuous properties, are generally considered more complex in the AL framework than classification. A key challenge is uncertainty estimation in a continuous output space. The Density-Aware Greedy Sampling (DAGS) method is a state-of-the-art AL technique designed for this purpose. DAGS integrates uncertainty estimation with data density to select optimal data points for labeling. It has been shown to consistently outperform both random sampling and other AL techniques when training regression models with a limited number of data points for functionalized nanoporous materials like metal-organic frameworks (MOFs) and covalent-organic frameworks (COFs) [10].

Troubleshooting Guides

Problem: My machine learning model performs well on the training set but generalizes poorly to new regions of chemical space.

- Potential Cause: Sampling Bias. Your training data is not representative of the broader chemical space you are trying to model. It may be clustered in a specific, narrow region.

- Solution:

- Diversity Assessment: Use chemical space visualization tools like ChemMaps or Principal Component Analysis (PCA) to plot your training data and new test data. Check if the test data falls outside the dense regions of your training set [37].

- Implement Strategic Sampling: Instead of random sampling, use diversity-based methods to build your initial training set. For example, "periphery sampling" selects molecules from the outside-in, ensuring coverage of the chemical space's boundaries, while "medoid-periphery sampling" alternates between central and outlier molecules to ensure a balanced spread [37].

- Adopt Active Learning: Switch to an AL workflow. The model will itself identify "out-of-sample" regions where it is uncertain and request new data from those areas, thereby continuously expanding the covered chemical space and improving generalizability [40].

Problem: My active learning algorithm is stuck in a local region of chemical space and is not exploring broadly.

- Potential Cause: Over-exploitation. The query strategy (e.g., pure uncertainty sampling) may be over-prioritizing a specific region without sufficient exploration of the global space.

- Solution:

- Hybrid Query Strategy: Combine an exploitation criterion (like model uncertainty) with an exploration criterion (like diversity or density). The DAGS algorithm is a prime example, as it balances uncertainty with data density to guide the sampling [10].

- Density-Based Sampling: Incorporate a measure of data density into your selection process. Prioritize points that are in sparse regions of the already-sampled chemical space to force broader exploration [10].

- Committee-Based Methods: Use Query by Committee (QBC), where an ensemble of models is trained. The disagreement (e.g., variance) among the committee's predictions is used as the selection criterion. This naturally guides sampling towards regions where the model knowledge is incomplete [40].

Problem: My chemical dataset is small and highly imbalanced, leading to biased model predictions.

- Potential Cause: Class Imbalance. The model is biased towards predicting the majority class because it has not seen enough examples of the minority class.

- Solution:

- Strategic k-Sampling: Integrate strategic sampling techniques into your training process. This involves actively creating balanced batches (k-ratios) of data from the imbalanced dataset to ensure the model is exposed to a more representative distribution during training [18].

- Active Stacking-Deep Learning: Employ a stacking ensemble model (combining CNNs, BiLSTMs, and attention mechanisms) within an AL framework. The ensemble improves robustness, while the AL loop focuses on strategically acquiring informative samples from the minority class [18].

- Validate with Docking: For molecular property prediction like toxicity, use computational validation methods like molecular docking to reinforce the model's predictions for the minority class (e.g., highly toxic compounds), adding credibility to the identified actives [18].

Experimental Protocols and Workflows

Protocol 1: Density-Aware Active Learning for Materials Property Prediction

This protocol is designed for efficiently mapping structure-property relationships in materials science, such as for metal-organic frameworks.

- Initialization: Start with a small, randomly selected initial training set (e.g., 1-5% of the available unlabeled data pool). Calculate the target property for these points using high-fidelity computational methods (e.g., DFT).

- Model Training: Train an initial ensemble of machine learning regression models (e.g., Gaussian process models or neural networks) on the current training set.

- Density-Aware Query: a. For all candidates in the unlabeled pool, calculate the uncertainty (e.g., predictive variance from the model ensemble). b. Calculate the density of each candidate relative to the current training set, for example, using the inverse of the average similarity to the k-nearest neighbors in the training set. c. Combine uncertainty and density into a single acquisition score (e.g., a weighted sum). d. Select the top N candidates with the highest acquisition scores for labeling.

- Oracle & Update: Use the "oracle" (e.g., run a DFT calculation) to get the true property values for the selected candidates. Add these new data points to the training set.

- Iteration: Repeat steps 2-4 until a performance threshold or experimental budget is reached.

The following workflow illustrates the iterative cycle of this density-aware active learning protocol:

Protocol 2: Active Stacking-Deep Learning for Imbalanced Toxicity Data

This protocol is tailored for building classification models with imbalanced biological activity data.

- Data Preparation: Curate and preprocess data from sources like the EPA ToxCast program. Standardize SMILES notations, remove inorganics and mixtures, and eliminate duplicates. Split into an initial small training subset and a hold-out test set.

- Feature Calculation: Compute a diverse set of 12 molecular fingerprints (e.g., substructure, topological, electrotopological) for all compounds to comprehensively represent molecular structure.

- Initial Model Setup: Construct a stacking ensemble model with base learners including a CNN, a BiLSTM, and a model with an attention mechanism. Train this ensemble using strategic k-sampling to create balanced mini-batches from the imbalanced initial data.

- Active Learning Loop: a. Use the trained ensemble to predict on the unlabeled pool. b. Apply a selection strategy (e.g., uncertainty sampling) to identify the most informative candidates. For classification, uncertainty is often highest for predictions near the decision boundary (e.g., probability ~0.5). c. Query the experimental oracle (e.g., run the high-throughput assay) to label these candidates as "active" or "inactive." d. Add the newly labeled data to the training set. e. Retrain the stacking ensemble model with strategic k-sampling on the updated training set.

- Termination: Stop when the model's performance on the balanced test set (e.g., measured by AUROC and AUPRC) converges or the experimental budget is exhausted.

Key Reagent Solutions for Featured Experiments

The following table lists key computational and experimental "reagents" essential for implementing the described diversity-driven strategies.

| Research Reagent | Function & Application |

|---|---|

| Molecular Fingerprints [18] | Binary vectors representing molecular structure. Used as input features for ML models to quantify chemical similarity and navigate chemical space. Categories include predefined substructures and topological indices. |

| Extended Similarity Indices [37] | A computational tool for comparing multiple molecules simultaneously with O(N) scaling. Used in ChemMaps to efficiently evaluate library diversity and sample chemical "satellites" for visualization. |

| CETSA (Cellular Thermal Shift Assay) [41] | An experimental method for validating direct drug-target engagement in intact cells and tissues. Provides functionally relevant data for AL loops in drug discovery, confirming mechanistic hypotheses. |

| Strategic k-Sampling [18] | A data-level algorithm for handling class imbalance. Divides training data into k-ratios to create balanced batches, preventing model bias toward the majority class during training. |

| Density-Aware Greedy Sampling (DAGS) [10] | An active learning query strategy for regression. Integrates model uncertainty with data density to select the most informative data points, optimizing the exploration of large design spaces. |

| Stacking Ensemble Model [18] | A machine learning architecture combining predictions from multiple base models (e.g., CNN, BiLSTM). Serves as a robust and accurate predictor within AL frameworks, improving generalization. |

Performance Data and Comparisons

Table 1: Comparison of Chemical Space Sampling Strategies

This table summarizes the core characteristics and applications of different sampling methodologies.

| Sampling Strategy | Key Principle | Best For | Key Advantage / Finding |

|---|---|---|---|

| Random Sampling [39] | Passive, uniform selection of data points. | Establishing baseline performance; robust initial datasets. | Can be surprisingly robust; shown to yield lower test errors than AL for ML potentials of quantum water with small datasets [39]. |

| Active Learning (QBC) [40] | Query by Committee; uses model disagreement to select data. | Maximizing knowledge gain per experiment; general purpose. | Achieved level of accuracy on par with best ML potentials using only 25% of data required by random sampling [40]. |

| Periphery & Medoid Sampling [37] | Selects compounds from the outside-in (periphery) or center-out (medoid) of chemical space. | Ensuring broad, diversity-based coverage of chemical space for visualization or initial library design. | Provides a systematic way to approximate the distribution of compounds in large datasets using a small number of "satellite" molecules [37]. |

| Strategic k-Sampling in AL [18] | Combines active learning with balanced batch sampling to address class imbalance. | Imbalanced classification tasks (e.g., toxicity prediction). | Achieved AUROC of 0.824 and AUPRC of 0.851 for thyroid toxicity, requiring up to 73.3% less labeled data [18]. |

| Density-Aware Greedy (DAGS) [10] | Integrates uncertainty and data density for data point selection. | Regression tasks in materials science with large design spaces (e.g., MOFs, COFs). | Consistently outperformed random sampling and other AL techniques in training accurate regression models with limited data [10]. |

Table 2: Quantitative Results from Recent Sampling Studies

This table presents specific performance metrics from recent research, highlighting the efficacy of advanced sampling strategies.

| Study / Method | Application Context | Key Performance Metrics |

|---|---|---|

| Active Learning (ANI-1x) [40] | Training universal ML potentials for organic molecules (CHNO). | Outperformed original model trained on 100% of data by using only 25% of data via AL. |

| Active Stacking-Deep Learning [18] | Predicting Thyroid-Disrupting Chemicals (TDCs). | MCC: 0.51; AUROC: 0.824; AUPRC: 0.851; used up to 73.3% less labeled data. |

| Complexity-to-Diversity (CtD) Synthesis [42] | Derivatizing Andrographolide for drug discovery. | Identified potent leads: Anti-SARS-CoV-2 EC~50~ = 2.8 µM; Anti-nasopharyngeal carcinoma EC~50~ = 5.4 µM. |

| Representative Random Sampling (RRS) [38] | Unbiased exploration of chemical space. | Provides a method to generate unbiased samples and estimate database representativeness for molecules up to ~30 atoms. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the core components of a hybrid active learning strategy, and why is their combination important? A robust hybrid active learning strategy typically combines uncertainty sampling and diversity sampling [43] [44]. Uncertainty sampling identifies data points where the model's predictions are least confident, often targeting areas of the chemical space where the model is likely to improve most with new data [45] [7]. Diversity sampling ensures that the selected batch of data represents a broad and heterogeneous set of instances, preventing the selection of redundant, highly similar compounds and promoting scaffold diversity [45] [46]. Individually, these approaches have limitations; uncertainty sampling can yield repetitive instances, while diversity sampling may select trivial examples [43]. Their hybrid combination ensures that the model is trained on a set of compounds that are both challenging for the current model and broadly representative of the chemical space, leading to more robust and generalizable performance [43] [47] [44].

FAQ 2: In a low-data regime, how can I prevent my active learning model from over-exploiting a narrow region of chemical space? Over-exploitation, leading to analog identification with limited scaffold diversity, is a common challenge in early-stage projects with limited training data [45]. To mitigate this:

- Implement a Diversity Filter: Incorporate a rule-based filter that assigns a zero score to new molecules that are structurally too similar (e.g., within a Tanimoto distance threshold) to previously selected hits. This forces the algorithm to explore new regions [46].

- Adopt a Paired Representation Approach: Methods like ActiveDelta can be particularly effective. Instead of predicting absolute molecular properties, they learn from and predict the property differences between a candidate molecule and the current best compound. This approach has been shown to identify more chemically diverse inhibitors in terms of Murcko scaffolds [45].

- Use Clustering in the Feature Space: As part of a hybrid strategy, cluster the molecular representations (e.g., fingerprints or model embeddings) of the unlabeled pool. When selecting a batch, choose instances from different clusters to ensure diversity is maintained [43] [44].