Active Learning for Chemical Space Exploration: Strategies, Applications, and Future Directions in Drug Discovery

This article provides a comprehensive overview of active learning (AL) methodologies for navigating vast chemical spaces in drug discovery and materials science.

Active Learning for Chemical Space Exploration: Strategies, Applications, and Future Directions in Drug Discovery

Abstract

This article provides a comprehensive overview of active learning (AL) methodologies for navigating vast chemical spaces in drug discovery and materials science. It covers foundational concepts, including the challenge of exploring immense molecular libraries and the core AL iterative loop. The review details cutting-edge methodological strategies, from multi-level Bayesian optimization to alchemical free energy calculations and machine learning-guided docking. It addresses critical troubleshooting aspects for low-data regimes and model generalizability, and presents rigorous validation through case studies and performance benchmarks against traditional methods. Aimed at researchers and drug development professionals, this article synthesizes recent advances to guide the effective implementation of AL for accelerated molecular discovery.

Navigating the Vastness of Chemical Space: The Active Learning Imperative

The concept of "chemical space" encompasses all possible organic molecules and materials of interest for drug discovery, a universe estimated to contain up to 10^60 drug-like compounds [1]. This vast expanse presents both extraordinary opportunity and formidable challenge for researchers seeking new therapeutics. The size of actionable chemical spaces is surging due to novel computational and experimental techniques, generating novel molecular matter that cannot be neglected in early-phase drug discovery [2]. Huge, combinatorial, make-on-demand chemical spaces with high probability of synthetic success rise exponentially in content, generative machine learning models work alongside synthesis prediction, and DNA-encoded libraries offer new ways of hit structure discovery [2]. These technologies enable searching for new chemical matter in a much broader and deeper manner with less effort and fewer financial resources, yet they simultaneously create unprecedented cheminformatics challenges for making these spaces searchable and analyzable [2].

The 'Big Data' era in medicinal chemistry presents new challenges for analysis. While modern computers can store and process millions of molecular structures, final decisions in medicinal chemistry remain in human hands [3]. However, the ability of humans to analyze large chemical data sets is limited by cognitive constraints, creating a demand for methods and tools to visualize chemical space and facilitate navigation [3]. This whitepaper examines the current state of chemical space exploration, focusing specifically on the transformative role of active learning methodologies in bridging the gap between theoretical possibility and practical drug discovery.

Representing and Visualizing Chemical Space

Chemical Space Networks and Molecular Complexity

Traditional coordinate-based representations of chemical space face significant limitations, including lack of invariance to chosen features and difficulty handling both discrete and continuous features [4]. Chemical Space Networks (CSNs) have emerged as a powerful alternative, representing chemicals as nodes connected by edges based on molecular similarity [4]. This approach allows application of graph theory metrics—degree, betweenness, and eigenvector centrality—to characterize chemical behavior within the network. Research has demonstrated that CSNs exhibit complex non-random organization and can reveal meaningful structural patterns related to biological activity, such as in developmental toxicity prediction where CSNs highlight well-established toxicophores like aryl derivatives, neurotoxic hydantoins, barbiturates, and amino alcohols [4].

Molecular complexity represents another critical dimension in chemical space navigation, reflecting the diversity and intricacy of substructures in a molecule and indicating synthetic difficulty [5]. While medicinal chemists often navigate a "love-hate relationship" with complexity—appreciating simple, elegant molecules while recognizing the need for added complexity to optimize potency, selectivity, and metabolic stability—recent work has proposed simplified complexity measures [5].

Table 1: Molecular Complexity and Synthetic Accessibility Measures

| Name | Type | Description | Reference |

|---|---|---|---|

| MC1 | Complexity | Fraction of non-divalent nodes in molecular graph | [5] |

| MC2 | Complexity | Number of non-divalent nodes (excluding certain carbonyl groups) | [5] |

| FCFP4 | Complexity | Number of on-bits in a binary 2048-bit FCFP4 fingerprint | [5] |

| Data Warrior | Complexity | Fractal complexity using Minkowski–Bouligand dimension concept | [5] |

| SAscore | Synthesizability | Fragment occurrence combined with complexity penalty | [5] |

| SCS | Synthesizability | Machine-learned score predicting synthetic steps from Reaxys data | [5] |

Visualization Approaches for High-Dimensional Data

As chemical libraries grow to millions of compounds, efficient visualization methods become increasingly important [3]. Recent advances include tree-maps (TMAPs) organized by substructure similarity using molecular fingerprints like MAP4, where each molecule is a point color-coded according to properties or complexity metrics [5]. Deep generative modeling combined with chemical space visualization paves the way for interactive exploration of chemical space, extending beyond chemical compounds to include reactions and chemical libraries [3]. These visualization approaches also support visual validation of QSAR/QSPR models and analysis of activity/property landscapes [3].

Figure 1: Chemical Space Visualization Workflow

Active Learning for Chemical Space Exploration

The Active Learning Paradigm in Drug Discovery

Active learning represents a paradigm shift in computational drug discovery, addressing the fundamental limitation of traditional virtual screening: the inability to evaluate all compounds in ultralarge libraries [6]. The core concept involves an iterative approach where machine learning models suggest new compounds for evaluation by an oracle (experimental measurement or computational predictor), with these compounds and their scores incorporated back into the training set for continuous model improvement [1]. This methodology is particularly valuable in low-data scenarios typical of drug discovery, where it can achieve up to a sixfold improvement in hit discovery compared to traditional screening methods [6].

In practice, active learning driven prioritization has demonstrated significant utility in target-focused drug discovery. For example, in targeting the SARS-CoV-2 main protease (Mpro), researchers have interfaced structure-based growing algorithms with active learning to improve the efficiency of searching the combinatorial space of possible linkers and functional groups [7]. This approach successfully identified several small molecules with high similarity to molecules discovered by the COVID moonshot effort, using only structural information from a fragment screen in a fully automated fashion [7].

Active Learning Strategies and Configurations

Several strategic approaches exist for compound selection within active learning cycles, each with distinct advantages for different discovery scenarios:

- Greedy Selection: Chooses only the top predicted binders at every iteration step, focusing exclusively on exploitation of the current model [1].

- Uncertainty Selection: Selects ligands with the largest prediction uncertainty, prioritizing exploration of chemical space regions where the model is least confident [1].

- Mixed Strategy: Identifies top predicted binders then selects those with the most uncertain predictions, balancing exploration and exploitation [1].

- Narrowing Strategy: Combines broad selection in initial iterations with subsequent switch to greedy approach, beginning with exploration before focusing on exploitation [1].

Table 2: Active Learning Performance Across Molecular Representations

| Representation | Description | Best For | Limitations |

|---|---|---|---|

| 2D_3D Features | Constitutional, electrotopological, and molecular surface area descriptors | General-purpose screening | Computationally intensive |

| Atom-hot | Grid-based 3D voxel counting of ligand atoms | Structure-based design | Requires binding poses |

| PLEC Fingerprints | Protein-ligand interaction fingerprints | Target-specific optimization | Protein structure dependent |

| MDenerg | Residue-based interaction energies | Accurate binding affinity prediction | Computationally expensive |

| R-group-only | Focused on variable substituents | Lead optimization series | Limited scaffold diversity |

The effectiveness of active learning depends critically on both the molecular representation and the selection strategy. Studies have systematically analyzed active learning strategies combined with deep learning architectures on large-scale molecular libraries, identifying the most important determinants of success in low-data regimes [6]. Optimal performance typically requires matching the exploration strategy to the specific drug discovery stage—broad exploration for early hit identification versus focused exploitation for lead optimization.

Experimental Protocols and Case Studies

Integrated Active Learning Workflow for SARS-CoV-2 Mpro

A recent study demonstrated a complete active learning workflow for targeting SARS-CoV-2 main protease (Mpro) using the FEgrow software package [7]. The methodology provides a template for structure-based active learning applications:

Initialization Phase:

- Protein Preparation: Obtain and prepare the receptor structure from crystallographic data (PDB ID: 7BQY for Mpro)

- Ligand Core Definition: Define a constrained core based on fragment hits from crystallographic screens

- Chemical Space Definition: Specify linkers (from library of 2000) and R-groups (from library of ~500) or seed with purchasable compounds from on-demand libraries like Enamine REAL

Active Learning Cycle:

- Compound Generation: FEgrow builds ligands by growing user-defined functional groups off the constrained core

- Pose Optimization: Hybrid machine learning/molecular mechanics (ML/MM) potential energy functions optimize bioactive conformers

- Scoring: gnina convolutional neural network scoring function predicts binding affinity

- Model Training: Random forest or neural network models trained on scored compounds

- Compound Selection: Mixed strategy selection of additional compounds for next cycle

- Iteration: Repeated for 10-20 cycles or until convergence

Experimental Validation:

- Compound Procurement: Top-ranked compounds ordered from on-demand libraries

- Activity Testing: Fluorescence-based Mpro assay to confirm inhibitory activity

- Hit Confirmation: Dose-response measurements for active compounds

This protocol successfully identified three weakly active Mpro inhibitors from 19 designed compounds, demonstrating the feasibility of fully automated structure-based design guided by active learning [7].

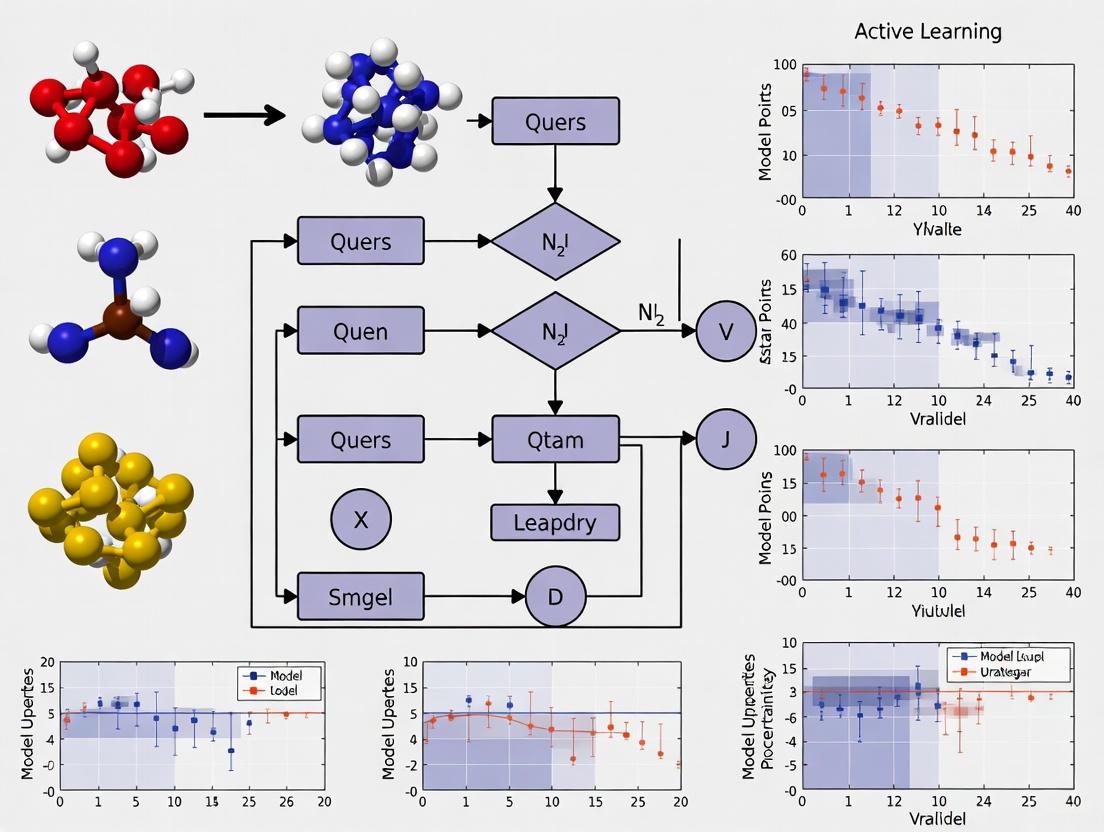

Figure 2: Active Learning Cycle for Drug Discovery

Prospective PDE2 Inhibitor Discovery with Alchemical Free Energy Calculations

Another sophisticated implementation combined active learning with alchemical free energy calculations for phosphodiesterase 2 (PDE2) inhibitor discovery [1]. This approach addresses the critical need for accurate binding affinity prediction in chemical space navigation:

Ligand Pose Generation Protocol:

- Reference Selection: Identify reference crystal structures (4D08, 4D09, 4HTX for PDE2)

- Similarity Matching: For each library compound, select reference with highest Dice similarity based on RDKit topological fingerprint

- Constrained Embedding: Generate initial poses using ETKDG algorithm with core atoms constrained to reference geometry

- Pose Refinement: Molecular dynamics simulation in vacuum with hybrid topology morphing

Active Learning with Free Energy Oracle:

- Initialization: Weighted random selection based on t-SNE embedding diversity

- Free Energy Calculations: Relative binding free energies using alchemical transformation

- Model Training: Gaussian process regression or neural networks on free energy data

- Batch Selection: 100 compounds per iteration using mixed strategy

- Termination: After 10-15 iterations or when high-affinity binders identified

This prospective application successfully identified potent PDE2 inhibitors while explicitly evaluating only a small fraction (∼5%) of the virtual library, demonstrating the efficiency gains possible through active learning [1].

Emerging Frontiers and Research Directions

Chemical-Linguistic Space Integration

An emerging frontier involves navigating chemical-linguistic sharing space through heterogeneous molecular encoding [8]. This approach addresses the semantic gap between natural language and molecular representations by unifying features from multiple perspectives:

- 1D SMILES Encoder: Embeds SMILES strings into text feature space

- 2D Graph Encoder: Captures molecular connectivity and topology

- 3D Coordinate Encoder: Incorporates spatial atomic arrangements

- Molecular Fragment Encoder: Provides explicit substructure information through learned fragments

The Heterogeneous Molecular Encoding (HME) framework compresses molecular features from fragment sequence, topology, and conformation with Q-learning, enabling more effective bidirectional mapping between chemical structures and textual descriptions [8]. This facilitates both chemical space exploration with linguistic guidance and linguistic space exploration with molecular guidance.

Multi-Objective Optimization

Future directions increasingly focus on multi-objective optimization, simultaneously balancing potency, selectivity, synthetic accessibility, and pharmacokinetic properties [6]. Active learning protocols are being extended to incorporate:

- Synthetic Complexity Scores (SCS): Machine-learned predictions of synthetic steps from Reaxys data [5]

- Molecular Complexity Metrics: MC1 and MC2 for synthetic accessibility assessment [5]

- Property Predictions: Integrated models for ADMET and physicochemical properties

- Multi-fidelity Approaches: Combining cheap approximate predictions with expensive accurate calculations

Table 3: Key Research Reagents and Computational Tools for Chemical Space Exploration

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| FEgrow | Software | Builds and scores congeneric ligand series in protein binding pockets | Open-source [7] |

| Enamine REAL | Compound Library | >5.5 billion make-on-demand compounds for virtual screening | Commercial [7] |

| RDKit | Cheminformatics | Core molecular informatics functionality for fingerprinting and similarity | Open-source [7] |

| OpenMM | Molecular Dynamics | Platform for molecular simulation and energy minimization | Open-source [7] |

| gnina | Docking Software | Convolutional neural network scoring for protein-ligand binding | Open-source [7] |

| GDB Databases | Chemical Space | Enumerated hypothetical molecules from mathematical graphs | Research use [5] |

| TMAP | Visualization | Tree-based visualization of high-dimensional chemical space | Open-source [5] |

| PLIP | Analysis | Protein-ligand interaction profiling for structural motifs | Open-source [7] |

The grand challenge of navigating 10^60 possible compounds to practical drug discovery is being transformed by active learning approaches. By combining sophisticated molecular representations with iterative experimental design, researchers can now efficiently explore relevant regions of chemical space that would otherwise remain inaccessible. The integration of structure-based design, synthetic accessibility metrics, and multi-objective optimization within active learning frameworks represents a paradigm shift in early-phase drug discovery. As these methodologies continue to mature, incorporating emerging capabilities in chemical-linguistic space navigation and heterogeneous molecular encoding, they promise to significantly accelerate the identification of novel therapeutic candidates while reducing resource requirements. The future of chemical space exploration lies not in exhaustive enumeration, but in intelligent navigation—a journey guided by active learning from theoretical possibility to practical discovery.

Active learning represents a paradigm shift in machine learning for scientific discovery, moving from passive model training on static datasets to an intelligent, iterative process that optimizes data acquisition. In the context of chemical space exploration—a domain characterized by vast molecular diversity and computationally expensive data generation—active learning has emerged as a critical strategy for accelerating research. This approach strategically selects the most informative data points for labeling and model training, dramatically reducing computational costs while maintaining or even improving model accuracy. For researchers and drug development professionals, implementing active learning iterative loops enables efficient navigation of complex chemical landscapes that would otherwise be prohibitively expensive to explore exhaustively. The core principle hinges on recognizing that not all data points contribute equally to model improvement, and that selectively querying the most valuable samples creates a self-improving cycle that maximizes learning efficiency while minimizing resource expenditure [9] [10].

The Active Learning Iterative Loop: Core Components and Workflow

Fundamental Operating Principle

The active learning iterative loop operates through a cyclic process of model training, data selection, and knowledge incorporation. Unlike traditional supervised learning that uses a fixed, pre-defined dataset, active learning algorithms actively query a human expert or information source to annotate specifically chosen data points. The primary objective is to minimize the labeled data required for training while maximizing model performance, creating an efficient learning trajectory that focuses resources on the most chemically relevant regions of feature space. This approach is particularly valuable in chemical research where obtaining labeled data through experimentation or simulation is costly, time-consuming, or scarce [9].

The loop begins with a small initial set of labeled data points, which serves as the starting point for training the first model iteration. Through successive cycles, the model identifies gaps in its knowledge and strategically requests annotations for samples that will provide the greatest information gain. This creates a continuous improvement mechanism where each iteration enhances the model's understanding of chemical space, enabling more informed exploration in subsequent cycles [9] [10].

The Five-Step Iterative Process

The active learning framework implements a structured, five-step process that transforms raw data into refined predictive models:

Step 1: Planning and Requirements - Researchers define project objectives and outline essential requirements that must be met for project success. This establishes the foundation for all subsequent iterations and ensures the active learning process remains aligned with research goals [11].

Step 2: Analysis and Design - The team focuses on the specific business needs and technical requirements of the project. This phase involves designing the initial model architecture and determining which chemical features or properties will be prioritized during the exploration process [11].

Step 3: Implementation - The development team creates the first iteration of the active learning model. This initial implementation aims to achieve the primary project objectives while establishing the framework for subsequent refinement cycles [11].

Step 4: Testing - The current model iteration undergoes rigorous evaluation, typically through validation against known chemical data or through computational experiments. In chemical space exploration, this often involves comparing predictions against established quantum mechanical calculations or experimental results [10] [11].

Step 5: Evaluation and Review - Researchers assess the iteration's success and identify necessary improvements. The model's performance is analyzed to determine which chemical regions require additional sampling. Based on this evaluation, the process returns to Step 2 to create the next iteration, with each cycle building upon previous knowledge while maintaining focus on the original project objectives [11].

This iterative methodology stands in contrast to non-iterative approaches like the Waterfall method, where project phases are sequentially completed without revisiting previous stages. The iterative nature of active learning provides the flexibility needed to adapt to new insights discovered during chemical space exploration [11].

Workflow Visualization

The following diagram illustrates the core active learning iterative loop as implemented in chemical research:

Figure 1: Active Learning Iterative Loop

Key Query Strategies for Chemical Space Exploration

Query strategies form the decision-making engine of the active learning loop, determining which unlabeled samples would provide maximum information gain if annotated. In chemical space exploration, these strategies guide the efficient sampling of molecular configurations, reactions, or properties. Three primary configurations are commonly implemented in research settings:

Pool-Based Sampling - The model assesses all available unlabeled data points in a "pool" and queries the most informative samples based on specific criteria. This approach is particularly effective when researchers have access to large databases of uncharacterized molecules or molecular configurations [12].

Stream-Based Selective Sampling - Each data point is individually considered as it becomes available, with the model determining whether to query it or reject it based on current information needs. This method is valuable when chemical data is generated continuously, such as in real-time monitoring of reactions or high-throughput computational screening [9].

Membership Query Synthesis - The model generates its own synthetic samples for annotation, creating novel molecular structures or configurations not present in the original dataset. This advanced approach enables exploration beyond known chemical spaces but requires careful validation to ensure synthetic samples remain chemically plausible [12].

Specific Query Algorithms

Within these broader configurations, several algorithmic approaches determine how "informativeness" is quantified for sample selection:

Table 1: Active Learning Query Strategies

| Strategy | Mechanism | Chemical Application Context |

|---|---|---|

| Random Sampling | Selects samples randomly from unlabeled pool | Establishing baseline performance; initial diverse sampling |

| Least Confidence Sampling | Prioritizes samples with lowest prediction confidence | Identifying regions of chemical space with high uncertainty |

| Entropy Sampling | Selects samples with highest entropy in prediction distribution | Molecular property prediction where multiple outcomes are plausible |

| Discriminative Active Learning | Chooses samples to make labeled and unlabeled sets indistinguishable | Ensuring representative coverage of diverse chemical classes |

Each strategy offers distinct advantages for different phases of chemical space exploration. Least Confidence and Entropy Sampling are particularly valuable for identifying regions where quantum mechanical calculations would provide maximum information gain, while Discriminative approaches ensure comprehensive coverage of chemical diversity [12].

Implementation Framework: PALIRS for Infrared Spectra Prediction

The practical implementation of active learning for chemical space exploration is exemplified by PALIRS (Python-based Active Learning Code for Infrared Spectroscopy), a recently developed framework for efficiently predicting IR spectra of organic molecules. This system addresses a critical challenge in computational chemistry: the accurate simulation of IR spectra traditionally requires computationally expensive density functional theory-based ab-initio molecular dynamics (AIMD) calculations. PALIRS overcomes this limitation by implementing an active learning-enhanced machine-learned interatomic potential (MLIP) that achieves accurate spectral predictions at a fraction of the computational cost [10].

The PALIRS framework demonstrates how active learning iterative loops can be specifically designed for chemical applications. By focusing sampling efforts on molecular configurations that maximize information gain, the system efficiently explores the relevant chemical space while minimizing quantum mechanical computations. This approach has shown particular success for small catalytically relevant organic molecules, accurately reproducing IR spectra computed with AIMD while dramatically reducing computational requirements [10].

Detailed Workflow and Experimental Protocol

The PALIRS implementation follows a structured four-step methodology for IR spectrum prediction:

Step 1: Active Learning for MLIP Development - Initial machine-learned interatomic potentials are trained on molecular geometries sampled along normal vibrational modes. An active learning strategy then iteratively expands the training set through machine learning-assisted molecular dynamics (MLMD) simulations. The acquisition function selects molecular configurations with the highest uncertainty in force predictions, enriching the dataset with the most informative structures while minimizing redundancy. To balance exploration and exploitation, MLMD simulations are performed at multiple temperatures (300K, 500K, and 700K) [10].

Step 2: Dipole Moment Model Training - A separate machine learning model, based on the MACE framework, is specifically trained to predict dipole moments necessary for IR spectra calculations. This specialization ensures accurate representation of the electronic properties critical for spectral simulations [10].

Step 3: MLMD Production Simulations - Using the refined MLIP for energies and forces, researchers conduct production molecular dynamics simulations. The trajectory provides the structural evolution data required for spectral calculation [10].

Step 4: IR Spectrum Calculation - Dipole moments are computed for all structures along the trajectory using the specialized dipole model. IR spectra are then derived by computing the autocorrelation function of these dipole moments, reproducing the anharmonic effects captured by more computationally intensive methods [10].

The iterative active learning process in PALIRS continues until model performance plateaus or computational budgets are exhausted. Through 40 active learning iterations, the final dataset consists of 16,067 structures (approximately 600-800 structures per molecule), significantly fewer than would be required for exhaustive sampling [10].

Workflow Visualization

The following diagram details the PALIRS active learning workflow for infrared spectra prediction:

Figure 2: PALIRS Active Learning Workflow

Research Reagents and Computational Tools

Successful implementation of active learning for chemical space exploration requires specific computational tools and methodological components:

Table 2: Essential Research Reagents and Computational Tools

| Component | Function | Implementation Example |

|---|---|---|

| Initial Dataset | Provides starting point for active learning loop | Molecular geometries from normal mode sampling [10] |

| MLIP Architecture | Machine-learned interatomic potential for energy/force prediction | MACE (Multi-Atomic Cluster Expansion) models [10] |

| Uncertainty Quantification | Estimates model confidence for sample selection | Ensemble of three MACE models [10] |

| Query Strategy | Selects most informative samples for annotation | Highest uncertainty in force predictions [10] |

| Ab-Initio Calculator | Provides ground-truth labels for selected samples | FHI-aims DFT code [10] |

| Active Learning Framework | Manages iterative learning process | PALIRS (Python-based Active Learning Code) [10] |

Performance Assessment and Validation

Quantitative Performance Metrics

The effectiveness of active learning iterative loops must be rigorously quantified through appropriate performance metrics. In the PALIRS implementation, researchers evaluated model improvement during active learning by comparing predictions against a predefined test set of harmonic frequencies. These metrics provide reliable validation of the model's accuracy and progression through successive iterations [10].

Key quantitative assessments include:

Mean Absolute Error (MAE) - Measures the average magnitude of errors between MLIP-computed harmonic frequencies and DFT reference values, providing a comprehensive view of model accuracy across the chemical space of interest [10].

Learning Curves - Track model performance as a function of training set size, demonstrating the efficiency gains achieved through strategic sample selection compared to random sampling approaches [10].

Computational Cost Reduction - Quantifies the reduction in required quantum mechanical calculations while maintaining target accuracy levels. The PALIRS framework demonstrated accurate IR spectrum prediction at a fraction of the computational cost of traditional AIMD approaches [10].

Advantages and Limitations

The implementation of active learning iterative loops offers significant advantages for chemical space exploration, along with some important considerations:

Advantages:

- Reduced Labeling Costs - By selectively choosing the most informative samples for expensive quantum mechanical calculations, active learning significantly reduces computational requirements [9] [10].

- Improved Accuracy - Focusing on high-information regions of chemical space often produces more accurate models than training on larger but less informative datasets [9].

- Faster Convergence - Strategic sample selection enables models to achieve target performance levels with fewer training iterations [9].

- Adaptability - The iterative nature allows models to adapt to new discoveries and refine their understanding of chemical space throughout the exploration process [9].

Challenges and Limitations:

- Initial Data Requirements - Effective active learning requires sufficient initial data to bootstrap the iterative process, which can be challenging for novel chemical domains [10].

- Uncertainty Quantification - Accurate estimation of model uncertainty is critical for sample selection but can be computationally expensive or methodologically complex [10].

- Temporal Overhead - The iterative cycle of training, selection, and annotation introduces organizational complexity compared to single-batch training [11].

- Potential for Bias - If not properly managed, active learning can over-exploit certain regions of chemical space while neglecting others [9].

Future Directions and Integration with Foundation Models

The field of active learning for chemical space exploration is rapidly evolving, with several emerging trends shaping its future development. Recent advances in molecular foundation models like MIST (Molecular Insight SMILES Transformers) present opportunities for enhancing active learning frameworks. These models, trained on billions of molecular structures using novel tokenization schemes that capture nuclear, electronic, and geometric features, provide rich pretrained representations that can accelerate active learning iterations [13].

The integration of active learning with foundation models creates a powerful synergy: foundation models provide comprehensive initial representations of chemical space, while active learning efficiently targets computational resources to refine these representations for specific chemical properties or reactions. This combination is particularly valuable for exploring underrepresented regions of chemical space or extending models to novel molecular classes not well-represented in pretraining datasets [13].

Future methodological developments will likely focus on improving uncertainty quantification for complex molecular representations, developing multi-objective acquisition functions that balance multiple chemical priorities simultaneously, and creating more efficient integration between active learning cycles and high-throughput computational screening platforms. As these technical advances mature, active learning iterative loops will become increasingly central to computational chemical research, enabling more efficient exploration of the vast chemical universe and accelerating the discovery of novel molecules with valuable properties [10] [13].

In the pursuit of novel chemical entities, such as drugs or catalysts, researchers face a search space of astronomical proportions, estimated to contain up to 10^60 drug-like compounds [1]. Active learning (AL) has emerged as a powerful artificial intelligence (AI) paradigm to navigate this vast chemical space efficiently. An AL cycle operates as an iterative feedback loop: a machine learning (ML) model selects promising candidate molecules, an oracle evaluates them, and the results are used to retrain and improve the model for the next cycle [14]. While the ML model is often the focus of development, the nature of the oracle—the source of feedback—is equally critical for the success of any AL campaign.

The oracle provides the ground-truth data that guides the entire exploration process. It can be computational, using physics-based simulations to predict molecular properties, or experimental, relying on high-throughput laboratory measurements. The choice of oracle involves a fundamental trade-off between cost, throughput, and accuracy. This paper provides an in-depth examination of oracle definitions within AL for chemical space exploration, detailing their implementation, relative merits, and integration into robust experimental protocols for the drug development community.

Oracle Typology: Computational and Experimental Feedback

Oracles in active learning can be broadly categorized into two classes: those based on computational simulations and those relying on experimental data. The table below summarizes the primary oracle types used in the field.

Table 1: Types of Oracles Used in Active Learning for Chemical Discovery

| Oracle Type | Specific Method | Primary Output | Typical Use Case | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Computational | Alchemical Free Energy Calculations [15] [1] | Binding Affinity (ΔG) | Lead Optimization | High accuracy close to experiments; rigorous physical basis [1] | Computationally very demanding |

| Computational | Molecular Docking & Scoring (e.g., gnina) [7] | Docking Score | Virtual Screening & Hit Identification | Very high throughput; low cost per compound [7] | Approximate; can suffer from scoring errors |

| Computational | Hybrid ML/MM & Graph Neural Networks [16] [7] | Binding Affinity, Energy, Forces | Binding Pose Optimization & Property Prediction | Balances accuracy and speed; can enhance scoring [7] | Dependent on quality of training data |

| Experimental | High-Throughput In Vitro Assays [17] [14] | Inhibitory Activity (e.g., IC50), Toxicity | Experimental Validation & Toxicity Prediction | Provides direct, biological relevant data [17] | Costly and time-consuming relative to computation |

| Experimental | Fluorescence-Based Bioassay [7] | Enzyme Inhibition Activity | Confirmatory Testing of Designed Compounds | Direct functional readout | Lower throughput; requires compound synthesis |

Computational Oracles

Computational oracles provide a cost-effective way to evaluate vast regions of chemical space without the need for physical materials or complex laboratory setups.

Alchemical Free Energy Calculations: These methods are considered a high-accuracy computational oracle for predicting binding affinities. They work by using molecular dynamics simulations to compute the free energy difference between a ligand and a reference compound through a non-physical (alchemical) pathway [1]. While their accuracy is high, they are computationally demanding, often taking days to screen hundreds to thousands of ligands on high-performance computing clusters [1]. Consequently, they are ideally suited for the lead optimization phase, where precision is paramount, and are used as the oracle in AL cycles to train more efficient ML models [15] [1].

Molecular Docking and Scoring: In contrast, docking serves as a high-throughput computational oracle. Tools like gnina use convolutional neural networks to score how well a small molecule (ligand) fits into a protein's binding pocket [7]. This method is a cornerstone of virtual screening, allowing researchers to quickly triage millions of compounds. However, its approximations can lead to false positives and negatives. For instance, in a study targeting the SARS-CoV-2 main protease, the docking score from gnina was used as the primary oracle in an AL-driven workflow to prioritize compounds for synthesis [7].

Hybrid Machine Learning/Molecular Mechanics (ML/MM): Emerging approaches seek to balance speed and accuracy. The FEgrow software, for example, uses ML/MM potential energy functions to optimize the conformations of growing ligands within a protein binding pocket, using a docking score or other functions as its objective [7]. This creates a more refined, structure-based oracle for de novo molecular design.

Experimental Oracles

Experimental oracles provide the ultimate validation, as they measure real-world biological activity or properties.

High-Throughput In Vitro Assays: These are the workhorses of experimental feedback. Data from programs like the U.S. EPA ToxCast provide large-scale biological activity data that can be used to train and validate models for toxicity prediction [17]. In an AL context, such assays can be used directly as the oracle to select compounds for subsequent testing rounds, efficiently focusing experimental resources on the most informative candidates [14].

Confirmatory Biochemical Assays: In prospective drug discovery campaigns, computationally prioritized compounds are synthesized and tested in specific biochemical assays. For example, in the SARS-CoV-2 Mpro study, 19 designed compounds were ordered and tested in a fluorescence-based Mpro activity assay to confirm inhibitory activity, with three showing weak activity [7]. This experimental result closes the AL loop and provides hard validation of the overall strategy.

Implementation: Protocols and Workflows

Implementing an effective active learning system requires a carefully designed protocol that integrates the oracle, the machine learning model, and the chemical library.

General Active Learning Workflow

The following diagram illustrates the standard iterative cycle of an active learning campaign in chemical discovery.

This workflow is agnostic to the specific oracle used. The key steps are:

- Initialization: The process begins with a small, initially labeled set of compounds. This can be a random sample or a strategically chosen set to maximize diversity [1].

- Model Training: A machine learning model is trained on the current set of labeled data (e.g., compound structures and their associated oracle outputs).

- Prediction & Selection: The trained model predicts the properties of a large, unlabeled pool of compounds. A query strategy is then used to select the most promising candidates for evaluation. Common strategies include:

- Uncertainty Sampling: Selects compounds where the model's prediction is most uncertain, aiming to improve the model itself [7] [1].

- Greedy Selection: Selects the top-ranked compounds predicted to have the best properties (e.g., highest binding affinity), focusing on optimization [1].

- Mixed Strategy: Selects the best-predicted compounds from a subset where uncertainty is high, balancing exploration and exploitation [1].

- Oracle Evaluation: The selected candidates are evaluated by the chosen oracle, be it a free energy calculation, a docking run, or a biological experiment.

- Iteration: The newly acquired data is added to the training set, and the cycle repeats until a stopping criterion is met, such as a performance target or exhaustion of resources.

Case Study Protocol: AL with Free Energy Oracle

A robust protocol for using alchemical free energy calculations as an oracle was detailed by Khalak et al. [1]. The methodology below can be adapted for other oracles with appropriate modifications.

Table 2: Essential Research Reagents and Tools for an AL Cycle

| Category | Item / Software | Specification / Version | Critical Function in the Workflow |

|---|---|---|---|

| Chemical Library | Enamine REAL / In-house Library | >5.5 Billion Compounds [7] | Source of unlabeled candidate molecules for exploration. |

| Structure Preparation | RDKit [7] [1] | v.2020.09 or later | Canonicalization of SMILES, 2D/3D descriptor calculation, and fingerprint generation. |

| Binding Pose Generation | Hybrid Topology (pmx) [1] | N/A | Generates physically plausible ligand binding poses for free energy calculations. |

| Oracle Software | Gromacs [1] | 2021.1 or later | Performs molecular dynamics and alchemical free energy calculations. |

| Machine Learning | Scikit-learn, PyTorch, etc. | N/A | Builds models to predict oracle outcomes from molecular representations. |

| Representation | 2D_3D Features, PLEC Fingerprints [1] | N/A | Encodes molecular structure and protein-ligand interactions for ML input. |

Step-by-Step Protocol:

Library Curation and Preparation:

Ligand Pose Generation for a Structural Oracle:

- For each ligand, identify a reference crystal structure with a high-similarity inhibitor.

- Align the largest common substructure of the ligand to the reference inhibitor in the protein binding pocket.

- Use a constrained embedding algorithm (e.g., ETKDG in RDKit) to generate initial poses for the variable parts of the ligand [1].

- Refine the poses using molecular dynamics with strong restraints on the common core, "morphing" the reference inhibitor into the new ligand to ensure a physically realistic binding mode [1].

Feature Engineering for ML:

- Calculate fixed-size vector representations for each ligand. A comprehensive ("2D_3D") representation may include:

- For R-group optimization, consider using representations that focus only on the variable parts of the molecule to reduce noise.

Active Learning Cycle Execution:

- Initialization: Select an initial training set of 50-100 compounds using a diversity-oriented strategy, such as weighted random selection based on t-SNE clustering of molecular fingerprints [1].

- Oracle Evaluation: Run alchemical free energy calculations on the selected compounds to obtain binding affinities. This involves setting up hybrid topology systems and running thermodynamic integration (TI) or free energy perturbation (FEP) simulations [1].

- Model Training and Selection: Train multiple ML models (e.g., random forest, neural networks) using different molecular representations. Select the top-performing models based on cross-validation error.

- Candidate Selection: Apply the chosen query strategy (e.g., "mixed strategy") to the pooled library. The ML model predicts affinities and uncertainties for all unlabeled compounds. Select the next batch (e.g., 100 compounds) for oracle evaluation.

- Iteration: Continue the cycle for a predefined number of iterations (e.g., 10-20) or until a performance plateau is observed.

Performance and Validation

The effectiveness of an AL framework is ultimately judged by its ability to efficiently identify hits. Quantitative metrics from recent studies demonstrate this success.

Table 3: Performance Metrics of Active Learning Frameworks with Different Oracles

| Study & Application | Oracle Used | Key Performance Metric | Result | Data Efficiency |

|---|---|---|---|---|

| Thyroid Toxicity Prediction [17] | In Vitro Assay (ToxCast) | Matthews Correlation Coefficient (MCC) | 0.51 | Achieved with up to 73.3% less labeled data |

| SARS-CoV-2 Mpro Inhibitor Design [7] | Docking Score (gnina) & Fluorescence Assay | Experimental Hit Rate | 3 out of 19 tested compounds showed activity | Enabled prioritization from billions of compounds |

| PDE2 Inhibitor Discovery [1] | Alchemical Free Energy | Enrichment of Potent Binders | Identified high-affinity inhibitors | Required evaluation of only a small subset of the library |

The integration of computational and experimental feedback is powerfully exemplified in the work by Cree et al. [7]. Their AL-driven workflow for targeting SARS-CoV-2 Mpro used the gnina docking score as the primary computational oracle to prioritize compounds from the Enamine REAL library. The most promising designs were then synthesized and tested in a fluorescence-based biochemical assay, the experimental oracle. This closed loop allowed them to identify novel, active inhibitors based solely on initial fragment data, with several designs showing high similarity to those discovered by the large-scale COVID moonshot consortium [7]. This validates the entire AL pipeline, from computational pre-screening to experimental confirmation.

The "oracle" is the cornerstone of any active learning system for chemical discovery. Its definition—whether a computationally intensive free energy calculation, a high-throughput docking score, or a wet-lab assay—directly determines the cost, speed, and ultimate success of the exploration campaign. As the field advances, the integration of multiple oracles into a single workflow will become more prevalent, leveraging the speed of computational screens for broad exploration and the fidelity of experimental assays for final validation. Furthermore, the development of more accurate and efficient computational oracles, such as those based on hybrid ML/MM or advanced machine learning potentials trained on high-quality datasets like QDπ [18], will continue to narrow the gap between in silico prediction and experimental reality. By making informed choices about the oracle and meticulously implementing the associated protocols, researchers can dramatically accelerate the journey from a hypothesis to a novel, functional molecule.

The process of translating molecular structures into a computer-readable format, known as molecular representation, serves as the foundational step in computational chemistry and drug design [19]. It bridges the critical gap between chemical structures and their biological, chemical, or physical properties [19]. Effective molecular representation is paramount for various drug discovery tasks, including virtual screening, activity prediction, and scaffold hopping, as it enables researchers to navigate the vast chemical space efficiently and precisely [19]. The evolution of these methods has progressively enhanced our ability to characterize molecules, moving from simple, human-defined rules to complex, data-driven algorithms that capture deeper structural and functional relationships [19].

This evolution is particularly crucial within the framework of active learning for chemical space exploration. In this context, the choice of molecular representation directly influences how a model queries, selects, and prioritizes compounds for synthesis or testing [20]. The representation must not only encode chemical structure but also enable the model to efficiently explore regions of chemical space with desired biological properties, thereby accelerating the iterative cycle of prediction and experimental validation [19] [20].

Traditional Molecular Representation Methods

Traditional molecular representation methods rely on explicit, rule-based feature extraction. These methods are computationally efficient and have laid a strong foundation for many computational approaches in drug discovery [19].

Molecular Descriptors and Fingerprints

Molecular descriptors quantify the physical or chemical properties of molecules, such as molecular weight, hydrophobicity, or topological indices [19]. Molecular fingerprints, on the other hand, typically encode substructural information as binary strings or numerical values [19]. A prominent example is the Extended-Connectivity Fingerprint (ECFP), which represents local atomic environments in a compact and efficient manner, making it invaluable for representing complex molecules [19]. These representations are highly effective for tasks like similarity search, clustering, and quantitative structure-activity relationship (QSAR) modeling due to their interpretability and computational efficiency [19].

Table 1: Key Traditional Molecular Representation Methods

| Method Type | Specific Example | Representation Format | Primary Applications |

|---|---|---|---|

| Molecular Fingerprint | Extended-Connectivity Fingerprints (ECFP) | Binary or count-based vector | Similarity search, QSAR, clustering |

| Molecular Descriptor | alvaDesc descriptors | Numerical vector (e.g., molecular weight, logP) | QSAR/QSPR modeling, property prediction |

| String-Based | SMILES (Simplified Molecular-Input Line-Entry System) | Character string | Data storage, exchange, sequence-based ML |

| String-Based | SELFIES | Character string | Robust molecular generation |

String-Based Representations

The Simplified Molecular-Input Line-Entry System (SMILES) is a widely used linear notation that encodes chemical structures as strings [19]. For instance, the SMILES string for propylene glycol is "CC(O)CO" [21]. Despite its simplicity and convenience, SMILES has inherent limitations in capturing the full complexity of molecular interactions and can be sensitive to small syntactic changes that do not alter the chemical structure [19]. Improved versions like CXSMILES and SMILES Arbitrary Target Specification (SMARTS) have been developed to extend its functionalities [19].

Modern AI-Driven Latent Representations

Recent advancements in artificial intelligence have ushered in a new era of molecular representation, shifting from predefined rules to data-driven learning paradigms [19]. These approaches leverage deep learning models to directly extract and learn intricate, high-dimensional features—latent representations—from large molecular datasets.

Graph-Based Representations

Graph-based methods model a molecule as a graph (G = (V, E)), where (V = {v1, v2, \ldots, vn}) represents atoms and (E = {e1, e2, \ldots, em}) represents bonds [22]. Graph Neural Networks (GNNs) operate on this structure using a message-passing mechanism, where nodes aggregate information from their neighbors to update their own features [22]. The Graph Isomorphism Network (GIN) is a particularly powerful variant, noted for its strong discriminative power in distinguishing different graph structures, which is crucial for capturing subtle yet important structural patterns [22].

Advanced frameworks like OmniMol have further developed this concept by formulating entire molecules and their corresponding properties as a hypergraph [23]. This unified view allows the model to extract and learn from three key relationships: among properties, between molecules and properties, and among molecules themselves [23].

Sequence-Based and Transformer Models

Inspired by natural language processing (NLP), transformer models treat molecular sequences (e.g., SMILES) as a specialized chemical language [19]. The string is tokenized at the atomic or substructure level, with each token mapped into a continuous vector [19]. Models like SMILES-BERT and other chemical transformers are pre-trained on large-scale molecular data to learn the underlying "syntax" and "semantics" of chemical structures, generating powerful contextual embeddings [21].

A cutting-edge application is the source-target molecular transformer, trained on hundreds of billions of molecular pairs and regularized via a similarity kernel function [24]. This model establishes a direct relationship between the probability of generating a target molecule and its similarity to a source molecule, enabling an approximately exhaustive sampling of the local chemical space around a given compound—a critical capability for active learning [24].

Multi-View and Hierarchical Representations

To overcome the limitations of single-view models, methods like MvMRL integrate multiple molecular representations [21]. This approach typically uses a multiscale CNN with squeeze-and-excitation blocks to learn from SMILES sequences, a multiscale GNN to encode molecular graphs, and a multilayer perceptron (MLP) to process molecular fingerprints, with a dual cross-attention mechanism fusing these views [21].

Similarly, hierarchical models like HLN-DDI explicitly encode molecular structures at multiple levels—atom-level, motif-level, and whole-molecule level [22]. Motifs, which are small, conserved substructures, are identified using algorithms like BRICS. These hierarchical representations are then integrated using a co-attention mechanism to produce a comprehensive molecular embedding [22].

Table 2: Comparison of Modern AI-Driven Molecular Representation Methods

| Method Category | Key Architecture | Molecular Input | Key Advantage |

|---|---|---|---|

| Graph-Based | Graph Neural Network (GNN) / Graphormer | Molecular Graph (2D/3D) | Captures intrinsic topology and spatial relationships |

| Sequence-Based | Transformer / BERT | SMILES/SELFIES String | Leverages powerful NLP architectures for context-aware embeddings |

| Hypergraph-Based | OmniMol | Molecular Graph & Property Data | Unifies molecules and properties to model complex relationships |

| Multi-View | MvMRL | SMILES, Graph, & Fingerprint | Combines strengths of multiple representations for a comprehensive view |

| Hierarchical | HLN-DDI | Molecular Graph (decomposed) | Captures complex, multi-level structures and substructures |

Experimental Protocols in Molecular Representation Learning

Protocol: Training a Regularized Molecular Transformer for Exhaustive Local Sampling

This protocol, derived from a large-scale study [24], aims to train a transformer model that systematically generates target molecules that are both highly probable (precedented) and similar to a source molecule.

- Dataset Curation: Assemble a massive dataset of molecular pairs. The exemplary study used PubChem to extract over 200 billion source-target pairs [24].

- Model Architecture: Adopt a standard encoder-decoder transformer architecture, treating SMILES strings as sequences of tokens [24].

- Loss Function Regularization: Introduce a key innovation by adding a regularization term to the standard negative log-likelihood (NLL) loss. This term penalizes the model if the similarity (e.g., based on ECFP4 fingerprints) between the generated target and the source molecule does not align with the generation probability. The combined loss function is: (L = L{NLL} + \lambda \cdot L{Rank}) where (L_{Rank}) enforces a correlation between the NLL of a generated sequence and its similarity to the source [24].

- Model Training: Train the model with the regularized loss function. The hyperparameter (\lambda) controls the strength of the similarity correlation [24].

- Exhaustive Sampling via Beam Search: For a given source molecule, use beam search to generate and rank a large number of candidate molecules by their NLL. The regularization ensures that sampling up to a specific NLL threshold corresponds to an approximately exhaustive enumeration of the precedented, near-neighborhood chemical space [24].

Protocol: Multi-View Molecular Representation Learning (MvMRL)

This protocol outlines the methodology for integrating features from multiple molecular representations [21].

- Multi-View Feature Learning:

- SMILES View: Pass the embedded SMILES sequence through a multiscale CNN-SE (Squeeze-and-Excitation) component. This uses convolutional kernels of different sizes to capture local semantic information at multiple scales, with the SE block adaptively weighting channel features [21].

- Molecular Graph View: Process the 2D molecular graph using a multiscale GNN encoder (e.g., GIN) to capture both local atom environments and global graph topology [21].

- Fingerprint View: Feed a traditional molecular fingerprint (e.g., ECFP) into a Multi-Layer Perceptron (MLP) to capture complex non-linear relationships in this feature space [21].

- Multi-View Feature Fusion: The feature vectors from the three views are integrated using a dual cross-attention component. This mechanism allows the model to focus on the most crucial features from each view and how they interact with one another [21].

- Prediction: The fused, comprehensive representation vector is then used for downstream prediction tasks, such as molecular property prediction [21].

Protocol: Hierarchical Molecular Representation with Motif Decomposition

This protocol, used in HLN-DDI, creates a hierarchical graph structure for a more chemically meaningful representation [22].

- Motif Decomposition: Convert the SMILES string of a drug into a molecular graph (G = (V, E)). Decompose this graph into a sequence of motifs (V^m = {V{1}^m, V{2}^m, ..., V_{k}^m}) using an enhanced BRICS (Breakdown of Recurrent Chemical Substructures) method. The enhancement involves further disintegrating larger ring fragments into their smallest constituent rings [22].

- Augmented Molecular Graph Construction: Construct an augmented graph (\tilde{G} = (\tilde{V}, \tilde{E})) where: (\tilde{V} = [V, V^m, V^g]) The node set now includes original atom-level nodes (V), new motif-level nodes (V^m), and a single molecule-level node (V^g). The edge set (\tilde{E} = [E, E^m, E^g]) is also expanded to include atom-motif edges (E^m) (connecting a motif node to all its constituent atoms) and motif-molecule edges (E^g) (connecting all motif nodes to the whole-molecule node) [22].

- Hierarchical Representation Encoding: Use a GNN (e.g., GIN) to perform message passing on this augmented graph. This process generates integrated node representations that encapsulate information from the atomic, motif, and molecular levels [22].

- Readout and Prediction: For a task like drug-drug interaction (DDI) prediction, the hierarchical representations for two drug molecules are integrated with a co-attention mechanism and combined with interaction-type information to predict the probability of an interaction [22].

Table 3: Key Research Reagents and Computational Tools for Molecular Representation Learning

| Item / Resource | Function / Description | Example Use Case |

|---|---|---|

| RDKit | An open-source cheminformatics toolkit used to convert SMILES to molecular graphs, calculate fingerprints, and perform molecular operations. | Converting SMILES "CC(O)CO" into a 2D/3D molecular graph for GNN input [22]. |

| PubChem | A massive public database of chemical molecules and their biological activities, containing over 100 million unique chemical structures. | Sourcing large-scale molecular data for pre-training transformer models or benchmarking [24]. |

| ECFP4 Fingerprints | A type of circular fingerprint that captures molecular substructures up to a diameter of 4 bonds, represented as a bit string or count vector. | Measuring molecular similarity for loss function regularization or as a baseline feature [24]. |

| BRICS Algorithm | A method for decomposing molecules into retrosynthetically interesting chemical substructures (motifs). | Performing motif decomposition for hierarchical graph construction [22]. |

| Graph Isomorphism Network (GIN) | A GNN variant with high discriminative power, theoretically as powerful as the Weisfeiler-Lehman graph isomorphism test. | Encoding molecular graphs to capture subtle structural differences [22]. |

| Squeeze-and-Excitation (SE) Block | A neural network component that adaptively recalibrates channel-wise feature responses by modeling interdependencies between channels. | Enhancing a CNN for SMILES sequence learning by highlighting important features [21]. |

Workflow and Conceptual Diagrams

Multi-View Molecular Representation Learning (MvMRL) Workflow

MvMRL Workflow

Hierarchical Molecular Graph Construction

Hierarchical Graph Construction

Active Learning Cycle with Molecular Representations

Active Learning Cycle

The journey from simple molecular fingerprints to sophisticated, multi-view latent representations marks a significant paradigm shift in computational chemistry [19]. Modern AI-driven methods, including graph neural networks, transformers, and hierarchical models, now provide a more powerful and comprehensive means to encode chemical structures, capturing both local and global features that are intimately linked to molecular properties and functions [23] [21] [22].

The integration of these advanced representations into active learning frameworks is reshaping the process of chemical space exploration [24] [20]. By enabling more efficient and intelligent navigation of the vast chemical space, these tools help prioritize the most promising candidates for synthesis and testing. This synergistic combination of representation learning and active learning holds the potential to dramatically accelerate the discovery of novel therapeutics and functional materials, systematically reducing the time and cost associated with traditional research and development pipelines [23] [24].

Advanced Active Learning Strategies and Real-World Applications

The process of drug and material discovery is often described as a search for a needle in a haystack, requiring the identification of optimal molecular structures from a virtually infinite pool of possibilities known as chemical space [25] [26]. The immense combinatorial complexity of possible molecular arrangements presents a fundamental challenge for conventional screening methods. Traditional high-throughput screening, whether experimental or computational, becomes prohibitively expensive and time-consuming when applied to ultralarge chemical libraries [27]. This limitation has catalyzed the development of more intelligent, efficient exploration strategies that prioritize promising regions of chemical space while minimizing the number of costly evaluations.

Within this context, hierarchical and funnel-like strategies have emerged as powerful frameworks for navigating chemical space systematically. These approaches leverage the core principles of active learning, where iterative cycles of prediction and experimental validation guide the exploration process [26]. By organizing the search across multiple levels of resolution or specificity, these methods effectively balance the competing demands of broad exploration and detailed exploitation. The hierarchical nature of these strategies allows researchers to compress the chemical space initially, then progressively refine their search toward the most promising candidates, dramatically improving the efficiency of molecular discovery [27] [28].

Core Methodological Frameworks

Multi-Resolution Coarse-Graining for Chemical Space Compression

Coarse-graining methodologies address chemical space complexity by grouping atoms into pseudo-particles or beads, effectively creating multiple simplified representations of molecular structures [27]. This approach enables researchers to explore compressed versions of chemical space before proceeding to more detailed levels. The process typically involves two fundamental steps: mapping groups of atoms to beads, and defining interactions between these beads using transferable force fields.

In practice, multi-resolution coarse-graining employs hierarchical models that share the same atom-to-bead mapping but differ in their assignment of transferable bead types [27]. Lower-resolution models with fewer bead types create a smaller, more manageable chemical space that is easier to explore initially, while higher-resolution models capture finer chemical details but present greater combinatorial complexity. The hierarchical relationship between these representations allows systematic mapping from higher to lower resolutions, creating a structured pathway for navigation.

Key parameters for multi-resolution coarse-graining:

| Resolution Level | Bead Types | Chemical Space Size | Information Detail | Primary Function |

|---|---|---|---|---|

| Low Resolution | Fewer | Smaller | Reduced | Broad exploration |

| Medium Resolution | Moderate | Medium | Moderate | Guided search |

| High Resolution | More | Larger | Higher | Detailed optimization |

Funnel-Learning Framework for Targeted Discovery

The hierarchy-boosted funnel learning (HiBoFL) framework represents another powerful approach to hierarchical chemical space exploration [28]. This methodology operates through a sequential narrowing process that efficiently identifies materials with desired properties. The framework integrates both unsupervised and supervised learning techniques in a complementary workflow that progressively focuses computational resources on the most promising regions of chemical space.

The HiBoFL framework implements a four-stage funnel [28]:

- Data Preparation: Initial dataset collection and preprocessing

- Unsupervised Learning: Identification of problem-specific clusters using similarity metrics

- Data Annotation: Targeted labeling of promising candidates through low-cost calculations

- Supervised Learning: Refined prediction using interpretable machine learning models

This approach has demonstrated significant success in identifying semiconductors with ultralow lattice thermal conductivity, where it enabled efficient discovery by training on only a few hundred materials targeted by unsupervised learning from a pool of hundreds of thousands [28]. The funnel strategy effectively circumvents large-scale brute-force calculations without clear objectives, dramatically reducing computational costs while maintaining discovery effectiveness.

Experimental Protocols and Workflows

Multi-Level Bayesian Optimization with Active Learning

The integration of multi-resolution coarse-graining with Bayesian optimization creates a powerful active learning protocol for molecular discovery [27]. This approach combines the computational efficiency of coarse-grained representations with the guided search capabilities of Bayesian optimization, enabling efficient navigation of chemical space. The methodology transforms discrete molecular spaces into smooth latent representations using graph neural network-based autoencoders, facilitating the application of Bayesian optimization across multiple resolution levels.

Step-by-Step Protocol:

Multi-Resolution Chemical Space Definition

- Define coarse-grained (CG) models at 3-4 resolution levels using the same atom-to-bead mapping but varying bead type assignments

- Higher-resolution models should correspond to established CG frameworks (e.g., Martini3 model [27])

- Enumerate all possible CG molecules for each resolution level within the target chemical space region

Latent Space Embedding

- Encode CG structures into continuous latent space using graph neural network-based autoencoders

- Encode each resolution level separately to maintain hierarchical relationships

- Validate latent space quality through reconstruction accuracy and similarity preservation metrics

Multi-Level Bayesian Optimization Loop

- Initialize with random sampling or prior knowledge across all resolution levels

- For each iteration:

- Select promising candidates using acquisition functions (e.g., Expected Improvement)

- Perform molecular dynamics simulations to calculate target free energies

- Update Gaussian process models with new data

- Transfer promising neighborhood information from lower to higher resolutions

- Continue for predetermined iterations or until convergence criteria met

Validation and Experimental Follow-up

- Select top candidates from highest-resolution optimization

- Validate predictions through alchemical free energy calculations or experimental testing

- Analyze chemical neighborhoods of successful candidates for design insights

This protocol was successfully applied to optimize molecules for enhancing phase separation in phospholipid bilayers, demonstrating how neighborhood information from lower resolutions effectively guides optimization at higher resolutions [27].

Active Learning with Alchemical Free Energy Calculations

For drug discovery applications, active learning can be productively combined with alchemical free energy calculations to identify high-affinity inhibitors [26]. This approach was specifically validated for phosphodiesterase 2 (PDE2) inhibitors, demonstrating robust identification of true positives while explicitly evaluating only a small subset of compounds in large chemical libraries.

Detailed Methodology:

Procedure Calibration Phase

- Begin with experimentally characterized binders for the target protein

- Optimize alchemical free energy calculation parameters (soft-core potentials, λ-scheduling)

- Establish accuracy benchmarks against experimental binding affinities

- Determine optimal machine learning model architecture and hyperparameters

Prospective Active Learning Cycle

- Initialize with diverse compound selection from large chemical library

- For each active learning iteration (typically 10-20 cycles):

- Probe 1-5% of remaining compounds using alchemical free energy calculations

- Train machine learning models (random forest, neural networks) on obtained affinities

- Apply trained models to predict affinities for entire library

- Select next batch for evaluation based on prediction confidence and estimated improvement

- Continue until identification of high-affinity binders or resource exhaustion

Experimental Validation

- Synthesize or acquire predicted high-affinity compounds

- Determine binding affinities using experimental techniques (SPR, ITC, enzymatic assays)

- Compare predicted vs. experimental affinities for method validation

- Iterate with additional rounds if necessary

This protocol enables the identification of high-affinity binders while explicitly evaluating only a small fraction (typically 5-15%) of a large chemical library, providing substantial computational savings [26].

Research Reagent Solutions: Computational Tools

Successful implementation of hierarchical exploration strategies requires specialized computational tools and resources. The table below details essential research reagents for conducting multi-level chemical space exploration.

| Tool Category | Specific Tools/Platforms | Function | Application Context |

|---|---|---|---|

| Coarse-Grained Force Fields | Martini3 [27] | Provides transferable bead types and interactions | Molecular dynamics simulations at reduced resolution |

| Chemical Libraries | ZINC20 [25], GDB-17 [26] | Ultralarge collections of synthesizable compounds | Initial screening libraries for exploration |

| Latent Space Encoding | Graph Neural Network Autoencoders [27], Variational Autoencoders (VAE) [27] | Creates continuous representations of discrete molecular structures | Enabling Bayesian optimization in chemical space |

| Bayesian Optimization | Gaussian Process Regression, Expected Improvement acquisition [27] | Guides selection of promising candidates for evaluation | Active learning loops across multiple resolutions |

| Free Energy Calculations | Alchemical free energy methods [26], Thermodynamic Integration (TI) [27] | Computes binding affinities or property differences | High-accuracy evaluation of selected compounds |

| Clustering & Dimensionality Reduction | K-means [28], PCA [28], t-SNE [28] | Identifies problem-specific clusters in chemical space | Unsupervised learning phase of funnel frameworks |

Performance Metrics and Comparative Analysis

Hierarchical and funnel-like strategies demonstrate significant advantages over conventional screening methods across multiple performance dimensions. The tables below summarize key quantitative comparisons and experimental outcomes.

Table 1: Performance Comparison of Exploration Strategies

| Exploration Strategy | Computational Cost | Success Rate | Chemical Space Coverage | Required Prior Knowledge |

|---|---|---|---|---|

| High-Throughput Screening | High (100% evaluation) | Low (0.001-0.01%) | Broad but shallow | Minimal |

| Standard Virtual Screening | Medium (10-30% evaluation) | Low-Medium (0.01-0.1%) | Moderate | Moderate (structure or ligands) |

| Single-Level Active Learning | Low-Medium (5-20% evaluation) | Medium (0.1-1%) | Focused | Moderate |

| Multi-Level Hierarchical | Low (1-10% evaluation) | High (1-5%) | Strategic depth progression | Low-Medium |

Table 2: Experimental Outcomes from Implemented Strategies

| Study | Target System | Strategy | Library Size | Compounds Evaluated | Success Rate |

|---|---|---|---|---|---|

| Khalak et al. [26] | PDE2 Inhibitors | Active Learning + Alchemical Free Energy | Large library | Small subset (exact % not specified) | Identified high-affinity binders |

| Walter & Bereau [27] | Lipid Bilayer Phase Separation | Multi-Level Bayesian Optimization | Not specified | Not specified | Enhanced phase separation |

| HiBoFL Framework [28] | Ultralow κL Semiconductors | Hierarchy-Boosted Funnel Learning | 154,718 materials | Few hundred | Efficient identification of target materials |

The performance data demonstrates that hierarchical strategies achieve significantly higher success rates while evaluating substantially fewer compounds compared to conventional approaches. This efficiency stems from their strategic navigation of chemical space, focusing computational resources on the most promising regions while maintaining the flexibility to explore novel chemical neighborhoods [26] [27] [28].

Hierarchical and funnel-like strategies represent a paradigm shift in chemical space exploration, moving beyond brute-force screening toward intelligent, guided navigation. By organizing the search process across multiple levels of resolution or specificity, these approaches achieve unprecedented efficiency in molecular discovery. The integration of active learning with multi-resolution modeling creates a powerful framework that balances exploration and exploitation, adapting to the complex structure of chemical space.

Future developments in this field will likely focus on several key areas: improved automated coarse-graining methodologies, enhanced latent space representations that better capture molecular similarities, and more efficient transfer of information across resolution levels. As these hierarchical strategies mature and integrate with emerging experimental techniques, they promise to dramatically accelerate the discovery of novel therapeutics and functional materials, transforming the landscape of molecular design and optimization.

The exploration of massive chemical spaces for drug discovery is fundamentally limited by the high computational cost of molecular simulations. This whitepaper presents a novel framework that integrates active learning (AL) strategies with alchemical free energy calculations and molecular dynamics (MD) to dramatically accelerate the screening of molecular compounds. By employing Oracle's cloud infrastructure for workflow orchestration, this approach enables efficient navigation of chemical spaces exceeding one million compounds, starting with minimal initial data. We demonstrate how this methodology identifies promising battery electrolyte solvents with state-of-the-art performance, providing a template for transformative acceleration in computational drug development.

The chemical space relevant to drug discovery is astronomically large, estimated at approximately 10^60 potential compounds [29]. Traditional computational methods for evaluating these compounds are prohibitively expensive, with each molecular dynamics simulation requiring weeks or months to generate sufficient data [29]. This fundamental limitation necessitates innovative approaches that can maximize information gain from minimal data.

Alchemical free energy calculations represent a powerful class of computational methods that predict free energy differences associated with molecular transfer processes, such as drug binding to protein targets or solute partitioning between environments [30]. These methods use "bridging" potential energy functions representing alchemical intermediate states that cannot exist as real chemical species, enabling efficient computation of transfer free energies with orders of magnitude less simulation time than direct transfer simulation [30].

Theoretical Foundations

Alchemical Free Energy Methods

Alchemical free energy calculations compute free energy differences associated with various transfer processes through non-physical intermediate states. The potential governing atomic interactions is modified for the atoms being changed, inserted, or deleted [30]. Key applications include:

- Relative binding free energies: Estimating differences in binding affinities between chemically related ligands [30]

- Absolute binding free energies: Computing the binding affinity of a single ligand to its receptor [30]