Active Learning for Compound-Target Interaction Prediction: A Practical Guide for Accelerating Drug Discovery

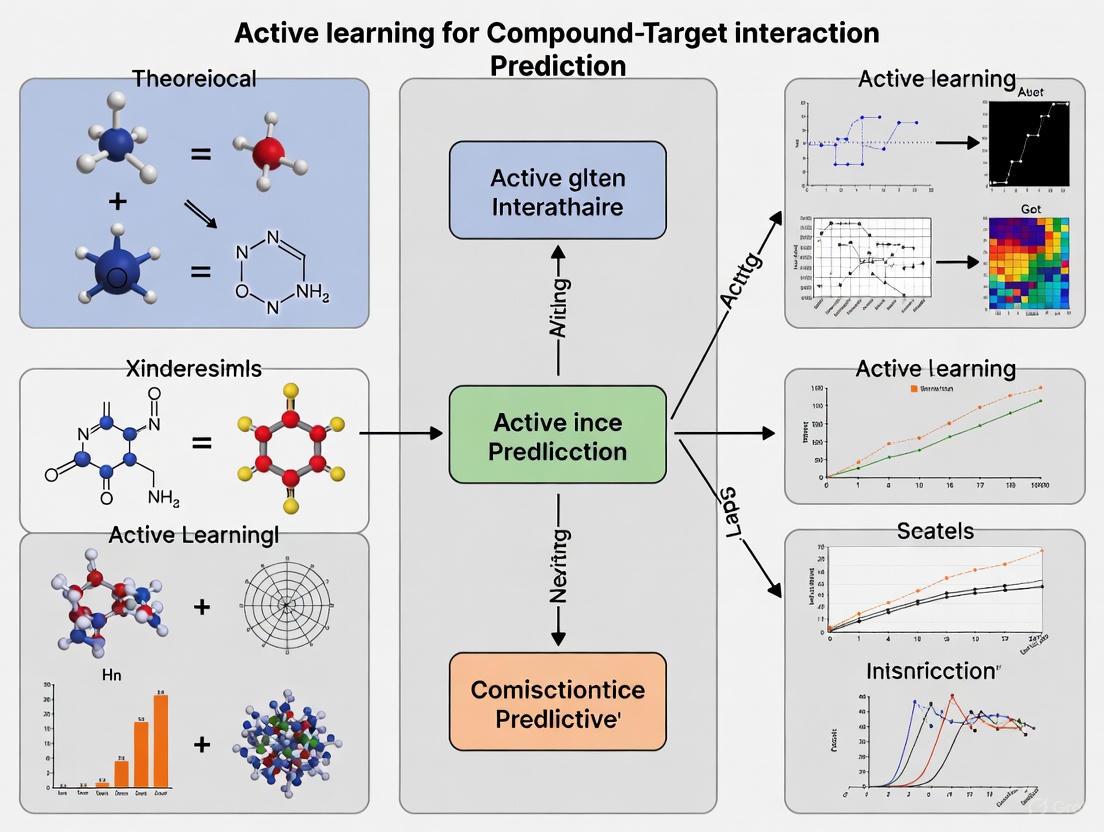

This article provides a comprehensive overview of Active Learning (AL) applications in predicting compound-target interactions, a critical task in modern drug discovery.

Active Learning for Compound-Target Interaction Prediction: A Practical Guide for Accelerating Drug Discovery

Abstract

This article provides a comprehensive overview of Active Learning (AL) applications in predicting compound-target interactions, a critical task in modern drug discovery. Aimed at researchers and drug development professionals, it explores the foundational principles of AL as an iterative, data-efficient machine learning strategy that addresses the challenges of vast chemical space and limited labeled data. The content details methodological implementations, from virtual screening to lead optimization, examines troubleshooting for common pitfalls like model selection and data imbalance, and offers a comparative analysis of state-of-the-art frameworks and their validation in real-world R&D settings. By synthesizing current trends and practical insights, this guide serves as a roadmap for integrating AL to reduce costs, compress timelines, and improve the predictive accuracy of drug-target models.

The Foundations of Active Learning in Drug Discovery: Addressing Data Scarcity and Vast Chemical Space

In the context of compound-target interaction prediction, active learning (AL) is an iterative, feedback-driven machine learning process designed to intelligently select the most valuable data points for experimental testing from a vast, unexplored chemical space [1]. This approach is particularly crucial in drug discovery, where the combinatorial search space for potential drug-target pairs is immense, and the phenomenon of interest, such as drug synergy or specific binding, is often rare [2]. Conventional machine learning models rely on static, pre-existing datasets, which can be biased and inefficient. In contrast, active learning creates a closed-loop system where the model's predictions guide the next cycle of experiments, and the results from those experiments are fed back to refine the model [3]. This iterative feedback loop enables researchers to maximize the information gain from a limited experimental budget, significantly accelerating the identification of promising drug candidates [2] [1].

Key Principles and Quantitative Impact

Active learning frameworks for drug discovery are built upon a core cycle involving a prediction model and a strategic selection criterion. This process addresses the fundamental exploration-exploitation trade-off, balancing the testing of uncertain but promising regions of the chemical space (exploration) against the verification of predicted high-value candidates (exploitation) [2]. The selection of the acquisition function, such as uncertainty sampling or expected model change, is critical to this balance.

The quantitative benefits of implementing active learning in drug discovery are substantial. In the task of identifying synergistic drug combinations, one study demonstrated that an active learning strategy could discover 60% of synergistic drug pairs by exploring only 10% of the total combinatorial space [2]. This represents a dramatic increase in efficiency, saving an estimated 82% of experimental time and materials compared to a random screening approach [2]. Furthermore, the study found that the synergy yield ratio is even higher with smaller batch sizes, and that dynamic tuning of the exploration-exploitation strategy can further enhance performance [2].

Table 1: Key Software and Tools for Active Learning and DTI Prediction

| Tool/Algorithm Name | Type/Function | Key Features/Description |

|---|---|---|

| RECOVER [2] | Active Learning Framework | A two-layer MLP for synergistic drug combination screening; uses pre-training and iterative batch selection. |

| DeepSynergy [2] | Deep Learning Model | A multi-layer perceptron (MLP) that predicts synergy using chemical and genomic descriptors as inputs. |

| Komet [4] | Scalable Prediction Pipeline | A scalable DTI prediction pipeline using a three-step framework with efficient computations and the Nyström approximation. |

| BarlowDTI [4] | Feature Extraction & Prediction | Uses the Barlow Twins architecture for feature extraction from target proteins; employs a gradient boosting machine for fast prediction. |

| MDCT-DTA [4] | Affinity Prediction Model | Combines multi-scale graph diffusion convolution and a CNN-Transformer Network for drug-target affinity prediction. |

| LCIdb [4] | Curated Dataset | An extensive, curated DTI dataset with enhanced molecule and protein space coverage. |

Experimental Protocols for Active Learning in Compound-Target Interaction Prediction

Protocol 1: Active Learning Setup for Drug Synergy Screening

This protocol is adapted from benchmark studies on synergistic drug combination discovery [2].

1. Problem Formulation and Initial Data Preparation

- Objective: To iteratively identify drug pairs with a Loewe synergy score >10.

- Public Data Pre-processing: Download a dataset such as O'Neil (15,117 measurements, 38 drugs, 29 cell lines). Define synergistic pairs based on the chosen synergy score threshold (e.g., 10 for Loewe) [2].

- Feature Engineering:

- Drug Representation: Encode drugs using Morgan fingerprints (radius 2, 1024 bits), which have shown superior performance in low-data regimes [2].

- Cellular Context: Represent cell lines using gene expression profiles from databases like GDSC. Research indicates that as few as 10 key genes may be sufficient for modeling inhibition, but using a larger set (e.g., 908 genes) is common to recapitulate transcriptional information [2].

- Model Selection: Choose a data-efficient algorithm. A Multi-Layer Perceptron (MLP) with an addition operation to combine drug representations is a validated starting point [2].

2. Initial Model Pre-training

- Split off a small, held-out test set (e.g., 10% of the public data).

- Use a portion of the remaining public data (e.g., another 10%) to conduct a few initial training epochs to initialize the model parameters. This "warm-starts" the model before the active loop begins [2].

3. Designing the Active Learning Loop

- Batch Size: Determine the batch size (

k) for each cycle. Smaller batch sizes (e.g., 1-5% of total budget) often lead to higher synergy yield but require more cycles [2]. - Acquisition Function: Select a strategy for querying the most informative samples. For synergy prediction, this often involves:

- Uncertainty Sampling: Selecting drug-cell combinations where the model's prediction is most uncertain.

- Expected Model Change: Selecting samples that would cause the greatest change to the current model parameters.

- Stopping Criterion: Define a termination point, such as a fixed total experimental budget or a target number of synergistic pairs discovered.

Diagram 1: Active learning workflow for drug screening.

Protocol 2: Active Learning with GANs for Imbalanced DTI Data

This protocol addresses the common challenge of imbalanced datasets in Drug-Target Interaction (DTI) prediction, where known interacting pairs are rare [4].

1. Framework Construction

- Feature Extraction:

- Drug Features: Use MACCS keys to extract structural fingerprints from drug compounds.

- Target Features: Represent target proteins using their amino acid composition and dipeptide composition.

- Data Balancing with GANs:

- Train a Generative Adversarial Network (GAN) on the minority class (known interacting pairs).

- The generator learns to produce synthetic drug-target feature vectors that resemble real interactions.

- The discriminator learns to distinguish between real and synthetic interactions.

- Classifier Training: Use a Random Forest Classifier, optimized for high-dimensional data, to make the final DTI predictions on the balanced dataset (original data + synthetic minority data) [4].

2. Active Learning Integration

- After the initial GAN+RFC model is trained, use it to screen a large virtual library of unlabeled drug-target pairs.

- Apply an acquisition function (e.g., prediction confidence or margin-based uncertainty) to select the most informative candidates for experimental validation.

- The newly acquired experimental results are added to the training set. The GAN can be fine-tuned with the new positive interactions, and the RFC is retrained, closing the feedback loop.

Table 2: Key Research Reagents and Computational Tools

| Reagent/Solution | Function in Experiment | Specifications & Notes |

|---|---|---|

| Morgan Fingerprints [2] | Drug Representation | A circular fingerprint representing the topology of a molecule. Typically used with a radius of 2 and 1024 bits. |

| MACCS Keys [4] | Drug Representation | A binary fingerprint based on a predefined set of 166 structural fragments. Used for capturing key molecular features. |

| Gene Expression Profiles [2] | Cellular Context | Gene expression data for cell lines (e.g., from GDSC). Critical for contextualizing predictions in a biological environment. |

| Generative Adversarial Network (GAN) [4] | Data Balancing | Generates synthetic data for the minority class (e.g., interacting drug-target pairs) to mitigate dataset imbalance. |

| Random Forest Classifier (RFC) [4] | Prediction Model | An ensemble ML algorithm used for making final DTI predictions; robust against overfitting and handles high-dimensional data well. |

| BindingDB Dataset [4] | Benchmarking | A public database containing measured binding affinities of drugs considered to be protein targets. Used for model training and validation. |

Implementation and Best Practices

The Active Learning Feedback Loop in Practice

The core of active learning is a tightly integrated cycle of prediction and experimentation. As the model is exposed to more strategically selected data, its performance improves, particularly for the task of identifying rare events. The feedback mechanism is crucial for correcting model biases and steering exploration toward fruitful regions of the chemical space [3]. This requires close collaboration between computational scientists and wet-lab researchers to ensure the rapid turnaround of experiments and the seamless integration of results into the model's training pipeline [5].

Diagram 2: The core active learning feedback loop.

Key Considerations for Success

- Batch Size: This is a critical hyperparameter. Smaller batch sizes generally lead to more efficient discovery but require more iterative cycles. The batch size should be optimized based on experimental throughput and cost [2].

- Feature Selection: While molecular encoding (e.g., fingerprint type) has a limited impact, the inclusion of cellular environment features (e.g., gene expression) consistently and significantly enhances prediction quality [2].

- Addressing Data Imbalance: For DTI prediction, where positive interactions are scarce, techniques like GAN-based data augmentation are highly effective in improving model sensitivity and reducing false negatives [4].

- Dynamic Tuning: The strategy for balancing exploration and exploitation should not be static. Dynamically tuning this trade-off based on the model's current performance and the yield of previous batches can lead to further performance gains [2].

Active learning, defined by its iterative feedback loop for intelligent data selection, represents a paradigm shift in computational drug discovery. By moving beyond static models to a dynamic, adaptive process, it offers a powerful strategy to navigate the vast and complex landscape of compound-target interactions. The structured protocols and evidence presented here provide a foundation for researchers to implement active learning, enabling more efficient use of resources and accelerating the journey from hypothesis to validated therapeutic candidate.

Modern drug discovery remains a challenging endeavor, characterized by prohibitively high costs and extensive development timelines. The traditional process from lead compound identification to regulatory approval typically spans over 12 years with cumulative expenditures often exceeding $2.5 billion [6]. Clinical trial success probabilities decline precipitously from Phase I (52%) to Phase II (28.9%), culminating in an overall success rate of merely 8.1% [6]. This inefficiency represents a critical bottleneck in delivering novel therapeutics to patients.

A fundamental challenge underpinning this crisis is the data acquisition bottleneck. Preclinical drug screening experiments for anti-cancer drug discovery, for example, involve testing candidate drugs against cancer cell lines, creating an experimental space that can be prohibitively large and expensive to explore exhaustively [7]. The characterization of compound activities through biophysical, biochemical, or cell-based experiments generates data that is often sparse, unbalanced, and from multiple sources [8].

Active learning (AL) represents a paradigm shift in addressing these challenges. As a strategic machine learning approach, AL minimizes labeling costs while maintaining or enhancing model accuracy by selectively querying the most informative data points for annotation [9]. This methodology is particularly valuable in domains like drug discovery where obtaining labeled data requires expert knowledge, specialized instrumentation, and intricate experimental protocols [10]. By intelligently selecting which experiments to perform or which compounds to screen, AL enables researchers to build robust predictive models while substantially reducing the volume of labeled data required [10].

Active Learning Fundamentals for Drug Discovery

Core Concepts and Workflow

Active learning operates through an iterative process of selection, labeling, and model retraining. The fundamental AL cycle consists of these key stages [11]:

- Initialization: Beginning with a small set of labeled data points

- Model Training: Training a predictive model on the available labeled data

- Query Strategy: Selecting the most informative unlabeled data points based on a defined strategy

- Human Annotation: Obtaining ground truth labels for selected points through experimentation

- Model Update: Incorporating newly labeled data and retraining the model

- Iteration: Repeating steps 3-5 until meeting stopping criteria

This iterative process is particularly suited to drug discovery applications, where each cycle corresponds to a round of costly experimental screening [7]. The core value proposition lies in the strategic selection of experiments to maximize information gain while minimizing resource expenditure.

Key Query Strategies for Compound-Target Interaction Prediction

Different AL query strategies offer distinct advantages for various drug discovery scenarios:

Table 1: Active Learning Query Strategies for Drug Discovery Applications

| Strategy Type | Mechanism | Best For | Considerations |

|---|---|---|---|

| Uncertainty Sampling | Selects samples where model prediction confidence is lowest [7] | Lead optimization stages, activity cliff compounds [8] | May select outliers; requires good initial model |

| Diversity Sampling | Chooses samples that maximize coverage of chemical space [7] | Virtual screening, hit identification [8] | Ensures broad representation but may include uninformative samples |

| Hybrid Approaches | Combines uncertainty and diversity principles [10] [7] | Balanced exploration-exploitation; general purpose | More complex implementation; tuning required |

| Expected Model Change | Selects samples that would most alter current model [10] | Model refinement, addressing knowledge gaps | Computationally intensive; theoretical guarantees limited |

Quantitative Evidence: Benchmarking Active Learning in Drug Discovery

Performance in Real-World Drug Screening Applications

Recent comprehensive investigations have demonstrated the significant advantages of AL strategies over conventional approaches in anti-cancer drug response prediction. In a study evaluating 57 drugs across hundreds of cancer cell lines, AL approaches showed substantial improvement in identifying hits (responsive treatments) compared to random and greedy sampling methods [7]. This capability to rapidly identify effective treatments enables the active learning process to stop sooner, achieving comparable results with reduced reliance on obtaining labeled data.

The performance of various AL strategies has been systematically benchmarked in materials science and drug discovery contexts, revealing that uncertainty-driven and diversity-hybrid strategies clearly outperform geometry-only heuristics and random baselines, especially during early acquisition phases when labeled data is most scarce [10]. As the labeled set grows, the performance gap narrows, indicating diminishing returns from AL under AutoML frameworks.

Data Efficiency and Cost Reduction Metrics

The data efficiency afforded by AL strategies translates directly into cost savings and accelerated timelines. Research has demonstrated that active learning can achieve performance parity with full-data baselines while querying merely 30% of the pool, equivalent to a 70–95% savings in computational or labeling resources [10]. For certain prediction tasks, such as band gap predictions in materials science, as little as 10% of the data were sufficient to reach target accuracy thresholds [10].

Table 2: Quantitative Performance of Active Learning in Biomedical Applications

| Application Domain | Performance Metric | AL Performance | Baseline Comparison |

|---|---|---|---|

| Anti-cancer Drug Screening | Hit Identification Rate | Significant improvement over random selection [7] | Random selection less efficient |

| Materials Property Prediction | Data Requirement for Target Accuracy | 10-30% of full dataset [10] | 100% required for random sampling |

| Small-Sample Regression | Early-Stage Model Accuracy | Uncertainty-driven strategies outperform [10] | Geometry-only heuristics less effective |

| Experimental Design | Cost Reduction | 60% reduction in experimental campaigns [10] | Traditional exhaustive screening |

Application Notes: Implementing Active Learning for Compound-Target Interaction Prediction

Protocol: Pool-Based Active Learning for Drug Response Prediction

Objective: To efficiently build high-performance drug response prediction models while simultaneously discovering validated responsive treatments with limited experimental resources.

Materials and Data Requirements:

- Unlabeled candidate set: 500-1000 compound-target pairs

- Initial labeled seed set: 50-100 representative samples

- Feature representations: Compound fingerprints (ECFP, MACCS) and target descriptors (sequence, structure)

- Response metric: IC₅₀, AUC, or AAC values

Procedure:

- Initial Model Training

- Train initial predictive model (e.g., Random Forest, GNN, or ensemble) on labeled seed set

- Perform 5-fold cross-validation to establish baseline performance

- Compute evaluation metrics (MAE, R², ROC-AUC as appropriate)

Query Strategy Implementation

- Apply uncertainty sampling using entropy-based measures or margin sampling

- Alternative: Implement diversity sampling using k-means clustering or core-set approaches

- Advanced: Deploy hybrid strategy combining uncertainty and diversity principles

Iterative Active Learning Cycle

- Select top-k most informative samples (typically 10-50 per iteration)

- Experimental validation: Obtain ground truth labels through targeted screening

- Add newly labeled samples to training set

- Retrain model and update performance metrics

- Repeat for 10-20 cycles or until performance plateaus

Validation and Quality Control:

- Maintain hold-out test set for unbiased performance evaluation

- Implement early stopping based on performance convergence

- Monitor for distribution shift between selected and overall populations

Protocol: Cross-Assay Generalization for Virtual Screening

Objective: To leverage active learning for building predictive models that generalize across experimental assays and conditions, addressing the challenge of multiple data sources in real-world compound activity data [8].

Materials and Special Considerations:

- Source assays from public databases (ChEMBL, BindingDB)

- Distinguish between Virtual Screening (VS) and Lead Optimization (LO) assay types [8]

- Address biased protein exposure through stratified sampling

- Account for congeneric compounds in LO assays

Procedure:

- Assay Characterization and Typing

- Calculate pairwise compound similarities within assays

- Classify as VS-type (diffused pattern) or LO-type (aggregated pattern)

- Apply assay-specific splitting strategies

Cross-Assay Active Learning

- Initialize model with diverse representation across assay types

- Implement query strategy that considers inter-assay relationships

- Prioritize samples that bridge assay conditions and protein families

Transfer Learning Integration

- Use pre-trained models on large-scale compound databases

- Fine-tune with AL-selected samples from target assay

- Employ multi-task learning where appropriate

Table 3: Essential Research Reagents and Computational Tools for AL-Driven Drug Discovery

| Resource Category | Specific Tools/Reagents | Function and Application |

|---|---|---|

| Compound Databases | ChEMBL [8], BindingDB [8], PubChem [8] | Source of compound structures and annotated activities for initial model training |

| Bioactivity Data | CARA benchmark [8], CTRP [7], GDSC | Curated compound activity data with assay annotations for model training and evaluation |

| Feature Representation | ECFP fingerprints, molecular descriptors, SMILES strings [7], protein sequences | Numerical representations of compounds and targets for machine learning |

| AL Frameworks | AutoML systems [10], Bayesian optimization tools [9] | Automated model selection and hyperparameter optimization integrated with AL |

| Uncertainty Estimation | Monte Carlo Dropout [10], ensemble methods, Bayesian neural networks | Quantifying model uncertainty for query strategy implementation |

| Validation Resources | Benchmark datasets [12], temporal split protocols [12] | Ensuring model robustness and real-world generalizability |

Workflow Visualization and Decision Pathways

Core Active Learning Cycle for Drug Discovery

Strategic Decision Pathway for Query Strategy Selection

Active learning represents a transformative approach to addressing the fundamental challenges of cost and efficiency in modern drug discovery. By strategically guiding experimental efforts toward the most informative data points, AL enables researchers to build robust predictive models for compound-target interactions while dramatically reducing resource requirements. The protocols and strategies outlined in this document provide a foundation for implementing AL methodologies across various drug discovery stages, from initial virtual screening to lead optimization.

As the field advances, the integration of active learning with emerging technologies—including large language models for compound representation [13], AlphaFold-generated protein structures [13], and automated experimental systems—promises to further accelerate therapeutic development. The continued development of standardized benchmarks [8] [12] and rigorous evaluation protocols will be essential for realizing the full potential of active learning in creating the next generation of medicines.

Active learning (AL) is a machine learning paradigm that operates through an iterative feedback process, efficiently identifying the most valuable data within a vast chemical space, even when starting with limited labeled data [1]. This characteristic renders it a particularly valuable approach for tackling the persistent challenges in drug discovery, such as the ever-expanding exploration space and the scarcity of expensive-to-acquire labeled data [1]. Consequently, AL is increasingly becoming a cornerstone methodology in modern drug development pipelines. This protocol will frame the core AL workflow specifically within the context of compound-target interaction prediction, providing researchers and drug development professionals with detailed application notes and experimental methodologies.

Core Active Learning Workflow

The following diagram illustrates the iterative cycle of pool-based active learning, which is the most prevalent scenario in drug discovery applications [14]. This workflow is designed to maximize data efficiency by strategically selecting the most informative compounds for labeling.

Workflow Phase Descriptions

- Initial Model: The process begins with a small, initially labeled dataset. An initial predictive model is trained on this data. The performance of this starting point is less critical than its ability to provide a baseline for uncertainty estimation [14].

- Prediction: The trained model is used to generate predictions for the entire pool of unlabeled compounds. This generates a landscape of predictions and associated uncertainty scores across the chemical space [10].

- Query Strategy: This is the core decision-making component of the AL cycle. A query strategy analyzes the model's predictions to select the most "informative" compounds from the unlabeled pool. Common strategies include [14]:

- Uncertainty Sampling: Selects compounds for which the model is least certain in its predictions.

- Query-by-Committee: Selects compounds where a committee of diverse models disagrees the most.

- Expected Model Change: Selects compounds that would cause the most significant change to the current model if their labels were known.

- Oracle: The selected compounds are sent for labeling by an "oracle." In drug discovery, this typically represents a costly and time-consuming experimental assay or high-throughput screen to determine the true compound-target interaction (e.g., binding affinity) [15].

- Update & Retrain: The newly acquired compound-target interaction data is added to the training set. The model is then retrained on this expanded dataset, incorporating the new knowledge [10].

- Evaluation & Decision: The updated model's performance is evaluated on a held-out test set. A stopping criterion (e.g., performance plateau, sufficient accuracy, or exhausted budget) is checked to determine whether to continue the AL cycle [10].

Experimental Protocols for Key AL Experiments

Protocol: Prospective Validation of AL for Phenotypic Profiling

This protocol is adapted from a foundational study that demonstrated the practical utility of AL-driven biological experimentation where potential phenotypes were unknown in advance [15].

1. Experiment Space Construction:

- Biological Targets: Select 48 different protein clones (e.g., via CD-tagging in NIH-3T3 cells) endogenously expressing EGFP-tagged proteins, representing a broad range of subcellular location patterns.

- Compound Library: Assemble a library of 48 chemically diverse treatment conditions, including 47 compounds suspected to affect subcellular trafficking, structure, or localization, plus a vehicle-only control (e.g., DMSO).

- Replication: To enable internal validation, assign two unique identifiers to each clone and drug, effectively creating a 96x96 experiment space while hiding the duplication from the AL algorithm.

2. Active Learning Setup:

- Initialization: Begin with a small, randomly selected subset of the experiment space (e.g., 1-2%) as the initial labeled dataset.

- Model: Implement an active learner capable of handling multiple targets and perturbagens simultaneously. The algorithm should iteratively select experiments based on maximizing information gain or model change.

- Automation: Fully integrate the AL algorithm with liquid handling robotics for drug manipulation and cell culture, and an automated microscope for image acquisition to close the experiment loop without human intervention.

3. Iterative Experimentation:

- Cycle: The AL algorithm requests a new experiment (a specific clone-drug combination). The automated system performs the experiment, acquiring fluorescent microscopy images.

- Labeling: An image analysis pipeline extracts the protein localization pattern (the "label") for the requested experiment.

- Model Update: The AL model is updated with the new experimental outcome. The process repeats, with the algorithm selecting the next most informative experiment.

4. Validation:

- Performance: Assess the model's ability to predict the outcomes of all held-out experiments (including the hidden duplicates) not performed by the AL algorithm.

- Efficiency: Calculate the fraction of the total experiment space (29% in the original study) required by the AL system to achieve accurate predictions.

Protocol: Benchmarking AL Strategies within an AutoML Framework

This protocol outlines a systematic approach for evaluating different AL query strategies in a regression setting typical of materials and drug property prediction, adaptable to compound-target interaction tasks [10].

1. Data Preparation:

- Obtain a dataset relevant to compound-target interactions or material properties.

- Partition the data into an initial labeled set (L = {(xi, yi)}{i=1}^l) and a large pool of unlabeled data (U = {xi}_{i=l+1}^n). Perform an 80:20 train-test split.

2. AutoML and AL Configuration:

- AutoML Setup: Configure an AutoML framework to automatically search and optimize across different model families (e.g., linear models, tree-based ensembles, neural networks) and their hyperparameters. Use 5-fold cross-validation within the AutoML workflow for model validation.

- AL Strategies: Define the AL strategies to benchmark. These should be based on principles like:

- Uncertainty Estimation (e.g., LCMD, Tree-based-R)

- Diversity (e.g., GSx)

- Hybrids (e.g., RD-GS combining Representativeness and Diversity)

- Include a Random-Sampling baseline for comparison.

3. Iterative Benchmarking Loop:

- For each AL strategy, iteratively select the most informative sample (x^*) from (U) based on the strategy's criterion.

- "Label" the sample by obtaining its true target value (y^*) from the held-out data.

- Expand the labeled set: (L = L \cup {(x^, y^)}).

- Use the updated set (L) to run the AutoML process, fitting a new model.

- Record the model's performance (e.g., MAE, R²) on the test set.

4. Analysis:

- Compare the performance of all strategies and the random baseline across the acquisition steps.

- Analyze performance particularly during the early, data-scarce phase, where the superiority of uncertainty-driven and diversity-hybrid strategies is often most pronounced.

Quantitative Comparison of Active Learning Strategies

Performance Benchmark of AL Strategies in AutoML

The following table summarizes findings from a comprehensive benchmark study evaluating various AL strategies within an Automated Machine Learning (AutoML) framework for small-sample regression tasks, which are directly analogous to early-stage drug discovery problems [10].

Table 1: Benchmark Performance of Active Learning Strategies in an AutoML Workflow

| Strategy Category | Example Strategies | Key Principle(s) | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Selects instances where model prediction is most uncertain. | Clearly outperforms random baseline and geometry-based heuristics. | Performance gap narrows; all methods eventually converge. |

| Diversity-Hybrid | RD-GS | Combines representativeness and diversity to select a varied set of informative samples. | Outperforms random baseline and geometry-only heuristics. | Convergence with other methods as labeled set grows. |

| Geometry-Only | GSx, EGAL | Selects samples based on geometric coverage of the feature space. | Less effective than uncertainty and hybrid methods early on. | Converges with other strategies. |

| Baseline | Random-Sampling | Selects data points at random from the unlabeled pool. | Serves as the baseline for comparison; less data-efficient. | Convergence with active strategies. |

Query Strategy Specifications

The table below details standard query strategies used in active learning, which can be implemented to drive the selection process in the core workflow [14].

Table 2: Common Active Learning Query Strategies

| Strategy Name | Core Principle | Typical Use Case in Drug Discovery |

|---|---|---|

| Uncertainty Sampling | Query the instances for which the current model is least certain. | Prioritizing compounds with ambiguous predicted activity for assay testing. |

| Query-by-Committee (QBC) | Train a committee of models; query instances where committee disagreement is highest. | Used when multiple, equally viable models exist (e.g., different algorithms). |

| Expected Model Change | Query instances that would cause the greatest change to the current model. | Useful when the model is in a rapid learning phase and can be significantly improved. |

| Expected Error Reduction | Query instances that are expected to most reduce the model's future generalization error. | Computationally expensive but aims for optimal long-term performance. |

| Diversity-Based | Query a set of instances that are diverse and representative of the unlabeled pool. | Ensuring broad exploration of chemical space, not just model uncertainty. |

The Scientist's Toolkit: Research Reagent Solutions

This section details key reagents and materials used in the prospective AL experimentation protocol for phenotypic profiling [15].

Table 3: Essential Research Reagents and Materials for AL-Driven Biological Experimentation

| Item | Function/Description | Example from Protocol [15] |

|---|---|---|

| CD-tagged Cell Clones | Provides a library of biological targets (proteins) with endogenously expressed fluorescent tags for visualization. | 48 different NIH-3T3 mouse fibroblast clones, each expressing a distinct EGFP-tagged protein. |

| Compound Library | A chemically diverse set of perturbagens to test against the biological targets. | 47 chemical compounds affecting subcellular structures + 1 vehicle control (DMSO). Stock concentrations varied (e.g., Apicidin 2.00 mM, Cytochalasin D 2.45 mM). |

| Liquid Handling Robotics | Automates the process of cell culture and compound addition to enable high-throughput, reproducible experimentation. | System under control of the AL algorithm to prepare assay plates. |

| Automated Microscope | Acquires high-content image data from the assays without manual intervention, closing the loop with the AL algorithm. | Fluorescent microscope used to image protein localization in response to compounds. |

| Image Analysis Pipeline | Processes acquired images to extract meaningful biological labels (phenotypes) for the AL model. | Software to quantify and classify changes in protein subcellular localization patterns. |

Active Learning (AL) has emerged as a pivotal methodology in computational drug discovery, particularly for compound-target interaction (CTI) prediction where acquiring labeled data is both costly and time-intensive. This paradigm strategically selects the most informative data points for labeling, optimizing experimental resources and accelerating model development. Within the context of CTI research, AL must navigate three fundamental challenges: data imbalance, where known interactions are vastly outnumbered by non-interactions; data redundancy, arising from chemical libraries with high structural similarity; and the exploration-exploitation dilemma, which involves balancing the verification of predicted high-affinity compounds with the exploration of chemically novel space. This article details practical protocols and application notes to address these challenges, providing a framework for the efficient implementation of AL in pharmaceutical research and development.

Addressing Data Imbalance in Compound-Target Interaction Prediction

Data imbalance is a prevalent issue in CTI datasets, where confirmed active compounds are significantly outnumbered by inactive or untested ones. This can bias predictive models toward the majority class (inactive compounds), reducing their ability to identify promising drug candidates.

Application Notes

- Challenge Impact: In CTI prediction, models trained on imbalanced data may achieve high overall accuracy but fail to identify the rare but critical active compounds, directly impacting hit discovery rates [16].

- Core Strategy - Data Re-balancing: Techniques such as the Synthetic Minority Over-sampling Technique (SMOTE) and its variants are used to generate synthetic samples for the minority class (active compounds) by interpolating between existing minority class instances [16].

- Considerations for CTI: When applying SMOTE to molecular descriptor data, it is crucial to validate that the synthetic molecules are chemically plausible. Domain knowledge should be integrated to ensure the generated features correspond to realistic molecular structures.

Protocol P1: Implementing SMOTE for Imbalanced CTI Data

This protocol guides the use of SMOTE to re-balance a CTI dataset before training a predictive model.

Objective: To improve model sensitivity in identifying active compounds by generating a balanced training set.

Materials: Imbalanced CTI dataset (e.g., bioactivity data from ChEMBL), Python environment with imbalanced-learn library, molecular descriptor calculation software (e.g., RDKit).

| Step | Procedure Description | Key Parameters & Notes |

|---|---|---|

| 1. Data Preparation | Load the bioactivity dataset. Encode compounds using molecular descriptors (e.g., ECFP4 fingerprints, molecular weight, logP). Label data points as "active" (minority) or "inactive" (majority) based on experimental IC50/Ki values. | Set a biologically relevant threshold for activity (e.g., IC50 < 1 µM). Ensure descriptors are normalized. |

| 2. SMOTE Application | Apply the SMOTE algorithm from the imbalanced-learn library to the training set only. Do not apply to the test set to maintain evaluation integrity. |

sampling_strategy: set to 'auto' to balance classes, or a float to specify the desired ratio. k_neighbors: typically 5; check for small disjuncts. |

| 3. Model Training & Validation | Train a classification model (e.g., Random Forest, XGBoost) on the resampled dataset. Evaluate performance using metrics appropriate for imbalanced data. | Use metrics like Precision-Recall AUC, F1-score, and Matthews Correlation Coefficient (MCC) instead of accuracy. |

Workflow Visualization: SMOTE for CTI Data

Mitigating Data Redundancy in Chemical Libraries

High redundancy in compound libraries, characterized by structural analogs, limits the chemical space explored during screening. AL strategies that prioritize diversity ensure a more comprehensive exploration of structure-activity relationships.

Application Notes

- Challenge Impact: Redundant data wastes experimental resources on structurally similar compounds, providing little new information for the predictive model [10] [17].

- Core Strategy - Diversity-Based Sampling: These methods select compounds that are maximally dissimilar from each other and from the already labeled set. Common approaches include cluster-based sampling and core-set selection [10].

- Considerations for CTI: Molecular diversity should be assessed using relevant representations, such as molecular fingerprints (e.g., ECFP4) that capture features important for biological activity. The choice of distance metric (e.g., Tanimoto distance) is critical.

Protocol P2: Diversity-Based Active Learning for Virtual Screening

This protocol uses a clustering approach to select a diverse subset of compounds for experimental testing from a large virtual library.

Objective: To select a representative and non-redundant set of compounds for initial screening or subsequent AL cycles. Materials: Large database of unlabeled compounds (e.g., ZINC, in-house library), clustering tool (e.g., Scikit-learn, Butina clustering in RDKit), fingerprint generator.

| Step | Procedure Description | Key Parameters & Notes |

|---|---|---|

| 1. Compound Featurization | Encode all compounds in the unlabeled pool using a molecular fingerprint. | ECFP4 is a standard choice. Consider using feature fingerprints for scaffold diversity. |

| 2. Clustering | Perform clustering on the fingerprint representations to group structurally similar compounds. | Butina clustering is efficient for large datasets. Adjust the similarity cutoff to control cluster granularity. |

| 3. Representative Selection | From each cluster, select one or a few representative compounds for labeling. | Select compounds closest to the cluster centroid. This ensures coverage of different chemical regions. |

| 4. Model Update & Iteration | After experimental testing, add the new labeled data to the training set. Retrain the model and initiate a new AL cycle, potentially switching to a different strategy. | In subsequent cycles, hybrid strategies (e.g., combining diversity and uncertainty) can be highly effective. |

Workflow Visualization: Diversity-Based Selection

Navigating the Exploration-Exploitation Dilemma

The exploration-exploitation trade-off is central to AL. In CTI, exploitation involves selecting compounds predicted with high confidence to be active, while exploration prioritizes compounds with high predictive uncertainty, which may belong to novel chemotypes or activity cliffs.

Application Notes

- Challenge Impact: Pure exploitation may lead to local optima (e.g., analogs of a known scaffold), missing novel chemotypes. Pure exploration may be inefficient, testing many inactive compounds [18] [19].

- Core Strategies:

- ε-Greedy: With probability (1-ε), select the compound with the highest predicted activity (exploit); with probability ε, select a random compound (explore) [19].

- Upper Confidence Bound (UCB): Select compounds based on a score that combines the predicted activity (exploitation) and the model's uncertainty (exploration). This "optimism under uncertainty" principle is highly effective [19].

- Thompson Sampling: A probabilistic method that selects compounds based on the probability that they are optimal, given the current model's posterior distribution [18].

Protocol P3: ε-Greedy and UCB for Iterative CTI Screening

This protocol outlines an iterative AL cycle using strategies that explicitly balance exploration and exploitation.

Objective: To efficiently refine a CTI model by strategically selecting compounds that either confirm high-confidence predictions or improve model knowledge in uncertain regions. Materials: An initial, small set of labeled CTI data, a trained probabilistic predictive model (e.g., Gaussian Process, Deep Learning with dropout for uncertainty), an unlabeled compound pool.

| Step | Procedure Description | Key Parameters & Notes |

|---|---|---|

| 1. Initial Model Training | Train a model on the initial labeled dataset. The model must provide both a prediction and an uncertainty estimate. | For neural networks, use Monte Carlo Dropout at inference to estimate predictive variance [10]. |

| 2. Query Strategy Application | For each compound in the unlabeled pool, calculate the acquisition function. | ε-Greedy: Set ε (e.g., 0.1). Roll a random number to decide action.UCB: Use formula: $Score = \mu(x) + c \cdot \sigma(x)$, where $\mu$ is predicted mean, $\sigma$ is standard deviation, and $c$ is a constant controlling exploration weight. |

| 3. Compound Selection & Labeling | Select the top-ranked compound(s) based on the chosen acquisition function. Submit them for experimental testing (e.g., binding assay). | Batch mode (selecting multiple compounds per cycle) is more practical. Use diverse batch selection to avoid redundancy. |

| 4. Model Update | Incorporate the newly labeled compounds into the training set. Retrain the model and repeat from Step 2. | The value of ε in ε-Greedy or $c$ in UCB can be annealed over time to shift from exploration to exploitation. |

Quantitative Comparison of Exploration-Exploitation Strategies

The table below summarizes the performance characteristics of different AL strategies as benchmarked in a materials science regression study, which shares similarities with CTI prediction [10].

Table 1: Benchmarking of Active Learning Strategies in Small-Data Regime

| Strategy Type | Example Methods | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) | Key Principle |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms random sampling and geometry-based methods | Converges with other methods | Selects samples where model prediction is most uncertain |

| Diversity-Hybrid | RD-GS | Clearly outperforms baseline | Gap narrows as data grows | Balances sample uncertainty with diversity in feature space |

| Geometry-Only | GSx, EGAL | Underperforms compared to uncertainty and hybrid methods | All methods eventually converge | Selects samples based on geometric coverage of space only |

| Random Sampling | (Baseline) | Serves as a reference point for comparison | Converges with other methods | Selects samples randomly (no strategy) |

Workflow Visualization: Exploration vs. Exploitation

The Scientist's Toolkit: Essential Research Reagents & Solutions

The table below lists key computational tools and resources essential for implementing the protocols described in this article.

Table 2: Key Research Reagents and Computational Tools for Active Learning in CTI Prediction

| Item Name | Type/Function | Brief Explanation of Role in AL Workflow |

|---|---|---|

| ChEMBL Database | Data Resource | A manually curated database of bioactive molecules with drug-like properties, providing a primary source of labeled CTI data for initial model training and benchmarking [6]. |

| ZINC Database | Data Resource | A free database of commercially available compounds for virtual screening, often serving as the initial "unlabeled pool" for AL campaigns [6]. |

| RDKit | Software Library | An open-source toolkit for Cheminformatics used to calculate molecular descriptors, generate fingerprints, perform clustering, and assess chemical similarity [17]. |

| scikit-learn | Software Library | A fundamental Python library for machine learning, providing implementations of standard models, clustering algorithms, and data preprocessing tools. |

| imbalanced-learn | Software Library | A Python library built on scikit-learn that provides numerous implementations of re-sampling techniques, including SMOTE and its many variants [16]. |

| AutoML Systems (e.g., AutoSklearn) | Software Framework | Automated Machine Learning systems can be integrated into the AL loop to automatically select and optimize the best predictive model at each iteration, reducing manual tuning [10]. |

| Monte Carlo Dropout | Algorithmic Technique | A method used with deep learning models to estimate predictive uncertainty without changing the model architecture, crucial for uncertainty-based AL strategies [10]. |

Implementing Active Learning: Strategies and Real-World Applications in CTI Prediction

The emergence of ultra-large, make-on-demand chemical libraries, containing billions of readily available compounds, presents a transformative opportunity for hit identification in drug discovery [20]. However, the computational cost and time required for exhaustive physics-based virtual screening of these libraries are often prohibitive [21]. Active Learning (AL) has emerged as a powerful machine learning strategy to overcome this challenge, enabling the efficient exploration of vast chemical spaces by iteratively selecting the most informative compounds for evaluation [22]. This approach amplifies discovery across vast chemical space, training a machine learning model on physics-based data iteratively sampled from a full library, thereby identifying the highest-scoring compounds at a fraction of the cost and speed of brute-force methods [22]. By framing the virtual screening problem within a Bayesian optimization framework, AL significantly improves sample efficiency, allowing researchers to recover a high percentage of top-scoring compounds while docking only a tiny fraction of the library [23].

The Active Learning Paradigm in Virtual Screening

Active Learning for virtual screening operates on a cyclic workflow of prediction, evaluation, and model refinement. This process strategically minimizes the number of computationally expensive physics-based calculations required to identify promising hits.

Core Workflow and Key Components

The following diagram illustrates the iterative feedback loop that is central to the Active Learning process.

Quantitative Performance of Active Learning Platforms

The efficiency of Active Learning is demonstrated by its ability to identify a high proportion of top-binding compounds while evaluating only a small fraction of the library. Different implementations have shown remarkable results, as summarized in the table below.

Table 1: Performance Metrics of Active Learning and Related Screening Platforms

| Platform / Method | Reported Performance | Screening Scale | Key Innovation |

|---|---|---|---|

| Schrödinger Active Learning Glide [22] | Recovers ~70% of top hits with 0.1% of the cost of exhaustive docking. | Multi-billion compounds | Machine learning trained on Glide docking scores. |

| Pretrained Transformer Model [23] | Identified 58.97% of top-50,000 compounds after screening 0.6% of a 99.5M compound library. | 99.5 million compounds | Bayesian optimization framework with pretrained molecular representation. |

| HelixVS [24] | 2.6x higher enrichment factor (EF) and >10x faster speed than Vina on DUD-E benchmark. | Millions of compounds per day | Multi-stage screening integrating docking with a deep learning pose-scoring model (RTMscore). |

| RosettaVS [21] | 14% hit rate for KLHDC2, 44% for NaV1.7; screening completed in <7 days. | Multi-billion compound libraries | Improved physics-based forcefield (RosettaGenFF-VS) with receptor flexibility. |

| REvoLd [20] | Hit rate improvements by factors of 869 to 1622 compared to random selection. | 20+ billion compound space (Enamine REAL) | Evolutionary algorithm for combinatorial make-on-demand libraries. |

Application Notes & Protocols

This section provides a detailed methodology for implementing an Active Learning-driven virtual screening campaign, from target preparation to hit selection.

Protocol: Multi-Stage Active Learning Screen

This protocol integrates concepts from several state-of-the-art platforms [21] [22] [24] to create a robust workflow for screening ultra-large libraries.

A. Pre-screening Phase: System Setup

- Target Preparation:

- Obtain a high-resolution 3D structure of the target protein from sources like the Protein Data Bank (PDB) or via homology modeling.

- Prepare the protein structure using standard tools (e.g., Schrödinger's Protein Preparation Wizard, Rosetta

prepack) to add hydrogens, assign protonation states, and optimize side-chain conformations. - Define the binding site of interest using a known ligand's coordinates or a predicted binding pocket.

- Library Curation:

- Select a commercially available ultra-large library, such as the Enamine REAL space [20] or other multi-billion compound collections.

- Perform standard molecular preprocessing: de-salting, tautomer standardization, and filtering for undesirable functional groups or drug-like properties (e.g., Lipinski's Rule of Five).

B. Active Learning Cycle Configuration

- Initial Sampling:

- Randomly select a small, diverse subset (e.g., 10,000-50,000 compounds) from the full library to serve as the initial training set for the machine learning model.

- Surrogate Model Selection:

- Choose a machine learning model to act as the surrogate predictor. Pretrained models are highly recommended for their sample efficiency [23]. Options include:

- Graph Neural Networks (GNNs): Effective at learning from molecular structure.

- Transformer-based Models: Powerful for processing SMILES strings or other molecular representations.

- Choose a machine learning model to act as the surrogate predictor. Pretrained models are highly recommended for their sample efficiency [23]. Options include:

- Acquisition Function:

- Define the strategy for selecting the next batch of compounds. A common approach is "expected improvement," which prioritizes compounds with the highest predicted potential to be top-binders, while also balancing exploration of uncertain regions of chemical space.

C. Iterative Docking and Learning

- Docking and Scoring:

- Dock the currently selected batch of compounds using a physics-based docking program. For the initial cycles or large batches, a faster method (e.g., AutoDock Vina, RosettaVS VSX mode [21]) can be used.

- For final-stage refinement, a more accurate and precise method (e.g., Glide SP, RosettaVS VSH mode [21], or FEP+ [22]) is recommended.

- Model Retraining:

- Append the new docking scores to the growing training set.

- Retrain the surrogate machine learning model on this updated dataset to improve its predictive accuracy for the next cycle.

- Informed Selection:

- Use the retrained model to predict scores for all remaining unscreened compounds.

- Apply the acquisition function to this list to select the next batch of compounds for docking.

- Convergence Check:

- Repeat steps 1-3 until a predefined stopping criterion is met. This can be a set number of cycles, a computational budget, or when the rate of new top-hit discovery falls below a threshold.

D. Post-Screening Analysis

- Hit Identification and Clustering:

- Compile the final list of top-ranking compounds from all docking rounds.

- Cluster these hits based on molecular similarity to prioritize diverse chemotypes for experimental validation.

- Interaction Analysis:

- Visually inspect the predicted binding poses of the top hits from each cluster to verify plausible binding modes and key protein-ligand interactions.

- Experimental Validation:

- Procure the selected hit compounds and test their binding affinity and/or functional activity in biochemical or cellular assays.

Reagent Solutions and Computational Tools

A successful virtual screening campaign relies on a suite of specialized software and libraries.

Table 2: Essential Research Reagent Solutions for AL-Based Virtual Screening

| Item / Resource | Type | Function in Workflow | Examples / Notes |

|---|---|---|---|

| Ultra-Large Compound Library | Data | The search space for discovering novel hits. | Enamine REAL, ZINC, other make-on-demand libraries [20]. |

| Protein Structure | Data | The target for docking simulations. | From PDB, homology models, or co-crystal structures. |

| Docking Software | Software | Predicts binding pose and affinity of a ligand to a protein. | Glide [22], AutoDock Vina [24], RosettaLigand/VS [21] [20]. |

| Surrogate ML Model | Software | Fast approximation of docking scores; core of the AL loop. | Pretrained Transformers [23], GNNs, or other QSAR models. |

| Active Learning Platform | Software | Manages the iterative workflow, model training, and compound selection. | Schrödinger Active Learning [22], REvoLd [20], RosettaVS [21], HelixVS [24]. |

| High-Performance Computing (HPC) | Infrastructure | Provides the computational power for docking and ML. | Local CPU/GPU clusters or cloud computing resources [21] [24]. |

Advanced Strategies and Considerations

Integrating Deep Learning and Multi-Stage Screening

To maximize both accuracy and efficiency, leading platforms like HelixVS have adopted a multi-stage funnel that combines the strengths of classical and AI methods [24]. The workflow progressively applies faster, less precise methods to filter down the library to a manageable size for slower, high-precision methods.

Addressing Receptor Flexibility

A key limitation of many docking protocols is the treatment of the receptor as a rigid body. Incorporating receptor flexibility is critical for targets that undergo induced conformational changes upon ligand binding [21] [20]. The RosettaVS platform, for example, accommodates full side-chain flexibility and limited backbone movement in its high-precision (VSH) mode, which has been validated to be crucial for successful screening campaigns against challenging targets [21].

Active Learning has fundamentally changed the paradigm of virtual screening, transforming it from a static, brute-force computation into a dynamic, intelligent exploration of chemical space. By leveraging machine learning to guide physics-based calculations, AL protocols enable researchers to triage billion-compound libraries with unprecedented efficiency and cost-effectiveness. The continued integration of more accurate docking methods, pretrained deep learning models, and strategies to handle biological complexity like receptor flexibility will further solidify AL as an indispensable tool in modern computational drug discovery.

Lead optimization is a critical stage in the drug discovery pipeline, focused on modifying a "hit" or "lead" compound to improve its potency, selectivity, and pharmacokinetic properties while reducing toxicity. This process primarily involves navigating the congeneric chemical space, where structural analogs sharing a common core are systematically evaluated and optimized [25]. The extensive optimization space for a lead, spanning hundreds to thousands of compounds, necessitates substantial resources for experimental evaluations, creating an urgent need for efficient predictive tools [25].

Artificial Intelligence (AI), particularly active learning (AL) frameworks, is revolutionizing this domain by enabling data-driven prioritization of compounds. These frameworks intelligently select the most informative candidates for expensive experimental validation, thereby accelerating the iterative design-make-test-analyze (DMTA) cycle. This article details the integration of advanced AI models and AL strategies to efficiently navigate congeneric chemical space, providing structured application notes and experimental protocols for researchers.

AI-Driven Methodologies for Lead Optimization

Several deep learning models have been developed specifically to address the challenge of predicting relative binding affinity within congeneric series. The table below summarizes the core architectures and their key applications.

Table 1: AI Models for Lead Optimization in Congeneric Chemical Space

| Model Name | Core Architecture | Primary Application | Key Advantage | Benchmark Performance |

|---|---|---|---|---|

| PBCNet [25] | Physics-informed graph attention network; Siamese network for pairwise comparison | Relative Binding Affinity (RBA) ranking for congeneric ligands | High accuracy and computational efficiency; outperforms many end-point methods. | RMSE ~1.11 kcal/mol on benchmark sets; comparable to FEP+ after fine-tuning. |

| EviDTI [26] | Evidential Deep Learning (EDL); integrates 2D drug graphs, 3D drug structures, and target sequences | Drug-Target Interaction (DTI) prediction with uncertainty quantification | Provides well-calibrated uncertainty estimates, identifying reliable predictions. | Competitive AUC/AUPR on DrugBank, Davis, and KIBA datasets. |

| KANO [27] | Knowledge graph-enhanced molecular contrastive learning | Molecular property prediction using fundamental chemical knowledge | Incorporates elemental knowledge and functional groups for interpretable predictions. | State-of-the-art on 14 molecular property prediction datasets. |

| Network Propagation [28] | Data mining on ensemble chemical similarity networks | Lead identification via activity score correlation | Identifies novel compounds by propagating information on similarity networks. | Validated in case study: 2 out of 5 predicted CLK1 candidates showed binding activity. |

Key Protocol: Relative Binding Affinity Prediction with PBCNet

PBCNet is specifically designed for ranking the relative binding affinity among congeneric ligands, a common task in lead optimization campaigns [25].

Experimental Workflow:

Input Preparation:

- Ligand Preparation: Generate and optimize 3D structures for the congeneric ligand pair. Ensure structures are in a format compatible with molecular docking (e.g., SDF, MOL2).

- Protein Preparation: Obtain the 3D structure of the target protein (e.g., from PDB or AlphaFold2 prediction). Prepare the protein by adding hydrogen atoms, assigning partial charges, and defining the binding pocket. The pocket typically comprises residues within 8.0 Å of the bound ligand.

- Complex Generation: Molecular docking is used to generate the binding pose for each ligand in the prepared protein pocket.

Model Inference:

- The pair of pocket-ligand complexes (with identical protein structures) is fed into the PBCNet model.

- The model's graph neural network processes the complexes, and the physics-informed attention mechanism identifies key protein-ligand atom interactions.

- The output is a prediction of the relative binding affinity (e.g., ΔpIC50 or ΔBinding Affinity) between the two ligands.

Result Interpretation:

- A negative ΔpIC50 value suggests ligand (j) has a lower IC50 (higher potency) than ligand (i).

- PBCNet provides attention scores that highlight molecular substructures and protein-ligand interactions critical to the binding difference, offering valuable medicinal chemistry insights.

Active Learning for Efficient Navigation of Chemical Space

Active Learning (AL) optimizes the lead optimization process by iteratively selecting the most valuable compounds for experimental testing, thereby maximizing the information gain while minimizing resource expenditure.

An Active Learning Framework for Lead Optimization

The following workflow diagram illustrates the iterative cycle of an AL-driven lead optimization campaign.

Workflow Description:

- Initialization: Begin with a congeneric library derived from the lead compound.

- Prediction: An AI model (e.g., PBCNet for relative affinity, EviDTI for interaction and uncertainty) screens the entire library.

- Acquisition: An acquisition function uses the model's predictions (e.g., prioritizing compounds with high predicted potency and high uncertainty) to rank candidates for experimental testing.

- Testing: The top-ranked compounds are synthesized and experimentally tested for binding affinity or functional activity.

- Update: The newly acquired experimental data is used to fine-tune and improve the AI model.

- Iteration: The cycle repeats until a compound meets the predefined potency goal.

A simulation-based experiment demonstrated that this AL-optimized approach could accelerate lead optimization campaigns by 473% compared to conventional methods [25].

Key Protocol: Implementing an Uncertainty-Guided AL Cycle

This protocol leverages a model like EviDTI that provides uncertainty estimates to guide the exploration of chemical space [26].

Model Setup:

- Select a pre-trained DTI model with uncertainty quantification capabilities, such as EviDTI.

- Configure the model to output both the predicted interaction probability and an associated uncertainty score.

Acquisition Strategy:

- For the initial iteration, the model screens a large virtual congeneric library.

- The acquisition function combines the predicted probability (e.g., p(Interaction)) and the uncertainty estimate. A common strategy is to select compounds where the model predicts high activity but with low confidence, indicating a high potential for learning.

- Rank all compounds based on the acquisition score.

Experimental Validation and Model Update:

- Synthesize or procure the top K (e.g., 5-10) ranked compounds.

- Conduct binding assays (e.g., SPR, ITC) or functional assays to determine the true activity of the selected compounds.

- Add the new experimental data (compound structure, target, measured activity) to the existing training dataset.

- Fine-tune the EviDTI model on the updated dataset. This step is crucial for adapting the model to the specific chemical space of the lead series and improving its predictive accuracy for subsequent iterations.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of computational protocols requires integration with experimental biology and chemistry. The following table lists key reagents and materials essential for the workflows described.

Table 2: Essential Research Reagents and Materials for AI-Driven Lead Optimization

| Item Name | Specifications / Example | Critical Function in Protocol |

|---|---|---|

| Target Protein | Recombinant human protein, >95% purity (e.g., kinase domain of FAK). | Essential for in vitro binding and activity assays to generate ground-truth data for model training and validation. |

| Congeneric Compound Library | 100-1000+ analogs with shared core; sourced from in-house collection or vendors (e.g., ZINC). [28] | Provides the chemical space for AI model screening and the source of candidates for synthesis and testing. |

| 3D Protein Structure | PDB ID or AlphaFold2-predicted model; binding pocket defined. [25] | Required for structure-based AI models like PBCNet to generate input complexes for relative affinity predictions. |

| Binding Assay Kit | TR-FRET, SPR, or FP-based competitive binding assay kit. | Measures the experimental binding affinity (e.g., IC50, Kd) of candidate compounds, generating the critical data for the AL loop. |

| Pre-trained AI Model | PBCNet, EviDTI, or KANO; available via web service or GitHub. [26] [25] [27] | The core computational tool for virtual screening and prediction, providing the decision support for compound prioritization. |

The integration of active learning with advanced AI models like PBCNet and EviDTI represents a paradigm shift in lead optimization. By systematically navigating the congeneric chemical space, these approaches prioritize the most promising and informative compounds for experimental testing, dramatically accelerating the journey from a lead molecule to a potent drug candidate. The protocols and application notes provided herein offer a practical roadmap for researchers to implement these powerful strategies, fostering more efficient and successful drug discovery campaigns.

The field of drug discovery has witnessed a paradigm shift with the integration of advanced machine learning (ML) models, particularly in predicting compound-target interactions. Traditional methods for identifying drug-target interactions (DTIs) are often expensive, time-consuming, and prone to failure, creating an pressing need for robust computational approaches [29]. The emergence of deep learning, transformer architectures, and multi-task learning (MTL) frameworks has provided powerful alternatives that can handle large-scale biological data, learn complex non-linear relationships, and improve prediction accuracy. These technologies are particularly valuable when combined with active learning strategies, creating a dynamic cycle where computational predictions guide experimental validation, which in turn refines the predictive models [30]. This application note details how these advanced ML methodologies are being implemented within active learning frameworks to accelerate drug discovery, complete with experimental protocols, performance benchmarks, and practical implementation resources.

Advanced Model Architectures in Drug Discovery

Transformer-Based Models for Representation Learning

Transformer architectures, renowned for their success in natural language processing, have been adapted to model biological sequences and molecular structures. Their self-attention mechanisms excel at capturing long-range dependencies and contextual information within drug molecules and target proteins.

- DTIAM Framework: The DTIAM model employs transformers through self-supervised pre-training on large amounts of unlabeled data. For drug molecules, it processes molecular graphs segmented into substructures, learning representations through masked language modeling, molecular descriptor prediction, and functional group prediction tasks. For target proteins, it uses transformer attention maps to extract features directly from amino acid sequences [31].

- Chemical Language Modeling: Models like ChemBERTa create meaningful molecular representations by treating Simplified Molecular-Input Line-Entry System (SMILES) strings as textual data, applying transformer-based language model pre-training to learn rich, contextualized features that benefit downstream prediction tasks [29] [30].

Multi-Task Learning Frameworks

MTL has emerged as a powerful paradigm for simultaneously learning related tasks, improving generalization by leveraging shared information across objectives. In drug discovery, MTL allows models to capture the complex, interconnected nature of biological systems.

- DeepTraSynergy: This framework employs MTL to predict drug combination synergy as the main task, with drug-target interaction and toxicity prediction as auxiliary tasks. The auxiliary losses help the model learn a more robust feature representation that improves synergy prediction while providing valuable additional pharmacological insights [32].

- DeepDTAGen: This model unifies drug-target affinity (DTA) prediction and target-aware drug generation within a single MTL framework. A shared feature space ensures that the learned representations capture ligand-receptor interaction knowledge applicable to both predictive and generative tasks [33].

- MultiComb: Specifically designed for combination therapy, MultiComb uses an MTL approach to simultaneously predict synergy and sensitivity scores for drug combinations. The model employs a cross-stitch mechanism to learn relationships between these related tasks, enhancing prediction accuracy for both objectives [34].

Graph Neural Networks

Graph-based representations naturally capture molecular structure by representing atoms as nodes and bonds as edges. Graph Neural Networks (GNNs) operate directly on these structures, learning features that preserve topological information.

- Molecular Graph Processing: In frameworks like DeepDDS, drugs are represented as graphs with atoms as nodes and chemical bonds as edges. GNNs then extract molecular features that capture both atomic properties and connectivity patterns, providing a richer representation than traditional fingerprints or descriptors [34] [33].

- Heterogeneous Network Integration: Some models construct multimodal graphs incorporating drug-drug interaction networks, drug-target interaction networks, and protein-protein interaction (PPI) networks. GNNs process these complex relational structures to predict properties like drug combination synergy [32].

Table 1: Performance Comparison of Advanced ML Models on Key Drug Discovery Tasks

| Model | Architecture | Task | Dataset | Performance |

|---|---|---|---|---|

| DTIAM [31] | Transformer + Self-Supervision | DTI, DTA, MoA | Yamanishi_08, Hetionet | Substantial improvement in cold-start scenarios |

| DeepDTAGen [33] | MTL + FetterGrad | DTA Prediction & Drug Generation | KIBA | MSE: 0.146, CI: 0.897, r²m: 0.765 |

| DeepTraSynergy [32] | MTL + Transformer | Drug Synergy | DrugCombDB | Accuracy: 0.7715 |

| MultiComb [34] | MTL + GNN | Synergy & Sensitivity | O'Neil | Synergy MSE: 232.37, Sensitivity MSE: 15.59 |

| RECOVER [30] | MLP + Active Learning | Drug Synergy | O'Neil | Identifies 60% of synergies with 10% of experiments |

Integration with Active Learning Frameworks

Active learning creates a closed-loop system where models selectively query the most informative data points for experimental validation, dramatically reducing the resources required for screening. The integration of advanced ML models with active learning is particularly valuable in drug discovery due to the vast combinatorial space and low frequency of positive hits.

Active Learning Cycle Implementation

The typical active learning workflow for drug discovery consists of several iterative stages [35] [30]:

- Initial Model Training: A pre-trained model is fine-tuned on existing bioactivity data (e.g., known DTIs, binding affinities, or synergy scores).

- Informativeness Scoring: The model evaluates untested compounds or combinations, assigning scores based on selection criteria.

- Batch Selection: The most promising candidates are selected for experimental testing.

- Model Refinement: New experimental results are incorporated into the training set to update the model parameters.

- Iteration: Steps 2-4 repeat until a stopping criterion is met (e.g., budget exhaustion or target performance).

Critical Implementation Factors

Several factors significantly impact the success of active learning implementations:

- Batch Size: Smaller batch sizes typically yield higher synergy discovery rates but increase computational overhead. Dynamic tuning of the exploration-exploitation balance can further enhance performance [30].

- Molecular Representation: While Morgan fingerprints with Tanimoto scores generally perform well, the specific molecular encoding has limited impact compared to cellular context features [30].

- Cellular Context Features: Gene expression profiles of target cells significantly enhance prediction quality, with as few as 10 genes sometimes sufficient to capture relevant biological information [30].

- Algorithm Data Efficiency: In low-data regimes typical of early discovery, parameter-light algorithms (e.g., logistic regression, XGBoost) can compete with deep learning models, though transformers and GNNs excel with sufficient data [30].

Experimental Protocols & Methodologies

Protocol: Transformer-Based DTI Prediction with DTIAM

Objective: Predict drug-target interactions, binding affinities, and mechanisms of action using self-supervised pre-training.

Materials:

- ChEMBL database (v34) containing 2.4M+ compounds, 15,598 targets, and 20.7M+ interactions [36]

- Molecular graphs of drug compounds

- Amino acid sequences of target proteins

Procedure:

- Drug Representation Learning:

- Segment molecular graphs into substructures

- Generate n × d embedding matrix where each substructure is a d-dimensional vector

- Process through Transformer encoder with three self-supervised tasks:

- Masked Language Modeling: Randomly mask substructures and predict them

- Molecular Descriptor Prediction: Predict quantitative chemical descriptors

- Functional Group Prediction: Identify presence of key molecular functional groups

Target Representation Learning:

- Process protein sequences through Transformer architecture with unsupervised language modeling

- Extract attention maps to identify important residues and contacts

Interaction Prediction:

- Combine drug and target representations

- Feed into automated ML framework with multi-layer stacking and bagging

- Output predictions for DTI (binary classification), DTA (regression), and MoA (activation/inhibition classification)

Validation:

- Perform warm start, drug cold start, and target cold start cross-validation

- Compare against baseline methods (CPIGNN, TransformerCPI, MPNNCNN, KGE_NFM) using AUC-ROC, AUC-PR metrics [31]

Protocol: Multi-Task Learning with DeepTraSynergy

Objective: Simultaneously predict drug combination synergy, drug-target interactions, and toxicity.

Materials:

- DrugCombDB or Oncology-Screen dataset

- Drug-chemical structures (SMILES)

- Protein-protein interaction networks

- Cell-target interaction data

Procedure:

- Feature Extraction:

- Drug Representation: Process SMILES strings through transformer architecture to generate molecular embeddings

- PPI Network: Construct graph of protein-protein interactions

- Cell-Target Interaction: Incorporate gene expression data for specific cell lines

Multi-Task Architecture:

- Main Task: Synergy prediction using combined drug and cellular features

- Auxiliary Task 1: Drug-target interaction prediction using binding affinity model

- Auxiliary Task 2: Toxicity prediction to prevent overlapping exposure

Loss Function Configuration:

- Implement three separate loss functions: synergy loss, toxic loss, DTI loss

- Balance loss contributions through weighted summation

- Use one-class learning for DTI to focus on active compound-target pairs

Training & Validation:

- Train on 80% of data, validate on 10%, test on 10%

- Evaluate synergy prediction using accuracy, AUC-ROC

- Assess auxiliary tasks with task-specific metrics (AUPR for DTI) [32]

Protocol: Active Learning with FEgrow for Compound Prioritization

Objective: Efficiently search chemical space of linkers and R-groups using active learning-driven molecular growing.

Materials:

- FEgrow software package (github.com/cole-group/FEgrow)

- Initial fragment or ligand core structure

- Receptor structure (from crystallography or AlphaFold)