Active Learning for Computational Cost Reduction: Strategies to Accelerate Drug Discovery and Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on leveraging active learning (AL) to significantly reduce the computational and experimental costs of machine learning projects.

Active Learning for Computational Cost Reduction: Strategies to Accelerate Drug Discovery and Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging active learning (AL) to significantly reduce the computational and experimental costs of machine learning projects. It explores the foundational principles of AL as a powerful alternative to traditional supervised learning, detailing core query strategies like uncertainty sampling and diversity-based methods. The piece delves into advanced methodological adaptations for real-world challenges, including batch selection for drug discovery and decreasing-budget strategies for medical imaging. It further offers practical troubleshooting advice for common pitfalls and a comparative analysis of strategy performance across various biomedical applications, synthesizing evidence from recent benchmarks and case studies to inform efficient and cost-effective research design.

The High Cost of Data: Why Active Learning is a Game-Changer for Computational Efficiency

The Data Annotation Bottleneck in Scientific Machine Learning

Frequently Asked Questions (FAQs)

FAQ 1: What is the core of the data annotation bottleneck in scientific machine learning? The bottleneck stems from the high cost, time, and expert labor required to create high-quality labeled datasets. In scientific fields, data annotation is not a simple preprocessing step but a core part of the machine learning lifecycle that can consume 50-80% of a project's budget and significantly extend timelines. Success depends less on model design and more on label quality [1] [2].

FAQ 2: Why is active learning a promising strategy to reduce annotation costs? Active learning is a machine learning technique that intelligently selects the most informative data points for labeling, reducing the amount of labeled data required. It can reduce hand-labeling needs by 30-70% and allows models to achieve performance comparable to using full datasets with only a fraction of the samples [3] [2] [4].

FAQ 3: What are the unique annotation challenges in medical and scientific domains?

- Expert Dependency: Annotations require certified domain experts (e.g., radiologists, materials scientists), who are costly and scarce [5] [6].

- Subjectivity and Complexity: Even among experts, annotation agreement can be as low as 70% for subtle features, and tasks often require pixel-level accuracy in 3D data [6].

- Data Scarcity and Diversity: Acquiring sufficient data that covers population, device, and condition variations is difficult and expensive due to regulatory constraints and fragmented infrastructure [5] [6] [7].

FAQ 4: How can I implement a human-in-the-loop annotation workflow? A hybrid pipeline combines model pre-labeling with structured human review. The model automatically labels high-confidence samples, while low-confidence predictions are routed to human experts for review. This balances automation with expert oversight for label fidelity and corner case detection [1].

FAQ 5: What are common query strategies in active learning?

- Uncertainty Sampling: Selects data points where the model has the highest prediction uncertainty [3] [8].

- Query-by-Committee: Uses a committee of models to select samples with the most disagreement [3].

- Diversity Sampling: Chooses data points that are representative of the overall distribution in the unlabeled pool [8].

- Expected Model Change: Selects samples that would cause the greatest change to the current model if labeled [3].

Troubleshooting Guides

Issue 1: High Annotation Costs and Slow Project Progress

Problem: Your project is consuming excessive resources for data labeling, slowing iteration cycles.

Solution: Implement a verified auto-labeling pipeline with targeted human review.

- Root Cause: Relying solely on manual, frame-by-frame annotation [2].

- Actionable Steps:

- Integrate a pre-trained model for auto-labeling. Vision-language models (VLMs) like Grounding DINO or CLIP can perform zero-shot detection and classification [2].

- Set a confidence threshold (e.g., 0.85). Auto-accept high-confidence labels and flag low-confidence predictions for expert review [1] [2].

- Use an evaluation dashboard to monitor performance metrics (mAP, F1 score) and identify failure modes [2].

- Expected Outcome: One study reported a ~100,000x cost reduction and 95% agreement with expert labels using this approach, transforming annotation from a months-long expense to a task completed in hours [2].

Issue 2: Poor Model Performance Despite Extensive Labeling

Problem: Your model is not achieving expected accuracy, potentially due to poor label quality or uninformative training data.

Solution: Enhance label provenance and implement active learning for data selection.

- Root Cause: Noisy labels or a training set that lacks informative examples [1] [3].

- Actionable Steps:

- Establish Label Provenance: Track who labeled each data point, under which schema version, and whether it was reviewed. Treat this as an audit log for your data [1].

- Run an Active Learning Cycle:

- Start with a small set of labeled data and train an initial model.

- Use an uncertainty-based query strategy (e.g., least confidence margin) to select the most informative batch of unlabeled samples [3] [4].

- Send only this selected batch for expert labeling.

- Retrain the model with the expanded labeled set and iterate.

- For Medical Data: Use a tiered workflow where trained non-medical annotators pre-label, and medical experts perform quality control and adjudication [5].

- Expected Outcome: A more robust model with better generalization, achieved with a significantly smaller, higher-quality labeled dataset [3].

Issue 3: Active Learning is Not Selecting Useful Data Points

Problem: Your active learning loop seems inefficient, not leading to rapid model improvement.

Solution: Re-evaluate and potentially switch your query strategy.

- Root Cause: The query strategy may be mismatched to the data distribution or model state [3] [4].

- Actionable Steps:

- Benchmark Strategies: Systematically test different query strategies on your data. In materials science, uncertainty-driven (LCMD, Tree-based-R) and diversity-hybrid (RD-GS) strategies have been shown to outperform random sampling early in the learning process [4].

- Use a Validation Set: Regularly evaluate model performance on a separate validation set to track the actual improvement from each actively selected batch [3].

- Consider Hybrid Methods: Combine uncertainty with diversity-based sampling to avoid selecting redundant or outlier examples [3] [8].

- Expected Outcome: Faster convergence and higher model accuracy with fewer labeled examples, as the strategy more effectively identifies the most valuable data for the model to learn from [4] [8].

Issue 4: Handling Subjectivity and Disagreement in Expert Labels

Problem: Inconsistent annotations from different experts are introducing noise and bias into your training data.

Solution: Implement a structured adjudication process.

- Root Cause: Inherent ambiguity in scientific data and evolving medical standards [6] [9].

- Actionable Steps:

- Develop Clear Annotation Protocols: Create detailed guidelines with examples to reduce subjectivity [5].

- Conduct Inter-observer Agreement Studies: Measure consistency between annotators to quantify and understand sources of disagreement [5].

- Adjudication Workflow: Have multiple experts label the same sample. Where disagreements occur, a senior expert makes the final call. This "ground truth" is then used for training [6] [9].

- Expected Outcome: A more consistent and reliable training set, leading to more robust and trustworthy models [5].

Experimental Protocols & Data

Table 1: Benchmarking of Active Learning Query Strategies in Scientific Regression Tasks

The following table summarizes the performance of various Active Learning (AL) strategies integrated with AutoML, as benchmarked on materials science datasets. Performance is measured by how quickly the model's accuracy improves as more data is labeled [4].

| Query Strategy | Strategy Type | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) | Key Characteristic |

|---|---|---|---|---|

| LCMD | Uncertainty | High | Converges | Leverages model uncertainty for sample selection. |

| Tree-based-R | Uncertainty | High | Converges | Effective with tree-based models within the AutoML pipeline. |

| RD-GS | Diversity-Hybrid | High | Converges | Combines redundancy and graph-based sampling for diversity. |

| GSx | Diversity (Geometry) | Moderate | Converges | Relies on geometric structure of the data. |

| EGAL | Diversity (Geometry) | Moderate | Converges | Emphasizes diverse sample selection. |

| Random Sampling | Baseline (No Strategy) | Low (Baseline) | Converges | Serves as a baseline for comparison. |

Protocol for Benchmarking:

- Initialization: Start with a small, randomly sampled labeled set (L = {(xi, yi)}_{i=1}^l) and a large pool of unlabeled data (U) [4].

- AutoML Model Fitting: In each AL cycle, fit an AutoML model to the current labeled set (L). AutoML automatically handles model selection (e.g., linear models, tree-based ensembles, neural networks) and hyperparameter tuning [4].

- Query and Label: Use the AL strategy to select the most informative sample (x^) from (U). Obtain its label (y^) (simulated from the full dataset in a benchmark) [4].

- Update: Expand the training set: (L = L \cup {(x^, y^)}) [4].

- Iterate and Evaluate: Repeat steps 2-4 for multiple rounds. Track model performance (e.g., Mean Absolute Error - MAE, R²) on a fixed test set after each round [4].

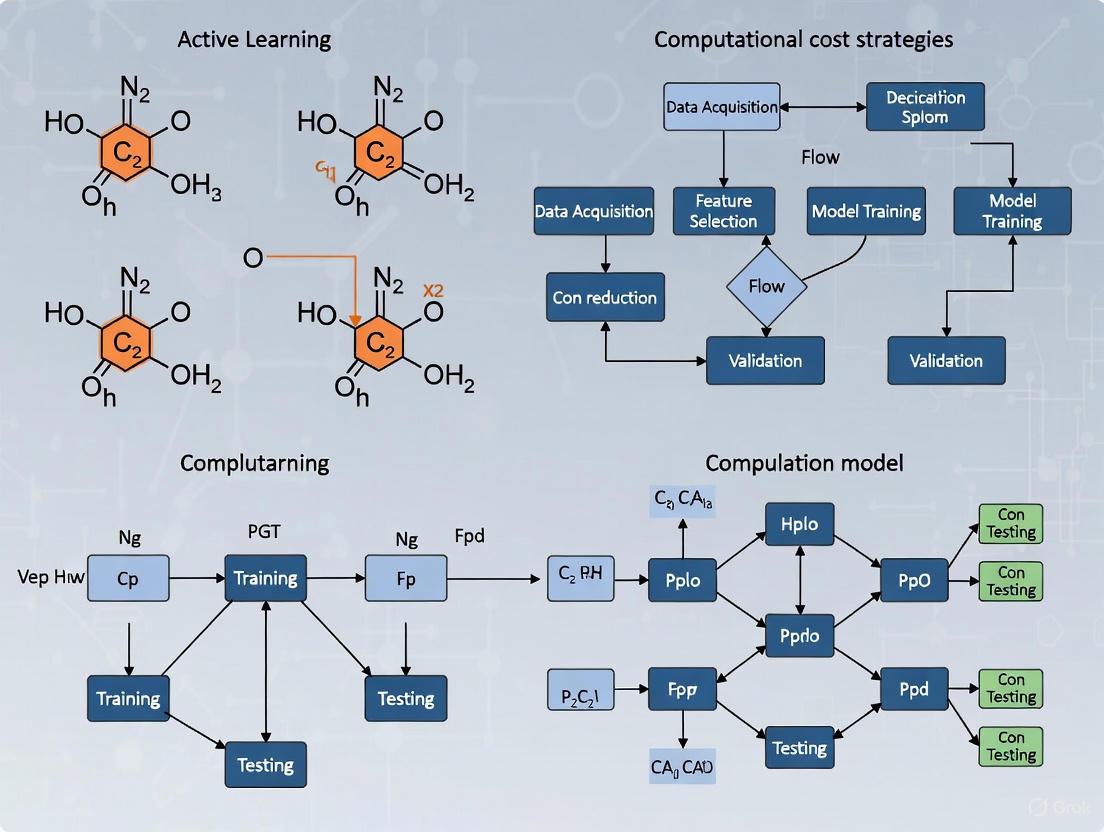

Workflow Diagram: Human-in-the-Loop Active Learning Pipeline

Active Learning Pipeline with Human-in-the-Loop

Workflow Diagram: Verified Auto-Labeling for Cost Reduction

Verified Auto-Labeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Managing the Annotation Bottleneck

This table details key tools and materials used to implement efficient data annotation workflows in scientific machine learning.

| Tool / Solution | Function | Application Context |

|---|---|---|

| AutoML Frameworks (e.g., AutoSklearn, TPOT) | Automates model selection and hyperparameter tuning, creating a robust surrogate model for the active learning loop. | Model-centric optimization to improve performance with limited data [4]. |

| Vision-Language Models (VLMs) (e.g., CLIP, Grounding DINO) | Enables zero-shot detection and classification, forming the backbone of auto-labeling pipelines without task-specific training. | Verified Auto-Labeling to generate initial labels for large unlabeled datasets [2]. |

| Annotation Platforms (e.g., FiftyOne, RedBrick.AI) | Provides integrated environments for visualization, auto-labeling, human review, and dataset management with QA workflows. | Streamlining the entire annotation lifecycle, especially for complex medical images [5] [2]. |

| Bayesian Neural Networks / Monte Carlo Dropout | Provides uncertainty estimates for model predictions, which is the foundation for uncertainty-based active learning strategies. | Quantifying model uncertainty to query the most informative samples [4]. |

| Synthetic Data Generators | Creates artificial training data using physical modeling or generative AI (e.g., GANs, Diffusion Models) to fill data gaps. | Addressing data scarcity for rare conditions or edge cases in medical and materials science [1] [6]. |

| Tiered Annotation Workforce | A structured team of non-expert annotators for pre-labeling and domain experts for QC and adjudication. | Managing costs and ensuring clinical validity in medical data annotation [5]. |

Frequently Asked Questions

What is the fundamental difference between Active Learning and Passive Supervised Learning? The core difference lies in how the learning algorithm acquires its training data. Passive Supervised Learning is trained on a static, pre-labeled dataset where the algorithm has no control over which data points it learns from [10]. In contrast, Active Learning starts with a small labeled dataset and iteratively queries a human annotator to label the most "informative" data points from a large pool of unlabeled data, actively influencing its training data [8] [10].

Why is Active Learning considered a key strategy for reducing computational costs? Active Learning reduces costs primarily by minimizing the most expensive part of the machine learning pipeline: data labeling [3]. By intelligently selecting only the most informative examples for human annotation, it avoids the cost of labeling large, redundant datasets. This can lead to significant reductions in labeling effort, time, and associated financial costs while achieving model performance comparable to models trained on much larger passively-labeled datasets [3] [11].

In which scenarios is Active Learning most beneficial? Active Learning is particularly beneficial in domains where [3] [8] [11]:

- Labeling data is expensive or time-consuming (e.g., requires medical experts, drug discovery scientists).

- You have large amounts of unlabeled data but a limited budget for annotation.

- The problem domain involves complex data where some examples are more informative for the model than others.

What are common query strategies in Active Learning? Common strategies for selecting which data to label include [3] [8]:

- Uncertainty Sampling: Selects data points for which the model's current prediction is most uncertain.

- Query-by-Committee: Uses an ensemble of models and selects data points where the models disagree the most.

- Diversity Sampling: Aims to select a diverse set of data points to ensure the training set is representative of the overall data distribution.

What are the typical performance outcomes when using Active Learning? When implemented effectively, Active Learning can achieve model accuracy that matches or even surpasses that of Passive Supervised Learning, but with a significantly smaller labeled dataset. The following table summarizes a general expected performance trend.

| Labeled Dataset Size | Expected Passive Learning Performance | Expected Active Learning Performance |

|---|---|---|

| Small | Low | Higher (due to focused learning on informative samples) |

| Medium | Medium | Competitive/High |

| Large | High | High (with potential for faster convergence) |

Troubleshooting Common Experimental Issues

Problem: My Active Learning model's performance has plateaued, and new queries are not improving it.

- Check Your Query Strategy: Your acquisition function might be selecting noisy or outlier data points that don't help the model generalize. Consider switching from pure uncertainty sampling to a diversity-based method or a hybrid approach to ensure a more representative training set [3] [8].

- Re-evaluate the Stopping Criterion: Define a clear stopping policy before starting the experiment. This could be a pre-defined performance threshold on a validation set or a labeling budget. Stop the process once this criterion is met to avoid wasteful labeling [3].

- Inspect for Label Noise: If the domain expert makes mistakes in labeling the selected examples, the model may learn incorrect patterns. Implement a mechanism for verifying and correcting labels, especially in early, critical iterations [3].

Problem: The model performance is unstable across iterations.

- Validate on a Hold-Out Set: It is essential to regularly evaluate the model's performance on a separate, static validation set that is not part of the active learning pool. This provides an unbiased measure of progress and helps detect overfitting to the selected samples [3].

- Manage Dataset Balance: Active learning can inadvertently lead to the selection of examples from under-represented classes. Employ strategies to maintain dataset balance, such as incorporating class-aware sampling into your query strategy [3].

Problem: Implementing Active Learning is computationally expensive per iteration.

- Consider a Decreasing-Budget Strategy: Instead of selecting a fixed number of samples in each iteration, start with a larger budget in the initial rounds and gradually reduce it. This prioritizes annotator effort where it has the most impact—early in the learning process—and reduces workload in later stages [11].

- Leverage Transfer Learning: Start with a pre-trained model as your initial model. A model already trained on a large, general dataset (e.g., ImageNet for vision tasks) provides a strong feature extractor, allowing the active learning process to fine-tune more efficiently on your specific domain [8].

Quantitative Data on Cost and Performance

The following table summarizes quantitative data from various studies on the effectiveness of Active Learning.

| Application Domain | Observed Cost/Efficiency Improvement | Key Metric | Source Context |

|---|---|---|---|

| General Data Labeling | Significant reduction in number of labeled examples required | Cost Reduction | [3] |

| Marketing & Software Processes | 20% to 30% reduction in costs | Cost Reduction | [12] |

| Medical Image Annotation (Digital Pathology) | Reduced specialist workload; model performance maximized with reduced effort | Workload Reduction & Model Performance | [11] |

| Customer Support Operations | Reduction in operating expenses by a third ($100M bottom-line impact) | Cost Reduction | [12] |

| Preventive Maintenance | Decreased cost by more than 40% | Cost Reduction | [12] |

Experimental Protocol: Implementing a Pool-Based Active Learning Cycle

This protocol outlines a standard methodology for setting up a pool-based active learning experiment, suitable for image classification or object detection tasks in domains like digital pathology [8] [11].

1. Initial Setup:

- Data Splitting: Divide your entire dataset into three fixed sets:

- Initial Training Set (

Tr) : A small set of labeled data (e.g., 5-10% of total data) to train the initial model. - Pool (

P) : A large set of unlabeled data (e.g., 70-80% of total data) from which the active learning algorithm will query. - Validation (

Va) and Test (Te) Sets: Fixed sets to evaluate model performance and generalizability.

- Initial Training Set (

- Model Selection: Choose an initial model architecture (e.g., MobileNetV3, InceptionV3 for classification; YOLOv8 for object detection) [11].

2. Iterative Active Learning Loop: Repeat the following steps until a stopping criterion (e.g., performance plateau, budget exhaustion) is met.

- Step A - Model Training: Train the model on the current

Tr. - Step B - Model Inference & Scoring: Use the trained model to make predictions on the entire unlabeled

P. Score each data point inPusing an acquisition function (e.g., prediction entropy for uncertainty sampling). - Step C - Query Selection: Select the top

B(the budget) data points fromPwith the highest scores according to the acquisition function. - Step D - Oracle Labeling: Send the selected

Bdata points to a human expert (the "oracle") for labeling. - Step E - Dataset Update: Remove the newly labeled

Bdata points fromPand add them toTr.

The workflow for this iterative process is illustrated below.

The Scientist's Toolkit: Research Reagent Solutions

The table below details key components for building an active learning system.

| Item / Component | Function in the Active Learning Experiment |

|---|---|

Initial Labeled Dataset (Tr) |

A small, often random, sample of labeled data used to bootstrap the initial model. Its quality is critical for the first query cycle. |

Unlabeled Data Pool (P) |

The large reservoir of unlabeled data from which the most informative samples are selected for expert annotation. |

| Human Expert (Oracle) | A domain specialist (e.g., a pathologist, drug discovery scientist) responsible for providing accurate labels for the queried samples. This is often the most costly resource. |

| Acquisition Function | The algorithm or "query strategy" that quantifies the informativeness of each unlabeled sample (e.g., using uncertainty, diversity metrics). It is the core of the selection logic [3] [8]. |

| Base Model Architecture | The underlying machine learning model (e.g., CNN, Transformer) that is iteratively retrained. Choices include task-specific models like YOLOv8 for detection or InceptionV3 for classification [11]. |

| Stopping Criterion | A pre-defined rule to halt the iterative process, preventing unnecessary labeling. This can be a performance target on (Va) or a total labeling budget. |

Troubleshooting Guides and FAQs

This technical support resource addresses common challenges researchers face when implementing active learning (AL) loops to reduce computational costs in scientific domains like materials science and drug development.

Frequently Asked Questions

Q1: My AutoML model performance plateaus or even degrades after several AL iterations. What could be causing this, and how can I address it?

Model degradation often stems from sampling bias or a shift in the model's hypothesis space. As your labeled set grows, the informative value of newly selected samples decreases.

- Recommended Action: Implement hybrid sampling strategies that balance uncertainty with diversity, such as the RD-GS (Diversity-hybrid) method, which has been shown to outperform geometry-only heuristics, especially in early acquisition phases [4]. Furthermore, monitor the type of models AutoML selects in each iteration. A strategy effective for a tree-based model may not be for a neural network.

Q2: With a limited annotation budget, which AL strategy will give me the best model performance fastest?

Uncertainty-driven strategies are particularly effective early in the AL process when data is scarce.

- Recommended Action: For regression tasks, start with strategies like LCMD or Tree-based-R, which have been benchmarked to show clear outperformance early in the acquisition process by selecting more informative samples [4]. Consider a decreasing-budget strategy that allocates more resources to initial iterations to build a robust core dataset quickly [11].

Q3: How do I efficiently manage the high and variable cost of expert annotation in AL workflows?

A fixed budget per iteration may not be optimal when expert time is costly and limited.

- Recommended Action: Adopt a decreasing-budget-based strategy ((S_{DB})). This approach optimizes budget allocation by encouraging annotators to focus more effort in initial iterations, which improves model performance faster and reduces the specialist's workload in subsequent rounds [11].

Q4: How can I ensure my AL strategy remains effective when my AutoML surrogate model changes type (e.g., from a linear regressor to a neural network)?

This "model drift" is a key challenge when using AL with AutoML. The sampling strategy must be robust to changes in the hypothesis space.

- Recommended Action: Prefer acquisition functions that are less dependent on the specific internal mechanics of a single model class. Diversity-based and hybrid methods (like RD-GS) have shown greater robustness in this dynamic environment compared to some uncertainty methods tailored to a specific model family [4].

Q5: What is the minimum viable initial labeled dataset size to start an AL loop?

While the exact size is project-dependent, the principle is to start with a very small but statistically representative set.

- Recommended Action: In benchmark studies, the process often begins with a small set of samples ((n_{init})) randomly sampled from the unlabeled dataset to create the initial labeled dataset (L) [4]. The key is to ensure the initial set is representative of the problem space. A common practice is to start with a number just large enough to train a simple baseline model.

Benchmarking Active Learning Strategies for Computational Cost Reduction

The table below summarizes the performance of various AL strategies within an AutoML framework for small-sample regression, a common scenario in materials science and drug development. The data shows that strategy choice is crucial for data efficiency, especially with limited budgets [4].

| Strategy Category | Example Strategies | Key Principle | Performance in Early Stages (Data-Scarce) | Performance with Larger Labeled Sets | Best Use Case |

|---|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Selects samples where the model's prediction is most uncertain. | Clearly outperforms baseline and other heuristics [4]. | Convergence with other methods; diminishing returns. | Maximizing initial performance gains; fast error reduction. |

| Diversity-Hybrid | RD-GS | Combines uncertainty with a measure of data diversity. | Outperforms geometry-only heuristics [4]. | Convergence with other methods. | Preventing sampling bias; building a representative dataset. |

| Geometry-Only | GSx, EGAL | Selects samples to cover the feature space geometry. | Underperforms compared to uncertainty and hybrid methods [4]. | Convergence with other methods. | Ensuring broad data coverage when uncertainty is unreliable. |

| Baseline | Random-Sampling | Selects samples randomly from the unlabeled pool. | Lower model accuracy compared to informed strategies [4]. | Serves as a convergence point for other methods. | Establishing a performance baseline; control experiments. |

Experimental Protocol: Benchmarking AL Strategies with AutoML

This protocol details the methodology for evaluating AL strategies within an AutoML pipeline for a regression task, as used in comprehensive benchmarks [4].

1. Problem Setup and Data Preparation

- Objective: Iteratively select the most informative samples from an unlabeled pool (U = {xi}{i=l+1}^n) to expand a small initial labeled set (L = {(xi, yi)}_{i=1}^l), minimizing the total number of samples required for a performant model.

- Initialization: Randomly sample (n_{init}) instances from (U) to create the initial labeled set (L).

- Data Split: Partition the entire dataset into training (including the initial (L) and subsequently selected samples) and a fixed test set in an 80:20 ratio. Validation within the AutoML workflow is performed using 5-fold cross-validation.

2. Active Learning Loop The iterative process, which can be run for dozens of rounds, is as follows:

- Step 1 - Model Training: Fit an AutoML model on the current labeled dataset (L). The AutoML system automatically searches and optimizes across model families (e.g., linear models, tree-based ensembles, neural networks) and their hyperparameters.

- Step 2 - Sample Selection: Using the trained model, score all instances in the unlabeled pool (U) with an acquisition function (f(M_i, P)). The acquisition function implements the AL strategy (e.g., uncertainty, diversity).

- Step 3 - Annotation & Update: Select the top-scoring sample (x^) (or a batch (B)), acquire its label (y^) (simulated from the holdout test set in benchmarks), and update the datasets: (L = L \cup {(x^, y^)}), (U = U \setminus {x^*}).

- Step 4 - Evaluation: Record the model's performance (e.g., MAE, (R^2)) on the fixed test set.

- Stopping Criterion: Repeat Steps 1-4 until a predefined budget is exhausted or (U) is empty.

3. Strategy Comparison

- Compare the performance trajectories (e.g., test MAE vs. number of labeled samples) of all AL strategies against a Random-Sampling baseline.

- The effectiveness is primarily judged by the rate of performance improvement in the early, data-scarce phase of the loop.

Workflow Visualization: The Active Learning Loop with AutoML

The following diagram illustrates the iterative pool-based active learning workflow integrated with an AutoML system.

Research Reagent Solutions: Essential Components for an AL Experiment

The table below lists the key "research reagents" or computational components required to set up and run a robust AL experiment.

| Component / Solution | Function / Description | Exemplars / Notes |

|---|---|---|

| Base Model Architectures | Core learning algorithms that AutoML can optimize. Provides the predictive function and uncertainty estimates. | For classification: MobileNetV3, InceptionV3 [11]. For object detection: YOLOv8, DETR, Faster R-CNN [11]. |

| Acquisition Functions | The core "strategy" that scores and selects samples from the unlabeled pool. | Uncertainty (LCMD, Tree-based-R), Diversity (GSx), Hybrid (RD-GS) [4]. |

| AutoML Framework | Automates the selection and hyperparameter tuning of base models, reducing manual effort and bias. | Frameworks that can dynamically switch between model families (e.g., from linear models to gradient boosting) within the AL loop [4]. |

| Annotation Oracle | The source of ground-truth labels for selected samples; often a domain expert or a high-fidelity simulation. | In medical applications, this is a specialist (e.g., a pathologist). The cost of this component is a primary target for reduction [11]. |

| Budget Management Strategy | Defines how the annotation budget is allocated across AL iterations. | Constant budget per iteration; Decreasing-budget-based strategy ((S_{DB})) for optimized resource allocation [11]. |

Quantifying Cost Reduction: Key Benchmarks and Data

Extensive research demonstrates that active learning (AL) can significantly reduce the resources required for machine learning projects. The following tables summarize quantified reductions in labeling effort and computational overhead achieved by various AL strategies.

Table 1: Projected Reductions in Labeling Effort from Active Learning

| Domain / Task Type | AL Strategy | Reduction in Labeling Effort | Performance Achieved |

|---|---|---|---|

| General Classification Tasks [13] | Uncertainty Sampling | 60% less data to reach target performance | 90% of final model performance using only 40% of labeled data |

| Named Entity Recognition (NER) [13] | Hybrid (Diversity & Uncertainty) | 50% fewer labeled sentences required | Target performance with half the original data volume |

| Benchmark Datasets [13] | Various (e.g., modAL, Cleanlab) | 30% to 70% less labeling effort | Varies by domain and task complexity |

| Materials Science Regression [4] | Uncertainty-driven (LCMD, Tree-based-R) & Hybrid (RD-GS) | Significant early-stage efficiency | Outperformed random sampling early in the acquisition process |

Table 2: Reduction in Computational and Experimental Overhead

| Application Domain | AL Strategy / Framework | Computational/Experimental Savings |

|---|---|---|

| Alloy Design (Experimental) [4] | Uncertainty-driven AL | Reduced experimental campaigns by >60% |

| Machine-Learned Potentials [14] | PAL (Parallel Active Learning) | Substantial speed-ups via asynchronous parallelization on CPU/GPU |

| First-Principles Databases [4] | Query-by-Committee | 70-95% savings in computational resources; 90% data reduction for some tasks |

| Ternary Phase-Diagram Regression [4] | Not Specified | State-of-the-art accuracy using only 30% of typically required data |

Experimental Protocols for Quantifying AL Value

To reliably reproduce the cost-saving benefits of active learning, researchers should adhere to structured experimental protocols. The following methodologies are cited in the provided research.

This protocol is designed for materials science regression tasks where data acquisition is costly.

Initial Dataset Partitioning:

- Begin with a dataset split into an unlabeled pool

Uand a small, initially labeled setL. - Partition the entire dataset into an 80% training pool and a held-out 20% test set. The initial

Lis a small subset of the training pool.

- Begin with a dataset split into an unlabeled pool

Initial Sampling:

- Randomly select

n_initsamples fromUto form the initial labeled datasetL.

- Randomly select

Iterative Active Learning Cycle:

- Model Training & Validation: Fit an Automated Machine Learning (AutoML) model on

L. Use 5-fold cross-validation within the AutoML workflow for robust validation and hyperparameter tuning. - Sample Selection: Use an AL query strategy (e.g., uncertainty, diversity) to select the most informative sample(s)

x*from the unlabeled poolU. - Oracle Labeling: Obtain the true label

y*for the selected sample(s)x*(simulating a costly experiment or expert annotation). - Dataset Update: Expand the labeled set:

L = L ∪ {(x*, y*)}and removex*fromU. - Performance Evaluation: Test the updated model on the held-out 20% test set. Track metrics like Mean Absolute Error (MAE) and Coefficient of Determination (R²).

- Model Training & Validation: Fit an Automated Machine Learning (AutoML) model on

Stopping Criterion:

- Continue the cycle until the model performance on the test set plateaus or a predefined labeling budget is exhausted.

This protocol outlines the workflow for the PAL framework, which reduces computational overhead via parallelization.

Kernel Initialization: Deploy the five core kernels of PAL concurrently using Message Passing Interface (MPI):

- Generator Kernel: Runs exploration algorithms (e.g., Molecular Dynamics steps).

- Prediction Kernel: Hosts the machine learning model for inference.

- Oracle Kernel: Performs high-fidelity calculations (e.g., Density Functional Theory).

- Training Kernel: Retrains the ML model with newly labeled data.

- Controller Kernel: Manages communication and data flow between all kernels.

Parallel Workflow Execution:

- The Generator produces new data instances (e.g., atomic geometries).

- The Controller sends these to the Prediction Kernel and receives ML predictions (e.g., energies, forces).

- Based on uncertainty quantification from the Controller, the Generator decides whether to trust the prediction or request a label from the Oracle.

- The Oracle Kernel calculates high-fidelity labels for selected data.

- Newly labeled data is sent to the Training Kernel for model updates.

- The updated model weights are periodically replicated in the Prediction Kernel.

Shutdown:

- The workflow terminates when a user-defined criterion is met (e.g., energy convergence, step count). Any kernel can signal the Controller to initiate shutdown.

Troubleshooting Guides and FAQs

FAQ 1: Why is my active learning model not converging, or why is its performance plateauing too early?

A. This common issue can stem from several factors in the AL loop:

- Check Your Sampling Strategy: A pure uncertainty sampling strategy can overfocus on ambiguous outliers or a specific region of the input space, leading to redundant samples and poor generalization [13]. Consider switching to a hybrid strategy that combines uncertainty with diversity sampling (e.g., using clustering in the feature space) to ensure broader coverage [13].

- Verify the Oracle's Labels: Noisy or incorrect labels can corrupt the training process. Implement label quality control measures. One method is to treat the annotator as a model and select samples that are prone to mislabeling for verification, adaptively allocating resources between labeling new data and checking existing labels [15].

- Re-evaluate the Stopping Criterion: You may have reached a performance plateau given the model's capacity and the chosen AL strategy. Plot the performance gain per labeled batch and stop when the improvement rate shows a steep drop or flatlines [13].

- Assess Model Capacity: The underlying model might be too simple to learn the complexity of the problem, even with informative samples. Within an AutoML framework, ensure the optimizer is allowed to explore more complex model families as the dataset grows [4].

FAQ 2: How can I reduce the significant computational time of my active learning cycle?

A. The sequential nature of traditional AL can be a major bottleneck. Implement parallelization:

- Adopt a Parallel AL Framework: Use frameworks like PAL (Parallel Active Learning) that decouple data generation, labeling, model training, and inference into separate kernels that run simultaneously. This eliminates idle time for computational resources [14].

- Asynchronous Operations: In PAL, while the Oracle is labeling a batch of data, the Generator can continue exploring using the current Prediction model, and the Training kernel can retrain the model on previously labeled data. This asynchronous parallelization has been shown to achieve substantial speed-ups on CPU and GPU hardware [14].

- Batch Active Learning: Instead of querying one sample at a time, use batch methods that select multiple diverse and informative samples in each iteration. Methods like BatchBALD are designed to reduce the probability of selecting redundant samples within the same batch, making the labeling process more efficient [15].

A. This is a typical pitfall of uncertainty-based sampling.

- Switch to a Hybrid Strategy: Incorporate diversity-based sampling. This strategy selects data points that are diverse from each other to avoid labeling redundant patterns. This can be measured using clustering, distance metrics, or embedding-based similarity [13].

- Use Query-by-Committee (QBC): Train multiple models (a committee) and select samples where the models disagree the most. This inherently introduces diversity as different models may be uncertain about different types of data [3] [13].

- Implement a Core-Set Approach: This method aims to select a set of labeled samples such that the entire data distribution is covered. It chooses unlabeled samples to minimize the maximum distance to any point in the labeled set, ensuring broad coverage [15].

Workflow Visualization: Parallel Active Learning (PAL)

The following diagram illustrates the parallelized workflow of the PAL framework, which is designed to minimize computational overhead by executing key tasks simultaneously [14].

The Scientist's Toolkit: Essential Research Reagents & Software

This table lists key computational tools and frameworks used in advanced active learning research, enabling the replication of the quantified benefits.

Table 3: Key Research Reagents & Software Solutions

| Tool/Reagent Name | Type | Primary Function in Research |

|---|---|---|

| PAL (Parallel Active Learning) [14] | Software Library | An automated, modular Python library using MPI for parallel AL workflows. Dramatically reduces computational overhead by running data generation, labeling, and model training simultaneously. |

| RAFFLE [16] | Software Package | Accelerates interface structure prediction in materials science. Uses active learning to guide atom placement by refining structural descriptors, efficiently exploring vast configuration spaces. |

| modAL [13] | Python Library | A lightweight, modular toolkit for building active learning workflows, integrated with scikit-learn. Facilitates rapid prototyping of various query strategies (uncertainty, committee, etc.). |

| Cleanlab [13] | Python Library | Helps identify mislabeled data and uncertain samples within datasets. Used for data quality control and improving the reliability of the data entering the AL loop. |

| AutoML Frameworks [4] | Methodology/Software | Automates the selection and hyperparameter tuning of machine learning models. Crucial for AL benchmarks to ensure performance gains are from sample selection, not manual model optimization. |

| Message Passing Interface (MPI) [14] | Programming Protocol | Enables parallel communication between different processes in high-performance computing environments. The backbone of the PAL framework for achieving scalability on clusters. |

Core Strategies and Niche Applications: Implementing AL in Drug Discovery and Biomedicine

Frequently Asked Questions & Troubleshooting Guides

This technical support resource addresses common challenges researchers face when implementing active learning (AL) strategies to reduce computational and experimental costs in scientific domains, particularly drug discovery.

FAQ: How do I choose between uncertainty, diversity, and QBC strategies for my specific problem?

Answer: The choice depends on your primary goal, data characteristics, and available computational resources. The table below provides a comparative overview to guide your selection.

Table 1: Guide to Selecting an Active Learning Query Strategy

| Strategy | Primary Mechanism | Best-Suited For | Key Advantages | Common Pitfalls |

|---|---|---|---|---|

| Uncertainty Sampling | Queries samples where the model's prediction confidence is lowest [17]. | - Rapidly refining a model's decision boundary [18]- Tasks with high annotation cost per sample | - Simple to implement and computationally efficient [18]- Directly targets model uncertainty | - Can select outliers [18]- Ignores data distribution, potentially causing imbalance [17] |

| Diversity Sampling | Queries a set of samples that broadly cover the data distribution [19]. | - Initial model training phases [18]- Exploring the input space efficiently | - Mitigates model bias- Good for discovering new, rare cases | - May select many irrelevant samples [18]- Can be computationally intensive for large pools |

| Query-by-Committee (QBC) | Queries samples that cause the most disagreement among an ensemble of models [18]. | - Scenarios where model architecture or parameters are uncertain- Complex, high-dimensional spaces | - Robustly identifies informative samples- Less sensitive to the bias of a single model | - High computational cost from training multiple models [18]- Complexity in managing the ensemble |

For many real-world applications, hybrid approaches that combine these strategies often yield the best results by balancing exploration and exploitation [18]. Furthermore, the optimal strategy can change during the AL lifecycle; for example, starting with a diversity-focused approach and later incorporating more uncertainty-based sampling can be effective.

FAQ: How can I prevent sampling bias and class imbalance in my selected batches?

Problem: The model consistently selects samples from only a few dominant classes, leading to poor performance on under-represented classes.

Solutions:

- Integrate Category Information: Enhance traditional uncertainty sampling by incorporating class-specific data. One method uses a pre-trained model (e.g., VGG16) to extract deep features from unlabeled samples and computes their cosine similarity to a labeled calibration set for each class. This category information is then fused with the uncertainty score to ensure balanced sampling across all classes [17].

- Use Diversity-Based Strategies: Employ methods that explicitly consider the "label distribution morphology." This involves selecting samples that are not only representative of the feature space but also have label ranking relationships that are diverse from the already labeled data. This ensures the selected batch covers a wide variety of label patterns [19].

- Dynamic Strategy Switching: For complex tasks like clinical Named Entity Recognition (NER), a dynamic strategy that begins with a diversity-based approach (to build a broad base of knowledge) and later switches to an uncertainty-based method (to refine difficult cases) has been shown to require up to 22.5% fewer annotations for high-difficulty entities compared to static strategies [20].

Table 2: Summary of Solutions for Mitigating Sampling Bias

| Solution | Underlying Principle | Reported Outcome | Applicable Domains |

|---|---|---|---|

| Category-Enhanced Uncertainty | Combines prediction uncertainty with semantic category similarity [17]. | Balanced dataset representation and reduced long-tail effect. | Computer Vision, Multi-class Classification |

| Diversity with Label Morphology | Selects samples based on diverse feature-space coverage and label-ranking relationships [19]. | Prevents information overlap in selected batches, improving model generalization. | Label Distribution Learning, Multi-label Tasks |

| Dynamic CLC/CNBSE Strategy | Switches from diversity-based to uncertainty-based sampling during the AL process [20]. | 20.4%–22.5% reduction in annotation edits needed for high target effectiveness. | Clinical NER, Text Mining |

The following workflow diagram illustrates how to integrate these strategies into a robust active learning pipeline:

FAQ: What are the most effective strategies for batch-mode active learning in drug discovery?

Problem: Selecting a single, optimal sample at a time is impractical for wet-lab experiments. Batch selection is necessary, but selecting a batch of highly similar samples wastes resources.

Solutions:

- Maximize Joint Entropy: This method selects a batch of samples that collectively have the highest information content. It works by computing a covariance matrix between model predictions for unlabeled samples and then iteratively selecting a sub-matrix (the batch) with the maximal determinant. This approach inherently balances high individual uncertainty (variance) with low correlation between samples (diversity) [21].

- Leverage Fisher Information: Methods like BAIT use a probabilistic framework to select the batch of samples that would maximize the Fisher information, thus optimally reducing the uncertainty of the model parameters [21].

- Consider Batch Size Dynamics: In drug synergy discovery, smaller batch sizes have been observed to yield a higher synergy discovery ratio. Implementing dynamic tuning of the exploration-exploitation trade-off can further enhance performance in these scenarios [22].

Table 3: Batch-Mode Active Learning Methods for Drug Discovery

| Method | Key Mechanism | Supported Evidence | Compatibility |

|---|---|---|---|

| COVDROP & COVLAP [21] | Maximizes the joint entropy (log-determinant) of the epistemic covariance matrix of batch predictions. | Outperformed random selection and other batch methods on ADMET and affinity datasets, leading to significant potential savings in experiments. | DeepChem, other deep learning libraries. |

| BAIT [21] | Uses Fisher information to optimally select a batch that minimizes the uncertainty of the model's parameters. | Effective in theoretical benchmarks and image classification; performance can vary for molecular data. | Deep learning models. |

| Small Batch Sizes with Dynamic Tuning [22] | Uses smaller sequential batches and dynamically adjusts the sampling strategy between exploration and exploitation. | Can discover 60% of synergistic drug pairs by exploring only 10% of the combinatorial space. | Drug synergy screening platforms. |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools and Datasets for Active Learning Experiments

| Tool / Resource Name | Type | Function in Active Learning | Relevant Context |

|---|---|---|---|

| modAL [18] | Python Library | Provides a flexible, scikit-learn compatible framework for rapidly implementing and testing various query strategies. | General-purpose AL prototyping. |

| DeepChem [21] | Deep Learning Library | Offers implementations of molecular featurization and graph neural networks, compatible with custom AL methods like COVDROP. | Drug discovery, molecular property prediction. |

| BioClinicalBERT [20] | Pre-trained Model | Serves as a powerful foundation model for NLP tasks, fine-tuned for clinical NER to reduce required labeled data. | Clinical text mining, Named Entity Recognition. |

| VGG16 [17] | Pre-trained Model | Used for efficient feature extraction without retraining, enabling category-information integration in sampling strategies. | Computer vision, image-based AL. |

| i2b2 2009, n2c2 2018 [20] | Benchmark Dataset | Gold-standard datasets for evaluating the performance and cost-reduction of AL strategies in clinical NLP. | Method validation in healthcare NLP. |

| Oneil, ALMANAC [22] | Benchmark Dataset | Curated datasets for synergistic drug combination screening, used to simulate and benchmark AL campaigns. | Drug synergy discovery. |

Experimental Protocol: Benchmarking Active Learning Strategies

This protocol provides a standardized methodology for comparing the performance and cost-efficiency of different AL query strategies, based on established practices in the literature [20] [4] [21].

1. Objective: To quantitatively evaluate and compare the data efficiency of Uncertainty Sampling, Diversity Sampling, and Query-by-Committee strategies on a specific dataset.

2. Materials and Software:

- Dataset: A labeled dataset relevant to your field (e.g., Oneil for drug synergy [22], i2b2 for clinical NER [20]).

- Model: A chosen machine learning model (e.g., BioClinicalBERT for NER [20], Graph Neural Network for molecules [21]).

- AL Framework: A library such as

modAL[18] or a custom implementation. - Computing Environment: Standard workstation or HPC cluster.

3. Methodology:

- Step 1 - Initialization: Randomly sample a small subset (e.g., 1-5%) from the full dataset to serve as the initial labeled training set ( L_0 ). The remainder constitutes the unlabeled pool ( U ) [4].

- Step 2 - Active Learning Loop: For a predetermined number of cycles or until the unlabeled pool is exhausted:

- Step 2.1 - Model Training: Train the model on the current labeled set ( L_i ).

- Step 2.2 - Query Selection: Apply each AL strategy (Uncertainty, Diversity, QBC) to select a batch of ( B ) samples from the unlabeled pool ( U ). For a fair comparison, the batch size ( B ) should be consistent across strategies [21].

- Step 2.3 - Simulated Annotation: "Label" the selected batch by using the ground-truth labels from the held-out dataset.

- Step 2.4 - Update Sets: Remove the newly labeled batch from ( U ) and add it to ( Li ) to create ( L{i+1} ).

- Step 3 - Performance Evaluation: After each AL cycle, evaluate the model's performance on a fixed, held-out test set. Record metrics such as Accuracy, F1-score, RMSE, or PR-AUC [20] [22].

4. Data Analysis and Visualization:

- Plot the model performance (y-axis) against the cumulative number of labeled samples (x-axis) for each strategy.

- The strategy whose curve rises fastest and to the highest level is the most data-efficient.

- Calculate the percentage of data savings: the reduction in labeled data required to reach a target performance level compared to random sampling or a baseline.

The following diagram visualizes this iterative benchmarking workflow:

Troubleshooting Common Batch Active Learning Issues

FAQ: Why does my model performance improve slowly in early active learning cycles?

This is often due to inadequate initial sampling or a poor acquisition function. The initial, small labeled dataset must be representative of the broader data distribution. If it fails to capture key regions of the feature space, the model starts with a poor understanding, and subsequent queries are less effective [4].

- Solution: Ensure your initial pool is sufficiently diverse. For regression tasks, consider using space-filling designs as your initial sampling strategy to build a robust foundational model before active learning cycles begin [23].

FAQ: My selected batches lack diversity and are highly redundant. How can I fix this?

This is a classic challenge where selecting samples based solely on individual uncertainty leads to choosing many similar, high-uncertainty points from the same region. Batch active learning must explicitly manage the trade-off between uncertainty and diversity [24] [25].

- Solution: Implement batch selection methods that maximize the joint entropy of the selected set. Techniques like COVDROP use the determinant of the epistemic covariance matrix to form batches that are both informative and diverse, thereby rejecting highly correlated samples [24].

FAQ: How do I manage annotation resources effectively across multiple experimental cycles?

A common pitfall is using a constant budget (selecting the same number of samples each cycle), which may not be optimal. Annotator effort can be better optimized by front-loading the more intensive work.

- Solution: Adopt a decreasing-budget strategy. Start with a larger batch size in the initial cycles to rapidly improve the model, then gradually reduce the batch size in subsequent iterations. This optimizes resource allocation and annotator focus [11].

FAQ: The surrogate model in my AutoML-driven active learning is unstable. What could be wrong?

In an AutoML framework, the underlying surrogate model (e.g., linear regressor, tree-based ensemble, neural network) can change between cycles. An acquisition function that works well for one type of model may not be robust to these changes [4].

- Solution: Prefer uncertainty-driven or diversity-hybrid acquisition functions that have demonstrated robustness in benchmark studies, even when the model family evolves. Strategies like LCMD and RD-GS have been shown to perform well early in the AutoML-active learning process [4].

Performance Comparison of Batch Active Learning Strategies

The table below summarizes the performance of various strategies across different domains, as reported in benchmark studies.

| Strategy | Core Principle | Application Domain | Reported Performance |

|---|---|---|---|

| COVDROP/COVLAP [24] | Maximizes joint entropy via covariance matrix determinant | Drug Discovery (ADMET, Affinity) | Greatly improves on existing methods, leading to significant savings in experiments needed. |

| Decreasing-Budget [11] | Reduces batch size over iterations | Medical Image Annotation | Optimizes annotator effort and resource allocation, improving model performance with reduced effort. |

| MMD-based [25] | Minimizes distribution difference between labeled/unlabeled data | General Classification (UCI datasets), Biomedical Imaging | Selects representative samples; achieves superior/comparable performance efficiently. |

| Uncertainty (LCMD) [4] | Queries points with highest predictive uncertainty | Materials Science (via AutoML) | Clearly outperforms baseline and geometry-based heuristics early in the acquisition process. |

| Diversity-Hybrid (RD-GS) [4] | Combines representativeness and diversity | Materials Science (via AutoML) | Outperforms baseline early on; gap narrows as labeled set grows. |

Detailed Experimental Protocols

Protocol 1: Evaluating Batch Strategies for Drug Property Prediction

This protocol is adapted from the study "Deep Batch Active Learning for Drug Discovery" [24].

- Data Preparation: Obtain public ADMET/affinity datasets (e.g., aqueous solubility, lipophilicity). Split the data into an initial small labeled set (L), a large unlabeled pool (U), and a fixed test set.

- Model Setup: Use a graph neural network as the base model. For methods like COVDROP, enable Monte Carlo dropout at inference to estimate uncertainty.

- Active Learning Loop:

- Step 1: Train the model on the current labeled set L.

- Step 2: Using the trained model, compute the covariance matrix C over the predictions for all samples in the unlabeled pool U.

- Step 3: Select a batch of B samples from U such that the submatrix C_B of C has the maximal determinant. This can be done with a greedy algorithm.

- Step 4: "Label" the selected batch (in a retrospective study, the labels are retrieved from the hold-out set) and add them to L.

- Step 5: Repeat steps 1-4 for a fixed number of cycles or until the unlabeled pool is exhausted.

- Evaluation: Track the Root Mean Square Error (RMSE) on the fixed test set after each cycle to compare the convergence speed of different batch selection strategies (e.g., vs. random, k-means, BAIT).

Protocol 2: Benchmarking Strategies with AutoML for Materials Science

This protocol is based on the benchmark study from Scientific Reports [4].

- Framework Setup: Configure an AutoML system (e.g., based on TPOT, AutoSklearn) to automatically search over model families (linear models, tree ensembles, neural networks) and their hyperparameters.

- Data Setup: Use materials formulation datasets. Create an initial labeled set L with

n_initsamples randomly drawn from the full dataset. The rest constitutes the unlabeled pool U. - Integrated AL Loop:

- Step 1: Run the AutoML optimizer on the current labeled set L to find the best-performing model and pipeline.

- Step 2: Use this optimized model to score all samples in U with a chosen acquisition function (e.g., predictive uncertainty for LCMD).

- Step 3: Select the top-scoring sample (or batch) from U, retrieve its label, and add it to L.

- Step 4: Repeat steps 1-3 for multiple rounds.

- Evaluation: Monitor performance metrics like Mean Absolute Error (MAE) and R² on a held-out test set. The key comparison is the learning curve, showing how quickly each AL strategy improves model performance with the number of samples acquired.

Workflow and Strategy Selection Diagrams

Diagram Title: Batch Active Learning Core Cycle

Diagram Title: Strategy Selection Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Batch Active Learning Experiments |

|---|---|

| DeepChem [24] | An open-source toolkit that facilitates the use of deep learning in drug discovery and related fields. It can serve as a platform for implementing active learning methods like COVDROP. |

| Monte Carlo Dropout [24] [4] | A technique used to estimate the predictive uncertainty of a neural network. It is a key component for uncertainty-based acquisition functions in deep learning settings. |

| AutoML Framework [4] | Software like TPOT or Auto-sklearn that automates the process of model selection and hyperparameter tuning. Essential for benchmarking AL strategies when the surrogate model is not fixed. |

| Space-Filling Design [23] | An initial experimental design (e.g., Latin Hypercube) used to select the first batch of labeled data. It ensures the initial model has a good broad understanding of the input space. |

| Fisher Information Matrix [24] [25] | A mathematical tool used in some batch active learning methods (e.g., BAIT) to select data points that are expected to maximally reduce the uncertainty of model parameters. |

| Maximum Mean Discrepancy (MMD) [25] | A statistical test used to measure the difference between two probability distributions. It can be used as an objective for selecting batches that best represent the unlabeled data. |

This guide provides technical support for researchers implementing the decreasing-budget active learning strategy. This approach strategically allocates a larger annotation budget to initial learning cycles, reducing it in subsequent iterations to optimize computational resources and expert annotator effort, which is crucial in domains like drug development [11].

Troubleshooting Guides

Problem 1: Model Performance Stagnates After Initial Iterations

Q: After strong initial gains, my model's performance stopped improving significantly in later iterations, even though the budget was still decreasing. What could be the cause?

- Potential Cause 1: Non-Representative Initial Sample. The initial large batch of data may not have been sufficiently diverse or representative of the entire data pool.

- Solution: Incorporate a diversity-based sampling method into your acquisition function. Instead of selecting samples based solely on model uncertainty, also ensure selected batches represent different data clusters. Using Sentence-BERT to create semantic clusters of data for selection has proven effective in clinical NER tasks [20].

Solution: Validate the cluster structure or data distribution of your initial selected batch against the entire unlabeled pool to ensure coverage.

Potential Cause 2: Overfitting to Early Data. The model may be over-optimizing for the specific patterns in the first large batch of data it received.

- Solution: Implement a more robust validation strategy. Use a held-out test set that is completely separate from the active learning pool to monitor for overfitting at every iteration [20].

- Solution: Introduce regularization techniques (e.g., dropout, weight decay) during model training to prevent it from becoming over-confident on the initial data patterns [3].

Problem 2: Determining the Optimal Starting Budget and Decay Rate

Q: How do I decide the size of the initial budget and how quickly it should decrease? I have a fixed total annotation budget.

- Potential Cause: Lack of a Pilot Study. The data distribution and learning complexity are unknown.

- Solution: Conduct a small pilot study. Randomly sample a small subset of your data (e.g., 2-5% of your total budget), annotate it, and train a preliminary model. The learning curve from this pilot can help estimate the initial budget size needed to get the model to a reasonable performance level [3].

- Solution: Use a conservative decay rate if uncertain. A common approach is to reduce the budget by a fixed percentage (e.g., 10-20%) each iteration or to use a logarithmic decay schedule. A slower decay is more forgiving if the problem is complex [11].

Problem 3: High Computational Cost of Iterative Training

Q: Re-training my deep learning model from scratch at every active learning iteration is computationally expensive and time-consuming. How can I reduce this cost?

- Potential Cause: Inefficient Re-training Strategy. The standard pool-based active learning protocol often involves full model re-training after each new batch of data is added [11].

- Solution: Utilize transfer learning with a pre-trained model. Start with a model pre-trained on a large, general dataset (e.g., BioClinicalBERT for clinical text, or a model pre-trained on ImageNet for medical images). Fine-tuning this model in each active learning iteration is significantly faster and requires less data than training from scratch [20].

- Solution: Investigate incremental training or warm-starting. Instead of initializing weights randomly for each re-training, use the weights from the previous iteration as a starting point. This can drastically reduce the number of epochs needed for convergence [3].

Frequently Asked Questions (FAQs)

Q: How does the decreasing-budget strategy differ from standard active learning? A: Standard active learning often uses a constant budget per iteration, meaning the annotator's workload remains similar each round. The decreasing-budget strategy starts with a larger budget in the initial iterations and systematically reduces it over time. This focuses human effort where it has the most impact—when the model is learning the most fundamental patterns—and optimizes resource allocation as the model matures [11].

Q: Can this strategy be combined with different query strategies (e.g., uncertainty sampling)?

A: Yes, the decreasing-budget strategy is orthogonal to the choice of acquisition (query) function. It defines how many samples to query in each round, not which ones. It can be effectively combined with uncertainty sampling (selecting the most uncertain samples), diversity-based methods (selecting a representative set), or dynamic strategies that switch between them [20] [11]. For example, the CNBSE strategy dynamically combines diversity and uncertainty sampling and can be paired with a decreasing budget [20].

Q: What is a key metric to track when using this strategy in a machine-assisted annotation setup? A: In a machine-assisted context where humans review model pre-annotations, a key metric is the number of edits or corrections the human annotator must make to achieve a target label quality (e.g., 98% F1 score). A successful decreasing-budget implementation should show this number dropping significantly over iterations, proving that the model is learning accurately and reducing the human workload [20].

Q: When should I stop the active learning process with a decreasing budget? A: A clear stopping criterion is essential. This can be triggered when:

- The model's performance on a held-out validation set reaches a pre-defined target threshold [3].

- The performance improvement between two consecutive iterations falls below a minimum delta (e.g., less than 0.5% F1 score improvement) [3].

- The total cumulative annotation budget has been exhausted [26].

Experimental Protocols & Data

The table below synthesizes parameters from successful implementations of active learning in medical and clinical domains, which can serve as a benchmark for your experiments.

| Experimental Parameter | Reported Values / Methods | Application Context |

|---|---|---|

| Base Model Architectures | BioClinicalBERT [20], MobileNetV3, InceptionV3, YOLOv8 [11] | Clinical NER, Medical Image Classification & Object Detection |

| Initial Training Set Size | Small, randomly selected subset of the entire pool (e.g., 1-5%) [11] | Various (Computer Vision) |

| Acquisition Functions | Least Confidence (LC) [20], Cluster-based (CLUSTER) [20], Dynamic (CNBSE) [20] | Clinical NER |

| Budget Decay Schedule | Linear or non-linear decrease from a high initial budget [11] | Medical Image Analysis |

| Stopping Criterion | Performance plateau (minimal improvement between iterations) [3] [11] | Various |

| Primary Performance Metric | F1 Score, Accuracy, mAP [20] [11] | Clinical NER, Object Detection |

| Annotation Cost Metric | Number of human edits to reach target effectiveness [20] | Machine-assisted Clinical NER |

Detailed Methodology for a Classification Experiment

This protocol outlines the steps for implementing a decreasing-budget strategy for an image classification task, based on established research [11].

Initial Setup:

- Dataset: Split your data into a fixed test set (

Te), a validation set (Va), and a large unlabeled pool (P). - Initial Model: Randomly select a very small initial training set (

Tr_0) fromP. Train your initial model (M_0) onTr_0. - Budgeting: Define your total annotation budget (

B_total) and an initial budget (B_0). Define a decay rule (e.g.,B_i = B_0 * (1 - decay_rate)^i).

- Dataset: Split your data into a fixed test set (

Active Learning Loop (Iterative Process):

- Step 1: Score Pool. Use the current model (

M_i) and your chosen acquisition function (e.g., uncertainty sampling) to score all instances in the unlabeled poolP. - Step 2: Select and Annotate. Select the top

B_iscoring instances fromP. Annotate these instances (or correct model pre-annotations) and add them to your training set to createTr_{i+1}. - Step 3: Re-train Model. Re-train (or fine-tune) the model using the updated training set

Tr_{i+1}to create a new modelM_{i+1}. - Step 4: Validate and Decide. Evaluate

M_{i+1}on the fixed validation set (Va) and test set (Te). Apply your stopping criterion. - Step 5: Update Budget. Apply the decay rule to calculate the budget

B_{i+1}for the next iteration. - Repeat Steps 1-5 until the stopping criterion is met.

- Step 1: Score Pool. Use the current model (

Workflow Visualization

The following diagram illustrates the iterative workflow of the decreasing-budget active learning strategy.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists essential computational "reagents" and tools for constructing a decreasing-budget active learning experiment in a scientific context.

| Tool / Resource | Function / Description | Exemplar in Research |

|---|---|---|

| Pre-trained Models | Provides a robust feature extractor or base model to fine-tune, reducing data needs and training time. | BioClinicalBERT for clinical text [20]; MobileNetV3, InceptionV3 for images [11]. |

| Acquisition Functions | The algorithm that quantifies the "informativeness" of an unlabeled sample to prioritize annotation. | Least Confidence (LC), Margin Sampling [3]; Cluster-based (CLUSTER), Dynamic (CNBSE) [20]. |

| Annotation Management Platform | Software to manage the iterative labeling process, often integrating pre-annotation and active learning. | Platforms that allow pathologists to create annotations and employ AL for ranking images [11]. |

| Specialist Annotators | Domain experts (e.g., pathologists, pharmacologists) required for high-quality, reliable labels. | Pathologists annotating medical images for tumor detection [11] or clinical concepts in text [20]. |

| Validation & Test Sets | Curated, fixed datasets used for unbiased evaluation of model performance and guiding stopping decisions. | Held-out splits from i2b2, n2c2, or MADE corpora for clinical NER [20]. |

Troubleshooting Guides and FAQs

This technical support resource addresses common challenges in integrating active learning (AL) with virtual screening and ADMET prediction. The guidance is framed within a research thesis focused on computational cost-reduction strategies, helping you optimize resources and improve model performance with limited data.

Virtual Screening & Molecular Docking

FAQ: What are the first steps for preparing a compound library for virtual screening?

A robust virtual screening pipeline begins with careful preparation of both the target protein and the ligand library.

- Procedure: For the protein target (e.g., BACE1, PDB ID: 6ej3), obtain the 3D structure from the RCSB database. Prepare it by refining hydrogen bonds, adding missing hydrogen atoms, and removing water molecules. Finally, optimize the structure using a force field like OPLS 2005 for energy minimization [27].

- Procedure: For ligand libraries (e.g., from the ZINC database), filter compounds based on Lipinski's Rule of Five to ensure drug-likeness. Use tools like Schrödinger's LigPrep to generate 3D structures, minimize energy, and create multiple tautomers and conformers [27].

FAQ: How do I validate my molecular docking protocol to ensure results are reliable?

Validation is critical to ensure your docking setup can reproduce known binding modes.

- Solution: Perform a re-docking experiment. Take the co-crystallized ligand (e.g., inhibitor B7T from the 6ej3 BACE1 structure) out of the protein's binding site and re-dock it. A reliable protocol will re-dock the ligand into a nearly identical position, with a Root Mean Square Deviation (RMSD) value of less than 2 Å compared to its original position. An RMSD of 0.77 Å, for example, indicates excellent reproducibility [27].

ADMET Property Prediction

FAQ: My ADMET prediction model performs poorly on new, unseen compounds. What could be wrong?

This is often a data quality or representation issue.

- Solution: Focus on data preprocessing and feature engineering.

- Data Quality: Ensure your dataset is clean and normalized. For imbalanced datasets, use sampling techniques [28].

- Feature Engineering: Move beyond simple 1D molecular fingerprints. Employ multi-view molecular representations that integrate 1D fingerprints, 2D molecular graphs, and 3D geometric information. This provides a more comprehensive description of the molecule, significantly improving model generalizability [29].

- Feature Selection: Use filter, wrapper, or embedded methods to identify the most relevant molecular descriptors and remove redundant features, which can enhance model accuracy [28].

FAQ: Are there specific machine learning architectures that are better for ADMET prediction?

Yes, model architecture plays a key role in prediction accuracy.

- Solution: Consider frameworks that use multi-task learning (MTL) and multi-view fusion. For instance, the MolP-PC framework integrates 1D, 2D, and 3D molecular representations through an attention-gated fusion mechanism. It then uses a multi-task adaptive learning strategy to simultaneously predict multiple ADMET endpoints. This approach has been shown to achieve optimal performance, especially on small-scale datasets, by leveraging shared information across related tasks [29].

Active Learning for Cost Reduction

FAQ: With a limited budget for experimental data, which Active Learning strategy should I use first?

The most effective strategy can depend on the size of your current labeled dataset.

- Solution: Based on benchmark studies, uncertainty-driven and diversity-hybrid strategies are highly effective early on.

- For Initial Stages (Very Little Data): Prioritize uncertainty-based methods (e.g., LCMD, Tree-based-R) or diversity-hybrid methods (e.g., RD-GS). These strategies seek out the most informative or representative samples, providing the highest model performance boost per data point acquired [4].

- As Data Grows: The performance advantage of complex AL strategies diminishes. As your labeled set expands, the difference between various strategies and random sampling narrows, indicating reduced returns on investment from AL [4].

FAQ: How can I structure an AL experiment for a typical drug discovery regression task (e.g., predicting binding affinity)?

A standard pool-based AL framework can be implemented as follows [4]:

- Initialization: Start with a small set of labeled data (L) and a large pool of unlabeled data (U).

- Model Training: Train an initial model (e.g., using an AutoML system) on L.

- Querying: Use an acquisition function (e.g., an uncertainty metric) to score all samples in U and select the most informative one(s),

x*. - Labeling: Obtain the target value

y*forx*(e.g., via a lab experiment or computation). - Expansion: Add the newly labeled sample

(x*, y*)to L and remove it from U. - Iteration: Retrain the model on the expanded L and repeat from step 3 until a stopping criterion (e.g., budget exhaustion) is met.

Workflow & Integration

FAQ: Our team struggles with managing data between virtual screening, ADMET prediction, and experimental results. Are there tools to help?

Yes, integrated digital environments are designed to address this exact problem.

- Solution: Platforms like CDD Vault provide a collaborative biological and chemical database to securely manage private and external data. These platforms allow teams to intuitively organize chemical structures, biological assay data, and experimental protocols, linking computational and experimental workflows within a single workspace. This integration streamlines data analysis and decision-making from virtual screening to lead optimization [30].

Experimental Protocols & Data

Detailed Methodologies

Protocol: Structure-Based Virtual Screening for Identifying BACE1 Inhibitors [27]

- Protein Preparation:

- Download the BACE1 crystal structure (PDB: 6ej3).

- Use a protein preparation wizard to add hydrogens, assign bond orders, and correct for missing residues.

- Delete all water molecules and optimize the side chains.

- Perform energy minimization using the OPLS 2005 force field.

- Ligand Library Preparation:

- Obtain ~80,000 natural compounds from ZINC.

- Filter using Lipinski's Rule of Five, resulting in ~1,200 compounds.

- Prepare ligands using LigPrep: generate 3D structures, desalt, and generate possible ionization states and tautomers at a physiological pH of 7.0 ± 2.0.

- Molecular Docking:

- Grid Generation: Define the binding site around the co-crystallized ligand's coordinates.

- Virtual Screening:

- Step 1 (HTVS): Dock the 1,200-compound library using High-Throughput Virtual Screening mode. Select the top 50 hits based on docking score (G-Score).

- Step 2 (SP): Re-dock the top 50 hits using Standard Precision mode. Select the top 7 compounds for further analysis.

- Step 3 (XP): Dock the top 7 hits using Extra Precision mode for refined binding affinity estimation.

- Validation:

- Validate the docking protocol by re-docking the native ligand and calculating the RMSD.

Protocol: Implementing an Active Learning Cycle for a Regression Task [4]

- Data Setup:

- Partition the full dataset into a labeled training set (L), an unlabeled pool (U), and a fixed test set (Te). A common initial split is 5-10% of data in L.

- Active Learning Loop:

- for iteration i = 1 to N do:

- Train Model: Use an AutoML system on the current labeled set L to find the best-performing model.

- Evaluate: Record the model's performance (e.g., MAE, R²) on the test set Te.

- Acquire Samples: If U is not empty, use the chosen AL strategy (e.g., uncertainty sampling) to select the top

Bmost informative samples from U.Bcan be a fixed number or follow a decreasing-budget strategy. - Label: Simulate an expensive experiment by revealing the target values for the selected

Bsamples. - Update: Add the newly labeled

Bsamples to L and remove them from U.

- end for

- for iteration i = 1 to N do:

- Analysis:

- Plot the model performance (e.g., MAE) against the number of labeled samples acquired. Compare the learning curves of different AL strategies against a random sampling baseline.

Summarized Quantitative Data

Table 1: Performance of Top Docked Ligands Against BACE1 [27]

| Ligand ID | Docking Score (kcal/mol) | Key Interacting Residues |

|---|---|---|

| L2 | -7.626 | ASP32, ASP228, GLY230, ILE226, TYR198 |

| L1 | -7.185 | ASN37, SER35, LEU30, GLN73 |

| L3 | -6.924 | THR329, THR72, ARG128, VAL69 |