Active Learning for Molecular Optimization: Strategies, Applications, and Benchmarking in Drug Discovery

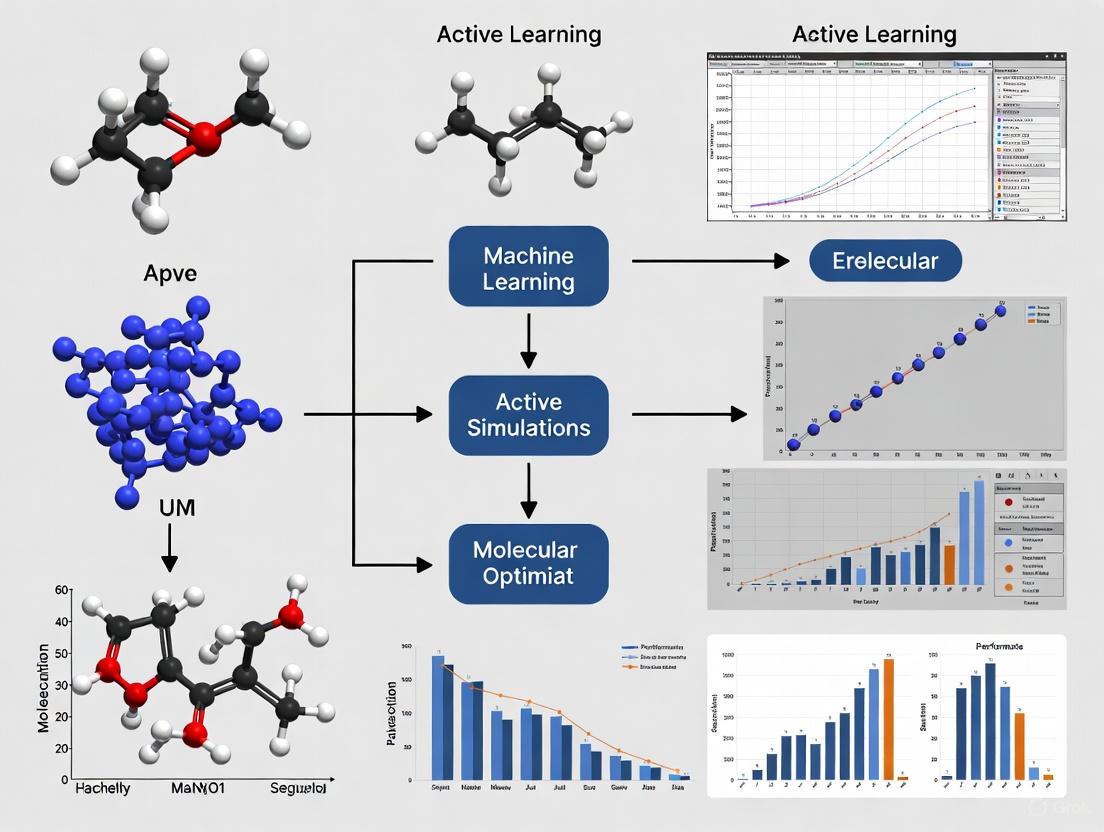

This article provides a comprehensive evaluation of active learning (AL) as a transformative machine learning strategy for accelerating molecular optimization in drug discovery.

Active Learning for Molecular Optimization: Strategies, Applications, and Benchmarking in Drug Discovery

Abstract

This article provides a comprehensive evaluation of active learning (AL) as a transformative machine learning strategy for accelerating molecular optimization in drug discovery. It explores the foundational principles of AL, where algorithms iteratively select the most informative molecules for expensive experimental testing to maximize learning efficiency. The review systematically analyzes diverse methodological approaches, including novel strategies like ActiveDelta and batch selection techniques, and their successful application in optimizing key drug properties such as potency, solubility, and permeability. Critical challenges such as data scarcity, model exploitation leading to limited scaffold diversity, and balancing exploration with exploitation are addressed with practical troubleshooting and optimization strategies. Finally, the article presents rigorous validation through benchmarking studies across numerous biological targets, comparing AL performance against traditional methods and highlighting its significant potential to reduce experimental costs and timelines while identifying more potent and chemically diverse compounds.

Understanding Active Learning: A Foundational Guide for Efficient Molecular Exploration

Defining Active Learning and Its Core Mechanism in Machine Learning

Active Learning and Its Core Mechanism in Machine Learning

Active learning is a specialized machine learning approach that optimizes data annotation by strategically selecting the most informative data points for labeling, thereby maximizing model performance while minimizing labeling costs [1]. Unlike traditional supervised learning that uses a fixed, pre-labeled dataset, active learning employs an iterative process where the algorithm interactively queries a human annotator to label data with the desired outputs [2] [3]. This human-in-the-loop paradigm is particularly valuable in domains like molecular optimization research where data labeling requires specialized expertise, expensive equipment, or time-consuming experimental procedures [4].

Core Mechanism of Active Learning

The fundamental mechanism of active learning operates through a carefully orchestrated cycle that combines model prediction, strategic sample selection, and human expertise. The core belief is that an algorithm can achieve higher accuracy with fewer training labels if allowed to choose which data to learn from [3].

The Active Learning Loop

The active learning process follows an iterative cycle that progressively improves model performance [5]:

This continuous feedback loop enables the model to learn systematically from its uncertainties, improving predictive accuracy while strategically expanding the labeled dataset [5]. The process typically continues until the model reaches a performance plateau, achieves target accuracy, or exhausts a predetermined labeling budget [3].

Key Query Strategies

The selection of data points for labeling is governed by query strategies that determine which unlabeled instances would be most valuable for model improvement:

Uncertainty Sampling: The model selects unlabeled samples where it is least confident about its predictions. Common techniques include least confident sampling, margin sampling (minimizing the gap between top two predictions), and entropy sampling (maximizing prediction entropy) [5].

Diversity Sampling: This approach selects data points that represent the overall diversity of the dataset, often using clustering methods or core-set approaches to ensure broad coverage of the feature space [5].

Query-by-Committee (QBC): Multiple models form a "committee" through ensemble methods, and the algorithm selects data points where committee members disagree most, indicating high uncertainty [5].

Membership Query Synthesis: Rather than selecting from existing data, the algorithm generates synthetic examples for labeling, though this can be challenging for human annotators to label effectively [3].

Hybrid Approaches: Combine multiple strategies, such as selecting samples that are both uncertain and diverse, to overcome limitations of individual methods [5].

Experimental Comparison of Active Learning Strategies

Performance Benchmarking in Materials Science

A comprehensive 2025 benchmark study evaluated 17 active learning strategies combined with Automated Machine Learning (AutoML) for small-sample regression in materials science [4]. The study employed a pool-based active learning framework where algorithms iteratively selected the most informative samples from unlabeled data pools.

Table 1: Performance Comparison of Active Learning Strategies in Materials Science [4]

| Strategy Category | Representative Methods | Early-Stage Performance | Data Efficiency | Key Characteristics |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Superior | High | Targets samples with highest prediction uncertainty |

| Diversity-Hybrid | RD-GS | Strong | High | Balances uncertainty with dataset diversity |

| Geometry-Only | GSx, EGAL | Moderate | Medium | Based on data distribution geometry |

| Random Sampling | Baseline | Lower | Low | Serves as comparison baseline |

The benchmark revealed that uncertainty-driven and diversity-hybrid strategies significantly outperformed other approaches early in the acquisition process, demonstrating the importance of strategic sample selection in data-scarce environments typical of molecular optimization research [4]. As the labeled set expanded, performance gaps between strategies narrowed, indicating diminishing returns from active learning under AutoML frameworks.

Drug Design Applications

In a 2025 drug discovery application, researchers developed a generative AI workflow integrating a variational autoencoder (VAE) with nested active learning cycles for optimizing molecules targeting CDK2 and KRAS proteins [6].

Table 2: Active Learning Performance in Drug Design [6]

| Metric | CDK2 Target | KRAS Target |

|---|---|---|

| Generated Molecules | Diverse, drug-like molecules with excellent docking scores | Novel scaffolds distinct from known inhibitors |

| Experimental Validation | 8/9 synthesized molecules showed in vitro activity | 4 molecules with potential activity identified |

| Potency Achievement | 1 molecule with nanomolar potency | In silico validation completed |

| Synthetic Accessibility | High predicted synthesis accessibility | High predicted synthesis accessibility |

The nested active learning architecture included inner cycles focused on chemical validity and synthetic accessibility, and outer cycles employing molecular docking simulations as affinity oracles [6]. This approach successfully generated novel molecular scaffolds with high predicted binding affinity while maintaining drug-like properties.

Detailed Experimental Protocols

Benchmarking Framework for Materials Informatics

The materials science benchmark employed the following rigorous methodology [4]:

Dataset Preparation: Nine materials formulation datasets with high data acquisition costs were partitioned 80:20 into training and test sets.

Initialization: The process began with randomly selected initial labeled samples (n_{init}) from the unlabeled dataset.

Active Learning Cycle:

- Model training using current labeled set

- Sample selection using various AL strategies

- "Oracle" labeling of selected samples

- Model retraining with expanded labeled set

Evaluation: Performance was measured using Mean Absolute Error (MAE) and Coefficient of Determination (R²) across multiple acquisition steps.

Validation: Five-fold cross-validation was automatically performed within the AutoML workflow to ensure robustness.

The study specifically addressed the challenge of dynamic model selection in AutoML environments, where the surrogate model may switch between algorithm families (linear regressors, tree-based ensembles, neural networks) across iterations [4].

Molecular Optimization Workflow in Drug Discovery

The drug design implementation featured a specialized workflow for molecular generation and optimization [6]:

Data Representation: Training molecules were represented as SMILES strings, tokenized, and converted into one-hot encoding vectors for VAE processing.

Initial Training: The VAE was initially trained on a general dataset, then fine-tuned on target-specific data to enhance target engagement.

Inner Active Learning Cycles: Generated molecules were evaluated using chemoinformatics oracles for drug-likeness, synthetic accessibility, and similarity thresholds. Molecules meeting criteria were added to a temporal-specific set for VAE fine-tuning.

Outer Active Learning Cycles: After multiple inner cycles, accumulated molecules underwent docking simulations as affinity oracles. Successful molecules were transferred to a permanent-specific set for further fine-tuning.

Candidate Selection: Promising candidates underwent intensive molecular modeling simulations (PELE) and absolute binding free energy (ABFE) calculations before experimental validation.

This structured approach enabled the exploration of novel chemical spaces while maintaining focus on molecules with high predicted affinity and synthetic accessibility [6].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Active Learning in Molecular Optimization

| Tool Category | Specific Solutions | Function in Research |

|---|---|---|

| AutoML Frameworks | Automated machine learning platforms | Automates model selection, hyperparameter tuning, and preprocessing; reduces manual optimization effort [4] |

| Molecular Representations | SMILES, One-hot encoding, Molecular fingerprints | Converts chemical structures into machine-readable formats for model processing [6] |

| Cheminformatics Oracles | Drug-likeness predictors, Synthetic accessibility scorers | Provides computational assessment of molecular properties before experimental validation [6] |

| Physics-Based Simulators | Molecular docking, PELE simulations, Absolute binding free energy calculations | Offers reliable affinity predictions using physics principles, especially valuable in low-data regimes [6] |

| Active Learning Libraries | Lightly, Custom AL implementations | Provides query strategies, uncertainty estimation, and data selection capabilities [5] |

| Generative Models | Variational Autoencoders (VAEs) | Generates novel molecular structures with controlled interpolation in latent space [6] |

Active learning represents a paradigm shift in machine learning for molecular optimization research, strategically addressing the data scarcity challenges inherent in experimental sciences. By implementing iterative query strategies that selectively target the most informative data points for labeling, active learning frameworks demonstrably accelerate materials discovery and drug design while significantly reducing resource expenditures.

The core mechanism—centered on uncertainty quantification, strategic sample selection, and human-in-the-loop validation—enables researchers to maximize information gain from minimal data. As evidenced by recent breakthroughs in materials informatics and drug discovery, integrating active learning with complementary technologies like AutoML and generative AI creates powerful workflows capable of navigating complex molecular spaces with unprecedented efficiency. For researchers in drug development and materials science, mastering these active learning approaches provides a critical competitive advantage in the rapidly evolving landscape of data-driven molecular optimization.

Active learning (AL) has emerged as a transformative paradigm in machine learning, particularly for data-scarce and high-cost domains like molecular optimization and drug discovery. It functions as a sophisticated iterative loop where a model strategically selects the most informative data points for labeling, thereby maximizing learning efficiency and minimizing experimental resource expenditure [1]. In molecular contexts, where synthesizing and assaying compounds is both time-consuming and expensive, this approach shifts the discovery process from one of random screening to a guided, intelligent exploration of chemical space [6]. The core of this methodology is a carefully orchestrated cycle—from initialization to model update—whose precise implementation critically determines the success of a molecular optimization campaign. This guide provides a detailed comparison of the components and performance of this iterative loop, offering a scientific benchmark for its application in research.

Deconstructing the Core Active Learning Loop

The active learning loop is a systematic process designed to optimize the acquisition of knowledge. The following diagram illustrates the complete workflow and logical relationships between each stage.

Component 1: Initialization Strategy

The process begins with the Initialization Strategy, which establishes the foundational labeled dataset for training the initial model.

- Objective: To create a small, initial labeled dataset (

L = {(x_i, y_i)}_{i=1}^l) that is representative enough to bootstrap the learning process [4]. - Common Practices: In molecular settings, this often involves random sampling from a historical compound library. However, strategic sampling based on chemical diversity or known active scaffolds can provide a superior starting point, mitigating the "cold start" problem [7].

- Research Consideration: The choice of initialization can significantly influence the early trajectory of the AL cycle. For novel targets with scant data, leveraging transfer learning from related targets or using coarse-grained simulations for initial sampling is an advanced technique gaining traction [6].

Component 2: Query Strategy

The Query Strategy is the intellectual core of the loop, determining which unlabeled data points (x^* from pool U) are most valuable to label next [4]. The selection is based on a predefined notion of "informativeness."

Table: Comparison of Primary Active Learning Query Strategies

| Strategy | Core Principle | Typical Use Case in Molecular Optimization | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Uncertainty Sampling [1] [7] | Selects samples where the model's prediction is least confident. | Optimizing a lead compound with a well-defined structure-activity relationship (SAR). | Simple to implement; highly effective for refining model confidence. | Can miss diverse, novel scaffolds; prone to selecting outliers. |

| Diversity Sampling [1] | Selects samples that are most dissimilar to the existing labeled set. | Early-stage exploration of a vast chemical space to identify novel chemotypes. | Ensures broad coverage of the chemical space; prevents model over-specialization. | May label many irrelevant compounds if the space is not well-constrained. |

| Query-by-Committee [7] | Uses an ensemble of models; selects samples with the greatest disagreement among committee members. | Complex molecular properties where no single model architecture is clearly superior. | Reduces model bias; robust for complex, multi-faceted prediction tasks. | Computationally expensive due to training and querying multiple models. |

| Expected Model Change [8] | Selects samples that would cause the largest change in the current model parameters. | High-risk, high-reward scenarios where a single informative sample could dramatically shift understanding. | Theoretically selects the most impactful data points. | Computationally intensive to calculate for large models and datasets. |

| Hybrid (e.g., RD-GS) [4] | Combines multiple principles, e.g., uncertainty and diversity. | Most real-world applications, balancing exploitation of known leads with exploration of new areas. | Mitigates the weaknesses of individual strategies; generally more robust performance. | More complex to tune and implement effectively. |

Component 3: Human-in-the-Loop and Experimental Oracle

The selected candidates are passed to the Oracle for labeling. In molecular optimization, this "oracle" is often a costly experimental process or a high-fidelity simulation [6] [9].

- Wet-Lab Experiment: This involves the actual synthesis of the proposed compound and its subsequent biological assay (e.g., measuring binding affinity against a target protein like KRAS or CDK2) [6].

- Computational Oracle: To reduce costs, a computational proxy like a docking score or a physics-based simulation (e.g., Absolute Binding Free Energy calculations) can be used as a surrogate for experimental measurement [6]. The key is that the oracle provides the ground-truth label

y^*for the selected samplex^*.

Component 4: Model Update and Iteration

The newly acquired labeled sample (x^*, y^*) is added to the training set (L = L ∪ {(x^*, y^*)}), and the model is retrained on this augmented dataset [4]. This step is not a simple refresh; in an Automated Machine Learning (AutoML) framework, the entire model architecture and hyperparameters may be re-optimized in each cycle [4]. This iterative process continues until a stopping criterion is met, such as performance saturation, depletion of a time/budget resource, or the identification of a sufficient number of candidate molecules [1] [10].

Performance Comparison & Experimental Data

The efficacy of the active learning loop is best demonstrated through its application in real-world scientific benchmarks. The following data synthesizes findings from recent, high-impact studies.

Benchmarking AL Strategies with AutoML

A comprehensive 2025 benchmark study evaluated 17 different AL strategies within an AutoML framework for small-sample regression tasks in materials science, a field analogous to molecular optimization in its data constraints [4].

Table: Performance of Select AL Strategies in AutoML Benchmark [4]

| AL Strategy | Underlying Principle | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) | Remarks |

|---|---|---|---|---|

| Random Sampling | Baseline (No active selection) | Baseline | Baseline | Converges with others given enough data. |

| LCMD | Uncertainty | Clearly Outperforms baseline | Gap narrows, converges | A top performer for rapid initial learning. |

| Tree-based-R | Uncertainty | Clearly Outperforms baseline | Gap narrows, converges | Effective for tree-based model families within AutoML. |

| RD-GS | Diversity-Hybrid | Clearly Outperforms baseline | Gap narrows, converges | Balances exploration and exploitation effectively. |

| GSx | Geometry / Diversity | Mixed performance | Gap narrows, converges | Purely diversity-driven heuristics were less effective early on. |

| EGAL | Geometry / Diversity | Mixed performance | Gap narrows, converges | Similar to GSx. |

Key Finding: The benchmark concluded that uncertainty-driven (LCMD, Tree-based-R) and diversity-hybrid (RD-GS) strategies significantly outperformed random sampling and geometry-only heuristics, especially during the critical early stages of learning. As the labeled set grows, the performance gap diminishes, highlighting the paramount importance of strategic data acquisition under a limited budget [4].

Case Study: Drug Design for CDK2 and KRAS

A 2025 study on drug design provides compelling experimental data on the real-world efficiency gains of an AL loop. The research used a generative model (Variational Autoencoder) embedded within a dual-loop AL framework to design inhibitors for the CDK2 and KRAS proteins [6].

Table: Experimental Outcomes of AL-Driven Drug Discovery [6]

| Metric | CDK2 Target | KRAS Target | Context & Implication |

|---|---|---|---|

| Molecules Synthesized | 9 | N/A (In silico validation) | Demonstrates the workflow's ability to generate synthesizable candidates. |

| Experimentally Active Molecules | 8 out of 9 | 4 (Predicted) | Shows a remarkably high success rate, validating the model's precision. |

| Potency of Best Hit | Nanomolar | Potential activity | Led to a highly potent inhibitor for CDK2. |

| Key Workflow Feature | Nested AL cycles with chemical and physics-based oracles. | Relied on computational oracles (docking, ABFE). | The nested loop structure was critical for refining candidate quality. |

Key Finding: The AL-driven workflow successfully generated novel, synthesizable scaffolds with high predicted affinity. For CDK2, the model's predictions were experimentally validated with a ~89% success rate (8 out of 9 synthesized molecules showing activity), a hit rate far exceeding traditional high-throughput screening [6].

Case Study: Catalyst Development for Alcohol Synthesis

A 2024 study in catalysis showcases AL's power in optimizing both material composition and process conditions simultaneously. The goal was to develop a highly active FeCoCuZr catalyst for higher alcohol synthesis (HAS) [9].

Table: Efficiency Gains in AL-Driven Catalyst Development [9]

| Metric | Traditional Approach | Active Learning Approach | Improvement / Saving |

|---|---|---|---|

| Experiments Required | Hundreds to thousands | 86 | >90% reduction |

| Optimal Catalyst | Fe79Co10Zr11 (Benchmark) | Fe65Co19Cu5Zr11 | 1.2-fold improvement over benchmark |

| Higher Alcohol Productivity | ~0.2 gHA h⁻¹ gcat⁻¹ (typical) | 1.1 gHA h⁻¹ gcat⁻¹ (stable for 150h) | 5-fold improvement over typical yields |

| Exploration Space | Limited, intuitive | ~5 billion combinations | Systematic, data-driven navigation |

Key Finding: The integration of Bayesian optimization into the AL loop enabled the researchers to navigate a space of ~5 billion potential combinations in only 86 experiments, identifying a catalyst with a 5-fold improvement in productivity and achieving over a 90% reduction in experimental footprint and cost [9].

Detailed Experimental Protocols

To ensure reproducibility, this section outlines the core methodologies from the cited studies.

This protocol is standard for quantitative structure-activity relationship (QSAR) modeling and materials property prediction.

- Data Partitioning: Start with a full dataset. Partition it into an initial labeled set

L(e.g., 5-10%), a large unlabeled poolU(~70-85%), and a held-out test set (~20%) for final evaluation. The test set remains completely untouched during the AL cycles. - Initial Model Training: Train an initial predictive model (or an AutoML system) on

L. The model can be a random forest, a neural network, or any other regressor/classifier. - Iterative AL Loop:

a. Query: Use the current model to score all samples in

Ubased on the chosen query strategy (e.g., uncertainty measured by predictive variance). b. Select: Choose the topksamples (e.g.,k=5) with the highest scores. c. Label: Obtain the true labels for theseksamples. In a simulation, this is done by revealing their held-out label; in reality, this requires experimental assay. d. Update: Remove theksamples fromUand add them toL. Retrain (or hyperparameter-optimize) the model on the updatedL. - Stopping: Repeat the loop for a fixed number of iterations or until model performance on a separate validation set plateaus.

This advanced protocol integrates a generative model directly into the AL loop for de novo molecular design.

- Initial Training: A Variational Autoencoder (VAE) is pre-trained on a general molecular dataset (e.g., ChEMBL) and then fine-tuned on a target-specific dataset.

- Inner AL Cycle (Cheminformatics Oracle): a. Generation: The VAE is sampled to generate new molecules. b. Filtration: Generated molecules are evaluated by fast, computational oracles for drug-likeness (e.g., Lipinski's rules), synthetic accessibility (SA), and novelty (dissimilarity to training set). c. Fine-tuning: Molecules passing the thresholds are used to fine-tune the VAE, pushing it to generate more molecules with these desirable chemical properties.

- Outer AL Cycle (Physics-Based Oracle): a. After several inner cycles, accumulated molecules are evaluated by a more expensive, physics-based oracle (e.g., molecular docking). b. Molecules with favorable docking scores are added to a permanent set, and the VAE is fine-tuned on this set, guiding the generation towards high-affinity candidates.

- Candidate Selection: The final pool of candidates from the outer cycle undergoes rigorous filtration and selection via more intensive simulations (e.g., Monte Carlo, Absolute Binding Free Energy calculations) before experimental validation.

The workflow for this nested protocol is visualized below.

The Scientist's Toolkit: Research Reagent Solutions

Implementing a robust active learning loop for molecular optimization requires a suite of computational and experimental tools.

Table: Essential Reagents for an AL-Driven Molecular Optimization Lab

| Tool / Reagent | Category | Primary Function | Example / Note |

|---|---|---|---|

| AutoML Platform [4] | Computational | To automatically search and optimize model architectures and hyperparameters during the model update step. | Reduces manual tuning burden; ensures model is consistently high-performing. |

| Chemistry Simulation Suite | Computational Oracle | To act as a surrogate for experimental measurement, providing labels (e.g., binding affinity, energy) for generated molecules. | Schrodinger Suite, OpenMM, AutoDock Vina. Critical for pre-screening. |

| Generative Model Architecture [6] | Computational | To create novel molecular structures from scratch within the AL loop. | Variational Autoencoder (VAE), Generative Adversarial Network (GAN), Transformers. |

| Bayesian Optimization Library [9] | Computational | To power the query strategy, especially in high-dimensional spaces involving both composition and reaction conditions. | Manages the exploration-exploitation trade-off. |

| Target Protein & Assay Kits | Wet-Lab Experimental Oracle | To provide ground-truth biological data (e.g., IC50, Ki) for compounds selected by the AL loop, closing the experimental feedback loop. | e.g., Purified CDK2 kinase and a corresponding activity assay kit. |

| Chemical Synthesis Equipment & Reagents | Wet-Lab | To physically synthesize the top-predicted compounds for experimental validation. | Standard organic chemistry lab equipment and bulk chemical reagents. |

| High-Performance Computing (HPC) Cluster | Infrastructure | To handle the computational load of training models, running simulations, and managing the iterative AL cycles. | Essential for practical timelines. |

Selecting the right query strategy is a critical determinant of success in active learning (AL) pipelines for molecular optimization. These strategies control how an algorithm selects the most informative data points from a vast pool of unlabeled candidates for costly expert labeling, which is often a quantum chemical calculation in computational chemistry. This guide provides an objective comparison of the three predominant strategies—Uncertainty Sampling, Diversity Sampling, and Query-by-Committee (QBC)—framed within the context of molecular optimization research. It summarizes experimental data, details methodologies from recent studies, and offers a toolkit for implementation to help researchers and drug development professionals make informed decisions.

Active learning is a machine learning approach where the algorithm interactively queries a human or computational "oracle" to label new data points, aiming to achieve high model performance with minimal labeling cost [1]. The core of this process is the active learning loop: an initial model is trained on a small labeled dataset, used to select valuable unlabeled points, which are then labeled by an oracle and added to the training set before the model is retrained [11] [5]. The component that decides which data points to select is the query strategy or acquisition function [12] [5].

In molecular optimization, the "oracle" is often an expensive computational method like Density Functional Theory (DFT), and the "label" is a molecular property such as energy or a photophysical characteristic [13] [14]. The choice of query strategy directly impacts the efficiency of exploring the vast chemical space and the cost of discovery campaigns.

Comparative Analysis of Core Query Strategies

The table below summarizes the core principles, strengths, weaknesses, and primary use cases for the three key strategies.

Table 1: Comparison of Key Active Learning Query Strategies

| Strategy | Core Principle | Key Advantages | Key Limitations | Ideal Use Cases in Molecular Optimization |

|---|---|---|---|---|

| Uncertainty Sampling [1] [12] [5] | Selects data points where the model's prediction confidence is lowest. | Intuitive and simple to implement. Highly effective at refining decision boundaries. Computationally efficient. | Can overfocus on outliers or noisy data. Ignores data distribution, risking model bias. Requires well-calibrated model confidence scores. | Optimizing a specific molecular property (e.g., HOMO-LUMO gap) where the goal is to pinpoint candidates near a target value. |

| Diversity Sampling [1] [11] [5] | Selects a set of data points that are maximally different from each other and the existing training set. | Ensures broad exploration and coverage of the design space. Mitigates redundancy in the training data. Helps prevent model bias towards over-represented regions. | May select many easy samples that don't improve model accuracy. Can be computationally intensive for large datasets. Slower performance gains per labeled sample compared to uncertainty sampling. | Initial stages of a project to build a representative dataset, or when the chemical space is known to be highly diverse and multi-modal. |

| Query-by-Committee (QBC) [12] [15] [5] | Maintains a committee (ensemble) of models; selects points where committee members disagree the most. | Directly measures model uncertainty via disagreement. Less reliant on perfectly calibrated confidence scores from a single model. Often more robust than single-model uncertainty sampling. | Computationally expensive to train and maintain multiple models. Can be noisy if committee models are poorly tuned. Increased implementation complexity. | Scenarios requiring high model reliability and where computational resources for training multiple models are available. |

Quantitative Performance Comparison

Recent experimental studies in molecular optimization provide quantitative evidence of the performance trade-offs between these strategies. The following table summarizes key findings.

Table 2: Experimental Performance in Molecular Optimization Tasks

| Source & Context | Strategy Tested | Key Experimental Findings | Reported Metric |

|---|---|---|---|

| Unified AL for Photosensitizers [13] | Sequential (Diversity-first, then Uncertainty) | Outperformed static (non-AL) baselines by 15-20% in test-set Mean Absolute Error (MAE). | MAE on predicting T1/S1 energy levels |

| Enhanced Uncertainty Sampling [16] | Uncertainty Sampling (Baseline) | Traditional uncertainty sampling led to class imbalance; dock targets had higher entropy and dominated selections over buoys/lighthouses. | Qualitative analysis of sample selection entropy |

| Enhanced Uncertainty Sampling [16] | Category-Enhanced Uncertainty Sampling (Novel Method) | Achieved accuracy comparable to state-of-the-art while reducing computational overhead by up to 80%. | Computational Cost & Model Accuracy |

| QDπ Dataset Curation [15] | Query-by-Committee | The strategy was effective at avoiding redundant training information without sacrificing chemical diversity in a 1.6-million-structure dataset. | Chemical Diversity & Data Efficiency |

| PAL for ML Potentials [14] | Uncertainty-based QbC (Implied) | Enabled efficient development of machine-learned potentials, allowing MD simulations with ab initio accuracy at a fraction of the computational cost. | Computational Efficiency & Model Accuracy |

Experimental Protocols and Workflows

The following workflow diagram illustrates how the different query strategies integrate into a unified active learning framework for molecular optimization, as demonstrated in recent research [13] [14].

Diagram Title: Active Learning Workflow for Molecular Optimization

Detailed Experimental Methodology

The following protocols are synthesized from the cited studies to illustrate how these strategies are implemented and evaluated in practice.

1. Protocol for QBC in Dataset Curation (QDπ Dataset) [15]

- Objective: To prune a large molecular dataset (from sources like ANI and SPICE) without sacrificing chemical diversity, using expensive ωB97M-D3(BJ)/def2-TZVPPD level calculations.

- Procedure:

- Committee Setup: Train 4 independent Machine Learning Potential (MLP) models on the current labeled dataset using different random seeds.

- Prediction & Disagreement: For each unlabeled molecule in the source pool, the committee of models predicts energies and forces. The standard deviation of these predictions is calculated.

- Selection Criteria: Molecules are selected for labeling if the standard deviation of the committee's predictions exceeds a threshold (e.g., >0.015 eV/atom for energy, >0.20 eV/Å for forces).

- Iteration: A random subset of up to 20,000 candidates meeting the criteria is selected for quantum chemical labeling per cycle. The process repeats until all molecules in the source pool are either included or excluded.

- Outcome Measurement: The resulting dataset's chemical diversity and its performance in training a robust, universal MLP.

2. Protocol for a Hybrid Sequential Strategy (Photosensitizer Design) [13]

- Objective: To optimize photosensitizer candidates for properties like T1/S1 energy levels by balancing exploration and exploitation.

- Procedure:

- Initial Phase (Diversity-Focused): In early active learning cycles, the acquisition function prioritizes molecular diversity to achieve broad coverage of the chemical space. This builds a representative base model.

- Later Phase (Uncertainty/Objective-Focused): In subsequent cycles, the strategy shifts towards uncertainty sampling or a direct optimization function to refine the model around high-performance regions of the chemical space.

- Model and Oracle: Uses a Graph Neural Network (GNN) as a surrogate model. The oracle is an ML-xTB pipeline, which provides DFT-level accuracy at ~1% of the cost.

- Outcome Measurement: Improvement in test-set Mean Absolute Error (MAE) for property prediction compared to static models and random sampling.

3. Protocol for Enhanced Uncertainty Sampling (Multi-class Vision Tasks, adapted for Molecules) [16]

- Objective: To overcome the class imbalance caused by traditional uncertainty sampling.

- Procedure:

- Feature Extraction: A pre-trained model (e.g., VGG16) is used to extract deep features from all unlabeled data points (e.g., molecular representations or images) without retraining.

- Category Identification: Category information is assigned by comparing feature similarities (e.g., using cosine similarity) to a labeled reference set.

- Integration with Uncertainty: The traditional uncertainty score (e.g., entropy) is combined with the category information. This ensures selection is not only based on uncertainty but also on achieving a balanced representation across different classes (or molecular scaffolds).

- Outcome Measurement: Final dataset balance and model performance on underrepresented classes, alongside computational cost savings.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The table below lists key computational tools and methodologies essential for implementing active learning in molecular optimization, as featured in the cited research.

Table 3: Essential Research Reagents & Solutions for Active Learning in Molecular Optimization

| Item Name | Function / Description | Example Use Case |

|---|---|---|

| Graph Neural Network (GNN) | Surrogate model that learns from molecular graph structures to predict properties. | Serves as the fast, trainable model in the AL loop to predict molecular energies and screen candidates [13]. |

| ML-xTB Pipeline | A quantum mechanical method that uses machine learning to achieve near-DFT accuracy at a fraction of the computational cost. | Acts as the "oracle" for labeling molecular properties like T1/S1 energies in a high-throughput manner [13]. |

| DP-Gen Software | An open-source software package specifically designed for active learning in the context of molecular dynamics and ML potentials. | Implements the query-by-committee strategy to automate the generation of training data for machine-learned potentials [15]. |

| PAL (Parallel AL Library) | An automated, modular library that parallelizes AL components using Message Passing Interface (MPI). | Manages parallel execution of exploration, labeling, and training tasks on high-performance computing clusters for efficiency [14]. |

| Query Strategy Algorithms | The core logic for data selection (e.g., Least Confidence, Margin, Entropy, Clustering). | Implemented within an AL framework to define the molecule selection policy, determining the efficiency of the discovery process [12] [5]. |

The experimental data and protocols demonstrate that there is no single "best" query strategy for all molecular optimization tasks. The choice is dictated by the project's specific stage and goals. Uncertainty Sampling is powerful for targeted optimization but risks bias. Diversity Sampling is crucial for comprehensive exploration. Query-by-Committee offers robustness at a higher computational cost.

The most effective modern approaches, as evidenced by recent research, tend to be hybrid or adaptive strategies that combine the strengths of these core methods [16] [13]. Furthermore, the field is moving towards increased automation and parallelism, as seen with tools like PAL, to fully leverage high-performance computing resources and minimize human intervention [14]. For researchers, the key is to define the chemical space and optimization objective clearly, then select and potentially combine strategies to build an efficient, data-driven discovery pipeline.

Contrasting Active Learning with Passive Learning and Reinforcement Learning

In the computationally intensive field of drug discovery, machine learning (ML) offers powerful strategies to navigate vast chemical spaces. Three predominant paradigms—active learning, passive learning, and reinforcement learning—each provide distinct approaches to optimization problems. Active learning represents a supervised machine learning approach that strategically selects the most informative data points for labeling to optimize the learning process, aiming to minimize the labeled data required while maximizing model performance [1]. This contrasts with passive learning, which relies on pre-collected labeled datasets without interactive selection, and reinforcement learning, where an agent learns optimal behaviors through environmental feedback. Within molecular optimization research, the choice among these paradigms carries significant implications for experimental resource allocation, model accuracy, and ultimately, the success of discovery campaigns. This guide provides an objective comparison of these methodologies, focusing on their application in molecular optimization to inform researchers and drug development professionals.

Conceptual Frameworks and Key Differentiators

Defining the Learning Paradigms

Active Learning operates as an iterative, human-in-the-loop process where the algorithm selectively queries a human annotator for the most informative data points to label. By focusing on samples expected to provide the maximum information gain, active learning achieves higher efficiency than traditional methods [1]. In drug discovery, it functions as an iterative feedback process that efficiently identifies valuable data within vast chemical spaces, even with limited initial labeled data [17].

Passive Learning, also known as batch learning, follows a conventional supervised approach where the model is trained on a fixed, pre-defined labeled dataset. The algorithm processes all available data without interacting with the user or requesting additional data to improve its accuracy [18]. This method assumes availability of comprehensive, high-quality labeled datasets before training commences.

Reinforcement Learning (RL) represents a fundamentally different approach where an agent learns optimal behaviors through interaction with an environment. The agent performs actions, receives feedback in the form of rewards or penalties, and adjusts its strategy to maximize cumulative reward [19]. RL can be further categorized into active and passive variants based on the agent's role in action selection.

Fundamental Operational Differences

Table 1: Core Characteristics Comparison of Machine Learning Paradigms

| Characteristic | Active Learning | Passive Learning | Reinforcement Learning |

|---|---|---|---|

| Learning Approach | Selective sampling of informative data points | Uses entire pre-collected dataset | Learns through environmental interaction |

| Data Interaction | Actively queries oracle/human for labels | No interaction; consumes pre-labeled data | Interacts with environment to receive rewards |

| Data Efficiency | High; minimizes labeling costs | Low; requires large labeled datasets | Variable; depends on exploration strategy |

| Human Involvement | High during iterative labeling | Primarily during initial data collection | Minimal after environment setup |

| Implementation Complexity | More complex due to interaction loops | Relatively straightforward | Highly complex due to policy optimization |

| Optimal Use Cases | Data labeling is expensive/limited | Abundant labeled data available | Sequential decision-making problems |

Active vs. Passive Learning: Experimental Comparison in Molecular Optimization

Performance and Efficiency Metrics

Experimental comparisons in drug discovery applications consistently demonstrate active learning's superior data efficiency compared to passive approaches. Research shows active learning can achieve comparable or better model performance while using only a fraction of the data required by passive methods [20].

Table 2: Experimental Performance Comparison in Drug Discovery Applications

| Application Domain | Dataset Size | Performance Metric | Active Learning | Passive Learning | Experimental Findings |

|---|---|---|---|---|---|

| Synergistic Drug Combination Screening [21] | 15,117 measurements (O'Neil dataset) | Synergy Detection Rate | 60% of synergistic pairs found exploring only 10% of combinatorial space | Required exhaustive search (∼8253 measurements for same yield) | 5-10× higher hit rates than random selection; significant resource savings |

| ADMET & Affinity Prediction [20] | Multiple public datasets (e.g., 9,982 compounds for solubility) | RMSE vs. Iterations | Faster convergence to lower error rates | Slower convergence requiring more data | COVDROP method outperformed random selection and other baselines across datasets |

| Virtual Screening [22] | Billion-compound libraries | Hit Recovery Rate | ~70% of top-scoring hits found with 0.1% of docking cost | Required exhaustive docking of entire libraries | Active Learning Glide achieved massive computational savings |

| Molecular Generation [6] | CDK2 and KRAS targets | Novel Active Molecules Generated | 8 out of 9 synthesized molecules showed activity | Limited by training data diversity | Successfully explored novel chemical spaces with validated experimental results |

Methodological Approaches and Experimental Protocols

Active Learning Query Strategies implement various approaches for selective sampling:

- Stream-based Selective Sampling: Evaluates data points as they arrive, making immediate decisions about labeling based on informativeness measures [1]

- Pool-based Sampling: Selects the most informative examples from a large collection of unlabeled data based on criteria like uncertainty or diversity [19]

- Uncertainty Sampling: Queries points where the model shows highest prediction uncertainty

- Diversity Sampling: Selects data points that differ from existing labeled examples to improve coverage

Passive Learning Protocols follow traditional supervised learning workflows:

- Collect and preprocess a comprehensive labeled dataset

- Train model on the entire dataset in a single batch

- Evaluate model performance on held-out test sets

- Deploy trained model for predictions without further learning

Molecular Optimization Experimental Setup typically involves:

- Data Representation: Molecules encoded as SMILES strings, molecular fingerprints (e.g., Morgan fingerprints), or graph representations [6] [21]

- Model Architecture: Neural networks (including graph neural networks), ensemble methods, or Bayesian models

- Evaluation Metrics: RMSE for regression tasks, precision-recall AUC for classification, novel scaffold diversity, and synthetic accessibility scores

- Validation: Rigorous cross-validation, temporal validation splits, and where possible, experimental confirmation of predicted activities

Active vs. Passive Reinforcement Learning: Distinct RL Variants

Conceptual Framework and Operational Differences

Within reinforcement learning, active and passive approaches represent fundamentally different interaction paradigms with the learning environment:

Active Reinforcement Learning involves an agent that actively chooses which actions to perform based on the current state of its environment [23]. The agent maintains control over its actions and can freely explore to find the optimal strategy for maximizing cumulative reward. For example, in a drug design context, an active RL agent would autonomously decide which molecular modifications to explore next.

Passive Reinforcement Learning utilizes a fixed policy that provides a predefined set of actions for the agent to execute [19]. The agent follows this predetermined policy without exploring alternative strategies, simply observing the environment and receiving feedback (rewards) for its actions without attempting to influence the environment through exploration.

Methodological Implementations in RL

Diagram 1: Active vs. Passive Reinforcement Learning Workflows. Active RL features policy updates through exploration, while passive RL follows a fixed policy.

Passive RL Techniques focus on policy evaluation:

- Direct Utility Estimation: Executes sequences of state-action transitions and estimates utility based on sample values as running averages [24]

- Adaptive Dynamic Programming (ADP): Learns the environment model by estimating state utility as the sum of immediate reward and expected discounted future rewards [24]

- Temporal Difference Learning: Updates value estimates between successive states without requiring a full environment model [24]

Active RL Algorithms emphasize policy optimization:

- ADP with Exploration Function: Converts passive agents to active ones by assigning higher weights to unexplored actions [24]

- Q-Learning: Learns action-value functions rather than state utilities, enabling action selection without a transition model [24]

Integrated Workflows: Active Learning with Generative AI for Molecular Optimization

Advanced Implementation Architecture

Recent advances integrate active learning with generative AI models to create powerful molecular optimization pipelines. One notable implementation combines a variational autoencoder (VAE) with two nested active learning cycles that iteratively refine predictions using chemoinformatics and molecular modeling predictors [6].

Diagram 2: Integrated VAE-Active Learning Workflow for Molecular Generation featuring nested optimization cycles for chemical space exploration and affinity refinement [6].

Experimental Results and Validation

This integrated workflow demonstrated significant success in real-world applications:

- For CDK2 inhibitors: Generated novel scaffolds distinct from known inhibitors; from 9 synthesized molecules, 8 showed in vitro activity with one exhibiting nanomolar potency [6]

- For KRAS inhibitors: Identified 4 molecules with potential activity through in silico methods validated by CDK2 assay frameworks [6]

- Achieved exploration of novel chemical spaces tailored for specific targets while maintaining synthetic accessibility and drug-likeness [6]

Table 3: Essential Research Tools for Active Learning Implementation in Molecular Optimization

| Tool/Category | Specific Examples | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Active Learning Platforms | Schrödinger Active Learning Applications [22], DeepChem [20] | Provides integrated workflows for molecular screening and optimization | Commercial and open-source options available; consider integration with existing pipelines |

| Molecular Representation | Morgan Fingerprints [21], MAP4 [21], SMILES [6] | Encodes molecular structure for machine learning algorithms | Morgan fingerprints shown effective for synergy prediction; graph representations capture topology |

| Cheminformatic Oracles | Synthetic Accessibility predictors, Drug-likeness filters (e.g., Lipinski's rules) | Filters generated molecules for practical feasibility | Critical for ensuring generated molecules can be synthesized and tested |

| Physics-Based Oracles | Molecular docking (e.g., Glide [22]), FEP+ calculations [22] | Provides reliable affinity predictions using physical principles | More reliable than data-driven methods in low-data regimes; computationally intensive |

| Cellular Context Features | Gene expression profiles (e.g., from GDSC [21]) | Incorporates biological system information into predictions | Significant impact on prediction quality; minimal 10 genes sufficient for convergence in synergy studies |

| Benchmarking Datasets | O'Neil [21], ALMANAC [21], ChEMBL [20] | Provides standardized data for model training and validation | Essential for comparative performance assessment; chronological splits reflect real-world scenarios |

The comparative analysis reveals distinct advantages and optimal applications for each learning paradigm in drug discovery contexts. Active learning demonstrates superior performance when labeled data is scarce or expensive to acquire, with experimental results showing 5-10× higher hit rates in synergistic drug combination screening and 70% top-hit recovery with only 0.1% of computational cost in virtual screening [21] [22]. Passive learning remains effective when comprehensive, high-quality labeled datasets already exist, though it lacks the adaptive capabilities of active approaches. Reinforcement learning offers unique advantages for sequential decision-making problems, with active RL enabling greater exploration and adaptation in dynamic environments.

For molecular optimization research, the emerging best practice integrates active learning with generative AI models, creating self-improving cycles that simultaneously explore novel chemical spaces while focusing on molecules with higher predicted affinity and better synthetic accessibility [6]. This approach successfully addresses key challenges in drug discovery, including limited target-specific data, synthetic accessibility concerns, and the need for generalization beyond training data distributions. As the field advances, the strategic combination of these paradigms—leveraging their complementary strengths—will accelerate the discovery and optimization of therapeutic compounds across diverse target classes.

The Critical Need for Active Learning in Vast Chemical Space Exploration

The exploration of chemical space for developing new materials, electrolytes, and pharmaceuticals represents one of the most formidable challenges in modern science. The sheer scale is astronomical—estimates suggest the space of potentially drug-like molecules may encompass 10^60 to 10^100 compounds, far exceeding the number of stars in the observable universe [25]. Traditional experimental approaches, where each data point can take "weeks, months to get," are utterly infeasible for navigating such immensity [25]. This exploration bottleneck has driven the adoption of machine learning (ML). However, conventional ML faces its own constraint: its effectiveness is often dependent on massive, labeled datasets that are equally impractical to acquire. It is within this context that active learning (AL) has emerged as a transformative framework, enabling efficient navigation of chemical space by strategically selecting the most informative data points for experimentation and computation.

What is Active Learning? A Paradigm Shift in Experimentation

Active learning is a subfield of artificial intelligence characterized by an iterative feedback process. Unlike traditional "one-shot" machine learning models trained on static datasets, an AL system starts with a minimal set of labeled data, builds a model, and then uses that model to intelligently select which unlabeled data points would be most valuable to label next [26]. These newly acquired data are then fed back into the model, enhancing its performance for the next cycle [26]. This creates a closed-loop system that prioritizes learning efficiency.

This approach stands in stark contrast to passive learning, where a model simply receives a fixed, pre-selected dataset without any strategic input into which data is most useful to learn from [27] [28]. In the context of scientific discovery, passive learning corresponds to traditional high-throughput screening, where a vast library of compounds is tested in a non-adaptive manner. Active learning, by contrast, is an adaptive process that "selects informative data points for labeling on the basis of model-generated assumptions," dramatically reducing the number of experiments required to reach a desired performance level [26].

Comparative Analysis: Active Learning Versus Alternative Approaches

To objectively evaluate the performance of active learning, it is essential to compare it against other common strategies for molecular optimization. The table below summarizes key findings from multiple studies.

Table 1: Performance Comparison of Active Learning Against Alternative Screening Methods

| Study Focus | Alternative Method(s) | Active Learning Approach | Key Performance Results |

|---|---|---|---|

| Battery Electrolyte Screening [25] | Exhaustive experimental screening of one million compounds (infeasible) | Active learning starting from 58 data points with experimental feedback | Identified 4 high-performing electrolytes after testing ~70 candidates (0.007% of the space) |

| Small Molecule Affinity & ADMET Optimization [20] | Random Sampling; K-means sampling; BAIT batch selection | Novel deep batch AL (COVDROP) maximizing joint entropy and diversity | Consistently lower RMSE across datasets; significant potential saving in experiments needed |

| SARS-CoV-2 Mpro Inhibitor Design [29] | Random selection from virtual library | FEgrow workflow with AL prioritization of R-groups and linkers | Identified 3 active compounds; discovered designs highly similar to known hits automatically |

| Photosensitizer Discovery [30] | Static machine learning on pre-defined datasets | Unified AL with hybrid quantum mechanics/ML and adaptive acquisition | Superior prediction of energy levels; 15-20% better test-set MAE than static baselines |

The data consistently demonstrates that active learning outperforms passive and random strategies. In drug discovery, AL "compensates the shortcomings" of both high-throughput and virtual screening by making the exploration process data-efficient and adaptive [26]. For instance, the COVDROP method developed by Sanofi researchers showed rapid performance improvement, "very quickly lead[ing] to better performance when compared to other methods" on ADMET and affinity datasets [20]. This translates directly into reduced experimental costs and accelerated project timelines.

Experimental Protocols: How Key Active Learning Studies Were Conducted

Battery Electrolyte Discovery with Minimal Data

A landmark study from the University of Chicago provides a compelling protocol for AL with minimal initial data [25].

- Objective: To identify novel battery electrolytes from a virtual space of one million candidates.

- Initial Dataset: Started with an exceptionally small set of only 58 known data points [25].

- AL Workflow: The team ran seven iterative active learning campaigns. In each cycle:

- The model suggested a batch of approximately 10 electrolyte candidates.

- Researchers actually built batteries with these electrolytes and cycled them to obtain real-world performance data (discharge capacity).

- These experimental results were fed back into the model to refine its predictions for the next cycle [25].

- Validation: The ultimate validation was experimental, confirming that the final selected electrolytes rivaled state-of-the-art performance.

Drug Discovery: Optimizing Compounds for SARS-CoV-2 Mpro

Another study illustrates the application of AL in structure-based drug design [29].

- Objective: To design and prioritize inhibitors of the SARS-CoV-2 main protease (Mpro) from on-demand chemical libraries.

- Tools: The FEgrow software was used to build congeneric ligand series in the protein binding pocket, employing hybrid ML/molecular mechanics potential energy functions for optimization [29].

- AL Workflow:

- A virtual library of compounds was generated by combining a rigid ligand core with flexible linkers and R-groups.

- Compounds were built and scored using an expensive objective function (GNINA CNN scoring).

- These initial scores trained a machine learning model to predict the performance of the vast untested chemical space.

- The AL algorithm selected the next most promising batch of compounds for evaluation, balancing exploration and exploitation.

- The loop continued, with the model being updated iteratively [29].

- Outcome: The workflow prioritized 19 compounds for purchase and testing, of which three showed reproducible activity in a biochemical assay [29].

The following diagram visualizes the core iterative workflow common to these active learning protocols:

Figure 1: The Active Learning Cycle for Molecular Optimization

Implementing an active learning framework for chemical discovery requires a suite of computational tools and resources. The table below details key components of the research toolkit as used in the featured studies.

Table 2: Essential Research Toolkit for Active Learning in Molecular Discovery

| Tool/Resource | Category | Primary Function | Example Use Case |

|---|---|---|---|

| FEgrow [29] | Software Package | Builds and scores congeneric ligand series in protein binding pockets. | Growing R-groups and linkers for SARS-CoV-2 Mpro inhibitors. |

| DeepChem [20] | ML Library | Provides deep learning models for atomistic systems; a foundation for building AL pipelines. | Developing and testing new batch active learning methods (COVDROP). |

| GNINA [29] | Scoring Function | A convolutional neural network used to predict protein-ligand binding affinity. | Serving as the objective function for ranking designed compounds in FEgrow. |

| RDKit [29] | Cheminformatics | A core toolkit for cheminformatics and molecular manipulation. | Handling molecule merging, conformation generation, and descriptor calculation. |

| xTB-sTDA [30] | Quantum Chemistry | Fast semi-empirical quantum method for geometry optimization and excited-state calculation. | High-throughput labeling of photophysical properties (S1/T1 energies). |

| Chemprop-MPNN [30] | Machine Learning | A message-passing neural network for accurate molecular property prediction. | Serving as the surrogate model to predict properties and uncertainties. |

| Enamine REAL Database [29] | Chemical Library | A vast database of readily synthesizable ("on-demand") compounds. | Seeding the chemical search space with synthetically tractable candidates. |

The exploration of vast chemical spaces for advanced materials and therapeutics is a defining scientific challenge of our time. The evidence from battery research, drug discovery, and materials science converges on a single conclusion: active learning is not merely a useful tool but a critical necessity. By transforming the discovery process from a static, resource-intensive endeavor into a dynamic, adaptive, and iterative loop, AL provides a practical path forward. It directly addresses the core constraints of time, cost, and data scarcity. As the field matures, the integration of more sophisticated AI, automated experimentation, and open-source frameworks will only amplify its impact, solidifying active learning as the foundational paradigm for the next generation of molecular innovation.

Advanced Active Learning Methods and Their Real-World Drug Discovery Applications

Explorative vs. Exploitative vs. Balanced Active Learning Strategies

In molecular optimization research, active learning (AL) strategies are crucial for navigating vast chemical spaces. These strategies can be categorized as explorative, exploitative, or balanced, each with distinct advantages and trade-offs. This guide provides an objective comparison of their performance, supported by experimental data and detailed protocols, to inform their application in drug discovery.

The exploration-exploitation dilemma is a fundamental challenge in decision-making. In molecular optimization, this translates to a choice between:

- Exploration: Sampling novel regions of chemical space to gather new information and discover potentially valuable, unknown scaffolds.

- Exploitation: Focusing on known, promising regions to optimize and refine existing lead compounds for specific properties like binding affinity.

A balanced strategy aims to dynamically integrate both approaches, using explorative tactics to avoid local minima and exploitative tactics to refine promising candidates [31] [32]. In drug discovery, this is often operationalized through active learning (AL), an iterative feedback process that prioritizes computational or experimental evaluation based on model-driven uncertainty or diversity criteria to maximize information gain while minimizing resource use [6].

Quantitative Performance Comparison Table

The following table summarizes the core characteristics and typical outcomes associated with each strategic approach.

| Strategy | Primary Objective | Typical Molecular Output | Key Strengths | Inherent Risks & Limitations |

|---|---|---|---|---|

| Explorative | Maximize novelty and diversity of chemical space explored [32]. | Novel scaffolds with high diversity [6]. | Discovers new chemotypes; avoids local maxima; excellent for initial discovery [6]. | High risk of generating non-viable (e.g., non-synthesizable) molecules; may miss optimization of known leads [6]. |

| Exploitative | Optimize known, high-value regions for specific properties (e.g., affinity) [32]. | Refined analogs of known lead series. | High efficiency in improving specific traits (e.g., potency); lower failure rate in synthesis and assay [6]. | High risk of getting stuck in local optima; limited chemical novelty in output [6]. |

| Balanced | Systematically balance the trade-off between novelty and optimization [6]. | Diverse, novel, and drug-like molecules with high predicted affinity [6]. | Mitigates risks of pure strategies; generates synthesizable, novel, and potent candidates [6]. | Increased algorithmic and implementation complexity [6]. |

Experimental Protocols and Workflows

Protocol for a Balanced Active Learning Strategy in Drug Design

A state-of-the-art balanced strategy integrates a generative model with nested active learning cycles. The workflow below was tested on CDK2 and KRAS targets, generating novel, drug-like molecules with high predicted affinity and synthesis accessibility [6].

1. Data Representation and Initial Training:

- Data Representation: Represent training molecules as SMILES strings, which are then tokenized and converted into one-hot encoding vectors for model input [6].

- Initial Training: A Variational Autoencoder (VAE) is first trained on a general molecular dataset to learn viable chemical structures. It is then fine-tuned on a target-specific training set to initialize its understanding of target engagement [6].

2. Nested Active Learning Cycles: The core of the balanced strategy involves two nested feedback loops [6]:

- Inner AL Cycle (Exploration & Filtering): The generated molecules are evaluated by a chemoinformatics oracle for key properties like drug-likeness, synthetic accessibility (SA), and novelty (dissimilarity from known molecules). Those passing the thresholds are used to fine-tune the VAE, guiding subsequent generation toward more viable and novel chemical space [6].

- Outer AL Cycle (Exploitation & Affinity Optimization): After several inner cycles, accumulated molecules are evaluated by an affinity oracle (e.g., molecular docking simulations). High-scoring molecules are transferred to a "permanent-specific set" and used to fine-tune the VAE, exploiting regions of chemical space with high predicted target affinity [6].

3. Candidate Selection and Validation:

- Promising candidates from the permanent set undergo more intensive molecular modeling (e.g., binding stability simulations) before final selection for synthesis and in vitro biological testing [6].

Comparative Experimental Findings

- Performance on CDK2: This balanced VAE-AL workflow generated molecules with novel scaffolds distinct from known CDK2 inhibitors. Of the molecules selected for synthesis, 8 out of 9 showed in vitro activity, with one exhibiting nanomolar potency [6].

- Performance on KRAS: For a target with a sparsely populated chemical space, the workflow identified 4 molecules with potential activity, demonstrating its ability to explore effectively and generate viable candidates even with limited starting data [6].

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and components essential for implementing the active learning strategies discussed.

| Research Reagent / Component | Function in the Workflow |

|---|---|

| Variational Autoencoder (VAE) | A generative model that learns a compressed representation (latent space) of molecular structures, enabling the generation of novel molecules [6]. |

| Active Learning (AL) Cycles | An iterative protocol that selects the most informative molecules for evaluation, maximizing learning efficiency and guiding the generative model [6]. |

| Chemoinformatics Oracle | A computational filter that predicts key properties like synthetic accessibility (SA) and drug-likeness to ensure generated molecules are viable [6]. |

| Affinity Oracle (e.g., Docking) | A physics-based or machine learning predictor that estimates target binding affinity, allowing for the prioritization of potent molecules [6]. |

| Absolute Binding Free Energy (ABFE) Simulations | A high-fidelity computational method used for final candidate validation, providing a more accurate prediction of binding strength before synthesis [6]. |

| Performance Metrics (Novelty, Diversity, Affinity) | Quantitative measures used to evaluate the success of a campaign, balancing the objectives of exploration and exploitation [6]. |

The choice between explorative, exploitative, and balanced strategies is not one-size-fits-all. Explorative strategies are most valuable in the earliest stages of a project or when seeking breakthrough innovations for undrugged targets. Exploitative strategies become critical later in the pipeline for lead optimization. However, the most robust and effective approach for a full discovery campaign is often a balanced strategy.

The data demonstrates that integrated balanced strategies, particularly those combining generative AI with active learning, can successfully manage the exploration-exploitation trade-off. They yield concrete experimental results, producing novel, diverse, and potent molecules while managing the risk of generating non-viable compounds, thereby opening new avenues in drug discovery [6].

The application of active learning in molecular optimization represents a paradigm shift in drug discovery and materials science. This machine learning approach allows algorithms to steer iterative experimentation, accelerating and de-risking the identification of optimal molecular structures [33]. However, traditional active learning methods face significant challenges during early project stages where training data is scarce. With limited data, models may perform poorly, and exploitation strategies can lead to analog identification with limited scaffold diversity [34]. These limitations constrain the exploration of chemical space and potentially overlook superior molecular candidates.

The ActiveDelta framework emerges as an innovative solution to these challenges. Introduced by Fralish and Reker, this adaptive approach leverages paired molecular representations to predict improvements from the current best training compound, fundamentally rethinking how molecular optimization prioritizes further data acquisition [34] [35]. By focusing on relative improvements rather than absolute property predictions, ActiveDelta addresses core limitations of standard active learning implementations, particularly in low-data regimes commonly encountered in practical research settings.

Understanding the ActiveDelta Methodology

Core Conceptual Framework

ActiveDelta fundamentally reimagines the molecular optimization process by shifting from absolute property prediction to relative improvement forecasting. Where standard machine learning models predict absolute property values for individual molecules, ActiveDelta employs molecular pairing to directly learn and predict property differences between compounds [34]. This approach mirrors how experienced medicinal chemists think about molecular optimization—focusing on incremental improvements from existing lead compounds rather than evaluating each molecule in isolation.

The framework operates through a sophisticated pairing strategy. During training, data is structured through cross-merged pairs where each molecular pair includes the property difference (Δ) as the learning target [34]. For prediction, the single most potent molecule in the training set is paired with every molecule in the learning set, creating a focused evaluation of potential improvements. The compound showing the greatest predicted enhancement is then selected for inclusion in the next iteration of active learning [34]. This targeted selection mechanism allows ActiveDelta to make more informed decisions with limited data.

Implementation Architectures

The ActiveDelta concept has been successfully implemented across multiple machine learning architectures, demonstrating its versatility:

ActiveDelta Chemprop (AD-CP): Utilizes a two-molecule version of the directed Message Passing Neural Network (D-MPNN) Chemprop architecture, specifically modified to process molecular pairs [34]. This implementation operates with significantly fewer training epochs (5 versus 50) compared to standard Chemprop, indicating more efficient learning from paired data.

ActiveDelta XGBoost (AD-XGB): Employs tree-based gradient boosting with concatenated molecular fingerprints. The radial chemical fingerprints (Morgan Fingerprint, radius 2, 2048 bits) of each molecule in the pair are combined to create enriched feature representations [34].

These implementations were rigorously compared against standard active learning approaches using single-molecule Chemprop, XGBoost, and Random Forest models, all evaluated under identical experimental conditions across 99 benchmarking datasets [34].

Experimental Design & Benchmarking Protocol

Dataset Curation and Preparation

The evaluation utilized 99 Ki datasets from ChEMBL, curated using the SIMPD (Simulated Medicinal Chemistry Project Data) algorithm to create realistic time-based splits that mimic actual drug discovery projects [34]. This approach generated training and test sets with an 80:20 ratio while maintaining consistency for target id, assay organism, assay category, and BioAssay Ontology format. Duplicate molecules were systematically removed to prevent bias.

For initial active learning cycles, two random datapoints were selected from each original training dataset, with the remaining training datapoints forming the learning dataset pool [34]. This sparse initialization deliberately created challenging low-data conditions representative of early-stage discovery projects. Each active learning experiment was repeated three times with unique starting datapoint pairs to ensure statistical robustness and account for variability in initial conditions.

Active Learning Workflow

The experimental protocol followed a structured iterative process:

- Initialization: Begin with two random training compounds from the target domain

- Model Training: Train machine learning models on available training data

- Compound Selection:

- Standard approach: Select compound with highest predicted potency

- ActiveDelta: Select compound with greatest predicted improvement from current best

- Iteration: Add selected compound to training set and repeat

This process continued for 100 iterations, with comprehensive evaluation at each step to track performance progression as more data became available. Test sets were strictly reserved for final evaluation and never used during the active learning selection process [34].

ActiveDelta vs Standard Active Learning Workflow Comparison

Performance Comparison: ActiveDelta vs. Standard Approaches

Compound Identification Efficacy

The core metric for evaluation was each method's ability to identify the most potent compounds—specifically those within the top ten percentile of potency in both learning and external test sets [34]. Across 99 benchmarking datasets and three independent replicates, ActiveDelta implementations consistently outperformed standard approaches.

Table 1: Performance Comparison in Identifying Potent Compounds

| Method | Most Potent Compounds Identified (Average ± SD) | Scaffold Diversity (Murcko Scaffolds) | External Test Set Accuracy |

|---|---|---|---|

| AD-Chemprop | Significantly higher than standard methods | Highest diversity | Most accurate identification |

| AD-XGBoost | Significantly higher than standard methods | High diversity | High accuracy |

| Standard Chemprop | Lower than AD implementations | Lower diversity | Lower accuracy |

| Standard XGBoost | Lower than AD implementations | Lower diversity | Lower accuracy |

| Random Forest | Lowest performance | Lowest diversity | Lowest accuracy |

The superiority of ActiveDelta was statistically validated using the non-parametric Wilcoxon signed-rank test across all replicates, confirming that the performance advantages were not due to random chance [34]. This consistent outperformance demonstrates the robustness of the molecular pairing approach across diverse target classes and chemical spaces.

Chemical Diversity Assessment

Beyond pure potency identification, ActiveDelta demonstrated a critical advantage in maintaining scaffold diversity throughout the optimization process. When evaluated using Murcko scaffold analysis, ActiveDelta-selected compounds exhibited significantly greater structural variety compared to standard approaches [34]. This diversity is crucial in real-world drug discovery, where varied molecular scaffolds provide flexibility in addressing synthesis challenges, pharmacokinetic optimization, and intellectual property considerations.

The enhanced diversity emerges from ActiveDelta's fundamental mechanics. By focusing on predicted improvements from the current best compound rather than absolute potency, the method naturally explores broader chemical space instead than converging on local optima represented by structural analogs [34]. This property makes ActiveDelta particularly valuable during early discovery phases where understanding structure-activity relationships across diverse chemotypes is essential.

Technical Implementation & Research Toolkit

Essential Research Reagents and Computational Tools

Successful implementation of ActiveDelta requires specific computational tools and chemical data resources:

Table 2: Essential Research Reagents and Tools for ActiveDelta Implementation

| Resource Type | Specific Implementation | Function in ActiveDelta Framework |

|---|---|---|

| Benchmark Data | 99 Ki datasets from ChEMBL [34] | Provides standardized benchmarking across diverse targets |

| Deep Learning Framework | Chemprop with D-MPNN [34] | Graph-based neural network for molecular pairs |

| Tree-Based Framework | XGBoost with GPU acceleration [34] | Gradient boosting with paired fingerprint inputs |

| Molecular Representation | Radial Chemical Fingerprints (Morgan, radius 2, 2048 bits) [34] | Concatenated fingerprints for paired molecular representations |

| Statistical Validation | Wilcoxon signed-rank test [34] | Non-parametric statistical analysis of performance differences |

| Diversity Metrics | Murcko scaffold analysis [34] | Quantification of chemical structural diversity |