Active Learning for Small Dataset Scenarios: Maximizing Efficiency in Drug Discovery and Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on leveraging active learning (AL) to overcome the critical challenge of small, expensive-to-label datasets.

Active Learning for Small Dataset Scenarios: Maximizing Efficiency in Drug Discovery and Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging active learning (AL) to overcome the critical challenge of small, expensive-to-label datasets. It covers the foundational principles of AL as a subfield of artificial intelligence that strategically selects the most informative data points for labeling. The content explores key methodological approaches, including query strategies and their integration into automated machine learning (AutoML) pipelines, with specific applications in virtual screening and molecular property prediction. It addresses common troubleshooting and optimization challenges, such as algorithm selection and performance variability, and provides validation through comparative benchmarks of AL strategies against random sampling. The goal is to equip scientists with the knowledge to build robust predictive models while substantially reducing data acquisition costs and time.

What is Active Learning and Why is it a Game-Changer for Data-Scarce Research?

Fundamental Concepts & FAQs

1. What is active learning in machine learning? Active learning is a supervised machine learning approach that strategically selects the most informative data points from an unlabeled pool for human annotation. Its primary objective is to minimize the labeled data required for training while maximizing the model's performance, which is particularly beneficial when labeling data is costly, time-consuming, or scarce [1].

2. How does active learning differ from passive learning? In passive learning, the model is trained on a fixed, pre-defined labeled dataset. In contrast, active learning uses a query strategy to iteratively select the most informative samples for labeling and training, making it more adaptable and data-efficient [1].

3. Why is active learning especially important for research with small datasets? In fields like materials science and drug development, acquiring labeled data is often prohibitively expensive as it requires expert knowledge, specialized equipment, and time-consuming procedures. Active learning addresses this by optimizing data acquisition, enabling the construction of robust predictive models while substantially reducing the volume of labeled data required [2].

4. What is the typical workflow for an active learning experiment? A pool-based active learning framework for a regression task typically follows this iterative process [2]:

- Initialization: Start with a small labeled dataset, (L = {(xi, yi)}{i=1}^l), and a large pool of unlabeled data, (U = {xi}_{i=l+1}^n).

- Model Training: Train an initial model on the labeled set.

- Query Strategy: Use an active learning strategy to select the most informative sample, (x^*), from the unlabeled pool.

- Annotation: Obtain the label (y^*) for the selected sample (simulated by an oracle in benchmarks).

- Update Sets: Add the newly labeled sample to the training set: (L = L \cup {(x^, y^)}) and remove it from (U).

- Model Update: Retrain the model on the expanded training set. Repeat steps 3-6 until a stopping criterion (e.g., performance plateau or budget exhaustion) is met.

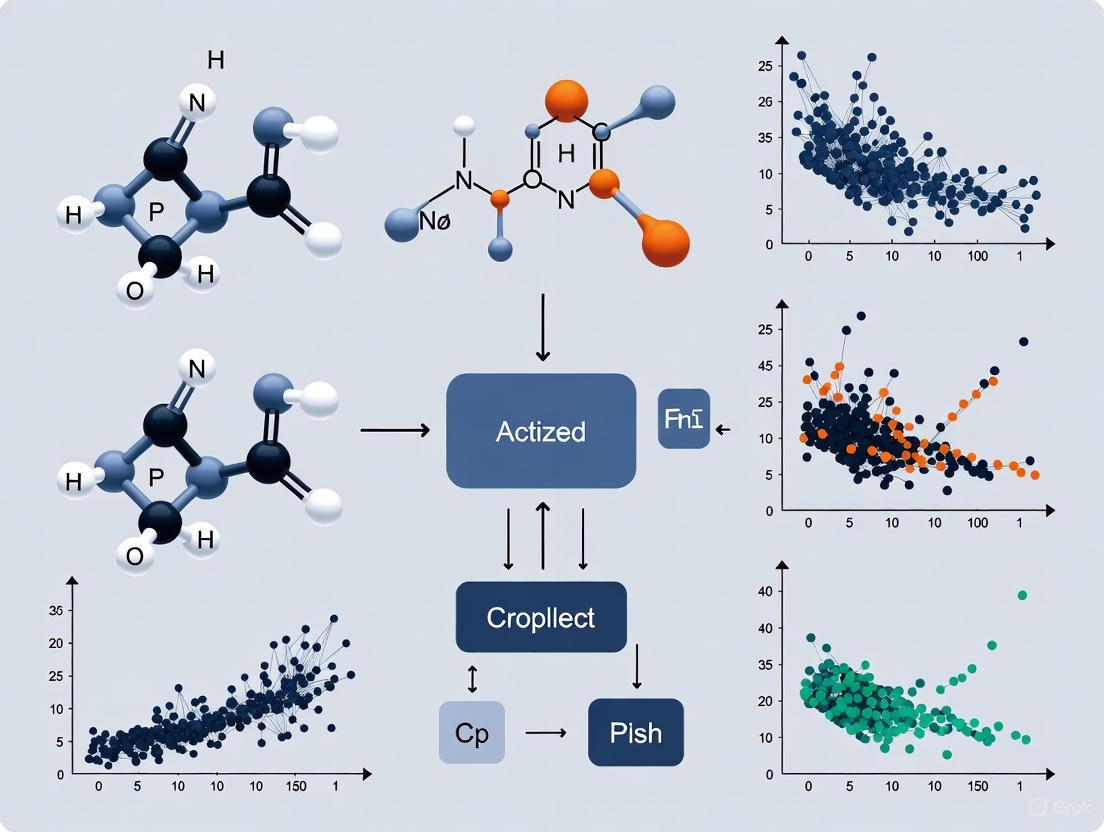

The following diagram illustrates this iterative workflow:

Troubleshooting Common Experimental Issues

1. Issue: My active learning model's performance has plateaued despite adding new samples.

- Potential Cause: The query strategy may no longer be selecting informative samples, or the model may have exhausted the "easy" gains from the initial pool.

- Solution:

- Switch Query Strategies: If you began with an uncertainty-based method, try a diversity-based or hybrid strategy to explore new regions of the feature space [2].

- Inspect Data Distribution: Analyze the characteristics of the newly selected samples. If they are very similar to existing data, the strategy may be stuck. A hybrid diversity strategy can help [2].

- Re-evaluate Stopping Criterion: Implement a formal stopping rule based on performance convergence on a held-out validation set to avoid wasting resources [2].

2. Issue: The model performance is unstable when integrated with an AutoML pipeline.

- Potential Cause: The surrogate model within the AutoML system can change between iterations (e.g., switching from a linear model to a tree-based ensemble), causing shifts in hypothesis space and uncertainty calibration. This is known as model drift [2].

- Solution:

- Benchmark Robust Strategies: Select AL strategies known to be robust under dynamic model changes. The benchmark by suggests that uncertainty-driven (like LCMD or Tree-based-R) and diversity-hybrid (like RD-GS) strategies tend to outperform early in the acquisition process even when the model changes [2].

- Standardize the Learner: If possible, configure your AutoML system to restrict the model search space to a single, consistent model family for the duration of the AL experiment to ensure stability.

3. Issue: Strong performance on the validation set does not generalize to a held-out test set.

- Potential Cause: The active learning strategy may have overfit to the validation set by selectively choosing samples that improve validation metrics without learning generally applicable patterns.

- Solution:

- Use a Separate Test Set: Ensure your test set is completely held out and not used in any part of the sample selection or model validation process [2].

- Cross-Validation: Within the AutoML workflow, use cross-validation on the labeled training set for model selection and hyperparameter tuning to get a more robust performance estimate [2].

- Analyze Out-of-Distribution Performance: If possible, test the model on a separate, challenging dataset to ensure it has not just memorized the training distribution [2].

4. Issue: My initial labeled set is too small, and the first model is performing very poorly.

- Potential Cause: The initial random sample may not be representative of the underlying data distribution, leading to a poor initial model that struggles to select useful subsequent samples.

- Solution:

- Increase Initial Sample Size: If feasible, start with a slightly larger initial labeled set to ensure a more stable base model [2].

- Use Diversity Sampling: For the initial batch, employ a diversity-based sampling strategy (like RD-GS) to maximize the coverage of the input space from the very beginning, which can help bootstrap the process more effectively than random sampling [2] [1].

Active Learning Query Strategies & Selection Guide

The choice of query strategy is critical. The table below summarizes common strategies based on different principles [2] [1].

| Strategy Principle | Description | Best Used When... |

|---|---|---|

| Uncertainty Sampling | Selects data points where the model's prediction is most uncertain (e.g., lowest predicted probability for classification, or highest variance for regression). | The model is somewhat reliable, and you want to quickly refine decision boundaries. Examples include LCMD and Tree-based-R [2]. |

| Diversity Sampling | Selects a set of data points that are most dissimilar to each other and to the existing labeled set. | The initial dataset is very small, and you need to explore and capture the broad structure of the data first [1]. |

| Expected Model Change | Selects data points that would cause the greatest change to the current model (e.g., greatest change in gradient). | Computational resources are adequate, and you want to make the most impactful updates per iteration. |

| Query-By-Committee | Uses a committee of models; selects data points where the committee disagrees the most. | You can train multiple models and want a robust, committee-based measure of uncertainty [2]. |

| Hybrid Methods (e.g., RD-GS) | Combines multiple principles, such as selecting points that are both uncertain and diverse. | You want a balanced approach that avoids the pitfalls of any single method. This is often a robust default choice [2]. |

Based on a comprehensive 2025 benchmark study, the following table compares the early-stage performance of various strategies within an AutoML framework for small-sample regression in science [2]. This provides actionable guidance for researchers.

| Performance Tier | Strategy Type | Specific Examples | Key Findings & Recommendations |

|---|---|---|---|

| Top Performers (Early Stage) | Uncertainty-Driven & Diversity-Hybrid | LCMD, Tree-based-R, RD-GS | Clearly outperform random sampling and geometry-only heuristics early in the acquisition process. They are best for maximizing initial performance gains with minimal data [2]. |

| Weaker Performers (Early Stage) | Geometry-Only Heuristics | GSx, EGAL | Less effective at the start when data is very scarce. They may not select as informative samples as the top-tier strategies [2]. |

| Long-Term Performance | All Methods | All 17 methods tested | As the labeled set grows, the performance gap between different strategies narrows and eventually converges, indicating diminishing returns from active learning under AutoML [2]. |

Advanced Protocols: Benchmarking & AutoML Integration

Detailed Methodology for Benchmarking AL Strategies [2]

This protocol is adapted from recent materials science research and is applicable to other domains using small-sample regression.

Data Preparation:

- Select multiple datasets representative of your domain (e.g., 9 datasets were used in the benchmark).

- Partition data into an initial training pool (80%) and a held-out test set (20%). The test set should never be used for sample selection.

- The initial training pool is split into a very small initial labeled set ( (L) , chosen via random sampling) and a large unlabeled pool ( (U) ).

Experimental Setup:

- AutoML Configuration: Use an AutoML framework configured for automatic model and hyperparameter selection. Set the internal validation to 5-fold cross-validation.

- Compared Strategies: Define the AL strategies to benchmark (e.g., the 17 strategies in the original study, plus a random sampling baseline).

- Evaluation Metrics: Use Mean Absolute Error (MAE) and the Coefficient of Determination (R²) to track performance on the held-out test set.

Iterative Benchmarking Loop:

- For each AL strategy:

- Initialize with the small labeled set (L).

- For a predetermined number of acquisition steps do:

- Train the AutoML model on the current (L).

- Evaluate the model on the held-out test set and record MAE and R².

- Select the top informative sample (x^) from (U) using the specific AL strategy.

- Simulate Annotation: Remove (x^) from (U) and add it with its true label to (L).

- End For

- End For

- For each AL strategy:

Analysis:

- Plot learning curves (model performance vs. number of labeled samples) for all strategies.

- Analyze the performance of different strategies during the early (data-scarce) and late (data-rich) phases of the experiment.

The workflow for this benchmarking protocol is detailed below:

The Scientist's Toolkit: Key Research Reagents

For setting up a robust active learning experimentation environment, the following "research reagents" are essential [2] [1].

| Item / Tool | Function in Active Learning Research |

|---|---|

| AutoML Framework (e.g., AutoSklearn, TPOT) | Automates the selection of machine learning models and their hyperparameters. This is crucial for maintaining a fair benchmark, as it removes manual model tuning bias and allows the focus to remain on data acquisition [2]. |

| Active Learning Library (e.g., modAL, ALiPy) | Provides pre-implemented, standardized versions of various query strategies (uncertainty, diversity, etc.), ensuring correctness and comparability in experiments [1]. |

| Pool-based Simulation Environment | A software framework that manages the initial labeled set, unlabeled pool, and test set. It orchestrates the iterative cycle of training, querying, and updating datasets, as described in the benchmarking protocol [2]. |

| Uncertainty Estimator | For regression tasks, techniques like Monte Carlo Dropout are needed to estimate predictive uncertainty, as there is no direct method like in classification. This is a core component for uncertainty-based query strategies [2]. |

| Diversity Metric (e.g., based on clustering) | A computational method to quantify the dissimilarity between data points. This is the core engine for diversity-based and hybrid sampling strategies [2] [1]. |

Troubleshooting Guide: Common Active Learning Pitfalls

Q: My model's performance has plateaued despite several active learning cycles. What could be wrong? A: This can be caused by several factors. Your query strategy might be selecting redundant or noisy data points. Try switching from a pure uncertainty sampling method to a hybrid strategy that also considers diversity to ensure broad coverage of the data space [1] [3]. Also, verify that your initial labeled dataset is representative of the underlying problem; a poor initial set can hinder all subsequent learning [4].

Q: The labels I receive from human experts are inconsistent. How can I improve model stability? A: Inconsistency in human labels introduces noise that the model can learn. Implement an annotation pipeline with clear, detailed guidelines for your experts [3]. For critical tasks, use multiple annotators per sample and employ a consensus mechanism (e.g., majority vote) to determine the final label. This improves the quality and reliability of your training data [4].

Q: My active learning system is too slow for my experimental workflow. How can I speed it up? A: Consider moving from a sequential (one-by-one) query mode to a batch mode, where multiple samples are selected and labeled in each cycle [5]. While this is computationally more challenging, methods that maximize joint entropy within a batch can ensure both informativeness and diversity, saving significant experimental time [5]. Also, ensure your model architecture is optimized for fast retraining.

Q: How do I know when to stop the active learning cycle? A: Define a stopping criterion upfront. This could be a performance threshold (e.g., model accuracy >95%), a labeling budget, or a plateau in performance improvement over several consecutive cycles [6] [4]. Monitoring the reduction in model uncertainty over the unlabeled pool can also serve as a stopping signal.

Frequently Asked Questions (FAQs)

Q: What is the single most important component of an active learning system? A: The query strategy is critical, as it directly determines which data points are selected for labeling [4] [7]. A well-chosen strategy, such as uncertainty sampling or query-by-committee, ensures that every human annotation effort provides the maximum possible boost to model performance [1].

Q: Can active learning be applied to regression tasks, such as predicting molecular properties? A: Yes, but it requires different uncertainty measures. Instead of classification entropy, methods like Monte Carlo Dropout can be used to estimate the variance of a continuous prediction, which then serves as the basis for the query [2].

Q: How does active learning help with rare or imbalanced events, like finding synergistic drug pairs? A: Active learning is exceptionally powerful for imbalanced data. Because it seeks the most informative samples, it will naturally gravitate towards the rare, uncertain examples that are often the minority class. In drug synergy, this means it can efficiently find the ~3% of synergistic pairs without having to label the entire combinatorial space [8] [4].

Q: What is the role of the "oracle" in this workflow? A: The oracle is the source of ground-truth labels, which is often a human domain expert, such as a biologist or chemist [1] [9]. In a drug discovery context, the oracle can also be an actual wet-lab experiment that measures a property (e.g., binding affinity) for a selected compound [6] [8].*

Active Learning Workflow Visualization

The following diagram illustrates the core iterative cycle of an active learning system.

Diagram 1: The core Active Learning feedback loop.

Query Strategy Comparison

The table below summarizes common query strategies used in the sample selection step.

| Strategy Name | Core Principle | Best Used When | Key Consideration |

|---|---|---|---|

| Uncertainty Sampling [4] [7] | Selects data points where the model's prediction confidence is lowest. | The model is generally well-calibrated and you need to resolve decision boundaries. | Can be misled by noisy or outlier data points. |

| Query-by-Committee (QBC) [4] [3] | Selects points where a committee of models disagrees the most. | You want a robust measure of uncertainty and have computational resources for multiple models. | Computationally expensive; requires maintaining an ensemble. |

| Diversity Sampling [1] [3] | Selects a set of data points that are dissimilar from each other. | You need to ensure the training set broadly represents the entire input space. | May select some easy samples; often combined with uncertainty. |

| Expected Model Change [4] [3] | Selects points that would cause the largest change to the model parameters. | You want to maximize learning progress per labeled sample. | Computationally very intensive to calculate precisely. |

Quantitative Impact of Active Learning

The following table summarizes real-world efficiency gains from applying active learning in scientific domains.

| Application Domain | Key Metric | Performance with Active Learning | Context & Comparison |

|---|---|---|---|

| Drug Synergy Screening [8] | Synergistic Pair Discovery | Found 60% of synergistic pairs by exploring only 10% of the combinatorial space. | Without a strategy, finding the same number required exploring 82% more of the space. |

| Molecular Property Prediction [5] | Model Error (RMSE) | New batch methods (COVDROP) led to faster error reduction compared to random sampling and other methods. | Achieved better performance with fewer labeled samples across ADMET and affinity datasets. |

| General Model Efficiency [4] | Labeling Effort | Achieved human-comparable accuracy with up to 80% less labeling effort. | Particularly efficient for rare categories, requiring up to 8x fewer samples than passive learning. |

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in Active Learning Workflow |

|---|---|

| Unlabeled Compound Pool | The vast chemical space (e.g., from ZINC, PubChem) from which the model selects candidates for testing [6]. |

| High-Throughput Screening (HTS) Assay | Serves as the experimental "oracle" to reliably measure the property of interest (e.g., binding, permeability) for selected compounds [9]. |

| Molecular Descriptors/Features | Numerical representations of molecules (e.g., Morgan Fingerprints, MAP4) that the model uses to learn structure-property relationships [8]. |

| Cellular Feature Data | Genomic or transcriptomic data (e.g., from GDSC) that provides context on the cellular environment, crucial for accurate predictions in tasks like synergy screening [8]. |

| Automated ML (AutoML) Platform | Tools that automate model selection and hyperparameter tuning, which is especially valuable in low-data regimes to ensure optimal model performance [2]. |

Visualizing Query Strategies

The following diagram outlines the logical process for choosing a query strategy based on project goals and constraints.

Diagram 2: A logic flow for selecting an appropriate query strategy.

Frequently Asked Questions

What is active learning and how does it address high data costs? Active Learning (AL) is a supervised machine learning approach that strategically selects the most informative data points for labeling, minimizing the volume of expensive-to-acquire labeled data required to train a robust model [1]. It creates an iterative loop where a model queries a human annotator to label the samples from which it can learn the most, thereby optimizing the learning process and significantly reducing labeling costs compared to traditional passive learning on a fixed dataset [2] [1].

Which active learning strategy should I use for my regression task with materials data? For regression tasks common in materials science, your choice of strategy depends on the size of your current labeled dataset. Benchmark studies reveal that no single strategy is universally best, but performance trends can guide your selection [2]. The table below summarizes the performance of various strategies based on a comprehensive 2025 benchmark.

| Strategy Type | Example Strategies | Performance in Early Stages (Small L) | Performance in Later Stages (Large L) |

|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms baseline [2] | |

| Diversity-Hybrid | RD-GS | Clearly outperforms baseline [2] | |

| Geometry-Only | GSx, EGAL | Less effective than uncertainty/diversity methods [2] | |

| All Strategies | (All 17 tested) | Performance converges with diminishing returns [2] |

Our AI-discovered drug candidate is progressing to clinical trials. What is the success rate for AI-designed drugs? While no AI-discovered drug has received full market approval as of 2024, the clinical success rate so far is highly promising. As of December 2023, AI-developed drugs that have completed Phase I trials show a success rate of 80–90%, which is significantly higher than the traditional drug development success rate of approximately 40% [10].

Are there AI models specifically designed to perform well with small datasets in drug discovery? Yes, specific neural network architectures are engineered for this challenge. Capsule Networks (CapsNet) excel in handling small datasets by capturing spatial hierarchical relationships among features, which helps overcome the common problem of data scarcity in drug discovery [11]. Their ability to preserve spatial information makes them particularly promising for tasks like molecular property prediction.

Troubleshooting Guides

Problem: Slow model convergence and high labeling costs during materials screening.

Solution: Implement an Automated Machine Learning (AutoML) pipeline integrated with an active learning query strategy. This combination automates model selection and hyperparameter tuning while intelligently selecting the most valuable data points to label [2].

Experimental Protocol:

- Initialization: Start with a small, randomly sampled initial labeled dataset (L = {(xi, yi)}_{i=1}^l) from your larger unlabeled pool (U) [2].

- Model Fitting: Fit an AutoML model to the current labeled set (L). The AutoML system will automatically handle model selection (e.g., choosing between tree-based ensembles, neural networks) and hyperparameter optimization using 5-fold cross-validation [2].

- Query Selection: Use the trained model and a chosen AL strategy (e.g., uncertainty sampling like LCMD for early stages) to select the most informative sample (x^*) from the unlabeled pool (U) [2].

- Human Annotation: Obtain the target value (y^*) for the selected sample through experimentation or expert annotation [2].

- Dataset Update: Expand your training set: (L = L \cup {(x^, y^)}) and remove (x^*) from (U) [2].

- Iteration: Repeat steps 2-5 until a stopping criterion is met (e.g., performance plateau or exhaustion of the labeling budget) [2].

This workflow is illustrated in the following diagram, which shows the active learning cycle with an AutoML model. The colors used provide sufficient contrast for readability according to web accessibility guidelines [12].

Problem: Choosing an ineffective query strategy for your specific data.

Solution: Diagnose the problem by comparing your strategy's learning curve against a random sampling baseline. If performance is unsatisfactory, switch strategies based on the current size of your labeled dataset and the nature of your data [2].

Diagnosis & Resolution Protocol:

- Benchmark: Run your AL experiment with multiple strategies, including a random sampling baseline, and plot model performance (e.g., MAE or R²) against the number of labeled samples [2].

- Analyze:

- If performance is poor early on, your strategy is not efficiently identifying the most informative samples. Resolution: Switch to an uncertainty-driven (e.g., LCMD) or diversity-hybrid (e.g., RD-GS) strategy [2].

- If the performance gap between strategies narrows quickly, you may have simple data. Resolution: A simpler, less computationally expensive strategy may be sufficient [2].

- Re-validate: Continue your experiment with the new, more appropriate strategy.

The logic for selecting a query strategy is mapped out below.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Experiment |

|---|---|

| AutoML Framework | Automates the process of selecting the best machine learning model and its hyperparameters for a given dataset, which is crucial for robust performance in data-scarce regimes [2]. |

| Uncertainty Estimation Method | Provides a quantitative measure of a model's confidence in its predictions, which is the core of uncertainty-based active learning strategies [2]. |

| Capsule Networks (CapsNet) | A type of neural network that excels at learning from small datasets by preserving hierarchical spatial relationships in data, making it valuable for drug discovery tasks like molecular design [11]. |

| Pool-Based Sampling Framework | The computational infrastructure that manages the large pool of unlabeled data and facilitates the iterative query-label-update cycle of active learning [2]. |

| Generative AI Models (e.g., for de novo design) | Used to design novel molecular structures in silico, potentially expanding the search space for new drug candidates without immediate lab synthesis [13] [10]. |

## Quantitative Evidence: The Impact of Active Learning

The table below summarizes key performance data from various studies, demonstrating how Active Learning (AL) reduces labeling efforts while achieving high model performance.

| Domain / Application | Key Metric | Performance with Active Learning | Compared to Standard Approach |

|---|---|---|---|

| General ML Tasks (Classification, NER) [14] | Labeled Data Required | Reached target performance with 30-50% less data | Required 100% of labeled data |

| Binary Classification [14] | Data Efficiency | Achieved 90% of final performance using only 40% of labeled data | Required 100% of data for full performance |

| Named Entity Recognition (NER) [14] | Labeling Effort | Reduced the number of labeled sentences by half | Required 100% of sentences to be labeled |

| Drug Discovery (ADMET, Affinity) [5] | Experimental Efficiency | Significant potential savings in the number of experiments needed | Required full set of experiments |

| Aqueous Solubility Prediction [5] | Model Accuracy (RMSE) | COVDROP method quickly led to better performance in fewer cycles | Other methods (e.g., k-means, BAIT, Random) were slower to converge |

## Experimental Protocols for Key Active Learning Strategies

### FAQ: How do I select the most informative data points for labeling?

The core of AL lies in the query strategy. The table below details three common methodologies.

| Component | Uncertainty Sampling | Query-by-Committee (QBC) | Diversity Sampling |

|---|---|---|---|

| Objective | Select data points the current model is most uncertain about [15]. | Select data points that cause the most disagreement among a group of models [16]. | Ensure broad coverage of the data distribution by selecting dissimilar points [14]. |

| Key Procedure | 1. Use model's prediction output (e.g., probability).2. Calculate uncertainty score (e.g., entropy, least confidence, margin).3. Select samples with the highest scores for labeling [17]. | 1. Train multiple models (a "committee") on the current labeled data.2. Have all models predict on unlabeled data.3. Measure disagreement (e.g., vote entropy).4. Select samples with the highest disagreement [15]. | 1. Use clustering (e.g., k-means) on the unlabeled data's feature space.2. Select samples from different clusters or those farthest from existing labeled points [1] [14]. |

| Ideal Use Case | Classification problems with clear decision boundaries and well-calibrated probability scores [14]. | Situations where uncertainty is hard to measure with a single model or to exploit model diversity [14]. | Datasets with inherent repetition or to ensure coverage of edge cases early in the learning process [14]. |

| Considerations | Can overfocus on outliers and noisy data [14]. Requires calibrated confidence estimates. | Computationally more intensive due to multiple models. Can be noisy if committee models are poorly tuned [14]. | May lead to slower gains in model performance compared to uncertainty sampling, as it might select obviously hard examples [14]. |

### FAQ: What is the standard workflow for an Active Learning cycle?

The following diagram illustrates the iterative feedback loop that is central to AL.

### FAQ: How do different query strategies relate to each other?

This diagram maps the strategic relationships between common AL query approaches to help you choose the right one.

## Troubleshooting Common Active Learning Challenges

### FAQ: My initial model is weak and selects poor samples. What can I do?

This is the "cold start" problem. Several strategies can help initialize your AL pipeline effectively [16]:

- Leverage Pre-trained Models: Use a model pre-trained on a similar, larger dataset or a related task. For example, in a project to detect squirrels, a YOLOv5 model pre-trained on the COCO dataset was used as an initial model, even though "squirrel" was not a COCO category [18].

- Train on Available Seed Data: If a domain expert has pre-labeled a small amount of data, train a smaller, simpler model on this seed data to create a sufficient starting point for the AL cycle [18].

- Incorporate Domain Knowledge: Use a "Business Value" or information density approach for the first batch, focusing on labeling data points that are known to be critical, rather than relying solely on the model's uncertain predictions [17].

### FAQ: How do we manage the time of our expensive domain experts?

Working with domain experts like dentists or radiologists requires a respectful and optimized workflow [18]:

- Plan and Calibrate Expectations: Before starting, agree on the average labeling time per sample, the hours per week the expert can dedicate, and the number of images/data points needed per AL cycle. This prevents overburdening and ensures project timelines are realistic [18].

- Optimize the Workflow: Use annotation tools that support pre-labeling, where the model provides a first draft that the expert only needs to correct, significantly speeding up the process [14].

- Address Data Sensitivity: Ensure your AL pipeline is set up with appropriate data privacy and security measures, such as private repositories and controlled access, which is especially critical for medical or corporate IP data [18].

### FAQ: When should we stop the active learning cycle?

Knowing when to stop is crucial for cost-effectiveness. Implement a clear stopping policy [15] [14]:

- Track Performance Metrics: After each iteration, evaluate the model on a held-out validation set using F1, accuracy, or task-specific metrics.

- Plot the Performance Curve: Graph the model's performance against the number of labeled samples used. The AL cycle can be stopped when this curve plateaus, indicating that additional labels are yielding diminishing returns [14].

- Set a Threshold: Define a performance target or a labeling budget in advance. Stop once the model meets the target accuracy or when the budget is exhausted [15].

## The Scientist's Toolkit: Essential Reagents for an Active Learning Lab

The following table lists key computational "reagents" and tools needed to set up an effective AL pipeline in a research environment.

| Tool / Resource | Function / Purpose | Key Features / Use Case |

|---|---|---|

| modAL [14] [16] | A modular, flexible AL framework for Python. | Built on scikit-learn; lightweight and easy to integrate for prototyping various query strategies. |

| DeepChem [5] | A deep-learning library for drug discovery, materials science, and quantum chemistry. | Supports molecular machine learning; the study [5] developed new AL methods compatible with it. |

| DagsHub Data Engine [18] | An end-to-end platform for managing ML projects and data. | Simplifies implementing a complete AL pipeline, including data versioning, labeling, and experiment tracking. |

| Label Studio [14] | An open-source data labeling tool. | Flexible and supports custom workflows; can be integrated with model inference to create a human-in-the-loop system. |

| MLflow [18] | An open-source platform for managing the machine learning lifecycle. | Essential for logging experiments, parameters, and models during the iterative AL process to ensure reproducibility. |

| BAIT [5] | A probabilistic batch active learning method. | Uses Fisher information to optimally select samples; was used as a benchmark in drug discovery research [5]. |

| Query Strategy (Uncertainty) | The algorithm to select the most informative data points. | Core to the AL loop; techniques include least confidence, margin, and entropy sampling [1] [15]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: In a drug discovery context with very limited labeled compounds, what is the most immediate advantage of switching from a passive to an active learning strategy?

A: The most immediate advantage is a significant reduction in labeling costs while maintaining model performance. In Active Learning (AL), a query strategy selectively chooses the most informative data points from your unlabeled pool for annotation [1] [19]. This means you can train an accurate model by labeling only 10% to 30% of a dataset that would be required for passive learning, leading to a 70-95% savings in computational or labeling resources [2]. In practice, this translates to needing far fewer synthesized compounds to be experimentally tested for properties like potency or selectivity, dramatically accelerating early-stage discovery [1] [13].

Q2: My predictive model for material properties is no longer improving as I add more data. Is this a failure of my active learning strategy?

A: Not necessarily. This is a common scenario where the strategy has successfully identified the most informative samples. The performance of different AL strategies tends to converge as the labeled set grows, indicating diminishing returns [2]. This is a sign that you should stop the AL cycle to avoid unnecessary labeling costs. At this point, the solution is to re-evaluate your model's hypothesis space or feature set, not to collect more data. Integrating AutoML can be particularly beneficial here, as it can automatically search for and switch to a more optimal model architecture as the data grows [2].

Q3: How do I choose the right query strategy for my biological dataset? I'm unsure if an uncertainty-based or diversity-based method is better.

A: The optimal strategy often depends on your specific dataset and the stage of learning. Benchmark studies suggest that early in the acquisition process when data is very scarce, uncertainty-driven strategies (like LCMD or Tree-based-R) and diversity-hybrid strategies (like RD-GS) typically outperform others [2]. These methods are designed to find the most informative or representative samples. If you are unable to benchmark strategies yourself, consider using an active learning framework like Libact, which features a meta-algorithm that can automatically select the best strategy for your dataset [20].

Q4: When implementing an active learning pipeline for a new target discovery project, what is a critical first step to ensure success?

A: A critical first step is establishing a high-quality, small set of initial labeled data. The AL process begins with this initial set, and its quality is paramount [2]. If this initial data is not representative of the broader problem space, the AL algorithm may struggle to select useful subsequent samples. Furthermore, you must define a reliable oracle—a source of ground-truth labels, which could be a wet-lab experiment, a high-fidelity simulation, or a domain expert [19]. Ensuring this oracle can provide accurate and consistent labels is essential for the iterative learning process.

Troubleshooting Common Experimental Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Model performance is unstable or degrades during AL cycles. | The query strategy is selecting outliers or noisy data points. The model is overfitting to the peculiarities of the selected samples. | Switch from a pure uncertainty-sampling strategy to a hybrid strategy that also considers diversity or representativeness. This ensures a more balanced training set [2]. |

| The AL algorithm seems to get "stuck" in a local optimum, repeatedly selecting similar data points. | Lack of diversity in the selected samples. The strategy is exploiting one area of the feature space but failing to explore others. | Implement a strategy that explicitly balances exploration and exploitation, such as those modeled as a contextual bandit problem [19]. Alternatively, incorporate a diversity-sampling method like Coreset or VAAL [20]. |

| Integrating AL with a deep learning model leads to poor performance, even with uncertainty sampling. | Deep learning models can produce overconfident probability estimates via the softmax layer, making standard uncertainty measures unreliable [20]. | Use uncertainty estimation methods designed for deep learning, such as Monte Carlo Dropout or Bayesian Active Learning by Disagreement (BALD), which provide better confidence estimates [20]. |

| The cost of querying the oracle (e.g., running a lab experiment) is still too high, even with AL. | The stream-based selective sampling approach might be inefficient, or the oracle itself is a major cost bottleneck. | Ensure you are using a pool-based sampling approach, which evaluates the entire unlabeled pool to find the single most informative sample, maximizing the value of each query [19]. Also, explore if in silico models can serve as a preliminary, cheaper oracle. |

Quantitative Data on Active Learning Performance

Table 1: Benchmark of Active Learning Query Strategies in Materials Science (Regression Tasks)

Data sourced from a 2025 benchmark study evaluating AL strategies within an AutoML framework on small-sample datasets [2].

| Strategy Category | Example Strategies | Early-Stage (Data-Scarce) Performance | Late-Stage (Data-Rich) Performance | Key Principle |

|---|---|---|---|---|

| Uncertainty-Based | LCMD, Tree-based-R | Clearly outperforms random sampling baseline | Converges with other strategies | Queries points where model prediction is most uncertain. |

| Diversity-Hybrid | RD-GS | Clearly outperforms random sampling baseline | Converges with other strategies | Selects samples to maximize coverage and diversity of the training set. |

| Geometry-Only | GSx, EGAL | Performance closer to baseline | Converges with other strategies | Selects samples based on geometric structure of the data space. |

| Random Sampling | (Baseline) | (Baseline for comparison) | (Baseline for comparison) | Selects data points at random (Traditional "Passive" approach). |

Table 2: Comparison of Active vs. Passive Learning in Machine Learning

Synthesized from multiple sources on ML theory and applications [1] [19] [20].

| Feature | Active Learning | Passive Learning (Traditional Supervised) |

|---|---|---|

| Data Selection | Strategic; algorithm selects "informative" samples [1]. | Random or pre-defined; no strategic selection. |

| Labeling Cost | Lower; aims to minimize human annotation [1] [20]. | High; requires a large, fully-labeled dataset. |

| Human Role | Human-in-the-loop (oracle) for queryed labels [19]. | Labeler; typically labels a static set before training. |

| Adaptability | High; can adapt to model's needs with each query [1]. | Low; model is trained once on a static dataset. |

| Typical Workflow | Iterative loop: Train -> Query -> Label -> Update [1] [19]. | Linear: Label -> Train -> Deploy. |

Detailed Experimental Protocols

Protocol 1: Implementing a Pool-Based Active Learning Cycle for a Quantitative Structure-Activity Relationship (QSAR) Model

This protocol is ideal for building a predictive model of compound activity with minimal wet-lab testing.

1. Initialization:

- Input: A large pool of unlabeled data (U) consisting of the chemical feature vectors for thousands of compounds. A very small, initially labeled set (L) is created by randomly selecting a few dozen compounds from U and obtaining their experimental activity values (e.g., IC50).

- Model Training: An initial machine learning model (e.g., a random forest or neural network) is trained on the small labeled set L [1].

2. Iterative Active Learning Loop: The following steps are repeated until a stopping criterion is met (e.g., performance plateau or labeling budget exhausted).

- Step 1 - Prediction and Ranking: The trained model is used to predict the target property for all compounds in the unlabeled pool U.

- Step 2 - Query Strategy Application: A query strategy is applied to rank the unlabeled compounds by their "informativeness." For a QSAR model, Uncertainty Sampling is highly effective. This involves selecting the compound for which the model is most uncertain about its predicted activity [19] [20].

- Step 3 - Oracle Query: The top-ranked compound(s) are sent for experimental testing (the "oracle") to obtain the true activity value.

- Step 4 - Dataset Update: The newly labeled compound(s) are removed from U and added to L.

- Step 5 - Model Update: The machine learning model is retrained from scratch on the now-expanded labeled set L [1]. Using an AutoML tool in this step can automatically find the best model and hyperparameters for the current data [2].

Protocol 2: Benchmarking Multiple Active Learning Strategies with AutoML

This protocol is used to determine the most effective AL strategy for your specific dataset.

1. Experimental Setup:

- Dataset: Start with a fully labeled dataset. Split it into a training pool (80%) and a held-out test set (20%). The training pool is then treated as "unlabeled" by hiding all the labels.

- Initialization: Randomly select a small subset (e.g., 1-5%) from the training pool to serve as the initial labeled set L₀.

- AutoML Configuration: Configure an AutoML system (e.g., AutoSklearn, TPOT) to handle the model selection and training in each cycle, using cross-validation to avoid overfitting [2].

2. Benchmarking Loop:

- For each AL strategy (e.g., Uncertainty Sampling, Query-by-Committee, Diversity Sampling), run an independent AL cycle.

- In each cycle, the AutoML system trains a model on the current L. The model's performance is evaluated on the held-out test set and recorded.

- The AL strategy then selects the top N (e.g., N=10) samples from the "unlabeled" pool to be added to L.

- This process repeats for a fixed number of iterations.

3. Analysis:

- Plot the model performance (e.g., R² score or MAE) against the number of labeled samples used for each strategy.

- The best strategy is the one that achieves the highest performance with the fewest number of labeled samples. The benchmark study [2] indicates that uncertainty and diversity-hybrid strategies typically perform best in the early, data-scarce phase.

Workflow and Relationship Diagrams

Active Learning Workflow

Passive vs Active Learning

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Frameworks for Active Learning Research

| Item Name | Type | Function/Benefit | Reference |

|---|---|---|---|

| modAL | Python Framework | A flexible and modular active learning framework built on scikit-learn, ideal for prototyping various query strategies with minimal code. | [20] |

| Libact | Python Framework | A package designed for pool-based active learning that implements many popular strategies and includes a meta-algorithm for automatic strategy selection. | [20] |

| ALiPy | Python Framework | A module-based framework that supports a very wide range of active learning algorithms and is designed for analyzing and evaluating their performance. | [20] |

| Monte Carlo Dropout | Algorithmic Technique | A method used to estimate prediction uncertainty in deep learning models, which is crucial for effective uncertainty sampling. | [2] [20] |

| AutoML Systems | Tool (e.g., AutoSklearn) | Automates the process of model selection and hyperparameter tuning, which is particularly useful when the optimal model may change during AL cycles. | [2] |

| Uncertainty Sampling | Query Strategy | A foundational strategy that queries the samples for which the model's current prediction is most uncertain. Highly effective for many scientific tasks. | [19] [20] |

| Diversity Sampling (Coreset) | Query Strategy | A strategy that selects a diverse subset of data to ensure the training set is representative of the entire unlabeled pool. | [20] |

| Query-by-Committee | Query Strategy | Involves maintaining a committee of models and querying samples where the committee members disagree the most. | [19] |

Implementing Active Learning: Key Strategies and Real-World Applications in Biomedicine

FAQs and Troubleshooting Guides

FAQ 1: What are the core query strategies in active learning and when should I use each one?

Answer: The three core query strategies are Uncertainty Sampling, Query-by-Committee (QBC), and Diversity Sampling. Each is suited to different experimental goals and data regimes.

- Uncertainty Sampling is most effective when you have a reasonably accurate initial model and aim to refine decision boundaries quickly. It selects instances where the model's prediction is least confident. This method is computationally efficient but can be biased towards the current model and sensitive to outliers [21] [22].

- Query-by-Committee (QBC) is ideal for mitigating the bias of a single model. It uses a committee of models and selects data points where committee members disagree the most. This is particularly useful when the initial hypothesis space is large or a single model is likely to be misled by its initial biases [23] [22].

- Diversity Sampling is crucial in low-data regimes or at the start of an active learning cycle (the "cold start" problem). It aims to select a set of instances that are representative of the overall data distribution, ensuring the model learns a broad foundation before focusing on difficult cases. It is often combined with other strategies to avoid selecting redundant or outlier samples [24] [25].

Research indicates that a hybrid approach, starting with diversity-based sampling before switching to uncertainty-based methods, often yields the strongest and most consistent performance across various labeling budgets [25].

FAQ 2: During batch selection, my Uncertainty Sampling strategy keeps selecting highly similar, redundant instances. How can I resolve this?

Answer: This is a common limitation of naive uncertainty sampling in batch-mode active learning. To resolve this, you need to incorporate a diversity measure alongside the uncertainty criterion. Below are two methodological approaches you can implement.

Approach 1: Hybrid Sampling with Clustering

- Identify Informative Candidates: First, use an uncertainty measure (e.g., margin sampling or entropy) to select a larger pool of the most uncertain instances from the unlabeled pool [21] [26].

- Ensure Diversity: Then, apply a clustering algorithm (like kernel k-means) to this pool of uncertain candidates.

- Select Final Batch: Finally, from each cluster, select one or a few instances for labeling. This ensures the final batch is both uncertain and diverse, covering different regions of the feature space [24].

Approach 2: Direct Diversity Integration

- Use a combined scoring function that explicitly weights both uncertainty and representativeness. For example, the information content of a sample

x_ican be defined as:Infor(x_i) = α * Uncertainty(x_i) * Rep(x_i), whereRep(x_i)is a representativeness measure based on similarity to other unlabeled instances [24].

- Use a combined scoring function that explicitly weights both uncertainty and representativeness. For example, the information content of a sample

Experimental Protocol for Validating the Solution:

- Dataset: Use a standard benchmark dataset (e.g., CIFAR-10, or a public ADMET dataset from ChEMBL for drug discovery) [23] [5].

- Comparison: Run three active learning cycles on the same initial labeled data:

- Baseline: Standard margin sampling.

- Method A: The hybrid clustering approach.

- Method B: A random sampling baseline.

- Metric: Track model accuracy (or RMSE for regression) after each batch is added to the training set. A successful method should show a steeper learning curve than the baseline.

FAQ 3: My uncertainty-based active learning is performing poorly in the initial cycles with very little labeled data. What is the cause and solution?

Answer: This problem is known as the "cold start" problem in active learning [25]. The cause is that the initial model, trained on very little data, is poor and unreliable. Its uncertainty estimates are not a good indicator of which samples are truly informative for improving a robust model; they often just reflect the model's initial biases.

Solution Strategy: Transition to a diversity-first strategy for the initial learning phases.

- Initial Phase: Begin the active learning process with a diversity-based sampling method like TypiClust. This method clusters the data in a pre-trained embedding space and selects the most typical instance from each cluster, ensuring broad coverage of the data distribution [25].

- Transition Point: After building a representative labeled set (a rule of thumb is a total budget of roughly 20 times the number of categories), switch to an uncertainty-based method like margin sampling to refine the decision boundaries [25]. This combined strategy, often called TCM (TypiClust to Margin), has been shown to effectively mitigate the cold start problem [25].

FAQ 4: How can I implement Query-by-Committee for a deep learning model without the cost of training multiple full models?

Answer: Training multiple deep learning models is computationally prohibitive. Instead, you can approximate a committee using these efficient techniques:

- MC Dropout (Monte Carlo Dropout): This is the most common and efficient method. You can use a single model with dropout layers. During prediction, perform multiple forward passes on the same unlabeled instance with dropout enabled. The variation in the outputs across these stochastic passes approximates the model's epistemic uncertainty (the committee's disagreement) [26]. You can then use consensus entropy or vote entropy as your query strategy [26] [22].

- Snapshot Ensembles: Train a single model using a cyclic learning rate schedule that causes it to converge to multiple distinct local minima. Save the model parameters at the end of each cycle to create an "implicit ensemble" without the cost of training multiple models from scratch [26].

- Multi-Head Networks: A single base network can be equipped with multiple output heads (classifiers). Each head can be trained with different initializations or data orders to encourage diversity, creating a committee at a lower cost than training full independent models [26].

Experimental Protocol for MC Dropout QBC:

- Model Setup: Ensure your neural network has dropout layers applied before every weight layer.

- Committee Prediction: For each unlabeled instance

xin the poolU, performNstochastic forward passes (e.g.,N=100) with dropout enabled to getNprobability distributions. - Disagreement Scoring: Calculate the consensus entropy:

- Query: Select the instances with the highest consensus entropy for labeling.

Table 1: Comparison of Core Query Strategies

| Strategy | Core Principle | Advantages | Limitations | Ideal Use Case |

|---|---|---|---|---|

| Uncertainty Sampling [21] [27] [22] | Queries instances where the model is least confident in its prediction. | - Computationally efficient- Directly targets decision boundaries- Easy to implement | - Prone to outlier selection- Biased towards the current model- Suffers from "cold start" | Medium-to-high data regimes, rapid refinement of model boundaries. |

| Query-by-Committee (QBC) [23] [22] | Queries instances where a committee of models most disagrees. | - Reduces model bias- More robust hypothesis exploration | - Computationally expensive (naive implementation)- Requires maintaining multiple models | Scenarios with large hypothesis space or to overcome initial model bias. |

| Diversity Sampling [24] [25] | Queries a set of instances that are representative of the overall data distribution. | - Mitigates "cold start" problem- Avoids redundant queries- Covers the input domain broadly | - Does not directly target model errors- May select easy, non-informative samples | Low-data regimes ("cold start"), initial cycles of active learning. |

Table 2: Uncertainty Measures for (Classification)

| Measure Name | Formula | Interpretation | ||

|---|---|---|---|---|

| Least Confidence [21] [26] | `U(x) = 1 - P_θ(ŷ | x)` | Queries the instance whose most likely prediction is the least confident. | |

| Margin Sampling [21] [22] | `U(x) = 1 - [P_θ(ŷ₁ | x) - P_θ(ŷ₂ | x)]` | Queries the instance with the smallest difference between the top two most probable classes. |

| Entropy [21] [27] [26] | `U(x) = - Σ Pθ(yi | x) log Pθ(yi | x)` | Queries the instance with the highest average "information" or uncertainty over all classes. |

Experimental Protocols

Protocol 1: Implementing and Evaluating a Hybrid Diversity-Uncertainty Strategy

Objective: Compare the performance of a hybrid TCM-like strategy against pure uncertainty and diversity baselines [25].

- Initial Setup:

- Dataset: Select a publicly available dataset relevant to your field (e.g., a molecular property prediction dataset from DeepChem for drug discovery) [5].

- Feature Backbone: Use a self-supervised pre-trained model (e.g., DINO or SimCLR) to extract meaningful feature embeddings for all data points. This is crucial for effective diversity sampling [25].

- Labeled Pool: Start with a very small, randomly selected set of labeled data (e.g., 2% of the dataset).

- Unlabeled Pool: The remaining data.

- Active Learning Cycle - Hybrid Strategy (TCM):

- Phase 1 - Diversity: For the first

kcycles (e.g.,k=3for a tiny budget), use TypiClust for batch selection: a. Perform clustering on the unlabeled pool embeddings. b. Select the most typical instance from each cluster (typicality is the inverse of the average distance to other points in the cluster). - Phase 2 - Uncertainty: For all subsequent cycles, switch to Margin Sampling for batch selection.

- Phase 1 - Diversity: For the first

- Baseline Strategies:

- Run parallel experiments starting from the same initial labeled pool, using only Margin Sampling and only TypiClust.

- Evaluation:

- After each active learning cycle, retrain the model on the accumulated labeled set.

- Evaluate the model on a fixed, held-out test set.

- Plot the performance (e.g., accuracy, RMSE) against the number of labeled instances acquired. The hybrid strategy should outperform both baselines, especially in early and mid-stage learning.

Protocol 2: Implementing Query-by-Committee with MC Dropout

Objective: Set up a QBC strategy using MC Dropout to approximate a model committee without training multiple models [26].

- Model Configuration:

- Design your neural network with dropout layers applied before every weight layer.

- Train the initial model on the small labeled pool with dropout enabled, as is standard.

- Committee Disagreement Scoring:

- For each unlabeled instance

x, performTstochastic forward passes (e.g.,T=100) with dropout enabled, storing the output probability distribution for each pass. - Calculate the consensus entropy for each

x: a. Compute the average output probability distribution across theTpasses:P_C = (1/T) * Σ P_t. b. The acquisition score is the entropy of this consensus:U(x) = - Σ P_C * log(P_C).

- For each unlabeled instance

- Query Selection:

- Rank all unlabeled instances by their consensus entropy in descending order.

- Select the top

binstances (your batch size) for labeling by an oracle.

- Model Update:

- Add the newly labeled

(x, y)pairs to the training set. - Retrain the model on the updated training set.

- Add the newly labeled

Workflow and Strategy Diagrams

Active Learning Core Cycle

Query Strategy Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Active Learning

| Tool / Method | Function | Reference / Implementation |

|---|---|---|

| MC Dropout | Approximates Bayesian neural networks to estimate model uncertainty without ensembles. Enables QBC with one model. | [26] |

| modAL Library | A flexible, modular active learning framework for Python, compatible with scikit-learn. Simplifies implementation of various strategies. | [23] |

| DeepChem | A library for deep learning in drug discovery, ecosystems, and the life sciences. Useful for handling molecular datasets. | [5] |

| Self-Supervised Backbones (e.g., DINO, SimCLR) | Provides high-quality feature embeddings for data, which is critical for the effectiveness of diversity-based sampling methods. | [25] |

| Laplace Approximation | A method to approximate the posterior distribution of model parameters, used for uncertainty estimation in advanced active learning. | [5] |

Frequently Asked Questions (FAQs)

FAQ 1: What are the core components of a hybrid active learning framework for small datasets? A robust hybrid framework integrates two core components: uncertainty quantification and diversity sampling. Uncertainty sampling (e.g., using predictive entropy or Monte Carlo Dropout) identifies data points where the model is most uncertain, thereby targeting knowledge gaps. Diversity sampling (e.g., using core-sets or representative sampling) ensures the selected data points cover a broad and representative area of the input feature space. Combining these principles prevents the model from selecting repetitive, redundant data and helps build a more comprehensive model from limited samples, which is crucial in data-scarce fields like materials science and drug discovery [2] [1].

FAQ 2: How can I quantify uncertainty in a regression task for active learning? Quantifying uncertainty in regression is more complex than in classification. Common effective strategies include:

- Ensemble Methods: Training multiple models and using the variance in their predictions as an uncertainty measure [2].

- Monte Carlo (MC) Dropout: Performing multiple forward passes with dropout enabled at inference time; the variance across outputs estimates epistemic uncertainty [2] [28].

- Probabilistic Models: Using models like Gaussian processes or Bayesian Neural Networks that natively provide predictive distributions [29].

- Hybrid Architectures: Advanced frameworks like HybridFlow use a normalizing flow to model aleatoric (data) uncertainty and a separate probabilistic predictor for epistemic (model) uncertainty, providing a unified and well-calibrated uncertainty estimate [28].

FAQ 3: My model's performance has plateaued despite active learning. What could be wrong? This is a common challenge where the returns from active learning diminish as the labeled set grows [2]. To troubleshoot:

- Verify the Query Strategy: Ensure your hybrid strategy balances exploration (diversity) and exploitation (uncertainty). A strategy overly focused on uncertainty might miss important regions of the feature space.

- Check for Model Capacity: The underlying model (e.g., within an AutoML system) might be too simple to capture the remaining complexity in the data. Allowing the AutoML to explore more complex model families can help [2].

- Re-evaluate Data Quality: Investigate if the newly acquired labels are noisy. Noisy labels can corrupt the model and halt improvement. Implementing a quality control check for new annotations is recommended.

FAQ 4: How do I integrate an active learning loop into an existing AutoML workflow? The integration is an iterative process [2]:

- Initialization: Start with a very small labeled dataset (L) and a large pool of unlabeled data (U).

- AutoML Model Training: Use your AutoML system to automatically select and train the best model on the current (L).

- Query Selection: Use your hybrid uncertainty-diversity strategy to select the most informative batch of samples from (U).

- Annotation & Update: The selected samples are labeled (e.g., by a human expert) and added to (L).

- Iteration: Repeat steps 2-4 until a performance target or labeling budget is met. The key is that the surrogate model inside the active learning loop is dynamically updated and potentially changed by the AutoML controller in each cycle [2].

Troubleshooting Guides

Issue 1: Poor Model Calibration and Unreliable Uncertainty Estimates

| Symptom | Potential Cause | Solution |

|---|---|---|

| Model is consistently overconfident in its incorrect predictions [28]. | The loss function (e.g., standard NLL) may be overestimating aleatoric uncertainty to compensate for model error. | Replace the standard Negative Log-Likelihood (NLL) loss with a Beta-NLL loss, which better balances the mean squared error and the uncertainty term, leading to better calibration [28]. |

| Uncertainty scores do not correlate with actual model error, especially on out-of-distribution data. | The model architecture is not properly capturing both aleatoric and epistemic uncertainty. | Implement a hybrid framework like HybridFlow that decouples the estimation of aleatoric and epistemic uncertainty, which has been shown to improve calibration and the correlation between quantified uncertainty and model error [28]. |

| The active learner selects outliers instead of informative in-distribution samples. | The diversity component of the query strategy is too weak. | Incorporate a geometry-based or representative sampling heuristic (e.g., RD-GS) that emphasizes data coverage. This hybrid approach ensures selected samples are both uncertain and representative of the overall data structure [2]. |

Experimental Protocol: Implementing a Hybrid Query Strategy

Objective: To actively learn a predictive model for a materials property or drug activity using a small initial dataset by strategically querying for new labels. Materials: See "Research Reagent Solutions" table below. Software: Python with libraries such as scikit-learn, PyTorch/TensorFlow (for probabilistic models), and an AutoML framework like AutoSklearn or TPOT.

Methodology:

- Data Preparation: Partition your full dataset into an initial labeled set (L) (1-5%), a large unlabeled pool (U) (~70%), and a fixed test set (T) (25%). Ensure the test set is held out and not used for query selection.

- Base Model Configuration: Configure your AutoML system for a regression task. Set it to optimize for Mean Absolute Error (MAE) or Coefficient of Determination ((R^2)) and use 5-fold cross-validation for robust validation [2].

- Define the Hybrid Query Strategy: Combine an uncertainty-based strategy with a diversity-based strategy. For example:

- Uncertainty: Use the predictive variance from an ensemble of models or an MC Dropout network.

- Diversity: Use a Core-Set approach, which selects points that are diverse relative to the current labeled set (L) by solving a k-Center problem.

- Hybrid: Rank the pool (U) by uncertainty and then select the top-k most diverse points from the uncertain shortlist.

- Active Learning Loop: a. Train the AutoML model on the current (L). b. Evaluate the model on the test set (T) and record performance metrics (MAE, (R^2)). c. Use the hybrid query strategy to select the top (n) (e.g., (n=10)) most informative samples from (U). d. "Label" these samples (in simulation, use the ground truth; in practice, send to an expert annotator). e. Add the newly labeled samples to (L) and remove them from (U). f. Repeat steps a-e for a fixed number of iterations or until performance converges.

The workflow for this protocol is summarized in the following diagram:

Issue 2: Inefficient Sample Selection in Early Active Learning Rounds

| Symptom | Potential Cause | Solution |

|---|---|---|

| The model fails to improve significantly in the first few rounds of active learning. | The initial model is too poor for uncertainty estimates to be reliable. The query strategy is not suited for the cold-start problem. | Adopt stream-based selective sampling with an uncertainty threshold for the initial rounds. This allows for efficient, on-the-fly assessment of incoming data points [1]. Alternatively, use a diversity-hybrid method like RD-GS early on, which has been shown to outperform geometry-only heuristics when data is extremely scarce [2]. |

Quantitative Performance Data

Table 1: Benchmarking Hybrid Active Learning Strategies in AutoML [2]

This table summarizes the performance of various strategies on small-sample regression tasks in materials science. Performance is measured by Mean Absolute Error (MAE) and Coefficient of Determination ((R^2)) at different stages of the active learning process.

| Strategy Type | Example Methods | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) |

|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Lower MAE, Higher (R^2) | Performance converges with other methods |

| Diversity-Hybrid | RD-GS | Lower MAE, Higher (R^2) | Performance converges with other methods |

| Geometry-Only | GSx, EGAL | Higher MAE, Lower (R^2) | Performance converges with other methods |

| Baseline | Random Sampling | Highest MAE, Lowest (R^2) | Performance converges with other methods |

Table 2: Clinical Impact of an Uncertainty-Aware Hybrid Framework [29]

This table shows the performance improvements of a hybrid, uncertainty-aware optimization framework for cardiovascular disease detection, demonstrating its real-world clinical utility.

| Metric | Standard AI Model | Hybrid Uncertainty-Aware Framework | Clinical Impact |

|---|---|---|---|

| AUC | 0.839 | 0.853 (+1.4%) | ~50 additional correct diagnoses per 10,000 patients [29]. |

| Calibration Error | Baseline | 20% Reduction | More reliable prediction confidence for clinicians [29]. |

| Robust Performance | Degrades under noise | Maintained >80% AUC | Reliable operation under realistic clinical noise and missing data [29]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Hybrid Active Learning Pipeline

| Item | Function in the Experiment |

|---|---|

| AutoML Platform (e.g., AutoSklearn, TPOT) | Automates the process of model selection, hyperparameter tuning, and feature preprocessing, which is essential for maintaining a robust and up-to-date surrogate model within the active learning loop [2]. |

| Probabilistic Modeling Library (e.g., Pyro, TensorFlow Probability) | Provides the tools to build models capable of quantifying predictive uncertainty, such as those using MC Dropout, ensemble methods, or Bayesian Neural Networks [2] [28]. |

| High-Quality Unlabeled Data Pool | A large, representative collection of unlabeled data from the target domain (e.g., compounds, materials formulations). This is the pool from which the most informative samples will be selected for labeling [2] [30]. |

| Expert Annotation Resource | Access to domain experts (e.g., materials scientists, medicinal chemists) for providing accurate labels for the selected samples, which is often the most costly and critical part of the workflow [1] [31]. |

| Validation Test Set | A held-out dataset with high-quality labels, used exclusively for evaluating model performance after each active learning round to track progress and determine stopping points [2]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary benefits of integrating Active Learning with an AutoML framework?

Integrating Active Learning (AL) with AutoML creates a powerful, automated system for data-efficient model development. The primary benefits include [2] [1] [32]:

- Reduced Labeling Costs: AL can reduce annotation requirements by 50–80% compared to random sampling by strategically selecting the most informative data points for labeling.

- Improved Model Performance with Small Data: This combination is particularly effective in small-data scenarios, such as materials science or drug development, where it helps build robust predictive models while substantially reducing the volume of labeled data required.

- Accelerated Experimentation Cycles: AutoML automates model selection and hyperparameter tuning, while AL minimizes data collection bottlenecks. Together, they can help models reach production quality 3–5x faster.

FAQ 2: In an AL-AutoML pipeline, my model performance has stopped improving despite continued sampling. What could be the cause?

This is a common scenario where the law of diminishing returns applies to active learning. A recent benchmark study noted that as the labeled set grows, the performance gap between different AL strategies narrows and they eventually converge, indicating diminishing returns from AL under AutoML [2]. We recommend:

- Verify Your Stopping Criterion: This is a natural signal to end the AL loop. Define a clear stopping criterion upfront, such as a performance threshold (e.g., MAE < 0.2) or an annotation budget.

- Re-evaluate the Query Strategy: The current strategy might be over-exploiting a specific region of the data space. Consider switching to, or incorporating, a diversity-based strategy (e.g.,

RD-GS) to ensure broader coverage of the data distribution [2]. - Check for Data Quality Issues: The newly sampled data points might be outliers or contain label noise that hinders learning. Implement a data validation step before adding new samples to the training set.

FAQ 3: My AutoML model is a "black box." How can I effectively implement uncertainty sampling for an AL query?

This challenge arises because the inner workings and uncertainty calibration of models generated by AutoML can be non-transparent. You can address this with the following strategies [2] [33]:

- Leverage Model Ensembles: AutoML often produces ensemble models as its final output. You can use the "Query-by-Committee" (QBC) strategy, which measures disagreement (e.g., using Vote Entropy or Consensus Entropy) among the models in the ensemble to quantify uncertainty [32].

- Utilize Inherent Uncertainty Metrics: Some AutoML frameworks for specific tasks provide built-in uncertainty estimates. For instance, when using AutoML for computer vision or NLP, you can sometimes access the model's confidence scores or leverage techniques like Monte Carlo Dropout to approximate predictive uncertainty [2].

- Proxy Uncertainty with AutoML Output: If direct uncertainty is not available, you can use the output of the AutoML model. For regression, you might calculate the variance of predictions from multiple cross-validation models. For classification, standard techniques like Least Confidence, Margin Sampling, or Entropy Sampling can be applied to the model's probability outputs [1] [32].

FAQ 4: How do I design the initial labeled dataset to ensure my AL-AutoML pipeline starts effectively?

The initial seed set is critical for bootstrapping the AL cycle. A poor initial set can lead the model down a suboptimal path [32].

- Prioritize Diversity and Representativeness: The initial set doesn't need to be large, but it should broadly cover the major categories or the expected range of your data distribution. Avoid sampling from a single cluster or category.

- Use Unsupervised Pre-screening: Before starting the AL loop, perform a clustering analysis (e.g., using k-means) on the entire unlabeled pool. Select a few instances from each cluster to form your initial seed set, ensuring a representative starting point.

- Incorporate Domain Knowledge: If possible, collaborate with domain experts (e.g., drug development scientists) to curate a small, high-quality seed set that includes known critical cases or edge cases.

Troubleshooting Guides

Issue: High Variance in Model Performance During Iterative Retraining

Problem Description After each AL query and AutoML retraining cycle, the model's performance metrics (e.g., MAE, R²) fluctuate significantly, making it difficult to gauge true progress.

Diagnostic Steps

- Check AutoML Stability: Configure your AutoML job to use a fixed random seed. Run the same AutoML job (with identical data and settings) multiple times to see if the output model and its performance are stable. High variance here indicates an unstable AutoML configuration.

- Analyze Selected Samples: Examine the characteristics of the samples selected by the AL strategy over several iterations. If the selections are highly dissimilar from one another and from the existing training data, it can cause the AutoML process to reconfigure the entire pipeline drastically each time, leading to instability.

- Validate the Validation Method: Ensure that your AutoML setup uses a robust validation method, such as 5-fold cross-validation, to evaluate candidate models. A simple train-validation split might give unreliable performance estimates on small, actively learned datasets [2].

Resolution

- Increase Automation and Reduce Randomness: Ensure your AutoML setup is as deterministic as possible by fixing random seeds.

- Adjust the AL Query Strategy: If using a pure uncertainty-based method, switch to a hybrid strategy that balances uncertainty with diversity (e.g.,

RD-GS). This can provide a more stable and representative set of new samples in each batch [2]. - Implement a Batch Retraining Policy: Instead of retraining the model after every single query, collect a batch of queries (e.g., 5-10 samples) before triggering the AutoML retraining job. This can smooth out the learning process.

Issue: The AutoML Model Fails to Generalize Despite High Training Performance

Problem Description The model produced by the AutoML pipeline performs well on the training and validation data but shows poor performance on a held-out test set or in production.

Diagnostic Steps

- Test for Sampling Bias: The AL strategy may have selected a non-representative subset of data, causing the AutoML model to overfit to a specific region. Check the distribution of the actively selected dataset against the distribution of the full pool or a held-out test set.

- Review AutoML Featurization: AutoML often applies automated feature engineering. Inspect the featurization steps to see if they are creating features that are too specific to the training cohort and do not generalize well.

- Check for Data Leakage: Ensure that the held-out test set is never used during the active learning cycle, not even for uncertainty estimation. The model should only interact with the unlabeled pool and the current labeled training set.

Resolution

- Incorporate a Static Test Set: Always maintain a completely separate, static test set that is never used for training or querying. Use it only for the final evaluation of the model after the AL process is complete to get an unbiased performance estimate [34] [2].

- Customize Featurization in AutoML: Most AutoML frameworks (like Azure ML) allow you to customize or turn off certain featurization options. If you have domain knowledge, use it to guide the feature engineering process and prevent the creation of spurious features [34].

- Enforce Diversity in Queries: As a preventive measure, use an AL strategy that explicitly incorporates diversity to ensure the training data covers the input space more broadly. Strategies based on core-set selection or clustering are designed for this purpose [1] [32].

Experimental Protocol & Benchmarking

For researchers aiming to replicate or benchmark the integration of AL with AutoML, the following methodology, derived from a recent comprehensive study, provides a robust framework [2].

Workflow Diagram

The following diagram illustrates the iterative, closed-loop feedback system of an integrated AL-AutoML pipeline.

Quantitative Performance of AL Strategies in AutoML

The table below summarizes the performance of various AL strategies when used with AutoML on small-sample regression tasks, as benchmarked in a recent study. MAE (Mean Absolute Error) and R² (Coefficient of Determination) are key metrics for regression. The "Early Phase" refers to the data-scarce initial stages of the AL process [2].