Active Learning Glide: Revolutionizing Ultra-Large Library Docking for Drug Discovery

This article provides a comprehensive overview for researchers and drug development professionals on leveraging Active Learning Glide for ultra-large library virtual screening.

Active Learning Glide: Revolutionizing Ultra-Large Library Docking for Drug Discovery

Abstract

This article provides a comprehensive overview for researchers and drug development professionals on leveraging Active Learning Glide for ultra-large library virtual screening. It covers the foundational principles of overcoming the computational bottlenecks of traditional docking, details the step-by-step methodology and practical applications, addresses common troubleshooting and optimization strategies, and presents rigorous validation and comparative performance data against other state-of-the-art methods. The synthesis of current research and case studies demonstrates how this powerful approach enables the efficient exploration of billion-compound libraries, significantly accelerating hit identification in early-stage drug discovery.

The Ultra-Large Library Challenge: Why Traditional Docking Falls Short

The landscape of early drug discovery has been fundamentally transformed by the emergence of ultra-large chemical libraries, which have expanded the accessible chemical space from millions to billions of readily synthesizable compounds. This expansion represents both an unprecedented opportunity and a significant computational challenge. The "make-on-demand" approach, exemplified by libraries such as Enamine REAL, leverages robust chemical reactions and available building blocks to create vast virtual collections of molecules, with over 20 billion compounds documented in recent studies [1]. Within this prodigious chemical space lies the potential to discover novel chemotypes and potent inhibitors for challenging therapeutic targets, moving beyond the constraints of traditional screening collections.

The core challenge lies in efficiently navigating this vastness. Exhaustive virtual screening of multi-billion compound libraries using physics-based molecular docking requires immense computational resources, often making such campaigns prohibitively expensive and time-consuming [2] [3]. Active learning has emerged as a powerful strategic solution to this problem, enabling intelligent, iterative exploration of chemical space by focusing computational resources on the most promising regions [4]. This document details the application of Active Learning Glide, a methodology that combines Schrödinger's high-accuracy docking tool with machine learning to achieve efficient and effective screening of ultra-large libraries.

The Scale of Modern Chemical Libraries

Ultra-large "make-on-demand" libraries have fundamentally redefined the scale of virtual screening. The following table quantifies the scope and performance of several key libraries and screening campaigns from recent literature.

Table 1: Characteristics of Ultra-Large Chemical Libraries and Screening Campaigns

| Library / Study | Size | Key Characteristics | Reported Hit Rate |

|---|---|---|---|

| Enamine REAL (2025) | >20 billion compounds | Constructed from simple building blocks via robust reactions; high synthetic accessibility [1]. | N/A |

| Lyu et al. (2019) - AmpC β-lactamase | 99 million compounds | 44 compounds synthesized; 5 were active inhibitors (11% hit rate); discovery of a novel 77 nM phenolate inhibitor [5]. | 11% |

| Lyu et al. (2019) - D4 Dopamine Receptor | 138 million compounds | 549 compounds synthesized; 81 new chemotypes discovered, 30 were sub-micromolar [5]. | Hit rate fell monotonically with docking score |

| CACHE LRRK2 WDR Optimization (2025) | ~5.5 billion compounds (screened) | Active Learning-guided RBFE calculations identified 8 novel inhibitors from 35 tested [6]. | 23% |

| RosettaVS (2024) - KLHDC2 & NaV1.7 | Multi-billion compounds | Discovered 7 hits for KLHDC2 (14% hit rate) and 4 hits for NaV1.7 (44% hit rate) [3]. | 14%-44% |

The value of screening these expansive libraries is captured by the concept of enrichment factor, which measures a method's ability to prioritize active compounds over inactive ones. In benchmark studies, advanced scoring functions have demonstrated their capability to significantly enrich for true binders, with one study reporting an enrichment factor of 16.72 in the top 1% of ranked molecules, meaning actives were nearly 17 times more concentrated in the top rank than in a random distribution [3]. This strong enrichment is critical for the feasibility of ultra-large screening.

Active Learning Glide: A Protocol for Ultra-Large Library Docking

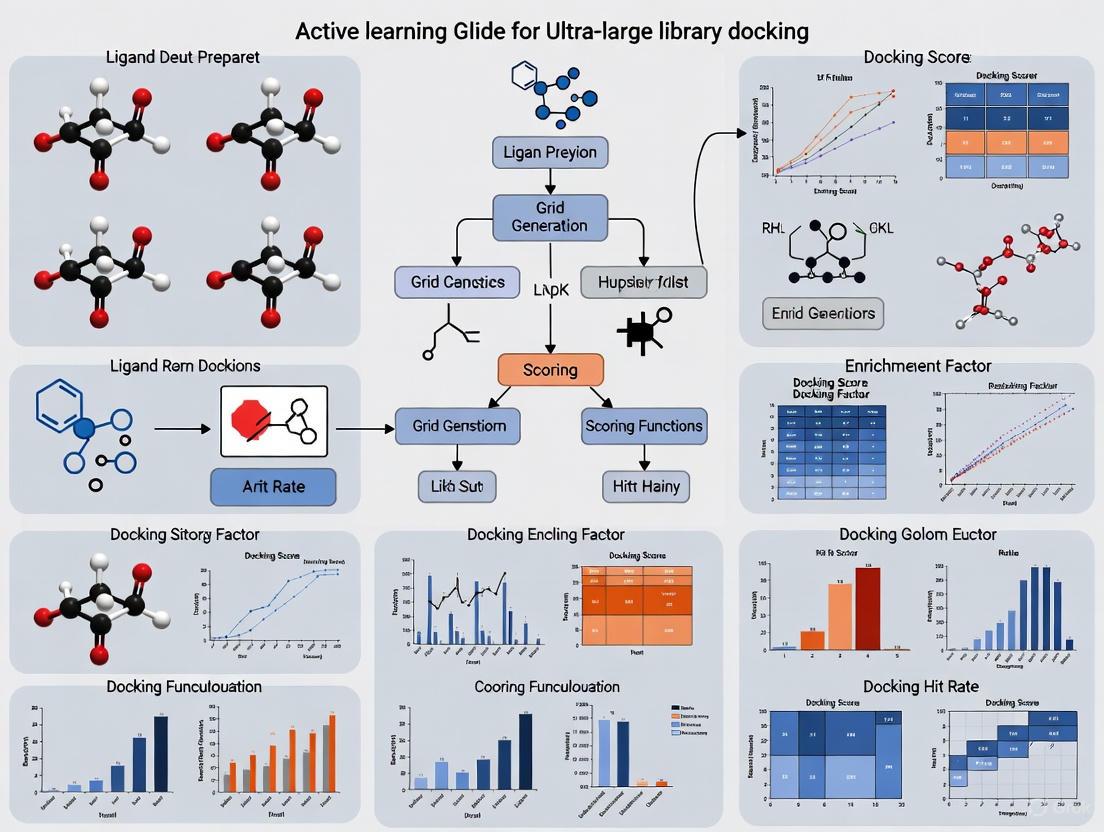

Active Learning Glide provides a structured framework to overcome the computational barrier of screening billions of compounds. The workflow iterates between machine learning prediction and molecular docking to rapidly converge on high-scoring candidates.

The following diagram illustrates the iterative cycle of the Active Learning Glide protocol.

Detailed Protocol Steps

Step 1: Library and Target Preparation

- Ligand Library: Obtain the ultra-large library in a suitable format (e.g., SMILES). The Enamine REAL library is a prime example of a make-on-demand library suitable for such campaigns [5].

- Protein Target Preparation: Prepare the protein structure using the Protein Preparation Wizard in Schrödinger's Maestro. This includes adding hydrogens, assigning bond orders, optimizing H-bond networks, and performing a restrained minimization to remove steric clashes.

Step 2: Initial Random Sampling and Docking

- Randomly select a subset of 10,000 - 50,000 compounds from the full library [2].

- Dock this initial set using a standard precision Glide (Glide SP) protocol to generate the first set of docking scores and poses. This serves as the initial training data for the machine learning model.

Step 3: Machine Learning Model Training

- Train a target-specific machine learning model (e.g., a Graph Neural Network) to predict docking scores based on the 2D molecular structures of the compounds that have already been docked [2]. The model learns to associate chemical features with favorable docking scores.

Step 4: Prediction and Acquisition of the Next Batch

- Use the trained ML model to predict the docking scores for all remaining compounds in the library.

- Select the next batch of compounds for docking using an acquisition function. Common strategies include:

Step 5: Iteration and Final Selection

- Iterate Steps 2-4 until a predefined stopping criterion is met (e.g., a set number of iterations, computational budget, or sufficient number of high-scoring compounds identified).

- In the final stage, the top-ranking compounds from the active learning cycle are re-docked using the more rigorous Glide XP (Extra Precision) mode and an MM-GBSA correction for final ranking [7]. This step provides high-confidence predictions for experimental testing.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of an active learning-guided virtual screening campaign requires a suite of specialized software tools and compound libraries.

Table 2: Key Research Reagent Solutions for Active Learning Docking

| Tool / Resource | Type | Primary Function in Workflow |

|---|---|---|

| Schrödinger Active Learning Glide [4] | Software Platform | Core application that integrates the docking engine (Glide) with the active learning machine learning model to manage the iterative screening workflow. |

| Enamine REAL Library [1] [5] | Make-on-Demand Chemical Library | An ultra-large virtual library of synthetically accessible compounds, providing the vast chemical space to be explored. |

| Glide (Docking Engine) [8] | Molecular Docking Software | Performs the physics-based docking calculations, sampling ligand conformations and poses within the binding site and scoring them. |

| Maestro Graphical Interface [7] | User Interface | Provides the visual environment for protein preparation, workflow setup, and analysis of docking results and binding poses. |

| Protein Preparation Wizard [7] [8] | Protein Structure Tool | Prepares and refines protein structures from PDB files for docking, ensuring correct protonation states and minimized structures. |

| FEP+ Protocol Builder [7] | Free Energy Perturbation Tool | Uses active learning to generate accurate simulation protocols for subsequent lead optimization via free energy calculations. |

Case Study & Experimental Protocol: Discovering LRRK2 WDR Inhibitors

A recent study in the CACHE Challenge #1 provides a robust experimental protocol for hit optimization using an active learning workflow, yielding an impressive 23% hit rate [6].

Experimental Protocol: Active Learning-Guided Hit Optimization

Step 1: Library Filtering by Structural Motifs

- Objective: Focus the screening effort on analogs of known hit molecules.

- Procedure:

- Define SMARTS patterns based on the Murcko scaffolds of the initial hits.

- Filter a multi-billion compound library (e.g., Enamine REAL) using these patterns to create a focused set of "close analogs" and a more diverse set of "general analogs" [6].

Step 2: Multi-Stage Docking and Selection for RBFE Calculations

- Objective: Select a manageable number of compounds for computationally intensive free energy calculations.

- Procedure:

- Perform template docking for close analogs using representative protein structures from MD simulations.

- For general analogs, first perform fast docking without a template, filter by score, and then perform template docking.

- Apply filters based on docking score and RMSD to the template pose to select a final set for free energy calculations (~25,000 molecules in the cited study) [6].

Step 3: Active Learning for Relative Binding Free Energy (RBFE) Calculations

- Objective: Efficiently predict binding affinities for thousands of analogs.

- Procedure:

- Initialization: Compute RBFEs using Molecular Dynamics Thermodynamic Integration (MD TI) for a small pre-AL set of molecules.

- Model Training: Train an ML model to predict Absolute Binding Free Energies (ABFEs) from molecular structures.

- Iteration:

- The ML model predicts ABFEs for the entire AL set.

- Select the top-predicted compounds for the next round of MD TI RBFE calculations.

- Re-train the ML model with the new data.

- Repeat for multiple iterations (e.g., 7-8 rounds) [6].

- Experimental Validation: Select top-ranked compounds for synthesis and experimental binding affinity validation via techniques like Surface Plasmon Resonance (SPR) [6].

The paradigm of virtual screening has irrevocably shifted from million-compound to billion-compound libraries, embracing the vastness of chemical space as a route to discovering novel and potent therapeutics. Active Learning Glide stands as a critical enabling technology for this new paradigm, combining the accuracy of physics-based docking with the efficiency of machine learning. By intelligently guiding the search, it allows researchers to extract profound value from ultra-large libraries, achieving high hit rates and discovering unprecedented chemotypes at a fraction of the computational cost of exhaustive screening. This powerful synergy between physical simulation and machine intelligence is setting a new standard for efficient and effective early drug discovery.

Ultra-large, make-on-demand chemical libraries, such as the Enamine REAL Space which contains over 37 billion compounds, present unprecedented opportunities for hit identification in structure-based drug discovery [1] [9]. The conventional approach to exploiting these libraries utilizes virtual high-throughput screening (vHTS) with molecular docking, wherein each compound is individually scored against a protein target. However, this brute-force methodology faces prohibitive computational bottlenecks when applied to ultra-large libraries [1] [9]. This Application Note quantifies the time and cost constraints of exhaustive docking and contextualizes these bottlenecks within research focused on active learning Glide methodologies for efficient ultra-large library screening.

The Scale of the Challenge

Brute-force docking of ultra-large libraries requires immense computational resources, creating significant practical and economic barriers for research institutions.

Table 1: Computational Cost Estimates for Brute-Force Docking of Ultra-Large Libraries

| Library | Library Size | Estimated Docking Cost | Key Bottlenecks |

|---|---|---|---|

| Enamine REAL Space [9] | 37 billion compounds | ~$3,000,000 (AWS) | Computational time, financial cost, infrastructure |

| eMolecules eXplore [9] | 7 trillion compounds | Prohibitive (Not calculated) | Data storage, molecular preparation, scoring time |

| General Ultra-Large Library | Billions to Trillions | Extremely high, often infeasible | Full ligand & receptor flexibility, post-processing |

The fundamental challenge stems from the vastness of the chemical space. Docking each compound individually, especially with methods that account for critical ligand and receptor flexibility, consumes substantial computational time [1]. This process is inherently sequential and difficult to parallelize efficiently at the required scale. Furthermore, the financial cost of provisioning the necessary cloud computing or high-performance computing (HPC) resources becomes prohibitive, as illustrated by the multi-million-dollar estimate for a single screen [9]. Finally, the downstream tasks of storing, managing, and analyzing the terabytes of resulting data from billions of docking poses present additional, non-trivial bottlenecks.

Experimental Protocols for Benchmarking

To objectively evaluate docking methodologies, standardized benchmarking protocols are essential. The following sections detail common experimental setups for assessing both brute-force and accelerated screening approaches.

Protocol for Exhaustive Docking Benchmarking

This protocol is designed to establish a baseline performance metric for virtual screening campaigns [1] [9].

- Target Selection and Preparation: Select protein targets with experimentally determined structures (e.g., from the PDB). Prepare the protein structure by adding hydrogen atoms, assigning partial charges, and defining the binding site.

- Library Preparation: Obtain the library of compounds (e.g., Enamine REAL Space). Prepare ligand structures by generating tautomers and protonation states at a physiological pH, and performing energy minimization.

- Docking Setup: Configure the docking software (e.g., Glide, RosettaLigand).

- High-Performance Computing Execution: Distribute the docking jobs across a large-scale CPU cluster. The scale required is immense; for example, a library of 1 million compounds may require thousands of CPU cores running for several days.

- Data Collection: Collect the top docking score (e.g., GlideScore, Rosetta Energy Units) for every compound in the library.

- Hit Identification: Rank all compounds by their docking scores and select the top-scoring molecules (e.g., top 0.001%) for subsequent analysis or experimental validation.

Protocol for Active Learning Glide Screening

This protocol leverages machine learning to minimize docking computations while recovering most top-performing hits [4].

- Initialization: Dock a small, chemically diverse subset of the ultra-large library (e.g., 1% of the total compounds or a dedicated diverse library like the Hit Locator Library) against the prepared target using Glide.

- Model Training: Use the docking scores from the initial set to train a machine learning (ML) model. This model learns to predict docking scores based on the chemical features of the molecules.

- Iterative Sampling and Prediction (Active Learning Loop):

- The ML model predicts the docking scores for all remaining undocked compounds in the full library.

- Select a new batch of compounds for which the model is most uncertain or which are predicted to be high-binders.

- Dock this new, much smaller batch of compounds with Glide to get their actual scores.

- Add the new data (compounds and their actual scores) to the training set and update the ML model.

- Final Hit Selection: After several iterations, the ML model identifies the most promising compounds. The final set of top-ranked molecules is selected from the pool of compounds that were actually docked during the active learning process.

Visualizing the Workflows

The contrasting workflows of brute-force docking and active learning Glide are depicted below, highlighting the fundamental differences in their approach to sampling chemical space.

Brute-Force Docking Workflow

Active Learning Glide Workflow

Quantitative Performance Comparison

The computational efficiency of active learning Glide, compared to a brute-force approach, is demonstrated by direct performance metrics and real-world applications.

Table 2: Performance Comparison: Brute-Force vs. Active Learning Glide

| Screening Method | Computational Efficiency | Hit Recovery Rate | Reported Case Studies |

|---|---|---|---|

| Brute-Force Docking | 100% of library docked; Cost ~$3M for 37B library [9] | 100% of top hits identified (theoretical) | Feasible only for smaller libraries (millions of compounds) |

| Active Learning Glide | ~70% of top hits recovered for 0.1% of the cost [4] | High recovery of top-performing hits | WLS inhibitor identification from 500M compound library [10] |

| Other Accelerated Methods | Varies by method; e.g., HIDDEN GEM uses ~600,000 dockings [9] | Strong enrichment (up to 1000-fold) [9] | REvoLd: 869-1622x improved hit rate vs. random [1] |

Active learning Glide demonstrates a transformative improvement in efficiency. It achieves this by intelligently selecting which compounds to dock based on an iteratively refined machine learning model, focusing computational resources on the most promising regions of the chemical space [4]. This strategy has been successfully deployed in practice, for example, to identify a first-in-class small molecule inhibitor of the Wnt transporter Wntless (WLS) from a library of nearly 500 million compounds [10].

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Resources for Ultra-Large Library Screening

| Resource | Type | Function in Research | Access |

|---|---|---|---|

| Enamine REAL Space [9] | Make-on-Demand Chemical Library | Provides ultra-large, synthetically accessible chemical space for virtual screening. | Commercial (Enamine) |

| Glide [4] | Molecular Docking Software | High-accuracy ligand-receptor docking for structure-based screening. | Commercial (Schrödinger) |

| Active Learning Applications [4] | Machine Learning Platform | Accelerates ultra-large library screening by training ML models on docking data. | Commercial (Schrödinger) |

| RosettaLigand & REvoLd [1] | Flexible Docking & Evolutionary Algorithm | Enables docking with full flexibility and explores combinatorial libraries via evolutionary algorithms. | Academic (Rosetta) |

| HIDDEN GEM [9] | Integrated Workflow | Combines docking, generative modeling, and similarity searching for hit discovery. | Academic |

| Amazon Web Services (AWS) [9] | Cloud Computing Platform | Provides scalable computational resources required for large-scale docking campaigns. | Commercial |

Core Principles and Quantitative Performance of Active Learning

Active Learning is a specialized machine learning paradigm in which the learning algorithm interactively queries a human user or an information source to label data with the desired outputs [11]. This approach strategically selects the most informative data points for labeling to maximize model performance while minimizing labeling costs [12]. In the context of drug discovery, this translates to a powerful method for exploring ultra-large chemical libraries with extreme efficiency.

Performance Metrics in Drug Discovery Applications

The application of Active Learning to ultra-large library screening has demonstrated remarkable performance improvements over traditional exhaustive screening methods. The table below summarizes key quantitative findings from recent implementations:

Table 1: Performance Metrics of Active Learning in Ultra-Large Library Screening

| Method/Platform | Library Size | Computational Efficiency | Hit Recovery Rate | Reference |

|---|---|---|---|---|

| Active Learning Glide (Schrödinger) | Billions of compounds | ~0.1% of brute-force cost | ~70% of top-scoring hits recovered | [4] |

| REvoLd (Evolutionary Algorithm) | 20+ billion compounds | 49,000-76,000 molecules docked per target | 869-1622x improvement in hit rates vs. random | [1] |

| Chemprop Models (on LSD Database) | 468M-1.63B molecules | Evaluated only 1% of library to find top 0.01% | High correlation (R=0.65-0.86) with docking scores | [13] |

Active Learning Query Strategies: Experimental Protocols

The efficacy of Active Learning systems depends critically on the query strategy employed to select the most informative data points. Below are detailed protocols for the primary strategies used in ultra-large library docking.

Pool-Based Selective Sampling Protocol

Pool-based sampling is the most prevalent scenario for Active Learning in virtual screening [11] [14].

Initialization Phase:

Model Training & Iteration:

- Train a machine learning model (e.g., Chemprop [13] or other QSAR models) on the currently labeled data.

- Use the trained model to predict docking scores for the entire pool of unlabeled compounds.

- Calculate uncertainty metrics (e.g., entropy, margin) for all predictions to identify the most informative candidates for the next labeling round.

- Select the top 1,000-10,000 most uncertain compounds and dock them using the physics-based method.

- Add the newly labeled compounds to the training set.

- Repeat until a stopping criterion is met (e.g., performance plateaus, budget exhausted).

Uncertainty Sampling Methodology

Uncertainty sampling selects instances for which the current model is least certain about what the correct output should be [11].

- Implementation:

- After model training, obtain prediction probabilities for all unlabeled compounds.

- For classification tasks, calculate the entropy for each prediction: ( H(x) = -\sum{i=1}^{C} P(yi|x) \log P(y_i|x) ), where C is the number of classes.

- For regression tasks (e.g., predicting docking scores), use variance or margin-based metrics.

- Prioritize compounds with the highest entropy or lowest margin for the next docking round.

Query-by-Committee Protocol

This approach utilizes a committee of diverse models to select instances where committee disagreement is highest [11].

Committee Formation:

- Train multiple models (e.g., Random Forest, Neural Networks, Support Vector Machines) on the current labeled data.

- Ensure model diversity through different architectures or training subsets.

Disagreement Measurement:

- Use the committee to predict docking scores or classifications for all unlabeled compounds.

- Calculate the variance in predictions across committee members for each compound.

- Select compounds with the highest prediction variance for the next labeling round.

Workflow Visualization: Active Learning for Ultra-Large Library Docking

The following diagram illustrates the complete iterative workflow for applying Active Learning to ultra-large chemical libraries in virtual screening.

Successful implementation of Active Learning for ultra-large library docking requires specific computational tools and resources. The table below details the essential components of the research toolkit.

Table 2: Essential Research Reagents & Computational Resources for Active Learning Docking

| Tool/Resource | Type | Primary Function | Application in Workflow |

|---|---|---|---|

| Schrödinger Active Learning Applications [4] | Commercial Software Platform | Integrates ML with physics-based docking (Glide) and free energy calculations (FEP+) | End-to-end active learning implementation for ultra-large libraries |

| REvoLd [1] | Evolutionary Algorithm (Rosetta) | Efficiently explores combinatorial make-on-demand chemical spaces without full enumeration | Protocol for screening Enamine REAL space with full ligand/receptor flexibility |

| Chemprop [13] | Machine Learning Framework | Message-passing neural network for molecular property prediction | Predicting docking scores and enriching top-ranking molecules |

| DOCK3.7/3.8 [13] | Docking Software | Physics-based molecular docking for large libraries | Generating ground-truth docking scores for ML training |

| Enamine REAL Space [1] | Make-on-Demand Chemical Library | Billions of readily available compounds constructed from robust reactions | Primary screening library for virtual screening campaigns |

| LSD Database [13] | Benchmarking Database | Provides docking scores, poses, and experimental results for 6.3B molecules across 11 targets | Training and validating machine learning models |

Advanced Implementation Considerations

Training Set Optimization Protocol

The composition of the training set significantly impacts model performance. A stratified sampling approach has proven effective:

Procedure:

- Allocate 80% of the training budget to sampling from the top-ranking 1% of molecules.

- Allocate the remaining 20% to random sampling from the entire library.

- This approach significantly improves logAUC (0.77 vs. 0.49 with random sampling) for recalling top-scoring molecules [13].

Hyperparameter Optimization:

- Population size: 200 initial ligands provide sufficient variety.

- Selection pressure: Allow top 25% of individuals to advance to next generation.

- Termination criteria: 30 generations typically balances convergence and exploration [1].

Performance Validation and Model Assessment

Rigorous validation is essential for ensuring model reliability:

Evaluation Metrics:

Validation Protocol:

- Hold out a representative test set (100,000+ molecules) before training.

- Ensure no overlap between training and test compounds.

- Evaluate final model performance on held-out test set only.

Glide (Grid-Based Ligand Docking with Energetics) from Schrödinger stands as an industry-leading solution for ligand-receptor docking, renowned for its accuracy in binding mode prediction and virtual screening [15]. Its robust methodology employs a hierarchical filtering approach to efficiently explore the conformational space of a ligand within a receptor's binding site, striking a critical balance between computational speed and predictive accuracy [16]. By combining sophisticated sampling techniques with empirical scoring functions, Glide has become an indispensable tool in structure-based drug discovery, enabling researchers to identify and optimize hit compounds with a high probability of success. This application note details the protocols and quantitative performance that establish Glide as the gold standard, with a specific focus on its application within modern active learning workflows for screening ultra-large chemical libraries.

Performance & Validation: Quantitative Benchmarking

Rigorous, unbiased benchmarking against diverse datasets is crucial for establishing the reliability of any docking program. Glide's performance has been extensively validated in both pose prediction and virtual screening enrichment, demonstrating its consistency across a wide range of target classes.

Pose Prediction Accuracy

A primary strength of Glide is its exceptional ability to reproduce experimentally observed binding geometries. In evaluations using the Astex set, a standard benchmark for docking accuracy, Glide SP successfully recapitulated the crystal complex geometry in 85% of cases with a root-mean-square deviation (RMSD) of less than 2.5 Å [16]. This high level of accuracy provides researchers with confidence in the predicted ligand poses, forming a solid foundation for subsequent lead optimization efforts.

Virtual Screening Enrichment

The value of docking in a drug discovery project is often measured by its ability to enrich true active compounds early in a ranked list from a vast library of decoys. Glide's performance in this area is exemplary. In retrospective studies using the Directory of Useful Decoys (DUD) dataset, which contains structurally similar actives and decoys, Glide SP demonstrated strong enrichment [16]. It outperformed random selection in 97% of the 39 DUD targets, achieving an average Area Under the Curve (AUC) of 0.80 [16]. Early enrichment is particularly critical for reducing experimental costs, and Glide successfully recovered a significant proportion of known actives in the top fraction of the screened library, as shown in Table 1.

Table 1: Early Enrichment Performance of Glide SP on the DUD Dataset

| Metric | Top 0.1% | Top 1% | Top 2% | AUC |

|---|---|---|---|---|

| Average Recovery of Actives | 12% | 25% | 34% | 0.80 [16] |

Glide Docking Methodologies & Protocols

Glide offers a suite of docking methodologies tailored to different screening scenarios, balancing accuracy and computational cost. The core docking process, often referred to as the "docking funnel," involves a series of hierarchical filters that progressively evaluate ligand poses with increasing levels of rigor [16].

Core Docking Methodologies

The standard Glide workflows are designed to cater to various stages of a virtual screening campaign, from initial rapid filtering to high-accuracy pose prediction.

Table 2: Glide Docking Methodologies and Their Applications

| Methodology | Sampling Strategy | Approx. Time/Compound | Primary Application |

|---|---|---|---|

| Glide HTVS | Reduced sampling for maximum speed | ~2 seconds | Initial filtering of ultra-large libraries (>1 billion compounds) [16] |

| Glide SP | Exhaustive sampling and scoring | ~10 seconds | Standard balance of speed and accuracy for virtual screening [16] [15] |

| Glide XP | Anchor-and-grow sampling; more rigorous scoring | ~2 minutes | High-accuracy pose prediction and scoring for lead optimization [16] |

| Glide CovDock | Specialized sampling for covalent bonds | Protocol Dependent | Docking of ligands that form covalent bonds with the receptor [15] |

The scoring of protein-ligand complexes in Glide uses the Emodel scoring function to select the best pose for a given ligand and the GlideScore function to rank-order different compounds [16]. GlideScore is an empirical scoring function that incorporates terms for lipophilic interactions, hydrogen bonding, rotatable bond penalties, and metal-binding interactions, alongside terms for more complex phenomena like hydrophobic enclosure [16].

Advanced Sampling & Scoring Protocols

For challenging systems that deviate from the rigid receptor approximation, Glide integrates with advanced Schrödinger workflows.

- Induced Fit Docking (IFD) Protocol: This protocol accounts for receptor flexibility by combining Glide docking with Prime protein structure prediction. It begins by generating an ensemble of ligand poses using a softened potential, followed by side-chain refinement and minimization of the protein structure around each pose. The ligands are then re-docked into the induced-fit protein structures, and the final complexes are ranked using a composite score [16]. This is essential for targets where ligand binding induces significant side-chain or backbone movements.

- Peptide and Macrocycle Docking: Docking flexible polypeptides and macrocycles presents unique challenges due to the high number of rotatable bonds and ring conformations. Glide addresses this with specialized protocols. For peptides, the SP-peptide mode modifies sampling parameters and can achieve up to 58% accuracy in binding mode prediction when combined with MM-GBSA rescoring [16]. For macrocycles, Glide leverages a database of pre-generated ring conformations to accurately sample low-energy states of the macrocyclic ring, which is critical for correct pose prediction [16].

Application in Ultra-Large Library Docking with Active Learning

The advent of make-on-demand chemical libraries containing hundreds of millions to billions of readily synthesizable compounds has transformed virtual screening [5]. However, exhaustively docking such libraries with traditional methods remains computationally prohibitive. The integration of Glide with Active Learning (AL), a machine learning strategy, effectively overcomes this barrier.

The Active Learning Glide Workflow

Active Learning Glide uses an iterative process to intelligently screen an ultra-large library without docking every compound. A machine learning model is trained on a subset of physics-based Glide docking scores and then used to predict the best compounds in the full library, dramatically accelerating the discovery process [4].

Diagram 1: Active Learning Glide workflow for ultra-large libraries.

Performance and Efficiency Gains

This approach offers remarkable computational savings. It has been shown to recover approximately 70% of the top-scoring hits that would be identified by an exhaustive, brute-force dock of the entire ultra-large library, while requiring only 0.1% of the computational cost and time [4]. This makes screening billion-compound libraries not just feasible, but practical on moderate-sized computing clusters. For example, a project that might take 100 days and a correspondingly high compute cost with exhaustive docking can be completed in a fraction of the time and cost with Active Learning Glide [4].

Experimental Protocols for Key Applications

Protocol 1: Standard Virtual Screening with Glide SP/XP

This protocol is designed for virtual screening of libraries up to several million compounds.

- Protein Preparation: Use the Protein Preparation Wizard to correct PDB structures, assign bond orders, add hydrogens, fill in missing side chains, and optimize hydrogen bonding networks. Finally, perform a restrained minimization using the OPLS force field [16].

- Receptor Grid Generation: Define the binding site for docking. The center of the grid is typically based on the centroid of a co-crystallized ligand or key residue side chains. Grid box dimensions should be sufficient to accommodate ligand flexibility.

- Ligand Preparation: Prepare the ligand library using LigPrep to generate accurate 3D structures, possible ionization states at a physiological pH (e.g., 7.0 ± 2.0), and low-energy ring conformations using the OPLS force field [17].

- Docking Execution: Run the docking calculation using Glide SP for a standard balance of speed and accuracy, or Glide XP for more demanding pose prediction and scoring.

- Post-Processing Analysis: Analyze top-ranked poses for key interactions (hydrogen bonds, hydrophobic contacts, pi-stacking). Use constraints (e.g., core constraints, H-bond constraints) to bias poses based on experimental data and "stay close to experiment" [16].

Protocol 2: Active Learning Glide for Ultra-Large Libraries

This protocol is for screening libraries containing hundreds of millions to billions of compounds.

- Library and Target Preparation: Curate the make-on-demand library (e.g., Enamine REAL Space) and prepare the protein target as in Protocol 1 [4] [5].

- Initial Sampling: Dock a randomly selected subset of the library (e.g., 50,000-100,000 compounds) using Glide HTVS or SP to generate initial training data [4].

- Active Learning Loop:

- Training: Train a machine learning model (e.g., a neural network) on the collected docking scores and molecular descriptors of the docked subset.

- Prediction & Selection: Use the trained model to predict the docking scores for all undocked compounds in the full library. Select the next batch of compounds (e.g., 10,000) with the highest predicted scores.

- Docking & Expansion: Dock the newly selected batch with Glide to obtain accurate physics-based scores. Add these new data to the training set.

- Iteration: Repeat the training-prediction-docking cycle until a stopping criterion is met (e.g., a fixed number of iterations or convergence in hit discovery) [4].

- Hit Validation: Synthesize and test the top-ranking, novel compounds identified by the final model. As demonstrated in a screen of 138 million compounds against the D4 dopamine receptor, this can yield high hit rates, with 30 out of 81 new chemotypes showing sub-micromolar activity, including a 180 pM agonist [5].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software and Data Components for a Glide Docking Campaign

| Tool / Resource | Function / Description | Role in the Workflow |

|---|---|---|

| Glide | The core docking engine for pose sampling and scoring [15]. | Performs the ligand conformational sampling and scoring within the prepared receptor grid. |

| Protein Preparation Wizard | A workflow for preparing and optimizing protein structures from PDB files for molecular modeling [16]. | Ensures the input protein structure is structurally correct and energetically minimized for reliable docking results. |

| LigPrep | A tool for generating 3D ligand structures with correct chirality, ionization states, and low-energy ring conformations [17]. | Prepares the small molecule ligands for docking, ensuring chemical accuracy and representative conformational states. |

| Schrödinger Prepared Commercial Libraries | Pre-prepared and curated databases of purchasable compounds from partners like Enamine [15]. | Provides immediate access to vast chemical space (millions to billions of compounds) that is ready for docking, saving preparation time. |

| Active Learning Applications | A machine learning tool that iteratively trains models on Glide docking data to efficiently screen ultra-large libraries [4]. | Amplifies the throughput of Glide docking, making billion-compound screens computationally feasible. |

| Prime | Schrödinger's protein structure prediction and refinement tool used in the Induced Fit protocol [16]. | Models protein flexibility and conformational changes induced by ligand binding. |

| WaterMap | A tool for analyzing the structure and energetics of water molecules in a binding site [15]. | Provides insights for rational drug design and can be used in the Glide WS rescoring workflow to improve scoring accuracy. |

| FEP+ | A physics-based method for calculating relative binding affinities with high accuracy [4]. | Used for lead optimization after docking to precisely rank and optimize the most promising hit compounds. |

Glide's comprehensive validation, high accuracy in pose prediction, and powerful enrichment performance firmly establish it as the gold standard in molecular docking. Its versatility across various ligand classes, from small molecules to peptides and macrocycles, makes it a universally applicable tool in structure-based drug design. The integration of Glide with Active Learning machine learning strategies represents the cutting edge of virtual screening, effectively unlocking the vast potential of ultra-large make-on-demand chemical libraries. This combination allows researchers to rapidly identify novel, potent, and synthetically accessible hit compounds, dramatically accelerating the early stages of drug discovery.

Implementing Active Learning Glide: A Step-by-Step Workflow for Screening Billions

Core Components of the Active Learning Glide Workflow

Active Learning Glide (AL-Glide) is a machine learning (ML)-augmented molecular docking workflow designed to efficiently identify high-scoring ligands from ultra-large chemical libraries containing billions of compounds. This methodology addresses the fundamental computational bottleneck of traditional brute-force docking, making the screening of vast chemical spaces feasible without a proportional increase in computational cost [4] [18]. The core principle involves an iterative, supervised learning cycle where a machine learning model is trained to become a accurate proxy for the Glide docking scoring function. This model then prioritizes which compounds to dock in subsequent cycles, effectively focusing computational resources on the most promising regions of chemical space [18].

The workflow is a cornerstone of modern virtual screening (VS), enabling researchers to achieve double-digit hit rates—a significant improvement over the 1-2% typical of traditional VS—while requiring only a fraction of the computational cost of an exhaustive screen [18]. By recovering approximately 70% of the top-scoring hits found through exhaustive docking at just 0.1% of the cost, AL-Glide has established itself as a critical tool for initial hit identification in structure-based drug design [4].

Workflow Diagram and Process

The following diagram illustrates the iterative, cyclical nature of the Active Learning Glide workflow.

Process Description

The AL-Glide workflow can be broken down into the following key stages, as shown in Figure 1:

- Library Initialization: The process begins with an ultra-large chemical library, often containing billions of purchasable compounds from sources like the Enamine REAL database [18] [10].

- Initial Batch Selection: An initial, manageable batch of compounds is selected at random from the full library for the first round of docking [18].

- Glide Docking & Scoring: The selected batch of compounds is docked against the protein target of interest using Glide, and each compound is assigned a docking score (e.g., GlideScore) [4] [18].

- Machine Learning Model Training: The compounds that were docked, along with their docking scores, are used to train a machine learning model. This model learns to approximate the relationship between a compound's features and its Glide docking score [18].

- ML-Based Library Prediction: The trained ML model is then used to rapidly predict the docking scores for the entire ultra-large library, a process that is orders of magnitude faster than actual docking [4] [18].

- Iterative Batch Prioritization: Compounds with the best-predicted scores from the ML model are selected to form the next batch for actual Glide docking. The workflow then returns to Step 3, creating a closed-loop cycle. With each iteration, the ML model is retrained on an increasingly large and informative set of docked compounds, improving its predictive accuracy and its ability to find high-scoring ligands [18].

- Final Output: After a predetermined number of cycles, the process terminates, yielding a final, curated list of top-scoring compounds that have been validated by full Glide docking. These compounds are then typically advanced to more rigorous scoring stages in a modern virtual screening pipeline [18].

Quantitative Performance Metrics

The performance of the Active Learning Glide workflow is characterized by high computational efficiency and robust recovery of top-tier hits, as summarized in the table below.

Table 1: Performance Metrics of Active Learning Glide

| Metric | Performance | Context / Comparison |

|---|---|---|

| Computational Cost Reduction | ~99.9% cost saving [4] | Compared to brute-force docking of an ultra-large library. |

| Top-Hit Recovery Rate | ~70% of top-scoring hits recovered [4] | Compared to the hits found from an exhaustive docking screen. |

| Virtual Screening Hit Rate | Double-digit hit rates (e.g., 14%, 44%) frequently achieved [18] [3] | Post-experimental validation; far exceeds traditional 1-2% rates. |

| Library Size Applicability | Billions of compounds [4] [10] | Successfully applied to screens of ~500 million compounds [10]. |

Detailed Experimental Protocol

This section provides a detailed, step-by-step protocol for setting up and running an Active Learning Glide screen, based on established methodologies [4] [18] [10].

System Preparation

Protein Target Preparation:

- Obtain a high-resolution 3D structure of the target protein (e.g., from PDB).

- Using Maestro's Protein Preparation Wizard, perform the following steps:

- Add missing hydrogen atoms.

- Assign appropriate protonation states at biological pH for residues, particularly His, Asp, Glu, and Lys.

- Optimize hydrogen bonding networks.

- Perform a restrained minimization to relieve steric clashes using the OPLS4 force field until the RMSD of heavy atoms converges to 0.3 Å.

Receptor Grid Generation:

- Within Glide, define the receptor grid for docking.

- Select the prepared protein structure.

- Define the centroid of the binding site using a co-crystallized ligand or known binding site residues.

- Set the inner box (bounding box) to enclose the ligand, typically defaulting to 10 Å from the centroid.

- Set the outer box (box size) to define the overall space sampled by the ligand, typically 20-30 Å depending on ligand size variability expected in the library.

Ligand Library Preparation:

- Source a commercial ultra-large library (e.g., Enamine REAL).

- Using LigPrep, standardize the compound structures:

- Generate possible tautomers and protonation states at a pH of 7.0 ± 2.0.

- Apply specified chirality.

- Perform energy minimization using the OPLS4 force field.

Active Learning Glide Execution

Initialization:

- In the Active Learning Applications interface, load the prepared receptor grid and ligand library.

- Configure the active learning parameters:

- Initial Batch Size: Typically 50,000-100,000 compounds, selected randomly.

- Selection Size per Cycle: The number of top ML-predicted compounds to dock in each subsequent cycle (e.g., 50,000-100,000).

- Number of Cycles: Typically 10-20 iterations, or until convergence is observed in the docking scores of the selected batches.

Iterative Active Learning Cycle:

- Cycle 1: The initial random batch is docked with Glide SP, and the docking scores are recorded.

- An ML model (e.g., a directed message passing neural network) is trained on the featurized compounds and their docking scores.

- The trained model predicts scores for the entire undocked library.

- The top N compounds with the best-predicted scores are selected for the next docking cycle.

- Cycles 2 to N: The newly selected batch is docked. The ML model is retrained on the accumulated set of all previously docked compounds and their scores. The process of prediction and selection repeats.

- Monitor the convergence by tracking the docking scores of the selected batches across cycles; the process can be stopped when the score distribution stabilizes.

Final Output:

- Upon completion of the specified cycles, the workflow outputs a list of the top-scoring compounds identified across all docking cycles. Full docking poses are available for these compounds.

Post-Processing and Hit Validation

Rescoring with Advanced Docking Methods:

- To improve pose prediction and initial enrichment, the top hits from AL-Glide (e.g., the best 10-100 million compounds) can be rescored using the more sophisticated Glide WS. Glide WS incorporates explicit water energetics from WaterMap, leading to a significant reduction in false positives and improved docking accuracy [15] [19] [18].

Absolute Binding Free Energy Perturbation (ABFEP+):

- A few thousand of the most promising compounds are subjected to rigorous free energy calculations using ABFEP+.

- This step provides a highly accurate prediction of binding affinity, closely correlating with experimental results, and is a linchpin for achieving high hit rates [18].

- An active learning approach can also be applied to ABFEP+ to scale the number of compounds evaluated with this expensive method [18].

Experimental Validation:

- The final, computationally validated hits are acquired or synthesized and tested in biochemical or cell-based assays to confirm biological activity, as demonstrated in the identification of a first-in-class WLS inhibitor [10].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The successful implementation of the AL-Glide workflow relies on a suite of software tools and chemical resources. The following table details these essential components.

Table 2: Key Research Reagents and Solutions for AL-Glide

| Item Name | Function / Description | Role in the Workflow |

|---|---|---|

| Glide | Industry-leading ligand-receptor docking software. | Performs the core high-throughput docking and scoring of compound batches; provides the "ground truth" data for ML model training [15]. |

| Active Learning Applications (Schrödinger) | A powerful tool that integrates ML with docking. | Manages the iterative active learning cycle, including ML model training, prediction on the full library, and batch selection [4]. |

| Enamine REAL Library | An ultra-large, make-on-demand combinatorial chemical library. | Serves as the primary source of billions of synthetically accessible compounds for the virtual screen [10]. |

| FEP+ | A technology for performing free energy perturbation calculations. | Used for accurate rescoring of top hits via ABFEP+ to dramatically improve hit rates prior to experimental testing [4] [18]. |

| Glide WS | An advanced docking method incorporating explicit water dynamics. | Rescores top hits from AL-Glide to improve pose prediction and enrichment, helping to reduce false positives [15] [19] [18]. |

| Maestro (Schrödinger Suite) | A unified graphical user interface for computational life sciences. | Provides the integrated platform for protein prep, grid generation, workflow setup, and results analysis [4] [15]. |

The screening of ultra-large chemical libraries, often containing billions of commercially available compounds, has become a prominent strategy for in silico hit discovery in structure-based drug design [2] [3]. The fundamental premise is that larger libraries increase the probability of identifying high-affinity ligands, a concept often summarized as "the bigger, the better" [2]. However, the computational expense of exhaustively docking billions of compounds using physics-based methods like molecular docking is prohibitive for most research groups [2] [20]. For instance, screening 1.3 billion compounds utilizing 8,000 CPUs can require 28 days and cost over $200,000 [2].

Active learning (AL), a machine learning strategy, has emerged as a powerful solution to this challenge. This iterative framework intelligently selects the most informative compounds for expensive physics-based docking, training surrogate models to predict docking scores for the entire library at a fraction of the computational cost [4] [20]. By strategically sampling the chemical space, Active Learning Glide can recover approximately 70% of the top-scoring hits that would be found through exhaustive docking while requiring only 0.1% of the computational resources [4]. This Application Note details the protocols and practical considerations for implementing iterative model training within the context of ultra-large library docking research.

Key Performance Metrics and Comparative Analysis

The efficacy of active learning docking is validated through robust benchmarking studies. The following tables summarize key quantitative data and performance comparisons.

Table 1: Key Performance Metrics from Active Learning Docking Studies

| Metric | Reported Value | Context / Method | Source |

|---|---|---|---|

| Computational Cost Reduction | 99% (screening 1% of library) | HASTEN tool recall of top-scoring compounds [20] | [20] |

| Top-Hit Recall Rate | ~90% of top 1000 hits | HASTEN on a 1.56 billion compound library [20] | [20] |

| Hit Rate in Experimental Validation | 14% (KLHDC2), 44% (NaV1.7) | RosettaVS platform screening [3] | [3] |

| Screening Enrichment (EF1%) | 16.72 | RosettaGenFF-VS on CASF2016 benchmark [3] | [3] |

| Docking Time for Ultra-Large Library | < 7 days | OpenVS platform with 3000 CPUs and 1 GPU [3] | [3] |

Table 2: Comparison of Docking and Active Learning Strategies

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Brute-Force Docking | Exhaustive screening of entire library [20] | Maximum coverage; no bias [2] | Prohibitively high computational cost and time [2] [20] |

| Active Learning Glide | Iterative docking & ML model training; uses ensemble of RF and GCNN [4] [20] | Recovers ~70% of top hits for 0.1% cost [4] | Model performance depends on initial sampling and acquisition function [2] |

| HASTEN | Iterative docking using Chemprop neural network for score prediction [20] | 99% cost reduction; robust recall on giga-scale libraries [20] | Performance can be hampered by large numbers of failed docking compounds [20] |

| RosettaVS (OpenVS) | Open-source platform; combines VSX (fast) and VSH (high-precision) modes with active learning [3] | High accuracy; models receptor flexibility; open-source [3] | Requires substantial HPC infrastructure for ultra-large screens [3] |

Experimental Protocols for Active Learning Docking

This section provides detailed methodologies for setting up and executing an active learning virtual screening campaign.

Core Workflow of Active Learning for Molecular Docking

The following diagram illustrates the overarching iterative workflow of an active learning docking campaign.

Protocol 1: Standard Active Learning Docking Setup

This protocol is adapted from methodologies described for tools like HASTEN and Active Learning Glide [2] [20].

Step 1: Library Preparation

- Obtain the chemical library in SMILES format (e.g., Enamine REAL, ZINC).

- Standardization: Use tools like RDKit or Schrödinger LigPrep to standardize structures, generate canonical SMILES, and remove duplicates [20].

- Preparation for Docking: Prepare 3D structures with tools like LigPrep, generating relevant tautomers and stereoisomers at a physiological pH (e.g., 7.0 ± 1.5). Energy-minimize structures using a force field like OPLS_2005 [20].

Step 2: Receptor Preparation

- Obtain the 3D structure of the target protein from the PDB or via homology modeling.

- Processing: Prepare the protein structure using a tool like the Schrödinger Protein Preparation Wizard. This includes adding hydrogens, assigning bond orders, filling missing side chains, and optimizing hydrogen bonds.

- Grid Generation: Define the binding site and generate a docking grid centered on the region of interest.

Step 3: Initial Random Sampling and Docking

Step 4: Iterative Active Learning Cycle The core loop is detailed in the diagram below, which expands on the machine learning and acquisition steps.

- Step 5: Termination and Analysis

- Stopping Criterion: The cycle typically runs for a fixed number of iterations (e.g., 10-20) or until the top-ranked compounds stabilize and no longer change significantly.

- Output: The final output is a ranked list of top-scoring compounds from the entire library, generated from the model's predictions and the docked subsets.

Protocol 2: Handling Docking Failures and Constraints

A known challenge in ML-boosted docking is that some compounds fail to dock successfully, often due to conformational strain or the application of constraints (e.g., required hydrogen bonds) [20]. This protocol outlines mitigation strategies.

Step 1: Pre-Filtering

- Implement aggressive pre-filtering based on physicochemical properties (e.g., molecular weight, logP, rotatable bonds) to remove compounds unlikely to dock successfully or fit the binding pocket.

- Use functional group filters to remove reactive or undesirable species.

Step 2: Failed Compound Management

- During the docking steps, track and log compounds that fail to generate a valid pose or score.

- Strategy 1 (Exclusion): Assign a penalizingly poor score to failed compounds, ensuring the ML model learns to avoid similar structures in subsequent iterations.

- Strategy 2 (Separate Classifier): Train a separate binary classifier to predict the likelihood of a compound docking successfully. Use this classifier to filter the library before the acquisition step [20].

Step 3: Constraint-Aware Active Learning

- Be cautious when using stringent docking constraints, as they can increase failure rates and hamper ML learning [20].

- Consider applying constraints more leniently in the initial VS stage and applying them rigorously only during the final re-ranking of top hits.

Table 3: Key Research Reagent Solutions for Active Learning Docking

| Item / Resource | Function / Description | Example Tools / Sources |

|---|---|---|

| Ultra-Large Chemical Libraries | Source of purchasable or synthesizable compounds for virtual screening. | Enamine REAL, ZINC, ChemBridge [2] [20] |

| Molecular Docking Software | Physics-based program to predict protein-ligand binding poses and scores. | Glide, AutoDock Vina, RosettaVS, GOLD [4] [3] [21] |

| Active Learning Platform | Software that implements the iterative AL workflow for docking. | Schrödinger Active Learning Apps, HASTEN, OpenVS, MolPAL [4] [3] [20] |

| Machine Learning Framework | Library for building and training surrogate models for score prediction. | PyTorch, TensorFlow, Chemprop (for GNNs), Scikit-learn (for RF) [20] |

| Cheminformatics Toolkit | Library for handling molecular data, featurization, and pre-processing. | RDKit, Schrödinger LigPrep, OpenBabel [20] |

| High-Performance Computing (HPC) | Computational cluster with hundreds to thousands of CPUs and multiple GPUs. | Local HPC clusters, Cloud computing (AWS, GCP, Azure) [3] [20] |

Discussion and Technical Notes

Understanding the "Black Box": How 2D Models Predict 3D Affinity

A critical inquiry in active learning docking is how a surrogate model can accurately predict a docking score—a property rooted in 3D molecular interaction—using only 2D structural information. Analysis suggests that these models effectively memorize structural patterns that are prevalent in the high-scoring compounds sampled during the iterative process [2]. These patterns are not arbitrary; they correlate with specific three-dimensional shapes and interaction potentials (e.g., pharmacophores) that are complementary to the binding pocket. The model learns that certain molecular subgraphs or fingerprints are consistently associated with favorable docking outcomes for a specific target, enabling rapid and effective prioritization without explicit 3D calculation for every molecule [2].

Best Practices and Pitfalls

- Acquisition Function Choice: The Greedy and UCB strategies are most effective for finding top-scoring compounds, while Uncertainty sampling is better for model exploration and training [2].

- Initial Sampling: A sufficiently large and diverse initial random sample is crucial to prevent the model from getting trapped in a local optimum of chemical space.

- Validation: Always validate the final hit list by re-docking a sample of top-ranked compounds with a more precise, high-fidelity docking method (e.g., Glide SP or XP, RosettaVS VSH mode) [3].

- Generalizability: The surrogate model is highly target-specific. A model trained on one protein target cannot be directly applied to another.

In modern drug discovery, the ability to efficiently screen ultra-large chemical libraries containing billions of molecules presents both a substantial opportunity and a significant computational challenge. Active learning (AL) integrated with physics-based computational methods has emerged as a transformative solution, enabling researchers to navigate this vast chemical space with unprecedented efficiency. This application note details the implementation and synergy of two key active learning applications: Active Learning Glide (AL-Glide) for initial hit identification and Active Learning Free Energy Perturbation (AL-FEP+) for subsequent lead optimization. By framing these methodologies within a cohesive workflow, we provide researchers with a comprehensive protocol for accelerating the early drug discovery pipeline, from initial screening to optimized lead compounds.

Active Learning Glide (AL-Glide) for Hit Finding

Active Learning Glide (AL-Glide) addresses the fundamental challenge of screening ultra-large, make-on-demand chemical libraries, which can contain hundreds of millions to billions of readily available compounds [1] [10]. Traditional virtual screening methods, which rely on brute-force docking of entire libraries, become computationally prohibitive at this scale. AL-Glide combines the accuracy of the Glide docking program with cutting-edge machine learning to iteratively train a model that predicts docking scores, functioning as a rapid proxy for the more computationally expensive physics-based docking calculation [4] [18]. This approach allows for the identification of the most promising compounds for a fraction of the time and cost of an exhaustive screen.

Quantitative Performance

The performance of AL-Glide is demonstrated through its application across multiple drug discovery campaigns. The table below summarizes key quantitative benchmarks.

Table 1: Performance Metrics of AL-Glide in Ultra-Large Library Screening

| Metric | Performance | Context / Library Size | Source |

|---|---|---|---|

| Computational Efficiency | ~0.1% of the cost of exhaustive docking | Recovering ~70% of top-scoring hits | [4] |

| Hit Rate Improvement | Double-digit hit rates (e.g., >10%) achieved | Compared to 1-2% with traditional VS | [18] |

| Reported Application | Identification of a first-in-class WLS inhibitor | From ~500 million compounds | [10] |

| Comparative Docking Cost | Days | Versus months for brute-force docking | [4] |

Detailed Experimental Protocol

The following workflow delineates a standard protocol for a hit-finding campaign using AL-Glide.

Protocol Steps:

- Library and Protein Preparation: Begin with an ultra-large commercial library (e.g., Enamine REAL). Prepare the protein structure using the Schrödinger Protein Preparation Wizard, which involves adding hydrogen atoms, correcting ionization states, and performing a restrained energy minimization [22]. Generate a receptor grid file defining the binding site.

- Prefiltering: Filter the library based on desired physicochemical properties (e.g., molecular weight, lipophilicity) to remove undesirable compounds and focus on a relevant chemical space [18].

- AL-Glide Screening: Initiate the active learning cycle as depicted in the workflow diagram.

- An initial batch of compounds is selected from the library and docked with Glide.

- These docking scores are used to train a machine learning model.

- The trained model predicts the docking scores for the entire library.

- The model then selects the most informative compounds for the next round of docking and model retraining. This process iterates, with the model becoming increasingly accurate at identifying high-scoring compounds [18].

- Full Docking and Rescoring: Upon completion of the active learning cycles, the top several million compounds ranked by the ML model undergo a full Glide docking calculation. The resulting poses and scores are then refined using Glide WS (WaterScore), which explicitly models key water molecules in the binding site for more accurate scoring and pose prediction [18].

- Hit Selection and Validation: A final list of top-ranked compounds is selected for procurement and experimental validation in biochemical or cell-based assays.

Active Learning FEP+ (AL-FEP+) for Lead Optimization

Following hit identification, the lead optimization phase aims to explore diverse chemical analogs to improve potency and other drug-like properties. Active Learning FEP+ (AL-FEP+) is designed for this purpose, enabling the exploration of tens to hundreds of thousands of compounds by building machine learning models on free energy perturbation (FEP+) predicted affinities [4] [23]. FEP+ provides highly accurate, physics-based binding affinity predictions, but is computationally expensive. AL-FEP+ uses an active learning framework to minimize the number of required FEP+ calculations, using them to train an ML model that can accurately predict potency across a much larger virtual chemical space [4].

Quantitative Performance

AL-FEP+ has been rigorously tested in retrospective and prospective studies, demonstrating its impact on lead optimization.

Table 2: Performance and Application of AL-FEP+ in Lead Optimization

| Aspect | Finding | Context | Source |

|---|---|---|---|

| Chemical Space Exploration | Tens to hundreds of thousands of compounds | Per design objective | [4] |

| Model Performance | Well-performing models generated within several rounds | Especially when core is kept constant | [23] |

| Impact on Hit Rates | Contributes to achieving double-digit hit rates | In integrated modern VS workflow | [18] |

| Protocol Development | Active learning used to develop accurate FEP+ protocols | For challenging systems via FEP+ Protocol Builder | [4] |

Detailed Experimental Protocol

This protocol describes the use of AL-FEP+ to optimize a lead series by exploring a large, enumerated virtual library.

Protocol Steps:

- Define the Virtual Library: Start with a lead compound and generate a large virtual library (e.g., 100,000 - 1,000,000 compounds) through enumeration, exploring diverse R-groups, core changes, and bioisosteres [4].

- Initial FEP+ Calculations: Select a diverse subset of compounds from the virtual library (e.g., a few hundred) and run FEP+ calculations to obtain accurate binding affinity predictions.

- Active Learning Cycle:

- Train ML Model: Use the FEP+ results as training data to build a quantitative structure-activity relationship (QSAR) model. Schrödinger's AutoQSAR can be used for this, computing structural descriptors and employing multiple regression algorithms (e.g., PLS, KPLS) [22].

- Predict and Select: The trained model predicts the binding affinities for the entire enumerated library. An "explore-exploit" selection strategy is then applied. A portion of the next batch is selected from the top-predicted compounds (exploit), while another portion is chosen from chemically diverse or uncertain regions of the chemical space (explore) [23].

- Iterate with New FEP+: The newly selected compounds are processed with FEP+, and the results are added to the training set. The model is retrained, and the cycle repeats for several iterations (typically 3-5 rounds) [23] [22].

- Final Compound Selection: After the final active learning cycle, the ML model's predictions are used to identify the most promising compounds from the virtual library. These compounds are prioritized for synthesis and experimental testing based on their predicted high potency and favorable properties.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The successful implementation of the workflows described above relies on a suite of specialized software tools and chemical resources.

Table 3: Key Research Reagents and Computational Solutions for Active Learning-Driven Discovery

| Tool / Resource | Type | Key Function in Workflow | Relevant Application |

|---|---|---|---|

| Enamine REAL Library | Chemical Library | Source of ultra-large, make-on-demand compounds for screening. | Hit Finding with AL-Glide [1] [10] |

| Glide | Software Module | Performs high-throughput molecular docking for pose prediction and scoring. | Foundational docking in AL-Glide [4] [18] |

| Active Learning Glide | Software Module | Machine learning layer that accelerates docking of billion-compound libraries. | Core of the hit-finding protocol [4] [18] |

| FEP+ | Software Module | Provides high-accuracy, physics-based binding free energy calculations. | Foundational for lead optimization [4] [18] |

| Active Learning FEP+ | Software Module | ML-guided selection for efficient FEP+ calculations on large virtual libraries. | Core of the lead optimization protocol [4] [23] |

| Glide WS | Software Module | Advanced docking rescoring that explicitly models water molecules for accuracy. | Rescoring post-docking [18] |

| AutoQSAR | Software Module | Automates the building and application of QSAR models using ML algorithms. | ML model training in AL-FEP+ [22] |

| Protein Preparation Wizard | Software Module | Readies protein structures for simulation by correcting structures and protonation states. | Essential pre-processing step [22] |

The Wnt signaling pathway is a fundamental biological system regulating cellular growth, development, and tissue homeostasis in animals [24]. Dysregulation of this pathway is implicated in numerous cancers, fibrotic diseases, and degenerative disorders, making it a significant therapeutic target [25] [24]. While previous drug discovery efforts have focused on upstream components like Porcupine (PORCN) or downstream effectors such as the β-catenin-TCF4 interaction, the integral membrane transporter Wntless (WLS) had remained an undrugged target despite its essential role in Wnt secretion [24] [26].

This case study details a successful structure-based drug discovery campaign that employed ultra-large scale virtual screening powered by Active Learning/Glide to identify the first-in-class WLS inhibitor, ETC-451, from a library of nearly 500 million compounds [25] [24] [10]. The research was framed within a broader thesis on applying advanced computational methods to access novel chemical space for challenging therapeutic targets.

Biological Context and Target Rationale

The Wnt Secretion Pathway and WLS Function

Wnt proteins are lipid-modified glycoproteins that require specialized machinery for their secretion and transport. The biosynthesis begins with the attachment of a palmitoleate moiety to Wnt by the endoplasmic reticulum-resident acyltransferase Porcupine (PORCN) [24]. This lipid modification is essential for the subsequent binding of Wnt to its dedicated transporter, Wntless (WLS), which facilitates secretion into the extracellular environment [24]. Once secreted, Wnt ligands initiate downstream signaling by binding to Frizzled receptors and co-receptors on target cells.

As the essential transporter for Wnt secretion, WLS represents a strategic bottleneck for modulating Wnt pathway activity. Genetic and preclinical data confirm that targeting WLS can effectively suppress signaling in Wnt-addicted cancers, validating its therapeutic potential [25] [24].

Target Druggability and Structural Insights

Recent advances in structural biology revealed that WLS contains a G-protein coupled receptor (GPCR) domain with a druggable binding pocket [25] [24] [10]. Cryo-EM structures of human WLS in complex with WNT8A and WNT3A show the bound palmitoleate moiety of Wnt inserted deeply into a hydrophobic cavity within the WLS transmembrane domain [24]. Structural homology searches and binding site detection analyses confirmed this pocket shares characteristics with canonical drug-binding sites in other GPCRs, suggesting susceptibility to small-molecule inhibition [24].

Diagram 1: WLS role in Wnt secretion pathway and cancer proliferation.

Computational Methodology

Virtual Screening Workflow Design

The virtual screening campaign followed a modern, multi-tiered workflow designed to efficiently navigate the ultra-large chemical space while maximizing the probability of identifying genuine hits. The protocol integrated machine learning-enhanced docking with rigorous physics-based rescoring to balance computational efficiency with predictive accuracy [18].

Key innovations included the use of Active Learning Glide (AL-Glide) to prioritize compounds for full docking calculations and the application of absolute binding free energy calculations (ABFEP+) for accurate affinity prediction across diverse chemotypes [18]. This approach addressed the fundamental limitations of traditional virtual screening, which had been constrained to smaller libraries (hundreds of thousands to few million compounds) and suffered from inaccuracies in scoring function-based ranking [18].

Structure Preparation and Binding Site Analysis

The virtual screening campaign initiated with the previously solved cryo-EM structure of the WNT8A-WLS complex (PDB). The Wnt ligand was removed from the complex, and the Sitemap tool (Schrödinger) was employed to identify and characterize potential druggable binding pockets on WLS [24]. This analysis revealed the GPCR-like transmembrane tunnel as the most promising binding site, designated Site 1, which featured partially polar residues at the top and predominantly hydrophobic residues lining the lower pocket [24].

Compound Library Preparation

The screening utilized Enamine's REAL 350/3 Lead-Like library, a make-on-demand collection containing approximately 500 million compounds with favorable physicochemical properties for drug discovery [24]. Library compounds adhered to stringent lead-like criteria: molecular weight between 270-350 Da, heavy atom count between 14-26, SlogP ≤ 3, and no more than 2 aryl rings [24]. These parameters ensured selected hits would provide optimal starting points for subsequent medicinal chemistry optimization.

Active Learning Glide Screening Protocol

The core screening methodology employed AL-Glide, which combines machine learning with molecular docking to efficiently prioritize compounds from ultra-large libraries [18] [24]. The protocol substantially reduced computational resources compared to brute-force docking of the entire library.

Diagram 2: Active learning Glide virtual screening workflow.

Step-by-Step AL-Glide Protocol:

- Initial Diversity Screening: A random diversity-based subset of 50,000 compounds was selected from the full library and docked against the WLS binding site using Glide SP mode [24].

- Machine Learning Model Training: The docking scores from the initial subset were used to train a DeepChem learning model, which learned to predict docking scores based on chemical features [24].

- Active Learning Cycles: The trained model predicted docking scores across the entire library, and additional rounds of docking and model retraining were performed to refine predictions (Figure S1A) [24]. This iterative process continued until the machine learning model became an accurate proxy for the docking method.

- Full Docking Calculation: The top 1 million compounds ranked by the active learning model were subjected to full Glide SP docking calculations to generate precise poses and scores [24].

- Rescoring with Explicit Water: The most promising compounds from Glide SP docking were rescored using Glide WS, which incorporates explicit water information in the binding site to improve pose prediction and enrichment over standard docking alone [18] [24].

Hit Selection and Compound Prioritization

From the top 10,000 ranked compounds, volume overlapping clustering was applied to classify compounds based on their spatial occupancy within the binding site [24]. This analysis identified 6 major clusters, with the largest cluster (containing 9,116 compounds) demonstrating the best mutual fitness between ligand shape and binding pocket architecture [24]. Additional filtering using Schrödinger's membrane permeability module and visual inspection for putative protein-ligand interactions yielded a final selection of 86 diverse compounds for synthesis and experimental testing [24].

Experimental Validation

Primary Screening and Hit Identification

Of the 86 compounds selected from virtual screening, 68 were successfully synthesized by Enamine and evaluated in a Wnt/β-catenin reporter assay using STF3A cells [24]. This cellular system consists of HEK293 cells with integrated WNT3A expression and a β-catenin responsive SuperTOPFlash (STF) luciferase reporter, providing a sensitive readout of Wnt pathway activity [24]. Following 48-hour treatment with test compounds, luciferase activity measurements identified ETC-451 as a promising hit that significantly reduced Wnt reporter activity [24].

Mechanism of Action Studies

Secondary assays confirmed ETC-451 functioned through the intended mechanism:

- WLS-WNT3A Interaction: ETC-451 effectively blocked the physical interaction between WLS and its cargo protein WNT3A, demonstrating direct target engagement [24].

- Target Gene Expression: Treatment with ETC-451 downregulated expression of endogenous Wnt target genes, confirming functional pathway inhibition at the transcriptional level [24].

- Cellular Proliferation: In Wnt-addicted pancreatic cancer cell lines, ETC-451 treatment significantly decreased cellular proliferation, validating the therapeutic potential of WLS inhibition [25] [24].

Key Research Reagents and Solutions

Table 1: Essential research reagents and solutions for WLS inhibitor discovery

| Reagent/Solution | Provider/Source | Function in Research Protocol |

|---|---|---|

| Enamine REAL 350/3 Library | Enamine | Nearly 500 million make-on-demand lead-like compounds for ultra-large virtual screening [24] |

| Schrödinger Suite | Schrödinger | Comprehensive software for molecular modeling, docking, and machine learning [18] |

| Active Learning Glide | Schrödinger | Machine learning-enhanced docking for efficient billion-compound screening [18] [24] |

| Glide WS | Schrödinger | Advanced docking with explicit water molecules for improved pose prediction [18] |

| STF3A Reporter Cell Line | Academic literature | HEK293 cells with WNT3A expression and β-catenin responsive luciferase reporter [24] |

| Wnt/β-catenin Reporter Assay | Laboratory established | Luciferase-based functional screen for Wnt pathway inhibition [24] |