Active Learning in Chemogenomics: A Strategic Guide to Accelerating Drug Discovery

This article provides a comprehensive overview of how active learning (AL) is revolutionizing chemogenomics and drug discovery.

Active Learning in Chemogenomics: A Strategic Guide to Accelerating Drug Discovery

Abstract

This article provides a comprehensive overview of how active learning (AL) is revolutionizing chemogenomics and drug discovery. Aimed at researchers and drug development professionals, it explores the foundational principles of AL's iterative feedback loop, which strategically selects the most informative data for experimental labeling to navigate vast chemical spaces efficiently. The piece delves into key methodological applications, including virtual screening, multi-target drug discovery, and human-in-the-loop systems, while also addressing critical challenges like model generalizability and data sparsity. Through real-world case studies and comparative analyses, it validates AL's power to significantly reduce experimental costs and increase hit rates, offering a practical roadmap for its implementation in modern pharmaceutical research.

What is Active Learning? Core Principles Solving Drug Discovery's Biggest Challenges

In the field of chemogenomics, a primary challenge is the efficient identification of target-specific bioactive molecules from an exponentially vast chemical space, often with limited and costly experimental data. Active Learning (AL) has emerged as a powerful iterative machine learning framework to address this challenge. AL is an iterative feedback process that strategically selects the most informative data points for labeling to improve a model's performance while minimizing resource expenditure [1]. Within chemogenomics, this translates to a cycle where a model guides the selection of which compounds to test or simulate next, based on a specific acquisition criterion, with the resulting data being used to refine the model itself [1] [2]. This approach is particularly valuable for optimizing molecular properties, predicting drug-target interactions (DTIs), and navigating complex biological fitness landscapes where experimental validation is a major bottleneck [1] [2]. The core of the AL cycle lies in its iterative loop of hypothesis, query, and model update, enabling a continuous refinement process that is both data-efficient and targeted.

The Core Components of the Active Learning Cycle

The AL cycle is a structured process comprising several key stages that work in concert to improve a model's predictive accuracy with each iteration.

Initial Model Training

The cycle begins with an initial model trained on a, often limited, set of labeled data, denoted as ( \mathcal{D}0 = {(\mathbf{x}i, yi)}{i=1}^{N0} ) [3]. In chemogenomics, ( \mathbf{x}i ) typically represents a molecule (e.g., via a fingerprint or graph structure) and ( y_i ) its corresponding property, such as bioactivity or binding affinity [3] [4].

Hypothesis and Uncertainty Quantification

The trained model is then used to form a hypothesis about the unlabeled data in a pool, ( \mathcal{U} ). A crucial step here is Uncertainty Quantification (UQ), which assesses the model's confidence in its predictions [2]. For a given molecule ( \mathbf{x} ) in ( \mathcal{U} ), the model produces both an expected prediction ( \mathbb{E}[f{\boldsymbol{\theta}}(\mathbf{x})] ) and an associated uncertainty ( \mathbb{V}[f{\boldsymbol{\theta}}(\mathbf{x})] ) [2]. UQ helps identify regions of chemical space where the model is uncertain, preventing over-reliance on potentially flawed predictions [2].

The Query Strategy: Data Acquisition

An acquisition function is applied to the unlabeled pool to select the most informative candidates for the next cycle [1]. This function uses the model's hypotheses and uncertainties to prioritize data points. A prominent strategy is based on Expected Predictive Information Gain (EPIG), which selects molecules expected to provide the greatest reduction in predictive uncertainty, thereby improving the model's accuracy for subsequent predictions [3]. Other common strategies include querying by committee or selecting for maximum diversity [1].

Model Update and Iteration

The newly acquired data, now labeled (either through wet-lab experiments, simulations, or human expert feedback [3] [5]), are added to the training set. The model is then retrained on this augmented dataset, ( \mathcal{D}1 = \mathcal{D}0 \cup {(\mathbf{x}{new}, y{new})} ) [1]. This model update completes a single cycle. The process repeats, with each iteration aiming to enhance the model's performance and expand its applicability domain—the region of chemical space where it can make reliable predictions [3] [1]. The cycle terminates when a stopping criterion is met, such as satisfactory model performance, depletion of resources, or diminishing returns on information gain [1].

Table: Core Components of an Active Learning Cycle in Chemogenomics

| Component | Description | Common Techniques/Examples |

|---|---|---|

| Initial Model | A machine learning model trained on a starting set of labeled molecules. | Graph Neural Networks (GNNs) [4], Random Forests, Support Vector Machines (SVMs) [1] |

| Hypothesis & UQ | The process of making predictions on unlabeled data and estimating the model's confidence. | Ensemble methods [2], Bayesian Neural Networks [2], Gaussian Processes [2] |

| Query Strategy | The algorithm for selecting which unlabeled data points to evaluate next. | Expected Predictive Information Gain (EPIG) [3], uncertainty sampling (e.g., highest entropy), diversity sampling [1] |

| Oracle/Labeling | The source of ground-truth labels for the selected molecules. | Wet-lab experiments [1], physics-based simulations (e.g., docking) [6] [5], human expert feedback [3] |

| Model Update | Retraining the model with the newly acquired labeled data. | Incremental learning, full model retraining [1] |

Quantitative Performance of Active Learning

Empirical studies across various drug discovery tasks demonstrate that AL can significantly accelerate model improvement compared to random selection or single-shot model training.

Table: Benchmarking Performance of Active Learning in Drug Discovery

| Dataset/Application | Key Finding | AL Method & Comparative Performance |

|---|---|---|

| Aqueous Solubility [7] | AL reached lower RMSE significantly faster than random sampling. | COVDROP method achieved superior performance with fewer labeled samples compared to k-means, BAIT, and random selection. |

| Cell Permeability (Caco-2) [7] | Clear efficiency gains were observed with an AL-guided approach. | COVDROP was the top performer, requiring fewer experiments to achieve target model accuracy. |

| Plasma Protein Binding (PPBR) [7] | AL methods successfully navigated highly imbalanced data distributions. | All methods initially struggled, but AL adapted to cover underrepresented regions, with COVDROP showing strong performance. |

| SARS-CoV-2 Mpro Inhibitor Design [5] | AL efficiently identified high-scoring compounds from a vast combinatorial space. | An AL-driven search of linker/R-group space using the FEgrow package enabled prioritization of synthesizable candidates for testing. |

| Goal-Oriented Molecule Generation [3] | Human-in-the-loop AL refined property predictors and improved oracle alignment. | Using the EPIG criterion, the approach increased the accuracy of predicted properties and the drug-likeness of top-ranked molecules. |

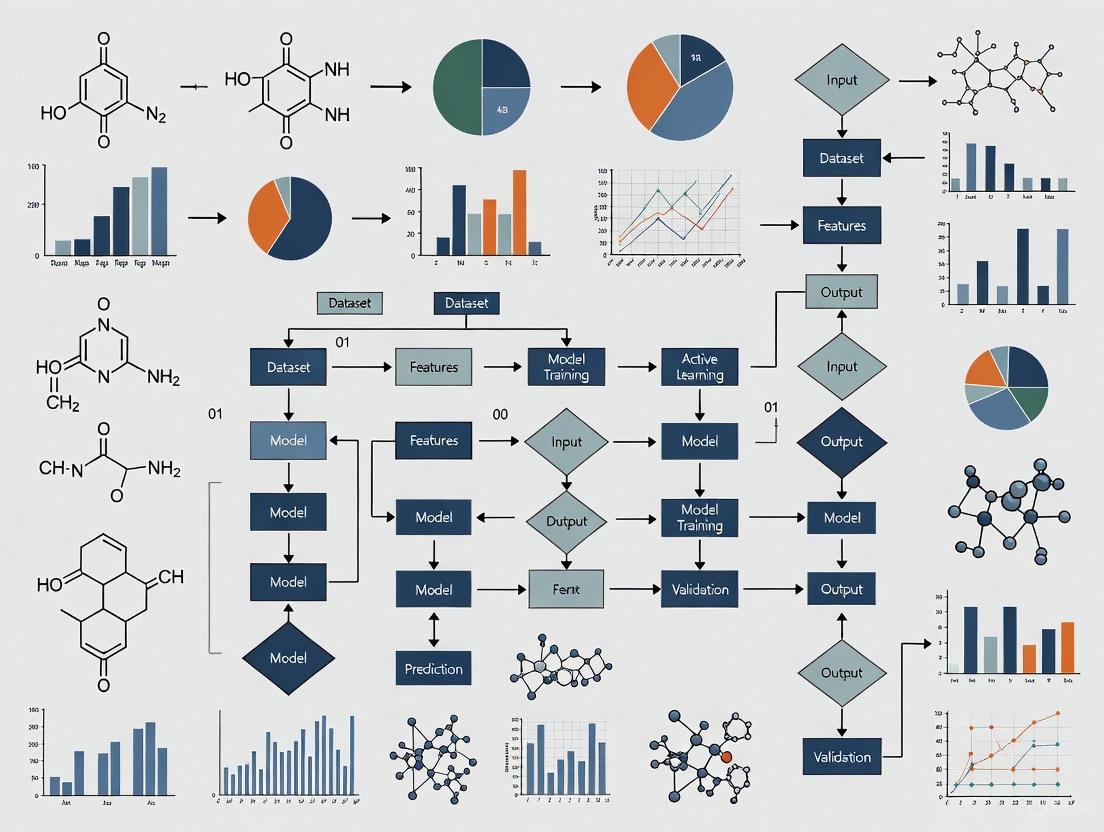

Diagram: The Active Learning Cycle. This workflow illustrates the iterative feedback loop of hypothesis generation, data query, and model refinement that defines AL in chemogenomics.

Detailed Experimental Protocol: An AL Case Study with FEgrow

The following protocol is adapted from a study that used AL to prioritize compounds from on-demand libraries targeting the SARS-CoV-2 main protease (Mpro) [5].

Objective

To efficiently search a combinatorial space of possible linkers and functional groups and identify synthesizable compounds with high predicted affinity for SARS-CoV-2 Mpro [5].

Materials and Reagents

Table: Essential Research Reagents and Tools for the FEgrow AL Protocol

| Item | Function/Description | Source/Example |

|---|---|---|

| Protein Structure | The 3D structure of the target protein used for pose optimization and scoring. | PDB ID 7BQY (SARS-CoV-2 Mpro with a bound fragment) [5] |

| Ligand Core | A fixed molecular fragment or known hit compound that serves as the base for growing new molecules. | A fragment from a crystallographic screen, placed in the binding pocket [5] |

| R-group & Linker Libraries | Libraries of chemical substituents and connecting units used to build new molecules from the core. | Distributed libraries with 2000+ linkers and 500+ R-groups [5] |

| FEgrow Software | Open-source package for building and optimizing ligands in a protein binding pocket. | https://github.com/cole-group/FEgrow [5] |

| gnina | A convolutional neural network scoring function used to predict binding affinity. | Integrated within the FEgrow workflow for scoring generated poses [5] |

| RDKit | Open-source cheminformatics toolkit used for molecular manipulation and conformer generation. | Used by FEgrow for merging, conformer generation, and filtering [5] |

| Machine Learning Model | A surrogate model trained on FEgrow outputs to predict scores for unscreened compounds. | A random forest model was used in the cited study [5] |

Step-by-Step Methodology

Initialization:

- Define the rigid protein structure, the ligand core, and the growth vector(s).

- Assemble the initial combinatorial library of linkers and R-groups.

Initial Sampling and Expensive Evaluation:

- Randomly select a small batch of (linker, R-group) combinations.

- Use FEgrow to build each full molecule into the protein binding pocket. This involves:

- Merging the core, linker, and R-group.

- Generating an ensemble of ligand conformers using RDKit's ETKDG algorithm.

- Filtering out conformers that clash with the protein.

- Optimizing the remaining conformers using a hybrid ML/MM potential energy function with a rigid protein [5].

- Score the resulting optimized poses using the gnina scoring function [5]. This score serves as the initial label (proxy for affinity) for the AL cycle.

Active Learning Loop:

- Train ML Model: Train a machine learning model (e.g., Random Forest) on the current set of evaluated molecules. The input features are representations of the (linker, R-group) pairs, and the target variable is the gnina score.

- Hypothesis & Query: Use the trained model to predict scores for all unevaluated molecules in the combinatorial library. Apply an acquisition function (e.g., selecting molecules with the highest predicted scores or those with high uncertainty) to select the next batch of promising candidates [5].

- Label: Run the selected batch of candidates through the FEgrow building and scoring pipeline (Step 2) to obtain their "expensive" gnina scores.

- Model Update: Add the newly evaluated molecules and their scores to the training set.

Termination and Validation:

- Repeat the AL loop for a predefined number of cycles or until model performance plateaus.

- Select the top-ranked molecules from the final cycle for purchase, synthesis, and experimental validation in a bioassay (e.g., a fluorescence-based Mpro activity assay) [5].

Integration with Broader Chemogenomics Workflows

The AL cycle is not an isolated process but is deeply integrated into modern chemogenomics and drug discovery pipelines. It is a key enabler of the Design-Build-Test-Learn (DBTL) cycle, where "Learn" directly corresponds to the model update and hypothesis steps in AL [2]. This integration is crucial for navigating complex biological fitness landscapes, which are characterized by high dimensionality, epistasis (non-additive mutational effects), and sparse regions of high fitness [2]. Furthermore, AL is increasingly combined with generative models in a symbiotic relationship. For instance, a Variational Autoencoder (VAE) can be embedded within nested AL cycles, where the generative model proposes novel molecules, and the AL cycle selects the most informative ones for expensive evaluation, using the results to fine-tune the generator [6]. This creates a powerful, self-improving system for de novo molecular design. The emerging paradigm of Human-in-the-Loop AL further enriches this workflow by incorporating feedback from chemistry experts to approve or refute model predictions, effectively acting as a cost-effective oracle to bridge gaps in training data and guide the exploration of chemical space [3].

The endeavor of drug discovery is fundamentally a search for a needle in a haystack, involving the exploration of an estimated 10^60 drug-like compounds to identify those with the desired therapeutic properties [8]. This vast chemical space presents an insurmountable challenge for traditional experimental methods, which can only screen a minuscule fraction of possible compounds due to constraints in time, cost, and resources. Furthermore, the acquisition of labeled data—molecules with experimentally determined properties—is exceptionally expensive and time-consuming, often requiring sophisticated laboratory techniques such as high-throughput screening, binding affinity assays, or toxicity tests. In this context, active learning (AL) has emerged as a powerful machine learning strategy that strategically addresses both the problem of vast chemical space and the scarcity of labeled data by iteratively selecting the most informative compounds for experimental validation, thereby accelerating the discovery process while significantly reducing costs [9] [8].

Active learning operates on a simple yet powerful premise: instead of randomly selecting compounds for testing, an AL algorithm proactively identifies which unlabeled data points would be most valuable to label, based on the current model's uncertainties or potential for improvement. This creates a human-in-the-loop paradigm where experimentalists guide both data collection and model training through targeted exploration within the vast chemical space [10]. The procedure adopts an iterative strategy of data collection, annotation, and training, using a specific set of rules to identify molecules that maximize the enhancement of model performance. By validating these molecules through wet lab experiments, active learning achieves greater improvements in model performance compared to random selection strategies, all within the same experimental annotation budget [10].

The Active Learning Workflow in Drug Discovery

Core Cycle and Implementation Strategies

The active learning framework follows an iterative cycle that integrates computational predictions with experimental validation. This process begins with a small initial set of labeled compounds used to train a preliminary machine learning model. The trained model then evaluates a much larger library of unlabeled compounds, scoring them based on a specific acquisition function. The most informative compounds are selected for experimental testing ("oracle" validation), and the newly acquired data is incorporated into the training set. The model is retrained with this expanded dataset, and the cycle repeats until a stopping criterion is met, such as achievement of target performance or exhaustion of resources [8] [10].

Figure 1: Active Learning Cycle for Drug Discovery

Several key strategies govern how compounds are selected at each iteration, balancing the exploration of diverse chemical space with the exploitation of promising regions [8] [11]:

- Greedy Selection: Chooses only the top predicted binders at every iteration step, focusing exclusively on exploitation.

- Uncertainty Sampling: Selects ligands for which the prediction uncertainty is largest, prioritizing exploration.

- Mixed Strategy: First identifies compounds with strong predicted binding affinity, then selects those with the most uncertain predictions among them, balancing exploitation and exploration.

- Narrowing Strategy: Combines broad selection in the first iterations with a subsequent switch to a greedy approach.

Quantitative Impact of Active Learning

The implementation of active learning strategies has demonstrated significant improvements in efficiency across multiple drug discovery applications. The following table summarizes key performance metrics reported in recent studies:

Table 1: Performance Metrics of Active Learning in Drug Discovery Applications

| Application Domain | Performance Improvement | Data Efficiency | Key Metrics | Citation |

|---|---|---|---|---|

| Mutagenicity Prediction | Competitive performance with small labeled samples | 57% reduction in training molecules required | Uncertainty-based sampling | [10] |

| Synergistic Drug Combinations | 60% of synergistic pairs found exploring only 10% of space | 82% savings in experimental materials | Precision-Recall AUC | [12] |

| Ultra-Large Library Docking | ~70% of top hits found at 0.1% of brute-force cost | 1000x cost reduction | Recall of top binders | [13] |

| Affinity Prediction (TYK2, USP7, D2R, Mpro) | Higher recall of top binders with sparse training data | Optimal batch size: 20-30 compounds | R², Spearman, F1 score | [11] |

Experimental Protocols and Methodologies

Protocol for Ligand Binding Affinity Optimization

A well-established AL protocol for optimizing ligand binding affinity involves multiple carefully designed steps that combine physics-based calculations with machine learning [8]:

Library Preparation: Generate an in silico compound library, typically through combinatorial expansion of R-groups around a core scaffold or by enumerating virtual compounds from available building blocks.

Initial Sampling: Employ weighted random selection for model initialization, where ligands are selected with probability inversely proportional to the number of similar ligands in the dataset. Similarity is determined using t-SNE embedding and 2D histogram binning.

Binding Pose Generation:

- For each ligand, identify the crystal structure with the highest Dice similarity based on RDKit topological fingerprint.

- Constrain coordinates of the largest substructure matches to the reference crystal structure.

- Generate initial guesses for remaining atoms via constrained embedding following the ETKDG algorithm.

- Refine ligand binding poses through hybrid topology molecular dynamics simulations in vacuum, morphing reference inhibitor into the ligand while lowering temperature.

Ligand Representation:

- Calculate molecular features including 2D descriptors (constitutional, electrotopological), 3D descriptors (molecular surface area), and molecular fingerprints (PLEC, MACCS).

- Generate interaction features including electrostatic and van der Waals interaction energies between ligand and each protein residue.

Active Learning Cycle:

- Train machine learning models (Gaussian Process, Random Forest, or Neural Networks) on current labeled set.

- Use selection strategy (mixed, uncertainty, or greedy) to choose the next batch of compounds for free energy calculations.

- Perform alchemical free energy calculations as the oracle for selected compounds.

- Incorporate newly labeled compounds into training set.

- Repeat for predetermined number of cycles or until performance convergence.

Protocol for Mutagenicity Prediction

The muTOX-AL framework demonstrates an effective AL approach for molecular mutagenicity prediction [10]:

Data Preparation:

- Curate mutagenicity dataset (e.g., TOXRIC with 7,495 compounds).

- Split data into five folds for cross-validation.

- For each fold, create initial labeled pool of 200 randomly selected samples, with remaining samples as unlabeled pool.

Feature Extraction:

- Generate molecular fingerprints (MACCS, Morgan) and molecular descriptors.

- Perform principal component analysis to visualize chemical space distribution.

Model Architecture:

- Implement feature extraction module for input processing.

- Design backbone module (neural network) for mutagenicity prediction.

- Incorporate uncertainty estimation module to quantify sample informativeness.

- Configure loss calculation module combining backbone and uncertainty losses.

Active Learning Cycle:

- Train model on current labeled pool.

- Calculate uncertainty scores for all samples in unlabeled pool.

- Select samples with highest uncertainty scores for oracle annotation.

- Add newly labeled samples to training set.

- Iterate until label budget exhausted or performance plateaus.

Successful implementation of active learning in drug discovery requires a combination of computational tools, molecular representations, and experimental assays. The following table details key resources mentioned in recent studies:

Table 2: Essential Research Reagents and Computational Tools for AL in Drug Discovery

| Tool/Resource | Type | Function in AL Pipeline | Examples/Implementation |

|---|---|---|---|

| Molecular Representations | Descriptors | Encode molecular structure for ML models | 2D/3D RDKit descriptors, Morgan fingerprints, MAP4, MACCS, PLEC fingerprints [8] [12] |

| Protein-Ligand Interaction Features | Descriptors | Capture binding site interactions | MedusaNet voxel grids, residue interaction energies, PLEC fingerprints [8] |

| AL Selection Algorithms | Software | Implement compound selection strategies | Mixed strategy, uncertainty sampling, greedy selection, BAIT, COVDROP, COVLAP [8] [14] |

| Free Energy Calculations | Computational Oracle | Provide accurate binding affinity predictions | Alchemical free energy calculations, FEP+ [8] [13] |

| Docking Tools | Computational Oracle | Screen large compound libraries | Glide docking, molecular docking scores [13] |

| Experimental Assays | Wet Lab Oracle | Validate computational predictions | Ames test (mutagenicity), binding assays (affinity), cell viability (synergy) [10] [12] |

| Cell Line Features | Descriptors | Incorporate cellular context in predictions | Gene expression profiles from GDSC database [12] |

Visualization of Selection Strategy Implementation

The implementation of different selection strategies follows specific logical pathways that determine how compounds are prioritized for experimental testing:

Figure 2: Compound Selection Strategies in Active Learning

Active learning represents a paradigm shift in computational drug discovery, directly addressing the fundamental challenges of vast chemical space and limited labeled data. By strategically selecting the most informative compounds for experimental validation, AL protocols achieve dramatic improvements in efficiency—reducing the number of required experiments by 57% in mutagenicity prediction [10], identifying 60% of synergistic drug combinations while exploring only 10% of combinatorial space [12], and recovering ~70% of top-scoring hits at 0.1% of the cost of exhaustive docking [13]. The continued refinement of molecular representations, selection strategies, and integration with high-performance computing and automated experimentation platforms will further solidify AL's role as an indispensable tool in modern drug discovery. As these methodologies become more sophisticated and widely adopted, they promise to significantly accelerate the identification of novel therapeutic compounds while reducing the substantial costs associated with traditional drug discovery approaches.

Active learning (AL) has emerged as a transformative paradigm in chemogenomics, enabling researchers to navigate the vast molecular and target interaction space with unprecedented efficiency. In the context of drug discovery, chemogenomics involves modeling the compound-protein interaction space to predict bioactivity, typically for identifying or optimizing drug candidates [15]. The core challenge AL addresses is the fundamental constraint of resources: wet-lab experiments, synthesis, and biological assays are notoriously time-consuming and expensive [3]. Active learning frameworks are strategically designed to overcome this by implementing an iterative, guided process for data acquisition. The two primary and interconnected objectives are: (1) Maximizing Information Gain: Each selected experiment should optimally reduce the uncertainty of the predictive model, enhancing its understanding of the structure-activity relationship across the chemical space. (2) Minimizing Experimental Cost: By prioritizing the most informative compounds for testing, AL aims to achieve high model performance and identify promising candidates with a minimal number of experiments, thereby de-risking and accelerating the project timeline [16] [17]. This guide details the technical implementation of these objectives, providing a roadmap for integrating AL into modern chemogenomics research.

Foundational Principles and Acquisition Strategies

The operationalization of AL's core objectives hinges on the deployment of specific acquisition functions—algorithms that score and rank unlabeled compounds based on their potential value to the model.

Core Acquisition Strategies

- Exploitation focuses on immediately improving the desired molecular property. This strategy selects compounds that the current model predicts will have the highest value (e.g., greatest potency, binding affinity, or other target property). While this can rapidly yield high-performing candidates, it risks converging on local optima and lacks chemical diversity [16].

- Exploration prioritizes the improvement of the model itself. It selects compounds where the model's predictive uncertainty is highest. By labeling these points, the model learns about previously poorly understood regions of the chemical space, expanding its applicability domain. This strategy can enhance the model's generalizability but may not directly yield the best compounds in the short term [3].

- Balanced Strategies combine exploration and exploitation to harness the benefits of both. A common framework is the Expected Predictive Information Gain (EPIG), which selects molecules expected to provide the greatest reduction in predictive uncertainty, leading to more accurate evaluations of subsequently generated molecules [3]. Another advanced balanced strategy is ActiveDelta, a paired-molecule approach that predicts the property improvement from the current best compound, directly guiding optimization while maintaining diversity [16].

Batch Active Learning for Practical Workflows

In real-world drug discovery, testing compounds one at a time is impractical. Batch Active Learning addresses this by selecting an optimal set of compounds for each experimental cycle. The key challenge is avoiding redundancy within a batch. Advanced methods like COVDROP and COVLAP select batches by maximizing the joint entropy (the log-determinant) of the epistemic covariance matrix of the batch predictions. This approach explicitly balances individual uncertainty (variance) and inter-compound diversity (covariance), preventing the selection of highly correlated candidates and ensuring the batch is collectively informative [17].

Table 1: Comparison of Key Active Learning Acquisition Strategies

| Strategy | Primary Objective | Key Mechanism | Advantages | Limitations |

|---|---|---|---|---|

| Exploitation | Find high-value compounds | Selects molecules with the highest predicted property value [16]. | Rapid identification of potent leads. | Can get stuck in local optima; low scaffold diversity. |

| Exploration | Improve model accuracy | Selects molecules with the highest predictive uncertainty [3]. | Broadens model knowledge; improves generalizability. | May not directly advance primary optimization goal. |

| EPIG | Reduce predictive uncertainty | Maximizes the expected information gain for model predictions [3]. | Balances exploration and exploitation; improves predictor accuracy. | Computationally intensive. |

| ActiveDelta | Guide molecular optimization | Predicts property improvements via molecular pairing [16]. | Effective with small data; identifies diverse scaffolds. | Requires paired data representation. |

| Batch Selection (COVDROP) | Maximize batch information | Maximizes joint entropy of batch predictions [17]. | Practical for HTS; ensures diversity within a batch. | High computational complexity for large candidate pools. |

Practical Implementation and Workflows

Translating AL theory into practice requires a structured workflow and an understanding of the supporting computational infrastructure.

The Human-in-the-Loop (HITL) Framework

A powerful extension of AL integrates domain expertise directly into the loop. In this framework, a property predictor (e.g., a QSAR model) guides the generative design of molecules. An acquisition function like EPIG then identifies generated molecules that are most informative for the predictor—often those with high predicted scores but also high uncertainty. Instead of immediate wet-lab testing, these molecules are evaluated by human experts who can approve or refute the predicted properties based on their domain knowledge. This feedback is used to refine the property predictor, creating a closed-loop system that leverages human insight to efficiently navigate the chemical space and generate molecules that are both promising and synthetically tractable [3].

The FEgrow-AL Workflow for Structure-Based Design

A concrete example of an AL-driven workflow is demonstrated by the FEgrow software for de novo drug design. This workflow targets a specific protein binding pocket:

- Input: A fixed ligand core and libraries of linkers and functional groups (R-groups) are defined.

- Building & Scoring: FEgrow builds the full ligand in the protein pocket, optimizes its pose, and scores it using a function like the gnina CNN scoring function to predict binding affinity.

- Active Learning Cycle:

- An initial subset of compounds is built and scored.

- This data trains a machine learning model (e.g., a random forest or neural network) to predict the objective function (e.g., docking score) for the entire combinatorial space.

- The model selects the next most promising batch of compounds (e.g., those with the best-predicted scores) for evaluation with the expensive FEgrow process.

- The cycle repeats, iteratively improving the model and focusing resources on the most valuable regions of the chemical space [5].

Table 2: Essential Research Reagent Solutions for an AL-Driven Campaign

| Reagent / Tool Category | Specific Examples | Function in the AL Workflow |

|---|---|---|

| Cheminformatics Libraries | RDKit [5] [16], DeepChem [17] | Handles molecular I/O, fingerprint generation (e.g., Morgan fingerprints), and descriptor calculation. |

| Molecular Representations | Morgan Fingerprints (ECFP) [16], SMILES/SELFIES [18], Graph Neural Networks [17] | Encodes molecular structure for machine learning models. |

| Predictive & Generative Models | Chemprop (D-MPNN) [16], XGBoost [16], Generative Adversarial Networks (GANs) [19] | Serves as the surrogate model for property prediction or generates novel molecular structures. |

| Active Learning & Optimization Packages | FEgrow [5], BATCHIE [20], Custom implementations of COVDROP/COVLAP [17] | Orchestrates the active learning cycle, including model training, candidate ranking, and batch selection. |

| Experimental Assay Platforms | High-Throughput Screening (HTS) [21], Fluorescence-based bioassays [5] | Functions as the "oracle" to provide experimental validation and ground-truth labels for selected compounds. |

Case Studies and Experimental Validation

Retrospective and prospective validations across diverse domains underscore the real-world impact of AL in achieving its core objectives.

Small Molecule Optimization with ActiveDelta

In a benchmark study across 99 Ki datasets from ChEMBL, the ActiveDelta strategy was pitted against standard exploitative AL. ActiveDelta, which uses paired molecular representations to predict potency improvements, consistently outperformed standard methods. It identified a greater number of the most potent inhibitors and, critically, achieved this with enhanced chemical diversity as measured by Murcko scaffold analysis. This demonstrates that AL can simultaneously minimize experimental effort (by requiring fewer cycles to find potent hits) and maximize information gain (by exploring a broader chemical space) [16].

Large-Scale Combination Screening with BATCHIE

Screening for effective drug combinations faces a combinatorial explosion of possibilities. The BATCHIE platform uses a Bayesian active learning approach based on Probabilistic Diameter-based Active Learning (PDBAL) to design maximally informative batches of combination experiments. In a prospective screen of a 206-drug library across 16 pediatric cancer cell lines, BATCHIE accurately predicted unseen drug combinations and detected synergies after exploring only 4% of the 1.4 million possible experiments. This dramatic reduction in experimental cost was achieved without sacrificing information gain, as the model successfully identified a panel of effective combinations, including a clinically relevant hit [20].

In Vitro Protein Production Optimization

Beyond drug discovery, AL's principles are universally applicable. In one study, researchers sought to optimize a cell-free buffer system for protein production—a combinatorial space of over 4 million possible compositions. An AL strategy using an ensemble of neural networks and a balanced acquisition function achieved a 34-fold increase in protein yield after testing only ~1000 compositions. Furthermore, they demonstrated that a minimal set of 20 highly informative compositions was sufficient to train a model that could accurately predict optimal buffers for new lysates, showcasing a powerful "one-step" optimization method with minimal experimental overhead [22].

Table 3: Summary of Experimental Outcomes from AL Case Studies

| Case Study | Domain | Key AL Method | Reported Efficiency Gain | Performance Improvement |

|---|---|---|---|---|

| ActiveDelta [16] | Ki Potency Prediction | Molecular Pairing & Exploitation | Identified more potent and diverse inhibitors with the same data budget. | Superior performance in identifying top-potency compounds compared to standard AL. |

| BATCHIE [20] | Combination Drug Screening | Probabilistic Diameter-based AL (PDBAL) | Screened only 4% of a 1.4M-experiment space. | Accurately predicted unseen combinations; identified validated synergistic hits. |

| Cell-Free Optimization [22] | Bioprocessing | Ensemble Neural Networks | Achieved optimization after testing ~0.02% of search space. | 34-fold increase in protein production yield. |

| FEgrow [5] | Structure-Based Design | Model-based Batch Selection | Enabled efficient search of combinatorial linker/R-group space. | Identified purchasable compounds with activity against SARS-CoV-2 Mpro. |

Experimental Protocols and Best Practices

Protocol: Implementing an Exploitative ActiveDelta Cycle

This protocol is adapted from the benchmark study detailed in [16].

Initialization:

- Begin with a small, randomly selected set of labeled compounds (e.g., N=2) as the initial training data.

- Define a large pool of unlabeled compounds as the learning set.

Model Training (ActiveDelta):

- Pre-process the training data by creating all possible pairwise combinations of molecules.

- For each pair (A, B), calculate the difference in the target property (e.g., ΔKi = Ki,B - Ki,A).

- Train a machine learning model (e.g., the two-molecule version of Chemprop or a paired-fingerprint XGBoost) on these pairs to predict the property difference.

Candidate Selection:

- Identify the single best compound (e.g., lowest Ki) in the current training set. Designate this as the reference molecule.

- Pair this reference molecule with every compound in the unlabeled learning set.

- Use the trained ActiveDelta model to predict the property improvement (Δ) for each of these pairs.

- Select the compound from the learning set that is part of the pair with the highest predicted improvement.

Iteration:

- The selected compound is experimentally tested ("labeled") and added to the training dataset.

- The model is retrained on the expanded and re-paired training data.

- The cycle (Steps 2-4) repeats until the experimental budget is exhausted or a performance target is met.

Protocol: Setting Up a Batch AL Campaign with COVDROP

This protocol is based on the methodology described in [17].

Problem Formulation:

- Assemble a large pool of unlabeled molecules (e.g., from a virtual library).

- Define a batch size B (e.g., 30 molecules) appropriate for your experimental throughput.

Model and Uncertainty Setup:

- Choose a deep learning model (e.g., a Graph Neural Network) suitable for regression/classification of your molecular property.

- Implement an uncertainty quantification technique. For COVDROP, this is typically Monte Carlo (MC) Dropout.

- During inference, perform multiple forward passes (e.g., 100) with dropout enabled.

- The predictions from these passes form a distribution for each molecule.

Covariance Matrix Calculation:

- For all molecules in the unlabeled pool, compute the predictive covariance matrix, C.

- Each element Cij represents the covariance between the predictive distributions of molecule i and molecule j. This captures both individual uncertainties (variances, Cii) and similarities between molecules (covariances, C_ij).

Greedy Batch Selection:

- Initialize an empty batch.

- Iteratively, for k = 1 to B:

- Find the molecule in the unlabeled pool that, when added to the current batch, maximizes the log-determinant of the resulting batch's covariance submatrix, CB.

- This step seeks to maximize the joint entropy (total information content) of the batch.

- The final set of B selected molecules constitutes the optimal batch.

Experimental Cycle:

- The selected batch is tested experimentally.

- The model is retrained on the newly labeled data.

- The process repeats from Step 3 for the next batch.

Active learning represents a fundamental shift in the approach to computational and experimental research in chemogenomics. By strategically prioritizing data acquisition, it directly attacks the core bottlenecks of cost and time. Frameworks like Human-in-the-Loop AL, ActiveDelta, and BATCHIE provide concrete methodologies to simultaneously maximize information gain and minimize experimental cost. The resulting models are not only more predictive and robust but also guide the exploration of chemical space more intelligently, leading to the discovery of potent, diverse, and novel candidates with a fraction of the traditional resource investment. As these methodologies continue to mature and integrate with cutting-edge generative AI, they are poised to become the standard operating procedure for efficient and effective drug discovery.

In chemogenomics, where researchers model the complex interactions between chemical compounds and biological targets, the quality of training data is a primary determinant of machine learning (ML) model success. The field consistently grapples with two pervasive data flaws: severe imbalance and significant redundancy. Bioactivity datasets often exhibit extreme skewness, with hit rates in high-throughput screens sometimes as low as 0.01%, creating a massive imbalance between active and inactive compounds [23]. Simultaneously, chemical libraries frequently contain clusters of structurally similar compounds, introducing redundancy that biases models and wastes computational resources. These flaws lead to ML models that appear accurate yet fail to predict the biologically important minority class (e.g., active compounds) and generalize poorly to novel chemical scaffolds.

Active learning (AL) has emerged as a powerful computational strategy to address these intrinsic data problems. AL is an iterative feedback process that intelligently selects the most informative data points for labeling and model training [1]. By prioritizing informative instances over redundant ones and strategically addressing class imbalance through intelligent sampling, AL enables the construction of highly predictive models from smaller, higher-quality datasets. Within chemogenomics, this capability allows researchers to extract maximum value from expensive experimental data, accelerating the identification of novel compound-target interactions while minimizing resource expenditure [15].

How Active Learning Tackles Data Flaws

Core Mechanisms Against Data Imbalance

Active learning counteracts data imbalance through its fundamental operating principle: uncertainty sampling. Instead of training models on entire available datasets, AL begins with a small initial training set and iteratively selects the most uncertain instances for experimental validation and inclusion in subsequent training cycles [23]. This approach automatically guides the sampling process toward the decision boundary where the model struggles most to distinguish between classes, which naturally leads to increased representation of the minority class in the training data.

Research demonstrates that this adaptive subsampling strategy significantly outperforms both training on complete datasets and using static subsampling methods. In studies across multiple molecular classification tasks, AL-based subsampling achieved performance improvements of up to 139% in Matthews Correlation Coefficient compared to models trained on full datasets [23]. The strategy proves particularly robust against label noise, maintaining performance even when significant portions of the training data contain errors, a common issue in experimental biological data.

Strategic Approaches to Reduce Redundancy

To address data redundancy, AL incorporates diversity criteria into its selection algorithms. Rather than selecting batches of compounds based solely on individual uncertainty, advanced AL methods choose sets of compounds that are collectively informative. These approaches maximize the coverage of the chemical space within each batch, ensuring that each selected compound provides unique information to the model.

Batch active learning methods specifically tackle this challenge by selecting compounds that are both uncertain and diverse. One approach uses covariance matrices to quantify the similarity between unlabeled samples, then selects batches that maximize the joint entropy (information content) by maximizing the determinant of the covariance submatrix [17]. This ensures selected compounds are non-redundant and collectively provide the maximum possible information gain, effectively eliminating the bias introduced by structurally similar compound clusters in traditional screening libraries.

Active Learning Methodologies in Practice

Workflow and Implementation

The practical implementation of active learning in chemogenomics follows a structured, iterative cycle that integrates computational modeling with experimental validation. The standard AL workflow comprises several key stages that form a closed feedback loop, continuously refining the model with each iteration.

Diagram 1: Standard AL workflow for chemogenomics. This iterative process efficiently builds predictive models by strategically selecting the most informative compounds for experimental testing.

The process begins with a small initial training set of compound-target interactions, which may be randomly selected or chosen for diversity. A predictive model (e.g., random forest, neural network) is trained on this initial data. The trained model then evaluates all compounds in the unlabeled pool, estimating the uncertainty of each prediction. The most informative compounds are selected based on predefined criteria (typically combining uncertainty and diversity metrics) for experimental validation. The newly acquired experimental data is incorporated into the training set, and the model is retrained. This cycle continues until a stopping criterion is met, such as performance plateau or exhaustion of resources [1] [23].

Key Acquisition Functions for Data Selection

Acquisition functions form the mathematical core of AL systems, determining which data points are selected in each iteration. The table below summarizes the primary acquisition strategies used to combat data flaws in chemogenomics.

Table 1: Acquisition Functions for Addressing Data Flaws in Chemogenomics

| Function Type | Mechanism | Addresses | Advantages | Limitations |

|---|---|---|---|---|

| Uncertainty Sampling | Selects instances where model prediction is most uncertain | Data imbalance | Targets decision boundary; improves minority class detection | May select outliers; ignores diversity |

| Diversity Sampling | Maximizes dissimilarity between selected instances | Data redundancy | Broadly explores chemical space; reduces redundancy | May include clearly unproductive regions |

| Query-by-Committee | Selects instances with most disagreement between ensemble models | Data imbalance | Robust uncertainty estimation; reduces model bias | Computationally intensive for large ensembles |

| Expected Model Change | Selects instances causing greatest model change | Both | High information per sample; efficient learning | Computationally expensive; complex implementation |

| Batch BALD | Maximizes mutual information between batch and model parameters | Both | Optimizes batch diversity and uncertainty | High computational complexity for large batches |

In practice, advanced AL implementations often combine multiple strategies. For example, deep batch active learning methods use covariance matrices to select compounds that maximize joint entropy, simultaneously addressing both uncertainty and diversity [17]. Similarly, the "balanced-diverse" approach applies both class balancing and structural diversity criteria to create optimal training subsets [23].

Experimental Protocols and Benchmarking

Implementing AL in chemogenomics requires careful experimental design and rigorous benchmarking. The following protocol outlines a standardized approach for AL implementation in compound-target interaction prediction:

Initial Setup and Data Preparation

- Dataset Collection: Compile a comprehensive dataset of compound-target interactions, ensuring representation of both active and inactive classes. Public databases like ChEMBL are commonly used sources.

- Representation: Encode molecular structures using appropriate representations. Morgan fingerprints (radius 2, 1024 bits) implemented in RDKit provide a robust baseline representation [23].

- Splitting: Perform a 50:50 scaffold split to separate compounds into training and validation sets, ensuring structurally distinct sets for rigorous evaluation.

Active Learning Implementation

- Initialization: Randomly select one positive and one negative example from the training pool to form the initial training set.

- Model Training: Train a predictive model (e.g., Random Forest with 100 trees and Gini impurity) on the current training set.

- Uncertainty Quantification: Apply ensemble-based uncertainty estimation by calculating variance in prediction probabilities across all trees in the Random Forest.

- Compound Selection: Identify the compound with the highest predictive uncertainty from the unlabeled pool.

- Iteration: Add the selected compound to the training set and retrain the model. Repeat steps 3-5 until all compounds are exhausted or performance plateaus.

- Evaluation: Monitor performance metrics (Matthews Correlation Coefficient, F1 score, balanced accuracy) on the independent validation set throughout the process.

This protocol has demonstrated consistent success across multiple bioactivity prediction tasks, typically achieving peak performance with only 10-25% of the total data available [15] [23].

Performance Benchmarks and Comparative Analysis

Rigorous benchmarking studies demonstrate the significant advantages of AL approaches over conventional screening and random selection strategies. The performance gains are consistent across diverse drug discovery tasks, from virtual screening to molecular property prediction.

Table 2: Performance Benchmarking of Active Learning Methods in Drug Discovery

| Application Domain | Dataset | Best Performing AL Method | Performance Gain vs. Random | Data Efficiency |

|---|---|---|---|---|

| Virtual Screening | Protein-Ligand Affinity | Covariance Dropout (COVDROP) | ~40% higher hit rate | Reaches maximum performance with 50% less data |

| Molecular Property Prediction | Aqueous Solubility | Batch Active Learning with Diversity | 30% lower RMSE | 60% fewer samples needed for same accuracy |

| Compound-Target Interaction | HIV Replication Inhibition | Ensemble-based Uncertainty Sampling | 139% higher MCC | Identifies 80% of actives with only 20% of total data |

| Toxicity Prediction | Clinical Trial Toxicity | Balanced-Diverse Sampling | 45% higher F1 score | Achieves peak performance with 25% of data |

The consistency of these results across different domains highlights the robustness of AL approaches to the data flaws prevalent in chemogenomics. Notably, AL not only achieves better final performance but does so with substantially less experimental effort, directly addressing the resource constraints common in drug discovery programs.

Advanced Implementations and Future Directions

Integration with Generative Models

Recent advances combine AL with generative artificial intelligence to create more powerful molecular design pipelines. One innovative approach integrates a variational autoencoder (VAE) with two nested AL cycles [6]. In this architecture, the VAE generates novel molecular structures, while the AL components iteratively select the most promising candidates for evaluation using both chemoinformatic predictors (drug-likeness, synthetic accessibility) and physics-based oracles (molecular docking). This synergistic combination addresses fundamental limitations of generative models, including poor target engagement and limited synthetic accessibility, while simultaneously exploring novel regions of chemical space.

This VAE-AL framework has demonstrated impressive experimental validation. When applied to CDK2 inhibitor design, the approach generated novel molecular scaffolds distinct from known inhibitors, with 8 out of 9 synthesized molecules showing biological activity, including one with nanomolar potency [6]. This success highlights how AL can guide generative models toward chemically feasible, biologically active compounds while navigating around data scarcity and quality issues.

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of AL in chemogenomics relies on a core set of computational tools and resources. The table below summarizes key components of the AL research toolkit.

Table 3: Essential Research Reagents for AL Implementation in Chemogenomics

| Tool/Resource | Type | Function | Application in AL Workflow |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular representation and manipulation | Generates molecular fingerprints (e.g., Morgan fingerprints) for compound encoding |

| DeepChem | Deep Learning Library | Molecular machine learning | Provides implementations of graph neural networks for compound property prediction |

| scikit-learn | Machine Learning Library | General-purpose ML algorithms | Supplies Random Forest and other classifiers with uncertainty estimation capabilities |

| GPy | Gaussian Process Library | Probabilistic non-parametric models | Offers built-in uncertainty quantification for regression tasks |

| ChemBL Database | Bioactivity Database | Repository of compound-target interactions | Sources initial training data and provides ground truth for experimental validation |

| BAIT | Batch AL Implementation | Fisher information-based selection | Optimizes batch selection for deep learning models |

| GeneDisco | AL Benchmarking Suite | Benchmarking platform for AL algorithms | Evaluates and compares different AL strategies on standardized tasks |

Emerging Trends and Future Outlook

The future of AL in chemogenomics points toward increased integration with experimental automation and more sophisticated uncertainty quantification techniques. As noted in recent research, "AL-assisted design-build-test-learn cycles can quickly converge on the true landscape with just a few iterations of small-scale sampling, filtering out a significant portion of unnecessary, costly, and time-consuming validations" [2]. This is particularly valuable in genetic engineering and protein design applications, where experimental throughput continues to increase.

Future developments will likely focus on multi-objective optimization AL, which simultaneously balances multiple molecular properties (efficacy, selectivity, pharmacokinetics), and transfer AL, which leverages knowledge from related targets to jumpstart learning for novel targets with limited data [1]. Additionally, the integration of AL with foundation models pre-trained on large chemical libraries represents a promising direction for few-shot learning in chemogenomics, potentially further reducing the experimental burden required for model development.

Active learning provides a powerful, principled framework for addressing the fundamental data quality challenges—imbalance and redundancy—that persistently hamper traditional approaches in chemogenomics. By intelligently selecting the most informative compounds for experimental testing, AL systems systematically build balanced, representative training datasets that maximize predictive performance while minimizing resource expenditure. The robust performance gains demonstrated across diverse drug discovery applications, from virtual screening to molecular generation, underscore the transformative potential of AL methodologies. As chemogenomics continues to grapple with increasingly complex research questions and expanding chemical spaces, the strategic integration of active learning into the research workflow will be essential for extracting meaningful insights from imperfect data and accelerating the discovery of novel therapeutic agents.

Active Learning in Action: Key Applications from Virtual Screening to Molecule Generation

The identification of novel compound-protein interactions is a fundamental objective in drug discovery. Traditional virtual screening methods, which rely on the exhaustive computational docking of every molecule in a large virtual library, are becoming increasingly prohibitive as these libraries now routinely contain billions of compounds [24]. This creates a critical bottleneck in the early stages of drug development. Within this context, active learning has emerged as a powerful machine learning framework to dramatically increase the efficiency of virtual screening campaigns. As a core methodology in computational chemogenomics—which aims to model the compound-protein interaction space—active learning enables the construction of highly predictive models by iteratively selecting the most informative ligand-target interactions for evaluation [15]. This technical guide explores the application of active learning to structure-based virtual screening, providing a detailed examination of its performance, methodologies, and implementation to help researchers prioritize the most promising compounds for experimental testing.

Active Learning Performance and Quantitative Benchmarks

Active learning guided virtual screening has demonstrated remarkable efficiency in identifying top-scoring compounds from ultra-large libraries by evaluating only a small fraction of the total collection. The performance can be quantified using the Enrichment Factor (EF), which measures the ratio of the percentage of top-k scores found by the model-guided search to the percentage found by a random search [24]. The following table summarizes key performance metrics from recent studies:

Table 1: Performance Benchmarks of Active Learning in Virtual Screening

| Virtual Library Size | Surrogate Model | Acquisition Function | Screening Effort | Top Compounds Identified | Reference |

|---|---|---|---|---|---|

| 100 million compounds | Directed-Message Passing Neural Network (D-MPNN) | Upper Confidence Bound (UCB) | 2.4% | 94.8% of top-50,000 | [24] |

| 100 million compounds | Directed-Message Passing Neural Network (D-MPNN) | Greedy | 2.4% | 89.3% of top-50,000 | [24] |

| 99.5 million compounds | Pretrained Transformer / Graph Neural Network | Bayesian Optimization | 0.6% | 58.97% of top-50,000 | [25] |

| 10,560 compounds | Feedforward Neural Network | Greedy | 6.0% | 66.8% of top-100 (EF=11.9) | [24] |

| 10,560 compounds | Random Forest | Greedy | 6.0% | 51.6% of top-100 (EF=9.2) | [24] |

Beyond standard docking, the ActiveDelta approach, which leverages paired molecular representations to predict property improvements, has shown superior performance in exploitative active learning. In benchmarks across 99 Ki datasets, ActiveDelta implementations (using both Chemprop and XGBoost) consistently identified a greater number of potent inhibitors and achieved higher scaffold diversity compared to standard active learning methods [26].

Key Components of an Active Learning Workflow for Virtual Screening

An effective active learning system for virtual screening integrates several key components, each of which must be carefully selected based on the specific campaign goals.

Table 2: Key Components of an Active Learning Workflow

| Component | Description | Common Options & Examples |

|---|---|---|

| Surrogate Model | A machine learning model trained on docking results to predict scores of unscreened compounds. | - Random Forest (RF): Fast, works well on small data. [24]- Feedforward Neural Network (NN): Improved performance over RF. [24]- Message Passing Neural Network (MPNN): State-of-the-art, captures graph structure. [24] [26]- Pretrained Models (Transformer/GNN): High sample efficiency. [25] |

| Acquisition Function | The strategy for selecting the next compounds to dock based on the surrogate model's predictions. | - Greedy: Selects compounds with the best-predicted score. [24]- Upper Confidence Bound (UCB): Balances prediction (exploitation) and uncertainty (exploration). [24]- Thompson Sampling (TS): Selects based on stochastic predictions from a probabilistic model. [24] |

| Objective Function | The expensive, physics-based calculation that the surrogate model approximates. | - Docking Score (e.g., AutoDock Vina, Glide, RosettaVS): Primary metric for binding affinity. [24] [13] [27]- Free Energy Perturbation (FEP+): Higher accuracy binding affinity prediction. [13]- Composite Scores: Can include other properties like molecular weight or specific protein-ligand interactions. [5] |

The Active Learning Cycle

The integration of these components forms an iterative cycle, as illustrated in the following workflow:

Experimental Protocols and Case Studies

Protocol 1: Bayesian Optimization with D-MPNN for Ultra-Large Libraries

This protocol, detailed by Graff et al. [24], is designed for screening libraries containing tens to hundreds of millions of compounds.

- Library Preparation: Obtain the virtual compound library (e.g., ZINC, Enamine REAL). Standardize structures and generate 3D conformers.

- Initialization: Randomly select a small initial batch of compounds (e.g., 0.1% of the library) and dock them using a program like AutoDock Vina to establish an initial training set.

- Model Training: Train a Directed-Message Passing Neural Network (D-MPNN) as the surrogate model on the accumulated docking scores. The D-MPNN operates directly on the molecular graph, learning meaningful features for prediction [24] [26].

- Acquisition and Selection:

- Use the trained model to predict the docking scores and associated uncertainties for all remaining compounds in the library.

- Apply an acquisition function, such as the Upper Confidence Bound (UCB), to select the next batch of compounds. UCB is defined as ( \text{UCB}(x) = \mu(x) + \kappa \sigma(x) ), where ( \mu(x) ) is the predicted score, ( \sigma(x) ) is the uncertainty, and ( \kappa ) is a parameter balancing exploration and exploitation [24].

- Iteration: Dock the newly selected batch, add the results to the training data, and retrain the model. Repeat steps 3-5 until a predefined budget is exhausted or performance plateaus.

- Output: The top-scoring compounds identified across all iterations are prioritized for experimental testing.

Protocol 2: ActiveDelta for Potency Optimization in Low-Data Regimes

The ActiveDelta protocol is particularly effective in early project stages with limited data, as it focuses on predicting relative improvements rather than absolute binding scores [26].

- Data Pairing: Start with a small training set of compounds with known binding affinities (e.g., Ki values). Create a paired dataset where each data point consists of two molecules and the difference in their potency.

- Model Training: Train a machine learning model (e.g., a paired D-MPNN in Chemprop or a paired-fingerprint XGBoost) on this paired dataset. The model learns to predict the potency difference between any two molecules [26].

- Acquisition and Selection:

- Identify the most potent molecule, ( M{best} ), in the current training set.

- For every molecule ( Mi ) in the learning pool, form a pair ( (M{best}, Mi) ).

- Use the trained model to predict the potency improvement for ( Mi ) relative to ( M{best} ).

- Select the compound with the largest predicted improvement for the next round of experimental testing or computational evaluation.

- Iteration: The newly tested compound is added to the training set, and all possible new pairs are generated for the next round of model training and selection.

Case Study: Targeting SARS-CoV-2 Mpro with FEgrow and Active Learning

A recent study by Cree et al. [5] successfully integrated active learning with the FEgrow software to design inhibitors for the SARS-CoV-2 main protease (Mpro).

- Objective: To efficiently search a combinatorial space of linkers and R-groups grown from a fixed ligand core.

- Workflow: The FEgrow software was used to build and score ligands in the protein binding pocket using a hybrid ML/MM potential. An active learning cycle was implemented where a machine learning model was trained on a subset of FEgrow results and then used to select the most promising compounds for the next evaluation round [5].

- Integration with On-Demand Libraries: The chemical space was "seeded" with purchasable compounds from the Enamine REAL database, ensuring synthetic tractability.

- Outcome: The workflow identified several novel designs, some of which showed high similarity to known inhibitors from the COVID Moonshot effort. Prospective testing of 19 purchased compounds yielded three with weak activity, validating the approach for hit identification [5].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software Tools for Active Learning Virtual Screening

| Tool Name | Type/Function | Key Features | Reference/Link |

|---|---|---|---|

| MolPAL | Open-source active learning software | Implements various surrogate models (RF, NN, MPNN) and acquisition functions (Greedy, UCB, TS). | [24] |

| FEgrow | Open-source tool for building congeneric series | Grows ligands in protein pockets, integrates with active learning, uses hybrid ML/MM for optimization. | [5] |

| ActiveDelta | Algorithm for exploitative active learning | Uses paired molecular representations to predict property improvements; available in Chemprop. | [26] |

| Schrödinger Active Learning Applications | Commercial platform | Active Learning Glide for docking and Active Learning FEP+ for free energy calculations. | [13] |

| OpenVS | Open-source AI-accelerated virtual screening platform | Integrates RosettaVS docking with active learning for screening billion-member libraries. | [27] |

| AutoDock Vina | Molecular docking software | Fast, widely used docking engine for generating initial training data. | [24] |

| gnina | Docking with convolutional neural networks | Used as a scoring function within workflows like FEgrow. | [5] |

Active learning represents a paradigm shift in how computational scientists approach the vastness of chemical space in drug discovery. By strategically guiding the selection of compounds for expensive virtual screening evaluations, active learning frameworks can recover the vast majority of top-performing hits at a fraction of the computational cost of exhaustive screens. As virtual libraries continue to expand into the billions, the adoption of these intelligent, adaptive methodologies will be crucial for maintaining efficiency in chemogenomics research. The continued development of more accurate surrogate models, such as pretrained transformers and advanced graph neural networks, along with innovative acquisition strategies like ActiveDelta, promises to further enhance the sample efficiency and effectiveness of virtual screening campaigns, ultimately accelerating the delivery of new therapeutic compounds.

Predicting Drug-Target Interactions (DTIs) in a Multi-Target Paradigm

The drug discovery process is notoriously complex, expensive, and time-consuming, typically costing approximately $2.6 billion and taking over 10 years from concept to market approval [28]. A fundamental challenge in this process is efficiently identifying interactions between drugs and their protein targets within an enormous chemical and biological space. Chemogenomics has emerged as a powerful framework that aims to model the entire compound-protein interaction space systematically, rather than focusing on individual targets in isolation [15] [29]. This paradigm recognizes that pharmacological compounds often interact with multiple targets, and leveraging these polypharmacological relationships can accelerate drug discovery and repositioning efforts.

Active Learning (AL) represents a transformative approach within computational chemogenomics. As an iterative, feedback-driven machine learning process, AL strategically selects the most informative data points for labeling and model training [1]. This methodology is particularly valuable in drug discovery contexts where obtaining labeled data (experimentally confirmed drug-target interactions) is both costly and time-intensive. By focusing resources on collecting the most valuable data, active learning enables the construction of highly predictive models using only 10-25% of large bioactivity datasets, dramatically reducing experimental requirements while maintaining model accuracy [15].

Active Learning Fundamentals in Chemogenomics

Core Conceptual Framework

Active learning operates on the principle that a machine learning algorithm can achieve greater accuracy with fewer training labels if it is allowed to choose which data points to learn from. In the context of drug-target interaction prediction, this translates to an iterative process where the model selectively identifies which compound-target pairs should be prioritized for experimental testing to maximally improve model performance [1].

The fundamental AL cycle consists of four key phases:

- Initial Model Training: A base model is trained on a small set of labeled drug-target interactions

- Query Strategy Application: The model evaluates unlabeled instances and selects the most informative ones according to a predefined acquisition function

- Experimental Labeling: The selected compound-target pairs are tested experimentally (e.g., via high-throughput screening)

- Model Update: Newly labeled data is incorporated into the training set, and the model is retrained

This process repeats until a stopping criterion is met, such as performance convergence or exhaustion of experimental resources [1] [17].

Algorithmic Approaches and Query Strategies

Several algorithmic approaches have been developed for active learning in chemogenomics:

Query-by-Committee employs multiple models (a committee) to evaluate unlabeled instances. Structures with high disagreement among committee members are selected for labeling, as this disagreement indicates model uncertainty and potential learning value [30]. This approach has been successfully used to create diverse datasets like QDπ, which incorporates 1.6 million molecular structures while maximizing chemical diversity [30].

Uncertainty Sampling selects instances where the model's prediction confidence is lowest. For regression tasks (e.g., predicting binding affinity), this may involve selecting compounds with highest predictive variance [17].

Representation-based Methods focus on selecting diverse compounds that cover the chemical space efficiently. K-means clustering and related approaches ensure broad coverage of the molecular feature space [17].

Table 1: Common Active Learning Query Strategies in DTI Prediction

| Strategy | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Greedy Acquisition | Selects compounds with highest predicted activity | Simple, computationally efficient; effective for molecular docking [31] | May get stuck in local optima; poor exploration |

| Uncertainty Sampling | Selects compounds with highest prediction uncertainty | Directly addresses model uncertainty; good for error reduction [1] | Sensitive to initial model bias |

| Upper Confidence Bound (UCB) | Balances prediction score and uncertainty | Balanced exploration-exploitation trade-off [31] | Requires tuning of balance parameter |

| Query-by-Committee | Selects compounds with highest committee disagreement | Robust; reduces model-specific bias [30] | Computationally intensive; requires multiple models |

| Diversity Sampling | Maximizes chemical space coverage | Ensures broad exploration [17] | May miss high-activity regions |

Experimental Design and Methodologies

Workflow Implementation

Implementing active learning for DTI prediction requires careful orchestration of computational and experimental components. The following workflow visualization captures the iterative nature of this process:

Active Learning Workflow for DTI Prediction

Protocol Details: QDπ Dataset Construction

The creation of the QDπ dataset exemplifies rigorous active learning implementation for chemogenomic modeling [30]. The methodology employed four distinct strategies for incorporating molecular structures:

Direct Inclusion: Source databases with energies and forces already calculated at the ωB97M-D3(BJ)/def2-TZVPPD theory level were incorporated entirely.

Relabeling: Small databases without reference-level data were recalculated at the target theory level without geometry reoptimization.

Active Learning Pruning: For large databases, a query-by-committee approach identified non-redundant structures. The active learning cycle involved:

- Training 4 independent machine learning potential models with different random seeds

- Calculating energy and force standard deviations between models for each structure

- Setting thresholds of 0.015 eV/atom for energy and 0.20 eV/Å for force standard deviations

- Selecting up to 20,000 candidate structures per cycle exceeding these thresholds

- Terminating when all structures were either included or excluded [30]

Active Learning Extension: For small databases containing only optimized structures, molecular dynamics sampling was combined with active learning to identify thermally accessible conformations.

Protocol Details: EviDTI Framework

The EviDTI framework incorporates evidential deep learning for uncertainty quantification in DTI prediction [32]. The experimental protocol includes:

Data Encoders:

- Protein feature encoder using ProtTrans pre-trained model for sequence features

- Drug feature encoder combining 2D topological graphs (via MG-BERT) and 3D spatial structures (via geometric deep learning)

- Light attention mechanism to capture local interactions at residue level

Evidence Layer:

- Concatenated protein and drug representations fed to evidential layer

- Output parameters used to calculate prediction probability and uncertainty values

- Direct learning of uncertainty without reliance on random sampling

Training Regimen:

- Benchmark datasets (DrugBank, Davis, KIBA) split 8:1:1 for training, validation, and testing

- Evaluation using seven metrics: accuracy, recall, precision, MCC, F1 score, AUC, and AUPR

- Cold-start evaluation following established practices for novel DTI prediction [32]

Performance Metrics and Benchmarking

Quantitative Performance Analysis

Table 2: Performance Comparison of DTI Prediction Methods on Benchmark Datasets

| Method | Dataset | Accuracy (%) | Precision (%) | MCC (%) | AUC (%) | AUPR (%) |

|---|---|---|---|---|---|---|

| EviDTI [32] | DrugBank | 82.02 | 81.90 | 64.29 | - | - |

| EviDTI [32] | Davis | 84.20 | 79.10 | 68.50 | 92.70 | 89.10 |

| EviDTI [32] | KIBA | 82.10 | 78.50 | 64.40 | 91.30 | 87.60 |

| Active Learning [15] | Chemogenomic | (10-25% data required) | - | - | - | - |

| COVDROP [17] | Solubility | (2x faster convergence) | - | - | - | - |

| GraphDTA [32] | Davis | 83.40 | 78.50 | 67.60 | 92.60 | 88.80 |

| MolTrans [32] | KIBA | 81.50 | 78.10 | 64.10 | 91.20 | 87.50 |

Case Study: SARS-CoV-2 Main Protease Inhibitor Discovery

A recent application of active learning for SARS-CoV-2 Mpro inhibitor discovery demonstrates the practical utility of these approaches [5]. The implementation:

- Utilized the FEgrow software for building congeneric compound series in protein binding pockets

- Employed hybrid ML/molecular mechanics potential energy functions to optimize bioactive conformers

- Integrated active learning to efficiently search combinatorial space of linkers and functional groups

- Achieved identification of novel designs with high similarity to COVID Moonshot discoveries using only fragment screen structural information

- Resulted in 19 compound designs ordered and tested, with three showing weak activity in fluorescence-based Mpro assays [5]

This case study highlights both the promise and current limitations of active-learning-driven DTI prediction, particularly the need for improved prioritization metrics for compound purchase decisions.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Resources for Active Learning in DTI Prediction

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| DP-GEN [30] | Software | Implements active learning for molecular dataset generation | QDπ dataset construction; active learning pruning/extension |

| EviDTI [32] | Framework | Evidential deep learning for DTI prediction with uncertainty | Reliable DTI prediction with confidence estimation |

| FEgrow [5] | Software | Builds congeneric series in protein binding pockets | Structure-based de novo hit expansion |

| gnina [5] | Scoring Function | Convolutional neural network for binding affinity prediction | Structure-based binding affinity estimation |

| DeepChem [17] | Library | Deep learning toolkit for drug discovery | Building and evaluating DTI prediction models |

| QDπ Dataset [30] | Data Resource | 1.6 million molecular structures with quantum mechanical properties | Training universal machine learning potentials |

| ProtTrans [32] | Protein Language Model | Protein sequence feature extraction | Encoding protein representations for DTI prediction |

| MG-BERT [32] | Molecular Graph Model | Drug 2D topological feature extraction | Encoding molecular representations for DTI prediction |

Uncertainty Quantification in DTI Prediction

A significant advancement in active learning for DTI prediction is the incorporation of explicit uncertainty quantification. Traditional deep learning models often produce overconfident predictions, which is particularly problematic in drug discovery where false positives can lead to costly experimental follow-up on inactive compounds [32].

Evidential Deep Learning approaches address this challenge by:

- Modeling epistemic uncertainty (from model parameters) and aleatoric uncertainty (from data noise) separately

- Providing well-calibrated confidence estimates for predictions

- Enabling prioritization of DTIs with higher confidence for experimental validation

- Reducing the risk of false positives in virtual screening campaigns [32]

The EviDTI framework demonstrates that uncertainty-aware models not only achieve competitive accuracy but also provide better-calibrated confidence estimates, allowing researchers to focus resources on the most promising drug-target pairs [32].

Architectural Framework for Multi-Target Prediction

The following architecture visualization illustrates how modern DTI prediction systems integrate multiple data modalities and active learning components:

Multi-Modal DTI Prediction Architecture

Future Directions and Challenges

Despite significant progress, active learning for DTI prediction in a multi-target paradigm faces several challenges. Data sparsity and the "cold start" problem for new drugs or targets remain significant hurdles [29]. Integration of multi-omics data and sophisticated modeling of polypharmacology effects present both opportunities and computational complexities [1].

Promising research directions include: