Active Learning in Computational Chemistry: Accelerating Drug Discovery with AI

This article provides a comprehensive overview of active learning (AL), a transformative machine learning paradigm that is reshaping computational chemistry and drug discovery.

Active Learning in Computational Chemistry: Accelerating Drug Discovery with AI

Abstract

This article provides a comprehensive overview of active learning (AL), a transformative machine learning paradigm that is reshaping computational chemistry and drug discovery. By strategically selecting the most informative data for expensive calculations or experiments, AL creates iterative feedback loops that dramatically accelerate tasks like virtual screening, molecular optimization, and property prediction. We explore the foundational concepts of AL workflows, detail its methodological applications in docking and free energy calculations, address key troubleshooting and optimization challenges, and validate its performance against traditional brute-force methods. Aimed at researchers and drug development professionals, this review synthesizes current evidence to demonstrate how AL enables more efficient navigation of vast chemical spaces, leading to faster identification of potent inhibitors and optimized materials.

What is Active Learning? The Foundational Framework Reshaping Chemical Discovery

Active learning represents a paradigm shift in machine learning, strategically addressing the critical bottleneck of data annotation in computationally intensive fields. This technical guide examines its core mechanisms—an iterative feedback loop for intelligent data selection—within the context of computational chemistry and drug development. By enabling models to selectively query the most informative data points for labeling, active learning achieves radical improvements in data efficiency, dramatically reducing the cost and time associated with experimental and simulation-based data acquisition. This whitepaper details the operational principles, query strategies, and experimental protocols underpinning successful active learning implementations, providing researchers and scientists with a framework for accelerating compound discovery and optimization.

In computational chemistry and drug discovery, the acquisition of high-quality, labeled data—such as binding affinities, solubility metrics, or toxicity profiles—often requires expensive wet-lab experiments, complex simulations, or expert annotation. This creates a fundamental constraint on the pace of research. Traditional supervised learning models require vast, pre-labeled datasets, a requirement that is often economically and logistically prohibitive.

Active learning (AL) directly confronts this challenge. It is a supervised machine learning approach that optimizes the annotation process by strategically selecting the most valuable data points to label [1]. Unlike passive learning, which uses a static, pre-defined dataset, an active learning algorithm interactively queries a human expert or an information source (the "oracle") to label new data points with the desired outputs [2]. The primary objective is to minimize the amount of labeled data required to train a model to a desired level of performance, thereby maximizing learning efficiency [1] [3].

Core Concepts and the Active Learning Workflow

The essence of active learning is an iterative cycle that prioritizes exploration of the most informative regions of chemical space. This process is governed by a query strategy that determines which unlabeled data points are selected for annotation.

The Standard Active Learning Cycle

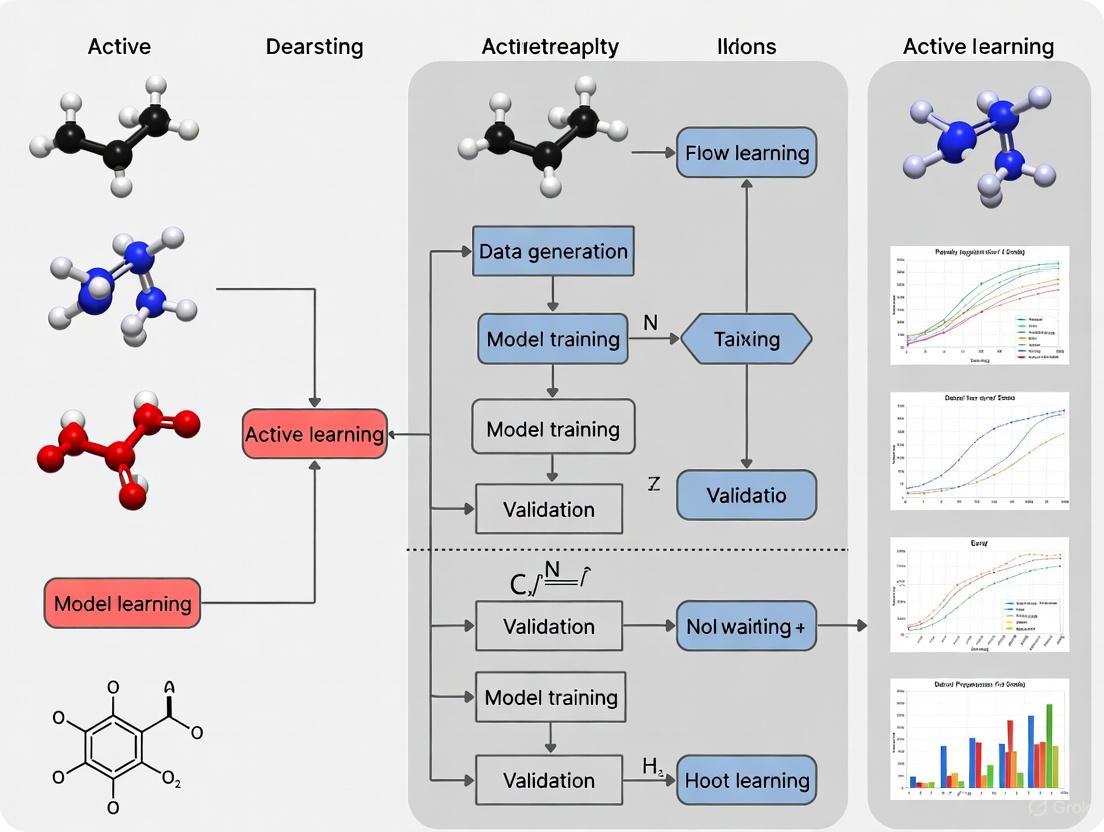

The following Graphviz diagram illustrates the foundational, model-agnostic workflow of an active learning system.

This workflow can be broken down into the following key stages [1] [4] [3]:

- Initialization: The process begins with a small, initially labeled dataset,

L. - Model Training: A machine learning model is trained on the current set of labeled data.

- Prediction & Selection: The trained model is used to predict on a large pool of unlabeled data,

U. A query strategy is then applied to select the most informative candidates,C, from this pool. - Oracle Querying: The selected candidates are presented to an oracle (e.g., a human expert, a high-throughput assay, or a high-fidelity simulation) for labeling.

- Dataset Update: The newly labeled data is added to the training set

L. - Model Update: The model is retrained on the expanded, updated training set.

- Iteration: Steps 3 through 6 are repeated until a stopping criterion is met, such as satisfactory model performance, depletion of resources, or diminishing returns on model improvement.

Query Scenarios and Strategies

The implementation of the active learning cycle can vary based on how data is presented and evaluated. The three primary scenarios are:

- Pool-based sampling: The most common scenario, where the algorithm evaluates the entire pool of unlabeled data before selecting the best candidates for labeling [2]. This is memory-intensive but highly effective.

- Stream-based selective sampling: Each unlabeled data point is examined one at a time in a stream, and the model decides in real-time whether to query for its label or discard it [1] [4]. This is more computationally efficient for continuous data streams.

- Membership query synthesis: The algorithm generates new, synthetic data instances from an underlying distribution for the oracle to label [2]. This is less common in chemistry due to the challenge of generating chemically valid and meaningful structures.

The intelligence of an active learning system is defined by its query strategy. The table below summarizes the most prominent strategies.

Table 1: Key Active Learning Query Strategies

| Strategy | Core Principle | Typical Measure | Advantage in Chemistry |

|---|---|---|---|

| Uncertainty Sampling [1] [3] | Selects data points where the model's prediction is least certain. | Least Confidence, Margin Sampling, Entropy. | Focuses experimental resources on compounds whose activity is ambiguous, refining decision boundaries. |

| Query By Committee (QBC) [3] [2] | Trains multiple models (a "committee"); selects points where committee disagreement is highest. | Vote Entropy, Kullback-Leibler (KL) Divergence. | Reduces model bias and variance by leveraging ensemble methods. |

| Diversity Sampling [1] [4] | Selects a set of data points that are representative of the entire unlabeled pool. | Clustering, Feature Space Coverage. | Ensures broad exploration of chemical space and prevents over-sampling from a single region. |

| Expected Model Change [2] | Selects data points that would cause the greatest change to the current model if their labels were known. | Gradient of the objective function. | Aims for maximum impact on model parameters per labeling effort. |

| Hybrid Approaches [3] | Combines multiple strategies, e.g., selecting data that is both uncertain and diverse. | Custom combination of above measures. | Balances exploration (diversity) and exploitation (uncertainty), often yielding superior results. |

Active Learning in Practice: Computational Chemistry Case Studies

Case Study 1: Hit Discovery for the WDR5 Protein

The ChemScreener workflow provides a compelling example of active learning's power in early drug discovery. This multi-task active learning framework was designed to navigate large, diverse chemical libraries starting from limited initial data.

Table 2: Experimental Protocol & Results for WDR5 Inhibitor Screening

| Protocol Aspect | Detailed Methodology |

|---|---|

| Target & Objective | Identify novel inhibitors of the WDR5 protein using iterative single-dose HTRF (Homogeneous Time-Resolved Fluorescence) screens. |

| Initial Data | A primary HTS (High-Throughput Screen) with a baseline hit rate of 0.49%. |

| Active Learning Setup | Used a Balanced-Ranking acquisition strategy that leveraged ensemble uncertainty to explore novel chemistry while prioritizing predicted activity. The workflow iteratively selected compounds for experimental testing. |

| Iteration & Validation | Over five iterative cycles, 1,760 compounds were selected and tested. Hit compounds were consolidated with close analogs, and 269 compounds were retested and clustered. Promising hits advanced to dose-response assays and were validated as binders by Differential Scanning Fluorimetry (DSF). |

| Key Results | The active learning approach increased the average hit rate to 5.91% (a >10x enrichment over HTS), yielding 104 hits from 1,760 compounds. It de novo identified three novel scaffold series and three singleton scaffolds as validated hits [5]. |

Case Study 2: Prioritizing Compounds for SARS-CoV-2 Mpro

A 2025 study integrated active learning with the FEgrow software package for structure-based de novo design targeting the SARS-CoV-2 main protease (Mpro) [6].

Table 3: Experimental Protocol for SARS-CoV-2 Mpro Inhibitor Design

| Protocol Aspect | Detailed Methodology |

|---|---|

| Target & Objective | Design and prioritize synthesizable compounds inhibiting SARS-CoV-2 Mpro using fragment-based structural data. |

| Core Technology | FEgrow: An open-source package that builds congeneric ligand series in protein binding pockets. It uses hybrid ML/MM (Machine Learning/Molecular Mechanics) potential energy functions to optimize bioactive conformers. |

| Active Learning Integration | 1. Build & Score: FEgrow automatically generated and scored compound designs using a gnina CNN scoring function. 2. Train ML Model: The scored compounds were used to train a machine learning model to predict the scoring function output. 3. Prioritize & Seed: The ML model predicted scores for the vast combinatorial space, prioritizing the next batch of compounds. The workflow was "seeded" with purchasable compounds from the Enamine REAL database. |

| Key Results | The active learning-driven workflow identified several novel designs with high similarity to molecules discovered by the independent COVID Moonshot effort. Of 19 compounds purchased and tested, three showed weak activity in a fluorescence-based Mpro assay, validating the approach for prospective compound prioritization [6]. |

The following Graphviz diagram maps the specific computational and experimental workflow from this case study.

Quantitative Benchmarking of Active Learning Strategies

A comprehensive 2025 benchmark study evaluated 17 different active learning strategies within an Automated Machine Learning (AutoML) framework across nine materials science regression tasks, providing critical insights into strategy selection for scientific domains [7].

Table 4: Benchmark Performance of Active Learning Strategies in Scientific Regression Tasks

| Strategy Category | Example Methods | Early-Stage (Data-Scarce) Performance | Late-Stage (Data-Rich) Performance |

|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperformed random sampling and geometry-based heuristics. | Performance gap narrows as all methods converge with sufficient data. |

| Diversity-Hybrid | RD-GS (combining Representativeness and Diversity) | Clearly outperformed baseline, effectively balancing exploration and exploitation. | Similarly converges with other high-performing methods. |

| Geometry-Only | GSx, EGAL | Underperformed compared to uncertainty and hybrid methods in initial phases. | Converges with other strategies with larger labeled datasets. |

| Random Sampling | (Baseline) | Served as the baseline for comparison; consistently inferior in early stages. | Matches the performance of advanced strategies once the dataset is large enough. |

The key finding is that the choice of active learning strategy is most critical under small-data conditions. Early in the process, uncertainty-driven and diversity-hybrid strategies provide significant performance gains, thereby maximizing the return on investment for each expensive data point. As the labeled set grows, the marginal benefit of intelligent selection diminishes.

For researchers implementing an active learning pipeline in computational chemistry, the following tools and resources are essential.

Table 5: Essential Research Reagents and Software Solutions for Active Learning

| Item / Resource | Function / Purpose | Relevance to Active Learning Workflow |

|---|---|---|

| FEgrow [6] | Open-source Python package for building and optimizing congeneric ligand series in a protein binding pocket. | Serves as the core "oracle" or simulation step for structure-based active learning, generating and scoring compound designs. |

| gnina [6] | A convolutional neural network-based scoring function for predicting protein-ligand binding affinity. | Used within workflows like FEgrow to provide a rapid, ML-based proxy for experimental binding affinity during the scoring phase. |

| RDKit [6] | Open-source cheminformatics and machine learning software. | Handles fundamental tasks like molecule manipulation, descriptor generation, and conformer ensemble generation (via ETKDG). |

| Enamine REAL Database [6] | A multi-billion compound catalog of readily synthesizable (on-demand) molecules. | Used to "seed" the chemical search space, ensuring that designed compounds are synthetically tractable and available for purchase and testing. |

| High-Performance Computing (HPC) Cluster [6] | Parallel computing infrastructure. | Enables the automation and parallelization of computationally intensive steps (e.g., FEgrow building/scoring) across large compound libraries. |

| Active Learning Software | Libraries like modAL (Python) or custom implementations. |

Provides the framework for implementing the active learning loop, query strategies, and model management. |

Active learning establishes a powerful, iterative feedback loop for intelligent data selection, directly addressing the fundamental challenge of data scarcity in computational chemistry and drug development. By strategically guiding experimentation and simulation towards the most informative compounds, it demonstrably enriches hit rates, discovers novel chemotypes, and optimizes resource allocation. As computational power and algorithmic sophistication grow, the integration of active learning with automated workflows like AutoML and high-fidelity simulators like FEgrow is poised to become a standard paradigm, fundamentally accelerating the journey from hypothesis to validated compound.

Active learning (AL) is a machine learning paradigm that addresses a critical bottleneck in computational chemistry: the prohibitive cost of generating high-quality reference data using quantum mechanical methods. By iteratively and intelligently selecting the most valuable data points for a human or computational oracle to label, AL constructs accurate models with far fewer expensive calculations. This guide details the core cycle—query strategy, oracle, and model update—that makes this efficient exploration of chemical space possible.

The Core Active Learning Cycle

The fundamental process of active learning is an iterative loop designed to maximize model performance while minimizing oracle calls. The cycle can be broken down into several key stages, as shown in the workflow below.

The Query Strategy: Selecting Informative Data

The query strategy is the intelligence of the AL cycle, determining which unlabeled data points would be most valuable for the model to learn from next. Its goal is to find the optimal trade-off between exploration (sampling diverse regions of chemical space) and exploitation (focusing on uncertain regions relevant to the property of interest).

Primary Query Strategy Frameworks

| Framework | Core Principle | Best Use Cases in Computational Chemistry |

|---|---|---|

| Uncertainty Sampling [8] | Queries instances where the model's prediction is least confident. Ideal for refining model predictions in specific regions of the potential energy surface (PES). | |

| Query-by-Committee [9] | Trains multiple models (a committee); queries instances where committee disagrees the most. Reduces model bias and improves generalizability. | |

| Expected Model Change | Queries instances that would cause the greatest change to the current model parameters. Prioritizes data with high potential impact. | |

| Diversity Sampling | Selects a batch of data points that are diverse from each other. Ensures broad coverage of chemical space and prevents oversampling. |

Uncertainty sampling is the most commonly applied framework, with several specific methods for quantifying uncertainty [8]:

- Least Confidence: Selects the instance where the model has the lowest probability for its most likely prediction.

- Margin Sampling: Selects the instance with the smallest difference in probability between the two most likely predictions.

- Entropy Sampling: Selects the instance with the largest entropy (i.e., the highest overall uncertainty across all possible labels).

For complex simulations like non-adiabatic molecular dynamics, more sophisticated, physics-informed uncertainty quantification is critical. This involves ensuring low errors not just in energies, but also in crucial properties like energy gaps between electronic states, which are essential for calculating hopping probabilities in surface hopping dynamics [10].

The Oracle: Source of Ground Truth

In computational chemistry, the oracle is typically a high-accuracy, computationally expensive computational method that provides ground-truth data. The choice of oracle is a major determinant in the cost and accuracy of the entire AL workflow.

Oracle Methods in Computational Chemistry

| Oracle Method | Description | Relative Cost | Typical Application |

|---|---|---|---|

| Coupled Cluster (CCSD(T)) | The "gold standard" of quantum chemistry [11]. | Very High | Small molecules; training highly accurate surrogate models. |

| Density Functional Theory (DFT) | Workhorse method for systems of medium size [11]. | Medium | Most material and molecular property predictions. |

| Force Fields (e.g., UFF) | Fast, classical potentials [9]. | Low | Generating initial data; testing AL protocols. |

| Molecular Docking (e.g., Glide) | Scores protein-ligand binding affinity [12] [13]. | Medium to High | Virtual screening of ultra-large chemical libraries. |

A powerful concept is the bidirectional active learning framework, where the model and oracle improve each other. In this setup, the model can also assist oracle learning by selectively transferring its prior knowledge. For instance, in a study with 252 clinicians, a model helped train the human oracles by showing them samples it found uncertain, which enhanced both oracle accuracy and final model performance [14].

The Model Update: Incorporating New Knowledge

Once the oracle provides labels for the queried data, the model must be updated efficiently. This often involves fine-tuning a pre-trained model rather than training from scratch. For example, one can start with a universal potential like M3GNet and fine-tune it on-the-fly during a molecular dynamics simulation, a process known as Active Learning MD [9].

For learning complex manifolds of electronic states, the model architecture itself is crucial. Multi-state models can learn an arbitrary number of excited states across different molecules. These models are often trained using a composite loss function, L, that incorporates errors in energies (LE), forces (LF), and critically, the energy gaps between states (Lgap) [10]:

L = ωE*LE + ωF*LF + ωgap*Lgap

This physics-informed training ensures accurate prediction of energy gaps, which is vital for the stability of photochemical dynamics simulations [10].

Experimental Protocols & Benchmarking

Protocol: Active Learning for Virtual Screening

Active Learning Glide is a commercial implementation that exemplifies a robust protocol for drug discovery [13]:

- Initial Sampling: A small, diverse subset (e.g., 1%) of an ultra-large chemical library is docked using Glide.

- Model Training: A machine learning model is trained to predict docking scores based on molecular features.

- Iterative Cycle:

- The model screens the entire library and predicts the top-scoring compounds.

- A batch of these high-priority compounds (and often some diverse or uncertain ones) is selected for docking with Glide (the oracle).

- The model is retrained on the newly acquired data.

- Final Evaluation: The process repeats until a stopping criterion is met (e.g., compute budget). The final model is used to identify the best hits.

Performance: This protocol can recover approximately 70% of the top-scoring hits found by exhaustively docking the entire library, at just 0.1% of the computational cost [13].

Protocol: Active Learning for Molecular Dynamics

The Simple (MD) Active Learning workflow in the Amsterdam Modeling Suite provides a detailed protocol for on-the-fly training of machine learning potentials [9]:

- Input Setup: Define the initial molecular structure, reference engine (e.g., DFT, UFF), MD parameters, and the base ML model (e.g., M3GNet).

- Active Learning Loop:

- The MD simulation runs for a segment (e.g., 10 frames).

- A reference calculation is performed on the current structure and compared to the ML model's prediction.

- Success Criteria: If the error in energies/forces is below a threshold, the simulation continues.

- Retraining: If the error is too high, the simulation is rolled back, and the model is retrained including this new data point. The step is reattempted.

This protocol ensures the ML potential remains accurate throughout the simulation, even as the system explores new configurations [9].

Benchmarking Results

Direct benchmarking across different docking engines integrated into an active learning framework (like MolPAL) reveals how the oracle impacts performance [12]:

| AL Docking Protocol | Key Performance Finding |

|---|---|

| Vina-MolPAL | Achieved the highest top-1% recovery of active compounds [12]. |

| SILCS-MolPAL | Reached comparable accuracy and recovery at larger batch sizes, while providing a more realistic membrane environment description [12]. |

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational "reagents" and tools essential for implementing active learning in computational chemistry.

| Item | Function in Active Learning |

|---|---|

| Reference Engine (e.g., ADF, DFTB, Glide) | The computational oracle; provides high-accuracy ground-truth labels (energies, forces, scores) for selected molecular structures [9] [13]. |

| Universal Potential (e.g., M3GNet-UP-2022) | A pre-trained machine learning potential used as a starting point for transfer learning, significantly accelerating convergence for new systems [9]. |

| Molecular Dynamics Engine | Propagates nuclear trajectories, generating new structures for the AL algorithm to evaluate and query [9]. |

| Chemical Library (e.g., Enamine REAL, ZINC) | The vast search space of synthesizable molecules screened in virtual screening campaigns [13]. |

| Structural Descriptors (e.g., ANI-type, E(3)-equivariant) | Mathematical representations that convert atomic coordinates into a format usable by machine learning models, crucial for capturing physical symmetries [11] [10]. |

| Active Learning Software (e.g., Schrödinger's AL Apps, AMS, MolPAL) | Integrated platforms that automate the core AL cycle, managing query selection, job submission to the oracle, and model retraining [12] [9] [13]. |

The fundamental challenge in computational chemistry is the sheer vastness of chemical space. With estimates of up to 10^60 drug-like compounds, exhaustive experimental or computational evaluation is impossible [15]. Traditional methods, which rely on screening large static libraries, become computationally prohibitive and inefficient. This creates a critical data scarcity problem: high-quality data is expensive to produce, yet essential for building accurate predictive models.

Active Learning (AL) presents a paradigm shift from this traditional approach. It is an iterative machine learning process that intelligently selects the most informative data points for evaluation, thereby maximizing knowledge gain while minimizing resource expenditure [16]. By strategically exploring chemical space, AL addresses the core data problem, making the exploration of vast chemical landscapes not only feasible but efficient. This guide examines why AL is uniquely suited for this task, providing a technical overview of its methodologies, applications, and implementations for researchers and drug development professionals.

Core Principles of Active Learning in Chemistry

At its heart, AL is a closed-loop feedback system. Instead of training a model on a static, pre-selected dataset, an AL system starts with a small initial dataset and iteratively improves the model by selecting new data points based on specific acquisition strategies [15]. The core cycle involves:

- Training a model on the current labeled dataset.

- Using the model to analyze a large pool of unlabeled data.

- Selecting the most "informative" candidates based on an acquisition function.

- Querying an "oracle" (e.g., a computationally expensive simulation or a wet-lab experiment) to get labels for the selected candidates.

- Adding the newly labeled data to the training set and repeating the process.

This iterative refinement allows the model to learn rapidly and direct resources toward the most promising regions of chemical space. In computational chemistry, the "oracle" can be a high-level quantum mechanics calculation, an alchemical free energy perturbation (FEP) calculation, or a molecular docking simulation [15] [13].

Key Acquisition Strategies

The "acquisition function" is the intelligence behind the AL loop, determining which compounds to evaluate next. Several strategies have been developed, each with distinct advantages:

- Uncertainty Sampling: Selects compounds for which the model's prediction is most uncertain, often measured by the variance in an ensemble of models (Query by Committee) [17]. This is ideal for improving model accuracy in under-explored regions.

- Greedy (or Exploitation) Sampling: Selects the compounds predicted to have the best properties (e.g., highest binding affinity) [15]. This focuses the search on optimizing for performance.

- Diversity Sampling: Selects a diverse set of compounds to ensure broad coverage of the chemical space, preventing the model from becoming overly specialized [15].

- Mixed Strategies: Hybrid approaches, such as selecting compounds that are both high-performing and uncertain, balance exploration of new areas with exploitation of known promising regions [15]. A "narrowing" strategy that begins with broad exploration before focusing on optimization has also been successfully employed [15].

Active Learning in Action: Experimental Protocols and Case Studies

Protocol 1: Hit Discovery with ChemScreener

A prime example of AL's power is the ChemScreener workflow, designed for early hit discovery with limited initial data [5].

- Objective: Identify novel, diverse inhibitors of the WDR5 protein.

- Oracle: Single-dose HTRF (Homogeneous Time-Resolved Fluorescence) biochemical assay.

- Acquisition Strategy: Balanced-Ranking, leveraging ensemble uncertainty to explore novel chemistry while prioritizing predicted activity.

- Workflow:

- Initialization: Begin with a small set of assay data.

- Model Training: Train an ensemble of models to predict activity.

- Compound Selection: Select compounds for the next round of assay using the Balanced-Ranking strategy.

- Iteration: Repeat steps 2-3 for several iterative rounds of screening.

- Results: The AL-driven screen dramatically increased the hit rate from 0.49% in the primary high-throughput screen (HTS) to an average of 5.91% (ranging from 3% to 10% per round). From 1,760 compounds tested, 104 hits were identified, leading to the de novo discovery of three novel scaffold series and three singleton scaffolds [5]. This demonstrates AL's ability to efficiently find diverse chemotypes that a conventional HTS would likely miss.

Protocol 2: Lead Optimization with Alchemical Free Energy Calculations

AL is also powerfully applied in lead optimization, where accuracy is critical. This protocol uses alchemical free energy calculations as a high-accuracy oracle [15].

- Objective: Identify high-affinity Phosphodiesterase 2 (PDE2) inhibitors in a large chemical library.

- Oracle: Alchemical relative binding free energy (RBFE) calculations.

- Acquisition Strategy: Various strategies (e.g., mixed, uncertain, greedy) were probed, selecting batches of 100 ligands per iteration.

- Workflow:

- Pose Generation: For each ligand, generate a binding pose through molecular docking and refinement via molecular dynamics.

- Ligand Representation: Encode each ligand using fixed-size vectors (e.g., molecular fingerprints, protein-ligand interaction fingerprints, or voxelized 3D atom densities).

- Active Learning Loop: a. Train a machine learning model (e.g., neural network) on the current set of compounds with FEP-calculated affinities. b. Use the model to predict affinities for the entire library. c. Select a new batch of compounds based on the chosen acquisition strategy. d. Run FEP calculations on the selected compounds to obtain accurate affinity labels. e. Add the new data to the training set and repeat.

- Results: The protocol robustly identified a large fraction of true positives by explicitly evaluating only a small subset of the library, making high-accuracy FEP screening of vast libraries computationally tractable [15].

Performance Comparison: Active Learning vs. Traditional Methods

The following table quantifies the performance gains of AL in various chemistry applications.

Table 1: Quantitative Performance of Active Learning in Chemical Discovery

| Application / Case Study | Traditional Method Performance | Active Learning Performance | Key Improvement |

|---|---|---|---|

| WDR5 Hit Discovery [5] | HTS hit rate: 0.49% | AL hit rate: 5.91% (avg.), 104 hits from 1,760 compounds | >10x increase in hit rate |

| Ultra-Large Library Docking [13] | Dock 1 billion compounds: ~100% cost & time | Dock 1 billion compounds: 0.1% cost & time | ~1000x reduction in resource use |

| PDE2 Inhibitor Optimization [15] | FEP screening of full library: computationally prohibitive | Identified potent inhibitors with a small fraction of FEP calculations | Made high-accuracy FEP tractable for large libraries |

The Scientist's Toolkit: Essential Components for an AL Workflow

Implementing an AL system requires a combination of software and methodological components. The table below details key "research reagents" for building an AL pipeline in computational chemistry.

Table 2: Essential Toolkit for Implementing Active Learning in Chemistry

| Tool / Component | Category | Function in the Workflow | Example Implementations |

|---|---|---|---|

| Alchemical FEP+ | Physics-based Oracle | Provides high-accuracy binding affinity labels for training and validating ML models [13]. | Schrödinger FEP+ [13] |

| Molecular Docking (Glide) | Physics-based Oracle | Provides rapid structural binding scores; used as a cost-effective oracle for initial screening [13]. | Schrödinger Glide [13] |

| High-Dimensional NNPs | ML Model / Oracle | Learns potential energy surfaces from QM data; enables fast MD simulations [17]. | HDNNP [17] |

| AIQM1 | AI-Enhanced QM Method | Provides quantum mechanical data at high speed and accuracy for training ML models [18]. | AIQM1 method [18] |

| Variational Autoencoder (VAE) | Generative Model | Generates novel molecular structures within an AL loop for de novo design [16]. | Custom VAE architectures [16] |

| Query by Committee | Acquisition Strategy | Estimates prediction uncertainty by measuring the variance (disagreement) among an ensemble of models [17]. | Ensemble of neural networks |

| RDKit | Cheminformatics | Provides molecular fingerprinting, descriptor calculation, and basic molecular operations [15]. | RDKit toolkit [15] |

Workflow Visualization: Active Learning in Computational Chemistry

The following diagram illustrates the core iterative loop of an Active Learning system as applied to a chemical discovery problem, such as optimizing a lead compound for binding affinity.

Active Learning Cycle for Chemical Discovery - This workflow shows the iterative feedback loop where a model is repeatedly refined with strategically selected new data.

Advanced Workflow: Generative AL with Nested Cycles

For de novo molecular design, more advanced workflows integrate generative AI with AL. The following diagram details a nested AL cycle workflow that combines a Variational Autoencoder with chemoinformatic and physics-based oracles to generate and optimize novel, drug-like molecules [16].

Generative AI with Nested Active Learning - This workflow combines a VAE with nested AL cycles, using fast chemoinformatic filters and rigorous physics-based oracles to steer the generation of novel, optimized molecules [16].

Practical Considerations and Limitations

While powerful, AL is not a panacea. Successful implementation requires careful consideration of its limitations:

- Oracle Cost and Accuracy: The entire AL process is constrained by the cost and accuracy of the oracle. A slow or inaccurate oracle will lead to a slow or misdirected learning process [15].

- Initial Dataset Bias: The performance of AL can be sensitive to the initial dataset. A poor initial sampling may bias the model and cause it to overlook promising regions of chemical space [17].

- Performance vs. Random Sampling: In some cases, particularly when the relevant configurational space is well-defined, simple random sampling can perform on par with or even better than advanced AL strategies, especially when measured by test error on standard benchmarks [17]. This highlights the importance of choosing the right sampling strategy for the problem.

- Model Transferability: ML models and AL-selected training sets are often optimized for a specific task or chemical series, which can limit their transferability to related but distinct problems [19].

The immense scale of chemical space presents a fundamental data problem that traditional computational and experimental methods cannot overcome. Active Learning directly addresses this challenge by replacing brute-force screening with an intelligent, iterative, and adaptive search process. As evidenced by real-world case studies, AL dramatically accelerates hit discovery, makes high-accuracy free energy calculations tractable for large libraries, and enables the generative design of novel chemotypes. By maximizing the informational value of every computational or experimental assay, AL emerges as an indispensable paradigm for navigating the vast chemical universe, promising to reshape the future of efficient and effective molecular discovery.

Active Learning (AL) is an iterative machine learning paradigm that has transformed computational chemistry and drug discovery. It strategically selects the most informative data points for experimental validation or costly simulation, creating a continuous feedback loop between prediction and experimentation. This approach is particularly powerful in domains where data is scarce or experiments are expensive, as it minimizes resource expenditure while maximizing the discovery of novel chemical entities. By balancing exploration of unknown chemical space with exploitation of known promising regions, AL algorithms can efficiently navigate the vast combinatorial possibilities of molecules and reactions. This methodology represents a significant shift from traditional one-shot virtual screening, moving towards a more dynamic, adaptive, and efficient discovery process that closely mirrors human scientific reasoning [5] [6] [20].

The Conceptual Foundations and Early Implementation

The theoretical groundwork for AL lies in its ability to address fundamental challenges in computational chemistry. Traditional virtual screening methods, such as molecular docking, though efficient at processing large compound libraries, often struggle with accuracy in scoring binding affinities [21]. Similarly, exhaustive experimental screening is prohibitively expensive and time-consuming for most research groups [20]. AL emerged as a solution to these limitations by introducing an intelligent, sequential decision-making process.

Early implementations focused on replacing exhaustive searches with iterative cycles. A typical AL cycle begins with an initial small dataset, which is used to train a preliminary machine learning model. This model then evaluates a larger, unlabeled chemical space and prioritizes a select batch of candidates for the next round of testing—whether computational (e.g., more accurate scoring) or experimental. The results from this batch are then used to retrain and refine the model, gradually improving its predictive performance and guiding the search towards high-value regions [6] [22]. This process effectively turns the discovery workflow into a "needle in a haystack" problem where the magnet gets smarter with each iteration [21].

Evolution into a Chemistry Powerhouse: Key Methodological Advances

The transformation of AL from a conceptual framework to a practical powerhouse in chemistry is marked by several key methodological developments.

Integration with Multi-fidelity Data and Experimental Automation

A significant leap forward has been the integration of AL with automated laboratory systems, creating self-driving or automated labs. The CRESt (Copilot for Real-world Experimental Scientists) platform exemplifies this advancement. It uses multimodal feedback, including data from scientific literature, experimental results, and human input, to guide a robotic system in synthesizing and testing materials. In one application, CRESt explored over 900 chemistries and conducted 3,500 tests, discovering a multi-element fuel cell catalyst with a 9.3-fold improvement in performance per dollar [23]. This demonstrates AL's ability to leverage diverse data types and control physical experiments directly.

Development of Sophisticated Acquisition Functions and Uncertainty Quantification

The core of any AL system is its acquisition function—the algorithm that decides which samples to evaluate next. Strategies have evolved beyond simple uncertainty sampling. For instance, the Balanced-Ranking strategy used in the ChemScreener workflow leverages ensemble models to quantify uncertainty and balance the exploration of novel chemistries with the exploitation of predicted activity. This approach dramatically increased hit rates in a WDR5 inhibitor screen from a baseline of 0.49% in a primary high-throughput screen to an average of 5.91% (reaching up to 10%) [5].

Embracing Target-Specific and Physical Property Scoring

Moving beyond generic scoring functions, AL has incorporated target-specific and learned scores for more accurate candidate prioritization. In a campaign to identify TMPRSS2 inhibitors, researchers developed a target-specific "h-score" that rewarded structural features correlated with inhibition, such as occlusion of the enzyme's S1 pocket. This custom score significantly outperformed standard docking scores, reducing the number of compounds requiring computational screening by over 10-fold and placing known inhibitors within the top few ranked positions [21]. Furthermore, this concept was extended to a learned score for trypsin-domain proteins, which achieved a high correlation (0.80) with experimental binding affinities [21].

Table 1: Impact of Advanced Scoring and Ensembles in Active Learning

| Method | Key Innovation | Performance Improvement | Application Example |

|---|---|---|---|

| Target-Specific Score (h-score) [21] | Empirical score based on structural biology insights | >200-fold reduction in experimental candidates; known inhibitors ranked in top 6 | TMPRSS2 inhibitor discovery |

| Receptor Ensemble [21] | Docking to multiple MD-derived protein conformations | Poor ranking without ensemble vs. top-tier ranking with ensemble | Improved docking pose quality and ranking |

| Learned Score [21] | Machine learning model trained on protein-ligand observables | Correlation of 0.80 with experimental binding affinities | Generalization to trypsin-domain proteins |

State-of-the-Art Workflows and Experimental Protocols

Modern AL workflows are comprehensive pipelines that integrate molecular design, simulation, and experimental validation. Below is a generalized protocol for a structure-based drug discovery campaign using AL, synthesizing elements from several recent studies [6] [21].

The following diagram illustrates the iterative cycle of a typical active learning workflow in computational chemistry.

Detailed Methodology

Step 1: Problem Initialization and Library Design

- Define the Target: Start with a protein target of interest and obtain a 3D structure (e.g., from crystallography, cryo-EM, or homology modeling).

- Generate a Receptor Ensemble: To account for protein flexibility, run molecular dynamics (MD) simulations of the apo protein or a reference complex. From the trajectory, select multiple diverse snapshots for docking. This has been shown to be critical for achieving meaningful results [21].

- Define the Chemical Space: This can be a virtual library of purchasable compounds (e.g., from ZINC or Enamine REAL) or a vast virtual space of synthetically accessible molecules defined by a core scaffold with lists of possible R-groups and linkers [6].

Step 2: Initial Sampling and Evaluation

- Select an Initial Batch: Randomly select a small batch of molecules (e.g., 1% of the library or ~20-50 compounds) from the chemical space. Alternatively, seed the initial set with known actives or fragments if available [22].

- Evaluate with High-Fidelity Method: For each candidate, perform the "expensive" evaluation. This could be:

- Molecular Docking: Dock each candidate into every structure in the receptor ensemble.

- Advanced Scoring: Score the resulting poses using a target-specific or learned scoring function (e.g., the h-score for serine proteases) [21].

- MD Refinement (Optional): Run short MD simulations from the docked pose and re-score (dynamic scoring) to improve pose stability and ranking [21].

Step 3: Machine Learning Model Training

- Feature Representation: Encode the molecules in the training set (all evaluated compounds) using molecular descriptors (e.g., CDDD, Morgan fingerprints) or graph-based representations [22] [20].

- Model Training: Train a machine learning model (e.g., Multi-Layer Perceptron, Random Forest, Graph Neural Network) to predict the high-fidelity score (from Step 2) based on the molecular features.

Step 4: Prediction and Acquisition

- Model Inference: Use the trained model to predict the scores for all remaining unevaluated compounds in the chemical space.

- Candidate Selection with Acquisition Function: Apply an acquisition strategy to select the next batch for evaluation. Common strategies include:

- Exploitation: Selecting the top-K candidates with the highest predicted score.

- Exploration: Selecting candidates where the model is most uncertain (e.g., high variance in an ensemble model).

- Balanced-Ranking: A hybrid approach that considers both predicted score and uncertainty to maintain hit enrichment while exploring novel chemotypes [5].

Step 5: Iteration and Validation

- Iterate: Return to Step 2, evaluating the new batch of selected compounds with the high-fidelity method. Add this new data to the training set and repeat the cycle until a stopping criterion is met (e.g., identification of a sufficient number of hits, depletion of resources).

- Experimental Validation: Synthesize or purchase the top-ranking compounds identified after the final AL cycle and validate their activity and binding in experimental assays (e.g., HTRF, DSF, cell-based assays) [5] [21].

Quantitative Impact and Case Studies

The power of AL is best demonstrated by its quantitative success in real-world discovery campaigns. The following table summarizes key metrics from several recent applications.

Table 2: Quantitative Performance of Active Learning in Recent Chemical Discovery Campaigns

| Application / Tool | Target | Key Performance Metric | Result with Active Learning | Baseline or Traditional Method |

|---|---|---|---|---|

| ChemScreener [5] | WDR5 Inhibitor | Hit Rate | 5.91% (avg.), up to 10% | 0.49% (Primary HTS) |

| MD + AL Framework [21] | TMPRSS2 Inhibitor | Experimental Tests Needed | < 20 compounds | Vastly more (needle-in-haystack) |

| Ultra-low Data Screening [22] | General Hit Discovery | Probability of Finding 5 Top-1% Hits | 97-100% (with only 110 tests) | Impractical with random screening |

| CRESt Platform [23] | Fuel Cell Catalyst | Power Density per Dollar | 9.3-fold improvement | Baseline (Pure Pd) |

| Synergistic Drug Discovery [20] | Drug Combination Synergy | Experimental Cost Saving | Discovered 60% of synergies with 10% of tests | Required 8253 tests for same result |

Case Study: Efficient Identification of a Broad Coronavirus Inhibitor

A landmark study combined MD simulations with AL to discover a potent inhibitor of TMPRSS2, a human protease critical for the entry of SARS-CoV-2 and other coronaviruses [21]. The workflow involved docking compounds from drug libraries to an ensemble of TMPRSS2 structures generated by MD. A target-specific score was used to rank candidates. The AL cycle was able to identify all four known inhibitors in the DrugBank library by computationally screening only 262 compounds on average, a significant reduction from the 2,230 compounds needed when docking to a single static structure. This led to the identification and experimental validation of BMS-262084, a nanomolar inhibitor (IC~50~ = 1.82 nM) effective against multiple SARS-CoV-2 variants. This case highlights how AL, coupled with physics-based simulations, can drastically reduce both computational and experimental burdens while delivering a high-value candidate [21].

Case Study: Accelerating Infrared Spectroscopy with Machine-Learned Potentials

Beyond drug discovery, AL is accelerating materials science and spectral prediction. The PALIRS framework uses AL to efficiently train machine-learned interatomic potentials (MLIPs) for predicting infrared (IR) spectra [24]. The process starts with an initial small dataset of molecular geometries. An AL loop then runs molecular dynamics at different temperatures, selecting structures with high prediction uncertainty to be re-calculated with density functional theory (DFT) and added to the training set. This iterative process built a high-quality dataset of ~16,000 structures, enabling the MLIP to reproduce DFT-level IR spectra at a fraction of the computational cost. This demonstrates AL's utility in optimizing the construction of training data for complex scientific ML models [24].

Implementing a successful AL-driven project requires a suite of computational and experimental tools. The table below details key resources as used in the featured studies.

Table 3: Key Research Reagent Solutions for Active Learning in Computational Chemistry

| Tool / Resource Name | Type | Primary Function | Application Example |

|---|---|---|---|

| FEgrow [6] | Software | Builds and scores congeneric ligand series in a protein binding pocket. | R-group and linker exploration for SARS-CoV-2 M~pro~ inhibitors. |

| ChemScreener [5] | Workflow | Multi-task active learning workflow for hit discovery with balanced-ranking acquisition. | Increased hit rates for WDR5 inhibitors. |

| PALIRS [24] | Software | Active learning framework for training machine-learned interatomic potentials to predict IR spectra. | Accurate and efficient IR spectra prediction for organic molecules. |

| CRESt [23] | Platform | Multimodal AI system that integrates literature, experiments, and robotics for autonomous discovery. | Discovery of a high-performance, multi-element fuel cell catalyst. |

| Enamine REAL Database [6] | Chemical Library | Database of billions of readily synthesizable compounds for virtual screening. | Source of purchasable compounds for hit expansion and validation. |

| Gnina [6] | Software | Convolutional neural network scoring function for predicting protein-ligand binding affinity. | Used as a scoring function within the FEgrow workflow. |

| MD-Generated Receptor Ensemble [21] | Data/Protocol | A collection of protein structures from MD simulations to account for flexibility in docking. | Crucial for achieving high-ranking of true TMPRSS2 inhibitors. |

| Target-Specific Score (e.g., h-score) [21] | Method | An empirical or learned scoring function tailored to a specific protein's inhibition mechanism. | Dramatically improved prioritization of TMPRSS2 inhibitors over generic docking scores. |

Active Learning has unequivocally evolved from a theoretical concept into a cornerstone of modern computational chemistry and drug discovery. Its power stems from a fundamental shift in strategy: instead of performing all possible experiments or calculations, it uses intelligent, iterative selection to maximize information gain with minimal resource expenditure. As demonstrated by its success in discovering potent enzyme inhibitors, synergistic drug pairs, and novel functional materials, AL is particularly potent when integrated with other advanced techniques such as molecular dynamics simulations, automated robotics, and multimodal AI. The continued development of more sophisticated acquisition functions, seamless human-in-the-loop interfaces, and robust uncertainty quantification methods promises to further solidify AL's role as an indispensable powerhouse driving innovation in chemical research.

Active learning (AL) has emerged as a transformative paradigm in computational chemistry and drug discovery, directly addressing two of the field's most significant bottlenecks: the scarcity of expensively labeled data and the prohibitive computational cost of high-fidelity simulations. By implementing an iterative, feedback-driven process that intelligently selects the most informative data points for labeling, AL enables the construction of highly accurate models with far fewer data points and computational cycles than traditional approaches. This whitepaper details the core advantages, quantitative benefits, and practical methodologies of active learning, providing researchers with a guide to its application in accelerating molecular and materials design.

Quantitative Advantages of Active Learning

The following table summarizes key quantitative evidence demonstrating the efficacy of active learning in overcoming data and computational constraints.

| Application Area | Performance Metric | AL Performance | Benchmark/Control | Data Source |

|---|---|---|---|---|

| Photosensitizer Design (General Molecular Property Prediction) | Test Set Mean Absolute Error (MAE) | 15-20% lower MAE than static models | Static model baselines | [25] |

| Catalyst Discovery (Materials Screening) | Screening Acceleration | 32x acceleration over random screening | Random screening | [25] |

| COVID-19 Mpro Inhibitor Design (Structure-Based Drug Design) | Computational Cost for Candidate Prioritization | Requires evaluating only a fraction of the total chemical space | Exhaustive or random searches | [6] |

| Molecular Property Prediction (with hybrid ML/MM methods) | Data Efficiency | Effective training with small datasets | Models requiring large, pre-labeled datasets | [11] |

Core Methodologies and Experimental Protocols

The power of active learning is realized through specific iterative workflows and acquisition functions. Below are detailed protocols from leading research.

The Unified Active Learning Workflow for Molecular Design

This framework, used for the design of photosensitizers, provides a generalizable protocol for data-driven molecular discovery [25].

Workflow Diagram: The following diagram illustrates the iterative feedback loop at the heart of this active learning protocol.

Detailed Protocol Steps:

Initialization:

- Input: Prepare an initial small set of labeled data (e.g., 100-1,000 molecules with properties calculated via quantum chemistry).

- Chemical Space: Define a vast, unlabeled search space (e.g., 655,000+ candidates from public databases like QM9 or specialized libraries) [25].

Model Training:

- Surrogate Model: Train a fast-to-evaluate machine learning model, such as a Graph Neural Network (GNN), on the current labeled dataset. The GNN learns to map molecular structures to target properties (e.g., excited-state energies S1/T1) [25].

Candidate Selection & Acquisition:

- Acquisition Function: Apply a selection strategy to the unlabeled pool to identify the most valuable candidates for labeling. Key strategies include:

- Uncertainty Sampling: Selects molecules where the model's prediction is most uncertain.

- Diversity Sampling: Ensures exploration by selecting structurally diverse molecules.

- Exploitation Sampling: Selects molecules predicted to have high performance (e.g., optimal T1/S1 ratio) [25].

- Batch Selection: A hybrid approach balancing these strategies is often used to select a batch of candidates for labeling.

- Acquisition Function: Apply a selection strategy to the unlabeled pool to identify the most valuable candidates for labeling. Key strategies include:

High-Fidelity Labeling:

- Objective Function: Perform accurate but expensive computational labeling on the selected candidates. The ML-xTB pipeline is a cost-effective method, achieving density functional theory (DFT)-level accuracy at approximately 1% of the computational cost. This pipeline involves generating a 3D conformation, optimizing geometry with xTB, and computing properties with a pre-trained ML model [25].

Iteration and Convergence:

- The newly labeled data is added to the training set.

- The surrogate model is retrained, and the cycle repeats until a stopping criterion is met (e.g., model performance plateaus or a computational budget is exhausted).

Active Learning for Structure-Based Drug Design with FEgrow

This protocol specifically addresses the challenge of expensive molecular docking and scoring in the context of hit expansion [6].

Workflow Diagram: The diagram below outlines the automated cycle for prioritizing compound designs targeting a specific protein.

Detailed Protocol Steps:

Compound Generation and Scoring:

- Input: Start with a known ligand core bound to a protein target and a library of possible linkers and R-groups.

- Building & Optimization: Use FEgrow to generate new compound structures by appending linkers and R-groups. The conformers are optimized in the rigid protein binding pocket using a hybrid Machine Learning/Molecular Mechanics (ML/MM) potential energy function [6].

- Scoring: The binding affinity of the designed compounds is predicted using the gnina convolutional neural network scoring function as a surrogate for expensive free energy calculations [6].

Machine Learning and Prioritization:

- The results from FEgrow (compounds and their scores) are used to train a separate machine learning model.

- This model learns to predict the scoring function output based on chemical structure, enabling it to rapidly screen millions of virtual compounds.

- The ML model's predictions are used to select the next, most promising batch of compounds for evaluation with the more expensive FEgrow workflow.

Prospective Experimental Validation:

- The final output is a prioritized list of compound designs. These top candidates can be sourced from on-demand chemical libraries (e.g., Enamine REAL) for synthesis and experimental testing, as demonstrated in the identification of SARS-CoV-2 Mpro inhibitors [6].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table catalogues key software, datasets, and algorithms that form the essential "reagents" for implementing an active learning pipeline in computational chemistry.

| Item Name | Type | Function/Benefit |

|---|---|---|

| FEgrow [6] | Software Package | Open-source Python-based workflow for building and optimizing congeneric ligand series in a protein binding pocket. |

| ML-xTB Pipeline [25] | Computational Method | Provides quantum chemical accuracy (for properties like S1/T1 energies) at ~1% of the cost of TD-DFT, enabling high-throughput labeling. |

| Graph Neural Network (GNN) [11] [25] | Machine Learning Model | Surrogate model that naturally represents molecular graph structures for accurate property prediction. |

| Multi-task Electronic Hamiltonian Network (MEHnet) [11] | Neural Network Architecture | A single model that predicts multiple electronic properties (dipole moment, polarizability, etc.) from CCSD(T)-level data. |

| QM9, ANI-1, Materials Project [26] | Datasets | Curated quantum chemistry and materials property datasets used for pre-training or as a starting point for chemical space exploration. |

| Acquisition Functions (e.g., Uncertainty Sampling) [27] [25] | Algorithm | Core AL component that selects the most informative data points for labeling, balancing exploration and exploitation. |

| gnina [6] | Scoring Function | A convolutional neural network used for predicting protein-ligand binding affinity, serving as a fast objective function. |

Active Learning in Action: Key Methodologies and Real-World Applications

Virtual screening (VS) stands as a cornerstone technique in modern drug discovery, enabling researchers to computationally identify potentially bioactive compounds from libraries containing millions to billions of small molecules [28]. The fundamental premise involves using computational tools to predict which compounds are most likely to bind to a specific biological target, thereby enriching the selection of candidates for expensive experimental testing [28]. With the advent of readily accessible ultra-large chemical libraries containing billions of readily purchasable compounds, the potential for discovering novel hits has expanded dramatically [29] [30]. Recent works have demonstrated a direct correlation between library size and hit-finding success, encapsulated by the "the bigger, the better" principle [29]. For instance, screening nearly 100 million compounds from the EnamineREAL library yielded a 24% experimental hit rate against AmpC and D4 dopamine target receptors [29].

However, this opportunity comes with significant computational challenges. Traditional brute-force docking of ultra-large libraries requires massive computational resources; for example, screening 1.3 billion compounds using 8,000 CPUs necessitates approximately 28 days of running time with associated costs exceeding $200,000 [29] [31]. This resource barrier has catalyzed the adoption of active learning (AL) frameworks, which iteratively combine machine learning with molecular docking to efficiently explore chemical space while dramatically reducing computational costs [29] [13]. Active learning represents a strategic approach to computational chemogenomics that adaptively incorporates minimal but informative examples for modeling, yielding compact but high-quality predictive models [32]. In the context of molecular docking, these frameworks aim to identify the highest docking-scored compounds through iterative cycles of molecular docking and machine learning model training, requiring only a fraction of the computational resources of exhaustive screening [29].

Technical Foundation: Active Learning Mechanics and Implementation

Core Active Learning Workflow

The active learning workflow for molecular docking operates through an iterative cycle of simulation, training, and selection designed to maximize the discovery of high-scoring compounds while minimizing computational expense [29] [13]. The process typically begins with an initial random sampling of ligands from the screening library, which are subjected to docking simulations against the target receptor. The resulting docking scores serve as training data for a machine learning model, typically a graph neural network (GNN), which learns to predict docking scores and associated uncertainties based on molecular structural features [29]. This trained surrogate model then screens the remaining library, and acquisition strategies select the most promising candidates for the next round of docking. The newly acquired data further refines the model in subsequent iterations, progressively improving its predictive accuracy [29].

Table 1: Key Acquisition Functions in Active Learning Docking

| Acquisition Strategy | Mathematical Formulation | Strategic Objective |

|---|---|---|

| Greedy | ( a(x) = \hat{y}(x) ) | Selects compounds with the highest predicted docking scores [29] |

| Upper Confidence Bound (UCB) | ( a(x) = \hat{y}(x) + 2\hat{\sigma}(x) ) | Balances score prediction and model uncertainty [29] |

| Uncertainty (UNC) | ( a(x) = \hat{\sigma}(x) ) | Selects compounds where model prediction is most uncertain [29] |

Schrödinger's implementation of Active Learning Glide exemplifies this workflow in practice. The platform trains machine learning models on physics-based docking data iteratively sampled from full libraries [13]. These trained models can rapidly generate predictions for new molecules and identify the highest-scoring compounds in ultra-large libraries at a fraction of the cost and speed of brute-force methods [13]. This approach demonstrates how active learning creates a virtuous cycle where each iteration enhances the model's understanding of the chemical space most relevant to the target.

Architectural Considerations and Sampling Strategies

The efficacy of active learning protocols depends critically on several architectural considerations. Numerous studies have attempted to enhance these protocols' efficiency by implementing strategies such as pruning poor-performing candidates to reduce computational costs without compromising performance [29]. Furthermore, research has examined diverse acquisition methods under noisy conditions, affirming the robustness of greedy acquisition approaches in such environments [29]. While simple strategies like greedy acquisition demonstrate effectiveness for straightforward datasets, more sophisticated approaches leveraging predictive uncertainty, such as expected improvement (EI) acquisition, may be preferable for challenging targets [29].

A critical insight from recent investigations is that surrogate models in active learning often predict docking scores using only 2D ligand structural features without explicit receptor information [29]. Despite this apparent limitation, these models demonstrate remarkable effectiveness by memorizing structural patterns prevalent in high-scoring compounds, which likely originate from shared shape and interaction patterns specific to binding pockets [29]. This capability enables the models to generalize effectively across diverse chemical spaces while maintaining computational efficiency.

AL Glide: Implementation and Performance Benchmarks

Integration with Schrödinger's Glide Platform

Schrödinger's Active Learning Glide represents a sophisticated implementation of these principles, integrated within the industry-leading Glide docking solution [31] [13]. Glide itself provides high docking accuracy across diverse receptor types, including small molecules, peptides, and macrocycles, and offers customizable constraints to bias docking calculations toward desired chemical spaces [31]. The platform includes multiple scoring workflows, notably Glide SP for high-throughput virtual screens and Glide WS, which incorporates explicit water dynamics from WaterMap for improved sampling and scoring [31].

Active Learning Glide augments these capabilities by training machine learning models on Glide docking scores iteratively sampled from full libraries [13]. This integration creates a powerful synergy where the physics-based accuracy of Glide docking informs efficient machine learning models that can rapidly explore ultra-large chemical spaces. The implementation includes specialized workflows for different stages of drug discovery, including hit identification in ultra-large libraries and lead optimization through Active Learning FEP+ for exploring diverse chemical space against multiple hypotheses [13].

Quantitative Performance Assessment

The performance advantages of Active Learning Glide are substantial and well-documented. Schrödinger reports that the platform can recover approximately 70% of the same top-scoring hits that would be found through exhaustive docking of ultra-large libraries with Glide, while requiring only 0.1% of the computational cost [13]. This dramatic efficiency gain transforms the practical feasibility of screening billion-compound libraries.

Table 2: Computational Efficiency Comparison: Brute-Force vs. Active Learning Docking

| Screening Approach | Library Size | Compute Time | Estimated Cost | Hit Recovery |

|---|---|---|---|---|

| Glide (Dock All Compounds) | 1 Billion | ~60 days | ~$1,440,000 | Reference (100%) |

| Active Learning Glide | 1 Billion | ~6 days | ~$1,440 | ~70% of top hits |

Note: Cost estimates based on $0.06 per CPU hour and $0.35 per GPU hour. License costs not included. Adapted from Schrödinger's Active Learning Calculator [13].

These efficiency gains are corroborated by independent research. Studies have demonstrated that through iterative docking, training, and inference with appropriate acquisition strategies, active learning methodologies can discover top-docking-scored compounds with success rates exceeding 90%, while requiring less than 10% of the simulation time needed for docking the entire library [29]. This performance advantage extends across diverse target types and chemical spaces, making it a versatile approach for various drug discovery applications.

Practical Implementation: Workflows and Protocols

Experimental Setup and Library Preparation

Successful implementation of Active Learning Glide begins with careful experimental setup and library preparation. The initial step involves thorough bibliographic research on the target receptor, considering aspects such as biological function, natural ligands, catalytic mechanism, and involvement in pathological processes [28]. Subsequently, researchers should collect available activity and structural data, including known inhibitors and crystallographic structures of the receptor, validating the reliability of binding site coordinates when using PDB files [28].

Library preparation is equally critical. Most commercial compounds are available in 2D format, but docking requires 3D molecular conformations [28]. Conformational sampling must generate a sufficiently broad set of conformations for each compound to cover its conformational space while avoiding high-energy conformations with low probability of adoption at room temperature [28]. Software such as OMEGA and ConfGen have demonstrated high performance in systematic conformer generation, while RDKit's distance geometry algorithm provides a robust free alternative [28]. Additionally, proper preparation must address molecular charges, protonation states at relevant pH, tautomeric states, stereochemistry, and the presence of salt and solvent fragments [28].

Detailed Active Learning Protocol

The core active learning protocol for molecular docking follows a systematic, iterative process:

Initialization: Randomly sample a starting set of compounds (e.g., 10,000 ligands) from the screening library and conduct docking simulations against the target receptor using Glide [29].

Surrogate Model Training: Train a graph neural network to predict docking scores and heteroscedastic aleatoric uncertainty based on input molecular graphs using the initially docked compounds as training data [29].

Compound Acquisition: Use the trained surrogate model to screen undocked compounds and select additional candidates for docking based on acquisition functions (Greedy, UCB, or Uncertainty) [29].

Iterative Refinement: Conduct docking simulations on the newly selected compounds, add the resulting data to the training set, and retrain the surrogate model [29].

Termination: Repeat steps 3-4 for a predetermined number of iterations (typically 9-10 cycles) or until performance metrics plateau [29].

Throughout this process, the surrogate model becomes increasingly adept at identifying structural features associated with high docking scores, progressively focusing computational resources on the most promising regions of chemical space [29] [13].

Diagram 1: Active Learning Docking Workflow. This diagram illustrates the iterative process of AL-guided docking, showing the cycle of docking, model training, and compound selection.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Active Learning Virtual Screening

| Tool Category | Representative Software | Primary Function |

|---|---|---|

| Docking Platform | Schrödinger Glide [31] | Industry-leading ligand-receptor docking solution |

| Active Learning Framework | Schrödinger Active Learning Applications [13] | Machine learning-guided compound selection |

| Commercial Compound Libraries | Enamine REAL, ZINC [29] [28] | Sources of ultra-large screening collections |

| Molecule Standardization | LigPrep, Standardizer, MolVS [28] | Preparation of molecular structures |

| Conformer Generation | OMEGA, ConfGen, RDKit [28] | 3D conformational sampling |

| Structure Visualization | Maestro, Flare, VIDA [28] | Analysis of docking poses and interactions |

| Free Energy Calculations | FEP+ [13] | High-performance binding affinity prediction |

Case Studies and Experimental Validation

Successful Applications in Hit Discovery

The practical efficacy of active learning approaches for molecular docking is demonstrated through multiple successful applications. In one case study involving the leucine-rich repeat kinase 2 (LRRK2) WDR domain—a target with no known inhibitors prior to the CACHE Challenge—researchers employed an active learning workflow based on optimized free-energy molecular dynamics simulations [33]. This approach identified 8 experimentally verified novel inhibitors out of 35 tested, representing a 23% hit rate, and demonstrated the ability to efficiently explore large chemical spaces while minimizing expensive simulations [33].

In another application targeting TMPRSS2, a human serine protease relevant to coronavirus entry, researchers developed a framework combining molecular dynamics simulations with active learning [21]. This approach reduced the number of compounds requiring experimental testing to less than 10 while cutting computational costs by approximately 29-fold, ultimately discovering BMS-262084 as a potent inhibitor with 1.82 nM IC50 [21]. These results highlight how target-specific scoring combined with active learning can efficiently address the "needle-in-a-haystack" problem of drug discovery.

Performance Across Diverse Targets

Benchmark studies across multiple receptor targets provide further validation of active learning methodologies. Research encompassing six receptor targets and compound pools from EnamineHTS and EnamineREAL libraries confirmed that surrogate model-based score rankings exhibit concordance primarily among samples possessing high docking scores [29]. Furthermore, these studies revealed that top-scored compounds demonstrate substantial three-dimensional shape similarities, where similar structural patterns relate to shape and interaction patterns specific to binding pockets [29].

Despite the tendency of surrogate models to memorize structural patterns prevalent in high-docking-scored compounds obtained during acquisition steps, these models demonstrate significant utility in virtual screening, successfully identifying actives from the DUD-E dataset and high-docking-scored compounds from the 100M sized EnamineReal library [29]. This performance across diverse targets and libraries underscores the robustness and general applicability of active learning approaches in practical virtual screening campaigns.

Emerging Trends and Methodological Advancements

The field of active learning for virtual screening continues to evolve with several promising directions. One significant trend involves the integration of more sophisticated uncertainty quantification methods to better guide the acquisition process [29] [21]. Additionally, there is growing interest in combining active learning with free energy perturbation calculations (Active Learning FEP+) to enhance the accuracy of binding affinity predictions during lead optimization [13] [33].

Another emerging area is the development of open-source active learning platforms, such as OpenVS, which aim to make these advanced methodologies more accessible to the broader research community [30]. These platforms typically incorporate receptor flexibility through molecular dynamics simulations, enhancing their ability to model induced fit and conformational selection mechanisms that play crucial roles in molecular recognition [30] [21].

Active learning represents a transformative approach to ultra-large virtual screening, effectively addressing the computational bottlenecks associated with billion-compound libraries while maintaining high hit identification rates. By iteratively combining machine learning with physics-based docking methods like Glide, these protocols enable researchers to explore unprecedented regions of chemical space with practical computational resources. The documented success across diverse targets, coupled with ongoing methodological advancements, positions active learning as an indispensable component of the modern computational drug discovery toolkit. As the field progresses, further integration with advanced sampling methods, improved uncertainty quantification, and more sophisticated molecular representations promise to enhance both the efficiency and effectiveness of these approaches, accelerating the discovery of novel therapeutic agents.

Diagram 2: AL Screening Applications and Evolution. This diagram shows the key applications, advantages, and future directions of active learning in virtual screening.

In the competitive landscape of computer-aided drug design, lead optimization presents a critical bottleneck. The process of refining a initial "hit" compound into a potent, selective, and developable drug candidate requires the evaluation of thousands of chemical analogs, a task that is both time-consuming and resource-intensive. While Relative Binding Free Energy (RBFE) calculations, such as those implemented in FEP+, provide a gold-standard, physics-based method for predicting binding affinity, their high computational cost has traditionally limited their application to small, congeneric series. This is where Active Learning (AL), a machine learning paradigm, is creating a paradigm shift. Framed within the broader thesis of computational chemistry, Active Learning represents an intelligent, iterative feedback system that dramatically amplifies the scope and efficiency of physics-based simulations. This guide details how the integration of Active Learning with FEP+ is revolutionizing lead optimization by enabling the rapid and systematic exploration of vast chemical spaces to enhance compound potency.

The Synergy of Active Learning and FEP+

What is Active Learning FEP+?

Active Learning FEP+ is a hybrid workflow that combines the high accuracy of FEP+ calculations with the efficiency of machine learning to prioritize computational resources [34]. The core concept is an iterative cycle where a machine learning model is trained on a limited set of FEP+ results and then used to intelligently select the most informative or promising compounds for the next round of FEP+ calculations [35] [36]. This creates a powerful feedback loop that continuously improves the model's understanding of the structure-activity relationship (SAR) for the target.

This approach directly addresses two key challenges:

- The Accuracy-Scale Trade-off: FEP+ provides accuracy matching experimental methods (≈1 kcal/mol) but is computationally expensive. Ligand-based QSAR models can screen millions of compounds quickly but are less accurate and rely on the data they are trained on [35] [34]. AL-FEP+ bridges this gap.

- Exploration vs. Exploitation: An optimal search must balance exploring new, uncertain regions of chemical space ("exploration") with refining known, promising areas ("exploitation"). The acquisition functions in an AL workflow are designed to manage this balance, preventing the search from becoming trapped in local minima [34] [37].

The Core Workflow of Active Learning FEP+

The following diagram illustrates the iterative cycle of an Active Learning FEP+ campaign.

Diagram 1: The Active Learning FEP+ iterative cycle. The process begins with a small initial set of FEP+ data, which is used to train a machine learning (ML) model. This model then predicts binding affinities for a large virtual library. An acquisition function intelligently selects the next batch of compounds for accurate FEP+ calculation, whose results are used to retrain the ML model, continuing the cycle [35] [34] [6].

Performance and Impact: A Data-Driven Approach

The efficacy of Active Learning FEP+ is not merely theoretical; it is backed by robust quantitative studies and prospective applications that demonstrate its transformative potential in real-world drug discovery campaigns.