Active Learning in Drug Discovery: A Comparative Guide to Efficient Hit Identification Strategies

This article provides a comprehensive comparison of active learning (AL) strategies for hit discovery in drug development.

Active Learning in Drug Discovery: A Comparative Guide to Efficient Hit Identification Strategies

Abstract

This article provides a comprehensive comparison of active learning (AL) strategies for hit discovery in drug development. Aimed at researchers and scientists, it explores the foundational principles of AL as a solution to the high costs and inefficiencies of traditional high-throughput screening. The content details various methodological approaches, including uncertainty sampling, diversity-based selection, and hybrid models, supported by recent case studies across diverse targets like WDR5, SARS-CoV-2 Mpro, and CDK2. It further offers practical guidance on troubleshooting common challenges, optimizing AL workflows, and validating model performance. By synthesizing evidence from current literature, this guide serves as a strategic resource for implementing efficient, AL-driven hit discovery campaigns that significantly enrich hit rates and reduce experimental burden.

What is Active Learning and Why Does it Revolutionize Hit Discovery?

In the high-stakes field of drug discovery, the transition from traditional passive screening to intelligent, iterative active learning represents a fundamental paradigm shift in research methodology. Active learning (AL) is a machine learning framework that strategically selects the most informative data points for experimental testing, thereby compressing discovery timelines and optimizing resource allocation in hit identification [1]. Unlike passive approaches that rely on static datasets and predetermined screening libraries, active learning creates a dynamic, self-improving cycle where each experimental result informs the selection of subsequent experiments [2]. This methodological evolution is particularly critical in early-stage research where the chemical search space is vast and experimental resources are constrained. By prioritizing compounds that maximize information gain, active learning systems efficiently navigate multidimensional optimization landscapes to identify promising hit candidates with fewer experimental iterations [3]. This guide provides a comprehensive comparison of active learning strategies, delivering quantitative performance assessments and implementable experimental protocols for drug discovery researchers seeking to adopt these transformative approaches.

Active Learning Fundamentals: Core Strategies and Mechanisms

Defining the Active Learning Workflow

Active learning operates through an iterative feedback loop that progressively refines predictive models by incorporating strategically selected experimental data. The fundamental AL cycle begins with an initial, often small, labeled dataset used to train a preliminary machine learning model. This model then evaluates a larger pool of unlabeled candidate compounds, selecting the most "informative" samples for experimental validation based on specific query strategies [2]. Newly acquired experimental data is incorporated into the training set, updating the model for the next cycle. This continuous process of model prediction, strategic experimentation, and knowledge integration enables researchers to rapidly converge toward high-potential chemical regions while avoiding redundant testing.

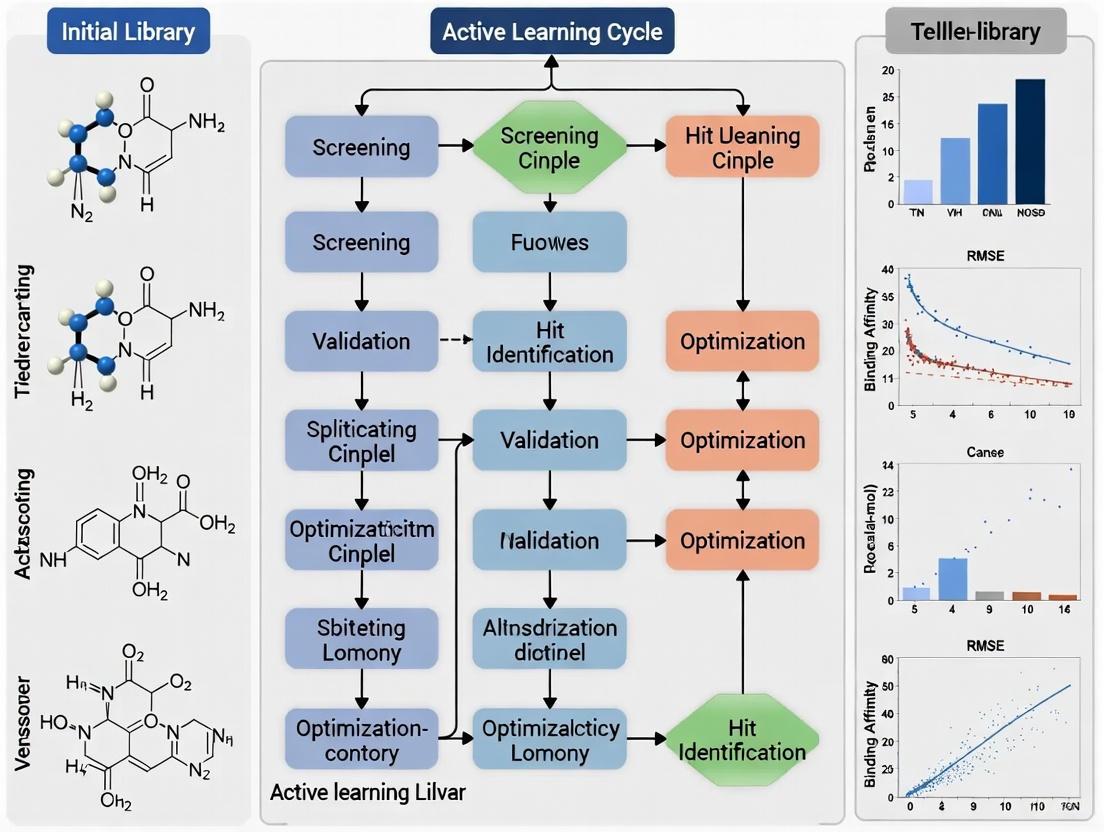

The diagram below illustrates this continuous, iterative workflow:

Key Query Strategies for Hit Discovery

Active learning strategies employ various mathematical frameworks to identify which experiments will yield the maximum information gain, with the optimal approach often dependent on specific research goals and dataset characteristics:

- Uncertainty Sampling: Selects compounds where the model's prediction confidence is lowest, effectively targeting decision boundaries where clarification most improves model accuracy. In regression tasks for property prediction, methods like Monte Carlo dropout provide variance-based uncertainty estimates [2].

- Diversity Sampling: Prioritizes compounds that differ substantially from already tested examples, ensuring broad exploration of chemical space and preventing oversampling of similar molecular scaffolds.

- Expected Model Change: Selects data points that would most significantly alter the current model parameters if their labels were known, favoring instances with maximum potential influence.

- Hybrid Approaches: Combine multiple criteria, such as uncertainty and diversity, to balance exploration of unknown regions with refinement of promising areas [2]. The RD-GS strategy exemplifies this approach, demonstrating superior early-phase performance in benchmark studies [2].

Comparative Analysis of Active Learning Platforms and Performance

Quantitative Benchmarking of Active Learning Strategies

Recent comprehensive benchmarking studies reveal significant performance differences among active learning strategies when applied to materials and drug discovery problems. These evaluations typically measure how rapidly models achieve target accuracy levels as the labeled dataset grows, providing crucial insights for strategy selection.

Table 1: Performance Benchmark of Active Learning Strategies in Regression Tasks

| AL Strategy Category | Representative Methods | Early-Stage Performance | Data Efficiency | Key Advantages |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms baseline | High | Targets knowledge gaps effectively |

| Diversity-Hybrid | RD-GS | Superior to geometry-only | High | Balances exploration & exploitation |

| Geometry-Only | GSx, EGAL | Underperforms early on | Moderate | Simple implementation |

| Random Sampling | N/A | Baseline for comparison | Low | No computational overhead |

The benchmark analysis demonstrates that uncertainty-driven and diversity-hybrid strategies provide substantial early advantages, selecting more informative samples that accelerate model improvement [2]. As the labeled set grows, performance gaps between strategies typically narrow, indicating diminishing returns from advanced AL approaches under conditions of abundant data [2].

Leading AI-Driven Discovery Platforms Implementing Active Learning

Several established and emerging platforms have successfully integrated active learning principles into end-to-end drug discovery workflows, with documented progression of AI-designed candidates to clinical stages.

Table 2: AI-Driven Drug Discovery Platforms with Active Learning Components

| Platform/Company | Core AL Approach | Therapeutic Area | Clinical Stage | Key Achievement |

|---|---|---|---|---|

| Insilico Medicine | Generative chemistry + target discovery | Idiopathic pulmonary fibrosis | Phase IIa | First AI-generated drug to clinical trials (18-month discovery) [4] |

| Exscientia | Automated precision chemistry | Oncology, Immuno-oncology | Phase I/II | AI-designed candidates with ~70% faster design cycles [4] |

| Schrödinger | Physics-enabled ML design | Autoimmune diseases | Phase III | TYK2 inhibitor (zasocitinib) advancing to late-stage trials [4] |

| Recursion | Phenomics-first screening | Multiple disease areas | Multiple phases | Integrated AL with automated chemistry post-merger [4] |

| Atomwise | Structure-based deep learning | Multi-target | Preclinical | Screens billions of compounds via AtomNet architecture [5] |

These platforms exemplify the translation of active learning methodologies from theoretical concepts to practical drug discovery engines. The merger of Recursion and Exscientia in 2024 exemplifies the strategic trend toward integrating complementary AL capabilities—combining phenomic screening with generative chemistry into a unified active learning framework [4].

Experimental Protocols for Active Learning Implementation

Protocol 1: High-Throughput Screening with Active Learning

This protocol outlines the methodology for implementing active learning in high-throughput screening campaigns, optimized for identifying novel hit compounds against specific therapeutic targets.

Step 1: Initial Library Design and Feature Representation

- Procedure: Curate diverse compound library representing broad chemical space. Calculate molecular descriptors (fingerprints, molecular weight, logP, topological polar surface area) and store in structured database.

- Quality Control: Apply drug-like filters (Lipinski's Rule of Five, PAINS exclusion) to remove problematic compounds. Standardize representation using IUPAC conventions.

- Tools: RDKit or OpenBabel for descriptor calculation, KNIME or Pipeline Pilot for workflow automation.

Step 2: Baseline Model Training

- Procedure: Randomly select 0.5-1% of library (500-1000 compounds) for initial screening. Train ensemble model (random forest or gradient boosting) using 5-fold cross-validation.

- Validation: Establish performance benchmarks (R², MAE) against held-out test set of 20% of initial data.

- Tools: Scikit-learn, AutoML frameworks for automated model selection [2].

Step 3: Iterative Active Learning Cycle

- Procedure:

- Use uncertainty sampling (predictive variance) to identify 384 compounds for next screening batch.

- Conduct high-throughput assays (fluorescence, absorbance, or luminescence-based).

- Incorporate results into training dataset.

- Retrain model with expanded dataset.

- Repeat for 5-10 cycles or until performance metrics plateau.

- Batch Size: Optimize based on screening capacity (typically 384-well format).

- Stopping Criterion: Model performance plateau (ΔMAE < 5% between cycles) or maximum budget allocation.

- Procedure:

Step 4: Hit Validation and Triaging

- Procedure: Confirm actives from final model predictions using orthogonal assay methods. Apply medicinal chemistry filters for lead-like properties.

- Counter-Screening: Test against related targets to assess selectivity.

- Dose-Response: Determine IC₅₀ values for confirmed hits using 10-point concentration series.

This protocol typically reduces experimental requirements by 40-70% compared to conventional high-throughput screening while maintaining comparable hit rates [2] [3].

Protocol 2: Reaction Condition Optimization Using Active Learning

This methodology applies active learning to optimize chemical reaction conditions for parallel synthesis, particularly valuable for building compound libraries or improving synthetic routes for hit-to-lead optimization.

Step 1: Experimental Design Space Definition

- Procedure: Identify critical reaction parameters (catalyst, solvent, temperature, concentration, ligand) and define plausible ranges for each variable.

- Experimental Units: Design microtiter plates with 96-384 reaction wells covering parameter combinations.

- Objective Function: Define optimization criteria (yield, purity, enantioselectivity).

Step 2: Bayesian Optimization Implementation

- Procedure:

- Use Gaussian process regression to model reaction outcome as function of parameters.

- Employ expected improvement acquisition function to select next experiment batch.

- Run reactions using liquid handling automation.

- Analyze outcomes via UPLC-MS/HPLC.

- Update model with results.

- Parallelization: Evaluate 4-8 parameter combinations simultaneously in plate format.

- Procedure:

Step 3: Complementary Condition Identification

- Procedure: Apply maximum uncertainty sampling to identify diverse, high-performing condition sets that cover broader substrate scope [3].

- Validation: Test complementary condition sets across diverse substrate panels to verify generality.

Research demonstrates that this active learning approach identifies high-coverage reaction condition sets with 60% fewer experiments than traditional grid searches while achieving broader substrate compatibility [3].

The strategic relationships and workflow for this protocol are illustrated below:

Essential Research Reagent Solutions for Active Learning Implementation

Successful implementation of active learning workflows requires specific reagent systems and instrumentation to enable rapid iteration between computational prediction and experimental validation.

Table 3: Essential Research Reagents and Platforms for Active Learning

| Reagent/Platform Category | Specific Examples | Function in AL Workflow | Key Considerations |

|---|---|---|---|

| Compound Libraries | Diversity-oriented synthesis libraries, DNA-encoded libraries | Provides chemical space for AL exploration | Library size, diversity, drug-like properties |

| Biochemical Assay Kits | Kinase-Glo, ADP-Glo assays, fluorescence polarization kits | Enables high-throughput target-based screening | Sensitivity, dynamic range, DMSO tolerance |

| Cell-Based Assay Systems | Reporter gene assays, viability assays (CellTiter-Glo) | Provides phenotypic context for hit validation | Relevance to disease physiology, reproducibility |

| Automated Liquid Handlers | Tecan Veya, Eppendorf Research 3 neo pipette | Enables reproducible compound transfer and assay assembly | Precision, throughput, integration capabilities [6] |

| 3D Cell Culture Systems | mo:re MO:BOT platform, organoid technologies | Enhances biological relevance of phenotypic data | Reproducibility, scalability, physiological accuracy [6] |

| Protein Production Systems | Nuclera eProtein Discovery System | Rapid generation of protein targets for screening | Speed, yield, membrane protein capability [6] |

| Data Integration Platforms | Cenevo, Sonrai Analytics Discovery Platform | Unifies experimental data for AL model training | Metadata capture, interoperability, AI readiness [6] |

The transition from passive screening to intelligent, iterative active learning represents a fundamental advancement in hit discovery methodology. The comparative data presented in this guide demonstrates that uncertainty-driven and hybrid active learning strategies consistently outperform both passive approaches and random sampling, particularly during early phases of discovery campaigns where data scarcity presents the greatest challenge. Implementation success depends on selecting AL strategies aligned with specific project objectives—uncertainty sampling for rapid hit identification versus diversity-based approaches for comprehensive chemical space exploration. The integration of automated experimental systems with robust data capture infrastructure emerges as a critical enabler, allowing the full potential of active learning cycles to be realized. As AI-driven platforms continue to advance, adopting these iterative approaches will become increasingly essential for maintaining competitive advantage in drug discovery.

High-Throughput Screening (HTS) has long been the established cornerstone of early drug discovery, relying on the automated experimental testing of hundreds of thousands of physical compounds to identify initial "hits" [7]. However, this brute-force approach carries immense and often prohibitive financial and temporal costs. A single HTS campaign can cost hundreds of thousands of dollars and requires significant investments in specialized infrastructure: miniaturized assay formats (e.g., 384- or 1536-well plates), sophisticated robotics for liquid handling, and high-capacity plate readers [8] [7]. Furthermore, the hit rate in a typical HTS is notoriously low, often less than 1%, meaning vast resources are expended to find a very small number of useful starting points [8]. This high-cost, low-efficiency problem inherent to traditional HTS powerfully justifies the shift toward Artificial Intelligence (AI)-driven Active Learning (AL) strategies.

Active Learning describes a machine learning paradigm in which the algorithm intelligently selects the most informative data points to test next, creating a iterative "design-make-test-analyze" loop [1]. By prioritizing experiments that maximize learning and minimize redundancy, AL aims to drastically reduce the number of experiments and compounds required to identify high-quality hits. This guide provides an objective comparison of these two approaches, presenting experimental data and protocols to help researchers evaluate their relative merits.

Comparative Performance: HTS vs. Active Learning

The following tables summarize key performance metrics and characteristics of HTS and AL, compiled from recent large-scale studies.

Table 1: Quantitative Comparison of Screening Performance Between HTS and AI/AL Approaches

| Performance Metric | Traditional HTS | AI/Active Learning | Key Findings from Experimental Data |

|---|---|---|---|

| Typical Hit Rate | 0.001% - 0.15% [9] [8] | ~6.7% - 7.6% (AtomNet study) [9] | A 318-target study showed AI consistently achieved hit rates orders of magnitude higher than HTS benchmarks [9]. |

| Active Compound Recovery | Requires screening >99% of library | 70-90% of actives found screening only 35-50% of library [8] | Iterative screening recovers the vast majority of active compounds while testing a fraction of the collection [8]. |

| Campaign Cost | "Hundreds of thousands of dollars" per campaign [8] | Significantly lower physical testing costs; higher computational cost | AL reduces the primary cost driver: the number of physical compounds that must be synthesized and tested [8] [9]. |

| Chemical Space Explored | Limited to existing physical libraries (10^5 - 10^6 compounds) | Access to virtual, synthesis-on-demand libraries (10^9 - 10^12 compounds) [9] | AI screens a chemical space thousands of times larger than HTS, accessing novel scaffolds not in any physical library [9]. |

Table 2: Characteristics and Resource Requirements of HTS vs. Active Learning

| Characteristic | Traditional HTS | Active Learning |

|---|---|---|

| Primary Approach | Experimental, brute-force screening of a full static library | Computational, iterative selection of informative subsets |

| Automation Focus | Liquid handling robotics, plate readers | Algorithmic selection and model retraining |

| Key Assay Metric | Z'-factor (0.5-1.0 indicates excellent assay) [7] | Model performance (e.g., F1 score, predictive accuracy for hit identification) [10] |

| Data Utilization | Single-use for a single campaign; often underutilized | Cumulative; each experiment improves the model for subsequent cycles |

| Scaffold Novelty | Limited to known and available chemotypes | High; capable of generating novel, drug-like scaffolds not based on known bioactives [9] |

Experimental Evidence for Active Learning Efficiency

Protocol: Iterative Screening for Hit Finding

A seminal study demonstrated a practical AL protocol for hit identification, which can be implemented with standard computational resources [8].

- Step 1: Initial Diverse Set Screening. The process begins by screening a small, diverse subset (e.g., 10-15%) of the compound library, selected using a MaxMin algorithm to ensure broad chemical coverage [8].

- Step 2: Model Training. The results (active/inactive labels) from this initial batch are used to train a machine learning model. The study found Random Forest (RF) algorithms performed best, even on standard desktops [8].

- Step 3: Exploitation and Exploration. The trained model predicts hit probabilities for all remaining unscreened compounds. The next batch for testing is selected using an 80/20 rule: 80% of the batch is filled with compounds ranked highest for predicted activity (exploitation), while 20% are chosen randomly from the remainder to explore under-sampled chemical space and improve the model (exploration) [8].

- Step 4: Iterative Model Refinement. Steps 2 and 3 are repeated for a small number of iterations (e.g., 3-6 cycles). With each iteration, the model becomes more accurate at predicting active compounds [8].

Results: This workflow, screening just 35% of the total library over three iterations, recovered a median of 70% of all active compounds. Increasing the screened portion to 50% raised the median recovery to 80% of actives [8]. This demonstrates a massive reduction in experimental effort for a minimal loss in potential hits.

Protocol: Large-Scale Virtual Screening with the AtomNet Model

A 2024 study involving 318 targets provides robust, large-scale evidence for replacing initial HTS with convolutional neural networks (CNNs) [9].

- Step 1: Virtual Screening. The AtomNet CNN, a structure-based deep learning model, was used to score protein-ligand interactions for billions of compounds in a virtual, synthesis-on-demand library [9].

- Step 2: Algorithmic Compound Selection. The top-ranked molecules were clustered to ensure diversity. The highest-scoring exemplars from each cluster were selected algorithmically, without manual cherry-picking [9].

- Step 3: Experimental Validation. Selected compounds were synthesized and tested in biochemical or cell-based assays at contract research organizations (CROs). Assays included standard additives (e.g., Tween-20, DTT) to mitigate common interference artifacts [9].

Results: Across 22 internal drug discovery projects, the approach achieved a 91% success rate in identifying reconfirmed hits. The average dose-response hit rate was 6.7%, vastly exceeding typical HTS hit rates. Crucially, this success was demonstrated for targets without known binders, high-quality X-ray structures, or both, addressing a historical limitation of computational methods [9].

Visualizing the Workflows

The fundamental difference between HTS and AL lies in their workflow structure. The diagrams below illustrate the linear, resource-heavy nature of HTS versus the adaptive, efficient cycle of AL.

HTS Linear Process - Figure 1: The traditional HTS process is a linear, single-pass workflow that requires screening an entire library before any analysis, leading to high upfront costs.

AL Iterative Cycle - Figure 2: The Active Learning workflow is an iterative cycle where experimental data continuously refines a model, which then intelligently selects the next most valuable experiments, dramatically increasing efficiency.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful implementation of an AL strategy, particularly the experimental validation phase, relies on key reagents and tools. The following table details essential components for setting up the necessary screening assays.

Table 3: Key Research Reagent Solutions for Screening Assays

| Reagent / Solution | Function in Screening | Application Notes |

|---|---|---|

| Transcreener ADP2 Assay | Universal biochemical assay for detecting ADP production; applicable to kinases, ATPases, GTPases, and other enzymes. | Flexible detection: can use Fluorescence Polarization (FP), Fluorescence Intensity (FI), or TR-FRET readouts. Enables potency (IC50) and residence time measurements [7]. |

| Miniaturized Assay Plates (384-/1536-well) | High-density microplates that minimize reagent consumption and enable automated, high-throughput testing. | Standard format for modern HTS and follow-up screening. Requires compatible liquid handling robotics and plate readers [7]. |

| Assay Interference Mitigants | Reagents like Tween-20, Triton-X 100, and Dithiothreitol (DTT) added to assays to reduce false positives. | Counteract common compound interference mechanisms such as aggregation, promiscuous inhibition, and oxidation [9]. |

| Target-Specific Biochemical Kits | Pre-optimized assay systems for specific target classes (e.g., kinases, proteases, epigenetic targets). | Reduce development time and ensure robust performance (high Z'-factor) for primary screening [7]. |

| Cell-Based Assay Reagents | Reagents for cell viability (e.g., Cell Titer-Glo), reporter gene assays, and high-content imaging. | Critical for phenotypic screening and assessing compound activity in a more physiologically relevant context [8] [7]. |

The empirical data and comparative analysis presented in this guide build a compelling case. The high-cost problem of Traditional HTS—characterized by low hit rates, immense physical screening costs, and limited chemical exploration—is no longer a necessary burden in hit discovery. Active Learning and other AI-driven approaches offer a validated, efficient, and powerful alternative. By adopting an iterative, intelligence-guided strategy, researchers can substantially reduce costs and timelines while accessing richer, more novel chemical space, ultimately accelerating the journey from concept to clinical candidate.

In the resource-intensive field of drug discovery, active learning has emerged as a powerful strategy to accelerate hit identification. This guide compares two advanced active learning frameworks—one leveraging generative AI and another employing a multi-task balanced-ranking strategy—against non-iterative high-throughput screening (HTS), providing the experimental data and protocols to underpin your research decisions.

Performance Comparison: Active Learning Strategies vs. Traditional HTS

The table below summarizes the key performance metrics of two distinct active learning methodologies compared to a primary HTS screen, demonstrating the significant efficiency gains of an iterative approach.

Table 1: Experimental Performance Metrics Across Discovery Strategies

| Strategy / Framework Name | Core Approach | Target Protein(s) | Hit Rate | Key Experimental Validation | Reference |

|---|---|---|---|---|---|

| Generative AI with Active Learning | VAE with nested AL cycles guided by chemoinformatic & physics-based oracles [11] | CDK2, KRAS | 8 out of 9 synthesized molecules showed in vitro activity (1 nanomolar) [11] | Synthesis & bioassay of generated molecules; CDK2: 8/9 active; KRAS: 4 in silico actives identified [11] | [11] |

| ChemScreener | Multi-task active learning with Balanced-Ranking acquisition [12] | WDR5 | Average 5.91% (Range: 3-10%) [12] | 44 hits advanced to dose-response; over 50% of top hits validated as binders by DSF; 3 novel scaffold series identified [12] | [12] |

| Primary HTS (Baseline) | Non-iterative screening of a large compound library [12] | WDR5 | 0.49% [12] | N/A (Baseline for comparison) | [12] |

Detailed Experimental Protocols

To ensure reproducibility and provide depth for scientific evaluation, here are the detailed methodologies for the two featured active learning frameworks.

Protocol 1: Generative AI with Nested Active Learning Cycles

This protocol is designed for de novo molecular generation and optimization for a specific target [11].

- 1. Data Representation & Initial Training: Represent training molecules as tokenized SMILES strings, converted into one-hot encoding vectors. The Variational Autoencoder (VAE) is first trained on a general molecular dataset to learn viable chemistry, then fine-tuned on a target-specific initial training set [11].

- 2. Workflow Execution (Nested Active Learning): The core of the method involves two nested cycles [11]:

- Inner AL Cycle (Chemical Optimization): The VAE generates new molecules. An oracle comprising chemoinformatic predictors evaluates them for drug-likeness, synthetic accessibility (SA), and dissimilarity from the training set. Molecules passing these filters are added to a "temporal-specific set" used to fine-tune the VAE. This cycle repeats, iteratively improving chemical properties [11].

- Outer AL Cycle (Affinity Optimization): After several inner cycles, the accumulated molecules in the temporal-specific set are evaluated by a physics-based affinity oracle (e.g., molecular docking simulations). Molecules with favorable docking scores are promoted to a "permanent-specific set," which is used for the next round of VAE fine-tuning, initiating a new outer cycle with nested inner cycles [11].

- 3. Candidate Selection & Validation: After multiple outer AL cycles, the most promising molecules from the permanent-specific set undergo rigorous filtration. This includes advanced molecular modeling (e.g., PELE simulations for binding pose refinement) and Absolute Binding Free Energy (ABFE) calculations. Top candidates are then selected for chemical synthesis and in vitro bioassay validation [11].

Protocol 2: ChemScreener's Balanced-Ranking for HTS Follow-Up

This protocol is designed for efficiently screening large, diverse chemical libraries after a primary HTS, using iterative single-dose assays [12].

- 1. Primary HTS & Model Initialization: Conduct a primary high-throughput screen to establish a baseline hit rate and gather initial activity data. Use this data to initialize a multi-task predictive model [12].

- 2. Iterative Screening & Model Updating: For each iterative cycle [12]:

- Prediction: The model predicts activity for all compounds not yet tested.

- Selection (Balanced-Ranking): A subset of compounds is selected for experimental testing based on an acquisition function that balances exploration (prioritizing compounds with high model uncertainty, often calculated via ensemble methods) and exploitation (prioritizing compounds with high predicted activity). This strategy enriches hit rates while exploring novel chemistry [12].

- Model Refinement: The selected compounds are tested in a single-dose assay (e.g., HTRF). The new experimental data (activity labels) is added to the training set, and the model is retrained before the next cycle [12].

- 3. Hit Validation & Characterization: Consolidate all hits from the iterative cycles and retest them alongside close analogs in a full dose-response assay. Confirm binding through orthogonal biophysical methods (e.g., Differential Scanning Fluorimetry - DSF) and cluster confirmed hits to identify novel scaffold series [12].

Workflow Visualization

The following diagrams illustrate the logical structure of the two core active learning principles, using the specified color palette.

Generative AI Active Learning Workflow

Balanced-Ranking Active Learning Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Active Learning-Driven Hit Discovery

| Item | Function / Application in the Workflow |

|---|---|

| WDR5 Protein | The target protein used in the HTRF-based iterative screening campaign to identify potential inhibitors [12]. |

| CDK2 / KRAS Proteins | Oncology-related target proteins used for benchmarking the generative AI workflow, involving docking and bioassays [11]. |

| HTRF Assay Kit | Used for the primary and iterative single-dose screens (e.g., for WDR5) to rapidly quantify compound activity and provide data for model refinement [12]. |

| Differential Scanning Fluorimetry (DSF) | An orthogonal, biophysical method used post-screening to validate true binding of identified hits to the target protein (e.g., WDR5), countering assay artifacts [12]. |

| Molecular Docking Software | Serves as the physics-based affinity oracle in the generative AI workflow, providing a computationally efficient estimate of binding potential to prioritize compounds [11]. |

| VAE & Predictive Models | The core computational engines. The VAE generates novel molecules, while the predictive models (e.g., Random Forest, Deep Learning) forecast activity and uncertainty for the screening library [12] [11]. |

In the face of vast chemical space and constrained research budgets, active learning (AL) has emerged as a transformative machine learning approach for hit discovery. AL operates through an iterative feedback process that selectively identifies the most valuable data points for experimental testing, effectively optimizing resource allocation [13]. This methodology stands in stark contrast to traditional high-throughput screening (HTS), which treats all compounds equally and often wastes resources on testing chemically redundant or uninformative molecules. The fundamental premise of AL is that by prioritizing uncertainty and diversity in compound selection, models can learn more efficiently, requiring far fewer experimental cycles to identify promising hit compounds [14].

The strategic implementation of AL addresses three critical challenges in modern drug discovery: the exponentially expanding chemical space that exceeds practical testing capacity, the high costs and time requirements of wet-lab experimentation, and the scarcity of labeled bioactivity data for model training [13]. By framing hit discovery as an iterative, model-guided exploration rather than a one-shot screening endeavor, AL enables research teams to accelerate project timelines, significantly enrich hit rates from chemical libraries, and substantially reduce operational costs associated with compound acquisition and testing.

Quantitative Performance Comparison

Empirical studies across multiple drug discovery campaigns demonstrate that active learning strategies consistently outperform traditional screening approaches across key performance metrics. The following tables synthesize quantitative results from recent implementations, highlighting the significant advantages of AL in hit discovery.

Table 1: Comparative Performance of Active Learning vs. Traditional Screening

| Screening Approach | Average Hit Rate | Hit Rate Improvement | Number of Hits Identified | Library Size | Reference |

|---|---|---|---|---|---|

| Primary HTS Screen | 0.49% | Baseline | Not specified | Not specified | [12] |

| ChemScreener AL | 5.91% (avg: 3-10%) | 12x increase | 104 hits | 1,760 compounds | [12] |

| Transcriptomics AL | 13-17x higher | 13-17x increase | Significant increase | Not specified | [15] |

| Random Sampling | Baseline | Baseline | Baseline | Various | [16] |

| Active Learning (various strategies) | Significantly higher | 30-70% more efficient than random | Increased | Various | [16] [14] |

Table 2: Efficiency Gains of Active Learning Strategies

| Performance Metric | Traditional Screening | Active Learning | Improvement | Application Context |

|---|---|---|---|---|

| Labeling efficiency | Baseline | 30-70% reduction in labels needed | Significant | General ML [14] |

| Hit identification speed | Baseline | Much earlier identification | Substantial | Anti-cancer drug screening [16] |

| Model performance gain | Baseline | Faster improvement per labeled sample | Significant | Drug response prediction [16] |

| Experimental cost | High | Reduced through focused experimentation | Substantial | Virtual screening [13] |

The data reveal that AL implementations achieve substantially higher hit rates compared to conventional methods. The ChemScreener workflow demonstrated particularly impressive results, increasing hit rates from a baseline of 0.49% in primary HTS to an average of 5.91% (ranging from 3-10% across cycles) [12]. This represents an approximate 12-fold enrichment in hit discovery efficiency. Similarly, an AL framework leveraging transcriptomics for phenotypic screening outperformed state-of-the-art models, translating to a 13-17x increase in phenotypic hit-rate across two hematological discovery campaigns [15].

Beyond hit rate enrichment, AL methodologies demonstrate remarkable efficiency in resource utilization. Research indicates that well-designed AL pipelines can reduce labeling requirements by 30-70% while maintaining or improving model performance compared to exhaustive screening approaches [14]. This efficiency translates directly to cost savings through reduced compound testing, smaller library requirements, and shorter discovery timelines.

Experimental Protocols and Methodologies

ChemScreener Workflow for WDR5 Inhibitor Discovery

The ChemScreener experimental protocol exemplifies a sophisticated implementation of active learning for hit discovery. The methodology employed a multi-task active learning workflow designed for early drug discovery across large, diverse chemical libraries [12]. The process commenced with an initial training set of known bioactive compounds, followed by iterative cycles of model prediction and experimental validation.

Balanced-Ranking Acquisition Strategy: ChemScreener's core innovation lies in its acquisition function, which leverages ensemble uncertainty to balance exploration of novel chemistry with exploitation of predicted activity [12]. This strategy simultaneously prioritizes compounds with high predicted activity against WDR5 while ensuring chemical diversity by selecting structures from underrepresented regions of chemical space. The ensemble model generated multiple predictions for each compound, with disagreement among models serving as a proxy for uncertainty.

Experimental Validation Cycle: Each AL iteration consisted of several key steps: (1) model training on existing bioactivity data; (2) prediction on untested compounds in the library; (3) selection of compounds for testing using the Balanced-Ranking strategy; (4) experimental testing via HTRF assays; and (5) model updating with new experimental results [12]. This cycle repeated five times in the WDR5 case study, with each iteration refining the model's understanding of structure-activity relationships.

Hit Confirmation Protocol: Promising hits from single-dose HTRF screens underwent rigorous validation through multiple orthogonal assays. The confirmation workflow included: (1) compound consolidation with close analogs; (2) retesting in dose-response format; (3) counter-screening in HTRF assays to exclude artifacts; and (4) validation of binding via differential scanning fluorimetry (DSF) [12]. This comprehensive approach ensured that identified hits represented genuine binders rather than assay artifacts.

Anti-Cancer Drug Response Prediction Framework

A comprehensive investigation of AL strategies for anti-cancer drug response prediction provides another exemplary protocol. This study focused on constructing drug-specific response prediction models for cancer cell lines, with the dual objectives of improving prediction model performance and efficiently identifying effective treatments [16].

Cell Line Selection Strategies: The researchers implemented and compared multiple AL approaches for selecting cell lines for screening, including: (1) random sampling (baseline); (2) greedy sampling (selecting cell lines with highest predicted sensitivity); (3) uncertainty sampling (prioritizing predictions with highest model uncertainty); (4) diversity sampling (maximizing representation of different cancer types); and (5) hybrid approaches combining uncertainty and diversity criteria [16].

Data Sources and Processing: The analysis utilized the Cancer Therapeutics Response Portal v2 (CTRP) dataset, encompassing 494 drugs, 812 cell lines, and over 318,000 dose-response experiments [16]. Cell lines were represented by multi-omic features, including gene expression, mutations, and copy number variations, while drugs were encoded using molecular fingerprints and descriptors.

Evaluation Metrics: Performance was assessed using two primary metrics: (1) the number of identified hits (validated responsive treatments) selected during the AL process, and (2) the performance of response prediction models trained on the data selected by each strategy [16]. The results demonstrated that most AL strategies significantly outperformed random selection for identifying effective treatments, with hybrid approaches generally showing the most robust performance across diverse drug classes.

Workflow Visualization

Active Learning Cycle for Hit Discovery

The following diagram illustrates the iterative feedback loop that forms the core of active learning methodologies in drug discovery:

Active Learning Cycle for Hit Discovery

Balanced-Ranking Acquisition Strategy

ChemScreener's innovative Balanced-Ranking strategy combines exploration and exploitation through the following decision process:

Balanced-Ranking Acquisition Strategy

Research Reagent Solutions Toolkit

Successful implementation of active learning for hit discovery requires specialized research tools and reagents. The following table details essential components used in the featured studies:

Table 3: Essential Research Reagents and Tools for Active Learning-Driven Hit Discovery

| Reagent/Tool | Function in Workflow | Application Example |

|---|---|---|

| HTRF Assay Kits | Measure compound-protein interaction in high-throughput format | WDR5-binding confirmation in ChemScreener study [12] |

| Cancer Cell Line Panels | Provide diverse biological context for compound screening | CTRP database with 812 cell lines for response prediction [16] |

| Molecular Fingerprints | Encode chemical structures for machine learning models | Extended-connectivity fingerprints for structure-activity modeling [13] |

| Differential Scanning Fluorimetry (DSF) | Validate binding through thermal stability shifts | Orthogonal confirmation of WDR5 binders [12] |

| Transcriptomics Profiling | Generate multi-omic features for cell line characterization | Predictive features for phenotypic screening [15] |

| Automated Screening Systems | Enable high-throughput experimental testing | Implementation of iterative AL cycles [12] |

| Ensemble Modeling Software | Generate predictions with uncertainty estimates | Balanced-ranking acquisition in ChemScreener [12] |

Comparative Analysis of Active Learning Strategies

The implementation details of AL strategies significantly impact their performance in hit discovery applications. Different sampling approaches offer distinct advantages and limitations:

Uncertainty Sampling prioritizes compounds where the model shows highest prediction uncertainty, typically targeting decision boundary regions [14]. This approach efficiently improves model accuracy but may overfocus on outliers or noisy data points. In anti-cancer drug response prediction, uncertainty sampling demonstrated particular effectiveness for early identification of responsive treatments [16].

Diversity Sampling selects compounds that maximize structural or functional diversity in the training set, ensuring broad coverage of chemical space [14]. This approach mitigates the redundancy inherent in large chemical libraries but may deliver slower improvements in hit rates compared to uncertainty-focused methods.

Hybrid Approaches combine multiple criteria to balance competing objectives. The Balanced-Ranking strategy used in ChemScreener exemplifies this category, simultaneously considering predicted activity (exploitation) and model uncertainty (exploration) [12]. Similarly, research in other domains has successfully combined uncertainty sampling with clustering to ensure diverse selection of informative samples [14]. These hybrid methods generally demonstrate more robust performance across diverse drug targets and chemical libraries.

Committee-Based Strategies employ multiple models to quantify disagreement as a measure of uncertainty [14]. While computationally intensive, this approach can yield more reliable uncertainty estimates than single-model methods, particularly for complex structure-activity relationships.

The comparative performance of these strategies depends on factors including target biology, chemical library diversity, and available training data. The research consistently indicates that most AL strategies significantly outperform random selection, with hybrid approaches generally delivering the most balanced performance across multiple optimization objectives [16].

The accumulated evidence from recent studies firmly establishes active learning as a transformative methodology for hit discovery in drug development. Through strategic compound selection and iterative model refinement, AL implementations consistently achieve substantial hit rate enrichment, significant timeline acceleration, and meaningful cost reduction compared to traditional screening approaches.

The case studies examined demonstrate that AL can increase hit rates by an order of magnitude—from under 0.5% in conventional HTS to 3-10% in AL-guided campaigns [12]—while simultaneously reducing experimental requirements by 30-70% [14]. These improvements directly address the fundamental challenges of modern drug discovery: navigating vast chemical spaces with constrained resources.

The successful application of AL across diverse target classes (including WDR5 and various anti-cancer targets) and screening methodologies (binding assays, phenotypic screens) underscores its versatility and generalizability [12] [16] [15]. As drug discovery continues to confront increasingly challenging targets and growing chemical spaces, the strategic implementation of active learning methodologies will become increasingly essential for maintaining research productivity and therapeutic innovation.

A Practical Guide to Active Learning Strategies and Their Real-World Applications

In the high-stakes field of drug discovery, researchers face the monumental challenge of identifying potential therapeutic compounds from libraries containing billions of molecules. Traditional high-throughput screening methods are prohibitively expensive and time-consuming, often requiring substantial resources to evaluate even a fraction of available chemical space. Active learning has emerged as a powerful strategy to address this inefficiency by enabling iterative, data-driven selection of the most informative compounds for experimental testing. Within this paradigm, uncertainty sampling represents a foundational approach that prioritizes compounds for which the current predictive model exhibits maximum uncertainty, thereby targeting samples most likely to improve model performance with each iteration.

The application of uncertainty sampling in drug discovery is particularly valuable in scenarios characterized by extreme class imbalance, such as synergistic drug combination screening where synergistic pairs represent only 1.47-3.55% of all possible combinations [17]. In such contexts, random sampling strategies waste significant resources on non-informative examples, while well-designed uncertainty sampling methods can discover 60% of synergistic drug pairs by exploring only 10% of the combinatorial space [17]. This efficiency gain translates directly to reduced experimental costs and accelerated research timelines, making uncertainty sampling an indispensable tool for modern drug development pipelines.

Theoretical Foundations of Uncertainty Sampling

Core Principles and Mechanisms

Uncertainty sampling operates on a fundamentally simple yet powerful principle: in pool-based active learning, an algorithm sequentially queries the labels of those instances for which its current prediction model is maximally uncertain [18] [19]. This approach stands in contrast to other active learning strategies that might prioritize representativeness or diversity. The underlying assumption is that by resolving the model's areas of greatest uncertainty, each newly acquired data point will provide maximum information gain, leading to more efficient model improvement with fewer labeled examples.

The effectiveness of uncertainty sampling hinges on properly defining and quantifying "uncertainty" within the specific context of the prediction task and loss function [19]. Traditional probabilistic measures include:

- Least confidence sampling: Selecting instances where the model assigns the highest probability to any class is lowest

- Margin sampling: Choosing examples with the smallest difference between the two highest class probabilities

- Entropy sampling: Prioritizing instances with maximum class probability entropy

Recent theoretical work has established that uncertainty sampling essentially optimizes against an "equivalent loss" that depends on both the chosen uncertainty measure and the original loss function [19]. This perspective provides a mathematical foundation for understanding the behavior and performance of different uncertainty sampling variants.

Uncertainty Types and Their Implications

A critical advancement in uncertainty sampling theory recognizes that not all uncertainty is equivalent. Modern approaches distinguish between epistemic uncertainty (reducible uncertainty stemming from limited training data) and aleatoric uncertainty (irreducible uncertainty inherent in the data itself) [18]. This distinction is particularly relevant in drug discovery, where epistemic uncertainty might indicate promising exploration areas for model improvement, while high aleatoric uncertainty might signal inherently noisy or unpredictable biological systems.

Evidential Deep Learning (EDL) represents one approach to separately modeling these uncertainty types. As implemented in the EviDTI framework for drug-target interaction prediction, EDL provides direct uncertainty quantification without relying on computationally expensive random sampling [20]. This capability allows researchers to not only identify uncertain predictions but also understand the nature of that uncertainty, enabling more informed decision-making about which compounds to prioritize for experimental validation.

Comparative Analysis of Uncertainty Sampling Strategies

Standard Uncertainty Sampling Methods

Traditional uncertainty sampling methods form the foundation upon which more advanced techniques are built. These approaches typically rely on the probabilistic outputs of classification models to identify uncertain instances. In the context of drug discovery, these methods have been applied to various prediction tasks including drug-target interactions, synergy prediction, and molecular property estimation.

The fundamental limitation of these standard approaches lies in their potential to create sample imbalance in multi-class scenarios, where high-frequency or high-complexity classes become overrepresented while low-frequency classes suffer from insufficient representation [21]. This distributional imbalance can severely constrain model performance, resulting in significantly diminished predictive capability for underrepresented molecular classes and ultimately affecting overall accuracy. Despite this limitation, standard uncertainty sampling remains widely used due to its computational efficiency and straightforward implementation.

Evidential Deep Learning for Uncertainty Quantification

The EviDTI framework represents a significant advancement in uncertainty-aware modeling for drug-target interaction prediction [20]. By employing evidential deep learning, EviDTI addresses a critical challenge in traditional deep learning models: the tendency to produce overconfident and incorrect predictions for novel, unseen drug-target interactions. The framework integrates multiple data dimensions, including drug 2D topological graphs, 3D spatial structures, and target sequence features, through a specialized architecture comprising protein feature encoders, drug feature encoders, and an evidential layer.

Experimental results demonstrate EviDTI's competitive performance against 11 baseline models across three benchmark datasets: DrugBank, Davis, and KIBA [20]. On the challenging KIBA dataset, characterized by significant class imbalance, EviDTI outperformed the best baseline model by 0.6% in accuracy, 0.4% in precision, 0.3% in Matthews correlation coefficient, and 0.4% in F1 score [20]. More importantly, the well-calibrated uncertainty estimates provided by EviDTI's evidential approach enable prioritization of drug-target interactions with higher confidence predictions for experimental validation, potentially enhancing the efficiency of drug discovery pipelines.

Category-Enhanced Uncertainty Sampling

To address the class imbalance limitations of traditional uncertainty sampling, Wang et al. proposed an enhanced approach that integrates category information with uncertainty measures [21]. This method employs a pre-trained VGG16 architecture and cosine similarity metrics to efficiently extract category features without requiring additional model training. The framework combines these features with traditional uncertainty measures to ensure balanced sampling across classes while maintaining computational efficiency.

In object detection tasks, this category-enhanced approach achieves competitive mean average precision scores while ensuring balanced category representation [21]. For image classification, the method achieves accuracy comparable to state-of-the-art approaches while reducing computational overhead by up to 80% [21]. Although developed for computer vision applications, the underlying principle of incorporating category information to mitigate sampling bias has direct relevance to drug discovery, particularly in scenarios involving multiple target classes or therapeutic areas.

Calibrated Uncertainty Sampling

A recently proposed innovation addresses the critical issue of miscalibrated uncertainty estimates in deep neural networks [22]. Standard uncertainty sampling approaches can be misled when a model's uncertainty estimates are poorly calibrated on unlabeled data, leading to suboptimal sample selection and reduced active learning performance. The calibrated uncertainty sampling method estimates calibration errors and queries samples with the highest calibration error before leveraging the model's uncertainty estimates.

Theoretical analysis shows that active learning with this acquisition function eventually leads to a bounded calibration error on both the unlabeled pool and unseen test data [22]. Empirically, the approach surpasses other acquisition function baselines by achieving lower calibration and generalization errors across pool-based active learning settings. This focus on calibration is particularly relevant to drug discovery, where reliable confidence estimates are essential for making costly decisions about which compounds to synthesize and test experimentally.

Thompson Sampling and Roulette Wheel Selection

For screening ultralarge combinatorial libraries, Thompson sampling has emerged as a valuable probabilistic search method that operates in reagent space rather than product space [23]. This approach associates each chemical building block with a probability distribution, sampling promising building blocks more frequently to guide the search toward productive areas of chemical space. Recent enhancements to this method introduce a roulette wheel selection approach combined with thermal cycling to balance greedy search and diversity-driven exploration.

In extensive benchmarking involving 2.18 billion evaluations across 20 reactions applied to 109 shape-based virtual screens, the enhanced Thompson sampling approach matched greedy scheme performance on two-component libraries and outperformed it on most three-component libraries [23]. The method parallelizes with approximately linear scaling, enabling practical screening of ultralarge combinatorial spaces containing billions or trillions of compounds. This capability is particularly valuable for hit expansion in early drug discovery, where efficiently navigating vast chemical spaces is essential.

Table 1: Performance Comparison of Uncertainty Sampling Methods in Drug Discovery Applications

| Method | Key Innovation | Application Context | Performance Advantages | Limitations |

|---|---|---|---|---|

| Standard Uncertainty Sampling | Querying by model uncertainty | General classification tasks | Computational efficiency, straightforward implementation | Prone to class imbalance, uncalibrated uncertainties |

| EviDTI (Evidential Deep Learning) | Integrated uncertainty quantification | Drug-target interaction prediction | Competitive accuracy (82.02% on DrugBank), well-calibrated uncertainty estimates | Architectural complexity, computational requirements |

| Category-Enhanced Sampling | Integration of category information | Multi-class scenarios | Reduces computational overhead by up to 80%, mitigates class imbalance | Requires category feature extraction |

| Calibrated Uncertainty Sampling | Explicit calibration error estimation | Pool-based active learning | Lower calibration and generalization errors | Additional computation for calibration estimation |

| Enhanced Thompson Sampling | Roulette wheel selection with thermal cycling | Ultralarge combinatorial library screening | Identifies >90% of top molecules with 0.1-1% library evaluation, linear scaling | Performance varies by library composition |

Experimental Protocols and Methodologies

EviDTI Framework Implementation

The EviDTI framework employs a multi-modal architecture that integrates diverse molecular representations for enhanced drug-target interaction prediction [20]. The implementation comprises three main components:

Protein Feature Encoder: This module utilizes the protein language pre-trained model ProtTrans as the initial encoder to generate target representations. These representations undergo further feature extraction through a light attention module, providing insights into local interactions at the residue level.

Drug Feature Encoder: This component processes both 2D topological information and 3D structural information of drug molecules. For 2D representations, the pre-trained model MG-BERT generates initial encodings that are subsequently processed by a 1D convolutional neural network. For 3D structural information, the spatial structure is converted into an atom-bond graph and a bond-angle graph, with representations obtained through a GeoGNN module.

Evidential Layer: The concatenated target and drug representations are fed into this layer, which outputs the parameter α used to calculate both prediction probability and corresponding uncertainty value.

Experimental validation followed rigorous protocols using three benchmark datasets: DrugBank, Davis, and KIBA [20]. These datasets were randomly divided into training, validation, and test sets in a ratio of 8:1:1. Performance was evaluated using seven metrics: accuracy, recall, precision, Matthews correlation coefficient, F1 score, area under the ROC curve, and area under the precision-recall curve.

Active Learning for Synergistic Drug Combination Discovery

The experimental protocol for active learning in synergistic drug discovery involves several key components [17]. Researchers typically use the Oneil dataset, which contains 15,117 measurements comprising 38 drugs and 29 cell lines with 3.55% synergistic drug pairs (defined as Loewe synergy score >10). The active learning process proceeds iteratively through multiple batches, with model updates between batches incorporating newly acquired experimental data.

Critical protocol parameters include:

- Batch size: Smaller batch sizes (typically 1-5% of total samples) yield higher synergy discovery rates

- Molecular features: Morgan fingerprints with addition operations demonstrated highest prediction performance

- Cellular features: Gene expression profiles from GDSC database significantly improve prediction quality

- AI algorithms: Ranging from parameter-light (logistic regression) to parameter-heavy (transformers)

This protocol demonstrated that 1,488 measurements scheduled with active learning recovered 60% (300 out of 500) synergistic combinations, saving 82% of experimental resources compared to random screening (which would require 8,253 measurements to obtain the same number of synergies) [17].

Enhanced Thompson Sampling for Library Screening

The experimental methodology for enhanced Thompson sampling with roulette wheel selection involves several stages [23]:

Warmup Cycle: Reagents from each reaction component are placed in a matrix, with each reagent selected for a minimum number of molecules. This stage establishes initial probability distributions for building blocks.

Search Cycle: The method samples random scores from probability distributions of each building block, selects building blocks with highest sampled scores, combines them to produce virtual reaction products, and evaluates these products using 3D similarity searches.

Probability Update: Evaluation results are used to adjust probability distributions of related building blocks using a Boltzmann-weighted average rather than arithmetic mean.

The benchmarking process involved 109 queries against twenty distinct 1-million-compound libraries using ROCS (Rapid Overlay of Chemical Structures) for shape-based similarity assessment [23]. This extensive evaluation encompassed 2.18 billion assessments to validate method performance across diverse chemical spaces.

Table 2: Experimental Results Across Different Uncertainty Sampling Applications

| Application Domain | Dataset | Baseline Performance | Uncertainty Sampling Performance | Key Metric |

|---|---|---|---|---|

| Drug-Target Interaction Prediction | DrugBank | Varies by baseline model | Accuracy: 82.02%, Precision: 81.90% | MCC: 64.29% [20] |

| Drug-Target Interaction Prediction | Davis | Varies by baseline model | Exceeds best baseline by 0.8% in accuracy, 0.6% in precision | MCC: +0.9%, F1: +2% [20] |

| Drug-Target Interaction Prediction | KIBA | Varies by baseline model | Outperforms best baseline by 0.6% in accuracy, 0.4% in precision | MCC: +0.3%, F1: +0.4% [20] |

| Synergistic Drug Discovery | Oneil | Random sampling requires 8,253 measurements for 300 synergies | Active learning finds 300 synergies in 1,488 measurements | 82% resource saving [17] |

| Combinatorial Library Screening | 20 virtual libraries | Exhaustive screening required | Identifies >90% of top 100 molecules with 0.1-1% evaluation | Linear scaling with CPUs [23] |

Workflow Visualization: Uncertainty Sampling in Drug Discovery

The following diagram illustrates the generalized workflow for uncertainty sampling in drug discovery applications, integrating elements from the EviDTI framework, synergistic combination discovery, and combinatorial library screening:

Uncertainty Sampling Workflow in Drug Discovery

Table 3: Key Research Reagents and Computational Tools for Uncertainty Sampling Implementation

| Resource Category | Specific Tool/Resource | Function in Uncertainty Sampling | Application Context |

|---|---|---|---|

| Bioactivity Datasets | DrugBank Database | Provides known drug-target interactions for model training and validation | Drug-target interaction prediction [20] |

| Bioactivity Datasets | Davis Dataset | Contains kinase inhibition data for evaluation | Drug-target interaction benchmarking [20] |

| Bioactivity Datasets | KIBA Dataset | Provides kinase inhibitor bioactivity scores | Method validation on unbalanced data [20] |

| Bioactivity Datasets | Oneil Dataset | Contains drug combination synergy measurements | Synergistic drug discovery [17] |

| Computational Tools | ProtTrans | Protein language model for sequence feature extraction | Protein representation learning [20] |

| Computational Tools | MG-BERT | Molecular graph pre-training model for 2D structure encoding | Drug representation learning [20] |

| Computational Tools | GeoGNN | Geometric deep learning for 3D molecular structure processing | 3D drug representation [20] |

| Computational Tools | ROCS (Rapid Overlay of Chemical Structures) | 3D shape-based similarity screening | Combinatorial library screening [23] |

| Experimental Platforms | High-throughput screening systems | Automated experimental validation of selected compounds | All experimental applications |

| Cellular Model Systems | GDSC (Genomics of Drug Sensitivity in Cancer) | Provides gene expression profiles for cellular context | Synergy prediction in specific environments [17] |

Uncertainty sampling strategies represent powerful approaches for optimizing compound selection in drug discovery, offering significant efficiency improvements over traditional screening methods. The comparative analysis presented in this guide demonstrates that while standard uncertainty sampling provides a solid foundation, specialized approaches such as evidential deep learning, category-enhanced sampling, and enhanced Thompson sampling address specific limitations and application scenarios.

The experimental data consistently shows that well-implemented uncertainty sampling can achieve 60-90% of potential discoveries while evaluating only 10% or less of total available compounds [20] [17]. This efficiency gain translates directly to reduced research costs and accelerated timelines, making uncertainty sampling an increasingly essential component of modern drug discovery pipelines.

Future developments in uncertainty sampling will likely focus on improved uncertainty calibration, better integration of multi-modal data sources, and enhanced handling of extreme class imbalance scenarios. As these methods continue to evolve, their integration with emerging technologies such as hybrid AI-quantum computing approaches [24] promises to further expand their capabilities and applications in pharmaceutical research.

The concept of chemical space, a theoretical framework for organizing molecular diversity, is foundational to modern cheminformatics and drug discovery. With an estimated >10^60 potential drug-like molecules, the chemical universe is too vast to explore exhaustively [25]. This reality makes the strategic selection of diverse molecular subsets a critical task for discovering novel bioactive compounds and functional materials. Diversity-based selection aims to ensure that screened compounds are not just numerous but also broadly representative of unexplored chemical territories, thereby maximizing the probability of identifying new hits with unique properties and mechanisms of action.

In hit discovery research, diversity-based strategies are often evaluated alongside other active learning (AL) approaches, which iteratively select compounds for screening based on model predictions. The core thesis of this guide is that while all AL strategies offer efficiency gains over random screening, diversity-based methods provide a unique and essential advantage by systematically promoting exploration over exploitation. This ensures that molecular libraries do not merely grow in size but expand meaningfully in their coverage of chemical space, a distinction highlighted by recent studies questioning whether the rapid increase in database size directly translates to increased diversity [26].

This guide provides a comparative analysis of diversity-based selection against other prominent active learning strategies, supported by quantitative performance data and detailed experimental protocols.

Comparative Analysis of Active Learning Strategies

Active learning strategies for drug screening aim to optimize the experimental selection process to achieve one or both of two primary objectives: improving the performance of drug response prediction models and efficiently identifying effective treatments (hits) [27]. The table below summarizes the core operational principles of key strategies.

Table 1: Key Active Learning Strategies for Hit Discovery

| Strategy | Primary Selection Principle | Main Advantage | Typical Use Case |

|---|---|---|---|

| Diversity-Based | Selects compounds that are most dissimilar to previously tested molecules or to each other [25]. | Maximizes exploration and broad coverage of chemical space. | Early-stage discovery when little is known about the structure-activity relationship. |

| Uncertainty Sampling | Selects compounds for which the prediction model is most uncertain [27]. | Rapidly improves model accuracy in local regions around decision boundaries. | When a preliminary model exists and needs refinement. |

| Greedy Sampling | Selects compounds predicted to be most active (e.g., highest predicted IC50) [27]. | Directly maximizes the short-term yield of confirmed hits. | Hit confirmation stages after initial active regions are identified. |

| Hybrid (e.g., Uncertainty + Diversity) | Combines multiple criteria, such as uncertainty and diversity, in the selection process [27]. | Balances exploration (diversity) and exploitation (uncertainty/greedy). | A robust default choice for balanced campaign performance. |

| Random Sampling | Selects compounds randomly from the library. | Provides an unbiased baseline; simple to implement. | Baseline for comparing the performance of other strategies. |

Quantitative comparisons from a comprehensive investigation of anti-cancer drug screening reveal the relative performance of these strategies. The study evaluated approaches based on two key metrics: the number of identified hits (responses validated to be responsive) and the performance of the drug response prediction model built on the acquired data [27].

Table 2: Performance Comparison of Active Learning Strategies in Anti-Cancer Drug Screening [27]

| Strategy | Hit Identification (Relative to Random) | Model Performance | Overall Efficacy |

|---|---|---|---|

| Diversity-Based | Significant Improvement | Good, improves for some drugs | High for broad exploration |

| Uncertainty Sampling | Significant Improvement | Good, improves for some drugs | High for model refinement |

| Greedy Sampling | Moderate Improvement | Limited improvement | Medium, risks early convergence |

| Hybrid Approaches | Significant Improvement | Good, more consistent improvement | Very High, balanced performance |

| Random Sampling | (Baseline) | (Baseline) | Low |

A key real-world application is the ChemScreener workflow, which employs a Balanced-Ranking acquisition strategy. This multi-task active learning approach leverages ensemble uncertainty to explore novel chemistry while maintaining hit rate enrichment. In an iterative screen targeting the WDR5 protein, ChemScreener achieved an average hit rate of 5.91% (with cycles reaching 3–10%), a substantial increase from the primary HTS screen baseline of 0.49%. This demonstrates the power of combining exploration with targeted activity prediction, leading to the identification of multiple novel scaffold series [12].

Essential Tools and Metrics for Diversity Assessment

The Scientist's Toolkit: Research Reagent Solutions

Implementing a diversity-based selection strategy requires a suite of computational tools and metrics. The following table details the essential components of the research toolkit.

Table 3: Essential Research Reagents and Tools for Diversity Analysis

| Tool / Descriptor | Type | Primary Function | Relevance to Diversity |

|---|---|---|---|

| Molecular Fingerprints (e.g., ECFP, MACCS) [26] [25] | Structural Descriptor | Encodes molecular structure as a binary bit string. | Serves as the foundational representation for calculating structural similarity and diversity. |

| Tanimoto Coefficient [25] | Similarity Metric | Calculates the similarity between two fingerprint vectors. | The most common metric for pairwise similarity; 1 - Tanimoto is used as a distance/dissimilarity measure. |

| iSIM (Intrinsic Similarity Method) [26] | Computational Framework | Efficiently calculates the average pairwise similarity within a massive library in O(N) time. | Provides a global, single-value metric (iT) for a library's internal diversity, enabling comparison of entire databases. |

| Graph Neural Networks (GNNs) [25] | Machine Learning Model | Learns vector representations of molecules that capture both structural and property information. | Generates rich molecular descriptors that can be used for property-aware diversity selection. |

| BitBIRCH Algorithm [26] | Clustering Algorithm | Efficiently groups extremely large numbers of molecular fingerprints. | Enables "granular" analysis of chemical space by identifying natural clusters within a library. |

| Submodular Functions (e.g., Log-Determinant) [25] | Mathematical Framework | Quantifies the diversity of a set of molecules (e.g., as the volume spanned by their vectors). | Allows for efficient, near-optimal diverse subset selection with mathematical performance guarantees. |

Quantifying Diversity and Strategy Performance

The performance of hit discovery strategies is measured using a range of metrics, tailored to the specific goal, whether it is classification or regression.

- For Hit Identification (Classification):

- Hit Rate: The percentage of screened compounds that are confirmed as active. This is a direct measure of screening efficiency [12].

- Scaffold Diversity: The number of distinct molecular frameworks or core structures identified among the hits. A higher count indicates broader exploration success [12].

- For Model Performance (Regression/Prediction):

- Root Mean Squared Error (RMSE): Measures the average difference between predicted and actual activity values. It is in the same units as the target variable, making it interpretable [28].

- R-Squared (R²): Represents the proportion of variance in the response variable that is explained by the model. A higher value indicates a better fit [28].

Experimental Protocols for Strategy Evaluation

Protocol 1: Time-Evolution Analysis of Chemical Library Diversity

This protocol, derived from recent research, assesses how the chemical diversity of public libraries has evolved over time, independent of a specific screening campaign [26].

- Data Collection: Obtain sequential historical releases of a chemical database (e.g., ChEMBL releases 1 through 33).

- Molecular Representation: Standardize molecular structures and encode them using one or more fingerprint types (e.g., ECFP4).

- Global Diversity Calculation: For each library release, calculate the iT (iSIM Tanimoto) value. This metric represents the average pairwise Tanimoto similarity within the library, where a lower iT indicates greater diversity [26].

- Granular Cluster Analysis: Apply the BitBIRCH clustering algorithm to each release to identify and track the formation and growth of distinct molecular clusters over time [26].

- Complementary Similarity Analysis: For each molecule in a release, calculate its complementary similarity (the iT of the library after its removal). This identifies "medoid" molecules (low complementary similarity, central to the library) and "outlier" molecules (high complementary similarity, on the periphery) [26].

- Trend Interpretation: Analyze trends in iT, cluster count and size, and the diversity of medoid vs. outlier regions to determine if library growth corresponds with true diversity expansion.

Protocol 2: Benchmarking Active Learning Strategies for a Specific Target

This protocol outlines a head-to-head comparison of active learning strategies for a specific drug screening project.

- Initialization:

- Define Compound Library: Select an ultra-large library (e.g., >10^7 compounds) for screening [26].

- Establish Training Data: Start with a small, initial set of compounds with known activity against the target (e.g., WDR5).

- Iterative Active Learning Cycle: Repeat for a predetermined number of cycles or until a performance plateau is reached.

- Model Training: Train a predictive model (e.g., a Graph Neural Network) on all data acquired so far [25].

- Compound Selection: Apply each AL strategy in parallel to select a batch of compounds from the unexplored library.

- Diversity: Use a method like SubMo-GNN, which employs a submodular function (e.g., log-determinant) on GNN-generated molecular vectors to select a maximally diverse subset [25].

- Uncertainty: Select compounds where the ensemble of models shows the highest prediction variance [27].

- Greedy: Select compounds with the highest predicted activity.

- Hybrid: Use a balanced ranking that combines criteria (e.g.,

α * Uncertainty_Score + (1-α) * Diversity_Score).

- Experimental Testing: Acquire and test the selected compounds in a robust assay (e.g., HTRF, DSF).

- Data Integration: Add the new experimental results to the training data.

- Performance Evaluation: After the final cycle, compare the strategies based on the cumulative number of hits identified, the diversity of the hit scaffolds, and the predictive performance (e.g., RMSE) of the models they built.

The following workflow diagram illustrates the key steps and decision points in Protocol 2.

Visualizing the Chemical Space Exploration Logic

The strategic rationale for employing diversity-based selection, especially in contrast to other methods, can be visualized as a decision-making logic for navigating chemical space. The following diagram maps this high-level logic, showing how diversity-based methods prioritize broad exploration to mitigate the risk of overlooking promising regions.

The empirical data clearly demonstrates that while all advanced active learning strategies significantly outperform random and greedy sampling, diversity-based selection holds a unique and critical role in the hit discovery pipeline. Its primary strength lies in systematically ensuring the broad exploration of chemical space, which is a fundamental safeguard against the premature convergence on local optima—a common pitfall of purely exploitation-driven strategies.

For researchers and drug development professionals, the strategic implication is that diversity-based methods are indispensable in the early stages of discovery when the goal is to map the structure-activity landscape and identify novel chemotypes. As campaigns progress, hybrid approaches that balance diversity with model uncertainty or predicted activity often provide the most robust performance, efficiently expanding the chemical frontier while deepening the understanding of promising regions [12] [27]. The ongoing development of sophisticated tools like iSIM, BitBIRCH, and SubMo-GNN provides the computational rigor needed to move beyond simple compound counting and towards a truly strategic, diversity-driven expansion of the explored chemical universe [26] [25].