Active Learning Strategies for Data-Scarce Chemical Problems: A Guide for Efficient Discovery

This article provides a comprehensive guide for researchers and drug development professionals on leveraging active learning (AL) to overcome data scarcity in chemical and materials science.

Active Learning Strategies for Data-Scarce Chemical Problems: A Guide for Efficient Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging active learning (AL) to overcome data scarcity in chemical and materials science. It covers the foundational principles of AL, detailing how this machine learning paradigm strategically selects the most informative experiments to minimize labeling costs and accelerate discovery. The piece explores key methodological strategies and their successful applications in areas like drug discovery and materials design, while also addressing common challenges such as imbalanced data and computational cost. Finally, it presents a comparative analysis of different AL approaches based on recent benchmark studies, offering evidence-based recommendations for implementing these techniques to optimize ADMET properties, discover novel materials, and enhance predictive modeling in biomedical research.

What is Active Learning and Why is it a Game-Changer for Data-Scarce Chemistry?

Frequently Asked Questions (FAQs)

FAQ 1: What is active learning and why is it critical for data-scarce problems in chemical research? Active learning is a machine learning paradigm where the algorithm strategically selects the most informative data points for experimental testing, rather than relying on passive consumption of large, pre-existing datasets [1]. This is crucial for chemical and drug discovery research because generating experimental data is often costly, time-consuming, and the phenomena of interest—like synergistic drug pairs or successful reaction conditions—can be rare [2] [3]. By guiding experiments toward the most promising areas of chemical space, active learning minimizes resource consumption and accelerates discovery [4] [5].

FAQ 2: How does active learning fundamentally differ from traditional machine learning? Traditional machine learning models are typically trained on static, large-scale datasets and act as passive predictors. In contrast, active learning operates in a closed-loop fashion [5] [3]. It starts with an initial dataset, a model is trained to make predictions and quantify uncertainty, and an acquisition function uses this information to select the next most informative experiments. The results from these targeted experiments are then used to retrain and improve the model, creating an iterative cycle of learning and discovery [4] [6].

FAQ 3: What are the main strategies for selecting experiments in active learning? The selection process is governed by the exploration-exploitation trade-off [4] [2]. The specific strategy is implemented through an acquisition function. Common philosophies include:

- Exploration: Prioritizing experiments in regions of high uncertainty to broaden the model's understanding of the chemical space.

- Exploitation: Prioritizing experiments in regions predicted to have high performance (e.g., high yield, strong synergy) to refine and confirm the best candidates.

- Balanced/Hybrid: Modern frameworks, like the Confidence-Adjusted Surprise (CAS), dynamically balance exploration and exploitation by amplifying surprises in regions where the model is more confident, preventing wasted resources on inherently noisy areas [4].

FAQ 4: My active learning model is stuck and keeps selecting similar, unproductive experiments. How can I escape this local optimum? This is a common challenge. Several strategies can help:

- Adjust the Acquisition Function: If you are using a purely exploitative strategy, switch to one that encourages more exploration or uses a dynamic balance like CA-SMART [4].

- Incorporate Prior Knowledge via Transfer Learning: Use a model pre-trained on a related, larger source dataset (e.g., a public reaction database) to initialize your active learning loop. This "warms up" the model, providing better initial guidance and helping it avoid unproductive regions from the start [7] [6].

- Reduce Batch Size: In batch active learning, using smaller batch sizes has been shown to increase the discovery yield of rare events, as the model can adapt more frequently to new information [2].

- Re-evaluate Feature Space: Ensure your molecular or reaction descriptors are relevant to the problem. For drug synergy, for instance, cellular environment features (e.g., gene expression) can be more critical than the specific molecular encoding [2].

FAQ 5: How do I choose the right machine learning model for my active learning campaign? The choice depends on your data and problem domain:

- Tree-based models (e.g., Random Forest) are often effective for structured reaction condition data, are computationally efficient, and provide a good baseline [5] [6].

- Geometric Graph Neural Networks are powerful for predicting reaction outcomes and regioselectivity, as they naturally incorporate the 3D structure and symmetry of molecules [5].

- Bayesian Optimization (BO) with a Gaussian Process surrogate model is ideal for optimizing continuous variables (e.g., reaction temperature, concentration) and naturally provides uncertainty estimates [4]. The key is to prioritize models that are data-efficient and can provide reliable uncertainty estimates to guide the acquisition function [2].

Troubleshooting Guides

Issue 1: Poor Model Performance Despite Iterative Sampling

Symptoms

- The model's predictive accuracy does not improve with new experimental data.

- The acquisition function selects data points that do not lead to the discovery of high-performing candidates.

Diagnosis and Resolution Steps

| Step | Action | Diagnostic Check | Resolution |

|---|---|---|---|

| 1 | Verify Data Quality & Relevance | Check for consistent experimental protocols and accurate outcome measurement. | Re-standardize experimental procedures; re-evaluate outcome labels (e.g., yield, synergy score thresholds). |

| 2 | Audit Feature Set | Ensure input features (e.g., molecular fingerprints, cellular context) are informative for the task. | Incorporate more relevant descriptors; for drug synergy, confirm inclusion of genomic features from the target cell line [2]. |

| 3 | Analyze Acquisition Function | Determine if the function is over-exploring (high uncertainty) or over-exploiting (low uncertainty). | Switch to a balanced acquisition function like Confidence-Adjusted Surprise (CAS) [4] or adjust the balance parameter. |

| 4 | Implement Transfer Learning | Assess if a model trained on your small target data generalizes poorly. | Pre-train (fine-tune) your model on a larger, related source dataset before starting the active learning cycle [7] [6]. |

Issue 2: Inefficient Resource Use and Slow Discovery

Symptoms

- An excessive number of experimental rounds are needed to find a viable candidate.

- The cost and time of the campaign are prohibitively high.

Diagnosis and Resolution Steps

| Step | Action | Diagnostic Check | Resolution |

|---|---|---|---|

| 1 | Optimize Batch Size | Evaluate the synergy yield per batch. | Reduce the batch size. Smaller batches allow the model to update more frequently, which can significantly increase the discovery rate of rare events [2]. |

| 2 | Simplify the Model | Check if the model is overly complex (e.g., too many parameters). | Use simpler models (e.g., shallow Random Forests). Simple models with limited tree depths can secure better generalizability and performance in low-data regimes [6]. |

| 3 | Leverage Prior Knowledge | Check if you are starting from a random or uninformed initial dataset. | Start the campaign with a source model trained on literature or public database information to make informed first suggestions [7] [6]. |

Experimental Protocols

Protocol 1: General Closed-Loop Active Learning Workflow for Reaction Optimization

This protocol outlines a generalized procedure for using active learning to optimize chemical reactions, such as predicting regioselectivity or reaction yields [5] [6].

1. Initialization Phase

- Objective: Define the goal (e.g., maximize yield, predict regioselectivity).

- Acquire Initial Dataset: Compile a small, relevant set of experimental data (target domain). If available, gather a larger, related dataset (source domain) for transfer learning [7].

- Featurize Data: Convert chemical structures and reaction conditions into numerical descriptors (e.g., molecular fingerprints, geometric graph features) [5].

2. Model Training & Prediction

- Train Model: Train a predictive model (e.g., Geometric Graph Neural Network, Random Forest) on the current dataset. If using transfer learning, pre-train on the source domain first, then fine-tune on the target data [5] [7].

- Predict and Quantify Uncertainty: Use the trained model to predict outcomes for all candidate experiments in the predefined search space. The model should also estimate its uncertainty for each prediction [4].

3. Strategic Experiment Selection

- Apply Acquisition Function: Rank all candidate experiments using an acquisition function (e.g., Upper Confidence Bound, Confidence-Adjusted Surprise) that balances predicted performance and uncertainty [4].

- Select Batch: Choose the top-ranked candidates for experimental testing.

4. Iteration and Model Update

- Conduct Experiments: Perform the selected experiments in the laboratory.

- Update Dataset: Add the new experimental results (both successes and failures) to the training dataset.

- Retrain Model: Update the model with the expanded dataset.

- Loop: Repeat steps 2-4 until the performance goal is met or the experimental budget is exhausted.

Protocol 2: CA-SMART Framework for Material Discovery under Constraints

This protocol details the application of the Confidence-Adjusted Surprise Measure for Active Resourceful Trials (CA-SMART), a Bayesian active learning framework tailored for resource-constrained discovery, such as predicting material fatigue strength [4].

1. Framework Setup

- Define Surrogate Model: Typically, a Gaussian Process (GP) is used as the surrogate model to approximate the underlying black-box function.

- Specify Search Space: Define the high-dimensional design space (e.g., composition, processing parameters).

2. Iterative CA-SMART Cycle

- Model Belief: The GP provides a posterior distribution (mean and variance) over the search space, representing the model's current belief and confidence.

- Evaluate Surprise: For a candidate data point, observe its outcome and compute the Confidence-Adjusted Surprise (CAS). CAS amplifies surprises (divergence between expected and observed outcomes) in regions where the model is more confident, and discounts surprises in highly uncertain regions.

- Update Model: Use the surprising observations to update the GP surrogate model, prioritizing data points that provide high-information gain relative to the model's current confidence.

- Loop: Repeat until convergence to an optimal material candidate with minimal experimental trials.

Quantitative Performance Data

Table 1: Benchmarking Active Learning Performance in Drug Synergy Discovery Data adapted from a study benchmarking active learning for synergistic drug combination screening, showing its high efficiency [2].

| Metric | Random Screening | Active Learning | Performance Gain |

|---|---|---|---|

| Experiments to find 300 synergistic pairs | 8,253 | 1,488 | 82% reduction in experiments |

| Synergistic pairs found (exploring 10% of space) | Not specified | 60% | Highly efficient discovery |

| Impact of Batch Size | N/A | Higher yield with smaller batches | Key parameter for optimization |

Table 2: Transfer Learning Efficacy for Reaction Condition Prediction Data from a study on predicting Pd-catalyzed cross-coupling reaction conditions, demonstrating the value of transfer learning between related nucleophiles [6]. Performance is measured by ROC-AUC, where 1.0 is perfect and 0.5 is random.

| Source Nucleophile | Target Nucleophile | Model Performance (ROC-AUC) | Interpretation |

|---|---|---|---|

| Benzamide | Phenyl Sulfonamide | 0.928 | Excellent transfer (mechanistically similar) |

| Benzamide | Pinacol Boronate Ester | 0.133 | Poor transfer (mechanistically different) |

| Sulfonamide | Benzamide | 0.880 | Excellent transfer (mechanistically similar) |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Components for an Active Learning Drug Discovery Campaign

| Item | Function in Active Learning | Example/Note |

|---|---|---|

| Molecular Descriptors | Numerical representation of chemical compounds for model input. | Morgan Fingerprints, MAP4, MACCS keys, Graph-based representations [2]. |

| Cellular Context Features | Provides biological environment information, critical for accurate predictions in cell-based assays. | Gene expression profiles of target cell lines (e.g., from GDSC database) [2]. |

| Source Domain Dataset | A large, public dataset for pre-training models via transfer learning to boost initial performance. | DrugComb, ChEMBL, or public reaction databases [7] [2]. |

| Acquisition Function | The core algorithm that selects the next experiments based on model predictions. | Upper Confidence Bound (UCB), Expected Improvement (EI), Confidence-Adjusted Surprise (CAS) [4]. |

| High-Throughput Screening Platform | Enables rapid experimental validation of the selected candidate compounds or conditions. | Automated platforms for performing 100s-1000s of experiments in parallel [2]. |

In chemical research and drug development, the acquisition of high-quality experimental data through synthesis and characterization represents a significant bottleneck. The process is hindered by prohibitively high costs, extensive time requirements, and inherent practical limitations. The direct financial burden of data acquisition is substantial; for complex tasks like semantic segmentation of images, annotation costs can range from $0.84 to $3.00 or more per image [8]. Furthermore, the "compliance tax" associated with data privacy in regulated sectors adds millions in overhead, while traditional anonymization techniques can degrade data utility by 30% to 50% [8]. This data scarcity critically impedes the application of data-hungry artificial intelligence (AI) models in chemistry and drug discovery [9]. This technical support center outlines strategies, particularly Active Learning (AL) and data synthesis, to overcome these challenges, providing practical guidance for researchers navigating data-scarce environments.

Frequently Asked Questions (FAQs) on Data Scarcity Solutions

1. What are the primary strategies for dealing with scarce chemical data in AI projects? Several core strategies exist for handling inadequate data in AI-driven chemical research. The most prominent include:

- Active Learning (AL): An iterative process where a model selectively queries an expert to label the most informative data points, maximizing performance with minimal labeling cost [9] [10].

- Data Synthesis (DS): The generation of artificial data that replicates the statistical properties and patterns of real-world experimental data, creating a virtually unlimited supply of training data [9].

- Transfer Learning (TL): A technique that leverages knowledge from a model pre-trained on a large, general dataset (even from a different domain) to jumpstart learning on a small, specific chemical dataset [9].

- Federated Learning (FL): A collaborative method that enables model training across multiple institutions without sharing the raw data itself, thus overcoming data silos and privacy concerns [9].

2. How does synthetic data address the high cost of data acquisition, and what are its limitations? Synthetic data acts as a direct economic solution. It is pre-labeled, eliminating the need for expensive manual annotation, and can be generated in unlimited quantities, drastically reducing both time and monetary costs [11]. It also sidesteps privacy regulations, as it contains no real personally identifiable information [8]. However, its major limitation is a potential lack of realism; it may not fully capture the subtle nuances and complexity of real-world chemical systems, which can reduce model performance in high-stakes applications [11]. The quality of synthetic data is also entirely dependent on the quality and representativeness of the real data used to create the generator model [11].

3. In an Active Learning framework, how does the model decide which data points are most "informative"? The selection is guided by a query strategy. Common strategies include [10]:

- Uncertainty Sampling: Selecting data points for which the model's current prediction is most uncertain.

- Margin Sampling: Choosing points where the difference between the top two predicted probabilities is smallest.

- Entropy Sampling: Selecting points where the probability distribution across all possible labels is highest (most uniform).

4. Can these strategies be combined for greater effect? Yes, hybrid approaches are often most effective. For instance, a stacking ensemble model (which combines multiple base models) can be integrated with strategic data sampling and an AL framework to tackle severe class imbalance and data scarcity simultaneously. This has been shown to achieve high performance while requiring up to 73.3% less labeled data [10].

Troubleshooting Guides for Data-Scarce Chemical Problems

Problem 1: Poor Model Performance Due to Insufficient Training Data

Symptoms:

- Your AI model exhibits high error rates in predicting chemical properties or activities.

- Model performance plateaus quickly despite efforts to tune hyperparameters.

- The model fails to generalize to new, unseen chemical compounds.

Solution Guide:

| Step | Action | Protocol & Methodology |

|---|---|---|

| 1 | Diagnose Data Scarcity | Quantify your dataset size and class distribution. Compare it to the complexity of the problem. Data-hungry deep learning models typically require large datasets [9]. |

| 2 | Evaluate Strategy Feasibility | Assess if you have a large pool of unlabeled data and a domain expert for labeling. If yes, proceed with Active Learning. If not, consider Data Synthesis or Transfer Learning [9]. |

| 3 | Implement Active Learning Cycle | 1. Train Initial Model: Start with a small, randomly selected labeled dataset.2. Predict on Unlabeled Pool: Use the current model to make predictions on the large unlabeled dataset.3. Query for Labels: Apply a selection strategy (e.g., Uncertainty Sampling) to choose the most informative data points.4. Expert Labeling: Have a domain expert label the selected data points.5. Update Model: Retrain the model on the expanded labeled dataset. Repeat from Step 2 [10]. |

| 4 | Validate and Iterate | Continuously evaluate model performance on a held-out test set. Monitor the rate of performance improvement versus the number of new labels acquired. |

Problem 2: High Costs of Experimental Characterization and Labeling

Symptoms:

- Project budgets are exhausted by the costs of analytical instrumentation and technician time.

- Data acquisition is the primary bottleneck, slowing down research cycles.

- Highly paid researchers (e.g., data scientists, senior chemists) are spending significant time on manual data annotation tasks [8].

Solution Guide:

| Step | Action | Protocol & Methodology |

|---|---|---|

| 1 | Quantify Costs | Calculate the Total Cost of Ownership (TCO) for your real-world data, including direct acquisition, labeling, and compliance overhead [8]. |

| 2 | Adopt a Hybrid Data Approach | Use a small amount of high-quality, real experimental data to seed and validate your models. Generate a larger volume of synthetic data for training at scale. This combines real-world fidelity with synthetic scalability [11]. |

| 3 | Generate and Validate Synthetic Data | Methodology: Use generative AI models (e.g., GANs, VAEs) trained on your existing real data to create synthetic datasets. Critical Validation: The synthetic data must be rigorously checked for: - Statistical Fidelity: It must preserve univariate and multivariate distributions of the real data. - Model Utility: A model trained on synthetic data must perform as well as one trained on real data when tested on a real-world holdout set. - Privacy Preservation: The data must be truly anonymous and resistant to re-identification attacks [8]. |

| 4 | Utilize Transfer Learning | Protocol: Select a pre-trained model from a related domain with abundant data (e.g., a general biochemical model). Fine-tune the last few layers of this model using your small, specific dataset. This transfers generalized knowledge to your specific task, reducing the need for vast amounts of new data [9]. |

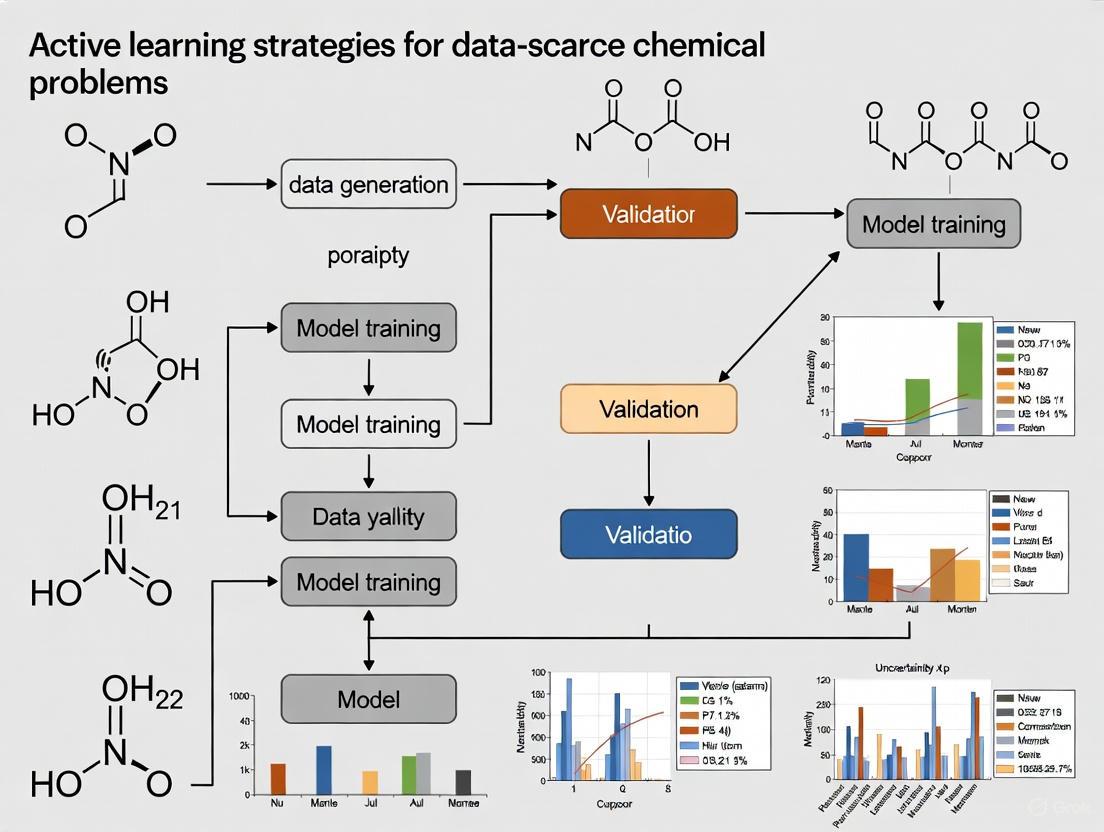

Workflow Visualization

Diagram 1: Active Learning Cycle for Chemical Data Acquisition

Diagram 2: Synthetic Data Generation and Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and strategic "reagents" essential for implementing the discussed data-scarcity solutions.

| Research Reagent | Function & Explanation |

|---|---|

| Active Learning Query Strategy | The algorithm that decides which unlabeled data points would be most valuable for a model to learn from next, optimizing the labeling budget [10]. |

| Generative Model (e.g., GAN) | The engine for synthetic data generation. It learns the underlying probability distribution of real chemical data and can sample new, artificial data points from it [8] [9]. |

| Pre-trained Foundation Model | A large, general-purpose AI model (e.g., trained on vast public chemical databases) that serves as a starting point for Transfer Learning, providing a robust feature extractor for specific, small-scale tasks [9]. |

| Stacking Ensemble Model | A meta-model that combines predictions from multiple base learning algorithms (e.g., CNN, BiLSTM) to improve overall generalization and performance, particularly effective when integrated with AL [10]. |

| Molecular Fingerprints | Numerical representations of chemical structure that convert molecules into a format suitable for machine learning algorithms, enabling the model to learn structure-activity relationships [10]. |

| Validation Framework | A set of standardized tests and metrics used to ensure that generated synthetic data is statistically sound, useful for model training, and free of privacy violations before deployment [8]. |

What is the fundamental principle behind an Active Learning workflow? Active learning is a supervised machine learning approach that strategically selects the most informative data points for labeling to optimize the learning process. Unlike traditional methods that use a static, pre-defined dataset, active learning iteratively selects data points that are expected to provide the most valuable information, minimizing the amount of labeled data required while maximizing model performance [12].

How does the core Active Learning cycle function? The workflow operates through a repeated cycle of model training, querying, and labeling [12]:

- Initialization: Begin with a small, initially labeled dataset.

- Model Training: Train a machine learning model on the current labeled data.

- Query Strategy: Use a selection strategy (e.g., uncertainty sampling) to identify the most informative unlabeled data points from a pool.

- Human Annotation (Human-in-the-Loop): A human expert (oracle) labels the selected data points.

- Model Update: Incorporate the newly labeled data into the training set and retrain the model. This loop repeats until a performance plateau is reached or labeling resources are exhausted [12].

Query Strategy Selection and Optimization

How do I choose the right query strategy for my chemical data? The optimal query strategy depends on your specific dataset and project goals. The table below summarizes common strategies and their applications, particularly in chemical research.

| Strategy | Core Principle | Best-Suited For | Example Chemical Research Application |

|---|---|---|---|

| Uncertainty Sampling [12] [13] | Selects data points where the model's prediction confidence is lowest. | Rapidly improving model accuracy on ambiguous cases. | Identifying molecules with borderline predicted binding affinity for further free energy calculation [14]. |

| Diversity Sampling [12] | Selects a diverse set of data points to cover the feature space. | Ensuring the model learns from a broad range of chemical structures. | Exploring diverse scaffolds in early-stage drug discovery to avoid local minima [14]. |

| Mixed Strategy [14] | Combines multiple approaches (e.g., first shortlists high-affinity candidates, then picks the most uncertain among them). | Balancing exploration of the chemical space with exploitation of promising leads. | Lead optimization: focusing on the most promising and informative compounds from a large library [14]. |

| Stream-Based Selective Sampling [12] [13] | Evaluates data points one-by-one against a confidence threshold, labeling only those below the threshold. | Scenarios with a continuous, real-time stream of data or where immediate labeling decisions are needed. | Real-time analysis of reaction products or high-throughput screening data streams. |

| Greedy Strategy [14] | Selects only the top predicted binders or performers at every iteration. | Pure exploitation; rapidly finding the highest-scoring candidates when the model is already reliable. | Late-stage lead optimization to refine the most potent compounds [14]. |

We are dealing with a large, multi-parameter chemical space. What advanced strategy can we use? For complex regression tasks common in materials science and chemistry (e.g., predicting binding affinity or material properties), consider Density-Aware Greedy Sampling (DAGS). This advanced Active Learning method integrates uncertainty estimation with data density, ensuring selected points are both informative and representative of the overall data distribution. It has been shown to outperform random sampling and other state-of-the-art techniques in training regression models with limited data points [15].

Practical Implementation and Troubleshooting

We have limited computational budget for our "oracle" (e.g., FEP+ calculations). How can we maximize its impact? Implement a narrowing strategy. Begin with broad exploration using less expensive models (e.g., QSAR, docking) and diverse selection to map the chemical space. After a few iterations, switch to a greedy or mixed strategy that focuses the computationally expensive oracle on the most promising regions identified initially. This approach efficiently navigates large chemical libraries for a fraction of the cost of exhaustive screening [14] [16].

Our model performance has plateaued despite continued labeling. What could be the cause? This is a classic sign of diminishing returns in Active Learning [13]. Possible causes and solutions include:

- Strategy Exhaustion: Your current query strategy may no longer be selecting sufficiently informative data. Consider switching strategies (e.g., from uncertainty to diversity sampling) to "jump" to a new region of the chemical space.

- Oracle Bottleneck: The oracle's accuracy might be limiting the model. Verify the consistency and accuracy of your human annotators or computational oracle.

- Data Drift: The distribution of the incoming, unlabeled data may have shifted from the initial training set. Regularly sample and inspect new data to ensure its representativeness [17] [13].

How do we effectively integrate a human expert (oracle) into the loop for chemical data? The human expert's role is to provide high-quality labels for the queried data. In chemistry, this could involve:

- Curation and Validation: Expert chemists can curate generated structures for synthesizability and validate machine-generated annotations [18].

- Complex Annotation: Interpreting results from complex assays or spectral data that are not easily automated. To minimize fatigue and error, use tools that present the expert with clear, contextualized data and pre-populated labels for quick verification [12] [13].

Application in Data-Scarce Chemical Problems

What are the key "Research Reagent Solutions" or components for setting up an Active Learning experiment in drug discovery?

| Component | Function & Explanation |

|---|---|

| Initial Labeled Set | A small set of molecules with known properties (e.g., binding affinity). This "seeds" the model and should be as representative as possible of the broader chemical space of interest [12] [14]. |

| Large Unlabeled Library | A vast virtual or physical compound library (e.g., Enamine REAL space). This is the chemical "haystack" from which the Active Learning algorithm will selectively sample [14] [16]. |

| Computational Oracle | A high-accuracy, computationally expensive simulation used to generate training labels. Alchemical free energy calculations (e.g., FEP+) or molecular docking (e.g., Glide) are common examples that provide reliable affinity predictions [14] [16]. |

| Ligand Representation | A fixed-size vector encoding a molecule's structural and chemical features. Common examples include PLEC fingerprints (protein-ligand interaction contacts) or 3D voxel grids (e.g., MedusaNet), which inform the model about the molecular context [14]. |

| Active Learning Platform | Software that automates the iterative cycle. Platforms like Schrödinger's Active Learning Applications or custom pipelines manage model training, query selection, and job submission to the oracle [16]. |

Can Active Learning truly accelerate a real-world drug discovery project? Yes. A prospective study searching for Phosphodiesterase 2 (PDE2) inhibitors demonstrated this effectively. An Active Learning protocol that combined alchemical free energy calculations as an oracle with a machine learning model was able to identify high-affinity binders by explicitly evaluating only a small subset of a large chemical library. This provided a robust and efficient protocol for lead optimization [14].

How does Active Learning help with multi-parameter optimization in lead optimization? Active Learning frameworks, such as those combined with FEP+, can explore tens to hundreds of thousands of compounds against multiple hypotheses (e.g., potency against a primary target and selectivity against anti-targets) simultaneously. This allows researchers to quickly identify compounds that maintain or improve primary potency while achieving other critical design objectives [16].

Active Learning Technical Support Center

Troubleshooting Guides

Issue 1: Poor Model Performance with Limited Labeled Data

Problem: Model accuracy remains low despite multiple active learning cycles, particularly with highly imbalanced datasets or high-dimensional optimization spaces.

Diagnosis: This typically occurs when the acquisition function fails to properly balance exploration and exploitation, or when batch selections lack diversity, leading to redundant information.

Solutions:

- Implement GandALF Framework: Combine Gaussian processes with clustering to ensure selection of informative, representative, and diverse experiments. This approach has demonstrated 33% reduction in required experiments for catalytic pyrolysis yield prediction [19].

- Apply DANTE for High-Dimensional Problems: Use Deep Active Optimization with Neural-Surrogate-Guided Tree Exploration for complex systems with up to 2,000 dimensions. This method introduces conditional selection and local backpropagation mechanisms to escape local optima [20].

- Leverage Covariance-Based Batch Selection: For drug discovery applications, use methods that maximize joint entropy by selecting batches with maximal determinant of the epistemic covariance matrix (COVDROP/COVLAP). This ensures both uncertainty and diversity in batch selection [21].

Verification: Monitor model improvement per batch - effective active learning should show steeper learning curves compared to random sampling, with at least 15-20% improvement in fairness metrics while maintaining accuracy for fair learning applications [22].

Issue 2: Inefficient Experimental Design for Chemical Systems

Problem: Traditional Design of Experiments (DoE) methods require excessive experimental iterations to map complex chemical reaction spaces, increasing time and resource costs.

Diagnosis: Standard DoE techniques often rely on fixed models and preliminary process knowledge that may not accurately represent the studied reaction system.

Solutions:

- Adopt Gaussian Process-Based Active Learning: Use GandALF for kinetic modeling of chemical processes where the relationship between variables and output can be represented by Gaussian processes. This requires no preliminary knowledge and uses kernel-based approaches to describe processes with minimal information [19].

- Integrate Deep Neural Surrogates: For critical point calculations of fluid mixtures, employ DNN models trained on various compositions to initialize analytical calculations, reducing required iterations by 50-90% [23].

- Utilize ChemXploreML for Property Prediction: Implement this user-friendly desktop application for predicting molecular properties like boiling points, melting points, and vapor pressure without requiring advanced programming skills [24].

Verification: Compare the information gain per experiment between traditional DoE and active learning approaches. Experiments selected with active learning should be significantly more informative for reaction modeling [19].

Issue 3: Algorithm Convergence to Local Optima

Problem: Active learning cycles stagnate as the algorithm repeatedly selects similar data points from local regions of the search space, missing global optima.

Diagnosis: This occurs when acquisition functions over-emphasize exploitation over exploration, or when the surrogate model cannot adequately capture the global structure of the objective function.

Solutions:

- Enable Conditional Selection in DANTE: Implement mechanisms that compare DUCB (Data-driven Upper Confidence Bound) values between root and leaf nodes, encouraging selection of higher-value nodes and preventing value deterioration [20].

- Apply Local Backpropagation: Update visitation data only between root and selected leaf nodes to prevent irrelevant nodes from influencing decisions, creating local DUCB gradients that help escape local optima [20].

- Incorporate Fair Clustering: Use FAL-CUR approach that combines fair clustering with acquisition functions based on uncertainty and representativeness to ensure diverse sampling across the entire data distribution [22].

Verification: Track the exploration of diverse chemical space regions - effective methods should demonstrate progressive escape from local maxima and coverage of underrepresented regions [20].

Frequently Asked Questions

Q: How does active learning specifically reduce labeling costs in chemical engineering applications? A: Active learning reduces labeling costs by strategically selecting the most informative experiments rather than using random or grid-based approaches. In catalytic pyrolysis of plastic waste, active learning achieved a 33% reduction in required experiments while maintaining model accuracy. For drug discovery applications, active learning methods have shown significant potential savings in the number of experiments needed to reach the same model performance [19] [21].

Q: What are the key differences between active learning and Bayesian optimization? A: Active learning explores the entire reaction space to build an accurate global model, while Bayesian optimization focuses on finding optimal reaction conditions for a particular objective. Active learning aims to model a black-box function as accurately as possible with minimum measurements, whereas Bayesian optimization uses uncertainty-based acquisition to find optimal candidates [19] [20].

Q: How can we ensure fairness in active learning for chemical and drug discovery applications? A: Implement FAL-CUR (Fair Active Learning using fair Clustering, Uncertainty, and Representativeness) which applies fair clustering to group uncertain samples while maintaining fairness constraints, then selects samples based on representativeness and uncertainty scores within these fair clusters. This approach has demonstrated 15-20% improvement in fairness metrics like equalized odds while maintaining stable accuracy [22].

Q: What computational resources are typically required for implementing active learning in chemical research? A: Requirements vary by method. GandALF using Gaussian processes is suitable for moderate-dimensional problems, while DANTE with deep neural surrogates can handle up to 2,000 dimensions but requires more computational resources. For large-scale problems, quantum-inspired algorithms and high-performance computing architectures can be integrated [19] [20] [25].

Q: How do we handle highly imbalanced datasets in active learning for drug discovery? A: For datasets with extreme imbalances (e.g., PPBR dataset), use covariance-based batch selection methods (COVDROP/COVLAP) that maximize joint entropy and ensure diversity. These methods help the model gain insight into underrepresented regions by selectively sampling from these areas while maintaining overall batch diversity [21].

Quantitative Performance Data

Table 1: Active Learning Performance Across Applications

| Application Domain | Method | Performance Improvement | Data Reduction | Key Metric |

|---|---|---|---|---|

| Catalytic Pyrolysis | GandALF | 33% reduction in experiments [19] | 18 experiments vs. traditional DoE [19] | Olefin yield prediction |

| Drug Discovery - Solubility | COVDROP | Faster convergence [21] | Significant batch reduction [21] | RMSE improvement |

| High-Dimensional Optimization | DANTE | 10-20% improvement over SOTA [20] | 500 data points for 2,000 dimensions [20] | Global optimum finding |

| Fair Active Learning | FAL-CUR | 15-20% fairness improvement [22] | Maintains accuracy with fairness [22] | Equalized odds |

| Critical Point Calculations | DNN Initialization | 50-90% iteration reduction [23] | Faster convergence [23] | Computation time |

Table 2: Dataset Characteristics for Active Learning Validation

| Dataset Type | Size | Dimensions/Features | Active Learning Method | Validation Results |

|---|---|---|---|---|

| Hydrocracking Modeling | Virtual data | Multiple process variables | GandALF vs. EMOC/clustering | 33% improvement in data efficiency [19] |

| ADMET Properties | 906-9,982 compounds [21] | Molecular descriptors | COVDROP/COVLAP | Superior to k-means, BAIT, random [21] |

| Synthetic Functions | 20-2,000 dimensions [20] | High-dimensional space | DANTE | 80-100% global optimum success [20] |

| Real-World Fairness | 4 datasets [22] | With sensitive attributes | FAL-CUR | Stable accuracy + fairness [22] |

| Fluid Mixtures | Various compositions [23] | Thermodynamic parameters | DNN initialization | 50-90% iteration reduction [23] |

Experimental Protocols

Protocol 1: GandALF for Catalytic Pyrolysis Yield Prediction

Objective: Predict yield of light olefins (C2-C4) from catalytic pyrolysis of LDPE using minimal experiments.

Materials:

- Tandem reactor system for ex-situ catalytic pyrolysis

- LDPE plastic waste feedstock

- Various catalyst materials

- Temperature control system (variable 400-600°C)

- Space time variation capability

Methodology:

- Define Design Space: Identify variables (temperature, space time, catalyst type) and output (olefin yield)

- Initial Experiments: Perform small set of initial experiments (~5-10% of total budget)

- Train Gaussian Process: Use initial data to train GP surrogate model

- Cluster Uncertain Regions: Apply k-means clustering to uncertain regions identified by GP

- Select Experiments: Choose experiments from largest empty clusters using centroid selection

- Iterate: Repeat steps 3-5 until experimental budget exhausted or target accuracy achieved

Validation: Compare models trained on active learning-selected experiments versus traditional DoE using mean squared error and information gain metrics [19].

Protocol 2: COVDROP for Drug Discovery Applications

Objective: Optimize ADMET properties and affinity predictions using deep batch active learning.

Materials:

- Molecular compound libraries (e.g., ChEMBL, internal datasets)

- Deep neural network architecture (graph neural networks preferred)

- Computational resources for model training

- Batch selection infrastructure

Methodology:

- Model Setup: Initialize deep learning model with appropriate architecture for molecular data

- Uncertainty Quantification: Use Monte Carlo dropout to estimate model uncertainty

- Covariance Calculation: Compute covariance matrix between predictions on unlabeled samples

- Batch Selection: Iteratively select submatrix with maximal determinant to ensure diversity

- Experimental Testing: Submit selected batch for experimental validation

- Model Update: Incorporate new labeled data and retrain model

- Cycle Continuation: Repeat until desired performance achieved [21]

Workflow Diagrams

Active Learning Workflow for Chemical Problems

Troubleshooting Decision Framework

Research Reagent Solutions

Table 3: Essential Computational Tools for Active Learning Implementation

| Tool/Method | Application Context | Key Function | Implementation Requirements |

|---|---|---|---|

| GandALF | Kinetic modeling, catalytic pyrolysis | Combines Gaussian processes with clustering for data-scarce applications | Python, GP libraries, clustering algorithms [19] |

| DANTE | High-dimensional optimization (up to 2,000D) | Neural-surrogate-guided tree exploration for complex systems | Deep learning frameworks, tree search implementation [20] |

| COVDROP/COVLAP | Drug discovery, ADMET optimization | Covariance-based batch selection with uncertainty quantification | Deep neural networks, covariance calculations [21] |

| FAL-CUR | Fair active learning applications | Fair clustering with uncertainty and representativeness | Fair clustering algorithms, fairness metrics [22] |

| ChemXploreML | Molecular property prediction | User-friendly ML without programming expertise | Desktop application, molecular embedders [24] |

| Quantum-Inspired Algorithms | Large-scale optimization problems | Quantum genetic algorithms for complex search spaces | Quantum computing principles, HPC integration [25] |

Core Active Learning Strategies and Their Real-World Chemical Applications

Uncertainty sampling is a core component of active learning, a methodology designed to reduce the amount of labeled data required to train machine learning models. For researchers tackling data-scarce chemical problems, this approach is particularly valuable. It works by prioritizing the labeling of data points about which the current model is most uncertain, thereby maximizing the informational gain from each expensive experimental measurement [26]. By iteratively querying an expert (or "oracle") to label only the most ambiguous instances, active learning can significantly accelerate research in areas like catalyst discovery, electrolyte development, and molecular property prediction, where data is limited and experimental resources are precious [27] [28].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental intuition behind uncertainty sampling? The core idea is that not all data points contribute equally to improving a model's performance. A machine learning algorithm can achieve greater accuracy with fewer training labels if it is allowed to choose the data from which it learns. Uncertainty sampling identifies the examples that the model is most "confused" about, as clarifying these ambiguous cases provides the most information about the underlying class boundaries or function mappings [26].

Q2: In a chemical context, what do "aleatoric" and "epistemic" uncertainty represent? In molecular property prediction, aleatoric uncertainty refers to the inherent noise in the data, often due to experimental measurement errors or the intrinsic stochasticity of a process. It is generally considered irreducible. Epistemic uncertainty, on the other hand, stems from a lack of knowledge in the model, often because the query molecule is structurally different from those in the training data. This type of uncertainty is reducible by collecting more relevant data [29] [30]. For a researcher, a high epistemic uncertainty indicates that the model is venturing into uncharted chemical space.

Q3: My model's uncertainty estimates seem unreliable. How can I evaluate their quality? Evaluating the calibration of uncertainty estimates is crucial. Simple ranking metrics like Spearman's correlation can be sensitive to test set design. A more robust method is error-based calibration, which assesses whether the predicted uncertainties statistically match the observed errors. A well-calibrated model should have the property that, for a subset of predictions with a certain predicted variance, the root mean square error (RMSE) of those predictions is approximately equal to that variance [31].

Q4: How can I implement a basic uncertainty sampling loop for my chemical dataset? A standard pool-based active learning loop involves these steps [26]:

- Initialization: Start with a very small, labeled subset of your data.

- Model Training: Train your initial model on this small labeled set.

- Prediction & Scoring: Use the trained model to predict all unlabeled data points. Calculate an uncertainty score (e.g., entropy, least confidence) for each prediction.

- Querying: Select the top k most uncertain instances and query their labels from an expert (oracle).

- Iteration: Add the newly labeled data to the training set, retrain the model, and repeat from step 3 until a performance goal or labeling budget is reached.

Troubleshooting Common Experimental Issues

Problem: The model gets stuck querying outliers or noisy data.

- Potential Cause: The uncertainty measure is dominated by aleatoric uncertainty (inherent noise) rather than epistemic uncertainty (model ignorance).

- Solution: Employ uncertainty measures that explicitly separate or prioritize epistemic uncertainty. Techniques like evidential deep learning or ensemble-based methods can help achieve this [29] [32]. Furthermore, incorporating diversity sampling can mitigate this by ensuring that the selected batch of queries is both uncertain and representative of the overall unlabeled pool structure [32].

Problem: The model performance is unstable during the early (cold-start) stages of active learning.

- Cause: With a very small initial labeled dataset, the model is poorly initialized, leading to unreliable uncertainty estimates.

- Solution: Utilize self-supervised learning (SSL) or transfer learning to pre-train the model's feature extractor on abundant unlabeled data before starting the active learning cycle. This provides a more stable and informative initial representation, alleviating the cold-start problem [32].

Problem: My uncertainty estimates are poorly calibrated.

- Cause: The model's predicted probabilities do not reflect the true likelihood of correctness.

- Solution: Apply post-hoc calibration techniques. For example, for ensemble models, you can fine-tune the weights of selected layers on a separate validation set to better align the predicted variance with the actual observed errors [30].

Comparison of Popular Uncertainty Measures

The table below summarizes common uncertainty measures used in classification tasks, which can be applied to categorical chemical properties (e.g., catalyst class, reaction outcome).

| Measure Name | Formula | Interpretation | Query Strategy | ||

|---|---|---|---|---|---|

| Least Confidence [26] [33] | `1 - P(ŷ | x)whereŷ` is the most likely class. |

How unsure the model is about its top prediction. | Select instance with highest value. | |

| Classification Margin [26] [33] | `P(ŷ₁ | x) - P(ŷ₂ | x)whereŷ₁andŷ₂` are the top two predictions. |

The difference in confidence between the top two candidates. | Select instance with smallest value. |

| Classification Entropy [26] [33] | -Σ pₖ log(pₖ) across all classes k. |

The overall unpredictability of the class distribution. | Select instance with highest value. |

Experimental Protocol: Implementing an Active Learning Cycle

This protocol outlines the steps for a pool-based active learning experiment, as used in screening electrolyte solvents for anode-free lithium metal batteries [28].

1. Define the Problem and Search Space:

- Objective: Maximize a target property (e.g., normalized discharge capacity at the 20th cycle).

- Search Space: Define the virtual chemical space (e.g., ~1 million solvent candidates filtered from databases like PubChem).

2. Initialize the Training Data:

- Assemble a small, initial labeled dataset (e.g., 58 data points from in-house cycling tests).

3. Select and Configure the Model:

- Choose a model suitable for small data and uncertainty quantification, such as Gaussian Process Regression (GPR) or an Evidential Deep Learning model.

- To combat overfitting with small data, use Bayesian Model Averaging (BMA) to combine predictions from models with different kernels or initializations [28].

4. Execute the Active Learning Loop:

- For each campaign (batch of experiments) within the budget:

- Train the model on the current labeled dataset.

- Use the model to predict the target property and its uncertainty for all candidates in the unlabeled pool.

- Calculate an acquisition function (e.g., an uncertainty measure) for each candidate.

- Select the top

ncandidates (e.g., 10) with the highest acquisition score. - Query the "oracle" (i.e., perform the experiment) to get the true label for these candidates.

- Add the newly labeled data to the training set.

5. Analyze Results and Validate:

- Track the model's performance and the quality of the discovered candidates over successive campaigns.

- Experimentally validate the top-performing candidates identified by the process.

The following diagram illustrates this iterative workflow.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational "reagents" and their roles in building an effective active learning system for data-scarce chemical research.

| Tool / Technique | Function | Application Example |

|---|---|---|

| Gaussian Process Regression (GPR) | A Bayesian non-parametric model that naturally provides uncertainty estimates (standard deviation) alongside predictions. | Ideal for modeling continuous chemical properties when data is scarce [28]. |

| Deep Ensembles | Trains multiple neural networks with different initializations; model variance indicates epistemic uncertainty. | Predicting molecular properties with explainable, atom-attributed uncertainties [30]. |

| Evidential Deep Learning | Modifies the neural network to output parameters of a higher-order distribution, explicitly modeling aleatoric and epistemic uncertainty. | Efficiently generating calibrated predictive uncertainties in low-budget fault diagnosis [32]. |

| Self-Supervised Learning (SSL) | Pre-trains a model on unlabeled data to learn meaningful latent representations without using labels. | Stabilizing model initialization (warm-start) to overcome the cold-start problem in active learning [32]. |

| Bayesian Model Averaging (BMA) | Combines predictions from multiple models, weighted by their posterior model probabilities. | Mitigating the risk of model overfitting and improving prediction robustness on small datasets [28]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary goal of diversity sampling in chemical library design? The main objective is to select a representative and diverse subset of compounds from a larger collection. This ensures that the selected subset broadly spans the entire descriptor space, maximizing the chances of identifying hits with desired biological activities during screening, which is a crucial step in pre-clinical drug discovery and High Throughput Screening (HTS) [34].

FAQ 2: My dataset has descriptor values with very different numerical ranges. How do I prevent this from biasing the diversity calculation? It is recommended to use data normalization, which scales the data using the mean and standard deviation. Without normalization, Euclidean distance calculations can be unfairly biased toward descriptors with large real number values compared to those with ranges between 0 and 1. Many tools, like DivCalc, offer data normalization as a selectable option [34].

FAQ 3: Does a rapid increase in the number of compounds in my library guarantee an increase in its chemical diversity? Not necessarily. Quantitative studies on the time-evolution of chemical libraries show that an increasing number of molecules cannot be directly translated to increased diversity. The chemical diversity must be assessed using specific metrics, as new compounds may populate already well-represented regions of the chemical space rather than exploring new ones [35] [36].

FAQ 4: How can I efficiently visualize the chemical space of a very large library? For large libraries, using chemical satellites and methods like ChemMaps is an efficient approach. This involves selecting a representative subset of compounds (satellites) whose similarities to the rest of the library can be used to generate an approximate yet reliable visualization of the entire chemical space using principal component analysis (PCA), reducing the amount of high-dimensional data that needs to be processed [37].

FAQ 5: What is an efficient way to select diverse compounds when working with a very large dataset? You can use algorithms that identify the most dissimilar compounds. One common method involves:

- Finding the compound farthest from the data centroid.

- Selecting the compound farthest from the first selected compound.

- Iteratively selecting the unselected compound whose minimum distance to any already selected compound is the largest [34]. For even greater efficiency with ultra-large libraries, consider tools with O(N) scaling, such as those using the iSIM framework or the BitBIRCH clustering algorithm [35] [36].

Troubleshooting Guides

Issue 1: Suboptimal Compound Selection for Screening

Problem: The selected diverse subset of compounds does not yield the expected hit rates or coverage of chemical space during screening.

Solution: Verify the diversity sampling protocol.

- Check Descriptor Choice: Ensure the molecular descriptors (e.g., one-, two-, or three-dimensional) are relevant to your property of interest. Diversity analysis often uses one- and two-dimensional descriptors [34].

- Confirm Data Preprocessing: Apply data normalization to prevent descriptors with large numerical ranges from dominating the distance calculations [34].

- Validate Sampling Method: Use a proven algorithm, such as the DISSIM algorithm, which is designed to select maximally dissimilar compounds [34].

- Assess Sample Size: Be aware that as the size of your selected subset increases, the dissimilarity of newly added compounds to those already selected decreases rapidly. There may be an ideal sample size for your specific library [34].

Issue 2: Inefficient Analysis of Large Libraries

Problem: Standard diversity analysis and clustering tools are too slow or run into memory issues with libraries containing hundreds of thousands or millions of compounds.

Solution: Implement advanced frameworks designed for scalability.

- Use Linear-Scaling Tools: Employ methods like the iSIM framework, which calculates the average pairwise Tanimoto similarity for an entire set with O(N) complexity instead of the traditional O(N²), making it feasible for large libraries [35] [36].

- Apply Efficient Clustering: Utilize clustering algorithms like BitBIRCH, which is designed to handle binary fingerprint data and Tanimoto similarity efficiently, enabling the dissection of chemical space in large datasets [35] [36].

- Leverage Complementary Similarity: Use this metric to quickly identify molecules in high-density (medoid) and low-density (outlier) regions of your library's chemical space, facilitating a more granular analysis [37].

Issue 3: Poor Integration of Diversity Sampling with Active Learning

Problem: Difficulty in effectively using diversity sampling to guide an active learning cycle for data-scarce chemical problems.

Solution: Establish a closed-loop computational search.

- Generative Phase: Use a generative chemical model to propose new candidate structures with targeted properties.

- Diversity Sampling Phase: Apply diversity sampling to select the most informative and diverse candidates from the generated set for the subsequent "testing" step.

- Predictive Phase: Characterize the selected compounds using a predictive model (e.g., for a specific bioactivity).

- Iterative Refinement: Use the newly characterized compounds to retrain the predictive and generative models. This active learning loop allows the model to gradually learn the chemistries needed to explore the target regions of chemical space by actively suggesting the data it needs [18] [38].

Experimental Protocols & Data

Table 1: Key Software Tools for Diversity Analysis

| Tool Name | Primary Function | Key Algorithm/Feature | Scalability & Limitations |

|---|---|---|---|

| DivCalc [34] | Selects diverse subsets from a compound library | DISSIM algorithm (Euclidean distance) | Limited to ~25,000 data points; Windows OS. |

| iSIM Framework [35] [36] | Quantifies intrinsic diversity of a library | Calculates average pairwise Tanimoto in O(N) | Efficient for large libraries (linear scaling). |

| BitBIRCH [35] [36] | Clustering of large chemical libraries | Adapted BIRCH algorithm for binary fingerprints | Suitable for clustering large libraries. |

| ChemMaps [37] | Visualization of chemical space | Uses satellite compounds and PCA | Reduces data needed for visualization. |

Table 2: Core Components of a Diversity Sampling Workflow

| Component | Function | Example/Note |

|---|---|---|

| Molecular Descriptors | Numerical representation of chemical structures | 1D, 2D, or 3D descriptors; calculated by software like Dragon [34]. |

| Distance/Similarity Metric | Quantifies the (dis)similarity between two compounds | Euclidean distance; Tanimoto similarity [34] [35]. |

| Sampling Algorithm | Selects the final diverse subset | DISSIM; Medoid/Outlier sampling based on complementary similarity [34] [37]. |

| Data Preprocessing | Prepares data for robust analysis | Data normalization (scaling using mean and standard deviation) [34]. |

Protocol 1: Selecting a Diverse Subset using a Dissimilarity Algorithm

This protocol is based on the DISSIM method implemented in tools like DivCalc [34].

Input: A space-delimited data file containing molecular descriptors for all compounds.

- Data Preprocessing: Load the data. Apply data normalization to all descriptor values to prevent bias.

- Centroid Calculation: Calculate the centroid (average point) of the entire input dataset in the descriptor space.

- Select First Compound: Identify the compound with the maximum Euclidean distance from the centroid. This is your first selected compound.

- Select Second Compound: Identify the unselected compound with the maximum Euclidean distance from the first selected compound.

- Iterative Selection: For all subsequent selections, from the pool of unselected compounds, choose the compound whose minimum distance to any of the already selected compounds is the largest.

- Output: Repeat step 5 until the desired number or percentage of compounds has been selected. The output is a ranked list of compounds from most to least diverse.

Protocol 2: Assessing Library Diversity and Evolution with iSIM

This protocol uses the iSIM framework to analyze the intrinsic diversity of a compound library over time [35] [36].

Input: Molecular fingerprints (e.g., ECFP4) for all compounds in each release of a library.

- Fingerprint Matrix Assembly: For a given library release, arrange all N molecular fingerprints into a matrix.

- Column Sum Calculation: Sum the elements in each column of the fingerprint matrix to produce a vector, K = [k₁, k₂, …, kₘ], where kᵢ is the number of "1"s in the i-th column.

- iT Calculation: Calculate the intrinsic Tanimoto (iT) index, which represents the average of all possible pairwise Tanimoto similarities in the set, using the formula:

- iT = Σ [kᵢ(kᵢ - 1)] / Σ [ kᵢ(kᵢ - 1) + 2kᵢ(N - kᵢ) ]

- A lower iT value indicates a more diverse library.

- Time-Evolution Analysis: Repeat steps 1-3 for each historical release of the library (e.g., subsequent versions of ChEMBL or DrugBank). Plot the iT value against time or release number to assess whether the library's diversity is increasing.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Diversity-Oriented Experiments

| Item | Function in Diversity Analysis |

|---|---|

| Descriptor Calculation Software | Generates numerical representations (descriptors) of chemical structures from their molecular structures. Examples include Dragon software [34]. |

| Curated Chemical Database | Provides a source of compounds with associated biological and chemical data for analysis. Examples include ChEMBL, DrugBank, and PubChem [35] [36]. |

| High-Performance Computing (HPC) Cluster | Provides the computational power needed for descriptor calculation, diversity analysis, and clustering of large (10⁷+ compounds) and ultra-large (10⁹+ compounds) libraries [35]. |

| Standardized Natural Product Libraries | Collections of purified natural products or fractions used for screening. These provide biologically relevant chemical diversity and are a historical source of drugs and tool compounds [39]. |

Workflow Diagrams

Diversity Sampling Core Algorithm

Active Learning with Diversity Sampling

Frequently Asked Questions

1. Why is my committee in agreement on most data points, making it hard to find informative queries? This is often a sign of committee collapse, where models become too similar. This can happen if the committee members are of the same type or if the initial training data is not diverse enough.

- Solution: Ensure committee diversity by using different model types (e.g., Random Forest, Support Vector Machines) or the same model type with different initializations or subsets of features [40] [41]. Increasing the initial number of labeled data points can also help establish a more robust version space.

2. My QBC process is computationally expensive and slow. How can I improve its efficiency? The need to maintain and retrain multiple models is inherently costly [41].

- Solution: Consider implementing a batch active learning strategy. Instead of querying one instance at a time, select a batch of the most informative instances simultaneously. This reduces the number of retraining cycles [41]. Additionally, using simpler base learners or employing stochastic sampling methods to approximate the version space can speed up the process [42] [41].

3. What does it mean if the oracle "abstains" from labeling a point, and how should I handle it? In some frameworks, the oracle (e.g., a human expert or a costly experiment) can abstain from providing a label, often for the most uncertain data points [43].

- Solution: Abstention can be a signal that a data point is an outlier or too ambiguous to be labeled reliably. Implement a strategy that queries the most uncertain points but not too uncertain. This avoids wasting resources on outliers that do not follow the underlying data distribution and ensures you select informative samples from representative regions [43].

4. My model's performance is not improving with successive queries. What is wrong? This could be due to poorly calibrated models or a poorly chosen disagreement measure.

- Solution: Verify that your model's confidence scores are well-calibrated. A model that is overconfident in its wrong predictions will misguide the query strategy [44]. Also, experiment with different disagreement measures, such as switching from vote entropy to average KL divergence, to see if it better captures the committee's disagreement for your specific data [45] [41].

Troubleshooting Guide

| Problem | Possible Causes | Recommended Actions |

|---|---|---|

| Low Model Diversity | Committee members are identical in type and initialization. | Use heterogeneous models [40] or enforce diversity via bootstrapping or different feature sets. |

| High Computational Load | Retraining a large committee after every query; large pool of unlabeled data. | Adopt batch querying [41]; use efficient classifiers; implement a dynamic stopping criterion [42]. |

| Poor Performance Gain | Poorly calibrated models; uninformative data pool. | Apply calibration techniques (e.g., Platt scaling) [44]; review and pre-process the unlabeled data pool. |

| Oracle Abstention | Queried points are outliers or too noisy for reliable labeling. | Filter the unlabeled pool to remove suspected outliers; adjust the query strategy to avoid regions of extreme uncertainty [43]. |

Experimental Protocol: Implementing QBC for a Classification Task

This protocol provides a step-by-step methodology for setting up a Query-by-Committee experiment, using a toy dataset as a reference [40].

1. Initial Setup and Data Preparation

- Objective: To actively learn a classification model for the Iris dataset by strategically querying labels for the most informative data points.

- Committee Members: 2

- Base Learner: Random Forest Classifier

- Initial Training Data: 2 randomly selected instances per committee member.

- Disagreement Measure: Vote Entropy [45]

- Software/Packages: Python, scikit-learn, modAL library [40].

2. Required Research Reagents and Materials Table: Essential Components for the QBC Experiment

| Component | Function in the Experiment |

|---|---|

| Iris Dataset | A standard benchmark dataset for multi-class classification tasks [40]. |

| RandomForestClassifier (from scikit-learn) | Serves as the base estimator for each active learner in the committee [40]. |

| Committee (from modAL.models) | The core object that assembles individual active learners and manages the QBC process [40]. |

| PCA (for visualization) | Used to reduce the data to 2 dimensions for visualizing predictions and performance [40]. |

3. Step-by-Step Workflow The following diagram illustrates the core active learning loop in a QBC setup:

QBC Active Learning Loop

Initialize the Committee:

- From the entire dataset (

X_pool,y_pool), randomly selectn_initialinstances (e.g., 2) for each committee member without replacement [40]. - Create an

ActiveLearnerobject for each member, providing the base estimator (RandomForestClassifier()) and its initial training data [40]. - Assemble the learners into a

Committee[40].

- From the entire dataset (

The Active Learning Loop: Repeat for a predefined number of queries or until a stopping criterion is met [42]:

- Query: Use

committee.query()to select the instancexfromX_poolwith the highest disagreement, as measured by vote entropy [40] [45]. The indexiof this instance is returned. - Oracle: Request the true label

yforxfrom the oracle (in this case, from the held-outy_pool). - Teach: Use

committee.teach()to retrain the committee on the new labeled instance(x, y)[40]. - Remove: Delete the newly labeled instance

(x, y)from the unlabeled poolX_poolandy_pool[40]. - Evaluate & Check: Periodically score the committee on a held-out test set and record the performance. Check if a dynamic stopping criterion (e.g., low prediction variance across the committee) is met [42].

- Query: Use

4. Key Quantitative Metrics to Track Table: Performance Metrics for the QBC Experiment

| Metric | Formula/Description | Purpose | ||||

|---|---|---|---|---|---|---|

| Vote Entropy [45] | $ -\frac{1}{\log C} \sum\_i \frac{V(y_i)}{ | C | } \log \left( \frac{V(y_i)}{ | C | } \right) $ | Measures the disagreement among committee members for a given instance. The instance with the highest entropy is selected for querying. |

| Classification Accuracy | $ \frac{\text{Number of correct predictions}}{\text{Total predictions}} $ | Tracks the model's performance improvement on a held-out test set over the active learning cycles [40]. | ||||

| Committee Prediction Variance | Variance in the predictions (or probabilities) made by different committee members. | Can be used to define a dynamic stopping criterion; low variance indicates consensus and reduced model uncertainty [42]. |

In data-scarce chemical research, active learning provides a powerful framework for intelligently selecting the most informative experiments, thereby accelerating discovery while minimizing resource consumption. Among the most effective approaches are hybrid strategies that balance two key principles: uncertainty sampling, which selects data points where the model's predictions are least reliable, and diversity sampling, which ensures exploration of the broad chemical space. This technical support center provides practical guidance for researchers implementing these advanced methodologies in drug development and materials science.

FAQs: Implementing Hybrid Sampling Strategies

What is the fundamental advantage of a hybrid sampling strategy over using either uncertainty or diversity alone?

A hybrid strategy overcomes the individual limitations of pure uncertainty or diversity sampling. Uncertainty-based methods can sometimes lead to selecting outliers that are not truly informative, while diversity-based methods might waste resources on already well-understood regions of chemical space. By combining them, you ensure that experiments are both informative for the model and representative of unexplored territories.

- Synergistic Effect: The hybrid approach leverages the complementarity between different uncertainty quantification methods. For instance, combining distance-based methods (which act as a proxy for distributional uncertainty) with Bayesian approaches (which quantify model and data uncertainty) can provide more robust uncertainty estimates for out-of-distribution samples [46].

- Practical Impact: In anti-cancer drug response prediction, hybrid active learning strategies have been shown to significantly outperform random and greedy sampling methods in the early identification of responsive treatments [47].

How do I choose which uncertainty estimation method to use in my hybrid sampling framework?

The choice depends on your model architecture, computational resources, and the specific nature of your chemical problem. There is no single best method that outperforms others in all scenarios [48].

The table below summarizes common uncertainty quantification (UQ) methods used in active learning for chemical problems:

| Method Category | Key Principle | Typical Use Case in Chemistry | Pros and Cons |

|---|---|---|---|

| Ensemble Methods [48] [49] | Trains multiple models; uncertainty is the variance of their predictions. | Interatomic potential development [49], QSAR modeling [46]. | Pro: High accuracy, theoretically straightforward. Con: Computationally expensive. |

| Monte Carlo Dropout (MCDO) [48] | Approximates Bayesian inference by applying dropout during inference. | Molecular property prediction with graph neural networks. | Pro: Computationally cheaper than ensembles. Con: Can be less accurate than full ensembles. |

| Mean-Variance Estimation (MVE) [46] | Model is trained to predict both the mean and variance of its output. | Quantifying aleatoric (data) uncertainty in QSAR regression tasks [46]. | Pro: Directly models data noise. Con: Requires specialized loss function. |

| Distance-Based Methods [46] [48] | Measures similarity (distance) of a new sample to the training set. | Defining the Applicability Domain (AD) of a QSAR model [46]. | Pro: Intuitive, model-agnostic. Con: Depends on the choice of distance metric and representation. |

My hybrid active learning loop seems to be stuck, failing to discover new high-performance candidates. What could be wrong?

This is a common issue, often referred to as a "feedback trap," where the model reinforces its existing knowledge. Here are key troubleshooting steps:

- Audit Your Diversity Component: Ensure your diversity metric is effective. For molecular data, a latent space distance in a well-trained graph neural network often outperforms simple fingerprint-based distances [46]. If the diversity sampling is too weak, the algorithm will keep sampling from a narrow, already-known region.

- Check for Representation Collapse: In generative active learning frameworks, if the generative model lacks diversity in its outputs, the entire active learning loop will suffer. Monitor the diversity of the candidate compounds generated in each iteration [50].

- Re-calibrate the Exploration-Exploitation Balance: The ranking criterion used to select candidates might be over-prioritizing exploitation (high predicted performance) over exploration (high uncertainty). Adjust the weighting in your acquisition function to favor uncertainty more strongly, especially in early iterations [50].

- Validate on a Held-Out Set: Continuously monitor the model's performance on a fixed, diverse test set. If performance plateaus while the uncertainty on the selected candidates decreases, it's a strong indicator that the loop is stuck [47].

How can I handle highly imbalanced data across different chemical processes or conditions?

This is a key challenge in materials science, where data for a simple process (e.g., gravity casting) may be abundant, while data for a complex one (e.g., hot extrusion) is scarce. A process-synergistic framework can be highly effective.

- Leverage Conditional Models: Use a conditional generative model, like a conditional Wasserstein Autoencoder (c-WAE), which encodes the processing route as a conditional variable. This allows the model to learn a shared latent representation across all processes, leveraging data from abundant processes to improve predictions for data-scarce ones [50].

- Multi-Task Learning: Train a single model to predict properties for multiple processes simultaneously. The shared parameters learn general features of the composition-property relationship, which benefits the predictions for the process with limited data [50].

Experimental Protocols

Protocol 1: Implementing a Simple Hybrid Sampling Strategy for Drug Response Prediction

This protocol is adapted from a comprehensive investigation of active learning for anti-cancer drug response prediction [47].

1. Problem Setup: The goal is to build a drug-specific model to predict the response (e.g., IC50) of various cancer cell lines to a specific drug. You start with a large pool of uncharacterized cell lines.

2. Initialization:

- Train an initial drug response prediction model (e.g., a Graph Neural Network or a descriptor-based model) on a small, randomly selected seed set of cell lines.

- Define your candidate pool as the remaining unlabeled cell lines.

3. Active Learning Loop: Repeat for a predetermined number of iterations or until a performance goal is met.

- Step 1: Prediction and Uncertainty Estimation. Use the current model to predict the response for all cell lines in the candidate pool. Obtain an uncertainty estimate for each prediction using a chosen UQ method (e.g., Ensemble [47]).

- Step 2: Diversity Sampling. Cluster the candidate pool cell lines based on their genomic features (e.g., gene expression profiles) into k clusters.

- Step 3: Hybrid Selection. Within each cluster, select the cell line with the highest uncertainty estimate. This ensures that you pick the most informative sample from each diverse region of the chemical (genomic) space.

- Step 4: Experimental Validation. Send the selected cell lines for experimental testing to obtain the ground-truth drug response.

- Step 5: Model Update. Add the new data to the training set and retrain the predictive model.

4. Evaluation:

- Track the cumulative number of "hits" (responsive treatments) identified over iterations.

- Monitor the model's prediction performance (e.g., R², RMSE) on a held-out test set.

Protocol 2: Uncertainty-Driven Dynamics (UDD) for Conformational Sampling

This advanced protocol uses a bias potential to drive molecular dynamics (MD) simulations towards high-uncertainty regions, efficiently exploring conformational space for interatomic potential development [49].

1. Prerequisites:

- An ensemble of Neural Network (NN) interatomic potentials (e.g., 5-10 models) trained on an initial dataset.

- A starting molecular structure.

2. UDD-AL Simulation Loop: