Active Learning with Uncertainty Quantification: A Practical Framework for Accelerating Drug Discovery

This article provides a comprehensive overview of the integration of active learning (AL) and uncertainty quantification (UQ) to address critical challenges in modern drug discovery.

Active Learning with Uncertainty Quantification: A Practical Framework for Accelerating Drug Discovery

Abstract

This article provides a comprehensive overview of the integration of active learning (AL) and uncertainty quantification (UQ) to address critical challenges in modern drug discovery. Aimed at researchers and development professionals, it explores the foundational principles that make AL/UQ essential for navigating vast chemical spaces and rare-event problems, such as synergistic drug combination discovery. The piece details cutting-edge methodological frameworks, including nested AL cycles and UQ-enhanced graph neural networks, and offers practical strategies for overcoming implementation hurdles like data scarcity and model generalization. Finally, it synthesizes evidence from recent successful applications and benchmarking studies, demonstrating how these techniques can significantly compress discovery timelines, reduce experimental costs, and improve the reliability of AI-driven molecular design.

Why Active Learning and Uncertainty Quantification are Revolutionizing Drug Discovery

Traditional drug discovery is a high-risk endeavor, characterized by an average cost of $2.6 billion per approved drug and a timeline of 10-15 years [1]. A staggering 90% of drug candidates that enter clinical trials never reach patients, with the phase II clinical trial stage being the most significant hurdle, often called the 'graveyard' of drug development due to a nearly 70% failure rate [1] [2]. This inefficiency stems from the challenge of navigating an immense chemical space, estimated to contain over 10⁶⁰ drug-like molecules, with limited experimental throughput [1] [3].

Active Learning (AL) coupled with Uncertainty Quantification (UQ) presents a paradigm shift. This AI-driven approach creates a iterative, data-driven workflow where machine learning models guide experimental design. By identifying the most informative compounds to test next—based on both predicted properties and the model's own uncertainty—researchers can significantly accelerate the exploration of chemical space, reduce costs, and mitigate late-stage attrition [4].

Table: The Drug Development Gauntlet - Key Statistics

| Development Stage | Average Duration | Primary Reason for Failure | Probability of Success |

|---|---|---|---|

| Discovery & Preclinical | 2-4 years | Toxicity, lack of effectiveness in models | ~0.01% (to approval) |

| Phase I Clinical Trial | ~2.3 years | Unmanageable toxicity/safety in humans | ~52% - 70% |

| Phase II Clinical Trial | ~3.6 years | Lack of clinical efficacy in patients | ~29% - 40% |

| Phase III Clinical Trial | ~3.3 years | Insufficient efficacy or safety in large groups | ~58% - 65% |

| FDA Review | ~1.3 years | Safety/efficacy concerns in submitted data | ~91% |

Technical Support Center: FAQs & Troubleshooting

This section addresses common computational and experimental challenges encountered when implementing active learning frameworks in drug discovery.

FAQ: Core Concepts

Q1: What is the difference between aleatoric and epistemic uncertainty, and why does it matter for my assay?

Uncertainty in machine learning predictions is disentangled into two primary sources [5]:

- Aleatoric Uncertainty: This is the inherent stochastic variability or "noise" within your experiments. It is often considered irreducible because it cannot be mitigated by additional data alone. In drug discovery, this can reflect biological stochasticity or human intervention during assay execution. Proper quantification helps in better risk management.

- Epistemic Uncertainty: This encompasses uncertainties related to the model's lack of knowledge, stemming from insufficient training data or model limitations. Unlike aleatoric uncertainty, it can be reduced by acquiring additional data in specific regions of the chemical space. Understanding this allows researchers to strategically guide data collection efforts in an active learning loop.

Q2: My team has decades of historical assay data. Can we use it to build an effective active learning model?

While historical data is valuable, it often comes with significant challenges for building robust models [6]. Key issues include:

- Assay Drift: Over many years, machines, operators, and software change, yet IC50 values are often assumed to be comparable.

- Lack of Metadata: Databases often contain only summarized values (e.g., a single IC50), not the underlying raw measurement values or the control values from the same experiment. This makes proper statistical estimation nearly impossible.

- Solution: Implement "statistical discipline in statistical systems." This means systematically logging all hyperparameters, software versions, and operator information to create a traceable data lineage. Leadership must prioritize baking this traceability into data systems from the start [6].

Q3: What is a "censored label" in my experimental data, and how can I use it?

Censored labels arise when an experiment's measurement range is exceeded, and the exact value cannot be recorded [7] [5]. For instance, if no biological response is observed within the tested range of compound concentrations, the experiment may only indicate that the true activity value lies above or below a certain threshold. Standard regression models ignore this partial information. However, by adapting models using techniques from survival analysis (like the Tobit model), you can incorporate these censored labels. This utilizes all available experimental information, leading to more accurate predictions and superior uncertainty estimation, which is crucial for effective active learning [7] [5].

Troubleshooting Guides

Issue 1: Lack of Assay Window in TR-FRET-Based Screening

- Problem: You are running a TR-FRET assay (e.g., LanthaScreen) and observe no difference between positive and negative controls.

- Investigation & Resolution:

- Instrument Setup: The most common reason for a complete lack of window is that the instrument was not set up properly. Confirm that the exact recommended emission filters for your specific microplate reader are being used. Unlike other fluorescence assays, the filter choice can make or break a TR-FRET assay [8].

- Reagent Testing: Before beginning any assay, test your microplate reader's TR-FRET setup using reagents you have already purchased. Refer to the Terbium (Tb) or Europium (Eu) Assay Application Notes for specific plate reader setup instructions [8].

- Data Analysis: Ensure you are using ratiometric data analysis. Calculate the emission ratio by dividing the acceptor signal by the donor signal (e.g., 520 nm/495 nm for Tb). This ratio accounts for pipetting variances and lot-to-lot reagent variability [8].

Issue 2: Inconsistent IC50/EC50 Values Between Labs or Replicates

- Problem: Different labs, or even different replicates in the same lab, report significantly different EC50 or IC50 values for the same compound.

- Investigation & Resolution:

- Stock Solution Preparation: The primary reason for such differences is often variations in the preparation of stock solutions, typically at the 1 mM stage. Ensure standardized protocols and verification methods for stock solution preparation across all teams [8].

- Data Quality Assessment: Do not rely on the assay window alone. Calculate the Z'-factor, a key metric that considers both the assay window size and the data variability (standard deviation). Assays with a Z'-factor > 0.5 are considered suitable for screening. A large window with high noise can have a worse Z'-factor than a small window with low noise [8].

Issue 3: High Uncertainty in AI Model Predictions for Novel Chemotypes

- Problem: Your molecular property prediction model works well on molecules similar to its training set but returns high uncertainty for novel chemical scaffolds, making active learning selection difficult.

- Investigation & Resolution:

- Evaluate UQ Method Performance: No single UQ method consistently outperforms others. Benchmark methods like ensemble models, Monte Carlo Dropout, and density-estimation approaches on your specific data. Note that many standard UQ methods fail to perform well on out-of-distribution (OOD) molecules, so your choice of UQ method is critical [4].

- Incorporate Censored Data: If you have excluded compounds with censored labels (e.g., "IC50 > 10 µM") from model training, re-train your model using methods that can incorporate this partial information. This has been shown to enhance uncertainty quantification in real pharmaceutical settings [7] [5].

- Temporal Validation: Avoid only using random or scaffold-based data splits for evaluation. Implement a temporal split (training on older data, validating/testing on newer data) to best approximate the model's real-world predictive performance and uncertainty calibration over time [5].

Experimental Protocols for Active Learning with Uncertainty Quantification

Protocol 1: Implementing an Active Learning Cycle for Virtual Screening

This protocol details the steps to set up a closed-loop system where a model guides the selection of compounds for subsequent experimental testing.

1. Hypothesis & Model Initialization:

- Objective: Identify novel hit compounds for a specific protein target from a large virtual library (e.g., 1 million compounds).

- Initial Training Set: Start with a diverse but small set of compounds (e.g., 200) with experimentally measured IC50 values from historical data.

- Model Selection: Choose a graph neural network (GNN) or a molecular descriptor model capable of uncertainty quantification.

2. Experimental Design & UQ Strategy:

- Uncertainty Estimation: Apply an ensemble-based method (e.g., training 10 models with different random seeds) to generate both a mean prediction (pIC50) and a standard deviation (uncertainty) for every compound in the virtual library [4].

- Acquisition Function: Use an uncertainty-based sampling strategy. Rank all untested compounds by their predicted uncertainty (standard deviation). The top N (e.g., 50) most uncertain compounds are selected for the next round of testing.

- Rationale: This strategy prioritizes compounds where the model is least confident, thereby maximizing information gain with each experimental cycle and helping the model generalize to new regions of chemical space more rapidly [4].

3. Key Procedures:

- Iterative Loop:

- Step 1: The model screens the virtual library and proposes the 50 most uncertain compounds.

- Step 2: These compounds are procured or synthesized and tested in the biochemical assay.

- Step 3: The new experimental data (IC50 values, including any censored labels for inactive compounds) is added to the training set.

- Step 4: The model is re-trained on the expanded dataset.

- Repeat until a predefined number of hits are identified or the budget is exhausted.

4. Data Analysis:

- Monitor the model's performance on a held-out test set over iterations.

- Track the hit rate (number of active compounds found / number tested) in each cycle. A successful AL campaign should show a higher hit rate compared to random selection.

Protocol 2: Enhancing QSAR Models with Censored Regression Data

This protocol adapts standard machine learning models to learn from censored experimental labels, providing a more accurate view of uncertainty.

1. Hypothesis:

- Incorporating censored data (e.g., "IC50 > 10 µM") into QSAR model training will improve prediction accuracy and uncertainty quantification for inactive compounds.

2. Experimental Design:

- Data Preparation: Compile a dataset containing both precise IC50 values and censored labels. Censored labels should be assigned a threshold value and a direction (e.g., left-censored for

IC50 < X, right-censored forIC50 > Y). - Model Adaptation: Adapt ensemble-based, Bayesian, or Gaussian models to learn from censored labels using the Tobit model from survival analysis. This involves modifying the loss function (e.g., Gaussian Negative Log-Likelihood) to account for the probability of a data point being censored [7] [5].

3. Key Procedures:

- Baseline Model Training: Train a standard model (e.g., GNN) using only the precise IC50 values, ignoring censored data.

- Censored Model Training: Train an identical model architecture using the adapted loss function that incorporates both precise and censored labels.

- Evaluation: Perform a temporal evaluation by training both models on data available up to a certain date and testing on data generated after that date.

4. Data Analysis:

- Compare the Root Mean Square Error (RMSE) and calibration of uncertainty estimates (e.g., via negative log-likelihood) of the two models on the temporal test set.

- A successful implementation will show that the model trained with censored labels provides better-calibrated uncertainties and more accurate predictions, especially for inactive compounds [5].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Reagents for Key Drug Discovery Assays

| Reagent / Material | Function / Application | Key Considerations |

|---|---|---|

| RNAscope Control Probes (PPIB, POLR2A, dapB) | Validate sample RNA quality and assay performance in RNAscope ISH assays. PPIB and POLR2A are positive controls; dapB is a negative bacterial control. | Successful PPIB staining should generate a score ≥2. Samples should display a dapB score of <1, indicating low background [9]. |

| LanthaScreen Eu/Tb Kinase Binding Assay Reagents | Enable TR-FRET-based kinase activity and binding assays. The lanthanide donor provides a long-lived fluorescence signal, allowing time-gated detection to reduce background. | Using the correct emission filters for your microplate reader is critical. Always use ratiometric data (acceptor/donor) for analysis to normalize for pipetting and reagent variability [8]. |

| Z'-LYTE Kinase Assay Kit | A fluorescence-based, coupled-enzyme format for screening kinase inhibitors. Protease cleavage of the substrate is correlated with kinase activity. | The output is a blue/green emission ratio. The 0% phosphorylation (100% inhibition) control should yield the maximum ratio. A 10-fold difference in ratio between 100% and 0% phosphorylated controls is typical [8]. |

| Superfrost Plus Microscope Slides | Used for tissue sectioning and staining in assays like RNAscope ISH. | These slides are required for RNAscope assays. Other slide types may result in tissue detachment during the rigorous protocol [9]. |

| ImmEdge Hydrophobic Barrier Pen | Used to draw a barrier around tissue sections on slides to maintain reagent coverage and prevent drying. | This is the only barrier pen recommended for the RNAscope procedure, as it will maintain a hydrophobic barrier throughout the entire process [9]. |

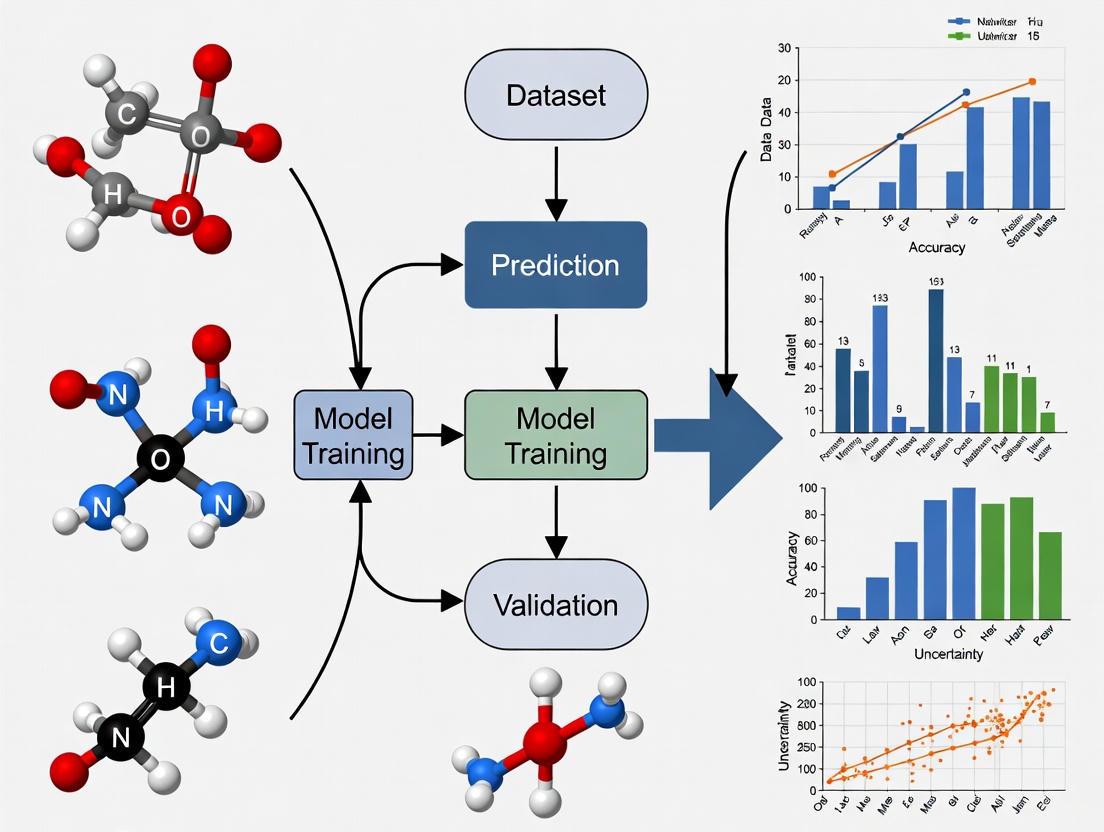

Workflow Visualization

Active Learning Cycle with UQ

Censored Data in Model Training

FAQs on Active Learning in Drug Discovery

What is active learning and how does it accelerate drug discovery?

Active learning is a machine learning paradigm that operates as an iterative feedback loop designed for optimal experimental design. In drug discovery, it addresses the challenge of exploring vast molecular search spaces where experiments are time-consuming and expensive. The core process involves a surrogate model making predictions about molecular properties, which are then used by a utility function to prioritize the most informative next experiments based on their uncertainty and potential value [7] [10]. This approach systematically guides experiments toward compounds with desired properties, significantly reducing the time and cost of discovery compared to traditional trial-and-error methods [10].

Why is Uncertainty Quantification (UQ) critical in this loop?

Uncertainty Quantification (UQ) is fundamental because it assesses the reliability of the model's predictions. In drug discovery, data-driven models often fail when predicting properties for molecules outside their training domain [11]. UQ helps identify these situations, allowing the system to prioritize experiments that reduce model uncertainty. This leads to more robust exploration of chemical space and prevents misleading conclusions from overconfident but incorrect predictions [7] [11]. Techniques for UQ include ensemble models, Bayesian models, and Gaussian processes [7].

How do I handle censored experimental data in my models?

Censored labels provide threshold information (e.g., "activity > X") rather than precise values and are common in pharmaceutical data, with approximately one-third or more of experimental labels often being censored [7]. Standard UQ methods cannot fully utilize this information. You can adapt ensemble-based, Bayesian, and Gaussian models to learn from censored labels by integrating the Tobit model from survival analysis [7]. This adaptation is essential for reliable uncertainty estimation with real-world, sparse experimental data.

Which model should I choose for my active learning pipeline?

The choice depends on your data size and UQ needs. The table below compares common approaches:

| Model Type | Best For | UQ Strengths | Considerations |

|---|---|---|---|

| Gaussian Process (GP) [11] | Smaller datasets, high-precision UQ | Provides natural, well-calibrated uncertainty estimates. | Computational cost scales poorly (O(n³)) with large datasets. |

| Graph Neural Networks (GNNs) [11] | Large, complex molecular datasets | Scalable with fixed parameters regardless of dataset size. | UQ under domain shift can be challenging; requires specific methods like ensemble or Bayesian learning. |

| Ensemble Models [7] | General-purpose, robust UQ | Simple to implement, effective uncertainty estimates. | Can be computationally expensive as it requires training multiple models. |

What are common utility functions and when should I use them?

The utility function is critical for decision-making. Here are key types:

| Utility Function | Primary Goal | Mechanism | Use Case Example |

|---|---|---|---|

| Probabilistic Improvement (PIO) [11] | Meet specific property thresholds. | Selects candidates based on the probability of exceeding a target value. | Optimizing a molecule to achieve a potency IC50 < 10 nM. |

| Expected Improvement (EI) [10] [11] | Find the best possible property value. | Balances the potential magnitude of improvement and its probability. | Maximizing the binding affinity of a drug candidate. |

| Variance Reduction | Improve overall model accuracy. | Selects points where uncertainty (variance) is highest. | Initial exploration of a poorly characterized chemical space. |

Troubleshooting Guides

Problem: My Active Learning Loop Is Not Finding Better Candidates

Possible Causes and Solutions:

Insufficient Exploration: The utility function is too greedy, focusing only on the most promising areas and getting stuck.

- Solution: Incorporate more exploration in your utility function. For example, mix in some random sampling or use a function like Upper Confidence Bound (UCB) that explicitly balances exploring uncertain regions with exploiting known promising ones [10].

Poor Surrogate Model Performance: The model's predictions are inaccurate, leading the loop in the wrong direction.

Inadequate UQ: The model's uncertainty estimates are poorly calibrated, making the utility function's decisions unreliable.

- Solution: For GNNs, implement ensemble methods or Monte Carlo Dropout to improve UQ [11]. Benchmark different UQ methods on your specific dataset.

Problem: My Model Cannot Learn from Censored Data Effectively

Solution: Implement the Tobit model framework for censored regression [7]. This involves adapting the loss function of your chosen model (e.g., ensemble, Bayesian) to account for the fact that for censored data points, we only know that the true value lies beyond a certain threshold. This allows the model to learn from the partial information in censored labels, which is crucial for reliable uncertainty estimation in real-world pharmaceutical settings [7].

Problem: The Computational Cost of UQ Is Too High

Solution: Choose a scalable UQ method appropriate for your data size.

- For large datasets, avoid Gaussian Processes and instead use parametric models like GNNs with ensemble UQ, as their computational cost is independent of dataset size [11].

- If using GPs is necessary, employ approximation strategies like inducing-point methods or random Fourier features to reduce computational complexity [11].

Experimental Protocols

Protocol 1: Implementing an Active Learning Loop with D-MPNN and UQ

This protocol uses Graph Neural Networks for molecular property prediction [11].

1. Dataset Preparation:

- Input Data: Collect molecular structures (e.g., as SMILES strings) and their corresponding experimental activity data.

- Censored Data Handling: If censored data exists (e.g., "IC50 > 10μM"), label them appropriately for the Tobit model adaptation [7].

2. Surrogate Model Training (D-MPNN with UQ):

- Tool: Use the

Chemproppackage, which implements D-MPNNs [11]. - UQ Method: Train an ensemble of D-MPNNs. The uncertainty can be quantified as the variance or standard deviation across the ensemble's predictions [11].

- Training: Split data into training/validation sets. Train multiple D-MPNN models with different random initializations on the training set.

3. Candidate Selection & Iteration:

- Acquisition Function: Calculate the Probabilistic Improvement (PIO) for all candidates in the unlabeled pool. PIO quantifies the likelihood a candidate exceeds a property threshold [11].

- Selection: Rank candidates by PIO and select the top ones for the next round of "experiments."

- Iterate: Add the new data (real or simulated) to the training set and retrain the model ensemble. Repeat until a candidate meets the target criteria.

Protocol 2: Benchmarking Active Learning Strategies

Use this protocol to compare different utility functions or UQ methods [11].

1. Benchmark Setup:

- Platforms: Use open-source molecular design platforms like Tartarus or GuacaMol for predefined tasks and datasets [11].

- Tasks: Select both single-objective (e.g., optimize solubility) and multi-objective (e.g., optimize potency and metabolic stability) tasks.

2. Experimental Procedure:

- Baseline: Run an active learning loop with a simple, uncertainty-agnostic strategy (e.g., selecting candidates with the highest predicted value).

- Test Strategy: Run the same loop with your UQ-enhanced strategy (e.g., using PIO or Expected Improvement).

- Metric: Track the number of iterations or total experiments required to find a candidate satisfying the target. For multi-objective tasks, measure the success rate of finding candidates that meet all objectives simultaneously [11].

3. Analysis:

- Compare the performance curves of the different strategies. A more efficient strategy will find successful candidates in fewer iterations.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Function in Active Learning | Example Use |

|---|---|---|

| Chemprop [11] | A software package implementing Directed Message Passing Neural Networks (D-MPNNs) for molecular property prediction. | Serving as the surrogate model to predict activity from molecular structure, with built-in support for uncertainty quantification. |

| Tartarus Benchmarking Platform [11] | A suite of computational benchmarks that simulate real-world molecular design challenges (e.g., optimizing organic photovoltaics, protein ligands). | Evaluating and comparing the performance of different active learning and UQ strategies in a simulated, cost-effective environment. |

| Tobit Model [7] | A statistical model from survival analysis adapted for regression with censored data. | Enabling the surrogate model to learn from experimental labels that are incomplete (e.g., "activity > 10μM"), which is common in early drug screening. |

| Probabilistic Improvement (PIO) [11] | An acquisition function that selects experiments based on the probability of exceeding a target property threshold. | Guiding the search for molecules that need to meet a specific minimum efficacy or safety threshold, rather than just maximizing a value. |

Active Learning Workflow and Signaling

Active Learning Loop for Drug Discovery

UQ-Enhanced Molecular Optimization

Uncertainty Quantification (UQ) is a critical process in artificial intelligence that evaluates the reliability of model predictions by estimating their confidence levels. In drug discovery research, where decisions guide expensive and time-consuming laboratory experiments, accurately quantifying uncertainty enables researchers to distinguish between high-confidence and speculative predictions, optimizing resource allocation [7] [5].

UQ disentangles two primary types of uncertainty: aleatoric uncertainty (inherent noise in the data that cannot be reduced with more data) and epistemic uncertainty (stemming from the model's lack of knowledge, which can be reduced with additional training data) [5]. For active learning frameworks in drug discovery, this distinction is crucial—epistemic uncertainty helps identify which compounds would be most informative to test next in the laboratory [5] [11].

FAQs on Uncertainty Quantification in Drug Discovery

1. Why is uncertainty quantification especially important in AI-driven drug discovery?

Drug discovery experiments are both time-consuming and costly. Uncertainty quantification provides a measure of confidence in AI predictions, allowing researchers to prioritize experiments more likely to succeed and avoid being misled by overconfident but incorrect model outputs. This builds trust in AI models and optimizes resource allocation [7] [12]. Furthermore, in active learning settings, UQ guides the selection of the most valuable data points to test experimentally next, thereby improving the model efficiently with fewer experiments [11].

2. What is the difference between aleatoric and epistemic uncertainty?

- Aleatoric uncertainty captures the inherent stochasticity or noise in the experimental process itself. It is often considered irreducible because it cannot be eliminated by collecting more data. In drug discovery, this might reflect biological variability or measurement error [5].

- Epistemic uncertainty arises from a lack of knowledge in the model, often due to insufficient training data in certain regions of the chemical space. This type of uncertainty can be reduced by gathering more relevant data, making it a key target for active learning strategies [5].

3. How can I use UQ to improve my active learning cycle?

In an active learning cycle for drug discovery, UQ is used as a criterion for selecting the next compounds for experimental testing. After training an initial model on available data, you would:

- Use the model to predict properties and their associated uncertainties for a large library of untested compounds.

- Prioritize compounds where the model exhibits high epistemic uncertainty (indicating it is operating outside its knowledge domain) or those that are predicted to have high desired properties but with some uncertainty.

- Conduct experiments on these selected compounds.

- Add the new experimental results to the training data and retrain the model. This iterative process, guided by UQ, helps expand the model's knowledge base more efficiently than random selection [5] [11].

4. My experimental data contains "censored labels" (e.g., compound potency reported as ">10μM"). Can UQ methods use this information?

Yes. Standard UQ methods cannot fully utilize censored labels, but recent adaptations allow models to learn from this partial information. By applying techniques from survival analysis, such as the Tobit model, ensemble-based, Bayesian, and Gaussian models can be extended to incorporate censored regression labels. This leads to more reliable uncertainty estimates, especially in real-world pharmaceutical settings where a significant portion of experimental data may be censored [7] [5].

5. What are common UQ methods I can implement?

The table below summarizes common UQ methods used in drug discovery research.

Table 1: Common Uncertainty Quantification Methods

| Method Category | Key Examples | Brief Description | Strengths |

|---|---|---|---|

| Ensemble Methods | Deep Ensembles [13], MC-Dropout [13] | Trains multiple models (or uses dropout at inference) and measures the variance in their predictions. | Simple to implement; strong empirical performance. |

| Bayesian Methods | Bayesian Neural Networks [13] [14] | Treats model weights as probability distributions, naturally capturing uncertainty. | Principled probabilistic framework. |

| Gaussian Methods | Mean-Variance Estimation (MVE) [13], Gaussian Ensemble [13] | The model is trained to directly predict both a mean and a variance for each input. | Directly estimates aleatoric uncertainty. |

| Evidential Methods | Deep Evidential Regression [13] | The model is trained to place a higher-order distribution over the predictions, yielding both aleatoric and epistemic uncertainty. | Can capture both uncertainty types without ensembles. |

Troubleshooting Common UQ Issues

Problem: My model is overconfident on new, unseen types of molecules.

- Cause: This is often a sign of underestimated epistemic uncertainty. The model has not encountered similar molecules during training and fails to recognize its own ignorance.

- Solutions:

- Implement Temporal Validation: Split your data temporally, training on older compounds and validating on newer ones. This better simulates real-world deployment and reveals overconfidence on novel chemotypes [7] [5].

- Use Ensemble Methods: Switch from a single model to an ensemble of models. The variance in the ensemble's predictions is a good indicator of epistemic uncertainty [13] [15].

- Apply Conformal Prediction: Use this distribution-free method to create prediction sets (e.g., confidence intervals) that have guaranteed coverage properties under certain assumptions, providing a more realistic view of model performance on new data [16].

Problem: The estimated uncertainty values are poorly calibrated (e.g., 90% confidence intervals only contain the true value 50% of the time).

- Cause: The model's uncertainty estimates do not match the true frequency of correct predictions.

- Solutions:

- Recalibration: Apply post-processing calibration techniques. For regression, use a held-out validation set to fit a linear regression that maps your estimated uncertainties to more accurate ones. For classification, Platt scaling or Venn-ABERS predictors can be effective [13].

- Test Calibration: Use metrics like the Expected Normalized Calibration Error (ENCE) to quantitatively assess calibration and track improvements [13].

Problem: My UQ method is too computationally expensive for my large dataset.

- Cause: Some methods, like deep ensembles or Gaussian Processes, scale poorly with data size.

- Solutions:

- For Ensembles: Reduce the number of models in the ensemble or use smaller, more efficient base models.

- Consider Alternative Methods: Use MC-Dropout as a more lightweight approximation of a Bayesian neural network [13].

- Leverage Scalable Models: Graph Neural Networks (GNNs) with UQ provide a scalable parametric alternative to non-parametric methods like Gaussian Processes, enabling UQ on larger datasets [11].

Experimental Protocols for UQ Evaluation

Protocol 1: Temporal Evaluation of UQ Methods

Objective: To benchmark UQ methods under realistic conditions that simulate the temporal evolution of a drug discovery project.

Workflow:

Materials:

- Dataset: Internal pharmaceutical assay data with timestamps (e.g., IC50/EC50 values from target-based or ADME-T assays) [7] [5].

- Software: UQ4DD package [13], PyTorch, Scikit-learn.

- Models: Ensemble of D-MPNNs, Bayesian Neural Networks, Gaussian Mean-Variance Estimators [13] [11].

Procedure:

- Collect data from relevant biological assays, ensuring each data point has a reliable experimental date.

- Sort the entire dataset chronologically and split it into training (e.g., first 60%), validation (e.g., next 20%), and test (e.g., last 20%) sets [7] [5].

- Train multiple UQ models (e.g., ensemble, Bayesian, Gaussian) on the training set. If available, incorporate censored labels using adapted loss functions [7].

- Use the trained models to make predictions and quantify uncertainties (both aleatoric and epistemic) on the held-out test set.

- Evaluate the quality of the uncertainties using metrics such as:

- Negative Log-Likelihood (NLL): Measures how likely the true data is under the predicted probability distribution (lower is better).

- ENCE: Measures the calibration of the uncertainty intervals (lower is better) [13].

- AUC of tasks like identifying prediction errors or active compounds using the uncertainty score.

Protocol 2: Active Learning Cycle with UQ-Based Selection

Objective: To iteratively improve a predictive model by selectively acquiring new experimental data based on UQ.

Workflow:

Materials:

- Initial Data: A small, labeled dataset of compounds with assay results.

- Candidate Pool: A large, diverse virtual library of compounds without assay data.

- UQ-Capable Model: A model such as a GNN ensemble or Bayesian neural network [11].

Procedure:

- Initial Training: Train your chosen UQ model on the initial labeled dataset.

- Prediction & Selection:

- Use the model to predict the target property and its associated epistemic uncertainty for all compounds in the candidate pool.

- Rank the candidates based on a selection criterion. Common criteria include:

- Uncertainty Sampling: Select the compounds with the highest epistemic uncertainty.

- Probabilistic Improvement (PIO): Select compounds with the highest probability of exceeding a desired property threshold, which balances predicted performance and uncertainty [11].

- Experimental Loop:

- Send the top-ranked compounds (e.g., top 10-50) for experimental testing.

- Add the new experimental results to the initial training data.

- Retrain the model on the expanded dataset.

- Iteration: Repeat steps 2 and 3 for multiple cycles until a performance goal is met or the budget is exhausted.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Computational Tools for UQ in Drug Discovery

| Tool / Solution | Function | Application Context |

|---|---|---|

| UQ4DD Python Package [13] | Provides implementations of ensemble, Bayesian, and Gaussian UQ methods adapted for censored data. | Benchmarking and applying UQ methods to molecular property prediction tasks. |

| Chemprop with D-MPNN [11] | A Graph Neural Network that directly learns from molecular structures and can be extended for UQ. | Building accurate surrogate models for molecular optimization with built-in UQ capabilities. |

| Therapeutics Data Commons (TDC) [13] | A collection of public datasets for drug discovery. | Accessing benchmark datasets for training and evaluating models when internal data is limited. |

| Scikit-learn [13] | A core machine learning library with tools for cross-validation and baseline models (e.g., Random Forest). | Implementing baseline ensemble models and standard evaluation procedures. |

| Conformal Prediction Frameworks [16] | Provides distribution-free methods for creating statistically valid prediction intervals. | Adding rigorous, model-agnostic confidence intervals to predictions from any model. |

Technical Support Center: Troubleshooting AL/UQ in Drug Discovery

This guide provides practical solutions for researchers implementing Active Learning (AL) with Uncertainty Quantification (UQ) in drug discovery projects. It addresses common pitfalls and offers standardized protocols to ensure robust and efficient discovery cycles.

Frequently Asked Questions (FAQs)

1. My AL model fails to generalize to new molecular scaffolds. What is wrong? This is a common issue where the model's uncertainty estimates are not effectively identifying truly informative out-of-domain (OOD) samples. Many standard UQ methods, like those relying solely on prediction variance, perform poorly on OOD data [17]. To improve generalization:

- Action: Incorporate density-based UQ methods, such as Kernel Density Estimation (KDE), which have been shown to outperform other approaches in identifying OOD molecules and accelerating model generalization [17].

- Action: Use the UNIQUE framework to benchmark your UQ methods. Evaluate metrics like

DiffkNN, which measures the absolute difference between a test sample's UQ metric and that of its nearest neighbors in the training set, as it is specifically designed to detect distribution shifts [18].

2. How should I handle experimental data where many activity values are reported as thresholds (e.g., IC50 > 10μM)? Standard UQ models cannot utilize this "censored" data, leading to information loss. You can adapt your UQ models to learn from these censored labels.

- Action: Integrate tools from survival analysis, specifically the Tobit model, into your ensemble, Bayesian, or Gaussian models. This adaptation allows the model to learn from censored regression labels, which is essential for reliable uncertainty estimation when a significant portion (e.g., one-third or more) of experimental labels are censored [7].

3. How can I determine which UQ metric is best for my specific drug discovery project? There is no single best UQ metric; the optimal choice depends on the downstream application [17] [18].

- Action: Clearly define your goal and use the following table as a guide:

| Your Goal | Recommended UQ Approach | Reasoning |

|---|---|---|

| Identifying the Model's Applicability Domain (AD) | Error Models (e.g., Random Forest predicting L1 error) or Data-based metrics (e.g., distance to training set) [19]. | These methods directly link uncertainty to the feature space of your training data, helping to identify when a molecule is too dissimilar to be trusted. |

| Estimating Prediction Intervals for Confidence Estimation | Sum of model-based and data-based variances [19] or Ensemble methods [20]. | Combining variance sources provides a more robust estimate of the total prediction interval. |

| Selecting compounds for Active Learning | Density-based methods (KDE) or Transformed UQ metrics (DiffkNN) [17] [18]. | These are more effective at quantifying changes in model uncertainty and identifying informative OOD samples for experimental follow-up. |

4. My UQ method seems miscalibrated, providing overconfident false predictions. How can I fix this? This occurs when the estimated uncertainty does not accurately reflect the actual prediction error. This is a known limitation, especially under data distribution shifts [18].

- Action: Implement calibration techniques. Use a calibration set to adjust your uncertainty estimates. Frameworks like Fortuna and MAIPE are designed for this purpose and can provide better-calibrated conformal prediction sets [18].

Troubleshooting Guides

Problem: Poor Performance of Uncertainty-Based Active Learning

Symptoms: The model's performance does not improve efficiently with new data acquisitions, or it fails to generalize to new regions of chemical space.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inadequate UQ for OOD Data | Check the correlation between your UQ scores and prediction errors on a held-out OOD test set. A low Spearman correlation indicates poor ranking ability [21] [17]. | Switch to a UQ method proven effective for OOD detection, such as Kernel Density Estimation (KDE) or other density-based methods [17]. |

| Over-exploitation | Analyze the diversity of molecules selected by the AL cycle. If they are all structurally similar, the system is over-exploiting. | Modify the acquisition function to balance exploration and exploitation. Incorporate a diversity measure or use a UQ metric like DiffkNN that explicitly probes for novelty [18]. |

| Miscalibrated Uncertainty | Use the UNIQUE framework to perform a calibration-based evaluation of your UQ metrics. A miscalibrated metric will not accurately reflect the true error distribution [18]. | Re-calibrate your UQ metrics using a held-out calibration dataset or employ a library like Fortuna that includes calibration functions [18]. |

Problem: Unreliable Predictions Despite High Training Accuracy

Symptoms: The model performs well on validation data drawn from the same distribution as the training set but fails on real-world candidate molecules.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Ignoring the Applicability Domain (AD) | Calculate the distance (e.g., Euclidean, Tanimoto) of the failing molecules to the training set. If they are distant, they are outside the model's AD [21]. | Implement an AD filter. Reject predictions for molecules where the data-based UQ metric (e.g., distance to k-NN) exceeds a predefined threshold [21] [18]. |

| Confusing Epistemic and Aleatoric Uncertainty | Diagnose the source of uncertainty. Epistemic uncertainty is high in data-scarce regions and can be reduced with more data. Aleatoric uncertainty is high due to data noise and is irreducible [21]. | Use UQ methods that can decompose uncertainty. For example, Bayesian models or deep ensembles can often separate epistemic (model) uncertainty from aleatoric (data) uncertainty, guiding whether to collect more data or improve assay protocols [21]. |

Detailed Experimental Protocols

Protocol 1: Benchmarking UQ Methods with the UNIQUE Framework

Objective: To systematically evaluate and select the best Uncertainty Quantification method for a specific molecular property prediction task.

Materials:

- Dataset: A curated set of molecules with experimental property data (e.g., solubility, bioactivity).

- Data Splits: Training, Calibration, and Test sets. The test set should include both in-domain and out-of-domain molecules.

- Software: Python with the UNIQUE library installed.

Methodology:

- Train a Base Model: Train your chosen machine learning model (e.g., Random Forest, GNN) on the training set.

- Generate Predictions and Inputs: Run inference on the calibration and test sets. Create an input file containing:

- Molecule IDs

- True labels and model predictions

- Data split designation for each sample

- Data features (e.g., molecular fingerprints)

- Model-based UQ metrics (e.g., prediction variance from an ensemble)

- Configure the UNIQUE Pipeline: Specify in a YAML configuration file which UQ metrics to benchmark. These can include:

- Data-based: k-NN distances (Euclidean, Manhattan, Tanimoto), KDE.

- Model-based: Prediction variance.

- Transformed: Sum of variances,

DiffkNN. - Error Models: Train a Random Forest or Lasso model to predict the error.

- Run Evaluation: Execute the UNIQUE pipeline. It will automatically calculate all specified UQ metrics and evaluate them using:

- Ranking-based metrics: Spearman correlation between UQ scores and actual errors.

- Calibration-based metrics: How well the estimated prediction intervals match the observed error distribution.

- Proper scoring rules: Negative Log-Likelihood (NLL).

- Select Best Metric: Choose the UQ metric that performs best on the evaluation criteria most relevant to your application (e.g., OOD detection vs. prediction interval estimation) [18] [19].

The workflow for this benchmarking process is standardized to ensure consistent evaluation:

Protocol 2: Implementing a Censored Data-Aware UQ Model

Objective: To train a UQ model that effectively learns from censored experimental data (e.g., IC50 > 10μM).

Materials:

- Dataset: Pharmaceutical assay data containing both precise and censored activity values.

- Software: Python with PyTorch/TensorFlow and custom code implementing the Tobit loss (Code available at: https://github.com/MolecularAI/uq4dd) [7].

Methodology:

- Data Preparation: Annotate each data point, specifying whether it is a precise measurement or a left/right-censored value.

- Model Selection: Choose a base UQ model (e.g., an ensemble of neural networks, a Bayesian neural network, or a Gaussian process).

- Adapt the Loss Function: Modify the model's loss function to use the Tobit loss, which is the negative log-likelihood for a censored normal distribution. This allows the model to incorporate information from both precise and censored labels during training.

- Model Training: Train the model using the adapted loss function. The model will learn to predict the underlying uncensored activity values and quantify the associated uncertainty, even for censored data points.

- Validation: Evaluate the model on a test set with precise measurements. The study shows that this approach is essential for reliable uncertainty estimation when a significant portion of the data is censored [7].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational tools and their functions for implementing robust AL/UQ pipelines.

| Item | Function / Application | Key Features |

|---|---|---|

| UNIQUE Python Library [18] [19] | A unified framework for benchmarking UQ metrics. | Model-agnostic; supports data- and model-based UQ metrics, error models, and comprehensive evaluation. |

| ML Uncertainty Package [20] | Estimates prediction intervals for classical ML models like Linear Regression and Random Forests. | Intuitive interface; exploits statistical properties of models; computationally efficient. |

| UQ4DD Codebase [7] | Provides implementations for handling censored data in UQ models. | Includes adaptations of ensemble, Bayesian, and Gaussian models with Tobit loss for censored regression. |

| Therapeutics Data Commons [7] | A resource for public molecular property data. | Useful for building benchmark datasets and testing protocols when proprietary data is unavailable. |

| Error Model (Lasso/RF) [18] | A meta-model that predicts the error of the primary ML model. | Uses features and model outputs to forecast prediction errors, acting as a powerful UQ metric. |

| Kernel Density Estimation (KDE) [17] [18] | A data-based UQ method for estimating the probability density of the training data. | Particularly effective at identifying out-of-domain samples and guiding exploration in AL. |

The interplay of these tools and data types within an AL cycle creates a robust system for efficient discovery, as visualized in the following workflow:

Frequently Asked Questions (FAQs)

Q1: What are the main types of uncertainty in AI-driven drug discovery, and why do they matter? Uncertainty is categorized into two main types, each with different implications for your experiments [21]:

- Aleatoric uncertainty stems from inherent noise or randomness in experimental data (e.g., variations in biological assays or measurement errors). It cannot be reduced by collecting more data.

- Epistemic uncertainty arises from a lack of knowledge or data in certain regions of the chemical space. It can be reduced by strategically acquiring more experimental data in these under-explored areas.

Properly quantifying these uncertainties helps prioritize experiments, improve model reliability, and guide resource allocation.

Q2: Our active learning model performs well on validation data but fails to select promising synergistic combinations in real-world testing. What could be wrong? This common issue often relates to the batch selection strategy and model generalization [22] [23]. Key factors to check:

- Batch Size: Smaller batch sizes often yield higher synergy discovery rates. Dynamic tuning of the exploration-exploitation balance is crucial.

- Cellular Context: Ensure your model incorporates relevant cellular environment features (e.g., gene expression profiles), as these significantly enhance prediction quality compared to molecular features alone [22].

- Data Scarcity: Synergy is a rare event (often 1.5-3.5% of pairs). Implement techniques like data augmentation or consider the joint modeling approach of

Hyformerto improve robustness in low-data regimes [22] [24].

Q3: How can we trust AI predictions for molecules that are very different from our training set? This is a fundamental challenge of model applicability domain. Solutions include [21]:

- Uncertainty Quantification (UQ): Deploy ensemble methods, Bayesian models, or similarity-based approaches to assign a confidence score to each prediction.

- Similarity Assessment: If a test molecule is too dissimilar from all training set molecules, its prediction should be treated with caution.

- Censored Data: Use models adapted for censored regression labels, which can learn from incomplete data or activity thresholds common in early drug discovery [7].

Q4: What is the role of active learning in optimizing molecular properties like solubility or permeability? Active learning accelerates the multi-parameter optimization of ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties. Novel batch active learning methods (e.g., COVDROP, COVLAP) select the most informative molecules for testing by maximizing both the uncertainty and diversity of the batch. This can lead to significant savings in the number of experiments needed to achieve the same model performance [23].

Troubleshooting Guides

Problem: Poor Assay Window or No Signal in TR-FRET Assays

Symptoms:

- No difference in signal between positive and negative controls.

- Low Z'-factor, indicating an unreliable assay.

Recommended Actions [8]:

- Verify Instrument Setup: The most common reason for no assay window is improper instrument configuration. Confirm that the correct emission filters for your TR-FRET reagent (Terbium or Europium) are installed precisely as recommended for your microplate reader.

- Check Reagent Preparation: Ensure stock solutions are prepared correctly. Inconsistent compound dissolution is a primary cause of EC50/IC50 variability between labs.

- Use Ratiometric Data Analysis: Always calculate an emission ratio (Acceptor signal / Donor signal, e.g., 520 nm/495 nm for Tb). This ratio corrects for pipetting variances and lot-to-lot reagent variability. Do not rely on raw RFU values.

- Assess Data Quality with Z'-factor: Do not rely on assay window size alone. Calculate the Z'-factor, which incorporates both the assay window and the data variability. An assay with a Z'-factor > 0.5 is considered suitable for screening [8].

Problem: Low Hit Rate in Synergistic Drug Combination Screening

Symptoms:

- Despite AI predictions, the experimentally validated rate of synergistic combinations is low.

- Model predictions do not correlate well with subsequent experimental results.

Recommended Actions [22] [25]:

- Benchmark Model Data Efficiency: Test your AI algorithm's performance using only a small fraction (e.g., 10%) of your available training data. Models that are not data-efficient will struggle in real-world active learning scenarios where data is initially scarce.

- Incorporate Cellular Features: Use genomic features like gene expression profiles of the target cell lines. Research shows this can lead to a significant gain (0.02–0.06 in PR-AUC) in prediction performance compared to using molecular features alone [22].

- Optimize the Exploration-Exploitation Trade-off: An overly greedy strategy that only selects the most promising pairs can miss novel synergies. Implement selection criteria that balance picking high-scoring pairs with exploring uncertain regions of the chemical space.

- Validate with a Pilot Screen: Before a full-scale campaign, run a smaller active learning cycle to benchmark the entire workflow—from feature calculation and model prediction to experimental validation and model retraining.

Problem: Overconfident Predictions on Novel Molecular Scaffolds

Symptoms:

- The model assigns high confidence (e.g., high Softmax probability) to incorrect predictions for out-of-distribution molecules.

- This leads to wasted resources on synthesizing and testing ineffective compounds.

Recommended Actions [24] [21]:

- Implement Uncertainty Quantification: Replace models that output only a single prediction with those that provide uncertainty estimates. Ensemble methods and Bayesian neural networks are widely used for this.

- Use Joint Models: Consider architectures like

Hyformerthat unify generative and predictive tasks. These models have shown benefits in robust property prediction for out-of-distribution samples and in conditional generation [24]. - Define the Applicability Domain: Use simple similarity-based methods (e.g., Tanimoto similarity to the training set) to flag predictions for molecules that are highly dissimilar to the model's training data. Predictions for these molecules should be considered less reliable.

- Calibrate Model Outputs: Ensure that the predicted probabilities of classification models are calibrated to reflect the true likelihood of correctness. A model is well-calibrated if, for example, of the molecules to which it assigns a 80% confidence, roughly 80% are actually correct.

Table 1: Active Learning Performance in Drug Synergy Screening

This table summarizes key performance metrics from a study on using active learning for synergistic drug combination discovery [22].

| Metric | Performance with Active Learning | Performance with Random Screening |

|---|---|---|

| Combinatorial Space Exploration | 10% explored | 100% required for exhaustive search |

| Synergistic Pairs Discovered | 60% (300 out of 500) | Required 8253 measurements to find 300 pairs |

| Experimental Savings | 82% saving in time and materials | Baseline (0% saving) |

| Key Influencing Factor | Smaller batch sizes increase synergy yield | N/A |

Table 2: Performance Comparison of Uncertainty Quantification Methods

This table compares different UQ approaches based on their core ideas and applications in drug discovery [21].

| UQ Method | Core Idea | Example Applications in Drug Discovery |

|---|---|---|

| Similarity-Based | Predictions are unreliable if a test sample is too dissimilar to training samples. | Virtual screening; Toxicity prediction; SARS-CoV-2 inhibitor prediction. |

| Ensemble-Based | The consistency of predictions from multiple models estimates confidence. | Solubility prediction; Bioactivity prediction; ADMET property forecasting. |

| Bayesian | Model parameters and outputs are treated as random variables, and predictions include a measure of uncertainty. | Molecular property prediction; Protein-ligand interaction prediction; Virtual screening. |

Detailed Experimental Protocols

Protocol 1: Implementing an Active Learning Cycle for Synergy Screening

Objective: To iteratively discover synergistic drug combinations using a closed-loop active learning process that integrates AI prediction and experimental validation.

- Initialization:

- Start with a small, initial dataset of experimentally measured drug combinations (this could be a public dataset or a small in-house pilot screen).

- Train an initial AI model (e.g., a neural network like DeepSynergy or a graph convolutional network) on this data. Use molecular fingerprints (e.g., Morgan fingerprint) and cellular features (e.g., gene expression from GDSC) as input.

- Active Learning Loop:

- Prediction & Prioritization: Use the trained model to predict synergy scores for all unexplored drug pairs in the library. Prioritize a batch of pairs for testing based on a selection criterion that balances high predicted synergy (exploitation) and high model uncertainty (exploration).

- Experimental Validation: Test the selected batch of drug combinations in a wet-lab assay (e.g., cell viability assay in PANC-1 cells for cancer research). Use a validated synergy metric like the Gamma score or Bliss score [25].

- Model Retraining: Add the new experimental data to the training set. Retrain or fine-tune the AI model on this updated, larger dataset.

- Repeat the loop until the desired number of synergistic pairs is found or the experimental budget is exhausted.

Key Considerations:

- Batch Size: Smaller batches (e.g., 30 combinations) allow for more frequent model updates and can improve performance [23].

- Model Choice: Ensure the base AI model is data-efficient, meaning it can learn effectively from a small amount of data [22].

Protocol 2: Quantifying Prediction Uncertainty for Molecular Property Optimization

Objective: To estimate the reliability of model predictions for ADMET properties, enabling better decision-making and guiding experimental efforts.

- Model Selection:

- Choose an uncertainty-aware model architecture. Two effective options are:

- Deep Ensemble: Train multiple instances of the same model architecture (e.g., a Graph Neural Network like ChemProp) with different random initializations.

- Bayesian Neural Network: Use a network where the weights are represented by probability distributions (e.g., using Monte Carlo Dropout).

- Choose an uncertainty-aware model architecture. Two effective options are:

Training:

- Train the chosen model on your labeled dataset of molecules and their properties (e.g., solubility, permeability).

Inference and Uncertainty Calculation:

- For a new molecule, make predictions with all models in the ensemble (or multiple stochastic forward passes for Bayesian NN).

- Calculate the mean of the predictions as the final predicted value.

- Calculate the variance or standard deviation of the predictions as the quantitative measure of epistemic uncertainty.

Application:

- Use the predicted uncertainty to prioritize molecules for testing. Molecules with high predicted property values and high uncertainty are prime candidates for experimental validation, as they can maximally improve the model.

Key Considerations:

- Calibration: Periodically check if the predicted uncertainties are well-calibrated (e.g., 90% of the time, the true value lies within the 90% prediction interval).

- Censored Data: If your experimental data has limits of detection (e.g., "IC50 > 10 µM"), use models adapted for censored regression to incorporate this information and improve uncertainty estimates [7].

Experimental Workflow and Pathway Diagrams

Diagram 1: Active Learning Cycle with Uncertainty Quantification

Active Learning Workflow for Drug Discovery

Diagram 2: Uncertainty-Informed Decision Pathway

Decision Logic for Experimental Prioritization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Guided Drug Discovery Experiments

| Reagent / Material | Function / Application | Technical Notes |

|---|---|---|

| TR-FRET Assay Kits (e.g., LanthaScreen) | Used for high-throughput screening assays to study biomolecular interactions (e.g., kinase activity). | Use ratiometric data analysis (Acceptor/Donor). Correct emission filter selection is critical for success [8]. |

| Cell Line Panels (e.g., PANC-1, other cancer cell lines) | Provide the cellular context for testing drug combinations or single-agent efficacy. | Genomic data (e.g., gene expression from GDSC) for these lines should be incorporated into AI models for improved predictions [22] [25]. |

| Compound Libraries (e.g., NCATS, ChEMBL) | Source of small molecules for screening and for building training data for AI models. | Libraries should be diverse and well-annotated with structures (SMILES) and known activities (IC50) [25]. |

| Gene Expression Datasets (e.g., GDSC - Genomics of Drug Sensitivity in Cancer) | Provide cellular feature inputs for AI models, significantly boosting synergy prediction accuracy. | As few as 10 carefully selected genes can be sufficient for accurate predictions in some contexts [22]. |

| Molecular Descriptors & Fingerprints (e.g., Morgan Fingerprints, MAP4, MACCS) | Numerical representations of molecules used as input for machine learning models. | Morgan fingerprints with simple addition operations have been shown to be highly effective and data-efficient [22]. |

Implementing AL/UQ Frameworks: From Theory to Practice

Troubleshooting Common Active Learning Issues

FAQ: My active learning model's performance has plateaued. What could be wrong? This is often caused by a poor exploration-exploitation balance. If your query strategy only selects samples the model is most uncertain about (exploitation), it may miss important, diverse regions of the data space. Incorporate diversity sampling methods, such as clustering-based sampling, to ensure your selected batches represent the entire unlabeled pool. Dynamic tuning of this balance, often influenced by batch size, can further enhance performance [22].

FAQ: How do I handle highly imbalanced data in drug synergy screening? When synergistic pairs are rare (e.g., 1.47-3.55% in common datasets), standard uncertainty sampling can struggle. Consider using the Precision-Recall Area Under Curve (PR-AUC) score to quantify detection performance instead of metrics like accuracy. Furthermore, actively querying for the rare class or using algorithms benchmarked for data efficiency can significantly improve results [22].

FAQ: My uncertainty estimates are unreliable, leading to poor sample selection. How can I improve them? Unreliable uncertainty quantification (UQ) is a common challenge, especially under domain shift. You can:

- Use Ensemble Models: Train multiple models and measure disagreement (e.g., using vote entropy or KL divergence) [26] [27].

- Integrate Censored Data: In drug discovery, many experimental results are censored (e.g., values reported as ">" or "<" a threshold). Adapt your UQ methods to learn from these censored labels, which provides more information for uncertainty estimation [7].

- Employ Advanced UQ Methods: For graph-based models, methods like Monte Carlo Dropout (COVDROP) or Laplace Approximation (COVLAP) have been shown to provide robust uncertainty estimates for molecular data by maximizing the joint entropy of selected batches [28] [11].

FAQ: What is the impact of batch size in an active learning cycle? Batch size is a critical hyperparameter. Smaller batch sizes generally lead to a higher synergy yield ratio (more positive hits per experiment) because the model can adapt more frequently. However, very small batches may not fully capture data diversity. One study found that active learning with 1,488 measurements could recover 60% of synergistic combinations, saving 82% of experimental resources compared to an unguided approach [22].

Essential Experimental Protocols

Protocol: Benchmarking Data-Efficient AL Algorithms

Objective: To identify the most suitable machine learning algorithm for an active learning campaign starting with limited data.

Methodology:

- Data Splitting: Divide your dataset (e.g., the O'Neil drug combination dataset) into three parts: a small training set (e.g., 10% of the data), a validation set (10%), and a large, initially unlabeled pool (the remaining 80%).

- Algorithm Training: Train a variety of algorithms on the small labeled set. This should include:

- Parameter-light: Logistic Regression, XGBoost.

- Parameter-medium: A standard Multi-Layer Perceptron (MLP) neural network.

- Parameter-heavy: Advanced deep learning models like Graph Neural Networks (GNNs) or Transformers (e.g., DTSyn) [22].

- Performance Evaluation: Use the PR-AUC metric on the validation set to evaluate each algorithm's ability to identify the rare, synergistic pairs.

- Iteration: The best-performing algorithm is then deployed in the active learning cycle.

Protocol: Evaluating Molecular and Cellular Features for Synergy Prediction

Objective: To determine the most informative numerical representations (features) of drugs and cells for predicting synergy.

Methodology:

- Fix the Model: Use a standard model architecture, such as an MLP.

- Benchmark Molecular Features: Test different drug representations while keeping cellular features constant. Common features include:

- Morgan Fingerprints: A circular fingerprint representing molecular structure.

- MAP4: MinHashed atom-pair fingerprint.

- MACCS: A fingerprint based on a predefined set of structural keys.

- ChemBERTa: A pre-trained representation from a transformer model [22].

- Benchmark Cellular Features: Test different cell line representations using the best molecular feature.

- Compare a trained representation against using gene expression profiles from databases like GDSC.

- Perform feature selection on the gene expression profiles to identify the minimal set of genes needed for accurate predictions [22].

- Analysis: The optimal feature set is the one that yields the highest PR-AUC score on the validation set with the given training data size.

Performance Data & Research Reagents

Table 1: Active Learning Performance in Drug Discovery Benchmarks

This table summarizes key quantitative results from recent studies, highlighting the efficiency gains from active learning.

| Application / Dataset | Key Finding | Performance Metric | Result with Active Learning | Result Without Guidance |

|---|---|---|---|---|

| Drug Synergy Screening (O'Neil dataset) | Synergistic pairs recovered | % of Synergistic Pairs Found | 60% found after screening 10% of space [22] | Required screening ~55% of space for similar yield [22] |

| General Drug Discovery (Various ADMET/Affinity datasets) | Model accuracy over iterations | Root Mean Square Error (RMSE) | Lower RMSE achieved in fewer iterations using methods like COVDROP [28] | Higher RMSE for the same number of training samples [28] |

| Molecular Optimization (Tartarus/GuacaMol benchmarks) | Success in multi-objective optimization | Optimization Success Rate | Substantially improved success using Probabilistic Improvement (PIO) [11] | Lower success rate with uncertainty-agnostic approaches [11] |

This table lists essential computational tools and data resources for building active learning pipelines in drug discovery.

| Item Name | Function / Explanation | Example Source / Implementation |

|---|---|---|

| Morgan Fingerprints | A numerical representation of a molecule's structure, used as input features for ML models. | RDKit (Open-source Cheminformatics) [22] |

| Gene Expression Profiles | Genomic features of the target cell line, crucial for context-specific predictions like drug synergy. | Genomics of Drug Sensitivity in Cancer (GDSC) database [22] |

| Directed-MPNN (D-MPNN) | A type of Graph Neural Network that operates directly on molecular graphs, capturing structural information. | Chemprop (Open-source Python Library) [11] |

| Censored Regression Labels | Experimental data points where the precise value is unknown but known to be above/below a threshold. | Internal pharmaceutical data; can be modeled with the Tobit model [7] |

| DeepBatch Active Learning (COVDROP/COVLAP) | Advanced batch selection methods that maximize joint entropy (uncertainty + diversity) for deep learning models. | Sanofi research (Methods applicable in frameworks like DeepChem) [28] |

Workflow Visualization

Active Learning Cycle for Drug Discovery

Uncertainty-Aware Molecular Optimization

Uncertainty Quantification Techniques for Deep Learning Models in Cheminformatics

Frequently Asked Questions (FAQs)

Q1: My model's uncertainty estimates are poorly calibrated, especially for new molecular structures. How can I improve this? Poor calibration often occurs when the model encounters out-of-domain structures or when aleatoric uncertainty is not properly modeled. Implement a post-hoc calibration method that fine-tunes the weights of selected layers in your ensemble models. This approach refines the aleatoric uncertainty calculated by Deep Ensembles for better confidence interval estimates. Additionally, consider using explainable uncertainty quantification that attributes uncertainties to specific atoms in the molecule, helping you diagnose which chemical components introduce uncertainty to the prediction [29].

Q2: How can I effectively incorporate censored experimental data (e.g., activity thresholds instead of precise values) into uncertainty quantification? Standard UQ methods cannot fully utilize censored labels. Adapt ensemble-based, Bayesian, and Gaussian models with tools from survival analysis, specifically the Tobit model, to learn from censored regression labels. This approach is particularly valuable in real pharmaceutical settings where approximately one-third or more of experimental labels may be censored, leading to more reliable uncertainty estimates [7].

Q3: When using active learning for molecular optimization, my model struggles to explore diverse chemical spaces. What UQ strategy can help? Integrate Probabilistic Improvement Optimization (PIO) with your graph neural networks. This uncertainty-aware acquisition function quantifies the likelihood that candidate molecules will exceed predefined property thresholds, which is more effective for practical applications than seeking extreme property values. PIO has demonstrated particularly strong performance in multi-objective optimization tasks, better balancing competing objectives than uncertainty-agnostic approaches [11].

Q4: My uncertainty-based active learning performs poorly with high-dimensional molecular descriptors. Why does this happen and how can I fix it? Uncertainty-based active learning efficiency strongly depends on descriptor dimensions. With high-dimensional descriptors like Morgan fingerprints (2048 dimensions), the input distribution becomes unbalanced in feature space. Reduce descriptor dimensions through feature selection or use graph-based representations that directly operate on molecular structure. Studies show AL works best with lower-dimensional descriptors, and performance degrades significantly as dimensionality increases [30].

Q5: How can I implement batch active learning for drug discovery while ensuring diversity in selected compounds? Use joint entropy maximization approaches that select batches by maximizing the log-determinant of the epistemic covariance of batch predictions. Methods like COVDROP compute a covariance matrix between predictions on unlabeled samples and iteratively select a submatrix with maximal determinant. This enforces batch diversity by rejecting highly correlated molecules and has shown significant improvements over random selection and other active learning methods in ADMET optimization tasks [31].

Troubleshooting Guides

Issue 1: Poor Uncertainty Calibration in Deep Ensemble Models

Symptoms:

- Confidence intervals do not match empirical error rates

- Underestimation of uncertainty for novel molecular structures

- Poor performance in out-of-domain applications

Diagnosis Steps:

- Calculate calibration curves comparing predicted confidence levels to actual coverage rates

- Test calibration separately on in-domain and out-of-domain molecular structures

- Check whether the issue affects both aleatoric and epistemic uncertainty components

Solutions:

- Implement post-hoc calibration: Fine-tune selected layers of your trained ensemble models specifically for better uncertainty calibration [29]

- Separate uncertainty types: Use methods that separately quantify aleatoric and epistemic uncertainties to identify the source of poor calibration [29]

- Atomic attribution: Analyze which atoms contribute most to uncertainty to identify problematic chemical motifs [29]

Issue 2: Inefficient Active Learning with High-Dimensional Descriptors

Symptoms:

- Active learning performs worse than random sampling

- Slow convergence of model accuracy

- Selected samples cluster in specific regions of chemical space

Diagnosis Steps:

- Compare AL performance against random sampling baseline

- Analyze the distribution of selected samples in descriptor space using dimensionality reduction (e.g., UMAP)

- Check the intrinsic dimensionality of your molecular representations

Solutions:

- Descriptor selection: Switch to lower-dimensional descriptors or use feature selection methods [30]

- Graph representations: Use graph neural networks that operate directly on molecular structures rather than predefined descriptors [11]

- Alternative acquisition functions: Experiment with Thompson sampling or hybrid approaches that balance exploration and exploitation [30]

Issue 3: Handling Censored Data in Uncertainty Quantification

Symptoms:

- Model cannot incorporate threshold-based experimental results

- Wasted experimental information from activity limits (e.g., ">10μM")

- Biased uncertainty estimates in regions with abundant censored data

Diagnosis Steps:

- Audit your dataset to identify the percentage of censored labels

- Check for systematic patterns in which data points are censored

- Evaluate how current models handle censored versus precise measurements

Solutions:

- Tobit model integration: Adapt ensemble, Bayesian, and Gaussian models with censored regression capabilities [7]

- Multiple imputation: Generate possible values for censored observations based on distribution assumptions

- Specialized loss functions: Modify training objectives to properly handle interval-censored data [7]

Uncertainty Quantification Performance Comparison

Table 1: Comparison of Uncertainty Quantification Methods in Cheminformatics

| Method | Uncertainty Types Captured | Best For | Key Advantages | Limitations |

|---|---|---|---|---|

| Deep Ensembles [29] | Aleatoric, Epistemic | General molecular property prediction | Simple implementation, strong empirical performance | Computationally expensive, may need post-hoc calibration |

| Monte Carlo Dropout [29] | Epistemic | High-dimensional data, limited compute | Computational efficiency, easy to implement | Primarily captures epistemic uncertainty |

| Gaussian Processes [30] | Aleatoric, Epistemic | Small datasets, well-defined kernels | Naturally provides uncertainty estimates | Poor scalability to large datasets (O(n³)) |

| Graph Neural Networks with UQ [11] | Aleatoric, Epistemic | Molecular optimization tasks | Direct operation on molecular graphs | Complex implementation, training intensive |

| Censored Regression Models [7] | Aleatoric (with censored data) | Pharmaceutical data with thresholds | Utilizes partially informative experimental data | Specialized for censored data scenarios |

Table 2: Active Learning Performance Across Molecular Representations

| Representation Type | Descriptor Dimension | AL Efficiency vs. Random | Recommended Use Cases |

|---|---|---|---|

| Composition-based descriptors [30] | Low (~45 dimensions) | Significant improvement | Ternary systems, inorganic materials |

| Morgan Fingerprints [30] | High (2048 dimensions) | Occasionally inefficient | Small molecule drug discovery |

| Graph Representations [11] | Structure-dependent | Good with UQ integration | Molecular optimization tasks |

| Matminer descriptors [30] | Medium (~145 dimensions) | Variable performance | Crystalline materials properties |

Experimental Protocols

Protocol 1: Explainable Uncertainty Quantification with Deep Ensembles

Purpose: To implement and calibrate an explainable uncertainty quantification method that separates aleatoric and epistemic uncertainties and attributes them to specific atoms in molecules.

Materials and Methods:

- Model Architecture: Deep Ensemble with multiple networks trained with different initializations [29]

- Training Data: Molecular structures with corresponding property measurements

- Software: Python, PyTorch or TensorFlow, RDKit for molecular processing

Procedure:

- Ensemble Training: Train multiple neural networks with different random initializations on the same molecular dataset. Modify the last layer to output both mean (μ(x)) and variance (σ²(x)) for Gaussian uncertainty estimation [29]

- Uncertainty Decomposition: Calculate epistemic uncertainty as the variance between ensemble predictions, and aleatoric uncertainty as the mean of predicted variances [29]

- Atomic Attribution: Use gradient-based methods to attribute the estimated uncertainties to individual atoms in the molecular structure [29]

- Post-hoc Calibration: Fine-tune selected layers of the trained ensemble models using a separate calibration dataset to improve uncertainty quantification [29]

- Validation: Evaluate calibration using confidence interval coverage plots and analyze atomic uncertainty patterns for chemical interpretability [29]

Expected Outcomes:

- Separately quantified aleatoric and epistemic uncertainties

- Atom-level uncertainty attributions for chemical insight

- Better calibrated confidence intervals for molecular property predictions

Protocol 2: Uncertainty-Guided Active Learning for Molecular Optimization