Adam Optimization in Deep Learning: Accelerating Drug Discovery and Molecular Design

This comprehensive review explores the transformative role of the Adam (Adaptive Moment Estimation) optimizer in deep learning applications for chemistry and drug discovery.

Adam Optimization in Deep Learning: Accelerating Drug Discovery and Molecular Design

Abstract

This comprehensive review explores the transformative role of the Adam (Adaptive Moment Estimation) optimizer in deep learning applications for chemistry and drug discovery. It examines Adam's core mechanism—combining momentum and adaptive learning rates—to efficiently train complex neural networks on high-dimensional chemical data. The article details practical implementations for molecular property prediction, generative molecule design, and optimization challenges, while comparing Adam's performance against alternative optimizers. Supported by recent case studies, including anticocaine addiction drug development, this resource provides chemists and researchers with actionable strategies to leverage Adam optimizer for accelerated, data-driven molecular innovation.

Understanding Adam Optimizer: Core Principles and Chemical Context

The Fundamental Mechanics of Adam Optimization

Troubleshooting Guide: Common Adam Optimizer Issues

Why does my training loss suddenly explode or become unstable after a period of steady decrease?

This is a recognized instability issue with the Adam optimizer, particularly in later training stages. The problem often stems from the denominator term in the update rule becoming too small when gradients are minimal, causing parameter updates to blow up and the loss to spike [1].

Recommended Solution: Implement the AMSGrad variant of Adam. AMSGrad modifies the second moment estimate to use the maximum of past squared gradients rather than an exponential average, preventing uncontrolled growth of the effective learning rate [1] [2].

Implementation:

Additional Stabilization Techniques:

- Gradient Clipping: Cap the gradient values to a maximum magnitude before the optimizer uses them [1].

- Learning Rate Scheduling: Gradually reduce the learning rate as performance improves. One user reported success by "linearly reducing the learning rate at perfect performance to 1/10th the original LR" [1].

- Batch Normalization: Helps stabilize the training dynamics and has been used in combination with gradient clipping to prevent this issue [1].

How can I address poor generalization performance when using Adam?

While Adam often converges quickly, its final performance on test data can sometimes be worse than simple Stochastic Gradient Descent (SGD). This is a known generalization gap [3].

Recommended Solution: Consider a hybrid optimization strategy like SWATS. This approach begins training with Adam for fast initial convergence but switches to SGD once learning plateaus, combining the strengths of both methods [4] [3].

What should I do if my model fails to learn anything at all?

This can be caused by various implementation bugs that are common in deep learning [5].

Debugging Protocol:

- Overfit a single batch: Try to drive the training error on a single, small batch of data arbitrarily close to zero. This heuristic can catch many bugs [5].

- If the error increases, check for a flipped sign in your loss function or gradient calculation [5].

- If the error explodes, this could be a numerical instability issue or a learning rate that is too high [5].

- If the error oscillates, try lowering the learning rate and inspecting your data for mislabeled examples [5].

- If the error plateaus, try increasing the learning rate and temporarily removing any regularization [5].

- Compare to a known result: Reproduce the results of an official model implementation on a benchmark dataset, checking your code line-by-line to ensure matching outputs [5].

Frequently Asked Questions (FAQs)

What is the Adam optimizer and why is it so popular?

Adam (Adaptive Moment Estimation) is an iterative optimization algorithm that minimizes the loss function during neural network training. It is popular because it combines the advantages of two other powerful optimizers: Momentum (which accelerates convergence by smoothing gradient directions) and RMSProp (which adapts the learning rate for each parameter based on recent gradient magnitudes) [6] [7] [8]. This combination leads to:

- Faster convergence than standard SGD [6] [4].

- Adaptive learning rates for each parameter, reducing the need for extensive manual tuning [8] [4].

- Efficiency in terms of both computation and memory [6] [8].

What are the default hyperparameters for Adam, and when should I tune them?

The following table summarizes the default values and roles of Adam's key hyperparameters [6] [8]:

Table: Adam Optimizer Hyperparameters and Defaults

| Hyperparameter | Default Value | Description | Tuning Guidance |

|---|---|---|---|

| α (Learning Rate) | 0.001 | The step size for updates. | The most common parameter to tune. Start with the default and adjust if convergence is slow or unstable. |

| β₁ | 0.9 | Decay rate for the first moment (mean of gradients). | Typically left at default. Controls how much past gradient history is remembered. |

| β₂ | 0.999 | Decay rate for the second moment (uncentered variance of gradients). | Typically left at default. Controls the adaptation to gradient steepness. |

| ε (epsilon) | 1e-8 | A small constant to prevent division by zero. | Usually kept default. In some cases (e.g., training Inception on ImageNet), values like 1.0 or 0.1 have been used [8]. |

How does Adam's update rule work mathematically?

The algorithm can be broken down into the following steps [7] [2]:

- Initialize the first moment vector (m0 \leftarrow 0) and the second moment vector (v0 \leftarrow 0).

- At each time step (t), compute the gradient (g_t) of the objective function with respect to the parameters.

- Update biased first moment estimate: (mt \leftarrow \beta1 \cdot m{t-1} + (1 - \beta1) \cdot g_t)

- Update biased second moment estimate: (vt \leftarrow \beta2 \cdot v{t-1} + (1 - \beta2) \cdot g_t^2)

- Compute bias-corrected first moment estimate: (\hat{mt} \leftarrow \frac{mt}{1 - \beta_1^t})

- Compute bias-corrected second moment estimate: (\hat{vt} \leftarrow \frac{vt}{1 - \beta_2^t})

- Update parameters: (\thetat \leftarrow \theta{t-1} - \alpha \cdot \frac{\hat{mt}}{\sqrt{\hat{vt}} + \epsilon})

The bias correction is a critical step that counteracts the initial zero-bias of the moving averages, especially important in the early stages of training [7].

Are there improved variants of Adam for more advanced applications?

Yes, several variants have been proposed to address specific limitations of the original Adam algorithm. The following table compares some notable ones:

Table: Advanced Variants of the Adam Optimizer

| Variant | Key Mechanism | Primary Advantage | Potential Application in Chemistry Research |

|---|---|---|---|

| AMSGrad [2] [1] | Uses maximum of past (v_t) to prevent rapid decrease of learning rate. | Theoretical convergence guarantees; prevents training instability and loss spikes. | Training stable models for long-term molecular dynamics simulations. |

| AdamW [2] | Decouples weight decay from gradient-based updates. | Improved generalization and more correct weight decay implementation. | Regularizing complex QSAR (Quantitative Structure-Activity Relationship) models. |

| BDS-Adam [2] | Dual-path framework with nonlinear gradient mapping and adaptive smoothing. | Addresses biased gradient estimation and early-training instability. | Optimizing high-dimensional kinetic parameters in reaction models (e.g., as in DeePMO [9]). |

| HN_Adam [3] | Automatically adjusts step size based on the norm of parameter updates. | Aims to combine fast convergence of Adam with good generalization of SGD. | Image-based analysis in pathology or high-throughput screening. |

Experimental Protocol: Benchmarking Adam in a Chemistry Research Context

This protocol outlines how to evaluate the performance of Adam and its variants against other optimizers when training a deep learning model on a chemistry-relevant dataset.

Objective: To compare the convergence speed and final performance of different optimizers on a chemical property prediction task.

Materials and Setup:

- Dataset: Use a standard public dataset like QM9 (molecular properties) or a proprietary dataset of reaction kinetics.

- Model: A Graph Neural Network (GNN) suitable for molecular graph data or a Multilayer Perceptron (MLP) for pre-computed molecular descriptors.

- Optimizers to Compare:

- Adam

- AdamW

- SGD with Momentum

- RMSprop

- (Optional) A modern variant like BDS-Adam or HN_Adam.

- Evaluation Metrics:

- Training Loss (e.g., Mean Squared Error) over epochs to track convergence speed.

- Validation Accuracy/MSE to assess generalization.

- Wall-clock time to reach a specific validation performance threshold.

Procedure:

- Baseline Establishment: Train the model with the default Adam optimizer (α=0.001, β₁=0.9, β₂=0.999, ε=1e-8) for a fixed number of epochs. Record the loss and accuracy curves.

- Variant Comparison: Train the same model architecture on the same data split using the other selected optimizers. Use their recommended default hyperparameters.

- Hyperparameter Sensitivity (Optional): Perform a small grid search around the learning rate (α) for the top-performing optimizers to ensure a fair comparison.

- Analysis: Plot the training loss and validation accuracy versus epochs (and versus time) for all optimizers. Note the stability of the training curves and the final achieved performance.

Workflow and Schematic Diagrams

Adam Optimization Algorithm Steps

Troubleshooting Decision Tree

Table: Essential Components for Optimizing Deep Learning Models in Chemistry

| Item / Resource | Function / Purpose | Example / Notes |

|---|---|---|

| Deep Learning Framework | Provides the computational backbone for building and training models. | PyTorch [6] [1], TensorFlow/Keras [4]. |

| Adam Optimizer (Default) | A robust, general-purpose starting point for training most deep neural networks. | Use torch.optim.Adam or tf.keras.optimizers.Adam with default parameters [6] [8]. |

| Adam Variants (AMSGrad, AdamW) | Address specific failure modes like instability and poor generalization. | amsgrad=True in PyTorch's Adam [1]. AdamW for better weight decay [2]. |

| Gradient Clipping | Prevents exploding gradients by capping their maximum value. | A standard utility in all major frameworks. Crucial for training RNNs and Transformers. |

| Learning Rate Scheduler | Systematically reduces the learning rate during training to refine convergence. | StepLR, ReduceLROnPlateau in PyTorch. Helps improve final accuracy [1]. |

| Benchmark Chemistry Datasets | Standardized data for fair evaluation and benchmarking of new models and optimizers. | QM9, MD17 for molecular property prediction; custom kinetic datasets like those used in DeePMO [9]. |

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind the Adam optimizer's "dual-path" approach, and why is it beneficial for training deep learning models in chemistry? Adam's dual-path approach separately calculates the first moment (the mean of past gradients, acting as momentum) and the second moment (the uncentered variance of past gradients, for adaptive learning rates) [10] [11]. These two paths are then combined for parameter updates. Momentum accelerates convergence in directions of persistent gradient descent, while the adaptive learning rate stabilizes training by adjusting the step size for each parameter individually [10]. This is particularly beneficial in chemistry for handling sparse or noisy data from molecular datasets and navigating the complex, high-dimensional optimization landscapes common in tasks like molecular property prediction [12].

Q2: My model's training loss is oscillating or diverging during early training. What could be the cause related to Adam? This is a known "cold-start" instability issue with Adam, often caused by biased gradient estimates early in training when the moving averages are initialized to zero [2]. The second moment estimate ((vt)) can be too small, leading to excessively large parameter updates [2]. To mitigate this, ensure you are using the bias-corrected versions of the first and second moments ((\hat{mt}) and (\hat{v_t})) as outlined in the standard algorithm [13]. Furthermore, consider using a variant like BDS-Adam, which incorporates an adaptive second-order moment correction specifically designed to counter these cold-start effects [2].

Q3: How does Adam handle the problem of pathological curvature in loss landscapes, a challenge in complex molecular optimization? Pathological curvature, characterized by steep slopes in one dimension and gentle slopes in another, causes simple SGD to bounce off the walls of the "ravine" rather than moving quickly along the bottom towards the minimum [11]. Adam's dual-path approach addresses this effectively. The momentum component helps to speed up progress along the shallow, consistent direction (the bottom of the ravine), while the adaptive learning rate (from RMSProp) dampens the updates in the steep, oscillating direction (the walls of the ravine), leading to a more direct and faster path to the minimum [11].

Q4: Are there Adam variants that offer improved performance for specific challenges in drug discovery? Yes, several advanced variants have been developed to address specific limitations. The table below summarizes key Adam variants and their relevance to drug discovery research.

Table: Advanced Adam Optimizer Variants for Drug Discovery Research

| Optimizer Variant | Key Innovation | Relevance to Drug Discovery Challenges |

|---|---|---|

| BDS-Adam [2] | Integrates nonlinear gradient mapping and adaptive momentum smoothing; features adaptive variance correction. | Enhances training stability and convergence speed for complex molecular data; mitigates early training instability. |

| AdamZ [14] | Dynamically adjusts learning rate by detecting overshooting and stagnation. | Improves precision in loss minimization, critical for accurate molecular property prediction and QSAR modeling. |

| AdamW [14] | Decouples weight decay from the gradient-based update. | Provides better regularization, reducing overfitting in over-parametrized models common in graph neural networks (GNNs) for molecular structures. |

| RAdam [2] | Uses a symplectic correction to the adaptive learning rate to improve stability. | Addresses convergence issues in the volatile early stages of training generative models for de novo molecular design. |

Q5: What are the recommended best practices for tuning Adam's hyperparameters in cheminformatics applications? While Adam is robust to hyperparameter settings, fine-tuning can yield significant performance gains [10]. Key recommendations include:

- Learning Rate ((\alpha)): This is the most critical parameter. Start with the default of 0.001, but be prepared to perform a grid or random search around this value [15]. For tasks involving Graph Neural Networks (GNNs) on molecular data, systematic hyperparameter optimization (HPO) is highly recommended [16].

- Momentum Decays ((\beta1, \beta2)): The common defaults of (\beta1 = 0.9) and (\beta2 = 0.999) are often sufficient. (\beta_1) can be annealed from 0.5 to 0.99 during training for some tasks [11].

- Epsilon ((\epsilon)): This is a stability constant to prevent division by zero. The default value of 1e-8 is typically used and should not be changed without good reason [13].

- Batch Size: The choice of batch size influences gradient noise. Smaller batches provide a regularizing effect but can be noisy, while larger batches offer more stable gradient estimates [15].

Troubleshooting Guides

Issue: Training Instability and Non-Convergence

Symptoms: The training loss fails to decrease consistently, shows large oscillations, or becomes NaN.

Diagnosis and Resolution Protocol:

- Verify Bias Correction: Ensure your implementation uses the bias correction terms for the first and second moments ((\hat{mt}) and (\hat{vt})). This is crucial for stability in the early stages of training [2] [13].

- Lower the Learning Rate: A high learning rate is a primary cause of instability and divergence. Reduce the learning rate by an order of magnitude (e.g., from 0.001 to 0.0001) and observe the training curve [15].

- Check Gradient Explosion: Implement gradient clipping to cap the magnitude of gradients during backpropagation. This prevents parameter updates from becoming destructively large.

- Tune Hyperparameters Systematically: For critical applications like molecular design, employ formal HPO techniques such as Bayesian optimization or grid search over the learning rate and decay parameters [16].

- Consider an Advanced Variant: Switch to a more stable optimizer variant like BDS-Adam, which is explicitly designed to suppress abrupt parameter updates and mitigate cold-start effects through its dual-path architecture and adaptive correction techniques [2].

Issue: Model Overfitting

Symptoms: The model performs excellently on training data but poorly on validation or test data (e.g., predicts well on known molecules but fails on novel scaffolds).

Diagnosis and Resolution Protocol:

- Apply Regularization: Integrate robust regularization techniques such as Dropout or L2 regularization.

- Use AdamW: Replace standard Adam with AdamW, which correctly decouples weight decay from the adaptive learning rate mechanism. This often leads to better generalization performance [14].

- Monitor Validation Loss: Implement early stopping by halting training when the validation loss stops improving for a predefined number of epochs.

- Increase Dataset Size and Diversity: In molecular AI, this could involve augmenting your dataset with more diverse chemical structures or employing data augmentation techniques specific to molecular graphs [17].

Experimental Protocols

Protocol: Benchmarking Adam Optimizer Performance in a Molecular Property Prediction Task

Objective: To empirically compare the convergence speed and generalization performance of standard Adam against its variants (e.g., AdamW, BDS-Adam) on a quantitative structure-activity relationship (QSAR) dataset.

Materials and Dataset:

- Dataset: Curated bioactivity data from a public repository like ChEMBL or DrugBank [12].

- Model Architecture: A Graph Neural Network (GNN), such as a Graph Convolutional Network (GCN), to directly process molecular graphs [16].

- Optimizers: Adam, AdamW, BDS-Adam (or another selected variant).

- Framework: PyTor or TensorFlow with appropriate cheminformatics libraries (e.g., RDKit, DeepChem).

Methodology:

- Data Preprocessing: Standardize the dataset. Split it into training, validation, and test sets using scaffold splitting to assess generalization to novel chemotypes.

- Hyperparameter Setup: Use consistent model architecture and initial weights. For each optimizer, perform a limited search to find a near-optimal learning rate.

- Training: Train the model with each optimizer, logging the training loss and validation accuracy at regular intervals.

- Evaluation: Compare optimizers based on key metrics: final validation accuracy, time to convergence (number of epochs to reach a target loss), and training stability (smoothness of the loss curve).

Table: Key Research Reagent Solutions for Optimizer Experiments

| Item | Function in Experiment |

|---|---|

| GNN Architecture (e.g., GCN, GIN) | Learns representations from molecular graph structures for property prediction [16]. |

| Optimizers (Adam, AdamW, BDS-Adam) | The core algorithms being tested, responsible for updating model parameters to minimize loss [2] [14]. |

| Hyperparameter Optimization (HPO) Search Space | Defines the range of values (e.g., for learning rate) to be explored to find the optimal configuration for a given task [16]. |

| Validation Metric (e.g., AUC-ROC, RMSE) | A quantitative measure used to evaluate and compare the performance of different optimized models objectively [12]. |

Visualizations

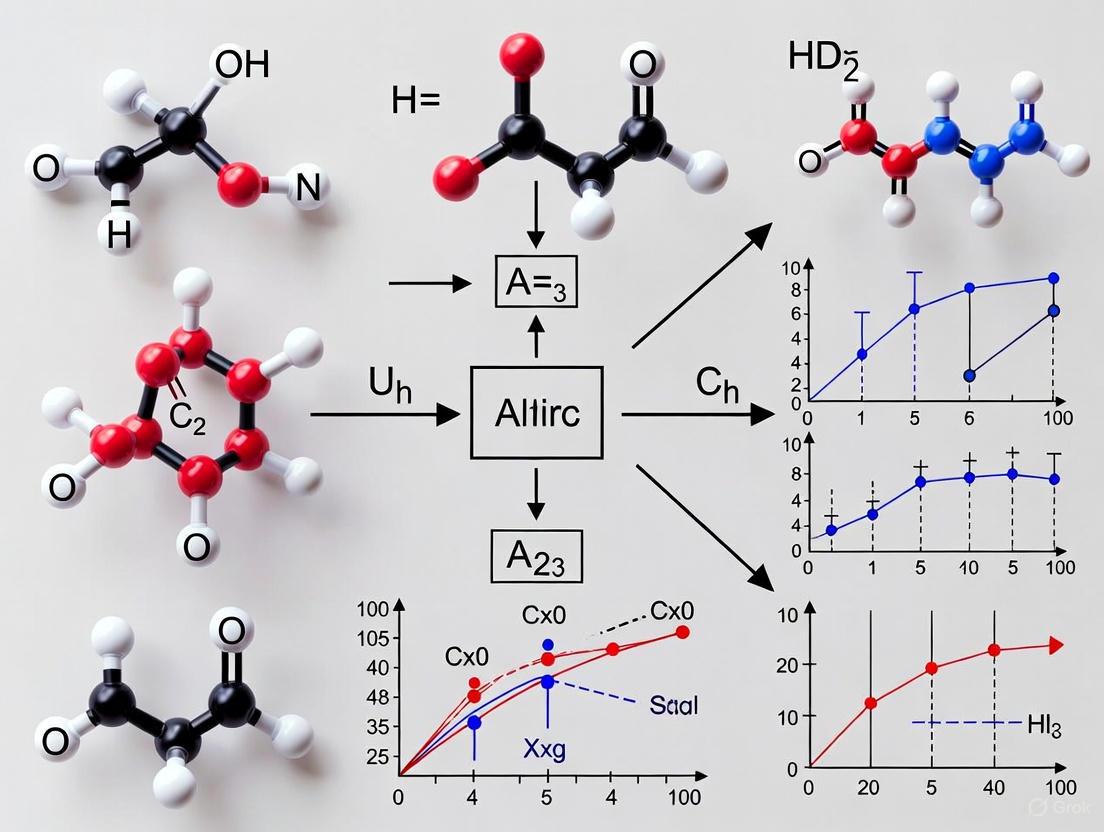

Adam's Dual-Path Update Mechanism

This diagram illustrates the core dual-pathway architecture of the Adam optimizer, showing how gradients flow separately to compute momentum and adaptive learning rates before being fused for the parameter update.

Optimizer Benchmarking Workflow

This flowchart outlines the experimental procedure for systematically comparing the performance of different optimization algorithms on a specific dataset and model.

Why Adam Excels with Sparse, High-Dimensional Chemical Data

Frequently Asked Questions

FAQ 1: Why is Adam particularly well-suited for handling sparse chemical data? Adam is an adaptive learning rate algorithm, which means it calculates a unique, adaptive step size for each model parameter. In sparse datasets, many features (like specific molecular descriptors) are zero most of the time. Adam's update rule assigns larger updates to parameters associated with these infrequent features, ensuring they are effectively learned and do not get overlooked during training. This makes it more robust than non-adaptive optimizers for datasets with high sparsity [18] [19].

FAQ 2: My model is training slowly on a large, high-dimensional molecular graph dataset. Can Adam help? Yes. Adam combines the benefits of momentum, which helps accelerate convergence in relevant directions, and adaptive learning rates, which help navigate the complex, high-dimensional loss landscapes common in deep learning models for chemistry, such as Graph Neural Networks (GNNs) [18] [20]. Its efficiency in handling large-scale data has made it a cornerstone in the field [21].

FAQ 3: I've observed training instability with Adam on my complex GNN. What could be the cause?

While Adam is powerful, its standard form may not fully account for global factors like overall model complexity. It has been observed that increasing model complexity can lead to larger fluctuations and instability in the training loss [22]. Furthermore, Adam can be sensitive to its hyperparameters (beta1, beta2) and may sometimes generalize worse than SGD with momentum on certain tasks [19]. Using a lower learning rate or exploring advanced variants like AMC or BDS-Adam, which are designed to improve stability, can be beneficial [2] [22].

FAQ 4: What are the latest advancements in Adam optimizers for scientific applications? Recent research has focused on addressing Adam's limitations, such as biased gradient estimation and early-training instability. New variants have been proposed:

- BDS-Adam: Integrates a dual-path framework with nonlinear gradient mapping and adaptive momentum smoothing to stabilize training and improve convergence [2].

- AMC (Adam with Model Complexity): Dynamically adjusts the learning rate based on the model's complexity (measured by its Frobenius norm), automatically using a more cautious learning rate for highly complex models [22].

Troubleshooting Guides

Issue 1: Poor Generalization Performance (Overfitting) Symptoms: Validation accuracy is significantly lower than training accuracy. Potential Solutions:

- Use AdamW: Switch from standard Adam to AdamW, which decouples weight decay from the gradient update and often leads to better generalization [22].

- Add Regularization: Incorporate L2 regularization or dropout to your model.

- Fine-tune Hyperparameters: Systematically tune the learning rate and decay rates (

beta1,beta2). A lower learning rate can sometimes help. - Consider a Hybrid Approach: Use Adam for initial fast convergence, then switch to SGD for fine-tuning to potentially achieve a better minimum [19].

Issue 2: Unstable or Oscillating Training Loss Symptoms: The training loss curve shows large fluctuations. Potential Solutions:

- Lower the Learning Rate: This is the most common fix to reduce the step size of updates.

- Adjust Momentum Parameters: Increase

beta1(e.g., to 0.99) to rely more on a smoother average of past gradients. - Use a Learning Rate Schedule: Implement a schedule to gradually decrease the learning rate over time.

- Explore Advanced Variants: Implement an optimizer like BDS-Adam, which explicitly incorporates an adaptive momentum smoothing controller to suppress abrupt parameter updates [2].

Issue 3: Slow Convergence Symptoms: Training loss decreases very slowly. Potential Solutions:

- Increase the Learning Rate: If the loss is decreasing steadily but slowly, a larger learning rate may help.

- Check for Gradient Vanishing: Ensure your model architecture (e.g., choice of activation functions) is appropriate.

- Verify Data Preprocessing: Ensure your input data is correctly normalized.

- Utilize a Complexity-Aware Optimizer: For complex models, using AMC can help by dynamically optimizing the learning rate based on the model's complexity [22].

Experimental Data & Performance

The following table summarizes quantitative results from empirical evaluations comparing Adam and its variants across different benchmark tasks.

Table 1: Optimizer Performance on Benchmark Tasks

| Optimizer | Test Dataset | Key Metric (Accuracy) | Notes |

|---|---|---|---|

| Adam | CIFAR-10 | Baseline | Widely used for its adaptive learning rates and handling of sparse gradients [18] [19]. |

| BDS-Adam | CIFAR-10 | +9.27% vs Adam | Dual-path framework improves stability and convergence [2]. |

| BDS-Adam | MNIST | +0.08% vs Adam | Demonstrates robustness even on simpler datasets [2]. |

| BDS-Adam | Gastric Pathology | +3.00% vs Adam | Effective in specialized, complex biomedical tasks [2]. |

| AMC | Multiple Benchmarks | Faster Convergence & Better Stability | Dynamically adjusts learning rate based on model complexity, especially beneficial for complex models [22]. |

Experimental Protocol: Benchmarking Optimizers on a Molecular Property Prediction Task

This protocol outlines a methodology for comparing the performance of different optimizers on a molecular property prediction task using Graph Neural Networks (GNNs).

1. Objective: To evaluate and compare the convergence speed, stability, and final performance of Adam, BDS-Adam, and AMC optimizers.

2. Materials and Dataset:

- Dataset: Use a standard molecular dataset like those from MoleculeNet (e.g., HIV, FreeSolv) or a custom cheminformatics dataset [16] [23].

- Model: A standard GNN architecture (e.g., MPNN, GIN) [16].

- Software: Python with deep learning frameworks (PyTorch, TensorFlow), and libraries for molecular processing (RDKit).

3. Procedure:

- Step 1: Data Preparation

- Split the dataset into training, validation, and test sets (e.g., 80/10/10).

- Standardize the features and convert molecules into graph representations (nodes for atoms, edges for bonds).

- Step 2: Model & Optimizer Setup

- Initialize the GNN model with the same architecture and random weights for each experimental run.

- Configure the optimizers to be tested:

- Step 3: Training Loop

- Train the model for a fixed number of epochs.

- For each mini-batch, compute the gradients and update the model parameters using the respective optimizer.

- Record the training loss and validation accuracy at regular intervals.

- Step 4: Evaluation

- Evaluate the final model on the held-out test set to report accuracy, ROC-AUC, or other relevant metrics.

- Plot the training loss and validation accuracy curves to visualize convergence and stability.

The workflow for this experiment is outlined below.

The Scientist's Toolkit: Key Research Reagents

Table 2: Essential Computational Tools for Optimizer Experiments in Cheminformatics

| Item / Reagent | Function / Explanation |

|---|---|

| Graph Neural Network (GNN) | The primary model architecture used to learn directly from molecular graph structures [16] [24]. |

| Molecular Graph Dataset | A collection of molecules represented as graphs (e.g., from MoleculeNet). Provides the sparse, high-dimensional data for training and evaluation [16]. |

| Hyperparameters (lr, β1, β2) | The core settings that control the optimizer's behavior. Tuning them is critical for performance [18] [20]. |

| Bias Correction Terms | Mathematical corrections in Adam that counteract the initial zero-initialization of moment vectors, crucial for stability in early training [2] [20]. |

| Frobenius Norm | A measure of model complexity used by the AMC optimizer to dynamically scale the learning rate [22]. |

| Gradient Fusion Mechanism | A component of BDS-Adam that combines smoothed and non-linearly transformed gradients to produce more stable and geometry-aware parameter updates [2]. |

Advanced Optimizer Mechanisms

To understand why advanced variants like BDS-Adam are effective, it is useful to visualize their internal mechanics, which address specific flaws in the original Adam algorithm.

Diagram Explanation: The BDS-Adam optimizer processes raw gradients through a dual-path framework. One path applies a nonlinear transformation (e.g., hyperbolic tangent) to better capture local geometry, while the other path applies adaptive smoothing based on real-time gradient variance to suppress noise. A fusion mechanism combines these outputs, and an adaptive variance correction module mitigates cold-start effects, leading to a more stable and effective parameter update [2].

Technical Support Center

This guide provides targeted support for researchers using the Adam optimizer in deep learning for chemical applications. The adaptive learning rates of Adam make it particularly suitable for navigating the complex, high-dimensional, and often noisy optimization landscapes found in computational chemistry, from molecular property prediction to kinetic model optimization [6] [25].

Frequently Asked Questions (FAQs)

FAQ 1: What are the roles of the key hyperparameters β₁, β₂, and ε in the Adam optimizer?

Adam (Adaptive Moment Estimation) combines the concepts of momentum and adaptive learning rates. The hyperparameters β₁ and β₂ control the decay rates for these two components [6] [25].

- β₁ (Beta1): This is the decay rate for the first moment (the mean) of the gradients. It acts similarly to momentum in traditional Stochastic Gradient Descent (SGD), helping to accelerate convergence by smoothing out the gradient updates. A high value (like the default of 0.9) means the optimizer has a longer "memory" for past gradients, which helps in navigating areas of noisy or oscillating gradients commonly encountered in chemical loss landscapes [6] [26].

- β₂ (Beta2): This is the decay rate for the second moment (the uncentered variance) of the gradients. It is used to adapt the learning rate for each parameter individually. A very high value (like the default of 0.999) provides a stable estimate of the variance of gradients over time, which is crucial for stable parameter updates, especially when dealing with sparse or varying gradient signals from heterogeneous chemical data [6] [26] [2].

- ε (Epsilon): This is a small constant added to prevent division by zero in the parameter update rule. While it seems minor, its value can influence numerical stability, particularly in the early stages of training when the second-moment estimate (vₜ) is very close to zero. It is typically set to a very small value like 1e-8 [6] [2].

The following table summarizes their functions and default values:

Table 1: Key Hyperparameters of the Adam Optimizer

| Hyperparameter | Function | Common Chemistry-Focused Default | Chemical Relevance |

|---|---|---|---|

| β₁ | Controls momentum of gradient history | 0.9 | Smoothens updates across noisy chemical data landscapes [6]. |

| β₂ | Controls scaling of learning rate per parameter | 0.999 | Adapts step sizes for diverse parameters (e.g., atomic weights, energy terms) [6]. |

| ε | Ensures numerical stability in updates | 1e-8 | Prevents failure in early training steps [6]. |

FAQ 2: How do I troubleshoot unstable training or poor convergence when using Adam for molecular property prediction?

Instability during training can often be traced to misconfigured hyperparameters. Below is a troubleshooting guide for common issues.

Table 2: Troubleshooting Guide for Adam in Chemical Models

| Symptom | Potential Cause | Corrective Action |

|---|---|---|

| Training loss oscillates wildly | Learning rate is too high; β₂ is too low, causing unstable second-moment estimates. | Decrease the learning rate (η). Consider increasing β₂ closer to 0.999 for a more stable variance estimate [26]. |

| Convergence is slow | Learning rate is too low; β₁ is too low, reducing momentum benefits. | Increase the learning rate. Consider increasing β₁ to 0.99 to build more momentum in consistent directions [26]. |

| Model fails to converge or produces NaNs | Extremely high gradients or ε is too small, leading to numerical instability. | Use gradient clipping. Verify ε is set correctly (e.g., 1e-8) [6]. In some chemistry applications, β₁=0 can help (see FAQ 3) [27]. |

| Poor generalization despite good training loss | Over-adaptation to training data; default β₁/β₂ not optimal for final convergence. | Use a lower β₂ (e.g., 0.99) or switch to SGD fine-tuning (SWATS method) [3]. Try AdamW for better weight decay [28]. |

FAQ 3: Are there documented cases where deviating from the default β₁ and β₂ values is beneficial in scientific deep learning?

Yes, significant deviations are sometimes used. A notable example comes from training Generative Adversarial Networks (GANs) for tasks like molecular structure generation. In the StyleGAN2 and Progressive GAN implementations, researchers set β₁ = 0 and β₂ = 0.99 [27].

- Reasoning: This configuration is effectively equivalent to the RMSprop optimizer. A β₁ of 0 removes the influence of the first moment (momentum). In the context of GANs, where the loss landscape is highly complex and non-stationary, momentum can sometimes be detrimental. This setup prioritizes rapid adaptation via the second moment alone, which can improve stability and sample quality in adversarial training [27].

- Chemical Relevance: When training generative models for de novo molecular design, this hyperparameter strategy can be a valuable alternative if standard Adam leads to training instability.

FAQ 4: How do the β₁ and β₂ hyperparameters interact with other experimental choices in chemical deep learning?

The effectiveness of β₁ and β₂ is interdependent with other key experimental design choices. The diagram below illustrates the logical relationship between these factors and their collective impact on model performance.

Diagram 1: Hyperparameter Interaction Logic

The following table outlines key reagents and computational tools for building and training deep learning models in chemistry.

Table 3: Research Reagent Solutions for Chemical Deep Learning

| Category | Item | Function in Experiment |

|---|---|---|

| Software & Libraries | PyTorch / TensorFlow | Provides the implementation of the Adam optimizer and deep neural network components [6]. |

| Optimization Algorithms | Adam / AdamW / HN_Adam | Core algorithm for minimizing the loss function. AdamW decouples weight decay, often improving generalization [28] [3]. |

| Chemical Data Representation | Molecular Descriptors / Graph Embeddings | Represents chemical structures (e.g., from QM7 dataset) as input features (xᵢ) for the model [25]. |

| Target Property | Quantum Chemical Properties (e.g., Energy, Solubility) | The true label (yᵢ) the model is trained to predict [25]. |

Experimental Protocols

Protocol 1: Implementing Adam in a PyTorch Training Loop for Property Prediction

This protocol details a standard workflow for implementing Adam to train a model that predicts molecular properties.

Code Example:

Source: Adapted from [6]

Protocol 2: Systematic Hyperparameter Tuning for Kinetic Model Optimization (DeePMO Framework)

For complex tasks like optimizing high-dimensional kinetic parameters, a more systematic approach is required. The DeePMO (Deep learning-based kinetic model optimization) framework employs an iterative strategy [9].

Workflow Overview:

- Sampling: Explore the high-dimensional kinetic parameter space (e.g., for methane, n-heptane, or ammonia fuels).

- Learning: Train a hybrid Deep Neural Network (DNN) that maps kinetic parameters to performance metrics (e.g., ignition delay time, laminar flame speed).

- Inference: Use the trained DNN to guide the selection of the next promising set of parameters to sample.

- Iteration: Repeat the sampling-learning-inference cycle to efficiently converge on an optimal solution [9].

Diagram 2: Iterative Optimization Workflow

The Evolution from SGD to Adam in Chemical Deep Learning

A Technical Support Center for Molecular Deep Learning

This guide provides troubleshooting support for researchers applying deep learning to molecular property prediction. The following FAQs address common optimizer-related challenges encountered in real-world chemistry experiments.

FAQ 1: My Model Isn't Converging. Is the Optimizer the Problem?

Answer: Optimizer choice significantly impacts convergence. In molecular property prediction, adaptive optimizers like Adam, AdamW, and AMSGrad often demonstrate superior convergence stability and speed compared to basic SGD [29]. The adaptive learning rates in Adam help navigate the complex, often noisy, loss landscapes common in chemical data [18] [6].

Troubleshooting Protocol:

- Initial Check: Start with the Adam optimizer (default parameters: lr=0.001, β1=0.9, β2=0.999) as a robust baseline [6].

- Stability Diagnosis: If convergence is unstable (loss oscillates wildly), reduce the learning rate by an order of magnitude (e.g., from 0.001 to 0.0001).

- Advanced Tuning: For tasks requiring high precision or if generalization is poor, try AdamW or AMSGrad, which can offer better convergence guarantees [29].

- SGD Validation: Compare against SGD with Momentum. While often slower to converge, a well-tuned SGD can sometimes achieve better generalization on smaller chemical datasets [30] [31].

FAQ 2: SGD vs. Adam: Which Should I Choose for My Molecular Dataset?

Answer: The choice involves a trade-off between speed of convergence and final generalization performance [30].

- Use Adam for: Large-scale molecular datasets (e.g., ChEMBL), deep Graph Neural Networks (GNNs), and when you need rapid prototyping [29] [18]. Its adaptive learning rates require less hyperparameter tuning.

- Consider SGD for: Smaller, dense datasets or when you have the computational resources for extensive hyperparameter optimization. A well-tuned SGD with a learning rate schedule can sometimes achieve a lower final validation error [30] [31].

The table below summarizes typical performance characteristics observed in molecular classification tasks [29] [25].

| Optimizer | Convergence Speed | Stability | Generalization | Typical Use Case in Chemistry |

|---|---|---|---|---|

| SGD | Slow | Low | Variable, can be high | Small datasets; well-tuned final models |

| SGD + Momentum | Medium | Medium | High | Handling noisy gradients in QSAR models |

| Adam | Fast | High | Good (default) | Default for most MPNNs; large-scale screening |

| AdamW/AMSGrad | Fast | Very High | Very Good | Tasks requiring robust convergence and generalization |

FAQ 3: How Do I Replicate Optimizer Comparisons in My Own Experiments?

Answer: To ensure fair and reproducible comparisons between optimizers, follow this experimental protocol, adapted from systematic studies [29] [32].

Detailed Methodology:

- Data Preparation: Use a standard benchmark dataset (e.g., BACE or NCI-1). Partition into training/validation/test sets (e.g., 80/10/10) and ensure the dataset is thoroughly shuffled to avoid biases from feature ordering [31].

- Model Initialization: Use the same model architecture (e.g., a Message Passing Neural Network) for all tests. Crucially, reset the model to the same random initialization before training with each different optimizer [32].

- Training Loop: For each optimizer in your test set (e.g., SGD, Adam, RMSprop):

- Initialize the optimizer.

- Train the model for a fixed number of epochs (e.g., 100).

- Track loss and relevant metrics (e.g., AUC, accuracy) on both training and validation sets.

- Hyperparameters: Use consistent training hyperparameters where possible (e.g., batch size, weight decay). The learning rate can be tuned per-optimizer for a fair comparison [29].

- Reporting: Run each experiment multiple times (e.g., 5 runs) with different random seeds and report mean and standard deviation of the performance metrics.

The Scientist's Toolkit: Essential Research Reagents

The table below details key computational "reagents" used in optimizer experiments for molecular deep learning.

| Item Name | Function / Explanation |

|---|---|

| BACE Dataset | A benchmark dataset containing molecular structures and binary binding outcomes for inhibitors of the β-secretase 1 enzyme. Used for classification task validation [29]. |

| NCI-1 Dataset | A benchmark dataset from the National Cancer Institute with ~3,466 chemical compounds categorized as active or inactive against cancer. Used for graph classification tasks [29]. |

| Message Passing Neural Network (MPNN) | A core Graph Neural Network architecture that learns molecular representations by iteratively passing messages between connected atoms (nodes), effectively capturing molecular structure [29]. |

| Binary Cross-Entropy Loss | The standard loss function used for binary molecular classification tasks (e.g., active/inactive). The optimizer's job is to minimize this value [29]. |

| Graphviz (DOT language) | A tool used to create diagrams of experimental workflows and model architectures, ensuring clarity and reproducibility in research publications. |

Experimental Workflow and Optimizer Comparison

The following diagram illustrates the typical workflow for a systematic optimizer comparison in a molecular property prediction task.

The conceptual evolution of optimizers, from simple SGD to adaptive methods like Adam, has equipped deep learning models with more sophisticated "navigation" tools for complex molecular loss landscapes, as shown below.

Implementing Adam for Molecular Modeling and Drug Discovery

Molecular Property Prediction with Adam-Optimized Networks

Troubleshooting Guides

Why is my model failing to converge or showing unstable training?

Problem: The training loss does not decrease consistently, shows large oscillations, or the model fails to converge to a good solution.

Diagnosis: This is a known issue with adaptive optimizers like Adam. The exponential moving average of past gradients can sometimes cause convergence to suboptimal solutions, particularly in non-convex settings common in molecular property prediction [33]. This occurs because the adaptive learning rates can become excessively large or small based on noisy gradient estimates.

Solutions:

- Enable Amsgrad: Use the

amsgrad=Trueflag in your optimizer. This variant uses the maximum of past squared gradients rather than the exponential average, which can lead to more stable and consistent convergence [34]. - Tune Beta Parameters: Adjust the moment decay rates. For problems with high gradient noise, slightly reducing

beta2(e.g., from 0.999 to 0.99) can make the optimizer more responsive to recent gradients [34] [2]. - Implement Gradient Mapping and Smoothing: Inspired by advanced variants like BDS-Adam, incorporate a gradient smoothing controller. This adaptively suppresses abrupt parameter updates based on real-time gradient variance to stabilize training [2].

How can I improve model performance with very limited labeled data?

Problem: Predictive accuracy is poor due to a small number of labeled molecules for a specific property (the "ultra-low data regime").

Diagnosis: Standard single-task learning struggles to learn meaningful representations from scarce data. This is a fundamental challenge in molecular property prediction where data collection is expensive [35].

Solution: Implement Adaptive Checkpointing with Specialization (ACS) via Meta-Learning.

This methodology uses a multi-task learning framework to leverage correlations among various molecular properties, sharing knowledge across tasks to improve performance on the low-data target task [36] [35].

Experimental Protocol:

- Model Architecture: Employ a shared graph neural network (GNN) backbone (e.g., a Message Passing Neural Network) to learn general molecular representations. Attach separate task-specific multi-layer perceptron (MLP) heads for each property [35].

- Training Procedure:

- Inner Loop (Task-Specific): For each task (including your low-data target), update the parameters of its specific MLP head. This allows the model to specialize for each property [36].

- Outer Loop (Shared): Jointly update all parameters of the shared GNN backbone. This step transfers learned features across all tasks [36].

- Checkpointing: During training, continuously monitor the validation loss for every task. Save a checkpoint of the shared backbone and the task-specific head each time a task achieves a new minimum validation loss. This "adaptive checkpointing" protects tasks from negative transfer—where updates from one task degrade performance on another [35].

Diagram 1: ACS Meta-Learning Workflow

How do I select the optimal hyperparameters for Adam in my experiments?

Problem: Model performance is highly sensitive to the choice of optimizer hyperparameters, making reproducible results difficult.

Diagnosis: The default parameters of Adam are a good starting point but are not optimal for all problems, especially in specialized domains like molecular machine learning [34] [6].

Solution: Adopt a structured tuning strategy. The following table summarizes the core hyperparameters and a tuning strategy.

Table 1: Adam Hyperparameter Tuning Guide

| Hyperparameter | Default Value | Function | Tuning Advice |

|---|---|---|---|

| Learning Rate (α) | 0.001 | Controls the step size of parameter updates. | The most critical to tune. Start with a range like 0.1 to 1e-5. Use a learning rate scheduler to reduce it during training [34]. |

| Beta1 (β₁) | 0.9 | Decay rate for the first moment (mean of gradients). | Controls momentum. Keeping it close to 0.9 is usually effective [34] [6]. |

| Beta2 (β₂) | 0.999 | Decay rate for the second moment (variance of gradients). | Stabilizes learning. For noisy problems, try 0.99. Values closer to 1 provide a longer-term memory of gradients [34] [6]. |

| Epsilon (ε) | 1e-8 | Small constant to prevent division by zero. | Generally safe at default. Tune if using half-precision computations or to avoid NaN errors [34]. |

| Weight Decay | 0 | L2 regularization penalty. | Add a small value (e.g., 1e-4) to prevent overfitting and improve generalization [34]. |

| Amsgrad | False | Uses max of past squared gradients for convergence stability. | Set to True if you encounter convergence issues [34]. |

Experimental Protocol for Tuning:

- Start with Defaults: Begin with the default Adam parameters.

- Tune Learning Rate: Perform a coarse-to-fine search over the learning rate while keeping other parameters fixed.

- Adjust Beta2 and Weight Decay: If performance is unstable or plateaus, adjust

beta2andweight_decaynext. - Validate Performance: Always use a held-out validation set (not the test set) to evaluate the effect of hyperparameter changes [34].

Frequently Asked Questions (FAQs)

Q1: What are the theoretical convergence guarantees of Adam for inverse problems like molecular property prediction?

A1: Recent theoretical work has established convergence rates for Adam when applied to linear inverse problems. Under specific conditions, the algorithm achieves a sub-exponential convergence rate in the absence of noise. When noise is present, the error consists of a decaying term and a noise term that eventually saturates, requiring a stopping criterion to avoid overfitting to noise [37]. This analysis is performed by constructing Lyapunov functions, treating the optimization process as a dynamical system.

Q2: Beyond standard Adam, what are some advanced variants recommended for chemistry applications?

A2: Several variants have been developed to address Adam's limitations:

- AMSGrad: Recommended to fix convergence failures in convex settings [33] [6].

- BDS-Adam: A newer variant that integrates a nonlinear gradient mapping module and an adaptive variance correction to address biased estimation and early-training instability, potentially leading to higher test accuracy [2].

- NAdam: Incorporates Nesterov momentum into Adam, which can sometimes provide better performance than vanilla Adam [6].

Q3: My model performs well on the training set but poorly on the test set. How can I improve generalization?

A3:

- Apply Regularization: Use Weight Decay (L2 regularization) in your Adam optimizer. This is crucial to penalize large weights and reduce overfitting [34].

- Incorporate 3D Geometric Information: For molecular properties dependent on spatial structure, use geometric deep learning. Featurize your molecular graphs with 3D atomic coordinates and use a geometric GNN (e.g., a D-MPNN that handles 3D information) to achieve "chemical accuracy" [38].

- Use Transfer Learning: Pre-train your model on a large, diverse molecular dataset (even with lower-accuracy labels). Then, fine-tune the model on your small, high-accuracy target dataset. This

Δ-MLapproach can strongly enhance prediction reliability [38].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Molecular Property Prediction Experiments

| Resource / Tool | Type | Function & Application |

|---|---|---|

| PyTorch | Software Library | Primary deep learning framework for implementing GNNs, the Adam optimizer, and custom training loops [34]. |

| Directed-MPNN (D-MPNN) | Algorithm/Architecture | A robust graph neural network architecture that avoids unnecessary loops during message passing, commonly used as the backbone model for molecular graphs [35] [38]. |

| MoleculeNet | Data Benchmark | A standard benchmark collection for molecular machine learning, containing datasets like Tox21, SIDER, and ClinTox for model validation and comparison [35]. |

| ThermoG3 / ThermoCBS | Data Benchmark | Novel quantum chemical databases with over 50,000 structures each, providing high-accuracy thermochemical property data for training models on industrially-relevant molecules [38]. |

| Adaptive Checkpointing (ACS) | Methodology | A meta-learning training scheme that mitigates negative transfer in multi-task learning, essential for operating in ultra-low data regimes (e.g., with only 29 samples) [35]. |

FAQs: Optimizer Performance and Convergence

Q1: Does the Adam optimizer provably converge in molecular design tasks?

The convergence of Adam is a nuanced topic. While it is known that Adam may not converge for certain problem configurations, recent theoretical work has shown that it can converge under specific conditions relevant to molecular design. A key factor is the hyperparameter β₂ (the second-moment decay rate). Theoretical results indicate that Adam converges when β₂ is large enough (close to 1), but the minimal β₂ that ensures convergence is problem-dependent [39]. In practice, default values like β₂=0.999 in PyTorch are set to promote stability. For the finite-sum problems common in chemistry (e.g., optimizing over a dataset of molecular structures), Adam with a decaying step size can be shown to converge to a bounded region under standard smoothness and growth conditions, provided β₂ is sufficiently large and β₁ is small [39].

Q2: What are the common failure modes of GANs in molecular generation, and how can they be addressed?

GANs are powerful but can suffer from several common issues during training for molecular design:

- Vanishing Gradients: This occurs if the discriminator becomes too good, leaving the generator with no useful gradient signal. Remedies include using Wasserstein loss or a modified minimax loss [40].

- Mode Collapse: The generator produces a limited diversity of molecules, often getting stuck on a few outputs. Solutions involve employing Wasserstein loss or Unrolled GANs, which prevent the generator from over-optimizing for a single, fixed discriminator [40].

- Failure to Converge: GAN training can be unstable. Regularization techniques, such as adding noise to the discriminator's inputs or penalizing its weights, can help stabilize training [40].

Q3: My VAE training seems stuck; the KL loss is near zero and reconstruction loss is high. What could be wrong?

This is a common problem where the VAE ignores the latent space (resulting in a negligible KL divergence) and performs poorly on reconstruction. This is often a sign of an imbalance between the two components of the VAE loss function. Troubleshooting steps include [41]:

- Checking Gradients: Investigate if gradients are vanishing during backpropagation, which can halt learning even in moderately deep networks.

- Architecture Review: Ensure the neural network architecture (typically 6-7 layers) is suitable and that the latent space dimension is appropriately sized for your molecular data.

- Loss Function Tuning: The VAE loss is a sum of the reconstruction loss and the KL divergence. You may need to introduce a weighting parameter (β) to better balance these terms, encouraging the model to utilize the latent space effectively.

Q4: Are there enhanced versions of Adam that perform better in molecular optimization?

Yes, researchers have developed improved variants to address Adam's limitations, such as biased gradient estimation and early-training instability. One recently proposed variant is BDS-Adam [2]. It features a dual-path framework:

- A nonlinear gradient mapping path that uses a hyperbolic tangent function to reshape gradients for better local geometry capture.

- An adaptive momentum smoothing path that controls updates based on real-time gradient variance to suppress instability. These paths are combined via a gradient fusion mechanism. Empirical evaluations show that BDS-Adam can improve test accuracy on benchmark datasets compared to standard Adam [2].

Troubleshooting Guides

Guide: Diagnosing and Resolving VAE Training Failure

Problem: The KL divergence loss is very low (e.g., ~1e-10) and does not increase, while the reconstruction loss (e.g., MSE) remains high and stagnant [41].

Diagnosis: This typically indicates that the VAE is failing to use the latent space for meaningful representation, a phenomenon known as "posterior collapse." The encoder is not learning to map inputs to a structured distribution in the latent space.

Resolution Protocol:

- Verify Gradient Flow: Use debugging tools to track gradients through your network. Ensure that gradients are flowing back to the encoder layers and are not vanishing. This is a primary suspect even in networks that are not extremely deep [41].

- Adjust the Loss Weighting: Implement a β-VAE framework. Introduce a coefficient β > 1 to weight the KL divergence term in the total loss function:

Total Loss = Reconstruction Loss + β * KL Loss. This forces the model to pay more attention to shaping the latent distribution. - Review Latent Space Dimensionality: A latent space that is too large can be easy to ignore. Experiment with reducing the dimensionality of the latent vector

z. - Warm-up Schedule: Implement a training schedule where the weight of the KL loss (β) is gradually increased from 0 to its final value over the first several epochs. This allows the decoder to learn meaningful reconstruction first before being constrained by the latent space structure.

Guide: Addressing GAN Mode Collapse in Molecular Generation

Problem: The generator produces a very limited variety of molecular structures, often repeating the same or similar outputs, regardless of the random input vector [40].

Diagnosis: This is a classic case of mode collapse. The generator has found a few outputs that temporarily fool the discriminator and over-optimizes for them, while the discriminator fails to learn its way out of this local minimum.

Resolution Protocol:

- Switch to a More Robust Loss Function: Replace the standard minimax loss with Wasserstein loss with Gradient Penalty (WGAN-GP). This provides more stable gradients and helps the discriminator (critic) become optimal without breaking the generator's learning process [40].

- Use Unrolled GANs: Implement an Unrolled GAN. This technique modifies the generator's loss to incorporate the outputs of future discriminator versions. By "looking ahead," the generator cannot over-optimize for a single, static discriminator and is encouraged to produce diverse outputs [40].

- Apply Regularization: Add regularization to the discriminator, such as weight penalty or adding noise to its inputs, to prevent it from becoming too powerful too quickly, which can trigger mode collapse [40].

- Monitor Diversity Metrics: During training, track metrics beyond loss, such as the uniqueness and novelty of generated molecular structures, to quantitatively detect mode collapse early.

Guide: Mitigating Adam Instability in Non-Convex Landscapes

Problem: Training loss oscillates wildly or fails to decrease consistently when using Adam to optimize a deep neural network for molecular property prediction.

Diagnosis: The adaptive learning rates in Adam can become unstable in the highly non-convex optimization landscapes common in deep learning, especially during the early "cold-start" phase where moment estimates are biased [2].

Resolution Protocol:

- Tune β₂ Hyperparameter: Theoretical and empirical evidence suggests that a larger β₂ (e.g., 0.99, 0.999, or even higher) promotes convergence [39]. Treat the minimal-convergence-ensuring β₂ as a problem-dependent parameter that may need tuning for your specific chemistry dataset.

- Consider an Advanced Variant: Employ an enhanced optimizer like BDS-Adam, which is explicitly designed to counter early-training instability and biased estimation through adaptive variance correction and gradient smoothing [2].

- Implement Learning Rate Decay: Use a scheduled decay for the global learning rate η (e.g., ηₖ = η₁/√k). This is a standard technique to reduce the size of parameter updates as training progresses, promoting convergence to a minimum [39].

- Apply Gradient Clipping: Cap the norm of the gradients before they are used in the Adam update rule. This prevents explosively large parameter updates that can derail training.

Table 1: Empirical Performance of BDS-Adam vs. Standard Adam on Benchmark Datasets [2]

| Dataset | Task Type | Test Accuracy (Adam) | Test Accuracy (BDS-Adam) | Improvement |

|---|---|---|---|---|

| CIFAR-10 | Image Classification | Baseline | Baseline +9.27% | +9.27% |

| MNIST | Image Classification | Baseline | Baseline +0.08% | +0.08% |

| Gastric Pathology | Medical Image Diagnosis | Baseline | Baseline +3.00% | +3.00% |

Table 2: Common GAN Problems and Proposed Solutions [40]

| Failure Mode | Description | Recommended Solutions |

|---|---|---|

| Vanishing Gradients | Optimal discriminator provides no usable gradient for the generator. | Wasserstein loss, Modified minimax loss |

| Mode Collapse | Generator produces low diversity of outputs. | Wasserstein loss, Unrolled GANs |

| Failure to Converge | Training process is unstable and oscillates. | Input noise (Discriminator), Weight penalty (Discriminator) |

Experimental Protocols

Protocol: Training a VAE for Molecular Representation Learning

This protocol outlines the steps for training a Variational Autoencoder (VAE) to learn latent representations of molecular structures, a common step in generative molecular design [42].

Workflow Diagram: VAE for Molecular Representation

Methodology:

- Input Representation: Convert molecular structures into a machine-readable format. Common choices are extended-connectivity fingerprints (ECFPs) or SMILES strings [42].

- Encoder Network: Pass the input through an encoder network,

f_θ(x). This is typically a fully connected (FC) network with 2-3 hidden layers (e.g., 512 units each) using ReLU activation. The output layer is split into two separate, dense layers that output the meanμand log-variancelog(σ²)of the latent distributionq(z|x) = N(z|μ(x), σ²(x))[42]. - Latent Sampling: Sample a latent vector

zusing the reparameterization trick:z = μ + σ ⋅ ε, whereε ~ N(0, I). This makes the sampling step differentiable. - Decoder Network: Pass the latent vector

zthrough a decoder network,g_φ(z). The decoder is a mirror of the encoder, with FC layers and ReLU activation. The final output layer uses a sigmoid or softmax activation to reconstruct the original molecular inputx̂[42]. - Loss Calculation and Training: The model is trained by minimizing the VAE loss function:

ℒ_VAE = 𝔼_{q_θ(z|x)}[log p_φ(x|z)] - β * D_KL[q_θ(z|x) || p(z)]- Reconstruction Loss:

𝔼[log p_φ(x|z)]measures the fidelity of the reconstruction (e.g., using binary cross-entropy for fingerprints). - KL Divergence:

D_KL[...]regularizes the latent space by penalizing deviation from a priorp(z)(typically a standard normal distribution). - Weighting Parameter β: A coefficient

βcan be used to control the strength of the regularization [42]. Training proceeds with a stochastic gradient-based optimizer like Adam.

- Reconstruction Loss:

Protocol: Integrated VAE-GAN (VGAN) Framework for Drug-Target Interaction Prediction

This protocol describes a generative framework that combines VAEs and GANs for enhanced drug-target interaction (DTI) prediction, a critical task in drug discovery [42].

Workflow Diagram: VGAN-DTI Framework

Methodology:

- VAE for Latent Space Learning: Train a VAE (as described in Protocol 4.1) on a database of known drug-like molecules. The VAE learns a smooth, continuous latent space

zthat encodes the fundamental features of molecular structures. It also refines molecular representations and can generate novel molecules by decoding random samples from the priorp(z)[42]. - GAN for Adversarial Generation:

- Generator (G): The generator of the GAN uses the VAE's decoder or a separate network that takes a random latent vector

zand outputs a generated molecular structureG(z)[42]. - Discriminator (D): The discriminator is a binary classifier that takes a molecular structure (either real from the dataset or fake from the generator) and outputs a probability

D(x)of it being real. The two networks are trained adversarially with the losses [42]:- Discriminator Loss:

ℒ_D = 𝔼[log D(x_real)] + 𝔼[log(1 - D(G(z)))] - Generator Loss:

ℒ_G = -𝔼[log D(G(z))]This process encourages the GAN to generate diverse and realistic molecular structures.

- Discriminator Loss:

- Generator (G): The generator of the GAN uses the VAE's decoder or a separate network that takes a random latent vector

- MLP for Interaction Prediction: A Multilayer Perceptron (MLP) is used for the final DTI prediction. The input to the MLP is a combined feature vector representing the drug molecule (either real or generated) and the target protein. The MLP, typically with 2-3 hidden layers and ReLU activation, processes these features to predict a scalar value indicating the probability or strength of binding interaction [42]. The overall framework allows for the generation of novel molecules and the simultaneous prediction of their biological activity.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Components for Generative Molecular Design

| Research Reagent (Component) | Function in the Experiment | Example & Context |

|---|---|---|

| BindingDB Dataset | A public repository of drug-target interaction data. | Used as the labeled dataset for training and evaluating MLP DTI prediction models [42]. |

| SMILES Strings | A line notation system for representing molecular structures as text. | Serves as a common input representation for molecular VAEs and GANs [42]. |

| Molecular Fingerprints (e.g., ECFP) | A bit vector representation of molecular structure and features. | Used as an alternative input feature vector for molecular encoders in VAEs [42]. |

| DeePMO Framework | A deep learning-based kinetic model optimization tool. | Validated for optimizing kinetic parameters across multiple fuel models; demonstrates the application of DNNs in combustion chemistry, a related domain [9]. |

| Nonlinear Gradient Mapping (tanh) | A module that adaptively reshapes raw gradients. | A core component of the BDS-Adam optimizer, enabling it to better capture local geometric structures in the loss landscape [2]. |

| Adaptive Momentum Smoothing Controller | A module that dynamically adjusts momentum based on gradient variance. | Another key component of BDS-Adam, used to suppress abrupt parameter updates and stabilize early training [2]. |

The application of the Adam optimizer in deep neural networks has become a cornerstone of modern computational chemistry research, particularly in the high-stakes field of drug discovery. This case study examines the role of Adam within a specific research project aimed at developing anti-cocaine addiction drugs, showcasing how this optimization algorithm enables researchers to efficiently train complex models that generate and evaluate potential therapeutic molecules. The adaptive learning rate capabilities of Adam make it particularly valuable for navigating the complex, high-dimensional optimization landscapes encountered in molecular property prediction and generative chemistry.

Technical FAQs: Adam Optimizer in Drug Discovery

Q1: What specific advantages does the Adam optimizer offer for deep learning projects in drug discovery, such as the anti-cocaine addiction project?

Adam provides several distinct advantages that make it well-suited for drug discovery applications:

- Adaptive Learning Rates: Unlike traditional stochastic gradient descent, Adam maintains separate adaptive learning rates for each parameter, which is crucial when working with sparse molecular data and complex neural architectures like Directed Message Passing Neural Networks (D-MPNNs) used in molecular property prediction [43] [44] [45].

- Efficient Convergence: The combination of momentum and RMSProp concepts enables faster convergence toward minima, which is valuable when training computationally expensive models on large molecular datasets [43].

- Handling Sparse Gradients: In natural language processing tasks with SMILES strings or molecular graph representations, gradients can be sparse. Adam's adaptability makes it particularly effective in these scenarios commonly encountered in chemical informatics [44].

Q2: Our research team is experiencing slow convergence when training molecular property prediction models with Adam. What hyperparameter adjustments should we prioritize?

Slow convergence often indicates suboptimal hyperparameter configuration. Based on successful implementations in chemical deep learning, consider these adjustments:

Table: Adam Hyperparameter Tuning Recommendations for Molecular Property Prediction

| Hyperparameter | Default Value | Recommended Range for Chemistry | Impact on Training |

|---|---|---|---|

| Learning Rate (α) | 0.001 | 0.0001 - 0.01 | Critical; too high causes divergence, too low slows convergence [43] |

| β₁ (First Moment Decay) | 0.9 | 0.8 - 0.95 | Controls momentum; lower values may help with noisy molecular data [43] |

| β₂ (Second Moment Decay) | 0.999 | 0.99 - 0.999 | Higher values (closer to 1) improve stability [39] |

| Weight Decay | 0 | 1e-5 - 1e-3 | Prevents overfitting on limited chemical datasets [44] |

| Epsilon (ε) | 1e-8 | 1e-8 - 1e-7 | Prevents division by zero; minor impact on convergence [43] |

Additionally, implementing learning rate decay schedules can further improve convergence as parameters approach optimal solutions [43].

Q3: Why does our PyTorch implementation of Adam yield different results compared to TensorFlow when reproducing the anti-cocaine addiction drug discovery paper?

This is a known issue that researchers have reported even when using identical hyperparameters and initial weights [46]. The differences stem from:

- Implementation Variations: Despite following the same algorithm, underlying numerical computations and default settings differ between frameworks.

- Random Number Generation: Even with identical seeds, different frameworks may produce different random sequences during training.

- Numerical Precision: Subtle differences in floating-point operations accumulate over training epochs.

To ensure reproducibility:

- Document exact framework versions and hardware configurations

- Use the same weight initialization method across implementations

- Consider averaging results across multiple runs with different seeds

- Validate with identical forward passes before beginning optimization [46]

Q4: How critical is the β₂ hyperparameter for training stability in molecular generation tasks, and what values are recommended?

β₂ is exceptionally important for training stability as it controls the decay rate for second-order moment estimates. Theoretical analysis reveals that:

- Convergence Dependency: Adam with small β₂ may diverge, while sufficiently large β₂ (typically ≥ 0.99) ensures convergence under standard assumptions [39].

- Problem-Specific Optimal Values: The minimal β₂ that ensures convergence is problem-dependent rather than a universal constant [39].

- Default Success: For molecular generation and property prediction tasks, the PyTorch default of β₂=0.999 generally provides good stability and is recommended as a starting point [43] [39].

Q5: What enhanced Adam variants show promise for addressing the challenges of early training instability in molecular property prediction?

Recent research has developed enhanced Adam variants that address common limitations:

- BDS-Adam: This variant incorporates a dual-path framework with nonlinear gradient mapping and adaptive momentum smoothing to address biased gradient estimation and early training instability [2].

- Theoretical Improvements: BDS-Adam includes adaptive variance correction to mitigate "cold-start" effects caused by inaccurate variance estimates in early iterations, which is particularly valuable when working with limited chemical data [2].

- Empirical Results: In benchmark evaluations, BDS-Adam demonstrated test accuracy improvements of up to 9.27% on CIFAR-10 and 3.00% on medical image datasets compared to standard Adam, suggesting potential for similar gains in chemical informatics applications [2].

Troubleshooting Common Experimental Issues

Problem: Training Loss Oscillations During Molecular Embedding Learning

Symptoms: Erratic and non-decreasing loss values during training of molecular graph neural networks.

Solutions:

- Reduce Learning Rate: Decrease the learning rate by factors of 10 (try 0.0001, 0.00001) until oscillations stabilize [43].

- Increase β₂: Adjust β₂ closer to 1 (e.g., 0.999, 0.9999) to smooth out second-moment estimates [39].

- Gradient Clipping: Implement gradient clipping to prevent exploding gradients during message passing in graph neural networks [45].

- Increase Batch Size: Larger batch sizes provide more stable gradient estimates, though this increases computational requirements.

Problem: Poor Generalization to Unseen Molecular Structures

Symptoms: Model performs well on training data but poorly on validation/test sets of novel molecular scaffolds.

Solutions:

- Implement Weight Decay: Add L2 regularization with values between 1e-5 and 1e-3 to prevent overfitting [44].

- Adjust Architecture: Modify the D-MPNN architecture (in Chemprop) by reducing hidden size (from default 300 to 150-200) or number of message passing steps (from default 3 to 2-4) [45].

- Ensemble Methods: Train multiple models with different initializations and average predictions, as implemented in Chemprop's standard workflow [45].

- Data Augmentation: Employ SMILES augmentation or molecular tautomer generation to increase structural diversity in training data.

Research Reagent Solutions

Table: Essential Computational Tools for AI-Driven Drug Discovery

| Research Reagent | Function | Application in Anti-Cocaine Addiction Study |

|---|---|---|

| Chemprop | Directed Message Passing Neural Network implementation for molecular property prediction | Predicts binding affinities to dopamine transporter (DAT), serotonin transporter (SERT), and norepinephrine transporter (NET) targets [45] |

| Stochastic Generative Network Complex (SGNC) | Molecular generation platform integrating Langevin dynamics | Generates novel multi-target anti-cocaine addiction leads [47] [48] |

| D-MPNN Architecture | Graph convolutional neural network for molecular graphs | Learns atomic embeddings from molecular structure for property prediction [45] |

| Langevin Equation | Stochastic differential equation for optimization | Modifies latent space vectors in molecular generators to explore chemical space [47] [48] |

| Binding Affinity Predictors | Machine learning models for protein-ligand interaction | Estimates potential lead affinities to DAT, NET, and SERT simultaneously [47] |

Experimental Protocol: Implementing Adam in Anti-Cocaine Addiction Drug Discovery

This protocol outlines the methodology for reproducing the key experiments from the anti-cocaine addiction drug discovery case study [47] [48].

Phase 1: Molecular Property Prediction with Adam-Optimized D-MPNN

Data Preparation:

- Collect inhibition data for DAT, SERT, and NET targets from public databases and literature

- Standardize molecular representations using RDKit to convert SMILES to molecular graphs

- Split data using scaffold-based splitting to ensure generalization to novel chemotypes

Model Configuration:

- Implement D-MPNN architecture using Chemprop with default hidden size (300) and message passing steps (3)

- Configure Adam optimizer with learning rate=0.001, β₁=0.9, β₂=0.999, ε=1e-8

- Set training for 30 epochs with batch size 50 and early stopping patience of 5 epochs

Training Procedure:

- Initialize ensemble of 5 models with different random seeds

- Train each model with Adam optimizer using mean squared error loss

- Monitor training and validation loss for signs of overfitting or instability

- Save model weights for prediction phase

Phase 2: Molecular Generation with Stochastic Optimization

Generative Model Setup:

- Initialize Stochastic Generative Network Complex (SGNC) architecture

- Configure Langevin dynamics for latent space exploration

- Set multi-objective optimization for DAT, SERT, and NET affinity

Optimization Protocol:

- Implement Adam optimizer with reduced learning rate (0.0005) for training stability

- Use β₁=0.8 and β₂=0.999 to handle noisy gradient estimates

- Apply gradient clipping at norm 1.0 to prevent instability

Lead Compound Evaluation:

Advanced Optimization Strategies

Integrating Adam with Stochastic Methods for Molecular Generation

The anti-cocaine addiction case study successfully integrated Adam with stochastic-based methodologies to enhance molecular generation [47] [48]. This hybrid approach combines the adaptive learning capabilities of Adam with the exploration benefits of stochastic methods:

Key Integration Benefits:

- Enhanced Exploration: Stochastic methods enable broader exploration of chemical space while Adam efficiently optimizes toward promising regions

- Stable Training: Adam's adaptive learning rates help stabilize training when combined with noisy stochastic gradients

- Multi-Objective Optimization: The combined approach successfully generated molecules with desired multi-target profiles (DAT, SERT, NET) [47]

Performance Optimization and Validation

Quantitative Results from Anti-Cocaine Addiction Study

Table: Experimental Outcomes of AI-Driven Anti-Cocaine Addiction Drug Discovery

| Metric | Performance | Significance |

|---|---|---|

| Generated Leads | 15 promising multi-target candidates | Demonstrated practical utility of Adam-optimized generative models [47] |

| Target Coverage | Simultaneous prediction for DAT, SERT, NET | Enabled multi-target optimization approach [47] [48] |

| Architecture | Stochastic Generative Network Complex (SGNC) | Integrated stochastic methods with deep learning [47] |

| Validation Method | Cross-referencing with literature and expertise | Ensured reliability of AI-generated suggestions [47] [48] |

Best Practices for Validation and Reproducibility:

Implement Rigorous Verification:

Optimize Hyperparameters Systematically:

- Use Bayesian optimization or random search for hyperparameter tuning

- Conduct sensitivity analysis on critical parameters (learning rate, β₂)

- Document all hyperparameter settings for reproducibility