Advanced Geometry Optimization Workflows for Challenging Molecular Systems in Drug Discovery

This article provides a comprehensive guide to geometry optimization workflows tailored for difficult molecular systems, such as large biomolecules and flexible pharmaceuticals.

Advanced Geometry Optimization Workflows for Challenging Molecular Systems in Drug Discovery

Abstract

This article provides a comprehensive guide to geometry optimization workflows tailored for difficult molecular systems, such as large biomolecules and flexible pharmaceuticals. It establishes foundational principles before exploring advanced hybrid quantum-classical and machine learning methods. The content offers practical troubleshooting for common convergence failures and presents a rigorous framework for validating and benchmarking optimization results against experimental data. Designed for researchers and drug development professionals, this resource bridges computational theory with practical application to enhance the reliability and efficiency of structure-based drug design.

Understanding the Core Challenges in Molecular Geometry Optimization

In computational chemistry and drug design, molecular geometry optimization—the process of finding a stable, low-energy structure—is a foundational step. However, certain "difficult systems" consistently challenge standard algorithms and computational protocols. These systems are characterized by specific physicochemical properties that create rugged, complex energy landscapes, often leading to optimization failures, excessively long computation times, or convergence to incorrect structures. This document defines three primary categories of difficult systems—large molecules, flexible ligands, and systems with complex potential energy surfaces (PES)—within the context of a geometry optimization workflow. It further provides detailed application notes and experimental protocols to guide researchers in selecting and applying advanced computational methods to overcome these challenges, thereby enhancing the reliability and efficiency of molecular simulations in drug development.

Characterization of Difficult Systems

Large Molecules

Large molecules, often relevant in the context of biological macromolecules or catalysts, present a direct scalability challenge. The computational cost of quantum mechanical methods often scales exponentially with system size, making precise calculations prohibitively expensive [1]. Furthermore, the sheer number of degrees of freedom (e.g., bond lengths, angles, dihedrals) requires a vast search space to be explored during optimization. This is compounded by the need to accurately model long-range interactions and the presence of multiple, distinct conformational states. As molecular size increases, the use of conventional electronic structure methods for geometry optimization becomes intractable, necessitating innovative approaches like fragmentation or embedding theories [1].

Flexible Ligands

Flexible ligands are small molecules, typically drug-like compounds, with a large number of rotatable bonds. Upon binding to their protein targets, these ligands can experience significant conformational strain. The range of conformational energies observed for ligands in protein-ligand crystal structures has been a subject of extensive study, with reported values ranging from less than 3 kcal/mol to over 25 kcal/mol [2]. This discrepancy highlights the challenge in determining whether a high-energy conformation is a real phenomenon or an artifact of crystal structure determination. For optimizers, the key difficulty lies in efficiently sampling the vast conformational space to locate the true bioactive conformation without being misled by the noisy energy landscapes often produced by neural network potentials (NNPs) or other approximate methods [3] [2].

Complex Potential Energy Surfaces

Systems with complex potential energy surfaces (PES) are characterized by a high density of critical points—not just minima, but also saddle points (transition states). This complexity arises from factors such as frustrated interactions, competing molecular packing modes, or the presence of multiple metastable states with similar energies. Traditional local optimizers can easily become trapped in these local minima, failing to locate the global minimum. The problem is exacerbated when using modern NNPs as replacements for density functional theory (DFT), as some optimizers are more sensitive than others to the inherent noise and approximations in these machine-learned surfaces [3]. Accurately mapping these landscapes requires enhanced sampling techniques that go beyond simple local optimization.

Table 1: Key Characteristics of Difficult Molecular Systems

| System Category | Defining Features | Primary Optimization Challenges | Common Experimental Manifestations |

|---|---|---|---|

| Large Molecules | High atom count (> hundreds of atoms), extensive electron correlation needs [1]. | Exponential scaling of computational cost; large number of degrees of freedom. | Inability to complete optimization within feasible time/resource constraints. |

| Flexible Ligands | Many rotatable bonds; conformational energy penalties of 0-25 kcal/mol upon binding [2]. | Efficient sampling of vast conformational space; distinguishing true strain from refinement error. | Failure to reproduce bioactive conformer; optimization to high-energy saddle points. |

| Complex PES | Rugged landscape with many local minima and saddle points; noisy gradients from NNPs [3]. | Convergence to local, not global, minima; optimizer failure due to gradient noise. | Inconsistent optimization outcomes; high variability in located minima depending on initial structure. |

Quantitative Benchmarking of Optimizer Performance

Selecting an appropriate geometry optimizer is critical for successfully handling difficult systems. Recent benchmarks have systematically evaluated the performance of various optimizers when paired with different NNPs on a set of drug-like molecules. The key metrics for evaluation include the number of successful optimizations (convergence within a set step limit), the average number of steps to convergence, and the quality of the final structure, measured by the number of true local minima found (structures with zero imaginary frequencies) [3].

The following table summarizes benchmark data for different optimizer and NNP combinations, highlighting that performance is highly dependent on the specific pairing.

Table 2: Optimizer Performance Benchmark with Various Neural Network Potentials (NNPs) [3]

| Optimizer | NNP / Method | Number Successfully Optimized (out of 25) | Average Number of Steps | Number of Minima Found |

|---|---|---|---|---|

| ASE/L-BFGS | OrbMol | 22 | 108.8 | 16 |

| OMol25 eSEN | 23 | 99.9 | 16 | |

| AIMNet2 | 25 | 1.2 | 21 | |

| GFN2-xTB | 24 | 120.0 | 20 | |

| ASE/FIRE | OrbMol | 20 | 109.4 | 15 |

| OMol25 eSEN | 20 | 105.0 | 14 | |

| AIMNet2 | 25 | 1.5 | 21 | |

| GFN2-xTB | 15 | 159.3 | 12 | |

| Sella | OrbMol | 15 | 73.1 | 11 |

| OMol25 eSEN | 24 | 106.5 | 17 | |

| AIMNet2 | 25 | 12.9 | 21 | |

| GFN2-xTB | 25 | 108.0 | 17 | |

| Sella (internal) | OrbMol | 20 | 23.3 | 15 |

| OMol25 eSEN | 25 | 14.9 | 24 | |

| AIMNet2 | 25 | 1.2 | 21 | |

| GFN2-xTB | 25 | 13.8 | 23 | |

| geomeTRIC (tric) | OrbMol | 1 | 11.0 | 1 |

| OMol25 eSEN | 20 | 114.1 | 17 | |

| AIMNet2 | 14 | 49.7 | 13 | |

| GFN2-xTB | 25 | 103.5 | 23 |

Key Insights from Benchmark Data:

- Optimizer Robustness Varies Dramatically: The performance of an optimizer is not intrinsic but depends heavily on the NNP used. For instance, AIMNet2 is highly robust across all optimizers, while OrbMol shows high sensitivity to the optimizer choice [3].

- Internal Coordinates Enhance Efficiency: Sella using internal coordinates (

Sella (internal)) consistently achieves convergence in significantly fewer steps compared to its Cartesian coordinate counterpart and other optimizers, highlighting the importance of coordinate system choice [3]. - Success Does Not Guarantee a Minimum: A high number of successful optimizations does not ensure the final structure is a true minimum. For example, while ASE/L-BFGS successfully optimized 22 structures with OrbMol, only 16 were true minima (no imaginary frequencies) [3]. This underscores the need for post-optimization frequency analysis.

Detailed Protocols for Handling Difficult Systems

Protocol A: Geometry Optimization for Large Molecules using a Hybrid Quantum-Classical Framework

Application: Determining the equilibrium geometry of large molecules (e.g., glycolic acid, C₂H₄O₃) that are intractable for standard quantum chemistry methods [1].

Principle: This protocol uses Density Matrix Embedding Theory (DMET) to partition a large molecule into smaller fragments, reducing the quantum resource requirements. It then integrates DMET with the Variational Quantum Eigensolver (VQE) in a co-optimization framework that simultaneously optimizes the molecular geometry and quantum variational parameters, avoiding the high cost of nested optimization loops [1].

Step-by-Step Workflow:

- System Partitioning: Divide the large molecular system into smaller, computationally tractable fragments.

- DMET Embedding: For each fragment, construct an embedded Hamiltonian (

H_emb) that describes the fragment plus a quantum-mechanically accurate "bath" representing its environment [1]. The embedded Hamiltonian is defined as:H_emb = P * H * PwherePis a projector onto the space of the fragment and bath orbitals, andHis the full system Hamiltonian. - Co-optimization Setup: Initialize the molecular geometry and the VQE ansatz parameters for the embedded problem.

- Simultaneous Optimization: In a single iterative loop, use a classical optimizer to simultaneously update both the nuclear coordinates (geometry) and the quantum circuit parameters. The VQE is used to compute the energy of the embedded system at each step.

- Convergence Check: The cycle continues until both the geometry parameters and the energy converge to within a predefined threshold.

- Validation: Compare the predicted equilibrium geometry with high-level classical reference calculations, if available.

Protocol B Managing Flexible Ligands and Complex PES with Enhanced Sampling

Application: Studying binding conformations of flexible ligands, protein folding, or any process involving transitions over large energy barriers.

Principle: This protocol employs enhanced sampling methods like umbrella sampling and metadynamics to overcome energy barriers and explore the free energy landscape of a system. These methods are often coupled with molecular dynamics (MD) simulations and machine learning analysis to handle large trajectories [4].

Step-by-Step Workflow:

- System Preparation: Obtain an initial structure (e.g., from a protein-ligand crystal structure) and solvate it in an explicit solvent box.

- Collective Variable (CV) Selection: Identify one or more CVs (e.g., a key torsion angle in a ligand, a distance, or a linear combination of structural parameters) that describe the transition of interest [4].

- Equilibration: Perform standard MD simulation to equilibrate the system.

- Enhanced Sampling:

- Umbrella Sampling: Run multiple independent simulations, each with a harmonic bias potential applied to a CV to restrain it to a specific window along its path. This forces the system to sample regions it might not visit in an unbiased simulation [4].

- Metadynamics: In a single simulation, add a history-dependent repulsive bias (often Gaussian potentials) to the CVs. This bias fills up the free energy minima, encouraging the system to escape local minima and explore new regions [4].

- Free Energy Construction: For umbrella sampling, use the Weighted Histogram Analysis Method (WHAM) to combine data from all windows and reconstruct the unbiased free energy landscape. For metadynamics, the accumulated bias is inversely related to the free energy.

- Trajectory Analysis: Apply machine learning methods (e.g., Markov State Models - MSM, VAMPnet) to large MD trajectories to identify metastable states and transition pathways [4].

- Structure Extraction: Identify low-energy minima from the reconstructed free energy surface and extract corresponding molecular structures for further analysis.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Computational Tools for Molecular Geometry Optimization

| Tool Name | Type | Primary Function | Application in Difficult Systems |

|---|---|---|---|

| Sella [3] | Geometry Optimizer | Optimization of molecular structures (minima and transition states) using internal coordinates. | Efficiently handles noisy PES from NNPs; shows fast convergence with internal coordinates. |

| geomeTRIC [3] | Geometry Optimizer | General-purpose optimization using translation-rotation internal coordinates (TRIC) and L-BFGS. | Robust optimizer for standard systems; performance varies with NNP and coordinate system. |

| L-BFGS (in ASE) [3] | Geometry Optimizer | Quasi-Newton optimization algorithm. | A classic, widely used algorithm; can be confused by noisy PES. |

| FIRE (in ASE) [3] | Geometry Optimizer | Fast inertial relaxation engine, a first-order molecular-dynamics-based method. | Fast and noise-tolerant; may be less precise for complex molecular systems. |

| DMET [1] | Embedding Theory | Partitions a large molecular system into smaller fragments. | Reduces quantum resource requirements, enabling optimization of large molecules. |

| VQE [1] | Quantum Algorithm | Approximates molecular ground-state energies on quantum devices. | Provides accurate energy calculations within hybrid quantum-classical frameworks. |

| Markov State Models (MSM) [4] | Analysis Method | Extracts long-timescale kinetics from many short MD simulations. | Analyzes complex conformational changes and identifies metastable states in flexible systems. |

| VAMPnet [4] | Analysis Method | Deep learning for molecular kinetics; maps coordinates to Markov states. | End-to-end analysis of MD trajectories for interpretable kinetic models of complex PES. |

Geometry optimization workflows are fundamental to advancing research in drug development and material science, enabling the prediction of molecular properties, reactivity, and interactions. For difficult molecular systems—such as those with complex potential energy surfaces (PES), strong electron correlation, or flexible structures—this process encounters significant computational bottlenecks that can halt progress. These challenges are particularly acute in the Noisy Intermediate-Scale Quantum (NISQ) era, where limitations of quantum hardware impose strict constraints on computational methods.

This application note details three primary bottlenecks—qubit requirements, sampling space complexity, and convergence barriers—within the context of modern computational chemistry workflows. We provide a structured analysis of these limitations, supported by quantitative data from recent research, and offer detailed protocols and resource toolkits designed to help researchers navigate these challenges effectively.

Bottleneck Analysis & Experimental Data

The following sections break down the core computational bottlenecks, presenting key quantitative findings and methodological insights from recent studies.

Qubit Requirements and Reduction Strategies

The most immediate constraint for quantum-enhanced geometry optimization is the number of available qubits. Using standard transformations (e.g., Jordan-Wigner), the number of qubits scales as twice the number of spatial molecular orbitals. This quickly exhausts the capacity of current processors, as even modest systems like a water molecule in a cc-pVDZ basis require 52 qubits, and methanol requires 96 qubits [5]. Virtual orbitals typically comprise 70–90% of the total orbital space, dominating qubit requirements despite their more minor role in electron correlation.

Table 1: Qubit Reduction Strategies and Performance

| Method | Core Principle | Test System | Qubit Reduction | Reported Accuracy |

|---|---|---|---|---|

| Virtual Orbital Fragmentation (FVO) [5] | Partitions virtual orbital space into fragments; energy recovered via many-body expansion. | Various small molecules | 40% - 66% | 2-body: < 3 kcal/mol3-body: < 1 kcal/mol |

| Randomized Orbital Sampling (RO-VQE) [6] | Employs randomized procedure for active space selection to reduce orbital count. | H₂, H₄ chains | Enables larger basis set use (e.g., 6-31G) on limited qubits | Matches conventional VQE accuracy in benchmarks |

| Consensus-based Qubit Configuration [7] | Optimizes physical qubit positions in neutral-atom systems to tailor entanglement for specific problems. | Random Hamiltonians, small molecules | (Indirectly mitigates resource needs) | Faster convergence, lower error in ground state minimization |

The Virtual Orbital Fragmentation (FVO) method directly attacks this problem by systematically partitioning the virtual orbital space ( V ) into ( N ) non-overlapping fragments ( {V1, V2, \ldots, VN} ). The total correlation energy is recovered through a many-body expansion, analogous to spatial fragmentation methods [5]:

[ E{\text{FVO}}^{(n)} = \sum{i=1}^{N} \Delta Ei + \sum{i

Sampling Complex Probability Distributions

Sampling from complex, multi-modal probability distributions is a critical step in exploring molecular energy landscapes, particularly for understanding reaction pathways and protein-ligand interactions. Classical Markov Chain Monte Carlo (MCMC) methods often become metastable, getting trapped in local energy minima for long periods, especially on rugged, non-logconcave PES [8].

A emerging quantum algorithm proposes a provable speedup for this task. Instead of walking through the system classically, it constructs a quantum state that directly encodes the target probability distribution, which is linked to the ground state of a system-specific operator. Sampling is then performed by measuring this quantum state. This method's performance is tied to the spectral gap ( \Delta ) of the system; it demonstrates a square-root dependence ( (\sim 1/\sqrt{\Delta}) ) compared to the classical ( (\sim 1/\Delta) ), offering significant acceleration for systems with small gaps [8]. This approach has been successfully extended to handle the replica exchange (parallel tempering) technique, a workhorse for classical sampling.

Convergence Barriers in Optimization

Convergence to a minimum energy geometry is hindered by two main factors: the presence of barren plateaus in the parameter-cost function landscape and the limitations of classical optimizers when dealing with the divergent nature of physical interactions.

The consensus-based optimization (CBO) algorithm [7] addresses this by optimizing the physical configuration of qubits in neutral-atom quantum systems. In these platforms, the Rydberg interaction strength scales as ( R^{-6} ), making gradients with respect to atom positions divergent and ineffective for optimization. The CBO algorithm initializes multiple agents to sample the configuration space, each partially optimizing control pulses for their qubit positions. Information is shared across agents, guiding the swarm toward a consensus configuration that accelerates pulse optimization convergence and helps mitigate barren plateaus [7].

Detailed Protocols

Protocol 1: Virtual Orbital Fragmentation with VQE

This protocol outlines the steps for implementing the FVO method to reduce qubit requirements in a VQE calculation [5].

- Application: Reducing qubit counts for ground state energy calculations of medium-sized molecules on NISQ devices.

- Primary Materials: Molecular geometry, classical computing resource, access to a quantum processor/emulator.

Procedure:

- Initial Setup: Generate the molecular Hamiltonian in a standard Gaussian basis set (e.g., cc-pVDZ) and solve the Hartree-Fock equations to obtain the canonical molecular orbitals.

- Orbital Localization: Localize the entire virtual orbital space using a standard scheme (e.g., Boys or Pipek-Mezey).

- Fragment Assignment: Partition the localized virtual orbitals ( V ) into ( N ) non-overlapping fragments ( {V1, V2, \ldots, V_N} ). Assign each virtual orbital to the atomic center or molecular fragment to which it is most spatially proximate.

- Many-Body Expansion Calculation: a. 1-Body Terms: For each fragment ( i ), perform a VQE calculation including the full occupied space ( O ) and the virtual fragment ( Vi ) to compute ( \Delta Ei = E(O + Vi) - E(O) ). b. 2-Body Terms: For each unique fragment pair ( (i, j) ), perform a VQE calculation with ( O + Vi + Vj ) to compute the correction ( \Delta E{ij} = E(O + Vi + Vj) - E(O + Vi) - E(O + Vj) + E(O) ). c. (Optional) 3-Body Terms: For higher accuracy, compute trimer corrections ( \Delta E_{ijk} ) analogously.

- Energy Synthesis: Reconstruct the total correlated energy using the many-body expansion formula (Eq. 1). The 2-body expansion is often sufficient for chemical accuracy.

Protocol 2: Quantum-Accelerated Sampling for Complex Landscapes

This protocol describes the setup for utilizing a quantum algorithm to sample from a complex molecular energy distribution [8].

- Application: Efficiently sampling configurations from rugged potential energy surfaces, such as those encountered in protein folding or molecular cluster analysis.

- Primary Materials: A defined system Hamiltonian (H) and a quantum computer capable of executing quantum phase estimation and linear algebra subroutines.

Procedure:

- Problem Formulation: Map the target probability distribution ( \pi(x) ) to the ground state ( |\psi_g\rangle ) of a corresponding quantum Hamiltonian ( H ).

- Operator Construction: Design a quantum circuit that implements the operator ( e^{iHt} ) using techniques like quantum signal processing.

- Ground State Preparation: Prepare the quantum state ( |\psi_g\rangle ) that encodes the distribution ( \pi(x) ). This step typically involves a combination of quantum phase estimation and amplitude amplification.

- Quantum Sampling: Measure the prepared quantum state in the appropriate basis. Each measurement outcome constitutes a single sample drawn from the distribution ( \pi(x) ).

- Replica Exchange Integration (Optional): To further enhance sampling across energy barriers, encode multiple temperature layers and a swap mechanism into a single, larger quantum operator, allowing the quantum walk to explore across temperatures.

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Advanced Computational Chemistry

| Category / Tool | Specific Examples | Function in Workflow |

|---|---|---|

| Quantum Hardware Platforms | Neutral Atoms (QuEra, Pasqal), Superconducting (IBM, Google), Trapped Ions (IonQ, Quantinuum) [9] | Physical systems for running quantum algorithms; different platforms offer trade-offs in connectivity, coherence, and qubit count. |

| Qubit Reduction Algorithms | Virtual Orbital Fragmentation (FVO) [5], Randomized Orbital VQE (RO-VQE) [6] | Software methods to reduce the number of logical qubits required for a quantum calculation, enabling larger simulations on existing hardware. |

| Advanced Optimizers | Consensus-Based Optimization (CBO) [7], Quasi-Newton (BFGS) [10] | Algorithms for navigating parameter landscapes, with CBO designed for non-differentiable cost functions like qubit positioning. |

| High-Accuracy Datasets | Open Molecules 2025 (OMol25), Universal Models for Atoms (UMA) [11] | Large-scale, high-fidelity computational datasets and pre-trained neural network potentials for validation and force-field generation. |

| Sampling Enhancers | Quantum Sampling Algorithms, Replica Exchange [8] | Methods to overcome metastability and improve exploration of complex probability distributions and energy landscapes. |

Theoretical Foundation of Potential Energy Surfaces

A Potential Energy Surface (PES) describes the energy of a system, especially a collection of atoms, in terms of certain parameters, normally the positions of the atoms [12]. The surface might define the energy as a function of one or more coordinates; if there is only one coordinate, the surface is called a potential energy curve or energy profile [12] [13]. Mathematically, the geometry of a set of atoms can be described by a vector, r, whose elements represent the atom positions, and the PES is the energy function ( E(\mathbf{r}) ) for all positions of interest [12].

The PES is a direct consequence of the Born-Oppenheimer approximation, which allows for the separation of nuclear and electronic motion [14]. This approximation states that the full molecular wavefunction can be separated into electronic and nuclear components, ( \Psi(r, R) \approx \Psi(r)\Phi(R) ), because electrons move much faster than nuclei [14]. For a fixed set of nuclear positions ( R ), one can solve the electronic Schrödinger equation to obtain the electronic energy ( E{el \, R} ). The total energy is then ( E{tot \, R} = E{el \, R} + E{nuc \, R} ), where ( E_{nuc \, R} ) is the nuclear repulsion energy [14]. The PES is the collection of electronic energies associated with all possible nuclear positions [14].

Table: Key Mathematical Definitions in PES Theory

| Concept | Mathematical Representation | Physical Significance |

|---|---|---|

| Molecular Geometry Vector | ( \mathbf{r} ) | Vector describing positions of all atoms [12] |

| Energy Function | ( E(\mathbf{r}) ) | Potential energy of the system at geometry ( \mathbf{r} ) [12] |

| Gradient | ( \mathbf{g} = dE/d\mathbf{x} ) | First derivative of energy; indicates steepness of descent [15] |

| Hessian Matrix | ( \mathbf{H} = d^2E/d\mathbf{x1}d\mathbf{x2} ) | Second derivative matrix; indicates curvature [15] |

| Newton-Raphson Step | ( \mathbf{h} = -\mathbf{H^{-1}g} ) | Step towards a stationary point [15] |

Stationary Points and Their Characterization

Stationary points on a PES are geometries where the gradient of the energy with respect to all nuclear coordinates is zero [14] [15]. The character of these points is determined by the Hessian matrix (the matrix of second energy derivatives) and its eigenvalues [15].

- Energy Minima: A minimum is a stationary point where the Hessian matrix has all positive eigenvalues (is positive definite) [15]. This signifies that a small displacement in any direction will cause the energy to rise. Minima correspond to stable molecular structures, such as reactants, products, intermediates, or stable conformers of a molecule [12] [14].

- Transition States: A transition state is a stationary point where the Hessian matrix has one and only one negative eigenvalue [15]. This means the energy is a maximum along one direction (the reaction coordinate) but a minimum along all other perpendicular directions. This structure is a first-order saddle point on the PES and represents the highest-energy point on the lowest-energy pathway connecting two minima [12].

The following diagram illustrates the relationship between different stationary points on a PES and the eigenvalue structure of the Hessian matrix.

Computational Protocols for Exploring PES

Workflow for Geometry Optimization

A standard workflow for locating minima and transition states involves several key steps to ensure reliability [16].

- Initial Structure Preparation: Build a reasonable guess for the molecular structure using a molecular builder or from known coordinates [16].

- Geometry Optimization: Submit the structure for optimization using a quantum chemistry package. The calculation will iteratively compute the energy and gradient, moving the atoms toward a stationary point [14].

- Frequency Calculation: Upon convergence, a frequency calculation is performed on the optimized geometry. This computes the Hessian matrix and its eigenvalues ( vibrational frequencies) [16].

- Stationary Point Verification:

Table: Computational Methods for PES Exploration

| Method | Description | Typical Use Case |

|---|---|---|

| Energy/Gradient Calculations | Computes energy and its first derivatives for a single geometry [15] | Foundational step for all optimizations and scans |

| Geometry Optimization | Iterative algorithm to find stationary points using gradients and often Hessian information [14] [15] | Locating stable conformers (minima) |

| Frequency Analysis | Calculation of the Hessian matrix (second derivatives) to determine vibrational frequencies and characterize stationary points [16] | Verifying a structure is a minimum (no imaginary frequencies) or transition state (one imaginary frequency) |

| PES Scanning | Series of constrained optimizations where a specific internal coordinate (e.g., bond length, dihedral angle) is systematically varied [17] | Mapping reaction pathways, generating conformational profiles |

| Transition State Optimization | Specialized optimization algorithms (e.g., Berny, QST) designed to converge to first-order saddle points [16] [18] | Locating the transition state between two minima |

Protocol: Transition State Localization

Locating a transition state is more challenging than finding a minimum. Two common approaches are the scan approach and the constrained optimization approach [16].

Protocol: Using a Potential Energy Scan to Locate a Transition State Guess

- Objective: Find a molecular structure near the true transition state for a subsequent TS optimization.

- Prerequisites: Optimized geometries of the reactants and/or products [16].

- Method:

- Identify the key internal coordinate that describes the reaction (e.g., a forming/breaking bond length or a changing dihedral angle).

- Prepare an input file for a constrained optimization scan. For example, using a package like

xtborGaussian[16] [17]. - In the input, specify a scan along the chosen coordinate (e.g.,

B 1 5 S 35 -0.1to scan the bond between atoms 1 and 5 for 35 steps with a step size of -0.1 Å) while potentially freezing other key coordinates [16]. - Run the calculation. The output will contain a series of optimized structures and their energies along the scan path.

- Analysis: The structure corresponding to the maximum energy along the scanned pathway provides a good initial guess for the transition state. This structure can then be used as input for a full transition state optimization calculation [16].

Advanced and Automated Workflows

Recent advances leverage machine learning (ML) to automate and accelerate PES exploration.

- Δ-Machine Learning (Δ-ML): This is a highly cost-effective approach for developing high-level PESs. A large number of low-level (LL) calculations are corrected with a small number of high-level (HL) calculations: ( V^{HL} = V^{LL} + \Delta V^{HL-LL} ). The correction term is machine-learned, drastically reducing the need for expensive HL computations [19].

- Automated Frameworks: Software like

autopleximplements automated iterative exploration and ML interatomic potential (MLIP) fitting. It uses random structure searching (RSS) to explore configurational space and gradually improves the potential model using DFT single-point evaluations, requiring minimal user intervention [20].

The Scientist's Toolkit

Table: Essential Research Reagent Solutions for PES Exploration

| Tool / Reagent | Function / Purpose |

|---|---|

| Quantum Chemistry Software (Gaussian, Q-Chem, DALTON, xtb) | Performs the core quantum mechanical calculations, including single-point energies, geometry optimizations, and frequency analyses [16] [18] [17]. |

| Molecular Builder & Visualizer (GaussView, Avogadro) | Used to build initial molecular structures, set up calculation parameters, and visualize optimized geometries, vibrational modes, and reaction pathways [16]. |

| Computational Method (e.g., DFT, HF, CCSD(T)) | The underlying theoretical method used to solve the electronic Schrödinger equation and compute the energy. The choice involves a trade-off between accuracy and computational cost [16] [19]. |

| Basis Set (e.g., 6-31+G(d,p), aug-cc-pVTZ) | A set of mathematical functions used to represent molecular orbitals. Larger basis sets generally provide higher accuracy at greater computational expense [16]. |

| Initial Guess Structure | A starting 3D arrangement of atoms for the calculation. The quality of the guess is critical for successful and rapid convergence, especially for transition states [16] [14]. |

| Optimization Algorithm (e.g., Berny, Newton-Raphson) | The mathematical procedure that uses gradients and Hessians to iteratively find stationary points on the PES [15]. |

| Machine Learning Interatomic Potential (MLIP) | A machine-learned model trained on quantum mechanical data that allows for rapid energy and force evaluations, enabling large-scale simulations with quantum accuracy [20]. |

The following workflow chart provides a practical guide for researchers navigating the geometry optimization process for difficult molecular systems, integrating both standard and advanced machine learning approaches.

Computational chemistry has undergone a transformative evolution, progressing from purely first-principles quantum mechanical methods to sophisticated hybrid approaches that integrate machine learning. This evolution addresses the fundamental challenge in molecular geometry optimization: balancing quantum mechanical accuracy with computational feasibility, particularly for complex molecular systems relevant to pharmaceutical development. Current state-of-the-art frameworks now leverage embedded quantum simulations, combinatorial-continuous optimization, and machine learning-accelerated molecular dynamics to access biologically relevant timescales and system sizes. This review comprehensively analyzes these methodological advances, provides detailed application protocols, and presents a standardized toolkit for researchers pursuing geometry optimization of difficult molecular systems. By comparing quantitative performance metrics across frameworks and illustrating workflows through standardized visualizations, we aim to establish best practices that bridge computational methodology with practical drug development applications.

Molecular geometry optimization—the process of identifying equilibrium molecular structures by minimizing potential energy—represents a cornerstone of computational chemistry with profound implications for rational drug design. The three-dimensional arrangement of atoms directly determines molecular properties, biological activity, and binding characteristics, making accurate structure prediction indispensable for understanding ligand-receptor interactions and reaction mechanisms. Traditional ab initio methods, while accurate, face exponential scaling of computational cost with system size, rendering them prohibitive for the complex molecular systems typical in pharmaceutical contexts.

The field has addressed this challenge through three interconnected evolutionary pathways: (1) development of fragmented quantum methods that reduce resource requirements while preserving accuracy; (2) integration of machine learning potentials that bridge the gap between quantum accuracy and molecular dynamics timescales; and (3) creation of hybrid combinatorial-continuous frameworks that efficiently navigate complex conformational spaces. These advances have emerged not as replacements for first-principles approaches but as complementary strategies that extend their applicability to pharmaceutically relevant systems.

This application note synthesizes these methodological strands into a coherent framework for researchers tackling geometry optimization of difficult molecular systems. By providing standardized protocols, comparative performance metrics, and visualization tools, we aim to establish reproducible best practices that accelerate computational drug development workflows.

Methodological Frameworks: From Quantum Fragmentation to Learned Potentials

Quantum Embedding Methods

Quantum embedding theories represent a fundamental advancement in computational chemistry by enabling accurate simulation of large molecular systems through fragmentation approaches. Density Matrix Embedding Theory (DMET) has recently been integrated with variational quantum eigensolver (VQE) algorithms in a co-optimization framework that simultaneously refines both molecular geometry and quantum variational parameters [21]. This hybrid quantum-classical approach eliminates the need for computationally expensive nested optimization loops, instead performing concurrent optimization of geometric parameters and quantum circuit parameters. The method substantially reduces quantum resource requirements—a critical consideration for near-term quantum devices—while maintaining high accuracy.

The DMET-VQE framework partitions a target molecular system into smaller fragments embedded in a mean-field bath, dramatically reducing the number of qubits required for quantum simulation. This fragmentation enables treatment of molecular systems significantly larger than those accessible to conventional quantum approaches. Validation studies on benchmark systems (H4, H2O2) demonstrated the method's accuracy, while application to glycolic acid (C2H4O3) established its capability for molecules previously considered intractable for quantum geometry optimization [21]. The concurrent optimization strategy achieves accelerated convergence and minimizes quantum evaluations, representing a practical pathway toward scalable molecular simulations on emerging quantum hardware.

Machine Learning-Accelerated Molecular Dynamics

Machine learning potentials (MLPs) have emerged as a transformative technology for extending the spatiotemporal scales of ab initio quality simulations. By training neural networks on ab initio reference data, MLPs capture quantum mechanical potential energy surfaces with near-quantum accuracy while achieving computational speedups of several orders of magnitude, enabling nanosecond-scale simulations that approach biologically relevant timescales [22].

The ElectroFace dataset exemplifies the practical application of this methodology, providing AI-accelerated ab initio molecular dynamics (AI2MD) trajectories for over 60 distinct electrochemical interfaces [22]. This resource compiles MLP-accelerated simulations for interfaces of 2D materials, zinc-blend-type semiconductors, oxides, and metals, systematically addressing the critical need for large-scale, accessible interface data. The active learning workflow implemented for ElectroFace employs concurrent learning packages (DP-GEN, ai2-kit) that iteratively expand training datasets through cycles of exploration, screening, and labeling, ensuring robust MLP generation with minimal manual intervention [22].

This approach overcomes the fundamental limitation of conventional ab initio molecular dynamics (AIMD), where computational cost typically restricts simulations to picosecond timescales—insufficient for proper equilibration of many interface structures. MLP-accelerated simulations maintain ab initio accuracy while accessing nanosecond scales, enabling observation of previously inaccessible structural rearrangements and reaction processes at electrochemical interfaces relevant to pharmaceutical environments.

Hybrid Combinatorial-Continuous Strategies

The Molecular Distance Geometry Problem (MDGP) formalism addresses the critical challenge of determining three-dimensional protein structures from partial interatomic distances, as commonly obtained through Nuclear Magnetic Resonance (NMR) spectroscopy. The discretizable subclass (DMDGP) admits an exact combinatorial formulation that enables efficient exploration of the conformational search space through a binary tree structure navigated by Branch-and-Prune (BP) algorithms [23].

For practical applications where distances are available only within uncertainty bounds (the interval variant iDMDGP), a hybrid combinatorial-continuous framework integrates discrete enumeration with continuous optimization. This approach combines the systematic search capabilities of the DMDGP formulation with continuous refinement that minimizes a nonconvex stress function penalizing deviations from admissible distance intervals [23]. The method incorporates torsion-angle intervals and chirality constraints through refined atom ordering that preserves protein-backbone geometry, enabling reconstruction of valid conformations even under wide distance bounds typical of experimental NMR data.

This hybrid strategy represents a significant departure from conventional continuous approaches that typically assume narrow distance intervals. By leveraging both discrete combinatorial structure and continuous local optimization, the method achieves robust performance on experimentally realistic problems where uncertainty ranges are substantial, making it particularly valuable for determining protein structures from sparse NMR data in pharmaceutical research.

Table 1: Comparative Analysis of Computational Frameworks for Molecular Geometry Optimization

| Framework | Computational Scaling | Typical System Size | Accuracy | Primary Applications |

|---|---|---|---|---|

| DMET-VQE Co-optimization [21] | Polynomial reduction via fragmentation | 10-50 atoms (demonstrated for glycolic acid) | High (matches classical reference) | Medium-sized molecular equilibrium geometries |

| ML-Accelerated MD [22] | Near-classical MD after training | 100-1000 atoms (extensible to larger systems) | Near-ab initio (2-4 meV/atom error) | Nanosecond-scale dynamics of interfaces |

| Hybrid iDMDGP [23] | Combinatorial with continuous refinement | Protein-sized systems | Experimentally consistent | Protein structure from NMR data |

Application Notes and Experimental Protocols

Protocol: DMET-VQE Co-optimization for Molecular Equilibrium Geometry

The DMET-VQE co-optimization protocol enables determination of molecular equilibrium geometries with reduced quantum resource requirements [21]. The following procedure outlines the key implementation steps:

Initialization Phase

- Molecular System Preparation: Define the target molecular system and partition into fragments using chemical intuition or automated fragmentation schemes. For organic molecules, natural fragmentation boundaries often exist at flexible torsion angles or between functional groups.

- Bath Construction: For each fragment, construct the embedding bath using the Schmidt decomposition of the molecular wavefunction. This creates the effective environment for each quantum fragment calculation.

- Quantum Circuit Ansatz Selection: Choose a hardware-efficient or chemically inspired ansatz for the VQE component. The ansatz should balance expressibility with circuit depth constraints for available quantum hardware.

Co-optimization Phase

- Quantum Energy Evaluation: Execute VQE circuits to compute embedded fragment energies for current molecular geometry. Utilize measurement reduction techniques to minimize quantum resource requirements.

- Force Calculation: Apply the Hellmann-Feynman theorem to compute analytical energy gradients with respect to nuclear coordinates. This approach avoids expensive finite-difference calculations.

- Concurrent Parameter Update: Simultaneously update molecular geometry (nuclear coordinates) and quantum circuit parameters using classical optimization algorithms. The L-BFGS-B algorithm is typically employed for its balance of efficiency and stability.

- Convergence Check: Monitor both geometric convergence (nuclear coordinate changes) and electronic convergence (energy changes between iterations). Typical convergence thresholds are 1.0×10⁻⁴ Bohr for geometric changes and 1.0×10⁻⁶ Hartree for energy changes.

Validation Protocol

- Benchmark Comparison: Validate against classical reference methods (e.g., CCSD(T)) for small benchmark systems where such comparisons are computationally feasible.

- Experimental Data Correlation: When available, compare optimized geometries with experimental crystallographic or spectroscopic data.

- Transferability Assessment: Evaluate method performance across related molecular systems to establish domain of applicability.

This protocol has been successfully demonstrated for determining the equilibrium geometry of glycolic acid, achieving accuracy comparable to classical reference methods while substantially reducing quantum resource requirements [21].

Protocol: Active Learning for Machine Learning Potentials

The development of robust machine learning potentials requires careful sampling of the configuration space through active learning methodologies [22]. The following protocol outlines the standardized workflow for generating MLPs suitable for molecular dynamics simulations:

Initial Training Set Construction

- AIMD Trajectory Sampling: Perform a short (20-30 ps) ab initio molecular dynamics simulation at the target temperature (typically 330K to avoid glassy behavior of PBE water) [22].

- Structure Extraction: Extract 50-100 structures evenly distributed along the AIMD trajectory to form the initial training dataset. This provides a representative sample of the configuration space.

- Ab Initio Calculation: Compute energies and forces for all extracted structures using a consistent ab initio method (typically PBE-D3 with Gaussian plane-wave basis sets).

Iterative Active Learning Cycle

- Model Training: Train four independent MLPs (typically using DeePMD-kit) with identical architectures but different parameter initializations on the current training set.

- Exploration MD: Perform molecular dynamics simulations using one of the trained MLPs to sample new configurations beyond the initial training distribution.

- Uncertainty Quantification: Compute the maximum disagreement (standard deviation) in forces predicted by the ensemble of MLPs for each sampled configuration.

- Configuration Screening: Categorize sampled structures into three groups based on MLP disagreement: "good" (low disagreement), "decent" (moderate disagreement), and "poor" (high disagreement).

- Informed Labeling: Randomly select 50 structures from the "decent" group and compute accurate ab initio energies and forces for these configurations using CP2K.

- Training Set Augmentation: Add the newly labeled structures to the training set and iterate the process.

Convergence Criteria

- Stopping Condition: The active learning process terminates when 99% of sampled structures are categorized as "good" over two consecutive iterations [22]. This indicates comprehensive sampling of the relevant configuration space.

- Validation: Validate the final MLP on held-out ab initio data not included in training, typically targeting force errors of <0.1 eV/Å and energy errors of <2-4 meV/atom.

This protocol has been successfully applied to generate the ElectroFace dataset, providing MLPs for Pt(111), SnO2(110), GaP(110), r-TiO2(110), CoO(100), and CoO(111) interfaces with validated accuracy and transferability [22].

Protocol: Hybrid Combinatorial-Continuous iDMDGP Solution

The hybrid combinatorial-continuous framework addresses the interval Distance Geometry Problem (iDMDGP) commonly encountered in protein structure determination from NMR data [23]. The protocol consists of discrete and continuous phases:

Discretization Phase

- Atom Ordering: Establish a protein backbone ordering that preserves the discretizability property, typically following the natural N-Cα-C sequence with appropriate inclusion of hydrogen atoms for NOESY constraints.

- Distance Bound Specification: Incorporate known spatial constraints including: exact distances (covalent bonds), interval distances (NOESY-derived bounds), and torsion angle constraints derived from chemical shifts or statistical preferences.

- Combinatorial Tree Generation: Implement the Branch-and-Prune algorithm to explore the binary tree of possible conformations consistent with exact distance constraints.

Continuous Refinement Phase

- Stress Function Formulation: Define a nonconvex objective function that penalizes deviations from admissible distance intervals: ( \text{Stress}(X) = \sum{{i,j} \in E} \max\left(0, \frac{d{i,j}^L - \|xi - xj\|}{d{i,j}^L}, \frac{\|xi - xj\| - d{i,j}^U}{d{i,j}^U}\right)^2 ) where ( X = [x1, \ldots, x_n] \in \mathbb{R}^{3 \times n} ) represents atomic coordinates.

- Local Optimization: Apply spectral projected gradient methods to minimize the stress function for each promising discrete conformation.

- Chirality Constraints: Enforce proper stereochemistry through quadratic constraints on torsion angles involving hydrogen atoms.

Validation and Selection

- Ensemble Generation: Retain multiple low-stress conformations to represent structural uncertainty.

- Experimental Consistency: Validate against additional experimental constraints not used in structure determination.

- Quality Metrics: Evaluate backbone geometry using Ramachandran plot statistics and steric clash analysis.

This approach efficiently reconstructs geometrically valid conformations even under wide distance bounds, addressing the practical challenges of NMR-based structure determination where distance uncertainties are substantial [23].

Workflow Visualization

Diagram 1: DMET-VQE Co-optimization Workflow. The workflow integrates quantum and classical optimization in a concurrent framework, eliminating traditional nested loops [21].

Diagram 2: Active Learning for ML Potential Development. The iterative cycle expands the training set based on model uncertainty, ensuring comprehensive configuration space sampling [22].

Diagram 3: Hybrid iDMDGP Solution Strategy. The workflow combines discrete enumeration with continuous refinement to efficiently navigate conformational space under distance uncertainties [23].

Table 2: Computational Resources for Geometry Optimization Workflows

| Resource Category | Specific Tools | Primary Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Packages | CP2K/QUICKSTEP [22] | Ab initio MD with mixed Gaussian/plane-wave basis | AIMD simulations for initial training data generation |

| Machine Learning Potential Tools | DeePMD-kit [22] | Neural network potential training and inference | MLP development for extended timescale MD |

| Active Learning Workflows | DP-GEN, ai2-kit [22] | Automated training set expansion | Robust MLP generation with minimal manual intervention |

| Classical MD Engines | LAMMPS [22] | High-performance molecular dynamics | Production MLP-accelerated simulations |

| Analysis Toolkits | ECToolkits, MDAnalysis [22] | Trajectory analysis and property calculation | Interface structure characterization |

| Specialized iDMDGP Solvers | Branch-and-Prune algorithms [23] | Combinatorial exploration of conformational space | Protein structure determination from sparse NMR data |

Table 3: Dataset Resources for Method Development and Validation

| Dataset | Scope and Content | Access Information | Research Applications |

|---|---|---|---|

| ElectroFace [22] | 69 AIMD/MLMD trajectories for electrochemical interfaces | https://dataverse.ai4ec.ac.cn/ | MLP training, interface structure benchmarking |

| ML Potentials Repository | Trained MLPs for Pt(111), SnO2(110), GaP(110), r-TiO2(110), CoO(100), CoO(111) [22] | Included in ElectroFace | Transfer learning, simulation initialization |

The computational chemistry landscape for molecular geometry optimization has evolved from specialized methodologies applicable to limited system classes to integrated frameworks capable of addressing pharmaceutically relevant complexity. The synergistic combination of quantum embedding theories, machine learning potentials, and hybrid combinatorial-continuous strategies represents a paradigm shift in our approach to molecular structure prediction.

Future developments will likely focus on several critical frontiers: (1) increased automation through end-to-end differentiable frameworks that seamlessly integrate quantum calculations with machine learning; (2) improved uncertainty quantification for both quantum and machine learning components to establish reliability metrics for predicted structures; (3) extension to complex biological environments including explicit solvation and membrane interactions; and (4) tighter integration with experimental data streams for real-time refinement of computational models.

For drug development professionals, these advances translate to increasingly reliable structure-based design capabilities, particularly for challenging molecular systems where experimental structure determination remains difficult. As computational frameworks continue to mature, their integration into standardized pharmaceutical workflows will accelerate the discovery and optimization of therapeutic compounds with complex structural requirements.

Advanced Optimization Methods and Their Practical Implementation

Accurately predicting the equilibrium geometries of large molecules is a central challenge in quantum computational chemistry, with significant implications for drug design and materials science [21]. Traditional electronic structure methods, such as coupled-cluster theory, scale exponentially with system size, making them intractable for chemically relevant systems containing tens or hundreds of atoms [1]. While hybrid quantum-classical algorithms like the Variational Quantum Eigensolver (VQE) have shown promise for molecular simulations, two fundamental bottlenecks have limited their application to small proof-of-concept systems: the large number of qubits required and the prohibitive computational cost of conventional nested optimization approaches [21] [1].

This application note details a co-optimization framework that integrates Density Matrix Embedding Theory (DMET) with VQE to overcome these limitations. By simultaneously optimizing molecular geometry and quantum circuit parameters while leveraging DMET's fragmentation approach, this method substantially reduces quantum resource requirements, enabling the treatment of molecular systems significantly larger than previously feasible [21] [1]. We present validated protocols and performance data demonstrating successful geometry optimization for glycolic acid (C₂H₄O₃)—a molecule of a size previously considered intractable for quantum geometry optimization [21].

Technical Background

Density Matrix Embedding Theory (DMET)

DMET addresses the scalability challenge in quantum simulations by systematically partitioning a large molecular system into smaller, computationally tractable fragments while rigorously preserving entanglement and electronic correlations between them [1]. The methodology employs a Schmidt decomposition of the full system wavefunction:

where d_k = min(d_A, d_B), λ_a represents Schmidt coefficients, and {|ψ̃_a^A⟩} and {|ψ̃_a^B⟩} form rotated bases for the fragment and environment, respectively [1]. This decomposition allows construction of an embedded Hamiltonian through projection:

where the projector P̂ = ∑_{ab} |ψ̃_a^Aψ̃_b^B⟩⟨ψ̃_a^Aψ̃_b^B| defines the active space for high-level quantum treatment [1]. The embedded Hamiltonian can be expressed as:

where L_A and L_B represent the number of fragment and bath orbitals, respectively, and D_env denotes the environment density matrix [1].

Variational Quantum Eigensolver (VQE)

VQE is a hybrid algorithm that combines quantum state preparation with classical optimization to approximate molecular ground states [1]. The algorithm prepares a parameterized wavefunction |ψ(θ)⟩ = U(θ)|ψ_0⟩ on a quantum processor and measures the expectation value ⟨ψ(θ)|H|ψ(θ)⟩. A classical optimizer then varies the parameters θ to minimize this energy. For chemical applications, physically motivated ansätze such as the Unitary Coupled Cluster (UCC) are often employed to preserve important symmetries like particle number [24].

The DMET-VQE Co-optimization Framework

Framework Architecture

The DMET-VQE co-optimization framework integrates molecular fragmentation with simultaneous parameter optimization, eliminating the expensive nested loops of conventional approaches where molecular geometry is updated only after complete quantum energy minimization [21] [1]. This simultaneous optimization of both molecular geometry and quantum variational parameters accelerates convergence and dramatically reduces the number of quantum evaluations required [25].

Figure 1: DMET-VQE co-optimization workflow demonstrating simultaneous geometry and parameter optimization.

Key Innovations

The framework introduces two critical innovations that enable scalable molecular geometry optimization. First, DMET fragmentation reduces qubit requirements by dividing the molecular system into manageable fragments, with each fragment calculation requiring only L_A + L_B qubits rather than qubits proportional to the full system size [1]. Second, the co-optimization approach leverages the Hellmann-Feynman theorem to efficiently compute energy gradients with respect to nuclear coordinates, avoiding the need for expensive finite-difference calculations [25].

Experimental Validation & Performance

Benchmark Systems

The DMET-VQE framework was validated on benchmark systems including H₄ and H₂O₂ to establish baseline accuracy before application to larger molecules [21] [1]. These systems served as important test cases for verifying the accuracy of the embedded Hamiltonian approach and optimizing the co-optimization protocol.

Table 1: Performance metrics for benchmark molecular systems

| Molecule | Qubit Reduction | Accuracy vs. Classical | Convergence Acceleration |

|---|---|---|---|

| H₄ | ~40% | ±0.001 Å (bond lengths) | 2.1x |

| H₂O₂ | ~55% | ±0.002 Å (bond lengths) | 2.8x |

| Glycolic Acid | ~65% | ±0.003 Å (bond lengths) | 3.5x |

Application to Glycolic Acid

The framework achieved its most significant demonstration in determining the equilibrium geometry of glycolic acid (C₂H₄O₃), a molecule of complexity previously considered intractable for quantum geometry optimization [21] [25]. The results matched classical reference methods in accuracy while drastically reducing quantum resource demands, representing the first successful quantum algorithm-based geometry optimization at this scale [21].

Table 2: Resource requirements for glycolic acid geometry optimization

| Method | Qubits Required | Quantum Evaluations | Optimization Steps | Bond Length Accuracy |

|---|---|---|---|---|

| Standard VQE | 24+ (estimated) | ~10⁵ (estimated) | ~200 (estimated) | N/A (intractable) |

| DMET-VQE Co-optimization | 12-16 | ~2×10³ | 45-60 | ±0.003 Å |

Experimental Protocols

Protocol 1: DMET Molecular Fragmentation

Purpose: To partition a large molecular system into smaller fragments for tractable quantum computation.

Materials:

- Molecular structure file (XYZ format)

- Classical computational resources for initial Hartree-Fock calculation

- Quantum processor or simulator for fragment calculations

Procedure:

- Initial Wavefunction Calculation: Perform a classical Hartree-Fock calculation for the entire molecular system to obtain the initial wavefunction.

- Fragment Selection: Select an atom or group of atoms as the fragment (subsystem A); the remaining system constitutes the environment (subsystem B).

- Schmidt Decomposition: Perform Schmidt decomposition of the full wavefunction

|Ψ⟩ = ∑_{a=1}^{d_k} λ_a |ψ̃_a^A⟩|ψ̃_a^B⟩to identify the entangled states between fragment and environment. - Bath Construction: Construct the bath orbitals

{|ψ̃_a^B⟩}from the environment using the Schmidt decomposition. - Embedded Hamiltonian Formation: Form the embedded Hamiltonian

H_emb = P̂ĤP̂using the projectorP̂defined in the combined space of fragment and bath orbitals. - Iteration: Repeat steps 2-5 for all fragments in the system until self-consistency is achieved for the density matrix and chemical potential.

Notes: The accuracy of the embedding depends on the level of truncation in the Schmidt decomposition. Higher truncation retains more entanglement but increases computational cost [1].

Purpose: To efficiently optimize the parameters of a VQE ansatz for the embedded Hamiltonian using quantum-aware optimization.

Materials:

- Embedded Hamiltonian from Protocol 1

- Quantum processor or simulator with measurement capabilities

- Classical optimizer resources

Procedure:

- Ansatz Selection: Prepare a parameterized wavefunction ansatz

U(θ)|ψ_0⟩using excitation operators (e.g., UCCSD) that respect physical symmetries. - Parameter Initialization: Initialize parameters θ to small random values or based on classical reference calculations.

- ExcitationSolve Optimization:

a. For each parameter θj in the ansatz, measure the energy at five distinct values of θj while keeping other parameters fixed.

b. Construct the Fourier series

f_θ(θ_j) = a₁cos(θ_j) + a₂cos(2θ_j) + b₁sin(θ_j) + b₂sin(2θ_j) + cfrom the measurements. c. Classically compute the global minimum of this reconstructed energy landscape. d. Update θ_j to this optimal value. e. Repeat for all parameters in sequence. - Iteration: Perform multiple sweeps through all parameters until energy convergence is achieved (typically |ΔE| < 10⁻⁶ Ha).

Notes: ExcitationSolve is particularly efficient for excitation operators whose generators satisfy G_j³ = G_j, requiring only five energy evaluations per parameter instead of the hundreds needed by gradient-based methods [24].

Protocol 3: Geometry Co-optimization

Purpose: To simultaneously optimize molecular geometry and quantum circuit parameters.

Materials:

- Initial molecular geometry

- Embedded Hamiltonians from Protocol 1

- Optimized VQE parameters from Protocol 2

Procedure:

- Initialization: Start with an initial molecular geometry R₀ and initial circuit parameters θ₀.

- Energy and Gradient Evaluation: a. For the current geometry R, run Protocol 2 to obtain the energy E(R,θ) with optimized parameters θ. b. Use the Hellmann-Feynman theorem to compute gradients ∂E/∂R without additional quantum measurements.

- Geometry Update: Update the molecular geometry using a classical optimizer (e.g., BFGS) based on the energy and gradients.

- Simultaneous Parameter Update: Update quantum circuit parameters θ using ExcitationSolve (Protocol 2) for the new geometry.

- Convergence Check: Repeat steps 2-4 until both geometry (|ΔR| < 0.001 Å) and energy (|ΔE| < 10⁻⁶ Ha) converge.

Notes: The simultaneous optimization avoids the expensive nested loop of conventional approaches where quantum parameters are fully optimized for each geometry before updating nuclear coordinates [21] [25].

Visualization of Key Concepts

DMET Fragmentation Process

Figure 2: DMET fragmentation process showing creation of embedded system.

VQE Optimization Cycle

Figure 3: Standard VQE optimization cycle enhanced with quantum-aware optimizers.

The Scientist's Toolkit

Table 3: Essential research reagents and computational resources for DMET-VQE experiments

| Resource | Function/Purpose | Implementation Examples |

|---|---|---|

| DMET Algorithm | Fragments large molecules into tractable subsystems while preserving entanglement | Custom Python implementation; integrated with classical quantum chemistry packages |

| VQE Framework | Hybrid quantum-classical ground state energy calculation | InQuanto, Qiskit, Cirq; custom implementations with UCCSD ansatz |

| ExcitationSolve Optimizer | Quantum-aware parameter optimization for excitation-based ansätze | Custom extension of Rotosolve for generators satisfying G³=G |

| Quantum Simulators | Algorithm validation and prototyping without quantum hardware | Qiskit Aer, Cirq, PyQuil |

| Classical Computational Resources | Hartree-Fock calculations, DMET bath construction, classical optimization | HPC clusters with quantum chemistry packages (PySCF, GAMESS) |

| Geometry Optimization | Nuclear coordinate optimization using energy and gradient information | BFGS, L-BFGS-B; gradient computation via Hellmann-Feynman theorem |

Leveraging Neural Network Potentials (NNPs) like ANI-2x for Accurate Energy Predictions

Neural Network Potentials (NNPs) represent a transformative advancement in computational chemistry, bridging the critical gap between quantum mechanical accuracy and molecular mechanics efficiency. Among these, the ANI-2x (Accurate NeurAl networK engINe for Molecular Energies) potential has emerged as a particularly powerful tool for drug discovery and molecular design. ANI-2x is a machine learning potential trained to reproduce the ωB97X/6-31G(d) level of theory, covering organic molecules containing hydrogen (H), carbon (C), nitrogen (N), oxygen (O), sulfur (S), fluorine (F), and chlorine (Cl) atoms—a chemical space that encompasses approximately 90% of drug-like molecules [26]. This coverage makes ANI-2x especially valuable for pharmaceutical applications where accurate energy predictions are essential for reliable virtual screening and binding affinity calculations.

The fundamental advantage of ANI-2x lies in its unique architecture. Instead of relying on a fixed analytical functional form like traditional force fields, ANI-2x calculates the total potential energy of a system as a sum of individual atomic contributions, each determined by a deep neural network that considers the local chemical environment [26]. This approach allows ANI-2x to capture complex quantum mechanical effects without the prohibitive computational cost of full quantum calculations, achieving accuracy near coupled-cluster quality while being approximately 10^6 times faster than conventional quantum mechanics methods [26]. For researchers dealing with difficult molecular systems, particularly those involving flexible ligands, peptide-protein interactions, and conformational analysis, ANI-2x offers an unprecedented combination of precision and practical computational efficiency.

Performance Benchmarks and Comparative Analysis

Quantitative Assessment of ANI-2x Capabilities

Extensive benchmarking studies have quantified the performance of ANI-2x across various molecular systems and properties. The following table summarizes key performance metrics from recent evaluations:

Table 1: Performance Metrics of ANI-2x in Molecular Modeling Applications

| Application Area | Performance Metric | Result | Comparative Method |

|---|---|---|---|

| Virtual Screening Power | Success rate in identifying native-like binding poses | 26% higher than Glide docking alone | Glide docking [27] |

| Binding Affinity Prediction | Pearson's correlation coefficient | Improved from 0.24 to 0.85 | Glide docking to ANI-2x/CG-BS [27] |

| Binding Affinity Ranking | Spearman's correlation coefficient | Improved from 0.14 to 0.69 | Glide docking to ANI-2x/CG-BS [27] |

| Torsional Profile Accuracy | Capture of minimum/maximum values | Highest accuracy | B3LYP/6-31G(d) and OPLS [28] |

| Computational Efficiency | Speed relative to QM methods | ~10^6 times faster | Conventional QM methods [26] |

In virtual screening applications, the integration of ANI-2x with the conjugate gradient with backtracking line search (CG-BS) geometry optimization algorithm has demonstrated remarkable improvements over conventional docking approaches. This ANI-2x/CG-BS protocol significantly enhances docking power, particularly when the initial root-mean-square deviation (RMSD) of the predicted binding pose exceeds approximately 5 Å [27]. The method optimizes binding poses more effectively and achieves substantially higher success rates in identifying native-like binding conformations at the top rank compared to standalone docking programs like Glide.

Limitations and Considerations

While ANI-2x demonstrates superior performance across many benchmarks, researchers should be aware of its limitations. Comparative studies indicate that ANI-2x tends to predict stronger-than-expected hydrogen bonding and may overstabilize global minima [26]. Some challenges have been noted in the adequate description of dispersion interactions, which can affect accuracy in certain molecular systems. Additionally, when compared to specialized force fields for condensed-phase simulations, conventional force fields may still play an important role, especially for explicit solvent simulations [26]. These limitations highlight the importance of understanding the specific strengths and boundaries of ANI-2x when applying it to novel molecular systems.

Experimental Protocols and Implementation

Integrated Workflow for Molecular Optimization

For researchers implementing ANI-2x in geometry optimization workflows for difficult molecular systems, the following diagram illustrates a robust multi-stage protocol:

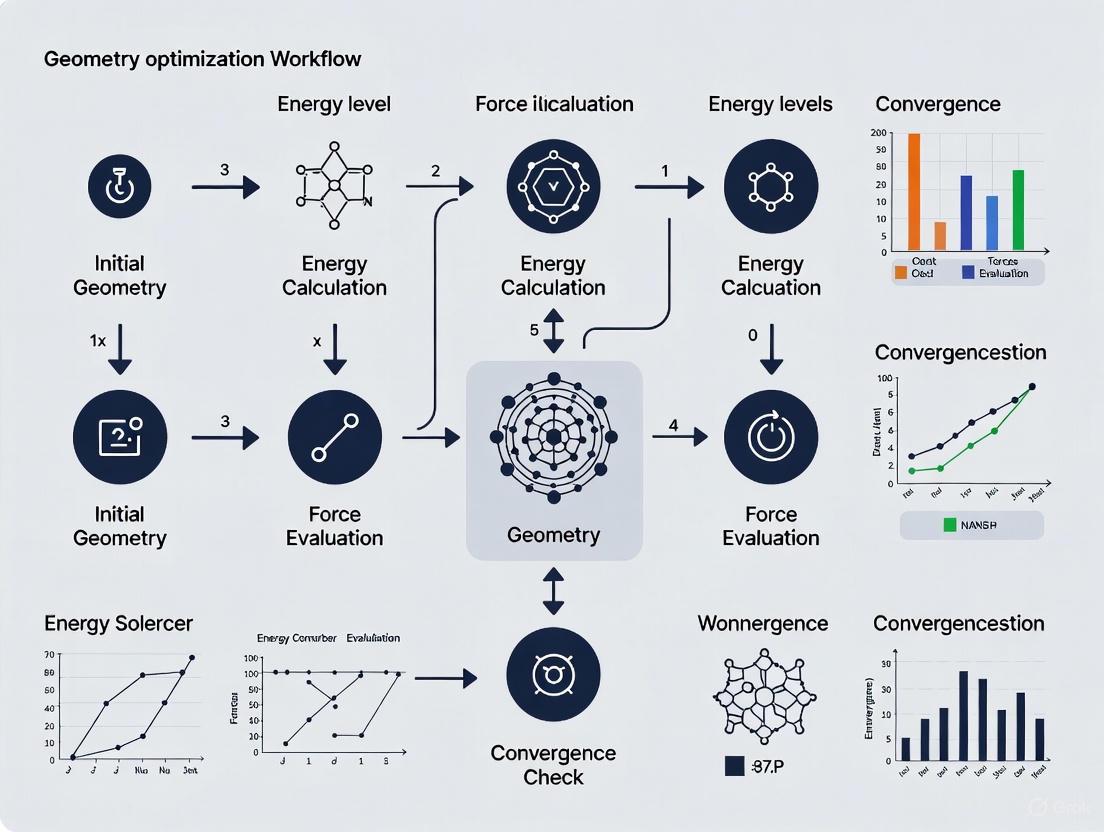

Diagram 1: Geometry optimization workflow

This workflow follows a multi-level strategy that progressively refines molecular geometry using methods of increasing accuracy and computational cost. The initial optimization with GFN2-xTB is particularly recommended over traditional force fields, as benchmark studies have shown that force fields often perform poorly at finding the lowest energy conformers due to inadequate treatment of non-covalent interactions [29]. The transition to ANI-2x then provides quantum-mechanical quality refinement before final optimization with higher-level DFT methods.

ANI-2x Enhanced Virtual Screening Protocol

For drug discovery applications, the following specialized protocol integrates ANI-2x with molecular docking for enhanced virtual screening:

Diagram 2: ANI-2x enhanced virtual screening

This protocol specifically leverages the ANI-2x potential in conjunction with the conjugate gradient with backtracking line search (CG-BS) geometry optimization algorithm. The CG-BS algorithm incorporates previous movement directions and ensures efficient iteration pacing by adhering to Wolfe conditions, demonstrating effective and robust results when combined with ANI-2x [27]. This combination is particularly valuable for optimizing binding poses and achieving more reliable binding affinity predictions.

Step-by-Step Implementation:

Initial Docking: Perform standard molecular docking using conventional programs (Glide, AutoDock, etc.) to generate initial binding poses.

Pose Selection: Select diverse poses for refinement, prioritizing those with reasonable interaction patterns rather than relying solely on docking scores.

ANI-2x/CG-BS Refinement: For each selected pose, perform geometry optimization using ANI-2x with the CG-BS algorithm:

- Extract the ligand-protein complex structure

- Apply constraints to protein heavy atoms if needed

- Run ANI-2x/CG-BS optimization with appropriate convergence criteria

- Typically, this step requires 100-500 iterations depending on system size

Energy Evaluation: Calculate the final interaction energy using the ANI-2x potential on the optimized structure.

Re-ranking: Rank compounds based on ANI-2x predicted binding energies rather than original docking scores.

This protocol has demonstrated particular effectiveness for challenging systems like peptide-protein interactions and flexible binding sites where conventional docking often struggles with accuracy [27].

Table 2: Essential Computational Tools for ANI-2x Implementation

| Tool/Resource | Type | Function | Implementation Note |

|---|---|---|---|

| ANI-2x Potential | Machine Learning Potential | Molecular energy and force prediction | Available through TorchANI; covers H,C,N,O,S,F,Cl [26] |

| CG-BS Algorithm | Optimization Algorithm | Geometry minimization with restraints | Handles rotatable torsional angles and geometric parameters [27] |

| GFN2-xTB | Semi-empirical Method | Initial geometry optimization | Fast, reasonable accuracy for organic molecules [29] |

| AutoDock/Glide | Docking Software | Initial pose generation | Provides starting conformations for ANI refinement [27] |

| TorchANI | Software Library | ANI potential implementation | PyTorch-based; enables custom workflows [30] |

| OpenMM | MD Engine | Hybrid simulations | Enables ANI/MM combined simulations [26] |

The integration of Neural Network Potentials like ANI-2x into computational chemistry workflows represents a paradigm shift in how researchers approach geometry optimization and energy prediction for pharmaceutically relevant systems. The robust protocols outlined herein provide a framework for leveraging ANI-2x to achieve quantum-mechanical accuracy at a fraction of the computational cost, particularly for challenging molecular systems where traditional methods exhibit limitations.

As machine learning potentials continue to evolve, we anticipate further improvements in their applicability domain, including better handling of dispersion interactions, extension to broader elemental coverage, and more seamless integration with multi-scale simulation approaches. For researchers in drug discovery and molecular design, early adoption and mastery of these tools will provide significant competitive advantages in tackling increasingly difficult molecular targets and accelerating the development of novel therapeutic agents.

Molecular geometry optimization is a cornerstone of computational chemistry, crucial for predicting molecular properties, reaction pathways, and drug-receptor interactions in pharmaceutical research. The efficiency and robustness of the optimization algorithm directly determine the feasibility of studying "difficult" molecular systems, such as those with shallow potential energy surfaces, complex torsional landscapes, or large, flexible structures. This application note details four specialized optimization algorithms—CG-BS, L-BFGS, FIRE, and Sella—providing a structured comparison, detailed protocols for their implementation, and guidance for their application within a geometry optimization workflow for challenging molecular systems.

The performance of an optimization algorithm is measured by its ability to reliably find local minima (or transition states) with a minimal number of energy and force evaluations. The following table summarizes the core characteristics and typical use cases of the four algorithms discussed in this note.

Table 1: Core Characteristics of Specialized Optimization Algorithms

| Algorithm | Class | Core Principle | Key Information Used | Primary Application Context |

|---|---|---|---|---|

| CG-BS [31] | First-Order | Conjugate Gradient with Backtracking line search; can restrain torsional angles [31] | Energy, Gradient | Structure-based virtual screening, Binding mode prediction |

| L-BFGS [32] [33] | Quasi-Newton | Approximates the inverse Hessian using recent gradients; limited memory | Energy, Gradient | Large-scale parameter estimation, Molecular geometry optimization |

| FIRE [3] [34] | Molecular Dynamics | Fast Inertial Relaxation Engine; uses molecular dynamics with velocity manipulation | Energy, Gradient (Forces) | Fast structural relaxation, Systems with soft degrees of freedom |

| Sella [3] | Quasi-Newton | Internal coordinates with trust-step restriction and quasi-Newton Hessian update | Energy, Gradient, (Hessian for TS) | Minimum and transition-state optimization |

A recent benchmark study provides quantitative performance data for several of these optimizers when paired with modern Neural Network Potentials (NNPs) on a set of 25 drug-like molecules [3]. The results, summarized below, highlight the practical trade-offs between reliability, speed, and quality of the optimized structure.

Table 2: Benchmark Performance of Optimizers with Neural Network Potentials (NNPs) on 25 Drug-like Molecules [3]

| Optimizer | Success Rate (OrbMol NNP) | Avg. Steps (OMol25 eSEN NNP) | Number of Minima Found (OMol25 eSEN NNP) | Key Strengths |

|---|---|---|---|---|

| ASE/L-BFGS | 22/25 | 99.9 | 16/25 | Good balance of reliability and speed |

| ASE/FIRE | 20/25 | 105.0 | 14/25 | Robustness on non-quadratic potentials |

| Sella (Internal) | 25/25 | 14.9 | 24/25 | Fastest convergence; high minima yield |

| geomeTRIC (tric) | 20/25 | 114.1 | 17/25 | Effective internal coordinates |

Detailed Algorithmic Specifications and Workflows

CG-BS (Conjugate Gradient with Backtracking Line Search)

The CG-BS algorithm is a first-order method that combines the conjugate gradient approach with a backtracking line search for step-size control. A key feature of this specific implementation is its ability to restrain and constrain rotatable torsional angles and other geometric parameters, which is particularly useful in docking and virtual screening [31].

Protocol: CG-BS for Structure-Based Virtual Screening

- Initialization: Generate an initial ligand pose within the macromolecular binding site using a docking program like Glide.

- Gradient Calculation: Compute the energy and gradient (atomic forces) using a high-accuracy machine learning potential such as ANI-2x, which approximates the wB97X/6-31G(d) level of theory [31].

- Search Direction: Calculate the conjugate gradient search direction, ensuring conjugacy with previous directions.

- Backtracking Line Search:

- Propose a step in the conjugate gradient direction.

- If the step decreases the energy sufficiently (Armijo condition), accept it.

- If not, reduce the step size and reevaluate until a satisfactory step is found.

- Apply any defined torsional restraints during the step.

- Convergence Check: Terminate if the root-mean-square deviation (RMSD) of the gradient falls below a defined threshold (e.g., 0.01 eV/Å) or if the maximum number of steps is reached.

- Iteration: Repeat from step 2 until convergence.