Advanced Neural Network Architectures for Molecular Property Prediction: A 2025 Guide for Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on tuning neural network architectures for molecular property prediction (MPP).

Advanced Neural Network Architectures for Molecular Property Prediction: A 2025 Guide for Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on tuning neural network architectures for molecular property prediction (MPP). It explores the foundational shift from traditional feature engineering to end-to-end deep learning models, particularly Graph Neural Networks (GNNs). The content details cutting-edge methodological advances, including the integration of large language models (LLMs) for knowledge extraction, novel architectures like Kolmogorov-Arnold GNNs, and innovative generative and optimization techniques. It further addresses critical troubleshooting and optimization challenges such as data scarcity, model generalizability, and computational efficiency. Finally, the article offers a rigorous framework for the validation and comparative analysis of different models, emphasizing the importance of high-fidelity datasets and robust benchmarking to translate computational predictions into real-world drug discovery success.

From Feature Engineering to Deep Learning: Core Concepts in Molecular Property Prediction

The Critical Role of Molecular Property Prediction in Accelerating Drug Discovery

Molecular property prediction (MPP) has emerged as a cornerstone of modern computational drug discovery, fundamentally transforming how researchers identify and optimize candidate therapeutics. By leveraging artificial intelligence (AI) to predict key molecular characteristics, MPP enables more informed decisions early in the drug development pipeline, significantly reducing the time and cost associated with traditional experimental approaches [1] [2]. The integration of advanced neural network architectures has been particularly transformative, allowing models to learn complex structure-property relationships directly from molecular data, moving beyond the limitations of manual feature engineering [1] [3].

The drug discovery process traditionally faces a fundamental challenge: the chemical space of potential drug-like molecules is astronomically large, exceeding 10^60 compounds, while experimental evaluation remains resource-intensive and time-consuming [4] [2]. Molecular property prediction addresses this bottleneck by computationally screening virtual compounds for desirable pharmacological profiles and potential safety issues before synthesis and testing [5] [2]. This approach has become indispensable for predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, biological activity against specific targets, and physicochemical characteristics [6] [5].

Recent advancements in deep learning, particularly graph neural networks (GNNs) and transformer architectures, have dramatically enhanced our ability to represent and learn from molecular structures [1] [7]. These AI-driven methods have demonstrated superior performance compared to traditional quantitative structure-activity relationship (QSAR) models, especially when applied to complex biological properties that challenge conventional approaches [2]. The ongoing refinement of neural network architectures through techniques such as transfer learning, multi-task training, and few-shot learning continues to push the boundaries of predictive accuracy and applicability across diverse drug discovery scenarios [8] [9] [4].

Molecular Representation Methods

The foundation of effective molecular property prediction lies in representing chemical structures in formats suitable for computational analysis. Molecular representation methods have evolved significantly from traditional rule-based approaches to modern AI-driven techniques that automatically learn informative features from data [1].

Traditional Representation Approaches

Traditional methods rely on explicit, human-defined schemes to encode molecular information:

String-Based Representations: The Simplified Molecular-Input Line-Entry System (SMILES) provides a compact string-based encoding of chemical structures that remains widely used despite limitations in capturing molecular complexity [1]. International Chemical Identifier (InChI) offers a standardized representation but cannot guarantee decoding back to original molecular graphs [1].

Molecular Descriptors: These quantitative features describe physicochemical properties (e.g., molecular weight, hydrophobicity) and topological indices derived from molecular structure [1] [3]. While interpretable, they often struggle to capture intricate structure-function relationships [1].

Molecular Fingerprints: Binary vectors encoding substructural information, such as Extended-Connectivity Fingerprints (ECFP), enable similarity searching and clustering [1]. They efficiently represent local atomic environments but rely on predefined structural patterns [1].

AI-Driven Representation Learning

Modern deep learning approaches automatically learn molecular representations directly from data:

Graph-Based Representations: Molecules naturally map to graph structures with atoms as nodes and bonds as edges [1] [3]. Graph neural networks (GNNs) process these representations to capture both local and global structural patterns [1] [8].

Language Model-Based Approaches: Inspired by natural language processing, these methods treat molecular sequences (e.g., SMILES) as a specialized chemical language [1]. Transformer architectures learn contextual embeddings by processing tokenized molecular strings [1] [7].

Multimodal and 3D Representations: Advanced frameworks incorporate three-dimensional conformational information alongside structural data to better capture spatial relationships critical to molecular function [8]. For example, the self-conformation-aware graph transformer (SCAGE) integrates 2D atomic distance prediction and 3D bond angle prediction to learn comprehensive molecular semantics [8].

Table 1: Comparison of Molecular Representation Methods

| Representation Type | Key Examples | Advantages | Limitations |

|---|---|---|---|

| String-Based | SMILES, SELFIES | Compact, human-readable | Limited structural complexity capture |

| Molecular Descriptors | AlvaDesc, Mordred | Interpretable, physically meaningful | Manual engineering, incomplete coverage |

| Molecular Fingerprints | ECFP, FCFP | Computational efficiency, similarity search | Predefined patterns, limited flexibility |

| Graph Neural Networks | GCN, GAT, GIN | Natural structure representation, end-to-end learning | Data hunger, computational complexity |

| Language Models | ChemBERTa, SMILES-BERT | Contextual understanding, transfer learning | SMILES syntax constraints |

| 3D Representations | SCAGE, Uni-Mol | Spatial relationship capture | Conformational computation cost |

Neural Network Architectures for Molecular Property Prediction

The architecture of neural networks plays a pivotal role in determining their ability to capture complex relationships between molecular structure and biological activity. Several specialized architectures have emerged as particularly effective for molecular property prediction tasks.

Graph Neural Networks

GNNs have become a dominant architecture for MPP due to their natural alignment with molecular graph structure [3]. These networks operate by passing messages between connected atoms (nodes) and bonds (edges), iteratively updating atomic representations to capture both local chemical environments and global molecular topology [1] [3]. Variants such as Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs), and Graph Isomorphism Networks (GINs) introduce specialized mechanisms to weight neighbor contributions or enhance expressive power [5] [10]. Industrial applications demonstrate that GNN-based predictions remain stable over time and provide valuable guidance for structure-activity relationship (SAR) exploration [6].

Transformer and Attention-Based Architectures

The attention mechanism, particularly as implemented in transformer architectures, has revolutionized molecular representation learning by enabling models to focus on structurally significant regions [7]. Self-attention graph transformers extend this capability to molecular graphs, dynamically weighting the importance of different atoms and substructures for specific property predictions [8] [7]. Frameworks like SCAGE incorporate multitask pretraining with molecular fingerprint prediction, functional group identification, and spatial relationship learning to develop comprehensive molecular representations [8]. The Attentive FP algorithm exemplifies how attention mechanisms can highlight atoms critical to properties like hERG toxicity, providing interpretable insights alongside accurate predictions [5].

Specialized Architectural Innovations

Recent architectural innovations address specific challenges in molecular property prediction:

Multi-Task Learning: Networks trained simultaneously on multiple related properties leverage shared representations to improve generalization, particularly valuable with limited data for individual endpoints [9]. Controlled experiments demonstrate that multi-task learning outperforms single-task models, especially when augmenting small, sparse datasets with additional molecular data [9].

Few-Shot Learning Architectures: For low-data scenarios, frameworks like Context-informed Few-shot Molecular Property Prediction via Heterogeneous Meta-Learning (CFS-HML) employ dual encoders to separate property-shared and property-specific knowledge [10]. This approach enables effective learning from just a few examples by leveraging transferable molecular commonalities while maintaining sensitivity to task-specific contexts [10].

Knowledge-Enhanced Models: Emerging architectures integrate external knowledge sources, including large language models (LLMs), to complement structural information [3]. These frameworks prompt LLMs to generate domain-relevant knowledge and executable code for molecular vectorization, fusing the resulting features with structural representations from pre-trained molecular models [3].

Experimental Protocols and Methodologies

Robust experimental design is crucial for developing and validating molecular property prediction models. Below are detailed protocols for key methodologies referenced in recent literature.

Protocol 1: Multi-Task Graph Neural Network Training

This protocol outlines the procedure for training multi-task GNNs as described in recent systematic evaluations [9].

Research Reagent Solutions:

- Molecular Datasets: QM9 dataset (134k stable small organic molecules) [9]

- Software Framework: PyTorch Geometric with RDKit cheminformatics toolkit

- Model Architecture: Graph Isomorphism Network (GIN) with multi-task output heads

- Training Infrastructure: GPU acceleration (NVIDIA V100 or equivalent)

Procedure:

- Data Preparation:

- Curate molecular datasets with multiple property annotations

- Apply scaffold splitting to separate structurally distinct molecules across training, validation, and test sets [9]

- Standardize molecular structures and generate graph representations with atom and bond features

Model Configuration:

- Implement GIN architecture with 5 message-passing layers

- Initialize atom embeddings with 300-dimensional vectors

- Add separate output heads for each molecular property task

- Employ shared hidden layers (256 units) before task-specific layers

Training Protocol:

- Utilize Adam optimizer with initial learning rate of 0.001

- Implement learning rate reduction on plateau (factor=0.5, patience=10 epochs)

- Apply gradient clipping with maximum norm of 1.0

- Use balanced sampling for tasks with imbalanced data distributions

Evaluation:

- Assess performance on held-out test set using task-appropriate metrics (AUROC for classification, RMSE for regression)

- Compare against single-task baselines trained on identical data subsets

- Perform statistical significance testing across multiple random seeds

Protocol 2: Self-Conformation-Aware Pretraining (SCAGE Framework)

This protocol details the multitask pretraining approach used in the SCAGE framework to learn conformation-aware molecular representations [8].

Research Reagent Solutions:

- Data Source: ~5 million drug-like compounds from public and proprietary sources

- Conformation Generation: Merck Molecular Force Field (MMFF) for stable conformation sampling

- Model Architecture: Modified graph transformer with Multiscale Conformational Learning (MCL) module

- Pretraining Tasks: Molecular fingerprint prediction, functional group prediction, 2D atomic distance prediction, 3D bond angle prediction

Procedure:

- Conformational Analysis:

- Generate molecular conformations using MMFF force field

- Select lowest-energy conformation as most stable state

- Compute 2D atomic distances and 3D bond angles from optimized structures

Functional Group Annotation:

- Apply algorithmic functional group assignment to each atom

- Implement hierarchical classification of chemical motifs

- Encode group membership as auxiliary node features

Multitask Pretraining:

- Implement four pretraining tasks simultaneously:

- Molecular fingerprint prediction (binary classification)

- Functional group prediction (multi-label classification)

- 2D atomic distance prediction (regression)

- 3D bond angle prediction (regression)

- Employ Dynamic Adaptive Multitask Learning to balance task losses

- Train for 100 epochs with batch size of 32

- Implement four pretraining tasks simultaneously:

Downstream Finetuning:

- Initialize property prediction models with pretrained weights

- Finetune on target molecular property datasets

- Evaluate on molecular property and activity cliff benchmarks

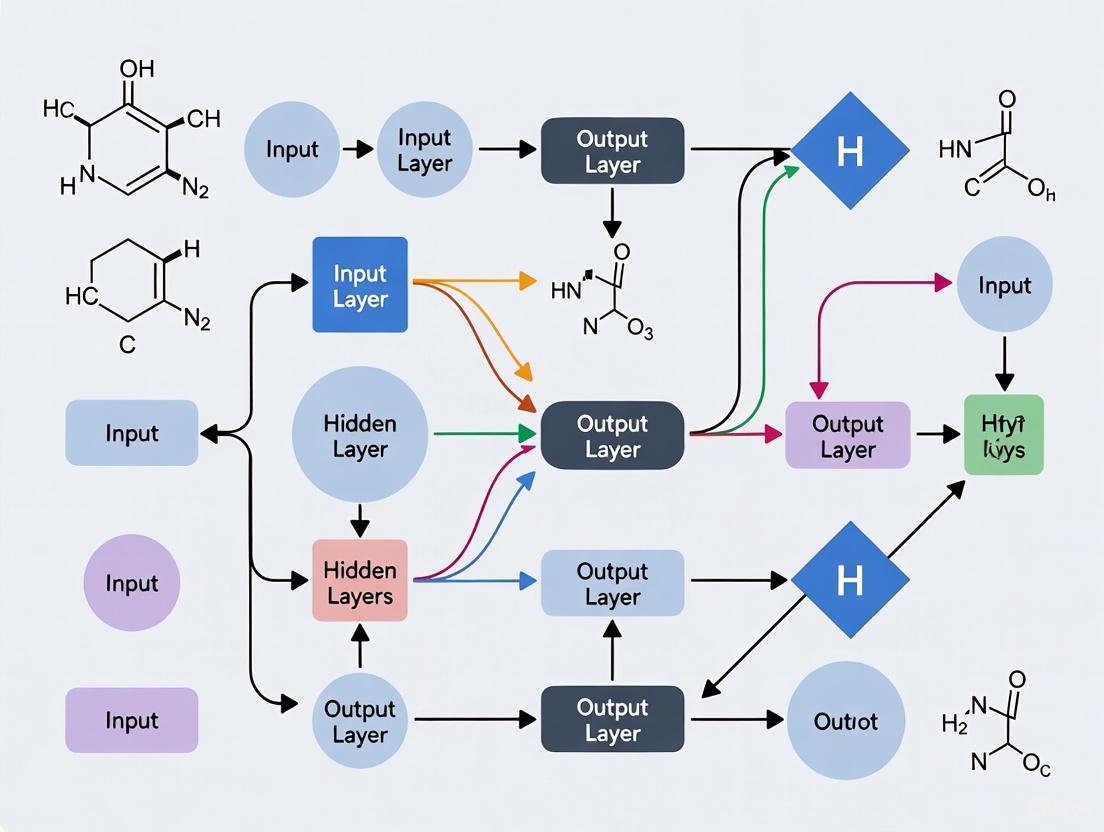

Diagram 1: SCAGE Framework Pretraining and Finetuning Workflow. This illustrates the complete pipeline from molecular input to property prediction.

Protocol 3: Few-Shot Molecular Property Prediction with Meta-Learning

This protocol describes the heterogeneous meta-learning approach for few-shot molecular property prediction [10].

Research Reagent Solutions:

- Model Architecture: Dual-encoder framework with GIN-based property-specific encoder and self-attention property-shared encoder

- Meta-Learning Framework: Model-Agnostic Meta-Learning (MAML) with heterogeneous optimization

- Task Formation: 2-way K-shot classification tasks with support and query sets

Procedure:

- Task Construction:

- Sample molecular properties with limited labeled examples

- Formulate 2-way K-shot classification tasks (typically K=1,5,10)

- Divide each task into support set (labeled examples) and query set (unlabeled examples)

Dual Molecular Encoding:

- Process molecules through property-specific encoder (GIN-based)

- Extract property-shared representations using self-attention mechanism

- Concatenate both representations for comprehensive molecular embedding

Meta-Training:

- Outer loop: Update all parameters across diverse property prediction tasks

- Inner loop: Rapid adaptation using only property-specific parameters

- Employ relation network to propagate labels through molecular similarity graph

Evaluation:

- Test on held-out molecular properties unseen during training

- Compare against standard few-shot learning baselines

- Analyze performance as function of available training examples

Performance Benchmarking

Rigorous evaluation across diverse molecular properties establishes the comparative performance of different architectural approaches. The following tables summarize key benchmarking results from recent literature.

Table 2: Performance Comparison of Molecular Property Prediction Models on Benchmark Tasks

| Model Architecture | BBBP (AUROC) | Tox21 (AUROC) | ClinTox (AUROC) | ESOL (RMSE) | FreeSolv (RMSE) |

|---|---|---|---|---|---|

| Random Forest (Descriptors) | 0.724 | 0.803 | 0.713 | 1.054 | 2.012 |

| Graph Convolutional Network | 0.792 | 0.831 | 0.844 | 0.876 | 1.403 |

| Attentive FP | 0.854 | 0.856 | 0.901 | 0.685 | 1.155 |

| GROVER | 0.893 | 0.879 | 0.942 | 0.589 | 0.982 |

| Uni-Mol | 0.912 | 0.891 | 0.961 | 0.512 | 0.874 |

| SCAGE | 0.928 | 0.903 | 0.973 | 0.498 | 0.826 |

Table 3: Few-Shot Learning Performance on Molecular Property Prediction (AUROC)

| Model | 1-Shot | 5-Shot | 10-Shot | Full Data |

|---|---|---|---|---|

| Matching Network | 0.612 | 0.698 | 0.734 | 0.812 |

| Prototypical Network | 0.634 | 0.721 | 0.759 | 0.829 |

| IterRefLSTM | 0.658 | 0.752 | 0.791 | 0.853 |

| PAR Network | 0.681 | 0.773 | 0.812 | 0.869 |

| CFS-HML | 0.723 | 0.804 | 0.839 | 0.891 |

Applications in Drug Discovery Workflow

Molecular property prediction integrates throughout the drug discovery pipeline, from target identification to lead optimization.

ADMET Profiling

Accurate prediction of ADMET properties represents one of the most valuable applications of MPP, addressing a major cause of clinical-stage attrition [5] [2]. Models like AttenhERG achieve state-of-the-art accuracy in predicting hERG channel toxicity while identifying atoms contributing most to toxicity risk [5]. Similarly, frameworks like FP-ADMET and MapLight combine molecular fingerprints with machine learning to establish robust prediction frameworks for a wide range of ADMET properties [1]. StreamChol provides specialized prediction of drug-induced liver injury via cholestasis, enabling early identification of this complex toxicity endpoint [5].

Activity Cliff Prediction

Activity cliffs occur when small structural modifications cause dramatic changes in molecular potency, presenting significant challenges in lead optimization [8]. Advanced MPP models like SCAGE demonstrate improved performance on structure-activity cliff benchmarks, accurately identifying critical functional groups associated with molecular activity [8]. Case studies on targets like BACE show close alignment between model attention patterns and molecular docking results, validating their utility in quantitative structure-activity relationship (QSAR) analysis [8].

Scaffold Hopping and Molecular Generation

MPP enables scaffold hopping—identifying structurally distinct compounds with similar biological activity—by capturing essential pharmacophoric features beyond specific structural frameworks [1]. Traditional methods utilizing molecular fingerprints and similarity searches have been supplemented by AI-driven approaches that learn continuous molecular embeddings capturing non-linear structure-activity relationships [1]. Generative models including variational autoencoders (VAEs) and generative adversarial networks (GANs) design novel scaffolds absent from existing chemical libraries while tailoring molecules for desired properties [1].

Diagram 2: Transfer Learning Process for Molecular Property Prediction. This illustrates knowledge transfer from data-rich source tasks to data-poor target tasks.

Implementation Considerations

Successful deployment of molecular property prediction models requires careful attention to several practical considerations.

Data Quality and Splitting Strategies

Model performance depends critically on data quality and appropriate dataset partitioning [5]. Scaffold splitting, which separates molecules based on core structural frameworks, provides more realistic evaluation than random splitting by ensuring models generalize to novel chemotypes [8] [5]. The Uniform Manifold Approximation and Projection (UMAP) split offers an even more challenging benchmark that better reflects real-world scenarios [5]. Data imbalance remains a significant challenge, with techniques like focal loss and artificial data augmentation showing promise in addressing unequal class distributions [5].

Hyperparameter Optimization and Overfitting

Molecular datasets often feature limited labeled examples, creating vulnerability to overfitting during hyperparameter optimization [5]. Studies suggest that using preselected hyperparameter sets can produce models with similar or better accuracy than extensive grid search, particularly for small datasets [5]. Methods like fastprop provide efficient descriptor-based modeling with minimal hyperparameter tuning, achieving competitive performance with significantly reduced computation time [5].

Interpretation and Explainability

Model interpretability remains crucial for building trust and extracting chemical insights [6] [5]. Attention mechanisms naturally provide atom-level importance scores, while specialized approaches like group graphs enable unambiguous interpretation of substructure contributions [5]. Case studies demonstrate that interpretation methods can identify functional groups closely associated with molecular activity, with results consistent with experimental structural-activity relationships [8].

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools for Molecular Property Prediction

| Tool/Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Molecular Representation | SMILES, SELFIES, Graph Representation | Encode molecular structure for computational processing | Input format for all molecular modeling tasks |

| Feature Generation | RDKit, alvaDesc, Mordred | Compute molecular descriptors and fingerprints | Traditional QSAR and descriptor-based machine learning |

| Deep Learning Frameworks | PyTorch Geometric, Deep Graph Library | Implement GNN architectures | Graph-based molecular property prediction |

| Pretrained Models | ChemBERTa, GROVER, SCAGE | Provide transferable molecular representations | Few-shot learning and transfer learning scenarios |

| Property Prediction Platforms | ADMET Predictor, ChemProp | Specialized endpoints for drug discovery | ADMET optimization and safety assessment |

| Validation Tools | Model confidence estimation, applicability domain assessment | Evaluate model reliability and limitations | Lead optimization decision support |

Future Directions

The field of molecular property prediction continues to evolve rapidly, with several promising research directions emerging. Integration of large language models (LLMs) represents a frontier approach, leveraging their encoded chemical knowledge to complement structural information [3]. Methods like LLM4SD demonstrate that knowledge extracted from LLMs can outperform structure-only models for certain properties, while hybrid approaches that fuse LLM-derived knowledge with structural features show particular promise [3].

Geometric deep learning incorporating 3D molecular information continues to advance, with frameworks like SCAGE demonstrating the value of explicitly modeling conformational relationships [8]. As quantum chemistry datasets expand, neural network potentials may eventually replace traditional quantum mechanical calculations for certain applications, offering dramatic speed improvements while maintaining accuracy [5].

In industrial settings, transfer learning with graph neural networks has shown significant promise for leveraging data across the drug discovery funnel [4]. By transferring knowledge from large, easily generated early-stage data to improve predictions for expensive, information-rich later-stage assays, this approach addresses fundamental resource constraints in pharmaceutical research [4].

Molecular property prediction has thus evolved from a supplementary tool to a central technology in modern drug discovery, with continued architectural innovation expanding its capabilities and applications. As models become more accurate, interpretable, and data-efficient, their integration into automated discovery platforms promises to further accelerate the identification and optimization of novel therapeutics.

The evolution of molecular representation has fundamentally transformed computational chemistry and drug discovery, progressing from manual feature engineering to automated end-to-end deep learning models. This shift has enhanced predictive accuracy and enabled more efficient exploration of chemical space. Framed within the context of neural network architecture tuning for molecular property prediction, this article details the critical transition, providing application notes and experimental protocols that empower researchers to leverage these advancements. We summarize benchmark results, provide detailed methodologies for key experiments, and visualize complex workflows and relationships to serve as a practical toolkit for scientists and drug development professionals.

Molecular representation serves as the foundational bridge between chemical structures and their predicted biological or physicochemical properties. The journey from expert-crafted features to end-to-end learning represents a fundamental paradigm shift in computational approaches to drug discovery. Traditional methods relied heavily on manual feature engineering, requiring deep domain expertise to translate molecular structures into fixed, human-interpretable numerical vectors or fingerprints. While effective for specific tasks, these approaches were often brittle and limited by human preconceptions of what features were relevant.

The advent of deep learning introduced models capable of learning optimal representations directly from raw molecular data, significantly reducing reliance on manual feature engineering. This evolution has been particularly impactful in neural network architecture tuning for molecular property prediction, where graph neural networks (GNNs) and transformer-based architectures now automatically discover complex structure-property relationships. The tuning of these architectures has become a critical research focus, as their performance is highly sensitive to hyperparameters and architectural choices [11]. Modern approaches increasingly integrate diverse data modalities, including structural, textual, and functional group information, to create more robust and predictive models, even in ultra-low data regimes commonly encountered in real-world drug discovery pipelines [12] [13] [1].

Quantitative Benchmarking of Representation Approaches

The performance of different molecular representation methods varies significantly across datasets, tasks, and data availability regimes. The following tables summarize key quantitative benchmarks from recent literature, providing a basis for selecting appropriate modeling strategies.

Table 1: Performance comparison of multi-task learning schemes on MoleculeNet benchmarks (Average AUC-ROC in %)

| Method | ClinTox | SIDER | Tox21 | Average |

|---|---|---|---|---|

| STL (Single-Task Learning) | 84.7 | 70.3 | 83.1 | 79.4 |

| MTL (Multi-Task Learning) | 89.2 | 72.1 | 84.5 | 82.0 |

| MTL-GLC | 89.6 | 72.5 | 84.9 | 82.3 |

| ACS (Proposed) | 95.3 | 74.8 | 86.2 | 85.4 |

Table 2: Generalization performance of Handcrafted (HC) Features vs. Deep Learning (DL) across data regimes

| Setting | HC Features | Deep Learning | Hybrid (HC+DL) |

|---|---|---|---|

| In-Distribution (ID) | 85.1% | 99.8% | 98.5% |

| Out-of-Distribution (OOD) - Small Sample | 85.4% | 70.2% | 84.3% |

| Out-of-Distribution (OOD) - Large Sample | 86.1% | 84.3% | 89.7% |

Experimental Protocols for Key Methodologies

Protocol: Adaptive Checkpointing with Specialization (ACS) for Multi-Task GNNs

Application: This protocol is designed for molecular property prediction in scenarios with significant task imbalance or limited labeled data, mitigating negative transfer in multi-task learning [13].

Materials:

- Dataset: Multi-task molecular dataset (e.g., ClinTox, SIDER, Tox21 from MoleculeNet).

- Software: Python 3.8+, PyTorch or TensorFlow, Deep Graph Library (DGL) or PyTor Geometric.

- Model Architecture: A shared GNN backbone (e.g., MPNN) with task-specific Multi-Layer Perceptron (MLP) heads.

Procedure:

- Data Preparation: Partition data using a Murcko-scaffold split to ensure generalization to novel molecular scaffolds. Apply loss masking for tasks with missing labels.

- Model Initialization: Initialize a shared GNN backbone and separate MLP heads for each prediction task. The GNN should be configured to output a general-purpose latent representation for each molecule.

- Training Loop:

- For each training epoch, iterate through the batched data.

- For each task with available labels, compute the loss (e.g., Binary Cross-Entropy) between the prediction (from the shared backbone + task-specific head) and the ground truth.

- Backpropagate the combined, masked loss to update the shared GNN parameters and the relevant task-specific head.

- Validation and Checkpointing:

- After each epoch, compute the validation loss for every task independently.

- For each task, if its current validation loss is the lowest observed, checkpoint the entire model state (shared backbone parameters + the specific task's head parameters) to a dedicated file for that task.

- Specialization: Upon completion of training, for each task, load the corresponding best checkpoint to obtain a specialized model tailored to that specific property.

Protocol: Integrating LLM-Generated Knowledge with Structural GNNs

Application: Enhances molecular property prediction by fusing domain knowledge extracted from Large Language Models (LLMs) with structural features from pre-trained molecular models [12].

Materials:

- LLM Access: API or local access to state-of-the-art LLMs (e.g., GPT-4o, GPT-4.1, DeepSeek-R1).

- Structural Model: A pre-trained molecular GNN or Transformer (e.g., ChemBERTa, Pre-trained GNN on PubChem).

- Fusion Model: A downstream classifier (e.g., Random Forest, MLP) capable of processing fused feature vectors.

Procedure:

- Knowledge Extraction via Prompting:

- Design prompts to elicit two types of information from the LLM: a) Domain-relevant knowledge about the molecular property of interest, and b) Executable code for generating molecular descriptors from SMILES strings.

- Execute the prompts and extract the textual knowledge and generated code. Validate the code for safety and functionality before execution.

- Molecular Vectorization:

- Run the LLM-generated or standard descriptor calculation code (e.g., using RDKit) on the SMILES representations of the molecules to produce a knowledge-based feature vector.

- Structural Feature Extraction:

- Pass the molecular graphs through a pre-trained GNN to obtain a structural feature embedding.

- Feature Fusion:

- Concatenate the knowledge-based feature vector and the structural feature vector to form a unified representation for each molecule.

- Property Prediction:

- Train the chosen fusion model on the unified representations to predict the target molecular property.

Protocol: Benchmarking HC Features vs. Deep Learning for OOD Generalization

Application: Systematically evaluates the robustness of handcrafted features against deep learning representations when test data distribution differs from training data [14] [15].

Materials:

- Datasets: Multiple homogenized datasets with common label spaces (e.g., public HAR datasets adapted for molecular tasks or molecular datasets from different sources).

- Feature Extractors: Tool for HC feature generation (e.g., TSFEL for time-series, RDKit for molecular descriptors) and a standard 1D-CNN or GNN for deep features.

Procedure:

- OOD Setting Definition: Define the Out-of-Distribution scenario (e.g., test on different molecular scaffolds, different assay conditions, or different sensor positions).

- Feature Generation (HC):

- For the HC approach, extract a comprehensive set of pre-defined features (e.g., Gaussian mixture models, Euler characteristic curves, topological indices) from the preprocessed data.

- Feature Learning (DL):

- For the DL approach, train a 1D-CNN or GNN end-to-end on the raw or minimally preprocessed input data from the training set.

- Classifier Training: Train a standard classifier (e.g., Random Forest) on the HC features. For the DL approach, the network itself is the classifier.

- Evaluation: Test both trained models on the held-out OOD test set. Compare performance metrics (e.g., accuracy, AUC-ROC) to determine which representation generalizes better.

Visualization of Workflows and Relationships

ACS Training and Specialization Logic

Diagram Title: ACS Training Logic Flow

Molecular Representation Evolution Pathway

Diagram Title: Evolution of Molecular AI

LLM and GNN Feature Fusion Architecture

Diagram Title: LLM and GNN Feature Fusion

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key software and methodological "reagents" for modern molecular property prediction

| Item Name | Type | Primary Function | Example/Reference |

|---|---|---|---|

| Directed MPNN (D-MPNN) | Graph Neural Network | End-to-end learning from molecular graphs; reduces redundant updates. | Chemprop [16] [11] |

| Adaptive Checkpointing (ACS) | Training Scheme | Mitigates negative transfer in multi-task learning with imbalanced data. | [13] |

| Functional Group Benchmarks (FGBench) | Dataset & Benchmark | Provides fine-grained, localized FG data for interpretable, structure-aware LLMs. | [17] |

| Multi-Task Graph Networks | Model Architecture | Leverages correlations between molecular properties to improve data efficiency. | [13] |

| LLM for Knowledge Extraction | Feature Extractor | Generates domain knowledge and molecular descriptors from chemical text. | [12] |

| Neural Architecture Search (NAS) | Optimization | Automates the design of high-performing GNN architectures for given datasets. | [18] [11] |

Graph Neural Networks (GNNs) as a Natural Framework for Molecular Data

In computational drug discovery and materials science, the accurate prediction of molecular properties is a fundamental challenge. Graph Neural Networks (GNNs) have emerged as a powerful framework for this task by naturally aligning with the structural representation of molecules. In a molecular graph, atoms correspond to nodes, and chemical bonds form the edges, creating an inherent graph structure that GNNs can process directly. This structural congruence allows GNNs to outperform traditional Multilayer Perceptrons (MLPs) by leveraging topological information, with theoretical analyses quantifying that GNNs enhance the regime of low test error over MLPs by a factor of (D^{q-2}), where (D) represents a node's expected degree and (q) is the power of the ReLU activation function with (q>2) [19]. The integration of GNNs into molecular property prediction has revolutionized various aspects of drug design, from initial lead discovery to optimization, significantly accelerating the discovery process while reducing costs and late-stage failures [20].

Advanced GNN Architectures for Molecular Property Prediction

Kolmogorov-Arnold Graph Neural Networks (KA-GNNs)

A recent breakthrough in GNN architecture is the development of Kolmogorov-Arnold Graph Neural Networks (KA-GNNs), which integrate Kolmogorov-Arnold networks (KANs) into the fundamental components of GNNs: node embedding, message passing, and readout [21]. Unlike conventional GNNs that use fixed activation functions, KA-GNNs employ learnable univariate functions on edges, offering improved expressivity, parameter efficiency, and interpretability. The framework introduces Fourier-series-based univariate functions within KAN layers to enhance function approximation by effectively capturing both low-frequency and high-frequency structural patterns in molecular graphs [21].

Two primary architectural variants have been developed: KA-Graph Convolutional Networks (KA-GCN) and KA-augmented Graph Attention Networks (KA-GAT). In KA-GCN, node embeddings are initialized by processing atomic features and neighboring bond features through a KAN layer, while message-passing layers follow the GCN scheme with node features updated via residual KANs. KA-GAT extends this approach by incorporating edge embeddings, where both node and edge features are initialized using KAN layers [21]. Experimental results across seven molecular benchmarks demonstrate that KA-GNNs consistently outperform conventional GNNs in both prediction accuracy and computational efficiency [21].

Activity-Cliff-Explanation-Supervised GNN (ACES-GNN)

Activity cliffs (ACs), defined as pairs of structurally similar molecules with significant potency differences, present a particular challenge for predictive models. The ACES-GNN framework addresses this by integrating explanation supervision for activity cliffs directly into the GNN training objective [22]. This approach aligns model attributions with chemist-friendly interpretations, forcing the model to focus on the minor structural differences that cause major property changes. Validated across 30 pharmacological targets, ACES-GNN consistently enhances both predictive accuracy and attribution quality for activity cliffs compared to unsupervised GNNs [22].

Table 1: Performance Comparison of Advanced GNN Architectures on Molecular Property Prediction

| Architecture | Key Innovation | Theoretical Foundation | Reported Advantages |

|---|---|---|---|

| KA-GNN [21] | Integration of Kolmogorov-Arnold networks (KANs) with Fourier-series basis functions | Kolmogorov-Arnold representation theorem; Fourier analysis using Carleson’s theorem [21] | Superior accuracy & computational efficiency; Improved interpretability by highlighting chemically meaningful substructures [21] |

| ACES-GNN [22] | Supervision of both predictions and model explanations for activity cliffs | Explanation-guided learning [22] | Improved predictive accuracy for activity cliffs; Generates chemically intuitive explanations [22] |

| Knowledge-Enhanced GNN [23] [24] | Integration of global chemical knowledge (e.g., from SMILES) that GNNs struggle to learn | Not specified | Enhanced accuracy compared to pure GNN approach; Better explainability via node-level prediction [23] [24] |

Experimental Protocols and Workflows

Protocol: Implementing a KA-GNN for Molecular Property Prediction

Objective: To predict molecular properties using a KA-GNN architecture that integrates Fourier-based KAN modules. Materials: Molecular dataset (e.g., QM9 [25]), Python, deep learning framework (e.g., PyTorch), and cheminformatics library (e.g., RDKit).

Data Preprocessing:

- Convert molecular structures (e.g., from SMILES strings) into graph representations. Each atom becomes a node, and each bond becomes an edge.

- Construct node features (e.g., atomic number, hybridization) and edge features (e.g., bond type, bond length).

- Split the dataset into training, validation, and test sets.

Model Architecture Setup (KA-GCN Variant):

- Node Embedding Initialization: Pass the concatenation of a node's atomic features and the averaged features of its neighboring bonds through a Fourier-based KAN layer [21].

- Message Passing: Implement graph convolutional layers. The message from neighboring nodes is aggregated and passed through a KAN layer for feature updating. Residual KAN connections can be used to stabilize training [21].

- Readout/Global Pooling: Generate a graph-level representation by pooling all node embeddings (e.g., using sum or mean) after the final message-passing layer. Pass this representation through a final KAN layer for the property prediction [21].

Training Loop:

- Initialize the model and optimizer (e.g., Adam).

- For each batch of molecular graphs in the training set:

- Perform a forward pass to obtain predictions.

- Calculate the loss between predictions and ground-truth labels (e.g., using Mean Squared Error for regression).

- Perform backpropagation and update model parameters.

- Validate the model on the validation set after each epoch to monitor for overfitting.

Evaluation:

- Evaluate the final model on the held-out test set using relevant metrics (e.g., Mean Absolute Error for regression, AUC-ROC for classification).

The following workflow diagram illustrates the KA-GNN architecture and process.

Protocol: Molecular Generation via Direct Inverse Design with a GNN

Objective: To generate novel molecular structures with desired properties by directly optimizing the input to a pre-trained GNN predictor. Materials: A pre-trained GNN property predictor, constraint functions for molecular validity.

Pre-train a Property Predictor: Train a GNN model to accurately predict the target property (e.g., HOMO-LUMO gap) on a large dataset like QM9 [25]. Freeze the weights of this model.

Initialize a Starting Graph:

- Begin with a random graph or an existing molecular structure.

Construct Input Matrices with Constraints:

- Adjacency Matrix (A): Constructed from a weight vector to ensure symmetry and zero trace. Use a sloped rounding function, ([x]_{sloped} = + a(x - [x])), to allow gradient flow through the rounding operation [25].

- Feature Matrix (F): The atom types are defined by the valence (sum of bond orders) of each node, derived from the adjacency matrix. A weight matrix is used to differentiate between elements with the same valence [25].

Gradient Ascent Optimization:

- Perform a forward pass of the constructed graph through the pre-trained GNN to get a property prediction.

- Calculate the loss (e.g., squared difference from the target property). Add a penalty for chemical violations (e.g., valence exceeding 4).

- Perform backpropagation to calculate gradients of the loss with respect to the underlying weight vectors (wadj, wfea) that define the graph, not the GNN weights.

- Update the graph's weight vectors to minimize the loss.

Valence Enforcement: During optimization, if an atom's valence reaches 4, block gradients that would push it higher [25].

Termination: The process stops when the graph satisfies basic chemical valence rules and its predicted property is within a specified range of the target [25].

The following diagram illustrates this molecular generation process.

The Scientist's Toolkit: Research Reagents & Datasets

Table 2: Essential Resources for GNN-based Molecular Property Prediction

| Resource Name | Type | Primary Function | Example Use-Case |

|---|---|---|---|

| QM9 Dataset [25] | Molecular Dataset | A comprehensive dataset of small organic molecules with quantum mechanical (DFT) properties. | Training and benchmarking GNNs for predicting quantum mechanical properties like HOMO-LUMO gap [25]. |

| Activity Cliff (AC) Datasets [22] | Benchmark Dataset | Curated datasets of molecular pairs with high structural similarity but large potency differences. | Training and evaluating explainable GNN models (e.g., ACES-GNN) to improve prediction and interpretation of challenging cases [22]. |

| Molecular Graphs | Data Representation | A graph object where nodes are atoms and edges are chemical bonds, annotated with features. | The fundamental input representation for a GNN, encoding a molecule's structure for the model to process [21] [25]. |

| Fourier-KAN Layer [21] | Neural Network Layer | A layer using Fourier series (sines, cosines) as its learnable, univariate activation functions. | Replacing standard MLP layers in a GNN to enhance expressivity and capture periodic patterns in molecular data [21]. |

| Sloped Rounding Function [25] | Algorithm | A constrained rounding function that allows gradients to flow backwards, essential for graph generation. | Enforcing integer bond orders in the adjacency matrix during gradient-based molecular generation [25]. |

| Attribution Methods (e.g., Integrated Gradients, GNNExplainer) | Explainability Tool | Techniques to assign importance scores to input features (atoms/bonds) for a model's prediction. | Interpreting a trained GNN's decisions by highlighting chemically relevant substructures [26] [22]. |

GNNs provide a fundamentally natural and powerful framework for modeling molecular data, directly mirroring the graph structure of chemical compounds. The ongoing evolution of GNN architectures, including the integration of Kolmogorov-Arnold networks and explanation-guided learning paradigms, is consistently pushing the boundaries of predictive accuracy, computational efficiency, and model interpretability. Furthermore, the invertible nature of these networks opens up exciting possibilities for the direct generation of novel molecular structures with designer properties. As these methodologies mature, supported by robust benchmarks and standardized protocols, they are poised to become an indispensable tool in the computational scientist's arsenal, significantly accelerating discovery in drug development and materials science.

The accurate prediction of molecular properties is a critical task in drug discovery, where traditional computational methods often face a trade-off between leveraging data-driven structural models and incorporating valuable human prior knowledge. While Graph Neural Networks (GNNs) have demonstrated remarkable success in learning directly from molecular structures in an end-to-end fashion, their performance is often constrained by the limited availability of labeled experimental data and their inherent "black-box" nature [12] [27]. Simultaneously, the emergence of Large Language Models (LLMs) trained on vast scientific corpora offers unprecedented access to encoded human expertise, though they suffer from knowledge gaps and hallucinations, particularly for less-studied molecular properties [12] [3].

This Application Note outlines emerging frameworks that synergistically combine structural and knowledge-based approaches. We detail protocols for integrating LLM-derived knowledge features with structure-based representations from pre-trained molecular models, enabling enhanced predictive accuracy and improved generalization, particularly in small-data regimes [12] [27]. These methodologies are contextualized within the broader scope of neural network architecture tuning for molecular property research, providing researchers with practical tools for implementing these hybrid paradigms.

Quantitative Comparison of Integrated Approaches

The table below summarizes the core methodologies and reported advantages of three key integrated paradigms discussed in this note.

Table 1: Comparison of Integrated Knowledge-Structure Approaches for Molecular Property Prediction

| Paradigm | Core Methodology | Key Advantages | Representative Models/References |

|---|---|---|---|

| LLM Knowledge Infusion | Extracts knowledge features by prompting LLMs (e.g., GPT-4o, DeepSeek-R1); fuses them with structural features from pre-trained GNNs [12] [3]. | Mitigates LLM hallucinations; leverages both human expertise and structural data; outperforms standalone models [12]. | Framework by Zhou et al. [12] [3] |

| Knowledge-Embedded GNNs | Incorporates explicit human knowledge annotations (e.g., atom-level effect on property) directly into the message-passing mechanism [27]. | Improves accuracy with small training data; enhances model interpretability and physical consistency [27]. | KEMPNN [27] |

| Kolmogorov-Arnold GNNs | Replaces standard MLP components in GNNs with Kolmogorov-Arnold Networks (KANs) using learnable Fourier-series-based functions [21]. | Superior parameter efficiency; enhanced expressivity and interpretability; captures complex functional relationships [21]. | KA-GNN, KA-GCN, KA-GAT [21] |

Experimental Protocols

Protocol 1: LLM-Driven Knowledge Extraction and Fusion with Structural Features

This protocol describes the process of using LLMs to generate knowledge-based molecular features and integrating them with features from a pre-trained structural model for property prediction.

Materials and Reagents

Table 2: Research Reagent Solutions for LLM-Structure Fusion

| Item Name | Function / Description | Example / Specification |

|---|---|---|

| LLM API | Generates domain knowledge and executable code for molecular vectorization based on task-specific prompts. | GPT-4o, GPT-4.1, or DeepSeek-R1 [12] [3]. |

| Pre-trained Molecular Model | Provides foundational structural representations of molecules from graph or 3D data. | Models pre-trained on large datasets (e.g., OMol25 [28]). |

| Molecular Dataset | Contains SMILES strings and corresponding property labels for training and evaluation. | Benchmark datasets from MoleculeNet (e.g., ESOL, FreeSolv) [27]. |

| Feature Fusion Layer | A neural network layer that combines knowledge embeddings with structural embeddings. | A simple concatenation layer or a more complex cross-attention module. |

| Prediction Head | Maps the fused representation to the final property prediction. | A fully-connected layer or a Random Forest classifier/regressor [3]. |

Methodological Procedure

Knowledge Feature Generation:

- Input Preparation: For a given molecular property prediction task, prepare a prompt that includes the target property and a set of relevant molecular samples (SMILES strings) to provide context [3].

- LLM Querying: Prompt the LLM to generate two outputs: a) Domain-relevant knowledge about the property-molecule relationship. b) Executable Python code that defines a function to convert a SMILES string into a numerical vector based on the inferred rules [12] [3].

- Feature Extraction: Execute the generated code for each molecule in the dataset to obtain the knowledge-based feature vector, K.

Structural Feature Extraction:

- Input Encoding: Convert the SMILES string of a molecule into its graph representation G (atoms as nodes, bonds as edges).

- Forward Pass: Process the molecular graph G through a pre-trained GNN to obtain a structural graph-level embedding, S [12].

Feature Fusion:

- Combination: Combine the knowledge vector K and the structure vector S. The simplest method is concatenation: F = CONCAT(S, K) [12].

- Optional Projection: Pass the fused vector F through a non-linear projection layer to reduce dimensionality and facilitate better interaction between features.

Property Prediction:

- Feed the final fused representation into a task-specific prediction head (e.g., a fully-connected layer for regression) to obtain the predicted property value ŷ.

- The model is trained end-to-end using a loss function (e.g., Mean Squared Error) that minimizes the difference between predictions ŷ and true labels y.

The following workflow diagram illustrates this multi-stage process:

Protocol 2: Knowledge-Embedded Message Passing Neural Networks (KEMPNN)

This protocol details the integration of explicit, human-annotated knowledge directly into the message-passing and readout phases of a GNN [27].

Materials and Reagents

- Knowledge Annotations: Per-atom real-value annotations kᵥ (e.g., +1 for positive effect, -1 for negative effect, 0 for no effect on the target property) [27]. These can be manual or rule-based (using SMARTS patterns).

- Standard GNN Backbone: A base GNN architecture such as a Message Passing Neural Network (MPNN) or Graph Attention Network (GAT).

- Knowledge-Guided Attention Mechanism: An attention layer that is explicitly trained to align with the provided knowledge annotations.

Methodological Procedure

Graph and Knowledge Representation:

- Represent the molecule as a graph G(V, E) with node features xᵥ and edge features eᵥw.

- For each atom v ∈ V, assign a knowledge annotation kᵥ.

Knowledge-Embedded Message Passing:

- The standard message-passing and node update functions are augmented with a knowledge-supervised attention mechanism.

- During each message-passing step, an attention weight aᵥw is computed for each edge. This weight is trained to be consistent with the knowledge annotations of the connected nodes.

- The loss function includes a Knowledge Supervision Loss term (e.g., Mean Squared Error) that penalizes deviations between the model's attention scores and the ground-truth knowledge labels kᵥ [27].

Readout and Prediction:

- After T message-passing layers, a graph-level representation is generated via a readout function (e.g., sum or mean of final node embeddings).

- This representation is passed to an output layer for the final property prediction, which is trained with the standard Prediction Loss.

Multi-Task Training:

- The total loss for training KEMPNN is: L_total = L_prediction + α * L_knowledge, where α is a hyperparameter balancing the two objectives [27]. This joint training explicitly encourages the model to learn representations that are predictive of the property and consistent with human knowledge.

The logical flow of the KEMPNN architecture is shown below:

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Resources for Integrated Molecular Property Prediction Research

| Category | Item | Function / Application |

|---|---|---|

| Datasets & Benchmarks | MoleculeNet [27] | Standardized benchmark suites (ESOL, FreeSolv, Lipophilicity) for evaluating MPP performance. |

| Open Molecules 2025 (OMol25) [28] | Massive dataset of high-accuracy computational chemistry calculations for pre-training. | |

| Computational Models | Pre-trained GNNs [12] | Provide robust structural feature extraction; can be fine-tuned on downstream tasks. |

| Large Language Models (LLMs) [12] [3] | Source of prior knowledge; used for feature generation via prompting (GPT-4o, DeepSeek-R1). | |

| Software & Libraries | Graph Neural Network Libraries (e.g., PyTorch Geometric, DGL) | Facilitate the implementation and training of custom GNN architectures like KEMPNN and KA-GNN. |

| Hyperparameter Optimization (HPO) Tools [11] | Automate the search for optimal model configurations, crucial for tuning complex integrated models. |

Cutting-Edge Architectures and Practical Applications in 2025

Leveraging Large Language Models (LLMs) for Knowledge-Driven Feature Extraction

The prediction of molecular properties is a critical task in drug discovery and materials science, traditionally reliant on expert-crafted features or graph-based deep learning models. While Graph Neural Networks (GNNs) have advanced the field by learning directly from molecular structures, they often overlook decades of accumulated semantic and contextual knowledge. The integration of Large Language Models (LLMs) offers a transformative approach by extracting and encoding this human prior knowledge into molecular representations. This paradigm shift leverages the vast scientific knowledge embedded in LLMs to complement structural information, enabling more robust and accurate predictive models. By framing molecular feature extraction as a knowledge-driven process, researchers can overcome limitations of traditional methods, such as reliance on manual feature engineering and insufficient utilization of domain knowledge [12] [3].

The core premise of knowledge-driven feature extraction lies in harnessing LLMs' remarkable reasoning capabilities and scientific knowledge acquired during pre-training on massive text corpora. These models can generate rich molecular representations by interpreting molecular structures through multiple conceptual views, including structural characteristics, task-specific requirements, and chemical rules. This approach is particularly valuable for molecular property prediction (MPP), where integrating knowledge-based features with structural representations has demonstrated significant performance improvements across diverse benchmarks [29] [3].

Comparative Analysis of LLM Approaches for Molecular Feature Extraction

Table 1: Performance Comparison of LLM-Based Molecular Feature Extraction Frameworks

| Framework | LLMs Utilized | Key Methodology | Reported Performance Advantages | Knowledge Integration Approach |

|---|---|---|---|---|

| LLM-Knowledge Fusion [12] [3] | GPT-4o, GPT-4.1, DeepSeek-R1 | Extracts domain knowledge and generates executable code for molecular vectorization; fuses knowledge features with structural features from pre-trained models | Outperforms existing GNN and LLM-only approaches on multiple MPP benchmarks | Direct knowledge extraction via prompting + structural feature fusion |

| M²LLM [29] | Not Specified | Multi-view representation learning integrating structure, task, and rules views; dynamic view fusion | State-of-the-art performance on multiple benchmarks across classification and regression tasks | Perspective-based reasoning with adaptive view weighting |

| MolLLMKD [30] | ChatGPT-4 | Generates descriptive prompts via structured templates; employs multi-level knowledge distillation with HMPNN encoder | Achieves SOTA on 12 benchmark datasets; improved robustness and interpretability | Template-controlled semantic prompting to avoid hallucinations |

| LLM-Prop [31] | T5 (encoder-only) | Processes textual crystal descriptions with specialized preprocessing; linear prediction head on encoder outputs | Outperforms GNN-based methods by ~8% on band gap prediction; 65% on unit cell volume prediction | Textual representation of materials with numerical token replacement |

Table 2: Analysis of Technical Strengths and Implementation Considerations

| Framework | Technical Strengths | Implementation Complexity | Domain Specialization Requirements | Consistency Challenges |

|---|---|---|---|---|

| LLM-Knowledge Fusion [12] [3] | Mitigates LLM hallucinations through structural grounding; compatible with multiple SOTA LLMs | High (requires integration of multiple components) | General molecular properties | Not explicitly addressed |

| M²LLM [29] | Dynamic view adaptation to task requirements; leverages advanced reasoning capabilities | Medium-High (complex view integration) | General molecular properties | Not explicitly addressed |

| MolLLMKD [30] | Explicitly addresses hallucination via templates; multi-level distillation improves generalization | High (multiple distillation levels + HMPNN) | Specific molecular properties | Improved via structured templates |

| LLM-Prop [31] | Effective for crystalline materials; specialized numerical tokenization | Medium (focused on textual representations) | Crystalline materials | Not explicitly addressed |

| General LLMs [32] | Broad knowledge base; strong zero-shot capabilities | Low (direct API usage) | Multiple domains | Low consistency (≤1% across representations) |

Experimental Protocols for Knowledge-Driven Feature Extraction

Protocol 1: LLM-Knowledge Fusion for Molecular Property Prediction

This protocol describes the methodology for integrating knowledge extracted from LLMs with structural features from pre-trained molecular models, adapted from Zhou et al. [12] [3].

Materials and Reagents:

- Molecular Dataset: Collection of SMILES strings with corresponding property labels

- LLM API Access: GPT-4o, GPT-4.1, or DeepSeek-R1 with appropriate authentication

- Computational Environment: Python 3.8+ with PyTorch/TensorFlow, RDKit, and molecular representation libraries

- Pre-trained Molecular Models: Structurally pre-trained GNNs (e.g., on ZINC15 or ChEMBL)

Procedure:

- Task Analysis and Prompt Design:

- Analyze target molecular properties and identify relevant domain knowledge requirements

- Design structured prompts that request: (a) domain-relevant knowledge about the target property, and (b) executable Python code for molecular vectorization based on this knowledge

- Example prompt structure: "For molecular property [PROPERTY_NAME], provide: 1. Key molecular features influencing this property 2. Python function that takes a SMILES string and returns a feature vector based on these features"

Knowledge Extraction via LLM Prompting:

- Input SMILES representations through the designed prompts to the selected LLM

- Execute the generated code to produce knowledge-based molecular features

- Validate code functionality with a subset of molecules before full deployment

Structural Feature Extraction:

- Process molecular graphs through pre-trained GNNs (e.g., using message-passing neural networks)

- Extract molecular representations from the final graph embedding layer

- Normalize structural features to match the scale of knowledge-based features

Feature Fusion and Model Training:

- Concatenate knowledge-based features with structural representations

- Apply feature weighting or attention mechanisms to balance contribution sources

- Train prediction heads (e.g., MLP) on fused representations for target properties

- Validate performance on held-out test sets comparing against baseline methods

Troubleshooting:

- If LLM-generated code fails execution, implement code validation with try-except blocks

- For feature dimension mismatch, apply dimensionality reduction techniques (PCA, autoencoders)

- If performance improvement is minimal, adjust the knowledge-structure fusion ratio through weighted concatenation

Protocol 2: Multi-View Molecular Representation Learning (M²LLM)

This protocol implements the multi-view framework for molecular representation learning, adapted from Ju et al. [29].

Materials and Reagents:

- Multi-task Molecular Dataset: Benchmarks with diverse property annotations (e.g., MoleculeNet)

- LLM with Reasoning Capabilities: Model capable of complex reasoning (e.g., GPT-4, Claude)

- Graph Neural Network Framework: PyTorch Geometric or DGL with pre-trained GNN weights

Procedure:

- View-Specific Prompt Design:

- Molecular Structure View: "Describe the structural characteristics of [SMILES] including functional groups, ring systems, and stereochemistry"

- Molecular Task View: "What features are most relevant for predicting [PROPERTYNAME] of this molecule?"

- Molecular Rules View: "What chemical principles or rules govern the [PROPERTYNAME] of molecules like [SMILES]?"

View-Specific Representation Generation:

- Generate responses for each molecule across all three views using the LLM

- Encode textual responses using sentence transformers (e.g., all-MiniLM-L6-v2) to create view-specific embeddings

- Process molecular graphs through GNN to obtain structural embeddings

Dynamic View Fusion:

- Implement attention mechanisms to compute importance weights for each view based on the target task

- Combine view representations using computed weights:

F_fused = ∑(w_i * F_view_i) - Fine-tune fusion parameters during model training on downstream tasks

Multi-Task Optimization:

- Employ task-specific prediction heads sharing the fused representation backbone

- Optimize using weighted sum of task-specific losses with gradient balancing

Validation:

- Perform ablation studies to measure contribution of each view

- Evaluate cross-task generalization capabilities

- Analyze attention weights to interpret view importance for different property types

Research Reagent Solutions

Table 3: Essential Research Reagents for LLM-Driven Molecular Feature Extraction

| Reagent Category | Specific Tools/Resources | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| LLM APIs | GPT-4o, GPT-4.1, DeepSeek-R1, Claude 3 Opus | Knowledge extraction and reasoning across molecular representations | API cost management; rate limiting; prompt engineering optimization |

| Domain-Specific LLMs | BioBERT, PubMedBERT, BioGPT, MatBERT | Specialized understanding of chemical and biological terminology | Required for advanced domain tasks; reduced hallucination risk |

| Molecular Representation Libraries | RDKit, OpenBabel, DeepChem | SMILES parsing; molecular graph conversion; fingerprint generation | Essential for structural feature extraction and validation |

| GNN Frameworks | PyTorch Geometric, Deep Graph Library (DGL), Spektral | Graph-based molecular representation learning | Pre-trained model availability; scalability to large molecular datasets |

| Feature Fusion Modules | Custom attention mechanisms; concatenation layers; transformer encoders | Integration of knowledge and structural features | Critical for performance; requires careful balancing of feature sources |

| Evaluation Benchmarks | MoleculeNet, TextEdge (for crystals) | Standardized performance assessment across diverse molecular tasks | Ensures comparable results; requires strict data splitting protocols |

Workflow Visualization

Diagram 1: Knowledge-Driven Feature Extraction Workflow - This diagram illustrates the integrated pipeline for extracting molecular features using LLMs, combining knowledge-based and structural approaches for property prediction.

Diagram 2: Multi-View Molecular Representation Framework - This diagram shows the multi-view learning approach where LLMs generate complementary molecular representations that are dynamically fused for property prediction.

Challenges and Limitations

Despite the promising results of LLM-driven feature extraction, several significant challenges remain. A critical limitation is the consistency problem - LLMs often produce different predictions for chemically equivalent molecular representations (e.g., SMILES vs. IUPAC), with state-of-the-art models exhibiting strikingly low consistency rates (≤1%) [32]. This indicates that models may rely on surface-level textual patterns rather than truly understanding intrinsic chemistry. Even with consistency-enhancing interventions like sequence-level KL divergence regularization, improvements in consistency don't necessarily translate to improved accuracy, suggesting these may be orthogonal concerns in molecular representation learning [32].

Additional limitations include the knowledge gap problem for less-studied molecular properties where LLMs lack sufficient training data, and the persistent issue of hallucination where models generate plausible but incorrect chemical information [12] [3]. Computational efficiency also remains a concern, as LLM inference introduces significant overhead compared to traditional molecular machine learning approaches. Future research directions should focus on developing more chemically-grounded LLM training methodologies, improved consistency regularization techniques, and hybrid approaches that better integrate symbolic reasoning with neural representation learning.

The accurate prediction of molecular and material properties is a cornerstone of modern scientific discovery, accelerating advancements in drug development and materials science. Traditional computational methods, though reliable, are often prohibitively slow for large-scale screening. Recently, geometric deep learning has emerged as a transformative solution. Among the most significant developments are Kolmogorov-Arnold Graph Neural Networks (KA-GNNs), which integrate novel learnable activation functions for enhanced interpretability and accuracy on molecular graphs; the equivariant Smooth Energy Network (eSEN), a model designed for learning conservative, smooth interatomic potentials that reliably conserve energy in molecular dynamics simulations; and the Universal Models for Atoms (UMA) family, which leverages massive, cross-domain datasets and a Mixture of Linear Experts (MoLE) architecture to create a single, highly generalizable model for diverse atomic systems. This application note details the protocols for implementing these architectures, providing researchers with the methodologies to leverage their unique strengths for molecular property prediction.

The following table summarizes the core attributes, strengths, and primary applications of the three featured architectures.

Table 1: Comparative Overview of Innovative GNN Architectures

| Architecture | Core Innovation | Key Strength | Primary Application Domain |

|---|---|---|---|

| KA-GNN [21] [33] | Integration of Kolmogorov-Arnold Networks (KANs) with learnable activation functions (e.g., B-splines, Fourier series) into GNN components (node embedding, message passing, readout). | Superior interpretability and parameter efficiency; ability to capture both low and high-frequency patterns in graph data. | Molecular property prediction on static graph representations (e.g., solubility, toxicity). |

| eSEN [34] [35] | An equivariant architecture enforcing strict energy conservation and smooth potential energy surfaces (PES) through conservative forces and specific design choices (e.g., polynomial envelope functions). | High reliability in molecular dynamics (MD) and tasks requiring higher-order derivatives of the PES (e.g., phonon calculations). | Energy-conserving MD simulations, geometry optimization, thermal conductivity, and phonon spectrum prediction. |

| UMA [36] [37] [28] | A universal model trained on massive, diverse datasets (e.g., OMol25) using a Mixture of Linear Experts (MoLE) architecture to efficiently scale parameters. | Unprecedented generalization across chemical domains (molecules, materials, catalysts) without task-specific fine-tuning. | Broad-spectrum property prediction across materials, biomolecules, and catalysts within a single model. |

Quantitative benchmarks further highlight the performance of these models. The table below summarizes key results reported across various molecular and material benchmarks.

Table 2: Summary of Reported Model Performance on Key Benchmarks

| Model | Benchmark | Reported Performance | Notes | Source |

|---|---|---|---|---|

| KA-GNN | Multiple Molecular Benchmarks | Consistently outperforms conventional GNNs (e.g., GCN, GAT) in prediction accuracy and computational efficiency. | Two variants, KA-GCN and KA-GAT, were evaluated across seven molecular benchmarks. | [21] |

| eSEN | Matbench-Discovery | F1 score: 0.831 (compliant), 0.925 (non-compliant); ( \kappa_{\mathrm{SRME}} ): 0.340 (compliant), 0.170 (non-compliant). | Achieves state-of-the-art results on materials stability prediction. | [35] |

| eSEN | MDR Phonon Benchmark | State-of-the-art results. | Excels in predicting phonon properties, which require accurate second and third-order derivatives. | [35] |

| UMA | Diverse Cross-Domain Tasks | Performs similarly to or better than specialized models without fine-tuning. | Demonstrated on a wide range of applications across molecules, materials, and catalysts. | [36] [37] |

| eSEN / UMA | Molecular Energy Accuracy (e.g., GMTKN55) | Essentially perfect performance, matching high-accuracy DFT. | Models trained on the OMol25 dataset show remarkable accuracy. | [28] |

Application Protocols

Protocol for KA-GNN Implementation in Molecular Property Prediction

KA-GNNs replace the static, fixed activation functions in standard GNNs with learnable univariate functions based on the Kolmogorov-Arnold representation theorem. This protocol outlines the steps for implementing a KA-Graph Convolutional Network (KA-GCN) for a graph-level prediction task, such as predicting molecular solubility.

1. Molecular Graph Representation:

- Input Feature Engineering: Represent each molecule as a graph where atoms are nodes and bonds are edges.

- Node Features: Encode atom-level information (e.g., atomic number, hybridization, formal charge) into a feature vector for each node.

- Edge Features: Encode bond-level information (e.g., bond type, bond length) into a feature vector for each edge.

2. KA-GNN Model Initialization:

- Core Layer Construction: Implement KA-GNN layers where the transformation functions are parameterized as learnable splines (e.g., B-splines) or Fourier series [21] [33].

- A KA-GNN layer can be formulated as: [ hi^{(l+1)} = \sum{j \in \mathcal{N}(i)} \phi^{(l)}\left(hi^{(l)}, hj^{(l)}, e_{ij}\right) ] where ( \phi^{(l)} ) is a KAN-based function instead of a simple linear transform followed by a fixed activation.

- Data-Aligned Spline Initialization: Initialize the spline functions' control points based on the input feature distribution to stabilize early training [33].

3. Model Training & Interpretation:

- Training Loop: Train the model using standard backpropagation and an appropriate optimizer (e.g., Adam) to minimize a task-specific loss function, such as Mean Squared Error for regression.

- Interpretation of Learned Functions: After training, visualize the learned univariate functions ( \phi ) in the KAN layers. These often reveal intuitive, human-understandable transformations of the input features, highlighting chemically meaningful substructures [21].

Protocol for Energy-Conserving Simulations with eSEN

The eSEN model is architected to produce a smooth and physically realistic Potential Energy Surface (PES), which is critical for stable and accurate molecular dynamics simulations. This protocol describes its use for running energy-conserving NVE-MD simulations.

1. Model and System Setup:

- Model Selection: Utilize a pre-trained eSEN model with conservative forces, where forces ( \mathbf{F} ) are strictly defined as the negative gradient of the predicted energy ( E ): ( \mathbf{F} = -\nabla_{\mathbf{r}} E ) [34] [35]. Avoid direct-force prediction models for this application.

- Initial Structure and Velocities: Prepare the initial atomic configuration ( \mathbf{r}(t=0) ) and assign initial atomic velocities ( \mathbf{v}(t=0) ) from a Maxwell-Boltzmann distribution corresponding to the desired temperature.

2. Molecular Dynamics Integration:

- Force and Energy Evaluation: At each time step ( t ), compute the potential energy ( E(t) ) and atomic forces ( \mathbf{F}(t) ) using the eSEN model.

- Numerical Integration: Update the atomic positions and velocities using a time-reversible and symplectic integrator like Velocity Verlet [35]: [ \begin{aligned} \mathbf{v}\left(t + \frac{\Delta t}{2}\right) &= \mathbf{v}(t) + \frac{\mathbf{F}(t)}{m} \frac{\Delta t}{2} \ \mathbf{r}(t + \Delta t) &= \mathbf{r}(t) + \mathbf{v}\left(t + \frac{\Delta t}{2}\right) \Delta t \ \mathbf{F}(t + \Delta t) &= -\nabla E(\mathbf{r}(t + \Delta t)) \ \mathbf{v}(t + \Delta t) &= \mathbf{v}\left(t + \frac{\Delta t}{2}\right) + \frac{\mathbf{F}(t + \Delta t)}{m} \frac{\Delta t}{2} \end{aligned} ]

- Energy Conservation Monitoring: Track the total energy ( E{total} = E{kinetic} + E_{potential} ) throughout the simulation. A well-behaved, conservative model will show only minimal energy drift.

3. Efficient Training Strategy (Optional):

- For training a new eSEN model, employ the two-stage strategy: first, pre-train a model with a direct-force prediction head for speed; then, replace the head and fine-tune the model using conservative force prediction (i.e., computing forces from the energy gradient) [35] [28]. This reduces total training time by approximately 40%.

Protocol for Cross-Domain Prediction with UMA

UMA models are designed as generalists, capable of high performance across diverse chemical domains without task-specific fine-tuning. This protocol covers using a pre-trained UMA model for property prediction on a new material or molecule.

1. Input Preparation for Universal Representation:

- Structure Definition: For a given atomic system (molecule, crystal, surface), define the 3D atomic coordinates ( \mathbf{r} ), atomic numbers ( \mathbf{a} ), and, for periodic systems, the lattice parameters ( \mathbf{l} ).

- Data Wrangling: Ensure the input format is compatible with the UMA model's expected input schema, which typically handles a wide variety of elements and structures from its multi-dataset training.

2. Model Inference:

- Leveraging Mixture of Linear Experts (MoLE): Feed the prepared structure into the UMA model. Internally, the MoLE architecture will selectively activate a subset of parameters (e.g., ~50M out of 1.4B total in UMA-medium) based on the input, allowing for efficient inference while maintaining a massive knowledge base [36] [28].

- Property Output: The model will directly output the desired properties, such as the total potential energy, per-atom forces, and stress tensors for periodic systems.

3. Validation and Integration:

- Benchmarking: Validate the model's predictions on a small set of known results for your specific system, if available, to establish confidence.

- Workflow Integration: Integrate the UMA model into larger computational workflows, such as high-throughput virtual screening of material databases or as a force provider for geometry optimization of novel catalytic systems.

Workflow Visualization

The following diagram illustrates the high-level experimental workflow for implementing and utilizing the three featured architectures, highlighting their primary pathways and applications.

The logical flow of the KA-GNN architecture, specifically detailing the integration of KAN layers within the message-passing framework, is shown below.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Datasets, Models, and Tools for Molecular AI Research

| Reagent / Resource | Type | Function & Application | Access Information |

|---|---|---|---|

| OMol25 Dataset [28] | Dataset | A massive, high-accuracy dataset of over 100M quantum chemical calculations on diverse systems (biomolecules, electrolytes, metal complexes). Serves as the foundational training data for next-generation models. | Details available via Meta FAIR publications. |