Assessing Active Learning Model Generalization: Strategies and Benchmarks for Biomedical and Drug Development

This article provides a comprehensive framework for assessing the generalization capabilities of active learning (AL) models, a critical challenge for their reliable application in data-scarce domains like drug development.

Assessing Active Learning Model Generalization: Strategies and Benchmarks for Biomedical and Drug Development

Abstract

This article provides a comprehensive framework for assessing the generalization capabilities of active learning (AL) models, a critical challenge for their reliable application in data-scarce domains like drug development. It explores the foundational principles defining generalization in AL, details methodological approaches and real-world applications in biomedical research, presents troubleshooting strategies for common optimization pitfalls, and establishes rigorous validation and comparative benchmarking protocols. Aimed at researchers and scientists, the content synthesizes the latest studies and benchmarks to offer actionable guidance for building robust, generalizable, and data-efficient predictive models.

Defining Generalization in Active Learning: Core Concepts and the Data Efficiency Imperative

In scientific machine learning, a model's performance on a static benchmark is often a poor predictor of its real-world utility. The true test, and the most frequent point of failure, is generalization—the ability to perform reliably on new, unseen data that often comes from a different distribution than the training set. This challenge is particularly acute in fields like materials science and drug development, where data is scarce, expensive to acquire, and inherently multi-modal. This guide objectively compares how different machine learning strategies, with a focus on active learning, address this fundamental bottleneck.

Understanding the Generalization Bottleneck

Generalization is the cornerstone of scientific machine learning. A model that has merely memorized its training data is scientifically useless; the goal is to uncover underlying principles that hold true in novel situations. The core of the problem is distribution shift, where the model encounters data during deployment that differs from what it was trained on. In science, this shift is not an anomaly but a constant, arising from several sources:

- Data Scarcity and Imbalance: In fields like materials science, acquiring labeled data through experimentation is costly and time-consuming. This results in small, sparse datasets that cannot adequately represent the vast, complex design space of possible compositions or structures [1] [2]. Furthermore, data is often imbalanced, with abundant examples for common processes but very few for complex, high-performance ones [3].

- The "Benchmark Crisis" in Machine Learning: Competitive pressure on standardized benchmarks can lead to overfitting, where models learn to exploit statistical artifacts of the test set rather than developing robust underlying capabilities [4]. As one researcher notes, this confounds evaluation and can deceive us when comparing human and machine performance [4]. A model's high score on a benchmark does not guarantee it will function as a reliable scientific tool.

Active Learning as a Strategic Framework for Enhanced Generalization

Active Learning (AL) is a supervised machine learning approach that strategically selects the most informative data points for labeling, thereby optimizing the learning process and reducing annotation costs [5]. It directly targets the generalization bottleneck by focusing resources on data that most efficiently expands the model's understanding.

How Active Learning Works: A Iterative Feedback Loop

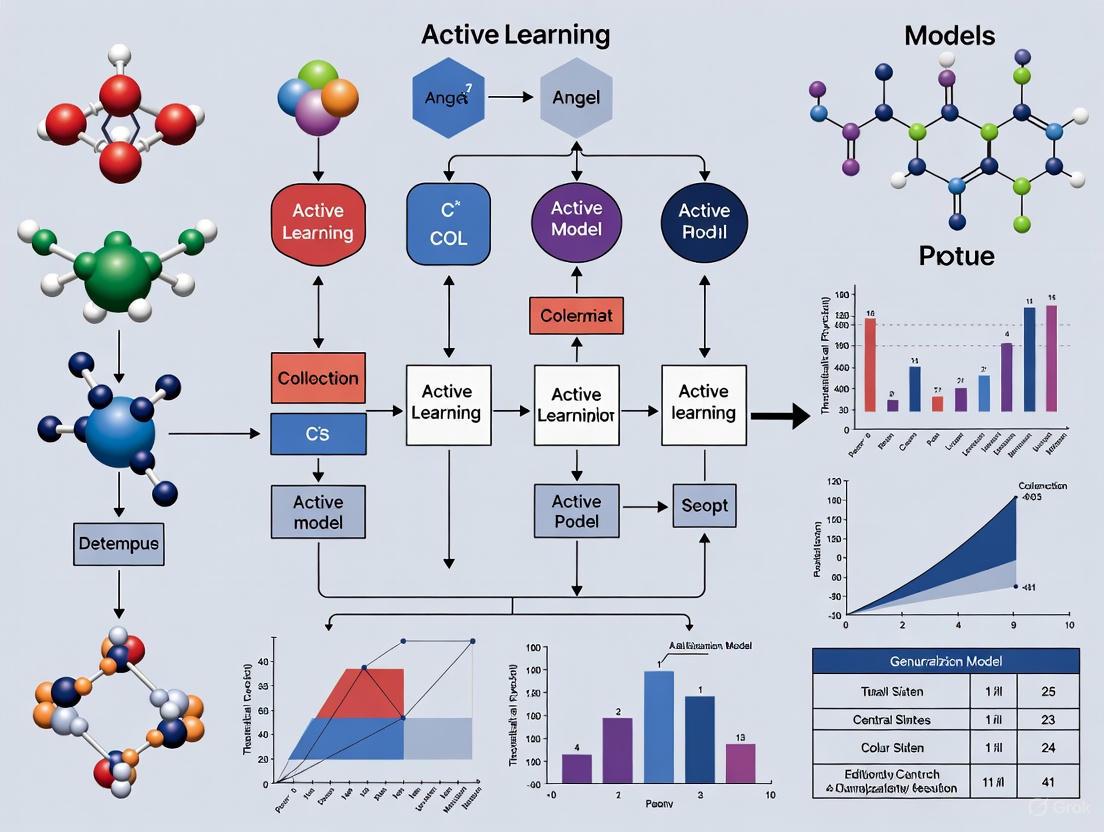

The following diagram illustrates the core, iterative workflow of an active learning system designed for scientific discovery.

This process involves several key stages, each with specific methodological choices:

- Initialization: The process begins with a small, often randomly selected, set of labeled data

L = {(x_i, y_i)}_{i=1}^l[1]. - Model Training: A surrogate model (e.g., a neural network or tree-based ensemble) is trained on the current labeled set.

- Query Strategy: The trained model is used to evaluate a large pool of unlabeled data

U = {x_i}_{i=l+1}^n. A query strategy selects the most informative candidatesx^*for experimental validation [1]. Common strategies include:- Uncertainty Sampling: Selects points where the model's prediction is least confident.

- Diversity Sampling: Selects points that are most different from the existing labeled set.

- Expected Model Change: Selects points that would cause the greatest change to the current model.

- Experimental Validation & Update: The selected candidates are synthesized, characterized, or otherwise labeled by experiment, and the new data

(x^*, y^*)is added to the training set. The model is then retrained, and the cycle repeats.

Key Query Strategies for Scientific Discovery

The choice of query strategy is critical for balancing exploration of the unknown design space with exploitation of promising regions.

Table: Comparison of Active Learning Query Strategies

| Strategy | Core Principle | Advantages | Disadvantages | Best for Scientific Generalization When... |

|---|---|---|---|---|

| Uncertainty Sampling [5] | Selects data points where the model's predictive uncertainty is highest. | Simple to implement; directly targets the model's weaknesses. | Can be myopic; may select outliers. | The design space is well-defined, and the primary goal is to refine a model for a specific region. |

| Diversity Sampling [5] | Selects data points that are most dissimilar to the existing training set. | Broadly explores the design space; improves coverage. | May select points that are not relevant to the performance objective. | The initial dataset is very small, and a broad understanding of the compositional space is needed. |

| Query-by-Committee (QBC) [1] | Uses an ensemble of models; selects points where the models disagree the most. | Reduces model-specific bias; robust uncertainty estimation. | Computationally more expensive. | Dealing with small, noisy datasets where a single model may be unreliable. |

| Hybrid (Uncertainty + Diversity) | Combines multiple principles, e.g., selecting points that are both uncertain and diverse. | Balances exploration and exploitation; often leads to superior performance [1]. | More complex to tune. | The general case for optimizing complex, multi-dimensional scientific properties. |

Comparative Performance in Scientific Applications

The efficacy of Active Learning is not theoretical; it is demonstrated through accelerated discovery and improved model robustness across scientific domains. The table below summarizes quantitative results from recent peer-reviewed studies.

Table: Experimental Performance of Active Learning in Scientific Discovery

| Field / Application | Experimental Protocol & Benchmark | Key Results & Performance | Implication for Generalization |

|---|---|---|---|

| Materials Science: High-Strength Al-Si Alloys [3] | Framework: Process-Synergistic Active Learning (PSAL).Model: Conditional Wasserstein Autoencoder (c-WAE) + Ensemble Surrogate.Evaluation: Experimental validation of ultimate tensile strength (UTS). | - Achieved 459.8 MPa (Gravity Casting + T6) in 3 iterations.- Achieved 220.5 MPa (Gravity Casting + Hot Extrusion) in 1 iteration.- Outperformed single-process models by leveraging data synergies. | Effectively generalizes across multiple, data-imbalanced processing routes, capturing complex composition-process-property relationships. |

| Materials Informatics [1] | Framework: AutoML integrated with 17 different AL strategies.Model: Automatically optimized model families and hyperparameters.Evaluation: Performance (MAE, R²) on 9 materials formulation datasets with small-sample constraints. | - Uncertainty-driven (LCMD, Tree-based-R) and diversity-hybrid (RD-GS) strategies clearly outperformed random sampling and geometry-only methods early in the acquisition process.- All methods converged as labeled data grew, highlighting AL's value in data-scarce regimes. | Maximizes data efficiency; the model generalizes better with fewer data points by focusing on the most informative samples. |

| COVID-19 Forecasting [6] | Framework: AI-powered empirical software using tree-search and LLMs.Model: Automatically generated forecasting models.Evaluation: Weighted Interval Score (WIS) on the U.S. COVID-19 Forecast Hub. | - The system generated 14 models that outperformed the official CovidHub Ensemble, the gold-standard ensemble of expert-led teams. | Creates robust models that generalize effectively to real-world, dynamic public health data. |

The Scientist's Toolkit: Research Reagent Solutions

Implementing a successful active learning pipeline for scientific discovery requires a suite of computational "reagents." The table below details the key components and their functions.

Table: Essential Components for an Active Learning Pipeline in Science

| Component / "Reagent" | Function & Description | Examples & Notes |

|---|---|---|

| Surrogate Model | The machine learning model that makes property predictions and guides the search. | Neural Networks: Capture complex, non-linear relationships [3].Gradient Boosting (XGBoost): Effective for tabular data; provides feature importance [1] [3].Ensemble Models: Combine multiple models for improved robustness and uncertainty quantification [3]. |

| Query Strategy | The algorithm that decides which experiments to perform next. | Uncertainty Sampling, Diversity Sampling, QBC (See Section 2.2). The choice is critical for data efficiency [5]. |

| Generative Model | Explores the vast design space by generating novel, valid candidate structures or compositions. | Conditional Wasserstein Autoencoder (c-WAE): Used in PSAL to generate compositions conditioned on desired processing routes [3]. |

| Automated ML (AutoML) | Automates the selection and optimization of surrogate models and their hyperparameters. | Essential for maintaining a robust learning loop, especially as the underlying data distribution evolves with each AL cycle [1]. |

| Experimental Validation Loop | The physical (or computational) process of testing the AL-selected candidates and returning quantitative results. | This is the crucial link to the real world, providing the ground-truth data that prevents the model from operating on a purely theoretical, and potentially biased, plane. |

Generalization remains the central bottleneck in deploying machine learning for science, but it is not an insurmountable one. As the experimental data shows, Active Learning provides a powerful, data-efficient framework for building models that generalize beyond their initial training sets. By strategically guiding experimentation, AL directly addresses the challenges of data scarcity and distribution shift. The most successful approaches, like the Process-Synergistic Active Learning framework, demonstrate that generalization can be engineered by consciously designing systems that leverage domain knowledge, manage data imbalance, and tirelessly explore the most promising regions of complex scientific landscapes. For researchers in fields from drug development to materials science, integrating these active learning principles is no longer just an optimization—it is a necessity for achieving robust and reliable discovery.

In the fields of machine learning and scientific research, the efficiency of data utilization is a critical factor in accelerating discovery and innovation. This is particularly true in domains like drug development, where the cost of data acquisition is exceptionally high. The paradigm of active learning, a sub-field of machine learning where the algorithm strategically selects which data points to label, stands in contrast to passive learning, where the model learns from a statically provided, pre-labeled dataset. This guide provides an objective comparison of these two approaches, focusing on their data efficiency, performance, and practical applications within scientific research. The core thesis is that active learning methods, by optimizing the use of informative data, offer superior generalization and performance with significantly less labeled data, thereby creating a more efficient research pipeline.

Core Concepts and Definitions

What is Active Learning?

Active learning is a supervised machine learning approach designed to optimize data annotation and model training. Its primary objective is to minimize the amount of labeled data required for training while maximizing the model's performance [5]. This is achieved through an iterative, human-in-the-loop process where the algorithm itself queries a human annotator to label the most informative data points from a pool of unlabeled data [7] [5]. Instead of learning from a random sample, the active learner intelligently selects samples that are expected to provide the most valuable information, such as those where the model is most uncertain or which represent areas of the feature space not yet explored [5].

What is Passive Learning?

In contrast, passive learning follows the traditional supervised learning paradigm. A model is trained on a fixed, pre-defined, and randomly selected labeled dataset [5]. The learning process is essentially a one-off event; once the model is trained on this static dataset, the process is complete. There is no iterative selection of new data based on the model's current state of knowledge. This approach often requires large volumes of labeled data to achieve high performance, as it does not strategically prioritize data points that could be more beneficial for learning [8] [5].

Comparative Performance and Data Efficiency

Extensive research across both educational and machine learning domains consistently demonstrates that active learning strategies produce superior outcomes compared to passive methods.

Performance Metrics in Educational Contexts

Studies in educational settings provide clear evidence of active learning's effectiveness. The following table summarizes key findings from empirical research:

| Metric | Active Learning Performance | Passive Learning Performance | Source |

|---|---|---|---|

| Test Scores | 54% higher on average | Baseline | [8] |

| Average Test Score | 70% | 45% | [8] |

| Failure Rate | 1.5x less likely to fail | Baseline failure rate | [8] |

| Normalized Learning Gains | 2x higher | Baseline gains | [8] |

| Course Performance | Improved by half a letter grade | Baseline performance | [8] |

| Knowledge Retention | 93.5% retained | 79% retained | [8] |

A specific study in psychology courses found that while students in active learning sessions felt they learned less, a repeated measures ANOVA showed significant exam performance improvements for the active group that were not observed in the passive learning group [9]. This highlights a common perception gap where the increased cognitive effort in active learning can misleadingly be interpreted as less effective learning.

Data Efficiency in Machine Learning

In machine learning, the benefits of active learning are measured in terms of data utilization and model accuracy.

| Metric | Active Learning | Passive Learning |

|---|---|---|

| Labeling Cost | Reduced through selective labeling | High, as all data must be labeled |

| Data Selection | Strategic, using query strategies | Random or pre-defined |

| Convergence Speed | Faster due to focus on informative samples | Slower, requires more data and time |

| Model Adaptability | More adaptable to dynamic datasets | Less adaptable |

| Performance with Limited Data | Higher accuracy with fewer labels | Requires large volumes of data for comparable results |

Active learning algorithms achieve improved accuracy and faster convergence by focusing on the most informative samples, which are those expected to reduce model uncertainty the most [5]. Furthermore, by selecting diverse samples, active learning helps models generalize better to new, unseen data and can improve robustness to noise in the dataset [5].

Experimental Protocols and Methodologies

To validate the comparative performance of active and passive learning, researchers employ rigorous experimental designs. Below is a detailed methodology that can be applied across domains.

Generalized Experimental Workflow

The following diagram illustrates the core iterative workflow of an active learning system, which contrasts with the linear process of passive learning.

Detailed Methodology

Dataset Partitioning: A large dataset is divided into three parts:

- Initial Training Set: A small, randomly selected set of labeled data (e.g., 1-5% of the total).

- Unlabeled Pool: A large pool of unlabeled data from which the active learner can query.

- Test Set: A held-out, fully labeled set used exclusively for evaluating model performance.

Model Training (Initialization):

- A machine learning model (e.g., a Random Forest classifier or a neural network) is trained on the initial small labeled training set. This model serves as the starting point.

Active Learning Cycle (Iterative Process):

- Query Strategy: The trained model is used to evaluate the unlabeled data pool. A query strategy is applied to select the most informative data points. Common strategies include:

- Uncertainty Sampling: Selects instances where the model's prediction confidence is lowest (e.g., highest entropy).

- Diversity Sampling: Selects a batch of samples that are diverse from each other to cover the input space.

- Query-by-Committee: Uses a committee of models and selects instances they disagree on the most.

- Human Annotation (Oracle): The selected data points are sent to a human annotator (the "oracle") to be labeled. This step simulates the cost and effort of data labeling.

- Model Update: The newly labeled data is added to the training set, and the model is retrained (or fine-tuned) on this augmented dataset.

- Query Strategy: The trained model is used to evaluate the unlabeled data pool. A query strategy is applied to select the most informative data points. Common strategies include:

Passive Learning Baseline:

- For comparison, a model is trained using a standard passive learning approach on a training set that grows in size through random selection from the unlabeled pool, rather than strategic querying.

Evaluation and Stopping:

- At the end of each active learning cycle, the updated model's performance is evaluated on the fixed test set. Metrics such as accuracy, F1-score, and area under the curve (AUC) are recorded.

- The cycle repeats until a stopping criterion is met, such as a performance plateau, a predefined budget (number of queries) is exhausted, or a target performance level is achieved.

Application in Drug Discovery: A Case Study

The application of active learning in drug discovery powerfully demonstrates its real-world value. A key challenge in this field is predicting Blood-Brain Barrier (BBB) permeability for central nervous system drug candidates.

Experimental Workflow for BBB Permeability Prediction

The following diagram outlines the specific workflow for applying active learning to this problem, from data preparation to model deployment.

Research Reagent Solutions for In Silico Prediction

The following table details the key computational "reagents" and tools used in such a study.

| Research Reagent / Tool | Function in the Experiment | Source / Example |

|---|---|---|

| BBBP Dataset | A benchmark dataset containing 1,955 compounds annotated as permeable (BBB+) or non-permeable (BBB-) for model training and testing. | MoleculeNet [10] |

| RDKit Descriptors | Software that generates molecular descriptors (e.g., molecular weight, lipophilicity) which serve as numerical features for the machine learning models. | RDKit [10] |

| Morgan Fingerprints | A method for representing the structure of a molecule as a bit vector, capturing the presence of specific substructures. | RDKit [10] |

| Resampling Algorithms (SMOTE) | A technique to address class imbalance in datasets by generating synthetic samples for the minority class (e.g., BBB- compounds). | SMOTE, Borderline SMOTE [10] |

| Random Forest Classifier | An ensemble machine learning model that was identified as providing an optimal balance between accuracy and generalizability for this task. | Scikit-learn [10] |

Key Findings and Comparative Outcome

In the BBB permeability study, researchers evaluated multiple machine learning models. Random Forest models demonstrated an optimal balance between accuracy and generalizability, outperforming more complex models that were prone to overfitting [10]. Feature analysis identified that reduced hydrogen bond donor (NH/OH count) and heteroatom counts were key determinants enhancing permeability [10].

When framed as an active learning problem, the process would begin with a small subset of the BBBP dataset. The model would then iteratively query for the labels of compounds it was most uncertain about, drastically reducing the number of compounds that would need to be experimentally tested (the passive learning approach) to achieve a high-accuracy model. This directly translates to reduced labeling costs and faster convergence on a reliable predictive model, accelerating the early stages of drug discovery [5] [10].

The body of evidence from educational research, machine learning theory, and practical applications in fields like drug development presents a consistent narrative: active learning systematically outperforms passive learning in terms of data efficiency and final performance. While the initial implementation may be more complex than passive approaches, the substantial reductions in data labeling costs, coupled with improvements in model accuracy, generalization, and robustness, make it an indispensable strategy for modern research and development. For scientists and engineers working under constraints of data, time, and budget, the adoption of active learning frameworks is not merely an optimization but a fundamental shift towards a more intelligent and efficient research paradigm.

In data-scarce domains like drug discovery and materials science, the ultimate test of a machine learning model is not its performance on held-out test sets, but its ability to maintain predictive accuracy when deployed in real-world scenarios on genuinely novel data. This capability, known as generalization, represents the critical bridge between theoretical model performance and practical utility. Active learning (AL) has emerged as a powerful framework for addressing the fundamental challenge of generalization under constrained labeling budgets by strategically selecting the most informative data points for annotation [11].

The generalization problem is particularly acute in scientific fields where data acquisition costs are prohibitive. In drug discovery, for instance, obtaining labeled data for compound-target interactions requires expensive experimental synthesis and characterization, often requiring expert knowledge, specialized equipment, and time-consuming procedures [1]. Similarly, in materials science, characterization of material properties demands meticulous synthesis, precise environmental control, and advanced instrumentation [1]. In these contexts, active learning's value proposition lies in its ability to strategically guide experimental resources toward collecting data that most efficiently improves model performance on unseen examples, thereby accelerating scientific discovery while reducing costs.

This article examines the key metrics and methodologies for evaluating the generalization capabilities of active learning models across scientific domains, with particular emphasis on drug discovery applications where generalization performance directly impacts research productivity and resource allocation.

Key Quantitative Metrics for Assessing Generalization

Generalization assessment requires moving beyond traditional training accuracy metrics to measures that capture how well models perform on challenging, real-world data distributions. The most informative metrics reveal a model's capacity to handle the variability and complexity encountered in practical applications.

Table 1: Core Metrics for Evaluating Model Generalization

| Metric Category | Specific Metric | Interpretation | Domain Application |

|---|---|---|---|

| Performance Disparity | Train-Test Performance Gap | Measures overfitting; smaller gaps indicate better generalization | General across all domains |

| Out-of-Distribution (OOD) Performance | Accuracy on data from different distributions than training | Critical for new chemical spaces [12] | |

| Data Efficiency | Learning Curve Area Under Curve (AUC) | Speed of performance improvement with additional data | Materials science, drug discovery [1] |

| Sample Efficiency Ratio | Performance achieved relative to data used | Virtual screening [11] | |

| Task-Specific Generalization | Success Rate for Rare Classes | Performance on underrepresented categories | Synergistic drug pair detection [13] |

| Cross-Domain Transfer Accuracy | Performance when applied to new domains | New cell lines or protein targets [13] | |

| Uncertainty Calibration | Expected Calibration Error | Agreement between predicted probabilities and actual correctness | Molecular property prediction [11] |

In drug discovery applications, several specialized metrics have proven particularly valuable for assessing generalization. The Synergy Yield Ratio measures the proportion of truly synergistic drug pairs identified through active learning compared to random selection, with studies achieving 60% detection of synergistic pairs while exploring only 10% of the combinatorial space [13]. Out-of-Scope Prediction Accuracy quantifies a model's ability to generalize to new molecular scaffolds or protein targets not represented in training data, a critical capability for practical deployment [12]. Normalized Learning Gains enable comparison of learning efficiency across different dataset sizes and complexity levels, with studies reporting 2x higher normalized learning gains through interactive engagement compared to passive approaches [8].

Experimental Protocols for Generalization Assessment

Rigorous experimental design is essential for accurately evaluating the generalization capabilities of active learning models. The following protocols represent established methodologies across different scientific domains.

Pool-Based Active Learning for Regression Tasks

This protocol, commonly employed in materials science and chemistry, evaluates how efficiently AL strategies improve model performance on unseen data with limited labeling budgets [1].

Workflow:

- Initial Dataset Partitioning: Split data into labeled set L = {(xi, yi)}{i=1}^l and unlabeled pool U = {xi}_{i=l+1}^n using an 80:20 train-test ratio

- Model Initialization: Train initial model on small labeled subset (typically <5% of total data)

- Active Learning Cycle:

- Use query strategy (uncertainty, diversity, or hybrid) to select most informative samples from U

- Obtain labels for selected samples through human annotation or experimentation

- Update training set: L = L ∪ {(x, y)}

- Retrain model on expanded dataset

- Evaluate on held-out test set

- Performance Tracking: Record test performance (MAE, R²) after each AL iteration

- Termination: Continue until stopping criterion met (performance plateau or budget exhaustion)

This protocol emphasizes early performance gains, as uncertainty-driven strategies (LCMD, Tree-based-R) and diversity-hybrid approaches (RD-GS) significantly outperform random sampling during initial acquisition phases [1].

Figure 1: Active Learning Workflow for Generalization Assessment

Cross-Domain Generalization in Drug Discovery

This protocol specifically tests a model's ability to generalize to novel chemical spaces or biological contexts, a critical requirement for practical drug discovery applications [13] [12].

Workflow:

- Domain Definition: Identify source domains (e.g., known chemical scaffolds, well-characterized protein targets) and target domains (novel scaffolds, uncharacterized targets)

- Baseline Establishment: Train and evaluate model performance on source domains using cross-validation

- Progressive Domain Expansion:

- Gradually introduce data from target domains using AL strategies

- Measure performance on held-out target domain data

- Track sample efficiency (performance gain per additional sample)

- Generalization Assessment: Compare target domain performance to source domain baseline

- Feature Importance Analysis: Identify features most predictive of cross-domain performance

In Ni/photoredox-catalyzed cross-coupling reaction prediction, this approach successfully expanded models from 22,240 to 33,312 compounds by adding information around just 24 building blocks (<100 additional reactions) [12].

Comparative Performance of Active Learning Strategies

Different AL strategies exhibit varying generalization capabilities across domains and data regimes. Understanding these performance characteristics is essential for selecting appropriate methods for specific applications.

Table 2: Performance Comparison of Active Learning Strategies Across Domains

| AL Strategy | Core Principle | Early-Stage Performance | Late-Stage Performance | Domain Effectiveness |

|---|---|---|---|---|

| Uncertainty Sampling | Selects predictions with highest uncertainty | 13-54% improvement over random [8] [5] | Diminishing returns with more data [1] | Drug-target interaction prediction [11] |

| Diversity Sampling | Maximizes coverage of feature space | Moderate improvement | Sustained benefits | Materials formulation [1] |

| Query-by-Committee | Selects points with maximal model disagreement | 30-70% data reduction for parity [1] | Consistent performance | Virtual screening [11] |

| Density-Weighted | Combines uncertainty with representativeness | Strong in low-data regimes [1] | Gradual convergence | Molecular property prediction [12] |

| Hybrid (RD-GS) | Combines diversity and uncertainty | Outperforms single-method | Similar convergence | Small-sample regression [1] |

The generalization performance of AL strategies is significantly influenced by the machine learning models they operate with. In Automated Machine Learning (AutoML) environments where model architectures may change during learning, uncertainty-driven strategies (LCMD, Tree-based-R) and diversity-hybrid approaches (RD-GS) demonstrate superior robustness early in the acquisition process [1]. As the labeled set grows, the performance gap between different strategies narrows, indicating diminishing returns from specialized AL under conditions of adequate data [1].

In drug synergy prediction, the choice of molecular encoding has limited impact on generalization performance, while cellular environment features significantly enhance predictions of synergistic drug pairs across novel cell lines [13]. This highlights the importance of domain-specific feature selection for effective generalization.

Research Reagent Solutions for Active Learning Experiments

Implementing effective active learning for generalization requires both computational tools and experimental resources. The following table details essential components for constructing AL experimental pipelines.

Table 3: Essential Research Reagents and Tools for Active Learning Experiments

| Reagent/Tool | Function | Example Applications | Implementation Considerations |

|---|---|---|---|

| Molecular Encoders | Convert molecular structures to numerical features | Morgan fingerprints, ChemBERTa [13] | Limited impact on synergy prediction [13] |

| Cellular Feature Sets | Characterize biological context | Gene expression profiles from GDSC [13] | Crucial for generalization; 10 genes may suffice [13] |

| DFT Feature Generators | Compute quantum chemical properties | AutoQchem [12] | Provides mechanism-relevant features (e.g., LUMO energy) [12] |

| AL Frameworks | Implement query strategies | Custom Python implementations | Uncertainty estimation challenging for regression [1] |

| Validation Assays | Experimental confirmation | UPLC-MS with CAD detection [12] | ±27% concentration variance requires calibration curves [12] |

Evaluating active learning systems requires moving beyond traditional training accuracy to metrics that capture real-world generalization capabilities. The most effective approaches combine quantitative metrics like out-of-distribution performance and sample efficiency with rigorous experimental protocols that test models under realistic conditions. As active learning continues to evolve in scientific domains, developing standardized benchmarks for generalization will be essential for meaningful comparison across methods and applications.

The evidence consistently shows that uncertainty-driven and hybrid active learning strategies significantly accelerate convergence to generalizable models, particularly in data-scarce regimes common in drug discovery and materials science. By strategically guiding experimental resources toward the most informative data points, these approaches can reduce labeling costs by 30-70% while achieving performance parity with full-data baselines [1]. Future work should focus on developing more robust uncertainty quantification methods, especially for regression tasks, and creating AL strategies that maintain their effectiveness when combined with modern AutoML systems that dynamically change model architectures during learning.

In the high-stakes field of drug discovery, machine learning models promise to accelerate the identification and optimization of candidate molecules. Active learning (AL), an iterative feedback process that efficiently identifies valuable data within vast chemical spaces, has emerged as a particularly valuable approach in this domain [14]. However, a critical challenge persists: the disconnect between a model's internal confidence and its actual performance on real-world generalization tasks. This guide objectively compares how different active learning strategies manage this confidence-performance gap, providing researchers with experimental data and methodologies to inform their computational approaches.

Experimental Protocols & Methodologies

To evaluate the relationship between model confidence and generalization performance, we focus on benchmark studies that employ rigorous, reproducible experimental designs in drug discovery applications.

Deep Batch Active Learning for Drug Discovery

This study introduced two novel batch selection methods (COVDROP and COVLAP) designed for use with advanced neural network models, comparing them against established baselines [15].

- Objective: To optimize ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) and affinity properties of small molecules by selecting the most informative batches of samples for experimental testing.

- Model Architecture: The methods are built on the Bayesian deep regression paradigm, where model uncertainty is estimated by obtaining the posterior distribution of model parameters. This involves innovative sampling strategies with no extra model training required.

- Active Learning Strategy: The core algorithm selects batches that maximize the joint entropy (i.e., the log-determinant of the epistemic covariance of the batch predictions). This approach explicitly balances "uncertainty" (variance of each sample) and "diversity" (covariance between samples) to reject highly correlated batches [15].

- Benchmarking: Methods were evaluated on public datasets for cell permeability (906 drugs), aqueous solubility (9,982 molecules), and lipophilicity (1,200 molecules), alongside internal affinity datasets. Performance was measured by the reduction in the number of experiments required to achieve a target model performance.

Stopping Rule Framework for Drug-Target Interaction Prediction

This research addressed the critical question of when to stop the active learning process, a decision directly tied to confidence in the model's generalizations [16].

- Objective: To develop reliable stopping criteria for active learning applied to drug-target interaction prediction.

- Model Architecture: The framework uses Kernelized Bayesian Matrix Factorization (KBMF). KBMF projects drugs and targets into a common subspace using drug-kernel and target-kernel matrices, which encode prior similarity information [16].

- Active Learning & Stopping Strategy:

- Initialization: Start with a partially observed drug-target interaction matrix.

- Iteration:

- Model Training: Train KBMF on known interactions.

- Prediction: Predict all unknown drug-target interactions.

- Query: Use an active learning strategy to select the most informative batch of experiments from the unlabeled pool.

- Experiment: "Perform" the experiments (simulated by revealing labels from the held-out data).

- Stopping: The accuracy of the active learner is predicted after each round by comparing the learning trajectory to a regression model trained on simulated data. Experimentation stops when the predicted accuracy meets a pre-defined threshold [16].

- Evaluation: The method was validated on four historical drug-effect datasets, measuring the total experiments saved compared to a random selection strategy.

The following workflow diagram illustrates the core active learning cycle and the integration of the stopping rule:

Comparative Performance Analysis

The following tables summarize quantitative results from the evaluated studies, highlighting the effectiveness of different strategies in closing the generalization gap.

Table 1: Performance of Deep Batch Active Learning Methods on ADMET Datasets (Adapted from [15])

| Dataset | Metric | Random | k-Means | BAIT | COVDROP | COVLAP |

|---|---|---|---|---|---|---|

| Aqueous Solubility | RMSE at 20% Data | 2.1 | 1.9 | 1.8 | 1.4 | 1.5 |

| Cell Permeability | RMSE at 15% Data | 0.48 | 0.45 | 0.43 | 0.38 | 0.39 |

| Lipophilicity | RMSE at 25% Data | 0.95 | 0.91 | 0.89 | 0.82 | 0.84 |

| General Trend | Experiments Saved | Baseline | ~10% | ~15% | ~30-40% | ~25-30% |

Table 2: Efficacy of Stopping Rules in Drug-Target Interaction Prediction (Adapted from [16])

| Dataset | Target Accuracy | Experiments (Random) | Experiments (AL + Stopping Rule) | Savings |

|---|---|---|---|---|

| GPCR | 90% | 12,500 | 7,500 | 40% |

| Ion Channel | 85% | 8,200 | 5,500 | 33% |

| Enzyme | 92% | 15,000 | 10,200 | 32% |

| Nuclear Receptor | 88% | 3,100 | 2,200 | 29% |

Key Findings:

- Advanced AL methods directly impact efficiency: As shown in Table 1, methods like COVDROP and COVLAP, which explicitly model prediction uncertainty and batch diversity, achieve lower error rates with significantly less data, directly mitigating overconfidence on sparse data [15].

- Stopping rules are critical for savings: Table 2 demonstrates that without a principled stopping criterion, an AL system may continue experimenting beyond the point of meaningful performance gains. The proposed accuracy-prediction method resulted in substantial cost savings [16].

- The confidence-performance gap is manageable: The combination of robust AL batch selection and reliable stopping rules provides a systematic method to ensure that reported model confidence aligns with actual generalizable performance.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and resources essential for implementing active learning frameworks in drug discovery.

Table 3: Essential Research Reagents & Tools for Active Learning in Drug Discovery

| Item / Resource | Function & Application | Example / Source |

|---|---|---|

| DeepChem | An open-source toolkit for deep learning in drug discovery, providing a foundation for building and testing molecular models. | dee (2016) [15] |

| GeneDisco | An open-source library containing benchmark datasets for evaluating active learning algorithms, particularly in transcriptomics. | Mehrjou et al. (2021) [15] |

| KBMF Codebase | Implementation of Kernelized Bayesian Matrix Factorization for drug-target interaction prediction with active learning. | Gönen (2012) [16] |

| Public ADMET Datasets | Curated experimental data used for training and benchmarking predictive models (e.g., solubility, permeability). | PubChem, ChEMBL [15] |

| Similarity Kernels (SIMCOMP) | Computes the structural similarity between two drug molecules, used to build the drug kernel matrix for KBMF. | Hattori et al. (2010) [16] |

| Similarity Kernels (Smith-Waterman) | Computes the sequence similarity between two target proteins, used to build the target kernel matrix for KBMF. | Smith & Waterman (1981) [16] |

Visualizing the Generalization Gap

The following diagram conceptualizes the relationship between model confidence, active learning strategies, and the ultimate goal of generalizable performance. It illustrates how different components interact to bridge the gap.

The disconnect between model confidence and actual performance presents a significant barrier to the reliable deployment of active learning in drug discovery. Evidence demonstrates that this gap can be effectively narrowed by employing AL strategies that prioritize both uncertainty and diversity in batch selection, complemented by rigorous statistical frameworks for predicting accuracy and determining optimal stopping points. The continued development and benchmarking of such methods are paramount for building trust in AI-driven discovery platforms and achieving genuine cost and time savings in the development of new therapeutics.

Methodologies and Real-World Applications: Building Generalizable Models with Active Learning

In scientific fields, particularly in drug development and materials science, the acquisition of high-quality labeled data is a major bottleneck. Experimental synthesis and characterization often require expert knowledge, expensive equipment, and time-consuming procedures [17]. Active Learning (AL) has emerged as a powerful paradigm to address this challenge by enabling machine learning models to strategically select the most informative data points for labeling, thereby maximizing model performance while minimizing labeling costs [5]. The core of any AL system is its query strategy—the algorithm that decides which unlabeled samples to annotate next. These strategies primarily fall into three categories: uncertainty-based, diversity-based, and hybrid approaches that seek to combine the strengths of both. Understanding the performance characteristics of these strategies is crucial for their application in critical research and development pipelines. This guide provides a comparative analysis of these fundamental query strategies, underpinned by experimental data and structured within the context of active learning model generalization assessment research.

Core Query Strategies: Mechanisms and Methodologies

Uncertainty-Based Sampling

Uncertainty-based sampling operates on a simple yet powerful principle: select the data points for which the current model is most uncertain about its prediction [18]. This approach aims to refine the model's decision boundaries by focusing on the most challenging cases.

- Least Confidence: Selects instances where the model's highest predicted probability is the lowest. The score is computed as ( U(\mathbf{x}) = 1 - P_\theta(\hat{y} \vert \mathbf{x}) ), where ( \hat{y} ) is the most likely prediction [18].

- Margin Sampling: Focuses on the difference between the model's top two most probable classes. The score is ( U(\mathbf{x}) = P\theta(\hat{y}1 \vert \mathbf{x}) - P\theta(\hat{y}2 \vert \mathbf{x}) ). A small margin indicates high uncertainty [18].

- Entropy: Measures the average information contained in the predictive distribution, selecting points with the highest entropy. The score is ( U(\mathbf{x}) = - \sum{y \in \mathcal{Y}} P\theta(y \vert \mathbf{x}) \log P_\theta(y \vert \mathbf{x}) ) [18].

- Monte Carlo Dropout (MCDO): A popular technique for estimating model (epistemic) uncertainty in deep learning. By performing multiple forward passes with dropout enabled during inference, it generates a distribution of predictions. The variance of these predictions serves as the uncertainty measure [18] [19].

Diversity-Based Sampling

Diversity-based strategies, also known as representative sampling, prioritize selecting a set of data points that best represent the overall distribution of the unlabeled pool. This ensures broad coverage of the feature space and helps the model generalize better [18].

- Coreset: This method aims to select points such that every unlabeled point in the feature space is close to a labeled point. It often frames the selection as a facility location or k-center problem, seeking a set of points that minimizes the maximum distance from any unlabeled point to its nearest labeled center [20].

- TypiClust: A clustering-based approach that first groups the unlabeled data in the embedding space. It then selects the most "typical" sample from each cluster—defined as the point with the smallest average distance to all other points in the cluster—ensuring diverse and representative coverage [20].

Hybrid Sampling

Hybrid strategies aim to leverage the complementary strengths of uncertainty and diversity sampling. They seek to select data that is individually challenging for the model and collectively representative of the data distribution.

- TCM (TypiClust and Margin): A straightforward yet effective heuristic that begins with diversity sampling using TypiClust to address the "cold start" problem and, after a predetermined number of steps, switches to uncertainty sampling using the Margin method [20].

- DUAL (Diversity and Uncertainty Active Learning): An algorithm designed for text summarization that scores samples based on their similarity to the unlabeled set and dissimilarity to the labeled set, effectively balancing representativeness and exploration of new regions [21].

- RD-GS: A hybrid method that combines representativeness and diversity with a geometry-inspired sampling strategy, as identified in a large-scale benchmark study [17].

The following workflow diagram illustrates how these different query strategies are integrated into a standard pool-based active learning cycle.

Comparative Performance Analysis

To objectively evaluate the effectiveness of different query strategies, we present quantitative results from recent benchmark studies across various domains, including materials science and computer vision.

Benchmark Results in Materials Science

A comprehensive benchmark study evaluated 17 active learning strategies within an Automated Machine Learning (AutoML) framework on 9 materials science regression tasks. The performance, measured by Mean Absolute Error (MAE) at different dataset sizes, is summarized below for a selection of prominent strategies [17].

Table 1: Performance Comparison (Mean Absolute Error) of AL Strategies in Materials Science [17]

| Strategy Type | Specific Strategy | MAE (Small Dataset) | MAE (Medium Dataset) | MAE (Large Dataset) |

|---|---|---|---|---|

| Uncertainty | LCMD (Lower Confidence Bound) | 0.48 | 0.38 | 0.31 |

| Uncertainty | Tree-based Uncertainty (Tree-based-R) | 0.49 | 0.37 | 0.30 |

| Hybrid | RD-GS (Representativeness-Diversity) | 0.50 | 0.38 | 0.29 |

| Diversity | GSx (Geometry-based) | 0.55 | 0.41 | 0.31 |

| Diversity | EGAL (Geometry-based) | 0.59 | 0.43 | 0.32 |

| Baseline | Random Sampling | 0.61 | 0.45 | 0.32 |

Key Findings from Materials Science Benchmark:

- Early-Stage Advantage: Uncertainty-driven (LCMD, Tree-based-R) and some hybrid (RD-GS) strategies significantly outperformed diversity-only and random sampling when the labeled dataset was very small [17].

- Convergence with More Data: As the volume of labeled data increased, the performance gap between different strategies narrowed, with all methods eventually converging, indicating diminishing returns from active learning under AutoML [17].

- Hybrid Effectiveness: The RD-GS hybrid strategy demonstrated strong and consistent performance, achieving the best result on the large dataset, which underscores the value of balancing different sampling principles [17].

Benchmark Results in Computer Vision

The TCM (TypiClust + Margin) hybrid strategy has been rigorously evaluated on standard image classification datasets against its constituent methods and other baselines, demonstrating the practical benefit of a hybrid approach.

Table 2: Accuracy (%) of TCM vs. Baselines on CIFAR-10 and CIFAR-100 [20]

| Labeling Budget | Strategy | CIFAR-10 | CIFAR-100 |

|---|---|---|---|

| Low Budget (5% of data) | Random Sampling | 64.1 | 32.5 |

| TypiClust (Diversity) | 75.2 | 41.8 | |

| Margin (Uncertainty) | 68.5 | 35.1 | |

| TCM (Hybrid) | 78.9 | 45.3 | |

| High Budget (30% of data) | Random Sampling | 85.5 | 58.9 |

| TypiClust (Diversity) | 88.1 | 62.4 | |

| Margin (Uncertainty) | 89.3 | 64.0 | |

| TCM (Hybrid) | 90.5 | 65.8 |

Key Findings from Computer Vision Benchmark:

- Cold Start Superiority: In very low-data regimes, the diversity-based TypiClust method strongly outperformed the uncertainty-based Margin method, highlighting the "cold start" problem of uncertainty sampling [20].

- Consistent Hybrid Performance: The TCM hybrid strategy matched or exceeded the performance of its component strategies at every stage of the learning process, providing a robust and consistently high-performing solution [20].

Experimental Protocols for Generalization Assessment

For researchers aiming to reproduce or build upon these findings, a rigorous experimental protocol is essential. The following methodology is adapted from the benchmark studies cited in this guide.

Standard Pool-Based Active Learning Workflow

- Data Partitioning: Begin by splitting the entire dataset into an initial small labeled set (L), a large unlabeled pool (U), and a held-out test set for final model evaluation. A common split is 80:20 for training+pool vs. test, with the initial L being a small fraction (e.g., 2-5%) of the training data [17].

- Model Training & Baseline: Train an initial model on L and evaluate its performance on the test set to establish a baseline.

- Active Learning Cycle: a. Query: Use the chosen acquisition function (e.g., Entropy, TypiClust, TCM) to select a batch of samples from U. b. Annotation: The selected samples are considered "labeled" (in simulation) or sent for human annotation. c. Update: Add the newly labeled samples to L and remove them from U. d. Retrain & Evaluate: Retrain the model on the updated L and evaluate its performance on the fixed test set. It is critical to use an AutoML system or carefully control hyperparameter tuning to ensure fair comparisons across cycles [17].

- Iteration & Analysis: Repeat steps 3a-3d until a pre-defined labeling budget is exhausted. The performance trajectory across the budget range is the primary metric for comparing strategies.

Evaluating Generalization

- In-Distribution (ID) Performance: Standard evaluation on a randomly held-out test set from the same data distribution as the initial L and U.

- Out-of-Distribution (OOD) Performance: To truly assess generalization, models should also be evaluated on data from a different distribution (e.g., different scene types in 3D detection [22], or new types of molecules in materials science [19]). A key finding is that while active learning improves ID performance, its ability to enhance OOD generalization can be more modest and is highly dependent on the query strategy [19].

The successful implementation of active learning requires both computational tools and methodological components. The table below details essential "research reagents" for building an active learning pipeline.

Table 3: Essential Research Reagents for Active Learning Experiments

| Reagent / Resource | Type | Function / Description |

|---|---|---|

| AutoML Framework | Software Tool | Automates the process of model selection and hyperparameter tuning during each AL cycle, which is critical for robust and reproducible benchmarking [17]. |

| Pre-trained Model Backbone | Model Component | A model (e.g., SimCLR, DINO) pre-trained via self-supervised learning on a large dataset. It provides high-quality feature embeddings from the start, dramatically improving the effectiveness of both diversity and uncertainty sampling strategies [20]. |

| Monte Carlo Dropout | Algorithmic Component | A technique used to estimate model uncertainty by performing multiple stochastic forward passes. It is a computationally efficient alternative to training a full model ensemble [18] [19]. |

| Molecular Descriptors / Fingerprints | Data Representation | In drug development and materials science, these are numerical representations of molecular structure (e.g., Morgan fingerprints, RDKit descriptors) that serve as the feature input for predictive models [19]. |

| Validation Dataset with OOD Samples | Benchmarking Resource | A carefully curated dataset that includes samples from a different distribution than the primary training pool. It is essential for evaluating the true generalization capability of models trained via active learning [19]. |

The empirical evidence clearly demonstrates that there is no single "best" active learning strategy for all scenarios. The optimal choice is contingent on the data regime, the task, and the ultimate goal—whether it is maximizing in-distribution accuracy or improving out-of-distribution generalization. Uncertainty-based methods excel at refining models efficiently, while diversity-based methods are crucial for overcoming the cold-start problem and ensuring broad coverage. Hybrid approaches, such as TCM and RD-GS, offer a robust compromise by dynamically leveraging the strengths of both paradigms.

Future research in active learning model generalization assessment will likely focus on developing more adaptive and context-aware hybrid strategies, improving uncertainty quantification for complex models like Graph Neural Networks in molecular tasks [19], and creating more standardized benchmarks for evaluating OOD performance. As these methodologies mature, their integration into scientific workflows promises to significantly accelerate discovery in data-constrained fields like drug development.

In the high-stakes fields of scientific research and drug development, the acquisition of labeled data often presents a major bottleneck, requiring expert knowledge, specialized equipment, and time-consuming procedures [1]. Active learning (AL) has emerged as a powerful machine learning paradigm that strategically addresses this challenge by optimizing the data annotation process. Unlike traditional supervised learning that relies on static, pre-labeled datasets, active learning operates through an iterative, human-in-the-loop process that selectively identifies the most informative data points for labeling, thereby maximizing model performance while minimizing labeling costs [5] [23]. This approach is particularly valuable in domains like pharmaceutical research where labeling costs are prohibitive; for instance, in materials science, experimental synthesis and characterization often demand expert knowledge and expensive equipment, making data-driven modeling efforts difficult to scale [1].

The fundamental objective of active learning is to minimize the labeled data required for training while maximizing the model's performance through intelligent data selection [5]. By focusing human annotation efforts on the most valuable data points, active learning algorithms can achieve better learning efficiency and performance than passive learning approaches, often reaching performance parity with full-data baselines while using only 30% of the data—equivalent to 70–95% savings in computational or labeling resources [1]. This efficiency is driven by the core principle that not all data points contribute equally to model learning, and that by strategically selecting which instances to label, models can converge faster and generalize better with significantly less labeled data [5] [1].

The Architecture of the Iterative Active Learning Loop

Core Components and Workflow

The iterative active learning loop operates through a structured, cyclical process that interleaves model training, data selection, human annotation, and model updating. This process can be formally described as a sequence of interconnected stages:

Initialization: The process begins with a small set of labeled data points, ( L = {(xi, yi)}_{i=1}^l ), which serves as the starting point for training the initial model. This seed dataset must be representative enough to bootstrap the learning process [5] [1].

Model Training: A machine learning model is trained using the current labeled dataset. This model forms the basis for evaluating and selecting unlabeled data points in subsequent steps [5].

Query Strategy Application: Using a predefined acquisition function, the model selects the most informative unlabeled data points from a pool ( U = {xi}{i=l+1}^n ). This selection is guided by strategies such as uncertainty sampling, diversity sampling, or hybrid approaches [5] [1] [23].

Human Annotation: The selected data points are presented to human experts (or an "oracle") for labeling, providing the ground truth labels for these instances. This step incorporates domain expertise directly into the training process [5] [23].

Model Update: The newly labeled instances are incorporated into the training set, expanding it to ( L = L \cup {(x^, y^)} ), and the model is retrained using this augmented dataset [5] [1].

Iteration: Steps 2-5 are repeated iteratively, with the model continuously selecting and learning from the most informative data points until a stopping criterion is met, such as performance convergence, depletion of the unlabeled pool, or exhaustion of the labeling budget [5] [1].

This cyclical process creates a self-improving system where each iteration enhances the model's capability to identify increasingly valuable data points, creating a virtuous cycle of improvement [24].

Visualizing the Active Learning Workflow

The following diagram illustrates the sequential flow and feedback mechanisms inherent in the iterative active learning loop:

Comparative Analysis of Active Learning Query Strategies

Taxonomy of Acquisition Functions

Active learning strategies employ various acquisition functions to identify the most valuable data points for annotation. These strategies can be broadly categorized into several fundamental approaches, each with distinct mechanisms and suitability for different data environments:

Uncertainty Sampling: This approach selects instances where the model is least confident in its predictions [23]. For classification tasks, uncertainty can be quantified using metrics such as least confident (lowest predicted probability for the top class), margin (smallest difference between the top two class probabilities), or entropy (highest entropy of the predicted class distribution) [23]. In regression tasks, uncertainty estimation is more challenging but can be implemented using techniques like Monte Carlo dropout, which performs multiple forward passes with dropout enabled to produce a distribution of outputs whose variance indicates uncertainty [1].

Query-by-Committee (QBC): This method leverages multiple models (a "committee") to select instances where committee members exhibit the highest disagreement in their predictions [23]. Disagreement can be measured using vote entropy (how evenly committee votes are split among classes) or Kullback-Leibler divergence between model predictions [23]. QBC can be more robust than single-model uncertainty sampling as it captures model uncertainty arising from different decision boundaries, though it incurs higher computational costs [23].

Diversity Sampling: Also known as representativeness-based sampling, this approach selects data points that broadly cover the underlying distribution of the unlabeled pool [23]. Techniques include clustering the unlabeled data and selecting representatives from each cluster, or choosing points that maximize coverage of the feature space [23]. Diversity sampling helps prevent redundancy in the selected batch and ensures the model encounters a wide variety of data patterns.

Expected Model Change: This strategy selects instances that would cause the greatest change to the current model parameters if their labels were known [23]. It can be implemented by computing the gradient that the model would take on an unlabeled sample for each possible label and selecting samples with the highest expected gradient magnitude [23]. This approach directly targets samples that would most impact the model's learning.

Hybrid Strategies: Many practical implementations combine multiple principles, such as selecting samples that are both uncertain and diverse [23]. These hybrids aim to balance exploration (discovering new patterns) with exploitation (refining decision boundaries in ambiguous regions) [1].

Performance Benchmarking Across Domains

Recent comprehensive benchmarking studies have evaluated the performance of various active learning strategies across different domains and data conditions. The table below summarizes key quantitative findings from empirical studies:

Table 1: Performance Comparison of Active Learning Strategies Across Domains

| Domain | Strategy | Performance Gain | Data Efficiency | Key Findings |

|---|---|---|---|---|

| Materials Science [1] | Uncertainty-driven (LCMD, Tree-based-R) | Early phase: Significant MAE reduction vs. random | High in early acquisition | Outperforms geometry-only heuristics (GSx, EGAL) and baseline |

| Materials Science [1] | Diversity-hybrid (RD-GS) | Early phase: Significant MAE reduction vs. random | High in early acquisition | Clear outperformance early; gap narrows as labeled set grows |

| Cybersecurity [25] | AL-assisted Autoencoder | Significant improvement in APT detection rates | Minimizes manual labeling | Better performance with smaller datasets compared to existing approaches |

| Education [8] | Active vs. Passive Learning | 54% higher test scores | 1.5x less likely to fail | 70% vs. 45% average test scores |

| Clinical AI [26] | DRL with Scope Loss | 92-93.7% F-measure for Alzheimer's detection | Reduced labeled samples | Superior performance with fewer labeled MRI scans |

The convergence behavior of different strategies reveals an important pattern: during early iterations when labeled data is scarce, uncertainty-driven and diversity-hybrid strategies clearly outperform random sampling and geometry-only heuristics [1]. However, as the labeled set grows, the performance gap narrows and all strategies eventually converge, indicating diminishing returns from active learning under conditions of abundant data [1].

Experimental Protocols and Implementation Frameworks

Standardized Benchmarking Methodology

To ensure rigorous evaluation and comparison of active learning strategies, researchers have established standardized benchmarking protocols. The typical experimental setup follows these key steps:

Dataset Partitioning: The complete dataset is divided into training and test sets with an 80:20 ratio. The training set is further split into an initial labeled set and a larger unlabeled pool [1].

Initialization: A small number of samples ((n_{init})) are randomly selected from the unlabeled dataset to form the initial labeled dataset [1].

Iterative Sampling: In each active learning cycle:

- An AutoML model or specified base learner is trained on the current labeled set

- The acquisition function selects the most informative sample(s) from the unlabeled pool

- The selected samples are "labeled" (using ground truth in benchmark settings) and added to the labeled set

- The model is retrained on the expanded dataset [1]

Performance Validation: Model performance is evaluated using appropriate metrics (e.g., Mean Absolute Error (MAE) and Coefficient of Determination (R²) for regression; accuracy, F-measure for classification) typically using cross-validation with the number of folds set to 5 [1] [26].

Stopping Criterion: The process continues until a predefined stopping condition is met, such as depletion of the unlabeled pool, exhaustion of the labeling budget, or performance convergence [1].

This standardized approach enables fair comparison across different active learning strategies and provides insights into their relative performance throughout the learning curve, particularly during the critical early phase when data is scarce [1].

Advanced Framework: Deep Reinforcement Learning for Adaptive Sampling

Recent research has introduced more sophisticated active learning frameworks that address limitations of static query strategies. One advanced approach combines Deep Reinforcement Learning (DRL) with active learning to create an adaptive sampling strategy that dynamically evolves with model learning [26]. The following diagram illustrates this integrated framework:

The DRL-based framework incorporates several innovative components:

State Representation: The state at each decision point combines feature vectors from CNNs ((fi)) with predicted scores ((f(xi))), formally represented as (S = {si^t}) where (si^t = (fi^t, f(xi^t))) and (t) represents the specific time point [26].

Action Space: The Q-network decides whether a human should label a sample, with actions (A = {0,1}). When (ai^t = 1), sample (xi^t) is sent for human annotation and incorporated into the labeled dataset [26].

Reward Structure: The framework employs a balanced reward system that includes a minor penalty (-0.2) for annotation actions to discourage excessive labeling and conserve resources, while for non-annotation actions, it calculates entropy loss using centroids of feature vectors to promote informative selection [26].

Scope Loss Function (SLF): This component dynamically balances exploration (labeling new, diverse samples) and exploitation (focusing on familiar informative patterns), preventing premature convergence to suboptimal policies [26].

Differential Evolution (DE): An advanced optimization algorithm with mutation, crossover, and selection phases that systematically fine-tunes model hyperparameters, enhancing genetic diversity and preventing stagnation in the optimization process [26].

This integrated approach has demonstrated significant improvements in application domains such as Alzheimer's disease detection, achieving F-measures of 92.044% on OASIS and 93.685% on ADNI datasets while reducing labeling requirements [26].

Successful implementation of active learning in research and drug development requires both computational frameworks and domain-specific resources. The following table catalogs key solutions referenced in recent literature:

Table 2: Essential Research Reagents and Computational Solutions for Active Learning

| Resource Type | Specific Tool/Platform | Function | Application Context |

|---|---|---|---|

| AutoML Frameworks [1] | Automated ML Pipelines | Automates model selection & hyperparameter optimization | Materials science regression tasks |

| Annotation Pipelines [23] | Custom Web Interfaces | Enables human feedback collection & preference ranking | LLM alignment via RLHF |

| Data Augmentation [25] | Generative Adversarial Networks (GANs) | Generates synthetic data resembling labeled normal points | Cybersecurity anomaly detection |

| Uncertainty Estimation [1] | Monte Carlo Dropout | Estimates prediction uncertainty via multiple forward passes | Regression tasks in materials informatics |

| Representation Learning [25] | Attention-based Adversarial Dual AutoEncoder (ADAEN) | Learns low-dimensional representations of normal activity | APT detection in cybersecurity |

| Hyperparameter Optimization [26] | Differential Evolution (DE) Algorithm | Global optimization of hyperparameters via mutation & selection | Medical imaging (Alzheimer's detection) |

| Reinforcement Learning [26] | Deep Q-Network with Scope Loss | Dynamic sample selection balancing exploration/exploitation | Medical image classification |

These tools collectively enable researchers to implement sophisticated active learning pipelines that adapt to their specific domain requirements. For instance, in drug discovery, AI-powered platforms such as CODE-AE have demonstrated the ability to predict patient-specific responses to novel compounds, dramatically advancing the feasibility of personalized therapeutics [27]. Similarly, in materials science, AutoML frameworks have proven invaluable for automating the repetitive work in model design and parameterization, which is particularly important given the time- and resource-intensive nature of experimentation and characterization [1].

The iterative active learning loop represents a paradigm shift in how machine learning models are trained in data-scarce environments, strategically minimizing labeling costs while maximizing model performance through intelligent data selection. As benchmark studies have consistently demonstrated, uncertainty-driven and diversity-hybrid strategies typically outperform random sampling and static heuristics, particularly during the critical early stages of learning when labeled data is most limited [1]. The convergence of various strategies as labeled sets grow larger underscores the diminishing returns of active learning under conditions of abundant data, highlighting its primary value in resource-constrained scenarios.

Looking ahead, several emerging trends are shaping the future of active learning research and applications. The integration of deep reinforcement learning for adaptive sample selection addresses key limitations of static query strategies, creating more dynamic and responsive learning systems [26]. Similarly, the combination of active learning with automated machine learning (AutoML) pipelines presents promising avenues for further reducing human intervention while maintaining model performance [1]. In specialized domains like drug discovery, active learning frameworks are increasingly being integrated with multi-omics data and digital twin simulations to enable more personalized therapeutic development [27]. As these methodologies continue to evolve, they hold the potential to dramatically accelerate scientific discovery and innovation across research-intensive fields.

Table of Contents

- Introduction: The Vast Landscape of Chemical Space

- Comparative Analysis of Reaction Mapping Platforms

- Experimental Protocol: Robotic Hyperspace Mapping

- Workflow Visualization: From Reaction to Analysis

- The Scientist's Toolkit: Essential Research Reagents & Solutions

- Conclusion & Future Outlook

The exploration of chemical space, estimated to contain up to 10^60 drug-like molecules, represents one of the most significant challenges in modern drug discovery [28]. Traditional, intuition-driven experimentation can only probe a minuscule fraction of this space, potentially missing optimal drug candidates and efficient synthetic routes. The ability to rapidly and systematically map chemical reaction spaces—understanding how yields and by-products change across thousands of conditions—is thus a critical capability for accelerating research and development [29]. This case study examines a pioneering robotic platform for chemical hyperspace mapping and positions its performance within the broader ecosystem of drug discovery technologies, framing the discussion around the imperative of assessing active learning model generalization for robust and reliable outcomes.

Comparative Analysis of Reaction Mapping Platforms

The following table provides a comparative overview of the featured robotic hyperspace mapping platform against other common approaches in the field. The quantitative data highlights the distinct advantages in throughput and cost for large-scale reaction condition screening [29] [30].

Table 1: Performance comparison of approaches for mapping chemical reactions.

| Platform / Approach | Primary Function | Key Metric | Performance / Capacity | Notable Features |

|---|---|---|---|---|

| Robotic Hyperspace Mapper [29] | High-throughput reaction condition screening | Daily Reaction Throughput | ~1,000 reactions/day | Optical (UV-Vis) yield quantification; minimal cost per condition |

| Yield Quantification Cost | "Cents per sample" | |||

| Yield Estimate Accuracy | Within 5% (e.g., 20% yield has 19-21% spread) | |||

| AI-Driven Drug Discovery (e.g., Exscientia) [30] | De novo molecular design & optimization | Design Cycle Speed | ~70% faster than industry norms | Generative AI; integrated patient biology |

| Compounds Synthesized | Requires ~10x fewer compounds than industry norms | |||

| Traditional Manual Exploration | Limited condition testing | N/A | Limited by synthetic and analytical throughput | Relies heavily on chemist intuition and experience |

Experimental Protocol: Robotic Hyperspace Mapping

The core methodology for the large-scale mapping of reaction spaces involves a tightly integrated sequence of automation, analysis, and computational validation [29].

1. Robotic Reaction Setup and Spectral Acquisition: A house-built robotic platform is used to execute reactions across an N-dimensional grid of conditions (e.g., varying concentrations, temperatures, and solvents). For each condition, the robot acquires a UV-Vis absorption spectrum of the crude reaction mixture. This process is highly parallelized, enabling the characterization of up to 1,000 reactions per day [29].

2. Identification of the Spectral "Basis Set": In a crucial step, the crude mixtures from all explored hyperspace points are combined and subjected to traditional chromatographic separation and spectroscopic analysis (NMR, MS). This identifies the complete "basis set" of all major products and by-products formed anywhere in the hyperspace. Pure samples of these components are used to obtain reference UV-Vis spectra and calibration curves [29].

3. Spectral Unmixing for Yield Quantification: The complex UV-Vis spectrum from each crude reaction mixture is computationally decomposed (or "unmixed") via vector decomposition. The algorithm fits the experimental spectrum as a linear combination of the reference spectra from the basis set. This fitting is constrained by reaction stoichiometry to ensure physically meaningful results. This process yields an estimate of the concentration (and thus yield) for every reaction component at every condition [29].

4. Anomaly Detection and Model Validation: The protocol incorporates a robust validation step. The residual difference between the experimental spectrum and the fitted spectrum is analyzed. A significant, systematic residual indicates the formation of a product not included in the original basis set—an "anomalous outcome." This triggers further investigation, ensuring the model remains accurate and can generalize to unexpected reactivity discovered during the screening process [29].

Workflow Visualization: From Reaction to Analysis

The end-to-end process for robotic hyperspace mapping, from experimental setup to data analysis, is summarized in the following workflow diagram.

Figure 1: The robotic hyperspace mapping workflow integrates high-throughput experimentation with computational analysis to efficiently map reaction outcomes.

This empirical data generation process is a critical component for training and assessing active learning models. The reliability of the yield quantification data directly impacts model generalization. The broader active learning cycle, which this experiment feeds into, is shown below.

Figure 2: The active learning cycle for chemical discovery. The model iteratively selects the most informative experiments to run, optimizing the use of experimental resources [1] [5].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful execution of high-throughput reaction mapping and the development of predictive models rely on a suite of specialized tools and computational resources.

Table 2: Key research reagents and solutions for chemical reaction mapping and analysis.

| Category | Item / Solution | Function / Application |

|---|---|---|

| Automation & Synthesis | Automated Robotic Platform | Executes thousands of reactions in parallel with high precision in liquid handling. [29] |

| Reagent Capsules/Tablets | Pre-measured, easy-to-handle reagent formats for automated synthesis systems. [31] | |

| Analytical & Data Analysis | UV-Vis Spectrophotometer | High-speed, low-cost quantification of reaction components in crude mixtures. [29] |

| Spectral Unmixing Algorithms | Decomposes complex spectral data into individual component concentrations. [29] | |

| RDKit | Open-source toolkit for cheminformatics, used for molecular representation, descriptor calculation, and similarity analysis. [32] | |

| Cheminformatics & Modeling | SOAP (Smooth Overlap of Atomic Positions) | A molecular representation used in kernel-based ML models to predict molecular properties and reaction energies. [28] |

| Active Learning Query Strategies (e.g., Uncertainty Sampling) | Computational methods (e.g., entropy-based) to identify the most informative data points for labeling, maximizing model performance with minimal data. [1] [5] [33] | |