AUC and Concordance Index Calculation: A Comprehensive Guide for Pharmaceutical Researchers

This comprehensive guide explores the calculation, application, and interpretation of Area Under the Curve (AUC) and Concordance Index (C-index) for researchers and drug development professionals.

AUC and Concordance Index Calculation: A Comprehensive Guide for Pharmaceutical Researchers

Abstract

This comprehensive guide explores the calculation, application, and interpretation of Area Under the Curve (AUC) and Concordance Index (C-index) for researchers and drug development professionals. Covering foundational concepts to advanced methodologies, it addresses AUC calculation methods in pharmacokinetics, C-index implementation for survival analysis, troubleshooting common pitfalls, and comparative validation approaches. With practical examples from recent studies and regulatory perspectives, this resource provides the essential knowledge needed to accurately apply these critical metrics in biomedical research, clinical trials, and therapeutic drug monitoring.

Understanding AUC and Concordance Index: Core Concepts and Significance in Biomedical Research

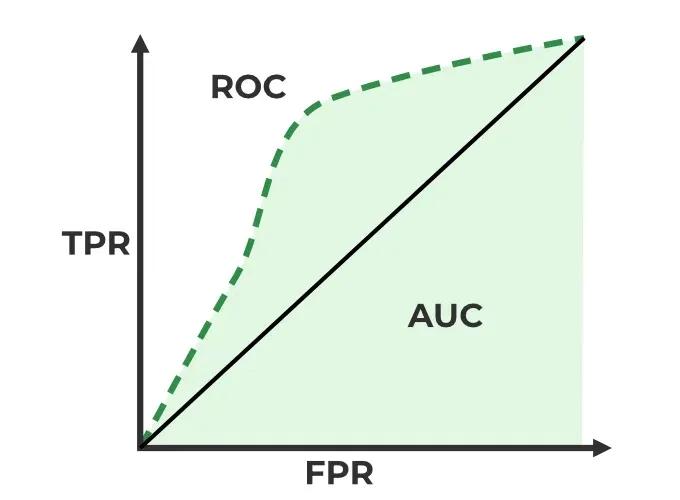

Area Under the Curve (AUC) serves as a fundamental quantitative metric across biomedical research, providing crucial insights in two primary domains: quantifying systemic drug exposure in pharmacokinetics and evaluating diagnostic performance in biomarker and model validation. In pharmacokinetics, AUC represents the total integrated drug concentration in the bloodstream over time, serving as a definitive measure of overall systemic exposure following drug administration [1]. For diagnostic applications, the AUC derived from Receiver Operating Characteristic (ROC) curves measures a binary classifier's ability to distinguish between classes, with an AUC of 1.0 representing perfect discrimination and 0.5 representing no discriminative capacity beyond chance [2]. This dual application makes AUC an indispensable tool for researchers, scientists, and drug development professionals requiring robust, quantitative assessments of biological responses and model performance.

The calculation and interpretation of AUC varies significantly between these contexts. In pharmacokinetics, researchers calculate AUC from experimentally measured concentration-time data using integration methods, while in diagnostic medicine, AUC is computed from the ROC curve generated by plotting sensitivity against 1-specificity across all possible classification thresholds [2]. Despite these methodological differences, both applications rely on AUC as a single quantitative measure that summarizes complex biological or diagnostic data, enabling comparative assessments and decision-making in research and clinical applications.

AUC in Pharmacokinetics: Quantifying Drug Exposure

Core Concept and Calculation Methods

In pharmacokinetics, the Area Under the Curve (AUC) of a drug concentration-time profile represents the total integrated drug exposure to which a subject is subjected following administration. This metric is fundamental for establishing dosage regimens, assessing bioavailability, and understanding exposure-response relationships in drug development [1]. The accuracy of AUC estimation directly impacts critical development decisions, including dose selection for late-stage clinical trials.

Two primary methodological approaches exist for estimating AUC from graphically extracted data when raw participant-level data are unavailable:

Trapezoidal Integration Method: This standard approach applies the trapezoidal rule directly to group-level means extracted from published response curves. To approximate uncertainty, AUC bounds are estimated by computing the trapezoidal rule on the mean ± standard deviation at each timepoint, yielding a confidence range for the AUC estimate [1].

Monte Carlo Method: This advanced approach samples plausible response curves and integrates over their posterior distribution. The method involves sampling synthetic observations from distributions defined by group means and standard deviations at each timepoint, fitting interpolating splines through the sampled values, and calculating AUC for each simulated curve to generate a full posterior distribution of plausible AUC values [1].

Recent large-scale benchmarking across 3,920 synthetic datasets derived from seven functional response types common in biomedical research demonstrated that the Monte Carlo method produced near-unbiased AUC estimates with tighter alignment to known values compared to the standard trapezoidal approach, which consistently underestimated true AUC, particularly in curves with skewed or long-tailed structures [1].

Experimental Protocol: AUC Estimation via Monte Carlo Method

Purpose: To accurately estimate Area Under the Curve (AUC) and its uncertainty from graphically extracted pharmacokinetic data when raw data are unavailable.

Materials and Equipment:

- Digitized concentration-time data (means and standard errors/extracted from published figures)

- Computational software with statistical capabilities (R, Python, etc.)

- Figure digitization software (e.g., PlotDigitizer)

Procedure:

- Data Extraction: Extract group-level means and measures of variance (standard deviation or standard error) at each timepoint from published concentration-time curves using figure digitization software.

- Parameter Setup: Define the number of synthetic datasets to generate (typically 1,000 iterations for stable estimates).

- Monte Carlo Simulation: For each iteration:

- Sample synthetic response values at each timepoint from a normal distribution defined by the extracted mean and standard deviation.

- Fit a smooth interpolating spline through the sampled values over the original timepoints.

- Generate a fine-resolution time grid (e.g., 1,000 points) spanning the original time range.

- Evaluate the interpolated curve on this fine grid.

- Calculate the AUC of the interpolated curve using numerical integration (e.g., trapezoidal rule).

- Store the calculated AUC value.

- Result Compilation: After all iterations, compile all stored AUC values into a distribution.

- Summary Statistics: Calculate the mean and standard deviation of the simulated AUC distribution as the final estimate and its uncertainty [1].

Validation Notes: This method has demonstrated robust performance even under sparse sampling conditions (4-10 timepoints) and small cohort sizes (5-40 participants), maintaining accuracy across various pharmacokinetic curve shapes including skewed Gaussian, biexponential decay, and Bateman functions [1].

Comparative Performance of AUC Estimation Methods

Table 1: Performance comparison of AUC estimation methods across 3,920 synthetic datasets [1]

| Method | Bias | Precision | Conditions Favoring Use | Limitations |

|---|---|---|---|---|

| Trapezoidal Integration | Consistent underestimation, especially for skewed/long-tailed curves | Moderate | Initial screening, computational efficiency | Fails to capture true AUC in complex curve shapes |

| Monte Carlo Method | Near-unbiased across all curve types | High | Meta-analyses, regulatory submissions, sparse data | Computationally intensive, requires programming expertise |

| Key Finding: Monte Carlo approach demonstrated superior accuracy and uncertainty quantification across all tested conditions, including varying timepoints (4-10) and participant sizes (5-40). |

AUC in Diagnostic Accuracy: The ROC Curve

Core Principles and Interpretation

In diagnostic medicine, the Area Under the Receiver Operating Characteristic (ROC) Curve (AUC-ROC) quantifies the overall ability of a binary classifier to distinguish between two classes across all possible classification thresholds. The ROC curve itself plots the True Positive Rate (Sensitivity) against the False Positive Rate (1-Specificity) at various threshold settings [2]. The resulting AUC value provides a single measure of diagnostic performance that is threshold-independent, unlike sensitivity or specificity alone.

The interpretation of AUC values follows established standards:

- AUC = 0.5: Indicates no discriminative ability, equivalent to random guessing

- 0.5 < AUC < 0.7: Considered poor discriminative ability

- 0.7 ≤ AUC < 0.8: Acceptable discrimination

- 0.8 ≤ AUC < 0.9: Excellent discrimination

- AUC ≥ 0.9: Outstanding discrimination [2]

An AUC of 1.0 represents perfect classification, where the model achieves 100% sensitivity and 100% specificity simultaneously. The AUC equivalent to 0.5 indicates the classifier performs no better than chance in distinguishing between positive and negative cases.

Experimental Protocol: ROC Curve Generation and AUC Calculation

Purpose: To generate a Receiver Operating Characteristic (ROC) curve and calculate the Area Under the Curve (AUC) to evaluate the performance of a binary classification model.

Materials and Equipment:

- Dataset with known ground truth labels (positive/negative)

- Classification model producing probability scores

- Computational environment with statistical libraries (scikit-learn, pROC, etc.)

Procedure:

- Model Prediction: Obtain predicted probability scores for the positive class from your classification model on the test dataset.

- Threshold Definition: Define a series of classification thresholds ranging from 0 to 1 (typically 100+ increments).

- Classification at Thresholds: For each threshold:

- Convert probability scores to binary predictions (1 if ≥ threshold, 0 otherwise).

- Calculate True Positives (TP), False Positives (FP), True Negatives (TN), False Negatives (FN).

- Compute True Positive Rate: TPR = TP / (TP + FN).

- Compute False Positive Rate: FPR = FP / (FP + TN).

- ROC Plotting: Plot TPR against FPR for all thresholds to generate the ROC curve.

- AUC Calculation: Calculate the area under the ROC curve using numerical integration methods (e.g., trapezoidal rule) or dedicated functions (e.g.,

roc_auc_scorein scikit-learn) [2]. - Validation: Apply statistical methods to estimate confidence intervals for the AUC, typically through bootstrapping or DeLong's test for correlated ROC curves.

Implementation Note: Most statistical software packages provide built-in functions for ROC curve generation and AUC calculation. For example, Python's scikit-learn library includes roc_curve() and roc_auc_score() functions that automate steps 2-5 [2].

Diagnostic Performance of Biomarkers and Imaging Modalities

Table 2: Diagnostic accuracy of biomarkers and imaging modalities for various clinical conditions [3] [4]

| Biomarker/Modality | Clinical Application | Sensitivity (95% CI) | Specificity (95% CI) | AUC | Evidence Quality |

|---|---|---|---|---|---|

| Interleukin-6 (IL-6) | Late-onset neonatal sepsis | 85.2% (80.0-89.3%) | 84.1% (77.5-89.0%) | 0.91 | Moderate (GRADE) |

| Fecal Calprotectin (<50 μg/g) | Crohn's disease recurrence | 76% (70-82%) | 66% (56-75%) | 0.83* | Moderate |

| CT/MR Enterography | Crohn's disease recurrence | 89% (73-96%) | 65% (43-82%) | 0.87* | Moderate |

| Intestinal Ultrasound | Crohn's disease recurrence | 92% (75-96%) | 76% (52-90%) | 0.92* | Moderate |

| Note: AUC values marked with * are estimated from reported sensitivity and specificity values. IL-6 demonstrates excellent diagnostic accuracy (AUC 0.91) for late-onset neonatal sepsis, while cross-sectional imaging shows high sensitivity for detecting Crohn's disease recurrence. |

The Concordance Index (C-index) in Prognostic Research

Relationship Between AUC and C-index

The Concordance Index (C-index) represents an extension of the AUC principle to time-to-event data, making it particularly valuable for evaluating prognostic models in clinical research, especially in oncology. While standard AUC assesses discrimination in binary classification, the C-index measures the concordance between predicted risk scores and observed survival times, evaluating whether patients with higher risk scores experience events sooner than those with lower scores [5] [6] [7].

In practical applications, the C-index ranges from 0 to 1, with 0.5 indicating no predictive discrimination and 1.0 indicating perfect discrimination. Well-validated nomograms for cancer prognosis typically demonstrate C-index values between 0.70 and 0.85, reflecting moderate to strong predictive accuracy [5] [6] [7]. For example, a nomogram for early-stage cervical cancer achieved a C-index of 0.79 in the development cohort and 0.84 in the validation cohort for predicting disease-free survival [5], while a male breast cancer nomogram reported C-indices of 0.72-0.75 in internal validation and 0.98 in external validation [7].

Experimental Protocol: C-index Calculation for Prognostic Models

Purpose: To calculate the Concordance Index (C-index) for evaluating the discriminative ability of a prognostic model with time-to-event data.

Materials and Equipment:

- Dataset with observed survival times and event status

- Predicted risk scores from prognostic model

- Statistical software with survival analysis capabilities (R, Python, SPSS)

Procedure:

- Data Preparation: Compile dataset containing observed survival times, event indicators (1 for event, 0 for censored), and predicted risk scores for all subjects.

- Form All Comparable Pairs: Identify all possible pairs of subjects where the subject with shorter observed time experienced an event (i.e., not censored).

- Evaluate Concordance: For each comparable pair:

- Determine if the subject with higher risk score had the event first.

- Count the pair as concordant if higher risk score corresponds to earlier event.

- Count the pair as discordant if higher risk score corresponds to later event or no event.

- Count the pair as tied if risk scores are equal.

- Calculate C-index: Compute the C-index as (number of concordant pairs + 0.5 × number of tied pairs) / total number of comparable pairs.

- Validation: Assess statistical significance and calculate confidence intervals through bootstrapping or other resampling methods.

Implementation Note: Most statistical packages provide built-in functions for C-index calculation. In R, the coxph() function automatically computes the C-index for Cox models, while the concordance.index() function in various packages offers general calculation capabilities. Similar functionality exists in Python's lifelines library [5] [6] [7].

Research Reagent Solutions and Computational Tools

Table 3: Essential tools and resources for AUC and C-index research

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| PlotDigitizer | Figure data extraction | Meta-analysis of published curves | Converts graph images to numerical data |

| R Statistical Software | Data analysis and modeling | AUC estimation, ROC analysis, C-index calculation | Comprehensive statistical packages (survival, rms, pROC) |

| Python Scikit-learn | Machine learning and evaluation | ROC curve generation, AUC calculation | roc_curve(), roc_auc_score() functions |

| SEER*Stat Software | Cancer database access | Prognostic model development | Population-based cancer incidence and survival data |

| X-tile Software | Cutpoint optimization | Risk stratification in prognostic models | Determines optimal cutoff values for continuous variables |

| PMC Literature Database | Scientific literature access | Methodological reference | Open-access biomedical literature |

| Note: These tools represent essential resources for researchers conducting AUC-related analyses, from data extraction to model development and validation. |

Area Under the Curve serves as a versatile quantitative metric with critical applications spanning pharmacokinetics and diagnostic medicine. In drug development, accurate AUC estimation through advanced methods like Monte Carlo simulation provides reliable quantification of drug exposure essential for dosage determination [1]. In diagnostic and prognostic research, AUC-ROC and C-index offer robust measures of discriminatory accuracy for classification models and survival predictions [5] [2] [6]. The methodological frameworks and experimental protocols presented in this article provide researchers with standardized approaches for implementing these analyses across diverse research contexts, ensuring rigorous quantitative assessment of biological responses and model performance.

Survival analysis, or time-to-event analysis, is a statistical method for analyzing the time until an event of interest occurs. A unique characteristic of survival data is censoring, where the event of interest is not observed for some subjects during the study period, meaning their true event times are only partially known [8] [9]. Evaluating predictive models in this context requires specialized metrics that account for this censoring, with the Concordance Index (C-index) emerging as the most commonly used metric for assessing the discriminatory power of survival models [8] [10].

The C-index measures a model's ability to produce a reliable ranking of subjects by their risk of experiencing an event. It represents the rank correlation between the predicted risk scores and the observed event times, quantifying the probability that the model orders any two comparable subjects correctly [8] [11] [10]. Unlike absolute accuracy measures which assess how close predictions are to actual values, the C-index evaluates ranking accuracy, making it particularly suitable for survival analysis where accurately identifying higher-risk versus lower-risk individuals is often the primary objective [12] [13].

Theoretical Foundations of the Concordance Index

Core Conceptual Framework

The fundamental intuition behind the C-index is that a good predictive model should assign higher risk scores to subjects who experience the event earlier than to those who experience it later or not at all [10]. Formally, for a pair of subjects (i, j), if subject i has a shorter observed survival time than subject j and also receives a higher risk score from the model, this pair is considered concordant. If the model assigns a lower risk score to the subject with the shorter survival time, the pair is discordant [8] [10].

The C-index is calculated as the ratio of concordant pairs to all comparable pairs [10]:

\begin{equation} C = \frac{\text{Number of concordant pairs} + \frac{1}{2} \times \text{Number of tied risk pairs}}{\text{Total number of comparable pairs}} \end{equation}

Ties in risk scores are typically counted as half-concordant [13]. The resulting value ranges from 0 to 1, where 0.5 indicates predictions no better than random chance, and 1 represents perfect discrimination [10].

Handling Censored Data

A particular challenge in survival analysis is determining which pairs of subjects are comparable given the presence of censoring [8] [10]. The handling of different types of pairs is summarized below:

Table 1: Handling of Different Types of Subject Pairs in C-index Calculation

| Pair Type | Description | Treatment in C-index |

|---|---|---|

| Both subjects experienced event | Known ordering of event times | Always comparable |

| One censored, one with event | Comparable only if event time < censoring time | Included only if ordering is known |

| Both subjects censored | Unknown which would experience event first | Not comparable (excluded) |

| Tied risk scores | Model assigns equal risk to both subjects | Counted as half-concordant |

[8] [10] [13] provides a clear example: if a subject experienced an event at time t = 3 years, and another subject was censored at t = 5 years, we know the first subject experienced the event first, making this pair comparable. If instead the censoring occurred at t = 2 years, we cannot determine who would have experienced the event first, making the pair non-comparable [13].

Quantitative Comparison of Concordance Index Variants

Key C-index Estimators and Their Properties

Several statistical estimators have been developed to calculate the C-index, each with different properties and suitability for various research contexts.

Table 2: Comparison of Major C-index Estimators in Survival Analysis

| Estimator | Key Principle | Advantages | Limitations | Suitable Contexts |

|---|---|---|---|---|

| Harrell's C-index [14] [10] [9] | Direct comparison of comparable pairs | Intuitive; easy to compute; widely used | Optimistic bias with high censoring; depends on censoring distribution | Low censoring rates; preliminary analysis |

| Uno's C-index [8] [14] | Inverse probability of censoring weighting (IPCW) | Less biased with high censoring; robust to independent censoring | Requires correct censoring model; still biased with dependent censoring | High censoring rates with independent censoring |

| Gerds' C-index [14] | IPCW with covariate-dependent censoring | Handles policy-related dependent censoring; more appropriate for policy evaluation | Complex implementation; requires modeling censoring distribution | Policy evaluations; dependent censoring scenarios |

Impact of Censoring on Different Estimators

The performance of these estimators varies significantly based on the censoring mechanism and rate. Simulation studies have demonstrated that Harrell's C-index becomes increasingly optimistic as censoring rates increase, while Uno's estimator remains more stable under independent censoring [8]. In policy-sensitive contexts where censoring depends on risk scores (e.g., patients with higher scores receive interventions and become censored), only Gerds' C-index appropriately accounts for this dependency [14].

Experimental Protocols for C-index Evaluation

Standard Protocol for Calculating Harrell's C-index

Objective: To evaluate the performance of a survival prediction model using Harrell's C-index.

Materials and Reagents:

- Dataset: Survival data containing observed times and event indicators for all subjects

- Software: Statistical software with survival analysis capabilities (e.g., R survival package, scikit-survival, lifelines, PySurvival)

- Model: Trained survival prediction model capable of generating risk scores

Procedure:

- Generate risk scores: Use the trained model to compute a risk score for each subject in the dataset

- Identify comparable pairs: For each unique pair of subjects (i, j), determine if they are comparable based on event times and censoring status

- Classify pairs: For each comparable pair:

- If risk score i > risk score j and time i < time j → Concordant pair

- If risk score i < risk score j and time i < time j → Discordant pair

- If risk score i = risk score j → Tied risk pair

- Calculate C-index: Apply the formula to compute the final concordance statistic

- Interpret results: Values closer to 1.0 indicate better discrimination performance

Protocol for Handling Dependent Censoring with Gerds' C-index

Objective: To evaluate model performance when censoring is dependent on risk scores.

Additional Materials:

- Censoring model: Methodology to estimate censoring probabilities based on covariates

Procedure:

- Model the censoring distribution: Estimate probabilities of being censored at each time point using a regression model that incorporates risk scores and other relevant covariates

- Calculate inverse probability weights: Compute weights for each subject based on the estimated probability of remaining uncensored

- Apply weighted comparison: Compare subject pairs using these weights to account for differential censoring patterns

- Compute weighted C-index: Calculate the concordance statistic using the weighted contributions of each comparable pair

Validation Protocol for C-index Stability

Objective: To assess the stability of C-index estimates across different censoring patterns.

Procedure:

- Apply synthetic censoring: Artificially introduce additional censoring into the dataset using specified mechanisms (independent, risk-dependent)

- Compute multiple C-indices: Calculate Harrell's, Uno's, and Gerds' C-indices on the synthetically censored data

- Compare results: Analyze how each estimator behaves under different censoring scenarios

- Assess robustness: Determine which estimator provides the most stable performance across censoring patterns

Visualization of C-index Concepts and Workflows

Logical Flow for Concordance Assessment

Diagram 1: C-index Calculation Workflow - This diagram illustrates the logical decision process for classifying subject pairs when calculating the C-index, showing how comparable pairs are identified and classified as concordant, discordant, or tied.

Experimental Framework for Method Comparison

Diagram 2: C-index Comparison Protocol - This workflow shows the experimental process for comparing different C-index estimators on the same dataset and model, highlighting the parallel calculation of different variants.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for C-index Research

| Tool/Reagent | Function | Implementation Considerations |

|---|---|---|

| scikit-survival [8] [13] | Python library for survival analysis | Provides concordanceindexcensored(), concordanceindexipcw(); uses predicted risks |

| lifelines [13] | Python survival analysis library | Concordance index based on predicted event times rather than risks |

| PySurvival [11] [13] | Python survival modeling framework | concordance_index() function requires model object input |

| survival (R package) [10] [15] | Comprehensive survival analysis in R | Standard for statistical validation; widely cited in literature |

| Simulated datasets [8] | Method validation | Generate data with known censoring mechanisms to test estimator robustness |

A critical note for researchers: different software packages may implement the C-index with subtle variations. For instance, scikit-survival expects predicted risks (higher value = higher risk), while lifelines uses predicted event times (higher value = longer survival). This means that for the same model, the C-index in scikit-survival will typically equal 1 - C-index in lifelines [13]. PySurvival additionally counts subject pairs in both directions (both (i,j) and (j,i)), effectively doubling the number of pairs compared to other implementations [13]. These differences must be accounted for when comparing results across studies or software platforms.

Advanced Applications and Recent Methodological Developments

C-index Decomposition for Model Insight

Recent research has proposed decomposing the C-index into components that provide deeper insights into model performance. The overall C-index can be expressed as a weighted harmonic mean of two quantities:

- CI~ee~: Ranking of observed events versus other observed events

- CI~ec~: Ranking of observed events versus censored cases [9]

This decomposition reveals that different models may perform differently on these two aspects, explaining why some models maintain stable performance across censoring levels while others deteriorate. Deep learning models, for instance, have been shown to utilize observed events more effectively than classical methods, maintaining stable C-indices across different censoring levels [9].

Time-Dependent Extensions

For applications where predictive performance within specific time horizons is important, time-dependent extensions of the C-index have been developed. These are closely related to time-dependent ROC curves and evaluate how well a model distinguishes between subjects who experience an event by a given time from those who do not [8] [16]. The cumulative/dynamic AUC implemented in scikit-survival's cumulative_dynamic_auc() function addresses this need for time-specific discrimination assessment [8].

The Concordance Index remains a fundamental metric for evaluating predictive performance in survival analysis, with multiple estimators available to address different research contexts and censoring mechanisms. Proper application requires understanding the censoring mechanisms in the data, selecting the appropriate estimator, and being aware of implementation differences across software platforms. Recent methodological developments, including decomposition approaches and time-dependent extensions, continue to enhance the depth of insight that can be gained from this versatile metric. As survival modeling increasingly incorporates machine learning approaches, appropriate use of the C-index and its variants will remain essential for rigorous model evaluation and comparison.

The Fundamental Relationship Between AUC and Harrell's C-index

Within the realms of machine learning, medical statistics, and survival analysis, researchers and drug development professionals frequently require robust metrics to evaluate the performance of predictive models. For binary classification tasks, the Area Under the Receiver Operating Characteristic Curve (AUC) is the standard measure of a model's ability to discriminate between classes [17]. In time-to-event analyses, which are crucial for clinical trials and drug development, Harrell's C-index (or concordance index) is the predominant metric for assessing a model's ability to rank survival times [10]. A clear understanding of the fundamental relationship between these two metrics is essential for the proper validation of prognostic models. This application note delineates this relationship, provides protocols for their computation, and discusses their appropriate application within a research context, particularly for drug development.

Theoretical Foundations and Definitions

Area Under the Curve (AUC)

The AUC is a performance measurement for classification problems at various threshold settings. It is derived from the Receiver Operating Characteristic (ROC) curve, which plots the True Positive Rate (TPR or Sensitivity) against the False Positive Rate (FPR or 1-Specificity) across all possible classification thresholds [17].

- Interpretation: The AUC represents the probability that a randomly selected positive instance will be ranked higher than a randomly selected negative instance by the model. An AUC of 1.0 signifies perfect discrimination, 0.5 indicates performance no better than random chance, and values below 0.5 suggest worse-than-chance performance [17].

- Formula Context: For a binary outcome ( D ) and a model score ( M ), the AUC is defined as ( P(M{diseased} > M{non-diseased}) ) [16].

Harrell's C-index

Harrell's C-index evaluates a model's ability to produce a risk score that correctly orders subjects by their time until an event [10]. It is essential for censored survival data, where the exact event time is not known for all subjects.

- Core Concept: The C-index is the proportion of all usable pairs of subjects where the model's predictions and the actual outcomes are concordant [10].

- Permissible Pairs: A pair of subjects is considered "permissible" or "usable" only if the order of their event times can be unequivocally determined. This typically excludes pairs where both subjects are censored or where a censored subject's event time precedes the observed event time of another [10] [18].

- Calculation: The simplified C-index is calculated as: ( C = \frac{\text{Number of Concordant Pairs}}{\text{Number of Permissible Pairs}} ) Tied risk scores are often accounted for by adding half the number of tied pairs to the numerator [18].

- Interpretation: A C-index of 1 indicates perfect concordance, 0.5 suggests no better than random ordering, and 0 indicates perfect discordance [10].

The following diagram illustrates the logical relationship between AUC and the C-index, and the workflow for calculating the C-index.

The Fundamental Relationship

The relationship between AUC and Harrell's C-index is one of conceptual generalization.

For a binary outcome, the C-index is mathematically equivalent to the AUC [19] [20]. In this specific scenario, the "positive" and "negative" instances form the permissible pairs, and concordance is achieved when the positive instance receives a higher risk score.

Harrell's C-index generalizes the concept of the AUC to survival data, where outcomes are time-to-event and subject to censoring [20]. While the standard AUC is static, the C-index dynamically accounts for whether a subject with a higher risk score experiences the event before a subject with a lower risk score, considering the complexities introduced by censored observations. The concordance matrix used to compute the C-index for a binary classifier directly corresponds to the ROC curve, and the area under this curve is the AUC [20].

Table 1: Key Characteristics of AUC and Harrell's C-index

| Feature | AUC (for Binary Outcomes) | Harrell's C-index (for Survival Outcomes) |

|---|---|---|

| Outcome Type | Binary (e.g., disease/no disease) | Time-to-event with censoring |

| Core Question | Does the model rank a random positive higher than a random negative? | Does the model rank a random shorter survivor higher than a random longer survivor? |

| Pair Usage | All case-vs-control pairs are used [18] | Only "permissible" pairs are used (handles censoring) [10] |

| Theoretical Relationship | Base metric for binary classification | A generalization of AUC to survival data [20] |

Calculation Protocols

Protocol for Calculating AUC

This protocol outlines the steps for calculating the AUC for a binary classifier.

1. Problem Definition: Define a binary classification task (e.g., predicting responders vs. non-responders to a drug therapy).

2. Model and Scores: Train a predictive model (e.g., logistic regression, random forest) that outputs a continuous score or probability for the positive class for each subject.

3. Vary Thresholds: Systematically vary the classification threshold from the minimum to the maximum predicted score.

4. Calculate TPR and FPR: At each threshold, calculate the True Positive Rate (TPR) and False Positive Rate (FPR) using the confusion matrix.

- ( TPR = \frac{TP}{TP + FN} )

- ( FPR = \frac{FP}{FP + TN} )

5. Plot ROC Curve: Graph the resulting (FPR, TPR) pairs.

6. Calculate AUC: Compute the area under the plotted ROC curve using a numerical integration method such as the trapezoidal rule [17].

Protocol for Calculating Harrell's C-index

This protocol is designed for evaluating a Cox proportional hazards or other survival model in a clinical study setting.

1. Study Data Preparation: Collect time-to-event data, including covariates, observed time ((Xi)), and event indicator ((\Deltai)).

2. Survival Model Fitting: Fit a survival model (e.g., Cox PH model) to the data to obtain a risk score ((\etai = \mathbf{Zi}^\top \boldsymbol{\beta})) for each subject.

3. Identify All Pairs: Enumerate all possible pairs of subjects ((i, j)).

4. Classify Pairs: For each pair, determine if it is permissible and, if so, whether it is concordant. The decision logic is summarized in the table below.

Table 2: Classification of Subject Pairs for Harrell's C-index

| Case | Subject i | Subject j | Permissible? | Concordant if |

|---|---|---|---|---|

| 1 | Event at (T_i) | Event at (T_j) | Yes | ( \etai > \etaj ) and ( Ti < Tj ) [10] |

| 2 | Censored at (T_i) | Censored at (T_j) | No | - |

| 3 | Event at (T_i) | Censored at (T_j) | Only if ( Ti < Tj ) [10] | ( \etai > \etaj ) |

| 4 | Censored at (T_i) | Event at (T_j) | Only if ( Tj < Ti ) [10] | ( \etaj > \etai ) |

5. Compute C-index: Tally the total number of concordant pairs and permissible pairs. Apply the formula: ( C = \frac{\text{Number of Concordant Pairs} + 0.5 \times \text{Number of Tied Risk Pairs}}{\text{Number of Permissible Pairs}} ) [18].

The following workflow diagram visualizes the computational steps for Harrell's C-index.

The Scientist's Toolkit: Key Reagents and Computational Solutions

Table 3: Essential Materials and Tools for AUC and C-index Research

| Item / Solution | Function / Description | Example / Note |

|---|---|---|

| Time-to-Event Dataset | The fundamental input for survival model development and C-index validation. Must include time-to-event, censoring indicator, and covariates. | Clinical trial data with overall survival (OS) or progression-free survival (PFS) endpoints. |

| Binary Outcome Dataset | The fundamental input for binary classifier development and AUC calculation. | Data from a diagnostic test study with confirmed disease status. |

| Statistical Software (R/Python) | Provides environments with comprehensive packages for calculating both AUC and C-index. | R: survival package (concordance), pROC package (AUC). Python: scikit-survival (C-index), scikit-learn (AUC). |

| Phoenix WinNonlin | A commercial software platform used in pharmacokinetics/pharmacodynamics (PK/PD) for non-compartmental analysis (NCA), which calculates AUC for drug concentration-time curves [21]. | Uses methods like Linear-Log Trapezoidal for calculating exposure metrics like AUC0-inf [21] [22]. |

| Inverse Probability Weighting (IPW) | A statistical technique used to make C-index estimates more robust to censoring patterns, ensuring they are less dependent on the study-specific censoring distribution [23]. | Used in advanced C-statistics to create estimators that are consistent for a population parameter free of censoring [23]. |

Advanced Considerations and Limitations

While Harrell's C-index is immensely useful, researchers must be aware of its limitations. The standard C-index can be sensitive to the study-specific censoring distribution [23]. Modifications, such as Uno's C-index or IPW-based estimators, have been developed to provide a measure that is less dependent on the censoring pattern [23]. Furthermore, the C-index has been criticized for its insensitivity to the addition of new, significant predictors to a model and for its focus on the ranking of pairs rather than the absolute accuracy of predictions [18]. For a more granular assessment, time-dependent AUC methods can evaluate discrimination at specific time points (e.g., 1-year, 5-year) and can be connected to the C-index as a weighted average of these time-specific AUCs [16].

Pharmacokinetic Studies: Quantifying Drug Exposure

Core Principles and Calculation Methods

The Area Under the Curve (AUC) in pharmacokinetics (PK) represents the integral of a substance's plasma concentration over time, serving as a crucial indicator of total drug exposure within the body [24]. Expressed in units such as mg·h/L, AUC is derived from concentration-time data established during pharmacokinetic studies and is essential for evaluating medication bioavailability [24]. This metric quantifies how much of a substance reaches systemic circulation and its potential therapeutic effects, making it fundamental for dose selection and therapeutic monitoring [24].

Table 1: AUC Calculation Methods in Pharmacokinetics

| Method | Formula | Application Context | Advantages/Limitations |

|---|---|---|---|

| Linear Trapezoidal | AUC = Σ [0.5 × (C₁ + C₂) × (t₂ - t₁)] |

General use; increasing concentrations | Simple calculation; may overestimate AUC during elimination phase [21] |

| Logarithmic Trapezoidal | AUC = (t₂ - t₁) × (C₁ - C₂)/ln(C₁/C₂) |

Decreasing concentrations (elimination phase) | More accurate for exponential elimination; assumes C₁ > C₂ [21] [22] |

| Linear-Log Trapezoidal (Linear-Up Log-Down) | Combination: Linear for increasing concentrations, Log for decreasing concentrations | Complete concentration-time profiles | Most accurate overall; appropriate for both absorption and elimination phases [21] |

| AUC Extrapolation to Infinity | AUC₀‑inf = AUC₀‑last + Cₚₜ/Kₑₗ |

Complete exposure estimation | Provides total drug exposure; requires accurate determination of elimination rate constant (Kₑₗ) [22] |

Advanced Considerations: Accounting for Variable Baselines

In specialized applications such as gene expression data or pharmacodynamic responses, the initial condition for the response of interest is often not zero, creating uncertainty in the true baseline value [25]. This necessitates calculating AUC relative to a variable baseline, which accounts for inherent uncertainty and variability in baseline measurements [25]. The algorithm involves:

Estimating Baseline and Its Error: Depending on experimental design, baseline can be estimated from:

- Measurements at only t=0 (for chronic dosing scenarios)

- Measurements at t=0 and t=Tₗₐₛₜ (for acute dosing with return to baseline)

- Separate control group measurements at each time point (for circadian rhythms or other variable baselines) [25]

Estimating AUC and Its Error: Using bootstrapping approaches with the trapezoidal rule applied to resampled data [25].

Handling Biphasic Responses: Calculating positive and negative AUC components separately to identify multiphasic responses where values deviate both above and below baseline [25].

AUC Calculation Decision Framework

Experimental Protocol: AUC Determination in Preclinical PK Studies

Objective: To determine the pharmacokinetic profile and total exposure of a novel compound following intravenous administration to rat models.

Materials and Reagents:

- Test compound (≥95% purity)

- Sprague-Dawley rats (250-300 g, n=6 per group)

- Heparinized blood collection tubes

- LC-MS/MS system for compound quantification

- Phoenix WinNonlin software for PK analysis

Procedure:

- Dose Administration: Administer 5 mg/kg test compound via tail vein injection.

- Blood Sampling: Collect serial blood samples (0.3 mL) at pre-dose, 0.08, 0.25, 0.5, 1, 2, 4, 8, 12, and 24 hours post-dose.

- Sample Processing: Immediately centrifuge samples at 4°C, 3000 × g for 10 minutes. Transfer plasma to clean tubes and store at -80°C until analysis.

- Bioanalysis: Quantify compound concentrations using validated LC-MS/MS method with calibration standards (1-1000 ng/mL).

- Data Analysis:

- Calculate mean concentration at each time point

- Apply linear-up log-down trapezoidal method to calculate AUC₀‑t

- Determine terminal elimination rate constant (Kₑₗ) from log-linear regression of the last 4-5 time points

- Calculate AUC₀‑inf = AUC₀‑t + Cₗₐₛₜ/Kₑₗ

- Report mean ± SD AUC values across subjects

Quality Control: Include quality control samples at low, medium, and high concentrations during bioanalysis with acceptance criteria of ±15% deviation from nominal values.

Bioequivalence Trials: Establishing Therapeutic Equivalence

Regulatory Framework and Acceptance Criteria

Bioequivalence (BE) trials are abbreviated clinical studies that evaluate whether a generic drug or new formulation is equivalent to a previously approved reference product [26]. These trials rely primarily on AUC and Cₘₐₓ (maximum plasma concentration) as key parameters for comparing the extent and rate of absorption, respectively [27]. The current FDA guidelines declare products average bioequivalent if the difference in their population means on the log-transformed scale falls within the regulatory limit of ±0.223, corresponding to the 80-125% equivalence range in the original scale [26].

Table 2: Bioequivalence Assessment Approaches

| Approach | Statistical Criteria | Application Context | Regulatory Requirements |

|---|---|---|---|

| Average Bioequivalence (ABE) | 90% CI for GMR (T/R) must fall within 80-125% [27] | Standard drugs with low to moderate variability | Standard 2x2 crossover design; 12-24 subjects typically |

| Reference-Scaled Average Bioequivalence (RSABE) | Acceptance range widens based on reference variability: [exp(∓k × sWR)] where k=0.294 (EMA) or 0.25 (FDA) [27] |

Highly variable drugs (CV ≥30%) [27] | Replicated crossover design; reference administered at least twice; ≥24 subjects (FDA) |

| Population Bioequivalence | Comparison of total variability (within + between subject) | Ensuring switchability between populations | More complex study designs; not routinely required |

| Individual Bioequivalence | Comparison within-subject variances | Assessing switchability for individuals | Most complex; rarely required in practice |

Advanced Methodology: RSABE for Highly Variable Drugs

For highly variable drugs (HVDs) with within-subject coefficient of variation ≥30%, the Reference-Scaled Average Bioequivalence approach has been adopted to address the challenge of demonstrating bioequivalence [27]. The high intra-subject variability can obscure real similarities between products, making traditional ABE approaches impractical due to the excessively large sample sizes that would be required [27].

The RSABE methodology scales the bioequivalence limits according to the within-subject variability of the reference product:

EMA RSABE Equation:

-ln(1.25) × (SWR/0.294) ≤ μT - μR ≤ ln(1.25) × (SWR/0.294)

FDA RSABE Equation:

-ln(1.25) × (SWR/0.25) ≤ μT - μR ≤ ln(1.25) × (SWR/0.25)

Where SWR is the within-subject standard deviation of the reference product, and μT - μR is the difference between logarithmic means of test and reference products [27].

Table 3: RSABE Acceptance Range at Different Variability Levels

| Within-Subject CV (%) | SWR | EMA RSABE Limits | FDA RSABE Limits |

|---|---|---|---|

| 30 | 0.294 | 80.00 - 125.00 | 76.94 - 129.97 |

| 40 | 0.385 | 74.62 - 134.02 | 70.89 - 141.06 |

| 50 | 0.472 | 69.84 - 143.19 | 65.58 - 152.48 |

| 60 | 0.555 | 69.84 - 143.19 (max) | 60.95 - 164.08 |

Experimental Protocol: Two-Period Crossover Bioequivalence Study

Objective: To demonstrate bioequivalence between a test formulation and reference listed drug.

Study Design: Randomized, two-period, two-sequence, single-dose crossover with ≥14-day washout period.

Subjects: Healthy adult volunteers (n=24), 18-55 years, BMI 18.5-30 kg/m², confirmed health status through medical history, physical examination, and laboratory tests.

Procedures:

- Screening: Informed consent; eligibility assessment within 21 days prior to dosing.

- Period 1: Overnight fasting (≥10 hours); administer single dose with 240 mL water; standardized meals at 4 and 10 hours post-dose.

- Blood Sampling: Pre-dose and at 0.25, 0.5, 0.75, 1, 1.5, 2, 2.5, 3, 4, 6, 8, 12, 16, 24, 36, and 48 hours post-dose.

- Washout: 14-day period based on 5× terminal half-life of reference drug.

- Period 2: Repeat procedures with alternate formulation.

Bioanalytical Method:

- Validated LC-MS/MS method per FDA guidance

- Calibration range covering expected concentrations

- Quality controls (low, medium, high) with ≤15% deviation

Statistical Analysis:

- Calculate AUC₀‑t, AUC₀‑inf, and Cₘₐₓ for both formulations

- ANOVA on log-transformed parameters including sequence, period, and treatment effects

- Construct 90% confidence intervals for geometric mean ratio (Test/Reference)

- Conclude bioequivalence if 90% CI falls within 80-125% for all primary parameters

Survival Model Evaluation: Moving Beyond the C-index

Limitations of Concordance Index in Survival Analysis

The concordance index (C-index) measures the rank correlation between predicted risk scores and observed event times, representing the ratio of correctly ordered pairs to comparable pairs [8]. Despite its widespread use (employed in over 80% of survival analysis studies in leading statistical journals), the C-index has significant limitations [28]:

- Insensitivity to clinically significant predictors: The C-index may show minimal improvement even when statistically and clinically significant covariates are added to models [28].

- Dependence only on ranks: Models with inaccurate survival predictions can have higher C-indices than models with more accurate predictions due to the metric's reliance solely on ranking [28].

- Optimistic bias with high censoring: Harrell's C-index has been shown to be overly optimistic with increasing amounts of censored data [8].

- Limited clinical relevance: In low-risk populations, the C-index often compares patients with very similar risk probabilities, providing little practical value to clinicians [28].

Comprehensive Survival Model Evaluation Framework

A robust evaluation strategy for survival models should incorporate multiple metrics addressing different aspects of model performance:

Table 4: Survival Model Evaluation Metrics

| Metric | Formula/Calculation | Interpretation | Strengths/Limitations |

|---|---|---|---|

| Harrell's C-index | C = (Concordant Pairs + 0.5 × Tied Pairs)/Comparable Pairs |

Probability that predictions correctly rank order survival times | Intuitive; widely used; but optimistic with high censoring [8] |

| IPCW C-index | Inverse Probability of Censoring Weighting | Less biased estimate of concordance | Addresses censoring bias; more appropriate with high censoring [8] |

| Integrated Brier Score | IBS = 1/τ × ∫₀τ BS(t) dt where BS(t) = 1/N × Σ[(0 - S(t⎪x))² × I(t ≤ y, δ=1) + (1 - S(t⎪x))² × I(t > y)] |

Overall measure of prediction error (0-1 scale) | Assesses both discrimination and calibration; lower values indicate better performance [8] |

| Restricted AUC | AUC = ∫₀τ S(t) dt where S(t) is survival function |

Mean survival time up to time τ | Captures survival plateau; model-independent calculation [29] |

| Time-Dependent AUC | AUC(t) = P(Ŝᵢ(t) < Ŝⱼ(t) ⎪ Tᵢ ≤ t, Tⱼ > t) |

Discrimination at specific time points | Identifies time-varying discrimination performance [8] |

The AUC Method for Survival Curve Analysis

In survival analysis, the area under the survival curve provides a valuable alternative to median survival, particularly for detecting survival plateaus that often occur with immunotherapies and other novel cancer treatments [29]. The AUC method essentially represents a rearrangement of traditional mean lifetime survival measures [29].

Key Applications:

- Randomized Controlled Trials: The ratio between AUC values in treatment and control groups provides information similar to the hazard ratio while better capturing long-term survival differences [29].

- Single-Arm Trials: The ratio between AUC and median survival identifies long-term survival plateaus (ratio >1 indicates presence of plateau) [29].

Calculation Method:

- Restricted AUC (rAUC): Calculated from time zero to the last observed time-point using the trapezoidal rule applied to the Kaplan-Meier survival curve [29].

- Unrestricted AUC: Extrapolated to infinity using model-based approaches (e.g., Weibull, Gompertz distributions) [29].

Survival Model Evaluation Framework

Experimental Protocol: Comprehensive Survival Model Validation

Objective: To evaluate the performance of a novel survival prediction model for overall survival in advanced non-small cell lung cancer patients.

Data:

- Training set: n=1,500 patients with clinical, genomic, and treatment data

- Validation set: n=500 patients from different institutions

- Primary endpoint: Overall survival from diagnosis to death from any cause

Evaluation Procedure:

- Model Training: Develop Cox proportional hazards model with regularization to predict survival distributions.

- Discrimination Assessment:

- Calculate Harrell's C-index and IPCW C-index on validation set

- Compute time-dependent AUC at 1, 2, and 3 years

- Compare concordance between risk groups

- Calibration Assessment:

- Calculate integrated Brier score over observed time range

- Create calibration plots comparing predicted vs observed survival at 1, 2, and 3 years

- Perform Hosmer-Lemeshow-type tests for survival models

- Clinical Utility Assessment:

- Calculate restricted AUC (rAUC) up to 5 years for each treatment group

- Perform milestone analysis at 2 and 3 years

- Compare AUC/median ratios to identify survival plateaus

- Comparison to Benchmarks:

- Compare all metrics against established clinical prognostic scores

- Perform statistical tests for differences in performance metrics

Reporting: Present all metrics with confidence intervals and clinical interpretations of observed differences.

Research Reagent Solutions

Table 5: Essential Tools for AUC and Survival Analysis

| Category | Specific Tools/Software | Primary Application | Key Features |

|---|---|---|---|

| PK/PD Analysis Software | Phoenix WinNonlin [21] [27] | Noncompartmental analysis for bioavailability studies | Implements multiple AUC methods; RSABE templates; regulatory-compliant output |

| Statistical Programming | R Survival package; scikit-survival [8] | Survival model development and evaluation | Implements C-index, IPCW C-index, time-dependent AUC, Brier score |

| Bioanalytical Instruments | LC-MS/MS systems with validated methods | Drug concentration quantification | High sensitivity and specificity for PK studies; required for BE trials |

| Clinical Data Management | Electronic Data Capture (EDC) systems | BE trial and survival study data collection | 21 CFR Part 11 compliance; audit trails; data integrity |

| Study Design Templates | FDA/EMA RSABE project templates [27] | Highly variable drug bioequivalence studies | Predefined analysis workflows for regulatory submissions |

Area Under the Curve (AUC) serves as a fundamental pharmacokinetic (PK) parameter that quantifies total systemic drug exposure over time [21]. In drug development, accurate AUC calculation is critical for assessing bioavailability, determining dosing regimens, and establishing bioequivalence between drug formulations [21]. The implementation of robust AUC methodologies must align with regulatory standards, particularly the ICH Q2(R2) guideline on analytical procedure validation, which emphasizes parameters such as accuracy, precision, specificity, and linearity in analytical measurements [30]. This framework ensures that the AUC data generated throughout drug development possesses the necessary quality and reliability to support regulatory submissions and clinical decision-making.

Beyond traditional pharmacokinetics, AUC has significant applications in machine learning for evaluating classification models [17] and in survival analysis through related concordance indices [31] [28]. The convergence of these methodologies in modern drug development requires researchers to understand both the computational techniques and appropriate contexts for their application. This document provides comprehensive application notes and experimental protocols for AUC implementation within this broad regulatory and methodological context, serving the needs of researchers, scientists, and drug development professionals engaged in quantitative analysis.

AUC Calculation Methods: Theory and Applications

Fundamental Trapezoidal Methods for AUC Calculation

The trapezoidal family of methods forms the foundation for numerical AUC estimation in pharmacokinetic analysis, each with distinct mathematical approaches and applications.

Linear Trapezoidal Method: This approach applies linear interpolation between concentration-time data points, forming trapezoids whose areas are summed to calculate total AUC [21]. For a time interval (t₁ to t₂), the AUC is calculated as: AUC = (C₁ + C₂)/2 × (t₂ - t₁), where C₁ and C₂ are consecutive concentration measurements [21]. While mathematically straightforward, this method can overestimate AUC during the elimination phase because it does not account for the exponential nature of drug concentration decline [21].

Logarithmic Trapezoidal Method: This method uses logarithmic interpolation between concentration-time points, making it particularly suitable for decreasing concentrations that follow first-order elimination kinetics [21]. The formula for a given interval is: AUC = (C₁ - C₂)/(ln(C₁) - ln(C₂)) × (t₂ - t₁) [21]. This approach more accurately captures the exponential decay characteristic of drug elimination but may underestimate AUC during absorption phases [21].

Linear-Log Trapezoidal Method (Linear-Up/Log-Down): This hybrid approach applies the linear trapezoidal method when concentrations are increasing (absorption phase) and the logarithmic method when concentrations are decreasing (elimination phase) [21]. Recognized as one of the most accurate numerical methods for AUC estimation, it effectively models both the ascending and descending portions of the concentration-time profile [21]. Phoenix WinNonlin implements this as the "Linear Up Log Down" method, which does not depend solely on Cmax, making it suitable for profiles with secondary peaks [21].

Table 1: Comparison of Primary AUC Calculation Methods

| Method | Mathematical Basis | Optimal Application Phase | Advantages | Limitations |

|---|---|---|---|---|

| Linear Trapezoidal | Linear interpolation | Absorption phase | Simple implementation; intuitive calculation | Overestimates elimination phase AUC |

| Logarithmic Trapezoidal | Logarithmic interpolation | Elimination phase | Accurate for exponential decay | Underestimates absorption phase AUC |

| Linear-Log Trapezoidal (Linear-Up/Log-Down) | Combines linear and logarithmic approaches | Entire concentration-time profile | Most accurate overall; adapts to curve shape | More complex implementation |

| Bayesian Estimation | Population PK with Bayesian priors | Early therapy; sparse sampling | Reduces sampling burden; enables early optimization | High cost; model variability [32] |

Advanced AUC Estimation Methods

Beyond traditional trapezoidal methods, advanced approaches have been developed for specific applications:

First-Order Pharmacokinetic Equations: These methods utilize timed post-infusion drug levels to estimate patient-specific PK parameters and AUC [32]. The trapezoidal PK equation approach computes daily AUC (AUC₂₄) using the formula: AUC₂₄ = (AUCᵢₙf + AUCₑₗᵢₘ) × (24/Tau), where AUCᵢₙf represents the area under the infusion curve (Tᵢₙf × 0.5 × (Cmax + Cmin)) and AUCₑₗᵢₘ describes the area under the elimination curve ((Cmax - Cmin)/Kₑₗ) [32]. This method provides accurate, patient-specific AUC estimates with minimal assumptions but offers static estimates that require new concentration measurements when patient physiology changes [32].

Bayesian Methods: These approaches apply Bayesian statistical theory to integrate population pharmacokinetic data (prior) with patient-specific drug levels and clinical parameters (posterior) [32]. This iterative mathematical approach provides refined AUC estimates and dosing recommendations, with the significant advantage of enabling early AUC optimization using pre-steady-state levels and reducing sampling burden [32]. Limitations include high implementation costs and variability in accuracy between different commercially available software platforms [32].

Concordance Index Research and Applications

Concordance Index Fundamentals

The Concordance Index (C-index) serves as a crucial performance metric in survival analysis and risk prediction models, evaluating a model's ability to correctly rank order survival times [28]. In essence, the C-index measures the probability that, for two randomly selected patients, the patient with higher predicted risk will experience the event first [28]. This ranking metric has become particularly important in healthcare applications such as evaluating risk prediction models for hospital readmission, cardiovascular disease, and treatment-related complications [31].

Despite its widespread adoption, with over 80% of survival analysis studies in leading statistical journals using C-index as their primary evaluation metric, significant limitations have been identified [28]. The C-index measures only discriminative ability (ranking) without assessing the accuracy of time-to-event predictions or the calibration of probabilistic estimates [28]. It demonstrates insensitivity to the addition of clinically significant covariates and can provide misleadingly high values in low-risk populations where patients have similar risk profiles [28].

Advanced Concordance Index Methodologies

Recent research has addressed limitations in traditional concordance metrics through methodological refinements:

C-index Decomposition: A novel approach decomposes the C-index into a weighted harmonic mean of two components: (1) the C-index for ranking observed events versus other observed events, and (2) the C-index for ranking observed events versus censored cases [33]. This decomposition enables finer-grained analysis of model performance, revealing that deep learning models utilize observed events more effectively than classical methods, maintaining stable C-index performance across varying censoring levels [33].

Gerds' Weighting: This advanced weighting scheme addresses deficiencies in standard C-index methodologies under policy-related dependent censoring [31]. In comparative studies of liver failure patients, the concordance metric based on Gerds' weighting demonstrated different performance characteristics (0.864, 95% CI: 0.840-0.888) compared to Harrell's C-Index (0.854, 95% CI: 0.844-0.864) and Uno's C-Index (0.832, 95% CI: 0.819-0.844) when evaluating Model for End-Stage Liver Disease (MELD) scores [31]. This highlights the importance of selecting appropriate weighting schemes to avoid bias in policy evaluations.

Table 2: Comparison of Concordance Index Methodologies

| Metric | Statistical Basis | Censoring Handling | Applications | Key Considerations |

|---|---|---|---|---|

| Harrell's C-Index | Proportional hazards assumptions | Handles right-censoring | General survival analysis | Standard approach; may be insensitive to new covariates [28] |

| Uno's C-Index | Inverse probability weighting | More robust to censoring distribution | Studies with non-random censoring | Improved performance with informative censoring |

| Gerds' Weighting | Inverse probability of censoring weights | Addresses dependent censoring | Policy evaluation; healthcare applications | Reduces bias in policy-related censoring [31] |

| C-index Decomposition | Harmonic mean of components | Separates events vs events and events vs censored | Model development and refinement | Provides granular performance insights [33] |

Experimental Protocols for AUC Implementation

Protocol 1: Vancomycin AUC Monitoring Using First-Order PK Equations

Therapeutic drug monitoring of vancomycin exemplifies the practical application of AUC calculations in clinical practice, with consensus guidelines recommending an AUC target of 400-600 mg×h/L for optimizing efficacy while minimizing toxicity [32].

Research Reagent Solutions and Materials:

Table 3: Essential Materials for Vancomycin AUC Protocol

| Item | Specifications | Function/Purpose |

|---|---|---|

| Vancomycin standard solutions | Certified reference material, known concentrations | Calibration curve establishment |

| Biological matrix | Human plasma/serum, drug-free | Matrices for standard and quality control samples |

| Sample preparation reagents | Protein precipitation reagents (e.g., acetonitrile, methanol), buffers | Sample clean-up and preparation |

| Analytical instrument | LC-MS/MS system with validated bioanalytical method | Quantitative vancomycin concentration measurement |

| Calculation software | Phoenix WinNonlin, Excel with custom templates | PK parameter and AUC calculation |

Experimental Workflow:

Drug Administration and Sampling: Administer vancomycin dose via intravenous infusion (typically over 1-2 hours). Collect two post-infusion blood samples within the same dosing interval, typically after the 4th or 5th dose to approach steady-state conditions [32]. Optimal sampling times include one sample at the end of infusion (peak) and another immediately before the next dose (trough).

Sample Analysis: Process samples using a validated bioanalytical method (e.g., LC-MS/MS) following ICH Q2(R2) validation parameters [30]. Establish a calibration curve using quality controls to ensure accuracy and precision of concentration measurements.

PK Parameter Calculation: Calculate the elimination rate constant (Kₑₗ) using the two concentration measurements: Kₑₗ = (ln(C₁) - ln(C₂))/(t₂ - t₁), where C₁ and C₂ are consecutive concentrations at times t₁ and t₂ [32].

AUC Calculation Using Trapezoidal Method: Apply the trapezoidal method to compute AUC over the dosing interval: Calculate AUCᵢₙf (area under infusion) as Tᵢₙf × 0.5 × (Cmax + Cmin) and AUCₑₗᵢₘ (area under elimination) as (Cmax - Cmin)/Kₑₗ. Sum these to obtain AUC for the dosing interval: AUCτ = AUCᵢₙf + AUCₑₗᵢₘ [32]. Calculate daily AUC (AUC₂₄) by multiplying by the number of dosing intervals in 24 hours: AUC₂₄ = AUCτ × (24/Tau).

Dose Adjustment: Compare calculated AUC₂₄ to target range of 400-600 mg×h/L. Adjust subsequent doses proportionally to achieve target exposure, considering patient-specific factors such as renal function and clinical status.

Diagram 1: Vancomycin AUC Monitoring Workflow

Protocol 2: Survival Model Evaluation Using Concordance Indices

Experimental Workflow for Survival Model Validation:

Data Preparation: Structure right-censored survival dataset with triplets (xᵢ, tᵢ, δᵢ) for i=1...N subjects, where xᵢ represents feature vectors, tᵢ observed times, and δᵢ event indicators (δᵢ=1 for observed events, δᵢ=0 for censored observations) [28].

Model Training: Implement survival models ranging from traditional Cox proportional hazards to advanced deep learning approaches that generate individual survival distributions (ISDs). Ensure models output risk scores or survival functions for each subject.

Concordance Index Calculation: Apply appropriate C-index methodology based on research question and censoring patterns:

- For standard evaluation, implement Harrell's C-index: CI = ΣᵢΣⱼ I(Tᵢ < Tⱼ) × I(ηᵢ > ηⱼ) × δᵢ / ΣᵢΣⱼ I(Tᵢ < Tⱼ) × δᵢ, where η represents predicted risk scores [28].

- For policy evaluations with potential dependent censoring, implement Gerds' weighting scheme [31].

- For detailed performance analysis, compute the C-index decomposition into events-versus-events and events-versus-censored components [33].

Comprehensive Model Evaluation: Supplement C-index with additional metrics addressing different aspects of model performance:

- Calibration measures (plotting observed versus predicted probabilities)

- Time-dependent discrimination metrics

- Overall prediction error measures (e.g., Brier score)

Sensitivity Analysis: Assess model performance stability under varying censoring levels using synthetic censoring approaches to evaluate robustness [33].

Diagram 2: Survival Model Evaluation Workflow

Regulatory and Implementation Considerations

ICH Q2(R2) Validation Framework for AUC Methods

The ICH Q2(R2) guideline provides a comprehensive framework for validating analytical procedures used in pharmaceutical analysis, including those supporting AUC determinations [30]. When implementing AUC calculation methods, several key validation parameters require consideration:

Accuracy: Demonstrate that AUC calculation methods produce results close to true values. For trapezoidal methods, this involves comparison against known theoretical AUC values for reference curves with defined mathematical properties.

Precision: Evaluate repeatability (same analyst, same conditions) and intermediate precision (different days, different analysts) of AUC calculations applied to standardized concentration-time data.

Linearity: Establish that the AUC calculation methodology produces results proportional to drug concentration across the specified range, particularly important for methods incorporating logarithmic transformations.

Range: Confirm that the interval between upper and lower concentration values provides suitable accuracy, precision, and linearity for AUC estimation.

Implementation of Bayesian AUC estimation methods requires additional validation considerations, including assessment of population model appropriateness, evaluation of prior distribution influence, and demonstration of robustness across patient subpopulations [32].

Appropriate Use Criteria (AUC) Program Context

While the Centers for Medicare & Medicaid Services (CMS) paused the Appropriate Use Criteria (AUC) Program for advanced diagnostic imaging in 2024, rescinding current regulations [34] [35], the underlying legislative mandate remains. The program was established to promote evidence-based imaging through consultation of AUC via qualified clinical decision support mechanisms (CDSMs) [36] [35]. Although CMS has removed AUC-related coding requirements from Medicare claims processing [34], the conceptual framework of ensuring appropriate utilization through evidence-based criteria remains relevant for drug development professionals.

Healthcare organizations are encouraged to maintain voluntary compliance readiness, as future iterations of the program are anticipated [36]. This includes continued implementation of CDSMs, staff training on evidence-based imaging principles, and documentation of compliance efforts. The pause provides an opportunity for refinement of implementation strategies without immediate penalty pressure [36].

The calculation and interpretation of Area Under the Curve and related concordance indices represent critical competencies for drug development professionals. Implementation of these methodologies requires careful consideration of mathematical foundations, appropriate application contexts, and alignment with regulatory standards including ICH Q2(R2). The experimental protocols outlined provide practical frameworks for applying these concepts in both pharmacokinetic analysis and survival model evaluation. As quantitative methods continue to evolve in pharmaceutical development, maintaining rigor in AUC implementation ensures reliable decision-making throughout the drug development lifecycle.

Practical Calculation Methods: Step-by-Step Implementation for AUC and C-index

In non-compartmental analysis (NCA), the area under the concentration-time curve (AUC) is a fundamental parameter for quantifying total drug exposure over time [21]. Alongside Cmax, AUC serves as a cornerstone for assessing systemic drug exposure and is critically important for formulation comparisons in pharmacokinetic studies and bioequivalence trials [21]. The accurate calculation of AUC is essential for understanding key pharmacokinetic parameters such as bioavailability, clearance, and volume of distribution. While the mathematical principles behind AUC calculation are straightforward, the choice of computational method introduces important nuances that significantly impact result interpretation [21]. This application note details the primary numerical methods for AUC estimation—linear trapezoidal, logarithmic trapezoidal, and linear-log trapezoidal—providing researchers with structured protocols for their implementation in drug development.

Trapezoidal Methods for AUC Calculation

Linear Trapezoidal Method

The linear trapezoidal method represents the simplest approach for AUC estimation, applying linear interpolation between consecutive concentration-time data points [21]. This method connects adjacent concentrations with straight lines, forming trapezoids whose collective area equals the total AUC [21]. For any time interval (t1, t2) with corresponding concentrations (C1, C2), the partial AUC segment is calculated as:

AUClinear = (t2 - t1) × (C1 + C2)/2 [21] [37]

The linear trapezoidal method utilizes the arithmetic mean of concentrations across each time interval. While computationally simple and historically significant, this method systematically overestimates AUC during elimination phases because it does not account for the exponential nature of drug concentration decline [21] [38]. The algorithm assumes first-order elimination follows a straight-line decline rather than its actual curvilinear trajectory, leading to positive bias particularly pronounced with widely spaced sampling points [21].

Logarithmic Trapezoidal Method

The logarithmic trapezoidal method addresses the limitation of the linear approach during decreasing concentration phases by employing logarithmic interpolation between data points [21]. This method is particularly appropriate for elimination phases where drug concentrations typically follow exponential decay, which appears linear when plotted on a logarithmic scale [21]. For a time interval (t1, t2) with decreasing concentrations (C1 > C2), the partial AUC segment is calculated as:

AUClog = (t2 - t1) × (C2 - C1) / ln(C2/C1) [21] [38] [37]

This approach uses the geometric mean rather than the arithmetic mean of concentrations, providing superior accuracy for decreasing concentrations [38]. However, the method cannot be applied when concentrations are equal (C1 = C2) or when any concentration is zero, as the logarithm of zero or equal ratios becomes undefined [38]. In practice, software implementations typically default to the linear trapezoidal method in these scenarios [38].

Linear-Log Trapezoidal Method (Linear-Up/Log-Down)

The linear-log trapezoidal method, also known as "linear-up/log-down," combines both approaches by applying the linear trapezoidal method during increasing concentrations (absorption phase) and the logarithmic trapezoidal method during decreasing concentrations (elimination phase) [21]. This hybrid approach is widely considered the most accurate for typical pharmacokinetic profiles because it matches the appropriate mathematical model to each physiological phase [21]. The decision logic for this method follows:

AUCii+1 =

- (ti+1 - ti) × (Ci+1 + Ci)/2 if Ci+1 ≥ Ci (increasing concentrations)

- (ti+1 - ti) × (Ci+1 - Ci) / ln(Ci+1/Ci) if Ci+1 < Ci (decreasing concentrations) [38]

This method automatically selects the appropriate interpolation technique based on whether concentrations are rising or falling, without dependence on tmax identification, making it particularly valuable for profiles with secondary peaks or complex absorption patterns [21].

Table 1: Comparison of Trapezoidal Methods for AUC Calculation

| Method | Mathematical Formula | Best Application Phase | Advantages | Limitations |

|---|---|---|---|---|

| Linear Trapezoidal | AUC = (t₂-t₁)×(C₁+C₂)/2 |

Absorption phase | Simple calculation; handles zero/equal concentrations | Overestimates elimination phase AUC |

| Logarithmic Trapezoidal | AUC = (t₂-t₁)×(C₂-C₁)/ln(C₂/C₁) |

Elimination phase | Accurate for exponential decay; reduced bias | Fails with C=0 or C₁=C₂; complex computation |

| Linear-Log Trapezoidal | Combination of above based on concentration trend | Complete profile (default choice) | Optimal accuracy; matches physiology | Requires trend detection; implementation complexity |

Table 2: Impact of Sampling Frequency on Method Selection

| Sampling Density | Linear Trapezoidal | Logarithmic Trapezoidal | Linear-Log Trapezoidal |

|---|---|---|---|

| Frequent sampling (closely spaced) | Minimal advantage | Minimal advantage | Recommended (optimal accuracy) |

| Sparse sampling (widely spaced) | Significant overestimation during elimination | Potential underestimation during absorption | Substantial improvement over single methods |

| Practical recommendation | Limited to absorption phase or when simplicity required | Limited to elimination phase with adequate concentration decline | Default choice for complete profiles |

Experimental Protocols for AUC Calculation

Protocol 1: Implementing Linear-Log Trapezoidal Method

Purpose: To calculate total AUC from concentration-time data using the most accurate hybrid method.

Materials:

- Plasma/serum concentration-time data

- Statistical software (e.g., Phoenix WinNonlin, PumasCP) or computational environment

Procedure:

- Data Preparation: Organize concentration-time data in chronological order with time in consistent units

- Concentration Trend Analysis: For each consecutive pair (Ci, ti) and (Ci+1, ti+1):

- Calculate concentration difference: ΔC = Ci+1 - Ci

- Method Selection:

- If ΔC ≥ 0 (increasing or equal concentrations): Apply linear trapezoidal rule

- If ΔC < 0 (decreasing concentrations): Apply logarithmic trapezoidal rule

- Partial AUC Calculation:

- For linear segments: AUCi = (ti+1 - ti) × (Ci+1 + Ci)/2

- For logarithmic segments: AUCi = (ti+1 - ti) × (Ci+1 - Ci) / ln(Ci+1/Ci)

- Total AUC Determination: Sum all partial AUC values

Validation: Compare results against known standard values; ensure logarithmic calculations are not applied to zero or equal concentrations.

Protocol 2: Handling Below Limit of Quantitation (BLQ) Values

Purpose: To manage BLQ values appropriately in AUC calculations without introducing bias.

Materials:

- Complete concentration-time dataset with BLQ values identified

- Regulatory guidance on BLQ handling

Procedure:

- Categorize BLQ Values:

- First timepoints (pre-Tmax): Consider "Keep," "Drop," or "Replace with 0" based on study objectives

- Middle timepoints (around Tmax): Typically replace with 0 or impute using scientific judgment

- Last timepoints (terminal phase): Critical for accurate elimination rate estimation; apply scientific rationale

- Document Handling Method: Justify selected approach in study documentation

- Consistency Application: Apply identical method across all study subjects

- Sensitivity Analysis: Compare AUC results with alternative BLQ handling methods

Note: The specific handling method should be pre-specified in the statistical analysis plan and consistently applied [37].

Protocol 3: Calculating AUC with Variable Baselines