Basis Sets and SCF Convergence: A Practical Guide for Computational Drug Discovery

This article provides a comprehensive analysis of the critical role basis sets play in Self-Consistent Field (SCF) convergence, a fundamental process in computational chemistry.

Basis Sets and SCF Convergence: A Practical Guide for Computational Drug Discovery

Abstract

This article provides a comprehensive analysis of the critical role basis sets play in Self-Consistent Field (SCF) convergence, a fundamental process in computational chemistry. Tailored for researchers and drug development professionals, we explore the foundational principles behind basis set selection, practical methodologies for robust SCF procedures, advanced troubleshooting techniques for challenging systems like transition metal complexes, and validation strategies for ensuring reliable results. By synthesizing current research and software-specific guidelines, this guide aims to equip practitioners with the knowledge to optimize their computational workflows for accurate and efficient modeling of biomolecular systems and drug-receptor interactions.

Understanding the Foundation: How Basis Set Choice Directly Governs SCF Convergence

In theoretical and computational chemistry, the choice of basis set is a foundational step that directly controls the accuracy and feasibility of solving the electronic Schrödinger equation. A basis set is a set of mathematical functions, termed basis functions, used to represent the electronic wave function within methods like Hartree-Fock (HF) or density-functional theory (DFT). This representation transforms the complex partial differential equations into algebraic equations suitable for efficient computation [1]. The core approximation lies in representing molecular orbitals as a linear combination of atomic orbitals (LCAO), where the quality of this representation hinges on the completeness and flexibility of the underlying basis set [1]. The pursuit of the complete basis set (CBS) limit, where the finite set expands towards an infinite, complete set of functions, represents the ideal of a fully converged calculation free from basis set error [1].

The structure and composition of the basis set are intrinsically linked to the Fock matrix, a central construct in self-consistent field (SCF) theory. The Fock matrix, defined as F = T + V + J + K (encompassing kinetic energy, external potential, Coulomb, and exchange matrices), depends on the density matrix, which is itself built from the molecular orbital coefficients [2]. These coefficients are solved for through the Roothaan-Hall equation, F C = S C E, where S is the overlap matrix of the atomic orbitals and E is the orbital energy matrix [2]. Consequently, the basis set dictates the dimension and structure of the Fock and overlap matrices. A more extensive and flexible basis set provides a better representation of the electron density but increases the computational cost and complexity of building and diagonalizing the Fock matrix at each SCF cycle. Therefore, understanding the hierarchy of basis sets—from minimal to augmented—is crucial for controlling the cost-accuracy trade-off in SCF convergence research.

Basis Set Hierarchy and Composition

The evolution from minimal to highly extended basis sets is driven by the need to accurately model the electronic structure of atoms within a molecular environment. The following sections detail this standard hierarchy.

Minimal Basis Sets

Minimal basis sets represent the simplest starting point, employing the minimum number of basis functions required to represent all the electrons in a free atom. For atoms in the second period (Li to Ne), this typically translates to five functions: two s-type and three p-type orbitals [1] [3]. The most common family is the STO-nG basis sets, where 'n' (e.g., 3, 4, or 6) indicates the number of primitive Gaussian-type orbitals (GTOs) used to approximate a single Slater-type orbital (STO) for both core and valence orbitals [1]. While computationally inexpensive, minimal basis sets lack the flexibility to adjust to different molecular bonding environments and yield results that are generally too rough for research-quality publications [1] [3].

Split-Valence and Polarized Basis Sets

Recognizing that valence electrons are primary participants in chemical bonding, split-valence basis sets represent valence orbitals by multiple basis functions, offering flexibility to model the changing spatial extent of electron density in molecules.

- Pople-style basis sets: These are denoted by notations like

X-YZg. Here,Xis the number of GTOs representing each core atomic orbital.YandZsignify that valence orbitals are split into two basis functions, composed ofYandZprimitive GTOs, respectively. For example, in6-31G, core orbitals are a contraction of 6 GTOs, while the valence orbitals are split into two, described by 3 and 1 primitive GTOs [1]. - Polarization functions: To account for the deformation of electron density away from the atomic nuclei in molecules, polarization functions are added. These are angular momentum functions higher than required for the ground state atom (e.g., d-functions on first-row atoms, p-functions on hydrogen) [1]. In Pople's notation, a

*indicates d-type polarization functions on heavy atoms (e.g.,6-31G*), whilealso adds p-functions on hydrogen (6-31G). A more explicit modern notation specifies the functions in parentheses, such as(d,p)[1]. - Correlation-consistent basis sets: Developed by Dunning and coworkers, these sets (e.g.,

cc-pVXZ, where X=D, T, Q, 5, 6) are systematically designed to converge post-Hartree-Fock correlation energies to the CBS limit [1] [4]. They are built to recover correlation energy in a systematic way by adding shells of higher angular momentum functions [4].

Augmented (Diffuse) and Specialized Basis Sets

For specific electronic properties, standard valence and polarized basis sets require further augmentation.

- Diffuse functions: These are Gaussian functions with small exponents, granting them a more extended spatial range. They are essential for accurately modeling anions, Rydberg states, dipole moments, and weak intermolecular interactions (e.g., van der Waals complexes), as they better describe the electron density "tail" far from the nucleus [1] [5]. In Pople basis sets, they are denoted by a

+for heavy atoms (6-31+G) or++for all atoms [1]. For correlation-consistent sets, the prefixaug-(e.g.,aug-cc-pVDZ) is used [4]. - Specialized and decontracted basis sets: Properties like chemical shifts, spin-spin couplings, and electric field gradients often require specialized basis sets or the decontraction of standard sets [6]. Decontraction increases the variational flexibility of the basis set by breaking the fixed linear combinations of primitive GTOs, which can be crucial for high-precision property calculations [6].

Table 1: Common Basis Set Families and Their Characteristics

| Basis Set Family | Key Examples | Notation Meaning | Typical Use Case |

|---|---|---|---|

| Minimal | STO-3G, STO-4G | STO-nG: n GTOs per STO | Initial scans, education (low cost) |

| Split-Valence (Pople) | 3-21G, 6-31G, 6-311G | X-YZg or X-YZWVg for valence splitting | General purpose molecular calculations |

| Polarized (Pople) | 6-31G, 6-31G* | *=d on heavy atoms, |

Improved geometries, molecular properties |

| Correlation-Consistent | cc-pVDZ, cc-pVTZ | cc-pVXZ: X=D(double), T(triple), etc. | High-accuracy correlated (post-HF) methods |

| Augmented | 6-31+G, aug-cc-pVDZ | +/aug- adds diffuse functions |

Anions, excited states, weak interactions |

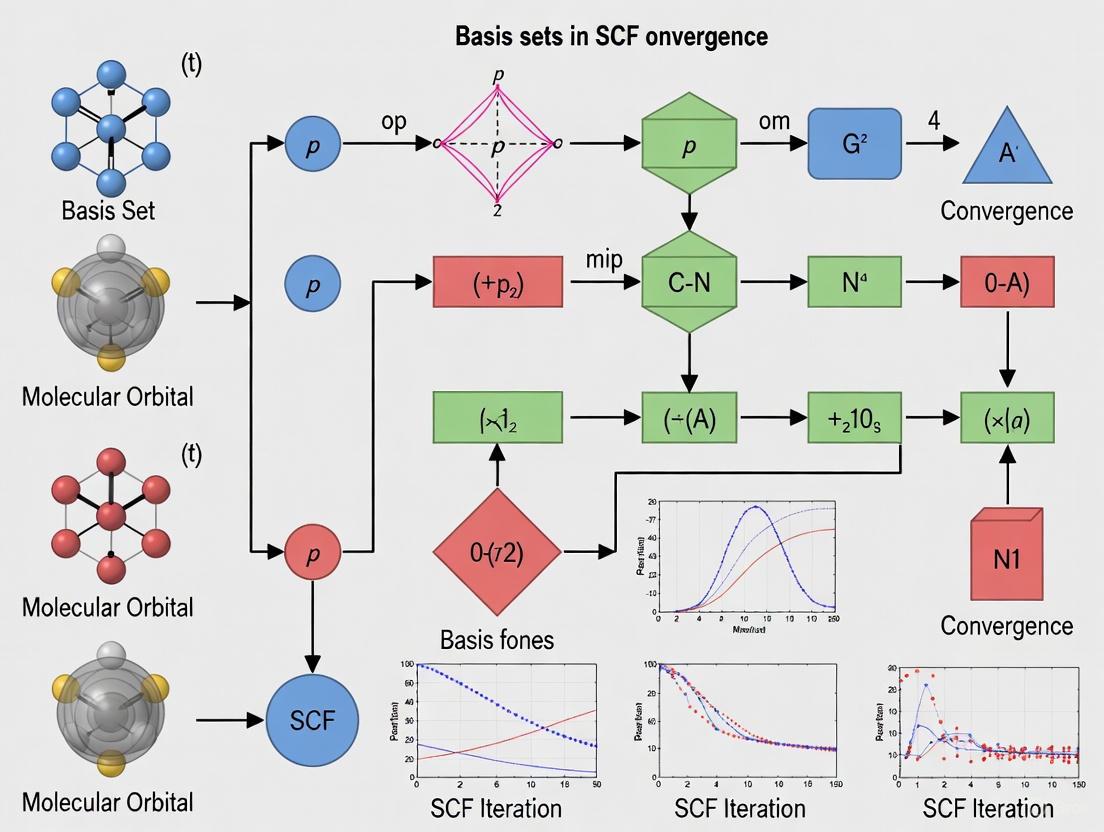

The following diagram illustrates the logical hierarchy and composition of standard basis sets.

Mathematical Foundation: Basis Sets and the Fock Matrix

The SCF procedure is an iterative algorithm to find a solution where the Fock matrix and the density matrix are consistent. The basis set is the stage upon which this process unfolds.

The electronic wavefunction is constructed from molecular orbitals (|ψᵢ⟩), each expressed as a linear combination of basis functions (|μ⟩), the atomic orbitals: |ψᵢ⟩ ≈ ∑μ cμi |μ⟩ [1]. The coefficients cμi are determined by solving the generalized eigenvalue problem, the Roothaan-Hall equation: F C = S C E [2]. Here, F is the Fock matrix, S is the overlap matrix (with elements Sμν = ⟨μ|ν⟩), C is the matrix of molecular orbital coefficients, and E is a diagonal matrix of orbital energies [2].

The Fock matrix itself is a function of the density matrix D. For a closed-shell system, the Fock matrix element Fμν is:

Fμν = Hμν(core) + ∑λσ Dλσ [ 2(μν|λσ) - (μλ|νσ) ]

where Hμν(core) is the core Hamiltonian matrix element, and (μν|λσ) are the two-electron repulsion integrals [1]. The dimensions of these matrices (F, S, D) are N x N, where N is the number of basis functions in the set. Consequently, the size and quality of the basis set directly determine the computational cost.

- One-electron operators (like the core Hamiltonian) are represented as matrices (rank-two tensors).

- Two-electron operators correspond to rank-four tensors, and the number of two-electron integrals scales approximately as

N⁴, a primary driver of computational expense [1].

Larger basis sets reduce the error in representing the wavefunction but increase the size of N, leading to larger matrices and a dramatic increase in the number of integrals to compute. This expansion directly impacts the cost and memory requirements of each SCF iteration and can introduce challenges in achieving SCF convergence.

Impact on SCF Convergence and Advanced Algorithms

The choice of basis set profoundly influences the stability, speed, and very possibility of achieving SCF convergence. Poor or ill-suited basis sets can lead to oscillations or divergence in the SCF procedure.

SCF Convergence Challenges and Techniques

Achieving SCF convergence can be problematic, especially for systems with small HOMO-LUMO gaps, metallic character, or when using large, diffuse basis sets [7] [2] [5]. Several algorithms have been developed to accelerate and stabilize convergence:

- DIIS (Direct Inversion in the Iterative Subspace): This is the most widely used method. It extrapolates a new Fock matrix as a linear combination of Fock matrices from previous iterations by minimizing the norm of the commutator

[F, PS], wherePis the density matrix andSis the overlap matrix [7] [2]. - EDIIS (Energy-DIIS) and ADIIS (Augmented DIIS): These are variants of DIIS. EDIIS minimizes a quadratic energy function, while ADIIS uses the augmented Roothaan-Hall (ARH) energy function to obtain the linear coefficients for the Fock/density matrix combination. These methods can be more robust, and a combination "ADIIS+DIIS" is often highly reliable [7].

- Damping and Level Shifting: Simple damping mixes the new density with the old one (

D_new = α D_old + (1-α) D_new). Level shifting increases the energy gap between occupied and virtual orbitals, stabilizing the update [2]. - Second-Order SCF (SOSCF): Methods like Newton-Raphson or the co-iterative augmented hessian (CIAH) method can achieve quadratic convergence but have higher computational cost per iteration [2].

Basis Set Normalization and Numerical Stability

Recent research highlights that the internal normalization of atomic orbitals (AOs) by quantum chemistry packages can impact results. AOs are typically normalized, but internal reduction procedures (pruning of primitive Gaussians) can cause the norm of contracted basis functions to deviate from unity [8]. This norm loss can affect sensitive molecular properties like Raman intensities and J-coupling constants. Studies show that different normalization approaches (e.g., preventing automatic reduction or renormalizing while retaining both positive and negative contraction components) can lead to non-negligible shifts in computed properties [8]. This underscores that basis set implementation is not just a numerical detail but can be a physically impactful step in SCF calculations.

Table 2: SCF Convergence Accelerators and Their Mechanisms

| Method | Key Mechanism | Advantages & Challenges |

|---|---|---|

| DIIS [7] [2] | Minimizes the commutator [F, PS] to extrapolate a new Fock matrix. |

Fast and efficient in most cases; can oscillate or diverge for difficult cases. |

| EDIIS/ADIIS [7] | Minimizes an approximate energy function (EDIIS: quadratic; ADIIS: ARH) to combine Fock/Density matrices. | More robust than DIIS for difficult convergence; "ADIIS+DIIS" is a powerful combination. |

| Damping [2] | Mixes a fraction of the old density matrix with the new one. | Simple to implement; can slow down convergence if overused. |

| Level Shifting [2] | Artificially increases the HOMO-LUMO gap in the Fock matrix. | Very effective for systems with small gaps; the final energy depends on the shift magnitude. |

| SOSCF [2] | Uses second-order orbital optimization (e.g., CIAH algorithm). | Quadratic convergence near solution; higher computational cost per iteration. |

The workflow below outlines the core SCF procedure and highlights key points where basis set choice and convergence accelerators intervene.

A Practical Guide for Researchers

Selecting an appropriate basis set requires balancing accuracy, computational cost, and the specific chemical problem.

Basis Set Selection and Error Management

General recommendations for researchers, particularly in fields like drug development, include:

- Rule of Thumb: For DFT calculations of energies and geometries, a balanced polarized triple-zeta basis set (e.g.,

def2-TZVP) is often sufficiently converged. Post-HF methods converge more slowly and may require quadruple-zeta basis sets or basis set extrapolation techniques for high accuracy [6]. - Initial Workflows: Use double-zeta basis sets (e.g.,

def2-SVP,6-31G*) for initial geometry optimizations of organic molecules, but do not trust final energies or sensitive properties with such small sets [6]. - Handling Weak Interactions: Calculating accurate weak interaction energies is challenging due to basis set superposition error (BSSE). While the counterpoise (CP) correction is standard, recent research shows that exponential-square-root basis set extrapolation from double-zeta (

def2-SVP) to triple-zeta (def2-TZVPP) can achieve near-CBS accuracy for interaction energies without CP correction, reducing computational cost and SCF issues [5]. - Anions and Diffuse Functions: For anions or Rydberg states, diffuse functions are critical. Minimally augmented basis sets (e.g.,

ma-TZVP) provide an economical and stable alternative to fully augmented sets [6] [5]. - Consistency: Use one family of basis sets (e.g., Ahlrichs

def2series) for all elements in a system to ensure balanced description and avoid problems [6].

The Scientist's Toolkit: Essential Computational Reagents

Table 3: Key "Research Reagent" Basis Sets and Their Functions

| Basis Set / Tool | Function / Purpose | Typical Application Context |

|---|---|---|

| STO-3G | Provides a minimal, computationally cheap representation of atomic orbitals. | Initial molecular geometry scans, educational purposes, very large systems. |

| 6-31G* | A standard split-valence polarized double-zeta basis set. | General-purpose geometry optimization for organic/main-group molecules. |

| def2-SVP | A modern, efficient split-valence polarized double-zeta basis set. | Default for initial geometry optimizations; part of the comprehensive def2 family. |

| def2-TZVP | A modern, efficient split-valence polarized triple-zeta basis set. | Recommended for final DFT single-point energies, optimizations, and frequencies. |

| cc-pVDZ / cc-pVTZ | Correlation-consistent double- and triple-zeta basis sets. | High-accuracy correlated wavefunction theory (e.g., MP2, CCSD(T)) calculations. |

| aug-cc-pVDZ | Correlation-consistent basis set with added diffuse functions. | Anions, excited states, weak interactions, and accurate molecular properties. |

| ma-TZVP | Minimally augmented def2-TZVP; adds a single diffuse s/p set economically. | Weak interaction calculations with DFT, reducing linear dependence vs. full augmentation. |

| Counterpoise (CP) Correction [5] | A method to correct for Basis Set Superposition Error (BSSE). | Essential for accurate intermolecular interaction energies with small-to-medium basis sets. |

| Basis Set Extrapolation [5] | A technique to estimate the Complete Basis Set (CBS) limit using results from two basis sets. | Achieving near-CBS accuracy for energies without the cost of a very large basis set. |

The development and application of basis sets continue to evolve, driven by new computational challenges and hardware paradigms.

Emerging Trends and Quantum Computing

- Quantum Computational Chemistry: A frontier in electronic structure theory is the adaptation of methods for quantum computers. Recent work has enabled solving chemistry problems in the first quantization with any basis set, not just plane waves, using techniques like linear-combination-of-unitaries (LCU) decomposition [9] [10]. This allows quantum algorithms to leverage efficient, compact quantum chemistry basis sets like atomic orbitals, potentially offering polynomial speedups [9]. Furthermore, methods like the unitary projector augmented-wave (UPAW) are being adapted for quantum computers to handle electron-nucleus interactions efficiently [10].

- High-Precision Normalization: As computational accuracy demands increase, the precise treatment of basis sets themselves is being re-examined. Tools like

BasisSculptare being developed to provide controlled, reproducible AO normalization, acknowledging that default procedures in classical software can impact properties sensitive to the electron density distribution [8].

Basis sets form the fundamental, non-physical approximation in most electronic structure calculations, directly governing the accuracy of the resulting wavefunction and all derived properties. Their composition, from the minimal STO-3G to augmented correlation-consistent sets, dictates the structure and properties of the Fock matrix and the subsequent path and success of the SCF convergence process. Challenges in SCF convergence, often exacerbated by poor basis set choices, are addressed by robust algorithms like ADIIS+DIIS and level shifting. For the practicing computational researcher, a deep understanding of the basis set hierarchy and its impact is indispensable for designing reliable computational protocols, especially in demanding fields like drug development where accurate energies and properties are paramount. The future of basis set technology is tightly linked to new computational paradigms, including the quest for high-precision normalization in classical computing and the novel formulation of basis sets for emerging quantum algorithms.

Self-Consistent Field (SCF) convergence is a foundational process in computational chemistry for determining molecular electronic structure. This technical guide examines the core convergence criteria—electronic energy change, density matrix change, and orbital gradients—exploring their theoretical interrelationships and practical implementation across major quantum chemistry packages. The analysis is framed within the critical context of basis set selection, highlighting how the choice and quality of the basis set directly influence the convergence behavior and the reliability of the resultant wavefunction. Furthermore, we detail experimental protocols for benchmarking convergence behavior and provide a "Scientist's Toolkit" of essential computational reagents, empowering researchers to diagnose and resolve SCF convergence failures, a common bottleneck in drug development pipelines.

The Self-Consistent Field (SCF) method is an iterative procedure central to Hartree-Fock theory and Kohn-Sham Density Functional Theory (DFT). Its goal is to find a set of molecular orbitals that are eigenfunctions of the Fock or Kohn-Sham operator, which itself depends on the resulting electron density. This cyclic dependency necessitates an iterative process, starting from an initial guess and proceeding until the solution is self-consistent [2].

The convergence of this process is not guaranteed and can be adversely affected by several factors, including the initial guess, the molecular system's electronic structure (e.g., small HOMO-LUMO gaps in transition metal complexes or open-shell systems), and notably, the choice of the atomic orbital basis set [11] [12]. The completeness and diffuseness of the basis set are critical; large, diffuse basis sets, while often essential for accuracy in properties like non-covalent interactions, can severely hamper the sparsity of the one-particle density matrix (1-PDM) and introduce convergence difficulties [13] [5]. This creates a fundamental tension between accuracy and computational tractability, placing the convergence criterion at the heart of effective computational research.

Core Convergence Metrics and Their Theoretical Linkages

A converged SCF solution corresponds to a stationary point on the energy surface of orbital rotations. Several metrics can quantify the proximity to this solution, each with distinct mathematical and practical significance.

The Change in Total Electronic Energy

The most intuitive convergence metric is the change in the total electronic energy between successive SCF cycles, ( \Delta E = |E{i} - E{i-1}| ). When this change falls below a predefined threshold, the energy is considered converged.

- Advantage: It has direct physical relevance, as the total energy is often the primary quantity of interest.

- Disadvantage: The energy is a scalar quantity that can exhibit false convergence, where the energy change becomes deceptively small even though the wavefunction or density has not yet reached a stationary point. This is particularly risky for subsequent property calculations that depend critically on the quality of the density matrix [14].

The Change in the Density Matrix

The root-mean-square (RMS) and maximum (Max) change in the density matrix (P) between cycles are widely used metrics. The change is defined as ( \Delta P = P{i} - P{i-1} ).

- Theoretical Basis: In the simplest terms, the Fock matrix (F) is a function of the density matrix, ( F = F(P) ). A stationary solution requires that the density matrix used to build the Fock matrix is the same as the one that results from its diagonalization. Thus, a negligible change in P signifies self-consistency.

- Advantage: Monitoring the change in P provides a more robust guarantee of true self-consistency than the energy alone [14]. A converged density is often a prerequisite for accurate post-SCF calculations like coupled cluster or CI [14].

The Orbital Gradient

The most rigorous convergence metric is the orbital gradient, which is the occupied-virtual block of the Fock matrix in the molecular orbital basis. The DIIS (Direct Inversion in the Iterative Subspace) method, a dominant SCF acceleration technique, specifically aims to minimize the norm of an error vector, often related to the commutator ( [\mathbf{F},\mathbf{PS}] ), which is a proxy for the orbital gradient [11] [2].

- Theoretical Basis: From an optimization perspective, the SCF process is a minimization of the energy with respect to orbital rotation parameters. The orbital gradient is the direct mathematical derivative of the energy with respect to these parameters. A zero gradient is the definitive condition for a stationary point (minimum or saddle point) [14].

- Advantage: This is the fundamental quantity to minimize. Convergence based on a small orbital gradient ensures the solution is a true stationary point.

- Relationship to Density Change: A zero orbital gradient implies no further change in the density matrix, and vice versa. However, the numerical thresholds for these two metrics are on different scales [14].

The logical and mathematical relationships between these three core metrics are summarized in the following diagram.

A Comparative Analysis of Convergence Criteria in Quantum Chemistry Software

Different quantum chemistry packages implement SCF convergence criteria with varying defaults and philosophies. The table below synthesizes the default convergence settings and key algorithms for several prominent software packages, illustrating the diversity of approaches.

Table 1: Default SCF Convergence Criteria and Algorithms in Select Quantum Chemistry Software

| Software Package | Default Convergence Metric(s) | Default Threshold(s) | Primary SCF Algorithm(s) | Key Reference |

|---|---|---|---|---|

| Q-Chem [11] | Wavefunction error (Max DIIS error) | 1.0×10⁻⁵ (Single point) | DIIS (Default), GDM, ADIIS | SCF_CONVERGENCE & SCF_ALGORITHM |

| ORCA [12] | Energy change & one-electron energy change | TolE: 3.0×10⁻⁷ (StrongSCF) | DIIS, TRAH | TolE, TolRMSP, TolMaxP |

| Gaussian [15] | RMS and Max density change | ~1.0×10⁻⁸ (TightSCF) | Combination of EDIIS and CDIIS | SCF=Conver=N (RMS=10⁻ᴺ, Max=10⁻⁽ᴺ⁻²⁾) |

| PySCF [2] | Energy and orbital gradient | Not explicitly stated | DIIS, SOSCF (Second-Order) | conver_tol & conv_tol_grad |

This comparative analysis reveals two primary philosophies. Packages like Gaussian and Q-Chem prioritize density-based or orbital gradient-based error metrics, which provide a more direct check for a self-consistent solution. In contrast, ORCA's default emphasizes the change in the total and one-electron energies [12] [14]. The one-electron energy check acts as a proxy for density convergence, as a changing density will necessarily alter the one-electron energy [14]. For critical applications, especially those feeding into post-SCF methods, it is considered best practice to enforce convergence on both the energy and the density/orbital gradient [14].

The Pivotal Role of Basis Sets in SCF Convergence

The atomic orbital basis set is not a passive component but an active determinant of SCF convergence behavior. Its influence is twofold, creating a "conundrum" for computational scientists [13].

The Blessing and Curse of Diffuse Functions

- The Blessing of Accuracy: Diffuse basis functions (e.g., in aug-cc-pVXZ or def2-TZVPPD sets) are essential for an accurate description of electron density in regions far from the nucleus. This is critical for properties such as non-covalent interaction energies, anion binding, and excited states [13] [5]. For example, the accuracy for non-covalent interactions improves dramatically with augmented triple-zeta basis sets (e.g., def2-TZVPPD or aug-cc-pVTZ) compared to their non-augmented counterparts [13].

- The Curse of Sparsity and Convergence: The addition of diffuse functions has a "detrimental impact on the sparsity of the one-particle density matrix" [13]. This reduces the locality of the electronic structure, causing significant numerical challenges and often leading to severe SCF convergence issues [13] [5]. This "curse of sparsity" counterintuitively worsens with larger, more accurate basis sets [13].

Basis Set Superposition Error (BSSE) and Counterpoise Correction

The use of finite, localized basis sets introduces Basis Set Superposition Error (BSSE), an artificial lowering of the energy of interacting fragments in a complex. The standard correction is the Counterpoise (CP) method, which requires multiple SCF calculations and can itself be a source of convergence problems [5]. Basis set extrapolation schemes have been proposed as an alternative that can alleviate the need for CP correction while also reducing SCF convergence issues associated with large, diffuse basis sets [5].

Experimental Protocols for Investigating SCF Convergence

To systematically study the impact of basis sets and convergence criteria, researchers can adopt the following benchmark protocol.

Protocol: Benchmarking Convergence Behavior

Objective: To quantitatively assess the convergence profile (number of cycles, stability) of a target molecular system across different basis sets and convergence criteria.

- System Selection: Choose a representative molecule relevant to your research (e.g., a drug fragment, a transition metal catalyst). Systems with known convergence challenges (e.g., open-shell singlets, small-gap systems) are ideal.

- Basis Set Series: Select a hierarchy of basis sets. A recommended series is:

def2-SVP→def2-TZVP→def2-TZVPP→def2-TZVPPD(orcc-pVDZ→cc-pVTZ→aug-cc-pVTZ). - Computational Level: Fix the theoretical method (e.g.,

B3LYP-D3(BJ)) and other computational parameters (integration grid, memory) across all calculations. - Convergence Criteria: Perform calculations for each basis set using a range of convergence criteria (e.g.,

LooseSCF,StrongSCF,TightSCFin ORCA [12], or by tighteningSCF_CONVERGENCEin Q-Chem [11]). - Data Collection: For each run, record: a) the number of SCF cycles to convergence, b) the final total energy, c) the final density/energy/gradient change values, and d) whether the calculation converged successfully.

- Analysis: Plot the number of cycles versus basis set size and tightness. Analyze the sensitivity of the final energy to the convergence threshold for each basis set.

The workflow for this protocol is visualized below.

Protocol: Basis Set Extrapolation for Weak Interactions

As explored in recent research [5], an optimized exponential-square-root extrapolation scheme can be used to achieve near-complete-basis-set (CBS) accuracy for weak interaction energies while avoiding the SCF challenges of large, diffuse basis sets.

- Calculation: Perform single-point energy calculations on the complex and its monomers using two smaller basis sets (e.g.,

def2-SVPanddef2-TZVPP). - Extrapolation: Apply the two-point extrapolation formula ( E{CBS} = EX - A \cdot e^{-\alpha \sqrt{X}} ), where ( X ) is the basis set cardinal number (2 for DZ, 3 for TZ). Use an optimized parameter (e.g., ( \alpha = 5.674 ) for

B3LYP-D3(BJ)with the def2 series) [5]. - Interaction Energy: Compute the CP-uncorrected interaction energy using the extrapolated CBS energies for the complex and monomers. This protocol has been shown to yield results comparable to CP-corrected calculations with larger basis sets, but with significantly reduced computational cost and SCF convergence issues [5].

The Scientist's Toolkit: Essential Research Reagents

Successfully navigating SCF convergence challenges requires a toolkit of both methodological strategies and computational "reagents." The following table details key solutions.

Table 2: Research Reagent Solutions for SCF Convergence

| Reagent / Solution | Function & Purpose | Example Usage / Keyword |

|---|---|---|

| Initial Guess Variants | Provides a starting point for the SCF iteration. A good guess is crucial for fast and stable convergence. | init_guess = 'minao' (PySCF) [2], SCF_GUESS = ATOM (Q-Chem). |

| DIIS & GDM Algorithms | Accelerates convergence by extrapolating new Fock matrices from previous iterations (DIIS) or via robust direct minimization (GDM). | SCF_ALGORITHM = DIIS_GDM (Q-Chem) [11], .newton() (PySCF SOSCF) [2]. |

| Level Shifting | Increases the energy gap between occupied and virtual orbitals, stabilizing the SCF process in small-gap systems. | LEVEL_SHIFT = [value] (Various), SCF=VShift (Gaussian) [15]. |

| Damping | Mixes a fraction of the previous Fock/density with the new one to prevent large oscillations in early iterations. | DAMP = [value] (Various), mf.damp = 0.5 (PySCF) [2]. |

| Fermi Smearing | Uses fractional orbital occupations at a finite electronic temperature to help converge metallic systems or those with near-degeneracies. | SCF=Fermi (Gaussian) [15], Smearing (PySCF) [2]. |

| Stability Analysis | Checks if the converged wavefunction is a true minimum or an unstable saddle point, guiding restarts with a broken-symmetry guess. | ! Stable (ORCA), mf.stability() (PySCF) [2]. |

| Compact Basis Sets | Reduces SCF convergence issues by avoiding diffuse functions, potentially at the cost of accuracy for certain properties. | def2-SVP, def2-TZVP [13]. |

| Complementary Auxiliary Basis Sets (CABS) | A proposed solution to achieve high accuracy with compact, low angular momentum basis sets, mitigating the "curse of sparsity" [13]. | CABS singles correction [13]. |

The choice of SCF convergence criterion is a critical decision that balances computational cost with the required reliability of the electronic wavefunction. While the change in total energy is a simple metric, convergence based on the density matrix or the orbital gradient provides a more robust guarantee of a self-consistent solution, which is paramount for subsequent property calculations. This entire convergence landscape is profoundly shaped by the selection of the atomic orbital basis set. The researcher must therefore navigate the inherent trade-off: diffuse-rich basis sets offer a "blessing" of accuracy for key chemical phenomena but introduce a "curse" of numerical instability and poor convergence. By leveraging the experimental protocols and toolkit items outlined in this guide—such as systematic benchmarking, basis set extrapolation, and advanced algorithms like GDM and SOSCF—computational chemists and drug developers can strategically overcome SCF convergence barriers, ensuring efficient and reliable electronic structure modeling in their research.

The self-consistent field (SCF) method serves as the foundational computational procedure for solving electronic structure problems in quantum chemistry, from which more advanced correlated methods are built. The choice of atomic orbital basis set is a critical determinant of the accuracy, efficiency, and reliability of these calculations. Within this context, diffuse basis functions—characterized by their small exponents and spatially extended electron densities—present a particularly complex tradeoff for computational chemists. These functions are essential for describing subtle electronic phenomena but introduce significant numerical challenges that can impede SCF convergence.

This technical guide examines the dual nature of diffuse functions within the broader research landscape of SCF convergence. While the accuracy benefits of diffuse functions for properties like non-covalent interactions and excited states are well-established, their impact on convergence stability and computational tractability deserves equal attention. We explore the mechanistic origins of these challenges, present quantitative assessments of their effects, and provide structured protocols for their mitigation, offering researchers a comprehensive framework for making informed basis set selections.

The Critical Role of Basis Sets in SCF Theory

The SCF procedure requires the iterative solution of the Hartree-Fock or Kohn-Sham equations until a consistent electronic configuration is found. The basis set forms the mathematical foundation for this process, representing molecular orbitals as linear combinations of atomic-centered functions. The completeness and quality of this basis directly control the accuracy of the resulting wavefunction and energy.

Atom-centered basis sets, particularly Gaussian-type orbitals (GTOs), are widely used due to their computational efficiency and ability to represent nuclear cusps with relatively few functions [16]. The standard hierarchy progresses from minimal (SZ) to double-zeta (DZ), triple-zeta (TZ), and quadruple-zeta (QZ) quality, each step adding more flexibility for describing electron correlation. Polarization functions (denoted by 'P') of higher angular momentum are then added to describe orbital distortion, while diffuse functions (often denoted by 'aug-' or '+') with small exponents are included to capture the electron density far from atomic nuclei.

The SCF convergence process is particularly sensitive to the numerical conditioning of the overlap and Fock matrices. As basis sets grow larger and more diffuse, these matrices can become ill-conditioned, leading to the oscillatory behavior and convergence failure observed in practice [16] [17]. Understanding this fundamental relationship between basis set structure and SCF stability is essential for effective computational research.

The Blessing: Accuracy for Weak Interactions

Quantitative Accuracy Improvements

Diffuse functions are indispensable for achieving chemical accuracy in properties that depend on an accurate description of the distant electron density. The table below summarizes the dramatic improvement diffuse functions provide for non-covalent interaction (NCI) energies, using the ωB97X-V functional and the ASCDB benchmark [13].

Table 1: Basis Set Errors for Non-Covalent Interactions (RMSD in kJ/mol)

| Basis Set | NCI RMSD (Basis Error Only) | NCI RMSD (Method + Basis Error) |

|---|---|---|

| def2-SVP | 31.33 | 31.51 |

| def2-TZVP | 7.75 | 8.20 |

| def2-TZVPPD | 0.73 | 2.45 |

| cc-pVDZ | 30.17 | 30.31 |

| cc-pVTZ | 12.46 | 12.73 |

| aug-cc-pVDZ | 4.32 | 4.83 |

| aug-cc-pVTZ | 1.23 | 2.50 |

The data demonstrates that unaugmented basis sets, even of triple-zeta quality, introduce errors exceeding 7 kJ/mol in NCIs—far beyond chemical accuracy thresholds. The incorporation of diffuse functions reduces these errors to below 1.3 kJ/mol for the augmented triple-zeta sets, making them essential for reliable supramolecular and drug design applications.

Physical Origins of Accuracy Improvements

The accuracy improvements afforded by diffuse functions stem from their ability to model key physical phenomena:

Electron Density Tails: Diffuse functions provide the mathematical flexibility to describe the exponentially decaying electron density at large distances from atomic nuclei, which is poorly represented by standard compact basis functions.

Intermolecular Overlap: Non-covalent interactions arise from subtle electron density overlaps between separated molecules. Diffuse functions capture these weak interactions by providing non-zero overlap in the critical regions between molecules.

Polarization Responses: External fields or nearby charges induce polarization that extends electron density away from nuclear centers. Diffuse basis functions are essential for modeling these response properties.

Rydberg and Excited States: Electronic excitations to diffuse states require basis functions with extended spatial characteristics, making diffuse functions mandatory for accurate excited-state calculations [18].

The Curse: Convergence and Sparsity Challenges

The SCF Convergence Problem

Despite their accuracy benefits, diffuse functions introduce severe numerical challenges that manifest directly in SCF convergence difficulties. Researchers report that calculations converging readily with standard basis sets (e.g., Def2-TZVP) become "really noisy and weird" and fail to converge when diffuse functions are added [17]. These convergence failures appear as large oscillations in the SCF energy and density between iterations, preventing the solution from stabilizing.

The root causes of these convergence issues include:

Ill-Conditioned Overlap Matrices: Diffuse functions on adjacent atoms exhibit significant linear dependence, causing the overlap matrix to become nearly singular [19].

Extended Orbital Relaxation: The SCF process requires more iterations to achieve self-consistency when the wavefunction has flexible diffuse components.

Energy Level Crowding: Diffuse functions introduce virtual orbitals with similar energies, creating near-degeneracies that challenge diagonalization algorithms.

The Sparsity Destruction Effect

Beyond convergence issues, diffuse functions dramatically reduce sparsity in the one-particle density matrix (1-PDM), which is crucial for linear-scaling electronic structure methods [13]. The table below quantifies this effect for a DNA fragment (1,052 atoms) using different basis sets.

Table 2: Impact of Basis Sets on 1-PDM Sparsity and Computation Time

| Basis Set | 1-PDM Sparsity | Relative Computation Time | Typical Application |

|---|---|---|---|

| STO-3G | High | 1× | Preliminary screening |

| def2-SVP | Moderate | ~3× | Geometry optimization |

| def2-TZVP | Reduced | ~9× | Single-point energy |

| def2-TZVPPD | Very Low | ~28× | Non-covalent interactions |

| aug-cc-pVTZ | Very Low | ~53× | High-accuracy properties |

This "curse of sparsity" occurs because the inverse overlap matrix (S⁻¹) becomes significantly less sparse with diffuse functions, causing the 1-PDM to have important elements between spatially distant basis functions, despite the underlying electronic structure being local [13]. This effect is particularly pronounced for small, diffuse basis sets, creating a paradoxical situation where larger basis sets eventually recover better sparsity in the asymptotic limit, though at tremendous computational cost.

Mechanistic Insights: Mathematical Origins

Linear Dependence and Numerical Conditioning

The fundamental mathematical challenge with diffuse functions arises from linear dependence in the basis set. When diffuse functions on adjacent atoms overlap significantly, they become nearly linearly dependent, causing the eigenvalues of the overlap matrix to approach zero. The condition number of the overlap matrix (ratio of largest to smallest eigenvalue) increases dramatically, amplifying numerical errors in the matrix inversion and diagonalization steps within the SCF procedure.

This effect is particularly pronounced in molecular systems with many atoms in close proximity, where diffuse functions from multiple centers create an overcomplete representation of the space. The transformation required to remove these linear dependencies mixes functions of different angular momenta, further complicating the physical interpretation of results [19].

Real-Space Representation Analysis

The paradoxical sparsity destruction can be understood by examining the real-space representation of the density matrix. For insulating systems with significant HOMO-LUMO gaps, the matrix elements of the 1-PDM are expected to decay exponentially with increasing real-space distance from the diagonal [13]. However, when represented in a diffuse atomic orbital basis, this decay is masked by the non-locality of the contra-variant basis functions obtained through the inverse overlap matrix.

In mathematical terms, while the co-variant Bloch functions (the original basis) may be local, their contra-variant duals are necessarily non-local, and this non-locality becomes more severe with diffuse functions. This explains why the sparsity problem persists even when projecting the 1-PDM onto a real-space grid—it is an intrinsic property of the basis set representation rather than the underlying physics.

Model System Analysis

Analysis of an infinite non-interacting chain of helium atoms reveals that the exponential decay rate of the 1-PDM is proportional to both the diffuseness and local incompleteness of the basis set [13]. This simple model shows that non-locality can arise even in systems with highly local electronic structures and basis sets that consider only nearest-neighbor overlap. The key insight is that small, diffuse basis sets are affected most severely, creating the worst-case scenario for sparsity.

Experimental Protocols and Methodologies

Basis Set Optimization for Strong Field Ionization

Studies of strong field ionization using time-dependent configuration interaction with complex absorbing potential (TDCI-CAP) require carefully designed diffuse basis sets. The following protocol has been established for constructing effective basis sets [19]:

Start with Standard Molecular Basis: Begin with a standard basis set such as aug-cc-pVTZ as the foundation.

Add Even-Tempered Diffuse Functions: Augment with diffuse s, p, d, and f Gaussian functions using even-tempered exponents of the form 0.0001 × 2ⁿ placed on each atom.

Evaluate Radial Distribution: Ensure the largest contribution to the ionization rate comes from functions with a maximum in their radial distribution near the onset of the complex absorbing potential (typically 3.5 times the van der Waals radius for each atom).

Assess Angular Dependence: Verify that the basis set reproduces the rate and shape of the angular dependence of strong field ionization.

Special Cases for Electronegative Atoms: For electronegative atoms like oxygen, include additional f functions with tighter exponents (0.0512 and 0.1024) beyond the standard diffuse functions.

The optimal exponents for the most diffuse functions in this application are 0.0032 (s, p) and 0.0064 (d, f), or smaller [19].

Convergence Troubleshooting Protocol

When facing SCF convergence failures with diffuse basis sets, employ this systematic protocol [17]:

Initial Assessment:

- Verify convergence with a standard basis set (e.g., Def2-TZVP)

- Introduce diffuse functions incrementally

SCF Algorithm Adjustments:

- Implement level shifting to stabilize initial cycles

- Experiment with different convergence accelerators (DIIS, EDIIS, KDIIS)

- Adjust SCF convergence thresholds gradually

Basis Set Modification:

- Remove the most diffuse functions (smallest exponents) first

- Consider basis set projection techniques

- Evaluate linear dependence via overlap matrix condition number

Advanced Techniques:

Figure 1: SCF Convergence Troubleshooting Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Managing Diffuse Function Challenges

| Tool Category | Specific Examples | Function/Purpose |

|---|---|---|

| Standard Basis Sets | def2-SVP, def2-TZVP, cc-pVDZ, cc-pVTZ | Provide foundation for calculations; establish baseline convergence behavior |

| Diffuse-Augmented Sets | def2-SVPD, def2-TZVPPD, aug-cc-pVXZ | Add diffuse functions for accuracy in NCIs, excited states, and anion properties |

| Even-Tempered Sets | ET-pVQZ, ET-QZ3P-1DIFFUSE, ET-QZ3P-2DIFFUSE | Enable systematic approach to basis set limit with controlled diffuse augmentation |

| Specialized Sets | ZORA/TZ2P, Corr/TZ3P, AUG/ADZP | Address specific needs: relativity, correlation, response properties |

| Algorithmic Tools | DIIS, Level shifting, Multigrid preconditioning | Stabilize SCF convergence with challenging basis sets |

| Analysis Frameworks | CABS correction, Density matrix purification | Recover accuracy or sparsity compromised by diffuse functions |

Emerging Solutions and Future Directions

Discontinuous Galerkin Frameworks

Novel discontinuous Galerkin (DG) frameworks show promise for addressing the challenges of diffuse basis sets by allowing basis functions to be discontinuous across element interfaces [16]. This approach provides several advantages:

Improved Numerical Conditioning: DG basis sets maintain favorable numerical conditioning even as basis set size increases, avoiding the ill-conditioning that plagues traditional diffuse-augmented basis sets.

Structured Sparsity: The method induces structured sparsity in one- and two-electron integrals, potentially mitigating the "curse of sparsity" associated with diffuse functions.

Adaptive Preconditioning: DG frameworks naturally support adaptive multigrid preconditioning for the linear eigensolvers used in SCF calculations, significantly improving convergence behavior.

CABS Singles Correction

The complementary auxiliary basis set (CABS) singles correction offers an alternative approach by combining compact, low angular-momentum basis sets with auxiliary functions to recover the accuracy typically requiring diffuse functions [13]. This strategy addresses the sparsity conundrum by:

Preserving Sparsity: Using a compact primary basis maintains sparsity in the 1-PDM while achieving accuracy through corrections.

Systematic Improvement: The approach provides a well-defined path toward the complete basis set limit without the severe numerical penalties of traditional diffuse augmentation.

Computational Efficiency: Calculations demonstrate promising results for non-covalent interactions with significantly reduced computational resources compared to traditional diffuse-augmented basis sets.

Figure 2: Emerging Solutions for Diffuse Function Challenges

Diffuse basis functions remain a double-edged sword in electronic structure calculations—indispensable for achieving target accuracy in non-covalent interactions, excited states, and response properties, yet problematic for SCF convergence and computational efficiency. The mechanistic understanding of these challenges, rooted in the mathematical properties of the basis set representation rather than the underlying physics, provides a foundation for developing effective mitigation strategies.

As computational chemistry continues to address increasingly complex systems in drug design and materials science, the intelligent application of diffuse functions—balanced with emerging approaches like discontinuous Galerkin methods and CABS corrections—will be essential for combining accuracy with computational tractability. By understanding both the blessings and curses of these basis set components, researchers can make informed decisions that advance both scientific knowledge and practical applications.

In the pursuit of chemical accuracy in quantum chemical simulations, researchers often employ increasingly large and diffuse basis sets to approach the complete basis set (CBS) limit. While this strategy generally improves the description of molecular systems, particularly for properties involving electron correlation, weak interactions, or excited states, it introduces significant numerical challenges that can compromise computational outcomes. The phenomenon of linear dependency represents a critical instability that emerges when basis functions become excessively similar or redundant, leading to a precipitous decline in the conditioning of the mathematical problem. Within the context of self-consistent field (SCF) convergence research, understanding and mitigating linear dependency is paramount, as it directly impacts the reliability, efficiency, and predictive power of computational chemistry across scientific domains, including rational drug design where accurate molecular properties are non-negotiable.

The fundamental paradox lies in the competing demands of accuracy and stability. Large, diffuse basis sets (such as aug-cc-pVQZ or ma-def2-TZVPP) provide the flexibility needed to describe subtle electronic effects, polarization, and dispersion interactions [20] [5]. However, this very flexibility increases the risk of numerical redundancy. When the overlap between different basis functions approaches unity, the overlap matrix S in the Roothaan-Hall equations FC = SCE becomes nearly singular [2]. This ill-conditioning manifests as convergence failures in the SCF procedure, unphysical oscillations in calculated properties, and ultimately, unreliable scientific conclusions. This technical guide examines the genesis of linear dependency, its computational signatures, and robust mitigation strategies essential for maintaining scientific rigor in advanced quantum chemical applications.

The Mathematical Foundation of Linear Dependency

Conceptual Underpinnings in LCAO-MO Theory

The Linear Combination of Atomic Orbitals (LCAO) approach to Molecular Orbital (MO) theory constructs molecular wavefunctions from a basis of atom-centered functions. Mathematically, this requires solving a generalized eigenvalue problem of the form:

FC = SCE

where F is the Fock matrix, S is the overlap matrix between basis functions, C contains the molecular orbital coefficients, and E is the diagonal matrix of orbital energies [2]. The overlap matrix S is central to understanding linear dependency. Its elements Sμν = ⟨χμνμ and χν.

A basis set is considered linearly independent if the determinant of the overlap matrix is non-zero. However, as basis sets grow larger—particularly with the addition of multiple diffuse and high-angular momentum functions—the individual basis functions on different atoms can become increasingly similar. When the overlap between functions approaches unity, the overlap matrix becomes numerically singular (its determinant approaches zero), creating an ill-conditioned eigenvalue problem that standard diagonalization routines cannot reliably solve [21] [2].

Mechanisms Leading to Numerical Instability

Several specific mechanisms drive the emergence of linear dependency in expanded basis sets:

Diffuse Function Overlap: Diffuse functions (characterized by small exponents) exhibit slow exponential decay, causing them to remain significant over longer distances. In molecular systems with multiple atoms in close proximity, the diffuse functions on different centers develop substantial overlap, creating near-redundancies in the basis [20] [5].

Basis Set Saturation: With increasing basis set quality (e.g., moving from triple-zeta to quadruple-zeta), the additional functions provide diminishing returns in terms of energy lowering while exponentially increasing the risk of linear dependencies. The table below illustrates how computational cost escalates with basis set size while potential for instability grows:

Table 1: Computational Trade-offs with Increasing Basis Set Quality

| Basis Set | Energy Error (eV/atom)* | CPU Time Ratio* | Risk of Linear Dependency |

|---|---|---|---|

| SZ | 1.8 | 1.0 | Low |

| DZ | 0.46 | 1.5 | Low |

| DZP | 0.16 | 2.5 | Moderate |

| TZP | 0.048 | 3.8 | Moderate |

| TZ2P | 0.016 | 6.1 | High |

| QZ4P | reference | 14.3 | Very High |

Data adapted from BAND documentation benchmarking on a carbon nanotube system [22]

- System-Specific Vulnerabilities: Linear dependency problems are particularly acute in certain chemical systems:

- Third-row and heavier elements where core-valence separation becomes crucial [20]

- Anionic systems and radical anions that require diffuse functions for accurate description [21]

- Extended π-systems and metal clusters with delocalized electronic structures [21]

- Supramolecular complexes and weakly interacting systems where large, diffuse basis sets are essential for capturing dispersion interactions [5]

The following diagram illustrates the conceptual relationship between basis set size, completeness, and the emergence of linear dependency:

Computational Manifestations and Diagnostic Protocols

Recognizing Symptoms in SCF Calculations

Linear dependency manifests through distinct computational signatures during quantum chemical calculations. Researchers should remain vigilant for these symptoms when employing large, diffuse basis sets:

SCF Convergence Failures: The self-consistent field procedure exhibits oscillatory behavior, stagnation, or complete divergence despite otherwise appropriate convergence settings. The SCF may cycle indefinitely without achieving the specified energy or density convergence criteria [21] [12]. In ORCA, for instance, this may trigger warnings about "huge, unreliable steps" in SOSCF procedures or necessitate specialized convergence algorithms like TRAH (Trust Radius Augmented Hessian) [21].

Warning Messages and Errors: Explicit warnings about linear dependency or matrix singularity often appear in program output. The ADF software suite generates warnings such as "Virtuals almost lin. dependent" with the recommendation to "Consider using keyword DEPENDENCY" [23]. Similar messages in other codes may reference problems with matrix diagonalization or overlap matrix conditioning.

Unphysical Results and Discontinuities: Calculated molecular properties exhibit erratic behavior or discontinuities with slight geometric perturbations. For example, NMR shielding constants of third-row elements show irregular convergence patterns with increasing basis set size, as observed in phosphorus mononitride (PN) where "σiso calculated with the aug-cc-pVXZ basis sets were scattered, evincing nonstandard convergence with increasing basis set size" [20].

Numerical Overflow and Underflow: Extreme cases may produce floating-point exceptions during integral evaluation or matrix diagonalization, particularly when solving the generalized eigenvalue problem FC = SCE [2].

Quantitative Diagnostic Methods

Beyond observational symptoms, rigorous diagnostic protocols enable researchers to quantitatively assess linear dependency:

- Overlap Matrix Eigenvalue Analysis: The most direct mathematical approach involves diagonalizing the overlap matrix S and examining its eigenvalues. The condition number of S (ratio of largest to smallest eigenvalue) provides a quantitative measure of stability. As noted in the search results, "when the smallest eigenvalue of the virtual SFO overlap matrix is smaller than 1e-5" [23], linear dependency becomes a significant concern. Practical thresholds for diagnostic intervention are summarized below:

Table 2: Diagnostic Thresholds for Linear Dependency Assessment

| Diagnostic Metric | Stable Range | Concerning Range | Critical Range | Remedial Action |

|---|---|---|---|---|

| Smallest Overlap Eigenvalue | >10-5 | 10-7 to 10-5 | <10-7 | Basis set pruning essential |

| Overlap Matrix Condition Number | <103 | 103 to 106 | >106 | Immediate intervention required |

| SCF Convergence Cycles | <30 | 30-100 | >100 | Modify algorithm or basis |

| Energy Oscillation (ΔE) | Monotonic decrease | <10x tolerance | >10x tolerance | Increase damping/level shift |

Basis Set Quality Assessment: Monitoring the convergence of target properties with systematically improved basis sets provides indirect evidence of stability. Irregular oscillations in properties like NMR shieldings with increasing basis set cardinal number X signal underlying linear dependency issues. Research demonstrates that "the use of Dunning core-valence or Jensen basis sets effectively reduces the scatter of theoretical NMR results and leads to their exponential-like convergence to CBS" compared to the irregular convergence with standard polarized-valence sets [20].

Wavefunction Stability Analysis: Most quantum chemistry packages, including PySCF, implement formal stability analysis to detect whether the converged solution represents a true minimum or a saddle point on the electronic energy surface [2]. While not exclusively diagnostic of linear dependency, unstable wavefunctions in conjunction with large basis sets often indicate underlying numerical issues.

Mitigation Strategies and Computational Protocols

Basis Set Selection and Modification

Strategic basis set selection provides the first line of defense against linear dependency:

Hierarchical Basis Set Selection: Adopt a systematic approach to basis set improvement rather than immediately selecting the largest available basis. The BAND documentation recommends the hierarchy "SZ < DZ < DZP < TZP < TZ2P < QZ4P" [22], where TZP typically offers the optimal balance between accuracy and stability for most applications.

Specialized Basis Sets for Specific Properties: Employ property-optimized basis sets rather than universally large ones. For NMR calculations, Jensen's aug-pcSseg-n family "were designed and used for efficient predictions of nuclear shieldings" with better convergence behavior than standard energy-optimized sets [20]. Similarly, for weak interactions, "minimal augmentation" basis sets like ma-def2-TZVPP provide improved stability compared to fully augmented counterparts [5].

Core-Valence Basis Sets for Heavy Elements: For third-row and heavier elements, core-valence basis sets (e.g., aug-cc-pCVXZ) provide more regular convergence than standard valence-only sets. Research shows that "the use of Dunning core-valence basis set family produced regularly converging 31P NMR parameters in PN" compared to the scattered results with aug-cc-pVXZ sets [20].

Program-Specific Remedies and Algorithms

Most quantum chemistry packages implement specific functionality to address linear dependency:

Dependency Removal Directives: Explicit keywords trigger automatic basis set pruning when near-linear dependencies are detected. In ADF, the "Dependency" keyword addresses the warning "Virtuals almost lin. dependent" [23]. Similar functionality exists in other codes through keywords like %maxsubspace or IOp(3/12) in Gaussian.

Forced Orthogonalization Techniques: Programs often employ built-in orthogonalization schemes (e.g., Löwdin orthogonalization) to transform the basis to an orthogonal set before solving the SCF equations, mitigating the impact of mild linear dependencies.

Advanced SCF Algorithms: Second-order SCF methods and trust-region approaches can improve stability in problematic cases. ORCA's "Trust Radius Augmented Hessian (TRAH)" approach represents "a robust second-order converger" that automatically activates when standard DIIS-based approaches struggle [21]. Similarly, PySCF implements second-order SCF through the ".newton()" decorator [2].

The following workflow diagram provides a systematic protocol for diagnosing and addressing linear dependency issues:

Table 3: Research Reagent Solutions for Linear Dependency Challenges

| Tool/Resource | Function/Purpose | Implementation Example |

|---|---|---|

| Dependency Keyword | Automatically removes linearly dependent basis functions | ADF: Dependency [23] |

| Core-Valence Basis Sets | Provides balanced description of core and valence electrons for heavy elements | aug-cc-pCVXZ (X=D,T,Q,5) [20] |

| Property-Optimized Basis | Basis sets designed for specific molecular properties | Jensen: aug-pcSseg-n for NMR [20] |

| Minimal Augmentation | Adds only essential diffuse functions to reduce BSSE and linear dependency | ma-def2-TZVPP for weak interactions [5] |

| TRAH Algorithm | Robust second-order SCF convergence for difficult cases | ORCA: ! TRAH or automatic activation [21] |

| Second-Order SCF | Newton-Raphson solver with quadratic convergence | PySCF: scf.RHF(mol).newton() [2] |

| Level Shifting | Increases HOMO-LUMO gap to improve SCF stability | PySCF: mf.level_shift = 0.5 [2] |

| Basis Set Extrapolation | Achieves CBS accuracy without largest basis sets | Exponential-square-root formula [5] |

Linear dependency represents a fundamental computational challenge inextricably linked to the pursuit of complete basis set limits in quantum chemical simulations. As research progresses toward increasingly complex molecular systems and higher accuracy requirements, the intelligent management of this trade-off becomes essential. The strategies outlined in this work—judicious basis set selection, systematic diagnostic protocols, and targeted algorithmic interventions—provide a framework for maintaining numerical stability without sacrificing chemical accuracy.

The broader context of SCF convergence research must acknowledge that mathematical robustness underpins physical meaningfulness. Future developments in basis set design, particularly those incorporating system-adaptive approaches and integration with solver algorithms, promise to push the boundaries of this frontier. For researchers in computational drug development and materials science, where reliable predictions guide expensive experimental work, mastering these numerical aspects remains as crucial as understanding the underlying quantum chemistry.

The pursuit of reliable and accurate electronic structure calculations necessitates a deep understanding of the interplay between three core components: the chosen basis set, the resulting molecular orbital structure, and the Self-Consistent Field (SCF) convergence procedure. The size and composition of the basis set directly influence the calculated energy and spatial characteristics of the frontier molecular orbitals—the Highest Occupied Molecular Orbital (HOMO) and the Lowest Unoccupied Molecular Orbital (LUMO). The energy difference between these orbitals, the HOMO-LUMO gap, is not merely a chemical descriptor of stability and reactivity but is also a critical numerical factor governing the stability and convergence of the SCF cycle. This technical guide examines the role of the HOMO-LUMO gap in SCF stability, framing it within the context of basis set selection for computational research. A foundational understanding of this relationship is essential for researchers aiming to perform robust calculations on large molecular systems, such as in drug development, where navigating the trade-off between computational cost and accuracy is paramount.

Theoretical Foundations: Molecular Orbitals, Basis Sets, and the SCF Cycle

Molecular Orbital Theory and Frontier Orbitals

Molecular orbitals (MOs) are mathematical functions that describe the wave-like behavior of an electron in a molecule, formed through the quantum mechanical combination of atomic orbitals (AOs) [24]. According to the Linear Combination of Atomic Orbitals (LCAO) approach, n atomic orbitals combine to form n molecular orbitals [25]. These MOs are categorized as bonding (stabilizing, lower energy), antibonding (destabilizing, higher energy), or non-bonding [24]. The Highest Occupied Molecular Orbital (HOMO) and the Lowest Unoccupied Molecular Orbital (LUMO), collectively known as the frontier orbitals, define the boundary between occupied and unoccupied electron states [26] [27]. They are critically important because they largely determine a molecule's chemical reactivity and optical properties [26]. The energy difference between them is known as the HOMO-LUMO gap.

Basis Sets in Electronic Structure Calculations

A basis set is a set of mathematical functions, centered on atoms, used to represent the molecular orbitals of a system [22]. The choice of basis set profoundly impacts the accuracy, CPU time, and memory requirements of a calculation [22]. Basis sets are hierarchically categorized by their level of completeness:

- Minimal/Single Zeta (SZ): The smallest basis sets, generally considered unreliable for quantitative work and useful only for exploratory calculations [22] [28].

- Double Zeta (DZ): More flexible than SZ, but the lack of polarization functions often leads to a poor description of the virtual orbital space (e.g., the LUMO) [22].

- Polarized Basis Sets (e.g., DZP, TZVP): These include higher angular momentum functions (e.g.,

p-functions on hydrogen,d-functions on carbon), which allow orbitals to change their shape and provide a better description of electron distribution. The TZP (Triple Zeta plus Polarization) basis set is often recommended as offering the best balance between performance and accuracy [22] [28]. - Augmented/Diffuse Functions (e.g., aug-cc-pVXZ): These basis sets include functions with very small exponents, which decay slowly and are essential for accurately describing anions, excited states, and other systems with diffuse electron densities [29] [28].

Table 1: Common Basis Set Types and Their Characteristics

| Basis Set Type | Abbreviation | Description | Typical Use Case |

|---|---|---|---|

| Single Zeta | SZ | Minimal basis set; one function per atomic orbital. | Quick test calculations; computationally efficient but inaccurate [22]. |

| Double Zeta | DZ | Two functions per atomic orbital. | Pre-optimization of structures [22]. |

| Double Zeta + Polarization | DZP | DZ plus higher angular momentum functions. | Geometry optimizations of organic systems [22]. |

| Triple Zeta + Polarization | TZP | Three functions per atomic orbital plus polarization. | Recommended for a good balance of accuracy and performance [22] [28]. |

| Augmented | e.g., aug-cc-pVXZ | Includes diffuse functions. | Anions, Rydberg states, and properties like electron affinity [29] [28]. |

The Self-Consistent Field (SCF) Procedure

The SCF method is an iterative procedure for solving the electronic Schrödinger equation. An initial guess of the molecular orbitals is used to build the Fock matrix, from which new orbitals are derived. This process repeats until the input and output orbitals (or densities) converge according to predefined thresholds [12]. The stability of this iterative process is highly sensitive to the electronic structure of the molecule. A small HOMO-LUMO gap can lead to near-instability in the SCF process, making convergence difficult or causing it to settle into an unphysical solution [12]. This is because a small gap implies a low energy cost for electronic excitations, which can destabilize the single-determinant reference wavefunction.

The Interplay Between Basis Sets, the HOMO-LUMO Gap, and SCF Convergence

How Basis Set Size and Quality Affect the HOMO-LUMO Gap

The choice of basis set directly controls the accuracy of the computed HOMO-LUMO gap. Small basis sets (e.g., SZ, DZ) lack the flexibility to describe the tails of electron distributions and the shapes of excited states, typically resulting in an inaccurate, often overestimated, HOMO-LUMO gap [22]. As the basis set is enlarged, the description of both occupied and virtual orbitals improves, and the HOMO-LUMO gap systematically converges toward its complete basis set limit [29]. The convergence of the virtual orbital space (the LUMO and higher unoccupied orbitals) is particularly sensitive to the inclusion of polarization and diffuse functions. For instance, a DZ basis without polarization functions provides a "very poor" description of the virtual orbital space, while a TZP basis captures band gap trends well [22]. This has a direct and critical consequence for SCF stability.

The HOMO-LUMO Gap as a Determinant of SCF Stability

The HOMO-LUMO gap is a primary factor governing the convergence and stability of the SCF procedure. The underlying mathematical reason is that a small energy difference between the HOMO and LUMO makes the electron density susceptible to large, oscillatory changes during the SCF iteration. The system becomes "soft" with respect to electronic perturbations. In contrast, a large HOMO-LUMO gap indicates a stable, closed-shell electronic configuration that is numerically easier to converge. This is a fundamental link between a molecule's electronic structure and the computational algorithm.

The addition of diffuse functions in augmented basis sets, while necessary for accuracy in many cases, introduces a specific numerical challenge: it can lead to a reduction of the HOMO-LUMO gap and an increase in the basis set's linear dependence [29] [28]. Diffuse functions have large spatial extents, leading to significant overlap between basis functions on different atoms. This can cause the eigenvalues of the overlap matrix to become very small, resulting in a high condition number [29]. A high condition number indicates near-linear dependence within the basis set, which makes the SCF equations ill-conditioned and can cause severe convergence difficulties, forcing the use of tighter integral thresholds and more sophisticated SCF algorithms [29] [28].

Quantitative Benchmarks: Basis Set Performance and Accuracy

The trade-off between basis set size, computational cost, and accuracy can be quantitatively benchmarked. The following table summarizes typical performance data for a range of basis sets applied to a system such as a carbon nanotube.

Table 2: Benchmark of Basis Set Accuracy and Computational Cost [22]

| Basis Set | Energy Error (eV/atom) | CPU Time Ratio | Recommended Application |

|---|---|---|---|

| SZ | 1.8 (High) | 1.0 (Reference) | Very quick test calculations only [22] [28]. |

| DZ | 0.46 | 1.5 | Pre-optimization; poor for properties relying on virtual space [22]. |

| DZP | 0.16 | 2.5 | Reasonable for geometry optimizations in organic systems [22]. |

| TZP | 0.048 | 3.8 | Recommended for a good balance of accuracy and performance [22] [28]. |

| TZ2P | 0.016 | 6.1 | Accurate; good for virtual space properties [22]. |

| QZ4P | Reference | 14.3 | Benchmarking for highest accuracy [22]. |

Specialized basis sets have been developed to address these challenges. For example, the recently introduced augmented MOLOPT basis sets are optimized for excited-state calculations on large molecules and crystals. They are designed to achieve fast convergence of GW gaps and Bethe-Salpeter excitation energies while maintaining low condition numbers of the overlap matrix to ensure numerical stability [29]. For instance, the double-zeta version of this family yields a mean absolute deviation of only 60 meV from the complete basis set limit for GW HOMO-LUMO gaps [29].

Computational Protocols and Methodologies

A Standard Protocol for Assessing SCF Stability with Different Basis Sets

For researchers investigating new molecular systems, the following workflow provides a robust methodology for navigating basis set selection and ensuring SCF stability.

Diagram 1: SCF Convergence Protocol

Step-by-Step Protocol:

- Initial Calculation with a Small Basis Set: Begin with a computationally efficient, small split-valence basis set like

def2-SV(P)[28]. Use default orLooseSCFconvergence criteria to quickly generate an initial wavefunction. - Geometry Optimization: Use the wavefunction from Step 1 as an initial guess for a geometry optimization. This can be done with a

DZPorTZPbasis set to obtain a reasonable molecular structure [22]. - Single-Point Energy with a Larger Basis Set: Using the optimized geometry, perform a single-point energy calculation with a larger, more accurate basis set such as

def2-TZVPordef2-TZVPP. At this stage, tighter convergence criteria (e.g.,TightSCF) should be employed [12] [28]. - SCF Stability Analysis: Following the converged SCF calculation, perform a formal stability analysis [12]. This checks if the solution is a true minimum on the surface of orbital rotations or if it can collapse to a lower-energy solution. Most quantum chemistry software packages (e.g., ORCA, Gaussian) have built-in procedures for this.

- Addressing Instabilities: If the wavefunction is found to be unstable:

- Final High-Accuracy Calculation: Once a stable solution is obtained, it can be used as a guess for a final single-point calculation with an even larger basis set (e.g.,

def2-QZVPP) or for correlated methods beyond DFT (e.g., MP2, CCSD(T)) if required [28].

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Computational Tools for SCF Stability Research

| Tool / "Reagent" | Function / Purpose | Technical Notes |

|---|---|---|

| Basis Sets (def2-series) | A consistent family of Gaussian basis sets for non-relativistic calculations across the periodic table [28]. | def2-SV(P) for initial scans; def2-TZVP for good accuracy; def2-TZVPP/def2-QZVPP for final energies. |

| Augmented MOLOPT | A family of all-electron Gaussian basis sets optimized for excited-state calculations on large systems [29]. | Designed for low condition numbers and fast convergence of GW/BSE energies. Mitigates instability from diffuse functions. |

| RIJCOSX Approximation | Speeds up HF exchange calculation in hybrid DFT by combining Resolution of the Identity (RI) and Chain-of-Spheres (COSX) [28]. | Crucial for making large basis set calculations with hybrid functionals computationally feasible. |

| Stability Analysis | A numerical procedure to verify that an SCF solution is a local minimum and not a saddle point [12]. | Critical for open-shell systems and species with small HOMO-LUMO gaps to ensure the validity of the result. |

| TRAH SCF Algorithm | The "Trust Region Augmented Hessian" SCF algorithm [12]. | More robust and reliable than standard DIIS for problematic convergence, especially with large, diffuse basis sets. |

Advanced Topics and Specialized Applications

Frontier Orbital Engineering in Materials Science

The deliberate manipulation of the HOMO-LUMO gap, known as HOMO-LUMO gap engineering, is a key strategy in materials science for designing organic semiconductors with tailored properties. Research on tetraoxa[8]circulenes has demonstrated that conjugated polymers can be designed with HOMO-LUMO gaps as low as ~1.66 eV for 1D polymers and ~2.0 eV for 2D polymers, classifying them as organic semiconductors [30]. This is achieved by extending the π-conjugation, which reduces the gap. However, this very reduction can introduce challenges for computational characterization, requiring careful basis set selection to accurately model the delocalized electronic structure without encountering SCF convergence issues.

The Dual-Basis Approach for Enhanced Efficiency

A powerful technique to mitigate the high computational cost of large basis sets is the dual-basis method [31]. This approach performs an initial SCF calculation with a small basis set and then projects the resulting density matrix onto a larger basis set. A single Fock-matrix build in the large basis set then yields a significantly improved total energy. Analytic energy gradients are available for this method, enabling efficient geometry optimizations and molecular dynamics simulations with large basis sets that would otherwise be prohibitively expensive [31]. This is particularly useful for pre-optimizing structures before a final, highly accurate single-point energy calculation.

The HOMO-LUMO gap serves as a critical nexus linking the electronic structure of a molecule, the choice of computational basis set, and the numerical stability of the SCF procedure. Small basis sets offer computational efficiency but risk inaccurate results, while large, diffuse basis sets—essential for chemical accuracy—can shrink the HOMO-LUMO gap and introduce numerical instabilities that hinder SCF convergence. A deep understanding of this triad allows computational researchers and drug development scientists to design robust computational protocols. By strategically navigating the hierarchy of basis sets, utilizing stability analyses, and employing advanced algorithms, it is possible to achieve chemically meaningful and numerically stable results, even for challenging systems with small frontier orbital gaps. This systematic approach is fundamental to the reliable application of computational chemistry in cutting-edge scientific research.