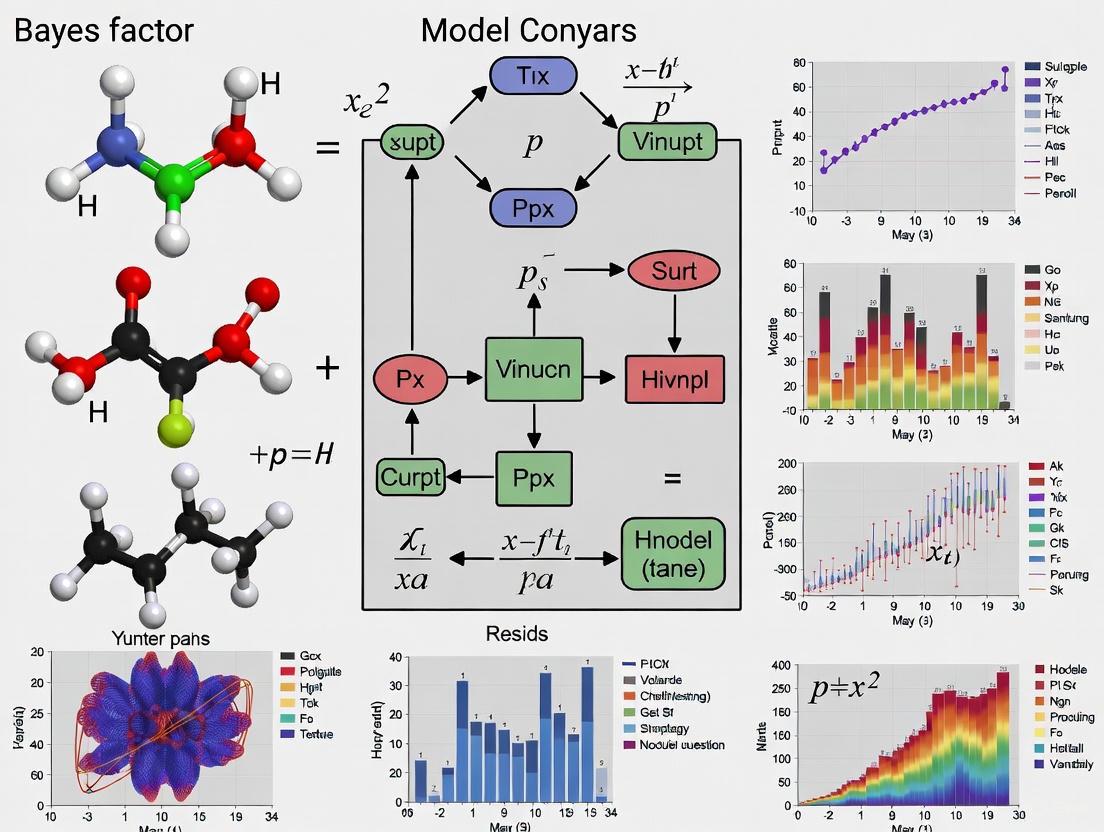

Bayes Factor Model Comparison: A Computational Guide for Biomedical Research and Drug Development

This article provides a comprehensive guide to Bayes Factor model comparison for researchers, scientists, and professionals in computational fields and drug development.

Bayes Factor Model Comparison: A Computational Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive guide to Bayes Factor model comparison for researchers, scientists, and professionals in computational fields and drug development. It covers foundational concepts, practical implementation using modern computational tools, and addresses common challenges like prior sensitivity and statistical power. The scope includes methodological applications in epidemiology and clinical trial analysis, troubleshooting of widespread interpretation errors, and comparative analysis with information criteria. Designed to bridge theory and practice, this guide emphasizes robust computational workflows to enhance model selection reliability in biomedical research.

Understanding Bayes Factors: From Theoretical Foundations to Practical Interpretation

Defining Bayes Factors as Relative Evidence and Updated Odds

Bayes Factors (BFs) are indices of relative evidence used in Bayesian statistics to quantify the support for one statistical model over another based on observed data [1]. In the context of model comparison, they serve a role analogous to p-values in frequentist hypothesis testing but with a critical advantage: they allow researchers to evaluate evidence in favor of a null hypothesis rather than only being able to reject it [2]. The core principle of a Bayes Factor is to compare the predictive performance of two competing models by assessing how well each explains the observed data [3]. This makes them particularly valuable for computational research where models of varying complexity must be objectively compared.

The mathematical definition of the Bayes Factor is rooted in Bayes' theorem. Given two models, M1 and M2, the Bayes Factor is the ratio of their marginal likelihoods—the probability of the data under each model [2]. Formally, this is expressed as: [ BF_{12} = \frac{Pr(D|M1)}{Pr(D|M2)} ] where ( Pr(D|M) ) represents the marginal likelihood of the data D under model M [1]. This ratio can be intuitively understood as the factor by which our prior beliefs about the relative credibility of two models are updated after observing data, moving us to our posterior beliefs [1].

Mathematical Foundation: From Prior Odds to Posterior Odds

The Bayes Factor provides a direct link between prior and posterior model probabilities. This relationship is derived from the standard form of Bayes' theorem applied to model comparison [2]:

[ \underbrace{\frac{P(M1|D)}{P(M2|D)}}{\text{Posterior Odds}} = \underbrace{\frac{P(D|M1)}{P(D|M2)}}{\text{Bayes Factor}} \times \underbrace{\frac{P(M1)}{P(M2)}}_{\text{Prior Odds}} ]

This equation reveals that the posterior odds (the relative belief in M1 versus M2 after seeing the data) equal the prior odds (the initial relative belief) multiplied by the Bayes Factor [1]. The Bayes Factor therefore represents the evidence provided by the data itself, quantifying how much our beliefs should shift due to the empirical evidence. When prior odds are equal, the Bayes Factor is identical to the posterior odds [2].

Key Properties and Advantages

- Evidence for the Null: Unlike traditional significance testing, Bayes factors can provide evidence for a null model, not just against it [2].

- Model Complexity Penalization: The marginal likelihood automatically penalizes model complexity because it integrates over the entire parameter space. More complex models must demonstrate sufficiently improved fit to be justified [3].

- Theoretical Flexibility: Bayes factors can compare non-nested models with different functional forms, unlike classical likelihood ratio tests that typically require nested models [2].

Interpreting Bayes Factors: Scales of Evidence

To standardize interpretation, several scales have been proposed to categorize the strength of evidence provided by Bayes Factors. The following table summarizes two widely cited interpretation scales:

Table 1: Interpretation Scales for Bayes Factors

| Bayes Factor (BF₁₂) | log₁₀(BF₁₂) | Jeffreys' Scale Terminology | Kass & Raftery (1995) Terminology |

|---|---|---|---|

| 1 to 3.2 | 0 to 0.5 | Barely worth mentioning | Not worth more than a bare mention |

| 3.2 to 10 | 0.5 to 1 | Substantial evidence | Substantial evidence |

| 10 to 100 | 1 to 2 | Strong evidence | Strong evidence |

| > 100 | > 2 | Decisive evidence | Decisive evidence |

Sources: [2]

Jeffreys also provided a more detailed scale that includes ranges for evidence supporting M2 over M1 (when BF₁₂ < 1), creating a symmetrical interpretation framework [2]. For example, a BF₁₂ of 0.1 provides the same strength of evidence for M2 as a BF₁₂ of 10 provides for M1.

Methodological Approaches and Experimental Protocols

Computational Methods for Bayes Factor Calculation

Computing Bayes Factors requires calculating the marginal likelihood, which involves integrating over parameter spaces. This integration is often challenging, and several computational techniques have been developed:

Table 2: Methods for Bayes Factor Computation

| Method | Key Principle | Applications | Considerations |

|---|---|---|---|

| Thermodynamic Integration (TI) | Uses a path sampling approach between prior and posterior [4] | Hydrological model selection [4] | High computational cost but accurate for complex models |

| Savage-Dickey Density Ratio | Compares posterior and prior densities at the null value [1] [2] | Testing point-null hypotheses | Only applicable to nested models with specific constraints |

| Bridge Sampling | Uses a bridge function to connect two distributions [4] | General model comparison | Requires careful choice of bridge function |

| Chib's Method | Estimates marginal likelihood from posterior samples [4] [2] | General Bayesian inference | Can underestimate for multimodal distributions [4] |

| Importance Sampling | Uses proposal distribution to approximate integral | General purpose | Performance depends heavily on proposal distribution choice |

Protocol: Model Comparison for Epidemics with Super-Spreading

A recent study demonstrates a complete Bayesian workflow for comparing epidemic models with different transmission mechanisms [5]:

Model Specification: Define five competing stochastic branching-process models representing homogeneous transmission, unimodal/bimodal super-spreading events, and unimodal/bimodal super-spreading individuals.

Prior Selection: Choose appropriate prior distributions for parameters such as the basic reproduction number (R₀) based on domain knowledge.

Posterior Inference: Use Markov Chain Monte Carlo (MCMC) methods, particularly Hamiltonian Monte Carlo (HMC) or its variants, to sample from posterior distributions of model parameters.

Marginal Likelihood Estimation: Apply importance sampling to compute marginal likelihoods, selected for its "consistency and lower variance compared to alternatives" [5].

Model Selection: Calculate Bayes Factors from the marginal likelihoods to identify the best-supported model. The framework accurately identified the true data-generating model in most simulations and produced estimates consistent with previous studies when applied to SARS and COVID-19 data [5].

Bayesian Model Comparison Workflow

Protocol: Bayesian Updating for Sequential Analysis

Bayesian updating provides an alternative to fixed sample size designs, particularly useful when data collection is ongoing:

Initial Setup: Define competing hypotheses and specify prior distributions. Begin with an initial sample size.

Sequential Analysis: After collecting the initial data, compute the Bayes Factor comparing hypotheses of interest.

Decision Framework: If the Bayes Factor reaches a pre-specified threshold (e.g., 10 for strong evidence), stop data collection. Otherwise, continue collecting data.

Iterative Updating: Repeat steps 2-3 until sufficient evidence is achieved or a maximum sample size is reached.

This approach is particularly valuable in studies "where additional subjects can be recruited easily and data become available in a limited amount of time" [6]. Simulation studies are recommended to understand expected sample sizes and error rates under different effect sizes [6].

Applications Across Research Domains

Pharmaceutical Development and Drug Discovery

In pharmaceutical development, Bayesian approaches including Bayes Factors are increasingly used to incorporate prior information, potentially reducing the time and cost of bringing new medicines to patients [7] [8]. The FDA has issued formal guidance on using Bayesian statistics in medical device clinical trials, acknowledging their value when good prior information exists [9]. Specific applications include:

- Clinical Trial Design: Using prior data to inform trial designs, potentially reducing required sample sizes [9]

- Adaptive Trials: Modifying trials based on accumulating evidence while controlling error rates [9]

- Process Optimization: Applying Bayesian optimization to pharmaceutical manufacturing processes to reduce experimental burden [8]

Computational Psychiatry and Behavioral Modeling

A Bayesian workflow for generative modeling in computational psychiatry demonstrates how Bayes Factors can identify optimal models of behavioral processes [10]. The approach uses Hierarchical Gaussian Filter (HGF) models equipped with multivariate response models that simultaneously analyze binary responses and continuous response times, improving parameter identifiability and model robustness [10].

Hydrological Model Selection

Recent research has developed sophisticated methods for Bayes Factor computation in hydrological applications. The REpHMC + TI method combines:

- Replica-exchange preconditioned Hamiltonian Monte Carlo (REpHMC) for efficient sampling of potentially multimodal posteriors

- Thermodynamic Integration (TI) for marginal likelihood estimation

- Automatic differentiation for gradient calculations through ordinary differential equation systems [4]

This approach enables robust model comparison for conceptual rainfall-runoff models with moderate-dimensional, strongly correlated parameter spaces [4].

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Tools for Bayes Factor Research

| Tool/Technique | Function | Application Context |

|---|---|---|

| Markov Chain Monte Carlo (MCMC) | Posterior sampling for complex models [9] | General Bayesian inference |

| Hamiltonian Monte Carlo (HMC) | Efficient sampling of high-dimensional parameter spaces [4] | Models with correlated parameters |

| Replica-Exchange Monte Carlo | Sampling multimodal distributions [4] | Complex hydrological models |

| TensorFlow Probability | Differentiable programming for automatic differentiation [4] | Models formulated as ODE systems |

| R package bayestestR | User-friendly Bayes Factor computation [1] | General statistical modeling |

| Thermodynamic Integration | Accurate marginal likelihood estimation [4] | High-dimensional model comparison |

Bayes Factor Calculation and Interpretation Process

Comparative Performance in Model Selection

Bayes Factors have distinct advantages and limitations compared to alternative model comparison methods:

Comparison with Posterior Predictive Methods

Research demonstrates that Bayes Factors outperform posterior predictive methods like WAIC (Watanabe-Akaike Information Criterion) when evaluating models with order constraints or nested structures [3]. In cases where a constrained model is nested within a more general unconstrained model, posterior predictive methods fail to favor the constrained model even when data strongly support the constraints [3]. Bayes Factors appropriately apply Occam's razor by rewarding simpler models that fit the data equally well.

Comparison with Information Criteria

While information criteria like AIC and BIC are more computationally tractable, they rely on asymptotic approximations and explicitly penalize model complexity based on parameter counts [4] [2]. Bayes Factors provide an exact finite-sample comparison that automatically balances fit and complexity without requiring explicit penalty terms [4]. Unlike information criteria, Bayes Factors are invariant to parameter transformations, making them more robust to different model parameterizations [4].

Bayes Factors provide a mathematically rigorous framework for model comparison that quantifies relative evidence as the updating factor from prior to posterior odds. Their ability to incorporate prior knowledge, evaluate evidence for null hypotheses, and automatically balance model complexity against goodness-of-fit makes them particularly valuable for computational research across diverse domains from epidemiology to pharmaceutical development. While computational challenges remain, recent advances in sampling algorithms and marginal likelihood estimation continue to expand their applicability to increasingly complex models, establishing Bayes Factors as a fundamental tool in modern statistical inference.

The Mathematics of Marginal Likelihoods and Model Evidence

In the realm of Bayesian statistics, model selection is a critical process for identifying which mathematical representation best describes observed data. Central to this process is the marginal likelihood, also known as model evidence—a quantitative measure of a model's average performance, weighted against the data. This guide provides an objective comparison of the primary computational methods for estimating marginal likelihoods, focusing on their application within Bayesian model comparison and drug development research.

Core Concepts: Marginal Likelihood and Bayes Factor

The marginal likelihood for a model ( M ) is the probability of the observed data ( D ) given that model, integrating over all the model's parameters ( \theta ). It is expressed as:

[ p(D | M) = \int p(D | \theta, M) \, p(\theta | M) \, d\theta ]

This integral represents the average fit of the model to the data, penalized for model complexity—an embodiment of the Occam's razor principle [11]. For comparing two models, ( M1 ) and ( M0 ), Bayesian statisticians use the Bayes Factor (BF), which is the ratio of their marginal likelihoods:

[ BF{10} = \frac{p(D | M1)}{p(D | M_0)} ]

A Bayes Factor greater than 1 favors model ( M1 ), while a value less than 1 favors ( M0 ). The strength of this evidence is often interpreted using established scales, such as the Kass and Raftery scale [12].

Computational Methods: A Comparative Analysis

Calculating the marginal likelihood is challenging as it requires solving a multidimensional integral, often intractable with exact methods. Several computational techniques have been developed to address this, each with distinct strengths, weaknesses, and optimal use cases, as summarized in the table below.

Table 1: Comparison of Marginal Likelihood Estimation Methods

| Method | Core Principle | Computational Requirements | Best Suited For | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Sequential Neural Likelihood Estimation (SNLE) [13] | Uses neural density estimators to approximate the likelihood function iteratively. | High (neural network training, sequential simulations) | Models with intractable likelihoods but available simulators. | Amortized inference; focuses on relevant parameter regions. | Sensitive to model misspecification; requires careful tuning. |

| Likelihood Level Adapted Methods [11] | Transforms the multidimensional integral into a 1D integral over likelihood levels. | Moderate to High (adaptive sampling) | High-dimensional problems with complex, multi-modal posteriors. | High accuracy in low & high dimensions; flexible sampling. | Implementation complexity of adaptive levels. |

| Nested Sampling [11] | Transforms the multidimensional integral into a 1D integral over the prior mass. | Moderate (sampling from constrained prior) | General-purpose use, particularly for multi-modal posteriors. | Conceptually straightforward; provides evidence directly. | Can be inefficient in very high-dimensional spaces. |

| Sequential Monte Carlo (SMC) [11] | Samples from a sequence of distributions, from prior to posterior. | High (managing multiple particles and temperatures) | High-dimensional and/or multi-modal posterior distributions. | Robust and flexible; provides an estimate of the evidence. | Can be computationally intensive. |

| Power Posterior / Thermodynamic Integration [11] | Estimates evidence by integrating over a path from prior to posterior. | High (MCMC sampling at multiple temperatures) | Models where a continuous path from prior to posterior is feasible. | Provides a robust estimate for a wide range of models. | Very computationally expensive. |

Experimental Protocols and Workflows

To ensure reproducible and reliable estimation of marginal likelihoods, researchers should follow structured experimental protocols. Below are detailed workflows for two prominent methods.

Protocol 1: Sequential Neural Likelihood Estimation (SNLE)

This protocol is designed for simulation-based inference where the likelihood function is not directly available [13].

1. Problem Formulation: * Define the generative model: ( M: \theta \rightarrow x ), which can simulate data ( x ) from parameters ( \theta ). * Specify a proper prior distribution ( \pi(\theta) ) for the parameters. * Define the observed dataset ( \mathbf{x}^* ).

2. Algorithm Initialization: * Choose a neural density estimator (e.g., a normalizing flow) to act as the surrogate likelihood ( q(\mathbf{x} | \theta) ). * Set the number of sequential rounds ( L ) and the number of simulations per round ( N ).

3. Sequential Training: * For round ( \ell = 1 ) to ( L ): * Proposal: If ( \ell=1 ), sample parameters ( { \thetai } ) from the prior ( \pi(\theta) ). Otherwise, sample from the current approximate posterior (e.g., via MCMC). * Simulation: For each ( \thetai ), simulate a dataset ( \mathbf{x}i \sim p(\cdot | \thetai) ). * Training: Update the neural surrogate ( q^{(\ell)}(\mathbf{x} | \theta) ) on the aggregated set of all parameter-data pairs ( { (\thetai, \mathbf{x}i) } ) from all rounds. * End For

4. Estimation & Output: * The final surrogate likelihood ( q^{(L)}(\mathbf{x}^* | \theta) ) and the prior ( \pi(\theta) ) together form an unnormalized posterior. * Use Sequential Importance Sampling (SIS) or MCMC on this unnormalized posterior to generate samples and compute the final marginal likelihood estimate ( C_L ) [13].

The following diagram illustrates the iterative, sequential nature of the SNLE workflow:

Protocol 2: Likelihood Level Adapted Estimation

This method is highly effective for complex models in computational mechanics and related fields [11].

1. Problem Setup: * Define the parametric model with likelihood ( p(D | \theta) ) and prior ( p(\theta) ).

2. Probability Integral Transformation: * The key insight is to transform the multidimensional parameter space integral into a one-dimensional integral over the likelihood value. * Define ( \xi = p(D | \theta) ) as the likelihood value. * The marginal likelihood becomes ( p(D) = \int_0^{\xi^*} P(\xi) d\xi ), where ( P(\xi) ) is the probability density of the likelihood ( \xi ) under the prior.

3. Adaptive Level Selection: * A sequence of increasing likelihood levels ( \xi1 < \xi2 < ... < \xi_n ) is chosen adaptively. The goal is to select levels that efficiently traverse the range from low to high likelihood regions.

4. Probability Mass Estimation (at each level ( \xit )): * One of three algorithms is used to estimate the probability mass between levels ( \xi{t-1} ) and ( \xi_t ): * Importance Sampling: Uses samples from previous levels to build an importance distribution. * Stratified Sampling: Divides the parameter space into strata for efficient exploration. * MCMC Sampling: Runs Markov chains from samples at the previous level to generate new samples at the current level.

5. Numerical Integration: * The final estimate of the marginal likelihood is computed by summing the products of the estimated probability masses and their corresponding likelihood values (e.g., using a quadrature rule).

The logical flow of this adaptive approach is visualized below:

The Scientist's Toolkit: Research Reagent Solutions

Successfully implementing these computational methods requires a suite of software "reagents." The table below lists essential tools and their functions in the computational workflow.

Table 2: Essential Computational Tools for Bayesian Model Evidence

| Tool Category | Example Implementations | Primary Function in Workflow |

|---|---|---|

| Probabilistic Programming Frameworks | PyMC3, Stan, Pyro, TensorFlow Probability | Provides high-level language to specify complex Bayesian models and automates posterior inference via MCMC and variational inference. |

| Simulation-Based Inference (SBI) Libraries | sbi (Python toolbox) |

Specifically implements methods like SNLE, SNPE, and SNRE for models where the likelihood is intractable but simulations are possible [13]. |

| Neural Density Estimators | Normalizing Flows (e.g., MAF, NSF), Mixture Density Networks | Used within SBI methods to flexibly approximate the likelihood or posterior distribution [13]. |

| Nested Sampling Software | MultiNest, dynesty |

Efficiently computes the marginal likelihood and explores multi-modal posteriors using the nested sampling algorithm [11]. |

| High-Performance Computing (HPC) | CPU Clusters, GPU Accelerators | Accelerates computationally intensive tasks like large-scale parallel simulation, training of neural networks, and running many MCMC chains. |

Application in Drug Development and Pharmaceutical Processes

Bayesian methods, including model selection via marginal likelihoods, are increasingly vital in pharmaceutical development. They help quantify uncertainty and guide decision-making, potentially speeding up the process and reducing experimental burdens [8].

- Adaptive Clinical Trials: The Bayesian framework facilitates trials that use accumulating data to modify aspects of the study (e.g., sample size, randomization ratios) according to a pre-specified plan. Bayes factors can inform interim decisions on efficacy or futility [14] [9].

- Phase I Dose-Finding: Methods like the Continual Reassessment Method (CRM) use Bayesian inference to determine the maximum tolerated dose (MTD) by combining prior information with data from previously dosed subjects [14].

- Pharmaceutical Process Development: Bayesian data-driven models and Bayesian optimization are used to efficiently invent and optimize chemical synthesis routes and process conditions, reducing the number of costly lab experiments required [8].

- Post-Marketing Surveillance: The Bayesian paradigm naturally allows for continuous learning. The posterior distribution from pre-marketing studies becomes the prior for analyzing post-marketing data, updating the understanding of a device's or drug's safety and effectiveness in a larger population [14] [9].

Bayes factors have emerged as a cornerstone of Bayesian hypothesis testing and model comparison, providing a rigorous statistical framework for evaluating the relative evidence for competing models [15]. In computational research, particularly in fields as critical as drug development and disease modeling, the Bayes factor quantifies how strongly observed data support one statistical model over another [5]. Mathematically, the Bayes factor is defined as the ratio of two marginal likelihoods: the likelihood of the data under the alternative hypothesis (H1) to the likelihood of the data under the null hypothesis (H0) [15]. This fundamental definition, expressed as BF10 = p(D|H1)/p(D|H0), provides a coherent mechanism for updating prior beliefs in light of new evidence [15] [16].

Unlike frequentist p-values, which measure the probability of observing data as extreme as, or more extreme than, the actual data assuming the null hypothesis is true, Bayes factors directly quantify the evidence for one hypothesis relative to another [7] [17]. This distinction is crucial for computational researchers who need to make informed decisions based on the weight of evidence rather than arbitrary significance thresholds. The Bayesian approach is particularly valuable in drug development, where it enables more efficient trial designs and formal incorporation of existing knowledge [7] [18]. As Bayesian methods continue to gain traction across scientific disciplines, understanding how to properly interpret Bayes factor values has become an essential skill for researchers, scientists, and drug development professionals engaged in model comparison.

Established Interpretation Scales for Bayes Factors

Comparative Analysis of Interpretation Frameworks

Several interpretation scales have been proposed to translate quantitative Bayes factor values into qualitative evidence assessments. Table 1 summarizes three widely cited frameworks from Jeffreys (1939), Lee and Wagenmakers (2014), and Kass and Raftery (1995) [19].

Table 1: Comparative Interpretation Scales for Bayes Factors

| Bayes Factor Value | Jeffreys (1939) Interpretation | Lee & Wagenmakers (2014) Interpretation | Kass & Raftery (1995) Interpretation |

|---|---|---|---|

| 1-3 | Barely worth mentioning | Anecdotal evidence | Not worth a bare mention |

| 3-10 | Substantial evidence | Moderate evidence | Positive evidence |

| 10-30 | Strong evidence | Strong evidence | Strong evidence |

| 30-100 | Very strong evidence | Very strong evidence | Very strong evidence |

| >100 | Decisive evidence | Extreme evidence | Decisive evidence |

Jeffreys' original scale, developed in 1939, established the foundational categories for evidence interpretation [19]. Kass and Raftery later simplified the scale by eliminating one category and adjusting thresholds, while Lee and Wagenmakers modified the verbal labels to better reflect modern terminology, changing "substantial" to "moderate" as they believed the original sounded too decisive [19] [20]. These scales serve as rough descriptive guides rather than rigid calibration standards, acknowledging that the interpretation should consider context and prior knowledge [21].

Detailed Interpretation Guidelines

For values falling between established categories, researchers can refer to more granular interpretation guidelines. Table 2 provides an expanded view of evidence classifications based on contemporary usage across scientific literature [15] [20] [22].

Table 2: Detailed Bayes Factor Interpretation Guidelines

| Bayes Factor | Evidence Category | Interpretation in Research Context |

|---|---|---|

| >100 | Extreme evidence | Decisive support for H1 over H0 |

| 30-100 | Very strong evidence | Strong empirical support for H1 |

| 10-30 | Strong evidence | Substantial support for H1 |

| 3-10 | Moderate evidence | Positive but not definitive evidence |

| 1-3 | Anecdotal evidence | Minimal evidence for H1 |

| 1 | No evidence | Models equally supported |

| 1/3-1 | Anecdotal evidence | Minimal evidence for H0 |

| 1/10-1/3 | Moderate evidence | Positive evidence for H0 |

| 1/30-1/10 | Strong evidence | Substantial support for H0 |

| 1/100-1/30 | Very strong evidence | Strong empirical support for H0 |

| <1/100 | Extreme evidence | Decisive support for H0 over H1 |

These classifications provide researchers with a common vocabulary for communicating statistical evidence. However, it's important to recognize that what constitutes "strong" evidence may vary by field and context [21]. Extraordinary claims may require higher thresholds of evidence, while replication of established findings might be accepted with more moderate Bayes factors [16].

Methodological Protocols for Bayes Factor Application

Experimental Design Considerations

Implementing Bayes factors effectively in computational research requires careful methodological planning. The Bayes Factor Design Analysis (BFDA) framework provides a structured approach for designing experiments that balance informativeness and efficiency [15]. BFDA allows researchers to determine appropriate sample sizes for both fixed-N designs (where sample size is determined in advance) and sequential designs (where data collection depends on interim evidence assessments) [15].

The experimental workflow for implementing Bayes factors in model comparison research involves several critical stages, from prior specification to evidence interpretation. The following diagram illustrates this sequential process:

For fixed-N designs, researchers determine sample size in advance through simulation studies that estimate the expected strength of evidence for plausible effect sizes [15]. For sequential designs, researchers specify stopping thresholds based on Bayes factor values, allowing data collection to continue until reaching a target evidence level or maximum sample size [15]. This approach is particularly valuable in drug development, where ethical and efficiency considerations favor designs that can reach conclusions with minimal participant exposure to potentially ineffective treatments [7] [18].

Calculation Methods and Technical Implementation

The computational implementation of Bayes factors requires careful attention to the calculation of marginal likelihoods. Several methods have been developed for this purpose, each with distinct strengths and considerations [5] [16].

In practical applications, researchers can utilize specialized software packages and online calculators to compute Bayes factors [20]. The Bayesian approach has been successfully implemented in diverse research contexts, including infectious disease modeling [5], addiction research [20], and rare disease drug development [18]. For complex models where direct calculation of marginal likelihoods is challenging, methods such as importance sampling provide consistent estimators with lower variance compared to alternatives [5].

When calculating Bayes factors, researchers must specify prior distributions for parameters, which should reflect reasonable expectations about effect sizes based on previous research or theoretical considerations [15] [20]. Sensitivity analyses are recommended to assess how conclusions might change under different plausible prior specifications [20].

Applications in Scientific Research and Drug Development

Evidence from Clinical Research and Drug Development

Bayes factors have demonstrated particular utility in clinical research and drug development, where they help address complex evidential questions. Table 3 summarizes key applications and findings from recent studies employing Bayes factor analysis.

Table 3: Bayes Factor Applications in Clinical Research and Drug Development

| Research Context | Bayes Factor Value | Interpretation | Research Impact |

|---|---|---|---|

| Addiction Medicine RCTs [20] | 3-10 (20% of non-significant results) | Moderate evidence for experimental hypothesis | Provided evidence for effects where p-values were non-significant |

| Paclitaxel-Eluting Device Safety [22] | 14.6 (3-5 year mortality) | Moderate evidence for increased mortality | Highlighted safety signal requiring further investigation |

| Rare Disease Trial Design [18] | N/A (design stage) | Informed efficient trial designs | Reduced required sample size while maintaining evidential standards |

| Progressive Supranuclear Palsy Trial [18] | N/A (design stage) | Enabled incorporation of historical data | Reduced placebo arm participants through Bayesian priors |

In the addiction medicine context, a systematic review of randomized controlled trials found that 20% of non-significant findings (p>0.05) actually showed moderate evidence for the experimental hypothesis when evaluated using Bayes factors [20]. This demonstrates how Bayes factors can provide more nuanced interpretations than traditional p-value thresholds, particularly for non-significant results that might otherwise be dismissed as evidence for the null hypothesis.

The application of Bayes factors in drug safety assessment is illustrated by research on paclitaxel-eluting devices, where a Bayes factor of 14.6 for increased mortality at 3-5 years provided moderate but not definitive evidence of harm [22]. This nuanced interpretation appropriately reflected the uncertainty in the findings and helped contextualize the potential risk without overstating the evidence.

Research Reagent Solutions for Bayes Factor Implementation

Successfully implementing Bayes factor analysis requires specific computational tools and methodological approaches. Table 4 catalogues essential "research reagents" for scientists engaged in Bayes factor model comparison studies.

Table 4: Research Reagent Solutions for Bayes Factor Implementation

| Tool Category | Specific Solution | Function | Implementation Considerations |

|---|---|---|---|

| Calculation Tools | Online Bayes Factor Calculators [20] | User-friendly interface for basic Bayes factor computation | Accessible for researchers with limited programming experience |

| R Packages (BayesFactor, rstan) [20] | Advanced Bayesian computation and model comparison | Requires programming proficiency but offers greater flexibility | |

| Importance Sampling Algorithms [5] | Marginal likelihood estimation for complex models | Provides consistent estimators with lower variance | |

| Methodological Frameworks | Bayes Factor Design Analysis (BFDA) [15] | Prospective design of informative and efficient studies | Helps balance evidence strength with resource constraints |

| Informed Prior Specification [15] | Incorporation of existing knowledge into analysis | Requires careful justification and sensitivity analysis | |

| Sequential Analysis Designs [15] | Adaptive data collection based on accumulating evidence | More efficient than fixed-N designs but requires additional planning | |

| Interpretation Guides | Jeffreys' Scale [19] | Qualitative evidence categorization | Established standard but may need contextual adaptation |

| Kass & Raftery Framework [19] | Simplified evidence categorization | Combines categories for more straightforward interpretation |

These research reagents provide the essential components for implementing Bayes factor analysis across diverse research contexts. The choice of specific tools depends on factors such as research question complexity, available computational resources, and researcher expertise. For regulatory applications in drug development, additional considerations include transparency in prior specification and demonstration of operating characteristics [7] [18].

Critical Considerations and Methodological Challenges

Addressing Common Misinterpretations and Errors

Despite their theoretical advantages, Bayes factors are susceptible to misinterpretations that can undermine their appropriate application in research. A significant concern documented in recent literature is the conversion of Bayes factors to equivalent "sigma" significance levels using invalid formulas [16]. This approach overestimates evidence strength and misrepresents Bayesian results within a frequentist framework, potentially leading to overstated conclusions [16].

The relationship between Bayes factors and prior distributions presents another challenge. Bayes factors can be sensitive to prior choices, particularly with small sample sizes [15] [16]. This sensitivity necessitates transparency in prior specification and thorough sensitivity analyses to establish the robustness of findings [20]. Researchers should clearly report the priors used and consider how alternative plausible specifications might affect conclusions.

The sequential use of Bayes factors maintains correct interpretation regardless of analysis frequency or stopping rule, unlike p-values which require adjustment for multiple looks at data [17]. This property makes Bayes factors particularly suitable for adaptive trial designs common in drug development [7] [18].

Contextual Interpretation and Evidential Standards

Verbal categories for Bayes factor interpretation provide helpful guidance but should not be applied mechanistically [21]. The practical significance of a specific Bayes factor value depends on contextual factors including:

- Prior plausibility: Extraordinary claims require more substantial evidence [21]

- Decision consequences: Higher thresholds may be appropriate for high-stakes decisions [7]

- Field-specific standards: Different disciplines may establish conventional evidence thresholds [16]

- Research phase: Exploratory research may accept lower evidence levels than confirmatory studies [18]

Rather than relying solely on categorical labels, researchers should interpret Bayes factors as continuous measures of evidence strength within their specific research context [21]. Reporting actual Bayes factor values alongside verbal classifications allows for more nuanced interpretation and facilitates meta-scientific evaluation [20] [22].

The relationship between statistical evidence and decision-making is complex, particularly in regulated environments like drug development. While Bayes factors quantify evidence between hypotheses, actual decisions incorporate additional factors such as clinical significance, safety considerations, and cost-effectiveness [7] [17]. Bayesian decision theory provides a formal framework for integrating these elements, though practical implementation often involves qualitative judgment alongside quantitative evidence [17].

Common Misconceptions and Widespread Interpretation Errors in Scientific Literature

Within computational research, particularly in fields employing Bayesian model selection, numerous statistical misconceptions persist that undermine the validity and interpretability of scientific findings. These errors range from fundamental misunderstandings of statistical measures to the misapplication of complex model comparison techniques. In the context of Bayes factor model comparison—a method increasingly used to evaluate competing computational theories—these misconceptions can lead to flawed inferences, reduced research reproducibility, and ultimately, misguided scientific conclusions. This guide objectively examines these common pitfalls, provides structured experimental data comparing different methodological approaches, and offers practical protocols to enhance statistical practice.

Common Statistical Misconceptions in Scientific Literature

Fundamental Statistical Misunderstandings

Several foundational statistical concepts are frequently misinterpreted in scientific literature, creating a weak basis for more advanced analytical techniques including Bayesian model comparison.

The P-Value Misinterpretation: Perhaps the most persistent error is the misinterpretation of p-values as the probability that the null hypothesis is true. In reality, a p-value represents the probability of observing data at least as extreme as the current data, assuming the null hypothesis is correct [23]. This misconception dangerously inverts the actual conditional probability and overstates evidence against null hypotheses.

Non-Significant Equals No Effect: Many researchers incorrectly assume that a non-significant result (typically p > 0.05) definitively demonstrates the absence of an effect. This overlooks the critical role of statistical power; a non-significant finding may simply indicate insufficient data or study design limitations to detect a true effect [23]. Proper interpretation requires consideration of confidence intervals and effect sizes rather than binary significance testing.

Single-Study Overreliance: The perception that a single statistical test can conclusively prove a finding remains widespread. This neglects the probabilistic nature of statistical inference and the need for replication across different samples and contexts to establish robust findings [23].

Model Comparison and Selection Errors

Within computational modeling research, specific misconceptions arise around model comparison techniques, particularly concerning Bayes factors and alternative methods.

Neglecting Model Specification Principles: A critical oversight occurs when researchers prioritize readily available statistical models over those specifically tailored to their scientific questions. This violates the "specification-first principle," which holds that model specification should be primary, with statistical inference secondary to scientific inference [3]. Methods that force researchers into particular model specifications potentially sacrifice scientific relevance for computational convenience.

Overlooking Statistical Power in Model Selection: There is a widespread failure to recognize that statistical power for model selection decreases as the model space expands. While power typically increases with sample size for simple hypothesis tests, in model selection contexts, considering more candidate models requires larger samples to maintain the same power for correct model identification [24]. This underappreciated relationship leads to underpowered model comparison studies across psychology and neuroscience.

Misunderstanding Posterior Predictive Methods: Researchers often incorrectly assume posterior predictive methods like WAIC (Watanabe-Akaike information criterion) and LOOCV (leave-one-out cross-validation) can adequately handle nested model comparisons. In reality, these methods struggle when comparing constrained versus unconstrained models, often failing to favor more constrained models even when data strongly support the constraints [3].

Table 1: Common Model Comparison Misconceptions and Their Implications

| Misconception | Correct Interpretation | Field Most Affected |

|---|---|---|

| Bigger datasets always improve model selection | Data quality and relevance matter more than quantity; larger datasets can introduce bias | Computational psychology, neuroscience |

| Fixed-effects approaches suffice for group studies | Random-effects methods better account for between-subject variability in model validity | Cognitive science, neuroimaging |

| Posterior predictive methods handle all constraint types | Bayes factors better accommodate overlapping models with theoretical constraints | Psychological science |

| Model selection consistency is guaranteed with large samples | The "true model" may not be selected even with sufficient data if it doesn't yield best predictions | All computational fields |

Quantitative Evidence: Power Deficiencies in Model Selection

The Statistical Power Framework for Model Selection

Statistical power in model selection contexts has unique properties that differ dramatically from conventional hypothesis testing. A formal power analysis framework for Bayesian model selection reveals two critical relationships: power increases with sample size but decreases as more models are considered [24]. This creates a fundamental trade-off where expanding the model space to include more theoretical alternatives requires substantially larger samples to maintain identification accuracy.

The mathematical formalization of this relationship shows that for a model space of size K and sample size N, the probability of correctly identifying the true model depends on both factors simultaneously. This framework demonstrates that many current studies in psychology and human neuroscience operate with critically low statistical power for model selection, with 41 of 52 reviewed studies having less than 80% probability of correctly identifying the true model [24].

Empirical Evidence from Clinical Trial Applications

Recent large-scale analyses of clinical trial data demonstrate the practical consequences of these statistical issues. When applying Bayes factor analyses to 71,126 results from ClinicalTrials.gov, researchers found that a significant proportion of findings with statistically significant p-values (p ≤ α) showed contradictory evidence when evaluated using Bayes factors [25]. Specifically, the proportion of findings with p ≤ α yet Bayes factor values favoring the null hypothesis closely tracked the significance level α, suggesting these contradictions likely represent Type I errors that would be missed with conventional testing.

Table 2: Analysis of 71,126 Clinical Trial Findings Using Bayes Factors

| Finding Category | Percentage | Interpretation |

|---|---|---|

| Studies setting α ≥ .05 as evidence threshold | 75% | Majority use conventional significance thresholds |

| Significant results (α = .05) with only anecdotal Bayes factor evidence | 35.5% | Over one-third of "significant" results provide weak evidence |

| Candidate Type I errors identified | 4,088 instances | Potential false positives in literature |

| Jeffreys-Lindley paradox instances | 487 identifications | Cases where p-values and Bayes factors strongly disagree |

Methodological Comparisons: Bayes Factors vs. Posterior Predictive Approaches

Theoretical Foundations and Differences

Bayes factors and posterior predictive methods represent two distinct Bayesian perspectives on model comparison with fundamentally different theoretical underpinnings:

Bayes Factors (Prior Predictive Perspective): The Bayes factor examines how well the model (prior and likelihood) explains the observed data based on the prior predictive distribution [26]. It represents a "cruel realist" perspective that penalizes models for not having the best possible prior information about parameters [26].

Posterior Predictive Methods (Cross-Validation): Approaches like cross-validation assess how well a model fit to training data can predict held-out validation data [26]. This represents a "fair judge" perspective that gives each model the best possible prior probability for its parameters to evaluate its optimal performance [26].

The critical distinction lies in their treatment of priors: Bayes factors evaluate the probability of observed data under prior assumptions, while posterior predictive methods are less dependent on priors because they are combined with likelihood before making predictions [26].

Performance on Constrained Model Comparison

The theoretical differences between these approaches manifest practically when comparing models with theoretical constraints, particularly in cases where a constrained model is nested within a more general unconstrained model [3].

For example, when testing an order constraint (e.g., θ > 0 representing a positive treatment effect) against an unconstrained alternative, posterior predictive methods like WAIC fail to appropriately favor the constrained model even when data strongly support the constraint [3]. This occurs because when data are compatible with both models, posteriors under both are approximately equal, leading posterior predictive methods to treat the models as equivocal regardless of the constraint.

In contrast, Bayes factors appropriately incorporate the a priori prediction of the constraint through the prior distribution, applying Occam's razor to favor the constrained model when data support it [3]. This capacity makes Bayes factors particularly useful for assessing ordinal constraints, which are common in psychological science [3].

Table 3: Comparative Performance of Model Comparison Methods

| Method Characteristic | Bayes Factor | Posterior Predictive Methods |

|---|---|---|

| Theoretical basis | Prior predictive distribution | Posterior predictive distribution |

| Handling of priors | Highly sensitive to prior choice | Less dependent on priors (with sufficient data) |

| Performance with constraints | Appropriately favors constrained models when supported by data | Fails to favor constrained models even with supporting data |

| Model specification flexibility | Honors specification-first principle | Forces certain model specifications |

| Computational requirements | Often computationally challenging | Generally more computationally tractable |

| Interpretation perspective | "Cruel realist" - penalizes poor priors | "Fair judge" - evaluates optimal performance |

Experimental Protocols for Robust Model Comparison

Power Analysis for Bayesian Model Selection

Before conducting model comparison studies, researchers should implement formal power analysis to ensure adequate sample sizes. The protocol involves:

Define Model Space: Explicitly specify all candidate models (K) to be compared, ensuring they represent distinct theoretical positions relevant to the research question.

Specify Data-Generating Process: Identify the presumed true data-generating model and its parameters based on pilot data or literature review.

Simulate Synthetic Datasets: Generate multiple synthetic datasets across a range of sample sizes (N) using the identified data-generating process.

Compute Model Evidence: Apply Bayesian model selection to each synthetic dataset, calculating model evidence for all candidate models.

Estimate Identification Rate: Compute the proportion of simulations where the true data-generating model is correctly identified as the best model.

Determine Target Sample Size: Identify the sample size required to achieve acceptable power (typically ≥ 80%) for correct model identification.

This protocol directly addresses the underappreciated relationship between model space size and statistical power, helping researchers avoid underpowered model comparison studies [24].

Random Effects Bayesian Model Selection Protocol

For studies involving multiple participants, the fixed effects approach to model selection—which assumes a single model generates all subjects' data—should be replaced with random effects methods that account for between-subject variability in model validity [24]. The experimental protocol includes:

Model Evidence Calculation: For each participant n and model k, compute the model evidence ℓnk = p(Xn∣Mk) by marginalizing over model parameters.

Dirichlet Prior Specification: Assume model probabilities follow a Dirichlet distribution p(m) = Dir(m∣c) with concentration parameters typically set to c = 1 for equal prior probability.

Multinomial Data Generation: Assume each participant's data are generated by exactly one model, with model k expressed with probability mk.

Posterior Probability Estimation: Estimate the posterior probability distribution over the model space m given the model evidence values across all participants.

This random effects approach acknowledges the inherent variability in human populations and provides more nuanced inferences about cognitive processes and neural mechanisms [24].

Research Reagent Solutions for Bayesian Model Comparison

Table 4: Essential Computational Tools for Robust Model Comparison

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Power Analysis Frameworks | Calculate required sample sizes for target model identification rates | Custom simulation pipelines based on [24] |

| Bridge Sampling Methods | Compute marginal likelihoods for Bayes factor calculation | bridgesampling R package [27] |

| Cross-Validation Tools | Approximate predictive accuracy for model comparison | loo R package for PSIS-LOO-CV [26] [27] |

| Random Effects BMS | Account for between-subject variability in model expression | SPM software for neuroimaging; custom implementations [24] |

| Generalized Bayes Factor Approximations | Enable Bayes factor calculation from p-values in meta-analyses | eJAB method for clinical trial reanalysis [25] |

| Model Stacking Algorithms | Combine predictions from multiple models without selection | Bayesian model stacking via loo package [27] |

The landscape of scientific inference is increasingly dependent on sophisticated statistical approaches like Bayesian model comparison, making the identification and correction of common misconceptions essential for research progress. The evidence presented demonstrates that fundamental errors in interpreting p-values, underestimating power requirements for model selection, and misapplying posterior predictive methods to constrained theoretical comparisons significantly impact research validity. By adopting the rigorous experimental protocols and computational tools outlined here, researchers can enhance the robustness of their findings, particularly in Bayes factor model comparison computational research. The move toward methods that honor the specification-first principle, properly account for between-subject variability, and maintain adequate statistical power will strengthen scientific inference across multiple disciplines, ultimately leading to more reproducible and meaningful research outcomes.

The landscape of statistical inference is undergoing a profound transformation, moving from the long-dominant frequentist paradigm toward Bayesian approaches. This shift centers on the replacement of traditional p-values with Bayes Factors (BF), representing not merely a technical change but a fundamental philosophical reorientation in how evidence is quantified. This guide objectively compares these methodologies, examining their performance characteristics, computational requirements, and practical implications for research in computational biology and drug development.

Statistical inference forms the backbone of scientific discovery, particularly in fields like clinical research and drug development where decisions have profound consequences. For nearly a century, the frequentist approach with its cornerstone p-value has dominated scientific practice. However, concerns about p-value misuse and misinterpretation have stimulated a seismic shift toward Bayesian alternatives, particularly Bayes Factors [28] [29].

The p-value represents the probability of obtaining results as extreme as the observed data, assuming the null hypothesis (H₀) is true [28] [30]. In contrast, the Bayes Factor directly quantifies the evidence for one hypothesis relative to another by comparing how likely the data are under each hypothesis [28] [31]. This distinction represents more than a mathematical technicality—it embodies a fundamental philosophical divergence in how we conceptualize evidence, uncertainty, and the very nature of statistical reasoning.

Conceptual Foundations and Interpretation

The Frequentist Framework: P-Values and Significance

The p-value operates under a fixed-threshold binary decision framework. A result is deemed "statistically significant" when the p-value falls below a conventional cutoff (typically 0.05), indicating that the observed data would be unusual if the null hypothesis were true [28] [30]. However, this approach has critical limitations:

- Indirect Evidence: P-values assess the probability of data given a hypothesis, not the probability of hypotheses given data [31] [29].

- Sample Size Sensitivity: In very large samples, trivial effects can yield significant p-values, while in small samples, important effects may fail to achieve significance [28] [30].

- Misinterpretation Risk: The p-value is often mistaken as the probability that the null hypothesis is true, leading to overstatement of evidence against H₀ [29].

The Bayesian Framework: Bayes Factors and Evidence Gradation

The Bayes Factor offers a different proposition—a continuous measure of evidence that directly compares how well two hypotheses predict the observed data [28] [31]. The BF₁₀ represents the ratio of the probability of the data under the alternative hypothesis (H₁) to its probability under the null hypothesis (H₀). This framework provides several conceptual advantages:

- Direct Hypothesis Comparison: BF quantifies which hypothesis better predicts the observed data [28] [29].

- Evidence Continuum: Unlike binary significance, BF provides graded evidence on a continuous scale [28].

- Prior Incorporation: BF formally incorporates prior knowledge through the prior distribution, though this introduces sensitivity to prior choice [28] [32].

Table 1: Interpretation Guidelines for Bayes Factors and P-Values

| Bayes Factor (BF₁₀) Value | Interpretation | P-Value Equivalent | Interpretation |

|---|---|---|---|

| > 100 | Strong to very strong evidence for H₁ | < 0.01 | Strong evidence against H₀ |

| 30 - 100 | Strong evidence for H₁ | 0.01 - 0.05 | Moderate to strong evidence against H₀ |

| 10 - 30 | Moderate to strong evidence for H₁ | - | - |

| 3 - 10 | Weak to moderate evidence for H₁ | 0.05 - 0.1 | Weak or no evidence against H₀ |

| 1 - 3 | Negligible evidence for H₁ | > 0.1 | Little to no evidence against H₀ |

| 1 | No evidence | - | - |

| 0.33 - 1 | Negligible evidence for H₀ | - | - |

| 0.1 - 0.33 | Weak to moderate evidence for H₀ | - | - |

| 0.03 - 0.1 | Moderate to strong evidence for H₀ | - | - |

| 0.01 - 0.03 | Strong evidence for H₀ | - | - |

| < 0.01 | Strong to very strong evidence for H₀ | - | - |

Source: Adapted from Fordellone et al. (2025) [28]

Quantitative Performance Comparison

Simulation Studies: Sensitivity to Sample Size and Effect Size

Simulation studies directly comparing p-values and Bayes Factors reveal critical performance differences, particularly regarding sensitivity to sample size and effect size [28]. In a two-sample t-test simulation designed to evaluate these behaviors:

- P-values demonstrated higher sensitivity to variations in both sample size and effect size compared to Bayes Factors [28].

- Bayes Factors showed more caution in declaring evidence for the alternative hypothesis, especially with moderate effect sizes. For instance, with an effect size of 0.5 and sample size of 150, p-values reached extremely low values while Bayes Factors indicated only moderate evidence for H₁ [28].

- Sample size impact differed: P-values were primarily sensitive to sample size when the null hypothesis was false, whereas Bayes Factors were affected by sample size regardless of the ground truth [28].

Table 2: Comparative Performance in Simulation Studies

| Condition | P-Value Behavior | Bayes Factor Behavior | Practical Implication |

|---|---|---|---|

| Large sample size with small effect | Often significant (p < 0.05) | Often shows only weak evidence (BF < 3) | BF reduces false positives for trivial effects |

| Small sample size with moderate effect | May not reach significance | Can show moderate evidence with appropriate prior | BF can be more efficient with limited data |

| Very large effect (d = 0.8+) | Highly significant (p < 0.001) | Shows strong evidence (BF > 30) | Both methods agree on strong effects |

| True null hypothesis | Correctly non-significant ~95% of time (α = 0.05) | Shows evidence for H₀ based on prior and data | BF can provide positive evidence for null |

Source: Adapted from Fordellone et al. (2025) and Assaf et al. (2018) [28] [29]

Meta-Analytic Comparisons: Real-World Applications

Comparative performance extends beyond simulations to real-world research applications. A meta-analytic comparison in colorectal research reanalyzed two previously published meta-analyses using both frequentist and Bayesian approaches [31]:

- Trans-anastomotic tubes (TAT) analysis: The frequentist approach yielded an odds ratio (OR) of 0.670 with 95% CI [0.386, 1.162] and p = 0.15, while the Bayesian approach produced a similar OR of 0.719 with 95% CrI [0.43, 1.17] but with BF₁₀ = 0.681, indicating the null hypothesis was 1.47 times more likely than the alternative [31].

- Indocyanine green (ICG) analysis: Both methods supported the intervention, but the Bayesian BF₁₀ of 18.93 indicated the data were 19 times more likely under H₁ than H₀, providing a direct probability statement impossible with p-values alone [31].

- Sequential analysis advantages: Bayesian approaches naturally accommodate sequential updating, with increasing confidence in the alternative hypothesis as more studies are added, without requiring multiple testing corrections [31].

Methodological Protocols and Workflows

Experimental Design and Data Collection Protocols

The integration of Bayesian methods necessitates modified experimental protocols, particularly in clinical trial design:

- Adaptive Designs: Bayesian approaches enable revolutionary modifications in trial design, including sample size re-estimation, arm dropping, and randomization probability adjustments [33]. These adaptations improve ethical and efficiency considerations without compromising type I error control when properly implemented [33].

- Platform Trials: Designs like I-SPY 2 use Bayesian adaptive methodologies to evaluate multiple treatments simultaneously across patient subgroups, dramatically increasing trial efficiency [33].

- Active Learning Frameworks: Methods like BATCHIE (Bayesian Active Treatment Combination Hunting via Iterative Experimentation) use information theory and probabilistic modeling to design maximally informative experiments sequentially, particularly valuable in large-scale combination drug screens [34].

Computational Implementation and Analysis Workflow

Bayesian analysis requires specialized computational workflows distinct from traditional frequentist approaches:

Diagram 1: Bayesian analysis workflow

- Prior Elicitation: Formally incorporating prior knowledge through probability distributions, with approaches ranging from non-informative/reference priors to strongly informative priors based on existing literature [32] [35].

- Posterior Computation: Utilizing Markov Chain Monte Carlo (MCMC) methods, including Metropolis-Hastings, Gibbs Sampling, and Hamiltonian Monte Carlo (HMC) to approximate posterior distributions [35].

- Convergence Diagnostics: Assessing MCMC algorithm performance using trace plots, autocorrelation diagnostics, Gelman-Rubin statistics (R-hat), and effective sample size (ESS) calculations [35].

- Model Checking: Employing posterior predictive checks and Bayesian model comparison techniques to evaluate model adequacy [29] [35].

Table 3: Essential Computational Tools for Bayesian Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| Stan | Probabilistic programming language | General Bayesian modeling, uses HMC/NUTS sampling |

| JAGS | Gibbs sampler for Bayesian analysis | Standard regression models, conjugate priors |

| R packages (BayesFactor, metaBMA) | Specific BF calculation and meta-analysis | Hypothesis testing, evidence synthesis |

| BATCHIE platform | Active learning for combination screens | Adaptive drug screening experimental design |

| Power Prior Methods | Historical data incorporation | Clinical trials with previous study data |

| Calibrated Bayes Factor | Prior weight parameter elicitation | Robust Bayesian analysis with prior-data conflict |

Source: Compiled from multiple sources [31] [32] [35]

Comparative Strengths and Limitations in Research Applications

Advantages of Bayes Factors

- Evidence Continuum: BF provides graded evidence strength rather than binary significance, better reflecting scientific reasoning [28] [29].

- Direct Probability Statements: BF quantifies relative evidence for competing hypotheses, enabling statements about hypothesis probabilities [31].

- Explicit Prior Incorporation: Formalizes the use of existing knowledge while allowing sensitivity analysis [28] [35].

- Sample Size Adaptability: Can provide meaningful evidence with appropriate priors even in smaller samples [31] [35].

- Sequential Analysis Compatibility: Naturally accommodates interim analysis and accumulating evidence without multiple testing penalties [31] [33].

Limitations and Considerations

- Computational Intensity: Requires specialized software and computational resources for all but the simplest models [35].

- Prior Sensitivity: Results can be sensitive to prior choice, particularly with limited data [28] [32].

- Implementation Complexity: Requires additional methodological expertise compared to standard frequentist tests [29] [35].

- Regulatory Acceptance: While growing, Bayesian methods still face more scrutiny in some regulatory contexts [33].

Advantages of P-Values

- Computational Simplicity: Easily calculated for standard analyses with widespread software support [28] [29].

- Familiarity: Well-established interpretation (despite common misunderstandings) throughout scientific literature [29].

- Regulatory Precedent: Long history of acceptance in regulatory submissions [33].

Limitations of P-Values

- Binary Decision Framework: Forces continuous evidence into significant/non-significant dichotomy [28] [30].

- Sample Size Dependence: Overstates evidence in large samples, understates evidence in small samples [28].

- Misinterpretation Vulnerability: Commonly mistaken for the probability that H₀ is true [29].

- Multiple Testing Problems: Requires adjustments for sequential and multiple analyses [33].

The comparison between Bayes Factors and traditional p-values reveals a landscape in transition. While p-values offer simplicity and familiarity, Bayes Factors provide a more nuanced, direct, and philosophically coherent framework for scientific evidence. The performance data demonstrates that BF offers particular advantages in contexts requiring graded evidence interpretation, sequential analysis, and explicit incorporation of prior knowledge.

For computational researchers and drug development professionals, the shift toward Bayesian methods represents more than a statistical technicality—it enables more adaptive, efficient, and evidentially transparent research practices. As computational power increases and methodological tools mature, the Bayesian paradigm promises to address many of the fundamental limitations that have long plagued traditional significance testing, potentially ushering in a new era of statistical reasoning in scientific discovery.

Computational Implementation and Real-World Applications Across Disciplines

Bayesian model comparison is a fundamental tool for researchers, scientists, and drug development professionals engaged in computational research. At the heart of this framework lies the Bayes factor, which quantifies the evidence that data provides for one model over another. This factor is calculated as the ratio of the marginal likelihoods (also known as model evidence) of competing models [36] [37]. The marginal likelihood, represented as Z = P(D|M) in Bayes' theorem, is the probability of observing the data given a model, obtained by integrating over all model parameters [38] [39]. Despite its conceptual elegance, computing this high-dimensional integral is analytically intractable for nearly all realistic models, necessitating sophisticated computational approximation techniques [37].

This guide provides an objective comparison of the three primary sampling algorithms used for evidence approximation: Markov Chain Monte Carlo (MCMC), Sequential Monte Carlo (SMC), and Nested Sampling. We evaluate their theoretical foundations, performance characteristics, and practical implementation, with a specific focus on their application within Bayes factor model comparison computational research. By synthesizing current experimental data and methodological insights, we aim to equip researchers with the knowledge needed to select appropriate algorithms for their specific evidence approximation challenges.

Algorithmic Foundations and Evidence Estimation

Markov Chain Monte Carlo (MCMC)

MCMC methods construct a reversible Markov chain that explores the parameter space, with the chain's equilibrium distribution matching the target posterior distribution [40] [41]. The Metropolis-Hastings algorithm, the canonical MCMC method, operates through a propose-evaluate-accept/reject cycle: it generates a candidate parameter value from a proposal distribution, computes the acceptance probability based on the ratio of posterior densities, and then probabilistically accepts or rejects this candidate [37]. While MCMC efficiently generates correlated samples from the posterior distribution, it faces significant challenges for evidence computation. The primary limitation is that MCMC does not directly estimate the marginal likelihood, requiring additional techniques such as importance sampling or bridge sampling to approximate Z from posterior samples [37].

Sequential Monte Carlo (SMC)

SMC methods are population-based algorithms that propagate a collection of weighted particles through a sequence of intermediate distributions, gradually transitioning from a tractable reference distribution (often the prior) to the complex target distribution (the posterior) [38] [37]. The algorithm iterates through three core steps: reweighting (adjusting particle weights via importance sampling), resampling (selectively replicating high-weight particles and discarding low-weight ones), and moving (applying MCMC kernels to diversify particles) [38]. A key advantage of SMC for evidence approximation is that it directly computes the marginal likelihood as a natural byproduct of the annealing process, by tracking the product of normalized weights across iterations [37]. This provides SMC with a significant practical advantage for model comparison tasks.

Nested Sampling

Nested Sampling takes a fundamentally different approach by transforming the multidimensional evidence integral into a one-dimensional integral over prior volume [36] [39]. The algorithm maintains a set of live points that explore the parameter space, iteratively discarding the point with the lowest likelihood and replacing it with a new point drawn from the prior subject to a higher likelihood constraint [36]. As the algorithm progresses, the prior volume shrinks exponentially, and the evidence is computed by summing the product of likelihoods and prior volumes associated with discarded points [39]. This design makes Nested Sampling uniquely specialized for evidence computation as a primary objective, rather than treating it as a secondary byproduct.

Table 1: Core Methodological Approaches to Evidence Approximation

| Algorithm | Primary Mechanism | Evidence Estimation | Theoretical Basis |

|---|---|---|---|

| MCMC | Markov chain exploration of parameter space | Indirect (requires additional methods) | Stationary distribution of constructed chain [40] |

| SMC | Population evolution through intermediate distributions | Direct (natural byproduct) | Sequential Importance Sampling/Resampling [38] [37] |

| Nested Sampling | Prior volume integration constrained by likelihood | Direct (primary objective) | Transformation of evidence integral [36] [39] |

Performance Comparison and Experimental Data

Computational Efficiency and Scalability

Recent experimental comparisons provide valuable insights into the performance characteristics of these algorithms. In Bayesian deep learning applications, parallel implementations of both MCMC (MCMC∥) and SMC (SMC∥) have been systematically evaluated on benchmarks including MNIST, CIFAR, and IMDb datasets [42]. The findings revealed that both methods perform comparably to their non-parallel implementations in terms of performance and total cost when run for sufficient durations, with both suffering from "catastrophic non-convergence" if terminated prematurely [42].

In high-dimensional multimodal sampling problems from lattice field theory—which serve as important benchmarks for complex posterior landscapes—GPU-accelerated particle methods (SMC and Nested Sampling) have demonstrated competitive performance against state-of-the-art neural samplers [43]. Simple particle-based methods with minimal tuning achieved strong results on challenging bimodal distributions, matching or outperforming more complex neural approaches in both sample quality and wall-clock time while simultaneously estimating the partition function [43].

Evidence Approximation Accuracy

The accuracy of marginal likelihood estimation is particularly crucial for reliable Bayes factor computation. SMC methods demonstrate advantage here, with recent methodological improvements like Persistent Sampling (PS)—an SMC extension that retains particles from previous iterations—showing significantly reduced variance in marginal likelihood estimates compared to standard approaches [38]. This enhancement addresses particle impoverishment and mode collapse, resulting in more accurate posterior approximations and more reliable model comparison [38].

Nested Sampling's direct focus on evidence computation naturally provides robust estimates, though its performance depends heavily on the efficiency of generating new samples satisfying the likelihood constraint [36]. The development of dynamic Nested Sampling algorithms has further improved computational efficiency by dynamically adjusting how samples are allocated across different regions of the parameter space [36].

Table 2: Empirical Performance Characteristics in Benchmark Studies

| Algorithm | Multimodal Handling | Marginal Likelihood Estimation | Parallelization Efficiency | Wall-Clock Performance |

|---|---|---|---|---|

| MCMC | Struggles with poorly mixing chains [37] | Requires additional computations [37] | Parallel chains require careful bias control [42] | Varies with model complexity and tuning |

| SMC | Effective through particle diversity [37] | Low-variance, direct estimates [38] | High (natural parallelizability) [42] [37] | Competitive with state-of-the-art alternatives [43] |

| Nested Sampling | Good with appropriate sampling [36] | Direct, specialized computation [36] | Moderate (live points can be parallelized) | Efficient for evidence-focused tasks [43] |

Implementation Protocols and Diagnostic Approaches

Experimental Methodology for Algorithm Evaluation

Systematic evaluation of sampling algorithms requires standardized methodologies. For parallel implementations, researchers should run multiple independent chains (for MCMC∥) or islands (for SMC∥) and monitor convergence using diagnostic measures such as potential scale reduction factors [42]. Computational cost should be assessed in terms of both total computational cost and wall-clock time, acknowledging that SMC's inherent parallelizability can provide practical time savings despite similar total computational requirements [42].

Benchmarking should include both well-characterized synthetic problems where ground truth is known and real-world datasets relevant to the target application domain [43]. For evidence approximation specifically, algorithms should be evaluated on models with analytically computable marginal likelihoods to verify estimation accuracy before proceeding to more complex models [37].

Diagnostic Frameworks and Convergence Assessment

Robust diagnostics are essential for verifying algorithm performance. For Nested Sampling, dedicated diagnostics include the U-test for verifying that the rank of the likelihood of replacement points follows the expected uniform distribution, as well as consistency checks across independent runs [36]. For SMC methods, monitoring the effective sample size (ESS) throughout iterations provides a quantitative measure of particle degeneracy and triggers resampling when diversity drops too low [44].

MCMC diagnostics are more established, including trace plot examination, calculation of Gelman-Rubin statistics for multiple chains, and assessment of autocorrelation to ensure sufficient chain mixing and convergence [41] [37]. For all algorithms, simulation-based calibration provides a general framework for verifying that inference procedures are working correctly [36].

Essential Computational Materials

Successful implementation of these sampling algorithms requires both theoretical understanding and practical tools. Key "research reagent solutions" for evidence approximation include:

- Reference Distributions: Well-chosen prior distributions that encapsulate domain knowledge while remaining tractable for initial sampling [38] [37].

- Transition Kernels: MCMC mutation steps (e.g., Metropolis-Hastings, Hamiltonian Monte Carlo) that maintain detailed balance while efficiently exploring parameter spaces [38] [43].

- Tempering Schedules: Sequences of intermediate distributions that gradually transition from prior to posterior, typically constructed via likelihood tempering: pt(θ) ∝ ℒ(θ)^βt π(θ) with 0 = β1 < ... < βT = 1 [38] [37].

- Resampling Schemes: Algorithms (multinomial, stratified, systematic) for replenishing particle populations while maintaining asymptotic correctness [44].

- Proposal Distributions: Parameterized distributions for generating new candidates, often adapted using particle covariance estimates [43].

Several sophisticated software packages implement these algorithms:

- Blackjax: Provides GPU-accelerated implementations of SMC samplers and Nested Sampling, particularly useful for high-dimensional problems [43].

- MultiNest: Implements Nested Sampling with multiple ellipsoidal rejection sampling, effective for multimodal posteriors [36].

- PolyChord: Nested Sampling software that scales efficiently with parameter dimension using slice sampling [36].

- Dynesty: Python implementation of dynamic Nested Sampling that adaptively allocates samples [36].

- BUGS/JAGS: Historical standards for MCMC implementation, though increasingly supplemented with more modern alternatives.

Algorithm Workflows and Logical Relationships

The fundamental processes of the three sampling algorithms can be visualized through their characteristic workflows. The following diagram illustrates the logical sequence of operations for MCMC, SMC, and Nested Sampling methods, highlighting their distinct approaches to evidence approximation: