Bayesian Models for Chemical Probe Quality Prediction: Advancing Drug Discovery with Machine Learning

This article explores the transformative role of Bayesian statistical models in predicting the quality and viability of chemical probes for drug discovery.

Bayesian Models for Chemical Probe Quality Prediction: Advancing Drug Discovery with Machine Learning

Abstract

This article explores the transformative role of Bayesian statistical models in predicting the quality and viability of chemical probes for drug discovery. It covers foundational concepts, demonstrating how Bayesian methods leverage prior knowledge and existing data to make probabilistic assessments. The review details key methodological implementations, including Naïve Bayesian classifiers and active learning frameworks, for evaluating molecular properties and identifying undesirable compounds. It further addresses practical challenges in model optimization and uncertainty quantification, and provides a comparative analysis of Bayesian approaches against traditional filtering rules. Aimed at researchers and drug development professionals, this synthesis offers critical insights for integrating robust, data-driven Bayesian strategies into the early stages of pharmaceutical research to improve efficiency and success rates.

The Bayesian Revolution in Chemical Probe Assessment: From Foundational Principles to Practical Need

The Chemical Probe Quality Problem

Chemical probes are small molecules used to investigate protein function in biological systems, serving as essential tools for target validation and basic research. A significant challenge, however, is that many investigational compounds used in scientific literature are weak, non-selective, or generate artifactual results, leading to erroneous biological conclusions [1]. The National Institutes of Health (NIH) invested heavily in high-throughput screening efforts through the Molecular Libraries Program, producing over 300 chemical probes. A critical evaluation found that over 20% of these probes were undesirable based on criteria including potential chemical reactivity, overly extensive literature references suggesting promiscuity, or uncertain biological quality [2]. This high failure rate underscores the critical need for robust methods to evaluate chemical probe quality before their use in research.

Defining High-Quality Chemical Probes

Consensus criteria have been established to define high-quality chemical probes. These molecules must demonstrate:

- High potency: Half-maximal inhibitory concentration or dissociation constant < 100 nM in biochemical assays; half-maximal effective concentration < 1 μM in cellular assays [1]

- Excellent selectivity: >30-fold selectivity within the protein target family, with extensive profiling against off-targets outside the primary target family [1]

- Evidence of on-target engagement: Demonstration of direct target modulation in cellular and organismal models according to the Pharmacological Audit Trail concept [1]

- Avoidance of undesirable mechanisms: Compounds should not be nonspecific electrophiles, redox cyclers, chelators, or colloidal aggregators that promiscuously modulate biological targets through artifactual mechanisms [1]

Quantitative Analysis of Probe Characteristics

Analysis of molecular properties for NIH probes classified as desirable versus undesirable revealed distinct trends. Desirable compounds tended to exhibit higher pKa, molecular weight, heavy atom count, and rotatable bond numbers [2]. The following table summarizes key molecular properties analyzed in chemical probe assessment:

Table 1: Molecular Properties in Chemical Probe Quality Assessment

| Molecular Property | Impact on Probe Quality | Analysis Method |

|---|---|---|

| pKa | Higher pKa observed in desirable probes [2] | Marvin suite (ChemAxon) [2] |

| Molecular Weight | Higher molecular weight observed in desirable probes [2] | Calculated from structure [2] |

| Heavy Atom Count | Higher heavy atom count observed in desirable probes [2] | Calculated from structure [2] |

| Rotatable Bond Number | Higher rotatable bond number observed in desirable probes [2] | Calculated from structure [2] |

| Lipinski Score | Assesses drug-likeness based on multiple properties [2] | Calculated using standard rules [2] |

| Polar Surface Area | Influences cell permeability [2] | Marvin suite (ChemAxon) [2] |

Established Filtering Methods for Probe Assessment

Several computational methods have been developed to flag problematic compounds:

- PAINS (Pan Assay INterference compoundS): A set of filters identifying chemical substructures associated with frequent hitting in high-throughput screens [2]

- REOS (Rapid Elimination of Swill): Vertex-developed filters for flagging molecules that may be false positives [2]

- QED (Quantitative Estimate of Drug-likeness): A desirability-based measure of drug-likeness that avoids molecular property inflation [2]

- BadApple: A promiscuity prediction method that learns from "frequent hitters" in screening data [2]

Table 2: Computational Methods for Assessing Chemical Probes

| Method | Primary Function | Key Features |

|---|---|---|

| PAINS Filters | Identifies promiscuous assay interference compounds [2] | Uses >400 substructural features; implemented in FAFDrugs2 program [2] |

| QED | Quantifies drug-likeness [2] | Based on concept of desirability; uses open source software from SilicosIt [2] |

| BadApple | Predicts compound promiscuity [2] | Scaffold-based prediction using public screening data [2] |

| Ligand Efficiency | Measures binding efficiency relative to molecular size [2] | Integrates binding affinity with molecular properties [2] |

Bayesian Models for Probe Quality Prediction

Bayesian modeling approaches offer a powerful computational framework for predicting chemical probe quality by learning from expert medicinal chemistry evaluations. These methods can capture the complex decision-making processes of experienced chemists who assess compounds based on multiple criteria including literature profiles and chemical reactivity [2].

Bayesian Model Development Protocol

Objective: Develop a Bayesian classification model to predict medicinal chemists' assessments of chemical probe quality.

Dataset Preparation:

- Source: NIH chemical probes compiled from PubChem using NIH PubChem Compound Identifier as the defining field [2]

- Chiral Compounds: Two-dimensional depictions searched in CAS SciFinder with associated references defining intended structures [2]

- Expert Scoring: Experienced medicinal chemist evaluates each probe based on established criteria [2]

- Undesirable Probes: Compounds scoring 0 meet any of these criteria:

- >150 references to biological activity (suggesting low selectivity)

- Zero literature references for probes not of recent vintage

- No CAS RegNo with chemistry unexplored in drugs

- Predicted chemical reactivity [2]

- Desirable Probes: All other probes score 1 [2]

- Data Availability: Data and molecular structures publicly available in CDD Public database [2]

Descriptor Calculation:

- Remove salts from molecules prior to calculation [2]

- Calculate molecular properties using Marvin suite: molecular weight, logP, H-bond donors, H-bond acceptors, Lipinski score, pKa, heavy atom count, polar surface area, rotatable bond number [2]

- Calculate additional descriptors using Discovery Studio: AlogP, number of rings, aromatic rings, molecular fractional polar surface area [2]

Model Training:

- Use sequential Bayesian model building with iterative testing as additional probes are included [2]

- Apply Naïve Bayesian classification for modeling [2]

- Compare different machine learning methods and validate externally [2]

- Compare results with PAINS, QED, BadApple, and ligand efficiency metrics [2]

Implementation Considerations:

- Use function class fingerprints for structure representation [2]

- Employ Bayesian Model Selection and Averaging to enhance prediction accuracy and evaluate model uncertainty [3]

- The approach achieves accuracy comparable to other measures of drug-likeness and filtering rules [2]

Advanced Bayesian Applications in Drug Discovery

Beyond chemical probe assessment, Bayesian methods are advancing multiple drug discovery domains. Bayesian active learning platforms enable efficient large-scale combination screens by dynamically designing experiments to be maximally informative based on previous results [4]. The BATCHIE platform uses Probabilistic Diameter-based Active Learning to select optimal drug combination experiments, significantly reducing the experimental burden required to identify effective combinations [4].

In pharmaceutical process development, Bayesian approaches quantify uncertainty to enable faster decision-making across route and process invention, optimization, and characterization stages [5]. These methods help select optimal process conditions with fewer experiments by incorporating uncertainty associated with each outcome [5].

Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for Bayesian Chemical Probe Assessment

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| CDD Vault | Public database hosting chemical structures and associated data [2] | Contains published NIH probe data for model development |

| Marvin Suite | Calculates molecular properties and descriptors [2] | Used for MW, logP, H-bond donors/acceptors, pKa, PSA |

| FAFDrugs2 | Implements PAINS filtering and other structural alerts [2] | Flags potential assay interference compounds |

| SilicosIt QED | Computes quantitative estimate of drug-likeness [2] | Open source tool for desirability assessment |

| CAS SciFinder | Provides literature references and CAS RegNo data [2] | Critical for assessing probe publication history |

| BATCHIE Platform | Bayesian active learning for combination screens [4] | Open source tool for adaptive experimental design |

| Bayesian Tensor Factorization | Models drug combination responses [4] | Captures individual drug and interaction effects |

The critical challenge of chemical probe quality in drug discovery represents a significant bottleneck in biomedical research. Bayesian modeling approaches offer a powerful computational framework for predicting probe quality by learning from expert medicinal chemistry evaluations. These methods successfully capture complex expert decision-making processes and achieve accuracy comparable to established filtering rules, providing researchers with valuable tools for prioritizing high-quality chemical probes. As Bayesian methods continue to evolve through active learning platforms and uncertainty quantification approaches, they promise to further enhance the efficiency and reliability of chemical probe selection and development.

In pharmaceutical science, the choice of a statistical framework is not merely a technical decision but a foundational one that shapes every aspect of drug development, from trial design to regulatory submission. The two dominant paradigms—Frequentist and Bayesian statistics—offer fundamentally different approaches to inference, probability, and decision-making. The Frequentist approach, with its roots in the early 20th-century work of Ronald Fisher, Jerzy Neyman, and Egon Pearson, interprets probability as the long-run frequency of events across repeated trials and treats parameters as fixed, unknown constants [6]. This approach forms the backbone of traditional clinical trial analysis through null hypothesis significance testing (NHST), p-values, and confidence intervals. In contrast, the Bayesian approach, named after Thomas Bayes and refined by statisticians like Bruno de Finetti and Leonard Savage, views probability as a degree of belief and treats parameters as random variables with associated probability distributions [6] [7]. This philosophical difference manifests practically in how evidence is accumulated, with Bayesian methods formally incorporating prior knowledge through prior distributions that are updated with new data to form posterior distributions.

The pharmaceutical industry is witnessing a paradigm shift, with Bayesian methods gaining traction in areas where traditional Frequentist approaches face limitations. The U.S. Food and Drug Administration (FDA) has recognized this potential, noting that "Bayesian statistics can be used in practically all situations in which traditional statistical approaches are used and may have advantages" [8]. Specifically, the FDA highlights situations where high-quality, relevant external information exists, allowing studies to "be completed more quickly and with fewer participants" while making it "easier to adapt the design of a Bayesian trial based on the accumulated information compared with a traditional trial" [8]. This review systematically contrasts these two statistical paradigms within pharmaceutical contexts, providing application notes, experimental protocols, and practical frameworks for implementation in drug development programs.

Core Philosophical and Methodological Differences

Foundational Principles

The distinction between Frequentist and Bayesian statistics originates from their contrasting interpretations of probability. The Frequentist paradigm defines probability objectively as the limit of an event's relative frequency after many trials [6] [7]. Within this framework, parameters representing treatment effects or population characteristics are considered fixed, unknown quantities. Inference relies entirely on the observed data, with procedures designed to have desirable long-run frequency properties. For example, a 95% confidence interval implies that if the same study were repeated infinitely, 95% of the calculated intervals would contain the true parameter value [6]. This approach deliberately excludes prior beliefs or external evidence, aiming for objectivity through standardized procedures like hypothesis testing and confidence interval estimation.

The Bayesian paradigm offers a more subjective interpretation, defining probability as a degree of belief about an event or parameter [7]. This perspective naturally accommodates the incorporation of prior knowledge through Bayes' Theorem, which provides a formal mechanism for updating beliefs in light of new evidence. The theorem is mathematically expressed as P(θ|D) = [P(D|θ) × P(θ)] / P(D), where P(θ) represents the prior distribution of parameters, P(D|θ) is the likelihood of observed data, P(D) serves as a normalizing constant, and P(θ|D) is the posterior distribution representing updated beliefs [7]. This sequential updating process is particularly suited to pharmaceutical development, where knowledge accumulates across preclinical, clinical, and post-marketing phases.

Key Methodological Distinctions

Table 1: Core Methodological Differences Between Frequentist and Bayesian Approaches

| Aspect | Frequentist Approach | Bayesian Approach |

|---|---|---|

| Probability Interpretation | Long-run frequency of events [6] | Degree of belief or uncertainty [7] |

| Parameters | Fixed, unknown constants [7] | Random variables with distributions [7] |

| Inference Basis | Likelihood of observed data under null hypothesis [9] | Combination of prior beliefs and observed data [7] |

| Interval Estimation | Confidence intervals (long-run coverage properties) [6] | Credible intervals (direct probability statements) [7] |

| Hypothesis Testing | p-values, significance tests [9] | Bayes factors, posterior probabilities [9] |

| Prior Information | Not formally incorporated [6] | Explicitly incorporated via prior distributions [8] |

| Computational Demands | Generally lower; closed-form solutions [7] | Generally higher; often requires MCMC sampling [6] [7] |

The methodological distinctions extend to how evidence is quantified and interpreted. Frequentist hypothesis testing revolves around p-values, which measure the probability of observing data as extreme as, or more extreme than, the actual data, assuming the null hypothesis is true [9]. This indirect approach to evidence has been frequently misunderstood, with p-values often misinterpreted as the probability that the null hypothesis is true [6]. Bayesian hypothesis testing typically employs Bayes factors, which quantify how much the observed data should alter prior beliefs by comparing the probability of the data under competing hypotheses [9]. This provides a more direct assessment of hypothesis support.

Similarly, interval estimation differs substantially between paradigms. A Frequentist 95% confidence interval indicates that in repeated sampling, 95% of similarly constructed intervals would contain the true parameter [6]. This property relates to the procedure, not any specific interval. In contrast, a Bayesian 95% credible interval means there is a 95% probability that the parameter lies within the specified interval, given the observed data and prior [7]. This direct probability statement often aligns more naturally with how researchers and decision-makers interpret intervals.

Quantitative Comparison in Pharmaceutical Applications

Performance in Personalized Randomized Controlled Trials

Recent research has directly compared these paradigms in innovative trial designs relevant to pharmaceutical science. Jackson et al. (2025) evaluated both approaches within the context of a Personalised Randomised Controlled Trial (PRACTical) design, which addresses scenarios where multiple treatment options exist without a single standard of care [10] [11]. Their simulation study compared four targeted antibiotic treatments for multidrug resistant bloodstream infections across four patient subgroups, with the primary outcome being 60-day mortality [10].

Table 2: Performance Comparison of Frequentist and Bayesian Approaches in PRACTical Design Simulation

| Performance Measure | Frequentist Model | Bayesian Model (Strong Informative Prior) |

|---|---|---|

| Probability of Predicting True Best Treatment | ≥80% (Pbest) [10] | ≥80% (Pbest) [10] |

| Maximum Probability of Interval Separation | 96% (PIS) [10] | Comparable to Frequentist approach [10] |

| Probability of Incorrect Interval Separation | <0.05 (PIIS) across all sample sizes (N=500-5000) in null scenarios [10] | <0.05 (PIIS) across all sample sizes (N=500-5000) in null scenarios [10] |

| Sample Size Required for PIS ≥80% | N=1500-3000 [10] | Similar to Frequentist approach [10] |

| Sample Size Required for Pbest ≥80% | N≤500 [10] | Similar to Frequentist approach [10] |

| Key Finding | Utilising uncertainty intervals highly conservative; limits applicability to large pragmatic trials [10] | Performed similarly to Frequentist approach in predicting true best treatment [10] |

The PRACTical design simulation revealed that both approaches demonstrated comparable performance in identifying the optimal treatment, with the Frequentist model and Bayesian model using strong informative priors both achieving a probability of predicting the true best treatment (Pbest) of at least 80% [10]. Similarly, both maintained a low probability of incorrect interval separation (PIIS) below 0.05 across all sample sizes in null scenarios [10]. The research highlighted that utilizing uncertainty intervals for treatment coefficient estimates was "highly conservative, limiting applicability to large pragmatic trials," with sample sizes of 1500-3000 patients required for the probability of interval separation to reach 80%, compared to only 500 patients needed for the probability of predicting the true best treatment to reach 80% [10].

Application Across Drug Development Domains

The FDA has identified several pharmaceutical development areas where Bayesian approaches offer particular advantages, including pediatric drug development, dose-finding trials, and ultra-rare diseases [8]. In pediatric drug development, where efficacy is often extrapolated from adult populations, "Bayesian statistics can incorporate the information from adults that can be considered in understanding the effects of a drug in children" [8]. This approach was exemplified in an asthma product evaluation where "Bayesian methods allowed us to borrow variable amounts of information obtained from adults and to evaluate the dependence of the results on the amount borrowed and to ultimately make more informed decisions" [8].

In oncology dose-finding, Bayesian designs provide "much more flexibility in the design and dosing in the trial and can improve the accuracy with which the MTD is estimated and the efficiency of the study by linking the estimation of toxicities across doses" [8]. For ultra-rare diseases with extremely limited patient populations, "Bayesian methods provide two key advantages: the ability to incorporate prior information and the ability to adapt the design more easily" [8]. Additionally, Bayesian "hierarchical models are particularly useful for assessing how well a drug works in particular subgroups of patients" because they "can provide estimates of drug effects in these subgroups that are generally more accurate than the estimates one obtains by analyzing each subgroup in isolation" [8].

Experimental Protocols and Implementation Frameworks

Protocol for Bayesian Adaptive Dose-Finding Trial

Objective: To identify the maximum tolerated dose (MTD) of a novel oncology therapeutic using a Bayesian adaptive design.

Background: Traditional 3+3 dose escalation designs have limitations in accuracy and efficiency. Bayesian approaches model the dose-toxicity relationship explicitly, allowing more precise MTD identification.

Materials and Reagents:

Table 3: Research Reagent Solutions for Bayesian Adaptive Dose-Finding

| Reagent/Solution | Function | Specifications |

|---|---|---|

| Probabilistic Programming Framework | Implements Bayesian model computation | PyMC3, Stan, or Edward software platforms [7] |

| Prior Distribution Specifications | Encapsulates pre-trial belief about dose-toxicity relationship | Based on preclinical data, similar compounds, or expert elicitation [8] |

| Adaptive Randomization Algorithm | Allocates patients to doses with optimal information gain | Bayesian logistic regression model with continuous monitoring [8] |

| Toxicity Assessment Scale | Standardizes dose-limiting toxicity (DLT) evaluation | NCI CTCAE criteria with predefined DLT definition |

| Decision Rule Framework | Determines dose escalation/de-escalation | Predefined posterior probability thresholds (e.g., escalate if P(DLT < 0.33) > 0.9) |

Procedure:

Prior Elicitation: Define prior distributions for dose-toxicity model parameters based on preclinical data and clinical expertise. Consider using skeptical priors to conservatively guard against overdosing.

Dose-Toxicity Modeling: Implement a Bayesian logistic regression model relating dose to probability of dose-limiting toxicity (DLT). The model structure follows: logit(P(DLT)) = α + β×dose, with priors placed on α and β.

Patient Cohort Evaluation: After each cohort (typically 1-3 patients), update the posterior distribution of model parameters using observed DLT data.

Dose Selection: Calculate the posterior probability of DLT for each dose level. Identify the dose with DLT probability closest to the target (e.g., 0.25-0.33) while considering precision of estimate.

Adaptive Randomization: Allocate patients to doses with the highest information value, typically those with estimated DLT rates near the target, while maintaining adequate patient safety.

Stopping Rules: Predefine stopping criteria based on posterior precision (e.g., when the credible interval for MTD falls below a specified width) or when maximum sample size is reached.

Model Checking: Conduct posterior predictive checks to verify model adequacy throughout the trial.

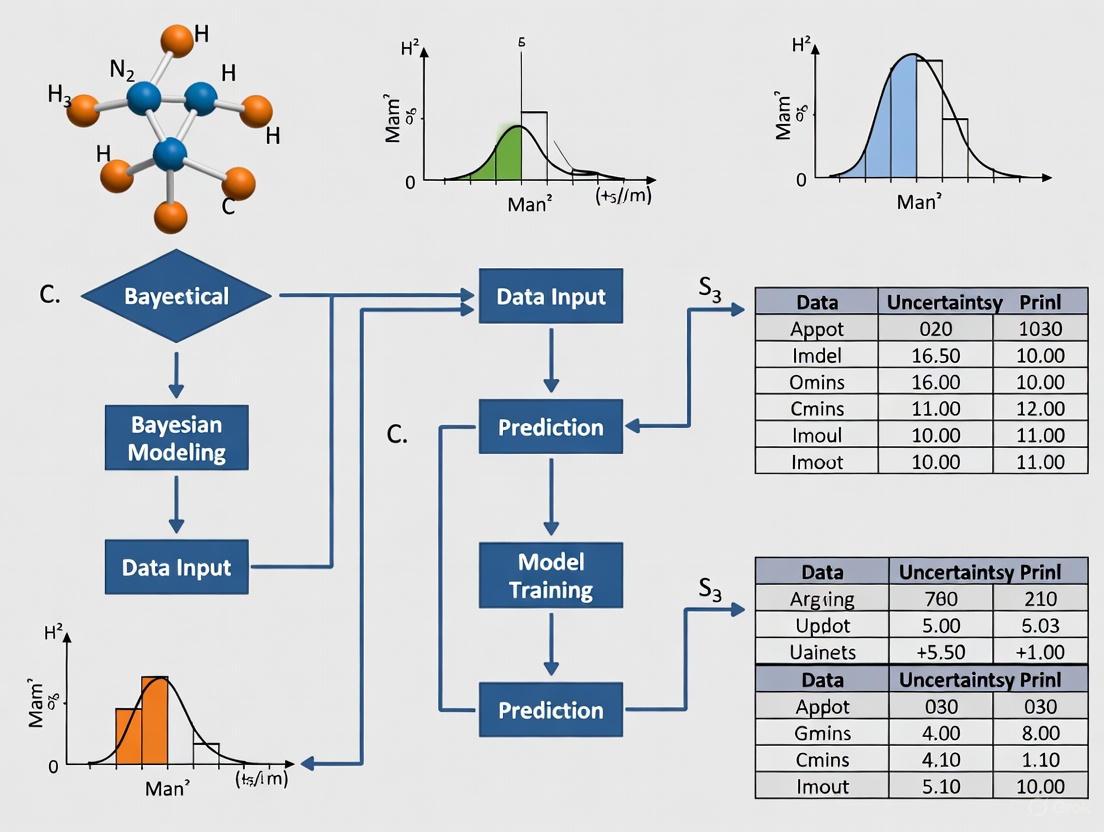

Diagram Title: Bayesian Adaptive Dose-Finding Workflow

Protocol for Frequentist Multi-Arm Trial with Fixed Design

Objective: To compare multiple doses of an investigational drug against a control using a fixed-sample, multi-arm parallel group design.

Background: Fixed designs with pre-specified sample sizes and analysis plans remain the standard for confirmatory trials in regulatory submissions, providing straightforward interpretation and controlled type I error rates.

Materials and Reagents:

Table 4: Research Reagent Solutions for Frequentist Multi-Arm Trial

| Reagent/Solution | Function | Specifications |

|---|---|---|

| Sample Size Calculation Software | Determines required sample size for target power | nQuery, PASS, or R/pwr package |

| Randomization System | Allocates patients to treatment arms | Interactive Web Response System (IWRS) |

| Statistical Analysis Plan | Pre-specifies analysis methods and decision rules | Detailed document including primary endpoint, covariates, multiplicity adjustments |

| Hypothesis Testing Framework | Tests pre-specified null hypotheses | Analysis of covariance (ANCOVA) or mixed models for repeated measures |

| Multiple Comparison Procedure | Controls family-wise error rate | Bonferroni, Hochberg, or gatekeeping procedures |

Procedure:

Sample Size Calculation: Determine required sample size based on pre-specified effect size, power (typically 80-90%), and significance level (α=0.05, potentially adjusted for multiple comparisons).

Randomization: Implement balanced randomization to each treatment arm, potentially stratified by important prognostic factors.

Interim Analysis (if planned): Conduct pre-specified interim analyses with α-spending functions to control type I error. Consider independent Data Monitoring Committee for blinded review.

Database Lock: Finalize database after all patient data collection complete and all queries resolved.

Primary Analysis: Conduct analysis according to pre-specified statistical analysis plan. For continuous endpoints, typically use ANCOVA with baseline adjustment. For binary endpoints, use logistic regression.

Multiplicity Adjustment: Apply pre-specified multiple comparison procedures to control family-wise error rate across multiple doses and endpoints.

Sensitivity Analyses: Conduct supporting analyses to assess robustness of primary findings (e.g., different covariate adjustments, missing data approaches).

Interpretation and Reporting: Interpret results in context of pre-specified decision rules and clinical significance.

Diagram Title: Frequentist Multi-Arm Trial Workflow

Application to Chemical Probe Quality Prediction

Bayesian Hierarchical Modeling for Probe Assessment

In chemical probe development, where multiple related compounds are evaluated across various assays, Bayesian hierarchical models offer distinct advantages for quality prediction. These models naturally accommodate the complex data structures inherent in probe characterization while providing principled uncertainty quantification.

Implementation Framework:

Model Specification: Construct a hierarchical model that shares information across related chemical probes while allowing for probe-specific variations. The model structure incorporates assay-level parameters, probe-level parameters, and overarching hyperparameters.

Prior Distributions: Specify weakly informative priors for hyperparameters based on historical data from similar chemical classes or domain expertise. Consider heavy-tailed distributions to robustify against prior misspecification.

Posterior Computation: Implement Markov Chain Monte Carlo (MCMC) sampling using probabilistic programming tools (Stan, PyMC3) to approximate the joint posterior distribution of all parameters.

Probe Ranking: Calculate posterior probabilities for each probe exceeding predefined quality thresholds across multiple assay dimensions. Generate rank probabilities to quantify uncertainty in probe prioritization.

Decision Support: Utilize posterior predictive distributions to estimate the probability of success in subsequent validation experiments, informing resource allocation decisions.

Diagram Title: Bayesian Hierarchical Model Structure

Comparative Performance Metrics

When applying these statistical approaches to chemical probe quality prediction, several performance metrics should be evaluated:

Calibration: How well do predicted probabilities of probe success align with observed frequencies? Bayesian methods typically demonstrate superior calibration through direct probability statements.

Discrimination: How effectively do models distinguish high-quality from low-quality probes? Both approaches can achieve strong discrimination with appropriate model specification.

Information Borrowing: How efficiently does the model leverage information across related probes? Bayesian hierarchical models excel at partial pooling, improving precision for probes with limited data.

Computational Efficiency: What are the runtime requirements for model fitting and prediction? Frequentist approaches generally offer faster computation, though modern Bayesian software has substantially closed this gap.

Decision Support: How intuitively do model outputs inform go/no-go decisions? Bayesian posterior probabilities and predictive distributions often provide more direct decision support than p-values and confidence intervals.

Regulatory Landscape and Implementation Considerations

The regulatory environment for Bayesian methods in pharmaceutical development has evolved significantly, with the FDA actively promoting their use through various initiatives. The Complex Innovative Designs (CID) Paired Meeting Program, established under PDUFA VI, offers sponsors "increased interaction with FDA staff to discuss their proposed CID approach" [8]. Notably, "thus far, the selected submissions in the CID Paired Meeting Program have all utilized a Bayesian framework," reflecting the method's suitability for "flexibility in the design and analysis of a trial" and appropriateness "in settings where multiple sources of evidence are considered" [8]. The FDA anticipates "publishing draft guidance on the use of Bayesian methodology in clinical trials of drugs and biologics" by the end of FY 2025 [8].

When implementing Bayesian approaches, several practical considerations emerge:

Prior Specification: Selecting appropriate prior distributions requires careful consideration. Informative priors should be justified with historical data or scientific rationale, while weakly informative priors can safeguard against undue influence.

Computational Infrastructure: Bayesian analysis often requires substantial computational resources, particularly for complex models. Modern probabilistic programming frameworks (Stan, PyMC3, JAGS) have improved accessibility but still require statistical expertise.

Model Validation: Bayesian models necessitate rigorous checking through posterior predictive checks, convergence diagnostics (Gelman-Rubin statistic, trace plots), and sensitivity analyses to assess prior influence.

Interdisciplinary Collaboration: Successful implementation requires collaboration between statisticians, clinical scientists, and regulatory affairs professionals to ensure designs address scientific questions while meeting regulatory standards.

Education and Interpretation: Bayesian outputs (posterior distributions, credible intervals, Bayes factors) require different interpretation than their Frequentist counterparts. Team education is essential for appropriate decision-making.

For chemical probe quality prediction specifically, Bayesian approaches offer compelling advantages through their ability to formally incorporate structural relationships between probes, share information across assays, and provide direct probabilistic statements about probe quality that directly inform development decisions.

In the field of chemical probe and drug discovery, the ability to make accurate predictions from limited experimental data is paramount. Bayesian models provide a powerful statistical framework for this purpose by formally incorporating prior knowledge with new experimental data to produce probabilistic predictions and quantify uncertainty [2] [5]. This approach is particularly valuable for assessing chemical probe quality, where researchers must evaluate multiple complex criteria to identify compounds with desired bioactivity and minimal cytotoxicity [2] [12]. The Bayesian paradigm transforms raw data into actionable insights, enabling more efficient resource allocation in pharmaceutical development [5] [13].

Core Principles and Theoretical Foundation

Bayes' Theorem as a Foundational Framework

At the heart of Bayesian methodology lies Bayes' theorem, which describes the conditional relationship between two events and enables the updating of beliefs based on new evidence [14]. The theorem is mathematically expressed as:

P(A|B) = [P(B|A) × P(A)] / P(B)

Where P(A|B) is the posterior probability of event A given event B, P(B|A) is the likelihood of observing B given A, P(A) is the prior probability of A, and P(B) is the marginal probability of B [14]. In the context of chemical probe quality prediction, this framework allows researchers to systematically update their beliefs about a compound's quality as new experimental data becomes available.

Key Components of Bayesian Modeling

Table 1: Core Components of Bayesian Models for Chemical Probe Quality Assessment

| Component | Description | Role in Chemical Probe Assessment |

|---|---|---|

| Prior Probability (P(A)) | Initial belief about parameter values before seeing new data | Based on historical data of chemical probe performance, molecular properties, or expert evaluation [2] |

| Likelihood (P(B|A)) | Probability of observing data given specific parameters | Derived from experimental results of bioactivity, cytotoxicity, or other quality metrics [12] |

| Posterior Probability (P(A|B)) | Updated belief after incorporating new evidence | Final assessment of chemical probe quality combining prior knowledge with new data [2] [12] |

| Uncertainty Quantification | Natural output of posterior distribution | Confidence intervals for predictions of probe efficacy and toxicity [5] [13] |

Application Note: Bayesian Classification for Chemical Probe Evaluation

Background and Challenge

The National Institutes of Health (NIH) invested over half a billion dollars in high-throughput screening efforts that identified more than 300 chemical probes through the Molecular Libraries Screening Center Network (MLSCN) and Molecular Library Probe Production Center Network (MLPCN) [2]. A critical challenge emerged: how to efficiently evaluate the chemistry quality of these probes based on multiple criteria including literature references, chemical reactivity, and selectivity. Traditional evaluation methods required extensive expert review, creating bottlenecks in probe development and validation [2].

Bayesian Solution Implementation

Researchers implemented a Bayesian classification approach to predict the evaluations of an experienced medicinal chemist who assessed chemical probes based on established quality criteria [2]. The methodology employed sequential Bayesian model building and iterative testing, incorporating additional probes as the model developed. The Bayesian classifier was trained to recognize molecular features associated with desirable versus undesirable probe characteristics, achieving accuracy comparable to other established drug-likeness measures and filtering rules [2].

Table 2: Performance Metrics of Bayesian Classification for Chemical Probe Evaluation

| Evaluation Metric | Performance Result | Comparative Advantage |

|---|---|---|

| Accuracy | Comparable to other drug-likeness measures and filtering rules | Matches established medicinal chemistry consensus [2] |

| Molecular Features Identified | Higher pKa, molecular weight, heavy atom count, rotatable bond number | Identifies key structural properties of desirable probes [2] |

| Undesirable Probe Detection | Flagged over 20% of NIH probes as undesirable | Effective identification of problematic chemistry [2] |

| Validation Method | External validation with different machine learning methods | Robust performance across validation frameworks [2] |

Experimental Outcomes

Analysis of molecular properties of compounds scored as desirable revealed distinctive characteristics, including higher pKa, molecular weight, heavy atom count, and rotatable bond number [2]. The Bayesian model successfully identified problematic probes that exhibited potential chemical reactivity or lacked sufficient literature evidence of biological activity, providing a computational approach to replicate expert medicinal chemistry due diligence [2].

Protocol: Building a Dual-Event Bayesian Model for Anti-Tuberculosis Compound Screening

Research Reagent Solutions

Table 3: Essential Materials for Bayesian Model Development and Validation

| Reagent/Material | Specification | Function in Protocol |

|---|---|---|

| Training Dataset | Public HTS data for M. tuberculosis (e.g., MLSMR dose response data) [12] | Provides baseline bioactivity information for model training |

| Cytotoxicity Data | Vero cell CC50 measurements [12] | Supplies cytotoxicity information for dual-event modeling |

| Commercial Compound Library | Asinex library (>25,000 compounds) [12] | Serves as source for prospective validation compounds |

| Software Tools | Bayesian modeling software (e.g., CDD Vault, Python libraries) [14] | Enables model construction and compound scoring |

| Validation Assays | M. tuberculosis growth inhibition (IC50) and mammalian cell cytotoxicity (CC50) [12] | Confirms model predictions experimentally |

Step-by-Step Methodology

Step 1: Data Preparation and Curation

- Compile bioactivity data (IC90 values) for M. tuberculosis growth inhibition from public high-throughput screening sources [12]

- Collect corresponding cytotoxicity data (CC50 values) for the same compounds in mammalian cell lines (e.g., Vero cells) [12]

- Define activity criteria: active compounds (IC90 < 10 μg/mL) and non-cytotoxic compounds (Selectivity Index SI = CC50/IC90 > 10) [12]

- Remove salts and normalize molecular structures prior to descriptor calculation [2]

Step 2: Descriptor Calculation and Feature Generation

- Calculate molecular descriptors using cheminformatics tools (e.g., ChemAxon Marvin Suite, Discovery Studio) [2]

- Include key descriptors: molecular weight, logP, hydrogen bond donors/acceptors, pKa, heavy atom count, polar surface area, rotatable bond count [2]

- Generate structural fingerprints (e.g., Function Class Fingerprints) to capture relevant molecular features [2]

Step 3: Model Training and Validation

- Implement Naïve Bayesian classification using the compiled training data [2] [12]

- For dual-event model: Integrate both bioactivity and cytotoxicity information into a unified Bayesian framework [12]

- Employ leave-one-out cross-validation to assess model performance [12]

- Calculate performance metrics including Receiver Operator Characteristic (ROC) values, with ideal models achieving ROC values approaching 1.0 [12]

- Validate model against known first- and second-line TB drugs to ensure predictive capability for clinically relevant compounds [12]

Step 4: Prospective Screening and Experimental Validation

- Virtually screen commercial compound libraries (e.g., Asinex) using the trained Bayesian model [12]

- Rank compounds by Bayesian score (range observed: -28.4 to 15.3), where more positive values indicate higher probability of activity [12]

- Select top-scoring compounds (e.g., Bayesian score 9.4 to 15.3) for experimental testing [12]

- Validate selected compounds through in vitro assays for Mtb growth inhibition and mammalian cell cytotoxicity [12]

- Confirm hit rates and compare against traditional HTS performance metrics [12]

Workflow Visualization

Application Note: Dual-Event Bayesian Models for Tuberculosis Drug Discovery

Advanced Model Architecture

Traditional Bayesian models focused solely on bioactivity endpoints, potentially overlooking cytotoxicity concerns [12]. The dual-event Bayesian model represents a significant advancement by simultaneously incorporating both bioactivity and cytotoxicity information [12]. This approach learns molecular features associated with both Mycobacterium tuberculosis growth inhibition and low mammalian cell cytotoxicity, creating a more comprehensive assessment framework for identifying promising chemical probes with favorable safety profiles [12].

Experimental Validation and Outcomes

In prospective validation, a dual-event Bayesian model achieved a remarkable 14% hit rate when applied to a commercial library of >25,000 compounds, representing a 1-2 order of magnitude improvement over typical high-throughput screening results [12]. The model identified novel antitubercular hits with whole-cell activity and low mammalian cell cytotoxicity, including a promising pyrazolo[1,5-a]pyrimidine class compound (SYN 22269076) exhibiting an IC50 of 1.1 μg/mL (3.2 μM) against Mtb [12].

The dual-event model demonstrated superior predictive power compared to single-event models that excluded cytotoxicity information, with leave-one-out cross-validation yielding an ROC value of 0.86 [12]. When applied to a published library of antimalarial hits, the model successfully identified compounds with antitubercular activity and acceptable safety profiles, including a potent small molecule TB drug lead showing nanomolar growth inhibition of cultured mycobacteria with acceptable in vitro and in vivo mouse safety [12].

Protocol: Bayesian Optimization for Chemical Synthesis Conditions

Workflow Implementation

Bayesian optimization provides a sample-efficient global optimization strategy for chemical synthesis parameter tuning, particularly valuable when experiments are resource-intensive or time-consuming [15]. The methodology employs probabilistic surrogate models (typically Gaussian Processes) and acquisition functions to systematically balance exploration and exploitation in the chemical search space [15] [14].

Implementation Guidelines

Step 1: Define Search Space and Objective

- Identify critical reaction parameters: temperature, reaction time, solvent selection, catalyst, concentration, stoichiometry [15]

- Define objective function: yield, selectivity, space-time yield, E-factor, or multiple objectives for Pareto optimization [15]

- Establish constraints: safety limits, equipment capabilities, chemical compatibility [15]

Step 2: Establish Initial Dataset

- Select initial experiments using design of experiments (DoE) or random sampling across parameter space [15]

- Perform initial experiments and measure objective function values [15]

- Document all experimental conditions and outcomes systematically [15]

Step 3: Configure Bayesian Optimization Parameters

- Select surrogate model: Gaussian Process with appropriate kernel function for chemical space [15] [14]

- Choose acquisition function: Expected Improvement (EI), Upper Confidence Bound (UCB), Thompson Sampling (TS), or q-Noise Expected Hypervolume Improvement (q-NEHVI) for multi-objective optimization [15]

- Set convergence criteria: maximum iterations, performance thresholds, or resource limitations [15]

Step 4: Execute Optimization Cycle

- Train surrogate model on current dataset [15] [14]

- Calculate acquisition function across parameter space [15]

- Select next experiment(s) that maximize acquisition function [15] [14]

- Perform experiment(s) and measure objective function [15]

- Update dataset with new results [15]

- Repeat until convergence criteria met [15]

Advanced Applications

The Bayesian optimization framework has been successfully applied to diverse chemical synthesis challenges, including multi-objective optimization of nanomaterial synthesis (e.g., antimicrobial ZnO and p-cymene) [15], ultra-fast lithium-halogen exchange reactions with sub-second residence time control [15], and optimization of pressure swing adsorption processes through hybrid frameworks (e.g., TSEMO + DyOS) [15]. The Summit framework developed by the Lapkin group provides a comprehensive implementation of these methods, demonstrating performance advantages over traditional optimization approaches across multiple chemical reaction benchmarks [15].

Bayesian principles provide a robust foundation for chemical probe quality assessment by formally integrating prior knowledge with experimental data to generate probabilistic predictions. The methodologies outlined in these application notes and protocols demonstrate significant improvements in efficiency and accuracy for chemical probe evaluation, tuberculosis drug discovery, and synthesis optimization. By embracing these Bayesian approaches, researchers can accelerate the identification of high-quality chemical probes while effectively quantifying prediction uncertainty, ultimately enhancing decision-making in pharmaceutical development.

Within chemical biology and drug discovery, chemical probes are essential, high-quality small molecules used to modulate and understand the function of specific proteins in biomedical research [16]. The robustness of experimental findings using these tools is highly dependent on their appropriate selection and application. Misuse of inadequate compounds has been identified as a significant factor contributing to irreproducible results, highlighting a critical "robustness crisis" in the literature [17] [16]. This application note details the expert-derived criteria that define a high-quality chemical probe, framing these guidelines within the context of developing predictive Bayesian models for probe quality assessment. We summarize quantitative data into structured tables and provide detailed protocols for implementing these evaluations.

Expert-Defined Criteria for Probe Desirability

Expert panels, such as the Scientific Expert Review Panel (SERP) for the Chemical Probes Portal, evaluate compounds based on a consensus set of "fitness factors" [16]. The criteria below form the foundational definition of probe desirability.

Core Fitness Factors

- Potency: A chemical probe should demonstrate high in vitro potency against its primary intended target, typically with an IC50 or EC50 of less than 100 nM [17]. This ensures a strong and specific interaction with the target protein.

- Selectivity: The probe must be selective for the target over other related proteins. A common guideline is a minimum 30-fold selectivity against closely related proteins, particularly those within the same family, to minimize off-target effects that could confound experimental interpretation [17].

- Cellular Activity: The probe must engage its intended target within a cellular environment. The ideal probe exhibits on-target cellular activity at concentrations preferably below 1 μM, ensuring the observed phenotypic effects are mechanism-based [17].

Supporting Evidence and Structural Attributes

Beyond the core factors, several other considerations inform expert evaluations:

- Availability of Controls: A critical criterion is the availability of a structurally matched, target-inactive control compound [18] [17]. This control, which is synthetically accessible and ideally differs from the active probe by only a few atoms, is essential for confirming that observed phenotypic effects are due to the inhibition of the intended target and not to non-specific effects.

- Orthogonal Probes: Confidence in biological findings is significantly increased when multiple, structurally distinct chemical probes (orthogonal probes) for the same target yield similar phenotypic outcomes [17].

- Literature Validation and Chemical Reactivity: Experts assess the breadth of biological literature references associated with a compound. A very high number of references may indicate promiscuous behavior, while a complete lack of literature for an older probe may suggest underlying problems [2]. Furthermore, probes containing functional groups with predicted chemical reactivity (e.g., moieties that can act as thiol traps, Michael acceptors, or redox-active compounds) are often flagged as undesirable due to the risk of non-specific effects [2].

- Molecular Properties: Analysis of NIH-funded chemical probes revealed that compounds deemed "desirable" by a medicinal chemist expert tended to have distinct molecular properties, including higher pKa, molecular weight, heavy atom count, and rotatable bond number compared to those flagged as undesirable [2] [19].

Table 1: Quantitative Criteria for a High-Quality Chemical Probe

| Criterion | Quantitative Guideline or Requirement | Rationale |

|---|---|---|

| In Vitro Potency | IC50/EC50 < 100 nM | Ensures strong, effective target engagement. |

| Selectivity | ≥ 30-fold against related family proteins | Minimizes confounding off-target effects. |

| Cellular Activity | On-target activity ≤ 1 μM | Confers target engagement in a physiological context. |

| Control Compound | Structurally matched inactive analogue available | Controls for non-specific and off-target effects. |

| Orthogonal Probes | At least one additional, structurally distinct probe available | Increases confidence that phenotype is target-related. |

| Undesirable Groups | Lacks reactive or promiscuity-associated substructures | Reduces risk of assay interference and false positives. |

The "Rule of Two" for Best-Practice Application

To ensure robust experimental design, a recent systematic review of the literature proposed "the rule of two" [17]. This guideline stipulates that every cell-based study should employ:

- At least two chemical probes. This can be a pair of orthogonal target-engaging probes and/or the combination of a chemical probe with its matched target-inactive control.

- All probes must be used within their recommended concentration range [17]. Alarmingly, a 2023 review found that only 4% of publications analyzed adhered to all these best-practice principles, underscoring the need for clearer guidelines and tools [17].

Bayesian Models for Predicting Probe Quality

The expert criteria for desirability provide a labeled dataset upon which computational models can be trained to predict the quality of novel compounds.

Model Foundation and Workflow

Bayesian models are particularly suited for this task as they can learn the complex relationships between a compound's structural features, physicochemical properties, and its expert-assigned quality rating [2] [14]. The process is a sequential, model-based global optimization.

Diagram 1: Bayesian model optimization cycle for evaluating chemical probes. The model iteratively improves its predictions by incorporating new expert-validated data.

The core of this approach relies on Bayes' theorem, which updates the probability for a hypothesis (a compound being "desirable") as more evidence or data becomes available [14]. The key components are:

- Surrogate Model: Typically a Gaussian Process (GP) or Naïve Bayesian classifier, used to estimate the unknown objective function (expert desirability) across the chemical space based on initial training data [2] [14] [20].

- Acquisition Function: A utility function that uses the surrogate model's predictions (mean and variance) to decide which compound to evaluate next, balancing exploration of uncertain chemical space with exploitation of known desirable areas [14].

Advanced Bayesian Optimization: The Dual-GP Workflow

For complex, real-world experimental data, a Dual-GP approach enhances traditional Bayesian optimization. This method introduces a second surrogate model to act as a quality controller for the raw data used in the optimization loop [20].

Diagram 2: Dual-GP workflow for robust probe optimization. A second GP model assesses data quality, dynamically constraining the primary GP to focus on reliable experimental regions.

This Dual-GP method is especially valuable when dealing with high-dimensional or noisy experimental readouts (e.g., spectroscopy), where a pre-defined function must convert raw data into a scalar value for the primary model. The second GP assesses the compatibility between the raw data and the scalarizer function, assigning a quality score that dynamically constrains the optimization to regions of the chemical space more likely to produce meaningful data [20].

Experimental Protocols

Protocol 1: Implementing Expert Criteria for Probe Evaluation

This protocol provides a step-by-step methodology for manually assessing a compound's suitability as a chemical probe, mirroring the process used by expert panels.

Key Research Reagent Solutions:

- Public Probe Databases: Chemical Probes Portal [21], Probe Miner [22], Structural Genomics Consortium Probes [16].

- Literature Search Tools: CAS SciFinder, PubMed.

- Chemical Structure Analysis: Software suites like ChemAxon's JChem or Biovia's Discovery Studio for calculating molecular descriptors and visualizing structures.

- Selectivity & Promiscuity Profiling: Tools like BadApple for predicting promiscuity based on public data [2] and PAINS (Pan Assay Interference Compounds) filters to identify problematic substructures [2].

Procedure:

- Compound Identification: Unambiguously define the compound structure using a standard identifier (e.g., PubChem CID, SMILES).

- Literature Review:

- Query the primary literature for the number of biological activity references associated with the compound. Exercise caution with compounds exhibiting an extremely high (>150) or zero count of references, as this may indicate promiscuity or underlying issues, respectively [2].

- Identify the primary publication(s) disclosing the probe.

- Control & Orthogonal Probe Check:

- Structural Interrogation:

- Data Integration & Scoring:

- Synthesize all information. A high-quality probe (typically ≥3 stars on the Chemical Probes Portal) will satisfy the core fitness factors and have available controls [18] [16].

- For in-cell use, apply the "rule of two": use at least two probes (probe+control or two orthogonal probes) at their recommended concentrations [17].

Protocol 2: Building a Bayesian Predictive Model for Probe Desirability

This protocol outlines the methodology for constructing a computational model to predict an expert's evaluation of chemical probes, as described in prior research [2].

Key Research Reagent Solutions:

- Modeling Software: Bayesian optimization packages (e.g., BoTorch, Ax, Scikit-optimize) [14].

- Descriptor Calculation: Cheminformatics toolkits (e.g., RDKit, ChemAxon Marvin, Discovery Studio).

- Training Data: Publicly available expert-curated datasets, such as the NIH chemical probes collection with expert desirability scores [2] [23].

Procedure:

- Dataset Curation:

- Obtain a set of chemical probes with binary expert evaluations ("desirable" = 1, "undesirable" = 0). The initial dataset can comprise several hundred compounds [2].

- Remove salts and standardize structures to ensure consistency.

- Descriptor Generation:

- Calculate a set of molecular descriptors for each compound. Relevant descriptors include, but are not limited to: molecular weight, logP, H-bond donors/acceptors, rotatable bond count, heavy atom count, polar surface area, pKa, and fingerprints capturing structural features (e.g., Function Class Fingerprints) [2].

- Model Training:

- Implement a Naïve Bayesian classifier or a Gaussian Process (GP) model.

- Use the molecular descriptors as input features (X) and the expert's binary evaluation as the target label (y).

- Split the data into training and test sets to validate model performance.

- Model Validation & Iteration:

- Validate the model's accuracy on the held-out test set. Compare its performance against other measures of drug-likeness (e.g., QED, Ligand Efficiency) and filtering rules [2].

- Deploy the model prospectively to score new, unrated compounds. Integrate expert feedback on these predictions in an iterative loop to refine the model, as shown in Diagram 1 [2] [14].

Table 2: Molecular Properties from Expert-Evaluated NIH Probes

| Molecular Property | Trend in 'Desirable' Probes | Software/Tool for Calculation |

|---|---|---|

| pKa | Higher | ChemAxon Marvin, JChem |

| Molecular Weight | Higher | Standard cheminformatics toolkit |

| Heavy Atom Count | Higher | Standard cheminformatics toolkit |

| Rotatable Bond Number | Higher | Standard cheminformatics toolkit |

| Undesirable Substructures | Absence of PAINS/REOS | FAF-Drugs, Custom substructure filters |

In the field of medicinal chemistry, the expertise of seasoned chemists is a precious, yet scarce, resource. The intricate process of evaluating chemical probes—assessing their potential for reactivity, promiscuity, and overall quality—has traditionally relied on this human intuition and experience [2]. This manual approach, however, is fundamentally limited when confronting the scale of modern chemical libraries, which now contain tens of billions of "make-on-demand" molecules [24]. The central challenge is to scale this critical, expert-level due diligence to keep pace with the vastness of chemical space. Computational prediction, particularly through Bayesian models, emerges as the essential solution to this problem, offering a data-driven framework to augment and amplify expert judgment [2].

Bayesian Models: A Primer for Chemical Prediction

Bayesian models are a class of probabilistic models that are exceptionally well-suited for learning from data and quantifying predictive uncertainty. In the context of medicinal chemistry, they can learn the complex relationships between a molecule's structural features and its biological desirability as judged by an expert.

A foundational application is the use of Naïve Bayesian classification to predict an expert's evaluation of chemical probes [2]. These models can process a variety of molecular descriptors, including:

- Molecular Properties: Molecular weight, logP, heavy atom count, rotatable bonds, and polar surface area [2].

- Structural Fingerprints: Function class fingerprints (FCFP) that encode molecular substructures [2].

- pKa and Charge: The acid dissociation constant and the average charge at a physiological pH of 7.4 [2].

The model operates on Bayes' theorem, updating the prior probability of a compound being "desirable" with the likelihood of observing its specific features to compute a posterior probability. This posterior probability provides a quantitative, probabilistic score of chemical probe quality, directly capturing the pattern recognition heuristics of an experienced medicinal chemist [2].

Application Note: Implementing a Bayesian Classifier for Probe Quality

This protocol details the steps for building and validating a Bayesian classifier to predict the desirability of small molecule chemical probes, based on the methodology validated in prior research [2]. The process encompasses data curation, feature calculation, model training, and validation.

Experimental Protocol

Step 1: Dataset Curation and Expert Labeling

- Source: Begin with a collection of known chemical probes, such as those from the NIH's Molecular Libraries Program [2].

- Curation: Remove salts and complex molecular mixtures to ensure structures are normalized for analysis [2].

- Expert Evaluation: A medicinal chemist evaluates each probe against defined criteria:

- Literature References: Probes with >150 biological activity references may lack selectivity; those with zero references may have uncertain quality [2].

- Chemical Reactivity: Flag compounds with structural alerts indicating potential chemical reactivity (e.g., thiol traps, Michael acceptors) [2].

- Patent Presence: A high frequency across many patents can be an indicator of promiscuous activity, though this requires careful examination [2].

- Labeling: Assign a binary label: 1 for "desirable" and 0 for "undesirable" based on the evaluation above [2].

Step 2: Molecular Descriptor Calculation

With the labeled dataset, calculate a set of molecular descriptors and features for each compound.

- Software Tools: Utilize chemoinformatics toolkits such as the Marvin Suite (ChemAxon) or Discovery Studio (Biovia) [2].

- Key Descriptors to Calculate:

- Physicochemical Properties: Molecular weight, AlogP, hydrogen bond donors/acceptors, rotatable bond count, polar surface area.

- Acid-Base Properties: pKa and the distribution of major microspecies at pH 7.4 [2].

- Structural Fingerprints: Generate Function Class Fingerprints (FCFP_6) to capture relevant chemical substructures [2].

Step 3: Model Training with Sequential Bayesian Learning

- Algorithm: Employ a Naïve Bayesian classifier.

- Process: Use a process of sequential Bayesian model building and iterative testing, adding probes to the training set incrementally to monitor performance [2].

- Software: Implement the model using available data science libraries in Python (e.g., Scikit-learn) or leverage specialized platforms like the CDD Vault for collaborative drug discovery [2].

Step 4: External Validation and Benchmarking

- Validation Set: Evaluate the trained model's performance on a held-out set of probes not used in training.

- Benchmarking: Compare the Bayesian model's predictions against other established metrics and rules, including:

- PAINS (Pan Assay Interference Compounds): Filter out compounds with substructures known to cause false-positive assay results [2].

- QED (Quantitative Estimate of Drug-likeness): Measure a compound's overall drug-likeness based on desirability of key properties [2].

- Ligand Efficiency: Assess the binding energy per heavy atom of a molecule [2].

Table 1: Key Molecular Descriptors for Bayesian Modeling of Chemical Probes

| Descriptor | Description | Role in Probe Quality Assessment |

|---|---|---|

| pKa / Charge at pH 7.4 | Measure of acidity/basicity under physiological conditions. | Higher pKa was associated with desirable probes [2]. |

| Molecular Weight | Mass of the molecule. | Higher molecular weight was associated with desirable probes [2]. |

| Heavy Atom Count | Number of non-hydrogen atoms. | Higher heavy atom count was associated with desirable probes [2]. |

| Rotatable Bond Count | Number of bonds that allow free rotation. | Higher rotatable bond number was associated with desirable probes [2]. |

| FCFP Fingerprints | Structural fingerprints encoding molecular features. | Captures essential substructural patterns linked to expert desirability [2]. |

Results and Interpretation

In a seminal study, this approach demonstrated that computational Bayesian models could achieve accuracy comparable to other measures of drug-likeness and filtering rules [2]. The model successfully learned the complex decision-making pattern of an expert chemist, identifying molecular properties that were statistically associated with desirable probes, as summarized in Table 1.

The following diagram illustrates the sequential workflow for building and validating the Bayesian classifier for chemical probe quality.

Scaling Up: Bayesian Active Learning for Combination Screens

The principle of using Bayesian methods to guide experimental design can be scaled from single-molecule evaluation to the immensely complex problem of large-scale combination drug screens. The number of possible drug-dose-cell line combinations quickly becomes intractable for exhaustive testing (e.g., 1.4 million possibilities for a 206-drug library on 16 cell lines) [4].

The BATCHIE (Bayesian Active Treatment Combination Hunting via Iterative Experimentation) platform addresses this by using a Bayesian active learning strategy [4]. The core of the method is the Probabilistic Diameter-based Active Learning (PDBAL) criterion, which selects experiments that are expected to most efficiently reduce the model's uncertainty across the entire experimental space [4].

Protocol for Bayesian Active Learning in Drug Screening

Step 1: Initial Batch Design

- Use a design of experiments approach to select an initial batch of combinations that efficiently covers the drug and cell line space [4].

Step 2: Model Training

- Train a hierarchical Bayesian tensor factorization model on the collected experimental results.

- The model decomposes a combination's effect into cell-line-specific effects, individual drug-dose effects, and interaction terms, providing a posterior distribution over all unobserved combination responses [4].

Step 3: Adaptive Batch Design via PDBAL

- For each subsequent batch, use the model's posterior to simulate outcomes of candidate experiments.

- Calculate the expected information gain for each candidate by how much it would reduce the "diameter" (disagreement) between different posterior samples.

- Select a batch of experiments that collectively provides the maximum information gain [4].

Step 4: Iteration and Validation

- Run the newly designed batch of experiments.

- Update the Bayesian model with the new data.

- Repeat steps 3 and 4 until the experimental budget is exhausted or model convergence is achieved.

- Use the final, optimally trained model to predict and prioritize highly effective and synergistic combinations for validation [4].

Table 2: BATCHIE Platform Performance in a Prospective Pediatric Cancer Screen

| Screen Metric | Value | Interpretation and Impact |

|---|---|---|

| Possible Combinations | 1.4 Million | The scale of the exhaustive screen, making it practically intractable. |

| Combinations Explored | ~4% | The fraction of the total space tested using the BATCHIE adaptive design. |

| Outcome | Accurate prediction of unseen combinations and detection of synergies. | Demonstrated efficiency and predictive power of the active learning approach. |

| Validated Hit | PARP inhibitor + Topoisomerase I inhibitor | A rational, translatable combination, now in Phase II clinical trials for Ewing sarcoma. |

The workflow for this scalable, adaptive screening platform is depicted below.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The successful implementation of these computational protocols relies on a suite of software tools and data resources.

Table 3: Essential Research Reagents and Computational Tools

| Tool / Resource | Type | Function in Computational Prediction |

|---|---|---|

| CDD Vault | Software Platform | Collaborative database for managing chemical and biological data; used for descriptor calculation and model building [2]. |

| Marvin Suite (ChemAxon) | Chemoinformatics Toolkit | Calculates key molecular descriptors (e.g., pKa, logP, molecular weight) essential for feature extraction [2]. |

| Python (Scikit-learn) | Programming Language / Library | Provides libraries for implementing Naïve Bayesian classifiers and other machine learning models. |

| BATCHIE | Open-Source Software | Platform for implementing Bayesian active learning in combination drug screens [4]. |

| NIH PubChem / MLSCN Data | Public Data Repository | Source of known chemical probes and associated bioactivity data for training and validation [2]. |

| FAFDrugs2 | Filtering Software | Applies PAINS and other substructure filters to flag potentially problematic compounds [2]. |

The imperative for computational prediction in medicinal chemistry is clear. The scaling of expert knowledge is no longer a luxury but a necessity in the big-data era of drug discovery [24]. Bayesian models provide a robust, probabilistic framework to achieve this, enabling researchers to systematize expert intuition, guide resource-efficient experimentation, and navigate vast chemical and biological spaces with unprecedented speed and confidence. From predicting the quality of a single chemical probe to orchestrating million-combination drug screens, these methods are fundamentally expanding the scope and precision of medicinal chemistry.

Building Predictive Bayesian Models: Methodologies and Real-World Applications

The evaluation of chemical probes—small molecules used to modulate and study biological systems—is a critical step in chemical biology and early drug discovery. The application of Naïve Bayesian classifiers provides a robust, data-driven framework to objectively assess the quality and utility of these probes [2]. This methodology aligns with a broader thesis on employing Bayesian models for predicting chemical probe quality, offering a systematic approach to replace subjective, heuristic-based assessments. Bayesian models are particularly suited for this task because they can seamlessly integrate prior knowledge with new experimental data, a process known as sequential learning [25] [26]. This is essential in a field where data accumulates progressively from high-throughput screening (HTS) campaigns. The "naïve" assumption of feature independence simplifies the model construction, enabling the handling of the high-dimensional data typical of chemical probes (e.g., molecular weight, potency, solubility, selectivity) while maintaining remarkable predictive performance [27] [28].

The National Institutes of Health (NIH) Molecular Libraries Probe Production Centers Network (MLPCN) initiative, which produced hundreds of chemical probes, highlighted the need for objective, quantitative assessment methods [2] [29]. Traditional evaluation by medicinal chemists, while valuable, can be variable and subjective. Computational models, especially Naïve Bayesian classifiers, have been successfully developed to predict the evaluations of an experienced medicinal chemist, achieving accuracy comparable to other established drug-likeness measures [2]. This demonstrates the potential of Bayesian classification to formalize expert knowledge and create scalable, reproducible tools for the research community. By leveraging publicly available medicinal chemistry data, these models empower researchers to make informed decisions on probe selection, ultimately accelerating biomedical research [22].

Theoretical Foundation of Naïve Bayesian Classifiers

Core Principles and Bayes' Theorem

The Naïve Bayesian classifier is a probabilistic classification model grounded in Bayes' Theorem. It calculates the probability of a data point belonging to a particular class based on its features [27] [28]. For chemical probe evaluation, a probe can be classified as "Desirable" or "Undesirable" given its molecular properties. Bayes' Theorem is expressed as:

P(y|X) = [P(X|y) * P(y)] / P(X) [27]

Where:

- P(y|X) is the posterior probability: the probability of a probe being in class

y(e.g., "Desirable") given its feature setX. - P(X|y) is the likelihood: the probability of observing the feature set

Xamong probes of classy. - P(y) is the prior probability: the initial probability of a probe belonging to class

y, based on the overall distribution in the training data. - P(X) is the evidence: the overall probability of the feature set

Xacross all classes. This term is a normalizing constant often ignored for classification, as it does not depend on the class [28].

The "naïve" conditional independence assumption simplifies the calculation of the likelihood P(X|y). It assumes that each feature in the set X contributes independently to the probability of the class y, given that class. Thus, the complex joint likelihood P(X|y) is decomposed into the product of individual, simpler probabilities [27] [28]:

P(X|y) = P(x₁|y) * P(x₂|y) * ... * P(xₙ|y)

The Classification Decision Rule

For a given chemical probe with features X, the classifier calculates the posterior probability for each potential class. The class with the highest probability is assigned as the prediction [28]. This is known as the Maximum A Posteriori (MAP) decision rule:

ŷ = argmaxᵧ P(y) * Π P(xᵢ|y)

In practice, to avoid numerical underflow from multiplying many small probabilities, calculations are often performed in the log space, which converts the product into a sum without changing the argmax result [28]:

ŷ = argmaxᵧ [ log(P(y)) + Σ log(P(xᵢ|y)) ]

This framework allows the model to handle a large number of features, making it highly suitable for chemical data where each molecular descriptor or property can be treated as an individual feature.

Protocol for Constructing a Bayesian Classifier for Probe Evaluation

This protocol provides a step-by-step methodology for building and validating a Naïve Bayesian classifier to predict chemical probe quality, based on proven approaches from the literature [2].

Phase 1: Data Collection and Curation

- Step 1: Assemble a Reference Set of Chemical Probes. Compile a dataset of known chemical probes with established quality ratings. Public resources like the NIH PubChem database and the CDD Public database are invaluable starting points [2] [29].

- Step 2: Define a Binary Classification. Assign a binary label to each probe in the dataset based on expert assessment. For example, based on criteria such as literature references, chemical reactivity, and selectivity, probes can be classified as "Desirable" (score of 1) or "Undesirable" (score of 0) [2].

- Step 3: Calculate Molecular Properties and Descriptors. For each compound, calculate a set of relevant molecular descriptors. These will form the feature set (

X) for the model. Essential properties include [2]:- Molecular weight

- Calculated logP (AlogP)

- Number of hydrogen bond donors and acceptors

- Number of rotatable bonds

- Heavy atom count

- Polar surface area

- pKa (and average charge at pH 7.4)

- Step 4: Generate Structural Fingerprints. Beyond simple properties, compute binary structural fingerprints (e.g., Function Class Fingerprints, ECFP) that encode the presence or absence of specific chemical substructures. These are well-suited for the Bernoulli Naïve Bayes model [2].

Phase 2: Feature Engineering and Model Training

- Step 5: Preprocess and Discretize Continuous Features. For Gaussian Naïve Bayes, continuous features (like molecular weight) are assumed to be normally distributed within each class. The mean (μ) and variance (σ²) for each feature are calculated for the "Desirable" and "Undesirable" classes separately [27] [28]. The likelihood for a new value

x_iis then calculated using the Gaussian probability density function. - Step 6: Train the Naïve Bayesian Classifier. Using the training data, compute the following model parameters [27]:

- Class Priors (P(y)): The proportion of "Desirable" and "Undesirable" probes in the training set.

- Conditional Probabilities (P(xᵢ|y)): For each feature

x_iand each classy, calculate the likelihood. For binary fingerprint features, this is the frequency of a feature being present in a class. For continuous features, it is the Gaussian PDF for the class-specific μ and σ².

The following workflow diagram illustrates the key stages of this protocol.

Phase 3: Model Validation and Application

- Step 7: Validate Model Performance. Use a held-out test set or cross-validation to assess the classifier's accuracy. Compare its predictions against the expert-derived classifications. Performance can be benchmarked against other methods like PAINS filters, QED, or BadApple [2].

- Step 8: Deploy the Model for Prospective Prediction. The trained model can now be used to score new chemical probes. The feature set for a new compound is calculated, and the model computes the posterior probability for the "Desirable" class. A probability above a predefined threshold (e.g., 0.5) indicates a high-confidence "Desirable" probe.

- Step 9: Interpret the Model. Analyze which features contribute most strongly to the classification of a probe as "Desirable" or "Undesirable." This provides interpretable insights into the molecular properties and structural motifs that influence probe quality.

Case Study: Bayesian Evaluation of NIH Chemical Probes

A seminal study demonstrated the practical application of Naïve Bayesian classifiers to evaluate NIH chemical probes [2]. The research aimed to computationally predict the "desirability" assessments of an experienced medicinal chemist who had evaluated over 300 NIH probes.

- Dataset: The training data consisted of NIH probe compounds classified by the expert as "Desirable" or "Undesirable" based on criteria including excessive literature references (potential promiscuity), zero literature references (uncertain biological quality), and predicted chemical reactivity [2].

- Model Construction: The researchers used a process of sequential Bayesian model building and iterative testing. Molecular properties and structural fingerprints were used as features. The study highlighted that probes scored as "Desirable" tended to have distinct molecular property profiles.

- Key Findings: The analysis revealed that "Desirable" probes were associated with higher pKa, molecular weight, heavy atom count, and rotatable bond number [2]. The resulting Bayesian models achieved accuracy comparable to other measures of drug-likeness and filtering rules, validating this approach as a powerful tool for probe evaluation.

Table 1: Molecular Properties Associated with Desirable vs. Undesirable Chemical Probes in a Bayesian Classification Study [2]

| Molecular Property | Trend in Desirable Probes | Notes / Implication |

|---|---|---|

| pKa | Higher | Suggests a preference for basic compounds in the studied dataset. |

| Molecular Weight | Higher | Indicates a potential bias towards larger molecules in desirable probes. |