Bayesian Optimization for Chemical Hyperparameter Tuning: A Machine Learning Framework for Accelerated Drug Discovery

This article provides a comprehensive guide to Bayesian Optimization (BO), a powerful machine learning strategy for efficiently tuning hyperparameters in chemical and drug discovery applications.

Bayesian Optimization for Chemical Hyperparameter Tuning: A Machine Learning Framework for Accelerated Drug Discovery

Abstract

This article provides a comprehensive guide to Bayesian Optimization (BO), a powerful machine learning strategy for efficiently tuning hyperparameters in chemical and drug discovery applications. Tailored for researchers and drug development professionals, it covers the foundational principles of BO, including surrogate models and acquisition functions. The content explores methodological implementations for optimizing reaction parameters, molecular properties, and pharmaceutical formulations, alongside advanced techniques for troubleshooting noisy, multi-objective problems. Finally, it presents rigorous validation strategies and comparative performance analyses against traditional optimization methods, demonstrating BO's capacity to reduce experimental costs and accelerate the development of new therapeutics.

Beyond Trial and Error: Foundational Principles of Bayesian Optimization

Frequently Asked Questions (FAQs)

Q1: What are the main limitations of traditional One-Factor-At-a-Time (OFAT) optimization that Bayesian optimization addresses?

OFAT approaches explore only a limited subset of fixed combinations in the reaction space and often miss important regions of the chemical landscape, especially as additional reaction parameters multiplicatively expand the space of possible experimental configurations [1]. Bayesian optimization addresses this by using machine learning to balance exploration of new materials with exploitation of existing knowledge, guiding the search toward optimal materials with far greater efficiency [2] [1].

Q2: How can I handle categorical variables like solvents and catalysts in Bayesian optimization?

Categorical variables can be represented by converting molecular entities into numerical descriptors [1]. In one pharmaceutical optimization study, researchers successfully handled parameters including solvent (11 options), iodine source (5 options), and catalyst (3 options) by representing the reaction condition space as a discrete combinatorial set of potential conditions [3]. The platform automatically filtered impractical conditions like unsafe combinations or temperatures exceeding solvent boiling points [1].

Q3: My optimization is stuck in local optima. What advanced BO techniques can help?

Several advanced approaches address this challenge:

- Feature Adaptive BO (FABO): Dynamically adapts material representations throughout optimization cycles [2]

- Reasoning BO: Leverages LLMs' inference abilities to generate scientific hypotheses and avoid local optima [4]

- Multi-task BO: Transfers knowledge from previous optimization campaigns to accelerate new ones [5]

- Parallel Bayesian Optimization: Uses scalable acquisition functions like q-NParEgo and TS-HVI for highly parallel HTE applications [1]

Q4: How much experimental data do I need to start benefiting from Bayesian optimization?

Bayesian optimization is particularly valuable in the small-data regime. For novel tasks, you can start with algorithmic quasi-random Sobol sampling to select initial experiments that diversely cover the reaction space [1]. For related tasks, multi-task Bayesian optimization can leverage data from previous campaigns—one study successfully used 96 data points from auxiliary tasks to significantly accelerate optimization of new reactions [5].

Troubleshooting Guides

Issue 1: Poor Performance in High-Dimensional Search Spaces

Symptoms:

- Slow convergence despite many experiments

- Algorithm fails to identify promising regions of chemical space

- Inconsistent performance across similar substrates

Solutions:

- Implement Feature Adaptive Bayesian Optimization (FABO) which automatically identifies the most relevant molecular features during optimization [2]

- Use maximum relevancy minimum redundancy (mRMR) or Spearman ranking for feature selection to reduce dimensionality [2]

- Start with a complete feature set including both chemical and geometric characteristics, then allow the algorithm to adapt representations [2]

- For reaction optimization with many categorical variables, employ a discrete combinatorial representation with constraint checking [1]

Validation: In MOF discovery tasks, FABO effectively reduced feature space dimensionality and accelerated identification of top-performing materials across CO₂ adsorption and band gap optimization tasks [2].

Issue 2: Inefficient Optimization of Multiple Objectives

Symptoms:

- Conditions that improve yield reduce selectivity

- Difficulty balancing economic, environmental, and performance objectives

- Inability to identify Pareto-optimal conditions

Solutions:

- Implement scalable multi-objective acquisition functions like q-NParEgo, Thompson sampling with hypervolume improvement (TS-HVI), or q-Noisy Expected Hypervolume Improvement (q-NEHVI) for large batch sizes [1]

- Use the hypervolume metric to quantify performance in multi-objective space, considering both convergence toward optimal objectives and diversity [1]

- For pharmaceutical applications, simultaneously optimize yield, selectivity, and process safety considerations [1]

Case Study: In pharmaceutical process development, a multi-objective approach successfully identified multiple reaction conditions achieving >95 area percent yield AND selectivity for both Ni-catalyzed Suzuki coupling and Pd-catalyzed Buchwald-Hartwig reactions [1].

Issue 3: Transferring Knowledge Between Related Optimization Tasks

Symptoms:

- Repeatedly starting from scratch for similar reactions

- Inability to leverage historical optimization data

- Wasted resources on preliminary exploration

Solutions:

- Implement Multi-Task Bayesian Optimization (MTBO) using multitask Gaussian processes that learn correlations between different tasks [5]

- For medicinal chemistry applications, leverage data from previous C–H activation optimizations to accelerate new substrate optimization [5]

- When multiple auxiliary tasks are available, MTBO performance improves significantly—one study showed optimal conditions found in fewer than five experiments when using four auxiliary tasks [5]

Performance Data: In experimental C–H activation reactions with pharmaceutical intermediates, MTBO demonstrated large potential cost reductions compared to industry-standard process optimization techniques [5].

Experimental Protocols & Methodologies

Protocol 1: Feature Adaptive Bayesian Optimization (FABO) for Materials Discovery

Application: Discovering high-performing metal-organic frameworks (MOFs) for specific applications [2]

Workflow:

- Initialization: Begin with a complete, high-dimensional representation of each material (chemical + pore geometric characteristics)

- Feature Representation:

- Represent chemistry using Revised Autocorrelation Calculations (RACs)

- Include stoichiometric feature sets

- Compute RACs over the crystal graph of the material

- Adaptive Cycle (repeated each BO cycle):

- Perform feature selection using mRMR or Spearman ranking

- Select 5-40 features based on the specific task

- Update surrogate model with selected features

- Select next experiment using acquisition function (EI or UCB)

Materials: QMOF database (8,437 materials with DFT-calculated band gaps) or CoRE-2019 database (9,525 materials with gas adsorption data) [2]

Table 1: FABO Performance Across MOF Optimization Tasks

| Target Property | Database | Key Influencing Factors | FABO Performance |

|---|---|---|---|

| CO₂ Adsorption (16 bar) | CoRE-2019 | Primarily pore geometry | Outperformed fixed representations |

| CO₂ Adsorption (0.15 bar) | CoRE-2019 | Geometry + chemistry | Identified expert-aligned features |

| Electronic Band Gap | QMOF | Material chemistry | Efficient high-dimensional optimization |

Protocol 2: Highly Parallel Multi-Objective Reaction Optimization

Application: Pharmaceutical reaction optimization with 96-well HTE platforms [1]

Workflow:

- Experimental Design:

- Define reaction condition space as discrete combinatorial set

- Include categorical (solvent, catalyst, ligand) and continuous parameters (temperature, concentration)

- Implement constraint checking for impractical conditions

- Initial Sampling: Use Sobol sampling for initial batch to maximize reaction space coverage

- Optimization Cycle:

- Train Gaussian Process regressor on experimental data

- Use scalable acquisition function (q-NParEgo, TS-HVI, or q-NEHVI)

- Select batch of experiments balancing exploration and exploitation

- Run experiments using automated platform

- Update model and repeat for desired iterations

Validation: In nickel-catalyzed Suzuki reaction optimization (88,000 possible conditions), this approach identified conditions with 76% yield and 92% selectivity where traditional HTE plates failed [1].

Protocol 3: Multi-Task Bayesian Optimization for Medicinal Chemistry

Application: Accelerating optimization of precious intermediate reactions in drug discovery [5]

Workflow:

- Data Preparation:

- Collect historical optimization data from related reactions

- Align parameter spaces across different substrates

- Model Setup:

- Replace standard Gaussian Process with multitask GP

- Model learns correlations between different reaction tasks

- Optimization Execution:

- Leverage auxiliary task data (typically 96 data points)

- Multitask GP uses covariance between tasks to improve predictions

- Balance exploration with knowledge transfer from similar reactions

Case Study Results: For Suzuki couplings, MTBO achieved better and faster results than single-task BO when auxiliary tasks had similar reactivity, determining optimal conditions in fewer than five experiments when using multiple auxiliary tasks [5].

Research Reagent Solutions

Table 2: Essential Components for Bayesian Optimization Workflows

| Reagent/Component | Function | Application Example |

|---|---|---|

| Gaussian Process Regressor | Probabilistic surrogate model for predicting reaction outcomes with uncertainty quantification | Core model in FABO for MOF discovery [2] |

| mRMR Feature Selection | Maximum Relevancy Minimum Redundancy feature selection to balance relevance and redundancy | Dimensionality reduction in molecular representation [2] |

| Sobol Sequences | Quasi-random sampling for initial space-filling experimental design | Initial batch selection in parallel optimization [1] |

| Multi-task Gaussian Processes | Transfer learning between related optimization tasks | Leveraging historical C–H activation data for new substrates [5] |

| Scalable Acquisition Functions (q-NParEgo, TS-HVI) | Guide batch experiment selection in multi-objective optimization | 96-well plate optimization in pharmaceutical development [1] |

| Knowledge Graphs | Structured storage of domain knowledge and experimental results | Reasoning BO framework for storing chemical insights [4] |

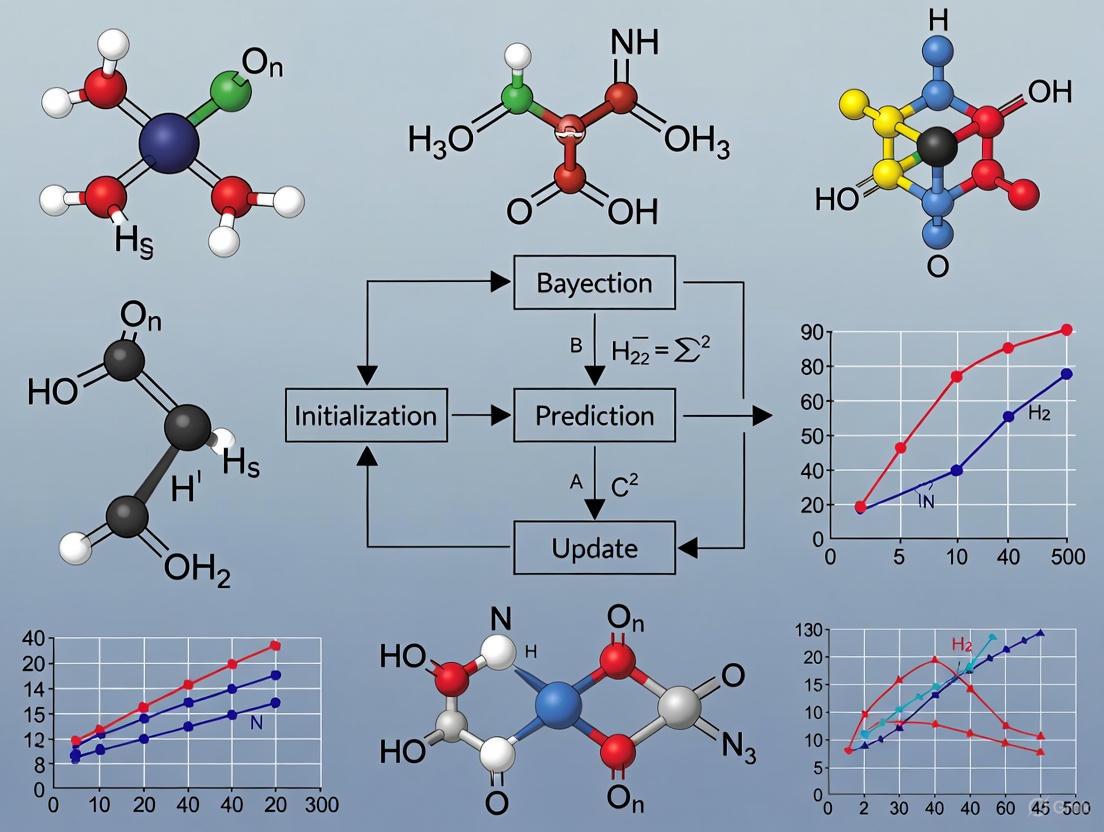

Workflow Visualization

Bayesian Optimization Core Cycle

Feature Adaptive BO (FABO) Workflow

Multi-Task Bayesian Optimization Framework

Core Components of the Bayesian Optimization Framework

Frequently Asked Questions (FAQs)

1. What are the two essential components of the Bayesian Optimization framework? The Bayesian Optimization (BO) framework consists of two core components: a probabilistic surrogate model used to emulate the expensive objective function, and an acquisition function that guides the selection of the next point to evaluate by balancing exploration and exploitation [6] [7] [8]. The surrogate model, often a Gaussian Process (GP), provides a posterior distribution of the function, while the acquisition function uses this information to decide where to sample next [9] [10].

2. Why is a Gaussian Process commonly chosen as the surrogate model? Gaussian Processes (GPs) are a common choice for the surrogate model because they are flexible, non-parametric models that provide not only a mean prediction for the objective function at any point but also a measure of uncertainty (variance) around that prediction [8] [11]. This uncertainty quantification is essential for the acquisition function to effectively balance exploring regions with high uncertainty and exploiting regions with promising mean predictions [9] [6].

3. What is the difference between the Probability of Improvement (PI) and Expected Improvement (EI) acquisition functions?

The Probability of Improvement (PI) acquisition function selects the next point based on the highest probability of achieving any improvement over the current best observation [9] [11]. In contrast, the Expected Improvement (EI) acquisition function considers both the probability of improvement and the magnitude of that potential improvement, making it a popular and often more effective choice [9] [6] [10]. EI is defined as EI(x) = E[max(f(x) - f(x*), 0)], where f(x*) is the current best value [6].

4. My optimization seems stuck in a local minimum. How can I encourage more exploration? This is a classic sign of overexploitation. You can address this by:

- Adjusting the acquisition function: If using Upper Confidence Bound (UCB), increase the

κparameter to weight the uncertainty term more heavily, encouraging exploration [8] [11]. If using Probability of Improvement (PI), increasing theεparameter can force the algorithm to look beyond the immediate vicinity of the current best point [9]. - Using "plus" acquisition functions: Some software implementations offer acquisition functions like

'expected-improvement-plus'that automatically detect overexploitation and modify the model to encourage exploration [10].

5. Why does the optimization become slow as the number of trials increases, and what can I do?

The computational cost of refitting the Gaussian Process surrogate model grows cubically (O(n³)) with the number of observations n [12]. For high-dimensional problems or long runs, consider:

- Using a different surrogate model like Random Forests, which can be more scalable for certain problems [12] [11].

- Leveraging parallel Bayesian Optimization algorithms that can suggest multiple points to evaluate simultaneously, thus making better use of computational resources [13] [10].

Troubleshooting Common Experimental Issues

Issue 1: Poor Convergence or Unphysical Suggestions in Chemical Design

Problem: The algorithm fails to find good candidates or suggests parameter combinations that are chemically impossible or unstable [12].

Diagnosis and Solution:

| Diagnostic Step | Solution |

|---|---|

| Check the feasibility of the suggested points against known chemical rules. | Incorporate hard constraints into the BO algorithm to explicitly rule out invalid regions of the search space [12]. |

| Analyze if the problem has a highly discontinuous or complex search space that a standard GP with a smooth kernel cannot model well. | Use a Random Forest surrogate model, which can handle discontinuities more effectively and can be integrated with domain knowledge [12]. |

| Verify the initial dataset. A poorly chosen initial set of points can lead the model to form incorrect beliefs about the objective function. | Use space-filling designs like Latin Hypercube Sampling for the initial points to ensure the space is well-covered from the start [8]. |

Issue 2: Handling Noisy or Failed Experimental Evaluations

Problem: In real-world chemistry experiments, evaluations can be noisy, or a suggested experiment might fail to return a valid result (e.g., a failed synthesis) [7] [10].

Diagnosis and Solution:

| Diagnostic Step | Solution |

|---|---|

| Determine if the objective function is stochastic (noisy) or if some evaluations result in errors. | For noisy measurements, ensure your GP model includes a Gaussian noise term (likelihood) during fitting, which is a standard feature in most GP implementations [11] [10]. |

| If experiments occasionally fail, the data contains "objective function errors." | Use a BO algorithm that can handle such errors. For instance, the bayesopt function in MATLAB can model the probability of constraint satisfaction and integrate it into the acquisition function [10]. |

Issue 3: Performance and Scalability in High-Dimensional Spaces

Problem: The optimization is prohibitively slow, or performance degrades when tuning a large number of hyperparameters (e.g., >20) [12].

Diagnosis and Solution:

| Diagnostic Step | Solution |

|---|---|

| Assess the dimensionality of your search space. Standard BO with GP is known to struggle in high-dimensional spaces (>20 dimensions) [12]. | Consider using a scalable surrogate model like a Random Forest or employing dimensionality reduction techniques before optimization [12]. |

| Evaluate if all parameters are equally important. | Perform a sensitivity analysis to identify less influential parameters and fix them to reasonable values, thereby reducing the effective dimensionality of the problem [14]. |

Bayesian Optimization Workflow

The following diagram illustrates the iterative cycle of the Bayesian Optimization framework.

Research Reagent Solutions

The following table details the essential "research reagents" or core components needed to implement a Bayesian Optimization experiment in chemical tuning.

| Item | Function & Application |

|---|---|

| Gaussian Process (GP) | The core surrogate model. It uses a prior distribution over functions and updates it with data to produce a posterior that predicts the objective and quantifies uncertainty [9] [6] [11]. |

| Expected Improvement (EI) | A widely used acquisition function. It suggests the next experiment by calculating the expected value of improvement over the current best result, naturally balancing exploration and exploitation [6] [10]. |

| ARD Matérn 5/2 Kernel | A common covariance function for the GP. It defines how the objective function values at different points are correlated, and Automatic Relevance Determination (ARD) helps handle different input scales [10]. |

| Latin Hypercube Sampling | A method for selecting the initial set of experiments. It ensures good coverage of the entire parameter space with a minimal number of points, providing a solid starting point for the surrogate model [8]. |

| Software Library (e.g., Ax/BoTorch) | The experimental platform. These specialized libraries provide robust, tested implementations of the BO loop, including various models and acquisition functions, allowing researchers to focus on their domain problem [13] [6]. |

Troubleshooting Guides and FAQs

FAQ: Why is my Gaussian Process (GP) model providing poor predictions despite having a good mean prediction, and how can I improve it?

For reliability and safety assessments in drug development, the quality of the entire predictive distribution is crucial, not just the mean prediction. Poor uncertainty quantification often stems from non-robust estimation of the GP's hyperparameters. Standard methods like Maximum Likelihood Estimation (MLE) can sometimes produce inaccurate predictive distributions.

Solution: Implement a robust hyperparameter estimation algorithm that jointly optimizes for both data likelihood and the empirical coverage of the prediction intervals. This ensures the uncertainty bounds are reliable. A recent algorithm proposes maximizing the likelihood while also maximizing a Coverage Function (CF), which measures the accuracy of the prediction intervals, under the constraint that the model's predictive power (e.g., Q2 score) does not degrade [15].

FAQ: How can I perform Bayesian Optimization (BO) for a novel chemical task when I don't know the best molecular representation to use?

Choosing a fixed, high-dimensional molecular representation can lead to poor BO performance due to the curse of dimensionality. However, for novel optimization tasks, prior knowledge or large labeled datasets to select the best features are often unavailable [2].

Solution: Use a framework that integrates feature selection directly into the BO loop. One such method is Feature Adaptive Bayesian Optimization (FABO). It starts with a complete, high-dimensional feature set and dynamically refines it at each optimization cycle using efficient feature selection methods (like mRMR or Spearman ranking) on the data acquired during the campaign. This automatically identifies the most informative features for your specific task without requiring prior knowledge [2].

FAQ: My Bayesian Optimization gets stuck in local optima when tuning reaction parameters. How can I guide it towards better regions?

Traditional BO relies solely on the acquisition function and can lack the global, heuristic perspective needed to escape local optima. It also does not naturally incorporate domain knowledge, such as chemical reaction rules [4].

Solution: Integrate large language models (LLMs) with reasoning capabilities into the BO loop. In a Reasoning BO framework, an LLM can evaluate candidates proposed by the standard BO algorithm. Leveraging domain knowledge and historical data, the LLM generates scientific hypotheses and assigns confidence scores, helping to filter out implausible suggestions and guide the search toward more promising, globally optimal regions [4].

Detailed Experimental Protocols

Protocol 1: Hyperparameter Tuning with Feature Adaptive Bayesian Optimization (FABO)

This protocol is designed for optimizing chemical reactions or molecular properties when the optimal feature representation is unknown [2].

- Define Search Space: Start with a large, comprehensive set of numerical features (e.g., for molecules, this could include chemical descriptors RACs and geometric properties).

- Initial Sampling: Select an initial small set of experiments (e.g., 5-10 points) using a space-filling design like Latin Hypercube Sampling (LHS).

- Run Experiments: Execute the initial experiments (simulations or lab work) to obtain the target property values (e.g., reaction yield).

- FABO Loop: Iterate through the following cycle until a performance target or budget is met:

- Feature Selection: Using only the data collected so far, apply a feature selection method (e.g., Maximum Relevancy Minimum Redundancy - mRMR) to select the top

kmost relevant features for the current task. - Build Surrogate Model: Construct a Gaussian Process surrogate model using the adapted (reduced) feature set.

- Propose Next Experiment: Use an acquisition function (e.g., Expected Improvement - EI) on the surrogate model to select the most promising parameter set to test next.

- Run and Update: Run the proposed experiment and add the new {parameters, result} pair to the dataset.

- Feature Selection: Using only the data collected so far, apply a feature selection method (e.g., Maximum Relevancy Minimum Redundancy - mRMR) to select the top

Protocol 2: Robust Gaussian Process Surrogate Modeling for Reliability Analysis

This protocol ensures the GP model provides a reliable predictive distribution, which is critical for risk assessment and failure probability analysis in critical systems [15].

- Generate Learning Sample: Collect

ninput-output data points(Xs, Ys)from the expensive computational model (e.g., a pharmacokinetic simulation). - Standardize Data: Center and scale the output data

Ysto have a mean of zero and a standard deviation of one. - Define Kernel and Estimation Criteria: Select a kernel (e.g., Matérn 5/2) and define the two objectives for estimation:

- Likelihood: The standard log-likelihood of the data given the hyperparameters.

- Coverage Function (CF): A function that measures how well the 90% predictive intervals from the GP match the empirical coverage from the data.

- Estimate Hyperparameters: Instead of simple MLE, use a multi-objective optimization algorithm to find hyperparameters that jointly maximize the likelihood and the coverage function, while ensuring the model's Q2 predictivity coefficient remains above a acceptable threshold (e.g., 0.7).

- Validate Predictive Distribution: Rigorously validate the final GP model using various criteria beyond mean-error metrics, including the reliability of its prediction intervals across the input space.

Structured Data for Comparison

Table 1: Comparison of Key Hyperparameter Estimation Methods for Gaussian Processes

| Estimation Method | Key Principle | Advantages | Limitations | Best Suited For |

|---|---|---|---|---|

| Maximum Likelihood (MLE) | Finds parameters that make the observed data most probable [15]. | Conceptually straightforward, widely used, theoretical guarantees. | Can produce poor predictive distributions; sensitive to optimization [15]. | Initial modeling, cases where only mean prediction is needed. |

| Coverage-based Algorithm | Jointly maximizes likelihood and empirical accuracy of prediction intervals [15]. | Provides more reliable predictive uncertainty, robust for safety/reliability studies. | More computationally intensive than MLE. | Risk assessment, failure probability estimation, robust optimization. |

| Bayesian Approaches | Places a prior distribution on hyperparameters and computes the posterior [15]. | Accounts for uncertainty in hyperparameters, regularizes the solution. | High computational cost; requires expertise to define priors [15]. | Problems with limited data where prior knowledge is available and quantifiable. |

Table 2: Bayesian Optimization Frameworks for Chemical Synthesis

| Framework/Method | Core Innovation | Handles Novelty | Key Application in Chemistry | Reference |

|---|---|---|---|---|

| FABO | Dynamically adapts material/molecular representations during BO. | Excellent for novel tasks with no prior feature knowledge. | MOF discovery, organic molecule optimization. | [2] |

| Reasoning BO | Integrates LLMs for hypothesis generation and knowledge-guided search. | Uses domain knowledge to avoid local optima and implausible regions. | Chemical reaction yield optimization (e.g., Direct Arylation). | [4] |

| TSEMO | Uses Thompson sampling for efficient multi-objective optimization. | Requires a fixed parameter space. | Multi-objective optimization of nanomaterial synthesis and flow chemistry. | [16] |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key "Research Reagent Solutions" for Gaussian Process-based Bayesian Optimization

| Item / "Reagent" | Function / "Role in the Experiment" | Examples / "Specifications" |

|---|---|---|

| Surrogate Model | A cheap-to-evaluate statistical model that approximates the expensive computational or experimental process [17] [18]. | Gaussian Process (GP), Random Forest, Neural Networks. |

| Acquisition Function | A utility function that guides the selection of the next experiment by balancing exploration (high uncertainty) and exploitation (high promise) [16] [2]. | Expected Improvement (EI), Upper Confidence Bound (UCB). |

| Kernel / Covariance Function | The core component of a GP that defines the covariance between data points, thereby specifying the expected smoothness and patterns of the function being modeled [19]. | Matérn, Radial Basis Function (RBF). |

| Design of Experiments (DOE) | A systematic method for planning the initial set of experiments to efficiently sample the parameter space [18] [17]. | Latin Hypercube Sampling (LHS), Sobol sequence. |

| Feature Selection Method | Identifies the most relevant input features from a large pool, improving model interpretability and BO efficiency in high-dimensional spaces [2]. | mRMR (Maximum Relevancy Minimum Redundancy), Spearman ranking. |

Workflow and System Diagrams

FABO Workflow

Robust GP Estimation

Frequently Asked Questions

What is an acquisition function and why is it crucial in Bayesian Optimization?

An acquisition function is a decision-making tool that guides Bayesian Optimization (BO) by selecting the next experiment to evaluate. It uses the surrogate model's predictions (mean, μ) and uncertainty estimates (standard deviation, σ) to balance exploring new, uncertain regions of the search space against exploiting areas known to yield good results. This balance is vital for efficiently finding the global optimum of expensive black-box functions, such as chemical reaction yields, with a limited experimental budget [16] [20].

My BO algorithm seems stuck in a local optimum. How can I encourage more exploration? This common problem often stems from an over-exploitative acquisition function. Solutions include:

- Tuning the exploration parameter: For the Upper Confidence Bound (UCB) function

α(x) = μ(x) + λσ(x), increase the value ofλto give more weight to uncertain regions [21] [20]. - Switching the acquisition function: Consider using Expected Improvement (EI), which naturally balances the probability and magnitude of improvement. If you are using Probability of Improvement (PI), switching to EI is often recommended, as PI does not account for the magnitude of improvement and can be overly greedy [22] [20].

- Checking hyperparameters: An incorrectly tuned surrogate model, such as a Gaussian Process with a too-short lengthscale, can cause over-smoothing and premature convergence. Ensure your model's hyperparameters are properly specified [22] [23].

How do I choose the right acquisition function for my chemical optimization problem? The choice depends on your primary goal. The table below summarizes common functions and their typical use cases.

| Acquisition Function | Mathematical Formulation | Best For | Chemical Application Example |

|---|---|---|---|

| Probability of Improvement (PI) | PI(x) = Φ((μ(x) - f(x*)) / σ(x)) |

Quick, initial search for improvement; can get stuck in local optima [22]. | Initial screening of catalyst candidates. |

| Expected Improvement (EI) | EI(x) = (μ(x) - f(x*))Φ(Z) + σ(x)φ(Z) where Z = (μ(x) - f(x*)) / σ(x) |

A robust, general-purpose choice that balances the probability and size of improvement [22] [20]. | Optimizing reaction temperature and time for yield. |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + λσ(x) |

Explicit control over exploration vs. exploitation via the λ parameter [21] [20]. |

High-risk screening of novel solvent combinations. |

| Thompson Sampling (TS) | Samples a function from the posterior surrogate model and maximizes it [16]. | Multi-objective optimization problems and scenarios favoring random exploration [16]. | Simultaneously optimizing for yield and E-factor (environmental impact) [16]. |

The optimization suggestions from my BO framework seem scientifically implausible. What could be wrong? This could indicate a problem with "hallucinated" suggestions, especially if you are using a language model-enhanced BO framework. Modern frameworks like "Reasoning BO" address this by incorporating domain knowledge. Ensure your setup includes:

- Confidence-based filtering: The framework should assign confidence scores to suggestions and filter out low-confidence, implausible ones [4].

- Knowledge integration: Use a dynamic knowledge management system that incorporates structured domain rules (e.g., chemical reaction rules) to keep suggestions scientifically grounded [4].

Troubleshooting Guides

Problem: Inconsistent or Poor Optimization Performance

Diagnosis: Poor performance can arise from an incorrect prior width in the surrogate model, over-smoothing, or inadequate maximization of the acquisition function itself [22].

Resolution:

- Verify Surrogate Model Hyperparameters: For a Gaussian Process, check the kernel amplitude (

σ) and lengthscale (ℓ). An inappropriate lengthscale can cause the model to over- or under-fit the data. Use marginal likelihood maximization or a validation set to tune these [22]. - Ensure Thorough AF Maximization: The acquisition function must be maximized effectively to find the best next experiment. Use a robust optimizer (e.g., L-BFGS-B or multi-start stochastic optimization) for this inner loop and avoid premature convergence [22].

- Implement a Hybrid Strategy: For chemical spaces with both continuous (temperature, concentration) and categorical (solvent, catalyst) variables, consider a hybrid approach. The TSEMO + DyOS framework has been successfully applied to complex chemical reactions, demonstrating precise control and efficient Pareto front development [16].

The following workflow diagram illustrates a robust Bayesian Optimization cycle that incorporates these troubleshooting principles.

Bayesian Optimization Troubleshooting Workflow

Problem: Optimizing for Multiple, Conflicting Objectives

Diagnosis: In chemical synthesis, you often need to optimize for multiple objectives simultaneously, such as maximizing yield while minimizing cost or environmental impact (E-factor). Standard BO for single objectives is insufficient.

Resolution: Adopt a Multi-Objective Bayesian Optimization (MOBO) framework.

- Define Pareto Front: The goal shifts from finding a single optimum to identifying a set of non-dominated solutions known as the Pareto front.

- Use Multi-Objective Acquisition Functions: Implement algorithms like Thompson Sampling Efficient Multi-Objective (TSEMO) or q-Noise Expected Hypervolume Improvement (q-NEHVI) [16].

- Experimental Protocol: A case study on optimizing a reaction for Space-Time Yield (STY) and E-factor used TSEMO. The surrogate model was constructed from initial data, and the acquisition function proposed new experiments until the Pareto frontier was developed after 68-78 iterations [16].

The logic of how an acquisition function like UCB balances exploration and exploitation for a single decision is shown below.

Acquisition Function Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and experimental "reagents" essential for implementing Bayesian Optimization in chemical research.

| Tool / Reagent | Function / Explanation | Application Note |

|---|---|---|

| Gaussian Process (GP) | A probabilistic model that serves as the surrogate function, providing predictions and uncertainty estimates for unexplored reaction conditions [22] [16]. | The RBF kernel is common. Proper tuning of the lengthscale (ℓ) and amplitude (σ) is critical to avoid under/over-fitting [22]. |

| Expected Improvement (EI) | An acquisition function that selects the next experiment by considering the expected value of improvement over the current best result [20]. | A robust, general-purpose choice. Recommended over Probability of Improvement (PI) as it accounts for the magnitude of improvement [22]. |

| TSEMO Algorithm | A multi-objective acquisition function (Thompson Sampling Efficient Multi-Objective) used for optimizing several conflicting objectives at once [16]. | Successfully used for simultaneously optimizing chemical reaction yield and environmental E-factor [16]. |

| Knowledge Graph | A structured database of domain knowledge (e.g., chemical reaction rules) integrated into frameworks like "Reasoning BO" to keep optimization suggestions scientifically plausible [4]. | Helps prevent the LLM component from suggesting invalid or dangerous experiments, enhancing safety and trustworthiness [4]. |

| Summit Framework | A Python software toolkit specifically designed for chemical reaction optimization using BO and other self-optimization strategies [16]. | Provides implementations of various algorithms (including TSEMO) and benchmarks for comparing optimization strategies [16]. |

Bayesian Optimization (BO) is a powerful, sequential design strategy for globally optimizing expensive-to-evaluate black-box functions. This approach is particularly valuable in chemical synthesis and drug development, where experiments are costly and time-consuming, and the underlying functional relationships between variables and outcomes are complex and unknown [24] [16]. The core BO cycle operates by building a probabilistic surrogate model of the objective function and using an acquisition function to intelligently select the next experiment to perform, thereby balancing the exploration of unknown regions of the search space with the exploitation of known promising areas [9].

The sequential nature of this process—iteratively updating the model with new data and selecting new points—makes it exceptionally sample-efficient. This article details the step-by-step workflow of the sequential BO cycle, provides a real-world chemical application, and offers a technical support guide to address common implementation challenges faced by researchers.

The Step-by-Step Workflow

The Sequential Bayesian Optimization cycle consists of four key steps that are repeated until a stopping criterion is met, such as convergence or the exhaustion of an experimental budget. The workflow is illustrated in the diagram below.

Diagram 1: The Sequential Bayesian Optimization Cycle

- Step 1: Build/Update the Surrogate Model. The cycle begins with an initial, often small, dataset of performed experiments. A probabilistic surrogate model, most commonly a Gaussian Process (GP), is trained on this data. The GP models the unknown objective function by providing a posterior distribution—a mean prediction and an uncertainty estimate (variance) for every point in the search space [16] [9]. In the first cycle, this is the prior model; in subsequent cycles, it is updated with all available data.

- Step 2: Optimize the Acquisition Function. The surrogate model is used to construct an acquisition function, which guides the selection of the next experiment. This function quantifies the utility of evaluating any given point, balancing the goal of improving the model (exploring regions of high uncertainty) with the goal of finding the optimum (exploiting regions of high predicted performance). Common acquisition functions include Expected Improvement (EI), Probability of Improvement (PI), and Upper Confidence Bound (UCB) [24] [9].

- Step 3: Execute the New Experiment. The point that maximizes the acquisition function is selected as the next experiment to run. This is the key step where the algorithm interacts with the real world—for instance, by synthesizing a new catalyst or running a chemical reaction under the proposed conditions [25] [16].

- Step 4: Update the Dataset. The outcome of the new experiment (e.g., reaction yield) is measured and added to the growing dataset. The cycle then returns to Step 1, where the surrogate model is updated with this new data point. This closed-loop process continues, with each experiment intelligently informing the next, until the global optimum is located or the experimental budget is spent [25] [9].

Example Protocol: Optimizing an Organic Photoredox Catalyst

A study in Nature Chemistry provides a clear protocol for using sequential BO to discover and optimize organic molecular metallophotocatalysts for a decarboxylative cross-coupling reaction [25]. The following table summarizes the key reagents and their functions in this experiment.

Table 1: Research Reagent Solutions for Metallophotocatalysis

| Reagent | Function / Role in the Experiment |

|---|---|

| CNP-based OPCs (Cyanopyridine core) | Organic photoredox catalyst (PC) that absorbs light and facilitates single-electron transfer (SET) processes. |

| NiCl₂·glyme | Source of nickel, the transition-metal catalyst that operates in a synergistic cycle with the photocatalyst. |

| dtbbpy (4,4′-di-tert-butyl-2,2′-bipyridine) | Ligand that coordinates to the nickel center, modulating its reactivity and stability. |

| Cs₂CO₃ | Base, essential for facilitating the decarboxylation step in the reaction mechanism. |

| DMF solvent | Reaction medium. |

| Blue LED irradiation | Light source required to photoexcite the photoredox catalyst and initiate its catalytic cycle. |

Experimental Methodology

The research employed a two-step, sequential closed-loop BO workflow [25]:

Catalyst Discovery:

- Virtual Library: A virtual library of 560 synthesizable cyanopyridine (CNP) molecules was designed.

- Molecular Encoding: Each catalyst candidate was encoded using 16 molecular descriptors capturing key thermodynamic, optoelectronic, and excited-state properties.

- BO Setup: A batched Bayesian optimization was set up to maximize the reaction yield. The algorithm was initialized with 6 diverse candidates selected via the Kennard-Stone algorithm.

- Iterative Loop: The BO sequentially selected batches of 12 new catalysts to synthesize and test. After evaluating only 55 molecules (9.8% of the library), it identified a catalyst (CNP-129) achieving a 67% yield.

Reaction Condition Optimization:

- Expanded Search Space: The best-performing catalysts from the first step were used to optimize reaction conditions, varying the catalyst, nickel catalyst concentration, and ligand concentration—a space of 4,500 possible conditions.

- Second BO Loop: A second BO was run to navigate this multi-dimensional space.

- Result: After evaluating only 107 conditions (2.4% of the total space), the optimization discovered a set of conditions that delivered an 88% reaction yield, making it competitive with expensive iridium-based catalysts [25].

Troubleshooting Guide and FAQs

Q1: My BO convergence is slow or gets stuck in a local optimum. What can I do?

- Symptom: The algorithm fails to find significantly better results after several iterations.

- Possible Causes & Solutions:

- Inadequate Acquisition Function Tuning: The balance between exploration and exploitation is off. Solution: Adjust the parameters of your acquisition function. For example, increase the

ϵparameter in the Probability of Improvement (PI) function to force more exploration [9]. Consider switching to Expected Improvement (EI), which accounts for both the probability and magnitude of improvement [9]. - Poor Initial Sampling: The initial dataset is too small or not representative enough. Solution: Use space-filling designs like the Kennard-Stone algorithm or Latin Hypercube Sampling to select a better initial set of points [25].

- Suboptimal Representation: The features used to describe your chemicals or materials may not be relevant to the task. Solution: Implement adaptive representation techniques like FABO (Feature Adaptive Bayesian Optimization), which dynamically identifies the most informative molecular or material descriptors during the BO campaign [2].

- Inadequate Acquisition Function Tuning: The balance between exploration and exploitation is off. Solution: Adjust the parameters of your acquisition function. For example, increase the

Q2: The surrogate model performance is poor or training becomes computationally expensive.

- Symptom: Model predictions are inaccurate, or the time to update the model becomes prohibitive.

- Possible Causes & Solutions:

- High-Dimensional Search Space: Standard GP models scale cubically with the number of data points. Solution: For larger datasets (>1000 points), consider surrogate models with lower computational complexity, such as Random Forests or Bayesian neural networks [16].

- Noisy Data: Experimental noise can overwhelm the signal. Solution: Explicitly model noise in the GP by specifying a noise likelihood. Use acquisition functions that are robust to noise, such as the q-Noise Expected Hypervolume Improvement (q-NEHVI) for multi-objective problems [16].

Q3: How can I incorporate my domain knowledge or interpret the BO process?

- Symptom: The "black-box" nature of BO is a barrier to adoption and trust.

- Possible Causes & Solutions:

- Lack of Interpretability: Traditional BO provides limited insight into its decision-making. Solution: Integrate large language models (LLMs) into the loop. Frameworks like Reasoning BO use LLMs to generate and evolve scientific hypotheses, provide confidence scores for proposed experiments, and maintain a dynamic knowledge graph, making the optimization process more interpretable and trustworthy [4].

Q4: How do I handle both continuous and categorical variables (like catalyst types and solvents)?

- Symptom: The search space contains a mix of variable types.

- Possible Causes & Solutions:

- Standard Kernels are for Continuous Space: Common GP kernels (e.g., RBF) are designed for continuous inputs. Solution: Use specialized kernels that can handle categorical variables, such as the Hamming kernel, or one-hot encode categorical variables. Advanced BO platforms like Summit are specifically designed to handle such mixed-variable optimization common in chemical reactions [16].

Table 2: Common Acquisition Functions and Their Use Cases

| Acquisition Function | Key Principle | Best For |

|---|---|---|

| Probability of Improvement (PI) | Selects the point with the highest probability of being better than the current best. | Quick convergence when the optimum region is roughly known; can be sensitive to the ϵ parameter [9]. |

| Expected Improvement (EI) | Selects the point with the highest expected improvement over the current best. | The most widely used strategy; offers a good balance between exploration and exploitation [24] [9]. |

| Upper Confidence Bound (UCB) | Selects the point where the upper confidence bound (mean + κ * standard deviation) is highest. | Explicit control of the explore/exploit trade-off via the κ parameter [2] [24]. |

Table 3: Comparison of Optimization Methods in Chemical Synthesis

| Method | Key Advantage | Key Limitation |

|---|---|---|

| Trial-and-Error / OFAT | Simple to implement, intuitive. | Highly inefficient, ignores variable interactions, prone to missing global optimum [16]. |

| Design of Experiments (DoE) | Systematically accounts for variable interactions. | Requires relatively large initial data; efficiency drops with high dimensionality [16]. |

| Bayesian Optimization (BO) | Highly sample-efficient; ideal for expensive experiments. | Computational cost of model training; can be sensitive to initial data and hyperparameters [16]. |

Visualization of Acquisition Function Behavior

The following diagram illustrates how different acquisition functions make decisions based on the same surrogate model state, highlighting their exploration-exploitation trade-offs.

Diagram 2: Decision Logic of Different Acquisition Functions

From Theory to Lab Bench: Methodological Implementation and Drug Discovery Applications

Frequently Asked Questions (FAQs)

FAQ 1: My Bayesian Optimization seems to get stuck in a local optimum. How can I improve its global search? This is a common challenge, often related to the balance between exploration and exploitation. The acquisition function is key to managing this balance.

- Solution: Use acquisition functions that are more exploration-biased, especially in early optimization rounds. Upper Confidence Bound (UCB) explicitly handles this with a tunable parameter to control the exploration-exploitation trade-off [16] [26]. Alternatively, consider algorithms like Thompson Sampling Efficient Multi-Objective (TSEMO), which has demonstrated strong performance in navigating complex chemical spaces and avoiding local optima [16].

FAQ 2: How do I effectively include categorical variables, like solvent or catalyst type, in my continuous BO framework? Categorical variables require special handling as they have no natural order. Standard Gaussian Process kernels assume continuous, ordered inputs.

- Solution: Encode categorical variables using numerical descriptors. The optimization pipeline can represent the reaction condition space as a discrete combinatorial set, automatically filtering out impractical conditions (e.g., a reaction temperature above a solvent's boiling point) [1]. Another workaround for a limited number of candidates is to transform the problem into optimizing the composition of a mixture (e.g., a binary or ternary solvent mixture) [27].

FAQ 3: My experimental measurements are very noisy. Is BO still suitable? Yes, Bayesian Optimization is particularly well-suited for noisy environments. Its probabilistic nature allows it to model and account for uncertainty.

- Solution: Ensure your surrogate model is configured to handle noise. Gaussian Processes can use kernels that include a white noise term to explicitly model experimental noise [26]. For more complex, non-constant noise (heteroscedastic noise), specialized frameworks like BioKernel offer heteroscedastic noise modeling to improve accuracy [26].

FAQ 4: How many initial experiments are needed to start a BO campaign? There is no fixed number, but the initial dataset should be diverse enough to allow the surrogate model to build a preliminary map of the landscape.

- Solution: Use space-filling designs for your initial experiments. Methods like Sobol sampling or Latin Hypercube Sampling are designed to maximize the coverage of your parameter space with a relatively small number of points, increasing the likelihood of discovering promising regions [1] [28]. For instance, some successful chemical reaction optimizations have started with as few as 9 initial data points [29].

Troubleshooting Guides

Issue 1: Slow Optimization Convergence in High-Dimensional Spaces

Problem: The optimization takes too many iterations to find a good solution, especially when tuning more than just a few parameters (e.g., temperature, time, concentration, catalyst loading, solvent).

Diagnosis and Solutions:

- Diagnosis: The "curse of dimensionality" makes the search space exponentially larger. Traditional sequential BO methods may be too slow for practical use.

- Solution 1: Use Highly Parallel BO. Scale up by using large batch sizes. Frameworks like Minerva are specifically designed for high-throughput experimentation (HTE) and can handle batch sizes of 24, 48, or even 96 experiments at a time. This leverages automated platforms to explore the space much more rapidly [1].

- Solution 2: Choose Scalable Acquisition Functions. For multi-objective optimization in large batches, use acquisition functions that scale efficiently, such as:

- q-NParEgo

- Thompson Sampling with Hypervolume Improvement (TS-HVI)

- q-Noisy Expected Hypervolume Improvement (q-NEHVI) [1] These functions have better computational complexity for large parallel batches compared to alternatives.

Issue 2: Handling Multiple, Competing Objectives

Problem: You need to optimize for several objectives simultaneously (e.g., maximize yield AND minimize cost), but improving one objective often worsens another.

Diagnosis and Solutions:

- Diagnosis: Single-objective BO is not suitable; a multi-objective approach is required to find a set of optimal trade-offs (the Pareto front).

- Solution: Implement Multi-Objective Bayesian Optimization (MOBO). The goal is to maximize the hypervolume of the Pareto front, which measures both the quality and diversity of the solutions found [16] [1].

- Recommended Algorithms:

Issue 3: Translating BO Results to Practical, Scalable Processes

Problem: Optimal conditions found in small-scale, automated BO campaigns fail to perform well when scaled up to industrial production.

Diagnosis and Solutions:

- Diagnosis: The optimization did not account for scale-dependent variables or physical constraints of larger reactors.

- Solution 1: Incorporate Physical Constraints. Use physics-guided frameworks that integrate knowledge about the system (e.g., thermodynamics, mass transfer) into the BO process. For example, Gaussian Process Port-Hamiltonian Systems (GP-PHS) can embed physical laws as priors, leading to more physically realistic and scalable optimization results [30].

- Solution 2: Dynamic Optimization. For batch processes, optimize the entire parameter trajectory, not just fixed setpoints. BO has been successfully applied to dynamic optimization, for instance, to find optimal temperature and pressure profiles that minimize the total production cost in a pharmaceutical intermediate concentration process [29].

Experimental Protocol: A Standard BO Workflow for Reaction Optimization

The table below outlines a generalized, step-by-step protocol for implementing Bayesian Optimization, synthesizing methodologies from multiple case studies [16] [1] [31].

Table 1: Standard Experimental Protocol for a Bayesian Optimization Campaign

| Step | Procedure | Details & Technical Specifications |

|---|---|---|

| 1. Define Search Space | Identify parameters and their ranges. | Continuous: Temp. (25-95°C), Time (min-hr), Concentration (0.1-2.0 eq.). Categorical: Solvent (DMF, DMSO, MeOH, etc.), Catalyst (PSTA, AcOH, none) [31]. Apply constraints (e.g., T < solvent boiling point) [1]. |

| 2. Select BO Framework | Choose software and algorithmic components. | Frameworks: Summit, Minerva, BioKernel, JMP Pro [16] [26] [1]. Surrogate Model: Gaussian Process (Matern or RBF kernel) [26] [32]. Acquisition Function: For single-objective: UCB or EI. For multi-objective: TSEMO or q-NEHVI [16] [1]. |

| 3. Initial Sampling | Generate the first set of experiments. | Use Sobol sequences or Latin Hypercube Sampling to create a space-filling design for the initial batch (e.g., 8-16 experiments) [1] [28]. |

| 4. Run Experiments | Execute reactions and analyze outcomes. | Utilize automated platforms (e.g., robotic liquid handlers, flow reactors) or manual execution. Analyze yields/conversion via HPLC, GC, or inline spectroscopy (IR, NMR) [31] [27]. |

| 5. Update Model & Suggest Next | Input results into the BO loop. | The surrogate model is updated with new data. The acquisition function then suggests the next batch of experiments (single or parallel) with the highest expected improvement [16]. |

| 6. Iterate | Repeat steps 4 and 5. | Continue until convergence (e.g., no significant improvement over 2-3 iterations) or upon exhausting the experimental budget [16] [29]. |

Research Reagent Solutions

The following table lists key reagents and materials commonly used in BO-guided reaction optimization campaigns, along with their primary functions.

Table 2: Essential Reagents and Materials for Reaction Optimization

| Reagent/Material | Function in Optimization | Example from Literature |

|---|---|---|

| N-Iodosuccinimide (NIS) | Halogenating agent for functional group transformation. | Used as an iodinating agent in the optimization of terminal alkyne iodination [31]. |

| Polar Solvents (DMF, DMSO) | High-polarity solvents to dissolve reactants and influence reaction mechanism. | Commonly included in solvent screens for various reactions, including Suzuki couplings [1] [31]. |

| Non-Precious Metal Catalysts (Ni) | Earth-abundant, lower-cost alternative to precious metal catalysts like Pd. | A Ni-based catalyst was optimized in a Suzuki coupling reaction for pharmaceutical process development [1]. |

| Chloramine Salts | Oxidizing agent in halogenation reactions. | Used as an oxidant with an iodine salt in an alternative route for alkyne iodination [31]. |

| Tetralkylammonium Salts (e.g., TBAI) | Phase-transfer catalysts or iodide sources. | Listed as a potential iodine source in a multi-parameter optimization study [31]. |

Workflow Diagrams

Bayesian Optimization Core Loop

High-Throughput Experimentation (HTE) Integration

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Bayesian optimization loop is converging on candidates with high affinity but poor solubility. How can I adjust the process? A: This indicates an imbalance in your multi-objective function. The algorithm is prioritizing affinity. Implement a constrained optimization approach or adjust the weights in your objective function.

- Protocol: Setting Up a Weighted Sum Objective Function

- Define Normalized Objectives: Scale each property (e.g., pIC50 for affinity, LogS for solubility, pLD50 for toxicity) to a 0-1 range, where 1 is ideal.

- Assign Weights: Assign a weight (w) to each objective based on project priorities (e.g., waffinity=0.5, wsolubility=0.3, w_toxicity=0.2). Ensure weights sum to 1.

- Combine: Compute the total score for a molecule:

Score = (w_affinity * Norm_Affinity) + (w_solubility * Norm_Solubility) + (w_toxicity * Norm_Toxicity). - Optimize: Use this

Scoreas the single objective for your Bayesian optimizer to maximize.

Q2: The acquisition function in my Bayesian optimizer is not exploring the chemical space effectively and gets stuck. What can I do? A: This is often due to over-exploitation. The Upper Confidence Bound (UCB) acquisition function is tunable for this.

- Protocol: Tuning the UCB Acquisition Function

- Identify Parameter: The UCB function is

μ + κ * σ, whereμis the mean prediction,σis the uncertainty, andκis the tunable parameter. - Adjust κ: A low

κ(e.g., 0.1-1.0) favors exploitation (refining known good areas). A highκ(e.g., 5.0-10.0) favors exploration (probing high-uncertainty areas). - Implement a Schedule: Start with a high

κfor broad exploration and gradually decrease it over iterations to refine the best candidates.

- Identify Parameter: The UCB function is

Q3: How do I handle the computational cost of evaluating toxicity for every candidate in a large virtual library? A: Use a tiered filtering approach. Employ fast, cheap filters first before running expensive simulations.

- Protocol: Tiered Virtual Screening Workflow

- Step 1 - Rule-Based Filters: Apply hard filters (e.g., PAINS, REOS) to remove molecules with obvious structural alerts. This can eliminate 10-20% of the library.

- Step 2 - QSAR Models: Use pre-trained Quantitative Structure-Activity Relationship (QSAR) models for rapid toxicity (e.g., hERG, Ames) and solubility prediction.

- Step 3 - Multi-Objective Bayesian Optimization: Apply Bayesian optimization on the filtered library, using the predictions from Step 2 as objectives.

- Step 4 - Experimental Validation: Only the top-ranked candidates from the optimization require costly MD simulations or experimental assays.

Q4: My property predictions (e.g., LogS) have high uncertainty, which misleads the Bayesian model. How can I account for this? A: Bayesian optimization naturally handles uncertainty. You should ensure this predictive uncertainty is propagated correctly to the acquisition function.

- Checklist for Predictive Model Uncertainty:

- Model Calibration: Ensure your underlying machine learning models (e.g., for solubility) are well-calibrated. Use calibration plots.

- Use Probabilistic Models: Employ models that output a mean and variance (e.g., Gaussian Process models, Bayesian Neural Networks) rather than just a point estimate.

- Acquisition Function: Use an acquisition function like Expected Improvement (EI) or UCB that explicitly incorporates prediction uncertainty (

σ) to balance exploration and exploitation.

Data Presentation

Table 1: Comparison of Multi-Objective Optimization Strategies in Virtual Screening

| Strategy | Key Principle | Pros | Cons | Best for |

|---|---|---|---|---|

| Weighted Sum | Combines objectives into a single score. | Simple, fast, works with standard BO. | Sensitive to weight choice; may miss Pareto-optimal solutions. | Projects with clear, fixed priorities. |

| Constrained Optimization | Optimizes one objective subject to constraints on others. | Intuitive, mirrors experimental design. | Can be inefficient if feasible region is small. | Ensuring a candidate meets a minimum safety threshold. |

| Pareto Optimization | Seeks a set of non-dominated solutions (Pareto front). | Finds diverse trade-off options. | Computationally intensive; harder to analyze. | Exploratory phases where trade-offs are unknown. |

Table 2: Typical Ranges for Key Molecular Properties in Drug Discovery

| Property | Metric | Ideal Range | High-Risk Range |

|---|---|---|---|

| Affinity | pIC50 | >6.3 (nM) | <5.0 (nM) |

| Solubility | LogS | >-4.0 | <-6.0 |

| Toxicity (hERG) | pIC50 | <5.0 | >5.0 |

Experimental Protocols

Protocol: Standard Workflow for a Multi-Objective Bayesian Optimization Cycle

- Initialization: Select a small, diverse set of molecules (50-100) from the chemical space to form the initial training set.

- Property Evaluation: Calculate or predict the multi-objective properties (Affinity, Solubility, Toxicity) for the initial set.

- Model Training: Train a separate surrogate model (e.g., Gaussian Process) for each objective property using the initial data.

- Acquisition: Use a multi-objective acquisition function (e.g., Expected Hypervolume Improvement) to select the next most promising molecule(s) to "evaluate."

- Update: "Evaluate" the selected molecule(s) (i.e., get predictions from your oracle functions) and add the new data to the training set.

- Iteration: Repeat steps 3-5 for a predefined number of iterations or until convergence.

Mandatory Visualization

Title: Bayesian Optimization Workflow

Title: Tiered Screening Protocol

The Scientist's Toolkit

Table 3: Research Reagent Solutions for In Silico Multi-Objective Optimization

| Item | Function | Example Tools / Libraries |

|---|---|---|

| Cheminformatics Library | Handles molecular representation, fingerprinting, and basic descriptor calculation. | RDKit, OpenBabel |

| Descriptor Calculator | Generates quantitative numerical representations of molecular structures. | Mordred, PaDEL-Descriptor |

| Machine Learning Framework | Builds and trains surrogate models for property prediction. | scikit-learn, PyTorch, TensorFlow |

| Bayesian Optimization Library | Provides algorithms for efficient global optimization of black-box functions. | BoTorch, GPyOpt, Scikit-Optimize |

| Molecular Docking Software | Predicts binding affinity and pose of a ligand to a protein target. | AutoDock Vina, GOLD, Glide |

| ADMET Prediction Platform | Provides pre-trained or trainable models for solubility, toxicity, and other properties. | ADMETlab, OCHEM, proprietary software |

This technical support center provides troubleshooting guides and FAQs for researchers implementing Expert-Guided Multi-Objective Bayesian Optimization (MOBO) within the CheapVS framework for virtual screening, specifically on EGFR and DRD2 targets [33].

Frequently Asked Questions (FAQs)

FAQ: How does CheapVS incorporate human expertise into the optimization process? CheapVS uses a preferential multi-objective Bayesian optimization framework. It captures expert chemical intuition by having chemists provide pairwise comparisons of candidates, which guide the trade-offs between multiple drug properties like binding affinity, solubility, and toxicity. This feedback is translated into a latent utility function that the BO uses to prioritize subsequent screening candidates [33].

FAQ: My optimization is failing due to occasional errors from the docking model. How can I recover without restarting?

Bayesian optimization loops can be designed to recover from intermittent errors. If an evaluation fails, you can fix the issue (e.g., restarting a crashed service), and then restart the optimization from the last successful step using the data, model, and acquisition state stored in the optimization history. For stateful acquisition rules like TrustRegion, ensuring this state is correctly reloaded is crucial [34].

FAQ: Why might my Bayesian optimization perform poorly in molecule design? Common pitfalls in BO for molecule design include an incorrect prior width in the surrogate model, over-smoothing, and inadequate maximization of the acquisition function. Addressing these hyperparameter tuning issues is critical for achieving state-of-the-art performance [22].

FAQ: How can I handle experimental noise in my assay data during active learning? In noisy environments, a retest policy can be integrated into the batched Bayesian optimization process. This policy selectively chooses experiments to repeat based on their importance or uncertainty. To maintain a consistent experimental budget, each retest replaces one new candidate in a batch. This approach has been shown to help correctly identify more active compounds despite noise [35].

Troubleshooting Guides

Problem: Optimization process runs out of memory.

- Potential Cause: The search space may be too complex, or the surrogate model may be evaluating overly large datasets during acquisition function optimization [34] [36].

- Solutions:

- Simplify the Search Space: Reduce the number of parameters being optimized or decrease the complexity of their distributions [36].

- Batch Acquisition Evaluations: Configure your acquisition function optimizer to evaluate the acquisition function in smaller batches rather than on the entire candidate set at once [34].

- Increase Memory Allocation: If simplification is not possible, allocate more memory to the optimization job [36].

Problem: The algorithm appears to be exploring poorly and gets stuck in a local optimum.

- Potential Cause: Inadequate trade-off between exploration and exploitation, potentially due to a poorly tuned acquisition function or kernel hyperparameters [22] [35].

- Solutions:

- Check Hyperparameters: Review the width of the surrogate model's prior and the lengthscale of its kernel. An incorrectly set prior can hinder exploration [22].

- Adjust Acquisition Function: For noisy environments, consider using the Upper Confidence Bound (UCB) with a higher

βparameter to weight exploration more heavily [22] [35]. - Validate with a Retest Policy: In physically noisy assays, implement a retest policy to confirm promising candidates and prevent the algorithm from being misled by erroneous high readings [35].

Problem: Expert preferences do not seem to be guiding the search effectively.

- Potential Cause: The pairwise comparison data might be too sparse or inconsistent to learn a reliable utility function [33].

- Solutions:

- Increase Query Frequency: Present the expert with a slightly larger number of pairwise comparisons per optimization cycle to gather more preference data.

- Clarify Objectives: Ensure that the drug properties used for comparisons are well-defined and understood by the expert to maintain consistency in feedback.

Experimental Protocols

Protocol 1: Running the CheapVS Framework for EGFR/DRD2 This protocol outlines the core methodology for hit identification on EGFR and DRD2 targets as described in the CheapVS study [33].

- Library Preparation: Obtain a chemical library (e.g., 100,000 candidates). For initial setup, a small random subset (e.g., 5 initial points) is evaluated to seed the model [33] [34].

- Expert Preference Elicitation: Present the domain expert (medicinal chemist) with pairwise comparisons of candidate molecules. The expert chooses the preferred candidate based on the trade-off between multiple properties (e.g., binding affinity vs. solubility).

- Model Fitting: Fit a multi-output Gaussian process surrogate model to the available data, which includes both evaluated property vectors and the inferred preferences.

- Candidate Selection: Using a preferential MOBO acquisition function (which leverages the learned utility function), select the most promising batch of candidates for the next round of evaluation.

- Iterative Optimization: Repeat steps 2-4 for the desired number of optimization cycles or until a performance threshold is met.

- Hit Validation: The final output is a shortlist of top-ranking candidates. In the referenced study, this process identified 16/37 known EGFR drugs and 37/58 known DRD2 drugs after screening only 6% of the library [33].

Protocol 2: Implementing a Retest Policy for Noisy Assays This protocol mitigates the impact of experimental noise, common in biochemical assays [35].

- Define Batch Size: Set a fixed batch size (e.g., 100 compounds per batch [35]).

- Rank Candidates: Use your acquisition function to rank all unevaluated candidates.

- Identify Retest Candidates: Select a subset of the highest-value or most uncertain previously tested candidates for retesting. The number of retests (

n_retest) should be predefined. - Form Final Batch: The next batch consists of

n_retestretest candidates and(batch_size - n_retest)new candidates from the top of the ranking. - Update Model: Update the surrogate model with the new results, incorporating both new evaluations and retest data.

The following table summarizes key quantitative results from the CheapVS case study on EGFR and DRD2 targets, demonstrating its high efficiency [33].

Table 1: Summary of CheapVS Performance on EGFR and DRD2 Targets

| Metric | EGFR Target | DRD2 Target |

|---|---|---|

| Library Size | 100,000 compounds | 100,000 compounds |

| Screening Fraction | 6% | 6% |

| Known Drugs Recovered | 16 out of 37 | 37 out of 58 |

| Recovery Rate | 43.2% | 63.8% |

Research Reagent Solutions

Table 2: Key Resources for Implementing Expert-Guided MOBO

| Resource Name | Type | Function in the Experiment |

|---|---|---|

| Chemical Library | Data | A large collection (e.g., 100K candidates) of chemical compounds for virtual screening [33]. |

| Docking Model (e.g., AlphaFold3, Chai-1) | Software/Tool | Computationally measures the binding affinity between a ligand and the target protein (e.g., EGFR, DRD2) [33]. |

| Multi-output Gaussian Process | Surrogate Model | Models the multiple, correlated drug properties and learns the latent utility function from expert preferences [33] [37]. |

| Therapeutics Data Commons | Data Platform | Provides open-access, curated datasets and algorithms for benchmarking AI models across various stages of drug discovery [38]. |

Workflow and System Diagrams

CheapVS High-Level Workflow

Expert Preference Integration

Error Recovery Process

In the competitive landscape of pharmaceutical development, maximizing yield and quality in formulation and bioprocessing is paramount. Traditional optimization methods, such as one-factor-at-a-time (OFAT) approaches, are inefficient for complex, multi-parameter reactions and often fail to identify global optima due to their inability to account for factor interactions [16]. Bayesian Optimization (BO) has emerged as a powerful machine learning framework that transforms reaction engineering and bioprocess development by enabling efficient, cost-effective optimization of complex systems [16].

BO is a sample-efficient global optimization strategy that excels where evaluations are expensive and the search space is high-dimensional [13] [16]. It operates by constructing probabilistic surrogate models of the objective function (e.g., yield, purity) and using acquisition functions to intelligently guide the selection of subsequent experiments by balancing exploration of uncertain regions with exploitation of known promising areas [13]. This approach is particularly valuable in bioprocessing, where experiments are resource-intensive and the relationships between critical process parameters (CPPs) and critical quality attributes (CQAs) are often complex and non-linear.

The integration of BO into bioprocess development aligns with the industry's shift toward Quality by Design (QbD) and Process Analytical Technology (PAT), enabling data-driven, intelligent optimization of multi-parameter processes [39] [40]. With the global bioprocess optimization market projected for substantial growth, driven by demands for biopharmaceuticals and advanced therapies, adopting BO frameworks provides a strategic advantage in accelerating development while maintaining rigorous quality standards [40].

Essential Bayesian Optimization Frameworks and Tools

Implementing BO requires specialized software tools. The table below summarizes key Bayesian Optimization packages relevant to chemical and bioprocess applications.

Table 1: Selected Bayesian Optimization Software Packages

| Package Name | Core Models | Key Features | Applicability to Bioprocessing |

|---|---|---|---|

| BoTorch [13] | Gaussian Processes (GP), others | Multi-objective optimization, built on PyTorch | High - flexible for complex, multi-response problems |

| Ax/Dragonfly [13] | GP | Multi-fidelity optimization, modular framework | High - supports various experiment types and data sources |

| Summit [16] | GP (TSEMO algorithm) | Specialized for chemical reaction optimization, multi-objective | Very High - includes benchmarks and domain-specific features |

| COMBO [13] | GP | Multi-objective optimization | Medium - general-purpose but capable |

| Reasoning BO [4] | GP + Large Language Models (LLMs) | Incorporates scientific reasoning, knowledge graphs | Emerging - useful when leveraging domain knowledge |

The Feature Adaptive Bayesian Optimization (FABO) Framework

A significant challenge in applying BO to materials and molecules is selecting the appropriate numerical representation (feature set). The Feature Adaptive Bayesian Optimization (FABO) framework addresses this by dynamically identifying the most informative features during the optimization campaign [2]. FABO starts with a complete, high-dimensional representation of the material or molecule and, at each cycle, refines this representation using feature selection methods (e.g., mRMR, Spearman ranking) to retain only the most relevant features influencing performance [2]. This ensures the representation is both compact and informative, significantly enhancing BO efficiency, especially in novel tasks where prior knowledge is limited.

Frequently Asked Questions (FAQs) on Bayesian Optimization

Q1: My bioprocess has multiple critical quality attributes (CQAs) like yield and purity. How can Bayesian Optimization handle multiple, potentially competing, objectives?

Bayesian Optimization can effectively handle multi-objective problems through Multi-Objective Bayesian Optimization (MOBO). Instead of seeking a single optimal point, MOBO identifies a Pareto front—a set of solutions where improving one objective necessitates worsening another [16]. Frameworks like Summit implement algorithms such as the Thompson Sampling Efficient Multi-Objective (TSEMO) algorithm. This algorithm uses Gaussian Process models for each objective and an acquisition function that guides experiments toward populating the Pareto frontier, allowing you to make informed trade-off decisions based on your specific quality targets [16].

Q2: The initial experiments in my BO campaign are yielding poor results. Is the algorithm failing, and how can I improve its start?

It is common for BO to require a few cycles to model the complex response surface effectively. The performance is sensitive to the initial set of experiments, or "seed" data [4]. To ensure a robust start:

- Use Space-Filling Designs: Employ design of experiments (DoE) methods, such as Latin Hypercube Sampling, for the initial batch to ensure broad exploration of the search space [16].

- Incorporate Prior Knowledge: If available, use historical data or expert intuition to bias the initial sampling toward regions believed to be more promising. Emerging frameworks like Reasoning BO leverage Large Language Models (LLMs) to inject such domain priors directly, improving initial performance [4].

- Ensure Proper Feature Representation: If using FABO, starting with a comprehensive feature set is crucial, as a suboptimal initial representation can severely hinder finding the global optimum [2].

Q3: My experimental measurements are sometimes noisy. How robust is Bayesian Optimization to this noise?

BO, particularly when using Gaussian Process (GP) surrogates, is inherently capable of handling noisy observations. You can explicitly model the noise by specifying a likelihood function (e.g., a Gaussian likelihood) for the GP. The GP will then estimate the underlying function while accounting for the measurement uncertainty, preventing the algorithm from overfitting to noisy data points [13] [16]. The acquisition function will naturally balance the need to explore noisy regions to reduce uncertainty with the need to exploit confidently known optima.

Q4: I am optimizing categorical variables, like different cell culture media or resin types. Can Bayesian Optimization handle these alongside continuous parameters like temperature and pH?

Yes, this is a key strength of modern BO implementations. While GPs traditionally work with continuous inputs, kernels have been developed to handle mixed spaces containing both continuous and categorical parameters [16]. Software packages like BoTorch and Ax support these complex search spaces, allowing you to simultaneously optimize discrete choices (e.g., catalyst type, solvent) and continuous parameters (e.g., concentration, reaction time) within a single optimization campaign [13].

Troubleshooting Common Bayesian Optimization Workflow Issues

Problem: BO Algorithm Gets Stuck in a Local Optimum

- Symptoms: The algorithm repeatedly suggests experiments in a small region with only marginal improvement, failing to discover significantly better performance areas found in earlier screening.

- Possible Causes & Solutions:

- Cause 1: Overly Greedy Exploitation. The acquisition function's balance is skewed too heavily toward exploitation.

- Solution: Use an acquisition function like Upper Confidence Bound (UCB) and increase its