Bayesian Optimization in Molecular Discovery: A Guide to Data-Efficient Property Prediction and Drug Design

This article provides a comprehensive overview of Bayesian optimization (BO) for molecular property prediction, a powerful machine learning framework that is transforming data-efficient drug and materials discovery.

Bayesian Optimization in Molecular Discovery: A Guide to Data-Efficient Property Prediction and Drug Design

Abstract

This article provides a comprehensive overview of Bayesian optimization (BO) for molecular property prediction, a powerful machine learning framework that is transforming data-efficient drug and materials discovery. It covers the foundational principles of BO, including surrogate models and acquisition functions, and explores cutting-edge methodological advances such as adaptive feature selection, multi-fidelity approaches, and ranking-based surrogates. The content addresses key challenges like high-dimensional search spaces and noisy data, offering practical optimization strategies. Furthermore, it validates these approaches through comparative analysis of performance across diverse molecular optimization tasks and real-world applications in autonomous discovery platforms, providing researchers and drug development professionals with the insights needed to implement BO in their workflows.

What is Bayesian Optimization? Core Principles for Navigating Chemical Space

Framing Molecular Discovery as a Global Optimization Problem

The process of molecular discovery, particularly in the field of drug development, is inherently a complex global optimization problem. Researchers aim to find molecules with an optimal combination of properties—such as high binding affinity, low toxicity, and good solubility—within a vast and high-dimensional chemical space. Bayesian optimization (BO) has emerged as a powerful machine learning framework to solve these black-box optimization problems where the objective function is expensive to evaluate and lacks an analytical form [1] [2]. By leveraging a surrogate model to approximate the unknown landscape and an acquisition function to guide the selection of promising candidates, BO efficiently balances exploration of unknown regions with exploitation of known promising areas, significantly accelerating the discovery process [1] [3] [2]. This article details practical protocols and applications for implementing BO in molecular discovery campaigns.

Core Components of Bayesian Optimization

A successful Bayesian optimization pipeline consists of several key algorithmic building blocks. The table below summarizes their functions and common implementations.

Table 1: Core Components of a Bayesian Optimization Pipeline

| Component | Function | Common Choices & Notes |

|---|---|---|

| Surrogate Model | Models the posterior distribution of the objective function; predicts mean and uncertainty. | Gaussian Process (GP) is standard for its uncertainty quantification [1] [4]. Bayesian Neural Networks are also used [5]. |

| Acquisition Function | Guides the selection of the next experiment by balancing exploration and exploitation. | Expected Improvement (EI), Upper Confidence Bound (UCB) [4] [3], and Information-based methods (e.g., BALD [6]) are popular. |

| Molecular Representation | Converts molecular structure into a numerical feature vector for the surrogate model. | Fixed fingerprints (e.g., ECFP), learned representations (e.g., from BERT [6]), or adaptive representations (e.g., FABO [4]). |

| Experimental Goal | Defines the success criteria for the optimization campaign. | Can be single-objective (e.g., maximize affinity) [2], multi-objective [7] [3], or target a specific property subset [3]. |

Application Notes & Experimental Protocols

Protocol 1: Single-Objective Hit Optimization with Fixed Representation

This protocol is designed for the common scenario of optimizing a single primary molecular property, such as binding affinity in virtual screening.

Workflow Overview:

Detailed Methodology:

Problem Formulation:

- Objective Function: Define the property to be optimized (e.g., negative binding energy from a docking simulation).

- Search Space: Define the chemical library or generative space of molecules to be explored.

Initialization:

Molecular Representation:

- Using a pre-defined method, convert each molecule in the search space and the training set into a fixed numerical vector. Common choices include Extended-Connectivity Fingerprints (ECFPs) or learned representations from a pre-trained model like MolBERT [6].

Bayesian Optimization Loop: Repeat until the experimental budget (e.g., number of evaluations) is exhausted:

- Model Training: Train a Gaussian Process (GP) surrogate model on the current set of labeled data (

D). The GP will model the underlying property landscape, providing a mean and variance prediction for every molecule in the search space [1] [2]. - Candidate Selection: Using the GP's predictions, calculate an acquisition function across the search space. For single-objective optimization, Expected Improvement (EI) is a standard choice [2]. The molecule with the maximum acquisition value is selected for evaluation.

- Expensive Evaluation: Evaluate the selected molecule using the expensive objective function (e.g., run a docking simulation or wet-lab experiment) to obtain its true property value,

y_new. - Data Update: Augment the training dataset:

D = D ∪ (x_new, y_new).

- Model Training: Train a Gaussian Process (GP) surrogate model on the current set of labeled data (

Output: Return the molecule with the best observed objective function value from the entire campaign.

Protocol 2: Multi-Objective & Preference-Guided Optimization

Drug discovery requires balancing multiple, often competing, properties. This protocol uses Preferential Multi-Objective Bayesian Optimization to incorporate expert knowledge [7].

Workflow Overview:

Detailed Methodology:

Problem Formulation:

- Objective Functions: Define the multiple properties to be considered (e.g., binding affinity, solubility, synthetic accessibility).

- Expert Preference Elicitation: Present the chemist with pairs of candidate molecules and their simulated properties. The expert indicates their preferred candidate based on an intuitive trade-off between the properties [7].

Preference Learning:

- From the collected pairwise comparisons, learn a latent utility function that captures the expert's implicit weighting of the different objectives. This utility function consolidates the multi-objective problem into a single-objective problem that reflects domain knowledge.

Bayesian Optimization Loop:

- Surrogate Modeling: Train a separate GP model for each molecular property of interest.

- Acquisition: Use a multi-objective acquisition function like Expected Hypervolume Improvement (EHVI) [7] [3] or optimize the expected utility.

- Evaluation & Update: Evaluate the proposed candidate across all objectives, update the respective GP models, and obtain new expert preferences on the latest candidates to refine the utility function.

Protocol 3: Adaptive Representation with FABO

The choice of molecular representation is critical. The Feature Adaptive Bayesian Optimization (FABO) framework dynamically learns the most relevant features during the BO process, which is especially useful for novel tasks lacking prior knowledge [4].

Workflow Overview:

Detailed Methodology:

Initialization:

- Begin with a large, comprehensive set of molecular features that capture both chemical and geometric (e.g., for materials) information. For example, start with Revised Autocorrelation Calculations (RACs) and stoichiometric features [4].

- Initialize with a small, randomly selected labeled dataset.

Adaptive BO Loop: At each cycle:

- Feature Selection: Apply a feature selection algorithm to the currently available labeled data to identify the most informative features. The Maximum Relevancy Minimum Redundancy (mRMR) method is an effective choice [4]. This step reduces dimensionality and tailors the representation to the task.

- Model Training & Candidate Selection: Train the GP surrogate model using only the adapted, task-relevant feature set. Use the acquisition function to select the next molecule for evaluation.

- The process repeats, with the feature set being refined at each iteration based on newly acquired data.

The Scientist's Toolkit

This section lists key resources and software for implementing Bayesian optimization in molecular discovery.

Table 2: Essential Research Reagent Solutions for Bayesian Optimization

| Category | Tool / Resource | Function & Application Notes |

|---|---|---|

| Software Libraries | BoTorch, Ax [2] | Flexible, modular Python frameworks for implementing BO, supporting advanced features like multi-objective optimization. |

| GAUCHE [1] [2] | A library specifically designed for Gaussian processes in chemical and scientific applications. | |

| Molecular Representations | Extended-Connectivity Fingerprints (ECFPs) | Fixed, circular topological fingerprints; a standard baseline for molecular representation. |

| MolBERT / Pre-trained Transformers [6] | Provides high-quality, contextual molecular representations learned from large unlabeled datasets; improves data efficiency. | |

| RACs (Revised Autocorrelation Calculations) [4] | Hand-crafted physical-chemical descriptors particularly useful for representing materials like Metal-Organic Frameworks (MOFs). | |

| Feature Selection | mRMR (Max-Relevance Min-Redundancy) [4] | Feature selection method that balances relevance to the target and redundancy among features; used in the FABO framework. |

| Surrogate Models | Gaussian Process (GP) Regression [1] [4] | The gold-standard for BO due to its native uncertainty estimates. Can be combined with informed priors for better performance [5]. |

| Experimental Goals | BAX Framework (InfoBAX, SwitchBAX) [3] | A framework for targeting specific subsets of the design space (e.g., finding all materials with a property above a threshold), beyond simple optimization. |

Bayesian optimization (BO) has emerged as a powerful, data-efficient strategy for navigating complex scientific design spaces, particularly in molecular property optimization (MPO) where traditional methods struggle with high dimensionality and expensive experimental evaluations. The core challenge in MPO involves identifying molecules with optimal functional properties from combinatorial chemical spaces that can exceed 100,000 candidates, while constrained to fewer than 100 property evaluations via simulations or wet-lab experiments [8]. BO addresses this through a principled framework that balances exploration of uncertain regions with exploitation of promising areas, making it indispensable for modern molecular discovery in pharmaceuticals, materials science, and chemical engineering. The effectiveness of BO hinges on two fundamental components: probabilistic surrogate models that approximate the black-box objective function, and acquisition functions that guide the sequential selection of evaluation points by quantifying potential utility [9]. This article examines the operational principles of these components within the BO cycle, providing detailed protocols for their implementation in molecular property prediction research.

The Bayesian Optimization Cycle: Core Components and Workflow

Theoretical Foundation and Mathematical Framework

The molecular property optimization problem is formally posed as finding a molecule ( m^* ) from a discrete set ( \mathcal{M} ) that maximizes a black-box objective function ( F(m) ), which maps molecules to property values [8]. This function is typically expensive to evaluate and often noisy. BO solves this through sequential decision-making: at each iteration ( t ), it uses all available data ( \mathcal{D}{1:t} = {(m1, y1), ..., (mt, yt)} ) to build a probabilistic surrogate model of ( F ), then selects the next candidate ( m{t+1} ) by maximizing an acquisition function ( \alpha(m) ). The core BO equation is:

[ m{t+1} = \arg \max{m \in \mathcal{M}} \alpha(m | \mathcal{D}_{1:t}) ]

This process continues until meeting a termination criterion (e.g., evaluation budget or convergence threshold). The strength of BO lies in its ability to quantify uncertainty and strategically reduce it through intelligent experiment selection [9] [10].

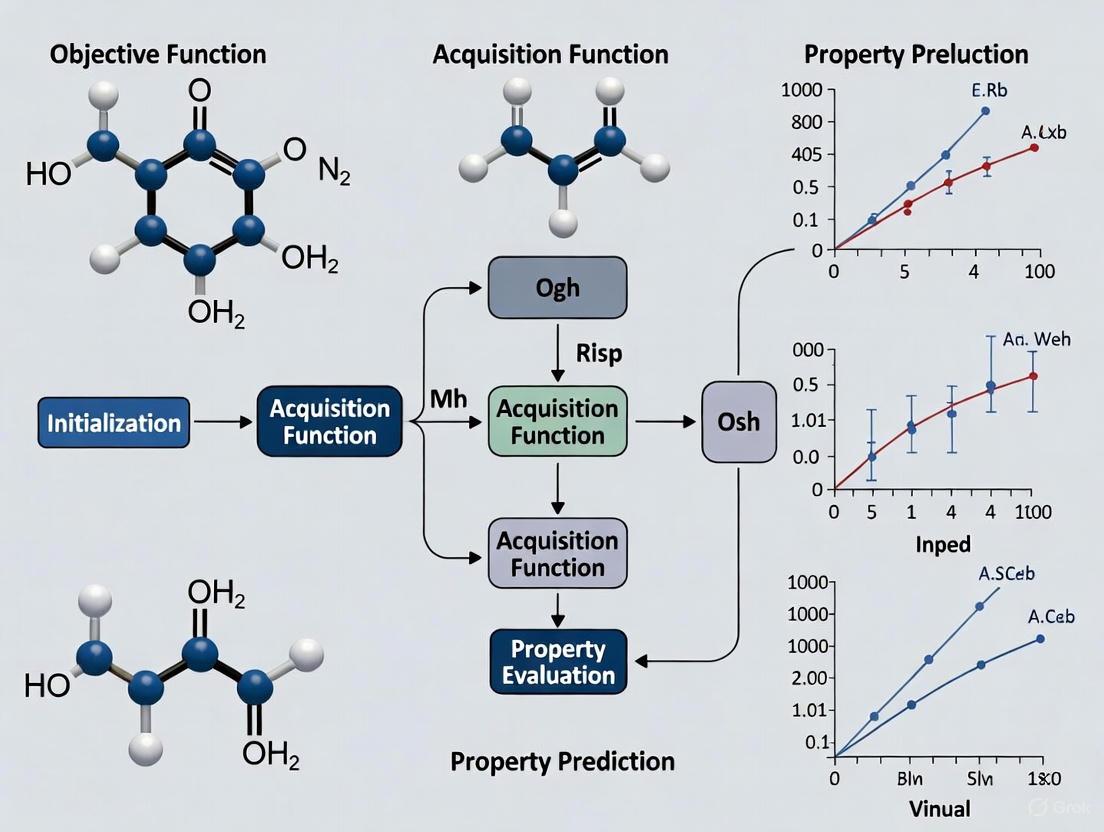

Visualizing the Bayesian Optimization Workflow

The following diagram illustrates the complete Bayesian optimization cycle as applied to molecular property prediction:

Surrogate Models in Bayesian Optimization

Gaussian Process Fundamentals

Gaussian processes (GPs) serve as the predominant surrogate model in Bayesian optimization due to their flexibility, analytical tractability, and native uncertainty quantification [8] [9]. A GP defines a distribution over functions, completely specified by a mean function ( \mu(m) ) and covariance kernel ( k(m, m') ):

[ f(m) \sim \mathcal{GP}(\mu(m), k(m, m')) ]

Given a dataset ( \mathcal{D} = {(mi, yi)}{i=1}^n ) with ( yi = F(mi) + \varepsiloni ) and ( \varepsiloni \sim \mathcal{N}(0, \lambdai) ), the posterior predictive distribution at a new point ( m ) is Gaussian with closed-form expressions for mean and variance [8]:

[ \mathbb{E}[f(m)|\mathcal{D}] = \mu(m) + \mathbf{k}n(m)^\top (\mathbf{K}n + \mathbf{\Lambda}n)^{-1} (\mathbf{y}n - \mathbf{u}n) ] [ \mathbb{V}[f(m)|\mathcal{D}] = k(m, m) - \mathbf{k}n(m)^\top (\mathbf{K}n + \mathbf{\Lambda}n)^{-1} \mathbf{k}_n(m) ]

where ( \mathbf{k}n(m) = [k(m, m1), \ldots, k(m, mn)] ), ( \mathbf{K}n ) is the covariance matrix between training points, ( \mathbf{y}n ) is the vector of observed values, ( \mathbf{u}n ) is the vector of mean values at training points, and ( \mathbf{\Lambda}_n ) is a diagonal matrix of measurement noise variances [8].

Advanced Surrogate Modeling Techniques

Table 1: Comparison of Gaussian Process Surrogate Models for Molecular Optimization

| Model Type | Key Features | Molecular Applications | Advantages | Limitations |

|---|---|---|---|---|

| Conventional GP (cGP) | Standard kernel functions (RBF, Matern) | Single-property optimization [10] | Mathematical rigor, uncertainty quantification [10] | Cannot capture property correlations [10] |

| Multi-Task GP (MTGP) | Shared kernel across related tasks | Correlated material properties [10] | Leverages correlations, improves data efficiency [10] | Complex kernel design [10] |

| Deep GP (DGP) | Hierarchical composition of GPs | Complex, non-linear property relationships [10] | Captures complex patterns [10] | Computationally intensive [10] |

| Sparse GP (SAAS) | Sparsity-inducing priors for high dimensions | Molecular descriptor libraries [8] | Automatic relevance determination, handles 100+ descriptors [8] | Requires Bayesian inference [8] |

Molecular Representations for Surrogate Modeling

The choice of molecular representation critically impacts BO performance. Common featurization approaches include:

- Descriptor-based feature vectors: Computed physicochemical properties (void fraction, pore diameters, surface area) that provide interpretable representations [11]

- Fingerprints: Binary vectors encoding molecular substructures [8]

- Learned embeddings: Neural network-generated representations via pretrained transformers (e.g., BERT) on large molecular databases [6]

The MolDAIS framework demonstrates how adaptive subspace identification within large descriptor libraries enables effective optimization in 100+ dimensional spaces using sparsity-inducing techniques [8].

Acquisition Functions: Strategic Guidance of Experiments

Taxonomy of Acquisition Functions

Acquisition functions formalize the trade-off between exploration (sampling uncertain regions) and exploitation (sampling promising regions) by quantifying the expected utility of evaluating a candidate point. The following diagram illustrates the decision logic for common acquisition functions:

Quantitative Comparison of Acquisition Strategies

Table 2: Performance Comparison of Acquisition Functions in Molecular Optimization

| Acquisition Function | Mathematical Formulation | Optimization Type | Molecular Application Results | Computational Complexity |

|---|---|---|---|---|

| Expected Improvement (EI) | ( \mathbb{E}[\max(f(m) - f(m^+), 0)] ) | Single-objective | Identifies optimal MOFs for methane storage [11] | Moderate |

| Probability of Improvement (PI) | ( P(f(m) \geq f(m^+) + \xi) ) | Single-objective | Selects informative MOFs for adsorption modeling [11] | Low |

| Upper Confidence Bound (UCB) | ( \mu(m) + \kappa\sigma(m) ) | Single-objective | Molecular property optimization with trade-off parameter κ [8] | Low |

| BALD | ( \mathbb{I}[\theta, y \mid m, \mathcal{D}] ) | Active Learning | Toxic compound identification with 50% fewer iterations [6] | High (requires posterior) |

| GP Standard Deviation | ( \sigma(m) ) | Pure Exploration | Uncertainty sampling for broad coverage [11] | Low |

Integrated Experimental Protocols

Protocol 1: Molecular Property Optimization with Adaptive Descriptors

Objective: Identify molecules with optimal target properties from large chemical libraries using the MolDAIS framework [8].

Materials and Reagents:

- Chemical Library: >100,000 candidate molecules (e.g., ZINC database, CoRE MOFs [11])

- Descriptor Software: RDKit, Dragon for molecular descriptor calculation

- BO Framework: Custom implementation with SAAS prior or adaptive screening variants

Procedure:

- Featurization Phase:

- Compute comprehensive descriptor library (150+ descriptors) for all molecules in search space

- Standardize descriptors to zero mean and unit variance

Initial Experimental Design:

- Select 5-10 initial points via Latin hypercube sampling across descriptor space

- Evaluate initial molecules via simulation or experiment to create training set ( \mathcal{D}_0 )

BO Iteration Loop:

- Train GP surrogate with SAAS prior on current data ( \mathcal{D}_t )

- Optimize acquisition function (EI recommended) to select next candidate ( m_{t+1} )

- Evaluate property of ( m_{t+1} ) via experiment/simulation

- Update dataset: ( \mathcal{D}{t+1} = \mathcal{D}t \cup {(m{t+1}, y{t+1})} )

- Check convergence (minimum improvement threshold or evaluation budget)

Termination:

- Return best-performing molecule after 50-100 evaluations

- Analyze identified descriptor subspace for interpretability

Validation: Benchmark against random search and conventional BO on public molecular datasets (Tox21, ClinTox [6]). Expected performance: Identifies near-optimal candidates with 50% fewer evaluations than conventional approaches [8].

Protocol 2: Multi-Objective Materials Discovery with Hierarchical GPs

Objective: Discover high-entropy alloy compositions optimizing multiple correlated properties (e.g., low thermal expansion coefficient and high bulk modulus) [10].

Materials:

- Search Space: FeCrNiCoCu high-entropy alloy composition space

- Characterization: High-throughput atomistic simulations for property evaluation

- Modeling Framework: MTGP or DGP with advanced kernel structures

Procedure:

- Multi-Objective Framework Setup:

- Define property targets and constraints (e.g., CTE < target, BM > threshold)

- Formulate weighted objective function or Pareto optimization scheme

Surrogate Model Configuration:

- Implement MTGP with coregionalization kernel to capture property correlations

- Alternative: DGP with 2-3 hidden layers for hierarchical representation learning

Parallel BO Execution:

- Use batch acquisition function (e.g., q-EI) for parallel evaluation of 3-5 compositions

- Evaluate candidate compositions via atomistic simulations

- Update surrogate model with all new data points

Iteration and Analysis:

- Continue for 20-30 iterations (100-150 total evaluations)

- Analyze predicted property correlations and composition-property relationships

- Validate top candidates with additional characterization

Validation: Compare against cGP-BO on FeCrNiCoCu HEA system. Expected performance: MTGP-BO and DGP-BO achieve 30-50% faster convergence by exploiting property correlations [10].

Table 3: Essential Research Reagents and Computational Tools for Molecular BO

| Category | Specific Tools/Resources | Function | Application Context |

|---|---|---|---|

| Molecular Representations | RDKit, Dragon descriptors, MolBERT embeddings [6] | Convert molecular structures to feature vectors | Create input representations for surrogate models |

| Surrogate Modeling | GPflow, GPyTorch, STAN (for SAAS) | Build probabilistic models of molecular property functions | Implement cGP, MTGP, DGP, or sparse GP models |

| Acquisition Optimization | BoTorch, Scipy optimize | Maximize acquisition functions to select candidates | Implement EI, UCB, PI, and information-theoretic functions |

| Experimental Platforms | High-throughput simulation (GCMC [11]), automated synthesis robots | Evaluate candidate molecules/properties | Generate training data for BO cycles |

| Benchmark Datasets | Tox21 [6], ClinTox [6], CoRE MOFs [11] | Validate BO performance | Compare algorithms on public molecular property data |

Bayesian optimization represents a paradigm shift in data-efficient molecular discovery, enabling researchers to navigate vast chemical spaces with minimal experimental resources. The interplay between surrogate models and acquisition functions creates a powerful framework for iterative experimental design: surrogate models provide probabilistic estimates of molecular properties, while acquisition functions strategically guide experimentation toward maximally informative candidates. For molecular scientists, mastering this cycle enables accelerated discovery of novel materials, pharmaceuticals, and functional compounds while dramatically reducing experimental costs. The protocols and analyses presented here provide both theoretical foundation and practical methodologies for implementing BO in diverse molecular optimization scenarios, from single-property drug candidate identification to multi-objective materials design.

Bayesian optimization (BO) has emerged as a powerful paradigm for the sample-efficient optimization of expensive black-box functions, making it particularly well-suited for molecular property prediction and design in drug discovery. The core challenge in this field lies in navigating the vast, high-dimensional chemical space with a limited budget for costly simulations or wet-lab experiments. This document provides detailed application notes and protocols for implementing the three key components of a Bayesian optimization framework—Gaussian Processes (GPs), Random Forests (RFs), and the Expected Improvement (EI) acquisition function—specifically within the context of molecular property optimization (MPO). We frame this within a broader thesis on advancing Bayesian optimization for drug design, providing researchers and scientists with practical, experimentally validated methodologies.

Key Components: Technical Specifications and Performance

The following table summarizes the core technical aspects and recent performance findings for each key component in the context of molecular property research.

Table 1: Key Components for Bayesian Molecular Optimization

| Component | Key Function | Recent Findings & Performance | Theoretical Advances |

|---|---|---|---|

| Gaussian Process (GP) | Probabilistic surrogate model for the black-box molecular property function. | Using Matérn kernels enables standard GP-BO to achieve top-tier results in high-dimensional settings, often surpassing specialized methods [12]. | A robust initialization strategy mitigates gradient vanishing in SE kernels, making them competitive [12]. |

| Expected Improvement (EI) | Acquisition function that balances exploration and exploitation by quantifying potential improvement. | GP-EI with BPMI/BSPMI incumbents achieves sublinear cumulative regret (no-regret) for SE and Matérn kernels [13] [14]. | EI has been reinterpreted as a variational approximation of information-theoretic acquisition functions, leading to novel hybrids like VES-Gamma [15]. |

| Random Forest (RF) | Non-parametric ensemble model that can be used as a surrogate or for hyperparameter tuning. | A Bayesian-optimized RF model achieved R² values of 0.915 (training) and 0.965 (independent test) in predicting loess collapsibility, demonstrating high reliability [16]. | Integrated with BO for hyperparameter optimization, RF models show marked improvements in optimizing search efficiency, especially with sparse target data [16] [17]. |

Integrated Workflow for Molecular Property Optimization

The synergy between GPs, EI, and RFs enables a powerful, data-efficient workflow for molecular discovery. The following diagram illustrates the typical closed-loop Bayesian optimization process, adapted for molecular property prediction.

Detailed Experimental Protocols

Protocol 1: High-Dimensional Molecular Optimization with Gaussian Processes

This protocol leverages recent findings on the robustness of standard GPs with Matérn kernels for high-dimensional Bayesian optimization [12] [8].

- Objective: To identify a molecule with an optimal target property from a large, high-dimensional chemical library using a Gaussian Process surrogate model.

- Materials & Reagents:

- Molecular Library: A discrete set of >100,000 molecules (e.g., from ZINC or Enamine databases).

- Featurization: A comprehensive library of molecular descriptors (e.g., RDKit descriptors, MOE descriptors) or a pretrained molecular transformer model (e.g., MolBERT) for generating fixed representations [6] [8].

- Software: A BO software package supporting GPs and SAAS priors (e.g., BoTorch, GPyOpt).

- Step-by-Step Procedure:

- Featurization: Encode all molecules in the library into a numerical feature vector using the chosen descriptor set or pretrained model.

- Initial Design: Randomly select a small initial set of molecules (e.g., 10-20) from the library, ensuring diversity (e.g., via scaffold splitting) [6].

- Data Collection: Obtain the target property value (e.g., binding affinity, solubility) for each molecule in the initial set via simulation or experiment.

- Model Training: Initialize a Gaussian Process surrogate model with a Matérn kernel (e.g., ν=5/2). For very high-dimensional descriptor spaces (>100), employ the SAAS (Sparse Axis-Aligned Subspace) prior to promote sparsity and adaptively identify relevant features [8].

- Candidate Selection: Using the trained GP, compute the Expected Improvement (EI) acquisition function across the entire molecular library. Select the molecule that maximizes EI.

- Iterative Loop: Evaluate the selected molecule, update the training dataset, and retrain the GP model. Repeat steps 5-6 until the evaluation budget (typically <100 evaluations) is exhausted or a performance plateau is reached.

- Validation: The success of the optimization is measured by the discovered molecule's property value and the rate of convergence. Performance is benchmarked against other MPO methods (e.g., graph-based BO, SMILES-based BO) on the same task [8].

Protocol 2: Tuning Predictive Models with Bayesian-Optimized Random Forests

This protocol uses the EI acquisition function to tune the hyperparameters of a Random Forest model, which can then be used for fast, interpretable property prediction [16].

- Objective: To optimize the hyperparameters of a Random Forest regressor for accurately predicting a molecular property, minimizing the root mean squared error (RMSE) on a validation set.

- Materials & Reagents:

- Dataset: A labeled molecular dataset (e.g., Tox21, ClinTox) split into training, validation, and test sets using scaffold splitting [6].

- Software: A machine learning library (e.g., scikit-learn) and a Bayesian optimization package (e.g., scikit-optimize).

- Step-by-Step Procedure:

- Define Search Space: Define the Bayesian optimization search space for key RF hyperparameters: number of trees (

n_estimators: 50-500), maximum tree depth (max_depth: 3-20), and minimum samples per leaf (min_samples_leaf: 1-10). - Set Objective Function: The objective function for the BO is the RMSE achieved by the RF model on the held-out validation set.

- Initialization: Start with a small number (e.g., 10) of random points in the hyperparameter space.

- BO Loop: For a fixed number of iterations (e.g., 50):

- The GP surrogate models the relationship between hyperparameters and validation RMSE.

- The EI acquisition function identifies the most promising hyperparameter set to evaluate next.

- The RF model is trained with the proposed hyperparameters and evaluated on the validation set.

- The result is used to update the GP surrogate.

- Final Model: Train a final RF model using the best-found hyperparameters on the combined training and validation set, and report its performance on the independent test set.

- Define Search Space: Define the Bayesian optimization search space for key RF hyperparameters: number of trees (

- Validation: The model's performance is quantified using R² and RMSE on the independent test set. A successfully tuned model should achieve high R² values, as demonstrated by the result of 0.965 reported in a recent study [16].

Protocol 3: No-Regret Molecular Design with Expected Improvement

This protocol focuses on the critical implementation details of the EI acquisition function to ensure robust and theoretically sound performance in noisy experimental settings [13] [14].

- Objective: To implement a no-regret Bayesian optimization algorithm for molecular design using the Expected Improvement acquisition function with a theoretically robust incumbent strategy.

- Materials & Reagents:

- Software: A Bayesian optimization library that allows custom incumbent selection (e.g., BoTorch).

- Setup: A trained GP surrogate model and a pool of unlabeled, featurized molecules.

- Step-by-Step Procedure:

- Incumbent Selection: Choose an incumbent strategy based on the noise level of your experimental data.

- For low-noise settings: Use the Best Posterior Mean Incumbent (BPMI), which finds the molecule with the best predicted mean across the entire domain. This offers the strongest theoretical guarantees but is computationally more expensive [14].

- For high-noise or large-scale settings: Use the Best Sampled Posterior Mean Incumbent (BSPMI), which selects the best mean from the set of already-sampled molecules. This is computationally cheaper and maintains no-regret properties [13] [14].

- Avoid the Best Observation Incumbent (BOI) in high-noise settings, as it can be brittle and lead to poor cumulative regret [14].

- EI Calculation: Calculate the Expected Improvement for each molecule in the pool. The standard EI formula is:

EI(x) = E[max(0, f(x) - f_incumbent)], wheref_incumbentis the value from the chosen incumbent strategy. - Molecule Selection: Select the molecule with the maximum EI value for the next experiment.

- Iteration: Update the GP model with the new data and repeat the process.

- Incumbent Selection: Choose an incumbent strategy based on the noise level of your experimental data.

- Validation: The algorithm's performance is tracked via cumulative regret. A no-regret algorithm will show a sublinear growth in cumulative regret, meaning the average regret

R_T/Tapproaches zero as the number of iterationsTincreases [13] [14].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagents and Computational Tools for Bayesian Molecular Optimization

| Item Name | Function / Role | Specifications / Examples |

|---|---|---|

| Molecular Descriptor Libraries | Provides a fixed, chemically meaningful numerical representation of molecules for the surrogate model. | RDKit descriptors, Dragon descriptors; Used in frameworks like MolDAIS for adaptive feature selection [8]. |

| Pretrained Molecular Transformer (MolBERT) | Provides high-quality, context-aware molecular representations that disentangle feature learning from uncertainty estimation, drastically improving data efficiency [6]. | A BERT model pretrained on 1.26 million compounds; integrated into the AL pipeline to structure the embedding space [6]. |

| Sparse Axis-Aligned Subspace (SAAS) Prior | A Bayesian prior applied to the GP surrogate model that promotes sparsity, allowing it to ignore irrelevant descriptors and focus on task-relevant features in high-dimensional spaces [8]. | Key component of the MolDAIS framework; enables efficient optimization in descriptor libraries with thousands of features [8]. |

| Benchmark Molecular Datasets | Standardized datasets for training, validating, and benchmarking model performance in fair and comparable ways. | Tox21 (12 toxicity pathways), ClinTox (FDA-approved vs. failed drugs) [6]. |

| Scaffold Splitting Algorithm | A data splitting method that partitions molecules based on core structural scaffolds, ensuring that test sets contain novel chemotypes not seen during training. This tests a model's true generalization ability [6]. | Bemis-Murcko scaffold representation; crucial for evaluating real-world utility in drug discovery [6]. |

{# Abstract}

This application note addresses the central challenge of balancing exploration and exploitation within Bayesian optimization (BO) frameworks for molecular property prediction and materials discovery. Designed for researchers and drug development professionals, it details practical protocols and frameworks that dynamically manage this trade-off, enabling efficient navigation of high-dimensional chemical spaces with minimal experimental resource expenditure.

The application of Bayesian optimization (BO) in molecular sciences represents a paradigm shift in the acceleration of drug design and materials discovery. BO is a sample-efficient, sequential strategy for the global optimization of expensive-to-evaluate "black-box" functions, a category that includes complex laboratory experiments and detailed molecular simulations [9]. Its core strength lies in a principled balance between exploration (probing regions of high uncertainty in the search space) and exploitation (refining knowledge in areas known to yield good results) [9].

However, the vastness and high dimensionality of molecular search spaces pose a critical challenge. The effectiveness of BO is heavily dependent on the numerical representation, or featurization, of molecules and materials [4] [8]. High-dimensional representations can cripple BO performance due to the "curse of dimensionality," while an incomplete representation that misses key features can bias the search irrevocably [4]. This note presents and protocols advanced frameworks that integrate adaptive feature selection and sophisticated surrogate models to overcome this challenge, ensuring robust and data-efficient optimization.

Core Principles and Adaptive Frameworks

The power of Bayesian optimization stems from three core components: Bayesian inference for updating beliefs with new evidence, a Gaussian Process (GP) as a probabilistic surrogate model of the objective function, and an acquisition function to manage the exploration-exploitation trade-off [9].

Key Components of Bayesian Optimization

- Gaussian Process Surrogate Model: A GP defines a distribution over functions, providing for any input (e.g., a molecular descriptor vector) both a prediction (mean) and a measure of uncertainty (variance) [9] [10]. This uncertainty quantification is essential for guiding the search.

- Acquisition Functions: This function uses the GP's output to score the utility of evaluating any candidate point. Common functions include:

- Expected Improvement (EI): Selects points offering the highest expected improvement over the current best observation [4] [8].

- Upper Confidence Bound (UCB): Selects points by maximizing an upper confidence bound on the prediction, with a tunable parameter to balance exploration and exploitation [4] [9].

- Probability of Improvement (PI) and Bayesian Active Learning by Disagreement (BALD) are other examples used in different contexts [9] [6].

Advanced Frameworks for Adaptive Representation

Traditional BO uses a fixed molecular representation, which can be suboptimal. Recent frameworks address this by dynamically adapting the feature set during optimization.

- Feature Adaptive Bayesian Optimization (FABO): This framework integrates feature selection directly into the BO cycle. It starts with a complete, high-dimensional feature set and, at each cycle, uses methods like Maximum Relevancy Minimum Redundancy (mRMR) or Spearman ranking to identify and retain the most informative features for the specific task. This leads to a compact, task-relevant representation that enhances BO efficiency without requiring prior expert knowledge [4].

- Molecular Descriptors with Actively Identified Subspaces (MolDAIS): MolDAIS leverages the sparse axis-aligned subspace (SAAS) prior to induce sparsity in the feature space. It constructs parsimonious GP models that actively identify and focus on a low-dimensional, task-relevant subspace from a large library of molecular descriptors as new data is acquired [8].

Quantitative Performance Comparison

The following tables summarize the performance of adaptive BO methods against baselines in various molecular and materials optimization tasks.

Table 1: Performance in Molecular and Materials Discovery Tasks

| Framework | Optimization Task | Search Space Size | Performance vs. Baseline | Key Metric |

|---|---|---|---|---|

| FABO [4] | MOF for CO₂ uptake & band gap | ~8,500 - 9,500 materials | Outperformed random search and fixed-representation BO | Accelerated identification of top performers |

| MolDAIS [8] | Molecular Property Optimization | >100,000 molecules | Outperformed state-of-the-art methods (graphs, SMILES, embeddings) | Identified near-optimal candidates in <100 evaluations |

| BioKernel [9] | Limonene production in E. coli | 4-dimensional input space | 78% fewer evaluations than grid search | Converged to optimum in 18 vs. 83 points |

| Pretrained BERT + BALD [6] | Toxic compound identification (Tox21, ClinTox) | ~1,484 - 8,000 compounds | 50% fewer iterations than conventional active learning | Equivalent identification accuracy |

Table 2: Comparison of Feature Selection and Surrogate Model Methods

| Method | Feature Selection / Model Approach | Key Advantage | Best Suited For |

|---|---|---|---|

| mRMR [4] | Selects features balancing relevance to target and redundancy among themselves | Creates a compact, non-redundant feature set | General-purpose optimization with a full feature pool |

| Spearman Ranking [4] | Univariate ranking based on monotonic correlation with target | Computational efficiency and simplicity | Quick initialization or low-dimensional targets |

| SAAS Prior [8] | Fully Bayesian GP with sparsity-inducing prior | Automatically identifies sparse, relevant subspaces | High-dimensional descriptor libraries with inherent sparsity |

| Mutual Information (MI) / MIC [8] | Screening variants for scalable subspace selection | Runtime efficiency with retained interpretability | Large feature sets where full SAAS is prohibitive |

| Multi-task GP (MTGP) [10] | Models correlations between distinct material properties | Leverages information from correlated objectives | Multi-objective optimization with related properties |

Detailed Experimental Protocols

Protocol 1: Implementing FABO for MOF Discovery

Application: Discovering metal-organic frameworks (MOFs) with optimal properties like gas uptake or electronic band gap [4].

Workflow Overview:

FABO Cycle for MOF Discovery

Step-by-Step Procedure:

Problem Formulation:

- Objective: Define the target property (e.g., CO₂ uptake at 16 bar, electronic band gap).

- Search Space: Define the database of MOF candidates (e.g., QMOF, CoRE-2019) [4].

Initialization and Featurization:

- Feature Pool: Represent each MOF with a comprehensive set of features, including:

- Initial Sampling: Select a small initial set of MOFs (e.g., via random sampling or Latin Hypercube) for labeling.

Closed-Loop FABO Cycle:

- Data Labeling: Obtain the target property value for the selected MOF via simulation or experiment.

- Adaptive Feature Selection:

- Using all data acquired so far, apply a feature selection method.

- For mRMR: Iteratively select features that maximize relevance (F-statistic with target) and minimize redundancy (average correlation with already-selected features) [4].

- For Spearman: Rank all features by the absolute value of their Spearman rank correlation coefficient with the target and select the top k.

- Surrogate Model Update: Train a Gaussian Process model using only the currently selected, adapted feature set.

- Candidate Selection:

- Using an acquisition function (e.g., EI, UCB) on the updated GP model, evaluate all MOFs in the database.

- Select the MOF with the highest acquisition function value as the next candidate for labeling.

Termination: The cycle repeats until a convergence criterion is met (e.g., a performance target is achieved, a maximum number of iterations is reached, or the improvement between cycles becomes negligible).

Protocol 2: Molecular Optimization with MolDAIS

Application: Single- or multi-objective optimization of molecular properties from large chemical libraries [8].

Workflow Overview:

MolDAIS Framework for Molecular Optimization

Step-by-Step Procedure:

Problem Formulation:

- Define the molecular property(s) to optimize.

- Define the discrete molecular search space (e.g., a chemical library with over 100,000 molecules) [8].

Featurization:

- Compute a comprehensive library of molecular descriptors for every molecule in the search space. This can include simple atom counts, topological indices, quantum-chemical descriptors, etc. [8].

MolDAIS Initialization:

- Impose a SAAS prior on the GP surrogate model. This prior assumes that only a sparse subset of the descriptor dimensions is actively relevant to the property [8].

- Begin with a small initial dataset.

Closed-Loop Optimization:

- Acquire Data: Obtain the property value for the proposed molecule.

- Update Surrogate Model: Perform Bayesian inference (e.g., using Hamiltonian Monte Carlo) to update the GP model. This process automatically infers the "active" descriptors (those with non-zero length scales) and shrinks the influence of irrelevant ones.

- Propose Next Candidate: Optimize the acquisition function (e.g., EI) over the entire molecular search space using the updated model to find the most promising molecule to evaluate next.

Termination: Cycle continues until the evaluation budget is exhausted or convergence is achieved.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Datasets

| Item | Function / Description | Example Use Case |

|---|---|---|

| Gaussian Process (GP) Regressor | Core surrogate model for BO; provides predictions with uncertainty estimates. | Modeling the relationship between molecular features and a target property [4] [10]. |

| Molecular Descriptor Libraries | Precomputed sets of numerical features (e.g., RACs, topological indices) representing molecular structure. | Featurizing molecules for input into the BO model [4] [8]. |

| mRMR Algorithm | Feature selection method to maximize relevance and minimize redundancy. | Creating a compact, informative feature set within FABO [4]. |

| SAAS Prior | A sparsity-inducing prior for GPs that promotes the use of only a subset of input features. | Enabling automatic feature selection in the MolDAIS framework [8]. |

| QMOF Database | A database of over 8,000 MOFs with computed electronic properties from DFT. | Search space for MOF band gap or electronic property optimization [4]. |

| CoRE MOF Database | A database of thousands of MOFs with gas adsorption data. | Search space for optimizing MOFs for gas storage or separation [4]. |

| Tox21/ClinTox Datasets | Publicly available datasets with toxicology data for thousands of compounds. | Benchmarking optimization and active learning for drug safety [6]. |

Advanced BO Strategies and Real-World Applications in Molecular Design

Molecular property optimization (MPO) is a central challenge in fields ranging from drug discovery to materials science. The effectiveness of these optimization campaigns critically depends on the molecular representation used. Traditional fixed representations, such as fingerprints and descriptors, often struggle in high-dimensional, low-data regimes. This article details the application of two advanced Bayesian optimization (BO) frameworks—MolDAIS (Molecular Descriptors with Actively Identified Subspaces) and FABO (Feature Adaptive Bayesian Optimization)—that overcome these limitations by dynamically adapting their molecular representations to identify optimal candidates with exceptional sample efficiency [8] [18].

While both MolDAIS and FABO share the core principle of adaptive representation within a Bayesian optimization context, they are distinct frameworks with different methodological approaches and origins.

MolDAIS: Molecular Descriptors with Actively Identified Subspaces

MolDAIS is a flexible BO framework designed for data-efficient chemical design. Its core innovation lies in adaptively identifying a sparse, task-relevant subspace from a large, precomputed library of molecular descriptors during the optimization process. This approach avoids the high dimensionality and potential irrelevance of fixed representations by focusing the surrogate model on a minimal set of informative features [19] [8].

FABO: Feature Adaptive Bayesian Optimization

FABO is a framework that integrates feature selection directly into the Bayesian optimization process. It uses Gaussian processes to dynamically adapt material representations throughout the optimization cycles, allowing it to automatically identify molecular representations that align with human chemical intuition for known tasks and discover effective representations for novel tasks where prior knowledge is unavailable [18].

Comparative Analysis

Table: Framework Comparison: MolDAIS vs. FABO

| Feature | MolDAIS | FABO |

|---|---|---|

| Core Adaptation Mechanism | Active identification of sparse subspaces from descriptor libraries [8] | Integrated feature selection within the BO process [18] |

| Primary Representation | Precomputed molecular descriptor libraries | Molecular features adapted via Gaussian processes |

| Key Innovation | Sparsity-inducing priors (SAAS) & screening variants (MI, MIC) for scalability [8] | Dynamic adaptation of representations across BO cycles [18] |

| Reported Performance | Identifies near-optimal molecules from >100k library in <100 evaluations [8] | Outperforms random search and fixed-representation baselines [18] |

| Interpretability | High; provides a compact set of relevant molecular descriptors [8] | High; identifies representations aligned with chemical intuition [18] |

Experimental Protocols and Performance Benchmarks

This section provides detailed methodologies for implementing and evaluating the MolDAIS and FABO frameworks, based on published results.

MolDAIS Protocol: Single-Objective Molecular Optimization

The following protocol is adapted from the MolDAIS quick start example and methodological paper [19] [8].

1. Problem Initialization

- Define Search Space: Compile a list of candidate molecules as SMILES strings.

- Compute Target Properties: Obtain or calculate the target property values (e.g., logP) for a labeled subset, formatted as a PyTorch tensor.

- Initialize Problem Object: Create a

MolDAIS.Problemobject, specifying the SMILES list, target values, and an experiment name. Executeproblem.compute_descriptors()to featurize the molecules.

2. Optimizer Configuration

Configure the OptimizerParameters object. Critical parameters include:

sparsity_method: Feature selection method ('MI' for Mutual Information or 'MIC').acq_fun: Acquisition function ('EI' for Expected Improvement).num_sparsity_feats: Number of features to select (e.g., 10).total_sample_budget: Total function evaluations (e.g., 7).initialization_budget: Initial random samples for model warm-up (e.g., 2).

3. Execution and Analysis

- Instantiate the

MolDAISclass with the problem and parameters. - Run the optimization via

mol_dais.configuration.optimize(). - Access results:

mol_dais.results.best_moleculesandmol_dais.results.best_values. - Visualize convergence:

mol_dais.configuration.plot_convergence().

4. Performance Benchmark In rigorous testing, MolDAIS demonstrated the ability to identify high-performing molecules from large chemical libraries. The table below summarizes its data-efficient performance across various tasks [8].

Table: MolDAIS Performance Benchmarks

| Optimization Task | Search Space Size | Evaluation Budget | Key Performance Outcome |

|---|---|---|---|

| Single-objective MPO | >100,000 molecules | <100 evaluations | Identified near-optimal candidates [8] |

| Multi-objective MPO | Large-scale libraries | <100 evaluations | Consistently outperformed state-of-the-art baselines [8] |

| Organic Electrode Discovery | Real-world experimental space | Low budget (specific n not stated) | Found candidates matching/surpassing state-of-the-art at lower cost [20] |

FABO Protocol: Adaptive Representation in BO

The general workflow for FABO, which integrates feature selection with the BO loop, can be summarized as follows [18]:

1. Initialization

- Start with a full set of molecular features or descriptors.

- Define the black-box objective function to be optimized.

2. Iterative Optimization Loop

- Surrogate Modeling: Train a Gaussian process model on the currently collected data, using the dynamically adapted feature subset.

- Feature Selection: The model updates its hypothesis about the relevant feature subspace based on the accumulated data.

- Acquisition and Evaluation: Use an acquisition function to select the next promising molecule for evaluation based on the adapted model.

- Data Update: Augment the dataset with the new evaluation result.

3. Output After the evaluation budget is exhausted, the framework returns the best-performing molecule(s) found, along with the final adapted molecular representation.

The Scientist's Toolkit: Essential Research Reagents

The following table catalogs the key computational tools and components required to implement adaptive representation frameworks like MolDAIS and FABO.

Table: Key Research Reagent Solutions for Adaptive Molecular Optimization

| Tool/Component | Function | Example/Note |

|---|---|---|

| Molecular Descriptor Libraries | Provides a comprehensive set of featurizations for molecules, serving as the initial input for frameworks like MolDAIS. | RDKit 2D descriptors (200 features), Morgan Fingerprints (ECFP) [21] [8] |

| Sparsity-Inducing Surrogate Models | The core model that actively identifies a sparse, relevant subset of features from the full library during optimization. | Gaussian Process with SAAS (Sparse Axis-Aligned Subspace) prior [8] |

| Bayesian Optimization Backbone | Provides the algorithmic engine for sample-efficient, sequential experimental design. | Bayesian Optimization loop with acquisition functions like Expected Improvement (EI) [19] [8] |

| Domain-Specific Objective Function | The expensive black-box function representing the molecular property to be optimized. | Can be a high-fidelity simulation, a machine learning model, or an experimental measurement [8] [20] |

Workflow Visualization

The following diagram illustrates the core adaptive loop of the MolDAIS framework, from molecular search space to iterative subspace refinement.

Diagram: The MolDAIS Adaptive Bayesian Optimization Loop

MolDAIS and FABO represent a significant shift from static to adaptive molecular representations in Bayesian optimization. By actively learning which features matter most for a specific task, these frameworks achieve remarkable sample efficiency, making them exceptionally well-suited for real-world applications where data is scarce and costly to acquire. Their ability to provide interpretable insights into the key molecular descriptors driving property optimization further enhances their utility for researchers and drug development professionals aiming to accelerate the discovery of novel molecules and materials.

Leveraging Multi-Fidelity Bayesian Optimization for Experimental Funnels

Multi-fidelity Bayesian Optimization (MFBO) is an advanced machine learning framework that accelerates scientific discovery by intelligently integrating data sources of varying cost and accuracy. In the context of molecular property prediction and materials research, it addresses a critical challenge: experimental resources are finite and high-fidelity measurements (e.g., precise biological activity assays) are often expensive and time-consuming. MFBO leverages cheaper, lower-fidelity approximations (e.g., computational simulations or rapid preliminary screens) to guide the optimization process more efficiently than using high-fidelity data alone [22]. By building a probabilistic model that understands the relationships between different information sources, MFBO strategically decides which experiment to perform and at what fidelity, maximizing learning while minimizing total cost [22] [23]. This document provides detailed application notes and protocols for implementing MFBO within experimental funnels for molecular property optimization, framed within a broader thesis on Bayesian optimization for molecular research.

Core Concepts and Quantitative Foundations

Bayesian Optimization Refresher

Bayesian Optimization (BO) is a sample-efficient strategy for optimizing expensive-to-evaluate black-box functions [24]. It operates through two key components:

- Surrogate Model: Typically a Gaussian Process (GP), which provides a probabilistic approximation of the objective function, giving a mean prediction and uncertainty estimate at any point in the search space [24] [25].

- Acquisition Function: A policy that uses the surrogate's predictions to select the next most promising point to evaluate by balancing exploration (high uncertainty) and exploitation (high predicted value) [24]. Common acquisition functions include Expected Improvement (EI) and Upper Confidence Bound (UCB) [25].

Multi-Fidelity Extension

MFBO extends this core BO framework by incorporating multiple information sources, or fidelities. A high-fidelity (HF) source represents the expensive, accurate measurement (e.g., final experimental validation), while one or more low-fidelity (LF) sources provide cheaper, less accurate approximations (e.g., computational models or simplified experimental assays) [22]. The multi-fidelity surrogate model, often a multi-task GP, learns the correlation between these fidelities, allowing knowledge transfer from abundant LF data to inform predictions at the HF level [22].

When Does MFBO Succeed? A Quantitative Guide

The performance advantage of MFBO over single-fidelity BO (SFBO) is not guaranteed; it depends critically on the characteristics of the low-fidelity source. Systematic studies on synthetic functions (e.g., Branin, Park) have quantified the conditions for success [22].

Table 1: Impact of Low-Fidelity Source Characteristics on MFBO Performance

| Characteristic | Favorable Condition for MFBO | Unfavorable Condition for MFBO | Quantitative Impact on Performance (Δ) |

|---|---|---|---|

| Cost Ratio (ρ)(Cost of LF / Cost of HF) | Low (e.g., ρ = 0.1) | High (e.g., ρ = 0.5) | Inverse correlation; lower cost yields higher performance gain (Δ) [22] |

| Informativeness (R²)(Correlation between LF and HF) | High (e.g., R² > 0.9) | Low (e.g., R² < 0.75) | Direct correlation; higher R² yields higher performance gain (Δ) [22] |

| Combined Effect | Cheap & Informative LF | Expensive & Non-informative LF | Maximum Δ observed in favorable scenarios (e.g., 0.53); negative Δ (worse than SFBO) in unfavorable scenarios [22] |

The performance gain, Δ, is a key metric comparing the normalized performance of MFBO against SFBO, with positive values indicating an advantage for MFBO [22]. The data shows a clear gradient where progression towards cheaper and more informative LF sources provides better MFBO performance [22].

MFBO Protocol for Molecular Property Optimization

This protocol outlines the steps for employing MFBO to optimize a target molecular property (e.g., drug candidate binding affinity).

Pre-Experimental Planning

Step 1: Define Fidelity Hierarchy

- High-Fidelity (HF): Define the primary, expensive experimental endpoint. Example: IC₅₀ determination from a full, validated biochemical assay. Cost is normalized to 1.

- Low-Fidelity (LF): Identify one or more cheaper, faster proxies. Examples:

- Assign Costs: Quantify the relative cost (time, resources) of each LF source relative to HF. Example: Computational docking cost ρ = 0.01; HTS assay cost ρ = 0.1 [22].

Step 2: Characterize Fidelity Relationship

- If historical data exists, compute the correlation (e.g., R²) between the LF and HF outputs for a set of representative molecules. An R² > 0.8 suggests high informativeness [22].

- If no data exists, use domain expertise to estimate the expected correlation and proceed; the MFBO model will refine this understanding during optimization.

Step 3: Initial Experimental Design

- Using a space-filling design (e.g., Latin Hypercube Sampling), select an initial set of 5-10 molecules to be evaluated at the HF level.

- Optionally, evaluate a larger set of molecules (e.g., 50-100) at the primary LF level to seed the model with initial data [22].

Iterative MFBO Loop

The core optimization process is an iterative cycle.

Step 4: Model Initialization

- Fit a multi-fidelity Gaussian Process surrogate model to all available data. The model should use a kernel designed to capture correlations across fidelities (e.g., in BoTorch) [22].

Step 5: Candidate Suggestion via Acquisition Function

- Use a cost-aware acquisition function to select the next molecule and fidelity level to evaluate. The function balances three factors:

- Potential Improvement: Based on the surrogate model's prediction.

- Uncertainty Reduction: The value of exploring uncertain regions.

- Cost: The relative expense of the fidelity level.

- Common choices are the Multi-Fidelity Expected Improvement or Upper Confidence Bound.

Step 6: Execution and Data Incorporation

- Execute the experiment as suggested by the acquisition function (e.g., synthesize the proposed molecule and run it at the specified fidelity level).

- Add the new data point (molecule, fidelity, observed result) to the training dataset.

Step 7: Termination Check

- The loop (Steps 4-6) repeats until a stopping criterion is met, such as:

- Exhaustion of the experimental budget.

- Convergence (minimal improvement over several iterations).

- Discovery of a molecule meeting the target property threshold.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for MFBO Implementation

| Item Name | Function/Description | Example in Molecular Context |

|---|---|---|

| High-Fidelity Assay Kit | Provides the definitive, gold-standard measurement of the target molecular property. | Validated enzyme activity assay kit for precise IC₅₀ determination. |

| Low-Fidelity Proxy Assay | Enables rapid, cheaper approximation of the property for high-throughput screening. | Fluorescence-based initial screening assay; Computational docking software. |

| Multi-Fidelity BO Software | Computational backbone for implementing the MFBO algorithm and surrogate modeling. | BoTorch (PyTorch-based) or NUBO frameworks [22] [24]. |

| Chemical Library | A diverse set of molecules (virtual or physical) representing the search space for optimization. | Commercially available scaffold library; In-house virtual compound database. |

| Automation & LIMS | Laboratory automation systems and a Laboratory Information Management System to track experiments and data. | Liquid handling robots for assay plating; Electronic lab notebook for data logging. |

Performance Benchmarking and Analysis

Expected Outcomes

When applied under favorable conditions (cheap, informative LF), MFBO significantly reduces the total cost required to find an optimal solution compared to SFBO. The following table synthesizes performance gains observed in benchmark studies.

Table 3: MFBO Performance in Benchmark Studies

| Optimization Task / Function | Key MFBO Parameters | Performance Gain (Δ) vs. SFBO | Notes |

|---|---|---|---|

| Branin (Synthetic) | LF Cost ρ=0.1, LF R²>0.9 | Δ = 0.53 (Maximum discount) | MFBO achieves lower regrets quicker by exploiting LF data [22] |

| Park (Synthetic) | LF Cost ρ=0.1, LF R²>0.9 | Δ = 0.33 (Maximum discount) | Demonstrates effectiveness in higher-dimensional spaces [22] |

| Direct Arylation (Chemical Rxn) | Not Specified | 60.7% yield (MFBO) vs 25.2% yield (BO) | Example of LLM-enhanced Reasoning BO framework [28] |

| Bioprocess Optimization | Not Specified | 36% productivity increase | Bayesian Exp. Design optimized biomass formation [29] |

Advanced Integration: LLM-Enhanced Reasoning BO

Emerging frameworks are augmenting MFBO with Large Language Models (LLMs) to address limitations like local optima convergence and lack of interpretability. The "Reasoning BO" framework uses LLMs to generate and refine scientific hypotheses, which are then used to guide the BO sampling process [28]. For instance, in a chemical reaction yield optimization task (Direct Arylation), this hybrid approach achieved a final yield of 94.39%, compared to 76.60% for Vanilla BO, by leveraging domain knowledge and real-time reasoning [28]. This points to a future where MFBO is not just data-efficient but also scientifically insightful.

Multi-fidelity Bayesian Optimization represents a paradigm shift for efficient experimental design in molecular property prediction. Its successful application hinges on the careful selection of low-fidelity sources that are both inexpensive and informative relative to the high-fidelity goal. By following the protocols outlined herein—from pre-experimental planning and fidelity characterization to the execution of the iterative MFBO loop—researchers can systematically reduce the time and cost associated with molecular discovery and optimization. Integrating these data-driven strategies into experimental funnels promises to accelerate the pace of research in drug development and materials science.

Rank-Based Bayesian Optimization (RBO) for Rough Property Landscapes

Bayesian optimization (BO) has become an indispensable tool for autonomous decision-making across diverse applications, from autonomous vehicle control to accelerated drug and materials discovery [30]. With the growing interest in self-driving laboratories, BO of chemical systems is crucial for machine learning (ML)-guided experimental planning [31]. Traditional BO typically employs a regression surrogate model to predict the distribution of unseen parts of the search space. However, for molecular selection tasks where the goal is to pick top candidates with respect to a distribution, the relative ordering of their properties may be more important than their exact values [30] [31]. This insight has led to the development of Rank-based Bayesian Optimization (RBO), which utilizes a ranking model as the surrogate instead of traditional regression approaches [31].

The fundamental shift in RBO addresses key challenges in molecular property prediction, particularly when dealing with rough structure-property landscapes and activity cliffs—situations where small changes in molecular structure correspond to large fluctuations in property values [31]. These challenging landscapes are prevalent in drug discovery, where optimal compounds are often found precisely at these activity cliffs [31]. Regression models struggle with such rough landscapes because they attempt to predict exact property values, while ranking models focus solely on relative ordering, effectively reducing the impact of sharp changes in the functional space [31].

Theoretical Foundation: RBO vs. Regression BO

Core Conceptual Differences

Rank-Based Bayesian Optimization represents a paradigm shift from conventional regression-based BO by reformulating the surrogate modeling task from value prediction to ordinal ranking. The table below summarizes the fundamental differences between these approaches:

Table 1: Fundamental Differences Between Regression BO and Rank-Based BO

| Aspect | Regression BO | Rank-Based BO (RBO) |

|---|---|---|

| Surrogate Output | Predicts exact property values | Predicts relative rankings between candidates |

| Loss Function | Mean Squared Error (MSE) | Pairwise ranking loss (e.g., marginal ranking loss) |

| Primary Focus | Accurate value estimation | Correct ordinal relationships |

| Handling Activity Cliffs | Struggles with sharp property changes | Robust to rough landscapes and outliers |

| Data Efficiency | Requires more data for accurate regression | Effective ranking even in low-data regimes |

| Uncertainty Quantification | Predictive variance on values | Uncertainty in ranking orders |

Mathematical Formulation of Ranking Loss

The ranking loss used for Learning to Rank (LTR) tasks in RBO is typically formulated as a pairwise marginal ranking loss. Unlike point-wise loss functions like MSE that map a scalar prediction and ground truth to a scalar loss value, pairwise loss functions map a pair of predictions and a pair of ground truths to a scalar loss [31]. The pairwise marginal ranking loss has the form:

[ \mathcal{L}(y1, y2, \hat{y}1, \hat{y}2) = \max\big(0, -\textrm{sign}(y1 - y2) * (\hat{y}1 - \hat{y}2) + m\big) ]

Where ((y1, y2)) is the ground truth pair, ((\hat{y}1, \hat{y}2)) is the predicted pair, and (m) is a margin parameter that allows for predicted rank overlap (typically set to (m=0) for no margin) [31]. For correctly ranked predicted pairs, the sign of the second argument will be negative and (\mathcal{L}=0). During training, the dataset is collated into ((N^2-N)/2) unique pair combinations, where (N) is the number of data points [31].

Quantitative Performance Comparison

Performance Across Chemical Datasets

Comprehensive investigations of RBO's optimization performance compared to conventional BO on various chemical datasets demonstrate similar or improved optimization performance using ranking models [31]. The following table summarizes key quantitative findings from these studies:

Table 2: Performance Comparison of RBO vs. Regression BO on Chemical Datasets

| Dataset Characteristics | Regression BO Performance | RBO Performance | Key Observations |

|---|---|---|---|

| Rough structure-property landscapes | Suboptimal due to activity cliffs | Superior - robust to roughness | RBO maintains performance where regression fails |

| Smooth property landscapes | Excellent performance | Comparable performance | Both methods perform well in smooth spaces |

| Low-data regimes | Struggles with accurate value prediction | Superior - effective ranking with limited data | Ranking ability maintained at early BO iterations |

| High-data regimes | Excellent with sufficient data | Excellent performance | Both methods converge with ample data |

| Presence of activity cliffs | Predictive accuracy reduced | Minimal performance degradation | Ranking unaffected by sharp property changes |

| Correlation with surrogate ability | Moderate correlation | High correlation | Surrogate ranking ability strongly predicts BO performance |

Performance Metrics and Statistical Significance

Studies have demonstrated that RBO consistently achieves lower objective values and exhibits greater stability across runs compared to traditional approaches [32]. Statistical tests further confirm that RBO significantly outperforms Random Search at the 1% significance level [32]. The high correlation between surrogate ranking ability and BO performance makes RBO particularly valuable for optimization campaigns where early performance is critical [31].

Experimental Protocols and Implementation

RBO Workflow Implementation

RBO Experimental Workflow: Implementation steps for Rank-Based Bayesian Optimization

Detailed Protocol Steps

Molecular Representation and Featurization

For RBO implementation, molecules must be converted into numerical representations suitable for machine learning models. Two primary approaches are recommended:

Extended-Connectivity Fingerprints (ECFP): Use Morgan fingerprints with radius 3, implemented in cheminformatics software RDKit, to create 2048-dimensional bit vectors hashed from local structures of the molecular graph [31]. The Tanimoto distance kernel is particularly effective when using Morgan fingerprint representations [31].

Graph Neural Networks (GNN): Represent molecules as graphs with atoms as nodes and bonds as edges, along with node and edge features as defined in the Open Graph Benchmark [31]. GNNs based on the ChemProp architecture with two message-passing layers operating and a final variational inference Bayesian layer to produce uncertainty estimates are particularly effective [31].

Surrogate Model Training Protocol

Ranking Model Implementation:

- Model Architecture Selection: Choose between Multi-Layer Perceptron (MLP), Bayesian Neural Network (BNN), or Graph Neural Network (GNN) architectures [31].

- Pairwise Training Data Preparation: Collate the dataset into ((N^2-N)/2) unique pair combinations for training [31].

- Loss Function Implementation: Implement the pairwise marginal ranking loss with margin parameter (m=0) [31].

- Probabilistic Output: For Bayesian models, include regularization via KL divergence over weight distributions of variational layers [31].

- Model Training: Train until convergence with appropriate validation on ranking metrics.

Comparative Regression Model Implementation:

- Model Architecture: Use the same base architecture as ranking models for fair comparison.

- Loss Function: Implement Mean Squared Error (MSE) loss for regression tasks.

- Probabilistic Extensions: For Bayesian models, include appropriate uncertainty quantification methods.

Acquisition Function and Selection

- Acquisition Function Choice: Expected Improvement (EI) is commonly used, but other functions like Upper Confidence Bound (UCB) can be implemented [33].

- Candidate Selection: Choose top candidates based on acquisition function optimization for experimental evaluation.

- Batch Selection: For parallel experimentation, select multiple candidates using appropriate batch selection strategies.

Evaluation Metrics and Validation

Implement comprehensive evaluation strategies including:

- Ranking Performance Metrics: Kendall's Tau, Spearman's correlation between predicted and true rankings.

- Optimization Efficiency: Number of iterations to reach target performance, best value found over iterations.

- Statistical Validation: Multiple runs with different random seeds to ensure result robustness.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools and Resources for RBO Implementation

| Tool/Resource | Type | Function in RBO Research | Implementation Notes |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular featurization via ECFP fingerprints | Open-source, provides Morgan fingerprint implementation |

| PyTorch | Deep Learning Framework | Model implementation for MLP, BNN | Enables custom ranking loss implementation |

| PyTorch Geometric | GNN Library | Graph-based molecular representation | Implements message-passing layers for molecules |

| GPyTorch/GAUCHE | Gaussian Process Library | Baseline GP regression models | Provides Tanimoto kernel for molecular similarity |

| BayesMallows | Bayesian Ranking Models | Alternative ranking model implementation | Based on Mallows model for permutations |

| RBO Code Repository | Reference Implementation | Complete RBO workflow | Available at github.com/gkwt/rbo |

Application Guidelines and Decision Framework

When to Use RBO vs. Regression BO

The decision to implement Rank-Based Bayesian Optimization should be guided by specific characteristics of the optimization problem:

Use RBO when:

- Dealing with rough structure-property landscapes with activity cliffs [31]

- Working in low-data regimes where ranking is easier than regression [31]

- The primary goal is candidate prioritization rather than exact property prediction [30]

- Facing noisy or unreliable absolute measurements but reliable comparative assessments [32]

Use Regression BO when:

- Working with smooth, well-behaved property landscapes [31]

- Exact property values are critical for downstream applications

- Sufficient data is available for accurate regression modeling

- Uncertainty quantification on absolute values is required

Implementation Considerations for Molecular Optimization

For researchers implementing RBO in molecular property prediction, several practical considerations are essential:

- Representation Choice: ECFP fingerprints work well for smaller molecules, while GNN representations may capture complex structural relationships better for larger compounds [31].

- Model Selection: BNN and GNN models provide inherent uncertainty quantification valuable for BO, while standard MLPs are simpler but lack uncertainty estimates [31].

- Computational Resources: Pairwise ranking loss requires more memory due to quadratic pair generation; implement efficient sampling for large datasets [31].

- Integration with Experimental Platforms: For self-driving laboratories, ensure RBO workflow integration with automated synthesis and characterization platforms [30].

Rank-Based Bayesian Optimization represents a significant advancement for molecular optimization tasks, particularly those characterized by rough property landscapes and activity cliffs. By focusing on relative rankings rather than exact values, RBO demonstrates enhanced robustness and performance in challenging optimization scenarios prevalent in drug discovery and materials science.

The experimental protocols and implementation guidelines provided here offer researchers a comprehensive framework for applying RBO to their molecular optimization challenges. As the field advances, future developments will likely focus on hybrid approaches combining the strengths of ranking and regression models, improved uncertainty quantification for ranking, and enhanced scalability for high-throughput experimentation environments.

Integrating Pretrained Models and Active Learning for Enhanced Sample Efficiency

The discovery of molecules with optimal functional properties is a central challenge across diverse fields such as energy storage, catalysis, and chemical sensing [8]. However, molecular property optimization (MPO) remains difficult due to the combinatorial size of chemical space and the substantial cost of acquiring property labels via simulations or wet-lab experiments [8]. Bayesian optimization (BO) offers a principled framework for sample-efficient discovery in such settings, but its effectiveness depends critically on the quality of the molecular representation used to train the underlying probabilistic surrogate model [8].

Traditional machine learning approaches for molecular property prediction often struggle in low-data regimes due to high dimensionality or poorly structured latent spaces [8] [6]. Active learning (AL) provides a promising alternative by strategically selecting informative molecules for labeling, thereby reducing experimental costs [6]. However, conventional active learning typically trains models on labeled examples alone, neglecting valuable information present in unlabeled molecular data [6].

This application note explores the integration of pretrained models with active learning frameworks to enhance sample efficiency in molecular property prediction and optimization. We demonstrate how this synergy addresses critical challenges in data-scarce environments while providing practical protocols for implementation in drug discovery and materials science applications.

Background and Significance

Molecular Property Optimization Challenges

Molecular property optimization can be formally posed as a global optimization task where the goal is to identify the optimal molecule m★ that maximizes an objective function F(m) from a discrete set of candidate molecules [8]. This becomes computationally intractable when molecular sets approach ∼10⁴ compounds or more, compounded by expensive function evaluations that often require sophisticated simulations or physical experiments [8].