Bayesian vs. Random Search for Chemical ML: A Practical Guide for Efficient Reaction Optimization

This article provides a comprehensive comparison of Bayesian and Random Search optimization for machine learning in chemical applications.

Bayesian vs. Random Search for Chemical ML: A Practical Guide for Efficient Reaction Optimization

Abstract

This article provides a comprehensive comparison of Bayesian and Random Search optimization for machine learning in chemical applications. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of both methods, their practical implementation in chemical synthesis and materials discovery, strategies for troubleshooting common challenges, and a rigorous validation of their performance. By synthesizing the latest advances and real-world case studies, this guide empowers chemists to select and apply the most efficient optimization strategy to accelerate their research, from reaction parameter tuning to autonomous experimentation in pharmaceutical development.

Understanding the Optimization Landscape: From Grid Search to Intelligent Algorithms

The Hyperparameter Optimization Problem in Chemical Machine Learning

In the field of chemical machine learning (ML), the performance of predictive models hinges critically on the selection of appropriate hyperparameters. These settings control the learning process itself and can dramatically influence a model's ability to accurately predict molecular properties, reaction outcomes, or optimize synthetic processes. Within this context, a fundamental methodological debate exists regarding the most effective and efficient strategies for hyperparameter optimization (HPO). This guide objectively compares two predominant approaches—Bayesian optimization and random search—within the specific experimental constraints of chemical research, providing researchers with data-driven insights to inform their methodological choices.

The core challenge in chemical ML stems from the resource-intensive nature of experimental validation. Unlike purely computational domains where model evaluations are relatively cheap, many chemical ML applications ultimately require costly wet-lab experiments, high-throughput screening, or computationally expensive quantum calculations to generate training data or validate predictions. This reality places a premium on optimization algorithms that can identify optimal hyperparameters with minimal function evaluations, making sample efficiency a paramount concern.

Fundamental Optimization Strategies: A Comparative Framework

Random Search: Foundations and Characteristics

Random search operates on a simple premise: hyperparameter combinations are sampled randomly from predefined distributions until a satisfactory solution is found or a computational budget is exhausted. While seemingly naive, this approach possesses several notable characteristics when applied to chemical ML problems:

- Exploration Capability: By sampling throughout the entire hyperparameter space without prejudice, random search thoroughly explores the global landscape, which can be advantageous when prior knowledge is limited.

- Implementation Simplicity: The algorithm is straightforward to implement and parallelize, as all evaluations are independent.

- No Learning Mechanism: Crucially, random search lacks any mechanism to learn from previous evaluations. Each new sample is generated without considering the performance of previous trials, often resulting in inefficient resource utilization for expensive chemical objectives.

Bayesian Optimization: A Probabilistic Approach

Bayesian optimization (BO) represents a more sophisticated paradigm that addresses the limitations of random search through a probabilistic framework. As highlighted in recent literature, "Bayesian optimization is a sample-efficient and low-sample-cost global optimization strategy. It leverages probabilistic surrogate models and systematically explores the entire search space to achieve global optimization of complex systems" [1]. The BO framework consists of two core components:

- Probabilistic Surrogate Model: Typically a Gaussian process (GP) that approximates the unknown objective function and provides both predictive mean and uncertainty estimates. The Gaussian process is often chosen because "Gaussian distributions are maximum entropy distributions and therefore minimize the amount of prior knowledge that is being put into the assumption" [2], making them a principled choice under high uncertainty.

- Acquisition Function: A criterion that balances exploration of uncertain regions with exploitation of promising areas by leveraging the surrogate model's predictions. Common acquisition functions include Expected Improvement (EI), Upper Confidence Bound (UCB), and knowledge gradient [1] [3].

Table 1: Core Components of Bayesian Optimization for Chemical Applications

| Component | Common Implementations | Role in Optimization | Chemical Relevance |

|---|---|---|---|

| Surrogate Model | Gaussian Process, Random Forests, Bayesian Neural Networks | Approximates expensive objective function | Handles noisy experimental data; Quantifies prediction uncertainty |

| Acquisition Function | Expected Improvement (EI), Upper Confidence Bound (UCB), Thompson Sampling | Guides selection of next experiment | Balances exploring new conditions vs exploiting known productive regions |

| Domain Handling | Mixed-variable approaches, Latent variable methods | Manages continuous and categorical parameters | Essential for chemical spaces (solvents, catalysts, ligands, temperatures) |

The iterative BO process—surrogate modeling, acquisition optimization, experimental evaluation, and model updating—creates an efficient learning loop that becomes increasingly informed with each evaluation. This methodology is particularly well-suited to chemical applications where "the objective function is typically calculated with a numerically costly black-box simulation" [4].

Comparative Performance Analysis: Quantitative Benchmarks

Performance Metrics and Experimental Protocols

To objectively compare Bayesian and random search approaches, we examine their performance across several chemical ML scenarios using standardized evaluation metrics:

- Hypervolume Indicator: Measures the volume of objective space dominated by the solutions found, considering both convergence and diversity in multi-objective optimization [5].

- Sample Efficiency: The number of experimental evaluations required to reach a target performance threshold, critically important for resource-constrained chemical research.

- Best Achievable Performance: The optimal objective value (e.g., yield, selectivity, computational accuracy) identified within a fixed evaluation budget.

Recent studies have established rigorous benchmarking protocols using both synthetic test functions and real chemical datasets. For instance, benchmarking often begins with "algorithmic quasi-random Sobol sampling to select initial experiments, aiming to sample experimental configurations diversely spread across the reaction condition space" [5], ensuring fair initialization for subsequent optimization cycles. Evaluations typically employ repeated runs with different random seeds to account for stochastic variability, with performance measured across progressively increasing batch sizes to reflect realistic experimental constraints.

Empirical Results in Chemical Applications

Evidence from multiple chemical domains demonstrates Bayesian optimization's superior efficiency compared to random search:

In chemical reaction optimization, a comprehensive study comparing seven optimization strategies found that Bayesian approaches "exhibited the best performance across both benchmarks, with particularly strong gains in hypervolume improvement" [1]. For challenging transformations like nickel-catalyzed Suzuki reactions exploring 88,000 possible conditions, BO successfully identified conditions achieving 76% yield and 92% selectivity where traditional approaches failed [5].

In molecular conformer generation, BO demonstrated remarkable efficiency gains. For molecules with four or more rotatable bonds, "BOA typically requires 10² energy evaluations to find top candidates, while systematic search typically evaluates 10⁴ conformers" [3]. Despite using 100-fold fewer evaluations, BO found lower-energy conformations than systematic search 20-40% of the time for flexible molecules [3].

Table 2: Performance Comparison in Chemical Optimization Tasks

| Application Domain | Optimization Method | Key Performance Metric | Result | Evaluation Budget |

|---|---|---|---|---|

| Reaction Optimization | Bayesian Optimization (TSEMO) | Hypervolume Improvement | Best performance across benchmarks [1] | 50-100 experiments |

| Reaction Optimization | Random Search Baseline | Hypervolume Improvement | Consistently outperformed by BO [5] | Same budget |

| Conformer Generation | Bayesian Optimization (BOA) | Energy Evaluations Needed | 10² evaluations [3] | Fixed convergence criteria |

| Conformer Generation | Systematic Search (Confab) | Energy Evaluations Needed | 10⁴ evaluations (median) [3] | Same convergence criteria |

| Ni-catalyzed Suzuki | Bayesian Optimization | Yield/Selectivity Identified | 76% yield, 92% selectivity [5] | 96-well HTE campaign |

| Ni-catalyzed Suzuki | Chemist-designed HTE | Yield/Selectivity Identified | Failed to find successful conditions [5] | 2 HTE plates |

The performance advantage of Bayesian optimization becomes increasingly pronounced in high-dimensional spaces and when experimental resources are limited. As one study notes, "Bayesian optimization uses uncertainty-guided ML to balance exploration and exploitation of reaction spaces, identifying optimal reaction conditions in only a small subset of experiments" [5].

Methodological Implementation: Workflows and Reagents

Bayesian Optimization Workflow for Chemical ML

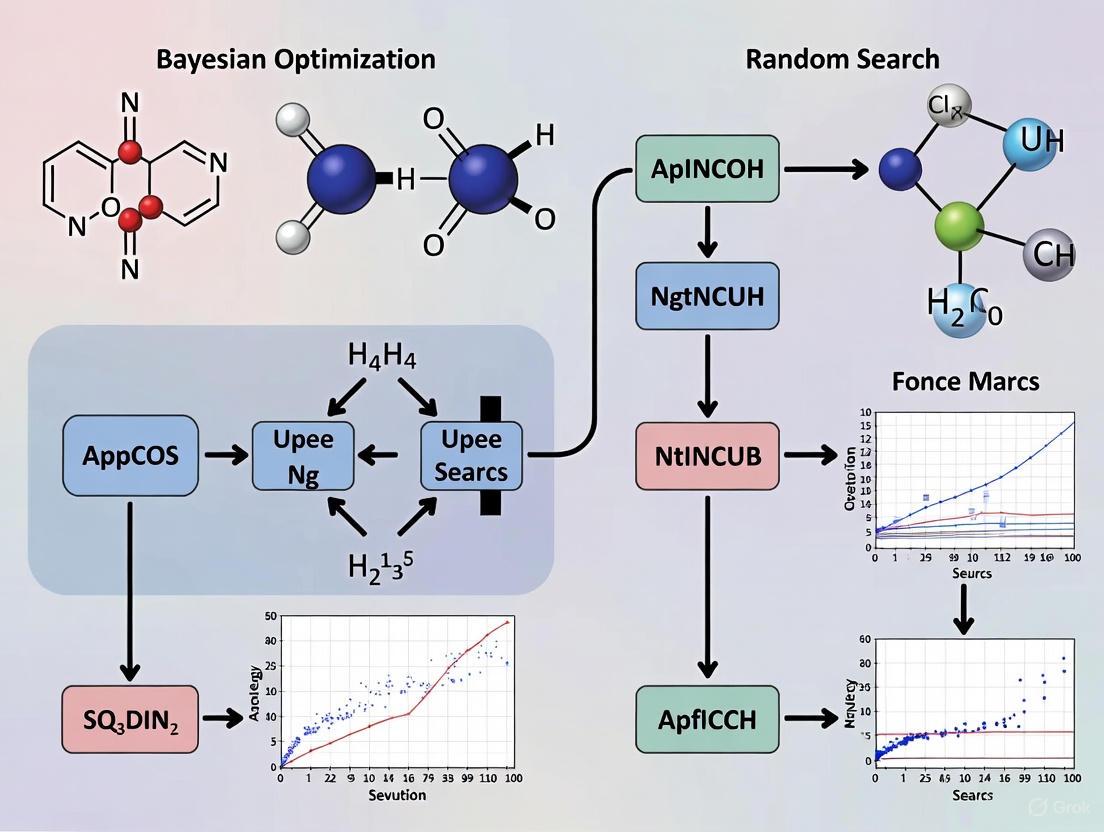

The Bayesian optimization process follows a structured, iterative workflow that can be adapted to various chemical ML applications. The following diagram illustrates this process for a typical hyperparameter optimization task in chemical ML:

Bayesian Optimization Workflow

Essential Computational Reagents for Optimization Experiments

Implementing effective hyperparameter optimization requires both software tools and methodological components that serve as essential "research reagents" in computational experiments:

Table 3: Essential Research Reagents for Chemical ML Optimization

| Reagent Category | Specific Tools/Components | Function in Optimization | Representative Examples |

|---|---|---|---|

| Optimization Frameworks | Summit, GPyOpt, BoTorch, MLrMBO | Provides algorithmic implementations | Summit specializes in chemical reaction optimization [1] |

| Surrogate Models | Gaussian Processes, Random Forests, Bayesian Neural Networks | Approximates expensive objective functions | GPs with Matern kernels for chemical landscapes [4] |

| Acquisition Functions | EI, UCB, q-NEHVI, TSEMO | Guides experimental selection | q-NEHVI for parallel multi-objective optimization [5] |

| Chemical Representations | Morgan Fingerprints, RDKit Descriptors, SMILES | Encodes molecular structures | Used in ADMET prediction benchmarks [6] |

| Benchmarking Resources | ChemBench, TDC, MoleculeNet | Provides standardized evaluation | ChemBench for LLM evaluation in chemistry [7] |

Advanced Considerations: Methodological Refinements

Multi-Objective Optimization in Chemical Applications

Chemical optimization problems frequently involve multiple, competing objectives—such as maximizing yield while minimizing cost, waste, or hazardous byproducts. Bayesian optimization extends naturally to these scenarios through specialized acquisition functions like q-Noisy Expected Hypervolume Improvement (q-NEHVI) and Thompson Sampling Efficient Multi-Objective (TSEMO) algorithms [1] [5].

These multi-objective approaches identify Pareto-optimal solutions representing the best possible trade-offs between competing objectives. For instance, in pharmaceutical process development, BO has successfully identified reaction conditions achieving >95% yield and selectivity for both Ni-catalyzed Suzuki couplings and Pd-catalyzed Buchwald-Hartwig reactions, directly translating to improved process conditions at scale [5].

Mixed-Variable Optimization Strategies

Chemical hyperparameter spaces often contain both continuous variables (temperature, concentration, learning rates) and categorical variables (solvent identity, catalyst type, neural network architectures). This mixed-variable nature presents particular challenges that random search handles naively, but requires specialized approaches in Bayesian optimization.

Advanced techniques include:

- Latent Variable Approaches: "Discrete variables are relaxed into continuous latent variables" [4], allowing standard continuous optimization methods to be applied before mapping back to the original categorical space.

- Specialized Kernels: Composite kernels that combine continuous kernels (e.g., RBF) with discrete kernels for categorical variables, enabling GPs to handle mixed spaces directly [4].

- Random Forest Surrogates: Tree-based models that natively handle mixed variable types and provide uncertainty estimates through methods like jackknife-based variance estimation [4].

The following diagram illustrates the comparative decision process for selecting between Bayesian and random search approaches based on project constraints:

Optimization Method Selection Guide

Limitations and Benchmarking Challenges

Despite its demonstrated advantages, Bayesian optimization faces several important limitations in chemical ML contexts. The performance of any optimization algorithm is highly dependent on proper benchmarking practices, which present particular challenges in chemical domains:

- Data Quality Issues: Public chemical datasets often contain "inconsistent SMILES representations, duplicate measurements with varying values, and inconsistent binary labels" [6], complicating fair algorithm comparisons.

- Standardization Deficiencies: "The chemical structures in a benchmark dataset should be standardized according to an accepted convention" [8], yet this is frequently not the case in widely used benchmarks.

- Experimental Artifacts: Combined data from multiple sources may introduce biases, as "it is highly unlikely that the authors of these 55 papers employed the same experimental procedures" [8].

- Representation Challenges: Optimal model and feature choices "are highly dataset-dependent" [6], making universally optimal hyperparameters difficult to identify.

Additionally, Bayesian optimization with Gaussian processes encounters scalability limitations with large datasets due to O(n³) computational complexity in the number of observations [2]. For very high-dimensional problems (>50 parameters) with large evaluation budgets, random search can sometimes prove more practical despite lower sample efficiency.

Within the context of chemical machine learning, where experimental evaluations are costly and optimization efficiency directly impacts research productivity, Bayesian optimization emerges as the mathematically superior approach for hyperparameter optimization. The empirical evidence consistently demonstrates that BO identifies better hyperparameters with fewer evaluations compared to random search, particularly for sample-constrained scenarios common in chemical research.

Random search maintains utility in specific situations: initial exploration of entirely unknown response surfaces, high-dimensional problems with very large evaluation budgets, and when implementation simplicity is paramount. However, for most chemical ML applications involving expensive function evaluations and moderate-dimensional search spaces, Bayesian optimization provides substantially better performance per unit computational or experimental resource.

As chemical ML continues to evolve, ongoing developments in Bayesian optimization—including transfer learning approaches that incorporate knowledge from related chemical tasks, multi-fidelity methods that combine cheap approximations with precise measurements, and more scalable surrogate models—will further extend its advantages for the hyperparameter optimization problems fundamental to advancing computational chemistry and drug discovery.

In the realm of chemical machine learning research, hyperparameter optimization is paramount for developing predictive models for tasks ranging from molecular property prediction to reaction optimization. Among available strategies, Grid Search remains a foundational, exhaustive method. This guide objectively compares Grid Search's performance against Random and Bayesian optimization, providing structured experimental data and protocols. Framed within the critical thesis of Bayesian versus Random Search for chemical ML, we demonstrate that while Grid Search provides a comprehensive baseline, its computational inefficiency and limitations in high-dimensional spaces make it increasingly unsuitable for modern, resource-intensive drug discovery applications.

Hyperparameters are external configuration variables that govern a machine learning model's training process and architecture. Unlike model parameters learned during training, hyperparameters must be set beforehand and critically impact model performance, influencing generalization, convergence, and predictive accuracy [9] [10]. In chemical ML, where datasets can be small, noisy, and high-dimensional—such as in ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) prediction or reaction yield optimization—systematic hyperparameter tuning is not merely beneficial but essential for achieving reliable, statistically significant results [6] [1].

Hyperparameter optimization methods exist on a spectrum from simple exhaustive to sophisticated sequential approaches. Grid Search represents the exhaustive end of this spectrum. It operates on a simple principle: specify a finite set of values for each hyperparameter, then evaluate the model performance for every possible combination in this predefined grid [9] [11]. Its primary appeal lies in its thoroughness; given sufficient computational resources, it is guaranteed to find the best combination within the provided grid. However, this thoroughness is also the source of its major limitations, especially when contrasted with Random Search (a more efficient stochastic sampling method) and Bayesian Optimization (a sequential, model-based approach that uses past evaluations to inform future trials) [1] [10].

This guide provides a detailed, data-driven comparison of these methods, contextualized for researchers and scientists in drug development and chemical synthesis.

Methodological Deep Dive: Grid Search

Core Algorithm and Workflow

Grid Search Cross-Validation (GridSearchCV) is the standard implementation, combining the exhaustive search with cross-validation to robustly estimate model performance [9]. The algorithm follows these steps:

- Parameter Space Definition: The user defines a discrete grid of hyperparameter values. For example, for a Random Forest model, one might specify

n_estimators': [50, 100, 150]and'max_depth': [None, 10, 20]. - Cartesian Product Generation: The algorithm generates the complete set of hyperparameter combinations from the grid.

- Model Training and Validation: For each unique combination, it trains a model and evaluates its performance using k-fold cross-validation. The average performance across all folds is the score for that combination.

- Optimal Selection: After all combinations are evaluated, the algorithm selects the configuration with the highest average score as optimal [9] [11].

The following diagram illustrates this exhaustive workflow.

Computational Complexity and the "Curse of Dimensionality"

The primary weakness of Grid Search is its computational cost, which scales exponentially with the number of hyperparameters—a phenomenon known as the "curse of dimensionality" [10]. The total number of model evaluations is the product of the number of values for each hyperparameter.

For a grid with d hyperparameters, each with n values, the total number of combinations is n^d [10]. For instance, a grid with 6 hyperparameters, each with just 4 values, results in 4⁶ = 4,096 unique combinations. With 5-fold cross-validation, this necessitates 20,480 model fits, which can be computationally prohibitive for complex models like deep neural networks or large chemical datasets [9] [11].

Comparative Performance Analysis

Empirical Results from Benchmarking Studies

Empirical studies across various domains, including chemical ML, consistently reveal the performance trade-offs between different optimization strategies. The following table synthesizes key quantitative findings from the literature.

Table 1: Comparative Performance of Hyperparameter Optimization Methods

| Method | Key Principle | Computational Efficiency | Best Performance Found | Ideal Use Case |

|---|---|---|---|---|

| Grid Search | Exhaustive search over a finite grid [9] | Very low; scales poorly with parameters [10] | Guaranteed best in grid [9] | Small, low-dimensional parameter spaces (e.g., <5 parameters) [11] |

| Random Search | Random sampling from parameter distributions [9] | High; cost is user-defined (n_iter) [10] | Near-optimal; often finds good solutions faster [9] [10] | High-dimensional spaces, continuous parameters, limited budget [9] [11] |

| Bayesian Optimization | Sequential model-based optimization [1] | Very high; sample-efficient [1] | Often finds superior solutions with fewer trials [1] | Expensive-to-evaluate models (e.g., neural networks, chemical simulations) [1] |

A landmark study in hyperparameter optimization demonstrated that for high-dimensional spaces, Random Search can often find models that are as good as or better than those found by Grid Search, but with far fewer trials [9]. This is because in most models, only a few hyperparameters significantly impact performance. Random Search's random sampling has a higher probability of finding good values for these important parameters across a wider range, whereas Grid Search wastes resources exhaustively testing less important ones [9] [10].

Case Study: ADMET Prediction in Drug Discovery

A recent benchmarking study for ML in ADMET predictions highlights the practical impact of model optimization. The study involved rigorous feature selection and model tuning across multiple public datasets [6]. While the study emphasized the importance of systematic tuning, it also reflected a community practice where the selection of optimization methods is often dataset-dependent. The research employed extensive hyperparameter optimization, underscoring that for a fair comparison between complex algorithms like Random Forests, Support Vector Machines, and Message Passing Neural Networks, each must be tuned properly—a process where the efficiency of the optimizer directly impacts feasibility [6].

Case Study: Optimization for Chemical Synthesis

Bayesian Optimization has emerged as a particularly powerful tool for chemical synthesis optimization. It transforms reaction engineering by efficiently optimizing complex, multi-variable systems (e.g., temperature, catalyst, solvent) where traditional methods fail [1]. Unlike Grid Search, Bayesian Optimization uses a probabilistic surrogate model, like a Gaussian Process, to approximate the objective function (e.g., reaction yield). An acquisition function then guides the selection of the next experiment by balancing exploration (testing uncertain regions) and exploitation (refining known good regions) [1]. This model-based approach is drastically more sample-efficient than Grid Search, making it ideal for resource-intensive wet-lab experiments or large-scale virtual screening in drug discovery [1] [12].

Table 2: Computational Cost Analysis: Grid Search vs. Random Search

| Metric | Grid Search | Random Search |

|---|---|---|

| Total Combinations in Space | 648 [11] | 60 [11] |

| Model Fits (with cv=5) | 3,240 [11] | 300 [11] |

| Typical Performance | Finds best in grid | Finds near-optimal solution |

| Search Space Flexibility | Limited to discrete values | Handles both discrete and continuous distributions |

The Scientist's Toolkit: Essential Reagents for Hyperparameter Optimization

This table details key computational "reagents" and their functions for implementing hyperparameter optimization experiments, particularly in a chemical ML context.

Table 3: Key Research Reagent Solutions for Optimization Experiments

| Item / Tool | Function / Purpose | Example in Chemical ML Context |

|---|---|---|

| Scikit-learn (GridSearchCV, RandomizedSearchCV) | Provides core implementations for Grid and Random Search with cross-validation [9]. | Tuning a Random Forest classifier for predicting molecular activity from fingerprints [11]. |

| Scipy.stats Distributions (uniform, loguniform, randint) | Defines parameter distributions for Random and Bayesian Search [9]. | Sampling learning rates log-uniformly for a neural network predicting reaction yields. |

| Bayesian Optimization Frameworks (e.g., Summit) | Specialized libraries for implementing Bayesian Optimization with chemical applications [1]. | Multi-objective optimization of a chemical reaction for both yield and space-time-yield [1]. |

| Gaussian Process (GP) Surrogate Model | Core of Bayesian Optimization; models the objective function and its uncertainty [1]. | Modeling the complex, non-linear relationship between reaction parameters and enantioselectivity. |

| Acquisition Function (e.g., EI, UCB) | Guides the next experiment by balancing exploration and exploitation [1]. | Deciding the next set of reaction conditions to test in an automated flow reactor. |

| RDKit Cheminformatics Toolkit | Generates molecular features (descriptors, fingerprints) used as model input [6]. | Creating Morgan fingerprints as input for an ADMET classification model. |

Experimental Protocol: Benchmarking Optimizers

To objectively compare Grid Search, Random Search, and Bayesian Optimization, follow this detailed experimental protocol.

Dataset and Model Selection

- Dataset: Use a standardized benchmark relevant to chemical research. The Therapeutics Data Commons (TDC) provides curated datasets for ADMET properties [6]. For a simpler, publicly available alternative, the Breast Cancer Wisconsin dataset is a common choice for initial benchmarking [11].

- Model: Select a model with multiple hyperparameters. A Support Vector Machine (SVM) or Random Forest is a suitable starting point due to their common use and tunability [9] [11].

- Performance Metric: Use a relevant metric such as accuracy, ROC-AUC, or mean squared error, and ensure it is consistent across all evaluations.

Defining the Search Space

Create equivalent search spaces for all three methods. For example, for an SVM with an RBF kernel:

- Grid Search Space: Define discrete values.

- Random Search / Bayesian Optimization Space: Define distributions for the same parameters.

Execution and Evaluation

- Grid Search: Run

GridSearchCVwith the definedparam_grid. Record the best score and the total computation time. - Random Search: Run

RandomizedSearchCVwith theparam_distributionsand setn_iterto a fraction of the Grid Search combinations (e.g., 20 or 60) [9] [11]. Record the best score and computation time. - Bayesian Optimization: Use a framework like Summit or Scikit-optimize. Configure it with the same parameter distributions and run it for the same number of iterations (

n_iter) as Random Search. Record the best score and time. - Analysis: Compare the final performance (best validation score) of all methods against the computational cost (total runtime or number of model fits). Plot the optimization trajectory (best score found vs. iteration) for each method to visualize their convergence speed.

The fundamental difference in how these strategies explore the hyperparameter space is visualized below.

Within the broader thesis evaluating Bayesian versus Random Search for chemical machine learning, Grid Search stands as a critical but limited baseline. Its exhaustive nature provides a guaranteed result within a defined space, making it a useful tool for small-scale problems or for establishing a performance baseline. However, its severe computational inefficiency and poor scalability render it impractical for the high-dimensional, resource-constrained environments typical of modern chemical and drug discovery research.

For scientists and researchers, the evidence is clear: Random Search is a superior default choice for most scenarios, offering a better balance of performance and cost. For the most computationally expensive models, such as deep neural networks for molecular property prediction or complex experimental optimization, Bayesian Optimization represents the state-of-the-art, leveraging intelligent sampling to achieve maximum performance with minimal experimental or computational burden. Grid Search's role is thus foundational but increasingly peripheral in the advanced toolkit of the modern chemical data scientist.

In the computationally intensive field of chemical machine learning (ML), where models predict molecular properties, optimize reaction conditions, or discover new drugs, hyperparameter tuning is a critical step for achieving peak model performance. This process involves adjusting the configuration settings that govern the ML algorithms themselves. For researchers and drug development professionals, the choice of tuning strategy directly impacts project timelines, computational costs, and the quality of results. The debate often centers on the trade-offs between sophisticated, informed methods like Bayesian Optimization and simpler, stochastic approaches like Random Search.

Within this context, Random Search offers a compelling proposition for high-dimensional problems common in chemical informatics. Its stochastic efficiency—the ability to find good solutions quickly through random sampling—makes it particularly suitable when dealing with the complex, often poorly understood relationships between many hyperparameters and model performance. This guide provides an objective comparison of these methods, supported by experimental data and protocols, to inform strategic decisions in chemical ML research.

Understanding the Key Hyperparameter Tuning Methods

Three primary methods dominate the hyperparameter tuning landscape, each with a distinct approach to navigating the search space.

Grid Search is a traditional, exhaustive method. It performs an uninformed search, meaning it does not learn from previous iterations. It operates by evaluating every single combination of hyperparameters within a pre-defined grid. While this approach guarantees finding the best combination within the specified range, it is computationally expensive and scales poorly as the number of hyperparameters increases. Its performance is also restricted by the user's specified parameter range, and it can only perform discrete searches, even for continuous hyperparameters [13] [14].

Random Search, another uninformed search method, addresses some of Grid Search's limitations. Instead of an exhaustive sweep, it evaluates a specific number of hyperparameter sets selected at random from the search space. This makes it less computationally demanding than Grid Search and allows it to explore a broader and more continuous range of values for each hyperparameter. However, because of its random nature, it runs the risk of missing the optimal set of hyperparameters [14] [15].

Bayesian Optimization is an informed search method that uses probabilistic models to guide the search. It builds a model, often a Gaussian Process, to map hyperparameters to the probability of a good score. Crucially, it uses this model to decide which hyperparameters to evaluate next based on previous results, allowing it to converge to the optimal set much faster than uninformed methods. The process is guided by an acquisition function, such as Expected Improvement (EI) or Upper Confidence Bound (UCB), which balances exploration (testing uncertain areas) and exploitation (testing promising areas) [13] [16]. Its main drawback is that each iteration is slower due to the overhead of updating the model, and it is a sequential process, making it less easy to parallelize than Random Search [17].

Comparative Analysis: Performance and Efficiency

Independent studies and practical experiments consistently reveal the trade-offs between these tuning methods. The following table summarizes a typical comparative study based on tuning a random forest classifier [14].

Table 1: Comparative performance of hyperparameter tuning methods on a model tuning task.

| Method | Total Trials | Trials to Optimum | Best F1-Score | Run Time | Key Characteristics |

|---|---|---|---|---|---|

| Grid Search | 810 | 680 | 0.98 | Longest | Exhaustive, high computational cost |

| Random Search | 100 | 36 | 0.94 | Shortest | Fast, parallelizable, risk of missing optimum |

| Bayesian Optimization | 100 | 67 | 0.98 | Medium | Informed, sample-efficient, sequential |

The data shows that Random Search found a good solution in the fewest number of iterations and with the shortest total run time. While Bayesian Optimization achieved the same high score as Grid Search, it did so with far fewer trials (100 vs. 810). This highlights the core strength of Random Search: its exceptional speed and efficiency, especially when the number of truly important hyperparameters is small, as it can quickly stumble upon good values for those key parameters [14] [15].

Relevance to High-Dimensional Spaces in Chemical Research

The efficiency of Random Search is particularly valuable in chemical ML, where datasets are often high-dimensional. Research has shown a surprising phenomenon in such spaces: small random subsets of features (as low as 0.02-1%) can sometimes match or even outperform the predictive performance of both full feature sets and computationally selected features [18]. This challenges the assumption that meticulously selected features are always superior and suggests that in high-dimensional scenarios, an arbitrary set of features can be as good as any other. This finding reinforces the value of Random Search's stochastic approach, as an exhaustive or highly guided search may not yield significantly better results while consuming vastly more resources.

Furthermore, the performance bounds of chemical datasets themselves must be considered. Experimental data in chemistry is often costly to collect, leading to small datasets with significant experimental errors. Studies have demonstrated that some reported ML models in drug and materials discovery may have reached the intrinsic performance limits of their datasets, potentially "fitting noise" [19]. In such cases, employing an extremely complex and thorough hyperparameter tuning method like Grid Search is unlikely to yield meaningful improvements and represents a waste of computational resources. The efficiency of Random Search makes it a pragmatic choice for establishing a realistic performance baseline.

Experimental Protocols in Practice

To illustrate how these methods are applied in a real-world context, here are the detailed protocols for two key experiments cited in this guide.

Protocol 1: Comparative Hyperparameter Tuning for a Classifier

This protocol outlines the study comparing Grid, Random, and Bayesian optimization for a random forest model [14].

- Objective: To identify the optimal hyperparameters for a random forest classifier (

n_estimators,max_depth,min_samples_split) that maximize the F1-Score on a digit recognition dataset. - Dataset: The

load_digitsdataset from Scikit-learn. - Search Space:

n_estimators: [100, 200, 300, 400, 500]max_depth: [5, 10, 15, 20, None]min_samples_split: [2, 5, 10]

- Methodologies:

- Grid Search: All 75 (5 x 5 x 3) unique hyperparameter combinations were evaluated using

GridSearchCV. - Random Search: 100 hyperparameter sets were sampled randomly from the search space using

RandomizedSearchCV. - Bayesian Optimization: 100 trials were conducted using the

Optunalibrary, which uses a Tree-structured Parzen Estimator to model the search space and suggest promising parameters.

- Grid Search: All 75 (5 x 5 x 3) unique hyperparameter combinations were evaluated using

- Evaluation Metric: The models were evaluated using the F1-Score on a held-out test set. The number of trials, time to find the best parameters, and the final score were recorded.

Protocol 2: High-Dimensional Feature Selection with Evolutionary Algorithms

This protocol is based on a study proposing a hybrid algorithm for high-dimensional feature selection, which aligns with the challenges in chemical data analysis [20].

- Objective: To select an optimal subset of features from high-dimensional datasets that maximizes classification performance while minimizing the number of features selected.

- Dataset: 16 classification datasets from UCI and scikit-feature repositories, with feature counts ranging from 166 to 24,482.

- Algorithm (DR-RPMODE): The proposed method is a two-stage hybrid algorithm.

- Dimensionality Reduction (DR) Phase: Uses "freezing" and "activation" operators to rapidly remove a large portion of irrelevant and redundant features.

- Multi-Objective Differential Evolution (RPMODE) Phase: A multi-objective evolutionary algorithm searches the reduced feature space. It includes redundant handling to remove duplicate solutions and preference handling to prioritize classification performance over feature set size.

- Comparison: DR-RPMODE was compared against seven other feature selection algorithms.

- Evaluation Metrics: Performance was measured using Hypervolume (HV) and Inverted Generational Distance (IGD) to assess the quality of the Pareto front (the trade-off between model performance and feature count), as well as final classification accuracy.

Workflow and Logical Relationships

The following diagram illustrates the logical workflow and high-level decision process for selecting a hyperparameter tuning strategy, particularly within the context of a chemical ML project.

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational tools and concepts essential for implementing hyperparameter tuning in chemical ML research.

Table 2: Essential computational reagents for hyperparameter tuning experiments.

| Tool / Concept | Type | Primary Function in Tuning |

|---|---|---|

Scikit-learn (GridSearchCV, RandomizedSearchCV) |

Software Library | Provides easy-to-use, parallelizable implementations of Grid and Random Search for standard ML models. |

| Optuna / BayesianOptimization | Software Library | Frameworks specifically designed for implementing Bayesian Optimization, handling the probabilistic modeling and acquisition function selection. |

| Gaussian Process (GP) | Probabilistic Model | Serves as the surrogate model in Bayesian Optimization, estimating the distribution of the objective function and its uncertainty. |

| Acquisition Function (e.g., EI, UCB) | Algorithmic Component | Guides the Bayesian search by balancing exploration and exploitation to select the next hyperparameters to evaluate. |

| High-Dimensional Dataset (e.g., from microarrays, RNA-Seq) | Data | The complex, high-feature-count data common in chemical and biological research, where efficient tuning methods are most valuable. |

| Multi-Objective Evolutionary Algorithm (MOEA) | Optimization Algorithm | Used for complex optimization tasks like feature selection, where multiple conflicting objectives (e.g., accuracy vs. feature count) must be balanced. |

The choice between Random Search and Bayesian Optimization is not about identifying a universally superior method, but about selecting the right tool for the specific research context. Random Search stands out for its remarkable stochastic efficiency, especially in high-dimensional spaces prevalent in chemical ML. Its speed, simplicity, and easy parallelization make it an excellent choice for initial model development, rapid prototyping, and when computational resources are a primary constraint.

For chemical researchers, this efficiency is key. When dealing with thousands of molecular descriptors or spectral features, and when dataset noise inherently limits potential performance, the brute-force approach of Grid Search is often impractical and unnecessary. Bayesian Optimization remains a powerful alternative when model evaluations are extremely time-consuming and sample efficiency is paramount, but its sequential nature and computational overhead can be a bottleneck. By understanding the performance trade-offs and experimental protocols outlined in this guide, scientists can make informed decisions, embracing the stochastic efficiency of Random Search to accelerate the journey from data to discovery.

In the fields of chemical machine learning (ML) and materials science, researchers are consistently confronted with the formidable challenge of navigating vast, high-dimensional search spaces to discover optimal molecules, reaction conditions, or material formulations. Traditional optimization methods often require an impractical number of experiments, which are both time-consuming and resource-intensive. Within this context, two algorithmic strategies have emerged as prominent contenders: the straightforward stochastic sampling of Random Search and the intelligent, sequential model-based approach of Bayesian Optimization (BO). While Random Search has been praised for its simplicity and surprising effectiveness, Bayesian Optimization represents a paradigm shift towards sample-efficient, intelligent search. This guide provides an objective comparison of these methods, underpinned by experimental data and benchmarks from recent literature, to equip researchers and drug development professionals with the knowledge to select the optimal strategy for their specific discovery campaigns.

The core distinction lies in their operational philosophy. Random Search evaluates hyperparameter configurations independently, performing a non-adaptive exploration of the search space [13]. In contrast, Bayesian Optimization constructs a probabilistic surrogate model of the objective function and uses an acquisition function to guide the selection of subsequent experiments based on all previous results. This allows it to balance the exploration of uncertain regions with the exploitation of known promising areas, leading to a more informed and efficient search process [13] [21].

Theoretical Foundations: How the Algorithms Operate

Random Search Mechanics

Random Search operates on a simple principle: it randomly samples a pre-defined number of configurations from the hyperparameter space and evaluates them. Its primary advantage is the avoidance of the exponential computational growth associated with exhaustive methods like Grid Search, especially as dimensionality increases [22] [23]. The method is dirt-simple to implement and provides a probabilistic guarantee of finding a solution within a top quantile of all possible solutions. For instance, to have a 95% probability (p=0.95) of finding a solution in the top 5% of all possible solutions (quantile q=0.95), only 60 random samples are required, a number that holds regardless of the search space's dimensionality [24]. However, this strength is also a key weakness; it treats all regions of the space as equally promising and does not learn from past evaluations.

Bayesian Optimization Mechanics

Bayesian Optimization is a more sophisticated, sequential strategy designed for the global optimization of black-box functions that are expensive to evaluate. Its core cycle involves two key components [21]:

- A surrogate model is used to approximate the unknown objective function. The most common choice is a Gaussian Process (GP), which provides a posterior distribution that estimates the function and its uncertainty at every point in the search space. Alternative surrogate models include Random Forests (RF) [25] [1].

- An acquisition function leverages the surrogate's predictions to decide the next most promising point to evaluate. It systematically balances exploration (sampling regions of high uncertainty) and exploitation (sampling regions with a high predicted mean). Common acquisition functions include Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI) [25] [1].

Table 1: Core Components of Bayesian Optimization

| Component | Description | Common Examples |

|---|---|---|

| Surrogate Model | Probabilistic model that approximates the expensive black-box function. | Gaussian Process (GP), Random Forest (RF) |

| Acquisition Function | Decision-making function that selects the next experiment by balancing exploration and exploitation. | Expected Improvement (EI), Upper Confidence Bound (UCB) |

The following diagram illustrates the iterative workflow of a standard Bayesian Optimization cycle, as applied in an experimental setting.

Performance Benchmarking: Experimental Data and Comparisons

Quantitative Performance in Materials Science

A comprehensive benchmarking study published in npj Computational Materials evaluated BO performance across five diverse experimental materials systems, including carbon nanotube-polymer blends, silver nanoparticles, and lead-halide perovskites. The study employed metrics like acceleration factor (how much faster an algorithm finds a target objective value compared to random search) to ensure a fair comparison [25].

The results demonstrated that BO, particularly with an anisotropic kernel (GP-ARD) or Random Forest (RF) as the surrogate model, consistently and significantly outperformed random search. The data revealed that the choice of surrogate model is critical, with GP-ARD and RF showing comparable and robust performance, both surpassing the commonly used GP with an isotropic kernel [25].

Table 2: Benchmarking Results Across Materials Science Domains [25]

| Materials System | Key Optimization Objective | Best Performing BO Method | Acceleration Factor vs. Random Search |

|---|---|---|---|

| Pb-Halide Perovskites (PVSK) | Maximize Photoluminescence Quantum Yield | GP-ARD | ~5x |

| Silver Nanoparticles (AgNP) | Maximize Photonic Density of States | Random Forest (RF) | ~2x |

| Polymer Blends (P3HT/CNT) | Maximize Electrical Conductivity | GP-ARD | ~3x |

| Additive Manufacturing (AutoAM) | Maximize Toughness | GP-ARD / RF | ~3x |

Efficiency Gains and Computational Cost

Beyond acceleration factors, other studies have highlighted the raw sample efficiency of Bayesian Optimization. One analysis reported that BO could achieve the same F1 score as Grid Search or Random Search but in 7x fewer iterations and with a 5x faster execution time, converging on the optimal configuration much earlier [13]. Furthermore, the 2020 NeurIPS Black-Box Optimization Challenge, which focused on tuning ML models, concluded that Bayesian Optimization was "superior to random search," establishing its effectiveness on a competitive platform [26].

It is important to note that the relative advantage of BO is most pronounced in scenarios with moderate to high evaluation costs. For small models or very cheap objective functions, the computational overhead of building and updating the surrogate model may negate its sample-efficiency benefits, making random search a practical choice [13].

Table 3: Method Comparison Overview

| Criterion | Random Search | Bayesian Optimization |

|---|---|---|

| Search Strategy | Independent, random sampling | Sequential, model-based guidance |

| Sample Efficiency | Low | High (e.g., 7x fewer iterations [13]) |

| Computational Overhead | Very Low | Moderate to High (model training) |

| Theoretical Guarantees | Probabilistic (e.g., 60 samples for top 5% [24]) | Convergence to optimum [21] |

| Handling of Noise | Inherently robust | Requires specific robust models [1] |

| Ideal Use Case | Low-cost objectives, large budgets, initial screening | Expensive experiments, limited budget, complex landscapes |

Experimental Protocols and Case Studies in Chemistry

Detailed Methodology for a BO Campaign

A typical experimental protocol for applying BO to a chemical synthesis problem, as detailed in multiple studies [25] [1], involves several key stages:

- Problem Formulation: Define the input parameters (e.g., temperature, concentration, catalyst type) and the objective function to optimize (e.g., reaction yield, selectivity, space-time yield).

- Initial Design: A small set of initial experiments (typically 5-10) is selected using a space-filling design like Latin Hypercube Sampling or chosen randomly to seed the model.

- Iterative Optimization Loop: The core cycle from Figure 1 is executed:

- The surrogate model (e.g., GP) is trained on all available data.

- The acquisition function (e.g., EI, UCB) is optimized to propose the next experiment.

- The proposed experiment is conducted in the lab, and the result is measured.

- The new data point is added to the dataset.

- Termination: The loop continues until a stopping criterion is met, such as a performance threshold, a maximum number of experiments, or convergence of the suggestion.

Case Study: Multi-Objective Reaction Optimization

The Lapkin research group has been instrumental in demonstrating BO's power in chemical synthesis. In one landmark study, they used a multi-objective BO algorithm called Thompson Sampling Efficient Multi-Objective (TSEMO) to optimize a reaction with the objectives of maximizing space-time yield (STY) and minimizing the environmental factor (E-factor) [1]. Their framework, after 68-78 iterations, successfully mapped the Pareto front—the set of optimal trade-offs between the two objectives. This showcases BO's ability to handle complex, real-world optimization problems with competing goals, a task for which random search is profoundly inefficient.

The Scientist's Toolkit: Essential Research Reagents and Software

Implementing these optimization strategies requires a combination of software and conceptual tools.

Table 4: Key Research Reagent Solutions for Optimization

| Item / Solution | Function / Description | Examples |

|---|---|---|

| Gaussian Process (GP) Surrogate | Models the objective function and quantifies prediction uncertainty; the core of sample-efficient BO. | GPyOpt, BoTorch, GPax [21] |

| Acquisition Function | Decides the next experiment by balancing exploration and exploitation. | Expected Improvement (EI), Upper Confidence Bound (UCB) [25] [1] |

| High-Throughput (HTE) Robotics | Automates the execution of experiments, enabling rapid data generation for the optimization loop. | Self-driving lab platforms [25] |

| Bayesian Optimization Software | Integrated packages that provide surrogates, acquisition functions, and optimization loops. | BoTorch, Optuna, SMAC3, Summit [1] [21] |

| Feature Selection Method | Dynamically identifies the most relevant features in complex material representations during BO. | Maximum Relevancy Minimum Redundancy (mRMR) [27] |

Critical Considerations and the Path Forward

Limitations and Challenges of Bayesian Optimization

Despite its strengths, BO is not a universal solution. Its performance can be sensitive to the choice of surrogate model and its hyperparameters. For instance, a standard GP with an isotropic kernel can be outperformed by a Random Forest on some problems, and GP with an anisotropic kernel (Automatic Relevance Detection, ARD) is often recommended for robustness [25]. Furthermore, BO struggles with high-dimensional search spaces (the "curse of dimensionality") and optimizing categorical variables. The computational cost of training the surrogate model can also become a bottleneck for very large datasets.

Emerging Innovations

Research is actively addressing these limitations. The Feature Adaptive Bayesian Optimization (FABO) framework integrates feature selection directly into the BO cycle, dynamically identifying the most informative features and making BO effective for complex material representations without prior knowledge [27]. Other advancements include:

- Multi-fidelity and transfer learning: Using cheap, low-accuracy data to inform the optimization of expensive experiments [1] [21].

- Noise-robust models: Enhancing BO for the noisy data common in real-world chemical experiments [1].

- Open-source software: The development of powerful, accessible libraries like BoTorch and Ax is lowering the barrier to entry for researchers [21].

The experimental evidence is clear: Bayesian Optimization provides a statistically superior and more sample-efficient paradigm for optimizing expensive black-box functions compared to Random Search. Its ability to intelligently guide experiments by learning from past results leads to dramatic accelerations, often 2x to 5x faster, in discovering optimal materials and reaction conditions [25].

The choice between the two methods should be guided by the cost and context of the research problem. Random Search remains a valid, easy-to-implement option for problems with low evaluation costs, very high-dimensional spaces where BO struggles, or as an initial baseline. However, for the vast majority of chemical and materials discovery campaigns—where each experiment consumes valuable time, resources, and expert effort—Bayesian Optimization is the unequivocally recommended strategy. Its intelligent, sample-efficient search aligns perfectly with the core goals of modern research: to accelerate discovery and reduce costs. By leveraging the growing ecosystem of advanced algorithms and software tools, scientists can harness this powerful paradigm to drive their discovery pipelines forward.

Bayesian Optimization (BO) is a powerful, sequential strategy for global optimization of black-box functions that are expensive to evaluate [21]. This sample-efficient approach is particularly valuable in chemical and materials research, where experiments or simulations are costly and time-consuming. BO excels in navigating complex, high-dimensional design spaces common in molecular property optimization, catalyst discovery, and materials synthesis [28]. The core strength of BO lies in its two fundamental components: the surrogate model, which approximates the unknown objective function and quantifies uncertainty and the acquisition function, which guides the search by balancing exploration of uncertain regions with exploitation of promising areas [29]. This sophisticated balancing act enables BO to typically identify optimal solutions with significantly fewer evaluations compared to random search, making it particularly valuable for resource-intensive chemical research [25].

Surrogate Models: Gaussian Process and Alternatives

The surrogate model forms the probabilistic foundation of BO by building a statistical approximation of the expensive black-box function using observed data [30]. This model provides both a prediction (mean) and uncertainty estimate (variance) at any point in the design space, enabling informed decision-making about where to sample next.

Table 1: Comparison of Primary Surrogate Models Used in Bayesian Optimization

| Model | Key Features | Mathematical Foundation | Best Use Cases | Performance Notes |

|---|---|---|---|---|

| Gaussian Process (GP) | Flexible, probabilistic, provides uncertainty quantification | Defined by mean function and covariance kernel; posterior is Gaussian [30] | Low-to-medium dimensional problems, smooth objective functions | Strong performance with anisotropic kernels; higher computational cost (O(n³)) [25] |

| GP with Automatic Relevance Detection (ARD) | Adaptive lengthscales for each input dimension | Anisotropic kernels with individual characteristic lengthscales lj for each dimension j [25] | High-dimensional spaces with irrelevant features | Most robust performance in materials optimization; identifies feature importance [25] |

| Random Forest (RF) | Non-parametric, ensemble method, no distributional assumptions | Multiple decision trees; uncertainty from tree variance [25] | Discrete spaces, mixed variable types, larger datasets | Comparable to GP-ARD; lower computational cost; minimal tuning [25] |

| Sparse Axis-Aligned Subspace (SAAS) | Sparsity-inducing prior for high-dimensional spaces | Bayesian treatment with hierarchical priors to shrink irrelevant parameters [28] | Molecular optimization with large descriptor libraries | Effectively identifies task-relevant subspaces; improves sample efficiency [28] |

Gaussian Process in Detail

Gaussian Processes offer a principled probabilistic framework for surrogate modeling. A GP is defined by a prior mean function $μ0(x)$ and a prior covariance kernel $Σ0(x, x')$, resulting in the prior distribution $f(Xn) ∼ \mathcal{N}(m(Xn), K(Xn, Xn))$ [30]. After observing data $\mathcal{D}n$, the posterior predictive distribution for test points $X*$ is Gaussian with mean and variance given by:

$μn(X) = K(X_, Xn)[K(Xn, Xn) + σ^2I]^{-1}(y - m(Xn)) + m(X_*)$

$σ^2n(X) = K(X_, X*) - K(X, X_n)[K(X_n, X_n) + σ^2I]^{-1}K(X_n, X_)$ [30]

The Matérn 5/2 kernel is particularly popular for practical optimization due to its flexibility [25] [30].

Acquisition Functions: Balancing Exploration and Exploitation

Acquisition functions guide the optimization process by quantifying the potential utility of evaluating the objective function at any given point. They automatically balance exploration (sampling uncertain regions) and exploitation (sampling areas with high predicted performance) to efficiently locate the global optimum [29] [30].

Table 2: Key Acquisition Functions and Their Characteristics

| Acquisition Function | Mathematical Formulation | Exploration-Exploitation Balance | Performance Notes |

|---|---|---|---|

| Expected Improvement (EI) | $α{EI}(X) = (μ_n(X_) - y^{best})Φ(z) + σn(X)φ(z)$ where $z = \frac{μ_n(X_) - y^{best}}{σn(X*)}$ [30] | Automatic balance based on improvement probability | Most widely used; strong empirical performance across domains [25] [30] |

| Upper Confidence Bound (UCB) | $a(x;λ) = μ(x) + λσ(x)$ [29] | Explicitly tunable via λ parameter | Simple interpretation; λ controls exploration-exploitation tradeoff [29] |

| Probability of Improvement (PI) | $PI(x) = Φ\left(\frac{μ(x)-f(x^*)}{σ(x)}\right)$ [29] | Tends toward exploitation with increasing samples | Can get stuck in local optima; less popular than EI [29] |

Expected Improvement Deep Dive

Expected Improvement is perhaps the most widely used acquisition function due to its strong empirical performance and theoretical foundation. EI measures the expected value of the improvement $I(x) = max(f(x) - f(x^), 0)$ over the current best observation $f(x^)$ [29]. The closed-form expression under the Gaussian process surrogate is derived as:

$\text{EI}(x) = \begin{cases} (μ(x) - f(x^*))Φ(Z) + σ(x)φ(Z) & \text{if } σ(x) > 0 \ 0 & \text{if } σ(x) = 0 \end{cases}$

where $Z = \frac{μ(x) - f(x^*)}{σ(x)}$ [29]. This formulation elegantly balances the desire to sample points with high predicted mean (exploitation) and high uncertainty (exploration) without requiring additional tuning parameters.

Experimental Protocols and Benchmarking Methodology

Rigorous benchmarking across diverse materials systems provides compelling evidence for BO's superiority over random search in chemical applications [25]. The standard evaluation framework involves pool-based active learning with carefully designed metrics to quantify performance.

Benchmarking Framework

The pool-based active learning framework evaluates BO algorithms by simulating materials optimization campaigns [25]. The process begins with a small initial dataset (typically 5-10 points) selected via space-filling design. In each iteration, the surrogate model is trained on all available data, the acquisition function selects the next point to evaluate, and this point is added to the training set. This process continues until reaching the evaluation budget [25].

Key performance metrics include:

- Acceleration Factor: The ratio of iterations required by random search versus BO to reach the same objective value [25]

- Enhancement Factor: The relative improvement in final performance compared to random search [25]

- Simple Regret: The difference between the true optimum and the best value found during optimization

Chemical Discovery Applications

In automated chemical discovery, Bayesian Optimization has demonstrated remarkable efficiency. A Bayesian Oracle system was able to rediscover eight historically important reactions (including aldol condensation, Buchwald-Hartwig amination, and Suzuki coupling) by performing >500 reactions and retaining both positive and negative results [31]. The system encoded chemist intuition as probabilistic models connecting reagents and process variables to observed reactivity, with Bayes' theorem providing the framework for continuously refining beliefs as new experimental data arrived [31].

For molecular property optimization, the MolDAIS framework combines Bayesian Optimization with adaptive subspace identification to efficiently navigate large molecular descriptor libraries [28]. By imposing sparsity-inducing priors, MolDAIS automatically identifies low-dimensional, property-relevant subspaces during optimization, enabling identification of near-optimal candidates from chemical libraries exceeding 100,000 molecules using fewer than 100 property evaluations [28].

Research Reagent Solutions: Bayesian Optimization Software

Table 3: Essential Software Tools for Bayesian Optimization in Chemical Research

| Package Name | Primary Surrogate Models | Key Features | License | Reference |

|---|---|---|---|---|

| BoTorch | GP, others | Multi-objective optimization, built on PyTorch | MIT | [21] |

| Ax | GP, others | Modular framework built on BoTorch | MIT | [21] |

| Dragonfly | GP | Multi-fidelity optimization | Apache | [21] |

| GPyOpt | GP | Parallel optimization | BSD | [21] |

| SMAC3 | GP, RF | Hyperparameter tuning | BSD | [21] |

| MolDAIS | GP with SAAS prior | Specialized for molecular descriptor libraries | - | [28] |

Performance Comparison: Bayesian vs Random Search

Comprehensive benchmarking across five experimental materials systems provides quantitative evidence of BO's superiority over random search [25]. The performance advantage varies based on the specific surrogate model and acquisition function selection.

Table 4: Performance Comparison of Bayesian Optimization vs Random Search

| Optimization Method | Surrogate Model | Acceleration Factor | Key Advantages | Limitations |

|---|---|---|---|---|

| Random Search | None | 1.0x (baseline) | Simple, embarrassingly parallel | No information gain between evaluations |

| Bayesian Optimization | GP (Isotropic) | 1.5-3x | Better than random, simple to implement | Struggles with high-dimensional spaces [25] |

| Bayesian Optimization | GP (ARD) | 3-8x | Automatic relevance detection, robust [25] | Higher computational cost |

| Bayesian Optimization | Random Forest | 3-7x | No distribution assumptions, handles discrete spaces [25] | Uncertainty estimates less calibrated than GP |

| Bayesian Optimization | SAAS (MolDAIS) | 5-10x+ | Extreme sample efficiency for molecular design [28] | Complex implementation |

The acceleration factors demonstrate that well-configured BO algorithms typically identify optimal solutions 3-8x faster than random search in materials optimization tasks [25]. In specific chemical applications, the performance gap can be even more substantial. For Direct Arylation reaction optimization, advanced BO frameworks achieved 94.39% yield compared to 76.60% with basic approaches, representing a 23.3% improvement in final performance [32].

The experimental evidence overwhelmingly supports Bayesian Optimization as superior to random search for chemical and materials research. The core components—surrogate models and acquisition functions—work in concert to provide sample-efficient optimization of expensive black-box functions. Gaussian Processes with anisotropic kernels typically offer the most robust performance, while Random Forest provides a compelling alternative with lower computational overhead [25]. For molecular optimization, sparse models like SAAS dramatically improve efficiency in high-dimensional descriptor spaces [28]. Expected Improvement consistently demonstrates strong performance across diverse chemical applications, making it the default acquisition function choice [25] [30]. The quantitative benchmarking reveals that properly configured BO algorithms typically identify optimal conditions 3-8x faster than random search, with even greater acceleration factors in specialized molecular design applications [25] [28]. This significant performance advantage, combined with growing accessibility through open-source software, establishes Bayesian Optimization as the method of choice for data-efficient chemical discovery.

Implementing Optimization Strategies in Real-World Chemical Workflows

In chemical synthesis, particularly in pharmaceutical development, researchers face the complex challenge of simultaneously optimizing multiple reaction objectives. The primary goals often include maximizing chemical yield, which improves process efficiency and reduces waste, and enhancing selectivity, which minimizes byproducts and simplifies purification [5]. In process chemistry, these demands are even more rigorous, encompassing additional economic, environmental, health, and safety considerations that often necessitate using lower-cost, earth-abundant catalysts and greener solvents [5].

The traditional approach to this challenge, the one-factor-at-a-time (OFAT) method, is highly inefficient for multi-parameter reactions as it ignores interactions between factors and often fails to identify globally optimal conditions [1]. The emergence of high-throughput experimentation (HTE) has enabled highly parallel execution of numerous reactions, but as the number of parameters multiplicatively expands the search space, exhaustive screening remains intractable [5]. This has created a pressing need for more intelligent optimization strategies that can efficiently navigate complex chemical landscapes.

Within this context, Bayesian optimization has emerged as a powerful machine learning approach that transforms reaction engineering by enabling efficient optimization of complex reaction systems [1]. This guide provides a comprehensive comparison between Bayesian optimization and random search, examining their performance across critical chemical objectives including yield, selectivity, and multi-goal optimization.

Core Concepts: Bayesian Optimization vs. Random Search

Understanding the Mechanisms

Bayesian optimization is a sample-efficient global optimization strategy that uses probabilistic surrogate models to approximate the objective function in the chemical space of interest [1]. Its core strength lies in systematically balancing exploration of unknown regions with exploitation of promising areas identified through previous experiments [5] [1]. The process iteratively uses an acquisition function to select the most informative next experiments based on predictions and uncertainty estimates from the surrogate model [1].

In contrast, random search represents a baseline approach where experimental conditions are selected randomly from the defined search space without leveraging information from previous experiments. While simple to implement, it lacks any guiding intelligence to direct the search toward optimal regions, making it inefficient for exploring high-dimensional chemical spaces [5].

Key Components of Bayesian Optimization

Bayesian optimization relies on two fundamental components:

- Surrogate Models: Typically Gaussian Process Regressors (GPR) that predict reaction outcomes and their uncertainties for all potential reaction conditions, providing probabilistic estimates that guide the search process [5] [27].

- Acquisition Functions: Algorithms including Expected Improvement (EI), Upper Confidence Bound (UCB), and multi-objective variants like q-Noisy Expected Hypervolume Improvement (q-NEHVI) that determine the next experiments by balancing the exploration-exploitation trade-off [5] [1].

The following diagram illustrates the iterative workflow of Bayesian optimization in chemical reaction optimization:

Experimental Comparison: Performance Metrics and Data

Case Study: Nickel-Catalyzed Suzuki Reaction Optimization

In a direct experimental validation, researchers applied Bayesian optimization (Minerva framework) in a 96-well HTE campaign for a nickel-catalyzed Suzuki reaction, exploring a search space of 88,000 possible conditions [5]. The Bayesian approach successfully identified reactions with an area percent yield of 76% and selectivity of 92% for this challenging transformation involving non-precious metal catalysis. Notably, two chemist-designed HTE plates following traditional approaches failed to find successful reaction conditions, highlighting Bayesian optimization's superior capability in navigating complex chemical landscapes with unexpected reactivity [5].

Pharmaceutical Process Development Applications

Extending to industrial applications, Bayesian optimization was deployed in pharmaceutical process development for two active pharmaceutical ingredient (API) syntheses [5]. For both a Ni-catalyzed Suzuki coupling and a Pd-catalyzed Buchwald-Hartwig reaction, the approach identified multiple conditions achieving >95 area percent yield and selectivity, directly translating to improved process conditions at scale [5]. In one case, the Bayesian optimization framework led to identification of improved process conditions in just 4 weeks compared to a previous 6-month development campaign, demonstrating dramatic acceleration of process development timelines [5].

Quantitative Performance Benchmarking

Table 1: Performance Comparison of Optimization Algorithms in Virtual Benchmarking Studies

| Optimization Method | Batch Size | Hypervolume (%) | Key Strengths | Limitations |

|---|---|---|---|---|

| Bayesian Optimization (q-NEHVI) | 96 | ~98% (vs. reference) | Excellent parallel performance, handles multiple objectives | Higher computational complexity |

| Bayesian Optimization (TS-HVI) | 96 | ~95% (vs. reference) | Scalable for high parallelization | Slightly lower hypervolume |

| Bayesian Optimization (q-NParEgo) | 96 | ~92% (vs. reference) | Good balance of performance/speed | Less optimal for complex spaces |

| Random Search (Sobol Sampling) | 96 | ~65% (vs. reference) | Simple implementation, unbiased | Inefficient for large spaces |

Table 2: Multi-Objective Optimization Performance in Pharmaceutical Applications

| Application Context | Optimization Method | Yield Achieved | Selectivity Achieved | Development Time | Key Outcomes |

|---|---|---|---|---|---|

| Ni-catalyzed Suzuki reaction | Bayesian Optimization | >95% AP | >95% AP | 4 weeks | Multiple optimal conditions identified |

| Pd-catalyzed Buchwald-Hartwig | Bayesian Optimization | >95% AP | >95% AP | 4 weeks | Directly transferable to scale |

| Ni-catalyzed Suzuki reaction | Traditional HTE | Failed | Failed | 6 months | No successful conditions found |

| Pharmaceutical process development | Random Search | Variable, typically suboptimal | Variable, typically suboptimal | 6+ months | Inefficient resource use |

Technical Implementation: Methodologies and Protocols

Experimental Workflow for Bayesian Optimization

The Bayesian optimization workflow for chemical reaction optimization follows a systematic protocol:

Search Space Definition: The reaction condition space is represented as a discrete combinatorial set of potential conditions comprising parameters such as reagents, solvents, and temperatures deemed plausible for a given chemical transformation. This allows automatic filtering of impractical conditions (e.g., temperatures exceeding solvent boiling points) [5].

Initial Sampling: Algorithmic quasi-random Sobol sampling selects initial experiments to maximally cover the reaction condition space, increasing the likelihood of discovering regions containing optima [5].

Surrogate Model Training: Using initial experimental data, a Gaussian Process regressor is trained to predict reaction outcomes and their uncertainties for all reaction conditions [5] [27].

Acquisition Function Evaluation: An acquisition function balancing exploration and exploitation evaluates all reaction conditions and selects the most promising next batch of experiments [1].

Iterative Refinement: The process repeats for multiple iterations, usually terminating upon convergence, stagnation in improvement, or exhaustion of the experimental budget [5].

Advanced Methodologies for Complex Objectives

For multi-objective optimization, specialized acquisition functions have been developed to handle competing objectives:

- q-Expected Hypervolume Improvement (q-EHVI): Calculates the expected improvement in the hypervolume metric, which quantifies the volume of objective space enclosed by selected reaction conditions [5].

- Scalable Alternatives: Methods including q-NParEgo, Thompson sampling with hypervolume improvement (TS-HVI), and q-Noisy Expected Hypervolume Improvement address computational limitations of q-EHVI for large batch sizes [5].

Recent advances include feature adaptive Bayesian optimization (FABO), which dynamically identifies the most informative features influencing material performance at each optimization cycle, enabling efficient optimization without prior representation knowledge [27].

Research Reagent Solutions and Experimental Materials

Table 3: Essential Research Reagents and Materials for Optimization Campaigns

| Reagent/Material Category | Specific Examples | Function in Optimization | Application Context |

|---|---|---|---|

| Non-Precious Metal Catalysts | Nickel-based catalysts | Cost-effective alternative to precious metals | Suzuki reactions, cross-couplings [5] |

| Ligand Libraries | Diverse phosphine ligands, N-heterocyclic carbenes | Modulate catalyst activity and selectivity | Transition metal catalysis [5] |

| Solvent Systems | Pharmaceutical-grade solvents adhering to guidelines | Medium for reaction, influences kinetics & selectivity | Green chemistry applications [5] |

| High-Throughpute Equipment | 96-well plates, automated liquid handlers | Enable parallel reaction execution | HTE optimization campaigns [5] |

| Analytical Tools | UPLC/HPLC systems, mass spectrometers | Quantify yield and selectivity metrics | Reaction outcome analysis [5] |

The experimental evidence demonstrates that Bayesian optimization significantly outperforms random search across all chemical objectives, particularly for complex multi-goal optimization involving yield, selectivity, and process considerations. Key advantages include:

- Superior Efficiency: Bayesian optimization identifies high-performing conditions in dramatically fewer experimental cycles, reducing development time from months to weeks [5].

- Complex Landscape Navigation: Effectively handles high-dimensional search spaces and unexpected chemical reactivity where traditional methods fail [5].

- Multi-Objective Capability: Advanced acquisition functions successfully balance competing objectives, identifying Pareto-optimal conditions that satisfy multiple constraints [5] [1].

Random search remains useful only as a baseline for initial space exploration or when computational resources are severely constrained. For most practical applications in chemical synthesis and pharmaceutical development, Bayesian optimization represents a transformative approach that accelerates discovery timelines and improves process robustness.

The integration of Bayesian optimization with high-throughput experimentation and automated platforms represents the future of chemical reaction optimization, enabling more efficient exploration of vast chemical spaces while satisfying the multiple objectives required for sustainable and economical chemical processes.

In chemical machine learning (ML) research, the efficiency of discovering new molecules or optimizing reactions hinges on the strategy used to navigate the complex, high-dimensional search space. This space is typically composed of both continuous variables (such as temperature, concentration, or molecular orbital energies) and categorical variables (such as catalyst type, solvent class, or functional groups). The design of this search space and the optimization algorithm used to explore it are critical. Within the broader thesis of Bayesian versus Random Search for chemical ML, evidence indicates that Bayesian Optimization (BO), with its ability to intelligently balance exploration and exploitation, generally outperforms Random Search (RS), especially when dealing with the mixed-variable landscapes common in chemistry applications. This guide provides an objective comparison of these methods, supported by experimental data and detailed protocols, to inform researchers and scientists in drug development and materials science.

Hyperparameter tuning is the process of finding the optimal configuration of parameters that are not learned during model training. For chemistry ML models, these could be parameters related to the neural network architecture or the learning process itself. The choice of tuning algorithm significantly impacts the speed and success of the search.