Benchmarking Few-Shot Learning for Molecular Property Prediction: A Comprehensive Guide for Drug Discovery

This article provides a systematic benchmark and comprehensive analysis of few-shot learning (FSL) approaches for molecular property prediction, a critical capability in early-stage drug discovery and materials design where labeled...

Benchmarking Few-Shot Learning for Molecular Property Prediction: A Comprehensive Guide for Drug Discovery

Abstract

This article provides a systematic benchmark and comprehensive analysis of few-shot learning (FSL) approaches for molecular property prediction, a critical capability in early-stage drug discovery and materials design where labeled experimental data is scarce. We first establish the foundational challenges of data scarcity and distribution shifts inherent in molecular datasets. We then categorize and evaluate the landscape of FSL methodologies, including meta-learning, graph neural networks, and multi-task learning, analyzing their mechanisms and application contexts. A dedicated troubleshooting section addresses pervasive optimization challenges like negative transfer and structural heterogeneity, offering practical mitigation strategies. Finally, we present a rigorous comparative validation of representative methods across standard benchmarks, discussing performance trends, dataset characteristics, and evaluation protocols. This guide is tailored for researchers and drug development professionals seeking to implement robust, data-efficient molecular property prediction systems.

The Data Scarcity Challenge: Foundations of Few-Shot Molecular Property Prediction

Molecular Property Prediction (MPP) is a fundamental task in computational chemistry and drug discovery, aiming to estimate the properties of molecules using models trained on compounds with known characteristics [1] [2]. By accelerating the identification of promising lead compounds and anticipating therapeutic efficacy or toxicity, MPP helps to reduce the high costs and daunting attrition rates associated with traditional drug development [1] [3]. The core challenge in MPP lies in learning effective molecular representations from which properties can be predicted [1] [2] [3].

This field is particularly relevant for few-shot learning, a scenario common in real-world drug discovery where labeled experimental data for novel molecular structures or rare disease targets is severely limited [4] [5]. This guide objectively compares the performance and methodologies of contemporary approaches developed to tackle this challenge.

Experimental Protocols and Performance Comparison

Evaluating MPP models typically involves benchmark datasets like those from MoleculeNet and the Therapeutics Data Commons (TDC), which cover properties related to physiology, biophysics, physical chemistry, and ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) [3] [6]. A critical step in ensuring a model can generalize to new chemical space is the scaffold split, where molecules are divided into training and test sets based on their core structural motifs (Bemis-Murcko scaffolds) [3] [6]. Performance is most often measured by the Area Under the Receiver Operating Characteristic Curve (AUROC) for classification tasks and the Root Mean Square Error (RMSE) for regression tasks [1].

The table below summarizes the reported performance of several state-of-the-art models on public benchmarks.

Performance Comparison on Benchmark Datasets

| Model Name | Core Approach | Key Features | Reported Performance (Dataset) |

|---|---|---|---|

| CFS-HML [7] | Heterogeneous Meta-Learning | Combines GNNs & self-attention; property-shared & property-specific features | "Substantial improvement in predictive accuracy", excels with few training samples |

| PG-DERN [5] | Meta-Learning (MAML) | Dual-view encoder (node & subgraph); relation graph learning | "Outperforms state-of-the-art methods" on four benchmark datasets |

| CLAPS [2] | Contrastive Learning (SSL) | Attention-guided positive sample selection; Transformer encoder | "Outperforms the state-of-the-art (SOTA) methods in most cases" on various benchmarks |

| MolFCL [3] | Contrastive & Prompt Learning | Fragment-based augmented graphs; functional group prompts | Outperforms SOTA baselines on 23 molecular property prediction datasets |

| MolVision [8] [9] | Multimodal (Vision-Language) | Integrates molecular images with SMILES/SELFIES text; uses LoRA fine-tuning | Multimodal fusion "significantly enhances generalization"; improves with fine-tuning |

Technical Approaches and Methodologies

Modern MPP models can be categorized by their technical approach, each with distinct strengths for handling data scarcity.

Molecular Representations

The choice of how a molecule is represented for a model is fundamental [1]:

- Fixed Representations (e.g., ECFP fingerprints): Pre-defined vectors signifying the presence of specific structural patterns [1].

- SMILES Strings: Linear text notations of molecular structure, processed by models like RNNs or Transformers [1] [2].

- Molecular Graphs: Treats atoms as nodes and bonds as edges, processed natively by Graph Neural Networks (GNNs) [1] [2].

Key Technical Paradigms

- Meta-Learning: Designed for few-shot scenarios, it learns a generalizable model initialization from many related property prediction tasks. This allows for fast adaptation to a new property with only a handful of examples [7] [4] [5].

- Self-Supervised Contrastive Learning: Leverages large unlabeled molecular databases. It pre-trains a model by learning to identify different augmented views ("positive samples") of the same molecule while distinguishing them from other molecules ("negative samples") [2] [3]. The quality of the augmentations is critical; methods that incorporate chemical knowledge, like fragment reactions, avoid destroying meaningful molecular semantics [3].

- Multimodal Learning: Aims to overcome the limitations of a single representation by combining multiple views, such as molecular structure images and textual SMILES strings, to provide a more robust and informative feature set [8] [9].

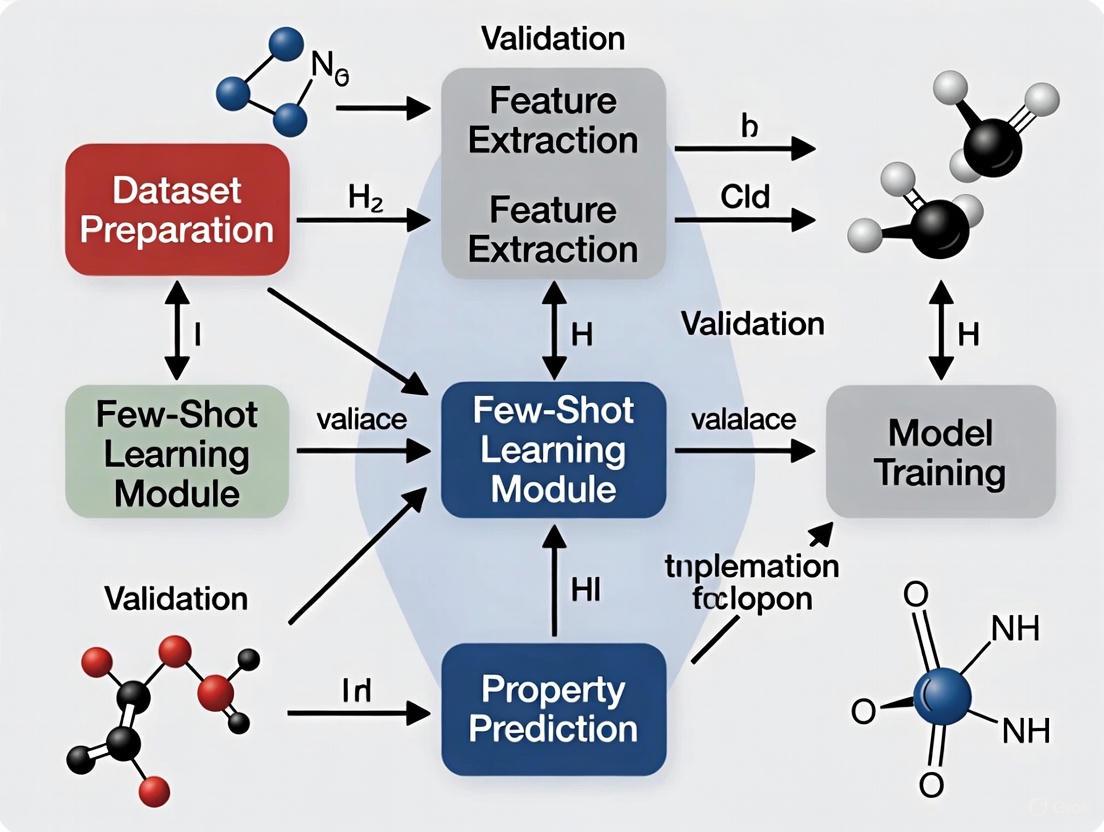

The following diagram illustrates a generic workflow that underlies many advanced MPP methods, particularly those using contrastive and self-supervised learning.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful MPP research relies on a suite of computational tools and datasets. The table below details key resources mentioned in the reviewed literature.

| Item Name | Type | Function / Application |

|---|---|---|

| RDKit [1] [9] | Software | Open-source cheminformatics toolkit; computes 2D descriptors, generates molecular images from SMILES, and handles scaffold splitting. |

| ZINC15 [2] [3] | Database | A large, publicly available database of commercially available chemical compounds; used for self-supervised pre-training. |

| MoleculeNet [1] [3] | Benchmark Suite | A collection of standardized datasets for molecular machine learning; used for training and benchmarking models. |

| Therapeutics Data Commons (TDC) [3] | Benchmark Suite | Provides datasets and tools for systematic evaluation across the entire therapeutic pipeline, including ADMET properties. |

| LoRA (Low-Rank Adaptation) [8] [9] | Fine-tuning Method | An efficient parameter fine-tuning technique that significantly reduces the number of trainable parameters for adapting large foundation models. |

| Extended-Connectivity Fingerprints (ECFP) [1] [3] | Molecular Representation | A circular fingerprint that encodes the presence of substructures; a traditional and strong baseline for MPP models. |

| BERT / Transformer Architecture [2] [6] | Model Architecture | A powerful neural network architecture adapted from NLP; used to process SMILES strings and learn contextual molecular representations. |

The landscape of Molecular Property Prediction is rapidly evolving to address the critical challenge of data scarcity in drug discovery. While no single approach is universally superior, meta-learning frameworks like CFS-HML and PG-DERN are explicitly designed for few-shot scenarios, showing strong empirical results [7] [5]. Meanwhile, self-supervised contrastive learning methods like MolFCL and CLAPS demonstrate that pre-training on vast unlabeled corpora can yield powerful and generalizable representations that benefit downstream property prediction [2] [3]. The emerging trend of multimodal learning, as seen in MolVision, suggests that combining multiple molecular representations can further enhance model robustness and generalization [8] [9]. For researchers, the choice of model depends on the specific context—particularly the amount of available labeled data and the level of interpretability required.

In the field of molecular property prediction, a critical bottleneck impedes progress: the scarcity of high-quality, annotated data. Traditional supervised learning models require vast amounts of labeled data, which is often unavailable due to the high cost, time, and expertise required for wet-lab experiments [10]. This data scarcity defines the few-shot problem—a fundamental challenge in applying artificial intelligence to early-stage drug discovery and materials design [10]. This article examines the core challenges of few-shot learning (FSL) in molecular property prediction, benchmarks current methodological approaches, and provides experimental protocols for evaluating model performance in data-scarce environments.

Core Challenges in Few-Shot Molecular Property Prediction

The few-shot problem in molecular property prediction is characterized by two interconnected challenges that severely hamper model generalization.

Cross-Property Generalization Under Distribution Shifts

Different molecular property prediction tasks often correspond to distinct structure-property mappings with weak correlations, differing significantly in label spaces and underlying biochemical mechanisms [10]. This creates severe distribution shifts that hinder effective knowledge transfer between tasks. For instance, a model trained to predict solubility may struggle to generalize to toxicity prediction because the fundamental biochemical mechanisms and feature representations differ substantially, leading to performance degradation when learning from limited examples [10].

Cross-Molecule Generalization Under Structural Heterogeneity

Molecules involved in different—or even the same—properties can exhibit significant structural diversity [10]. This structural heterogeneity means that models tend to overfit the structural patterns of limited training molecules and fail to generalize to structurally diverse compounds. The risk of overfitting and memorization under limited molecular property annotations significantly hampers generalization ability to new rare chemical properties or novel molecular structures [10].

Methodological Approaches to Few-Shot Learning

Researchers have developed several algorithmic strategies to address these challenges. The table below summarizes the core methodological families and their applications to molecular property prediction.

Table 1: Few-Shot Learning Methodological Approaches

| Method Category | Core Principle | Key Algorithms | Molecular Application Examples |

|---|---|---|---|

| Meta-Learning [11] [12] | "Learning to learn" across multiple tasks to enable rapid adaptation | MAML [12], Task-Adaptive Meta-Learning [13] | Heterogeneous meta-learning for property prediction [7] |

| Metric-Based [11] [12] | Learning similarity metrics in embedding space for classification | Prototypical Networks [12], Matching Networks [11], Relation Networks [11] | Molecular similarity assessment for property inference |

| Data-Level [11] | Generating additional training samples to overcome data scarcity | GANs [12], VAEs [12], Data Augmentation | Synthetic molecular generation for rare properties |

| Transfer Learning [11] [14] | Leveraging pre-trained models and fine-tuning on target tasks | Pre-trained GNNs [7], Foundation Models | Transferring knowledge from large molecular databases to rare properties |

Experimental Benchmarking of FSL Methods

To quantitatively assess the performance of various FSL approaches, researchers have established standardized evaluation protocols centered on the N-way-K-shot classification framework [11] [15]. In this paradigm, N represents the number of classes, and K represents the number of labeled examples ("shots") per class provided in the support set [11]. Each training episode consists of a support set (containing K labeled examples for each of N classes) and a query set (containing new examples for classification based on learned representations) [11].

Benchmark Results on Molecular Datasets

The following table synthesizes performance metrics from recent studies on standard molecular property prediction benchmarks, enabling direct comparison of FSL approaches.

Table 2: Experimental Performance Comparison of FSL Methods on Molecular Property Prediction

| Model/Approach | Benchmark Dataset | Setting | Performance Metric | Score | Key Innovation |

|---|---|---|---|---|---|

| HSL-RG [13] | Multiple real-life benchmarks | Few-shot | Accuracy | Superior to SOTA (Exact values not provided in source) | Hierarchical structure learning on relation graphs |

| Context-informed via Heterogeneous Meta-Learning [7] | MoleculeNet | Few-shot | Predictive Accuracy | Substantial improvement with fewer samples | Combines GNNs with self-attention encoders |

| Traditional Supervised Learning [10] | ChEMBL | Data-rich | Generalization Ability | Fails with scarce data | Requires large annotated datasets |

Detailed Experimental Protocol

For researchers seeking to replicate or extend these benchmarks, the following experimental protocol provides a standardized methodology:

Dataset Preparation: Utilize established molecular benchmarks such as those from MoleculeNet [7] [10]. For few-shot scenarios, construct multiple tasks by sampling subsets of properties with limited annotations.

Task Formulation: Adopt the N-way-K-shot framework [11] [15]. For each training episode, randomly select N property classes, with K labeled examples per class in the support set and a query set containing different examples from the same N classes.

Model Training:

- For meta-learning approaches: Implement an episodic training strategy where models learn across multiple tasks [12].

- For metric-based approaches: Train models to learn optimal distance metrics in embedding space [11].

- Implement a two-phase optimization for heterogeneous meta-learning: update property-specific features within individual tasks (inner loop) and jointly update all parameters (outer loop) [7].

Evaluation: Assess model performance on completely unseen property classes to measure generalization capability [11]. Use multiple random samplings of support and query sets to ensure statistical significance.

Visualization of Few-Shot Learning Framework

The following diagram illustrates the structural relationship between core components in a typical few-shot molecular property prediction system, highlighting both global and local learning pathways:

The Scientist's Toolkit: Research Reagent Solutions

Implementing effective few-shot learning for molecular property prediction requires specialized computational "reagents." The table below details essential resources for building robust FSL pipelines.

Table 3: Essential Research Reagents for Few-Shot Molecular Property Prediction

| Research Reagent | Function/Purpose | Example Implementations |

|---|---|---|

| Benchmark Datasets | Standardized evaluation and comparison | MoleculeNet [7] [10], ChEMBL [10] |

| Graph Neural Networks | Molecular structure representation learning | GIN [7], Pre-GNN [7] |

| Meta-Learning Algorithms | Cross-task knowledge transfer | MAML [12], Heterogeneous Meta-Learning [7] |

| Relation Graph Constructs | Global-level molecular knowledge communication | Graph Kernels [13] |

| Self-Supervised Learning Signals | Local-level transformation-invariant representations | Structure Optimization [13] |

The few-shot problem, characterized by scarce annotations and real-world data limitations, presents both a significant challenge and opportunity for advancing molecular property prediction. Benchmark results demonstrate that approaches combining hierarchical structure learning with meta-learning, such as HSL-RG [13], and context-informed heterogeneous meta-learning [7] show particular promise in addressing cross-property and cross-molecule generalization challenges. As the field evolves, future research directions should focus on developing more sophisticated approaches for handling distribution shifts, structural heterogeneity, and integrating domain knowledge to enable accurate molecular property prediction with minimal labeled data.

In the field of AI-driven drug discovery, Few-Shot Molecular Property Prediction (FSMPP) has emerged as a critical approach for identifying promising molecular candidates when experimental data is scarce. Among the core challenges in FSMPP, cross-property generalization under distribution shifts presents a particularly difficult problem that limits the real-world application of predictive models. This challenge arises when a model trained on a set of molecular properties must generalize to predict novel properties with limited labeled examples, while contending with distributional differences between the source and target properties [4]. These distribution shifts occur because each property corresponds to a different prediction task that may follow a distinct data distribution, or may be inherently weakly related to others from a biochemical perspective [4]. The ability to transfer knowledge across these heterogeneous prediction tasks is paramount for developing robust FSMPP systems that can accelerate early-stage drug discovery and materials design.

This comparison guide provides an objective analysis of contemporary approaches addressing cross-property generalization under distribution shifts, examining their methodological frameworks, experimental protocols, and comparative performance across benchmark datasets. By synthesizing findings from cutting-edge research, we aim to establish a clear benchmarking framework that helps researchers and drug development professionals select appropriate methodologies for their specific FSMPP challenges.

Methodological Approaches Compared

Recent research has produced several innovative frameworks specifically designed to tackle the challenge of cross-property generalization in FSMPP. The table below summarizes four representative approaches that have demonstrated state-of-the-art performance.

Table 1: Representative FSMPP Models Addressing Cross-Property Generalization

| Model Name | Core Methodology | Key Innovation | Distribution Shift Handling |

|---|---|---|---|

| KRGTS [16] | Knowledge-enhanced Relation Graph & Task Sampling | Constructs molecule-property multi-relation graph to capture many-to-many relationships | Leverages high-related auxiliary tasks to provide relevant information for target properties |

| Meta-DREAM [17] | Disentangled Graph Encoder with Soft Clustering | Explicitly discriminates underlying factors of tasks and groups them into clusters | Maintains knowledge generalization within clusters and customization among clusters |

| CFS-HML [7] | Heterogeneous Meta-Learning | Combines GNNs with self-attention encoders for property-specific and property-shared features | Employs inner loop for property-specific updates and outer loop for joint updates of all parameters |

| PG-DERN [5] | Dual-View Encoder & Relation Graph Learning | Integrates node and subgraph information with property-guided feature augmentation | Transfers information from similar properties to novel properties to improve feature representation |

Architectural Commonalities and Variations

Despite their different implementations, these models share several architectural commonalities aimed at addressing distribution shifts. All four approaches incorporate some form of graph-based representation learning to capture molecular structures, and most employ meta-learning strategies to enable rapid adaptation to new properties with limited data [7] [16] [17]. Additionally, they explicitly model relationships between properties rather than treating each property prediction task in isolation.

The primary variation lies in how they conceptualize and leverage these inter-property relationships. KRGTS focuses on constructing explicit molecule-property relationship graphs [16], while Meta-DREAM employs factor disentanglement and soft clustering to group related tasks [17]. CFS-HML differentiates between property-shared and property-specific knowledge through heterogeneous meta-learning [7], and PG-DERN uses a dual-view encoder combined with property-guided feature augmentation [5].

Experimental Benchmarking Framework

Standardized Evaluation Protocols

To ensure fair comparison across FSMPP methods, researchers have converged on standardized evaluation protocols centered around the meta-learning paradigm. The typical experimental setup involves organizing molecular properties into meta-training, meta-validation, and meta-testing sets, with strict separation to ensure no property overlap between meta-training and meta-testing phases [4] [16].

The standard protocol involves:

- Task Construction: Each property is treated as a separate prediction task, with molecules divided into support (training) and query (testing) sets for few-shot learning [16] [17].

- Episodic Training: Models are trained using episodes, where each episode samples a subset of tasks from the meta-training set [7] [5].

- Few-Shot Evaluation: Model performance is evaluated on novel properties from the meta-test set with K-shot learning scenarios (typically 1, 5, 10, or 20 shots) [16] [17].

- Cross-Property Generalization Assessment: The key evaluation metric is how well models trained on source properties can predict novel target properties with limited examples, despite distribution shifts [4].

Performance is typically measured using standard classification metrics including AUC-ROC, AUC-PR, and accuracy, with results averaged across multiple runs and task samples to ensure statistical significance [16] [17] [5].

Benchmark Datasets

The following table outlines the key benchmark datasets used for evaluating cross-property generalization in FSMPP, along with their characteristics and prevalence in literature.

Table 2: Benchmark Datasets for FSMPP Cross-Property Generalization

| Dataset Name | Molecule Count | Property Count | Key Characteristics | Usage in Literature |

|---|---|---|---|---|

| Tox21 | ~12,000 compounds | 12 toxicity assays | Nuclear receptor and stress response pathways | Used in [16] [17] [5] |

| SIDER | ~1,427 drugs | 27 system organ classes | Adverse drug reactions grouped by organ class | Used in [16] [17] |

| MUV | ~90,000 compounds | 17 validation screens | Designed for virtual screening with low hit rates | Used in [16] [5] |

| BBBP | ~2,000 compounds | 1 blood-brain barrier penetration | Membrane permeability property | Used in [5] |

| ClinTox | ~1,500 compounds | 2 clinical toxicity measures | Comparison of FDA approval and clinical toxicity | Used in [17] |

Comparative Performance Analysis

Quantitative Results Across Datasets

Rigorous experimental evaluations have been conducted to compare the performance of FSMPP methods under varying few-shot scenarios. The table below synthesizes performance metrics reported across multiple studies, focusing on the critical few-shot setting where distribution shifts pose the greatest challenge.

Table 3: Comparative Performance Analysis (AUC-ROC) in Few-Shot Settings

| Model | 5-shot Tox21 | 5-shot SIDER | 5-shot MUV | 10-shot Tox21 | 10-shot SIDER | 10-shot MUV |

|---|---|---|---|---|---|---|

| KRGTS [16] | 0.783 | 0.682 | 0.751 | 0.812 | 0.724 | 0.792 |

| Meta-DREAM [17] | 0.769 | 0.674 | 0.739 | 0.806 | 0.715 | 0.781 |

| CFS-HML [7] | 0.758 | 0.665 | 0.728 | 0.794 | 0.706 | 0.772 |

| PG-DERN [5] | 0.772 | 0.671 | 0.742 | 0.802 | 0.712 | 0.778 |

The performance trends reveal several important insights. First, all methods experience performance degradation as the number of shots decreases, highlighting the fundamental challenge of few-shot learning under distribution shifts. Second, methods that explicitly model inter-property relationships (KRGTS and Meta-DREAM) generally outperform approaches that focus primarily on molecular representation learning, particularly in the most challenging low-shot scenarios [16] [17]. This performance advantage demonstrates the value of directly addressing the cross-property generalization challenge rather than treating it as a secondary consideration.

Impact of Auxiliary Tasks and Relationship Modeling

A key finding across multiple studies is the importance of appropriate auxiliary task selection for mitigating distribution shifts. KRGTS demonstrates that using high-related auxiliary properties significantly improves performance on target properties, while low-related or unrelated auxiliary properties provide diminishing returns and can even introduce noise [16]. Similarly, Meta-DREAM shows that clustering related tasks and maintaining separate generalization patterns within each cluster leads to more robust performance across diverse property types [17].

The relationship between the number of auxiliary tasks and model performance follows a consistent pattern: initial performance improvements as more tasks are added, followed by a plateau and eventual degradation when too many tasks are included [16] [17]. This pattern underscores the importance of selective task sampling rather than leveraging all available auxiliary properties indiscriminately.

Architectural Workflows and System Diagrams

Knowledge-Enhanced Relation Graph Architecture

The KRGTS framework introduces a sophisticated architecture for capturing molecule-property relationships that directly addresses distribution shifts through structured knowledge representation.

Diagram 1: KRGTS Framework for Cross-Property Generalization

Disentangled Factor Learning Architecture

Meta-DREAM addresses distribution shifts through explicit factor disentanglement and cluster-aware learning, providing an alternative approach to the relationship modeling in KRGTS.

Diagram 2: Meta-DREAM Disentangled Factor Learning

Benchmark Datasets and Evaluation Frameworks

Successful research in FSMPP cross-property generalization requires familiarity with established benchmarks and evaluation frameworks. The following table outlines key resources available to researchers in this field.

Table 4: Essential Research Resources for FSMPP

| Resource Name | Type | Description | Access Information |

|---|---|---|---|

| MoleculeNet | Benchmark Dataset Collection | Curated collection of molecular property prediction datasets | Publicly available at https://moleculenet.org/ [7] |

| FS-Mol | Few-Shot Benchmark | Specifically designed for few-shot molecular property evaluation | Available from https://github.com/microsoft/FS-Mol [18] |

| KRGTS Codebase | Implementation | Reference implementation of the KRGTS framework | https://github.com/Vencent-Won/KRGTS-public [16] |

| CFS-HML Codebase | Implementation | Reference implementation of the CFS-HML model | https://github.com/xuejunhao123/CFS-HML [7] |

| Awesome FSMPP Literature | Literature Survey | Curated collection of FSMPP research papers | https://github.com/Vencent-Won/Awesome-Literature-on-Few-shot-Molecular-Property-Prediction [19] |

The comparative analysis presented in this guide reveals that while significant progress has been made in addressing cross-property generalization under distribution shifts, substantial challenges remain. Methods that explicitly model molecule-property relationships through structured graphs (KRGTS) or factor disentanglement (Meta-DREAM) currently demonstrate state-of-the-art performance, particularly in challenging low-shot scenarios [16] [17]. However, even the best-performing models experience significant performance degradation when distribution shifts are pronounced and labeled examples are extremely scarce.

Future research directions likely to advance the field include: (1) development of more sophisticated relationship quantification methods that better capture biochemical similarities between properties, (2) integration of large-scale pre-training approaches with meta-learning frameworks to learn more transferable molecular representations, and (3) creation of more comprehensive benchmark datasets that specifically stress-test cross-property generalization under controlled distribution shifts [4] [19]. As these methodological improvements mature, FSMPP systems have the potential to dramatically accelerate early-stage drug discovery by enabling accurate property prediction for novel molecular structures with minimal experimental data.

In Few-Shot Molecular Property Prediction (FSMPP), cross-molecule generalization under structural heterogeneity presents a fundamental obstacle. This challenge arises when machine learning models, trained on a limited set of labeled molecules, must accurately predict the properties of novel, structurally diverse compounds. The core of the problem lies in the immense and complex nature of chemical space; molecules can vary dramatically in their size, topology, and constituent functional groups, leading to significant shifts in the data distribution between the training and testing phases [4] [10]. In real-world drug discovery, this scenario is commonplace, particularly for novel molecular scaffolds or targets associated with rare diseases where annotated data is exceptionally scarce [5].

When models overfit the specific structural patterns of the few training molecules, their ability to generalize to new, heterogeneous structures is severely hampered [10]. This limitation undermines the practical utility of AI in accelerating early-stage drug discovery and materials design. Consequently, developing models robust to this heterogeneity is an active and critical area of research. This guide benchmarks contemporary approaches designed to overcome this challenge, comparing their performance and dissecting the experimental protocols that validate their efficacy.

Comparative Analysis of FSMPP Methods

The following table summarizes key methodologies, their core mechanisms for tackling structural heterogeneity, and their performance on standard benchmarks.

Table 1: Comparison of FSMPP Methods Addressing Structural Heterogeneity

| Method Name | Core Mechanism for Cross-Molecule Generalization | Reported Performance (ROC-AUC ± Std.) |

|---|---|---|

| M-GLC [20] | Constructs a tri-partite context graph (molecule-motif-property) and uses local-focus subgraph encoders to capture transferable structural priors from chemical motifs. | Tox21: 0.841 ± 0.018SIDER: 0.902 ± 0.012ClinTox: 0.942 ± 0.010 |

| PG-DERN [5] | Employs a dual-view encoder (node + subgraph) and a relation graph learning module to propagate information between similar molecules, guided by meta-learning. | Outperforms state-of-the-art baselines across four benchmarks (specific metrics not fully detailed in excerpt). |

| ACS [21] | A multi-task GNN training scheme using adaptive checkpointing with specialization to mitigate negative transfer and overfitting on low-data tasks. | ClinTox: ~0.92 (from graph)SIDER: ~0.88 (from graph)Tox21: ~0.83 (from graph) |

| KRGTS [22] | Features a Knowledge-enhanced Relation Graph and a Task Sampling module to improve learning of transferable knowledge across tasks and structures. | Superior to a variety of state-of-the-art methods (specific metrics not fully detailed in excerpt). |

Experimental Protocols and Benchmarking

A standardized evaluation protocol is crucial for the fair comparison of FSMPP methods. The field primarily adopts a meta-learning framework to simulate real-world low-data scenarios [20].

Standard FSMPP Evaluation Protocol

- Task Formulation: The problem is framed as a series of N-way K-shot learning tasks. Each task is a distinct molecular property prediction problem (e.g., toxicity). For each task, the model has access to a small support set (e.g., K=10 labeled molecules per class) and is evaluated on a separate query set [20] [5].

- Meta-Training and Meta-Testing: Models are trained on a set of source properties (( \mathcal{T}{\text{train}} )) during a meta-training phase. Crucially, the properties used for evaluation (( \mathcal{T}{\text{test}} )) are held out entirely during training, ensuring a strict separation: ( \mathcal{P}{\text{train}} \cap \mathcal{P}{\text{test}} = \emptyset ) [20]. This tests true generalization to novel properties and their associated molecules.

- Datasets: Common public benchmarks include:

- Splitting Strategy: To rigorously assess cross-molecule generalization, datasets are often split using the Murcko-scaffold protocol [21]. This method groups molecules based on their core molecular scaffold, ensuring that molecules in the training and test sets have distinct core structures. This directly tests a model's ability to handle structural heterogeneity and avoid over-relying on scaffold-specific features.

Method-Specific Workflows

Table 2: Detailed Experimental Workflows of Representative Methods

| Method | Key Workflow Steps | Primary Datasets Used |

|---|---|---|

| M-GLC [20] | 1. Motif Extraction: Identify recurring chemical sub-structures (motifs) from molecular graphs.2. Graph Construction: Build a global heterogeneous graph linking molecules, properties, and motifs.3. Subgraph Encoding: For each molecule-property pair, extract and encode a local subgraph from the global context graph.4. Meta-Learning: Train the model using episodic sampling from the meta-training set of properties. | Tox21, SIDER, ClinTox, and others (5 total) |

| ACS [21] | 1. Multi-Task Pre-training: Train a shared GNN backbone on multiple property prediction tasks simultaneously.2. Adaptive Checkpointing: Monitor validation loss for each task independently and save the best-performing model parameters (backbone + task-specific head) for that task.3. Specialization: The final model for a specific task is its specialized checkpoint, mitigating interference from other tasks. | ClinTox, SIDER, Tox21 |

| PG-DERN [5] | 1. Dual-View Encoding: Generate molecular representations from both an atomic (node) view and a substructural (subgraph) view.2. Relation Graph Learning: Construct a graph where molecules are nodes, and edges represent molecular similarity to enable information propagation.3. Meta-Optimization: Use a MAML-based strategy to learn good initial parameters that can rapidly adapt to new properties with few gradient steps. | Four benchmark datasets (specific names not listed in excerpt) |

Workflow Visualization: M-GLC Framework

The M-GLC framework provides a cohesive architecture for integrating global and local structural information. The diagram below illustrates its core workflow.

Diagram Title: M-GLC Framework for FSMPP

This workflow begins by integrating molecules, properties, and chemical motifs into a unified graph structure. The subsequent local subgraph sampling and encoding are critical steps that allow the model to focus on the most relevant structural context for each prediction task.

The Scientist's Toolkit: Key Research Reagents

Table 3: Essential Resources for FSMPP Research

| Resource Name | Type | Primary Function in FSMPP Research |

|---|---|---|

| MoleculeNet Benchmarks [21] [23] | Dataset | Standardized datasets (e.g., Tox21, SIDER, ClinTox) for training and fairly benchmarking model performance. |

| Open Molecules 2025 (OMol25) [24] | Dataset | A large, diverse dataset of quantum chemistry calculations used for pre-training foundational models on atomic-level interactions. |

| Meta's Universal Model for Atoms (UMA) [24] | Pre-trained Model | A foundational model providing accurate predictions of atomic interactions, serving as a versatile base for downstream fine-tuning. |

| FGBench [25] | Dataset & Benchmark | Provides fine-grained, functional group-annotated data for probing and improving model reasoning about structure-property relationships. |

| Graph Neural Networks (GNNs) [21] [23] [20] | Model Architecture | The core deep learning architecture for learning meaningful representations directly from molecular graph structures. |

| Meta-Learning Algorithms (e.g., MAML) [5] | Training Algorithm | Enables models to learn a general initialization from many few-shot tasks, allowing for rapid adaptation to novel properties with minimal data. |

Molecular property prediction is fundamental to early-stage drug discovery and materials design, serving as a critical component in hit identification, lead optimization, and toxicity assessment. However, the field faces a fundamental challenge: the high cost and complexity of wet-lab experiments result in severely limited annotated data for many properties and molecular structures. This data scarcity has propelled few-shot molecular property prediction (FSMPP) to the forefront of computational molecular research [10]. FSMPP addresses this limitation by developing models capable of learning from only a handful of labeled examples, enabling generalization across both novel molecular structures and rarely annotated properties [10].

Within this context, public molecular databases serve as the foundational bedrock for developing, benchmarking, and validating FSMPP approaches. These repositories provide the essential training data, standardized evaluation frameworks, and realistic testing scenarios necessary to advance the field. The ChEMBL database, in particular, has emerged as a preeminent resource, containing millions of experimentally derived compound activities and properties curated from scientific literature [10]. Other critical databases include BindingDB, PubChem, and MoleculeNet, each contributing unique dimensions to molecular benchmarking. This guide provides a systematic analysis of these molecular databases, comparing their structural characteristics, application contexts, and utility in benchmarking few-shot learning approaches for molecular property prediction.

Comparative Analysis of Molecular Databases for Few-Shot Learning

Database Characteristics and Application Contexts

Table 1: Key Molecular Databases for Few-Shot Learning Benchmarking

| Database Name | Primary Focus | Data Volume | Key Characteristics | Few-Shot Relevance |

|---|---|---|---|---|

| ChEMBL [10] [26] | Bioactive molecules, drug-like compounds | >2.5M compounds, 16K targets | Experimentally measured binding, functional and ADMET data; Multiple data sources with varying protocols | Provides real-world data scarcity scenario; Natural task distribution for meta-learning |

| PharmaBench [27] | ADMET properties | 52,482 entries across 11 properties | LLM-curated experimental conditions; Standardized units and conditions; Drug-discovery focused compounds | Enhanced data quality for low-data regimes; Addresses molecular weight bias in earlier sets |

| CARA [26] | Compound activity prediction | Not specified | Distinguishes VS vs LO assays; Real-world train-test splits; Accounts for temporal bias | Models practical deployment scenarios; Separates structurally diverse vs congeneric compounds |

| FS-Mol [26] | Few-shot QSAR | Not specified | Designed specifically for few-shot learning; Binary classification tasks | Built for FSMPP evaluation; Contains scaffold-based splits |

| MoleculeNet [27] | Broad molecular machine learning | >700K compounds across 17 datasets | Aggregates multiple property types; Includes physical chemistry and physiology | Standardized evaluation benchmarks; Diverse property types |

Critical Data Challenges in Real-World Applications

The systematic analysis of ChEMBL and related databases reveals several critical challenges that directly impact few-shot learning performance:

Data Scarcity and Imbalance: Analysis of ChEMBL demonstrates severe annotation scarcity, with significant imbalances in IC50 distributions across targets spanning several orders of magnitude [10]. This creates natural few-shot scenarios where certain properties or targets have limited examples.

Assay Type Heterogeneity: CARA's distinction between Virtual Screening (VS) and Lead Optimization (LO) assays highlights a fundamental division in molecular data [26]. VS assays typically contain structurally diverse compounds with diffuse similarity patterns, while LO assays contain congeneric compounds with high structural similarity and aggregated distributions. This dichotomy necessitates different few-shot learning strategies for each scenario.

Temporal and Spatial Biases: Molecular data often exhibits temporal biases where older compounds dominate training sets, and spatial biases where data clusters in specific regions of chemical space [21]. These distributional shifts can lead to overoptimistic performance estimates if not properly accounted for in benchmarking.

Experimental Condition Variability: As highlighted in PharmaBench's curation process, experimental conditions such as pH levels, measurement techniques, and buffer compositions significantly impact property measurements [27]. This variability introduces noise that few-shot models must overcome.

Table 2: Data Challenge Analysis in Molecular Databases

| Challenge Type | Impact on Few-Shot Learning | Databases Addressing Challenge |

|---|---|---|

| Annotation Scarcity | Creates natural few-shot scenarios; Risk of overfitting | ChEMBL, FS-Mol |

| Assay Type Heterogeneity | Requires different generalization strategies for VS vs LO | CARA, ChEMBL |

| Temporal Bias | Inflates performance without time-split validation | CARA, ChEMBL |

| Experimental Variability | Introduces noise in learning signals | PharmaBench, ChEMBL |

| Molecular Weight Bias | Limits applicability to drug-discovery compounds | PharmaBench, CARA |

Experimental Protocols for Benchmarking Few-Shot Learning Approaches

Data Partitioning Strategies

Robust evaluation of few-shot molecular property prediction methods requires careful data partitioning to avoid data leakage and ensure realistic performance estimates:

Scaffold-Based Splits: This approach partitions molecules based on their Bemis-Murcko scaffolds, ensuring that molecules with core structural similarities remain in either training or test sets [21]. This evaluates model capability to generalize to novel molecular architectures, representing a more challenging and realistic scenario for drug discovery applications.

Temporal Splits: As implemented in CARA, temporal splitting trains models on older compounds and tests on newer ones [26]. This mirrors real-world discovery pipelines where models predict properties for newly synthesized compounds, preventing inflated performance from similar structures across splits.

Task-Type Specific Splits: CARA implements distinct splitting strategies for Virtual Screening versus Lead Optimization assays [26]. For VS tasks, random splitting may be appropriate given structural diversity, while for LO tasks, more careful partitioning is needed to avoid data leakage from highly similar compounds.

Few-Shot Episode Construction: Following meta-learning paradigms, FS-Mol and related benchmarks construct evaluation episodes containing support sets (for training) and query sets (for testing) [10]. These episodes sample tasks from different protein targets or property measurements to assess cross-property generalization.

Evaluation Metrics and Performance Assessment

Comprehensive benchmarking requires multiple metrics to capture different dimensions of few-shot performance:

ROC-AUC (Receiver Operating Characteristic - Area Under Curve): Particularly valuable for virtual screening tasks where ranking capability is crucial [26]. It measures the model's ability to prioritize active compounds over inactive ones across different classification thresholds.

PR-AUC (Precision-Recall Area Under Curve): More informative than ROC-AUC for imbalanced datasets where inactive compounds significantly outnumber actives [26]. This is common in real-world screening scenarios.

RMSE (Root Mean Square Error): Appropriate for regression tasks such as predicting binding affinity values or physicochemical properties [21]. It quantifies the magnitude of prediction errors in the original unit of measurement.

Few-Shot Adaptation Speed: Measures how quickly models converge to satisfactory performance with limited labeled examples [10]. This is particularly important for practical applications where annotation resources are constrained.

Methodological Approaches in Few-Shot Molecular Property Prediction

Technical Frameworks for Addressing FSMPP Challenges

The survey by Wang et al. [10] organizes FSMPP methods into a coherent taxonomy addressing two core challenges: cross-property generalization under distribution shifts and cross-molecule generalization under structural heterogeneity. These approaches can be categorized into three primary frameworks:

Meta-Learning Approaches: Methods like MAML (Model-Agnostic Meta-Learning) learn superior parameter initializations that enable rapid adaptation to new properties with limited examples [10] [5]. These frameworks train across diverse property prediction tasks, extracting transferable knowledge that facilitates quick learning of novel properties.

Multi-Task Learning with Negative Transfer Mitigation: Techniques like Adaptive Checkpointing with Specialization (ACS) address the challenge of negative transfer in multi-task learning [21]. ACS combines shared backbones with task-specific heads, implementing adaptive checkpointing when negative transfer is detected. This approach has demonstrated effectiveness in ultra-low data regimes, achieving accurate predictions with as few as 29 labeled samples.

Property-Guided Architectures: Methods like PG-DERN incorporate chemical domain knowledge through dual-view encoders and relation graph learning modules [5]. These approaches explicitly model relationships between molecules and transfer information from chemically similar properties to novel prediction tasks.

Visualization of Few-Shot Molecular Property Prediction Workflow

The following diagram illustrates the complete workflow for few-shot molecular property prediction, integrating database handling, model training, and evaluation components:

Molecular Data Characteristics and Their Impact on Model Performance

The following diagram illustrates the relationship between molecular data characteristics and their impact on few-shot learning approaches:

Table 3: Key Research Reagent Solutions for Molecular Data Analysis

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Primary Data Repositories | ChEMBL, BindingDB, PubChem | Source of experimental compound activity data | Foundation for constructing benchmark datasets; Source of few-shot tasks |

| Curated Benchmarks | PharmaBench, CARA, FS-Mol, MoleculeNet | Pre-processed datasets with standardized splits | Model evaluation and comparison; Few-shot learning research |

| Data Processing Tools | RDKit, LLM-based curation systems [27] | Molecular standardization, feature generation, condition extraction | Handles molecular heterogeneity; Extracts experimental conditions |

| Evaluation Frameworks | Scaffold splitting, Temporal splitting protocols | Prevent data leakage; Ensure realistic performance estimation | Model validation under real-world conditions |

| Specialized Model Architectures | ACS [21], CFG-HML [7], PG-DERN [5] | Address FSMPP challenges like negative transfer | Production-level molecular property prediction |

The systematic analysis of ChEMBL and related molecular databases reveals a rapidly evolving landscape where data quality, methodological innovation, and realistic benchmarking converge to advance few-shot molecular property prediction. Key insights emerge from this comparative analysis:

First, the distinction between Virtual Screening and Lead Optimization assays represents a critical consideration for both database construction and model development. These different assay types demand distinct few-shot learning strategies due to their fundamentally different data distribution patterns [26]. Second, temporal and spatial biases in molecular data significantly impact model generalizability, necessitating time-aware splitting protocols and specialized architectures like ACS that mitigate negative transfer [21]. Third, recent advances in data curation, particularly LLM-assisted approaches like those used in PharmaBench, demonstrate promising pathways for enhancing data quality and standardization in molecular databases [27].

As the field progresses, successful few-shot molecular property prediction will increasingly depend on the synergistic combination of high-quality databases, sophisticated benchmarking methodologies, and specialized model architectures capable of navigating the complex landscape of molecular data characteristics. The continued development of comprehensive, realistic, and well-structured molecular databases remains fundamental to translating few-shot learning advancements into practical drug discovery applications.

Methodological Landscape: From Meta-Learning to Multi-Modal Fusion

Molecular property prediction (MPP) is a fundamental task in drug discovery and materials science, aiming to predict the physicochemical, biological, and toxicological properties of compounds from their structural information. However, the high cost and complexity of wet-lab experiments often result in scarce molecular annotations, creating a significant bottleneck for traditional supervised learning approaches [4] [10]. In response to this challenge, few-shot molecular property prediction (FSMPP) has emerged as a promising paradigm that enables models to learn from only a handful of labeled examples [10].

The core challenge of FSMPP lies in its two-fold generalization problem: (1) cross-property generalization under distribution shifts, where models must transfer knowledge across different property prediction tasks that may have weakly correlated data distributions and biochemical mechanisms; and (2) cross-molecule generalization under structural heterogeneity, where models tend to overfit limited molecular structures and fail to generalize to structurally diverse compounds [10]. To systematically address these challenges, researchers have developed numerous methods that can be organized into a unified taxonomy spanning data-level, model-level, and learning paradigm-level approaches.

This guide provides an objective comparison of FSMPP methods within this unified taxonomy, presenting experimental data and detailed methodologies to help researchers and drug development professionals select appropriate approaches for their specific low-data scenarios.

A Unified Taxonomy for FSMPP Methods

The following diagram illustrates the comprehensive taxonomy of few-shot molecular property prediction methods, organized across data, model, and learning paradigm levels:

Figure 1: Unified taxonomy of few-shot molecular property prediction methods organized across data, model, and learning paradigm levels.

Data-Level Methods

Data-level approaches focus on enhancing the quantity or quality of training data to mitigate the challenges of limited annotations:

Data Augmentation: These methods generate synthetic molecular samples or tasks to expand the training distribution. For example, Motif-based Task Augmentation (MTA) generates new labeled samples by retrieving highly relevant molecular motifs, effectively creating new training tasks for meta-learning [28].

Multi-Task Learning: Approaches like Adaptive Checkpointing with Specialization (ACS) leverage correlations among related molecular properties to improve predictive performance. ACS employs a shared graph neural network backbone with task-specific heads and uses adaptive checkpointing to mitigate negative transfer between tasks, particularly effective under severe task imbalance [21].

Model-Level Methods

Model-level approaches design specialized architectures and representation learning strategies to enhance few-shot generalization:

Multi-Modal Fusion Architectures: Methods like AttFPGNN-MAML incorporate hybrid feature representations by combining graph neural network embeddings with multiple molecular fingerprints (MACCS, ErG, and PubChem) to enrich molecular representations and model task-specific intermolecular relationships [28].

Attribute-Guided Representation Learning: The Attribute-guided Prototype Network (APN) extracts and leverages high-level molecular attributes, including 14 different fingerprint types and deep attributes from self-supervised learning, to guide graph-based molecular encoders through dual-channel attention mechanisms [29] [30].

Graph Neural Networks: Approaches like Hierarchically Structured Learning on Relation Graphs (HSL-RG) explore molecular structural semantics at both global and local levels by constructing relation graphs with graph kernels and employing self-supervised learning for transformation-invariant representations [13].

Learning Paradigm-Level Methods

Learning paradigm-level approaches reformulate the optimization process itself to enable effective learning from limited data:

Meta-Learning (Optimization-Based): Model-Agnostic Meta-Learning (MAML) and its variants learn optimal initial parameters that can quickly adapt to new tasks with few gradient steps. ProtoMAML combines prototype networks with MAML to leverage both metric-based and optimization-based meta-learning [28].

Metric-Based Methods: Prototypical networks and relation networks learn embedding spaces and similarity measures that enable quick adaptation to new tasks without extensive fine-tuning. APN enhances this paradigm by incorporating attribute-guided prototype refinement [29].

Multi-Task Training Schemes: Methods like ACS implement specialized training schemes that balance shared representation learning with task-specific specialization through adaptive checkpointing and negative transfer mitigation [21].

Comparative Performance Analysis

Experimental Setup & Benchmarking Protocols

Standardized evaluation protocols are essential for fair comparison across FSMPP methods. Most studies use the following experimental framework:

Dataset Splitting: Methods are typically evaluated on benchmark datasets like Tox21, SIDER, MUV, and FS-Mol using Murcko scaffold splits to ensure that test molecules are structurally distinct from training molecules, better simulating real-world discovery scenarios [21].

Task Formulation: The FSMPP problem is commonly formulated as a 2-way K-shot classification task, where each task contains a support set (with K labeled examples per class) for model adaptation and a query set for evaluation [28] [29].

Evaluation Metrics: Common metrics include ROC-AUC (Area Under the Receiver Operating Characteristic Curve), PR-AUC (Area Under the Precision-Recall Curve), and F1-score, with results reported over multiple random task samples to ensure statistical significance [29] [30].

Table 1: Performance comparison of FSMPP methods across benchmark datasets

| Method | Taxonomy Category | Tox21 (5-shot ROC-AUC) | SIDER (5-shot ROC-AUC) | MUV (5-shot PR-AUC) | FS-Mol (16-shot ROC-AUC) |

|---|---|---|---|---|---|

| APN [29] | Model-Level + Paradigm-Level | 80.40% | 76.32% | 65.18% | - |

| AttFPGNN-MAML [28] | Model-Level + Paradigm-Level | - | - | - | 78.91% |

| ACS [21] | Data-Level + Paradigm-Level | 79.85% | 75.64% | - | - |

| HSL-RG [13] | Model-Level | 78.95% | 74.83% | 63.42% | - |

| Meta-MGNN [28] | Paradigm-Level | 76.52% | 73.45% | 61.87% | - |

| PAR [28] | Paradigm-Level | 77.18% | 74.26% | 62.95% | - |

Impact of Shot Number and Data Regime

The performance of FSMPP methods varies significantly with the number of available labeled examples (shots) and the specific data regime:

Table 2: Performance comparison across different shot numbers on Tox21 dataset

| Method | 5-shot ROC-AUC | 10-shot ROC-AUC | Performance Improvement |

|---|---|---|---|

| APN [29] [30] | 80.40% | 84.54% | +4.14% |

| ACS [21] | 79.85% | 83.72% | +3.87% |

| HSL-RG [13] | 78.95% | 82.91% | +3.96% |

| Siamese Network [30] | 72.36% | 76.84% | +4.48% |

| MetaGAT [30] | 77.15% | 81.03% | +3.88% |

Advanced methods like APN and ACS demonstrate stronger performance in ultra-low-data regimes (5-shot) and maintain consistent improvements as more samples become available. The performance gap between simpler approaches (e.g., Siamese Networks) and more sophisticated methods is more pronounced in the lowest-data scenarios [21] [30].

Analysis of Molecular Representation Strategies

The choice of molecular representation significantly impacts few-shot prediction performance:

Table 3: Effect of molecular representation choices on Tox21 10-shot performance

| Representation Strategy | Example Method | ROC-AUC | Key Advantages |

|---|---|---|---|

| Graph + Multi-Fingerprint Fusion | AttFPGNN-MAML [28] | 83.72% | Combines structural and expert-knowledge representations |

| Attribute-Guided (Triple Fingerprint) | APN [29] [30] | 84.46% | Leverages complementary fingerprint combinations |

| 3D Graph Representation | DLF-MFF [31] | 82.91% | Captures spatial molecular geometry |

| Hierarchical Relation Graphs | HSL-RG [13] | 82.89% | Models global and local structural semantics |

| Single Fingerprint (Best Performing) | APN with RDK5 [30] | 82.15% | Simple yet effective path-based representation |

Methods that integrate multiple complementary representations consistently outperform single-representation approaches. For instance, APN demonstrates that combining multiple fingerprint types (e.g., hashapavalonecfp4) achieves better performance than any single fingerprint alone [30]. Similarly, AttFPGNN-MAML shows that fusing graph neural network embeddings with molecular fingerprints creates more expressive representations that capture both structural and chemical features [28].

Experimental Protocols and Methodologies

Key Experimental Workflows

The following diagram illustrates a typical experimental workflow for developing and evaluating FSMPP methods:

Figure 2: Standard experimental workflow for FSMPP method development and evaluation.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key computational resources and datasets for FSMPP research

| Resource Name | Type | Description | Key Applications |

|---|---|---|---|

| FS-Mol [28] | Dataset | Comprehensive few-shot learning dataset with ~8,000 assays | Benchmarking FSMPP methods across diverse properties |

| MoleculeNet [28] [21] | Dataset | Curated benchmark collection including Tox21, SIDER, MUV | Standardized evaluation and comparison |

| Uni-Mol [30] | Pre-trained Model | Self-supervised learning framework for molecular structures | Generating deep molecular attributes for APN |

| RDKit | Software | Cheminformatics toolkit for molecular manipulation | Fingerprint generation and molecular representation |

| Meta-Learning Libraries (PyTorch, TensorFlow) | Framework | Deep learning frameworks with meta-learning extensions | Implementing MAML and prototypical networks |

The unified taxonomy of data-level, model-level, and learning paradigm-level methods provides a systematic framework for understanding and advancing few-shot molecular property prediction. Experimental comparisons reveal that hybrid approaches combining multiple strategies—such as APN (attribute-guided model with metric-based learning) and AttFPGNN-MAML (multi-modal fusion with optimization-based meta-learning)—typically achieve state-of-the-art performance across diverse benchmarks.

Key insights for researchers and drug development professionals include:

Method Selection Guidance: For scenarios with extremely limited data (≤5 shots), attribute-guided and multi-modal fusion methods generally outperform simpler approaches. In slightly higher-data regimes (10+ shots), the performance gap narrows, but advanced methods still provide meaningful improvements.

Representation Importance: Molecular representation choices significantly impact performance, with multi-modal approaches that combine structural graphs, molecular fingerprints, and chemical attributes demonstrating consistent advantages.

Future Directions: Promising research avenues include developing more sophisticated negative transfer mitigation strategies for multi-task learning, creating larger and more diverse few-shot benchmarks, and exploring foundation models pre-trained on extensive unlabeled molecular databases that can be efficiently adapted to few-shot property prediction tasks.

As the field progresses, this unified taxonomy and comparative analysis provides a foundation for selecting, developing, and evaluating FSMPP methods that can accelerate drug discovery and materials design in data-scarce environments.

The accurate prediction of molecular properties is a cornerstone of modern drug discovery and materials science. However, the field is persistently hampered by the "low data problem" – the scarcity of expensive, experimentally derived labeled data for training robust machine learning models [28]. This challenge is particularly acute for novel drug targets or emerging molecular families, where available data can be exceptionally limited. Few-shot learning, a subfield of machine learning where models must learn from a very small number of examples, has emerged as a promising framework to address this bottleneck [28]. Within this framework, meta-learning has proven particularly powerful. Often termed "learning to learn," meta-learning algorithms simulate the few-shot learning scenario during training by exposing a model to a wide variety of tasks, enabling it to accumulate generalized knowledge that can be rapidly adapted to new, unseen tasks with minimal data [32].

Among the most influential meta-learning strategies is Model-Agnostic Meta-Learning (MAML), which learns a superior initial model parameterization that can be quickly fine-tuned for new tasks via a few gradient steps [28]. A notable adaptation that combines the parameter optimization of MAML with the representational power of prototype networks is ProtoMAML [28]. This guide provides a comparative analysis of MAML, ProtoMAML, and their molecular adaptations, benchmarking their performance and detailing their experimental protocols to serve researchers and professionals in computational drug discovery.

Core Concepts: MAML and ProtoMAML

Model-Agnostic Meta-Learning (MAML)

The core objective of MAML is not to learn a single model for all tasks, but to learn an optimal initial set of model parameters that are highly sensitive to the loss functions of new tasks. This allows for rapid and efficient adaptation (fine-tuning) using only a small support set from a novel task. The algorithm operates through a bi-level optimization process:

- Inner Loop (Task-Specific Adaptation): For each task in a training batch, the model's initial parameters are updated with one or more gradient steps using the task's support set.

- Outer Loop (Meta-Optimization): The initial parameters are then updated by evaluating the performance of the adapted models on their respective query sets and aggregating the gradients across all tasks [28].

ProtoMAML: A Hybrid Approach

ProtoMAML is a hybrid algorithm that integrates the prototypical networks approach into the MAML framework [28]. Prototypical networks learn an embedding space in which a single prototype (typically the mean of support embeddings) represents each class. Classification is performed by finding the nearest prototype for a given query sample.

In ProtoMAML, the model learned via the MAML algorithm is specifically designed to produce high-quality embeddings for this prototype-based classification. The model is adapted on a support set to compute task-specific prototypes. The loss on the query set, which drives the meta-optimization, is computed based on the Euclidean distance between query embeddings and these class prototypes [28]. This fusion leverages MAML's strength in finding easily adaptable parameters while benefiting from the simplicity and efficacy of prototype-based reasoning in few-shot classification.

Molecular Adaptations and Benchmark Performance

The standard MAML and ProtoMAML frameworks are model-agnostic but require careful integration with domain-specific model architectures to achieve peak performance on molecular data.

AttFPGNN-MAML: A State-of-the-Art Implementation

A leading molecular adaptation is AttFPGNN-MAML, which incorporates a hybrid molecular representation to enrich the input to the meta-learner [28]. Its architecture, detailed in the experimental protocols section, combines a Graph Neural Network (GNN) with traditional molecular fingerprints, processed through an attention mechanism to generate task-specific representations. This model is then trained using the ProtoMAML strategy.

The table below summarizes the performance of AttFPGNN-MAML against other few-shot learning methods on the MoleculeNet benchmark.

Table 1: Performance Comparison on MoleculeNet Few-Shot Tasks (ROC-AUC)

| Model / Method | BBBP | Tox21 | SIDER | ClinTox | Average |

|---|---|---|---|---|---|

| AttFPGNN-MAML | 0.915 | 0.783 | 0.605 | 0.918 | 0.805 |

| Matching Networks | 0.851 | 0.737 | 0.584 | 0.817 | 0.747 |

| Prototypical Networks | 0.879 | 0.751 | 0.598 | 0.882 | 0.778 |

| MAML (with GNN) | 0.901 | 0.769 | 0.613 | 0.901 | 0.796 |

| Meta-MGNN | 0.893 | 0.775 | 0.601 | 0.910 | 0.795 |

As shown in Table 1, AttFPGNN-MAML achieves state-of-the-art or highly competitive performance, leading in three out of the four tasks and achieving the highest average ROC-AUC [28]. This demonstrates the effectiveness of combining a rich, hybrid molecular representation with the ProtoMAML learning strategy.

Performance Across Different Data Regimes

The utility of a model often depends on the volume of available data. The following table compares AttFPGNN-MAML with other methods on the FS-Mol dataset across varying support set sizes, illustrating its robustness in ultra-low data regimes.

Table 2: Performance on FS-Mol at Different Support Set Sizes (Average ROC-AUC)

| Model / Method | 16-shot | 32-shot | 64-shot | 128-shot |

|---|---|---|---|---|

| AttFPGNN-MAML | 0.672 | 0.685 | 0.701 | 0.723 |

| Prototypical Networks | 0.645 | 0.661 | 0.678 | 0.699 |

| MAML (with GNN) | 0.663 | 0.677 | 0.692 | 0.725 |

| IterRefLSTM | 0.658 | 0.669 | 0.684 | 0.711 |

| PAR | 0.649 | 0.665 | 0.681 | 0.706 |

AttFPGNN-MAML consistently outperforms other meta-learning methods at the lower support set sizes (16, 32, and 64-shot), underscoring its superior ability to leverage limited data [28]. Its performance is nearly matched by standard MAML at the 128-shot level, suggesting that the relative advantage of the more complex hybrid architecture is most pronounced when data is scarcest.

Experimental Protocols for Molecular Meta-Learning

For researchers seeking to reproduce or build upon these methods, a detailed understanding of the experimental setup is crucial. This section outlines the standard protocol for training and evaluating models like AttFPGNN-MAML.

The following diagram visualizes the end-to-end experimental workflow for a molecular meta-learning study, from data preparation to final evaluation.

Key Experimental Components

Problem Formulation and Data Splitting

In molecular few-shot learning, a "task" typically represents a specific binary property prediction, such as toxicity or bioactivity for a particular assay [28]. The entire dataset is divided into a meta-training set of tasks, a meta-validation set for hyperparameter tuning, and a meta-test set of held-out tasks for final evaluation. A Murcko-scaffold split is critical to ensure that molecules with core structural similarities are grouped together, preventing data leakage and creating a more realistic and challenging evaluation that tests the model's ability to generalize to novel molecular scaffolds [21].

The AttFPGNN-MAML Architecture

The high performance of AttFPGNN-MAML stems from its sophisticated model architecture, which is visualized below.

Key Components:

- Graph Neural Network (GNN): Processes the molecular graph, using a message-passing mechanism to capture structural information [28].

- Molecular Fingerprint Module: Extracts complementary chemical information using predefined fingerprints (e.g., MACCS, ErG, PubChem) to ensure a comprehensive representation [28].

- Feature Fusion & Attention: The GNN and fingerprint vectors are concatenated and passed through a fully connected layer. An instance attention module then refines these representations, making them specific to the context of the current task [28].

- ProtoMAML Training: The final task-specific representations are fed into the ProtoMAML algorithm, which learns to generate effective prototypes and classify query samples based on their distance to these prototypes [28].

Training Regime and Hyperparameters

Models are trained using the episodic framework. Common hyperparameters include:

- Inner Loop Optimizer: A single gradient step with a learning rate between 0.01 and 0.1.

- Outer Loop Optimizer: Adam optimizer with a meta-learning rate between 0.001 and 0.0001.

- Training Epochs: Typically several thousand to tens of thousands of episodes to ensure convergence.

The Scientist's Toolkit: Essential Research Reagents

The following table lists key computational "reagents" and resources essential for conducting research in molecular meta-learning.

Table 3: Essential Research Reagents and Resources

| Item | Function & Application | Example Sources / implementations |

|---|---|---|

| Benchmark Datasets | Provide standardized tasks and splits for fair model comparison and benchmarking. | MoleculeNet [28], FS-Mol [28] |

| Graph Neural Network Libraries | Provide building blocks for creating GNN-based molecular encoders. | PyTor Geometric, Deep Graph Library (DGL) |

| Meta-Learning Frameworks | Offer pre-implemented versions of MAML and other meta-learning algorithms. | Torchmeta, Learn2Learn |

| Molecular Fingerprinting Tools | Generate fixed-length vector representations of molecules based on chemical structure. | RDKit (for MACCS, PubChem-like fingerprints) |

| Scaffold Splitting Utilities | Ensure realistic data splits based on molecular Bemis-Murcko scaffolds to avoid over-optimistic performance estimates. | RDKit (for scaffold generation) |

| AttFPGNN-MAML Code | A complete, reproducible implementation of the state-of-the-art model. | Public GitHub repository (sanomics-lab/AttFPGNN-MAML) [28] |

In the challenging domain of few-shot molecular property prediction, meta-learning strategies like MAML and ProtoMAML provide powerful tools to overcome data scarcity. Benchmarking results consistently show that molecularly-adapted models, particularly AttFPGNN-MAML, set a new state-of-the-art by effectively combining hybrid molecular representations with robust meta-learning algorithms. The continued refinement of these protocols, especially through advanced cross-modal and prototype-guided methods shown in other molecular AI research [33], promises to further enhance the precision, interpretability, and overall impact of these models in accelerating scientific discovery.

The accurate prediction of molecular properties is a critical challenge in drug discovery and materials science. Traditional methods, reliant on quantum chemistry calculations, are computationally prohibitive for real-time predictions and high-throughput screening. In recent years, Graph Neural Networks (GNNs) have emerged as a powerful paradigm for molecular representation learning, treating atoms as nodes and bonds as edges in a molecular graph. This approach has fundamentally shifted the field from reliance on hand-engineered descriptors to automated, data-driven feature extraction.

A significant contemporary challenge lies in the scarcity of high-quality, labeled molecular data, which has spurred growing interest in few-shot learning (FSL) scenarios. Within this context, benchmarking various GNN architectures becomes essential for understanding their capabilities and limitations in transferring knowledge from data-rich to data-poor molecular properties. This guide provides a systematic comparison of GNN architectures serving as molecular encoders, evaluating their performance, architectural nuances, and suitability for few-shot molecular property prediction (FSMPP).

Architectural Paradigms in Molecular Graph Neural Networks

Molecular GNNs have evolved from simple graph convolutional networks to sophisticated models that incorporate 3D structural information and physical inductive biases. The core of these models is the message-passing mechanism, where nodes (atoms) iteratively aggregate information from their neighbors (connected atoms) to update their own representations. This process directly mirrors the local nature of chemical interactions.

Evolution of Message-Passing Schemes

Early GNNs for molecules utilized basic spatial convolution operators. However, a key advancement came with models that incorporate geometric equivariance. Standard GNNs are invariant to rotations and translations, which is desirable for many graph-level tasks. However, molecular properties often depend on the 3D spatial arrangement of atoms. E(3)-equivariant GNNs are designed to transform predictably under rotations, translations, and reflections of the 3D molecular structure, allowing them to better capture geometric and electronic properties.

- SchNet: A pioneering model that uses continuous-filter convolutional layers to model quantum interactions in molecules. It is invariant to rotations and translations, making it suitable for learning scalar molecular properties [34].

- PaiNN (Polarizable Atom Interaction Neural Network): An advancement over SchNet that introduces an equivariant message-passing mechanism. It can represent both scalar (e.g., energy) and vector (e.g., dipole moment) properties, improving predictions for spectroscopic properties [34].

- DetaNet and EnviroDetaNet: These represent the state-of-the-art in E(3)-equivariant models. EnviroDetaNet integrates intrinsic atomic properties, spatial features, and, crucially, atomic environment information from pre-trained models. This allows it to capture both local and global molecular features, addressing a limitation of earlier GNNs that could suffer from "message over-smoothing" and a poor understanding of global context [34].

Table 1: Comparison of Core GNN Architectures for Molecular Representation.

| Model | Core Message-Passing Mechanism | Equivariance | Key Innovation | Typical Application |

|---|---|---|---|---|

| SchNet | Continuous-filter convolution | E(3)-Invariant | Modeling quantum interactions with continuous filters | Prediction of potential energy surfaces, fundamental molecular properties [34] |

| PaiNN | Equivariant message-passing | E(3)-Equivariant | Learning on irreducible representations for scalar and vector features | Prediction of dipole moments, polarizability, and spectroscopic properties [34] |

| DetaNet | E(3)-equivariant self-attention | E(3)-Equivariant | Combining equivariance with self-attention mechanisms | Multi-task spectral prediction (IR, Raman, UV, NMR) [34] |

| EnviroDetaNet | Environment-aware equivariant MP | E(3)-Equivariant | Integration of pre-trained atomic environment embeddings | Robust property prediction with limited data, complex molecular systems [34] |

| KPGT | Knowledge-guided graph transformer | N/A | Pre-training a graph transformer with domain knowledge | Learning robust molecular representations for drug discovery [35] |

The architectural evolution highlights a clear trend: from invariant to equivariant models, and from models that treat atoms as simple physical particles to those that incorporate rich chemical and environmental context. This is particularly important for FSMPP, where a model's ability to generalize from limited data depends on the quality and completeness of its inherent molecular representation.

Performance Benchmarking and Quantitative Comparison

Empirical performance on standardized benchmarks is the ultimate test for any model. The following comparative data illustrates the effectiveness of advanced GNNs against traditional and contemporary baselines.

Performance on Quantum Chemical Properties

The QM9 dataset is a standard benchmark for predicting quantum mechanical properties of small organic molecules. Performance on a subset of these properties, particularly those sensitive to 3D geometry, effectively distinguishes model capabilities.

Table 2: Benchmarking Performance on QM9 Property Prediction (Mean Absolute Error).

| Molecular Property | SchNet | PaiNN | DetaNet | EnviroDetaNet | EnviroDetaNet (50% Data) |

|---|---|---|---|---|---|

| Hessian Matrix | - | - | 0.105 (Baseline) | 0.061 (41.9% reduction) | 0.077 (39.6% reduction vs. baseline) [34] |

| Dipole Moment | 0.028 | 0.012 | 0.033 (Baseline) | 0.017 (48.5% reduction) | - [34] |

| Polarizability | - | - | 0.089 (Baseline) | 0.043 (52.2% reduction) | 0.051 (46.1% reduction vs. baseline) [34] |

| Hyperpolarizability | - | - | 0.241 (Baseline) | 0.153 (36.5% reduction) | - [34] |