Benchmarking in Computational Chemistry: A Guide to Validating Models for Drug Discovery and Biomedical Research

This article provides a comprehensive guide to benchmarking in computational chemistry, a critical process for validating the accuracy and reliability of models that predict molecular properties and behaviors.

Benchmarking in Computational Chemistry: A Guide to Validating Models for Drug Discovery and Biomedical Research

Abstract

This article provides a comprehensive guide to benchmarking in computational chemistry, a critical process for validating the accuracy and reliability of models that predict molecular properties and behaviors. Tailored for researchers, scientists, and drug development professionals, it covers foundational concepts, methodological applications, troubleshooting strategies, and comparative validation techniques. By exploring current frameworks, statistical metrics, and real-world case studies—from predicting toxicokinetic properties to assessing machine-learned interatomic potentials—this resource offers practical insights for implementing robust benchmarking practices. The goal is to empower scientists to select and develop trustworthy computational tools that accelerate innovation in biomedical and clinical research.

What is Model Benchmarking? Core Concepts and Critical Importance in Computational Chemistry

In computational chemistry, benchmarking is the systematic process of evaluating and comparing the performance of computational models against reliable experimental data to assess their accuracy and reliability. This process serves as a critical bridge between theoretical predictions and real-world observations, establishing confidence in computational methods used for predicting molecular properties and behaviors. The fundamental purpose of benchmarking is to rigorously quantify how well a computational model reproduces physically observable phenomena, thereby guiding method selection, improvement, and establishing domains of applicability [1] [2].

Benchmarking differs from, yet complements, the broader concepts of verification and validation (V&V). Verification addresses whether a computational model is solved correctly ("solving the equations right"), while validation determines whether the correct model is being solved ("solving the right equations") [3] [2]. Benchmarking operates primarily within the validation domain, providing the empirical evidence needed to assess a model's physical accuracy. As computational simulations increasingly inform critical decisions in drug discovery and materials design, rigorous benchmarking has become indispensable for transitioning from qualitative demonstrations to quantitatively reliable predictions [4].

The Critical Importance of Benchmarking

Benchmarking provides the essential foundation for establishing credibility in computational models, particularly as these models are increasingly used to reduce reliance on costly physical experiments [3]. In high-consequence fields like drug development and nuclear safety, where computational predictions may inform regulatory decisions or safety assessments, comprehensive benchmarking is not merely academic but a practical necessity [2]. The benchmarking process creates a structured framework for method selection, enabling researchers to choose the most appropriate computational approach for their specific problem from among multiple competing methods [5].

The recent emergence of machine-learned interatomic potentials (MLIPs) highlights benchmarking's role in driving methodological progress. As noted in evaluations of models trained on the Open Molecules 2025 (OMol25) dataset, "trust is especially critical here because scientists need to rely on these models to produce physically sound results that translate to and can be used for scientific research" [6]. Benchmarking creates a competitive yet collaborative environment where "better benchmarks and evaluations have been essential for progress and advancing many fields of ML" [6]. This friendly competition, often facilitated by public leaderboards, accelerates innovation while maintaining rigorous standards [7] [6].

Furthermore, benchmarking identifies limitations and weaknesses in current methodologies, directing future development efforts. For instance, benchmarking revealed that MLIPs with similar training errors can exhibit significantly different performance on real-world tasks like molecular dynamics simulations [7]. Similarly, in drug discovery, benchmarking has exposed concerning inconsistencies in binding pose prediction, with one study finding that "only 26% of noncovalently bound ligands and 46% of covalent inhibitors could be accurately regenerated within 2.0 Å RMSD of the experimental pose" [4]. These performance gaps, uncovered through systematic benchmarking, highlight where methodological improvements are most urgently needed.

Key Components of a Benchmarking Framework

Defining Purpose and Scope

The first step in any benchmarking study involves clearly defining its purpose and scope. Studies generally fall into three categories: method development benchmarks (conducted by method developers to demonstrate advantages of a new approach), neutral benchmarks (independent comparisons of existing methods), and community challenges (organized competitions like CASP for protein structure prediction) [5]. The scope must balance comprehensiveness with practical constraints, ensuring the benchmark addresses chemically relevant questions without becoming unmanageably large [5].

Selection of Methods

Method selection should be guided by the benchmark's purpose. Neutral benchmarks should strive to include all available methods for a specific analysis, functioning as a comprehensive review, while method development benchmarks may compare against a representative subset of state-of-the-art and baseline methods [5]. Inclusion criteria should be clearly defined and applied consistently, such as requiring freely available software implementations that can be successfully installed without excessive troubleshooting [5].

Selection of Benchmark Datasets

The choice of reference datasets fundamentally influences benchmarking outcomes. Two primary dataset types are used: experimental data and simulated data. Experimental data provides real-world relevance but may have measurement uncertainties, while simulated data offers known "ground truth" but must accurately reflect real systems [5]. High-quality benchmarks employ diverse datasets representing various conditions and system types to thoroughly test method robustness [7]. For example, the MLIPAudit framework includes "organic small molecules, flexible peptides, folded protein domains, molecular liquids and solvated systems" to comprehensively evaluate machine-learned interatomic potentials [7].

Performance Metrics and Evaluation Criteria

Quantitative performance metrics enable objective method comparison. Common metrics in computational chemistry include Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and the coefficient of determination (R²) [8] [1]. Different metrics emphasize different aspects of performance—MAE weights all errors equally, RMSE penalizes larger errors more heavily, and R² measures correlation strength. Selection should align with the benchmark's goals, often requiring multiple metrics to fully characterize performance [5].

Experimental Protocols and Methodologies

Benchmarking Neural Network Potentials

A recent benchmark evaluating OMol25-trained neural network potentials (NNPs) on experimental reduction potential and electron affinity data exemplifies rigorous methodology [8]. For reduction potential prediction, researchers obtained experimental data from a compiled dataset of 193 main-group and 120 organometallic species. The protocol involved:

- Geometry Optimization: Optimizing non-reduced and reduced structures for each species using each NNP with geomeTRIC 1.0.2 [8]

- Solvent Correction: Calculating solvent-corrected electronic energies using the Extended Conductor-like Polarizable Continuum Solvation Model (CPCM-X) [8]

- Energy Difference Calculation: Computing reduction potential as the difference between electronic energies of non-reduced and reduced structures (in volts) [8]

For electron affinity benchmarking, the protocol omitted solvent correction and compared predicted versus experimental gas-phase values for simple main-group organic/inorganic species and organometallic coordination complexes [8].

Validation of Fluid Correlation Peaks

An example from materials science demonstrates experimental validation of computational predictions for fluid systems [9]. Researchers tested the universality of correlation peaks in radial distribution functions (RDFs, g(r)) through:

- Experimental System Preparation: Creating 2D colloidal suspensions with tunable interactions using rotating electric fields [9]

- Microscopy and Tracking: Using optical microscopy to track individual particle positions over time [9]

- RDF Calculation: Computing experimental g(r) from particle position data [9]

- Peak Analysis: Decomposing g(r) into correlation peaks pₐ(r) representing different coordination spheres using Voronoi decomposition and shortest path graph concepts [9]

- Comparison with Simulation: Comparing experimentally derived peak parameters (norm, mean distance, mean square displacement) with molecular dynamics simulations of 2D and 3D systems [9]

Quantitative Benchmarking Results: A Case Study

The benchmarking study of OMol25-trained NNPs provides illustrative quantitative results comparing multiple methods across different chemical systems [8]. The table below summarizes performance metrics for reduction potential prediction:

Table 1: Performance of Computational Methods for Predicting Reduction Potentials [8]

| Method | Set | MAE (V) | RMSE (V) | R² |

|---|---|---|---|---|

| B97-3c | OROP | 0.260 (0.018) | 0.366 (0.026) | 0.943 (0.009) |

| B97-3c | OMROP | 0.414 (0.029) | 0.520 (0.033) | 0.800 (0.033) |

| GFN2-xTB | OROP | 0.303 (0.019) | 0.407 (0.030) | 0.940 (0.007) |

| GFN2-xTB | OMROP | 0.733 (0.054) | 0.938 (0.061) | 0.528 (0.057) |

| eSEN-S | OROP | 0.505 (0.100) | 1.488 (0.271) | 0.477 (0.117) |

| eSEN-S | OMROP | 0.312 (0.029) | 0.446 (0.049) | 0.845 (0.040) |

| UMA-S | OROP | 0.261 (0.039) | 0.596 (0.203) | 0.878 (0.071) |

| UMA-S | OMROP | 0.262 (0.024) | 0.375 (0.048) | 0.896 (0.031) |

| UMA-M | OROP | 0.407 (0.082) | 1.216 (0.271) | 0.596 (0.124) |

| UMA-M | OMROP | 0.365 (0.038) | 0.560 (0.064) | 0.775 (0.053) |

For electron affinity prediction, the study reported these results:

Table 2: Performance of Computational Methods for Predicting Electron Affinities (Main-Group Species) [8]

| Method | MAE (eV) | RMSE (eV) | R² |

|---|---|---|---|

| r2SCAN-3c | 0.130 | 0.176 | 0.984 |

| ωB97X-3c | 0.154 | 0.206 | 0.977 |

| g-xTB | 0.222 | 0.279 | 0.958 |

| GFN2-xTB | 0.236 | 0.313 | 0.947 |

| eSEN-S | 0.527 | 0.664 | 0.763 |

| UMA-S | 0.189 | 0.256 | 0.965 |

| UMA-M | 0.349 | 0.453 | 0.889 |

These quantitative results reveal several important patterns. First, performance varies significantly across different chemical domains (main-group vs. organometallic species). Second, no single method outperforms all others across all metrics and systems, highlighting the importance of context-dependent method selection. Third, despite not explicitly incorporating charge-based physics, some NNPs (particularly UMA-S) achieve accuracy competitive with traditional computational methods [8].

Table 3: Key Research Reagent Solutions for Computational Benchmarking

| Resource Category | Specific Examples | Function and Purpose |

|---|---|---|

| Reference Datasets | OMol25 dataset [6], Experimental reduction potential data [8], Experimental electron affinity data [8] | Provide high-quality reference data for training and benchmarking computational models |

| Benchmarking Frameworks | MLIPAudit [7], CASP challenges [4] | Standardized platforms for evaluating and comparing model performance |

| Experimental Validation Systems | 2D colloidal suspensions with tunable interactions [9] | Enable direct experimental testing of computational predictions under controlled conditions |

| Software Tools | geomeTRIC [8], CPCM-X solvation model [8], LAMMPS [9] | Implement geometry optimization, solvation corrections, and molecular dynamics simulations |

| Statistical Analysis Methods | Mean Absolute Error, Root Mean Square Error, R² [8] [1] | Quantify model performance and enable objective comparisons |

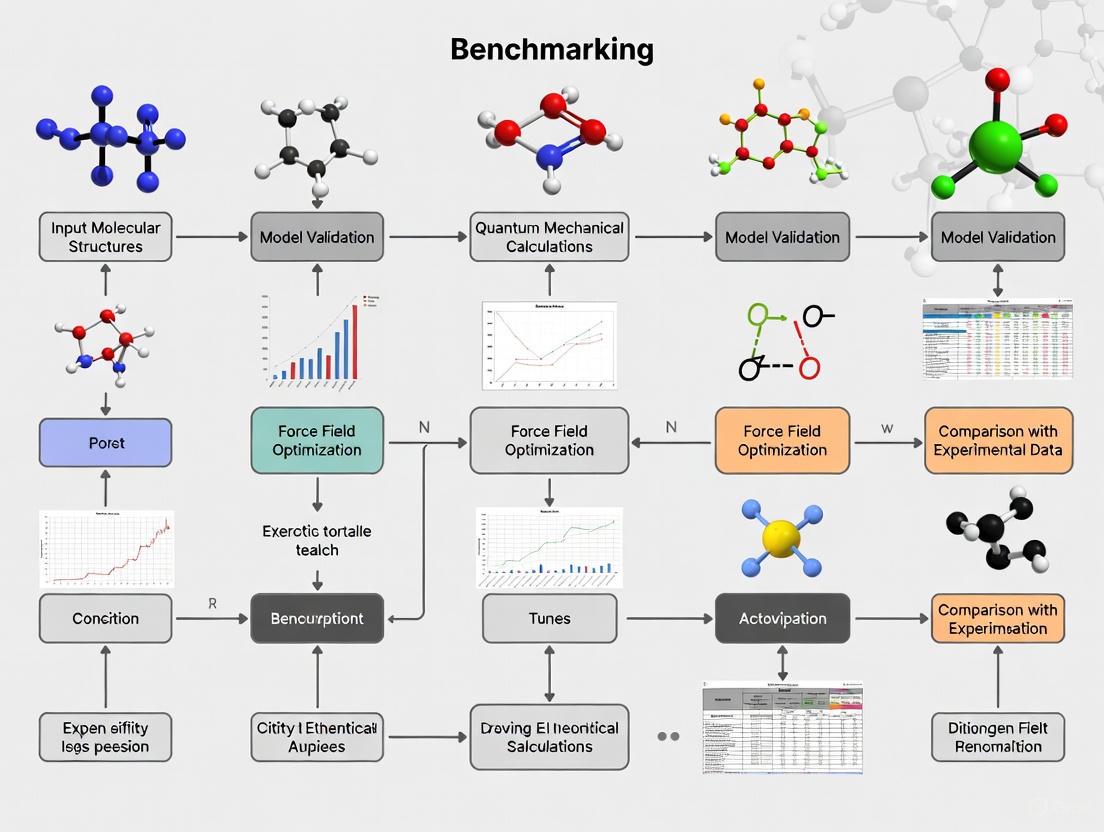

Workflow Diagram of the Benchmarking Process

The following diagram visualizes the systematic workflow for benchmarking computational models against experimental data:

Diagram 1: Benchmarking Process Workflow

Current Challenges and Future Directions

Despite methodological advances, benchmarking in computational chemistry faces persistent challenges. Data quality and availability remain significant constraints, particularly for systems requiring complex experimental measurements [4]. Overlap between training and evaluation datasets can lead to overoptimistic performance estimates, while structurally complex or flexible binding sites present particular difficulties for methods like molecular docking [4]. The field also grapples with establishing standardized evaluation protocols that balance comprehensiveness with practical feasibility [7].

Future progress requires addressing several critical needs. There is a growing consensus for developing diverse, high-quality datasets that reflect real-world applications rather than idealized systems [4]. The community would benefit from blinded evaluation methods to reduce unconscious bias, and continuous benchmarking platforms that track performance improvements over time [4]. As noted in drug discovery, "unlike protein structure prediction, which has been continually improved through CASP for over 30 years, the small molecule drug discovery community lacks equivalent, sustained frameworks for progress" [4].

Emerging approaches show promise for addressing these challenges. Community-driven benchmarking initiatives like MLIPAudit create shared reference points for assessing model accuracy, robustness, and generalization [7]. Multi-fidelity benchmarks that incorporate both high-level theoretical reference data and experimental measurements can provide more comprehensive validation [8]. Uncertainty quantification is increasingly recognized as essential for establishing predictive credibility, moving beyond point estimates to probabilistic predictions that acknowledge methodological limitations [3].

Benchmarking constitutes the essential process that connects computational predictions with experimental reality in chemistry. Through systematic comparison against reliable reference data, benchmarking transforms abstract computational methods into validated tools for scientific discovery and application. The process requires careful design—from defining clear objectives and selecting appropriate methods to choosing representative datasets and meaningful performance metrics.

As computational methods grow increasingly complex, particularly with the rise of machine learning approaches, rigorous benchmarking becomes ever more critical. It provides the evidentiary foundation needed to establish trust in computational predictions, especially when those predictions inform high-stakes decisions in drug development, materials design, or safety assessment. By identifying both strengths and limitations of computational approaches, benchmarking not only guides current method selection but also illuminates the path for future methodological improvements. In this way, benchmarking serves as both the quality control mechanism for existing methods and the innovation engine driving computational chemistry forward.

In the rapidly advancing field of computational chemistry, benchmarking has emerged as the cornerstone of scientific progress and validation. As large atomistic models (LAMs) and complex computational methods transform drug discovery and materials science, rigorous benchmarking provides the essential framework for distinguishing genuine advancements from mere algorithmic artifacts. Benchmarking serves as the critical evaluation mechanism that ensures computational tools meet the stringent requirements of scientific accuracy, reliability, and reproducibility before they can be trusted in real-world applications such as drug design and material development [10] [4].

The fundamental importance of benchmarking stems from its role in bridging the gap between theoretical development and practical application. Unlike fields where validation is straightforward, computational chemistry deals with complex molecular systems where even minor errors in energy calculations—on the scale of 1 kcal/mol—can lead to erroneous conclusions about molecular stability or binding affinity [11]. As noted in recent assessments of the field, the lack of sustained, community-wide benchmarking efforts has significantly impeded progress in critical areas like binding pose and activity prediction, where only 26-46% of ligands can be accurately regenerated within experimental uncertainty using current methods [4].

This whitepaper establishes a comprehensive framework for understanding why benchmarking is non-negotiable in computational chemistry research. By examining current benchmarking methodologies, analyzing performance data across domains, and providing practical implementation protocols, we demonstrate how systematic evaluation accelerates scientific discovery while preventing costly missteps in downstream applications.

The Benchmarking Imperative: Addressing Critical Gaps

The Universality Challenge in Atomistic Modeling

The pursuit of universal potential energy surfaces (PES) represents one of the most ambitious goals in computational chemistry, yet benchmarking reveals significant gaps between current capabilities and this ideal. Recent analyses through the LAMBench framework demonstrate that even state-of-the-art large atomistic models (LAMs) struggle with true universality across diverse chemical domains [10]. These models exhibit dramatically variable performance when applied across different research domains, particularly when trained on domain-specific data such as the MPtrj dataset for inorganic materials (using PBE/PBE+U functionals) versus small molecules requiring higher-level ωB97M functionals [10].

The fundamental challenge lies in the inherent incompatibilities between data generated across different computational chemistry domains. Variations in exchange-correlation functionals, basis sets, and pseudopotentials create systematic discrepancies that prevent seamless integration of training data [10]. This fragmentation directly impedes the development of truly universal models, as evidenced by benchmarking results showing that models excelling in one domain (e.g., inorganic materials) often underperform in others (e.g., biomolecular systems) [10].

The Reproducibility Crisis in Predictive Modeling

Beyond accuracy metrics, benchmarking reveals critical limitations in model reproducibility and stability—factors essential for reliable scientific application. In clinical diagnostic applications, large language models exhibit concerning variability, generating different responses even when input prompts, model architecture, and parameters remain identical [12]. This inconsistency poses substantial risks in diagnostic settings where the same patient case might yield divergent suggestions, potentially undermining clinical decision-making [12].

Similar challenges manifest in molecular dynamics simulations, where non-conservative models—those predicting forces directly rather than deriving them from energy gradients—can demonstrate high apparent accuracy in static evaluations yet prove unstable in actual simulations [10] [13]. The LAMBench evaluations systematically document this phenomenon, showing that models failing conservativeness requirements generate unreliable molecular dynamics trajectories despite excellent performance on energy prediction benchmarks [10].

Table 1: Performance Variability of Computational Models Across Domains

| Model Category | Primary Domain | Transfer Performance | Critical Limitations |

|---|---|---|---|

| Domain-Specific LAMs (MACE-MP-0, SevenNet-0) | Inorganic Materials | Poor transfer to biomolecular systems | Trained on PBE/PBE+U level data incompatible with chemical accuracy requirements |

| Small Molecule LAMs (AIMNet, Nutmeg) | Organic/Small Molecules | Limited transfer to materials science | Requires hybrid functionals (ωB97M) not used in materials science |

| Universal Models (UMA, eSEN) | Multiple Domains | Moderate cross-domain performance | Performance variations across chemical spaces; computational expense |

| Clinical LLMs | Medical Diagnostics | Variable across clinical specialties | Output variability even with identical inputs |

The Applicability Domain Problem

Benchmarking consistently reveals that models perform substantially worse on out-of-distribution examples compared to their advertised capabilities on in-distribution test sets. This applicability domain problem particularly impacts real-world deployment where models encounter chemical spaces not represented in their training data [14]. Comprehensive benchmarking of quantitative structure-activity relationship (QSAR) models for toxicokinetic and physicochemical properties demonstrates that prediction accuracy decreases markedly when compounds fall outside the model's defined applicability domain [14].

The consequences of this limitation are particularly significant in drug discovery, where activity cliffs—cases where small structural changes cause dramatic affinity differences—often prove most valuable for optimization yet represent precisely the scenarios where models frequently fail [4]. Without rigorous benchmarking that specifically tests these edge cases, models may appear deceptively competent while failing in the most critical applications.

Current Benchmarking Frameworks and Performance Metrics

Established Benchmarking Systems

The computational chemistry community has developed several specialized benchmarking frameworks to address distinct evaluation needs. The table below summarizes key frameworks and their primary applications:

Table 2: Specialized Benchmarking Frameworks in Computational Chemistry

| Benchmark Framework | Primary Focus | Key Metrics | Domain Coverage |

|---|---|---|---|

| LAMBench [10] | Large Atomistic Models (LAMs) | Generalizability, Adaptability, Applicability | Broad coverage across materials, molecules, and biomolecules |

| QUID [11] | Ligand-Pocket Interactions | Interaction energy accuracy, Force prediction | Non-covalent interactions in biological systems |

| MOFSimBench [13] | Metal-Organic Frameworks | Structure optimization, Molecular dynamics stability, Host-guest interactions | Porous materials for catalysis and storage |

| ADMET Benchmarking [14] | Toxicokinetic Properties | Regression R², Balanced accuracy, Applicability domain adherence | Drug-like molecules and industrial chemicals |

Quantitative Performance Comparisons

Rigorous benchmarking provides crucial quantitative comparisons between computational methods. Recent evaluations of machine learning interatomic potentials (MLIPs) on MOFSimBench reveal significant performance variations across different simulation tasks [13]:

Table 3: MLIP Performance on MOFSimBench Tasks (100 structures)

| Model | Structure Optimization (<10% volume change) | MD Stability (<10% volume change) | Bulk Modulus MAE | Host-Guest Interaction MAE |

|---|---|---|---|---|

| PFP v8.0.0 | 92/100 | 89/100 | 1.98 GPa | 0.029 eV |

| eSEN-OAM | 88/100 | 91/100 | 1.52 GPa | 0.031 eV |

| orb-v3-omat+D3 | 87/100 | 88/100 | 2.15 GPa | 0.035 eV |

| uma-s-1p1 | 86/100 | Not tested | 2.01 GPa | 0.033 eV |

For ligand-pocket interactions, the QUID benchmark establishes a "platinum standard" through agreement between complementary coupled cluster (CC) and quantum Monte Carlo (QMC) methods, achieving remarkable interaction energy agreement of 0.5 kcal/mol [11]. This high-accuracy benchmark reveals that while several dispersion-inclusive density functional approximations provide reasonable energy predictions, their atomic van der Waals forces often differ substantially in magnitude and orientation [11]. Meanwhile, semiempirical methods and empirical force fields require significant improvements in capturing non-covalent interactions, particularly for out-of-equilibrium geometries [11].

In ADMET prediction, comprehensive benchmarking of twelve QSAR tools shows that models for physicochemical properties (average R² = 0.717) generally outperform those for toxicokinetic properties (average R² = 0.639 for regression, average balanced accuracy = 0.780 for classification) [14]. This performance gap highlights the greater complexity of biological interactions compared to pure compound characteristics.

Experimental Protocols for Rigorous Benchmarking

Protocol 1: Evaluating Model Generalizability

The LAMBench framework provides a systematic methodology for assessing model generalizability across three critical dimensions: in-distribution performance, out-of-distribution performance, and cross-domain transfer capability [10].

Procedure:

- Dataset Curation: Compile diverse test sets representing distinct chemical domains (biomolecules, electrolytes, metal complexes, materials)

- In-Distribution Testing: Evaluate performance on test sets randomly split from training data distribution

- Out-of-Distribution Testing: Assess performance on chemically distinct systems not represented in training data

- Cross-Domain Transfer: Measure performance when models trained in one domain (e.g., materials) are applied to another (e.g., biomolecules)

- Statistical Analysis: Compute metrics including Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and failure rate analysis

Key Considerations: Domain-specific functional preferences create inherent incompatibilities; for example, materials science typically employs PBE/PBE+U functionals while chemical accuracy requires hybrid functionals like ωB97M [10]. Benchmarking must therefore account for these fundamental methodological differences when assessing cross-domain performance.

Protocol 2: Assessing Reproducibility and Repeatability

For clinical and diagnostic applications, a specialized statistical framework quantifies both repeatability (agreement under identical conditions) and reproducibility (agreement under different, pre-specified conditions) [12].

Procedure:

- Experimental Setup: Select multiple models (commercial and open-source) representing different architectures and scales

- Prompt Variation: Utilize multiple validated prompts designed to elicit distinct reasoning strategies (chain-of-thought, differential diagnosis, intuitive, analytic, and Bayesian reasoning)

- Repeated Sampling: Execute numerous independent runs (R=100) per prompt-case-model combination

- Semantic Consistency Assessment: Measure stability in output meaning across repeated runs using embedding-based similarity metrics

- Internal Consistency Quantification: Assess token-level generation variability across repetitions

Validation Datasets: Employ both standardized benchmarks (e.g., MedQA with 518 USMLE-style questions) and real-world challenging cases (e.g., 90 rare disease cases from Undiagnosed Diseases Network) [12].

Protocol 3: Validating Practical Applicability

The MOFSimBench framework provides a comprehensive protocol for evaluating practical model performance on real-world simulation tasks [13].

Procedure:

- Structure Optimization Task

- Input: 100 diverse MOF/COF/zeolite structures

- Method: Full geometry optimization using target MLIP

- Metric: Volume change percentage compared to DFT-optimized reference structures

- Success Criterion: |ΔV| < 10% compared to DFT reference

Molecular Dynamics Stability Assessment

- Input: 100 structures after optimization

- Method: NPT simulation (50 ps, 300K, 1 bar) after equilibration

- Metric: Volume change between initial and final structures

- Success Criterion: |ΔV| < 10% during production MD

Bulk Property Prediction

- Input: Strain series for each optimized structure

- Method: Birch-Murnaghan equation of state fitting

- Metric: Mean Absolute Error (MAE) for bulk modulus compared to DFT

- Quality Control: Exclude structures where fitted minimum volume deviates >1% from optimized volume

Host-Guest Interaction Accuracy

- Input: 26 MOF structures with CO₂/H₂O adsorbates at multiple interaction distances

- Method: Single-point energy and force calculations

- Metric: MAE for interaction energies and forces compared to DFT reference across repulsion, equilibrium, and weak-attraction regimes

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Benchmarking Tools and Resources

| Tool/Resource | Type | Primary Function | Key Applications |

|---|---|---|---|

| LAMBench [10] | Benchmarking System | Evaluating Large Atomistic Models | Generalizability, adaptability, and applicability assessment |

| QUID Framework [11] | Quantum-Chemical Benchmark | Platinum-standard interaction energies | Ligand-pocket non-covalent interactions |

| MOFSimBench [13] | Specialized Benchmark | MLIP evaluation for porous materials | MOF structure, stability, and host-guest properties |

| OPERa [14] | QSAR Toolsuite | Predicting physicochemical properties | ADMET profiling and chemical safety assessment |

| torch-dftd [13] | Dispersion Correction | Adding dispersion forces to MLIPs | Accurate non-covalent interaction modeling |

| RDKit [14] | Cheminformatics | Chemical structure standardization | Data curation and descriptor calculation |

| PBE0+MBD [11] | Density Functional | Reference quantum calculations | Generating high-quality training and benchmark data |

Implementation Framework: Integrating Benchmarking into Research Workflows

Establishing a Continuous Benchmarking Culture

The most effective benchmarking extends beyond periodic validation to become an integral part of the research lifecycle. This requires adopting several key practices:

Pre-registration of Benchmarking Protocols: Before model development begins, researchers should pre-register their intended benchmarking strategies, including datasets, evaluation metrics, and comparison baselines. This approach prevents retrospective benchmark selection that potentially inflates perceived performance [4].

Blinded Evaluation Methods: Following the successful model of the Critical Assessment of Structure Prediction (CASP) in protein folding, small molecule drug discovery should implement blinded evaluations using unreleased experimental data to prevent unconscious optimization toward known results [4].

Multi-dimensional Performance Tracking: Rather than relying on single metrics, comprehensive benchmarking should simultaneously track accuracy, computational efficiency, robustness to input variations, and failure modes across diverse chemical spaces [10] [13].

Community-Wide Benchmarking Initiatives

Individual efforts alone cannot address the systemic benchmarking challenges in computational chemistry. The field requires coordinated community initiatives:

Standardized Dataset Generation: Following the example of the OMol25 dataset—which comprises over 100 million quantum chemical calculations requiring 6 billion CPU-hours—the community should prioritize creating shared, high-accuracy datasets spanning diverse chemical domains [15].

Open Leaderboards and Transparent Reporting: Initiatives like the interactive LAMBench leaderboard provide ongoing community assessment of model capabilities, enabling researchers to identify strengths and limitations before applying models to specific research problems [10].

Cross-disciplinary Benchmarking Consortia: Successful benchmarking requires integration across traditionally separate domains. The collaboration between quantum chemists, materials scientists, and pharmaceutical researchers in developing QUID demonstrates the power of cross-domain collaboration in establishing meaningful benchmarks [11].

Benchmarking represents a non-negotiable foundation for reliable computational chemistry research. As the field progresses toward increasingly complex models and applications, systematic evaluation becomes ever more critical for distinguishing genuine advances from methodological artifacts. The frameworks, protocols, and resources outlined in this whitepaper provide a roadmap for integrating rigorous benchmarking throughout the research lifecycle.

The evidence is clear: without comprehensive benchmarking, computational chemistry risks generating elegant but unreliable models that fail in critical applications. From drug discovery to materials design, the consequences of unvalidated models include wasted resources, missed opportunities, and ultimately, erosion of trust in computational methods. By embracing the benchmarking imperative, the research community can accelerate genuine progress while ensuring the reliability and reproducibility that form the bedrock of scientific integrity.

As the field stands at what many call "an AlphaFold moment" for atomistic simulation [15], the establishment of robust, community-wide benchmarking practices will determine whether this promise translates into genuine scientific advancement or remains an unfulfilled potential. The tools, frameworks, and methodologies now exist to make comprehensive benchmarking routine rather than exceptional—the responsibility lies with the research community to implement them consistently and rigorously.

In computational chemistry and drug discovery, benchmarking is the systematic process of evaluating and comparing the performance of predictive models against standardized datasets and metrics. This process is fundamental for assessing model robustness, reliability, and practical utility in real-world applications such as toxicity prediction and molecular property estimation [14]. The reliability of any computational model is intrinsically linked to three interconnected concepts: the Training Set, which provides the foundational data for model building; the Applicability Domain (AD), which defines the chemical space where model predictions are reliable; and Validation Metrics, which quantitatively measure model performance [16]. Together, these components form a critical framework for establishing confidence in computational predictions, guiding researchers in identifying the most suitable tools for chemical safety assessment, drug discovery, and material design [14].

Core Terminology Deep Dive

Applicability Domain (AD)

The Applicability Domain (AD) represents the "response and chemical structure space in which the model makes predictions with a given reliability" [17]. It establishes the boundaries within which a model can be confidently applied, based on the chemical space covered by its training data. Predictions for molecules falling outside the AD are considered unreliable [17].

Key Methods for Defining AD:

- Novelty Detection: Identifies compounds dissimilar to training set molecules using only their explanatory variables, without considering the underlying classifier or class labels [17].

- Confidence Estimation: Uses information from the trained classifier itself, typically measuring the distance of a compound to the model's decision boundary [17].

- Subgroup Discovery (SGD): Identifies domains of applicability as logical conjunctions of simple conditions (e.g., on lattice vectors or bond distances) where model error is substantially lower than its global average [18].

Training Sets

Training sets consist of chemical compounds with known experimental or calculated properties used to build predictive models. The composition and quality of training data directly influence model performance and the extent of its applicability domain [14].

Critical Aspects of Training Set Construction:

- Data Curation: Requires standardization of chemical structures, removal of duplicates, handling of inorganic and organometallic compounds, neutralization of salts, and identification of outliers [14].

- Chemical Space Analysis: Involves mapping training compounds against reference chemical spaces (e.g., approved drugs, industrial chemicals, natural products) to understand coverage limitations [14].

- Assessing Modelability: Indexes such as the Rivality Index (RI) can predict dataset suitability for modeling before model building, with high positive RI values indicating compounds outside the AD [16].

Validation Metrics

Validation metrics provide quantitative measures of model performance and prediction reliability, enabling comparison between different modeling approaches [14] [19].

Classification Metrics:

- Area Under the Receiver Operating Characteristic Curve (AUC-ROC): Measures the trade-off between true positive and false positive rates across different classification thresholds [19].

- Area Under the Precision-Recall Curve (AUC-PR): Particularly valuable for imbalanced datasets where inactive compounds significantly outnumber actives [19].

- Balanced Accuracy: Appropriate for balanced sets, calculated as the average of sensitivity and specificity [16].

Regression Metrics:

- Coefficient of Determination (R²): Measures the proportion of variance in the response variable explained by the model [14].

- Mean Absolute Error (MAE): Average magnitude of errors between predicted and experimental values [1].

- Root Mean Square Error (RMSE): Places higher weight on larger errors due to squaring of differences [1].

Uncertainty Quantification Metrics:

- Spearman's Rank Correlation: Assesses how well uncertainty estimates rank the observed errors [20].

- Negative Log Likelihood (NLL): Measures how well the uncertainty distribution explains the observed errors [20].

- Error-Based Calibration: The superior metric that checks if the average absolute error or RMSE matches the predicted uncertainty across uncertainty ranges [20].

Experimental Protocols for Benchmarking

Comprehensive Model Benchmarking Framework

Table 1: Key Steps in Model Benchmarking

| Step | Protocol Description | Purpose |

|---|---|---|

| Dataset Collection | Gather experimental data from literature and databases (e.g., ChEMBL, PHYSPROP) using systematic search terms and API access [14]. | Ensures comprehensive coverage of chemical space and endpoints. |

| Data Curation | Standardize structures, remove duplicates, neutralize salts, identify outliers using Z-scores, and resolve inconsistent values across datasets [14]. | Improves data quality and reliability for model training and validation. |

| Chemical Space Analysis | Plot datasets against reference chemical spaces (approved drugs, industrial chemicals) using molecular fingerprints and PCA [14]. | Determines chemical categories covered and identifies potential biases. |

| Model Training | Implement multiple algorithms (RF, SVM, Neural Networks) with appropriate hyperparameter settings and validation techniques [17]. | Enables fair comparison of different modeling approaches. |

| Performance Evaluation | Assess models using multiple metrics (AUC-ROC, R², etc.) with emphasis on performance inside the applicability domain [14]. | Provides comprehensive assessment of model strengths and limitations. |

Establishing the Applicability Domain

Table 2: Methods for Defining Applicability Domain

| Method Category | Specific Techniques | Implementation Considerations |

|---|---|---|

| Novelty Detection | Leverage, vicinity, distance to training set centroid, k-NN similarity [14] [17]. | Does not use class label information; based solely on feature space proximity. |

| Confidence Estimation | Class probability estimates, distance to decision boundary, classifier stability [17]. | Uses information from the trained classifier; generally more powerful than novelty detection. |

| Hybrid Approaches | ADAN (6 measurements), Consensus models, Random Forest with v-NN [16]. | Combines multiple approaches; often provides systematically better performance. |

Figure 1: Workflow for Comprehensive Model Benchmarking in Computational Chemistry

Quantitative Benchmarks and Performance Standards

Performance Across Chemical Properties

Table 3: Benchmarking Results for Property Prediction Models

| Property Type | Best Performing Models | Performance Metrics | Chemical Space Coverage |

|---|---|---|---|

| Physicochemical (PC) Properties | OPERA, Random Forests | R² average = 0.717 [14] | Drugs, industrial chemicals, pesticides [14] |

| Toxicokinetic (TK) Properties | Ensemble methods, SVM | R² average = 0.639 (regression)\nBalanced accuracy = 0.780 (classification) [14] | Relevant for ADMET profiling [14] |

| Bioactivity Prediction | Deep Learning, SVM, Random Forests | AUC-ROC: 0.8-0.9 range [19] | Diverse targets from ChEMBL (1300+ assays) [19] |

Uncertainty Quantification Benchmarking

Performance evaluation of uncertainty quantification methods requires specific metrics that differ from standard model validation [20]. The error-based calibration approach introduced by Levi et al. has been shown superior to metrics like Spearman's rank correlation, miscalibration area, and negative log likelihood [20]. This method validates that the relationship between predicted uncertainties and observed errors follows the expected statistical behavior where the average absolute error should approximate $\sqrt{\frac{2}{\pi}}\sigma$ and the root mean square error should approximate $\sigma$ for a suitably large subset of predictions [20].

Figure 2: Applicability Domain Assessment Workflow for Individual Predictions

The Scientist's Toolkit: Essential Research Reagents

Table 4: Essential Tools for Computational Chemistry Benchmarking

| Tool Category | Specific Tools | Function and Application |

|---|---|---|

| Chemical Databases | ChEMBL, PubChem, DrugBank, PHYSPROP | Sources of experimental data for training and validation [14] |

| Descriptor Calculation | RDKit, CDK, jCompoundMapper | Generation of molecular fingerprints and descriptors [14] |

| Modeling Algorithms | Random Forest, SVM, Neural Networks, k-NN | Core algorithms for building predictive models [17] |

| Validation Metrics | AUC-ROC, AUC-PR, R², Balanced Accuracy | Quantitative assessment of model performance [19] |

| Uncertainty Quantification | Ensemble methods, Latent Space Distance, Evidential Regression | Estimating prediction reliability and confidence intervals [20] |

Robust benchmarking in computational chemistry requires meticulous attention to training set composition, clear definition of applicability domains, and appropriate selection of validation metrics. The integration of these three components forms the foundation for developing reliable predictive models that can accelerate drug discovery and chemical safety assessment. Current research indicates that while no single algorithm universally outperforms others across all chemical domains, systematic benchmarking enables identification of optimal approaches for specific prediction tasks [14] [19]. Future methodological improvements should focus on developing more sophisticated applicability domain definitions, standardized benchmarking protocols, and enhanced uncertainty quantification techniques to further increase confidence in computational predictions.

The Role of Benchmarking in New Approach Methodologies (NAMs) and Reducing Animal Testing

The pharmaceutical and regulatory landscape is undergoing a fundamental transformation, marked by a strategic shift away from traditional animal testing toward human-relevant New Approach Methodologies (NAMs). This transition, championed by regulatory bodies including the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA), leverages advanced computational models, in vitro systems, and AI-driven analytics to evaluate drug safety and efficacy [21] [22] [23]. Benchmarking serves as the critical bridge connecting innovative computational methodologies to regulatory acceptance and real-world application. By providing a rigorous, standardized framework for validation, benchmarking ensures that computational models in chemistry and biology are predictive, reliable, and trustworthy enough to inform high-stakes decisions in drug development, thereby accelerating the reduction of animal use in accordance with the 3Rs principles (Replace, Reduce, Refine) [22] [23].

The Critical Function of Benchmarking in Computational NAMs

Within computational chemistry and drug discovery, benchmarking is not merely a performance check; it is the foundational process that establishes scientific validity and regulatory confidence. It systematically answers the question: "Can this model reliably recapitulate or predict complex biological and chemical phenomena for its intended purpose?"

Fragmented, domain-specific benchmarks have historically impeded progress toward universal models. The LAMBench benchmark, for instance, was created to address the lack of comprehensive evaluation frameworks for Large Atomistic Models (LAMs) that aim to approximate a universal potential energy surface [10]. It assesses models on three core capabilities:

- Generalizability: Accuracy across diverse, unseen atomistic systems.

- Adaptability: Capacity to be fine-tuned for tasks beyond primary training, such as structure-property relationships.

- Applicability: Stability and efficiency in real-world simulations, like molecular dynamics [10].

Similarly, in the field of binding prediction, Kramer et al. highlight the lack of sustained community benchmarks akin to the CASP challenge for protein structure prediction. This gap hinders the reliable comparison of methods for predicting ligand binding poses and affinities, which is a cornerstone of structure-based drug design [4].

The Critical Gap in Pose and Activity Prediction

The absence of long-term, blinded community benchmarks for binding pose- and activity prediction (P-AP) has significantly hampered progress in computational drug discovery. Unlike the protein structure prediction field, which has been rigorously advanced through the decades-long CASP challenge, P-AP lacks an equivalent framework. This makes it difficult for researchers to compare methods and track genuine improvements, ultimately limiting the adoption of reliable computational tools in the drug discovery pipeline [4].

Quantitative Benchmarking of Computational Chemistry Models

Robust benchmarking requires standardized datasets and protocols to objectively compare the performance of different computational methods. The following examples illustrate how this is practiced in the field.

Benchmarking Neural Network Potentials on Charge-Related Properties

A key study benchmarked Neural Network Potentials (NNPs) trained on Meta's Open Molecules 2025 (OMol25) dataset against experimental data for reduction potential and electron affinity. The results were compared to traditional low-cost computational methods, revealing the strengths and weaknesses of data-driven NNPs, even when they do not explicitly model charge-based physics [8].

Table 1: Performance of Computational Methods in Predicting Experimental Reduction Potentials (Mean Absolute Error, V)

| Method | Main-Group Set (OROP) | Organometallic Set (OMROP) |

|---|---|---|

| B97-3c (DFT) | 0.260 | 0.414 |

| GFN2-xTB (SQM) | 0.303 | 0.733 |

| eSEN-S (OMol25 NNP) | 0.505 | 0.312 |

| UMA-S (OMol25 NNP) | 0.261 | 0.262 |

| UMA-M (OMol25 NNP) | 0.407 | 0.365 |

Source: Adapted from [8]. MAE values in Volts (V). Lower is better.

Benchmarking on Non-Equilibrium Structures

The Wiggle150 benchmark addresses a critical gap by focusing on highly strained, non-equilibrium molecular conformations. This is essential for validating models used in ab initio molecular dynamics and reaction-path exploration, where molecules frequently adopt geometries far from their equilibrium states. The benchmark comprises 150 strained conformations of three molecules (adenosine, benzylpenicillin, and efavirenz), with reference energies derived from high-level DLPNO-CCSD(T)/CBS calculations. In this challenging test, the neural network potential AIMNet2 was identified as particularly robust among the methods surveyed [24].

Experimental Protocol for Benchmarking Reduction Potentials

The methodology for benchmarking computational models against experimental reduction potentials, as detailed in [8], involves a precise multi-step workflow:

- Structure Optimization: The non-reduced and reduced structures of each species are optimized using the NNP (or other method) in question, typically employing a geometry optimization library like

geomeTRIC. - Solvent Correction: Each optimized structure is processed through an implicit solvation model, such as the Extended Conductor-like Polarizable Continuum Model (CPCM-X), to obtain a solvent-corrected electronic energy.

- Energy Difference Calculation: The predicted reduction potential is calculated as the difference in electronic energy (in electronvolts) between the non-reduced and reduced structures. This value is directly comparable to the experimental reduction potential in volts.

- Statistical Comparison: The predicted values are compared against the experimental dataset. Standard metrics like Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and the coefficient of determination (R²) are computed to quantify accuracy.

A Framework for Generating High-Quality Negative Data

A major challenge in validating virtual high-throughput screening (vHTS) pipelines is the lack of high-quality negative data (i.e., confirmed non-binders). An innovative computational approach generates such data without additional experiments:

- Ligand Randomization: Docking known ligands into unrelated protein structures from the Protein Data Bank (PDB) to create a set of confirmed non-binders.

- Isomer Generation: Creating structural isomers of known active ligands, which are highly likely to be inactive, providing negative data points that are closely matched in molecular properties to the positives.

This method produces practically unlimited negative data that is superior in quality and quantity to previously available sets, enabling rigorous validation of every step in a vHTS pipeline to ensure it adds genuine enrichment over random selection [25].

The Path to Regulatory Acceptance: A Tiered Workflow

For a NAM to be adopted in regulatory decision-making, it must undergo a rigorous evaluation process with regulatory agencies. The following workflow outlines the key stages and interactions for achieving regulatory acceptance.

Diagram 1: Path to Regulatory Acceptance of NAMs. The workflow illustrates the iterative stages of engagement with regulators like the EMA, from initial briefing to full qualification. ITF: Innovation Task Force. Adapted from [23].

The context of use is a formal description of the specific circumstances under which the NAM is applied and is the cornerstone of this process. Regulatory requirements are most stringent for NAMs intended to replace animal studies in safety assessment, where a formal qualification opinion from the Committee for Human Medicinal Products (CHMP) may be required. For applications in primary pharmacology or proof-of-concept, acceptance can be achieved on a case-by-case basis within a marketing authorization application [23].

The successful implementation and benchmarking of NAMs rely on a suite of computational and experimental tools.

Table 2: Key Research Reagent Solutions for NAMs Implementation

| Tool / Resource | Type | Function in NAMs & Benchmarking |

|---|---|---|

| Organoids & 3D Cell Cultures | In Vitro System | Provides complex, human-relevant tissue models for efficacy and toxicity testing, bridging the gap between 2D cells and in vivo models [22]. |

| Organs-on-a-Chip | In Vitro System | Microphysiological systems that mimic human organ function and interaction for high-fidelity safety and ADME profiling [21] [22]. |

| OMol25 Dataset | Computational Data | A massive dataset of >100M quantum chemical calculations used to pre-train universal neural network potentials for molecular modeling [8]. |

| LAMBench | Benchmarking Software | A comprehensive benchmarking system to evaluate the generalizability, adaptability, and applicability of Large Atomistic Models [10]. |

| Wiggle150 | Benchmarking Dataset | A curated set of 150 highly strained molecular conformations with reference energies for testing model robustness on non-equilibrium structures [24]. |

| ChemBench | Benchmarking Framework | An automated framework with >2,700 curated questions to evaluate the chemical knowledge and reasoning abilities of Large Language Models [26]. |

Benchmarking is the linchpin in the transition to a modern, human-relevant paradigm for drug safety and efficacy evaluation. Through rigorous, community-driven benchmarks like LAMBench, Wiggle150, and ChemBench, computational models achieve the validation necessary to gain the confidence of researchers and regulators. As the FDA's plan to phase out animal testing for monoclonal antibodies demonstrates, the future of drug development is inextricably linked to the continued development and validation of NAMs [21]. By adhering to structured benchmarking protocols and engaging early with regulatory pathways, the scientific community can accelerate the adoption of these innovative tools, ultimately leading to safer, more effective medicines developed through more efficient and ethical means.

Benchmarking is an indispensable practice in computational chemistry and drug development, serving as the foundational process for assessing the accuracy, reliability, and performance of computational models and experimental workflows. Within computational chemistry research, benchmarking is formally defined as the systematic process of comparing a model's predictions against reference data—whether experimental results or higher-level theoretical calculations—to establish its predictive validity and domain of applicability [27]. This process enables researchers to quantify progress, validate new methodologies, and make informed decisions based on empirical evidence rather than intuition alone.

In the broader thesis of computational model validation, benchmarking represents the critical bridge between theoretical development and practical application. It transforms abstract algorithms into trusted tools for scientific discovery and industrial application. As computational methods increasingly inform critical decisions in drug development and materials design, the rigor of benchmarking practices directly impacts the pace of innovation and the reliability of outcomes across chemical sciences and related fields [4] [28].

Critical Gaps in Current Benchmarking Practices

Methodological and Data Quality Deficiencies

Current benchmarking approaches across computational chemistry and pharmaceutical development exhibit significant shortcomings that undermine their utility and reliability.

Table 1: Primary Deficiencies in Traditional Benchmarking Approaches

| Deficiency Category | Specific Limitations | Impact on Decision-Making |

|---|---|---|

| Data Completeness | Infrequent updates failing to incorporate new data [29] | Decisions based on outdated information leading to risk underestimation |

| Data Quality | Overly broad categorization (e.g., "oncology" vs. specific cancer subtypes) [29] | Inaccurate probability of success assessments for specific targets |

| Methodological Rigor | Overly simplistic Probability of Success (POS) calculations multiplying phase transition rates [29] | Systematic overestimation of drug development success rates |

| Domain Applicability | Inadequate handling of innovative development paths (e.g., skipped phases, dual phases) [29] | Poor benchmarking for non-standard development approaches |

The pharmaceutical industry exemplifies these challenges, where traditional benchmarking often relies on static datasets that "are updated infrequently and therefore don't draw on the most up-to-date information" [29]. This temporal decay in data relevance is particularly problematic in fast-evolving fields. Furthermore, simplistic methodological approaches, such as multiplying phase transition probabilities to determine overall likelihood of success, systematically "overestimate a drug's success rate, resulting in less-than-ideal data for decision-making" [29].

Community and Infrastructure Limitations

Beyond methodological issues, the field suffers from insufficient community infrastructure and standardized practices for sustained benchmarking. Unlike protein structure prediction, which has benefited from decades of continuous community evaluation through the Critical Assessment of Structure Prediction (CASP), small molecule drug discovery "lacks equivalent, sustained frameworks for progress" [4]. This infrastructure gap manifests through several critical challenges:

- Dataset Contamination: Widespread overlap between training and evaluation datasets leads to overly optimistic performance estimates [4].

- Evaluation Complexity: Structurally complex or flexible binding sites, non-physical poses, and variable experimental data quality complicate fair comparisons [4].

- Temporal Validation Deficits: Absence of prospective, time-stamped evaluations that simulate real-world discovery scenarios [4].

- Unconfirmed Decoys: Use of unvalidated negative examples in binding affinity predictions [4].

These limitations collectively hinder reliable comparison of computational methods and obscure genuine performance improvements, ultimately slowing the translation of methodological advances into practical discovery tools.

Domain-Specific Benchmarking Challenges

Pharmaceutical Development and Clinical Translation

In drug development, benchmarking serves crucial functions in risk management, resource allocation, and regulatory strategy, yet significant gaps persist between benchmarking practices and decision-making needs. Recent empirical analyses of FDA approvals (2006-2022) reveal an average Likelihood of Approval (LoA) rate of 14.3% across leading pharmaceutical companies, with substantial variation ranging from 8% to 23% [30]. This heterogeneity underscores the limitations of one-size-fits-all benchmarking approaches.

Clinical development benchmarking extends beyond success rates to encompass operational metrics including site performance, protocol amendments, fair market value, and patient enrollment [31]. Each domain presents unique benchmarking challenges:

- Protocol Design: Benchmarking protocol amendment experiences and their impact on study duration requires "systematic assessments of the number and causes of protocol amendments" to create better, less costly protocols [31].

- Site Selection: Benchmarking first-patient-in cycle times across regions to improve site activation and trial initiation [31].

- Investigator Payments: Fair market value benchmarking to establish appropriate payments based on therapeutic area, region, and trial phase [31].

The transition between clinical phases represents a particularly critical benchmarking gap, with traditional approaches often failing to account for program-specific factors that significantly influence transition probabilities.

Computational Chemistry and Method Validation

Theoretical chemistry faces distinct benchmarking challenges rooted in the relationship between computational predictions and experimental validation. A concerning trend identified in the literature is the practice of "theory benchmarking theory," where "the quality of a model is thereby no longer measured through any relation to experiment, but purely to the similarity to another model" [27]. This self-referential approach has become so prevalent that "many manuscripts dedicated to quantum chemistry benchmarks do not feature a single experimental result" [27].

The GMTKN30 database exemplifies this issue, containing "only a small amount of experimental reference data" with "14/30 sets us[ing] as reference data estimated CCSD(T)/CBS limits" [27]. This reliance on theoretical rather than experimental benchmarks creates circular validation that may not reflect real-world predictive performance.

The recent introduction of massive datasets like Meta's Open Molecules 2025 (OMol25), containing "over 100 million quantum chemical calculations" representing "biomolecules, electrolytes, and metal complexes," offers potential improvements through unprecedented chemical diversity and data quality [15]. However, the scale of such datasets "will make training challenging for organizations without access to large numbers of GPUs," potentially creating resource-based disparities in benchmarking capabilities [15].

Table 2: Comparison of Computational Chemistry Datasets and Benchmarks

| Dataset/Benchmark | Size | Diversity | Reference Quality | Key Limitations |

|---|---|---|---|---|

| OMol25 (2025) | >100 million calculations [15] | High (biomolecules, electrolytes, metal complexes) [15] | ωB97M-V/def2-TZVPD level theory [15] | Computational resource requirements limit accessibility |

| GMTKN30 | 30 benchmark sets [27] | Moderate (main-group organic) [27] | Primarily CCSD(T)/CBS estimates [27] | Limited experimental validation; theory-only references |

| ANI Series | Millions of structures [15] | Low (simple organic, 4 elements) [15] | ωB97X/6-31G(d) level theory [15] | Limited element coverage and chemical diversity |

Experimental Protocols for Robust Benchmarking

Framework for Predictive Model Validation

Robust benchmarking requires standardized experimental protocols that ensure fair comparison and reproducible results. Based on community best practices, the following methodology provides a template for comprehensive model evaluation:

1. Dataset Curation and Validation

- Collect experimental data from diverse sources including literature, public databases (e.g., PubChem, DrugBank), and proprietary collections [14].

- Standardize chemical representations using automated procedures (e.g., RDKit Python package) to address inorganic/organometallic compounds, mixtures, and unusual elements [14].

- Resolve duplicates by averaging continuous values with standardized standard deviation <0.2; remove compounds with greater variance [14].

- Identify and exclude response outliers using Z-score analysis (Z-score >3 considered outliers) [14].

2. Chemical Space Analysis

- Establish reference chemical space using representative compounds (e.g., ECHA database for industrial chemicals, DrugBank for approved drugs) [14].

- Compute molecular descriptors (e.g., FCFP fingerprints with radius 2 folded to 1024 bits) using standardized tools [14].

- Apply Principal Component Analysis (PCA) to visualize dataset coverage relative to reference space [14].

3. Model Evaluation and Applicability Domain Assessment

- Perform external validation using temporally or structurally distinct test sets [32] [14].

- Define and apply applicability domain (AD) methods (e.g., leverage, structural vicinity) to identify reliable predictions [14].

- Employ multiple metrics including R² for regression, balanced accuracy for classification, and domain-specific measures [32] [14].

4. Performance Benchmarking

- Compare against established baselines and state-of-the-art methods [14].

- Conduct statistical significance testing on performance differences [32].

- Report performance stratified by applicability domain inclusion [14].

Community-Wide Evaluation Frameworks

Inspired by successful initiatives in structural biology, emerging frameworks for community-wide benchmarking address the need for standardized, blinded evaluations:

Temporal Splitting Protocol

- Sort drug-indication associations by approval date [32]

- Use earlier approvals for training, later approvals for testing [32]

- Simulates real-world predictive scenarios more realistically than random splits [32]

Leave-One-Out Cross-Validation

- Iteratively exclude all associations for specific indications or drug classes [32]

- Tests model performance on completely novel therapeutic areas [32]

- Particularly relevant for drug repurposing applications [32]

Stratified Performance Reporting

- Report metrics stratified by chemical classes, target families, and disease areas [14]

- Include applicability domain coverage statistics [14]

- Disclose performance variation across different data segments [14]

Essential Research Reagents and Computational Tools

The Scientist's Toolkit for Benchmarking Studies

Robust benchmarking requires both computational tools and experimental data resources. The following table catalogs essential resources identified through recent benchmarking initiatives:

Table 3: Essential Resources for Computational Chemistry Benchmarking

| Resource Category | Specific Tools/Databases | Primary Function | Key Features/Benefits |

|---|---|---|---|

| Quantum Chemical Datasets | OMol25 [15], ANI series [15], SPICE [15] | Training and validation of neural network potentials | High-accuracy calculations, diverse chemical spaces, extensive coverage |

| Drug Discovery Databases | Therapeutic Targets Database (TTD) [32], Comparative Toxicogenomics Database (CTD) [32], DrugBank [14] | Ground truth mapping for drug-indication associations | Manually curated interactions, standardized identifiers, multiple evidence levels |

| QSAR Modeling Platforms | OPERA [14], admetSAR [14] | Prediction of physicochemical and toxicokinetic properties | Applicability domain assessment, batch prediction capabilities, open-source availability |

| Cheminformatics Tools | RDKit [14], CDK (Chemistry Development Kit) [14] | Molecular standardization, descriptor calculation, fingerprint generation | Open-source, comprehensive functionality, Python integration |

| Clinical Development Data | ClinicalTrials.gov [30], internal pharmaceutical company databases [31] | Clinical trial success rates, operational metrics | Real-world development outcomes, comprehensive trial metadata |

A Roadmap for Community Action

Addressing the benchmarking gaps in computational chemistry and drug development requires coordinated community action. Based on identified challenges and emerging best practices, we propose the following roadmap:

1. Establish Sustained Benchmarking Infrastructure

- Create blinded evaluation platforms for pose and activity prediction similar to CASP [4]

- Develop mechanisms for continuous dataset updates while maintaining backward compatibility [4]

- Implement temporal validation as a standard practice rather than an exception [32]

2. Improve Dataset Quality and Diversity

- Prioritize experimental validation alongside theoretical improvements [27]

- Expand chemical space coverage to include under-represented compound classes [14]

- Develop standardized protocols for data curation and annotation [14]

3. Enhance Methodological Rigor

- Replace simplistic success rate calculations with nuanced risk assessment models [29]

- Develop standardized metrics that reflect real-world utility rather than theoretical performance [4]

- Implement improved statistical methodologies that account for program-specific factors [29]

4. Foster Cross-Community Collaboration

- Encourage collaboration between computational and experimental researchers [27]

- Establish partnerships between academia, industry, and regulatory agencies [4]

- Develop shared standards for data reporting and method documentation [28]

The implementation of this roadmap requires commitment across the research community but offers substantial rewards. As noted in recent literature, pairing "robust benchmarking with modern cheminformatic and bioinformatic tools like molecular dynamics simulations and machine learning" presents "a clear opportunity to raise the standard of computer-aided drug discovery" [4]. By addressing current gaps through sustained, community-wide effort, we can accelerate the translation of computational innovations into practical solutions for chemical and pharmaceutical challenges.

How to Benchmark: Frameworks, Tools, and Real-World Applications in Drug Discovery

Within computational chemistry and quantitative structure-activity relationship (QSAR) modeling, benchmarking serves as the critical process for objectively evaluating and validating computational models against known experimental data. This practice is fundamental for assessing model predictivity, reliability, and applicability domain, ensuring that in silico predictions can be trusted for decision-making in drug discovery and chemical safety assessment [1]. The impossibility of conducting experimental tests on all compounds due to cost and time constraints further underscores the necessity of robust computational methods [14]. A systematic benchmarking workflow, from rigorous data curation to comprehensive performance assessment, provides researchers, regulatory authorities, and industry professionals with a framework to identify optimal computational tools for predicting crucial physicochemical (PC) and toxicokinetic (TK) properties [14].

Foundational Principles of Model Validation

Validation of computational results against experimental data is paramount. This process ensures the accuracy and reliability of computational models, allowing researchers to confidently predict molecular properties and behaviors [1]. Key components of this validation include benchmarking, which evaluates models against known experimental results; model validation, which assesses how well computational predictions align with experimental observations; and error analysis, which quantifies discrepancies [1]. Without this rigorous process, models risk producing inaccurate predictions, leading to flawed scientific conclusions and poor decision-making in critical applications like drug development, where 40–60% of drug failures in clinical trials stem from PC and bioavailability deficiencies [14].

Error Analysis and Statistical Assessment

Comprehensive error analysis is essential for understanding model limitations. Systematic errors introduce consistent bias from improperly calibrated instruments or flawed theoretical assumptions, while random errors cause unpredictable fluctuations and can be reduced by increasing sample size [1]. Statistical techniques for validation include:

- Descriptive statistics (mean, median, standard deviation) to summarize dataset features

- Inferential statistics to draw conclusions about populations based on sample data

- Regression analysis to model relationships between variables

- Confidence intervals to provide plausible value ranges for population parameters [1]

Advanced approaches like machine learning techniques (random forests, neural networks) can identify complex patterns in large datasets, while Bayesian statistics incorporate prior knowledge and update probabilities as new data becomes available [1].

Stage 1: Data Curation and Standardization

High-quality molecular datasets are the foundation of reliable QSAR modeling and drug discovery [33]. The data curation process transforms raw, often inconsistent chemical data into standardized, ready-to-use datasets for cheminformatic analysis. This initial stage is critical, as many molecular databases contain inaccuracies such as invalid structures, duplicates, and experimental outliers that compromise model performance and reproducibility [33] [14]. Automated workflows help researchers retrieve chemical data (SMILES) from the web, check their correctness, and curate them to produce consistent datasets [34].

Data Collection and Curation Protocols

A robust curation procedure involves multiple steps to ensure data integrity. For substances lacking SMILES notation, isomeric SMILES should be retrieved using the PubChem PUG REST service from CAS numbers or chemical names [14]. The subsequent standardization and curation process should implement an automated procedure that addresses several key aspects:

Table 1: Key Steps in Molecular Data Curation

| Curation Step | Description | Implementation |

|---|---|---|

| Structure Validation | Identify and remove inorganic/organometallic compounds, mixtures, and compounds with unusual elements | RDKit Python package functions [14] |

| Salt Neutralization | Remove counterions to standardize to the parent structure | Automated in-house procedures [14] |

| Duplicate Removal | Identify and remove duplicates at SMILES level | Structural comparison algorithms [33] |

| Outlier Detection | Remove intra- and inter-outliers with inconsistent values | Z-score calculation (Z-score >3 considered outliers) [14] |

| Unit Standardization | Convert all data to consistent units for comparison | Appropriate conversion factors applied across datasets [14] |

For duplicate compounds, specific protocols must be followed. With continuous data, duplicates with a standardized standard deviation (standard deviation/mean) greater than 0.2 should be considered ambiguous and removed, while experimental values should be averaged if their difference is lower [14]. For binary classification data, only compounds with the same response values should be retained [14]. Tools like MEHC-curation implement a three-stage pipeline (validation, cleaning, normalization) with integrated duplicate removal and error tracking, making high-quality curation accessible to non-experts [33].

Data Curation Workflow

The following diagram illustrates the comprehensive data curation workflow from initial collection to finalized datasets:

Stage 2: Chemical Space Analysis and Applicability Domain

The applicability of benchmarking results is strictly limited to the chemical space covered by the datasets used for model evaluation [14]. Analyzing this chemical space ensures that validation results remain relevant to the specific categories of chemicals under investigation, such as pharmaceuticals, industrial chemicals, or natural products. Understanding the applicability domain (AD) of QSAR models is crucial for identifying when models can provide reliable predictions for query chemicals based on their similarity to the training set compounds [14].

Chemical Space Mapping Methodology

To obtain a meaningful view of the chemical space covered by validation datasets, chemicals should be plotted against a reference chemical space encompassing main categories of real-life interest. This reference space should include data from:

- The ECHA database of substances registered under REACH as representative of industrial chemicals

- The Drug Bank as representative of approved drugs

- The Natural Products Atlas as representative of natural chemical products [14]

The technical process for chemical space analysis involves:

- Standardization of all compound structures

- Fingerprint generation using functional connectivity circular fingerprints (FCFP) with a radius of 2 folded to 1024 bits computed using CDK

- Dimensionality reduction via principal component analysis (PCA) with two components applied to the descriptor matrix

- Visualization by plotting each collected dataset on the obtained two-dimensional chemical space defined by the PCA to determine coverage of relevant chemical categories [14]

This analysis confirms the validity of benchmarking results for specific chemical categories and helps researchers select appropriate models for their specific chemical classes of interest.

Stage 3: Model Training and Hyperparameter Tuning

With curated datasets and understood chemical space, the workflow proceeds to model development. Machine learning methods for developing classification QSAR models should incorporate calculation and selection of chemical descriptors, tuning of model hyperparameters, and methods to handle data unbalancing [34]. Automated workflows implementing six machine learning methods can efficiently develop QSAR models, with the additional capability to predict external chemicals [34].

Machine Learning Workflow for QSAR Modeling

The following diagram illustrates the comprehensive model training and validation process:

Stage 4: Performance Assessment and Benchmarking Metrics

The performance assessment stage quantitatively evaluates model predictivity using appropriate statistical metrics and validation procedures. For QSAR models predicting PC and TK properties, benchmarking typically emphasizes the performance of models inside their applicability domain [14]. This external validation provides the most realistic assessment of how models will perform on new, previously unseen chemicals.

Key Performance Metrics for Model Benchmarking

Different metrics are required for regression (continuous) and classification (categorical) models:

Table 2: Performance Metrics for Computational Model Assessment

| Model Type | Key Metrics | Interpretation and Application |

|---|---|---|

| Regression Models | R² (Coefficient of Determination) | Proportion of variance explained by the model; R² average of 0.717 for PC properties and 0.639 for TK properties reported in benchmarks [14] |

| Mean Absolute Error (MAE) | Average magnitude of errors between predicted and experimental values [1] | |

| Root Mean Square Error (RMSE) | Standard deviation of prediction errors, giving higher weight to large errors [1] | |