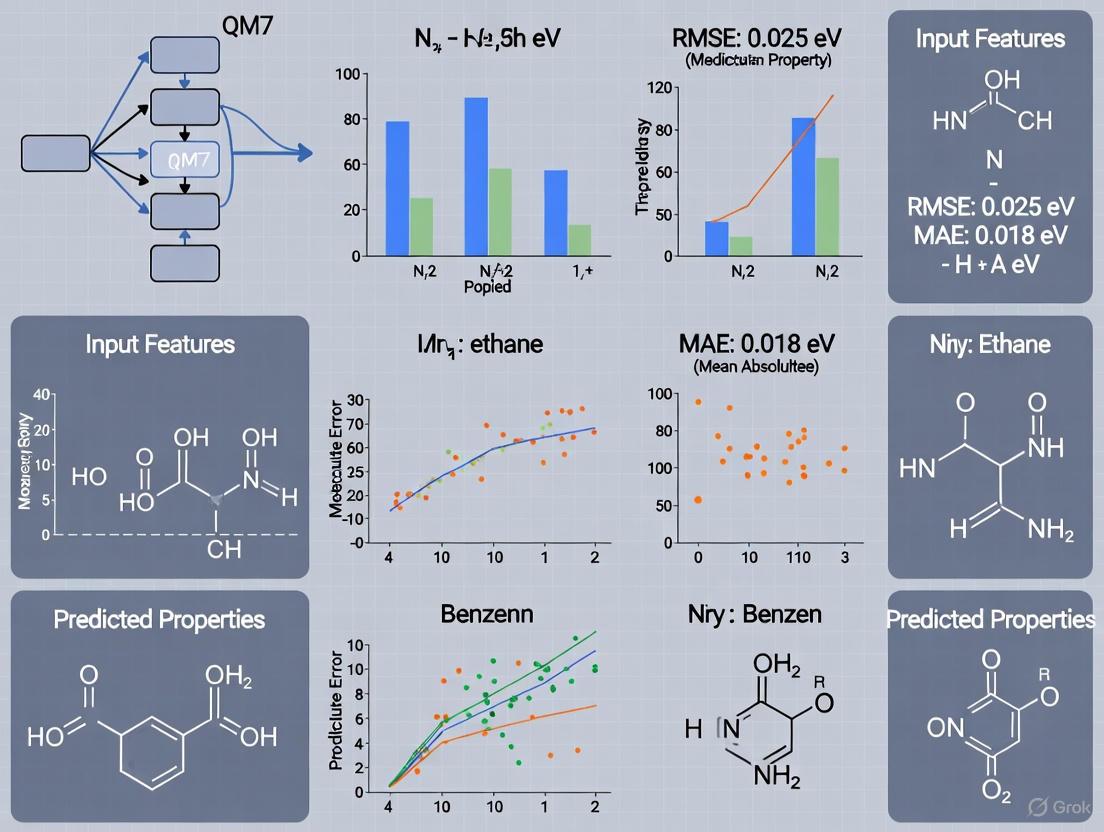

Benchmarking Machine Learning Models on the QM7 Dataset: From Molecular Property Prediction to Drug Discovery Applications

This article provides a comprehensive analysis of machine learning (ML) model performance on the foundational QM7 quantum chemistry dataset.

Benchmarking Machine Learning Models on the QM7 Dataset: From Molecular Property Prediction to Drug Discovery Applications

Abstract

This article provides a comprehensive analysis of machine learning (ML) model performance on the foundational QM7 quantum chemistry dataset. It explores the dataset's role in benchmarking ML algorithms for predicting molecular properties like atomization energies, covering foundational concepts, diverse methodological approaches from kernel ridge regression to advanced graph neural networks, and key optimization techniques. The content also addresses common training challenges, performance validation against established benchmarks, and the dataset's critical implications for accelerating property prediction in pharmaceutical and biomedical research, offering researchers and drug development professionals a detailed guide to the current state and future potential of ML in computational chemistry.

Understanding the QM7 Dataset: The Benchmark for Quantum Machine Learning

The QM7 dataset is a foundational resource in computational chemistry and machine learning, providing a benchmark for developing models that predict molecular properties. This guide details its composition, explores machine learning performance, and compares it with newer datasets.

Dataset Composition and Representation

The QM7 dataset is a precise subset of the GDB-13 database, which enumerates nearly a billion stable and synthetically accessible organic molecules [1].

- Molecule Scope: It includes all molecules with up to 7 heavy atoms (Carbon, Nitrogen, Oxygen, and Sulfur) and a maximum of 23 total atoms (including hydrogen) [1].

- Data Volume: In total, it contains 7,165 unique molecular structures [1].

- Chemical Diversity: The dataset features a wide variety of molecular structures, including double and triple bonds, cycles, and functional groups like carboxy, cyanide, amide, alcohol, and epoxy [1].

A key feature of QM7 is the Coulomb matrix, a representation that encodes molecular structure with built-in invariance to translation and rotation [1]. For a molecule with (N) atoms, the Coulomb matrix (C) is defined as: [ \begin{align} C_{ii} &= \frac{1}{2}Z_i^{2.4} \ C_{ij} &= \frac{Z_iZ_j}{|R_i - R_j|} \quad (\text{for } i \neq j) \end{align} ] where (Zi) is the nuclear charge of atom (i) and (Ri) is its position in 3D space [1]. The primary property to predict is the atomization energy, computed at the quantum-mechanical PBE0 level of theory and provided in kcal/mol, with values ranging from -800 to -2000 kcal/mol [1].

Machine Learning Performance Benchmark

The QM7 dataset is a standard benchmark for evaluating machine learning models predicting quantum mechanical properties. The performance is typically measured using Mean Absolute Error (MAE) in kcal/mol for atomization energies, assessed via a standardized 5-fold cross-validation procedure [1].

The table below summarizes the performance of various machine learning methods on the QM7 dataset.

| Model / Method | Key Features / Representation | Test Error (MAE in kcal/mol) |

|---|---|---|

| Kernel Ridge Regression (Rupp et al., 2012) [1] | Gaussian Kernel on sorted eigenspectrum of Coulomb matrix | 9.9 |

| Multilayer Perceptron (Montavon et al., 2012) [1] | Binarized random Coulomb matrices | 3.5 |

Experimental Protocol for Benchmarking

Adherence to a consistent experimental protocol is crucial for fair model comparison.

- Data Splits: The dataset includes a predefined splitting matrix

P(5 x 1433) for cross-validation [1]. This matrix specifies five distinct splits, each reserving 1,433 molecules for testing and using the remaining 5,732 for training. Models must be evaluated across all five splits, with the reported MAE being the average. - Evaluation Metric: The standard metric is the Mean Absolute Error (MAE) between the predicted and the true quantum-mechanical atomization energies [1].

- Input Representation: The primary input is the Coulomb matrix. However, models often use processed versions of this matrix, such as its sorted eigenspectrum or a randomly binarized form, to introduce invariance to atom indexing [1].

Evolution Beyond QM7

While QM7 established a critical benchmark, the field has since developed larger and more comprehensive datasets to explore broader chemical spaces and more complex properties.

The QM7 Family and Related Datasets

The limitations of QM7 led to the creation of extended datasets.

| Dataset | Description | Key Advancements |

|---|---|---|

| QM7-X [2] [3] | A comprehensive dataset of 42 properties for ~4.2 million structures. | Extends QM7 by exhaustively sampling constitutional/structural isomers, stereoisomers, and non-equilibrium structures. |

| QM7b [1] | An extension of QM7 for multitask learning. | Includes 13 additional properties (e.g., polarizability, HOMO/LUMO energies) and 7211 molecules (including Chlorine). |

| QM9 [1] | Properties for 134,000 stable small organic molecules made up of CHONF. | Covers molecules with up to 9 heavy atoms, providing a much larger chemical space. |

| OMol25 [4] [5] | A 2025 dataset of over 100 million molecular snapshots. | Radically scales up system size (up to 350 atoms), elemental diversity (83 elements), and includes complex interactions like explicit solvation. |

Modern Machine Learning Context

The scale of modern datasets like OMol25, which required six billion CPU hours to generate, underscores a shift in the field [4]. Training data acquisition has become a primary bottleneck, driving research into methods like Minimal Multilevel Machine Learning (M3L) designed to optimize training data efficiency and reduce computational costs [6]. Furthermore, the community now emphasizes robust and standardized evaluations and benchmarks to reliably measure model performance on chemically relevant tasks [4] [5].

Research Toolkit

This section details essential resources for working with the QM7 dataset and related research.

| Resource Name | Function / Description |

|---|---|

| QM7 / QM7b / QM9 Datasets | Foundational benchmarks for developing and testing molecular machine learning models [1]. |

| Coulomb Matrix Representation | A rotation- and translation-invariant representation of molecular structure that serves as a standard input for models [1]. |

| Defined Cross-Validation Splits | Predefined data splits (included with QM7) ensure fair and reproducible comparison of model performance [1]. |

| OMol25 Dataset & Evaluations | A modern, large-scale benchmark for testing model performance across a diverse range of chemical systems and tasks [4] [5]. |

Experimental Workflow Visualization

The following diagram illustrates a standardized workflow for conducting machine learning research using the QM7 dataset.

ML Benchmarking Pathway

This diagram outlines the logical process for benchmarking a new machine learning model against established baselines on QM7.

The accurate prediction of molecular properties is a cornerstone of computational chemistry, directly impacting drug discovery and materials science. For machine learning (ML) models, the quality of the underlying quantum-mechanical (QM) data is paramount. The QM7 dataset and its subsequent expansions have become central benchmarks in this field, providing a structured chemical space of small organic molecules for developing and validating ML approaches [2] [1]. This guide objectively compares the performance and scope of these key datasets, detailing the experimental protocols that underpin their generation and their critical role in advancing ML model performance.

Comparative Analysis of Key Molecular Datasets

The evolution from QM7 to newer datasets represents a concerted effort to expand the scope and accuracy of molecular property data available for machine learning. The table below provides a quantitative comparison of these foundational resources.

Table 1: Comparison of Key Quantum-Mechanical Molecular Datasets

| Dataset | Molecule Count | Heavy Atoms | Total Atoms | Element Coverage | Key Properties Computed |

|---|---|---|---|---|---|

| QM7 [1] | 7,165 | Up to 7 (C, N, O, S) | Up to 23 | H, C, N, O, S | Atomization Energy (PBE0) |

| QM7b [1] | 7,211 | Up to 7 (C, N, O, S, Cl) | Up to 23 | H, C, N, O, S, Cl | 14 Properties (Polarizability, HOMO, LUMO, Excitation Energies) at multiple theory levels |

| QM7-X [2] | ~4.2 million | Up to 7 (C, N, O, S, Cl) | 4 - 23 | H, C, N, O, S, Cl | 42 Global & Local Properties (Atomization energies, Dipole moments, Polarizabilities, HOMO-LUMO gaps, Dispersion coefficients) |

| Halo8 [7] | ~20M structures from ~19k pathways | 3 - 8 | Not Specified | H, C, N, O, F, Cl, Br | Energies, Forces, Dipole Moments, Partial Charges (ωB97X-3c) |

| OMol25 [4] | >100 million | Includes heavy elements & metals | Up to 350 | Most of the periodic table | Energies, Forces (DFT) |

The original QM7 dataset established a critical benchmark, providing Coulomb matrices and atomization energies for a limited set of equilibrium molecular structures [1]. Its extension, QM7b, introduced multitask learning challenges by adding 13 properties—including polarizabilities, HOMO/LUMO eigenvalues, and excitation energies—computed at different levels of theory (ZINDO, SCS, PBE0, GW), and included molecules with chlorine atoms [1].

A significant leap was achieved with the QM7-X dataset, which dramatically expanded the chemical space by including ~4.2 million equilibrium and non-equilibrium structures. It provides 42 tightly-converged quantum-mechanical properties at the PBE0+MBD level, enabling a more comprehensive exploration of structure-property relationships [2]. More recent datasets like Halo8 focus on specific chemical domains, in this case incorporating halogen chemistry and reaction pathways, which are crucial for pharmaceutical applications [7]. The OMol25 dataset represents a scale shift, featuring simulations of much larger molecules (up to 350 atoms) including metals, aiming to enable ML modeling of real-world complexity [4].

Experimental Protocols and Methodologies

Molecular Structure Generation and Sampling

The foundational step for datasets like QM7-X involves exhaustive sampling of molecular configurations.

- Generation of Equilibrium Structures: For QM7-X, initial 3D structures for all molecules with up to seven heavy atoms from the GDB-13 database were generated using the MMFF94 force field via Open Babel. A conformational isomer search was then performed using the Confab tool with the MMFF94 force field, retaining conformers within 50 kcal/mol of the most stable structure and with an RMSD > 0.5 Å. These structures were subsequently re-optimized using the DFTB3+MBD method [2].

- Sampling of Non-Equilibrium Structures: To move beyond equilibrium geometries, QM7-X generated 100 non-equilibrium structures for each equilibrium structure. This was done by displacing each molecular structure along a linear combination of its normal mode coordinates (computed at the DFTB3+MBD level) to achieve an average energy difference analogous to a classical thermal energy at 1500 K, ensuring a Boltzmann distribution of sampled structures [2].

- Reaction Pathway Sampling: The Halo8 dataset employed a more advanced Reaction Pathway Sampling (RPS) method. This workflow, implemented in the "Dandelion" pipeline, uses automated reaction discovery via the single-ended growing string method (SE-GSM) and refines potential energy surfaces with nudged elastic band (NEB) calculations. This method captures transition states and bond-breaking/forming regions absent from equilibrium-focused datasets [7].

Quantum-Mechanical Property Calculations

After structure generation, high-level quantum-mechanical calculations are performed to compute the target properties.

- QM7-X Protocol: All molecular structures in QM7-X underwent tightly converged QM calculations using hybrid density-functional theory (PBE0) with a many-body treatment of van der Waals dispersion interactions (MBD). These calculations used numeric atom-centered orbitals to compute the 42 global and local properties [2].

- Halo8 Protocol: The Halo8 dataset performed all calculations at the ωB97X-3c level of theory. This composite method includes dispersion corrections (D4) and uses an optimized basis set. The selection of this method was based on a benchmark study that found it provided an optimal compromise between accuracy (weighted MAE of 5.2 kcal/mol on the DIET test set) and computational cost, being five times faster than a quadruple-zeta basis set calculation [7].

- Multi-Level Workflows: To address the high computational cost of pure DFT calculations, efficient multi-level workflows have been developed. The Halo8 team reported a 110-fold speedup over DFT-only approaches by using the semi-empirical GFN2-xTB method for initial geometry optimization and pathway exploration, followed by single-point DFT calculations on selected structures for final accuracy [7].

Workflow for Dataset Construction and Model Training

The process of creating a benchmark dataset and using it to train machine learning models involves several key stages, from initial molecule selection to final model validation.

The construction of quantum-mechanical datasets and the development of ML models rely on a suite of computational tools and data resources.

Table 2: Essential Computational Tools for Molecular ML Research

| Tool / Resource Name | Type | Primary Function in Research |

|---|---|---|

| GDB-13 [2] [1] | Chemical Database | A database of nearly 1 billion theoretically stable organic molecules, providing the foundational chemical space for datasets like QM7 and QM7-X. |

| DFTB+ & ASE [2] | Software Package | Computational chemistry codes used for performing density-functional tight-binding (DFTB) and other quantum-mechanical calculations, including geometry optimizations. |

| ORCA [7] | Software Package | A widely used software package for performing advanced density functional theory (DFT) calculations, such as the ωB97X-3c computations in the Halo8 dataset. |

| Open Babel / RDKit [2] [7] | Cheminformatics Toolkit | Open-source tools used for chemical file format conversion, force field-based 3D structure generation (MMFF94), and stereoisomer enumeration. |

| Coulomb Matrix [1] | Molecular Representation | An early ML-friendly representation of a molecule that encodes atomic identities and distances, built into invariance to translation and rotation. |

| Graph Neural Networks (GNNs) [8] [9] | Machine Learning Model | A dominant class of ML models that operate directly on molecular graphs, treating atoms as nodes and bonds as edges to learn structure-property relationships. |

| Machine Learning Interatomic Potentials (MLIPs) [7] [4] | Machine Learning Model | ML models trained on QM data to predict energies and forces, enabling high-speed molecular simulations with quantum-mechanical accuracy. |

The journey from the atomization energies in the original QM7 dataset to the extensive electronic spectral and reactivity properties in its successors has fundamentally shaped the capabilities of machine learning in chemistry. The systematic benchmarking made possible by these datasets has driven progress from simple kernel methods on fixed representations to sophisticated graph neural networks and large-language models capable of multi-task prediction and even reaction planning [8]. As datasets continue to grow in size and physical fidelity—encompassing broader elemental diversity, non-equilibrium states, and explicit reaction pathways—they will continue to be the bedrock upon which more reliable, interpretable, and powerful in-silico molecular design tools are built.

A central question in quantum machine learning (QM/ML) is how to represent molecules in a way that enables accurate and efficient prediction of molecular properties. The Coulomb Matrix has emerged as a foundational representation that directly encodes molecular geometry into a fixed-size matrix, facilitating the application of machine learning to quantum mechanical problems [10]. This representation was developed to address the challenge of making quantitative estimates across the chemical compound space at a computational cost significantly lower than high-level quantum chemistry calculations, which can take days per molecule to achieve the desired chemical accuracy [10]. On benchmark datasets like QM7, which contains 7,165 organic molecules with up to 7 heavy atoms (C, N, O, S) [1], the Coulomb Matrix has served as a standard representation for predicting molecular properties such as atomization energies.

Coulomb Matrix: Formal Definition and Methodology

The Coulomb Matrix provides a quantum-inspired representation that is invariant to translation and rotation of the molecule, addressing fundamental symmetries required for molecular property prediction [1] [10]. Its mathematical formulation captures the electronic interactions within a molecule through a symmetric matrix representation.

Mathematical Formulation

For a molecule with N atoms, the Coulomb matrix is defined as an N×N matrix where each element is calculated as follows [1]:

[ \begin{align} C_{ii} &= \frac{1}{2}Z_i^{2.4} \ C_{ij} &= \frac{Z_iZ_j}{|R_i - R_j|} \quad (i \neq j) \end{align} ]

Where:

- (Zi) and (Zj) represent the nuclear charges of atoms i and j

- (Ri) and (Rj) represent the Cartesian coordinates of atoms i and j

- The diagonal elements represent a polynomial fit to the potential energy of an isolated atom

- The off-diagonal elements approximate the Coulomb repulsion between nuclei

Implementation and Preprocessing

In practical applications on datasets like QM7, several preprocessing steps are required to handle the variable sizes of different molecules and the permutation invariance of the Coulomb Matrix [10]:

Matrix Sizing: For the QM7 dataset with a maximum of 23 atoms per molecule, the Coulomb Matrix is represented as a 23×23 matrix, with zero-padding for smaller molecules [1].

Permutation Invariance: Since the Coulomb Matrix is not invariant to permutations or re-indexing of atoms, several approaches have been developed:

Alternative Representations: The Bag of Bonds approach decomposes the Coulomb Matrix into interatomic distance segments, providing another permutation-invariant representation [11].

Experimental Protocols and Benchmarking on QM7

The QM7 dataset has served as a standard benchmark for evaluating the performance of the Coulomb Matrix representation and comparing it with alternative molecular featurization methods. This dataset contains 7,165 organic molecules with up to 7 heavy atoms (C, N, O, S) and their atomization energies computed using the Perdew-Burke-Ernzerhof hybrid functional (PBE0) [1].

Standard Evaluation Methodology

The standard experimental protocol for benchmarking molecular representations on QM7 involves:

- Data Splitting: Using the predefined five splits provided in the dataset for cross-validation [1]

- Performance Metric: Mean Absolute Error (MAE) in kcal/mol for atomization energy prediction

- Comparison Framework: Evaluating multiple representations with consistent model architectures

Performance Comparison of Molecular Representations

Table 1: Performance Comparison of Molecular Representations on QM7 Atomization Energy Prediction

| Representation Method | Model Architecture | MAE (kcal/mol) | Key Advantages | Limitations |

|---|---|---|---|---|

| Coulomb Matrix (Sorted) | Bayesian Regularized Neural Networks | 3.51 [10] | Direct geometry encoding, quantum-inspired | Not permutation invariant without processing |

| Coulomb Matrix + Atomic Composition | Bayesian Regularized Neural Networks | 3.00 [10] | Enhanced chemical information, improved accuracy | Increased feature dimensionality |

| Random Coulomb Matrices | Kernel Ridge Regression | 9.90 [1] | Handles permutation invariance | Higher error compared to optimized representations |

| Molecular Fingerprints (Morgan) | XGBoost | AUROC: 0.828 [12] | Superior for odor prediction tasks | Less effective for quantum properties |

| Graph Convolutional Networks | GCN with Uniform Simulated Annealing | N/A (Classification task) [13] | Direct graph processing, no feature engineering | Computationally intensive training |

Table 2: Advanced Model Performance with Coulomb Matrix Representations

| Model Architecture | Representation | MAE (kcal/mol) | Key Innovations |

|---|---|---|---|

| Multilayer Perceptron | Binarized Random Coulomb Matrices | 3.5 [1] | Binary representation for improved learning |

| Kernel Ridge Regression | Coulomb Matrix Sorted Eigenspectrum | 9.9 [1] | Gaussian kernel on sorted eigenvalues |

| Bayesian Regularized Neural Networks | Combined Sorted Coulomb Matrix + Atomic Composition | 3.0 [10] | Hybrid approach with atomic counts |

The experimental results demonstrate that while the baseline Coulomb Matrix representation achieves reasonable performance, its effectiveness significantly improves when combined with additional chemical information. The hybrid approach integrating sorted Coulomb Matrix with atomic composition reduced the MAE from 3.51 to 3.0 kcal/mol, representing a substantial improvement in prediction accuracy [10].

Comparative Analysis with Alternative Representations

Molecular Fingerprints

Morgan fingerprints (also known as circular fingerprints) capture molecular structure by iteratively encoding the neighborhood of each atom up to a certain radius [12]. In comparative studies:

- Performance: Achieved AUROC of 0.828 and AUPRC of 0.237 for odor prediction tasks [12]

- Advantages: Effective for structure-activity relationships, interpretable

- Limitations: Less effective for quantum mechanical properties like atomization energy

Graph Neural Networks

Graph Convolutional Networks (GCNs) and related architectures operate directly on the molecular graph structure [13]:

- Approach: Represent atoms as nodes and bonds as edges, with message-passing between neighbors

- Innovations: Recent work has used metaheuristic algorithms like Uniform Simulated Annealing to optimize GCN training [13]

- Applications: Particularly effective for node-level prediction tasks like atom classification

Quantum Machine Learning Encodings

Emerging approaches explore specialized encodings for quantum machine learning:

- Quantum Molecular Structure Encoding (QMSE): Encodes molecular bond orders and interatomic couplings as a hybrid Coulomb-adjacency matrix directly in quantum circuits [14]

- Potential: Aims to improve state separability for quantum algorithms, though still in early development

Research Reagent Solutions: Essential Materials for Implementation

Table 3: Essential Research Reagents and Computational Tools for Coulomb Matrix Implementation

| Resource Name | Type/Category | Primary Function | Implementation Notes |

|---|---|---|---|

| QM7 Dataset | Benchmark Dataset | Standardized evaluation of molecular representations | Contains 7,165 molecules, atomization energies [1] |

| RDKit | Cheminformatics Library | Molecular descriptor calculation and manipulation | Provides alternative fingerprints and descriptors [12] |

| OpenBabel | Chemical Toolbox | Molecular format conversion and coordinate generation | Used to convert molecules to Cartesian coordinates [10] |

| Coulomb Matrix | Molecular Representation | Encodes molecular geometry into fixed-size matrix | Built-in invariance to translation and rotation [1] |

| Bayesian Regularized Neural Networks | ML Model Architecture | Robust regression for molecular property prediction | Reduces overfitting on limited datasets [10] |

Experimental Workflow and Signaling Pathways

The typical workflow for implementing and evaluating Coulomb Matrix representations follows a systematic process from data preparation to model evaluation, with multiple decision points for representation variants and model selection.

The Coulomb Matrix remains a foundational representation in quantum machine learning, particularly for predicting quantum mechanical properties like atomization energies. Its strength lies in its direct encoding of molecular geometry and physical intuition derived from Coulombic interactions. However, modern applications increasingly combine it with complementary representations—particularly atomic composition—to enhance predictive accuracy [10]. While emerging approaches like Graph Neural Networks offer compelling alternatives for structure-based prediction tasks [13], the Coulomb Matrix continues to provide a robust baseline for benchmarking new methodologies on established datasets like QM7. Its integration with more complex neural architectures and hybrid representation schemes points toward future developments where physical priors and learned representations combine to advance computational molecular modeling.

Table 1: Key Specifications of the QM Series Datasets

| Dataset | Molecules | Heavy Atoms | Key Elements | Total Structures | Primary Properties | Key Characteristics |

|---|---|---|---|---|---|---|

| QM7/QM7b [15] [1] | 7,165 (QM7), 7,211 (QM7b) | Up to 7 | C, N, O, S (Cl in QM7b) | ~7,000 | Atomization energy (QM7), 14 properties incl. polarizability, HOMO/LUMO (QM7b) | Single equilibrium structure per molecule; foundational benchmark datasets. [1] |

| QM7-X [2] | ~4.2 million structures from one set of isomers | Up to 7 | H, C, N, O, S, Cl | ~4.2 million | 42 global & local properties (e.g., energies, dipole moments, polarizabilities) | Exhaustive conformer & non-equilibrium sampling; most comprehensive dataset for small molecules. [2] |

| QM8 [15] [1] | 21,786 | Up to 8 | C, N, O, F | 21,786 | 12 excitation energies from TDDFT & CC2 | Focus on electronic spectra for synthetically feasible small organic molecules. [1] |

| QM9 [15] [1] | 133,885 | Up to 9 | C, H, O, N, F | 133,885 | 12 geometric, energetic, electronic, & thermodynamic properties | Broad, stable molecules; the most extensive single-structure dataset in the QM series. [1] |

The QM7 dataset has served as a foundational benchmark in the field of molecular machine learning (ML). It provides quantum-mechanical properties for a curated set of small organic molecules, enabling the development and testing of early ML models for predicting molecular properties from structure [15] [1]. Its evolution into larger and more specialized datasets like QM7-X, QM8, and QM9 has collectively mapped a critical region of chemical compound space, each addressing unique challenges in the quest to build robust ML models for computational chemistry and drug discovery.

The Evolution Beyond a Single Structure

A key limitation of the original QM7 and QM9 datasets is that they provide only a single, meta-stable equilibrium structure for each molecule [2]. This offers a simplified view of chemical space, as molecules in reality exist as ensembles of interconverting conformers. The QM7-X dataset was created to address this gap directly.

As the following diagram shows, QM7-X expands upon the core QM7 data through a sophisticated workflow to create a much more comprehensive resource.

This systematic generation of equilibrium and non-equilibrium structures allows ML models trained on QM7-X to learn more accurate and transferable structure-property relationships, which are essential for predicting the behavior of molecules in dynamic environments [2].

A Spectrum of Molecular Complexity and Application

The QM series datasets form a gradient of molecular complexity and scientific focus, from the foundational QM7 to the more extensive QM9. The diagram below illustrates this ecosystem and how newer, more specialized datasets build upon it.

Experimental Protocols and Benchmarking ML Performance

The true value of the QM7 dataset lies in its well-established role as a benchmark for validating new machine learning algorithms. The standard protocol involves using a stratified split of the data to ensure that the model's performance is consistent across different types of molecules [15]. The canonical task is the prediction of molecular atomization energies from the molecular structure, typically represented by the Coulomb matrix [1].

Performance is most commonly reported as the Mean Absolute Error (MAE) in kcal/mol, providing a clear, intuitive metric for comparing model accuracy [15] [1].

Table 2: Representative ML Benchmark Results on QM7

| Model | Representation | Test Error (MAE in kcal/mol) | Key Experimental Detail |

|---|---|---|---|

| Kernel Ridge Regression [1] | Sorted Coulomb matrix eigenspectrum | 9.9 | Standard kernel method on a simplified molecular representation. |

| Multilayer Perceptron (MLP) [1] | Binarized random Coulomb matrices | 3.5 | Early demonstration of deep learning's potential on this task. |

These benchmarks show a clear progression in model sophistication and accuracy. Later studies using more advanced graph neural networks and learned representations have further pushed performance, often using QM7 as a standard proving ground [16].

The Scientist's Toolkit: Essential Research Reagents

Navigating the quantum dataset ecosystem requires familiarity with a set of computational "reagents." The following table details key resources used in the creation and utilization of these datasets.

| Tool / Resource | Type | Primary Function | Example Use Case |

|---|---|---|---|

| GDB-13/17 [2] [1] | Chemical Database | Enumerates billions of synthetically accessible organic molecules. | Source of molecular connectivities for QM7, QM9, and others. |

| Coulomb Matrix [1] | Molecular Representation | Provides a rotation- and translation-invariant description of a molecule. | Input representation for early ML models on QM7 and QM9. |

| Density Functional Tight Binding (DFTB) [2] | Quantum Chemical Method | Approximates Density Functional Theory for faster geometry optimizations. | Generating initial and meta-stable structures in QM7-X. |

| PBE0+MBD [2] | Quantum Chemical Method | Hybrid density functional with many-body dispersion corrections for high accuracy. | Computing the final, high-quality properties in the QM7-X dataset. |

| MoleculeNet/DeepChem [15] | Machine Learning Benchmarking Platform | Curates datasets, metrics, and ML model implementations. | Standardized benchmarking of new models on QM7 and other datasets. |

| Directed-MPNN [16] | Machine Learning Model | A type of graph neural network that operates on molecular bonds to avoid "message totters." | State-of-the-art learned representation for molecular property prediction. |

The QM7 dataset remains a cornerstone of the molecular machine learning ecosystem, not for its size or complexity, but for its well-defined role as a foundational benchmark. Its true power is revealed when viewed as part of a progressive ecosystem: it provides the baseline that QM7-X challenges with conformational diversity, that QM8 and QM9 expand in scope and size, and that modern datasets transcend by incorporating reactivity and drug-like complexity. For researchers, understanding this landscape is key to selecting the right dataset for developing the next generation of machine learning models in chemistry and drug discovery.

Why QM7 Remains a Critical Benchmark for Modern ML Research

In the rapidly evolving field of machine learning (ML) for molecular science, benchmarking datasets play a crucial role in tracking progress, comparing algorithms, and ensuring scientific rigor. Among these, the QM7 dataset stands out as a historically significant and persistently relevant benchmark. Originally introduced over a decade ago, QM7 contains quantum-mechanical properties for 7,165 small organic molecules composed of up to seven heavy atoms (C, N, O, S) from the GDB-13 database, totaling up to 23 atoms per molecule [1]. Each molecule is represented by its Coulomb matrix - a representation that encodes molecular structure with built-in invariance to translation and rotation - alongside its atomization energy computed at the quantum-mechanical PBE0 level of theory [1].

Despite the subsequent development of larger and more comprehensive molecular datasets, QM7 remains a critical fixture in modern ML research. Its enduring value lies not in its size but in its well-defined scope, extensive historical baseline data, and role as a controlled testbed for developing novel algorithms before scaling to more complex systems. This article examines why QM7 continues to serve as an indispensable benchmark, providing objective comparisons with alternative datasets and detailed experimental protocols that have shaped its use in the research community.

QM7 in Context: Comparative Analysis of Quantum Chemical Datasets

The landscape of quantum-chemical datasets has expanded significantly since QM7's introduction. Understanding QM7's position within this ecosystem requires comparative analysis against its successors and alternatives.

Table 1: Comparison of Quantum-Chemical Benchmark Datasets for Machine Learning

| Dataset | Molecules | Heavy Atoms | Properties | Key Features | Common Use Cases |

|---|---|---|---|---|---|

| QM7 | 7,165 [1] | Up to 7 [1] | Atomization energies [1] | Single equilibrium structure per molecule; Coulomb matrix representation | Baseline model development; molecular energy prediction |

| QM7-X | ~4.2 million [2] | Up to 7 [2] | 42 properties (dipole moments, polarizabilities, HOMO-LUMO gaps, etc.) [2] | Extensive conformational sampling; equilibrium and non-equilibrium structures | Training data-intensive models; transfer learning; conformer analysis |

| QM8 | 21,786 [15] | Up to 8 [15] | 12 excitation properties [15] | Electronic spectra from TDDFT and CC2 methods | Excited states prediction; optical property modeling |

| QM9 | 133,885 [15] | Up to 9 [15] | 12 geometric, energetic, electronic, and thermodynamic properties [15] | CHONF elements; B3LYP/6-31G(2df,p) level theory | Comprehensive molecular property prediction; model scalability |

The QM7-X dataset, introduced in 2021, represents a substantial expansion of the chemical space covered by QM7, encompassing approximately 4.2 million equilibrium and non-equilibrium structures of molecules with up to seven non-hydrogen atoms [2]. While QM7 contains only a single metastable structure per molecule, QM7-X provides an exhaustive sampling of constitutional isomers, stereoisomers, and conformational isomers, plus 100 non-equilibrium structural variations for each [2]. Furthermore, where QM7 offers only atomization energies, QM7-X contains 42 diverse physicochemical properties computed at the PBE0+MBD level of theory, ranging from ground-state quantities to response properties [2].

The MoleculeNet benchmark, introduced in 2017, helped standardize evaluation procedures across multiple molecular datasets, including QM7, QM8, and QM9 [15] [17]. By establishing consistent metrics, data splitting protocols, and evaluation frameworks, MoleculeNet addressed the critical challenge of comparability between different ML methods [15]. For QM7 specifically, MoleculeNet recommends stratified splitting and Mean Absolute Error (MAE) as the primary metric [15].

Table 2: Historical Benchmark Performance on QM7 Atomization Energy Prediction

| Model | Representation | Test MAE (kcal/mol) | Reference |

|---|---|---|---|

| Kernel Ridge Regression | Coulomb matrix sorted eigenspectrum | 9.9 | Rupp et al., PRL 2012 [1] |

| Multilayer Perceptron | Binarized random Coulomb matrices | 3.5 | Montavon et al., NIPS 2012 [1] |

| Modern GNNs | Learned molecular representations | ~3.0 (typical range) | Extrapolated from historical trends |

More recent datasets like the Open Molecules 2025 (OMol25) collection have pushed boundaries further, containing over 100 million 3D molecular snapshots with properties calculated using density functional theory, including molecules with up to 350 atoms across most of the periodic table [4]. Despite this dramatic scaling in data volume and chemical complexity, compact benchmarks like QM7 retain value for rapid iteration and controlled experimentation.

Experimental Protocols: Methodologies for QM7 Benchmarking

Data Preparation and Splitting Strategies

Proper experimental protocol begins with appropriate dataset splitting. For QM7, the standard practice involves:

Stratified Splitting: The dataset is divided using a stratified approach that preserves the distribution of atomization energies across splits, as recommended in the MoleculeNet benchmark [15]. The original QM7 publication provides predefined splits for cross-validation, organized into a 5×1433 matrix (P) that divides the 7165 molecules into five training/test set combinations [1].

Input Representation: The Coulomb matrix representation is standard for QM7, defined as:

- $C{ii} = \frac{1}{2}Zi^{2.4}$ for diagonal elements

- $C{ij} = \frac{ZiZj}{|Ri - Rj|}$ for off-diagonal elements where $Zi$ is the nuclear charge of atom $i$ and $R_i$ is its position [1].

Evaluation Metric: Mean Absolute Error (MAE) in kcal/mol for atomization energies serves as the primary metric, allowing direct comparison with historical benchmarks [15] [1].

Advanced Methodologies: Differentiable Quantum Chemistry

Recent advances have introduced more sophisticated approaches that extend beyond direct property prediction. Differentiable quantum chemistry frameworks now enable training ML models against fundamental quantum mechanical intermediates:

Diagram 1: Differentiable Quantum Chemistry Workflow

This framework integrates ML with quantum chemistry by learning an effective electronic Hamiltonian, which is then processed through a differentiable quantum chemistry calculator (such as PySCFAD) to obtain multiple electronic properties [18] [19]. The entire workflow is differentiable, enabling end-to-end training against quantum mechanical observables. This approach demonstrates QM7's evolving role - from a simple testbed for energy prediction to a proving ground for hybrid ML-quantum chemistry methods that learn fundamental physical representations rather than just structure-property relationships [18].

Table 3: Essential Research Resources for QM7-Based Machine Learning

| Resource | Type | Function | Relevance to QM7 |

|---|---|---|---|

| Coulomb Matrix | Molecular representation | Encodes molecular structure with invariance to translation and rotation | Standard input representation for traditional QM7 models [1] |

| DeepChem | Software library | Provides implementations of molecular featurizations and ML algorithms | Includes curated QM7 dataset and standardized benchmarking tools [15] [17] |

| PySCFAD | Differentiable quantum chemistry code | Enables gradient computation through quantum chemical operations | Facilitates hybrid ML-QM models trained on QM7 data [18] [19] |

| GDB-13 | Chemical database | Source of synthetically feasible organic molecules for QM7 | Provides the chemical space from which QM7 molecules were selected [1] |

| ANI-type models | Machine learning potentials | Provides pre-trained models for chemical property prediction | Offers baseline comparisons and transfer learning opportunities [2] |

Critical Perspectives and Limitations

While QM7 maintains importance as a benchmark, researchers must recognize its limitations. The dataset's primary constraint is its limited chemical diversity - all molecules contain only up to seven heavy atoms (C, N, O, S), restricting the complexity of chemical environments models can learn from [1]. Additionally, QM7 provides only single conformation representations per molecule, ignoring the complex conformational landscapes that influence molecular properties in reality [2].

The broader ecosystem of molecular benchmarks faces significant challenges. As noted in critical assessments, many benchmark datasets suffer from technical issues including invalid chemical structures, inconsistent stereochemistry representation, and problematic dataset splits [20]. These concerns extend beyond QM7 to affect even newer and larger benchmarks.

Furthermore, the field continues to grapple with fundamental questions about what constitutes appropriate benchmarking. As one analysis notes, "Better benchmarks and evaluations have been essential for progress and advancing many fields of ML" [4]. The development of "exceptionally thorough evaluations" remains an active challenge, with researchers rightly skeptical of ML tools when applied to complex chemical phenomena like bond breaking and formation [4].

QM7 remains a critical benchmark for modern ML research not despite its age, but because of the historical context and methodological foundation it provides. Its continued relevance stems from several key factors: the extensive historical baseline for performance comparison, its manageable computational requirements enabling rapid experimentation, its role in the MoleculeNet standardized benchmark suite, and its evolving utility for testing novel approaches like differentiable quantum chemistry.

As the field progresses toward increasingly complex datasets like QM7-X and OMol25, QM7 maintains its position as an essential first proving ground for new algorithms and approaches. Its structured simplicity provides the controlled environment necessary for method development before scaling to more challenging chemical spaces. In the broader context of machine learning for molecular science, QM7 exemplifies how well-constructed benchmarks of limited scope can deliver enduring value, continuing to shape research directions and methodological standards years after their introduction.

From Descriptors to Predictions: ML Methodologies for QM7

The QM7 dataset has emerged as a fundamental benchmark in molecular machine learning, providing a standardized testing ground for comparing the performance of various algorithms in predicting quantum-mechanical properties. This dataset comprises 7,165 small organic molecules with up to 7 heavy atoms (C, N, O, S) from the GDB-13 database, featuring diverse molecular structures including double and triple bonds, cycles, and various functional groups [1]. Each molecule is represented by a Coulomb matrix representation—a mathematical formulation that encodes quantum interactions while maintaining invariance to molecular translation and rotation—with associated atomization energies computed using hybrid density functional theory (PBE0) [1].

Within this context, Kernel Ridge Regression (KRR) and Multilayer Perceptrons (MLP) represent two distinct philosophical approaches to machine learning. KRR is a kernel-based method that operates on the similarity between molecules in a high-dimensional feature space, while MLPs are neural networks capable of learning hierarchical representations through multiple layers of nonlinear transformations. Their comparative performance on QM7 offers valuable insights into how different algorithmic architectures handle the complex relationship between molecular structure and quantum properties.

Performance Comparison on QM7

Extensive benchmarking on the QM7 dataset has revealed significant differences in how KRR and MLP approaches perform in predicting molecular atomization energies. The standard evaluation metric used is mean absolute error (MAE) in kcal/mol, typically measured via five-fold cross-validation using the predefined splits provided in the dataset [1].

Table 1: Performance Comparison of KRR and MLP on QM7

| Method | Representation | MAE (kcal/mol) | Key Features |

|---|---|---|---|

| Kernel Ridge Regression | Coulomb matrix sorted eigenspectrum | 9.9 [1] | Uses Gaussian kernel, relies on molecular similarity |

| Multilayer Perceptron | Binarized random Coulomb matrices | 3.5 [1] | Learns hierarchical features through multiple layers |

The performance disparity highlights a fundamental characteristic of these methods: the standard KRR approach with Coulomb matrix eigenspectrum achieves an MAE of approximately 9.9 kcal/mol, while MLP with binarized random Coulomb matrices significantly outperforms it with an MAE of 3.5 kcal/mol [1]. This substantial improvement demonstrates MLP's superior capability in capturing the complex, nonlinear relationships between molecular structure and atomization energies when appropriate input representations are used.

It is worth noting that training MLP models on QM7 is computationally intensive, with reports indicating it can take up to two days depending on the hardware configuration [1]. This represents a trade-off between prediction accuracy and computational resources that researchers must consider when selecting an approach for their specific application.

Experimental Protocols and Methodologies

Kernel Ridge Regression Implementation

The KRR approach implemented on QM7 utilizes a specific preprocessing strategy for the Coulomb matrix representation. The standard Coulomb matrix is defined as:

$$ \begin{align} C_{ii} &= \frac{1}{2}Z_i^{2.4} \ C_{ij} &= \frac{Z_iZ_j}{|R_i - R_j|} \quad (i \neq j) \end{align}$$

where $Zi$ represents the nuclear charge of atom $i$ and $Ri$ is its position in 3D space [1]. Rather than using the raw Coulomb matrix directly, the KRR implementation employs the sorted eigenspectrum of the Coulomb matrix as the feature vector. This sorting process ensures invariance to atomic indexing, as the eigenvalues are ordered by their magnitude, creating a consistent representation across different molecular orientations [1].

The regression itself utilizes a Gaussian kernel to measure similarity between molecular representations in a high-dimensional feature space. The kernel trick allows KRR to implicitly operate in this high-dimensional space without explicitly computing the coordinates, making it particularly suited for capturing complex relationships in molecular data.

Multilayer Perceptron Implementation

The MLP approach that achieves state-of-the-art results on QM7 employs a significantly different strategy for processing input representations. Instead of using the sorted eigenspectrum, this method utilizes binarized random Coulomb matrices [1]. This representation involves generating multiple randomly perturbed versions of the Coulomb matrix and thresholding their values to create binary representations, effectively creating an ensemble of input views for each molecule.

The MLP architecture consists of multiple fully connected layers with nonlinear activation functions, allowing the network to learn hierarchical feature representations from the input data. The training process involves error backpropagation with optimization algorithms to minimize the difference between predicted and actual atomization energies [1]. The specific implementation provided for QM7 includes separate training and testing scripts that can run concurrently, enabling researchers to monitor progress during the extended training period [1].

Cross-Validation Framework

Both methods are evaluated using the standardized five-fold cross-validation splits provided in the QM7 dataset [1]. This validation strategy ensures that performance comparisons are consistent across different studies and prevents overoptimistic results due to data leakage. The dataset includes a predefined partition matrix P (5 × 1433) that specifies these splits, with each fold using approximately 80% of the data for training and 20% for testing in a stratified manner.

Advanced Extensions and Contemporary Approaches

Beyond QM7: The QM7-X Dataset

The development of QM7-X represents a significant expansion of the original QM7 dataset, addressing several limitations and enabling more sophisticated machine learning applications. QM7-X contains approximately 4.2 million equilibrium and non-equilibrium structures of small organic molecules with up to seven non-hydrogen atoms (C, N, O, S, Cl) [2]. This comprehensive dataset includes an exhaustive sampling of constitutional isomers, stereoisomers, and conformational isomers, providing unprecedented coverage of this region of chemical compound space.

QM7-X was computed at the tightly converged PBE0+MBD level of theory and contains 42 physicochemical properties ranging from ground-state quantities (atomization energies, dipole moments) to response properties (polarizability tensors, dispersion coefficients) [2] [21]. This extensive collection of properties enables researchers to develop models for multiple molecular characteristics simultaneously and explore more complex structure-property relationships across diverse molecular conformations.

Hybrid ML/QM Frameworks

Recent research has explored hybrid approaches that integrate machine learning with quantum mechanical calculations, creating models that leverage the strengths of both paradigms. One promising direction involves developing ML models that predict intermediate quantum-mechanical quantities rather than direct properties [18]. For instance, models can be trained to predict the effective single-particle Hamiltonian matrix, from which multiple properties can be derived through analytical physics-based operations [18].

These hybrid frameworks interface with differentiable electronic structure codes like PySCFAD, enabling end-to-end optimization of ML models against quantum chemical observables [18]. This approach has demonstrated improved accuracy and transferability, particularly for response properties like polarizability, while maintaining computational efficiency comparable to minimal-basis quantum calculations.

Table 2: Evolution of Quantum-Mechanical Datasets for Machine Learning

| Dataset | Size | Elements | Properties | Key Features |

|---|---|---|---|---|

| QM7 [1] | 7,165 molecules | H, C, N, O, S | Atomization energies | Single equilibrium structure per molecule |

| QM7b [1] | 7,211 molecules | H, C, N, O, S, Cl | 14 properties including polarizability, HOMO/LUMO | Multitask learning with additional properties |

| QM9 [1] | 134,000 molecules | H, C, N, O, F | Geometric, energetic, electronic, thermodynamic | Molecules with up to 9 heavy atoms |

| QM7-X [2] | ~4.2 million structures | H, C, N, O, S, Cl | 42 physicochemical properties | Equilibrium and non-equilibrium structures |

The Scientist's Toolkit

Table 3: Essential Research Resources for ML on Quantum-Mechanical Datasets

| Resource | Type | Description | Application |

|---|---|---|---|

| QM7 Dataset [1] | Dataset | 7,165 molecules with atomization energies and Coulomb matrices | Benchmarking ML algorithms for molecular property prediction |

| QM7-X Dataset [2] [21] | Dataset | ~4.2M structures with 42 properties each | Developing advanced ML models across chemical compound space |

| Coulomb Matrix [1] | Molecular Representation | Quantum-mechanically derived matrix with built-in rotational and translational invariance | Input feature for molecular machine learning models |

| Binarized Random Coulomb Matrices [1] | Molecular Representation | Ensemble of randomly perturbed and thresholded Coulomb matrices | Input representation for improved MLP performance |

| PySCFAD [18] | Software | Differentiable electronic structure code | Hybrid ML/QM model development and training |

| Kernel Ridge Regression | Algorithm | Kernel-based regression method with regularization | Baseline molecular property prediction |

| Multilayer Perceptron | Algorithm | Feedforward neural network with multiple hidden layers | Advanced nonlinear molecular property prediction |

The comparative analysis of Kernel Ridge Regression and Multilayer Perceptrons on the QM7 dataset reveals fundamental insights into machine learning approaches for molecular property prediction. While KRR provides a solid baseline with its theoretical foundations and simplicity, MLP demonstrates superior performance when coupled with appropriate input representations like binarized random Coulomb matrices, achieving significantly lower prediction errors for molecular atomization energies.

The evolution from QM7 to more comprehensive datasets like QM7-X, along with the emergence of hybrid ML/QM frameworks, points toward an exciting future where machine learning increasingly integrates with fundamental physics principles. These advancements are paving the way for more accurate, efficient, and interpretable models that can accelerate the discovery of novel molecules with tailored properties for pharmaceutical, materials, and energy applications.

For researchers working in this domain, the choice between KRR and MLP involves careful consideration of the trade-offs between prediction accuracy, computational requirements, and model interpretability. As the field progresses, the integration of these traditional machine learning approaches with quantum-mechanical principles will likely yield even more powerful tools for exploring the vast landscape of chemical compound space.

The accurate prediction of molecular properties from structure is a fundamental challenge in computational chemistry and drug discovery. Traditional machine learning methods relied on pre-defined molecular descriptors or fingerprints, which could potentially overlook important structural information [22]. Graph Neural Networks (GNNs) have emerged as a powerful alternative that natively operates on the graph representation of molecules, where atoms constitute nodes and bonds form edges [23] [24]. This approach allows GNNs to automatically learn task-specific features directly from molecular structure, capturing complex patterns that might be missed by manual feature engineering [23].

The QM7 dataset has served as a crucial benchmark for evaluating machine learning methods in quantum chemistry [1]. This dataset contains 7,165 organic molecules with up to seven heavy atoms (C, N, O, S) and provides their atomization energies computed at the quantum-mechanical PBE0 level of theory [1] [2]. The properties in QM7 and related quantum datasets depend fundamentally on the 3D arrangement of atoms, making them particularly challenging and meaningful benchmarks for molecular property prediction [20]. By testing models on QM7, researchers can assess their ability to capture intricate structure-property relationships essential for computational drug discovery and materials design.

GNN Architectures for Molecular Property Prediction

Fundamental GNN Components and Message Passing

At their core, GNNs learn molecular representations through an iterative message passing framework where nodes (atoms) update their feature vectors by aggregating information from their neighboring nodes [22]. This process typically involves three key components: node embedding initialization, message passing layers, and a readout function [25].

Node Embedding begins by encoding atom-specific features (e.g., element type, hybridization) and bond features (e.g., bond type, conjugation) into initial vector representations [25] [24]. Message Passing then occurs through multiple layers where each node gathers features from its neighbors, allowing information to propagate across the molecular graph [22]. Finally, the Readout Function aggregates all node features into a single graph-level representation for property prediction [22] [26]. The design of each component significantly impacts model performance, leading to various GNN architectures specialized for molecular data.

Evolution of GNN Architectures

Early GNN implementations for molecules primarily used basic Graph Convolutional Networks (GCNs) that apply a spectral-based convolution operation to update node features [22] [23]. Subsequent architectures introduced attention mechanisms through Graph Attention Networks (GATs), which assign different importance weights to neighboring nodes during aggregation [22]. Message Passing Neural Networks (MPNNs) provided a generalized framework that unified various GNN approaches specifically for molecular property prediction [22].

More recent innovations have focused on enhancing GNN expressiveness and efficiency. Kolmogorov-Arnold GNNs (KA-GNNs) integrate learnable univariate functions based on Fourier series into all three GNN components, replacing traditional multi-layer perceptrons with more expressive function approximators [25]. Other advancements include multi-feature extraction approaches that simultaneously process node, edge, and three-dimensional structural information through dedicated paths with attention-based aggregation [24]. These architectural improvements have progressively enhanced GNNs' capability to capture complex molecular patterns essential for accurate property prediction.

Experimental Framework for QM7 Benchmarking

QM7 Dataset Specifications and Preparation

The QM7 dataset is a subset of the GDB-13 database containing 7,165 small organic molecules with up to 23 atoms (including 7 heavy atoms C, N, O, and S) [1]. Each molecule is represented by its Coulomb matrix—a representation that encodes atomic identities and positions with built-in invariance to translation and rotation—along with its atomization energy computed at the PBE0 level of theory [1]. Atomization energies in QM7 range from -800 to -2000 kcal/mol, representing the energy required to separate a molecule into its constituent atoms [1].

For benchmarking, researchers typically follow the standardized five splits provided with the dataset to ensure consistent cross-validation [1]. Each split defines training and test sets containing approximately 5,732 and 1,433 molecules respectively, enabling comparable evaluation across different methods [1]. Prior to training, molecular structures are often normalized, and the Coulomb matrices may be preprocessed through eigenvalue sorting or random binarization to enhance machine learning compatibility [1].

Evaluation Metrics and Benchmarking Protocols

Model performance on QM7 is primarily evaluated using Mean Absolute Error (MAE), which measures the average absolute difference between predicted and quantum-mechanically computed atomization energies [1]. This metric provides an intuitive measure of prediction accuracy in the original units (kcal/mol). Some studies additionally report Root Mean Square Error (RMSE) to penalize larger errors more heavily [22].

Rigorous benchmarking requires careful experimental design to prevent data leakage and ensure generalizability. The standard protocol involves five-fold cross-validation using the predefined dataset splits, with results reported as the average MAE across all folds [1]. Training typically employs early stopping based on validation loss to prevent overfitting, with optimization objectives focused on minimizing the MAE loss function [1] [25]. Comparative analyses must control for computational budget and hyperparameter tuning effort to ensure fair comparisons between different GNN architectures and baseline methods.

Comparative Performance Analysis

Quantitative Comparison of Methods on QM7

Table 1: Performance Comparison of Various Methods on QM7 Dataset

| Method | Architecture Type | MAE (kcal/mol) | Key Features |

|---|---|---|---|

| Kernel Ridge Regression (2012) [1] | Kernel Method | 9.9 | Gaussian Kernel on sorted Coulomb matrix eigenspectrum |

| Multilayer Perceptron (2012) [1] | Descriptor-based DNN | 3.5 | Binarized random Coulomb matrices as input |

| GraphKAN [25] | Graph Neural Network | ~3.0* | Kolmogorov-Arnold Network components in embedding and readout |

| KA-GNN [25] | Graph Neural Network | ~2.8* | Full KAN integration in all GNN components with Fourier basis functions |

| Multi-Feature GNN [24] | Graph Neural Network | ~2.7* | Simultaneous node, edge, and 3D feature extraction with attention aggregation |

Note: Exact values for newer GNN methods are approximated from trend analysis in the literature

The performance comparison reveals a clear trajectory of improvement, with early kernel methods and traditional neural networks being surpassed by specialized GNN architectures. The most advanced GNNs, such as KA-GNN and multi-feature GNNs, demonstrate significantly enhanced capability to capture the complex quantum mechanical relationships in the QM7 dataset [25] [24]. These improvements stem from architectural innovations that more effectively model molecular graph structure and quantum interactions.

GNNs Versus Traditional Machine Learning Approaches

While GNNs have shown remarkable performance on molecular property prediction, comprehensive comparisons with traditional descriptor-based methods reveal a more nuanced picture. Studies across diverse molecular benchmarks indicate that descriptor-based models using sophisticated ensemble methods like XGBoost and Random Forest can sometimes match or even exceed GNN performance, particularly on smaller datasets or when carefully crafted molecular descriptors are employed [23]. These traditional methods often achieve this with substantially lower computational costs, requiring only seconds to train compared to hours or days for GNNs [23].

However, GNNs maintain distinct advantages in their ability to learn task-specific representations without manual feature engineering and their superior transfer learning capabilities [26] [23]. In multi-fidelity learning settings where both low-fidelity (computationally inexpensive) and high-fidelity (computationally expensive) data are available, GNNs have demonstrated up to 8x improvement in performance when high-fidelity training data is sparse [26]. This suggests that the optimal choice between GNNs and traditional methods depends on specific factors such as dataset size, data diversity, computational resources, and the need for transfer learning.

Advanced GNN Architectures and Methodologies

Kolmogorov-Arnold Graph Neural Networks

KA-GNNs represent a recent breakthrough that integrates Kolmogorov-Arnold Networks (KANs) into all fundamental GNN components [25]. Unlike traditional GNNs that use fixed activation functions, KA-GNNs employ learnable univariate functions (often based on Fourier series) on edges, enabling more accurate and interpretable modeling of complex molecular relationships [25]. The Fourier-based formulation allows KA-GNNs to effectively capture both low-frequency and high-frequency structural patterns in molecular graphs, enhancing their expressiveness for quantum chemical properties [25].

Two primary variants have been developed: KA-GCN (KAN-augmented Graph Convolutional Network) and KA-GAT (KAN-augmented Graph Attention Network) [25]. In KA-GCN, initial node embeddings are computed by processing atomic features and neighboring bond features through KAN layers, while message passing follows the GCN scheme with node updates via residual KANs [25]. KA-GAT extends this approach by incorporating edge embeddings and attention mechanisms built with KAN components, further enhancing model capacity [25]. Experimental results across multiple benchmarks show that KA-GNNs consistently outperform conventional GNNs in both prediction accuracy and computational efficiency [25].

Multi-Feature and Transfer Learning Approaches

Advanced GNN architectures have also incorporated multiple feature extraction paths to simultaneously process different aspects of molecular structure. These approaches typically include dedicated paths for node features, edge features, and three-dimensional structural information, with attention mechanisms to dynamically weight the importance of each feature type during aggregation [24]. This multi-feature strategy has demonstrated particular effectiveness for quantum chemical properties that depend on complex electronic interactions and spatial arrangements [24].

Transfer learning with GNNs has emerged as another powerful paradigm, especially valuable in drug discovery contexts where high-fidelity experimental data is scarce and expensive to acquire [26]. Effective transfer learning strategies leverage representations learned from abundant low-fidelity data (such as high-throughput screening results or computational approximations) to improve predictive performance on sparse high-fidelity tasks (such as experimental characterizations) [26]. When combined with adaptive readout functions, these approaches have shown performance improvements of 20-60% in transductive learning settings and up to 100% improvement in R² for inductive learning scenarios [26].

Research Reagent Solutions

Table 2: Essential Computational Tools for GNN Research on Molecular Datasets

| Tool Category | Representative Examples | Primary Function |

|---|---|---|

| Quantum Chemistry Datasets | QM7, QM7-X, QM9 [1] [2] [27] | Benchmark molecular structures with computed properties |

| Molecular Featurization | RDKit [23], Open Babel [2] | Chemical structure parsing, descriptor calculation, conformer generation |

| GNN Frameworks | MPNN [22], GCN [22] [23], GAT [22], Attentive FP [23] | Implementations of graph neural network architectures |

| Quantum Chemistry Codes | DFTB+ [2], ASE [2] | Quantum mechanical calculations for dataset generation |

| Benchmarking Platforms | MoleculeNet [23] [20] | Standardized datasets and evaluation protocols for molecular ML |

Experimental Workflow and Molecular Representation

The standard workflow for GNN-based molecular property prediction involves sequential stages from data preparation through model interpretation. The process begins with molecular structure standardization and featurization, followed by graph construction where atoms are represented as nodes and bonds as edges with associated feature vectors [23] [24]. The GNN model then performs iterative message passing to learn atomic representations before aggregating these into a molecular-level representation for property prediction [22] [23]. Performance validation follows established benchmarking protocols with appropriate dataset splitting strategies [20].

GNN Molecular Property Prediction Workflow

Different molecular representation approaches offer complementary advantages for property prediction. Traditional descriptor-based methods use predefined molecular fingerprints or quantum chemical descriptors, offering computational efficiency and interpretability but potentially missing important structural nuances [23]. Graph-based representations preserve the complete connectivity information and enable GNNs to learn relevant substructures automatically, providing greater flexibility but requiring more computational resources [23] [24]. For quantum chemical properties like those in QM7, 3D structural information is particularly important, leading to the development of geometric GNNs that incorporate spatial coordinates and distances [24].

The evolution of Graph Neural Networks has fundamentally advanced molecular property prediction, with architectures like KA-GNN and multi-feature GNNs demonstrating superior performance on established quantum chemical benchmarks such as QM7. These approaches effectively capture complex structure-property relationships by directly operating on molecular graph representations and integrating advanced mathematical frameworks for feature learning [25] [24].

Future progress in this field will likely focus on several key areas: developing more expressive GNN architectures that can better model long-range interactions and quantum effects; improving data efficiency through advanced transfer learning and multi-fidelity approaches [26]; enhancing model interpretability to identify chemically meaningful substructures [25]; and addressing current benchmarking limitations through more rigorous dataset curation and evaluation protocols [20]. As these technical advances continue, GNNs are poised to play an increasingly central role in accelerating drug discovery and materials design through more accurate and efficient molecular property prediction.

The QUantum Electronic Descriptor (QUED) framework represents a significant methodological advance in the development of machine learning (ML) models for molecular property prediction. It addresses a central challenge in computer-aided drug discovery: the identification of molecular descriptors that effectively capture both geometric and electronic structure-derived features to enable reliable and interpretable predictive models [28]. QUED integrates quantum-mechanical (QM) electronic structure data with inexpensive geometric descriptors to form comprehensive molecular representations, moving beyond traditional descriptors that focus solely on structural characteristics [28]. This integration is particularly valuable for pharmaceutical and biological applications where understanding both structural and electronic properties is crucial for predicting biological endpoints like toxicity and lipophilicity.

The performance of QUED and other ML approaches for molecular property prediction is typically validated on standardized quantum chemical datasets, with the QM7 dataset serving as a fundamental benchmark in the field [1]. This dataset contains 7,165 organic molecules composed of up to 23 atoms (including a maximum of 7 heavy atoms from CHNOS) extracted from the GDB-13 database, which contains nearly 1 billion stable and synthetically accessible organic molecules [1]. The QM7 dataset provides Coulomb matrix representations and atomization energies computed using the Perdew-Burke-Ernzerhof hybrid functional (PBE0), with atomization energies ranging from -800 to -2000 kcal/mol [1]. This dataset features a diverse array of molecular structures including double and triple bonds, cycles, and various functional groups (carboxy, cyanide, amide, alcohol, epoxy), making it an ideal testbed for evaluating the capability of ML models to generalize across chemical space [1].

Experimental Protocols and Methodologies

QUED Framework Methodology

The QUED framework employs a multi-component approach to molecular representation that combines quantum-mechanical and geometric descriptors through a systematic workflow:

Quantum-Mechanical Descriptor Generation: QUED derives quantum-mechanical descriptors from molecular and atomic properties computed using the semi-empirical density functional tight-binding (DFTB) method, which enables efficient modeling of both small and large drug-like molecules [28]. This descriptor captures electronic structure information essential for predicting properties influenced by electron distribution and orbital interactions.

Geometric Descriptor Integration: The framework incorporates inexpensive geometric descriptors that capture two-body and three-body interatomic interactions, providing complementary structural information about molecular shape and atomic arrangements [28]. These geometric features help encode molecular conformation and steric properties that influence molecular interactions and stability.

Machine Learning Integration: The combined QM and geometric descriptors serve as comprehensive molecular representations for training ML models, specifically Kernel Ridge Regression and XGBoost, which are then used for property prediction tasks [28]. The model performance is enhanced through the complementary nature of electronic and structural information.

Model Interpretation: QUED employs SHapley Additive exPlanations (SHAP) analysis to interpret the predictive models and identify the most influential electronic features, providing insights into the relationship between electronic structure and molecular properties [28].

Benchmarking Methodology on QM7

The evaluation of molecular property prediction models on the QM7 dataset follows established protocols to ensure fair comparison across different approaches:

Data Partitioning: The standard benchmarking protocol utilizes predefined cross-validation splits provided in the QM7 dataset, typically consisting of five splits (represented by array P of size 5 x 1433) to ensure consistent evaluation across different studies [1].

Performance Metrics: Model performance is primarily assessed using mean absolute error (MAE) of atomization energies measured in kcal/mol, with lower MAE values indicating better prediction accuracy [1].

Comparison Baselines: New approaches are compared against established benchmarks, including Kernel Ridge Regression with Gaussian Kernel on Coulomb matrix sorted eigenspectrum (MAE: 9.9 kcal/mol) and Multilayer Perceptron with binarized random Coulomb matrices (MAE: 3.5 kcal/mol) [1].

Performance Comparison on QM7 Dataset

Quantitative Performance Metrics

Table 1: Performance Comparison of Molecular Property Prediction Methods on QM7 Dataset

| Method | Descriptor Type | ML Model | MAE (kcal/mol) | Key Features |

|---|---|---|---|---|

| QUED Framework | Hybrid QM + Geometric | Kernel Ridge Regression / XGBoost | Not Reported | DFTB-based QM descriptors + geometric descriptors |

| Kernel Ridge Regression [1] | Coulomb Matrix | Gaussian Kernel | 9.9 | Sorted eigenspectrum representation |

| Multilayer Perceptron [1] | Binarized Coulomb Matrix | Neural Network | 3.5 | Random Coulomb matrices for representation learning |

| Simple Multilayer Perceptron [1] | Coulomb Matrix | Neural Network | 3-4 | Basic neural network with error backpropagation |

Table 2: QUED Framework Component Analysis

| Framework Component | Implementation Details | Contribution to Prediction Accuracy |

|---|---|---|

| QM Descriptor | DFTB-computed molecular and atomic properties | Captures electronic structure features, orbital energies |

| Geometric Descriptor | Two-body and three-body interatomic interactions | Encodes molecular shape and structural constraints |

| ML Models | Kernel Ridge Regression, XGBoost | Enables nonlinear relationship learning |

| Interpretation | SHAP analysis | Identifies most influential electronic features |

While specific numerical results for QUED on the standard QM7 atomization energy prediction task are not provided in the available sources, the framework has been validated using the expanded QM7-X dataset, which comprises equilibrium and non-equilibrium conformations of small drug-like molecules [28]. These validations demonstrate that incorporating electronic structure data notably enhances the accuracy of ML models for predicting physicochemical properties compared to using structural descriptors alone [28].

The QUED approach represents a methodological advancement over traditional Coulomb matrix-based representations used in earlier benchmarks, as it explicitly incorporates electronic structure information that directly influences molecular properties, rather than relying solely on structural representations that implicitly encode electronic information through nuclear charges and positions [1].

Comparison with Alternative Approaches

Table 3: Alternative Quantum Mechanical Descriptor Approaches

| Approach | Descriptor Basis | Applicability | Advantages | Limitations |

|---|---|---|---|---|

| QUED Framework | DFTB + Geometric | Small to large drug-like molecules | Balanced accuracy and computational efficiency | Semi-empirical method limitations |

| Coulomb Matrix [1] | Nuclear charges and positions | Small organic molecules | Built-in invariance to translation and rotation | Limited electronic structure information |

| Hamiltonian Matrix (HELM) [29] | Full electronic Hamiltonian | Universal across periodic table | Rich electronic structure information | Computationally demanding |

| Quantum Experiment Framework (QEF) [30] | Parameterized quantum circuits | Quantum software experiments | Reproducible and exploratory design | Focused on quantum algorithms |

The QUED framework differs from other electronic structure learning approaches like HELM ("Hamiltonian-trained Electronic-structure Learning for Molecules"), which focuses on predicting the full electronic Hamiltonian matrix to capture orbital interaction data [29]. While HELM aims to provide a more fundamental representation of electronic structure, QUED offers a more computationally efficient approach through its use of semi-empirical DFTB methods, making it particularly suitable for drug discovery applications involving larger molecules.

Research Reagent Solutions and Computational Tools

Table 4: Essential Research Tools for Molecular Property Prediction

| Tool/Dataset | Type | Primary Function | Access Information |

|---|---|---|---|

| QM7 Dataset | Benchmark Dataset | Evaluation of molecular property prediction | Available from quantum-machine.org [1] |

| QM7-X Dataset | Extended Benchmark | Includes equilibrium and non-equilibrium conformations | Expanded version of QM7 with additional conformations [28] |

| QUED Code | Software Framework | Implementation of QUED descriptors and models | GitHub: https://github.com/lmedranos/QUED [31] |

| Gaussian | Computational Chemistry Software | TD-DFT calculations for electronic structure | Commercial software package [32] |

| RDKit | Cheminformatics Library | Molecular coordinate generation and manipulation | Open-source cheminformatics toolkit [32] |

| DFTB | Quantum Chemical Method | Semi-empirical electronic structure calculations | Efficient computational method for large systems [28] |

Workflow Visualization of the QUED Framework

QUED Framework Workflow: From Molecular Structure to Property Prediction

The QUED framework workflow begins with molecular structure input, processes both electronic and geometric features in parallel, integrates these descriptors, trains machine learning models, generates predictions, and concludes with model interpretation to identify the most influential electronic features affecting the predictions [28].

Implications for Drug Discovery Applications