Best Practices for Validating Target Prediction Methods: A Strategic Guide for Drug Discovery

This article provides a comprehensive framework for validating computational target prediction methods, a critical step for ensuring reliability in drug discovery and repurposing.

Best Practices for Validating Target Prediction Methods: A Strategic Guide for Drug Discovery

Abstract

This article provides a comprehensive framework for validating computational target prediction methods, a critical step for ensuring reliability in drug discovery and repurposing. Aimed at researchers and drug development professionals, it covers the foundational principles of in silico prediction, a comparative analysis of modern methodological approaches, strategies for troubleshooting and optimizing performance, and robust validation techniques. By synthesizing insights from recent benchmark studies and real-world case studies, this guide empowers scientists to make informed decisions, improve predictive accuracy, and confidently integrate these tools into their R&D workflows to accelerate therapeutic development.

Understanding the Landscape and Importance of Rigorous Validation

The Critical Role of Target Prediction in Modern Drug Discovery and Repurposing

Target prediction stands as a foundational pillar in modern drug discovery, critically determining the success of both de novo drug development and strategic drug repurposing. This process involves identifying biological macromolecules—most commonly proteins—that interact with drug compounds to produce therapeutic effects. In the context of drug repurposing, defined as finding new therapeutic uses for existing drugs or drug candidates outside their original medical indication, accurate target prediction enables researchers to bypass much of the early discovery and safety testing, substantially reducing development timelines from 10-17 years to 3-12 years and cutting costs from billions to approximately $300 million on average [1]. The strategic importance of target prediction has intensified with the growing recognition that traditional single-gene, single-disease, single-drug discovery paradigms yield diminishing returns, necessitating approaches that comprehend complex interactions across multiple biological pathways [2].

Methodological Approaches in Target Prediction

Disease-Centric Approaches

Disease-centric approaches begin with comprehensive analysis of pathological mechanisms to identify potential intervention points. These methods systematically explore biomolecules such as genes or proteins underlying disease cascades [2].

Differential Gene Expression Analysis: This technique identifies genes differentially expressed in disease states compared to normal conditions or across disease stages. For example, in Alzheimer's disease research, scientists extracted microarray data from Gene Expression Omnibus (GEO) datasets to identify differentially expressed genes (DEGs), then performed protein-protein interaction (PPI) network analysis and functional enrichment to pinpoint central targets like PTGS2 (COX-2) [2].

Weighted Gene Co-expression Network Analysis (WGCNA): WGCNA has emerged as a powerful tool for retrieving patterns of gene co-expression, identifying gene modules associated with specific traits, and obtaining insights into complex disease mechanisms [2].

Multi-Omics Integration: Combining genomics, transcriptomics, and proteomics data provides a systems-level view of disease processes. In hepatocellular carcinoma (HCC) research, investigators identified 756 differentially expressed genes from GEO datasets, then performed survival and pathway analyses to identify eight hub genes (CDK1, CCNB1, CCNA2, TOP2A, AURKA, AURKB, KIF20A, and MELK) strongly associated with patient prognosis [2].

Drug-Centric Approaches

Drug-centric approaches leverage existing pharmacological data to reveal new target interactions, capitalizing on previously characterized compounds.

Adverse Effect Analysis: Investigating mechanisms behind adverse drug reactions can unveil potential targets, as these unintended effects may represent desirable therapeutic actions in other disease contexts. For instance, the hypertrichosis side effect of the antihypertensive drug minoxidil led to its repurposing as a topical treatment for alopecia [1].

Chemical Similarity and Side Effect Clustering: Drugs with structural similarities or comparable side effect profiles often share target interactions, enabling prediction of off-target effects [2].

Drug-Target Interaction (DTI) Prediction: Computational DTI methods leverage growing chemical and biological data to predict novel interactions, helping to mitigate the high costs and low success rates of traditional development [3].

Artificial Intelligence and Advanced Computational Methods

Artificial intelligence has revolutionized target prediction by integrating heterogeneous data sources and identifying complex patterns beyond human analytical capacity.

Heterogeneous Data Integration: AI algorithms excel at combining diverse datasets—including chemical structures, omics data, clinical records, and scientific literature—to generate multifaceted hypotheses for target identification [2].

Large Language Models and AlphaFold: Emerging technologies like large language models can process biomedical literature at scale, while AlphaFold-predicted protein structures expand the scope of targetable proteins for virtual screening [3].

Deep Learning Applications: In psoriasis research, scientists constructed a genome-wide genetic and epigenetic network comprising PPI and Gene Regulatory Networks, then applied deep learning to identify potential drug candidates based on predicted target interactions [2].

Table 1: Key Methodological Approaches in Target Prediction

| Approach Category | Specific Methods | Primary Application | Data Requirements |

|---|---|---|---|

| Disease-Centric | Differential Gene Expression Analysis | Identifying disease-associated targets | Transcriptomic data (e.g., from GEO) |

| Weighted Gene Co-expression Network Analysis (WGCNA) | Discovering gene modules in complex diseases | Multi-sample gene expression data | |

| Pathway and Network Analysis | Mapping disease-relevant biological networks | PPI data, pathway databases | |

| Drug-Centric | Adverse Effect Analysis | Repurposing based on side effects | Clinical safety profiles, adverse event reports |

| Chemical Similarity Clustering | Predicting targets based on structural analogs | Chemical structures, bioactivity data | |

| Drug-Target Interaction Prediction | Identifying novel drug-target pairs | Heterogeneous drug and target data | |

| AI & Computational | Deep Learning Networks | Complex pattern recognition in biological data | Multi-omics, chemical, and clinical data |

| Large Language Models | Extracting insights from biomedical literature | Scientific literature, clinical notes | |

| Structure-Based Prediction | Leveraging protein structural information | Experimental or predicted 3D structures |

Experimental Validation Frameworks

Computational Validation Workflows

Computational validation provides the initial assessment of predicted targets before committing to resource-intensive experimental work.

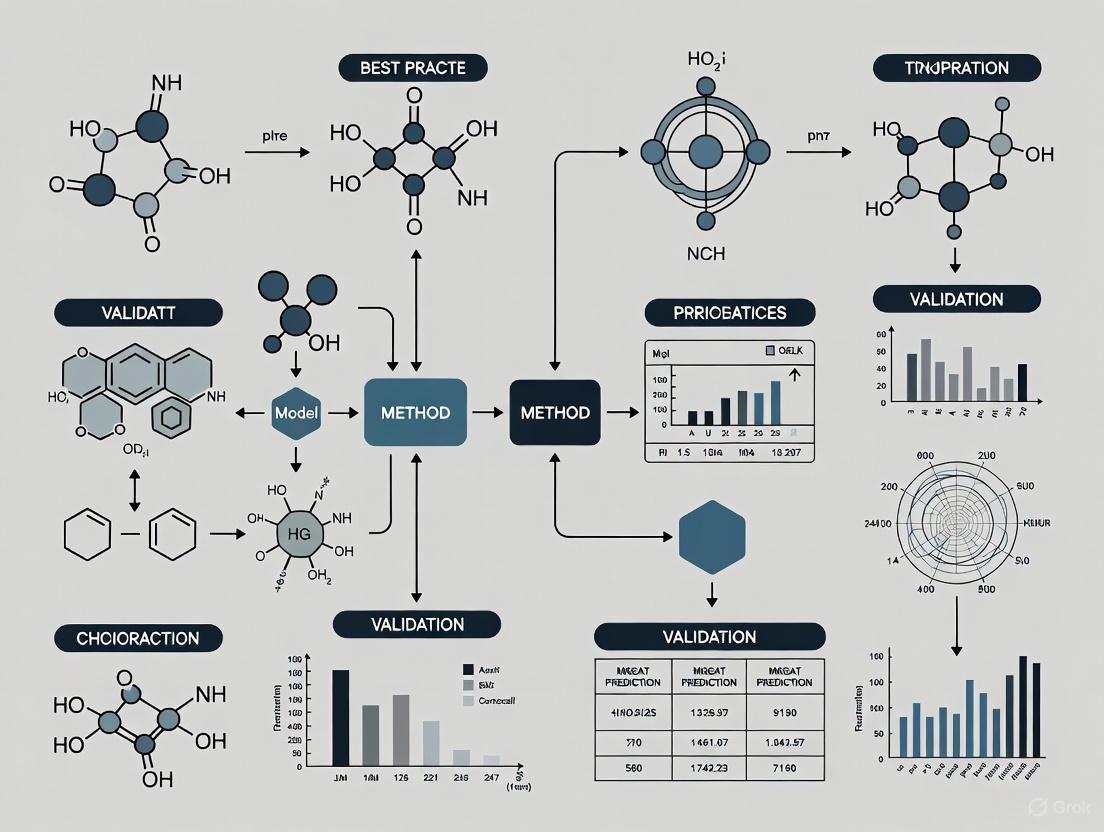

The following diagram illustrates a comprehensive computational validation workflow for target prediction:

Workflow Description: This computational pipeline begins with Homology Modeling to generate 3D protein structures when experimental structures are unavailable [2]. The subsequent Binding Site Analysis identifies and characterizes potential binding pockets, analyzing amino acids lining these cavities to determine druggability potential [2]. Virtual Screening then assesses interactions between the target and compound libraries, typically using molecular docking software like AutoDock to prioritize candidates based on binding affinity and complementarity [4] [2]. Molecular Dynamics Simulations evaluate the stability of predicted drug-target complexes under simulated physiological conditions, providing insights into binding kinetics and residence time [2]. Finally, Druggability Assessment ranks targets based on comprehensive scoring systems that incorporate structural, chemical, and biological factors to prioritize targets with the highest therapeutic potential [2].

Experimental Validation Workflows

Following computational predictions, experimental validation confirms target engagement and pharmacological activity in biologically relevant systems.

The following diagram illustrates the sequential experimental validation process:

Workflow Description: Experimental validation begins with In Vitro Assays using purified targets or cellular models to confirm compound binding and functional effects [2]. The Cellular Thermal Shift Assay (CETSA) has emerged as a crucial method for validating direct target engagement in intact cells and tissues, providing quantitative, system-level confirmation of binding. For example, researchers applied CETSA with high-resolution mass spectrometry to quantify drug-target engagement of DPP9 in rat tissue, confirming dose- and temperature-dependent stabilization ex vivo and in vivo [4]. Ex Vivo Models using patient-derived cells or tissue samples provide human-relevant context while maintaining controlled experimental conditions [2]. In Vivo Models assess target engagement and therapeutic effects in whole organisms, addressing complexity that reductionist systems cannot capture [2]. Successful candidates then advance to Clinical Trials, where phase II trials may begin directly for repurposed drugs, as established safety profiles often allow skipping phase I trials [5] [1].

Table 2: Key Experimental Techniques for Target Validation

| Technique Category | Specific Methods | Key Applications in Target Validation | Advantages |

|---|---|---|---|

| Computational | Molecular Docking (AutoDock, SwissDock) | Predicting binding modes and affinities | High-throughput, low cost |

| Molecular Dynamics Simulations | Assessing binding stability and kinetics | Provides temporal resolution | |

| Pharmacophore Modeling | Identifying essential interaction features | Captures key chemical features | |

| Biophysical | Cellular Thermal Shift Assay (CETSA) | Measuring target engagement in cells | Native cellular environment |

| Surface Plasmon Resonance (SPR) | Quantifying binding kinetics | Label-free, real-time monitoring | |

| Isothermal Titration Calorimetry (ITC) | Measuring binding thermodynamics | Provides full thermodynamic profile | |

| Cell-Based | High-Content Screening (HCS) | Multiparametric analysis of cellular phenotypes | High information content |

| RNA Interference (RNAi) | Functional validation of target importance | Established, versatile methodology | |

| CRISPR-Cas9 Knockout | Determining target essentiality | Precise, permanent gene modification | |

| In Vivo | Disease Models | Evaluating therapeutic efficacy in whole organisms | Full biological complexity |

| Pharmacokinetic/Pharmacodynamic (PK/PD) | Linking exposure to target engagement | Clinically translatable parameters |

Successful target prediction and validation requires specialized research reagents and computational resources. The following table details essential solutions for target prediction research:

Table 3: Essential Research Reagent Solutions for Target Prediction

| Resource Category | Specific Resources | Key Function | Application Context |

|---|---|---|---|

| Bioinformatics Databases | Gene Expression Omnibus (GEO) [2] | Repository of transcriptomic data | Identifying differentially expressed genes |

| Protein Data Bank (PDB) | Repository of 3D protein structures | Structure-based drug design | |

| Molecular Signatures Database (MSigDB) [2] | Collection of annotated gene sets | Pathway analysis and functional enrichment | |

| Protein Interaction Resources | BioGRID, IntAct, MINT, DIP [2] | Protein-protein interaction data | Network-based target identification |

| STRING Database | Known and predicted protein interactions | Pathway reconstruction | |

| Computational Tools | AutoDock, SwissDock [4] | Molecular docking and virtual screening | Predicting drug-target binding |

| Cytoscape [2] | Network visualization and analysis | Biological network exploration | |

| R/Bioconductor | Statistical analysis of omics data | Differential expression analysis | |

| Experimental Assay Systems | CETSA [4] | Cellular target engagement validation | Confirming compound binding in cells |

| High-Content Screening Systems | Multiparametric cellular phenotyping | Functional validation of target modulation | |

| Patient-Derived Cells/Tissues [2] | Biologically relevant experimental models | Translational target validation |

Best Practices for Validating Target Prediction Methods

Establishing Robust Validation Frameworks

Rigorous validation of target prediction methodologies requires multifaceted approaches that address both computational and biological dimensions.

Benchmarking Against Known Interactions: Utilize established drug-target pairs from databases like DrugBank and ChEMBL as positive controls to determine method accuracy, reporting standard metrics including sensitivity, specificity, and area under the receiver operating characteristic curve [3].

Experimental Cross-Validation: Implement orthogonal validation techniques to confirm predictions, such as combining CETSA for direct binding confirmation with functional assays to establish pharmacological relevance [4].

Clinical Corroboration: Whenever possible, leverage clinical data from electronic health records or biobanks to assess whether predicted targets show association with relevant human phenotypes [2].

Addressing Data Quality and Integration Challenges

The performance of target prediction methods depends heavily on data quality and integration strategies.

Heterogeneous Data Integration: Combine multiple data types—chemical, genetic, proteomic, and clinical—to overcome limitations of homogeneous datasets and improve prediction accuracy through complementary evidence [2].

Data Sparsity Management: Apply "guilt-by-association" principles and matrix factorization techniques to address incomplete data in drug-target networks [3].

Context-Specific Validation: Account for biological context—including tissue type, cellular state, and disease stage—as target relevance may vary significantly across conditions [2].

Emerging Trends and Technologies

The field of target prediction continues to evolve with several promising directions enhancing accuracy and translational potential.

Advanced AI Architectures: Graph neural networks and transformer-based models show exceptional promise for capturing complex relationships in heterogeneous biological networks, potentially surpassing current machine learning approaches [3].

Multi-Scale Modeling: Integrating molecular-level target predictions with tissue- and organism-level physiological models will improve translation from in silico predictions to clinical outcomes [2].

Real-World Data Integration: Growing availability of real-world evidence from electronic health records and wearable sensors provides unprecedented opportunities to validate targets in human populations [2].

Target prediction represents a critical nexus in modern drug discovery and repurposing, determining the efficiency and success of therapeutic development. The methodologies reviewed—spanning disease-centric approaches, drug-centric strategies, and advanced computational intelligence—provide researchers with powerful tools to identify novel therapeutic applications for existing compounds. The validation frameworks presented establish rigorous standards for confirming target engagement and pharmacological relevance. As the field advances, integration of multi-scale data, application of sophisticated AI methodologies, and adherence to robust validation practices will further enhance our ability to identify therapeutically valuable targets, ultimately accelerating the delivery of effective treatments to patients while reducing development costs and attrition rates.

In modern drug discovery, the accurate prediction of drug-target interactions (DTIs) is a critical step for understanding mechanisms of action, identifying repurposing opportunities, and elucidating polypharmacological effects [6] [3]. Computational DTI prediction methods have evolved into two principal paradigms: ligand-centric and target-centric approaches. These methodologies differ fundamentally in their underlying principles, data requirements, and practical applications. Within the context of validating target prediction methods, understanding this dichotomy is essential for selecting appropriate tools and interpreting their results accurately. This technical guide provides an in-depth examination of both approaches, their comparative performance, experimental validation protocols, and emerging trends that are shaping the future of computational drug discovery.

Core Methodological Principles

Ligand-Centric Approaches

Ligand-centric methods, also known as similarity-based or ligand-based approaches, operate on the principle that structurally similar molecules are likely to share similar biological targets [7] [8]. These methods predict targets for a query molecule by calculating its similarity to a large library of compounds with known target annotations [9]. The core mechanism involves:

- Molecular Representation: Compounds are encoded as chemical fingerprints that capture structural and/or physicochemical properties. Common representations include ECFP4, FCFP4, MACCS, and Morgan fingerprints [6] [9].

- Similarity Calculation: Similarity metrics such as Tanimoto coefficient or Dice score quantify the structural resemblance between molecules [6].

- Target Inference: Targets are ranked based on the similarity scores between the query molecule and reference ligands in the knowledge-base [8].

A key advantage of ligand-centric methods is their extensive coverage of the target space, as they can potentially identify any target that has at least one known ligand [7]. This makes them particularly valuable for exploratory research where the relevant targets may not be known in advance.

Target-Centric Approaches

Target-centric methods reverse the prediction logic by building individual predictive models for each target of interest [7] [10]. These approaches include:

- Machine Learning Models: Quantitative Structure-Activity Relationship (QSAR) models trained using algorithms such as Random Forest, Naïve Bayes, or deep learning [6] [11].

- Structure-Based Methods: Molecular docking simulations that predict binding poses and affinities based on the three-dimensional structure of target proteins [12].

- Proteochemometric Modeling: Integrated models that consider both ligand and target properties to predict interactions [10].

Target-centric methods typically offer higher precision for well-characterized targets but are inherently limited to targets with sufficient training data (known actives and inactives) or reliable structural models [7].

Table 1: Fundamental Comparison of Core Approaches

| Feature | Ligand-Centric | Target-Centric |

|---|---|---|

| Basic Principle | Chemical similarity principle: similar molecules have similar targets [7] [8] | Model-based prediction for each specific target [7] [10] |

| Target Coverage | High (any target with ≥1 known ligand) [7] | Limited to targets with sufficient data for model building [7] |

| Data Requirements | Library of target-annotated molecules [8] | Sufficient active/inactive compounds per target or protein structures [6] [12] |

| Typical Algorithms | Similarity searching, k-nearest neighbors [8] [9] | QSAR, Random Forest, Naïve Bayes, molecular docking [6] [12] |

| Best Suited For | Exploratory target fishing, novel target discovery [7] [13] | Focused investigation on predefined targets [7] [10] |

Performance Benchmarking and Validation

Quantitative Performance Metrics

Rigorous benchmarking is essential for evaluating and comparing target prediction methods. Standard validation metrics include precision (proportion of correct predictions among all predicted targets), recall (proportion of known targets that are correctly predicted), and the Matthews Correlation Coefficient (MCC), which provides a balanced measure considering all confusion matrix categories [8]. Area Under the Curve (AUC) for ROC and precision-recall curves are also commonly reported, though their relevance to actual drug discovery decisions has been questioned [14].

Recent large-scale benchmarking studies have revealed significant performance differences between methods. A 2025 systematic comparison of seven target prediction methods using a shared benchmark of FDA-approved drugs found that MolTarPred was the most effective ligand-centric method, particularly when using Morgan fingerprints with Tanimoto scores [6]. The study also highlighted that consensus strategies, which combine predictions from multiple models, can achieve true positive rates of 0.98 with false negative rates of 0 in the top 20% of target profiles [10].

Practical Performance Considerations

In practical applications, ligand-centric methods have demonstrated remarkable performance despite their relative simplicity. Studies estimate that researchers need to test only approximately five predicted targets to find two true targets with submicromolar potency, though significant variability exists across different query molecules [7]. Furthermore, approved drugs present a particular challenge for prediction, as their targets are generally harder to predict than those of non-drug molecules [8].

The expansion of bioactivity knowledge-bases has substantially improved performance. One study increased the knowledge-base from 281,270 to 887,435 ligand-target associations, resulting in significantly enhanced prediction capabilities [8]. This highlights the critical importance of data quality and comprehensiveness for accurate target prediction.

Table 2: Performance Benchmarks of Representative Methods

| Method | Type | Precision | Recall | Key Findings |

|---|---|---|---|---|

| MolTarPred (optimized) | Ligand-centric | Not specified | Varies with filtering | Most effective in 2025 benchmark; Morgan fingerprints with Tanimoto score perform best [6] |

| Ligand-centric baseline | Ligand-centric | 0.348 | 0.423 | Average across clinical drugs; large drug-dependent variability [8] |

| EviDTI (Deep Learning) | Target-centric | 0.819 | Competitive | Integrates 2D/3D drug structures and target sequences; provides uncertainty estimates [11] |

| Consensus TCM | Hybrid | TPR: 0.98 | FNR: 0.0 | Top 20% of target profiles; demonstrates power of ensemble strategies [10] |

Experimental Design and Methodological Protocols

Benchmarking Protocol for Ligand-Centric Methods

A robust benchmarking protocol for ligand-centric target prediction should include the following key steps [8]:

Knowledge-Base Construction:

- Retrieve bioactivity data from curated databases (ChEMBL, BindingDB)

- Filter for high-confidence interactions (e.g., confidence score ≥7 in ChEMBL)

- Apply activity thresholds (e.g., IC50, Ki, Kd < 1-10 μM)

- Include diverse target classes (enzymes, membrane receptors, ion channels)

Query Set Preparation:

- Use approved drugs with documented targets as positive controls

- Ensure no overlap between query molecules and knowledge-base compounds

- Include molecules with varying degrees of polypharmacology

Similarity Calculation and Target Ranking:

- Compute molecular fingerprints for all compounds

- Calculate similarity metrics between query and knowledge-base molecules

- Rank targets based on similarity scores of their associated ligands

- Apply similarity thresholds to filter background noise [9]

Performance Evaluation:

- Calculate precision, recall, MCC for each query molecule

- Analyze performance variation across different target classes and molecule types

- Compare against negative controls (random molecules)

Validation Framework for Target-Centric Methods

Validating target-centric approaches requires distinct considerations [10]:

Dataset Curation:

- Collect balanced sets of active and inactive compounds for each target

- Apply stringent criteria for activity thresholds (typically IC50 ≤ 10 μM for actives)

- Address dataset bias through careful negative example selection

- Implement temporal splits to simulate realistic prediction scenarios

Model Training and Evaluation:

- Apply appropriate cross-validation strategies (k-fold, leave-one-out)

- Use strict separation between training, validation, and test sets

- Evaluate extrapolation capability through cold-start scenarios [11]

- Assess performance on novel structural scaffolds not present in training data

Uncertainty Quantification:

- Implement confidence estimation for predictions

- Use evidential deep learning or Bayesian approaches for reliability scores [11]

- Calibrate prediction probabilities to reflect true confidence levels

Advanced Strategies and Emerging Trends

Confidence Estimation and Reliability Scoring

A significant advancement in target prediction is the development of reliability scores for individual predictions. Recent research has demonstrated that the similarity between a query molecule and a target's reference ligands can serve as a quantitative measure of prediction confidence [9]. Fingerprint-specific similarity thresholds have been established to distinguish true positives from background noise, significantly enhancing the practical utility of predictions.

Evidential deep learning represents another promising approach for uncertainty quantification. The EviDTI framework provides well-calibrated uncertainty estimates alongside interaction predictions, enabling researchers to prioritize the most reliable predictions for experimental validation [11]. This addresses a critical limitation of traditional deep learning models, which often produce overconfident predictions for out-of-distribution samples.

Integration and Consensus Strategies

Consensus approaches that combine predictions from multiple models or similarity metrics have consistently demonstrated superior performance compared to individual methods [10]. Ensemble strategies mitigate the limitations of individual approaches by leveraging complementary strengths. For instance, integrating predictions from models using different molecular fingerprints (ECFP4, MACCS, Morgan) can capture diverse aspects of molecular similarity, resulting in more robust target profiles.

Hybrid frameworks that combine ligand-centric and target-centric elements represent the cutting edge of DTI prediction. These systems leverage both chemical similarity and target-based information to generate predictions with enhanced accuracy and coverage [11] [10]. The integration of alphaFold-predicted protein structures with ligand-based similarity metrics is particularly promising for expanding target coverage to proteins without experimentally determined structures.

Handling Polypharmacology and Promiscuity

Modern target prediction must account for the pervasive nature of polypharmacology, where drugs typically interact with multiple targets. Current estimates indicate that approved drugs have an average of 8-11.5 targets with submicromolar affinity [7] [8]. Advanced prediction methods now incorporate promiscuity analysis to identify molecules with appropriate polypharmacological profiles for specific therapeutic applications, such as multi-target drugs for complex diseases or selective inhibitors to minimize side effects.

Table 3: Key Resources for Target Prediction Research

| Resource | Type | Function | Key Features |

|---|---|---|---|

| ChEMBL Database | Bioactivity database | Source of curated ligand-target interactions | Experimentally validated bioactivities, confidence scores, extensive coverage [6] [8] |

| BindingDB | Bioactivity database | Binding affinity data for protein targets | Focus on measured binding affinities, complements ChEMBL [9] |

| RDKit | Cheminformatics toolkit | Molecular fingerprint calculation and manipulation | Open-source, multiple fingerprint types, similarity metrics [9] |

| Molecular Fingerprints | Molecular representation | Encode chemical structures as numerical vectors | ECFP4, FCFP4, MACCS, Morgan fingerprints capture different aspects [6] [9] |

| ProtTrans | Protein language model | Protein sequence representation and feature extraction | Pre-trained deep learning models for protein sequences [11] |

| EviDTI Framework | Prediction platform | DTI prediction with uncertainty quantification | Evidential deep learning, multi-dimensional representations [11] |

The ligand-centric and target-centric prediction paradigms offer complementary approaches to drug-target interaction prediction, each with distinct strengths and limitations. Ligand-centric methods provide broad target coverage and are particularly valuable for exploratory research, while target-centric approaches offer higher precision for well-characterized targets. The emerging trend toward hybrid frameworks that integrate multiple data modalities and prediction strategies represents the most promising direction for the field.

Robust validation remains paramount, requiring carefully designed benchmarking protocols that account for real-world application scenarios. The development of reliable confidence estimates and the strategic use of consensus approaches can significantly enhance the practical utility of prediction tools. As bioactivity databases continue to expand and computational methods become increasingly sophisticated, target prediction will play an ever more central role in accelerating drug discovery and repurposing efforts.

The accurate prediction of drug-target interactions (DTIs) is a critical foundation in modern drug discovery, holding the potential to significantly reduce the high costs and extensive timelines associated with bringing new therapeutics to market [3]. Traditional drug development is characterized by low success rates, often attributed to insufficient efficacy or unforeseen safety concerns arising from incomplete target understanding [15]. In silico DTI prediction methods have emerged as powerful alternatives, yet they face three persistent core challenges: reliability, referring to the accuracy and biological relevance of predictions; consistency, concerning the reproducibility of results across different methods and datasets; and data sparsity, stemming from the vast interaction space and limited experimentally validated data [3] [16]. These challenges are interconnected, as data sparsity impedes the training of reliable models, and unreliable models produce inconsistent results. This guide examines these challenges within the context of validating target prediction methods and provides a detailed overview of advanced computational strategies and experimental protocols designed to overcome them.

The table below summarizes the core challenges and the quantitative evidence of their impact on DTI prediction, as revealed by recent studies.

Table 1: Core Challenges in Drug-Target Interaction Prediction

| Challenge | Quantitative Evidence & Impact | Source |

|---|---|---|

| Data Sparsity & Imbalance | Positive/Negative sample ratio typically < 1:100; leads to model overfitting on unseen compounds. [17] | GHCDTI Framework [17] |

| Model Consistency | Systematic comparison of 7 methods showed significant variability in performance and output. [16] | Benchmark Study [16] |

| Prediction Reliability | A state-of-the-art model achieved an AUROC of 0.966 and AUPR of 0.901, yet real-world validation remains crucial. [18] | MVPA-DTI Model [18] |

Advanced Computational Strategies for Robust DTI Prediction

Overcoming Data Sparsity with Heterogeneous Networks and Contrastive Learning

Data sparsity arises from the immense number of potential drug-target pairs compared to the relatively small number of known interactions. This creates a severe class imbalance problem that can lead models to overfit on the few available positive examples.

- Heterogeneous Network Integration: Modern approaches construct heterogeneous networks that incorporate not only drugs and targets but also additional biological entities like diseases and side effects. For example, the MVPA-DTI model integrates drugs, proteins, diseases, and side effects from multisource data to systematically characterize multidimensional associations [18]. This provides a richer context and allows the model to infer new interactions through related entities, effectively mitigating data sparsity.

- Contrastive Learning with Adaptive Sampling: To handle extreme class imbalance, the GHCDTI framework employs a multi-level contrastive learning strategy with adaptive positive sampling [17]. This technique enhances generalization by maximizing the agreement between different augmented views of the same data point (e.g., topological and frequency-domain views of a protein structure), ensuring the model learns robust features even from limited positive examples.

Enhancing Reliability with Multimodal Feature Extraction and Deeper Networks

Reliability is compromised when models fail to capture complex biochemical features or are limited by their architectural depth.

- Leveraging Large Language Models (LLMs) and 3D Information: The MVPA-DTI model employs a molecular attention transformer to extract 3D conformation features from drug structures and uses the protein-specific LLM Prot-T5 to derive biophysically meaningful features from protein sequences [18]. This integration of structural and sequential information provides a more comprehensive representation, leading to more reliable predictions.

- Dynamic Weighting and Residual Connections: Many graph neural network-based models are shallow and suffer from performance degradation when made deeper (the over-smoothing problem). The DDGAE model addresses this with a Dynamic Weighting Residual Graph Convolutional Network (DWR-GCN) module, which allows the construction of deeper networks capable of capturing higher-level semantic information without performance loss [19].

Ensuring Consistency through Rigorous Benchmarking and High-Confidence Filtering

Inconsistency across different prediction methods undermines their practical utility and makes it difficult for researchers to trust and compare results.

- Systematic Benchmarking: A 2025 study systematically compared seven target prediction methods (MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred) using a shared benchmark dataset of FDA-approved drugs [16]. This type of standardized evaluation is critical for assessing the consistency and real-world performance of various approaches.

- High-Confidence Filtering: The same study explored model optimization strategies, finding that high-confidence filtering can improve the precision of predictions. However, this comes at the cost of reduced recall, making it a strategic choice depending on the application (e.g., less ideal for broad drug repurposing efforts) [16].

Experimental Protocols for Method Validation

Validating computational predictions with experimental evidence is paramount. The following protocols provide a path from in silico prediction to in vitro and in vivo confirmation.

Table 2: The Scientist's Toolkit: Key Reagents and Experimental Methods for Validation

| Research Reagent / Method | Function in Validation | Example Usage |

|---|---|---|

| CRISPR-Cas9 | Gene editing tool for creating knock-out or knock-in cell lines to study target function and drug mechanism. [20] | Validating that a drug's effect is lost when its putative target gene is knocked out. |

| siRNA/shRNA | Gene knockdown tools to transiently reduce target protein expression and observe phenotypic consequences. [15] | Confirming the role of a target in a disease-relevant cellular pathway. |

| Tool Antibodies/SMOL Compounds | Selective inhibitors or binders used to pharmacologically modulate the target of interest. [15] | Testing if pharmacological inhibition replicates the phenotypic effect of genetic knockdown. |

| Molecular Docking & Free Energy Calculations | Computational simulations to predict the binding pose and affinity of a drug to its target. [17] [21] | Providing a structural hypothesis for the interaction before wet-lab experiments. |

| AlphaFold Protein Structure Database | Source of high-quality predicted protein structures for targets with unknown experimental structures. [22] | Enabling structure-based drug design and docking for a wider range of targets. |

Detailed Validation Workflow: A Case Study on Fenofibric Acid

A 2025 study on fenofibric acid exemplifies a robust validation pipeline [16]. The workflow can be summarized as follows:

- In Silico Prediction: Multiple target prediction methods (e.g., MolTarPred) were used to generate hypotheses. The study found that using Morgan fingerprints with Tanimoto scores provided optimal performance for this task [16].

- Hypothesis Generation: The models predicted fenofibric acid, a drug used for lipid management, as a potential THRB (thyroid hormone receptor beta) modulator [16].

- Experimental Validation:

- In Vitro Assays: The interaction between fenofibric acid and THRB was tested using binding affinity assays (e.g., Surface Plasmon Resonance) and functional cell-based assays to confirm the predicted modulation of the receptor's activity.

- Phenotypic Confirmation: The therapeutic implication—repurposing for thyroid cancer—was then investigated in relevant thyroid cancer cell lines, measuring outcomes like cell proliferation and apoptosis.

- Result: The case study successfully demonstrated the drug's potential for repurposing as a THRB modulator for thyroid cancer treatment, validating the initial computational prediction [16].

The diagram below illustrates the logical flow of this integrated computational and experimental validation workflow.

The fields of artificial intelligence and bioinformatics are rapidly developing sophisticated solutions to the long-standing challenges of reliability, consistency, and data sparsity in DTI prediction. The integration of heterogeneous biological data, advanced neural network architectures, and protein language models is steadily enhancing the robustness of computational predictions. However, as these models become more complex, the importance of rigorous benchmarking and experimental validation only increases. The future of reliable target discovery lies in a continuous, iterative cycle where computational predictions inform targeted experiments, and experimental results, in turn, refine and improve the computational models. By adhering to the best practices and validation protocols outlined in this guide, researchers can better navigate the complexities of DTI prediction and contribute to the accelerated development of new therapeutics.

This whitepaper provides an in-depth technical examination of core classification metrics—Accuracy, Precision, Recall, and F1 Score—within the critical context of validating target prediction methods in biomedical research. For researchers, scientists, and drug development professionals, robust model evaluation is paramount to ensuring the reliability and translational potential of computational predictions. This guide details the mathematical definitions, interpretive nuances, and practical application of these metrics, supported by structured data summaries, methodological protocols for metric evaluation, and visualizations of their conceptual relationships. Adherence to these evaluation best practices mitigates the risk of biased performance assessment, particularly when dealing with the imbalanced datasets typical in early-stage research, thereby strengthening the path from in silico prediction to clinical development.

In the domain of drug discovery, computational target prediction methods have become indispensable for identifying and prioritizing novel therapeutic targets [23]. These methods, which include ligand-based, structure-based, and chemogenomic approaches, typically function as binary classifiers, predicting whether a small molecule will interact with a specific biomacromolecular target [23]. The transition of a predicted target from an academic finding to a viable candidate for a clinical development program requires rigorous and persuasive validation [24].

Performance metrics are the cornerstone of this validation process. They provide a quantitative foundation for assessing a model's predictive power, guiding model selection, and communicating the potential of a target to stakeholders [25] [23]. However, no single metric can capture all the desirable properties of a model [25]. A nuanced understanding of multiple metrics—specifically, what aspect of performance each measures and what its limitations are—is therefore essential. Misapplication of these metrics, such as relying solely on accuracy for imbalanced data, can lead to overly optimistic and misleading conclusions, ultimately wasting valuable resources [26] [27]. This guide deconstructs the key metrics of Accuracy, Precision, Recall, and F1 Score to build a comprehensive framework for robust model evaluation in biomedical research.

Foundational Concepts: The Confusion Matrix

All metrics discussed in this whitepaper are derived from the confusion matrix, a table that summarizes the performance of a binary classification algorithm by cross-tabulating the actual class labels with the predicted class labels [28] [29] [30]. The four fundamental outcomes in a binary confusion matrix are:

- True Positive (TP): The model correctly predicts the positive class (e.g., a true drug-target interaction is correctly identified) [25] [30].

- True Negative (TN): The model correctly predicts the negative class (e.g., the absence of an interaction is correctly identified) [25] [30].

- False Positive (FP): The model incorrectly predicts the positive class (a "false alarm"); also known as a Type I error [25] [30].

- False Negative (FN): The model incorrectly predicts the negative class (a "miss"); also known as a Type II error [25] [30].

The following diagram illustrates the logical structure of the confusion matrix and the flow of decisions that lead to each of these four outcomes.

Metric Definitions and Computational Methodologies

This section provides the formal definitions, mathematical formulas, and interpretive guidance for each core performance metric.

Accuracy

Accuracy measures the overall correctness of the model across both positive and negative classes [26] [27]. It answers the question: "Out of all predictions, how many were correct?"

Formula: [ \text{Accuracy} = \frac{TP + TN}{TP + TN + FP + FN} ]

Interpretation and Use Case: While accuracy is intuitive and easy to communicate, it can be highly misleading for imbalanced datasets, where one class significantly outnumbers the other [26] [29] [27]. In target prediction, where active compounds are often rare, a model that always predicts "no interaction" would achieve a high accuracy but would be practically useless [25]. Therefore, accuracy is most informative when used in combination with other metrics and primarily when class distribution is balanced [26] [31].

Precision

Precision (also known as Positive Predictive Value or PPV) measures the reliability of positive predictions [26] [27]. It answers the question: "Out of all instances predicted as positive, what fraction is actually positive?"

Formula: [ \text{Precision} = \frac{TP}{TP + FP} ]

Interpretation and Use Case: A high precision indicates a low rate of false positives [26]. This is critical in scenarios where the cost of a false positive is high. In the context of target prediction and drug discovery, precision is crucial when optimizing a lead series, as pursuing false-positive interactions wastes significant time and resources [23]. For instance, in virtual screening, high precision means that the compounds flagged for experimental testing are highly likely to be true binders.

Recall

Recall (also known as Sensitivity or True Positive Rate - TPR) measures the model's ability to identify all relevant positive instances [26] [30]. It answers the question: "Out of all actual positives, what fraction did the model correctly identify?"

Formula: [ \text{Recall} = \frac{TP}{TP + FN} ]

Interpretation and Use Case: A high recall indicates a low rate of false negatives [26]. This metric should be prioritized when the cost of missing a positive instance is unacceptably high. In biomedical research, recall is paramount in safety assessment (e.g., predicting off-target interactions that could cause toxicity) and in disease screening, where failing to identify a true therapeutic target (a false negative) could mean missing a potential treatment [25] [30].

F1 Score

The F1 Score is the harmonic mean of precision and recall, providing a single metric that balances both concerns [26] [32].

Formula: [ \text{F1 Score} = 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \Recall} = \frac{2TP}{2TP + FP + FN} ]

Interpretation and Use Case: The F1 score is particularly valuable for imbalanced datasets where both false positives and false negatives carry costs, and a trade-off must be found [26] [31] [32]. It is a more robust metric than accuracy in such scenarios because it only considers the positive class and its associated errors (FP and FN), ignoring the true negatives which can inflate accuracy [26]. The F1 score reaches its best value at 1 (perfect precision and recall) and worst at 0. A generalized version, the F-beta score, allows for weighting recall higher than precision or vice versa, depending on the specific business or research problem [31].

Table 1: Summary of Core Binary Classification Metrics

| Metric | Formula | Interpretation | Optimal Context in Target Validation |

|---|---|---|---|

| Accuracy | (TP + TN) / (TP+TN+FP+FN) | Overall correctness of the model | Balanced high-throughput screens; initial coarse-grained model assessment [26] |

| Precision | TP / (TP + FP) | Reliability of positive predictions | Lead optimization phase, where false positives are costly [26] [23] |

| Recall | TP / (TP + FN) | Ability to find all positive instances | Safety pharmacology & toxicology screening; novel target identification [26] [25] |

| F1 Score | 2TP / (2TP + FP + FN) | Harmonic mean of Precision and Recall | Imbalanced datasets; when a balanced view of FP and FN is needed [26] [31] |

Experimental Protocols for Metric Evaluation

Robust validation requires more than just calculating metrics; it demands a rigorous experimental design to prevent over-optimism and ensure generalizability.

Data Partitioning Strategies

Model development should be split into distinct phases to avoid information leakage between training and evaluation [25] [23].

- Training Set: Used to train the model's parameters.

- Validation Set: Used for iterative model tuning and hyperparameter optimization (internal validation).

- Test Set: A fully blinded set, withheld until the final model is selected, used for the final calculation of performance metrics (external validation) [25] [23].

The single train-test split is effective only if both sets are large and representative. For smaller datasets, cross-validation (CV) schemes are preferred [23].

Cross-Validation for Robust Estimation

n-Fold Cross-Validation is a standard protocol for obtaining a robust performance estimate [23].

1. Procedure: Randomly partition the dataset into n equal-sized folds (typically 5 or 10).

2. Iteration: Iteratively train the model on n-1 folds and validate on the remaining 1 fold.

3. Aggregation: Calculate the desired metric (e.g., F1 Score) for each iteration and report the average and standard deviation across all n folds.

Designed-Fold Cross-Validation is critical for target prediction to avoid over-optimism [23]. 1. Cluster Compounds: Cluster compounds based on structural similarity (e.g., using molecular fingerprints). 2. Form Folds: Assign all compounds from a given cluster to the same fold. This ensures that the model is tested on structurally novel compounds not seen during training. 3. Execute CV: Perform the n-fold CV procedure using these cluster-based folds. This "realistic split" provides a more challenging and realistic estimate of a model's ability to generalize to new chemical scaffolds [23].

The following workflow diagram outlines the key steps in this rigorous validation process.

Threshold Tuning and Metric Calculation

Most classifiers output a continuous score or probability. A classification threshold must be applied to convert these scores into class labels [26] [31]. The choice of threshold directly impacts the confusion matrix and all derived metrics.

Protocol for Threshold Optimization: 1. Generate Scores: Obtain the model's prediction scores for the validation set. 2. Vary Threshold: Test a range of thresholds from 0 to 1. 3. Calculate Metrics: For each threshold, calculate the confusion matrix and the target metric(s) (e.g., F1 Score). 4. Select Optimal Threshold: Choose the threshold that maximizes the target metric for the specific application (e.g., maximize Recall for safety screening, or maximize F1 for a general-purpose balance). 5. Apply to Test Set: Use this optimized threshold when evaluating the final model on the blinded test set.

Table 2: Key Reagents and Resources for Validation of Target Prediction Methods

| Tool / Reagent | Category | Function in Validation |

|---|---|---|

| Benchmark Datasets | Data | Provide a standardized, publicly available ground-truth set for fair comparison between different prediction methods [23]. |

| Chemical Clustering Tool | Software | Enables realistic train-test splits by grouping compounds by structural similarity to assess performance on novel chemotypes [23]. |

| Curated Bioactivity Database | Data | Sources like ChEMBL provide the known positive and negative interaction data required to build confusion matrices and calculate metrics [23]. |

| Metric Calculation Library | Software | Libraries like scikit-learn in Python provide optimized functions for computing accuracy, precision, recall, F1, and other metrics from label vectors [31] [32]. |

| Cross-Validation Framework | Software | Automated tools for implementing n-fold and cluster-based validation schemes, ensuring rigorous and reproducible performance estimation [23]. |

The rigorous validation of computational target prediction models is a non-negotiable step in modern drug discovery. As detailed in this whitepaper, a nuanced understanding and correct application of performance metrics—Accuracy, Precision, Recall, and F1 Score—are fundamental to this process. No single metric is sufficient; a thoughtful combination, interpreted in the context of the specific biological question and the inherent imbalance of most biomedical datasets, is required. By adopting the experimental protocols outlined herein, including rigorous data partitioning, realistic cross-validation, and conscious threshold tuning, researchers can generate reliable, interpretable, and persuasive evidence of model performance. This disciplined approach to model evaluation de-risks the translational pathway and strengthens the foundation upon which critical decisions in drug development are made.

A Comparative Guide to Modern Target Prediction Methods and Tools

The landscape of small-molecule drug discovery has progressively shifted from traditional phenotypic screening toward more precise, target-based approaches, placing a greater emphasis on understanding mechanisms of action (MoA) and target identification [33] [6]. In this context, revealing hidden polypharmacology—the ability of a drug to interact with multiple targets—has emerged as a powerful strategy to reduce both time and costs in drug development, primarily through off-target drug repurposing [33] [6]. For instance, drugs like Gleevec and Viagra, originally developed for leukemia and hypertension, were successfully repurposed for gastrointestinal stromal tumors and erectile dysfunction, respectively, by understanding their off-target effects [6].

However, despite the significant potential of in silico target prediction, the reliability and consistency of these methods remain a considerable challenge across different tools and methodologies [33] [6]. The field is characterized by a diverse array of computational approaches, including target-centric methods that build predictive models for each target, ligand-centric methods that focus on the similarity between a query molecule and known ligands, and newer deep learning frameworks that integrate multiple tasks [6] [34]. This whitepaper provides a systematic comparison of leading target prediction tools, including MolTarPred, DeepTarget, and DeepDTAGen, within the critical context of best practices for validating these methods. It is important to note that a tool explicitly named "VGAN-DTI" was not identified in the gathered research; the comparison will therefore focus on the tools for which substantive data was available. The objective is to furnish researchers, scientists, and drug development professionals with a technical guide to inform their selection and application of these powerful technologies.

Tool Comparison: Performance Metrics and Methodologies

This section provides a detailed comparison of the core target prediction tools, summarizing their key attributes, performance, and underlying algorithms. A systematic evaluation is crucial for understanding their respective strengths and optimal applications.

Table 1: Comprehensive Comparison of Target Prediction Tools

| Tool Name | Primary Approach | Data Source | Core Algorithm / Technique | Key Performance Highlights |

|---|---|---|---|---|

| MolTarPred [33] [6] [35] | Ligand-centric | ChEMBL 20 [6] | 2D similarity search using molecular fingerprints (MACCS, Morgan) [6] | Most effective method in a 2025 systematic comparison; outperformed 6 other methods on a shared benchmark of FDA-approved drugs [33] [6]. |

| DeepTarget [36] | Context-centric integration | DepMap Consortium (genetic & drug screens in cancer cells) [36] | AI model trained on cellular context data, not chemical structure [36] | Better than state-of-the-art tools (e.g., RoseTTAFold All-Atom) in 7/8 tests predicting primary targets; accurately predicted Ibrutinib's secondary target (EGFR) in lung cancer [36]. |

| DeepDTAGen [34] | Multitask Deep Learning | KIBA, Davis, BindingDB [34] | Multitask learning with FetterGrad algorithm for DTA prediction & target-aware drug generation [34] | On KIBA: MSE=0.146, CI=0.897, r²m=0.765; outperformed GraphDTA, DeepDTA, and traditional ML models [34]. |

| CMTNN [6] | Target-centric | ChEMBL 34 [6] | Multitask Neural Network (ONNX runtime) [6] | Included in systematic comparison; specific performance metrics not detailed in results. |

| RF-QSAR [6] | Target-centric | ChEMBL 20 & 21 [6] | Random Forest QSAR model [6] | Included in systematic comparison; specific performance metrics not detailed in results. |

Experimental Protocols and Benchmarking Insights

The performance data for several tools, particularly MolTarPred, stems from a precise comparative study published in Digital Discovery in 2025 [33] [6]. The experimental methodology of this study provides a robust framework for validation.

- Benchmark Dataset Curation: The researchers constructed a shared benchmark dataset of FDA-approved drugs sourced from the ChEMBL database (version 34) to ensure a fair comparison [6]. To prevent over-optimism and data leakage, these approved drug molecules were explicitly excluded from the main knowledge base used by the prediction tools during the testing phase [6].

- Database Preparation Protocol: The ChEMBL 34 database was hosted locally in PostgreSQL, and bioactivity data was retrieved via pgAdmin4. The team filtered records to include only those with standard values (IC50, Ki, or EC50) below 10,000 nM [6]. To ensure data quality, they excluded entries associated with non-specific or multi-protein targets and removed duplicate compound-target pairs, resulting in 1,150,487 unique ligand-target interactions for the analysis [6]. A high-confidence filtered database was also created, requiring a minimum confidence score of 7, which corresponds to "direct protein complex subunits assigned" in ChEMBL [6].

- Evaluation of Optimization Strategies: The study also explored how model components influence performance. For MolTarPred, it was found that using Morgan fingerprints with a Tanimoto similarity score outperformed the use of MACCS fingerprints with Dice scores [33] [6]. Furthermore, the use of high-confidence filtering, while improving precision, was noted to reduce recall, making it less ideal for broad drug repurposing campaigns where the goal is to identify all potential targets [33].

A Framework for Validating Target Prediction Methods

Robust validation is the cornerstone of reliable target prediction research. The following workflow and framework synthesize best practices from the analyzed studies, providing a roadmap for researchers to critically assess and apply these tools.

Diagram 1: Target prediction validation workflow.

The Scientist's Toolkit: Essential Reagents and Materials

The transition from in silico prediction to validated biological insight requires a suite of experimental reagents and systems. The following table details key materials essential for the confirmatory stages of target prediction research.

Table 2: Key Research Reagent Solutions for Experimental Validation

| Reagent / Material | Primary Function in Validation | Application Example |

|---|---|---|

| Cancer Cell Line Panel [36] | Provides cellular context to test if a drug's effect is specific to certain genetic backgrounds (e.g., mutant vs. wild-type). | DeepTarget used 371 cancer cell lines from DepMap to identify context-specific targets [36]. |

| Recombinant Target Protein | Used in biophysical assays (e.g., SPR, ITC) and biochemical assays to measure direct binding affinity and kinetics. | Validating a predicted drug-target interaction requires a purified, functional protein. |

| Validated Bioactivity Assays (e.g., Ki, IC50, EC50) | Quantifies the strength and potency of a drug-target interaction in a standardized system. | The ChEMBL database is built on curated bioactivity data from such assays [6]. |

| Primary & Secondary Antibodies | Enables detection of target protein expression, phosphorylation status, and downstream pathway modulation via Western Blot/IF. | Confirming that Ibrutinib treatment affects EGFR signaling pathways in lung cancer cells [36]. |

| Phenotypic Assay Reagents (e.g., viability, apoptosis) | Measures the ultimate functional effect of a drug (e.g., cell death) in a disease-relevant model. | Testing if Ibrutinib kills lung cancer cells with mutant EGFR more effectively [36]. |

Best Practices and Interpretation of Results

A critical practice is to move beyond simple binary predictions and consider the cellular and disease context. As demonstrated by DeepTarget, a drug's primary target in one tissue (e.g., BTK for Ibrutinib in blood cancer) can be secondary in another, where a different target (e.g., mutant EGFR in lung cancer) drives the therapeutic effect [36]. This highlights that context-specificity is a feature, not a bug, in polypharmacology.

Furthermore, the choice of a tool should be aligned with the research goal. For broad drug repurposing where maximizing potential leads is key, a high-recall method is preferable, even if it sacrifices some precision [33]. Conversely, when resources for experimental validation are limited, applying high-confidence filters or using tools that provide reliability scores (like MolTarPred) can improve prospective hit rates [33] [35].

Diagram 2: The iterative cycle of prediction and validation.

The systematic comparison of leading target prediction tools reveals a maturing field where different methodologies excel in different domains. MolTarPred has established itself as a high-performance ligand-centric tool, while DeepTarget introduces a paradigm-shifting, context-aware approach that more closely mirrors the biological reality of drug action [33] [36]. Meanwhile, multitask learning frameworks like DeepDTAGen represent the cutting edge, combining predictive and generative capabilities in a unified model [34].

The ultimate value of these in silico tools is realized only when they are embedded within a rigorous validation framework that includes carefully designed benchmark datasets, context-aware analysis, and a clear understanding of the trade-off between precision and recall. By adhering to these best practices, researchers can leverage these powerful computational methods to accelerate drug discovery, unlock novel therapeutic applications for existing drugs, and systematically decode the complex polypharmacology of small molecules.

The application of artificial intelligence (AI) in target prediction and drug discovery represents a paradigm shift, moving from labor-intensive, human-driven workflows to AI-powered discovery engines capable of compressing traditional timelines [37]. As of 2025, over 75 AI-derived molecules have reached clinical stages, demonstrating the tangible impact of these technologies [37]. However, this rapid advancement necessitates rigorous benchmarking frameworks to differentiate genuine progress from hype and to establish trust in AI predictions, which must be reproducible, explainable, and capable of generalizing beyond their training data [38] [39].

This technical guide provides a comprehensive overview of benchmarking practices for three dominant AI architectures—Graph Neural Networks (GNNs), Transformer-based models, and Generative Models—within the context of validating target prediction methods. We focus on practical experimental protocols, performance metrics, and material requirements to equip researchers with the tools needed for robust model evaluation.

Core Architectures in Molecular AI

- Graph Neural Networks (GNNs): GNNs operate on graph-structured data, where nodes (atoms) and edges (bonds) encode molecular information. Through iterative message passing, nodes aggregate information from their neighbors to learn representations that capture both molecular structure and chemical properties [40] [41]. GNNs have become the foundational architecture for molecular property prediction, powering applications from Google Maps' traffic prediction to Pinterest's recommendation systems [42] [43].

- Graph Transformers: An evolution of the transformer architecture, Graph Transformers replace the standard message passing of GNNs with a global self-attention mechanism, allowing each node (or edge) to attend to all other nodes (or edges) in the graph [40] [41]. This architecture addresses limitations of traditional GNNs, such as over-smoothing and over-squashing, which hinder learning of long-range interactions in graphs [41].

- Generative Models: In drug discovery, generative models like Generative Adversarial Networks (GANs), Diffusion Models, and Variational Autoencoders (VAEs) are primarily used for de novo molecular design [44] [45]. These models learn the underlying distribution of chemical space and can generate novel molecular structures with desired properties, enabling accelerated hit identification and lead optimization [37].

Quantitative Performance Comparison

Table 1: Comparative Performance of AI Architectures on Key Molecular Tasks

| Architecture | Representative Models | Sterimol Parameters (MAE) | Binding Energy Estimation (RMSE) | Long-Range Task Performance | Inference Speed |

|---|---|---|---|---|---|

| GNNs | ChemProp, GIN-VN, SchNet, PaiNN | Baseline | Baseline | Limited by over-squashing [41] | Baseline |

| Graph Transformers | Graphormer, Transformer-M, ESA | On par with GNNs [40] | On par with GNNs [40] | State-of-the-art [41] | Faster than GNNs [40] |

| Generative Models | GANs, Diffusion Models, VAEs | Not Primary Use Case | Not Primary Use Case | Varies by Architecture | Computationally Expensive [44] |

Table 2: Domain-Specific Application Strengths

| Architecture | Primary Drug Discovery Applications | Key Strengths | Notable Real-World Examples |

|---|---|---|---|

| GNNs | Molecular property prediction, Binding affinity estimation [40] | Strong performance on local structural features [41] | SchNet, PaiNN for quantum property prediction [40] |

| Graph Transformers | Molecular representation learning, Transfer learning [40] [41] | Superior generalization, long-range dependency modeling [41] | Edge-Set Attention (ESA) outperforming GNNs on 70+ tasks [41] |

| Generative Models | De novo molecular design, Lead optimization [37] | Exploration of novel chemical space, multi-parameter optimization | Exscientia's AI-designed drugs in clinical trials [37] |

Experimental Benchmarking Methodologies

Standardized Evaluation Protocols

Robust benchmarking requires standardized evaluation protocols that simulate real-world scenarios. Key methodological considerations include:

- Generalization Testing: To evaluate real-world utility, models should be tested on left-out protein superfamilies not present in the training data. This simulates the scenario of predicting interactions for novel protein families discovered in the future [38].

- Context-Enriched Training: Incorporating pretraining on quantum mechanical atomic-level properties and auxiliary task training has been shown to enhance model performance, particularly for Graph Transformer architectures [40].

- Rigorous Dataset Selection: Benchmarks should utilize diverse datasets that challenge different model capabilities:

- BDE Dataset: Focused on reaction-centric properties of organometallic catalysts, testing binding energy estimation from molecular graphs [40].

- Kraken Dataset: Contains DFT-computed conformer ensembles for organophosphorus ligands, evaluating 3D molecular descriptor prediction [40].

- tmQMg Dataset: Comprises over 60,000 transition-metal complexes, testing generalization capabilities for challenging chemical spaces [40].

Emerging Benchmarking Frameworks

The field is addressing limitations in standardized evaluation through new approaches:

- Synthetic Graph Generation: Tools like SkyMap generate synthetic graph datasets with fine-grained control over topology and feature distribution parameters, enabling more comprehensive GNN benchmarking. SkyMap achieves a 64% lower Wasserstein distance compared to previous generators, better replicating the learnability of real-world graphs [43].

- Structure-Aware Evaluation: The recently released SAIR (Structurally Augmented IC50 Repository) dataset provides over 5 million protein-ligand structures paired with experimental binding affinities, creating a common testbed for rigorous, head-to-head benchmarking of structure-aware AI models [39].

Visualization of Key Architectures and Workflows

Diagram 1: AI Architecture Comparison

Diagram 2: Model Validation Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools for AI Validation

| Tool/Resource | Function | Application in Validation |

|---|---|---|

| SAIR Dataset [39] | Open dataset of 5M+ protein-ligand structures with experimental binding affinities | Training and benchmarking structure-aware AI models for binding affinity prediction |

| PoseBusters [39] | Python-based tool for evaluating physical plausibility of protein-ligand structures | Validating structural predictions and filtering unrealistic molecular conformations |

| SkyMap [43] | Generative graph model for creating synthetic benchmark datasets | Testing GNN performance across diverse graph topologies and feature distributions |

| ECFP/RDKit Fingerprints [40] | Traditional molecular fingerprints for compound representation | Baseline comparisons against graph-based methods |

| Open Graph Benchmark (OGB) [40] [43] | Curated collection of benchmark graph datasets | Standardized evaluation of graph learning algorithms |

| AutomationStudio (Exscientia) [37] | Automated synthesis and testing platform | Closing the design-make-test-learn cycle with experimental validation |

Benchmarking AI models for target prediction requires a multifaceted approach that evaluates not only traditional performance metrics but also generalizability, computational efficiency, and utility in real-world drug discovery settings. Graph Transformers have emerged as compelling alternatives to GNNs, offering competitive performance with added advantages in speed and flexibility, particularly when enhanced with context-enriched training [40]. The Edge-Set Attention architecture demonstrates how purely attention-based approaches can outperform both GNNs and more complex transformers across diverse tasks [41].

For generative models, the most meaningful benchmarks extend beyond molecular generation to include experimental validation of synthesized compounds and progression through clinical stages [37]. As the field matures, the development of standardized benchmarking frameworks—such as the SAIR dataset for structure-aware AI and synthetic graph generators like SkyMap—will be crucial for advancing the field and building trustworthy AI for drug discovery [39] [43]. Future validation efforts must prioritize generalizability across novel protein families and transparent reporting of model limitations to fully realize the potential of AI in transforming target prediction and drug development.

The accurate prediction of drug-target interactions (DTIs) is a critical and rate-limiting step in modern drug discovery, essential for identifying new therapeutic targets, repurposing existing drugs, and reducing the high failure rates in clinical trials [46] [47]. While artificial intelligence (AI) and machine learning (ML) models have demonstrated potential to accelerate this process, their reliability hinges on rigorous validation against high-quality benchmark datasets. State-of-the-art deep learning models frequently fail to generalize to novel structures because they exploit topological shortcuts in training data rather than learning the underlying chemical and biological principles that govern molecular interactions [46]. This validation gap underscores the indispensable role of carefully curated, multimodal databases in developing truly predictive computational models. Without standardized benchmarking against datasets like ChEMBL, BindingDB, and DrugBank, the field cannot distinguish between models that have genuinely learned the principles of molecular recognition versus those that have merely memorized annotation patterns in biased training sets.

Core Datasets for Drug-Target Interaction Prediction

The foundation of robust DTI prediction research rests on the appropriate selection and use of primary data sources. The table below summarizes the core characteristics of three indispensable databases.

Table 1: Core Benchmark Databases for Drug-Target Interaction Research

| Database | Primary Focus | Key Data Types | Notable Features | Common Applications |

|---|---|---|---|---|

| ChEMBL [47] [48] | Bioactivity data | Bioactivity values (e.g., IC50, Ki, Kd), pChEMBL values, DTP scores | Manually curated; extensive bioactivity data from scientific literature; drug discovery data | Training ML models on quantitative bioactivity; drug repurposing |

| BindingDB [46] [47] | Binding affinities | Experimental binding affinities (Kd, Ki, IC50), protein targets, chemical structures | Focuses on measured binding affinities; rich interaction data | Validating binding predictions; benchmarking DTI models |

| DrugBank [47] [48] | Drug and target information | Comprehensive drug data, target sequences, mechanisms, drug interactions | Detailed drug information with validated target links | Gold-standard data for validation; understanding drug mechanisms |

In-Depth Database Profiles

ChEMBL is a manually curated database of bioactive molecules with drug-like properties, providing access to quantitative bioactivity data for a vast array of compounds and targets [47]. Its pChEMBL values offer a standardized metric for bioactivity, enabling consistent model training and comparison. ChEMBL's size and diversity make it particularly valuable for training deep learning models that require large volumes of reliable data.

BindingDB specializes in recording measured binding affinities between chemical substances and proteins [46]. This singular focus makes it invaluable for validating the predictive accuracy of DTI models, especially for structure-based approaches. However, the distribution of its data presents challenges, as the number of annotations for proteins and ligands follows a fat-tailed distribution, creating significant annotation imbalance where a few "hub" nodes have disproportionately more binding records [46].

DrugBank serves as a comprehensive knowledge repository for drug and target information, containing detailed data on FDA-approved and experimental drugs, their mechanisms, and interactions [48]. Its rigorously validated drug-target pairs are often used as gold-standard references for benchmarking the performance of novel prediction algorithms, particularly in real-world scenarios.

Critical Challenges and Best Practices in Dataset Utilization

The Topological Shortcut Problem

A fundamental challenge in DTI prediction is the tendency of ML models to rely on topological shortcuts present in benchmark data. Instead of learning the complex relationships between molecular structures and their binding affinities, models may exploit a simpler correlation: proteins and ligands with many known interactions (high-degree nodes in the protein-ligand interaction network) are more likely to have additional predicted interactions [46]. This occurs because of annotation imbalance, where the distribution of positive and negative annotations is highly skewed. In typical training data, most proteins and ligands have either only binding or only non-binding annotations, creating degree ratios (ρ) clustered near 1 or 0 [46]. Consequently, models achieve apparently strong performance on standard benchmarks while failing to generalize to novel targets or compounds.

Addressing Data Limitations with Advanced Methodologies

Table 2: Strategies to Overcome Common Data Limitations

| Challenge | Impact on Model Performance | Recommended Mitigation Strategy |

|---|---|---|

| Annotation Imbalance | Models bias predictions toward highly annotated nodes, poor generalization [46] | Network-based sampling (e.g., using distant pairs as negatives), unsupervised pre-training [46] |

| Data Sparsity | Limited coverage of the chemical and target space reduces predictive power | Integrate heterogeneous data sources (e.g., side effects, gene expression) [48] |

| Validation Bias | Overly optimistic performance estimates in real-world applications | Implement cold-start testing (evaluating on novel proteins/ligands) [46] |

Advanced Methodologies:

- Network-Based Sampling: The AI-Bind pipeline introduces a robust approach for generating negative samples by selecting protein-ligand pairs with the longest shortest path distances on the interaction network, ensuring these pairs are biologically distant and unlikely to interact [46].

- Heterogeneous Data Integration: Models like DrugMAN overcome chemogenomic data limitations by integrating multiple functional networks—including drug-side effect associations, disease relationships, and gene expression data—to create enriched feature representations for drugs and targets [48].

- Cross-Validation Strategies: Implementing both warm-start (random split) and cold-start (novel proteins or drugs) testing scenarios provides a more realistic assessment of model performance in genuine discovery contexts where predicting interactions for novel structures is essential [48].

Experimental Protocols for Robust Model Validation

Standardized Benchmarking Workflow

A comprehensive validation strategy must assess model performance across multiple scenarios, from optimisitic warm-start to challenging cold-start conditions. The following workflow diagram illustrates a rigorous experimental protocol for benchmarking DTI prediction methods.

Implementation Protocol

Data Preparation:

- Data Collection: Download and combine interaction data from multiple sources (ChEMBL, BindingDB, DrugBank) to create a comprehensive dataset [47] [48].

- Conflict Resolution: Handle duplicate entries for the same drug-target pair by taking the median of reported bioactivity values to minimize assay-specific variability [47].

- Threshold Application: Define binding interactions using established bioactivity thresholds (e.g., pChEMBL >5 for actives, DTP >0) and verify inactive interactions against databases like PubChem [47].

Experimental Splits:

- Warm-Start Evaluation: Randomly split all drug-target pairs (80% training, 20% testing) to establish baseline performance [47].

- Cold-Start Evaluations:

- Cold-Drug: Place all interactions for specific drugs in the test set, ensuring these drugs are completely absent from training

- Cold-Target: Place all interactions for specific protein targets in the test set

- Cold-Both: Test on drug-target pairs where both the drug and target are novel [48]

Performance Metrics:

- Calculate AUROC (Area Under the Receiver Operating Characteristic curve) to measure overall ranking capability