Beyond Accuracy: A Strategic Guide to Metric Selection for Hyperparameter Optimization in Chemistry ML

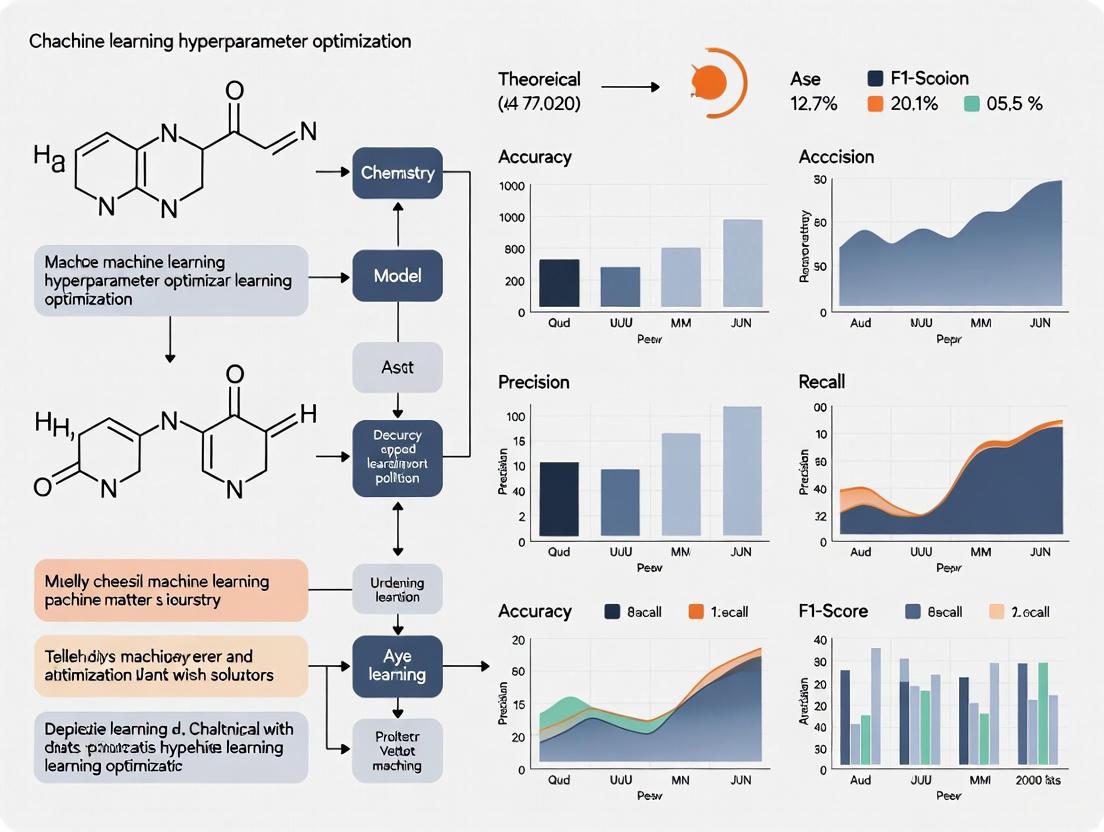

Selecting the right evaluation metrics is a critical, yet often overlooked, step in hyperparameter optimization for chemistry machine learning.

Beyond Accuracy: A Strategic Guide to Metric Selection for Hyperparameter Optimization in Chemistry ML

Abstract

Selecting the right evaluation metrics is a critical, yet often overlooked, step in hyperparameter optimization for chemistry machine learning. This article provides a comprehensive framework for researchers and drug development professionals to navigate this complex landscape. It covers the foundational reasons why standard metrics fail with chemical data, introduces domain-specific metrics for drug discovery applications, outlines advanced methodologies for robust model tuning in low-data and imbalanced scenarios, and provides a rigorous protocol for validating and comparing model performance to ensure reliable, trustworthy predictions in biomedical research.

Why Standard Metrics Fail in Chemistry: The Case for Domain-Specific Evaluation

The Pitfalls of Accuracy and F1-Score with Imbalanced Chemical Data

## FAQs on Metric Selection and Model Evaluation

### FAQ 1: Why are standard metrics like accuracy misleading for my imbalanced chemical dataset?

In imbalanced datasets, where one class is significantly underrepresented, a model can achieve high accuracy by simply always predicting the majority class. This creates a false impression of good performance while completely failing to identify the critical minority class.

The table below summarizes the performance of various machine learning models on imbalanced data, demonstrating how their effectiveness decreases as the imbalance becomes more severe [1].

| Machine Learning Model | Performance Trend as Imbalance Increases | Performance Stability on Imbalanced Data |

|---|---|---|

| Logistic Regression (LR) | Decreases | Unstable |

| Decision Tree (DT) | Decreases | Unstable |

| Support Vector Classifier (SVC) | Decreases | Unstable |

| Gaussian Naive Bayes (GNB) | Decreases | Relatively Stable |

| Bernoulli Naive Bayes (BNB) | Decreases | Most Stable |

| K-Nearest Neighbors (KNN) | Decreases | Relatively Stable |

| Random Forest (RF) | Decreases | Relatively Stable |

| Gradient Boosted Decision Trees (GBDT) | Decreases | Relatively Stable |

For example, in a dataset with 95% non-toxic and 5% toxic compounds, a model that labels everything as non-toxic would be 95% "accurate" but useless for identifying toxicants. The model is biased toward the majority class because it lacks sufficient examples of the minority class to learn meaningful patterns [2] [1].

### FAQ 2: If accuracy is flawed, shouldn't I just use the F1-Score?

The F1-Score, which is the harmonic mean of precision and recall, is often recommended over accuracy for imbalanced data. However, it has its own significant pitfalls and should not be the sole metric for hyperparameter optimization or model selection [3].

The F1-Score is highly sensitive to the number of true negative instances, which can be enormous in imbalanced datasets. A change in the model's ability to correctly identify negatives can cause large swings in the F1-Score that may not reflect true improvement in identifying the positive class. A study on genotoxicity prediction found that while F1 is useful, it should be considered alongside other metrics for a complete picture [4].

A more robust approach is to use a suite of metrics. The following diagram illustrates the recommended workflow for a comprehensive evaluation.

For hyperparameter optimization, metrics like the Area Under the Precision-Recall Curve (AUPRC) and G-Mean are often more reliable objectives than F1-Score [5] [1]. A study analyzing model stability proposed the AFG metric—the arithmetic mean of AUC, F-measure, and G-mean—as a robust single metric for evaluation [1].

### FAQ 3: What are the best metrics to guide hyperparameter tuning for my model?

When performing hyperparameter optimization on imbalanced chemical data, your choice of optimization metric is critical. You should select metrics that are sensitive to the performance on both the majority and minority classes.

The table below compares key metrics used in recent chemical ML studies for evaluating models on imbalanced data [4] [5] [1].

| Metric | Definition | Interpretation | Advantage for Imbalanced Data |

|---|---|---|---|

| AUPRC (Area Under the Precision-Recall Curve) | Area under the plot of Precision vs. Recall | Closer to 1.0 is better. Better than AUC for imbalance. | Focuses directly on the minority (positive) class, ignoring true negatives. |

| G-Mean | √(Sensitivity × Specificity) | Geometric mean of class-wise accuracy. Higher is better. | Measures balanced performance between both majority and minority classes. |

| MCC (Matthews Correlation Coefficient) | √(Precision × Recall) | A value between -1 and +1. +1 is perfect prediction. | Conserves all four confusion matrix categories; reliable for imbalance. |

| AFG | (AUC + F1 + G-Mean) / 3 | Arithmetic mean of three metrics. Higher is better. | Provides a stable, combined assessment from multiple perspectives [1]. |

For example, a study predicting clinical trial outcomes used MCC as a key performance metric because it is considered a more reliable statistical measure for biomedical imbalanced data [5]. Another study systematically analyzing model performance on imbalanced data used a combination of AUC, F-measure, and G-mean [1].

### FAQ 4: What experimental protocols can I use to validate my metric choice?

A robust experimental protocol involves comparing your model's performance using different metrics across multiple validation techniques and data-balancing methods.

Step 1: Dataset Curation and Splitting Curate your dataset carefully, as done in a genotoxicity study that started with 9,411 chemicals and refined it to 4,171 based on quality criteria [4]. Split the data into training and test sets, ensuring the imbalance ratio is roughly preserved in each split.

Step 2: Apply Data-Balancing Techniques (on training set only) Apply various data-balancing methods exclusively to the training set to avoid data leakage. A typical protocol tests several methods [2] [4]:

- Random Oversampling (ROS): Randomly duplicates minority class samples.

- SMOTE: Generates synthetic minority samples by interpolating between existing ones.

- Random Undersampling (RUS): Randomly removes majority class samples.

- Sample Weight (SW): Assigns higher misclassification costs to minority class samples during model training.

Step 3: Model Training with Hyperparameter Optimization Use the training set (balanced or weighted) to train your model. Use a hyperparameter optimization strategy like Bayesian Optimization or RandomizedSearchCV to efficiently search the hyperparameter space, using a robust metric like AUPRC or G-Mean as the scoring function [6] [7].

Step 4: Comprehensive Evaluation Evaluate the final model on the untouched test set using the full suite of metrics discussed in FAQ 3. This workflow is summarized in the following diagram.

## The Scientist's Toolkit: Research Reagent Solutions

| Tool Category | Specific Tool/Method | Brief Function/Explanation |

|---|---|---|

| Data Balancing | SMOTE & Variants | Generates synthetic minority samples to balance class distribution. Variants (Borderline-SMOTE, SVM-SMOTE) improve on noise handling [2]. |

| Random Undersampling (RUS) | Randomly removes majority class samples. Risk of losing important information but is computationally efficient [2] [4]. | |

| Sample Weight (SW) | Adjusts the loss function to make misclassifying a minority sample more costly than a majority sample. Does not alter the dataset itself [4]. | |

| Robust Metrics | AUPRC | Best practice metric for hyperparameter tuning when primary interest is in the minority class [5]. |

| G-Mean | Best practice metric that ensures both classes are recognized well, measuring balanced performance [1]. | |

| MCC | Best practice, robust metric that considers all four confusion matrix categories [5]. | |

| Hyperparameter Optimization | Bayesian Optimization | A smart search algorithm that uses a probabilistic model to find the best hyperparameters efficiently [6] [7]. |

| RandomizedSearchCV | Randomly samples hyperparameters from distributions. More efficient than a full grid search for large parameter spaces [6]. | |

| Advanced Algorithms | Bilevel Optimization (MUBO) | A novel undersampling approach that uses optimization to select an optimal subset of majority data, avoiding the pitfalls of random sampling and synthetic data [8]. |

Understanding False Positives and False Negatives

In clinical development, statistical errors are a major contributor to costs and delays. False positives occur when ineffective treatments appear promising, leading to expensive follow-up testing and unnecessary patient risk. False negatives are effective treatments that are wrongly eliminated from the development pipeline, resulting in missed healthcare and economic opportunities [9].

The burden of false negatives is particularly high because these treatments are typically not tested further, limiting the information available about them. Simulations show that underpowered early-phase trials significantly contribute to this problem [9].

Frequently Asked Questions (FAQs)

1. What are the real-world consequences of false negatives in drug discovery? False negatives lead to the loss of effective treatments, which represents a significant missed opportunity for public health. From a commercial perspective, this also results in the loss of potential profits that could have been reinvested into research and development. Simulations suggest that improving phase II trial power from 50% to 80% can increase productivity by over 60% and profits by over 50% [9].

2. How can machine learning models in chemistry produce false positives? In high-throughput screening for drug discovery, false positives can occur even with advanced techniques like mass spectrometry, which is generally less prone to artefacts than classical assays. Specific, unreported mechanisms can cause compounds to be misidentified as hits, wasting significant time and resources to resolve [10].

3. Why is hyperparameter optimization crucial for ML in chemistry? Hyperparameters are external model configurations not learned from data, such as learning rate or number of trees in a random forest. Effective tuning is critical for preventing overfitting or underfitting and achieving higher accuracy on unseen data [6]. For chemistry applications like retrosynthesis prediction or catalytic design, proper tuning ensures the model generalizes well to real-world data [11].

4. What are the best strategies for hyperparameter tuning? The most effective strategies are [6] [12]:

- GridSearchCV: A brute-force technique that tests all possible combinations in a defined grid.

- RandomizedSearchCV: Randomly samples combinations from the given ranges, often more efficient than grid search.

- Bayesian Optimization: A smarter approach that builds a probabilistic model to predict performance and learns from past results.

Troubleshooting Guides

Troubleshooting Underpowered Clinical Trials

Problem: Early-phase clinical trials (like Phase II) are often underpowered, leading to an unacceptably high rate of false negatives, where effective treatments are incorrectly eliminated [9].

Solution:

- Increase Sample Size: The additional costs of larger sample sizes are offset by the increase in overall development productivity [9].

- Use Advanced Statistical Methods: Implement techniques like CUPED variance reduction, which can increase experiment sensitivity by 30-50%, or sequential testing [13].

- Adopt a "Worth-the-Cost" Mindset: View the increased investment in Phase II power as a strategy to avoid the greater loss of abandoning a potentially successful therapy [9].

Troubleshooting False Positives in High-Throughput Screening

Problem: False-positive hits in high-throughput screening plague drug discovery, consuming resources and time to resolve [10].

Solution:

- Develop Validation Pipelines: Create specific pipelines to detect and identify the mechanisms of false-positive hits [10].

- Utilize Advanced Detection Techniques: Employ methods that can rapidly identify such compounds at the initial screen [10].

- Leverage Mass Spectrometry: Use techniques like RapidFire MRM that are free from artefacts that trouble classical assays (e.g., fluorescence interference) and negate the need for coupling enzymes [10].

Troubleshooting Poor Hyperparameter Tuning

Problem: Default or incorrect hyperparameters lead to suboptimal machine learning models, which is especially problematic for chemistry applications like retrosynthesis or catalyst design [14] [11].

Solution:

- Implement Systematic Tuning: Use Grid Search or Random Search for optimization. For greater efficiency, try Bayesian Optimization [6] [14].

- Define Appropriate Search Spaces: Establish meaningful hyperparameter ranges based on domain knowledge. Use logarithmic scales for parameters like learning rate that span multiple orders of magnitude [15].

- Run Parallel Training Jobs: Configure your system to run multiple training jobs concurrently to explore different hyperparameter combinations simultaneously [15].

- Implement Early Stopping: Automatically terminate poorly performing training jobs to save computational resources [15].

Quantitative Impact of Statistical Errors

The table below summarizes simulation results from 100 potential treatments entering Phase II, assuming 25% are truly effective. It demonstrates how different statistical power and significance levels impact development outcomes [9].

Table 1: Clinical Development Scenarios and Outcomes

| Scenario | Phase II Parameters | Effective Treatments Passing Phase II | Effective Treatments Successfully Launched | Key Outcome |

|---|---|---|---|---|

| Scenario 1: Status Quo | α=5%; Power=50% | 12.5 out of 25 | 10.1 out of 25 | High rate of false negatives (12.5 effective treatments lost) |

| Scenario 2: High Power | α=5%; Power=80% | 20.0 out of 25 | 16.2 out of 25 | 60.4% increase in productivity vs. Status Quo |

| Scenario 3: Stringent Alpha | α=1%; Power=50% | 12.5 out of 25 | 10.1 out of 25 | No meaningful advantage vs. Status Quo |

| Scenario 4: Optimal | α=20%; Power=95% | 23.8 out of 25 | 19.2 out of 25 | Maximizes successful launches, but with more Phase III testing |

Experimental Protocols

Protocol: Simulating Clinical Development Outcomes

This methodology is used to study the impact of statistical error thresholds on clinical development productivity [9].

- Create a Hypothetical Scenario: Define a cohort of 100 potential treatments entering Phase II trials. Assume a base case where 25% are truly "effective" treatments and 75% are "ineffective" [9].

- Set Statistical Parameters: Define Type-I (α) and Type-II (β) error rates for Phase II and Phase III.

- Status Quo Scenario: Phase II: α=5%, Power (1-β)=50%. Phase III: α=0.25% (simulating two successful trials), Power=90% [9].

- Model the Pipeline Flow:

- Calculate the number of "effective" and "ineffective" treatments that pass Phase II.

- These "positives" proceed to Phase III.

- Apply the Phase III statistical parameters to determine final successes and failures [9].

- Calculate Economic Impact: Assign costs to Phase II and Phase III studies and a return on investment for successful treatments to compute overall productivity and profit for the development portfolio [9].

Protocol: Hyperparameter Tuning with Bayesian Optimization

This protocol describes a smarter alternative to grid and random search for optimizing machine learning models [6].

- Define the Search Space: Specify the hyperparameters to tune and their potential value ranges (e.g., learning rate, number of layers in a neural network).

- Build a Surrogate Model: Create a probabilistic model (e.g., Gaussian Process, Random Forest Regression) that predicts model performance based on hyperparameters. This models P(score | hyperparameters) [6].

- Select the Next Parameters: Use an acquisition function to decide the most promising hyperparameter combination to test next, balancing exploration and exploitation.

- Evaluate and Update: Run a training job with the selected hyperparameters, get the evaluation score, and update the surrogate model with the new result.

- Iterate: Repeat steps 3 and 4 until a stopping condition is met (e.g., a set number of iterations or no significant improvement).

Workflow Visualization

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions

| Item / Solution | Function in Experimentation |

|---|---|

| Experimentation Platforms (e.g., Statsig) | Provides enterprise-grade A/B testing infrastructure with advanced statistical methods (CUPED, sequential testing) to reduce false positives and increase sensitivity [13]. |

| Hyperparameter Optimization Frameworks (e.g., SageMaker, Scikit-learn) | Automates the search for optimal ML model configurations using methods like GridSearchCV, RandomizedSearchCV, or Bayesian optimization, improving model accuracy and generalizability [6] [15]. |

| Model Explainability Tools (e.g., SHAP, LIME) | Provides post-hoc explanations for model predictions, helping to audit for bias, build trust, and understand model behavior in chemistry ML applications [14]. |

| Mass Spectrometry-Based Screening | Used in high-throughput screening to directly detect enzyme reaction products, avoiding artefacts from classical assays and reducing false positives [10]. |

| Warehouse-Native Experimentation Deployment | Allows teams to run experiments while maintaining complete data control within their own data warehouses (e.g., Snowflake, BigQuery), ensuring data integrity and security [13]. |

Troubleshooting Guides

Data Integration and Management

Problem: Inability to integrate disparate data formats into a unified analytical dataset.

- Symptoms: Errors during data ingestion, inability to map metadata across sources, inconsistent data interpretation.

- Diagnosis: This is typically caused by a lack of standardized data collection protocols and the inherent heterogeneity of multi-modal data (e.g., combining DICOM images with FASTQ genomics files) [16] [17].

- Solution:

- Implement Robust Data Pipelines: Utilize platforms that offer centralized data ingestion from cloud storage (S3, Blob) and proprietary tools [16].

- Standardize with Ontologies: Employ a centralized ontology management system, such as one built on the OHDSI vocabulary, to ensure consistent interpretation of clinical concepts across datasets [16].

- Automate Harmonization: Leverage LLM-based models and scalable pipelines to automate data cleaning, harmonization, and vocabulary mapping, transforming raw data into AI-ready formats [16].

Problem: Data silos and lack of interoperability hindering a holistic view.

- Symptoms: Incomplete patient journeys, duplication of effort, difficulty correlating findings from different experiments.

- Diagnosis: Data stored in isolated systems across different departments (e.g., internal labs, clinical trial sites, EHRs) without a unified ecosystem [17] [18].

- Solution:

- Adopt a FAIR Data Ecosystem: Ensure data is Findable, Accessible, Interoperable, and Reusable across the organization [17].

- Build Cloud-Native Infrastructures: Use API interfaces and gateways for real-time data transfer from instruments and automated data registration to reduce manual burdens [17].

- Foster Cross-Functional Collaboration: Encourage collaboration between data engineers, scientists, and clinicians to streamline workflows and break down silos [16].

Rare Event Detection and Analysis

Problem: Failure to detect rare adverse events or safety signals in pre-marketing studies.

- Symptoms: Unexpected safety issues emerge only after a drug is on the market, despite passing clinical trials.

- Diagnosis: Traditional randomized controlled trials (RCTs) are inherently limited in size and duration, making them underpowered to detect rare events [19]. For example, to detect a doubling of a 0.1% event rate, a study would need over 50,000 participants for sufficient power [19].

- Solution:

- Implement Post-Marketing Surveillance: Develop comprehensive plans for ongoing monitoring of adverse events once the drug is on the market [19] [20].

- Leverage Real-World Evidence (RWE): Analyze data from claims databases, EHRs, and patient registries to identify potential safety signals in larger, more diverse populations [20] [21].

- Use Advanced Statistical Signal Detection: Apply disproportionality analysis methods (e.g., the Information Component in the WHO VigiBase) to spontaneous report databases to identify emerging safety signals [21].

Problem: High false-positive burden in signal detection.

- Symptoms: Too many spurious associations, wasting resources on follow-up investigations.

- Diagnosis: This can occur when using overly broad term groupings in analyses or when statistical methods are not properly calibrated [21].

- Solution:

- Optimize Terminology Level: For overall timeliness and accuracy, perform quantitative signal detection at the MedDRA Preferred Term (PT) level, rather than higher-level groupings [21].

- Explore Custom Groupings: For specific investigations, evaluate tighter, custom-made groupings of MedDRA PTs that are clinically very similar to improve signal-to-noise ratio [21].

Machine Learning and Hyperparameter Optimization

Problem: Machine learning model performs poorly on new data despite high training accuracy.

- Symptoms: High training scores but low test scores, indicating overfitting and poor generalizability.

- Diagnosis: This is often a result of suboptimal hyperparameters that are tuned for the training set but do not generalize.

- Solution:

- Prioritize Generalizability in Optimization: When tuning hyperparameters, use an objective function that measures generalizability, such as the mean k-fold cross-validation score (e.g., mean 5-fold R² score) [22].

- Employ Bio-Optimized Algorithms (BoAs): Utilize advanced optimization algorithms for hyperparameter tuning. These can handle efficient exploration-exploitation trade-offs and are effective for complex optimization problems [23].

- Implement a Rigorous Validation Workflow: The methodology should include data preprocessing (outlier removal, normalization), splitting into train/test sets, and using an optimization algorithm to maximize the chosen generalizability metric [22].

Problem: Inefficient and slow hyperparameter tuning process.

- Symptoms: Tuning experiments take days or weeks, slowing down research and development cycles.

- Diagnosis: Manual or grid-search approaches are computationally expensive and inefficient for high-dimensional parameter spaces.

- Solution:

- Adopt Hybrid Bio-Optimized Algorithms: Leverage novel hybrid algorithms that combine the strengths of multiple optimizers. For instance, algorithms that integrate strong exploration capacity with faster convergence can significantly speed up the tuning process [23].

- Use Population-Based Methods: Algorithms like Particle Swarm Optimization (PSO) or the Sparrow Search Algorithm (SSA) are designed for global search and can efficiently navigate complex parameter spaces [23].

Frequently Asked Questions (FAQs)

Q1: What exactly is "multi-modal data" in the context of biopharma? A1: Multi-modal data refers to aggregated datasets that contain multiple data formats from various sources [17]. In biopharma, this can include:

- Omics data: Genomics, transcriptomics, proteomics (often massive and complex) [16].

- Clinical data: Electronic Health Records (EHRs), clinical trial data (often unstructured) [16].

- Imaging data: DICOM formats from MRIs, CT scans, and flow cytometry data [16] [17].

- Real-World Data (RWD): Claims data, patient-reported outcomes, and disease registries [24] [20]. The goal is to integrate these diverse types to form a complete view of a patient's biology and response to therapy [17].

Q2: Why are rare adverse events so difficult to detect in clinical trials? A2: Premarketing clinical trials are limited in size, typically involving only 500 to 3,000 participants for a relatively short duration [19]. This sample size is insufficient to reliably detect rare events. The statistical power to identify an adverse event depends on its frequency. The table below illustrates the sample size needed to detect a doubling in the rate of an adverse event with 80% power [19].

Table 1: Sample Size Requirements for Detecting Increases in Adverse Event Rates

| Sample Size | Detecting Increase from 5% to 10% | Detecting Increase from 1% to 2% | Detecting Increase from 0.1% to 0.2% |

|---|---|---|---|

| 1,000 | 82% | 17% | 5% |

| 5,000 | >99% | 80% | 7% |

| 10,000 | >99% | >98% | 17% |

| 50,000 | >99% | >99% | 79% |

As shown, detecting a doubling of a very rare event (0.1% to 0.2%) requires studying at least 50,000 participants, which is far beyond the scope of most pre-approval trials [19].

Q3: What are the best practices for selecting metrics for hyperparameter optimization in chemistry-focused ML? A3:

- Prioritize Generalization Metrics: Avoid optimizing solely for training accuracy. Instead, use metrics derived from a robust validation method, such as the mean k-fold R² score, which provides a better estimate of how the model will perform on unseen data [22].

- Align Metric with the Business Objective: For a regression task like predicting chemical concentration in a drying process, R², Root Mean Square Error (RMSE), and Mean Absolute Error (MAE) are highly relevant [22].

- Optimize for the Metric: Frame hyperparameter tuning as an optimization problem where the goal is to find the parameters that maximize (or minimize) your chosen validation metric. This can be effectively done using bio-optimization algorithms [23].

Q4: Our organization is drowning in data but gaining few insights. What is the first step? A4: The first step is to shift focus from simple data collection to building data literacy and a coherent data strategy [18]. This involves:

- Assessing Data Literacy: Ensure your team has the skills to analyze data, ask the right questions, and communicate insights effectively.

- Investing in AI-Ready Data Platforms: Implement tools that automate the ingestion, harmonization, and standardization of raw, messy datasets into structured, analysis-ready formats [16].

- Fostering a Data-Driven Culture: Encourage interdisciplinary collaboration where evaluating data is as natural as collecting it [18].

Experimental Protocols & Methodologies

Protocol 1: Hyperparameter Optimization for a Concentration Prediction Model

This protocol is based on a study that used machine learning to model a pharmaceutical lyophilization (freeze-drying) process [22].

1. Objective: Accurately predict the concentration (C) of a chemical in a 3D space, given coordinates X, Y, Z.

2. Dataset:

- Source: Over 46,000 data points from numerical simulation of mass transfer equations [22].

- Variables: Inputs are spatial coordinates X(m), Y(m), Z(m); target output is concentration C (mol/m³) [22].

3. Preprocessing:

- Outlier Removal: Use the Isolation Forest (IF) algorithm with a contamination parameter of 0.02 to identify and remove anomalous data points [22].

- Feature Normalization: Apply Min-Max scaling to all input features.

- Data Splitting: Split the cleaned data randomly into a training set (~80%) and a test set (~20%) [22].

4. Machine Learning Models:

- Train three models: Ridge Regression (RR), Support Vector Regression (SVR), and Decision Tree (DT) [22].

5. Hyperparameter Optimization:

- Algorithm: Use the Dragonfly Algorithm (DA) to tune the hyperparameters of each model [22].

- Objective Function: Maximize the mean 5-fold R² score on the training data to ensure model generalizability [22].

6. Performance Evaluation:

- Evaluate the final tuned models on the held-out test set using:

- R² score

- Root Mean Square Error (RMSE)

- Mean Absolute Error (MAE) [22]

Table 2: Key Reagents and Computational Tools for ML Experiments

| Item Name | Function / Explanation |

|---|---|

| Isolation Forest Algorithm | An unsupervised ensemble method for detecting outliers in datasets, crucial for ensuring data quality before model training [22]. |

| Dragonfly Algorithm (DA) | A bio-optimization algorithm used for hyperparameter tuning, effectively navigating the complex parameter space to find optimal model settings [22]. |

| Support Vector Regression (SVR) | A machine learning model effective at capturing nonlinear relationships. When optimized with DA, it demonstrated superior performance for spatial concentration prediction (R² test score of 0.999) [22]. |

| Structured Data Repository | A centralized system (e.g., in PostGRES or Snowflake) that stores harmonized data using standardized vocabularies, making it AI-ready and easily accessible for analysis [16]. |

| OHDSI Vocabulary / OMOP CDM | A proprietary data model and vocabulary system that serves as a centralized ontology management system, ensuring consistent interpretation of clinical concepts across disparate datasets [16]. |

Protocol 2: Signal Detection in Spontaneous Reporting Databases

This protocol outlines the methodology for timely quantitative signal detection using disproportionality analysis, as investigated by the IMI PROTECT consortium [21].

1. Objective: Identify disproportionate reporting patterns that may indicate a potential safety signal.

2. Data Source: A large spontaneous reporting database, such as the WHO Global ICSR Database, VigiBase [21].

3. Data Stratification: Adjust for confounding factors like country of origin and year of submission through Mantel-Haenszel-type stratification [21].

4. Disproportionality Analysis:

- Metric: Calculate the Information Component (IC), a Bayesian confidence interval-based measure.

- Key Metric: Compute the lower 95% two-sided credibility interval of the IC (IC025) [21].

5. Signaling Logic:

- A potential signal is indicated when the IC025 value first exceeds zero for a given drug-adverse event pair in a retrospective quarterly analysis [21].

6. Terminological Level:

- The PROTECT study recommends performing this analysis at the MedDRA Preferred Term (PT) level for the best overall timeliness, rather than using higher-level term groupings [21].

Workflow Visualizations

Multi-Modal Data Processing Workflow

AI-Ready Data Processing Pipeline

Hyperparameter Optimization with Bio-Algorithms

ML Hyperparameter Tuning Loop

Signal Detection and Management Process

Pharmacovigilance Signal Detection Flow

Frequently Asked Questions

Q1: What are the most critical categories of metrics for R&D, and why? R&D success is measured across multiple dimensions. The most critical categories include [25]:

- Innovation Metrics: Measure the output and impact of R&D (e.g., products launched, patents filed).

- Time-to-Market Metrics: Measure the speed and efficiency of the development process.

- Financial Metrics: Evaluate the return on investment (ROI) and financial efficiency of R&D activities.

- Project Success Metrics: Track the health and success rate of the R&D project portfolio. Using a balanced set of metrics from these categories prevents over-optimizing one area at the expense of others and provides a holistic view of R&D performance [26] [27].

Q2: How do I move from generic to tailored metrics for my chemistry ML project? Tailoring metrics requires aligning them with your specific research and strategic goals [26] [27]. Follow these steps:

- Define Strategic Objectives: Start with the primary goal of your ML research (e.g., accelerating reaction optimization, improving predictive accuracy for ADMET properties).

- Map Objectives to Metrics: Identify which metrics directly indicate progress toward your goals. For example, if your goal is efficient optimization, a key metric would be the number of experiments to convergence using a Bayesian optimization algorithm [28].

- Select Leading and Lagging Indicators: Combine process metrics (leading) with outcome metrics (lagging). For instance, model performance on a held-out test set (lagging) should be analyzed alongside the hyperparameter optimization efficiency (leading) that got you there [29].

- Incorporate Domain-Specific KPIs: Beyond standard ML metrics, include chemistry-specific outcomes such as yield, impurity levels, or cost savings achieved per initiative [26] [28].

Q3: Why is it important to include failed experiments in R&D reports? Including failed or discontinued projects is critical for transparency and improved future decision-making [26]. It helps:

- Build Institutional Knowledge: Documenting what doesn't work prevents future teams from repeating the same mistakes.

- Refine Models: Failed experiments provide valuable data to improve machine learning models, helping them learn the boundaries of a successful reaction space [28].

- Demonstrate a Learning Culture: It shows that the team is learning and that processes are evolving, which is essential for innovation-driven companies [26].

Q4: My ML model performs well in validation but poorly in real-world chemistry applications. What could be wrong? This is a classic sign of overfitting to your validation set or a train-test distribution mismatch. To address this:

- Ensure Robust Data Splitting: Use scaffold-based or temporal splits for training and testing instead of random splits to better simulate real-world performance on novel molecules [30].

- Quantify Generalization: Use frameworks like AU-GOOD to evaluate your model's performance under increasing dissimilarity between train and test sets [30].

- Tune Hyperparameters Correctly: Use a nested cross-validation approach, where hyperparameter optimization is performed within the training fold of an outer cross-validation loop. This prevents information from the validation set leaking into the model training process and provides an unbiased estimate of generalization performance [29].

Q5: How can AI and automation improve R&D reporting and decision-making? AI-enhanced tools can revolutionize R&D by providing [26] [27]:

- Improved Accuracy: Automated data collection and reporting reduce human error.

- Enhanced Efficiency: Automation saves time and resources, allowing teams to focus on innovation.

- Deeper Insights: AI algorithms can identify patterns and trends in R&D data that might be missed by human analysis, such as identifying risks in the R&D portfolio or suggesting which metrics to prioritize [26].

Troubleshooting Guides

Problem 1: Poor Model Generalization to Novel Chemical Spaces

Problem Description: A machine learning model trained for property prediction performs well on its test set but fails to make accurate predictions for new types of molecules outside its training domain.

Diagnosis and Solution Protocol:

| Step | Action | Rationale & Details |

|---|---|---|

| 1 | Audit Your Data Splitting Strategy | Random splits often create artificially high performance. Implement a scaffold split, where molecules with different core structures are separated into train and test sets, or a temporal split based on the date the data was acquired [30]. |

| 2 | Implement Rigorous Evaluation | Use the AU-GOOD framework or similar to quantify your model's Out-of-Distribution (OOD) generalization. This provides a performance metric under increasing train-test dissimilarity [30]. |

| 3 | Re-tune Hyperparameters with OOD in Mind | During hyperparameter optimization, use a nested cross-validation procedure. This ensures that the model selection process itself does not overfit to a particular validation set and gives a true estimate of performance on new data [29]. |

Problem 2: Inefficient Hyperparameter Optimization for Chemistry ML

Problem Description: The process of finding the best hyperparameters for a chemistry machine learning model is taking too long, consuming excessive computational resources, and failing to find a good set of parameters.

Diagnosis and Solution Protocol:

| Step | Action | Rationale & Details |

|---|---|---|

| 1 | Select the Right HPO Algorithm | Move beyond grid search. For most chemistry ML problems, Bayesian Optimization (BO) is superior as it builds a probabilistic model to balance exploration and exploitation, finding good parameters in fewer trials [29] [31]. For very large search spaces, Hyperband is efficient as it quickly terminates poorly-performing trials [31]. |

| 2 | Define a Logical Search Space | Base your hyperparameter ranges on literature and prior knowledge. For example, the learning rate for a neural network typically varies on a log scale (e.g., 1e-5 to 1e-2). Avoid overly broad spaces that waste resources [31]. |

| 3 | Use a Multi-Objective Approach for Conflicting Goals | In chemistry, objectives often conflict (e.g., maximizing yield while minimizing impurities). Use a multi-objective optimizer like TSEMO to discover a set of optimal solutions (the Pareto front), allowing you to understand the trade-offs [28]. |

Problem 3: Selecting Metrics for a Multi-Objective Optimization Campaign

Problem Description: Your research involves optimizing a chemical reaction or a molecular property where multiple, competing outcomes are important, and you are unsure how to define and track success.

Experimental Protocol (Based on Lithium-Halogen Exchange Optimization [28]):

- Define Primary Objectives: Clearly state the conflicting goals. In the referenced study, the objectives were to maximize reaction yield and minimize impurity formation [28].

- Establish Baseline Performance: Run initial experiments (e.g., using Latin Hypercube Sampling) to understand the baseline performance and the inherent trade-off between your objectives [28].

- Execute Multi-Objective Algorithm: Employ a suitable algorithm such as TSEMO (Thompson Sampling Efficient Multi-Objective Optimization). This algorithm uses Gaussian Process surrogate models and suggests new experiments to improve the Pareto front iteratively [28].

- Analyze the Pareto Front: Upon completion, analyze the set of non-dominated optimal solutions. This front visually represents the best possible trade-offs, enabling informed decisions based on the desired balance of objectives [28].

Key Metrics for R&D and Chemistry ML

The table below summarizes essential metrics, categorized from generic R&D to those specific to chemistry and ML hyperparameter optimization.

| Category | Metric | Formula / Description | Application Notes |

|---|---|---|---|

| Innovation | New Product Success Rate | (Number of Successful Products / Total Projects) × 100 [25] | Measures the effectiveness of the development pipeline. |

| Revenue from New Products | Sum of revenue generated from new products/innovations [25] | Ties R&D activity directly to financial impact. | |

| Time-to-Market | Average Time-to-Market | (Sum of Individual TTM Durations) / (Total New Products Launched) [25] | Tracks development speed; critical for competitive advantage. |

| Financial | R&D Effectiveness Index (RDEI) | (PV of Revenue from Products) / (PV of Cumulative R&D Costs) [25] | A powerful metric for evaluating the financial return on R&D over time (e.g., 5 years). |

| Cost | Cost Savings from R&D | Sum of cost savings from process improvements or new methods [26] [25] | Highlights R&D's role in improving operational efficiency. |

| Chemistry ML HPO | Hyperparameter Optimization Efficiency | Number of experimental trials or computational cost to reach target performance [28] [29] | A key leading indicator for the efficiency of your ML research process. |

| Multi-Objective Performance | Hypervolume of the Pareto front [28] | Quantifies the quality and coverage of solutions found in a multi-objective optimization. | |

| Model Generalization Score | Performance on a rigorously held-out test set (e.g., via scaffold split) or AU-GOOD score [29] [30] | The ultimate test of a model's real-world utility. |

The Scientist's Toolkit: Research Reagent Solutions

This table details key components used in advanced, computer-driven chemistry experimentation as described in the search results [28].

| Item | Function in the Experiment |

|---|---|

| Syringe Pumps (Harvard apparatus) | Precisely deliver reagent streams at controlled flow rates for continuous flow chemistry. |

| T-mixer / Microchip Reactor | Provides rapid and efficient mixing of reagents, critical for ultra-fast reactions like lithiation. The type of mixer can define the reaction regime (mixing vs. reaction-controlled). |

| Static Mixer Tubing | A section of tubing where mixed reagents reside for a precise "residence time" before quenching. |

| Bayesian Optimization Algorithm (TSEMO) | The software "reagent." It suggests the next best set of reaction conditions (temperature, time, stoichiometry) to efficiently map the performance landscape. |

| Gaussian Process (GP) Surrogate Model | A probabilistic model that predicts reaction outcomes (yield, impurity) for untested conditions based on acquired data, guiding the optimization algorithm. |

A Toolbox of Chemistry-Aware Metrics for Hyperparameter Optimization

In the field of chemistry and drug discovery, machine learning (ML) models often sift through thousands of compounds to identify the most promising candidates. When optimizing these models, selecting the right evaluation metric is as crucial as selecting the right algorithm. For tasks where the goal is to ensure that the top few predictions are highly reliable—such as selecting compounds for costly experimental validation—Precision-at-K (P@K) is an indispensable metric.

This guide provides technical support for researchers implementing P@K, addressing common challenges and detailing its proper application within ML hyperparameter optimization pipelines.

Frequently Asked Questions (FAQs)

1. What is Precision-at-K (P@K) and why is it important for chemical ML?

Precision-at-K is a ranking metric that measures the proportion of relevant items found within the top K predictions of a model [32]. It is defined as:

P@K = (Number of relevant items in top K) / K [33]

In the context of chemical ML, a "relevant item" could be a truly active compound, a drug with known efficacy for a specific disease, or a molecule with a desired property [34] [35]. P@K is particularly important because it focuses evaluation on the top of the ranking list, which directly corresponds to the shortlist of candidates a researcher would select for further testing [34]. This makes it more actionable than metrics that evaluate the entire list.

2. How do I define "relevance" for my chemical dataset?

Defining relevance is a critical, problem-dependent step. Relevance is typically a binary label (relevant or not relevant) derived from your experimental or historical data [32] [36]. Common approaches include:

- Using Experimental Data: Treating compounds with an activity value (e.g., IC50) beyond a specific threshold as relevant [36].

- Leveraging Known Associations: In drug repurposing, drugs already approved for a specific disease (as curated from databases like the Comparative Toxicogenomics Database) are considered relevant for that indication [35].

- Defining by Similarity: In some recommendation-like tasks, a compound could be deemed relevant to a query compound if it shares a sufficient number of key properties or structural features [37].

3. My P@K value is low. What are the primary areas to troubleshoot?

A low P@K value indicates that few of your top-K predictions are relevant. Focus your troubleshooting on these areas:

- Relevance Definition: Re-examine your criteria for "relevance." An improperly set threshold can mislabel compounds and skew the metric [36].

- Feature Quality: The features (e.g., molecular fingerprints, descriptors) used to represent your compounds may not adequately capture the properties necessary for the model to distinguish relevant from irrelevant candidates.

- Hyperparameters: The model's performance is highly sensitive to its hyperparameters [38]. Tuning them is essential for optimizing P@K [39].

- Model Choice: The chosen algorithm might not be well-suited for the underlying data structure. For graph-based molecular data, Graph Neural Networks (GNNs) often outperform other models, but their configuration is key [38].

4. What is the difference between P@K and Recall-at-K?

While P@K focuses on the accuracy of your shortlist, Recall-at-K focuses on its coverage. Recall-at-K measures the proportion of all possible relevant items that were captured in your top-K recommendations [32].

Recall@k = (Number of relevant items in top K) / (Total number of relevant items) [32] [36]

The choice between them depends on the cost of false positives versus false negatives in your project. P@K is preferred when the cost of validating a false positive (a dud candidate) is high [32] [34].

5. When should I use P@K versus other metrics like NDCG or AUC?

The optimal metric aligns with your research goal and user behavior.

- Use P@K when the order of items within the top-K is not critical, and you only care about how many of them are correct. It is simple and highly interpretable [32] [33].

- Use NDCG (Normalized Discounted Cumulative Gain) when the ranking order within the top-K list matters. NDCG rewards models for placing the most relevant items at the very top positions [32] [35] [40].

- Use AUC (Area Under the ROC Curve) when you want to evaluate the model's overall ranking performance across all possible thresholds, not just a specific K. However, it can be misleading with highly imbalanced datasets common in drug discovery [34] [35].

The table below summarizes this comparison:

| Metric | Best Used For | Key Advantage | Key Limitation |

|---|---|---|---|

| Precision-at-K (P@K) | Evaluating a shortlist of top-K candidates. | Simple, intuitive, directly maps to a user action. | Ignores the ranking order within the top-K. |

| Recall-at-K (R@K) | Ensuring all relevant candidates are captured in the shortlist. | Measures coverage of relevant items. | Does not account for the number of irrelevant items in the shortlist. |

| NDCG | Evaluating a ranked list where the order of results is critical. | Rank-aware; rewards placing highly relevant items first. | More complex to calculate and interpret [33]. |

| AUC-ROC | Overall performance evaluation across all thresholds. | Provides a single, general measure of ranking quality. | Can be overly optimistic with imbalanced data [34]. |

Troubleshooting Guides

Issue: Inconsistent P@K Values Across Experiments

Problem: Your P@K values vary significantly when you run the same experiment multiple times, making it difficult to judge model improvements.

Solution: Implement a robust cross-validation strategy and ensure your data splitting method is consistent.

- Stratified Splitting: When creating training/test splits, use stratified methods to ensure the proportion of relevant compounds is approximately the same in all splits. This prevents a scenario where one split has most of the active compounds, which would drastically change the P@K calculation.

- Fix Random Seeds: Set the random seed for your ML framework (e.g., NumPy, TensorFlow, PyTorch) and any data splitting functions. This ensures that your experiments are reproducible.

- Averaging: Calculate P@K for each user or query (e.g., for each target disease or query compound) and then report the average across all of them. Do not calculate a global P@K by pooling all recommendations together, as this can be biased by users with many relevant items [32].

Issue: Optimizing Hyperparameters for P@K

Problem: Standard hyperparameter tuning seems to have little effect on improving your P@K score.

Solution: Employ advanced hyperparameter optimization (HPO) techniques that are designed to directly optimize for your target metric.

- Choose the Right Tuner:

- Grid Search: Systematically works through multiple hyperparameter combinations. It is thorough but computationally expensive [39].

- Random Search: Randomly samples hyperparameters from a defined space. Often more efficient than grid search for high-dimensional spaces [39].

- Bayesian Optimization: A more sophisticated method that builds a probabilistic model of the function mapping hyperparameters to the objective (P@K). It intelligently selects the most promising hyperparameters to evaluate next, making it highly efficient for expensive-to-train models like GNNs [39] [38].

- Define the Search Space: Based on your model (e.g., Random Forest, GNN), identify the key hyperparameters that most influence learning. For GNNs, this could include the number of layers, hidden units, dropout rate, and learning rate [38].

- Set the Objective: Configure your HPO library (e.g., scikit-learn, Optuna) to use P@K as the scoring function to maximize.

The following workflow diagram illustrates a robust hyperparameter tuning process aimed at optimizing P@K:

Issue: The Value of K is Arbitrary

Problem: It's unclear what value of K to choose for the P@K metric.

Solution: The value of K should reflect a real-world constraint or objective in your research pipeline [32] [33].

- Budget Constraints: If your lab can only validate 20 compounds per week, set K=20. P@20 will then directly measure the expected yield of your model under that budget.

- UI/Display Limitations: If your web platform only displays 10 recommendations to a scientist, set K=10.

- Benchmarking: Use the same K as reported in similar literature to ensure fair comparisons. In drug repurposing, common benchmarks use K=10 or K=25 to measure accuracy [35].

Experimental Protocols

Protocol: Benchmarking a Model Using P@K

This protocol outlines how to evaluate a candidate ML model using the P@K metric in a cheminformatics context, such as a virtual screening task.

1. Hypothesis: A Graph Neural Network (GNN) model will achieve a higher P@10 than a Random Forest model in identifying active compounds from a virtual library.

2. Materials (Research Reagent Solutions):

| Item | Function in Experiment |

|---|---|

| Chemical Dataset (e.g., from ChEMBL) | Provides the compounds (SMILES strings) and associated activity labels (active/inactive). |

| Molecular Feature Generator (e.g., RDKit) | Converts SMILES strings into features (e.g., ECFP fingerprints, graph structures). |

| ML Libraries (e.g., Scikit-learn, PyTorch Geometric) | Provides the algorithms for model building, training, and evaluation. |

| Evaluation Framework (Custom Python scripts) | Implements the P@K calculation logic and manages the experimental pipeline. |

3. Methodology:

- Data Preprocessing:

- Load the dataset of compounds and their activity labels.

- Define "relevance": For example, compounds with IC50 ≤ 10 μM are labeled "active" (relevant).

- Split the data into 70% training, 15% validation, and 15% test sets using stratified splitting to preserve the ratio of active compounds.

- Model Training & Hyperparameter Tuning:

- Train both a GNN and a Random Forest model on the training set.

- Use the validation set and a Bayesian optimization tuner to find the best hyperparameters for each model, using P@10 as the optimization objective.

- Evaluation:

- For each compound in the test set, obtain the model's prediction (a relevance score or probability of being active).

- Sort the test compounds by their predicted score in descending order.

- From this ranked list, identify the top 10 compounds.

- Calculate P@10: Count how many of these top 10 compounds are actually active (based on your label). Divide this count by 10.

- Reporting:

- Report the P@10 for both the GNN and Random Forest models.

- Perform statistical significance testing to determine if the difference in performance is not due to random chance.

The logical flow of this benchmarking experiment is shown below:

Frequently Asked Questions (FAQs)

FAQ 1: Why are standard metrics like accuracy misleading for rare event models in chemistry? Standard metrics like accuracy can be highly misleading for imbalanced datasets because a model can achieve a high score by simply always predicting the majority class (e.g., "no event"), thereby missing all the rare but critical events you are trying to detect. For rare events, you should prioritize metrics that are sensitive to the correct identification of the minority class, such as Precision, Recall, F1-Score, and the area under the Precision-Recall curve (AUPRC) [41].

FAQ 2: My model has high performance on training data but fails on new data. What is happening? This is a classic sign of overfitting [42] [43]. It occurs when a model learns the training data too well, including its noise and irrelevant patterns, but fails to generalize to unseen data. This is a significant risk in low-data regimes common with rare chemical events. Mitigation strategies include applying regularization techniques, using cross-validation, and simplifying the model architecture [43].

FAQ 3: What is the minimum amount of data needed to start building a rare event prediction model? While there is no universal minimum, the challenge is more about the number of rare event examples available. The "events per variable" (EPV) ratio is a useful guideline, though it may not fully account for the complexity of rare event data [41]. In practice, the model needs enough data to learn the underlying patterns of both common and rare events. Some general rules of thumb suggest having more than three weeks of data for periodic trends or a few hundred data buckets for non-periodic data [44].

FAQ 4: How can I improve a model that is failing to detect any rare events (low recall)? To improve recall:

- Data-Level Processing: Address the class imbalance directly by using resampling techniques, such as oversampling the rare event class or undersampling the majority class [45].

- Algorithm-Level Approach: Use cost-sensitive learning, where a higher penalty is assigned to misclassifying a rare event, which incentivizes the model to find them [45] [41].

- Model and Metric Selection: Choose algorithms known to handle imbalanced data well, such as ensemble methods, and focus your hyperparameter optimization on improving the recall metric [45] [43].

FAQ 5: Can complex non-linear models be trusted for rare event prediction with small datasets? Yes, but it requires careful implementation. Traditionally, linear models are preferred for small datasets due to their simplicity and lower risk of overfitting. However, recent research shows that properly tuned and regularized non-linear models (like Neural Networks) can perform on par with or even outperform linear regression, even in low-data scenarios. The key is to use automated workflows that incorporate robust hyperparameter optimization designed specifically to mitigate overfitting [43].

Troubleshooting Guides

Problem 1: Model with Persistently Low Recall

Symptoms: The model identifies most of the common class (non-events) correctly but fails to flag known rare events (e.g., a successful reaction or a toxic compound). The confusion matrix shows a high number of false negatives.

Diagnosis and Solution Protocol:

Audit and Preprocess the Data:

- Check for Balance: Calculate the percentage of rare events in your dataset. If it falls into the "extremely rare" (0-1%) or "very rare" (1-5%) category [45], resampling is crucial.

- Apply Resampling: Use techniques like SMOTE (Synthetic Minority Over-sampling Technique) to generate synthetic examples of the rare event class and balance the dataset [45].

- Handle Missing Data: Identify and impute or remove samples with missing values to prevent bias [42].

Reframe the Modeling Objective:

- Utilize Cost-Sensitive Learning: If your algorithm supports it, adjust the class weight parameters to make misclassifying a rare event more "costly" than misclassifying a common event [45] [41].

- Switch to Ensemble Methods: Implement algorithms like Random Forests or Gradient Boosting, which can be more effective for imbalanced data [45] [41].

Re-tune Hyperparameters with a New Objective:

- Optimize for Recall: During hyperparameter optimization, use Recall or the F1-Score as the primary scoring metric instead of accuracy to directly guide the model towards better rare event detection [43].

Problem 2: Model Fails to Generalize (Overfitting)

Symptoms: Excellent performance (e.g., low error, high accuracy) on the training dataset, but performance drops significantly on the validation or test set.

Diagnosis and Solution Protocol:

Implement Rigorous Validation:

- Use Cross-Validation (CV): Replace a simple train/test split with k-fold cross-validation to get a more robust estimate of model performance [42] [43].

- Incorporate an Extrapolation Test: For chemistry ML, where predicting outside the trained range is common, use a sorted CV approach. This involves sorting the data by the target value and validating on the top and bottom partitions to explicitly test extrapolation capability [43].

Apply Regularization and Simplify the Model:

- Add Penalties: Use L1 (Lasso) or L2 (Ridge) regularization in your model to penalize complex models and prevent overfitting [41] [46].

- Reduce Model Complexity: If you are using a neural network, reduce the number of layers or neurons. For tree-based models, reduce the maximum depth or increase the minimum samples required to split a node [42].

Adopt an Advanced Hyperparameter Optimization Workflow:

- Use a Combined Metric: Implement a Bayesian optimization process that uses a combined objective function. This function should average performance from both interpolation (standard k-fold CV) and extrapolation (sorted CV) tests to select models that generalize better [43].

- Prevent Data Leakage: Always reserve a completely external test set (e.g., 20% of data) that is not used during any model training or hyperparameter optimization steps, ensuring a final, unbiased evaluation [43].

Experimental Protocols & Data

Detailed Methodology: Benchmarking ML Models in Low-Data Regimes

This protocol is adapted from recent research on applying non-linear models to small chemical datasets [43].

1. Objective: To compare the performance of Multivariate Linear Regression (MVL) against non-linear algorithms (Random Forest, Gradient Boosting, Neural Networks) for predicting chemical properties from small datasets (N < 50).

2. Data Preparation and Curation:

- Source: Use a curated CSV database containing molecular structures and their associated target property.

- Descriptors: Use consistent molecular descriptors (e.g., steric and electronic parameters) for all models to ensure a fair comparison.

- Train-Test Split: Reserve 20% of the initial data (or a minimum of 4 data points) as an external test set. The split should be "even" to ensure a balanced representation of target values across both sets. This test set is only used for the final evaluation.

3. Hyperparameter Optimization with a Combined Metric:

- Tool: Use an automated workflow (e.g., ROBERT software) that employs Bayesian optimization.

- Objective Function: The optimization aims to minimize a combined Root Mean Squared Error (RMSE) calculated from:

- Interpolation RMSE: Derived from a 10-times repeated 5-fold cross-validation on the training/validation data.

- Extrapolation RMSE: Derived from a selective sorted 5-fold CV, which sorts data by the target value and uses the highest RMSE from the top and bottom partitions.

- Output: The best set of hyperparameters for each algorithm, as determined by the lowest combined RMSE.

4. Model Evaluation and Scoring:

- Primary Metrics: Use scaled RMSE (as a percentage of the target value range) for easier interpretation.

- Validation Performance: Evaluate using 10x5-fold CV on the training data to mitigate the effects of any specific data split.

- Final Test: Evaluate the final model on the held-out external test set.

- Scoring System: A comprehensive score (e.g., on a scale of 10) should be calculated based on:

- Predictive ability (CV and test set scaled RMSE).

- Level of overfitting (difference between CV and test RMSE).

- Extrapolation ability.

- Prediction uncertainty.

- Robustness checks (e.g., performance after y-shuffling).

The table below summarizes key quantitative concepts and benchmarks for rare event modeling in chemistry ML.

Table 1: Key Metrics and Benchmarks for Rare Event Models

| Concept / Metric | Description / Benchmark | Relevance to Rare Events |

|---|---|---|

| Levels of Rarity [45] | R1: 0-1% (Extremely Rare)R2: 1-5% (Very Rare)R3: 5-10% (Moderately Rare)R4: >10% (Frequently-Rare) | Helps categorize the problem's difficulty and select appropriate techniques. |

| Scaled RMSE [43] | RMSE expressed as a percentage of the target value range. | Allows for easier comparison of model performance across different chemical datasets and properties. |

| Events Per Variable (EPV) [41] | A guideline for the minimum number of rare events needed per predictor variable. | Helps assess the stability of model estimates; low EPV can lead to "sparse data bias." |

| Combined RMSE Metric [43] | An objective function averaging interpolation and extrapolation CV performance. | Crucial for hyperparameter optimization in small-data chemistry, as it directly penalizes overfitting and promotes generalizability. |

| Model Generalization Score [43] | A multi-component score (e.g., out of 10) evaluating prediction, overfitting, and uncertainty. | Provides a standardized, holistic view of model trustworthiness for decision-making. |

Workflow Visualization

Low-Data ML Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Rare Event Chemistry ML

| Item / "Reagent" | Function & Explanation |

|---|---|

| Automated ML Workflows (e.g., ROBERT) [43] | Software that automates data curation, hyperparameter optimization, and model evaluation. Reduces human bias and ensures reproducibility, which is critical in low-data regimes. |

| Bayesian Optimization [43] [46] | A probabilistic, sample-efficient global optimization method. Ideal for tuning hyperparameters when each model evaluation is computationally expensive, as is often the case in chemistry. |

| Combined Validation Metric [43] | A custom objective function that tests a model's performance on both interpolation (within data range) and extrapolation (outside data range), safeguarding against over-optimistic results. |

| Resampling Techniques (e.g., SMOTE) [45] | Algorithms used to rebalance imbalanced datasets by generating synthetic samples of the minority class, directly addressing the "Curse of Rarity." |

| Regularization Methods (L1/L2) [41] [46] | Techniques that add a penalty to the model's loss function to discourage complexity, thereby directly combating overfitting in small or noisy datasets. |

| Interpretability Tools (e.g., SHAP, LIME) | Post-hoc analysis tools that help explain the predictions of complex "black-box" models, building trust and providing chemical insights, which is essential for adoption in research [41]. |

Frequently Asked Questions

Q1: What are pathway impact metrics and why are they important for chemistry ML?

Pathway impact metrics are quantitative measures that assess the biological significance of machine learning model predictions by analyzing their effects on known biological pathways. Unlike traditional performance metrics that only measure statistical accuracy, pathway impact metrics evaluate whether molecular property predictions make biological sense in the context of established signaling networks and metabolic pathways. In chemistry ML applications such as drug discovery, these metrics ensure that predicted compounds with favorable binding affinities also demonstrate biologically relevant mechanism of actions, reducing late-stage attrition in drug development pipelines.

Q2: My ML model shows excellent accuracy but poor pathway impact scores. What could be wrong?

This common issue typically stems from several technical root causes. The problem often lies in incomplete biological feature representation, where molecular descriptors capture chemical properties but lack pathway context. Another frequent issue is annotation database limitations, where pathway databases may have outdated or incomplete gene-protein relationships. Optimization strategy deficiencies represent a third category, where hyperparameter optimization focuses solely on accuracy metrics without biological constraints. The troubleshooting steps should include verifying biological feature completeness, updating pathway annotations, and modifying your hyperparameter optimization to incorporate pathway impact metrics as additional loss components or constraints.

Q3: How do I select appropriate pathway databases for my cheminformatics research?

Database selection should be guided by organism coverage, annotation depth, and molecular specificity. The table below summarizes key characteristics of major pathway databases:

| Database | Organism Coverage | Annotation Depth | Update Frequency | Chemical Specificity |

|---|---|---|---|---|

| KEGG | Broad | Medium-High | Regular | Medium |

| Reactome | Human-focused | High | Continuous | High |

| WikiPathways | Multiple | Variable | Community-driven | Variable |

| BioCarta | Human | Medium | Irregular | Low-Medium |

| NCI-PID | Human | Medium | Periodic | Medium |

Q4: What are the practical steps to integrate pathway metrics into hyperparameter optimization?

Implementation requires both computational and biological considerations. Begin by defining a combined objective function that incorporates both traditional metrics (like RMSE) and pathway impact scores. Select appropriate optimization algorithms capable of handling multi-objective functions, such as evolutionary approaches or Bayesian optimization with constraints. Establish validation protocols that include biological ground truth testing beyond standard train-test splits. Finally, implement iterative refinement cycles where hyperparameters are adjusted based on both statistical and biological performance feedback.

Troubleshooting Guides

Issue: Discrepancy Between Model Accuracy and Biological Relevance

Symptoms: High statistical accuracy (low RMSE, high AUC) but poor performance on pathway impact metrics, leading to biologically implausible predictions.

Investigation Procedure:

Verify Feature Representation

- Audit input features for pathway-relevant biological context

- Check if molecular descriptors include target pathway information

- Validate feature importance alignment with biological knowledge

Analyze Pathway Database Compatibility

- Confirm organism-specific pathway coverage

- Verify annotation currency and completeness

- Test multiple pathway analysis methods for consistency

Diagnose Optimization Bias

- Audit hyperparameter optimization objectives for biological metrics

- Check if regularization sufficiently prevents biological overfitting

- Validate training/validation splits for biological representativeness

Resolution Protocols:

For Feature Deficiency:

For Optimization Issues: Implement multi-objective optimization that balances accuracy and biological relevance:

Issue: High Computational Overhead from Pathway Analysis

Symptoms: Unacceptable increase in training time when incorporating pathway metrics, making hyperparameter optimization computationally prohibitive.

Optimization Strategies:

Implement Multi-Fidelity Methods

- Use early stopping for poor biological performers

- Apply successive halving algorithms to terminate unpromising trials

- Implement caching for pathway metric computations

Parallelization Approach

- Distribute pathway analysis across multiple workers

- Precompute pathway metrics for common molecular structures

- Implement asynchronous evaluation of biological metrics

Experimental Protocol for Efficiency:

The following workflow balances computational efficiency with biological assessment:

Experimental Protocols

Protocol 1: Pathway-Centric Hyperparameter Optimization

Objective: Identify hyperparameters that maximize both predictive accuracy and biological relevance through pathway impact analysis.

Methodology:

Define Multi-Objective Function:

Where α and β are weights determined by domain importance.

Configure Optimization Space:

- Standard hyperparameters (learning rate, layers, etc.)

- Biological constraint parameters (pathway significance thresholds)

- Feature selection parameters (biological vs. chemical feature balance)

Implement SPIA-Based Validation:

Execute Iterative Optimization: Apply Bayesian optimization or evolutionary algorithms to navigate the hyperparameter space while monitoring both objective components.

Validation Framework:

| Validation Type | Procedure | Success Criteria |

|---|---|---|

| Statistical | k-fold cross-validation | AUC > 0.8, RMSE below dataset threshold |

| Biological | Pathway impact analysis | SPIA p < 0.05, meaningful pathway activation |

| Experimental | Wet-lab validation | Directionally consistent with predictions |

Protocol 2: Bias Detection in Pathway Analysis

Objective: Identify and mitigate systematic biases in pathway impact assessment that could skew hyperparameter selection.

Methodological Steps:

Null Distribution Establishment:

- Generate random gene expression profiles with equivalent statistics

- Apply pathway analysis to establish baseline significance

- Calculate false positive rates for each pathway

Pathway-Specific Bias Assessment:

- Test uniform p-value distribution under null hypothesis

- Identify pathways with inherent bias toward significance

- Apply correction factors for biased pathways

Comparative Method Evaluation: Implement multiple pathway analysis approaches (SPIA, GSEA, GSA, PADOG) and compare their sensitivity to hyperparameter changes.

Experimental Design:

Research Reagent Solutions

| Research Tool | Function | Application Context |

|---|---|---|

| KEGG Pathway Database | Provides curated pathway information | Biological feature generation, validation |

| SPIA Algorithm | Topology-based pathway impact analysis | Pathway significance scoring in model validation |

| Hyperopt | Bayesian optimization framework | Multi-objective hyperparameter optimization |

| ReactomePA | Pathway analysis toolkit | Alternative pathway impact assessment |

| CMA-ES | Evolutionary optimization algorithm | Complex hyperparameter spaces with biological constraints |

| Molecular Signatures DB | Gene set enrichment resources | Biological context for compound activity prediction |

Frequently Asked Questions & Troubleshooting Guide

FAQ 1: My molecular embeddings fail to separate active and inactive compounds in my benchmark. What could be wrong? This is often a data issue. The embeddings may not have been trained on a dataset representative of your chemical space.

- Troubleshooting Steps:

- Verify Training Data: Check if the pretrained model was trained on a database like ZINC, which contains broad chemical space, or a more specialized dataset [47] [48].

- Check Domain Shift: The benchmark by Praski et al. (2025) suggests that many advanced models fail to generalize beyond their training data. Consider using traditional ECFP fingerprints as a robust baseline to confirm the underperformance of the deep learning model [49].

- Try a Different Model: If using a Graph Neural Network (GNN), its performance is highly sensitive to hyperparameters. Explore automated Hyperparameter Optimization (HPO) or Neural Architecture Search (NAS) to find a better model configuration [38].

FAQ 2: How do I choose between a Graph Neural Network and a molecular fingerprint for my similarity search? The choice involves a trade-off between potential performance and computational simplicity.

- Solution:

- Start with Fingerprints: For most applications, begin with Extended Connectivity Fingerprints (ECFP) and the Tanimoto coefficient. They are computationally efficient, well-understood, and surprisingly hard to outperform in many traditional benchmarks [47] [49].

- Consider a GNN for Specialized Tasks: Use GNN-based embeddings if you have a large, labeled dataset for finetuning or need to capture complex graph topology that fingerprints might miss. Be prepared for a more complex training and optimization process [38] [48].

FAQ 3: The similarity measure from my embeddings is not symmetric. Is this a problem? Yes, this indicates a problem. A proper similarity or distance metric should be symmetric [50].

- Troubleshooting Steps:

- Inspect the Metric: Ensure the distance function you are using (e.g., Euclidean distance) is inherently symmetric. The problem may lie in the embedding generation process.

- Check Model Architecture: Verify that your model architecture is invariant to the order of input atoms. Architectures like MolE, which use disentangled attention, are designed to be order-invariant [48].

FAQ 4: I have limited labeled data for my target property. Can I still use deep metric learning? Yes, this is a primary strength of foundation models.

- Solution:

- Leverage Pretrained Models: Use a model like MolE, which has been pretrained on hundreds of millions of unlabeled molecules. This self-supervised step allows the model to learn general chemical structures, which can then be adapted to your specific task with limited labeled data [48].

- Apply Domain Adaptation: Techniques like Metric learning-enhanced Optimal Transport (MROT) are explicitly designed to align the data distributions of a source (training) domain and a target (test) domain, improving generalization when data is limited or heterogeneous [51].

Data Presentation: Metrics & Model Comparison

Table 1: Comparison of Molecular Similarity Approaches

| Approach | Key Feature | Pros | Cons | Typical Metric |

|---|---|---|---|---|

| Molecular Fingerprints (ECFP) [47] [49] | Predefined molecular representation based on subgraph presence. | Fast, interpretable, robust performance, hard to outperform. | May not preserve full graph topology; handcrafted. | Tanimoto Coefficient |

| Graph Neural Networks (GNNs) [47] [38] | Learns embeddings directly from the molecular graph structure. | Can capture complex topological patterns; data-driven. | Performance sensitive to hyperparameters; can be outperformed by fingerprints [49]. | Euclidean Distance |

| Graph Transformers (e.g., MolE) [48] | Uses self-attention on molecular graphs; captures long-range dependencies. | Powerful pretraining strategies; state-of-the-art on some ADMET tasks. | Computationally more intensive than some GNNs. | Euclidean Distance |

| Deep Metric Learning (Triplet Loss) [47] | Learns a metric space where similar molecules are closer. | Creates a continuous, unbounded similarity space. | Requires careful construction of triplets for training. | Euclidean Distance |

| Model / Representation | Architecture / Type | Pretraining Dataset Size | State-of-the-art (SOTA) on TDC Tasks (out of 22) | Key Finding |

|---|---|---|---|---|

| MolE [48] | Graph Transformer | ~842 million molecules | 10 | A foundation model that achieves top performance on many ADMET tasks. |

| ECFP Fingerprints [49] | Hashed Fingerprint | Not Applicable | - | Negligible or no improvement over this baseline was found for nearly all neural models in a large-scale study. |

| CLAMP [49] | Fingerprint-based | Not Specified | - | The only model in a large benchmark to perform statistically significantly better than ECFP. |

| Various Pretrained GNNs [49] | Graph Neural Network | Varies (e.g., 2M for ContextPred [48]) | - | Generally exhibited poor performance across tested benchmarks compared to fingerprints. |

Experimental Protocols

Protocol 1: Training a Molecular Embedding Model with Triplet Loss

This protocol is based on the methodology described by Coupry et al. (2022) [47].

Objective: To train a Graph Neural Network (GNN) to generate molecular embeddings where Euclidean distance directly quantifies molecular similarity.

Materials: See "Research Reagent Solutions" below.

Procedure:

- Dataset Generation:

- Obtain a large set of public compounds (e.g., from the ZINC database).

- Filter compounds based on criteria such as molecular weight (<650 daltons) and allowed elements.

- Cluster molecules using a minimal definition of similarity (e.g., sharing the same Reduced Graph and Graph Frame).

- For each cluster, define triplets for training:

- Anchor (A): A randomly selected molecule.