Beyond Exhaustive Screening: How Active Learning Delivers Unprecedented Efficiency Gains in Research and Drug Discovery

This article explores the paradigm shift from exhaustive, manual screening to AI-driven active learning (AL) across scientific research and drug discovery.

Beyond Exhaustive Screening: How Active Learning Delivers Unprecedented Efficiency Gains in Research and Drug Discovery

Abstract

This article explores the paradigm shift from exhaustive, manual screening to AI-driven active learning (AL) across scientific research and drug discovery. It details the foundational principles of AL, a machine learning approach that iteratively selects the most informative data points for labeling, dramatically reducing experimental and screening workloads. We examine its methodologies in systematic literature reviews and drug synergy screening, where it has demonstrated workload reductions of over 40% and 80%, respectively. The article also addresses key troubleshooting and optimization strategies for implementation, including handling class imbalance and selecting appropriate models. Finally, we present a comparative analysis of AL's performance against traditional methods, validating its potential to accelerate evidence synthesis and de-risk the R&D pipeline, ultimately paving the way for faster scientific breakthroughs.

The Foundational Shift: Understanding Active Learning and Its Core Efficiency Advantage

In the realm of scientific research, particularly in data-intensive fields like drug development, traditional methods for screening materials or literature are often slow, resource-exhaustive, and incremental. Active learning (AL), a machine learning paradigm, presents a transformative alternative by shifting from passive data consumption to an iterative, intelligent querying process. This guide objectively compares the performance of active learning against traditional exhaustive screening methods, demonstrating its significant efficiency gains through experimental data and detailed methodologies.

Experimental Protocols & Workflows

Active learning operates on a sequential Bayesian experimental design. It uses a feedback loop where a model actively selects the most informative data points for experimental validation, thereby refining its predictions with each iteration.

Core Active Learning Protocol for Materials Screening

The following workflow, adapted from a study on electrolyte discovery, illustrates the standard AL cycle [1]:

Key Methodological Details [1]:

- Initial Dataset: The process begins with a small, labeled dataset (e.g., 58 data points of anode-free battery cycling profiles).

- Surrogate Model: A Gaussian process regression (GPR) model is trained, often using Bayesian Model Averaging (BMA) to mitigate overfitting and quantify prediction uncertainty from small, noisy data.

- Virtual Search Space: A large space of potential candidates is defined (e.g., 1 million electrolytes filtered from chemical databases). The model predicts the performance of all unlabeled entries in this space.

- Query Strategy (Acquisition Function): The algorithm intelligently selects the next experiments by balancing exploration (testing uncertain regions) and exploitation (testing candidates predicted to be optimal). This is the core of "intelligent querying."

- Experimental Feedback: The selected candidates are tested experimentally (e.g., cycled in real batteries), and the results are added to the training dataset.

- Iteration: The cycle repeats, with the model becoming increasingly adept at identifying high-performing candidates.

Protocol for Systematic Review Screening

In systematic reviews, AL is implemented using tools like ASReview. The workflow differs slightly as it involves prioritization rather than a virtual search space [2].

Key Methodological Details [2]:

- Feature Extraction: Text data from abstracts is transformed into quantitative features using methods like TF-IDF, Doc2Vec, or Bidirectional Encoder Representations from Transformers (BERT).

- Model Training: Classifiers such as Random Forests, Support Vector Machines, or Naive Bayes learn to predict the relevance of an abstract based on reviewer labels.

- Stopping Criteria: Heuristic rules determine when to stop screening. One effective criterion is to stop after finding no relevant records after screening a consecutive percentage of irrelevant records (e.g., 5%).

Performance Comparison: Active Learning vs. Exhaustive Screening

Quantitative data from controlled simulations and experiments across different domains validate the efficiency of active learning.

Table 1: Efficiency Gains in Materials Science Discovery

| Performance Metric | Traditional Exhaustive Screening | Active Learning Approach | Experimental Context |

|---|---|---|---|

| Search Space Size | Not applicable (relies on intuition) | 1 million electrolyte candidates [1] | Electrolyte solvents for anode-free batteries |

| Initial Training Data | N/A | 58 data points [1] | In-house cycling dataset |

| Candidates Identified | Slow, incremental discovery | 4 high-performing solvents in ~7 campaigns [1] | Rivaling state-of-the-art performance |

| Experimental Efficiency | High resource expenditure | Rapid convergence on optimal candidates [1] | Managed data-scarce, noisy settings |

Table 2: Efficiency Gains in Systematic Review Automation

| Performance Metric | Traditional Manual Screening | ML Screening with Active Learning | Experimental Context |

|---|---|---|---|

| Screening Workload Reduction | Baseline (0%) | 58% (SD = 19%) [2] | 27 systematic reviews in education |

| Estimated Time Saved | Baseline (0 days) | 1.66 days (SD = 1.80) [2] | Abstract screening phase |

| Optimal Stopping Criterion | Screen 100% of records | Stop after 20% records & consecutively 5% irrelevant [2] | Retrieved 95% of relevant abstracts |

| Top-Performing Model | N/A | Random Forests with BERT [2] | Feature extraction with semantic context |

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational and experimental components essential for implementing an active learning framework in a scientific screening context.

Table 3: Essential Components for an Active Learning Screening Pipeline

| Item Name | Function / Explanation |

|---|---|

| Gaussian Process Regression (GPR) | A surrogate model that provides predictions with uncertainty estimates, crucial for the Bayesian optimization core of AL [1]. |

| Bayesian Model Averaging (BMA) | A technique that combines multiple models (e.g., with different kernels) to improve prediction accuracy and robustness with small datasets [1]. |

| Acquisition Function | The algorithm (e.g., Expected Improvement, Upper Confidence Bound) that decides which experiment to run next by balancing exploration and exploitation. |

| BERT (Feature Extraction) | A state-of-the-art natural language processing model for converting text (e.g., abstracts, chemical descriptions) into meaningful numerical features [2]. |

| Random Forests Classifier | A powerful ensemble learning method that was identified as a top performer for classifying research abstracts during systematic reviews [2]. |

| Cu| |LiFePO4 Coin Cell | A standard experimental testing configuration used to validate the battery performance of electrolyte candidates identified by the AL model [1]. |

| Heuristic Stopping Rule | A pre-defined criterion that automatically halts the screening process once a target level of exhaustiveness is reached, preventing unnecessary work [2]. |

In the field of drug development and scientific research, the explosion of data has made traditional manual screening methods impractical. Active learning, a subfield of machine learning, offers a framework for substantial efficiency gains over exhaustive screening by strategically using human expertise. This approach creates an iterative human-in-the-loop (HITL) cycle where models and humans collaborate to accelerate discovery while ensuring reliability. This guide explores the core mechanisms of this cycle, provides quantitative evidence of its performance, and details its practical application in scientific domains.

The Active Learning & Human-in-the-Loop Workflow

The Human-in-the-Loop (HITL) model is an approach that integrates human judgment directly into the AI development process, creating a continuous feedback loop that combines the scalability of machines with the nuanced understanding of humans [3]. In an Active Learning (AL) framework, this collaboration becomes a powerful, iterative cycle for efficient model training.

The core of this process is an automated loop that selectively identifies the most valuable data points for a human expert to label. The foundational cycle involves three key stages: Select, Label, and Retrain [4].

The Iterative HITL Cycle

- Select: A machine learning model, even a weak one, is applied to a large pool of unlabeled data. The core of Active Learning is the strategy used to select which data points are most valuable for labeling. Common sampling strategies include [4]:

- Uncertainty Sampling: The model selects examples where its prediction confidence is lowest (e.g., probabilities near 0.5 in binary classification).

- Diversity Sampling: The model selects a diverse set of examples to ensure broad coverage of the data space and avoid redundancy.

- Query-by-Committee: Multiple models "vote" on unlabeled examples; samples with the most disagreement are selected.

- Label: A human expert—such as a researcher or data annotator—labels only the small batch of data selected in the previous step. This targeted labeling ensures human effort is applied efficiently to the most impactful cases [3] [4]. In a HITL system, this step often uses a user-friendly interface to streamline the review and correction process [3].

- Retrain: The model is updated (retrained or fine-tuned) using the newly labeled, high-value data. This expands the model's knowledge base with the most critical information [4].

- Repeat: The cycle continues—the newly retrained model selects the next batch of uncertain/diverse samples, which are then labeled by a human and used for further retraining. This loop continues until model performance on a validation set plateaus or a predefined stopping criterion is met [4].

Quantitative Efficiency Gains: Active Learning vs. Exhaustive Screening

The primary advantage of the Active Learning HITL cycle is its dramatic improvement in efficiency compared to exhaustive manual screening. The following table summarizes quantitative results from multiple scientific studies.

Table 1: Document Screening Efficiency - Systematic Food Safety Review [5]

| Active Learning Model | Mean Recall Achieved | Records Screened to Achieve Recall | Work Saved Over Sampling (at 95% Recall) |

|---|---|---|---|

| Naive Bayes / TF-IDF | 99.2% ± 0.8% | 62.6% ± 3.2% | High |

| Logistic Regression / Doc2Vec | 97.9% ± 2.7% | 58.9% ± 2.9% | High |

| Regression / TF-IDF | 98.8% ± 0.4% | 57.6% ± 3.2% | High |

| Manual (Random) Screening | ~95-100% | ~100% | 0% |

Table 2: Electrolyte Discovery for Anode-Free Batteries [1]

| Screening Method | Search Space Size | Initial Training Data | Experiments to Identify Leads | Key Outcome |

|---|---|---|---|---|

| Active Learning | 1 million electrolytes | 58 data points | ~70 (7 campaigns) | 4 high-performing solvents identified |

| Traditional Trial-and-Error | 1 million electrolytes | N/A | Potentially thousands | Slow, incremental progress |

Table 3: General Data Labeling & Model Training [6] [7] [4]

| Metric | Active Learning (HITL) | Exhaustive/Passive Labeling |

|---|---|---|

| Labeling Cost Reduction | 30% - 70% [4] / 33% [7] | Baseline (0%) |

| Data Throughput | Up to 5x faster [7] | Baseline (1x) |

| Time to Value | 75% reduction [7] | Baseline (0%) |

| Performance Goal Achievement | Reached with 40-50% less data [4] | Requires 100% of data |

Experimental Protocols in Practice

Protocol A: Screening Scientific Literature

This protocol is based on a study that used Active Learning to screen articles for a systematic review on digital tools in food safety [5].

- Objective: To efficiently identify relevant scientific articles for full-text review within a large dataset, minimizing human screening effort.

- Dataset: 3,738 articles from a previous systematic scoping review, of which 214 were labeled as relevant via prior manual screening [5].

- Methodology:

- Model Training: Three classification models (Naive Bayes/TF-IDF, Logistic Regression/Doc2Vec, Regression/TF-IDF) were trained on the initial labeled data to distinguish between relevant and irrelevant articles based on title and abstract.

- Iterative Screening Loop:

- Select: The model ranked all unlabeled articles by their probability of being relevant (Uncertainty Sampling).

- Label: A human reviewer screened (labeled) the top-ranked articles from the model's list.

- Retrain: The model was updated with the new human-provided labels. This cycle was repeated.

- Stopping Criterion: A heuristic stopping rule was applied, such as halting the process after screening 5% of the total records consecutively without finding a relevant article [5].

- Outcome Analysis: Recall (the proportion of truly relevant articles found) was measured against the total number of records screened. All Active Learning models achieved high recall (>97.9%) while screening only 58-63% of the total database, demonstrating significant workload reduction compared to manual screening [5].

Protocol B: Accelerating Materials Discovery

This protocol is based on a study that used Active Learning to discover electrolyte solvents for next-generation batteries, a common challenge in materials science and drug development [1].

- Objective: To identify optimal electrolyte solvents for anode-free lithium metal batteries from a vast chemical space of ~1 million candidates with minimal experimental testing.

- Dataset: An initial in-house dataset of only 58 anode-free battery cycling profiles [1].

- Methodology:

- Search Space Construction: A virtual search space of 1 million potential electrolyte solvents was created from chemical databases (PubChem, eMolecules) and filtered for desirable properties [1].

- Bayesian Active Learning Loop:

- Model & Prediction: A Gaussian Process Regression (GPR) model was trained on the available data to predict battery performance and, crucially, to quantify the uncertainty of its predictions for every unlabeled candidate in the search space.

- Select: The "oracle" component of the AL framework selected candidates for experimental testing using a strategy that balanced exploitation (choosing candidates predicted to be high-performing) and exploration (choosing candidates with high prediction uncertainty). This is a hallmark of Bayesian optimization.

- Label & Experiment: The selected electrolyte candidates were commercially sourced or synthesized and then tested experimentally in real battery cells to measure their performance (capacity retention), effectively "labeling" them with ground-truth data.

- Retrain: The new experimental results were added to the training dataset, and the GPR model was retrained to improve its predictions for the next cycle [1].

- Campaigns: This loop was run through seven sequential campaigns, testing about ten electrolytes in each [1].

- Outcome Analysis: The workflow rapidly converged on promising solvent families, identifying four distinct electrolyte solvents that rivaled state-of-the-art performance after testing only about 70 candidates out of a million [1].

The Scientist's Toolkit: Research Reagent Solutions

Implementing an effective Active Learning HITL system requires a combination of computational tools and expert human input. The following table details key components of this toolkit.

Table 4: Essential Research Reagents & Tools for HITL Active Learning

| Item | Function in the HITL Workflow |

|---|---|

| Specialized AI Platforms (e.g., bfPREP) | Purpose-built data preparation and cleansing modules for specific industries like life sciences. They automate the standardization of complex data (e.g., clinical, omics) and incorporate human-in-the-loop validation to ensure data integrity and reproducibility [8]. |

| Active Learning Toolkits (e.g., modAL, Cleanlab) | Open-source Python libraries that provide pre-built, modular components for implementing Active Learning loops. They help with strategies like uncertainty sampling and query-by-committee, accelerating pipeline development [4]. |

| Annotation Platforms (e.g., Label Studio, CVAT) | Flexible software, either open-source or commercial, that provides user-friendly interfaces for human experts to efficiently review, correct, and label data selected by the model. They are essential for the "Label" step [4]. |

| Bayesian Optimization Libraries | Computational tools essential for sequential experimental design in data-scarce environments. They use surrogate models (e.g., Gaussian Processes) to handle noisy data and quantify prediction uncertainty, guiding the selection of experiments in materials or drug discovery [1]. |

| Domain Expert (The "Human") | The critical, non-automatable component. Scientists and researchers provide the ground-truth labels, contextual understanding, and ethical judgment required to validate model outputs and correct errors, particularly for edge cases and high-stakes decisions [3] [9]. |

The Prohibitive Cost of Comprehensiveness

In the pursuit of absolute certainty in fields like drug discovery and materials science, exhaustive screening has traditionally represented the ideal of thoroughness. This approach aims to test all possible combinations of inputs or conditions to guarantee that no potential candidate is overlooked. However, a deeper examination reveals this method to be a practically impossible standard, characterized by immense computational, temporal, and financial demands [10].

The core challenge lies in the combinatorial explosion of possibilities. For example, in synergistic drug combination screening, the experimental space can be astronomically large. The DrugComb database aggregates over 739,964 drug combinations from various campaigns [11]. In a theoretical scenario involving 1,000 sets each with 500 elements, the number of possible combinations to test reaches an incomprehensible scale, making an exhaustive search of all options computationally infeasible [12]. Furthermore, the phenomenon being sought is often rare; in widely used datasets like Oneil and ALMANAC, synergistic drug pairs constitute only 3.55% and 1.47% of combinations, respectively [11]. This means that exhaustive screening expends绝大部分 of its resources to confirm negative results, an incredibly inefficient allocation of effort.

Table 1: The Scale of the Screening Challenge in Different Domains

| Domain | Scope of Combinatorial Space | Key Challenge | Practical Implication |

|---|---|---|---|

| Synergistic Drug Discovery [11] | 839,797 drugs; 2320 cell lines; >739,964 drug combinations | Synergy is a rare event (e.g., 1.47%-3.55% of pairs) | Exhaustive search is "time-consuming and expensive" |

| Metal-Organic Frameworks (MOFs) Screening [13] [14] | 1000s of MOF structures with different linkers, metal nodes, and pore geometries | Vast number of possible structures and operating conditions | High-throughput computational screening is needed but can be slow |

| Anti-Cancer Drug Screening [15] | 100s of drugs; 1000s of cancer cell lines | "Prohibitively expensive and time consuming" to test all combinations | Need for guided experimentation to identify responsive treatments |

Active Learning: A Paradigm of Strategic Efficiency

Active learning presents a powerful alternative, strategically navigating vast experimental spaces by iteratively selecting the most informative experiments to perform. This machine learning procedure breaks the discovery process into cycles [15]. In each iteration, a model trained on available data guides the selection of the next batch of experiments, the results of which are then used to refine the model for the subsequent cycle [11] [15]. This creates a closed-loop, adaptive system that continuously learns from new data, focusing resources on the most promising regions of the search space.

The quantitative benefits of this approach are substantial. Research in synergistic drug discovery demonstrates that an active learning framework can discover 60% of synergistic drug pairs by exploring only 10% of the combinatorial space [11]. This represents a dramatic reduction in experimental burden, saving an estimated 82% of experimental time and materials compared to a non-strategic approach [11]. Similarly, in anti-cancer drug response prediction, most active learning strategies are significantly more efficient than random selection at identifying effective treatments ("hits"), enabling comparable results with far less labeled data [15].

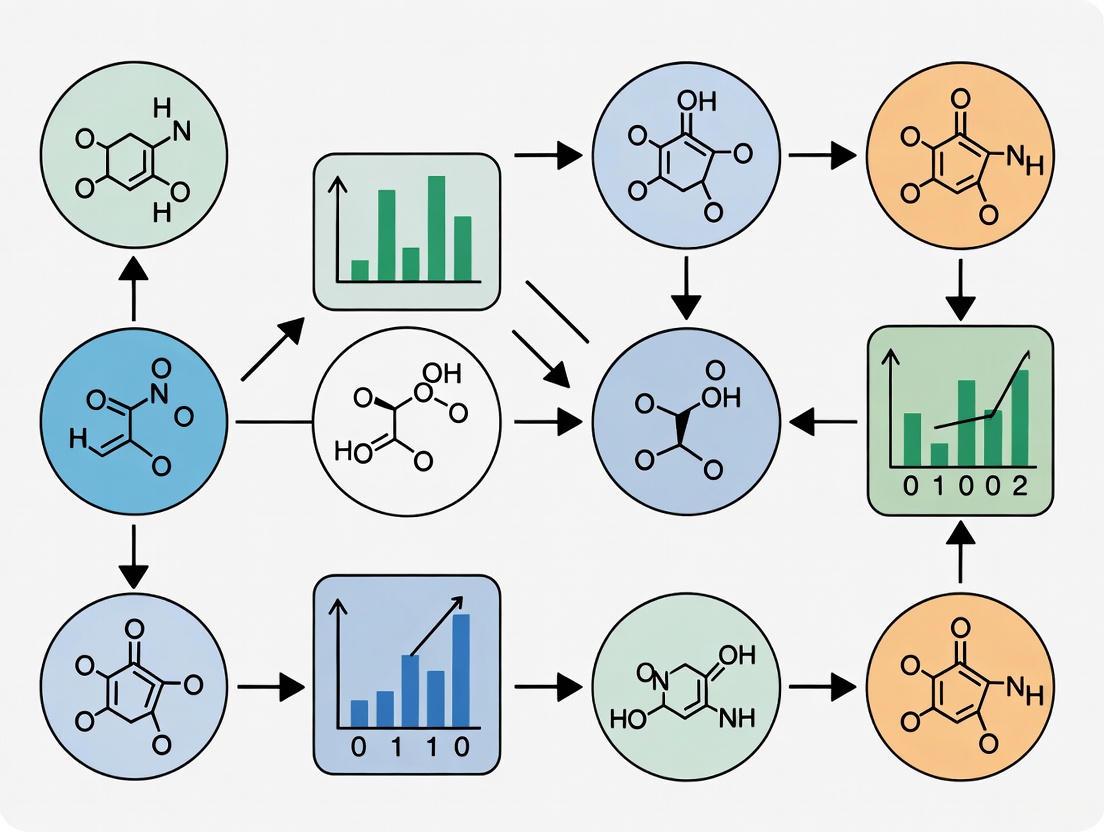

The following diagram illustrates the fundamental difference between the exhaustive screening paradigm and the iterative, efficient active learning workflow.

Diagram 1: A comparison of the exhaustive screening versus the active learning workflow.

Quantitative Comparisons: Experimental Data and Protocols

Performance Benchmarks in Drug Discovery

The superiority of active learning is not merely theoretical; it is demonstrated through rigorous, data-driven experiments. A landmark study on synergistic drug discovery provides a clear, quantitative comparison. Researchers benchmarked an active learning framework against a random selection strategy for identifying synergistic drug pairs (defined by a LOEWE score >10) from the Oneil dataset (38 drugs, 29 cell lines) [11].

Table 2: Experimental Performance: Active Learning vs. Exhaustive Search

| Metric | Exhaustive Search (Theoretical) | Active Learning Strategy |

|---|---|---|

| Total Experiments Required | 8,253 | 1,488 |

| Synergistic Pairs Identified | 300 | 300 |

| Experimental Space Explored | ~100% | 10% |

| Efficiency Gain | Baseline | 82% reduction in time/materials |

Experimental Protocol: The active learning framework, RECOVER, was pre-trained on the Oneil dataset [11]. It then iteratively selected small batches of drug combinations for experimental measurement based on its current predictions. The model was sequentially refined with data from each batch. The key was to balance exploration (testing uncertain predictions) and exploitation (testing predictions likely to be synergistic). The study found that smaller batch sizes and dynamic tuning of this balance further enhanced the synergy yield ratio [11].

Performance in Anti-Cancer Drug Response Prediction

A comprehensive investigation into active learning for anti-cancer drug response prediction further validates its efficiency. The study constructed drug-specific models to predict the responses of various cancer cell lines to a specific drug, using data from the Cancer Therapeutics Response Portal v2 (CTRP) [15].

Experimental Protocol: The research team implemented and compared multiple active learning strategies over 57 drugs [15]. The process for each drug was as follows:

- Start: Begin with a small, initially labeled set of cell line responses.

- Iterate: For each active learning cycle:

- Train a drug response prediction model on the current labeled set.

- Use a sampling strategy (e.g., uncertainty, diversity, or hybrid methods) to select the most informative cell lines from the unlabeled pool.

- "Experiment" on the selected cell lines (i.e., obtain their response labels from the dataset).

- Add the newly labeled data to the training set.

- Evaluate: Track performance on two goals: a) the number of responsive treatments ("hits") identified early in the process, and b) the prediction performance of the model as the training set grows.

The results demonstrated that active learning strategies significantly improved the early identification of hits compared to random and greedy sampling methods. Some strategies also showed improved response prediction performance, confirming that active learning can simultaneously advance both hit discovery and model refinement with high data efficiency [15].

The Scientist's Toolkit: Implementing Active Learning

Adopting an active learning framework requires a combination of data, computational models, and strategic querying functions. The table below details the key components and their functions based on the protocols from the cited research.

Table 3: Research Reagent Solutions for an Active Learning Pipeline

| Component | Function in the Active Learning Workflow | Examples from Literature |

|---|---|---|

| Initial Labeled Dataset | Serves as the seed to pre-train the initial predictive model. | Oneil dataset [11]; CTRP v2 dataset [15] |

| Predictive AI Algorithm | The core model that makes predictions on unlabeled data to guide sample selection. | Multi-layer Perceptron (MLP) [11]; Random Forests, XGBoost [15] |

| Molecular & Cellular Features | Numerical representations of drugs and biological context used as model input. | Morgan fingerprints, gene expression profiles [11] |

| Query Strategy | The algorithm for selecting the most informative samples from the unlabeled pool. | Uncertainty sampling, diversity sampling, hybrid approaches [15] |

| Experimental Platform | The high-throughput system used to generate new labeled data for selected samples. | Automated drug combination screening platforms [11] |

The following diagram maps how these components interact within a typical active learning cycle for drug discovery.

Diagram 2: The key components of an active learning pipeline and their interactions.

The evidence is clear: the burden of exhaustive screening is no longer a necessary evil in research. The combinatorial explosion inherent in modern discovery problems makes a comprehensive search prohibitively costly and slow [10] [12]. Active learning emerges as a superior paradigm, using strategic, model-guided experimentation to achieve dramatic efficiency gains [11] [15]. By framing research as an iterative, adaptive process, active learning allows scientists to navigate vast combinatorial landscapes with precision, accelerating the pace of discovery in drug development, materials science, and beyond while conserving precious resources.

In fields like materials science and drug discovery, the experimental space is often astronomically large, while resources for synthesis and characterization are limited and costly. The high-throughput screening of thousands of drug combinations or the synthesis of novel alloys presents a fundamental dimensionality problem; exhaustive experimentation is simply infeasible [16] [17]. Active learning (AL) addresses this challenge through a data-centric iterative paradigm, strategically selecting the most informative data points to label, thereby maximizing model performance while minimizing experimental cost. This guide focuses on the two core mechanistic pillars that enable this intelligent selection: uncertainty sampling and diversity sampling.

Uncertainty sampling operates on the principle of querying instances where the current model is most uncertain, thereby directly reducing predictive ambiguity. In contrast, diversity sampling aims to construct a representative training set by selecting data that broadly covers the input feature space. While often presented as competing approaches, their integration into hybrid strategies has proven particularly powerful in real-world scientific applications, from synergistic drug discovery to the development of new materials [16] [11]. This guide provides an objective comparison of these strategies, complete with experimental data and protocols, to inform their application in research settings.

Core Principles and Query Strategies

Uncertainty Sampling: Targeting Model Ambiguity

Uncertainty sampling is founded on the intuitive idea that a model can improve most by learning the answers to questions it finds most ambiguous. It is most effective in the early stages of active learning when the model's decision boundaries are poorly defined [16] [18].

- Least Confidence: Selects the instance where the model has the lowest confidence in its most likely prediction. For a classification task, this is the sample where the top predicted probability is smallest [18].

- Margin Sampling: For a given instance, the "margin" is the difference between the probabilities of the first and second most likely classes. A small margin indicates a close call and high uncertainty. This method queries the data points with the smallest margins [19] [20].

- Entropy Sampling: Entropy measures the average level of "information" inherent in the variable's possible outcomes. Higher entropy signifies greater disorder and uncertainty. This strategy selects samples where the predictive probability distribution across all classes has the highest entropy, calculated as ( \text{Entropy}(x) = - \sum_{c} P(y=c|x) \log P(y=c|x) ) [19] [18].

Diversity Sampling: Ensuring Representativeness

Diversity sampling, also known as representative sampling, counters a key weakness of pure uncertainty sampling: the risk of querying a cluster of very similar, ambiguous points that provide redundant information. Its goal is to select a batch of data that is collectively representative of the entire underlying data distribution [19] [21].

- Cluster-Based Sampling: This method involves clustering the unlabeled data in the feature space and then selecting representative points from each cluster. This ensures that the selected batch covers the diverse regions of the input space [19].

- Core-Set Approaches: These methods aim to solve the "k-center" problem, selecting a set of points such that the maximum distance from any unlabeled point to its nearest center is minimized. This maximizes the coverage of the feature space with a limited number of samples [18].

- Density-Weighted Methods: These approaches prioritize points that lie in dense regions of the feature space, ensuring that the selected samples are not only diverse but also representative of common patterns in the data [19].

Hybrid Strategies: The Best of Both Worlds

Hybrid strategies combine the strengths of uncertainty and diversity sampling to avoid the pitfalls of either method used alone. They typically select data points that are both highly uncertain and diverse from each other [19] [18].

- Uncertainty + Diversity: A common framework is to first shortlist the most uncertain samples and then from this subset, select the ones that are most dissimilar to each other and to the existing labeled set. This prevents the selection of redundant, highly similar outliers [19].

- Category-Enhanced Uncertainty Sampling: This innovative approach, used in multi-class computer vision, integrates category information with uncertainty measures. It uses a pre-trained model to extract category features and then combines these with uncertainty scores to ensure balanced sampling across all classes, mitigating the "long-tail" effect where rare classes are overlooked [20].

Experimental Comparison and Performance Benchmarks

Benchmark in Materials Science Regression

A comprehensive 2025 benchmark study evaluated 17 active learning strategies within an Automated Machine Learning (AutoML) framework across 9 materials science regression tasks. The study highlighted the varying effectiveness of strategies at different stages of data acquisition [16].

Table 1: Performance of AL Strategies in AutoML for Materials Science [16]

| Strategy Type | Example Strategies | Early-Stage Performance | Late-Stage Performance | Key Characteristics |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperformed random sampling and geometry-only heuristics. | Performance gap narrowed, converging with other methods. | Selects informative samples, improving model accuracy quickly. |

| Diversity-Hybrid | RD-GS | Clearly outperformed random sampling and geometry-only heuristics. | Performance gap narrowed, converging with other methods. | Balances exploration of the feature space with model uncertainty. |

| Geometry-Only | GSx, EGAL | Underperformed compared to uncertainty and hybrid methods. | Converged with all other methods. | Relies on data distribution geometry without model uncertainty. |

The study concluded that early in the acquisition process, uncertainty-driven and diversity-hybrid strategies are superior, as they more efficiently identify informative samples. However, as the labeled set grows, the law of diminishing returns sets in, and the performance of all strategies converges [16].

Application in Synergistic Drug Discovery

A 2025 study on synergistic drug combination screening provides a compelling case for the efficiency gains of active learning. The research demonstrated that active learning could discover 60% of synergistic drug pairs by exploring only 10% of the combinatorial space, resulting in savings of 82% of experimental time and materials compared to a random approach [11].

The study further investigated the critical factor of batch size in the active learning loop. It found that the synergy yield ratio was significantly higher with smaller batch sizes. This underscores the importance of iterative, adaptive re-training of the model, as smaller batches allow the algorithm to more dynamically incorporate feedback from previous experiments [11].

Investigation in Anti-Cancer Drug Response Prediction

A 2024 analysis compared active learning strategies for building drug-specific anti-cancer response prediction models across 57 drugs. The performance was evaluated based on the early identification of responsive treatments ("hits") and the improvement in prediction model performance [15].

Table 2: AL Strategy Performance in Anti-Cancer Drug Response [15]

| Strategy | Hit Identification | Model Performance | Remarks |

|---|---|---|---|

| Uncertainty-Based | Significant improvement over random and greedy methods. | Improvement for some drugs and analysis runs. | Effective for rapidly finding responsive treatments. |

| Diversity-Based | Significant improvement over random and greedy methods. | Not explicitly detailed in results. | Helps in covering the variety of cell lines. |

| Hybrid Approaches | Significant improvement over random and greedy methods. | Improvement for some drugs and analysis runs. | Combines strengths for a more robust selection. |

| Random Sampling | Baseline method. | Baseline performance. | Used as a control for comparison. |

The study demonstrated that most active learning strategies were more efficient than random selection for identifying effective treatments, with hybrid and uncertainty-based approaches also showing benefits for improving response modeling in certain experimental settings [15].

Experimental Protocols for Key Studies

Protocol: Benchmarking AL Strategies in AutoML

The following methodology was used in the comprehensive benchmark of active learning strategies with AutoML for small-sample regression in materials science [16].

- Data Setup: The process begins with an unlabeled dataset. A small initial labeled set (L0) of size (n{init}) is created by random sampling from the unlabeled pool (U).

- Iterative AL Loop: For a predefined number of steps: a. Model Training: An AutoML model is fitted on the current labeled set (L). The AutoML system automatically handles model selection, hyperparameter tuning, and data preprocessing, using 5-fold cross-validation for validation [16]. b. Query Selection: The trained model is used to evaluate all samples in (U). Based on a specific AL strategy (e.g., LCMD for uncertainty), the most informative sample (or batch of samples) (x^) is selected from (U). c. Oracle Labeling: The selected sample (x^) is labeled (e.g., through a simulated experiment or database lookup) to obtain its target value (y^). d. Set Update: The newly labeled sample ((x^, y^)) is added to the labeled set: (L = L \cup (x^, y^*)), and removed from the unlabeled pool (U).

- Performance Tracking: In each iteration, the model's performance is evaluated on a held-out test set using metrics like Mean Absolute Error (MAE) and the Coefficient of Determination ((R^2)).

- Comparison: The performance trajectories of all 17 AL strategies are compared against a random sampling baseline.

Protocol: Active Learning for Drug Synergy Screening

The guide for active learning in synergistic drug discovery outlines the following experimental workflow [11].

- Problem Framing: The goal is to prioritize drug pairs for experimental testing from a vast combinatorial space (e.g., 8397 drugs × 2320 cell lines).

- Model and Data Setup: a. Feature Selection: The algorithm uses molecular features (e.g., Morgan fingerprints) and cellular context features (e.g., gene expression profiles of cancer cell lines). The study found that cellular environment features significantly enhanced predictions, while the specific molecular encoding had a limited impact [11]. b. Algorithm Selection: The study benchmarks algorithms (e.g., Logistic Regression, XGBoost, Neural Networks) for data efficiency, selecting one that performs well with small training data.

- Iterative Screening Campaign: a. Initialization: The model is pre-trained on any existing public synergy data (e.g., Oneil dataset). b. Batch Selection: The model scores all untested drug-cell combinations. A batch of candidates is selected based on a query strategy (e.g., high predicted synergy or high uncertainty). The study emphasizes the use of small batch sizes for dynamic tuning of the exploration-exploitation trade-off. c. Wet-Lab Experimentation: The selected batch of drug combinations is synthesized and tested in the laboratory for synergy. d. Model Retraining: The new experimental results are added to the training set, and the model is retrained.

- Evaluation: The process is repeated. Success is measured by the rate of synergistic hit discovery over successive batches and the overall performance of the predictive model.

Workflow Visualization

The following diagram illustrates the standard pool-based active learning workflow, common to both experimental protocols described above.

The Scientist's Toolkit: Key Research Reagents and Materials

The implementation of active learning in experimental sciences relies on specific computational and data resources. The following table details key "reagents" used in the featured studies.

Table 3: Essential Research Reagents for Active Learning in Drug Discovery

| Reagent / Resource | Type | Function in Active Learning Workflow | Example Sources |

|---|---|---|---|

| Morgan Fingerprints | Molecular Descriptor | Encodes the structure of a molecule as a bit vector, serving as a key input feature for the predictive model. | RDKit, Open Babel [11] |

| Gene Expression Profiles | Cellular Feature | Provides genomic context of the targeted cell line, significantly enhancing synergy prediction accuracy. | GDSC, CCLE [11] |

| Pre-trained VGG16 | Computer Vision Model | Used in enhanced uncertainty sampling to extract deep image features for assigning category information without model retraining. | PyTorch/TensorFlow Model Zoo [20] |

| Synergy Datasets | Benchmark Data | Used for pre-training and benchmarking models. Provides experimental ground truth for synergy scores. | DrugComb, Oneil, ALMANAC [11] |

| AutoML Framework | Software Tool | Automates the process of model selection, hyperparameter tuning, and validation within the AL loop. | AutoSklearn, TPOT, H2O.ai [16] |

Uncertainty and diversity sampling are not merely abstract algorithms but are proven, core mechanisms for achieving dramatic efficiency gains in resource-intensive research. Quantitative benchmarks show that uncertainty-driven and hybrid strategies can reduce the required experimental volume by over 80% in drug discovery and achieve higher model accuracy with fewer data points in materials science. The choice of strategy is context-dependent: uncertainty sampling excels at rapid initial learning, while diversity methods ensure robustness and coverage. For the practicing scientist, the most effective approach often lies in a hybrid strategy, dynamically balancing exploration and exploitation, ideally implemented within an automated ML framework to adaptively guide high-value experimentation.

Active Learning in Action: Methodologies and Real-World Applications

Systematic reviews, which form the foundation for evidence-based medicine and policy, are notoriously labor-intensive and time-consuming. The traditional process of manually screening thousands of titles and abstracts represents a significant bottleneck, often requiring teams of researchers months of dedicated effort. As the volume of scientific literature grows exponentially, this challenge intensifies, creating an urgent need for more efficient screening methodologies. In response, active learning (AL) systems have emerged as a transformative solution, leveraging artificial intelligence to prioritize records for review and dramatically reduce screening workload while maintaining high recall of relevant studies.

Active learning represents a paradigm shift from traditional screening approaches. Unlike passive machine learning that requires a pre-labeled dataset, AL operates through an interactive human-in-the-loop process where the model iteratively improves its predictions by selecting the most informative records for human annotation. This creates a positive feedback loop: as reviewers label more records, the model becomes increasingly accurate at identifying relevant studies, allowing researchers to discover the majority of relevant publications after screening only a fraction of the total records [22] [23].

Performance Comparison: Quantifying the Efficiency Gains

Extensive simulation studies across diverse research domains have quantified the substantial efficiency gains achievable through active learning compared to traditional screening methods. The performance is typically evaluated using metrics such as Work Saved over Sampling (WSS), which measures the proportion of records not needing screening compared to random sampling while achieving a specific recall level, and recall, which indicates the proportion of total relevant records identified at a given screening point [23].

Table 1: Overall Performance Metrics of Active Learning Models

| Metric | Performance Range | Interpretation | Key Findings |

|---|---|---|---|

| WSS@95 | 63.9% to 91.7% | Work saved while finding 95% of relevant records | Naive Bayes + TF-IDF consistently among top performers [23] |

| Recall after 10% screening | 53.6% to 99.8% | Proportion of relevant records found early | Significant front-loading of relevant record identification [23] |

| Average Time to Discovery (ATD) | 1.4% to 11.7% | Average proportion of records screened per relevant found | Lower values indicate better overall efficiency [23] |

Table 2: Performance by Model Configuration (Selected Examples)

| Model Configuration | Feature Extractor | Recall Achieved | Workload Reduction | Notable Characteristics |

|---|---|---|---|---|

| Naive Bayes + TF-IDF | TF-IDF | 99.2% ± 0.8% | Screened only 62.6% of records | Strong overall performance, works well with small training sets [5] [23] |

| Logistic Regression + Doc2Vec | Doc2Vec | 97.9% ± 2.7% | Screened only 58.9% of records | Contextual understanding of text [5] |

| Logistic Regression + TF-IDF | TF-IDF | 98.8% ± 0.4% | Screened only 57.6% of records | Balanced performance across domains [5] |

| Support Vector Machine | TF-IDF | Varies by dataset | Competitive workload reduction | Default in several screening tools [23] [24] |

The evidence consistently demonstrates that active learning significantly outperforms random screening across all measured parameters. Large-scale simulation studies encompassing over 29,000 runs confirm that while the extent of improvement varies by dataset, model choice, and screening stage, the advantage of AL is clear and substantial [24]. This makes AL-aided screening particularly valuable for rapid evidence synthesis in emerging research areas or urgent health crises where traditional systematic reviews would be prohibitively time-consuming.

Methodology: Experimental Protocols and Validation

Simulation Study Design

Robust evaluation of active learning performance relies on carefully designed simulation studies that mimic the human screening process using pre-labeled datasets where all relevant records are already known. The standard protocol involves:

- Dataset Selection: Utilizing benchmark systematic review datasets with known relevance labels, such as the SYNERGY dataset which spans medicine, psychology, computational sciences, and biology with varying sizes (238 to 48,375 records) and relevance densities (0.25% to >20%) [24].

- Initialization: Typically starting with a minimal training set of one known relevant and one known irrelevant record to represent a challenging real-world scenario with limited prior knowledge [23].

- Iteration Process: The model is retrained after each labeling decision, predicting relevance scores for all unscreened records and presenting the highest-ranked record next [25] [23].

- Performance Assessment: Metrics like WSS, recall, and Time to Discovery (TD) are calculated across multiple runs (often 15 repetitions) with different random seeds to account for variability in initial training sets [23].

This simulation approach allows researchers to comprehensively evaluate model performance without the cost and time of actual human screening, while providing standardized conditions for comparing different algorithmic approaches.

Stopping Rules and Criteria

A critical methodological challenge in active learning implementation is determining the optimal point to stop screening. Unlike traditional reviews that screen all records, AL requires careful consideration of stopping rules to balance efficiency against the risk of missing relevant studies. The research describes several approaches:

- Statistical Stopping Rules: Methods that estimate the total number of relevant records and stop when a pre-specified recall level is reached with statistical confidence [22].

- Heuristic Stopping Rules: Practical approaches including (1) stopping after screening a fixed percentage of records; (2) stopping after a predetermined number of consecutive irrelevant records (e.g., 50-100); (3) identifying key papers beforehand and stopping once all are found [26].

- The SAFE Procedure: A conservative, multi-phase heuristic that combines screening a random set for training, applying active learning, searching with a different model, and final quality evaluation [26].

The emerging consensus emphasizes that stopping rules should be transparent about the risk of missing relevant studies and tailored to the specific review context, with more stringent rules applied for clinical guideline development versus rapid reviews [22].

The Researcher's Toolkit: Essential Components for AL Implementation

Table 3: Active Learning Screening Toolkit

| Component | Function | Examples & Notes |

|---|---|---|

| Classification Algorithms | Predict relevance of unscreened records | Naive Bayes, Logistic Regression, Support Vector Machines, Random Forest [23] [24] |

| Feature Extraction Methods | Convert text to machine-readable features | TF-IDF (term frequency-inverse document frequency), Doc2Vec, SBERT (Sentence-BERT) [25] [27] |

| Stopping Rule Modules | Determine when to stop screening | Statistical methods, heuristic rules (e.g., consecutive irrelevant records), SAFE procedure [22] [26] |

| Benchmark Datasets | Validate and compare model performance | SYNERGY dataset (multi-disciplinary), Cohen dataset (medical), Radjenović dataset (computer science) [25] [24] |

| Screening Software | Implement complete AL workflow | ASReview, Abstrackr, Rayyan, Colandr [28] [23] |

Successful implementation of active learning for systematic review screening requires appropriate selection and configuration of each toolkit component. Research indicates that feature extraction choice (particularly TF-IDF) often influences performance more than classifier selection [27]. Additionally, the optimal component combination may vary depending on specific dataset characteristics such as domain, size, and relevance density, highlighting the value of flexible software platforms that support multiple model configurations.

Active learning represents a significant advancement in systematic review methodology, addressing the critical bottleneck of literature screening through intelligent prioritization. The evidence demonstrates that AL can reduce screening workload by approximately 60-92% while maintaining 95% recall, substantially accelerating the evidence synthesis process without compromising rigor [23] [24]. This efficiency gain makes systematic reviews more feasible for resource-constrained teams and enables more timely evidence updates as new research emerges.

The implementation ecosystem for AL-assisted screening has matured considerably, with user-friendly software tools like ASReview making these techniques accessible to non-specialists [28]. As the field continues to evolve, standardization of evaluation metrics and stopping criteria will further enhance the reliability and transparency of AL-aided reviews. For the research community engaged in evidence synthesis, particularly in fast-moving fields like drug development, embracing active learning methodologies offers a practical path toward maintaining comprehensive, up-to-date systematic reviews in the face of exponentially growing scientific literature.

The traditional approach to drug discovery has long relied on exhaustive, high-throughput screening (HTS) of compound libraries, a process that is both resource-intensive and time-consuming. In this paradigm, researchers experimentally test hundreds of thousands—or even millions—of compounds against biological targets, hoping to find a few promising hits. While effective, this brute-force method requires enormous investments in time, materials, and cost, creating a significant bottleneck in the early stages of drug development [29]. The field is now undergoing a fundamental transformation with the adoption of active learning (AL), an artificial intelligence (AI)-driven approach that strategically selects the most informative experiments to perform, dramatically accelerating the discovery process.

Active learning represents a paradigm shift from exhaustive screening to intelligent, iterative exploration. Instead of testing all possible compounds or combinations, AL algorithms use machine learning models to predict the most promising candidates, experimentally test a small batch of these predictions, then use the results to refine the model for the next selection cycle [11]. This create a efficient "design-make-test-analyze" (DMTA) loop that continuously improves its targeting of the chemical space. Framed within the broader thesis of active learning's efficiency gains over exhaustive screening, this article provides a comparative analysis of how AI-driven approaches are revolutionizing the optimization of molecular properties and the identification of synergistic drug combinations, complete with experimental data and protocols for research implementation.

Active learning systems for drug discovery typically comprise three core components: (1) an initial dataset of known measurements, (2) a machine learning algorithm that predicts molecular properties or synergistic potential, and (3) a selection criterion that prioritizes which experiments to perform next based on the algorithm's predictions and uncertainties [11]. This framework creates a closed-loop system that learns from each experimental batch to improve subsequent selections.

The power of this approach lies in its efficient navigation of the vast combinatorial search space. For example, in synergistic drug combination screening—where the number of possible drug pairs grows quadratically with the number of candidate compounds—exhaustive experimental screening is practically infeasible. Research demonstrates that active learning can discover 60% of synergistic drug pairs by exploring just 10% of the combinatorial space, achieving an 82% reduction in experimental requirements compared to random screening [11]. This extraordinary efficiency gain forms the cornerstone of the computational revolution in drug discovery.

Visualizing the Active Learning Workflow

The following diagram illustrates the iterative workflow of an active learning framework for drug discovery, highlighting its efficient, closed-loop nature:

Comparative Analysis: Exhaustive Screening vs. Active Learning

The efficiency gains of active learning become strikingly evident when examining quantitative performance metrics across multiple studies. The following table summarizes key comparative findings from recent research implementations:

Table 1: Quantitative Comparison of Screening Efficiency Across Methodologies

| Screening Method | Experimental Scale | Synergistic Pairs Identified | Hit Rate | Resource Savings | Study/Platform |

|---|---|---|---|---|---|

| Exhaustive Screening | 8,253 measurements | 300 pairs | 3.6% | Baseline | Oneil Dataset [11] |

| Active Learning | 1,488 measurements | 300 pairs | 20.2% | 82% reduction | RECOVER Framework [11] |

| Traditional HTS | 496 combinations tested | 51 synergistic pairs | 10.3% | Baseline | NCATS Pancreatic Cancer Study [30] |

| ML-Predicted Combinations | 88 combinations tested | 51 synergistic pairs | 58.0% | ~82% fewer tests | NCATS/UNC/MIT Collaboration [30] |

| Ultra-Low Data Screening | 110 affinity evaluations | 5 top-1% hits | 97% probability | ~99.99% reduction | CDDD+MLP with PADRE [31] |

The data demonstrates that active learning and AI-guided approaches consistently achieve comparable or superior results while requiring dramatically fewer experimental resources. The hit rate for synergistic combinations increases from approximately 3.6% with exhaustive screening to over 20% with active learning—a more than 5-fold improvement in discovery efficiency [11]. Similarly, in a pancreatic cancer drug combination study, machine learning models achieved a 58% hit rate—identifying 51 synergistic pairs from just 88 tested combinations—compared to a 10.3% hit rate through traditional high-throughput screening [30].

Experimental Protocols: Implementing Active Learning for Drug Discovery

Protocol 1: Active Learning for Synergistic Drug Combination Discovery

This protocol is adapted from the RECOVER framework and related studies [11]:

Initial Data Compilation: Collect a training dataset of known drug combination outcomes, such as the Oneil dataset (15,117 measurements across 38 drugs and 29 cell lines) or ALMANAC (304,549 experiments) [11].

Feature Representation:

- Molecular Encoding: Generate Morgan fingerprints (ECFP4) for each drug using RDKit with 1024-bit length and radius 2.

- Cellular Context: Incorporate gene expression profiles of cancer cell lines from the Genomics of Drug Sensitivity in Cancer (GDSC) database. Research indicates that as few as 10 carefully selected genes may be sufficient for accurate predictions [11].

Model Selection & Training:

- Implement a multi-layer perceptron (MLP) architecture with three hidden layers (64 neurons each).

- Use Bilinear operation or Sum operation to combine drug pair representations.

- Train initially on available data using 5-fold cross-validation with a 90/10 train/validation split.

Iterative Active Learning Cycle:

- Prediction Phase: Use the trained model to predict synergy scores (e.g., LOEWE or Bliss scores) for all unexplored drug pairs in the virtual library.

- Selection Phase: Apply an acquisition function (e.g., uncertainty sampling, expected improvement) to select the most informative batch of combinations (typically 10-100 pairs) for experimental testing.

- Experimental Phase: Test selected combinations using cell-based assays (e.g., PANC-1 cell viability assays for pancreatic cancer).

- Retraining Phase: Incorporate new experimental results into the training set and update the model parameters.

- Repeat cycles until desired number of synergistic pairs is identified or resources are exhausted.

Protocol 2: Ultra-Low Data Hit Identification Using Active Learning

This protocol is designed for resource-limited settings where only minimal experimental capacity is available [31]:

Library Preparation: Select a diverse virtual compound library such as the Developmental Therapeutics Program repository (DTP) or Enamine Discovery Diversity Set 10 (DDS-10).

Initial Sampling: Randomly select 20-30 compounds from the library for initial activity testing to create a foundational dataset.

Model Implementation:

- Utilize Continuous and Data-Driven Descriptors (CDDD) for molecular representation.

- Implement a Multi-Layer Perceptron (MLP) model augmented with Pairwise Difference Regression (PADRE) data augmentation technique.

- Train the model on the initial data to predict molecular activity.

Active Learning Execution:

- For each of 5-10 iterative cycles:

- Use the model to predict activity for all untested compounds.

- Select the top 10-20 most promising candidates based on predicted activity and uncertainty metrics.

- Experimentally test the selected compounds (e.g., via docking scores or biochemical assays).

- Add results to the training set and retrain the model.

- Total experimental budget: ~110 affinity evaluations.

- For each of 5-10 iterative cycles:

Validation: Confirm identified hits through secondary assays. This approach has demonstrated 97-100% probability of identifying at least five top-1% hits from diverse compound libraries [31].

Molecular Representation Methods: The Foundation of AI-Driven Discovery

The performance of active learning systems depends critically on how molecular structures are represented computationally. The following table compares key molecular representation methods and their applications in drug discovery:

Table 2: Comparison of Molecular Representation Methods in AI-Driven Drug Discovery

| Representation Method | Type | Key Features | Best Applications | Performance Notes |

|---|---|---|---|---|

| Morgan Fingerprints (ECFP) [32] [11] | Traditional | Circular atom environments encoded as bit vectors; computationally efficient | Similarity searching, QSAR, virtual screening | With MLP, achieved highest prediction performance in synergy detection [11] |

| Graph Neural Networks (GCN/GAT) [32] [11] | AI-Driven | Directly operates on molecular graph structure; captures spatial relationships | Molecular property prediction, scaffold hopping | DeepDDS GCN uses topology for synergy prediction; excellent for novel scaffold identification [11] |

| Transformer Models (ChemBERT) [32] [11] | AI-Driven | Treats SMILES as chemical language; self-attention mechanisms | Large-scale molecular representation, transfer learning | Pre-trained on ChEMBL; requires fine-tuning for specific tasks [11] |

| Multimodal Fusion (MD-Syn) [33] | Hybrid AI | Combines 1D (SMILES) and 2D (graph) representations with attention mechanisms | Synergistic drug combination prediction | Achieved AUROC of 0.919; integrates chemical and genomic data [33] |

Recent advances in molecular representation have significantly enhanced scaffold hopping—the identification of novel core structures that retain biological activity. AI-driven approaches, particularly graph neural networks and transformer models, can capture complex structure-activity relationships that enable identification of structurally diverse compounds with similar target effects, expanding the explorable chemical space beyond traditional medicinal chemistry constraints [32].

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing active learning approaches requires specific computational and experimental resources. The following table details key solutions and their applications:

Table 3: Essential Research Reagent Solutions for Active Learning-Driven Drug Discovery

| Research Tool | Type | Function & Application | Implementation Example |

|---|---|---|---|

| CETSA (Cellular Thermal Shift Assay) [34] | Experimental Assay | Measures target engagement in intact cells and tissues; validates direct binding | Quantifying drug-target engagement of DPP9 in rat tissue [34] |

| Morgan Fingerprints (ECFP4) [11] | Computational Descriptor | Encodes molecular structure as binary vectors for similarity searching and ML | Molecular representation in RECOVER framework for synergy prediction [11] |

| Graph Convolutional Networks (GCN) [32] [33] | AI Algorithm | Learns molecular representations directly from graph structure of compounds | Feature extraction in MD-Syn for drug combination prediction [33] |

| Multi-Head Attention Mechanisms [33] | AI Algorithm | Identifies salient features in complex datasets; improves model interpretability | Identifying key molecular interactions in MD-Syn framework [33] |

| Protein-Protein Interaction (PPI) Networks [33] | Biological Data | Maps cellular context for drug actions; identifies compensatory pathways | Modeling higher-order relationships in GraphSynergy for combination prediction [33] |

| AutoDock & SwissADME [34] | Computational Tools | Predicts binding poses (docking) and drug-likeness properties (ADME) | Pre-screening filtration before synthesis and in vitro testing [34] |

Signaling Pathways in Synergistic Drug Action

Understanding the biological mechanisms underlying drug synergy is crucial for rational combination design. The following diagram illustrates a generalized signaling pathway framework where synergistic combinations often emerge:

Synergistic drug combinations often emerge when simultaneously targeting parallel signaling pathways (e.g., PI3K/AKT/mTOR and RAS/RAF/MEK/ERK pathways) or when inhibiting a primary pathway while blocking compensatory resistance mechanisms [30] [33]. This systems-level understanding enables more rational design of combination therapies that AI models can then optimize through active learning approaches.

The integration of active learning methodologies into drug discovery represents a fundamental shift from brute-force screening to intelligent, data-driven exploration. The experimental data and comparative analyses presented demonstrate that AI-guided approaches can achieve comparable or superior results to exhaustive methods while requiring dramatically fewer resources—typically reducing experimental burden by 80% or more [30] [31] [11].

The implications for research and development are profound. Active learning enables resource-constrained laboratories to pursue meaningful drug discovery programs, democratizing access to what was once the exclusive domain of well-funded institutions and pharmaceutical giants [31] [29]. Furthermore, as active learning frameworks continue to evolve—incorporating emerging technologies like hybrid quantum-classical computing and multimodal molecular representations—their efficiency and applicability will only expand [35].

For researchers implementing these approaches, success factors include: (1) selecting appropriate molecular representations for the specific discovery task, (2) incorporating relevant cellular context features, particularly gene expression profiles, and (3) implementing thoughtful exploration-exploitation strategies that balance risk and reward in the candidate selection process [33] [11]. As the field advances, the integration of active learning into standard drug discovery workflows promises to accelerate the development of novel therapeutics across diverse disease areas, ultimately translating computational efficiencies into clinical breakthroughs.

In fields such as drug development and materials science, the high cost of acquiring labeled data through expert-driven processes creates a critical need for data-efficient machine learning methodologies. Active Learning (AL) has emerged as a powerful solution to this challenge, strategically selecting the most informative data points for labeling to maximize model performance while minimizing annotation costs [21] [16]. This approach is particularly valuable for systematic reviews and research screening tasks, where exhaustive manual screening of thousands of articles or compounds represents a significant bottleneck in the research pipeline [5] [24].

This technical deep dive examines the core components of effective AL systems: feature extraction techniques that transform raw data into meaningful representations, model training approaches that enable intelligent sample selection, and query strategies that determine which unlabeled instances would be most valuable for annotation. By understanding how these components interact within AL frameworks, researchers and drug development professionals can significantly accelerate their screening processes while maintaining rigorous standards of evidence collection.

Feature Extraction: Transforming Raw Data into Meaningful Representations

Feature extraction serves as the foundational step in active learning pipelines, converting unstructured data into numerical representations that machine learning models can process effectively. The choice of feature extraction method significantly impacts the performance of subsequent AL cycles by determining how well the underlying patterns in the data can be captured and utilized.

Text-Based Feature Extraction Methods

In research domains involving literature analysis, such as systematic reviews of digital food safety tools or medical literature, textual data from titles and abstracts must be converted into vector representations. The following table summarizes prominent feature extraction techniques used in AL applications:

Table 1: Comparison of Feature Extraction Methods in Active Learning

| Method | Type | Key Characteristics | Performance in AL Studies |

|---|---|---|---|

| TF-IDF | Statistical | Term Frequency-Inverse Document Frequency; captures word importance | Typically outperforms Doc2Vec at finding relevant articles early in screening [5] |

| Doc2Vec | Word Embedding | Learns document-level representations using neural networks | Achieves 97.9% recall while screening only 58.9% of records in food safety reviews [5] |

| Word Embeddings | Distributed Representation | Captures semantic meaning through dense vectors | Frequently used in systematic review software; enables semantic understanding [24] |

Text preprocessing forms an essential prerequisite to feature extraction, involving tokenization, stopword removal, and stemming/lemmatization to reduce noise and dimensionality. Research indicates that eliminating stopwords alone can result in a 35–45% reduction in text size, allowing models to focus on more meaningful content [36].

Domain-Specific Feature Extraction

Beyond text applications, AL systems in materials science and drug development utilize specialized feature extraction techniques tailored to their data types. These include geometrical features capturing structural relationships, statistical features describing distributions, and texture-based features characterizing surface patterns [37]. The effectiveness of these extraction methods directly influences how efficiently an AL system can identify promising candidates for experimental validation with limited labeling budgets.

Model Training: Algorithms for Intelligent Sample Selection

The core objective of model training in active learning is to develop a predictive system that can not only accurately classify instances but also quantify its own uncertainty to guide the query strategy. Various machine learning approaches have been benchmarked for their effectiveness in AL pipelines across different research domains.

Model Architectures and Performance

In systematic review applications, simulation studies have evaluated numerous classifier and feature extractor combinations to determine optimal configurations. A large-scale simulation study totaling over 29,000 runs demonstrated that in every scenario tested, active learning outperformed random screening, though the extent of improvement varied across datasets, models, and screening progression stages [24].

Table 2: Model Performance in Active Learning Applications

| Model Category | Specific Algorithms | Performance Characteristics | Application Context |

|---|---|---|---|

| Traditional ML | Naive Bayes/TF-IDF, Logistic Regression/TF-IDF | Achieves 99.2% recall while screening only 62.6% of records [5] | Digital food safety literature screening |

| Ensemble Methods | Random Forest, Tree-based ensembles | Effective for uncertainty estimation in regression tasks [16] | Materials property prediction |

| Deep Learning | Neural networks with embedding layers | Shows promise but not widely adopted in systematic review simulations [24] | Complex pattern recognition tasks |

The integration of Automated Machine Learning (AutoML) with active learning has enabled the construction of robust prediction models while substantially reducing the volume of labeled data required. Benchmark studies in materials science have demonstrated that uncertainty-driven and diversity-hybrid strategies clearly outperform random sampling early in the acquisition process [16].

Training Protocols and Experimental Setup

Effective AL implementation requires careful attention to training protocols. The standard pool-based AL framework begins with a small set of labeled samples (L = {(xi, yi)}{i=1}^l) and a large pool of unlabeled data (U = {xi}_{i=l+1}^n). Through iterative cycles, the model selects the most informative sample (x^) from (U), obtains its label (y^) through human annotation, and updates the training set: (L = L \cup {(x^, y^)}) [16].

Studies typically employ cross-validation with 5 folds for model validation, and performance is evaluated using metrics such as Mean Absolute Error (MAE) and Coefficient of Determination ((R^2)) for regression tasks, or recall and Work Saved over Sampling (WSS) for classification tasks [16]. The initial labeled set size ((n_{init})) varies by application, with some systematic review simulations starting with just two records (one relevant and one irrelevant) to minimize prior knowledge requirements [24].

Query Strategies: Algorithms for Optimal Sample Selection

Query strategies form the decision engine of active learning systems, determining which unlabeled instances would provide the maximum information gain if labeled. These strategies balance the competing objectives of exploration (sampling diverse regions of the feature space) and exploitation (focusing on uncertain regions near the decision boundary).

Fundamental Query Strategies

The most established query strategies in active learning include:

Uncertainty Sampling: Selects instances where the model's prediction is least confident. Variants include:

- Least Confidence: (x^_{LC} = \arg\max_{x\in\mathcal{U}}(1 - P_\theta(y^|x)))

- Margin Sampling: (x^*{Margin} = \arg\min{x\in\mathcal{U}}(P\theta(y1|x) - P\theta(y2|x)))

- Entropy Sampling: (x^*{Ent} = \arg\max{x\in\mathcal{U}}(-\sum{y}P\theta(y|x)\log P_\theta(y|x))) [38]

Query by Committee (QBC): Maintains a committee of diverse models ({h1,...,hM}) and queries points with maximum predictive disagreement, measured by vote entropy: (x^*{QBC} = \arg\max{x\in\mathcal{U}}-\sum{c}\frac{vc(x)}{M}\log\frac{v_c(x)}{M}) [38]

Expected Model Change (EMC): Selects instances expected to induce the largest changes to the current model parameters: (x^*{EMC} = \arg\max{x\in\mathcal{U}}\mathbb{E}{y}\|\nabla\theta L(\theta; x, y)\|) [38]

Advanced and Hybrid Approaches

Recent advancements in query strategies have addressed limitations in traditional approaches:

Diversity-Driven Methods: Techniques such as core-set selection and k-center greedy algorithms promote coverage of the feature space to prevent sampling redundancy [38].

Density-Weighted Methods: Combine uncertainty with representativeness using formulations such as (Score(x) = Unc(x) \cdot \rho(x)), where (\rho(x)) represents data density [38].

Knowledge-Driven Active Learning (KAL): Incorporates domain knowledge by ranking unlabeled instances according to how much the model's predictions violate expert-defined rules, improving interpretability and efficiency [38].

In systematic review applications, these strategies typically employ a stopping criterion such as screening a certain percentage of total records (e.g., 5%) consecutively without identifying a relevant article [5].

Comparative Performance Analysis

Rigorous evaluation of active learning components requires standardized benchmarks across diverse domains. The following experimental data illustrates the performance gains achievable through well-designed AL systems.

Efficiency Gains in Systematic Reviews

A large-scale simulation study using the SYNERGY dataset (spanning medicine, psychology, computational sciences, and biology) demonstrated consistent advantages of AL over random screening:

Table 3: Active Learning Performance in Systematic Review Screening

| Model Configuration | Recall Achievement | Work Saved | Stopping Point |

|---|---|---|---|

| Naive Bayes/TF-IDF | 99.2 ± 0.8% | 37.4% of records not screened | After viewing 62.6% of records [5] |

| Logistic Regression/Doc2Vec | 97.9 ± 2.7% | 41.1% of records not screened | After viewing 58.9% of records [5] |

| Logistic Regression/TF-IDF | 98.8 ± 0.4% | 42.4% of records not screened | After viewing 57.6% of records [5] |

The study found that performance gains varied across datasets, models, and screening progression, ranging from considerable to near-flawless results. All models outperformed random screening at any recall level, demonstrating the consistent value of AL approaches [24].

Performance in Materials Science Regression

A comprehensive benchmark of 17 active learning strategies with AutoML for small-sample regression in materials science revealed:

- Early in the acquisition process, uncertainty-driven (LCMD, Tree-based-R) and diversity-hybrid (RD-GS) strategies clearly outperformed geometry-only heuristics (GSx, EGAL) and random baseline.

- These approaches selected more informative samples, improving model accuracy during the critical data-scarce phase.

- As the labeled set grew, the performance gap narrowed and all methods eventually converged, indicating diminishing returns from AL under AutoML with sufficient data [16].

Experimental Protocols and Workflows

Implementing effective active learning systems requires structured experimental protocols that account for domain-specific constraints and evaluation metrics.

Standard AL Workflow for Systematic Reviews

The typical workflow for AL-assisted systematic review screening follows this process:

Diagram 1: Active Learning Workflow for Systematic Reviews

This workflow incorporates key decision points including:

- Prior Knowledge Selection: Starting with known relevant and irrelevant documents to bootstrap the AL process

- Iterative Screening Cycles: Typically reviewing records in batches of 1-N documents per cycle

- Stopping Criteria: Usually based on finding no new relevant documents within a defined interval (e.g., 5% of total records screened consecutively without a hit) [5]

Benchmarking Protocol for AL Strategies

Comprehensive evaluation of AL components follows standardized protocols:

Diagram 2: AL Benchmarking Protocol

This protocol emphasizes:

- Cross-Validation: Typically 5-fold cross-validation for robust performance estimation [16]

- Multiple Replications: Running simulations with different random seeds to account for variability

- Comparison Metrics: Evaluating using domain-appropriate metrics including Work Saved over Sampling (WSS) at target recall levels (e.g., WSS@95%), Mean Absolute Error (MAE) for regression, and Area Under the Label Complexity Curve (AULC) [38]

The Researcher's Toolkit: Essential Components for AL Implementation

Successful implementation of active learning systems requires careful selection of computational tools and methodological components. The following table outlines key "research reagent solutions" for building effective AL pipelines:

Table 4: Essential Research Reagents for Active Learning Systems

| Component | Representative Options | Function | Implementation Considerations |

|---|---|---|---|

| Feature Extractors | TF-IDF, Doc2Vec, Word Embeddings, Domain-Specific Features | Transform raw data into machine-readable numerical representations | TF-IDF often outperforms embeddings early in screening; domain-specific features may be needed for specialized data [5] [36] |

| Classification Models | Logistic Regression, Random Forest, Support Vector Machines, Neural Networks | Make predictions and quantify uncertainty for query strategy | Simpler models often suffice; Logistic Regression with TF-IDF is a strong baseline for text [24] |

| Query Strategies | Uncertainty Sampling, QBC, Diversity Methods, Hybrid Approaches | Select the most informative unlabeled instances for labeling | Uncertainty sampling provides strong baselines; hybrid methods address redundancy [38] |

| Benchmarking Tools | ASReview, ALdataset, OpenAL, CDALBench | Standardized evaluation and comparison of AL strategies | Essential for reproducible research; ASReview enables large-scale simulations [24] |