Beyond Gradient Descent: Implementing Random Search for Efficient Chemical Machine Learning

This article provides a comprehensive guide for researchers and drug development professionals on implementing random search in chemical machine learning applications.

Beyond Gradient Descent: Implementing Random Search for Efficient Chemical Machine Learning

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing random search in chemical machine learning applications. It explores the foundational principles that make random search a powerful, computationally inexpensive tool for navigating vast chemical spaces, from hyperparameter tuning to reaction optimization. We detail practical methodologies, including integration with active learning and tools like LabMate.ML, and address key challenges such as the curse of dimensionality. The content offers a critical validation against other optimization methods, highlighting scenarios where random search outperforms more complex algorithms and where hybrid approaches excel. Finally, we synthesize key takeaways and future directions for deploying these strategies to accelerate drug discovery and materials development.

Why Random Search? Foundations for Navigating Chemical Space

The exploration of chemical space, estimated to contain over 10⁶⁰ potential drug-like molecules, represents one of the most formidable search challenges in modern science. Traditional brute-force computational methods are often computationally intractable for navigating these vast, high-dimensional spaces. Probabilistic sampling has emerged as a core principle enabling efficient exploration by strategically guiding the search toward regions of high promise while quantifying uncertainty inherent in predictive models. This paradigm shift from deterministic to probabilistic frameworks allows researchers to balance the exploration of novel chemical territories with the exploitation of known promising regions, thereby dramatically accelerating molecular discovery and optimization.

In chemical machine learning (ML), probabilistic sampling involves using probability distributions to represent beliefs about molecular properties, reaction outcomes, or structural stability. These distributions are iteratively updated as new data is acquired, allowing the search algorithm to intelligently prioritize which experiments or simulations to perform next. This approach is particularly valuable in drug discovery and materials science, where the cost of wet-lab experiments or high-fidelity simulations remains high, making efficient in-silico screening paramount.

Quantitative Performance of Probabilistic Methods

The adoption of probabilistic methods is driven by their demonstrated superior performance in key metrics such as prediction accuracy, data efficiency, and computational cost reduction compared to traditional approaches. The tables below summarize quantitative findings from recent studies.

Table 1: Performance Comparison of Probabilistic Models vs. Traditional Methods

| Model / Method | Test Case | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| Gaussian Process Regression (GPR) | H₂/air auto-ignition chemistry | R²test (vs. Direct Integration) | 0.997 | [1] |

| Gaussian Process Autoregressive Regression (GPAR) | H₂/air auto-ignition chemistry | R²test (vs. Direct Integration) | 0.998 | [1] |

| Artificial Neural Network (ANN) | H₂/air auto-ignition chemistry | R²test (vs. Direct Integration) | 0.988 | [1] |

| CSearch (Global Optimization) | Molecular docking for 4 target receptors | Computational Efficiency (vs. Virtual Library Screening) | 300-400x more efficient | [2] |

| Active Probabilistic Drug Discovery (APDD) | Lead molecule discovery on DUD-E, LIT-PCBA | Cost Reduction in Wet Experiments | ~70% reduction | [3] |

| Active Probabilistic Drug Discovery (APDD) | Lead molecule discovery on DUD-E, LIT-PCBA | Cost Reduction in Computational Docking | ~80% reduction | [3] |

Table 2: Inference Speed Comparison for Chemical Integrators

| Model | Speed-up Factor (vs. 0D Reactor Model) | Uncertainty Quantification | Key Strength |

|---|---|---|---|

| Gaussian Process (GPR/GPAR) | 1.9 - 2.1 | Native | High data efficiency & accuracy |

| Artificial Neural Network (ANN) | Up to 3.0 | Not Native | Pure inference speed |

Detailed Experimental Protocols

This section provides detailed, actionable protocols for implementing key probabilistic sampling methods as described in recent literature.

Protocol: Chemical Space Exploration with CSearch

Objective: To efficiently discover molecules with optimized binding affinity for a specific protein target using the CSearch global optimization algorithm [2].

Materials & Setup:

- Objective Function: A pre-trained Graph Neural Network (GNN) model that approximates docking energies for the target receptor.

- Fragment Database: A curated set of ~190,000 non-redundant molecular fragments (e.g., from Enamine Fragment Collection).

- Initial Bank: 60 diverse, drug-like molecules (e.g., curated from DrugspaceX).

- Similarity Metric: Tanimoto similarity based on Morgan Fingerprints (radius 2, 2048 bits).

Procedure:

- Initialization: Select the initial bank of 60 molecules with the best objective function values from the curated pool. Calculate the initial diversity radius (Rcut) as half the average pairwise distance between all initial bank molecules.

- CSA Cycle: a. Seed Selection: Randomly select six chemicals from the current bank that have not been used as seeds in this cycle. b. Trial Generation (Virtual Synthesis): - For each seed, perform virtual synthesis using BRICS rules [2]. - Generate up to 60 new trial molecules by combining a fragment from the seed molecule with a fragment from a randomly selected initial bank molecule. - Generate up to 60 additional trial molecules by combining a fragment from the seed with a fragment from a randomly selected set of 100 fragments from the fragment database. - Prioritize fragment selection based on frequency in PubChem to improve synthetic accessibility. c. Bank Update: For each trial chemical: - If its objective value is better than that of the nearest bank chemical within Rcut, it replaces that bank chemical. - If it is farther than Rcut from all bank members but has a better objective value than the worst in the bank, it replaces the worst bank chemical. - Otherwise, it is discarded.

- Annealing: After all bank chemicals have been used as seeds, reduce Rcut by a factor (e.g., 0.4^0.05) to gradually focus the search.

- Termination: Repeat the CSA cycle for a fixed number of iterations (e.g., 50 cycles) or until convergence. The final bank contains the optimized molecules.

Protocol: Reactivity Discovery with the Bayesian Oracle

Objective: To autonomously interpret chemical reactivity data from a robotic platform and discover novel reactions using a probabilistic model [4].

Materials & Setup:

- Robotic Chemistry Platform: A system (e.g., Chemputer) capable of automated liquid handling, reagent dispensing, and reaction execution.

- Online Analytics: HPLC, NMR, and/or MS for real-time reaction analysis.

- Probabilistic Model: A Bayesian model where compounds are assigned latent "property" variables (0 to 1) and reactivity is modeled as a joint probability distribution.

Procedure:

- Theory Encoding: Encode the chemist's initial understanding of reactivity as prior probability distributions within the Bayesian model. This makes initial biases explicit and quantifiable.

- Experiment Execution & Observation:

- The robotic platform performs combinatorial reactions from a set of starting materials.

- Online analytical instruments provide observations (e.g., evidence of product formation).

- Probabilistic Inference:

- Use Markov Chain Monte Carlo (MCMC) or variational inference to update the posterior distributions of the model parameters based on the new observational data.

- Anomaly Detection & Query:

- The Oracle calculates the likelihood of each experimental outcome. Outcomes with very low likelihood are flagged as "surprising" or anomalous.

- The model can be queried to predict the outcomes of untried experiments.

- Expert Intervention & Model Update:

- A chemist validates the shortlist of unexpected reactivity, potentially isolating novel products.

- Based on this validation, the expert can refine the theory (e.g., by defining new abstract properties), and the probabilistic model is updated instantly.

- Iteration: Steps 2-5 are repeated, continuously refining the model's understanding of the chemical space and guiding the discovery process.

Protocol: Enhanced Sampling for Transition State Characterization

Objective: To achieve a probabilistic characterization of transition states in enzymatic reactions using a machine learning-based enhanced sampling scheme [5].

Materials & Setup:

- System Setup: A solvated molecular system of the enzyme and substrate, modeled with a suitable molecular mechanics force field or machine learning potential.

- Enhanced Sampling Software: A molecular dynamics package (e.g., PLUMED) that supports the implementation of bias potentials and machine-learned collective variables (CVs).

Procedure:

- Committor Analysis & ML-CV Training:

- Run short simulations from the putative transition state region to determine the committor probability for each configuration (i.e., the probability to reach the product state before the reactant state).

- Train a neural network to approximate the committor function, using structural descriptors (e.g, distances, angles) of the catalytic pocket as input.

- Biased Simulation: Use the machine-learned committor as the CV in an enhanced sampling method (e.g., Metadynamics or Variational Free Energy Dynamics) to drive and accelerate the sampling of the transition state ensemble.

- Ensemble Analysis: Analyze the sampled configurations to characterize the transition state ensemble statistically, identifying key structural features and interactions (e.g., the role of specific water molecules).

- Free Energy Calculation: Reconstruct the free energy landscape along the learned CV to quantify the stability of different transition states and map out the reaction mechanism.

Visualizing Probabilistic Sampling Workflows

The following diagrams, generated with Graphviz, illustrate the logical flow of the core protocols described above.

Diagram 1: CSearch Global Optimization

Diagram 2: Bayesian Oracle Workflow

Table 3: Essential Research Reagents & Computational Tools

| Item / Resource | Type | Function / Application | Example / Source |

|---|---|---|---|

| BRICS Rules | Reaction Rules | Defines 16 types of reaction points for fragment-based virtual synthesis, ensuring chemical validity and synthesizability of generated molecules. | RDKit [2] |

| Morgan Fingerprints | Molecular Descriptor | A circular fingerprint representing a molecule's structure; used to calculate molecular similarity (Tanimoto) and diversity in chemical space. | RDKit [2] |

| Gaussian Process (GP) Models | Probabilistic ML Model | Used as a surrogate model or direct predictor; provides uncertainty quantification for each prediction, crucial for data-efficient optimization. | [1] [6] |

| Graph Neural Network (GNN) | Machine Learning Model | Learns from graph-structured data (atoms as nodes, bonds as edges); excels at predicting molecular properties like docking scores. | [2] |

| Bayesian Optimization | Hyperparameter Tuning | A sample-efficient global optimization strategy for black-box functions; ideal for tuning model hyperparameters or guiding experiments. | [7] |

| Committor Function | Analysis / ML Target | A key quantity in rare-event theory; its machine-learned approximation serves as an optimal collective variable for enhanced sampling. | [5] |

| Fragment Database | Chemical Library | A curated collection of small molecular building blocks used for in-silico compound assembly via virtual synthesis. | Enamine Fragment Collection [2] |

| Markov Chain Monte Carlo (MCMC) | Inference Algorithm | A class of algorithms for sampling from complex probability distributions, used for Bayesian inference in probabilistic models. | [4] |

Random search (RS) represents a family of powerful, derivative-free optimization methods ideally suited for complex chemical research problems where the relationship between parameters and outcomes is unknown, discontinuous, or difficult to model. This Application Note elucidates the mathematical foundations of random search, demonstrating its capacity to identify optimal experimental conditions by evaluating only a minimal fraction (0.03%–0.04%) of the possible search space [8]. We provide detailed protocols for implementing RS in chemical machine learning (ML) applications, including drug discovery and reaction optimization. Structured data presentations and visual workflows guide researchers in deploying RS to efficiently navigate high-dimensional experimental landscapes, significantly accelerating materials development and synthetic chemistry pipelines while minimizing resource expenditure.

In chemical research and development, optimizing reaction conditions, molecular properties, and synthesis parameters traditionally depends on extensive domain expertise and laborious, systematic exploration of variable space. The complexity of these optimization landscapes, often characterized by numerous categorical and continuous parameters, presents a significant bottleneck. Random search algorithms offer a mathematically grounded alternative, capable of identifying high-performing experimental conditions with minimal data requirements [8] [9].

The fundamental power of random search lies in its probabilistic guarantees. For a search space where promising regions constitute just 5% of the total volume, the probability of completely missing these regions after N random trials becomes exponentially small. Specifically, after 60 random configurations, the probability of finding at least one good configuration exceeds 95% (1 - 0.95^60 ≈ 0.953) [9]. This note details practical implementations of RS that leverage these principles for chemical ML, providing actionable protocols and analytical tools for research scientists.

Mathematical Foundations of Random Search

Core Algorithm and Variants

Random search operates without gradient information, making it a direct-search, derivative-free method suitable for non-continuous or noisy functions [9]. The foundational algorithm proceeds as follows:

- Initialize with a random position

xin the search-space. - Repeat until a termination criterion is met (e.g., iteration count or fitness threshold):

- Sample a new position

yfrom the hypersphere of a given radius surrounding the current positionx. - Evaluate the cost function

f(y). - Update If

f(y) < f(x), then setx = y.

- Sample a new position

Several structured variants enhance basic RS performance [9]:

- Fixed Step Size RS (FSSRS): Samples from a hypersphere of fixed radius.

- Adaptive Step Size RS (ASSRS): Heuristically adjusts the hypersphere radius based on improvement history.

- Optimized Relative Step Size RS (ORSSRS): Approximates optimal step size via exponential decrease.

Quantitative Efficacy

Table 1: Probability of Locating Optimal Conditions with Random Search

| Fraction of Search Space Occupied by Good Conditions | Number of Random Trials | Probability of Finding ≥1 Good Configuration |

|---|---|---|

| 5% | 60 | >95% [9] |

| 1% | 300 | >95% (Calculated) |

| 10% | 29 | >95% (Calculated) |

The efficacy of RS is demonstrated in real-world chemical optimization. LabMate.ML, an adaptive ML tool integrating RS, identifies optimal conditions by sampling merely 0.03%–0.04% of the entire search space [8]. This minimal data requirement enables rapid convergence to high-performance reaction conditions for diverse chemistries, outperforming human experts in double-blind competitions [8].

Applications in Chemical Research

Reaction Condition Optimization

RS algorithms efficiently navigate complex, multi-parameter spaces to identify optimal reaction conditions. In proof-of-concept studies, LabMate.ML simultaneously optimized real-valued (e.g., temperature, concentration) and categorical (e.g., solvent, catalyst) parameters for distinctive small-molecule, glyco-, and protein chemistries [8]. The method formalizes chemical intuition autonomously, providing an interpretable framework for informed, automated experiment selection.

Compound Target and Mode-of-Action Identification

In drug discovery, identifying a compound's primary targets and mechanism of action is crucial. RS-based strategies have been employed to analyze whole-genome expression data. However, advanced algorithms like CutTree now significantly outperform exhaustive (random) library search strategies, particularly when multiple Primary Affected Genes (PAGs) are involved [10]. For example, while an exhaustive random search struggles with the combinatorial explosion of searching >10^12 combinations, CutTree successfully identified 4 out of 5 known PAGs in the yeast galactose-response pathway from just 17 experimental perturbations [10].

Predictive Modeling of Molecular Properties

Machine learning models for predicting molecular properties, such as the absorption wavelengths of microbial rhodopsins, rely on data-driven approaches. The construction of these models can benefit from efficient search strategies to explore the vast space of possible amino acid sequences and their relationships to optical properties [11]. RS provides a foundational method for initial exploration and hyperparameter tuning in such ML pipelines.

Experimental Protocols

Protocol 1: Optimizing Chemical Reactions with LabMate.ML

Objective: Identify goal-oriented optimal reaction conditions with minimal experiments.

Materials:

- Reaction Components: Substrates, reagents, solvents, catalysts.

- Lab Equipment: Suitable reaction vessels (e.g., vial or microplate), temperature control, agitation.

- Analysis Method: HPLC, GC, NMR, or other quantitative analysis.

- Software: LabMate.ML or custom RS script [8].

Table 2: Research Reagent Solutions for Reaction Optimization

| Reagent Type | Example Options | Function in Optimization |

|---|---|---|

| Solvent | DMF, THF, MeCN, Toluene, Water | Screens solvent effects on reaction rate, yield, and selectivity. |

| Catalyst | Pd(PPh₃)₄, RuPhos, BrettPhos | Varies ligand and metal catalyst to find optimal combination. |

| Base | K₂CO₃, Cs₂CO₃, Et₃N, NaO-t-Bu | Explores base impact on reaction efficiency. |

| Additive | Salts, Crown ethers, Redox agents | Modifies reaction environment to improve outcomes. |

Procedure:

- Define Search Space: List all parameters to optimize (e.g., solvent, catalyst, temperature, time) and their respective ranges or categories.

- Formulate Objective Function: Define a quantitative metric for success (e.g., reaction yield, selectivity, purity).

- Initial Random Sampling: Use the RS algorithm to select an initial set of 0.03%–0.04% of the possible experimental conditions from the full search space [8].

- Execute and Analyze: Run the selected experiments and measure the objective function for each condition.

- Adaptive Iteration: Feed results into the adaptive ML algorithm. Allow LabMate.ML to propose the next set of most informative experiments based on previous outcomes.

- Termination: Repeat step 5 until performance plateaus or the optimal condition is identified with sufficient confidence.

Protocol 2: Data-Driven Prediction of Rhodopsin Absorption Wavelengths

Objective: Build a model to predict the absorption wavelength (λmax) of microbial rhodopsin variants based on amino acid sequence.

Materials:

- Database: Curated dataset of rhodopsin amino acid sequences and corresponding experimentally measured λmax [11].

- Computational Tools: ML framework (e.g., Python with Scikit-learn, TensorFlow), alignment software (e.g., ClustalW).

Procedure:

- Data Curation: Compile a database of wild-type and mutant rhodopsins with aligned sequences and measured λmax. The database used in the cited study contained 796 proteins [11].

- Feature Representation: Convert aligned amino acid sequences into a binary feature vector (e.g., one-hot encoding) representing the presence/absence of each amino acid at each position [11].

- Model Training with Sparse Learning: Apply a group-wise sparse learning ML method to the training set. This technique identifies "active residues" most critical to colour tuning by forcing the model to use only a sparse subset of all sequence features [11].

- Model Interpretation & Prediction: Use the trained model to:

- Predict λmax for new, uncharacterized rhodopsin sequences.

- Identify active residues by examining the model coefficients; residues with non-zero coefficients are deemed important for colour tuning.

- Quantify mutational effects based on the coefficient values, indicating the direction (red- or blue-shift) and magnitude of effect for specific amino acid changes [11].

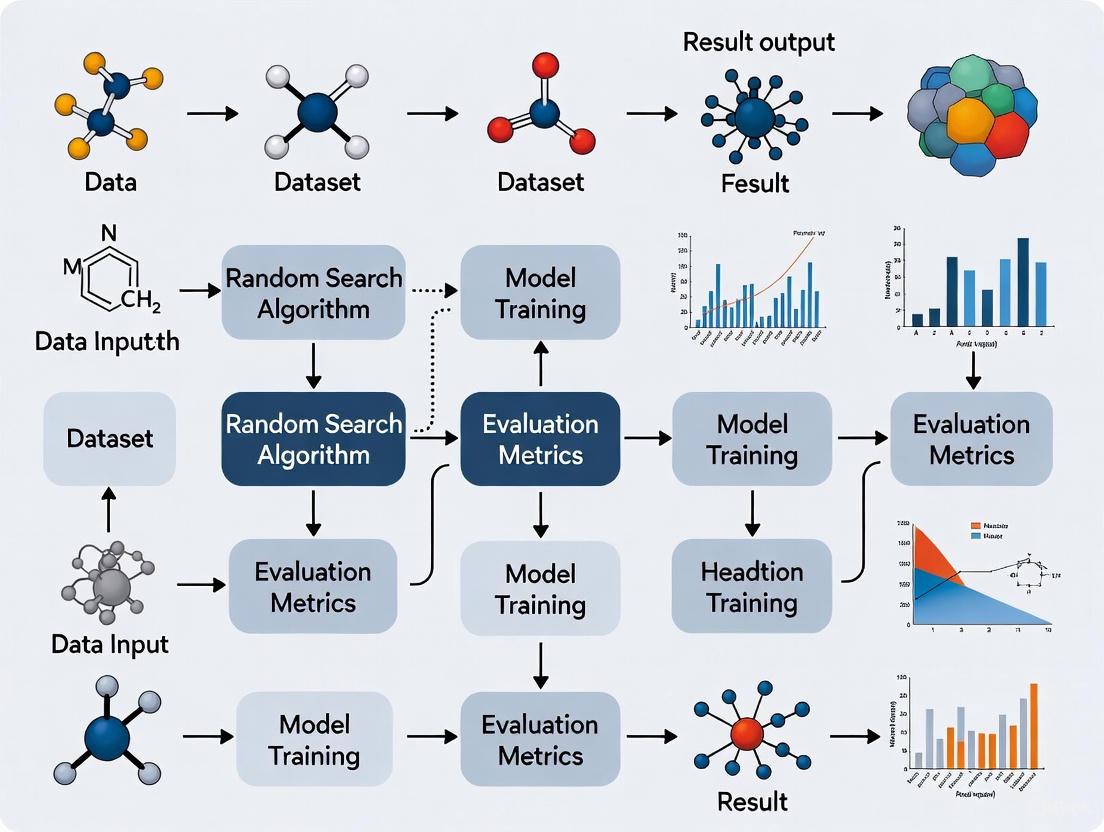

Workflow Visualization

Figure 1: Random Search Optimization Workflow. This diagram outlines the iterative process of using random search for chemical optimization, from problem definition to identifying optimal conditions.

Figure 2: Mathematical Guarantees of Random Search. This diagram visualizes the probability framework that ensures random search effectiveness with minimal experiments.

The chemical space of possible drug-like small organic molecules is estimated to exceed 10^60 compounds, a scale that exceeds the number of stars in the observable universe by many orders of magnitude [12]. This vastness presents a fundamental challenge to modern computational drug discovery: how to efficiently navigate this near-infinite space to identify viable candidate molecules. In stark contrast to this theoretical immensity, the practically accessible chemical space is significantly constrained. Make-on-demand chemical libraries, while substantial, currently contain >70 billion readily available molecules, and only approximately 13 million compounds are available in-stock from chemical suppliers [12]. This disparity of over 50 orders of magnitude between the possible and the readily available underscores the critical need for intelligent search strategies. Random search methods, when implemented with strategic biasing and machine learning acceleration, provide a powerful framework for exploring this intractable space, enabling the discovery of novel molecular scaffolds and structures that might otherwise remain inaccessible.

Core Protocols for Random Search in Chemical Space

Protocol 1: ab Initio Random Structure Searching (AIRSS)

The AIRSS method is a theory-driven, high-throughput approach for computational materials and molecular discovery, relying on the first-principles relaxation of diverse, stochastically generated structures [13].

- Principle: Systematically generate and optimize random sensible structures to uniformly sample configuration space and identify low-energy, stable configurations.

- Procedure:

- Structure Generation: Stochastically generate initial candidate structures by placing atoms randomly within a unit cell of random shape and size. Critically, the number of atoms per cell should be varied randomly to avoid heuristic biases.

- Structural Relaxation: Subject each candidate structure to direct structural relaxation using density functional theory (DFT) to find the nearest local energy minimum.

- High-Throughput Execution: Perform relaxations in a highly parallelized manner across large computational clusters to maximize the exploration of diverse starting points.

- Analysis and Identification: Collect all relaxed structures and rank them by energy. Analyze low-energy outliers for novel structural motifs and unexpected chemical phenomena.

Protocol 2: Hot Random Search (Hot-AIRSS)

Hot-AIRSS is an extension of AIRSS that integrates machine learning to enable more complex explorations, biasing the search towards low-energy regions [13].

- Principle: Combine long, machine learning-accelerated molecular dynamics (MD) anneals with direct structural relaxation to tackle complex energy landscapes.

- Procedure:

- Ephemeral Potential Generation: Construct an ephemeral data-derived potential (EDDP) on-the-fly from a subset of DFT calculations to serve as a fast, machine-learned interatomic potential.

- Stochastic Seeding: Generate initial random sensible structures, as in the standard AIRSS protocol.

- ML-Accelerated Annealing: For each candidate, perform a long, high-temperature MD simulation using the EDDP, followed by a slow cooling (annealing) process. This allows the structure to traverse energy barriers and find deeper minima.

- Final DFT Refinement: Conduct a final direct structural relaxation using DFT on the annealed structure to obtain a high-fidelity energy and geometry.

- Post-Processing: The resulting structures from the anneal-and-relax cycles are collected and analyzed alongside those from standard AIRSS runs.

Protocol 3: Datum-Derived Structure Generation

This method biases random structure generation towards a known reference structure, facilitating the discovery of structurally related but novel configurations [13].

- Principle: Generate candidates that are "close" to a reference structure in a machine-learned feature space, rather than generating from a purely uniform distribution.

- Procedure:

- Reference Selection: Choose a reference structure (e.g., a known crystal structure like diamond).

- Feature Space Definition: Use an actively learned EDDP to compute a descriptor vector for the atomic environments in the reference structure.

- Cost Function Optimization: Stochastically generate candidate structures and optimize them to minimize the difference between their EDDP environment vector and that of the reference structure.

- Exploration: The optimization process leads to the emergence of novel structures that share fundamental characteristics with the reference.

Protocol 4: Machine Learning-Guided Ultralarge Docking Screen

This protocol combines machine learning classification with molecular docking to virtually screen multi-billion compound libraries efficiently [12].

- Principle: Use a fast ML classifier to pre-filter a vast chemical library, drastically reducing the number of compounds that require computationally expensive docking simulations.

- Procedure:

- Library Preparation: Obtain a multi-billion-molecule library (e.g., Enamine REAL Space). Precompute molecular descriptors (e.g., Morgan2 fingerprints) for all compounds.

- Docking and Training Set Creation: Dock a representative subset (e.g., 1 million compounds) against the target protein using molecular docking software to generate docking scores. Define a threshold (e.g., top 1%) for "active" compounds.

- Classifier Training: Train a classification algorithm (e.g., CatBoost) on the 1-million-molecule set, using the fingerprints as features and the docking-based active/inactive labels.

- Conformal Prediction: Apply the trained classifier with the conformal prediction framework to the entire multi-billion-molecule library. At a chosen significance level (ε), the framework predicts a "virtual active" set.

- Focused Docking: Perform molecular docking only on the vastly reduced "virtual active" set (typically 1-10% of the original library) to identify final top-scoring hits.

- Experimental Validation: Select compounds from the final ranked list for synthesis and experimental binding assays.

Performance Benchmarking and Data

Table 1: Performance of Machine Learning-Guided Docking vs. Full Docking [12]

| Metric | Full Docking Screen | ML-Guided Docking Screen | Improvement Factor |

|---|---|---|---|

| Library Size Screened | 3.5 Billion | 3.5 Billion | - |

| Compounds Docked | 3.5 Billion | ~25-35 Million | >100-fold reduction |

| Computational Cost | ~493 Trillion complex predictions (for 11M compounds) | Docking of ML-predicted subset | >1,000-fold cost reduction |

| Sensitivity (Recall) | 100% (by definition) | 87-88% | - |

| Error Rate | - | Controlled to ≤ ε (e.g., 8-12%) | - |

Table 2: Key Metrics for the AIRSS Family of Methods [13]

| Method | Key Feature | Application Example | Outcome |

|---|---|---|---|

| AIRSS | High-throughput, parallel DFT relaxation of random sensible structures. | Dense hydrogen phases. | Prediction of mixed molecular-layer phases (e.g., C2/c-24). |

| Hot-AIRSS | Integration of long ML-accelerated MD anneals between DFT relaxations. | Complex boron structures in large unit cells. | Biased sampling towards low-energy configurations in complex systems. |

| Datum-Derived | Stochastic generation optimized to match a reference structure's feature vector. | Carbon allotropes from a diamond reference. | Emergence of graphite, nanotubes, and fullerene-like structures. |

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software and Computational Tools for Chemical Space Exploration

| Tool / Resource | Type | Primary Function | Relevance to Random Search |

|---|---|---|---|

| AIRSS [13] | Software Package | ab initio random structure searching. | Core platform for generating and relaxing random sensible structures via DFT. |

| Ephemeral Data-Derived Potentials (EDDP) [13] | Machine-Learned Interatomic Potential | Accelerates molecular dynamics and structure relaxation. | Enables Hot-AIRSS by providing fast, approximate potentials for long anneals. |

| CatBoost Classifier [12] | Machine Learning Algorithm | Gradient boosting on decision trees. | High-performance, fast classifier for pre-filtering ultralarge libraries before docking. |

| Conformal Prediction (CP) Framework [12] | Statistical Framework | Provides calibrated prediction intervals and error control. | Ensures reliability of ML pre-filtering by allowing control over the false positive rate. |

| Morgan Fingerprints (ECFP) [12] | Molecular Descriptor | Encodes molecular structure as a bit string based on circular substructures. | Represents molecules for ML models in virtual screening workflows. |

| Enamine REAL / ZINC15 [12] | Chemical Database | Libraries of commercially available or make-on-demand compounds. | Source of ultralarge chemical spaces (billions of compounds) for virtual screening. |

| ChemXploreML [14] | Desktop Application | User-friendly, offline ML tool for predicting molecular properties. | Democratizes access to ML-based property prediction for researchers without deep programming skills. |

| iSIM & BitBIRCH [15] | Cheminformatics Algorithms | Efficiently calculates intrinsic similarity and clusters large molecular datasets. | Quantifies and analyzes the diversity and evolution of chemical libraries over time. |

Concluding Remarks

The problem of 10^60 molecules is not merely a theoretical curiosity but a concrete barrier to discovery. The protocols outlined herein demonstrate that random search, far from being a naive brute-force approach, is a sophisticated strategy when augmented with machine learning and physical principles. Methods like AIRSS and its derivatives leverage high-throughput computing and ML-acceleration to uncover surprises in chemical space, from self-ionizing ammonia to complex electrides [13]. Simultaneously, ML-guided docking leverages intelligent pre-screening to render billion-molecule libraries tractable, achieving over a 1000-fold reduction in computational cost while maintaining high sensitivity [12]. The future of chemical discovery lies in the continued integration of these approaches—combining the exploratory power of minimally biased random sampling with the efficiency of data-driven intelligence to navigate the astoundingly large chemical universe.

Application Note: Hyperparameter Tuning in Low-Data Chemical Regimes

Background and Principle

In chemical machine learning (ML), particularly with small datasets (n < 50), traditional non-linear models are highly susceptible to overfitting. An advanced hyperparameter tuning workflow has been developed to make these models competitive with robust multivariate linear regression (MVLR) by implementing a specialized objective function during optimization that explicitly penalizes overfitting in both interpolation and extrapolation tasks [16].

Experimental Protocol: Bayesian Hyperparameter Optimization with Combined RMSE Metric

Step 1: Data Preparation and Splitting

- Reserve 20% of the initial dataset (minimum 4 data points) as an external test set using an "even" distribution split to ensure balanced target value representation.

- Perform data curation including outlier detection and feature scaling on the remaining 80% of data.

Step 2: Define Optimization Objective Function The core innovation involves using a combined Root Mean Square Error (RMSE) calculated as follows:

- Interpolation RMSE: Compute using 10-times repeated 5-fold cross-validation (10× 5-fold CV) on training/validation data.

- Extrapolation RMSE: Assess via selective sorted 5-fold CV: sort data by target value (y), partition into 5 folds, and select the highest RMSE between top and bottom partitions.

- Combined Metric: Average both RMSE values to form the final objective function for Bayesian optimization.

Step 3: Execute Bayesian Optimization

- For each candidate algorithm (Neural Networks, Random Forest, Gradient Boosting), run Bayesian optimization for 50-100 iterations.

- At each iteration, evaluate the hyperparameter set using the combined RMSE metric.

- Select the hyperparameter configuration that minimizes the combined RMSE score.

Step 4: Final Model Evaluation

- Train final model with optimized hyperparameters on the entire training set.

- Evaluate performance on the held-out test set.

- Generate comprehensive report including performance metrics, feature importance, and outlier analysis.

Performance Benchmarking

Table 1: Performance comparison of optimized non-linear models versus MVLR across diverse chemical datasets (18-44 data points)

| Dataset (Size) | Best Performing Model | 10× 5-Fold CV Scaled RMSE | Test Set Scaled RMSE |

|---|---|---|---|

| Liu (A) | Neural Networks | Competitive with MVLR | Outperformed MVLR |

| Doyle (F) | Neural Networks | Outperformed MVLR | Outperformed MVLR |

| Sigman (C) | Non-linear Algorithm | Competitive with MVLR | Outperformed MVLR |

| Sigman (H) | Neural Networks | Outperformed MVLR | Outperformed MVLR |

| Paton (D) | Neural Networks | Outperformed MVLR | Competitive with MVLR |

Workflow Visualization

Application Note: Reaction Condition Optimization

Background and Principle

Machine learning, particularly support vector regression (SVR) with nature-inspired optimization algorithms, has demonstrated exceptional performance in modeling complex chemical processes. When optimized with the Dragonfly Algorithm (DA), SVR achieves superior predictive accuracy for critical parameters in pharmaceutical manufacturing processes such as lyophilization [17].

Experimental Protocol: SVR with Dragonfly Algorithm for Pharmaceutical Drying Optimization

Step 1: Dataset Preparation

- Collect spatial concentration distribution data (>46,000 points) with coordinates (X, Y, Z) as inputs and concentration (C) as target.

- Preprocess data using Isolation Forest algorithm for outlier detection (approximately 2% contamination parameter).

- Normalize features using Min-Max scaling.

- Split data randomly into training (~80%) and test (~20%) sets.

Step 2: Dragonfly Algorithm Hyperparameter Optimization

- Initialize dragonfly population with random positions and velocities.

- Define objective function: maximize mean 5-fold R² score.

- Update dragonfly positions using five behaviors: separation, alignment, cohesion, attraction to food, distraction from enemies.

- Iterate for 100-200 generations or until convergence.

- Extract optimal SVR hyperparameters: C (regularization), ε (epsilon-tube), and kernel parameters.

Step 3: Model Training and Validation

- Train SVR model with optimized hyperparameters on full training set.

- Validate using k-fold cross-validation (k=5).

- Evaluate on test set using R², RMSE, and MAE metrics.

Step 4: Process Optimization

- Use trained model to predict concentration distribution across design space.

- Identify optimal spatial configurations for maximum drying efficiency.

- Validate predictions with small-scale experimental runs.

Performance Metrics

Table 2: Performance of DA-optimized SVR for pharmaceutical drying concentration prediction

| Metric | Training Performance | Test Performance |

|---|---|---|

| R² Score | 0.999187 | 0.999234 |

| RMSE | 1.2619E-03 | 1.2619E-03 |

| MAE | 7.78946E-04 | 7.78946E-04 |

| Maximum Error | 5.18029E-03 | 5.18029E-03 |

Workflow Visualization

Application Note: Initial Hit Discovery

Background and Principle

Artificial intelligence has transformed initial hit discovery by augmenting traditional medicinal chemistry approaches. AI systems can process vast chemical spaces to identify promising candidates, predict properties, and generate novel molecular structures with desired characteristics. Successful implementations have reduced discovery timelines from years to months while maintaining rigorous safety and efficacy standards [18].

Experimental Protocol: AI-Augmented Hit Discovery Workflow

Step 1: Target Identification and Validation

- Use natural language processing tools (SciBERT, BioBERT) to extract and analyze biomedical literature for novel target-disease associations.

- Leverage federated learning approaches to integrate multi-institutional datasets while preserving data privacy.

- Validate targets using graph neural networks to predict binding affinity and functional effects.

Step 2: Compound Screening and Design

- Implement deep learning models (CNNs, GNNs, Transformers) for virtual screening of compound libraries.

- Utilize generative AI models (PoLiGenX, CardioGenAI) for de novo molecular design conditioned on specific target pockets and desired properties.

- Apply multi-objective optimization to simultaneously optimize potency, selectivity, and ADMET properties.

Step 3: ADMET Prediction and Optimization

- Train ensemble models on curated ADMET datasets using robust cross-validation strategies.

- Implement models like AttenhERG (Attentive FP) for specific toxicity endpoints with interpretable atom-level contributions.

- Use transfer learning to adapt models to proprietary datasets with limited examples.

Step 4: Experimental Validation and Iteration

- Synthesize top-ranking compounds using AI-assisted retrosynthetic planning (e.g., LHASA-based systems).

- Test in high-throughput screening assays.

- Incorporate experimental results into models via active learning for continuous improvement.

Success Metrics

Table 3: Notable AI-assisted drug discovery achievements and their development timelines

| Compound | Organization | Therapeutic Area | AI Approach | Development Stage | Timeline |

|---|---|---|---|---|---|

| Baricitinib | BenevolentAI/Eli Lilly | COVID-19, Rheumatoid Arthritis | AI-assisted repurposing | Approved | Accelerated approval |

| INS018_055 | Insilico Medicine | TNIK inhibitor | Generative AI | Phase II Trials | 18 months to Phase II |

| DSP-1181 | Exscientia | Unknown | AI-designed molecule | Phase I (Discontinued) | Accelerated design |

| Halicin | MIT | Antibiotic | Deep learning | Preclinical | Novel mechanism |

Workflow Visualization

Table 4: Key research reagents and computational tools for chemical ML implementation

| Resource | Type | Function | Application Context |

|---|---|---|---|

| ROBERT Software | Computational Tool | Automated ML workflow with hyperparameter optimization | Low-data regime chemical modeling [16] |

| Cavallo Descriptors | Molecular Descriptors | Steric and electronic parameters for chemical spaces | Reaction outcome prediction [16] |

| Gnina 1.3 | Docking Software | CNN-based scoring functions for protein-ligand interactions | Structure-based drug discovery [19] |

| Therapeutics Data Commons (TDC) | Data Resource | Curated ADMET datasets for benchmarking | Model training and validation [20] |

| Dragonfly Algorithm | Optimization Method | Nature-inspired hyperparameter optimization | Pharmaceutical process modeling [17] |

| Attentive FP | Algorithm | Interpretable molecular representation with attention | Toxicity prediction (e.g., hERG) [19] |

| fastprop | Descriptor Package | Rapid molecular descriptor calculation | Property prediction without extensive tuning [19] |

| ChemProp | GNN Framework | Graph neural networks for molecular property prediction | ADMET and physicochemical properties [19] |

In the realm of chemical machine learning (ML) research, the computational expense associated with traditional optimization methods presents a significant bottleneck for exploring complex molecular systems. Gradient-based optimization algorithms, such as gradient descent, require the calculation of derivatives for all model parameters with respect to the loss function, a process that becomes prohibitively expensive for high-dimensional systems common in computational chemistry and materials discovery [21] [22]. This article examines strategic implementations of random search methodologies that circumvent these costly gradient calculations while maintaining robust exploratory capability within chemical search spaces. By leveraging heuristic approaches and intelligent sampling techniques, researchers can achieve substantial computational savings while effectively navigating the vast combinatorial landscapes of potential molecules and reactions.

The fundamental challenge stems from the computational complexity of calculating gradients across millions of parameters in modern ML architectures, particularly when coupled with expensive quantum mechanical calculations required for accurate chemical property prediction [13] [23]. Each gradient calculation requires backpropagation through deep neural networks, which involves successive application of the chain rule across all network layers—a process whose computational cost scales with both model complexity and dataset dimensionality [21] [22]. For research domains requiring repeated evaluation of candidate structures or reactions, these cumulative costs severely constrain the feasible search space, potentially overlooking novel chemical phenomena and materials.

Random Search Methodologies in Chemical ML

Theory and Advantages of Gradient-Free Optimization

Random search methodologies offer a computationally efficient alternative to gradient-based optimization by employing stochastic sampling of parameter space without derivative calculations. Where gradient descent algorithms iteratively adjust parameters in the direction of steepest descent (calculated as ( \theta{t+1} = \thetat - \alpha \cdot \nabla J(\theta_t) )), random search explores the objective function through probabilistically generated candidate solutions [21] [22]. This approach provides particular advantage in chemical ML applications where the energy landscape often contains multiple local minima, discontinuous regions, and noisy evaluation metrics that challenge gradient-based methods.

The theoretical foundation for random search in chemical exploration builds upon the concept of sufficient uniformity in sampling, wherein a carefully constructed stochastic process can effectively explore configuration space with dramatically reduced computational overhead compared to exhaustive methods [13]. In practice, random search preserves parallelization advantages while eliminating the sequential dependency inherent in gradient-based optimization, where each parameter update must await completion of the full gradient calculation [13]. This characteristic makes random search particularly suitable for high-throughput computational screening of chemical compounds and reactions, where computational resources can be fully utilized through simultaneous evaluation of multiple candidates.

Table 1: Comparative Analysis of Optimization Approaches in Chemical ML

| Feature | Gradient-Based Methods | Random Search Methods |

|---|---|---|

| Computational Complexity | O(n·d) per iteration where n=parameters, d=data points | O(k) per iteration where k=sample size |

| Parallelization Potential | Limited by sequential parameter updates | Highly parallelizable candidate evaluation |

| Local Minima Sensitivity | High susceptibility to entrapment | Reduced sensitivity through stochastic sampling |

| Derivative Requirement | Requires differentiable cost functions | No differentiability requirement |

| Implementation Complexity | High (requires gradient computation & backpropagation) | Low (relies on sampling & evaluation) |

Implementation Frameworks for Chemical Systems

Several specialized implementations of random search have been developed specifically for chemical ML applications. The Ab Initio Random Structure Searching (AIRSS) methodology exemplifies this approach, generating diverse stochastic candidate structures which are subsequently relaxed through first-principles calculations to identify low-energy configurations [13]. This method has demonstrated particular efficacy in predicting stable crystal structures and novel molecular phases without requiring gradient calculations through the potential energy surface.

More advanced implementations, such as Hot AIRSS (hot-AIRSS), integrate machine-learned interatomic potentials with extended annealing procedures between direct structural relaxations [13]. This approach biases sampling toward low-energy configurations while maintaining the parallel advantage of random search, enabling investigation of significantly more complex systems than possible with gradient-based methods. The ephemeral data-derived potentials (EDDPs) employed in these methods accelerate calculations by several orders of magnitude compared to pure density functional theory (DFT) approaches, making large-scale exploration of compositional spaces computationally feasible [13].

Complementary to structure prediction, active learning frameworks implement random search principles for guiding experimental exploration of chemical spaces. These methodologies employ decision-making algorithms to select which experiments to perform next based on current knowledge, effectively optimizing the information gain per experimental cycle [24]. In documented cases, human-robot teams employing active learning strategies achieved prediction accuracy of 75.6 ± 1.8%, outperforming both algorithmic (71.8 ± 0.3%) and human (66.3 ± 1.8%) approaches individually [24].

Experimental Protocols and Application Notes

Protocol 1: Hot Random Search for Structure Prediction

Objective: Implement hot-AIRSS for identifying low-energy configurations of complex boron structures in large unit cells while avoiding costly gradient calculations.

Materials and Computational Requirements:

- High-throughput computing cluster with minimum 64 cores

- DFT calculation software (e.g., VASP, CASTEP)

- Machine-learning interatomic potential framework

- Structure visualization software

Procedure:

- Initialization: Define composition space and approximate volume ranges for the target system.

- Structure Generation: Stochastically generate initial candidate structures with random atomic positions, cell parameters, and symmetries constrained only by fundamental physical constraints (e.g., minimum interatomic distances) [13].

- Ephemeral Potential Construction: Train initial EDDPs on a subset of candidates evaluated with DFT calculations to create machine-learned interatomic potentials.

- Annealing Cycle: For each candidate structure: a. Perform extended molecular dynamics anneals using EDDPs (typically 10-100 ps at elevated temperatures) b. Periodically sample configurations from the trajectory for direct DFT relaxation c. Select lowest-energy configuration from the relaxation series

- Potential Refinement: Incorporate newly relaxed structures into the training set to improve EDDP accuracy.

- Iteration: Repeat steps 2-5 for multiple generations (typically 10-20 cycles).

- Validation: Perform full DFT structural relaxation on the most promising candidates identified through the random search process.

Key Parameters:

- Annealing temperature: 1000-3000 K (system dependent)

- Number of initial candidates: 100-1000 structures per cycle

- MD time step: 1-2 fs

- Annealing duration: 10-100 ps per structure

- Selection pressure: Retain top 10-20% of candidates between cycles

Protocol 2: Active Learning for Chemical Space Exploration

Objective: Efficiently explore the self-assembly and crystallization space of polyoxometalate clusters using human-robot collaborative teams.

Materials and Experimental Setup:

- Automated robotic synthesis platform

- In-line analytics (UV-Vis, IR, or NMR spectroscopy)

- Active learning algorithm implementation

- Chemical intuition quantification framework

Procedure:

- Experimental Design: Define the chemical parameter space (concentration, temperature, pH, stoichiometry ratios).

- Baseline Establishment: Conduct initial experiments using: a. Purely algorithmic selection (e.g., Bayesian optimization) b. Human experimenter selection based on chemical intuition c. Record prediction accuracy for both approaches

- Team Integration: Implement collaborative decision-making where: a. Algorithm proposes candidate experiments based on model uncertainty and expected improvement b. Human experimenters apply intuition-based filters to exclude chemically unreasonable suggestions c. Final experiment selection represents consensus between approaches

- Parallel Evaluation: Execute selected experiments using automated platforms with in-line monitoring.

- Model Updating: Incorporate results into the active learning model to improve subsequent predictions.

- Performance Metrics: Track prediction accuracy, novel discovery rate, and exploration efficiency compared to individual approaches.

Validation Metrics:

- Prediction accuracy: Percentage of correct outcome predictions

- Exploration efficiency: Rate of novel phenomenon discovery per experimental cycle

- Team performance: Comparative improvement over individual approaches

Table 2: Research Reagent Solutions for Chemical ML Exploration

| Reagent Category | Specific Examples | Function in Experimental Protocol |

|---|---|---|

| Polyoxometalate Precursors | Na₆[Mo₁₂₀Ce₆O₃₆₆H₁₂(H₂O)₇₈]·200H₂O | Target compound for crystallization and self-assembly studies [24] |

| Solvent Systems | Water, acetonitrile, dimethylformamide | Mediate molecular self-assembly through solvation effects |

| Structure Directing Agents | Tetraalkylammonium salts, crown ethers | Influence supramolecular organization through templating effects |

| pH Modulators | Acids (HCl, HNO₃), bases (NaOH, NH₃) | Control protonation state and charge distribution |

| Machine Learning Potentials | Ephemeral Data-Derived Potentials (EDDPs) | Accelerate energy evaluations in structure prediction [13] |

Workflow Visualization

Diagram 1: Integrated workflow combining random structure search with human intuition filters for chemical ML applications. The process begins with definition of the target chemical space, followed by iterative generation and evaluation of candidate structures. Human intuition provides critical filtering before model updating, creating a collaborative optimization cycle that avoids costly gradient calculations while maintaining chemical relevance.

The implementation of random search methodologies in chemical ML research represents a paradigm shift in computational exploration strategies, offering substantial advantages over gradient-based approaches for navigating high-dimensional chemical spaces. By eliminating costly gradient calculations while maintaining effective exploration capabilities, these methods enable researchers to investigate larger compositional ranges and more complex systems than previously feasible. The integration of human chemical intuition with algorithmic search further enhances efficiency, demonstrating that collaborative approaches can outperform either method in isolation.

Future developments in this field will likely focus on improved sampling strategies that balance exploration and exploitation more effectively, potentially incorporating multi-fidelity modeling approaches that combine expensive high-accuracy calculations with rapid approximate evaluations. As automated experimental platforms become more sophisticated, the tight integration of computational random search with robotic synthesis and characterization will accelerate the discovery of novel materials and reactions, ultimately reducing the time from conceptual design to experimental realization in chemical research and drug development.

A Practical Toolkit: Implementing Random Search in Chemical ML Workflows

In cheminformatics and chemical machine learning (ML), the performance of models, particularly Graph Neural Networks (GNNs), is highly sensitive to their architectural choices and hyperparameters [25]. Defining the search space for these chemical parameters is therefore a critical, non-trivial task that forms the foundation of any successful ML-driven discovery pipeline. This process involves identifying the key tunable parameters that govern the model's behavior and establishing the bounds within which the optimization algorithm will search for the optimal configuration.

The adoption of automated optimization techniques like Neural Architecture Search (NAS) and Hyperparameter Optimization (HPO) is pivotal for enhancing model performance, scalability, and efficiency in key applications such as molecular property prediction, chemical reaction modeling, and de novo molecular design [25]. Framing this search within the context of a random search strategy, as required by the broader thesis, offers a computationally efficient alternative to exhaustive grid searches, especially when exploring a high-dimensional parameter space with many tuning parameters [26].

Core Chemical ML Parameters and Their Search Ranges

The following tables summarize the primary categories of parameters and their typical search spaces for chemical ML projects, particularly those utilizing Graph Neural Networks.

Table 1: Core Model Architecture Search Space

| Parameter Category | Specific Parameter | Typical Search Range | Description |

|---|---|---|---|

| Graph Convolution Layers | Number of Layers | [2, 6] (integers) |

Depth of the GNN model. |

| Hidden Layer Dimensionality | [64, 512] (integers) |

Size of node/feature embeddings. | |

| Aggregation Function | ['sum', 'mean', 'max'] |

How node features are combined. | |

| Neural Network Parameters | Activation Function | ['ReLU', 'PReLU', 'elu'] |

Non-linear function applied after layers. |

| Dropout Rate | [0.0, 0.5] (continuous) |

Fraction of input units to drop for regularization. | |

| Batch Normalization | [True, False] |

Whether to apply batch normalization. |

Table 2: Training Hyperparameter Search Space

| Parameter Category | Specific Parameter | Typical Search Range | Description |

|---|---|---|---|

| Optimization | Learning Rate | [1e-4, 1e-2] (log scale) |

Step size for weight updates. |

| Optimizer Type | ['Adam', 'AdamW', 'SGD'] |

Algorithm used for gradient descent. | |

| Weight Decay | [1e-6, 1e-2] (log scale) |

L2 regularization penalty. | |

| Training Procedure | Batch Size | [32, 256] (integers, powers of 2) |

Number of samples per gradient update. |

Experimental Protocol for Random Search Optimization

This protocol provides a detailed methodology for implementing random search to define and explore hyperparameters for a chemical ML task, such as molecular property prediction using a GNN.

Materials and Software Requirements

Table 3: Essential Research Reagent Solutions and Software

| Item Name | Function / Application | Example / Note |

|---|---|---|

| Cheminformatics Datasets | Source of features and labels for model training and testing. | Includes datasets for molecules and materials from experiments or computational calculations [27]. |

| Graph Neural Network (GNN) Model | The machine learning architecture to be optimized. | Directly models molecules based on their underlying chemical structures [25]. |

| Hyperparameter Optimization Library | Software to execute the random search algorithm. | e.g., caret in R [26] or scikit-learn in Python. |

| Computational Resources | Hardware for performing computationally intensive searches. | Modern computer hardware is crucial for accelerating the development process [28]. |

Step-by-Step Procedure

Problem Formulation and Metric Definition

- Action: Clearly define the chemical ML task (e.g., predicting solubility, toxicity, or binding affinity). Select an appropriate performance metric (e.g., ROC-AUC, RMSE, MAE) that will be used to evaluate and rank different hyperparameter combinations [26].

- Rationale: The random search algorithm requires a single, quantifiable objective to guide the optimization process.

Parameter Space Definition

- Action: Specify the hyperparameter search space based on the tables in Section 2. For random search, this involves defining the statistical distribution for each parameter (e.g., uniform, log-uniform) and its bounds [26].

- Rationale: A well-defined space ensures the search is both comprehensive and computationally tractable.

Random Sampling and Model Training

- Action: Set the total number of trials (

tuneLength). The algorithm will then randomly sample a unique combination of hyperparameters from the defined space for each trial. For each combination, train the model on the training set [26]. - Rationale: Random sampling avoids the curse of dimensionality that plagues grid search and has a high probability of finding a high-performing configuration quickly.

- Action: Set the total number of trials (

Model Validation and Selection

- Action: Use a robust validation method, such as repeated cross-validation, to evaluate the performance of each hyperparameter set on a held-out validation set [26]. This provides a reliable estimate of model generalization.

- Rationale: Prevents overfitting to the training data and ensures the selected model is performant on unseen data.

Final Model Fitting and Evaluation

- Action: The hyperparameter combination that achieves the best validation score is selected as the optimal configuration. A final model is then trained on the entire dataset (training + validation) and its performance is measured on a completely separate test set.

- Rationale: Provides an unbiased assessment of the model's real-world performance.

Workflow Visualization

The following diagram illustrates the logical flow of the random search protocol for hyperparameter optimization.

Advanced Considerations

While random search is a powerful and efficient baseline, several advanced considerations can further refine the process of defining your search space. It is crucial to incorporate domain knowledge from chemistry to constrain the search space intelligently. For instance, known relationships between molecular features and target properties can inform the prioritization of certain model architectures or feature combinations. Furthermore, for tasks with limited labeled data, the search space should include parameters for transfer learning or data augmentation techniques. The field is also moving towards more automated approaches, where the definition of the search space itself can be optimized, creating a feedback loop that continuously improves the chemical ML pipeline [25].

The optimization of chemical reaction conditions is a fundamental yet resource-intensive process in research and development, traditionally relying on deep expert knowledge and laborious experimentation. The LabMate.ML framework represents a significant advancement in this domain, introducing a self-evolving machine learning approach that requires only minimal experimental data to navigate complex chemical search spaces efficiently [8]. This paradigm is built upon the core principle of integrating an interpretable, adaptive machine-learning algorithm with an initial random sampling of a remarkably small fraction (0.03%–0.04%) of the total search space as input data [8]. By formalizing chemical intuition autonomously, LabMate.ML serves as a computational tool that augments rather than replaces researcher expertise, providing an innovative framework for informed, automated experiment selection toward the democratization of synthetic chemistry [8] [29].

Positioned within the broader context of implementing random search for chemical machine learning research, LabMate.ML utilizes strategic random sampling as a seeding mechanism rather than as the primary optimization driver. This initial diverse sampling of the reaction condition space provides the foundational dataset that the adaptive machine learning algorithm then builds upon to guide subsequent experiment selection [8] [30]. The ability to operate effectively with extremely limited data—typically requiring only 5-10 initial data points—and without specialized hardware makes this approach particularly valuable for research settings with limited resources or for problems where data generation is expensive or time-consuming [30]. This methodology stands in contrast to more resource-intensive approaches that depend on large historical datasets or extensive laboratory automation, instead focusing on data-efficient learning that aligns with practical laboratory constraints.

Performance Quantification and Comparative Analysis

The LabMate.ML approach has been rigorously validated across multiple chemical domains, demonstrating consistent performance in identifying optimal reaction conditions with minimal experimental investment. The quantitative efficacy of this paradigm is summarized in the table below, which aggregates performance metrics from prospective proof-of-concept studies.

Table 1: Quantitative Performance Metrics of LabMate.ML in Reaction Optimization

| Performance Metric | Value/Range | Context and Significance |

|---|---|---|

| Initial Search Space Sampling | 0.03%–0.04% | Fraction of total search space used as initial input data [8] |

| Training Data Requirements | 5–10 data points | Minimal number of experiments needed to initiate the adaptive learning process [30] |

| Additional Experiments for Success | 1–10 experiments | Range of additional experiments typically required to identify suitable conditions across nine case studies [30] |

| Human Competitive Performance | Comparable or superior to PhD chemists | Double-blind competitions and expert surveys confirmed performance competitive with human experts [8] [30] |

| Parameter Optimization Scope | Simultaneous optimization of real-valued and categorical features | Capability to handle diverse reaction parameters concurrently without simplification [8] |

The performance of LabMate.ML extends beyond these quantitative metrics to include qualitative advantages in formalizing chemical intuition. Through the use of interpretable random forest models, the platform affords quantitative and interpretable reactivity insights, allowing researchers to understand which parameters most significantly impact reaction outcomes [30]. This interpretability differentiates it from black-box optimization approaches and facilitates deeper chemical insight. In multiple cases, the algorithm learned novel relationships between parameters that defied the intuition of dozens of PhD-level chemists, demonstrating its capacity to uncover non-obvious chemical relationships that might be missed through traditional approaches [30].

Table 2: Application Scope of LabMate.ML Across Chemical Domains

| Chemical Domain | Optimization Objectives | Performance Outcome |

|---|---|---|

| Small-Molecule Chemistry | Goal-oriented condition identification | Successful optimization of distinctive objectives across multiple proof-of-concept studies [8] |

| Glycochemistry | Reaction condition optimization | Suitable conditions identified with minimal experimental iterations [30] |

| Protein Chemistry | Reaction condition optimization | Effective parameter optimization demonstrated in prospective studies [8] |

| Broad Organic Synthesis | Multi-parameter reaction optimization | Simultaneous optimization of various real-valued and categorical parameters [29] |

Experimental Protocol Implementation

Implementing the LabMate.ML paradigm involves a structured workflow that integrates strategic random sampling with adaptive machine learning. The following section provides detailed protocols for establishing and executing this approach within a research setting.

Protocol 1: Initial Search Space Configuration and Random Sampling

Purpose: To define the chemical reaction space and generate the initial diverse dataset required to initiate the LabMate.ML learning cycle.

Materials and Reagents:

- Chemical reactants specific to the transformation of interest

- Solvents covering diverse polarity, proticity, and coordination properties

- Catalysts and ligands appropriate for the reaction chemistry

- Additives, bases, acids, or other reagents as potentially relevant

- Laboratory equipment for conducting small-scale reactions

- Analytical instrumentation for reaction outcome quantification (e.g., HPLC, GC, NMR)

Procedure:

- Parameter Identification: Identify all categorical and continuous reaction parameters relevant to the optimization target. Categorical variables typically include solvent, catalyst, ligand, and additive identities. Continuous variables may include temperature, concentration, catalyst loading, and reaction time [8].

- Search Space Definition: Define the bounds of continuous parameters (e.g., temperature range from 25°C to 100°C) and the complete set of options for categorical parameters (e.g., solvent1, solvent2, ..., solventN) [8].

- Constraint Implementation: Incorporate practical chemical constraints to exclude unsafe or impractical condition combinations, such as temperatures exceeding solvent boiling points or incompatible reagent combinations [31].

- Random Sampling Execution: Perform random sampling of 0.03%–0.04% of the total defined search space. For a search space with 10,000 possible condition combinations, this corresponds to 3-4 experiments [8].

- Experimental Execution: Conduct the randomly selected experiments at appropriate reaction scales, ensuring precise control of all parameters.

- Outcome Quantification: Analyze reaction outcomes using appropriate analytical methods, quantifying key metrics such as yield, selectivity, or conversion.

Notes: The initial random sampling is critical for establishing a diverse baseline of reaction performance across the chemical space. This diversity enables the machine learning algorithm to identify promising regions for further exploration rather than exploiting potentially suboptimal areas.

Protocol 2: Adaptive Machine Learning Optimization Cycle

Purpose: To iteratively refine reaction conditions through an adaptive learning process that balances exploration of uncertain regions with exploitation of promising conditions.

Materials and Reagents:

- Data from initial random sampling experiments

- LabMate.ML software platform (accessible as described in research publications)

- Standard laboratory equipment for additional experiments

Procedure:

- Data Input: Input the experimental conditions and corresponding outcomes from the initial random sampling into the LabMate.ML platform [30].

- Model Training: The algorithm automatically trains a random forest model to establish relationships between reaction parameters and outcomes [30].

- Condition Prediction: The trained model predicts outcomes and associated uncertainties for all untested condition combinations in the search space.

- Next-Experiment Selection: Based on the model predictions, the algorithm selects the most informative next experiment(s) to perform, balancing exploration of uncertain regions and exploitation of promising conditions [8] [30].

- Experimental Validation: Conduct the suggested experiment(s) and precisely quantify outcomes.

- Iterative Learning: Feed the results back into the algorithm to update the model and select subsequent experiments [30].

- Termination Decision: Continue iterations until satisfactory conditions are identified, performance plateaus, or experimental resources are exhausted.

Notes: The random forest model provides interpretability through feature importance metrics, revealing which parameters most significantly impact reaction outcomes. This interpretability offers additional chemical insights beyond merely identifying optimal conditions [30].

Workflow Visualization

The following diagram illustrates the complete LabMate.ML workflow, integrating both the initial random sampling and the subsequent adaptive optimization cycle:

Figure 1: The LabMate.ML adaptive optimization workflow integrates initial random sampling with machine learning-guided experimentation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of the LabMate.ML paradigm requires both computational resources and practical laboratory materials. The following table details essential research reagent solutions and their functions within the optimization framework.

Table 3: Essential Research Reagent Solutions for LabMate.ML Implementation

| Reagent Category | Specific Examples | Function in Optimization Protocol |

|---|---|---|

| Solvent Libraries | Dimethylformamide (DMF), Dimethyl sulfoxide (DMSO), Acetonitrile, Tetrahydrofuran (THF), Toluene, Water, Alcohols | Screening solvent effects on reaction outcome including polarity, proticity, and coordination ability [8] |

| Catalyst Systems | Palladium catalysts (Pd(PPh3)4, Pd(dba)2), Nickel catalysts (Ni(acac)2), Organocatalysts, Acid/base catalysts | Evaluating catalyst impact on reaction efficiency and selectivity [31] |

| Ligand Arrays | Phosphine ligands (PPh3, XPhos, SPhos), Nitrogen-based ligands, Carbene precursors | Optimizing steric and electronic properties around catalytic metal centers [31] |

| Additive Sets | Salts (LiCl, NaBr), Acids (AcOH, TFA), Bases (Et3N, K2CO3), Scavengers | Modifying reaction environment, suppressing side reactions, or enhancing selectivity [8] |

| Chemical Descriptors | Solvent polarity parameters, Molecular fingerprints, Steric and electronic parameters | Featurizing categorical variables for machine learning algorithms [31] |

The strategic selection of reagents within each category should reflect both chemical diversity and practical constraints. For instance, solvent selection might prioritize options with different polarity indexes and coordination abilities while excluding those with practical handling issues or extreme toxicity. Similarly, catalyst and ligand arrays should encompass diverse steric and electronic properties to effectively sample the chemical space. This thoughtful reagent selection enhances the efficiency of both the initial random sampling and subsequent machine learning-guided optimization cycles.

Strategic random sampling is a foundational technique in machine learning-driven chemical research, designed to navigate vast and complex search spaces efficiently. Unlike simple random sampling, strategic approaches incorporate domain knowledge to define probability distributions that bias the search towards chemically relevant or information-rich regions. This is particularly critical in fields like drug development and materials science, where the chemical space is astronomically large and conventional exhaustive screening is computationally infeasible. For instance, the REAL Space virtual library contains billions of make-on-demand molecules, making strategic sampling not just beneficial but essential for effective exploration [32]. The core challenge lies in defining a sampling distribution that balances the exploration of unknown territories with the exploitation of promising areas, thereby accelerating the discovery of novel bioactive peptides, catalysts, or materials with desired properties.

Theoretical Foundation and Key Concepts

The Role of Probability Distributions in Random Search

In random search algorithms, the probability distribution from which candidates are sampled directly controls the efficiency and effectiveness of the exploration. A uniform distribution, where every candidate has an equal probability of being selected, represents the simplest and most unbiased strategy. However, for imbalanced chemical spaces—where functional molecules are rare—uniform sampling is highly inefficient. A strategically defined, non-uniform probability distribution can prioritize candidates based on features such as predicted bioactivity, structural novelty, or synthetic accessibility. For example, in the exploration of peptide libraries for anticancer peptides (ACPs), reinforcement learning models can be used to define a posterior distribution that guides the selection of candidates likely to exhibit membranolytic activity, dramatically reducing the search space [33]. Similarly, methods like Hierarchical Correlation Reconstruction focus on predicting entire probability distributions of molecular properties, which provide a more robust foundation for sampling than single-point estimates [34].

Comparison of Sampling Strategies

The table below summarizes key strategic sampling methods and their applicability in chemical ML research.

Table 1: Key Strategic Sampling Methods for Chemical ML

| Sampling Method | Core Principle | Best-Suited Application in Chemical ML | Key Advantage |

|---|---|---|---|

| Stratified Sampling [35] [36] | Divides population into homogeneous subgroups (strata) and samples from each proportionally. | Creating balanced training/validation sets for imbalanced chemical data (e.g., active vs. inactive compounds). | Ensures representation of all important subgroups, reducing bias in model evaluation. |

| Representative Random Sampling (RRS) [37] | Generates approximately uniform random samples from a defined chemical space without full enumeration. | Providing unbiased benchmark datasets for assessing the generalizability of ML models across chemical space. | Enables provably unbiased characterization of chemical space and model transferability. |

| Active Learning / Adaptive Sampling [38] [33] | Iteratively selects samples for experimentation based on model uncertainty and predicted performance. | Optimizing expensive experimental cycles (e.g., protein engineering, high-throughput screening). | Maximizes information gain per experiment, balancing exploration and exploitation. |

| Hot Random Search (hot-AIRSS) [13] | Integrates machine-learning-accelerated molecular dynamics anneals into a high-throughput random structure search. | Crystal structure prediction and exploration of complex energy landscapes in materials science. | Preserves parallel advantage of random search while biasing sampling towards low-energy configurations. |

Protocol for Implementing Stratified Sampling in Chemical ML

Stratified sampling is a pivotal strategy for ensuring that machine learning models are trained and evaluated on data that is representative of key subpopulations, such as different molecular scaffolds or activity classes [35]. The following protocol outlines its implementation for creating a robust validation set in a molecular property prediction task.

Experimental Workflow

The diagram below illustrates the step-by-step process of applying stratified sampling to a dataset of chemical compounds.

Stratified Sampling for Chemical Data

Detailed Methodologies

Analyze Class Distribution and Define Strata

- Action: Begin by analyzing the distribution of the target variable or other critical characteristics in your dataset. For a bioactivity dataset, this typically involves calculating the proportion of active versus inactive compounds [35].

- Strata Definition: Divide the population (the entire dataset) into distinct, homogeneous subgroups (strata) based on these characteristics. In the binary case, this results in two strata: "active" and "inactive." For multi-class problems or when considering multiple factors (e.g., molecular weight bins, scaffold types), more strata can be defined. It is crucial that each data point belongs to one and only one stratum [36].

Determine Sample Size and Randomly Sample

- Proportionate Allocation: Calculate the number of instances to be sampled from each stratum. In proportionate sampling, the sample size for a stratum is proportional to its size in the total population. For example, if the "inactive" stratum constitutes 95% of the data and a 20% overall sample is required, then 19% of the total data should be randomly selected from the "inactive" stratum, and 1% from the "active" stratum [35] [36].