Beyond Memorization: A Strategic Guide to Addressing Overfitting in Predictive Models for Drug Development

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, diagnose, and prevent overfitting in predictive models.

Beyond Memorization: A Strategic Guide to Addressing Overfitting in Predictive Models for Drug Development

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, diagnose, and prevent overfitting in predictive models. Covering foundational theory to advanced validation, it explores how overfitting manifests in high-stakes biomedical applications like drug-target interaction (DTI) prediction and clinical classifier development. The content delivers practical, methodology-agnostic strategies—from regularization and data augmentation to robustness testing—ensuring models are generalizable, reliable, and fit-for-purpose in accelerating discovery and regulatory success.

Defining the Enemy: What Overfitting Is and Why It Plagues Predictive Modeling

FAQs on Overfitting

What is overfitting in machine learning? Overfitting occurs when a machine learning model matches its training data too closely, learning both the underlying patterns (signal) and the random fluctuations (noise) [1] [2]. This results in excellent performance on the training data but poor performance on new, unseen data, as the model fails to generalize [3] [4]. It is akin to a student memorizing textbook exercises but being unable to solve new problems on an exam [5].

Why is overfitting a critical concern in predictive model research, especially in fields like drug discovery? Overfitting undermines the primary goal of predictive modeling: to build systems that make accurate decisions on real-world data [1]. In high-stakes fields like drug discovery, an overfit model can lead to costly failures. For instance, a model might perfectly predict drug-target interactions within its training data but fail to identify truly effective compounds in a laboratory setting, misdirecting research resources and time [6].

How can I detect if my model is overfitting? The primary method is to evaluate your model on a holdout test set [1]. A significant performance gap between the training set (e.g., high accuracy) and the test set (e.g., low accuracy) is a strong indicator of overfitting [2]. Monitoring generalization curves (loss curves for both training and validation sets) is also effective; if the validation loss stops decreasing and starts to rise while the training loss continues to fall, the model is likely overfitting [4]. Techniques like k-fold cross-validation provide a more robust assessment of model generalization [1] [3].

What are the main causes of overfitting? The principal causes are an unrepresentative training set and a model that is too complex [4].

- Unrepresentative Data: The training data may be too small [3], contain excessive noise [5], or fail to capture the full statistical distribution of real-world data [4].

- Excessive Model Complexity: A model with too many parameters (e.g., a deep neural network with many layers, a high-degree polynomial) can use its high capacity to memorize noise rather than learn the general trend [1] [7].

What is the difference between overfitting and underfitting?

| Feature | Underfitting | Overfitting |

|---|---|---|

| Performance | Poor on both training and test data [8]. | Excellent on training data, poor on new/unseen data [3]. |

| Model Complexity | Too simple for the data [1]. | Too complex for the data [1]. |

| Bias & Variance | High bias, low variance [7]. | Low bias, high variance [7]. |

| Analogy | A student who only read the chapter titles [8]. | A student who memorized the entire textbook verbatim [5]. |

What is the bias-variance tradeoff? The bias-variance tradeoff is a core concept that describes the tension between underfitting and overfitting [1] [2].

- Bias is the error from erroneous assumptions in the model. High bias can cause the model to miss relevant relations, leading to underfitting [7].

- Variance is the error from sensitivity to small fluctuations in the training set. High variance can cause the model to model the noise, leading to overfitting [7]. The goal is to find a model complexity that balances both, minimizing total error [8].

Troubleshooting Guide: Preventing and Addressing Overfitting

Problem: My model has a high performance gap between training and test sets.

Solution: Apply one or more of the following techniques.

1. Gather More and Better Data

- Action: Increase the size of your training dataset to provide more opportunities to learn the true signal [1] [8].

- Consideration: Ensure new data is clean and relevant. Simply adding more noisy data may not help [2].

2. Simplify the Model

- Action: Reduce model complexity. This can involve using a simpler algorithm (e.g., linear instead of polynomial), reducing the number of layers in a neural network, or decreasing the number of neurons per layer [7].

- Action: Perform feature selection to identify and remove redundant or irrelevant input features that contribute to noise [1] [2].

3. Apply Regularization

- Action: Add a penalty term to the model's loss function to discourage complexity. This technique forces the model to keep weights small unless they significantly improve the result [1] [8].

- Protocol (Conceptual): For a regression model, a regularization term is added to the loss function. Common methods include L1 (Lasso) and L2 (Ridge) regularization [8].

4. Use Early Stopping

- Action: When training iteratively, monitor performance on a validation set. Halt the training process as soon as performance on the validation set begins to degrade, even if performance on the training set is still improving [1] [3].

- Protocol: During model training, after each epoch, calculate and log loss on the validation set. Stop training when validation loss has not improved for a pre-defined number of epochs (patience).

5. Implement Cross-Validation

- Action: Use k-fold cross-validation to tune model hyperparameters and assess generalizability more reliably [1].

- Protocol:

- Randomly shuffle the dataset and split it into

kequal-sized folds (typically k=5 or 10). - For each unique fold: a) Treat the current fold as the validation set. b) Train the model on the remaining

k-1folds. c) Evaluate the model on the held-out fold and retain the performance score. - Calculate the average performance across all

kfolds to assess the model. This process helps ensure the model is evaluated on different data splits, reducing the chance of overfitting to a single train-test split [3].

- Randomly shuffle the dataset and split it into

6. Leverage Ensemble Methods

- Action: Combine predictions from multiple models to reduce variance [1].

- Protocol - Bagging (Bootstrap Aggregating):

- Generate multiple random subsets (with replacement) from the training data.

- Train a separate model (often a complex one like a deep decision tree) on each subset.

- For prediction, aggregate the outputs of all models (e.g., by averaging for regression or majority vote for classification). This "smooths out" individual model predictions [1] [2].

Problem: I am concerned about generalization to real-world data.

Solution: Ensure Dataset Quality and Representativeness

- Action: Verify that your data partitions (training, validation, test) are statistically similar and representative of real-world conditions [4].

- Protocol: Shuffle your dataset thoroughly before splitting to avoid temporal or spatial biases. Ensure the data is stationary (its fundamental properties don't change over time) and that examples are independent and identically distributed [4].

Case Study: OverfitDTI in Drug-Target Interaction Prediction

This case study reframes overfitting as a beneficial feature for creating an implicit representation of complex data, directly relevant to the thesis on addressing overfitting in predictive models research [6].

1. Experimental Objective To test the hypothesis that a deliberately overfit deep neural network (DNN) can sufficiently learn the complex, nonlinear relationship between drugs and targets to accurately predict Drug-Target Interactions (DTIs) and identify new candidate compounds [6].

2. Methodology & Workflow The OverfitDTI framework consists of two main components: supervised learning on known DTIs and unsupervised learning for new data.

3. Key Research Reagent Solutions

| Item | Function in the Experiment |

|---|---|

| Deep Neural Network (DNN) | The core "reagent" to be overfit. Its weights form an implicit representation of the nonlinear drug-target relationship space [6]. |

| Drug & Target Encoders | Feature extraction tools. Convert raw drug (e.g., SMILES strings) and target (e.g., amino acid sequences) data into numerical feature vectors. Examples include Morgan Fingerprints and Convolutional Neural Networks (CNNs) [6]. |

| Variational Autoencoder (VAE) | An unsupervised learning model used to generate latent feature representations for new, unseen drugs and targets not present in the original training set, enabling their inclusion in the prediction framework [6]. |

| Benchmark Datasets (e.g., KIBA) | Public, standardized datasets used to train and evaluate the model's performance, allowing for comparison with other state-of-the-art methods [6]. |

4. Performance Metrics and Results The model's performance was evaluated on benchmark datasets using standard metrics.

| Model Configuration | Mean Square Error (MSE) - Baseline | MSE - OverfitDTI | Concordance Index (CI) - Baseline | CI - OverfitDTI |

|---|---|---|---|---|

| Morgan-CNN | Baseline Value | ~2 orders of magnitude lower [6] | Baseline Value | Improved [6] |

| GNN-CNN | Baseline Value | Small performance improvement [6] | Baseline Value | Improved [6] |

5. Experimental Validation Predictions from the OverfitDTI framework led to the identification of fifteen compounds interacting with TEK, a receptor tyrosine kinase [6]. Two of these compounds, AT9283 and dorsomorphin, were experimentally validated in human umbilical vein endothelial cells (HUVECs) and demonstrated inhibitory effects on TEK, confirming the practical utility of the approach [6].

The Bias-Variance Tradeoff in Model Selection

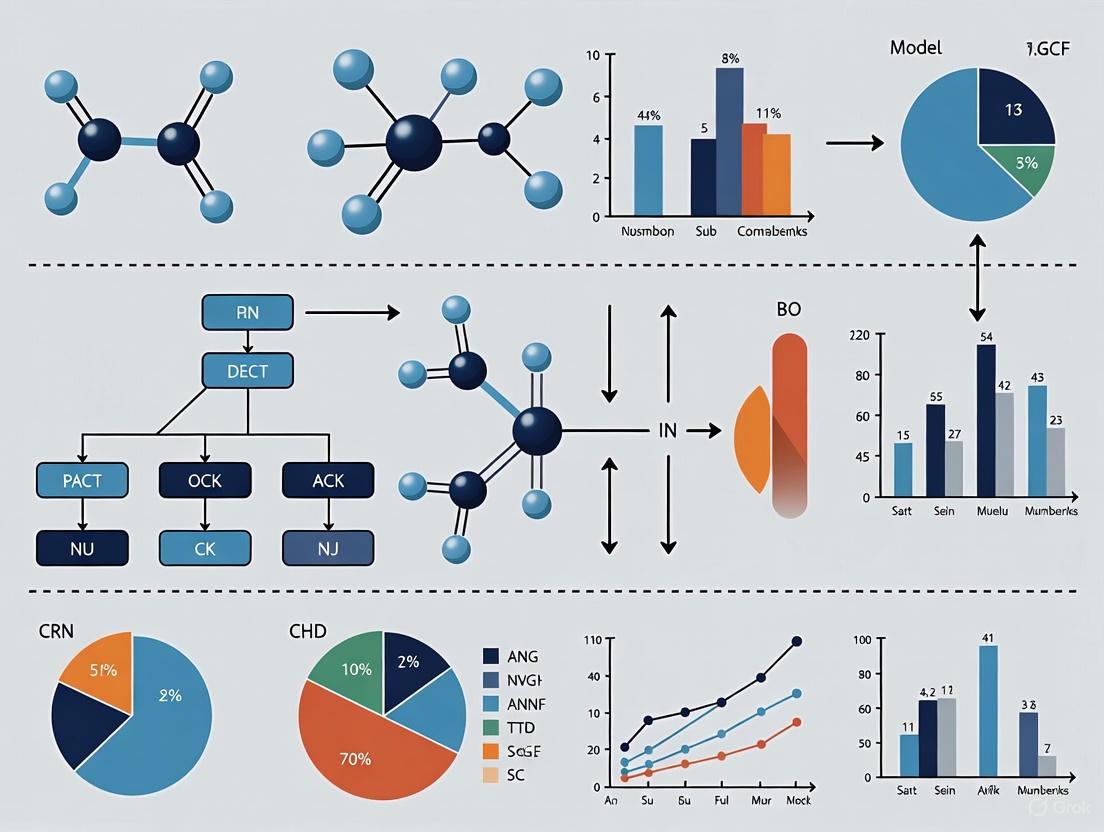

The following diagram illustrates the fundamental goal of finding the optimal model complexity that minimizes both bias and variance to achieve generalization.

Troubleshooting Guide: Diagnosing Model Performance Gaps

This guide helps researchers diagnose the cause of a performance gap between training and validation metrics, a common challenge in developing robust predictive models.

Q: What does a large gap between training and validation accuracy indicate?

A: A significantly higher training accuracy compared to validation accuracy is a classic indicator of overfitting [8] [9]. This means your model has learned the training data too well, including its noise and specific details, but fails to generalize this knowledge to unseen data (the validation set) [1] [3].

Q: How can we use loss curves to diagnose our model?

A: Monitoring training and validation loss during training is crucial. The patterns in these curves provide clear signals about your model's behavior [10].

Table: Interpreting Loss Curves

| Loss Curve Pattern | Diagnosis | Explanation |

|---|---|---|

| Training loss decreases, Validation loss decreases | Healthy Learning | The model is learning patterns that generalize well [10]. |

| Training loss decreases, Validation loss increases | Overfitting | The model is memorizing training data instead of learning generalizable patterns [10] [11]. |

| Both training and validation loss are high and stagnant | Underfitting | The model is too simple to capture the underlying patterns in the data [10] [12]. |

| Validation loss is consistently lower than training loss | Potential Data Issues | Can occur with strong regularization or if the validation set is easier than the training set [10]. |

The following diagram illustrates the decision-making process for diagnosing model performance based on these loss patterns.

Q: What if my validation loss is lower than my training loss?

A: While counter-intuitive, this can happen and is not always a problem. Common causes include:

- Regularization Applied During Training Only: Techniques like Dropout are active during training, intentionally "handicapping" the model, but are turned off for validation, giving the validation loss a slight advantage [11].

- Easier Validation Set: The validation set might, by chance, contain simpler examples than the training set [10].

- Metric Calculation Timing: In some frameworks, training loss is an average over epochs, while validation loss is calculated on the final model state after the epoch.

Q: Our model is overfitting. What are the most effective mitigation strategies?

A: Overfitting is a common issue in research models. The following experimental workflow outlines a structured approach to mitigate it.

Detailed Methodologies for Mitigation

Protocol 1: Implementing K-Fold Cross-Validation K-fold cross-validation provides a more robust estimate of model performance than a single train/validation split and helps in tuning hyperparameters without overfitting to one specific validation set [1] [13].

- Shuffle your dataset randomly.

- Split the dataset into

k(e.g., 5 or 10) equally sized folds or subsets. - For each unique fold

i:- Use fold

ias the validation set. - Use the remaining

k-1folds as the training set. - Train the model on the training set and evaluate it on the validation set.

- Retain the validation performance score.

- Use fold

- Analyze the model's overall performance by averaging the scores from all

kiterations. A high variance in scores can indicate sensitivity to the specific data split and potential overfitting [3].

Protocol 2: Building and Evaluating a CNN with Dropout and Early Stopping This protocol details a concrete experiment to train a Convolutional Neural Network (CNN) while monitoring for and preventing overfitting [10].

Data Preparation:

- Load the Fashion-MNIST dataset (or your specific research dataset).

- Reshape images for CNN input (e.g.,

(28, 28, 1)for Fashion-MNIST). - Normalize pixel values to the range

[0, 1]. - Convert integer labels into one-hot encoded vectors.

- Split the data into training and test sets. Further split the training set to create a validation holdout (e.g., 20%).

Model Architecture (Example using Keras Sequential API):

Model Training with Early Stopping:

- Compile the model with an optimizer (e.g.,

Adam), a loss function (e.g.,categorical_crossentropy), and accuracy as a metric. - Train the model using the

.fit()method, specifying the validation split. - Implement Early Stopping: Configure a callback to monitor

val_loss. Set patience to a number of epochs (e.g., 3-5), after which training stops if the validation loss fails to improve. This prevents the model from training for too long and memorizing the data [8] [1].

- Compile the model with an optimizer (e.g.,

Evaluation and Visualization:

- Plot the training and validation loss curves over epochs to visually confirm a "good fit" (both curves decreasing and converging) [10].

- Report final model performance on the held-out test set.

Table: Research Reagent Solutions for Predictive Modeling

| Reagent / Technique | Function / Purpose | Common Examples / Parameters |

|---|---|---|

| K-Fold Cross-Validation [1] [3] | Robust model validation protocol to detect overfitting by assessing performance across multiple data splits. | k=5 or k=10 folds. |

| Dropout [8] [13] | Neural network regularization technique that randomly disables neurons during training to prevent co-adaptation. | Dropout rate of 0.2 to 0.5. |

| L1/L2 Regularization [8] [9] | Adds a penalty to the loss function based on model coefficients to discourage complexity and simplify the model. | L1 (Lasso), L2 (Ridge); regularization strength alpha. |

| Early Stopping [8] [3] | Optimization procedure that halts training when validation performance degrades to prevent overfitting. | Monitor val_loss, patience (e.g., 5 epochs). |

| Data Augmentation [8] [13] | Artificially expands the training dataset by creating modified versions of existing data to improve generalization. | Image: rotations, flips. Text: synonym replacement. |

Frequently Asked Questions (FAQs)

Q: Can a model be overfitted if we have a large amount of data? A: Yes. While having more data is one of the most effective ways to combat overfitting, it is still possible to overfit if the model architecture is excessively complex for the problem. A model with millions of parameters can still memorize patterns from a large dataset if not properly regularized [8] [3].

Q: Is some degree of overfitting always bad? A: Not necessarily. The ultimate goal is to minimize the validation loss. In practice, the point of lowest validation loss often occurs when the training loss is somewhat lower, meaning the model is slightly overfitted to the training data. The key is to manage the degree of overfitting to achieve the best generalization performance [11].

Q: We have a small dataset for a drug discovery project. How can we prevent overfitting? A: Small datasets are highly susceptible to overfitting. A multi-pronged approach is essential:

- Use Simplified Models: Start with less complex models (e.g., logistic regression) before moving to deep neural networks [14].

- Aggressive Regularization: Employ stronger L2 regularization, higher dropout rates, and consider L1 regularization for feature selection.

- Leverage Transfer Learning: Use a pre-trained model on a larger, related dataset and fine-tune its last few layers on your small, specific dataset [14].

- Cross-Validation is Critical: Use k-fold cross-validation rigorously for model selection and evaluation [15].

Troubleshooting Guide: Frequently Asked Questions

FAQ 1: Why does my model perform perfectly on training data but fail on new clinical samples?

This is a classic sign of overfitting. It occurs when your model learns the noise and specific patterns in the training data rather than the underlying generalizable trends. In biomedical contexts with high-dimensional data (many features) and small sample sizes, this risk is significantly elevated [3] [16].

- Root Cause: High-dimensional data increases the model's capacity to memorize noise. When combined with a small number of samples, the model can easily find spurious correlations that do not hold in new data [17] [18].

- Diagnosis: A significant gap between high training accuracy (e.g., 99.9%) and low testing/validation accuracy (e.g., 45%) is a key indicator [19].

- Solution: Implement the strategies detailed in the following FAQs, focusing on feature selection, regularization, and cross-validation.

FAQ 2: My dataset has thousands of genes (features) but only dozens of patients. How do I choose the right features?

In High-Dimensional Small-Sample Size (HDSSS) scenarios, feature selection is critical. Your goal is to identify the most informative features while discarding irrelevant or redundant ones [20] [21].

The table below summarizes the main categories of feature selection methods:

| Method Type | How It Works | Key Advantage | Example Techniques |

|---|---|---|---|

| Filter Methods | Selects features based on statistical measures (e.g., correlation with target) independent of the model. | Fast and computationally efficient [20]. | Correlation analysis, statistical tests (t-test, chi-square) [16]. |

| Wrapper Methods | Uses the performance of a specific predictive model to evaluate and select feature subsets. | Considers feature interactions; can yield high-performing subsets [21]. | Genetic Algorithms (GA), Particle Swarm Optimization (PSO) [21]. |

| Embedded Methods | Performs feature selection as part of the model training process itself. | Efficient and less prone to overfitting than wrapper methods [20] [22]. | Lasso Regression (L1 regularization), Decision Trees with feature importance [22]. |

Feature Selection Workflow for High-Dimensional Data

FAQ 3: What are the most effective techniques to prevent overfitting during model training?

Beyond feature selection, several core techniques can be applied during the model training phase to improve generalization.

- 1. Regularization: These techniques penalize model complexity to prevent it from relying too heavily on any single feature or noise.

- 2. Cross-Validation (CV): This is essential for robust performance estimation. Instead of a single train-test split, CV creates multiple splits.

- 3. Ensemble Methods: These combine multiple simpler models (weak learners) to create a more robust and accurate predictor.

FAQ 4: How does high dimensionality directly lead to overfitting?

High dimensionality intensifies overfitting through several interconnected phenomena, often referred to as the "Curse of Dimensionality" [17] [16].

| Phenomenon | Description | Consequence for Model Training |

|---|---|---|

| Data Sparsity | Data points become spread out and isolated in a vast feature space. | The model lacks enough data to learn true patterns, causing it to fit to noise instead [16]. |

| Increased Model Complexity | More features allow the model to have more parameters and higher capacity. | The model can memorize noise and random fluctuations in the training data [23] [16]. |

| Multicollinearity | Features become highly correlated with each other due to high dimensionality. | It becomes difficult to distinguish the individual contribution of each feature, leading to unstable models [16]. |

| Chance Correlations | With thousands of features, it becomes likely that some noisy features will, by pure chance, appear correlated with the target. | The model may assign high importance to these irrelevant features, which will not generalize [23]. |

High Dimensionality to Overfitting Relationship

The Scientist's Toolkit: Research Reagent Solutions

This table lists key computational and methodological "reagents" for combating overfitting in biomedical research.

| Tool / Technique | Function | Key Application in Biomedical Data |

|---|---|---|

| Principal Component Analysis (PCA) | An unsupervised linear feature extraction algorithm that reduces dimensionality by projecting data onto directions of maximum variance [17]. | Preprocessing genomic or proteomic data before classification; visualizing high-dimensional data in 2D/3D. |

| Lasso (L1) Regression | An embedded feature selection method that performs regularization and variable selection simultaneously by shrinking some coefficients to zero [22]. | Identifying a small set of key biomarkers (e.g., critical genes) from thousands of potential candidates. |

| K-Fold Cross-Validation | A resampling procedure used to evaluate models on limited data samples by partitioning the data into K subsets [3]. | Robustly estimating model performance and tuning hyperparameters when patient sample size is small. |

| Decision Tree (with Pruning) | A simple, interpretable model whose complexity can be controlled by limiting its maximum depth ("pruning") [3] [23]. | Creating clinical decision rules that are easy to interpret and less prone to learning noise. |

| Autoencoders | A type of neural network used for unsupervised non-linear dimensionality reduction by learning efficient data codings [17]. | Extracting complex, non-linear features from raw biomedical data like medical images or EEG signals. |

Troubleshooting Guide: Identifying and Resolving Overfitting

This guide helps researchers diagnose and correct common overfitting issues in predictive models for drug discovery and clinical diagnostics.

Problem: My model has high accuracy on training data but poor performance on validation or real-world data.

| Checkpoint | What to Look For | Corrective Action |

|---|---|---|

| Generalization Curve | A growing gap between training and validation loss curves [4] [24]. | Implement early stopping when validation loss stops improving [1] [3]. |

| Model Complexity | A model with more parameters than justified by the dataset size [25] [26]. | Apply regularization (e.g., L1/Lasso, L2/Ridge) to penalize complexity [1] [3] [26]. |

| Data Quality & Quantity | A small training set or data that lacks diversity and contains noise [3] [27]. | Increase dataset size with clean, representative data or use data augmentation techniques [1] [3]. |

| Feature Selection | The model uses a large number of redundant or irrelevant input features [25] [3]. | Perform feature selection (pruning) to retain only the most impactful variables [1] [3]. |

| Validation Method | Error estimation is performed on the same data used for training or feature selection [25]. | Use robust protocols like nested cross-validation to get unbiased error estimates [25] [1]. |

Problem: The model fails to establish a meaningful relationship between input and output variables, leading to poor performance on both training and test data.

| Checkpoint | What to Look For | Corrective Action |

|---|---|---|

| Model Performance | High bias and low variance; poor accuracy on training data itself [1] [27]. | Increase model complexity, train for more epochs, or incorporate additional relevant features [1]. |

| Data Representation | The selected features lack the predictive power to determine the outcome. | Re-evaluate the input data; consult domain experts to identify more predictive variables. |

FAQ: Overfitting in Pharmaceutical Research

Q1: What is overfitting and why is it a critical issue in drug discovery? Overfitting occurs when a model learns the specific patterns—including noise and irrelevant details—of its training data so closely that it fails to generalize to new, unseen data [1] [3]. In drug discovery, this is profoundly dangerous because an overfit model may appear highly accurate during development but will make unreliable predictions in subsequent experiments or clinical settings [25]. This can lead to the pursuit of ineffective drug candidates, misdiagnosis in clinical tools, wasted resources, and significant ethical concerns regarding patient safety [28] [26].

Q2: How can I detect overfitting in a clinical diagnostic model? The primary method is to monitor the divergence between training and validation performance [4]. A clear sign is high accuracy on the training dataset coupled with a high error rate on a separate test or validation dataset [1] [3]. Technically, this is visualized by a generalization curve where the training loss continues to decrease while the validation loss begins to increase after a certain point [24] [4]. Using k-fold cross-validation provides a more robust assessment of model generalization by testing it on multiple held-out subsets of the data [1] [3].

Q3: What are the most effective techniques to prevent overfitting? Several strategies are commonly employed, often in combination:

- Cross-Validation: Using k-fold cross-validation to ensure the model's performance is consistent across different data splits [1] [3].

- Regularization: Applying techniques like Lasso (L1) or Ridge (L2) regression that add a penalty for model complexity, discouraging over-reliance on any single feature [1] [3] [26].

- Early Stopping: Halting the model training process once performance on a validation set stops improving [1] [3].

- Ensemble Methods: Using bagging or boosting to combine predictions from multiple weaker models, which reduces variance and improves generalization [1] [3].

- Simplifying the Model: Reducing the number of features (pruning) or using a less complex model architecture to match the true underlying signal in the data [25] [26].

Q4: Our model performed well on internal validation data but failed with real-world patient data. What could be the cause? This is a classic symptom of overfitting, often compounded by a mismatch between your training data and the real-world data distribution [4]. Common causes include:

- Non-Stationary Data: The relationship between inputs and outputs changes over time (e.g., viewer tastes for a streaming service) [4].

- Sampling Bias: The training data was not representative of the broader patient population (e.g., in terms of ethnicity, disease severity, or comorbidities) [28] [4].

- Confounding Variables: Unaccounted factors in the real world influence the outcome, which were not present or controlled for in the training set [28].

- Feedback Loops: The model's predictions themselves change the environment it is predicting, leading to inaccurate future predictions [4].

Q5: How does the "bias-variance tradeoff" relate to overfitting and underfitting? The bias-variance tradeoff is a fundamental concept for understanding model behavior [27].

- High Bias (Underfitting): The model is too simple and makes strong assumptions, leading to high error on both training and test data. It fails to capture the underlying trend [1] [27].

- High Variance (Overfitting): The model is too complex and is overly sensitive to the training data. It has low error on training data but high error on test data, as it has learned the noise [1] [27]. The goal is to find the "sweet spot" between bias and variance where the model generalizes best to new data [1].

Experimental Protocol: K-Fold Cross-Validation for Robust Error Estimation

Objective: To provide an unbiased estimate of a predictive model's generalization error and mitigate overfitting.

Methodology:

- Data Preparation: Randomly shuffle the dataset and partition it into k equally sized subsets (folds). A typical value for k is 5 or 10 [1] [3].

- Iterative Training and Validation: For each unique fold: a. Designate the current fold as the validation (holdout) set. b. Designate the remaining k-1 folds as the training set. c. Train the model from scratch on the training set. d. Evaluate the trained model on the validation set and record the performance score (e.g., accuracy, mean squared error).

- Performance Aggregation: After all k iterations, average the k recorded performance scores. This average is the final, robust estimate of the model's generalization error [3].

Experimental Protocol: Applying Regularization to Prevent Overfitting

Objective: To reduce model complexity and prevent the model from fitting noise in the training data by adding a penalty to the loss function.

Methodology:

- Model Definition: Start with your base model (e.g., a linear regression or a neural network).

- Loss Function Modification: Add a regularization term to the standard loss function (e.g., Mean Squared Error). This term penalizes large model coefficients.

- For L2 Regularization (Ridge): The penalty is the sum of the squares of the coefficients multiplied by a hyperparameter λ (lambda). This shrinks coefficients but rarely sets them to zero [1] [26].

- For L1 Regularization (Lasso): The penalty is the sum of the absolute values of the coefficients multiplied by λ. This can drive some coefficients to exactly zero, performing feature selection [1] [26].

- Hyperparameter Tuning: Use cross-validation to find the optimal value for λ, which controls the strength of the penalty. A low λ has little effect, while a very high λ can lead to underfitting.

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Tool / Solution | Function in Mitigating Overfitting |

|---|---|

| Scikit-learn | A comprehensive Python library offering built-in implementations of cross-validation, regularization algorithms, feature selection tools, and ensemble methods [26]. |

| TensorFlow / PyTorch | Deep learning frameworks that provide functionalities like Dropout layers and Early Stopping callbacks to prevent overfitting during neural network training [24] [26]. |

| K-Fold Cross-Validation | A resampling procedure used to evaluate a model's ability to generalize to an independent dataset, providing a more reliable performance estimate [1] [3]. |

| Dropout | A regularization technique for neural networks where randomly selected neurons are ignored during training, preventing complex co-adaptations on training data [24]. |

| Data Augmentation | A technique to artificially expand the size and diversity of the training dataset by creating modified versions of existing data, improving model robustness [3] [26]. |

Troubleshooting Guides

Guide 1: How to Diagnose Underfitting and Overfitting

Problem: My model is performing poorly. How do I determine if it's underfitting or overfitting?

Diagnosis Steps:

- Analyze Learning Curves: Plot your model's performance metric (e.g., error or accuracy) on both the training and a validation set against training time or model complexity.

- Compare Performance:

- Underfitting: The model performs poorly on both the training data and unseen data (like a validation or test set). This indicates high bias and an inability to capture underlying patterns in the data [7] [29].

- Overfitting: The model performs exceptionally well on the training data but performs poorly on unseen data. This indicates high variance and that the model has learned noise and irrelevant details from the training set [7] [29].

- Check Key Indicators: Use the following table to summarize the core differences:

Table: Diagnostic Indicators for Model Behavior

| Aspect | Underfitting | Well-Fitted Model | Overfitting |

|---|---|---|---|

| Performance on Training Data | Poor [29] | Good | Excellent, often too good to be true [29] |

| Performance on New, Unseen Data | Poor [29] | Good | Poor [7] [29] |

| Model Complexity | Too simple [7] | Balanced | Too complex [7] |

| Bias and Variance | High bias, low variance [7] | Balanced | Low bias, high variance [7] |

| Analogy | A student who didn't study enough [7] | A student who understands the concepts | A student who memorized answers without understanding [7] |

The following workflow visualizes the diagnostic process and its connection to the bias-variance tradeoff:

Guide 2: How to Fix an Underfitting Model

Problem: My model has high bias and is underfitting. What can I do to improve its learning capacity?

Solution Strategies:

- Increase Model Complexity: Switch to a more powerful algorithm. For example, move from linear regression to polynomial regression or from a shallow to a deeper decision tree [7].

- Enhance Feature Engineering:

- Reduce Regularization: Regularization techniques (like L1/Lasso or L2/Ridge) are designed to punish complexity. If the model is already too simple, reducing the strength of regularization can help it learn more [7].

- Train for Longer: For iterative models like neural networks or gradient boosting, increasing the number of training epochs or iterations can allow the model to learn more complex relationships [7].

Guide 3: How to Fix an Overfitting Model

Problem: My model has high variance and is overfitting. How can I improve its generalization to new data?

Solution Strategies:

- Get More Training Data: A larger dataset helps the model learn the underlying data distribution rather than the noise, improving its ability to generalize [7].

- Simplify the Model:

- Apply Regularization: Techniques like L1 (Lasso) and L2 (Ridge) regularization add a penalty for model complexity, discouraging overfitting by keeping weight values small [7].

- Use Early Stopping: For iterative learners, monitor performance on a validation set and stop training as soon as validation performance begins to degrade [7].

- Employ Robust Validation Techniques:

- k-Fold Cross-Validation: Use this resampling technique to get a more reliable estimate of model performance on unseen data and to ensure the model is not overfitting to a particular train-test split [29].

- Hold-Out Validation Set: Keep a completely separate validation dataset for the final model evaluation to avoid information leaking from the test set into the training process [29].

- Perform Feature Selection: Reduce the number of features to only the most important ones, which can decrease model complexity and noise [30].

- Use Dropout (for Neural Networks): Randomly "drop out" a proportion of neurons during training to prevent the network from becoming overly reliant on any single neuron and to encourage robust feature learning [7].

The following workflow summarizes the strategies for addressing both underfitting and overfitting:

Frequently Asked Questions (FAQs)

What is the fundamental difference between overfitting and underfitting?

The fundamental difference lies in the model's relationship with the training data and its ability to generalize. Underfitting occurs when a model is too simple to capture the underlying trend in the training data, leading to poor performance on both training and new data. Overfitting occurs when a model is too complex and learns not only the underlying trend but also the noise and random fluctuations in the training data, leading to excellent training performance but poor performance on new data [7] [29].

How can I detect overfitting without a separate test set?

Using a separate test set is the most straightforward method. However, if one is not available, resampling techniques like k-fold cross-validation are a gold standard alternative. In k-fold cross-validation, your data is split into 'k' subsets. The model is trained on k-1 folds and validated on the remaining fold, and this process is repeated k times. The average performance across all k folds provides a robust estimate of how your model will generalize to unseen data, helping to identify potential overfitting [29].

What is data leakage and how does it relate to overfitting?

Data leakage occurs when information from outside the training dataset, particularly from the test or validation set, is inadvertently used to create the model [31]. This can happen through improper data splitting, using future information to predict the past, or during faulty preprocessing (e.g., scaling the entire dataset before splitting). Data leakage creates an overly optimistic and invalid estimate of model performance because the model is effectively "cheating" by seeing information it shouldn't. This leads to a model that appears accurate during development but will fail catastrophically and unpredictably when deployed in a real-world setting, a severe form of overfitting [15] [31]. Rigorous experimental design, including a strict train-validation-test split, is crucial to prevent it [31].

Is overfitting or underfitting a bigger problem in practice?

While both are detrimental, overfitting is often considered the more common and insidious problem in applied machine learning [29]. This is because an underfit model is easy to detect—it performs poorly from the start. An overfit model, however, can appear to be highly accurate and successful during training and initial testing, creating a false sense of security. Its failure only becomes apparent upon deployment with real, unseen data, which can have significant consequences, especially in critical fields like drug development and medical diagnosis [15] [31].

How does the bias-variance tradeoff relate to these concepts?

The bias-variance tradeoff is a fundamental framework that explains underfitting and overfitting.

- Bias is the error from erroneous assumptions in the model. High bias causes underfitting, as a simplistic model fails to capture relevant patterns [7].

- Variance is the error from sensitivity to small fluctuations in the training set. High variance causes overfitting, as a complex model learns the noise in the data [7].

The goal is to find the optimal balance where both bias and variance are minimized, resulting in a model that generalizes well [7]. Increasing model complexity reduces bias but increases variance, while simplifying the model reduces variance but increases bias.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Robust Predictive Modeling

| Tool or Material | Function / Purpose |

|---|---|

| k-Fold Cross-Validation | A resampling technique used to assess model generalizability and limit overfitting by providing a robust estimate of performance on unseen data [29]. |

| Hold-Out Validation Set | A separate dataset not used during model training, reserved for the final, unbiased evaluation of model performance [29]. |

| L1 (Lasso) & L2 (Ridge) Regularization | Penalization methods that constrain model coefficients to prevent overfitting by discouraging over-complexity [7]. |

| Sequential Feature Selection | A process to identify and use the most informative features, reducing data complexity and the risk of overfitting while improving model interpretability [31] [32]. |

| Early Stopping | A technique for iterative models where training is halted once performance on a validation set stops improving, preventing the model from over-optimizing to the training data [7]. |

| Dropout | A regularization technique specifically for neural networks that randomly ignores units during training to prevent complex co-adaptations and encourage robust learning [7]. |

| Preprocessing Pipelines | Defined workflows (e.g., for intensity normalization, voxel resampling) applied correctly after data splitting to ensure consistency and prevent data leakage [31] [32]. |

| Interpretability Frameworks (e.g., SHAP) | Tools that provide post-hoc explanations for model predictions, helping to validate that the model is relying on clinically or scientifically plausible features and not spurious correlations [32]. |

Building Robust Models: Core Techniques to Prevent Overfitting

A technical support guide for researchers battling overfitting in predictive model research.

Troubleshooting FAQs

1. My model performs well on training data but poorly on new, real-world data. What is happening?

This is a classic sign of overfitting [1] [33]. Your model has likely memorized the patterns and noise in your training dataset instead of learning the underlying relationships that generalize to new data [3]. To confirm, compare your model's performance on training versus a held-out test set; a high training accuracy coupled with low test accuracy is a key indicator [19].

2. What are the most effective first steps to combat overfitting?

The most straightforward and effective first steps are data-centric [19] [33]:

- Gather more data: Increasing your training dataset size helps the model learn the true data distribution rather than memorizing idiosyncrasies [33].

- Improve data quality: Identify and correct mislabelled instances (noisy labels) and remove duplicate data points to prevent the model from learning errors [34].

- Use data augmentation: Artificially expand your dataset by creating modified versions of your existing data (e.g., rotating images, adding noise to text) [35] [33].

3. How can I detect overfitting in my models?

The best practice is to use a robust validation strategy [15]:

- Train-Test Split: Hold out a portion of your data as a test set. A significant performance gap between the training and test sets signals overfitting [3] [1].

- K-Fold Cross-Validation: Split your data into k subsets (folds). Iteratively train on k-1 folds and validate on the remaining one. This provides a more reliable performance estimate and helps identify overfitting that might be specific to one data split [3] [1].

4. My dataset is small and cannot be easily expanded. What can I do?

For small sample sizes, a data-centric approach is particularly critical [36]:

- Leverage Data Augmentation: Systematically apply transformations to your existing data to create new, synthetic training examples. This is a primary method for alleviating data scarcity [35].

- Generate Synthetic Data: Use techniques like Conditional Generative Adversarial Networks (CTGAN) to create artificial data. Caution is required: synthetic data must be filtered and selected for quality to be effective, as directly adding it can sometimes harm performance [36].

- Apply Strong Regularization: Techniques like L1 (Lasso) or L2 (Ridge) regularization penalize model complexity during training, discouraging overfitting to a small dataset [19].

5. In drug development, what are common data pitfalls that lead to overfitting?

Beyond general issues, drug development faces specific challenges:

- Faulty Data Preprocessing: Incorrect procedures during data handling can lead to data leakage, where information from the test set leaks into the training process, creating over-optimistic and non-generalizable models [15].

- Target Leakage: The model may "cheat" by having access to data during training that would not be available at prediction time. For example, a model predicting future drug efficacy might inadvertently use data that is only available after the prediction is supposed to be made [19].

- Biased or Non-Representative Data: If the training data does not adequately represent the real-world patient population (e.g., in terms of genetics, demographics, or disease subtypes), the model will fail to generalize [34] [19].

Troubleshooting Guides

Guide 1: Implementing a Data-Centric Workflow to Mitigate Overfitting

This guide outlines a systematic, data-centric workflow to build more robust and generalizable predictive models.

Objective: To establish a reproducible process that prioritizes data quality and quantity to reduce model overfitting.

Experimental Protocol/Methodology:

Data Quality Assessment

- Remove Duplicates: Use hashing techniques (e.g., Perceptual Hashing/pHash) to identify and eliminate duplicate instances that can bias the model [34].

- Correct Noisy Labels: Apply confident learning to detect mislabeled data. Instances with a predicted probability below an optimized threshold are flagged. These labels should be corrected, ideally through human expert annotation [34].

Data Quantity & Diversity Enhancement

- Acquire More Data: Prioritize collecting more clean, representative data. In drug development, this could involve ensuring diverse patient cohorts in clinical trials [19] [33].

- Apply Data Augmentation: Generate new data by applying realistic transformations. The table below summarizes techniques for different data types [35] [33].

- Generate Synthetic Data (if needed): Use models like CTGAN to create synthetic data, but always apply a filtering strategy to ensure only high-quality, reliable synthetic data is added to the experimental set [36].

Robust Validation

- Implement K-Fold Cross-Validation: Use this method during model development and hyperparameter tuning to get a stable estimate of performance and avoid overfitting to a single validation split [1] [19].

- Maintain a Strict Holdout Test Set: Finally, evaluate your model on a completely unseen test set that was not used in any previous step to simulate real-world performance [15].

The following workflow diagram illustrates this structured approach:

Guide 2: Data Augmentation Protocols for Different Data Types

This guide provides specific methodologies for implementing data augmentation across common data types in scientific research.

Objective: To increase the volume and diversity of training data by creating slightly modified copies of existing data, thereby improving model generalization.

Experimental Protocol/Methodology:

The table below catalogs standard and modern augmentation techniques suitable for various data modalities.

| Data Type | Augmentation Technique | Methodology / Protocol | Key Consideration |

|---|---|---|---|

| Image Data [35] [33] | Geometric Transformations | Apply random rotations (e.g., ±15°), flips (horizontal/vertical), translations, zooms, and cropping. | Preserve the semantic label post-transformation. |

| Color Space Adjustments | Alter brightness, contrast, saturation, and hue within a defined range. Add small amounts of noise (Gaussian). | Changes should reflect real-world variability. | |

| Advanced Synthesis | Use Generative Adversarial Networks (GANs) or Neural Rendering to create highly realistic, novel samples [35]. | Requires significant computational resources and expertise. | |

| Text Data [33] | Synonym Replacement | Replace random words with their synonyms using a lexical database. | Can slightly alter meaning; validate output. |

| Random Operations | Perform random insertion, deletion, or swapping of words. | Use with a low probability to maintain coherence. | |

| Back-Translation | Translate text to an intermediate language and then back to the original language. | Effective for paraphrasing but can be computationally expensive. | |

| Time-Series Data [33] | Jittering | Add small amounts of random noise to the signal. | Noise level should be representative of sensor variance. |

| Time Warping | Randomly stretch or compress the time series slightly. | Maintains temporal relationships but alters timing. | |

| Magnitude Warping | Randomly scale the amplitude of the signal. | Simulates changes in signal strength. |

The logical process for implementing a data augmentation pipeline is as follows:

Performance Data & Comparison

The following table summarizes quantitative results from a study that directly compared Model-Centric and Data-Centric approaches on well-known datasets using a ResNet-18 architecture [34].

| Dataset | Model-Centric Approach (Test Accuracy) | Data-Centric Approach (Test Accuracy) | Relative Performance Improvement |

|---|---|---|---|

| MNIST | Baseline Performance | Enhanced Performance | ≥ 3% |

| Fashion-MNIST | Baseline Performance | Enhanced Performance | ≥ 3% |

| CIFAR-10 | Baseline Performance | Enhanced Performance | ≥ 3% |

Note: The Data-Centric Approach involved data augmentation, multi-stage hashing to remove duplicates, and confident learning to correct noisy labels [34].

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table details key computational "reagents" and their functions for implementing data-centric strategies.

| Tool / Solution | Function | Application Context |

|---|---|---|

| Perceptual Hashing (pHash) | Generates a unique "fingerprint" for an image to identify and remove duplicate data instances [34]. | Data Cleaning |

| Confident Learning | A framework for identifying and correcting label errors in datasets by estimating the joint distribution of noisy and true labels [34]. | Data Quality Assessment |

| Conditional GAN (CTGAN) | A type of generative model that creates synthetic data samples conditioned on specific features, useful for augmenting small datasets [36]. | Data Augmentation & Synthesis |

| K-Fold Cross-Validation | A resampling procedure used to evaluate a model by partitioning the data into K subsets and repeatedly training on K-1 folds while validating on the held-out fold [3] [1]. | Model Validation |

| Regularization (L1/L2) | Techniques that add a penalty to the model's loss function to discourage complexity, helping to prevent overfitting [19]. | Model Training |

| Automated ML Platforms | Cloud-based services (e.g., Azure Automated ML) that can automatically detect overfitting and apply prevention strategies like hyperparameter tuning and cross-validation [19]. | End-to-End Model Development |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between how L1 and L2 regularization affect my model's coefficients?

A1: The core difference lies in the type of penalty applied to the coefficients:

- L1 (Lasso): Adds a penalty equal to the absolute value of the magnitude of coefficients. This can drive less important feature coefficients exactly to zero, effectively performing feature selection and creating a sparse model [37] [38].

- L2 (Ridge): Adds a penalty equal to the square of the magnitude of coefficients. This forces coefficients to become smaller but rarely, if ever, zero, keeping all features in the model but reducing their influence [37] [39].

Q2: When should I choose L1 regularization over L2 for my predictive model?

A2: Opt for L1 regularization (Lasso) when:

- You are dealing with high-dimensional data with many features and you suspect only a subset is truly relevant [40] [38].

- Feature selection and model interpretability are primary goals, as it helps identify the most important predictors [37] [41].

- You need a sparse solution for computational efficiency [41].

Q3: My model with L2 regularization is not discarding any features. Is this expected behavior?

A3: Yes, this is normal and a key characteristic of L2 regularization. Unlike L1, L2 regularization shrinks coefficients towards zero but does not set them to zero [37] [39]. Therefore, it does not perform feature selection. If feature selection is needed, consider using L1 regularization or a hybrid like Elastic Net [40] [42].

Q4: How does the lambda (α) hyperparameter affect my regularized model, and how do I choose its value?

A4: The hyperparameter lambda (often denoted as α in code) controls the strength of the penalty [37] [43]:

lambda = 0: No regularization; the model reverts to ordinary least squares (OLS), which may overfit [37] [39].- Very small

lambda: Very mild penalty; minimal effect on coefficients, risk of overfitting remains. - Very large

lambda: Excessive penalty; all coefficients are heavily shrunk (toward zero for L1, near zero for L2), leading to underfitting and a model that is too simple [37] [44].

The optimal value is typically found through cross-validation techniques (e.g., k-fold cross-validation), which aims to find the lambda that gives the best performance on validation data [38] [43].

Q5: I have highly correlated features in my dataset. Which regularization method is more appropriate?

A5:

- L2 (Ridge) regression is generally more effective for handling multicollinearity (highly correlated features). It shrinks the coefficients of correlated variables and distributes the effect among them more evenly without removing any [37] [39].

- L1 (Lasso) regression tends to arbitrarily select one feature from a group of correlated features and discard the others, which can be problematic for interpretation [37] [38].

- For datasets with many correlated features where you still want some level of feature selection, Elastic Net is a strong alternative as it combines both L1 and L2 penalties [40] [42].

Troubleshooting Guides

Problem: Model Performance is Poor After Applying Regularization

Potential Causes and Solutions:

| Observation | Potential Cause | Recommended Solution |

|---|---|---|

| High error on both training and test sets. | Underfitting due to excessively high lambda value [37] [44]. |

Reduce the alpha hyperparameter. Perform a cross-validated search over a lower range of alpha values [44] [43]. |

| High error on test set but low error on training set. | Overfitting is not fully controlled; lambda value may be too low [8]. |

Increase the alpha hyperparameter. Ensure you are correctly using a validation set to tune alpha [44]. |

| Performance is unstable; small data changes cause large model changes. | High variance not adequately controlled; model may still be too complex [8]. | For L2, try further increasing alpha. For L1, ensure it is the right method; if features are correlated, switch to L2 or Elastic Net [37] [39]. |

| L1 model is too sparse; too many features were removed. | The L1 penalty was too strong, potentially removing important features [45]. | Decrease the L1 alpha or use ElasticNet with a lower l1_ratio to blend in some L2 penalty, which can help retain groups of correlated features [40] [42]. |

Problem: Difficulty Interpreting or Implementing Regularization

1. Issue: Conceptual misunderstanding of how L1 and L2 penalties work geometrically.

The geometric difference explains why L1 leads to sparsity (feature selection) and L2 does not. The solution is found where the loss function contour touches the permissible region defined by the penalty.

Geometric Interpretation of Regularization: The optimal coefficients are found where the elliptical contours of the loss function meet the constraint region. L2's circular region often leads to solutions where all coefficients are non-zero. L1's diamond-shaped region has sharp corners on the axes, making it likely for the solution to have zero coefficients, thus enabling feature selection [45] [41].

2. Issue: Practical implementation of regularization in code.

Below is a standardized protocol for implementing and comparing L1 and L2 regularization in Python using scikit-learn.

Standardized Experimental Workflow: A systematic methodology for applying and tuning regularized models, ensuring reliable and reproducible results [42] [38] [43].

Table 1: Core Properties of L1 and L2 Regularization

| Property | L1 (Lasso) Regularization | L2 (Ridge) Regularization |

|---|---|---|

| Penalty Term | Absolute value of coefficients (λ‖β‖1) [37] [38] |

Squared value of coefficients (λ‖β‖22) [37] [39] |

| Effect on Coefficients | Can shrink coefficients exactly to zero [38]. | Shrinks coefficients close to but not zero [39]. |

| Feature Selection | Yes (built-in) [37] [41]. | No [37] [39]. |

| Handling Multicollinearity | Arbitrarily chooses one feature from correlated group; not ideal for severe multicollinearity [37] [38]. | Distributes effect among correlated features; better for handling multicollinearity [37] [39]. |

| Resulting Model | Sparse model [41]. | Dense model [39]. |

| Geometric Constraint | Diamond (L1-norm) [45] [41]. | Circle (L2-norm) [45]. |

Table 2: Impact of Regularization Strength (λ/α)

| Regularization Strength | Impact on L1 (Lasso) Model | Impact on L2 (Ridge) Model | Risk |

|---|---|---|---|

| λ = 0 | Equivalent to OLS regression (no penalty) [37]. | Equivalent to OLS regression (no penalty) [37]. | Overfitting [37]. |

| Very Small λ | Mild shrinkage; few coefficients may become zero. | Mild shrinkage; all coefficients slightly reduced. | Potential Overfitting. |

| Optimal λ | Balanced bias-variance tradeoff; irrelevant features removed [38]. | Balanced bias-variance tradeoff; stable coefficients [39]. | Well-fit model. |

| Very Large λ | All coefficients forced to zero; constant output model [45]. | All coefficients forced near zero; constant output model [43]. | Underfitting [37] [44]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools and Their Functions

| Tool / Reagent | Function in Regularization Experiments | Key Parameters |

|---|---|---|

scikit-learn (Python) |

Provides Lasso, Ridge, and ElasticNet classes for easy implementation [42] [43]. |

alpha: Regularization strength. max_iter: Maximum number of iterations. |

glmnet (R) |

Efficiently fits L1, L2, and Elastic Net models; excellent for cross-validation [38]. | alpha: Mixing parameter (0 for Ridge, 1 for Lasso). lambda: Penalty strength. |

Cross-Validation (e.g., GridSearchCV) |

Hyperparameter tuning to find the optimal alpha that generalizes best to unseen data [38] [43]. |

cv: Number of cross-validation folds. scoring: Metric to evaluate performance (e.g., MSE). |

Feature Scaler (e.g., StandardScaler) |

Critical pre-processing step. Rescales features to have mean=0 and std=1, ensuring the penalty is applied uniformly across all features [38]. |

This technical support center provides practical guidance on Neural Network Pruning, a core model compression technique that removes redundant parameters from a deep learning model to reduce its size and computational demands [46]. In the context of predictive model research, particularly for applications like drug development, pruning is a critical strategy for combating overfitting. An overfitted model learns the training data too closely, including its noise and random fluctuations, resulting in poor performance on new, unseen test data [8] [1]. By simplifying the network architecture, pruning encourages the model to learn the underlying patterns in your data, thereby improving its ability to generalize—a paramount concern for robust scientific research [8] [1].

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My model's accuracy drops significantly after pruning. What is the most likely cause and how can I fix it?

- Problem: Over-pruning or an incorrect pruning strategy has removed important parameters, damaging the model's ability to make accurate predictions.

- Solutions:

- Reduce Pruning Ratio: You have likely pruned too many weights at once. Implement an iterative pruning strategy instead: prune a small percentage of weights (e.g., 10-20%), then fine-tune the model, and repeat this cycle until the target sparsity is reached [47]. This allows the network to adapt gradually.

- Re-evaluate Pruning Criterion: If you used a simple magnitude-based criterion, consider a more informed method. Interpretable pruning based on mutual information and total correlation can identify redundant neurons without losing critical information, leading to less performance degradation [48].

- Fine-tune More: After pruning, the model must be fine-tuned on the training data. Increase the number of fine-tuning epochs and use a lower learning rate to allow the remaining weights to recover the model's accuracy [46].

Q2: How do I choose between unstructured and structured pruning for my research?

- Problem: Uncertainty about the trade-offs between different pruning granularities and their impact on the final model.

- Solution: The choice depends on your deployment goal. The table below compares the two approaches.

Table 1: Unstructured vs. Structured Pruning

| Feature | Unstructured Pruning | Structured Pruning |

|---|---|---|

| Granularity | Individual weights [46] | Entire structures like neurons, channels, or filters [46] [47] |

| Primary Benefit | High compression rate; good at maintaining accuracy [46] | Direct improvement in inference speed and memory usage; hardware-friendly [49] |

| Primary Drawback | Does not reliably speed up inference on standard hardware [46] | Higher risk of accuracy loss for a given pruning rate [46] |

| Best For | Maximizing model compression for storage, not speed | Deploying models on edge devices or in real-time applications [49] [50] |

Q3: I am working with a complex, multi-component architecture. How can I prune it without breaking the data flow between components?

- Problem: Standard dependency graphs used in pruning libraries can create overly large pruning groups that span multiple components, severely degrading performance [49].

- Solution: Adopt a component-aware pruning strategy [49].

- Methodology: Extend dependency graphs to explicitly isolate individual components (e.g., an encoder, a predictor, a controller) and model the inter-component data flows.

- Why it works: This creates smaller, targeted pruning groups that preserve the functional integrity of each component and their interactions. For instance, it prevents aggressive pruning of an encoder whose output is critical for a downstream predictor [49].

Q4: My model performs well on training data but poorly on validation data, indicating overfitting. Can pruning help even if I don't care about model size?

- Problem: A model is overfitted, exhibiting high variance [8].

- Solution: Yes, absolutely. Pruning is a powerful form of regularization [51]. By removing redundant connections, you force the network to rely on more robust and generalizable pathways, effectively reducing its complexity and capacity to memorize noise [8] [1]. In this scenario, your goal is not minimal size, but optimal generalization. Use pruning to find the model complexity that gives the best performance on your validation set.

Experimental Protocols & Methodologies

Interpretable Pruning Based on Information Theory

This method uses Mutual Information (MI) and Total Correlation (TC) to identify and remove redundant neurons in an unsupervised manner, providing a transparent pruning strategy [48].

- Workflow:

- Train a dense model until convergence.

- Select Representation Layer(s): Focus pruning on the narrowest layer(s) in the network, as they are often the most representative and contain neurons with the highest disentanglement [48].

- Compute Redundancy: For the selected layer, estimate the mutual information or total correlation between different sets of neurons. Neurons with high mutual information are considered redundant [48].

- Prune Neuron: Remove the neuron(s) with the highest redundancy.

- Stopping Criterion: Use the Information Plane (IP) visualization, plotting I(X, Z) (MI between input and hidden layers) and I(Z, Y) (MI between hidden and output layers). Stop pruning when there is a significant drop in I(Z, Y) or when you reach an "elbow" in the curve, indicating that further pruning harms predictive information [48].

Diagram 1: Interpretable Pruning Workflow

Post-Training Pruning with Fine-Tuning

A common and straightforward paradigm for applying pruning after a model is fully trained [46] [47].

- Workflow:

- Pre-train a dense model to convergence.

- Prune the model based on a chosen criterion (e.g., weight magnitude) and a target sparsity ratio.

- Fine-tune the pruned model for several epochs to recover any lost accuracy. The learning rate for fine-tuning is typically lower than that used for initial training.

- (Optional) For higher sparsity levels, perform Iterative Pruning: Repeat the prune-and-fine-tune steps in multiple cycles, gradually increasing the sparsity [47].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Concepts for Pruning Experiments

| Item / Concept | Function / Explanation | Relevance to Research |

|---|---|---|

| Magnitude Pruning | A pruning criterion that removes weights with the smallest absolute values [49]. | A simple, highly effective baseline method for identifying "unimportant" parameters [49]. |

| Mutual Information (MI) | A measure of the mutual dependence between two random variables. In pruning, it quantifies how much information is shared between neurons [48]. | Provides an information-theoretic foundation for identifying redundant neurons, leading to more interpretable pruning decisions [48]. |

| Dependency Graph | A graph representing how layers in a neural network depend on each other (e.g., pruning a channel in a conv layer requires pruning the corresponding channel in the next layer) [49]. | Critical for structured pruning to ensure the network remains functionally consistent after pruning. Essential for complex, multi-component architectures [49]. |

| Information Plane (IP) | A plot of I(X, Z) vs. I(Z, Y), visualizing the information flow in a network [48]. | Serves as a diagnostic tool to determine the optimal stopping point for pruning, balancing compression and the retention of predictive information [48]. |

| Structured Pruning | Removes entire structural components like neurons, channels, or filters [46] [47]. | The preferred method when the research goal is to achieve faster inference times on dedicated hardware [49] [50]. |

| Fine-Tuning | The process of retraining a pruned model for a few epochs to recover performance [46] [47]. | A mandatory step in most pruning pipelines to mitigate accuracy degradation caused by the removal of parameters. |

Performance Data & Comparative Analysis

The following table summarizes quantitative findings from recent research, illustrating the effects of pruning on various models and tasks.

Table 3: Comparative Analysis of Pruning Effects from Empirical Studies

| Model / Architecture | Dataset / Task | Pruning Method | Key Result | Source Context |

|---|---|---|---|---|

| VGG16, ResNet18 | BloodMNIST (Medical Imaging) | Sparsity (50% Conv, 80% Linear layers) | Achieved ~2% average accuracy increase over dense models, demonstrating sparsity can maintain competitive performance. | [50] |

| Fully Connected Network | MNIST, Fashion MNIST | Architectural Optimisation (Neuron Rearrangement) | Improved model robustness by 2.8% to 6.0% at fixed accuracy, by moving neurons to "colder" network areas. | [52] |

| General Models | Object Detection, Segmentation | Unstructured Global Pruning | Model file size decreases linearly with pruning ratio; some models maintain high performance even at high pruning ratios (e.g., 90%). | [46] |

| TD-MPC (Control) | Control Task | Component-Aware Structured Pruning | Achieved greater sparsity with less performance degradation compared to component-agnostic methods, preserving functional integrity. | [49] |

| Multi-Component Arch. | Industrial / Control Tasks | Standard Structured Pruning | Risk of severe performance degradation because large dependency groups can span multiple critical components. | [49] |

Pruning Methodology Selection Guide

The following diagram provides a logical pathway for researchers to select an appropriate pruning strategy based on their primary goal.

Diagram 2: Pruning Strategy Selection Guide

Frequently Asked Questions (FAQs)

General Concepts

Q1: What is the primary goal of using Early Stopping and Dropout in deep learning? The primary goal of both Early Stopping and Dropout is to prevent overfitting and improve the model's ability to generalize to new, unseen data. Overfitting occurs when a model learns the patterns and noise in the training data too well, resulting in poor performance on validation or test datasets [53] [54] [55]. While they share this goal, they approach it differently: Early Stopping is a training procedure that halts the process once performance on a validation set stops improving, whereas Dropout is an architectural technique that randomly deactivates neurons during training to force the network to learn more robust features [53] [56].

Q2: Can Early Stopping and Dropout be used together? Yes, Early Stopping and Dropout are often used together as complementary regularization strategies [53] [56]. Using Dropout during training can help slow down the overfitting process, and Early Stopping can determine the optimal point to halt training, thereby conserving computational resources and ensuring the best model is selected [55].

Implementation & Configuration

Q3: How do I set the 'patience' parameter for Early Stopping?

The patience parameter determines how many epochs to wait after the last time validation performance improved before stopping the training. There is no universally optimal value. Typical patience values range from 3 to 6 epochs [57]. A lower patience might stop training too early, while a very high patience might lead to unnecessary training and overfitting [53] [58]. It's best to start with a value in this range and adjust based on the observed volatility of your validation loss curve.

Q4: What is a good starting value for the Dropout rate? A common starting point for the Dropout rate is between 0.2 and 0.5 [54] [55]. A rate of 0.5 is often used in hidden layers as it approximates an exponential number of thinned networks [55]. However, the optimal rate depends on the network architecture and the problem. Simpler models may require lower dropout rates, while very large, complex networks might benefit from higher rates. It is treated as a hyperparameter that should be tuned [55].

Q5: On which layers of a neural network should I apply Dropout? Dropout is most commonly applied to fully connected (dense) layers where the risk of co-adaptation is high [55] [56]. It can also be applied to convolutional and recurrent layers, though specialized variants like DropBlock for CNNs may be more effective [56]. A typical strategy is to place Dropout layers after activation functions.

Troubleshooting

Q6: My model is stopping too early, even though the validation loss is still fluctuating. What should I do?

This is a classic sign of a patience value that is set too low. You should increase the patience parameter to allow the model to work through periods of minimal improvement or noise in the validation metric [53] [57]. Additionally, ensure that your validation dataset is large enough to provide a stable estimate of performance.

Q7: After implementing Dropout, my training loss is decreasing very slowly. Is this normal? Yes, this is an expected behavior. Dropout intentionally makes training more difficult by randomly removing parts of the network, which slows down the convergence rate [55]. This is a trade-off for better generalization. If the slowdown is excessive, you might consider slightly reducing the dropout rate or increasing the learning rate.

Q8: For a classification task, should I monitor validation loss or validation accuracy for Early Stopping? While validation loss is the most commonly monitored metric, validation accuracy can be a more intuitive and robust choice for classification problems, especially if your loss function is sensitive to small fluctuations [58]. The best practice is to monitor the metric that most closely aligns with your primary objective.

Troubleshooting Guides

Issue 1: Early Stopping is Not Triggering, Leading to Severe Overfitting

Problem: The training continues for the maximum number of epochs, and the validation loss increases significantly, indicating clear overfitting, but Early Stopping does not halt the process.

Solution:

- Step 1: Verify the Monitoring Metric and Direction: Confirm that your Early Stopping callback is correctly configured to monitor

val_lossand set tomode='min'. A simple misconfiguration can prevent it from triggering. - Step 2: Adjust the Patience Parameter: If the validation loss is noisy (goes up and down frequently), a low patience value might cause the training to continue. Increase the patience to a higher value (e.g., 10 or 20) to require a sustained degradation before stopping [53].

- Step 3: Check for Data Contamination: Ensure that your validation set is truly separate from the training set and that there is no data leakage. If the model sees the validation data during training in any way, the validation loss will not be a reliable indicator of generalization.

- Step 4: Use a More Robust Stopping Criterion: Consider implementing a more advanced stopping criterion. For example, the Correlation-Driven Stopping Criterion (CDSC) stops training when the rolling Pearson correlation between training and validation loss decreases below a threshold, which can be more effective than simple patience [59].

Issue 2: Model Performance is Poor After Adding Dropout

Problem: After introducing Dropout, the model's performance on both training and validation sets is significantly worse than before (i.e., the model is underfitting).

Solution:

- Step 1: Reduce the Dropout Rate: A high dropout rate (e.g., >0.5) can excessively cripple the network, preventing it from learning meaningful patterns. Systematically reduce the dropout rate (e.g., to 0.2 or 0.3) and re-evaluate the performance [55].

- Step 2: Adjust Network Capacity: Adding dropout regularizes the network, effectively reducing its capacity. If you are introducing a strong dropout, you may need to increase the network's capacity (e.g., add more layers or more units per layer) to compensate for the added regularization [53].

- Step 3: Verify Scaling During Inference: Ensure that during testing/inference, the weights of the neurons are scaled correctly. In many deep learning frameworks, this is handled automatically when using the standard Dropout layer. For custom implementations, the weights should be scaled by

(1 - dropout_rate)at test time to account for all neurons being active [54] [55]. - Step 4: Review the Placement of Dropout Layers: Applying dropout to the input layer or to layers that are too small can destroy critical information. Avoid using high dropout rates on the input layer and consider removing it from very small hidden layers.

Experimental Protocols & Data

Protocol 1: Standardized Workflow for Implementing Early Stopping

Objective: To systematically integrate Early Stopping into the training of a deep neural network to prevent overfitting.

Methodology:

- Data Partitioning: Split the dataset into three parts: Training Set (e.g., 70%), Validation Set (e.g., 15%), and Test Set (e.g., 15%). The validation set is used exclusively for monitoring performance during training.

- Callback Configuration: Configure an Early Stopping callback with the following typical parameters:

monitor='val_loss': Metric to monitor.mode='min': Direction of improvement (minimize loss).patience=10: Number of epochs with no improvement to wait.restore_best_weights=True: Revert model weights to the epoch with the bestval_loss.

- Model Training: Train the model for a generously large number of epochs (e.g., 100) with the Early Stopping callback enabled. The training will halt automatically when the stopping criterion is met.

- Final Evaluation: Evaluate the final model (with the restored best weights) on the held-out test set to obtain an unbiased estimate of its generalization performance.

Protocol 2: Evaluating the Impact of Different Dropout Rates

Objective: To empirically determine the optimal dropout rate for a given model and dataset.

Methodology: