Beyond Missing Values: A 2025 Guide to Incomplete Data Model Validation in Drug Development

This guide addresses the critical challenge of incomplete data in biomedical research, moving beyond simple identification to provide a comprehensive framework for validation.

Beyond Missing Values: A 2025 Guide to Incomplete Data Model Validation in Drug Development

Abstract

This guide addresses the critical challenge of incomplete data in biomedical research, moving beyond simple identification to provide a comprehensive framework for validation. Tailored for researchers, scientists, and drug development professionals, it covers the foundational costs of poor data, methodological techniques for handling missingness, advanced troubleshooting for complex datasets, and strategies for building robust, validated models that meet regulatory standards. The article synthesizes modern approaches, including AI-driven validation and continuous monitoring, to ensure data integrity from pre-clinical research to clinical trials.

The High Stakes of Incomplete Data in Clinical and Pre-Clinical Research

Understanding Incomplete Data in Research

In almost all clinical and scientific research, missing data presents a common challenge that can significantly reduce statistical power and produce biased estimates if not handled properly [1]. Incomplete data extends beyond simple missing values to encompass various mechanisms and patterns that researchers must understand to select appropriate handling methods.

Frequently Asked Questions

What are the different mechanisms by which data can be missing?

Data can be missing through three primary mechanisms, each with different implications for analysis [1] [2]:

- Missing Completely at Random (MCAR): The probability of missing data is unrelated to any observed or unobserved variables

- Missing at Random (MAR): The missingness relates to other observed variables but not the missing values themselves

- Missing Not at Random (MNAR): The missingness relates to the unobserved missing values themselves

When is complete case analysis (listwise deletion) acceptable?

Complete case analysis may be valid only when data are MCAR or in some specific situations when data are MAR [2]. However, this approach reduces statistical power by decreasing sample size and may introduce bias if the missingness mechanism isn't truly MCAR [1].

What is the fundamental difference between deterministic and stochastic imputation?

Deterministic imputation replaces missing values with single fixed estimates, while stochastic (multiple) imputation generates several plausible values for each missing data point, accounting for uncertainty in the imputation process [3].

Troubleshooting Common Experimental Data Issues

Problem: High Percentage of Missing Biomarker Measurements

Issue: More than 30% of participants lack complete biomarker panels in a longitudinal drug efficacy study.

Solution: Implement Multiple Imputation by Chained Equations (MICE) to preserve sample size and statistical power while accounting for uncertainty.

Protocol:

- Perform diagnostic analysis to assess missingness pattern

- Create 5-20 imputed datasets using predictive mean matching

- Analyze each complete dataset separately

- Pool results using Rubin's rules

Problem: Differential Missingness Between Treatment Arms

Issue: Missing outcome data occurs more frequently in the placebo group than active treatment.

Solution: Apply inverse probability weighting to correct for potential bias.

Protocol:

- Model the probability of missingness using baseline covariates

- Calculate inverse probability weights for complete cases

- Apply weights to the analysis

- Conduct sensitivity analysis for MNAR assumption

Problem: Missing Covariate Data in Predictive Model Development

Issue: Developing a clinical risk prediction model with incomplete predictor variables.

Solution: Apply bootstrapping followed by deterministic imputation, which is particularly suited for prediction models as it doesn't require the outcome variable in the imputation model and simplifies model deployment [3].

Protocol:

- Generate multiple bootstrap samples from original incomplete data

- Perform deterministic imputation separately within each sample

- Develop prediction models within each complete bootstrap sample

- Aggregate model performance across bootstrap samples

Experimental Protocols for Handling Missing Data

Protocol 1: Multiple Imputation Workflow

Protocol 2: Data Missingness Assessment

Objective: Systematically evaluate pattern and mechanism of missing data.

Procedure:

- Calculate percentage missing for each variable

- Create missingness pattern visualizations

- Test for associations between missingness and observed variables

- Document potential mechanisms (MCAR, MAR, MNAR)

Interpretation:

- MCAR: Little pattern in missingness

- MAR: Missingness correlates with observed variables

- MNAR: Missingness likely relates to unobserved values

Research Reagent Solutions

| Reagent/Method | Primary Function | Application Context |

|---|---|---|

| Multiple Imputation | Accounts for imputation uncertainty | Final analysis for publication |

| Deterministic Imputation | Creates single complete dataset | Clinical prediction model development [3] |

| Complete Case Analysis | Uses only complete observations | Preliminary analysis or MCAR data [2] |

| Maximum Likelihood | Model-based handling of missing data | Structural equation modeling |

| Sensitivity Analysis | Tests robustness to missing data assumptions | All studies with missing data |

Advanced Method Selection Framework

Performance Metrics for Imputation Methods

| Method | Bias Reduction | Variance Handling | Computational Intensity | Deployment Ease |

|---|---|---|---|---|

| Complete Case | Low for MCAR only | Poor | Low | High |

| Single Imputation | Variable | Underestimates | Medium | High |

| Multiple Imputation | High | Proper accounting | High | Medium |

| Deterministic Imputation | Medium when outcome omitted [3] | Requires bootstrapping [3] | Low | Very High [3] |

Best Practices for Prevention and Documentation

Preventive Measures [1]:

- Limit data collection to essential variables

- Develop comprehensive study documentation

- Train all study personnel thoroughly

- Conduct pilot studies to identify issues

- Set a priori targets for acceptable missing data levels

- Aggressively engage participants at risk of dropout

Documentation Requirements:

- Percentage missing for each variable

- Suspected mechanism of missingness

- Methods used to handle missing data

- Results of sensitivity analyses

- Justification for chosen handling method

Successful handling of incomplete data requires understanding the missingness mechanisms, selecting appropriate methods based on the research context, and thoroughly documenting the process to ensure the validity and reliability of research findings.

Frequently Asked Questions (FAQs)

Q1: What are the most common data quality issues in scientific research? Researchers commonly face data quality issues that can invalidate findings. The most frequent problems include:

- Incomplete Data: Essential information is missing from datasets, which can hinder accurate analysis and lead to biased results in clinical or experimental data [4].

- Inaccurate Data: This includes incorrect values entered during manual input, or data that is technically correct but wrong in context or meaning (a problem known as low veracity) [5] [4].

- Duplicate Entries: The same data point or record is entered more than once, which can skew aggregates and analytical outcomes [6] [4].

- Inconsistent Data: Mismatches in formats, units, or schemas occur when integrating data from diverse sources, such as different lab equipment or clinical systems [6] [4].

- Outdated Data: Data that is no longer current or useful can lead to misleading insights, especially in fast-moving research fields [6].

Q2: What is the concrete financial impact of poor data quality? The financial toll of poor data quality is staggering and goes far beyond simple cleanup costs.

- Organizational Costs: Gartner research indicates that poor data quality costs organizations an average of $12.9 million annually [7] [5].

- Revenue Loss: MIT Sloan Management Review research found that companies lose 15-25% of their annual revenue due to poor data quality [7].

- Stock Devaluation: Flawed data can directly impact market value. For example, Unity, a video game software company, saw its stock drop by 37% in 2022 after inaccurate data compromised a machine learning algorithm [5].

Q3: How does poor data quality specifically compromise research validity? Poor data quality directly undermines the scientific method by introducing bias, error, and unreliability.

- Compromised Predictive Models: In clinical prediction modeling, missing or erroneous predictor values can lead to inaccurate risk assessments for new patients, potentially affecting treatment decisions [8].

- Skewed Results and Insights: Duplicate records inflate data volume and can distort statistical analysis and aggregates, while inconsistent data from multiple sources can lead to incorrect conclusions [6] [4].

- Wasted Resources: Engineers and analysts can spend as much as half their time fixing data issues instead of conducting new research or delivering new features [5].

Q4: What methodologies can be used to validate data models with incomplete data? Several robust statistical methods are employed to handle missing data during model validation and application.

- Targeted Validation Sampling: This involves selecting the most informative subset of patients or data points for rigorous validation (e.g., through chart review) to efficiently improve data quality and completeness for the entire dataset [9].

- Submodels: Using models that are based only on the variables observed for a new patient, avoiding the need to impute missing values for that specific case [8].

- Multiple Imputation: Using statistical techniques to fill in missing values with multiple plausible estimates, creating several complete datasets for analysis [8].

Quantitative Impact of Poor Data Quality

The following tables summarize key statistics that highlight the scale and impact of data quality issues.

Table 1: Financial and Operational Costs

| Metric | Impact | Source |

|---|---|---|

| Average Annual Cost per Organization | $12.9 million | Gartner [7] [5] |

| Annual Revenue Loss | 15-25% | MIT Sloan & Cork University [7] |

| Time Spent Fixing Data Issues | Up to 50% for data teams | Alation [5] |

| Stock Price Impact (Unity Case) | 37% drop | IBM (via Alation) [5] |

Table 2: Prevalence of Data Quality Issues

| Metric | Prevalence | Source |

|---|---|---|

| Data Meeting Basic Quality Standards | Only 3% of companies | Harvard Business Review [7] |

| New Records with Critical Errors | 47% | MIT Sloan & Thomas Redman [7] |

| Data Duplication | Affects 10-30% of business records | Industry Analysis [7] |

| Data Decay (Email Invalidation) | 28% within 12 months | ZeroBounce [7] |

Experimental Protocols for Data Validation

Protocol 1: Targeted Validation with Enriched Chart Review

This protocol is designed for robust and efficient data quality improvement in electronic health record (EHR) research [9].

Objective: To ensure the quality and promote the completeness of EHR data for operationalizing a whole-person health measure (like the allostatic load index) by validating a targeted subset of patient records.

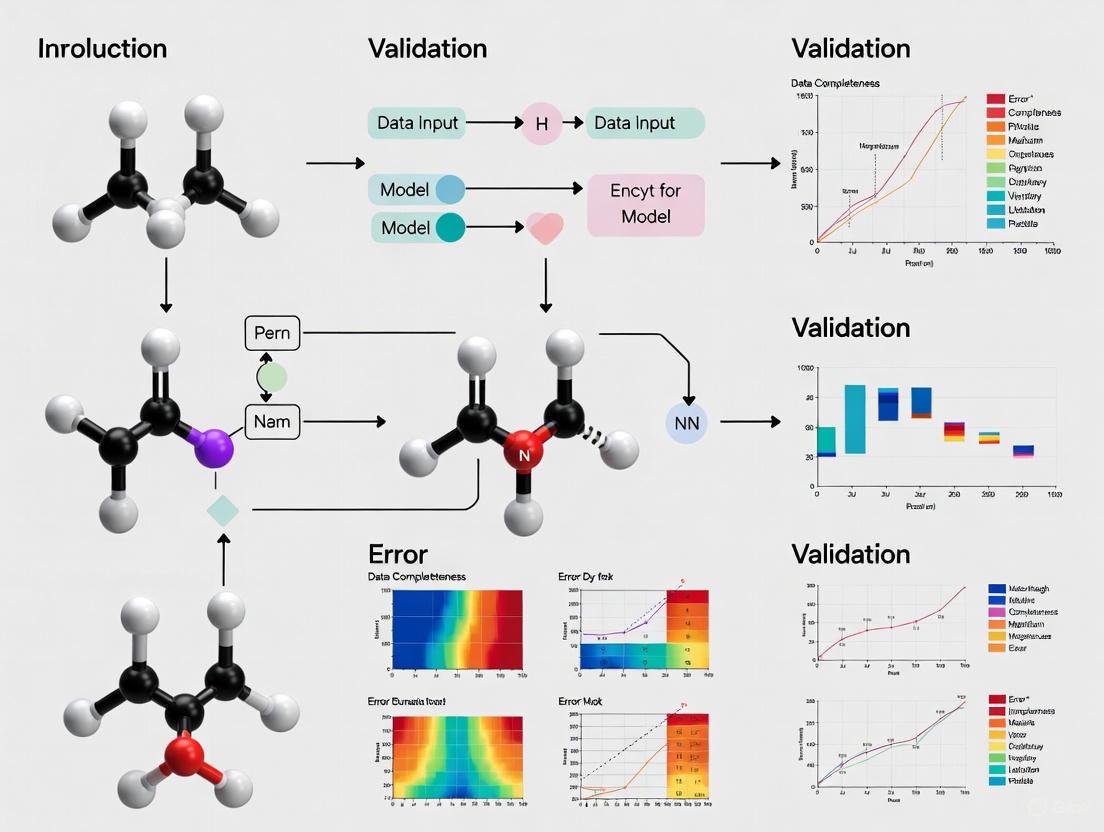

Workflow: The following diagram illustrates the targeted validation workflow.

Methodology:

- Initial Sampling & Analysis: Extract a representative sample from the EHR (e.g., 1000 patients). Perform a preliminary analysis and fit initial statistical models to this data [9].

- Targeted Patient Selection: Using the results from the initial model, calculate residuals to identify which patients are most "informative" (e.g., those whose outcomes are poorly predicted by the initial model). Select a subset (e.g., 100 patients) from this group for validation. This "residual sampling" method is statistically efficient for uncovering data issues [9].

- Enriched Chart Review: Conduct a thorough chart review of the selected patients. This review should not only check for errors but also actively recover missing data by looking for auxiliary information present in the patients' charts. The cited study found this protocol increased the number of non-missing data components per patient from 6 to 7, on average [9].

- Model Incorporation: Integrate the now-validated and more complete data from the chart review back into the statistical models. This creates a more accurate and reliable final model for prediction [9].

Protocol 2: Handling Missing Predictors for New Patients

This protocol addresses the challenge of applying a pre-existing prediction model to a new individual with missing data [8].

Objective: To generate a valid prediction for a single new patient when one or more predictor variables required by the model are missing.

Workflow: The diagram below shows the decision pathway for handling missing data for a new patient.

Methodology:

- Submodels: If the prediction model was developed with this in mind, it may have accompanying "submodels" that are based on different combinations of observed variables. For a new patient, you would use the submodel that corresponds to the specific set of predictors that are available for that individual [8].

- Marginalization: If the full model's distribution is known, you can statistically "average over" or marginalize the missing variables to obtain a prediction based only on the observed values. This method integrates out the uncertainty of the missing data [8].

- Single Imputation: For a single new patient, you can use an imputation method (like fully conditional specification) to generate a plausible value for the missing predictor based on the patient's other observed characteristics. This imputed value is then used alongside the truly observed values to generate a prediction [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Data Quality Management

| Tool Category | Function | Example Use-Case |

|---|---|---|

| Data Profiling & Cleansing Tools | Automatically scans data columns for nulls, outliers, and pattern violations. Assesses data integrity and uncovers hidden relationships or orphaned records [5]. | Identifying a higher-than-expected rate of missing values in a key biomarker column before analysis. |

| Deduplication Engines | Uses fuzzy matching algorithms to identify and merge duplicate records across different systems (e.g., CRM, EHR). Algorithms like Levenshtein distance calculate the edits needed to make two strings identical [5]. | Merging patient records that were created multiple times due to slight variations in name spelling (e.g., "Jon" vs "John"). |

| Validation Frameworks | Allows researchers to codify custom business or logic rules (e.g., "date of diagnosis must precede date of treatment") in SQL or other languages to automatically flag invalid records [5]. | Ensuring that all lab values in a dataset fall within physiologically plausible ranges. |

| Data Catalogs | Provides a centralized inventory of an organization's data assets. Helps uncover "dark data" by making it discoverable and includes metadata like lineage and ownership, which is critical for assessing trustworthiness [6]. | A research team discovering an existing, relevant dataset from another department that was previously unknown to them. |

| Statistical Software with Multiple Imputation | Advanced statistical packages (e.g., R, Python libraries) that implement rigorous methods for handling missing data, such as multiple imputation, which is a gold standard for addressing missingness in research datasets. | Creating multiple complete versions of a dataset with missing values imputed, analyzing each one, and pooling the results to get accurate estimates that account for the uncertainty of the imputation. |

FAQs on Laboratory Errors and Data Quality

What are the most common root causes of errors in a laboratory environment? Errors in laboratories stem from a combination of human, procedural, and system-level causes. A prevalent issue is the tendency to blame individuals instead of identifying weaknesses in the quality system itself [10]. The most common failure in root cause analysis is incorrectly citing "lack of training" as a root cause when a training program already exists; the real cause is often deeper, such as why the training wasn't retained or applied [10]. Other frequent causes include patient identification errors, specimen mislabeling, the use of expired reagents, and improper sample storage [11].

Which phase of the testing process is most vulnerable to errors? The pre-analytical phase, which includes steps like test ordering, patient preparation, and sample collection and transportation, is the most vulnerable. Studies indicate that pre-pre-analytical errors can account for up to 70% of all mistakes in laboratory diagnostics [12]. In contrast, the analytical phase has seen a significant reduction in error rates due to improved technology and standardization [12].

How can we prevent recurring data entry and specimen identification errors? Prevention requires a systemic approach that often combines technology and standardized procedures [11].

- For Data Entry: Implement systems that require double entry of critical data, use input masking for data validation (e.g., for dates, IDs), and leverage a user-friendly order entry interface with drop-down menus to reduce free-text errors [11].

- For Specimen Identification: Assign a unique ID to every specimen and its derivatives, utilize a barcode system, and enforce a two-point verification protocol (e.g., verifying patient name and date of birth) [11].

What is a robust methodology for investigating the root cause of a lab error? A highly effective method is the "Rule of 3 Whys" [10]. This involves iteratively asking "why" to move beyond symptoms and uncover a systemic cause.

- Why #1: Identify the immediate reason for the error.

- Why #2: Investigate why that immediate reason occurred.

- Why #3: Uncover the process or system failure that allowed it to happen. For example, if staff cannot locate a spill kit, asking "why" three times might reveal that the kit was stored in an unlabeled cupboard, not simply that staff "forgot" its location [10].

What are the essential practices for ensuring data quality and validation? Ensuring data quality involves proactive and reactive measures [13] [14]:

- Define Clear Validation Rules: Establish rules for data type, range, format, and pattern matching (e.g., for email addresses, numeric ranges) [14].

- Automate Validation Processes: Use automated checks to reduce human error and improve efficiency [15] [13].

- Conduct Regular Audits and Monitoring: Schedule regular data reviews to detect stale, incomplete, or incorrect data [13].

- Establish Data Governance: Assign clear ownership of data assets and define roles and policies to enforce accountability [13].

Troubleshooting Guides

Guide 1: Troubleshooting Pre-Analytical Data & Specimen Errors

| Symptom | Common Root Cause | Corrective & Preventive Actions |

|---|---|---|

| Inaccurate or Incomplete Patient Data [13] [11] | Manual data entry errors; lack of validation rules. | Implement internal double-entry systems; use input masking and drop-down menus in the order entry interface [11]. |

| Mislabeled or Swapped Specimens [11] | Failure to use at least two unique patient identifiers; no barcode system. | Use a barcoding system for all specimens; enforce a two-person verification system during collection and labeling [11]. |

| Missing or Incomplete Data [13] | Required fields not enforced during data entry; unclear procedures. | Apply data validation rules to enforce completeness; conduct regular audits to identify workflow gaps [11] [14]. |

Experimental Protocol: Two-Point Verification for Specimen Handling

- Purpose: To prevent patient misidentification and specimen swapping.

- Methodology:

- Upon specimen collection, ask the patient to state their full name and date of birth.

- Match these two identifiers against the requisition form and patient ID band.

- Label the specimen container at the bedside with a pre-printed barcode that includes the two unique identifiers.

- Before processing in the lab, a second staff member independently verifies that the information on the specimen label matches the requisition form.

Guide 2: Troubleshooting Analytical & Data Integrity Errors

| Symptom | Common Root Cause | Corrective & Preventive Actions |

|---|---|---|

| Contamination [11] | Deviation from hygiene protocols; cross-contamination from equipment. | Enforce strict PPE use and surface disinfection; separate clean and contaminated material workflows; perform regular air quality monitoring [11]. |

| Use of Expired Reagents [11] | Inadequate inventory management; lack of visible expiration date labeling. | Implement a digital inventory system with alert functions; follow the "first-expired, first-out" (FEFO) principle; clearly label all reagents [11]. |

| Inconsistent Data Across Systems [13] | Siloed systems; lack of standardized data definitions and formats. | Apply consistent formats and naming conventions; define a "single source of truth" for shared data; use data governance platforms to harmonize assets [13]. |

Experimental Protocol: Data Validation and Cleansing

- Purpose: To identify and rectify errors, inconsistencies, and duplicates within a dataset to ensure its accuracy and reliability [13] [14].

- Methodology:

- Data Profiling: Analyze the dataset to understand its structure, content, and quality by assessing value distributions and identifying null values [15].

- Validation & Cleansing:

- Perform format validation (e.g., email structure) and range validation (e.g., plausible numeric values) [13].

- Execute de-duplication using fuzzy or rule-based matching to merge duplicate records [13].

- Correct inaccurate data, such as misspelled names or inconsistent formats, based on predefined business rules [13].

- Verification: After cleansing, re-profile the data to verify that error rates have been reduced and data quality dimensions (completeness, accuracy, uniqueness) are improved.

Quantitative Data on Laboratory Errors

Table 1: Error Distribution Across the Total Testing Process (TTP)

| Testing Phase | Description of Phase | Estimated Frequency of Errors | Potential Impact on Patient Safety |

|---|---|---|---|

| Pre-Pre-Analytical | Test ordering, patient preparation, and sample collection [12]. | Up to 70% of all lab errors originate here [12]. | High risk of diagnostic errors and inappropriate care [12]. |

| Analytical | Sample analysis and testing within the laboratory [12]. | As low as 447 errors per million tests (0.0447%) in modern labs [12]. | Lower due to quality controls, but immunoassay interference remains a concern [12]. |

| Post-Post-Analytical | Result interpretation, patient notification, and follow-up [12]. | Failure to inform patients of abnormal results occurs in ~7.1% of cases [12]. | High risk of missed/delayed diagnosis and lack of treatment [12]. |

Table 2: Common Data Quality Problems and Fixes

| Data Quality Problem | Description | Prevention Strategy |

|---|---|---|

| Incomplete Data [13] | Missing or incomplete information in a dataset. | Implement data validation processes to ensure required fields are filled; improve data collection methods [13]. |

| Inaccurate Data [13] | Errors, discrepancies, or inconsistencies within the data. | Implement rigorous data validation and cleansing procedures; use data quality monitoring with alerts [13]. |

| Duplicate Data [13] | Multiple entries for the same entity across systems. | Implement de-duplication processes and use unique identifiers (e.g., customer IDs) [13]. |

| Outdated Data [13] | Information that is no longer current or relevant. | Establish data aging policies and regular data update/refresh procedures [13]. |

Workflow Diagrams

Root Cause Analysis (RCA) Workflow

Total Testing Process (TTP) Error Mapping

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Quality Control Consideration |

|---|---|---|

| Barcoded Specimen Containers [11] | Provides a unique identifier for each sample, preventing misidentification and swapping throughout the testing workflow. | Ensure compatibility with laboratory scanners and the Laboratory Information System (LIS). |

| Digital Reagent Inventory [11] | A tracking system that monitors reagent stock levels and expiration dates, sending alerts for replenishment. | Must be regularly updated and reviewed to prevent the use of expired reagents. |

| Personal Protective Equipment (PPE) [11] | Protects specimens from operator contamination and protects staff from biohazards. | Adherence to strict hygiene protocols and a defined cleaning schedule is critical for effectiveness. |

| Quality Control (QC) Materials | Used to monitor the precision and accuracy of analytical instruments and assays. | QC materials should be stored and handled according to manufacturer specifications to maintain integrity. |

Technical Support & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q: What is the recommended method for handling missing covariate data in clinical risk prediction models?

A: For clinical risk prediction models where the goal is to predict outcomes for future patients, deterministic imputation (single imputation) is often better suited than multiple imputation. In deterministic imputation, the outcome is not included in the imputation model, making it easier to apply to future patients where the outcome is unknown. This method is computationally efficient for model deployment [3].

Q: What is the correct sequence for combining internal validation and imputation for incomplete data?

A: You should perform bootstrapping prior to imputation. Conducting imputation before bootstrapping may use information from the development process in the validation process, which is not methodologically sound. The recommended "Val-MI" approach (validation followed by multiple imputation) provides largely unbiased performance estimates [3] [16].

Q: What are the core data integrity principles required for FDA submissions?

A: FDA submissions must adhere to ALCOA+ principles, which require data to be Attributable, Legible, Contemporaneous, Original, and Accurate, with the "+" representing Complete, Consistent, Enduring, and Available. These principles are fundamental to Good Clinical Practice (GCP) guidelines and are validated to guarantee adherence to data integrity standards [17].

Q: What common data integrity issues lead to FDA 483 Observations?

A: Common citations include unvalidated computer systems, lack of audit trails, or missing data. To minimize these risks, ensure your submission software and processes are fully validated, maintain complete timestamped audit logs, and implement ongoing oversight with periodic reviews of system logs [18].

Q: How should prognostic models be validated when limited incomplete data is available?

A: Implement a generic framework for validation based on uncertainty propagation. This can be achieved using sensitivity indices, correlation coefficients, Monte Carlo simulations, and analytical approaches to quantify uncertainty in model outputs, enabling validation even with data limitations [19].

Experimental Protocols for Incomplete Data Handling

Protocol: Bootstrapping with Deterministic Imputation for Clinical Prediction Models

This protocol is appropriate when developing and validating clinical risk prediction models with missing covariate data [3].

- Bootstrap Resampling: Draw multiple bootstrap samples (with replacement) from the original incomplete dataset.

- Deterministic Imputation: For each bootstrap sample, fit a separate deterministic imputation model for each missing variable using observed data within that sample. Crucially, do not include the outcome variable in the imputation models.

- Model Development: Develop the clinical prediction model (e.g., using logistic regression) on each completed bootstrap sample.

- Performance Validation: Apply each developed model to the original dataset (or out-of-bag samples) to estimate predictive performance metrics (e.g., AUC, calibration).

- Aggregate Results: Aggregate the performance estimates across all bootstrap samples to obtain a robust, internally validated measure of model performance, correcting for optimism.

Protocol: Combining Internal Validation and Multiple Imputation (Val-MI)

This method provides unbiased estimates of predictive performance measures for prognostic models developed on incomplete data [16].

- Data Splitting: Split the incomplete dataset into training and test sets using a resampling method (e.g., bootstrapping or cross-validation).

- Multiple Imputation on Training Data: Perform multiple imputation (M times) only on the training set to create M completed training datasets. The outcome can be included in the imputation model for this step.

- Model Training: Develop the prognostic model on each of the M imputed training datasets.

- Imputation on Test Data: For each of the M models, impute the missing data in the test set using the imputation model derived from its corresponding training set.

- Performance Assessment: Evaluate the model's performance on each of the M imputed test sets.

- Performance Pooling: Combine the M performance estimates (e.g., using Rubin's rules or similar) to obtain a final, optimism-corrected estimate of predictive performance.

Data Presentation

Quantitative Data Tables

Table 1: Comparison of Imputation Methods for Clinical Prediction Models

| Feature | Deterministic Imputation | Multiple Imputation (MI) |

|---|---|---|

| Core Principle | Single, fixed value replaces missing data [3] | Multiple plausible values sampled from a distribution [3] |

| Inclusion of Outcome in Imputation Model | Must not be included [3] | Must be included to ensure unbiased results [3] |

| Computational Efficiency at Deployment | High (static model, fast prediction) [3] | Low (requires development data, intensive computation) [3] |

| Primary Use Case | Prognostic clinical risk prediction models [3] | Estimation and inference in clinical research [3] |

| Handling of Imputation Uncertainty | Accounted for via bootstrapping [3] | Accounted for via between- and within-imputation variance [3] |

Table 2: Key FDA Validation Rules for SDTM Submission Data

| Validation Aspect | Key Requirements & Rules |

|---|---|

| Conformance to Standards | Data must align with CDISC SDTM Implementation Guide (IG) for domain structures, variables, and controlled terminology [17]. |

| Dataset Structure | Must follow prescribed row/column structure with correct required, expected, and permissible variables [17]. |

| Consistency Across Datasets | Relationships between datasets (e.g., DM vs. AE) must be maintained with consistent unique subject identifiers (USUBJID) [17]. |

| Controlled Terminology | Values must conform to CDISC Controlled Terminology for uniformity [17]. |

| Referential Integrity | Values in related datasets must match (e.g., subjects in AE dataset must exist in DM dataset) [17]. |

| Metadata Compliance | Define.xml must accurately describe structures, variables, and terminology [17]. |

Mandatory Visualizations

Workflow for Validating Models with Incomplete Data

Data Integrity Control System for FDA Submissions

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Data Validation

| Tool / Solution | Function & Explanation |

|---|---|

| Pinnacle 21 | Industry-standard software for automated validation of datasets against FDA submission guidelines (e.g., SDTM, SEND). It checks for compliance, errors, and formatting issues before submission [17]. |

| Deterministic Imputation | A single imputation method where a static model replaces missing values with fixed predictions. Essential for prognostic model deployment where the outcome is unknown for future patients [3]. |

| Bootstrap Resampling | A statistical technique that involves repeatedly sampling from a dataset with replacement. Used for internal validation of predictive performance, especially when combining with imputation methods [3]. |

| Uncertainty Propagation Framework | A methodological approach using sensitivity indices and Monte Carlo simulations to quantify uncertainty in prognostic model outputs, enabling validation even with limited or incomplete data [19]. |

| Electronic Submissions Gateway (ESG) | The FDA's mandatory portal for all electronic regulatory submissions. Using it with proper AS2 protocols and encryption is essential for successful data transmission and integrity [18]. |

Technical Support Center: Troubleshooting Data Integrity in Clinical Research

This technical support center provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals address the critical challenge of flawed and incomplete data in clinical trials. The content is framed within the broader context of handling incomplete data model validation research.

Troubleshooting Guide: Common Data Flaws and Solutions

Table: Common Data Flaws, Consequences, and Resolution Strategies

| Data Flaw Type | Real-World Consequence | Recommended Resolution Strategy |

|---|---|---|

| Inconsistent/Incomplete Data [20] | Delayed trial timelines, jeopardized approvals, biased outcomes, and incorrect conclusions. | Implement Electronic Data Capture (EDC) systems, standardize data collection procedures, and conduct regular staff training [20]. |

| Missing Data [20] | Gaps in trial results, reduced statistical power, and increased risk of biased conclusions. | Use statistical imputation techniques and improve patient follow-up practices. For new predictions, use submodels or marginalization methods [8] [20]. |

| Poor Quality Data (Human Error) [20] | Dramatically altered analyses (e.g., incorrect dosage evaluation), compromising trial validity. | Deploy automated validation tools and real-time data monitoring with built-in edit checks to minimize manual entry errors [21] [20]. |

| Non-Compliant Data [22] | Regulatory submission rejections, costly delays, and failure to gain drug approval. | Use validation tools (e.g., Pinnacle 21) to check against FDA rules and ensure adherence to CDISC standards like SDTM and ADaM [20] [22]. |

| Non-Representative Data [23] | Biased results that fail to capture how different demographic groups respond to treatments. | Set specific inclusion goals for underrepresented populations and use AI-powered tools to broaden recruitment strategies [23] [24]. |

Frequently Asked Questions (FAQs)

Q1: What is the most effective strategy to prevent data inconsistencies across multiple trial sites?

A: The most effective strategy is a combination of technology and standardization [20].

- Implement EDC Systems: These digital systems reduce reliance on paper forms and incorporate built-in validation checks, improving data accuracy by over 30% [20].

- Adopt Standardized Data Models: Align data organization with CDISC standards, such as the Study Data Tabulation Model (SDTM), to ensure interoperability and streamline regulatory submissions [20].

- Establish Uniform SOPs: Create and enforce Standard Operating Procedures and data dictionaries for all sites to minimize site-to-site variability [20].

Q2: How can we proactively identify and handle missing data in clinical prediction models?

A: Proactive handling requires robust study design and statistical techniques.

- Targeted Validation Designs: For data sourced from Electronic Health Records (EHR), use statistically efficient validation studies. Methods like residual sampling can help select the most informative subset of patient records for manual chart review to recover missing data and correct errors [9].

- Employ Robust Statistical Methods: Utilize techniques like semiparametric maximum likelihood estimation to incorporate all available patient information, even when some data is missing [9].

- Apply Methods for New Patients: When applying a model to a new patient with missing data, use methods based on submodels, marginalization over missing variables, or imputation to generate a valid prediction [8].

Q3: What framework should we use to ensure data integrity from the start of a trial?

A: Embed data integrity into your processes by adhering to the ALCOA+ principles [25] [22]. This framework ensures data is:

- Attributable: Traceable to its source.

- Legible: Clearly documented.

- Contemporaneous: Recorded at the time it is generated.

- Original: The first recorded instance.

- Accurate: Correct and error-free. The "+" adds that data must be Complete, Consistent, Enduring, and Available [25] [22]. Implementing this framework requires clear policies, regular training, and technological solutions that enforce these principles by design [25].

Q4: Can AI and automation help with data quality, and what are the key challenges?

A: Yes, AI is transformative but requires careful implementation.

- Opportunities: AI can dramatically improve data quality and efficiency. It is used for automated data validation, predicting patient enrollment with 85% accuracy, and identifying data anomalies. AI integration can accelerate trial timelines by 30–50% [23] [21] [26].

- Challenges: Key challenges include algorithmic bias (if training data is flawed, AI may produce discriminatory outcomes), accountability issues when errors occur, and regulatory uncertainty [23] [26]. Ensuring AI systems are transparent, equitable, and used with human oversight is critical [23].

Q5: Our global trial faces varying data privacy laws. How can we ensure compliance?

A: Managing global data privacy requires a proactive and layered approach.

- Understand Key Regulations: Key frameworks include the EU's General Data Protection Regulation (GDPR) and the US Health Insurance Portability and Accountability Act (HIPAA). Be aware that laws in India, Brazil, and Canada also introduce unique requirements [20].

- Implement Strict Access Controls: Ensure data is accessible only to authorized personnel and is stored and transmitted securely [25] [20].

- Plan for Data Transfers: For cross-border data transfer under GDPR, mechanisms like Standard Contractual Clauses (SCCs) or local data storage may be necessary [20].

- Prioritize Consent Management: Implement clear processes for obtaining participant consent, detailing how their data will be used, stored, and shared, and allowing for easy withdrawal [23] [20].

Experimental Protocols for Data Validation

Protocol 1: Targeted Chart Review for EHR Data Enrichment

This protocol is designed to validate and enrich Electronic Health Record (EHR) data for research, which is often prone to missingness and errors [9].

- Objective: To ensure the quality and completeness of EHR data for a defined study (e.g., operationalizing a whole-person health measure like the allostatic load index).

- Preliminary Data Extraction: Extract a preliminary dataset from the EHR for the patient cohort.

- Patient Selection for Validation: Employ a targeted sampling strategy (e.g., residual sampling) on the preliminary data to identify the ~10% of patients whose records are most informative for validation. This maximizes efficiency versus random sampling [9].

- Chart Review Execution: For each selected patient record, manually review the original clinical charts (the source documents). This "enriched validation" protocol involves:

- Error Checking: Verify that the extracted EHR data matches the source document.

- Data Recovery: Actively search for and record any missing data points using auxiliary information found in the charts [9].

- Data Integration and Analysis: Update the master dataset with the validated and recovered data. Use robust statistical methods (e.g., semiparametric maximum likelihood estimation) that incorporate all available data, both validated and non-validated [9].

Protocol 2: FDA Validation Rules Compliance

This protocol ensures clinical trial data is compliant with FDA standards, which is mandatory for submission [22].

- Planning: Establish and document validation protocols. Determine which FDA validation rules (business rules, study data validator rules) and technical standards (e.g., CDISC formats) will be applied. Share these guidelines with all stakeholders, including external vendors [22].

- Implementation: Configure validation software (e.g., Pinnacle 21 Enterprise) to execute the planned checks automatically [22].

- Testing & Correction: Run the validation tools on the clinical datasets. Address all flagged issues:

- Errors: Critical issues that must be resolved before submission.

- Warnings: Issues that may need clarification but do not necessarily block submission [22].

- Ongoing Monitoring: Integrate validation checks throughout the trial lifecycle, not just at the end. This allows for early detection and correction of issues [22].

Workflow Visualization: Clinical Data Validation

The diagram below illustrates the core workflow for validating clinical data, from problem identification to analysis, incorporating strategies like targeted sampling.

Data Validation and Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Solutions for Managing Clinical Trial Data

| Tool / Solution | Primary Function | Relevance to Data Integrity |

|---|---|---|

| Electronic Data Capture (EDC) Systems [20] [27] | Digital platform for input, storage, and management of clinical trial data. | Reduces manual entry errors via built-in validation checks; provides real-time data access and audit trails. |

| AI-Powered Validation Tools [21] [27] | Automated software using artificial intelligence to check for data discrepancies and anomalies. | Identifies inconsistencies and patterns faster than manual methods, improving data cleaning efficiency. |

| Pinnacle 21 Enterprise [22] | A specialized software platform for clinical data validation. | Automates compliance checks against FDA validation rules and CDISC standards to prepare data for regulatory submission. |

| CDISC Standards (SDTM/ADaM) [20] | A set of standardized models for organizing and presenting clinical trial data. | Ensures data consistency and interoperability across studies and sites, which is critical for regulatory compliance. |

| Data Visualization Platforms (e.g., Tableau, Power BI) [27] | Tools that transform complex datasets into intuitive dashboards and visual reports. | Enables real-time monitoring of study progress and quick identification of trends and outliers for proactive decision-making. |

Building Your Validation Toolkit: Techniques for Robust Data Handling

FAQs on Core Data Validation Checks

1. What is the difference between the 'Presence' and 'Completeness' checks? While both deal with data existence, "Presence" is a record-level check verifying that a required data record exists at all. "Completeness" is an attribute-level check, measuring the percentage of fields populated with non-null values within a record against the expectation of 100% fulfillment [28]. For example, a patient's record might be present, but critical attributes like phone number or email address could be missing, rendering the data incomplete for communication purposes [28].

2. Why is the 'Uniqueness' check critical in patient datasets? The Uniqueness dimension ensures that each patient or event is recorded only once. Violations can lead to duplicate records for a single patient (e.g., "Thomas" and "Tom") [28]. This can cause misdiagnoses, double-counting in reports, and fragmented clinical information, severely impacting the integrity of research analyses and patient safety [28] [29].

3. What are common technical causes for 'Completeness' errors? Common technical root causes include ETL (Extract, Transform, Load) process failures, such as data truncation during loading if target attributes are not large enough to capture the full length of the data values [28]. Other causes include system interoperability issues and human error during manual data entry [29].

4. How can I validate the 'Accuracy' of a patient's data? Data Accuracy is the degree to which data correctly represents the real-world object or event. It can be measured by comparing data values to a reliable reference source. For instance, you could validate a patient's diagnostic code against the latest version of the ICD (International Classification of Diseases) standard [28].

Troubleshooting Guides

Issue 1: High Number of Duplicate Patient Records

- Problem: One physical patient is represented by multiple records in the system, often with slight variations in identifying information (e.g., "Jon Snow" vs. "Jonathan Snow") [28].

- Solution:

- Implement Identity Resolution Algorithms: Use deterministic or probabilistic matching on multiple attributes.

- Create a Single Patient View: Consolidate duplicates by merging records and retaining the most accurate and recent information from all sources.

- Establish Data Entry Protocols: Enforce standardized formats for names and addresses at the point of entry to prevent future duplicates [30].

Issue 2: Persistent Missing Values in Critical Patient Attributes

- Problem: Essential fields like "Allergies" or "Primary Care Physician" are frequently found to be empty, compromising data completeness [28] [29].

- Solution:

- Enforce Validation at Source: Configure your data entry systems (EHR/EDC) to mandate the completion of critical fields before saving a record [30].

- Implement Automated Data Quality Rules: Create database rules or scripts that regularly scan for and report on records with null values in key columns.

- Staff Training and Clear Definitions: Ensure all personnel understand the importance of each field. Maintain a centralized data dictionary that clearly defines each attribute to avoid confusion [30].

Quantitative Data on Data Quality Dimensions

The table below summarizes the core data quality dimensions relevant to patient dataset validation, their definitions, and examples of common issues [28].

| Data Quality Dimension | Core Question | Example of Data Issue |

|---|---|---|

| Presence / Completeness | Is all the expected data available and populated? | A patient record exists, but the email address and phone number fields are left blank, making follow-up impossible [28]. |

| Uniqueness | Is each entity or event recorded only once? | A single patient is recorded twice, initially as "Thomas" and later by a nickname "Tom," leading to double-counting [28]. |

| Accuracy | Does the data correctly reflect reality? | A patient's weight is recorded as 200 kg due to a data entry error, when their actual weight is 80 kg [28]. |

| Consistency | Does data remain uniform across systems and over time? | The order dataset shows one gown ordered, but the shipping dataset for the same order indicates three gowns to be shipped [28]. |

| Validity | Does the data conform to the required format, type, or range? | A patient's age is entered as "fifty" instead of the numeric value "50," violating the field's data type rule [28]. |

Experimental Protocol for Data Validation

Objective: To systematically identify and measure violations of Presence, Completeness, and Uniqueness in a given patient dataset.

Materials:

- Source Patient Dataset: The dataset to be validated (e.g., an export from an Electronic Health Record system).

- Reference Data: A trusted source for validation, such as an official patient master index or a previously validated dataset.

- Data Processing Environment: A SQL database, Python/Pandas script, or a specialized data quality tool (e.g., an AI-powered data mapping tool) [30].

- Validation Framework Scripts: Pre-written queries or functions to execute the checks below.

Methodology:

- Presence Check:

- Reconcile the record count of your source dataset against a reference dataset or a known baseline.

- SQL Example:

SELECT COUNT(*) FROM source_patient_data; - Compare this count to the count in the reference system. A discrepancy indicates missing or extra records [28].

Completeness Check:

- For each critical attribute (e.g.,

patient_id,last_name,email), calculate the percentage of non-null and non-empty values. - SQL Example:

SELECT (COUNT(patient_id) / COUNT(*)) * 100 AS id_completeness_percent FROM source_patient_data; - Perform this for all key columns to identify fields with unacceptable levels of missingness [28].

- For each critical attribute (e.g.,

Uniqueness Check:

- Check for duplicate values in attributes that must be unique, such as

patient_idor a composite key ofssn,last_name, anddate_of_birth. - SQL Example:

SELECT patient_id, COUNT(*) FROM source_patient_data GROUP BY patient_id HAVING COUNT(*) > 1; - This query will return all patient IDs that are duplicated within the dataset [28].

- Check for duplicate values in attributes that must be unique, such as

Analysis and Reporting:

- Compile the results from all checks into a validation report.

- Quantify the error rates for each dimension (e.g., "Completeness for 'email' field is 85%").

- Document specific record IDs or examples for each type of failure to facilitate root cause analysis and data correction.

Research Reagent Solutions

The table below lists key tools and conceptual "reagents" essential for conducting rigorous data validation in a research context.

| Reagent / Tool | Function in Validation Experiment |

|---|---|

| SQL Database System | The primary environment for running structured queries to perform record counts, null checks, and identify duplicates across large datasets. |

| Reference Data / Master Patient Index | Serves as the "gold standard" or control group against which the accuracy and presence of records in the test dataset are validated [28]. |

| Data Profiling Tool | Software that automatically scans data to uncover patterns, statistics, and anomalies, providing a first-pass assessment of completeness and uniqueness [30]. |

| AI-Powered Data Mapping Tool | Uses machine learning to automatically detect, map, and align data formats from multiple sources, helping to identify inconsistencies and duplicates in complex datasets [30]. |

Data Validation Workflow Diagram

The diagram below visualizes the sequential and iterative process of applying core validation checks to a patient dataset.

Patient Record Consistency Diagram

This diagram illustrates the logical relationships and potential consistency issues between different subject areas within a patient data ecosystem, such as between ordering and shipping systems.

FAQs on Data Quality Fundamentals

Q1: What are the core characteristics of high-quality data in a research context? High-quality data is defined by several key characteristics, often measured as metrics during profiling [31]:

- Validity: Data adheres to predefined rules and standards (e.g., a patient's age is a plausible number like "150" would be invalid) [31].

- Accuracy: Data correctly represents the real-world values it is intended to capture (e.g., a lab result is recorded as "5.0 mmol/L" and not "50 mmol/L") [31].

- Completeness: All necessary data is present, with no missing values in critical fields that could bias analysis [31].

- Consistency: Data is coherent and does not contradict itself across different systems or time points (e.g., a patient's status is not "In Progress" in one record and "Completed" in another for the same visit) [31].

- Uniformity: Data follows a standard format, facilitating comparison and analysis (e.g., all dates are in the YYYY-MM-DD format) [32] [31].

Q2: Our clinical data has many missing values. What is the best approach to handle this? The approach depends on the pattern and extent of the missingness. Common methodologies include [32] [31]:

- Removal: Discard records with missing values if the amount is minimal and unlikely to affect overall results.

- Imputation: Fill missing values with a statistical estimate like the mean, median, or mode. More advanced techniques include K-Nearest Neighbors (KNN) or Multiple Imputation by Chained Equations (MICE), which provide more accurate estimates by considering relationships between variables [32]. This is crucial for building complete predictive models where removing data would cause significant information loss [32].

Q3: How can we automatically ensure text labels on our data visualizations (e.g., bar charts) are always readable? You can implement an algorithm that dynamically selects the text color based on the background color to ensure high contrast. In tools like R, this can be achieved by using the

prismatic::best_contrast()function within theggplot2plotting system to automatically choose white or black text for optimal readability [33].

Troubleshooting Guides

Guide 1: Resolving Duplicate Patient Records

- Problem: Suspected duplicate records for the same patient across merged datasets from multiple clinical sites, leading to incorrect patient counts and skewed analysis.

- Investigation: Profile the data to identify records with similar names, birth dates, or patient IDs. Look for variations like "John Smith," "J. Smith," and "Johnathan Smith" that may refer to the same individual [32].

- Solution: Implement a deduplication process with the following steps [32]:

- Exact Matching: Identify and merge records identical across all key fields.

- Fuzzy Matching: Use algorithms to detect non-exact matches based on similarities.

- Confidence Scoring: Assign a score to potential duplicates; automatically merge high-confidence matches and flag low-confidence ones for manual review.

- Validation: Test deduplication rules on a sample dataset before full-scale application.

Guide 2: Correcting Inconsistent Data Formats from Multi-Site Trials

- Problem: Inconsistent formatting of dates, numerical values, and categorical responses (e.g., "N/A", "Not Applicable") from different sources, making aggregation and analysis impossible [31].

- Investigation: Use data profiling to uncover the different formats and representations present in the dataset.

- Solution: Apply data standardization [32]:

- Document Rules: Create a comprehensive guide for standardization rules (e.g., all dates must be in ISO 8601 format YYYY-MM-DD, all "N/A" variants become "Not Applicable") [32].

- Automate Transformation: Use data quality tools to enforce these rules across the dataset.

- Preserve Originals: If feasible, store the original, unstandardized data in a separate field for auditability [32].

Guide 3: Identifying and Handling Anomalous Laboratory Readings

- Problem: Potential outliers in laboratory data that could distort statistical analysis or indicate data entry errors.

- Investigation: Use visual and numerical methods for outlier detection [32] [31]:

- Visualization: Create box plots, histograms, or scatterplots to spot extreme values.

- Statistical Methods: Apply Z-scores or the Interquartile Range (IQR) method to mathematically flag outliers.

- Solution: Treat outliers based on context [32] [31]:

- Consult Experts: Before removal, determine if an outlier is a legitimate, albeit rare, occurrence (e.g., a genuinely abnormal lab result).

- Take Action: Choose to remove, cap (replace with a max/min value), or transform the outlier. In cases like fraud detection, the outlier itself may be the subject of interest.

Experimental Protocols for Data Validation

Protocol 1: Quantitative Data Quality Assessment

Purpose: To establish a baseline measurement of data health for a given dataset using standardized metrics [31].

Methodology:

- Inspection and Profiling: Use data profiling tools to scan the dataset and compile statistics [31].

- Metric Calculation: Calculate the following key metrics for critical data fields. The results should be summarized in a table for easy comparison and monitoring.

Table: Data Quality Assessment Metrics

| Quality Dimension | Metric | Calculation Method | Target Threshold |

|---|---|---|---|

| Completeness | Percentage of non-null values | (Number of non-null entries / Total entries) * 100 | > 99% for critical fields |

| Validity | Percentage of valid entries | (Number of entries adhering to rules / Total entries) * 100 | 100% |

| Accuracy | Error rate | (Number of incorrect entries / Total entries) * 100 | < 0.1% |

| Consistency | Number of logical conflicts | Count of records violating cross-field rules (e.g., discharge date before admission) | 0 |

| Uniqueness | Percentage of duplicate records | (Number of duplicate records / Total records) * 100 | 0% |

* Note: Measuring accuracy often requires verification against an external, trusted source of truth [31].

Protocol 2: Handling Missing Data in Longitudinal Studies

Purpose: To methodically address missing data points in time-series or repeated-measures data to prevent bias in analysis.

Methodology:

- Pattern Analysis: Determine the mechanism of missingness: Missing Completely at Random (MCAR), Missing at Random (MAR), or Not Missing at Random (MNAR). This guides the choice of imputation method [32].

- Technique Selection: Choose an appropriate imputation method based on the pattern and data structure [32]:

- For MCAR/MAR: Use Multiple Imputation (e.g., with R's

MICEpackage) or K-Nearest Neighbors (KNN) imputation to create several complete datasets and account for uncertainty. - For Numerical Variables: Mean/Median imputation is fast but can distort variance.

- For Categorical Variables: Mode imputation is common.

- For MCAR/MAR: Use Multiple Imputation (e.g., with R's

- Validation: Use cross-validation techniques to assess the performance and impact of the chosen imputation method on downstream analysis [32].

Data Quality Workflow Visualization

Data Quality Management Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools for Data Profiling and Cleansing

| Tool Name | Type / Category | Primary Function in Research |

|---|---|---|

| Great Expectations [34] | Open-source Validation Framework | Enforces data quality by allowing teams to define, document, and automate "expectations" (rules) that data must meet, integrating directly into CI/CD pipelines. |

| OvalEdge [34] | Unified Data Governance Platform | Combines data cataloging, lineage visualization, and quality monitoring to provide a single source of truth, automate anomaly detection, and assign data ownership. |

| Soda Core & Soda Cloud [34] | Data Quality Monitoring | Provides a framework for defining data tests as code (Soda Core) and a cloud platform for real-time monitoring, anomaly detection, and collaborative alerting (Soda Cloud). |

| Informatica Cloud Data Quality [34] [35] | Enterprise Data Quality | Offers deep capabilities for profiling, standardization, and deduplication with prebuilt rules and AI, often used in regulated environments for governance. |

| Monte Carlo [34] | Data Observability Platform | Uses AI to automatically detect anomalies in data freshness, volume, and schema, mapping lineage to identify root causes of data incidents and reduce downtime. |

| Genedata Profiler [36] | Specialized Multi-Omics Platform | An end-to-end enterprise platform for securely integrating, harmonizing, and analyzing complex translational and clinical data, such as molecular and biomarker data, in a validation-ready environment. |

Foundational Concepts: Understanding Missing Data Mechanisms

What are the three primary types of missing data, and why are these distinctions critical for pre-clinical research?

Incomplete data presents a significant challenge in pre-clinical studies, and proper handling begins with understanding the underlying missing data mechanism. Rubin (1976) classified these mechanisms into three categories that determine the statistical methods required for valid inference [37].

Missing Completely at Random (MCAR): The probability of data being missing is unrelated to any observed or unobserved variables [37] [38]. For example, a sample might be lost due to a freezer malfunction, where the missingness is purely random [39]. Under MCAR, the complete cases remain a random subset of the original sample, making analysis less complex but often unrealistic in practice [37] [40].

Missing at Random (MAR): The probability of missingness depends on observed variables but not on the unobserved missing values themselves [37] [39]. For instance, in a study where older mice are less likely to have a specific biomarker measured due to procedural difficulties, the missingness relates to the observed variable (age) rather than the unobserved biomarker value itself [38]. Modern missing data methods generally rely on the MAR assumption [37].

Missing Not at Random (MNAR): The probability of missingness depends on the unobserved missing values themselves [37] [38]. This occurs when, for example, compounds with higher toxicity levels (the missing values) are less likely to have complete assay results because they damaged the testing equipment [37]. MNAR is the most complex scenario and requires specialized handling techniques [37].

How can I determine whether my pre-clinical data are MCAR, MAR, or MNAR?

Diagnosing the missing data mechanism involves both statistical tests and logical deduction based on study design and data collection procedures.

Statistical Tests for MCAR: Formal tests like Little's MCAR test can examine whether missingness patterns are completely random across all variables. A significant p-value suggests violation of the MCAR assumption [40].

Pattern Examination: Conduct descriptive analyses comparing records with and without missing values across other measured variables. Systematic differences suggest data are not MCAR [37] [38]. For example, if animals with higher baseline weight measurements are more likely to have missing endpoint data, this suggests MAR, with weight influencing missingness.

Study Process Knowledge: Understanding data collection protocols is crucial. If research staff document reasons for missing measurements (e.g., equipment failure, sample degradation, technical errors), these records can help classify the missing mechanism [38].

The following diagram illustrates the logical relationship between the missing data mechanisms and their defining characteristics:

Troubleshooting Guides: Addressing Specific Experimental Scenarios

How should I handle missing biomarker data in longitudinal animal studies?

Longitudinal pre-clinical studies frequently encounter missing biomarker measurements at various timepoints. The appropriate handling method depends on the suspected missing mechanism.

Scenario 1: Sporadic missing across timepoints without pattern

- Suspected mechanism: MCAR

- Recommended approach: Multiple imputation using time as a variable [38] [41]

- Protocol:

- Structure data in long format with time as a variable

- Use multiple imputation methods that incorporate the longitudinal structure

- Include baseline characteristics and previous measurements as predictors

- Pool results across imputed datasets for final analysis

Scenario 2: Increased missingness in later study phases particularly for specific treatment groups

- Suspected mechanism: MAR (missingness related to treatment group and time)

- Recommended approach: Maximum likelihood estimation or multiple imputation including treatment-time interactions [38] [41]

- Protocol:

- Include treatment group, time, and their interaction in imputation models

- Incorporate auxiliary variables that may predict missingness

- Consider sensitivity analyses to test robustness of findings

Scenario 3: Missing values concentrated among extremely high or low measurements

- Suspected mechanism: MNAR

- Recommended approach: Pattern-mixture models or selection models [37]

- Protocol:

- Document evidence suggesting MNAR (e.g., assay detection limits)

- Implement MNAR-specific methods with explicit modeling of missingness mechanism

- Conduct comprehensive sensitivity analyses under different MNAR assumptions

What strategies effectively address missing data in high-content screening assays?

High-content screening generates multidimensional data where missing values can arise from technical failures or quality control exclusions.

Prevention Strategies:

- Implement randomized plate designs to distribute potential technical artifacts

- Include quality control wells in each plate to monitor assay performance

- Establish automated quality metrics to flag potentially unreliable measurements [38]

Imputation Methods for Multiparametric Data:

- k-Nearest Neighbors (kNN) Imputation: Identify compounds with similar phenotypic profiles and impute missing values based on nearest neighbors [41]

- Iterative Imputation: Use multivariate imputation by chained equations (MICE) to leverage correlations between parameters [41]

- Hybrid Methods: Combine clustering with imputation, such as the FCKI method that integrates fuzzy c-means, kNN, and iterative imputation [41]

The following workflow diagram illustrates the recommended process for handling missing data in high-content screening:

Comparative Methodologies: Structured Analysis of Imputation Techniques

What are the advantages and limitations of different imputation methods for pre-clinical data?

Table 1: Comparison of Missing Data Handling Methods for Pre-Clinical Research

| Method | Best For Mechanism | Advantages | Limitations | Software Implementation |

|---|---|---|---|---|

| Complete Case Analysis | MCAR | Simple, unbiased if truly MCAR | Inefficient, biased if not MCAR | Standard statistical packages |

| Mean/Median Imputation | MCAR | Simple, preserves sample size | Distorts distribution, underestimates variance | Standard statistical packages |

| k-Nearest Neighbors (kNN) | MAR | Uses local similarity structure | Computationally intensive for large datasets [41] | Python: sklearn, R: VIM |

| Multiple Imputation | MAR | Accounts for imputation uncertainty, provides valid inference | Complex implementation, requires careful model specification [38] | R: mice, Python: sklearn IterativeImputer |

| Maximum Likelihood | MAR | Efficient, uses all available data | Requires specialized software, computationally intensive [38] | R: nlme, OpenMx |

| Hybrid Methods (FCKI) | MAR, MNAR | High accuracy, handles complex patterns [41] | Complex implementation, computationally demanding [41] | Custom implementation required |

How do I implement multiple imputation correctly for pre-clinical data validation studies?

Proper implementation of multiple imputation requires careful attention to model specification and analysis workstream.

Inclusion of Auxiliary Variables: Include variables that are associated with missingness or with the incomplete variables, even if they are not in the final analysis model. This improves the MAR assumption and imputation accuracy [38].

Model Specification: Use appropriate imputation models for different variable types (linear regression for continuous, logistic for binary, multinomial for categorical). For longitudinal data, include time structure and random effects if appropriate.

Number of Imputations: Current recommendations suggest 20-100 imputations depending on the percentage of missing data, with higher missing rates requiring more imputations.

Analysis and Pooling: Analyze each imputed dataset separately using standard complete-data methods, then combine results using Rubin's rules, which account for both within- and between-imputation variability.

Advanced Experimental Protocols: Implementing Cutting-Edge Imputation Strategies

Protocol: Hybrid missing data imputation integrating fuzzy clustering and kNN (FCKI method)

The FCKI method represents an advanced approach that combines multiple algorithms to improve imputation accuracy, particularly for complex pre-clinical datasets with nontrivial missing patterns [41].

Step 1: Data Preprocessing

- Standardize all continuous variables to mean 0 and standard deviation 1

- Convert categorical variables to appropriate numeric representations

- Identify missing data patterns and percentages by variable

Step 2: Fuzzy Clustering Partitioning

- Apply fuzzy c-means clustering to partition dataset into overlapping clusters

- Determine optimal number of clusters using validity indices

- Each record belongs to multiple clusters with different membership degrees

Step 3: Local kNN Imputation within Clusters

- For each missing value, identify the most relevant cluster based on membership degrees

- Within selected cluster, automatically determine optimal k value using similarity measures

- Find k-nearest neighbors based on available variables using appropriate distance metrics

Step 4: Iterative Imputation Refinement

- Apply iterative imputation using the global correlation structure among selected neighbors

- Cycle through missing variables, updating imputations in each iteration

- Continue until convergence criteria are met (minimal change in imputed values)

Step 5: Validation and Sensitivity Analysis

- Assess imputation quality using root mean square error (RMSE) on artificially masked data

- Compare distribution of imputed versus observed values

- Conduct sensitivity analysis to evaluate robustness to missing data assumptions

Protocol: Sensitivity analysis for potential MNAR mechanisms

When MNAR cannot be ruled out, sensitivity analysis is essential to evaluate how conclusions might change under different missingness assumptions.

Step 1: Pattern-Mixture Model Framework

- Stratify data based on missingness patterns

- Specify different distributional assumptions for each pattern

- Incorporate uncertainty about MNAR mechanism through prior distributions

Step 2: Selection Model Implementation

- Model the joint distribution of the data and missingness mechanism

- Specify selection function that links probability of missingness to unobserved values

- Estimate parameters using maximum likelihood or Bayesian methods

Step 3: Multiple Imputation under MNAR

- Implement MNAR-based imputation models that explicitly model the missingness mechanism

- Specify delta parameters representing the influence of unobserved values on missingness

- Vary delta parameters across plausible ranges to assess robustness

The following diagram illustrates the sensitivity analysis process for MNAR data:

Research Reagent Solutions: Essential Materials for Missing Data Studies

Table 2: Key Computational Tools for Implementing Advanced Imputation Methods

| Tool/Resource | Primary Function | Application Context | Implementation Considerations |

|---|---|---|---|

| R mice Package | Multiple imputation by chained equations | Flexible imputation of mixed data types | Supports various imputation models; requires programming expertise |

| Python SciKit-Learn IterativeImputer | Multivariate imputation | Python-based data analysis workflows | Integrates with scikit-learn pipeline; limited to continuous data |

| Fuzzy C-Means Algorithms | Soft clustering for hybrid imputation | Complex datasets with overlapping patterns | Available in R (ppclust) and Python (skfuzzy); requires parameter tuning |

| kNN Imputation Implementations | Nearest neighbor-based imputation | Datasets with local similarity structures | Sensitive to distance metrics and k selection; available in most platforms |

| Maximum Likelihood Estimation Software | Direct likelihood-based analysis | Longitudinal and multilevel data | Implemented in specialized packages (OpenMx, nlme); model specification critical |

| Sensitivity Analysis Tools | MNAR mechanism evaluation | Studies with potential MNAR data | Often requires custom programming; available in R (brms, mitools) |

Frequently Asked Questions: Addressing Common Implementation Challenges

What percentage of missing data is acceptable in pre-clinical studies?

There is no universal threshold for acceptable missing data, as the impact depends on the missingness mechanism, analysis method, and study objectives. However, these guidelines apply:

- <5% missingness: Generally minimal impact regardless of mechanism or method

- 5-20% missingness: Requires appropriate statistical methods (multiple imputation, maximum likelihood)

- >20% missingness: Substantial concerns about precision and potential bias; requires robust methods and comprehensive sensitivity analyses

- >40% missingness: Severe concerns about reliability regardless of method used

The critical factor is not merely the percentage missing, but whether the missingness mechanism has been properly addressed and the analysis method provides valid statistical inference [37] [38].

How can I minimize missing data in prospective pre-clinical studies?

Prevention represents the optimal strategy for handling missing data. Implement these practices in study design and conduct:

- Protocol Design: Include explicit procedures for data collection, handling of missed assessments, and contingency plans for technical failures [38]

- Training: Standardize procedures across all technical staff to reduce operator-dependent missingness [38]

- Pilot Studies: Conduct small-scale pilot studies to identify potential sources of missing data before main study initiation [38]

- Quality Control Systems: Implement real-time quality monitoring to detect systematic missingness patterns early

- Automated Data Capture: Where possible, use automated systems to reduce manual data entry errors

When should I consult a statistician about missing data in my validation research?

Engage statistical expertise in these scenarios:

- Planning Stage: When designing studies with potential for missing data

- >10% missingness: When any variable exceeds 10% missingness

- Complex Mechanisms: When missingness may be MNAR or have complex patterns

- Regulatory Submissions: When studies will support regulatory submissions requiring complete data justification

- Novel Methods: When considering advanced imputation methods beyond simple approaches

Early statistical consultation can improve study design, minimize missing data, and ensure appropriate analysis methods.

Leveraging Automated Tools and AI for Real-Time Validation in High-Throughput Experiments

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Our AI model's performance is degrading over time with new experimental data. What could be causing this and how can we address it? A: This is a classic case of model drift, a common challenge in adaptive AI systems. To address this:

- Implement a Predetermined Change Control Plan (PCCP) as outlined in recent FDA guidance to manage controlled model updates without complete revalidation [42].

- Establish continuous performance monitoring with statistical control charts to detect performance decay early [42].

- Maintain version control for both your AI models and the validation datasets to track performance changes systematically [42].

Q2: We're experiencing overwhelming data volumes from our HCS (High Content Screening) systems. How can we manage the data analysis and storage burden? A: This is a frequently reported challenge in HTS laboratories [43]. Solutions include:

- Deploy specialized database management systems like the Cellomics Store, an enterprise-class relational database specifically designed to manage, track, and archive large volumes of HCS data and images [43].

- Implement automated data reduction pipelines that extract key features in real-time, similar to the AMDEE project's event-driven data infrastructure [44].

- Utilize AI-powered analysis tools like Cellomics BioApplications which provide sophisticated image analysis algorithms to make complex measurements without requiring imaging expertise [43].

Q3: How can we validate AI systems that continuously learn and adapt, given our traditional validation frameworks are designed for static software? A: This requires shifting from static to continuous validation approaches [42]:

- Adopt a risk-based validation framework that integrates traditional deterministic validation with agile, data-centric controls [42].

- Implement the ALCOA++ principles (Attributable, Legible, Contemporaneous, Original, Accurate, Complete, Consistent, Enduring, Available) for AI/ML systems, ensuring data integrity throughout the model lifecycle [42].