Beyond Tanimoto: The Evolution of Active Learning and Molecular Similarity in Modern Drug Discovery

This article explores the transformative integration of active learning with advanced molecular similarity metrics, moving beyond traditional Tanimoto coefficients to accelerate drug discovery.

Beyond Tanimoto: The Evolution of Active Learning and Molecular Similarity in Modern Drug Discovery

Abstract

This article explores the transformative integration of active learning with advanced molecular similarity metrics, moving beyond traditional Tanimoto coefficients to accelerate drug discovery. We examine the foundational shift from structure-based to function-aware similarity indices and their role in guiding iterative experimentation. The content details cutting-edge methodological frameworks, including paired-molecule learning and evolutionary algorithms, that efficiently navigate ultra-large chemical spaces. Practical strategies for overcoming data imbalance and ensuring model generalizability are discussed, supported by comparative validation across real-world case studies targeting proteins like SARS-CoV-2 Mpro and CDK2. Aimed at researchers and drug development professionals, this analysis synthesizes key advancements and future directions for deploying these powerful computational strategies in biomedical research.

From Structural Fingerprints to Functional Predictions: The Foundation of Molecular Similarity

The Tanimoto Coefficient (TC), particularly when applied to molecular fingerprints, has served as a cornerstone of ligand-based virtual screening for decades. Its simplicity, interpretability, and computational efficiency have cemented its status as a default similarity metric in cheminformatics. The underlying principle of ligand-based discovery—that structurally similar molecules are likely to exhibit similar biological activities—relies heavily on such similarity measures to identify novel hit compounds. However, growing evidence from recent computational studies reveals critical blind spots in TC-driven approaches, constraining their ability to identify functionally active but structurally diverse chemotypes. As the field of drug discovery increasingly prioritizes the identification of novel scaffolds to overcome resistance and explore new chemical space, understanding these limitations becomes paramount. This analysis synthesizes current research to objectively quantify the Tanimoto Coefficient's performance gaps, compare it with emerging machine learning and alternative similarity measures, and provide a roadmap for its contextually appropriate use within modern, active-learning-driven discovery frameworks.

A primary and quantifiable limitation of structural similarity metrics like TC is their failure to capture many functionally related compounds. A recent 2025 study rigorously demonstrated that approximately 60% of similarly bioactive ligand pairs in the ChEMBL database exhibit a Tanimoto Coefficient below 0.30, a threshold typically considered to indicate significant structural dissimilarity [1]. This statistic reveals a major blind spot, suggesting that an over-reliance on TC would miss the majority of active compounds that are structurally dissimilar to a known active ligand. This blind spot directly constrains hit finding in virtual screening campaigns, particularly for targets where diverse chemotypes can elicit similar biological responses.

Quantitative Performance Comparison of Similarity Metrics

Performance Benchmarks in Virtual Screening

The following table summarizes the performance of the Tanimoto Coefficient against modern machine learning-based and alternative similarity metrics in key drug-discovery tasks.

Table 1: Performance Comparison of Similarity Metrics in Virtual Screening

| Metric / Model | Key Feature | Performance Benchmark | Key Limitation |

|---|---|---|---|

| Tanimoto Coefficient (TC) | 2D structural similarity based on molecular fingerprints | Mean rank of next active given a known active: 45.2 (ADRA2B target) [1] | Struggles to identify structurally dissimilar bioactive compounds (60% of bioactive pairs have TC < 0.30) [1] |

| Bioactivity Similarity Index (BSI) | Machine learning model predicting shared protein target binding | Mean rank of next active: 3.9 (ADRA2B target). Top 2% enrichment factor outperforms TC [1] | Requires training data; group-specific models need sufficient target-specific bioactivity data [1] |

| Baroni-Urbani-Buser (BUB) | Alternative binary similarity coefficient for interaction fingerprints | Identified as a top-performing alternative to TC for protein-ligand interaction fingerprints [2] | Less familiar to researchers; requires specialized implementation [2] |

| ChemBERTa (Cosine Similarity) | Molecular embedding from a transformer model | Mean rank of next active: 54.9 (ADRA2B target) [1] | Underperforms compared to TC and BSI in retrospective screening [1] |

| CLAMP (Cosine Similarity) | Molecular embedding from a specialized model | Mean rank of next active: 28.6 (ADRA2B target) [1] | Better than ChemBERTa but still significantly outperformed by BSI [1] |

Comparative Performance of Alternative Similarity Coefficients

Beyond machine learning models, the evaluation of alternative binary similarity coefficients for specialized tasks like analyzing protein-ligand interaction fingerprints (IFPs) further contextualizes the Tanimoto Coefficient's performance. A large-scale comparison of 44 similarity metrics evaluated their performance in virtual screening scenarios across ten protein targets using metrics like AUC values and the sum of ranking differences (SRD) [2].

Table 2: Alternative Similarity Coefficients for Interaction Fingerprints

| Similarity Coefficient | Type | Description | Performance Note |

|---|---|---|---|

| Tanimoto (JT) | Asymmetric (A) | ( JT = \frac{a}{a + b + c} ) | Common baseline; viable but alternatives identified [2] |

| Simple Matching (SM) | Symmetric (S) | ( SM = \frac{a + d}{p} ) | Considers shared absence of features (d) [2] |

| Baroni-Urbani-Buser (BUB) | Intermediate (I) | ( BUB = \frac{\sqrt{ad} + a}{\sqrt{ad} + a + b + c} ) | Top performer, good balance [2] |

| Hawkins–Dotson (HD) | Intermediate (I) | ( HD = \frac{1}{2} \left( \frac{a}{a + b + c} + \frac{d}{b + c + d} \right) ) | Good performance for interaction fingerprints [2] |

This research concluded that while Tanimoto is a viable metric, the Baroni-Urbani-Buser (BUB) and Hawkins–Dotson (HD) coefficients often represent superior choices for comparing interaction fingerprints [2]. The optimal metric can also depend on specific IFP configuration, such as the use of general interaction definitions and filtering rules [2].

Experimental Protocols for Benchmarking Similarity Methods

Protocol 1: Evaluating Bioactivity-Based Similarity (BSI)

The development and validation of the Bioactivity Similarity Index (BSI) provide a robust protocol for benchmarking any similarity method against a bioactivity ground truth [1].

- Objective: To train and evaluate a machine learning model that predicts the probability of two molecules sharing a protein target, moving beyond structural similarity.

- Training Data: Bioactivity data from ChEMBL, organized by protein families from the Pfam database.

- Cross-Validation: Leave-one-protein-out cross-validation is used to ensure models generalize to proteins not seen during training.

- Model Training: Models are trained on pairs of molecules known to bind the same target (positive pairs) and pairs known to bind different targets (dissimilar pairs). The model learns complex relationships between molecular features and bioactivity outcomes that are non-obvious from structure alone.

- Performance Evaluation:

- Enrichment Factor (EF₂%): Measures the model's ability to rank true active pairs highly within the top 2% of screened candidates.

- Retrospective Virtual Screening: In a simulated screen for the ADRA2B target, the mean rank of the next active molecule, given a known active, was calculated. BSI improved this rank to 3.9 from 45.2 with TC [1].

Protocol 2: Benchmarking Similarity Coefficients for Interaction Fingerprints

This protocol assesses the performance of different similarity coefficients for quantifying the similarity of binding poses [2].

- Objective: To identify the most effective similarity metric for comparing protein-ligand interaction fingerprints (IFPs) in a virtual screening context.

- Data Curation: A dataset of protein-ligand complexes for ten diverse protein targets (e.g., from DUD datasets) is prepared. A reference complex with a known active ligand is selected for each target.

- Fingerprint Generation: Interaction fingerprints (e.g., using a SIFt-like method) are generated for the reference ligand and a set of query molecules (including known actives and decoys) from their docked poses. Each bit represents a specific interaction type with a specific protein residue.

- Similarity Calculation: A wide array of similarity coefficients (Tanimoto, BUB, HD, etc.) is used to calculate the similarity between the reference IFP and each query IFP.

- Performance Analysis:

- Primary Metric: Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve to evaluate the ability of each metric to distinguish active from inactive compounds.

- Statistical Ranking: The Sum of Ranking Differences (SRD) algorithm is used to compare the consistency of all metrics against an ideal reference, which is a data fusion of all metrics. This identifies the top-performing coefficients [2].

The Evolving Toolkit: Integrating Tanimoto with Modern AI Frameworks

Research Reagent Solutions for Advanced Similarity Screening

Table 3: Essential Tools for Modern Similarity-Based Discovery

| Tool / Resource | Type | Function in Research | Access |

|---|---|---|---|

| FPKit | Software Package | Calculates various similarity measures and filters interaction fingerprints [2] | Open-source (Python) [2] |

| BSI (Bioactivity Similarity Index) | Machine Learning Model | Predicts functional similarity for ligand discovery, complementing TC [1] | Open-source (GitHub) [1] |

| REvoLd | Evolutionary Algorithm | Screens ultra-large make-on-demand libraries using flexible docking [3] | Within Rosetta software suite [3] |

| CTAPred | Command-Line Tool | Predicts protein targets for natural products using similarity searching [4] | Open-source (GitHub) [4] |

| Unified AL Framework | Active Learning Platform | Integrates semi-empirical calculations with adaptive screening for photosensitizer design [5] | Open-source tools and data [5] |

Integration with Active Learning and Evolutionary Algorithms

The future of ligand-based discovery lies in moving beyond static comparisons to dynamic, adaptive screening systems. Within active learning (AL) frameworks, similarity metrics can play a role in guiding the iterative selection of informative molecules for expensive calculations or experiments [5].

For instance, a unified AL framework for photosensitizer design integrates a graph neural network surrogate model with acquisition strategies that balance exploration (diversity-based) and exploitation (property-based) [5]. In such a framework, a pure TC-based search would be a weak exploitation strategy. In contrast, the AL framework uses ensemble-based uncertainty quantification to select molecules that are most informative for the model, leading to more data-efficient discovery [5].

Similarly, for structure-based screening, evolutionary algorithms like REvoLd efficiently navigate ultra-large combinatorial libraries (e.g., Enamine REAL space) without exhaustive enumeration [3]. REvoLd uses flexible docking with RosettaLigand as a fitness function and incorporates crossover and mutation steps to evolve promising ligands, demonstrating hit rate improvements by factors of 869 to 1622 compared to random selection [3]. This represents a powerful alternative to similarity-based screening for exploring vast synthetic spaces.

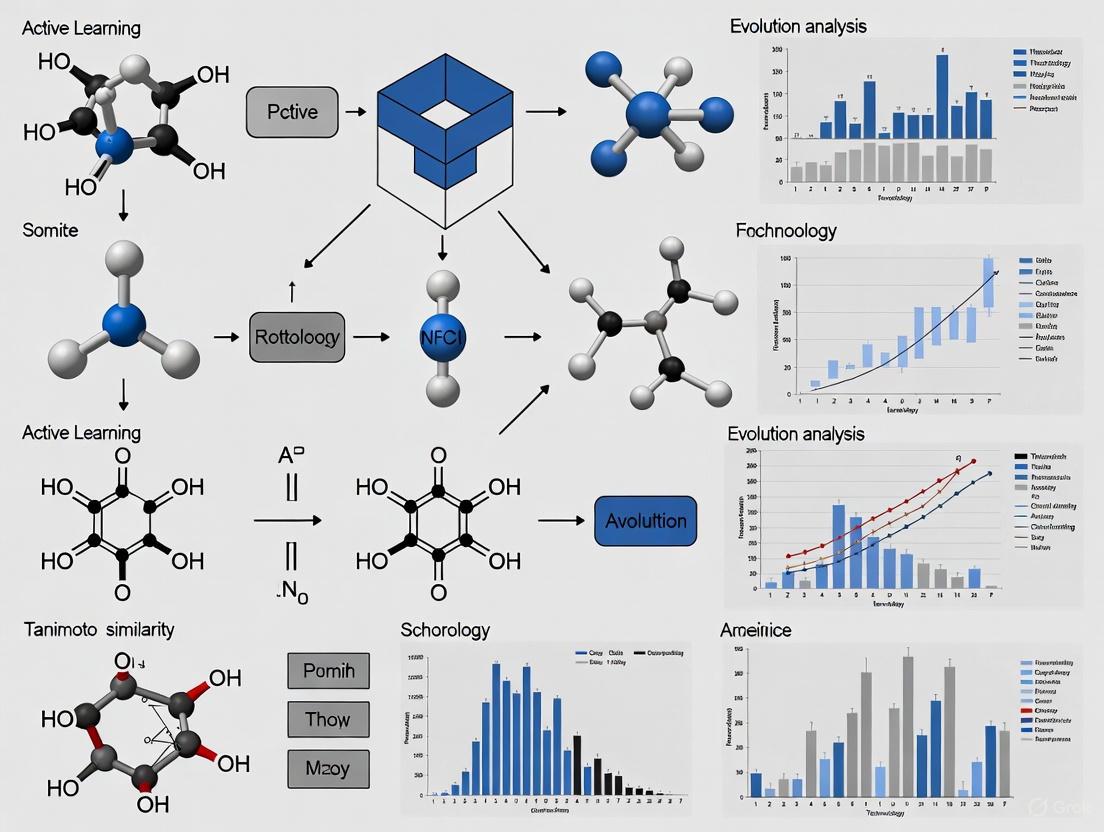

Visualizing the Evolving Workflow in Ligand-Based Discovery

The following diagram illustrates the paradigm shift from a traditional, static similarity screening to an integrated, active learning-driven workflow that mitigates the blind spots of the Tanimoto Coefficient.

Modern Ligand Discovery Workflow contrasts traditional Tanimoto-based screening with an integrated approach using multi-parameter similarity and active learning.

The evidence is clear: the Tanimoto Coefficient, while useful for identifying close structural analogs, possesses significant and quantifiable blind spots in ligand-based discovery. Its inability to consistently connect structurally dissimilar compounds with similar bioactivities limits its utility as a standalone tool for scaffold hopping and exploring novel chemical space. However, it is not obsolete. The path forward involves a nuanced, context-dependent application:

- Use TC for Initial Triage: It remains a computationally cheap and effective tool for initial filtering and finding close-in analogs.

- Complement with Bioactivity-Aware Metrics: For critical scaffold hopping and hit expansion, employ machine learning models like BSI that are explicitly trained on bioactivity data [1].

- Select the Right Tool for the Task: When analyzing binding poses, prefer alternative coefficients like BUB or HD for interaction fingerprints [2].

- Integrate into Adaptive Frameworks: Embed these similarity measures within larger active learning or evolutionary algorithms to create intelligent, self-improving discovery pipelines that efficiently navigate ultra-large chemical spaces [3] [5].

By acknowledging its limitations and strategically complementing it with next-generation methods, researchers can move beyond the blind spots of the Tanimoto Coefficient and significantly enhance the power and efficiency of ligand-based discovery.

For decades, the Tanimoto Coefficient (TC) has served as the cornerstone metric for quantifying molecular similarity in cheminformatics and drug discovery. This structure-based approach operates on the principle that structurally similar molecules are likely to exhibit similar biological activities. However, growing evidence reveals a significant limitation: structural similarity metrics frequently miss functionally related compounds. In fact, an analysis of the ChEMBL database shows that approximately 60% of similarly bioactive ligand pairs demonstrate TC values below 0.30, revealing a major blind spot in ligand-based discovery approaches [1]. This blind spot constrains the ability of researchers to identify structurally different yet functionally equivalent chemotypes, ultimately limiting the chemical space explored in virtual screening campaigns.

The emerging paradigm of bioactivity-driven similarity seeks to overcome this limitation by directly estimating the probability that two molecules share similar biological effects, regardless of their structural resemblance. This article explores the development and validation of the Bioactivity Similarity Index (BSI), a machine learning model that represents a significant evolution beyond traditional structural similarity. By framing this advancement within the context of active learning and similarity evolution research, we examine how BSI complements rather than replaces existing methods, extending hit-finding capabilities to remote chemotypes that are structurally dissimilar yet functionally equivalent [1].

Understanding the Bioactivity Similarity Index (BSI)

Conceptual Foundation and Machine Learning Architecture

The Bioactivity Similarity Index (BSI) is a machine learning model specifically designed to estimate the probability that two molecules bind the same or related protein receptors. Unlike traditional fingerprint-based methods, BSI learns the complex relationships between molecular structures and their biological activities directly from bioactivity data [1].

The model was trained using a leave-one-protein-out cross-validation strategy across Pfam-defined protein groups, particularly focusing on learning from dissimilar pairs [1]. This rigorous training approach ensures that the model generalizes well across different protein families and does not simply learn to recognize obvious structural analogs. The developers further created a cross-family model (BSI-Large) that, while slightly less performant than protein group-specific models, demonstrates superior generalization capabilities and can be fine-tuned to specific protein families with limited data [1].

Comparative Performance Analysis

In retrospective validation on new ChEMBL v35 data, BSI demonstrates strong early-retrieval performance, significantly outperforming both traditional Tanimoto similarity and modern molecular embedding methods across multiple protein families [1].

Table 1: Early-Retrieval Performance Comparison (EF₂%) [1]

| Method | Enrichment Factor (EF₂%) | Relative Performance |

|---|---|---|

| BSI (Group-Specific) | Highest | Best |

| BSI-Large | Competitive | Strong |

| Tanimoto Coefficient (TC) | Lower | Poor |

| ChemBERTa (Cosine Similarity) | Low | Poor |

| CLAMP (Cosine Similarity) | Low | Poor |

In a realistic virtual-screening scenario targeting ADRA2B, BSI dramatically improved the mean rank of the next active compound given a known active, reducing it from 45.2 with TC to just 3.9 [1]. This represents an order-of-magnitude improvement in retrieval efficiency for identifying promising bioactive compounds.

Table 2: Virtual Screening Performance on ADRA2B [1]

| Method | Mean Rank of Next Active | Performance Improvement |

|---|---|---|

| BSI | 3.9 | 11.6x |

| Tanimoto Coefficient (TC) | 45.2 | Baseline |

| ChemBERTa | 54.9 | 0.8x |

| CLAMP | 28.6 | 1.6x |

Experimental Protocols and Validation Methodologies

Model Training and Validation Framework

The development of BSI followed rigorous machine learning practices to ensure robust performance and generalizability. The training incorporated a leave-one-protein-out cross-validation approach across Pfam-defined protein groups, with particular emphasis on learning from dissimilar pairs to capture non-obvious bioactivity relationships [1].

The validation strategy employed multiple approaches:

- Retrospective validation using ChEMBL v35 data to assess early-retrieval performance

- Virtual-screening-like scenarios against specific targets including ADRA2B

- Comparison benchmarks against established methods (TC) and modern baselines (ChemBERTa, CLAMP)

- Enrichment factor calculations particularly focusing on early recognition capabilities (EF₂%) [1]

This comprehensive validation framework ensures that performance assessments reflect real-world application scenarios rather than optimized benchmark conditions.

Active Learning Integration in Similarity Methods

The broader context of active learning research reveals powerful strategies for optimizing model performance while minimizing experimental costs. Active learning approaches employ iterative strategies where a model guides the acquisition of additional data for training [6]. In practice, a machine learning model is initially trained on a small portion of available data, then iteratively selects the most informative samples to acquire for subsequent training cycles [6].

This approach has demonstrated remarkable efficiency in related domains. For instance, in developing predictors for metabolic soft spots (sites of metabolism, or SoMs), active learning required only 20% of the labeled atoms used by classical approaches to reach competitive performance [6]. This demonstrates how active learning can maximize the value of experimental data by strategically focusing annotation efforts on the most informative samples.

Active Learning Workflow for Bioactivity Model Development

Comparative Analysis of Similarity Methods

Performance Across Diverse Molecular Scenarios

The transition from structure-based to bioactivity-driven similarity represents an evolutionary step in molecular comparison methods. Each approach offers distinct advantages depending on the specific application context.

Table 3: Similarity Method Comparison Framework

| Method Type | Key Principle | Strengths | Limitations |

|---|---|---|---|

| Structural Similarity | Structural resemblance predicts bioactivity | Simple, interpretable, computationally efficient | Misses 60% of bioactive pairs with TC < 0.30 [1] |

| Molecular Embeddings | Learned representations from large datasets | Captures complex structural patterns | Performance varies; limited bioactivity correlation |

| Bioactivity Similarity | Direct probability estimation of shared targets | Identifies functionally similar chemotypes | Requires sufficient bioactivity data for training |

Integration with Pharmacophore-Based Approaches

Pharmacophore-informed methods offer a complementary approach to bioactivity-driven similarity. Tools like TransPharmer integrate ligand-based interpretable pharmacophore fingerprints with generative pre-training transformer frameworks for de novo molecule generation [7]. These methods demonstrate particular strength in scaffold hopping - producing structurally distinct but pharmaceutically related compounds - which aligns closely with the objectives of bioactivity-driven similarity [7].

In validation studies, TransPharmer generated novel PLK1 inhibitors with submicromolar activities, with the most potent compound (IIP0943) exhibiting a potency of 5.1 nM while featuring a new 4-(benzo[b]thiophen-7-yloxy)pyrimidine scaffold distinct from known inhibitors [7]. This demonstrates how pharmacophore awareness can guide the discovery of structurally novel bioactive ligands.

Implementation and Practical Applications

Practical Implementation Framework

Implementing bioactivity-driven similarity in drug discovery workflows requires both computational resources and strategic planning. The BSI framework is publicly available, providing researchers with direct access to this methodology [1].

BSI Implementation Workflow for Virtual Screening

The Scientist's Toolkit: Essential Research Reagent Solutions

Successfully implementing bioactivity-driven similarity methods requires both computational tools and experimental resources.

Table 4: Essential Research Reagent Solutions for Bioactivity-Driven Discovery

| Resource Category | Specific Tools/Sources | Function in Research |

|---|---|---|

| Bioactivity Databases | ChEMBL, PubChem, BindingDB, IUPHAR/BPS, Probes & Drugs [8] | Provide curated bioactivity data for model training and validation |

| Metabolism Prediction | FAME 3 [6] | Predicts sites of metabolism for compound optimization |

| Target Prediction | CTAPred [4] | Similarity-based target prediction for natural products |

| Chemical Language Models | CLMs with SMILES representation [9] | De novo molecular design leveraging structural and bioactivity data |

| Pharmacophore Tools | TransPharmer [7] | Pharmacophore-informed generative models for scaffold hopping |

The introduction of the Bioactivity Similarity Index represents a significant advancement in molecular similarity assessment, addressing critical limitations of traditional structure-based methods. By directly estimating the probability of shared bioactivity rather than relying on structural resemblance as a proxy, BSI enables researchers to identify functionally equivalent chemotypes that would be missed by conventional approaches.

The integration of bioactivity-driven similarity with active learning frameworks and pharmacophore-based methods creates a powerful ecosystem for drug discovery. These approaches collectively enable more efficient exploration of chemical space, identification of structurally novel bioactive compounds, and optimization of experimental resources through strategic data acquisition. As these methodologies continue to evolve and integrate, they promise to accelerate the discovery of new therapeutic agents by focusing on what ultimately matters most in drug discovery: biological activity rather than structural appearance alone.

In data-driven fields such as drug discovery and materials science, the high cost of acquiring labeled data creates a significant bottleneck. Experimental measurements and high-fidelity simulations often require expert knowledge, specialized equipment, and time-consuming procedures, rendering exhaustive exploration of vast chemical spaces economically and practically infeasible. This data scarcity necessitates highly efficient data acquisition strategies. Active Learning (AL), a subfield of machine learning, directly addresses this challenge by enabling models to intelligently select the most informative data points for labeling, thereby maximizing knowledge gain while minimizing experimental costs [10] [5]. This guide objectively compares the performance of various AL strategies and experimental protocols, providing a framework for their application within drug discovery, with a specific focus on the role of molecular similarity analysis.

Performance Benchmarking of Active Learning Strategies

Comparative Performance in Materials Science Regression

A comprehensive benchmark study evaluating 17 different AL strategies within an Automated Machine Learning (AutoML) framework for small-sample regression tasks in materials science reveals distinct performance trends [10]. The table below summarizes the key findings.

Table 1: Benchmark Performance of Active Learning Strategies in AutoML for Materials Science [10]

| Strategy Category | Example Strategies | Performance in Early Stages (Data-Scarce) | Performance in Later Stages (Data-Rich) | Key Characteristics |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms baseline | Converges with other methods | Selects points where model prediction is least certain |

| Diversity-Hybrid | RD-GS | Clearly outperforms baseline | Converges with other methods | Balances uncertainty with diversity of selected samples |

| Geometry-Only | GSx, EGAL | Underperforms vs. top strategies | Converges with other methods | Selects samples based on feature space coverage only |

| Baseline | Random-Sampling | Reference for comparison | Reference for comparison | Selects data points at random |

The study concluded that during the initial, data-scarce phase of learning, uncertainty-driven and diversity-hybrid strategies are most effective. However, as the volume of labeled data increases, the performance advantage of these sophisticated strategies diminishes, and all methods eventually converge, indicating diminishing returns from AL under AutoML [10].

Performance in Drug Discovery Applications

In structure-based drug discovery, AL and evolutionary algorithms demonstrate remarkable efficiency when navigating ultra-large chemical libraries.

Table 2: Performance of Efficient Screening Algorithms in Ultra-Large Libraries [3]

| Method | Chemical Space Searched | Key Performance Metric | Result |

|---|---|---|---|

| REvoLd (Evolutionary Algorithm) | Enamine REAL (20B+ molecules) | Hit rate improvement vs. random | 869 to 1622-fold enrichment |

| REvoLd (Evolutionary Algorithm) | Enamine REAL (20B+ molecules) | Molecules docked per target | ~49,000 - 76,000 |

| Unified AL for Photosensitizer Design | Custom library (655,197 molecules) | Test-set MAE improvement vs. static baselines | 15-20% improvement |

The REvoLd algorithm achieved its performance by exploring combinatorial libraries without exhaustive enumeration, using an evolutionary protocol with a population of 200 initial ligands, allowing 50 individuals to advance, and running for 30 generations to balance convergence and exploration [3].

Experimental Protocols and Workflows

A Standardized Pool-Based Active Learning Protocol

The benchmark for materials science followed a rigorous, generalizable pool-based AL protocol, which can be adapted to various domains [10].

- Initialization: A small set of labeled samples (L = {(xi, yi)}{i=1}^l) is randomly drawn from the entire dataset. The large pool of unlabeled data is denoted (U = {xi}_{i=l+1}^n).

- Iterative Active Learning Loop:

- Model Training: A predictive model is trained on the current labeled set (L). In the benchmarked AutoML framework, the model family and hyperparameters are automatically optimized in each iteration.

- Informativeness Scoring: The trained model is used to evaluate all samples in the unlabeled pool (U). A query strategy (e.g., uncertainty estimation) scores each sample based on its potential informativeness.

- Query and Label: The highest-scoring sample (x^) is selected from (U), and its target value (y^) is acquired (e.g., via experiment or simulation).

- Set Update: The newly labeled sample ((x^, y^)) is added to (L) and removed from (U).

- Termination: The loop repeats until a predefined stopping criterion is met, such as the exhaustion of a data acquisition budget or the achievement of a target model performance.

The workflow for this standard protocol is visualized below.

Standard AL Workflow

Specialized Workflow for Multi-Target Drug Discovery

A more complex, unified AL framework was developed for photosensitizer design and multi-target inhibitor generation, illustrating how AL can be tailored for specific discovery goals [5] [11]. Key aspects of the protocol include:

- Surrogate Model: A Graph Neural Network (GNN) or a Sequence-to-Sequence Variational Autoencoder (Seq2Seq VAE) is trained as a fast, approximate predictor for expensive-to-compute properties (e.g., excited-state energies, docking scores).

- Hybrid Acquisition Strategy: The query strategy combines multiple criteria:

- Uncertainty Estimation: Using ensemble methods to identify molecules where the surrogate model's predictions are uncertain.

- Physics/Property-Based: Prioritizing molecules that meet specific objective criteria (e.g., favorable S1/T1 energy levels).

- Diversity-Based: Ensuring exploration of broad chemical space, especially in early cycles.

- High-Fidelity Labeling: A small subset of molecules selected by the AL strategy is evaluated with high-fidelity methods (e.g., ML-xTB quantum calculations, molecular docking) to generate accurate labels.

- Iterative Refinement: The newly labeled, high-value data is added to the training set, and the surrogate model is retrained, closing the AL loop.

This sophisticated, multi-stage workflow is summarized in the following diagram.

Advanced AL for Drug Discovery

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and resources essential for implementing the AL strategies and protocols discussed in this guide.

Table 3: Essential Research Reagents for Active Learning in Molecular Discovery

| Reagent / Resource | Type | Function & Application | Example Use Case |

|---|---|---|---|

| Enamine REAL Space | Ultra-Large Chemical Library | Provides a synthetically accessible combinatorial space of billions of molecules for virtual screening [3]. | Benchmarking evolutionary algorithms and AL for hit identification [3]. |

| RosettaLigand (REvoLd) | Software Suite / Protocol | Enables flexible protein-ligand docking, a key scoring function for structure-based AL [3]. | Evaluating binding affinity within the REvoLd evolutionary algorithm [3]. |

| ML-xTB Pipeline | Computational Chemistry Method | Provides quantum chemical accuracy at ~1% the cost of TD-DFT, enabling affordable high-fidelity labeling [5]. | Generating labeled data for photosensitizer properties (S1/T1 energies) [5]. |

| Bioactivity Similarity Index (BSI) | Machine Learning Model | A learned similarity metric that identifies functionally similar molecules beyond structural resemblance [1]. | Enhancing ligand-based virtual screening by finding remote bioactive chemotypes [1]. |

| Graph Neural Network (GNN) | Surrogate Model | Learns from molecular graph structures to predict properties, enabling fast inference in AL loops [5]. | Serving as the surrogate model for predicting molecular properties in a unified AL framework [5]. |

| Seq2Seq VAE | Generative & Surrogate Model | Learns a latent representation of molecules; can be fine-tuned with AL to generate novel, optimized compounds [11]. | Generating multi-target inhibitor candidates in an iterative AL workflow [11]. |

Beyond Tanimoto: The Evolution of Similarity in Active Learning

Molecular similarity is a cornerstone of cheminformatics, but traditional structural metrics like the Tanimoto Coefficient (TC) have limitations. While the TC is a validated and appropriate choice for fingerprint-based similarity, often producing rankings closest to a composite of multiple metrics [12], it can miss critical bioactivity relationships. It has been reported that approximately 60% of similarly bioactive ligand pairs in the ChEMBL database have a TC less than 0.30, creating a major blind spot for ligand-based discovery [1].

This limitation has driven the development of advanced, learned similarity measures. The Bioactivity Similarity Index (BSI) is a machine learning model that estimates the probability that two molecules bind the same protein receptors [1]. In retrospective validation, BSI significantly outperforms TC and modern molecular embeddings (ChemBERTa, CLAMP). In a virtual screening scenario for the ADRA2B target, the mean rank of the next active molecule given a known active was 3.9 for BSI versus 45.2 for TC [1]. This demonstrates that integrating learned bioactivity similarity into AL and screening workflows can dramatically enhance the discovery of functionally relevant, yet structurally diverse, chemotypes.

The exploration of chemical space represents one of the most significant challenges in modern drug discovery, with an estimated 10^60 drug-like molecules presenting an insurmountable obstacle for conventional screening methods. Traditional virtual screening approaches, which rely heavily on structural similarity metrics like the Tanimoto coefficient (TC), have long been constrained by a major blind spot: they miss functionally related compounds that are structurally dissimilar. Indeed, 60% of similarly bioactive ligand pairs in the ChEMBL database show TC < 0.30, creating a fundamental limitation that constrains ligand-based discovery [1]. This critical gap in conventional methodologies has catalyzed the emergence of a powerful synergistic approach that combines advanced bioactivity-aware similarity indices with intelligent active learning optimization frameworks.

This integration represents a paradigm shift from exhaustive screening to targeted, intelligent exploration. While traditional methods treat molecular comparison and optimization as separate challenges, the combined approach creates a closed-loop system where each component informs and enhances the other. Advanced similarity metrics such as the Bioactivity Similarity Index (BSI) enable the identification of functionally analogous compounds that structural methods would miss, while active learning frameworks like DANTE (Deep Active Optimization with Neural-Surrogate-Guided Tree Exploration) efficiently navigate the vast chemical space to identify optimal candidates with minimal data requirements [1] [13]. This synergy is particularly transformative for resource-intensive applications in early drug discovery, where it enables researchers to prioritize the most promising compounds for synthesis and testing, dramatically reducing both time and cost while expanding the exploration of novel chemotypes.

Performance Benchmarking: Quantitative Superiority of Integrated Approaches

Comparative Performance in Virtual Screening

The integration of advanced similarity measures with active learning frameworks demonstrates consistent and substantial improvements across multiple drug discovery benchmarks. The table below summarizes key performance metrics from recent studies comparing traditional and advanced methods.

Table 1: Performance comparison of similarity and optimization methods in drug discovery tasks

| Method Category | Specific Method | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| Similarity Metrics | Tanimoto Coefficient (TC) | Mean rank of next active (ADRA2B target) | 45.2 | [1] |

| Bioactivity Similarity Index (BSI) | Mean rank of next active (ADRA2B target) | 3.9 | [1] | |

| ChemBERTa (cosine similarity) | Mean rank of next active (ADRA2B target) | 54.9 | [1] | |

| CLAMP (cosine similarity) | Mean rank of next active (ADRA2B target) | 28.6 | [1] | |

| Active Optimization | DANTE | Success rate in high-dimensional problems (up to 2000 dimensions) | 80-100% | [13] |

| Bayesian Optimization (BO) | Success rate in high-dimensional problems (up to 100 dimensions) | Lower than DANTE | [13] | |

| Molecular Optimization | MoGA-TA | Multi-objective optimization efficiency | Significantly improved | [14] |

| NSGA-II | Multi-objective optimization efficiency | Lower than MoGA-TA | [14] |

Case Study: SARS-CoV-2 Main Protease Inhibitor Discovery

A recent application targeting the SARS-CoV-2 main protease (Mpro) demonstrates the practical impact of this synergistic approach. Researchers integrated the FEgrow software for building congeneric series with active learning to prioritize compounds from on-demand libraries. This approach successfully identified novel designs showing activity in fluorescence-based Mpro assays, with several compounds exhibiting high similarity to known COVID Moonshot hits [15]. The active learning workflow enabled efficient exploration of the combinatorial space of possible linkers and functional groups, demonstrating that the most promising compounds could be identified by evaluating only a fraction of the total chemical space. This case study exemplifies the transformative potential of combining structural growing algorithms with intelligent selection frameworks in a real-world drug discovery campaign.

Methodological Deep Dive: Experimental Protocols and Workflows

Bioactivity Similarity Index (BSI) Implementation

The Bioactivity Similarity Index addresses fundamental limitations of structural similarity by directly estimating the probability that two molecules share protein targets. The experimental protocol involves several meticulously designed stages:

Table 2: Key components of the Bioactivity Similarity Index methodology

| Component | Specification | Rationale |

|---|---|---|

| Training Data | ChEMBL database (version 33) with pChEMBL values | Utilizes experimentally validated bioactivity data |

| Active Definition | pChEMBL > 6.5 (approximately Ki < 300 nM) | Standardized definition of active compounds |

| Inactive Definition | pChEMBL < 4.5 (approximately Ki > 30 μM) or explicitly marked inactive | Clear threshold for non-binders |

| Training Strategy | Leave-one-protein-out (LOPO) across Pfam-defined protein groups | Prevents overfitting and ensures generalization |

| Architecture | Deep learning model | Captures complex, non-linear relationships between structure and bioactivity |

The BSI methodology represents a shift from chemical structure comparison to bioactivity prediction. By training on protein families and employing a leave-one-protein-out validation strategy, BSI achieves robust performance across diverse target classes [1]. In retrospective validation on ChEMBL v35 data, BSI demonstrated strong early-retrieval performance, with group-specific models delivering the best enrichment, while the cross-family model (BSI-Large) remained competitive and offered better generalization with less data requirements.

Active Learning Optimization Framework

Active learning optimization frameworks address the challenge of identifying optimal solutions in complex, high-dimensional spaces with limited data. The DANTE pipeline exemplifies this approach through several key innovations:

Diagram 1: DANTE active optimization workflow

The DANTE algorithm introduces several key mechanisms that enhance its performance:

Conditional Selection: This mechanism addresses the "value deterioration problem" by comparing the Data-driven Upper Confidence Bound (DUCB) of root nodes against leaf nodes. If any leaf node has a higher DUCB, it becomes the new root for stochastic rollout, encouraging selection of higher-value nodes [13].

Local Backpropagation: Unlike conventional methods that update values along entire search paths, local backpropagation updates only between root and selected leaf nodes. This prevents irrelevant nodes from influencing current decisions and enables the algorithm to escape local optima by creating local DUCB gradients [13].

Neural Surrogate Model: DANTE employs deep neural networks as surrogate models to approximate high-dimensional nonlinear distributions, overcoming limitations of traditional machine learning models that struggle with complex relationships in high-dimensional spaces [13].

Similarity-Based Active Learning (SBAL) for Molecular Optimization

The MoGA-TA algorithm exemplifies the direct integration of similarity metrics with optimization frameworks. This approach uses Tanimoto similarity-based crowding distance calculation and a dynamic acceptance probability population update strategy for multi-objective drug molecular optimization [14]. The experimental protocol involves:

Population Initialization: Start with a population of molecules, typically based on known active compounds or diverse chemical scaffolds.

Decoupled Crossover and Mutation: Apply genetic operations in chemical space to generate new candidate molecules while maintaining chemical feasibility.

Tanimoto-Based Crowding Distance: Calculate crowding distance using Tanimoto similarity to better capture molecular structural differences, enhancing search space exploration and maintaining population diversity.

Dynamic Acceptance Probability: Employ a dynamic strategy that balances exploration and exploitation during evolution, with higher acceptance rates early for broad exploration and lower rates later for convergence.

This methodology has demonstrated significant improvements in success rate, dominating hypervolume, geometric mean, and internal similarity compared to traditional multi-objective optimization approaches like NSGA-II [14].

Successful implementation of integrated active learning and similarity approaches requires specific computational tools and data resources. The following table details key components of the research infrastructure:

Table 3: Essential research reagents and resources for integrated active learning and similarity methods

| Resource Category | Specific Tool/Database | Key Function | Access Information |

|---|---|---|---|

| Bioactivity Databases | ChEMBL (v34+) | Provides experimentally validated bioactivity data for training and validation | https://www.ebi.ac.uk/chembl/ [1] [16] |

| BindingDB | Curated database of protein-ligand binding affinities | https://www.bindingdb.org/ [16] | |

| Similarity Tools | BSI (Bioactivity Similarity Index) | Predicts functional similarity beyond structural metrics | https://github.com/gschottlender/bioactivity-similarity-index [1] |

| RDKit | Cheminformatics toolkit for fingerprint generation and similarity calculations | https://www.rdkit.org/ [14] | |

| Active Learning Platforms | FEgrow | Open-source package for building congeneric series with active learning interface | https://github.com/cole-group/FEgrow [15] |

| DANTE | Deep active optimization pipeline for high-dimensional problems | Reference implementation from Nature Computational Science [13] | |

| Target Prediction | MolTarPred | Ligand-centric target prediction using similarity searching | Stand-alone code available [16] |

| CMTNN | ChEMBL Multitask Neural Network for target prediction | Stand-alone code available [16] |

This toolkit enables researchers to implement the complete workflow from target identification and compound comparison to optimized selection and experimental prioritization. The integration of these resources creates a powerful infrastructure for modern, data-driven drug discovery.

Integrated Workflow: From Target Identification to Compound Prioritization

The complete integration of advanced similarity with active learning creates a cohesive workflow for drug discovery. The following diagram illustrates this synergistic relationship and how information flows between components:

Diagram 2: Integrated drug discovery workflow

This integrated workflow demonstrates how advanced similarity and active learning create a synergistic cycle of continuous improvement:

Knowledge Foundation: The process begins with target identification and known active compounds, establishing the foundation for both similarity comparisons and initial training data for active learning models.

Expanded Candidate Identification: Advanced similarity methods like BSI enable identification of functionally similar but structurally diverse compounds that would be missed by traditional Tanimoto-based approaches [1].

Intelligent Prioritization: Active learning frameworks efficiently navigate the expanded chemical space, selecting the most informative compounds for evaluation and minimizing resource-intensive synthetic and testing efforts [13] [15].

Iterative Refinement: Experimental results feed back into both similarity models and active learning algorithms, creating a continuous improvement cycle that enhances prediction accuracy and optimization efficiency with each iteration.

This synergistic approach fundamentally transforms the drug discovery process from a sequential, resource-intensive pipeline to an intelligent, adaptive system that learns from both computational predictions and experimental results to accelerate the identification of optimized therapeutic candidates.

The integration of advanced similarity metrics with active learning frameworks represents a fundamental shift in computational drug discovery. By moving beyond the limitations of structural similarity and embracing bioactivity-aware comparison methods, while simultaneously replacing exhaustive screening with intelligent optimization, this synergistic approach delivers substantial improvements in efficiency, success rates, and chemical space exploration. The quantitative evidence demonstrates consistent superiority across multiple benchmarks, with methods like BSI reducing mean ranks of identified actives from 45.2 to 3.9 compared to traditional Tanimoto similarity, and active optimization frameworks like DANTE successfully identifying optimal solutions in problems with up to 2000 dimensions where previous methods were limited to 100 dimensions [1] [13].

As chemical and biological datasets continue to grow in size and complexity, this synergistic approach will become increasingly essential for navigating the expanding search space of drug discovery. The integration of these methodologies creates a foundation for increasingly autonomous discovery systems that can efficiently leverage both existing knowledge and experimental data to accelerate the identification of novel therapeutic agents. This represents not just an incremental improvement but a fundamental transformation in how we explore chemical space and optimize molecular properties, ultimately leading to more efficient drug discovery pipelines and expanded therapeutic possibilities.

Frameworks in Action: Implementing Active Learning with Next-Generation Similarity

The application of active learning (AL) in drug discovery has emerged as a powerful strategy to steer iterative experimentation, accelerating the identification of potent compounds while managing resource constraints [17] [18]. Traditional exploitative AL methods, which select compounds based on predicted absolute potency, often face limitations in early project stages: scarce data can lead to poorly calibrated models, and excessive exploitation can result in limited scaffold diversity through analog identification [18]. Within this evolutionary context of molecular similarity analysis, the Tanimoto coefficient has long served as a foundational similarity metric for fingerprint-based comparisons [12]. However, new paradigms are emerging that directly address the optimization objective itself. The ActiveDelta paradigm represents a significant methodological shift by leveraging paired molecular representations to directly predict property improvements rather than absolute values. This guide provides a comprehensive performance comparison of ActiveDelta against standard active learning implementations, detailing experimental protocols and key resources for adoption by computational chemists and drug discovery scientists.

Comparative Performance Analysis

Quantitative Benchmarking Results

ActiveDelta's performance was rigorously evaluated against standard active learning (Std-AL) implementations across 99 Ki datasets from ChEMBL with simulated time-splits [18]. The following tables summarize the key quantitative findings.

Table 1: Performance in Identifying Top Potent Compounds During Active Learning

| Model | Avg. Number of Most Potent Compounds Identified | Standard Deviation | Key Advantage |

|---|---|---|---|

| AD-Chemprop | ~85 | ± ~3 | Superior hit identification & model accuracy |

| AD-XGBoost | ~83 | ± ~4 | Superior hit identification |

| Std-AL Chemprop | ~75 | ± ~4 | - |

| Std-AL XGBoost | ~72 | ± ~5 | - |

| Std-AL Random Forest | ~65 | ± ~5 | - |

Table 2: Performance on External Test Set Evaluation

| Model | Ability to Identify Top Potency Compounds | Chemical Diversity (Murcko Scaffolds) |

|---|---|---|

| AD-Chemprop | Most Accurate | More Diverse |

| AD-XGBoost | Superior | More Diverse |

| Std-AL Chemprop | Moderate | Less Diverse |

| Std-AL XGBoost | Lower | Less Diverse |

| Std-AL Random Forest | Lowest | Least Diverse |

The statistical analysis based on the Wilcoxon signed-rank test confirmed that the improvements offered by ActiveDelta implementations were significant [18].

Comparison with Advanced Similarity and Active Learning Approaches

While Tanimoto similarity remains a robust baseline for structural comparison [12], new bioactivity-focused metrics have emerged. The Bioactivity Similarity Index (BSI), a machine learning model, demonstrates that significant functional similarity can exist even between structurally dissimilar compounds (Tanimoto Coefficient < 0.30) [1]. Other advanced AL frameworks integrate generative AI with physics-based simulations for de novo molecular design [19], or employ evolutionary algorithms for ultra-large library screening [3]. ActiveDelta distinguishes itself by focusing on a highly efficient and interpretable strategy for optimizing potency within existing chemical series, demonstrating particular strength in low-data regimes where it mitigates the risk of over-exploitation and analog bias [17] [18].

Experimental Protocols

Core ActiveDelta Methodology

The fundamental innovation of ActiveDelta is its training on molecular pairs to predict relative potency improvements (ΔKi), rather than predicting absolute Ki values from single molecules [18].

Workflow Diagram: ActiveDelta vs. Standard Active Learning

Detailed Benchmarking Protocol

The comparative data presented in this guide was generated under the following experimental conditions [18]:

- Datasets: 99 Ki datasets curated from ChEMBL using the SIMPD algorithm for time-split simulation. An 80:20 train-test split was used.

- Initialization: Each active learning run began with only two randomly selected data points from the training set.

- Iteration: In each cycle, the model selected one additional compound from the learning set (the pool of available molecules) to be added to its training data.

- Model Implementations:

- ActiveDelta Models (AD-CP, AD-XGB): Used a cross-merged training set. The most potent molecule in the training set was paired with every molecule in the learning set. The model predicted the potency change (ΔKi) for each pair, and the molecule predicted to deliver the greatest improvement was selected.

- Standard Models (Std-AL): Trained on single molecules to predict absolute Ki. The molecule with the best predicted Ki was selected.

- Evaluation: Performance was measured by the model's ability to identify compounds in the top 10% of potency within the learning set and the external test set. Chemical diversity was assessed by comparing the Murcko scaffolds of the discovered hits.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item/Resource | Function/Role in the Workflow | Implementation Notes |

|---|---|---|

| Chemprop | Graph-based deep learning model for molecular property prediction. | Used in both single-molecule (Std-AL) and two-molecule (ActiveDelta) modes. For AD, number_of_molecules=2 [18]. |

| XGBoost | Tree-based machine learning algorithm. | Used with concatenated molecular fingerprints for paired predictions in the ActiveDelta implementation [18]. |

| Radial (Morgan) Fingerprints | A molecular representation capturing atomic environments. | Radius 2, 2048 bits. Used as input for fingerprint-based models like XGBoost and Random Forest [18]. |

| ChEMBL Database | A manually curated database of bioactive molecules. | Source of the 99 Ki benchmarking datasets for method validation [18]. |

| SIMPD Algorithm | Simulated Medicinal Chemistry Project Data algorithm. | Used to create realistic time-split training and test sets for benchmarking [18]. |

| Murcko Scaffolds | A method to define the core structure of a molecule. | Used as the metric to assess the chemical diversity of the hits identified by different AL strategies [18]. |

| Tanimoto Coefficient | A classical metric for quantifying molecular similarity based on fingerprint overlap. | Serves as a baseline for structural comparison; foundational to understanding the evolution of similarity analysis [12]. |

The ActiveDelta paradigm demonstrates a statistically significant advantage over standard active learning for exploitative molecular optimization. By directly modeling the objective of finding potency improvements through paired molecular representations, AD-Chemprop and AD-XGBoost consistently identify more potent and chemically diverse inhibitors, especially in challenging low-data scenarios typical of early drug discovery projects [18]. This approach complements other advanced techniques like generative AI [19] and learned bioactivity similarity indices [1], offering a robust, efficient, and readily implementable strategy for accelerating hit finding and optimization campaigns.

Similarity-Quantized Relative Learning (SQRL) represents a paradigm shift in molecular activity prediction by reformulating the fundamental learning objective from predicting absolute property values to learning relative differences between structurally similar compounds [20]. This approach directly addresses a critical challenge in computational drug discovery: making accurate predictions with limited and noisy experimental data, a common scenario in real-world pharmaceutical research and development [21].

The SQRL framework is inspired by the practical reasoning of medicinal chemists, who often analyze how specific structural modifications influence molecular properties relative to a known parent compound or within matched molecular pairs [20]. By leveraging precomputed molecular similarities to create informative training pairs, SQRL enhances the performance of various machine learning architectures, including graph neural networks, leading to significantly improved accuracy and generalization in low-data regimes commonly encountered in drug discovery pipelines [20].

Core Conceptual Workflow

The following diagram illustrates the fundamental transformation process of the SQRL framework from a standard dataset to a similarity-quantized relative representation.

Experimental Protocols and Methodologies

SQRL Framework Implementation

The SQRL methodology reformulates molecular activity prediction as a relative difference learning task. Given a standard dataset of molecular structures and their properties, denoted as 𝒟 = {(x_i, y_i)} where x_i represents molecule i and y_i denotes its corresponding property value, the goal is to learn a function f: 𝒳 × 𝒳 → ℝ that predicts the relative difference in property values between two molecules [20].

The framework constructs a new relative dataset 𝒟_rel through a systematic dataset matching process:

Formal Dataset Transformation Protocol:

𝒟_rel = {((x_i, x_j), Δy_ij) | x_i, x_j ∈ 𝒟, d(x_i, x_j) ≤ α}

Where d: 𝒳 × 𝒳 → ℝ ≥ 0 represents a distance function in the molecular input space, and α ∈ ℝ > 0 is a carefully selected distance threshold that determines which molecular pairs are considered sufficiently similar for inclusion in the relative training dataset [20]. This threshold is typically chosen based on the distribution of distances in the training data, often selecting a value smaller than the average pairwise distance to focus learning on the most structurally similar and informative compound pairs.

Model Optimization and Loss Function

The SQRL framework employs a dual-component architecture consisting of a representation function g: 𝒳 → ℝ^d that converts molecular compounds into d-dimensional real-valued vectors, and a prediction model f: ℝ^d → ℝ that uses the difference between molecular representations to predict property differences [20].

The optimization process minimizes the following objective function:

min_θ ℒ(θ) = min_θ Σ_((x_i,x_j),Δy_ij)∈𝒟_rel ℓ(f(g(x_i)-g(x_j)), Δy_ij)

Where θ represents the parameters of both f and g (if learnable), and ℓ is typically the mean squared error loss function. This approach enables the model to learn from local structural changes and their consistent effects on molecular properties across similar compounds, rather than attempting to learn absolute property values from limited data [20].

Comparative Performance Analysis

Quantitative Benchmarking Against Established Methods

Table 1: Performance Comparison of Molecular Activity Prediction Methods

| Method Category | Specific Method | Key Approach | Performance Highlights | Data Efficiency |

|---|---|---|---|---|

| Relative Learning | SQRL (Proposed) | Similarity-thresholded relative difference prediction | Superior in low-data regimes; Enhanced generalization | Excellent |

| Traditional Similarity | Tanimoto Coefficient (TC) | Structural fingerprint similarity | Limited functional relevance; Misses 60% bioactive pairs [1] | Poor |

| Learned Similarity | Bioactivity Similarity Index (BSI) | Machine learning-based binding probability | EF₂%: Top 2%; ADRA2B rank: 3.9 vs TC 45.2 [1] | Good with protein data |

| Evolutionary Screening | REvoLd (Rosetta) | Evolutionary algorithm in combinatorial space | 869-1622x hit rate improvement [3] | Computational intensive |

| Deep Learning | Standard GNNs | Absolute property prediction | Often outperformed by simpler models in low-data [20] | Variable |

Performance in Virtual Screening Scenarios

In realistic virtual-screening-like scenarios, SQRL and other advanced similarity methods demonstrate significant advantages over traditional approaches. When tested against the target ADRA2B, the mean rank of the next active compound given a known active improved dramatically from 45.2 using traditional Tanimoto similarity to 3.9 using the learned Bioactivity Similarity Index approach [1]. Other modern embedding methods showed intermediate performance, with ChemBERTa achieving a rank of 54.9 and CLAMP reaching 28.6, highlighting the substantial gap between traditional and advanced similarity metrics for practical drug discovery applications [1].

The enrichment capabilities of these methods further demonstrate their utility for early retrieval of active compounds. BSI achieves strong early-retrieval performance in the top 2% enrichment factor (EF₂%), with protein group-specific models delivering the best enrichment while cross-family models (BSI-Large) remain competitive for general applications [1].

Integration with Active Learning and Evolutionary Frameworks

Synergies with Evolutionary Screening Algorithms

The SQRL framework demonstrates natural compatibility with evolutionary algorithms for drug discovery, such as REvoLd, which implements an evolutionary approach to search combinatorial make-on-demand chemical spaces efficiently [3]. REvoLd explores vast search spaces of combinatorial libraries for protein-ligand docking with full ligand and receptor flexibility through RosettaLigand, achieving remarkable improvements in hit rates by factors between 869 and 1622 compared to random selections in benchmark studies across five drug targets [3].

The relationship between active learning, evolutionary methods, and relative learning approaches can be visualized as a synergistic workflow:

Addressing the Tanimoto Blind Spot

Traditional structural similarity metrics like the Tanimoto Coefficient present a significant limitation for modern drug discovery: they miss many functionally related compounds. Research reveals that approximately 60% of similarly bioactive ligand pairs in ChEMBL databases show Tanimoto Coefficients below 0.30, creating a major blind spot that constrains ligand-based discovery [1]. This limitation motivates approaches like SQRL and learned similarity indices that can identify structurally different yet functionally equivalent chemotypes that structure-based similarity fails to detect.

The SQRL framework complements rather than replaces structure-based similarity, effectively extending hit finding to remote chemotypes that are structurally dissimilar yet functionally equivalent [1]. By learning from relative differences within localized regions of chemical space, SQRL can generalize to novel structural motifs that would be missed by traditional similarity searches.

Research Reagent Solutions and Computational Tools

Table 2: Essential Research Tools for Advanced Molecular Similarity and Screening

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| SQRL Framework | Machine Learning | Similarity-thresholded relative difference learning | Low-data molecular activity prediction |

| BSI (Bioactivity Similarity Index) | Learned Similarity | Estimates binding probability to same protein | Virtual screening, hit expansion |

| REvoLd | Evolutionary Algorithm | Ultra-large library screening with flexible docking | Structure-based drug discovery |

| Enamine REAL Space | Chemical Library | Make-on-demand combinatorial compounds | Virtual HTS, synthetic access |

| RosettaLigand | Docking Software | Flexible protein-ligand docking | Structural validation, binding mode prediction |

| Graph Neural Networks | Architecture | Molecular representation learning | Feature extraction for SQRL |

| Tanimoto Coefficient | Similarity Metric | Structural fingerprint comparison | Baseline comparisons |

| ChEMBL | Database | Bioactivity data for training | Model development, validation |

Hit expansion, the process of evolving initial, often weak, binding molecules (hits) into more potent and selective leads, is a critical stage in early drug discovery. Traditional methods can be computationally intensive and may not efficiently explore the vast combinatorial chemical space of possible derivatives. The integration of active learning—a machine learning paradigm that intelligently selects the most informative data points for model training—is revolutionizing this process by prioritizing computational resources on the most promising candidates.

This guide examines the FEgrow workflow, an open-source software package that represents a significant advancement in structure-based hit expansion. FEgrow uniquely couples molecular building with active learning to efficiently explore congeneric series. We will objectively compare its performance, experimental data, and methodology against other contemporary computational approaches, framing the discussion within research on chemical space analysis and the critical role of molecular similarity, often quantified by the Tanimoto index.

FEgrow Workflow: An Active Learning-Driven Approach

FEgrow is an open-source software package designed for building and optimizing congeneric series of compounds directly within protein binding pockets. Its core functionality involves taking a known ligand core and a receptor structure, then using hybrid machine learning/molecular mechanics (ML/MM) potential energy functions to optimize the bioactive conformers of supplied linkers and functional groups [22] [23]. Recent developments have significantly enhanced its capabilities, transforming it into a tool for automated de novo design.

The figure below illustrates the core active learning workflow that automates and accelerates the hit expansion process.

Figure 1. The FEgrow Active Learning Workflow. The process iterates through building, optimizing, and scoring compounds, with an active learning oracle selecting the most informative candidates for the next cycle, thereby efficiently searching the combinatorial space [22].

Key Methodological Components

- Hybrid ML/MM Optimization: FEgrow employs machine learning-augmented molecular mechanics functions for efficient and accurate conformer optimization, balancing computational speed with physical reliability [22] [24].

- Interaction-Based Scoring: The scoring function incorporates favorable interactions made by crystallographic fragments, grounding the design in experimentally observed binding modes [23].

- On-Demand Library Integration: A key feature is the optional seeding of the chemical space with molecules readily available from on-demand chemical libraries, ensuring that designed compounds are synthetically accessible and can be rapidly procured for testing [22] [25].

Performance Comparison with Alternative Methods

To objectively evaluate FEgrow's position in the computational toolkit, we compare its performance, resource requirements, and typical use cases against other state-of-the-art methodologies.

Table 1: Comparative Analysis of Computational Hit Expansion and Virtual Screening Methods.

| Method | Core Approach | Typical Library Size | Computational Demand | Key Advantage | Key Limitation | Experimental Validation |

|---|---|---|---|---|---|---|

| FEgrow (with Active Learning) | Structure-based optimization & growing with iterative learning [22] | Millions of possible R-group/linker combinations | Moderate (CPU/GPU for ML/MM) | Efficient exploration of congeneric series; direct synthetic access via on-demand libraries [23] | Primarily suited for hit expansion from a known core | 3/19 compounds showed weak activity in Mpro assay [24] |

| HIDDEN GEM | Docking, generative AI, and similarity searching [25] | Ultra-large (e.g., 37 Billion) | High (GPU for AI, massive CPU for similarity search) | Exceptional enrichment from trillion-scale libraries; identifies purchasable hits [25] | Requires significant resources for similarity search | Computational benchmark; high enrichment factors vs. random screening [25] |

| DeepDocking | Machine learning pre-filter to reduce docking load [25] | Ultra-large (Billions) | High (GPU for model training/inference) | Significantly reduces number of docking calculations [25] | Quality dependent on initial docking set; GPU-dependent | Computational benchmark on known actives |

| V-SYNTHES | Docks building blocks, then constructs & docks top combinations [25] | Combinatorial libraries (Billions) | Moderate to High | Leverages combinatorial library structure [25] | Requires proprietary library chemistry knowledge | Computational benchmark on known actives |

Analysis of Comparative Performance Data

The comparative data reveals a clear trade-off between exploration scope and resource efficiency. HIDDEN GEM and DeepDocking are designed for the monumental task of screening ultra-large libraries (billions of compounds), achieving high enrichment but at a significant computational cost [25]. In contrast, FEgrow operates on a different premise. It excels in the focused exploration of chemical space around a known hit, a process known as hit expansion. Its integration with active learning makes this exploration highly efficient, and its direct link to on-demand libraries provides a rapid path to experimental testing [22] [23].

In a test case targeting the SARS-CoV-2 main protease (Mpro), the FEgrow workflow successfully identified compounds with high similarity to those discovered by the large-scale COVID Moonshot effort. Ultimately, 19 designed compounds were ordered and tested, of which three demonstrated weak activity in a biochemical assay [22] [24]. This highlights a key outcome: FEgrow can automatically generate credible, purchasable hits using only structural information from a fragment screen.

Experimental Protocols & Benchmarking

Detailed Protocol: FEgrow for SARS-CoV-2 Mpro

The following protocol outlines the key steps from the published study that serves as a benchmark for FEgrow's performance [22] [23] [24].

Input Preparation:

- Receptor Structure: Obtain a high-resolution crystal structure of the SARS-CoV-2 Mpro protein. The study used structures derived from a fragment screen.

- Ligand Core: Define the core scaffold of the initial hit or fragment from crystallographic data.

- R-Group/Linker Libraries: Prepare SMILES strings of the linkers and functional groups to be grown from the core.

Active Learning Configuration:

- Define the stopping criteria for the active learning loop (e.g., number of iterations, convergence of predicted scores).

- Set parameters for the hybrid ML/MM optimization.

Workflow Execution:

- Run the FEgrow active learning workflow. The software will iteratively:

- Propose new compounds by attaching linkers and R-groups.

- Optimize their conformations in the binding pocket.

- Score them based on the energy function and interaction fingerprints.

- Use the active learning oracle to select the most promising candidates for the next iteration.

- Run the FEgrow active learning workflow. The software will iteratively:

Post-Processing & Prioritization:

- After the active learning cycle completes, analyze the top-ranked compounds.

- Filter and prioritize candidates based on score, chemical attractiveness, and most importantly, similarity to commercially available compounds in on-demand libraries (e.g., Enamine).

Experimental Validation:

- Procure the top-prioritized compounds.

- Test activity in a relevant biochemical assay (e.g., a fluorescence-based Mpro protease assay).

Quantifying Success and Chemical Space

A critical aspect of cheminformatics workflows is the quantification of molecular similarity, which directly impacts the selection of compounds in steps like the "Similarity" step of HIDDEN GEM and the analysis of FEgrow's outputs.

Table 2: Key Metrics and Reagents for Analysis in Hit Expansion.

| Metric / Reagent | Function & Explanation | Relevance to Workflow |

|---|---|---|

| Tanimoto Coefficient | A measure of structural similarity between two molecules based on their 2D fingerprints [12]. Ranges from 0 (no similarity) to 1 (identical). | Used for chemical similarity searching and analyzing the diversity of generated libraries. It is often the metric of choice for fingerprint-based similarity [26] [12]. |

| iSIM (Intrinsic Similarity) | A computationally efficient method to calculate the average pairwise Tanimoto similarity within a large compound set in O(N) time [27]. | Crucial for analyzing the diversity (low iT) of large libraries or generated sets without the prohibitive cost of O(N²) pairwise comparisons [27]. |

| BitBIRCH Algorithm | A clustering algorithm designed to group large numbers of compounds represented by binary fingerprints efficiently [27]. | Used to dissect the chemical space of generated compounds or screening libraries into meaningful clusters to assess diversity and coverage [27]. |

| On-Demand Library (e.g., Enamine REAL Space) | A virtual catalog of billions of chemically feasible and synthesizable compounds that can be rapidly procured [25]. | Bridges computational design and experimental testing. FEgrow and HIDDEN GEM both use these to identify purchasable analogs of computationally designed hits [22] [25]. |

| Molecular Fingerprint (e.g., MACCS) | A binary vector representing the presence or absence of specific substructures or patterns in a molecule [26]. | The fundamental representation for calculating Tanimoto similarity and other cheminformatics analyses. Choice of fingerprint can influence results [26]. |

The Scientist's Toolkit: Essential Research Reagents & Software

Successful implementation of these advanced computational workflows relies on a suite of software tools and chemical resources.

Table 3: Essential Research Reagents and Software Solutions.

| Category | Item | Function in Research |

|---|---|---|

| Software & Packages | FEgrow | Open-source Python package for structure-based hit optimization and active learning-driven hit expansion [22]. |

| HIDDEN GEM Scripts | Custom scripts integrating docking, generative models, and similarity searching (implementation details in [25]). | |

| KNIME / JChem | Cheminformatics platform used for workflow automation, compound database management, and similarity calculations [12]. | |

| Docking Software | Programs like AutoDock Vina, GOLD, or Glide used for structure-based scoring in initialization and final steps [25]. | |

| Chemical Libraries | Enamine REAL Space | An ultra-large library of over 37 billion make-on-demand compounds for virtual screening and analog sourcing [25]. |

| Enamine Hit Locator Library (HLL) | A diverse, drug-like library of ~460,000 compounds, often used as an initial set for docking-based screenings [25]. | |

| ChEMBL | A manually curated database of bioactive molecules with drug-like properties, used for model training and validation [25]. | |

| Computational Resources | GPU (e.g., NVIDIA GTX 1080 Ti) | Accelerates generative model training and inference in workflows like HIDDEN GEM and FEgrow's ML potentials [25]. |

| CPU Cluster | Handles large-scale docking simulations and massive similarity searches against ultra-large libraries [25]. |

The landscape of computational hit discovery and expansion is diverse, with tools optimized for different stages of the pipeline. FEgrow, with its integrated active learning workflow, establishes a powerful and efficient paradigm for hit expansion. It is not necessarily a direct competitor to ultra-large screeners like HIDDEN GEM but rather a complementary tool. While HIDDEN GEM is designed for the initial "needle in a haystack" search across billions of molecules, FEgrow excels in the subsequent "needle sharpening" phase, optimally exploring the local chemical space around a confirmed hit.

The experimental validation of FEgrow, resulting in active compounds against a therapeutically relevant target, underscores its practical utility. For research teams with a known protein structure and a starting fragment or hit, FEgrow offers a streamlined, automated, and computationally efficient path to generating valuable lead compounds for further development.

The landscape of early-stage drug discovery has been fundamentally transformed by the emergence of ultra-large, make-on-demand compound libraries. These libraries, such as the Enamine REAL space, contain billions of readily synthesizable molecules, presenting an unprecedented opportunity for hit identification [3] [28]. However, this opportunity comes with a significant computational challenge: exhaustive virtual screening of these libraries using flexible docking protocols remains prohibitively expensive due to the immense computational resources required [3]. This review examines evolutionary algorithms, with particular focus on REvoLd within the Rosetta software suite, as a powerful solution for navigating these vast chemical spaces. We position these algorithms within the broader context of active learning and Tanimoto similarity evolution analysis, comparing their performance against alternative methodologies for structure-based drug discovery.

REvoLd: Algorithmic Framework and Implementation

Core Evolutionary Mechanism

REvoLd (RosettaEvolutionaryLigand) implements an evolutionary algorithm specifically engineered for combinatorial make-on-demand chemical spaces. The algorithm mimics Darwinian evolution by maintaining a population of ligand individuals that undergo iterative selection, mutation, and crossover based on a docking score fitness function [28]. Its key innovation lies in exploiting the combinatorial nature of make-on-demand libraries, where molecules are defined by chemical reactions and lists of purchasable substrates, rather than treating compounds as static entities [3] [28].

Each individual in the REvoLd population represents a specific molecule defined by a reaction and a list of fragments used for that reaction. The algorithm begins with a random population generation, where initial molecules are created by selecting a random reaction and suitable synthons for each of the reaction's positions [28]. The fitness of each molecule is evaluated using the RosettaLigand protocol, which provides full ligand and receptor flexibility during docking, with the lowest calculated interface energy serving as the fitness score [3] [28].

Selection and Reproduction Operators

REvoLd incorporates multiple selection mechanisms to maintain evolutionary pressure while preventing premature convergence:

- ElitistSelector: Preserves the fittest individuals unchanged between generations