Beyond the Data Desert: Strategies for Comprehensive Chemical Space Coverage in AI-Driven Drug Discovery

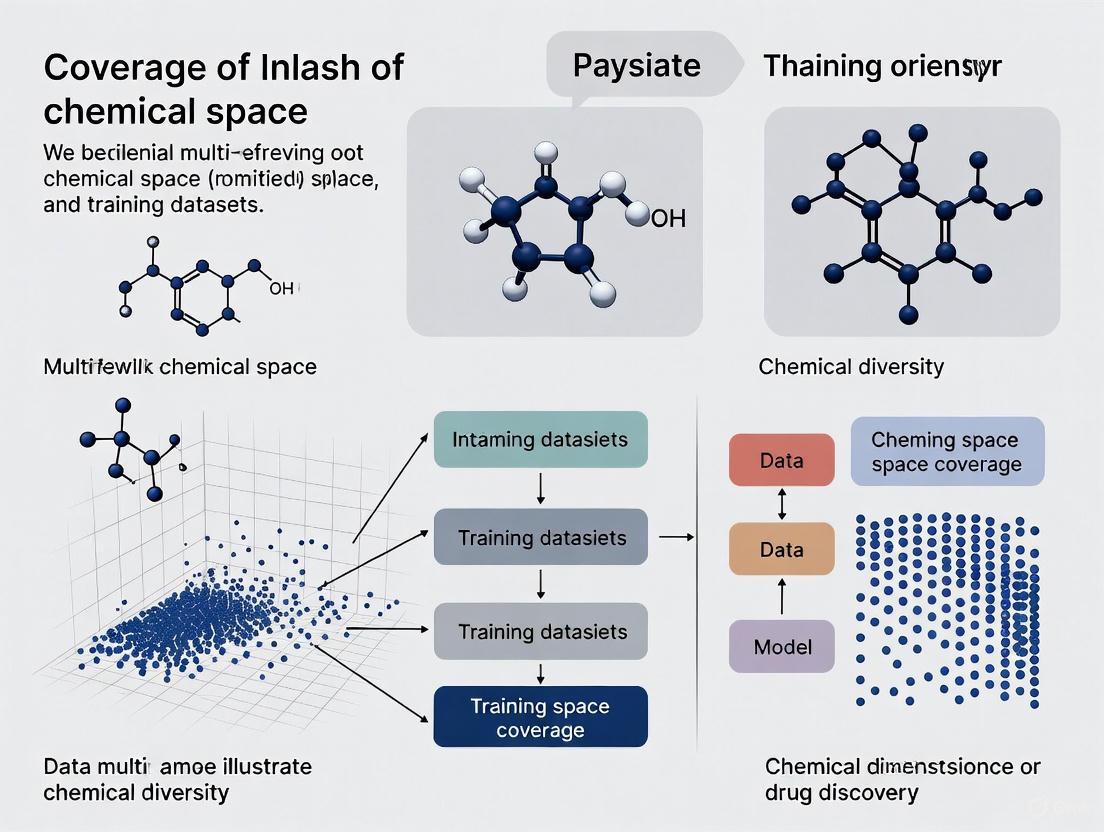

This article addresses the critical challenge of limited chemical space coverage in training datasets for AI-driven drug discovery.

Beyond the Data Desert: Strategies for Comprehensive Chemical Space Coverage in AI-Driven Drug Discovery

Abstract

This article addresses the critical challenge of limited chemical space coverage in training datasets for AI-driven drug discovery. For researchers and drug development professionals, we explore the foundational concepts of chemical space and its biologically relevant regions (BioReCS), highlighting significant coverage gaps in existing public datasets. The article details innovative methodological solutions, including the generation of massive, diverse datasets like OMol25 and MolPILE, and advanced sampling techniques for reaction pathways. We provide actionable troubleshooting strategies to overcome biases and represent underexplored chemical subspaces, such as metal-containing molecules and macrocycles. Finally, we present rigorous validation frameworks and comparative analyses that demonstrate how improved data coverage directly translates to enhanced model generalizability and performance in real-world discovery pipelines, from molecular property prediction to virtual screening.

Mapping the Void: Understanding Chemical Space and Its Coverage Gaps

Defining Chemical Space and the Biologically Relevant Chemical Space (BioReCS)

FAQs: Core Concepts and Definitions

What is Chemical Space (CS)? Chemical Space (CS), also referred to as the "chemical universe," is a concept used to encompass all possible chemical compounds. It is often visualized as a multidimensional space where each dimension represents a distinct molecular property (either structural or functional), and each molecule occupies a specific coordinate based on its properties [1]. The total number of theoretically possible small organic molecules is estimated to be on the order of 10^60, making this space extraordinarily vast and heterogeneous [2].

What is the Biologically Relevant Chemical Space (BioReCS)? The Biologically Relevant Chemical Space (BioReCS) is a critical subspace of the total chemical universe. It comprises molecules that exhibit a biological effect, which can be either beneficial (e.g., therapeutic drugs, agrochemicals) or detrimental (e.g., toxic compounds, allergens) [1]. BioReCS spans multiple application domains, including drug discovery, agrochemistry, food science, and natural product research [1].

Why is defining the BioReCS important for drug discovery? A deeper understanding of BioReCS is fundamental because exploring it has greatly enhanced our understanding of biology and led to the development of many modern drugs [3]. Accurately predicting the properties of molecules within BioReCS, particularly their Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET), is crucial for reducing clinical attrition rates. Approximately 40–45% of clinical failures are still attributed to ADMET liabilities [4].

What are the main challenges in exploring the BioReCS? The primary challenge is the immense size and diversity of the space, coupled with significant data limitations. Key issues include:

- Data Sparsity: Available experimental data covers only a tiny fraction of the total chemical space [4] [2].

- Limited Data Diversity: Many datasets are biased towards heavily explored regions, leaving "dark regions" of BioReCS underexplored [1].

- Generalization Failures: Machine learning models trained on narrow datasets often perform poorly when predicting properties for novel molecular scaffolds outside their training distribution [4] [2].

Troubleshooting Guide: Addressing Chemical Space Coverage Issues

Problem 1: My Model Fails on Novel Molecular Scaffolds

Symptoms: High predictive accuracy for molecules similar to your training set, but significant performance degradation on new compound classes or scaffolds.

Diagnosis: This indicates a fundamental coverage issue in your training dataset. The model has not learned a broad enough representation of BioReCS to generalize effectively.

Solutions:

- Utilize Federated Learning: Federated learning is a technique that enables multiple organizations to collaboratively train a machine learning model without sharing their proprietary data. This approach systematically expands the chemical space a model can learn from, altering the geometry of the learned representation and expanding its applicability domain. Federated models have been shown to consistently outperform isolated models, with performance gains scaling with the number and diversity of participants [4].

- Leverage Foundation Models: Employ large-scale molecular foundation models (FMs) like MIST, which are pre-trained on billions of diverse molecules. These models learn generalizable chemical concepts and can be fine-tuned for specific tasks with limited data, demonstrating robust performance across diverse chemical benchmarks, from physiology to quantum chemistry [2].

- Incorporate Negative Data: Include data on dark chemical matter (compounds repeatedly inactive in screens) or specifically curated inactive molecules. This helps the model learn the boundaries between bioactive and non-bioactive regions of chemical space [1].

Problem 2: Modeling Underexplored Regions of BioReCS

Symptoms: Difficulty in applying standard chemoinformatic tools to specific compound classes, leading to their exclusion from analyses.

Diagnosis: Traditional molecular descriptors and modeling tools are often optimized for small organic molecules, creating a barrier for underexplored chemical subspaces [1].

Solutions:

- Adopt Universal Molecular Descriptors: Move beyond traditional descriptors by implementing more general-purpose molecular representations. Promising options include:

- MAP4 Fingerprint: A MinHashed atom-pair fingerprint designed to be usable across different scales, from small molecules to peptides [1].

- Neural Network Embeddings: Use embeddings from chemical language models (like MIST) that learn chemically meaningful representations from molecular structure data [1] [2].

- The Smirk Tokenizer: A novel tokenization scheme that comprehensively captures nuclear, electronic, and geometric features of molecules, enabling models to handle diverse chemistries, including organometallics and isotopes [2].

- Targeted Data Generation: For critical but underexplored classes like metallodrugs, macrocycles, and PROTACs, initiate focused data generation and curation efforts to populate these regions in chemical databases [1].

Problem 3: Accounting for Ionization States in Property Prediction

Symptoms: Discrepancies between predicted and experimental properties like solubility, permeability, and binding affinity, especially for ionizable compounds.

Diagnosis: Standard chemical space analyses often assume neutral charge states. However, approximately 80% of contemporary drugs are ionizable. The ionization state profoundly impacts a molecule's behavior in physiological environments, and ignoring it leads to inaccurate predictions [1].

Solutions:

- Implement pH-Aware Descriptors: Calculate molecular descriptors (especially lipophilicity, logP) using the predominant ionization state at physiological pH, rather than relying solely on the neutral structure [1].

- Dynamic Protonation in Simulations: When running molecular dynamics simulations, use tools that allow for dynamic protonation state changes or ensure structures are properly pre-processed to reflect their charged state at the relevant pH.

Experimental Protocols for Enhancing Dataset Coverage

Protocol: Building a General Neural Network Potential for Diverse Molecules

This protocol is adapted from the development of the EMFF-2025 model, a general neural network potential (NNP) for C, H, N, O-based high-energy materials, and illustrates a transfer learning approach to efficiently model a chemical subspace [5].

1. Objective: Create a machine learning potential that achieves Density Functional Theory (DFT)-level accuracy for predicting structural, mechanical, and decomposition properties of a class of molecules, but at a fraction of the computational cost.

2. Methodology:

- Base Model: Start with a pre-trained NNP model (e.g., the DP-CHNO-2024 model was used as a base for EMFF-2025) [5].

- Transfer Learning: Employ a transfer learning strategy. Use a Deep Potential Generator (DP-GEN) framework to incorporate a small amount of new training data from DFT calculations on structures not included in the original database [5].

- Validation: Rigorously benchmark the new general model (e.g., EMFF-2025) against DFT calculations and experimental data. Key metrics include Mean Absolute Error (MAE) for energy (target: < 0.1 eV/atom) and forces (target: < 2 eV/Å) [5].

3. Chemical Space Analysis:

- Principal Component Analysis (PCA): Integrate the NNP with PCA to map the chemical space and structural evolution of the studied molecules across different temperatures [5].

- Correlation Heatmaps: Use correlation heatmaps to uncover intrinsic relationships and formation mechanisms of structural motifs within the chemical subspace [5].

NNP Development and Mapping Workflow

Protocol: Implementing a Federated Learning Workflow for ADMET Prediction

This protocol outlines the steps for a multi-partner federated learning project to build robust ADMET models, as demonstrated by initiatives like MELLODDY [4].

1. Objective: Train predictive ADMET models on diverse, distributed proprietary datasets without centralizing sensitive data, thereby expanding the effective chemical coverage of the models.

2. Methodology:

- Network Setup: Partners join a secured federated network (e.g., the Apheris Federated ADMET Network). Each partner retains full governance and ownership of their local data [4].

- Model Training: A global model is trained collaboratively. In each round, the model is sent to partners, who train it locally on their data. Only the model updates (gradients), not the data, are sent back to a central server for aggregation [4].

- Rigorous Benchmarking:

- Perform sanity and assay consistency checks on data.

- Use scaffold-based cross-validation to evaluate model performance.

- Benchmark against null models and established baselines to confirm true performance gains [4].

3. Expected Outcome: A federated model that systematically outperforms models trained on any single partner's data, with an expanded applicability domain and increased robustness for predicting novel scaffolds [4].

Federated Learning Architecture

Research Reagent Solutions: Essential Tools for Chemical Space Exploration

The following table details key computational tools and data resources for addressing chemical space coverage challenges.

Table: Key Resources for BioReCS Research

| Resource Name | Type | Primary Function | Relevance to BioReCS Coverage |

|---|---|---|---|

| ChEMBL [1] | Public Database | Curated database of bioactive molecules with drug-like properties. | Provides a vast source of annotated bioactive molecules for training models on heavily explored regions. |

| PubChem [1] | Public Database | Public repository of chemical substances and their biological activities. | A key resource for poly-active and promiscuous structures, and a source for negative data (inactive compounds). |

| Federated ADMET Network [4] | Computational Framework | Enables collaborative training of ML models across proprietary datasets. | Systematically expands the chemical space a model can learn from without sharing raw data. |

| MIST Foundation Model [2] | AI Model (Transformer) | A family of large-scale molecular foundation models. | Provides a pre-trained model that has learned general chemical concepts, enabling fine-tuning for diverse tasks with limited data. |

| EMFF-2025 [5] | Neural Network Potential (NNP) | A general ML potential for C, H, N, O-based materials. | Demonstrates a transfer learning protocol for achieving high accuracy in a chemical subspace with minimal new data. |

| MAP4 Fingerprint [1] | Molecular Descriptor | A structure-inclusive, general-purpose molecular fingerprint. | Aims to be a universal descriptor for entities ranging from small molecules to biomolecules. |

| InertDB [1] | Curated Dataset | A collection of experimentally determined and AI-generated inactive molecules. | Helps define the non-biologically relevant chemical space, improving model discrimination. |

Frequently Asked Questions (FAQs)

Q1: My dataset is small (N < 300). Why do my complex models perform well in training but fail in real-world predictions?

This is a classic sign of overfitting. In small datasets, sophisticated models like Random Forests or Neural Networks can memorize the noise in the training data rather than learning the underlying pattern. One study on digital mental health interventions found that for datasets of 300 or fewer samples, the difference between cross-validation results and holdout test performance could be as high as 0.12 in AUC (a key performance metric). Simpler models like Naive Bayes showed less overfitting under these conditions [6]. The solution is to use simpler models for small datasets, be skeptical of high cross-validation scores, and prioritize collecting more data.

Q2: When generating a synthetic dataset, should I prioritize creating a massive number of data points or focus on maximizing diversity?

Once a baseline dataset size is achieved, diversity often becomes more critical than sheer size. Research on building energy prediction models found that after the dataset contained approximately 1,440 samples, focusing on increasing the diversity of building shapes led to better model performance than simply adding more similar data points [7]. Similarly, the Massive Atomic Diversity (MAD) dataset, with under 100,000 structures, rivals models trained on much larger datasets by aggressively modifying structures to achieve massive atomic diversity [8].

Q3: Can I trust a model to predict the properties of a molecule that is very different from anything in my training set?

Extrapolation, or predicting far outside the range of your training data, is inherently risky and prone to large errors. Systematic analyses show that prediction errors become "much larger" during extrapolation compared to interpolation. For tasks requiring extrapolation, linear machine learning methods (e.g., Partial Least Squares regression) are often more reliable and preferable to complex, non-linear models [9]. Always define your model's "applicability domain" to understand its limits.

Q4: Is there a minimum dataset size that guarantees a good model?

There is no universal minimum, but domain-specific guidelines are emerging. For predicting dropout in digital mental health interventions, studies suggest a minimum of N = 500 to 1,000 data points to mitigate overfitting and see performance converge [6]. Furthermore, a new algorithmic framework from MIT researchers demonstrates that the optimal dataset size is problem-specific and can be mathematically identified, often being smaller than traditionally assumed, by exploiting the underlying structure of the problem [10].

Q5: How can I possibly screen a chemical library of billions or trillions of compounds?

A combination of machine learning and molecular docking can make this feasible. A state-of-the-art workflow involves training a machine learning classifier (like CatBoost) on the docking scores of a small, representative subset (e.g., 1 million compounds) of the vast library. This model then pre-screens the entire multi-billion-compound library, reducing the number of compounds that require computationally expensive docking by over 1,000-fold [11].

Troubleshooting Guides

Problem: High-Performance Variance in Small Datasets

Symptoms: Model performance (e.g., AUC, R²) is excellent during cross-validation but drops significantly on a separate holdout test set or when deployed.

Diagnosis: This is typically caused by overfitting on a small dataset (N ≤ 300), where the model learns spurious correlations specific to the training data [6].

Solution:

- Simplify the Model: Switch from a complex model (e.g., Neural Network, Random Forest) to a simpler one (e.g., Logistic Regression, Naive Bayes). Research shows simpler models are less prone to overfitting on small data [6].

- Reduce Feature Count: Use feature selection to retain only the most informative variables. One study found that a hand-selected set of 13 behavioral features outperformed a larger set of 129 features [6].

- Prioritize Data Collection: If possible, aim to collect data until you reach a more stable dataset size (e.g., N ≥ 500) [6].

Problem: Poor Model Generalization Across Diverse Chemical Structures

Symptoms: The model performs well on molecules similar to the training set but fails on novel scaffolds or structural types.

Diagnosis: The training dataset has insufficient coverage of the relevant chemical space [8] [12].

Solution:

- Assess Data Diversity: Use dimensionality reduction techniques like PCA, UMAP, or sketch-map to visualize your dataset's chemical space. Check if your test compounds fall outside the clusters formed by your training data [8].

- Augment with Synthetic Data: Generate synthetic data that expands the boundaries of your training set. The MAD dataset philosophy recommends applying "systematic perturbations" and "aggressive modifications" to existing stable structures to massively increase atomic diversity [8].

- Define the Applicability Domain: Implement a quantitative measure to define the model's applicability domain. Predictions for molecules outside this domain should be treated with extreme caution [9].

Problem: Inefficient Screening of Ultra-Large Chemical Libraries

Symptoms: Virtual screening of a multi-billion-compound library is computationally prohibitive using traditional methods like molecular docking alone.

Diagnosis: The direct docking approach does not scale to the size of modern make-on-demand chemical libraries [11].

Solution: Implement a Machine Learning-Accelerated Workflow [11]:

- Sample and Dock: Randomly sample a manageable subset (e.g., 1 million compounds) from the multi-billion library and dock them against your target.

- Train a Classifier: Train a machine learning classifier (CatBoost with Morgan fingerprints is a top performer) to predict "high-scoring" compounds based on the docking results from step 1.

- Pre-Screen with ML: Use the trained model to pre-screen the entire multi-billion library and select a much smaller subset (e.g., 20-25 million compounds) predicted to be active.

- Dock the Promising Subset: Perform molecular docking only on this pre-screened subset to identify final hits. This workflow can reduce computational cost by over 1,000-fold.

Experimental Protocols & Data

Protocol 1: Generating a Diverse Synthetic Dataset for Atomistic Machine Learning

This protocol is inspired by the construction of the Massive Atomic Diversity (MAD) dataset [8].

Objective: To create a compact yet highly diverse dataset for training robust, general-purpose machine-learning interatomic potentials.

Methodology:

- Seed Selection: Start with a set of stable, equilibrium structures from existing databases (e.g., organic molecules, inorganic crystals).

- Systematic Perturbation: Apply aggressive modifications to the seed structures to explore a wide energy landscape. Key operations include:

- Rattling: Introduce random atomic displacements.

- Random Strain: Apply random strain tensors to cell vectors.

- Random Composition: Create new structures by randomizing atomic species within a given lattice.

- Generate Derivatives: Create surfaces from bulk materials and clusters from molecules.

- Consistent Property Calculation: Calculate target properties (e.g., energy, forces) for all generated structures using a highly consistent level of theory (e.g., identical DFT settings) to ensure a coherent structure-energy mapping.

- Validation: Characterize the final dataset using latent space descriptors (e.g., feature vectors from a trained model) and visualize with PCA or sketch-map to confirm broad coverage of the chemical space.

Protocol 2: Machine Learning-Accelerated Virtual Screening of Billion-Compound Libraries

This protocol details the workflow proven to reduce docking computation by over 1,000-fold [11].

Objective: To efficiently identify top-scoring ligands for a protein target from a multi-billion-scale chemical library.

Methodology:

- Library Preparation: Obtain the ultra-large library (e.g., Enamine REAL, ZINC). Precompute molecular descriptors (Morgan fingerprints are recommended) for all compounds.

- Reference Docking: Randomly sample 1 million compounds from the library. Dock all 1 million compounds against the prepared protein target structure.

- Model Training:

- Label Data: Define the top 1% of scoring compounds from the reference docking as the "active" class.

- Train Classifiers: Train an ensemble of five CatBoost classifiers using the Morgan fingerprints and the assigned labels. Use 80% of the data for training and 20% for calibration.

- Conformal Prediction:

- Use the trained models within the Mondrian Conformal Prediction (CP) framework to predict the activity of the remaining billions of unscreened compounds.

- Set the significance level (ε) to achieve the desired balance between sensitivity and the size of the output set. This step selects the compounds that will be explicitly docked.

- Final Docking and Validation: Perform molecular docking on the much smaller subset of compounds selected by the CP step. Experimentally test the top-ranking compounds from this final list to validate the hits.

Quantitative Data on Dataset Size and Model Performance

The following table summarizes key quantitative findings from research on dataset sizes [7] [6].

Table 1: Empirical Guidelines for Dataset Sizes and Model Behavior

| Field / Context | Key Finding on Dataset Size | Quantitative Impact |

|---|---|---|

| Digital Mental Health (Dropout Prediction) | Overfitting is substantial for N ≤ 300. | Train-test performance gap up to 0.12 AUC. |

| Digital Mental Health (Dropout Prediction) | Overfitting is substantially reduced for N ≥ 500. | Train-test performance gap reduced to avg. 0.02 AUC. |

| Digital Mental Health (Dropout Prediction) | Model performance convergence point. | N = 750 - 1,500. |

| Building Energy Prediction | Point where diversity matters more than size. | After dataset size reaches ~1,440 samples. |

Workflow Diagrams

Diagram 1: ML-Accelerated Virtual Screening Workflow

Diagram 2: Solving Small Dataset & Diversity Problems

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Datasets for Chemical Space Research

| Tool / Resource | Function / Description | Key Application |

|---|---|---|

| MAD Dataset [8] | A compact, universal dataset of atomic structures designed for "Massive Atomic Diversity" via systematic perturbations. | Training robust, general-purpose interatomic potentials that perform well on both organic and inorganic systems. |

| CatBoost Classifier [11] | A high-performance gradient-boosting decision tree algorithm, particularly effective with categorical features like molecular fingerprints. | The core ML model in ultra-large virtual screening workflows for its optimal balance of speed and accuracy. |

| Conformal Prediction (CP) Framework [11] | A statistical framework that provides valid measures of confidence for ML predictions, allowing control over error rates. | Pre-screening chemical libraries to define a subset of compounds for docking with a guaranteed error rate. |

| Morgan Fingerprints (ECFP) [11] | A circular fingerprint that captures molecular substructures around each atom, providing a numerical representation of a molecule. | The molecular descriptor of choice for training QSAR models in virtual screening due to its strong benchmark performance. |

| Sketch-map [8] | A non-linear dimensionality reduction technique specifically designed to map high-dimensional atomistic configuration spaces. | Visualizing and assessing the diversity and coverage of a dataset within the broader chemical space. |

Frequently Asked Questions (FAQs)

FAQ 1: Why should we target the Beyond Rule-of-5 (bRo5) chemical space for difficult drug targets? Targets with large, flat, or relatively featureless binding sites, such as those involved in protein-protein interactions (PPIs), are often difficult to drug with conventional small molecules [13]. bRo5 compounds (typically with molecular weight > 500 Da) are beneficial for such targets because their larger size enables them to form sufficient contacts with the target protein to achieve high affinity and selectivity [13] [14]. Some bRo5 compounds, particularly macrocycles, can exhibit "chameleonic" properties, meaning they can adopt different conformations in different environments (e.g., changing polarity to cross cell membranes), which can enable improved cell permeability despite their size [15].

FAQ 2: What are the key experimental challenges in working with macrocycles and other bRo5 compounds? A major challenge is accurately characterizing their conformational behavior. Due to their size and flexibility, these molecules do not exist in a single 3D structure but as an ensemble of conformations [15]. This makes techniques like X-ray crystallography insufficient on their own, as the crystal environment captures only a limited set of conformations [15]. Furthermore, standard cellular permeability assays (e.g., Caco-2) often fail with bRo5 compounds due to technical issues like low detection sensitivity and poor compound recovery [16].

FAQ 3: How can we effectively profile the permeability of bRo5 compounds? Traditional high-throughput cellular permeability assays often yield unreliable data for bRo5 compounds. An equilibrated Caco-2 assay has been developed to address this. Key modifications from the standard protocol include [16]:

- A Pre-incubation Step: Compound solutions are added to the donor compartments and receiver buffer to the receiver compartments for 60-90 minutes before the main assay. This pre-incubation is then removed.

- Use of Bovine Serum Albumin (BSA): Adding BSA (1% w/v) to the transport buffer (HBSS, pH 7.4) helps reduce nonspecific compound binding to the apparatus.

- Optimized Analytics: Using sensitive LC-MS/MS methods for detection. This optimized setup allows for permeability measurement closer to equilibrium, significantly improving data quality, recovery, and the ability to predict human absorption for bRo5 compounds [16].

FAQ 4: What are the primary mechanisms of action for metal-based drugs? Metal-based drugs can operate via several distinct mechanisms, which provides a framework for their classification [17]:

- Covalent Binding: The metal complex undergoes ligand exchange, and the metal ion covalently binds to biomolecules like DNA or proteins, inhibiting their function (e.g., Cisplatin) [17].

- Enzyme Inhibition via Mimicry: The metal complex or species structurally mimics a natural substrate or metabolite, allowing it to competitively inhibit enzymes without direct coordination to the enzyme (e.g., Vanadium-oxo species mimicking phosphate) [17].

- Redox Activation: The metal ion can undergo changes in its oxidation state within the biological environment, leading to the generation of reactive oxygen species (ROS) or other reactive intermediates that cause cellular damage [17].

Troubleshooting Guides

Problem: Low Permeability in bRo5 Compound Candidates Potential Cause: The compound may not possess adequate "chameleonic" properties. It remains in a high-polarity conformation that is unable to traverse the lipid cell membrane [15]. Solution:

- Analyze Conformational Ensembles: Use a combination of NMR spectroscopy in both polar (e.g., DMSO) and nonpolar solvents to determine the true ensemble of conformations the compound adopts in different environments. Computational methods alone may not be reliable for this [15].

- Calculate 3D Polar Surface Area (PSA): Calculate the solvent-accessible 3D-PSA (SA-3D-PSA) for the conformers identified in the nonpolar environment. A lower minimum SA-3D-PSA is correlated with higher passive cell permeability [15].

- Promote Intramolecular Hydrogen Bonding (IMHB): Design compounds that can form stable IMHBs. These bonds can shield polar groups in a nonpolar environment (like a cell membrane), effectively reducing the molecule's apparent polarity and improving permeability [15].

Problem: Poor Recovery or Inconclusive Results in Standard Caco-2 Assays Potential Cause: bRo5 compounds frequently exhibit low permeability and high nonspecific binding to plasticware, leading to concentrations below the detection limit in the receiver compartment [16]. Solution: Implement the equilibrated Caco-2 assay protocol as described in FAQ 3 [16]. Key steps to verify:

- Ensure a pre-incubation step of 60-90 minutes is performed.

- Confirm that HBSS buffer with 1% (w/v) BSA is used in both donor and receiver compartments during the main incubation.

- Use a sensitive LC-MS/MS method for analytical detection.

- Validate the assay with known reference compounds to establish in vitro-in vivo correlation.

Problem: Lack of Chemical Diversity in an In-House Macrocycle Library Potential Cause: Traditional organic synthesis of macrocycles is often step-intensive and low-yielding, limiting the structural diversity that can be produced and screened [18]. Solution: Utilize cheminformatics-based enumeration to create large virtual libraries of macrocyclic scaffolds.

- Tool: Use software like the publicly available PKS Enumerator [18].

- Methodology: The software allows you to define constitutional constraints, such as the types and numbers of structural motifs (derived from known bioactive macrolides), ring size, and the maximum library size [18].

- Application: Enumerate a virtual library (e.g., the V1M library of 1 million macrolide scaffolds). This library can then be profiled with molecular descriptors, analyzed for diversity, and used for virtual screening to prioritize the most promising candidates for synthesis [18].

Experimental Protocols & Data

Table 1: Key Molecular Descriptors for Macrolactones (Macrocyclic Lactones) from MacrolactoneDB Analysis Analysis of nearly 14,000 macrolactones provides a benchmark for the properties of this structural class [19].

| Molecular Descriptor | Mean Value ± Standard Deviation | Violation Rate of Rule of 5* |

|---|---|---|

| Molecular Weight (MW) | 787 ± 339 g mol⁻¹ | 82% (MW > 500) |

| Topological Polar Surface Area (TPSA) | 213 ± 139 Ų | 71% (TPSA > 140) |

| SlogP | 3.10 ± 2.65 | 22% (SlogP > 5) |

| Hydrogen Bond Acceptors (HBA) | 12.7 ± 6.36 | 58% (HBA > 10) |

| Hydrogen Bond Donors (HBD) | 4.63 ± 4.88 | 23% (HBD > 5) |

| Number of Rotatable Bonds (NRB) | 9.21 ± 7.98 | 31% (NRB > 10) |

| Ring Size (RS) | 17.4 ± 5.99 atoms | Not Applicable |

*Lipinski's Rule of 5 thresholds: MW ≤ 500, SlogP ≤ 5, HBD ≤ 5, HBA ≤ 10 [19].

Table 2: Research Reagent Solutions for Key Experiments

| Reagent / Resource | Function | Example Application |

|---|---|---|

| PKS Enumerator Software | Cheminformatics tool to enumerate virtual libraries of macrocycle scaffolds with user-defined constraints [18]. | Generating diverse, synthetically-inspired macrocyclic libraries for virtual screening [18]. |

| Equilibrated Caco-2 Assay | A modified cellular assay with pre-incubation and BSA to reliably measure permeability of low-permeability bRo5 compounds [16]. | Predicting human intestinal absorption (fa) for bRo5 compounds and PROTACs [16]. |

| Cambridge Structural Database (CSD) | A repository of experimental small-molecule crystal structures [15]. | Analyzing solid-state conformations and intramolecular hydrogen bonding propensity [15]. |

| FTMap Server | Computational mapping of protein binding sites to identify "hot spots" that contribute most to binding energy [13]. | Assessing if a protein target has a "complex" hot spot structure that would benefit from a bRo5 ligand [13]. |

Workflow and Pathway Visualizations

Diagram: Workflow for Conformational Analysis of a bRo5 Compound This workflow outlines an integrated experimental-computational approach to characterize the conformational ensemble of a flexible bRo5 molecule like rifampicin, which is critical for understanding its permeability [15].

Diagram: Classification of Metal-Based Drug Mechanisms This diagram categorizes the primary modes of action (MoA) for metallodrugs, highlighting the key characteristics and examples for each class [17].

Frequently Asked Questions (FAQs)

FAQ 1: What is the core problem of chemical space coverage in public databases? The core problem is significant imbalance. Specific chemical subspaces (ChemSpas), primarily small organic and "drug-like" molecules, are heavily explored and over-represented. In contrast, other functionally important regions, such as metal-containing molecules, macrocycles, and peptides, are dark regions—severely underrepresented. This skews machine learning model training and limits discovery in areas like inorganic chemistry and underexplored biological target classes [20] [1].

FAQ 2: Which specific compound classes are considered "Dark Regions"? Dark regions, as identified in analyses of the Biologically Relevant Chemical Space (BioReCS), consistently include [20] [1]:

- Metal-containing molecules and metallodrugs: Often filtered out by tools designed for organic chemistry.

- Macrocycles: Compounds containing large rings (≥12 atoms).

- Beyond Rule of 5 (bRo5) compounds: Molecules beyond traditional drug-like property space.

- Mid-sized peptides and PROTACs (Proteolysis-Targeting Chimeras).

- Protein-Protein Interaction (PPI) inhibitors.

- Compounds with undesirable effects: Such as toxic chemicals, which are less studied than beneficial ones.

FAQ 3: How does data imbalance impact Machine Learning (ML) in drug discovery? Imbalanced data leads to biased ML models with poor predictive accuracy for the underrepresented classes. Models trained on existing public data may fail to recognize active compounds from dark regions, limiting their robustness and applicability for virtual screening in new therapeutic areas. This creates a critical bottleneck for generalizable AI in chemistry [21].

FAQ 4: What strategies can mitigate the data imbalance problem? Researchers can employ several technical strategies to address this challenge [21]:

- Data Resampling: Artificially balancing datasets by oversampling minority classes or undersampling majority classes.

- Data Augmentation: Using physical models or Large Language Models (LLMs) to generate new, plausible chemical structures in dark regions.

- Algorithmic Approaches: Using ML models and loss functions specifically designed to handle class imbalance.

- Feature Engineering: Developing universal molecular descriptors that work across diverse chemical classes (e.g., small molecules, peptides, metallodrugs).

Troubleshooting Guides

Problem: My ML Model Fails to Predict Activity in Underexplored Chemical Classes

This is a classic symptom of a model trained on an imbalanced dataset that lacks sufficient examples from the target chemical space.

Investigation & Diagnosis:

- Audit Your Training Data: Compare the chemical diversity of your training set against the target domain.

- Quantify the Imbalance: Calculate the distribution of key chemical features (e.g., presence of metals, molecular weight, specific functional groups) in your data. The following table helps benchmark your dataset's composition against known public resources.

Table 1: Quantifying Chemical Space Coverage in Public Databases

| Database / Dataset | Primary Chemical Focus (Heavily Explored) | Notable Omissions / Dark Regions | Key Statistics |

|---|---|---|---|

| ChEMBL [20] | Small organic molecules, bioactive compounds. | Metal-containing molecules, macrocycles, peptides. | ~2.4 million compounds; major source of poly-active and promiscuous structures. |

| PubChem [20] | Small organic molecules, broad bioactivity. | Similar to ChEMBL; default filters often remove inorganics. | One of the largest aggregate public repositories. |

| OMol25 [22] | Biomolecules, electrolytes, metal complexes. | Aims for broad coverage by including previously underrepresented classes. | >100M calculations; includes SPICE, Transition-1x, and metal complexes combinatorially generated via Architector. |

| Halo8 [23] | Halogen-containing (F, Cl, Br) reaction pathways. | Focused on addressing a specific coverage gap (halogens). | ~20M calculations from 19,000 pathways; incorporates systematic halogen substitution. |

| MolPILE [24] | Small, synthesizable organic compounds. | Curated for "real-world" feasibility, which may exclude some dark regions. | 222 million compounds; created via rigorous, automated curation from multiple large-scale databases. |

| Common Dark Regions [20] [1] | --- | Metallodrugs, Macrocycles, bRo5 compounds, PROTACs, PPI inhibitors, Toxic chemicals. | Often excluded due to modeling challenges and lack of standardized descriptors. |

Solution: Implement a Data Augmentation Pipeline Follow this experimental protocol to enrich your dataset and improve model generalizability.

Protocol: Multi-Level Data Augmentation for Dark Regions

Objective: Systematically augment training data to better represent a target dark region (e.g., metal complexes).

Research Reagent Solutions:

Table 2: Essential Tools for Data Augmentation and Analysis

| Reagent / Tool | Function / Application | Example Use Case |

|---|---|---|

| RDKit [23] | Open-source cheminformatics toolkit. | Molecular standardization, descriptor calculation, and structure-based filtering. |

| GFN2-xTB [23] | Semi-empirical quantum chemical method. | Fast geometry optimization and preliminary energy calculations for generated structures. |

| Architector [22] | Computational package for generating metal complexes. | Combinatorially generates 3D structures of metal complexes from metal/ligand combinations. |

| LHASA / SMARTS Patterns [24] | Reaction rule representations. | Defining and applying known chemical reactions for in silico compound generation. |

| MAP4 Fingerprint [1] | A general-purpose molecular descriptor. | Creating a unified representation for diverse molecules (small molecules to peptides). |

Step-by-Step Workflow:

- Identify Target Dark Region: Define the scope (e.g., "macrocyclic peptides with 15-25 amino acids").

- Seed Collection: Gather all available known actives and inactives from specialized databases (e.g., Peptipedia for peptides, MetAP DB for metallodrugs) [20].

- Structure Generation:

- Combinatorial Generation: Use tools like Architector for metal complexes [22] or apply SMARTS-based reaction rules to available building blocks [24].

- AI-Based Generation: Employ deep generative models or LLMs fine-tuned on the seed collection to propose novel, synthetically accessible structures [21].

- Structure Optimization & Validation: Optimize generated structures using a fast method like GFN2-xTB [23]. Filter out chemically unreasonable or unstable molecules.

- Descriptor Calculation & Integration: Calculate universal descriptors (e.g., MAP4) [1] for all new and existing data to create a consistent feature space.

- Balanced Dataset Creation: Combine the newly generated structures with the original training data, using resampling techniques to create a more balanced distribution for model training [21].

The following diagram visualizes this multi-level augmentation workflow.

Problem: Lack of Universal Descriptors for Mixed Compound Classes

Symptoms: Poor ML performance when your dataset contains a mix of small molecules, peptides, and metal complexes.

Solution: Employ structure-inclusive, general-purpose molecular descriptors.

Experimental Protocol:

- Move Beyond Traditional Fingerprints: Standard fingerprints like ECFP may not capture relevant features for non-organic structures [24].

- Evaluate Universal Descriptors: Test the performance of modern descriptors such as:

- Benchmarking: Train identical ML models on the same dataset but using different descriptor sets (ECFP, MAP4, Model Embeddings). Compare model performance on a held-out test set containing diverse molecule types to identify the most robust descriptor for your specific mixed-class problem.

This case study investigates a critical data gap in pharmaceutical research: the systematic underrepresentation of halogenated compounds in machine learning training datasets. Despite approximately 25% of pharmaceuticals containing halogens like fluorine, chlorine, and bromine, existing quantum chemical datasets predominantly focus on limited chemical spaces without adequate halogen coverage [23]. This discrepancy creates significant performance limitations in machine learning interatomic potentials (MLIPs) when applied to halogen-containing drug molecules, potentially compromising the accuracy of computational drug discovery pipelines.

The Halo8 dataset, a comprehensive collection incorporating approximately 20 million quantum chemical calculations from 19,000 unique reaction pathways, directly addresses this gap by systematically integrating fluorine, chlorine, and bromine chemistry into reaction pathway sampling [23]. By examining this solution, we demonstrate how improved halogen representation enables more accurate modeling of halogen-specific phenomena—including halogen bonding in transition states, polarizability changes during bond breaking, and unique mechanistic patterns—ultimately strengthening computational approaches to pharmaceutical development.

Halogen atoms play crucial roles across pharmaceutical chemistry, with fluorine appearing in approximately 25% of small-molecule drugs and numerous materials [23]. Despite this pharmaceutical relevance, halogen representation in quantum chemical datasets remains severely limited. The QM series datasets, which laid the groundwork for MLIP development, focus primarily on H, C, N, O, and F atoms, with fluorine appearing in less than 1% of QM7-X structures [23]. The ANI series expanded this foundation with extensive conformational sampling, and ANI-2x notably included both fluorine and chlorine atoms, though these datasets emphasize equilibrium and near-equilibrium configurations rather than reactive processes [23].

Transition1x marked a significant advance as the first large-scale dataset for chemical reactions but focused exclusively on C, N, and O heavy atoms without including halogens [23]. This absence presents critical challenges for MLIPs when modeling halogen-specific reactive phenomena. The unique electronic properties of halogens—including their polarizability, specific bonding patterns, and influence on molecular conformation—are insufficiently captured in current models trained on halogen-deficient datasets.

Table: Halogen Representation in Major Chemical Datasets

| Dataset | Heavy Atoms Covered | Halogen Coverage | Primary Focus |

|---|---|---|---|

| QM Series | H, C, N, O, (F in <1%) | Limited Fluorine | Equilibrium structures |

| ANI Series | H, C, N, O, F, Cl | Fluorine, Chlorine | Equilibrium and near-equilibrium configurations |

| Transition1x | C, N, O | None | Reaction pathways |

| Halo8 | H, C, N, O, F, Cl, Br | Comprehensive: F, Cl, Br | Reaction pathways with halogens |

Quantitative Evidence of the Representation Gap

Statistical Analysis of Dataset Composition

The underrepresentation of halogens in training data has measurable consequences for model performance. The Halo8 dataset comprises approximately 20 million individual structures derived from about 19,000 unique reaction pathways, with each path containing approximately 1,000 structural snapshots along the reaction coordinate [23]. Within this dataset, halogen-containing molecules account for 10.7 million structures (3.8M with fluorine, 3.7M with chlorine, and 3.1M with bromine) from 9,341 reactions, while recalculated Transition1x molecules contribute 9.4 million structures from 9,835 reactions [23].

Analysis of chemical space coverage reveals that existing datasets without deliberate halogen inclusion fail to capture critical regions of pharmaceutical relevance. When examining the pharmacological space, recent studies analyzing ChEMBL34 found that 81% of approved drugs contain at least one aromatic ring [25], yet the complex interplay between aromaticity and halogen substituents remains poorly represented in standard training datasets.

Performance Implications for Predictive Modeling

The selection of computational methods for dataset generation profoundly impacts model accuracy, particularly for halogenated systems. Benchmarking studies conducted for the Halo8 dataset revealed that the widely used ωB97X/6-31G(d) level—employed for Transition1x—showed unacceptably high weighted MAEs of 15.2 kcal/mol on the DIET test set, with most HAL59 subset entries unable to be calculated due to basis set limitations for heavier elements [23].

In contrast, the ωB97X-3c composite method achieved 5.2 kcal/mol accuracy—comparable to quadruple-zeta quality—while requiring only 115 minutes per calculation, representing a five-fold speedup compared to the quadruple-zeta level [23]. This methodological advancement enables practical generation of high-quality data for halogen-containing systems at manageable computational cost.

Table: Performance Comparison of Computational Methods for Halogenated Systems

| Computational Method | Weighted MAE (DIET set) | Computational Time | Feasibility for Large-Scale Dataset Generation |

|---|---|---|---|

| ωB97X/6-31G(d) | 15.2 kcal/mol | Not specified | Limited (basis set issues for heavier elements) |

| ωB97X-D4/def2-QZVPPD | 4.5 kcal/mol | 571 minutes | Low (computationally prohibitive) |

| ωB97X-3c | 5.2 kcal/mol | 115 minutes | High (optimal accuracy/efficiency balance) |

Experimental Protocols for Addressing Halogen Underrepresentation

Halogen-Enhanced Reaction Pathway Sampling

The Halo8 dataset employs a sophisticated multi-level computational workflow that achieves a 110-fold speedup over pure DFT approaches, making comprehensive reaction sampling for halogenated systems computationally feasible [23]. The protocol consists of four key phases:

Reactant Selection and Preparation

- Extract chlorine-containing molecules from GDB-8, a subset of GDB-13 containing molecules with up to 8 heavy atoms

- Systematically substitute each chlorine atom with fluorine and bromine, generating two additional molecules from each parent molecule

- Employ RDKit for stereoisomer enumeration and canonical SMILES generation

- Generate 3D coordinates using the MMFF94 force field and OpenBabel with conformer searching

- Optimize final structures using GFN2-xTB to ensure diverse starting geometries

Reaction Discovery and Characterization

- Process each molecule through the Dandelion computational pipeline

- Conduct product search via single-ended growing string method (SE-GSM) to explore possible bond rearrangements

- Perform landscape exploration using nudged elastic band (NEB) calculations with climbing image for improved transition state location

- Apply filtering criteria to ensure chemical validity, excluding trivial pathways with strictly uphill energy trajectories or negligible energy variations

Pathway Optimization and Validation

- Implement redundancy filtering: sample new bands only when cumulative sum of Fmax exceeds 0.1 eV/Å since last inclusion

- Require pathways to exhibit proper transition state characteristics (single imaginary frequency)

- Perform final refinement through single-point DFT calculations on selected structures along each pathway

Quantum Chemical Computation

- Execute all DFT calculations using ORCA 6.0.1 with the command

!wB97X-3c notrah nososcf - Validate consistent use of standard DIIS for SCF convergence

- Address previously identified bugs in force computation to ensure accuracy

Active Learning for Chemical Space Diversification

The QDπ dataset employs a query-by-committee active learning strategy to maximize chemical diversity while minimizing redundant information in training data [26]. This approach is particularly valuable for ensuring adequate coverage of halogen-containing compounds without prohibitive computational expense:

Committee Model Training

- Train 4 independent MLP models against the developing dataset with different random seeds

- Calculate energy and force standard deviations between the 4 models for each structure in source databases

- Set inclusion thresholds at 0.015 eV/atom for energy and 0.20 eV/Å for force standard deviations

Structure Selection and Inclusion

- Select random subsets of up to 20,000 structures from candidates exceeding threshold deviations

- Label selected structures with ωB97M-D3(BJ)/def2-TZVPPD and include in dataset

- Continue active learning cycles until all structures either included or excluded

Dataset Extension via Molecular Dynamics

- For small databases with few optimized structures, employ active learning with MD simulation

- Perform MD sampling using one of the 4 MLP models with varying simulation lengths

- Apply same tolerance thresholds for candidate selection

- Terminate procedure when models agree within specified tolerance for all explored samples

Technical Support Center: Troubleshooting Halogen Representation Issues

Frequently Asked Questions

Q1: How can I determine if my dataset has sufficient halogen diversity for my specific application?

A1: Implement the following diagnostic protocol:

- Calculate the iSIM Tanimoto (iT) value for your dataset, which corresponds to the internal diversity of the set (lower iT values indicate more diverse collections) [27]

- Perform complementary similarity analysis to identify molecules in the lowest 5th percentile (medoid-like) and highest 5th percentile (outlier molecules) [27]

- Use BitBIRCH clustering algorithm to dissect the chemical space and identify gaps in halogen coverage [27]

- Compare your dataset's halogen percentage against the pharmaceutical industry baseline of 25% halogen-containing compounds [23]

Q2: What are the specific technical challenges in modeling bromine and chlorine compared to fluorine?

A2: The challenges vary by halogen:

- Fluorine: Strong C-F bonds and pronounced electrostatic effects require accurate polarization treatment

- Chlorine: Larger atomic radius and polarizability demand appropriate basis sets with sufficient flexibility

- Bromine: Significant relativistic effects and diffuse electron distributions necessitate specialized pseudopotentials or all-electron relativistically-correct basis sets

- General: The ωB97X-3c method provides balanced performance across all three halogens at manageable computational cost [23]

Q3: How can I improve model transferability to novel halogenated compounds not in the training set?

A3: Implement strategic data augmentation:

- Apply systematic halogen substitution to existing non-halogenated compounds in your dataset [23]

- Include diverse bonding environments (aryl halides, alkyl halides, halogen bonding complexes)

- Sample along reaction coordinates involving halogen transfer or participation [23]

- Incorporate transition states with halogen bonding interactions

- Use active learning to identify and fill gaps in halogen chemical space [26]

Troubleshooting Guides

Problem: Poor Model Performance on Halogenated Compound Property Prediction

Symptoms

- High prediction errors for energies and forces of halogen-containing molecules

- Inaccurate geometry optimization for halogen bonding complexes

- Failure to reproduce known halogen substitution effects on molecular properties

Diagnostic Steps

- Quantify Representation Gap: Calculate the percentage of halogen-containing compounds in your dataset and compare to the 25% pharmaceutical industry benchmark [23]

- Assess Chemical Diversity: Use UMAP visualization with PubChem fingerprints to identify clustering patterns and gaps in halogen chemical space [25]

- Evaluate Methodological Adequacy: Verify that your computational method provides sufficient accuracy for halogen interactions (weighted MAE < 6 kcal/mol on relevant benchmarks) [23]

Solutions

- Immediate: Incorporate the Halo8 or QDπ datasets to bolster halogen coverage [23] [26]

- Medium-term: Implement active learning with halogen-focused candidate selection [26]

- Long-term: Develop organization-specific halogen-enriched datasets using the provided experimental protocols

Problem: Computational Bottlenecks in Halogen Dataset Generation

Symptoms

- Prohibitive computation times for electronic structure calculations on halogenated systems

- Memory issues when processing heavier halogens (bromine, iodine)

- Difficulty converging self-consistent field calculations for halogenated compounds

Optimization Strategies

- Method Selection: Adopt the ωB97X-3c composite method, which provides 5× speedup versus quadruple-zeta methods while maintaining accuracy [23]

- Multi-level Workflows: Implement the Halo8 approach combining xTB initial sampling with DFT refinement (110× speedup versus pure DFT) [23]

- Active Learning: Use query-by-committee approaches to minimize redundant calculations [26]

Visualization of Chemical Space Coverage

Research Reagent Solutions

Table: Essential Resources for Halogen-Inclusive Pharmaceutical Research

| Resource Name | Type | Key Features | Application in Halogen Research |

|---|---|---|---|

| Halo8 Dataset | Quantum Chemical Dataset | 20M structures, F/Cl/Br coverage, ωB97X-3c level | Training MLIPs for halogenated pharmaceuticals; reaction pathway analysis [23] |

| QDπ Dataset | Curated Chemical Dataset | 1.6M structures, active learning selection, 13 elements | Developing universal MLPs with optimized halogen diversity [26] |

| ChEMBL34 | Bioactivity Database | Manually curated bioactive molecules, drug-like properties | Mapping pharmacological space of halogen-containing drugs [25] |

| Dandelion Pipeline | Computational Workflow | Multi-level (xTB/DFT) reaction sampling, 110× speedup | Efficient generation of halogen reaction pathway data [23] |

| BitBIRCH Algorithm | Clustering Tool | O(N) complexity, Tanimoto similarity | Analyzing chemical diversity and identifying halogen coverage gaps [27] |

| iSIM Framework | Diversity Metric | Intrinsic similarity quantification, complementary similarity | Assessing and optimizing halogen representation in custom datasets [27] |

The systematic underrepresentation of halogenated compounds in pharmaceutical datasets constitutes a critical data quality issue with far-reaching implications for drug discovery pipelines. This case study demonstrates that targeted interventions—including strategic dataset development (Halo8, QDπ), optimized computational methods (ωB97X-3c), and intelligent sampling strategies (active learning)—can effectively address this representation gap.

The integration of these approaches enables substantial performance improvements in MLIPs for halogen-containing pharmaceuticals, ultimately enhancing the accuracy and efficiency of computational drug discovery. As the field advances, the ongoing development of diverse, well-curated datasets incorporating comprehensive halogen chemistry will be essential for realizing the full potential of machine learning in pharmaceutical sciences.

Future efforts should focus on expanding halogen diversity to include less common halogens, improving modeling of halogen bonding in complex biological environments, and developing more efficient active learning strategies specifically optimized for halogen chemical space. Through continued attention to dataset quality and diversity, the computational chemistry community can ensure that machine learning models remain reliable and effective tools for pharmaceutical innovation.

Building Better Datasets: Innovative Methods for Expansive Chemical Space Coverage

A fundamental challenge in creating machine learning (ML) models for molecular science is the lack of comprehensive training data that combines broad chemical diversity with a high level of accuracy. The "chemical space" is a multidimensional concept where molecular properties define coordinates and relationships between compounds. A specific and critical subset is the Biologically Relevant Chemical Space (BioReCS), which encompasses molecules with biological activity. Current datasets often fail to represent this space adequately, limiting the generalization ability of ML models in critical areas like drug discovery and materials science [28] [1].

Two recent, massive-scale datasets, Open Molecules 2025 (OMol25) and MolPILE, represent significant leaps forward in addressing this coverage issue. This guide distills their methodologies and provides a practical troubleshooting framework for researchers undertaking similar dataset creation projects.

The table below summarizes the core specifications of the OMol25 and MolPILE datasets, highlighting their scale and primary focus.

Table 1: Core Specifications of OMol25 and MolPILE Datasets

| Feature | OMol25 | MolPILE |

|---|---|---|

| Total Size | Over 100 million DFT calculations [28] | 222 million compounds [29] |

| Primary Content | High-accuracy Density Functional Theory (DFT) calculations [28] | Diverse collection of chemical structures for representation learning [29] |

| Level of Theory | ωB97M-V/def2-TZVPD [28] | N/A (compounds from various existing databases) [29] |

| Key Innovation | Unprecedented elemental, chemical, and structural diversity with high-accuracy quantum chemistry [28] | Large-scale, rigorously curated collection from 6 databases via an automated pipeline [29] |

| Stated Goal | Enable ML models with quantum chemical accuracy at a fraction of the computational cost [28] | Serve as a standardized, "ImageNet-like" resource for molecular representation learning [29] |

Key Experimental Protocols and Methodologies

Protocol: Constructing a Comprehensive Dataset like OMol25

The OMol25 project provides a detailed methodology for building a dataset that blends breadth and quantum chemical accuracy [28] [30].

- Define Coverage Areas: Deliberately select diverse regions of chemical space. OMol25 focused on three key areas:

- Biomolecules: Structures were sourced from the RCSB PDB and BioLiP2 datasets. Random docked poses were generated, and tools like Schrödinger were used to sample different protonation states and tautomers extensively [30].

- Electrolytes: Molecular dynamics simulations were run for various disordered systems (aqueous solutions, ionic liquids). Clusters were extracted, and systems relevant to battery chemistry, including oxidized/reduced clusters, were investigated [30].

- Metal Complexes: Combinatorially generated using combinations of metals, ligands, and spin states with the Architector package. Reactive species were generated using the artificial force-induced reaction (AFIR) scheme [30].

- Incorporate Existing Datasets: Recalculate and integrate data from established community datasets (e.g., SPICE, Transition-1x, ANI-2x) at a consistent, high level of theory to ensure broad coverage and data uniformity [28] [30].

- Execute High-Accuracy Calculations: Perform quantum chemical calculations at a high, consistent level of theory. OMol25 used the ωB97M-V functional with the def2-TZVPD basis set and a large integration grid to ensure accuracy for non-covalent interactions and gradients [28] [30].

- Generate Reactive Structures: Use specialized methods like AFIR to create reactive pathways and sample structures along them, ensuring the dataset includes underrepresented transition states and reaction intermediates [30].

Protocol: Curating a Diverse Compound Library like MolPILE

MolPILE emphasizes a robust, automated curation process to ensure data quality from heterogeneous sources [29].

- Source Selection: Identify and gather data from multiple, large-scale public and proprietary databases (MolPILE used 6 sources) [29].

- Automated Curation Pipeline: Develop and run a standardized data pipeline to:

- Remove Duplicates: Identify and merge duplicate compounds based on structural fingerprints.

- Standardize Formats: Convert all structures into a consistent representation (e.g., SMILES, SDF).

- Validate Structures: Perform basic chemical validity checks.

- Analyze Chemical Diversity: Use molecular descriptors and visualization techniques to profile the resulting dataset's coverage of chemical space and identify potential biases or gaps [29].

FAQs and Troubleshooting Guides

FAQ 1: How do I choose between a quantum chemistry dataset (like OMol25) and a structural library (like MolPILE) for my project?

Answer: The choice depends entirely on your project's goal.

- Use a quantum chemistry dataset (OMol25) when you need high-fidelity energetic and electronic properties. This is essential for tasks like force field development, molecular dynamics simulations, reaction modeling, and predicting spectroscopic properties. The trade-off is that these datasets are computationally prohibitive to create and often require more expertise to utilize fully.

- Use a structural library (MolPILE) for molecular representation learning, virtual screening, and predicting quantitative structure-activity relationships (QSAR). These datasets are ideal for training foundation models to understand general chemical structure and its relationship to broad properties, but they do not contain quantum mechanical energy data.

FAQ 2: What are the most common data quality issues in large molecular datasets, and how can I mitigate them?

Answer: The most common issues stem from inconsistency and bias.

- Problem: Inconsistent Calculation Methods. Mixing data from different levels of theory or basis sets introduces noise and systematic errors, crippling model accuracy.

- Solution: Adopt a uniform, high-level method for all calculations, as OMol25 did with ωB97M-V/def2-TZVPD [28]. If using existing data, rigorously filter for consistency.

- Problem: Underrepresentation of Critical ChemSpas. Many datasets are biased toward small, organic, drug-like molecules, performing poorly on metal complexes, peptides, and "beyond Rule of 5" molecules [1].

- Problem: Lack of Negative Data. Models trained only on active or successful compounds cannot distinguish inactive or failed ones.

- Solution: Incorporate negative data, such as "dark chemical matter" (compounds repeatedly inactive in assays) or databases of putative inactive molecules like InertDB [1].

FAQ 3: My model trained on a large dataset is overfitting. What scaling techniques should I consider?

Answer: Overfitting on massive datasets often relates to computational constraints and model complexity.

- Implement Distributed Computing: Use frameworks like Apache Hadoop or Spark to distribute data and computation across multiple machines, enabling parallel processing and faster training on the full dataset [31].

- Apply Feature Selection/Reduction: Use techniques like Principal Component Analysis (PCA) to reduce the dimensionality of your feature space, which decreases computational load and can improve generalization [31].

- Utilize Batch Processing: Divide the dataset into smaller batches and train the model incrementally. This helps prevent overfitting and makes the training process more manageable from a memory perspective [31].

- Consider a Simpler Model: Complex models with many parameters can struggle to scale. In some scenarios, a simpler model (e.g., linear models, shallow decision trees) may generalize better on large, high-dimensional data [31].

Essential Research Reagent Solutions

The following table lists key computational "reagents" and resources essential for large-scale molecular dataset creation and utilization.

Table 2: Key Research Reagents and Computational Tools

| Item / Resource | Function | Example in Use |

|---|---|---|

| ωB97M-V Functional | A state-of-the-art range-separated meta-GGA density functional that accurately models various interactions, including non-covalent forces. | Used as the consistent level of theory for all 100M+ calculations in the OMol25 dataset [28] [30]. |

| Automated Curation Pipeline | Software to standardize, deduplicate, and validate chemical structures from diverse sources. | Core to the MolPILE construction process, ensuring a clean and consistent dataset from 6 source databases [29]. |

| Neural Network Potentials (NNPs) | Machine learning models trained on quantum data to predict potential energy surfaces with near-quantum accuracy at a fraction of the cost. | Models like eSEN and UMA were trained on OMol25 and demonstrate state-of-the-art accuracy for molecular modeling [30]. |

| Universal Descriptors (e.g., MAP4) | Molecular fingerprints designed to be consistent across different compound classes (small molecules, peptides, etc.). | Crucial for exploring the entire BioReCS, as they allow for the comparison of diverse molecules like small organics and metallodrugs [1]. |

Workflow Visualization: Dataset Creation and Application

The diagram below illustrates the core workflow for creating and applying a massive-scale molecular dataset, integrating the methodologies of OMol25 and MolPILE.

Workflow for Creating and Applying a Molecular Dataset

Workflow Visualization: Model Training Strategies

For projects focused on training models from large datasets, the following diagram outlines the key strategic decisions and paths.

Model Training Strategy Decision Map

Frequently Asked Questions (FAQs)

1. What is the core chemical space limitation that Halo8 and Transition1x address? Most quantum chemical datasets predominantly focus on equilibrium structures and near-equilibrium configurations, which limits Machine Learning Interatomic Potentials (MLIPs) from accurately modeling chemical reactions that involve bond breaking/forming and transition states [32] [23]. Transition1x and Halo8 systematically incorporate molecular configurations on and around reaction pathways, providing the data needed to train next-generation ML models for reactive systems [32] [23].

2. How does Halo8 improve upon the Transition1x dataset? Halo8 significantly expands chemical diversity by incorporating halogen chemistry (fluorine, chlorine, bromine), which is critically important in pharmaceuticals and materials science but was missing from Transition1x [23]. It also uses a more advanced and accurate density functional theory (DFT) method, ωB97X-3c, and includes additional molecular properties like dipole moments and partial charges [23].

3. My ML model performs poorly on predicting reaction barriers. Could the training data be the issue? Yes. Models trained only on popular benchmarks like ANI1x or QM9, which lack sufficient sampling of transition state regions, will inherently fail to learn the features of reaction pathways [32]. Retraining your model on a combination of equilibrium data and reaction-pathway data from Transition1x or Halo8 should lead to substantial improvements in predicting reaction barriers and energies [32].

4. What is the practical impact of using different DFT levels between these datasets? The DFT level directly impacts the accuracy of computed energies and forces. Transition1x uses ωB97x/6-31G(d), while Halo8 uses the ωB97X-3c composite method [32] [23]. The latter provides accuracy comparable to quadruple-zeta basis sets and a much better treatment of dispersion interactions, which is crucial for halogen-containing systems, at a reasonable computational cost [23]. Mixing data from different DFT levels without recalculation can introduce systematic errors.

Troubleshooting Guides

Issue 1: Low Model Accuracy on Halogenated Compounds

Problem: Your MLIP shows high prediction errors when applied to molecules containing fluorine, chlorine, or bromine.

Solution:

- Root Cause: The model was likely trained on datasets with limited or no halogen coverage (e.g., QM9, ANI1x, or the original Transition1x) [23].

- Recommended Action: Fine-tune or retrain your model using the Halo8 dataset. Halo8 contains approximately 10.7 million structures of halogen-containing molecules from 9,341 reactions, providing the necessary diversity for the model to learn halogen-specific interactions [23].

- Validation: After retraining, validate the model's performance on the HAL59 benchmark subset, which focuses on halogen dimer interactions [23].

Issue 2: Inadequate Sampling of Transition State Regions

Problem: Your model fails to accurately identify or describe transition states and reaction barriers.

Solution:

- Root Cause: The training data does not sufficiently cover the high-energy regions of the potential energy surface (PES) where transition states exist [32].

- Recommended Action: Incorporate the Transition1x dataset into your training pipeline. It provides 9.6 million DFT calculations specifically sampled from configurations on and around reaction pathways using the Nudged Elastic Band (NEB) method [32].

- Workflow Integration: The data selection protocol in Transition1x ensures diverse sampling by including intermediate NEB paths that are significantly different from each other, preventing overfitting to specific regions [32].

Issue 3: Dataset Inconsistencies and Integration Errors

Problem: You encounter errors or performance drops when combining data from multiple sources (e.g., Transition1x and Halo8) for training.

Solution:

- Root Cause: Underlying differences in DFT software, versions, and calculation methods create systematic inconsistencies, even when the level of theory is nominally the same [23].

- Recommended Action: Use the recalculated Transition1x structures that are included within the Halo8 dataset. The creators of Halo8 recalculated the original Transition1x reactions at the ωB97X-3c level to ensure internal consistency across the entire dataset [23].

- Preventive Step: When building a new dataset from multiple sources, always check for consistency in computed properties and, if necessary, recalculate data to a unified standard.

Dataset Comparison & Specifications

The table below summarizes the core quantitative data for the Transition1x and Halo8 datasets, enabling a direct comparison of their scope and methodologies.

| Feature | Transition1x | Halo8 |

|---|---|---|

| Total Structures | 9.6 million DFT calculations [32] | ~20 million DFT calculations [23] |

| Reaction Pathways | 10,073 organic reactions [32] | ~19,000 unique reaction pathways [23] |

| Heavy Atoms Covered | C, N, O [32] | C, N, O, F, Cl, Br [23] |

| Source Molecules | GDB-7 (up to 7 heavy atoms) [32] | GDB-13 (3-8 heavy atoms), incl. systematic halogen substitution [23] |

| Level of Theory | ωB97x/6-31G(d) [32] | ωB97X-3c [23] |

| Primary Sampling Method | Nudged Elastic Band (NEB) with Climbing Image (CINEB) [32] | Reaction Pathway Sampling (RPS) / Multi-level workflow with NEB/CINEB [23] |

| Key Properties | Energies, Forces [32] | Energies, Forces, Dipole moments, Partial charges [23] |

Experimental Protocols

Protocol 1: Generating Reaction Pathways with Nudged Elastic Band (NEB)

This methodology is central to the creation of the Transition1x dataset [32].

Reactant and Product Preparation:

- Obtain initial 3D geometries for the reactant and product pair.

- Relax both endpoints using a geometry optimizer (e.g., BFGS) until the maximum force on any atom is below 0.01 eV/Å [32].

Initial Path Generation:

- Create an initial guess for the Minimum Energy Path (MEP) by interpolating between the relaxed reactant and product.

- Refine this initial path using the Image Dependent Pair Potential (IDPP) [32].

NEB Optimization:

Climbing Image NEB (CINEB):

- Switch to the CINEB algorithm to ensure the highest energy image converges to the true transition state.

- Continue optimization until the maximum perpendicular force is below a tight threshold of 0.05 eV/Å [32].

Data Selection:

- From the optimization iterations, save intermediate paths and their associated DFT calculations (energies and forces).

- A path is included in the dataset only if the cumulative sum of the maximal perpendicular force since the last saved path exceeds 0.1 eV/Å, ensuring diverse and non-redundant sampling [32].

Protocol 2: Multi-Level Computational Workflow for Halo8

This efficient protocol, implemented via the Dandelion pipeline, was used to generate the Halo8 dataset and achieves a ~110-fold speedup over pure DFT workflows [23].

Reactant Selection and Preparation:

Product Search:

- Use Single-Ended Growing String Method (SE-GSM) with automatically generated driving coordinates to discover possible reaction products from the reactant [23].

Landscape Exploration:

- For successfully identified reactant-product pairs, perform NEB with a climbing image to locate the transition state and map the minimum energy pathway [23].

- Apply filtering to exclude chemically invalid pathways (e.g., those with strictly uphill energy profiles or lacking a single imaginary frequency) [23].

DFT Refinement:

- Perform final single-point DFT calculations at the ωB97X-3c level of theory on selected structures along each converged pathway to obtain highly accurate energies, forces, and electronic properties [23].

The Scientist's Toolkit: Essential Research Reagents

The table below lists key computational tools and data resources used in the creation and application of these reaction pathway datasets.

| Resource Name | Type | Function in Research |

|---|---|---|

| Nudged Elastic Band (NEB) | Algorithm | Locates minimum energy paths and transition states between reactant and product states [32]. |

| Climbing Image NEB (CINEB) | Algorithm | An enhanced NEB variant that ensures one image converges to the saddle point (transition state) [32] [23]. |

| ωB97X-3c | DFT Method | A composite quantum chemistry method providing high accuracy for energies and non-covalent interactions at low computational cost, used in Halo8 [23]. |

| Dandelion Pipeline | Software Workflow | An automated, multi-level computational pipeline for efficient reaction discovery and pathway sampling [23]. |

| GDB-13 | Molecular Database | A source of billions of theoretically possible organic molecules used for reactant selection in Halo8 [23]. |

| ASE (Atomic Simulation Environment) | Python Library | A versatile toolkit for setting up, manipulating, running, visualizing, and analyzing atomistic simulations [23]. |

Experimental Workflow Diagrams

Transition1x Data Generation Workflow

Halo8 Multi-Level Computational Pipeline

Leveraging Multi-Level Computational Workflows for Efficient Data Generation

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: What is a multi-level computational workflow and why is it used for generating chemical data?

Multi-level workflows combine different levels of computational chemistry methods to balance speed and accuracy. They typically use a fast, approximate method (like xTB) to explore vast chemical spaces and identify promising regions, followed by accurate but expensive quantum chemical methods (like DFT) for final refinement [23]. This approach is essential because screening billions of molecules with high-level methods alone is computationally infeasible [11].

FAQ 2: My workflow fails during the reaction pathway sampling (RPS) step. What could be wrong?

- Problem: Trivial or repetitive pathways are being generated.

- Solution: Apply stringent filtering criteria. Exclude pathways with strictly uphill energy trajectories, negligible energy variations, or repetitive structures. Sample a new band only when the cumulative sum of Fmax exceeds 0.1 eV/Å since the last inclusion to avoid redundancy [23].

- Problem: The workflow is too slow.

- Solution: Ensure you are using the multi-level protocol, which can achieve a 110-fold speedup over pure DFT approaches. Use xTB for initial reaction discovery and transition state location before proceeding to single-point DFT calculations on selected structures [23].

FAQ 3: How can I improve the chemical space coverage of my dataset, especially for halogen-containing molecules?

- Use systematic halogen substitution on existing molecular sets. For example, extract chlorine-containing molecules from a database like GDB-8, then substitute each chlorine atom with fluorine and bromine to maximize diversity with minimal effort [23].

- Incorporate specialized datasets like Halo8, which systematically includes fluorine, chlorine, and bromine chemistry into reaction pathway sampling, addressing a critical gap in many existing datasets [23].

FAQ 4: What level of theory should I use for my DFT calculations on halogenated systems?