Beyond the Simulation: Why Experimental Data is the Keystone of Valid Computational Models in Biomedicine

This article explores the critical, multi-faceted role of experimental data in grounding computational models used in drug development and biomedical research.

Beyond the Simulation: Why Experimental Data is the Keystone of Valid Computational Models in Biomedicine

Abstract

This article explores the critical, multi-faceted role of experimental data in grounding computational models used in drug development and biomedical research. It establishes the foundational principle that models are hypotheses requiring empirical proof, explores methodologies for integrating diverse data types, addresses common challenges like validation and statistical power, and provides a framework for rigorous model evaluation. Aimed at researchers and drug development professionals, the content synthesizes current insights to advocate for a synergistic model-data paradigm that enhances predictive accuracy, fosters innovation, and builds confidence in computational tools for clinical translation.

The Bedrock of Predictiveness: How Experimental Data Transforms Models from Theory to Tool

Defining Mechanistic vs. Purely Data-Driven Models

In the realm of scientific computing, particularly within biological sciences and drug development, computational models are indispensable for integrating complex knowledge and generating testable predictions. These models largely fall into two broad, seemingly divergent categories: mechanistic and purely data-driven models [1]. A full understanding of complex biological processes, such as cell signaling, requires knowledge of protein structure, interactions, and how pathways control phenotypes. Computational models provide a framework for integrating this knowledge to predict the effects of perturbations and interventions in health and disease [1]. The careful implementation and integration of both mechanistic and data-driven approaches can provide new understanding for how manipulating system variables impacts cellular decisions, a principle that extends to pharmaceutical research and development [1] [2].

This guide explores the core definitions, methodologies, and applications of these two modeling paradigms, framing them within the critical context of a broader research thesis that emphasizes the indispensable role of experimental data in validating computational predictions [3].

Core Concepts and Definitions

Mechanistic Models

Mechanistic models are built on established causal relationships and prior biological knowledge. They synthesize biophysical understanding of network interactions to predict system behavior, such as protein concentrations or post-translational modifications, in response to perturbations [1]. These models are grounded in physical laws and are typically expressed through kinetic, constitutive, and conservation equations, often in the form of ordinary or partial differential equations (ODEs/PDEs) [1] [4].

In the conceptual "cue-signal-response" paradigm, mechanistic models are most appropriate for understanding the cue-signal processes, which are governed by knowable reaction rate laws [1]. Their strength lies in their ability to adapt to different physical scenarios and provide a transparent, interpretable framework for analyzing the system. However, they are often populated with numerous parameters that can be difficult to measure directly, leading to challenges with uncertainty and parameter estimation [1].

Purely Data-Driven Models

Purely data-driven models, in contrast, use computational algorithms to analyze data without requiring explicit prior mechanistic knowledge [1]. These models, which include machine learning (ML) and deep learning (DL) techniques, excel at identifying complex patterns within high-dimensional data to produce accurate predictions for tasks like forecasting and classification [2].

Within the "cue-signal-response" framework, data-driven models are ideal for distilling the complex relationships at the signal-response level, where the mechanistic links between multivariate signaling changes and phenotypic outcomes may be poorly defined [1]. Their primary limitation is a frequent lack of transparency, often functioning as "black boxes" that provide little insight into the underlying biological reasoning behind their predictions [2].

A Comparative Analysis

The table below summarizes the key characteristics of mechanistic and data-driven models for a direct, structured comparison.

Table 1: Comparative characteristics of mechanistic and data-driven models.

| Characteristic | Mechanistic Models | Purely Data-Driven Models |

|---|---|---|

| Fundamental Basis | Physical laws, causal relationships, and prior biological knowledge [1] | Identified patterns and statistical relationships within data [2] |

| Primary Strength | Transparency, interpretability, causal pathway analysis [2] | Handling high-dimensional data, pattern recognition without needing mechanistic knowledge [1] |

| Typical Formulation | Differential equations (ODEs, PDEs) [1] [4] | Machine learning algorithms (e.g., regression, clustering, classification) [1] |

| Data Requirements | Can be constructed with limited data, but require data for parameter estimation [1] | Require large volumes of data for training and validation [1] |

| Handling of Uncertainty | Parameters are difficult to measure; models can be "sloppy" or non-identifiable [1] | Predictions can be unstable or unreliable without sufficient and representative data [2] |

| Best-suited Application | Understanding biophysical basis of signal transduction (Cue-Signal) [1] | Predicting phenotypes from multivariate signaling data (Signal-Response) [1] |

The Critical Role of Experimental Data and Validation

Experimental data serves as the cornerstone for both developing and establishing confidence in computational models. The processes of verification and validation (V&V) are essential for generating evidence that a computer model yields results with sufficient accuracy for its intended use [5].

- Verification is the process of determining that a model implementation accurately represents the developer's conceptual description and solution. It answers the question, "Are we solving the equations correctly?" This involves checking for numerical errors, such as discretization error and computer round-off errors [5] [4].

- Validation is the process of assessing a model's accuracy by comparing its computational predictions to experimental data. It answers the question, "Are we solving the correct equations?" [5] [4]. A model should be validated across a range of conditions relevant to its intended use.

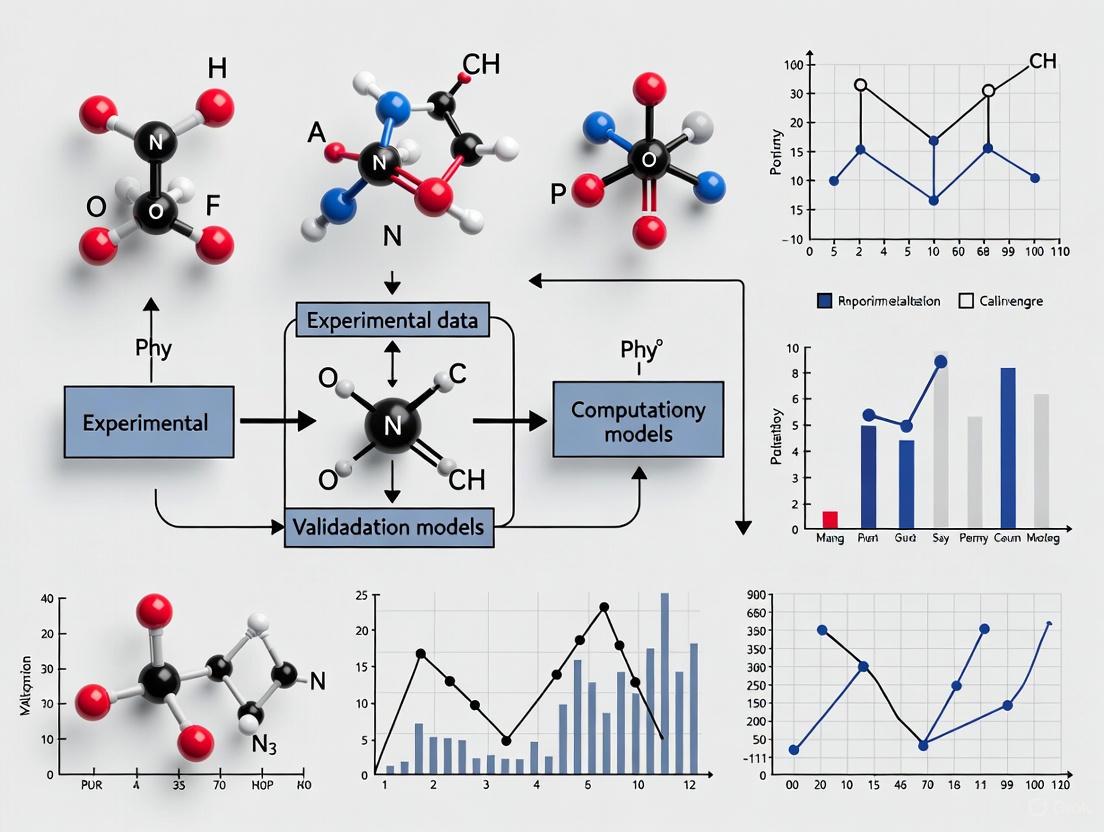

The following workflow diagram illustrates the integrated process of model development, verification, and validation within an experimental research framework.

Diagram 1: Integrated model development and validation workflow.

Validation is not a single step but a quantitative process. The concept of a validation metric is crucial for moving beyond qualitative, graphical comparisons to computable measures that quantitatively assess computational accuracy against experimental data over a range of conditions [4]. These metrics should account for both computational numerical errors and experimental measurement uncertainties.

Methodologies and Protocols

Key Protocols for Mechanistic Modeling

Constructing and testing a mechanistic model involves several critical steps to ensure its robustness and reliability.

- Parameter Estimation and Inference: Model parameters are often estimated by fitting the model to experimental data. Common methods include Maximum Likelihood Estimation (MLE) and, for non-normally distributed errors, profile likelihood. When parameter distributions are of interest, Bayesian inference can be used to update prior probability distributions with experimental data to generate posterior distributions [1].

- Sensitivity Analysis: This process identifies which parameters and processes most significantly impact model output.

- Local Sensitivity Analysis: Calculates the change in model output for a perturbation in a single parameter. It is simpler but can be misleading [1].

- Global Sensitivity Analysis: A more sophisticated method where all parameters are perturbed simultaneously, providing a more realistic assessment of parameter importance. Methods include Partial Rank Correlation Coefficient (PRCC) with Latin Hypercube Sampling (LHS) and the extended Fourier Amplitude Sensitivity Test (eFAST) [1].

- Addressing Sloppiness and Identifiability: Most mechanistic models are "sloppy," meaning only a few parameter combinations control model output. Sloppiness analysis helps quantify uncertainty and identify these key combinations. Additionally, models must be checked for structural identifiability (whether a unique parameter set can be determined) and practical identifiability (whether confidence intervals can be determined from available data) [1].

Key Protocols for Data-Driven Modeling

The development of data-driven models follows a different, data-centric pipeline.

- Data Collection and Preprocessing: The foundation of any data-driven model is a high-quality dataset with enough independent observations and broadly measured features to capture the relevant biochemistry. Data must be cleaned, normalized, and split into training and testing sets [1].

- Model Training and Selection: An algorithm (e.g., regression, random forest, neural network) is trained on the data to learn the mapping from inputs to outputs. Model selection techniques, potentially using information criteria, are used to choose the best-performing algorithm and architecture while guarding against overfitting [1].

- Validation and Performance Assessment: The trained model's performance is rigorously assessed using the held-out test data. Performance is quantified using metrics relevant to the task, such as accuracy, precision, recall, or mean squared error. This step is the data-driven equivalent of mechanistic model validation and is crucial for establishing predictive credibility [1] [3].

The workflow for a data-driven approach, highlighting its reliance on large datasets, is shown below.

Diagram 2: Data-driven model development workflow.

Synergistic Integration: Hybrid Approaches

The dichotomy between mechanistic and data-driven modeling is not rigid. A powerful emerging trend is their hybridization to leverage the strengths of both paradigms [2]. In animal production systems, for example, synergy is being achieved by:

- Using data streams (e.g., from sensors) to apply mechanistic models in real-time with new resolution.

- Augmenting a machine learning framework with parameters or outcomes generated by a mechanistic model.

- Using data-driven methods to parameterize a mechanistic model for individual animals or farms, while the mechanistic model provides biological bounds [2].

This hybrid approach aims to advance both predictive capabilities and system understanding, moving the field towards intelligent, knowledge-based systems in biology and medicine [2].

The Scientist's Toolkit

The table below lists key resources and their functions that are essential for research involving computational modeling and its experimental validation.

Table 2: Key research resources and computational tools.

| Resource / Tool | Category | Primary Function in Research |

|---|---|---|

| COPASI [1] | Software | An open-source platform for simulating and analyzing biochemical networks via ODEs. |

| MATLAB [1] | Software | A proprietary numerical computing environment used for algorithm development, parameter estimation, and data analysis. |

| Bayesian Inference Tools (e.g., Stan, PyMC3) [1] | Methodology/Software | A statistical framework and associated software for parameter estimation and uncertainty quantification. |

| Sensitivity Analysis Tools (e.g., for PRCC, eFAST) [1] | Methodology/Software | Algorithms and code for performing local and global sensitivity analysis on model parameters. |

| Cancer Genome Atlas (TCGA) [3] | Data Repository | A public database providing large-scale genomic and associated clinical data, crucial for training and testing data-driven models in oncology. |

| High Throughput Experimental Materials Database [3] | Data Repository | A database of experimental materials science data, useful for validating computational predictions of material properties. |

| Color Contrast Checkers [6] | Accessibility Tool | Tools to ensure sufficient contrast in data visualizations, making them accessible to a wider audience, including those with low vision. |

Mechanistic and purely data-driven models represent two powerful but distinct paradigms for computational research. Mechanistic models offer causal, interpretable insights based on biophysical principles, while data-driven models excel at extracting complex patterns from large, high-dimensional datasets. Neither approach is universally superior; each has its own set of strengths, limitations, and ideal application domains.

The credibility and utility of both model types are inextricably linked to rigorous experimental data through robust validation protocols. As the field progresses, the most significant advances will likely come from the strategic integration of these approaches into hybrid models. Such synergy leverages the interpretability of mechanistic frameworks with the predictive power of data-driven analytics, thereby accelerating discovery and innovation in drug development and biomedical science.

The principle of falsifiability, introduced by philosopher Karl Popper, serves as a cornerstone for distinguishing scientific theories from non-scientific claims [7] [8]. Popper argued that for a theory to be considered scientific, it must be capable of being refuted by empirical observations [8]. This principle creates a fundamental asymmetry: while no number of confirming observations can definitively verify a universal theory, a single genuine counter-instance can falsify it [7]. In contemporary scientific research, this philosophical foundation provides critical guidance for evaluating computational models, particularly as these models become increasingly central to biomedical research and drug development [9] [10].

Computational models are conjecture-driven frameworks that require rigorous testing against empirical evidence [9]. When positioned within Popper's critical rationalism, these models should not be viewed as verified truths but rather as refinable hypotheses that remain provisionally accepted only until they encounter contradictory evidence [8]. This perspective is particularly valuable in computational biology and drug development, where models must make testable predictions that can be potentially falsified by experimental data [9] [10]. The process of model corroboration—encompassing both calibration and validation—represents the practical application of falsificationist principles to computational science [10].

Core Theoretical Framework: From Popper to Computational Models

The Logical Structure of Falsifiability

Popper's falsification principle addresses two fundamental problems in philosophy of science: the problem of induction and the problem of demarcation [7]. The problem of induction recognizes that general laws cannot be conclusively verified through limited observations, no matter how numerous [7] [8]. For example, observing millions of white swans does not prove "all swans are white," but observing one black swan definitively falsifies this claim [8]. This deductive process, known as modus tollens, provides the logical foundation for falsification [7].

For computational models, this translates to a critical methodology: models must generate specific, risky predictions that could, in principle, be contradicted by experimental evidence [7] [8]. A model that is compatible with all possible outcomes—that cannot be falsified—fails as a scientific tool [7]. As Popper observed in his critique of psychoanalysis, theories that can explain everything after the fact actually explain nothing, because they make no testable predictions [7].

Falsifiability Versus Verification in Model Development

The transition from verification-oriented to falsification-oriented modeling represents a paradigm shift in computational science [9]. Traditional approaches often seek continual confirmation of models through accumulating supportive evidence [8]. In contrast, the falsificationist framework emphasizes deliberate attempts to disprove the model's predictions [8]. This approach embraces negative results as opportunities for scientific progress, recognizing that models are not final truths but provisional approximations that are refined through critical testing [8].

In practice, this means computational biologists should design experiments specifically to challenge their models' predictions, rather than merely seeking confirmatory evidence [10]. This methodological shift encourages the development of more robust models that make precise, testable predictions rather than vague, untestable claims [9].

Application to Computational Model Corroboration

The Corroboration Pipeline: Calibration and Validation

The process of computational model corroboration integrates falsificationist principles into practical research workflows [10]. This process consists of two critical phases:

- Calibration: The identification of model parameters that enable the recapitulation of the biological process of interest [10]. This phase uses optimization algorithms to minimize discrepancies between model predictions and calibration datasets.

- Validation: The assessment of model accuracy through comparison with experimental data not used during calibration [10]. This phase represents the true test of a model's predictive power beyond the conditions used for its training.

This corroboration pipeline embodies the Popperian view that scientific knowledge is provisional—the best we can do at the moment—and must be subjected to continuous critical testing [8] [10].

Figure 1: The iterative cycle of model development, testing, and refinement based on falsificationist principles.

Experimental Design for Effective Falsification

Different experimental models provide varying levels of stringency for testing computational models [10]. The selection of appropriate experimental frameworks is crucial for meaningful falsification attempts. Research demonstrates that 3D cell culture models often reveal discrepancies in computational models that 2D monolayers cannot detect [10]. For example, parameters calibrated solely with 2D proliferation data may fail to predict growth dynamics in 3D environments that more closely resemble in vivo conditions [10].

This underscores the importance of selecting experimental systems with sufficient complexity to provide rigorous tests of computational models. When models are calibrated with oversimplified experimental data, they may achieve the appearance of validation within limited contexts while failing to capture essential biological complexities [10].

Case Study: Ovarian Cancer Model Corroboration

Experimental Framework and Protocol Design

A comparative study of ovarian cancer computational models illustrates the critical role of experimental design in falsification-based corroboration [10]. Researchers developed an in-silico model of ovarian cancer cell growth and metastasis, then calibrated it using different experimental approaches [10]:

- 2D Monolayer Cultures: Traditional cell culture on flat surfaces

- 3D Cell Culture Models: Including organotypic models and bioprinted multi-spheroids

- Combined 2D/3D Datasets: Integration of data from both experimental frameworks

The organotypic model specifically co-cultured PEO4 ovarian cancer cells with healthy omentum-derived fibroblasts and mesothelial cells to better replicate the metastatic microenvironment [10]. This complex model provided a more rigorous test of the computational model's predictions compared to simplified 2D systems.

Table 1: Key Experimental Models for Computational Model Corroboration in Cancer Research

| Experimental Model | Key Features | Applications in Model Corroboration | Limitations |

|---|---|---|---|

| 2D Monolayer [10] | Cells grown on flat surfaces in monolayers; technical simplicity | Proliferation measurement via MTT assay; initial parameter estimation | Does not recapitulate 3D tissue architecture and cell-cell interactions |

| 3D Organotypic Model [10] | Co-culture of cancer cells with fibroblasts and mesothelial cells in collagen matrix | Study of adhesion and invasion capabilities; simulation of tumor microenvironment | Increased technical complexity; longer establishment time |

| 3D Bioprinted Multi-spheroids [10] | Cancer cells printed in PEG-based hydrogels using Rastrum 3D bioprinter | Quantification of proliferation in 3D context; real-time monitoring with IncuCyte S3 | Specialized equipment requirements; optimization of printing parameters |

Parameter Divergence Across Experimental Models

The ovarian cancer case study revealed significant differences in parameter sets when the same computational model was calibrated with different experimental data [10]. Parameters that accurately described proliferation in 2D monolayers failed to predict growth dynamics in 3D environments, suggesting that fundamental biological processes may operate differently across experimental contexts [10]. This parameter divergence serves as potential falsification evidence, indicating when models have insufficient biological realism.

Notably, models calibrated with 3D data often demonstrated superior predictive accuracy when validated against independent datasets, particularly for simulating treatment response [10]. This finding underscores the importance of using biologically relevant experimental systems for model corroboration.

Table 2: Comparative Analysis of Parameter Sets from Different Experimental Models

| Parameter Type | 2D Monolayer-Derived Values | 3D Organotypic-Derived Values | Combined 2D/3D Calibration | Biological Interpretation |

|---|---|---|---|---|

| Proliferation Rate | 0.45 ± 0.08 day⁻¹ | 0.28 ± 0.05 day⁻¹ | 0.36 ± 0.07 day⁻¹ | Reduced proliferation in 3D models reflects spatial constraints |

| Drug Sensitivity (Cisplatin) | IC₅₀ = 18.3 μM | IC₅₀ = 42.7 μM | IC₅₀ = 29.5 μM | Increased resistance in 3D environments due to diffusion barriers |

| Cell-Adhesion Strength | 0.12 ± 0.03 a.u. | 0.37 ± 0.06 a.u. | 0.24 ± 0.08 a.u. | Enhanced cell-matrix interactions in 3D architectures |

| Invasion Capacity | 0.08 ± 0.02 a.u. | 0.51 ± 0.09 a.u. | 0.31 ± 0.12 a.u. | More representative invasion metrics in tissue-like environments |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Experimental Model Corroboration

| Reagent/Material | Specification | Experimental Function | Application Context |

|---|---|---|---|

| PEO4 Cell Line [10] | High-grade serous ovarian cancer (HGSOC) with platinum resistance | In vitro model of recurrent ovarian cancer; GFP-labeled for tracking in co-cultures | 2D monolayers, 3D organotypic models, bioprinted spheroids |

| Collagen I [10] | 5 ng/μl concentration in fibroblast solution | Extracellular matrix component for 3D organotypic model structure | Organotypic model foundation layer |

| PEG-based Hydrogel [10] | 1.1 kPa stiffness, RGD-functionalized | Synthetic matrix for 3D cell encapsulation and spheroid formation | Bioprinting of multi-spheroids for proliferation studies |

| CellTiter-Glo 3D [10] | Luminescent ATP quantification assay | 3D viability measurement in hydrogel-encapsulated spheroids | End-point assessment of treatment response in 3D models |

| IncuCyte S3 Live Cell Analysis [10] | Real-time imaging and phase count analysis | Non-invasive monitoring of cell growth within hydrogels | Longitudinal proliferation tracking in 3D culture |

Methodological Guidelines for Robust Falsification

Experimental Design Principles

Based on the case study findings and falsification theory, several methodological guidelines emerge for effective computational model corroboration:

- Orthogonal Method Corroboration: Combine multiple experimental approaches that measure the same biological phenomenon through different mechanisms [9]. For example, genome-wide CNA calls from whole-genome sequencing data can be corroborated with low-depth WGS of thousands of single cells rather than reverting to lower-resolution FISH analysis [9].

- Resolution-Appropriate Validation: Select validation methods with sufficient resolution to test the model's specific predictions. High-depth targeted sequencing provides better variant detection than Sanger sequencing for low-frequency variants [9].

- Progressive Complexity Testing: Begin with simplified systems but progressively test models against increasingly complex experimental frameworks. This graded approach helps identify the boundaries of a model's predictive power [10].

Figure 2: A framework for orthogonal method corroboration, emphasizing the integration of different experimental approaches to test model predictions.

Data Analysis and Interpretation Framework

The interpretation of corroboration experiments requires careful consideration of falsificationist principles:

- Distinguishing Falsification from Anomaly: A single contradictory result may indicate limitations in specific model assumptions rather than requiring complete model rejection [8]. This reflects the Quine-Duhem thesis, which recognizes that theories are tested in networks rather than isolation [8].

- Quantitative Falsification Criteria: Establish predetermined thresholds for what constitutes falsification. For example, deviations beyond specific statistical confidence intervals or failure to predict key qualitative behaviors can serve as formal falsification criteria.

- Multi-scale Validation: Test models across different biological scales (molecular, cellular, tissue) to identify scale-specific limitations and failures [10].

The principle of falsifiability provides both a philosophical foundation and practical framework for advancing computational model development [7] [8]. By treating models as refinable hypotheses rather than verified truths, researchers can foster a culture of critical testing that progressively eliminates inadequate representations of biological systems [8] [10]. This approach embraces disconfirmation as an essential driver of scientific progress, recognizing that computational models are valuable not when they avoid falsification, but when they survive increasingly stringent attempts to disprove them [8].

The integration of falsificationist principles with modern computational approaches requires thoughtful experimental design, appropriate selection of model systems, and rigorous validation protocols [10]. As computational models grow in complexity and impact across biomedical research, maintaining this critical perspective ensures that these powerful tools remain grounded in empirical reality, driving meaningful advances in drug development and therapeutic innovation [9] [10].

The translation of findings from animal research to human clinical applications represents a critical juncture in biomedical science. Despite substantial global investment in preclinical research, a significant translation gap persists, limiting the efficiency of drug development and therapeutic innovation. This guide examines the quantitative evidence of this gap, explores the foundational principles of model validation, and provides a structured framework for enhancing the predictive power of animal studies through rigorous design and integration with computational modeling. The content is framed within the broader thesis that high-quality, reproducible experimental data is the cornerstone for validating and refining computational models, ultimately creating a synergistic cycle that accelerates research from bench to bedside.

Quantifying the Translation Gap

Understanding the current efficacy of animal-to-human translation requires a clear-eyed analysis of quantitative data. A 2024 umbrella review, which synthesized results from 122 systematic reviews encompassing 54 distinct human diseases and 367 therapeutic interventions, provides the most recent and comprehensive metrics on this process [11].

The review analyzed the proportion of therapies that successfully transition from animal studies to various stages of human application. The findings reveal that while initial transition appears promising, the rate of final regulatory approval is remarkably low, indicating systemic issues in the translational pipeline [11].

Table 1: Success Rates for Translating Therapies from Animal Studies to Human Application

| Stage of Development | Success Rate | Typical Timeframe (Median Years) |

|---|---|---|

| Advancement to any human study | 50% | 5 years |

| Advancement to a Randomized Controlled Trial (RCT) | 40% | 7 years |

| Achievement of regulatory approval | 5% | 10 years |

Furthermore, the same study investigated the consistency, or concordance, between positive results in animal studies and their corresponding human clinical trials. A meta-analysis showed an 86% concordance rate, suggesting that when animal studies yield positive results, they are likely to be positive in humans as well [11]. This high concordance, juxtaposed with the low final approval rate, points to potential deficiencies in the predictive validity of animal models for safety outcomes, as well as possible flaws in the design of both animal studies and early clinical trials.

Foundational Principles of Model Validation

To bridge the translation gap, a deliberate and critical approach to animal model selection and validation is essential. The concept of "fit-for-purpose" validation is paramount, meaning the model must be appropriately selected and evaluated for its ability to answer the specific clinical question at hand [12].

Key Validities of Animal Models

- Face Validity: This refers to how closely the animal model resembles the human disease in its phenomenological manifestations, including symptoms and biomarkers. A model with high face validity replicates the observable characteristics of the human condition [12].

- Predictive Validity: This is the most critical validity for translation. It measures how accurately the model's response to therapeutic interventions predicts the outcome in human patients. Assessing both efficacy and safety endpoints in the animal model is crucial for enhancing predictive validity [12].

Strategic Guidance for Model Selection and Use

- Define the Clinical Question Clearly: The choice of model should be driven by a precise clinical problem, not by model availability alone. This ensures the model is fit-for-purpose from the outset [12].

- Embrace Standardization and Rigor: Proper experimental design, execution, and reporting are fundamental to generating reproducible and translatable data. This includes blinding, randomization, appropriate statistical power, and detailed reporting of methodologies [12].

- Incorporate Humanized Models: The use of humanized mouse models, which incorporate human genes, cells, or tissues, can significantly improve the clinical relevance of preclinical findings by providing a more human-like biological context [12].

- Facilitate Back-Translation: Findings from clinical trials that were not predicted by animal testing should be used to refine and improve the animal models. This cyclical process of learning from clinical outcomes continuously enhances model validity [12].

An Integrated Workflow for Enhanced Translation

Improving translation requires a systematic approach that integrates robust experimental design with computational modeling. The following workflow and diagram outline this integrated strategy.

Diagram 1: Integrated experimental and computational workflow for improving translation.

Experimental Data as the Bedrock for Computational Models

Computational models are powerful tools for synthesizing knowledge and generating hypotheses, but their predictive power is contingent on the quality of the experimental data used to build and constrain them [13]. This reliance underscores the critical role of robust experimental data in the translational pipeline.

- Constraining Models: Computational models require accurate parameters, such as binding constants and molecular concentrations specific to a cell type or functional component. Currently, modellers often face data scarcity, forcing them to estimate a significant portion of parameters, which can reduce model reliability [13].

- External Validity Testing: Once built, a computational model must be tested against experimental data to establish its external validity—how well it represents biologically knowable states. A valid model can make predictions that go beyond currently available data, guiding future experimental directions [13].

The integration of FAIR (Findable, Accessible, Interoperable, and Reusable) data principles is vital here. Making experimental data machine-actionable and reusable dramatically accelerates the development and validation of computational models [14] [13].

Successful translational research relies on a suite of tools, reagents, and databases. The following table details key resources for enhancing the validity and analysis of pathway models and experimental data.

Table 2: Research Reagent Solutions for Pathway Modeling and Analysis

| Resource Name | Type | Primary Function |

|---|---|---|

| PathVisio [14] | Software Tool | Pathway editing and creation, supporting community curation and standard data formats. |

| WikiPathways [14] | Database | Community-curated pathway database allowing for direct editing and extension of pathway models. |

| BioModels [14] | Database | Repository of peer-reviewed, computational models of biological processes. |

| Complex Portal [14] | Database | Provides identifiers and details for protein complexes, enabling precise annotation in models. |

| FAIR Data Principles [14] [13] | Framework | A set of principles to make data Findable, Accessible, Interoperable, and Reusable for both humans and machines. |

| FindSim [13] | Framework | A framework for integrating multiscale models with experimental datasets for validation. |

| Humanized Mouse Models [12] | Experimental Model | Provides a more human-relevant in vivo context for testing therapeutic interventions. |

Visualizing the Validation Cycle for Computational Models

The validity of a computational model is assessed on two fronts: its internal soundness and its external biological relevance. The following diagram illustrates this collaborative validation cycle, which is fundamental to generating translatable insights.

Diagram 2: The internal and external validation cycle for computational models.

Internal vs. External Validity

- Internal Validity: This pertains to the model's construction. Is it sound, logically consistent, and independently reproducible? Ensuring internal validity involves rigorous code review, reproducibility audits, and adherence to modeling standards [13].

- External Validity: This assesses how well the model's output fits with existing experimental data and its ability to make accurate predictions about biological reality. It answers the question: "How can we be sure that the model is representative of in vivo states?" [13].

Fostering Collaboration to Close the Gap

A proposed solution to bridge the data scarcity gap is the creation of an incentivized experimental database [13]. In this framework, computational modellers could submit a "wish list" of critical experiments needed to parameterize or test their models. Experimentalists could then conduct these experiments, funded by microgrants, and submit the FAIR-compliant data. This approach directly incentivizes the generation of high-value data that accelerates model development and validation, fostering deeper collaboration between computational and experimental scientists [13].

Closing the translation gap from animal models to human physiology is a multifaceted challenge that demands a concerted shift in research practices. The quantitative evidence clearly shows that the current success rate from bench to regulatory approval is unacceptably low, despite high initial concordance. Addressing this requires an unwavering commitment to rigorous, fit-for-purpose animal model validation, the generation of high-quality, FAIR experimental data, and the deep integration of computational modeling into the translational pipeline. By treating experimental and computational research as synergistic partners—where models are refined by data and data collection is guided by models—the scientific community can enhance the predictive power of preclinical research, ultimately accelerating the delivery of safe and effective therapies to patients.

In the validation of computational models, the integration of diverse experimental data is paramount. While artificial intelligence has revolutionized biomolecular structure prediction, these models often lack dynamic information and require experimental validation to accurately represent biological reality. This technical guide explores the principles and methodologies for reconciling sparse, approximate, and sometimes contradictory experimental data into a unified, coherent framework. We focus on integrative structural biology, demonstrating how combining computational predictions with experimental restraints bridges the gap between static snapshots and dynamic ensembles, thereby enhancing the reliability of models for drug development.

Computational models, particularly AI-based structure prediction tools, have achieved remarkable accuracy but face inherent limitations. They primarily provide static structural snapshots and may struggle with transient complexes, conformational dynamics, and condition-specific interactions. These limitations underscore the critical role of diverse experimental data in validating and refining computational outputs.

The central challenge lies in the nature of experimental data itself: techniques such as crosslinking mass spectrometry (XL-MS), covalent labeling, chemical shift perturbation (CSP), and deep mutational scanning (DMS) provide valuable but often sparse or approximate structural insights [15]. Individually, each method offers limited information; collectively, they provide complementary restraints that can guide computational models toward higher accuracy and biological relevance. This guide outlines a systematic approach for reconciling these disparate data types into a coherent framework that enhances predictive power and experimental validation.

Methodological Framework: Principles of Data Integration

Successful data integration follows a core set of principles that ensure robustness and interpretability. The approach must be both efficient and flexible enough to handle diverse forms of experimental information while accounting for the uncertainties and biases inherent in each experimental method.

The Maximum Entropy Approach for Dynamic Ensembles

Modern integrative modeling increasingly utilizes the maximum entropy principle to build dynamic ensembles from diverse data sources. This approach prioritizes agreement with experimental data without introducing unnecessary bias, allowing researchers to resolve structural heterogeneity and interpret low-resolution data [16] [17]. By combining experiments with physics-based simulations, this method reveals both stable structures and transient, functionally important intermediates that are often missed by static structure determination alone.

Unified Statistical Framework for Multi-Data Integration

A coherent statistical framework must account for varying levels of precision and potential conflicts between different data types. Bayesian approaches are particularly valuable, as they incorporate prior structural knowledge while weighting experimental evidence according to its reliability. This enables the reconciliation of seemingly disparate results by quantifying uncertainties and identifying the structural models that best satisfy all available experimental restraints simultaneously.

Different experimental techniques provide complementary structural information at various resolutions and temporal scales. The table below summarizes key experimental methods, their structural insights, and integration applications.

Table 1: Key Experimental Techniques for Data Integration

| Technique | Structural Information Provided | Spatial Resolution | Temporal Resolution | Primary Integration Application |

|---|---|---|---|---|

| Crosslinking Mass Spectrometry (XL-MS) [15] | Distance restraints between reactive residues | Low (∼5-25 Å) | Snapshots | Defining proximity and interaction interfaces |

| Covalent Labeling [15] | Surface accessibility and solvent exposure | Low | Snapshots | Mapping surface topology and binding interfaces |

| Chemical Shift Perturbation (CSP) [15] | Local structural and chemical environment changes | Medium (residue-level) | Dynamic | Identifying binding sites and conformational changes |

| Deep Mutational Scanning (DMS) [15] | Functional impact of mutations; binding energetics | Low (residue-level) | Functional | Mapping critical interaction residues and stability |

| Hydrogen-Deuterium Exchange MS (HDX-MS) [16] | Solvent accessibility and dynamics | Low | Millisecond-second | Probing dynamics and conformational changes |

| Cryo-Electron Microscopy (cryo-EM) [16] | 3D density maps | High (near-atomic to low) | Snapshots | Providing overall structural framework |

| Nuclear Magnetic Resonance (NMR) [16] | Distance restraints, dynamics, atomic coordinates | High (atomic) | Picosecond-second | Providing atomic coordinates and dynamics |

Detailed Experimental Protocols

Crosslinking Mass Spectrometry (XL-MS) Protocol:

- Sample Preparation: Purify protein complex under native conditions.

- Crosslinking Reaction: Add lysine-reactive crosslinker (e.g., DSSO) at optimized molar ratio.

- Quenching: Terminate reaction with ammonium bicarbonate.

- Digestion: Digest with trypsin overnight at 37°C.

- Liquid Chromatography-Mass Spectrometry: Analyze peptides using LC-MS/MS with stepped collision energy.

- Data Analysis: Identify crosslinked peptides using specialized software (e.g., XlinkX, plink).

- Validation: Filter crosslinks using FDR cutoff and validate with reciprocal analysis.

Chemical Shift Perturbation (CSP) NMR Protocol:

- Sample Preparation: Prepare ¹⁵N-labeled protein in appropriate buffer.

- Titration Series: Collect 2D ¹H-¹⁵N HSQC spectra with increasing ligand/protein concentrations.

- Resonance Assignment: Assign backbone resonances using triple-resonance experiments.

- Chemical Shift Analysis: Calculate combined chemical shift changes: Δδ = √((ΔδH)² + (αΔδN)²).

- Threshold Determination: Identify significant perturbations (typically > mean + 1SD).

- Mapping: Map significant CSPs to structural models to identify interaction surfaces.

Computational Integration Strategies

The GRASP Framework for Assisted Structure Prediction

GRASP represents a significant advancement in integrating diverse experimental information for protein complex structure prediction. This tool efficiently incorporates restraints from crosslinking, covalent labeling, chemical shift perturbation, and deep mutational scanning, outperforming existing tools in both simulated and real-world experimental scenarios [15]. GRASP has demonstrated particular efficacy in predicting antigen-antibody complex structures, even surpassing AlphaFold3 when utilizing experimental DMS or covalent-labeling restraints.

The power of GRASP lies in its ability to integrate multiple forms of restraints simultaneously, enabling true integrative modeling. This capability has been showcased in modeling protein structural interactomes under near-cellular conditions using previously reported large-scale in situ crosslinking data for mitochondria [15].

Integration with Molecular Simulations

Physics-based simulations provide the necessary framework for interpreting dynamic experimental data. Molecular dynamics simulations can reconcile disparate experimental results by:

- Sampling conformational landscapes consistent with experimental observables

- Identifying transition pathways between experimentally observed states

- Calculating theoretical experimental observables for direct comparison

- Testing hypotheses about allosteric mechanisms and transient interactions

Enhanced sampling methods are particularly valuable for connecting experimental data to slow, large-scale conformational changes that are critical for biological function but difficult to observe directly [16].

Visualization and Workflow Diagrams

Data Integration Workflow

Iterative Refinement Process

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Integrative Studies

| Reagent/Material | Function/Purpose | Application Examples |

|---|---|---|

| Lysine-Reactive Crosslinkers (e.g., DSSO, BS³) | Covalently link proximal lysine residues for distance restraints | XL-MS studies of protein complexes [15] |

| ¹⁵N/¹³C-labeled Compounds | Isotopic labeling for NMR spectroscopy | Backbone assignment and CSP experiments [15] |

| Size Exclusion Chromatography Matrices | Protein complex purification under native conditions | Sample preparation for multiple techniques |

| Cryo-EM Grids (e.g., Quantifoil) | Support for vitrified samples for electron microscopy | High-resolution single-particle analysis [16] |

- Hydrogen-Deuterium Exchange Buffers: Enable measurement of solvent accessibility and protein dynamics through mass spectrometry [16].

- Site-Directed Mutagenesis Kits: Systematically alter protein sequences for deep mutational scanning studies [15].

- Paramagnetic Spin Labels (e.g., MTSL): Provide long-range distance restraints for NMR studies of large complexes [16].

Data Analysis and Quantitative Framework

Effective integration requires quantitative metrics for evaluating agreement between models and experimental data. The table below summarizes key validation metrics for different experimental data types.

Table 3: Quantitative Validation Metrics for Experimental Data Integration

| Data Type | Agreement Metric | Optimal Range | Interpretation |

|---|---|---|---|

| XL-MS | Satisfaction of distance restraints | >85% satisfied | Higher percentage indicates better model agreement with proximity data |

| CSP | Correlation between predicted and observed CSP | R² > 0.7 | Strong correlation indicates accurate binding interface prediction |

| DMS | Recovery of critical binding residues | AUC > 0.8 | Better discrimination of functional vs. neutral mutations |

| Covalent Labeling | Correlation with solvent accessibility | R² > 0.6 | Accurate representation of surface topology |

| Cryo-EM | Map-model correlation (FSC) | FSC₀.₁₄³ > 0.5 | High-resolution agreement with density data |

- Statistical Validation: Bayesian information criteria can evaluate whether additional data types significantly improve model quality without overfitting.

- Cross-Validation Approaches: Leave-one-out validation determines the predictive power of the integrated model for unseen data.

Case Studies and Applications

Antigen-Antibody Complex Prediction

GRASP has demonstrated remarkable performance in predicting antigen-antibody complex structures, outperforming AlphaFold3 when utilizing experimental DMS or covalent-labeling restraints [15]. This application highlights how integrative approaches can surpass purely AI-based methods when experimental data guides the modeling process.

Mitochondrial Interactome Modeling

The application of GRASP to model protein structural interactomes under near-cellular conditions using large-scale in situ crosslinking data showcases the power of integration for systems-level structural biology [15]. This approach moves beyond individual complexes to map interaction networks within functional cellular contexts.

Modeling Transient Complexes and Allosteric Mechanisms

Integrative approaches combining NMR, HDX-MS, and molecular dynamics simulations have revealed transient intermediates and allosteric pathways in signaling proteins [16]. These applications demonstrate how diverse data integration captures dynamic processes essential for biological function.

The integration of diverse experimental data provides an essential framework for validating and refining computational models. By reconciling disparate results into coherent structural ensembles, researchers can bridge the gap between static snapshots and dynamic biological reality. The continued development of integrative tools like GRASP, combined with advances in experimental techniques and simulation methods, promises to expand our understanding of biomolecular function and accelerate drug discovery.

Future directions include the development of more automated integration pipelines, improved methods for handling time-resolved data, and approaches for integrating cellular-scale data with molecular structural information. As these methods mature, the reconciliation of disparate experimental results will become increasingly central to computational model validation in structural biology and drug development.

From Lab to Code: Methodologies for Integrating Experimental Data into Computational Frameworks

Leveraging High-Throughput Data for Model Calibration

In the realm of computational biology and materials science, the predictive power of models hinges on their alignment with empirical reality. High-throughput experimental data has emerged as a transformative force in model calibration, providing the volume and diversity of evidence required to refine complex computational simulations. This process establishes a critical feedback loop where models are iteratively improved using experimental data, thereby enhancing their reliability for predicting new phenomena. The integration of these data-rich approaches is foundational to advancing research in drug development and materials engineering, where accurate predictions can significantly accelerate discovery timelines and improve outcomes.

The transition towards data-driven calibration represents a paradigm shift from traditional methods that often relied on limited datasets and manual parameter tuning. Modern high-throughput platforms can generate thousands to millions of data points, enabling the calibration of increasingly complex models that would otherwise be underdetermined. This technical guide examines the methodologies, protocols, and practical implementations of high-throughput data for model calibration, providing researchers with the framework to enhance the validity and predictive capacity of their computational models within the broader context of scientific research.

Foundational Calibration Methodologies

Statistical Frameworks for Functional Data

The calibration of high-throughput functional assays for clinical variant classification exemplifies the rigorous statistical approach required for transforming raw experimental data into clinically actionable insights. Under current clinical guidelines, using functional data as evidence for pathogenicity assertions requires establishing thresholds that distinguish functionally normal from abnormal variants. However, this approach often lacks formal calibration rigor, where a variant's posterior probability of pathogenicity must be estimated directly from raw experimental scores and mapped to discrete evidence strengths [18].

To address this limitation, researchers have developed a method that jointly models assay score distributions of synonymous variants and variants appearing in population databases (e.g., gnomAD) with score distributions of known pathogenic and benign variants. This multi-sample skew normal mixture model is learned using a constrained expectation-maximization algorithm that preserves the monotonicity of pathogenicity posteriors. The model subsequently calculates variant-specific evidence strengths for clinical use, demonstrating improved variant classification accuracy that directly enhances genetic diagnosis and medical management for individuals affected by Mendelian disorders [18].

Bayesian Optimization in Materials Science

In computational materials science, Bayesian optimization (BO) has emerged as a gradient-free efficient global optimization algorithm capable of calibrating constitutive models for crystal plasticity finite element models (CPFEM). These models establish structure-property linkages by relating microstructures to homogenized material properties. Recent advances have implemented asynchronous parallel constrained BO algorithms to calibrate phenomenological constitutive models for various alloys, significantly reducing computational overhead while maintaining calibration accuracy [19].

The Bayesian optimization framework is particularly valuable for handling expensive-to-evaluate computer models where gradient information is unavailable or costly to obtain. By building a probabilistic surrogate model of the objective function and using an acquisition function to guide the search process, BO efficiently navigates high-dimensional parameter spaces. This approach has proven effective for inverse identification of crystal plasticity parameters, enabling more accurate predictions of material behavior under various loading conditions [19].

Table 1: High-Throughput Calibration Methodologies Across Disciplines

| Methodology | Core Application | Key Algorithm | Advantages |

|---|---|---|---|

| Skew Normal Mixture Model | Clinical variant classification [18] | Constrained expectation-maximization | Preserves monotonicity of pathogenicity posteriors; enables variant-specific evidence strengths |

| Bayesian Optimization | Crystal plasticity models [19] | Asynchronous parallel constrained BO | Gradient-free; efficient global optimization; handles expensive-to-evaluate models |

| Quantile-Quantile Calibration | Linking high-content & high-throughput data [20] | Least squares regression of QQ-plot | Translates between measurement techniques; determines linear relationship between observables |

| Calibration-Free Quantification | Organic reaction screening [21] | GC-MS/GC-Polyarc-FID with retention indexing | Eliminates need for product references; uniform detector response across analytes |

Experimental Protocols and Workflows

Calibration Linking High-Content and High-Throughput Data

The integration of high-content single-cell measurements with high-throughput techniques requires a systematic calibration approach to maximize parameter identifiability. The following protocol outlines the general procedure for linking these complementary data types [20]:

Identical Cell Population Measurement: Measure the same cell population using both high-content (e.g., microscopy) and high-throughput (e.g., flow cytometry) techniques to determine a subset of matching quantities, defined as free variables (e.g., cell volume - Vcell, concentration of a fluorescently labeled marker - Ccell).

Quantile-Quantile Plot Analysis: For NC high-content measurements {XC,i}i=1,...,NC and NT high-throughput measurements {XT,i}i=1,...,NT (where NT > NC), create a QQ-plot of the ordered measurements (sample quantiles). According to the linear relationship:

XT(Y) = (m/m') × XC(Y) + (d-d')/m'

where Y refers to the quantity of interest, XC and XT are observables for high-content and high-throughput techniques connected to Y via slopes m, m' and intercepts d, d'.

Least Squares Regression: Perform a least squares fit of the QQ-plot to estimate the slope (m/m') and intercept ((d-d')/m') parameters that enable translation between XC and XT.

Mathematical Modeling: Express quantities of interest (high-content information dependent on free variables) through a mathematical model with estimated parameters.

Data Translation: Translate high-throughput measurements via calibration into the single-cell measurement context and through the fixed parameter model into cell population quantities.

This calibration procedure can be generally applied to combine experimental data generated by different techniques, provided the free variables can be measured by all techniques used for data generation [20].

High-Content to High-Throughput Calibration Workflow

Calibration-Free Quantification for Reaction Screening

The accelerated generation of reaction data through high-throughput experimentation (HTE) necessitates efficient analytical workflows. The following protocol enables quantitative analysis of reaction arrays with combinatorial product spaces without requiring isolated product references for external calibrations [21]:

Automated Reaction Setup: Utilize a Python-programmable liquid handler (e.g., OT-2) to prepare reaction arrays from stock solutions of substrates, reagents, and catalysts in 96-position reaction blocks.

Reaction Processing: Subject reaction mixtures to appropriate conditions (irradiation or heating), then use the liquid handler for automated workup including filtration, dilution, and transfer to GC vials.

Parallel GC Analysis: Analyze each sample using parallel GC-MS and GC-Polyarc-FID systems:

- GC-MS: Provides structural information for product identification

- GC-Polyarc-FID: Enables quantification through uniform carbon-specific detection via methane conversion (except for sulfur-containing solvents and fully fluorinated analytes)

Retention Index Calibration: Perform two additional calibration measurements with commercially available alkane standards to calculate Kováts retention indices (RIs) for all peaks.

Peak Mapping: Match peaks between GC-MS and GC-Polyarc-FID chromatograms using retention indices to correlate structural identity with quantitative data.

Automated Data Processing: Use open-source software (e.g., pyGecko Python library) to:

- Parse raw data files (converted to open mzML/mzXML formats)

- Perform smoothing, background subtraction, peak detection, and integration

- Calculate product ion-to-charge ratios based on plate layout

- Match product MS peaks to corresponding FID peaks using RIs

- Calculate yields as relative peak areas compared to internal standard

This workflow enables accurate quantification of diverse reaction products without molecule-specific calibration, significantly accelerating high-throughput screening for reaction discovery and optimization [21].

Data Management and Analysis Techniques

Quantitative Data Comparison Frameworks

Effective model calibration requires appropriate data visualization and comparison methodologies. The selection between charts and tables depends on the specific analytical goals and audience needs [22]:

Table 2: Data Presentation Modalities for Calibration Results

| Aspect | Charts | Tables |

|---|---|---|

| Primary Function | Show patterns, trends, and relationships [22] | Present detailed, exact figures [22] |

| Data Complexity | Illustrate complex relationships through visuals [22] | Can handle multidimensional information [22] |

| Analysis Strength | Identifying patterns and trends [22] | Precise, detailed analysis and comparisons [22] |

| Interpretation Speed | Quick to interpret for overview & general trends [22] | Requires more time and attention to understand details [22] |

| Best Use Cases | Presentations, reports where visual impact is key [22] | Academic, scientific, or detailed financial analysis [22] |

For calibration data, a combined approach often proves most effective: charts summarize key trends and relationships, while supplementary tables provide the precise values needed for detailed model parameterization. This dual approach accommodates both the need for quick insight and technical precision in computational model development.

Automated Data Processing Pipelines

The substantial data volumes generated by high-throughput experimentation necessitate automated processing workflows. The pyGecko Python library exemplifies this approach for gas chromatography data, providing [21]:

Format Flexibility: Parsing capabilities for proprietary vendor files through conversion to open mzML and mzXML formats using the msConvert tool from ProteoWizard.

Streamlined Processing: Automated peak detection, integration, and background subtraction following data parsing.

Retention Index Calculation: Determination of Kováts retention indices for all detected peaks using alkane standard calibrations.

Cross-Platform Correlation: Matching of product identification (GC-MS) with quantification (GC-Polyarc-FID) through retention index alignment.

High-Throughput Capability: Processing of full 96-reaction arrays in under one minute.

Result Visualization: Generation of heatmaps and export in standardized formats (e.g., Open Reaction Database schema).

Such automated pipelines are essential for maintaining the velocity of high-throughput experimentation and ensuring consistent, reproducible data processing for model calibration.

Automated GC Data Processing Pipeline

Essential Research Reagents and Tools

Table 3: Research Reagent Solutions for High-Throughput Calibration

| Reagent/Tool | Function | Application Context |

|---|---|---|

| Python-Programmable Liquid Handler | Automated reaction setup and workup [21] | High-throughput experimentation (e.g., OT-2 system) |

| GC-MS System | Product identification through structural characterization [21] | Reaction screening and analysis |

| GC-Polyarc-FID System | Quantification via uniform carbon-specific detection [21] | Calibration-free yield determination |

| Alkane Standards | Retention index calibration for peak alignment [21] | Chromatographic method standardization |

| pyGecko Python Library | Automated processing of GC raw data [21] | High-throughput data analysis pipeline |

| Skew Normal Mixture Model | Statistical modeling of assay score distributions [18] | Clinical variant classification calibration |

| Bayesian Optimization Framework | Efficient parameter space exploration [19] | Crystal plasticity model calibration |

High-throughput data has fundamentally transformed model calibration across scientific disciplines, enabling more robust and predictive computational models through rigorous, data-driven parameter estimation. The methodologies and protocols outlined in this technical guide provide researchers with a framework for leveraging these powerful approaches in their own work. As high-throughput technologies continue to evolve, their integration with computational modeling will undoubtedly yield increasingly accurate representations of complex biological and materials systems, ultimately accelerating scientific discovery and innovation.

The future of model calibration lies in the continued development of automated, integrated workflows that seamlessly connect experimental data generation with computational analysis. Such advances will further close the gap between empirical observation and theoretical prediction, enhancing our ability to model and manipulate complex systems across the scientific spectrum.

Connecting Blood Biomarkers to Drug Action at the Disease Site

The integration of blood-based biomarkers (BBBM) into the drug development pipeline represents a paradigm shift in connecting systemic drug action to pathological processes at the disease site. This technical guide examines the critical framework for validating computational models of drug-biomarker-disease interactions through rigorous experimental protocols. By establishing standardized methodologies and multi-optic approaches, researchers can bridge the translational gap between peripheral biomarker measurements and central pathophysiology, ultimately accelerating therapeutic development for complex diseases including Alzheimer's disease, cancer, and chronic pain disorders. The convergence of artificial intelligence, molecular profiling, and experimental validation creates an unprecedented opportunity to advance precision medicine through biomarker-driven insights.

Blood-based biomarkers serve as accessible proxies for monitoring drug pharmacodynamics and disease progression at the actual site of pathology, which is often difficult to access directly. The fundamental challenge lies in establishing validated quantitative relationships between peripheral biomarker measurements and central disease processes. This requires sophisticated computational models grounded in robust experimental data [23] [24].

The drug development landscape is increasingly reliant on BBBM for participant stratification, treatment monitoring, and therapeutic decision-making. In Alzheimer's disease (AD), for example, biomarkers including plasma phosphorylated tau (p-tau217) and amyloid-β42/40 ratio now enable non-invasive detection of pathology that was previously only measurable via cerebrospinal fluid analysis or PET imaging [23]. Similarly, in oncology, biomarkers like mesothelin provide critical information on tumor dynamics and treatment response [25]. The growing market for biomarker discovery—projected to reach $54.19 billion by 2033—reflects their expanding role in pharmaceutical development [26].

Table 1: Classes of Blood-Based Biomarkers in Drug Development

| Biomarker Class | Representative Analytes | Primary Applications in Drug Development | Technical Considerations |

|---|---|---|---|

| Amyloid Pathology | Aβ42/40 ratio, p-tau181, p-tau217 | Target engagement, patient stratification, dose optimization | Standardization across platforms, pre-analytical factors |

| Neuroinflammation | GFAP, YKL-40, IL-6, TNF-α | Monitoring treatment effects on neuroinflammatory pathways | Differentiation from systemic inflammation |

| Neuronal Injury | Neurofilament Light Chain (NFL) | Monitoring disease progression and neuroprotective effects | Specificity for neuronal subpopulations |

| Systemic Inflammation | CRP, IL-6, TNF-α, IL-1β | Assessing peripheral inflammatory status | Interaction with central processes |

| Metabolic Dysregulation | Insulin, lipids, adipokines | Evaluating metabolic contributions to pathology | Diurnal and nutritional influences |

Computational Frameworks for Modeling Biomarker-Disease-Drug Interactions

Knowledge Graph Approaches for Therapeutic Hypothesis Generation

Biological knowledge graphs (KGs) provide powerful computational frameworks for connecting drug actions to disease sites via biomarker patterns. These graphs are constructed with head entity-relation-tail entity (h, r, t) triples where entities correspond to biological nodes (drugs, diseases, genes, pathways, proteins) and relations represent the links between them [27]. Knowledge base completion (KBC) models predict unknown relationships within these graphs, generating testable hypotheses about drug-disease connections.

A reinforcement learning-based symbolic reasoning approach (exemplified by AnyBURL) mines logical rules that explain potential therapeutic mechanisms [27]. For example, a validated rule for drug repositioning might take the form:

This translates to: "Compound X treats disease Y because it binds to gene A, which is activated by compound B, which is in trial for disease Y" [27]. Such rules generate evidence chains connecting drug candidates to diseases via biologically plausible pathways.

Addressing the Biological Relevance Challenge in Computational Predictions

A significant limitation of knowledge graph approaches is the generation of biologically irrelevant or mechanistically insignificant paths. Automated filtering pipelines address this by incorporating disease-specific biological context. The multi-stage filtering approach includes:

- Rule Filtering: Prioritizes rules based on confidence scores and biological plausibility

- Significant Path Filtering: Retains paths with strong statistical support

- Gene/Pathway Filtering: Focuses on paths containing disease-relevant genes and pathways identified through prior landscape analysis [27]

This automated filtering dramatically reduces the volume of evidence chains requiring expert review—by 85% in cystic fibrosis and 95% in Parkinson's disease case studies—while maintaining biologically meaningful connections [27].

Molecular Docking and Binding Affinity Predictions

Molecular docking simulations predict how drug compounds interact with target proteins at the atomic level, providing insights into binding affinities and potential efficacy. These computational methods are particularly valuable for screening vast chemical libraries—which now contain over 11 billion compounds—to prioritize candidates for experimental testing [28]. Advanced approaches incorporating quantum computing enable more accurate simulation of quantum effects in molecular interactions, though these methods remain emerging technologies in drug discovery [28].

Experimental Validation Methodologies

Standardization and Quantification of Biomarker Assays

Standardization of biomarker measurements is prerequisite for correlating peripheral drug exposure with target engagement at disease sites. The CentiMarker approach addresses this challenge by transforming raw biomarker values to a standardized scale from 0 (normal) to 100 (near-maximum abnormal), analogous to the Centiloid scale for amyloid PET imaging [29].

The CentiMarker calculation protocol involves:

- CentiMarker-0 (CM-0) Dataset Identification: Using data from healthy controls to establish the normal baseline

- CentiMarker-100 (CM-100) Dataset Identification: Using data from severe cases to establish the abnormal extreme

- Outlier Exclusion: Removing values outside Q3+1.5×IQR or

- Linear Transformation: Converting raw values to the 0-100 scale using established anchors [29]

This standardization enables quantitative comparison of treatment effects across different biomarkers, cohorts, and analytical platforms, facilitating more robust correlations between drug exposure and biomarker response.

In Vitro Binding Assays for Target Engagement Validation

Surface-based binding assays provide experimental confirmation of computationally predicted drug-target interactions. The mesothelin-Fn3 binding study exemplifies this approach [25]:

Experimental Protocol:

- Protein Expression: Full-length mesothelin, single domains, or domain combinations are expressed on the yeast surface

- Binding Incubation: Engineered fibronectin type III (Fn3) domains are introduced to the displayed mesothelin domains

- Quantification: Fn3 binding to specific mesothelin domains is measured via flow cytometry

- Validation: Experimental binding data is compared with computational predictions from AlphaFold3 and molecular dynamics simulations [25]

This methodology validates both the specific binding interaction and the computational models that predicted it, strengthening confidence in the drug-biomarker-disease connection.

Multi-Omic Biomarker Discovery and Validation

Comprehensive biomarker discovery requires rigorous multi-cohort, multi-platform approaches to ensure biological reproducibility. The pain biomarker study exemplifies this methodology with separate microarray and RNA sequencing studies, each employing multiple independent cohorts [30]:

Experimental Workflow:

- Sample Collection: Whole blood collected in PAXgene tubes for RNA stabilization

- RNA Extraction: Total RNA isolated with quality control measures

- Platform-Specific Processing:

- Microarrays: Normalization (RMA for technical variability, z-scoring for biological variability by gender)

- RNA Sequencing: Minimum TPM count threshold of 0.1 for transcript inclusion

- Multi-Stage Analysis:

- Discovery: Within-subject comparisons across pain state transitions

- Validation: Cross-sectional analysis of severe chronic pain cases

- Testing: Independent cohort prediction of pain states and future healthcare utilization

- Convergence Analysis: Identification of biomarkers reproducibly identified across both platforms [30]

This robust design controls for technical variability while identifying biologically reproducible biomarker signatures.

Multi-Omic Biomarker Discovery Workflow

Integrative Analysis: Connecting Peripheral Measurements to Central Pathology

Accounting for Biological Determinants of Biomarker Variability

Interpretation of biomarker data requires understanding the biological factors that influence measurements independent of drug action or disease status. Key determinants include:

- Nutritional Status: Deficiencies in vitamins E, D, B12, and antioxidants can induce oxidative stress and subsequent neuroinflammation, altering biomarker levels [23]

- Inflammatory States: Chronic inflammation characterized by elevated IL-6, IL-18, and TNF-α promotes amyloid plaque formation and tau pathology [23]

- Metabolic Dysregulation: Insulin resistance, dyslipidemia, and thyroid imbalance contribute significant variability in AD biomarkers [23]

- Demographic Factors: Age, sex, and APOE-ε4 genotype introduce additional variability that must be accounted for in analysis [23]

These factors can alter expression of key biomarkers—Aβ, p-tau, and neurofilament light chain (NFL)—by 20-30% between individuals with similar disease burden, potentially obscuring drug effects [23].

Cross-Species Translation of Biomarker Signals

Translating biomarker signals from preclinical models to human applications requires careful consideration of species-specific biology. The following table outlines key methodological considerations:

Table 2: Experimental Models for Biomarker-Drug Action Validation

| Model System | Applications | Strengths | Limitations for Biomarker Translation |

|---|---|---|---|

| Yeast Surface Display | Domain-level binding validation | High-throughput, controlled expression environment | Lack of physiological cellular context |

| Cell-Based Assays | Functional pathway analysis | Human cellular context, manipulable pathways | Simplified model of complex tissue environments |

| Animal Models | In vivo target engagement, biodistribution | Intact biological system, pharmacokinetic data | Species differences in drug metabolism and target biology |

| Human Cohort Studies | Clinical validation, natural history | Direct human relevance, individual variability | Confounding factors, ethical constraints on tissue access |

Case Studies in Biomarker-Enabled Drug Development

Alzheimer's Disease: Biomarker-Stratified Clinical Trials

The Dominantly Inherited Alzheimer Network Trial Unit (DIAN-TU-001) exemplifies biomarker-driven trial design, using mutation status to enroll participants years before symptom onset [29]. The trial incorporated multiple fluid biomarkers (Aβ42/40, p-tau species, NFL) to monitor disease progression and treatment response. Standardization of these biomarkers using the CentiMarker approach enabled quantitative comparison of treatment effects across different analytes, demonstrating that gantenerumab reduced amyloid pathology while solanezumab showed limited effects [29].

Fragile X Syndrome: Computational Prediction with Experimental Validation

A knowledge graph approach identified sulindac and ibudilast as repurposing candidates for Fragile X syndrome [27]. Computational predictions generated evidence chains connecting these drugs to disease biology via inflammatory pathways. Subsequent preclinical validation demonstrated strong correlation between automatically extracted paths and experimentally derived transcriptional changes, confirming the biological plausibility of the predictions [27]. This integration of computational and experimental approaches provides a robust framework for connecting drug action to disease pathology via biomarker modulation.

Chronic Pain: Blood Biomarkers for Objective Monitoring

A multi-platform biomarker discovery program identified reproducible blood gene expression signatures for chronic pain states [30]. The top biomarkers included decreased expression of CD55 (a complement cascade regulator) and increased expression of ANXA1 (a glucocorticoid-mediated response effector) [30]. These biomarkers not only provided objective measures of pain severity but also informed drug repurposing analyses, identifying lithium, ketamine, and carvedilol as potential treatments. The study demonstrated how biomarker profiles could be translated into clinically actionable reports for personalized treatment matching [30].

Integrated Computational-Experimental Workflow

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Reagents and Platforms for Biomarker-Drug Connection Studies

| Reagent/Platform | Function | Application Examples | Technical Considerations |

|---|---|---|---|

| PAXgene Blood RNA Tubes | RNA stabilization from whole blood | Gene expression biomarker studies in pain research [30] | Standardized processing protocols required |

| Olink Explore HT | High-throughput proteomics | UK Biobank Pharma Proteomics Project profiling ~5,400 proteins [26] | Low sample volume requirements |

| Seer Proteograph | Unbiased proteomic profiling | 20,000-sample cancer biomarker study with AI analysis [26] | Compatibility with mass spectrometry |

| Certified Reference Materials (CRMs) | Assay standardization | IFCC CSF Aβ42 standardization [29] | Traceability to SI units |

| Yeast Surface Display | Domain-level binding validation | Mesothelin-Fn3 interaction mapping [25] | Controlled glycosylation patterns |