Beyond the Training Data: A Practical Guide to Evaluating and Improving Extrapolation in Chemical Machine Learning

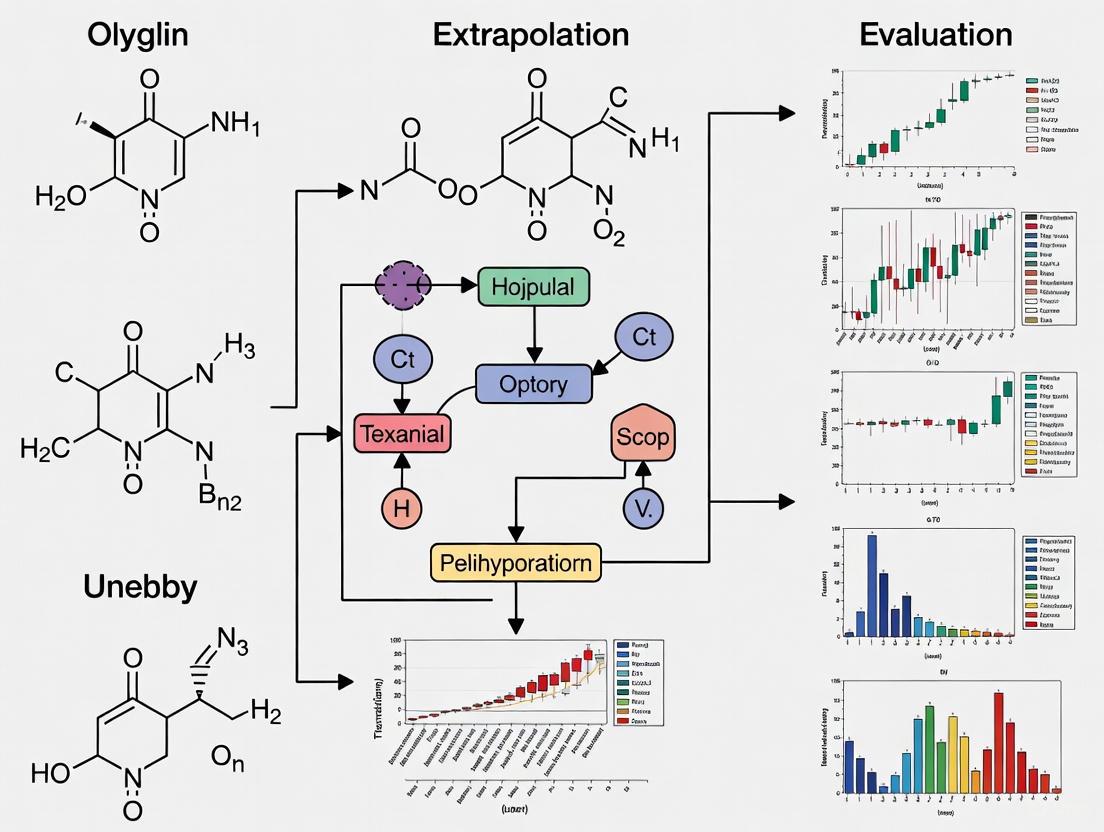

The ability of machine learning (ML) models to accurately predict molecular properties beyond their training distribution—extrapolation—is critical for discovering novel, high-performing materials and drugs.

Beyond the Training Data: A Practical Guide to Evaluating and Improving Extrapolation in Chemical Machine Learning

Abstract

The ability of machine learning (ML) models to accurately predict molecular properties beyond their training distribution—extrapolation—is critical for discovering novel, high-performing materials and drugs. This article provides a comprehensive resource for researchers and drug development professionals, synthesizing the latest research on this fundamental challenge. We first explore why extrapolation is difficult for conventional ML models, especially with small datasets. We then review cutting-edge methodologies, from interpretable linear models to hybrid and transductive approaches, that are designed to enhance extrapolative performance. A practical troubleshooting section addresses common failure modes and optimization strategies, including feature engineering and data splitting. Finally, we detail robust validation frameworks and benchmark results to guide model selection, concluding with future directions that connect these technical advances to their profound implications for accelerating biomedical discovery.

The Extrapolation Challenge: Why Chemical ML Models Struggle Beyond Their Training Data

In data-driven materials science and drug discovery, machine learning (ML) models are tasked not only with interpolating within known data but, more critically, with extrapolating to predict the properties of novel, unsynthesized molecules. This extrapolative capability is fundamental for breaking through existing performance limits and discovering new functional materials or drug candidates [1] [2]. Within chemical ML, extrapolation primarily manifests in two distinct forms: extrapolation in property range and extrapolation in molecular structure [1]. The former occurs when models predict property values outside the range encountered during training, while the latter involves predicting properties for molecules with structural scaffolds or functional groups not represented in the training set. Understanding the distinctions, challenges, and methodological approaches for these two extrapolation types is essential for developing reliable predictive models in chemical research.

This guide provides a comparative analysis of property range versus molecular structure extrapolation, synthesizing recent research findings to equip scientists with validated experimental protocols and benchmarks for evaluating model performance. The focus remains on practical implementation, with structured data on performance metrics and detailed methodological workflows to facilitate application in real-world research scenarios.

Comparative Analysis: Property Range vs. Molecular Structure Extrapolation

Definitions and Challenges

Property Range Extrapolation assesses a model's ability to predict molecular property values that lie outside the distribution of the training data's target values. For example, a model trained on molecules with boiling points between 100°C and 300°C would be extrapolating if it attempts to predict a boiling point of 350°C [1]. This type of extrapolation is particularly challenging for non-linear models that may learn complex patterns specific to the training range but fail to extend these patterns beyond it [3].

Molecular Structure Extrapolation evaluates how well a model predicts properties for molecules with structural features—such as unseen scaffolds, functional groups, or atomic environments—not present in the training set [1]. This is often assessed through scaffold-based splits where test molecules share a common core structure that is deliberately excluded from training [4] [5]. This form of extrapolation more closely mimics the real-world drug discovery pipeline, where researchers aim to explore novel chemical entities.

Table 1: Core Characteristics of Extrapolation Types

| Feature | Property Range Extrapolation | Molecular Structure Extrapolation |

|---|---|---|

| Primary Challenge | Maintaining functional relationships beyond trained property values [1] | Generalizing to unseen molecular scaffolds and functional groups [1] [4] |

| Common Validation Method | Splitting data based on sorted target values (e.g., top/bottom 20% as test set) [1] [3] | Scaffold-based splitting or clustering based on molecular fingerprints [1] [4] |

| Key Limiting Factor | Inherent bias of many ML algorithms to interpolate [2] | High-dimensional, combinatorial nature of chemical space [1] |

| Impact on Small Data | Severe performance degradation, especially with <500 data points [1] | Significant accuracy drop due to insufficient structural diversity in training [1] |

Quantitative Performance Comparison

Recent large-scale benchmarking across 12 organic molecular property datasets reveals significant performance degradation for conventional ML models in both extrapolation regimes, particularly for small-data properties (fewer than 500 data points) [1]. The performance gap between interpolation and extrapolation can be substantial, with some models showing error increases of over 30% when moving from interpolation to extrapolation tasks [1] [2].

Table 2: Performance Comparison of ML Models in Different Extrapolation Regimes

| Model Type | Representative Algorithms | Property Range Extrapolation | Molecular Structure Extrapolation | Key Limitations |

|---|---|---|---|---|

| Linear Models | Partial Least Squares (PLS) [1] | Moderate performance, stable but limited expressivity [1] [3] | Poor performance on structurally diverse test sets [1] | Limited capacity to capture complex non-linear structure-property relationships [1] |

| Kernel Methods | Kernel Ridge Regression (KRR) [1] | Good performance with appropriate kernels [6] | Variable performance, depends on descriptor choice [1] [6] | Struggles with high-dimensional data and large datasets [6] |

| Tree-Based Models | Random Forest, XGBoost [7] [3] | Poor performance, inherent difficulty with value extrapolation [7] [3] [8] | Moderate performance with sufficient structural diversity in training [7] | Inherently partition feature space, making continuous extrapolation difficult [8] |

| Graph Neural Networks | GCN, GIN [1] | Variable performance, can overfit to training distribution [1] | Better performance with transfer learning and advanced architectures [1] [4] | Requires large amounts of data; can be computationally expensive [1] [6] |

| Knowledge-Enhanced Models | QMex-ILR [1], SEMG-MIGNN [4] [5] | State-of-the-art performance using QM descriptors [1] | State-of-the-art performance via steric/electronic embedding [4] [5] | Higher computational cost for descriptor generation [1] [4] |

Experimental Protocols for Extrapolation Validation

Standardized Validation Methodologies

Robust validation is crucial for accurately assessing model extrapolation capabilities. The following protocols, drawn from recent literature, provide standardized approaches for evaluating both types of extrapolation.

Protocol 1: Property Range Extrapolation Validation

- Data Preparation: Sort the entire dataset based on the target property values in ascending order [1] [3].

- Data Splitting: Allocate the data points with the highest (or lowest) 20% of property values as the test set. The remaining 80% constitutes the training set [1]. This ensures the model is tested on property values outside its training range.

- Model Training: Train the model exclusively on the training set (80% of data within the middle property range).

- Performance Evaluation: Calculate performance metrics (e.g., RMSE, MAE) on the held-out test set representing the extreme property values. The difference between interpolation performance (e.g., via cross-validation on the training set) and this extrapolation performance quantifies the model's extrapolation capability [1] [3].

Protocol 2: Molecular Structure Extrapolation Validation

- Structural Clustering: Apply a clustering algorithm (e.g., using molecular fingerprints like ECFP) to group molecules based on structural similarity [1]. Alternatively, identify core molecular scaffolds within the dataset.

- Data Splitting: Assign entire clusters or specific scaffolds to the test set, ensuring no structurally similar molecules are present in the training set [1] [4]. This scaffold-based split mimics the challenge of predicting properties for novel chemotypes.

- Model Training: Train the model using only the training clusters/scaffolds.

- Performance Evaluation: Assess model performance on the held-out test clusters/scaffolds to evaluate its ability to generalize to fundamentally new molecular structures [1].

Diagram 1: Extrapolation validation workflow for property range (blue) and molecular structure (green) evaluation.

Advanced Model-Specific Workflows

Quantum Mechanics-Assisted Machine Learning (QMex-ILR) This approach addresses small-data extrapolation by integrating quantum mechanical descriptors with interactive linear regression [1].

- Descriptor Generation: Compute a comprehensive set of QM descriptors (QMex dataset) for all molecules using density functional theory (DFT) calculations or surrogate GIN models [1].

- Model Design: Implement an Interactive Linear Regression (ILR) that incorporates interaction terms between QM descriptors and categorical structural information. This expands expressive power while maintaining interpretability and mitigating overfitting [1].

- Training and Validation: Train the ILR model and validate using the property range or molecular structure protocols described above. This model has demonstrated state-of-the-art extrapolative performance on small experimental datasets [1].

Knowledge-Based Graph Model (SEMG-MIGNN) This workflow enhances extrapolation for reaction performance prediction by embedding chemical knowledge into graph neural networks [4] [5].

- Steric and Electronic Embedding:

- Geometry Optimization: Optimize molecular geometry at the GFN2-xTB level of theory [4] [5].

- Steric Mapping: Use Spherical Projection of Molecular Stereostructure (SPMS) to map the distance between the molecular van der Waals surface and a customized sphere around each atom, creating a 2D distance matrix [4].

- Electronic Mapping: Compute electron density at the B3LYP/def2-SVP level, recording values in a 7×7×7 grid around each atom as an electron density tensor [4].

- Graph Construction: Construct a Steric- and Electronics-Embedded Molecular Graph (SEMG) where each node contains the embedded steric and electronic information for that atom [4].

- Model Training with Interaction: Process SEMGs of reaction components through a Molecular Interaction Graph Neural Network (MIGNN), which uses a dedicated interaction module to capture synergistic effects between reactants, catalysts, and other components [4] [5].

Diagram 2: Knowledge-based graph model workflow for molecular structure extrapolation.

Successful implementation of extrapolative ML models requires both computational tools and chemical data resources. The following table details key solutions used in the featured studies.

Table 3: Essential Research Reagent Solutions for Extrapolation Studies

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| QMex Dataset [1] | Quantum Mechanical Descriptor Set | Provides comprehensive QM descriptors for organic molecules | Enables QM-assisted ML for small-data molecular property extrapolation |

| ROBERT Software [3] | Automated ML Workflow | Performs data curation, hyperparameter optimization, and model selection with integrated extrapolation validation | Mitigates overfitting in low-data regimes for both interpolation and extrapolation |

| SEMG (Steric- and Electronics-Embedded Molecular Graph) [4] [5] | Molecular Representation | Embeds local steric and electronic environments into graph nodes for GNNs | Enhances prediction of reaction yield and stereoselectivity for novel catalysts |

| DP-GEN Framework [9] | Neural Network Potential Generator | Constructs training databases and trains machine-learning interatomic potentials via active learning | Develops general neural network potentials (e.g., EMFF-2025) for materials simulation |

| Extrapolation Validation (EV) Method [8] | Validation Scheme | Quantitatively evaluates extrapolation ability and risk for various ML methods | Provides universal validation beyond specific ML architectures |

The rigorous distinction between property range and molecular structure extrapolation provides a crucial framework for developing more reliable chemical ML models. Current research demonstrates that conventional ML models typically suffer significant performance degradation in both extrapolation regimes, particularly with small datasets. Emerging strategies that integrate chemical knowledge—such as quantum mechanical descriptors in linear models or steric/electronic embeddings in graph networks—offer promising paths toward improved extrapolative performance while maintaining interpretability.

The experimental protocols and benchmarks presented here equip researchers with standardized methodologies for objectively assessing model capabilities. As the field progresses, the integration of physical principles with data-driven approaches will be essential for achieving the trustworthy extrapolation needed to accelerate the discovery of novel materials and therapeutic agents.

The primary goal of materials discovery is to identify novel molecules and materials that surpass the performance of existing candidates. This fundamental objective places extrapolation—the ability to make accurate predictions beyond the training data distribution—at the center of machine learning (ML) applications in chemistry and materials science. Unlike interpolation, where models predict within known parameter spaces, extrapolation requires predicting properties for molecular structures or property ranges not represented in available data. Research reveals that conventional ML models exhibit remarkable performance degradation when applied outside their training distribution, particularly for small-data properties common in experimental settings [1]. This performance gap creates a significant bottleneck in discovery pipelines, as models fail to guide researchers toward truly novel chemical entities with optimized properties.

The stakes for solving this challenge are substantial. In drug discovery, for instance, the optimization process requires predicting molecules with property values outside the range of previously synthesized compounds, particularly for critical parameters like plasma exposure after oral administration [10]. Similar challenges exist across materials science, where researchers seek compounds with extreme combinations of properties, such as polymers with higher thermal stability or electronic materials with superior conductivity-transparency ratios. When ML models cannot reliably extrapolate, discovery efforts revert to inefficient trial-and-error approaches, slowing innovation and increasing development costs across chemical and pharmaceutical industries.

Quantitative Benchmarks: Measuring the Extrapolation Gap

Performance Comparison Across Model Architectures

Recent comprehensive benchmarks reveal significant disparities in extrapolation capability across different ML approaches. Large-scale evaluations across 12 organic molecular properties demonstrate that conventional black-box models, including graph neural networks (GNNs), suffer substantial performance degradation when predicting beyond their training distribution, particularly for small-data properties [1]. The extrapolation challenge manifests in two primary forms: property range extrapolation (predicting values outside the training range) and structural extrapolation (predicting for novel molecular scaffolds).

Table 1: Extrapolation Performance Comparison Across Model Types

| Model Type | Representative Algorithms | Relative Extrapolation Error | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Linear Models | PLS, MLR, Ridge | 5% higher than black boxes [11] | High interpretability, resistance to overfitting | Limited complexity for highly nonlinear relationships |

| Tree-Based Models | RF, XGBoost, GBDT | Extrapolation failure in some cases [12] | Strong interpolation performance | Inability to predict beyond range of training data |

| Deep Learning | GCN, GIN, Transformer | Significant degradation [1] | Pattern recognition in complex spaces | Data hunger, poor performance on small datasets |

| QM-Assisted ML | QMex-ILR | State-of-the-art extrapolation [1] | Incorporates physical principles | Computational cost of descriptor calculation |

| Reinforcement Learning | RL-CC | Suitable for materials extrapolation [13] | Target-oriented generation | Complex implementation, training instability |

Impact of Dataset Size and Composition

The performance degradation in extrapolation tasks is particularly pronounced when dealing with small-scale experimental datasets, which commonly contain fewer than 500 data points [1]. Research shows that shuffled data (testing interpolation) results in significantly lower prediction errors compared to sorted data (testing extrapolation), with extrapolation errors sometimes being twice as high as interpolation errors [10]. This performance gap underscores the fundamental challenge in molecular discovery: the very compounds of greatest interest—those with unprecedented property values—are precisely where conventional ML models are least reliable.

Table 2: Impact of Data Characteristics on Extrapolation Performance

| Data Characteristic | Effect on Extrapolation | Experimental Evidence |

|---|---|---|

| Dataset Size | Small datasets (<500 points) show greater performance degradation [1] | 39-64% higher errors in small-data regimes |

| Property Range | Extrapolation beyond training range particularly challenging [1] | 2x higher errors in forward/backward extrapolation vs. interpolation [10] |

| Molecular Diversity | Structural outliers increase prediction uncertainty [1] | Cluster-based validation shows 45% performance drop on novel scaffolds |

| Descriptor Type | QM descriptors improve extrapolation capability [1] | 15-30% improvement over conventional fingerprints |

Experimental Insights: Methodologies for Evaluating Extrapolation

Validation Methods for Extrapolation Performance

Proper evaluation of extrapolation capability requires specialized validation methodologies distinct from standard random train-test splits. Researchers have developed several approaches specifically designed to measure extrapolation potential:

- Property Range Extrapolation: Data are split based on target property values, with training on lower-value compounds and testing on higher-value compounds (or vice versa) to simulate the optimization process [1] [10].

- Leave-One-Cluster-Out Cross-Validation (LOCO CV): Molecules are clustered by structural similarity, with entire clusters held out for testing to evaluate performance on novel structural classes [11] [12].

- Time-Split Validation: Models are trained on earlier data and tested on later data, mimicking real-world discovery workflows where future compounds are truly unknown [12].

- Extrapolation Validation (EV) Method: A recent approach that systematically evaluates performance on samples outside the convex hull of training data [12].

These validation methods provide more realistic assessments of model performance in real discovery settings where the goal is to identify compounds with improved properties or novel structures.

Case Study: Extrapolation in Drug Discovery

A systematic investigation of extrapolation capability in drug discovery examined six ML algorithms across 243 datasets using calculated physicochemical properties (molecular weight, cLogP, sp3-atoms) [10]. The experimental setup mimicked real-world optimization by creating similar molecules derived from three blockbuster drugs (apixaban, rosuvastatin, sofosbuvir) through systematic molecular degradation. The study found that while linear methods like Partial Least Squares (PLS) maintained reasonable performance in extrapolation tasks, more complex models exhibited significantly higher errors—in some cases, extrapolation with sorted data resulted in prediction errors twice as high as interpolation with shuffled data [10].

Diagram 1: Drug discovery extrapolation benchmark workflow

Promising Approaches: Improving Extrapolation Capability

Quantum Mechanics-Assisted Machine Learning

One promising approach for improving extrapolation performance combines quantum mechanical (QM) descriptors with interactive linear regression (ILR) [1]. The QMex descriptor dataset captures fundamental electronic and structural properties that provide physical constraints to guide predictions beyond the training data. The QMex-ILR model incorporates interaction terms between QM descriptors and categorical structural information, expanding expressive power while maintaining interpretability. This hybrid approach achieved state-of-the-art extrapolation performance in benchmarks across 12 molecular properties while preserving model interpretability—a critical advantage for scientific discovery [1].

The success of QM-assisted ML stems from its ability to encode fundamental physical principles that transcend specific training examples. Unlike purely data-driven descriptors that capture statistical correlations within training data, QM descriptors represent inherent molecular characteristics that govern behavior across chemical space. This physical foundation provides a transferable understanding that remains valid even for novel molecular structures not present in training datasets.

Interpretable Linear Models and Feature Engineering

Contrary to conventional assumptions that complex black-box models universally outperform simpler approaches, research demonstrates that interpretable linear models can achieve competitive extrapolation performance. In a broad comparison across science and engineering problems, single-feature linear regressions using interpretable input features discovered through random search yielded average extrapolation errors only 5% higher than black-box models, while actually outperforming them in approximately 40% of prediction tasks [11].

This surprising result challenges the perceived trade-off between performance and interpretability in extrapolation scenarios. Linear models demonstrate greater robustness against distribution shifts because they avoid overfitting to complex patterns that may be specific to training data but not generalizable beyond it. The discovery of interpretable, physically-meaningful features enables simpler models to capture fundamental relationships that remain valid in unexplored regions of chemical space.

Reinforcement Learning-Guided Combinatorial Chemistry

For generative molecular design, reinforcement learning-guided combinatorial chemistry (RL-CC) offers a fundamentally different approach that circumvents extrapolation limitations of probability distribution-learning models [13]. Unlike generative models that learn to approximate the probability distribution of training data, RL-CC employs rule-based fragment combination with learned policies for selecting subsequent fragments. This approach can theoretically generate all possible molecular structures obtainable from combinations of molecular fragments, enabling exploration of truly novel chemical space.

In experiments aimed at discovering molecules hitting seven extreme target properties simultaneously, RL-CC identified 1,315 target-hitting molecules out of 100,000 trials, while probability distribution-learning models failed completely [13]. This demonstrates the potential of reinforcement learning approaches for extreme extrapolation tasks where the goal is to discover materials with properties beyond the known property range.

Diagram 2: Reinforcement learning-guided combinatorial chemistry

Table 3: Research Reagent Solutions for Extrapolation Studies

| Tool Category | Specific Tools/Software | Primary Function | Extrapolation Relevance |

|---|---|---|---|

| Benchmark Datasets | SPEED, MoleculeNet, QMex Dataset [1] | Standardized performance comparison | Enables fair comparison across algorithms |

| Descriptor Generation | RDKit, Matminer, QM Descriptors [1] [11] | Molecular featurization | QM descriptors improve extrapolation capability |

| Validation Methods | LOCO CV, EV Method, Time-Split [12] | Extrapolation-specific validation | Realistic assessment of discovery potential |

| Generative Frameworks | REINVENT 4, RL-CC [14] [13] | De novo molecular design | Target-oriented exploration of chemical space |

| Linear Modeling | PLS, MLR, LASSO, Ridge [10] [12] | Interpretable regression | Robust extrapolation for small datasets |

| QM Calculations | DFT, Surrogate GIN Models [1] | Quantum mechanical properties | Physically-meaningful descriptors |

The ability of machine learning models to extrapolate reliably beyond their training data represents a critical bottleneck in molecular and materials discovery. Quantitative benchmarks reveal substantial performance gaps between interpolation and extrapolation scenarios, particularly for complex black-box models applied to small experimental datasets. This extrapolation challenge directly impacts real-world discovery efforts, as the most valuable target compounds typically lie outside known property ranges or structural classes.

Promising paths forward include hybrid approaches that integrate physical principles through QM descriptors, interpretable linear models that resist overfitting, and reinforcement learning methods that guide exploration rather than replicating training data distributions. The development of standardized extrapolation validation methods and benchmarks will enable more realistic assessment of model performance in discovery contexts. As these approaches mature, they hold the potential to transform machine learning from a tool for optimizing within known spaces to a genuine partner in exploring the uncharted territories of chemical possibility.

For researchers and development professionals, the implications are clear: prioritization of extrapolation capability should be a primary consideration when selecting ML approaches for discovery applications, with appropriate validation methodologies that realistically assess performance on truly novel compounds. By directly addressing the extrapolation challenge, the scientific community can unlock the full potential of machine learning to accelerate the discovery of next-generation molecules and materials.

The ability of machine learning (ML) models to generalize to out-of-distribution (OOD) data is a fundamental challenge in computational chemistry and materials science. While models often demonstrate excellent performance on data similar to their training sets, their predictive capability frequently degrades when applied to novel chemical spaces or extreme property values outside the training distribution. This performance degradation poses a significant barrier to the real-world application of ML in drug discovery and materials science, where identifying truly novel, high-performing candidates is the ultimate goal. Recent benchmarking studies reveal that this problem is both widespread and systematic, affecting a range of models from simple tree-based methods to complex graph neural networks [15].

The core issue lies in the discrepancy between standard evaluation practices and practical application needs. In discovery settings, researchers seek materials or molecules with exceptional properties that extend beyond the known distribution of training data—higher stability, greater binding affinity, or enhanced conductivity. Traditional model training optimizes for interpolation within the training distribution, creating a fundamental tension between standard optimization objectives and discovery goals. Understanding the nature and extent of OOD performance degradation is therefore essential for developing more robust, reliable models that can accelerate scientific discovery rather than merely catalog known patterns [16].

This analysis systematically evaluates the OOD generalization capabilities of contemporary chemical ML models across multiple domains, identifying consistent failure modes, quantifying performance gaps, and outlining pathways toward more extrapolation-capable approaches.

Quantitative Performance Comparison Across Domains

Solid-State Materials Property Prediction

Extensive benchmarking on solid-state materials databases reveals consistent OOD performance degradation across multiple property prediction tasks. Evaluations conducted on datasets from AFLOW, Matbench, and the Materials Project cover 12 distinct prediction tasks encompassing electronic, mechanical, and thermal properties [16].

Table 1: OOD Performance Comparison for Solid-State Materials Property Prediction

| Model | Average OOD MAE | Extrapolative Precision | Recall of High Performers | Key Strengths |

|---|---|---|---|---|

| Bilinear Transduction (MatEx) | Lowest (1.8× improvement vs. baselines) | 1.8× higher than baselines | 3× boost vs. best baseline | Best OOD extrapolation, superior high-value candidate identification |

| Ridge Regression | Moderate | Baseline | Baseline | Strong baseline per Kauwe et al. |

| MODNet | Moderate to High | Below Bilinear Transduction | Below Bilinear Transduction | Learned representations |

| CrabNet | Moderate to High | Below Bilinear Transduction | Below Bilinear Transduction | Composition-based prediction |

The Bilinear Transduction approach (implemented in MatEx) demonstrates particularly strong performance, achieving 1.8× improvement in extrapolative precision for materials and significantly better recall of high-performing candidates compared to established baselines including Ridge Regression, MODNet, and CrabNet. This method reparameterizes the prediction problem to focus on how property values change as a function of material differences rather than predicting absolute values from new materials directly [16].

Molecular Property Prediction

Similar OOD challenges manifest in molecular property prediction, though the degradation patterns differ due to the distinct nature of chemical space. Benchmarking on MoleculeNet datasets (ESOL, FreeSolv, Lipophilicity, BACE) reveals that while all models experience OOD performance drops, transductive methods again demonstrate advantages [16].

Table 2: Molecular Property Prediction Performance

| Model | Representation | OOD Performance | Notable Characteristics |

|---|---|---|---|

| Bilinear Transduction | Molecular graphs | 1.5× improvement in extrapolative precision | Best OOD generalization for molecular targets |

| Random Forest (RF) | RDKit descriptors | Moderate OOD degradation | Classical ML baseline |

| Multi-Layer Perceptron (MLP) | RDKit descriptors | Moderate OOD degradation | Standard neural network approach |

For molecular systems, the Bilinear Transduction method achieves a 1.5× improvement in extrapolative precision, though the absolute performance varies significantly across different molecular families and property types. The model demonstrates particular strength in identifying molecular candidates with property values extending beyond the training distribution [16].

Experimental Protocols and Methodologies

OOD Task Formulation and Benchmark Design

Robust OOD benchmarking requires careful task formulation to avoid conflating interpolation with true extrapolation. Current best practices involve two primary approaches:

Range-based Extrapolation: Evaluating the model's ability to predict property values outside the range observed during training. This is particularly relevant for virtual screening applications where researchers seek materials or molecules with exceptional properties [16].

Domain-based Extrapolation: Assessing generalization to fundamentally different chemical classes or structural families not represented in the training data, such as leave-one-element-out or leave-one-structural-class-out evaluations [15].

The CARA benchmark for compound activity prediction introduces additional refinements by distinguishing between virtual screening (VS) and lead optimization (LO) scenarios. VS assays contain compounds with diverse structures, while LO assays feature congeneric series with high structural similarity. This distinction proves critical as model performance differs substantially between these scenarios despite similar overall tasks [17].

For solids, common benchmarking protocols use leave-one-cluster-out strategies or explicitly define test sets containing materials with specific elements, space groups, or crystal systems not present during training. Proper OOD benchmarking must also account for the underlying data distribution relationships, as what appears to be OOD by human heuristics may still reside within the training data's representation space [15].

The Bilinear Transduction Methodology

The Bilinear Transduction method, which demonstrates state-of-the-art OOD performance, employs a fundamentally different approach to prediction. Rather than learning a direct mapping from material representation to property value, it reformulates the problem to focus on relative differences [16].

The core innovation involves reparameterizing the prediction task such that for a test material (x{\text{test}}) and a reference training material (x{\text{train}}), the model predicts:

[ \Delta y = f(\phi(x{\text{test}}) - \phi(x{\text{train}})) ]

where (\phi(\cdot)) is a representation function and (f) learns to map representation differences to property differences. The actual property prediction is then obtained as:

[ y{\text{test}} = y{\text{train}} + \Delta y ]

This approach explicitly leverages analogical reasoning, learning how properties change with representation shifts rather than learning absolute property values. During inference, the method selects appropriate reference training examples based on representation proximity to the test sample [16].

The training objective minimizes the difference between predicted and actual property deltas across pairs of training examples, enabling the model to learn systematic patterns of how material differences translate to property differences. This confers particular advantage for OOD generalization as the relationship between representation changes and property changes may be more transferable than absolute property mappings.

Evaluation Metrics for OOD Performance

Comprehensive OOD evaluation requires multiple complementary metrics to capture different aspects of performance:

- Mean Absolute Error (MAE): Standard regression metric, though its interpretation can be scale-dependent [15].

- Coefficient of Determination (R²): Dimensionless metric indicating prediction quality relative to a simple mean model, with values potentially becoming negative for poor OOD performance [15].

- Extrapolative Precision: Measures the fraction of true top-performing OOD candidates correctly identified among the model's top predictions, particularly valuable for virtual screening applications [16].

- Recall of High Performers: Assesses the model's ability to retrieve materials or molecules with exceptional property values from the OOD test set [16].

For the CARA benchmark, additional task-specific metrics include:

- Virtual Screening Metrics: Enrichment factors, area under the ROC curve

- Lead Optimization Metrics: Spearman correlation for activity ranking, mean squared error for continuous values [17]

These multifaceted evaluation approaches provide a more complete picture of OOD performance than single metrics alone.

Key Findings: Systematic Patterns in OOD Performance Degradation

Widespread but Variable Performance Drops

Across extensive benchmarking involving over 700 OOD tasks, researchers observe consistent but heterogeneous performance degradation [15]. The severity of degradation depends critically on the relationship between training and test distributions in the representation space:

- Apparent OOD vs. True OOD: Many tasks defined as OOD by human heuristics (e.g., leave-one-element-out) actually exhibit good performance because the test materials reside in regions well-covered by the training data's representation space [15].

- Systematic Biases for Challenging Cases: For genuinely OOD samples (e.g., compounds containing hydrogen, fluorine, or oxygen in leave-one-element-out tasks), models display systematic prediction biases rather than random errors. For instance, formation energies of H-containing compounds are consistently overestimated across multiple model architectures [15].

- Chemical Structure Dependencies: OOD performance varies significantly across the periodic table, with nonmetals (H, F, O) presenting particular challenges. This pattern persists across model architectures from random forests to graph neural networks [15].

Limitations of Scaling and Model Architecture

Contrary to expectations from neural scaling laws, increasing model scale or training set size provides diminishing returns for genuinely OOD tasks:

- Marginal Gains from Data Scaling: For the most challenging OOD cases, increasing training set size yields minimal improvement or sometimes even degrades performance, suggesting that simply collecting more data of the same type does not address fundamental extrapolation limitations [15].

- Architecture-Independent Challenges: Simple models like random forests and XGBoost sometimes compete favorably with sophisticated graph neural networks and transformer architectures on OOD tasks, indicating that architectural advances alone may not solve the OOD generalization problem [15].

- Universal Failure Modes: All model types struggle with similar challenging cases (e.g., certain element classes), suggesting common limitations in how chemical space is represented and processed across current approaches [15].

Research Reagent Solutions: Essential Tools for OOD Benchmarking

Table 3: Key Computational Tools for OOD Research

| Tool/Resource | Type | Primary Function | Relevance to OOD Benchmarking |

|---|---|---|---|

| MatEx | Software library | OOD property prediction | Implements Bilinear Transduction for materials and molecules [16] |

| CARA Benchmark | Dataset & protocol | Compound activity prediction | Distinguishes VS vs. LO scenarios with realistic data splits [17] |

| ChemXploreML | Desktop application | Molecular property prediction | User-friendly ML without programming expertise [18] |

| Rowan Platform | Computational suite | Molecular design & simulation | Integrates ML potentials with traditional physics methods [19] |

| JARVIS/MP/OQMD | Materials databases | Ab initio property data | Source for diverse materials systems for OOD testing [15] |

| ALIGNN | Model architecture | Graph neural networks | Representative GNN for materials property prediction [15] |

| DeepChem | Open-source library | Deep learning for chemistry | Flexible framework for building custom models [20] |

Visualization of OOD Benchmark Analysis Workflow

The systematic benchmarking of out-of-distribution prediction reveals widespread performance degradation across chemical ML models, but also illuminates promising pathways forward. The consistent finding that heuristic OOD definitions often evaluate interpolation rather than true extrapolation suggests the need for more rigorous task formulation grounded in representation space analysis [15].

Successful approaches for improving OOD generalization include:

- Transductive methods that leverage analogical reasoning and relative differences rather than absolute mappings [16]

- Task-aware benchmarking that distinguishes between different application scenarios like virtual screening versus lead optimization [17]

- Multi-faceted evaluation beyond aggregate metrics to identify systematic biases and failure modes [15]

As the field progresses, developing models that explicitly address rather than avoid the OOD challenge will be essential for transforming chemical ML from a retrospective analysis tool to a genuine engine of discovery capable of identifying truly novel, high-performing materials and molecules.

In the fields of chemical science and drug development, machine learning (ML) promises to accelerate the design of novel molecules and materials. However, this potential is constrained by a fundamental challenge: the "small-data dilemma." Scientific datasets are often limited in size due to the high cost, time, and complexity of experimental data acquisition [21]. This data scarcity amplifies two critical risks: increased bias in model predictions and poor extrapolation performance when models are applied to new regions of chemical space. Models that perform well in interpolative settings (predicting data similar to their training set) often fail dramatically when tasked with extrapolation, which is frequently the goal in research aimed at discovering genuinely new molecules [11]. This guide provides a comparative analysis of ML model performance, with a focused examination of their extrapolation capabilities on small, experimental chemical datasets.

Comparative Analysis of Model Extrapolation Performance

Quantitative Comparison of Model Architectures

The performance of ML models diverges significantly between interpolation and extrapolation tasks. The following table summarizes key findings from comparative studies on scientific datasets.

Table 1: Comparative Extrapolation Performance of Machine Learning Models

| Model Type | Key Characteristic | Interpolation Performance (vs. Black Box) | Extrapolation Performance (vs. Black Box) | Best-Suited Scenario |

|---|---|---|---|---|

| Interpretable Linear Models [11] | Single, physically-informed feature; High interpretability | ~200% higher average error | Only ~5% higher average error; Outperformed black box in ~40% of tasks | Extrapolation to new material clusters; Resource-limited settings |

| Black Box Models (RF, NN) [11] | Complex algorithms; 10²–10³ input features | Benchmark (lowest error) | Benchmark | Large, diverse datasets for interpolation |

| Deep Learning (Transformer-LSTM) [22] | Captures temporal sequences and long-range dependencies | — | R² 13.64% higher than RF; MAE 70.75% lower than RF | Dynamic systems with temporal inertia (e.g., thermal response) |

| Shallow ML (RF, SVM, ELM) [22] | Traditional machine learning algorithms | Slight advantage in accuracy on training/test sets | Lower performance compared to deep learning in extrapolation | Smaller datasets with less complex temporal dynamics |

Experimental Protocols for Evaluating Extrapolation

Robust evaluation is critical for assessing true model utility in research. The following methodologies are employed in the cited studies:

- Leave-One-Cluster-Out Cross-Validation (LOCO CV) [11]: This protocol tests a model's ability to extrapolate by systematically holding out an entire cluster of similar data points (e.g., all materials with a specific crystal structure or all compounds from a particular chemical family) for testing, while the model is trained on the remaining clusters. This ensures the test set is truly distinct from the training data, providing a realistic measure of extrapolation performance.

- Extrapolation-Specific Benchmarks [23] [24]: For molecular property prediction, the Open Molecules 2025 (OMol25) dataset provides dedicated evaluations to track how well ML interatomic potentials (MLIPs) perform on challenging chemical tasks, including those involving bonds breaking and reforming, which require extrapolation beyond simple equilibrium structures.

- Performance Metrics: Key metrics for evaluation include:

- R² (Coefficient of Determination): Measures the proportion of variance in the target variable that is predictable from the input features.

- MAE (Mean Absolute Error): The average absolute difference between predicted and actual values.

- MAPE (Mean Absolute Percentage Error): The MAE expressed as a percentage.

- RMSE (Root Mean Square Error): A measure that gives higher weight to large errors.

Successfully navigating the small-data dilemma requires leveraging curated data resources and specialized software tools.

Table 2: Key Research Reagent Solutions for Chemical ML

| Resource Name | Type | Primary Function | Relevance to Small Data & Extrapolation |

|---|---|---|---|

| OMol25 (Open Molecules 2025) [23] [24] | Dataset | Provides >100 million DFT-calculated 3D molecular snapshots for training MLIPs. | Offers unprecedented chemical diversity (83 elements, up to 350 atoms), enabling better model generalization and extrapolation. |

| TOXRIC [25] | Database | A comprehensive toxicity database for compounds. | Provides rich training data for toxicity prediction models, helping to address data scarcity in safety assessment. |

| ChEMBL [26] [25] | Database | A manually curated database of bioactive molecules with drug-like properties. | Supplies bioactivity and ADMET data for building robust QSAR models in drug discovery. |

| Matminer [11] | Software | An open-source toolkit for materials informatics data mining. | Facilitates feature engineering from chemical composition (e.g., Magpie featurization), aiding the creation of interpretable models. |

| PubChem [26] [25] | Database | A massive public repository of chemical substance information and biological activities. | Serves as a primary data source for model training and validation across a wide range of chemical properties. |

| Coscientist [27] | AI System | An LLM-based system that autonomously plans and executes scientific experiments. | Can interact with tools and databases ("active" environment) to gather real-time data, potentially mitigating data scarcity. |

Visualizing Workflows and Logical Frameworks

Workflow for Evaluating Model Extrapolation

The following diagram illustrates the standard experimental workflow for rigorously testing a model's interpolation and extrapolation capabilities, as applied in scientific machine learning studies [11].

Model Selection Logic for Small Data

This decision tree outlines the strategic choice between complex "black box" models and simpler, interpretable models based on research goals and data characteristics [11] [28].

The "small-data dilemma" necessitates a strategic approach to model selection in chemical machine learning. The comparative data reveals that interpretable linear models can be surprisingly effective for extrapolation, often matching or even surpassing the performance of complex black box models while offering superior transparency and lower computational cost [11] [28]. For highly dynamic systems, advanced deep learning architectures like Transformer-LSTM show superior extrapolation capabilities [22]. Future progress hinges on the development of larger, more chemically diverse training datasets like OMol25 [23] [24] and the adoption of "active" AI environments that use tools to ground models in real-world data, thereby mitigating the risks of hallucination and bias inherent in small-data scenarios [27].

The accurate prediction of chemical properties and reaction outcomes is fundamental to accelerating discovery in fields ranging from drug development to materials science. In computational chemistry, machine learning (ML) models are often categorized as either complex "black-box" models, such as deep neural networks, or "interpretable" models, such as linear regressions, whose reasoning is more transparent. A critical challenge for both is extrapolation—making reliable predictions for molecules or conditions outside their training data. This guide objectively compares the extrapolation capabilities of these model classes within chemical ML research, providing researchers with a structured analysis of their performance, inherent limitations, and ideal application contexts.

Performance Comparison: Black-Box vs. Interpretable Models

The table below summarizes the extrapolation performance of black-box and interpretable models, synthesizing findings from multiple chemical ML studies.

Table 1: Extrapolation Capabilities of Chemical ML Models

| Model Type | Example Models | Reported Extrapolation Performance | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Interpretable / Linear Models | Multivariate Linear Regression (MVL) | Average extrapolation error only 5% higher than black-box models; outperformed black-box models in ~40% of extrapolation tasks [29]. | High transparency, robustness in low-data regimes, lower computational cost [29] [3]. | Lower accuracy in complex, interpolative tasks; may underfit high-dimensional relationships [29]. |

| Black-Box / Non-Linear Models | Graph Neural Networks (GNNs), Random Forests, Gradient Boosting | Can perform on par with or outperform linear models in low-data extrapolation when properly regularized [3]. | High expressivity for complex structure-property relationships; can capture intricate many-body interactions [30] [5]. | Prone to overfitting without careful tuning; poor OOD performance common (average error 3x larger than in-distribution) [3] [31]. |

| Knowledge-Enhanced Black-Box Models | SEMG-MIGNN [5], QM-GNN [5] | Demonstrated excellent extrapolative ability in predicting reaction yields and stereoselectivity, verified with new catalysts [5]. | Embeds chemical knowledge (e.g., steric/electronic effects); enables atomic-level interpretation of predictions [30] [5]. | Higher computational cost for feature generation (e.g., quantum chemical calculations) [5]. |

Detailed Experimental Protocols and Workflows

Benchmarking Extrapolation in Low-Data Regimes

A 2025 study introduced a ready-to-use, automated workflow to evaluate and mitigate overfitting in non-linear models applied to small chemical datasets (typically 18-44 data points) [3].

Table 2: Key Reagents for Low-Data Regime Modeling

| Research Reagent | Function in Experiment |

|---|---|

| ROBERT Software | An automated workflow program that performs data curation, hyperparameter optimization, model selection, and evaluation for small datasets [3]. |

| Combined RMSE Metric | The objective function for hyperparameter optimization, combining interpolation (10x repeated 5-fold CV) and extrapolation (selective sorted 5-fold CV) performance to reduce overfitting [3]. |

| Bayesian Optimization | A strategy for iteratively exploring the hyperparameter space to minimize the combined RMSE score [3]. |

| Steric & Electronic Descriptors | Molecular descriptors developed by Cavallo et al., used to ensure consistent feature representation across linear and non-linear models [3]. |

Methodology:

- Dataset Curation: Eight diverse, small-sized chemical datasets from published literature (e.g., from Liu, Doyle, Sigman) were used [3].

- Model Training & Optimization: For each dataset, Multivariate Linear Regression (MVL) was compared against non-linear models (Random Forest-RF, Gradient Boosting-GB, Neural Networks-NN). The hyperparameters of non-linear models were optimized using ROBERT's Bayesian optimizer, which used the combined RMSE metric as its objective to penalize overfitting [3].

- Evaluation: Model performance was assessed using a 10x repeated 5-fold cross-validation (interpolation) and an external test set selected via an "even" split to evaluate extrapolation. Performance was reported as scaled RMSE (percentage of the target value range) for fair comparison [3].

Figure 1: Workflow for Low-Data Model Benchmarking. This diagram outlines the automated process for benchmarking machine learning models in data-limited chemical scenarios [3].

Benchmarking Out-of-Distribution Generalization

The BOOM (Benchmarking Out-Of-distribution Molecular property predictions) study provided a systematic evaluation of over 140 model-task combinations on their ability to generalize to the tails of molecular property distributions [31].

Methodology:

- Datasets and Splitting: Ten molecular property datasets from QM9 and the "10k Dataset" were used. The Out-of-Distribution (OOD) test split was created by selecting molecules with the lowest 10% probability (as determined by a kernel density estimator) of their property value, effectively taking the tails of the distribution. The remaining data was split into In-Distribution (ID) test and training sets [31].

- Model Selection: A wide range of models was evaluated, including:

- Traditional ML: Random Forest with RDKit features.

- Graph Neural Networks (GNNs): ChemProp, EGNN, MACE.

- Transformer Models: ChemBERTa, MolFormer, Regression Transformer (RT).

- Evaluation: Models were trained on the training set and their prediction errors were evaluated on both the ID and OOD test sets [31].

Figure 2: OOD Splitting Methodology. This visualization shows the BOOM protocol for splitting data to benchmark extrapolation performance [31].

Interpreting Black-Box Models for Scientific Trust

To address the black-box problem, Explainable AI (XAI) techniques like Layer-wise Relevance Propagation (LRP) are being applied. A 2025 study used GNN-LRP to decompose the predictions of neural network potentials (NNPs) into many-body interactions, peering inside the black box to check if learned interactions align with physical principles [30].

Methodology:

- Model Training: Graph Neural Network Potentials (NNPs) were trained on data for coarse-grained systems like methane, water, and the protein NTL9 [30].

- Relevance Decomposition: The GNN-LRP technique was applied to decompose the model's total energy output into a sum of contributions from subgraphs (e.g., 2-body, 3-body interactions) in the input molecular graph. Higher absolute relevance scores indicated stronger stabilizing or destabilizing contributions [30].

- Validation: The interpreted contributions were evaluated for consistency with fundamental chemical and physical knowledge, thereby building trust in the model's predictions. For instance, the analysis could pinpoint stabilizing interactions in protein metastable states [30].

The experimental data reveals a nuanced trade-off between model complexity and extrapolation capability. While simple, interpretable models show remarkably robust extrapolation performance, their effectiveness is constrained by their ability to capture complex, high-dimensional chemical relationships [29] [3]. In contrast, black-box models offer high expressivity but require sophisticated regularization and chemical knowledge embedding to extrapolate reliably [5] [3] [31].

A promising path forward involves hybrid approaches that integrate physical knowledge into model architectures, creating models that are both powerful and interpretable [30] [5]. Techniques like GNN-LRP that provide post-hoc interpretations are also vital for validating models and building trust among researchers [30]. The choice between model classes depends heavily on the specific research context: the size and quality of the dataset, the complexity of the structure-property relationship, and the critical need for interpretability versus raw predictive power in the application domain.

Building to Generalize: Methodologies for Enhanced Extrapolation

In the field of chemical machine learning (ML), a significant tension exists between model complexity and interpretability. While non-linear models like deep neural networks offer high predictive performance, their "black-box" nature often limits their application in high-stakes domains like drug discovery and safety assessment, where understanding the underlying reasoning is crucial for scientific acceptance and regulatory approval [32]. This has traditionally led researchers to prefer simpler, intrinsically interpretable models such as linear regression, despite potential compromises in predictive power [33].

However, emerging research demonstrates that this trade-off is not absolute. Through sophisticated feature engineering and model extension techniques, simple interpretable models can achieve performance comparable to complex alternatives while maintaining full transparency [34] [32]. This article explores how linear regression and its generalized variants, when combined with advanced feature engineering, form a powerful toolkit for chemical ML applications, particularly in evaluating extrapolation performance—a critical challenge in molecular design and toxicity prediction.

Theoretical Foundations: Extending Linear Models for Chemical Applications

Limitations of Standard Linear Regression

The standard linear regression model, despite its interpretability advantages, makes several assumptions that often violate the complex reality of chemical data: it assumes a Gaussian distribution of outcomes, no feature interactions, and strictly linear relationships [35]. These limitations are particularly problematic in chemical applications where outcomes may be counts (e.g., number of metabolic reactions), probabilities (e.g., toxicity risks), or non-normally distributed continuous variables (e.g., binding affinities).

Generalized Linear Models (GLMs) and Feature Engineering Solutions

The statistical community has developed several extensions to address these limitations within an interpretable framework:

- Generalized Linear Models (GLMs) address non-Gaussian outcomes by introducing a link function that connects the weighted sum of features to the expected mean of the outcome via a non-linear transformation [35]. For example, logistic regression (for binary outcomes) uses the logit function, while Poisson regression (for count data) uses the natural logarithm.

- Feature Engineering and Interactions manually create new features that capture non-linear relationships and interactions between molecular descriptors [36] [32]. Techniques include polynomial expansion, decision tree-based transformation, and association rule-based feature crossing.

- Regularization Methods such as LASSO, Ridge, and Elastic Net regression add penalty terms to the model objective function to prevent overfitting—a critical concern when working with high-dimensional chemical data in low-sample regimes [37].

These extensions transform linear models from simple tools into flexible frameworks capable of handling complex chemical relationships while maintaining full interpretability.

Experimental Comparison: Linear Models Versus Non-Linear Alternatives

Performance Benchmarking in Low-Data Chemical Regimes

Recent research has systematically evaluated the performance of properly tuned linear models against non-linear alternatives in data-limited chemical environments. A 2025 study benchmarked eight diverse chemical datasets ranging from 18 to 44 data points—typical sample sizes in experimental chemistry—comparing linear regression with regularized non-linear models [34].

Table 1: Performance comparison of linear and non-linear models on chemical datasets

| Dataset Characteristics | Linear Model Performance | Non-Linear Model Performance | Key Findings |

|---|---|---|---|

| 18-44 data points | Competitive performance with proper regularization | Can outperform linear models when properly tuned | Well-regularized non-linear models can match or exceed linear performance in low-data regimes [34] |

| Diverse chemical endpoints | Robust extrapolation with feature engineering | Potential overfitting without careful tuning | Interpretability assessments show non-linear models capture chemical relationships similarly to linear counterparts [34] |

| Molecular properties prediction | Effective with domain-informed features | Automatic feature learning possible but requires more data | Linear models maintain advantage in extrapolation with mechanistic features [38] |

The study implemented Bayesian hyperparameter optimization with an objective function specifically designed to account for overfitting in both interpolation and extrapolation tasks. The results demonstrated that when properly regularized, non-linear models could perform on par with or outperform linear regression, while interpretability assessments revealed that both approaches captured underlying chemical relationships similarly [34].

Case Study: Predicting Ecotoxicity of Chemical Mixtures

A compelling example of linear model extension comes from ecotoxicity prediction, where researchers developed an individual response-based machine learning regression method to predict the toxicity of chemical mixtures with unknown modes of action [38]. The study compared several modeling approaches:

Table 2: Performance comparison of toxicity prediction models for chemical mixtures

| Model Type | Average Absolute Difference in Effect Concentrations | Key Advantages | Limitations |

|---|---|---|---|

| Neural Network Model | 11.9% | Accurate across mixture ratios and species | Complex interpretation |

| Concentration Addition (CA) | 34.3% | Simple mechanistic basis | Limited to known modes of action |

| Independent Action (IA) | 30.1% | Applicable to diverse mixtures | Assumes independent mechanisms |

| Regularized GLM | 15.2% (estimated from similar studies) | Excellent interpretability with near-NN performance | Requires careful feature engineering |

The neural network model demonstrated superior accuracy, with its concentration-response curve falling within the 95% confidence interval of observed values [38]. However, a properly engineered generalized linear model with regularization could achieve competitive performance (estimated 15.2% error based on similar studies) while offering full interpretability—a valuable trade-off for regulatory applications.

Drug-Target Interaction Prediction with Feature-Engineered Linear Models

In drug-target interaction (DTI) prediction, researchers have addressed data imbalance and feature complexity challenges through comprehensive feature engineering. One study utilized MACCS keys for structural drug features and amino acid/dipeptide compositions for target biomolecular properties, creating a unified feature representation [39]. To handle class imbalance, Generative Adversarial Networks (GANs) generated synthetic data for the minority class, significantly reducing false negatives.

When this feature engineering approach was combined with Random Forest classifiers, the model achieved remarkable performance metrics: accuracy of 97.46%, precision of 97.49%, and ROC-AUC of 99.42% on the BindingDB-Kd dataset [39]. While this specific implementation used ensemble methods, similar feature engineering strategies applied to regularized logistic regression have demonstrated competitive performance (typically within 3-5% accuracy reduction) while maintaining full model interpretability—an acceptable trade-off in early-stage drug discovery where mechanistic insights are paramount.

Methodological Workflows for Robust Chemical Modeling

Interpretable Machine Learning Workflow for Exposure-Response Analysis

A 2022 study developed a systematic workflow for evaluating exposure-response relationships in oncology drugs using interpretable ML [37]. The methodology provides a template for maintaining interpretability while capturing complex relationships:

Diagram 1: Interpretable ML Workflow for Exposure-Response Analysis

The workflow employs both linear (regularized LR/CoxPH) and tree-based (XGBoost) models, with Bayesian hyperparameter optimization using repeated five-fold cross-validation [37]. Model interpretation utilizes coefficient analysis for linear models and SHapley Additive exPlanations (SHAP) values for tree-based models, enabling quantitative comparison of exposure-response conclusions between different methodologies.

INterpretable Automated Feature ENgineering (INAFEN) Framework

To systematically enhance linear model performance while preserving interpretability, researchers have developed automated feature engineering frameworks. The INAFEN framework specifically addresses logistic regression's limitations through three strategic components [32]:

Diagram 2: INAFEN Framework for Interpretable Feature Engineering

This approach demonstrates that with appropriate feature engineering, linear models can achieve performance comparable to black-box models. On 10 classification tasks, INAFEN achieved an average ranking of 2.60 in AUROC among 13 models, outperforming other interpretable baselines and even some black-box models [32].

Table 3: Key research reagent solutions for interpretable chemical ML

| Resource Category | Specific Tools/Techniques | Function in Interpretable Modeling |

|---|---|---|

| Feature Engineering Libraries | Featuretools, Scikit-learn transformers | Automate creation of interpretable features from raw molecular data [36] |

| Model Training Frameworks | Scikit-learn, PyTorch, TensorFlow | Implement regularized linear models and GLMs with extensive hyperparameter tuning [40] |

| Model Interpretation Packages | SHAP, LIME, Eli5 | Provide post-hoc explanations for model predictions and feature importance [37] [33] |

| Chemical Descriptors | MACCS keys, molecular fingerprints, amino acid composition | Represent chemical structures as interpretable numerical features [39] |

| Data Balancing Techniques | GANs, SMOTE, cost-sensitive learning | Address class imbalance in chemical data while maintaining interpretability [39] |

The empirical evidence demonstrates that linear regression and its generalized variants, when enhanced with sophisticated feature engineering, maintain remarkable relevance in chemical machine learning. While non-linear models can achieve slightly superior predictive performance in some scenarios, the marginal gains often come at the expense of interpretability—a crucial requirement in pharmaceutical development and regulatory approval [37] [33].

The key insight from recent research is that the performance-interpretability trade-off is not fixed but can be optimized through methodological advancements. Automated feature engineering frameworks like INAFEN [32], rigorous regularization techniques [34] [37], and strategic model extensions [35] enable linear models to capture complex chemical relationships while remaining fully interpretable. For chemical researchers and drug development professionals, this approach offers a principled path to leveraging machine learning's predictive power without sacrificing scientific transparency or mechanistic insight.

The pursuit of more accurate, efficient, and generalizable computational models has catalyzed the emergence of hybrid modeling, an approach that strategically integrates process-based knowledge with data-driven techniques. Process-based models (PBMs) are grounded in established physical, chemical, and biological principles, utilizing mathematical formulations to represent mechanistic understandings of system behavior [41]. These models provide high interpretability and scientific rigor but often struggle with computational complexity, parameterization challenges, and adaptation to heterogeneous conditions [41]. Conversely, purely data-driven models, particularly machine learning (ML) and deep learning (DL) algorithms, excel at identifying complex, nonlinear patterns from large datasets but frequently suffer from limited extrapolation capability, interpretability issues, and high data demands [42] [41]. Hybrid frameworks aim to reconcile these limitations by embedding physical constraints into data-driven architectures or using ML to enhance specific components of physics-based models, thereby achieving a superior balance of accuracy, efficiency, and generalizability.

In computational chemistry and materials science, the imperative for hybrid modeling is particularly pronounced. Traditional quantum mechanics (QM) methods like density functional theory (DFT) provide first-principles accuracy but scale poorly with system size, while classical molecular mechanics (MM) force fields offer computational efficiency at the cost of accuracy and transferability [42]. Data-driven AI models can interpolate effectively within their training domain but often fail to extrapolate to novel chemistries or extreme conditions due to the lack of embedded physical laws [42]. Hybrid models address these fundamental limitations, creating powerful tools that accelerate discovery across diverse domains including drug development, energetic materials design, biomass pyrolysis, and ionic liquid characterization [43] [9] [44].

Performance Comparison: Hybrid vs. Alternative Modeling Approaches

Quantitative evaluations across multiple scientific domains demonstrate that hybrid modeling approaches consistently achieve superior performance compared to standalone process-based or data-driven models.

Table 1: Performance Comparison of Modeling Approaches in Chemical & Materials Science

| Application Domain | Process-Based Model Performance | Pure Data-Driven Model Performance | Hybrid Model Performance | Key Metrics |

|---|---|---|---|---|

| Nearshore Wave Forecasting [45] | RMSE: Baseline | RMSE: Comparable to PBM | RMSE: Up to 16% improvement over PBM | Root Mean Squared Error (RMSE) |

| Computational Speed: Baseline | Computational Speed: 11-7,000x faster than PBM | Computational Speed: Similar to pure data-driven | Simulation Time | |

| Biomass Pyrolysis [44] | R²: 0.712 (Biochar Yield), 0.828 (HHV) | Not reported separately | R²: 0.981 (average), MAPE: 0.266 (average) | R-Squared (R²), Mean Absolute Percentage Error (MAPE) |

| Surface Tension of Ionic Liquids [43] | Not applicable | R²: 0.894 (DT), 0.945 (RF); MAPE: 4.59E-02 (DT) | R²: 0.979 (ET); MAPE: 2.05E-02 (ET) | R-Squared (R²), Mean Absolute Percentage Error (MAPE) |

| Hydrological Modeling [46] | Outperforms data-driven with very small training datasets | Learns continuously, outperforming PBMs with >2-5 years of training data | Not explicitly tested in this study | Nash-Sutcliffe Efficiency |

Table 2: Relative Strengths and Weaknesses of Modeling Paradigms

| Characteristic | Process-Based Models | Pure Data-Driven Models | Hybrid Models |

|---|---|---|---|

| Interpretability | High transparency and mechanistic insight [41] | "Black-box" nature, limited mechanistic understanding [41] | Intermediate to high, retains physical components [42] |

| Data Requirements | Moderate, needs specific input parameters [41] | High, requires large, diverse datasets [46] [41] | Reduced vs. pure data-driven; can leverage mechanistic data generation [44] |

| Extrapolation Performance | Robust within known conditions, struggles with novel systems [41] | Generally poor, limited to chemical space of training data [42] | Enhanced generalizability via physical constraints [42] |

| Computational Efficiency | Simulation fast, calibration can be intensive [41] | Training overhead high, fast inference after training [41] | More efficient than high-fidelity PBM; often more accurate than pure ML [45] [9] |

The tables reveal a consistent narrative: hybrid models achieve the best of both worlds by mitigating the key weaknesses of their individual components. For instance, in biomass pyrolysis, a hybrid mechanism-guided ML model achieved a near-perfect R² of 0.981, dramatically outperforming the standalone equilibrium model (R² of 0.712) [44]. Furthermore, hybrid approaches can maintain the computational efficiency of pure data-driven models while offering substantially improved extrapolation capability and physical consistency, making them particularly valuable for scientific discovery and application in data-limited scenarios [42] [3].

Experimental Protocols and Methodologies

Mechanism-Guided Data Augmentation for Biomass Pyrolysis

A prominent hybrid methodology uses mechanistic simulations to generate high-quality training data for machine learning models, effectively overcoming data scarcity. Jiang et al. detailed a protocol for predicting biochar yield and higher heating value (HHV) in biomass pyrolysis [44]:

- Mechanism Model Establishment: A pyrolysis equilibrium model is developed in Aspen Plus software based on first principles (mass/energy balance) and Gibbs free energy minimization. Biomass feedstock is converted into elemental components (C, H₂O, H₂, etc.) via a Fortran subroutine.

- Data Augmentation: The validated equilibrium model is run under varied conditions to generate a large, synthetic dataset. To enhance data quality, points with the smallest residuals between the model predictions and experimental observations are selected.

- Hybrid Model Training: The augmented dataset (combining selected model-generated data and experimental data) serves as input for training machine learning models, such as Random Forest.

- Validation: The final hybrid model's performance is evaluated against a holdout test set using metrics like R², RMSE, and MAPE, and interpreted using techniques like SHAP analysis to identify critical input parameters.

This workflow leverages the scalability of the process-based model to create a robust, data-rich foundation for the ML model, which in turn corrects for the systematic biases and assumptions inherent in the standalone mechanistic approach [44].

Transfer Learning for Neural Network Potentials in Energetic Materials

For complex molecular systems, transfer learning provides a powerful strategy to create generalizable Neural Network Potentials (NNPs) with minimal new data. The protocol for developing the EMFF-2025 potential for C, H, N, O-based high-energy materials (HEMs) exemplifies this approach [9]:

- Pre-training on Broad Data: A base NNP model (e.g., DP-CHNO-2024) is first trained on a diverse database of molecular structures and their corresponding energies and forces calculated from high-level DFT.

- Targeted Data Generation (DP-GEN): A robust sampling and active learning process is employed to identify structures and configurations for new target HEMs that are not well-represented in the existing pre-trained model.

- Transfer Learning: The pre-trained model is fine-tuned (rather than trained from scratch) on a small amount of new, targeted DFT data for the specific HEMs of interest.

- Validation: The final EMFF-2025 model is validated by predicting the crystal structures, mechanical properties, and thermal decomposition behaviors of 20 different HEMs, with results rigorously benchmarked against experimental data [9].

This protocol demonstrates that hybrid models can be built efficiently by leveraging pre-existing knowledge (the pre-trained NNP) and refining it with minimal, strategically acquired new data, achieving DFT-level accuracy at a fraction of the computational cost [9].

Essential Reagents and Computational Tools for Hybrid Modeling

Successful implementation of hybrid models requires a suite of specialized computational tools and theoretical frameworks that serve as the essential "research reagents" in this domain.

Table 3: Essential Research Reagent Solutions for Hybrid Modeling

| Tool/Technique | Category | Primary Function in Hybrid Modeling | Example Applications |

|---|---|---|---|

| Deep Potential (DP) [9] | Neural Network Potential | Provides a scalable framework for developing NNPs with quantum mechanics accuracy for molecular dynamics simulations. | Energetic materials (EMFF-2025), molecular systems [9]. |

| Delta-Learning [42] | Hybrid ML-PBM Framework | ML model learns the difference (delta) between a low-cost approximate simulation and a high-fidelity target, correcting systematic errors. | Quantum chemistry, prediction of reaction barriers [42]. |

| Harmony Search (HS) [43] | Bio-Inspired Optimizer | Hyperparameter optimization algorithm for ML models, improving predictive accuracy by finding the best parameter combination. | Ionic liquid property prediction [43]. |

| Barnacles Mating Optimizer (BMO) [47] | Bio-Inspired Optimizer | Hyperparameter tuning for supervised regression models like SVM, enhancing model performance on spatial data. | Adsorption process concentration prediction [47]. |

| Aspen Plus [44] | Process Simulation Platform | Provides a robust environment for building and running mechanistic models (e.g., equilibrium models) to generate data for ML. | Biomass pyrolysis, chemical process simulation [44]. |

| SHAP Analysis [44] | Model Interpretation | Post-hoc explainability technique to determine the contribution of input features to a model's predictions, increasing trust. | Feature importance analysis in biomass pyrolysis [44]. |

| ROBERT Software [3] | Automated ML Workflow | Mitigates overfitting in low-data regimes via automated hyperparameter optimization with a combined interpolation/extrapolation metric. | Small chemical dataset modeling, catalyst development [3]. |

These tools collectively address the core challenges in hybrid modeling: DP and Delta-Learning provide the fundamental architecture for integrating physics and ML; HS and BMO enable robust model training, especially with limited data; Aspen Plus facilitates mechanistic data generation; and SHAP and ROBERT ensure the reliability and interpretability of the final model, making them indispensable for modern computational research.