Beyond the Training Set: Evaluating and Enhancing Out-of-Distribution Robustness in Molecular Property Predictors

The accurate prediction of molecular properties for compounds outside a model's training distribution is a critical frontier in AI-driven drug discovery.

Beyond the Training Set: Evaluating and Enhancing Out-of-Distribution Robustness in Molecular Property Predictors

Abstract

The accurate prediction of molecular properties for compounds outside a model's training distribution is a critical frontier in AI-driven drug discovery. This article provides a comprehensive analysis for researchers and drug development professionals, exploring the fundamental challenges of out-of-distribution (OOD) generalization. We systematically review the performance of state-of-the-art machine learning models, including graph neural networks and transformers, on established OOD benchmarks like BOOM. The content delves into innovative methodological strategies, from transductive learning and meta-learning to advanced uncertainty quantification, that aim to improve extrapolation. Finally, we present a rigorous framework for the validation and comparative analysis of molecular property predictors, underscoring the imperative of robust OOD evaluation for successful real-world application in biomedical research.

The OOD Generalization Challenge: Why Molecular Discovery is an Inherently Out-of-Distribution Problem

The discovery of high-performance materials and molecules often depends on identifying extremes—candidates with property values that fall outside the known distribution of existing data [1] [2]. Consequently, the ability to extrapolate to out-of-distribution (OOD) property values has become critical for both solid-state materials and molecular design [2]. In molecular contexts, "out-of-distribution" can refer to two distinct but sometimes overlapping concepts: extrapolation in the input space (unseen molecular structures, scaffolds, or chemical spaces) and extrapolation in the output space (unseen ranges of property values) [1] [2]. This distinction is crucial because models that perform well on one type of extrapolation may struggle with the other, leading to potentially misleading predictions in real-world drug discovery applications where both types of shifts commonly occur.

The practical implications of this challenge are significant. In critical applications such as drug screening or design, misleading estimations of molecular properties can result in tremendous waste of wet-lab resources and delay the discovery of novel therapies [3]. Molecular representation learning models typically assume that training and testing graphs come from identical distributions, but this closed-world assumption often breaks down when models are deployed in real-world scenarios [3] [4]. For example, a model trained on drugs inhibiting Gram-negative pathogens may perform poorly when screening for antibiotics against Gram-positive bacteria due to different pharmacological mechanisms [3].

Defining the OOD Spectrum in Molecular Science

Input Space Extrapolation: Navigating Structural Shifts

When OOD generalization is defined with respect to the input molecular space, extrapolation often involves generalization to unseen classes of molecular structures, scaffolds, or chemical environments [1] [2]. This includes scenarios such as training on artificial molecules and predicting natural products, or training on certain molecular scaffolds and predicting on entirely different scaffold classes [1]. In practice, this type of extrapolation frequently reduces to interpolation because test sets often remain within the same overall distribution as the training data in the representation space [1] [2]. This pattern is observed in predictive models using leave-one-cluster-out strategies and generative approaches aimed at generalizing to structures with varying atomic compositions or sizes [2].

Property Value Extrapolation: Targeting Performance Extremes

The second notion of extrapolation addresses the range of the predictive function—specifically, output material property values that may or may not correlate with extrapolation in the input materials space [2]. This work focuses on zero-shot extrapolation to property value ranges beyond the training distribution, which presents distinct challenges for classical machine learning models [1] [2]. When OOD generalization targets the range of predictive functions, traditional regression models face significant difficulties, leading some researchers to shift toward classification approaches for identifying OOD materials [1] [2].

Table: Comparison of OOD Types in Molecular Context

| Aspect | Input Space Extrapolation | Property Value Extrapolation |

|---|---|---|

| Definition | Generalization to unseen molecular structures/scaffolds | Generalization to unseen ranges of property values |

| Common Challenges | Often reduces to interpolation in representation space | Classical ML models struggle with regression extrapolation |

| Typical Approaches | Leave-one-cluster-out strategies, generative models | Classification of OOD materials, transductive methods |

| Practical Impact | Screening novel structural classes | Discovering high-performance extremes |

Methodological Frameworks for Molecular OOD Prediction

Bilinear Transduction for Property Value Extrapolation

Bilinear Transduction represents a transductive approach to OOD property prediction that reparameterizes the prediction problem [1] [2]. Rather than making property value predictions directly on new candidate materials, this method makes predictions based on a known training example and the difference in representation space between the two materials [1] [2]. During inference, property values are predicted similarly—based on a chosen training example and the difference between it and the new sample [2]. This approach enables extrapolation by learning how property values change as a function of material differences rather than predicting these values directly from new materials [1] [2].

The core innovation of this method lies in its ability to leverage analogical input-target relations in both training and test sets, enabling generalization beyond the training target support [1] [2]. Experimental results demonstrate that this approach improves extrapolative precision by 1.8× for materials and 1.5× for molecules, while boosting recall of high-performing candidates by up to 3× [2].

Prototypical Graph Reconstruction for Input Space Detection

For input space OOD detection in molecular graphs, the PGR-MOOD framework introduces a novel approach using diffusion model-based reconstruction [3]. This method addresses two significant challenges: (1) the inadequacy of Euclidean distance metrics for capturing complex graph structure similarities, and (2) the computational inefficiency of iterative denoising processes when applied to large molecular libraries [3].

PGR-MOOD operates by creating a series of prototypical graphs that align with in-distribution (ID) samples while distancing themselves from OOD ones [3]. During testing, it measures similarity between input molecules and these pre-constructed prototypical graphs using Fused Gromov-Wasserstein (FGW) distance, which comprehensively quantifies matching degree based on both discrete edges and continuous node features [3]. This approach eliminates the need to reconstruct every test graph, enabling scalable OOD detection for large molecular databases [3].

Consistent Semantic Representation Learning

The Consistent Semantic Representation Learning (CSRL) framework addresses challenges posed by activity cliffs and complex molecular entanglements that hinder accurate invariant substructure identification [4]. This approach explores the potential correlation between consistent semantic information across different molecular representation forms and molecular property prediction under distribution shifts [4].

CSRL comprises two key modules: a Semantic Uni-code (SUC) module that adjusts incorrect embeddings into correct embeddings across different molecular representation forms, and a Consistent Semantic Extractor (CSE) that leverages non-semantic information as training labels to guide the discriminator's learning [4]. This framework suppresses the model's reliance on non-semantic information in different molecular representation embeddings, enhancing OOD generalization capability [4].

Experimental Comparison of OOD Methodologies

Performance Evaluation on Molecular Benchmarks

Comprehensive evaluations across multiple molecular benchmarks reveal significant performance differences between OOD methodologies. On molecular graph datasets from MoleculeNet—including ESOL (aqueous solubility), FreeSolv (hydration free energies), Lipophilicity (distribution coefficients), and BACE (binding affinities)—transductive and reconstruction-based approaches demonstrate superior OOD detection capabilities compared to traditional methods [2] [3].

Table: OOD Detection Performance on Molecular Graphs (AUC Scores) [3]

| Method | ESOL | FreeSolv | Lipophilicity | BACE | Average |

|---|---|---|---|---|---|

| Random Forest | 0.742 | 0.768 | 0.715 | 0.731 | 0.739 |

| MLP | 0.751 | 0.781 | 0.724 | 0.748 | 0.751 |

| GCN | 0.793 | 0.812 | 0.768 | 0.792 | 0.791 |

| GIN | 0.811 | 0.834 | 0.785 | 0.816 | 0.812 |

| PGR-MOOD | 0.892 | 0.908 | 0.861 | 0.887 | 0.887 |

The PGR-MOOD framework demonstrates an average improvement of 8.54% in detection AUC and 8.15% in AUPR compared to baseline methods, accompanied by a 13.7% reduction in FPR95 (false positive rate at 95% true positive rate) [3]. These improvements come with substantially reduced computational costs in testing time and memory consumption, addressing critical constraints for large-scale molecular screening applications [3].

Property Value Extrapolation Performance

For property value extrapolation, Bilinear Transduction has been evaluated against established baselines including Ridge Regression, MODNet, and CrabNet across multiple materials and molecular datasets [1] [2]. The method consistently outperforms or performs comparably to baseline methods across diverse prediction tasks, with particularly strong performance in identifying top OOD candidates—the 30% of test samples with the highest property values [2].

Table: Extrapolative Precision on Molecular Property Prediction [2]

| Method | Molecular Datasets | Extrapolative Precision | OOD Recall |

|---|---|---|---|

| Ridge Regression | ESOL, FreeSolv, Lipophilicity, BACE | 0.18 | 1.0× |

| MODNet | ESOL, FreeSolv, Lipophilicity, BACE | 0.22 | 1.2× |

| CrabNet | ESOL, FreeSolv, Lipophilicity, BACE | 0.25 | 1.4× |

| Bilinear Transduction | ESOL, FreeSolv, Lipophilicity, BACE | 0.33 | 1.5× |

The Bilinear Transduction method improves extrapolative precision by 1.5× for molecules and boosts recall of high-performing candidates by up to 3× compared to non-transductive baselines [2]. This enhanced capability to identify true high-performance extremes while minimizing false positives significantly streamlines the virtual screening process in drug discovery pipelines [2].

Research Reagent Solutions: Computational Tools for OOD Molecular Prediction

Table: Essential Computational Tools for OOD Molecular Property Prediction

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| MatEx (Materials Extrapolation) | Software Library | Implements Bilinear Transduction for OOD property prediction | GitHub: learningmatter-mit/matex [2] |

| PGR-MOOD | Framework | Prototypical graph reconstruction for molecular OOD detection | Anonymous code: https://anonymous.4open.science/r/PGR-MOOD-53B3 [3] |

| DrugOOD | Benchmark Dataset | Curated molecular datasets with systematic OOD splits | Publicly available [4] |

| ADMEOOD | Benchmark Dataset | ADME property prediction with distribution shifts | Publicly available [4] |

| MoleculeNet | Benchmark Suite | Multiple molecular property prediction tasks | Publicly available [2] |

| CSRL Framework | Software Library | Consistent semantic representation learning for molecules | Details in publication [4] |

The evolving landscape of OOD molecular property prediction reveals a critical distinction between input space and property value extrapolation, each demanding specialized methodological approaches [1] [2] [3]. Transductive methods like Bilinear Transduction demonstrate significant advantages for property value extrapolation, while reconstruction-based approaches such as PGR-MOOD offer scalable solutions for input space OOD detection [2] [3]. The emerging paradigm of consistent semantic representation learning further addresses fundamental challenges posed by activity cliffs and molecular entanglement [4].

For researchers and drug development professionals, these advanced OOD detection and prediction capabilities enable more reliable virtual screening, reduce resource waste on false leads, and accelerate the discovery of novel molecular entities with extreme properties [2] [3]. As the field progresses, integrating these complementary approaches into unified frameworks promises to enhance the trustworthiness and real-world applicability of molecular property predictors across the drug discovery pipeline [5] [4].

The pursuit of novel therapeutics demands the discovery of materials and molecules with exceptional, often unprecedented, properties. By definition, these high-performing candidates possess property values that fall outside the distribution of known compounds, making the ability to extrapolate—to make accurate predictions on Out-of-Distribution (OOD) data—a cornerstone of accelerated drug discovery [2]. The failure of machine learning models to generalize in this context poses a significant bottleneck. Traditional models frequently experience a performance drop when encountering OOD samples and, more dangerously, can produce overconfident mispredictions, where the model assigns high confidence to an incorrect prediction [6]. Such errors are not merely statistical artifacts; they misdirect experimental resources, compromise virtual screening efforts, and can ultimately derail development pipelines, incurring substantial costs and delays. This guide objectively evaluates the OOD robustness of contemporary molecular property predictors, comparing their performance across key benchmarks to identify methodologies capable of navigating the challenging landscape of real-world drug discovery.

Quantitative Performance Comparison of OOD Prediction Methods

A critical evaluation of OOD performance requires examining models on standardized benchmarks where property values in the test set lie outside the range of the training data. The following tables summarize the extrapolative capabilities of leading methods against a transductive approach, Bilinear Transduction, on solid-state materials and molecules [2].

Table 1: OOD Prediction Performance on Solid-State Materials (Mean Absolute Error) [2]

| Dataset | Property | Ridge Regression | MODNet | CrabNet | Bilinear Transduction (Ours) |

|---|---|---|---|---|---|

| AFLOW | Bulk Modulus (GPa) | 74.0 ± 3.8 | 93.06 ± 3.7 | 59.25 ± 3.2 | 47.4 ± 3.4 |

| AFLOW | Debye Temperature (K) | 0.45 ± 0.03 | 0.62 ± 0.03 | 0.38 ± 0.02 | 0.31 ± 0.02 |

| AFLOW | Shear Modulus (GPa) | 0.69 ± 0.03 | 0.78 ± 0.04 | 0.55 ± 0.02 | 0.42 ± 0.02 |

| Matbench | Band Gap (eV) | 6.37 ± 0.28 | 3.26 ± 0.13 | 2.70 ± 0.13 | 2.54 ± 0.16 |

| Matbench | Yield Strength (MPa) | 972 ± 34 | 731 ± 82 | 740 ± 49 | 591 ± 62 |

| Materials Project | Bulk Modulus (GPa) | 151 ± 14 | 60.1 ± 3.9 | 57.8 ± 4.2 | 45.8 ± 3.9 |

Table 2: Extrapolative Precision for Identifying Top-Tier Candidates [2]

| System | Baseline Methods (Avg.) | Bilinear Transduction (Ours) | Precision Improvement |

|---|---|---|---|

| Solid-State Materials | - | - | 1.8x |

| Molecules | - | - | 1.5x |

Table 3: OOD Classification Performance [1]

| System | Metric | Baseline Methods | Bilinear Transduction (Ours) | Improvement |

|---|---|---|---|---|

| Materials | True Positive Rate (TPR) | - | - | 3.0x |

| Materials | Precision | - | - | 2.0x |

| Molecules | True Positive Rate (TPR) | - | - | 2.5x |

| Molecules | Precision | - | - | 1.5x |

The data demonstrates that Bilinear Transduction consistently achieves a lower Mean Absolute Error (MAE) on OOD predictions across a variety of material properties. More importantly for discovery applications, it significantly boosts extrapolative precision and the recall of high-performing OOD candidates, meaning a higher percentage of its predicted top candidates are truly top-tier, reducing the resources wasted on false leads [2] [1].

Detailed Experimental Protocols and Methodologies

Benchmarking OOD Property Prediction

Objective: To evaluate a model's zero-shot extrapolation capability, i.e., its ability to predict property values for samples that lie outside the range of the training data distribution [2]. Datasets: The protocol utilizes established benchmarks:

- Solids: AFLOW, Matbench, and the Materials Project (MP) datasets, covering properties like band gap, bulk/shear modulus, and Debye temperature [2].

- Molecules: Datasets from MoleculeNet, including ESOL (solubility), FreeSolv (hydration free energy), and Lipophilicity [2]. OOD Splitting: The held-out dataset is divided into an in-distribution (ID) validation set and an OOD test set of equal size. The OOD test set contains samples with property values strictly greater than the maximum value seen in the training set to focus evaluation on pure extrapolation [2]. Evaluation Metrics:

- OOD Mean Absolute Error (MAE): Measures prediction accuracy on the OOD test set [2].

- Extrapolative Precision: The fraction of true top OOD candidates (e.g., top 30% by property value in the entire held-out set) correctly identified among the model's top predicted candidates [2].

- Recall: The proportion of actual top OOD candidates successfully retrieved by the model [2].

Bilinear Transduction Workflow

The core innovation of this approach is a reparameterization of the prediction problem to facilitate extrapolation [2] [1].

- Input Representation: Materials (e.g., compositions) or molecules (e.g., graphs) are converted into a fixed-length vector representation.

- Bilinear Model: Instead of predicting a property value ( y ) for a new test material ( xt ) directly, the model learns to predict the value based on a known training example ( xs ) and their difference in representation space.

- Inference: For a test sample ( xt ), a training sample ( xs ) is selected (e.g., via similarity), and the property value is predicted as ( \hat{yt} = ys + f(xs, xt - x_s) ), where ( f ) is the learned bilinear function. This allows the model to learn how property values change as a function of material differences, which is more amenable to extrapolation than predicting absolute values from new materials [2] [1].

Evaluating and Mitigating Overconfident Errors

Objective: To assess and improve a model's uncertainty estimation, particularly for OOD samples, to reduce overconfident incorrect predictions [6]. Protocol:

- Model Modification: Replace the standard Softmax output layer in a classifier with a normalizing flow-based density estimator, as in the Posterior Network (AttFpPost). This enhances the model's ability to distinguish between in-distribution and out-of-distribution data [6].

- Evaluation Scenarios: The model is tested on:

- Synthetic OOD Data: To simulate domain shift.

- ADMET Prediction Tasks: Critical tasks in drug development (Absorption, Distribution, Metabolism, Excretion, and Toxicity).

- Ligand-Based Virtual Screening (LBVS): Assessing early enrichment capability [6]. Outcome: Models equipped with improved uncertainty quantification (like AttFpPost) demonstrate a marked reduction in overconfident errors on OOD samples compared to vanilla models using Softmax [6].

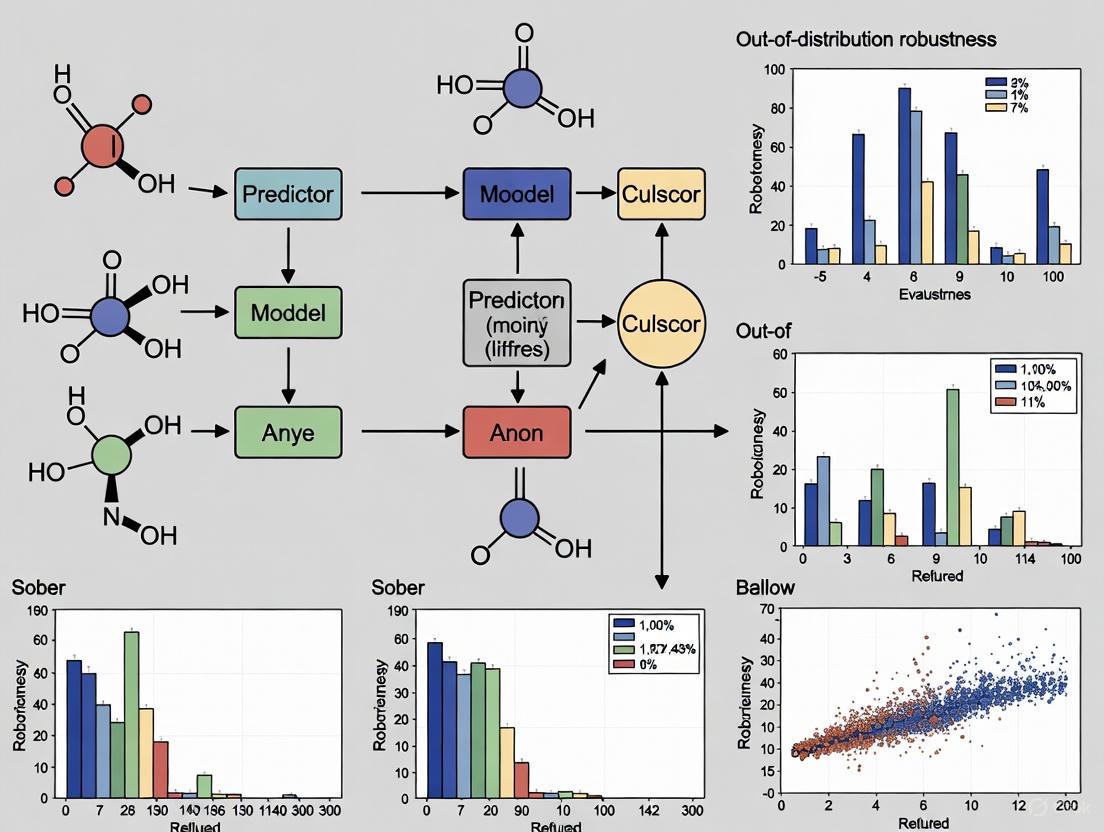

Visualizing the OOD Generalization Challenge and Solutions

The following diagrams illustrate the core problem of OOD generalization in drug discovery and the logical workflow of a robust evaluation protocol.

The OOD Generalization Gap in Drug Discovery

Protocol for Robust OOD Model Evaluation

The Scientist's Toolkit: Key Research Reagents and Solutions

This section details essential computational tools and datasets used in the featured experiments for benchmarking OOD robustness.

Table 4: Essential Research Toolkit for OOD Robustness Evaluation

| Item Name | Type | Function/Benefit | Source/Implementation |

|---|---|---|---|

| Bilinear Transduction | Algorithm | Enables extrapolation by learning property changes as a function of input differences. | GitHub: learningmatter-mit/matex [2] |

| AttFpPost (Posterior Network) | Model Architecture | Reduces overconfident errors on OOD samples via normalizing flows for better uncertainty estimation. | Citation: Patterns Journal [6] |

| AFLOW, Matbench, Materials Project | Data Benchmarks | Curated datasets for solid-state materials property prediction, enabling standardized OOD testing. | AFLOW API; Matbench [2] |

| MoleculeNet | Data Benchmarks | A collection of molecular property datasets (ESOL, FreeSolv, etc.) for benchmarking OOD generalization in molecules. | MoleculeNet [2] |

| GUEST Toolbox | Software Tool | A Python tool for the fair design and benchmarking of Drug-Target Interaction (DTI) prediction models, addressing data leakage. | GitHub: ML4BM-Lab/GraphEmb [7] |

| CleverHans & Foolbox | Software Library | Frameworks for generating adversarial examples to test and enhance model robustness against malicious inputs. | CleverHans GitHub; Foolbox Docs [8] |

The quantitative data and experimental protocols presented in this guide underscore a critical finding: traditional machine learning models exhibit significant vulnerabilities when predicting Out-of-Distribution properties, leading to overconfident errors that directly impede the drug discovery process. The evaluation of methods like Bilinear Transduction and uncertainty-aware models such as AttFpPost demonstrates that algorithmic choices which explicitly account for OOD generalization—through transduction or enhanced uncertainty quantification—can deliver substantially improved extrapolative precision and recall. For researchers and development professionals, this implies that the selection of a molecular property predictor must be guided not only by its in-distribution accuracy but, more importantly, by its rigorously tested OOD robustness. Integrating these robust methodologies and the accompanying toolkit into discovery pipelines is no longer optional but essential for efficiently identifying genuine, high-performance candidates and building a more trustworthy AI-driven future for pharmaceuticals.

The application of machine learning (ML) in molecular and materials discovery represents a paradigm shift in scientific research. However, a critical challenge undermines its real-world utility: models often fail to make accurate predictions on out-of-distribution (OOD) data. Molecular discovery is inherently an OOD prediction problem; discovering novel molecules that extend the boundaries of known chemistry requires models that can generalize to regions of chemical space beyond the training distribution [9]. Despite the importance of OOD performance, traditional benchmarks have predominantly evaluated models on in-distribution (ID) data, where test sets are randomly drawn from the same distribution as training data. This approach has led to overly optimistic performance assessments and models ill-equipped for practical discovery tasks [10].

This guide examines emerging benchmarks specifically designed for evaluating OOD generalization in molecular and materials property prediction. We focus on the recently introduced BOOM (Benchmarks for Out-Of-distribution Molecular property predictions) framework alongside other complementary initiatives [9] [11] [12]. By comparing their methodologies, experimental protocols, and key findings, we provide researchers with a comprehensive understanding of the current landscape and performance gaps in OOD prediction.

Benchmark Framework Comparison

The pressing need for systematic OOD evaluation has spurred the development of several benchmarking frameworks across domains. These frameworks employ different strategies to create meaningful distribution shifts between training and test data.

Table 1: Overview of OOD Benchmarking Frameworks

| Framework | Domain | OOD Splitting Strategy | Core Evaluation Focus | Key Contribution |

|---|---|---|---|---|

| BOOM [9] [12] | Molecular Property Prediction | Property-value based (tail-end of distribution) | Extrapolation to extreme property values | First large-scale benchmark for OOD molecular property prediction |

| Structure-based OOD Materials Benchmark [10] | Materials Property Prediction | Structure-based clustering (5 methods) | Generalization to novel material structures | Comprehensive benchmark for inorganic materials using structure-based GNNs |

| ImageNet-X/FS-X [13] [14] | Computer Vision | Semantic & covariate shifts | Detection under challenging real-world shifts | Benchmark for vision-language models with progressive difficulty |

| OpenMIBOOD [15] | Medical Imaging | Covariate-shifted ID, near-OOD, far-OOD | OOD detection in medical contexts | Domain-specific benchmark for healthcare AI reliability |

| MatEx (Bilinear Transduction) [2] | Molecules & Materials | Property-value based (zero-shot extrapolation) | Transductive extrapolation to high-value candidates | Novel method improving recall of high-performing OOD candidates |

BOOM: A Deep Dive into Molecular OOD Benchmarking

Experimental Design and Methodology

BOOM addresses a significant gap in molecular ML by providing the first standardized benchmark for assessing OOD generalization in molecular property prediction. Its methodology is built around several key design choices:

Property-based OOD Splitting: Unlike input-based splitting strategies, BOOM defines OOD with respect to model outputs, creating test sets from molecules with property values at the tail ends of the distribution. This directly aligns with molecule discovery goals where researchers seek materials with exceptional properties [9].

Dataset Composition: BOOM incorporates 10 molecular property datasets: 8 from QM9 (including isotropic polarizability, HOMO-LUMO gap, and dipole moment) and 2 from the 10k Dataset (density and solid heat of formation) [9].

Splitting Protocol: For each property, BOOM fits a kernel density estimator to the property values and selects molecules with the lowest probabilities (lowest 10% for QM9, lowest 1000 molecules for 10k Dataset) for the OOD test set. The remaining molecules are used for training and ID testing with random sampling [9].

Model Coverage: The benchmark evaluates over 140 combinations of models and tasks, including traditional ML (Random Forest with RDKit features), graph neural networks (GNNs) like Chemprop and TGNN, and transformer-based models (ChemBERTa, MolFormer) [9] [12].

The following diagram illustrates BOOM's experimental workflow from dataset preparation through to performance evaluation:

Key Experimental Findings from BOOM

BOOM's comprehensive evaluation reveals significant challenges in OOD generalization:

No Universal Performer: No single model achieved strong OOD generalization across all tasks. Even the top-performing model exhibited an average OOD error 3× larger than its in-distribution error [9] [12].

Inductive Bias Advantage: Deep learning models with high inductive bias (particularly certain GNN architectures) performed well on OOD tasks with simple, specific properties, suggesting that architectural choices should align with property characteristics [9].

Foundation Model Limitations: Current chemical foundation models with transfer and in-context learning showed promise for data-limited scenarios but did not demonstrate strong OOD extrapolation capabilities, indicating room for improvement in pretraining strategies [9].

Representation Impact: Molecular representation (SMILES, graphs, descriptors) significantly influenced OOD performance, with different excelling in different property prediction tasks [9].

Complementary OOD Benchmarks in Materials Science

Structure-based Materials Benchmark

A 2024 benchmark study focused on structure-based graph neural networks for inorganic materials property prediction proposed five distinct categories of OOD test sets based on crystal structure clustering [10]. This approach addresses the limitation of composition-based descriptors by incorporating structure-based representations like Orbital Field Matrix (OFM) for clustering.

Key findings from this benchmark include:

- Performance Overestimation: State-of-the-art GNN models that top leaderboards in conventional benchmarks (e.g., coGN, coNGN) showed significant performance drops on OOD test sets, demonstrating that reported superior performances were overestimated due to dataset redundancy [10].

- Generalization Gap: All algorithms performed worse on OOD tasks compared to their baseline MatBench performance, with an average performance drop that highlights a crucial generalization gap in realistic material prediction [10].

- Robust Performers: CGCNN, ALIGNN, and DeeperGATGNN demonstrated more robust OOD performance compared to current top MatBench models, providing insights for architectural improvements [10].

Transductive Approaches for OOD Extrapolation

The MatEx framework introduces a different approach to OOD property prediction using Bilinear Transduction, which reformulates the prediction problem by learning how property values change as a function of material differences rather than predicting values directly from new materials [2].

Table 2: Performance Comparison of OOD Methods on Solid-State Materials

| Method | Bulk Modulus MAE | Debye Temperature MAE | Shear Modulus MAE | Extrapolative Precision | OOD Recall |

|---|---|---|---|---|---|

| Bilinear Transduction [2] | Lower than baselines | Lower than baselines | Lower than baselines | 1.8× improvement | 3× boost |

| Ridge Regression [2] | Higher | Higher | Higher | Baseline | Baseline |

| MODNet [2] | Higher | Higher | Higher | Lower | Lower |

| CrabNet [2] | Higher | Higher | Higher | Lower | Lower |

This method demonstrated significant improvements in extrapolative precision (1.8× for materials, 1.5× for molecules) and boosted recall of high-performing candidates by up to 3× compared to conventional approaches [2].

Experimental Protocols and Methodologies

OOD Splitting Strategies

Different benchmarks employ distinct strategies for creating meaningful train-test splits:

- Property-based Splitting (BOOM): Uses kernel density estimation on property values to identify tail-end samples for OOD testing [9].

- Structure-based Clustering: Employs structural descriptors and clustering algorithms to identify novel material structures absent from training [10].

- Scaffold-based Splitting: Groups molecules by their Bemis-Murcko scaffolds and assigns entire scaffolds to different splits, testing generalization to novel molecular frameworks [16].

- Similarity-based Splitting: Uses chemical similarity clustering (K-means on ECFP4 fingerprints) to create challenging OOD splits where test molecules are distant from training examples in chemical space [16].

Evaluation Metrics

Comprehensive OOD evaluation requires multiple metrics:

- Performance Degradation: Comparison of ID vs. OOD performance (e.g., MAE ratio) [9] [10].

- Extrapolative Precision: Fraction of true top OOD candidates correctly identified among predicted top candidates [2].

- OOD Recall: Ability to retrieve high-performing OOD candidates [2].

- Ranking Consistency: Correlation between ID and OOD performance rankings across models [16].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for OOD Molecular Property Prediction

| Tool/Resource | Type | Function | Relevance to OOD Evaluation |

|---|---|---|---|

| QM9 Dataset [9] | Dataset | 133,886 small organic molecules with quantum chemical properties | Primary benchmark dataset for molecular OOD evaluation |

| RDKit [9] [2] | Software | Cheminformatics and molecular descriptor generation | Featurization for traditional ML models and fingerprint generation |

| Graph Neural Networks [9] [10] | Model Architecture | Message-passing networks on molecular graphs | State-of-the-art for structure-property relationship learning |

| SMILES [9] [2] | Representation | String-based molecular representation | Input for transformer-based models and language approaches |

| Kernel Density Estimation [9] | Statistical Method | Probability density function estimation | Identifying low-probability samples for OOD test set creation |

| Bilinear Transduction [2] | Algorithm | Transductive extrapolation method | Improving recall of high-performing OOD candidates |

Performance Insights and Research Implications

The collective findings from these benchmarks reveal several critical patterns:

ID Performance ≠ OOD Performance: Strong in-distribution performance does not guarantee out-of-distribution generalization. The correlation between ID and OOD performance varies significantly based on the splitting strategy, with scaffold splitting showing stronger correlation (Pearson r ∼ 0.9) than cluster-based splitting (r ∼ 0.4) [16].

Architecture Matters: Model architecture significantly impacts OOD robustness. GNNs with strong inductive biases often outperform more flexible transformer architectures on OOD tasks, particularly for properties with clear structure-property relationships [9] [10].

Data Generation Impact: How OOD data is generated substantially influences benchmark difficulty. Cluster-based splitting using chemical similarity poses the hardest challenge for both classical ML and GNN models [16].

Domain-Specific Challenges: OOD detection methods that perform well in computer vision domains do not necessarily translate to scientific applications, underscoring the need for domain-specific benchmarks [15].

The following diagram summarizes the relationship between different OOD splitting strategies and their impact on model generalization:

The development of specialized benchmarks like BOOM represents a crucial step toward more reliable and deployable molecular machine learning models. The consistent finding across all benchmarks—that current state-of-the-art models struggle with OOD generalization—highlights a fundamental challenge in the field.

Moving forward, researchers should:

- Prioritize OOD performance alongside ID metrics when developing new models

- Consider multiple OOD splitting strategies to comprehensively assess generalization

- Explore architectural innovations that explicitly incorporate inductive biases for molecular systems

- Develop transductive methods and transfer learning strategies specifically designed for OOD scenarios

As the field progresses, these OOD benchmarks will play an increasingly vital role in guiding the development of molecular property predictors that can truly accelerate scientific discovery by reliably identifying novel materials with exceptional properties.

The pursuit of reliable machine learning (ML) models for molecular property prediction represents a cornerstone of modern computational chemistry and drug discovery. These models promise to accelerate the identification of novel molecules with desirable properties, from pharmaceutical compounds to sustainable energy materials. However, their real-world utility hinges on a critical factor: robustness to Out-of-Distribution (OOD) data. Molecular discovery is, by its very nature, an OOD problem; the goal is to identify molecules that extend beyond the boundaries of known chemical space or exhibit properties that extrapolate beyond the training data [9]. A model that performs excellently on in-distribution (ID) data but fails on OOD data offers limited practical value, potentially misguiding discovery campaigns.

Recent large-scale benchmarking studies have provided stark, quantitative evidence of a significant performance gap between ID and OOD settings. This guide synthesizes the latest evidence on this gap, compares the OOD performance of various molecular property prediction models, and details the experimental methodologies and emerging solutions aimed at building more robust ML systems for science.

Empirical Evidence: Documenting the Performance Gap

Systematic evaluations reveal that OOD generalization remains a formidable challenge for state-of-the-art models. The BOOM (Benchmarking Out-Of-distribution Molecular property predictions) study, a comprehensive analysis of over 140 model-and-task combinations, found that even the top-performing models exhibited an average OOD error that was 3x larger than their in-distribution error [9]. This finding is pivotal, as it demonstrates that the gap is not a minor inconvenience but a substantial degradation in model performance.

Table: Summary of OOD Performance Gaps from Key Studies

| Study / Benchmark | Key Finding on OOD Performance | Context / Models Evaluated |

|---|---|---|

| BOOM Benchmark [9] | Top-performing model's average OOD error was 3x larger than its ID error. | Evaluation of 12+ ML models across 10 molecular property prediction tasks. |

| ACS for Multi-Task GNNs [17] | Adaptive Checkpointing with Specialization (ACS) outperformed standard MTL by up to 10.8% and single-task learning by 15.3% on ClinTox, mitigating negative transfer. | Multi-task Graph Neural Networks on MoleculeNet benchmarks (ClinTox, SIDER, Tox21). |

| OOD Detection Survey [18] | ML models are vulnerable to distribution shifts; performance can be severely impacted by covariate and concept shifts. | Broad survey of distribution shift handling methods in machine learning. |

| Molecular Property Prediction Review [16] | The correlation between ID and OOD performance is highly dependent on the data splitting strategy, weakening significantly under challenging splits. | Evaluation of 12 models, including Random Forests and GNNs, across 8 datasets with 7 splitting strategies. |

The performance drop is not uniform across all OOD scenarios. The relationship between ID and OOD performance is strongly influenced by how the OOD data is generated. For instance, while a strong positive correlation (Pearson r ~ 0.9) between ID and OOD performance exists under simple scaffold splits, this correlation weakens significantly (Pearson r ~ 0.4) under more challenging, cluster-based data splits [16]. This indicates that model selection based solely on ID performance is an unreliable strategy for applications requiring OOD robustness.

Experimental Protocols: How the OOD Gap is Measured

Benchmark Creation and OOD Splitting Strategies

A critical step in quantifying OOD performance is the methodology used to split data into in-distribution and out-of-distribution sets. The following workflows and strategies are central to current research.

Diagram: Workflow for Benchmarking OOD Generalization

The diagram above outlines the general workflow for creating an OOD benchmark. The specific splitting strategies are crucial and include:

- Property-Based Splitting (Output Space): This method, used in the BOOM benchmark, defines OOD with respect to the model's prediction target. A kernel density estimator is fitted to the distribution of a molecular property's values. Molecules with the lowest probability densities—those at the tail ends of the distribution—are assigned to the OOD test set. This directly tests a model's ability to extrapolate to novel property values, which is central to molecule discovery [9].

- Scaffold-Based Splitting (Input Space): This approach groups molecules based on their Bemis-Murcko scaffolds (the core molecular framework). The test set contains molecules with scaffolds that are absent from the training set. This evaluates a model's ability to generalize to novel chemical structures [17] [16].

- Cluster-Based Splitting (Input Space): Molecules are clustered using their chemical fingerprints (e.g., ECFP4) and a clustering algorithm like K-means. Entire clusters are held out for the test set, creating a more challenging OOD scenario that often poses the hardest generalization challenge for models [16].

Model Training and Evaluation

Once splits are established, models are trained exclusively on the training set. Their performance is then evaluated separately on the ID test set (drawn from the same distribution as the training data) and the OOD test set. The key metrics, such as Mean Absolute Error (MAE) for regression or Area Under the Curve (AUC) for classification, are compared directly to calculate the performance gap [9] [16].

Comparative Analysis of Model Performance

The BOOM benchmark provides a broad overview of how different model classes handle OOD data. The evaluation included traditional machine learning models, Graph Neural Networks (GNNs) with various inductive biases, and transformer-based chemical foundation models.

Table: OOD Performance of Model Classes on Molecular Property Prediction

| Model Class | Example Models | Key OOD Findings | Notable Strengths & Weaknesses |

|---|---|---|---|

| Traditional ML | Random Forest (with RDKit features) | Struggles with challenging OOD splits like cluster-based. | Simple, but relies heavily on the quality and completeness of hand-crafted molecular descriptors. |

| Graph Neural Networks (GNNs) | Chemprop, TGNN, IGNN, EGNN, MACE | Performance varies with architecture and inductive bias. Models with high inductive bias can perform well on OOD tasks with simple, specific properties [9]. | Strong permutational invariance. E(3)-invariant/equivariant models can better capture geometric physics. |

| Transformers / Foundation Models | MolFormer, ChemBERTa, Regression Transformer, ModernBERT | Current chemical foundation models do not show strong OOD extrapolation capabilities consistently across tasks [9]. | Promising for limited data via transfer learning, but pretraining on large corpora does not guarantee OOD robustness. |

| Specialized GNN Architectures | D-MPNN, ACS (Multi-task GNN) | Can match or surpass performance of other models; ACS effectively mitigates negative transfer in imbalanced data [17]. | Architectural choices like directed messaging (D-MPNN) or adaptive checkpointing (ACS) can enhance robustness. |

A critical finding is that no single existing model achieves strong OOD generalization across all diverse tasks [9]. This underscores OOD property prediction as a "frontier challenge" for the field. Furthermore, the assumption that large foundation models will automatically solve this problem is not yet supported by evidence; their pretraining on vast chemical datasets does not necessarily confer robust OOD extrapolation capabilities [9].

Mitigation Strategies: Bridging the OOD Gap

Several advanced techniques have been developed to specifically address and reduce the OOD performance gap.

Diagram: The ACS Method for Mitigating Negative Transfer

- Adaptive Checkpointing with Specialization (ACS): Designed for multi-task GNNs, ACS combats negative transfer—where updates from one task degrade performance on another. It uses a shared backbone for general representation learning but employs task-specific heads. Crucially, it checkpoints the best backbone-head pair for each task whenever a new minimum validation loss is achieved for that task. This approach allows beneficial parameter sharing while protecting individual tasks from detrimental interference, significantly improving performance in low-data and imbalanced regimes [17].

- Confidence Optimal Transport (COT/COTT): This method addresses the underestimation of OOD error by leveraging optimal transport theory to provide more robust estimates of model performance on OOD data without labels. It is particularly effective in the presence of pseudo-label shift (discrepancy between predicted and true OOD label distributions). An empirically-motivated variant, COTT, further improves accuracy by applying thresholding to individual transport costs [19].

- Architectural Inductive Biases: Incorporating physical and chemical priors into model architectures can enhance OOD generalization. For instance, E(3)-equivariant GNNs, which respect the symmetries of 3D space, can better leverage geometric information, potentially leading to more robust predictions on unseen molecular structures [9].

Table: Essential Research Reagents for OOD Molecular Property Prediction

| Resource Name | Type | Primary Function in OOD Research |

|---|---|---|

| BOOM Benchmark [9] | Benchmark Suite | Standardized benchmark for assessing OOD generalization performance across 10 molecular property datasets. |

| QM9 Dataset [9] | Molecular Dataset | A well-known dataset of 133,886 small organic molecules with quantum mechanical properties, used for training and evaluation. |

| MoleculeNet [17] | Benchmark Suite | A collection of molecular datasets for benchmarking ML models, often used with scaffold splitting for OOD evaluation. |

| RDKit [9] | Cheminformatics Library | Used to generate molecular descriptors, fingerprints, and scaffolds for featurization and data splitting. |

| Graph Neural Networks (GNNs) | Model Architecture | Learns directly from molecular graph structure, providing a strong inductive bias for molecular data. |

| ACS Training Scheme [17] | Algorithm/Method | A training scheme for multi-task GNNs that mitigates negative transfer, enabling accurate prediction with as few as 29 labeled samples. |

| COT/COTT Algorithm [19] | Algorithm/Method | Provides robust estimates of model performance on OOD data without requiring labeled OOD examples. |

The evidence is clear and consistent: a significant performance gap, quantified as a 3x increase in error, exists between in-distribution and out-of-distribution settings for molecular property predictors. This gap poses a substantial risk to the reliability of AI-driven discovery pipelines. Addressing this challenge requires a multi-faceted approach: using rigorous benchmarking practices like those in BOOM, adopting advanced mitigation strategies like ACS and COT, and developing models with stronger physical and chemical inductive biases. For researchers and professionals in drug development, moving beyond in-distribution metrics and proactively evaluating OOD robustness is no longer optional but essential for building trustworthy and effective predictive models.

Architectural and Algorithmic Innovations for Improved OOD Extrapolation

The discovery of high-performance materials and molecules fundamentally depends on identifying extremes with property values that fall outside known distributions [2] [1]. Traditional machine learning models excel at interpolation within their training data but face significant challenges when making predictions for out-of-distribution (OOD) property values, a critical capability for accelerating scientific discovery [2]. This limitation is particularly problematic in virtual screening workflows, where the objective is to identify high-performing OOD candidates from known compounds with unknown properties [2] [1]. Transductive learning approaches, particularly Bilinear Transduction, have emerged as a promising framework for addressing this fundamental challenge in molecular and materials informatics.

The core problem stems from how conventional machine learning models generalize. Classical supervised learning typically struggles with extrapolating property predictions through regression when test samples fall outside the training distribution [1]. Consequently, many approaches have shifted toward classifying OOD materials instead of performing direct regression [1]. Bilinear Transduction represents a paradigm shift in this landscape by reformulating the prediction problem itself, moving from absolute property prediction to relative difference estimation, enabling more accurate zero-shot extrapolation to unprecedented property ranges [2] [1].

Understanding Transductive Learning: A Conceptual Framework

Inductive Versus Transductive Learning Paradigms

In machine learning, a critical distinction exists between inductive and transductive learning approaches [20]. Inductive learning follows the traditional supervised pattern: reasoning from observed training cases to general rules, which are then applied to test cases [20]. This approach builds a predictive model from seen data samples in the form of weights that can be applied to unseen samples [7]. Most conventional machine learning models used in materials informatics operate under this paradigm.

In contrast, transductive learning represents a different reasoning approach: moving from observed, specific training cases to specific test cases without intermediary general rules [20]. Transductive methods do not build a predictive model with weights that can be applied to unseen samples [7]. Instead, they use all available data—both training and test instances—to generate predictions directly. This fundamental difference in approach enables transductive methods to leverage relationships between test samples and training data more effectively, particularly valuable when dealing with distribution shifts [7].

The Problem of Data Leakage in Transductive Evaluation

A significant challenge in evaluating transductive methodologies lies in preventing data leakage during feature generation [7]. When improperly implemented, transductive approaches can exhibit artificially inflated performance metrics because information from the test set may inadvertently influence feature creation [7]. This has been particularly observed in drug-target interaction prediction, where transductive models have demonstrated near-optimal performance due to evaluation artifacts rather than genuine predictive capability [7]. Proper benchmarking requires careful experimental design to ensure fair comparison between inductive and transductive approaches, often involving specific dataset splitting strategies that isolate test information during training [7].

Bilinear Transduction: Core Methodology and Implementation

Theoretical Foundation and Mathematical Reformulation

Bilinear Transduction addresses the OOD prediction problem through a fundamental reparameterization of the prediction task [1] [21]. Rather than making property value predictions directly from a new candidate material's features, predictions derive from a known training example and the difference in representation space between the two materials [1]. This approach enables extrapolation by learning how property values change as a function of material differences rather than predicting these values from new materials in isolation [2].

The core innovation lies in decomposing the input variable into an anchor (a variable in the input space) and a delta (the difference between the input variable and the anchor) [21]. During inference, property values are predicted based on a chosen training example and the difference between it and the new sample [1]. This transformation effectively converts an out-of-support learning problem into an out-of-combination problem, which can be more tractable if the reparameterized training and test data distributions satisfy certain assumptions [21].

Implementation Workflow

The following diagram illustrates the conceptual workflow of Bilinear Transduction for molecular property prediction:

The Bilinear Transduction workflow implements a distinct process compared to traditional inductive learning. For solid-state materials, the approach typically utilizes stoichiometry-based representations to capture compositionally driven property variation [2]. For molecular systems, inputs commonly consist of molecular graphs encoded as SMILES (Simplified Molecular Input Line Entry System) representations or related formats [2] [22]. The model learns analogical input-target relations across training and test sets, enabling generalization beyond the training target support [2] [1].

Experimental Protocols and Benchmarking Standards

Comprehensive evaluation of Bilinear Transduction involves multiple benchmark datasets spanning both solid-state materials and molecular systems [2] [1]. For solids, common benchmarks include AFLOW (containing material property values from high-throughput calculations), Matbench (an automated leaderboard for benchmarking ML algorithms on solid material properties), and the Materials Project (providing materials and properties derived from high-throughput calculations) [2]. For molecular systems, datasets from MoleculeNet are frequently employed, covering graph-to-property prediction tasks including ESOL (aqueous solubility), FreeSolv (hydration free energies), Lipophilicity (octanol/water distribution coefficients), and BACE (binding affinities) [2].

Performance evaluation typically focuses on extrapolation capability measured by mean absolute error (MAE) for OOD predictions [2] [1]. Additional metrics include extrapolative precision (measuring the fraction of true top OOD candidates correctly identified) and recall of high-performing candidates [2]. Proper benchmarking requires carefully designed train-test splits that ensure test samples represent genuine OOD cases with property values outside the training distribution [2] [1].

Performance Comparison: Bilinear Transduction vs. Alternative Methods

Solid-State Materials Property Prediction

Table 1: Performance comparison (Mean Absolute Error) for solid-state materials property prediction on AFLOW dataset

| Property | Ridge Regression | MODNet | CrabNet | Bilinear Transduction |

|---|---|---|---|---|

| Band Gap [eV] | 2.59 ± 0.03 | 2.65 ± 0.04 | 1.47 ± 0.03 | 1.51 ± 0.04 |

| Bulk Modulus [GPa] | 74.0 ± 3.8 | 93.06 ± 3.7 | 59.25 ± 3.2 | 47.4 ± 3.4 |

| Debye Temperature [K] | 0.45 ± 0.03 | 0.62 ± 0.03 | 0.38 ± 0.02 | 0.31 ± 0.02 |

| Shear Modulus [GPa] | 0.69 ± 0.03 | 0.78 ± 0.04 | 0.55 ± 0.02 | 0.42 ± 0.02 |

| Thermal Conductivity [W/mK] | 1.07 ± 0.05 | 1.5 ± 0.05 | 0.97 ± 0.03 | 0.83 ± 0.04 |

Table 2: Performance comparison for materials property prediction across multiple benchmarks

| Dataset | Property | Ridge Regression | MODNet | CrabNet | Bilinear Transduction |

|---|---|---|---|---|---|

| Matbench | Band Gap [eV] | 6.37 ± 0.28 | 3.26 ± 0.13 | 2.70 ± 0.13 | 2.54 ± 0.16 |

| Matbench | Refractive Index | 14.4 ± 2.0 | 4.24 ± 0.48 | 3.92 ± 0.5 | 3.81 ± 0.49 |

| Matbench | Yield Strength [MPa] | 972 ± 34 | 731 ± 82 | 740 ± 49 | 591 ± 62 |

| Materials Project | Bulk Modulus [GPa] | 151 ± 14 | 60.1 ± 3.9 | 57.8 ± 4.2 | 45.8 ± 3.9 |

Bilinear Transduction consistently outperforms or performs comparably to established baseline methods across diverse materials property prediction tasks [2] [1]. The method demonstrates particular strength in predicting mechanical properties like bulk modulus and shear modulus, where it achieves significant reductions in MAE compared to alternatives [1]. Quantitative analysis reveals that Bilinear Transduction improves extrapolative precision by 1.8× for materials and boosts recall of high-performing candidates by up to 3× compared to conventional approaches [2].

Molecular Property Prediction

For molecular systems, Bilinear Transduction has demonstrated similar advantages in OOD prediction tasks [2]. When evaluated on molecular property prediction benchmarks, the method shows improved extrapolation capability with 1.5× better extrapolative precision for molecules compared to traditional approaches [2]. The true positive rate of OOD classification improves by 2.5× for molecules with precision improvements of 1.5× compared to non-transductive baselines [1].

The performance advantages appear most pronounced in challenging extrapolation scenarios where the target property values substantially exceed the ranges observed in training data [2]. This capability is particularly valuable for discovery-oriented research where identifying exceptional materials or molecules with unprecedented properties is the primary objective [2] [1].

Research Reagent Solutions: Essential Tools for Implementation

Table 3: Key research reagents and computational tools for implementing bilinear transduction

| Tool/Dataset | Type | Purpose | Access |

|---|---|---|---|

| MatEx | Software Library | Implementation of Bilinear Transduction for materials | https://github.com/learningmatter-mit/matex [2] |

| AFLOW | Dataset | Material properties from high-throughput calculations | Public database [2] |

| Matbench | Benchmark | Automated leaderboard for material property prediction | Public benchmark [2] |

| Materials Project | Dataset | Computed materials properties and crystal structures | Public database [2] |

| MoleculeNet | Benchmark | Molecular property prediction tasks | Public benchmark [2] |

| SMILES | Representation | Molecular structure encoding | Standard chemical notation [22] |

| COCOA | Algorithm | Compositional conservatism with anchor-seeking | https://github.com/runamu/compositional-conservatism [21] |

Successful implementation of Bilinear Transduction requires appropriate computational frameworks and datasets. The MatEx (Materials Extrapolation) library provides an open-source implementation specifically designed for OOD property prediction in materials and molecules [2]. For molecular representation, SMILES strings serve as the fundamental input format, with potential enhancements through positional embeddings in transformer architectures [22].

Recent advancements include integration with reinforcement learning frameworks through approaches like COmpositional COnservatism with Anchor-seeking (COCOA), which combines Bilinear Transduction with learned reverse dynamics models to encourage conservatism in the compositional input space [21]. This integration has demonstrated improved performance in offline reinforcement learning benchmarks, suggesting promising avenues for further development in molecular and materials design [21].

Bilinear Transduction represents a significant advancement in transductive learning approaches for zero-shot property prediction, directly addressing the critical challenge of out-of-distribution robustness in molecular property predictors [2] [1]. By reformulating the prediction problem from absolute property estimation to relative difference calculation, the method enables more accurate identification of high-performing materials and molecules with exceptional properties [2].

The consistent performance advantages demonstrated across diverse benchmark datasets suggest that Bilinear Transduction and related transductive approaches offer a promising path forward for discovery-oriented research [2] [1]. However, proper implementation requires careful attention to potential data leakage issues that can inflate performance metrics in transductive settings [7]. Future research directions likely include integration with large language models for molecular representation [22], application to emerging challenges in drug-target interaction prediction [7], and development of more sophisticated anchor selection strategies [21].

As the field progresses, transductive learning approaches like Bilinear Transduction are poised to play an increasingly important role in accelerating the discovery of novel materials and molecules with exceptional properties, potentially transforming early-stage discovery workflows across materials science and drug development [2] [1].

In the field of drug discovery, molecular property prediction models play a crucial role in prioritizing compounds for experimental validation. However, a significant limitation persists: these models typically demonstrate strong performance on compounds similar to those in their training data (in-distribution, or ID) but suffer substantial performance degradation when applied to novel, structurally distinct compounds (out-of-distribution, or OOD). This covariate shift problem is particularly problematic in real-world discovery pipelines, where the most valuable compounds for advancing research often lie beyond the chemical space represented in training datasets [23]. The fundamental challenge stems from the scarcity of labeled data, as experimental validation remains costly and time-consuming, resulting in training sets that are both small and biased toward narrow regions of chemical space.

The evaluation of OOD robustness has emerged as a critical focus in machine learning research. Heuristic assessments often lead to biased conclusions about model generalizability, as many supposedly "OOD" tests actually reflect interpolation rather than true extrapolation, potentially overestimating both generalizability and the benefits of model scaling [24]. This review compares contemporary strategies for improving OOD generalization in molecular property prediction, with particular emphasis on meta-learning approaches that leverage abundant unlabeled data to "densify" scarce labeled distributions and bridge the ID-OOD performance gap.

Methodological Comparison: Strategies for OOD Generalization

Featured Approach: Meta-Learning with Scarce Data Densification

A novel meta-learning framework addresses OOD generalization by explicitly interpolating the scarce labeled training distribution with abundant unlabeled data. This approach utilizes a permutation-invariant learnable set function, or "mixer," that combines labeled training points with context points from the unlabeled dataset. The method operates through two core components: (1) a standard meta-learner (MLP) that maps input data to feature representations, and (2) the learnable set function that mixes labeled and unlabeled representations at a specific layer. This densification strategy encourages the model to generalize more robustly under covariate shift by effectively expanding the training distribution toward regions of chemical space represented in the unlabeled data [23].

The meta-learning process employs a context set () and meta-validation set (𝒟contextcontext\mathcal{D}{\text{context}}) drawn from the unlabeled pool, enabling the model to learn an interpolation function that improves generalization to OOD compounds. This approach is particularly valuable in drug discovery settings where advancing research requires predictions about compounds with substantial distributional shifts from known molecules.𝒟mvalidmvalid\mathcal{D}{\text{mvalid}}

Alternative Paradigms for OOD Generalization

Context-Informed Heterogeneous Meta-Learning

Another advanced meta-learning approach for few-shot molecular property prediction employs a heterogeneous architecture that extracts both property-shared and property-specific molecular features. This method utilizes graph neural networks combined with self-attention encoders to capture contextual information, with an adaptive relational learning module that infers molecular relations based on shared features. The framework employs a heterogeneous meta-learning strategy where property-specific features update within individual tasks (inner loop) while all parameters update jointly (outer loop). This division enables more effective capture of both general and contextual information, leading to significant improvements in predictive accuracy, especially with limited training samples [25].

Semi-Supervised Learning with Multi-Mode Augmentation

Beyond meta-learning, enhanced semi-supervised learning (SSL) methods offer alternative pathways for leveraging unlabeled data. One approach addresses limitations of traditional SSL in small-sample environments through multi-mode augmentation, combining intra-class random augmentation with inter-class mixed augmentation. This strategy simultaneously improves both intra-class and inter-class sample completeness, creating more robust feature representations. The method incorporates an uncertainty-aware pseudo-label selection mechanism based on model prediction statistics, improving pseudo-label quality while maximizing retention of unlabeled samples. When combined with exponential moving average techniques, this approach demonstrates strong performance even with extremely limited labeled and unlabeled data [26].

Comparative Performance Analysis

Table 1: Comparative Performance of OOD Generalization Methods

| Method | Approach Category | Key Mechanism | Reported Performance Advantage | Data Requirements |

|---|---|---|---|---|

| Meta-Learning with Densification [23] | Meta-learning | Interpolates labeled data with unlabeled context points | Significant gains over SOTA under substantial covariate shift | Scarce labeled + abundant unlabeled |

| Context-Informed Heterogeneous Meta-Learning [25] | Few-shot learning | Separates property-shared and property-specific features | Superior few-shot accuracy, especially with minimal samples | Few-shot setting |

| Multi-Mode Augmentation SSL [26] | Semi-supervised learning | Combines intra-class and inter-class augmentation | Outperforms MixMatch, UDA, FreeMatch on STL-10/CIFAR-10 | Limited labeled and unlabeled data |

| Traditional Supervised Baselines | Supervised learning | Standard empirical risk minimization | Poor OOD performance due to covariate shift | Large labeled datasets |

Table 2: OOD Evaluation Metrics and Method Characteristics

| Method | Evaluation Paradigm | Handles Distribution Shifts | Main Advantages | Limitations |

|---|---|---|---|---|

| Meta-Learning with Densification [23] | OOD performance testing | Yes, via explicit interpolation | Actively densifies training distribution | Complex training pipeline |

| Heterogeneous Meta-Learning [25] | OOD performance testing | Yes, through contextual modeling | Excellent in few-shot scenarios | Requires task structure for meta-learning |

| Multi-Mode Augmentation SSL [26] | OOD performance testing | Yes, via diverse augmentation | Works with very limited data | Domain-specific augmentations needed |

| Heuristic OOD Evaluation [24] | OOD performance prediction | No, primarily for assessment | Reveals true extrapolation capability | Evaluation method, not solution |

Experimental Protocols and Methodological Details

Meta-Learning Densification Framework

The experimental protocol for the meta-learning densification approach involves several carefully designed components. The method addresses molecular property prediction under covariate shift with a small labeled dataset and abundant unlabeled molecules 𝒟train={(xi,yi)}i=1ntrain\mathcal{D}{\text{train}}={(x{i},y{i})}{i=1}^{n}caligraphicD startPOSTSUBSCRIPT train endPOSTSUBSCRIPT = { ( italicx startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT , italicy startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT ) } startPOSTSUBSCRIPT italici = 1 endPOSTSUBSCRIPT startPOSTSUPERSCRIPT italicn endPOSTSUPERSCRIPT. The goal is to learn a predictive model 𝒟unlabeled={xj}j=1munlabeled\mathcal{D}{\text{unlabeled}}={x{j}}{j=1}^{m}caligraphicD startPOSTSUBSCRIPT unlabeled endPOSTSUBSCRIPT = { italicx startPOSTSUBSCRIPT italicj endPOSTSUBSCRIPT } startPOSTSUBSCRIPT italicj = 1 endPOSTSUBSCRIPT startPOSTSUPERSCRIPT italicm endPOSTSUPERSCRIPT that generalizes to a distributionally shifted test set f:𝒳→𝒴f:\mathcal{X}\to\mathcal{Y}italicf : caligraphicX → caligraphicY [23].𝒟testtest\mathcal{D}{\text{test}}caligraphicD startPOSTSUBSCRIPT test endPOSTSUBSCRIPT

The core innovation lies in the mixing function, which learns to combine each labeled data point μλ\mu{\lambda}italicμ startPOSTSUBSCRIPT italicλ endPOSTSUBSCRIPT with a variable number of context points xi∼𝒟traintrainx{i}\sim\mathcal{D}{\text{train}}italicx startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT ∼ caligraphicD startPOSTSUBSCRIPT train endPOSTSUBSCRIPT drawn from the unlabeled pool. For each minibatch {cij}j=1mi∼𝒟contextcontext{c{ij}}{j=1}^{m{i}}\sim\mathcal{D}{\text{context}}{ italicc startPOSTSUBSCRIPT italici italicj endPOSTSUBSCRIPT } startPOSTSUBSCRIPT italicj = 1 endPOSTSUBSCRIPT startPOSTSUPERSCRIPT italicm startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT endPOSTSUPERSCRIPT ∼ caligraphicD startPOSTSUBSCRIPT context endPOSTSUBSCRIPT, the number of context points BBitalicB follows a discrete uniform distribution mim{i}italicm startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT, where mi∼𝒰int(0,M)intm{i}\sim\mathcal{U}{\text{int}}(0,M)italicm startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT ∼ caligraphicU startPOSTSUBSCRIPT int endPOSTSUBSCRIPT ( 0 , italicM ) controls the maximum context samples per minibatch. The mixing operation occurs at a specific layer MMitalicM, producing enriched representations lmixmixl{\text{mix}}italicl startPOSTSUBSCRIPT mix endPOSTSUBSCRIPT that incorporate information from both labeled and unlabeled distributions [23].x~i(lmix)=μλ({xi(lmix),Ci(lmix)})mixmixmix\tilde{x}{i}^{(l{\text{mix}})}=\mu{\lambda}({x{i}^{(l{\text{mix}})},C{i% }^{(l{\text{mix}})}})over~ startARG italicx endARG startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT startPOSTSUPERSCRIPT ( italicl startPOSTSUBSCRIPT mix endPOSTSUBSCRIPT ) endPOSTSUPERSCRIPT = italicμ startPOSTSUBSCRIPT italicλ endPOSTSUBSCRIPT ( { italicx startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT startPOSTSUPERSCRIPT ( italicl startPOSTSUBSCRIPT mix endPOSTSUBSCRIPT ) endPOSTSUPERSCRIPT , italicC startPOSTSUBSCRIPT italici endPOSTSUBSCRIPT startPOSTSUPERSCRIPT ( italicl startPOSTSUBSCRIPT mix endPOSTSUBSCRIPT ) endPOSTSUPERSCRIPT } )

Diagram 1: Meta-Learning Densification Workflow. Illustrates how labeled and unlabeled data interact through the mixing layer to produce OOD-resistant predictions.

Heterogeneous Meta-Learning Protocol

The context-informed few-shot learning approach employs a dual-component architecture where graph neural networks extract property-specific molecular features while self-attention encoders capture property-shared characteristics. The experimental protocol involves an adaptive relational learning module that infers molecular relations based on shared features. The heterogeneous meta-learning strategy implements a two-loop optimization process: inner-loop updates refine property-specific features within individual tasks, while outer-loop updates jointly optimize all parameters across tasks [25].

This approach is evaluated under rigorous few-shot learning scenarios on real molecular datasets from MoleculeNet, with performance measured through metrics such as mean absolute error and coefficient of determination (R²) for regression tasks, demonstrating superior performance compared to alternatives, particularly when training samples are severely limited [25].

Evaluation Metrics for OOD Generalization

Robust evaluation of OOD generalization requires moving beyond heuristic assessments. Current research emphasizes that many supposedly "OOD" tests actually reflect interpolation rather than true extrapolation, potentially leading to overestimated generalizability [24]. Proper OOD evaluation aims not only to assess whether a model's OOD capability is strong but also to characterize the types of distribution shifts a model can effectively address and identify safe versus risky input regions [27].

Established evaluation paradigms include OOD performance testing (with test data), OOD performance prediction (without test data), and OOD intrinsic property characterization. For molecular property prediction, metrics like mean absolute error (MAE) and coefficient of determination (R²) are commonly employed, with R² being particularly valuable as a dimensionless accuracy measure that can be compared across different OOD test sets [24].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for OOD Molecular Property Prediction

| Research Reagent | Function | Example Implementation |

|---|---|---|

| Learnable Set Function (Mixer) | Interpolates labeled and unlabeled distributions | Permutation-invariant function μ_λ [23] |

| Graph Neural Networks | Encodes molecular structure information | GIN, Pre-GNN [25] |

| Self-Attention Encoders | Captures property-shared features | Transformer-based architectures [25] |

| Multi-Mode Augmentation | Enhances sample diversity | Random + mixed augmentation strategies [26] |

| Meta-Learning Framework | Enables few-shot adaptation | MAML-inspired algorithms [23] [25] |

| Uncertainty-Aware Selection | Improves pseudo-label quality | Confidence-based filtering [26] |

The integration of meta-learning strategies with unlabeled data densification represents a promising direction for addressing the fundamental challenge of OOD generalization in molecular property prediction. By actively leveraging abundant unlabeled molecular data to expand the effective training distribution, these approaches mitigate the covariate shift problems that plague traditional supervised methods. The comparative analysis presented herein demonstrates that methods like meta-learning densification and heterogeneous meta-learning consistently outperform conventional approaches, particularly in challenging few-shot scenarios and under significant distribution shifts.

Future research directions should focus on developing more sophisticated interpolation strategies, improving the scalability of meta-learning approaches to extremely large unlabeled datasets, and establishing more rigorous OOD evaluation benchmarks that accurately distinguish between interpolation and true extrapolation. As these methodologies mature, they hold significant potential for accelerating drug discovery by providing more reliable predictions for novel compound classes that diverge from established chemical spaces.

Molecular property prediction stands as a critical task in computational chemistry and drug discovery, where accurately forecasting properties like toxicity, solubility, or bioactivity can dramatically accelerate materials research and therapeutic development. Traditional Graph Neural Networks (GNNs) have emerged as powerful tools for this task, operating directly on the graph structure of molecules where atoms represent nodes and bonds represent edges. However, these standard models face significant challenges in real-world applications where they must generalize to molecular structures and property values beyond their training distribution—a capability known as out-of-distribution (OOD) robustness.

The limitations of conventional GNNs have spurred interest in more advanced architectures that better capture the physical and geometric principles governing molecular systems. Among these, E(3)-equivariant architectures and hybrid models have shown particular promise. E(3)-equivariant Graph Neural Networks explicitly embed the symmetries of 3D Euclidean space—translation, rotation, and reflection—directly into their architecture, ensuring predictions transform consistently with molecular orientation. Hybrid models combine complementary architectural paradigms, such as integrating transformer components with GNNs or incorporating quantum-inspired elements, to overcome limitations of single-architecture approaches.

This guide provides a systematic comparison of these advanced architectures, focusing on their performance, robustness, and applicability across diverse molecular prediction tasks, with particular emphasis on their OOD generalization capabilities—a crucial consideration for real-world deployment where novel molecular scaffolds are frequently encountered.

Theoretical Foundations: From Invariance to Equivariance

The Geometric Principles of E(3)-Equivariance

E(3)-equivariant networks fundamentally differ from standard GNNs through their explicit handling of 3D geometric symmetries. The E(3) group encompasses all translations, rotations, and reflections in 3D Euclidean space. For molecular systems, where properties should not depend on arbitrary orientation or placement in space, leveraging these symmetries is crucial for physical meaningfulness and data efficiency.

Equivariance refers to the property that when the input to a network undergoes a transformation (e.g., rotation), the representation at each layer transforms in a corresponding way. Formally, a function 𝑓: 𝑋 → 𝑌 is equivariant to a group 𝐺 if for any transformation 𝑔 ∈ 𝐺, 𝑓(𝑔·𝑥) = 𝑔·𝑓(𝑥). This contrasts with invariance, where 𝑓(𝑔·𝑥) = 𝑓(𝑥). For molecular systems, invariance is desired for scalar outputs like energy, while equivariance is essential for vector or tensor outputs like forces or dipole moments [28].

Standard GNNs typically achieve invariance through data augmentation or specific architectural choices, but this approach can be computationally inefficient and may fail to capture important geometric dependencies. E(3)-equivariant models like EGNNs (E(n) Equivariant Graph Neural Networks) build equivariance directly into their operations through carefully designed coordinate updates and message-passing schemes that preserve transformation properties across layers [29] [30].

Hybrid Architecture Paradigms

Hybrid architectures seek to combine the strengths of multiple approaches to overcome limitations of individual paradigms:

- Graph Transformer Hybrids: Integrate the global receptive field of transformers with the structural inductive biases of GNNs, using attention mechanisms to capture long-range dependencies in molecular graphs [31] [32].

- Quantum-Classical Hybrids: Incorporate quantum-inspired components or quantum neural networks to enhance modeling of complex quantum chemical relationships, particularly valuable for data-sparse scenarios [33].

- Multi-Scale and Multi-Fidelity Models: Combine information from different levels of theory (e.g., DFT calculations with experimental data) or different molecular representations to improve generalization [31] [34].

These hybrid approaches aim to balance the expressive power of large, general models with the sample efficiency of specialized architectures incorporating domain knowledge.

Architectural Comparison: Capabilities and Trade-offs