Beyond Trial and Error: Avoiding Critical Hyperparameter Tuning Mistakes in Chemical Machine Learning

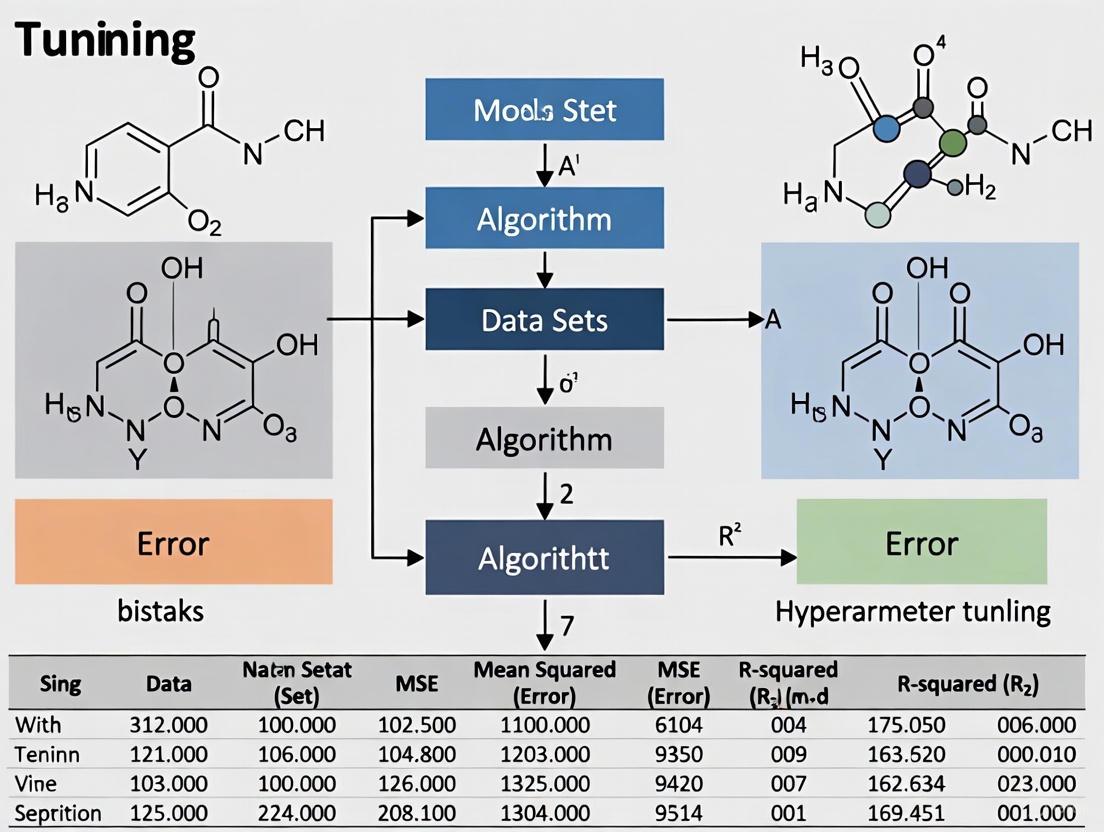

Hyperparameter tuning is a critical, yet often overlooked, step in developing robust machine learning models for chemical and pharmaceutical research.

Beyond Trial and Error: Avoiding Critical Hyperparameter Tuning Mistakes in Chemical Machine Learning

Abstract

Hyperparameter tuning is a critical, yet often overlooked, step in developing robust machine learning models for chemical and pharmaceutical research. Neglecting this process leads to suboptimal models, flawed predictions, and wasted resources in high-stakes areas like drug discovery and molecular property prediction. This article provides a comprehensive guide for chemists and researchers, detailing common pitfalls in hyperparameter optimization (HPO) for chemical ML. It explores foundational concepts, compares modern tuning methodologies like Bayesian optimization and Hyperband, and offers practical troubleshooting strategies. By synthesizing the latest research, this guide aims to equip scientists with the knowledge to build more accurate, reliable, and efficient predictive models, ultimately accelerating biomedical innovation.

Why Hyperparameter Tuning is Non-Negotiable in Chemical ML

Frequently Asked Questions

Q1: What is the fundamental difference between a model parameter and a hyperparameter?

A model parameter is an internal variable that the model learns automatically from the training data. Examples include the weights and biases in a neural network or the coefficients in a linear regression [1] [2] [3]. In contrast, a hyperparameter is a configuration variable that is set before the training process begins and cannot be learned from the data [1] [4] [5]. They control the learning process itself.

Q2: Can you provide examples relevant to chemical machine learning?

- Model Parameters (Learned from data): The specific weight coefficients in a model predicting reaction yields [3] or the learned patterns in a Graph Neural Network that maps molecular structure to a property like solubility [6].

- Hyperparameters (Set by the researcher): The learning rate for the optimization algorithm, the number of hidden layers in a neural network, the number of trees in a Random Forest model, or the regularization strength used to prevent overfitting [1] [4] [2]. In cheminformatics, the architecture of a Graph Neural Network (GNN) is a critical hyperparameter [6].

Q3: Why is correctly distinguishing them crucial in chemical ML projects?

Misunderstanding these concepts leads to fundamental errors. Tuning a model's parameters manually constitutes data leakage and invalidates the model, as parameters must be learned from the training data alone. Conversely, failing to optimize hyperparameters results in suboptimal model performance. Proper hyperparameter tuning is essential to prevent overfitting, especially in low-data chemical regimes, and to achieve a model that generalizes well to new, unseen molecules or reactions [7].

Troubleshooting Guides

Problem: Model is Overfitting to Training Data

Description: The model performs exceptionally well on your training set of chemical reactions but fails to predict outcomes for new, unseen reactions accurately.

Diagnosis: This is a classic sign of overfitting, where the model has learned the noise in the training data rather than the underlying chemical principles.

Solution:

- Implement Hyperparameter Tuning: Use automated methods to find optimal regularization hyperparameters.

- Leverage Bayesian Optimization: This is a highly efficient method for tuning hyperparameters in computationally expensive chemical ML models [4] [7]. It builds a probabilistic model of the objective function (e.g., validation score) and uses it to select the most promising hyperparameters to evaluate next.

- Incorporate Extrapolation Metrics: For chemical models that need to predict beyond the training data space, use a hyperparameter optimization objective that penalizes overfitting in both interpolation and extrapolation, as demonstrated in automated workflows like ROBERT [7].

Problem: Inefficient or Failed Reaction Optimization Campaign

Description: A high-throughput experimentation (HTE) campaign for reaction optimization is not converging to high-yielding conditions efficiently.

Diagnosis: The algorithmic hyperparameters guiding the experimental search (e.g., in a Bayesian Optimization framework) may be poorly chosen, or the search space is too large for naive methods.

Solution:

- Adopt Scalable Multi-Objective Algorithms: For large-scale parallel experiments (e.g., 96-well plates), use acquisition functions like q-NParEgo or Thompson sampling with hypervolume improvement (TS-HVI) that are designed for large batch sizes [8].

- Define the Search Space Carefully: Represent the reaction condition space as a discrete combinatorial set of plausible conditions (solvents, ligands, catalysts, temperatures) guided by chemical domain knowledge to filter out impractical combinations [8].

- Use a Robust Workflow: Implement a full ML-driven workflow that includes initial quasi-random sampling (e.g., Sobol sampling) to explore the space, followed by iterative cycles of model training and guided experiment selection [8].

Definitions and Comparisons

Table 1: Core Differences Between Model Parameters and Hyperparameters

| Aspect | Model Parameters | Model Hyperparameters |

|---|---|---|

| Definition | Variables learned from the data during training [1] [2] | Configuration variables set before training begins [1] [4] |

| Purpose | Make predictions on new data [1] [3] | Control the learning process and model structure [1] [2] |

| Determined By | Optimization algorithms (e.g., Gradient Descent) [1] | The researcher via hyperparameter tuning [1] [5] |

| Examples in ML | Weights & biases in a Neural Network; Coefficients in Linear Regression [1] [3] | Learning rate, number of layers, number of estimators [1] [4] |

| Examples in Chemistry | Learned structure-property relationships in a GNN [6] | GNN architecture, number of trees in a Random Forest model [6] [7] |

Table 2: Common Categories of Hyperparameters in Chemical Machine Learning

| Category | Description | Examples |

|---|---|---|

| Architecture Hyperparameters | Control the model's structure and complexity [2]. | Number of layers in a neural network, number of neurons per layer, number of trees in a Random Forest [1] [2]. |

| Optimization Hyperparameters | Govern how the model is updated during training [2]. | Learning rate, batch size, number of iterations/epochs [1] [4] [2]. |

| Regularization Hyperparameters | Used to prevent overfitting by adding constraints [2]. | Dropout rate, L1/L2 regularization strength [4] [2]. |

Experimental Protocols & Workflows

Protocol: Hyperparameter Optimization for Low-Data Chemical Models

This protocol is adapted from workflows designed to make non-linear models competitive with linear regression in low-data regimes [7].

- Data Preparation: Split the data into an external test set (e.g., 20% with even distribution of target values) and a training/validation set. The test set is held back until the final model is selected.

- Define the Objective Function: Create a combined metric that assesses both interpolation and extrapolation performance to aggressively combat overfitting. For example, use a combined Root Mean Squared Error (RMSE) calculated from:

- Interpolation: A 10-times repeated 5-fold cross-validation.

- Extrapolation: A selective sorted 5-fold CV that tests performance on the highest and lowest data points.

- Execute Bayesian Optimization: Use a Bayesian optimization library (e.g., via Optuna or Ray Tune) to explore the hyperparameter space. The objective is to minimize the combined RMSE metric from step 2.

- Final Evaluation: Train a final model with the best-found hyperparameters on the entire training/validation set and evaluate it once on the held-out test set.

Workflow: Machine Learning-Driven Reaction Optimization

This workflow outlines the process for using ML to guide high-throughput experimentation, as reported in platforms like Minerva [8].

The Scientist's Toolkit

Table 3: Essential Software Tools for Hyperparameter Optimization

| Tool Name | Type | Key Features | Best For |

|---|---|---|---|

| Optuna [9] [4] | Open-source Python library | Efficient sampling and pruning algorithms; defines search space with Python syntax (conditionals, loops); easy to use [9]. | Users who want a modern, flexible, and highly customizable tuning framework. |

| Ray Tune [9] | Open-source Python library | Integrates with many other optimization libraries (Ax, HyperOpt); scales without code changes; supports any ML framework [9]. | Large-scale distributed tuning and integrating with the Ray ecosystem. |

| HyperOpt [9] | Open-source Python library | Optimizes over complex search spaces (real-valued, discrete, conditional); uses Tree of Parzen Estimators (TPE) [9]. | Problems with complicated, conditional parameter spaces. |

| Scikit-Learn GridSearchCV/RandomizedSearchCV [4] [5] | Built-in Scikit-Learn methods | Simple to implement; integrated with the scikit-learn ecosystem; RandomizedSearchCV is faster than exhaustive grid search [4] [5]. | Quick and simple tuning for small to medium-sized datasets and search spaces. |

Troubleshooting Guides

Guide 1: Addressing Performance Degradation in Multi-Task Learning

Problem: My multi-task graph neural network (GNN) is performing worse than single-task models, especially on tasks with limited data.

Explanation: This is a classic symptom of Negative Transfer (NT), where parameter updates from one task degrade performance on another. This occurs due to task imbalance, gradient conflicts, or mismatches in data distribution and optimal learning rates across tasks [10].

Solution: Implement Adaptive Checkpointing with Specialization (ACS) [10].

Methodology:

- Architecture: Use a single, shared GNN as a backbone to learn general molecular representations. Attach separate, task-specific Multi-Layer Perceptron (MLP) heads for each property prediction task [10].

- Training: Monitor the validation loss for each task individually throughout the training process.

- Checkpointing: Save a checkpoint of the model (both the shared backbone and the task-specific head) whenever the validation loss for a particular task reaches a new minimum.

- Output: After training, you will have a specialized backbone-head pair for each task, effectively mitigating the interference from unrelated tasks [10].

Guide 2: Handling Highly Imbalanced Drug Discovery Datasets

Problem: My model is biased towards the majority class (e.g., non-antibacterial compounds) and fails to identify the rare, active candidates I'm interested in.

Explanation: Standard machine learning models often ignore the minority class in imbalanced datasets, treating them as noise. This is a common issue in drug discovery where active compounds are rare [11].

Solution: Apply the Class Imbalance Learning with Bayesian Optimization (CILBO) pipeline [11].

Methodology:

- Algorithm Selection: Start with an interpretable model like Random Forest.

- Feature Representation: Use RDKit fingerprints to generate topological structure representations of molecules [11].

- Hyperparameter Optimization: Use Bayesian Optimization to find the best hyperparameters. Crucially, include

class_weight(to penalize mistakes on the minority class) andsampling_strategy(e.g., for oversampling) among the parameters to optimize [11]. - Validation: Employ rigorous k-fold cross-validation (e.g., 30 repetitions of 5-fold) to evaluate the model's performance robustly [11].

Guide 3: Improving Generalization on Molecules with 'Activity Cliffs'

Problem: My model makes inaccurate predictions for molecules that have high structural similarity but vastly different properties (activity cliffs).

Explanation: Standard training methods struggle to learn distinctive representations for molecules that form activity cliffs, as they are often treated too similarly during the learning process [12].

Solution: Reformulate the problem using a curriculum-aware training approach [12].

Methodology:

- Task Reformulation: Frame molecular property prediction as a node classification problem on a molecular graph.

- Specialized Tasks: Introduce two auxiliary learning tasks:

- A node-level task that forces the model to discriminate between structurally similar molecules with different properties.

- An edge-level task that focuses on learning the nuanced relationships between molecular substructures that lead to property changes [12].

- Integration: This method can be integrated with various base GNN models, whether they are pre-trained or randomly initialized [12].

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical hyperparameters to optimize for a Graph Neural Network applied to molecular property prediction?

The performance of GNNs is highly sensitive to architectural and training hyperparameters. Key areas to focus on include [13] [6]:

- Graph Architecture: Message-passing depth, aggregation function (sum, mean, max), and node/edge feature representation.

- Network Architecture: The choice of activation functions and the number of layers and units in the downstream MLP predictor.

- Optimization: Learning rate, batch size, and the choice of optimizer (e.g., Adam, SGD). Automated Hyperparameter Optimization (HPO) and Neural Architecture Search (NAS) are crucial but computationally expensive techniques for tackling this complexity [6].

FAQ 2: I have very little labeled data for my molecular property of interest. What are my options?

In this "ultra-low data regime," standard single-task learning is likely to fail [14]. Your best options are:

- Multi-Task Learning (MTL): Leverage correlations with other, better-labeled molecular properties to improve predictive performance. The ACS method is particularly designed for this scenario, as it mitigates negative transfer from task imbalance [10].

- Advanced Architectures: Consider models like ASE-Mol, which uses a Mixture-of-Experts (MoE) approach to focus on predictive molecular substructures, improving performance on imbalanced data [15].

- Note of Caution: A systematic study found that basic AI models often fail to produce acceptable results on small, imbalanced clinical/chemical datasets, even after hyperparameter tuning. This underscores the need for specialized techniques like MTL rather than relying on standard models [14].

FAQ 3: Beyond hyperparameter tuning, what other strategies can improve my model's robustness and interpretability?

- Robustness: To handle activity cliffs, adopt the curriculum-aware training method that creates auxiliary tasks to help the model discriminate between deceptively similar molecules [12].

- Interpretability: The CILBO pipeline uses Random Forest models with molecular fingerprints, which are generally easier to interpret than deep learning models. The ASE-Mol framework also provides interpretability by design, as it identifies and leverages positive and negative substructures (e.g., via BRICS decomposition) that contribute to a molecular property [11] [15].

Table 1: Comparative Performance of Optimization Techniques on Molecular Property Benchmarks (ROC-AUC)

| Technique | Benchmark Dataset | Reported Performance | Key Advantage |

|---|---|---|---|

| Adaptive Checkpointing with Specialization (ACS) [10] | ClinTox | 15.3% improvement over Single-Task Learning (STL) | Mitigates negative transfer in multi-task learning with imbalanced data. |

| Class Imbalance Learning with Bayesian Optimization (CILBO) [11] | Antibacterial Discovery | ROC-AUC: 0.99 (on test set) | Effectively handles class imbalance; achieves performance comparable to a complex D-MPNN GNN. |

| ACS [10] | Multiple MoleculeNet Benchmarks (ClinTox, SIDER, Tox21) | 11.5% average improvement over other node-centric message passing methods. | Provides consistent gains across diverse property prediction tasks. |

Table 2: The Scientist's Toolkit: Essential Reagents & Computational Resources

| Item / Resource | Function / Role in Experiment |

|---|---|

| RDKit Fingerprint | A topological representation of a molecule's structure, used as input features for machine learning models [11]. |

| Graph Neural Network (GNN) | The core architecture for modeling molecules as graphs, where atoms are nodes and bonds are edges [10] [6]. |

| Bayesian Optimization | A sequential design strategy for globally optimizing black-box functions (like model performance), used for efficient hyperparameter tuning [11]. |

| Multi-Layer Perceptron (MLP) Head | A task-specific neural network module attached to a shared backbone, which makes the final property prediction [10]. |

| Message Passing Neural Network (MPNN) | A specific framework for GNNs that operates by passing and updating messages between nodes in a graph [10]. |

FAQs: Navigating Hyperparameter Challenges

FAQ 1: Does extensive Hyperparameter Optimization (HPO) always lead to better models in cheminformatics?

No. Contrary to intuition, extensive HPO does not always yield better models and can even be detrimental. A recent study on solubility prediction demonstrated that HPO did not consistently result in superior models, likely due to overfitting on the validation set when evaluating using the same statistical measures. The research showed that using a set of sensible, pre-selected hyperparameters could achieve similar predictive performance while reducing computational effort by a factor of approximately 10,000 times [16]. This suggests that for many applications, especially with smaller datasets, the risk of overfitting via HPO can outweigh its benefits.

FAQ 2: What is the relationship between model architecture selection and hyperparameter tuning?

The choice of model architecture and hyperparameter tuning are deeply intertwined. The performance of a model is highly sensitive to both its architectural choices and its hyperparameters [6]. However, it's crucial to recognize that a more complex architecture does not automatically guarantee better performance and often requires more intensive HPO. For instance, while nested graph networks (e.g., ALIGNN) can capture critical structural information like bond angles, they also significantly increase the number of trainable parameters and training costs [17]. In some cases, simpler models with well-chosen preset hyperparameters can be more efficient and effective, highlighting a trade-off between architectural complexity and tuning effort [16] [18].

FAQ 3: Which hyperparameters are most critical for avoiding over-smoothing in deep Graph Neural Networks (GNNs)?

Building deeper GNNs for complex molecular representations often leads to the over-smoothing problem, where node representations become indistinguishable. Key architectural strategies and their associated hyperparameters to combat this include [17]:

- Dense Connectivity: The density of connections between layers is a critical design choice. Architectures like DenseGNN use Dense Connectivity Networks (DCN) to create more direct and dense information propagation paths, which helps reduce information loss and supports the construction of very deep networks.

- Number of Graph Convolution (GC) Layers: While increasing GC layers is necessary for capturing wider molecular contexts, it is also the primary cause of over-smoothing. Using DCN and residual connection strategies allows for building deeper GNNs (e.g., 5 GC processing blocks) without the typical performance penalty.

- Local Structure Order Parameters Embedding (LOPE): The use of LOPE optimizes atomic embeddings and allows the model to train efficiently with a minimal level of edge connections, which helps maintain accuracy while reducing computational costs.

Troubleshooting Guides

Problem 1: Model performance is highly sensitive to small changes in a hyperparameter.

- The Culprit: An unfit range of values during HPO. Using a grid that is too fine or that does not cover the parameter's effective range can lead to misleading conclusions about model stability and performance [19].

- Solution:

- Initial Coarse Search: Start with a coarse-grained grid over a large range of values to identify regions where the model performance is most sensitive [19].

- Fine-Tuning: Once interesting areas are identified, perform a second, finer-grained search within that narrower range to pinpoint the optimal value.

- Sensitivity Analysis: Always plot the model's performance metric against the hyperparameter values. This visual inspection helps understand the parameter's influence and confirms that the chosen value lies in a stable, optimal region [19].

Problem 2: After extensive HPO, the model performs well on validation data but generalizes poorly to new data.

- The Culprit: Overfitting by hyperparameter optimization. This occurs when the hyperparameters are tuned too specifically to the validation set, capturing its noise rather than underlying data patterns [16].

- Solution:

- Re-evaluate the Need for HPO: For many standard tasks, consider using established, pre-set hyperparameters from literature, which can provide robust performance with a fraction of the computational cost [16] [18].

- Use a Hold-Out Test Set: Strictly separate a portion of your data as a final test set. Do not use this set for any step of the HPO process. The performance on this set is the true indicator of generalizability.

- Nested Cross-Validation: For a rigorous evaluation, use nested cross-validation, where an inner loop is used for HPO and an outer loop is used for model assessment. This provides a nearly unbiased estimate of the model's performance on unseen data.

Problem 3: High computational cost and memory footprint of GNNs hinder deployment.

- The Culprit: Full-precision, complex GNN models with high parameter counts.

- Solution: Model Quantization. This technique reduces the memory storage and computational costs by representing model parameters (weights, activations) in fewer bits [20].

- Methodology: Integrate quantization algorithms like DoReFa-Net into the GNN training procedure. This allows for flexible bit-widths (e.g., INT8, INT4) [20].

- Protocol:

- Train a full-precision model (e.g., GCN or GIN) on your dataset (e.g., ESOL, QM9).

- Apply Post-Training Quantization (PTQ) using the chosen algorithm to quantize weights and activations.

- Evaluate the predictive performance (RMSE, MAE) of the quantized model on the test set.

- Performance Consideration: The effectiveness is architecture and task-dependent. For example, on quantum mechanical tasks like predicting dipole moments (QM9 dataset), models can maintain strong performance up to 8-bit precision. However, aggressive quantization to 2-bit precision can severely degrade performance [20].

The table below synthesizes key quantitative evidence from recent studies on hyperparameter optimization in cheminformatics.

Table 1: Evidence on Hyperparameter Optimization from Recent Cheminformatics Studies

| Study Focus | Key Finding on HPO | Quantitative Result / Implication | Proposed Alternative |

|---|---|---|---|

| Solubility Prediction [16] | HPO can lead to overfitting without performance gain. | Similar results achieved with ~10,000x less computational effort. | Use of pre-set hyperparameters. |

| Model Quantization [20] | Lower bit quantization reduces resource use but impacts accuracy. | Performance maintained at 8-bit; severe degradation at 2-bit. | Use DoReFa-Net algorithm for flexible bit-width quantization. |

| Model Architecture [17] | Deeper GNNs face over-smoothing; requires architectural HPO. | DenseGNN with 5 GC blocks achieved SOTA performance without over-smoothing. | Use Dense Connectivity Networks & LOPE embedding. |

Experimental Protocols from Key Studies

Protocol 1: Evaluating the Impact of HPO vs. Pre-set Parameters (Solubility Prediction) [16]

- Datasets: Use seven thermodynamic and kinetic solubility datasets (e.g., AQUA, ESOL, CHEMBL).

- Data Cleaning: Apply a standardized protocol: Remove duplicates, standardize SMILES using MolVS, and filter by experimental conditions (temperature 25±5°C, pH 7±1).

- Model Training:

- HPO Condition: Perform hyperparameter optimization for state-of-the-art graph-based methods (e.g., ChemProp, AttentiveFP) as described in original studies.

- Pre-set Condition: Train the same models using a fixed set of sensible, pre-selected hyperparameters.

- Evaluation: Compare the Root Mean Squared Error (RMSE) and curated RMSE (cuRMSE) of models from both conditions on a held-out test set. Use identical statistical measures for a fair comparison.

Protocol 2: Quantizing a GNN for Efficient Molecular Property Prediction [20]

- Dataset Preparation: Select a molecular property dataset (e.g., ESOL for solubility, QM9 for dipole moment). Split the data randomly into training (80%), validation (10%), and test (10%) sets.

- Base Model Training: Train a full-precision GNN model (e.g., Graph Convolutional Network - GCN or Graph Isomorphism Network - GIN) on the training set.

- Quantization:

- Apply the DoReFa-Net quantization algorithm to the trained model.

- Quantize both weights and activations to target bit-widths (e.g., FP16, INT8, INT4, INT2).

- Validation & Testing: Use the validation set to monitor the quantized model's performance. Finally, evaluate the model on the test set using RMSE and Mean Absolute Error (MAE).

- Comparison: Compare the performance and computational efficiency (model size, inference speed) of the quantized models against the full-precision baseline.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Software Tools and Datasets for Cheminformatics HPO

| Item Name | Type | Function in Research |

|---|---|---|

| ChemProp [16] [18] | Software (GNN) | A message-passing neural network for molecular property prediction; frequently used as a benchmark in HPO studies. |

| Transformer CNN [16] | Software (NLP/CNN) | A representation learning method using SMILES; shown to provide high accuracy with minimal hyperparameter tuning. |

| RDKit [21] | Software Toolkit | A core cheminformatics library used for SMILES standardization, descriptor calculation, and molecular fingerprinting. |

| DenseGNN [17] | Software (GNN) | A GNN architecture designed with Dense Connectivity to overcome over-smoothing in deep networks. |

| DoReFa-Net [20] | Algorithm | A quantization technique used to reduce the memory and computational footprint of GNNs post-training. |

| ESOL, FreeSolv, QM9 [20] | Benchmark Datasets | Publicly available datasets for water solubility, hydration free energy, and quantum mechanical properties; standard for evaluating model performance. |

Workflow Diagram: HPO Decision Path

Workflow Diagram: GNN Quantization Protocol

Troubleshooting Guides

FAQ 1: Why does my hyperparameter tuning fail to improve model performance, despite extensive computation?

Issue: After running a long hyperparameter optimization, the performance of your chemical model shows no significant improvement over the default settings.

Diagnosis and Solutions:

Potential Cause 1: Overfitting of the Hyperparameter Search. The optimization process may have overfitted to your validation set, especially if the hyperparameter space was large and the computational budget was very high. A study on solubility prediction found that hyperparameter optimization did not always result in better models and could be due to this type of overfitting [16].

- Fix: Validate the final model on a completely held-out test set that was not used during the tuning process. Consider using pre-set hyperparameters, which can yield similar performance with a drastic reduction (up to 10,000 times) in computational effort [16].

Potential Cause 2: Inadequate Data Quality or Feature Engineering. The model's performance is fundamentally limited by its input data. In chemical ML, data can be sparse, noisy, or lack the necessary features for the model to learn effectively [22] [23].

- Fix: Prioritize data curation. This includes cleaning data, removing duplicates, standardizing chemical representations (e.g., SMILES), and addressing class imbalance. Feature engineering—creating informative descriptors that capture relevant chemical properties—can be more impactful than hyperparameter tuning alone [23] [16].

Potential Cause 3: Insufficient Model Complexity or Training Time. The chosen model architecture might be too simple to capture the complex relationships in high-dimensional chemical data, or it may not have been trained for a sufficient number of epochs [23].

- Fix: Experiment with different, more complex model architectures (e.g., switching from a simple linear model to a graph neural network). Ensure the model is trained for an adequate number of epochs, using techniques like early stopping to prevent overfitting while allowing for convergence [23].

FAQ 2: How can I accelerate hyperparameter optimization for large-scale chemical models?

Issue: Hyperparameter tuning for complex models like graph neural networks or large language models in chemistry is prohibitively slow and computationally expensive.

Diagnosis and Solutions:

- Solution: Implement Training Performance Estimation (TPE). This technique predicts the final performance of a model configuration after only a fraction (e.g., 20%) of the total training budget. Research has shown that TPE can reduce total tuning time and compute budgets by up to 90% during hyperparameter optimization and neural architecture selection for large chemical models [24].

- Solution: Adopt Advanced Optimization Frameworks. Replace exhaustive methods like

GridSearchCVwith modern frameworks like Optuna, which uses Bayesian optimization to efficiently navigate the hyperparameter space. Unlike blind search methods, Optuna learns from past trials to suggest more promising hyperparameters next [25]. - Solution: Use Pruning to Stop Unpromising Trials Early. Optuna and similar frameworks can automatically stop trials that are performing poorly early in the training process. This prevents wasting computational resources on hyperparameter sets that are unlikely to yield good results, which is particularly valuable for training deep learning models [25].

FAQ 3: Why do traditional tuning methods like Grid Search perform poorly in chemical ML?

Issue: Traditional hyperparameter tuning methods such as GridSearchCV and RandomizedSearchCV are ineffective or too slow for high-dimensional chemical machine learning tasks.

Diagnosis: Chemical machine learning often involves exploring a high-dimensional chemical space with complex, non-linear relationships [26]. Traditional methods are not designed for this complexity:

- GridSearchCV is exhaustive and computationally expensive. It becomes impractical as the number of hyperparameters increases because the number of combinations grows exponentially [25].

- RandomizedSearchCV, while faster, still performs a blind search with no learning from previous trials. It might miss the optimal combination due to its random sampling approach and its performance depends heavily on luck and the number of iterations [25].

Solution: Transition to a smarter search strategy. Bayesian optimization, as implemented in Optuna, builds a probabilistic model of the objective function. It balances the exploration of new areas of the hyperparameter space with the exploitation of known good areas, leading to a more efficient and effective search [25].

Table: Comparison of Traditional vs. Modern Hyperparameter Tuning Methods

| Method | Core Principle | Advantages | Disadvantages | Best for Chemical ML? |

|---|---|---|---|---|

| GridSearchCV | Exhaustive search over a predefined grid | Thorough, guarantees finding best combo on the grid | Computationally prohibitive for high dimensions; doesn't learn from trials | No |

| RandomizedSearchCV | Random sampling over a distribution | Faster than grid search; good for a large number of parameters | Blind search; may miss optimum; performance is luck-dependent | For quick, initial explorations |

| Bayesian Optimization (e.g., Optuna) | Builds a surrogate model to guide search | Efficient, learns from past trials; supports pruning and dynamic search spaces | More complex to set up | Yes, ideal for complex, expensive-to-train models |

Experimental Protocols & Workflows

Workflow 1: Accelerated Hyperparameter Optimization using TPE

This methodology, derived from scaling studies of deep chemical models, enables efficient identification of optimal training settings [24].

1. Objective: Quickly identify near-optimal hyperparameters (e.g., learning rate, batch size) for large-scale model training.

2. Procedure:

- Step 1: Define a search space for the hyperparameters of interest.

- Step 2: Sample a set of model configurations (hyperparameter combinations) from this space.

- Step 3: Train each model configuration for a small number of epochs (e.g., 10 epochs, ~20% of the full budget).

- Step 4: Record the loss/performance of each model at this early stage.

- Step 5: Perform a linear regression between the early performance (e.g., loss after 10 epochs) and the final performance (e.g., loss after 50 epochs). Studies have reported a high coefficient of determination (R² = 0.98) and Spearman's rank correlation (ρ = 1.0) for this relationship [24].

- Step 6: Use this regression model to predict the final performance of all configurations. Select and fully train only the configurations predicted to perform best.

Workflow 2: Dynamic Hyperparameter Optimization with Optuna for Model Selection

This protocol is useful when the best model architecture (e.g., Random Forest vs. XGBoost) is not known in advance [25].

1. Objective: Dynamically optimize both the model type and its hyperparameters simultaneously.

2. Procedure:

- Step 1: Define an objective function that takes a Optuna

trialobject and returns a performance metric (e.g., validation loss). - Step 2: Within the objective function, use

trial.suggest_categorical()to let Optuna choose between different model types (e.g., "xgb", "rf", "svm"). - Step 3: Use conditional hyperparameter spaces. For example, if the model type "xgb" is selected, then suggest XGBoost-specific hyperparameters like

max_depthandlearning_rate. - Step 4: Run the Optuna study over a large number of trials. The framework will automatically learn which model types and hyperparameter combinations yield the best results, dynamically focusing its search.

Diagram: Dynamic Hyperparameter Optimization with Optuna

The Scientist's Toolkit: Key Research Reagents & Solutions

Table: Essential Tools for Scalable Chemical Machine Learning

| Tool / Solution | Function | Relevance to Chemical ML |

|---|---|---|

| Optuna [25] | A hyperparameter optimization framework that uses Bayesian optimization. | Efficiently navigates complex hyperparameter spaces of chemical models; supports pruning and dynamic search spaces. |

| Training Performance Estimation (TPE) [24] | A method to predict final model performance from early training results. | Drastically reduces HPO compute time (by up to 90%) for large-scale models like ChemGPT and graph neural networks. |

| Define-by-Run API [25] | A programming paradigm where the hyperparameter search space is defined dynamically during the trial. | Allows the model type itself to be a hyperparameter, enabling flexible exploration of architectures (e.g., SVM, XGBoost, Neural Networks). |

| Pruning [25] | Automatically stops unpromising trials during the optimization process. | Saves immense computational resources by halting training for poorly performing hyperparameter sets early on. |

| Synthetic Data Generation [23] | The creation of artificial data to augment real-world datasets. | Can help overcome the "small data" challenge common in materials and chemicals, where each data point can be costly to acquire [22]. |

| TransformerCNN [16] | A representation learning method based on Natural Language Processing of SMILES strings. | Provides a high-accuracy alternative to graph-based methods for molecular property prediction, often with less computational demand. |

Key Quantitative Findings

Table: Empirical Results on Hyperparameter Tuning Efficiency and Performance

| Study Focus | Method Compared | Key Metric | Result / Finding | Implication |

|---|---|---|---|---|

| General HPO Efficiency [25] | GridSearchCV/RandomizedSearchCV vs. Optuna | Computational Efficiency | Optuna uses Bayesian optimization to find good solutions faster than exhaustive or random methods. | Enables feasible tuning for complex chemical models. |

| Scalability for Deep Chemical Models [24] | Standard HPO vs. HPO with Training Performance Estimation (TPE) | Time/Compute Budget | TPE reduced total time and compute budgets by up to 90% during HPO. | Makes large-scale neural scaling experiments practical. |

| Solubility Prediction Models [16] | Hyperparameter Optimization vs. Pre-set Parameters | Computational Effort & RMSE | Using pre-set parameters yielded similar performance but was ~10,000 times faster. | Questions the necessity of extensive HPO in all scenarios; warns of overfitting. |

| Model Performance [16] | Graph-based methods (ChemProp, AttentiveFP) vs. TransformerCNN | Prediction Accuracy | TransformerCNN provided better results for 26 out of 28 pairwise comparisons. | Suggests exploring alternative architectures can be more impactful than tuning a single architecture. |

From Grid Search to Bayesian Optimization: A Practical Guide to HPO Methods

FAQs on Hyperparameter Tuning Methods

Q1: What are the fundamental differences between manual, grid, and random search?

Manual search involves a human-driven, trial-and-error approach where a data scientist adjusts hyperparameters based on intuition, domain knowledge, and observation of previous results. It is not an exhaustive search and relies heavily on the practitioner's experience and educated guesses [27]. In contrast, grid search is a systematic method that pre-specifies a set of values for each hyperparameter and then exhaustively evaluates every possible combination in this grid. It methodically searches the entire predefined space [28] [29]. Random search, unlike grid search, does not test every combination. Instead, it randomly samples a fixed number of hyperparameter sets from a predefined search space (either uniform or log-uniform), allowing for a broader exploration of the space with a lower computational cost [29].

Q2: My grid search experiments are taking too long to complete. How can I optimize this process?

Grid search is computationally intensive because its time complexity grows exponentially with the number of hyperparameters [28]. For large hyperparameter spaces, consider these strategies:

- Switch to Random or Bayesian Search: For a similar computational budget, random search can often find a good combination faster than grid search by exploring a wider range of values [28] [29]. For even greater efficiency, Bayesian optimization uses information from prior trials to intelligently select the next set of hyperparameters to evaluate, often requiring far fewer iterations [30] [29].

- Reduce the Search Space: Limit the number of hyperparameters you tune simultaneously and narrow their value ranges based on domain knowledge or literature [28] [31].

- Use Parallelization: Both grid and random search can run a large number of parallel jobs since each hyperparameter set is evaluated independently. Ensure your tuning setup leverages this capability [28].

Q3: When should I prefer manual search over automated methods like grid or random search?

Manual search can be effective when you have deep domain expertise and a clear understanding of how different hyperparameters influence the model. It is often used for an initial, coarse exploration of the hyperparameter space or when computational resources are extremely limited. However, for a comprehensive and reproducible optimization process, automated methods like grid search (for small spaces), random search, or Bayesian optimization (for larger spaces) are generally recommended, as they are less prone to human bias and can more reliably find a high-performing configuration [27] [29].

Q4: How can I ensure my hyperparameter tuning is reproducible?

Reproducibility is crucial for scientific rigor. For grid search, the results are deterministic; using the same hyperparameter grid will produce identical results [28]. For stochastic methods like random search, you should set a random seed. Using the same seed will allow you to reproduce the exact sequence of hyperparameter configurations in subsequent tuning jobs [28]. Always log the hyperparameters, the resulting model performance metrics, and the seed value for every experiment [31].

Q5: What are the common pitfalls when tuning hyperparameters for Graph Neural Networks (GNNs) in cheminformatics?

In cheminformatics, where GNNs are common, key pitfalls include:

- Overlooking the Cost: The performance of GNNs is highly sensitive to architectural choices and hyperparameters, making optimal configuration a non-trivial and computationally expensive task [6].

- Using an Inefficient Search Strategy: Relying solely on manual or full grid search for a large number of hyperparameters can become prohibitively slow, hindering research progress [6].

- Ignoring Automated Methods: Leveraging automated Hyperparameter Optimization (HPO) and Neural Architecture Search (NAS) is critical for enhancing the performance, scalability, and efficiency of GNNs in key cheminformatics applications [6].

Troubleshooting Guides

Problem: Experiments are slow and lack direction.

- Symptoms: Long wait times for results, experiments frequently hitting dead ends, debugging errors like NaNs, and inconsistent logging [32].

- Solution: Move away from a purely manual, intuition-based workflow. Implement a structured automated strategy.

- Action Plan:

- Define a Clear Search Space: Start by defining bounded ranges for your most important hyperparameters (e.g., learning rate, batch size, model depth) [31].

- Choose an Efficient Algorithm: For an initial broad search, use random search. Once you have a promising region, use Bayesian optimization (e.g., with Optuna) to refine the parameters efficiently [30] [28] [29].

- Automate and Log: Use a framework that automates the experiment orchestration and, crucially, logs all parameters, metrics, and outcomes for every trial. This provides a clear record of "what worked and why" [32].

Problem: The model is overfitting despite hyperparameter tuning.

- Symptoms: High performance on training data but poor performance on validation or test data.

- Solution: Integrate regularization techniques directly into your hyperparameter search.

- Action Plan:

- Tune Regularization Hyperparameters: Include parameters like

weight_decay(L2 regularization), dropout rate, and learning rate in your search space [31]. - Use Cross-Validation: Implement cross-validation within your tuning workflow (e.g., using

GridSearchCVorRandomizedSearchCV). This ensures that the selected hyperparameters generalize well and are not overfit to a single validation split [29]. - Incorporate Early Stopping: Configure your training to stop automatically when the validation performance stops improving, preventing the model from over-optimizing on the training data [29].

- Tune Regularization Hyperparameters: Include parameters like

Problem: The tuning process is computationally too expensive.

- Symptoms: Jobs running for days, high cloud computing costs, inability to test a sufficient number of configurations.

- Solution: Optimize your tuning strategy and resource allocation.

- Action Plan:

- Limit the Number of Hyperparameters: Focus on tuning the 3-5 most impactful parameters first. This dramatically reduces the search space's complexity [28].

- Use an Early Stopping Strategy: Employ algorithms like Hyperband, which automatically stop poorly performing trials early, reallocating resources to more promising configurations [28].

- Prioritize Methods: If you must choose, prefer random search over grid search for higher-dimensional spaces. For the best efficiency, invest in Bayesian optimization frameworks [29].

Comparison of Hyperparameter Optimization Methods

The table below summarizes the core characteristics of manual, grid, and random search, helping you select the right strategy.

| Feature | Manual Search | Grid Search | Random Search |

|---|---|---|---|

| Core Principle | Human-guided, based on intuition and experience [27]. | Exhaustive search over all combinations in a predefined grid [29]. | Random sampling from a predefined search space [29]. |

| Best Use Case | Initial exploration; low-dimensional spaces; expert-driven fine-tuning [27]. | Small, well-understood hyperparameter spaces where an exhaustive search is feasible [28]. | Larger hyperparameter spaces where an exhaustive search is computationally prohibitive [29]. |

| Key Advantages | Leverages domain knowledge; low computational cost for a few trials. | Methodical and comprehensive; guaranteed to find the best point in the grid; simple and reproducible [28] [29]. | Better than grid search for the same budget; highly parallelizable; explores search space more broadly [29]. |

| Key Limitations | Not reproducible; prone to bias; non-exhaustive; doesn't scale [27]. | Computationally expensive (curse of dimensionality); inefficient for irrelevant parameters [29]. | Can miss the optimum; lacks a intelligence of guided search; may require many iterations. |

| Reproducibility | Low | High (identical with same grid) | Medium-High (with fixed random seed) [28]. |

Experimental Protocol for Method Comparison

This protocol outlines a structured experiment to compare manual, grid, and random search for a Graph Neural Network on a molecular property prediction task.

1. Objective: To compare the efficiency and final performance of Manual, Grid, and Random Search strategies in optimizing a GNN for a binary classification task (e.g., predicting molecular toxicity).

2. Materials (Research Reagent Solutions):

| Item | Function / Description |

|---|---|

| Cheminformatics Dataset (e.g., Tox21, ESOL) | Standardized benchmark dataset for molecular property prediction [6]. |

| Graph Neural Network Model (e.g., GCN, GIN) | The machine learning model whose hyperparameters are being optimized [6]. |

| Hyperparameter Optimization Library (e.g., Scikit-learn, Optuna) | Frameworks to implement Grid and Random Search [29]. |

| Validation Metric (e.g., AUC-ROC, F1-Score) | The objective metric used to evaluate and compare model performance [31]. |

3. Methodology:

- Step 1 - Define a Common Search Space: Fix the hyperparameters and their ranges for all methods to ensure a fair comparison. A sample space is shown below.

- Step 2 - Implement the Three Strategies:

- Manual Search: An expert is given 20 trials to find the best configuration using any logic they see fit.

- Grid Search: Use

GridSearchCVto evaluate all combinations in the grid. For the space below, this would be 3 x 3 x 3 x 2 = 54 trials. - Random Search: Use

RandomizedSearchCVto evaluate 54 trials (matching the computational budget of grid search).

- Step 3 - Evaluate: Train a final model for each best-found configuration on an identical test set. Compare the performance metrics (AUC-ROC, F1-Score) and the total computational time/resources consumed.

Example Hyperparameter Search Space:

Workflow Diagram for Hyperparameter Tuning

The diagram below illustrates a generalized, robust workflow for hyperparameter tuning that incorporates the best practices of the methods discussed.

Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: My Gaussian Process (GP) model produces poor predictions and seems overfit, even with limited data. What is the cause and solution?

A: This is a common pitfall when the GP hyperparameters (e.g., kernel length scales) are poorly chosen based on the standard Log Marginal Likelihood (LML) objective in data-scarce settings [33]. The LML can lead to distorted predictions and overfitting when the dataset is very small [33].

- Solution: Implement an uncertainty-aware objective function for hyperparameter tuning, such as the Hybrid Loss (HL) method [33]. The HL integrates information from the predictive variance to mitigate LML's shortcomings, resulting in more accurate predictions and surrogate models less prone to overfitting [33].

Q2: For optimizing a chemical reaction with numerous categorical variables (e.g., solvent or catalyst types), which optimizer should I choose and why?

A: The Tree-structured Parzen Estimator (TPE) is particularly suited for this scenario [34]. While Gaussian Processes (GP) struggle with non-continuous variables, TPE handles categorical and discrete values exceptionally well [34]. Its ability to model complex, high-dimensional search spaces efficiently makes it a robust choice for such chemical optimization tasks [35].

Q3: How can I effectively optimize my model when I have fewer than 50 experimental data points?

A: In low-data regimes, use automated, ready-to-use workflows that mitigate overfitting through Bayesian hyperparameter optimization [36]. These frameworks incorporate an objective function specifically designed to account for overfitting in both interpolation and extrapolation [36]. Benchmarking on chemical datasets as small as 18-44 data points has shown that properly tuned non-linear models can perform on par with or outperform traditional linear regression [36].

Q4: My optimization process is getting trapped in local minima. How can I encourage more global exploration?

A: Consider using evolutionary algorithms like the Paddy field algorithm, which are biologically inspired and designed to avoid early convergence on local minima [35]. These algorithms propagate parameters without direct inference of the underlying objective function, maintaining strong performance across diverse optimization benchmarks and demonstrating a robust ability to bypass local optima in search of global solutions [35].

Optimization Selector Guide

Table: Choosing Between Gaussian Processes and TPE for Chemical ML

| Criterion | Gaussian Process (GP) | Tree-structured Parzen Estimator (TPE) |

|---|---|---|

| Primary Strength | Provides uncertainty estimates; Excellent for modeling continuous functions [37] [38] [39]. | Efficient in high-dimensional and complex search spaces; Handles categorical variables well [34] [35]. |

| Best For | Problems where quantifying prediction confidence is critical; Low-to-medium dimensional continuous spaces [37] [39]. | Problems with many hyperparameters, categorical variables, or when computational efficiency is a concern [34]. |

| Computational Scaling | Scales poorly with many hyperparameters (computationally expensive) [34]. | More efficient and faster for large, complex search spaces [34]. |

| Handling of Variables | Struggles with non-continuous (categorical/discrete) variables [34]. | Naturally handles categorical and discrete values [34]. |

Experimental Protocols & Data

Detailed Methodology: TPE for Drug Solubility Prediction

This protocol outlines the procedure for predicting drug solubility using Decision Tree regression optimized with TPE, as demonstrated in a study analyzing the crystallization of salicylic acid [40].

Dataset Preparation:

- Source: Utilize a dataset of 217 data points, each with 15 input features including pressure, temperature, and various solvent properties [40].

- Preprocessing: Employ the Isolation Forest (iForest) algorithm for anomaly detection and outlier removal. This method explicitly isolates anomalies and is computationally efficient, requiring low memory resources [40].

Model Training with Hyperparameter Optimization:

- Base Model: Implement a Decision Tree Regressor (DT) as the base model [40].

- Ensemble Method: Use a Bagging ensemble method combining multiple Decision Tree models to reduce overfitting and improve handling of outliers [40].

- Hyperparameter Tuning: Apply the Tree-structured Parzen Estimator (TPE) to optimize the hyperparameters of the Bagging-DT model. TPE performs sequential model-based optimization by creating a grid to explore the hyperparameter space, using loss minimization as the criterion [40].

- TPE Mechanism: The algorithm models the hyperparameter space by building two density distributions: (l(x)) for hyperparameter configurations that yielded good results (loss below a threshold (y^)), and (g(x)) for bad configurations (loss above (y^)). It then selects the next hyperparameter set by maximizing the ratio (l(x)/g(x)), effectively guiding the search towards promising regions [34] [40].

Validation:

- Evaluate the final Bagging-DT model with optimized hyperparameters on training, validation, and test sets. The reported best model achieved the highest R² scores and lowest error rates across all sets [40].

Key Experimental Workflow

The following diagram illustrates the core iterative workflow of a Bayesian optimization process, common to both GP and TPE-based approaches.

Table: Performance Comparison of Optimization Algorithms

| Algorithm | Problem Type | Key Performance Findings |

|---|---|---|

| TPESampler (Optuna) | Hyperparameter Tuning (e.g., XGBoost) | Efficiently finds strong hyperparameter combinations (e.g., maxdepth, learningrate) within a limited number of trials (e.g., 20) [34]. |

| Paddy Algorithm | Chemical & Mathematical Benchmarking | Demonstrates robust versatility, maintaining strong performance across all benchmarks (mathematical functions, molecule generation, experimental planning) and effectively avoids local optima [35]. |

| Gaussian Process (TSEMO) | Multi-objective Chemical Reaction Optimization | Successfully obtained Pareto frontiers for reaction objectives (e.g., Space-Time Yield, E-factor) within 68-78 iterations; showed best performance in hypervolume improvement despite relatively high cost [37]. |

| Bagging-DT with TPE | Drug Solubility Prediction | Achieved the highest R² scores and lowest error rates in training, validation, and test sets for predicting salicylic acid solubility [40]. |

The Scientist's Toolkit

Research Reagent Solutions

Table: Essential Computational Tools for Bayesian Optimization

| Tool / Component | Function / Application |

|---|---|

| Optuna | A hyperparameter optimization framework that implements TPE (via TPESampler) for efficient and scalable optimization of machine learning models [34]. |

| Gaussian Process Regressor | A surrogate model that provides a probabilistic estimate of the objective function and, crucially, quantifies the uncertainty of its own predictions [38] [39]. |

| Expected Improvement (EI) | An acquisition function that selects the next experiment by maximizing the expected improvement over the current best observation, balancing exploration and exploitation [34] [38]. |

| Summit | A chemical optimization toolkit that includes implementations of various strategies, including TSEMO, for optimizing chemical reactions with multiple objectives [37]. |

| Hyperopt | A Python library for serial and parallel optimization over awkward search spaces, which includes the TPE algorithm [35]. |

Frequently Asked Questions (FAQs)

Q1: My Hyperband run is finishing quickly but returning a poor configuration. What is the most likely cause?

A1: This is often caused by setting the maximum budget (R) too low or the reduction factor (η) too high. This forces Hyperband to eliminate promising configurations before they have enough resources to demonstrate their potential. In chemical ML, where models often require significant epochs to learn complex structure-property relationships, an insufficient max budget is a common mistake.

Q2: Why is BOHB not converging to a good solution in my molecular property prediction task?

A2: This typically stems from an improperly defined search space. If your defined ranges for hyperparameters like learning_rate or max_depth do not encompass the optimal values, the optimizer cannot find them. Always start with a broad search space and consult literature for similar chemical datasets to define sensible bounds [41].

Q3: How do I choose between Hyperband and BOHB for my experiment? A3: The choice depends on your computational resources and prior knowledge.

- Use Hyperband when you have limited prior knowledge about which hyperparameters work best for your dataset and you want a robust, quick answer. It is highly efficient at rapidly allocating resources [42].

- Use BOHB when you have a more constrained search space from prior experiments and seek the best possible performance. It uses the intelligence of Bayesian optimization to find superior configurations, making it ideal for the final tuning stage of a high-impact model [41] [43].

Q4: What is the "budget" in the context of tuning models for chemical data? A4: The "budget" can be any resource that correlates with the performance of a model configuration. The most common types are:

- Number of training epochs/iterations

- Size of a data subset (e.g., using a fraction of your molecular dataset)

- Other computational resources

Troubleshooting Guides

Issue: The Optimization Process is Taking Too Long

Possible Causes and Solutions:

- Cause 1: Excessively large search space.

- Solution: Narrow your hyperparameter ranges. For instance, instead of searching

n_estimatorsfrom 100 to 2000, search from 50 to 500 based on initial coarse-grained trials.

- Solution: Narrow your hyperparameter ranges. For instance, instead of searching

- Cause 2: Maximum budget (

R) is set too high.- Solution: Reduce

R. A model's performance often plateaus; identify this point through small-scale experiments and setRjust beyond it.

- Solution: Reduce

- Cause 3: The reduction factor (

η) is too low (e.g., η=2).- Solution: Increase

ηto a value like 3 or 4. This will more aggressively eliminate configurations in each successive round, speeding up the overall process [42].

- Solution: Increase

Issue: Results Are Not Reproducible

Possible Causes and Solutions:

- Cause 1: Non-deterministic model training.

- Solution: Set random seeds for all relevant components (Python, NumPy, TensorFlow/PyTorch, etc.) at the start of your training script.

- Cause 2: Using a parallel or distributed setup with unpredictable scheduling.

- Solution: If possible, configure the system to use a fixed number of workers and note that perfect reproducibility can be challenging in highly parallel environments.

Issue: Optimization Gets Stuck in a Local Minimum

Possible Causes and Solutions:

- Cause: The probabilistic model in BOHB is over-exploiting and not exploring enough.

- Solution: BOHB has an intrinsic exploration/exploitation trade-off. You can adjust parameters that control this balance (e.g., the acquisition function). However, this is an advanced setting; a more straightforward fix is to ensure your search space is appropriate and that you are running the optimization for a sufficient number of iterations.

Performance Comparison of Hyperparameter Optimization Methods

The table below summarizes the key characteristics of different hyperparameter tuning strategies, highlighting why Hyperband and BOHB are superior for resource-intensive tasks.

Table 1: Comparison of Hyperparameter Optimization Methods

| Method | Key Principle | Strengths | Weaknesses | Best Use-Cases |

|---|---|---|---|---|

| Grid Search [44] | Exhaustive search over a defined grid | Simple to implement, guarantees to find the best point in the grid | Computationally intractable for high-dimensional spaces, wastes resources on poor parameters | Small, low-dimensional search spaces |

| Random Search [44] | Randomly samples from the search space | More efficient than grid search, better for high-dimensional spaces | May still waste resources evaluating clearly bad configurations, no learning from past trials | A better default than grid search for most cases, moderate-dimensional spaces |

| Bayesian Optimization [44] | Builds a probabilistic model to guide the search | Sample-efficient, intelligent search; good for expensive functions | Computational overhead of the surrogate model, can get stuck in local minima | Optimizing very expensive black-box functions with a limited budget |

| Hyperband [45] [42] | Uses successive halving with dynamic resource allocation | Very fast, good at quickly finding a decent configuration, resource-efficient | May discard promising configurations early due to aggressive pruning | Fast, preliminary model tuning and large-scale problems with limited resources |

| BOHB [41] [43] | Combines Bayesian Optimization with Hyperband | Best of both worlds: sample-efficient and fast, state-of-the-art performance | More complex to set up and run | Final-stage tuning for high-performance models and complex, resource-intensive tasks |

Detailed Experimental Protocols

Protocol 1: Implementing Hyperband for a Molecular Property Predictor

This protocol outlines the steps to apply the Hyperband algorithm to tune a graph neural network predicting solubility.

- Define the Resource: The budget is defined as the number of training epochs. Set the maximum budget

R= 81 epochs and the reduction factorη= 3. - Define the Search Space:

learning_rate: Log-uniform distribution between1e-4and1e-2.graph_layer_size: Uniform integer distribution between64and512.batch_size: Categorical choice of32,64,128.

- Calculate Brackets: Hyperband will calculate the number of brackets (

s_max + 1). ForR=81 andη=3,s_maxis 4, leading to 5 brackets. - Run Successive Halving: For each bracket, start with

n = η^sconfigurations. In the first bracket (s=4), start with3^4 = 81configurations, each trained forR/η^s = 81/81 = 1epoch. - Iterate and Promote: Keep the best

1/ηfraction of configurations (e.g., 27 out of 81) and promote them to the next round with a budget ofη * previous_budget = 3epochs. Repeat until only one configuration remains, trained with the full 81 epochs. - Select Best: After running all brackets, the best configuration is the one that achieved the highest validation performance across all brackets.

The following diagram illustrates the workflow and resource allocation for one bracket of the Hyperband algorithm.

Protocol 2: Applying BOHB to Optimize a Reaction Yield Prediction Model

This protocol describes how to use BOHB to fine-tune a transformer-based model for predicting chemical reaction yields.

- Setup: Install necessary libraries (

hpbandsterorOptunawhich supports BOHB). - Define the Worker: Create a worker class that defines the objective function. This function:

- Takes a hyperparameter configuration as input.

- Builds the model architecture (e.g., number of transformer layers, attention heads) based on the configuration.

- Trains the model for the allocated budget (number of epochs).

- Returns the validation loss (e.g., Mean Squared Error) as the performance metric.

- Define the Search Space:

learning_rate:hp.loguniform('lr', low=log(1e-5), high=log(1e-2))[41]n_layers:hp.quniform('n_layers', 2, 8, 1)dropout_rate:hp.uniform('dropout', 0.1, 0.5)ffn_dim:hp.quniform('ffn_dim', 128, 512, 32)

- Run BOHB: Initialize the BOHB optimizer with the configuration space and run the optimization. BOHB will internally manage the Hyperband structure while using a Gaussian process to model the loss function and suggest promising hyperparameters for new configurations [41].

- Analysis: After the optimization completes, retrieve the best configuration and use it to train a final model on the full training set.

The diagram below shows how BOHB integrates Bayesian optimization with the Hyperband structure.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Hyperband/BOHB Experiment in Chemical ML

| Item | Function | Example in Chemical ML Context |

|---|---|---|

| Configuration Space | Defines the hyperparameters and their possible values to be searched. | {'learning_rate': loguniform(1e-5, 1e-2), 'fingerprint_size': [512, 1024, 2048]} [41] |

Budget Parameter (R) |

The maximum amount of resource allocated to a single configuration. | Maximum number of training epochs (e.g., 100), or the size of the molecular data subset (e.g., 50% of data). |

Reduction Factor (η) |

Controls the aggressiveness of configuration elimination. A standard value is 3. | An η=3 means only the top 1/3 of configurations are promoted to the next, higher-budget round. |

| Objective Function | The function that evaluates a configuration's performance at a given budget. | A function that takes hyperparameters, builds a model, trains it for 'b' epochs, and returns the validation RMSE for a property prediction. |

| Optimization Framework | Software library that implements the algorithm. | Popular choices include hpbandster, Optuna, Ray Tune, and scikit-optimize. |

Frequently Asked Questions (FAQs)

Q1: Why should I move beyond simple grid or random search for hyperparameter optimization in chemical ML?

While grid and random search are straightforward to implement, they suffer from the "curse of dimensionality"; their efficiency drops exponentially as the number of hyperparameters increases. [46] Bayesian Optimization methods, like those in Optuna, are far more sample-efficient. They build a probabilistic model of your objective function to intelligently guess the next promising hyperparameters, balancing exploration of unknown regions and exploitation of known good ones. [47] This can slash your search time by 10x or more, a critical advantage when each model training is computationally expensive, such as with large molecular property predictors. [47]

Q2: My model's performance has plateaued during HPO. How can I break through this?

Performance plateaus often signal that your search is stuck in a local optimum. To address this:

- Adopt Advanced Metaheuristics: Algorithms like the Multi-Strategy Parrot Optimizer (MSPO) or Hierarchically Self-Adaptive PSO (HSAPSO) integrate strategies like chaotic parameters and nonlinear inertia weight to enhance global exploration ability and escape local optima. [48] [49] These have shown success in demanding fields like breast cancer image classification and drug target identification.

- Use Aggressive Pruning: Libraries like Optuna and Ray Tune can automatically stop underperforming trials early (a process called "pruning"). [47] This re-allocates computational resources from hopeless configurations to more promising ones, allowing you to explore a wider search space with the same budget.

Q3: I'm tuning for multiple objectives (e.g., model accuracy and inference speed). Which HPO techniques support this?

Single-objective optimization that only considers accuracy is often insufficient for real-world deployment. You need to find the Pareto front—the set of optimal trade-offs between your objectives. [47] Modern libraries like Optuna and Ray Tune have built-in support for multi-objective optimization. [46] [50] You can directly specify multiple metrics (e.g., accuracy and inference_time), and the optimizer will return a set of non-dominated solutions, allowing you to choose the best compromise for your specific application, such as a model that is both accurate and fast enough for high-throughput virtual screening. [46]

Q4: How can I visualize and interpret the impact of hyperparameters on my molecular model's predictions?

For models using SMILES strings, the XSMILES tool is designed for this purpose. [51] It provides interactive visualizations that coordinate a 2D molecular diagram with a bar chart of the SMILES string. This allows you to see how attribution scores from Explainable AI (XAI) techniques are mapped to both atoms and non-atom tokens (like brackets), helping you debug your model and identify learned chemical patterns that influence predictions. [51]

Troubleshooting Guides

Issue: Reproducibility Problems in HPO Experiments

Problem: Your HPO results are not consistent across different runs, making it difficult to trust or report your findings.

Diagnosis and Solution:

| Step | Action | Rationale |

|---|---|---|

| 1 | Set random seeds for all components (Python, NumPy, TensorFlow/PyTorch, etc.). | Ensures the model initializes and trains identically each time. |

| 2 | Use the seed parameter in your HPO library (e.g., in Optuna.create_study()). |

Guarantees the hyperparameter search sequence is reproducible. [47] |

| 3 | Ensure your training/validation data split is fixed and repeatable. | Preves performance metrics from varying due to different data splits. |

| 4 | Version control your code, search space definition, and environment. | Provides a complete snapshot for replicating the exact experimental conditions. |

Issue: Managing Computational Cost and Time

Problem: Hyperparameter searches are taking too long or consuming excessive resources.

Diagnosis and Solution:

| Strategy | Implementation | Benefit |

|---|---|---|

| Early Stopping | Use schedulers like HyperBand/ASHA in Ray Tune or Optuna's pruning. | Automatically terminates poorly-performing trials, saving >50% of compute time. [52] |

| Distributed Parallelism | Use Ray Tune to parallelize trials across multiple GPUs/nodes. | Reduces wall-clock time significantly; can scale to hundreds of parallel workers. [52] |

| Multi-Fidelity Optimization | Start trials with small subsets of data or fewer training epochs. | Quickly approximates model potential before committing full resources. |

Issue: Navigating Complex, Conditional Search Spaces

Problem: Your model has hyperparameters that are only active when another hyperparameter has a specific value (e.g., the choice of optimizer determines which related parameters are valid).

Diagnosis and Solution: Modern HPO frameworks like Optuna use a "define-by-run" API. This allows you to define the search space dynamically within your objective function. [50]

This imperative style seamlessly handles conditional hierarchies, preventing the search algorithm from wasting trials on invalid parameter combinations. [50]

Experimental Protocols & Data Presentation

Protocol: Benchmarking HPO Algorithms on a Molecular Property Prediction Task

This protocol outlines a fair comparison of different HPO methods for a chemical ML task.

1. Objective: Minimize the Mean Squared Error (MSE) on a validation set for a molecular property prediction model (e.g., predicting solubility from SMILES strings).

2. Dataset: Use a standardized public dataset (e.g., from MoleculeNet). Perform a fixed 80/20 train/validation split.

3. Model: A common architecture, such as a Graph Convolutional Network (GCN) or an LSTM-based SMILES parser.

4. Search Space:

learning_rate: LogUniform between 1e-5 and 1e-2batch_size: Choice of [32, 64, 128, 256]layer_size: Choice of [64, 128, 256]num_layers: IntUniform between 1 and 5dropout_rate: Uniform between 0.1 and 0.5

5. HPO Methods to Compare:

- Baseline: Random Search

- Bayesian Optimization (via Optuna)

- Population-Based Training (via Ray Tune)

6. Evaluation:

- Run each HPO method for a fixed number of trials (e.g., 50) or a fixed time budget (e.g., 24 hours).

- Record the best validation score and the time to find it.

- Run the final best configuration on a held-out test set.

Quantitative Comparison of HPO Techniques

The following table summarizes the typical performance characteristics of various HPO algorithms, as observed in literature and practice. [46] [13] [47]

| Optimization Algorithm | Sample Efficiency | Best For | Key Advantages | Known Limitations |

|---|---|---|---|---|

| Grid Search | Very Low | Small, low-dimensional search spaces (2-3 params). | Simple, exhaustive, highly interpretable results. | Intractable for complex spaces; wastes resources. |

| Random Search | Low | Moderate-dimensional spaces; initial exploration. | Better than grid for same budget; easy to implement. | Still inefficient; does not learn from past trials. |

| Bayesian Opt. (Optuna) | High | Expensive black-box functions; limited trial budgets. | Sample-efficient; smart trade-off (explore/exploit). | Overhead can be high for very cheap functions. |

| Metaheuristics (PSO, MSPO) | Medium-High | Complex, non-convex spaces; escaping local minima. | Strong global search; good for novel architectures. | May have many own parameters to tune; complex. |

| Population-Based (PBT) | Varies | Dynamic schedules; large-scale parallel resources. | Optimizes during training; discovers adaptive schedules. | Requires significant parallel resources. |

Workflow and Relationship Visualizations

HPO for Chemical ML Workflow

HPO Algorithm Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Library | Primary Function | Application in Chemical ML HPO |

|---|---|---|

| Optuna | Define-by-run HPO framework | Optimizes hyperparameters for SMILES-based RNNs or GCNs; supports multi-objective optimization for balancing accuracy/model size. [50] [47] |

| Ray Tune | Scalable experiment execution | Orchestrates distributed HPO sweeps across clusters; integrates with Optuna, HyperOpt; implements PBT for adaptive schedules. [52] |

| KerasTuner | Native Keras/TensorFlow HPO | Tunes standard Keras models with minimal code changes; suitable for prototyping feedforward networks on molecular fingerprints. |

| XSMILES | Interactive visualization for SMILES/XAI | Interprets attributions for atom and non-atom tokens; debugs model behavior by linking SMILES strings to molecular diagrams. [51] |

| Scikit-learn | ML library with basic HPO | Provides GridSearchCV and RandomSearchCV for tuning models on pre-computed molecular descriptor arrays. |

| RDKit | Cheminformatics library | Generates molecular features and fingerprints; converts SMILES to 2D diagrams for visualization tools like XSMILES. [51] |

Technical Support Center

This technical support center provides troubleshooting guides and frequently asked questions (FAQs) for researchers applying Bayesian Optimization (BO) to molecular property prediction, framed within the context of a thesis on hyperparameter tuning mistakes in chemical machine learning research.

Frequently Asked Questions (FAQs)

FAQ 1: My BO algorithm seems stuck in a local optimum and isn't exploring the chemical space effectively. What can I do?

Answer: This is a common issue where the acquisition function over-emphasizes exploitation. We recommend these steps:

- Implement a Pareto-Aware Multi-Objective Approach: For molecular design, switch from a single-objective to a multi-objective BO (MOBO) using a Pareto-based acquisition function like Expected Hypervolume Improvement (EHVI). Benchmark studies show EHVI consistently outperforms scalarized alternatives in Pareto front coverage, convergence speed, and chemical diversity, especially in low-data regimes where trade-offs are nontrivial [53].

- Integrate Linguistic Reasoning: A framework like "Reasoning BO" can be used, which incorporates a Large Language Model (LLM) to guide the sampling process. The LLM uses domain knowledge to generate scientific hypotheses and assign confidence scores to candidate experiments, helping the algorithm escape local optima by leveraging global heuristics [54].

FAQ 2: How should I handle the mix of categorical and continuous variables in my reaction optimization?

Answer: Optimizing a space with both categorical (e.g., solvent, ligand) and continuous (e.g., temperature, concentration) variables is a key strength of BO.

- Representation is Key: Represent the reaction condition space as a discrete combinatorial set of plausible conditions, which allows for automatic filtering of impractical combinations (e.g., unsafe reagent pairs) [8].

- Use the Right Surrogate Model: Gaussian Process (GP) surrogates with specialized kernels can handle such mixed spaces. Furthermore, tree-based models like XGBoost have also demonstrated excellent predictive performance (

R² ≥ 0.9) on mixed-variable problems in chemical process optimization, such as ultrafiltration process design [55].

FAQ 3: For a new optimization campaign with limited budget, should I use a single-fidelity or multi-fidelity approach?

Answer: The choice depends on the availability of lower-fidelity data sources.

- When to Use Multi-Fidelity BO (MFBO): If you have access to faster, cheaper, but less accurate data sources (e.g., computational simulations, low-resolution experiments), MFBO can significantly speed up discovery. A systematic evaluation recommends using MFBO when the low-fidelity function is "informative" and its cost is substantially lower than the high-fidelity experiment [56].

- Best Practices: Follow established guidelines for MFBO in materials and molecular research, which include careful selection of acquisition functions and a systematic evaluation of the cost-informativeness trade-off [56].