Bridging the Digital-Experimental Divide: A Practical Guide to Validating Molecular Property Predictions

This article addresses the critical challenge of validating computational molecular property predictions against experimental data, a central task in modern drug discovery.

Bridging the Digital-Experimental Divide: A Practical Guide to Validating Molecular Property Predictions

Abstract

This article addresses the critical challenge of validating computational molecular property predictions against experimental data, a central task in modern drug discovery. As machine learning models become indispensable for prioritizing compounds, ensuring their reliability is paramount. We explore the foundational causes of data discrepancies, showcase advanced methodological frameworks like multi-task and transfer learning designed for low-data regimes, and provide actionable strategies for troubleshooting common issues such as negative transfer and data heterogeneity. A strong emphasis is placed on rigorous validation protocols and the use of tools like AssayInspector for data consistency assessment, providing researchers and drug development professionals with a comprehensive roadmap to enhance the predictive accuracy and regulatory confidence of their computational models.

The Data Foundation: Understanding Sources of Error in Molecular Property Prediction

The Critical Impact of Data Heterogeneity and Distributional Misalignments

In computational drug discovery, the accuracy of molecular property prediction models is foundational to virtual screening and compound optimization. However, the performance of these models is critically limited by data heterogeneity and distributional misalignments across experimental sources. These challenges introduce inconsistencies that obscure biological signals and ultimately compromise predictive reliability [1]. As machine learning (ML) becomes increasingly embedded in early-stage drug development, understanding and addressing these data quality issues has become a prerequisite for building trustworthy predictive pipelines. This guide provides a comparative analysis of contemporary methodologies designed to mitigate these challenges, offering researchers a framework for selecting appropriate tools and strategies based on empirical performance data and methodological rigor.

Comparative Analysis of Methodologies and Performance

The table below summarizes core methodologies addressing data heterogeneity, their technical approaches, and performance characteristics.

Table 1: Comparative Analysis of Molecular Property Prediction Methods

| Method | Core Approach | Technical Innovation | Reported Performance Gain | Primary Application Context |

|---|---|---|---|---|

| AssayInspector [1] | Data Consistency Assessment | Statistical tests, visualization, and alerts for dataset discrepancies. | Prevents performance degradation from naive data integration. | Pre-modeling data quality control for ADME/Tox properties. |

| CFS-HML [2] | Heterogeneous Meta-Learning | Separates property-specific & shared knowledge; graph neural networks with self-attention. | Substantial improvement in few-shot predictive accuracy. | Few-shot learning with limited labeled data. |

| MolFCL [3] | Contrastive & Prompt Learning | Fragment-based graph augmentation; functional group prompt tuning. | Outperforms baselines on 23 property prediction tasks. | General molecular property prediction with interpretability. |

| AAIS [4] | Adversarial Data Augmentation | Adaptive augmentation using influence functions for imbalanced data. | 1%-15% AUC; 1%-35% F1-score. | Class-imbalanced, multi-task classification. |

| ProtoMol [5] | Prototype-Guided Multimodal Learning | Aligns molecular graphs & text via a unified prototype space. | Outperforms state-of-the-art baselines. | Integrating structural and textual molecular information. |

Quantitative performance is a key differentiator. The AAIS framework demonstrates robust improvements in challenging scenarios, with documented performance increases of 1-15% in AUC and 1-35% in F1-score, particularly for class-imbalanced and multi-task learning problems [4]. Meanwhile, MolFCL has established superiority across a wide range of tasks, outperforming state-of-the-art baselines on 23 diverse molecular property prediction datasets [3]. The CFS-HML model specializes in data-scarce environments, showing a more significant performance improvement when using fewer training samples [2].

Experimental Protocols and Workflows

Data Consistency Assessment with AssayInspector

The AssayInspector package provides a systematic workflow for detecting data misalignments prior to model training. The methodology is model-agnostic and can be applied to both regression and classification tasks involving physicochemical and pharmacokinetic data [1].

Table 2: Key Research Reagent Solutions for Data Consistency Assessment

| Item/Tool | Function | Application Context |

|---|---|---|

| AssayInspector | Python package for data consistency assessment. | Identifies outliers, batch effects, and endpoint discrepancies across datasets. |

| Two-sample KS Test | Statistical comparison of endpoint distributions. | Detects significant differences in regression task endpoints (e.g., half-life). |

| Chi-square Test | Statistical comparison of class distributions. | Assesses consistency in classification task labels across sources. |

| UMAP | Dimensionality reduction for chemical space visualization. | Maps dataset coverage and identifies potential applicability domains. |

| Tanimoto Coefficient | Molecular similarity metric based on ECFP4 fingerprints. | Quantifies structural similarity and divergence between data sources. |

The experimental protocol involves three key phases. First, Descriptive Analysis generates summary statistics (mean, standard deviation, quartiles for regression; class counts for classification) for each data source. Second, Statistical Testing applies the two-sample Kolmogorov-Smirnov test to compare endpoint distributions for regression tasks and the Chi-square test for classification tasks. Finally, Visualization and Alert Generation creates property distribution plots, chemical space maps via UMAP, and feature similarity plots, culminating in an insight report that flags conflicting, divergent, or redundant datasets [1].

AssayInspector Workflow for Data Consistency Assessment

Fragment-Based Contrastive Learning with MolFCL

The MolFCL framework introduces a novel approach to molecular representation learning that integrates chemical prior knowledge through a two-stage process: pre-training with fragment-based contrastive learning and fine-tuning with functional group-based prompt learning [3].

Pre-training Phase: The model first decomposes molecules into smaller fragments using the BRICS algorithm, which preserves the reaction relationships between fragments. This creates an augmented molecular graph that incorporates both atomic-level and fragment-level perspectives without violating the original molecular environment. A contrastive learning framework then trains the model to maximize the similarity (using NT-Xent loss) between the original molecular graph and its augmented counterpart while minimizing similarity with other molecules in the batch [3].

Fine-tuning Phase: For downstream property prediction tasks, MolFCL introduces a functional group-based prompt learning mechanism. This approach incorporates knowledge of functional groups and their corresponding atomic signals to guide the model's attention toward chemically meaningful substructures during property prediction, enhancing both performance and interpretability [3].

MolFCL Pre-training with Fragment-Based Contrastive Learning

Discussion

The comparative analysis reveals that addressing data heterogeneity requires a multifaceted approach tailored to specific research contexts. For organizations aggregating data from multiple public sources, AssayInspector provides an essential first line of defense against dataset misalignments that can systematically degrade model performance [1]. In scenarios characterized by extreme data scarcity, such as predicting properties for novel chemotypes, CFS-HML's meta-learning framework offers a robust solution by effectively separating property-specific and property-shared knowledge [2].

For most general-purpose molecular property prediction tasks, MolFCL represents a compelling option due to its demonstrated performance across diverse benchmarks and its innovative integration of chemical prior knowledge without altering the molecular environment [3]. In specialized contexts involving class imbalance—a common challenge in toxicity prediction—AAIS provides targeted augmentation of influential samples near decision boundaries, significantly boosting minority class performance [4]. Finally, for research requiring integration of structural and textual information, ProtoMol establishes a new state-of-the-art through its unified prototype space and hierarchical cross-modal alignment [5].

The progression of methodologies from simply aggregating larger datasets to intelligently reconciling and augmenting existing data reflects a maturation of the field. The most impactful advances now come from strategies that explicitly acknowledge and address the fundamental challenges of experimental noise, contextual dependency, and distributional shift inherent to biochemical data.

The accuracy of machine learning (ML) models in molecular property prediction is fundamentally constrained by the quality and consistency of their training data. Within drug discovery, this challenge is particularly acute for preclinical safety and pharmacokinetic (ADME) property prediction, where high-stakes decisions rely on sparse, heterogeneous datasets often compiled from multiple public and proprietary sources [1]. The integration of diverse datasets presents a significant opportunity to increase sample sizes and expand chemical space coverage. However, this practice is undermined by a critical, often overlooked problem: significant distributional misalignments and annotation inconsistencies between gold-standard data sources and popular benchmarks [1]. These discrepancies, arising from differences in experimental protocols, measurement conditions, and chemical space coverage, introduce noise that can degrade model performance, leading to unreliable predictions that misguide the drug discovery process. This guide systematically analyzes the nature and impact of these discrepancies, providing researchers with methodologies for their detection and mitigation to ensure more robust molecular property prediction.

Systematic Analysis of Dataset Discrepancies

Nature and Origins of Data Inconsistencies

The discrepancies between gold-standard and benchmark data sources are not merely random noise but stem from systematic differences that can profoundly impact model generalization.

- Experimental Protocol Variations: Data for properties like half-life and clearance are often aggregated from different laboratories employing varied experimental conditions (e.g., in vitro versus in vivo studies, different cell lines, or measurement techniques) [1]. These procedural differences introduce batch effects that create distributional shifts between datasets ostensibly measuring the same property.

- Chemical Space Coverage Differences: Gold-standard datasets like those from Obach et al. and Lombardo et al., often curated from literature with rigorous quality control, may cover different regions of chemical space compared to larger, more automated benchmark collections such as Therapeutic Data Commons (TDC) [1]. When models are trained on one distribution and tested on another, performance degradation is inevitable.

- Temporal and Spatial Data Disparities: Temporal differences, such as variations in measurement years, can lead to inflated performance estimates when using random dataset splits rather than time-split evaluations that better reflect real-world prediction scenarios [6]. Spatial disparities refer to differences in how data points cluster within the latent feature space, affecting knowledge transfer between tasks [6].

Quantifying the Impact on Predictive Performance

The consequences of these discrepancies are not merely theoretical but have demonstrated significant impacts on model performance, as shown in the table below which summarizes findings from systematic analyses.

Table 1: Impact of Dataset Discrepancies on Model Performance

| Discrepancy Type | Affected Molecular Properties | Observed Impact on Models | Key Evidence |

|---|---|---|---|

| Distributional Misalignment | Half-life, Clearance, Aqueous Solubility | Decreased predictive accuracy when integrating datasets without addressing misalignments [1] | Naive data integration degraded performance despite larger training set [1] |

| Annotation Inconsistency | ADME properties, Toxicity endpoints | Introduction of label noise; conflicting learning signals [1] | Inconsistent property annotations between gold-standard and benchmark sources [1] |

| Task Imbalance | Multiple properties in MTL settings | Negative transfer in Multi-Task Learning [6] | Performance drops of up to 15.3% on ClinTox dataset due to gradient conflicts [6] |

The performance degradation illustrated in Table 1 demonstrates that simply aggregating more data, without rigorous consistency assessment, can be counterproductive. For instance, one study found that data standardization, despite harmonizing discrepancies and increasing training set size, did not always lead to improved predictive performance [1].

Experimental Protocols for Data Consistency Assessment

Methodological Framework for Discrepancy Detection

A systematic approach to data consistency assessment prior to model training is essential for reliable molecular property prediction. The following workflow outlines a comprehensive methodology for identifying and diagnosing dataset discrepancies.

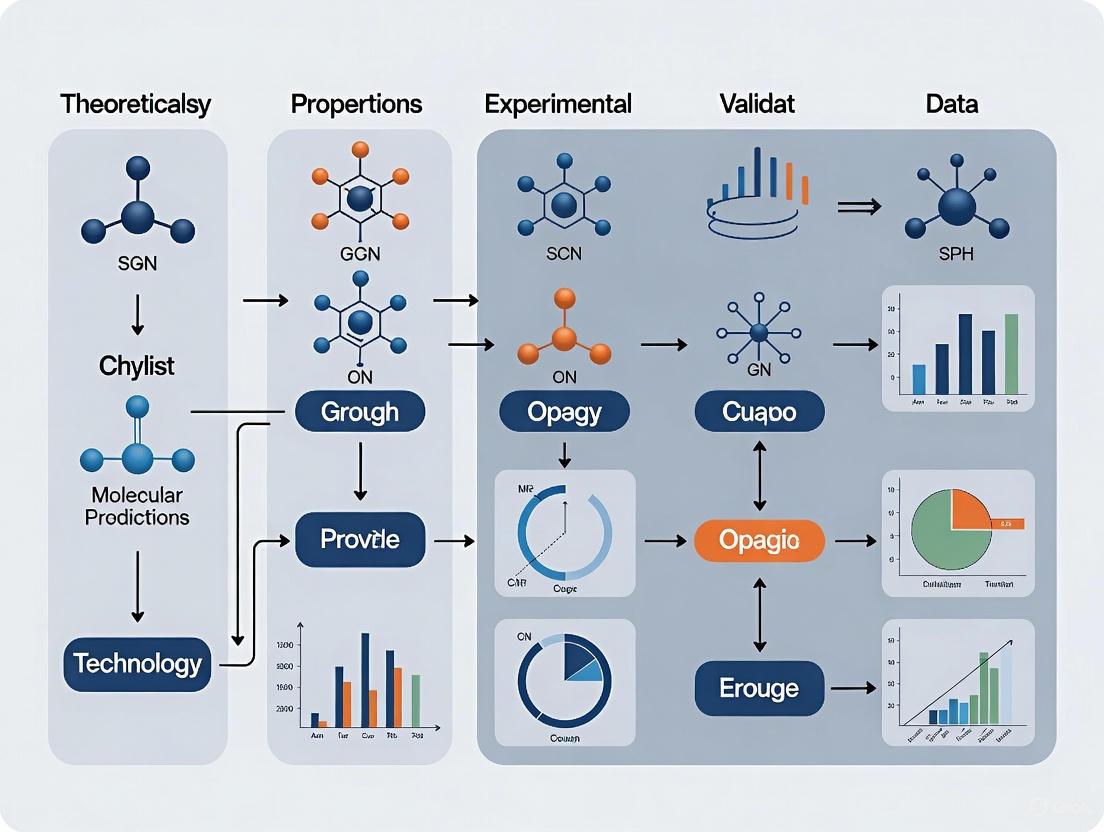

Diagram 1: Experimental workflow for data consistency assessment, illustrating the stepwise methodology from data input to integration decision-making.

The experimental workflow involves both quantitative and visual diagnostics to assess dataset compatibility:

- Step 1: Generate Descriptive Statistics: Compute fundamental parameters for each data source, including sample counts, endpoint statistics (mean, standard deviation, quartiles for regression; class ratios for classification), and molecular diversity metrics [1].

- Step 2: Statistical Distribution Comparison: Apply statistical tests such as the two-sample Kolmogorov-Smirnov test for regression tasks or Chi-square tests for classification tasks to identify significant differences in endpoint distributions between sources [1].

- Step 3: Chemical Space and Feature Similarity Analysis: Calculate within-source and between-source molecular similarity using Tanimoto coefficients for ECFP4 fingerprints or standardized Euclidean distance for molecular descriptors [1]. Visualize chemical space coverage using UMAP to identify potential applicability domain mismatches [1].

- Step 4: Outlier and Batch Effect Detection: Identify statistical outliers, out-of-range data points, and systematic batch effects that may indicate experimental artifacts or data quality issues [1].

- Step 5: Generate Insight Report: Compile diagnostic results into a comprehensive report flagging dissimilar datasets based on descriptor profiles, datasets with conflicting annotations for shared molecules, and datasets with significantly different endpoint distributions [1].

- Step 6: Informed Data Integration Decision: Based on the insight report, make strategic decisions about whether and how to integrate datasets, potentially excluding sources with irreconcilable differences or applying appropriate normalization techniques.

Implementation with Specialized Tools

The AssayInspector package provides a model-agnostic, Python-based implementation of this methodological framework [1]. Its functionalities are specifically designed for comparing experimental datasets from distinct sources before aggregation in ML pipelines, supporting both regression and classification tasks with built-in chemical descriptor calculation and comprehensive visualization capabilities.

Computational Tools for Discrepancy Mitigation

Specialized Software for Data Consistency Assessment

The following table catalogs key computational tools and their specific applications in addressing dataset discrepancies.

Table 2: Research Reagent Solutions for Data Consistency Assessment

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| AssayInspector [1] | Data consistency assessment prior to modeling | Physicochemical & ADME property prediction | Statistical comparisons, chemical space visualization, outlier detection, insight reports |

| ACS (Adaptive Checkpointing with Specialization) [6] | Mitigating negative transfer in Multi-Task Learning | Low-data regime property prediction | Task-specific early stopping, shared backbone with specialized heads |

| GSCDB138 [7] | Gold-standard benchmark for quantum chemistry | Density functional theory validation | 138 rigorously curated datasets with gold-standard accuracy |

| DES370K/DES5M [8] | Noncovalent interaction energy benchmarks | Force field and functional development | CCSD(T)/CBS interaction energies for 3,691 distinct dimers |

| Mol2vec with CATBoost [9] | NLP-based molecular featurization | Large-scale ionic liquid property screening | Natural language processing of SMILES strings for rich molecular representation |

Advanced Modeling Strategies for Handling Data Heterogeneity

Beyond assessment tools, specialized modeling approaches can inherently mitigate the effects of dataset discrepancies:

- Adaptive Checkpointing with Specialization (ACS): This training scheme for multi-task graph neural networks addresses negative transfer resulting from task imbalance and data distribution differences [6]. By combining a shared, task-agnostic backbone with task-specific heads and implementing adaptive checkpointing when negative transfer signals are detected, ACS preserves the benefits of multi-task learning while protecting individual tasks from detrimental parameter updates [6].

- Multi-Task Learning with Robust Architectures: When properly regularized, MTL can leverage correlations among related molecular properties to improve predictive performance, particularly in low-data regimes [6] [10]. However, careful architecture design is required to prevent gradient conflicts and capacity mismatches that exacerbate the impact of dataset discrepancies [6].

- Natural Language Processing (NLP) Featurization: Approaches like Mol2vec, which generate molecular embeddings from SMILES strings, have demonstrated superior predictive performance compared to traditional featurization techniques like Morgan fingerprints or quantum chemistry-derived descriptors for certain applications [9]. These methods may be less sensitive to certain types of experimental noise in the source data.

The reliability of molecular property prediction models is inextricably linked to the consistency of their underlying training data. Significant discrepancies between gold-standard and benchmark data sources—including distributional misalignments, annotation inconsistencies, and chemical space coverage differences—represent a critical challenge that can severely degrade model performance if left unaddressed. Through systematic data consistency assessment using specialized tools like AssayInspector, and the implementation of robust modeling strategies such as ACS for multi-task learning, researchers can identify and mitigate these discrepancies. The methodological framework presented in this guide provides a pathway toward more reliable integration of heterogeneous data sources, ultimately supporting the development of more accurate and generalizable predictive models in drug discovery and materials science. Future advancements will likely involve more sophisticated data quality metrics integrated directly into model training pipelines, as well as continued expansion of carefully curated gold-standard databases that serve as authoritative references for method validation.

In the pursuit of accelerating drug discovery and materials design, researchers increasingly rely on a hybrid approach, integrating rich in silico predictions with robust experimental validation. However, a significant and often underestimated challenge arises from the inherent variations in experimental protocols and computational conditions, which can introduce inconsistencies that compromise the reliability and reproducibility of data. These discrepancies are particularly pronounced in molecular property prediction, where differences in experimental assays, measurement techniques, and computational model training can lead to misaligned data distributions and conflicting annotations. For instance, substantial distributional misalignments and inconsistent property annotations have been identified between gold-standard data sources and popular benchmarks like the Therapeutic Data Commons [1]. This protocol-induced variability poses a major obstacle for machine learning models, as naive integration of heterogeneous data often degrades predictive performance instead of enhancing it [1]. This guide objectively compares the capabilities and limitations of experimental and in silico approaches, providing a structured framework for navigating protocol-induced variations to achieve more reliable molecular property prediction.

The table below summarizes the core characteristics of experimental and in silico data, highlighting key sources of variation that researchers must navigate.

Table 1: Characteristics and Variability Sources in Experimental vs. In Silico Data

| Aspect | Experimental Data | In Silico Data |

|---|---|---|

| Primary Nature | Direct physical measurement [11] | Computational simulation or prediction [11] |

| Typical Variability Sources | - Experimental conditions (temperature, pressure) [9]- Measurement techniques (e.g., different spectrometers) [11]- Sample preparation protocols (e.g., lyophilization) [11]- Biological system heterogeneity (e.g., cell lines, model organisms) | - Model architecture and training schemes (e.g., MTL, STL) [6]- Input data representation (e.g., fingerprints, 3D geometries) [12] [9]- Algorithmic parameters and assumptions- Training data quality and coverage [1] |

| Inherent Trade-offs | - Cost: High (specialized equipment, reagents) [9]- Time: Slow (days to months) [9]- Coverage: Limited by practical constraints | - Cost: Relatively low (computational resources) [9]- Time: Fast (seconds to days) [9]- Coverage: Can screen millions of candidates [9] |

| Key Challenges | - Data scarcity for many molecular properties [6]- Batch effects and inter-lab protocol differences [1]- Difficulty in controlling all variables | - Out-of-distribution (OOD) extrapolation [13]- Data misalignments between sources [1]- Model interpretability ("black box" issue) [14] |

Detailed Experimental Protocols and Methodologies

Key Experimental Protocols in Molecular Sciences

Understanding the specific methodologies behind data generation is crucial for interpreting results and identifying the root causes of variation.

Protocol for Neutron Scattering of Lyophilised Proteins: This protocol aims to characterize the dynamics of proteins in dehydrated (lyophilised) and weakly hydrated states, which is critical for pharmaceutical stability [11].

- Sample Preparation: Protein samples (e.g., apoferritin, insulin) are prepared at specific hydration levels, defined as

h(grams of D2O per gram of protein). A system is considered lyophilised ath ≤ 0.05and weakly hydrated at0.05 < h < 0.38[11]. - Data Collection: Experiments are performed using backscattering spectrometers (e.g., OSIRIS, IRIS) at facilities like the ISIS pulsed neutron and muon source. These instruments probe molecular dynamics within a temporal window of ~150 picoseconds [11].

- Key Measurement: The primary observable is the mean squared displacement (

<u²(T)>) of protein hydrogen atoms, which is derived from the Quasi-elastic Neutron Scattering (QENS) data and plotted as a function of temperature [11]. - Validation Insight: The experimental

<u²(T)>is used to authenticate corresponding in silico molecular dynamics (MD) protocols, serving as a ground-truth benchmark for validating the simulated dynamical behavior of the proteins [11].

- Sample Preparation: Protein samples (e.g., apoferritin, insulin) are prepared at specific hydration levels, defined as

High-Throughput Screening for Ionic Liquid Properties: This approach involves the direct experimental measurement of key physicochemical properties for various ionic liquid (IL) candidates [9].

- Property Measurement: Critical properties such as viscosity, density, surface tension, and ionic conductivity are measured for a library of ILs. For example, viscosity data is collected across a temperature range of 278.15–353.15 K at varying pressures [9].

- Data Aggregation: Large datasets are compiled from diverse sources, including the NIST ILThermo database. This process inherently introduces variability due to differences in experimental setups and conditions across different research groups [9].

- Application: The collected data serves as a gold-standard benchmark for training and validating machine learning models, highlighting the practical challenge of integrating heterogeneous experimental data from multiple sources [1] [9].

Key In Silico Protocols in Molecular Property Prediction

Computational protocols must be carefully designed to ensure they generate representative and reliable data.

Molecular Dynamics (MD) Protocol for Lyophilised Proteins: A critical protocol comparison study revealed that the method of constructing simulation models significantly impacts their dynamical accuracy [11].

- Protocol 1 (Traditional): The same starting protein structure is used for both hydrated and dry models. Water molecules are simply added or removed, followed by an equilibration phase. This protocol was found to poorly reproduce the water-induced dynamical enhancement observed experimentally [11].

- Protocol 2 (Experimentally Representative): The weakly hydrated system model undergoes a mild equilibration. The dry model is then created from this hydrated model by in silico lyophilisation (removal of water), directly mimicking the experimental process. This protocol proved superior in reproducing the experimental mean squared displacement and the dynamical transition at ~220 K [11].

- Key Analysis: The Mean Squared Displacement (MSD) of protein hydrogen atoms is calculated from the MD trajectory and validated against experimental neutron scattering data [11].

Machine Learning (ML) Training Schemes for Multi-Task Property Prediction: These protocols address the challenge of learning from limited and imbalanced data, a common scenario in molecular sciences [6].

- Architecture: A shared graph neural network (GNN) backbone learns general-purpose molecular representations. This is connected to task-specific multi-layer perceptron (MLP) heads that make individual property predictions [6].

- Adaptive Checkpointing with Specialization (ACS): This training scheme monitors the validation loss for each task independently. It checkpoints the best-performing model parameters (both shared backbone and task-specific head) for a given task whenever that task's validation loss reaches a new minimum. This approach mitigates "negative transfer," where updates from one task degrade performance on another, especially under severe task imbalance [6].

- Validation: Models are validated on standardized benchmarks like MoleculeNet (e.g., ClinTox, SIDER, Tox21) using scaffold splits to ensure realistic performance estimates [6].

Natural Language Processing (NLP) Featurization for Large-Scale Screening: This protocol enables the rapid prediction of properties for very large chemical databases [9].

- Featurization: Molecular structures in SMILES notation are converted into numerical vectors (embeddings) using techniques like Mol2vec, which treats molecular substructures analogously to words in a sentence [9].

- Model Training and Prediction: These Mol2vec embeddings are used as input features for machine learning models (e.g., CATBoost) to predict various IL properties. This method has demonstrated superior predictive performance compared to traditional featurization techniques like Morgan fingerprints or quantum chemistry-derived sigma profiles, while being computationally less expensive [9].

- Application: The trained model is deployed to screen a database of ~10.6 million generated ionic liquids, identifying candidates with optimal property combinations for specific applications like CO2 capture or biomass processing [9].

Visualization of Workflows and Relationships

Experimental and Computational Validation Workflow

The following diagram illustrates an integrated workflow that leverages both in silico and experimental data, emphasizing the critical validation feedback loop necessary to manage protocol variations.

Diagram 1: Integrated Validation Workflow. This diagram outlines a robust framework for aligning experimental and in silico data. It begins with parallel workstreams for computational prediction and experimental benchmarking, which converge at a critical Data Consistency Assessment (DCA) node [1]. A detected discrepancy feeds back into protocol refinement, creating a cycle that enhances model reliability and data concordance.

The High-Throughput In Silico Screening Pipeline

For large-scale discovery projects, the following pipeline demonstrates how computational models are used to efficiently navigate vast chemical spaces.

Diagram 2: High-Throughput Screening Pipeline. This sequence illustrates the scalable process for screening massive molecular databases, from featurization using NLP techniques like Mol2vec [9] to final experimental validation of a shortlist of top candidates, creating an iterative feedback loop for model improvement.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key computational and experimental tools that form the essential toolkit for modern research in molecular property prediction and validation.

Table 2: Key Research Reagent Solutions for Molecular Property prediction

| Tool / Solution | Type | Primary Function | Relevance to Protocol Variation |

|---|---|---|---|

| AssayInspector [1] | Software Package | Systematically identifies data misalignments, outliers, and batch effects across experimental datasets. | Critical for pre-modeling Data Consistency Assessment (DCA) to diagnose and manage variability before data integration. |

| GEO-BERT [12] | Pre-trained Deep Learning Model | A geometry-based model for molecular property prediction that incorporates 3D structural information. | Provides a robust, pre-validated starting point for predictions, reducing variability from model architecture choices. |

| ACS (Adaptive Checkpointing) [6] | ML Training Scheme | Mitigates negative transfer in multi-task learning by saving task-specific model checkpoints. | Manages variability introduced by imbalanced training data across multiple property prediction tasks. |

| OSIRIS/IRIS Spectrometers [11] | Experimental Instrument | Neutron backscattering spectrometers for measuring atomic mean squared displacement in proteins. | Provides high-quality, standardized experimental data for validating computational models of molecular dynamics. |

| Mol2vec [9] | NLP Featurization Algorithm | Generates molecular embeddings from SMILES strings for use in machine learning models. | Offers a consistent and effective featurization method, reducing variability compared to other descriptor types. |

| Bilinear Transduction [13] | ML Prediction Method | A transductive approach designed to improve out-of-distribution (OOD) property value extrapolation. | Addresses variability and performance drops when predicting properties outside the training data distribution. |

Navigating the variations between experimental and in silico data is not merely a technical hurdle but a fundamental aspect of modern molecular research. The reliability of predictive models and the success of discovery pipelines hinge on a rigorous, systematic approach to protocol design and data integration. Key to this process is the implementation of robust validation cycles, where computational predictions are continuously refined against high-quality experimental benchmarks, and experimental protocols are informed by computational insights. Tools like AssayInspector for data consistency assessment [1] and advanced modeling techniques like ACS [6] and Bilinear Transduction [13] provide the necessary methodology to mitigate the risks of data heterogeneity and negative transfer. By adopting the structured frameworks and tools outlined in this guide, researchers and drug development professionals can enhance the concordance between in silico predictions and experimental results, thereby accelerating the reliable discovery of novel molecules and materials.

In modern drug discovery, the optimization of a candidate molecule extends far beyond its primary pharmacological activity. A compound's journey from administration to its site of action and eventual elimination is governed by a core set of molecular properties. These properties—categorized as Absorption, Distribution, Metabolism, Excretion (ADME), toxicity, and physicochemical profiles—are critical determinants of clinical success and safety [15]. High-profile failures in late-stage development and post-marketing withdrawals are often attributable to unforeseen ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) liabilities, which account for a significant portion of clinical attrition [16] [17]. Consequently, the early and accurate prediction of these properties has become a cornerstone of efficient drug discovery pipelines, enabling researchers to identify and eliminate problematic candidates before substantial resources are invested.

The rise of artificial intelligence (AI) and machine learning (ML) has fundamentally transformed the predictive toxicology and ADME profiling landscape [18] [16]. These computational approaches leverage vast, heterogeneous datasets to uncover complex relationships between molecular structure and biological properties that are often imperceptible to traditional methods. However, the predictive accuracy and real-world utility of these models are intrinsically linked to the quality and consistency of the underlying experimental data against which they are validated [1]. This guide provides a comparative examination of key molecular properties, the experimental protocols used to measure them, and the computational tools that predict them, all framed within the critical context of validation against empirical evidence.

Core Molecular Properties and Their Target Values

The optimization of drug candidates requires a delicate balance of multiple properties. The following table summarizes the desired ranges for key parameters, which serve as a guideline for candidate selection and design. These values are particularly representative of small-molecule drugs but must be adapted for novel modalities like PROTACs [19].

Table 1: Target Values for Key Molecular Properties in Lead Optimization

| Property | Desired Value / Range | Significance & Rationale |

|---|---|---|

| T. b. brucei pEC50 | >7.0 [20] | Measures potent antiparasitic activity (used here as a model of primary pharmacological activity). |

| Selectivity Index (SI) | ≥100-fold [20] | Ratio of cytotoxic concentration (e.g., in MRC5 cells) to efficacy concentration; ensures a sufficient therapeutic window. |

| Molecular Weight (MW) | ≤360 Da [20] (For bRo5: ≤950 Da [19]) | Lower MW generally favors better absorption and permeability. Higher thresholds are considered for beyond Rule of 5 (bRo5) modalities. |

| Calculated logP (clogP) | ≤3 [20] | Controls lipophilicity; lower values reduce metabolic clearance and potential toxicity risks. |

| LogD at pH 7.4 | ≤2 [20] | Measures distribution between oil and water at physiological pH; critical for membrane permeability and solubility. |

| Topological Polar Surface Area (TPSA) | 40 < TPSA < 90 Ų [20] | Predicts passive cellular absorption and blood-brain barrier penetration. |

| Hydrogen Bond Donors (HBD) | ≤3 [19] (For oral PROTACs: ≤2 [19]) | Critical for permeability; a lower count is a strong predictor of better oral absorption, especially for larger molecules. |

| Lipophilic Ligand Efficiency (LLE) | ≥4 [20] | Balances potency and lipophilicity (LLE = pEC50 - logD); higher values indicate a more efficient and lead-like compound. |

| Thermodynamic Aqueous Solubility | >100 μM [20] | Ensures sufficient compound dissolution for bioavailability in gastrointestinal fluids. |

| Human Liver Microsome CLint | <47 μL/min/mg protein [20] | Indicates low intrinsic metabolic clearance, predicting a longer half-life in vivo. |

Computational Prediction: Models, Tools, and Workflows

The adoption of AI/ML has provided powerful in silico tools for early property prediction, helping to triage compounds before they enter costly experimental assays.

AI/ML Models for Toxicity and ADMET Prediction

A diverse ecosystem of models and architectures has been developed to address various property prediction tasks.

Table 2: Key AI/ML Models and Tools for Molecular Property Prediction

| Model / Tool Category | Examples & Key Features | Primary Applications |

|---|---|---|

| Graph Neural Networks (GNNs) | MoleculeFormer [21]: Integrates atom and bond graphs with 3D structural information and molecular fingerprints. HRGCN+ [21], FP-GNN [21]: Combine graph networks with molecular descriptors/fingerprints. | General molecular property prediction, including efficacy, toxicity, and ADME tasks. Excels at capturing local and global structural features. |

| Transformer-based Models | Models inspired by natural language processing that treat molecules as sequences (e.g., SMILES) or graphs. [18] | Activity and property prediction; can capture long-range dependencies in molecular structures. |

| Federated Learning Platforms | Apheris Federated ADMET Network [17], MELLODDY [17]: Enable collaborative training on distributed, proprietary datasets without sharing raw data. | Cross-pharma QSAR and ADMET model improvement, significantly expanding the chemical space and applicability domain. |

| Public Benchmark Suites | TDC (Therapeutic Data Commons) [1], ChEMBL [16], Tox21 [18]: Curated public datasets for model training and benchmarking. | Provides standardized benchmarks for comparing model performance across various ADMET and toxicity endpoints. |

| Traditional Machine Learning | Random Forest (RF), Support Vector Machines (SVM), XGBoost [18] [21]: Often use molecular fingerprints or descriptors as input. | A robust and interpretable approach for various classification (e.g., toxicity) and regression (e.g., solubility) tasks. |

A Workflow for Reliable Model Development and Application

Developing and applying predictive models requires a systematic workflow to ensure reliability and relevance. The following diagram illustrates a robust pipeline that incorporates critical data consistency checks.

Model Development Workflow

A critical yet often overlooked step is the Data Consistency Assessment (DCA). Before model training, data from different sources (e.g., public benchmarks like TDC and gold-standard literature sources) must be rigorously checked for distributional misalignments, inconsistent annotations, and batch effects. Tools like AssayInspector [1] have been developed specifically for this purpose, providing statistics and visualizations to identify discrepancies that could otherwise lead to poorly performing and misleading models.

Addressing the Data Scarcity Challenge: Federated Learning

A major limitation in ADMET modeling is the scarcity of high-quality, diverse data, much of which resides in siloed proprietary databases within pharmaceutical companies. Federated learning has emerged as a powerful solution to this problem [17]. This approach allows multiple organizations to collaboratively train a model without centralizing or directly sharing their confidential data. Instead, model updates are shared and aggregated. This process systematically expands the model's applicability domain, leading to more robust predictions for novel chemical scaffolds. The MELLODDY project demonstrated that such cross-pharma federated learning can unlock significant performance benefits in QSAR models without compromising proprietary information [17].

Experimental Protocols for Property Validation

Computational predictions must be grounded and validated using robust experimental assays. The following section details standard protocols for measuring critical properties.

Key Research Reagent Solutions

Table 3: Essential Materials and Assays for Experimental Profiling

| Research Reagent / Assay | Function & Application |

|---|---|

| Caco-2 Cells | A human colon adenocarcinoma cell line used in a transwell setup to model passive intestinal permeability and active transport [19]. |

| Liver Microsomes / Hepatocytes | Subcellular fractions or primary cells (human, rat, mouse) used to determine a compound's intrinsic metabolic clearance (CLint) [20] [19]. |

| Plasma Protein Binding Assay | Determines the fraction of a drug that is unbound (fu) in plasma, which influences volume of distribution and efficacy [15]. |

| Exposed Polar Surface Area (ePSA) | A chromatographic surrogate measurement for passive permeability, especially useful for challenging compounds like PROTACs where cell-based assays can be problematic [19]. |

| hERG Assay | Evaluates a compound's potential to block the hERG potassium channel, a key predictor of cardiotoxicity risk (e.g., Torsades de Pointes) [18]. |

| MTT / CCK-8 Assay | In vitro cytotoxicity tests that measure cell viability and proliferation, used to calculate a compound's selectivity index [16]. |

| FAERS Database | The FDA Adverse Event Reporting System, a database of post-marketing adverse event reports used for mining clinical toxicity signals [16]. |

Detailed Experimental Methodologies

Metabolic Stability in Hepatocytes

Objective: To determine the intrinsic clearance (CLint) of a compound using cryopreserved hepatocytes, predicting its metabolic stability in vivo [19].

Protocol:

- Incubation Setup: Cryopreserved hepatocytes (e.g., female CD-1 mouse) are thawed and viability is confirmed to be >70% via trypan blue staining.

- Reaction: The test compound (1 µM final concentration) is incubated with hepatocytes (0.2 × 10^6 cells per mL) in Krebs-Henseleit buffer (pH 7.4) at 37°C under 5% CO₂.

- Sampling: Aliquots are taken at multiple time points (e.g., 0, 10, 20, 40, 60, and 90 minutes) and the reaction is quenched with an acetonitrile solution containing an internal standard.

- Analysis: Samples are analyzed using UHPLC-MS/MS to determine the parent compound's concentration over time.

- Calculation: The depletion rate of the parent compound is fitted to a first-order decay model to calculate the half-life (t1/2) and subsequently the CLint (in µL/min/10^6 cells).

Validation Note: For beyond-Rule-of-5 molecules like PROTACs, standard in vitro-in vivo extrapolation (IVIVE) using predicted fraction unbound in incubation (fu,inc) can systematically under-predict clearance. Using experimentally determined fu,inc values is recommended to overcome this bias [19].

Permeability Assessment using the Caco-2 Assay

Objective: To measure the apparent permeability (Papp) of a compound, predicting its absorption potential in the human intestine [19].

Protocol:

- Cell Culture: Caco-2 cells (TC7 clone) are seeded onto transwell filters and cultured for 14-21 days to form a confluent, differentiated monolayer.

- Experiment: The test compound is added to the donor compartment (apical for A→B, basolateral for B→A), with HBSS buffer in the receiver compartment. The monolayer integrity is verified using a tightness marker like melagatran.

- Incubation: The plate is incubated for 2 hours at 37°C in 5% CO₂ and 100% humidity.

- Sampling and Analysis: Samples are taken from both compartments at the beginning (t0) and end (t120) of the experiment and analyzed via UHPLC-MS/MS.

- Calculation: Papp (in cm/s) is calculated using the formula:

P_app = (dQ/dt) / (A * C_0), wheredQ/dtis the rate of compound appearance in the receiver compartment,Ais the membrane surface area, andC_0is the initial donor concentration. Mass balance (recovery) is also checked.

Validation Note: The standard Caco-2 assay can be challenging for low-solubility, high-lipophilicity compounds like PROTACs due to poor recovery from nonspecific binding. Modifications such as adding serum (FCS) to the buffer can improve recovery but may not fully restore predictiveness for absorption. In such cases, surrogate measures like ePSA or adherence to descriptor guidelines (HBD ≤ 3, MW ≤ 950) are often more reliable for optimization [19].

The journey toward a safe and effective drug is a continuous process of optimization and validation. Success hinges on a deeply integrated strategy that leverages the predictive power of modern AI/ML tools while maintaining a firm grounding in robust experimental science. Computational models, especially those trained on diverse, high-quality data via federated learning or rigorously curated public sources, provide an indispensable filter for prioritizing compounds. However, their predictions must be continuously validated and refined using the gold standard of experimental assays. As drug discovery pushes into new chemical modalities, the close collaboration between computational scientists, medicinal chemists, and experimental biologists becomes ever more critical. This synergy ensures that in silico models are informed by biological reality and that experimental resources are focused on the most promising candidates, ultimately accelerating the delivery of new therapies to patients.

Advanced Computational Frameworks for Robust Prediction

Leveraging Multi-Task Learning (MTL) to Overcome Data Scarcity

Data scarcity remains a significant bottleneck in scientific fields, particularly in molecular property prediction for drug discovery and materials science. The process of experimentally determining molecular properties is often time-consuming and expensive, resulting in limited labeled datasets that can hinder the development of robust machine learning models [6] [22]. Multi-task Learning (MTL) has emerged as a powerful paradigm to address this challenge by simultaneously learning multiple related tasks, thereby allowing models to leverage shared information and representations across tasks [23]. This approach mirrors human learning processes where knowledge gained from one task enhances understanding of related tasks, ultimately enabling more accurate predictions even when data for any single task is limited [23] [6]. Within the context of validating molecular property predictions against experimental data, MTL provides a framework for building more reliable and data-efficient models that can accelerate scientific discovery.

MTL Fundamentals and Relevance to Data Scarcity

Core Principles of Multi-Task Learning

Multi-task Learning represents a fundamental shift from single-task learning (STL) paradigms. While STL trains isolated models on individual tasks, MTL jointly learns multiple related tasks by leveraging both task-specific and shared information [23]. This collaborative approach offers several key benefits: streamlined model architectures, improved generalization capabilities, and enhanced performance, particularly on tasks with limited data [23]. The paradigm draws inspiration from human learning, where knowledge transfer across various tasks enhances understanding of each through gained insights [23].

Formally, MTL can be understood through its shared representation learning framework. A typical MTL architecture consists of:

- Shared Backbone: Common layers that learn representations beneficial for all tasks

- Task-Specific Heads: Specialized layers that process shared representations for individual task outputs

- Joint Optimization: A training procedure that balances learning across all tasks simultaneously

This structure enables the model to discover and utilize underlying commonalities between tasks while maintaining specialized capabilities for each specific prediction objective [6].

MTL's Mechanism for Addressing Data Limitations

MTL mitigates data scarcity through several interconnected mechanisms. By pooling information across tasks, MTL effectively increases the effective sample size for learning generalizable representations [10]. The shared representations learned across tasks act as a form of regularization, preventing overfitting to small datasets by encouraging the model to focus on generally useful features [23] [6]. Additionally, MTL facilitates inductive transfer, where training signals from data-rich tasks help improve performance on data-poor tasks [6]. This cross-task knowledge sharing is particularly valuable in domains like molecular property prediction, where different properties may share underlying structural determinants that the model can discover through joint training [10] [22].

MTL Methodologies for Molecular Property Prediction

Architectural Approaches

Molecular property prediction has seen significant advances through the application of specialized MTL architectures, particularly graph neural networks (GNNs) that naturally represent molecular structures.

Table 1: MTL Architectural Approaches for Molecular Property Prediction

| Architecture Type | Key Characteristics | Advantages | Limitations |

|---|---|---|---|

| Shared-Backbone with Task-Specific Heads | Single GNN backbone with dedicated MLP heads for each task [6] | Promotes feature transfer; Computationally efficient | Potential gradient conflicts between tasks |

| Adaptive Checkpointing (ACS) | Saves best backbone-head pairs when validation loss minimizes [6] | Mitigates negative transfer; Handles task imbalance | Increased storage requirements |

| MT2ST Framework | Transitions from MTL to STL using Diminish or Switch strategies [24] | Balances generalization and specialization | Complex training scheduling |

The ACS Training Scheme

Adaptive Checkpointing with Specialization (ACS) represents a recent advancement specifically designed to address negative transfer (NT)—the phenomenon where updates from one task detrimentally affect another [6]. The ACS methodology employs:

- Shared Task-Agnostic Backbone: A single GNN based on message passing that learns general-purpose molecular representations

- Task-Specific MLP Heads: Dedicated networks for each property prediction task

- Validation-Loss Monitoring: Tracking validation loss for every task throughout training

- Adaptive Checkpointing: Saving the best backbone-head pair for each task when its validation loss reaches a new minimum

This approach allows each task to effectively obtain a specialized model while still benefiting from shared representations during training [6].

Experimental Comparison of MTL Approaches

Performance Benchmarks on Molecular Datasets

Comprehensive evaluation across multiple molecular property benchmarks demonstrates the effectiveness of MTL approaches in data-scarce scenarios.

Table 2: Performance Comparison of MTL Methods on Molecular Property Benchmarks (AUROC Scores)

| Method | ClinTox | SIDER | Tox21 | Data Efficiency | NT Resistance |

|---|---|---|---|---|---|

| Single-Task Learning (STL) | 0.783 | 0.805 | 0.821 | Low | N/A |

| Standard MTL | 0.812 | 0.823 | 0.839 | Medium | Low |

| MTL with Global Loss Checkpointing | 0.815 | 0.826 | 0.842 | Medium | Medium |

| ACS (Proposed) | 0.902 | 0.835 | 0.851 | High | High |

| MT2ST Framework | 0.856 | 0.830 | 0.845 | High | Medium |

Data sources: [6] [24] - Performance metrics normalized to AUROC where applicable

Notably, ACS demonstrates an 11.5% average improvement relative to other methods based on node-centric message passing and shows particular effectiveness on imbalanced datasets like ClinTox, where it improves upon STL by 15.3% [6].

Ultra-Low Data Regime Performance

The most significant advantages of MTL emerge in ultra-low data regimes. In practical applications such as predicting sustainable aviation fuel properties, ACS enables accurate predictions with as few as 29 labeled samples—capabilities unattainable with single-task learning or conventional MTL [6]. This dramatic improvement in data efficiency stems from MTL's ability to leverage correlated information across tasks, effectively amplifying the signal from limited labeled data.

Table 3: Performance in Ultra-Low Data Regime (Mean Absolute Error)

| Training Set Size | Single-Task Learning | Standard MTL | ACS |

|---|---|---|---|

| 100 samples | 0.89 | 0.76 | 0.71 |

| 50 samples | 1.12 | 0.91 | 0.79 |

| 29 samples | 1.45 | 1.22 | 0.83 |

Experimental Protocols for MTL in Molecular Science

Benchmark Dataset Preparation

Proper experimental validation of MTL approaches requires careful dataset preparation and benchmarking:

Dataset Selection: Standard benchmarks include ClinTox (distinguishing FDA-approved drugs from compounds failing clinical trials due to toxicity), SIDER (27 side effect classification tasks), and Tox21 (12 toxicity endpoints) [6].

Data Splitting: Murcko-scaffold splits ensure that structurally dissimilar molecules separate training and test sets, preventing artificial inflation of performance metrics [6].

Task Imbalance Handling: Techniques like loss masking address missing labels common in real-world molecular datasets without discarding valuable partial data [6].

Evaluation Metrics: Area Under the Receiver Operating Characteristic curve (AUROC) provides consistent evaluation across classification tasks, while Mean Absolute Error (MAE) suits regression tasks [6].

Model Training and Optimization

Effective MTL implementation requires specialized training protocols:

Gradient Conflict Management: Techniques like gradient surgery or uncertainty weighting balance learning across tasks with conflicting gradients [6] [25].

Dynamic Weighting: The MT2ST framework's Diminish strategy employs time-dependent weighting that reduces auxiliary task influence using the function: γk(t) = γk,0 · e-ηktνk, where γk,0 is the initial weight, ηk is the decay rate, and νk is the curvature parameter [24].

Validation-Based Checkpointing: ACS continuously monitors validation loss for each task, checkpointing the best model parameters when new minima occur [6].

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of MTL for molecular property prediction requires both computational tools and domain-specific resources.

Table 4: Essential Research Reagents for MTL in Molecular Property Prediction

| Resource | Type | Function | Implementation Examples |

|---|---|---|---|

| Graph Neural Networks | Algorithm | Learns molecular representations from structure | Message Passing Neural Networks, D-MPNN [6] |

| Benchmark Datasets | Data | Provides standardized evaluation | MoleculeNet (ClinTox, SIDER, Tox21) [6] |

| Multi-Task Optimization | Algorithm | Balances learning across tasks | Gradient Surgery, Uncertainty Weighting [6] [25] |

| Validation Frameworks | Methodology | Prevents overfitting in low-data regimes | Murcko Scaffold Splits, Temporal Splits [6] |

| Domain Knowledge | Expertise | Guides task grouping and interpretation | Medicinal Chemistry, QSAR Principles [22] |

Validation Against Experimental Data

The ultimate test for any MTL approach in molecular sciences is validation against experimental data. This process involves:

Prospective Validation: Predicting properties for novel molecular structures not included in training data, then experimentally verifying these predictions [10].

Temporal Validation: Using time-split evaluations where models train on older data and test on newer experimental results, better simulating real-world discovery scenarios [6].

Domain Shift Assessment: Evaluating model performance on molecular classes structurally distinct from training data to assess generalization capabilities [6].

In real-world applications like sustainable aviation fuel property prediction, MTL has demonstrated remarkable practical utility, achieving correlation coefficients >0.9 with experimental measurements even with limited training data [6]. Similarly, in pharmaceutical contexts, MTL models have successfully predicted complex properties like toxicity and membrane permeability, guiding experimental prioritization of promising drug candidates [10] [22].

Multi-task learning represents a paradigm shift in addressing data scarcity challenges in molecular property prediction and related scientific domains. By leveraging shared representations across related tasks, MTL enables more robust predictions in data-limited scenarios that are common in experimental sciences. Among current approaches, Adaptive Checkpointing with Specialization (ACS) demonstrates particular promise for handling real-world task imbalances and mitigating negative transfer, while hybrid approaches like MT2ST effectively balance multi-task generalization with single-task specialization.

The validation of MTL predictions against experimental data remains crucial for establishing trust and utility in scientific applications. As MTL methodologies continue evolving, their integration with domain knowledge and experimental design holds potential to significantly accelerate discovery cycles in fields ranging from drug development to materials science. For researchers working with scarce data, MTL offers a principled framework for maximizing insights from limited experimental resources while maintaining rigorous validation against empirical measurements.

Adaptive Checkpointing with Specialization (ACS) to Mitigate Negative Transfer

Data scarcity remains a major obstacle to effective machine learning in molecular property prediction and design, affecting diverse domains such as pharmaceuticals, solvents, polymers, and energy carriers [26]. While machine learning models have shown promise in accelerating the de novo design of high-performance molecules and mixtures, their predictive accuracy relies heavily on the availability and quality of training data [26]. In many practical applications, including pharmaceutical development and sustainable fuel design, the scarcity of reliable, high-quality labels impedes the development of robust molecular property predictors [26] [27].

Multi-task learning (MTL) has emerged as a promising approach to alleviate these data bottlenecks by exploiting correlations among related molecular properties [26]. Through inductive transfer, MTL leverages training signals from one task to improve another, allowing the model to discover and utilize shared structures for more accurate predictions across all tasks [26]. However, in practice, MTL is frequently undermined by negative transfer—performance drops that occur when updates driven by one task are detrimental to another [26]. This problem is particularly pronounced in real-world scenarios with severe task imbalance, where certain tasks have far fewer labels than others [26]. Adaptive Checkpointing with Specialization (ACS) represents a novel training scheme for multi-task graph neural networks specifically designed to counteract these effects while preserving the benefits of MTL [28] [26].

Understanding ACS: Mechanism and Workflow

Core Architectural Principles

ACS integrates a shared, task-agnostic backbone with task-specific trainable heads to balance inductive transfer with protection against negative transfer [26]. The backbone of the architecture is a single graph neural network (GNN) based on message passing, which learns general-purpose latent representations of molecules [26]. These representations are then processed by task-specific multi-layer perceptron (MLP) heads that provide specialized learning capacity for each individual property prediction task [26].

This hybrid design enables ACS to promote knowledge sharing across sufficiently correlated tasks while shielding individual tasks from deleterious parameter updates that cause negative transfer [26]. During training, the system monitors the validation loss of every task and checkpoints the best backbone-head pair whenever the validation loss of a given task reaches a new minimum [26]. Thus, each task ultimately obtains a specialized backbone-head pair optimized for its specific characteristics [26].

The ACS Workflow

The following diagram illustrates the complete ACS workflow, from molecular input to specialized task prediction:

Experimental Comparison: ACS Versus Alternative Approaches

Benchmark Dataset Performance

To evaluate its effectiveness, ACS was tested on multiple molecular property benchmarks from MoleculeNet, including ClinTox, SIDER, and Tox21 [26]. These datasets represent realistic challenges in molecular informatics: ClinTox distinguishes FDA-approved drugs from compounds that failed clinical trials due to toxicity; SIDER comprises 27 binary classification tasks indicating side effect presence; and Tox21 measures 12 in-vitro toxicity endpoints [26]. The following table summarizes the performance comparison between ACS and alternative methods:

Table 1: Performance comparison (ROC-AUC %) on MoleculeNet benchmarks

| Method | ClinTox | SIDER | Tox21 |

|---|---|---|---|

| GCN | 62.5 ± 2.8 | 53.6 ± 3.2 | 70.9 ± 2.6 |

| GIN | 58.0 ± 4.4 | 57.3 ± 1.6 | 74.0 ± 0.8 |

| D-MPNN | 90.5 ± 5.3 | 63.2 ± 2.3 | 68.9 ± 1.3 |

| SchNet | 71.5 ± 3.7 | 53.9 ± 3.7 | 77.2 ± 2.3 |

| MSR | 86.6 ± 1.2 | 61.4 ± 7.3 | 72.1 ± 5.0 |

| STL | 73.7 ± 12.5 | 60.0 ± 4.4 | 73.8 ± 5.9 |

| MTL | 76.7 ± 11.0 | 60.2 ± 4.3 | 79.2 ± 3.9 |

| MTL-GLC | 77.0 ± 9.0 | 61.8 ± 4.2 | 79.3 ± 4.0 |

| ACS | 85.0 ± 4.1 | 61.5 ± 4.3 | 79.0 ± 3.6 |

The results demonstrate that ACS consistently matches or surpasses the performance of recent supervised methods across diverse benchmark datasets [26]. Notably, ACS achieves an 11.5% average improvement relative to other methods based on node-centric message passing [26]. While D-MPNN achieves competitive performance on ClinTox, ACS maintains strong results across all three benchmarks without significant performance variations [26].

Comparative Analysis of Training Schemes

To isolate the specific contribution of the ACS methodology, researchers conducted controlled comparisons against multiple baseline training schemes [26]. The following table compares these approaches across key characteristics:

Table 2: Training scheme comparison

| Training Scheme | Parameter Sharing | Checkpointing | Negative Transfer Mitigation | Data Efficiency |

|---|---|---|---|---|

| Single-Task Learning (STL) | None | Task-specific | Not applicable | Low |

| Multi-Task Learning (MTL) | Full shared backbone | None | None | Moderate |

| MTL with Global Loss Checkpointing (MTL-GLC) | Full shared backbone | Global validation loss | Limited | Moderate |

| ACS | Shared backbone with task-specific heads | Adaptive task-specific | Active monitoring and specialization | High |

These comparisons reveal that ACS's gains stem specifically from its ability to mitigate negative transfer rather than merely from its architectural advantages [26]. Notably, single-task learning—which devotes separate backbone-head pairs to each task and removes all parameter sharing—has greater learning capacity than MTL-based approaches but fails to match ACS's performance, particularly in low-data regimes [26].

Experimental Protocol and Methodologies

Benchmark Experimental Setup

The validation of ACS followed rigorous experimental protocols to ensure fair comparison with existing methods [26]. For benchmark evaluations on ClinTox, SIDER, and Tox21 datasets, the researchers employed a Murcko-scaffold splitting protocol to prevent artificial performance inflation that can occur with random splits [26]. This approach better reflects real-world prediction scenarios by ensuring that structurally similar molecules don't appear in both training and test sets [26].

All experiments implemented ACS using a message-passing graph neural network as the shared backbone with task-specific multi-layer perceptron heads [26]. The training process monitored validation loss for each task independently, checkpointing parameters when a task achieved a new minimum loss [26]. This approach allows different tasks to effectively specialize at different points during the training process, circumventing the synchronization requirement that plagues conventional MTL [26].

Ultra-Low Data Regime Validation

To test ACS's performance in extremely challenging conditions, researchers conducted a real-world case study predicting 15 physicochemical properties of sustainable aviation fuel (SAF) molecules [26] [29]. This scenario is particularly relevant for validation against experimental data research because SAF development represents a "high-impact, real-world challenge where experimental data is extremely limited and labor-intensive to obtain" [29].

In this practical application, ACS demonstrated robust predictive performance with as few as 29 labeled samples—a data regime where conventional single-task learning and traditional MTL approaches typically fail [26] [29]. The methodology achieved over 20% higher predictive accuracy than conventional training methods in these ultra-low-data settings [29].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key research reagents and computational resources for ACS implementation

| Resource | Function | Availability |

|---|---|---|

| ACS Code Repository | Complete implementation of Adaptive Checkpointing with Specialization | GitHub: BasemEr/acs [28] |

| MoleculeNet Benchmarks | Standardized datasets for molecular property prediction | moleculenet.org [26] |

| Graph Neural Network Framework | Backbone architecture for molecular representation learning | PyTorch/PyTorch Geometric [28] |

| Sustainable Aviation Fuel Datasets | Domain-specific experimental data for validation | Custom collection [26] [29] |

| TensorBoard Logging | Training monitoring and visualization | Built-in with ACS code [28] |

Case Study: Sustainable Aviation Fuel Design

The application of ACS to sustainable aviation fuel (SAF) property prediction exemplifies its value in experimental research contexts [29]. In this real-world scenario, researchers applied ACS to predict 15 different physicochemical properties relevant to aviation fuel performance, including flammability limits and volatility characteristics [29]. These predictions are already generating new leads in SAF development and helping overcome challenges in the clean energy transition [29].

A key advantage in this application domain is ACS's ability to leverage relationships between molecular properties—for example, the correlation between a molecule's flammability limits and its volatility—to enhance predictive performance despite minimal training data [29]. The accurate predictions generated by ACS are being fed into fuel design tools targeting novel SAF formulations for industrial partners [26] [29].

Adaptive Checkpointing with Specialization represents a significant advancement in molecular property prediction, particularly for data-scarce scenarios common in frontier research areas. By effectively mitigating negative transfer while preserving the benefits of multi-task learning, ACS enables reliable prediction with dramatically reduced data requirements—capabilities unattainable with single-task learning or conventional MTL [26].

The robust performance of ACS across diverse benchmarks and its successful application to sustainable aviation fuel design demonstrates its potential to accelerate discovery cycles in pharmaceutical development, materials science, and clean energy research [29]. As experimental data remains costly and time-consuming to acquire, methodologies like ACS that maximize knowledge extraction from limited samples will play an increasingly crucial role in bridging computational prediction and experimental validation.

Transfer Learning Guided by Task Similarity (MoTSE Framework)

Predicting the properties of small molecules is a crucial task in drug development and computational chemistry. A significant challenge in this field is that many molecular property datasets contain only a limited amount of data, which hinders the application of powerful deep learning models that typically require large training sets [30]. Transfer learning has emerged as a promising strategy to mitigate this data scarcity problem by leveraging knowledge from related tasks. The core premise is that models pretrained on large, source datasets can be fine-tuned to achieve high performance on smaller, target datasets. However, the success of this strategy critically depends on selecting appropriate source tasks that are "similar" to the target task. The Molecular Tasks Similarity Estimator (MoTSE) framework addresses this exact challenge by providing an effective and interpretable computational method to accurately estimate task similarity, thereby guiding effective transfer learning for molecular property prediction [30].

Performance Comparison of Transfer Learning Strategies

The table below summarizes the performance of various molecular property prediction strategies, highlighting the advantages of the MoTSE-guided transfer learning approach.

Table 1: Performance Comparison of Molecular Property Prediction Strategies

| Method / Model | Key Approach | Reported Performance / Findings | Applicability / Notes |

|---|---|---|---|

| MoTSE Framework [30] | Transfer learning guided by a novel task similarity estimator. | Task similarity from MoTSE consistently improved transfer learning prediction performance on molecular properties. | Provides interpretable insights into intrinsic relationships between molecular properties. |

| Functional Group LLMs (FGBench) [31] | Uses functional group-level information for reasoning in Large Language Models. | Current LLMs struggle with FG-level property reasoning, highlighting a need for enhanced capabilities. | Focuses on fine-grained structure-property relationships (e.g., single FG impact, multiple FG interactions). |

| OMol25-Trained NNPs [32] | Neural Network Potentials (NNPs) pretrained on a massive computational chemistry dataset (OMol25). | For organometallic reduction potentials, UMA-S NNP (MAE=0.262 V) outperformed B97-3c DFT (MAE=0.414 V). | Effective for charge-related properties like reduction potential, even without explicit Coulombic physics. |

| PaiNN with TL [33] | Message Passing Neural Network (PaiNN) with pre-training on large datasets with cheap ab initio labels. | Excellent results for HOPV (HOMO-LUMO-gaps); less successful for Freesolv (solvation energies). | Success depends on the similarity between pre-training and fine-tuning tasks/labels. |

| Direct Data Integration [1] | Naive aggregation of datasets from different sources (e.g., TDC, Obach, Lombardo) without correcting for misalignments. | Often degrades model performance due to distributional shifts and inconsistent annotations. | Highlights the need for tools like AssayInspector for data consistency assessment prior to modeling. |

Experimental Protocols and Methodologies

The MoTSE Framework Workflow

The MoTSE framework introduces a systematic, data-driven approach to transfer learning. Its methodology can be summarized in the following key steps [30]:

- Task Similarity Estimation: The core of the framework is the MoTSE algorithm, which takes multiple molecular property prediction tasks as input. It employs an effective computational method to accurately measure the pairwise similarity between these tasks. This process is designed to be interpretable, helping researchers understand the intrinsic relationships between different molecular properties.

- Source Task Selection: Based on the calculated similarity matrix, the framework identifies the source tasks that are most similar to a given target task with limited data.

- Guided Transfer Learning: Instead of a random or heuristic choice, the transfer learning process is initiated using a model pre-trained on the source tasks identified as most similar by MoTSE.

- Fine-tuning and Prediction: The pre-trained model is subsequently fine-tuned on the data from the target task, leading to a more accurate and robust predictor for the desired molecular property.

The following diagram illustrates the logical workflow of the MoTSE framework:

Benchmarking OMol25 Neural Network Potentials

A separate study benchmarked the performance of OMol25-trained Neural Network Potentials (NNPs) on predicting experimental electrochemical properties, providing a comparison point for data-driven models [32]. The experimental protocol was as follows:

- Objective: To evaluate the ability of pretrained NNPs (eSEN-S, UMA-S, UMA-M) to predict experimental reduction potentials and electron affinities for main-group and organometallic species.

- Data: Experimental reduction-potential data for 192 main-group and 120 organometallic species, and experimental electron-affinity data for 37 simple main-group species and 11 organometallic complexes.

- Methodology:

- For each species, the non-reduced and reduced structures were geometry-optimized using the OMol25 NNP.

- The electronic energy of each optimized structure was calculated, with a solvent correction (CPCM-X) applied for reduction potential predictions.

- The reduction potential was derived from the difference in electronic energy between the non-reduced and reduced structures.

- The Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and coefficient of determination (R²) were computed against experimental values.

- Comparison: The accuracy of the NNPs was compared to that of low-cost Density Functional Theory (DFT) methods (B97-3c) and semiempirical quantum mechanical (SQM) methods (GFN2-xTB).

Functional Group-Level Reasoning with FGBench

The FGBench dataset and benchmark were introduced to probe and enhance the reasoning capabilities of LLMs at a more granular, chemically meaningful level [31]. The methodology for constructing and using FGBench involves:

- Data Construction: A novel pipeline was developed to create 625K molecular property reasoning problems. This pipeline uses a "validation-by-reconstruction" strategy to ensure high-quality molecular comparisons and precise annotation of functional groups, including their locations within the molecule.

- Task Dimensions: The problems are organized into three categories to mirror scientific reasoning:

- Single Functional Group Impact: Assessing the effect of a single functional group on a property.

- Multiple Functional Group Interactions: Reasoning about the interplay between multiple functional groups.

- Direct Molecular Comparisons: Comparing two molecules that differ by specific functional group modifications.

- Question-Answer Formats: Each dimension includes both Boolean (trend-based) and value-based (quantitative) question-answer pairs.

- Benchmarking: A curated subset of 7K problems was used to evaluate state-of-the-art LLMs, revealing their current limitations in functional group-level reasoning.

The logical process for this type of reasoning is shown below:

The Scientist's Toolkit

This section details key computational reagents and resources essential for research in molecular property prediction and transfer learning.

Table 2: Key Research Reagent Solutions for Molecular Property Prediction

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| MoTSE [30] | Computational Framework | Accurately estimates similarity between molecular property prediction tasks to guide effective transfer learning. |

| FGBench Dataset [31] | Specialized Dataset | Provides 625K problems for training and benchmarking models on functional group-level molecular property reasoning. |

| OMol25 Dataset & NNPs [32] | Pretrained Models & Data | Offers massive-scale computational chemistry data and pretrained Neural Network Potentials for predicting energies and properties of molecules in various states. |

| AssayInspector [1] | Data Analysis Tool | A model-agnostic Python package designed to systematically identify data misalignments, outliers, and batch effects across heterogeneous molecular datasets before aggregation. |

| Therapeutic Data Commons (TDC) [1] | Data Benchmark | Provides standardized benchmarks and aggregated datasets for molecular property prediction, though requires careful consistency assessment. |

| Position Weight Matrix (PWM) [34] | Computational Biology Tool | Represents the likelihood of each nucleotide at each position in a DNA binding motif; used in HMMs for sequence recognition tasks, analogous to molecular pattern detection. |

Data Augmentation Strategies through Multi-Task Graph Neural Networks