Bridging the Gap: Machine Learning Methods for Comparing Computational and Experimental Spectroscopy Data

This article explores the transformative role of machine learning (ML) in bridging computational and experimental spectroscopy, a critical synergy for researchers in chemistry, materials science, and drug development.

Bridging the Gap: Machine Learning Methods for Comparing Computational and Experimental Spectroscopy Data

Abstract

This article explores the transformative role of machine learning (ML) in bridging computational and experimental spectroscopy, a critical synergy for researchers in chemistry, materials science, and drug development. It covers the foundational challenges of automating structure prediction from spectra and the high computational cost of traditional simulations. The piece details methodological advances, including ML models that predict spectra from structures, identify structural models from data, and directly extract structural parameters. It further addresses troubleshooting experimental artifacts and optimizing models, and provides a framework for the rigorous validation and benchmarking of computational tools. The conclusion synthesizes how these integrated approaches are paving the way for accelerated, high-throughput discovery in biomedical and clinical research.

The Synergy of Spectroscopy and Machine Learning: Foundations and Core Challenges

Automated structure prediction from spectroscopic data represents a pivotal challenge at the intersection of analytical chemistry, machine learning, and molecular discovery. Despite the widespread availability of techniques such as Infrared (IR) and Nuclear Magnetic Resonance (NMR) spectroscopy, interpreting spectral data to determine complete molecular structures has traditionally required extensive expert knowledge and manual effort. The sheer complexity of molecular structure space, combined with the subtle, overlapping features present in experimental spectra, has made full automation an elusive goal [1]. Recent advances in machine learning, however, are beginning to transform this landscape, enabling new approaches that can directly predict molecular connectivity from spectral inputs, thereby accelerating research across chemical synthesis, drug development, and materials science.

This Application Note frames these developments within the broader context of comparing computational and experimental spectroscopy data. We present quantitative benchmarks for current methodologies, detailed experimental protocols for implementation, and visual workflows to guide researchers in navigating this rapidly evolving field.

Current Methodologies and Performance Benchmarks

The integration of machine learning with spectroscopy has catalyzed the development of models that address the inverse problem of structure elucidation—deriving molecular structure from spectral data rather than predicting spectra from known structures.

Machine Learning Approaches for IR and NMR Spectroscopy

Infrared Spectroscopy: Traditional analysis of IR spectra has been largely limited to identifying a handful of characteristic functional groups, leaving the information-rich "fingerprint region" (400–1500 cm⁻¹) underutilized [2]. A recent transformer-based model demonstrates that complete molecular structure prediction directly from IR spectra is now achievable. This approach uses an autoregressive encoder-decoder architecture trained on a large corpus of simulated and experimental data. The model takes both the IR spectrum and the chemical formula as inputs and generates the molecular structure as a SMILES string, effectively learning the complex mapping between spectral features and structural elements [2].

NMR Spectroscopy: For NMR, a major challenge in automation has been the difficulty of interpreting complex 1D ¹H NMR spectra with overlapping peaks and variable coupling patterns. A machine learning framework combining a convolutional neural network (CNN) for substructure prediction with a graph generation algorithm has been developed to address this [3]. The model identifies the probability of hundreds of potential substructures from the spectral data and uses these probabilities to construct and rank candidate constitutional isomers, mimicking the reasoning process of expert chemists but at a vastly increased scale and speed [3].

Quantitative Performance Comparison

The table below summarizes the performance of these state-of-the-art methods for automated structure elucidation, providing key benchmarks for researchers.

Table 1: Performance Benchmarks for Automated Structure Prediction from Spectra

| Spectroscopic Method | ML Model Architecture | Key Input Features | Top-1 Accuracy (%) | Top-10 Accuracy (%) | Molecular Scope |

|---|---|---|---|---|---|

| IR Spectroscopy [2] | Transformer (encoder-decoder) | IR spectrum, Chemical formula | 44.4 | 69.8 | 6-13 heavy atoms |

| NMR Spectroscopy [3] | CNN + Graph Generator | ¹H NMR spectrum, ¹³C NMR shifts, Molecular formula | 67.4 | 95.8 | ≤10 non-hydrogen atoms (C, H, O, N) |

| NMR - Scaffold Prediction [2] | Transformer | IR spectrum, Chemical formula | 84.5 | 93.0 | 6-13 heavy atoms |

These results highlight several key insights. The NMR-based approach achieves higher overall accuracy, reflecting the information-rich nature of NMR data for determining atomic connectivity. The IR-based method, while less accurate for full structure prediction, shows remarkable performance in identifying the core molecular scaffold, which can be invaluable for rapid compound characterization. In both cases, providing the chemical formula as a prior constraint significantly narrows the chemical search space and improves model performance [2] [3].

Detailed Experimental Protocols

Protocol A: Molecular Structure Elucidation from IR Spectra

This protocol details the procedure for utilizing a transformer model to predict molecular structures from experimental IR spectra, based on the methodology described in [2].

1. Sample Preparation and Data Acquisition

- Prepare a pure sample of the unknown compound at a relatively high concentration, suitable for IR spectroscopy.

- Acquire the IR spectrum using a standard FTIR spectrometer. The spectrum should cover the mid-IR region (e.g., 400–4000 cm⁻¹) with a resolution of approximately 4-16 cm⁻¹.

- Determine the chemical formula of the unknown compound using high-resolution mass spectrometry (HRMS).

2. Data Preprocessing

- Convert the raw spectrum into a one-dimensional vector of intensity values.

- Normalize the intensity values across the spectrum, for example, to a range of 0 to 1.

- Discretize the spectrum to a fixed sequence length (e.g., 400 tokens). A sequence length of 400, corresponding to a resolution of ~16 cm⁻¹, has been shown to balance information content and model performance [2].

- For optimal results, focus the model's attention on the most informative spectral regions. A merged split containing the fingerprint region (400–2000 cm⁻¹) and the C-H stretching window (2800–3300 cm⁻¹) is recommended.

3. Model Inference and Structure Generation

- Input the preprocessed spectral vector and the chemical formula into the pretrained transformer model.

- The model will autoregressively generate a ranked list of candidate molecular structures in the form of SMILES strings.

- The top-10 predictions should be considered, as the correct structure is found within them in 69.8% of cases for molecules with 6-13 heavy atoms [2].

4. Validation

- Validate the top-ranking candidate structures by comparing their predicted spectra with the experimental data or by using orthogonal analytical techniques such as NMR or LC-MS.

Protocol B: Molecular Structure Elucidation from 1D NMR Spectra

This protocol outlines the use of a convolutional neural network and graph generator for structure elucidation from routine 1D NMR data, as presented in [3].

1. Sample Preparation and Data Acquisition

- Dissolve the unknown compound in a deuterated NMR solvent.

- Acquire a ¹H NMR spectrum with a sufficient number of scans to achieve a good signal-to-noise ratio.

- Acquire a ¹³C NMR spectrum with ¹H decoupling.

- Determine the molecular formula via HRMS.

2. Data Preprocessing

- Process the ¹H NMR spectrum (FID) to obtain the frequency-domain spectrum. Perform phase correction and baseline correction.

- Identify and remove solvent peaks and peaks from labile protons (e.g., OH, NH₂).

- For the ¹H NMR spectrum, use the full spectral data as input to the model. The complex splitting patterns and integrations provide critical structural information.

- For the ¹³C NMR spectrum, extract a list of chemical shifts. Integrations and multiplicities are not required.

3. Substructure Prediction and Graph Generation

- Input the preprocessed ¹H NMR spectrum, the list of ¹³C NMR chemical shifts, and the molecular formula into the trained CNN.

- The model will output a probability score for each of the 957 defined substructures, creating a "substructure probability profile."

- This profile is then used by a graph generation algorithm, which assembles candidate molecular graphs (constitutional isomers) that are consistent with both the molecular formula and the predicted substructures.

4. Analysis and Validation

- The framework outputs a probabilistically ranked list of candidate constitutional isomers.

- The top candidate is correct 67.4% of the time for molecules with up to 10 non-hydrogen atoms, and it appears in the top-10 candidates 95.8% of the time [3].

- Perform experimental validation of the top candidate(s) using 2D NMR experiments or other spectroscopic data.

Workflow Visualization

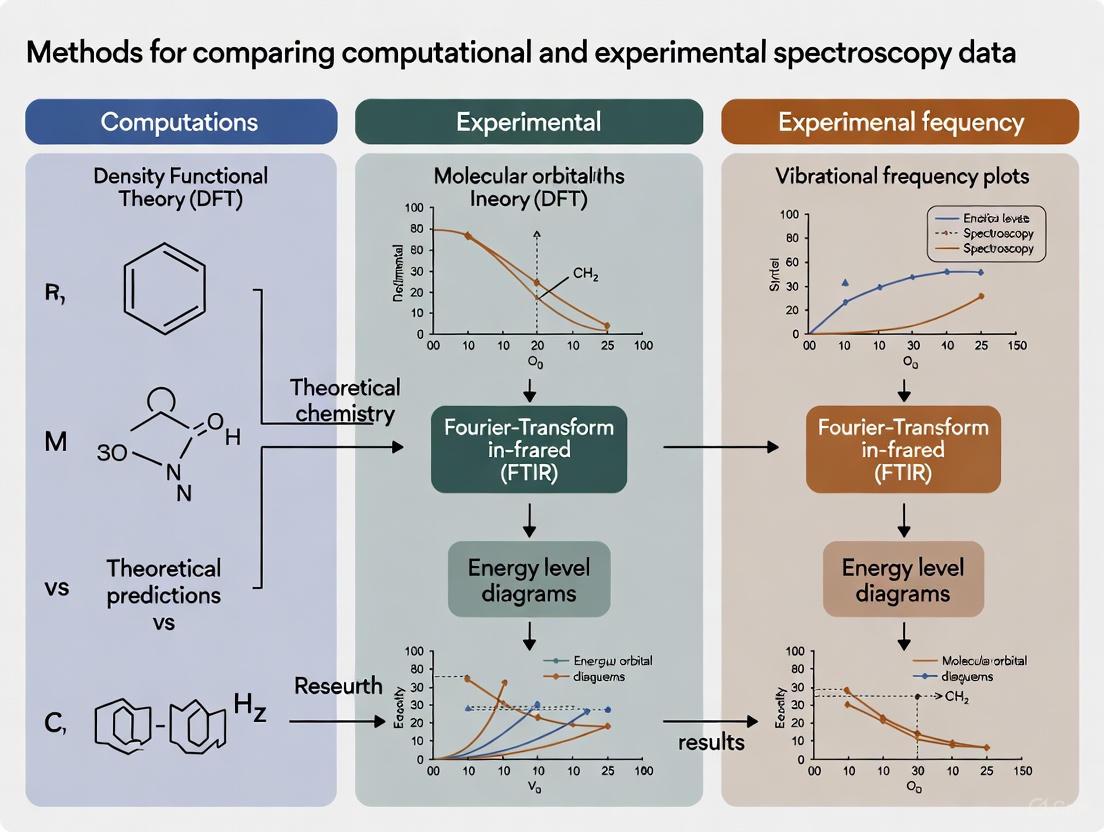

The following diagram illustrates the logical flow and core components of a generalized machine learning system for automated structure prediction from spectra, integrating key elements from both the IR and NMR methodologies discussed.

Automated Structure Elucidation Workflow

The workflow begins with the input of raw spectral data and a chemical formula. After preprocessing, the features are fed into a machine learning model (e.g., a Transformer or CNN). This model outputs a set of predicted substructures and their probabilities. A graph generation algorithm then uses this profile, along with the chemical formula, to systematically construct and rank candidate molecular structures. The final output is a list of ranked constitutional isomers, which must be validated experimentally [2] [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of automated structure elucidation requires careful attention to experimental materials and computational resources. The following table details key components of the research toolkit.

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Specification / Details | Primary Function in Workflow |

|---|---|---|

| FTIR Spectrometer | Mid-IR range (400-4000 cm⁻¹), resolution ~4-16 cm⁻¹ | Acquire experimental IR spectra for model input. |

| NMR Spectrometer | Capable of ¹H and ¹³C experiments, with deuterated solvent. | Acquire ¹H and ¹³C NMR spectra for model input [3]. |

| High-Resolution Mass Spectrometer (HRMS) | Sufficient resolution to determine elemental composition. | Provide accurate chemical formula, a critical prior for the model [2] [3]. |

| Deuterated NMR Solvents | e.g., CDCl₃, DMSO-d₆ | Dissolve samples for NMR analysis without introducing interfering signals. |

| Neural Network Potentials (NNPs) | Pre-trained models (e.g., eSEN, UMA on datasets like OMol25) | Provide fast, accurate energy calculations for geometry optimization of predicted structures in validation [4]. |

| Chromatography Software Suites | e.g., GC×GC Software for image-based fingerprinting | Process and analyze complex 2D chromatographic data for complementary untargeted analysis [5]. |

| Quantum Chemistry Packages | e.g., Psi4, with density functionals like r²SCAN-3c, ωB97X-3c | Perform reference calculations for benchmarking and validation of predicted structures and properties [4]. |

| MestReNova | Or equivalent NMR processing software | Process raw FIDs, perform phase and baseline correction, and remove solvent peaks [3]. |

Quantum chemical calculations are indispensable in modern scientific research, providing deep insights into molecular structure, reactivity, and properties from first principles. In the specific context of comparing computational and experimental spectroscopy data, these methods serve as a critical bridge for interpreting complex spectral signatures and validating theoretical models against empirical evidence. Density functional theory (DFT) has emerged as the most widely used computational approach, offering a balance between accuracy and computational cost for systems of practical scientific interest [6]. Despite advances in computational hardware and algorithms, researchers consistently face a fundamental computational bottleneck that limits the scope, accuracy, and applicability of these calculations across various domains, including drug development and materials science.

This bottleneck manifests as a critical trade-off between three competing factors: the size and complexity of the chemical system being studied, the level of theory and its inherent accuracy, and the computational resources required in terms of time, memory, and processing power. For spectroscopy researchers, this triad dictates which systems can be realistically modeled, which properties can be reliably predicted, and how meaningfully computational results can be compared with experimental data.

The Core Bottlenecks in Quantum Chemistry

The Scalability Challenge: Computational Cost vs. System Size

The most fundamental limitation arises from the unfavorable scaling of computational methods with system size. The electronic Schrödinger equation, which describes the behavior of electrons in a molecule, becomes prohibitively expensive to solve exactly as the number of electrons increases.

Table 1: Computational Scaling of Common Quantum Chemical Methods

| Method | Computational Scaling | Typical System Size Limit (Atoms) | Primary Limitation |

|---|---|---|---|

| Hartree-Fock (HF) | O(N⁴) | 50-100 | Neglects electron correlation |

| Density Functional Theory (DFT) | O(N³) to O(N⁴) | 100-500 | Accuracy depends on functional choice |

| Møller-Plesset Perturbation (MP2) | O(N⁵) | 50-200 | Costly for dynamic correlation |

| Coupled Cluster (CCSD(T)) | O(N⁷) | 10-50 | "Gold standard" but prohibitively expensive |

The computational cost manifests not only in time but also in memory and storage requirements. For example, the QeMFi dataset, a multifidelity quantum chemical dataset, required calculations across 135,000 molecular geometries at five different levels of theory (basis sets ranging from STO-3G to def2-TZVP), representing a massive computational undertaking even for small- to medium-sized organic molecules [7].

System Complexity and Methodological Limitations

Beyond simple atom count, molecular complexity introduces additional challenges that exacerbate the computational bottleneck:

- Strong Electron Correlation: Systems with degenerate or near-degenerate electronic states, such as transition metal complexes and open-shell molecules, challenge single-reference methods like standard DFT [8].

- Intermolecular Interactions: Modeling crystalline materials requires accounting for long-range interactions and periodic boundary conditions, necessitating more expensive periodic-DFT calculations rather than discrete cluster approaches [6].

- Solvation and Environmental Effects: Implicit solvation models provide reasonable approximations, but explicit solvent modeling dramatically increases system size and requires extensive conformational sampling.

- Excited States: Time-dependent DFT (TD-DFT) calculations for spectroscopic properties like UV-Vis spectra are considerably more demanding than ground-state calculations [7].

Practical Implications for Spectroscopy Research

The computational bottleneck directly impacts research workflows in computational spectroscopy, creating several practical constraints:

- Model Simplification Necessity: Researchers must often simplify molecular models to make calculations tractable, potentially sacrificing chemical realism. For crystalline materials, this presents a dilemma between periodic calculations that capture long-range order and discrete cluster approaches that are computationally cheaper but may miss crucial lattice effects [6].

- Basis Set Compromises: The choice of basis set represents a critical trade-off. Larger basis sets (e.g., def2-TZVP) provide better accuracy but dramatically increase computational cost compared to smaller basis sets (e.g., STO-3G) [7].

- Property-Dependent Limitations: Some molecular properties are more sensitive to computational limitations than others. While ground-state geometries can often be determined with reasonable accuracy, properties like reaction barriers, weak intermolecular interactions, and spectroscopic line shapes remain challenging [9].

Table 2: Impact of Computational Level on Predicted Properties

| Property | Low-Cost Method (e.g., B3LYP/6-31G) | High-Cost Method (e.g., CCSD(T)/CBS) | Experimental Reference |

|---|---|---|---|

| Enthalpy of Formation (kcal/mol) | MAE: 3-5 kcal/mol [9] | MAE: <1 kcal/mol [9] | Thermochemical measurements |

| Vibrational Frequencies (cm⁻¹) | Scale factor ~0.96-0.98 | Scale factor ~0.99-1.00 | IR/Raman spectroscopy |

| Reaction Barriers | Often underestimated | Within chemical accuracy (±1 kcal/mol) | Kinetic measurements |

| Band Gaps (eV) | Strong functional dependence | More consistent across systems | UV-Vis spectroscopy |

Emerging Strategies to Overcome Computational Limitations

Multifidelity Machine Learning Approaches

A promising strategy to circumvent the quantum chemical bottleneck involves multifidelity machine learning (MFML) methods that leverage calculations at multiple levels of theory [7]. These approaches use many inexpensive, low-fidelity calculations (e.g., with small basis sets) combined with fewer high-fidelity calculations to predict properties that would otherwise require expensive high-fidelity computations throughout.

The QeMFi dataset was specifically designed to enable development and benchmarking of such methods, providing properties computed at five different basis set fidelities for 135,000 molecular geometries [7]. This allows researchers to build models that achieve high-fidelity accuracy at a fraction of the computational cost.

MFML Workflow for Quantum Chemistry

Quantum-Informed Machine Learning Representations

Another innovative approach involves developing molecular representations that explicitly incorporate quantum-chemical information without requiring full quantum calculations for every new molecule. Gomes, Boiko, and colleagues have created stereoelectronics-infused molecular graphs (SIMGs) that encode information about orbitals and their interactions, providing machine learning models with crucial quantum-mechanical details that traditional molecular representations lack [10].

This approach is particularly valuable for drug discovery applications where the chemical space is vast but experimental data is scarce. By infusing machine learning with quantum chemical insight, researchers can achieve accurate predictions while sidestepping the computational bottleneck of traditional quantum chemistry.

Hybrid Quantum-Classical Computational Methods

For the most challenging electronic structure problems, hybrid quantum-classical methods represent a cutting-edge approach that distributes the computational load between classical and quantum processors. The variational quantum eigensolver (VQE) uses quantum computers to prepare trial wavefunctions while relying on classical computers for optimization [8].

Recent advances like the pUCCD-DNN method combine a paired unitary coupled-cluster ansatz with deep neural network optimization, reducing the mean absolute error of calculated energies by two orders of magnitude compared to traditional methods while minimizing the number of quantum hardware calls required [8]. Though still emerging, these methods point toward a future where computational bottlenecks may be substantially alleviated through specialized hardware.

Experimental Protocols for Methodological Validation

Protocol: Benchmarking Density Functionals for Thermochemical Predictions

Purpose: To evaluate the accuracy of different density functionals for predicting standard enthalpies of formation (ΔHf°) relevant to drug molecule stability and reactivity.

Procedure:

- Molecular Selection: Curate a diverse set of molecules including linear, branched, and cyclic hydrocarbons with available experimental ΔHf° data.

- Computational Setup: Perform geometry optimization and frequency calculations using Gaussian 16 with target functionals (e.g., M06-2X, MN12-SX, MN15) and the cc-pVTZ basis set [9].

- Frequency Analysis: Confirm the absence of imaginary frequencies for optimized structures and calculate zero-point energy (ZPE) corrections.

- Energy Evaluation: Compute single-point electronic energies at the same level of theory.

- Enthalpy Calculation: Derive ΔHf° values using the atom equivalent method, where carbon and hydrogen energy equivalents are obtained via least-squares fitting to experimental data.

- Error Analysis: Calculate mean absolute errors (MAE) and root mean square errors (RMSE) for each functional relative to experimental values.

Validation: Compare performance across functionals, with MN15 demonstrating superior accuracy with MAE of 1.70 kcal/mol when ZPE corrections are included [9].

Protocol: Multifidelity Machine Learning for Spectroscopic Properties

Purpose: To develop accurate predictors of quantum chemical properties while minimizing computational cost through multifidelity learning.

Procedure:

- Data Collection: Access the QeMFi dataset containing 135,000 molecular geometries with properties computed at five basis set fidelities (STO-3G to def2-TZVP) [7].

- Feature Engineering: Compute molecular descriptors or graph representations incorporating stereoelectronic effects [10].

- Model Architecture: Design a multifidelity neural network that takes low-fidelity predictions as input and learns corrections to achieve high-fidelity accuracy.

- Training Strategy: Employ a transfer learning approach where models are pre-trained on abundant low-fidelity data and fine-tuned on scarce high-fidelity data.

- Validation: Assess model performance on held-out test molecules using mean absolute error metrics and compare computational time versus traditional quantum chemical approaches.

Application: This protocol enables accurate prediction of vertical excitation energies and oscillator strengths for spectroscopic analysis at approximately 1/10th the computational cost of high-fidelity calculations alone.

Table 3: Key Software and Databases for Computational Spectroscopy

| Resource | Type | Primary Function | Application in Spectroscopy |

|---|---|---|---|

| Gaussian 16 | Software Package | Quantum chemical calculations | Geometry optimization, frequency analysis, TD-DFT spectra [9] |

| ORCA | Software Package | Quantum chemistry package | TD-DFT calculations with various functionals and basis sets [7] |

| CASTEP | Software Package | Periodic DFT code | Vibrational properties of crystalline materials [6] |

| QeMFi Dataset | Database | Multifidelity quantum properties | Training ML models for spectroscopic predictions [7] |

| WS22 Database | Database | Diverse molecular geometries | Benchmark set for method development [7] |

Computational-Experimental Spectroscopy Workflow

The computational bottleneck in quantum chemical calculations remains a significant challenge, particularly in the context of computational spectroscopy where researchers seek to bridge theoretical models with experimental observations. The fundamental limitations of scaling with system size, accuracy trade-offs, and resource constraints necessitate strategic approaches that balance computational feasibility with scientific rigor.

Emerging methodologies, particularly multifidelity machine learning and quantum-informed representations, offer promising pathways to circumvent these limitations without sacrificing predictive accuracy. By leveraging computational hierarchies and learning from available data, researchers can extend the reach of quantum chemistry to larger systems and more complex properties relevant to drug development and materials design.

For computational spectroscopy specifically, the iterative process of model validation against experimental data remains crucial. As methods continue to evolve, the integration of computational predictions with experimental spectroscopy will undoubtedly deepen our understanding of molecular structure and dynamics, ultimately accelerating scientific discovery across chemical and pharmaceutical domains.

Spectroscopy, the study of the interaction between matter and electromagnetic radiation, serves as a fundamental tool across chemistry, materials science, and drug development [11]. However, a significant gap has long existed between theoretical computational spectroscopy and experimental spectroscopic data. Theoretical simulations, while powerful, are constrained by the high computational cost of underlying quantum chemical calculations [11]. Conversely, interpreting complex experimental spectra often requires extensive expert knowledge and may miss compounds not present in existing spectral libraries [11].

Machine learning (ML) now emerges as a transformative bridge connecting these two domains. ML algorithms have revolutionized computational spectroscopy by enabling orders-of-magnitude faster predictions of electronic properties, thereby facilitating high-throughput screening and expanding libraries with synthetic data [11]. Simultaneously, ML techniques are increasingly applied to process and interpret high-dimensional experimental spectral data, extracting meaningful patterns that elude conventional analysis [12] [13]. This article explores these advancements through structured application notes, detailed protocols, and key resources, providing researchers with practical frameworks for leveraging ML in spectroscopic research.

Application Notes: Current State and Quantitative Comparisons

ML Approaches in Spectroscopy

Machine learning applications in spectroscopy primarily fall into supervised, unsupervised, and reinforcement learning paradigms [11]. In spectroscopic contexts, supervised learning typically involves predicting spectral properties (regression) or classifying samples based on spectral features. Unsupervised techniques like principal component analysis or clustering find patterns in spectral data without pre-defined labels, proving valuable for exploratory analysis [11] [12]. Reinforcement learning, though less common, holds promise for strategic tasks like molecular design [11].

ML models can learn different levels of quantum chemical outputs. As illustrated in Figure 1, learning secondary outputs (e.g., dipole moments) or tertiary outputs (e.g., spectra) from molecular structures represents the most common and practical approaches currently [11].

Comparative Performance of ML Methods

Table 1 summarizes quantitative comparisons of different ML and statistical methods across various spectroscopic applications, demonstrating their performance in real-world tasks.

Table 1: Comparative Performance of ML and Statistical Methods in Spectroscopy

| Application Domain | Methods Compared | Key Performance Metrics | Reference |

|---|---|---|---|

| Raman Spectroscopy (Glucose, acetate, sulfate quantification) | Convolutional Neural Network (CNN) vs. Partial Least Squares (PLS) | CNN trained on 8 spectrometers significantly outperformed PLS models | [13] |

| Hazelnut Authentication (Cultivar & origin) | NIR vs. hNIR vs. MIR with PLS-DA | NIR: ≥93% accuracy, MIR: ≥93% accuracy, hNIR: effective for cultivar only | [14] |

| Food Authentication | Benchtop NIR vs. Handheld NIR vs. MIR | Benchtop NIR showed superior performance for hazelnut authentication | [14] |

| Biomedical Imaging | ML vs. Traditional Multivariate Statistics | ML excels at identifying essential features in massive datasets with subtle patterns | [15] |

Standardized Platforms and Benchmarking

The field has seen recent development of standardized platforms to address fragmentation in ML spectroscopy research. SpectrumLab represents one such unified platform, integrating data processing tools, model development interfaces, and evaluation protocols [16]. Its associated SpectrumBench covers 14 spectroscopic tasks and over 10 spectrum types, featuring data from over 1.2 million distinct chemical substances [16]. These resources help establish consistent benchmarks for comparing ML approaches across different spectroscopic modalities.

Experimental Protocols

Protocol 1: Developing an ML Model for Spectrum Prediction from Molecular Structure

This protocol outlines the procedure for training a machine learning model to predict spectroscopic properties from molecular structures, applicable to various spectroscopic types including IR, NMR, and UV-Vis.

Materials and Data Requirements

- Molecular Structure Data: Obtain molecular structures in SMILES, InChI, or 3D coordinate formats from databases like PubChem or internal compound libraries.

- Reference Spectral Data: Acquire corresponding experimental or high-quality theoretical spectra for training and validation.

- Computational Resources: Access to computing hardware with adequate CPU/GPU capabilities for model training.

- Software Environment: Python with specialized libraries (e.g., PyTorch, TensorFlow, scikit-learn) and spectroscopic ML toolkits such as SpectrumLab [16].

Procedure

Data Preprocessing:

- Convert molecular structures to suitable representations (e.g., molecular graphs, fingerprints, SMILES-based embeddings) [16].

- Apply appropriate spectral preprocessing: normalize, baseline correct, and optionally reduce dimensionality of spectral data [17].

- Split dataset into training, validation, and test sets (typical ratio: 70/15/15).

Model Selection and Architecture Design:

- For structured molecular input, consider graph neural networks (GNNs) to capture molecular topology [16].

- For sequence-based representations (SMILES), recurrent or transformer architectures may be suitable.

- Design output layer to match spectral dimensions (e.g., 500-4000 cm⁻¹ for IR spectra).

Model Training:

- Initialize model with appropriate weight initialization strategy.

- Select loss function (e.g., mean squared error for regression, cross-entropy for classification).

- Train model with batch optimization, monitoring validation loss to prevent overfitting.

- Employ early stopping and learning rate scheduling as needed.

Model Validation:

- Evaluate model on held-out test set using metrics relevant to application (e.g., mean absolute error, Pearson correlation).

- Perform statistical testing to confirm significance of results.

- Compare against baseline methods (e.g., PLS, random forests) to establish improvement.

Protocol 2: ML-Assisted Analysis of Protein Structural Changes via Spectroscopy

This protocol describes an unsupervised ML approach for analyzing protein structural changes upon interaction with nanoparticles using multi-spectral data, adapted from Franzese et al. [12].

Materials

- Protein Samples: Purified protein of interest (e.g., fibrinogen) at physiological concentrations.

- Spectroscopic Instruments: UV Resonance Raman Spectrometer, Circular Dichroism Spectrometer, UV Absorbance Spectrophotometer.

- Nanoparticles: Hydrophobic carbon and hydrophilic silicon dioxide nanoparticles of controlled size and surface chemistry.

- Software: Python with scikit-learn, pandas, numpy; specialized tools for manifold learning.

Procedure

Sample Preparation and Data Acquisition:

- Prepare protein solutions with and without nanoparticles under controlled conditions (temperature, pH, buffer).

- Acquire spectral measurements using multiple techniques (UV Resonance Raman, Circular Dichroism, UV absorbance) across relevant experimental conditions (e.g., temperature series).

- Record control spectra for buffers and nanoparticles alone.

Multi-Spectral Data Integration:

- Preprocess individual spectra: normalize, align, and remove scattering artifacts.

- Fuse multi-source spectral data into a unified data structure, maintaining sample correspondence.

- Apply dimensionality reduction (e.g., PCA) to identify major sources of variance.

Unsupervised ML Analysis:

- Implement manifold learning techniques (e.g., t-SNE, UMAP) to visualize high-dimensional spectral patterns.

- Apply clustering algorithms (e.g., k-means, DBSCAN) to identify distinct structural states.

- Quantify spectral changes using appropriate similarity metrics between clusters.

Interpretation and Validation:

- Correlate identified clusters with experimental conditions (e.g., temperature, nanoparticle type).

- Identify spectral features contributing to cluster separation using explainable AI techniques if needed.

- Validate structural interpretations against known protein structural benchmarks.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 2 catalogues key software, tools, and resources that form the essential toolkit for implementing ML in spectroscopic research.

Table 2: Essential Research Reagents and Computational Solutions for ML in Spectroscopy

| Tool/Resource | Type | Primary Function | Application in Spectroscopy |

|---|---|---|---|

| Python with pandas, scikit-learn | Programming Library | Data manipulation, traditional ML | General-purpose data preprocessing, classical ML models |

| SpectrumLab/SpectrumWorld | Specialized Platform | Unified framework for spectroscopic ML | Standardized data processing, model development, and evaluation [16] |

| PyTorch/TensorFlow | Deep Learning Framework | Neural network development | Building custom architectures for spectral prediction |

| SHAP/LIME | Explainable AI Library | Model interpretation | Identifying influential spectral features in black-box models [18] |

| Jupyter AI | AI-Assisted Development | Code generation and model prototyping | Simplifying creation of ML models for spectral analysis [19] |

| Anaconda Navigator | Package/Environment Management | Python environment and dependency management | Isolating spectroscopic ML project environments [19] |

| Genedata Biopharma Platform | Enterprise informatics platform | Integrated data management and analysis | Streamlining capture, integration, and analysis of diverse spectral data types [20] |

Emerging Trends and unresolved Challenges

The integration of machine learning with spectroscopy continues to evolve rapidly, with several emerging trends and persistent challenges shaping its trajectory:

- Multimodal Large Language Models: Recent initiatives are incorporating multi-modal large language models (MLLMs) to bridge heterogeneous data modalities in spectroscopy, though this approach remains underexplored compared to single-modal methods [16].

- Explainability and Trust: The "black box" nature of complex ML models remains a significant barrier, especially in regulated applications. Explainable AI techniques like SHAP and LIME are becoming essential for identifying chemically meaningful spectral features and building trust in model predictions [18].

- Data Scarcity and Standardization: Unlike other AI-rich fields, spectroscopic imaging suffers from limited publicly available datasets [15]. Creating standardized benchmark datasets encompassing diverse imaging modalities and spectral ranges is critical for future progress.

- Foundation Models: While foundation models have shown promising progress in scientific discovery, spectroscopy foundation models remain underexplored, largely due to the inherent multimodal nature of spectroscopic data [16].

Machine learning has unequivocally established itself as a transformative bridge between theoretical and experimental spectroscopy. By enabling rapid prediction of spectral properties from molecular structures and extracting subtle patterns from complex experimental data, ML approaches are accelerating research and opening new possibilities in fields ranging from drug development to materials science. The development of standardized platforms like SpectrumLab, coupled with robust methodological protocols and specialized toolkits, provides researchers with increasingly sophisticated means to leverage these technologies. As ML methodologies continue to evolve—addressing challenges of interpretability, data scarcity, and multimodal integration—their role in advancing spectroscopic research promises to grow even more indispensable, ultimately leading to more efficient discovery pipelines and deeper scientific insights.

The integration of machine learning (ML) with spectroscopy has revolutionized the ability to characterize samples qualitatively and quantitatively across diverse fields such as biology, materials science, medicine, and chemistry. Spectroscopy, the study of matter through its interaction with electromagnetic radiation, faces challenges in automating the prediction of a sample's structure and composition from spectral data. Machine learning addresses these challenges by enabling computationally efficient predictions, expanding libraries of synthetic data, and facilitating high-throughput screening. While ML has significantly advanced theoretical computational spectroscopy, its full potential in processing experimental data remains underexplored, requiring sophisticated approaches to manage limited data and complex, noisy signals [11] [1].

ML techniques are generally categorized into three paradigms: supervised, unsupervised, and reinforcement learning. Each offers distinct mechanisms for learning from data, making them suitable for different spectroscopic applications. Understanding these paradigms is crucial for selecting the appropriate method for specific spectroscopic tasks, such as classification, concentration prediction, or spectral feature discovery [11].

Supervised Learning for Spectral Analysis

Core Concept and Workflow

Supervised learning involves training a model on a labeled dataset where both the input spectra and the desired output (target property) are known. The model learns a function that maps input data (e.g., a spectrum) to output labels (e.g., compound concentration or class). Training is achieved by minimizing a loss function that quantifies the error between the model's predictions and the known targets, such as the L1 or L2 norm. This process requires a sufficiently large and comprehensive training set to avoid overfitting, where models perform well on training data but generalize poorly to new data [11] [1].

In spectroscopy, supervised learning is primarily used for regression (predicting continuous values like concentration) and classification (identifying categories like material type). For example, models can predict secondary outputs (e.g., electronic energies) or tertiary outputs (e.g., final spectra) from input structures [11].

Experimental Protocol: Developing a Supervised Classification Model

- Objective: To develop a supervised learning model for classifying plastic types based on spectral data (e.g., FTIR, Raman, LIBS).

- Materials and Reagents:

- Spectral Data: Raw spectral data from public datasets or laboratory measurements.

- Pre-processing Tools: Software for cubic interpolation, normalization, S-G filtering, linear detrending, and Standard Normal Variate (SNV) transformations.

- ML Algorithms: Access to algorithms such as Support Vector Machine (SVM), Random Forest (RF), Back Propagation Neural Network (BP), or deep learning models like 1D-ResNet and GoogleNet.

- Validation Metrics: Accuracy, precision, recall, F1-score.

- Procedure:

- Data Pre-processing: Apply pre-processing techniques to the raw spectral data. Cubic interpolation and normalization handle scaling variations, S-G filtering reduces noise, and SNV transformations minimize scattering effects [21].

- Data Augmentation (Optional): To address limited sample size, generate synthetic spectra using a model like Conditional Generative Adversarial Networks (C-GAN). Validate generated spectra using difference spectroscopy, t-SNE, or Maximum Mean Discrepancy (MMD) to ensure consistency with real data [21].

- Feature Extraction (Optional): Use Principal Component Analysis (PCA) for dimensionality reduction and visualization to confirm that pre-processing improves feature separation [21].

- Model Training: Split the dataset into training and testing sets. Train selected classification algorithms (SVM, RF, BP, 1D-ResNet, etc.) on the training set.

- Model Evaluation: Evaluate model performance on the held-out test set using accuracy and other relevant metrics. For instance, after data augmentation, 1D-ResNet achieved a classification accuracy of 0.991 for FTIR data [21].

- Model Interpretation: Use visualization techniques like Grad-CAM to identify which spectral features (e.g., peak regions) the model uses for classification, confirming the model's reliance on chemically relevant information [21].

Unsupervised Learning for Spectral Pattern Discovery

Core Concept and Workflow

Unsupervised learning identifies inherent patterns, structures, or groupings in data without pre-defined labels or target properties. This paradigm is valuable when labeled data is scarce or when exploring data to generate new hypotheses. Common unsupervised techniques in spectroscopy include dimensionality reduction (e.g., Principal Component Analysis - PCA) and clustering [11] [1].

A more advanced approach is Physics-Informed Neural Networks (PINN), which incorporates physical laws into the learning process. This is particularly useful for unsupervised information extraction from spectra, such as estimating agent concentrations without controlled calibration experiments. PINNs use a loss function that combines data reconstruction error with a physics-based regularization term, guiding the network to learn physically plausible solutions [22].

Experimental Protocol: Unsupervised Spectral Decomposition with PINNs

- Objective: To extract component concentrations and background signals from a measured spectrum without labeled training data, using a Physics-Informed Neural Network.

- Materials and Reagents:

- Measured Spectra: The composite spectrum, ( I(\lambda) ).

- Known Reference Spectra: The specific emission spectra ( I_{0,j}(\lambda) ) for each phenomenon/agent of interest.

- PINN Framework: A neural network architecture capable of predicting background ( I{p,b}(\lambda) ) and component intensities ( c{p,j} ).

- Procedure:

- Network Architecture: Design a neural network with two parts: one to infer the background spectrum ( I{p,b}(\lambda) ), and another to predict the intensities ( c{p,j} ) of the known phenomena.

- Physics-Informed Loss Function: Define the total loss function ( L{tot} ) as:

( L{tot} = L{rec} + \alpha L{reg} )

where:

- ( L{rec} = \sum \left( I(\lambda) - \sum{j=1}^{N} c{p,j} I{0,j}(\lambda) - I{p,b}(\lambda) \right)^2 ) is the reconstruction loss.

- ( L{reg} = \sum \left( \frac{d I{p,b}}{d \lambda} \right)^2 ) is the regularization loss enforcing background smoothness.

- ( \alpha ) is a hyperparameter weighting the regularization term [22].

- Model Training: Train the PINN by minimizing ( L{tot} ). This unsupervised approach does not require known concentrations, only the measured spectrum and the reference spectra of the pure agents.

- Output Analysis: The trained network outputs the predicted background ( I{p,b}(\lambda) ) and the concentrations ( c{p,j} ) for each agent, effectively decomposing the original spectrum [22].

Table 1: Unsupervised Learning Techniques and Applications in Spectroscopy

| Technique | Primary Function | Spectroscopic Application Example |

|---|---|---|

| Principal Component Analysis (PCA) | Dimensionality Reduction, Visualization | Visualizing cluster separation in plastic spectra after pre-processing [21]. |

| Clustering | Grouping Similar Data Points | Analyzing protein structural changes upon interaction with nanoparticles [12]. |

| Physics-Informed Neural Networks (PINN) | Unsupervised Information Extraction | Estimating agent concentrations from composite spectra using known physics [22]. |

| t-SNE | Non-linear Dimensionality Reduction | Validating the consistency of generated synthetic spectra with real data [21]. |

Reinforcement Learning for Spectral Data

Core Concept and Workflow

Reinforcement Learning (RL) involves an agent learning to make decisions by interacting with an environment to maximize a cumulative reward. The agent takes actions in a given state, receives feedback as rewards or penalties, and adjusts its policy to achieve long-term goals. This paradigm combines exploration (trying new actions) with exploitation (using known successful actions) [11] [1].

While applications in experimental spectroscopy are still emerging, RL is powerful in scenarios with limited initial data, allowing the agent to learn optimal strategies through interaction. In chemistry, RL has been used for tasks like transition state searches. Its potential in spectroscopy includes optimizing experimental parameters or guiding spectral analysis strategies in an automated, adaptive manner [1].

Comparative Analysis and Selection Guide

Choosing the right ML paradigm depends on the problem structure, data availability, and desired outcome.

Table 2: Comparison of Machine Learning Paradigms for Spectroscopy

| Aspect | Supervised Learning | Unsupervised Learning | Reinforcement Learning |

|---|---|---|---|

| Data Requirement | Labeled datasets (inputs & targets) [11]. | Unlabeled data (inputs only) [11]. | An environment to interact with. |

| Primary Goal | Prediction, Classification, Regression. | Pattern discovery, Dimensionality reduction, Clustering. | Sequential decision-making, Optimization. |

| Key Strengths | High performance for well-defined tasks with sufficient labeled data. | Works without labels; good for exploratory data analysis. | Adapts and learns optimal strategies through interaction. |

| Key Challenges | Requires large, labeled datasets; prone to overfitting [11]. | Less performant than supervised; limited to specific problems [11] [22]. | Can be inefficient to train; requires careful reward design. |

| Spectroscopy Example | Classifying plastic type from FTIR spectra [21]. | Decomposing spectra into components with PINN [22]. | Optimizing experimental parameters during data acquisition. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for ML-Spectroscopy Experiments

| Item | Function in Experiment |

|---|---|

| Public/Proprietary Spectral Datasets | Provides the foundational input data for training, validating, and testing machine learning models. |

| Chemometric Software (e.g., SIMCA) | Enables Multivariate Data Analysis (MVDA), crucial for pre-processing, model building (e.g., PLS), and analysis [23] [24]. |

| Deep Learning Frameworks (e.g., TensorFlow, PyTorch) | Provides the programming environment to build and train complex neural network models like CNNs, ResNet, and PINNs [21] [22]. |

| Design of Experiments (DOE) Software (e.g., MODDE) | Helps plan efficient experiments to generate high-quality, statistically relevant data for building robust calibration models [24]. |

| Reference Analytes (e.g., Glucose, Lactate) | Used for spiking regimens to break analyte correlations and extend the calibration range of multivariate models [24]. |

Integrated Workflow and Future Perspectives

Machine learning paradigms are not mutually exclusive and can be combined into powerful hybrid workflows. For instance, unsupervised learning can pre-process data or create features for a supervised model. Furthermore, the field is moving towards more advanced physics-informed models that integrate domain knowledge, bridging the gap between purely data-driven and traditional model-based approaches [22] [11].

Future developments will likely focus on overcoming current challenges, such as the scarcity of large, curated public datasets for spectroscopic imaging [15]. Advancements in explainable AI will be crucial for building trust in clinical and diagnostic settings, while techniques that achieve high performance with minimal training data will be invaluable for specialized applications [15]. The continued integration of ML into spectroscopy promises to further automate analysis, enhance interpretability, and accelerate scientific discovery.

ML in Action: Methodologies for Predicting, Identifying, and Bypassing Models

The integration of machine learning (ML) with spectroscopy has revolutionized the process of identifying physical models from experimental data. This paradigm shift enables researchers to move beyond traditional, often manual, analysis towards automated, high-throughput screening and prediction. The core challenge lies in creating a robust pipeline that can process raw spectral data, handle experimental artifacts, and apply appropriate computational models to extract meaningful physical insights about the sample's composition, structure, and properties. This application note details the protocols and methodologies for this process, framed within the broader context of comparing computational and experimental spectroscopy data.

Comparative Analysis of Modeling Approaches

Selecting the appropriate modeling approach is critical and depends on factors such as data set size, dimensionality, and the specific analytical goal (e.g., classification or regression). The following table summarizes the performance characteristics of different algorithms as evidenced by recent comparative studies.

Table 1: Comparison of Spectral Data Modeling Approaches

| Model Category | Specific Algorithms/Approaches | Reported Performance & Optimal Use Case | Key Advantages |

|---|---|---|---|

| Traditional Chemometrics | PLS, iPLS (with classical pre-processing or wavelet transforms) [23] | Competitive or superior performance in low-dimensional data settings (e.g., 40 training samples); improved interpretability [23]. | High stability and accuracy with small sample sizes; methods are well-established and highly interpretable [23] [21]. |

| Machine Learning | SVM, Random Forest, KNN [21] | High stability and accuracy on small sample plastic spectroscopy datasets; minimal performance difference vs. deep learning pre-augmentation [21]. | Less computationally intensive than deep learning; effective for smaller datasets [21]. |

| Deep Learning | 1D-CNN, GoogleNet, 1D-ResNet [23] [21] | Peak accuracy of 0.991 (FTIR data, 1D-ResNet) after data augmentation; outperforms other methods on large sample datasets; benefits from pre-processing [23] [21]. | Superior performance on large datasets; can model complex, non-linear relationships; can learn features directly from raw data [23] [21]. |

| Data Augmentation | C-GAN (Conditional Generative Adversarial Network) [21] | Increased classification accuracy for all tested models by at least 3% after augmentation; effective for multi-class spectroscopy generation [21]. | Mitigates challenges of limited experimental data; enables more robust model training [21]. |

Experimental Protocols

Protocol 1: Pre-processing of Spectral Data

Objective: To clean, normalize, and transform raw spectral data to enhance signal quality and prepare it for downstream modeling [25].

Materials:

- Raw spectral data (e.g., from FTIR, Raman, LIBS)

- Computational software (e.g., Python with NumPy, SciPy; R; MATLAB)

Methodology:

- Data Cleaning:

- Remove spectral regions with high noise or interference.

- Correct for baseline drift using linear detrending or other algorithms.

- Apply Savitzky-Golay smoothing or wavelet denoising to reduce high-frequency noise. The Savitzky-Golay algorithm is given by: [ yj = \frac{\sum{i=-n}^{n} ci y{j+i}}{\sum{i=-n}^{n} ci} ] where (yj) is the smoothed value at point (j), (ci) are the filter coefficients, and (n) is the window size [25].

- Normalization:

- Data Transformation:

Workflow: The following diagram illustrates the sequential pre-processing workflow.

Protocol 2: Model Training and Interpretation for Physical Model Identification

Objective: To train and validate ML models on pre-processed spectral data for tasks like classification (e.g., plastic type) or regression (e.g., sugar content), and to interpret the model to identify physically meaningful spectral features [21] [26].

Materials:

- Pre-processed spectral data from Protocol 1.

- Computational environment with ML libraries (e.g., Scikit-learn, PyTorch, TensorFlow).

Methodology:

- Data Set Preparation:

- Split data into training, validation, and test sets.

- If sample size is insufficient, employ data augmentation techniques such as C-GAN to generate realistic synthetic spectra [21].

- Model Selection and Training:

- For small sample sizes (<100), consider traditional methods (PLS, iPLS) or ML models (SVM, Random Forest) [23] [21].

- For larger sample sizes, utilize deep learning models (1D-CNN, 1D-ResNet) [23] [21].

- Train the model by minimizing an appropriate loss function (e.g., L1 or L2 norm for regression) [1].

- Model Interpretation and Physical Insight:

- For linear models (PLS): Analyze regression coefficients and variable importance in projection (VIP) scores to identify influential wavelengths [23].

- For non-linear models (CNN, ResNet): Apply post-hoc interpretability methods like Grad-CAM to visualize which regions of the input spectrum were most critical for the model's decision, often corresponding to known peak features [21] [26].

- Validate identified features against known chemical assignments (e.g., using PCA loadings) to build the physical model [21].

Workflow: The following diagram outlines the iterative model development and interpretation process.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item/Tool | Function/Application |

|---|---|

| Fourier Transform Infrared (FTIR) Spectroscopy | Used for plastic classification; provides vibrational spectra for functional group identification [21]. |

| Raman Spectroscopy | Complementary to FTIR; used for material characterization and classification [21]. |

| Laser-Induced Breakdown Spectroscopy (LIBS) | Provides elemental composition data; applied in plastic waste sorting and analysis [21]. |

| Near-Infrared (NIR) Hyperspectral Imaging | Enables quantification of compounds (e.g., sugar in grapes) and visualization of their spatial distribution [26]. |

| Savitzky-Golay Filter | A data smoothing and derivative calculation technique used to reduce noise in spectral data without distorting the signal [25]. |

| Standard Normal Variate (SNV) | A normalization technique applied to individual spectra to remove scattering effects [21]. |

| Principal Component Analysis (PCA) | An unsupervised method for dimensionality reduction, data exploration, and visualization of spectral clustering [25] [21]. |

| Partial Least Squares (PLS) | A core chemometric method for developing regression models relating spectral data to a response variable [23]. |

| Conditional GAN (C-GAN) | A generative model used for data augmentation to create synthetic spectral data for under-represented classes [21]. |

| Grad-CAM | A post-hoc interpretability method for deep learning models that highlights important regions in the input spectrum for a prediction [21] [26]. |

Predicting Spectra from a Given Structure or Model

Predicting spectroscopic signals from a known molecular structure is a foundational application of computational chemistry, directly supporting the elucidation of complex chemical systems in research and drug development. This capability bridges theoretical modeling and experimental science, allowing researchers to simulate spectroscopic outcomes before conducting resource-intensive laboratory analyses. Current approaches leverage machine learning (ML) to achieve computational efficiency and manage the complex relationships between 3D molecular geometry and spectral outputs [1]. For researchers comparing computational and experimental data, these methods provide rapid, cost-effective spectral predictions that can validate experimental findings or guide targeted analyses. This application note details the methodologies, protocols, and tools enabling accurate spectral prediction, framed within the broader context of ensuring data is Findable, Accessible, Interoperable, and Reusable (FAIR) [27].

The prediction of spectra from molecular structures primarily utilizes machine learning models trained on data derived from quantum chemical calculations or experimental datasets. These models learn the complex mapping between a molecule's 3D structure and its resulting spectroscopic features [1] [28].

A critical distinction in ML approaches lies in the model's learning target, which can be the primary, secondary, or tertiary output of a quantum chemical calculation, as outlined in [1]. The table below compares these strategic approaches.

Table 1: Machine Learning Strategies for Spectral Prediction Based on Quantum Chemical Outputs

| Learning Target | Description | Example Outputs | Pros and Cons |

|---|---|---|---|

| Primary Output | Learns the fundamental result of a quantum calculation. | Electronic wavefunction. | Pros: Most powerful; enables calculation of any property.Cons: Extremely complex; largely an unsolved challenge for multiple molecules/states [1]. |

| Secondary Output | Learns properties computed directly from the Schrödinger equation. | Electronic energy, dipole moment vectors, coupling constants. | Pros: Computationally efficient; retains physical interpretability for spectra generation [1]. |

| Tertiary Output | Learns the final spectrum directly. | IR, NMR, or UV-Vis spectrum. | Pros: Can be applied to both theoretical and experimental data.Cons: Loses underlying electronic structure information [1]. |

For experimental data, the direct prediction of tertiary outputs (the spectra themselves) is often the only viable path, though it can face challenges like limited data availability and inconsistencies arising from different experimental setups [1]. In contrast, a study on predicting IR spectra demonstrated that a model using 3D molecular structures as input achieved a Spectral Information Similarity Metric of 0.92 on a test set, significantly outperforming the 0.57 achieved by standard Density Functional Theory (DFT) with scaled frequencies [28]. This approach also inherently accounts for anharmonic effects, offering a fast alternative to laborious anharmonic calculations [28].

Experimental and Computational Protocols

Protocol 1: Predicting IR Spectra from 3D Structures using a Neural Network

This protocol is adapted from a study that used a machine learning model to directly predict IR spectra from 3D molecular structures [28].

- Objective: To accurately predict a molecule's infrared (IR) absorption spectrum based on its three-dimensional atomic coordinates.

- Primary Application: Rapid virtual screening of molecular properties and support for experimental spectrum interpretation.

- Superiority Rationale: This method outperforms traditional DFT with scaled frequencies in accuracy and captures anharmonic effects without additional computational cost [28].

Table 2: Key Research Reagents and Computational Tools for IR Prediction

| Item Name | Function/Description | Critical Specifications |

|---|---|---|

| 3D Molecular Structure Database | Provides the input data (X) for the machine learning model. | Structures must be energy-minimized. Format (e.g., .xyz, .sdf) must be compatible with the model. |

| Reference IR Spectra Database | Provides the target output data (Y) for supervised learning. | Spectral data must be consistent in units (e.g., cm⁻¹), resolution, and normalization. |

| Neural Network Model | The algorithm that learns the mapping f: X → Y. | Architecture (e.g., convolutional, graph neural network) suitable for 3D structural data. |

| High-Performance Computing (HPC) Cluster | Executes the training of the neural network. | Requires significant GPU resources for processing large datasets and complex model architectures. |

Step-by-Step Procedure:

- Data Curation: Assemble a dataset of molecular 3D structures and their corresponding high-quality IR spectra. This data can be sourced from computational databases (e.g., results from ab initio methods) or curated experimental repositories.

- Data Preprocessing: Standardize all 3D structures and spectra into consistent formats. For spectra, this may involve aligning wavelength scales and normalizing intensity values.

- Model Training: Train the neural network model in a supervised learning framework. The model's parameters are optimized by minimizing a loss function (e.g., L1 or L2 norm) that quantifies the difference between the predicted spectrum and the target spectrum [1].

- Validation and Testing: Evaluate the trained model's performance on a held-out test set of molecules not seen during training. Use metrics like the Spectral Information Similarity Metric to quantify accuracy [28].

- Prediction: Use the trained model to predict the IR spectrum for a new molecule by inputting its 3D structure.

Protocol 2: Structure Revision via Computational NMR Prediction

This protocol outlines the use of calculated NMR chemical shifts to validate or revise proposed molecular structures, as exemplified by the structure revision of hexacyclinol [29].

- Objective: To determine the most likely molecular structure by comparing computationally predicted NMR chemical shifts with experimental data.

- Primary Application: Structure validation and revision of complex natural products or synthetic molecules.

- Superiority Rationale: Provides an objective, quantitative comparison that can override misinterpretations based on limited experimental data.

Table 3: Key Research Reagents and Computational Tools for NMR Prediction

| Item Name | Function/Description | Critical Specifications |

|---|---|---|

| Proposed Molecular Structure(s) | The candidate 2D or 3D structure(s) to be tested. | Must be drawn or generated with correct stereochemistry. |

| Quantum Chemistry Software | Performs geometry optimization and NMR calculation. | Examples: Gaussian, ORCA. Method: e.g., HF/3-21G for geometry optimization. |

| NMR Prediction Method | Calculates the NMR chemical shifts. | Method: e.g., mPW1PW91/6-31G(d,p) GIAO for carbon chemical shifts [29]. |

| Reference Standard | Provides the baseline for calculating chemical shifts (δ). | Example: Tetramethylsilane (TMS) for ¹H and ¹³C NMR. |

Step-by-Step Procedure:

- Structure Preparation: Generate 3D models for all candidate structures. For complex molecules, this may involve exploring low-energy conformers.

- Geometry Optimization: Use quantum chemical methods (e.g., HF/3-21G) to optimize the geometry of each candidate structure to its minimum energy conformation [29].

- NMR Calculation: Using the optimized geometry, calculate the NMR isotropic shielding constants with a higher-level method (e.g., mPW1PW91/6-31G(d,p) GIAO) [29].

- Shift Conversion: Convert the calculated shielding constants to chemical shifts (δ) by referencing to a calculated value for the standard (e.g., TMS).

- Statistical Comparison: Quantitatively compare the calculated shifts for each candidate structure to the experimental data. The correct structure will typically show a strong linear correlation (high R² value) and a low root-mean-square error (RMSE).

- Decision: Propose the structure with the best statistical match to the experimental data as the correct one, as was done for the diepoxide structure of hexacyclinol [29].

Workflow Visualization

The following diagram illustrates the logical workflow and decision points for the two primary protocols described in this note, highlighting their role in computational-experimental data comparison.

The Scientist's Toolkit

A successful spectral prediction strategy relies on a combination of computational methods, software, and adherence to data standards.

Table 4: Essential Resources for Spectral Prediction Research

| Category | Tool/Resource | Specific Role in Spectral Prediction |

|---|---|---|

| Computational Methods | Density Functional Theory (DFT) | Provides foundational data for training ML models or calculating NMR chemical shifts directly [29]. |

| Machine Learning (ML) | Enables fast, accurate prediction of spectra (IR, NMR, UV) from 3D structure, capturing complex/anharmonic effects [1] [28]. | |

| Software & Data | Quantum Chemistry Suites | Used for geometry optimization and ab initio calculation of spectroscopic parameters [29]. |

| FAIR Data Repositories | Stores and shares spectroscopic data and associated structures, ensuring reusability and findability for the research community [27]. | |

| Conceptual Framework | FAIR Data Principles | Guides the organization of data collections to be Findable, Accessible, Interoperable, and Reusable, which is critical for building robust ML models [27]. |

| IUPAC FAIRSpec Finding Aid | A specific framework for creating metadata that makes spectroscopic data collections machine-actionable and easier to integrate into computational workflows [27]. |

In the traditional paradigm of structural biology, determining a biomolecule's three-dimensional structure from experimental Nuclear Magnetic Resonance (NMR) data is an iterative process. This process involves generating model structures, computing theoretical NMR parameters from them, and then refining the structures to minimize the discrepancy with experimental data. The direct prediction of structural parameters represents a paradigm shift, leveraging machine learning (ML) to bypass this costly refinement cycle. By establishing a direct, learned mapping from chemical structure to NMR observables, these methods accelerate structural elucidation and are reshaping workflows in structural biology and drug discovery [30] [1].

This Application Note details the protocols for implementing this approach, which is particularly powerful for high-throughput screening and the analysis of complex molecular systems where conventional methods are prohibitively slow.

Methodological Approaches

Two primary computational methodologies enable the direct prediction of NMR parameters. Their combined use offers a balance between high accuracy and computational efficiency.

Quantum Chemical Calculations

Density Functional Theory (DFT) serves as a foundational tool for the first-principles computation of NMR parameters, such as chemical shifts and J-coupling constants [30]. DFT works by modeling the electronic structure of a molecule, from which its magnetic properties can be derived.

- Principle: The chemical shift of a nucleus is intrinsically linked to the local electron density and molecular geometry. DFT calculations approximate the solutions to the Schrödinger equation to quantify this relationship [30].

- Application: A researcher can take a proposed 3D molecular structure and use DFT to compute its theoretical NMR spectrum. This spectrum can be directly compared to experimental data for validation without iterative refinement [31].

Machine Learning (ML) Prediction

Machine Learning models, particularly in a supervised learning framework, are trained on large datasets to predict NMR parameters directly from molecular representations [1]. This bypasses the need for explicit quantum mechanical calculations during application.

- Principle: ML algorithms learn a complex function, f, that maps an input (e.g., a molecular structure) to an output (e.g., a chemical shift). The model is trained on known data pairs (structure, spectrum) to minimize a loss function [1].

- Application: Once trained, an ML model can predict the NMR spectrum of a novel compound in a fraction of a second, enabling rapid structural fingerprinting and database matching [30] [1].

Table 1: Comparison of Methodologies for Direct NMR Prediction

| Feature | Quantum Chemical (DFT) | Machine Learning (ML) |

|---|---|---|

| Underlying Principle | First-principles quantum mechanics | Statistical learning from data |

| Typical Input | 3D Molecular geometry | 1D/2D/3D Molecular representation |

| Primary Output | NMR parameters (δ, J) | NMR parameters (δ, J) or full spectrum |

| Computational Cost | High (hours/days per molecule) | Very low (seconds per molecule post-training) |

| Key Advantage | High accuracy; no training data needed | Extreme speed; high throughput |

| Key Limitation | Computationally expensive; sensitive to geometry | Requires large, high-quality training data |

Experimental and Computational Protocols

The following protocols outline the steps for validating a predicted molecular structure using direct NMR prediction.

Protocol 1: Validation via DFT-Predicted NMR Spectrum

This protocol is used for high-confidence validation of a single proposed structure.

- Structure Preparation (Input): Obtain a 3D atomic coordinate file of the candidate molecule. Ensure the geometry is energy-minimized.

- Quantum Chemical Calculation:

- Software: Use a computational chemistry package (e.g., ORCA).

- Method: Select an appropriate functional (e.g., B3LYP) and basis set (e.g., TZV-DKH) [31].

- Calculation: Run a DFT calculation to compute the magnetic shielding tensors for all nuclei of interest.

- Data Conversion: Convert the computed magnetic shielding tensors to chemical shifts (δ) by referencing to the shielding constant of a standard compound (e.g., Tetramethylsilane for 1H and 13C).

- Comparison and Validation: Directly overlay the computationally predicted NMR spectrum with the experimental spectrum. A strong correlation between peak positions (chemical shifts) and patterns (J-couplings) validates the proposed structure [30] [31].

Protocol 2: High-Throughput Screening via ML Prediction

This protocol is ideal for screening multiple candidate structures or for rapid identification.

- Model Selection (Input): Choose a pre-trained ML model for NMR prediction or train a new model on a relevant dataset of known structures and their NMR spectra [1].

- Structure Input: Provide the molecular representation of the candidate structure(s). This can be a SMILES string, an InChI, or a 2D molecular graph.

- Prediction: Execute the ML model to generate the predicted NMR parameters or full spectral lineshape.

- Spectral Matching: Use a similarity metric (e.g., mean squared error) to compare the ML-predicted spectrum against the experimental unknown. The candidate structure with the highest spectral similarity is identified as the most probable match [1].

Workflow Visualization

The following diagram illustrates the logical workflow and the critical decision points for applying these direct prediction methods, contrasting them with the traditional refinement pathway.

Direct NMR Prediction Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key computational and experimental resources required for implementing the described protocols.

Table 2: Key Research Reagents and Computational Tools

| Item Name | Function / Description | Application Note |

|---|---|---|

| DFT Software (e.g., ORCA) | Software suite for quantum chemical calculations of NMR parameters (chemical shifts, J-couplings) [31]. | Essential for Protocol 1; requires significant computational resources and expertise. |

| Pre-trained ML Model | A machine learning model trained to predict NMR spectra from molecular structure representations [1]. | Core of Protocol 2; enables instantaneous prediction for high-throughput applications. |

| Curated NMR Database | A library of paired chemical structures and experimental NMR spectra (e.g., for small molecules or proteins). | Serves as the essential training data for developing new ML models [1]. |

| NMR Spectrometer | The experimental apparatus used to acquire the reference NMR data from the sample. | Provides the ground-truth experimental data against which all predictions are validated [30]. |

| Molecular Dynamics (MD) Software | Generates realistic 3D conformational ensembles for flexible molecules. | Can be used to provide averaged NMR predictions that account for molecular dynamics in solution [30]. |

Vibrational spectroscopy and diffraction techniques are indispensable tools in modern analytical science, providing critical insights into material composition, crystal structure, and molecular interactions. This article presents application notes and protocols for X-ray diffraction (XRD), nuclear magnetic resonance (NMR), Raman spectroscopy, and infrared (IR) spectroscopy, framed within the context of comparing computational and experimental data. The integration of these analytical techniques with advanced computational methods enables researchers to address complex challenges across pharmaceutical development, materials science, and energy storage technology. We demonstrate through detailed case studies how these methods provide complementary information for material characterization and validation of computational models.

Table 1: Core Characteristics of Analytical Techniques

| Technique | Fundamental Principle | Key Applications | Sample Requirements | Complementary Computational Methods |

|---|---|---|---|---|

| XRD | Constructive interference of X-rays from crystal lattice planes | Crystal structure determination, phase identification, polymorphism studies | Crystalline solid, powder | Periodic DFT, Rietveld refinement, Pawley method |

| NMR | Absorption of radiofrequency radiation by atomic nuclei in magnetic field | Molecular structure elucidation, dynamics, interaction studies | Solution or solid-state | Density functional theory (DFT), ab initio calculations |

| Raman Spectroscopy | Inelastic scattering of monochromatic light | Molecular vibration analysis, phase identification, imaging | Solids, liquids, gases; minimal preparation | Cluster approaches, periodic DFT, ab initio molecular dynamics |

| IR Spectroscopy | Absorption of infrared radiation by molecular bonds | Functional group identification, quantitative analysis, reaction monitoring | Solids, liquids, gases; ATR requires minimal preparation | DFT calculations, frequency calculations, potential energy distribution |

The analytical techniques discussed herein operate on different physical principles, providing complementary information for material characterization. XRD directly probes the long-range order in crystalline materials, producing sharp diffraction patterns that serve as fingerprints for phase identification [32]. In contrast, vibrational spectroscopies (Raman and IR) investigate molecular vibrations and provide information about functional groups, molecular symmetry, and intermolecular interactions [33] [6]. NMR spectroscopy offers unique capabilities for studying local electronic environments and molecular dynamics through chemical shifts and relaxation times [33].

Computational spectroscopy serves as a bridge between experimental data and molecular-level understanding, with the choice of computational approach dependent on the technique and material system. For crystalline materials, periodic density functional theory (DFT) calculations can predict vibrational properties and phonon dispersion relationships across the entire Brillouin zone, enabling direct comparison with experimental spectra [6]. The Perdew-Burke-Ernzerhof (PBE) functional, often with empirical dispersion corrections, provides a balanced approach for predicting structural and vibrational properties in diverse crystalline materials [6]. For molecular systems, discrete DFT calculations using hybrid functionals like B3LYP offer accurate predictions of vibrational frequencies and NMR parameters when combined with appropriate basis sets [6].

Pharmaceutical Analysis Case Study: Combatting Falsified Medicines

Background and Objectives

The global pharmaceutical industry faces significant challenges from falsified medicines that threaten patient safety and public health. These products often contain incorrect active pharmaceutical ingredients (APIs), harmful impurities, or exist in potentially dangerous polymorphic forms [33]. This case study demonstrates the application of attenuated total reflectance Fourier transform infrared (ATR-FTIR) spectroscopy and X-ray powder diffraction (XRPD) as nondestructive, green analytical techniques for rapid identification of falsified pharmaceutical products, particularly those targeting erectile dysfunction [33].

Experimental Protocol

Protocol 1: ATR-FTIR Analysis of Suspected Falsified Tablets