Building a Molecular Property Prediction Pipeline: Integrating Mol2Vec Embeddings with Tree-Based Models

This article provides a comprehensive guide for researchers and drug development professionals on constructing a high-performance molecular property prediction pipeline.

Building a Molecular Property Prediction Pipeline: Integrating Mol2Vec Embeddings with Tree-Based Models

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on constructing a high-performance molecular property prediction pipeline. We explore the synergistic combination of Mol2Vec, a powerful molecular embedding technique, with modern tree-based ensemble models like XGBoost and LightGBM. Covering the entire workflow from foundational concepts to advanced optimization, the content details how to transform chemical structures into informative numerical representations and apply robust machine learning algorithms to predict critical properties such as melting point, boiling point, and toxicity. Practical validation demonstrates that this approach can achieve high predictive accuracy (R² up to 0.93) while offering significant computational efficiency, making it an accessible yet powerful tool for accelerating drug discovery and materials design.

Molecular Representation and Machine Learning Foundations for Cheminformatics

The Critical Role of Molecular Property Prediction in Drug Discovery and Materials Science

Molecular property prediction has become a cornerstone of modern drug discovery and materials science, serving as a critical filter to prioritize compounds for costly and time-consuming experimental testing. The core challenge lies in accurately translating a molecule's structure into its resulting properties, such as biological activity, toxicity, or physicochemical characteristics. The standard computational pipeline involves two major phases: first, converting molecular structures into a machine-readable format (representation learning), and second, applying machine learning models to predict properties of interest [1] [2]. Approaches like Mol2Vec, which generates numerical vectors from molecular structures, combined with powerful tree-based models such as Random Forest or XGBoost, form a robust and interpretable framework for these predictive tasks [2]. This pipeline enables researchers to virtually screen millions of compounds, dramatically accelerating the identification of promising drug candidates and novel materials.

Molecular Representation: The Foundation of Prediction

The first and most crucial step in the prediction pipeline is molecular representation—the process of translating chemical structures into a numerical format that machine learning algorithms can process [1] [2].

- Traditional Representations: Early methods relied on expert-defined features. Molecular fingerprints, such as Extended-Connectivity Fingerprints (ECFP), encode molecular substructures as bit strings, while molecular descriptors quantify specific physicochemical properties (e.g., molecular weight, logP) [1]. The Simplified Molecular-Input Line-Entry System (SMILES) is a string-based notation that describes a molecule's structure using ASCII text [1].

- Modern AI-Driven Representations: Recent advances use deep learning to automatically learn informative representations from data. Graph Neural Networks (GNNs) have emerged as a powerful technique, as they natively operate on the molecular graph structure, where atoms are nodes and bonds are edges [3] [1]. Methods like Mol2Vec fall into this category, providing an embedding that captures complex structural patterns [4].

- The Role of Mol2Vec: Mol2Vec is an unsupervised method that learns vector representations of molecular substructures. It works by applying the Word2Vec natural language processing algorithm to sequences of molecular substructures, generating a continuous vector space where structurally similar molecules are located near each other [4]. These vectors serve as high-quality input features for downstream property prediction models.

Table 1: Comparison of Major Molecular Representation Methods

| Method Type | Example | Key Principle | Advantages | Limitations |

|---|---|---|---|---|

| String-Based | SMILES | Textual representation of molecular structure | Simple, human-readable, compact [1] | Does not explicitly capture structural topology [1] |

| Descriptor-Based | ECFP Fingerprints | Predefined substructure patterns encoded as bits | Interpretable, computationally efficient [1] | Relies on expert knowledge, may miss complex features [1] |

| Graph-Based | GNNs, Mol2Vec | Learns features directly from the atom-bond graph structure [3] [4] | Captures complex structural relationships, data-driven [3] | Can be computationally intensive; requires significant data [5] |

Machine Learning Models: From Features to Predictions

Once a molecular representation is obtained, it is fed into a machine learning model for property prediction. While deep learning models like GNNs are state-of-the-art, tree-based models remain highly popular and effective, especially when working with fixed-input representations like Mol2Vec embeddings or fingerprints.

Tree-based models, including Random Forest and Gradient Boosting machines like XGBoost, construct multiple decision trees during training. Their collective prediction is obtained by averaging (Random Forest) or sequentially combining (XGBoost) the outputs of individual trees [2]. These models are prized for their high performance, robustness to irrelevant features, and relative interpretability.

Cutting-edge research continues to push the boundaries of predictive modeling. For instance:

- Kolmogorov-Arnold Graph Neural Networks (KA-GNNs) integrate novel learnable activation functions into GNNs, offering improved expressivity, parameter efficiency, and interpretability by highlighting chemically meaningful substructures [3].

- Multi-Task Learning (MTL) frameworks train a single model on multiple related properties simultaneously. This can improve generalization, especially for tasks with scarce data, by leveraging shared information across tasks [5]. The Adaptive Checkpointing with Specialization (ACS) training scheme is a recent advance that mitigates "negative transfer," a common problem in MTL where learning one task interferes with another [5].

- Few-Shot and Low-Data Learning approaches, such as the MolFCL framework, use techniques like contrastive learning and functional group-based prompt learning to enable accurate predictions with very few labeled examples, a common scenario in real-world drug discovery [6] [7].

Table 2: Quantitative Performance of Selected Models on Molecular Property Benchmarks

| Model / Framework | Dataset(s) | Key Metric | Reported Performance | Key Innovation |

|---|---|---|---|---|

| ACS (Multi-Task) [5] | ClinTox, SIDER, Tox21 | ROC-AUC | Outperformed Single-Task Learning by 8.3% on average [5] | Mitigates negative transfer in multi-task learning |

| KA-GNN [3] | 7 Molecular Benchmarks | Accuracy / ROC-AUC | Consistently outperformed conventional GNNs [3] | Integrates Kolmogorov-Arnold Networks into GNNs for better expressivity |

| MolFCL [7] | 23 Property Datasets | ROC-AUC / PRC-AUC | Outperformed state-of-the-art baselines [7] | Uses fragment-based contrastive learning and functional group prompts |

| ChemXploreML [4] | Critical Temperature, etc. | Accuracy | Up to 93% accuracy for critical temperature prediction [4] | User-friendly desktop app using Mol2Vec-like embeddings |

Application Notes & Protocols

Application Note: Building a QSAR Pipeline with Mol2Vec and XGBoost

Objective: To establish a robust quantitative structure-activity relationship (QSAR) pipeline for predicting compound activity against a biological target using Mol2Vec for representation and XGBoost for modeling.

Background: This pipeline is ideal for virtual screening in early drug discovery. It balances high predictive accuracy with computational efficiency and provides insights into important molecular substructures driving the activity.

Materials:

- Compound Library: A dataset of chemical structures (e.g., in SMILES format) with associated experimental activity data (e.g., IC50, Ki).

- Software: Python environment with libraries including

gensim(for Mol2Vec),rdkit(for cheminformatics), andxgboost(for the model). - Computational Resources: A standard desktop computer is sufficient for datasets of up to tens of thousands of molecules.

Procedure:

- Data Preprocessing: Standardize the molecular structures from the input SMILES using RDKit (e.g., neutralize charges, remove salts).

- Generate Mol2Vec Embeddings: Use the Mol2Vec algorithm to convert each standardized molecule into a fixed-length numerical vector.

- Dataset Splitting: Split the dataset into training, validation, and test sets using a scaffold-aware split (e.g., using the

ScaffoldSplitterfrom TDC) to evaluate the model's ability to generalize to novel chemotypes [7]. - Model Training: Train an XGBoost regression or classification model on the training set vectors and their associated activity values.

- Hyperparameter Tuning: Optimize the XGBoost hyperparameters (e.g.,

max_depth,learning_rate,n_estimators) using the validation set. - Model Evaluation: Assess the final model's performance on the held-out test set using metrics like Mean Squared Error (MSE) for regression or ROC-AUC for classification.

Troubleshooting:

- Poor Generalization to Test Set: Implement scaffold splitting during data partitioning to ensure a more rigorous assessment of model performance [7].

- Model Bias: If the dataset is imbalanced (e.g., many inactive compounds, few active ones), use techniques like SMOTE or adjust the

scale_pos_weightparameter in XGBoost.

Protocol: Data Consistency Assessment with AssayInspector

Objective: To systematically evaluate and address data quality and consistency issues across multiple public molecular property datasets before integration into a predictive model.

Rationale: The accuracy of any predictive model is heavily dependent on the quality of its training data. Public datasets often have significant misalignments due to differences in experimental protocols, measurement conditions, or chemical space coverage. Naive integration of these datasets can introduce noise and degrade model performance [8].

Materials:

- Datasets: Two or more public or in-house datasets for the same molecular property (e.g., half-life from TDC and Obach et al. [8]).

- Software: The

AssayInspectorPython package [8].

Step-by-Step Workflow:

- Data Compilation: Gather the datasets of interest and preprocess them into a consistent format (e.g., SMILES column, property value column).

- Run Descriptive Analysis: Use AssayInspector to generate a summary report of key parameters for each dataset (number of molecules, endpoint statistics, etc.) [8].

- Visualize Distributions: Generate property distribution plots and chemical space maps (via UMAP) to visually identify misalignments and outliers [8].

- Identify Inconsistencies: Use the tool's insight report to flag datasets with significantly different endpoint distributions, conflicting annotations for shared molecules, or low molecular overlap [8].

- Make Integration Decisions: Based on the report, decide to exclude, transform, or carefully aggregate certain datasets before proceeding to model training.

This protocol is a critical pre-modeling step that ensures the reliability and generalizability of the resulting predictive model [8].

Table 3: Key Software Tools and Datasets for Molecular Property Prediction

| Tool / Resource | Type | Function in Research | Access / Reference |

|---|---|---|---|

| RDKit | Cheminformatics Library | Open-source toolkit for cheminformatics; used for molecule standardization, descriptor calculation, and fingerprint generation. | https://www.rdkit.org |

| Therapeutic Data Commons (TDC) | Data Repository | Provides curated, standardized benchmarks for molecular property prediction, including ADMET and toxicity datasets. | https://tdc.hms.harvard.edu |

| ChemXploreML | Desktop Application | User-friendly app that automates molecular representation and model training, making ML accessible to non-experts [4]. | MIT McGuire Group |

| AssayInspector | Data Quality Tool | Python package for assessing consistency across molecular datasets before integration, preventing performance degradation [8]. | GitHub |

| ZINC15 | Compound Database | Publicly accessible database of commercially available compounds for virtual screening; used for pre-training models [7]. | http://zinc15.docking.org |

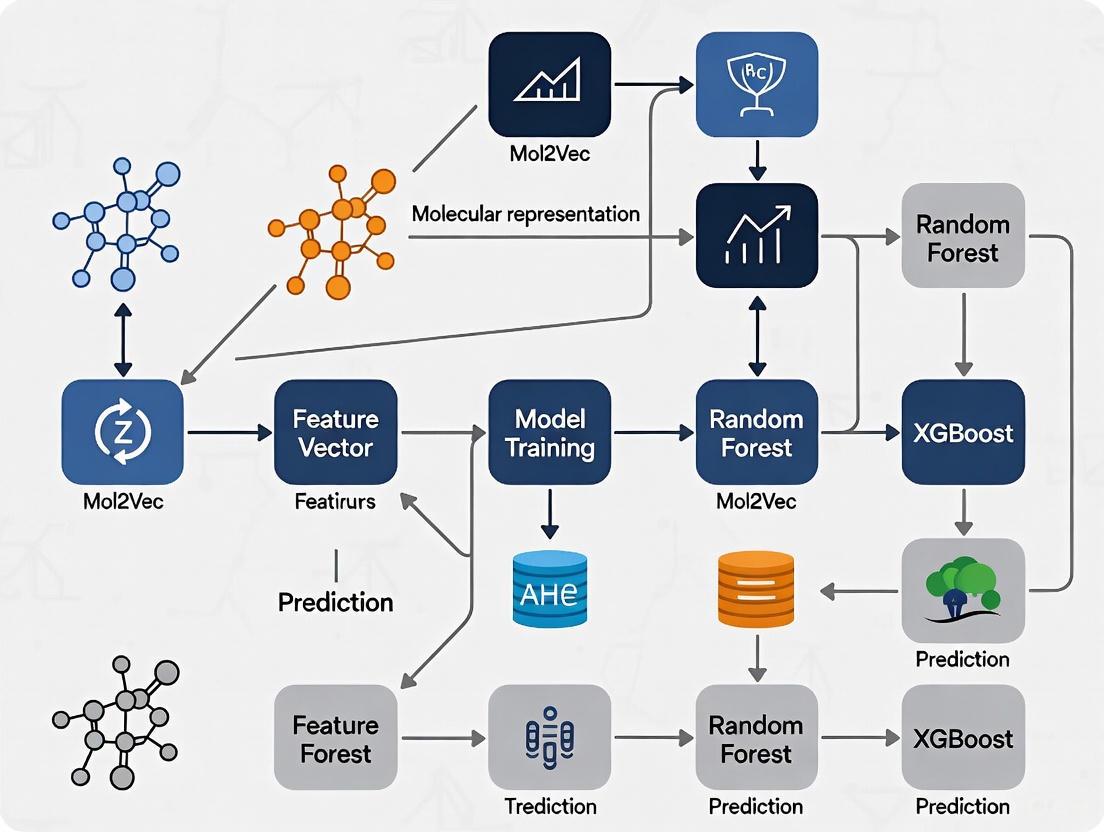

Workflow Visualization

The following diagram illustrates the integrated pipeline for molecular property prediction, highlighting the key steps from data preparation to model deployment, including the critical data consistency check.

The translation of molecular structures into information-rich numerical representations is a cornerstone of modern chemoinformatics and a critical step for harnessing machine learning in drug discovery and materials science [9]. Molecular representation learning has emerged as a powerful paradigm, moving beyond handcrafted descriptors to automatically learn salient features from molecular data [1]. Effective molecular representation bridges the gap between chemical structures and their biological, chemical, or physical properties, enabling various drug discovery tasks including virtual screening, activity prediction, and scaffold hopping [1]. These techniques allow researchers to efficiently navigate the vast chemical space and prioritize compounds with therapeutic potential, significantly accelerating the early stages of drug development [10] [1].

Classical Molecular Representation Methods

Molecular Descriptors and Fingerprints

Traditional molecular representation methods rely on explicit, rule-based feature extraction techniques, exemplified by molecular descriptors and fingerprints [1]. Molecular descriptors quantify physical or chemical properties of molecules, while fingerprints typically encode substructural information as binary strings or numerical values [1].

Molecular fingerprints are feature extraction methods based on identifying small subgraphs within a molecule and detecting their presence or counting their occurrences, yielding binary and count variants, respectively [9]. They can be broadly classified into substructural and hashed types. Substructural fingerprints detect predefined patterns determined by expert chemists, while hashed fingerprints define general shapes of extracted subgraphs and convert them into numerical identifiers using a modulo function into a fixed-length output vector [9].

Table 1: Common Molecular Fingerprint Types and Their Characteristics

| Fingerprint Type | Description | Key Features | Common Uses |

|---|---|---|---|

| Extended Connectivity Fingerprint (ECFP) | Circular neighborhoods capturing atom environments [9] | Daylight-like features, radius-dependent | Similarity searching, QSAR [1] |

| Topological Torsion (TT) | Paths of length 4 in the molecular graph [9] | Linear sequences of atoms and bonds | Molecular similarity, virtual screening |

| Atom Pair (AP) | Shortest paths between atom pairs [9] | Atom-type and distance information | Similarity searching, clustering |

Although not task-adaptive, hashed fingerprints remain widely used in chemoinformatics and molecular machine learning due to their flexibility, computational efficiency, and consistently strong performance [9]. In many cases, they continue to outperform more complex approaches, such as Graph Neural Networks (GNNs) [9].

String-Based Representations

The Simplified Molecular Input Line Entry System (SMILES) provides a compact and efficient way to encode chemical structures as strings and has become a widely used method for molecular representation [1]. Despite its simplicity and convenience, SMILES has inherent limitations in capturing the full complexity of molecular interactions [1]. As drug discovery tasks grow more sophisticated, traditional string-based representations often fall short in reflecting the intricate relationships between molecular structure and key drug-related characteristics such as biological activity and physicochemical properties [1].

Modern AI-Driven Embedding Approaches

Graph-Based Neural Representations

Graph neural networks offer a natural framework for molecular representation since molecules are inherently graph-structured with atoms as nodes and bonds as edges [9]. Most GNN architectures follow a message-passing framework where initial atom embeddings consist of elementary chemical descriptors, and in each GNN layer, atoms receive embeddings from their neighbors and update their own embedding accordingly [9]. To obtain a whole-molecule embedding, atom embeddings are aggregated using a readout function such as channel-wise average or sum [9].

The Graph Isomorphism Network (GIN) is one of the most widely used GNN architectures, as it was proven to be as expressive as the Weisfeiler-Lehman isomorphism test in distinguishing non-isomorphic graphs [9]. Recent advancements include models like ChemXTree, a feature-enhanced GNN framework that integrates a Gate Modulation Feature Unit and neural decision tree in the output layer to improve predictive accuracy for drug discovery tasks [11].

Language Model-Based Approaches

Inspired by advances in natural language processing, transformer models have been adapted for molecular representation by treating molecular sequences as a specialized chemical language [1]. Unlike traditional methods like ECFP fingerprints that encode predefined substructures, this approach tokenizes molecular strings at the atomic or substructure level [1]. Each token is mapped into a continuous vector, and these vectors are then processed by architectures like Transformers or BERT [1].

The Mol2Vec Approach

Mol2Vec is an unsupervised machine learning approach to learn vector representations of molecular substructures, inspired by natural language processing techniques like Word2vec [12]. Similar to how Word2vec models place vectors of closely related words in close proximity in the vector space, Mol2vec learns vector representations of molecular substructures that point in similar directions for chemically related substructures [12].

Compounds can be encoded as vectors by summing the vectors of the individual substructures, and these representations can be fed into supervised machine learning approaches to predict compound properties [12]. The resulting Mol2vec model is pretrained once, yields dense vector representations, and overcomes drawbacks of common compound feature representations such as sparseness and bit collisions [12].

Experimental Protocols and Implementation

Protocol: Generating Mol2Vec Embeddings

Objective: Generate continuous vector representations for molecules using the Mol2Vec approach.

Materials:

- Chemical compound dataset in SMILES format

- Mol2Vec pretrained model

- RDKit or similar cheminformatics toolkit

- Python environment with mol2vec library

Procedure:

- Data Preparation:

- Convert SMILES strings to RDKit molecule objects

- Standardize molecules (neutralization, tautomer removal)

- Curate dataset to remove duplicates and invalid structures

Substructure Identification:

- Apply Morgan circular fingerprints with radius 1-2

- Extract molecular substructures using the RDKit Morgan algorithm

- Create a "sentence" representation for each molecule where "words" are substructure identifiers

Model Training:

- Initialize the Word2Vec model with desired dimensions (typically 100-300)

- Set window size based on molecular complexity (typically 10-20)

- Train on the corpus of molecular "sentences" for multiple epochs

- Save the pretrained substructure embeddings

Molecular Vector Generation:

- For new molecules, identify substructures using the same procedure

- Retrieve corresponding vectors from the pretrained model

- Sum all substructure vectors to obtain the molecular embedding

Validation:

- Assess embedding quality using similarity search for known similar compounds

- Evaluate performance on benchmark tasks like molecular property prediction

- Compare with traditional fingerprints for well-established structure-activity relationships

Protocol: Molecular Property Prediction with Tree-Based Models

Objective: Predict molecular properties using Mol2Vec embeddings with tree-based ensemble models.

Materials:

- Molecular embeddings (from Protocol 4.1)

- Property annotation data

- LightGBM or XGBoost libraries

- Scikit-learn for model evaluation

Procedure:

- Feature Preparation:

- Split data into training, validation, and test sets (e.g., 70/15/15)

- Standardize features if necessary for the specific tree implementation

- Address class imbalance through weighting or sampling techniques

Model Training:

- Initialize LightGBM or XGBoost classifier/regressor

- Set hyperparameters (learning rate, max depth, number of estimators)

- Use cross-validation to optimize hyperparameters

- Train multiple random seeds for robustness assessment

Model Evaluation:

- Predict on held-out test set

- Calculate performance metrics: ROC-AUC, accuracy, precision, recall

- Compare with baseline models (random forest, SVM, neural networks)

- Perform statistical significance testing on performance differences

Model Interpretation:

- Analyze feature importance scores

- Apply SHAP analysis to understand embedding contribution

- Identify key substructures driving predictions

Performance Benchmarking and Applications

Comparative Performance of Representation Methods

Recent comprehensive benchmarking studies have evaluated numerous molecular representation approaches across multiple datasets and tasks. Surprisingly, many sophisticated neural models show negligible or no improvement over traditional molecular fingerprints, with only specialized fingerprint-based models demonstrating statistically significant advantages [9].

Table 2: Performance Comparison of Molecular Representation Methods on Benchmark Tasks

| Representation Method | BBBP (ROC-AUC) | BACE (ROC-AUC) | HIV (ROC-AUC) | ClinTox (ROC-AUC) |

|---|---|---|---|---|

| ECFP Fingerprints | Baseline | Baseline | Baseline | Baseline |

| D-MPNN | 71.0 (0.3) | 80.9 (0.6) | 77.1 (0.5) | 90.6 (0.6) |

| Attentive FP | 64.3 (1.8) | 78.4 (0.02) | 75.7 (1.4) | 84.7 (0.3) |

| N-Gram RF | 69.7 (0.6) | 77.9 (1.5) | 77.2 (0.1) | 77.5 (4.0) |

| PretrainGNN | 68.7 (1.3) | 84.5 (0.7) | 79.9 (0.7) | 72.6 (1.5) |

| GROVERbase | 70.0 (0.1) | 82.6 (0.7) | 62.5 (0.0) | N/A |

| ChemXTree | ~76.0* | ~86.0* | ~78.0* | ~91.0* |

Note: Values for ChemXTree are approximate based on reported improvements in [11]. Standard deviations shown in parentheses where available.

Application in Anticancer Ligand Prediction

The ACLPred framework demonstrates a successful application of molecular representation with tree-based models for predicting anticancer ligands [10]. Using Light Gradient Boosting Machine with molecular descriptors, ACLPred achieved prediction accuracy of 90.33% with AUROC of 97.31% on independent test data [10].

Key aspects of this implementation include:

- Feature Selection: Multistep feature selection with variance threshold, correlation filter, and Boruta algorithm to identify significant molecular descriptors [10]

- Model Architecture: LightGBM classifier optimized through hyperparameter tuning and cross-validation [10]

- Interpretability: SHAP analysis revealed that topological features made major contributions to decision-making, providing model interpretability [10]

Table 3: Key Research Reagent Solutions for Molecular Embedding Experiments

| Resource | Type | Function | Implementation Example |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular manipulation, descriptor calculation, fingerprint generation | Convert SMILES to molecular graphs, calculate molecular descriptors [10] |

| PaDELPy | Descriptor Calculation | Compute molecular descriptors and fingerprints | Generate 1D/2D molecular descriptors for feature engineering [10] |

| LightGBM | Machine Learning Library | Gradient boosting framework for tabular data | Build predictive models for molecular property prediction [10] |

| Mol2Vec | Embedding Algorithm | Generate continuous vector representations of molecules | Create unsupervised molecular embeddings for downstream tasks [12] |

| SHAP | Interpretation Framework | Explain machine learning model predictions | Interpret tree-based model decisions and identify important features [10] |

| MoleculeNet | Benchmark Suite | Standardized datasets for molecular machine learning | Evaluate model performance across multiple tasks [11] |

Molecular embedding techniques represent a critical advancement in computational chemistry and drug discovery, enabling the translation of chemical structures into machine-readable vectors. While traditional fingerprints like ECFP remain surprisingly competitive, modern approaches like Mol2Vec and graph neural networks offer complementary advantages for specific applications [9]. The integration of these representations with powerful tree-based models creates a robust pipeline for molecular property prediction, as demonstrated by frameworks like ACLPred for anticancer ligand prediction [10]. As the field evolves, the optimal approach likely involves selecting representation methods based on specific task requirements, data availability, and interpretability needs, with hybrid methods showing particular promise for addressing the complex challenges in computational drug discovery.

Mol2Vec is an unsupervised machine learning approach that generates continuous vector representations of molecular substructures, drawing direct inspiration from Natural Language Processing (NLP) techniques. The fundamental analogy at the heart of Mol2Vec is to consider a molecule as a "sentence" and its constituent substructures as "words." This conceptual framework allows the application of the powerful Word2vec algorithm to the chemical domain, enabling the embedding of chemical intuition into a high-dimensional vector space. By training on a large corpus of molecules, the model learns to position chemically similar substructures close to one another in a 300-dimensional space, effectively capturing latent structure-property relationships without the need for supervised labeling. This methodology represents a paradigm shift from traditional binary fingerprints to continuous, information-rich vector representations that have demonstrated state-of-the-art performance for both classification and regression tasks in molecular property prediction [13] [14].

The core innovation of Mol2Vec lies in its ability to capture the context of molecular substructures much like Word2vec captures semantic meaning in language. Just as the word "king" is spatially related to "man" and "queen" in the vector space of Word2vec, chemically related functional groups and substructures exhibit specific spatial relationships in the Mol2vec embedding space. This capability to encode chemical similarity and relationships in a continuous space provides a rich foundation for building predictive models in cheminformatics and drug discovery [13].

How Mol2Vec Works: From Molecules to Vector Embeddings

The Mol2Vec Pipeline: A Step-by-Step Protocol

The process of generating Mol2Vec embeddings follows a systematic pipeline that transforms raw molecular structures into meaningful vector representations. The following workflow illustrates this complete process:

Step 1: Corpus Preparation The process begins with assembling a large and chemically diverse corpus of molecules from databases such as ChEMBL (containing bioactivity data) and ZINC (containing commercially available compounds). The original Mol2Vec publication utilized approximately 19.9 million molecules from these sources. Each molecule is converted into its canonical SMILES representation using RDKit, ensuring a standardized molecular representation [13].

Step 2: Substructure Identification Using Morgan Fingerprints For each molecule in the corpus, all substructures contributing to a Morgan fingerprint with a radius of one are extracted. The Morgan algorithm generates atom identifiers that represent specific circular substructures around each atom, effectively breaking down each molecule into its fundamental structural components. These identifiers serve as the "words" that form the molecular "sentence" and have the same order as the canonical SMILES representation [13] [15].

Step 3: Word2Vec Model Training The collection of molecular "sentences" is used to train a Word2Vec model, specifically using the Skip-gram architecture with a window size of 10 and 300-dimensional embeddings. The Skip-gram model was selected as it better captures spatial relationships through its weighting of context words. During training, rare substructures occurring less than three times in the corpus are replaced with an "UNSEEN" token, which typically learns a vector close to zero [13] [15].

Step 4: Molecular Vector Generation To represent an entire molecule as a single vector, all substructure vectors for that molecule are summed together. If an unknown identifier is encountered during featurization of new data, the "UNSEEN" vector is employed. This additive approach preserves information about all substructures present in the molecule while generating a fixed-length representation regardless of molecular size [15].

Key Research Reagents and Computational Tools

Table 1: Essential Research Reagents and Software for Mol2Vec Implementation

| Resource Name | Type | Function/Purpose | Source/Reference |

|---|---|---|---|

| RDKit | Cheminformatics Library | Converts molecules to canonical SMILES; generates Morgan fingerprints for substructure extraction | [13] [16] |

| Gensim | NLP Library | Implements Word2Vec algorithm for training substructure embeddings | [13] |

| ChEMBL Database | Chemical Database | Provides bioactivity data for ~19.9 million molecules for corpus building | [13] |

| ZINC Database | Chemical Database | Supplies commercially available compounds to diversify training corpus | [13] |

| Mol2Vec Python Package | Specialized Library | Offers implemented Mol2Vec methodology for generating molecular vectors | [17] |

| Scikit-learn | Machine Learning Library | Provides Random Forest and other ML algorithms for property prediction | [13] |

| XGBoost/LightGBM | Gradient Boosting Frameworks | Ensemble methods for regression and classification tasks | [18] [16] |

Performance Benchmarking and Experimental Validation

Quantitative Assessment Across Diverse Molecular Properties

Extensive validation studies have demonstrated Mol2Vec's performance across various molecular property prediction tasks. The following table summarizes key benchmarking results from multiple studies:

Table 2: Performance Benchmarking of Mol2Vec Embeddings Across Various Tasks

| Dataset/Property | Task Type | Model Architecture | Performance Metrics | Comparative Performance |

|---|---|---|---|---|

| ESOL (Solubility) | Regression | GBM with Mol2Vec | R²cv = 0.86 | Superior to Morgan FP-GBM (R²cv = 0.66) [13] |

| Ames Mutagenicity | Classification | RF with Mol2Vec | State-of-the-art AUC | Recommended architecture for classification [13] |

| Tox21 | Classification | RF with Mol2Vec | State-of-the-art AUC | Outperformed SVM, NBC, CNN on toxicity targets [13] |

| Kinase Specificity | Proteochemometrics | PCM with Mol2Vec | High accuracy in cross-validation | Effective for unknown compound-target pairs [13] |

| Polymer Properties | Regression | ML with Mol2Vec | Improved accuracy | Effective even with small datasets (n=214) [19] |

| Critical Temperature | Regression | GBR with Mol2Vec | R² = 0.93 | Slightly higher accuracy than VICGAE embeddings [16] |

| Lipophilicity | Regression | GBFS with Mol2Vec | Superior performance | Outperformed state-of-the-art benchmarks [18] |

The table demonstrates that Mol2Vec embeddings consistently deliver competitive or superior performance compared to traditional molecular representations like Morgan fingerprints and other state-of-the-art algorithms. Notably, the combination of Mol2Vec with tree-based methods like Random Forest (for classification) and Gradient Boosting Machines (for regression) appears particularly effective across diverse chemical tasks [13] [18].

Comparative Analysis of Embedding Approaches

The performance of Mol2Vec must be understood in the context of alternative molecular representation approaches. The following diagram compares the key characteristics of different representation methods:

Mol2Vec offers distinct advantages over traditional fingerprints: (1) Lower dimensionality (300 dimensions vs. 2048-4096 bits for Morgan fingerprints) reduces memory requirements and training time; (2) Continuous representations capture nuanced similarity relationships beyond binary presence/absence; (3) Chemical intuition emerges through the spatial arrangement of related substructures in the embedding space [13] [20]. Compared to other learned representations like Graph Neural Networks (GNNs) or transformer models (e.g., MOLFORMER), Mol2Vec provides a favorable balance between predictive accuracy and computational requirements, making it particularly suitable for research environments with limited computational resources [18] [16].

Application Notes and Protocols for Molecular Property Prediction

Protocol: Building a Molecular Property Prediction Pipeline with Mol2Vec and Tree-Based Models

This protocol details the complete workflow for predicting molecular properties using Mol2Vec embeddings combined with tree-based ensemble methods, specifically designed for integration into a broader thesis research framework on molecular property prediction pipelines.

Step 1: Data Preparation and Preprocessing

- Input: Collect SMILES strings of target molecules and their corresponding property values (experimental or computational)

- Preprocessing: Standardize SMILES representations using RDKit's CanonSmiles function

- Data Splitting: Implement appropriate cross-validation splits based on research question:

- Random Split: Standard validation for generalizability

- Scaffold Split: Tests performance on novel molecular scaffolds

- Temporal Split: Mimics real-world discovery scenarios

Step 2: Mol2Vec Embedding Generation

- Load Pretrained Model: Utilize the pretrained Mol2Vec model (300 dimensions, trained on ZINC and ChEMBL)

- Generate Molecular Vectors:

- Handle Unknown Substructures: Replace vectors for unrecognized substructures with the "UNSEEN" vector

Step 3: Feature Selection and Engineering (Optional but Recommended)

- Gradient-Boosted Feature Selection (GBFS): Implement correlation analysis and importance weighting to identify the most relevant dimensions for the target property

- Dimensionality Reduction: For very small datasets (<500 molecules), consider PCA or UMAP to reduce dimensionality while preserving variance

Step 4: Model Training and Hyperparameter Optimization

- Algorithm Selection:

- For classification tasks (e.g., mutagenicity, toxicity): Use Random Forest with balanced class weights

- For regression tasks (e.g., solubility, lipophilicity): Use Gradient Boosting Machines (XGBoost, LightGBM, CatBoost)

- Hyperparameter Tuning:

- Random Forest: Optimize number of trees (100-1000), maximum depth (3-20), and minimum samples per leaf (1-5)

- Gradient Boosting: Optimize learning rate (0.01-0.3), number of estimators (100-2000), and maximum depth (3-8)

- Validation: Implement nested cross-validation to avoid overfitting and obtain robust performance estimates

Step 5: Model Interpretation and Validation

- Feature Importance: Analyze which embedding dimensions contribute most to predictions

- Chemical Interpretation: Project molecules into 2D space using UMAP or t-SNE to identify clusters of compounds with similar properties

- External Validation: Test model on completely held-out datasets to assess generalizability

Case Study: Predicting Aqueous Solubility (ESOL Dataset)

The ESOL (Estimated Solubility) dataset provides an excellent benchmark for demonstrating Mol2Vec's capabilities in regression tasks. The protocol for this specific application follows the general workflow but with these specific parameters:

- Dataset: 1,144 compounds with experimental aqueous solubility values [13]

- Mol2Vec Parameters: 300-dimensional embeddings generated from pretrained model

- Model: Gradient Boosting Machine (GBM) with 2000 estimators, maximum tree depth of 3, and learning rate of 0.1

- Performance: Achieved R²cv value of 0.86, significantly outperforming Morgan fingerprint-based GBM models (R²cv = 0.66) [13]

The superior performance on this dataset highlights Mol2Vec's particular advantage in low-data scenarios, where the pre-trained embeddings effectively transfer chemical knowledge learned from larger molecular corpora.

Advanced Applications and Integration Strategies

Proteochemometric Modeling with Mol2Vec and ProtVec

Mol2Vec embeddings can be combined with ProtVec vectors (which apply the same Word2vec concept to protein sequences) to create powerful proteochemometric (PCM) models that predict compound-target interactions. This integration enables the modeling of interactions between small molecules and their protein targets without requiring sequence alignments, making it particularly valuable for proteins with low sequence similarity to well-characterized targets [13] [14].

The PCM modeling approach employs specialized cross-validation strategies to assess different aspects of model performance:

- CV1: Tests performance on unknown compound-target pairs (easiest scenario)

- CV2: Tests performance on new targets by leaving out entire targets during training

- CV3: Tests performance on unknown compounds

- CV4: Tests performance on both unknown compounds and new targets (most challenging) [13]

For PCM tasks, Mol2Vec combined with Random Forest classification is specifically recommended based on rigorous validation across kinase specificity datasets [13].

Integration with Feature Selection Workflows

Recent research demonstrates that combining Mol2Vec with sophisticated feature selection methods further enhances performance while maintaining computational efficiency. The Gradient-Boosted Feature Selection (GBFS) workflow integrates Mol2Vec embeddings with statistical analysis and multicollinearity mitigation strategies to identify the most relevant substructure features for specific property prediction tasks [18].

This approach has shown particular promise for predicting quantum mechanical properties (e.g., using the QM9 dataset) and experimentally-measured physicochemical properties like lipophilicity. The feature selection process not only improves model performance but also enhances interpretability by identifying which specific substructural elements contribute most significantly to the target property [18].

Mol2Vec represents a significant advancement in molecular representation learning, effectively bridging the gap between traditional cheminformatics and modern natural language processing techniques. Its ability to capture complex structural and chemical relationships in a continuous 300-dimensional space has been rigorously validated across diverse molecular property prediction tasks, from aqueous solubility and toxicity to kinase specificity and polymer properties.

The combination of Mol2Vec embeddings with tree-based ensemble methods like Random Forest and Gradient Boosting Machines provides a particularly powerful and computationally efficient approach for molecular property prediction pipelines. This methodology demonstrates competitive or superior performance compared to more computationally intensive approaches like graph neural networks or transformer models, while offering advantages in model interpretability and resource requirements.

For researchers implementing molecular property prediction pipelines, Mol2Vec offers a robust foundation that balances chemical intuition, predictive accuracy, and computational efficiency. As the field advances, the integration of Mol2Vec with more sophisticated feature selection methods and its application to emerging areas like polymer informatics and proteochemometric modeling continue to expand its utility in accelerating drug discovery and materials design.

Molecular property prediction is a critical task in cheminformatics and drug discovery, aiming to link molecular structures with experimentally measurable biological activities or physicochemical properties [21]. In this context, tree-based ensemble models—particularly gradient boosting frameworks like XGBoost, LightGBM, and CatBoost—have emerged as powerhouse algorithms that consistently deliver state-of-the-art performance on tabular molecular data [21] [22]. Their robustness, predictive accuracy, and computational efficiency make them particularly well-suited for handling the unique challenges presented by cheminformatics datasets, which often feature high dimensionality, significant class imbalance, and potential measurement noise [21].

These algorithms excel at learning complex structure-activity relationships from molecular descriptors or embeddings, enabling researchers to build predictive models for applications ranging from virtual screening and toxicity prediction to drug sensitivity analysis [21] [16]. By leveraging ensemble techniques that combine multiple weak learners (decision trees), these methods effectively capture nonlinear relationships in molecular data while resisting overfitting through advanced regularization techniques [21] [23]. This application note explores the technical foundations of these algorithms, provides performance comparisons specific to molecular data, and outlines detailed experimental protocols for their implementation in molecular property prediction pipelines.

Algorithm Comparative Analysis

Key Characteristics and Performance

The three dominant gradient boosting implementations share a common foundation but incorporate distinct architectural innovations that yield different performance characteristics across various molecular datasets.

Table 1: Comparative Analysis of Gradient Boosting Algorithms for Molecular Data

| Feature | XGBoost | LightGBM | CatBoost |

|---|---|---|---|

| Tree Structure | Level-wise (depth-first) tree growth [21] | Leaf-wise tree growth with depth limitation [21] [23] | Symmetric (oblivious) trees with same splits per level [21] [24] |

| Handling Categorical Features | Requires extensive preprocessing/encoding [23] [24] | Optimized handling but may require encoding [23] | Native handling without preprocessing [23] [24] |

| Training Efficiency | Moderate training speed [21] [23] | Fastest training, especially on large datasets [21] [22] [23] | Competitive training speed [23] |

| Regularization Approach | L1/L2 regularization [21] [23] | Gradient-based One-Side Sampling (GOSS) [21] [23] | Ordered boosting, symmetric trees [21] [24] |

| Molecular Property Prediction Performance | Best overall predictive performance in QSAR benchmarks [21] | Excellent performance with significantly faster training times [21] | Competitive performance, excels with categorical features [25] [16] |

| Ideal Use Cases | General QSAR modeling when accuracy is priority [21] | Large-scale virtual screening, high-throughput datasets [21] [22] | Datasets with mixed feature types, smaller datasets [21] [24] |

XGBoost employs regularized learning with L1 and L2 regularization to prevent overfitting, making it particularly robust for molecular datasets where generalization is crucial [21] [23]. Its Newton descent optimization approach contributes to faster convergence compared to traditional gradient descent [21]. For molecular data, XGBoost has demonstrated superior predictive performance in comprehensive QSAR benchmarks encompassing 157,590 models across 16 datasets and 94 endpoints [21].

LightGBM introduces several efficiency optimizations including Gradient-based One-Side Sampling (GOSS) which retains instances with larger gradients while randomly sampling those with smaller gradients, and Exclusive Feature Bundling (EFB) which combines mutually exclusive features to reduce dimensionality [21] [23]. These innovations make it exceptionally efficient for large molecular datasets such as high-throughput screening data, where it can significantly reduce training time without substantial accuracy loss [21].

CatBoost's distinctive approach includes ordered boosting which prevents target leakage and overfitting by using a permutation-driven training scheme, and symmetric tree structures that serve as an implicit regularization mechanism [21] [24]. While categorical features are less common in traditional molecular descriptors [21], CatBoost's robust handling of mixed data types and strong performance on smaller datasets makes it valuable for specialized molecular prediction tasks [25] [16].

Molecular Data Performance Benchmarks

Comprehensive benchmarking studies provide empirical evidence for algorithm selection in molecular property prediction pipelines.

Table 2: Performance Benchmarks on Molecular and Materials Datasets

| Dataset Domain | Best Performing Algorithm | Key Metric | Performance Notes |

|---|---|---|---|

| QSAR (94 endpoints, 1.4M compounds) | XGBoost [21] | Predictive Accuracy | Overall best performance across diverse endpoints [21] |

| Concrete Compressive Strength (Multiple datasets) | CatBoost [25] | R²: 0.92-0.95 | Exceptional inter-dataset stability and generalization [25] |

| Molecular Properties (CRC Handbook) | Multiple [16] | R² up to 0.93 | All gradient boosting variants performed well with Mol2Vec embeddings [16] |

| Large-scale QSAR | LightGBM [21] | Training Time | Fastest training, especially beneficial for large datasets [21] |

| Critical Temperature Prediction | All Boosted Models [16] | R² = 0.93 | Excellent performance with Mol2Vec embeddings [16] |

In large-scale QSAR benchmarking encompassing 1.4 million compounds, XGBoost achieved the best overall predictive performance, while LightGBM required the least training time, particularly for larger datasets [21]. This comprehensive analysis demonstrated that all gradient boosting implementations substantially outperformed traditional machine learning approaches for molecular property prediction tasks.

For specific molecular properties such as critical temperature and critical pressure prediction, gradient boosting models combined with Mol2Vec embeddings achieved R² values up to 0.93, demonstrating their capability to capture complex structure-property relationships [16]. The performance advantage was consistent across diverse molecular families including hydrocarbons, halogenated compounds, oxygenated species, and heterocyclic molecules [16].

Experimental Protocols

Molecular Property Prediction with Gradient Boosting and Mol2Vec Embeddings

Purpose: To predict molecular properties (e.g., bioactivity, solubility, toxicity) using Mol2Vec molecular embeddings combined with gradient boosting algorithms.

Background: Mol2Vec generates unsupervised vector representations of molecular substructures, creating meaningful embeddings that capture chemical similarity [18] [16]. When combined with gradient boosting models, these embeddings enable accurate prediction of molecular properties without requiring extensive feature engineering.

Materials and Reagents:

- Chemical Compounds: Dataset of molecules with associated property measurements

- Computational Resources: Workstation or cluster with adequate RAM and CPU resources

- Software: Python environment with RDKit, gensim (Mol2Vec), and gradient boosting libraries (XGBoost, LightGBM, CatBoost)

Table 3: Research Reagent Solutions for Molecular Property Prediction

| Reagent/Resource | Specification | Function/Purpose |

|---|---|---|

| RDKit | Version 2022.09.5 or later [26] | Cheminformatics toolkit for molecule handling and descriptor calculation |

| Mol2Vec Implementation | Python implementation from original paper [16] | Generation of molecular embeddings from SMILES strings |

| Gradient Boosting Libraries | XGBoost 1.5+, LightGBM 3.3+, CatBoost 1.0+ [16] | Implementation of tree-based ensemble algorithms for model training |

| Chemical Datasets | Curated datasets from ChEMBL, TDC, or MoleculeNet [21] [26] | Standardized benchmarks for model training and validation |

| Optuna Hyperparameter Optimization | Version 2.10+ [16] | Automated hyperparameter tuning for optimal model performance |

Procedure:

Data Preparation and Preprocessing

- Obtain molecular structures in SMILES format and corresponding property values

- Curate dataset to remove duplicates and correct obvious errors

- Apply appropriate data splitting techniques (scaffold split for generalizability) [26]

- Standardize molecular structures using RDKit (normalization, tautomer standardization)

Molecular Embedding Generation

- Generate Mol2Vec embeddings for all compounds in the dataset

- Utilize the pre-trained Mol2Vec model or train a custom model on relevant chemical space

- Validate embedding quality through chemical similarity analysis and visualization

Model Training and Hyperparameter Optimization

- Implement multiple gradient boosting algorithms (XGBoost, LightGBM, CatBoost)

- Perform randomized or Bayesian hyperparameter optimization

- Employ nested cross-validation to ensure robust performance estimation

- Monitor training with appropriate validation metrics to prevent overfitting

Model Evaluation and Interpretation

- Evaluate final models on held-out test set using multiple metrics (RMSE, R², etc.)

- Analyze feature importance to identify influential molecular substructures

- Apply SHAP analysis for model interpretability and mechanistic insights [24]

Troubleshooting:

- Poor performance may indicate inadequate chemical space coverage in training data

- Overfitting can be addressed through increased regularization or ensemble simplification

- Training instability may require learning rate reduction or different tree growth strategies

Comprehensive Benchmarking Protocol for Algorithm Selection

Purpose: To systematically compare gradient boosting algorithms for specific molecular prediction tasks and identify the optimal approach.

Procedure:

Dataset Characterization

- Analyze dataset size, feature dimensionality, and class distribution

- Assess dataset balance and identify potential biases

- Perform chemical space analysis to understand structural diversity

Standardized Implementation

- Implement all algorithms with consistent preprocessing and evaluation frameworks

- Use identical cross-validation splits for fair comparison

- Apply comparable hyperparameter optimization effort to each algorithm

Multi-dimensional Evaluation

- Assess predictive performance using task-appropriate metrics

- Evaluate computational efficiency (training time, inference speed)

- Analyze model robustness and stability across multiple runs

- Consider model interpretability and feature importance consistency

Optimal Algorithm Selection

- Select algorithm based on project priorities (accuracy vs. speed vs. interpretability)

- Document performance characteristics for future reference

- Establish implementation best practices for selected algorithm

Implementation Guidelines

Algorithm Selection Framework

Choosing the appropriate gradient boosting algorithm depends on specific dataset characteristics and project requirements. The following decision framework provides guidance for algorithm selection:

Critical Implementation Considerations

Hyperparameter Optimization: Extensive hyperparameter tuning is crucial for maximizing predictive performance. Key hyperparameters include learning rate, tree depth, regularization terms, and subsampling ratios [21]. Automated optimization frameworks like Optuna [16] or RandomizedSearchCV can systematically explore the hyperparameter space.

Data Consistency Assessment: Before model training, rigorously assess data quality and consistency, particularly when integrating datasets from multiple sources. Tools like AssayInspector can identify distributional misalignments, outliers, and batch effects that could compromise model performance [26].

Feature Importance Interpretation: While all gradient boosting algorithms provide feature importance metrics, these should be interpreted with caution as different algorithms may rank molecular features differently due to variations in regularization and tree structures [21]. Expert chemical knowledge should always complement data-driven interpretations.

Molecular Representations: The choice of molecular representation significantly impacts model performance. While this protocol focuses on Mol2Vec embeddings, alternative representations including ECFP fingerprints, traditional molecular descriptors, or learned representations from graph neural networks may be preferable for specific applications [16] [26].

XGBoost, LightGBM, and CatBoost represent the state-of-the-art in tree-based ensemble learning for molecular property prediction, each offering distinct advantages for different scenarios within the drug discovery pipeline. XGBoost generally achieves the highest predictive accuracy, LightGBM offers exceptional computational efficiency for large-scale screening applications, and CatBoost provides robust performance with minimal preprocessing requirements. By implementing the standardized protocols and decision frameworks outlined in this application note, researchers can systematically leverage these powerful algorithms to accelerate molecular design and optimization campaigns. The integration of these methods with modern molecular representations like Mol2Vec creates a robust foundation for predictive modeling in cheminformatics, ultimately contributing to more efficient drug discovery processes.

The prediction of molecular properties such as melting point (MP), boiling point (BP), toxicity, and bioactivity constitutes a critical foundation in chemical research and drug discovery. These properties determine the behavior, efficacy, and safety profiles of chemical compounds, influencing their application in pharmaceuticals, materials science, and industrial chemistry. Accurate prediction of these properties enables researchers to screen compounds virtually, significantly reducing the reliance on time-consuming and resource-intensive experimental methods [27] [16]. The advent of artificial intelligence (AI) and machine learning (ML) has revolutionized this field, shifting the paradigm from experience-driven to data-driven evaluation [27]. This document outlines detailed application notes and protocols for assessing these key molecular properties, framed within a research context focused on building molecular property prediction pipelines using Mol2Vec embeddings and tree-based models.

Key Molecular Properties and Determining Factors

Melting Point and Boiling Point

Melting point is the temperature at which a substance transitions from solid to liquid, while boiling point is the temperature at which its vapor pressure equals the surrounding atmospheric pressure [28]. Both are colligative properties strongly influenced by the strength of intermolecular forces between molecules.

Primary Determining Factors:

- Intermolecular Forces: The relative strength of cohesive intermolecular interactions follows this general order: Ionic > Hydrogen bonding > Dipole-dipole > Van der Waals dispersion forces [29] [28] [30]. Molecules with functional groups capable of stronger interactions typically exhibit higher melting and boiling points.

- Molecular Weight and Surface Area: For molecules with similar functional groups, boiling points increase with molecular weight and surface area. Larger molecules with greater surface areas can form more intermolecular interactions [29] [30].

- Molecular Symmetry and Branching: Symmetrical molecules pack more efficiently in solid crystal structures, leading to higher melting points [28]. In contrast, branching tends to decrease boiling points by reducing molecular surface area and thus weakening Van der Waals dispersion forces [30].

Table 1: Factors Affecting Melting and Boiling Points of Organic Compounds

| Factor | Effect on Boiling Point | Effect on Melting Point | Example Comparison |

|---|---|---|---|

| Intermolecular Forces | Increases with stronger forces | Increases with stronger forces | Butane (BP: -0.5°C) vs. 1-butanol (BP: 117°C) [30] |

| Molecular Weight | Increases with higher molecular weight | Generally increases with higher molecular weight | CH₄ (BP: -161.5°C) < C₂H₆ (BP: -88.6°C) < C₃H₈ (BP: -42°C) [31] |

| Branching | Decreases with increased branching | Variable; often increases with symmetry | Pentane (BP: 36°C) > 2,2-dimethylpropane (BP: 9.5°C) [28] [30] |

| Hydrogen Bonding | Significantly increases | Significantly increases | Dimethyl ether (BP: -24.8°C) < Ethanol (BP: 78.4°C) [31] |

Toxicity and Bioactivity

Toxicity refers to the potential of a substance to cause harm to living organisms, while bioactivity describes its effect on a living organism or tissue, encompassing both therapeutic and adverse effects [32].

Key Aspects and Endpoints:

- ADMET Profiles: Toxicity is a crucial component of the comprehensive ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) evaluation, which determines a drug's fate in the body and its clinical viability [27].

- Common Toxicity Endpoints: These include acute toxicity (e.g., LD₅₀), organ-specific toxicities (e.g., hepatotoxicity, cardiotoxicity, nephrotoxicity), carcinogenicity, and genotoxicity [27] [32].

- Molecular Targets: Bioactivity often involves interaction with specific biological targets like enzymes (e.g., beta-secretase in Alzheimer's disease), ion channels (e.g., hERG channel linked to cardiotoxicity), and receptors [27] [33].

Table 2: Common Toxicity Endpoints and Relevant Assays

| Toxicity Endpoint | Description | Typical Assays/Measurements |

|---|---|---|

| Acute Toxicity | Adverse effects following a single dose or short-term exposure | LD₅₀ (median lethal dose), IGC₅₀ (half-maximal inhibitory concentration) [27] |

| Hepatotoxicity | Drug-induced liver injury | Elevated ALT, AST, bilirubin levels [27] |

| Cardiotoxicity | Heart muscle damage; often linked to hERG channel inhibition | hERG channel inhibition assays [27] [32] |

| Nephrotoxicity | Kidney damage | Serum creatinine, blood urea nitrogen measurements [27] |

| Carcinogenicity | Potential to cause cancer | Long-term animal studies, in vitro mutagenicity tests [27] |

| Genotoxicity | Damage to genetic information | Ames test, chromosomal aberration assays [32] |

Computational Prediction Methodology

Molecular Representation with Mol2Vec

A fundamental challenge in computational molecular property prediction is transforming molecular structures into machine-readable numerical representations. Mol2Vec is an unsupervised machine learning approach that generates molecular embeddings by learning from representations of molecular substructures [16] [34]. It treats a molecule as a "sentence" composed of "words" (its substructures) and produces a fixed-dimensional vector that captures essential chemical and structural information, making it suitable for use with machine learning algorithms.

Tree-Based Ensemble Models

Tree-based ensemble methods have demonstrated remarkable success in capturing complex structure-property relationships in molecular data [16]. These models combine multiple decision trees to improve predictive performance and robustness.

Commonly Used Tree-Based Models:

- Gradient Boosting Regression (GBR): Builds trees sequentially, with each tree correcting errors of its predecessor.

- XGBoost: An optimized gradient boosting system known for its speed and performance [16].

- LightGBM (LGBM): A gradient boosting framework that uses tree-based learning algorithms, designed for high efficiency and low memory usage [16].

- CatBoost: A gradient boosting algorithm effective for datasets with categorical features [16].

Integrated Prediction Pipeline

The integration of Mol2Vec embeddings with tree-based models creates a powerful pipeline for molecular property prediction. The typical workflow, as implemented in platforms like ChemXploreML, involves several key stages [16] [34]:

- Data Collection and Standardization: Molecular structures are gathered from reliable sources (e.g., CRC Handbook, PubChem) and standardized using canonical SMILES representations.

- Molecular Embedding: Mol2Vec generates numerical feature vectors for each molecule.

- Model Training and Validation: Tree-based models are trained on the embedded data using cross-validation techniques to prevent overfitting.

- Performance Evaluation: Models are assessed using metrics such as R² (coefficient of determination) for regression tasks and AUC (Area Under the ROC Curve) for classification tasks.

Figure 1: Workflow of molecular property prediction pipeline using Mol2Vec and tree-based models.

Experimental Protocols

Protocol: Predicting Melting and Boiling Points with ChemXploreML

Purpose: To predict melting point (MP) and boiling point (BP) of organic compounds using a Mol2Vec and tree-based model pipeline.

Materials and Software:

- ChemXploreML desktop application [16] [34]

- Dataset of molecular structures with experimental MP/BP values (e.g., from CRC Handbook of Chemistry and Physics)

- Python environment with RDKit, scikit-learn, and Mol2Vec dependencies

Procedure:

- Data Preparation:

- Compile a dataset of organic compounds with known melting and boiling points. The dataset should include canonical SMILES representations for each compound.

- Use RDKit to validate and standardize all SMILES strings to ensure consistent molecular representation [16].

Molecular Embedding:

- Input the standardized SMILES strings into the Mol2Vec algorithm to generate 300-dimensional molecular embeddings for each compound [16] [34].

- Alternatively, consider using VICGAE (Variance-Invariance-Covariance regularized GRU Auto-Encoder) embeddings (32 dimensions) for computationally efficient processing with comparable performance [16].

Model Training:

- Split the dataset into training (70-80%), validation (10-15%), and test (10-15%) sets using scaffold splitting to ensure generalizability to novel chemical structures [16].

- Configure tree-based models (XGBoost, CatBoost, LightGBM) in ChemXploreML and train them on the Mol2Vec-embedded training data [16].

- Perform hyperparameter optimization using frameworks like Optuna to maximize model performance [16].

Validation and Prediction:

- Evaluate model performance on the test set using R² values and root-mean-square error (RMSE).

- For reference, established pipelines have achieved R² values up to 0.93 for well-distributed properties like critical temperature [16] [34].

- Use the trained model to predict MP/BP for new compounds of interest.

Protocol: Assessing Toxicity and Bioactivity with AI Models

Purpose: To predict toxicity endpoints and bioactivity of candidate compounds using advanced AI frameworks.

Materials and Software:

- Toxicity databases (e.g., Tox21, ClinTox, hERG Central) [32]

- AI prediction platforms (e.g., ImageMol, DLF-MFF) [33] [35]

- Molecular descriptor calculation tools (e.g., RDKit)

Procedure:

- Data Collection and Preprocessing:

- Curate a dataset of compounds with known toxicity endpoints or bioactivity profiles from public databases like Tox21 (8,249 compounds across 12 toxicity targets) or hERG Central (300,000+ records of hERG channel inhibition) [32].

- Standardize molecular structures and calculate relevant molecular descriptors (e.g., molecular weight, logP, topological polar surface area) [32].

Model Selection and Training:

- For comprehensive molecular representation, consider multi-feature fusion models like DLF-MFF that integrate molecular fingerprints, 2D/3D molecular graphs, and molecular images [35].

- Implement appropriate data splitting strategies (e.g., scaffold split) to evaluate model generalizability to structurally novel compounds [33].

- For classification tasks (e.g., toxic vs. non-toxic), use tree-based classifiers or deep learning models trained on molecular representations.

Performance Evaluation:

- Assess classification models using AUC (Area Under the ROC Curve), with state-of-the-art models like ImageMol achieving AUC values of 0.847-0.975 on benchmark toxicity datasets [33].

- For regression tasks (predicting continuous values like IC₅₀), use RMSE (Root Mean Square Error) and R² values.

- Apply interpretability techniques such as SHAP analysis to identify molecular features contributing to toxicity predictions [32].

Experimental Validation:

Table 3: Key Resources for Molecular Property Prediction Research

| Resource | Type | Function/Application |

|---|---|---|

| CRC Handbook of Chemistry and Physics | Database | Provides reliable experimental data for melting points, boiling points, and other physicochemical properties for model training and validation [16] [34] |

| RDKit | Software | Open-source cheminformatics toolkit used for SMILES standardization, molecular descriptor calculation, and fingerprint generation [16] |

| PubChem | Database | Public repository of chemical compounds and their biological activities, providing molecular structures and bioactivity data [16] |

| Tox21 | Database | Curated dataset of ~12,000 compounds tested against 12 toxicity targets across nuclear receptor and stress response pathways [33] [32] |

| Mol2Vec | Algorithm | Generates molecular embeddings from substructure representations for machine learning applications [16] [34] |

| XGBoost | Algorithm | Optimized gradient boosting tree-based model for regression and classification tasks in molecular property prediction [16] |

| Optuna | Software | Hyperparameter optimization framework for efficiently tuning machine learning models [16] |

| hERG Assay Kits | Experimental Reagent | In vitro testing systems for assessing compound binding to hERG potassium channels, predicting cardiotoxicity risk [27] [32] |

| Human Liver Microsomes | Experimental Reagent | In vitro system for evaluating metabolic stability and metabolite formation, predicting hepatic clearance and toxicity [27] |

The accurate prediction of key molecular properties including melting point, boiling point, toxicity, and bioactivity is fundamental to advancing chemical research and streamlining drug discovery. This document has outlined the theoretical foundations, computational methodologies, and detailed experimental protocols for assessing these properties, with a specific focus on pipelines utilizing Mol2Vec embeddings and tree-based models. The integration of these advanced computational approaches enables researchers to rapidly screen compound libraries, prioritize promising candidates, and identify potential toxicity liabilities early in the development process. As AI and machine learning technologies continue to evolve, molecular property prediction will become increasingly accurate and integral to the design of novel compounds with optimized characteristics for therapeutic and industrial applications.

Within a molecular property prediction pipeline utilizing Mol2Vec embeddings and tree-based models, the consistency and quality of input structural data are paramount. The performance of advanced machine learning algorithms, including Gradient Boosting Regression (GBR), XGBoost, and LightGBM (LGBM), is contingent upon the integrity of the molecular representations from which features are derived [16]. SMILES (Simplified Molecular Input Line Entry System) strings, while a universal linear notation for molecules, can exhibit significant representational variability for the same chemical compound due to factors such as tautomerism, ionization states, and disparate atom-ordering algorithms from different sources [36]. This variability introduces noise that can obscure fundamental structure-property relationships, ultimately compromising the predictive accuracy of the model.

The RDKit toolkit addresses this critical data preprocessing challenge directly. As an open-source cheminformatics library, it provides a robust set of data structures and algorithms for manipulating chemical information [37]. This application note details standardized protocols using RDKit's MolStandardize module to canonicalize and clean molecular structures, transforming raw, heterogeneous SMILES data into a consistent, standardized representation. By integrating these protocols at the outset of a Mol2Vec and tree-based model pipeline, researchers can ensure that the subsequent steps of feature generation (embedding) and model training are performed on a reliable chemical dataset, thereby enhancing the robustness and generalizability of the resulting property prediction models [16] [36].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table catalogues the essential software tools and their specific functions within a cheminformatics pipeline focused on molecular standardization and property prediction.

Table 1: Essential Research Reagents & Solutions for Molecular Standardization and Property Prediction

| Item Name | Function/Application | Key Features / Notes |

|---|---|---|

| RDKit [37] | Core open-source toolkit for cheminformatics; used for reading molecules, structural manipulation, and descriptor calculation. | - Business-friendly BSD license- Python 3.x wrappers via Boost.Python- Provides 2D/3D molecular operations and descriptor generation for machine learning. |

| RDKit MolStandardize Module [36] [38] | Provides functions for standardizing molecular representations and normalizing functional groups. | - Includes methods for cleanup, desalting, metal disconnection, reionization, and tautomer enumeration.- Allows for definition and application of custom standardization rules. |

| Mol2Vec [12] | Generates vector representations (embeddings) of molecular substructures in an unsupervised manner. | - Inspired by Word2vec models from Natural Language Processing.- Produces a single, dense vector for an entire molecule by summing the vectors of its substructures. |

| Tree-Based Ensemble Models (e.g., LightGBM, XGBoost, CatBoost) [16] [10] | Machine learning algorithms used for the final molecular property prediction task. | - Known for handling complex, non-linear structure-property relationships.- LGBM has demonstrated high accuracy, e.g., 90.33% in anticancer ligand prediction [10]. |

| Python Scientific Stack (e.g., scikit-learn, Pandas, NumPy) [16] | Provides the computational environment for data handling, preprocessing, and model implementation. | - Essential for scripting the analysis pipeline, from data loading and RDKit processing to model training and validation. |

Application Notes: SMILES Standardization with RDKit

The Critical Role of Standardization in Prediction Pipelines

In the context of molecular property prediction, the primary objective of SMILES standardization is to represent all molecules from diverse sources in a single, consistent manner [36]. Chemical intuition suggests that different representations of the same molecule should yield identical or highly similar feature vectors. Without standardization, a single molecule represented by multiple SMILES strings could be treated as distinct entities by the Mol2Vec algorithm, leading to fragmented and unreliable feature sets. Research has shown that the choice of molecular embedding, such as Mol2Vec, significantly impacts the performance of subsequent machine learning models [16]. Standardization acts as a foundational step to mitigate this source of noise, ensuring that the learned embeddings reflect genuine chemical similarities rather than representational artifacts.

The standardization process specifically addresses several chemical challenges:

- Tautomerism: A molecule can exist as multiple tautomers, which are constitutional isomers that readily interconvert. The

TautomerEnumeratoris used to select a canonical tautomeric form [36]. - Charged States and Salts: Molecules may be represented with irrelevant counterions or in non-standard ionization states. The standardization pipeline includes steps to disconnect metals, remove salts, and neutralize molecules where appropriate [36] [38].

- Fragment Handling: Input data often contains salts or mixtures. The

FragmentParentfunction helps isolate the largest organic covalent unit, which is typically the chemical structure of interest [36].

Core Standardization Functions and Parameters

RDKit's MolStandardize module encapsulates a series of operations designed to address the challenges outlined above. The key functions and their parameters are summarized below.

Table 2: Core Functions and Parameters in RDKit's MolStandardize Module

| Function / Class | Key Parameters / Attributes | Primary Action |

|---|---|---|

Cleanup(mol) [36] |

(Convenience function, parameters internal) | Performs a composite cleanup: remove hydrogens, disconnect metal atoms, normalize functional groups, and reionize the molecule. |

FragmentParent(clean_mol) [36] |

- | Returns the largest covalent fragment in the molecule, effectively desalting and removing small organic fragments. |

Uncharger() [36] |

- | Attempts to neutralize the molecule while preserving the natural representation of zwitterions. |

TautomerEnumerator() [36] |

Canonicalize(mol) |

Applies a set of transformation rules to generate the canonical tautomer for the molecule. |

Normalizer() [38] |

normalize(mol), normalizeInPlace(mol) |

Applies a set of chemical transformations (e.g., sulfoxide normalization, nitro group normalization) to standardize functional groups. Can be initialized with custom rules. |

Experimental Protocols

Basic Molecular Standardization Workflow

This protocol describes a standard pipeline for converting a raw SMILES string into a standardized molecule, ready for the generation of Mol2Vec embeddings or other molecular descriptors.

- Input Raw SMILES. Begin with a SMILES string, for example,

'C1=CC(=C(C=C1Cl)Cl)NC(C)(C)C(=O)O'. - Convert to Molecule Object. Use

mol = Chem.MolFromSmiles(smiles)to parse the SMILES string and create an RDKit molecule object. - Initial Cleanup. Apply the

rdMolStandardize.Cleanup(mol)function. This step removes hydrogen atoms, disconnects metal atoms, normalizes the molecule (applying functional group transformations), and reionizes it to a common pH-appropriate state [36]. - Select Parent Fragment. For molecules with multiple fragments (e.g., salts), isolate the largest covalent unit using

parent_clean_mol = rdMolStandardize.FragmentParent(clean_mol). - Neutralize Charge. Use the

Unchargerto attempt neutralization:uncharger = rdMolStandardize.Uncharger()followed byuncharged_parent_clean_mol = uncharger.uncharge(parent_clean_mol). - Canonicalize Tautomer. Enumerate and select the canonical tautomer using a

TautomerEnumerator:te = rdMolStandardize.TautomerEnumerator()andtaut_uncharged_parent_clean_mol = te.Canonicalize(uncharged_parent_clean_mol)[36]. - Output Standardized SMILES. Convert the final, standardized molecule back to a canonical SMILES string using

Chem.MolToSmiles(taut_uncharged_parent_clean_mol)for downstream processing.

Advanced Protocol: Implementing Custom Standardization Rules

For specialized chemical registries or to handle specific functional groups, researchers can define and apply their own normalization rules alongside or in place of the defaults [38].

- Define Custom Transformations. Create a text file listing the custom reaction patterns in SMARTS (SMILES Arbitrary Target Specification) format. For example, to break a bond to an alkali metal:

[Li,Na,K]-[O;H1]>>[O;H1]. - Initialize a Custom Normalizer. In your Python script, read the transformation file and create a

Normalizerobject: - Integrate into Workflow. The custom

Normalizerobject (nrm) can be used in place of the defaultCleanupstep or applied at any point in the standardization pipeline vianormalized_mol = nrm.normalize(mol)[38]. - Capture and Analyze Logs (Optional). To programmatically determine which rules were applied to which molecules, you can capture the RDKit logs using Python's

loggingmodule and parse the output with regular expressions to extract the names of the applied transformations [38].

Workflow Visualization: From Raw SMILES to Standardized Representation

The following diagram, generated using the DOT language, illustrates the logical flow of the molecular standardization process and its position within a larger property prediction pipeline.

Diagram 1: Molecular Standardization and Property Prediction Workflow.

Integration into a Molecular Property Prediction Pipeline