ChemSpaceAL: An Efficient Active Learning Methodology for Targeted Molecular Generation in Drug Discovery

This article explores ChemSpaceAL, a computationally efficient active learning methodology that revolutionizes targeted molecular generation for drug discovery.

ChemSpaceAL: An Efficient Active Learning Methodology for Targeted Molecular Generation in Drug Discovery

Abstract

This article explores ChemSpaceAL, a computationally efficient active learning methodology that revolutionizes targeted molecular generation for drug discovery. By requiring evaluation of only a strategic subset of generated molecules, this approach successfully aligns generative AI models with specific protein targets. We examine its foundational principles, detailed methodology applied to proteins like c-Abl kinase and Cas9's HNH domain, troubleshooting approaches for molecular stability, and comprehensive validation demonstrating its capability to exactly reproduce known FDA-approved inhibitors while generating novel compounds for challenging targets. This resource provides researchers and drug development professionals with practical insights into implementing this cutting-edge methodology to navigate chemical space more effectively.

The Chemical Space Challenge: Why Active Learning is Revolutionizing Targeted Molecular Generation

The Vastness of Chemical Space and Drug Discovery Limitations

The concept of "chemical space" represents the multi-dimensional universe of all possible molecules, a domain so vast that it is estimated to contain at least 10^63 small, drug-like molecules [1]. This number, which exceeds the count of atoms in our solar system, presents both the ultimate resource and the fundamental challenge for modern drug discovery [2]. The pharmaceutical industry has explored only a minuscule fraction of this potential universe, creating a critical bottleneck in identifying novel therapeutic compounds [1]. This application note examines the quantitative dimensions of this challenge and details the experimental protocols, including the ChemSpaceAL methodology, that are enabling researchers to navigate this expanse more efficiently for targeted molecular generation.

Quantifying Chemical Space and Exploration Limits

The Scale of Chemical Space

The disconnect between theoretically possible and practically accessible chemical compounds defines the primary limitation in drug discovery. The table below summarizes key quantitative measures of this challenge.

Table 1: The Scale of Chemical Space and Current Exploration

| Metric | Scale/Number | Contextual Reference |

|---|---|---|

| Theoretical Drug-Like Chemical Space | 10^63 molecules | Estimated from combining up to 30 C, N, O, S atoms [1] |

| Commercially Available "In-Stock" Compounds | ~13 million compounds | Illustrates limited coverage of chemical space [3] |

| Make-on-Demand Libraries | >70 billion molecules | Readily available from suppliers like Enamine [3] |

| Virtual Corporate Libraries (e.g., Merck MASSIV) | 10^20 molecules | Similar to the number of stars in the universe [1] |

The Exploration Bottleneck

The fundamental limitation is straightforward: the growth of make-on-demand and virtual libraries has outpaced the ability to screen them exhaustively. While structure-based virtual screens have reached billions of compounds, these efforts demand substantial computational resources, making them impractical for the largest libraries and impossible for the theoretical entirety of chemical space [3]. As noted by researchers, the number of possibilities is now too large to navigate without sophisticated computational guidance [1].

Methodologies for Navigating Chemical Space

To overcome these limitations, researchers have developed specialized methodologies that combine computational efficiency with experimental validation.

Protocol 1: Machine Learning-Guided Docking Screen

This workflow combines machine learning (ML) with molecular docking to enable rapid virtual screening of multi-billion-compound libraries, achieving a computational cost reduction of more than 1,000-fold [3].

Table 2: Key Research Reagents & Solutions for ML-Guided Docking

| Reagent/Solution | Function in Protocol |

|---|---|

| Enamine REAL Space | Source of billions of synthetically accessible rule-of-four (Ro4) molecules for screening [3] |

| CatBoost Classifier | Machine learning algorithm that provides an optimal balance between speed and accuracy for classification [3] |

| Morgan2 Fingerprints (ECFP4) | Molecular descriptors that represent chemical structures for machine learning processing [3] |

| Mondrian Conformal Prediction (CP) Framework | A method that uses significance levels to control error rates and identify virtual active compounds for docking [3] |

Experimental Workflow:

- Benchmark Docking: Conduct molecular docking screens against the target protein using a randomly sampled subset of ~1 million compounds from a make-on-demand library (e.g., Enamine REAL Space).

- Classifier Training: Train a machine learning classifier (e.g., CatBoost) using the docking results. The molecular structures (represented as Morgan2 fingerprints) are the features, and the docking scores are the labels. The top-scoring 1% of compounds typically define the "active" class.

- Conformal Prediction: Apply the trained model via the Conformal Prediction framework to the entire multi-billion-compound library. The CP framework uses a user-defined significance level (ε) to classify compounds as "virtual active" or "virtual inactive" with a guaranteed error rate.

- Targeted Docking: Perform molecular docking only on the vastly reduced set of compounds predicted as "virtual active," which can represent just ~10% of the original library while retaining high sensitivity.

- Experimental Validation: Synthesize or procure and then test the top-ranking compounds from the final docking list in biochemical or cellular assays to confirm biological activity.

The following diagram illustrates this efficient workflow:

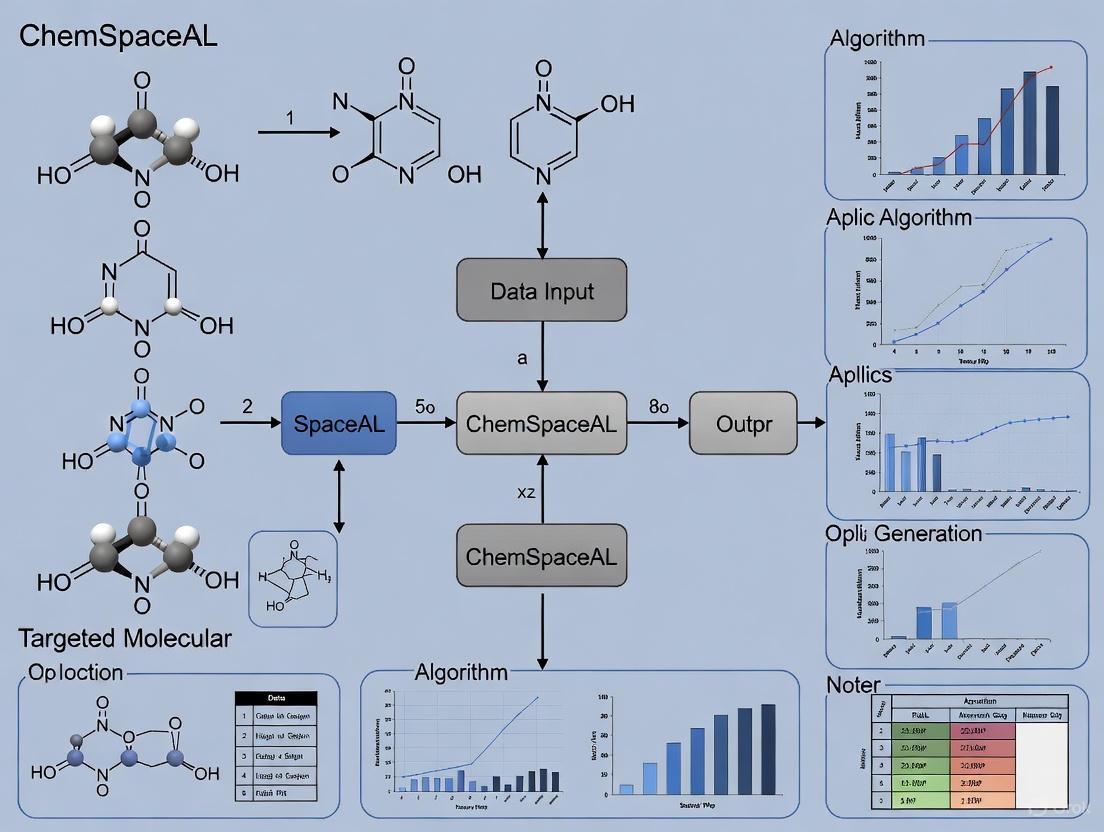

Protocol 2: ChemSpaceAL Active Learning for Molecular Generation

The ChemSpaceAL methodology is a computationally efficient active learning framework applied to protein-specific molecular generation. It requires the evaluation of only a subset of generated data to successfully align a generative model with a specified objective [4] [5].

Experimental Workflow:

- Initialization: Start with a pre-trained generative molecular model (e.g., a GPT-based molecular generator).

- Generation and Sampling: The generator produces a large sample of molecular structures.

- Informed Selection: An acquisition function selects the most informative subset of these generated molecules for evaluation based on the current model's state and uncertainty.

- Objective Evaluation: The selected molecules are scored using an objective function relevant to the target (e.g., a docking score, a predictive model of binding, or a quantitative structure-activity relationship (QSAR) model).

- Active Learning Loop: The evaluated molecules and their scores are added to the training set. The generative model is fine-tuned on this updated dataset, improving its understanding of the structure-activity relationship for the specific target.

- Iteration: Steps 2-5 are repeated for multiple cycles, progressively steering the generator toward regions of chemical space that produce molecules with the desired properties.

When applied to c-Abl kinase, this method learned to generate molecules similar to FDA-approved inhibitors without prior knowledge and reproduced two of them exactly [4] [5]. The following diagram illustrates the active learning cycle:

Integrated Discovery Platforms

The methodologies described are embedded within broader AI-driven drug discovery platforms. These platforms demonstrate the real-world application and validation of these approaches.

Table 3: Leading AI-Driven Discovery Platforms and Technologies

| Platform/Company | Core Approach | Key Achievement |

|---|---|---|

| Exscientia | Generative AI for small-molecule design; integrated "Centaur Chemist" approach. | Produced the first AI-designed drug (DSP-1181) to enter Phase I trials; reports design cycles ~70% faster than industry norms [6]. |

| Insilico Medicine | Generative AI for target identification and molecular design. | Advanced an idiopathic pulmonary fibrosis drug from target discovery to Phase I in 18 months [6]. |

| Schrödinger | Physics-based simulations combined with machine learning. | Advanced the TYK2 inhibitor, zasocitinib (TAK-279), into Phase III clinical trials [6]. |

| Quantum-Classical Hybrid | Quantum Circuit Born Machine (QCBM) integrated with classical LSTM model. | Generated novel molecular fragments to target the historically "undruggable" KRAS protein for cancer therapy [7]. |

The vastness of chemical space is no longer an impenetrable barrier but a frontier that can be systematically navigated. Methodologies like machine learning-guided docking and the ChemSpaceAL active learning framework represent a paradigm shift in drug discovery. By leveraging these computational protocols, researchers can transition from inefficient, broad screening to intelligent, targeted exploration. This allows for the rapid identification and generation of novel, potent, and specific therapeutic candidates, dramatically accelerating the journey from concept to clinic.

The discovery of novel molecules is a cornerstone of pharmaceutical research and materials science, yet it has traditionally been a time-consuming and resource-intensive process. Generative artificial intelligence (AI) has emerged as a transformative force in this domain, enabling the rapid exploration of vast chemical spaces to design compounds with desired properties [8] [9]. Among the various generative approaches, Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformer-based models have demonstrated remarkable success in de novo molecular design [10] [11]. These technologies are reshaping the drug discovery pipeline, offering the potential to significantly accelerate the identification of lead compounds and optimize their therapeutic characteristics.

Framed within the context of advanced research methodologies like ChemSpaceAL, an efficient active learning framework for protein-specific molecular generation, this article provides detailed application notes and experimental protocols for employing these generative models in targeted molecular design [5]. The ChemSpaceAL methodology demonstrates how active learning can successfully fine-tune generative models toward specific objectives, such as generating inhibitors for particular protein targets, by requiring the evaluation of only a subset of the generated data [5]. We present a comprehensive technical resource for researchers and drug development professionals, featuring standardized protocols, performance comparisons, and practical implementation guidelines to bridge the gap between algorithmic innovation and laboratory application.

Generative Model Architectures: Technical Foundations

Variational Autoencoders (VAEs) for Molecular Representation

Variational Autoencoders provide a probabilistic framework for learning continuous latent representations of molecular structures [10] [12]. In molecular design, VAEs typically operate on Simplified Molecular-Input Line-Entry System (SMILES) representations or molecular graphs, mapping them to a structured latent space where similar molecules are clustered together [13]. The encoder network processes input molecules and outputs parameters (mean and variance) defining a probability distribution in the latent space, while the decoder network reconstructs molecules from points sampled from this distribution [12].

A critical advantage of VAEs in molecular design is their explicitly defined latent space, which facilitates meaningful interpolation and optimization [10] [12]. This structured space enables researchers to navigate chemical space systematically by moving in directions that correspond to gradual changes in molecular properties. However, VAEs can sometimes produce blurrier outputs compared to other generative models and may struggle with generating highly complex molecular structures with perfect validity [10] [14]. The training process for VAEs involves maximizing the Evidence Lower Bound (ELBO), which balances reconstruction accuracy with the regularization of the latent space [12].

Generative Adversarial Networks (GANs) for Realistic Molecular Generation

Generative Adversarial Networks employ an adversarial training framework where two neural networks—a generator and a discriminator—compete against each other [10] [12]. The generator creates synthetic molecules from random noise vectors, while the discriminator attempts to distinguish between real molecules from the training data and synthetic ones produced by the generator [12]. Through this adversarial process, the generator progressively learns to produce increasingly realistic molecular structures.

GANs are particularly valued for their ability to generate high-quality, sharp outputs that closely resemble real molecules [10] [12]. This makes them well-suited for applications requiring high structural fidelity. However, GAN training can be unstable and prone to mode collapse, where the generator produces limited diversity in its outputs [12] [14]. Additionally, GANs lack an inherent latent space structure, making controlled generation and optimization more challenging compared to VAEs. Techniques such as Wasserstein GANs with gradient penalty and spectral normalization have been developed to stabilize training and improve performance [14].

Transformer-Based Models for Sequential Molecular Design

Transformer architectures, originally developed for natural language processing, have been successfully adapted for molecular design by treating SMILES strings as sequential data [10] [11]. Transformers utilize a self-attention mechanism that allows them to capture long-range dependencies within molecular representations, effectively understanding the complex relationships between different parts of a molecule [10].

The standout advantage of Transformers is their exceptional ability to model context and complex relationships within molecular structures [10]. This enables them to generate highly valid and novel molecules while maintaining structural coherence. However, Transformers require large datasets for effective training and have significant computational demands during both training and inference [10]. Their autoregressive nature, generating sequences token-by-token, can also lead to error propagation in longer sequences. Despite these challenges, Transformer-based models have demonstrated state-of-the-art performance in various molecular generation tasks, particularly when fine-tuned for specific property optimization [5].

Table 1: Comparative Analysis of Generative Model Architectures in Molecular Design

| Feature | VAEs | GANs | Transformers |

|---|---|---|---|

| Core Architecture | Encoder-Decoder with probabilistic latent space [12] | Generator-Discriminator in adversarial setup [12] | Self-attention based autoregressive model [10] |

| Molecular Representation | SMILES, Molecular graphs [13] | SMILES, Molecular graphs [12] | SMILES strings (as sequences) [10] [11] |

| Latent Space | Explicit, structured, continuous [10] [12] | Implicit, less structured [12] | No continuous latent space (sequential generation) |

| Training Stability | Generally more stable [12] | Often unstable, prone to mode collapse [12] [14] | Stable with proper regularization [10] |

| Sample Quality | Can be blurrier; may lack detail [10] | High-quality, sharp samples [10] [12] | High validity and novelty [10] |

| Strength | Meaningful latent space interpolation, uncertainty estimation [10] [12] | High realism and structural detail [10] [12] | Captures complex long-range dependencies in molecular structure [10] |

| Key Challenge | Ensuring generated molecular validity [13] | Training instability, limited output diversity [12] [14] | High computational requirements, data hunger [10] |

Application Notes: Performance Benchmarks

In practical applications, each class of generative models exhibits distinct performance characteristics that make them suitable for different aspects of the molecular design pipeline. Quantitative evaluation metrics typically include validity (the percentage of generated structures that correspond to valid molecules), uniqueness (the proportion of novel molecules not found in the training data), and novelty (the structural dissimilarity from known compounds) [13].

VAEs have demonstrated strong performance in scaffold hopping and molecular optimization tasks where exploring continuous transitions between molecular structures is valuable [13]. Their probabilistic nature makes them particularly useful when dealing with uncertain or incomplete data, as they can generate diverse potential solutions. In benchmark studies, VAEs have shown validity rates typically ranging from 60% to 90%, depending on the complexity of the molecular representation and architecture refinements [13].

GANs excel in generating highly realistic molecular structures with precise structural details, achieving validity rates that can exceed 80% with advanced architectures [12]. However, they may struggle with ensuring broad chemical diversity without techniques like minibatch discrimination or experience replay. When successfully trained, GANs can produce molecules with optimized properties such as enhanced binding affinity or improved solubility profiles [12] [9].

Transformers have set new standards for validity and novelty in molecular generation, with some implementations achieving validity rates exceeding 90% while maintaining high uniqueness [10] [5]. Their ability to capture complex, long-range dependencies in molecular structures makes them particularly effective for designing complex macrocycles and other structurally challenging compounds. In the ChemSpaceAL framework, Transformer-based models (GPT-based molecular generators) were successfully fine-tuned to generate molecules similar to known c-Abl kinase inhibitors, even reproducing two existing inhibitors exactly without prior knowledge of their existence [5].

Table 2: Quantitative Performance Benchmarks in Targeted Molecular Generation

| Model Architecture | Validity Rate (%) | Uniqueness (%) | Novelty (Tanimoto Similarity) | Optimization Efficiency |

|---|---|---|---|---|

| VAE (Standard) | 60-80% [13] | ~70% | 0.3-0.5 | Moderate |

| VAE (with Cyclical Annealing) | 85-95% [13] | ~75% | 0.4-0.6 | High |

| GAN (Standard) | 70-85% [12] | ~65% | 0.3-0.5 | Moderate |

| GAN (with Advanced Regularization) | 80-90% [12] | ~70% | 0.4-0.6 | High |

| Transformer (GPT-based) | >90% [5] | >80% | 0.5-0.7 | High |

| ChemSpaceAL (Active Learning + Transformer) | >95% [5] | >85% | 0.6-0.8 | Very High |

Experimental Protocols

Protocol 1: Targeted Molecular Generation using ChemSpaceAL Framework

The ChemSpaceAL methodology combines active learning with generative models to efficiently fine-tune molecular generation toward specific objectives with minimal data evaluation [5].

Step 1: Pre-training a Base Generative Model

- Select a suitable architecture (e.g., GPT-based model for SMILES generation) [5].

- Pre-train the model on a large-scale chemical database (e.g., ZINC, ChEMBL) to learn general chemical language and rules [5].

- Validate base model performance on standard metrics: validity, uniqueness, and novelty.

Step 2: Objective Function Definition

- Define a target-specific objective function incorporating key properties:

- Binding affinity predictions (e.g., using docking scores)

- Physicochemical properties (e.g., LogP, molecular weight)

- ADMET properties (Absorption, Distribution, Metabolism, Excretion, Toxicity)

- Structural constraints (e.g., specific substructures or scaffolds) [5]

Step 3: Active Learning Loop

- Generate an initial set of molecules (e.g., 10,000 compounds) using the base model.

- Select a subset (e.g., 1,000 compounds) for evaluation based on diversity sampling or uncertainty metrics.

- Compute objective function scores for the evaluated subset.

- Fine-tune the generative model on the scored molecules to align its output with the objective.

- Iterate the process until performance plateaus or desired objective scores are achieved [5].

Step 4: Validation and Analysis

- Generate final candidate molecules using the fine-tuned model.

- Validate candidates through more rigorous computational methods (e.g., molecular dynamics simulations).

- Select top candidates for experimental synthesis and testing.

Protocol 2: Latent Space Optimization with Reinforcement Learning

This protocol adapts the MOLRL (Molecule Optimization with Latent Reinforcement Learning) framework for optimizing molecules in the latent space of a pre-trained generative model using Proximal Policy Optimization (PPO) [13].

Step 1: Pre-training a VAE with Structured Latent Space

- Train a VAE model with a cyclical annealing schedule to prevent posterior collapse and ensure a continuous latent space [13].

- Use SMILES or molecular graph representations as input.

- Validate reconstruction performance and latent space continuity through perturbation analysis [13].

Step 2: Latent Space Exploration with PPO

- Initialize the PPO agent with policy and value networks.

- Define state as current position in latent space, action as movement in latent space, and reward based on property optimization goals [13].

- Train the agent to navigate toward regions of latent space that decode to molecules with desired properties.

- Use a trust region constraint to ensure movements in latent space produce structurally similar molecules [13].

Step 3: Multi-Objective Optimization

- Implement a weighted sum approach or constrained optimization for multiple properties.

- Simultaneously optimize for target affinity, synthetic accessibility, and favorable ADMET properties [13].

- Balance exploration and exploitation through entropy regularization.

Step 4: Scaffold-Constrained Generation

- Encode a desired scaffold into latent space.

- Constrain the optimization to regions around the scaffold embedding.

- Decode optimized latent vectors to generate novel molecules preserving the core scaffold while optimizing properties [13].

Protocol 3: Transformer Fine-Tuning for Property-Guided Generation

This protocol details the process of fine-tuning pre-trained Transformer models for targeted molecular generation, leveraging transfer learning from large chemical corpora.

Step 1: Model Initialization

- Initialize with a Transformer model pre-trained on a large chemical dataset (e.g., SMILES from PubChem) [5].

- Add task-specific layers if needed for property prediction.

Step 2: Transfer Learning with Property-Guided Data

- Curate a dataset of molecules with known target properties.

- Fine-tune the model using teacher forcing on sequences associated with desired properties.

- Alternatively, implement reinforcement learning fine-tuning with policy gradients toward property optimization [5].

Step 3: Conditional Generation

- Implement a controlled generation mechanism using conditional tokens or embeddings.

- Guide generation toward specific property profiles by conditioning on property value ranges [5].

- Use beam search or nucleus sampling to maintain diversity while ensuring quality.

Step 4: Iterative Refinement

- Implement an iterative refinement process where generated molecules are scored, and high-scoring examples are incorporated back into the training set.

- Continue fine-tuning in this iterative manner until convergence.

Workflow Visualization

Diagram 1: Targeted Molecular Generation Workflow (13.6 kB)

Diagram 2: ChemSpaceAL Active Learning Loop (9.8 kB)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for AI-Driven Molecular Design

| Resource Category | Specific Tools & Databases | Key Functionality | Application Context |

|---|---|---|---|

| Chemical Databases | ZINC, ChEMBL, PubChem [13] | Source of known molecules for training; provides initial chemical space representation | Pre-training generative models; establishing baseline distributions |

| Property Prediction | RDKit, OpenBabel, Schrödinger Suite [13] | Calculation of molecular descriptors; prediction of physicochemical & ADMET properties | Objective function formulation; candidate molecule evaluation |

| Docking & Simulation | AutoDock Vina, GROMACS, AMBER [9] | Molecular docking; binding affinity prediction; molecular dynamics simulations | Validating target engagement; assessing binding stability |

| Generative Modeling | PyTorch, TensorFlow, Hugging Face [5] | Implementation of VAE, GAN, and Transformer architectures | Building and training generative models for molecular design |

| Active Learning Framework | ChemSpaceAL Python Package [5] | Efficient fine-tuning toward specific objectives with minimal data evaluation | Targeted molecular generation for specific protein targets |

| Analysis & Visualization | RDKit, Matplotlib, Plotly [13] | Molecular visualization; latent space projection; performance metric tracking | Interpreting model results; analyzing chemical space coverage |

Generative AI models including Transformers, VAEs, and GANs have fundamentally transformed the paradigm of molecular design, enabling the rapid exploration of vast chemical spaces that were previously inaccessible to traditional methods. When integrated with advanced frameworks like ChemSpaceAL, these models demonstrate remarkable efficiency in targeting specific molecular optimization objectives with minimal data evaluation requirements [5]. The protocols and application notes presented here provide researchers with practical guidance for implementing these cutting-edge technologies in drug discovery and materials science applications.

As the field continues to evolve, we anticipate further convergence of these architectural approaches—such as VAE-Transformer hybrids and GANs with structured latent spaces—that will combine the strengths of each paradigm while mitigating their individual limitations. The ongoing development of more sophisticated active learning and reinforcement learning methodologies will further enhance the precision and efficiency of targeted molecular generation, accelerating the discovery of novel therapeutics and functional materials to address pressing challenges in human health and technology.

Active learning (AL) is a subfield of machine learning that addresses a fundamental challenge in scientific research: the high cost and difficulty of acquiring labeled data [15] [16]. In domains like materials science and drug discovery, experimental synthesis and characterization require expert knowledge, expensive equipment, and time-consuming procedures, making large-scale data collection impractical [16]. Active learning solves this through an iterative process where a model sequentially selects the most informative data points for experimentation, thereby maximizing knowledge gain while minimizing resource expenditure [15].

The core AL cycle operates as follows: a model is initially trained on a small labeled dataset; this model then characterizes what additional data would most improve it; an experiment is performed to obtain that data; and the model is updated with the new information [15]. This loop repeats until a stopping criterion is met, such as achieving sufficient model accuracy or exhausting resources [16]. In computational and experimental sciences, this approach has demonstrated remarkable efficiency, with studies showing it can reduce the number of experiments needed by over 60% compared to traditional approaches [16].

Core Principles and Query Strategies

Active learning strategies are built upon several foundational principles that guide the selection of informative experiments. Understanding these principles is crucial for selecting and designing effective AL protocols for specific applications.

Table 1: Fundamental Active Learning Query Strategies

| Principle | Mechanism | Typical Use Cases |

|---|---|---|

| Uncertainty Sampling | Selects data points where the model's predictive uncertainty is highest [15] [16]. | Ideal for refining decision boundaries in classification or reducing variance in regression [16]. |

| Diversity Sampling | Chooses points that are diverse or representative of the overall data distribution [16]. | Useful for initial model exploration and ensuring broad coverage of the experimental space [16]. |

| Expected Model Change | Selects points that are expected to cause the greatest change to the current model parameters [16]. | Effective when the model needs significant updating from specific informative instances. |

| Hybrid Methods | Combines multiple principles, such as uncertainty and diversity, to balance exploration and exploitation [16]. | Applied in complex scenarios like materials formulation design to prevent myopic sampling [16]. |

Each strategy possesses distinct strengths. Uncertainty-driven methods (e.g., LCMD, Tree-based-R) and diversity-hybrid approaches (e.g., RD-GS) have been shown to outperform random sampling and geometry-only heuristics significantly, particularly in the early stages of the AL process when labeled data is scarce [16]. As the labeled set grows, the performance gap between different strategies typically narrows, indicating diminishing returns from active learning under a fixed computational budget [16].

ChemSpaceAL: A Case Study in Targeted Molecular Generation

The ChemSpaceAL methodology provides a powerful illustration of how active learning principles can be applied to the challenge of targeted molecular generation for drug discovery [5]. This approach fine-tunes generative models to design molecules that interact with specific protein targets, demonstrating how AL can guide exploration of vast chemical spaces with high efficiency.

Methodology and Workflow

ChemSpaceAL operates through a structured pipeline that integrates a generative model with an active learning selector. The process begins with a pre-trained generative model, such as a GPT-based architecture for molecular structures. This generator creates a large sample space of candidate molecules. The key innovation is that only a subset of these generated molecules is evaluated by the objective function—for example, a docking simulation that predicts binding affinity to a target protein. An active learning selector then analyzes these evaluated candidates and identifies the most informative ones for retraining the generative model. This cycle iteratively steers the generator toward regions of chemical space that are more likely to contain molecules with the desired properties [5].

Application and Validation

The effectiveness of ChemSpaceAL was validated through two compelling case studies. When applied to c-Abl kinase, a protein with known FDA-approved small-molecule inhibitors, the model learned to generate molecules structurally similar to these inhibitors without any prior knowledge of their existence. Remarkably, it reproduced two of the exact inhibitors [5]. In a more challenging scenario targeting the HNH domain of the CRISPR-associated Cas9 enzyme—a protein without commercially available inhibitors—the methodology successfully identified novel candidate molecules, demonstrating its potential for pioneering new therapeutic avenues [5].

Benchmarking Active Learning Strategies

Evaluating the performance of different AL strategies requires rigorous benchmarking under standardized conditions. A comprehensive study compared 17 active learning strategies against a random sampling baseline within an Automated Machine Learning (AutoML) framework, using materials science regression tasks as a testbed [16]. This setup is particularly relevant as AutoML can dynamically switch between model families during the AL process, testing the robustness of each sampling strategy.

Table 2: Performance Comparison of Active Learning Strategies in AutoML

| Strategy Type | Examples | Early-Stage Performance | Late-Stage Performance | Key Characteristics |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R [16] | Outperform baseline [16] | Converge with other methods [16] | Effective for rapid initial improvement [16] |

| Diversity-Hybrid | RD-GS [16] | Outperform baseline [16] | Converge with other methods [16] | Balances exploration with exploitation [16] |

| Geometry-Only | GSx, EGAL [16] | Underperform uncertainty/hybrid [16] | Converge with other methods [16] | Relies on data distribution structure [16] |

| Random Sampling | Random [16] | Serves as baseline [16] | Converges with other methods [16] | Requires more data to achieve same accuracy [16] |

The benchmark revealed that during early acquisition phases, uncertainty-driven and diversity-hybrid strategies clearly outperformed geometry-only heuristics and random sampling by selecting more informative samples [16]. However, as the labeled set grew, the performance gap narrowed, with all strategies eventually converging, indicating diminishing returns from active learning under AutoML once sufficient data is acquired [16]. This underscores the particular value of AL in data-scarce environments, which is typical in experimental sciences.

Experimental Protocol: Implementing an Active Learning Cycle

This protocol provides a step-by-step methodology for implementing a pool-based active learning cycle for a regression task, applicable to molecular optimization or materials design.

Initial Setup and Data Preparation

- Define Objective: Clearly specify the target property to be optimized (e.g., binding affinity, catalytic activity, solubility).

- Prepare Feature Representations: Encode molecular or material candidates as feature vectors (e.g., using fingerprints, descriptors, or structural representations).

- Establish Labeled/Unlabeled Split: Create initial dataset where:

- Labeled dataset (L = {(xi, yi)}{i=1}^l) contains (l) samples with feature vectors (xi \in \mathbb{R}^d) and measured target values (y_i \in \mathbb{R}).

- Unlabeled pool (U = {xi}{i=l+1}^n) contains the remaining candidates awaiting evaluation [16].

- Select Initial Design: Randomly sample (n_{init}) candidates (typically 1-5% of pool) to create the initial labeled set [16]. Space-filling designs are also appropriate for initial sampling [17].

Active Learning Iteration Loop

- Model Training: Fit a surrogate model (e.g., Gaussian Process, Random Forest, or AutoML system) to the current labeled set (L). Use cross-validation (e.g., 5-fold) for validation and hyperparameter tuning [16].

- Query Selection: Apply the chosen AL strategy to select the most informative candidate (x^*) from the unlabeled pool (U). For uncertainty sampling, this would be the point with highest predictive variance; for diversity sampling, the point most dissimilar to existing labeled points [16].

- Experiment and Labeling: Obtain the target value (y^) for the selected candidate (x^) through experimental measurement or computational simulation (e.g., synthesis and characterization, or molecular docking).

- Dataset Update: Expand the training set: (L = L \cup {(x^, y^)}) and remove from unlabeled pool: (U = U \setminus {x^*}) [16].

- Performance Assessment: Evaluate the updated model on a held-out test set using metrics relevant to the task (e.g., Mean Absolute Error, (R^2) for regression; success rate for optimization) [16].

- Stopping Check: Repeat steps 1-5 until meeting a stopping criterion (e.g., performance plateau, budget exhaustion, or discovery of satisfactory candidate) [16].

Table 3: Key Research Reagent Solutions for Active Learning-Driven Molecular Optimization

| Tool/Resource | Function | Application Context |

|---|---|---|

| Generative Model (e.g., GPT-based) [5] | Creates novel molecular structures in the desired chemical space. | Core engine for molecular generation; provides candidate molecules for evaluation. |

| Surrogate Model (e.g., Gaussian Process, Random Forest, AutoML) [16] [17] | Predicts properties of candidate molecules and estimates prediction uncertainty. | Guides active learning selection by identifying promising candidates and uncertain predictions. |

| Evaluation Function (e.g., Molecular Docking, QSAR Model) [5] | Provides the target property value (e.g., binding affinity, solubility) for candidate molecules. | Serves as the experimental proxy or "oracle" that scores candidate molecules. |

| Chemical Feature Representation (e.g., Fingerprints, Descriptors) | Encodes molecular structures as numerical feature vectors for machine learning. | Enables similarity comparison and model training by converting structures to data. |

| Active Learning Selector [5] | Implements query strategy (uncertainty, diversity, etc.) to choose the most informative experiments. | Decision core that determines which candidates to evaluate in each iteration. |

| Automated Machine Learning (AutoML) [16] | Automates the selection and hyperparameter optimization of surrogate models. | Reduces manual tuning effort and adapts the model architecture throughout the AL process. |

ChemSpaceAL's Strategic Position in the Evolving Molecular Generation Landscape

The application of generative artificial intelligence (AI) in drug discovery represents a paradigm shift, moving beyond traditional virtual screening toward the de novo design of molecules. However, a significant challenge persists: the immense vastness of chemical space makes it computationally prohibitive to identify regions containing molecules with desired characteristics for a specific protein target. Generative models (GMs) initially trained on broad chemical databases lack inherent target specificity, and directly evaluating millions of generated molecules using resource-intensive physics-based simulations is infeasible [18] [19]. Within this landscape, ChemSpaceAL establishes its strategic position as an efficient active learning (AL) methodology that bridges this gap. By requiring the evaluation of only a small, strategically selected subset of generated molecules, it successfully aligns a generative model with a specified objective, such as binding to a particular protein [18] [5]. This protocol details the application of ChemSpaceAL for targeted molecular generation, providing a structured framework for researchers to implement and build upon this methodology.

The core innovation of ChemSpaceAL is its computationally efficient AL loop, which uses a "cheap upsampling method" to amplify the signal from a sparse set of expensive evaluations [20]. The methodology operates on the key insight that molecules which are physically close in a carefully constructed chemical space proxy—defined by molecular descriptors—are likely to have similar binding scores for a given target [20]. This allows the algorithm to generalize from a few evaluated molecules to a much larger set of unevaluated neighbors, dramatically improving sample efficiency.

Table 1: Core Stages of the ChemSpaceAL Workflow

| Stage | Key Action | Primary Outcome |

|---|---|---|

| 1. Pretraining | Train a GPT-based model on millions of diverse SMILES strings. | A foundational model with a broad understanding of drug-like chemical space. |

| 2. Molecular Generation & Clustering | Generate 100,000 unique molecules and cluster them in a PCA-reduced descriptor space. | A structured map of the generated chemical space, enabling strategic sampling. |

| 3. Strategic Sampling & Evaluation | Sample ~1% (10 molecules per cluster) for docking and scoring. | A computationally affordable set of protein-ligand binding scores. |

| 4. Active Learning Set Construction | Sample molecules from clusters proportionally to their mean scores; combine with top performers. | An augmented training set that directs the model toward high-scoring regions. |

| 5. Model Fine-tuning | Fine-tune the pretrained generator on the constructed AL training set. | An aligned model that generates a higher proportion of target-specific molecules. |

The following diagram illustrates the logical flow and iterative nature of this process.

Application Notes: Protocol for Targeted Molecular Generation

This section provides a detailed, step-by-step protocol for applying the ChemSpaceAL methodology to a protein target of interest, based on the demonstrations for c-Abl kinase and the Cas9 HNH domain [18] [21].

Pretraining the Generative Model

- Objective: To create a foundational model capable of generating a wide array of valid, drug-like molecules.

- Procedure:

- Data Curation: Combine large-scale molecular datasets such as ChEMBL, GuacaMol, MOSES, and BindingDB. After deduplication, this can yield a pretraining set of approximately 5.6 million unique SMILES strings [18].

- Model Selection & Training: Utilize a Generative Pretrained Transformer (GPT) architecture, which is an autoregressive model well-suited for sequence generation [18]. Train the model to predict the next token in the SMILES string sequence. This step equips the model with a general "understanding" of chemical rules and structures.

Iterative Active Learning for Target Alignment

Objective: To steer the generative model from broad chemical space toward a specific protein target over a few iterations (e.g., 3-5 cycles).

Procedure for Iteration i:

- Molecular Generation: Use the current model (pretrained for iteration 0, or fine-tuned for subsequent iterations) to generate 100,000 unique, valid molecules. Canonicalize their SMILES strings to ensure uniqueness [18] [21].

- Chemical Space Mapping:

- Descriptor Calculation: For each generated molecule, calculate a set of 196 RDKit molecular descriptors. These capture physicochemical properties and functional group counts [20].

- Dimensionality Reduction: Project the high-dimensional descriptor vectors into a lower-dimensional space (e.g., 120 principal components) using a precomputed PCA transformation fitted on the pretraining set. This serves as the "chemical space proxy" [18] [20].

- Clustering: Apply k-means clustering (with k=100 and k-means++ initialization) on the projected descriptors. This partitions the 100,000 molecules into 100 groups with similar properties [18] [20].

- Strategic Sampling & Binding Evaluation:

- Sampling: Randomly select 10 molecules from each of the 100 clusters, resulting in a representative subset of 1,000 molecules (~1% of the total) [18].

- Molecular Docking: Dock each of the 1,000 sampled molecules to the protein target's binding site using a docking tool like DiffDock. This step is computationally intensive, taking approximately 16 hours on a modern GPU [21] [20].

- Pose Scoring: Analyze the top-ranked docking pose for each molecule. Use a tool like ProLIF to generate a protein-ligand interaction fingerprint. Calculate a Binding Score as a weighted sum of interactions. For example: Ionic Interaction × 7 + H-Bond × 3.5 + Hydrophobic Interaction × 1 [20].

- Active Learning Set Construction:

- Identify all evaluated molecules that meet a predefined success criterion (e.g., a Binding Score above a threshold

T). Include replicas of these molecules in the new AL training set [18]. - For the remaining slots in the training set (e.g., 5,000 molecules), sample from the unevaluated molecules in the generated set. The sampling probability for a cluster should be proportional to the average Binding Score of the evaluated molecules within that cluster [20]. This is the crucial step that upsamples molecules from promising regions without needing to dock them.

- Identify all evaluated molecules that meet a predefined success criterion (e.g., a Binding Score above a threshold

- Model Fine-tuning: Continue training (fine-tune) the generative model on the newly constructed AL training set using a reduced learning rate. This updates the model's parameters to make it more likely to generate molecules similar to those in the high-scoring regions of chemical space [18] [21].

Critical Parameters for Implementation

- Binding Score Threshold (T): For targets with known binders,

Tcan be set to the score of a known inhibitor [18]. For novel targets, a statistical threshold can be used (e.g., a score above which a molecule has a high probability of being a binder) [20]. - Cluster Sampling: The number of clusters (k=100) and samples per cluster (n=10) are optimized for a total budget of 1,000 dockings per iteration. This can be adjusted based on computational resources [18] [20].

- Filters: Implement ADMET and functional group filters between generation and clustering to ensure drug-likeness and remove undesirable moieties [18].

The following diagram visualizes the strategic sampling and training set construction logic, which is the core of the methodology's efficiency.

Performance and Validation

The ChemSpaceAL methodology has been quantitatively validated on both a target with known inhibitors and one without.

Case Study 1: c-Abl Kinase

c-Abl kinase was used to validate the approach. The model was fine-tuned without prior knowledge of FDA-approved inhibitors like imatinib and bosutinib. After five AL iterations, the model's output distribution shifted significantly in the chemical space proxy toward the region containing these known inhibitors. Remarkably, the model exactly generated imatinib and bosutinib [18]. The quantitative improvement is summarized in the table below.

Table 2: Performance Metrics for c-Abl Kinase Alignment over 5 AL Iterations

| Model (Pretraining Set) | Initial % > Threshold | Final % > Threshold | Increase | Key Observation |

|---|---|---|---|---|

| C Model (Combined Dataset) | 38.8% | 91.6% | +52.8% | Reproduced two known inhibitors exactly. |

| M Model (MOSES Dataset) | 21.7% | 80.3% | +58.6% | Mean similarity to known inhibitors increased each iteration. |

Case Study 2: Cas9 HNH Domain

For this target with no commercially available inhibitors, success was measured by the increase in molecules surpassing a binding score threshold associated with a high likelihood of binding (score > 11) [20].

Table 3: Performance Comparison of Active Learning Strategies for Cas9 HNH Domain

| Active Learning Strategy | Final Performance (% > 11) | Relative Efficiency |

|---|---|---|

| Naïve AL (Training on replicas of ~300 hits) | 44% | Baseline |

| Uniform Sampling (AL set sampled uniformly from all clusters) | 51% | Moderately improved |

| ChemSpaceAL (Strategic sampling from high-score clusters) | 76% | Dramatically superior |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of ChemSpaceAL relies on a suite of software tools and datasets. The following table details these essential components.

Table 4: Key Research Reagents and Computational Tools for ChemSpaceAL

| Category | Item / Software | Function in the Protocol |

|---|---|---|

| Generative Model | GPT-based Architecture (e.g., as implemented in ChemSpaceAL) | The core engine for generating novel molecular structures as SMILES strings. |

| Chemical Informatics | RDKit | Calculates molecular descriptors, canonicalizes SMILES, and handles chemical data processing. |

| Docking & Pose Prediction | DiffDock | Predicts the binding pose of a ligand to a protein target quickly and accurately. |

| Interaction & Scoring | ProLIF (Protein-Ligand Interaction Fingerprints) | Analyzes docking poses to quantify specific interactions (H-bonds, ionic, hydrophobic). |

| Dataset | Combined ChEMBL, MOSES, GuacaMol, BindingDB | Provides a broad, diverse foundation of drug-like molecules for pretraining the generative model. |

| Methodology Package | ChemSpaceAL Python Package | The open-source software that integrates the entire workflow, facilitating reproducibility [21]. |

The convergence of targeted molecular generation and precision gene editing is forging a new paradigm in therapeutic development. This Application Note details the integration of the ChemSpaceAL active learning methodology for generating protein-specific molecules with CRISPR-Cas9 genome engineering protocols to create a powerful, unified pipeline for advanced therapeutic discovery. We frame these techniques within the context of a broader thesis on targeted molecular generation, providing researchers with detailed protocols for applying these cutting-edge tools to overcome longstanding challenges in drug development, particularly in oncology. The workflows described herein enable the rapid identification of novel chemical scaffolds and the subsequent genetic manipulation of biological systems to enhance therapeutic efficacy and combat resistance mechanisms [4] [22].

ChemSpaceAL Methodology for Targeted Molecular Generation

Core Principles and Workflow

The ChemSpaceAL framework implements an efficient active learning methodology to navigate vast chemical spaces for targeted molecular generation. This approach requires evaluation of only a subset of generated data to successfully align a generative model with a specified objective, dramatically reducing computational overhead compared to exhaustive screening methods [4] [5].

- Key Innovation: The model learns to generate molecules with desired characteristics without prior knowledge of existing inhibitors and can reproduce known active compounds through its exploration process.

- Architecture: Built upon a GPT-based molecular generator that can be fine-tuned toward specific protein targets using an active learning loop.

- Validation: Successfully demonstrated on both well-characterized targets (c-Abl kinase with FDA-approved inhibitors) and novel targets (HNH domain of CRISPR-associated protein Cas9) without commercially available inhibitors [4].

Implementation Protocol

Protocol Title: Protein-Specific Molecular Generation Using ChemSpaceAL Objective: To generate novel, target-specific small molecule inhibitors using active learning-guided exploration of chemical space.

Materials and Reagents:

- ChemSpaceAL Python package (open-source)

- Hardware: Standard computational workstation with GPU acceleration recommended

- Target protein structure or relevant molecular descriptors

Procedure:

- Target Specification: Define the target protein of interest (e.g., c-Abl kinase).

- Initialization: Initialize the GPT-based molecular generator with a broad chemical space prior.

- Active Learning Loop:

- Generation: The model generates a batch of molecular structures.

- Selection: A subset of these molecules is selected for evaluation based on acquisition functions (e.g., expected improvement, uncertainty sampling).

- Evaluation: The selected molecules are evaluated against the target objective (e.g., docking score, predictive model output).

- Update: The evaluation results are used to update the generative model, refining its understanding of the chemical space region of interest.

- Iteration: Repeat steps 3a-3d for a predetermined number of cycles or until convergence criteria are met (e.g., reproduction of known actives or identification of novel scaffolds with high predicted activity).

- Output: The final model generates candidate molecules for synthesis and experimental validation.

Technical Notes: The methodology is particularly valuable for targets with limited known actives, as it can discover novel scaffolds without relying on extensive structure-activity relationship data. For c-Abl kinase, the model learned to generate molecules similar to known inhibitors without prior knowledge and reproduced two FDA-approved inhibitors exactly [5].

Advanced Applications of Kinase Inhibitors

Key Kinase Targets and Their Inhibitors

Protein kinases represent one of the most successful target classes for molecular therapeutics, particularly in oncology. The development of isoform-selective compounds remains a primary focus to minimize off-target effects and overcome resistance mechanisms [23] [24]. The table below summarizes key kinase targets, their inhibitors, and clinical applications.

Table 1: Key Protein Kinase Targets and Their Clinically Relevant Inhibitors

| Kinase Target | Role in Cellular Function and Disease | Representative Inhibitors | Clinical Applications | Primary Resistance Mechanisms |

|---|---|---|---|---|

| BCR-ABL | Promotes unchecked cell proliferation in CML via constitutive tyrosine kinase activity | Imatinib, Nilotinib, Ponatinib | Chronic Myeloid Leukemia (CML) | T315I mutation, incomplete leukemia stem cell eradication [24] |

| EGFR | Transmembrane receptor tyrosine kinase regulating cell proliferation; mutated in NSCLC | Osimertinib, Gefitinib, Erlotinib | Non-Small Cell Lung Cancer (NSCLC) | T790M mutation, MET amplification, phenotypic transformation [22] |

| ALK | Drives tumorigenesis in NSCLC and lymphoma through fusion proteins | Crizotinib, Ceritinib, Lorlatinib | NSCLC, Anaplastic Large Cell Lymphoma | ALK secondary mutations, CNS metastases [24] |

| KRAS G12C | GTPase with constitutive activation in codon 12 mutations; prevalent in NSCLC | Sotorasib, Adagrasib | NSCLC, Colorectal Cancer | Secondary KRAS mutations, adaptive feedback reactivation [22] |

| FLT3 | Essential for hematopoiesis; mutations drive AML progression | Sorafenib, Gilteritinib | Acute Myeloid Leukemia (AML) | F691L gatekeeper mutation, D835 loop mutations [24] |

| VEGFR | Key regulator of angiogenesis, supporting tumor vascularization | Sorafenib, Sunitinib, Pazopanib | Renal Cell Carcinoma, Hepatocellular Carcinoma | Upregulation of alternative angiogenic factors (FGF, PDGF) [24] |

Experimental Protocol: CRISPR-Cas9 Screening for Kinase Inhibitor Resistance Mechanisms

Protocol Title: Genome-wide CRISPR Knockout Screening for Kinase Inhibitor Resistance Genes Objective: To identify genetic drivers of resistance to targeted kinase inhibitors in cancer models.

Materials and Reagents:

- GeCKO v2 or similar genome-wide CRISPR knockout library

- Cas9-expressing cell line (e.g., A549, H1975 for NSCLC models)

- Target kinase inhibitor (e.g., Osimertinib for EGFR, Sotorasib for KRAS G12C)

- Lentiviral packaging system (psPAX2, pMD2.G)

- Polybrene (8 μg/mL)

- Puromycin for selection

- Next-generation sequencing platform

Procedure:

- Library Preparation: Amplify the CRISPR knockout library and prepare high-titer lentivirus.

- Cell Transduction: Transduce Cas9-expressing cells at low MOI (0.3-0.5) to ensure single guide RNA (sgRNA) integration. Include a representation of at least 500 cells per sgRNA.

- Selection: Apply puromycin selection (1-5 μg/mL, concentration dependent on cell line) for 5-7 days to eliminate non-transduced cells.

- Treatment: Split cells into treatment groups: (1) vehicle control (DMSO) and (2) target kinase inhibitor at clinically relevant concentration (e.g., IC50-IC80).

- Passaging: Culture cells for 3-4 weeks, maintaining inhibitor pressure and sufficient cell representation (>500 cells/sgRNA throughout).

- Genomic DNA Extraction: Harvest cells at endpoint and extract genomic DNA from both treatment and control arms.

- sgRNA Amplification & Sequencing: Amplify integrated sgRNA sequences with barcoded primers for multiplexing and sequence using Illumina platform.

- Bioinformatic Analysis: Align sequences to reference library, count sgRNA reads, and use MAGeCK or similar algorithms to identify significantly enriched/depleted sgRNAs and genes in treatment versus control.

Technical Notes: This approach has successfully identified genes like ITGA8 as key determinants of EGFR-TKI sensitivity in lung adenocarcinoma [25]. For KRAS G12C-mutant models, similar screens have revealed "collateral dependencies" and synergistic drug combinations that enhance KRAS inhibition efficacy [22].

CRISPR-Cas9 Technology for Overcoming Therapeutic Resistance

CRISPR-Cas Systems and Their Applications

CRISPR-Cas systems have evolved beyond simple gene editing tools to encompass a versatile toolkit for genetic manipulation. The table below compares key CRISPR systems and their research applications in therapeutic development.

Table 2: Comparison of CRISPR-Cas Systems for Therapeutic Development Applications

| CRISPR System | Key Characteristics | Therapeutic/Research Applications | Advantages | Limitations |

|---|---|---|---|---|

| CRISPR-Cas9 | DSB creation with NGG PAM; blunt ends [26] | Gene knockout, knock-in (with HDR), large-scale screening [22] | Well-characterized, high efficiency, numerous variants available | Higher off-target potential compared to other systems, limited by PAM |

| CRISPR-Cas12a (Cpf1) | DSB creation with TTTV PAM; sticky ends [26] | Precise knock-in (e.g., CAR integration), multiplexed editing [26] | Lower off-target rate, simpler gRNA structure, multiplex editing capability | Typically lower editing efficiency than Cas9, narrower PAM options |

| CRISPR-dCas9 (CRISPRi/a) | Nuclease-deficient; transcriptional modulation [26] | Gene expression perturbation (knockdown or activation) [25] [26] | Avoids DNA damage, reversible effects, precise expression control | Modest expression changes, requires sustained expression |

| CRISPR-CasRx | RNA-targeting Cas13 variant [25] | RNA knockdown, splicing modulation | Targets RNA without genomic alteration, transient effect | Limited to RNA-level effects, potential collateral RNAse activity |

Experimental Protocol: Enhancing CRISPR-Cas9 Knock-in Efficiency in Primary T Cells

Protocol Title: DNA-PK Inhibitor-Enhanced CRISPR-Cas9 Knock-in for T-Cell Engineering Objective: To achieve high-efficiency, site-specific integration of therapeutic transgenes (e.g., CAR, TCR) into the TRAC locus of primary human T cells.

Materials and Reagents:

- Primary human T cells from leukapheresis product

- CRISPR-Cas9 ribonucleoprotein (RNP) complex:

- High-fidelity Cas9 protein

- Synthetic sgRNA targeting TRAC locus

- DNA-PK inhibitor (e.g., Samotolisib, M3814, PI-103)

- HDR template: ssODN or AAV vector containing payload (e.g., CAR expression cassette)

- Electroporation system (e.g., Lonza 4D-Nucleofector)

- GMP-compatible T-cell media with IL-7/IL-15

Procedure:

- T Cell Activation: Activate isolated T cells with CD3/CD28 beads for 24-48 hours.

- RNP Complex Formation: Complex synthetic sgRNA with Cas9 protein (3:1 molar ratio) and incubate 10-20 minutes at room temperature.

- DNA-PK Inhibitor Treatment: Pre-treat cells with DNA-PK inhibitor (e.g., 1 μM Samotolisib) for 1-2 hours before electroporation.

- Electroporation: Combine RNP complex and HDR template with 1-2×10^6 T cells in electroporation cuvette. Use appropriate program (e.g., EO-115 on 4D-Nucleofector).

- Recovery: Immediately transfer cells to pre-warmed media containing DNA-PK inhibitor.

- Inhibitor Washout: After 16-24 hours, wash cells and resuspend in fresh media with IL-7/IL-15 (10-20 ng/mL each).

- Expansion and Analysis: Culture cells for 7-14 days, monitoring integration efficiency by flow cytometry and functional assays.

Technical Notes: Samotolisib has demonstrated GMP-compatibility with no negative impact on T-cell viability, phenotype, expansion, or effector function [27]. This protocol has achieved knock-in efficiencies sufficient for clinical product generation. The use of DNA-PK inhibitors enhances HDR by temporarily inhibiting the competing NHEJ pathway [27].

Integrated Workflow Visualization

Molecular Generation to Therapeutic Implementation Workflow

The following diagram illustrates the integrated research-to-application pipeline, from initial molecular discovery through validation and therapeutic engineering:

CRISPR-Cas9 Mechanism and DNA Repair Pathways

The following diagram details the molecular mechanism of CRISPR-Cas9 and the key cellular DNA repair pathways it harnesses for different editing outcomes:

Essential Research Reagent Solutions

Table 3: Key Research Reagents for Integrated Kinase Inhibitor and CRISPR-Cas9 Studies

| Reagent/Category | Specific Examples | Function/Application | Implementation Notes |

|---|---|---|---|

| CRISPR-Cas9 Systems | High-fidelity SpCas9, Cas12a (Cpf1), dCas9-KRAB | Gene knockout, knock-in, transcriptional modulation | Cas12a offers lower off-target rates; dCas9 systems avoid DNA damage [26] |

| DNA Repair Modulators | Samotolisib, M3814, PI-103 (DNA-PK inhibitors) | Enhance HDR efficiency in primary cells | GMP-compatible samotolisib shows no negative impact on T-cell function [27] |

| Delivery Systems | Lipid nanoparticles (LNPs), Electroporation, AAV | In vivo and ex vivo delivery of editing components | LNPs favor liver accumulation; suitable for redosing [28] |

| Kinase Inhibitors | Osimertinib (EGFR), Sotorasib (KRAS G12C), Gilteritinib (FLT3) | Target validation, resistance mechanism studies | Used in combination screens with CRISPR libraries to identify resistance mechanisms [24] [22] |

| Cell Engineering Tools | CRISPR-Cas9 RNP complexes, CAR/TCR templates | Generation of universal CAR-T cells, TCR insertion | Cas12a demonstrated superior multi-gene knock-in capability for bispecific CARs [26] |

| Screening Libraries | Genome-wide CRISPR knockout (GeCKO), custom sgRNA sets | High-throughput identification of resistance genes and synthetic lethal interactions | Requires deep sequencing and specialized analysis tools (MAGeCK) [22] |

Implementing ChemSpaceAL: A Step-by-Step Guide to Protein-Specific Molecular Generation

This document outlines the architecture and protocols for a targeted molecular generation system that integrates a GPT-based molecular generator with an active learning (AL) loop. This framework, known as the ChemSpaceAL methodology, is designed to efficiently explore vast chemical spaces and generate novel compounds with high binding affinity for specific protein targets [18]. The approach addresses a fundamental challenge in drug discovery: the computational intractability of exhaustively evaluating all possible generated molecules. By leveraging strategic sampling and machine learning, it aligns a generative model toward a specified objective with minimal resource expenditure [18].

System Architecture and Workflow

The architecture consists of two core components: a GPT-based generative model pretrained on extensive chemical databases, and an active learning loop that iteratively refines the model's output based on selective feedback from a scoring function.

The following diagram illustrates the integrated workflow of the GPT-based generator and the active learning loop:

Core Component 1: The GPT-Based Molecular Generator

The foundation of this architecture is a Generative Pre-trained Transformer (GPT) model, which treats molecular structures as a chemical language.

Model Design and Pre-training

The generator is built on a transformer decoder architecture [29] [30]. Its pre-training process enables it to learn the fundamental "syntax" and "grammar" of chemistry.

- Representation: Molecules are represented as SMILES (Simplified Molecular Input Line Entry System) strings, a one-dimensional text-based notation [18] [30].

- Architecture: The model uses an autoregressive training objective, predicting the next token in a sequence based on the preceding tokens [30].

- Pre-training Data: The model is trained on millions of drug-like SMILES strings from public and proprietary databases (e.g., ChEMBL, GuacaMol, MOSES, BindingDB), encompassing several million unique and valid compounds [18]. This teaches the model general chemical rules and the structure of drug-like molecules.

This pre-training is crucial for enabling the model to generate a wide array of chemically valid and diverse molecules from the outset [18].

Core Component 2: The Active Learning Loop

The active learning loop is the iterative process that steers the general-purpose generator toward a specific target. The following diagram details the data flow and key operations within a single cycle:

Step-by-Step AL Protocol

This section provides a detailed methodology for executing the active learning cycle.

Step 1: Molecular Generation

- Action: Use the current GPT model to generate a large set of molecules (e.g., 100,000 unique molecules determined by SMILES-string canonicalization) [18].

- Purpose: To create a diverse pool of candidates for evaluation.

Step 2: Chemical Space Mapping

- Action:

- Calculate molecular descriptors (e.g., Morgan fingerprints, molecular weight, LogP) for each generated molecule [18].

- Project the descriptor vectors into a Principal Component Analysis (PCA)-reduced space. This space is constructed once from the descriptors of all molecules in the original pretraining set to ensure consistent coordinates [18].

- Apply k-means clustering on the generated molecules within this reduced space to group molecules with similar properties [18].

- Purpose: To structure the generated chemical space and enable efficient, representative sampling.

Step 3: Strategic Sampling and Evaluation

- Action:

- Sample a small subset (e.g., ~1%) of molecules from each cluster [18].

- Subject this sampled subset to a computationally expensive scoring function. In the referenced protocol, this involves molecular docking against the target protein (e.g., using AutoDock Vina) followed by scoring with an attractive interaction-based function [18].

- Establish a score threshold for "good" molecules. This can be derived from known inhibitors (e.g., the lowest score among FDA-approved inhibitors of the target) [18].

- Purpose: To gain an understanding of the binding potential within each region of the chemical space without the cost of docking all 100,000 molecules.

Step 4: Active Learning Training Set Construction

- Action: Create a new dataset for fine-tuning the GPT model by:

- Proportional Sampling: Sampling molecules from each cluster proportionally to the mean scores of the evaluated molecules within that cluster. Clusters with higher average scores contribute more molecules to the training set [18].

- Elite Inclusion: Adding replicas of the evaluated molecules whose scores meet the predefined threshold [18].

- Purpose: To create a biased dataset that over-represents high-scoring regions of the chemical space, teaching the model to generate more molecules with desirable properties.

Step 5: Model Fine-tuning

- Action: Fine-tune the pre-trained GPT model on the constructed AL training set [18].

- Purpose: To align the model's generative policy with the objective of producing molecules that score well against the target.

This cycle (Steps 1-5) is repeated for multiple iterations, progressively shifting the model's output distribution toward the desired chemical space [18].

Experimental Protocols and Validation

Benchmarking Protocol: Evaluating Generated Compounds

To validate the performance of the designed molecules, a comprehensive benchmarking protocol should be employed. The following metrics, derived from established benchmarks like CrossDocked2020, provide a multi-faceted evaluation [30]:

Table 1: Key Metrics for Evaluating Generated Molecules

| Metric | Description | Measurement Tool | Optimal Range/Value |

|---|---|---|---|

| Binding Affinity | Estimated strength of binding to the target protein. | Docking Score (AutoDock Vina) [30] | Lower (more negative) is better. |

| Drug-Likeness (QED) | Quantitative Estimate of Drug-likeness. | RDKit [29] [30] | 0 to 1 (Higher is better). |

| Synthetic Accessibility (SAS) | Estimated ease of synthesizing the molecule. | RDKit [29] [30] | 1 to 10 (Lower is better). |

| Lipophilicity (LogP) | Measure of molecular lipophilicity. | RDKit [30] | 0–5 for oral drugs [30]. |

| Molecular Diversity | Diversity of the generated set. | Tanimoto similarity between Morgan fingerprints [30] | Higher diversity is better. |

Case Study Protocol: Targeting c-Abl Kinase

A practical validation of the ChemSpaceAL methodology involves applying it to a specific target with known inhibitors.

- Target Protein: c-Abl kinase (PDB ID: 1IEP), a well-known anticancer target with multiple FDA-approved inhibitors (e.g., imatinib, nilotinib) [18].

- Objective: Fine-tune the generative model to produce molecules similar to these known inhibitors without prior knowledge of their structures [18].

- Validation Method:

- After multiple AL iterations, calculate the Tanimoto similarity (based on molecular fingerprints) between the generated molecular ensemble and each known inhibitor. Success is indicated by a consistent increase in these similarity scores [18].

- Inspect the final set of generated molecules for the exact replication of known inhibitors (e.g., imatinib and bosutinib were reproduced exactly in the referenced study) [18].

Table 2: Performance Progression for c-Abl Kinase Case Study

| AL Iteration | % of Molecules Meeting Score Threshold (C Model) | Mean Score (C Model) | % of Molecules Meeting Score Threshold (M Model) | Mean Score (M Model) |

|---|---|---|---|---|

| 0 (Pre-AL) | 38.8% | 32.8 | 21.7% | 30.3 |

| 3 | 81.2% | 44.0 | 68.8% | 39.9 |

| 5 | 91.6% | 46.0 | 80.3% | 41.0 |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key software and data resources required to implement the ChemSpaceAL methodology.

Table 3: Essential Research Reagents and Resources

| Item | Type | Function / Description | Example / Source |

|---|---|---|---|

| Pretraining Datasets | Data | Provide a diverse foundation of chemical knowledge for the GPT model. | ChEMBL, GuacaMol, MOSES, BindingDB [18] |

| Molecular Generator | Software | The core GPT model that generates novel molecular structures as SMILES strings. | Transformer decoder architecture [29] [18] |

| Descriptor Calculator | Software | Computes numerical representations of molecules for chemical space mapping. | RDKit (for Morgan fingerprints, etc.) [30] |

| Docking Software | Software | Predicts the binding pose and affinity of a molecule to a protein target. | AutoDock Vina [30] |

| Protein Data Bank (PDB) | Data | Source for 3D structures of the target proteins. | PDB ID 1IEP for c-Abl kinase [18] |

| Chemical SpaceAL Package | Software | Open-source Python package facilitating the implementation of the AL workflow [18]. | ChemSpaceAL [18] |

The development of robust molecular machine learning (ML) models is fundamentally constrained by the limitations of existing pretraining datasets. These datasets often lack the scale, diversity, and rigorous curation necessary for models to generalize effectively across the vast and varied landscape of chemical tasks encountered in drug discovery [31]. The size, diversity, and quality of pretraining datasets critically determine the generalization ability of foundation models [31]. This application note details a comprehensive pretraining strategy designed to overcome these limitations, outlining the construction of a multi-source molecular dataset, effective pretraining methodologies, and protocols for integrating this chemical knowledge into the targeted molecular generation pipeline of the ChemSpaceAL framework.

Data Curation and Processing Protocol

A high-quality pretraining dataset is the cornerstone of an effective molecular representation learning strategy. The protocol described herein emphasizes scalability, diversity, and quality control.

Source Data Aggregation

The foundation of a comprehensive pretraining dataset is built upon large, general-purpose chemical databases that aggregate experimentally synthesized compounds from multiple suppliers and sources [31]. We recommend sourcing from the following, noting their key characteristics in Table 1:

- UniChem & PubChem: Large-scale repositories containing experimentally verified compounds, providing broad coverage of chemical space [31].

- ZINC: A curated database of commercially available compounds, often used for virtual screening [31].

- ChEMBL: A database of bioactive molecules with drug-like properties, useful for incorporating bio-relevant chemical knowledge [31].

Table 1: Key Characteristics of Primary Data Sources

| Database | Primary Content | Scale (Approx. Molecules) | Key Strengths |

|---|---|---|---|

| UniChem/PubChem | Experimentally synthesized compounds | ~200 Million (aggregate) | High diversity, real-world compounds [31] |

| ZINC | Commercially available compounds | Tens of Millions | Synthetically accessible, drug-like focus [31] |

| ChEMBL | Bioactive molecules | Millions | Bio-relevant, associated with target data [31] |

Multi-Step Processing and Filtering Workflow

Raw data from source databases must undergo a uniform processing pipeline to ensure quality and consistency. The workflow involves three sequential stages, implemented using cheminformatics toolkits like RDKit [32]:

- Preprocessing: Initial data retrieval and parsing of molecular structures from source formats (e.g., SDF, SMILES).

- Standardization: Normalization of molecular structures, including neutralization of charges, standardization of tautomers, and removal of explicit hydrogens to create a consistent representation.

- Filtering: Application of rules to remove undesirable structures. This includes deduplication, removal of molecules with invalid valences or atoms inappropriate for small-molecule drug discovery (e.g., metals in organometallics may be context-dependent), and exclusion of extremely large molecules (e.g., peptides, polymers) [31].

This pipeline yields a standardized, non-redundant dataset of small molecules suitable for pretraining. The final step involves merging the processed datasets from all sources and performing a global deduplication to create the final pretraining corpus [31].

Pretraining Methodology and Experimental Protocols

The curated dataset enables the pretraining of molecular encoders through self-supervised tasks that learn general chemical knowledge without requiring property labels.

Selecting a Molecular Representation

The choice of molecular representation dictates the model architecture and the type of structural information that can be learned. Common representations include:

- 2D Molecular Graphs: Atoms as nodes and bonds as edges; captures topological connectivity [33].

- SMILES Strings: Text-based representation; amenable to language model architectures [33].

- Molecular Images: 2D depictions of structures; allows leveraging powerful vision foundation models [32].

For the ChemSpaceAL framework, which relies on generating novel molecular structures, a graph-based representation is often most suitable as it natively encodes structural components that can be manipulated during generation.

Multi-Task Pretraining Framework (M4 Paradigm)

To learn comprehensive chemical knowledge, we adopt a multi-task pretraining paradigm. This approach forces the model to integrate different facets of molecular information, leading to more robust and generalizable representations [33]. A highly effective framework, termed M4, incorporates the following four tasks:

- Molecular Fingerprint Prediction: A supervised task where the model predicts pre-defined molecular fingerprints (e.g., ECFP). This teaches the model to recognize substructural features and their correlations with chemical properties [33].

- Functional Group Prediction: A supervised task that identifies specific functional groups (e.g., carbonyl, hydroxyl) within the molecule. This injects critical chemical prior knowledge, guiding the model to recognize key determinants of molecular reactivity and function [33].

- 2D Atomic Distance Prediction: A self-supervised task where the model predicts the topological distance between atom pairs in the molecular graph. This enhances the model's understanding of long-range atomic interactions and overall molecular topology [33].

- 3D Bond Angle Prediction: A self-supervised task that predicts the spatial bond angles from a low-energy molecular conformation. This incorporates crucial 3D stereochemical information, making the model conformation-aware [33].

These tasks are balanced during training using a Dynamic Adaptive Multitask Learning strategy, which automatically adjusts the loss weight of each task to optimize learning [33].

Diagram 1: M4 Multi-Task Pretraining Framework

Integration with the ChemSpaceAL Pipeline

The pretrained molecular encoder serves as a foundational component within the broader ChemSpaceAL active learning methodology for targeted molecular generation.

Workflow Integration Protocol

The integration protocol involves transferring the knowledge from the pretrained model to the generative active learning cycle, as illustrated in the workflow below.

Diagram 2: Integration into ChemSpaceAL Workflow

The specific integration points are: