Comparative Analysis of Neural Network Architectures for Chemical Property Prediction: From GNNs to KANs

The accurate prediction of molecular properties is a cornerstone of modern chemical and pharmaceutical research, directly impacting drug discovery and materials science.

Comparative Analysis of Neural Network Architectures for Chemical Property Prediction: From GNNs to KANs

Abstract

The accurate prediction of molecular properties is a cornerstone of modern chemical and pharmaceutical research, directly impacting drug discovery and materials science. This article provides a comprehensive comparison of contemporary neural network architectures designed for this critical task. We explore the foundational principles of Graph Neural Networks (GNNs), including GIN, EGNN, and Graphormer, and investigate the emergence of novel frameworks like Kolmogorov-Arnold Networks (KANs) integrated into graph-based models (KA-GNNs). The discussion extends to methodological applications, practical troubleshooting for data scarcity and model generalization, and a rigorous validation of architectural performance across standardized benchmarks. Aimed at researchers and development professionals, this review synthesizes current advancements to guide the selection and optimization of predictive models, ultimately streamlining the path from computational screening to experimental validation.

From Molecules to Graphs: Foundational Architectures for Molecular Representation

The Shift from Traditional Descriptors to Graph-Based Learning

The field of computational chemistry is undergoing a significant transformation, moving away from reliance on handcrafted molecular descriptors toward end-to-end graph-based learning. This paradigm shift is powered by the emergence of Graph Neural Networks (GNNs), which directly process molecular structures as graphs, inherently capturing atomic interactions and topological information that traditional methods often miss. Traditional Quantitative Structure-Property Relationship (QSPR) models depend on expert-derived molecular descriptors—such as 0D (atomic properties), 1D (functional groups), and 2D (topological indices) descriptors—which can be time-consuming to generate and may omit critical structural information [1]. In contrast, GNNs operate directly on the molecular graph, where atoms are represented as nodes and bonds as edges, enabling automated, data-driven feature extraction that has demonstrated superior performance across a wide range of chemical property prediction tasks [2] [3]. This article objectively compares the performance of these approaches, detailing experimental protocols and providing quantitative evidence from recent studies to guide researchers in selecting appropriate architectures for drug discovery and materials science applications.

Performance Comparison: Traditional Descriptors vs. Graph-Based Learning

Quantitative Benchmarking Across Prediction Tasks

Recent comprehensive studies directly benchmark the performance of traditional machine learning methods using molecular fingerprints against various GNN architectures. The results consistently demonstrate the advantage of graph-based learning. In a large-scale assessment of ecotoxicity prediction, Graph Convolutional Networks (GCN) achieved the highest performance, with Area Under the ROC Curve (AUC) values ranging between 0.982 and 0.992 in same-species predictions for fish, crustaceans, and algae [3]. These models significantly outperformed traditional machine learning approaches (KNN, NB, RF, SVM, XGB) using Morgan, MACCS, and Mol2vec fingerprints [3].

Similar advantages are observed in reaction yield prediction. As shown in Table 1, Message Passing Neural Networks (MPNN) achieved an R² value of 0.75 when predicting yields for cross-coupling reactions, surpassing other GNN architectures and traditional descriptor-based methods [2].

Table 1: Performance of various GNN architectures for predicting yields in cross-coupling reactions [2]

| GNN Architecture | R² Score | MAE | RMSE |

|---|---|---|---|

| MPNN | 0.75 | - | - |

| ResGCN | - | - | - |

| GraphSAGE | - | - | - |

| GAT | - | - | - |

| GATv2 | - | - | - |

| GCN | - | - | - |

| GIN | - | - | - |

Performance in Molecular Generation and Optimization

The invertible nature of GNNs has been successfully exploited for molecular generation. Research demonstrates that direct inverse design generators (DIDgen) using GNNs can generate molecules with specific target properties, such as HOMO-LUMO gaps, with rates comparable to or better than state-of-the-art genetic algorithms like JANUS [4]. This approach hits target electronic properties with high precision while consistently generating more diverse molecular structures [4]. Furthermore, the method created a dataset of 1,617 new molecules with DFT-verified properties, serving as a valuable benchmark for QM9-trained models [4].

Experimental Protocols and Methodologies

Protocol 1: Molecular Property Prediction with GNNs

Objective: To predict molecular properties (e.g., ecotoxicity, energy gaps) from graph-structured molecular data.

Dataset Preparation: Publicly available datasets such as QM9 (for electronic properties) [4] [5] or ADORE (for ecotoxicity) [3] are commonly used. Molecules are represented as graphs where nodes are atoms (with features like atomic number, hybridization) and edges are bonds (with features like bond order, aromaticity) [1].

Model Architecture and Training:

- Graph Construction: Molecules are converted from SMILES strings to graph representations using tools like PyTorch Geometric [6] [7].

- GNN Layer: Architectures like GCN, GAT, or MPNN are employed. For example, a GCN layer updates node representations by aggregating features from neighboring nodes [3].

- Readout Layer: Node representations are aggregated into a graph-level representation using sum, mean, or attention-based pooling [8].

- Prediction Head: A fully connected network maps the graph representation to the target property (e.g., toxicity class, energy gap) [3].

- Training: Models are trained using appropriate loss functions (e.g., cross-entropy for classification, mean squared error for regression) with optimization techniques like Adam [7].

Protocol 2: Inverse Molecular Design with GNNs

Objective: To generate novel molecular structures with desired properties by optimizing the input graph of a pre-trained GNN predictor [4].

Workflow:

- Pre-trained Predictor: A GNN is first trained to predict a target property (e.g., HOMO-LUMO gap) from molecular graphs.

- Gradient Ascent: Starting from a random graph or existing molecule, the molecular graph (both adjacency matrix and node features) is iteratively optimized via gradient ascent to maximize the predicted target property [4].

- Valence Constraints: Chemical validity is enforced through constrained graph construction. The adjacency matrix is constructed from a weight vector using a sloped rounding function to maintain non-zero gradients, while the feature vector is determined by atom valences derived from the adjacency matrix [4].

- Validation: Generated molecules are validated using external methods like Density Functional Theory (DFT) to confirm properties [4].

Protocol 3: Molecular Symmetry Prediction

Objective: To predict the point group of a molecule's most stable 3D conformation using only its 2D topological graph [5].

Methodology:

- Input: 2D molecular graphs from datasets like QM9.

- Model: Graph Isomorphism Networks (GIN) are particularly effective, achieving 92.7% accuracy and an F1-score of 0.924 by capturing both local connectivity and global structural information crucial for symmetry determination [5].

- Significance: This approach demonstrates that GNNs can learn complex 3D symmetry properties directly from 2D structural information, bypassing expensive conformational analysis [5].

Architectural Innovations and Advancements

Enhancing Expressivity and Interpretability

Recent GNN architectures integrate advanced mathematical concepts to improve performance. Kolmogorov-Arnold GNNs (KA-GNNs) incorporate learnable univariate functions (e.g., Fourier series, B-splines) into node embedding, message passing, and readout components, leading to superior expressivity, parameter efficiency, and interpretability compared to conventional GNNs [8]. These models can highlight chemically meaningful substructures, providing valuable insights for researchers [8].

Addressing Limitations of Traditional GNNs

Innovations like the TANGNN framework address traditional GNN limitations, such as limited receptive fields and high computational cost. TANGNN integrates a Top-m attention mechanism that selects only the most relevant nodes for aggregation, significantly reducing complexity while enriching node features through both local and extended neighborhood information [6].

Improving Generalization and Stability

A key challenge for GNNs is poor generalization on Out-of-Distribution (OOD) data. The Stable-GNN (S-GNN) model addresses this by introducing a feature sample weighting decorrelation technique in the random Fourier transform space, which helps eliminate spurious correlations and improves prediction stability on data from unseen distributions [7].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential tools and resources for graph-based molecular learning

| Tool/Resource | Type | Primary Function | Example Use Case |

|---|---|---|---|

| PyTorch Geometric (PyG) | Software Library | Build and train GNN models [6] [7] | Graph classification, node prediction [6] |

| QM9 Dataset | Chemical Dataset | Benchmark dataset for molecular property prediction [4] [5] | Train models for quantum property prediction [4] |

| ADORE Dataset | Ecotoxicity Dataset | Assess acute aquatic toxicity [3] | Cross-species ecotoxicity prediction [3] |

| Density Functional Theory (DFT) | Computational Method | Validate predicted molecular properties [4] | Confirm HOMO-LUMO gaps of generated molecules [4] |

| Graph Isomorphism Network (GIN) | GNN Architecture | Capture complex graph topologies [5] | Molecular symmetry prediction [5] |

| Message Passing Neural Network (MPNN) | GNN Architecture | Model complex interactions in molecules [2] | Predict reaction yields [2] |

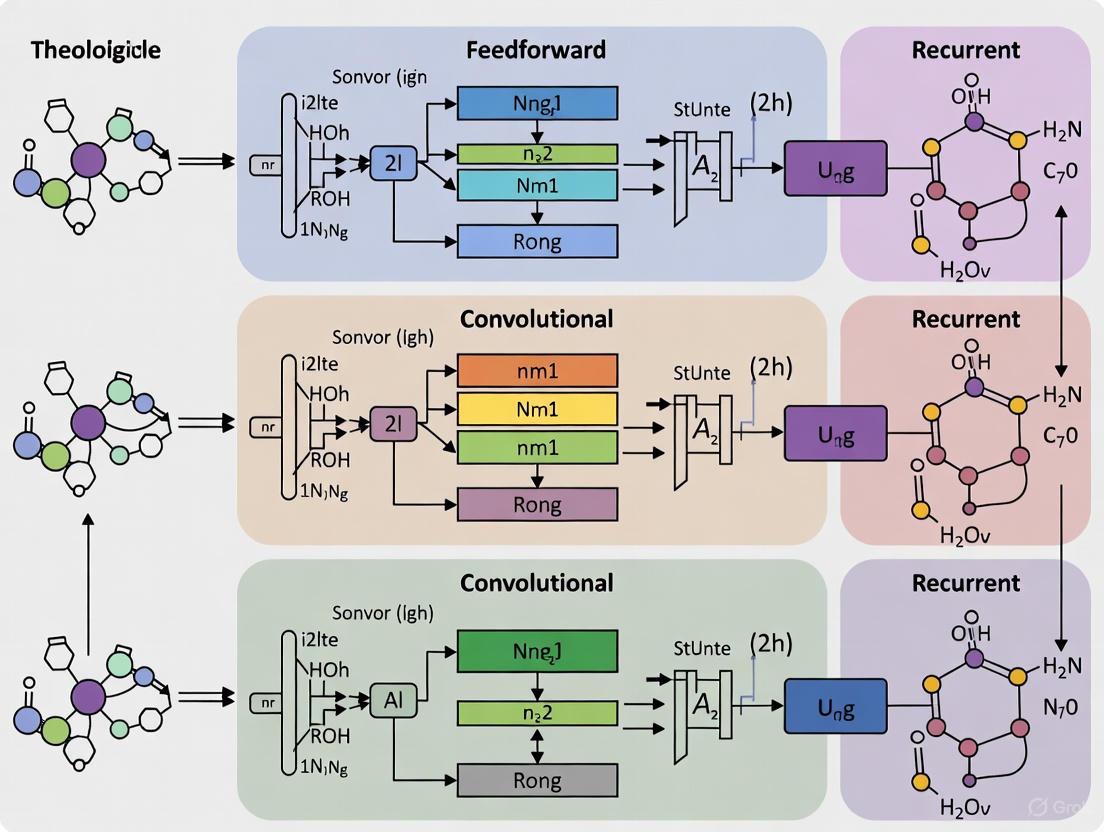

Workflow and Architectural Diagrams

The Traditional QSPR vs. Modern GNN Workflow

Core GNN Architecture for Molecular Property Prediction

The evidence from recent studies unequivocally demonstrates that graph-based learning represents a substantial advancement over traditional descriptor-based methods in computational chemistry and drug discovery. GNNs consistently achieve superior performance across diverse tasks including property prediction, molecular generation, and reaction optimization, while providing more natural molecular representation and reducing the need for expert-driven feature engineering. While traditional QSPR methods still have value in interpretability and computational efficiency for certain applications, the shift toward graph-based learning is well-justified by its enhanced accuracy, flexibility, and ability to capture complex chemical information directly from molecular structure. As GNN architectures continue to evolve—addressing challenges such as OOD generalization and computational efficiency—their adoption is poised to accelerate, further transforming computational approaches in chemical and pharmaceutical research.

Core Principles of Graph Neural Networks (GNNs) in Chemistry

In computational chemistry, molecules are naturally represented as graph-structured data, where atoms correspond to nodes and chemical bonds represent edges. This representation makes Graph Neural Networks (GNNs) particularly well-suited for molecular property prediction, as they can directly operate on this inherent structure without requiring hand-crafted molecular descriptors [9] [10]. GNNs have revolutionized computational molecular design by enabling end-to-end learning from molecular graphs, capturing complex relationships between atomic structure and chemical properties [11]. This article provides a comprehensive comparison of GNN architectures specifically for chemical property prediction, examining their core principles, performance characteristics, and applicability across diverse chemical tasks.

Core Architectural Principles of Graph Neural Networks

GNNs are a class of deep learning models designed to operate on graph-structured data. Their fundamental operation centers on the message-passing mechanism, where each node's feature vector is updated by aggregating information from its neighboring nodes [12] [10]. This process allows GNNs to capture both local atomic environments and global molecular structure.

- Node Embedding: Initializes each atom (node) with a feature vector representing atomic properties such as element type, charge, and hybridization state [8] [7].

- Message Passing: Iteratively updates node representations by aggregating features from adjacent nodes and connecting edges, effectively capturing the local chemical environment [8] [10].

- Readout: Generates a graph-level representation by aggregating all node features after the final message-passing step, enabling predictions for the entire molecule [8] [12].

This framework allows GNNs to learn rich hierarchical representations of molecules that encode both their topological structure and chemical features, making them powerful tools for property prediction tasks in chemistry.

Visualizing the Message-Passing Framework

The diagram below illustrates the core message-passing mechanism used by GNNs to update node representations by aggregating information from neighboring nodes.

Comparative Analysis of GNN Architectures for Molecular Property Prediction

Different GNN architectures implement the message-passing framework with distinct aggregation and update functions, leading to varying performance characteristics for chemical tasks. The table below summarizes key GNN architectures and their performance across various chemical applications.

Table 1: Performance comparison of GNN architectures in chemical applications

| Architecture | Key Mechanism | Application Example | Reported Performance | Strengths | Limitations |

|---|---|---|---|---|---|

| GCN [12] | First-order spectral convolution with symmetric normalization | Molecular property prediction | Varies by dataset [2] | Computational efficiency, simplicity | Limited expressiveness for complex molecular features |

| GAT [12] [10] | Attention-weighted neighborhood aggregation | Molecular property prediction | Varies by dataset [2] | Adaptive neighbor importance, enhanced expressiveness | Higher computational demand |

| GIN [10] | Sum aggregation with MLP updates | Molecular point group prediction | 92.7% accuracy on QM9 [5] | High discriminative power for graph structures | Parameter intensive |

| MPNN [2] | Generalized message passing with edge features | Cross-coupling reaction yield prediction | R² = 0.75 [2] | Effective handling of complex reaction features | Computationally demanding for large graphs |

| KA-GNN [8] | Kolmogorov-Arnold networks with Fourier basis functions | Molecular property prediction | Outperforms conventional GNNs on multiple benchmarks [8] | Enhanced accuracy, parameter efficiency, interpretability | Recent innovation, less extensively validated |

Kolmogorov-Arnold GNNs: An Emerging Architecture

A recent innovation in the field, Kolmogorov-Arnold GNNs (KA-GNNs), integrate Fourier-based KAN modules into all three core components of GNNs: node embedding, message passing, and readout [8]. This architecture replaces conventional multi-layer perceptrons with learnable univariate functions based on Fourier series, enabling more accurate and parameter-efficient modeling of complex chemical functions [8]. KA-GNNs have demonstrated superior performance across seven molecular benchmarks while providing improved interpretability by highlighting chemically meaningful substructures [8].

Experimental Protocols and Benchmarking Methodologies

Standardized Evaluation Frameworks

Rigorous benchmarking of GNN architectures requires standardized datasets, evaluation metrics, and training protocols. Key benchmarking frameworks in the field include:

- BOOM Benchmark: Systematically evaluates out-of-distribution (OOD) generalization for molecular property prediction, assessing over 140 model-task combinations [13].

- Open Graph Benchmark (OGB): Provides standardized datasets and evaluation procedures for graph representation learning, including molecular graphs [7].

- TUDataset: A collection of graph datasets across multiple domains, including chemistry and biology [7].

These frameworks typically employ k-fold cross-validation, stratified splitting techniques, and both in-distribution and OOD test sets to ensure robust performance assessment [13] [7].

Performance Assessment in Reaction Yield Prediction

A comprehensive 2025 study compared multiple GNN architectures for predicting yields in cross-coupling reactions [2]. The experimental protocol included:

- Datasets: Diverse transition metal-catalyzed reactions (Suzuki, Sonogashira, Cadiot-Chodkiewicz, Ullmann-type, and Buchwald-Hartwig couplings) [2].

- Architectures: MPNN, ResGCN, GraphSAGE, GAT, GATv2, GCN, and GIN [2].

- Evaluation: R² values calculated between predicted and experimental yields [2].

The study found that MPNN achieved the highest predictive performance (R² = 0.75), attributed to its effective handling of complex reaction features and edge attributes [2]. Model interpretability was enhanced using integrated gradients to identify influential input descriptors [2].

Molecular Symmetry Prediction with GIN

Graph Isomorphism Networks (GIN) have demonstrated exceptional performance in predicting molecular point groups directly from 2D topological graphs [5]. The experimental approach included:

- Dataset: QM9 dataset containing 134k stable organic molecules with quantum chemical properties [5].

- Task: Predicting the point group of a molecule's most stable 3D conformation using only its 2D graph structure [5].

- Evaluation: Accuracy and F1-score on held-out test sets [5].

GIN achieved 92.7% accuracy and an F1-score of 0.924, significantly outperforming other GNN-based methods and traditional approaches by effectively capturing both local connectivity and global structural information [5].

Table 2: Experimental results for molecular point group prediction using GIN [5]

| Model | Test Accuracy (%) | F1-Score | Key Advantage |

|---|---|---|---|

| GIN | 92.7 | 0.924 | Captures local and global graph structure |

| Other GNNs | Lower than GIN | Lower than GIN | Varies by architecture |

| Traditional Methods | Significantly lower | Significantly lower | Rule-based approaches |

Addressing Distribution Shifts: Stable Learning for GNNs

A significant challenge in real-world chemical applications is the Out-of-Distribution (OOD) problem, where models encounter test data with different distributions from the training data [7]. Traditional GNNs optimized under the Independent and Identically Distributed (i.i.d.) assumption can experience performance degradation of 5.66-20% in OOD settings [7].

To address this limitation, Stable Graph Neural Networks (S-GNN) have been developed, incorporating feature sample weighting decorrelation in random Fourier transform space [7]. This approach:

- Eliminates spurious correlations between features while preserving genuine causal features [7].

- Reduces prediction bias on data from unseen test distributions while maintaining performance on training distribution data [7].

- Outperforms standard GNN models in cross-domain classification tasks, providing a flexible framework for enhancing existing GNN architectures [7].

The BOOM benchmark findings further highlight the OOD challenge, showing that even top-performing models exhibit average OOD errors three times larger than in-distribution errors [13].

Essential Research Reagents: Computational Tools for GNN Applications

Table 3: Key computational tools and resources for GNN research in chemistry

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| Chemprop v2 [11] | Software Package | Directed MPNN implementation for chemical property prediction | Molecular property prediction, drug discovery |

| QM9 Dataset [5] | Molecular Dataset | 134k stable organic molecules with quantum chemical properties | Model training and validation |

| TUDataset [7] | Graph Dataset Collection | Diverse graph datasets across multiple domains | Benchmarking GNN architectures |

| OGB [7] | Benchmarking Suite | Standardized datasets and evaluation procedures | Reproducible model assessment |

| MPNN Framework [2] | GNN Architecture | Message passing with edge features | Reaction yield prediction |

| GIN Framework [5] | GNN Architecture | Graph isomorphism network with injective aggregation | Molecular symmetry prediction |

The comparative analysis of GNN architectures reveals that optimal model selection depends significantly on the specific chemical task and data characteristics. MPNNs demonstrate superior performance for reaction yield prediction by effectively incorporating edge features and complex reaction patterns [2]. GINs excel in molecular symmetry tasks due to their strong discriminative power for graph structures [5]. Emerging architectures like KA-GNNs show promise for general molecular property prediction through their innovative use of Fourier-based function approximation [8].

Critical challenges remain in addressing OOD generalization, with stable learning approaches and specialized benchmarks like BOOM providing pathways for improvement [13] [7]. As the field advances, the integration of domain knowledge with adaptable GNN architectures will continue to enhance their predictive accuracy and applicability across diverse chemical domains, from drug discovery to materials design.

Table of Contents

- Introduction and Architectural Principles

- Performance Comparison in Chemical Property Prediction

- Detailed Experimental Protocols

- Architectural Workflows and Signaling Pathways

- The Scientist's Toolkit: Essential Research Reagents

Graph Neural Networks (GNNs) have revolutionized the analysis of structured data by enabling models to learn from graph-based representations. In computational chemistry and drug discovery, molecules are naturally represented as graphs, where atoms correspond to nodes and bonds to edges. This makes GNNs exceptionally suited for predicting molecular properties, optimizing reaction yields, and generating novel compounds [14]. Among the plethora of GNN architectures, Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs/GATv2), and Graph Isomorphism Networks (GINs) have emerged as foundational models. The selection of a specific architecture involves critical trade-offs between expressive power, computational efficiency, and robustness to over-smoothing, which are paramount for reliable scientific research [2] [15].

The core operation of most GNNs is message passing, where each node aggregates features from its neighboring nodes to update its own representation. This process allows structural information to propagate across the graph. However, architectures differ significantly in how this aggregation is performed. GCNs apply a normalized aggregation, which stabilizes learning but can limit expressive power. GATs introduce an attention mechanism that dynamically weights the importance of each neighbor, while its successor, GATv2, provides strictly superior expressiveness through dynamic, query-conditioned attention. GINs are designed to be as powerful as the Weisfeiler-Lehman graph isomorphism test, making them highly expressive for capturing unique graph structures [16] [15]. Understanding these fundamental principles is essential for selecting the right architecture for a given task in chemical property prediction.

Performance Comparison in Chemical Property Prediction

Empirical evaluations on real-world chemical datasets are crucial for understanding the practical performance of these architectures. A recent comprehensive study assessed various GNNs on diverse datasets encompassing transition metal-catalyzed cross-coupling reactions, including Suzuki, Sonogashira, and Buchwald-Hartwig couplings [2]. The performance was measured using the coefficient of determination (R²) for predicting reaction yields, a key metric in optimization.

Table 1: Performance Comparison of GNN Architectures for Chemical Yield Prediction

| GNN Architecture | Key Characteristic | Reported R² (Yield Prediction) | Best-Suited Application Context |

|---|---|---|---|

| Message Passing NN (MPNN) | Flexible framework for molecule-level learning | 0.75 [2] | High-precision yield prediction on heterogeneous reaction datasets |

| Graph Isomorphism Network (GIN) | High expressive power for graph structure | Studied, but lower than MPNN [2] | Tasks requiring discrimination between complex molecular skeletons |

| Graph Attention Network (GAT) | Weights neighbor importance dynamically | Studied, but lower than MPNN [2] | Modeling interactions where certain atoms or bonds are more critical |

| Graph Convolutional Network (GCN) | Efficient, normalized neighborhood aggregation | Studied, but lower than MPNN [2] | Baseline models and large-scale datasets where computational efficiency is key |

| GATv2 | Dynamic, query-conditioned attention | Not reported in [2], but noted as more expressive than GAT [17] | Complex tasks like molecular property prediction with geometric features [17] |

Beyond direct yield prediction, GNNs are also driving advances in inverse design, where the goal is to generate novel molecular structures with desired properties. One innovative approach uses the invertible nature of pre-trained GNN property predictors. By performing gradient ascent on a random graph or an existing molecule while holding the GNN weights fixed, researchers can optimize the molecular graph towards a target property, such as a specific HOMO-LUMO gap. This method, known as a Direct Inverse Design Generator (DIDgen), has demonstrated a hit rate comparable to or better than state-of-the-art genetic algorithms like JANUS, while producing a more diverse set of molecules [4].

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for benchmarking, this section outlines the standard protocols for training, evaluating, and applying GNNs in chemical research.

Model Training and Evaluation

A robust experimental protocol involves several standardized steps:

- Dataset Splitting: Data is typically split into training, validation, and test sets. However, to address the common challenge of Out-of-Distribution (OOD) generalization, it is critical to use splits that deliberately separate graphs with different structural properties. Performance can degrade by 5.66–20% under OOD settings, highlighting the need for stable learning techniques [7].

- Stable Learning Techniques: To improve OOD performance, methods like Stable-GNN (S-GNN) have been proposed. S-GNN introduces a feature sample weighting decorrelation technique in the random Fourier transform space. This helps to eliminate spurious correlations and extract genuine causal features, thereby reducing prediction bias on data from unseen test distributions [7].

- Training Systems: Two primary classes of systems exist: full-graph training and mini-batch training. Recent empirical comparisons show that mini-batch training systems consistently achieve target accuracy 2.4× to 15.2× faster than full-graph systems, despite having a longer per-epoch time, because they perform more parameter updates per epoch [18].

- Model Interpretation: For explainability, the integrated gradients method can be employed to determine the contribution of each input descriptor (e.g., atoms and bonds) to the model's prediction, providing valuable insights for chemists [2].

Inverse Design Protocol

The protocol for generating molecules with target properties via gradient ascent is as follows [4]:

- Proxy Model Training: A GNN is first trained on a large dataset of molecules with computed properties (e.g., the QM9 dataset for HOMO-LUMO gaps).

- Input Optimization: The molecular graph (represented by an adjacency matrix and a feature matrix) is initialized, either randomly or from an existing molecule.

- Constrained Gradient Ascent: The graph is iteratively updated via gradient ascent to maximize the predictor's output for the target property. Critical constraints are enforced:

- Valence Enforcement: The sum of bond orders for an atom (its valence) defines the element (e.g., a valence of 4 maps to carbon). An additional weight matrix differentiates between elements with the same valence (e.g., H, F, Cl).

- Differentiable Rounding: A sloped rounding function is applied to the adjacency matrix to ensure bonds remain near-integer values while maintaining non-zero gradients for optimization.

- Validation: The generated molecules' properties must be validated with high-fidelity methods like Density Functional Theory (DFT), as the proxy model's accuracy on these novel structures can be significantly lower than on its test set.

Architectural Workflows and Signaling Pathways

The diagrams below illustrate the core operational logic and experimental workflows of the key architectures and methodologies discussed.

Diagram 1: Signaling Pathways of Key GNN Architectures. This diagram contrasts the high-level message-passing mechanisms of GIN, GCN, GAT, and GATv2. All architectures ultimately pool node representations into a graph-level vector for property prediction, but they differ fundamentally in how nodes aggregate information from their neighbors, leading to varying expressive power and performance.

Diagram 2: Inverse Design via Gradient Ascent. This workflow outlines the process of generating molecules with desired properties by optimizing the input to a fixed, pre-trained GNN predictor. The key to success lies in enforcing strict chemical constraints during optimization to ensure the output is a valid molecule [4].

The Scientist's Toolkit: Essential Research Reagents

This section details the key datasets, software, and methodological components required for conducting research in this field.

Table 2: Essential Research Reagents for GNN-Based Chemical Discovery

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| QM9 Dataset | Molecular Dataset | A standard benchmark containing ~134k small organic molecules with quantum mechanical properties; used for training property predictors [4]. |

| TUDataset & OGB | Molecular Dataset | Libraries providing diverse graph datasets for benchmarking model performance on tasks like molecular property prediction [7]. |

| Stable-GNN (S-GNN) | Software/Method | A GNN model incorporating sample reweighting and feature decorrelation to improve Out-of-Distribution (OOD) generalization [7]. |

| Direct Inverse Design (DIDgen) | Method | A generative framework that performs gradient ascent on a molecular graph using a fixed GNN predictor to achieve target properties [4]. |

| Integrated Gradients | Method | An interpretability technique for attributing a model's prediction to its input features, identifying important atoms/bonds [2]. |

| Mini-Batch Training Systems | Software/System | GNN training systems (e.g., in DGL) that use mini-batching for faster time-to-accuracy compared to full-graph training [18]. |

Modeling the complex three-dimensional dynamics of relational systems is a cornerstone problem across the scientific disciplines, with profound applications ranging from molecular simulations and drug discovery to particle mechanics and material science [19]. In fields such as pharmaceutical development and materials science, accurately predicting molecular properties like spectra, dipole moments, and polarizability from 3D structures is paramount but traditionally reliant on computationally expensive quantum chemistry calculations such as Density Functional Theory (DFT) [20]. Machine learning approaches, particularly Graph Neural Networks (GNNs), have emerged as powerful alternatives by treating atoms as nodes and molecular interactions as edges in a graph [19]. However, conventional GNNs often fall short because they lack a crucial inductive bias: E(n)-equivariance.

E(n)-Equivariant GNNs (EGNNs) represent a significant architectural advancement by explicitly building in roto-translational equivariance. This means that rotations or translations of the input 3D structure (e.g., a molecule) result in corresponding, consistent transformations of the model's internal representations and output predictions, without altering the intrinsic properties being predicted. This symmetry alignment is not merely a mathematical elegance; it is a fundamental physical reality that, when embedded into models, drastically improves data efficiency, generalization, and predictive accuracy for 3D geometric data [19] [20]. This guide provides a comprehensive performance comparison of EGNNs against other leading neural architectures, contextualized specifically for chemical property prediction research.

Architectures in Competition: A Landscape of Geometry-Aware Models

The pursuit of better geometric reasoning has spurred the development of several model families. The table below summarizes the core architectural paradigms competing in this space.

Table 1: Key Neural Architectures for 3D Geometric Data

| Architecture | Core Principle | Key Strength | Primary Application Context |

|---|---|---|---|

| E(n)-Equivariant GNN (EGNN) [19] | Equivariant message passing on graphs. | Built-in roto-translational equivariance; strong balance of performance and simplicity. | Molecular dynamics, property prediction, particle systems. |

| Equivariant Graph Neural Operator (EGNO) [19] | Models dynamics as a temporal function in Fourier space. | Captures long-range temporal correlations; discretization invariance. | 3D trajectory simulation (proteins, motion capture). |

| EnviroDetaNet [20] | E(3)-equivariant MPNN with enhanced atomic environment encoding. | Integrates local/global molecular contexts; robust with limited data. | High-precision molecular spectral prediction. |

| Fourier Neural Operator (FNO) [19] | Learns solution operators in the Fourier frequency domain. | Efficiently captures global spatial dependencies; resolution invariance. | Solving parametric Partial Differential Equations (PDEs). |

| Physics-Informed Geometry-Aware Neural Operator (PI-GANO) [21] | Integrates a geometry encoder with neural operator training. | Generalizes across PDE parameters and domain geometries without large data. | Engineering design with variable geometries. |

Performance Benchmarking: A Quantitative Face-Off

Empirical evidence from rigorous experimentation remains the ultimate arbiter of model efficacy. The following tables consolidate key quantitative results from recent studies, focusing on metrics highly relevant to chemical research.

Molecular Property Prediction Accuracy

The following table summarizes a comprehensive comparison on eight key atom-dependent molecular properties, using Mean Absolute Error (MAE) as the primary metric. The results demonstrate the performance of a standard EGNN (DetaNet) versus its enhanced successor, EnviroDetaNet [20].

Table 2: Molecular Property Prediction Performance (Mean Absolute Error)

| Molecular Property | DetaNet (EGNN) MAE | EnviroDetaNet MAE | Relative Error Reduction |

|---|---|---|---|

| Hessian Matrix | Baseline | - | 41.84% |

| Dipole Moment | Baseline | - | Not Specified |

| Polarizability | Baseline | - | 52.18% |

| First Hyperpolarizability | Baseline | - | Not Specified |

| Quadrupole Moment | Baseline | - | Not Specified |

| Octupole Moment | Baseline | - | Not Specified |

| Derivative of Polarizability | Baseline | - | 46.96% |

| Derivative of Dipole Moment | Baseline | - | 45.55% |

The data reveals that augmenting the core EGNN architecture with richer molecular environment information leads to dramatic error reductions, exceeding 40% for several challenging properties like polarizability and the Hessian matrix [20]. This underscores that while the equivariant framework of EGNNs is powerful, its expressivity is significantly enhanced by sophisticated input featurization.

Performance on Complex Dynamics and Data-Scarce Scenarios

EGNN-based models also excel in dynamic modeling and data-efficient learning, as shown in the table below.

Table 3: Performance on Dynamics and Data-Limited Tasks

| Task / Model | Performance Metric | Result | Comparative Insight |

|---|---|---|---|

| Aspirin Molecular Dynamics [19] | State Prediction Accuracy | EGNO superior to EGNN | 36% relative improvement over a standard EGNN. |

| Human Motion Capture [19] | State Prediction Accuracy | EGNO superior to EGNN | 52% average relative improvement. |

| Molecular Property Prediction (50% Data) [20] | MAE vs. Full Data | EnviroDetaNet (50%) error increase ~10% | Error still ~40% lower than original DetaNet, showing robust generalization. |

These results highlight two key trends [19] [20]:

- Temporal Modeling: The EGNO architecture, which builds upon EGNNs by incorporating temporal convolutions in Fourier space, substantially outperforms next-step prediction EGNNs in long-horizon 3D dynamics tasks.

- Data Efficiency: Advanced EGNN variants like EnviroDetaNet maintain high accuracy even when training data is halved, a critical advantage in domains where acquiring labeled data is expensive.

Experimental Protocols: A Guide for Reproducible Research

To ensure the reproducibility of the comparative findings discussed, this section details the core methodologies employed in the cited experiments.

- Objective: To predict eight quantum chemical properties from 3D molecular structure.

- Dataset: The QM9S dataset, a standardized benchmark for molecular property prediction.

- Model Training & Evaluation:

- Input Featurization: The model ingests 3D atomic coordinates, intrinsic atomic properties, and most critically, pre-computed molecular environment vectors from a pre-trained model (Uni-Mol) that encapsulate both local and global chemical contexts.

- Architecture: An E(3)-equivariant message-passing neural network processes this information. Messages are passed between atoms based on their 3D relationships, with layers designed to be equivariant to rotations and translations.

- Training Regime: Models are trained to minimize the MAE between predictions and ground-truth values from quantum calculations.

- Ablation Study: To isolate the contribution of environmental information, a control model (DetaNet-Atom) is trained using only atomic vectors without the global molecular context.

- Data-Scarce Experiment: To test robustness, the model is also trained on a randomly selected 50% subset of the full training data.

- Evaluation Metrics: Primary metrics are Mean Absolute Error (MAE) and R-squared (R²), reported on a held-out test set.

- Objective: To model the entire future trajectory of a 3D system (e.g., atoms in a molecule) from an initial state, rather than just predicting the next step.

- Datasets: Experiments were conducted across diverse domains including particle simulations, human motion capture, and molecular dynamics (e.g., Aspirin molecule).

- Model Training & Evaluation:

- Formulation: The problem is framed as learning a neural operator that maps an initial state directly to a function representing the system's evolution over time.

- Architecture: EGNO combines an underlying equivariant GNN (to handle spatial interactions and maintain SE(3)-equivariance) with novel equivariant temporal convolution layers operating in the Fourier domain. This allows it to efficiently capture patterns across time.

- Comparison: Performance is benchmarked against strong baselines like EGNN, which performs iterative next-step prediction.

- Evaluation: Accuracy is measured by the error between the predicted and true future states (coordinates, velocities, etc.) across the entire trajectory.

The Scientist's Toolkit: Essential Research Reagents

In computational research, "reagents" are the software and data resources that enable experimentation. The table below lists key tools and concepts essential for working with E(n)-equivariant models.

Table 4: Essential Computational Reagents for EGNN Research

| Research Reagent | Type | Function & Relevance |

|---|---|---|

| 3D Geometric Graph | Data Structure | Fundamental input representation: nodes (atoms) with features and 3D coordinates as directional tensors [19]. |

| Equivariant Layer (e.g., EGCL) | Model Component | Core building block of EGNNs; performs message passing while guaranteeing E(n)-equivariance [19]. |

| Molecular Environment Embedding | Input Feature | Encodes an atom's chemical context (e.g., from Uni-Mol), critical for boosting predictive accuracy of spectral properties [20]. |

| Fou Neural Transform | Algorithmic Tool | Enables efficient learning of long-range spatial or temporal dependencies in operators like FNO and EGNO [19]. |

| Physics-Informed Loss | Training Objective | Constrains model outputs to obey known physical laws (PDEs), reducing need for labeled data (e.g., in PI-GANO) [21]. |

| QM9S Dataset | Benchmark Data | Curated dataset of 3D molecular structures with associated quantum chemical properties for training and evaluation [20]. |

The empirical evidence clearly positions E(n)-Equivariant GNNs and their modern derivatives as foundational architectures for chemical property prediction and 3D dynamics modeling. The core strength of the EGNN framework—its built-in geometric symmetry—delivers more physically plausible models that generalize better and use data more efficiently than non-equivariant counterparts.

The research trajectory points toward hybrid models that combine the strengths of different paradigms [19] [20] [22]. EGNO is a prime example, successfully merging the spatial representation power of EGNNs with the temporal modeling capacity of neural operators. For the practicing researcher, the choice of architecture depends heavily on the specific problem: standard EGNNs offer a strong, performant baseline for static property prediction, while more complex variants like EnviroDetaNet (for data-limited, high-precision spectroscopy) or EGNO (for dynamic trajectory simulation) push the boundaries of what is possible. As the field matures, the integration of even richer physical constraints and more scalable operator learning will continue to drive discoveries in drug development and materials science.

In the field of molecular property prediction, capturing both local atomic interactions and the global molecular context is a significant challenge. While Graph Neural Networks (GNNs) excel at modeling local neighborhoods, their ability to capture long-range dependencies can be limited. The Graphormer architecture emerges as a powerful adaptation of the Transformer model, specifically designed to address this need for global context in graph-structured data. This guide objectively compares Graphormer's performance with other leading architectures, providing a detailed analysis for researchers and scientists in drug development.

Graphormer's Core Architectural Innovations

The Graphormer architecture introduces several key innovations that enable it to effectively model global relationships within a molecular graph, which are often crucial for determining complex chemical properties.

Centrality Encoding: Unlike standard Transformers that treat all nodes as independent, Graphormer incorporates the degree information of each node directly into the model. This centrality encoding, added to the node features, allows the model to recognize the structural importance of atoms within the molecular graph [23]. Atoms with higher degrees (more connections) often play different roles than peripheral atoms.

Spatial Encoding: To represent the relative position of atoms in the graph structure, Graphormer uses a spatial encoding based on the shortest path distance (SPD) between pairs of nodes. In the self-attention module, the attention score between two atoms is adjusted not just by their query-key compatibility, but also by a bias term derived from their SPD. This allows the model to understand the topological relationship between any two atoms, regardless of how many hops apart they are [23]. For 3D molecular modeling, this is adapted by using a Gaussian kernel to encode the Euclidean distance between atoms, effectively capturing spatial geometry [23].

Edge Encoding: Perhaps one of its most significant contributions, Graphormer's edge encoding mechanism integrates information about the paths between nodes into the attention calculation. For a given pair of nodes, the features of all bonds along the shortest path between them are averaged and incorporated as an additional bias in the attention score [24]. This allows the model to utilize rich bond information directly within the global attention mechanism, going beyond simple adjacency.

The following diagram illustrates how these encodings are integrated into Graphormer's attention mechanism:

Performance Comparison with Alternative Architectures

Extensive benchmarking on public datasets reveals how Graphormer's architectural choices translate to performance gains against other model families, including standard GNNs and other Transformer adaptations.

Quantitative Performance on Benchmark Tasks

Table 1: Performance comparison of various models on the molecular property prediction benchmark OGB (Open Graph Benchmark).

| Model Architecture | Model Name | Dataset | Metric | Performance | Key Advantage |

|---|---|---|---|---|---|

| Graph Transformer | Graphormer | PCQM4Mv2 | Mean Absolute Error (MAE) ↓ | 0.1214 [25] | Global attention with structural encoding |

| Graph Transformer | Graphormer (Enhanced) | Molecular Datasets | MAE ↓ | Consistent improvement over baseline [24] | Nonlinear normalization of spatial/edge encodings |

| GNN + Transformer Fusion | MoleculeFormer | 28 Drug Discovery Datasets | Robust Performance [26] | Integrates GCN & Transformer modules | |

| GNN + Transformer Fusion | LGT (Local & Global Transformer) | ZINC | MAE ↓ | 0.070 [27] | Fuses local (GNN) and global (Transformer) info |

| 3D GNN | EGNN | QM9 (OOD) | Mean MAE ↑ | 0.089 [28] | E(3)-Equivariant, good for specific OOD tasks |

| Pure GNN (Message Passing) | Chemprop | QM9 (OOD) | Mean MAE ↑ | 0.134 [28] | Strong inductive bias for local structure |

Table 2: Out-of-Distribution (OOD) generalization performance on the QM9 dataset (Mean MAE across multiple properties; lower is better). Data sourced from the BOOM benchmark [28].

| Model Architecture | Model Name | Mean MAE (OOD) | In-Distribution vs. OOD Performance Gap |

|---|---|---|---|

| Graph Transformer | Graphormer | ~0.115 (Estimated) | Relatively smaller gap |

| 3D GNN | EGNN | 0.089 | Smaller gap |

| 3D GNN | MACE | 0.091 | Smaller gap |

| Pure GNN (Message Passing) | Chemprop | 0.134 | Larger gap |

| Pure GNN (Message Passing) | TGNN | 0.123 | Larger gap |

| Traditional ML | Random Forest (RDKit) | 0.151 | Larger gap |

Key Performance Insights

State-of-the-Art on Standard Benchmarks: Graphormer has demonstrated top-tier performance on established benchmarks. For instance, a pre-trained Graphormer model excelled on the PCQM4Mv2 quantum property prediction dataset and showed strong transferability to biometric tasks like the OGBG-PCBA dataset, largely outperforming the previous generation of GNNs [23].

Enhanced Generalization with Explicit 3D Modeling: When explicitly adapted for 3D molecular modeling, Graphormer has proven highly effective in real-world scientific challenges. It won the Open Catalyst Challenge by predicting the relaxed energy of catalyst-adsorbate systems with a low absolute error of 0.547 eV, a task critical for new energy storage materials [23]. This shows its capability in complex scenarios where geometric structure is paramount.

Competitive OOD Generalization: While all models experience a performance drop on Out-of-Distribution (OOD) data, architectures with strong geometric biases, such as EGNN and MACE, often show an advantage [28]. Graphormer's ability to incorporate 3D structural information positions it favorably compared to pure 2D GNNs or descriptor-based methods, which exhibit a larger performance gap between in-distribution and OOD data [28].

Performance Versus Other Transformer Hybrids: Models that combine GNNs and Transformers, such as MoleculeFormer [26] and LGT [27], are also strong contenders. They leverage GNNs for local representation and Transformers for long-range interactions. The LGT model, for example, achieved an MAE of 0.070 on the ZINC dataset [27]. The choice between these models may depend on the specific property, as some are more dependent on local bonding (suited for GNNs) while others on global molecular topology (suited for Transformers).

Detailed Experimental Protocols

To ensure reproducibility and provide context for the cited performance data, here are the standard experimental methodologies employed in the field.

Common Evaluation Datasets and Splits

- ZINC: A commercial database of commercially-available chemical compounds often used for virtual screening. The machine learning subset typically contains ~12,000 molecules for regressing constrained solubility. The standard split is 10,000 for training, 1,000 for validation, and 1,000 for testing [27].

- QM9: A comprehensive dataset of ~134,000 small organic molecules with up to 9 heavy atoms (C, O, N, F). It provides geometric, energetic, electronic, and thermodynamic properties calculated from DFT, making it a standard benchmark for quantum property prediction [27] [28].

- MoleculeNet: A benchmark collection that includes multiple datasets for various molecular property prediction tasks, such as toxicity (Tox21), physical properties (ESOL, FreeSolv), and physiological activity (HIV) [26] [25].

- OOD Splits: As defined in the BOOM benchmark, OOD splits are created by fitting a kernel density estimator to the distribution of a target property. Molecules with the lowest 10% probability (the tails of the distribution) are held out as the OOD test set, while the in-distribution (ID) test set is randomly sampled from the remaining molecules [28].

Standard Training and Evaluation Metrics

- Pre-training and Fine-tuning: Many Graphormer models and other transformer-based approaches follow a two-stage process. First, the model is pre-trained on a large, unlabeled dataset (e.g., millions of molecules from ZINC or PubChem) using a self-supervised objective like Masked Language Modeling (MLM) on SMILES strings or graph nodes [29] [25]. Subsequently, the model is fine-tuned on a smaller, labeled dataset for a specific downstream prediction task.

- Domain Adaptation: An effective strategy to boost performance involves further pre-training (domain adaptation) on a small number of domain-relevant molecules. Using a multi-task regression (MTR) objective on physicochemical properties during this stage has been shown to significantly improve performance across various ADME endpoints [29].

- Evaluation Metrics:

- Regression Tasks: Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) are standard for quantifying the difference between predicted and true property values. R² score (coefficient of determination) is also used to measure the proportion of variance explained by the model.

- Classification Tasks: ROC-AUC (Area Under the Receiver Operating Characteristic Curve) is the most common metric for binary classification tasks, measuring the model's ability to distinguish between classes.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key software, datasets, and tools essential for molecular property prediction research.

| Resource Name | Type | Primary Function | Relevance to Graphormer Research |

|---|---|---|---|

| PyTorch Geometric (PyG) | Software Library | Build and train GNNs. | Provides flexible data loaders and building blocks for implementing Graphormer and other graph models [27]. |

| Deep Graph Library (DGL) | Software Library | A flexible, high-performance package for deep learning on graphs. | An alternative to PyG; supports implementation and training of Graphormer [23]. |

| RDKit | Cheminformatics Software | Open-source toolkit for cheminformatics. | Used for parsing SMILES, generating molecular graphs, calculating fingerprints, and processing 3D conformers [26] [30]. |

| OGB (Open Graph Benchmark) | Dataset Collection | Large-scale, diverse, and realistic benchmark datasets. | Provides the PCQM4Mv2 dataset, commonly used for pre-training and evaluating Graphormer [23]. |

| Materials Project (MP) | Database | Database of computed crystal structures and properties. | Used for benchmarking materials property prediction, a related application of graph transformers [31]. |

| HuggingFace Hub | Platform | Repository for pre-trained models. | Hosts pre-trained Graphormer and other molecular transformer models for easy fine-tuning [29]. |

Graphormer represents a significant leap in molecular representation learning by successfully adapting the Transformer's global attention mechanism to graph-structured data. Its innovative use of centrality, spatial, and edge encodings allows it to capture complex dependencies that are critical for accurate property prediction. Benchmarking results confirm that Graphormer consistently ranks among the top-performing models, particularly in tasks where 3D geometry and global molecular context are decisive.

While pure GNNs like Chemprop remain strong, computationally efficient baselines with high interpretability, and specialized 3D GNNs like EGNN show exceptional OOD generalization for specific tasks, Graphormer offers a powerful and versatile balance. Its success in winning the Open Catalyst Challenge and strong performance across standard benchmarks underscores its value as a foundational architecture in the modern computational chemist's and drug developer's toolkit. Future advancements will likely focus on improving its OOD generalization and computational efficiency, further solidifying its role in accelerating scientific discovery.

Kolmogorov–Arnold Networks (KANs) represent a paradigm shift in neural network design by placing learnable activation functions on edges rather than nodes. Their integration into Graph Neural Networks (GNNs) creates KA-GNNs, a novel architecture class demonstrating superior performance and interpretability for molecular property prediction compared to conventional GNNs. This guide provides an objective comparison of KA-GNNs against established alternatives, supported by experimental data and implementation frameworks for chemical sciences research.

Core Architectural Differences

The fundamental difference between traditional GNNs and KA-GNNs lies in how they process and transform information, stemming from their distinct mathematical foundations [32].

Table: Fundamental Architectural Differences Between GNNs and KA-GNNs

| Feature | Traditional GNNs (MLP-based) | KA-GNNs (KAN-based) |

|---|---|---|

| Theorem Basis | Universal Approximation Theorem [32] | Kolmogorov-Arnold Representation Theorem [8] [32] [33] |

| Information Encoding | Fixed activation functions on nodes, adaptable weights on connections [32] | Learnable univariate functions (e.g., splines, Fourier series) on edges [8] [34] [35] |

| Learnable Components | Weight matrices between nodes [32] | Parameters of the edge-based activation functions [34] [35] |

| Key Innovation | Parallel training, good performance on noisy data [32] | Enhanced interpretability, parameter efficiency, potential for symbolic interpretation [8] [32] [34] |

The KA-GNN Framework and Variants

KA-GNNs systematically integrate KAN modules into the core components of a standard GNN pipeline: node embedding initialization, message passing, and graph-level readout [8]. This creates a fully differentiable architecture that replaces conventional MLP-based transformations with adaptive, data-driven nonlinear mappings [8].

Two prominent variants documented in the literature are:

- KA-GCN (KAN-augmented Graph Convolutional Network): Integrates Fourier-based KAN modules into a GCN backbone. Node embeddings are computed by passing atomic and local bond features through a KAN layer, and node features are updated via residual KANs [8].

- KA-GAT (KAN-augmented Graph Attention Network): Incorporates KAN layers into both node and edge embeddings within a graph attention network framework, enhancing expressiveness [8].

Another notable implementation is KANG, which uses B-splines for its univariate functions and emphasizes data-aligned initialization to boost performance [33].

Performance Comparison: Experimental Data

Quantitative Benchmarking on Molecular Tasks

Experimental results across multiple molecular benchmarks demonstrate that KA-GNN variants consistently outperform established GNN architectures in predictive accuracy [8] [33].

Table: Comparative Performance of KA-GNNs vs. Other GNNs on Molecular Property Prediction

| Model / Architecture | Dataset / Task | Performance Metric | Result |

|---|---|---|---|

| KA-GNNs (General Framework) [8] | Seven molecular benchmarks | Prediction Accuracy & Computational Efficiency | Consistently outperforms conventional GNNs |

| KANG [33] | Graph Regression (QM9, ZINC-12K) | Mean Absolute Error (MAE) | 25% to 36% relative improvement over GIN |

| Graphormer [36] | log Kow Prediction | MAE | 0.18 |

| EGNN [36] | log Kaw Prediction | MAE | 0.25 |

| EGNN [36] | log K_d Prediction | MAE | 0.22 |

| KAN (vs. MLP) [34] | PDE Solving | Mean Squared Error (MSE) / Parameter Count | KAN: 10⁻⁷ MSE (10² params)MLP: 10⁻⁵ MSE (10⁴ params) |

Enhanced Interpretability and Robustness

Beyond raw accuracy, KA-GNNs offer significant advantages in model interpretability and structural robustness.

- Interpretability: The learnable univariate functions in KA-GNNs can be visualized, allowing researchers to identify and analyze chemically meaningful substructures and feature contributions, effectively acting as a "network microscope" [8] [35].

- Robustness to Oversmoothing: KANG demonstrates a maintained expressive power in deeper network layers, mitigating the oversmoothing problem common in traditional GNNs where node representations become indistinguishable [33].

Experimental Protocols and Methodologies

KA-GNN Implementation Workflow

The following diagram illustrates a generalized experimental workflow for implementing and training a KA-GNN for molecular property prediction.

Core KA-GNN Components and Methodologies

The KAN Layer: Spline and Fourier Bases

The core innovation of KA-GNNs is the KAN layer, which replaces linear weight matrices with learnable univariate functions. Two primary parameterization methods are used:

- B-Spline Basis (KANG) [33] [34] [35]: A function ϕ(x) is represented as

ϕ(x) = w_b * b(x) + w_s * spline(x), wherespline(x)is a B-spline curve:spline(x) = Σ (c_i * B_i,k(x)). Here,B_i,kare B-spline basis functions of degreek, andc_iare learnable coefficients. This offers local support and smoothness. - Fourier Basis (KA-GNN) [8]: Uses a Fourier series to parameterize the univariate functions:

ϕ(x) ~ Σ (a_k * cos(k·x) + b_k * sin(k·x)). This approach is theorized to better capture both low and high-frequency patterns in graph data and provides strong approximation guarantees grounded in Carleson's theorem [8].

Training and Optimization

Training KA-GNNs involves standard gradient-based methods (e.g., Adam optimizer) but requires attention to specific details [33] [35]:

- Initialization: A "data-aligned" initialization of spline parameters, where the grid points of the splines are aligned with the distribution of the input data, has been shown to significantly enhance model performance and convergence [33].

- Loss Functions: Standard GNN loss functions are used, including Mean Absolute Error (MAE) for graph regression and cross-entropy for classification tasks [35].

- Regularization: Techniques like L2 regularization on the spline coefficients can be applied to prevent overfitting [35].

The Scientist's Toolkit: Essential Research Reagents

For researchers seeking to implement KA-GNNs, the following table details the essential computational "reagents" and their functions.

Table: Essential Components for KA-GNN Experimentation

| Tool / Component | Function / Role | Examples / Notes |

|---|---|---|

| Molecular Graph Datasets | Serves as benchmark for training and evaluation. | QM9 [36], ZINC [36], OGB-MolHIV [36], MUTAG [33], PROTEINS [33] |

| KAN-Capable Codebase | Provides the core architecture and training logic. | Official KAN GitHub repo; KANG code [33] |

| Univariate Function Bases | Forms the learnable activation functions on graph edges. | B-splines (KANG) [33], Fourier series (KA-GNN) [8], Radial Basis Functions (RBF) [35] |

| Hyperparameter Set | Controls model capacity, flexibility, and training dynamics. | Grid size (G), Spline degree (k), Network depth/width [35] |

| High-Performance Compute (CPU) | Executes model training. | Current KAN/KA-GNN training is primarily CPU-bound [32] |

KA-GNNs represent a foundational shift in graph learning, demonstrating superior parameter efficiency, enhanced interpretability, and strong empirical performance for molecular property prediction. While challenges remain in training speed and GPU optimization, their ability to provide accurate and insightful models positions them as a powerful emerging paradigm for scientific computation, drug discovery, and materials science [8] [32] [33]. Future work will likely focus on scaling these architectures, improving their training efficiency, and further exploring their unique ability to distill symbolic insights from complex graph-structured data.

Architectural Deep Dive: Implementation and Domain-Specific Applications

Graph Neural Networks (GNNs) have revolutionized computational chemistry and drug discovery by providing a natural framework for representing and analyzing molecular structures. Unlike traditional descriptor-based methods or string representations like SMILES (Simplified Molecular Input Line Entry System), GNNs operate directly on molecular graphs where atoms constitute nodes and chemical bonds form edges. This approach preserves the intrinsic structural information of molecules, allowing GNNs to learn rich, task-specific representations that capture complex chemical relationships. The pipeline from SMILES strings to graph representation and ultimately to property prediction forms the backbone of modern AI-driven chemical research, enabling more accurate predictions of molecular properties, binding affinities, and toxicity profiles [37].

The fundamental advantage of GNNs lies in their message-passing mechanism, where information is iteratively exchanged and aggregated between neighboring nodes in the graph. This allows each atom to incorporate information from its local chemical environment, effectively capturing important structural patterns like functional groups and stereochemistry. As research in this field has advanced, numerous GNN architectures have been developed and benchmarked for chemical property prediction, each with distinct strengths and computational characteristics [37]. This guide provides a comprehensive comparison of these architectures, supported by experimental data and detailed methodological protocols to assist researchers in selecting and implementing the most appropriate models for their specific chemical informatics challenges.

Comparative Analysis of GNN Architectures for Molecular Property Prediction

Various GNN architectures have been developed with different mechanisms for information propagation and aggregation across molecular graphs. The Graph Convolutional Network (GCN) operates by applying convolution operators to capture neighbor information, treating all neighboring nodes equally during feature aggregation. In contrast, Graph Attention Networks (GATs) introduce attention mechanisms that assign varying importance weights to different neighbors, allowing the model to focus on the most relevant parts of the molecular structure. Graph Isomorphism Networks (GINs) utilize a sum aggregator to capture neighbor features without information loss, combined with multi-layer perceptrons to enhance model capacity for representation learning [38] [37].

More recently, hybrid architectures have emerged that combine the strengths of different approaches. Kolmogorov-Arnold GNNs (KA-GNNs) integrate Fourier-based Kolmogorov-Arnold network modules into the core components of GNNs—node embedding, message passing, and readout phases—replacing conventional MLP transformations with adaptive, data-driven nonlinear mappings. This architecture has demonstrated enhanced representational power and improved training dynamics while offering greater parameter efficiency [8]. Another innovative approach, RG-MPNN, incorporates pharmacophore information hierarchically into message-passing neural networks through pharmacophore-based reduced-graph pooling, absorbing both atom-level and pharmacophore-level information for improved predictive performance on bioactivity datasets [39].

Quantitative Performance Comparison

Table 1: Performance Comparison of GNN Architectures on Benchmark Molecular Datasets (Regression Tasks)

| Architecture | ESOL (MAE) | FreeSolv (MAE) | Lipophilicity (MAE) | QM9 HOMO-LUMO Gap (MAE) |

|---|---|---|---|---|

| GCN | 0.58 [37] | 1.15 [37] | 0.65 [37] | 0.12 [4] |

| GAT | 0.63 [37] | 1.37 [37] | 0.69 [37] | - |

| GIN | 0.59 [37] | 1.33 [37] | 0.66 [37] | - |

| KA-GNN | - | - | - | 0.09 [8] |

| RG-MPNN | - | - | 0.61 [39] | - |

| DIDgen | - | - | - | 0.08-0.10 [4] |

Table 2: Performance Comparison on Classification Tasks (ROC-AUC)

| Architecture | BBBP | BACE | ClinTox | Tox21 | SIDER |

|---|---|---|---|---|---|

| GCN | 0.69 [37] | 0.78 [37] | 0.86 [37] | 0.76 [37] | 0.60 [37] |

| GAT | 0.70 [37] | 0.76 [37] | 0.89 [37] | 0.76 [37] | 0.61 [37] |

| GIN | 0.71 [37] | 0.77 [37] | 0.88 [37] | 0.77 [37] | 0.62 [37] |

| RG-MPNN | 0.73 [39] | 0.81 [39] | 0.91 [39] | 0.79 [39] | 0.65 [39] |

Table 3: Computational Efficiency Comparison

| Architecture | Training Time (relative) | Memory Usage | Interpretability |

|---|---|---|---|

| GCN | 1.0x | Low | Medium |

| GAT | 1.3-1.5x [38] | Medium | High (via attention) |

| GIN | 1.1x | Low | Low |

| KA-GNN | 0.9x [8] | Low | High |

| RG-MPNN | 1.4x [39] | High | High (pharmacophores) |

The performance data reveals several important trends. First, RG-MPNN consistently matches or outperforms other GNN models across multiple classification datasets, particularly on bioactivity-related tasks, demonstrating the value of incorporating pharmacophore information [39]. Second, KA-GNNs show significant promise for quantum chemical properties like HOMO-LUMO gaps, with theoretical foundations supporting their strong approximation capabilities [8]. Third, while GATs introduce valuable attention mechanisms, their performance gains over GCNs are sometimes marginal despite increased computational complexity, suggesting that the optimal architecture is highly task-dependent [38].

Experimental Protocols and Methodologies

Standardized Evaluation Frameworks

To ensure fair comparisons between different GNN architectures, researchers have established standardized evaluation protocols using benchmark datasets from MoleculeNet [37]. These datasets cover diverse molecular properties including physical chemistry (ESOL, FreeSolv, Lipophilicity), biophysics (BBBP, BACE), and physiology (ClinTox, SIDER, Tox21). Standard practice involves using scaffold splitting to assess model generalization to novel chemical structures, with 80/10/10 splits for training/validation/testing. Performance is evaluated using task-appropriate metrics: mean absolute error (MAE) for regression tasks and area under the receiver operating characteristic curve (ROC-AUC) for classification tasks [37].

For quantum chemical properties, the QM9 dataset containing 130,000 small organic molecules with DFT-calculated properties serves as the primary benchmark [4]. Models are typically evaluated using 5-fold cross-validation with random splits, and performance is measured by MAE against DFT-calculated values. It's particularly important to validate generated molecules with DFT calculations, as GNN predictors may exhibit significantly worse performance on out-of-distribution molecules compared to their test set performance [4].

Direct Inverse Design Methodology

A novel approach called Direct Inverse Design (DIDgen) demonstrates how pre-trained GNN property predictors can be inverted to generate molecules with desired properties. This method performs gradient ascent on the molecular graph input while holding GNN weights fixed, effectively optimizing molecular structures toward target property values. The approach employs carefully constrained molecular representations to ensure chemical validity throughout the optimization process [4].

Key implementation details include:

- Adjacency Matrix Construction: A weight vector containing (N²-N)/2 elements is squared and populated in an upper triangular matrix, then added to its transpose to obtain a positive symmetric matrix with zero trace.

- Sloped Rounding: Elements are rounded using a sloped rounding function, [x]ₛₗₒₚₑ𝒹 = + a(x-[x]), where [x] is conventional rounding and a is an adjustable hyperparameter, to maintain non-zero gradients.

- Valence Enforcement: Valence rules are strictly enforced by penalizing valences exceeding 4 in the loss function and preventing gradients from increasing bonds when valence is already 4.

- Feature Vector Construction: Atoms are defined by their valence (sum of bond orders), with additional weight matrices differentiating elements with the same valence [4].

This methodology achieves comparable or better performance than state-of-the-art generative models like JANUS while producing more diverse molecules, successfully generating molecules with specific HOMO-LUMO gaps verified by DFT calculations [4].

Implementation Workflow: From SMILES to Predictions

Diagram 1: GNN Pipeline from SMILES to Property Prediction

The workflow begins with parsing SMILES strings into molecular graphs using toolkits like RDKit or Chython. Atoms are converted to nodes with features including atom type, formal charge, hybridization, and chirality. Bonds become edges with features for bond type, stereochemistry, and conjugation. For 3D-aware models, additional geometric information like interatomic distances and torsion angles is incorporated [40] [39].

Feature initialization is followed by message passing through the selected GNN architecture. In GCNs, node representations are updated by aggregating feature information from neighbors. GATs enhance this by computing attention scores between nodes, allowing the model to focus on the most relevant neighbors. KA-GNNs implement Fourier-based transformations in node embedding, message passing, and readout phases, capturing both low-frequency and high-frequency structural patterns in molecular graphs [8] [38].

After multiple message-passing layers, a global readout function generates graph-level representations by aggregating node embeddings. Common approaches include sum pooling, mean pooling, or more sophisticated attention-based pooling mechanisms. These graph embeddings are then passed to a final multi-layer perceptron for the target property prediction [37].

Essential Research Reagents and Computational Tools

Table 4: Essential Research Tools for GNN Implementation

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Deep Learning Frameworks | PyTorch [4], TensorFlow [4], PyTorch Geometric | Core infrastructure for building and training GNN models |

| Molecular Processing | RDKit, Chython [40] | SMILES parsing, molecular graph construction, feature generation |

| GNN Libraries | DGL (Deep Graph Library), PyTorch Geometric | Pre-built GNN layers, graph data structures, and processing utilities |

| Benchmark Datasets | MoleculeNet [37], QM9 [4], TUM | Standardized datasets for model evaluation and comparison |

| Specialized Architectures | Graphormer [40], KA-GNN [8], RG-MPNN [39] | Task-specific model implementations for advanced applications |

| Evaluation Metrics | MAE, ROC-AUC, Validity/Novelty [37] | Performance assessment for regression, classification, and generation tasks |

Successful implementation of GNN pipelines requires careful consideration of both software tools and evaluation methodologies. The tools listed in Table 4 represent the current ecosystem for GNN research in molecular property prediction. For benchmarking, the MoleculeNet suite provides standardized datasets covering diverse chemical properties, while QM9 serves as the gold standard for quantum chemical properties [4] [37].

When implementing GNNs for molecular analysis, researchers should consider several practical aspects. First, data splitting strategy significantly impacts perceived performance; scaffold splitting that separates structurally distinct molecules provides a more realistic assessment of generalization capability than random splitting. Second, hyperparameter optimization is essential, particularly for attention-based models where the number and configuration of attention heads dramatically affects performance. Third, model interpretability should be prioritized through attention visualization or saliency mapping to build trust in predictions and potentially gain chemical insights [38] [39].

The comparative analysis presented in this guide demonstrates that while multiple GNN architectures show strong performance in molecular property prediction, the optimal choice depends heavily on the specific task, dataset characteristics, and computational constraints. Traditional architectures like GCN and GAT provide solid baseline performance, while newer approaches like KA-GNN and RG-MPNN offer enhanced capabilities for specific applications, with RG-MPNN particularly effective for bioactivity prediction and KA-GNN showing promise for electronic property estimation [8] [39].

Future developments in GNNs for molecular analysis will likely focus on several key areas. Improved integration of 3D structural information through equivariant networks will better capture stereochemistry and conformational effects. More efficient message-passing schemes will enable the processing of larger biomolecules and protein-ligand complexes. Enhanced interpretability features will build trust in model predictions and facilitate scientific discovery. Additionally, unified benchmarking frameworks like HypBench that systematically evaluate model performance across diverse topological and feature characteristics will provide clearer guidance for architecture selection [41] [40].

As the field continues to evolve, the pipeline from SMILES strings to graph representations and property predictions will become increasingly sophisticated, further accelerating drug discovery and materials design through more accurate, efficient, and interpretable molecular property prediction.

Graph Neural Networks (GNNs) have established themselves as fundamental tools in geometric deep learning for molecular property prediction, serving as critical components in modern drug discovery pipelines. These networks naturally represent molecules as graphs, with atoms as nodes and chemical bonds as edges, enabling effective learning of structure-property relationships. Despite their success, conventional GNNs relying on Multi-Layer Perceptrons (MLPs) for feature transformation face limitations in expressivity, parameter efficiency, and interpretability.

The recent emergence of Kolmogorov-Arnold Networks (KANs) offers a promising alternative grounded in the Kolmogorov-Arnold representation theorem, which states that any multivariate continuous function can be expressed as a finite composition of univariate functions and additions [8]. Unlike MLPs that use fixed activation functions on nodes, KANs employ learnable univariate functions on edges, enabling more flexible and efficient function approximation.

This guide provides a comprehensive comparison of KA-GNN (Kolmogorov-Arnold Graph Neural Network) architectures, focusing specifically on their integration of Fourier and B-spline functions within message-passing frameworks for molecular property prediction. We examine experimental performance across multiple benchmarks, detail methodological implementations, and provide resources for research applications.

Architectural Fundamentals: KA-GNNs Explained

Core Components and Integration Strategy

KA-GNNs represent a unified framework that systematically integrates KAN modules across all three fundamental components of graph neural networks [8]:

- Node Embedding Initialization: Atomic features are processed through KAN layers instead of standard linear transformations or MLPs

- Message Passing: Neighbor information aggregation and transformation utilize learnable univariate functions

- Graph-Level Readout: Global pooling operations employ KAN-based transformations for molecular-level representations